System And Method For Signature-enhanced Multimedia Content Searching

Kind Code

U.S. patent application number 16/783189 was filed with the patent office on 2020-08-06 for system and method for signature-enhanced multimedia content searching. This patent application is currently assigned to Cortica Ltd.. The applicant listed for this patent is Igal Odinaev Raichelgauz. Invention is credited to Karina Odinaev, Igal Raichelgauz, Yehoshua Y. Zeevi.

| Application Number | 20200250218 16/783189 |

| Document ID | / |

| Family ID | 54065620 |

| Filed Date | 2020-08-06 |

| United States Patent Application | 20200250218 |

| Kind Code | A1 |

| Raichelgauz; Igal ; et al. | August 6, 2020 |

SYSTEM AND METHOD FOR SIGNATURE-ENHANCED MULTIMEDIA CONTENT SEARCHING

Abstract

A method for signature-enhanced multimedia content searching. The method includes searching through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

| Inventors: | Raichelgauz; Igal; (Tel Aviv, IL) ; Odinaev; Karina; (Tel Aviv, IL) ; Zeevi; Yehoshua Y.; (Haifa, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Cortica Ltd. Tel Aviv IL |

||||||||||

| Family ID: | 54065620 | ||||||||||

| Appl. No.: | 16/783189 | ||||||||||

| Filed: | February 6, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15611019 | Jun 1, 2017 | |||

| 16783189 | ||||

| 62347160 | Jun 8, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 16/685 20190101; G06F 16/40 20190101; G06N 20/00 20190101; H04N 7/17318 20130101; G06N 5/02 20130101; Y10S 707/99943 20130101; G10L 15/32 20130101; H04H 20/26 20130101; Y10S 707/99948 20130101; G06F 16/4393 20190101; G06F 40/134 20200101; G06N 5/04 20130101; H04L 67/327 20130101; G06F 16/7847 20190101; H04H 60/33 20130101; H04N 21/466 20130101; G06F 16/285 20190101; H04H 60/66 20130101; H04L 67/306 20130101; G06F 3/048 20130101; G06F 16/951 20190101; G06F 16/9558 20190101; G06K 2209/27 20130101; G06F 16/35 20190101; G06T 19/006 20130101; H04H 20/93 20130101; G10L 15/26 20130101; G06F 16/904 20190101; G06N 5/022 20130101; G06F 16/284 20190101; H04H 60/56 20130101; G06F 16/51 20190101; G06F 16/41 20190101; G06F 16/43 20190101; G06F 16/487 20190101; G06Q 30/0201 20130101; G06F 16/2228 20190101; G06F 16/48 20190101; G06F 16/7844 20190101; G06F 16/433 20190101; G06K 9/00281 20130101; G06N 5/025 20130101; G06Q 30/0246 20130101; G06Q 30/0261 20130101; G06F 3/0484 20130101; G06F 3/0488 20130101; G06F 16/172 20190101; G06F 16/435 20190101; G06F 16/683 20190101; G06F 16/783 20190101; G06N 7/005 20130101; G06F 16/1748 20190101; H04H 60/46 20130101; H04L 65/601 20130101; H04N 21/8106 20130101; G06F 16/14 20190101; G06F 16/7834 20190101; H04N 21/2668 20130101; G06K 9/00744 20130101; G06K 9/6267 20130101; G06F 16/434 20190101; H04H 60/37 20130101; H04H 60/59 20130101; H04N 21/25891 20130101; G06F 16/438 20190101; G06K 9/00711 20130101; G09B 19/0092 20130101; G10L 25/51 20130101; H04H 60/49 20130101; H04H 2201/90 20130101; H04L 67/10 20130101; G06K 9/00758 20130101; H04H 60/58 20130101; G06F 16/152 20190101; H04H 20/103 20130101; H04L 67/22 20130101; H04H 60/71 20130101 |

| International Class: | G06F 16/41 20060101 G06F016/41; G06F 16/14 20060101 G06F016/14; G06F 16/48 20060101 G06F016/48 |

Claims

1. A method for signature-enhanced multimedia content searching, comprising: searching through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

2. The method of claim 1, further comprising: assigning the at least one tag to at least one of the at least one input multimedia content element.

3. The method of claim 2, wherein assigning the at least one tag to one of the at least one input multimedia content element further comprises: generating at least one signature for the input multimedia content element; and comparing the generated at least one signature to a plurality of signatures of a plurality of reference multimedia content elements to determine at least one matching reference multimedia content element, wherein each reference multimedia content element is associated with at least one predetermined tag, wherein the at least one tag assigned to each input multimedia content element includes the at least one predetermined tag of each matching reference multimedia content element.

4. The method of claim 3, wherein the signatures of each matching reference multimedia content element match the at least one signature generated for the input multimedia content element above a predetermined threshold.

5. The method of claim 2, wherein assigning the at least one tag to one of the at least one input multimedia content element further comprises: sending, to a deep content classification system, at least one of: the input multimedia content element, and the at least one signature generated for the input multimedia content element; receiving, from the deep concept classification system, at least one concept matching the input multimedia content element; and creating at least one tag for the input multimedia content element, wherein the created at least one tag includes the metadata representing the matching at least one concept, wherein the created at least one tag is assigned to the input multimedia content element.

6. The method of claim 1, further comprising: determining, based on the comparison, at least one search result multimedia content element, wherein each search result multimedia content element is one of the at least one input multimedia content element having at least one tag matching the query above a predetermined threshold.

7. The method of claim 6, further comprising: sending the at least one search result multimedia content element to a user device.

8. The method of claim 1, wherein each input multimedia content element is at least one of: an image, graphics, a video stream, a video clip, an audio stream, an audio clip, a video frame, a photograph, images of signals, and a portion thereof.

9. The method of claim 1, wherein each signature is generated by a signature generator system, wherein the signature generator system includes a plurality of at least partially statistically independent computational cores, wherein the properties of each core are set independently of the properties of each other core.

10. A non-transitory computer readable medium having stored thereon instructions for causing a processing circuitry to execute a process, the process comprising: searching through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

11. A system for determining driving decisions based on multimedia content elements, comprising: a processing circuitry; and a memory connected to the processing circuitry, the memory containing instructions that, when executed by the processing circuitry, configure the system to: search through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

12. The system of claim 11, wherein the system is further configured to: assign the at least one tag to at least one of the at least one input multimedia content element.

13. The system of claim 12, wherein the system is further configured to: generate at least one signature for each input multimedia content element; and compare the generated at least one signature for each input multimedia content element to a plurality of signatures of a plurality of reference multimedia content elements to determine at least one matching reference multimedia content element, wherein each reference multimedia content element is associated with at least one predetermined tag, wherein the at least one tag assigned to each input multimedia content element includes the at least one predetermined tag of each matching reference multimedia content element.

14. The system of claim 13, wherein the signatures of each matching reference multimedia content element match the at least one signature generated for the input multimedia content element above a predetermined threshold.

15. The system of claim 12, wherein the system is further configured to: send, to a deep content classification system, at least one of: each input multimedia content element, and the at least one signature generated for each input multimedia content element; receive, from the deep concept classification system, at least one concept matching each input multimedia content element; and create at least one tag for each input multimedia content element, wherein the created at least one tag for each input multimedia content element includes the metadata representing the at least one concept matching the input multimedia content element, wherein the created at least one tag is assigned to the respective input multimedia content element.

16. The system of claim 11, wherein the system is further configured to: determine, based on the comparison, at least one search result multimedia content element, wherein each search result multimedia content element is one of the at least one input multimedia content element having at least one tag matching the query above a predetermined threshold.

17. The system of claim 16, wherein the system is further configured to: send the at least one search result multimedia content element to a user device.

18. The system of claim 11, wherein each input multimedia content element is at least one of: an image, graphics, a video stream, a video clip, an audio stream, an audio clip, a video frame, a photograph, images of signals, and a portion thereof.

19. The system of claim 11, wherein each signature is generated by a signature generator system, wherein the signature generator system includes a plurality of at least partially statistically independent computational cores, wherein the properties of each core are set independently of the properties of each other core.

20. The system of claim 11, further comprising: a signature generator system, wherein each signature is generated by the signature generator system, wherein the signature generator system includes a plurality of at least partially statistically independent computational cores, wherein the properties of each core are set independently of the properties of each other core.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of U.S. Provisional Application No. 62/347,160 filed on Jun. 8, 2016. This application is also a continuation-in-part (CIP) of U.S. patent application Ser. No. 14/050,991 filed on Oct. 10, 2013, now pending, which claims the benefit of U.S. Provisional Application No. 61/860,261 filed on Jul. 31, 2013. The Ser. No. 14/050,991 application is also a CIP of U.S. patent application Ser. No. 13/602,858 filed Sep. 4, 2012, now U.S. Pat. No. 8,868,619, which is a continuation of U.S. patent application Ser. No. 12/603,123, filed on Oct. 21, 2009, now U.S. Pat. No. 8,266,185. The Ser. No. 12/603,123 application is a CIP of:

[0002] (1) U.S. patent application Ser. No. 12/084,150 having a filing date of Apr. 7, 2009, now U.S. Pat. No. 8,655,801, which is the National Stage of International Application No. PCT/IL2006/001235, filed on Oct. 26, 2006, which claims foreign priority from Israeli Application No. 171577 filed on Oct. 26, 2005, and Israeli Application No. 173409 filed on Jan. 29, 2006;

[0003] (2) U.S. patent application Ser. No. 12/195,863 filed on Aug. 21, 2008, now U.S. Pat. No. 8,326,775, which claims priority under 35 USC 119 from Israeli Application No. 185414, filed on Aug. 21, 2007, and which is also a CIP of the above-referenced U.S. patent application Ser. No. 12/084,150;

[0004] (3) U.S. patent application Ser. No. 12/348,888 filed on Jan. 5, 2009, now pending, which is a CIP of the above-referenced U.S. patent application Ser. Nos. 12/084,150 and 12/195,863; and

[0005] (4) U.S. patent application Ser. No. 12/538,495 filed on Aug. 10, 2009, now U.S. Pat. No. 8,312,031, which is a CIP of the above-referenced U.S. patent application Ser. Nos. 12/084,150; 12/195,863; and 12/348,888.

[0006] All of the applications referenced above are herein incorporated by reference for all that they contain.

TECHNICAL FIELD

[0007] The present disclosure relates generally to the analysis of multimedia content, and more specifically to enhancing a user's search experience based on analysis of multimedia content.

BACKGROUND

[0008] Search engines are used for searching for information over the World Wide Web. Search engines are also utilized to search locally over the user device. A search query refers to a query that a user enters into such a search engine in order to receive search results. The search query may be in a form of a textual query, an image, or an audio query.

[0009] Searching for multimedia content elements (e.g., picture, video clips, audio clips, etc.) stored locally on the user device as well as on the web may not be an easy task. According to the prior art solutions, respective of an input query a search is performed through the metadata of the available multimedia content elements. The metadata is typically associated with a multimedia content element and includes parameters, such as the element's size, type, name, and short description, and so on. The description and name of the element are typically provided by the creator of the element and by a person saving or placing the element in a local device and/or a website. Therefore, metadata of an element, in most cases, is not sufficiently descriptive of the multimedia element. For example, a user may save a picture of a cat under the file name of "weekend fun", thus the metadata would not be descriptive of the contents of the picture.

[0010] As a result, searching for multimedia content elements based solely on their metadata may not provide the most accurate results. Following the above example, the input query `cat` would not return the picture saved under "weekend fun". In computer science, a tag is a non-hierarchical keyword or term assigned to a piece of information, such as multimedia content elements.

[0011] Tagging has gained wide popularity due to the growth of social networking, photograph sharing, and bookmarking of websites. Some websites allow users to create and manage tags that categorize content using simple keywords. The users of such sites manually add and define the description of the tags. However, some websites limit the tagging options of multimedia elements, for example, by only allowing tagging of people shown in a picture. Thus, existing solutions for tagging often result in inaccurate or incomplete tags which, consequently, leads to many inappropriate search results or missed appropriate search results.

[0012] It would be therefore advantageous to provide a solution that overcomes the deficiencies of the prior art.

SUMMARY

[0013] A summary of several example embodiments of the disclosure follows. This summary is provided for the convenience of the reader to provide a basic understanding of such embodiments and does not wholly define the breadth of the disclosure. This summary is not an extensive overview of all contemplated embodiments, and is intended to neither identify key or critical elements of all embodiments nor to delineate the scope of any or all aspects. Its sole purpose is to present some concepts of one or more embodiments in a simplified form as a prelude to the more detailed description that is presented later. For convenience, the term "some embodiments" or "certain embodiments" may be used herein to refer to a single embodiment or multiple embodiments of the disclosure.

[0014] Certain embodiments disclosed herein include a method for signature-enhanced multimedia content searching. The method comprises: searching through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

[0015] Certain embodiments disclosed herein also include a non-transitory computer readable medium having stored thereon causing a processing circuitry to execute a process, the process comprising: searching through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

[0016] Certain embodiments disclosed herein also include a system for signature-enhanced multimedia content searching. The system comprises: a processing circuitry; and a memory, the memory containing instructions that, when executed by the processing circuitry, configure the system to: search through a plurality of input multimedia content elements by comparing a query to at least one tag assigned to each input multimedia content element, wherein at least one of the at least one tag assigned to each input multimedia content element is assigned based on at least one signature generated for the input multimedia content element, wherein each signature represents a concept, wherein each concept is a collection of signatures and metadata representing the concept.

BRIEF DESCRIPTION OF THE DRAWINGS

[0017] The subject matter disclosed herein is particularly pointed out and distinctly claimed in the claims at the conclusion of the specification. The foregoing and other objects, features, and advantages of the disclosed embodiments will be apparent from the following detailed description taken in conjunction with the accompanying drawings.

[0018] FIG. 1 is a schematic block diagram of a network system utilized to describe the various embodiments disclosed herein.

[0019] FIG. 2 is a flowchart illustrating a method for tagging multimedia content elements according to an embodiment.

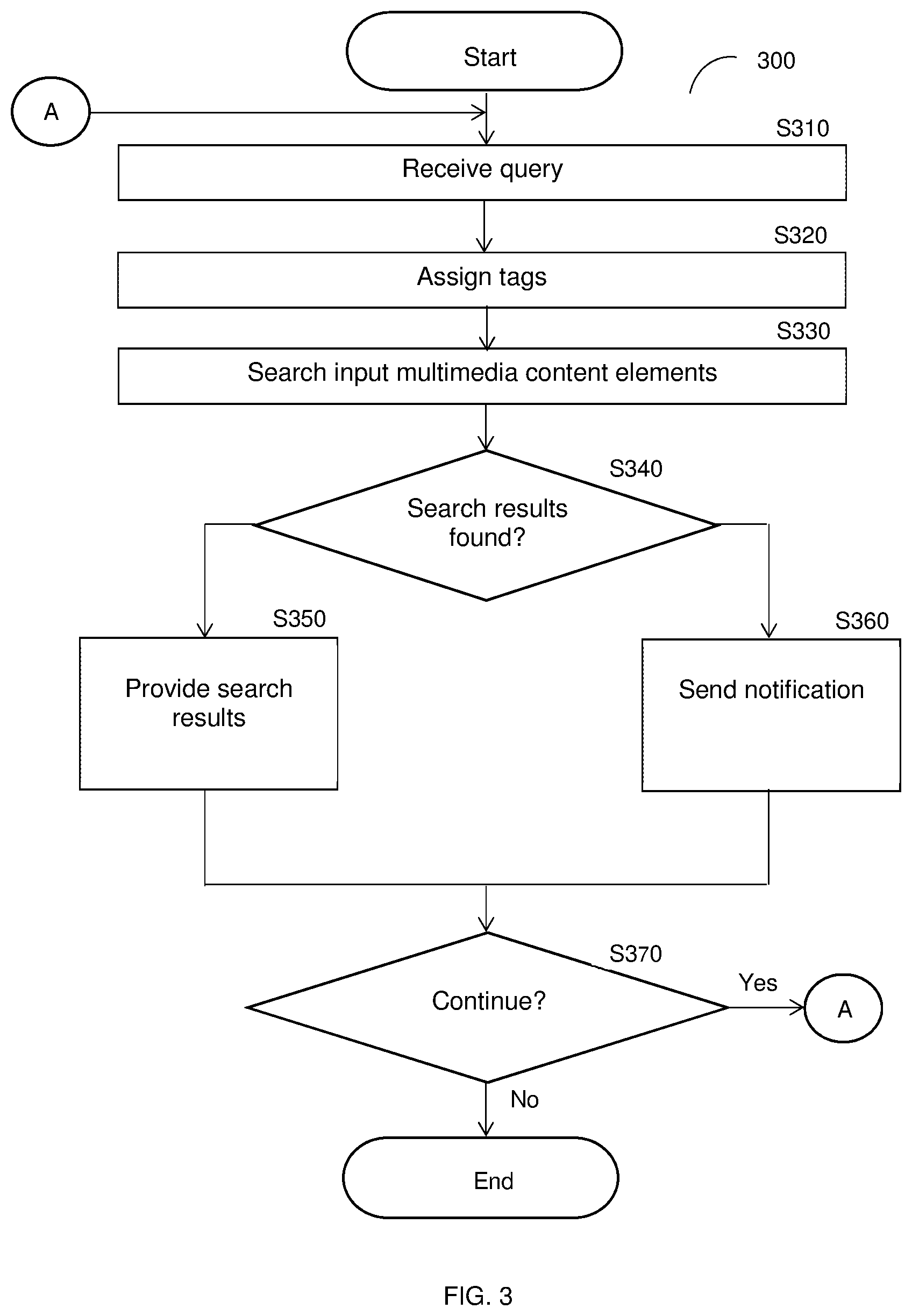

[0020] FIG. 3 is a flowchart illustrating a method for signature-enhanced multimedia content searching according to an embodiment.

[0021] FIG. 4 is a block diagram depicting the basic flow of information in the signature generator system.

[0022] FIG. 5 is a diagram showing the flow of patches generation, response vector generation, and signature generation in a large-scale speech-to-text system.

[0023] FIG. 6 is a schematic diagram of a multimedia content finder according to an embodiment.

DETAILED DESCRIPTION

[0024] It is important to note that the embodiments disclosed herein are only examples of the many advantageous uses of the innovative teachings herein. In general, statements made in the specification of the present application do not necessarily limit any of the various claimed embodiments. Moreover, some statements may apply to some disclosed features but not to others. In general, unless otherwise indicated, singular elements may be in plural and vice versa with no loss of generality. In the drawings, like numerals refer to like parts through several views.

[0025] The various disclosed embodiments include a system and a method for signature-enhanced multimedia content searching. At least a portion of a query is received. At least one tag may be assigned to at least one of a plurality of input multimedia content elements based on the signatures. The plurality of input multimedia content elements is searched by comparing the query to tags generated for the plurality of input multimedia content elements. At least one matching input multimedia content element found during the search may be provided as search results.

[0026] In an embodiment, assigning the tags includes generating at least one signature for each of the at least one of the plurality of input multimedia content elements and matching the generated signatures to a plurality of signatures of reference multimedia content elements associated with predetermined tags. Each tag associated with a reference multimedia content element matching an input multimedia content element as determined based on the signature matching may be assigned to the input multimedia content element.

[0027] FIG. 1 shows an example network diagram 100 utilized to describe the various disclosed embodiments. The network diagram includes a user device 120, a multimedia content (MMC) finder 130, a database 150, a deep content classification (DCC) system 160, and a plurality of data sources 170-1 through 170-m (hereinafter referred to individually as a data source 170 and collectively as data sources 170, merely for simplicity purposes). The network 110 may be the Internet, the world-wide-web (WWW), a local area network (LAN), a wide area network (WAN), a metro area network (MAN), and other networks capable of enabling communication between the elements of the network diagram 100.

[0028] The user device 120 may be, but is not limited to, a personal computer (PC), a personal digital assistant (PDA), a mobile phone, a smart phone, a tablet computer, an electronic wearable device (e.g., glasses, a watch, etc.), and other kinds of wired and mobile appliances, equipped with browsing, viewing, capturing, storing, listening, filtering, and managing capabilities enabled as further discussed herein below. The user device 120 may include or be communicatively connected to a local storage (not shown) storing multimedia content elements. As a non-limiting example, when the user device 120 is a smart phone including a camera, the local storage may store images and videos captured by the camera.

[0029] A multimedia content element may include, for example, an image, a graphic, a video stream, a video clip, an audio stream, an audio clip, a video frame, a photograph, and an image of signals (e.g., spectrograms, phasograms, scalograms, etc.), a combination thereof, or a portion thereof.

[0030] The user device 120 may further include an application (App) 125 installed thereon. The application 125 may be downloaded from an application repository, such as the AppStore.RTM., Google Play.RTM., or any repositories hosting software applications. The application 125 may be pre-installed in the user device 120. In an embodiment, the application 125 may be a web-browser. The application 125 may be configured to receive queries via an interface (not shown) of the user device 120 and to send the received queries to the multimedia content finder 130. It should be noted that only one user device 120 and one application 125 are discussed with reference to FIG. 1 merely for the sake of simplicity. However, the embodiments disclosed herein are applicable to a plurality of user devices each having an application installed thereon.

[0031] The database 150 stores at least reference multimedia content elements, tags associated with the reference multimedia content elements, and so on. In the example network diagram 100, the multimedia content finder 130 communicatively communicates with the database 150 through the network 110. In other non-limiting configurations, the multimedia content finder 130 may be directly connected to the database 150.

[0032] Each of the data sources 170 may store multimedia content elements that may be searched. To this end, the data sources 170 may include, but are not limited to, servers or data repositories of entities such as, for example, social media platforms, remote storage providers (e.g., cloud storage service providers), and any other entities storing multimedia content elements.

[0033] The signature generator system (SGS) 140 and the deep-content classification (DCC) system 160 may be utilized by the multimedia content finder 130 to perform the various disclosed embodiments. Each of the SGS 140 and the DCC system 160 may be connected to the multimedia content finder 130 directly or through the network 110. In certain configurations, the DCC system 160 and the SGS 140 may be embedded in the multimedia content finder 130.

[0034] In an embodiment, the multimedia content finder 130 is configured to receive a query and to search for relevant multimedia content elements based on the query. The searching may be among input multimedia content elements stored in, e.g., the user device 120 (e.g., based on multimedia content elements stored in a local storage of the user device 120), one or more of the data sources 170, or both. In a further embodiment, the multimedia content finder 130 is configured to search for the relevant multimedia content elements by comparing text of the query to text of tags created for the input multimedia content elements. In yet a further embodiment, each tag is created based on signatures of the respective input multimedia content element and of a plurality of reference multimedia content elements.

[0035] The tags may include previously created tags of each input multimedia content element. Alternatively or collectively, the multimedia content finder 130 may be configured to create tags for at least one of the input multimedia content elements when a query is received. In an example implementation, the multimedia content finder 130 may be configured to create tags only for input multimedia content elements that are not associated with at least one tag.

[0036] In an embodiment, the multimedia content finder 130 is configured to send the input multimedia content elements to the signature generator system 140, to the deep content classification system 160, or both. In a further embodiment, the multimedia content finder 130 is configured to receive a plurality of signatures generated to the multimedia content element from the signature generator system 140, to receive a plurality of signatures (e.g., signature reduced clusters) of concepts matched to the multimedia content element from the deep content classification system 160, or both. In another embodiment, the multimedia content finder 130 may be configured to generate the plurality of signatures, identify the plurality of signatures (e.g., by determining concepts associated with the signature reduced clusters matching each input multimedia content element), or a combination thereof.

[0037] In an embodiment, creating the tags for an input multimedia content element includes causing generation of at least one signature for the input multimedia content element and comparing the generated at least one signature to a plurality of signatures generated for reference multimedia content elements stored in, e.g., the database 150. Each reference multimedia content element is associated with at least one predetermined tag such that tags of the reference multimedia content element are likely to be appropriate for each matching input multimedia content element. In an embodiment, an input multimedia content element and a reference multimedia content element may be matching if signatures generated for the input multimedia content element match signatures of the reference multimedia content element above a predetermined threshold. The process of matching between signatures of multimedia content elements is discussed in detail below with respect to FIGS. 4 and 5.

[0038] Each signature represents a concept structure (hereinafter referred to as a "concept"). A concept is a collection of signatures representing elements of the unstructured data and metadata describing the concept. As a non-limiting example, a `Superman concept` is a signature-reduced cluster of signatures describing elements (such as multimedia elements) related to, e.g., a Superman cartoon: a set of metadata representing proving textual representation of the Superman concept. Techniques for generating concept structures are also described in the above-referenced U.S. Pat. No. 8,266,185.

[0039] In another embodiment, the multimedia content finder 130 is configured to create the tags by sending the input multimedia content elements to the DCC system 160 to match each input multimedia content element to at least one concept structure. If such a match is found, then the metadata of the concept structure may be used to generate a tag to be assigned the input multimedia content element. The identification of a concept matching the received multimedia content element includes matching at least one signature generated for the received element (such signature(s) may be produced either by the SGS 140 or the DCC system 160) and comparing the element's signatures to signatures representing a concept structure. The matching can be performed across all concept structures maintained by the system DCC 160.

[0040] It should be noted that, if the DCC system 160 returns multiple concept structures, a correlation for matching concept structures may be performed to generate a tag that best describes the element. The correlation can be achieved by identifying a ratio between signatures' sizes, a spatial location of each signature, and using the probabilistic models.

[0041] It should further be noted that using signatures generated for multimedia content elements enable accurate tagging of the elements, because the signatures generated for the multimedia content elements, according to the disclosed embodiments, allow for recognition and classification of multimedia content.

[0042] FIG. 2 depicts an example flowchart 200 describing a method for tagging multimedia content elements according to an embodiment.

[0043] At S210, at least one input multimedia content element is received. Alternatively or collectively, the at least one input multimedia content element may be retrieved from, e.g., a user device, one or more data sources, both, and the like.

[0044] At S220, at least one signature is generated for one of the input multimedia content elements. The signature(s) are generated by a signature generator system (e.g., the SGS 140) as described below with respect to FIGS. 4 and 5.

[0045] At S230, at least one tag is assigned to the input multimedia content element based on the generated signatures. In an embodiment, S230 includes searching for at least one matching reference multimedia content element and assigning at least one tag of the matching reference multimedia content element to the input multimedia content element content element. Two signatures are determined to be matching if their respective signatures at least partially match (e.g., above a predetermined threshold). In another embodiment, S230 includes querying a DCC system with the generated signature or the input multimedia content element to identify at least one matching concept structure. The metadata of the matching concept structure is used to tag the received multimedia element.

[0046] At optional S240, the input multimedia content element and its respective at least one tag may be stored.

[0047] At S250, it is checked whether additional input multimedia content elements are to be tagged and, if so, execution continues with S220, where a new input multimedia content element is tagged; otherwise, execution terminates.

[0048] FIG. 3 depicts an example flowchart 300 illustrating a method for signature-enhanced multimedia content searching according to an embodiment. In an embodiment, the method may be performed by the multimedia content finder 130, FIG. 1.

[0049] At S310, a query or a portion thereof is received. The query includes text representing, e.g., a search intent of a user.

[0050] In an embodiment, S310 may further include supplementing the received query using one or more supplementing rules. The supplementing rules may define terms to be added, removed, or modified, in received queries. The supplementing rules may include general rules (i.e., rules that apply regardless of user), rules that are specific to particular users, or both. As a non-limiting example, the supplementing rules may include rules for changing the term "me" included in a query to any of "selfies," "images showing me," "profile images," and the like. As another non-limiting example, the supplementing rules may include rules for changing the term "me" to a name of the user.

[0051] At optional S320, at least one tag may be assigned to each of at least one input multimedia content element. The input multimedia content elements may include, but are not limited to, multimedia content elements stored in a user device, one or more data sources, both, and the like, for example as described further herein above with respect to FIG. 1.

[0052] In an embodiment, S320 may include generating a signature for each input multimedia content element to be tagged and comparing the input multimedia content element signatures to a plurality of signatures of reference multimedia content elements associated with predetermined tags. In a further embodiment, S230 further includes assigning, to each input multimedia content element, tags associated with at least one matching reference multimedia content element having signatures matching the signatures of the input multimedia content element above a predetermined threshold.

[0053] In another embodiment, S320 may include sending the input multimedia content elements to be tagged or signatures therefore to a DCC system and receiving, from the DCC system, at least one concept matching each input multimedia content element. In a further embodiment, the assigned tags may be created based on metadata of the matching concepts.

[0054] Assigning tags to multimedia content elements based on signatures is described further herein above with respect to FIG. 2.

[0055] In an embodiment, tags may be assigned to input multimedia content elements that are currently lacking tags or lacking tags assigned based on signatures. As a non-limiting example, if a video was recently uploaded to a social media website, the video may lack tags or may only have tags assigned manually rather than based on signatures. Assigning tags only to input multimedia content elements lacking tags allows for conserving computing resources when one or more of the input multimedia content elements has, e.g., already been accurately tagged.

[0056] At S330, the input multimedia content elements are searched using the received query with respect to the assigned tags of each input multimedia content element. As noted above, each searched input multimedia content element is associated with at least one tag assigned based on signature matching to at least one reference multimedia content element.

[0057] At S340, it is determined whether at least one search result multimedia content element was found during the search and, if so, execution continues with S350; otherwise, execution continues with S360.

[0058] In an embodiment, each search result multimedia content element is an input multimedia content element associated with at least one tag matching the query above a predetermined threshold. In some embodiments, a limited number (e.g., 1, 5, 10, etc.) of input multimedia content elements may be found. In a further embodiment, the search result multimedia content elements may include one or more best matching input multimedia content elements as determined by comparing the query to the tags of each input multimedia content element.

[0059] At S350 the search result multimedia content elements may be provided, e.g., to a user device from which the query was received. In an embodiment, S350 may include sending the search result multimedia content elements, a notification identifying the search result multimedia content elements, or both.

[0060] At optional S360, a notification indicating that no search result multimedia content elements were found may be generated and sent, e.g., to a user device from which the query was received.

[0061] At S370, it is determined if additional queries have been received and, if so, execution continues with S310; otherwise, execution terminates. Additional queries may be processed in order to, e.g., handle queries from a plurality of users, handle revised queries from a user as the user changes the queries, and the like.

[0062] As a non-limiting example, a query of "me and Lucky" is received from a user device utilized by a user "John Smith." Based on supplementing rules, the received query is modified to be "image of John Smith and my dog." Tags for images stored in the user device are assigned based on signatures generated for the images. One of the stored images features John Smith and a dog and, consequently, signatures generated for the image represent at least the concepts of "dog" and "John Smith." The signatures of the images are compared to signatures of a plurality of reference images, and a reference image showing John Smith and a dog is determined to be matching. Accordingly, tags of "John Smith" and "dog" are assigned to the respective reference image. The modified query is compared to tags of each of the reference images, and it is determined that the tags of the reference image showing John Smith and a dog match the query above a predetermined threshold. Accordingly, the reference image of John Smith and a dog is determined as a search result, and may be sent to the user device.

[0063] It should be noted that the steps of FIG. 3 are described in a particular order merely for example purposes and without limitation on the disclosed embodiments. Other orders may be equally utilized without departing from the scope of the disclosure. In particular, assigning the tags to input multimedia content elements is described as occurring after receiving the query merely as an example. The tags may be equally assigned prior to or at the same time as receiving queries (e.g., at predetermined time intervals, as new input multimedia content elements are uploaded or otherwise stored, etc.) without departing from the scope of the disclosed embodiments.

[0064] It should be further noted that handling of multiple queries as described in FIG. 3 is discussed with respect to handling queries in series merely for simplicity purposes and without limitation on the disclosed embodiments. Queries may be handled in parallel without departing from the scope of the disclosure.

[0065] FIGS. 4 and 5 illustrate the generation of signatures for the multimedia content elements by the SGS 140 according to one embodiment. An exemplary high-level description of the process for large scale matching is depicted in FIG. 4. In this example, the matching is for a video content.

[0066] Video content segments 2 from a Master database (DB) 6 and a Target DB 1 are processed in parallel by a large number of independent computational Cores 3 that constitute an architecture for generating the Signatures (hereinafter the "Architecture"). Further details on the computational Cores generation are provided below. The independent Cores 3 generate a database of Robust Signatures and Signatures 4 for Target content-segments 5 and a database of Robust Signatures and Signatures 7 for Master content-segments 8. An exemplary and non-limiting process of signature generation for an audio component is shown in detail in FIG. 4. Finally, Target Robust Signatures and/or Signatures are effectively matched, by a matching algorithm 9, to Master Robust Signatures and/or Signatures database to find all matches between the two databases.

[0067] To demonstrate an example of the signature generation process, it is assumed, merely for the sake of simplicity and without limitation on the generality of the disclosed embodiments, that the signatures are based on a single frame, leading to certain simplification of the computational cores generation. The Matching System is extensible for signatures generation capturing the dynamics in-between the frames. In an embodiment, the SGS 140 is configured with a plurality of computational cores to perform matching between signatures.

[0068] The Signatures' generation process is now described with reference to FIG. 5. The first step in the process of signatures generation from a given speech-segment is to breakdown the speech-segment to K patches 14 of random length P and random position within the speech segment 12. The breakdown is performed by the patch generator component 21. The value of the number of patches K, random length P and random position parameters is determined based on optimization, considering the tradeoff between accuracy rate and the number of fast matches required in the flow process of the multimedia content finder 130 and SGS 140. Thereafter, all the K patches are injected in parallel into all computational Cores 3 to generate K response vectors 22, which are fed into a signature generator system 23 to produce a database of Robust Signatures and Signatures 4.

[0069] In order to generate Robust Signatures, i.e., Signatures that are robust to additive noise L (where L is an integer equal to or greater than 1) by the Computational Cores 3 a frame `i` is injected into all the Cores 3. Then, Cores 3 generate two binary response vectors: {right arrow over (S)} which is a Signature vector, and {right arrow over (RS)} which is a Robust Signature vector.

[0070] For generation of signatures robust to additive noise, such as White-Gaussian-Noise, scratch, etc., but not robust to distortions, such as crop, shift and rotation, etc., a core Ci={ni}(1.ltoreq.i.ltoreq.L) may consist of a single leaky integrate-to-threshold unit (LTU) node or more nodes. The node ni equations are:

V i = j w ij k j ##EQU00001## n i = .theta. ( V i - T h x ) ##EQU00001.2##

[0071] where, .theta. is a Heaviside step function; w.sub.ij is a coupling node unit (CNU) between node i and image component j (for example, grayscale value of a certain pixel j); kj is an image component T (for example, grayscale value of a certain pixel j); Thx is a constant Threshold value, where `x` is `S` for Signature and `RS` for Robust Signature; and Vi is a Coupling Node Value.

[0072] The Threshold values Thx are set differently for Signature generation and for Robust Signature generation. For example, for a certain distribution of Vi values (for the set of nodes), the thresholds for Signature (Th.sub.S) and Robust Signature (Th.sub.RS) are set apart, after optimization, according to at least one or more of the following criteria:

For: V.sub.i>Th.sub.RS 1-p(V>Th.sub.S)-1-(1-e).sup.l<<1 1:

i.e., given that l nodes (cores) constitute a Robust Signature of a certain image I, the probability that not all of these I nodes will belong to the Signature of same, but noisy image, is sufficiently low (according to a system's specified accuracy).

p(V.sub.i>Th.sub.RS).apprxeq.l/L 2:

i.e., approximately l out of the total L nodes can be found to generate a Robust Signature according to the above definition.

[0073] 3: Both Robust Signature and Signature are generated for certain frame i.

[0074] It should be understood that the generation of a signature is unidirectional, and typically yields lossless compression, where the characteristics of the compressed data are maintained but the uncompressed data cannot be reconstructed. Therefore, a signature can be used for the purpose of comparison to another signature without the need of comparison to the original data. The detailed description of the Signature generation can be found in U.S. Pat. Nos. 8,326,775 and 8,312,031, assigned to common assignee, which are hereby incorporated by reference for all the useful information they contain.

[0075] A Computational Core generation is a process of definition, selection, and tuning of the parameters of the cores for a certain realization in a specific system and application. The process is based on several design considerations, such as:

[0076] (a) The Cores should be designed so as to obtain maximal independence, i.e., the projection from a signal space should generate a maximal pair-wise distance between any two cores' projections into a high-dimensional space.

[0077] (b) The Cores should be optimally designed for the type of signals, i.e., the Cores should be maximally sensitive to the spatio-temporal structure of the injected signal, for example, and in particular, sensitive to local correlations in time and space. Thus, in some cases a core represents a dynamic system, such as in state space, phase space, edge of chaos, etc., which is uniquely used herein to exploit their maximal computational power.

[0078] (c) The Cores should be optimally designed with regard to invariance to a set of signal distortions, of interest in relevant applications.

[0079] A detailed description of the Computational Core generation and the process for configuring such cores is discussed in more detail in the above-referenced U.S. Pat. No. 8,655,801.

[0080] FIG. 6 is an example schematic diagram of the multimedia content finder 130 according to an embodiment. The multimedia content finder 130 includes a processing circuitry 610 coupled to a memory 620, a storage 630, and a network interface 640. In an embodiment, the components of the multimedia content finder 130 may be communicatively connected via a bus 650.

[0081] The processing circuitry 610 may be realized as one or more hardware logic components and circuits. For example, and without limitation, illustrative types of hardware logic components that can be used include field programmable gate arrays (FPGAs), application-specific integrated circuits (ASICs), Application-specific standard products (ASSPs), system-on-a-chip systems (SOCs), general-purpose microprocessors, microcontrollers, digital signal processors (DSPs), and the like, or any other hardware logic components that can perform calculations or other manipulations of information. In an embodiment, the processing circuitry 610 may be realized as an array of at least partially statistically independent computational cores. The properties of each computational core are set independently of those of each other core, as described further herein above.

[0082] The memory 620 may be volatile (e.g., RAM, etc.), non-volatile (e.g., ROM, flash memory, etc.), or a combination thereof. In one configuration, computer readable instructions to implement one or more embodiments disclosed herein may be stored in the storage 630.

[0083] In another embodiment, the memory 620 is configured to store software. Software shall be construed broadly to mean any type of instructions, whether referred to as software, firmware, middleware, microcode, hardware description language, or otherwise. Instructions may include code (e.g., in source code format, binary code format, executable code format, or any other suitable format of code). The instructions, when executed by the processing circuitry 610, cause the processing circuitry 610 to perform the various processes described herein. Specifically, the instructions, when executed, cause the processing circuitry 610 to provide recommendations of trending content based on context as described herein.

[0084] The storage 630 may be magnetic storage, optical storage, and the like, and may be realized, for example, as flash memory or other memory technology, CD-ROM, Digital Versatile Disks (DVDs), or any other medium which can be used to store the desired information.

[0085] The network interface 640 allows the multimedia content finder 130 to communicate with the signature generator system 140 for the purpose of, for example, sending multimedia content elements, receiving signatures, and the like. Further, the network interface 640 allows the multimedia content finder 130 to receive queries, send search results, store tags and associated multimedia content elements or signatures, and the like.

[0086] It should be understood that the embodiments described herein are not limited to the specific architecture illustrated in FIG. 6, and other architectures may be equally used without departing from the scope of the disclosed embodiments. In particular, the multimedia content finder 130 may further include a signature generator system configured to generate signatures, a tag generator configure to generate tags for multimedia content elements based on signatures, or both, as described herein, without departing from the scope of the disclosed embodiments.

[0087] The various embodiments disclosed herein can be implemented as hardware, firmware, software, or any combination thereof. Moreover, the software is preferably implemented as an application program tangibly embodied on a program storage unit or computer readable medium consisting of parts, or of certain devices and/or a combination of devices. The application program may be uploaded to, and executed by, a machine comprising any suitable architecture. Preferably, the machine is implemented on a computer platform having hardware such as one or more central processing units ("CPUs"), a memory, and input/output interfaces. The computer platform may also include an operating system and microinstruction code. The various processes and functions described herein may be either part of the microinstruction code or part of the application program, or any combination thereof, which may be executed by a CPU, whether or not such a computer or processor is explicitly shown. In addition, various other peripheral units may be connected to the computer platform such as an additional data storage unit and a printing unit. Furthermore, a non-transitory computer readable medium is any computer readable medium except for a transitory propagating signal.

[0088] All examples and conditional language recited herein are intended for pedagogical purposes to aid the reader in understanding the disclosed embodiments and the concepts contributed by the inventor to furthering the art, and are to be construed as being without limitation to such specifically recited examples and conditions. Moreover, all statements herein reciting principles, aspects, and embodiments of the invention, as well as specific examples thereof, are intended to encompass both structural and functional equivalents thereof. Additionally, it is intended that such equivalents include both currently known equivalents as well as equivalents developed in the future, i.e., any elements developed that perform the same function, regardless of structure.

* * * * *

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.