Ophthalmologic Image Processing Device And Non-transitory Computer-readable Storage Medium Storing Computer-readable Instruction

Kind Code

U.S. patent application number 16/778257 was filed with the patent office on 2020-08-06 for ophthalmologic image processing device and non-transitory computer-readable storage medium storing computer-readable instruction. This patent application is currently assigned to NIDEK CO., LTD.. The applicant listed for this patent is NIDEK CO., LTD.. Invention is credited to Yoshiki Kumagai, Sohei Miyazaki, Yusuke Sakashita, Ryosuke Shiba, Naoki Takeno.

| Application Number | 20200245858 16/778257 |

| Document ID | 20200245858 / US20200245858 |

| Family ID | 1000004666144 |

| Filed Date | 2020-08-06 |

| Patent Application | download [pdf] |

View All Diagrams

| United States Patent Application | 20200245858 |

| Kind Code | A1 |

| Takeno; Naoki ; et al. | August 6, 2020 |

OPHTHALMOLOGIC IMAGE PROCESSING DEVICE AND NON-TRANSITORY COMPUTER-READABLE STORAGE MEDIUM STORING COMPUTER-READABLE INSTRUCTIONS

Abstract

A processor of an ophthalmologic image processing device acquires an ophthalmologic image photographed by an ophthalmologic image photographing device. The processor inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm to acquire a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye. The processor acquires information of a distribution of weight relating to an analysis by a mathematical model, as supplemental distribution information, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable. The processor sets a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information. The processor acquires an image of a tissue including the attention area among a tissue of the subject eye and displays the image on a display unit.

| Inventors: | Takeno; Naoki; (Gamagori-shi, JP) ; Shiba; Ryosuke; (Gamagori-shi, JP) ; Miyazaki; Sohei; (Gamagori-shi, JP) ; Sakashita; Yusuke; (Okazaki-shi, JP) ; Kumagai; Yoshiki; (Toyokawa-shi, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | NIDEK CO., LTD. Gamagori-shi JP |

||||||||||

| Family ID: | 1000004666144 | ||||||||||

| Appl. No.: | 16/778257 | ||||||||||

| Filed: | January 31, 2020 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 3/0025 20130101; G06N 20/00 20190101; A61B 3/102 20130101; A61B 3/0041 20130101; G06K 9/3233 20130101; G16H 30/40 20180101 |

| International Class: | A61B 3/00 20060101 A61B003/00; G16H 30/40 20180101 G16H030/40; G06N 20/00 20190101 G06N020/00; G06K 9/32 20060101 G06K009/32; A61B 3/10 20060101 A61B003/10 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Jan 31, 2019 | JP | 2019-16062 |

| Jan 31, 2019 | JP | 2019-16063 |

Claims

1. An ophthalmologic image processing device that processes an ophthalmologic image of a tissue of a subject eye, the ophthalmologic image processing device comprising a processor, wherein the processor: acquires the ophthalmologic image photographed by an ophthalmologic image photographing device; inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquires a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye; acquires information of a distribution of weight relating to the analysis by the mathematical model, as supplemental distribution information, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; sets a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information; acquires an image of a tissue including the attention area among a tissue of the subject eye; and displays the image on a display unit.

2. The ophthalmologic image processing device according to claim 1, wherein the supplemental distribution information includes information indicating a distribution of a degree of influence that affects the result of the analysis output by the mathematical model.

3. The ophthalmologic image processing device according to claim 1, wherein the supplemental distribution information includes information indicating a distribution of a degree of certainty of the analysis by the mathematical model.

4. The ophthalmologic image processing device according to claim 3, wherein the processor sets an area of which the degree of certainty of the analysis relating to a specific disease or a specific structure is equal to or smaller than a threshold, within the image area of the ophthalmologic image, as the attention area.

5. The ophthalmologic image processing device according to claim 1, wherein the processor sets an area including a position of which the weight shown by the supplemental distribution is the largest or an area including a position of which the weight is equal to or larger than a threshold, within the image area of the ophthalmologic image, as the attention area.

6. The ophthalmologic image processing device according to claim 1, wherein the processor acquires a tomographic image of a part passing the attention area, among a tissue of the subject eye and displays the tomographic image on the display unit.

7. The ophthalmologic image processing device according to claim 1, wherein the processor extracts an image area including the attention area from the ophthalmologic image for which a tissue of the subject eye is photographed and displays the image on the display unit.

8. The ophthalmologic image processing device according to claim 1, wherein the processor switches whether the image of a tissue including the attention area is displayed or is not displayed on the display unit, in accordance with an instruction input by a user.

9. An ophthalmologic image processing device that processes an ophthalmologic image of a tissue of a subject eye, the ophthalmologic image processing device comprising a processor, wherein the processor: acquires the ophthalmologic image photographed by an ophthalmologic image photographing device; inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquires results of analyses relating to diseases or structures of a subject eye; acquires a supplemental map, for each of the results of the analyses, that indicates a distribution of weight relating to the analysis by the mathematical model, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; generates an integration map in which the supplemental maps are integrated within an identical area; and displays the integration map on a display unit.

10. The ophthalmologic image processing device according to claim 9, wherein the supplemental map indicates a distribution of a degree of influence that affects the result of the analysis output by the mathematical model.

11. The ophthalmologic image processing device according to claim 9, wherein the supplemental map indicates a distribution of a degree of certainty of the analysis by the mathematical model.

12. The ophthalmologic image processing device according to claim 9, wherein the processor changes a display mode of each of the supplemental maps and integrates the supplemental maps to generate the integration map.

13. The ophthalmologic image processing device according to claim 9, wherein the processor displays the integration map to be superimposed on the ophthalmologic image of the subject eye.

14. The ophthalmologic image processing device according to claim 13, wherein the processor execute at least one of switching whether the integration map is displayed to be superimposed on the ophthalmologic image of the subject eye or is not displayed and changing a transparency of the integration map to be superimposed on the ophthalmologic image, in accordance with an instruction input by a user.

15. A non-transitory computer-readable storage medium storing computer-readable instructions that, when executed by a processor of an ophthalmologic image processing device, causes the ophthalmologic image processing device to perform processes comprising: acquiring an ophthalmologic image photographed by an ophthalmologic image photographing device; inputting the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquiring a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye; acquiring information of a distribution of weight relating to the analysis by the mathematical model, as supplemental distribution information, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; setting a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information; acquiring an image of a tissue including the attention area among a tissue of the subject eye; and displaying the image on a display unit.

Description

CROSS REFERENCE TO RELATED APPLICATION

[0001] This application is based upon and claims the benefit of priority of Japanese Patent Application No. 2019-016062 and Japanese Patent Application No. 2019-016063, filed on Jan. 31, 2019, the contents of which are incorporated herein by reference in their entirety.

BACKGROUND

[0002] The present disclosure relates to an ophthalmologic image processing device that processes an ophthalmic image of a subject eye and a non-transitory computer-readable storage medium storing computer-readable instructions.

[0003] In recent years, a technique that acquires various medical information using a mathematical model trained by a machine learning algorithm has been proposed. For example, an ophthalmologic device disclosed in Japanese Unexamined Patent Application Publication JP 2018-051223 inputs an eye shape parameter into a mathematical model trained by a machine learning algorithm so as to acquire intraocular lens (IOL) related information (for example, a predicted postoperative anterior chamber depth) of a subject eye and calculates a power of an IOL based on the acquired IOL related information.

SUMMARY

[0004] It may be considered to input an ophthalmologic image into a mathematical model trained by a machine learning algorithm so as to acquire a result of an analysis relating to at least one of a disease and a structure of a subject eye. However, presence/absence of the disease, a degree of the disease, a structural characteristic or the like in a subject eye is different depending on the subject eye, and therefore variation of certainty might be caused in the result of the analysis. Consequently, it might occur that an operation such as a diagnosis of a user (for example, a doctor or the like) is not appropriately assisted by merely providing the result of the analysis output through the mathematical model to the user.

[0005] An object of a first aspect of the present disclosure is to provide an ophthalmologic image processing device and a non-transitory computer-readable storage medium storing computer-readable instructions that can appropriately assist an operation of a user.

[0006] Further, it may be possible to acquire results of analyses relating to diseases and structures from one ophthalmologic image. However, in such a case, it might be difficult for a user to recognize a plurality of the results of the analyses efficiently unless a plurality of the results of the analyses is appropriately provided to the user.

[0007] An object of a second aspect of the present disclosure is to provide an ophthalmologic image processing device and a non-transitory computer-readable storage medium storing computer-readable instructions that can appropriately provide a result of an analysis relating to a subject eye to a user.

[0008] Embodiments of the first aspect provide an ophthalmologic image processing device that processes an ophthalmologic image of a tissue of a subject eye. The processor of the ophthalmologic image processing device: acquires the ophthalmologic image photographed by an ophthalmologic image photographing device; inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquires a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye; acquires information of a distribution of weight relating to the analysis by the mathematical model, as supplemental distribution information, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; sets a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information; acquires an image of a tissue including the attention area among a tissue of the subject eye; and displays the image on a display unit.

[0009] Embodiments of the first aspect provide a non-transitory computer-readable medium storing computer-readable instructions that, when executed by a processor of an ophthalmologic image processing device, causes the ophthalmologic image processing device to perform processes including: acquiring an ophthalmologic image photographed by an ophthalmologic image photographing device; inputting the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquiring a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye; acquiring information of a distribution of weight relating to the analysis by the mathematical model, as supplemental distribution information, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; setting a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information; acquiring an image of a tissue including the attention area among a tissue of the subject eye; and displaying the image on a display unit.

[0010] According to the ophthalmologic image processing device and the non-transitory computer-readable medium storing the computer-readable instructions according to the first aspect of the present disclosure, an operation of a user can be appropriately assisted.

[0011] Embodiments of the second aspect provide an ophthalmologic image processing device that processes an ophthalmologic image of a tissue of a subject eye. The processor of the ophthalmologic image processing device: acquires the ophthalmologic image photographed by an ophthalmologic image photographing device; inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquires results of analyses relating to diseases or structures of a subject eye; acquires a supplemental map, for each of the results of the analyses, that indicates a distribution of weight relating to the analysis by the mathematical model, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; generates an integration map in which the supplemental maps are integrated within an identical area; and displays the integration map on a display unit.

[0012] Embodiments of the second aspect provide a non-transitory computer-readable medium storing computer-readable instructions that, when executed by a processor of an ophthalmologic image processing device, causes the ophthalmologic image processing device to perform processes including: acquiring an ophthalmologic image photographed by an ophthalmologic image photographing device; inputting the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquiring results of analyses relating to diseases or structures of a subject eye; acquiring a supplemental map, for each of the results of the analyses, that indicates a distribution of weight relating to the analysis by the mathematical model, for which an image area of the ophthalmologic image input into the mathematical model is set as a variable; generating an integration map in which the supplemental maps are integrated within an identical area; and displaying the integration map on a display unit.

[0013] According to the ophthalmologic image processing device and the non-transitory computer-readable medium storing the computer-readable instructions according to the second aspect of the present disclosure, the result of the analysis relating to a subject eye can be appropriately provided to a user.

BRIEF DESCRIPTION OF THE DRAWINGS

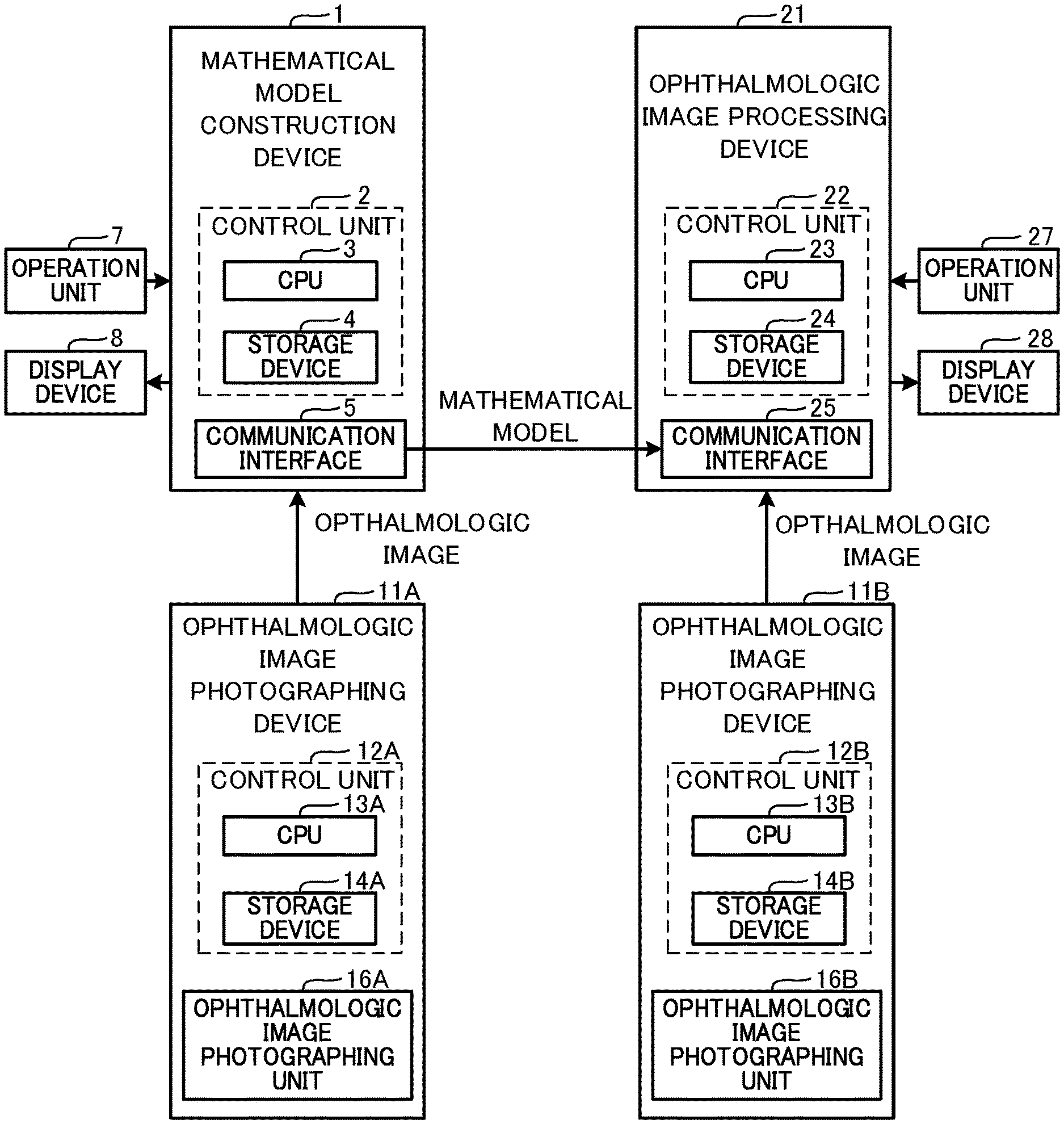

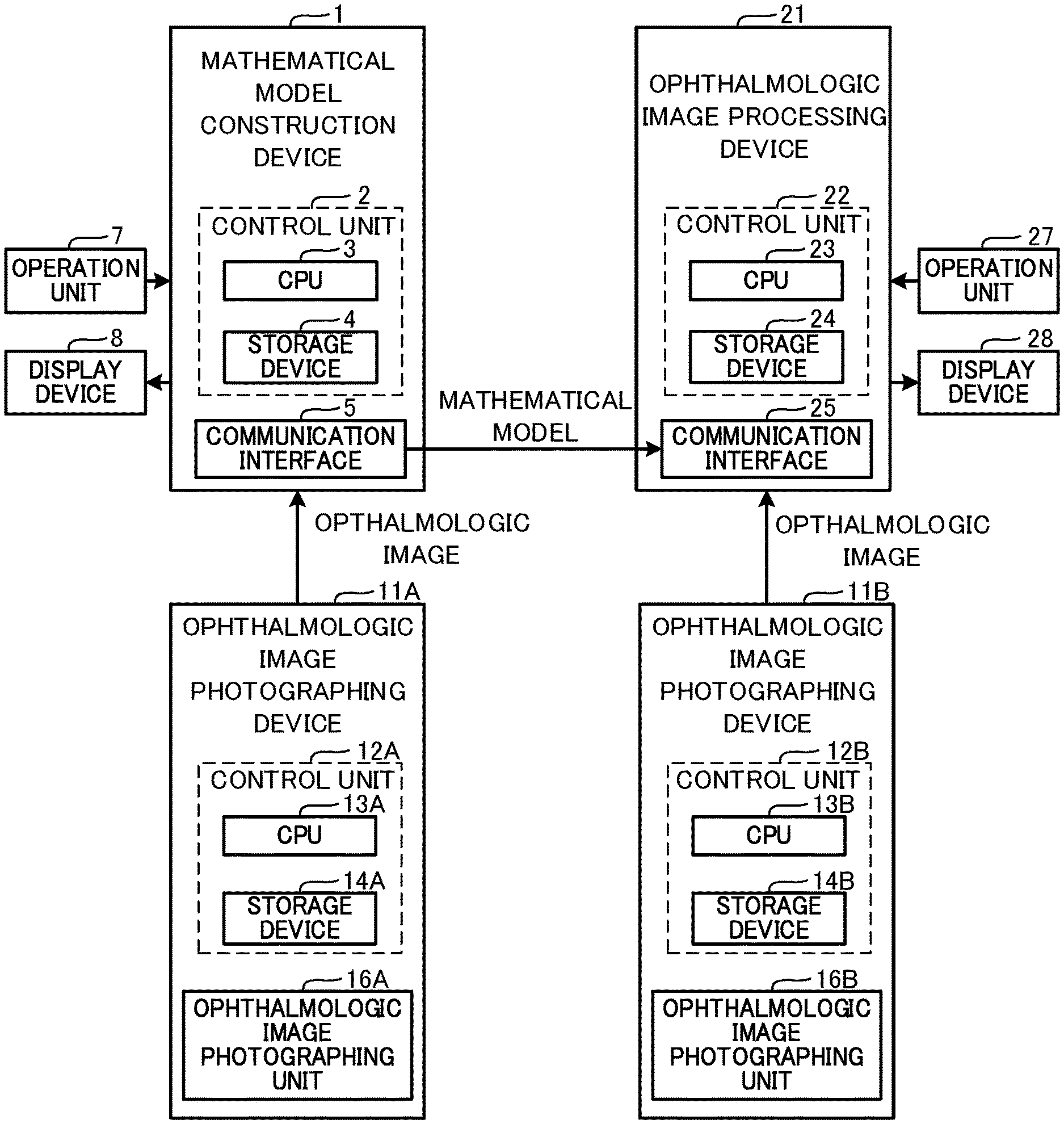

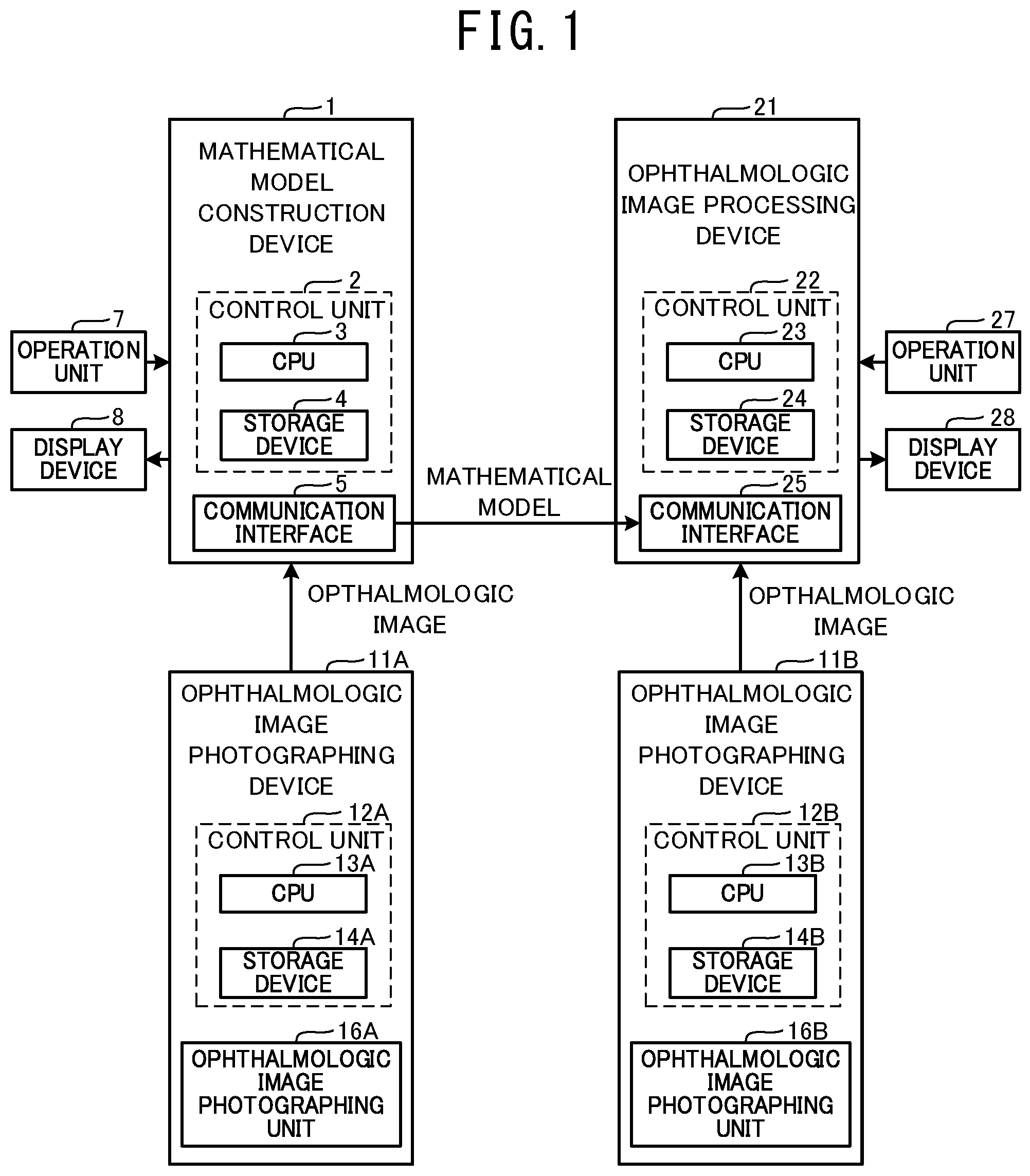

[0014] FIG. 1 is a block diagram illustrating a schematic configuration of a mathematical model construction device 1, an ophthalmologic image processing device 21, and ophthalmologic image photographing devices 11A and 11B.

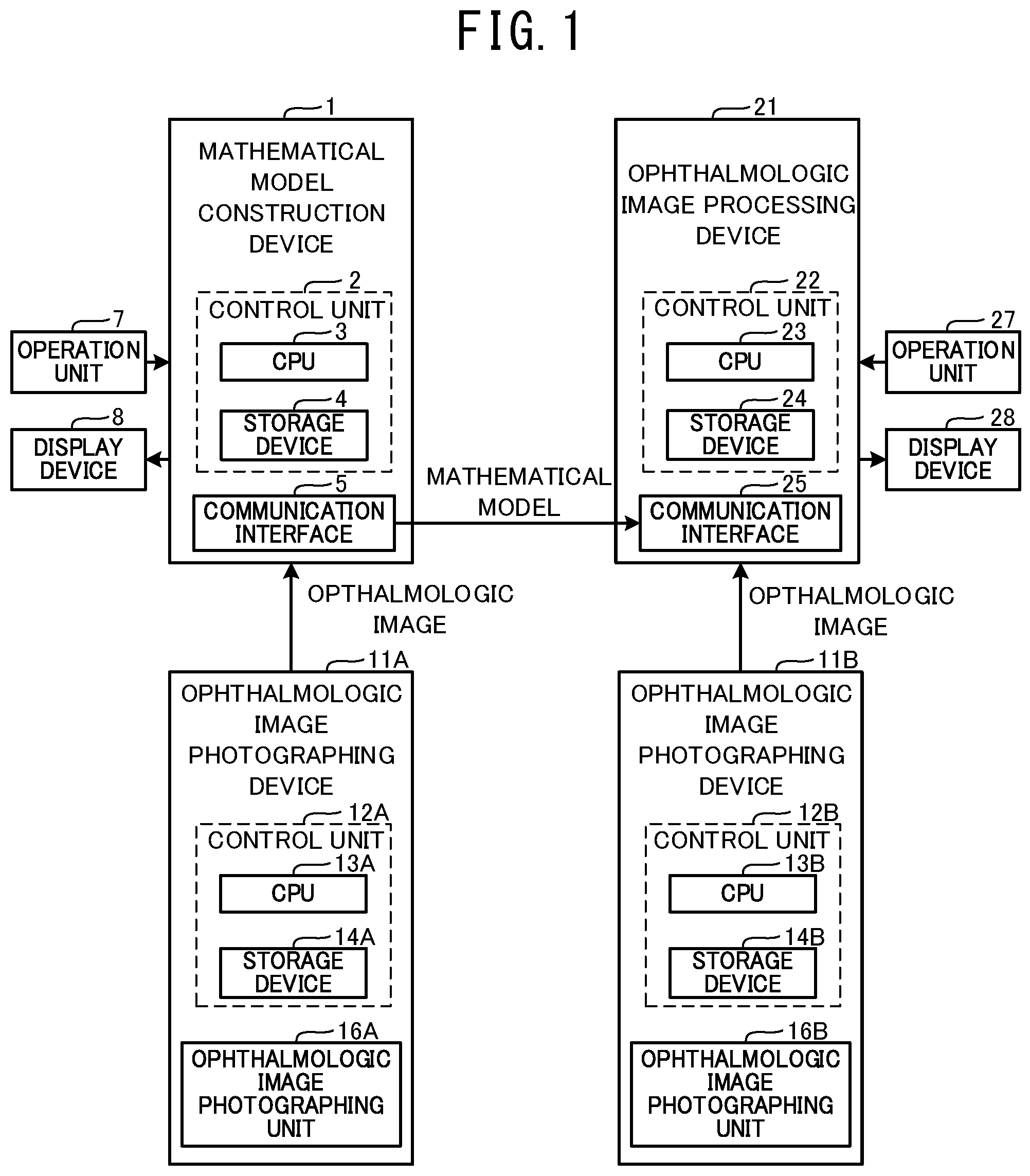

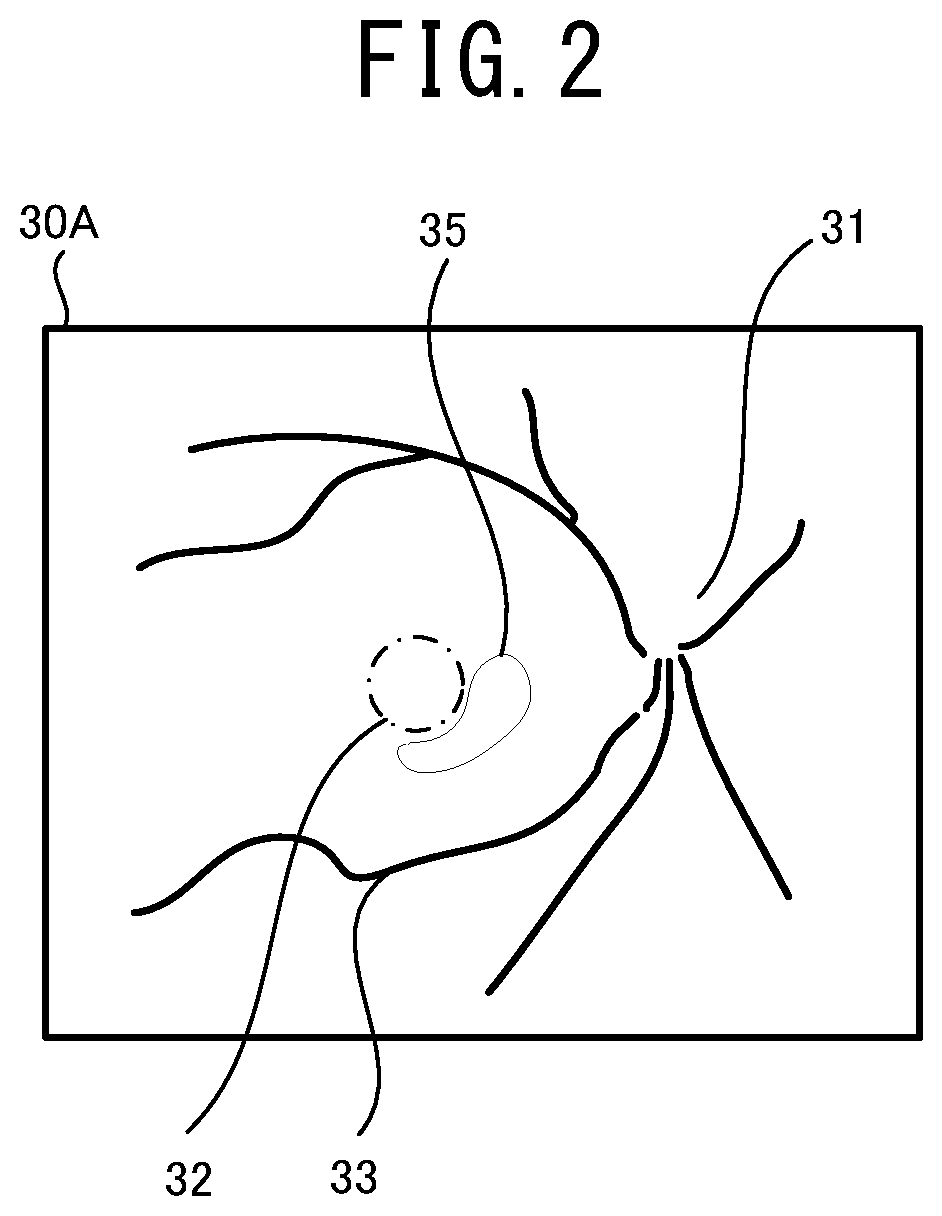

[0015] FIG. 2 illustrates one example of a front image of a fundus suffering from age-related macular degeneration.

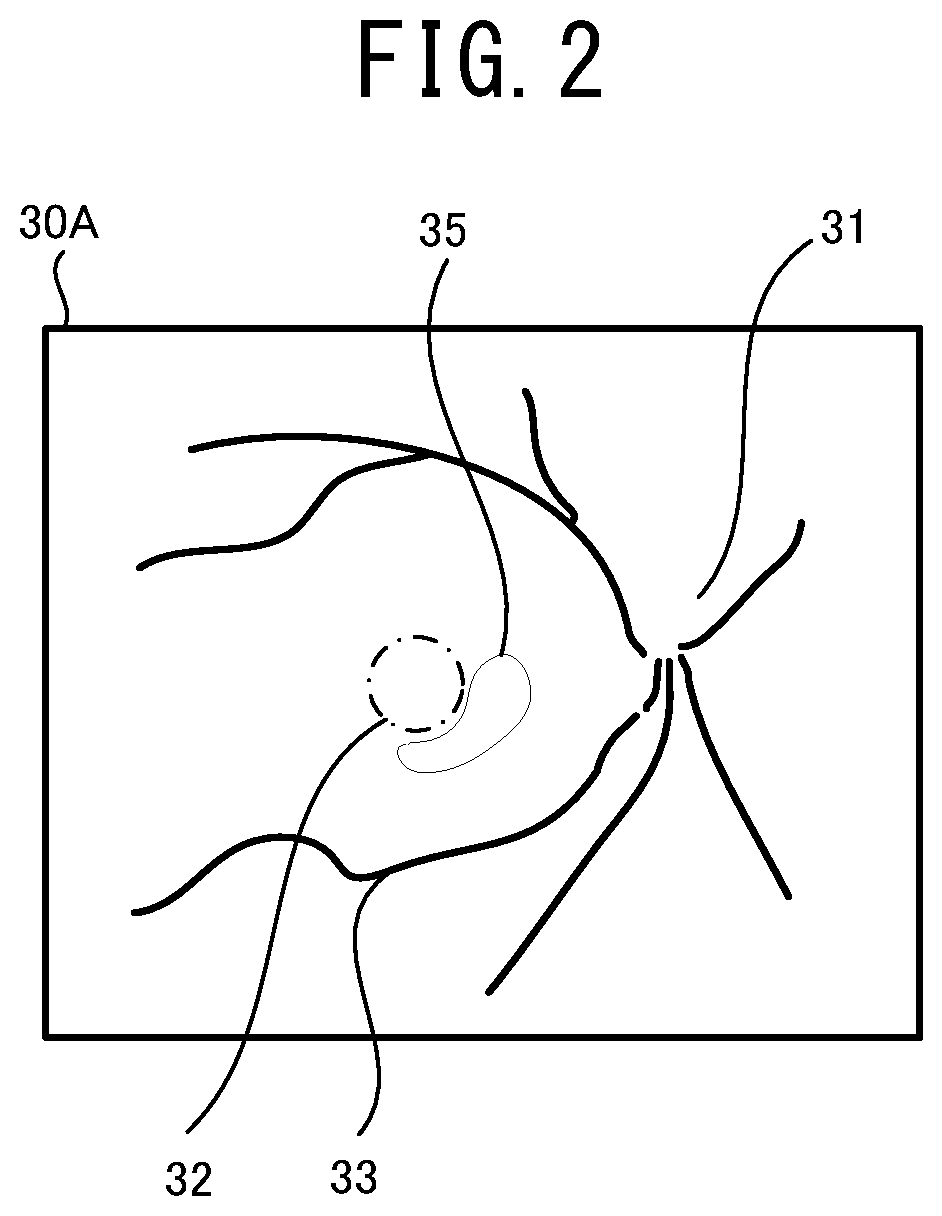

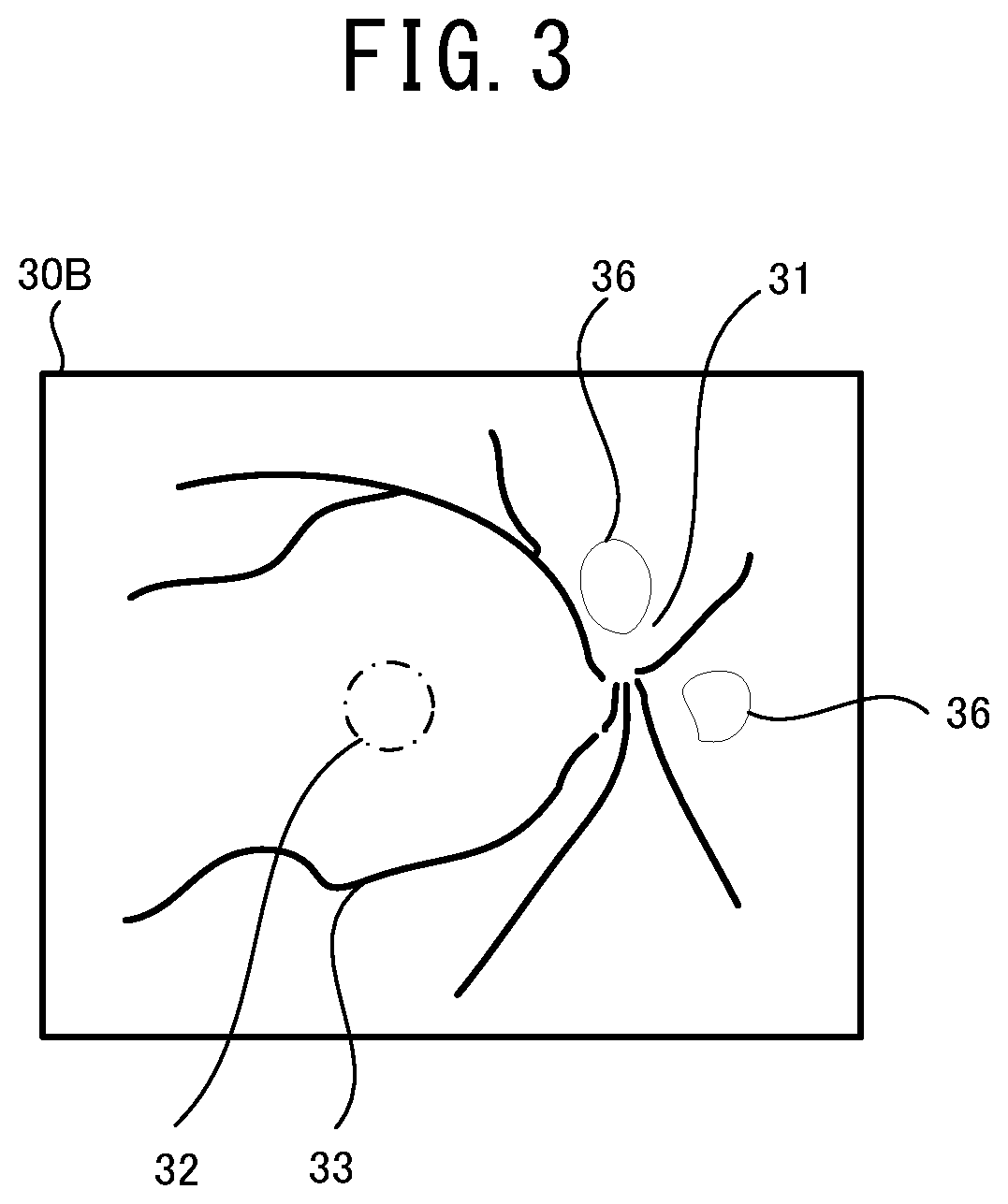

[0016] FIG. 3 illustrates one example of a front image of a fundus suffering from diabetic retinopathy.

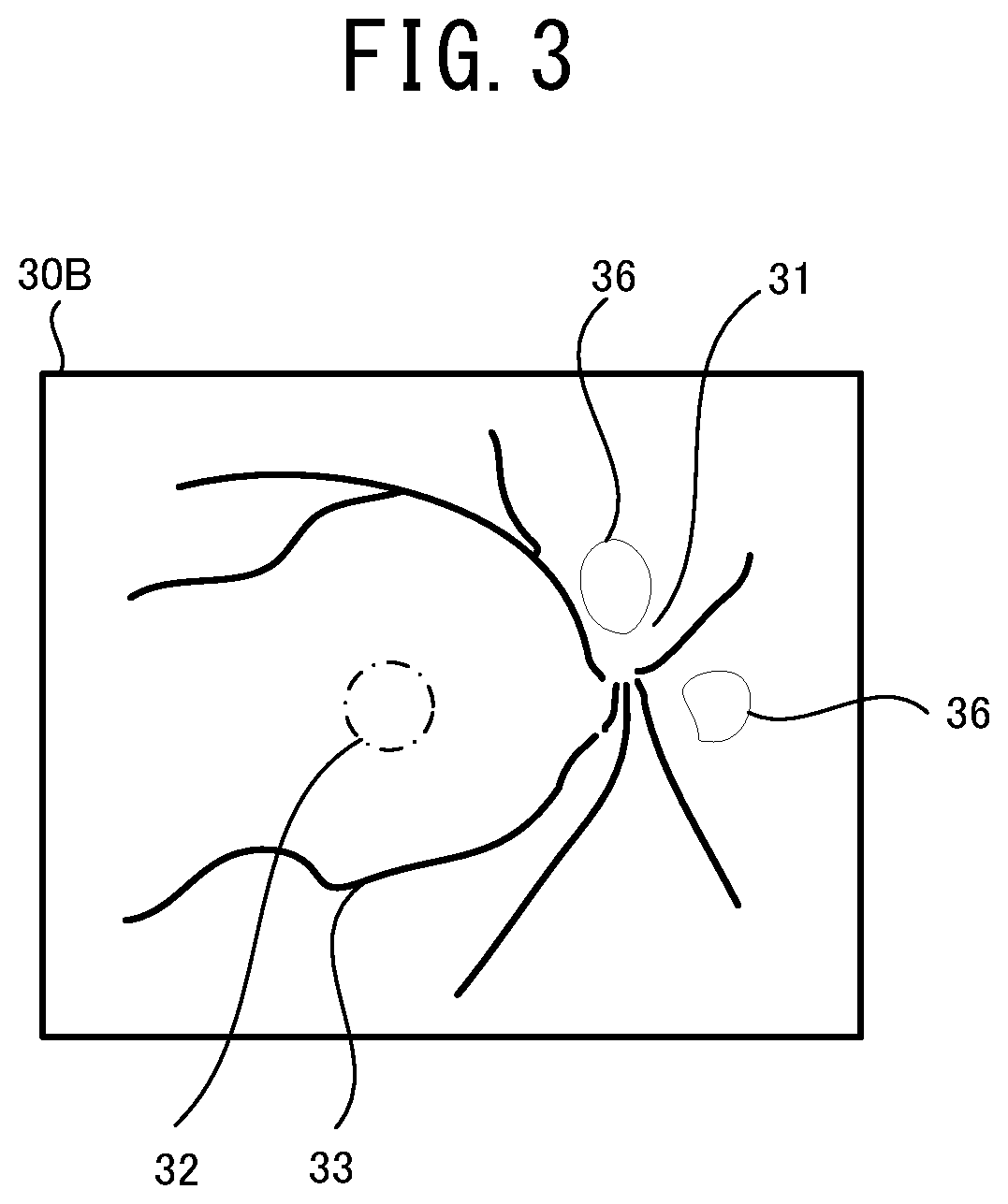

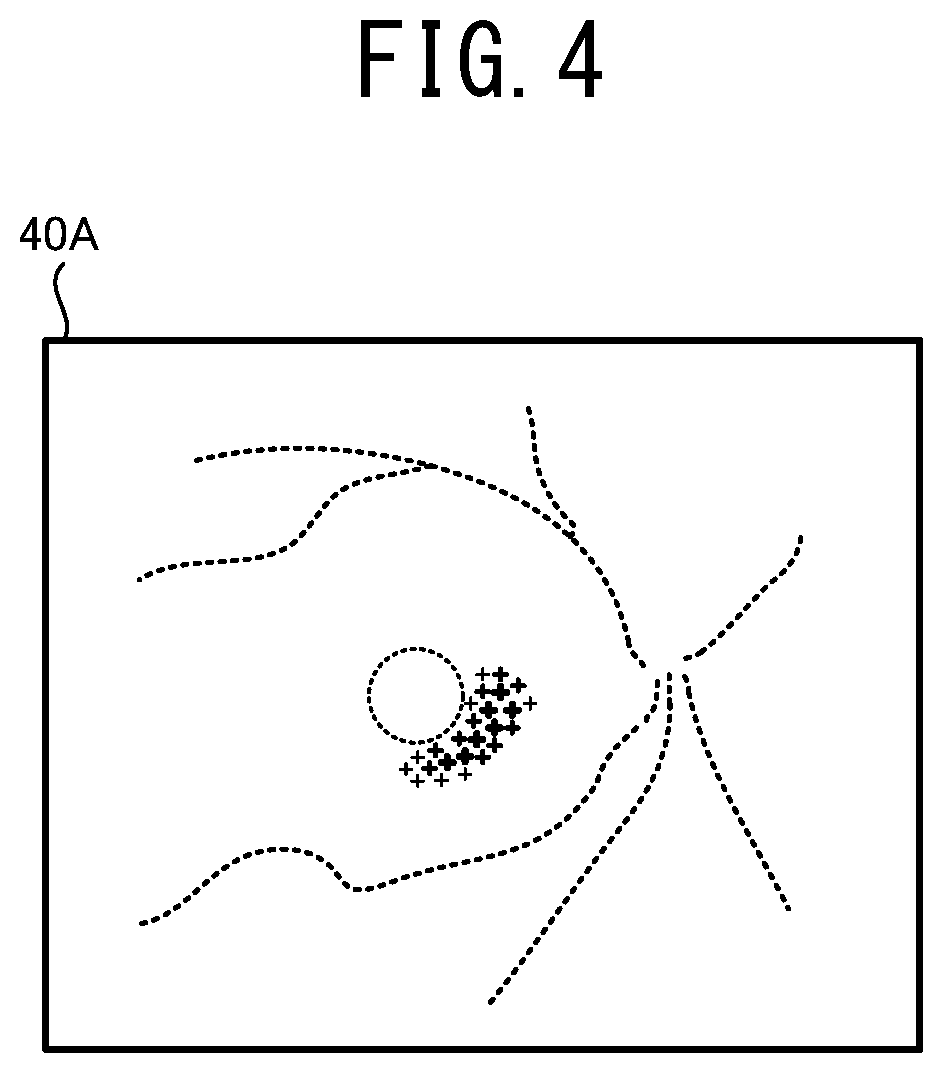

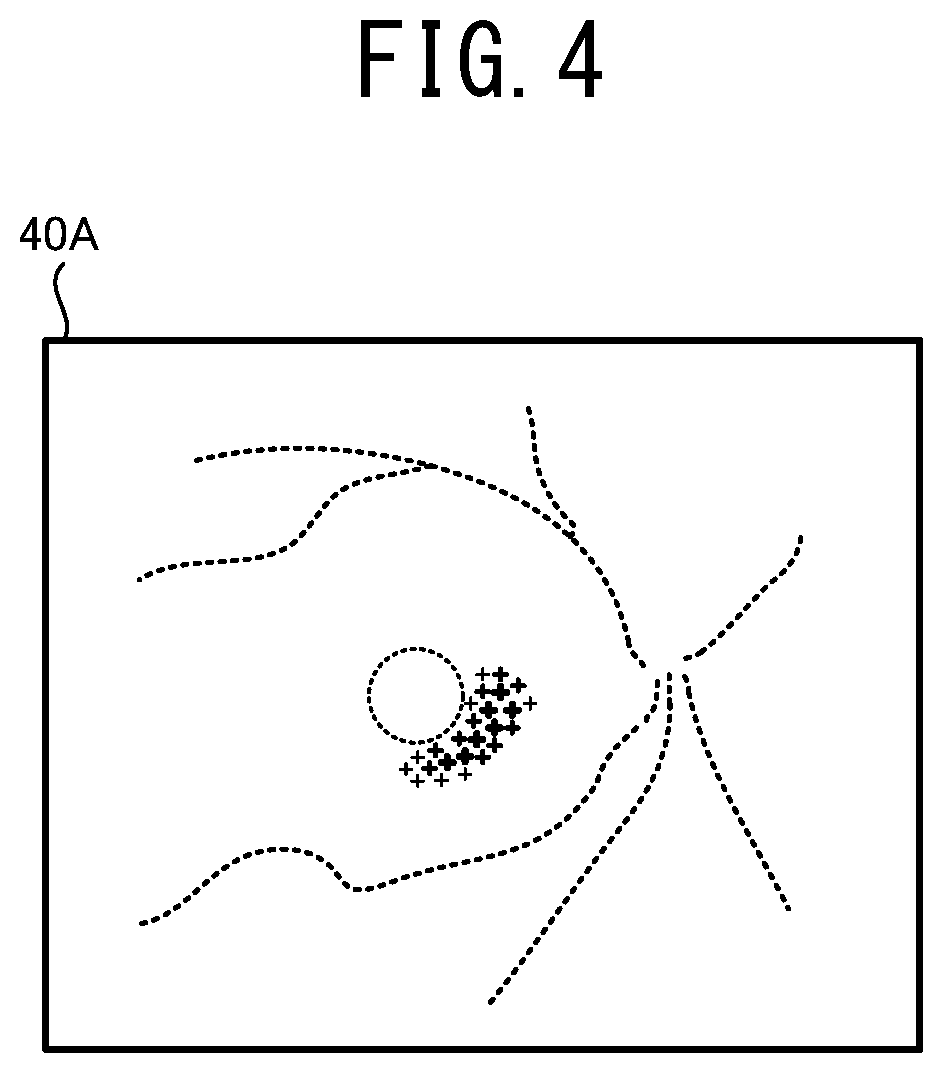

[0017] FIG. 4 illustrates one example of a supplemental map 40A in a case in which an ophthalmologic image 30A shown in FIG. 2 is subjected to an automatic analysis.

[0018] FIG. 5 illustrates one example of a supplemental map 40B in a case in which an ophthalmologic image 30B shown in FIG. 3 is subjected to an automatic analysis.

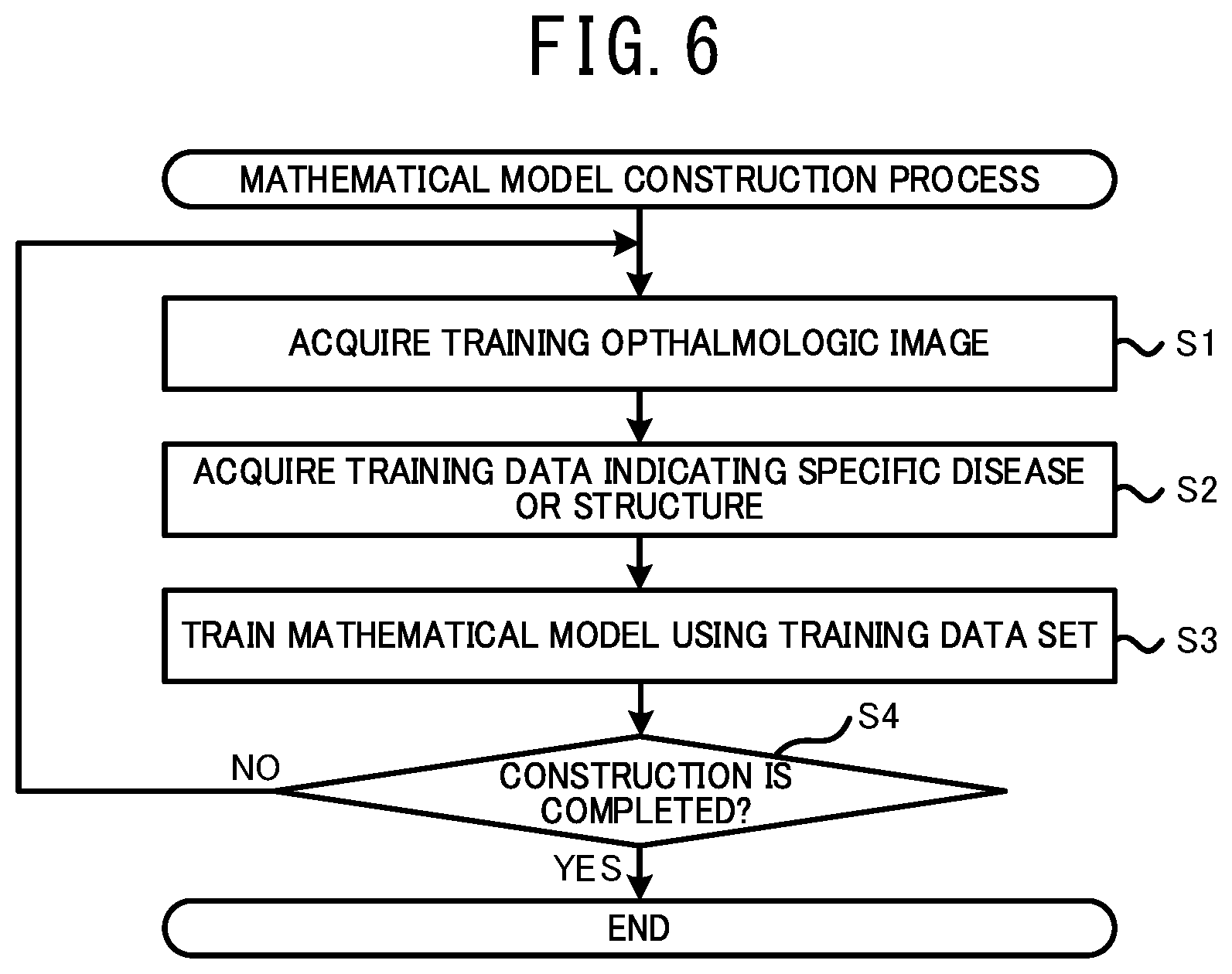

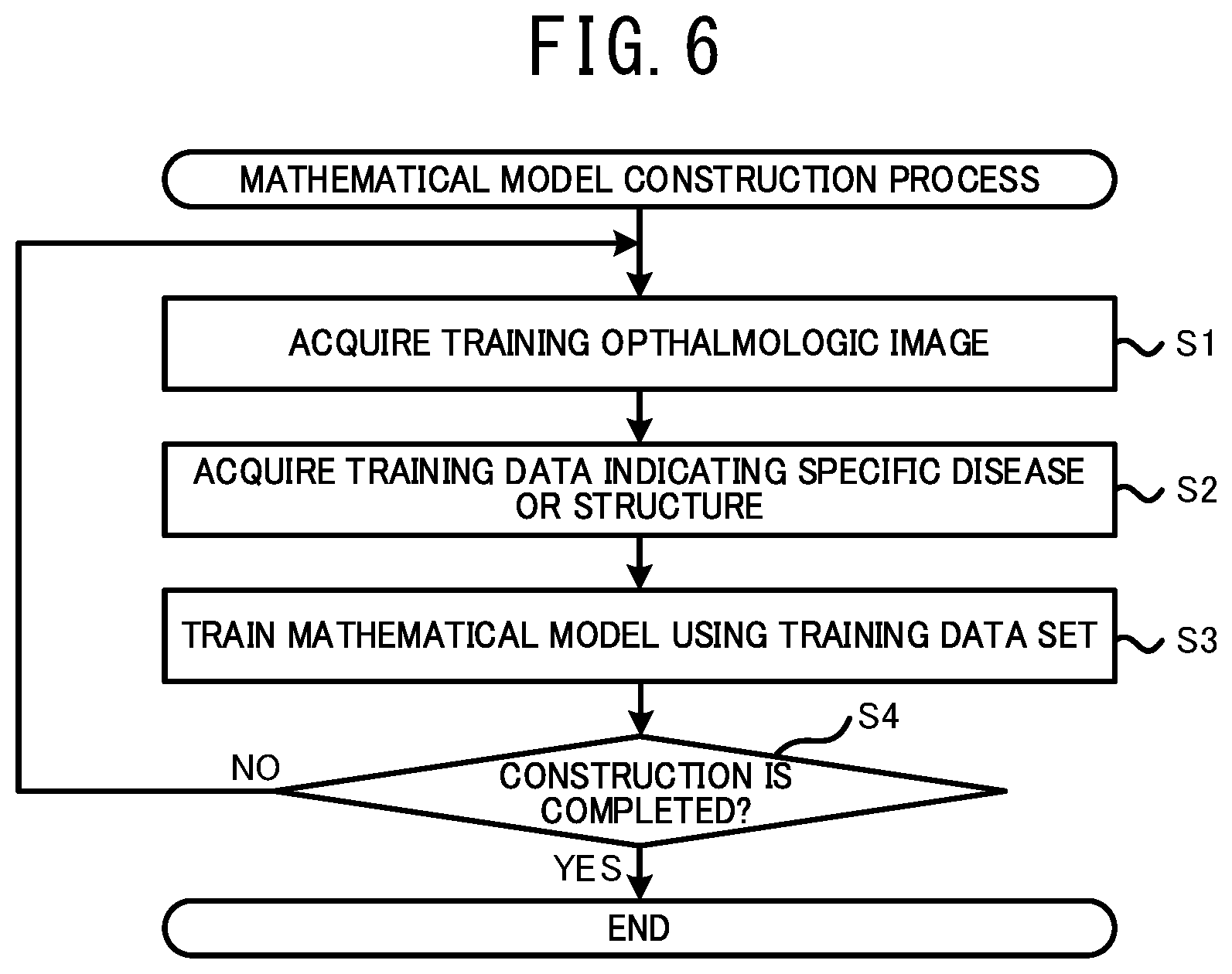

[0019] FIG. 6 is a flowchart illustrating a mathematical model construction process executed by the mathematical model construction device 1.

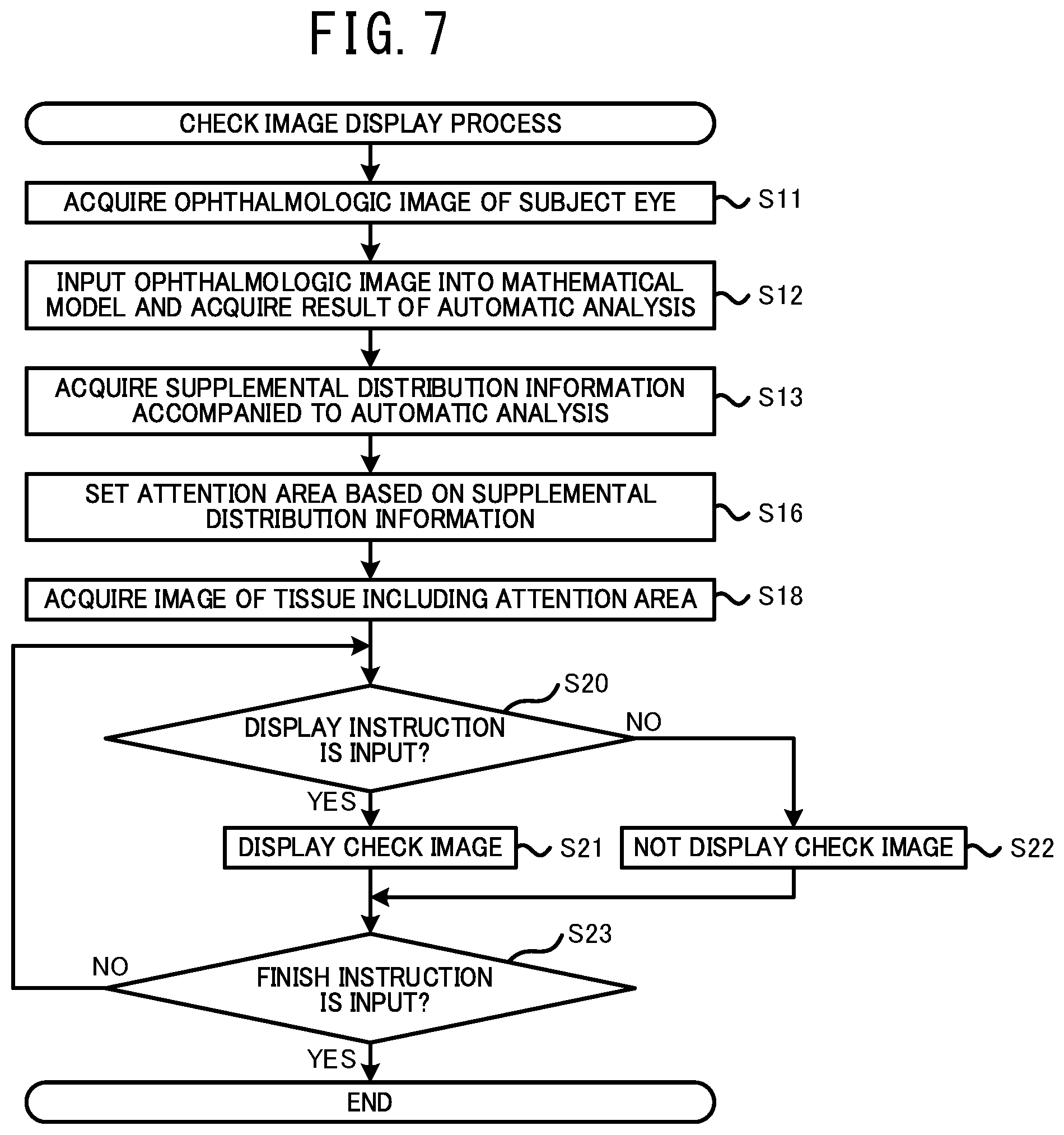

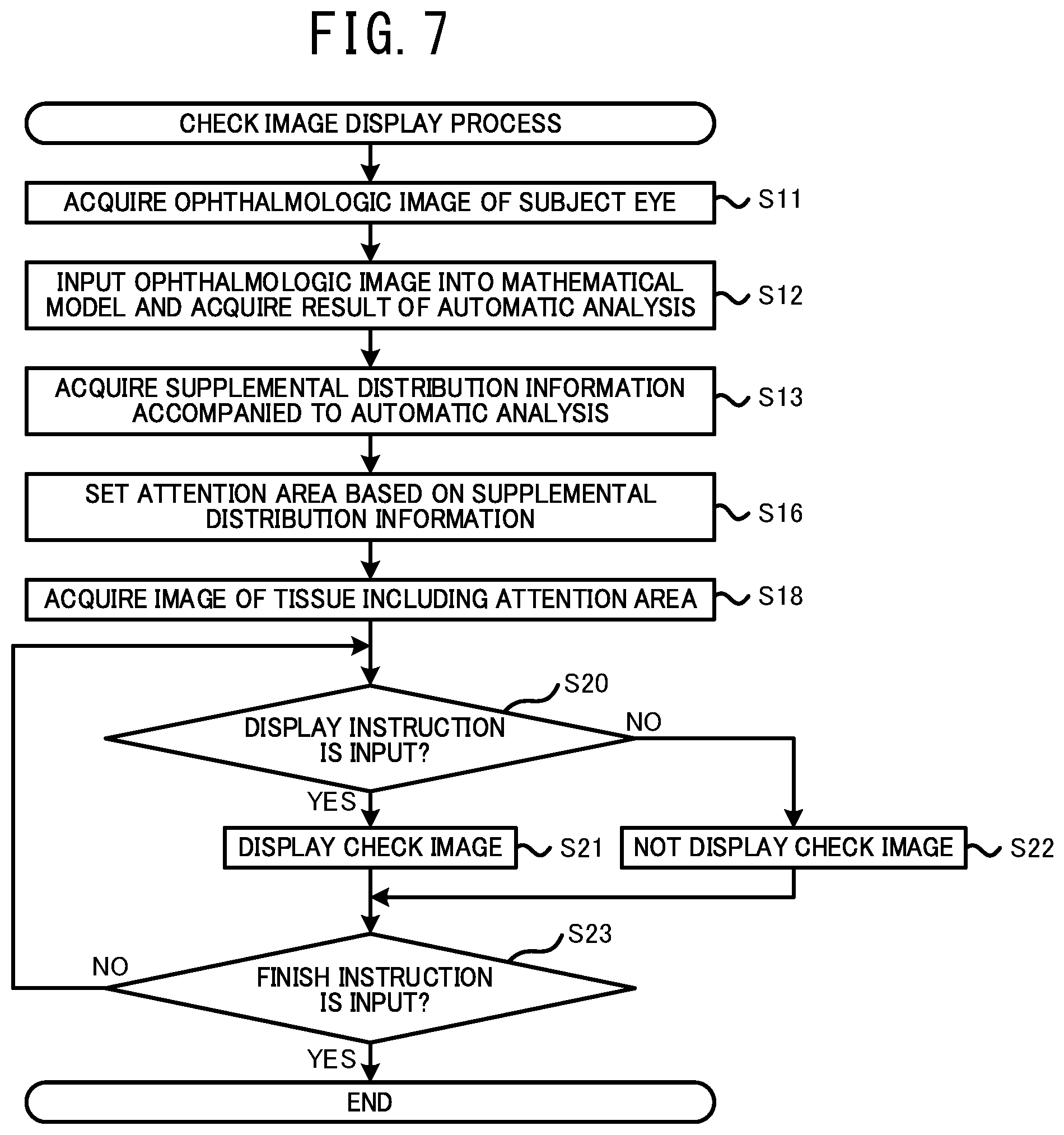

[0020] FIG. 7 is a flowchart illustrating a check image display process executed by the ophthalmologic image processing device 21.

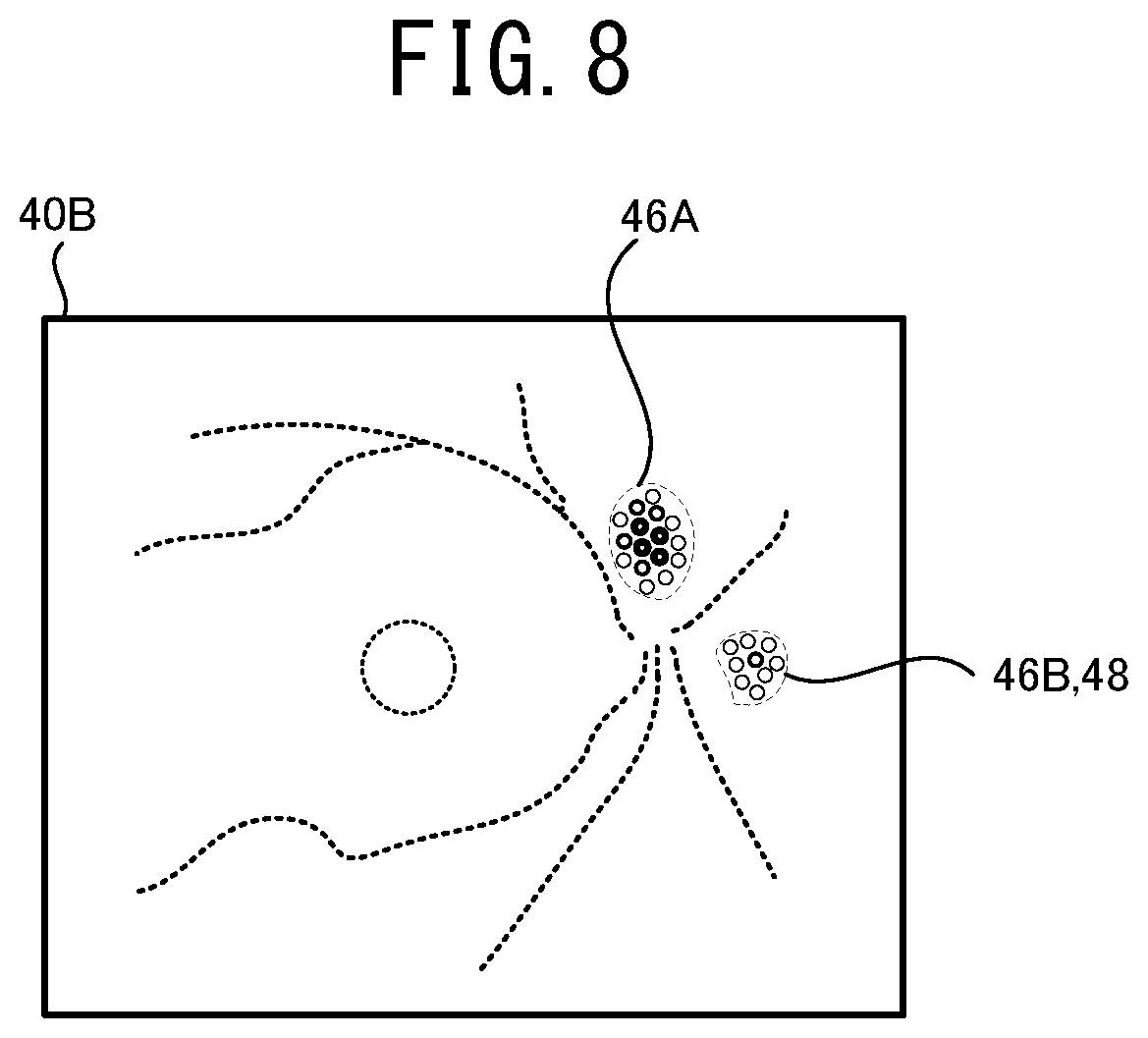

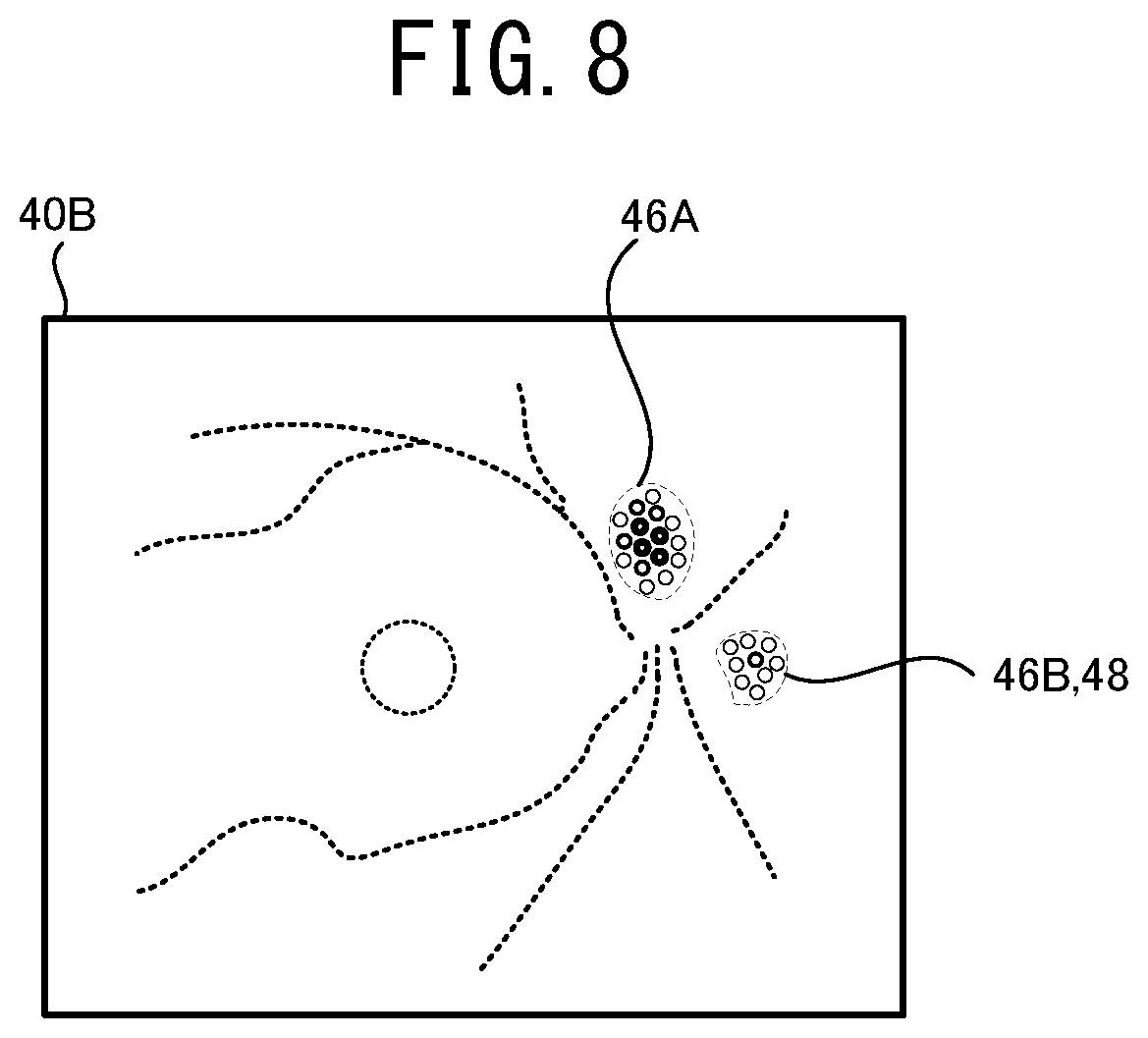

[0021] FIG. 8 illustrates one example of a method for setting areas 46A, 46B and 48 based on the supplemental map 40A.

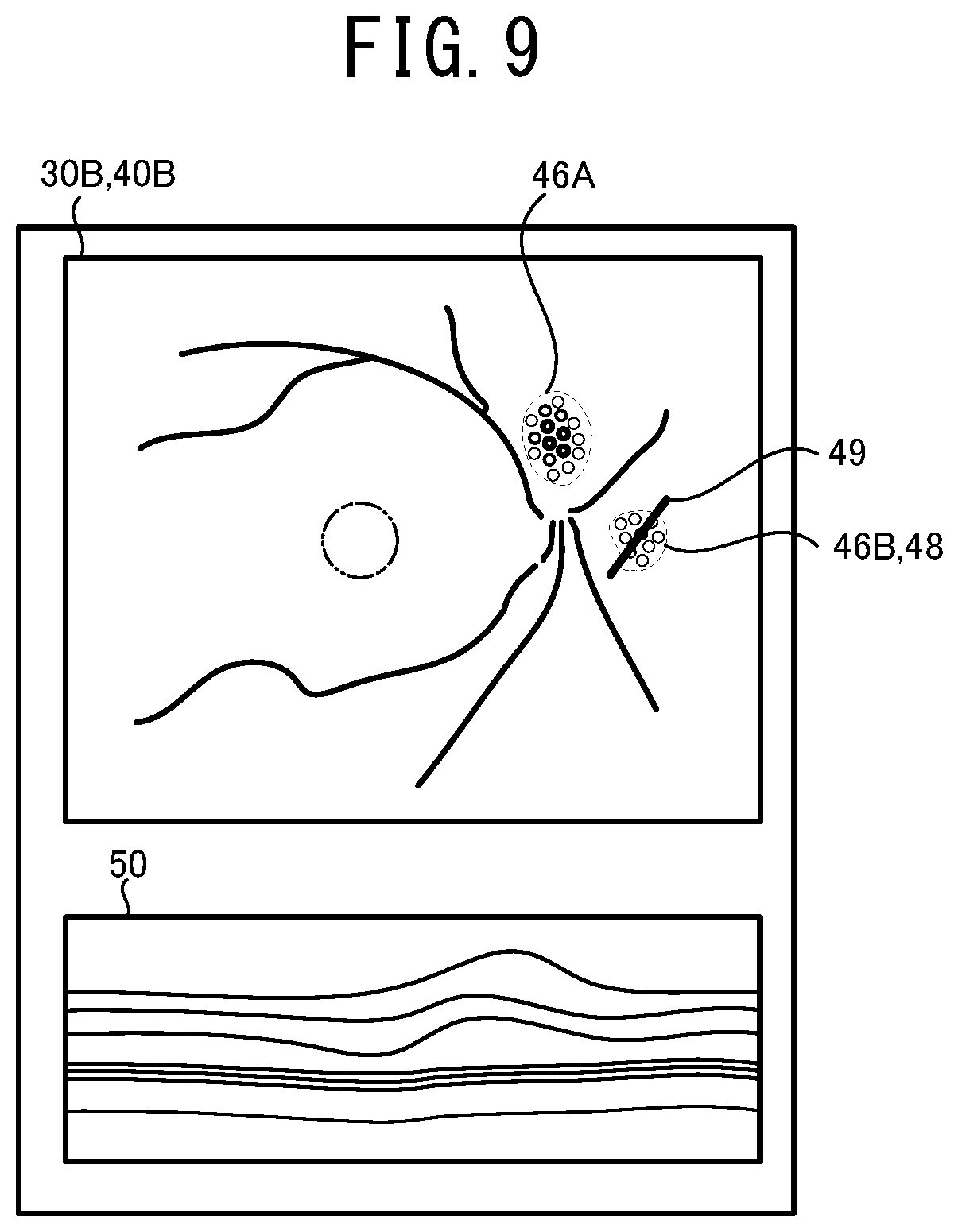

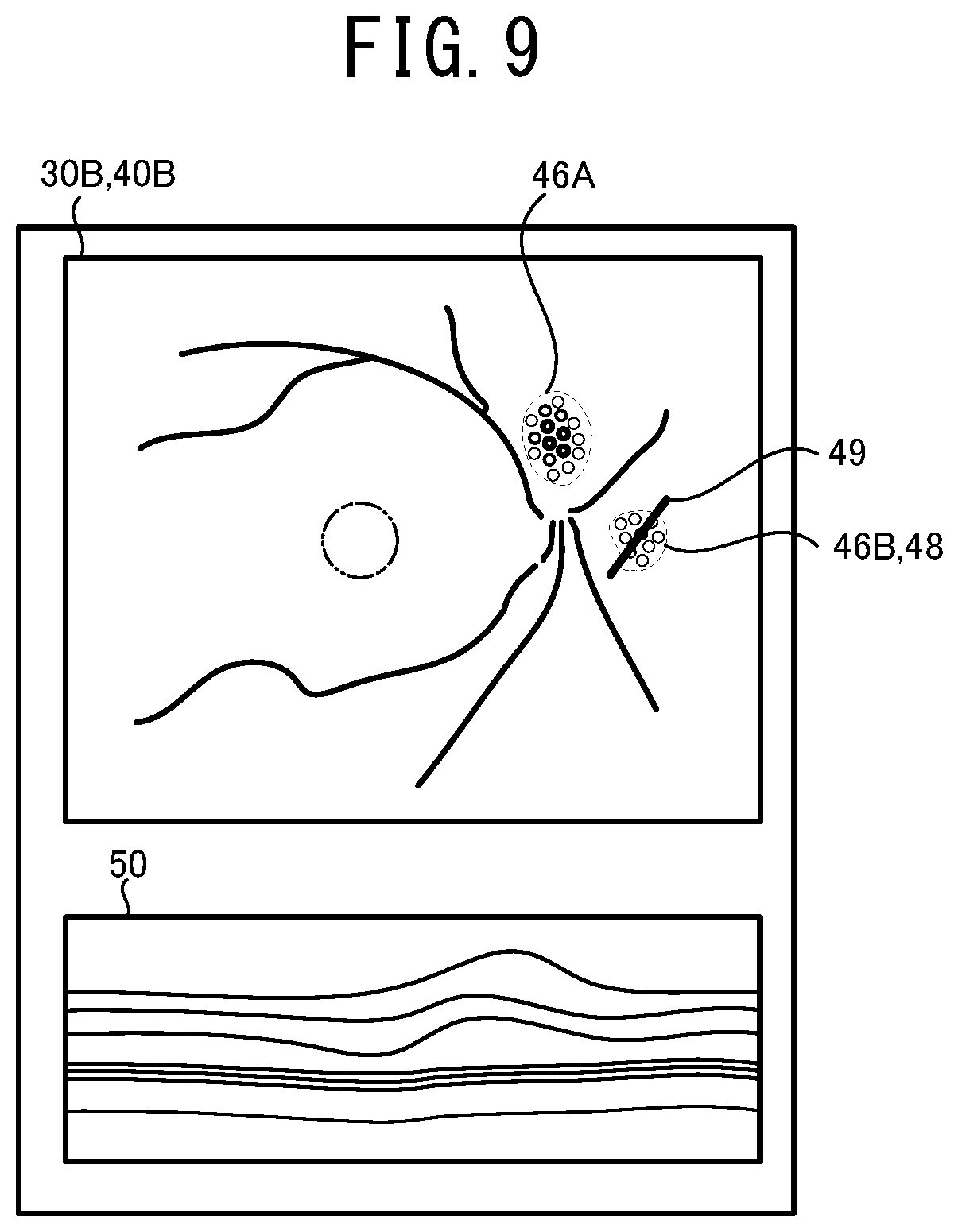

[0022] FIG. 9 illustrates one example of a display screen of a display device 28 displaying the ophthalmologic image 30B, the supplemental map 40B, and a check image 50.

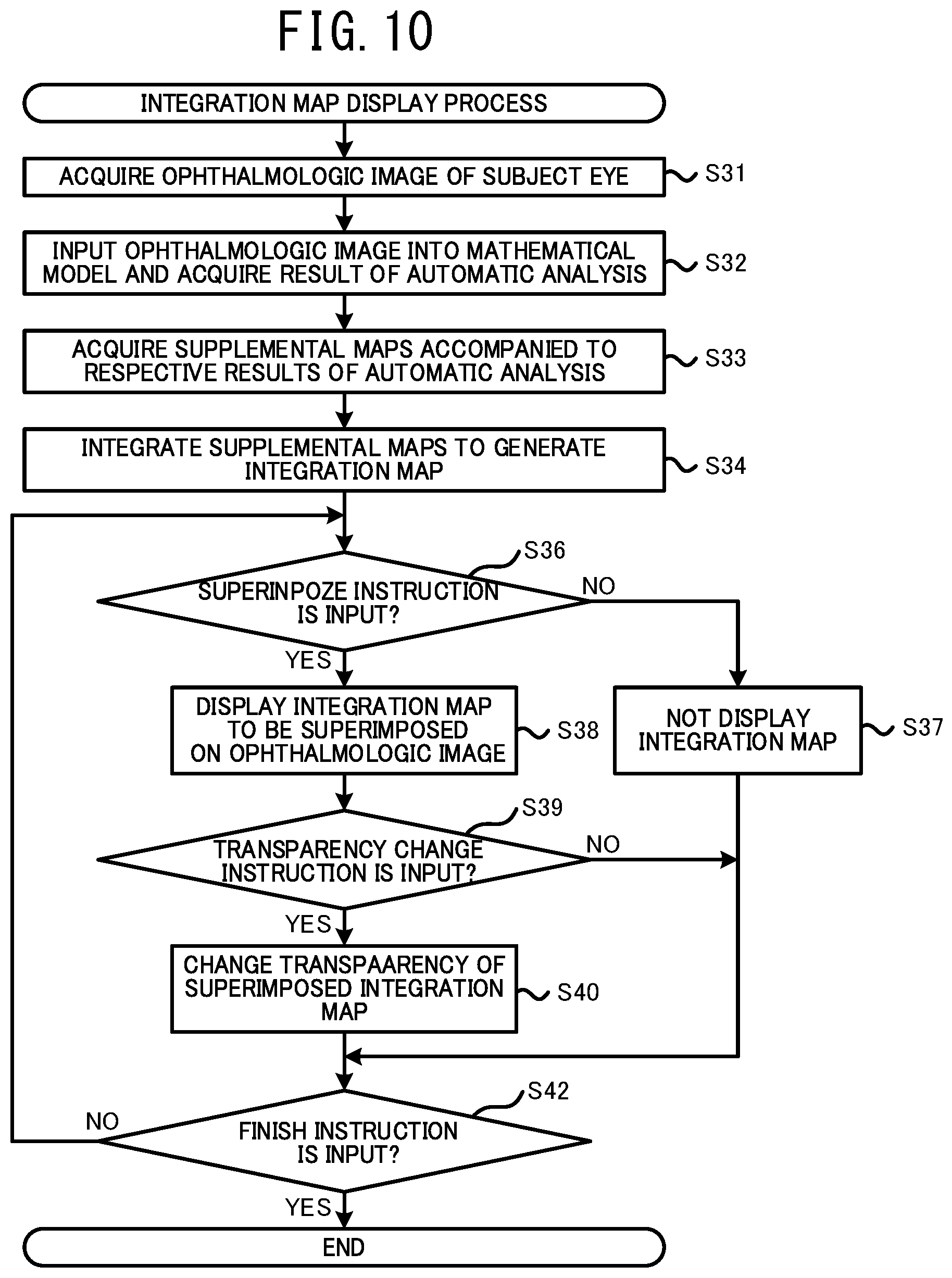

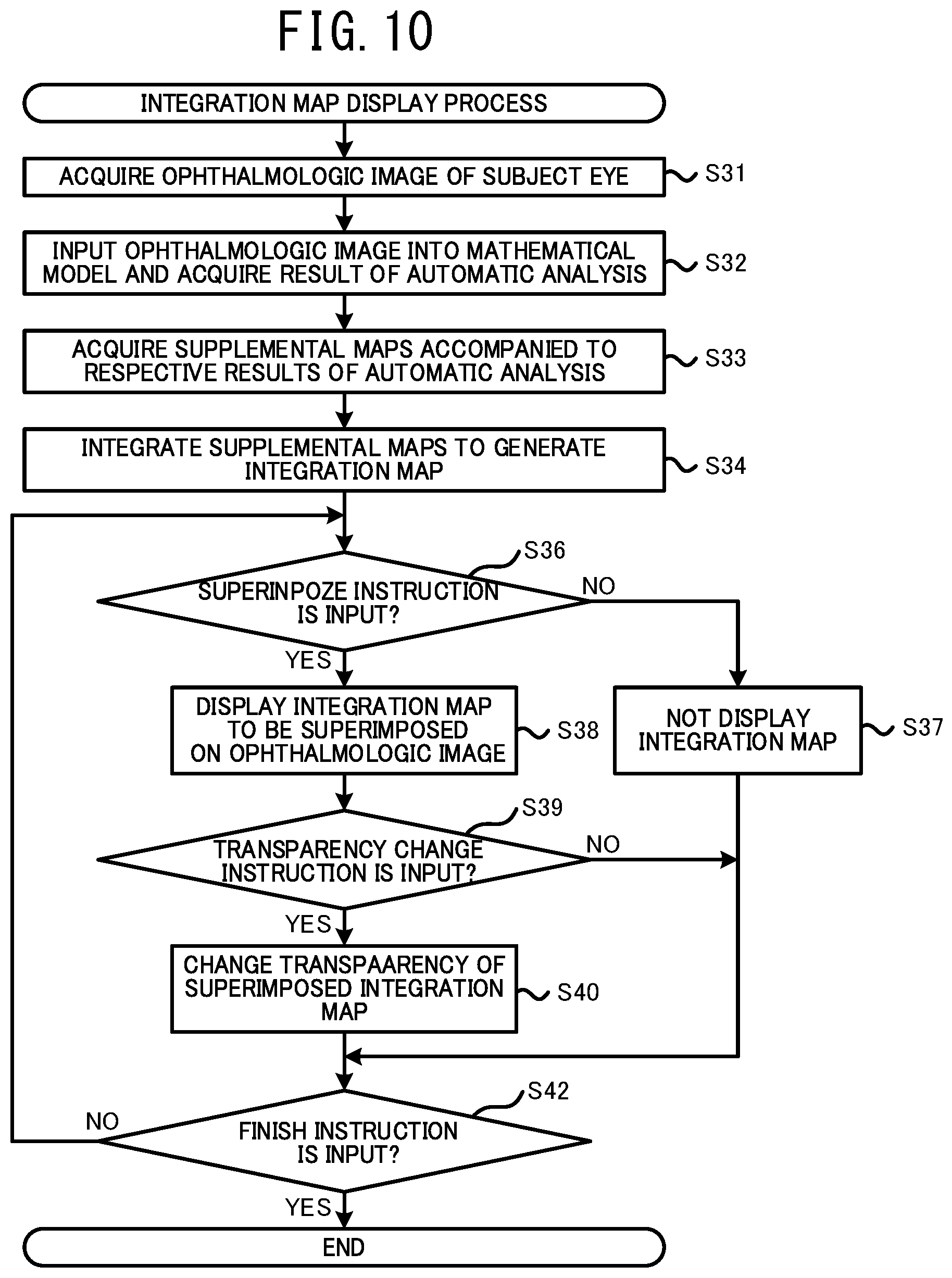

[0023] FIG. 10 is a flowchart illustrating an integration map display process executed by the ophthalmologic image processing device 21.

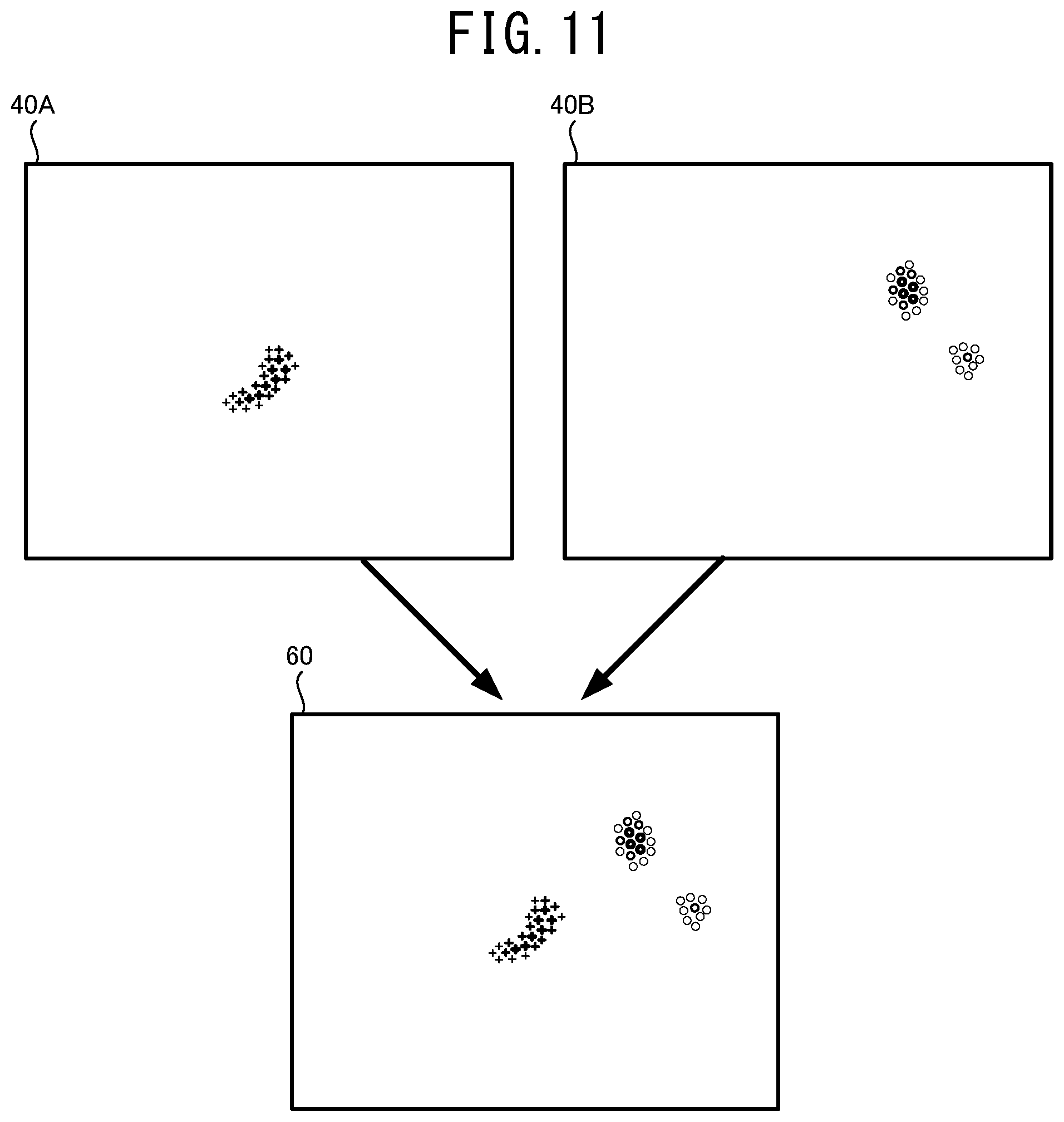

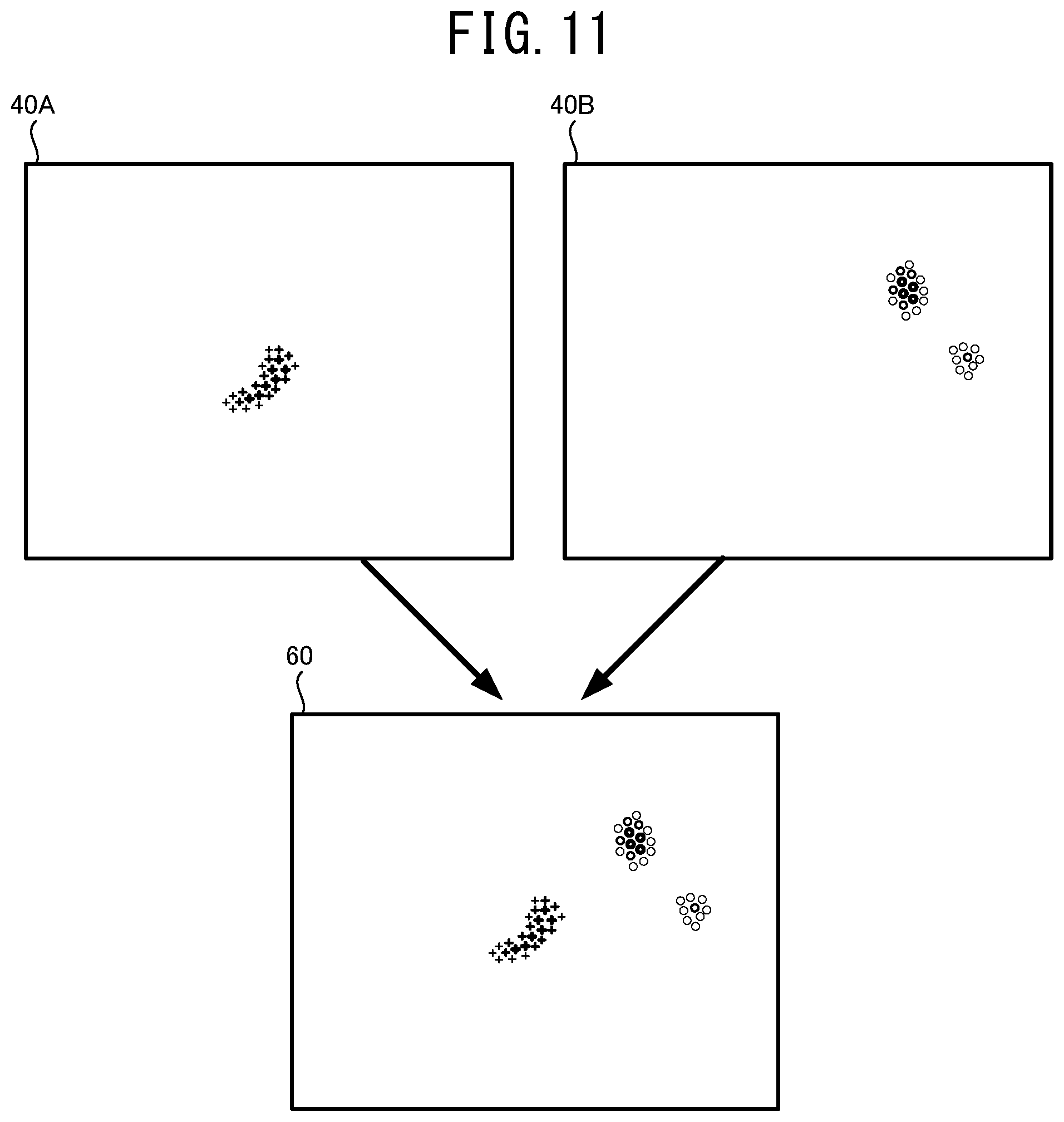

[0024] FIG. 11 illustrates one example of a method for generating an integration map 60 by integrating two supplemental maps 40A and 40B.

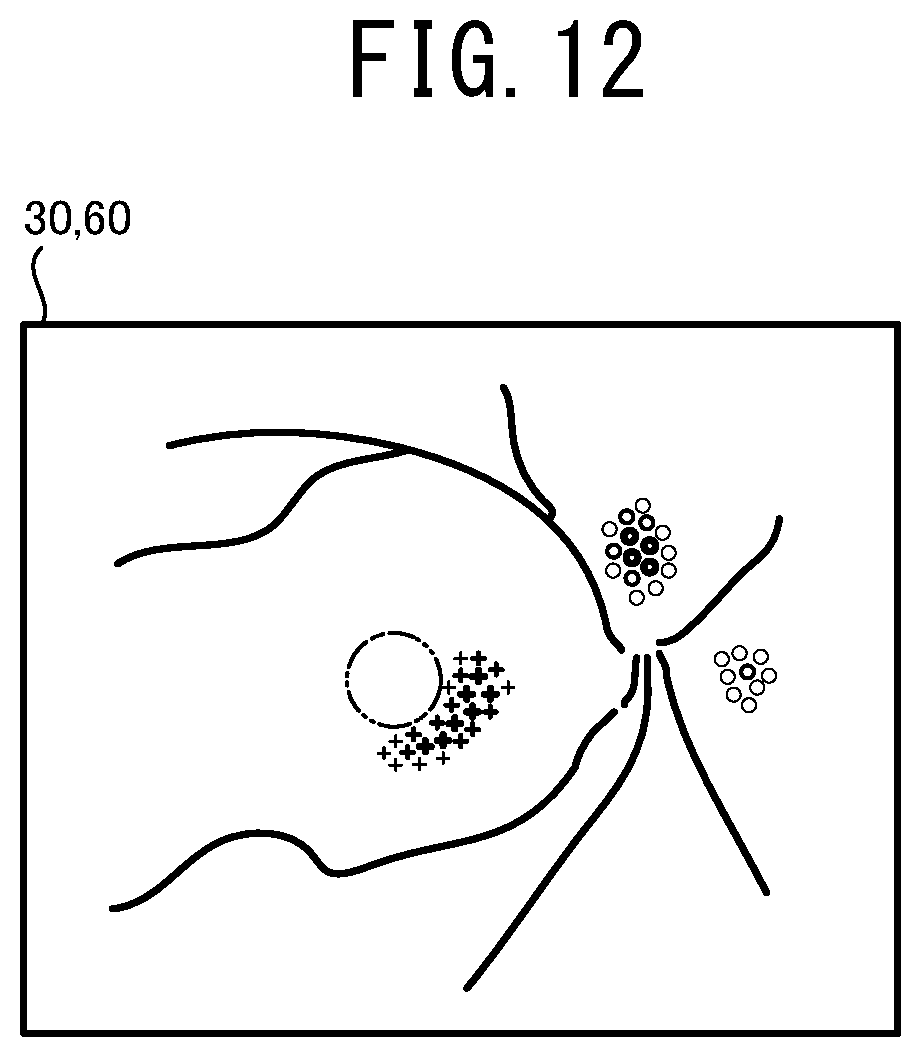

[0025] FIG. 12 illustrates one example of the ophthalmologic image 30 on which the integration map 60 is superimposed.

DETAILED DESCRIPTION

[0026] A processor of an ophthalmologic image processing device exemplarily disclosed in the present disclosure acquires an ophthalmologic image photographed by an ophthalmologic image photographing device. The processor inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquires a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye. The processor acquires information of a distribution of weight relating to the analysis by the mathematical model, as supplemental distribution information, for which an image area (namely, coordinates in the image area) of the ophthalmologic image input into the mathematical model is set as a variable. The processor sets a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information. The processor acquires an image of a tissue including the attention area among a tissue of the subject eye and displays the image on a display unit.

[0027] In this case, a user (for example, a doctor or the like) can appropriately check the tissue in the attention area that is set based on the supplemental distribution information among the tissue of the subject eye, through the image to be displayed. For example, in a case in which the image of the tissue in an area where the weight relating to the analysis is large is displayed, the user can easily check the image of an important part where the weight is large. While, in a case in which the image of the tissue in an area where the weight is small is displayed, the user can easily check the image or the like of a part, for example, where the reliability of the analysis is low. Consequently, an operation such as a diagnosis or the like by the user can be appropriately assisted.

[0028] The ophthalmologic image photographing device that photographs the ophthalmologic image to be input into the mathematical model and the ophthalmologic image photographing device that photographs the image of the tissue including the attention area may be the identical device or different devices.

[0029] The number of the ophthalmologic images to be input into the mathematical model may be one or two or more. In a case in which a plurality of the ophthalmologic images is input into the mathematical model, the ophthalmologic images may be photographed by the identical device or different devices. Further, a dimension of the ophthalmologic image to be input into the mathematical model is not especially limited. The ophthalmologic image may be a static image or a moving image.

[0030] The number of the attention areas to be set may be one or two or more. In a case in which a plurality of the attention areas is set, an image of all of the attention areas may be displayed or an image of a certain attention area(s) (for example, the attention area selected by a user or the like) may be displayed.

[0031] The supplemental distribution information may include information (may be also called an "attention map") indicating a distribution of a degree of influence (a degree of attention) that affects the result of the analysis output by the mathematical model. In this case, the attention map is properly set in accordance with the degree of influence that affects an automatic analysis by the mathematical model.

[0032] Further, the supplemental distribution information may include information (may be also called a "certainty degree map") indicating a distribution of a degree of certainty of the analysis by the mathematical model. In this case, the attention area is properly set in accordance with the degree of certainty of the analysis by the mathematical model.

[0033] The "degree of certainty" may be defined by a highness of reliability of the analysis, or alternatively a reciprocal of a lowness of the reliability (degree of uncertainty). In a case in which the degree of uncertainty is x %, the degree of certainty may use a value of (100-x) %. Further, the "degree of certainty" denotes a degree of confidence when the mathematical model executes the analysis. The degree of certainty and accuracy of the result of the analysis are not always proportional.

[0034] A specific content of the certainty degree map can be selected as needed. For example, the degree of certainty may include the entropy of a probability distribution (average information amount) in the automatic analysis by the mathematical model. The entropy denotes unreliability, randomness, and a degree of disorder. In a case in which the degree of certainty of the automatic analysis is the maximum, the entropy of the probability distribution is zero. Further, as the degree of certainty is decreased, the entropy is increased. Accordingly, when the entropy of the probability distribution is adopted as the degree of certainty, the certainty degree map is properly generated. Further, a value other than the entropy may be adopted as the degree of certainty. For example, at least one of standard deviation, coefficient of variation, variance and the like that indicates a degree of dispersion of the probability distribution in the automatic analysis may be adopted as the degree of certainty. Kullback-Leibler divergence or the like, which is a measure of a difference between the probability distributions, may be adopted as the degree of certainty. The maximum value of the probability distribution may be adopted as the degree of certainty. Further, in a case in which ranking the diseases or the like is performed using the automatic analysis, a probability of the first rank, or a difference between the probability of the first rank and a probability of other rank (for example, a probability of the second rank or the sum of probabilities of ranks other than the first rank) may be adopted as the degree of certainty. Further, variation of outputs between the mathematical models of which data, condition, or the like used in the learning are different from each other, may be adopted as the degree of certainty. In this case, it may be adopted even if the output of the mathematical model is not the probability distribution.

[0035] In a case in which the certainty degree map is adopted as the supplemental distribution information, the processor may set an area of which the degree of certainty of the automatic analysis relating to a specific disease or a specific structure is equal to or smaller than a threshold, within the image area of the ophthalmologic image, as the attention area. In this case, an image of a part where the degree of certainty is small (namely, a part where the reliability of the analysis is low) in a specific analysis (for example, an analysis relating to a specific disease) among the tissue of the subject eye is displayed on the display unit. Accordingly, the user can check a state of the part where the degree of certainty of the analysis is small, by himself/herself using the displayed image. Consequently, an operation such as a diagnosis or the like of the user is appropriately assisted.

[0036] The processor may set an area including a position of which the weight (for example, at least one of the degree of influence and the degree of certainty) shown by the supplemental distribution information is the largest or an area of which the weight is equal to or larger than a threshold, within the image area of the ophthalmologic image, as the attention area. In this case, an image of a part where the weight in the automatic analysis is large, among the tissue of the subject eye, is displayed on the display unit. Accordingly, the user can check a state of the important part where the weight is large, by himself/herself using the displayed image. Consequently, an operation such as a diagnosis or the like of the user is appropriately assisted.

[0037] A method for setting the attention area may be modified. For example, the processor may set an area where the weight is relatively larger than other area, within the image area of the ophthalmologic image, as the attention area. Also in this case, the user can appropriately check a state of the important area where the weight is large.

[0038] The processor may display the image of the supplemental map indicating the distribution of the weight, on the display unit. The processor may set an area corresponding to an area designated by the user on the displayed supplemental map, within the image area of the ophthalmologic image input into the mathematical model, as the attention area. The processor may acquire the image of the tissue including the attention area and display the image on the display unit. In this case, the user can easily display the image of the area that the user wants to check based on the distribution of the weight, in the ophthalmologic image processing device.

[0039] The processor may acquire a tomographic image of a part passing the set attention area, among the tissue of the subject eye, and display the tomographic image on the display unit. In this case, the user can appropriately check a state of the tissue in a depth direction at the part passing the attention area, based on the displayed image. Consequently, the use can further appropriately perform the operation such as a diagnosis. Here, the tomographic image may be a two dimensional tomographic image or a three dimensional tomographic image. The tomographic image may be photographed by, for example, an OCT device or the like.

[0040] The processor may extract an image area including the attention area from the ophthalmologic image for which a tissue of the subject eye is photographed and display the image on the display unit. In this case, the use can further appropriately check a state of the tissue in the attention area based on the image for which the area including the attention area is extracted. An image from which the image area is extracted may be identical to or different from the ophthalmologic image input into the mathematical model.

[0041] Further, a display mode of the image to be displayed on the display unit based on the attention area may be modified. For example, the processor may display the ophthalmologic image for which the tissue of the subject eye is photographed, on the display unit such that image quality (for example, at least one of contrast, resolution, transparency and the like) of an image area including the attention area is set to be higher than that of an image area other than the attention area. Further, the processor may display the ophthalmologic image on the display device in a state in which the image area including the attention area is enlarged. Also in this case, the user can appropriately check a state of the tissue in the attention area. Further, the processor may display the ophthalmologic image for which the tissue of the subject eye is photographed, on the display unit in a state in which an area other than the attention area is masked.

[0042] The processor may switch whether the image of a tissue including the attention area is displayed or is not displayed on the display unit, in accordance with an instruction input by a user. In this case, the user can check a state of the tissue including the attention area at appropriate timing.

[0043] Further, even in a case in which the result of the analysis is acquired based on the ophthalmologic image without using the mathematical model trained by the machine learning algorithm, the processor can acquire the supplemental distribution information accompanied to the result of the analysis. In this case, the ophthalmologic image processing device can be represented as below. An ophthalmologic image processing device processes an ophthalmologic image of a tissue of a subject eye. The processor of the ophthalmologic image processing device: acquires the ophthalmologic image photographed by an ophthalmologic image photographing device; acquires a result of an analysis relating to at least one of a specific disease and a specific structure of the subject eye, based on the ophthalmologic image; acquires information of a distribution of weight relating to the analysis, as supplemental distribution information, for which an image area of the ophthalmologic image is set as a variable; sets a part of the image area of the ophthalmologic image, as an attention area, based on the supplemental distribution information; acquires an image of a tissue including the attention area among a tissue of the subject eye; and displays the image on a display unit.

[0044] A processor of an ophthalmologic image processing device exemplarily disclosed in the present disclosure acquires an ophthalmologic image photographed by an ophthalmologic image photographing device. The processor inputs the ophthalmologic image into a mathematical model trained by a machine learning algorithm and acquires results of analyses relating to diseases or structures of a subject eye. The processor acquires a supplemental map, for each of the results of the analyses, that indicates a distribution of weight relating to the analysis by the mathematical model, for which an image area (namely, coordinates in the image area) of the ophthalmologic image input into the mathematical model is set as a variable. The processor generates an integration map in which the supplemental maps are integrated within an identical area and displays the image on a display unit.

[0045] In this case, a user can easily recognize the distribution of the weight relating to each of the automatic analyses, from one integration map. Accordingly, a state of each of the diseases or the structures can be further appropriately recognized.

[0046] The number of the ophthalmologic image input into the mathematical model may be one or two or more. In a case in which a plurality of the ophthalmologic images is input into the mathematical model, the ophthalmologic images may be photographed by one single device or respective devices. Further, a dimension of the ophthalmologic image to be input into the mathematical model is not especially limited. The ophthalmologic image may be a static image or a moving image.

[0047] The supplemental map (may be also called an "attention map") may indicate a distribution of a degree of influence (a degree of attention) that affects the result of the analysis output by the mathematical model. In this case, the degree of influence that affects each of the automatic analyses can be appropriately recognized from the integration map.

[0048] Further, the supplemental map (may be also called a "certainty degree map") may indicate a distribution of a degree of certainty of the automatic analysis by the mathematical model. In this case, the distribution of the degree of certainty of each of the automatic analyses by the mathematical model can be appropriately represented on the integration map.

[0049] The "degree of certainty" may be defined by a highness of reliability of the analysis, or alternatively a reciprocal of a lowness of the reliability (degree of uncertainty). In a case in which the degree of uncertainty is x %, the degree of certainty may use a value of (100-x) %. Further, the "degree of certainty" denotes a degree of confidence when the mathematical model executes the analysis. The degree of certainty and accuracy of the result of the analysis are not always proportional.

[0050] A specific content of the certainty degree map can be selected as needed. For example, the degree of certainty may include the entropy of a probability distribution (average information amount) in the automatic analysis by the mathematical model. The entropy denotes uncertainty, randomness, and a degree of disorder. In a case in which the degree of certainty of the automatic analysis is the maximum, the entropy of the probability distribution is zero. Further, as the degree of certainty is decreased, the entropy is increased. Accordingly, when the entropy of the probability distribution is adopted as the degree of certainty, the certainty degree map is properly generated. Further, a value other than the entropy may be adopted as the degree of certainty. For example, at least one of standard deviation, coefficient of variation, variance and the like that indicates a degree of dispersion of the probability distribution in the automatic analysis may be adopted as the degree of certainty. Kullback-Leibler divergence or the like, which is a measure of a difference between the probability distributions, may be adopted as the degree of certainty. The maximum value of the probability distribution may be adopted as the degree of certainty. Further, in a case in which ranking the diseases or the like is performed using the automatic analysis, a probability of the first rank, or a difference between the probability of the first rank and a probability of other rank (for example, a probability of the second rank or the sum of probabilities of ranks other than the first rank) may be adopted as the degree of certainty.

[0051] The processor may change a display mode of each of the supplemental maps and integrate the supplemental maps to generate the integration map. In this case, the user can appropriately recognize each of the supplemental maps integrated into the integration map by a difference of the display modes thereof.

[0052] The display mode changed for each supplemental map may be defined by, for example, at least one of a color that shows the weight (the degree of influence, the degree of certainty, or the like), a type of a contour line, a thickness of the contour line, and the like.

[0053] In a case in which the weight (for example, the degree of influence, the degree of certainty, or the like) is shown by a color, the processor may show a magnitude of the weight using the depth of the color. In this case, the user can appropriately recognize the magnitude of the weight of each area through the depth of the color for each area.

[0054] The processor may display the integration map to be superimposed on the ophthalmologic image of the subject eye. In this case, the user can easily compare several kinds of the distributions of the weight with the distribution of the tissue of the subject eye. Consequently, the user can further properly perform the operation such as a diagnosis.

[0055] While, a method for displaying the integration map may be changed. For example, the processor may display the ophthalmologic image of the subject eye and the integration map side by side on the display unit. In this case, the processor may display the ophthalmologic image and the integration map such that image areas of them are identical to each other. In a case in which the image areas are identical to each other, the distribution of the weight and the distribution of the tissue can be compared further easily. While, the processor may display only the integration map on the display unit.

[0056] The processor may execute at least one of switching whether the integration map is displayed to be superimposed on the ophthalmologic image of the subject eye or is not displayed and changing a transparency of the integration map to be superimposed on the ophthalmologic image, in accordance with an instruction input by a user. In this case, the user can further easily compare the several kinds of the distributions of the weight with the distribution of the tissue of the subject eye. Further, the user also can check only the tissue of the subject eye by deleting the integration map displayed to be superimposed.

[0057] Further, even in a case in which the result of the analysis is acquired based on the ophthalmologic image without using the mathematical model trained by the machine learning algorithm, the processor can acquire the supplemental distribution information accompanied to the result of the analysis. In this case, the ophthalmologic image processing device can be represented as below. An ophthalmologic image processing device processes an ophthalmologic image of a tissue of a subject eye. The processor of the ophthalmologic image processing device: acquires the ophthalmologic image photographed by an ophthalmologic image photographing device; acquires results of analyses relating to diseases or structures of a subject eye, based on the ophthalmologic image; acquires a supplemental map, for each of the results of the analyses, that indicates a distribution of weight relating to the analysis, for which an image area of the ophthalmologic image is set as a variable; generates an integration map in which the supplemental maps are integrated within an identical area; and displays the integration map on a display unit.

[0058] Device Configuration

[0059] Hereinafter, one typical embodiment of the present disclosure will be described with reference to the drawings. As shown in FIG. 1, in the present embodiment, a mathematical model construction device 1, an ophthalmological image processing device 21, and ophthalmological image photographing devices 11A and 11B are used. The mathematical model construction device 1 constructs a mathematical model by training a mathematical model using a machine learning algorism. The constructed mathematical model outputs a result of an analysis relating to at least one of a specific disease and a specific structure of a subject eye, based on the input ophthalmologic image. The ophthalmologic image processing device 21 acquires the result of the analysis by using the mathematical model and acquires supplemental distribution information (the details thereof are described below) indicating a distribution of weight (for example, at least one of a distribution of a degree of influence to the automatic analysis and a distribution of a degree of certainty of the automatic analysis) having an area (coordinate) of the input image as a variable against a specific confirmation item in the automatic analysis. The ophthalmologic image processing device 21 generates various information for assisting an operation of a user (for example, a doctor or the like) based on the supplemental distribution information and provides the information to the user. Each of the ophthalmologic image photographing devices 11A and 11B photographs an ophthalmologic image of a tissue of a subject eye.

[0060] As one example, a personal computer (hereinafter, referred to as PC) is adopted as the mathematical model construction device 1 of the present embodiment. Although the details thereof are described below, the mathematical model construction device 1 trains the mathematical model by using the data of the ophthalmologic image of a subject eye (hereinafter, referred to as training ophthalmologic image) acquired from the ophthalmologic image photographing device 11A and the data indicating at least one of the disease and the structure of the subject eye of which the training ophthalmologic image is photographed. As a result, the mathematical model is constructed. However, a device served as the mathematical model construction device 1 is not limited to the PC. For example, the ophthalmologic image photographing device 11A may be served as the mathematical model construction device 1. Further, processors of several devices (for example, a CPU of the PC and a CPU 13A of the ophthalmologic image photographing device 11A) may work together to construct the mathematical model.

[0061] Further, a PC is adopted as the ophthalmologic image processing device 21 of the present embodiment. However, a device served as the ophthalmologic image processing device 21 is not limited to the PC. For example, the ophthalmologic image photographing device 11B, a server, or the like may be served as the ophthalmologic image processing device 21. In a case in which the ophthalmologic image photographing device 11B is served as the ophthalmologic image processing device 21, the ophthalmologic image photographing device 11B photographs the ophthalmologic image and then acquires the result of the analysis and the supplemental distribution information from the photographed ophthalmologic image. Further, the ophthalmologic image photographing device 11B may photograph the image of an appropriate part of a subject eye, based on the acquired supplemental distribution information. Further, a mobile terminal such as a tablet and a smartphone may be served as the ophthalmologic image processing device 21. Processors of several devices (for example, a CPU of the PC and a CPU 13B of the ophthalmologic image photographing device 11B) may work together to execute various processes.

[0062] In the present embodiment, a configuration that adopts a CPU as one example of a controller that executes various processes is exemplarily described. However, it should be obvious that a controller other than the CPU may be adopted in at least a part of each device. For example, a GPU may be adopted as the controller to accelerate the processes.

[0063] Hereinafter, the mathematical model construction device 1 is described. The mathematical model construction device 1 is arranged in, for example, a manufacturer or the like that provides a user with the ophthalmologic image processing device 21 or an ophthalmologic image processing program. The mathematical model construction device 1 is provided with a control unit 2 that executes various control processes, and a communication interface 5. The control unit 2 includes a CPU 3 served as a controller, and a storage device 4 that can store a program, a data, and the like. A mathematical model construction program for executing a mathematic model construction process (see FIG. 2) described below is stored in the storage device 4. The communication interface 5 connects the mathematic model construction device 1 to other device (for example, the ophthalmologic image photographing device 11A, the ophthalmologic image processing device 21, and the like).

[0064] The mathematical model construction device 1 is connected to an operation unit 7 and a display device 8. The operation unit 7 is operated by a user that inputs various instructions to the mathematical model construction device 1. For example, at least one of a keyboard, a mouse, a touch panel and the like may be adopted as the operation unit 7. Further, a microphone or the like may be adopted together with or instead of the operation unit 7 in order to input various instructions. The display device 8 displays various images. Various devices (for example, at least one of a monitor, a display, a projector, and the like) that can display images may be adopted as the display device 8. The "image" of the present disclosure includes both of a static image and a moving image.

[0065] The mathematical model construction device 1 acquires the data of the ophthalmologic image (hereinafter, also referred to as merely "ophthalmologic image") from the ophthalmologic image photographing device 11A. The mathematical model construction device 1 may acquire the data of the ophthalmologic image from the ophthalmologic image photographing device 11A through, for example, at least one of wired communication, wireless communication, a detachable storage medium (for example, USB memory) and the like.

[0066] Next, the ophthalmologic image processing device 21 is described. The ophthalmologic image processing device 21 is arranged in facilities (for example, hospitals, medical check facilities, or the like) that, for example, diagnose or examine a subject. The ophthalmologic image processing device 21 is provided with a control unit 22, and a communication interface 25. The control unit 22 includes a CPU 23 served as a controller and a storage device 24 that can store a program, a data, and the like. An ophthalmologic image processing program for executing an ophthalmologic image process described below (a check image display process shown in FIG. 7 and integration map display process shown in FIG. 10) is stored in the storage medium 24. The ophthalmologic image processing program includes a program that executes the mathematical model constructed by the mathematical model construction device 1. The communication interface 25 connects the ophthalmologic image processing device 21 to other device (for example, the ophthalmologic image photographing device 11B, the mathematical model construction device 1, and the like).

[0067] The ophthalmologic image processing device 21 is connected to an operation unit 27 and a display device 28. Various devices may be adopted as the operation unit 27 and the display device 28, similar to the operation unit 7 and the display device 8 described above.

[0068] The ophthalmologic image processing device 21 acquires the ophthalmologic image from the ophthalmologic image photographing device 11B. The ophthalmologic image processing device 21 may acquire the ophthalmologic image from the ophthalmologic image photographing device 11B through, for example, at least one of wired communication, wireless communication, a detachable storage medium (for example, USB memory) and the like. The ophthalmologic image processing device 21 may acquire a program or the like that executes the mathematical model constructed by the mathematical model construction device 1, through communication.

[0069] Next, the ophthalmologic image photographing devices 11A and 11B are described. As one example, in the present embodiment, a configuration that adopts the ophthalmologic image photographing device 11A that provides an ophthalmologic image to the mathematical model construction device 1 and the ophthalmologic image photographing device 11B that provides an ophthalmologic image to the ophthalmologic image processing device 21 is exemplarily described. However, the number of the ophthalmologic image photographing devices is not limited two. For example, each of the mathematical model construction device 1 and the ophthalmologic image processing device 21 may acquire an ophthalmologic image from a plurality of the ophthalmologic image photographing devices. Further, the mathematical model construction device 1 and the ophthalmologic image processing device 21 may acquire an ophthalmologic image from one ophthalmologic image photographing device.

[0070] In the present embodiment, an OCT device is exemplarily adopted as the ophthalmologic image photographing device 11 (11A, 11B). However, an ophthalmologic image photographing device other than the OCT device (for example, a scanning laser ophthalmoscope (SLO), a fundus camera, a Scheimpflug camera, a corneal endothelial cell photographing device (CEM), or the like) may be adopted.

[0071] The ophthalmologic image photographing device 11 (11A, 11B) is provided with a control unit 12 (12A, 12B) that executes various control processes, and an ophthalmologic image photographing unit 16 (16A, 16B). The control unit 12 includes a CPU 13 (13A, 13B) served as a controller, and a storage device 14 (14A, 14B) that can store a program, a data, or the like.

[0072] The ophthalmologic image photographing unit 16 includes various components necessary for photographing an ophthalmologic image of a subject eye. The ophthalmologic image photographing unit 16 of the present embodiment includes an OCT light source, a branching optical element that branches an OCT light emitted from the OCT light source into a measurement light and a reference light, a scanning unit that scans an object with the measurement light, an optical system that irradiates a subject eye with the measurement light, a light receiving element that receives a synthetic light of the light reflected by a tissue of a subject eye and the reference light, and the like.

[0073] The ophthalmologic image photographing device 11 photographs a two dimensional tomographic image and a three dimensional tomographic image of a fundus of a subject eye. Specifically, the CPU 13 scans a scanning line with the OCT light (measurement light) so as to photograph the two dimensional tomographic image of a section crossing the scanning line. The two dimensional tomographic image may be a weighted average image generated through a weighted average process applied to a plurality of tomographic images of an identical part. Further, the CPU 13 scans the tissue with the OCT light in a two dimensional manner so as to photograph the three dimensional tomographic image of the tissue. For example, the CPU 13 scans respective scanning lines of which positions are different from each other in a two dimensional region when seen from a front of the tissue, with the measurement light so as to acquire a plurality of the two dimensional tomographic images. Thereafter, the CPU 13 combines the photographed two dimensional tomographic images so as to acquire the three dimensional tomographic image. Further, the ophthalmologic image photographing device 11 of the present embodiment may photograph the two dimensional front image of the fundus of the subject eye when seen from a front (Z direction along a light axis of the measurement light). The data of the two dimensional front image may be, for example, an integrated image data in which luminance values are integrated in a depth direction at each position in an X-Y direction crossing the Z direction, an integrated value of a spectrum data at each position in the X-Y direction, a luminance data at a certain identical depth at each position in the X-Y direction, a luminance data on either of layers of the retina (for example, retina surface layer) at each position in the X-Y direction, or the like.

[0074] Automatic Analysis

[0075] One example of the automatic analysis executed by the ophthalmologic image processing device 21 is described with reference to FIG. 2 and FIG. 3. As described above, the ophthalmologic image processing device 21 executes the automatic analysis relating to at least one of the disease and the structure of the subject eye, by using the mathematical model. As one example, in the present embodiment, the automatic analysis relating to the disease of the subject eye (namely, automatic diagnosis) is exemplarily described. A kind of the disease to the automatic analysis is applied can be selected as needed. The ophthalmologic image processing device 21 of the present embodiment automatically analyses presence/absence of each of the diseases including age-related macular degeneration and diabetic retinopathy, by inputting the ophthalmologic image into the mathematical model.

[0076] FIG. 2 illustrates one example of a front image of a fundus suffering from the age-related macular degeneration. In an ophthalmologic image 30A exemplarily shown in FIG. 2, a lesion 35 due to the age-related macular degeneration is found in an optic papilla 31, the macula 32, and fundus blood vessels 33 of the fundus and near a macula 32 as well. The mathematical model is trained in advance by the data (input training data) of the ophthalmologic image 30A exemplarily shown in FIG. 2 and the data (output training data) indicating that the disease of the subject eye for which the ophthalmologic image 30A has been photographed, is the age-related macular degeneration. Accordingly, when the ophthalmologic image similar to the illustration shown in FIG. 2 is input into the mathematical model, a result of the automatic analysis indicating that the subject eye is likely suffering from the age-related macular degeneration, is output.

[0077] FIG. 3 illustrates one example of a front image of a fundus suffering from the diabetic retinopathy. In an ophthalmologic image 30B exemplarily shown in FIG. 3, a lesion 36 due to the diabetic retinopathy is found in the optic papilla 31, the macula 32, and the fundus blood vessels 33 of the fundus and near the fundus blood vessels 33 as well. The mathematical model is trained in advance by the data (input training data) of the ophthalmologic image 30B exemplarily shown in FIG. 3 and the data (output training data) indicating that the disease of the subject eye for which the ophthalmologic image 30B has been photographed, is the diabetic retinopathy. Accordingly, when the ophthalmologic image similar to the illustration shown in FIG. 3 is input into the mathematical model, a result of the automatic analysis indicating that the subject eye is likely suffering from the diabetic retinopathy, is output.

[0078] Here, with the mathematical model of the present embodiment, the automatic analysis relating to a plurality of diseases is executed to one ophthalmologic image. Accordingly, a plurality of the diseases (for example, the age-related macular degeneration and the diabetic retinopathy) may be automatically determined as possible diseases.

[0079] Further, the ophthalmologic image processing device 21 may execute the automatic analysis relating to the structure (for example, at least one of a layer, a macula, an optic papilla, and a fundus blood vessel of the fundus) of the subject eye instead of or together with the automatic analysis relating to the disease. Specifically, the ophthalmologic image processing device 21 may execute the automatic analysis relating to a specific layer or a boundary of the specific layer in the fundus of the subject eye, by inputting the tomographic image (at least one of the two dimensional tomographic image and the three dimensional tomographic image) into the mathematical model. Further, the ophthalmologic image processing device 21 may execute the automatic analysis relating to a structure of a tissue (for example, at least one of the macula and the optic papilla) of the subject eye, by inputting the front image or the tomographic image of the fundus into the mathematical model. Further, the ophthalmologic image processing device 21 may execute the automatic analysis relating to the fundus blood vessels of the subject eye, by inputting the front image or the tomographic image of the fundus into the mathematical model. In this case, the ophthalmologic image processing device 21 may execute the automatic analysis relating to an artery and a vein of the fundus of the subject eye.

[0080] Supplemental Distribution Information

[0081] One example of the supplemental distribution information is described with reference to FIG. 4 and FIG. 5. In the present embodiment, the ophthalmologic image of the two dimensional image is input into the mathematical model. Thus, the supplemental distribution information is also the two dimensional information (map). However, for example, the ophthalmologic image of the three dimensional image may be input into the mathematical model. In such a case, the supplemental distribution information is the three dimensional information.

[0082] In the present embodiment, at least one of an attention map and a certainty degree map is adopted as the supplemental distribution information (supplemental map).

[0083] The attention map is a distribution of a degree of influence (a degree of attention) to respective positions within an image area, that affects the result of the analysis output by the mathematical model. An area with a large degree of influence strongly affects the result of the automatic analysis, compared to an area with a small degree of influence. One example of the attention map is disclosed in, for example, the document described below.

[0084] Ramprasaath R. Selvaraju, et al., "Grad-CAM: Visual Explanations from Deep Networks via Gradient-based Localization" Proceedings of the IEEE International Conference on Computer Vision, 2017-Oct, pp. 618-626.

[0085] The certainty degree map is a distribution of a degree of certainty of the automatic analysis at respective pixels within the image area when the mathematical model executes the automatic analysis. The degree of certainty may be defined by a highness of reliability of the automatic analysis, or alternatively a reciprocal of a lowness of the reliability (degree of uncertainty). For example, in a case in which the degree of uncertainty is x %, the degree of certainty may use a value of (100-x) %. As one example, in the present embodiment, the entropy of a probability distribution (average information amount) in the automatic analysis is adopted as the degree of certainty. In a case in which the degree of certainty of the automatic analysis is the maximum, the entropy of the probability distribution is zero. Further, as the degree of certainty is decreased, the entropy is increased. Accordingly, for example, when a reciprocal of the entropy of the probability distribution or the like is adopted as the degree of certainty, the certainty degree map is properly generated. However, information other than the entropy of the probability distribution (for example, standard deviation, coefficient of variation, variance or the like of the probability distribution) may be used for generating the certainty degree map. In a case in which the probability distribution in the automatic analysis is adopted as the degree of certainty, the probability distribution may be defined by a probability distribution in the automatic analysis for each pixel, or a probability distribution having one or more dimensional coordinates as a variable. Further, in a case in which ranking is performed using the automatic analysis, a probability of the first rank, or a difference between the probability of the first rank and a probability of other rank (for example, a probability of the second rank or the sum of probabilities of ranks other than the first rank) may be adopted as the degree of certainty.

[0086] FIG. 4 illustrates one example of a supplemental map 40A in a case in which the automatic analysis based on the mathematical model is executed to the ophthalmologic image 30A shown in FIG. 2. In FIG. 4, a tissue of the fundus blood vessels 33 and the like in the ophthalmologic image 30A (see FIG. 2) is schematically illustrated by a dotted line in order for facilitating comparison between an image area of the ophthalmologic image 30A and an area of the supplemental map 40A. In the supplemental map 40A shown in FIG. 4, a magnitude of the weight (the degree of influence or the degree of certainty in the present embodiment) at each position relating to the result of the automatic analysis indicating that the probability of the age-related macular degeneration is high, is shown by the depth of a color. That is, in a part with a deep color, the degree of influence or the degree of certainty relating to the result of the automatic analysis is large, compared to a part with a light color (in FIG. 4 and FIG. 5, the depth of the color is represented by the thickness of a line of the illustration for convenience of the description). In the example shown in FIG. 4, the degree of influence or the degree of certainty in the lesion 35 (see FIG. 2) is large. Further, the degree of influence or the degree of certainty of the center in the lesion 35 is larger than that of a peripheral part in the lesion 35.

[0087] FIG. 5 illustrates one example of a supplemental map 40B in a case in which the automatic analysis based on the mathematical model is executed to the ophthalmologic image 30B shown in FIG. 3. In FIG. 5, similar to FIG. 4, a tissue of the fundus blood vessels in the ophthalmologic image 30B (see FIG. 3) and the like is schematically illustrated by a dotted line. In the supplemental map 40B shown in FIG. 5, a magnitude of the degree of influence or the degree of certainty at each position relating to the result of the automatic analysis indicating that the probability of the diabetic retinopathy is large, is shown by the depth of a color. In a part with a deep color, the degree of influence or the degree of certainty relating to the result of the automatic analysis is large. Also in the example shown in FIG. 5, the degree of influence or the degree of certainty in the lesion 35 (see FIG. 3) is large.

[0088] In each of the supplemental maps 40A and 40B of the present embodiment, a display mode of the degree of influence or the degree of certainty is changed in accordance with the disease or the structure to which the automatic analysis is applied. In the present embodiment, a color indicating the degree of influence or the degree of certainty is changed in accordance with the disease or the structure to which the automatic analysis is applied. However, a specific display mode of the supplemental map may be changed. For example, a contour line linking the positions having the same magnitude of the degree of influence or the degree of certainty may be generated to display the magnitude of the degree of influence or the degree of certainty. In this case, at least one of a color, a type, a thickness and the like of the line forming the contour line may be changed in accordance with the disease or the structure to which the automatic analysis is applied.

[0089] In the present embodiment, when the ophthalmologic image is input into the mathematical model, the mathematical model outputs both of the result of the automatic analysis of the ophthalmologic image and the supplemental distribution information (the supplemental map in the present embodiment) accompanied to the automatic analysis. However, a method for generating the supplemental distribution information may be changed. For example, the CPU 23 of the ophthalmologic image processing device 21 may generate the supplemental distribution information based on the result of the automatic analysis output by the mathematical model.

[0090] In the present embodiment, a configuration in which the automatic analysis is executed to the disease of the subject eye is exemplarily described. However, also in a case in which the automatic analysis is execute to the structure of the subject eye, the supplemental distribution information similar to that described above may be generated. For example, in a case in which the automatic analysis is executed to an artery and a vein of the fundus of the subject eye, the supplemental distribution information to a result of the automatic analysis relating to the artery and the supplemental distribution information to a result of the automatic analysis relating to the vein may be generated. In this case, regarding a part having a small degree of certainty of the automatic analysis relating to the artery and the vein among the fundus blood vessels, the supplemental distribution information indicating the blood vessel having a small degree of certainty may be generated.

[0091] Mathematical Model Construction Process

[0092] A mathematical model construction process executed by the mathematical model construction device 1 is described with reference to FIG. 6. The mathematical model construction process is executed by the CPU 3 in accordance with the mathematical model construction program stored in the storage device 4. In the mathematical model construction process, the mathematical model is trained by a training data set, so that the mathematical model for executing the automatic analysis relating to the disease or the structure of the subject eye is constructed. The training data set includes an input data (input training data) and an output data (output training data).

[0093] As shown in FIG. 6, the CPU 3 acquires the data of the training ophthalmologic image, which is an ophthalmologic image photographed by the ophthalmologic image photographing device 11A, as the input training data (S1). In the present embodiment, the data of the training ophthalmologic image is generated by the ophthalmologic image photographing device 11A and then acquired by the mathematical model construction device 1. However, the CPU 3 may acquire the data of the training ophthalmologic image by acquiring a signal (for example, OCT signal), which is a basis for generating the training ophthalmologic image, from the ophthalmologic image photographing device 11A, and then generating the ophthalmologic image based on the acquired signal.

[0094] In S1 of the present embodiment, a two dimensional front image (so-called Enface image) of a fundus photographed by the ophthalmologic image photographing device 11A served as the OCT device is acquired as the training ophthalmologic image. However, the training ophthalmologic image may be photographed by a device other than the OCT device (for example, at least one of an SLO device, a fundus camera, an infrared camera, a corneal endothelial cell photographing device and the like). Further, the training ophthalmologic image is not limited to a two dimensional front image of a fundus. For example, a two dimensional tomographic image or a three dimensional tomographic image may be acquired as the training ophthalmologic image. Or alternatively, a moving image may be acquired as the training ophthalmologic image.

[0095] Next, the CPU 3 acquires the data indicating at least one of the disease and the structure (disease in the present embodiment) of the subject eye for which the training ophthalmologic image is photographed, as the output training data (S2). As one example, in the present embodiment, an operator (for example, a doctor or the like) diagnoses the disease by checking the training ophthalmologic image and inputs a kind of the disease if applicable, into the mathematical model construction device 1 by operating the operation unit 7, so that the output training data is generated. The output training data may include the data indicating a position of the lesion in addition to the data of presence/absence of the disease and a kind of the disease.

[0096] The output training data may be modified. For example, in a case in which the automatic analysis is executed to the structure of the subject eye using the mathematical model, the data indicating a position of a specific structure (for example, at least one of a position of a layer, a position of a boundary, a position of a specific tissue and the like) in the training ophthalmologic image may be adopted as the output training data.

[0097] Next, the CPU 3 trains the mathematical model using the training data set by the machine learning algorithm (S3). As the machine learning algorithm, for example, a neural network, a random forest, a boosting, a support vector machine (SVM), and the like are generally known.

[0098] The neural network is a technique that imitates the behavior of a neuron network of a living organism. Examples of the neural network include a feedforward neural network, a radial basis function (RBF) network, a spiking neural network, a convolutional neural network, a recurrent neural network (a recurrent neural network, a feedback neural network, and the like), a probabilistic neural network (a Boltzmann machine, a Bayesian network, and the like).

[0099] The random forest is a method to generate multiple decision trees, by performing learning on the basis of training data that is randomly sampled. When the random forest is used, branches of a plurality of the decision trees learned in advance as discriminators are followed, and an average (or a majority) of results obtained from the decision trees is calculated.

[0100] The boosting is a method to generate a strong discriminator by combining a plurality of weak discriminators. By causing sequential learning of simple and weak discriminators, the strong discriminator is constructed.

[0101] The SVM is a method to configure two-class pattern discriminators using linear input elements. For example, the SVM learns linear input element parameters from training data, using a reference (a hyperplane separation theorem) that calculates a maximum margin hyperplane at which a distance from each of data points is the maximum.

[0102] The mathematical model indicates, for example, a data structure for predicting a relationship between input data (the data of the two dimensional front image similar to the training ophthalmologic image in the present embodiment) and output data (the data of the result of the automatic analysis relating to the disease in the present embodiment). The mathematical model is constructed as a result of training using the training data set. As described above, the training data set is a set of the input training data and the output training data. For example, as a result of the training, correlation data (for example, weight) between the inputs and outputs is updated. The mathematical model in the present embodiment is trained to output the result of the automatic analysis, and the supplemental distribution information (the supplemental map in the present embodiment) accompanied to the automatic analysis as well.

[0103] In the present embodiment, a multi-layer neural network is adopted as the machine learning algorithm. The neural network includes an input layer for inputting data, an output layer for generating the data of the result of the automatic analysis to be predicted, and one or more hidden layers between the input layer and the output layer. A plurality of nodes (also known as units) is arranged in each of the layers. Specifically, a convolutional neural network (CNN) that is a type of the multi-layer neural network is adopted in the present embodiment.

[0104] Here, other machine learning algorithm may be adopted. For example, generative adversarial networks (GAN) using two competitive neural networks may be adopted as the machine learning algorithm.

[0105] The processes of S1 to S3 are repeated until the construction of the mathematical model is completed (S4: NO). That is, the mathematical model is repeatedly trained by the multiple training data sets including the training data set of the subject eye suffering from a disease (for example, see FIG. 2 and FIG. 3) and the training data set of the subject eye not suffering from a disease. When the construction of the mathematical model is completed (S4: YES), the mathematical model construction process is finished. The program and the data that execute the constructed mathematical model are installed in the ophthalmologic image processing device 21.

[0106] Check Image Display Process

[0107] A check image display process executed by the ophthalmologic image processing device 21 is described with reference to FIG. 7 to FIG. 9. A check image denotes an image suitable for a user to check it directly. In the present embodiment, the check image is automatically acquired based on the supplemental distribution information. The check image display process is executed by the CPU 23 in accordance with the ophthalmologic image processing program stored in the storage device 24.

[0108] Firstly, the CPU 23 acquires the ophthalmologic image of the subject eye (S11). It is preferable that a kind of the ophthalmologic image acquired in S11 is similar to the ophthalmologic image used as the input training data in the mathematical model construction process (see FIG. 6) described above. As one example, in S11 of the present embodiment, the two dimensional front image of the fundus of the subject eye is acquired. The CPU 23 may acquire a signal (for example, OCT signal), which is a basis of the ophthalmologic image, from the ophthalmologic image photographing device 11B and generate the ophthalmologic image based on the acquired signal.

[0109] The CPU 23 inputs the acquired ophthalmologic image into the mathematical model and acquires the result of the automatic analysis output by the mathematical model (S12). As described above, in the present embodiment, the results of the automatic analyses relating to the specific respective diseases are output by the mathematical model.

[0110] The CPU 23 acquires the supplemental distribution information accompanied to the automatic analysis in S12 (S13). As described above, in the present embodiment, at least one of the attention map and the certainty degree map is acquired as the supplemental distribution information (supplemental map). Further, in the present embodiment, the mathematical model outputs both of the result of the automatic analysis and the supplemental distribution information. However, the CPU 23 may generate the supplemental distribution information based on the result of the automatic analysis.

[0111] Next, the CPU 23 sets an attention area in a part of the image area of the ophthalmologic image acquired in S11, based on the supplemental distribution information (S16). Specifically, in a case in which the certainty degree map is acquired as the supplemental distribution information, the ophthalmologic image processing device 21 of the present embodiment displays an image of a part where the degree of certainty is small (namely, in the present embodiment, a part where the reliability of the automatic analysis relating to a specific disease is considered to be low), on the display device 28. In this case, a user can check a state of the part where the degree of certainty of the specific automatic analysis is small, by himself/herself using the check image to be displayed. Further, the ophthalmologic image processing device 21 of the present embodiment displays an image of a part where the weight (at least one of the degree of influence and the degree of certainty) shown by the supplemental distribution information is large, on the display device 28. In this case, a user can appropriately check a state of the important part where the weight is large (namely in the present embodiment, a state of the part where the possibility of a specific disease is determined high), based on the check image. The user operates the operation unit 27 so as to input either of an instruction to display the part where the degree of certainty is small and an instruction to display the part where the degree of influence or the degree of certainty is large, into the ophthalmologic image processing device 21.

[0112] In a case in which the instruction to display the part where the degree of certainty is small is input, the CPU 23 sets an area where the degree of certainty shown by the supplemental distribution information (specifically, the certainty degree map) is equal to or smaller than a threshold, as the attention area (S16). That is, the CPU 23 sets the area where the degree of certainty of the automatic analysis relating to a specific disease or structure (a specific disease in the present embodiment) is equal to or smaller than the threshold, as the attention area.

[0113] A specific content of the process for setting the attention area may be selected as needed. As one example, as shown in FIG. 8, the CPU 23 of the present embodiment separately extracts continuous areas where the degrees of certainty in the automatic analysis relating to the specific disease or structure are equal to or larger than a first threshold, respectively. In an example shown in FIG. 8, two areas 46A and 46B where the degrees of certainty are equal to or larger than the first threshold, are extracted. Thereafter, the CPU 23 sets an area where the maximum value of the degree of certainty is equal to or smaller than a second threshold (second threshold>first threshold) within the extracted area, as the attention area. In the example shown in FIG. 8, since the maximum value of the degree of certainty of the area 46A is larger than the second threshold, it is considered that the reliability of the automatic analysis relating to the specific disease is high. While, since the maximum value of the degree of certainty of the area 46B is equal to or smaller than the second threshold, it is considered that the reliability of the automatic analysis relating to the specific disease is low. Accordingly, in the example shown in FIG. 8, among two areas 46A and 46B, the area 46B where the maximum value of the degree of certainty is equal to or smaller than the second threshold is set as an attention area 48. In this case, among the areas 46A and 46B analyzed as likely suffering from the specific disease, only the area where the reliability of the automatic analysis is lower is set as the attention area.

[0114] The content of the process for setting the attention area may be modified. For example, the CPU 23 may set all areas where the degrees of certainty are in a range between the first threshold and the second threshold, as the attention area. The first threshold may be set as needed to the value larger than zero in accordance with various conditions.