Vehicular Driving Assistance System

Kind Code

U.S. patent application number 16/846456 was filed with the patent office on 2020-07-30 for vehicular driving assistance system. The applicant listed for this patent is DONNELLY CORPORATION. Invention is credited to Joseph Camilleri, Joel S. Gibson, Kenneth Schofield.

| Application Number | 20200244929 16/846456 |

| Document ID | 20200244929 / US20200244929 |

| Family ID | 1000004752438 |

| Filed Date | 2020-07-30 |

| Patent Application | download [pdf] |

| United States Patent Application | 20200244929 |

| Kind Code | A1 |

| Camilleri; Joseph ; et al. | July 30, 2020 |

VEHICULAR DRIVING ASSISTANCE SYSTEM

Abstract

A vehicular driver assistance system includes an imager disposed at a vehicle and viewing exterior the vehicle. A control includes an image processor that processes captured image data for at least a first application and a second application and that is operable to adjust the imager to first and second settings and to process captured image data via first and second processing techniques. The control adjusts the imager to the first or second setting to capture image data for the first or second application, respectively, and the image processor processes image data captured by the imager at the first or second setting via the respective first or second processing technique for the respective first or second application. The first application includes vehicle detection or lane departure warning or object detection and the second application includes headlamp control.

| Inventors: | Camilleri; Joseph; (Brighton, MI) ; Gibson; Joel S.; (Linden, MI) ; Schofield; Kenneth; (Holland, MI) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004752438 | ||||||||||

| Appl. No.: | 16/846456 | ||||||||||

| Filed: | April 13, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14641814 | Mar 9, 2015 | 10623704 | ||

| 16846456 | ||||

| 13936701 | Jul 8, 2013 | 8977008 | ||

| 14641814 | ||||

| 13481100 | May 25, 2012 | 8483439 | ||

| 13936701 | ||||

| 13017353 | Jan 31, 2011 | 8189871 | ||

| 13481100 | ||||

| 11239980 | Sep 30, 2005 | 7881496 | ||

| 13017353 | ||||

| 60628709 | Nov 17, 2004 | |||

| 60614644 | Sep 30, 2004 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 7/185 20130101; B60R 2300/106 20130101; Y10S 348/904 20130101; B60R 2300/301 20130101; H04N 7/188 20130101; B60R 2001/1253 20130101; G06K 9/00791 20130101; B60R 1/00 20130101; G08G 1/16 20130101; B60R 2300/304 20130101; G08G 1/166 20130101; B60R 2300/302 20130101; H04N 7/183 20130101; B60R 2300/305 20130101; B60R 2300/101 20130101; B60R 2300/804 20130101; B60R 2300/802 20130101; G08G 1/167 20130101; B60R 2300/8053 20130101; B60R 2300/8066 20130101; G08G 1/165 20130101 |

| International Class: | H04N 7/18 20060101 H04N007/18; G08G 1/16 20060101 G08G001/16; G06K 9/00 20060101 G06K009/00; B60R 1/00 20060101 B60R001/00 |

Claims

1. A vehicular driving assistance system, said vehicular driving assistance system comprising: an imager disposed at a vehicle equipped with said vehicular driving assistance system; wherein said imager is disposed at a windshield of the equipped vehicle and views forward of the equipped vehicle through the windshield of the equipped vehicle, and wherein said imager is operable to capture image data; wherein said imager comprises a CMOS photosensor array of photosensor elements; a control comprising an image processor; wherein said control processes captured image data for at least a first application and a second application; wherein said control is operable to adjust said imager to at least a first setting and a second setting; wherein said control is operable to process captured image data via a first processing technique and a second processing technique; wherein said control adjusts said imager to the first setting to capture image data for the first application, and wherein said image processor processes image data captured by said imager at the first setting via the first processing technique for the first application; wherein said control adjusts said imager to the second setting to capture image data for the second application, and wherein said image processor processes image data captured by said imager at the first setting via the first processing technique for the first application; and wherein the first application comprises vehicle detection, and wherein the second application comprises headlamp control.

2. The vehicular driving assistance system of claim 1, wherein said control is operable to process captured image data for the first application from a first set of captured image data in a first image processing to generate a first output in response to the first image processing, and wherein said control is operable to process captured image data for the second application from a second set of captured image data in a second image processing to generate a second output in response to the second image processing.

3. The vehicular driving assistance system of claim 1, wherein, responsive to processing by said control of captured image data, said control determines an ambient light level exterior the equipped vehicle.

4. The vehicular driving assistance system of claim 1, wherein said imager and at least a part of said control are disposed in an accessory module of the equipped vehicle.

5. The vehicular driving assistance system of claim 1, wherein said imager is incorporated as part of an interior rearview mirror assembly of the equipped vehicle.

6. The vehicular driving assistance system of claim 1, wherein image data captured by said imager is output via low-voltage differential signaling (LVDS).

7. The vehicular driving assistance system of claim 1, comprising a lens and a spectral filter at said imager.

8. The vehicular driving assistance system of claim 1, wherein image data captured for the first application is at a frame rate that differs from the frame rate used to capture image data for the second application.

9. The vehicular driving assistance system of claim 1, wherein said control is operable to provide enhanced imaging of an object viewed by said imager.

10. The vehicular driving assistance system of claim 1, wherein said control is operable to provide enhanced processing of at least a portion of an image captured by said imager that corresponds to a region exterior the equipped vehicle at which an object is viewed by said imager.

11. The vehicular driving assistance system of claim 1, wherein said control is operable to episodically adjust said imager to the first setting and the second setting for extracting information from the captured image data for the first application and the second application.

12. The vehicular driving assistance system of claim 11, wherein said control is operable to episodically adjust processing of captured image data via the first processing technique and the second processing technique for extracting information from the captured image data for the first application and the second application.

13. A vehicular driving assistance system, said vehicular driving assistance system comprising: an imager disposed at a vehicle equipped with said vehicular driving assistance system; wherein said imager is disposed at a windshield of the equipped vehicle and views forward of the equipped vehicle through the windshield of the equipped vehicle, and wherein said imager is operable to capture image data; wherein said imager comprises a CMOS photosensor array of photosensor elements; a control comprising an image processor; wherein said control processes captured image data for at least a first application and a second application; wherein said control is operable to adjust said imager to at least a first setting and a second setting; wherein said control is operable to process captured image data via a first processing technique and a second processing technique; wherein said control adjusts said imager to the first setting to capture image data for the first application, and wherein said image processor processes image data captured by said imager at the first setting via the first processing technique for the first application; wherein said control adjusts said imager to the second setting to capture image data for the second application, and wherein said image processor processes image data captured by said imager at the first setting via the first processing technique for the first application; and wherein the first application comprises lane departure warning, and wherein the second application comprises headlamp control.

14. The vehicular driving assistance system of claim 13, wherein said control is operable to process captured image data for the first application from a first set of captured image data in a first image processing to generate a first output in response to the first image processing, and wherein said control is operable to process captured image data for the second application from a second set of captured image data in a second image processing to generate a second output in response to the second image processing.

15. The vehicular driving assistance system of claim 13, wherein, responsive to processing by said control of captured image data, said control determines an ambient light level exterior the equipped vehicle.

16. The vehicular driving assistance system of claim 13, wherein image data captured by said imager is output via low-voltage differential signaling (LVDS).

17. The vehicular driving assistance system of claim 13, wherein image data captured for the first application is at a frame rate that differs from the frame rate used to capture image data for the second application.

18. The vehicular driving assistance system of claim 13, wherein said control is operable to episodically adjust said imager to the first setting and the second setting for extracting information from the captured image data for the first application and the second application.

19. The vehicular driving assistance system of claim 18, wherein said control is operable to episodically adjust processing of captured image data via the first processing technique and the second processing technique for extracting information from the captured image data for the first application and the second application.

20. A vehicular driving assistance system, said vehicular driving assistance system comprising: an imager disposed at a vehicle equipped with said vehicular driving assistance system; wherein said imager is disposed at a windshield of the equipped vehicle and views forward of the equipped vehicle through the windshield of the equipped vehicle, and wherein said imager is operable to capture image data; wherein said imager comprises a CMOS photosensor array of photosensor elements; a control comprising an image processor; wherein said control processes captured image data for at least a first application and a second application; wherein said control is operable to adjust said imager to at least a first setting and a second setting; wherein said control is operable to process captured image data via a first processing technique and a second processing technique; wherein said control adjusts said imager to the first setting to capture image data for the first application, and wherein said image processor processes image data captured by said imager at the first setting via the first processing technique for the first application; wherein said control adjusts said imager to the second setting to capture image data for the second application, and wherein said image processor processes image data captured by said imager at the first setting via the first processing technique for the first application; and wherein the first application comprises object detection, and wherein the second application comprises headlamp control.

21. The vehicular driving assistance system of claim 20, wherein said control is operable to process captured image data for the first application from a first set of captured image data in a first image processing to generate a first output in response to the first image processing, and wherein said control is operable to process captured image data for the second application from a second set of captured image data in a second image processing to generate a second output in response to the second image processing.

22. The vehicular driving assistance system of claim 20, wherein, responsive to processing by said control of captured image data, said control determines an ambient light level exterior the equipped vehicle.

23. The vehicular driving assistance system of claim 20, wherein image data captured by said imager is output via low-voltage differential signaling (LVDS).

24. The vehicular driving assistance system of claim 20, wherein image data captured for the first application is at a frame rate that differs from the frame rate used to capture image data for the second application.

25. The vehicular driving assistance system of claim 20, wherein said control is operable to episodically adjust said imager to the first setting and the second setting for extracting information from the captured image data for the first application and the second application.

26. The vehicular driving assistance system of claim 25, wherein said control is operable to episodically adjust processing of captured image data via the first processing technique and the second processing technique for extracting information from the captured image data for the first application and the second application.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] The present application is a continuation of U.S. patent application Ser. No. 14/641,814, filed Mar. 9, 2015, now U.S. Pat. No. 10,623,704, which is a continuation of U.S. patent application Ser. No. 13/936,701, filed Jul. 8, 2013, now U.S. Pat. No. 8,977,008, which is a continuation of U.S. patent application Ser. No. 13/481,100, filed May 25, 2012, now U.S. Pat. No. 8,483,439, which is a continuation of U.S. patent application Ser. No. 13/017,353, filed Jan. 31, 2011, now U.S. Pat. No. 8,189,871, which is a continuation of U.S. patent application Ser. No. 11/239,980, filed Sep. 30, 2005, now U.S. Pat. No. 7,881,496, which claims the benefit of U.S. provisional applications, Ser. No. 60/628,709, filed Nov. 17, 2004, and Ser. No. 60/614,644, filed Sep. 30, 2004, which are all hereby incorporated herein by reference in their entireties.

FIELD OF THE INVENTION

[0002] The present invention relates to imaging systems for vehicles and, more particularly, to reverse aid imaging systems with a rearward facing imaging device or camera and a display. However, aspects of the present invention are equally suitable for other vehicle imaging systems, such as side object detection systems, and forward facing imaging systems and the like.

BACKGROUND OF THE INVENTION

[0003] The advent of low cost, reliable imaging devices, based on a variety of silicon technologies, and in particular CMOS technology, combined with an improved cost/performance ratio for displays capable of meeting automotive specifications, and an increasing application rate of video monitor displays for automotive navigation systems or as part of the driver interface to a wide variety of vehicle systems, has led to an increasing use of cameras or imaging sensors designed to give the driver a view of those areas around the vehicle which are not in the normal direct field of view of the driver, typically referred to as "blind spots." These areas include the region close to the front of the vehicle, typically obscured by the forward structure of the vehicle, the region along the passenger side of the vehicle, the region along the driver side of the vehicle rearward of the driver, and the area immediately rearward of the vehicle which cannot be seen directly or indirectly through the rear view mirror system. The camera or imaging sensor may capture an image of the rearward (or sideward or other blind spot area) field of view, and the image may be displayed to the driver of the vehicle to assist the driver in backing up or reversing or otherwise driving or maneuvering the vehicle. The use of electronic cameras in these applications significantly increases the driver's knowledge of the space immediately surrounding the vehicle, which may be of importance prior to and during low speed maneuvers, and thus contributes to the safe completion of such maneuvers. However, in order to provide user controls or user inputs to allow the driver or user to control various functions of the camera at the rear or side or front of the vehicle, additional wiring or connections or communication links may be needed between a control and user input in the vehicle and the camera or imaging device at the rear or side or front of the vehicle.

[0004] It is known to provide a headlamp control system having an imaging sensor positioned on a vehicle and having a forward field of view. The system may detect headlamps and taillights in the images captured by the imaging sensor. It is also known to provide a lane departure warning system that includes an imaging sensor positioned on a vehicle and having a forward field of view. The lane departure warning system detects lane markers and the like along the road surface in front of the vehicle and determines when the vehicle is drifting out of the lane, and may provide an alert to the driver of the vehicle when such drifting is detected. Such systems typically are separate systems with different, independently operable controls and image processors and imaging sensors.

SUMMARY OF THE INVENTION

[0005] The present invention provides an imaging and display system or vision system for a vehicle that captures images of a scene occurring exteriorly of the vehicle, such as rearward of the vehicle, and displays the captured images at a display device in the vehicle. The imaging and display or vision system includes an imaging device or camera that is positioned at the vehicle with an exterior field of view (such as a rearward field of view) for capturing images of the exterior scene. The display device is positioned within the vehicle and remote from the camera and is operable to display the captured images in the vehicle where they are readily viewable by the driver or occupant of the vehicle. The vision system includes one or more user inputs at the display device and is operable to adjust or control at least one function or mode or feature of the camera in response to actuation of the user input or inputs. The vision system is operable to communicate imaging signals from the imaging device to the display device and to communicate camera control signals from the display device to the imaging device along common connections or wiring or communication links between the imaging device and the display device.

[0006] According to an aspect of the present invention, a vision system for a vehicle includes an imaging device having an imaging sensor, a camera microcontroller, a display device having a display element, a display microcontroller, and at least one user input selectively actuatable by a user. The user input is selectively actuatable by a user. The imaging device communicates an image signal to the display device via a communication link. The display microcontroller affects the image signal in response to the at least one user input. The camera microcontroller monitors the image signal on the communication link and adjusts a function of the imaging device in response to a detection of the affected image signal.

[0007] The imaging sensor may have a field of view exteriorly of the vehicle, such as rearwardly of the vehicle, for capturing an image of the scene occurring exteriorly of the vehicle. The vision system may include control circuitry at or near or associated with an interior rearview mirror assembly of the vehicle or a windshield electronics module or accessory module of the vehicle.

[0008] The display microcontroller may affect the image signal by disabling a video termination at the display device, and the imaging device may stop communicating the image signal when the video termination is disabled. The display microcontroller may selectively apply at least two voltage levels across the communication link in response to at least two user inputs, and the camera microcontroller may adjust a function of the imaging device in response to a respective one of the voltage levels.

[0009] The imaging device may include a video encoder that communicates the image signal to the display device via the communication link. The communication link may comprise a video plus and a video return wire.

[0010] According to another aspect of the present invention, a vision system for a vehicle includes an imaging device having an imaging sensor, a camera microcontroller, a display device having a display element, a display microcontroller, and at least one user input selectively actuatable by a user. The imaging device communicates an image signal to the display device via a communication link, and the display microcontroller communicates a control signal to the imaging device via the communication link in response to the user input. The camera microcontroller receives the control signal and adjusts a function of the imaging device in response to the control signal. The image signal and the control signal utilize a common link between the imaging device and the display device.

[0011] According to another aspect of the present invention, an imaging and display system or vision system may be operable in combination with or in conjunction with an auxiliary or triggering or initial sensing device or system or distance sensing/measuring/determining system. The auxiliary sensing device or system may provide additional sensing areas to cover blind spots that may not be encompassed by the imaging sensor or camera. Optionally, the auxiliary sensing system, such as ultrasonic sensors, radar, lidar, and the like, may detect an object exteriorly of the vehicle, and may determine or measure or detect the distance to the object, whereby the imaging and display system may be adjusted or controlled to provide enhanced imaging of the exterior scene or enhanced processing of the captured images or enhanced displaying of the images of the exterior scene and the detected object, in response to such an object detection by the auxiliary sensing system. For example, the imaging and display system may adjust a camera setting to provide enhanced imaging of the detected object, or may adjust the processor or control to provide enhanced processing of a portion of the image data that corresponds to the region of the scene at which the object was detected, or may adjust the display to highlight or enhance the displayed images of the detected object or of the region of the display that corresponds to the region of the exterior scene at which the object was detected.

[0012] Therefore, the present invention provides an imaging and display system or vision system that includes an imaging device and a display device connected via video connections or communication links. The imaging device includes user inputs and the imaging and display system is operable to selectively control or adjust the imaging device in response to the user inputs. The imaging and display system or vision system is operable to communicate imaging signals from the imaging device to the display device and to communicate camera control signals from the display device to the imaging device along common connections or wiring or communication links between the imaging device and the display device. The imaging and display system or vision system thus may provide various optional features or functions or modes without having to change or add wiring or connections between the imaging device and the display device.

[0013] The present invention also provides an imaging system or vision system for a vehicle that is operable to capture images of a scene occurring exteriorly of the vehicle, such as forward of the vehicle, and that captures and/or processes selective image data differently than other image data to extract and analyze the desired data for different applications or systems or accessories. The imaging system includes an imaging device or camera that is positioned at the vehicle with an exterior field of view (such as a forward field of view) for capturing images of the exterior scene. The imaging system may be operable to selectively or intermittently capture and/or process some image data in a manner suitable for a lane detection and/or to capture and/or process other image data in a manner suitable for headlamp detection and/or to capture and/or process other image data in a manner suitable for ambient light detection and/or other functions, systems or features or the like.

[0014] According to another aspect of the present invention, an imaging system for a vehicle includes an image sensor and a control. The image sensor is positioned at a vehicle and has an exterior field of view. The image sensor is operable to capture images of the exterior field of view and to generate image data. The control is operable to adjust the image sensor to at least two settings, and to process the image data via at least two processing techniques. The control is operable to synchronize the image sensor settings and the processing techniques to extract respective or appropriate information from the captured images for at least two applications of the imaging system.

[0015] The image sensor may have a field of view forwardly of the vehicle for capturing an image of the scene occurring forwardly of the vehicle.

[0016] The control may adjust the image sensor setting to capture image data suitable for a particular application, and may process those captured images via a processing technique suitable for the particular application. The control may adjust the image sensor to other settings and may correspondingly process those captured images via other processing techniques depending on the desired or appropriate or particular or respective application or function of the imaging system. For example, the control may selectively or intermittently or occasionally adjust the image sensor setting and processing technique to extract information from the captured image data for a headlamp control and/or a lane departure warning system and/or a rain sensor and/or a navigational system and/or an ambient light sensor and/or a collision avoidance system and/or a driving separation indicator and/or a backup aid and/or an object detection system and/or the like.

[0017] Therefore, the present invention also provides an imaging system or vision system that includes an imaging sensor and a control that are operable to provide multiple functions or control of multiple accessories or the like via selective capturing of image data and processing of the captured image data. The control is thus operable to selectively capture images and process image data to provide multiple functions or applications with a common image sensor and image processor. The imaging system may synchronize the settings of the image sensor with the processing techniques applied to the image data by the image processor, in order to perform and/or optimize two or more functions of the imaging system. The present invention thus provides a multi-tasking capability to an image sensor and image processor while providing enhanced capturing of the images and processing of the image data for the desired functions or applications.

[0018] These and other objects, advantages, purposes and features of the present invention will become apparent upon review of the following specification in conjunction with the drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

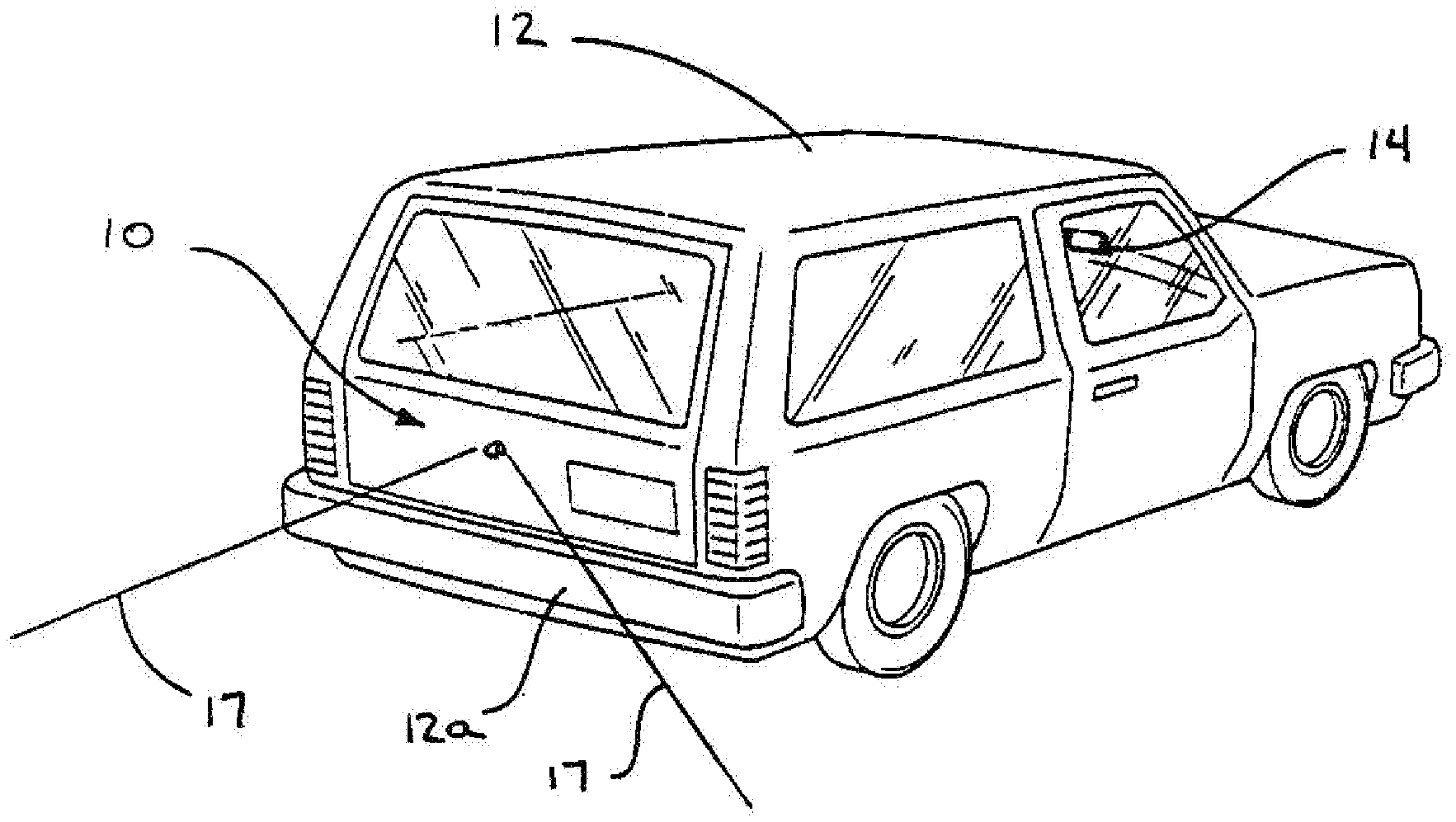

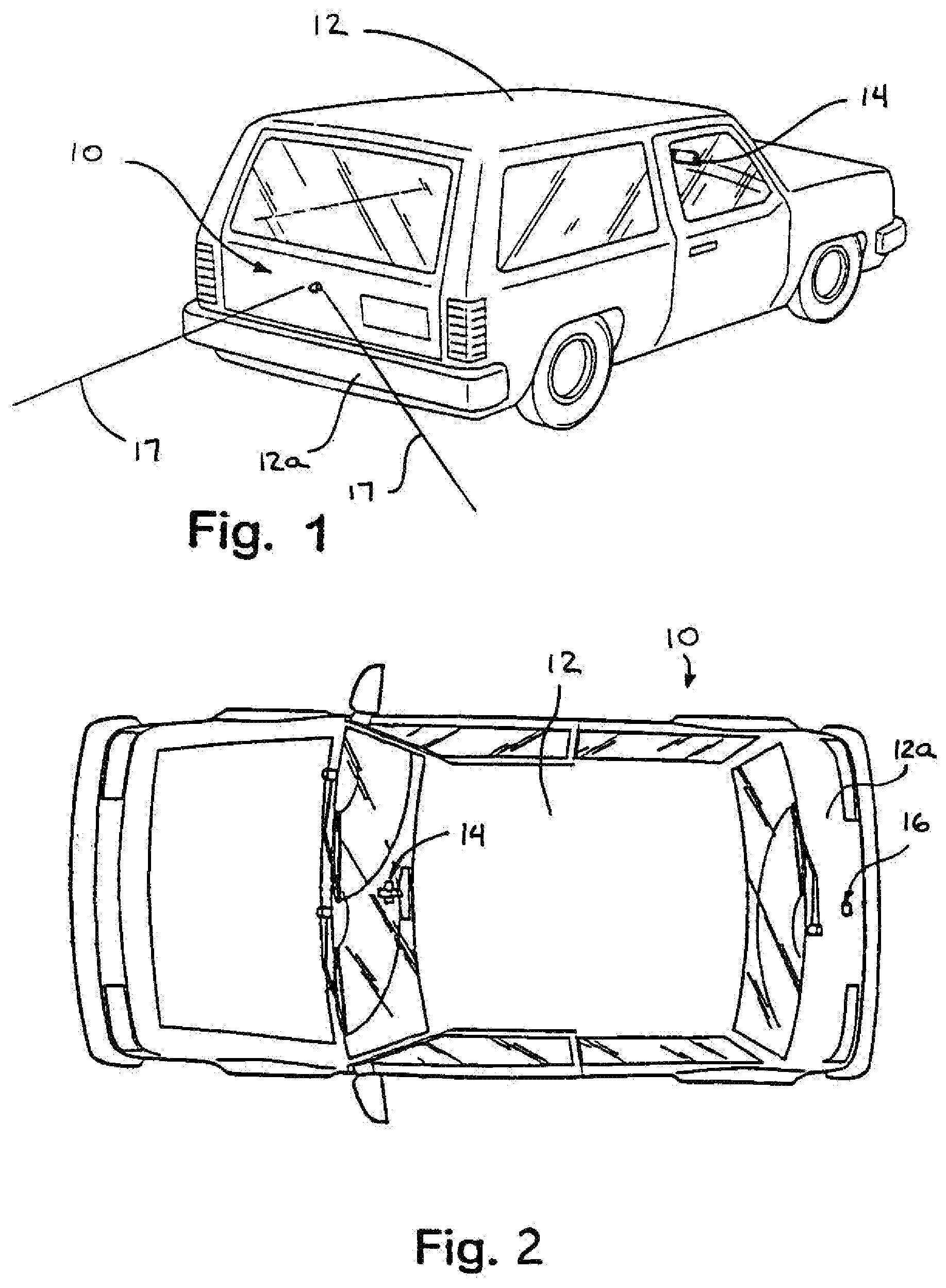

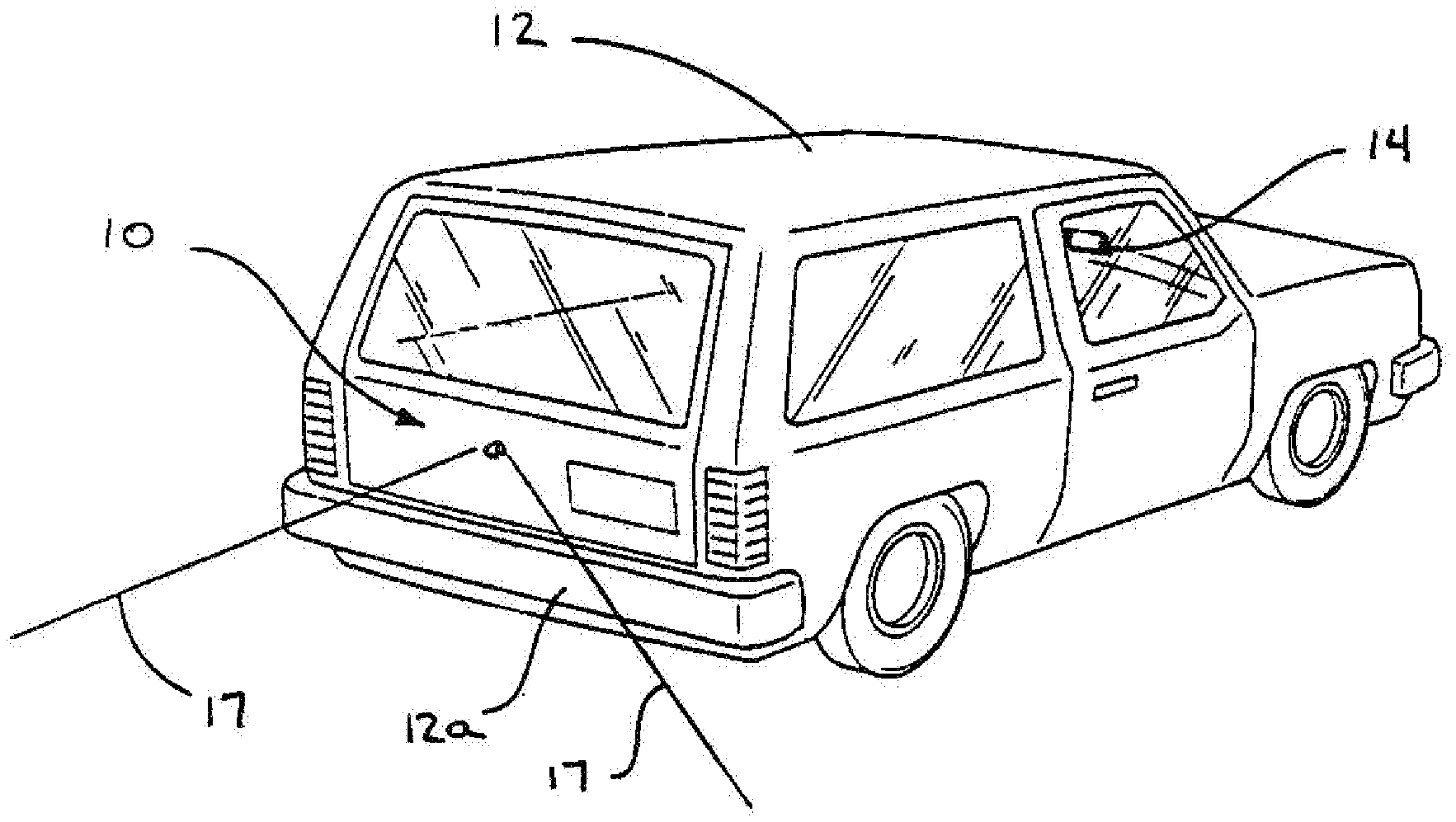

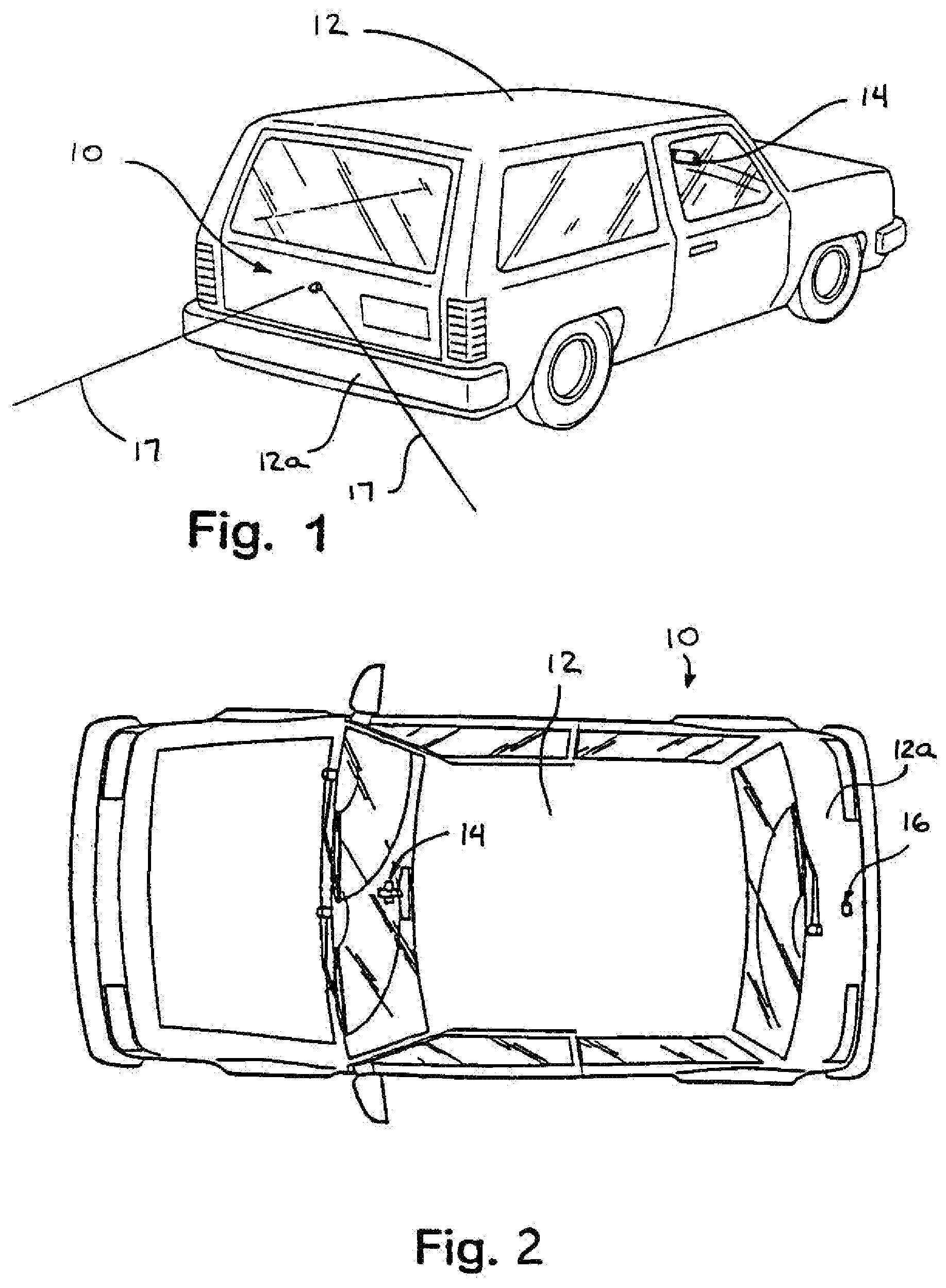

[0019] FIG. 1 is a rear perspective view of a vehicle having an imaging and display system thereon in accordance with the present invention;

[0020] FIG. 2 is a plan view of the vehicle of FIG. 1;

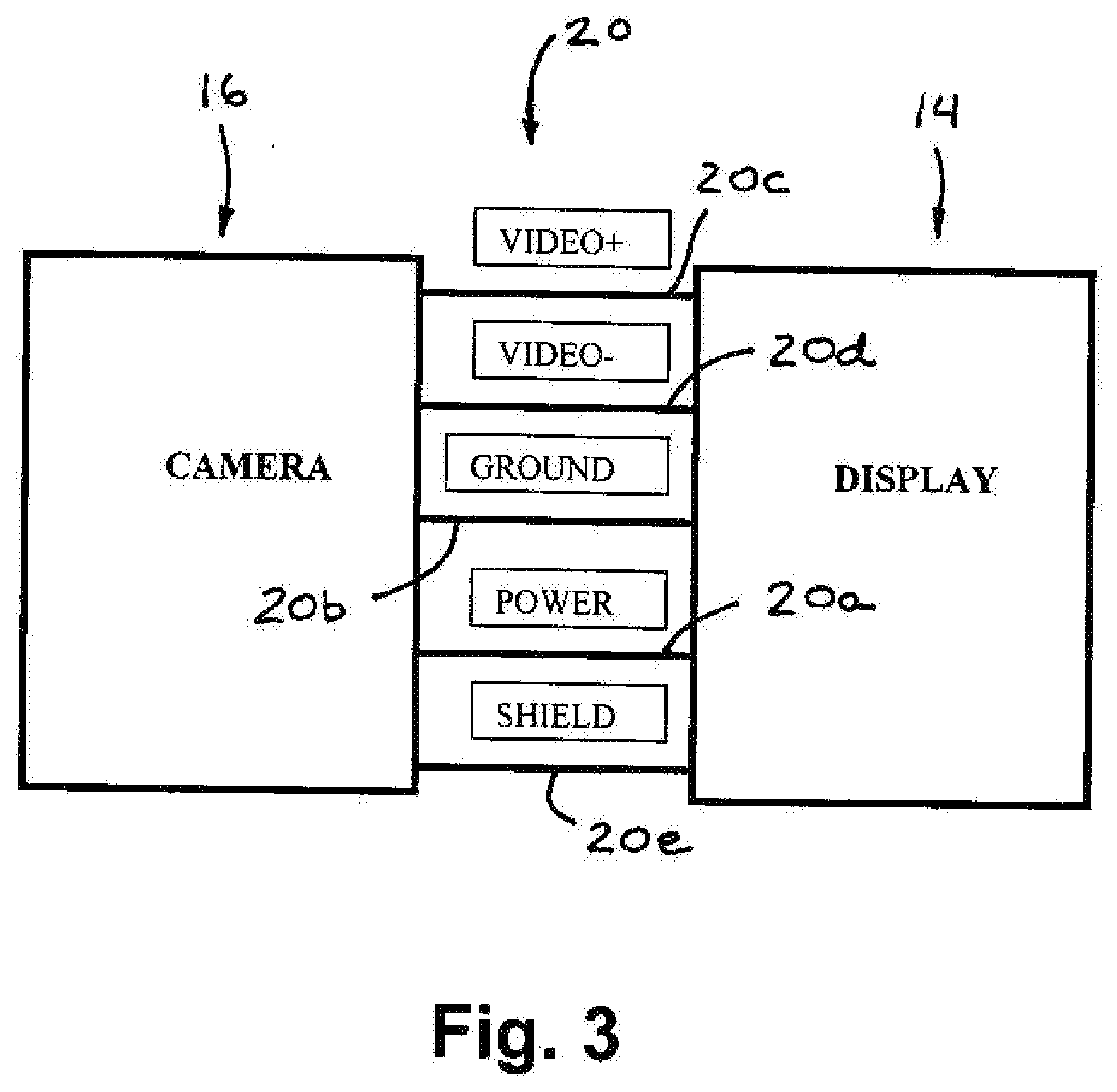

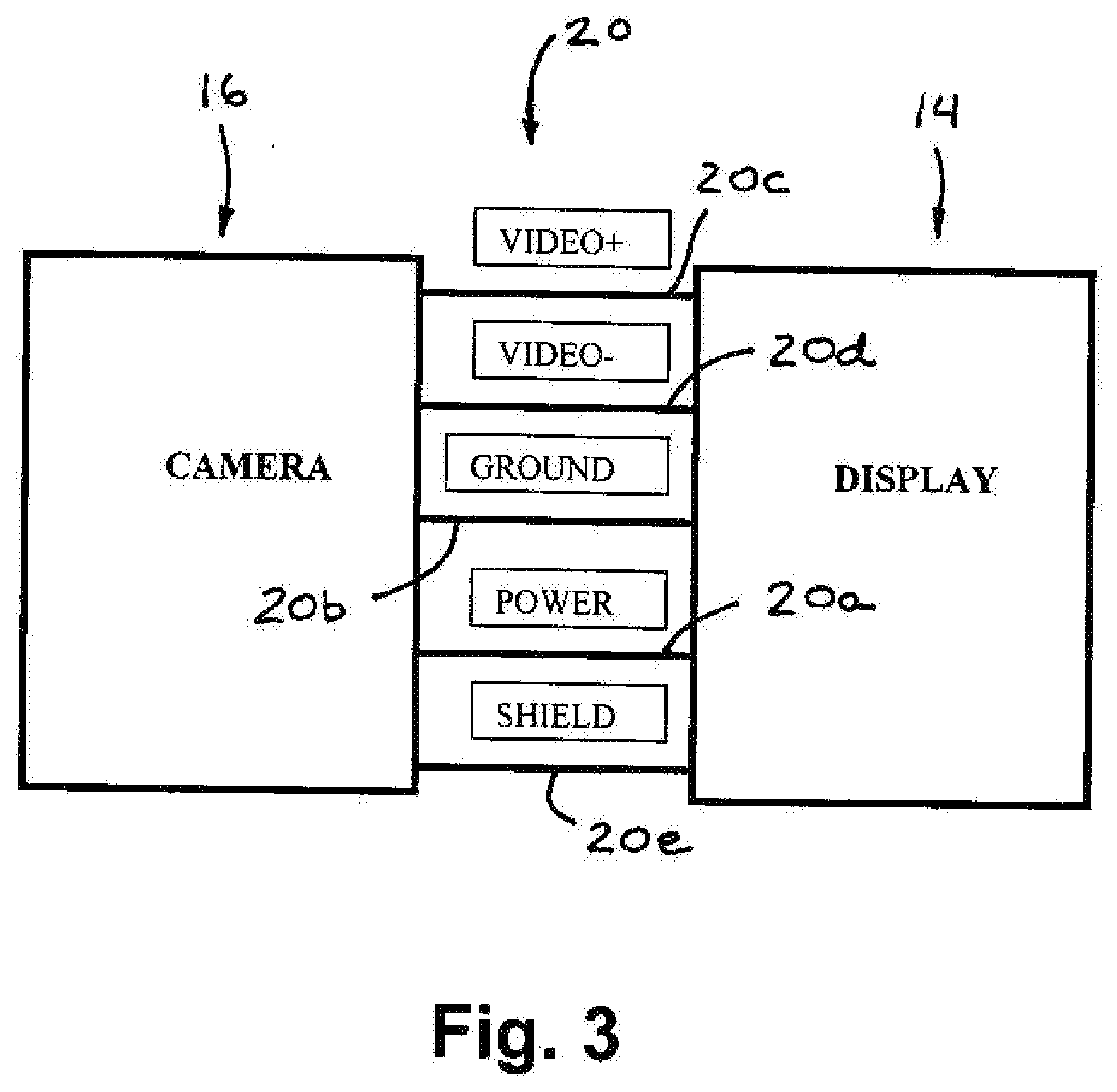

[0021] FIG. 3 is a block diagram of a camera and display device, showing the connections or wiring typically used to connect the camera to the display device;

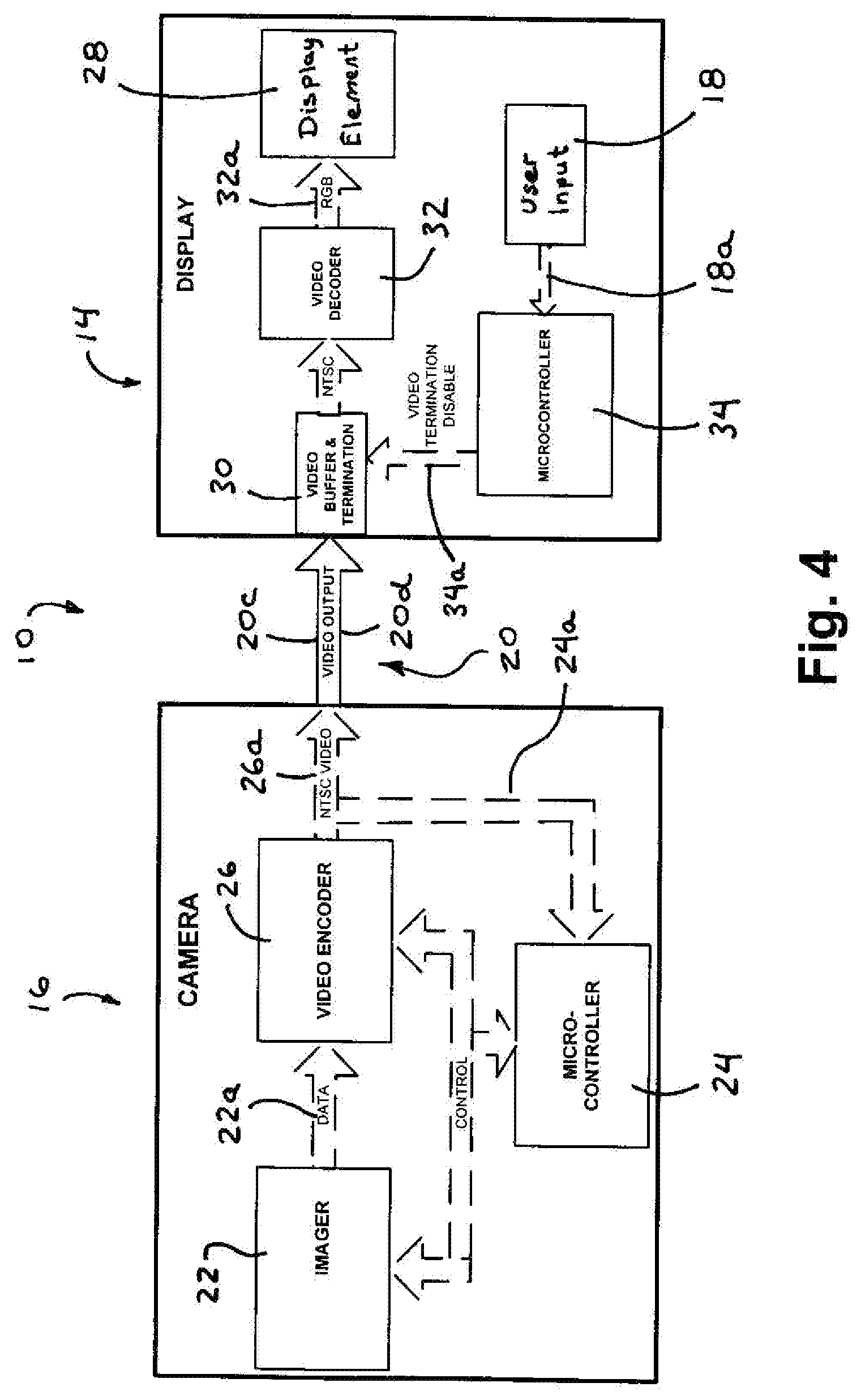

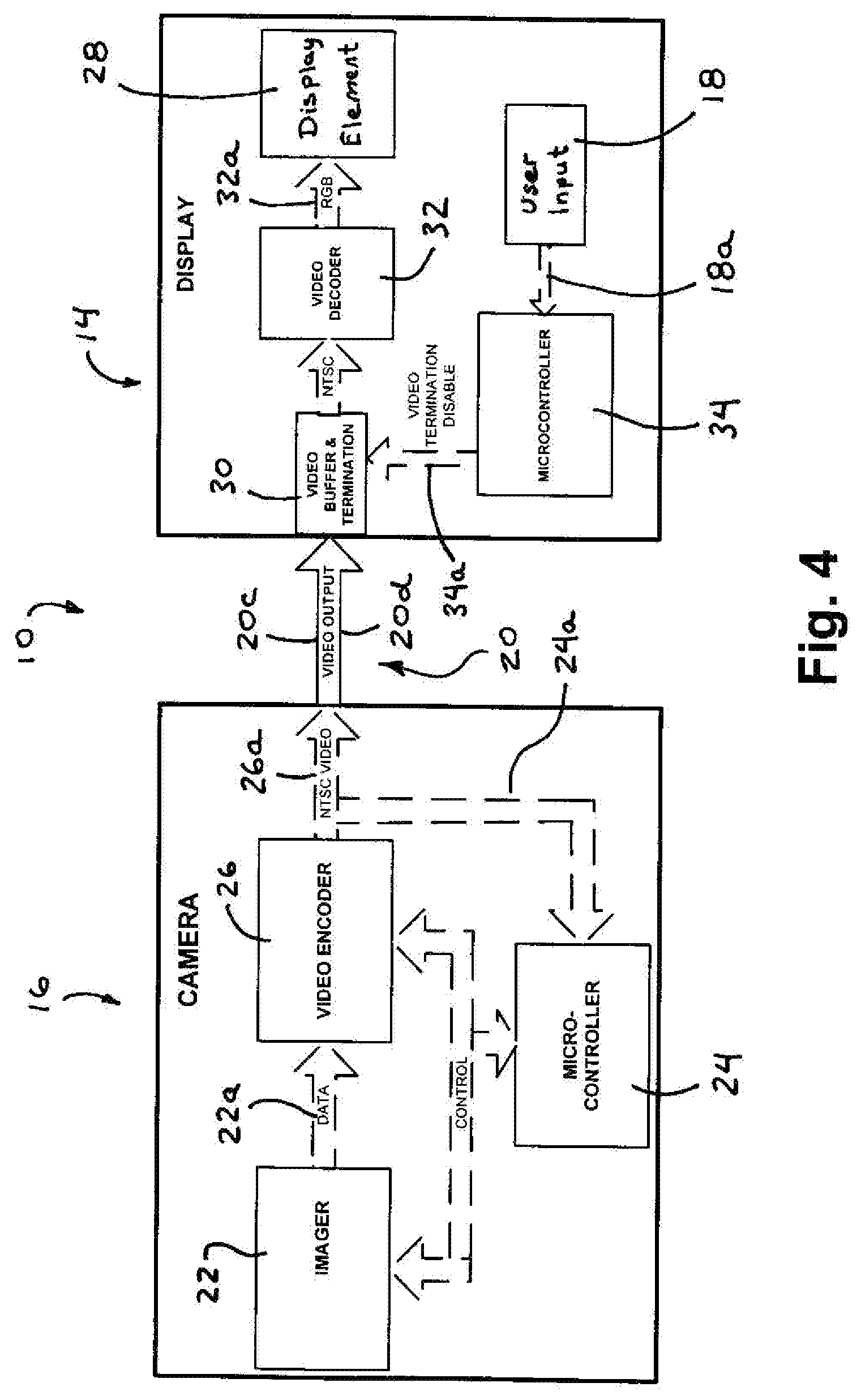

[0022] FIG. 4 is a block diagram of an imaging and display system in accordance with the present invention;

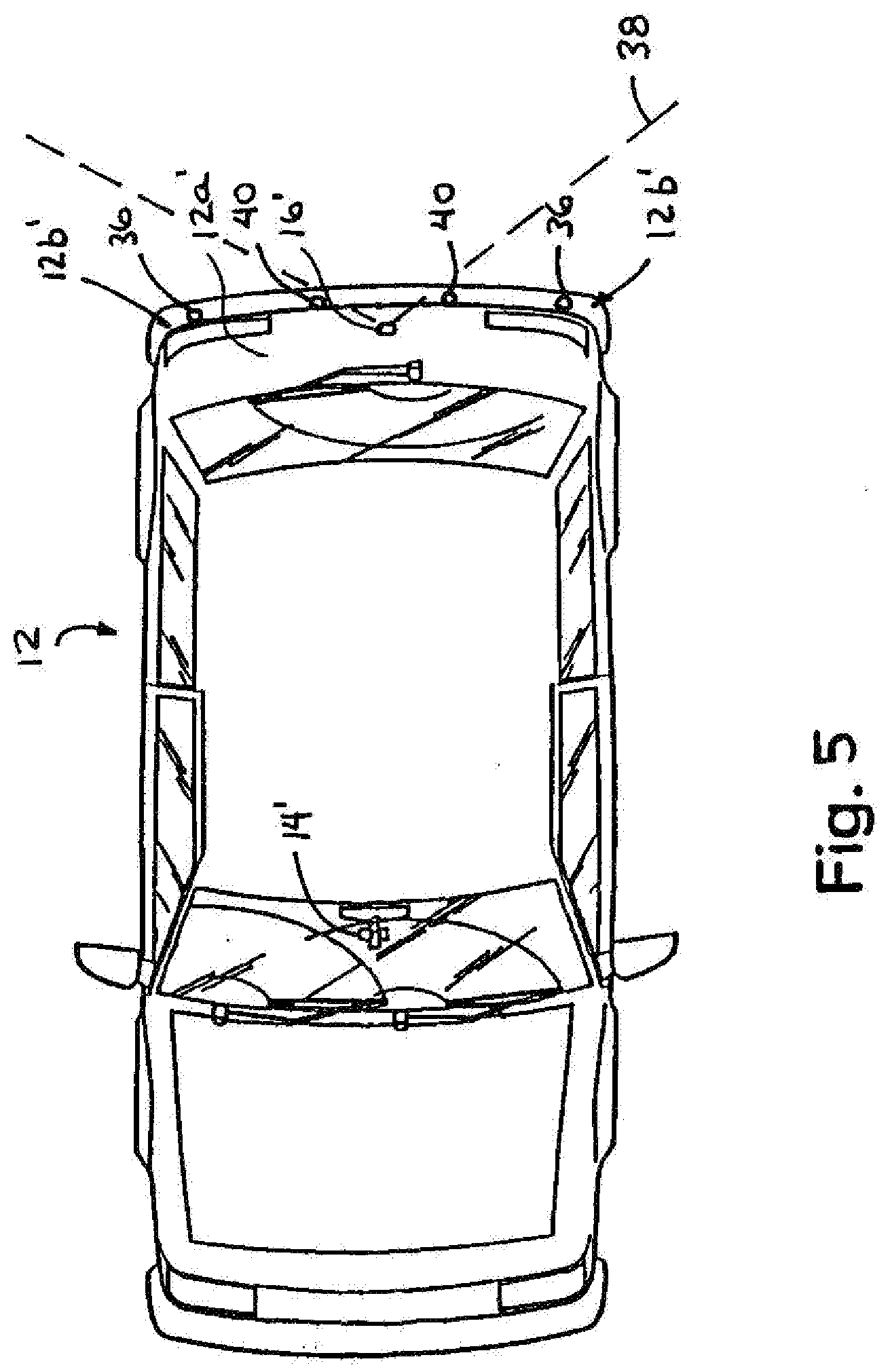

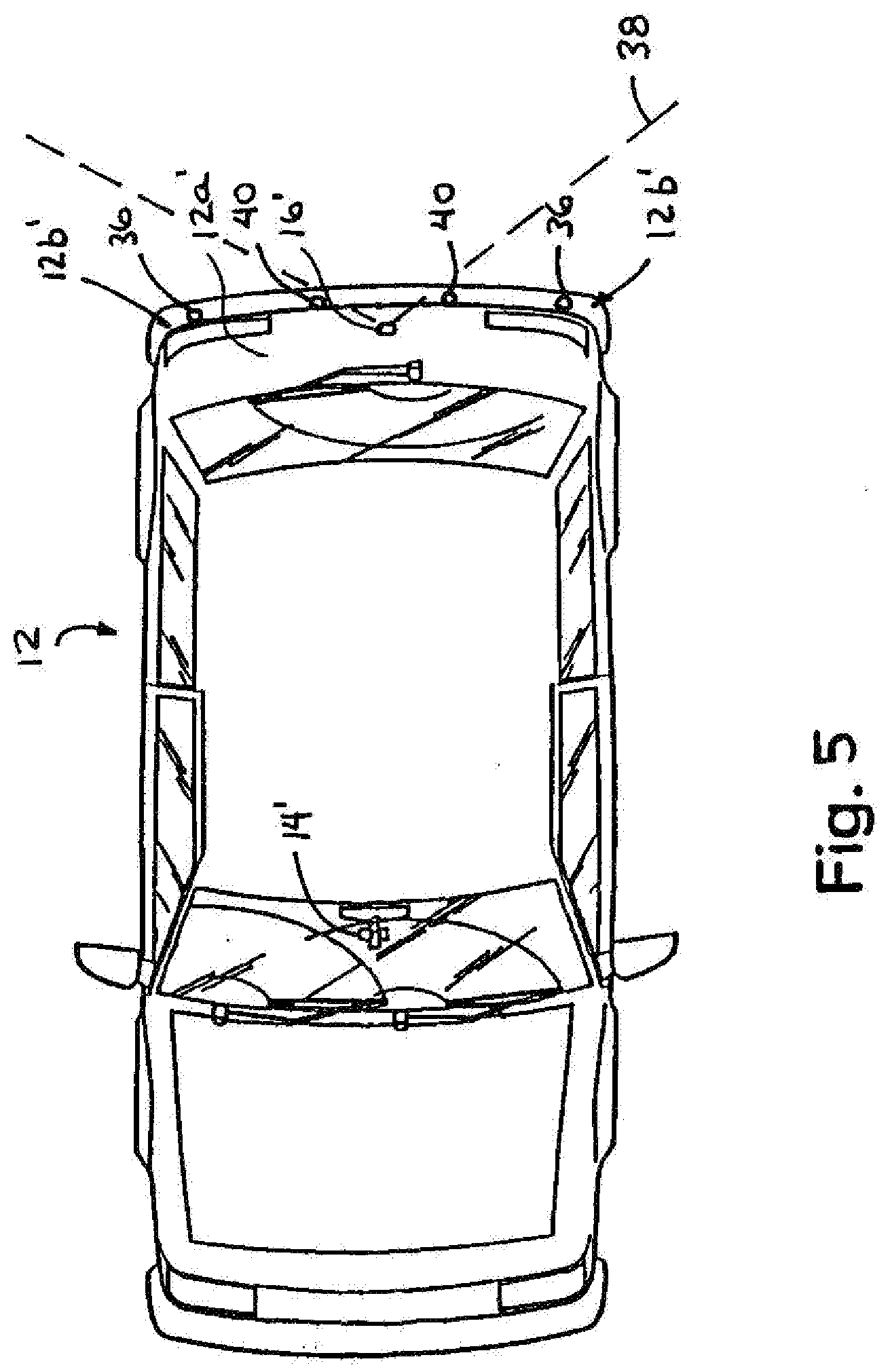

[0023] FIG. 5 is a plan view of a vehicle incorporating an imaging system in accordance with the present invention;

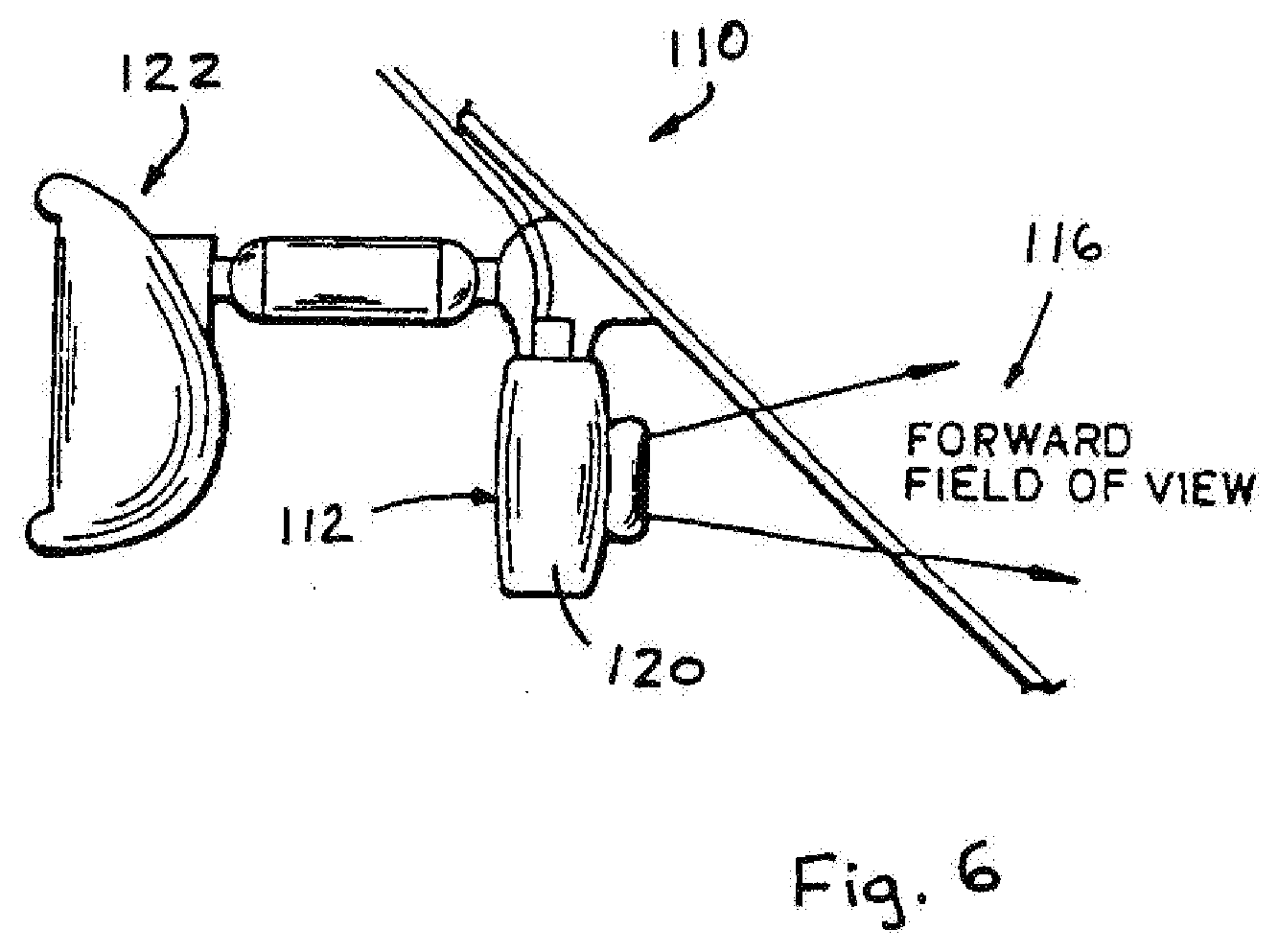

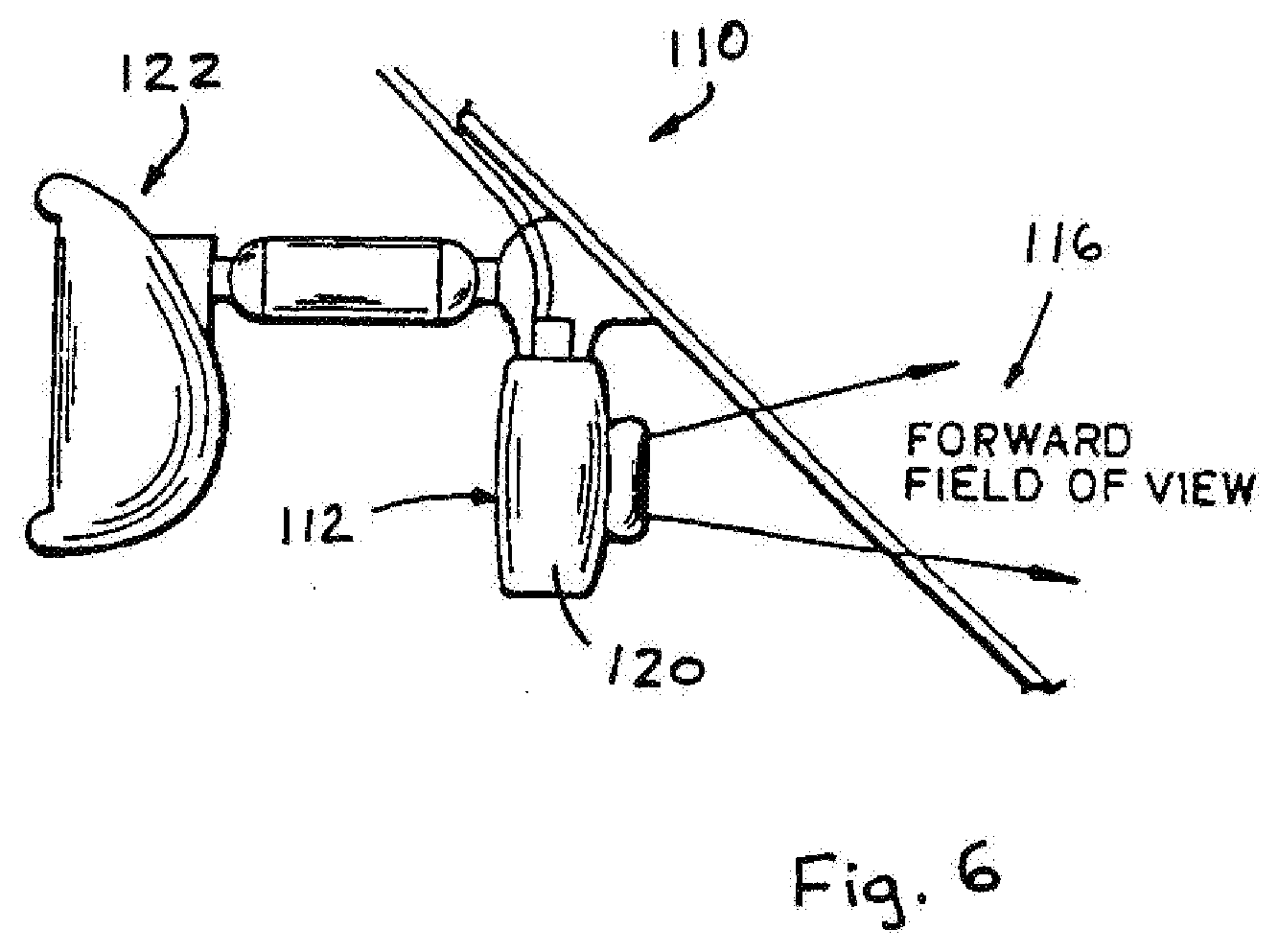

[0024] FIG. 6 is a side elevation of a portion of a vehicle embodying an imaging system in accordance with the present invention;

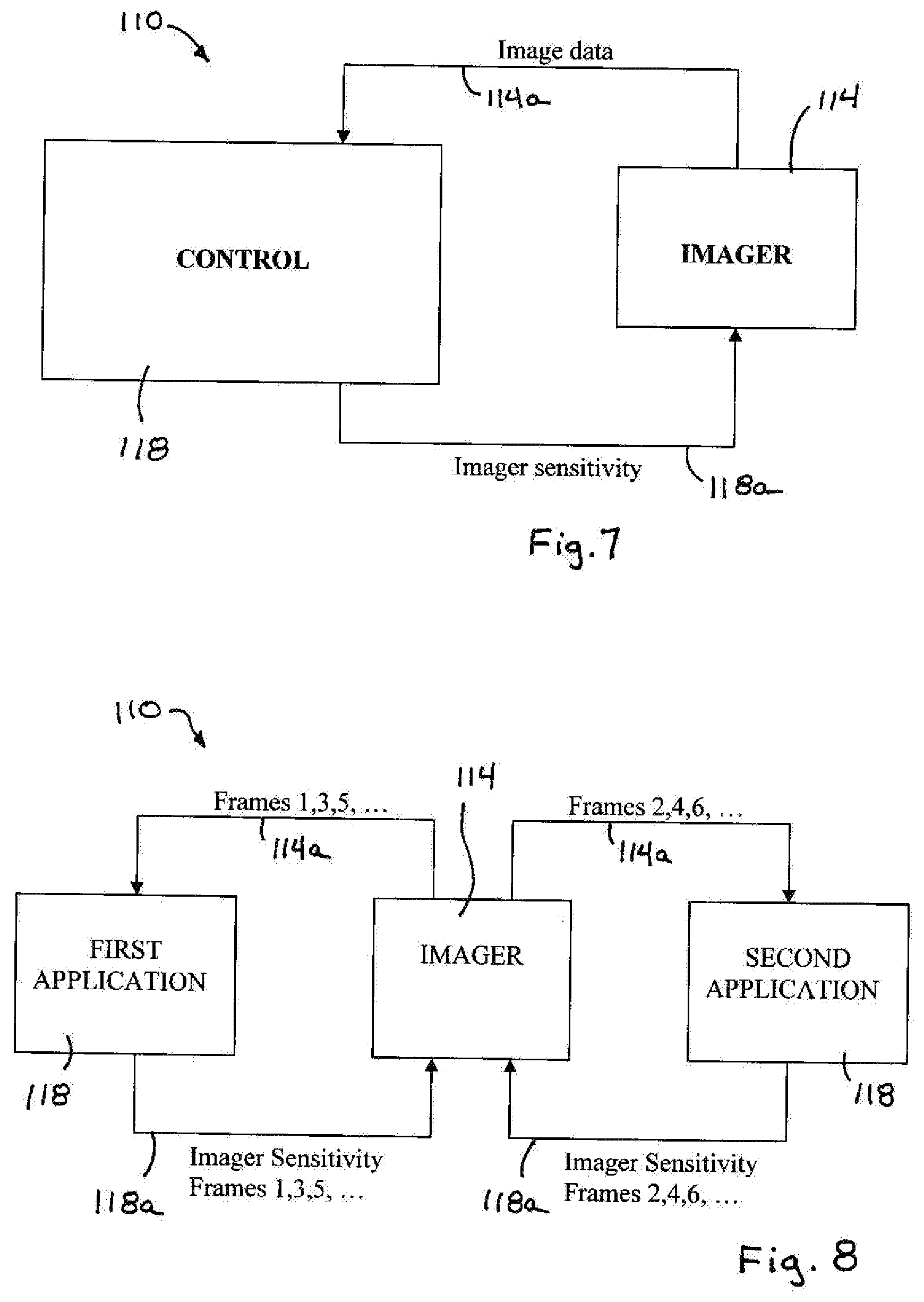

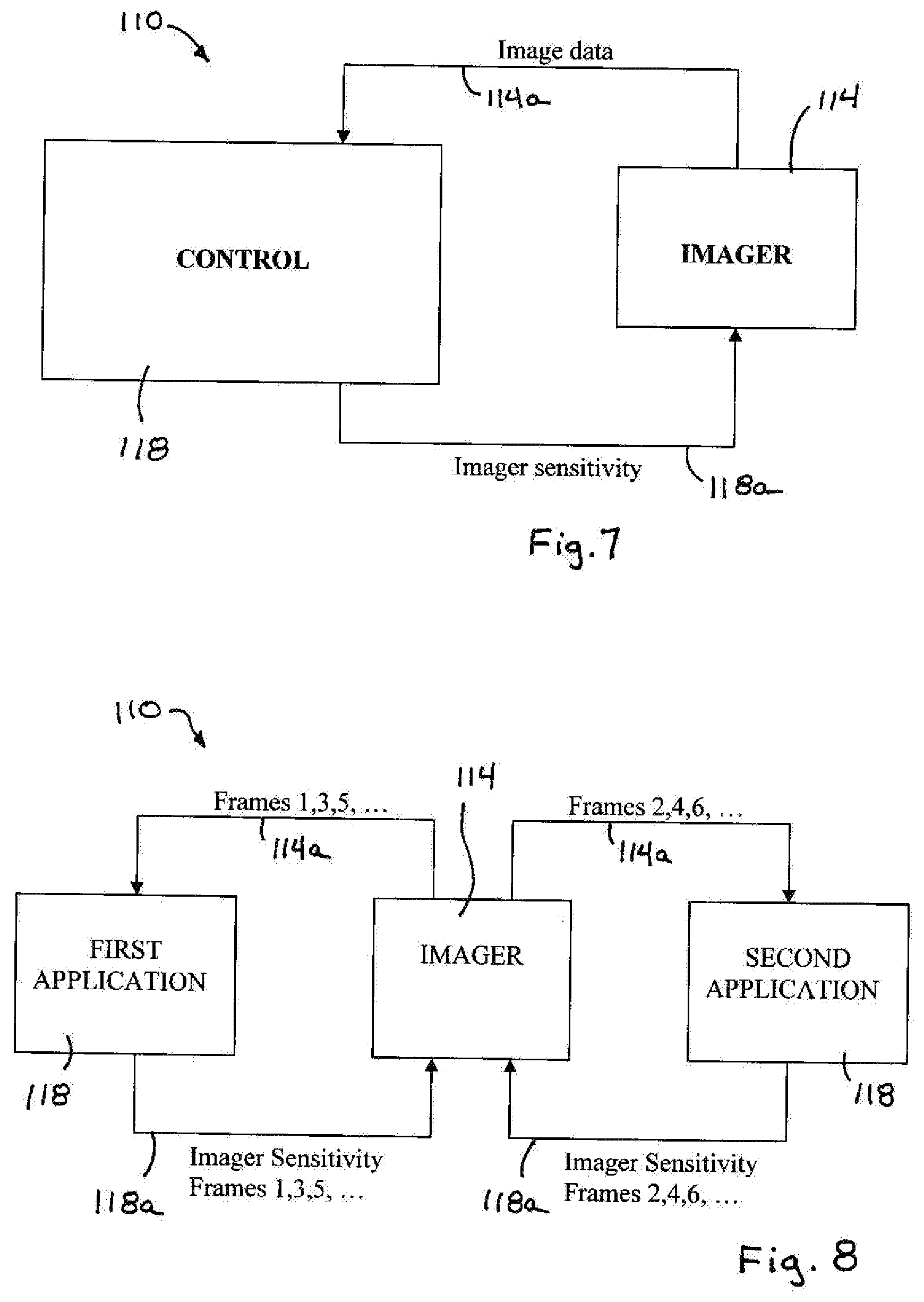

[0025] FIG. 7 is a block diagram of an imaging system having an imager and a control;

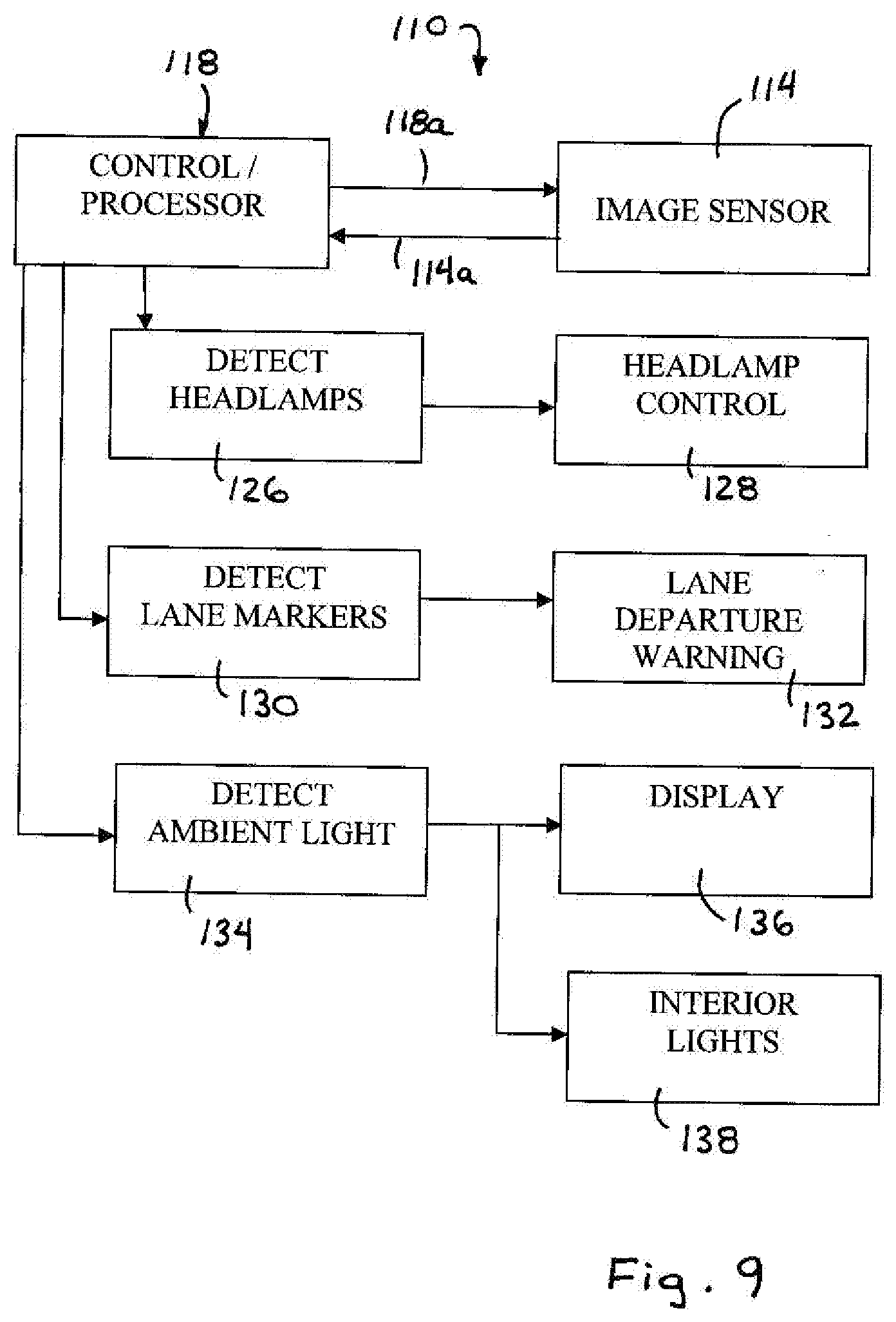

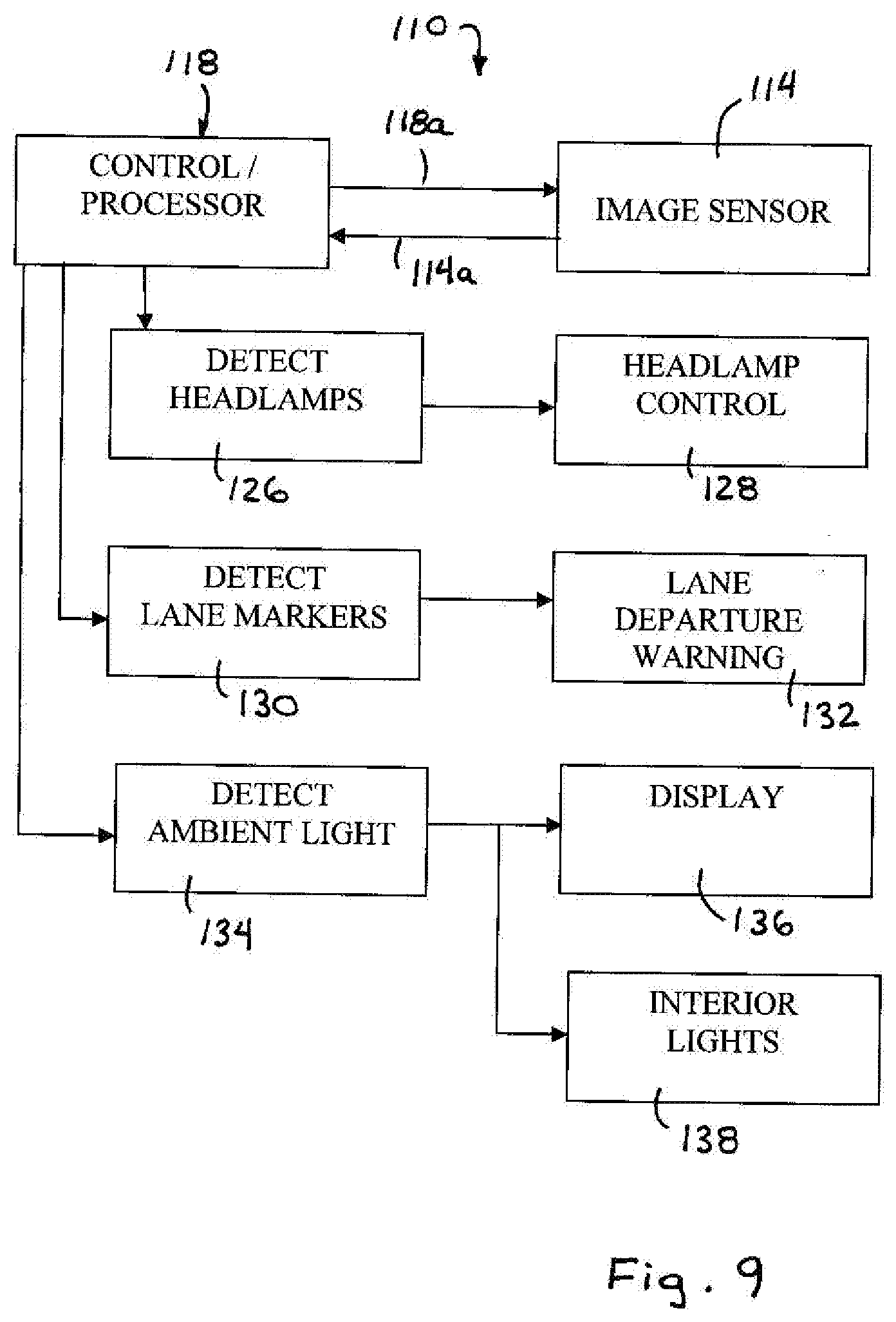

[0026] FIG. 8 is a block diagram of an imaging system in accordance with the present invention, showing different exemplary applications of the control; and

[0027] FIG. 9 is another block diagram of the imaging system of the present invention.

DESCRIPTION OF THE PREFERRED EMBODIMENTS

[0028] Referring now to the drawings and the illustrative embodiments depicted therein, an image capture system or vision system or imaging and display system 10 is positioned at an exterior portion 12a of a vehicle 12, such as at a rearward portion 12a of the vehicle 12, and is operable to capture an image of a scene occurring exteriorly of the vehicle, such as rearwardly of the vehicle, and to display the image at a display device or display system 14 of the vehicle which is viewable by a driver of the vehicle (FIGS. 1 and 2). Vision system 10 includes display device or system 14 and an imaging system or imaging device 16, which includes an imager or image capture device or camera 22 that is directed exteriorly of the vehicle and has an exterior field of view 17 which preferably at least partially encompasses a "blind spot" area exteriorly of the vehicle. The images or frames captured by imaging system 16 are displayed at a display element 28 of display system 14 to assist the driver in viewing the blind spot areas. The display system 14 includes one or more user inputs 18 (FIG. 4) that are actuatable by a user of the system to control or adjust a function of the imaging system 16, which is remote from the display system 14. The vision system 10 is operable to communicate imaging signals from the imaging device or system to the display device or system and control signals from the display device or system to the imaging device or system along common connections or wiring.

[0029] Imaging system or imaging device 16 may be positioned at the exterior portion of the vehicle and directed generally exteriorly of the vehicle for capturing images of the exterior scene to assist the driver in maneuvering or driving the vehicle. Vision system 10 and/or imaging system 16 may utilize principles of other vehicle vision or imaging systems, such as a vision or imaging system of the types disclosed in U.S. Pat. Nos. 6,757,109; 6,717,610; 6,396,397; 6,201,642; 5,550,677; 5,670,935; 5,877,897; 5,796,094; 6,097,023 and 6,498,620, and U.S. patent application Ser. No. 09/441,341, filed Nov. 16, 1999, now U.S. Pat. No. 7,339,149; and/or U.S. provisional applications, Ser. No. 60/614,644, filed Sep. 30, 2004; and Ser. No. 60/618,686, filed Oct. 14, 2004, which are hereby incorporated herein by reference. In the illustrated embodiment, the vision system is operable to capture and display images of the rearward area immediately behind the vehicle, so as to assist the driver in backing up or otherwise driving or maneuvering the vehicle rearwardly. However, the vision system may be operable to capture and display images of other areas exteriorly of the vehicle to provide images of other blind spot areas around or near the vehicle, without affecting the scope of the present invention. The vision system thus may be operable to captures images of the scene immediately rearward of the vehicle to assist the driver of the vehicle in backing up or maneuvering the vehicle in reverse. The backup assist system may be operable in response to the reverse gear of the vehicle being selected.

[0030] Preferably, the display element or display device may be located at an upper windshield area of the vehicle so as to be readily viewable by the driver of the vehicle. The display element may be located at or near the interior rearview mirror assembly of the vehicle or may be associated with the interior rearview mirror assembly of the vehicle. Optionally, the display element may be located at or near an accessory module or pod or windshield electronics module of the vehicle or may be associated with the accessory module or pod or windshield electronics module of the vehicle.

[0031] The imaging system may process the captured image data to detect objects or the like in the exterior scene. Such data processing may utilize aspects of the image processing techniques described in U.S. Pat. Nos. 6,353,392 and 6,313,454; and/or U.S. patent application Ser. No. 10/427,051, filed Apr. 30, 2003, now U.S. Pat. No. 7,038,577; and/or U.S. provisional application Ser. No. 60/638,687, filed Dec. 23, 2004, which are hereby incorporated herein by reference. The display device or element may display the image of the scene or may display the detected objects and/or may display graphic overlays or the like (such as distance measurements, icons, text or the like) to enhance the display for viewing by the driver or occupant of the vehicle. The vision system may be selectively operable to provide different functions or modes or features as selected by the user via one or more user inputs, as discussed below.

[0032] The imaging system 16 of vision system 10 thus may be operable in two or more modes and/or may provide various functions or features that are selectable by a user of the system (such as the driver of the vehicle) via one or more user inputs at or near or associated with the display system. For example, the imaging system may be selectively operable in a color mode or a black and white mode or a daytime or nighttime mode, or may be selectively operable to zoom in on a detected object or to pan across a scene or to adjust the gain, exposure and/or white balance in the captured images, in response to actuation of a respective user input by a user within the vehicle. Optionally, the imaging system may be selectively operable to process the images and display the images of the exterior scene in an outline form, where only the outlines of detected objects are shown at the display element, such as in a black and white line format. The image thus may be manipulated to provide an outline form and thus may be suitable for display on a low cost display element, such as a dot matrix display or the like, such as the type described in U.S. patent application Ser. No. 10/418,486, filed Apr. 18, 2003, now U.S. Pat. No. 7,005,974, which is hereby incorporated herein by reference. Also, or otherwise, the vision system may provide user inputs that enable a user to selectively actuate an overlay image to overlay images, such as distance measurements, text, icons, an image representation of the back of the vehicle, or other overlay images or icons, onto or over the image of the exterior scene, in order to enhance the display of the exterior scene for the user.

[0033] As shown in FIG. 3, and as is typical for various known imaging devices and vision systems, there are five connections or wires or leads or communication links 20 between the display system 14 and the imaging system 16. The connections or links include a camera power connection 20a, a ground connection 20b, a video+connection 20c, a video return connection 20d and a shield 20e. In known reverse aid systems, providing a control of the camera, such as to activate a digital zoom or pan mode for the camera (or to otherwise control or adjust a camera setting or mode), from the display area (and a user input at or near or associated with the display device) requires additional wiring for the secondary communication or communications between the user input of the display device and the camera and, thus, is a costly option for the automotive market and makes retrofitting a reverse aid system on a vehicle very difficult and costly. Controlling or adjusting or actuating these camera functions or modes or settings from the display area within the vehicle increases the overall system level performance and adds additional functionality to the system.

[0034] As shown in FIGS. 3 and 4, vision system 10 includes the communication links 20 (only the video+connection 20c and the video return connection 20d are separately shown in FIG. 4 for purposes of clarity) between the imaging system 16 and display system 14. As shown in FIG. 4, the imaging system 16 of vision system 10 includes an imaging sensor or imager 22, a camera microcontroller 24 and a video encoder 26. The imager or imaging array sensor 22 captures image data indicative of the exterior scene and generates a data signal 22a and outputs the data signal to the video encoder 26, which encodes the data and communicates an encoded video signal 26a to the display system 14 via the video+ and video return connections 20c, 20d. Under normal operation, the camera typically outputs an NTSC video signal on the video+ and video- or return connections 20c, 20d to the display system 14. This is typically done at about a 30 hertz frame rate. The camera microcontroller 24 controls the imager 22 and the video encoder 26, and monitors (as shown via monitoring link 24a in FIG. 4) the encoded video signal 26a from the encoder 26 to the display system 14. When a change in the signal 26a is detected by the camera microcontroller, the camera microcontroller 24 may adjust or control the imager 22 and/or video encoder 26 to set the imaging device or system to a different mode or function, as discussed below.

[0035] Display system or display device 14 includes a display element 28 and a display microcontroller 34. The display system 14 receives the encoded video signal 26a from the imaging system 16 via the video+ and video return connections 20c, 20d, and displays the captured image on the display element 28, such as an LCD panel or the like (or other types of displays or display elements as discussed below). As shown in FIG. 4, display system 14 includes a video buffer and termination 30, a video decoder 32 and the display element 28. The video buffer and termination 30 receives the video signal 26a and passes the signal to the video decoder 32, which decodes the encoded video signal and communicates an image signal or RGB signal 32a to the display element 28. The display element 28 displays the captured image within the vehicle where it is readily viewable by a driver or occupant of the vehicle.

[0036] Display system 14 also includes display microcontroller 34 that is operable to receive a user input signal 18a from one or more user inputs 18 and to adjust or control the display element 28 and/or to enable or disable the video termination 30 in response to the user input signal 18a. When the video termination is disabled, the camera microcontroller 24 detects the change in the signal along the video+ and video return connections 20c, 20d, and may adjust or control the imager 22 and/or video encoder 26 in response to such detection.

[0037] During normal operation of the imaging system, the imaging system 16 may output the NTSC video signal (or any other video signal protocol whether analog or digital) on the video+ and video- or return connections to the display system 14. The camera microcontroller 24 may continuously or substantially continuously monitor the NTSC video output signal 26a for a normal output that is about one volt peak into a 75 ohm load (provided at the video buffer and termination 30). When an appropriate user input [such as a button or switch or the like at or near or associated with the display element or a voice command or a consequent of a user action (such as selection of a reverse gear of the vehicle or the like) or a vehicle action or condition or characteristic or status (such as in response to the vehicle reaching a particular or threshold forward or reverse vehicle speed or the vehicle encountering a braking condition or the like) or other input or condition or characteristic or status or the like] is activated by a user action (such as a user input for selectively adjusting a camera function or mode, such as a digital zoom function or pan function or and adjustment of a gain or exposure or white balance function or a selection of a color mode or a black and white mode or a night vision mode or the like), the display microcontroller 34 disables the video termination 30 at the display system 14 via a signal 34a. The disabling of the video termination 30 at the display system 14 causes the video encoder 26 of the imaging system 16 to stop outputting the NTSC video signal 26a. The camera microcontroller 24 senses the change in the output signal or voltage along the connections 20c, 20d, and may control or adjust the imager to a different or new camera setting in response to such a detected change in signal output/voltage, or may control or adjust the video encoder if appropriate in response to such a detected change in the video signal along the connections 20c, 20d.

[0038] Optionally, when multiple functions or modes are desired, the display microcontroller 34 may disable the video termination and apply a particular voltage across the connections 20c, 20d (via signal 34a) that corresponds to the particular function or adjustment selected by the user at the user inputs, and the camera microcontroller 24 may be operable to determine the voltage applied on the connections and to adjust or control the imager and/or encoder accordingly. The camera microcontroller 24 may be preset or programmed to recognize the particular voltage across the connections and to control or adjust or activate the respective function or feature or mode in response to such detection and recognition. The camera adjustments or settings (such as activation of a digital zoom feature, a panning feature, a gain or exposure or white balance function or a black and white mode or night vision mode or the like) thus may be predetermined and based on the DC voltage level applied across the connections 20c, 20d by the display microcontroller 34 and sensed across the connections 20c, 20d by the camera microcontroller 24.

[0039] After the camera adjustment is made by the camera microcontroller, the display microcontroller may enable the video termination and remove the applied DC voltage if applicable, so that the encoder 26 will again communicate the video signal 26a to the display system 14. Optionally, the display microcontroller 34 may wait a predetermined amount of time before enabling the video termination again and removing the DC voltage level. This delay allows the camera microcontroller enough time to sense that the termination has been removed, that the new programmed settings have been uploaded to the imager, and that the settings have taken affect. Once the delay is completed, the video termination is enabled at the display, and the camera microcontroller and video encoder resume the NTSC signal to the display system to communicate the capture image data to the display system.

[0040] This process may be repeated for invoking and removal of special camera modes or functions. For example, for different user inputs and associated functions, the display microcontroller may disable the video termination and may apply a different voltage to the connections 20c, 20d. The camera microcontroller may then determine the voltage in the connections 20c, 20d and may adjust the imager and/or video encoder appropriately and in response to the detected voltage. The number of special modes of operation or functions that are desired to control or activate/deactivate at the display will dictate the number of voltages that are to be resolved by the camera microcontroller at the camera in order to activate or control or adjust the multiple camera settings from the display inputs. For example, if four different functions or modes and associated user inputs were desired, the display microcontroller may apply different voltages, such as at 1/4 volt increments or the like, for the different inputs and functions, and the camera microcontroller may be operable to discern or resolve the different voltages and control or adjust the imager and/or video decoder according to the detected voltage.

[0041] The vision system of the present invention thus provides user selectable control or adjustment of an exterior facing camera via user inputs at an interior display of the vehicle, without requiring additional wiring for the connection between the inputs and the camera. The present invention thus is highly suitable for retrofitting imaging and display systems or vision systems and/or selecting an appropriate or desired camera and/or display device or system that provides the desired features without concerns as to the wiring or connections between the camera and imaging system and the display element and display system. A user may select an optional camera that has desired features and may install the camera at the vehicle and connect to the existing wiring, which may be the base wiring for a base camera that may not have the features of the new camera. The present invention thus provides for upgrading of a camera and/or display and/or vision system either as an option installed at the vehicle assembly plant, or as an aftermarket device, without the cost and difficulties associated with rewiring or wiring new connectors or links between a user input or inputs and the camera. Different optional cameras and display systems and imaging systems thus be selected by an owner of a vehicle and may be readily installed in the vehicle without having to re-wire the vehicle or provide complicated communication links between the camera and the display device or system. The present invention thus allows for selection of a camera and display device or system having the desired features or content, without the costs and difficulties of implementing the high content cameras, since any selected camera and display device or system may utilize the same connections or communication links.

[0042] Optionally, the vision system may include or may be associated with an ultrasonic or radar device that determines the distance from the rear of the vehicle to a detected object. Optionally, other means for determining the distance to a detected object may be implemented, such as the means described in U.S. patent application Ser. No. 10/427,051, filed Apr. 30, 2003, now U.S. Pat. No. 7,038,577; and/or Ser. No. 11/105,757, filed Apr. 14, 2005, now U.S. Pat. No. 7,526,103; and/or U.S. provisional application Ser. No. 60/607,963, filed Sep. 8, 2004, which are hereby incorporated herein by reference. The vision system may detect the distance to objects in various zones or regions behind the vehicle and may provide a graphic or video overlay of the displayed image to indicate to the driver the distance to one or more detected objects. The overlay may be enhanced, such as by flashing or the like, to enhance the viewability of the overlay, such as when a hazardous condition is encountered, such as when the vehicle is within a threshold distance to a detected object rearward of the vehicle and in the vehicle's path of travel. The graphic overlay may be initiated or activated in response to the vehicle being shifted into the reverse gear or may be selectively activated via a user input or the like, without affecting the scope of the present invention.

[0043] Optionally, the imaging system and/or camera and/or display system may be operable in conjunction with or in combination with a distance sensing/measuring/determining system, such as an ultrasonic sensing system or the like. For example, and with reference to FIG. 5, an imaging and display system or vision system 10' may include an imaging sensor or camera or imaging system 16', which may be generally centrally positioned at the rear portion of the vehicle 12', and one or more secondary or auxiliary sensing devices, such as ultrasonic sensors 36, which may be also positioned at the rear portion of the vehicle. In the illustrated embodiment, an ultrasonic sensor 36 may be positioned at or near each rear corner region 12b' of the vehicle 12'. Typically, an imaging sensor or camera may provide a wide angle field of view (shown generally at 38 in FIG. 5) rearward of the vehicle that is approximately a 120 degree field of view. Such a field of view may not fully encompass the regions immediately rearward of the vehicle and at or toward the side corners of the vehicle, whereby blind spots may still exist in those regions. In order to provide enhanced object detection of objects that may be positioned at those regions, such as when the vehicle is backing up, the ultrasonic sensors 36 may be operable to sense or detect objects in those regions. The imaging and display system may then display an indication at the display device or display system 14' of a detected object in response to such detection by the ultrasonic sensors. For example, the display may provide an iconistic display of the object superimposed upon the video image of the rearward scene as captured by the rearward facing camera, or may provide a flashing of the display at the image on the screen and in the region or regions of the detected object, and/or may provide a graphical overlay at the regions of the display that represent the detected object to indicate that an object has been detected in one of the rear corner regions or elsewhere rearward of the vehicle.

[0044] Optionally, an ultrasonic sensing system may include a plurality of sensors, such as three or more ultrasonic sensors, positioned and spaced across a rear portion of the vehicle, such as along a rear fender of the vehicle, or along a rear lift gate of the vehicle, or along a rear spoiler of the vehicle, or along a light bar associated with the license plate of the vehicle or the like. For example, and as shown in FIG. 5, the sensors may include two side or corner sensors 36 and one or more sensors 40 (such as two sensors 40 in FIG. 5) interspaced across the rear portion of the vehicle and between the corner sensors 36. Preferably, a light bar is provided at the license plate of the vehicle and includes the imaging sensor or camera, the ultrasonic sensors, and preferably a light for illuminating the license plate of the vehicle, such that the imaging sensor and ultrasonic sensors may be provided as a module at the rear of the vehicle.

[0045] The ultrasonic sensors 36, 40 may provide adjacent and overlapping sensing regions to provide a sensing range that encompasses substantially the entire region behind the vehicle and about eight feet or thereabouts rearward of the vehicle. The imaging sensor or camera 16' may capture images of the rearward exterior scene that is encompassed by the field of view of the camera, and the display may display images of the scene for viewing by the driver of the vehicle when the vehicle is backing up. The display may be adjusted or modified or enhanced in one or more of the regions associated with one or more of the ultrasonic sensors in response to a detection of an object by one or more of the ultrasonic sensors.

[0046] For example, the display may be divided into four zones, each zone of the display corresponding to a zone or region covered by a respective ultrasonic sensor. If that ultrasonic sensor detects an object, then the corresponding zone of the display may be adjusted or enhanced to indicate to the driver of the vehicle that an object is detected in a particular zone or region rearward of the vehicle. For example, one or more of the zones in the display may flash or modulate or may change intensity to indicate that an object has been detected in the region or zone rearward of the vehicle that corresponds to the enhanced or flashing or modulating display zone. Optionally, the display may provide a graphic overlay, such as a measurement bar or the like, to show a distance to a detected object.

[0047] Optionally, the ultrasonic sensor or auxiliary sensing device may provide a triggering or initial sensing function, and the imaging system may adjust a camera setting or characteristic in response to a detection of an object by one or more of the ultrasonic sensors. For example, the camera may be controlled or adjusted to electronically zoom to the appropriate zone or region of the captured image in response to a detection of an object by a respective ultrasonic sensor. Because the captured image may have distortion due to the wide angle lens or optic of the imaging system and camera, it may be desirable to adjust the imaging sensor or camera to provide enhanced imaging of an object detected in the field of view. The camera thus may be zoomed in on the appropriate zone or region to provide enhanced imaging of that zone or region and to provide reduced distortion of that zone or region and, thus, enhanced imaging and reduced distortion of the detected object when it is displayed on the display. The imaging and display system may optionally provide a dynamic overlay at the display to highlight the detected object in the display, and may display the distance (which may be detected or determined in response to the ultrasonic sensing devices) to the object.

[0048] Optionally, the imaging system may process particular zones or regions of the image data corresponding to the exterior scene more than other zones or regions, such as by utilizing aspects of the object detection system described in U.S. patent application Ser. No. 10/427,051, filed Apr. 30, 2003, now U.S. Pat. No. 7,038,577; and/or Ser. No. 11/105,757, filed Apr. 14, 2005, now U.S. Pat. No. 7,526,103; and/or U.S. provisional application Ser. No. 60/607,963, filed Sep. 8, 2004, which are hereby incorporated herein by reference. Optionally, the imaging system may process particular zones or regions in response to a detection of an object by one or more of the ultrasonic sensors. For example, if one of the ultrasonic sensors initially detects an object at the left rear corner of the vehicle, the control may process the image data that corresponds to that area or region, or may enhance the processing of the image data that corresponds to that area or region, in order to identify or classify or detect the detected object in that area or region. The imaging and display system may then highlight or enhance the display at the detected object, such as by a graphic overlay at the display or an adjustment or modulation of the display in the region of the detected object. The ultrasonic sensors thus may function to detect an object and to direct the image processor to process the image data that corresponds to the area in which the object was detected. The image processor thus may process the image data for the regions that may have an object therein, and may provide reduced processing of other regions, and thus may provide efficient and enhanced processing of the captured images.

[0049] Optionally, the display may be actuated in response to a detection of an object behind the vehicle when the vehicle is backing up or in reverse. For example, when a driver shifts the vehicle into reverse, the ultrasonic sensors at the rear portion of the vehicle may be activated to sense the area rearward of the vehicle. The imaging and display system may monitor the ultrasonic sensors or may receive an input from the ultrasonic sensors or system, and may initially be in a non-active or non-display mode, where the display is deactivated. When an object rearward of the vehicle is detected by the ultrasonic sensors, the detection by the ultrasonic sensor or sensors may trigger the imaging and display system to be activated or set to an active mode, whereby the imaging sensor may begin capturing images of the rearward scene and the display may begin displaying images of the rearward scene (which may include enhanced imaging or processing or display or highlighting of the detected object such as discussed above). The activation of the display may provide an alert function to the driver of the vehicle that an object is detected behind the vehicle and/or in the path of the vehicle, so as to draw the driver's attention to the activated display and to the object being displayed in the display.

[0050] Optionally, the imaging and display system and ultrasonic sensing system may be implemented in conjunction with a movable video display, such as a slide out or extendable/retractable display screen that extends and retracts from the interior rearview mirror assembly or from an accessory module or overhead console or the like within the vehicle cabin and in the field of view of the driver of the vehicle. For example, the video display may comprise a slide out video display such as described in PCT Application No. PCT/US03/40611, filed Dec. 19, 2003 and published on Jul. 15, 2004 as International Publication No. WO 2004/058540; and/or U.S. provisional applications, Ser. No. 60/630,061, filed Nov. 22, 2004; Ser. No. 60/667,048, filed Mar. 31, 2005, which are hereby incorporated herein by reference. The display screen thus may be automatically extended and activated in response to a detection of an object rearward of the vehicle by the ultrasonic sensors, whereby the extension of the display screen provides an alert to the driver so that the driver's attention is readily drawn to the now extended and activated display screen. The imaging and display system and ultrasonic sensing system of the present invention thus may provide a display only when there is an object detected and may provide an alert function to the driver to view the display when an object is detected. In such an application, the video display may not be extended and be activated in response to the shifting into reverse, but would extend and activate after detection of an object exteriorly or rearward of the vehicle by the auxiliary sensor or sensors.

[0051] Although described above as ultrasonic sensors or sensing devices, it is envisioned that the imaging and display system of the present invention may be combined with other types of sensing systems or auxiliary or secondary or triggering or initial sensing devices or sensing techniques. For example, the imaging and display system may be operable in conjunction with or in combination with radar devices, sonar devices, laser sensing or lidar devices or laser scanning devices or the like, without affecting the scope of the present invention. The initial or triggering or auxiliary sensing device or devices may initiate or trigger enhanced imaging or zooming or other characteristics of the camera, or may initiate or trigger enhanced processing of the captured images, or may initiate or trigger enhanced display features or the like, in response to a detection of an object rearward of the vehicle by one of the initial or triggering or auxiliary sensing devices. Although shown and described as being combined with or operating in conjunction with a rearward facing camera and associated display, it is envisioned that aspects of the auxiliary sensing devices or system of the present invention may be equally suitable for use in conjunction with sideward facing imaging systems, such as side object detection systems or lane change assist systems or the like, or forward facing imaging systems, such as lane departure warning systems, adaptive speed control, headlamp controls, rain sensors or the like.

[0052] Optionally, and desirably, the image capture device or imaging device may be at least partially contained within an imaging module or camera module, which includes imaging sensor or imager and a lens positioned within a housing which defines a transparent window (which may comprise an at least substantially transparent glass or polycarbonate or acrylic (or other suitable material) window or panel) at the end of lens (such as described in PCT Application No. PCT/US2003/036177, filed Nov. 14, 2003 and published Jun. 3, 2004 as International Publication No. WO 2004/047421, which is hereby incorporated herein by reference). The imaging module may include the circuitry and controls or camera microcontroller and video encoder for the imaging sensor, such as on one or more printed circuit boards contained within the housing.

[0053] Optionally, the camera module may comprise a fully sealed module, which protects the imaging device and microcontroller and circuitry from exposure to the elements at the exterior of the vehicle, such as dust, dirt, mud, water, ice, salt and the like. The lens may provide part of the sealing of the module. For example, the camera module may include a lens that may be purchased or obtained as a subassembly that is separate from the imaging sensor and mounted to the module. The lens may loaded into the module, such as via insertion of the lens or threading of the lens into an aperture or opening in the module wall or adjacent to a transparent cap or cover of the module wall. The lens assembly (which is typically a five element lens set, but may include more or less optic elements without affecting the scope of the present invention) may be positioned at the opening or aperture or cover such that the lens is positioned at the appropriate or precise location relative to the imaging sensor or chip to properly focus the image onto the imaging sensor.

[0054] Optionally, the lens may be adjustably positioned, such as via threading into a threaded opening, to precisely position the lens to focus the image onto the imaging sensor. Once positioned at the precise or appropriate location, the lens may be retained or secured in the precise position, and the module may then be sealed with a back plate and gasket to seal the lens and imaging sensor and associated microprocessor and circuitry within the module. The module thus provides enhanced positioning of the lens relative to the imaging sensor, since the lens may be readily adjusted to provide proper focusing relative to a fixed imaging sensor.

[0055] The module may include a transparent cap or cover through which the field of view of the imaging sensor and lens is directed. The transparent cap may comprise a molded polycarbonate material or the like and may provide a substantially transparent and durable cover at the lens. Optionally, the transparent cap may include an anti-reflective coating or a hydrophobic or hydrophilic coating or the like, such as the coatings described in PCT Application No. PCT/US2003/036177, filed Nov. 14, 2003 and published Jun. 3, 2004 as International Publication No. WO 2004/047421, which is hereby incorporated herein by reference. The transparent cap thus may provide functionality and sealing to the camera module.

[0056] Because the lens assembly may be selected and positioned at the imaging sensor as a separate sub-assembly or as an aftermarket sub-assembly, the lens assembly may be selected to provide the desired effect depending on the particular application of the camera module. The lens may then be assembled to the module and the appropriate module, with the desired lens and features or content, may be mounted to the vehicle and connected to the existing connector (such as a five wire connector as described above). The display system may further process the image data or encoded signal to digitally correct for distortion in the image due to lens distortion and the like.

[0057] The present invention thus provides a customized imaging system that provides various features or functions or modes or content to the imaging device or system without requiring costly communication or data wires connecting between the imaging device and the user inputs within the vehicle. The present invention thus provides a vision system that is suitable for economically configuring the system to the desired content for a particular application of the imaging system. A user thus may select a vision system with a particular camera and/or display, with little or no effect on the wiring or connections between the camera and the display.

[0058] Optionally, the imaging system may be operable to function as a lane departure warning (LDW) system utilizing image processing of the images captured by the rearward facing imaging sensor or camera. The imaging system may include controls and/or circuitry for operating as such a lane departure warning system and thus may process the images to detect the lane markers and the like along the road surface, or the imaging system may provide image data to a separate image processor or microcontroller for processing the image data to detect the lane markers and the like along the road surface. The imaging system may utilize image processing techniques such as those described in U.S. patent application Ser. No. 10/427,051, filed Apr. 30, 2003, now U.S. Pat. No. 7,038,577; and/or Ser. No. 11/105,757, filed Apr. 14, 2005, now U.S. Pat. No. 7,526,103; and/or U.S. provisional applications, Ser. No. 60/607,963, filed Sep. 8, 2004; and/or Ser. No. 60/638,687, filed Dec. 23, 2004, which are hereby incorporated herein by reference.

[0059] The imager or imaging sensor 22 of the imaging system 16 of the imaging system 10 may comprise an imaging array sensor or a pixelated imaging array, such as a multi-pixel array such as a CMOS sensor or a CCD sensor or the like, such as the types disclosed in commonly assigned U.S. Pat. Nos. 5,550,677; 5,670,935; 5,796,094; 5,877,897; 6,097,023; 6,498,620 and 6,690,268, and U.S. patent application Ser. No. 09/441,341, filed Nov. 16, 1999, now U.S. Pat. No. 7,339,149, which are hereby incorporated herein by reference, or such as an extended dynamic range camera, such as the types disclosed in PCT Application No. PCT/US2003/036177, filed Nov. 14, 2003 and published Jun. 3, 2004 as International Publication No. WO 2004/047421, which is hereby incorporated herein by reference. For example, the imaging sensor may comprise a CMOS camera, such as the OV7930 single chip CMOS color NTSC camera available from OmniVision Technologies Inc. of Sunnyvale, Calif. Such color cameras may have the performance characteristics identified above and may additionally provide RGB and/or YCrCb video signals. Preferably, the color video camera operates at a minimum illumination (3000 K) of less than about 5 lux at f1.2, more preferably of less than about 3 lux at f1.2, and most preferably less than about of less than about 2 lux at f1.2. Such CMOS imaging sensors typically may have a peak sensitivity in the near infrared range, such as at approximately 850 nm to 900 nm.

[0060] Such pixelated imaging sensors may include a plurality of pixels, with at least some of the pixels masked or covered with a particular color filter, such that the individual pixels function to capture a particular color, such as red, green and blue colors or the like, such as disclosed in U.S. Pat. Nos. 5,550,677; 5,670,935; 5,796,094; 6,097,023 and/or 6,498,620, referenced above. For example, the imaging sensor may comprise an individual blue or a green or a red color filter over each pixel element of the CMOS multi-pixel element array. The imaging sensor is thus operable to provide color images to the display. Such RGB filters enable the capture of a color image by the CMOS detector, but necessarily result in a reduced or decreased low light level sensitivity for a color camera compared to a monochromatic or black and white camera. Optionally, and preferably, the imaging sensor may be capable of selectively operating in either a color mode, in which a color image may be displayed at display element 28 of display system 14, or a monochromatic or black and white mode, in which a monochromatic or black and white image may be displayed at display element 28 of display system 14, such as by utilizing aspects of the imaging sensor disclosed in U.S. Pat. No. 6,498,620; and/or PCT Application No. PCT/US2003/036177, filed Nov. 14, 2003 and published Jun. 3, 2004 as International Publication No. WO 2004/047421, which are hereby incorporated herein by reference.

[0061] Although described as a CMOS type camera, clearly other types of imaging arrays or imaging sensors or cameras may be implemented with the imaging system of the present invention. For example, the imaging sensor may comprise a CCD or other type of sensor, without affecting the scope of the present invention. Preferably, the selected imaging sensor has a low dark current and thus provides enhanced ruggedness and enhanced performance at higher temperatures. Optionally, the dark current (the current through the pixels when they are not sensing light) may be used to detect the temperature at the imaging sensor, such as described in U.S. patent application Ser. No. 11/105,757, filed Apr. 14, 2005, now U.S. Pat. No. 7,526,103; and U.S. provisional application Ser. No. 60/607,963, filed Sep. 8, 2004, which is hereby incorporated herein by reference.

[0062] Optionally, the imaging device or system may communicate the video signals to the display device or system via other types of signal communicating means. For example, the imaging device or system may communicate the video signals to the display device or system via an LVDS output of the imaging device or system. Optionally, the imaging system and the display system may share common components or circuitry or a common microprocessor to reduce components and cost of the vision system.

[0063] Referring now to FIG. 6, an image capture system or imaging system 110 is positioned at a vehicle, such as at or in an interior portion of the vehicle, such as at or in an accessory module or pod or attachment 112 of the vehicle, and is operable to capture an image of a scene occurring exteriorly of the vehicle, such as forwardly of the vehicle. Imaging system 110 includes an image capture device or imaging device or imaging system or camera or sensor 114 that is directed exteriorly of the vehicle and has an exterior field of view 116. The images or frames captured by image sensor 114 are processed by a control 118 to detect objects or items of interest in the captured images. The control may process the captured image data to determine if headlamps or taillights of other vehicles are present in the images, such as for a headlamp control system, and/or the control may process the captured images to detect lane markers along the road surface, such as for a lane departure warning system, and/or may process the image data to detect other characteristics or objects, as discussed below (such as by utilizing aspects of the imaging system described in U.S. provisional application Ser. No. 60/618,686, filed Oct. 14, 2004, which is hereby incorporated herein by reference in its entirety). The control may synchronize the processing techniques and the camera or image sensor settings to enhance and/or optimize processing of images for the particular system or function, as also discussed below.

[0064] Imaging sensor 112 may be positioned at the vehicle and directed or oriented with a field of view generally exteriorly of the vehicle for capturing images of the exterior scene for processing by the control, as discussed below. Imaging system 110 may utilize principles of other vehicle vision or imaging systems, such as a vision or imaging system or control of the types disclosed in U.S. Pat. Nos. 6,757,109; 6,717,610; 6,396,397; 6,201,642; 6,353,392; 6,313,454; 6,396,397; 5,550,677; 5,670,935; 5,796,094; 5,877,897; 6,097,023 and 6,498,620, and U.S. patent application Ser. No. 09/441,341, filed Nov. 16, 1999, now U.S. Pat. No. 7,339,149, and Ser. No. 10/427,051, filed Apr. 30, 2003, now U.S. Pat. No. 7,038,577, which are all hereby incorporated herein by reference. In a preferred embodiment, the imaging system 112 may include a lens element or optic between the imaging device 114 and the exterior scene. The optic may comprise an asymmetric optic, which focuses a generally central portion of the scene onto the imaging device, while providing classical distortion on the periphery of the scene or field of view.

[0065] In the illustrated embodiment, the image sensor 112 is mounted at or in an accessory module or windshield electronics module or mirror attachment or pod 120 and is arranged to have a field of view 116 forward of the vehicle, such as through the windshield of the vehicle and preferably through a portion of the windshield that is wiped by a windshield wiper of the vehicle. The image sensor 112 thus may capture images of a forward scene as the vehicle is traveling forwardly along a road or street or highway or the like. Optionally, the imaging device or sensor may be positioned elsewhere, such as at or in the interior rearview mirror assembly 122, or at or in an accessory module or windshield electronics module or the like (as discussed below), without affecting the scope of the present invention. Optionally, the image sensor and imaging system may be operable to capture images of other areas exteriorly of the vehicle to provide image data of other areas around or near the vehicle, without affecting the scope of the present invention. The imaging system thus may be operable to capture images of the scene sidewardly of the vehicle for a blind spot or side object detection or lane change assist systems or immediately rearward of the vehicle to detect objects rearward of the vehicle, such as for assisting the driver of the vehicle in backing up or maneuvering the vehicle in reverse.

[0066] As shown in FIG. 7, control 118 may control the imager or image sensor 114 via a control signal 118a, while the control may receive image data from the image sensor via an image data signal 114a. The control or microprocessor 118 is operable to process the image data generated by the image sensor 114 to analyze the image data and detect objects or light sources of interest in the captured image. The control or imaging system may selectively process the captured image data to detect objects or light sources or lane markers or the like in the exterior scene, and may process particular portions or regions of the captured image in accordance with the respective function of the control, as discussed below.

[0067] The control may be operable to control various accessories (or to generate an output to a control or circuitry of one or more accessories) in response to the image processing. For example, the control may control or adjust a headlamp high-low beam setting in response to a detection of headlamps or taillights in the captured images, and/or may actuate an alert device, such as a visible display or audible alert or the like, in response to a detection of the vehicle drifting out of its lane along the road, and/or may adjust an interior lighting or display intensity of a light or display within the vehicle in response to an ambient light detection, and/or may generate other outputs or actuate/control other accessories in response to detections of other objects or light sources of interest via such image processing.

[0068] The imaging system thus may be operable to function as or in conjunction with a lane departure warning system, and may generate an alert to the driver of the vehicle in response to a detection that the vehicle is drifting or moving out of a detected lane along the road. Such an application may utilize principles of systems of the types described in U.S. Pat. Nos. 6,353,392 and 6,313,454; and/or U.S. patent application Ser. No. 10/427,051, filed Apr. 30, 2003, now U.S. Pat. No. 7,038,577; Ser. No. 11/105,757, filed Apr. 14, 2005, now U.S. Pat. No. 7,526,103; and/or Ser. No. 10/209,173, filed Jul. 31, 2002, now U.S. Pat. No. 6,882,287, and/or U.S. provisional applications, Ser. No. 60/607,963, filed Sep. 8, 2004; and/or Ser. No. 60/638,687, filed Dec. 23, 2004, which are hereby incorporated herein by reference. Optionally, the imaging system may capture and process images for a headlamp control application, and may utilize principles of systems of the types described in U.S. Pat. Nos. 6,824,281; 5,796,094; 6,097,023; 5,320,176 and 6,559,435, which are hereby incorporated herein by reference, and/or may capture and process images for a rain sensing function or rain sensor application, and may utilize principles of the systems of the types described in U.S. Pat. Nos. 6,824,281; 6,320,176; 6,353,392; 6,313,454; 6,516,664; 6,341,523 and 6,250,148; and/or in U.S. patent application Ser. No. 10/348,514, filed Jan. 21, 2003, now U.S. Pat. No. 6,968,736, which are all hereby incorporated herein by reference, and/or may capture and process images for an ambient light detection or the like, and may utilize principles of systems of the types described in U.S. Pat. Nos. 5,550,677 and/or 5,670,935, which are hereby incorporated herein by reference, and/or may capture and process images for a collision avoidance system or vehicle separation system, and may utilize principles of systems of the types described in U.S. Pat. Nos. 6,411,204; 6,396,397; 6,124,647; 6,291,906 and 6,534,884, and/or U.S. patent application Ser. No. 10/422,512, filed Apr. 24, 2003, now U.S. Pat. No. 7,123,168, which are all hereby incorporated herein by reference, and/or may capture and process images for a navigational system, and may utilize principles of systems of the types described in U.S. Pat. Nos. 6,477,464; 5,924,212; 4,862,594; 4,937,945; 5,131,154; 5,255,442; 6,678,614 and/or 5,632,092, and/or U.S. patent application Ser. No. 10/456,599, filed Jun. 6, 2003, now U.S. Pat. No. 7,004,593; Ser. No. 10/645,762, filed Aug. 20, 2003, now U.S. Pat. No. 7,167,796; Ser. No. 11/105,757, filed Apr. 14, 2005, now U.S. Pat. No. 7,526,103; and Ser. No. 10/422,378, filed Apr. 24, 2003, now U.S. Pat. No. 6,946,978, now U.S. Pat. No. 6,946,978; and/or PCT Application No. PCT/US03/40611, filed Dec. 19, 2003 and published Jul. 15, 2004 as International Publication No. WO 2004/058540; and/or PCT Application No. PCT/US04/015424, filed May 18, 2004 and published Dec. 2, 2004 as International Publication No. WO 2004/103772, and/or U.S. provisional application Ser. No. 60/607,963, filed Sep. 8, 2004, which are all hereby incorporated herein by reference. Other accessories or functions or applications may also or otherwise be provided or controlled or adjusted by the imaging system, without affecting the scope of the present invention.

[0069] The control may adjust one or more characteristics or settings of the image sensor or camera and/or may operate an iris and/or an optical zoom and/or a digital zoom or the like so that the image sensor is adapted or set to enhance image capturing for a particular function of the imaging system or control. Optionally, the control may adjust a focus of the image sensor (such as via adjustment of the lens or optic) to provide a clear captured image focused on the desired or appropriate objects for the particular application of the imaging system.