Methods And Systems For Processing An Unltrasound Image

SHIN; JUN SEOB ; et al.

U.S. patent application number 16/622393 was filed with the patent office on 2020-07-30 for methods and systems for processing an unltrasound image. The applicant listed for this patent is KONINKLIJKE PHILIPS N.V.. Invention is credited to MAN NGUYEN, JEAN-LUC FRANCOIS-MARIE ROBERT, JUN SEOB SHIN, FRANCOIS GUY GERARD MARIE VIGNON.

| Application Number | 20200237345 16/622393 |

| Document ID | 20200237345 / US20200237345 |

| Family ID | 1000004777033 |

| Filed Date | 2020-07-30 |

| Patent Application | download [pdf] |

View All Diagrams

| United States Patent Application | 20200237345 |

| Kind Code | A1 |

| SHIN; JUN SEOB ; et al. | July 30, 2020 |

METHODS AND SYSTEMS FOR PROCESSING AN UNLTRASOUND IMAGE

Abstract

The invention provides methods and systems for generating an ultrasound image. In a method, the generation of an ultrasound image comprises: obtaining channel data, the channel data defining a set of imaged points; for each imaged point: isolating the channel data; performing a spectral estimation on the isolated channel data; and selectively attenuating the spectral estimation channel data, thereby generating filtered channel data; and summing the filtered channel data, thereby forming a filtered ultrasound image. In some examples, the method comprises aperture extrapolation. The aperture extrapolation improves the lateral resolution of the ultrasound image. In other examples, the method comprises transmit extrapolation. The transmit extrapolation improves the contrast of the image. In addition, the transmit extrapolation improves the frame rate and reduces the motion artifacts in the ultrasound image. In further examples, the aperture and transmit extrapolations may be combined.

| Inventors: | SHIN; JUN SEOB; (MEDFORD, MA) ; VIGNON; FRANCOIS GUY GERARD MARIE; (ANDOVER, MA) ; NGUYEN; MAN; (MELROSE, MA) ; ROBERT; JEAN-LUC FRANCOIS-MARIE; (CAMBRIDGE, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004777033 | ||||||||||

| Appl. No.: | 16/622393 | ||||||||||

| Filed: | June 11, 2018 | ||||||||||

| PCT Filed: | June 11, 2018 | ||||||||||

| PCT NO: | PCT/EP2018/065258 | ||||||||||

| 371 Date: | December 13, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62520233 | Jun 15, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 8/4461 20130101; A61B 8/5207 20130101; A61B 8/4494 20130101; G01S 7/52046 20130101; G01S 15/8997 20130101 |

| International Class: | A61B 8/08 20060101 A61B008/08; A61B 8/00 20060101 A61B008/00; G01S 15/89 20060101 G01S015/89; G01S 7/52 20060101 G01S007/52 |

Claims

1. A method for generating an ultrasound image, the method comprising: obtaining beam-summed data using an ultrasonic probe, wherein the beam-summed data is formed from a plurality of steering angles; for each steering angle of the beam-summed data, segmenting the beam-summed data based on an axial imaging depth of the beam-summed data; for each segment of the segmented beam-summed data: estimating an extrapolation filter, based on the segmented beam-summed data, the extrapolation filter having a filter order; and extrapolating, by an extrapolation factor, the segmented beam-summed data based on the extrapolation filter, thereby generating extrapolated beam-summed data; and coherently compounding the extrapolated beam-summed data across all segments, thereby generating the ultrasound image.

2. A method as claimed in claim 1, the method further comprising: for each axial segment of the beam-summed data, applying a Fourier transform to this axial segment of the beam-summed data; and performing an inverse Fourier transform on the extrapolated beam-summed data.

3. A method as claimed in claim 1, wherein the beam-summed data is obtained by way of at least one of plane wave imaging and diverging wave imaging.

4. A method as claimed in claim 1, wherein the axial segment is less than 4 wavelengths of a transmit signal that provides the beam-summed data in depth, for example less than or equal to 2 wavelengths in depth.

5. A method as claimed in claim 1, wherein the plurality of steering angles comprises less fewer than 20 angles, for examples less than or equal to 10 angles.

6. A method as claimed in claim 1, wherein the filter order is less than or equal to half the number of steering angles.

7. A method as claimed in claim 1, wherein the extrapolation factor is less than or equal to 10, for example less than or equal to 8.

8. A method as claimed in claim 1, wherein the estimation of the extrapolation filter is performed using an autoregressive model.

9. A method as claimed in claim 1, wherein the estimation of the extrapolation filter is performed using the Burg technique.

10. A method as claimed in claim 1, wherein the extrapolation occurs in an axial direction of the beam-summed data.

11. A computer program comprising computer program code means which is adapted, when said computer program is run on a computer, to implement the method of claim 1.

12. A controller for controlling the generation of an ultrasound image, wherein the controller is adapted to: obtain beam-summed data by way of an ultrasonic probe, wherein the beam-summed data is formed from a plurality of steering angles; for each steering angle of the beam-summed data, segment the beam-summed data based on an axial depth of the beam-summed data; for each segment of the segmented beam-summed data: estimate an extrapolation filter, based on the segmented beam-summed data, the extrapolation filter having a filter order; and extrapolate, by an extrapolation factor, the segmented beam-summed data based on the extrapolation filter, thereby generating extrapolated beam-summed data; and coherently compound the extrapolated beam-summed data across all segments, thereby generating the ultrasound image.

13. An ultrasound system, the system comprising: an ultrasonic probe; a controller as claimed in claim 12; and a display device for displaying the high contrast ultrasound image.

14. A system as claimed in claim 13, wherein the system further comprises a user interface having a user input.

15. A system as claimed in claim 14, wherein the user input is adapted to adjust at least one of: the axial depth of the axial segment; the extrapolation factor; and the filter order.

Description

RELATED APPLICATION

[0001] This application claims the benefit of and priority to U.S. Provisional Application No. 62/520,233, filed Jun. 15, 2017, which is incorporated by reference in its entirety.

FIELD OF THE INVENTION

[0002] This invention relates to the field of ultrasound imaging, and more specifically to the field of ultrasound image filtering.

BACKGROUND OF THE INVENTION

[0003] Ultrasound imaging is increasingly being employed in a variety of different applications. It is important that the image produced by the ultrasound system is as clear and accurate as possible so as to give the user a realistic interpretation of the subject being scanned. This is especially the case when the subject in question is a patient undergoing a medical ultrasound scan. In this situation, the ability of a doctor to make an accurate diagnosis is dependent on the quality of the image produced by the ultrasound system.

[0004] Off-axis clutter is a significant cause of image degradation in ultrasound. Adaptive beamforming techniques, such as minimum variance (MV) beamforming, have been developed and applied to ultrasound imaging to achieve an improvement in image quality; however, MV beamforming is computationally intensive as an inversion of the spatial covariance matrix is required for each pixel of the image. In addition, even though MV beamforming is developed primarily for an improvement in spatial resolution, and is not ideal for reducing off-axis clutter, its performance in terms of improving spatial resolution often needs to be sacrificed by reducing the subarray size. Otherwise, image artifacts may occur in the speckle due to signal cancellation.

[0005] Adaptive weighting techniques, such as: the coherence factor (CF); the generalized coherence factor (GCF); the phase coherence factor (PCF); and the short-lag spatial coherence (SLSC), have been proposed but all require access to per-channel data to compute a weighting mask to be applied to the image. Further, these methods would only work for conventional imaging with focused transmit beams and are not suitable for plane wave imaging (PWI) or diverging wave imaging (DWI) involving only a few transmits.

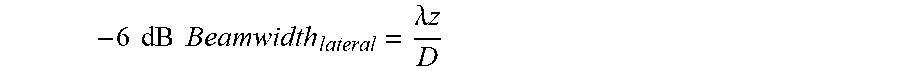

[0006] In addition, spatial resolution in ultrasound images, particularly the lateral resolution, is often suboptimal. The -6 dB lateral beamwidth at the focal depth is determined by the following equation:

- 6 dB Beamwidth lateral = .lamda. z D ##EQU00001##

where .lamda. is wavelength, z is transmit focal depth, and D is the aperture size. The smaller the wavelength (or the higher center frequency), the better the lateral resolution will be; however, a smaller wavelength is achieved at the cost of penetration depth. On the other hand, a larger aperture size D is needed to achieve a better lateral resolution; however, the aperture size is often limited by the human anatomy, hardware considerations, and system cost.

[0007] Adaptive beamforming techniques, such as the previously mentioned minimum variance (MV) beamforming, have been a topic of active research. These methods are data-dependent beamforming methods that seek to adaptively estimate the apodization function that yields lateral resolution beyond the diffraction limit.

[0008] In addition to standard ultrasound imaging techniques, plane wave imaging (PWI) or diverging wave imaging (DWI) in the case of phased arrays is a relatively new imaging technique, which has the potential to perform imaging at very high frame rates above 1 kHz and possibly several kHz. These techniques have also opened up many new possible imaging modes for different applications which were previously not possible with conventional focused transmit beams. For this reason, they have been some of the most actively researched topics in academia in recent years.

[0009] PWI/DWI can achieve high frame rates by coherently compounding images obtained from broad transmit beams at different angles. Since the spatial resolution in PWI/DWI is generally known to be only slightly worse than, or comparable to, conventional imaging with focused transmit beams, its main drawback is degradation in the image contrast, which is directly related to the number of transmit angles. The image contrast is generally low for a small number of transmit angles in PWI/DWI; therefore, many transmit angles are needed to maintain image quality comparable to that of conventional imaging with focused transmit beams.

[0010] PWI/DWI also suffers from motion artifacts, particularly when imaging fast-moving organs such as the heart, as individual image pixels are constructed from signals from different transmit beams. The effect of motion becomes more severe with increasing numbers of transmit angles; therefore, the dilemma in PWI/DWI systems is clear: more transmit angles are required to achieve high image contrast, but also result in more motion artifacts that degrade image quality.

[0011] Further, regardless of the number of transmit angles, PWI/DWI does not reduce reverberation clutter, which is one of the main sources of image quality degradation in fundamental B-mode ultrasound images.

SUMMARY OF THE INVENTION

[0012] The present invention provides systems and methods capable of performing off-axis clutter filtering, improving spatial resolution, and improving image contrast. In certain aspects, the present inventions proposes an extrapolation technique of the transmitted plane waves based on a linear prediction scheme, which allows for an extremely high frame rate while significantly improving image contrast. An additional benefit of the invention is that these benefits may be accomplished without significantly increasing the computational burden of the ultrasound system.

[0013] According to examples in accordance with an aspect of the invention, there is provided a method for performing off-axis clutter filtering in an ultrasound image, the method comprising:

[0014] obtaining channel data from an ultrasonic probe defining a set of imaged points, wherein the ultrasonic probe comprises an array of transducer elements;

[0015] for each imaged point in a region of interest: [0016] isolating the channel data associated with said imaged point; [0017] performing a spectral estimation of the isolated channel data; and [0018] selectively attenuating the isolated channel data by way of an attenuation coefficient based on the spectral estimation, thereby generating filtered channel data; and

[0019] summing the filtered channel data, thereby generating a filtered ultrasound image.

[0020] This method performs off-axis clutter filtering in an ultrasound image. By performing a spectral estimation of the channel data it is possible to identify the frequency content of the channel data. Typically, off-axis clutter signal will possess a high spatial frequency, which may be identified in the spectral estimation of the channel data. In this way, it is possible to selectively attenuate signals having high spatial frequencies, thereby reducing and/or eliminating off-axis clutter signals in the final ultrasound image.

[0021] In an embodiment, the isolating of the channel data comprises processing a plurality of observations of the imaged point.

[0022] In this way, it is possible to perform the spectral estimation on channel data that has been averaged over a plurality of measurements. In this way, the accuracy of the channel data, and so of the final ultrasound image, is increased.

[0023] In an arrangement, the spectral estimation comprises decomposing the channel data into a finite sum of complex exponentials.

[0024] By decomposing the channel data into a finite sum of complex exponentials, it is simple to identify the components with high spatial frequencies, thereby making it easier to attenuate the off-axis clutter signals in the ultrasound image.

[0025] In a further arrangement, the complex exponentials comprise:

[0026] a first model parameter; and

[0027] a second model parameter.

[0028] In a further embodiment, the first model parameter is complex.

[0029] In a yet further embodiment, the second model parameter is inversely proportional to the distance between adjacent transducer elements of the ultrasonic probe.

[0030] The first and second model parameters may be used to describe the nature of the channel data. In the case that the first model parameter is complex, the imaginary component relates to the phase of the signals and the modulus, which may be a real, positive number, relates to the amplitude of the signal. The second model parameter may relate to the spatial frequency of the signal.

[0031] In a still yet further arrangement, the first and second model parameters are estimated by way of spectral estimation.

[0032] In some designs, the attenuation coefficient is Gaussian.

[0033] In this way, it is simple to implement attenuation for signals approaching higher spatial frequencies. The aggressiveness of the filtering may be tuned by altering the width of the Gaussian used.

[0034] In an embodiment, the attenuation coefficient is depth dependent.

[0035] By making the attenuation coefficient depth dependent, it is possible to control the amount of off-axis clutter signal filtering for different depths.

[0036] In an arrangement, the attenuation coefficient is dependent on the second model parameter.

[0037] In this way, the attenuation coefficient may be directly dependent on the spatial frequency of the signal, meaning that the attenuation coefficient may adapt to the signals on an individual basis rather than requiring an input from the user.

[0038] In some embodiments, the attenuation coefficient is adapted to attenuate the channel data to half the width of the receive beampattern.

[0039] In this way, it is possible to both improve the lateral resolution and decrease the off-axis clutter in the filtered ultrasound image.

[0040] In some arrangements, the spectral estimation is based on an autoregressive model.

[0041] According to examples in accordance with a further aspect of the invention, there is provided a computer program comprising computer program code means which is adapted, when said computer program is run on a computer, to implement the method described above.

[0042] According to examples in accordance with a further aspect of the invention, there is provided a controller for controlling the filtering of off-axis clutter in an ultrasound image, wherein the controller is adapted to:

[0043] obtain channel data from an ultrasonic probe defining a set of imaged points;

[0044] for each imaged point in a region of interest: [0045] isolate the channel data associated with said imaged point; [0046] perform a spectral estimation of the isolated channel data; and [0047] selectively attenuate the isolated channel data by way of an attenuation coefficient based on the spectral estimation, thereby generating filtered channel data; and

[0048] sum the filtered channel data, thereby generating a filtered ultrasound image.

[0049] According to examples in accordance with a further aspect of the invention, there is provided an ultrasound system, the system comprising:

[0050] an ultrasonic probe, the ultrasonic probe comprising an array of transducer elements;

[0051] a controller as defined above; and

[0052] a display device for displaying the filtered ultrasound image.

[0053] According to examples in accordance with a further aspect of the invention, there is provided a method for generating an ultrasound image, the method comprising:

[0054] obtaining channel data by way of an ultrasonic probe;

[0055] for each channel of the channel data, segmenting the channel data based on an axial imaging depth of the channel data;

[0056] for each segment of the segmented channel data: [0057] estimating an extrapolation filter based on the segmented channel data, the extrapolation filter having a filter order; and [0058] extrapolating, by an extrapolation factor, the segmented channel data based on the extrapolation filter, thereby generating extrapolated channel data; and

[0059] summing the extrapolated channel data across all segments, thereby generating the ultrasound image.

[0060] This method performs aperture extrapolation on the channel data, thereby increasing the lateral resolution of the ultrasound image. By estimating the extrapolation filter based on the segmented channel data, the extrapolation filter may directly correspond to the channel data. In this way, the accuracy of the extrapolation performed on the segmented channel data is increased. In other words, this method predicts channel data from transducer elements that do not physically exist within the ultrasonic probe by extrapolating the existing channel data.

[0061] In an embodiment, the method further comprises:

[0062] for each axial segment of the channel data, applying a Fourier transform to an axial segment of the channel data; and

[0063] performing an inverse Fourier transform on the extrapolated channel data.

[0064] By performing the extrapolation of the segmented channel data in the temporal frequency domain, the accuracy of the extrapolated channel data may be further increased.

[0065] In an arrangement, the axial segment is less than 4 wavelengths in depth, for example less than or equal to 2 wavelengths in depth.

[0066] In this way, the performance of the system, which is largely dependent on the number of segments of channel data to be extrapolated, may be improved whilst maintaining the improvement in lateral resolution of the image, which is inversely proportional to the size of the axial segment.

[0067] In an embodiment, the extrapolation factor is less than or equal to 10.times., for example less than or equal to 8.times..

[0068] In this way, it is possible to achieve a substantial improvement in the lateral resolution of the ultrasound image whilst preserving the speckle texture within the image.

[0069] In some designs, the estimation of the extrapolation filter is performed using an autoregressive model.

[0070] In an arrangement, the estimation of the extrapolation filter is performed using the Burg technique.

[0071] In this way, the extrapolation filter may be simply estimated without requiring a significant amount of processing power.

[0072] In some embodiments, the filter order is less than or equal to 5, for example less than or equal to 4.

[0073] In this way, it is possible to achieve a substantial improvement in the lateral resolution of the ultrasound image whilst preserving the speckle texture within the image.

[0074] In an embodiment, the extrapolation occurs in the azimuthal direction in the aperture domain.

[0075] According to examples in accordance with a further aspect of the invention, there is provided a computer program comprising computer program code means which is adapted, when said computer program is run on a computer, to implement the method defined above.

[0076] According to examples in accordance with a further aspect of the invention, there is provided a controller for controlling the generation of an ultrasound image, wherein the controller is adapted to:

[0077] obtain channel data by way of an ultrasonic probe;

[0078] for each channel of the channel data, segment the channel data based on an axial imaging depth of the channel data;

[0079] for each segment of the segmented channel data: [0080] estimate an extrapolation filter based on the segmented channel data, the extrapolation filter having a filter order; and [0081] extrapolate, by an extrapolation factor, the segmented channel data based on the extrapolation filter, thereby generating extrapolated channel data; and

[0082] sum the extrapolated channel data across all segments, thereby generating the ultrasound image.

[0083] According to examples in accordance with a further aspect of the invention, there is provided an ultrasound system, the system comprising:

[0084] an ultrasonic probe;

[0085] a controller as defined above; and

[0086] a display device for displaying the ultrasound image.

[0087] In an embodiment, the system further comprises a user interface having a user input.

[0088] In this way, it is possible for a user to provide an instruction to the ultrasound system.

[0089] In an arrangement, the user input is adapted to adjust the axial depth of the axial segment.

[0090] In a further arrangement, the user input is adapted to alter the extrapolation factor.

[0091] In a yet further arrangement, the user input is adapted to alter the filter order.

[0092] In this way, the user may empirically adapt the various parameters of the extrapolation method in order to maximize the image quality according to their subjective opinion.

[0093] According to examples in accordance with a yet aspect of the invention, there is provided a method for generating an ultrasound image, the method comprising:

[0094] obtaining beam-summed data by way of an ultrasonic probe, wherein the beam-summed data comprises a plurality of steering angles;

[0095] for each steering angle of the beam-summed data, segmenting the beam-summed data based on an axial imaging depth of the channel data;

[0096] for each segment of the segmented beam-summed data: [0097] estimating an extrapolation filter, based on the segmented beam-summed data, the extrapolation filter having a filter order; and [0098] extrapolating, by an extrapolation factor, the segmented beam-summed data based on the extrapolation filter, thereby generating extrapolated beam-summed data; and

[0099] coherently compounding the extrapolated beam-summed data across all segments, thereby generating the ultrasound image.

[0100] This method performs transmit extrapolation on the beam-summed data, thereby improving the contrast of the ultrasound image. The beam-sum data corresponds to data summed across the aperture for several transmit beams overlapping on a point of interest. In addition, this method provides an increase in ultrasound image frame rate and a reduction in motion artifacts in the final ultrasound image by retaining contrast and resolution with fewer actual transmit events. By estimating the extrapolation filter based on the segmented beam-summed data, the extrapolation filter may directly correspond to the beam-summed data. In this way, the accuracy of the extrapolation performed on the segmented beam-summed data is increased. In other words, this method predicts beam-summed data from transmit angles outside of the range of angles used to obtain the original beam-summed data by extrapolation.

[0101] In an embodiment, the method further comprises:

[0102] for each axial segment of the beam-summed data, applying a Fourier transform to an axial segment of the beam-summed data; and

[0103] performing an inverse Fourier transform on the extrapolated beam-summed data.

[0104] By performing the extrapolation of the segmented beam-summed data in the temporal frequency domain, the accuracy of the extrapolated beam-summed data may be further increased.

[0105] In an arrangement, the beam-summed data is obtained by way of at least one of plane wave imaging and diverging wave imaging.

[0106] In this way, it is possible to produce an ultrafast ultrasound imaging method with increased image contrast and frame rate.

[0107] In some embodiments, the axial segment is less than 4 wavelengths in depth, for example less than or equal to 2 wavelengths in depth.

[0108] In this way, the performance of the system, which is largely dependent on the number of segments of beam-summed data to be extrapolated, may be improved whilst maintaining the improvement in image quality, which is inversely proportional to the size of the axial segment.

[0109] In some arrangements, the plurality of steering angles comprises less than 20 angles, for examples less than or equal to 10 angles.

[0110] In this way, the computational performance of the ultrasound system may be improved, as there are fewer steering angle to process, whilst maintaining the detail of the final ultrasound image, which is proportional to the number of steering angle used.

[0111] In some designs, the filter order is less than or equal to half the number of steering angles.

[0112] In an embodiment, the extrapolation factor is less than or equal to 10.times., for example less than or equal to 8.times..

[0113] In this way, it is possible to achieve a substantial improvement in the contrast resolution of the ultrasound image whilst preserving the speckle texture within the image.

[0114] In an arrangement, the estimation of the extrapolation filter is performed using an autoregressive model.

[0115] In an embodiment, the estimation of the extrapolation filter is performed using the Burg technique.

[0116] In this way, the extrapolation filter may be simply estimated without requiring a significant amount of processing power.

[0117] In an arrangement, the extrapolation occurs in the transmit direction.

[0118] According to examples in accordance with a further aspect of the invention, there is provided a computer program comprising computer program code means which is adapted, when said computer program is run on a computer, to implement the method defined above.

[0119] According to examples in accordance with a further aspect of the invention, there is provided a controller for controlling the generation of an ultrasound image, wherein the controller is adapted to:

[0120] obtain beam-summed data by way of an ultrasonic probe, wherein the beam-summed data comprises a plurality of steering angles;

[0121] for each steering angle of the beam-summed data, segment the beam-summed data based on an axial depth of the beam-summed data;

[0122] for each segment of the segmented beam-summed data: [0123] estimate an extrapolation filter, based on the segmented beam-summed data, the extrapolation filter having a filter order; and [0124] extrapolate, by an extrapolation factor, the segmented beam-summed data based on the extrapolation filter, thereby generating extrapolated beam-summed data; and

[0125] coherently compound the extrapolated beam-summed data across all segments, thereby generating the ultrasound image.

[0126] According to examples in accordance with a further aspect of the invention, there is provided an ultrasound system, the system comprising:

[0127] an ultrasonic probe;

[0128] a controller as defined above; and

[0129] a display device for displaying the high contrast ultrasound image.

[0130] In an embodiment, the system further comprises a user interface having a user input.

[0131] In this way, it is possible for a user to provide an instruction to the ultrasound system.

[0132] In a further embodiment, the user input is adapted to adjust at least one of: the axial depth of the axial segment; the extrapolation factor; and the filter order.

[0133] In this way, the user may empirically adapt the various parameters of the extrapolation method in order to maximize the image quality according to their subjective opinion.

BRIEF DESCRIPTION OF THE DRAWINGS

[0134] Examples of the invention will now be described in detail with reference to the accompanying drawings, in which:

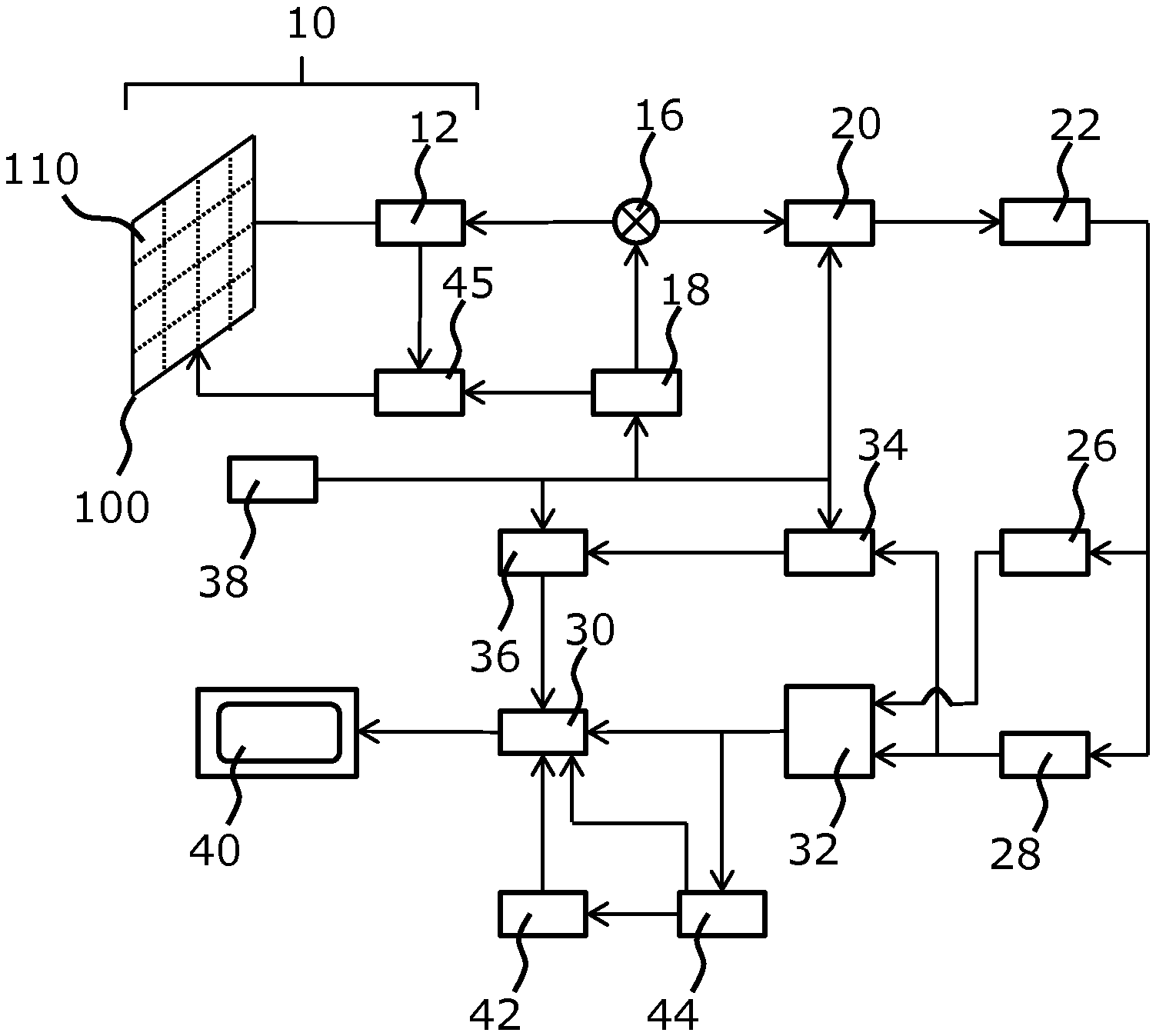

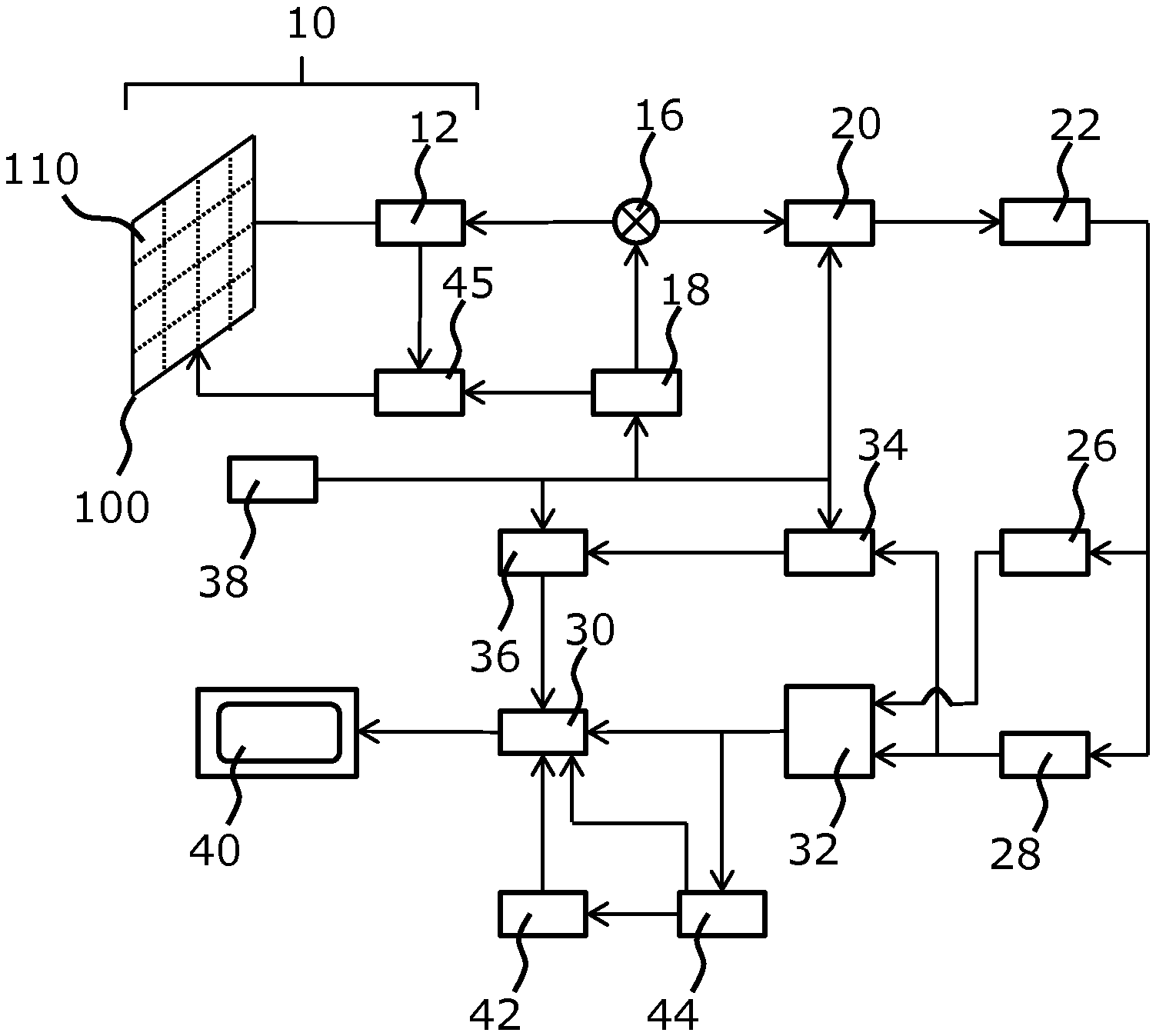

[0135] FIG. 1 shows an ultrasound diagnostic imaging system to explain the general operation;

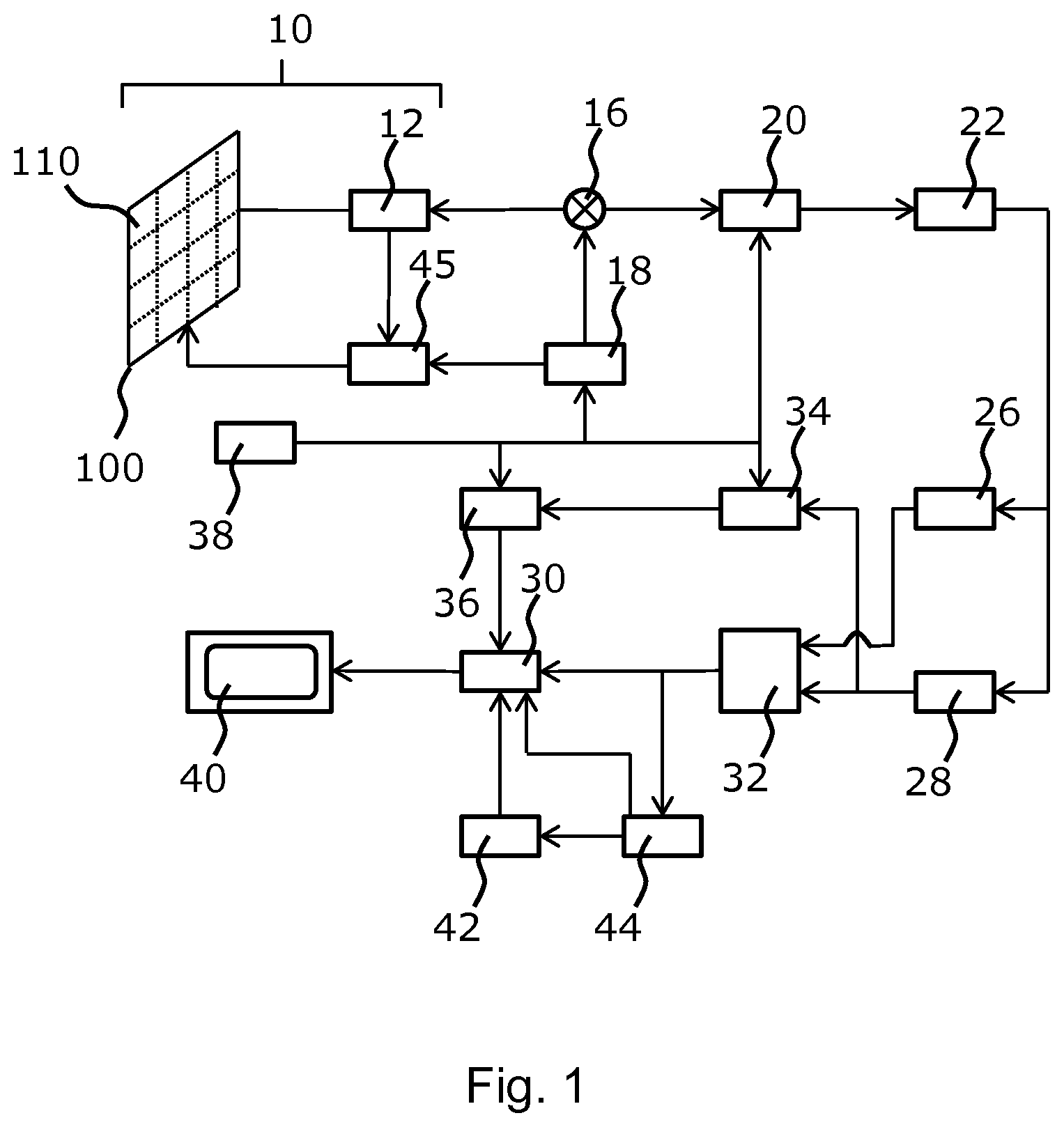

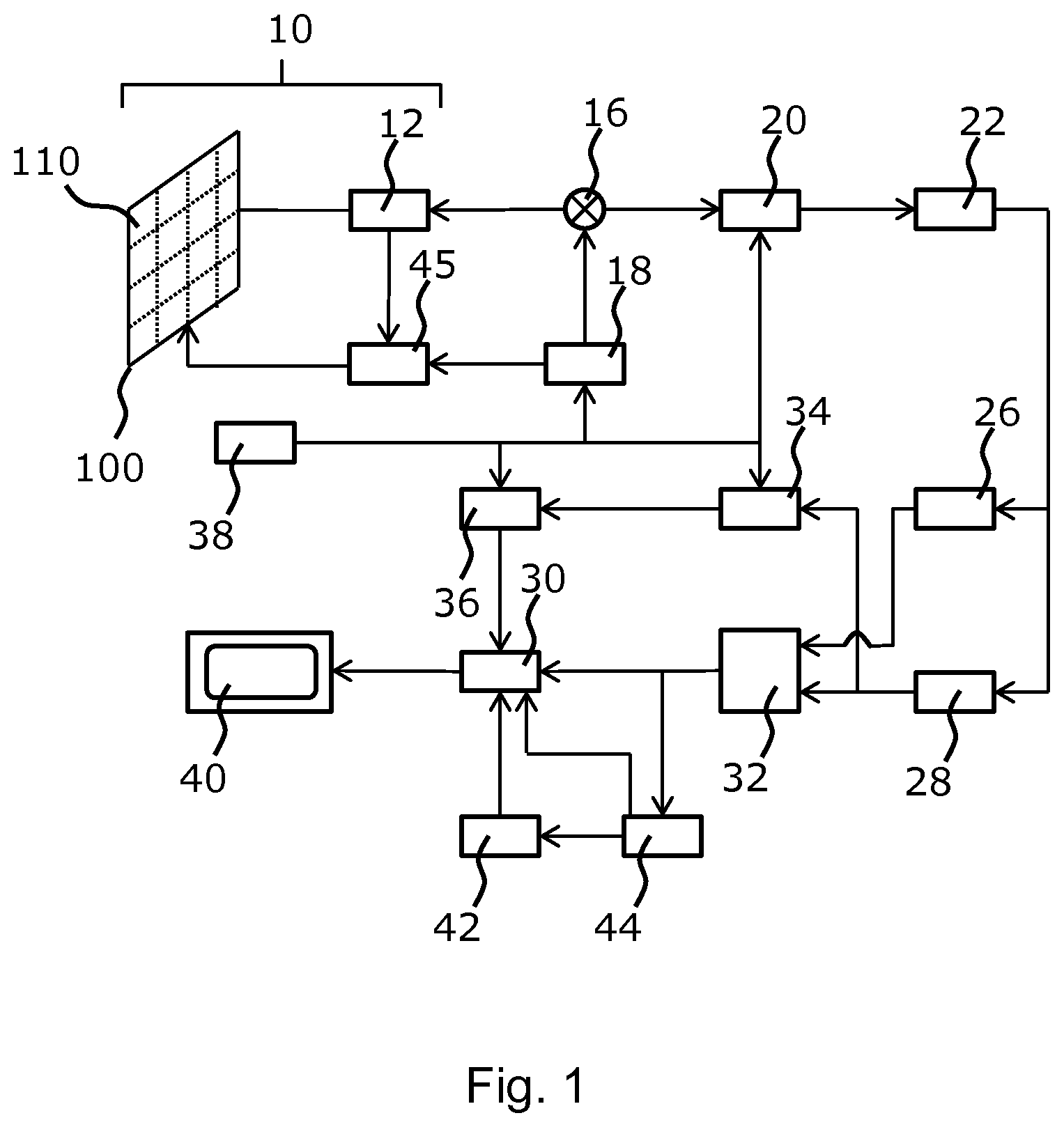

[0136] FIG. 2 shows a method of selectively attenuating channel data of an ultrasound image;

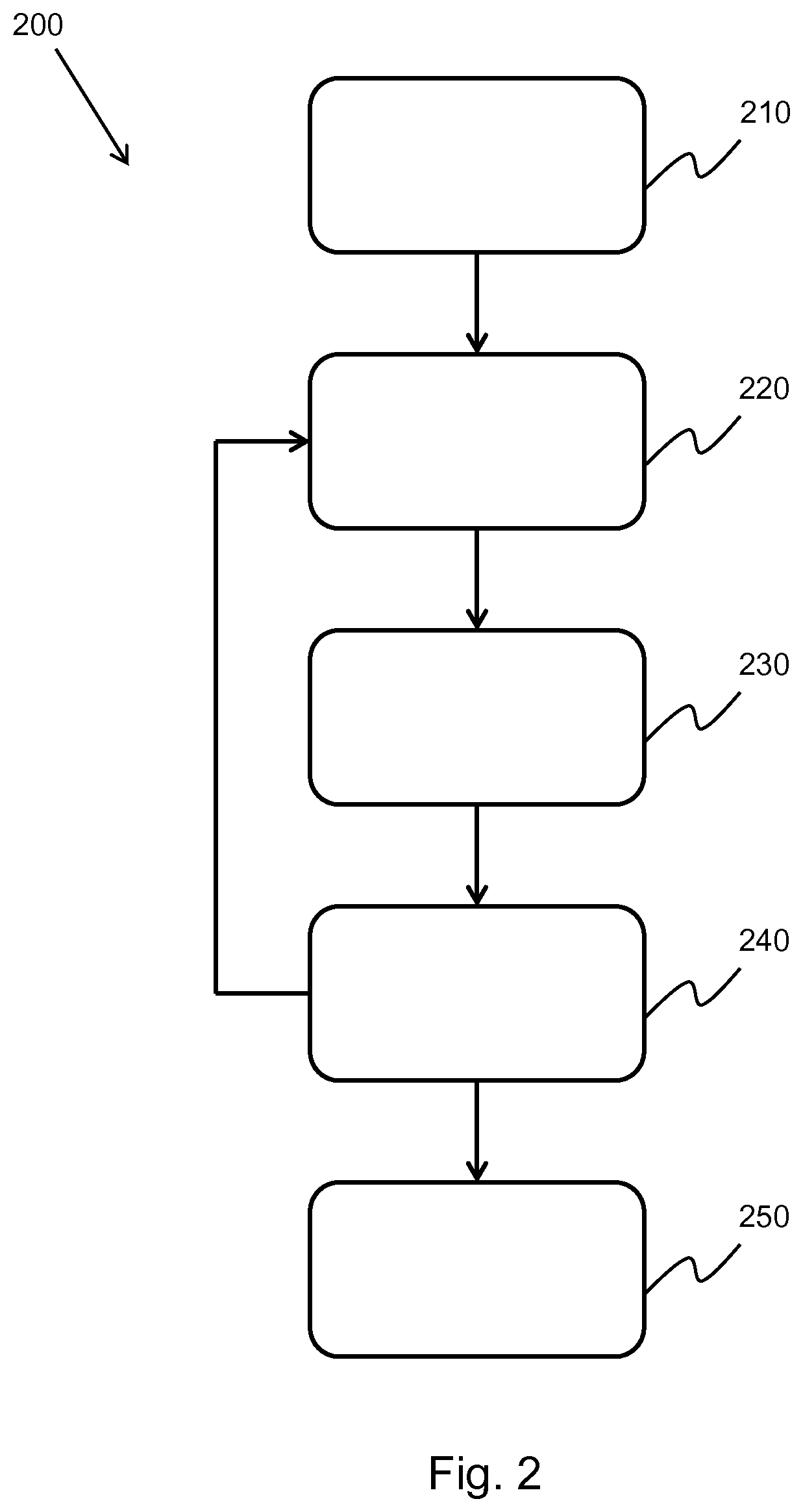

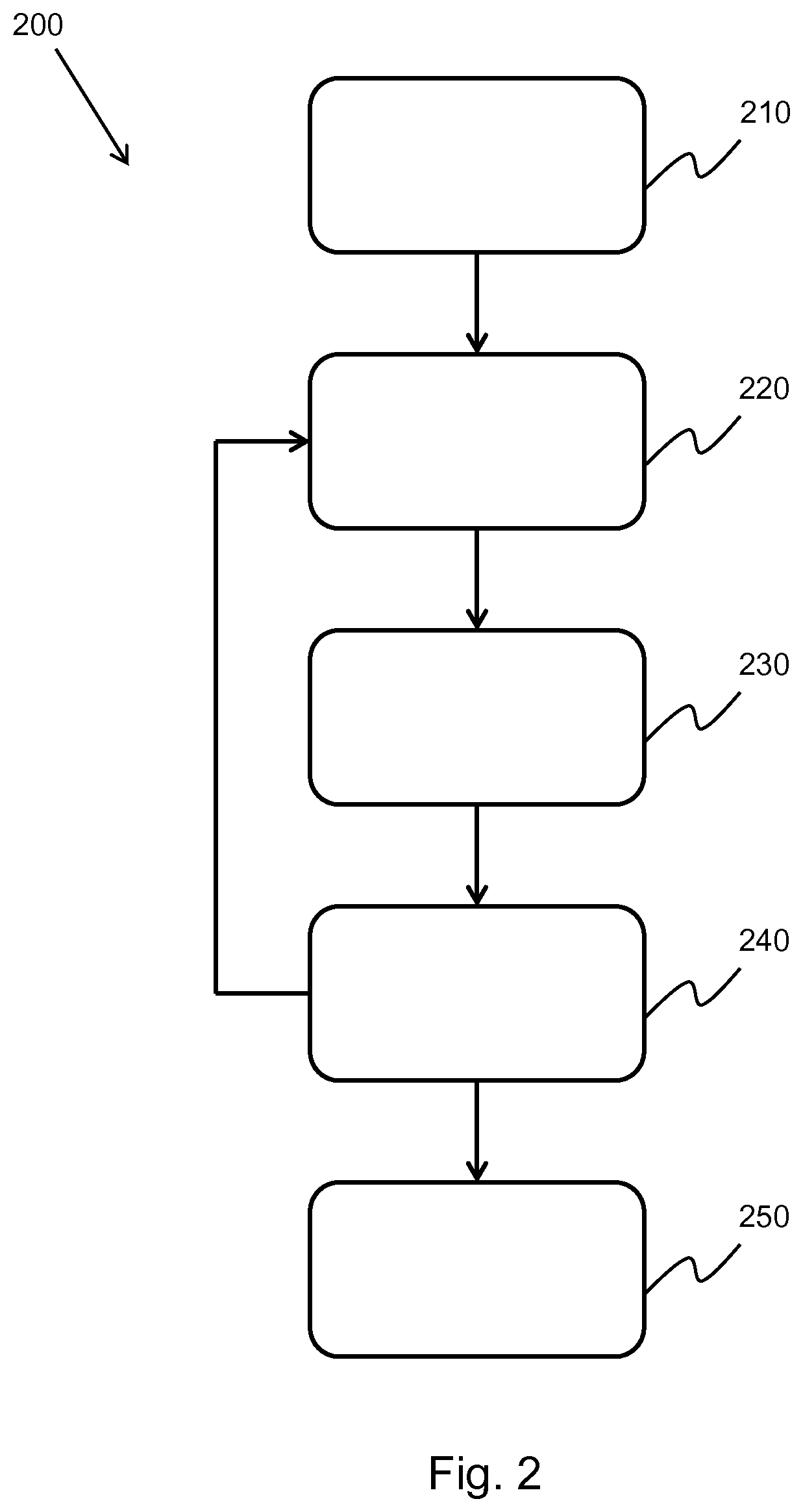

[0137] FIG. 3 shows an illustration of the implementation of the method of FIG. 2;

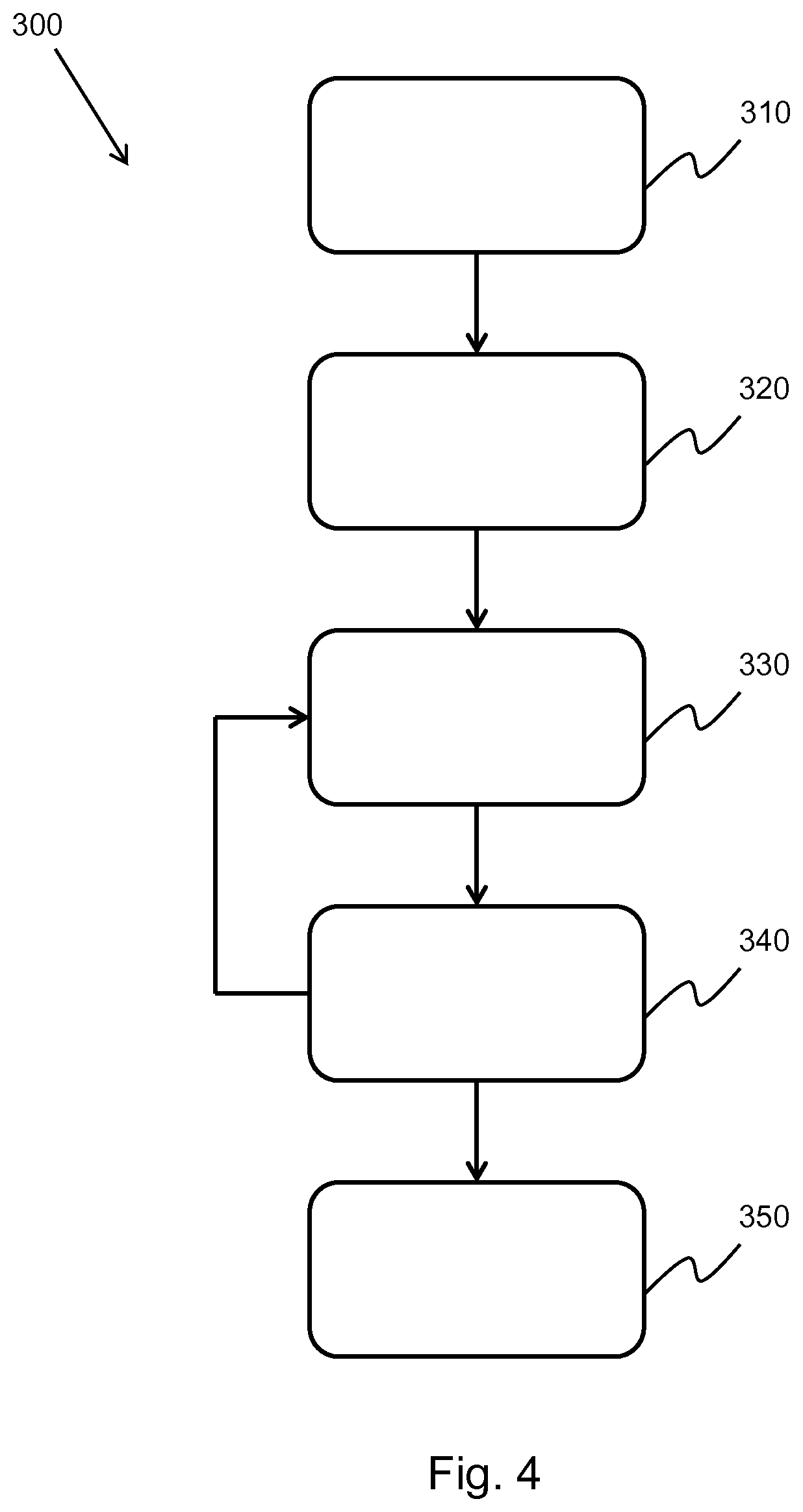

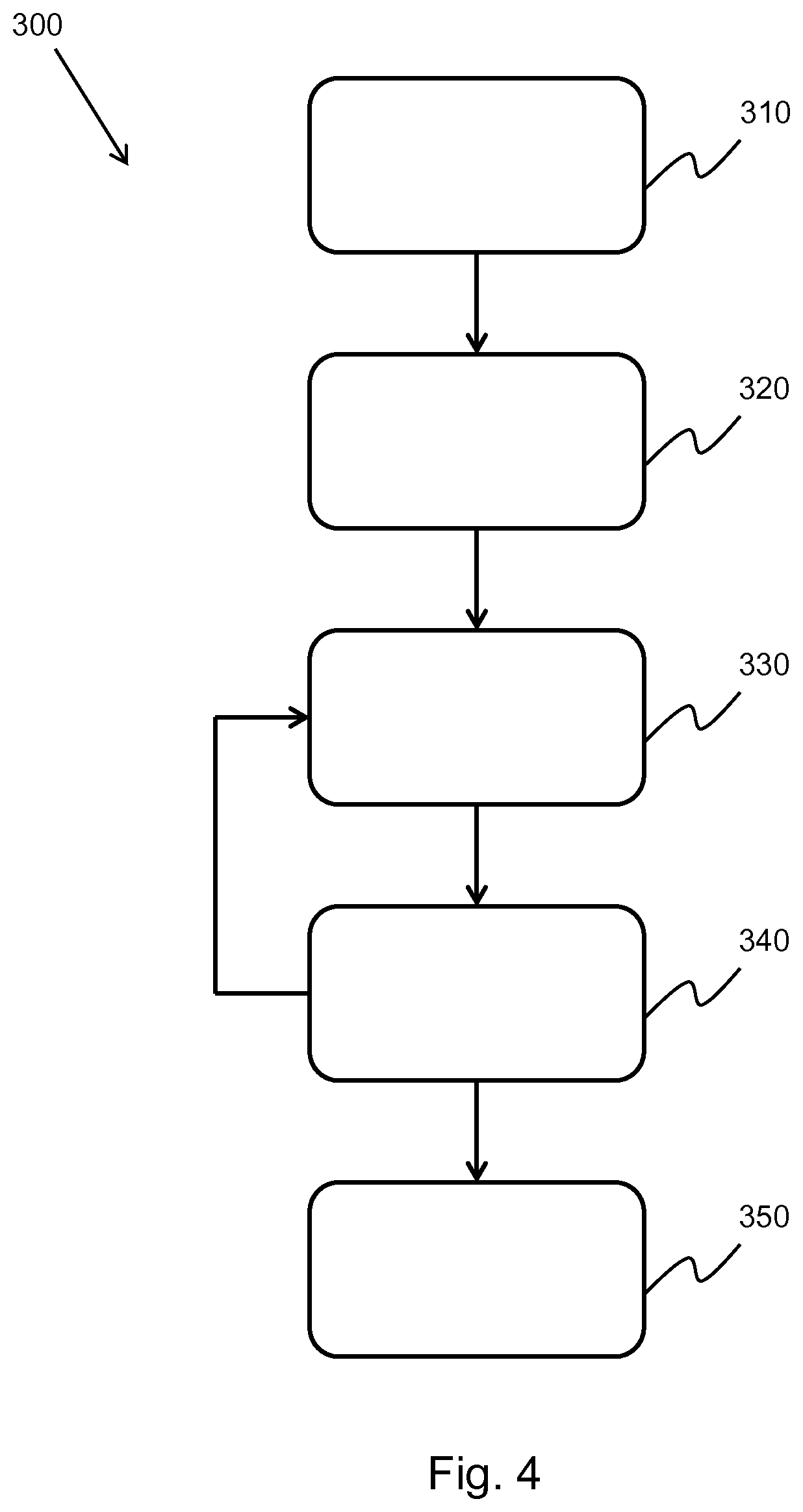

[0138] FIG. 4 shows a method of performing aperture extrapolation on an ultrasound image;

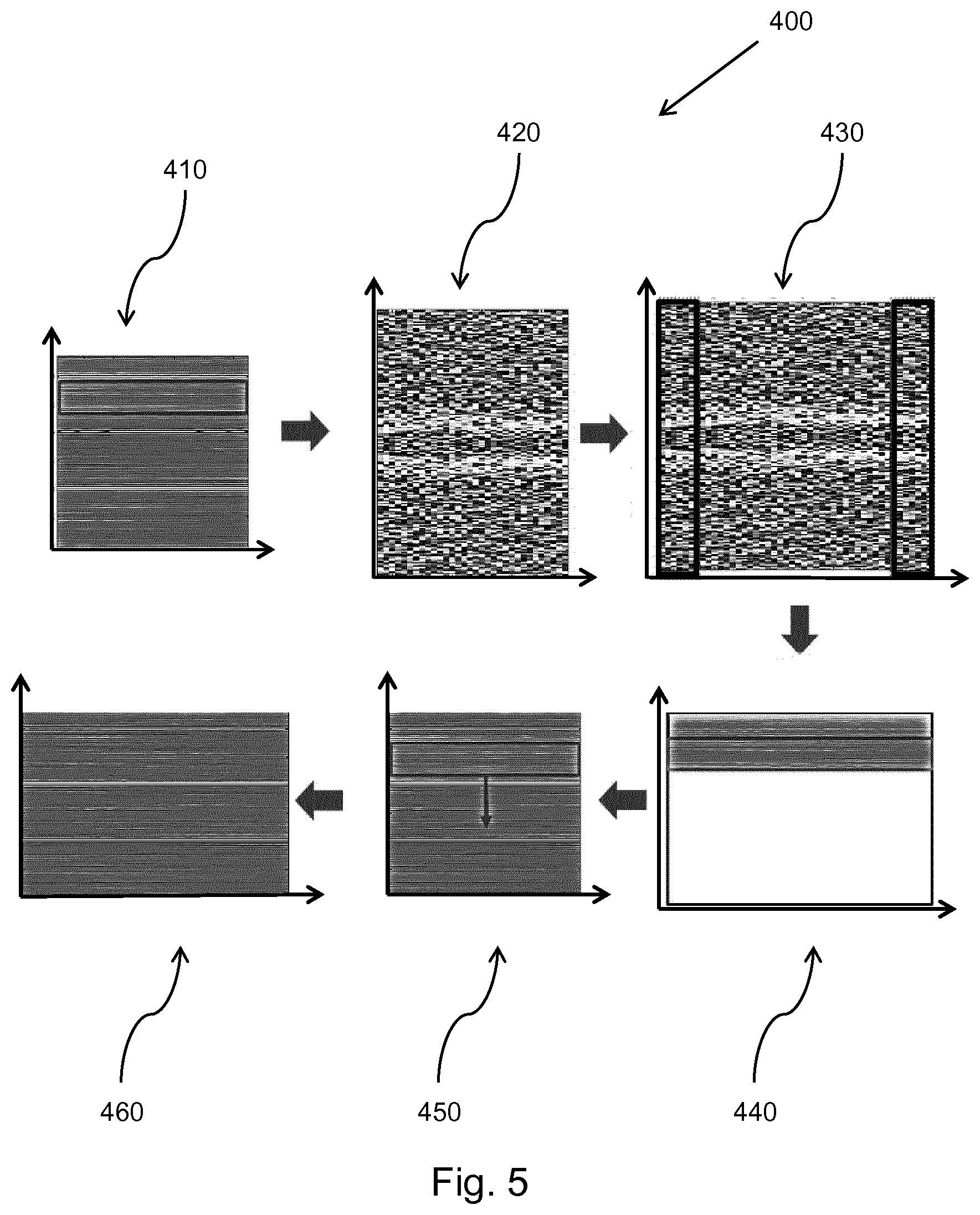

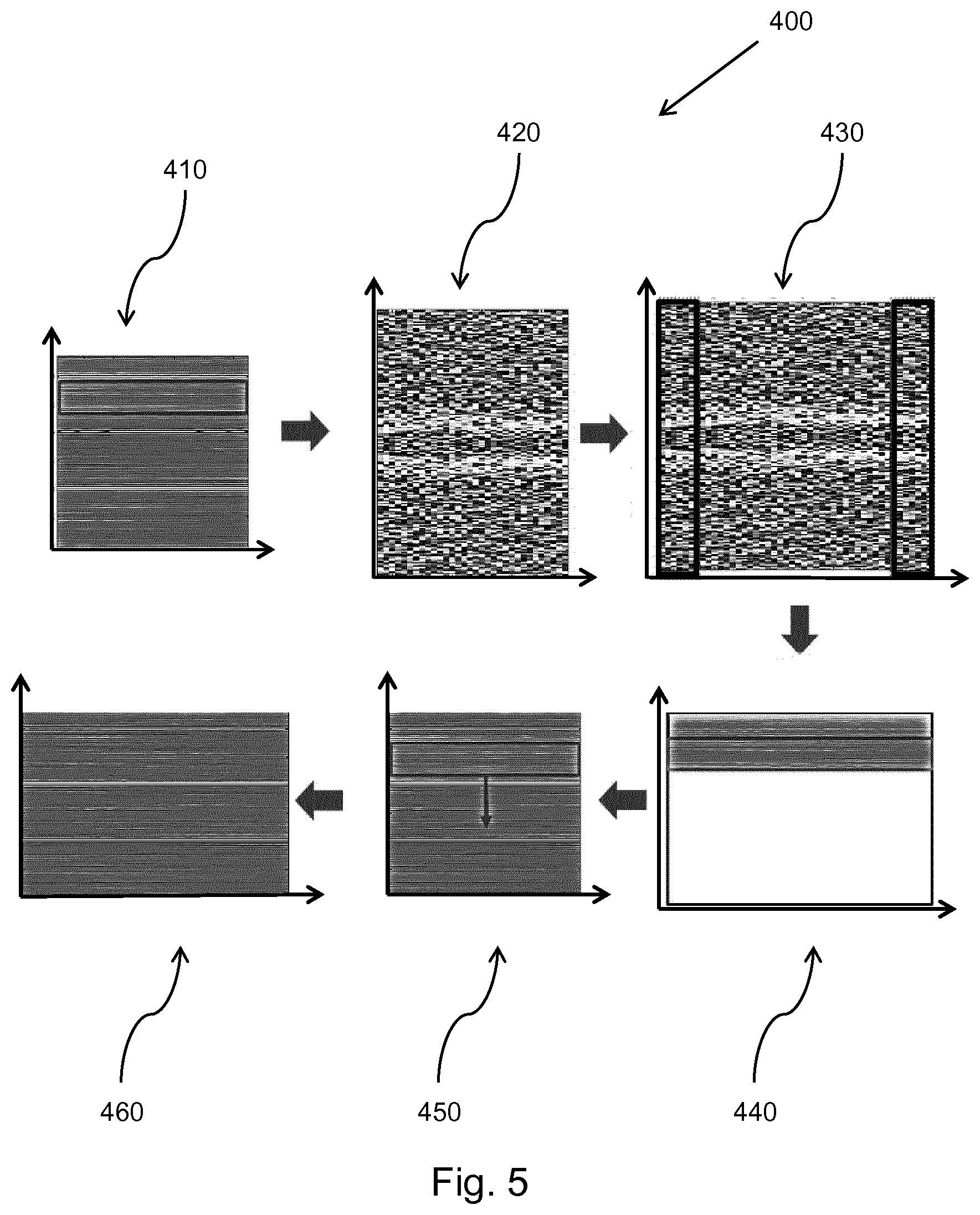

[0139] FIG. 5 shows an illustration of the method of FIG. 4;

[0140] FIG. 6, FIG. 7, FIG. 8 and FIG. 9 show examples of the implementation of the method of FIG. 4;

[0141] FIG. 10 shows a method of performing transmit extrapolation on an ultrasound image;

[0142] FIG. 11 shows an illustration of the method of FIG. 10; and

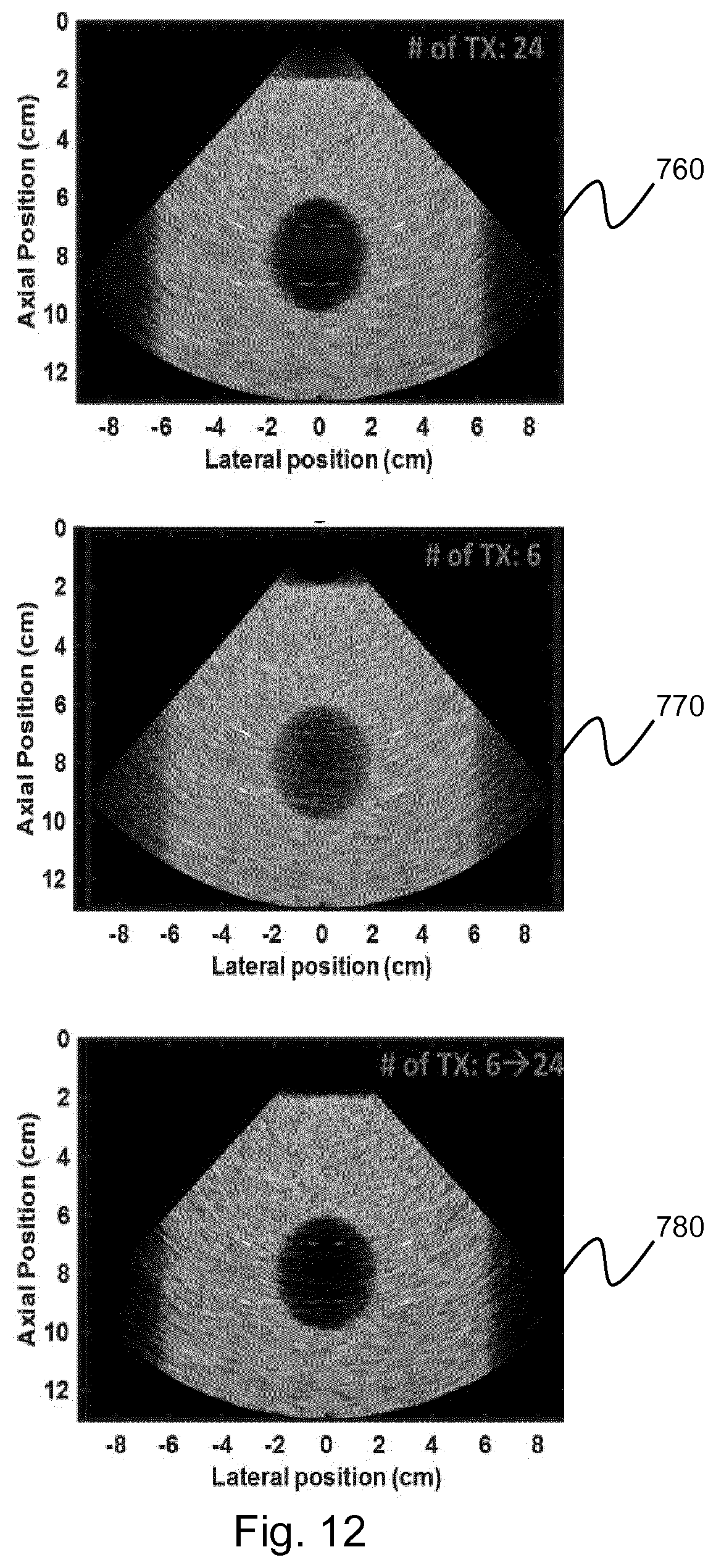

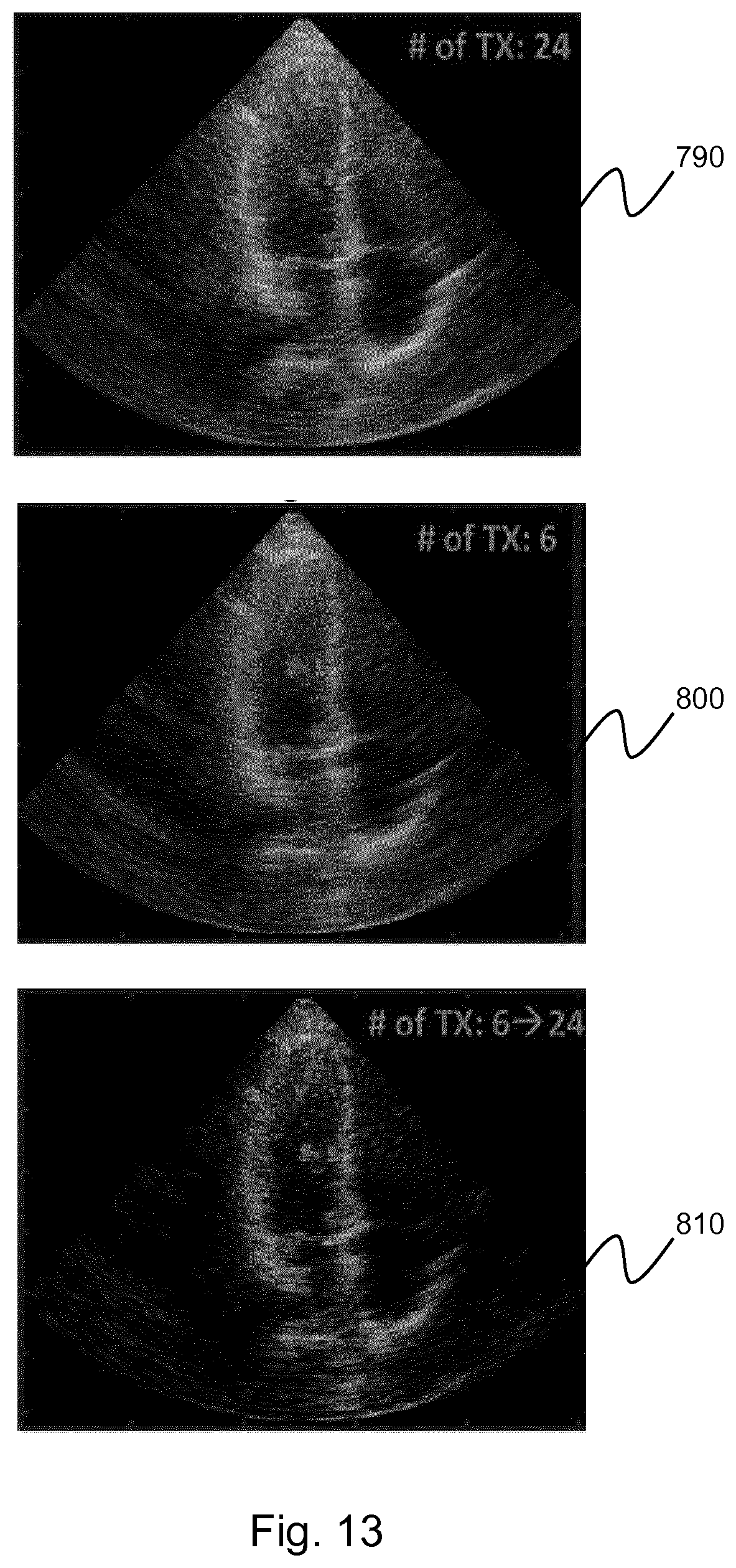

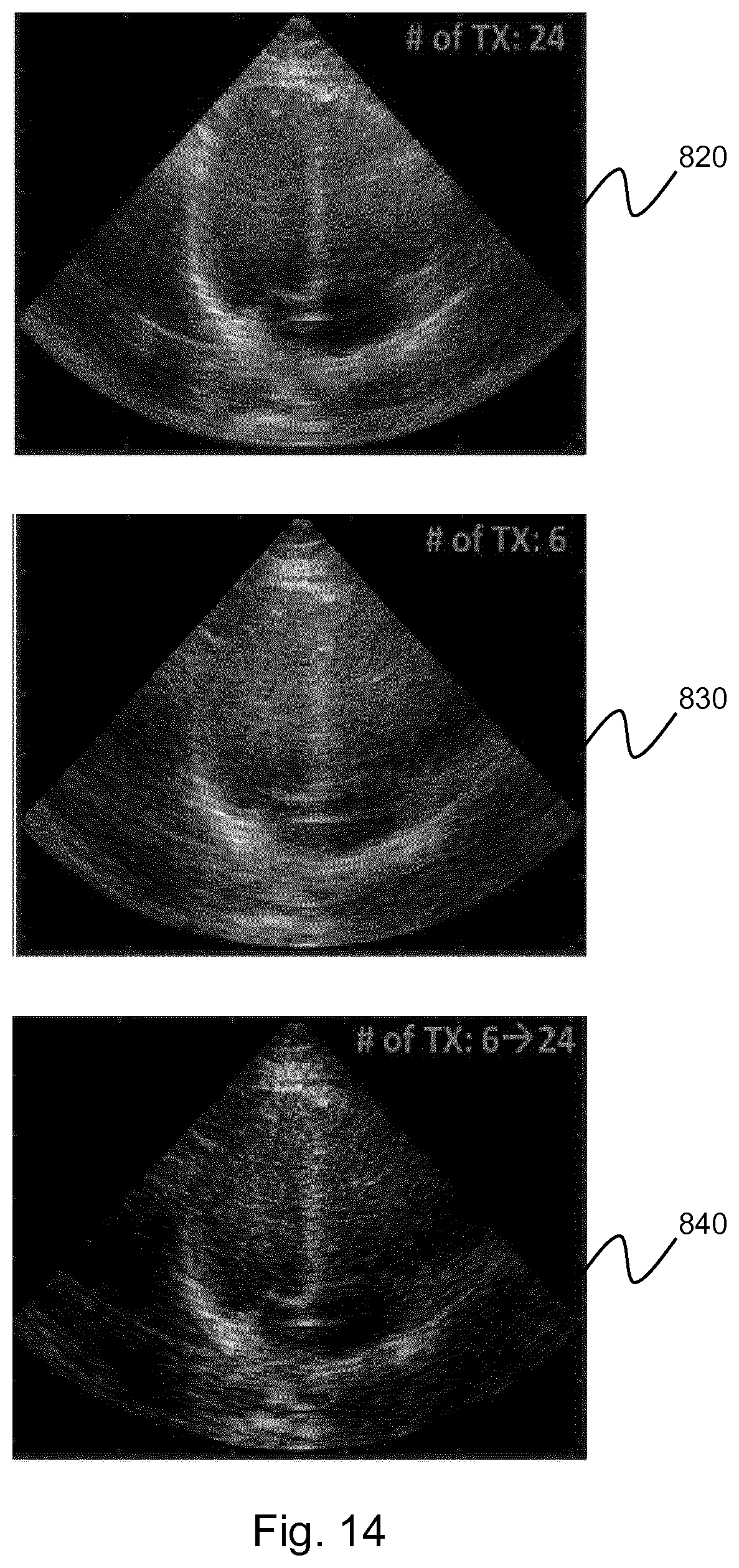

[0143] FIG. 12, FIG. 13, and FIG. 14 show examples of the implementation of the method of FIG. 10.

DETAILED DESCRIPTION OF THE EMBODIMENTS

[0144] The invention provides methods and systems for generating an ultrasound image. In a method, the generation of an ultrasound image comprises: obtaining channel data, the channel data defining a set of imaged points; for each imaged point: isolating the channel data; performing a spectral estimation on the isolated channel data; and selectively attenuating the spectral estimation channel data, thereby generating filtered channel data; and summing the filtered channel data, thereby forming a filtered ultrasound image.

[0145] In some examples, the method comprises aperture extrapolation. The aperture extrapolation improves the lateral resolution of the ultrasound image. In other examples, the method comprises transmit extrapolation. The transmit extrapolation improves the contrast of the image. In addition, the transmit extrapolation improves the frame rate and reduces the motion artifacts in the ultrasound image. In further examples, the aperture and transmit extrapolations may be combined.

[0146] The general operation of an exemplary ultrasound diagnostic imaging system will first be described, with reference to FIG. 1, and with emphasis on the signal processing function of the system since this invention relates to the processing of the signals measured by the transducer array.

[0147] The system comprises an array transducer probe 10 which has a CMUT transducer array 100 for transmitting ultrasound waves and receiving echo information. The transducer array 100 may alternatively comprise piezoelectric transducers formed of materials such as PZT or PVDF. The transducer array 100 is a two-dimensional array of transducers 110 capable of scanning in a 2D plane or in three dimensions for 3D imaging. In another example, the transducer array may be a 1D array.

[0148] The transducer array 100 is coupled to a microbeamformer 12 in the probe which controls reception of signals by the CMUT array cells or piezoelectric elements. Microbeamformers are capable of at least partial beamforming of the signals received by sub-arrays (or "groups" or "patches") of transducers as described in U.S. Pat. No. 5,997,479 (Savord et al.), U.S. Pat. No. 6,013,032 (Savord), and U.S. Pat. No. 6,623,432 (Powers et al.).

[0149] Note that the microbeamformer is entirely optional. The examples below assume no analog beamforming.

[0150] The microbeamformer 12 is coupled by the probe cable to a transmit/receive (T/R) switch 16 which switches between transmission and reception and protects the main beamformer 20 from high energy transmit signals when a microbeamformer is not used and the transducer array is operated directly by the main system beamformer. The transmission of ultrasound beams from the transducer array 10 is directed by a transducer controller 18 coupled to the microbeamformer by the T/R switch 16 and a main transmission beamformer (not shown), which receives input from the user's operation of the user interface or control panel 38.

[0151] One of the functions controlled by the transducer controller 18 is the direction in which beams are steered and focused. Beams may be steered straight ahead from (orthogonal to) the transducer array, or at different angles for a wider field of view. The transducer controller 18 can be coupled to control a DC bias control 45 for the CMUT array. The DC bias control 45 sets DC bias voltage(s) that are applied to the CMUT cells.

[0152] In the reception channel, partially beamformed signals are produced by the microbeamformer 12 and are coupled to a main receive beamformer 20 where the partially beamformed signals from individual patches of transducers are combined into a fully beamformed signal. For example, the main beamformer 20 may have 128 channels, each of which receives a partially beamformed signal from a patch of dozens or hundreds of CMUT transducer cells or piezoelectric elements. In this way the signals received by thousands of transducers of a transducer array can contribute efficiently to a single beamformed signal.

[0153] The beamformed reception signals are coupled to a signal processor 22. The signal processor 22 can process the received echo signals in various ways, such as band-pass filtering, decimation, I and Q component separation, and harmonic signal separation which acts to separate linear and nonlinear signals so as to enable the identification of nonlinear (higher harmonics of the fundamental frequency) echo signals returned from tissue and micro-bubbles. The signal processor may also perform additional signal enhancement such as speckle reduction, signal compounding, and noise elimination. The band-pass filter in the signal processor can be a tracking filter, with its pass band sliding from a higher frequency band to a lower frequency band as echo signals are received from increasing depths, thereby rejecting the noise at higher frequencies from greater depths where these frequencies are devoid of anatomical information.

[0154] The beamformers for transmission and for reception are implemented in different hardware and can have different functions. Of course, the receiver beamformer is designed to take into account the characteristics of the transmission beamformer. In FIG. 1 only the receiver beamformers 12, 20 are shown, for simplicity. In the complete system, there will also be a transmission chain with a transmission micro beamformer, and a main transmission beamformer.

[0155] The function of the micro beamformer 12 is to provide an initial combination of signals in order to decrease the number of analog signal paths. This is typically performed in the analog domain.

[0156] The final beamforming is done in the main beamformer 20 and is typically after digitization.

[0157] The transmission and reception channels use the same transducer array 10' which has a fixed frequency band. However, the bandwidth that the transmission pulses occupy can vary depending on the transmission beamforming that has been used. The reception channel can capture the whole transducer bandwidth (which is the classic approach) or by using bandpass processing it can extract only the bandwidth that contains the useful information (e.g. the harmonics of the main harmonic).

[0158] The processed signals are coupled to a B mode (i.e. brightness mode, or 2D imaging mode) processor 26 and a Doppler processor 28. The B mode processor 26 employs detection of an amplitude of the received ultrasound signal for the imaging of structures in the body such as the tissue of organs and vessels in the body. B mode images of structure of the body may be formed in either the harmonic image mode or the fundamental image mode or a combination of both as described in U.S. Pat. No. 6,283,919 (Roundhill et al.) and U.S. Pat. No. 6,458,083 (Jago et al.) The Doppler processor 28 processes temporally distinct signals from tissue movement and blood flow for the detection of the motion of substances such as the flow of blood cells in the image field. The Doppler processor 28 typically includes a wall filter with parameters which may be set to pass and/or reject echoes returned from selected types of materials in the body.

[0159] The structural and motion signals produced by the B mode and Doppler processors are coupled to a scan converter 32 and a multi-planar reformatter 44. The scan converter 32 arranges the echo signals in the spatial relationship from which they were received in a desired image format. For instance, the scan converter may arrange the echo signal into a two dimensional (2D) sector-shaped format, or a pyramidal three dimensional (3D) image. The scan converter can overlay a B mode structural image with colors corresponding to motion at points in the image field with their Doppler-estimated velocities to produce a color Doppler image which depicts the motion of tissue and blood flow in the image field. The multi-planar reformatter will convert echoes which are received from points in a common plane in a volumetric region of the body into an ultrasound image of that plane, as described in U.S. Pat. No. 6,443,896 (Detmer). A volume renderer 42 converts the echo signals of a 3D data set into a projected 3D image as viewed from a given reference point as described in U.S. Pat. No. 6,530,885 (Entrekin et al.).

[0160] The 2D or 3D images are coupled from the scan converter 32, multi-planar reformatter 44, and volume renderer 42 to an image processor 30 for further enhancement, buffering and temporary storage for display on an image display 40. In addition to being used for imaging, the blood flow values produced by the Doppler processor 28 and tissue structure information produced by the B mode processor 26 are coupled to a quantification processor 34. The quantification processor produces measures of different flow conditions such as the volume rate of blood flow as well as structural measurements such as the sizes of organs and gestational age. The quantification processor may receive input from the user control panel 38, such as the point in the anatomy of an image where a measurement is to be made. Output data from the quantification processor is coupled to a graphics processor 36 for the reproduction of measurement graphics and values with the image on the display 40, and for audio output from the display device 40. The graphics processor 36 can also generate graphic overlays for display with the ultrasound images. These graphic overlays can contain standard identifying information such as patient name, date and time of the image, imaging parameters, and the like. For these purposes the graphics processor receives input from the user interface 38, such as patient name. The user interface is also coupled to the transmit controller 18 to control the generation of ultrasound signals from the transducer array 10' and hence the images produced by the transducer array and the ultrasound system. The transmit control function of the controller 18 is only one of the functions performed. The controller 18 also takes account of the mode of operation (given by the user) and the corresponding required transmitter configuration and band-pass configuration in the receiver analog to digital converter. The controller 18 can be a state machine with fixed states.

[0161] The user interface is also coupled to the multi-planar reformatter 44 for selection and control of the planes of multiple multi-planar reformatted (MPR) images which may be used to perform quantified measures in the image field of the MPR images.

[0162] FIG. 2 shows a method 200 of performing selective attenuation on an ultrasound image.

[0163] In step 210, channel data is obtained from an ultrasonic probe. The channel data defines a set of imaged points within a region of interest.

[0164] In step 220, the channel data is isolated for a given imaged point, meaning that it may be operated on independently of the remaining channel data.

[0165] In step 230, a spectral estimation is performed on the isolated channel data. For example, the channel data may be decomposed into a finite sum of complex exponentials as follows:

S(x).apprxeq..SIGMA..sub.i=1.sup.Na.sub.ie.sup.ik.sup.i.sup.x,

[0166] where: x is the lateral coordinate along the array of transducer elements of the ultrasonic probe; S(x) is the measured channel data signal at x; N is the model order, which is the number of sinusoidal components used to describe the channel data; a.sub.i is the first model parameter; and k.sub.i is the second model parameter. Any spectral estimation method may be performed on the isolated channel data. For example, a Fourier transform may be performed in combination with a Total Variation method. In another example, a complex L1/L2 minimization may be used to decompose the channel data signal as a sparse sum of off-axis and off-range components. In the example above, an autoregressive model is used.

[0167] In this case, the a.sub.i are complex parameters, wherein the phase may be between -.pi. and .pi. and wherein the modulus is a real, positive number, indicative of the strength of the channel data signal.

[0168] The k.sub.i may also theoretically be complex; however, in this example they are real numbers. They may theoretically range from -.infin. to .infin. but in practice, due to sampling restrictions; they range from

- 1 dx to 1 dx , ##EQU00002##

where dx is me element spacing in the transducer array of the ultrasonic probe.

[0169] In this example, the first and second model parameters may be estimated through any known method in the art spectral estimation techniques. For example, the parameters may be estimated by way of a non-parametric method, such as a fast Fourier transform (FFT) or discrete Fourier transform (DFT), or a parametric method, such as the autoregressive (AR) or autoregressive moving average (ARMA) methods.

[0170] In step 240, the channel data is selectively attenuated by including an attenuation coefficient in the above formulation:

S.sub.f(x).apprxeq..SIGMA..sub.i=1.sup.Nw(k.sub.i)a.sub.ie.sup.ik.sup.i.- sup.x,

[0171] where: S.sub.f(x) is the filtered channel data signal at x; and w(k.sub.i) is the attenuation coefficient, wherein w(k.sub.i) may be a real number.ltoreq.1.

[0172] w(k.sub.i) is inversely proportional to k.sub.i, meaning that at higher spatial frequencies, the value of w(k.sub.i) decreases, thereby attenuating the high spatial frequency clutter signals. In other words, the attenuation coefficient may be dependent on the spatial frequency of the channel data. The attenuation coefficient is applied across the entire frequency spectrum of the channel data, thereby attenuating any high spatial frequency signals included within the channel data. w(k.sub.i) may, for example, be Gaussian in shape as shown in the following equation:

w ( k i ) = e - k i 2 / k 0 2 , ##EQU00003##

[0173] where k.sub.0 is an additional parameter, which dictates the width of the Gaussian and so the aggressiveness of the high spatial frequency signal attenuation. For lower values of k.sub.0, the Gaussian is thinner and so the clutter filtering is more aggressive. For larger values of k.sub.0, the width of the Gaussian is increased leading to less aggressive clutter filtering, which in turn allows more signals to contribute to the final ultrasound image. In this example, the value of k.sub.0 may be selected to be of the order of magnitude of the inverse of the aperture size, for example

k 0 = .pi. a , ##EQU00004##

where a is the aperture size. In addition, the value of k.sub.0 may be altered depending on the axial depth of the current channel data segment.

[0174] Alternative functions may also be used as an attenuation coefficient. For example, it may be possible to estimate the angular transmit, or round-trip, beampattern of the channel data, such as through simulation, to use as a weighting mask. Further, a rectangular function may be used, wherein the width of the function dictates a cutoff frequency above which all signals are rejected. Further still, an exponential decay function may also be used.

[0175] Steps 220 to 240 are repeated for each axial segment of the channel data. When the final segment of channel data has undergone selective attenuation, the method may progress to step 250.

[0176] In step 250, the filtered channel data is summed to form the final clutter filtered ultrasound image.

[0177] FIG. 3 shows a comparison between an ultrasound image of a heart at various stages in the method describe above with reference to FIG. 2.

[0178] The first image 260 shows the original ultrasound image captured from the raw channel data. As can be seen, the image contains a high level of noise and the details are difficult to make out.

[0179] The second image 270 shows the ultrasound image at stage 230 of the method, where the image has been reconstructed with the sparse sinusoidal decomposition as described above. In this example, the order of the model, N=4, meaning that the channel data at each depth is modeled as a sum 4 of sinusoids. The improvement in image clarity can already be seen; however, the signal is still noisy and the finer details, particularly towards the top of the image, remain largely unclear.

[0180] The third image 280 shows the ultrasound image at stage 250 of the method, after the application of the selective attenuation, thereby eliminating the sinusoidal signals with the highest spatial frequencies. As can be seen from the image, the signal noise has been significantly reduced by the attenuation of the high spatial frequency signals.

[0181] FIG. 4 shows a method 300 for applying aperture extrapolation to an ultrasound image.

[0182] In step 310, channel data is obtained by way of an ultrasonic probe.

[0183] In step 320, the channel is segmented based on an axial imaging depth of the axial data.

[0184] The axial window size of the segmented channel data may be empirically determined to suit the visual preferences of the user. For example, an axial window size of in the range of 1-2 wavelengths may produce a preferred image quality improvement. A larger axial window may provide a more reliable improvement in image quality and better preservation of speckle texture; however, it may adversely affect the axial resolution of the ultrasound image.

[0185] In step 330, an extrapolation filter of order p, a.sub.j where 1.ltoreq.j.ltoreq.p, is estimated based on the segmented channel data. In this case, the extrapolation filter may be estimated by way of the well-known Burg technique for autoregressive (AR) parameter estimation.

[0186] In step 340, the segmented channel data is extrapolated using the extrapolation filter estimated in step 330. In this case, a 1-step linear prediction extrapolator to obtain the 1.sup.st forward-extrapolated sample X.sub.N+1, using the extrapolation filter and the previous p samples, as shown below:

X.sub.N+1=.SIGMA..sub.j=1.sup.pX.sub.N+1-ja.sub.j,

[0187] where: X.sub.N is the current sample; X.sub.N+1 is the forward-extrapolated sample; and p is the order of the extrapolation filter, a.sub.j.

[0188] The forward extrapolation may be generalized as follows:

X.sub.k=.SIGMA..sub.j=1.sup.pX.sub.k-ja.sub.j, k>N (Forward Extrapolation),

[0189] where k is the number of forward extrapolations performed.

[0190] By reversing the filter order and taking the complex conjugate of the filter coefficients, it is possible to backward-extrapolate the value up to the k.sup.th channel as a linear combination of the first p channels:

X.sub.k=.SIGMA..sub.j=1.sup.pX.sub.k+ja*.sub.j, k<N (Backward Extrapolation),

[0191] Using both the forward and backward extrapolation formulae, it is possible to fully extrapolate the segmented channel data. Steps 330 and 340 are repeated for each axial segment of the segmented channel data.

[0192] In step 350, the fully extrapolated channel data segments are summed to obtain the beamsum signal and generate the final ultrasound image.

[0193] FIG. 5 shows an illustration 400 of an embodiment of the method of FIG. 4.

[0194] In step 410, channel data is obtained by way of an ultrasonic probe. The plot shows signal intensity, by way of the shading, for each channel at a given axial depth. In the plots of steps 410, 440, 450 and 460, the horizontal axis represents the channel being measured and the vertical axis represents the axial depth of the measured channel data, wherein the axial depth is inversely proportional to the height of the vertical axis. In the plots of steps 420 and 430, the horizontal axis represents the channel being measured and the vertical axis represents the temporal frequency of the channel data.

[0195] In step 420, a fast Fourier transform (FFT) is applied to the channel data, thereby transforming the channel data into the temporal frequency domain. In this case, the extrapolation filter is also estimated in the temporal frequency domain. The estimation is once again performed by the Burg technique.

[0196] In step 430, the estimated extrapolation filter is used to extrapolate the temporal frequency domain channel data beyond the available aperture. The extrapolated data is highlighted by the boxes to the right, representing the forward extrapolated channel data, and the left, representing the backward extrapolated channel data, of the plot.

[0197] In step 440, an inverse Fourier transform is applied to the extrapolated temporal frequency channel data, thereby generating spatial channel data. As can be seen from the plot, the channel data now covers a wider aperture than in step 410.

[0198] In step 450, the axial window is moved to a new axial segment and steps 420 to 440 are repeated.

[0199] In step 460, the fully extrapolated channel data is obtained. This may then be used to generate the final ultrasound image.

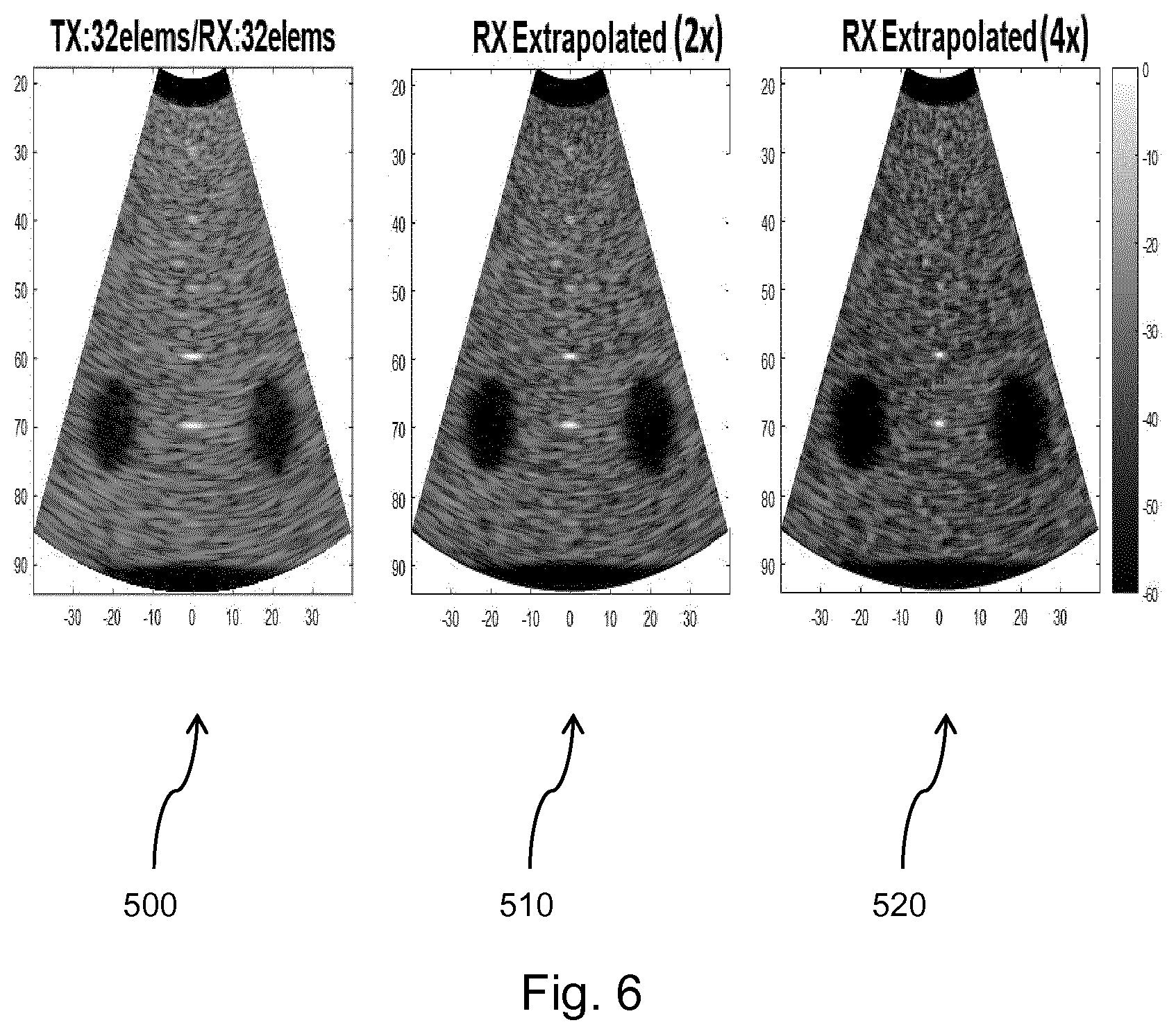

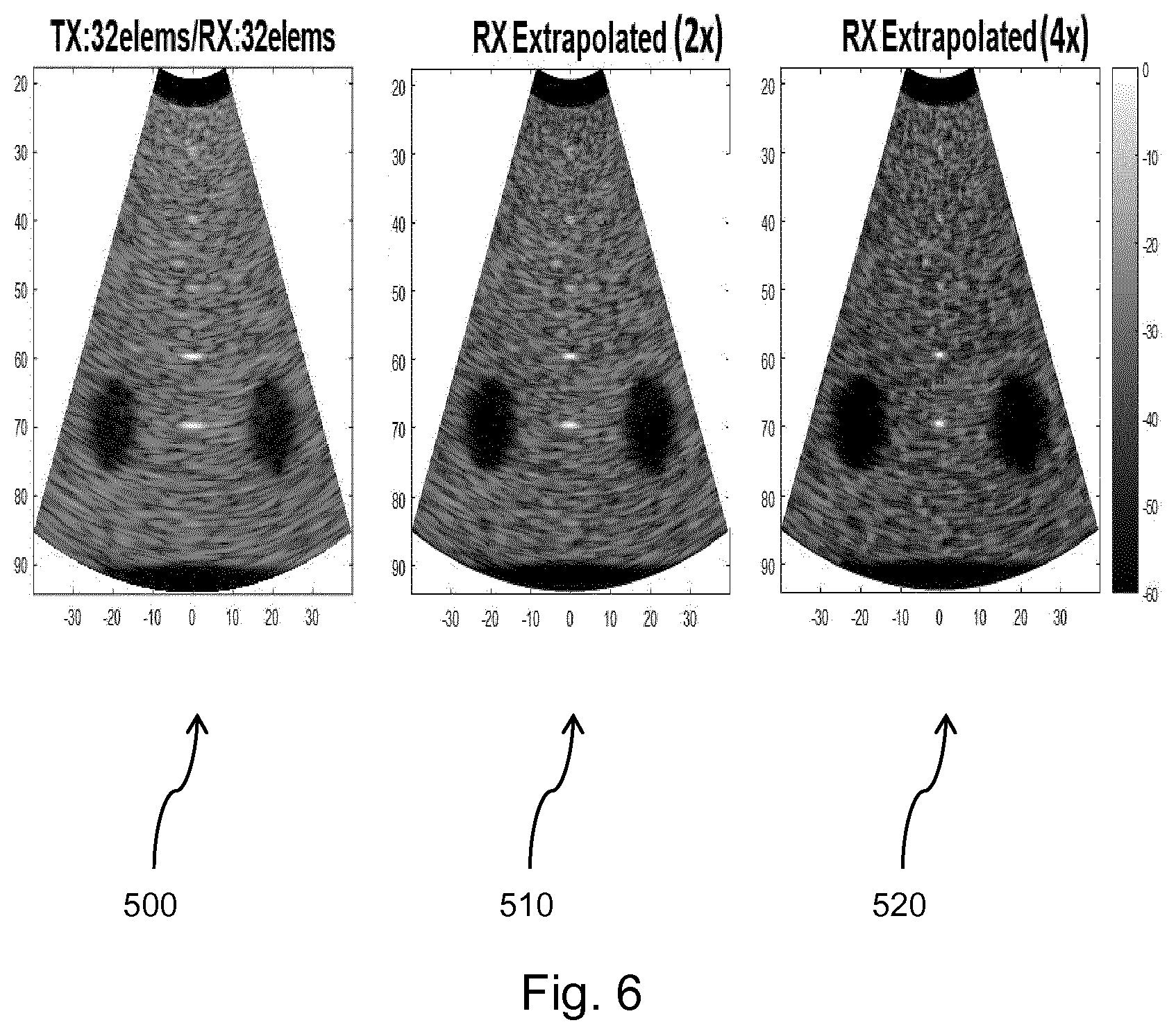

[0200] FIGS. 6 to 11 show examples of the implementation of embodiments of the method of FIG. 4.

[0201] FIG. 6 shows a comparison between an ultrasound image before and after the implementation of two embodiments of the method of FIG. 4. In FIGS. 6 to 8, the horizontal axes of the images represent the lateral position, measured in mm, of the signals and the vertical axes represent the axial position, measured in mm, of the signals. The gradient scales indicate the signal intensity at a given signal location.

[0202] The first image 500 shows a conventional delay and sum (DAS) beamformed image of a simulated phantom. In this case, a 32-element aperture was used in both transmit and receive steps. As can be seem from the image, the two simulated cysts introduce heavy scattering and cause a large amount of noise in the ultrasound image. This results in the cysts appearing poorly defined and unclear.

[0203] The second image 510 shows the same ultrasound image after the application of the aperture extrapolation technique described above. In this case, the receive aperture was extrapolated by a factor of 2 using an extrapolation filter of order 4. As can be seen from the image, the extrapolation has substantially improved the lateral resolution of the image whilst maintaining the quality of the speckle texture.

[0204] The third image 520 once again shows the same ultrasound image after the application of the aperture extrapolation technique described above; however, in this case, the receive aperture was extrapolated by a factor of 4 using an extrapolation filter of order 4. As can be seen from the image, the extrapolation by this additional factor has further increased the lateral resolution of the ultrasound image.

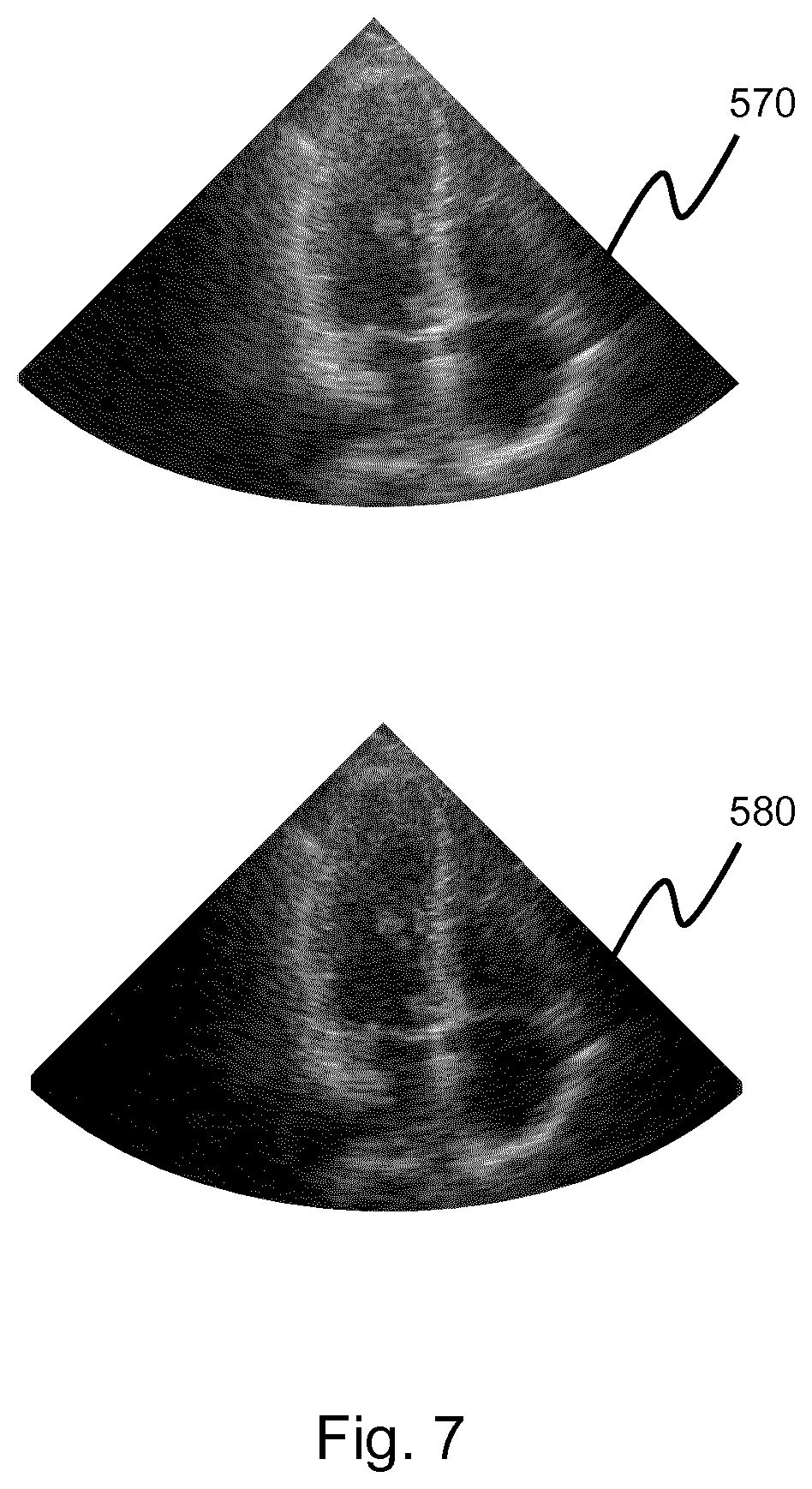

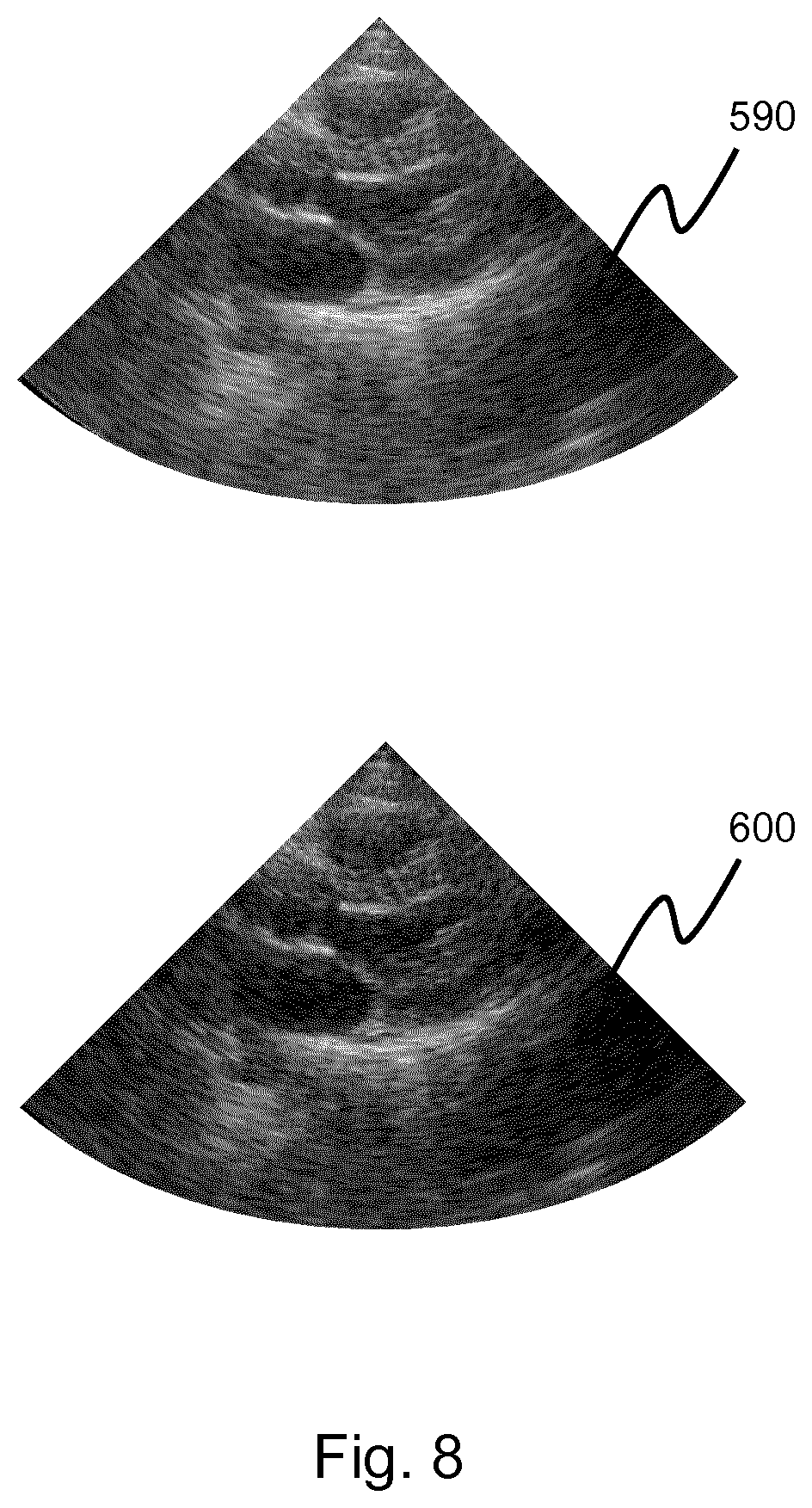

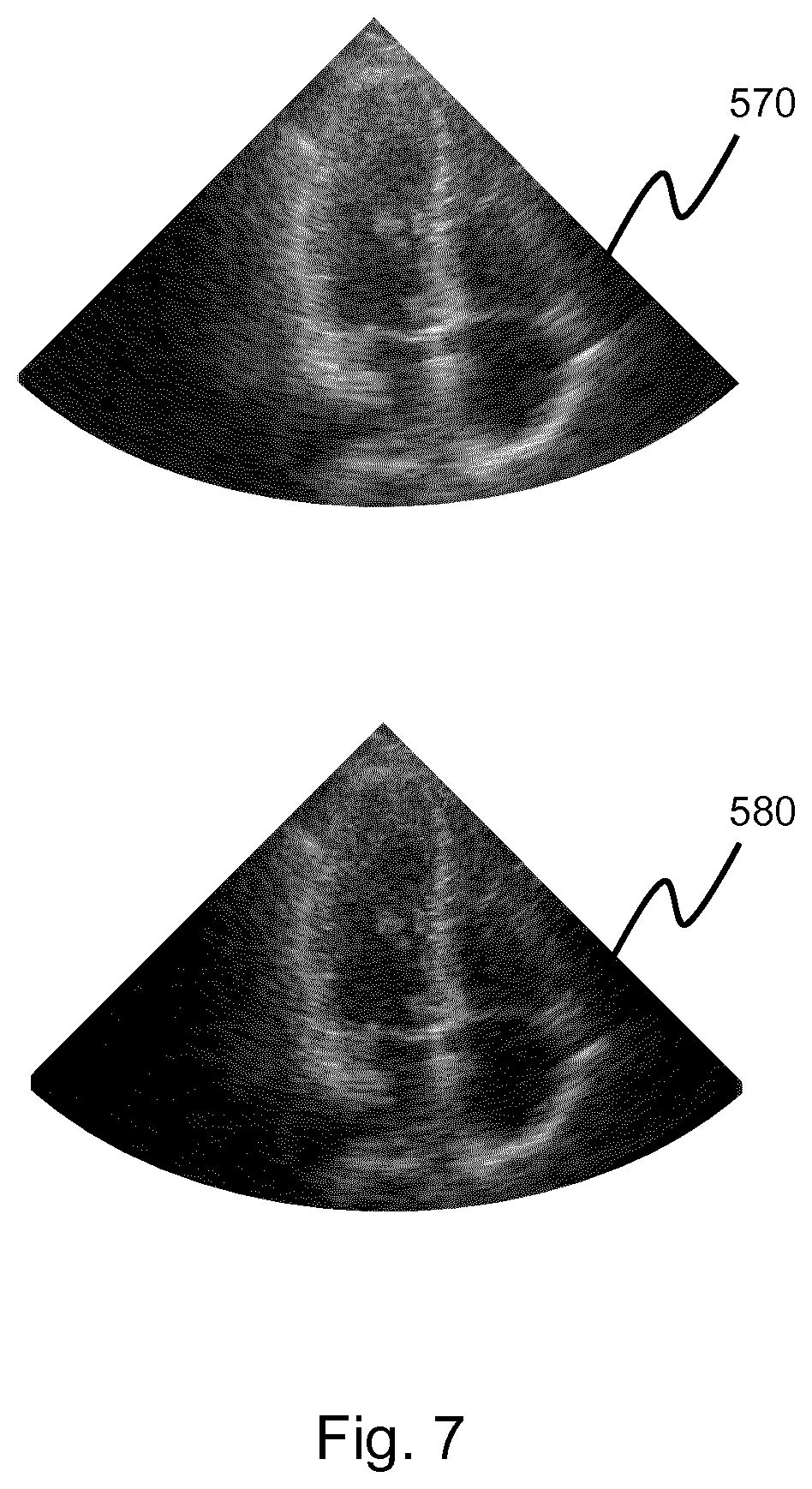

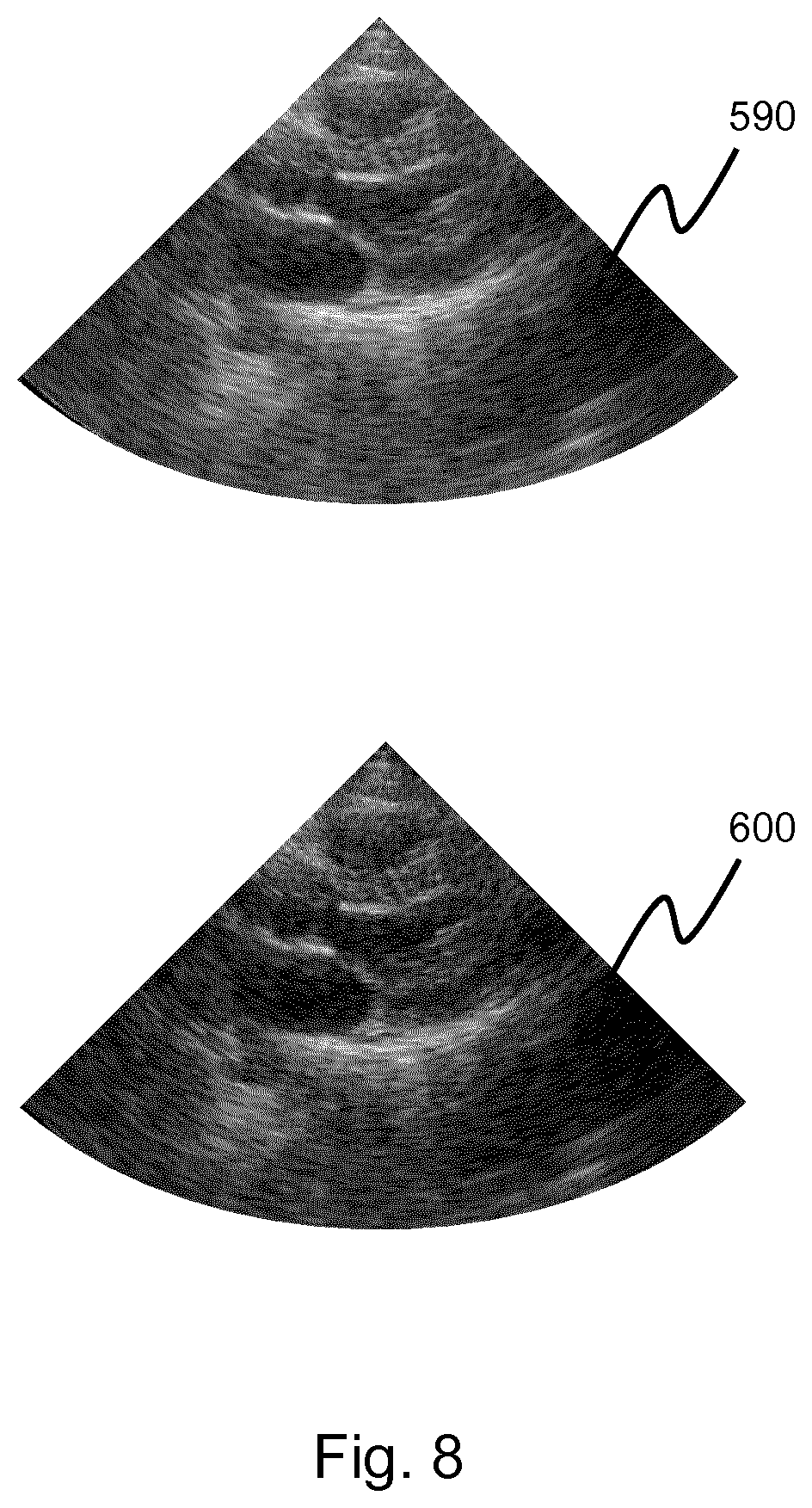

[0205] FIGS. 7 and 8 show a comparison between an ultrasound image before and after the application of an aperture extrapolation method as described above. The images are shown in the 60 dB dynamic range.

[0206] In both cases, the top images, 570 and 590, show a conventional DAS beamformed cardiac image. The bottom images, 580 and 600, show the ultrasound images after an aperture extrapolation by a factor of 8. In both Figures, it can be seen that the aperture extrapolation leads to an improvement in both lateral resolution and image contrast.

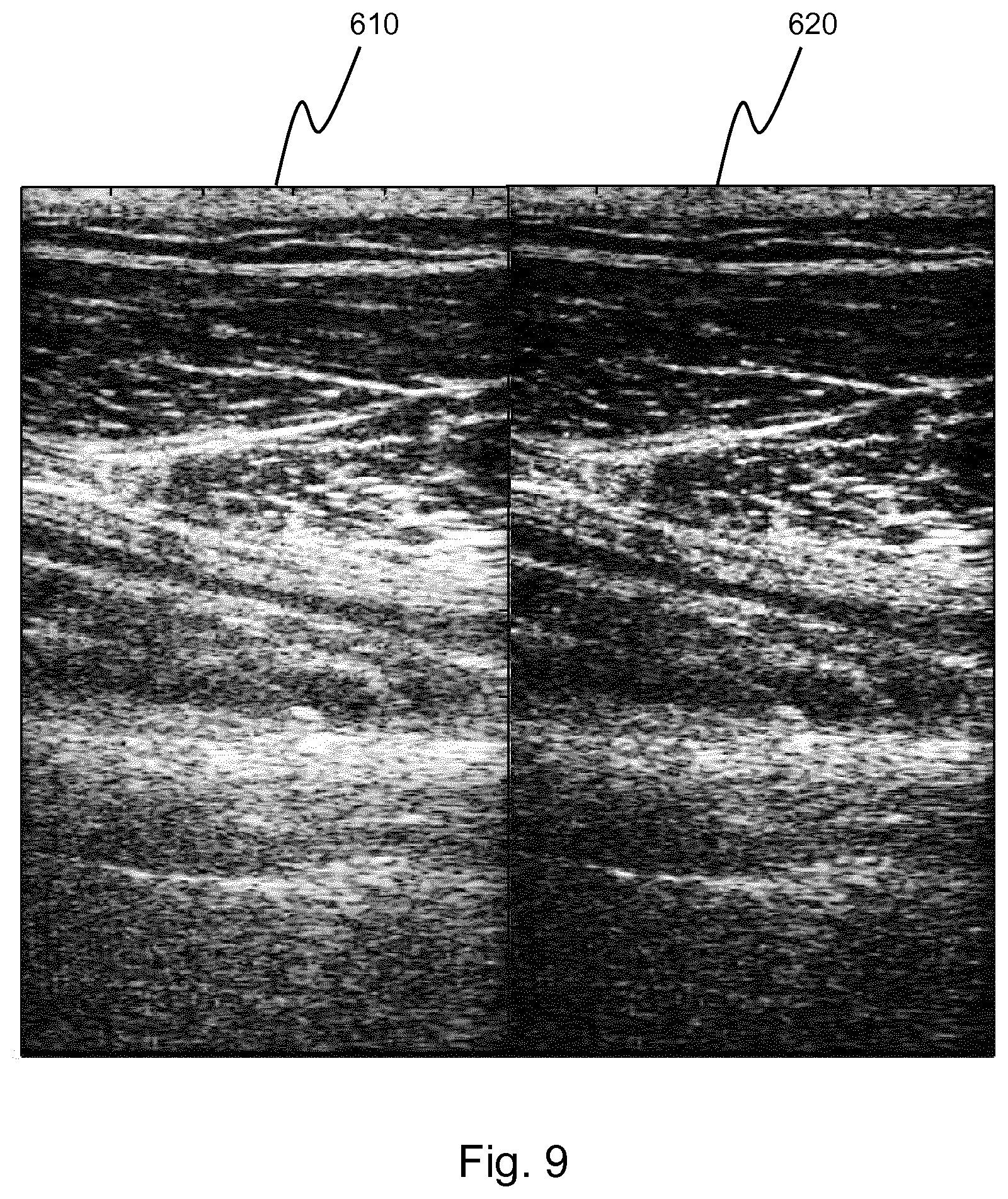

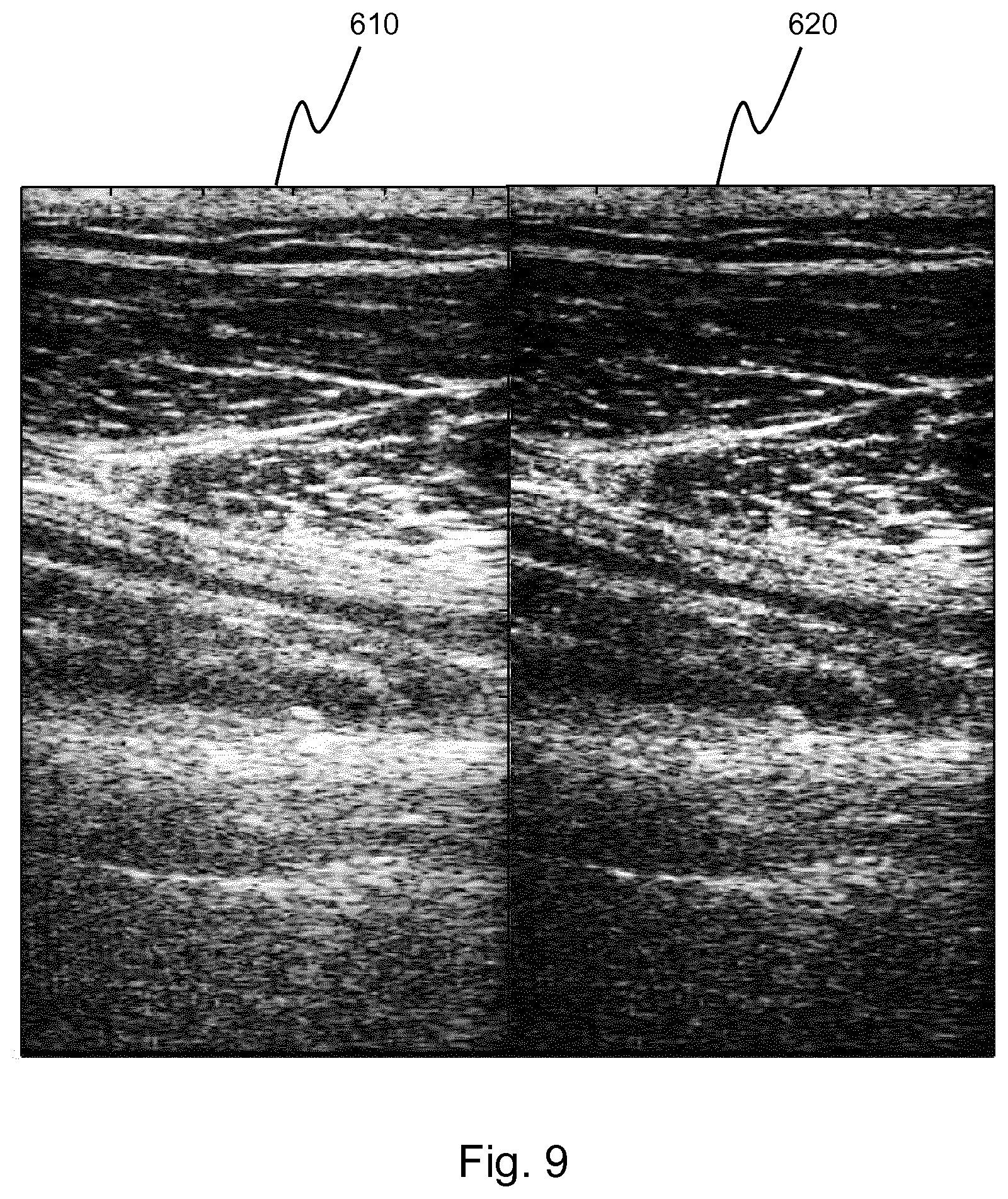

[0207] FIG. 9 shows a comparison between an ultrasound image of a leg before and after the application of an aperture extrapolation method as described above. The images are shown in the 60 dB dynamic range.

[0208] The first image 610 shows a conventional DAS beamformed ultrasound image. The second image 620 shows the same ultrasound image after the application of an aperture extrapolation method as described above. The aperture was extrapolated by a factor of 8. As can be seen from the second image, the aperture extrapolation leads to an improvement in the lateral resolution of the ultrasound image.

[0209] FIGS. 6 to 9 show an improvement in lateral resolution due to the aperture extrapolation method across a wide variety of imaging scenarios.

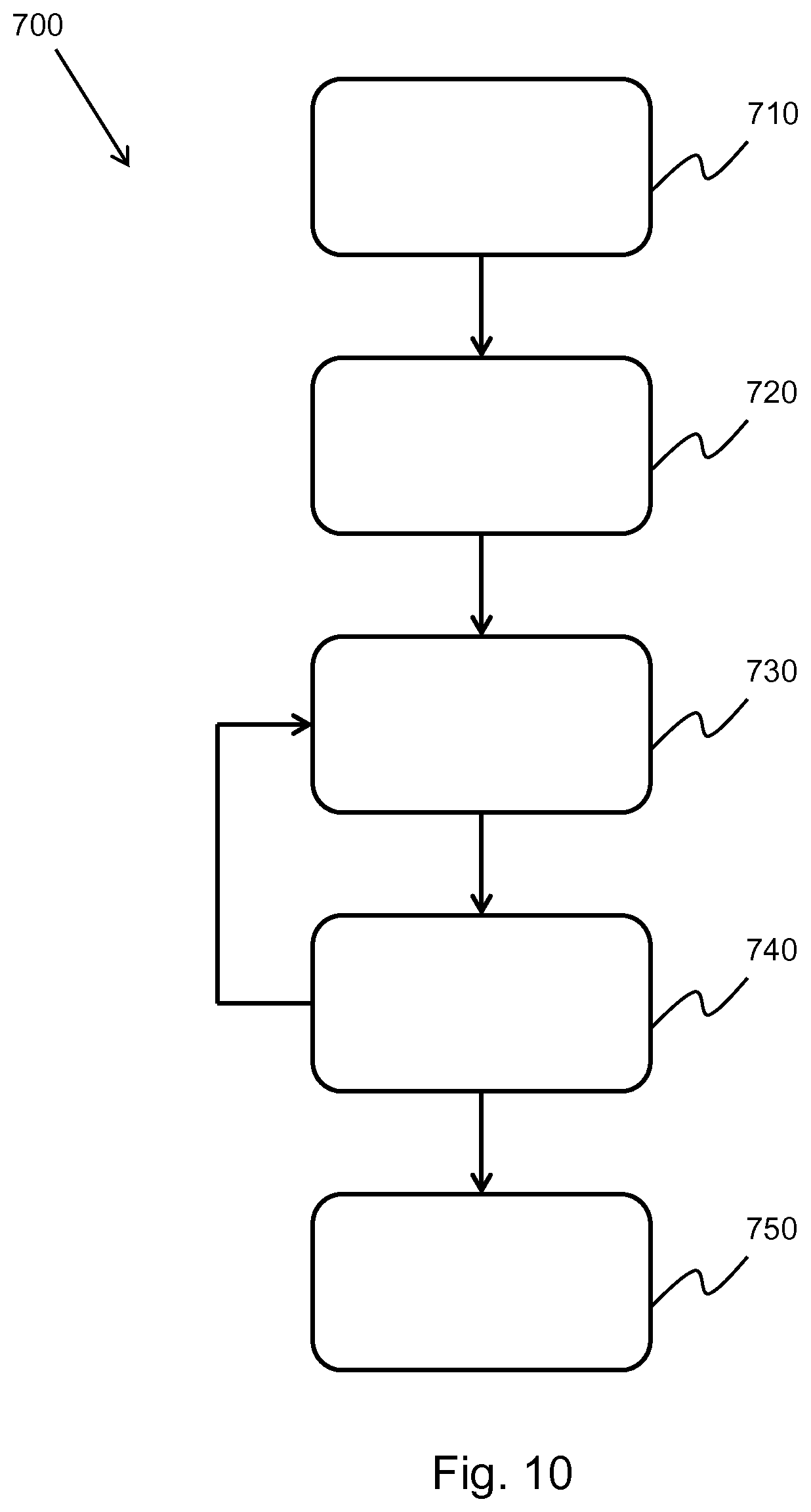

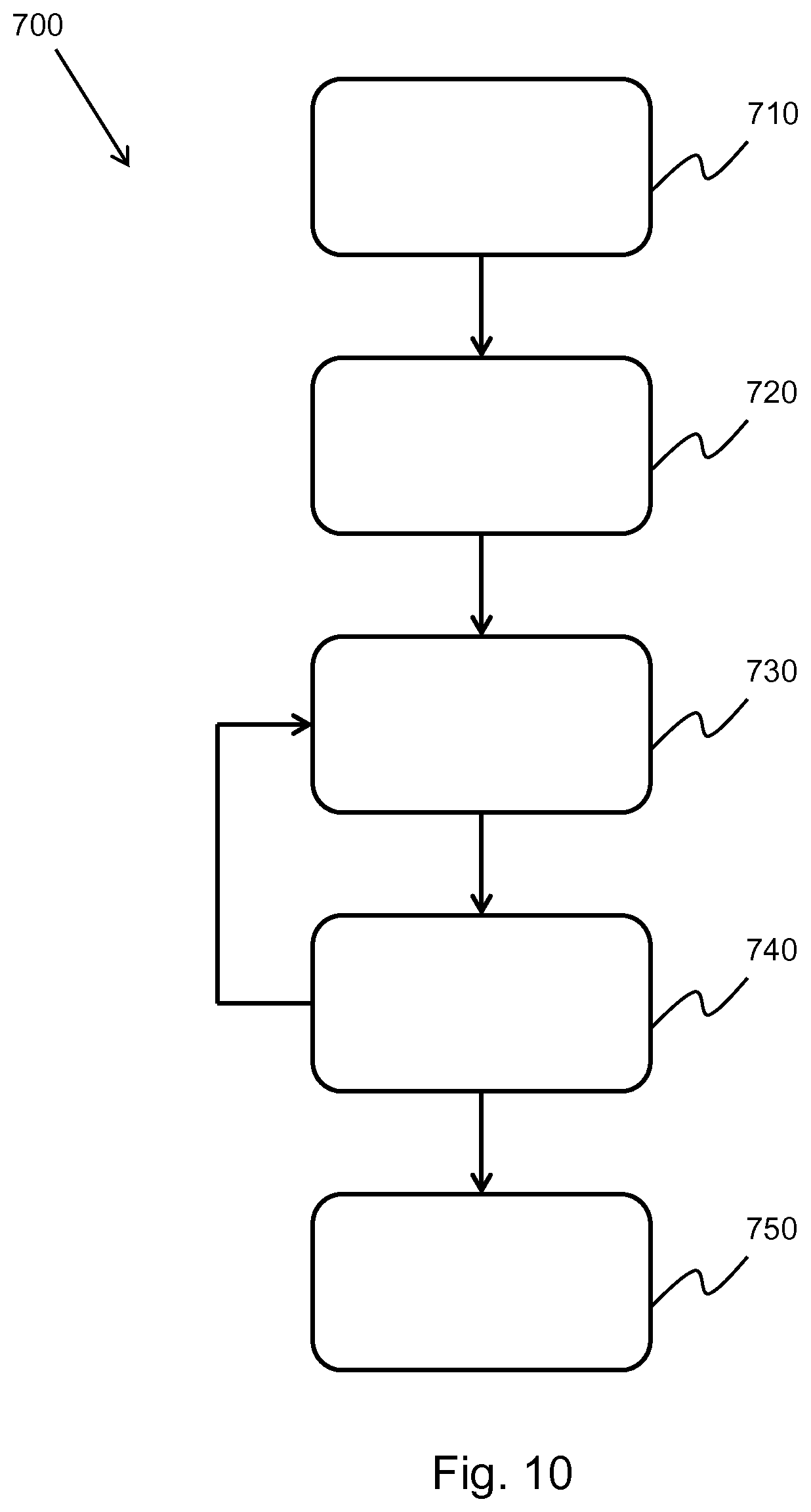

[0210] FIG. 10 shows a method 700 for applying transmit extrapolation to an ultrasound image.

[0211] In step 710, beam-summed data is obtained by way of an ultrasonic probe, wherein the beam-summed data comprises a plurality of steering angles.

[0212] In step 720, the beam-summed data is segmented for each steering angle based on the axial depth of the beam-summed data.

[0213] In step 730, an extrapolation filter of order p, a.sub.j where 1.ltoreq.j.ltoreq.p, is estimated based on the segmented beam-summed data. In this case, the extrapolation filter may be estimated by way of the well-known Burg technique for autoregressive (AR) parameter estimation.

[0214] In step 740, the segmented beam-summed data is extrapolated using the extrapolation filter estimated in step 730. In this case, a 1-step linear prediction extrapolator to obtain the 1.sup.st forward-extrapolated sample X.sub.N+1, using the extrapolation filter and the previous p samples of the available transmit angles, as shown below:

X.sub.N+1=.SIGMA..sub.j=1.sup.pX.sub.N+1-ja.sub.j,

[0215] where: X.sub.N is the current sample; X.sub.N+1 is the forward-extrapolated sample; and p is the order of the extrapolation filter, a.sub.j.

[0216] The forward extrapolation may be generalized as follows:

X.sub.k=.SIGMA..sub.j=1.sup.pX.sub.k-ja.sub.j, k>N (Forward Extrapolation),

[0217] where k is the number of forward extrapolations performed.

[0218] By reversing the filter order and taking the complex conjugate of the filter coefficients, it is possible to backward-extrapolate the value up to the k.sup.th transmit angle as a linear combination of the first p transmit angles:

X.sub.k=.SIGMA..sub.j=1.sup.pX.sub.k+ja*.sub.j, k<N (Backward Extrapolation),

[0219] Using both the forward and backward extrapolation formulae, it is possible to fully extrapolate the segmented beam-summed data. Steps 730 and 740 are repeated for each axial segment of the segmented beam-summed data.

[0220] In step 750, the fully extrapolated beam-summed data segments are coherently compounded to obtain the final beamsum signal and generate the final ultrasound image.

[0221] The transmit scheme used in the above method may be: planar; diverging; single-element; or focused.

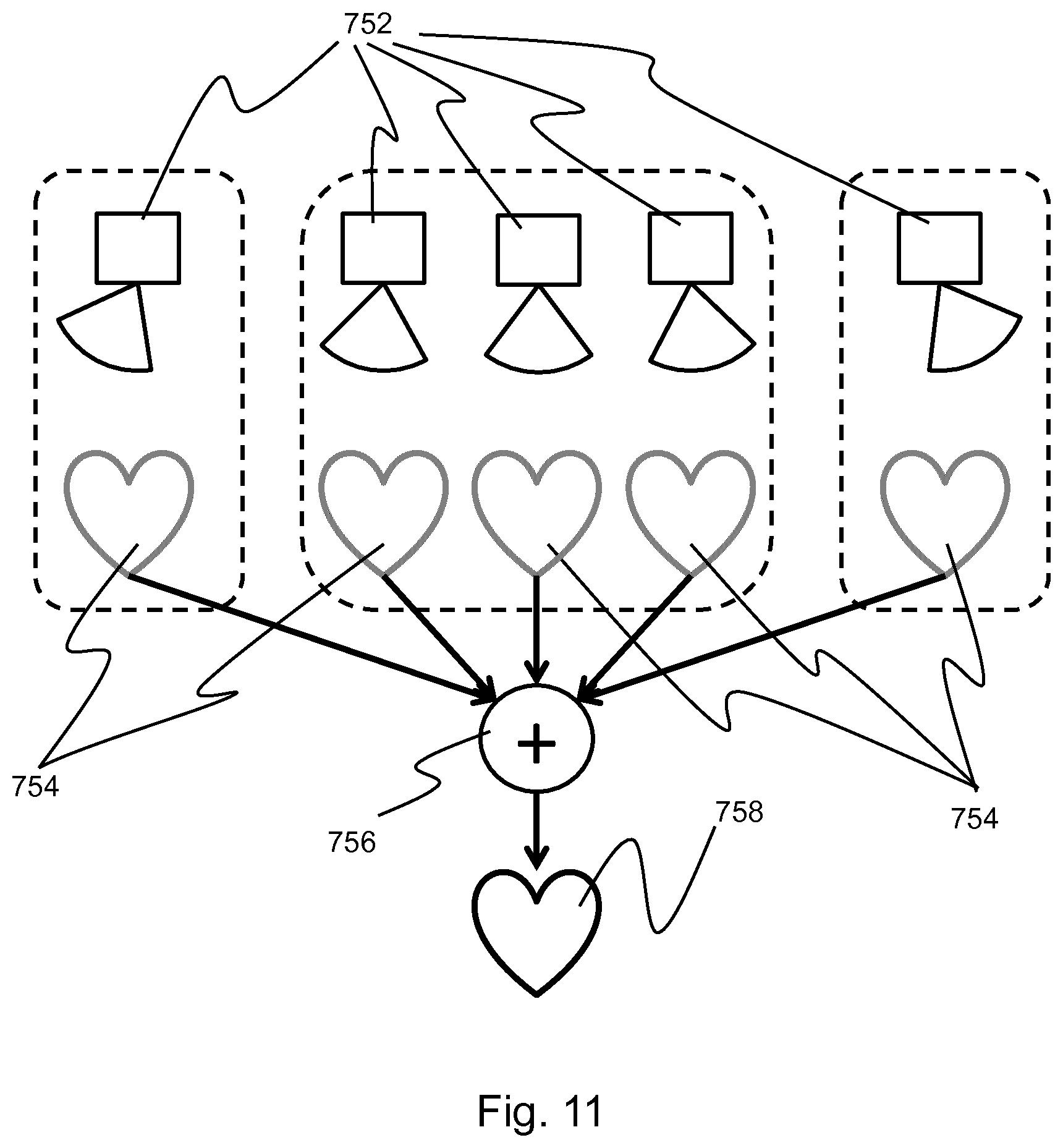

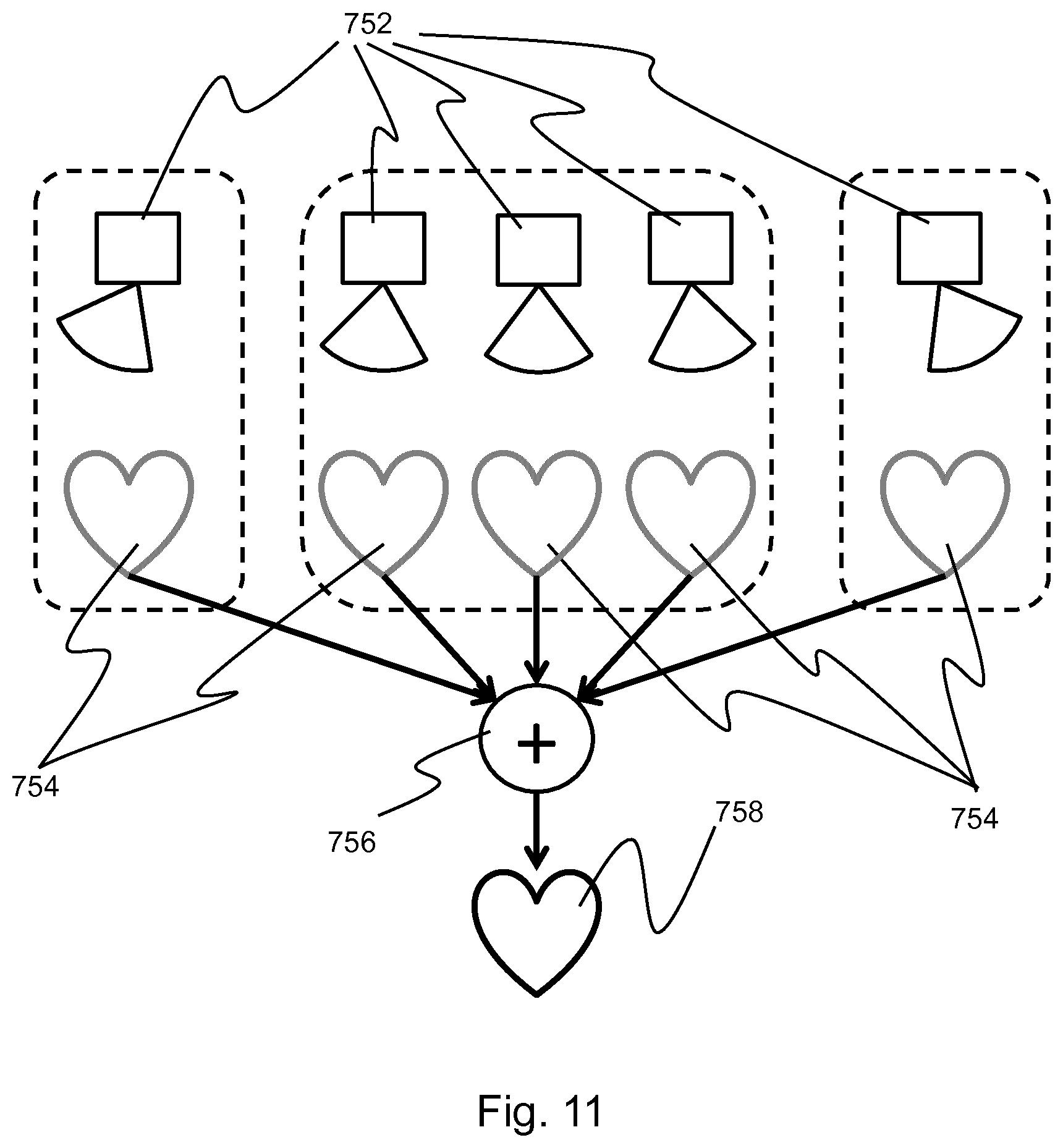

[0222] FIG. 11 shows an illustration of the method of FIG. 10. For each of a plurality of steering angles 752, a low contrast ultrasound image 754 is obtained. By coherently compounding 756 the plurality of low contrast ultrasound images, it is possible to generate a single high contrast ultrasound image 758. The improvement in image contrast is proportional to the number of steering angles used, and so the number of low contrast ultrasound images coherently compounded to generate the high contrast ultrasound image; however, a large number of initial steering angles may result in a decreased computational performance of the ultrasound system. By extrapolating from a small number of initial steering angles, it is possible to increase the image contrast without significantly degrading the performance of the ultrasound system.

[0223] FIG. 12 shows a comparison between an ultrasound image before and after the implementation of the transmit extrapolation and a reference image.

[0224] The first image 760 shows a reference image of a simulated phantom containing a 40 mm diameter anechoic cyst lesion. The phantom contains 4 strong point scatterers in the speckle background and 4 weak point scatterers inside of the anechoic lesion. The image was formed using 24 diverging waves.

[0225] The second image 770 shows an image of the same simulated phantom formed from only the 6 central angles of the 24 steering angles of the reference image. The 24 steering angles are evenly spaced between -45.degree. and 45.degree., meaning that the central 6 steering angles are separated by an angle of 3.91.degree.. It is clear to see from this image that the reduced number of steering angles results in a lower image contrast.

[0226] The third image 780 shows the result of applying the transmit extrapolation described above to the second image 770. In this case, an extrapolation filter of order 3 was used to extrapolate the number of transmit angles by a factor of 4. The image is generated by coherently compounding the data from the initial 6 transmit angles with the 18 predicted beamsum data from the extrapolation. As can be seen from the third image, the contrast is significantly improved from the second image and is comparable to that of the reference image. In addition, the contrast enhancement does not result in any artifacts suppressing the weak point scatterers inside the anechoic lesion.

[0227] FIG. 13 shows a comparison between an ultrasound image before and after the implementation of the transmit extrapolation and a reference image.

[0228] The first image 790 shows a reference image of an apical 4-chamber view of the heart form a patient. The image was formed using 24 diverging waves.

[0229] The second image 800 shows an image of the same data set formed with only 6 diverging waves. As before, the 6 diverging waves were selected as the central 6 of the 24 steering angles. As can be seen from the image, the image contrast of the second image is significantly lower than that of the first image.

[0230] The third image 810 shows the result of applying a transmit extrapolation to the second image as described above. In this case, an extrapolation filter of order 3 was used to extrapolate the number of steering angles by a factor of 4. Once again, the image is generated by coherently compounding the data from the initial 6 transmit angles with the 18 predicted beamsum data from the extrapolation. It is clear to see from the third image that the image contrast has been improved by the transmit extrapolation.

[0231] FIG. 14 shows a comparison between an ultrasound image before and after the implementation of the transmit extrapolation and a reference image.

[0232] The first image 820 shows a reference image of an apical 4-chamber view of the heart from a different patient to FIG. 13. The image was formed using 24 diverging waves. Unlike FIG. 13, the initial reference image for this patient has a poor image contrast.

[0233] The second image 830 shows an image of the same data set formed with only 6 diverging waves. As before, the 6 diverging waves were selected as the central 6 of the 24 steering angles. As can be seen from the image, the image contrast of the second image is significantly lower than that of the first image, which in this case renders a lot of the finer detail extremely unclear.

[0234] The third image 840 shows the result of applying a transmit extrapolation to the second image as described above. In this case, an extrapolation filter of order 3 was used to extrapolate the number of steering angles by a factor of 4. Once again, the image is generated by coherently compounding the data from the initial 6 transmit angles with the 18 predicted beamsum data from the extrapolation. It is clear to see from the third image that the image contrast has been improved by the transmit extrapolation.

[0235] It should be noted that any combination of extrapolation factor and extrapolation filter order may be used in any of the methods described above. In addition, any number of steering angles may be selected in the final method.

[0236] In some ultrasound systems, a combination of the above methods may be employed in order to further increase the image quality of the final ultrasound image.

[0237] For example, the aperture extrapolation method, described with reference to FIGS. 4 to 9, may be performed on a set of channel data followed by the transmit extrapolation method, described with reference to FIGS. 10 to 14. In this case, the aperture extrapolation may be performed for each transmit signal of the ultrasonic probe and the extrapolated channel data summed over the aperture, thereby generating a set of aperture extrapolated channel data. Following the summation, the transmit extrapolation may be performed on the aperture extrapolated channel data. In this way, the lateral resolution and image contrast of the final ultrasound image may be improved. In addition, for PWI and DWI ultrasound systems, the use of the transmit extrapolation method may allow for an increase in the frame rate of the ultrasound image.

[0238] In another example, the transmit extrapolation method may be performed before the aperture extrapolation method. In this case, the transmit extrapolation may be performed for each transducer element of the ultrasonic probe and the extrapolated channel data summed over all transmit angles, thereby generating a set of transmit extrapolated channel data. The aperture extrapolation method may then be performed over the transmit extrapolated channel data.

[0239] In both cases, the selective attenuation method, described with reference to FIGS. 2 and 3, may be employed to reduce the amount of off-axis clutter in the final ultrasound image. As this method simply attenuates the signals possessing a high spatial frequency, it may be performed in any order with the aperture extrapolation and transmit extrapolation methods. Alternatively, the selective attenuation method may be combined with only the aperture extrapolation method or the transmit extrapolation method.

[0240] It should be noted that the signals used to form the channel, and beam-summed, data may be geometrically aligned prior to performing the methods described above.

[0241] As discussed above, embodiments make use of a controller for performing the data processing steps.

[0242] The controller can be implemented in numerous ways, with software and/or hardware, to perform the various functions required. A processor is one example of a controller which employs one or more microprocessors that may be programmed using software (e.g., microcode) to perform the required functions. A controller may however be implemented with or without employing a processor, and also may be implemented as a combination of dedicated hardware to perform some functions and a processor (e.g., one or more programmed microprocessors and associated circuitry) to perform other functions.

[0243] Examples of controller components that may be employed in various embodiments of the present disclosure include, but are not limited to, conventional microprocessors, application specific integrated circuits (ASICs), and field-programmable gate arrays (FPGAs).

[0244] In various implementations, a processor or controller may be associated with one or more storage media such as volatile and non-volatile computer memory such as RAM, PROM, EPROM, and EEPROM. The storage media may be encoded with one or more programs that, when executed on one or more processors and/or controllers, perform at the required functions. Various storage media may be fixed within a processor or controller or may be transportable, such that the one or more programs stored thereon can be loaded into a processor or controller.

[0245] Other variations to the disclosed embodiments can be understood and effected by those skilled in the art in practicing the claimed invention, from a study of the drawings, the disclosure, and the appended claims. In the claims, the word "comprising" does not exclude other elements or steps, and the indefinite article "a" or "an" does not exclude a plurality. The mere fact that certain measures are recited in mutually different dependent claims does not indicate that a combination of these measures cannot be used to advantage. Any reference signs in the claims should not be construed as limiting the scope.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

D00011

D00012

D00013

D00014

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.