Security System for Detecting Hazardous Events and Occupants in a Building

Coles; Aaron Brent

U.S. patent application number 16/740124 was filed with the patent office on 2020-07-16 for security system for detecting hazardous events and occupants in a building. The applicant listed for this patent is DRIFT NET. Invention is credited to Aaron Brent Coles.

| Application Number | 20200225313 16/740124 |

| Document ID | / |

| Family ID | 69650693 |

| Filed Date | 2020-07-16 |

View All Diagrams

| United States Patent Application | 20200225313 |

| Kind Code | A1 |

| Coles; Aaron Brent | July 16, 2020 |

Security System for Detecting Hazardous Events and Occupants in a Building

Abstract

A gunshot detection/security system for detecting dangerous events in a school or other building includes one or several pods placed throughout the building premises. Each pod includes a camera, a thermal camera, and an acoustic sensor for detecting video, images, heat signatures, and sound within a detection area for the respective pod. The sensor data is then analyzed to identify a dangerous event in the building, and provide alerts regarding the dangerous event via the pods or client computing devices of students/occupants in the building, administrators, parents, and emergency responders. A server computing device generates digital maps of the interior and exterior of the building having location information regarding the occupants of the building and a danger zone indicating the epicenter for the dangerous event.

| Inventors: | Coles; Aaron Brent; (St. Charles, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 69650693 | ||||||||||

| Appl. No.: | 16/740124 | ||||||||||

| Filed: | January 10, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62804511 | Feb 12, 2019 | |||

| 62796224 | Jan 24, 2019 | |||

| 62791459 | Jan 11, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G08B 29/188 20130101; H04N 7/181 20130101; G08B 5/38 20130101; H04N 7/188 20130101; G08B 13/19619 20130101; G08B 13/1672 20130101; G08B 25/006 20130101; G08B 25/016 20130101; G08B 13/19656 20130101; G06Q 90/205 20130101; G08B 13/19697 20130101; G08B 29/183 20130101; G01S 5/22 20130101; G08B 25/14 20130101 |

| International Class: | G01S 5/22 20060101 G01S005/22; H04N 7/18 20060101 H04N007/18; G08B 13/16 20060101 G08B013/16; G08B 13/196 20060101 G08B013/196; G08B 25/14 20060101 G08B025/14; G08B 25/01 20060101 G08B025/01 |

Claims

1. A security system comprising: a multi-sensor device including: a camera configured to capture images within a detection area of the multi-sensor device; and a thermal camera configured to detect heat signatures from objects within the detection area; and a computing device including: one or more processors; and a non-transitory computer-readable memory coupled to the one or more processors and storing instructions thereon that, when executed by the one or more processors, cause the computing device to: receive image data, and heat signatures from the camera, and the thermal camera, respectively; analyze the image data, and heat signatures to identify a dangerous event at the detection area; and provide an alert indicating the dangerous event at the detection area.

2. The security system of claim 1, wherein the multi-sensor device is encased in a bulletproof material.

3. The security system of claim 1, wherein the instructions further cause the computing device to: detect a person within the detection area by: analyzing the image data to identify an object within the image having features corresponding to a person; analyzing the heat signature for the object to determine whether the heat signature for the person is within a body temperature range; and detecting the person when the object within the image has features corresponding to the person and the heat signature is within the body temperature range.

4. The security system of claim 3, wherein the instructions further cause the computing device to: generate a map of the detection area including indications of people within the detection area and an indication of a dangerous person or object within the detection area.

5. The security system of claim 1, wherein the multi-sensor device further includes an acoustic sensor configured to detect sound within the detection area, wherein to analyze the image data, heat signatures, and sound data to identify a dangerous event at the detection area, the instructions cause the computing device to: compare a heat signature to a threshold explosion temperature; compare a sound to a threshold noise level range; and detect a gunshot based on the heat signature being above the threshold explosion temperature and the sound being within the threshold noise level range.

6. The security system of claim 5, wherein the instructions further cause the computing device to: in response to detecting the gunshot: identify a number of blasts based on the sound data.

7. The security system of claim 6, wherein the instructions further cause the computing device to: identify a noise level associated with each blast from the sound data; and identify a type of weapon corresponding to the gunshot based on the number of blasts and the noise level associated with each blast.

8. The security system of claim 5, wherein the instructions further cause the computing device to: in response to detecting the gunshot: analyze a change in position of the heat signatures that are above the threshold explosion temperature over time to determine a trajectory of a projectile.

9. The security system of claim 1, wherein the instructions further cause the computing device to: store the image data in response to identifying the dangerous event at the detection area.

10. The security system of claim 1, wherein the security system includes a plurality of multi-sensor devices dispersed throughout a building.

11. The security system of claim 1, wherein the plurality of multi-sensor devices communicate with each other to track a location of a danger zone within a predetermined threshold distance of the dangerous event.

12. The security system of claim 1, wherein the multi-sensor device further includes a speaker and the instructions further cause the computing device to: provide, via the speaker, an alert specific to the detection area for the multi-sensor device.

13. The security system of claim 1, wherein the alert includes information regarding the dangerous event.

14. The security system of claim 13, wherein the information regarding the dangerous event includes a location of an active shooter.

15. The security system of claim 1, wherein the multi-sensor device further includes a communication interface configured to communicate with portable devices via a short-range communication link and configured to communicate with the computing device via a long-range communication link.

16. The security system of claim 15, wherein one of the portable devices determines that the portable device is within the detection area of the multi-sensor device in response to receiving a transmission from the multi-sensor device via the short-range communication link.

17. The security system of claim 16, wherein the transmission includes a unique identifier for the multi-sensor device to identify the multi-sensor device of a plurality of multi-sensor devices and a corresponding detection area.

18. The security system of claim 15, wherein one of the portable devices is a visitor badge.

19. The security system of claim 1, wherein to analyze the image data, and heat signatures to identify a dangerous event at the detection area, the instructions cause the computing device to: compare a heat signature of the heat signatures to a threshold fire temperature; and detect a fire based on the heat signature being above the threshold fire temperature.

20. The security system of claim 1, wherein the multi-sensor device further includes: a gas sensor configured to detect gas within the detection area.

21. The security system of claim 20, wherein the instructions further cause the computing device to: receive an indication of a gas leak from the gas sensor; and provide an alert of the gas leak within the detection area.

22. The security system of claim 20, wherein the gas sensor is configured to detect air quality within the detection area.

23. The security system of claim 20, wherein the gas sensor is configured to detect at least one of: carbon monoxide, carbon dioxide, acetylene, methanol, or ethanol.

24. The security system of claim 1, wherein the multi-sensor device further includes: a microphone configured to receive voice communications within the detection area for interacting with an administrator or emergency responder.

25. The security system of claim 1, wherein to analyze the image data, and heat signatures to identify a dangerous event at the detection area, the instructions cause the computing device to: analyze the image data to identify an object within the image having features corresponding to a weapon prior to detecting a gunshot.

26. The security system of claim 1, wherein the multi-sensor device further includes: a rechargeable power cell configured to provide power to sensors within the multi-sensor device; and one or more generators configured to generate additional power and provide the additional power to recharge the rechargeable power cell.

27. The security system of claim 1, wherein the multi-sensor device further includes: one or more fasteners for attaching a casing to the multi-sensor device; a sensor communicatively coupled to at least one of the fasteners configured to detect whether the at least one fastener has been removed; wherein in response to the sensor detecting that the at least one fastener has been removed, the instructions further cause the computing device to transmit an alert indicating that the multi-sensor device has been tampered with.

28. The security system of claim 1, wherein the multi-sensor device further includes: one or more light emitting elements; wherein in response to the sensor detecting that the fastener has been removed, the instructions further cause the computing device to activate the one or more light emitting elements.

29. The security system of claim 1, wherein the dangerous event includes at least one of: a gunshot, a weapon, a fire, a gas leak or other airborne contaminant, a water leak, a suspicious bag, a fight, or vandalism.

30. The security system of claim 1, wherein the instructions further cause the computing device to: identify a danger zone within building premises corresponding to locations within a predetermined threshold distance of the dangerous event; determine a route from a location of one or more occupants to a building exit which avoids the danger zone; and communicate the route to the one or more occupants.

31. The security system of claim 1, wherein the multi-sensor device further includes a speaker and the instructions further cause the computing device to: provide, via the speaker, announcements for a building which includes the multi-sensor device.

32. A gunshot detection system comprising: an acoustic sensor configured to detect sound within a detection area of the gunshot detection system; and a thermal camera configured to detect heat signatures from objects within the detection area, wherein a gunshot is detected in response to detecting a heat signature above a threshold temperature and a sound within a threshold noise level range.

33. The gunshot detection system of claim 32, further comprising: one or more processors; and a non-transitory computer-readable memory coupled to the one or more processors and storing instructions thereon that, when executed by the one or more processors, cause the one or more processors to: compare the sound to the threshold noise level range; compare the heat signature to the threshold temperature; and detect the gunshot in response to determining that the sound is in the threshold noise level range, the heat signature is above the threshold temperature, and the sound and the heat signature are detected within a same time period.

34. The gunshot detection system of claim 32, wherein the threshold noise level range is between 140 and 190 decibels (dB) and the threshold temperature is 200 degrees Celsius.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of priority under 35 U.S.C. .sctn. 119 of (1) U.S. Provisional Patent Application No. 62/791,459, entitled "Security System for Detecting Gunshots and Locating an Active Shooter and Occupants in a Building," filed Jan. 11, 2019, (2) U.S. Provisional Patent Application No. 62/796,224, entitled "Security System for Detecting Gunshots and Locating an Active Shooter and Occupants in a Building" filed Jan. 24, 2019, and (3) U.S. Provisional Patent Application No. 62/804,511 entitled "Security System for Detecting Gunshots and Locating an Active Shooter and Occupants in a Building," filed Feb. 12, 2019, the disclosure of each of which is hereby expressly incorporated by reference herein in its entirety.

FIELD OF THE DISCLOSURE

[0002] The present disclosure relates to security systems and more particularly, to a security system for identifying occupants in a building during a dangerous event.

BACKGROUND

[0003] In recent years, there has been a significant increase in the number of mass shootings and the number of injuries and fatalities caused by these mass shootings. When a mass shooting occurs in a school or other building, emergency responders such as police officers may be alerted of the mass shooting and may be provided with the name of the school or name of the building in which the shooting takes place. However, typically the police officers are not made aware of the location of the shooter within the building or the locations of other occupants relative to the shooter. Accordingly, police officers enter a building with little information regarding the whereabouts of the shooter or the whereabouts of other occupants who need to be evacuated from the building. Other emergency responders such as paramedics are also unaware of the locations of injured occupants who require immediate attention and may be suffering from life-threatening injuries.

[0004] Furthermore, many occupants in the building such as students and teachers are unaware of the location of the shooter within the building. As a result, they do not know whether they need to hide or can safely exit the building and/or which exits are safe.

[0005] Additionally, other dangerous events may occur in a building such as fires, gas leaks, water leaks, weapons, suspicious bags which may contain weapons, fights, vandalism, etc. While current systems may be used to alert occupants of some types of dangerous events such as a fire, the current systems require manual input such as pulling a fire alarm. Additionally, the current systems do not provide occupants and emergency responders with the precise location of a dangerous event, with escape routes for safely evacuating the building, and/or with the locations of injured occupants who require immediate attention.

SUMMARY OF THE DISCLOSURE

[0006] To locate a shooter and other occupants in a building during a dangerous event, a gunshot detection system includes several pods installed or dispersed at various locations throughout the interior and/or exterior of a building, such as a school, airport, office building, hospital, shopping mall, sports complex, retail store, department store, train station, food court, place of worship, etc. For example, a pod may be installed in each room within the building and several pods may be installed throughout a hallway. Pods may also be installed on light poles, the roof of the building, or other structures on the building premises exterior to the building. A pod is a multi-sensor device including several sensors collecting data from the same detection area. Each of the pods may include a camera, a thermal camera, and an acoustic sensor to detect image data, heat signatures, and sound data, respectively, in the detection area for the pod. For example, when the pod is installed within a room of the building, the detection area for the pod may span the area of the room. Additionally, each of the pods may also include a communication interface having long-range and short-range communication links, a speaker, a microphone, and/or a gas sensor.

[0007] The sensor data collected at a pod is then analyzed by a computing device within the pod to detect dangerous events such as gunshots, gas leaks or other airborne contaminants, water leaks, fires, weapons, suspicious bags which may contain weapons, fights, vandalism, and/or other events in the building which may cause harm or bodily injury to occupants. When a dangerous event is detected in the building, the computing device further analyzes the sensor data to identify the location of a shooter, the type of weapon used, the number of rounds fired, and locations of other occupants in the building. Using this information received from the pods 100, along with additional information previously stored (e.g., floor plans, map displays, etc.), the computing device in the pod or a server computing device may generate a two-dimensional (2D) or three-dimensional (3D) map display of the building including a display of each of the rooms in the building, indications of entrances and exits to and from the building, indications of the number of occupants in various rooms within the building and/or the movements of the occupants, and an indication of a danger zone or a dangerous person or object based on the location of the shooter, the type of weapon being fired, and the number of rounds that have been fired.

[0008] The server computing device may also generate audio alerts or messages and provide the audio alerts or messages to the pods to be presented via the speakers. In some embodiments, the audio alerts or messages are generated specifically for a particular detection area, and the server computing device may provide the audio alert or message to the pod corresponding to the particular detection area. For example, the server computing device may generate a first audio alert for pods having detection areas outside of the danger zone. The first audio alert may indicate that there is an active shooter in the building and may instruct occupants to exit from the nearest exit. The first audio alert may also provide a description of the nearest exit, such as the science wing doorway. The server computing device may also generate a second audio alert for pods having detection areas in the danger zone. The second audio alert may indicate that there is an active shooter nearby and may instruct occupants in the danger zone to lock all doors and hide.

[0009] The server computing device may provide the map display to client computing devices of occupants in the building, such as students and teachers. The server computing device may also provide the map display to school administrators and emergency responders, such as police officers, fire fighters, paramedics, etc. Additionally, the server computing device may provide alerts to client computing devices of parents or other relatives of the occupants.

[0010] In an embodiment, a security system comprises a multi-sensor device including a camera configured to capture images within a detection area of the security system, a thermal camera configured to detect heat signatures from objects within the detection area, and an acoustic sensor configured to detect sound within the detection area. The security system also includes a computing device including one or more processors and a non-transitory computer-readable memory coupled to the one or more processors and storing instructions thereon. When executed by the one or more processors, the instructions cause the computing device to receive image data, heat signatures, and sound data from the camera, the thermal camera, and the acoustic sensor, respectively, analyze the image data, heat signatures, and sound data to identify a dangerous event at the detection area, and provide an alert indicating the dangerous event at the detection area.

[0011] In another embodiment, a gunshot detection system comprises an acoustic sensor configured to detect sound within a detection area of the gunshot detection system, and a thermal camera configured to detect heat signatures from objects within the detection area, where a gunshot is detected in response to detecting a heat signature above a threshold temperature and a sound within a threshold noise level range.

BRIEF DESCRIPTION OF THE DRAWINGS

[0012] The following detailed description will be more easily and better understood when considered in conjunction with the following figures, in which like reference numbers are employed to designate like structures. It should be understood that, with the exception of magnified images, the drawings are not to scale, as scaled drawings would not facilitate an understanding of the depicted structures.

[0013] FIG. 1 illustrates an example pod installed within building premises implementing the gunshot detection system;

[0014] FIG. 2A illustrates a block diagram of an example pod;

[0015] FIG. 2B illustrates a block diagram of an example recharging system for recharging a rechargeable battery;

[0016] FIG. 2C is a flow diagram of an example method for recharging the rechargeable battery which can be implemented in a processor or controller communicatively coupled to the rechargeable battery;

[0017] FIG. 3 illustrates an example layout of pods installed in various rooms and hallways of a building and the respective detection areas for the pods;

[0018] FIG. 4 illustrates an example communication system in which techniques for detecting gunshots and providing alerts can be implemented;

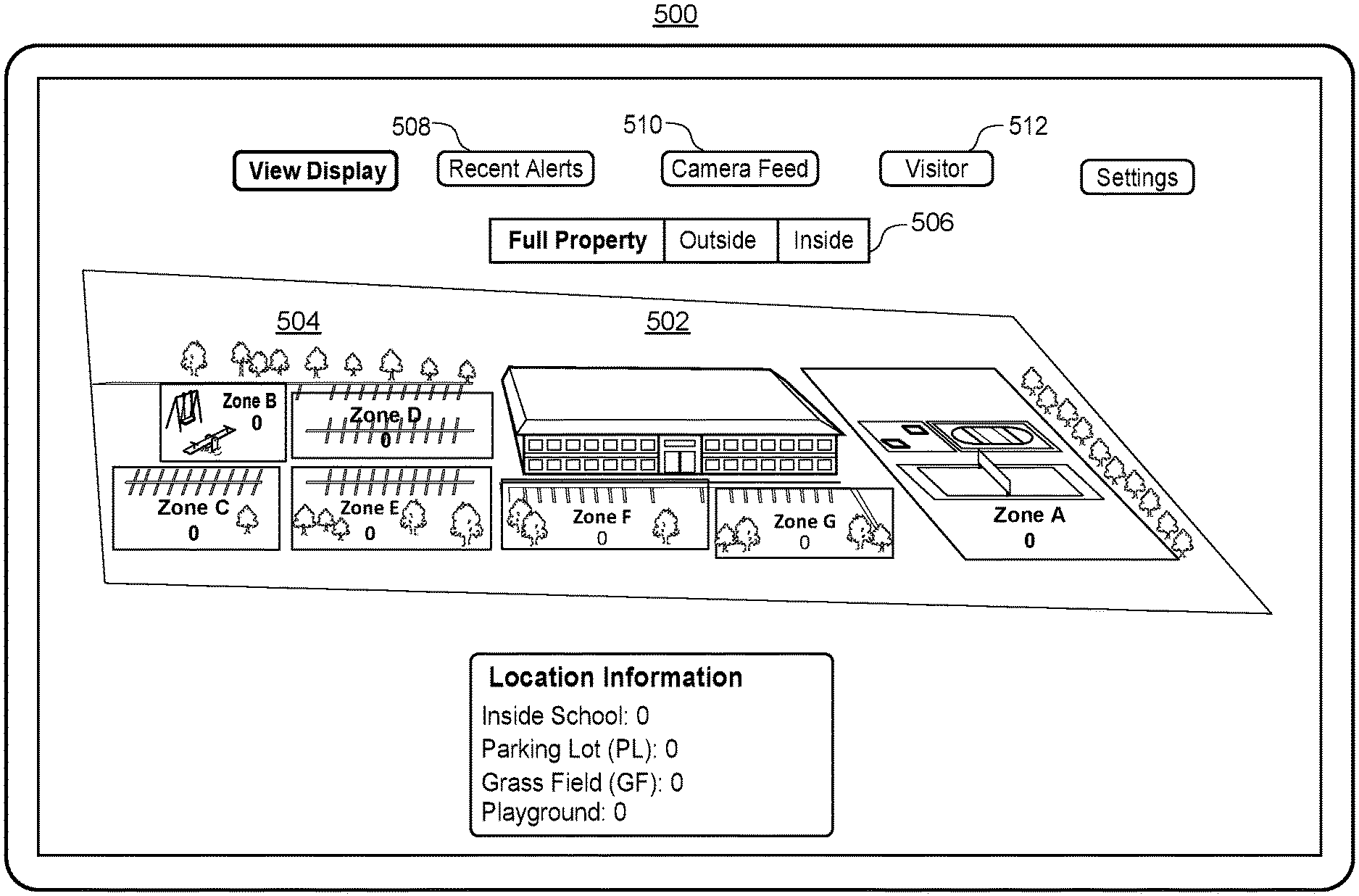

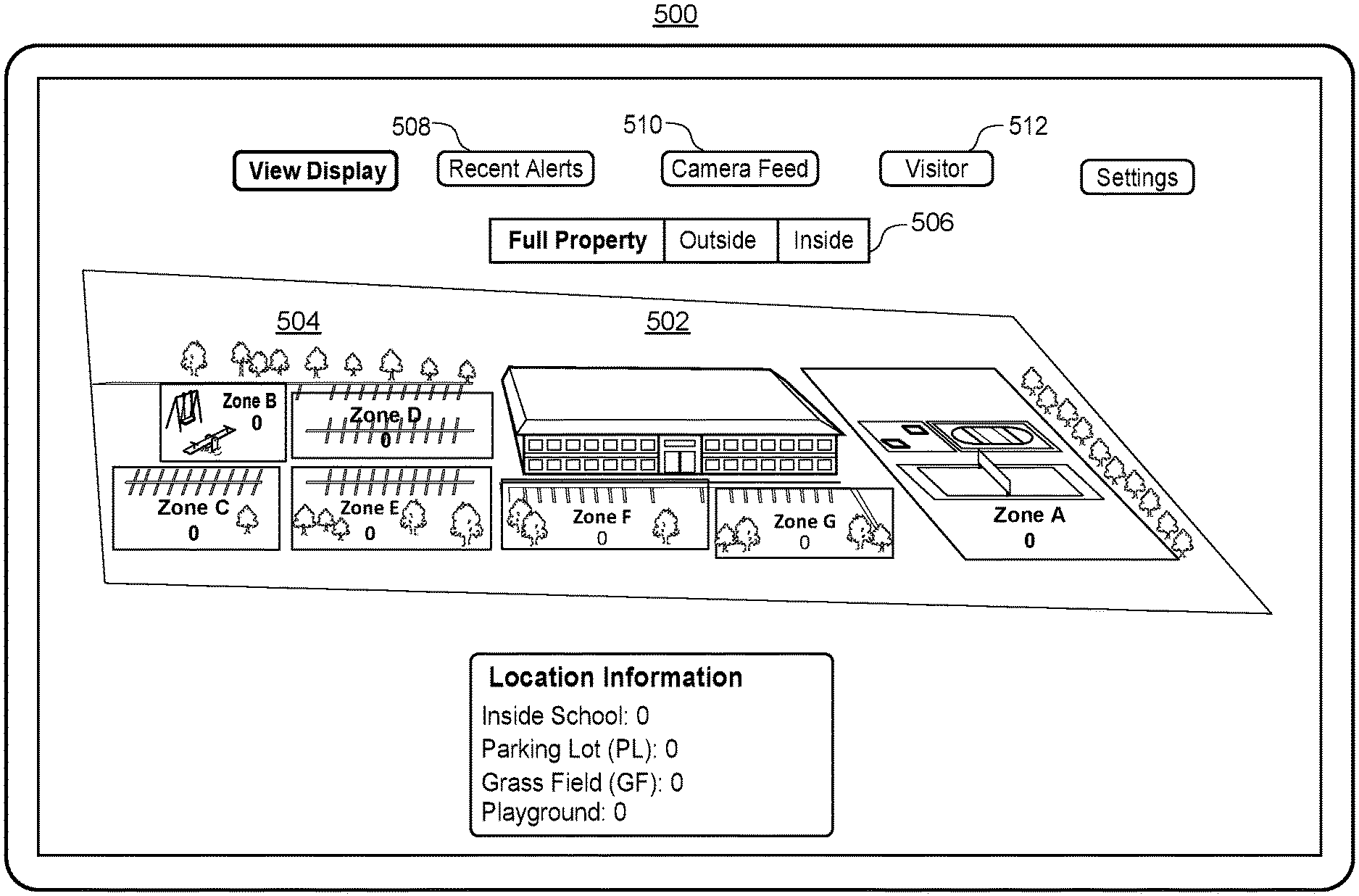

[0019] FIG. 5 illustrates an example outdoor map display screen of an administrator application or emergency responder application;

[0020] FIG. 6A illustrates an example gunshot alert screen of an administrator application;

[0021] FIG. 6B illustrates an example indoor map display screen of an administrator application;

[0022] FIG. 6C illustrates an example search screen of an administrator application;

[0023] FIG. 6D illustrates an example indoor map display screen of an administrator application including highlighted search results;

[0024] FIG. 6E illustrates an example message screen of an administrator application for sending a message to all users;

[0025] FIG. 6F illustrates an example room message screen of an administrator application for sending a room-specific message to corresponding users;

[0026] FIG. 6G illustrates an example guest search screen of an administrator application for searching for the location of a visitor;

[0027] FIG. 6H illustrates an example indoor map display screen of an administrator application which includes indications of the locations of visitors;

[0028] FIG. 7A illustrates an example gunshot alert screen of a student/occupant application;

[0029] FIG. 7B illustrates an example indoor map display screen of a student/occupant application;

[0030] FIG. 7C illustrates an example navigation route to the nearest and/or safest exit overlaid on the example indoor map display screen of FIG. 7B;

[0031] FIG. 7D illustrates an example instruction screen of a student/occupant application when there is no safe exit;

[0032] FIG. 7E illustrates an example status message screen of a student/occupant application for alerting administrators, parents, and/or emergency responders of the status of the user or other users;

[0033] FIG. 8A illustrates an example login screen of a parent application;

[0034] FIG. 8B illustrates an example indoor map display screen of a parent application;

[0035] FIG. 8C illustrates another example indoor map display screen of a parent application with a status update indicating information regarding the user's child;

[0036] FIG. 9A illustrates an example outdoor map display screen of an emergency responder application which includes an example navigation route to a recommended entrance to the building;

[0037] FIG. 9B illustrates an example three-dimensional model of an area surrounding an emergency responder's current location implemented in an emergency responder application for directing the emergency responder to a recommended entrance to the building;

[0038] FIG. 10A illustrates an example gunshot alert screen of an emergency responder application;

[0039] FIG. 10B illustrates an example indoor map display screen of an emergency responder application which highlights a recommended entrance for the emergency responder to enter;

[0040] FIG. 11 illustrates a flow diagram of an example method for generating an indoor map display of a building during a dangerous event which can be implemented in a server computing device;

[0041] FIG. 12 illustrates a flow diagram of an example method for presenting an indoor map display of a building during a dangerous event which can be implemented in a client computing device;

[0042] FIG. 13 illustrates a flow diagram of an example method for example method for generating an outdoor map display of building premises during a dangerous event which can be implemented in a server computing device;

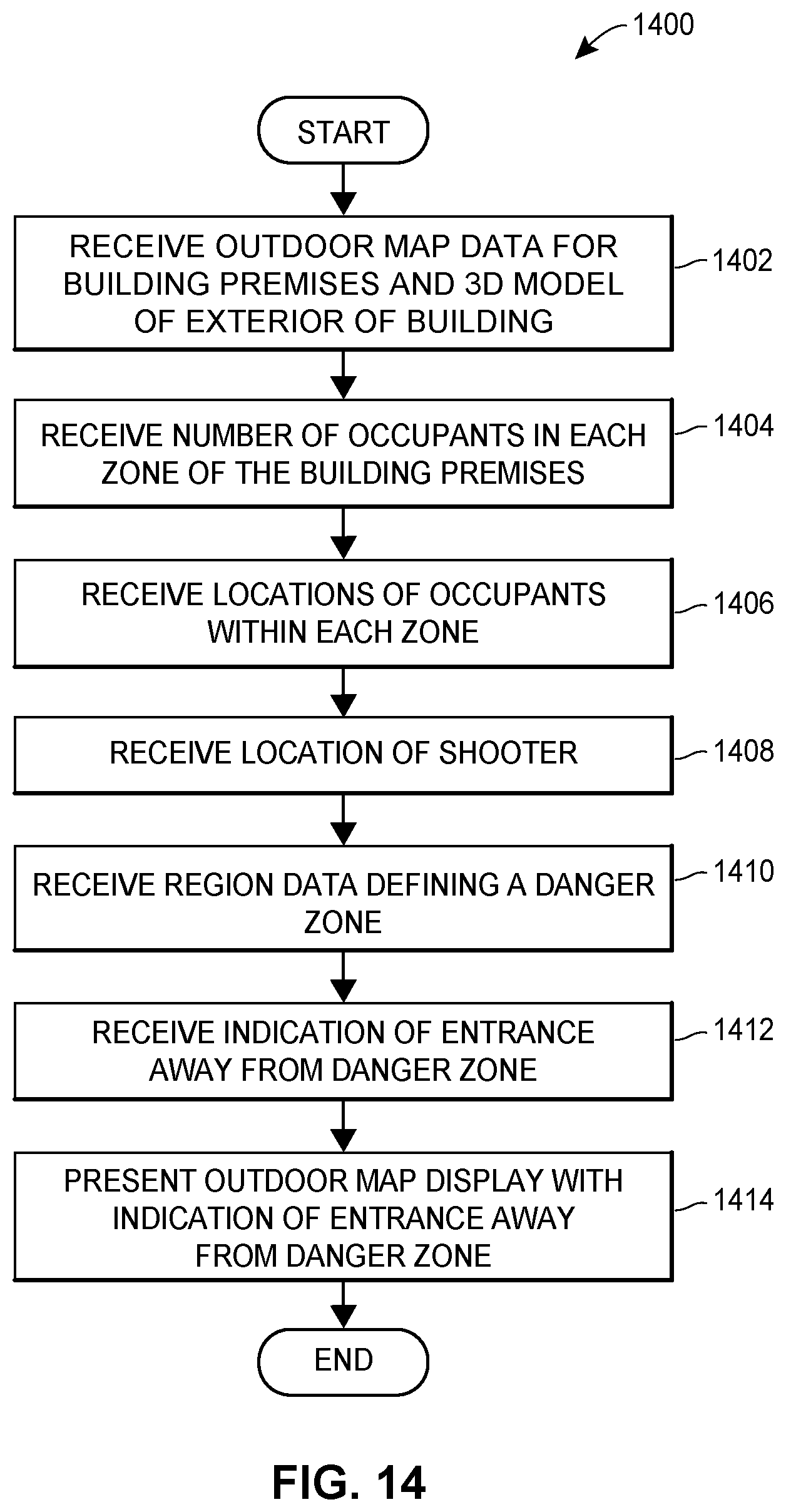

[0043] FIG. 14 illustrates a flow diagram of an example method for presenting an outdoor map display of building premises during a dangerous event which can be implemented in a client computing device;

[0044] FIG. 15 illustrates a flow diagram of an example method for routing a user to a building exit during a dangerous event which can be implemented in one or more pods and/or a server computing device;

[0045] FIG. 16 illustrates a flow diagram of an example method for automatically locking a door in a building during a dangerous event which can be implemented in one or more pods and/or a server computing device; and

[0046] FIG. 17 illustrates a flow diagram of an example method for broadcasting a notification of a dangerous event to an emergency responder device which can be implemented in one or more pods and/or a server computing device.

DETAILED DESCRIPTION

Pod Hardware

[0047] Referring to FIGS. 1 and 2A, FIG. 1 illustrates an embodiment of an example housing of a pod 100 (also referred to herein as a "multi-sensor device"). FIG. 2A is a block diagram of example elements which may be included in the pod 100. As shown in FIGS. 1 and 2A, the pod 100 includes a high-definition imaging camera 104 (also referred to herein as a "camera"), a thermal camera 106, an acoustic sensor 114, a speaker 108, a microphone 110, a gas sensor 112, one or more light emitting elements 120 such as a light emitting diode (LED), a communication interface 122, and pan and tilt servomotors 102. As used herein, thermal imaging cameras capturing infrared light in the infrared spectrum may be referred to as "thermal cameras" and the term "camera" or "imaging camera" may be used to refer to a high-definition or high-speed imaging camera capturing images in the visible light spectrum.

[0048] The pan and tilt servomotors 102 may be configured to control the camera 104 and/or the thermal camera 106 so that the camera 104 and/or the thermal camera 106 may rotate side-to-side and up and down. In some embodiments, when an object is detected within the detection area of the pod 100 such as an occupant, a visitor, a shooter, a weapon, a fire, etc., the pan and tilt servomotors 102 may control the camera 104 and/or the thermal camera 106 to track the movement of the object. In some embodiments, the camera 104 and/or the thermal camera 106 may be controlled manually be sending signals from a client computing device of a building administrator or a server computing device to the pan and tilt servomotors 102. For example, the building administrator may be monitoring a live video feed from the camera 104 and may control the camera 104 to view objects within or near the detection area of the pod 100.

[0049] The light emitting elements 120 may illuminate a surface on which the pod 100 is mounted, such as a wall or may illuminate a surface adjacent to the surface on which the pod 100 is mounted. In some embodiments, as shown in FIG. 1, the light emitting elements 120 may be placed on a portion of a surface of the pod 100, where the portion is moveable and is configured to pivot from a position parallel to the surface of the pod 100 to protrude outward away from the surface. The portion is also configured to retract to the position parallel to the surface of the pod 100. The portion may be configured to protrude and retract automatically for example, via a motor which may be controlled by a computing device in the pod 100, or manually, such as by a user pressing on the portion.

[0050] In some embodiments, the speaker 108 is placed on the back surface of the pod 100 which may be facing a wall, such that the wall may be used as a resonance board to amplify the sound from the speaker 108. This configuration may amplify the sound from the speaker 108 by about 10 decibels (dB). Like the light emitting elements 120, in some embodiments, the speaker 108 may be placed on a portion of a surface of the pod 100, where the portion is moveable and is configured to pivot from a position parallel to the surface of the pod 100 to protrude outward away from the surface. The portion is also configured to retract to the position parallel to the surface of the pod 100. The portion may be configured to protrude and retract automatically for example, via a motor which may be controlled by a computing device in the pod 100, or manually, such as by a user pressing on the portion. The speaker 108 and the light emitting elements 120 may be placed on the same portions of the same surfaces of the pod 100 or may be placed on different portions and/or different surfaces of the pod 100.

[0051] The pod 100 may also include a computing device having one or more processors 128 and a memory 130 to process the data received from the sensors 104-120. The processor(s) 128 may be a specially programmed general processing unit. In other embodiments, the processor(s) 128 may be a specially programmed field programmable gate array (FPGA), an application-specific integrated circuit (ASIC)), etc. The memory 130 can be a non-transitory memory and can include one or several suitable memory modules, such as random access memory (RAM), read-only memory (ROM), flash memory, other types of persistent memory, etc. The memory 130 stores instructions executable on the processors 128 that make up a gunshot detection module 132 for detecting a gunshot within a detection area of the pod 100, a weapon detection module 134 for detecting a weapon within the detection area for the pod 100, and an occupant detection module 136 for detecting the number of occupants within the detection area for the pod 100, and/or the precise locations of the occupants within the detection area. The gunshot detection module 132, weapon detection module 134, and occupant detection module 136 are described in more detail below.

[0052] In some embodiments, the pod 100 is encased in bulletproof material such as Kevlare infused plastic or another material with significant heat and impact resistance to prevent the pod 100 from being damaged during a shooting. The bulletproof material may be attached to the pod 100 via one or more fasteners, such as screws, nuts, bolts, pins, rivets, etc.

[0053] In some embodiments, the pod 100 includes a sensor communicatively coupled to one or more of the fasteners that is configured to detect whether the respective fastener has been removed or tampered with in any way. If the fastener has been tampered with, the computing device in the pod 100 may activate the LEDs and cause them to blink. For example, the LEDs may blink in a red color signaling an alarm. Additionally, the computing device in the pod 100 may transmit an alert to an administrator indicating that the pod 100 has been tampered with.

[0054] Also in some embodiments, the pod 100 is powered via a rechargeable power cell 124 included within the housing of the pod, such as a 2600 mAh rechargeable power bank. Accordingly, the high-definition imaging camera 104, thermal camera 106, acoustic sensor 114, speaker 108, microphone 110, gas sensor 112, and communication interface 122 may receive power via the rechargeable power cell 124.

Rechargeable Power Cell

[0055] The pod 100 may also reuse energy by including one or several generators 126 within the pod housing which are rotated (e.g., at 3600 rpm) to provide additional power that is used to recharge the power cell 124 while the power cell 124 is in use. In this manner, each pod 100 does not need extra wires to receive power from an electrical outlet.

[0056] FIG. 2B is a block diagram of a recharging system 150 for recharging a rechargeable battery 164. The rechargeable battery 164 may be used to power a resistive load/battery-powered device 167 such as the pod 100 of FIG. 1. The system 150 includes a backup battery 152 that provides DC electrical power to a voltage regulator 154. The voltage regulator 154 further provides the regulated DC power to a DC to AC power inverter 157. The voltage regulator 154 ensures that the DC voltage provided to the DC to AC power inverter 157 is within a certain voltage range. For example, it may be desirable for the DC voltage provided to the DC to AC power inverter 157 to be 3.+-.0.5 V, 3.+-.0.2 V, 3.+-.0.1 V, 3.+-.0.05 V, 3.+-.0.01 V, or another voltage range or tolerance as required by the DC to AC power inverter 157.

[0057] In some embodiments, the voltage regulator 154 may be a low-dropout (LDO) linear voltage regulator that can regulate a DC output voltage when the input voltage to the regulator 154 is very close to the output voltage of the regulator 154. Employing an LDO regulator as the regulator 154 may reduce electrical switching noise, reduce the form factor of the system 150, and simplify the design of the system 150.

[0058] The DC to AC power inverter 157 converts the DC power to AC power, and provides AC power to a generator 160. The generator 160 generates AC electrical power at higher voltages than the DC power provided by the backup battery 152. The AC power generated by the generator 160 is provided to an AC to DC rectifier 162, and the AC to DC rectifier 162 converts the generated AC power to DC power. The AC to DC rectifier 162 provides the DC power to the rechargeable battery 164 to recharge the rechargeable battery 164. The power provided by the backup battery 152, in cooperation with the other elements of the system 150 of FIG. 2B, enable recharging of the rechargeable battery 164, to provide power to the device 167.

[0059] In some embodiments, the system 150 may be configured to selectively provide power to the rechargeable battery 164 to recharge the rechargeable battery 164. For example, the system 150 may initiate recharging of the rechargeable battery 164 when the voltage of the rechargeable battery 164 has reached a threshold value. For example, if the device 167 requires 3V to operate, the system 150 may be configured to provide power to the rechargeable battery 164 when the voltage of the rechargeable battery 164 reaches a voltage value below 3.1 V, 3.2 V, 3.5 V or another voltage threshold value. In some embodiments, the voltage threshold value for selectively providing power to the rechargeable battery 164 may depend on the voltage required for operation of the device 167, a rate of discharge of the voltage from the rechargeable battery 164, a rate of recharge of the rechargeable battery 164, another factor associated with discharging or recharging of the rechargeable battery 164, and/or another factor associated with operation of the device 167. In some embodiments, the system 150 of FIG. 2B may include other components such as a controller or processor for determining when to provide power to the rechargeable battery 164, and controlling the selective recharging of the rechargeable battery 164.

[0060] In some embodiments, the rechargeable battery 164 and/or the backup battery 152 may be a battery with multiple battery cells. For example, the rechargeable battery 164 and/or the backup battery 152 may each be 7.4 V batteries with a first and second 3.7 V battery cell. In embodiments where the rechargeable battery 164 has first and second battery cells, the system 150 may be configured to selectively provide power from the first or second battery cell to the device 167. The system 150 may provide power to the device 167 from the first or second battery cell based on a voltage threshold of the first or second battery cell.

[0061] FIG. 2C is a flow diagram of a method 170 for recharging the rechargeable battery 164 having multiple battery cells. In some embodiments, a processor or controller communicatively coupled to the rechargeable battery 164 may execute the method 170 and/or the method may be executed by the rechargeable battery 164. While the method 170 is discussed with reference to a first and second battery cell of a rechargeable battery, a rechargeable battery 164 and a backup battery 152 may also be used to execute the method.

[0062] At block 172, the first battery cell of the rechargeable battery 164 may provide power to the device 167 until the voltage of the first battery cell reaches a threshold value (e.g., 3 V, 3.1 V, 3.2 V, etc.). Then at block 174, the processor or controller may evaluate whether the first battery cell has reached a voltage threshold. If the voltage threshold has not been reached, then the first battery cell may continue to provide power to the device 167. If at block 174 it is determined that the first battery cell has reached the voltage threshold, then the processor or controller may provide the device 167 with power from the second battery cell of the rechargeable battery 164.

[0063] At block 178, the processor or controller may recharge the first battery cell of the rechargeable battery 164 while simultaneously, at block 176, providing power to the device 167 from the second cell of the rechargeable battery 164. At block 182, the processor or controller may determine whether the first battery cell has recharged to a recharged voltage threshold, and at block 180, the processor or controller may determine whether the second battery cell has reached a voltage threshold. At block 184, the processor or controller may determine whether to power the device 167 from the first or second battery cell based on the determinations from blocks 180 and 182.

[0064] Then at block 172, the processor or controller may provide power to the device 167 from the recharged first battery cell of the rechargeable battery 164 when the voltage of the second battery cell reaches a threshold (e.g., 3 V, 3.1 V, 3.2 V, etc.), and/or when the first cell reaches a recharged voltage threshold (e.g., 3.2 V, 3.3 V, 3.4 V, 3.7 V, etc.). By selectively powering the device 167 from a first or second battery cell, and selectively recharging the first or second battery cells of the rechargeable battery 164, the present embodiments advantageously allow for continuous and/or extended operation of the device 167 due to the extended battery lifetime of the rechargeable battery 164 and the rechargeable battery cells.

Imaging Camera

[0065] The imaging camera 104 may be a high definition camera (e.g., a 1080p camera, an 8 megapixel camera, etc.) and is configured to detect visible light images and video within a detection area of the pod. For example, each pod 100 within a building may have a detection area that spans a portion of the building. More specifically, when a pod 100 is installed in a room of the building, the pod 100 may be installed in a corner of the room, such that the detection area for the pod 100 is the entire room and the images detected by the imaging camera 104 cover the entire room. When a pod 100 is installed in a hallway, the detection area for the pod 100 may be a rectangular area covering a portion of the hallway, such as the entire width of the hallway but a portion of the length of the hallway. Accordingly, in the aggregate the pods 100 may be installed such that the respective detection areas for the pods 100 cover the area of the entire building. The layout of the pods 100 within a building and the respective detection areas for each pod is described in more detail below with reference to FIG. 3. In some embodiments, the imaging camera 104 may include several imaging cameras, such as a dual camera, which may be used to detect depth information. In any event, images may be detected continuously or periodically (e.g., 10 frames per second, 60 frames per second, 120 frames per second, etc.) and then provided to a server computing device or the computing device within the pod 100 for further processing as described in more detail below.

High-Resolution Thermal Camera

[0066] The thermal camera 106 may be a high-contrast thermal camera (e.g., a thermal camera having more than a threshold number of pixels such as a 300,000 pixel thermal camera) and is configured to detect infrared (IR) light within the detection area of the pod 100 to generate heat signatures from objects within the detection area. IR images may be detected continuously or periodically (e.g., 10 frames per second, 60 frames per second, 120 frames per second, etc.) and then provided to a server computing device or the computing device within the pod 100 for further processing as described in more detail below. In other embodiments, the computing device within the pod 100 analyzes the visible light images and IR images.

[0067] In some embodiments, the imaging camera 104 and the thermal camera 106 are the same camera and/or the imaging camera 104 and the thermal camera 106 share the same lens. In other embodiments, the imaging camera 104 and the thermal camera are different cameras and/or the imaging camera 104 and the thermal camera 106 include separate lenses.

[0068] In some embodiments, the server computing device or the computing device 128 within the pod 100 (e.g., the occupant detection module 136) analyzes the visible light images and IR images over the same time period (e.g., over the previous second, over the previous ten seconds, etc.) to identify objects, such as people in the detection area. To identify a person, the server computing device or the computing device 128 within the pod 100 compares temperature data or heat signatures from the IR images to a threshold body temperature range corresponding to a human (e.g., 92.degree. F./33.degree. C.-112.degree. F./44.degree. C.). If a portion of each image includes temperatures within the threshold body temperature range, the server computing device or the computing device 128 within the pod 100 analyzes the same portion (also referred to herein as an "object") of the visible light images and identifies features of the object within the visible light images, such as the geometry of the edges of the object, and RGB pixel values or colors within the object.

[0069] The features of the object may also include facial features such as the geometry and RGB pixel values or colors for eyes, a mouth, and a nose. Additionally, the features of the object may include body features such as the geometry and RGB pixel values or colors for heads, arms, legs, etc. These features may be identified by detecting stable regions within the object that are detectable regardless of blur, motion, distortion, orientation, illumination, scaling, and/or other changes in camera perspective. The stable regions may be extracted from the object using a scale-invariant feature transform (SIFT), speeded up robust features (SURF), fast retina keypoint (FREAK), binary robust invariant scalable keypoints (BRISK), or any other suitable computer vision techniques. In some embodiments, keypoints may be located at high-contrast regions of the object, such as edges within the object. A bounding box may be formed around a keypoint and the portion of the object created by the bounding box may be a feature.

[0070] In any event, the server computing device or the computing device 128 within the pod 100 may compare the features identified for the object to features from template objects (also referred to herein as "template features") using image classification and/or machine learning techniques, where at least some of the template objects represent a person. The machine learning techniques may include linear regression, polynomial regression, logistic regression, random forests, boosting, nearest neighbors, Bayesian networks, neural networks, support vector machines, or any other suitable machine learning technique. For example, the widths and heights of people may be stored as template features along with skin tones for people, the widths and heights of noses, mouths, eyes, and their respective positions relative to each other. The template objects may also include other representations which are not of humans, such as representations of computers, other electronic devices, heaters, etc., which may also generate heat detected by the thermal camera 106 and/or which may be within the threshold body temperature range. Then each of these template features may be compared to the features for an object.

[0071] In some embodiments, the template features may be compared to the features for an object using a nearest neighbors algorithm. The nearest neighbors algorithm may identify template features which are the closest to the features of the object by creating numerical representations of the features to generate feature vectors, such as a pixel width and height of a nose, RGB pixel values for the nose, and pixel distances from the edges of the face to the top, bottom, and sides of the nose, for example. The numerical representations of the features or feature vectors of the object may be compared to the feature vectors of template objects to determine a vector distance between the features of the object and each template object. The server computing device or the computing device 128 within the pod 100 may then determine whether the object is a human based on the amount of similarity, or the vector distance in the nearest neighbors algorithm, between the features for the object and the features for template objects that represent a human. If the closest template objects represent a human and the temperature data from the IR images is within the threshold body temperature range, the object is identified as a human.

[0072] The server computing device or the computing device 128 within the pod 100 (e.g., the weapon detection module 134) may also analyze visible light images using image classification and/or machine learning techniques to identify a weapon in the building premises or a suspicious bag which may contain a weapon (e.g., a rifle bag). Weapons may include firearms such as rifles, pistols, handguns, automatic weapons, etc., knives, or other dangerous objects. Weapons may also include other objects which are not designed as weapons but may be used in a dangerous manner to cause harm, such as baseball bats, hammers, etc. Weapons may be identified before a gunshot is fired/detected. For example, the server computing device or the computing device 128 within the pod 100 may obtain template objects representing guns and other weapons and may identify features from these template objects. When the server computing device or the computing device 128 within the pod 100 obtains an image detected by the imaging camera 104, the server computing device or the computing device 128 within the pod 100 may identify features of the image using the techniques described above, such as SIFT, SURF, FREAK, BRISK, or any other suitable computer vision techniques. The features of the image may be compared to the template features of template objects representing weapons. The server computing device may then determine whether the image includes a weapon based on the amount of similarity, or the vector distance in the nearest neighbors algorithm, between the features of the image and the features for template objects that represent a weapon. In other embodiments, the computing device 128 within the pod 100 performs the analysis described above to identify objects, such as people or weapons in the detection area. In this manner, weapons may be identified by the pods 100 without requiring metal detectors.

[0073] The weapon detection module 134 or the server computing device may also identify a weapon based on temperature data from the thermal camera 106. To identify the weapon, the weapon detection module 134 or the server computing device identifies an occupant in the detection area in the manner described above, by comparing temperature data or heat signatures from the IR images to a threshold body temperature range corresponding to a human (e.g., 92.degree. F./33.degree. C.-112.degree.) F/44.degree. to identify an object including temperatures within the threshold body temperature range, and comparing features of the object to features from template objects using image classification and/or machine learning techniques, where at least some of the template objects represent a person.

[0074] In response to identifying a person, the weapon detection module 134 or the server computing device may identify a weapon attached to the person, such as a weapon being carried by the person or attached to the person's clothing based on differences in temperature data within the object corresponding to the person. More specifically, the weapon detection module 134 or the server computing device may identify a portion of the object which includes temperatures outside of the body temperature range to generate a heat signature. For example, each image of a person may include several contiguous portions which are outside of the body temperature range. The weapon detection module 134 or the server computing device may generate a heat signature for each contiguous portion. The weapon detection module 134 or the server computing device may then identify features of each heat signature, such as the geometry of the edges of the heat signature, a pixel width and height of the heat signature, etc. The features of each heat signature may be compared to template features of template objects representing weapons. The weapon detection module 134 or the server computing device may then determine whether each heat signature corresponds to a weapon (e.g., whether the heat signature is in the shape of a weapon) based on the amount of similarity, or the vector distance in the nearest neighbors algorithm, between the features of each heat signature and the features for template objects that represent a weapon.

[0075] Additionally or alternatively, the weapon detection module 134 or the server computing device may identify a weapon based on any suitable combination of an analysis of visible light images and thermal images. For example, the weapon detection module 134 or the server computing device may determine that a heat signature corresponds to a weapon based on an analysis of a thermal image. The weapon detection module 134 or the server computing device may then verify that the person has a weapon by analyzing the same portion of a visible light image as the portion of the thermal image that includes the heat signature.

[0076] Turning back to the pod, the acoustic sensor 114 is configured to detect sound within the detection area of the pod 100. The sound detected by the acoustic sensor 114 may be a noise level measured in decibels (dB). Sound data may be collected continuously, periodically (e.g., every second, every minute, 10 times per second, 60 times period second, 100 times period second, etc.), or for periods when the noise level exceeds a threshold noise level (e.g., 50 dB). Then the sound data is provided to a server computing device or the computing device within the pod 100 for further processing as described in more detail below.

[0077] In some embodiments, the computing device 128 in each pod 100 performs an analysis of the sensor data within the detection area of the pod 100 to for example, identify a dangerous event within the detection area, identify the location of a shooter within the detection area, identify the type of weapon used, identify the number of rounds fired, identify the number of occupants within the detection area, identify the locations of the occupants within the detection area, etc. The computing device 128 in each pod 100 then provides these interpretations of the sensor data to the server computing device which uses the data from the detection area of each pod 100 to generate data regarding larger areas, such as the exterior of the building, the interior of the building, a floor of the building, etc. This data may include a representation of a danger zone for the dangerous event, indoor or outdoor map data including indications of the locations of the occupants within an indoor or outdoor map of the building, a representation of a route to a safe exit from the building, a representation of a recommended entrance to the building for an emergency responder to enter, etc. In other embodiments, the sensor data, the interpretations of the sensor data, and the representations of the sensor data may be analyzed and/or generated by the computing device 128 in a pod 100, the server computing device, or any suitable combination of these devices.

Gunshot Detection System

[0078] In some embodiments, the server computing device, the computing device 128 within the pod 100, or a gunshot detection system or module within the server computing device or the computing device 128 within the pod 100, analyzes the sound data and IR images over the same time period (e.g., over the previous second, over the previous ten seconds, etc.) to identify a gunshot. More specifically, to identify a gunshot the server computing device or the computing device 128 within the pod 100 (e.g., the gunshot detection module 132) compares the noise level from the sound data during a particular time period to a threshold noise level range indicative of a gunshot (e.g., 140-190 dB). If the noise level during the particular time period is within the threshold noise level range, the server computing device or the computing device 128 within the pod 100 compares temperature data from the IR images during the same time period to a threshold explosion temperature indicative of a blast from a bullet exiting the barrel of a gun (e.g., 200.degree. C.). If the IR images during the same time period include temperatures or heat signatures exceeding the threshold explosion temperature and the sound data includes a noise level within the threshold noise level range, the server computing device or the computing device 128 within the pod 100 identifies a gunshot within the building.

[0079] In some embodiments, the server computing device or the computing device 128 within the pod 100 may also compare a change in temperature during the particular time period to a threshold change in temperature to identify a gunshot. For example, the blast created by firing the bullet may cause a sudden increase in temperature and then the heat from the blast may quickly dissipate upon the bullet leaving the barrel. These rapid changes in temperature may be compared to threshold temperature changes to identify the gunshot.

[0080] In some embodiments, the server computing device, the computing device 128 within the pod 100, or the gunshot detection system or module within the server computing device or the computing device 128 within the pod 100 identifies a gunshot when the IR images include temperatures or heat signatures exceeding the threshold explosion temperature before the noise level is within the threshold noise level range, because light travels faster than sound. Accordingly, the server computing device or the computing device 128 within the pod 100 may detect the gunshot when the threshold noise level is detected at least a threshold time offset after the threshold explosion temperature. In other embodiments, the computing device 128 within the pod 100, and more specifically, the gunshot detection module 132, performs the analysis described above to identify a gunshot in the detection area.

[0081] In addition to identifying the gunshot, the server computing device or the computing device 128 within the pod 100 may analyze sound data from the acoustic sensor 114 and/or temperature data from the thermal camera 106 to identify the type of weapon and the number of rounds fired. The server computing device or the computing device within the pod 100 may identify the number of rounds fired based on the number of times the noise level from the sound data reaches the threshold noise level range. More specifically, the server computing device or the computing device within the pod 100 may identify an additional round has been fired each time the noise level from the sound data decreases from the threshold noise level range below a second threshold noise level and then increases once again to the threshold noise level range. The server computing device or the computing device within the pod 100 may identify the type of weapon based on the frequency in which rounds are fired (e.g., when the frequency exceeds a threshold frequency, the weapon may be an automatic weapon) and/or the number of rounds fired, the particular noise level within the noise level range associated with each blast (e.g., the noise level for a rifle may be 140 dB while the noise level for a pistol may be 175 dB), the temperature of the blast, and/or other sensor data characteristics such as visual characteristics of the weapon determined by analyzing the visible light images.

[0082] In some embodiments, the server computing device or the computing device 128 within the pod 100 obtains characteristics of several types of weapons and compares the characteristics of each type of weapon to the characteristics of the weapon that was fired. The server computing device or the computing device within the pod 100 then identifies the type of weapon as the type having matching characteristics to the characteristics of the weapon that was fired. In other embodiments, the type of weapon is identified using machine learning techniques, such as random forests, boosting, nearest neighbors, Bayesian networks, neural networks, support vector machines, etc.

[0083] Moreover, the server computing device or the computing device 128 within the pod 100 may analyze the temperature data from the thermal camera 106 to identify the trajectory of the projectile fired from the weapon. The server computing device or the computing device 128 within the pod 100 may perform a frame-by-frame analysis of the IR images to detect changes in the position of the projectile over time based on the portion of each IR image having a heat signature exceeding the threshold explosion temperature range. The server computing device or the computing device 128 within the pod 100 may then determine the trajectory of the projectile based on the changes in the position of the projectile over time from the frame-by-frame analysis. In this manner, the server computing device or the computing device 128 within the pod 100 may alert an administrator or emergency responders of the direction in which the projectiles are being fired. The pods 100 may also announce the direction in which the projectiles are being fired from the speakers 108.

[0084] Furthermore, the server computing device or the computing device 128 within the pod 100 determines the location of the gunshot based on the pod 100 that captured the sound data and IR images indicative of a gunshot. The locations of each pod 100 within the building may be stored within a database. The server computing device or the computing device 128 within the pod 100 may retrieve the location for the pod 100 that captured the sound data and IR images to identify the location of the gunshot. The server computing device or the computing device 128 within the pod 100 then determines the location of the shooter based on the location of the gunshot and may track the location of the shooter to provide real-time updates on the shooter's location. More specifically, the server computing device or the computing device 128 within the pod 100 may determine the shooter's location based on movements from the shooter's initial location where the gunshot was fired or based on the locations of additional gunshots that are subsequently fired. The movements may be detected, for example, by continuously or periodically (e.g., every second, every minute, 10 times per second, 60 times period second, 100 times period second, etc.) identifying the location of the weapon based on visible light images from each pod 100. In other embodiments, the computing device within the pod 100 performs the analysis described above to identify the type of weapon, the number of rounds fired, and the location of the gunshot. The pods 100 may communicate with each other to track the location of the shooter based on movements from the shooter's initial location where the gunshot was fired or based on the locations of additional gunshots that are subsequently fired.

[0085] In some embodiments, the server computing device or the computing device 128 within the pod 100 may also determine the identity of the shooter. For example, images of each of the students, teachers, and/or other employees of the building may be stored within a database. The server computing device or the computing device within the pod 100 may retrieve these images and/or additional images from criminal or other public databases, such as public social networking databases. Then the server computing device or the computing device within the pod 100 may analyze visible light images of the shooter using image classification and/or machine learning techniques to determine the identity of the shooter. The visible light images of the shooter may be identified based on the images captured from the pod 100 where a gunshot or weapon is detected, and/or where a person is identified using the techniques described above. The server computing device or the computing device within the pod 100 may identify features of the images of the shooter using the techniques described above, such as SIFT, SURF, FREAK, BRISK, or any other suitable computer vision techniques. The features of the images of the shooter may be compared to the features of the images of the students, teachers, and/or other employees of the building. The server computing device or the computing device within the pod 100 may then determine the identity of the shooter based on the amount of similarity, or the vector distance in the nearest neighbors algorithm, between the features of the images of the shooter and the features of the images of the students, teachers, and/or other employees of the building. The student, teacher, or other employee having features which are the closest to the features of the shooter may be identified as the shooter for example, if the vector distance is below a threshold. If the vector distance of the closest features are not within the threshold distance, the shooter may not be identified. In other embodiments, the computing device within the pod 100 performs the analysis described above to determine the identity of the shooter.

Speaker

[0086] Once again turning back to the pod 100, the speaker 108 is configured to provide audio alerts to occupants within the detection area. The alerts may be specific to the particular detection area for the pod 100. In some scenarios, a first set of pods 100 in the building may provide a first audio alert and a second set of pods 100 may provide a second audio alert. More specifically, pods 100 within a threshold range of the shooter or other dangerous person or object (also referred to herein as a "danger zone") may provide audio alerts instructing occupants to hide, lock doors, stay away from the windows, etc. Pods 100 outside of the danger zone may provide audio alerts instructing occupants to exit the building immediately from the nearest exit. The audio alerts may also include a description of the nearest exit and/or directions for reaching the nearest exit.

[0087] Additionally, the audio alerts may include information regarding the dangerous event, such as a description of the type of dangerous event (e.g., a gunshot, a weapon, a fire, a gas leak, etc.), a location of an active shooter or a location of the dangerous event, etc. In some embodiments, the server computing device or the computing device within the pod 100 generates audio alerts specific to each detection area and provides each generated audio alert to a respective pod 100 for the corresponding detection area. In other embodiments, the computing device within the pod 100 generates the audio alert specific to that pod 100. In other scenarios, the speaker 108 is configured to provide announcements, such as general announcements in a public address (PA) system.

Microphone

[0088] The microphone 110 is configured to receive voice communications from occupants within the detection area. In this manner, the occupants may communicate with the pod 100. For example, the audio alert from a particular pod may instruct the occupants to exit the building from Entrance A. The occupants may then inform the security system that Entrance A is inaccessible, and the server computing device or the computing device within the particular pod 100 may generate an additional audio alert with different instructions. The voice communications may also include status information regarding the occupants, such as "Bob is injured," or "Jim is safe." In some embodiments, the voice communications from the occupants may be transmitted to emergency responders or school/building administrators so that the occupants may assist and communicate with the emergency responders or the school/building administrators.

Gas Sensor

[0089] In addition to the high-definition imaging camera 104, thermal camera 106, acoustic sensor 114, speaker 108, and microphone 110, the pod 100 also includes a gas sensor 112 such as a spectrometer configured to detect a gas leak in the detection area. The spectrometer 112 may test for carbon monoxide, carbon dioxide, acetylene, methanol, ethanol, or any other chemicals. Additionally, the spectrometer 112 may be used to measure air quality.

[0090] The server computing device or the computing device within the pod 100 may analyze sensor data from the thermal camera 106 to identify a fire. For example, the server computing device or the computing device 128 within the pod 100 may compare temperature data from the IR images during a particular time period to a threshold fire temperature (e.g., 400.degree. C.) indicative of a fire. The server computing device or the computing device 128 within the pod 100 may then detect a fire when the temperature data within the detection area includes temperatures which exceed the threshold fire temperature. To verify the existence of a fire, the server computing device or the computing device 128 within the pod 100 may analyze images detected by the imaging camera 104 or temperature data from the thermal camera 106 to for example, determine the size of the flame. If the size of the flame exceeds a threshold size (e.g., six inches), the server computing device or the computing device 128 within the pod 100 detects a fire.

[0091] Furthermore, if the gas sensor 112 indicates that there is a gas leak, the server computing device or the computing device within the pod 100 provides an alert of the gas leak within the detection area for the corresponding pod 100. The alert may be an audio alert provided via the speakers 108 of the pod 100 or a text-based alert provided to client computing devices. In other embodiments, the computing device 128 within the pod 100 performs the analysis described above to identify a fire or a gas leak and/or generate an alert.

[0092] More generally, the pod 100 may use any combination of two sensors to detect and verify a dangerous event, such as a fire, a gas leak or other airborne contaminant, a water leak, a gunshot, a weapon, etc. In some embodiments, a first type of sensor data from the first sensor may be used to detect the dangerous event, and a second type of sensor data from the second sensor may be used to verify that the dangerous event is occurring.

[0093] In one example, when the dangerous event is a fire, the server computing device or the computing device 128 within the pod 100 may compare temperature data from the thermal camera 106 during a particular time period to a threshold fire temperature (e.g., 400.degree. C.) indicative of a fire. The server computing device or the computing device 128 within the pod 100 may then detect a fire when the temperature data within the detection area includes temperatures which exceed the threshold fire temperature. To verify the existence of a fire, the server computing device or the computing device 128 within the pod 100 may analyze images detected by the imaging camera 104 or temperature data from the thermal camera 106 to for example, determine the size of the flame. If the size of the flame exceeds a threshold size (e.g., six inches), the server computing device or the computing device 128 within the pod 100 detects a fire. Otherwise, the server computing device or the computing device 128 within the pod 100 does not detect a fire, and flames less than the threshold size may be candles or burners. The server computing device or the computing device 128 within the pod 100 may analyze any suitable combination of two or more types of sensor data from the imaging camera 104, and the thermal camera 106 to detect and verify a fire in the detection area of the pod 100.

[0094] In another example, when the dangerous event is a water leak, the server computing device or the computing device 128 within the pod 100 may compare temperature data from the thermal camera 106 during a particular time period to a threshold water temperature range (e.g., 10-15.degree. C. and 40-50.degree. C.) indicative of water. The water may need to be hot or cold water for the server computing device or the computing device 128 within the pod 100 to distinguish the water from the environment at room temperature. The server computing device or the computing device 128 within the pod 100 may then detect a water leak when the temperature data within the detection area includes temperatures which are within the threshold water temperature range. To verify the existence of the water leak, the server computing device or the computing device 128 within the pod 100 may analyze images detected by the imaging camera 104 to for example, determine the size of the water leak. If the size of the water leak exceeds a threshold size (e.g., one foot), the server computing device or the computing device 128 within the pod 100 detects a water leak. Otherwise, the server computing device or the computing device 128 within the pod 100 does not detect a water leak, and the water may be from a faucet, for example.

[0095] In yet another example, as described above, when the dangerous event is a gunshot, the server computing device or the computing device 128 within the pod 100 compares temperature data from IR images from the thermal camera 106 to a threshold explosion temperature indicative of a blast from a bullet exiting the barrel of a gun (e.g., 200.degree. C.). If the IR images include temperatures or heat signatures exceeding the threshold explosion temperature, the server computing device or the computing device 128 within the pod 100 detects a gunshot. To verify that the gunshot occurred, the server computing device or the computing device 128 within the pod 100 may compare the noise level from sound data from the acoustic sensor 114 captured during the same time period as the IR images to a threshold noise level range indicative of a gunshot (e.g., 140-190 dB). If the noise level during the particular time period is within the threshold noise level range, the server computing device or the computing device 128 within the pod 100 verifies that the gunshot occurred.

[0096] In another example, as described above, when the dangerous event is a weapon, the server computing device or the computing device 128 within the pod 100 may compare temperature data or heat signatures from the IR images from the thermal camera 106 to a threshold body temperature range corresponding to a human (e.g., 33.degree. C.-44.degree. C.) to identify an object including temperatures within the threshold body temperature range, and comparing features of the object to features from template objects using image classification and/or machine learning techniques, where at least some of the template objects represent a person. In response to identifying a person, the server computing device or the computing device 128 within the pod 100 may identify a weapon attached to the person, such as a weapon being carried by the person or attached to the person's clothing based on differences in temperature data within the object corresponding to the person. More specifically, the server computing device or the computing device 128 may identify a portion of the object which includes temperatures outside of the body temperature range to generate a heat signature. For example, each image of a person may include several contiguous portions which are outside of the body temperature range. The server computing device or the computing device 128 may generate a heat signature for each contiguous portion. The server computing device or the computing device 128 may then identify features of each heat signature which may be compared to template features of template objects representing weapons. The server computing device or the computing device 128 may then determine whether each heat signature corresponds to a weapon (e.g., whether the heat signature is in the shape of a weapon) based on the amount of similarity, or the vector distance in the nearest neighbors algorithm, between the features of each heat signature and the features for template objects that represent a weapon. To verify that there is a weapon in the building premises, the server computing device or the computing device 128 within the pod 100 may analyze the same portion of the visible light image as the portion of the thermal image that includes the heat signature corresponding to a weapon using image classification and/or machine learning techniques. If the same portion of the visible light image is identified as a weapon, the server computing device or the computing device 128 within the pod 100 verifies that there is a weapon in the building premises.

[0097] As mentioned above, the pod 100 includes one or more light emitting elements 120, such as LEDs, which are configured to emit monochromatic light. The light emitting elements 120 may include several sets of light emitting elements each configured to emit a different color (e.g., red, green, blue, etc.). In some embodiments, the light emitting elements 120 are positioned on the pod 100 so that the light from the light emitting elements 120 projects onto a wall behind the pod 100. In response to detecting a dangerous event, the server computing device or the computing device 128 within the pod 100 may activate one or more of the LEDs 120 to act as an alert to occupants within the building premises. For example, the server computing device or the computing device 128 within the pod 100 may activate the LEDs 120 that emit a red color. The server computing device or the computing device 128 within the pod 100 may cause the LEDs 120 to turn on and off or blink at a particularly frequency to signal an alert to the occupants. In some embodiments, when the dangerous event has ended, the server computing device or the computing device 128 within the pod 100 may activate the LEDs 120 that emit a green color to signal that it is safe to resume normal activities and move throughout the building premises. The server computing device or the computing device 128 within the pod 100 may also control the LEDs 120 within multiple pods 100 to signal a path leading to a safe exit for the occupants. This is described in more detail below.

[0098] Still further, the pod 100 includes a communication interface 122 having long-range and short-range communication links. The short-range communication link may be wired (e.g., wired Universal Serial Bus (USB)) or wireless (e.g., Bluetooth.RTM., Bluetooth.RTM. Low Energy (LE), Wi-Fi Direct, wireless USB, radio-frequency identification (RFID)). The long-range communication link may be a third-, fourth-, or fifth-generation cellular network (3G, 4G, or 5G respectively) and/or the Internet. For example, the communication interface 122 may include a Wi-Fi transceiver for connecting to the Internet via Wi-Fi. The communication interface 122 may also include a 3G, 4G, or 5G transceiver for connecting to the Internet via a cellular network, for example if there is a connectivity issue with the Wi-Fi in the area. In this manner, the pod 100 may transmit, via long-range communication links, sensor data including image data, temperature data, heat signatures, sound data, flame data, gas leak data, and voice data from the imaging camera 104, thermal camera 106, acoustic sensor 114, gas sensor 112, and microphone 110 respectively, to the server computing device or any other suitable remote computing device. In other embodiments, the pod 100 may transmit an interpretation of the sensor data to the server computing device or any other suitable remote computing device. The interpretations of the sensor data may include an indication that a dangerous event has been detected, indications of the danger zone, indications of the locations of occupants within the building premises, an indication of the location of an active shooter, etc. The server computing device may then analyze the sensor data, or interpretations of the sensor data provided by the pod 100, and generate and transmit various representations of the sensor data to client computing devices of emergency responders such as police officers, firefighters, and paramedics, occupants of the building such as students and teachers, building/school administrators, and parents of the students. In other embodiments, the computing device 128 within the pod 100 may generate and transmit various representations of the sensor data to client computing devices.