Virtual Playbook With User Controls

Parker; Jason ; et al.

U.S. patent application number 16/741674 was filed with the patent office on 2020-07-16 for virtual playbook with user controls. The applicant listed for this patent is Electronic Arts Inc.. Invention is credited to Tommy Jacob, Kevin Kralian, Rob Moore, Hitoshi Nishimura, Jason Parker, Kevin Proctor.

| Application Number | 20200222803 16/741674 |

| Document ID | / |

| Family ID | 55314494 |

| Filed Date | 2020-07-16 |

View All Diagrams

| United States Patent Application | 20200222803 |

| Kind Code | A1 |

| Parker; Jason ; et al. | July 16, 2020 |

VIRTUAL PLAYBOOK WITH USER CONTROLS

Abstract

A computerized method operable on a computer system for compositing data streams to generate a playable composite stream includes receiving a plurality of independent data streams that are included in a broadcast stream. The independent data streams include a video stream and a metadata stream. The metadata stream includes a plurality of user selectable graphics metadata for a plurality of graphics options. The computerized method further includes receiving a user selection for at least one of the graphics options; and compositing the at least one graphics option with the video stream to generate a composite video stream, which includes the at least one graphics option and the video stream.

| Inventors: | Parker; Jason; (Winter Garden, FL) ; Kralian; Kevin; (Maitland, FL) ; Moore; Rob; (Longwood, FL) ; Proctor; Kevin; (Orlando, FL) ; Jacob; Tommy; (Deltona, FL) ; Nishimura; Hitoshi; (West Vancouver, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 55314494 | ||||||||||

| Appl. No.: | 16/741674 | ||||||||||

| Filed: | January 13, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15012792 | Feb 1, 2016 | 10532283 | ||

| 16741674 | ||||

| 12630768 | Dec 3, 2009 | 9266017 | ||

| 15012792 | ||||

| 61119705 | Dec 3, 2008 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A63F 13/26 20140902; A63F 2011/0097 20130101; H04N 21/23614 20130101; A63F 13/86 20140902; A63F 13/87 20140902; H04N 21/8545 20130101; A63F 13/23 20140902; A63F 13/497 20140902; H04N 21/21805 20130101; A63F 13/355 20140902; A63F 13/825 20140902; H04N 21/4781 20130101; A63F 13/54 20140902; H04N 21/2187 20130101; A63F 13/77 20140902; A63F 13/65 20140902; G09G 2340/12 20130101; A63F 13/00 20130101; H04N 21/8549 20130101; H04N 21/2665 20130101 |

| International Class: | A63F 13/497 20060101 A63F013/497; A63F 13/00 20060101 A63F013/00; H04N 21/2187 20060101 H04N021/2187; A63F 13/86 20060101 A63F013/86; H04N 21/218 20060101 H04N021/218; H04N 21/236 20060101 H04N021/236; H04N 21/8545 20060101 H04N021/8545; A63F 13/65 20060101 A63F013/65; H04N 21/8549 20060101 H04N021/8549; A63F 13/23 20060101 A63F013/23; A63F 13/26 20060101 A63F013/26; A63F 13/355 20060101 A63F013/355; A63F 13/54 20060101 A63F013/54; A63F 13/77 20060101 A63F013/77; A63F 13/825 20060101 A63F013/825; A63F 13/87 20060101 A63F013/87; H04N 21/2665 20060101 H04N021/2665; H04N 21/478 20060101 H04N021/478 |

Claims

1. A method comprising: posting, by a media server system, a link to initiate streaming of real-time live gameplay of a video game that is being played by at least one game player on at least one game player device; enabling selection of the link by a plurality of spectators on a plurality of spectator computers coupled to the media server system; receiving in real time by the media server system via a first network connection a video game stream of the real-time live gameplay of the video game as the at least one game player is playing the video game; streaming in real time by the media server system the video game stream of the real-time live gameplay of the video game via a second network connection to one or more commentator computer systems as the video game stream is being received by the media server system; receiving in real time by the media server system contemporaneous comments associated with the real-time live gameplay of the video game from at least one of the one or more commentator computer systems as at least one commentator is generating the contemporaneous comments on the at least one of the one or more commentator computer systems; generating by the media server system a composite stream of at least a portion of the video game stream and at least some of the contemporaneous comments associated with the real-time live gameplay of the video game; receiving at a particular moment in time by the media server system a selection of the link from a particular spectator computer of the plurality of spectator computers; and in response to receiving the selection of the link from the particular spectator computer, initiating over a third network connection to the particular spectator computer of the plurality of spectator computers a real-time streaming of the composite stream being generated by the media server system at substantially the particular moment in time and thereafter.

2. The method of claim 1, wherein the video game is a multiplayer video game, the at least one game player on the at least one game player device includes a plurality of game players on a plurality of game player devices, and the receiving in real time the video game stream of the real-time live game play includes receiving the video game stream from at least one of the plurality of game player devices.

3. The method of claim 1, wherein the spectators have capability to be commentators.

4. The method of claim 1, wherein the contemporaneous comments include telestration information.

5. The method of claim 1, wherein the contemporaneous comments include chat messages.

6. The method of claim 1, wherein the contemporaneous comments include audio commentary.

7. The method of claim 1, wherein at least two of the first network connection, the second network connection and the third network connection overlap.

8. The method of claim 1, wherein the generating the composite stream includes combining and synchronizing the at least a portion of the video game stream and the at least some of the contemporaneous comments.

9. The method of claim 1, wherein the initiating the real-time streaming of the composite stream includes initiating real-time streaming of a first independent stream including the at least a portion of the video game stream and of a second independent stream including the at least some of the contemporaneous comments.

10. The method of claim 1, wherein the posting the link to initiate streaming of real-time live gameplay of the video game that is being played by the at least one game player on the at least one game player device includes posting a plurality of links, each link to initiate streaming of real-time live gameplay of a different video game instance that is being played by at least one different game player on at least one different game player device.

11. A media server system, comprising: at least one processor, at least one network interface; and memory storing computer program code configured to cause the at least one processor to perform the steps of: posting a link to initiate streaming of real-time live gameplay of a video game that is being played by at least one game player on at least one game player device; enabling selection of the link by a plurality of spectators on a plurality of spectator computers; receiving in real time by the at least one network interface via a first network connection a video game stream of the real-time live gameplay of the video game as the at least one game player is playing the video game; streaming in real time by the at least one network interface the video game stream of the real-time live gameplay of the video game via a second network connection to one or more commentator computer systems as the video game stream is being received by the media server system; receiving in real time by the at least one network interface contemporaneous comments associated with the real-time live gameplay of the video game from at least one of the one or more commentator computer systems as at least one commentator is generating the contemporaneous comments on the at least one of the one or more commentator computer systems; generating a composite stream of at least a portion of the video game stream and at least some of the contemporaneous comments associated with the real-time live gameplay of the video game; receiving at a particular moment in time by the at least one network interface a selection of the link from a particular spectator computer of the plurality of spectator computers; and in response to receiving the selection of the link from the particular spectator computer, initiating over a third network connection to the particular spectator computer of the plurality of spectator computers a real-time streaming of the composite stream being generated at substantially the particular moment in time and thereafter.

12. The media server system of claim 11, wherein the video game is a multiplayer video game, the at least one game player on the at least one game player device includes a plurality of game players on a plurality of game player devices, and the computer program code configured to cause the at least one processor to perform the step of receiving in real time the video game stream of the real-time live game play comprises computer program code configured to cause the at least one processor to perform the step of receiving the video game stream from at least one of the plurality of game player devices.

13. The media server system of claim 11, wherein the spectators have capability to be commentators.

14. The media server system of claim 11, wherein the contemporaneous comments include telestration information.

15. The media server system of claim 11, wherein the contemporaneous comments include chat messages.

16. The media server system of claim 11, wherein the contemporaneous comments include audio commentary.

17. The media server system of claim 11, wherein at least two of the first network connection, the second network connection and the third network connection overlap.

18. The media server system of claim 11, wherein the computer program code configured to cause the at least one processor to perform the step of generating the composite stream comprises computer program code configured to cause the at least one processor to perform the steps of combining and synchronizing the at least a portion of the video game stream and the at least some of the contemporaneous comments.

19. The media server system of claim 11, wherein the computer program code configured to cause the at least one processor to perform the step of initiating the real-time streaming of the composite stream comprises computer program code configured to cause the at least one processor to perform the step of initiating real-time streaming of a first independent stream including the at least a portion of the video game stream and of a second independent stream including the at least some of the contemporaneous comments.

20. The media server system of claim 11, wherein the computer program code configured to cause the at least one processor to perform the step of posting the link to initiate streaming of real-time live gameplay of the video game that is being played by the at least one game player on the at least one game player device includes computer program code configured to cause the at least one processor to perform the step of posting a plurality of links, each link to initiate streaming of real-time live gameplay of a different video game instance that is being played by at least one different game player on at least one different game player device.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] Any and all applications for which a foreign or domestic priority claim is identified in the Application Data Sheet as filed with the present application are incorporated by reference under 37 CFR 1.57 and made a part of this specification.

FIELD OF THE INVENTION

[0002] Embodiments of the present invention relate generally to interactive entertainment, such as video games, and in particular to systems and methods for providing interactive entertainment based replays.

BACKGROUND OF THE INVENTION

[0003] Interactive entertainment such as video games often depict game characters in a virtual world, where the game characters are performing some generally pre-defined tasks, typically under the direction of the person who is playing the game. In the context of a sports game, such as Madden.TM., published by Electronic Arts Inc., the person playing the game directs a football team and competes by running various football plays against his opponent. The opponent can be another person or the artificial intelligence of the game.

[0004] In sports broadcasting, it is desirable to comment on replays of selected events in a game, for example, a specific running play or the execution of a touchdown in football. Typically, this is accomplished by broadcasting a previously recorded video of the play and adding audio from a commentator who describes what is happening in the replay, which the viewer sees on his display.

[0005] As part of the commentary, additional indicators such as arrows may be added to the video to show particular directions of players and play movements that are involved in the replay. Such indicators may also be used to show hypothetical situations, for example, if a different result would have occurred had a particular player moved along a different, hypothetical route. If the hypothetical scenario is sufficiently complicated and involves many players, however, then such indicators may simply confuse the viewer further.

[0006] For complicated hypothetical scenarios, a sports broadcaster might be able to show the scenario using archived video footage of players executing the particular scenario, but since the number of different game play combinations is very high, it is inconvenient to search for and retrieve such material. Another option would be to get teams of players together for the purpose of executing the hypothetical scenario on a real field and to record the action, however, this is also inconvenient and generally not available in real time.

[0007] Video games provide the ability to create multiple scenarios very easily because the game may be played over and over again, each time with a new scenario in mind. The content of a particular scenario generally depends in part on how the user plays the game and in part how the artificial intelligence of the game reacts to the user's game play. These in-game scenarios can be recorded by the game camera. However, there are challenges with using the game camera for this purpose. Generally, the game cameras are designed to provide the game player with a field of view that is appropriate for the game genre (sports, adventure, shooter, racing, etc.) and the presentation style (first person, third person detached, cinematic, etc.). This field of view may not be the same view that is desired for viewing the hypothetical scenarios described above. For example, the game camera in a sports game may follow a particular character (the character that is being played by the user), and only show that portion of the field, basketball court, etc. to the exclusion of events, which may be happening outside of that field of view. Game cameras can be difficult and time-consuming to design because the designer needs to take into account the design of the game world and how the player character moves within that world in order to prevent undesirable effects that will cause a user to become distracted or disoriented. Designers are reluctant to make changes to software code associated with game cameras because breaking this code can be disastrous for a game and the game team's production schedule.

[0008] Thus, it would be desirable to provide a means for editing cameras in real time and to provide real time playback to combine three dimensional video game graphics with broadcast images to produce interactive "replays" of game scenarios.

BRIEF SUMMARY OF THE INVENTION

[0009] In accordance with an embodiment of the invention, systems and methods for providing interactive entertainment replays are provided. Systems and methods for using data-driven real time camera editing and playback to combine three dimensional video game graphics and broadcast images are also provided.

[0010] According to one embodiment of the present invention, a computerized method operable on a computer system is provided for compositing data streams to generate a playable composite stream. The method includes receiving a plurality of independent data streams that are included in a broadcast stream. The independent data streams include a video stream and a metadata stream. The metadata stream includes a plurality of user selectable graphics metadata for a plurality of graphics options. The computerized method further includes receiving a user selection for at least one of the graphics options; and compositing the at least one graphics options with the video stream to generate a composite video stream, which includes the at least one graphics option and the video stream.

[0011] According to a specific embodiment of the method, the plurality of graphics options include a plurality of graphics. The plurality of graphics may include text, telestrations, and ads. The method may further include displaying the composite video stream.

[0012] According to another specific embodiment of the method, the plurality of data streams furthering includes an audio stream and the metadata stream includes a plurality of user selectable audio metadata for a plurality of audio segments. The method may further include receiving a user selection for at least one audio segment identified by the user selectable audio metadata, and compositing the at least one audio segment with the audio stream and the composite video stream to generate a composite event stream.

[0013] According to another specific embodiment of the method, the first mentioned compositing step includes combining and synchronizing the at least one graphic and the video stream, and the second mentioned compositing step includes combining and synchronizing the at least one audio segment with the audio stream and the composited video stream. The metadata stream includes information for a three dimensional model of a player in a game, and the user selection for the at least one graphics options includes a field of view of the player, which is different from a field of view of the video stream. The method may further includes simulating a video stream for the player for the field of view; and displaying the simulated video stream. The video stream is of a recorded event or a live event. The live event may be a live sports event or a live game event.

[0014] According to one embodiment of the present invention, a computer program product on a computer readable medium is provided for compositing data streams to generate a playable composite stream. Another embodiment of the present invention includes a computer readable medium that includes code for executing a method for compositing data streams to generate a playable composite stream.

[0015] According to one embodiment of the present invention, a computer system for compositing three dimensional video game graphics onto broadcast studio images includes video camera for recording a view of a studio, and a network interface coupled to the studio video camera for translating the studio video camera inputs into real-time camera commands. The computer system further includes a network interface for receiving metadata. A game device is coupled to the network interface for receiving the real-time camera commands as input. The game device includes a game camera module for producing a game video output in accordance with the real-time camera commands, and a green screen module for extracting the game video output associated with game play from the game environment. The computer system further includes a compositing device for combining the extracted game video output with the studio video camera input.

[0016] According to one embodiment of the present invention, a computerized method for editing a game camera in real time includes parsing a plurality of real-time camera commands, receiving metadata, and determining a plurality of edit parameters. The computerized method further includes creating a game camera in accordance with the parsed real-time camera commands and the plurality of edit parameters.

[0017] One benefit of the various embodiments of the present invention includes providing users with a user definable video experience of a game where the user is permitted to select one or more a number user selectable option for changing the video and or audio output of a game while viewing the game. Giving the user more control over their game viewing experience provides an engaging gaming experience. These and other benefits of the embodiments of the present invention will be apparent from review of the following specification and attached figures.

[0018] The following detailed description together with the accompanying drawings will provide a better understanding of the nature and advantages of the present invention.

BRIEF DESCRIPTION OF THE DRAWINGS

[0019] FIG. 1 is a simplified schematic of a spectator mode system according to one embodiment of the present invention.

[0020] FIG. 2 is a schematic of a stream-selection user interface that may be displayed on the commentator computers and may include a set of viewing options.

[0021] FIG. 3 is schematic of a messaging user interface that may be displayed on the commentator computer.

[0022] FIG. 4 is a simplified schematic of a text-grab user interface.

[0023] FIG. 5 is a simplified schematic of an audio-grab user interface that may be displayed on the commentator computer and includes a set of audio selections.

[0024] FIG. 6 is a simplified schematic of a graphics-grab user interface that may be displayed on the commentator computer and includes a set of graphics.

[0025] FIG. 7 is a further detailed view of the data streams that may be included in an event stream and/or a broadcast stream.

[0026] FIG. 8 is a schematic of various video segments in a video stream, various audio segments in an audio stream, and various metadata in a metadata stream that may be composited.

[0027] FIG. 9 is a simplified schematic of a screen captured image of a video that has been composited with various graphics, which may be available for selection and composition via the director code executed on a spectator computer or a commentator computer.

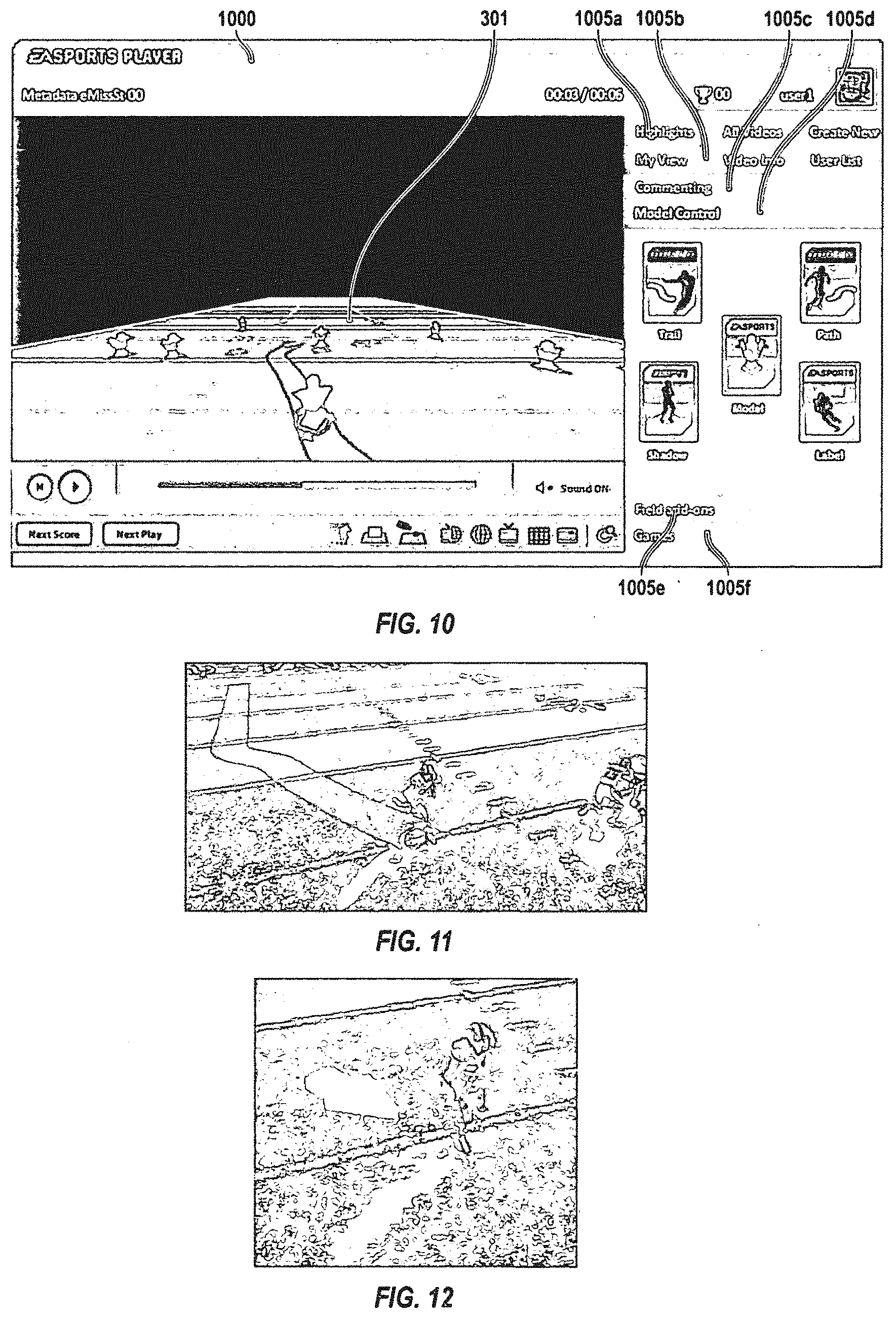

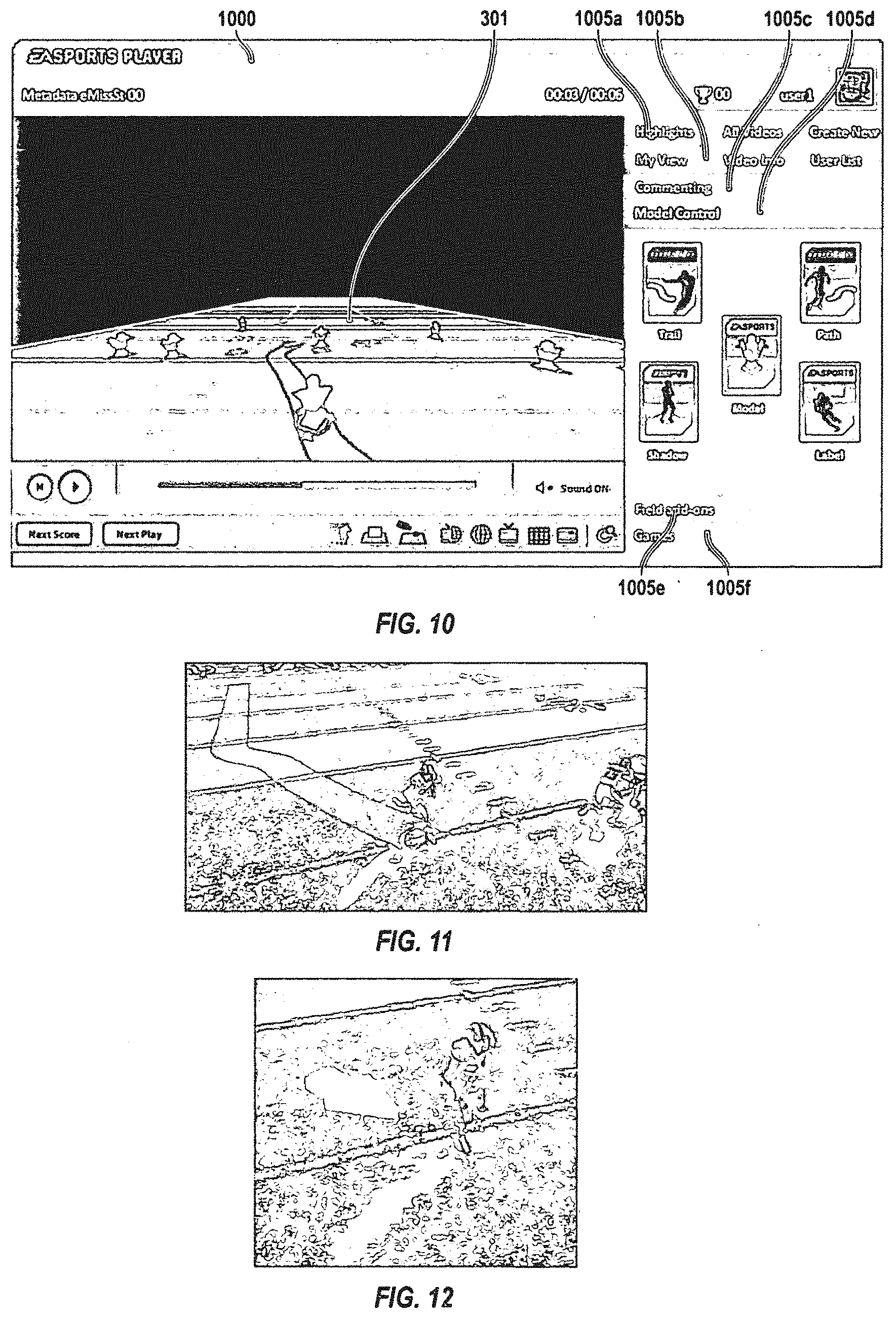

[0028] FIG. 10 is an example user interface having a video display, and a plurality of user selectable options.

[0029] FIG. 11 is an example screen captured image of a composite video where a video stream of a game is composited with a trail for a player.

[0030] FIG. 12 is an example screen captured image of a composite video where a video stream of a game is composited with an arrow that is configured to trail for a player.

[0031] FIG. 13 is an example screen captured image of a composite video where a video stream of a game is composited with a strobe image of a player moving in a game.

[0032] FIG. 14 is a screen captured image of another user selectable option that the director code or game code may provide.

[0033] FIG. 15 is a screen captured image of a top down view of a video stream.

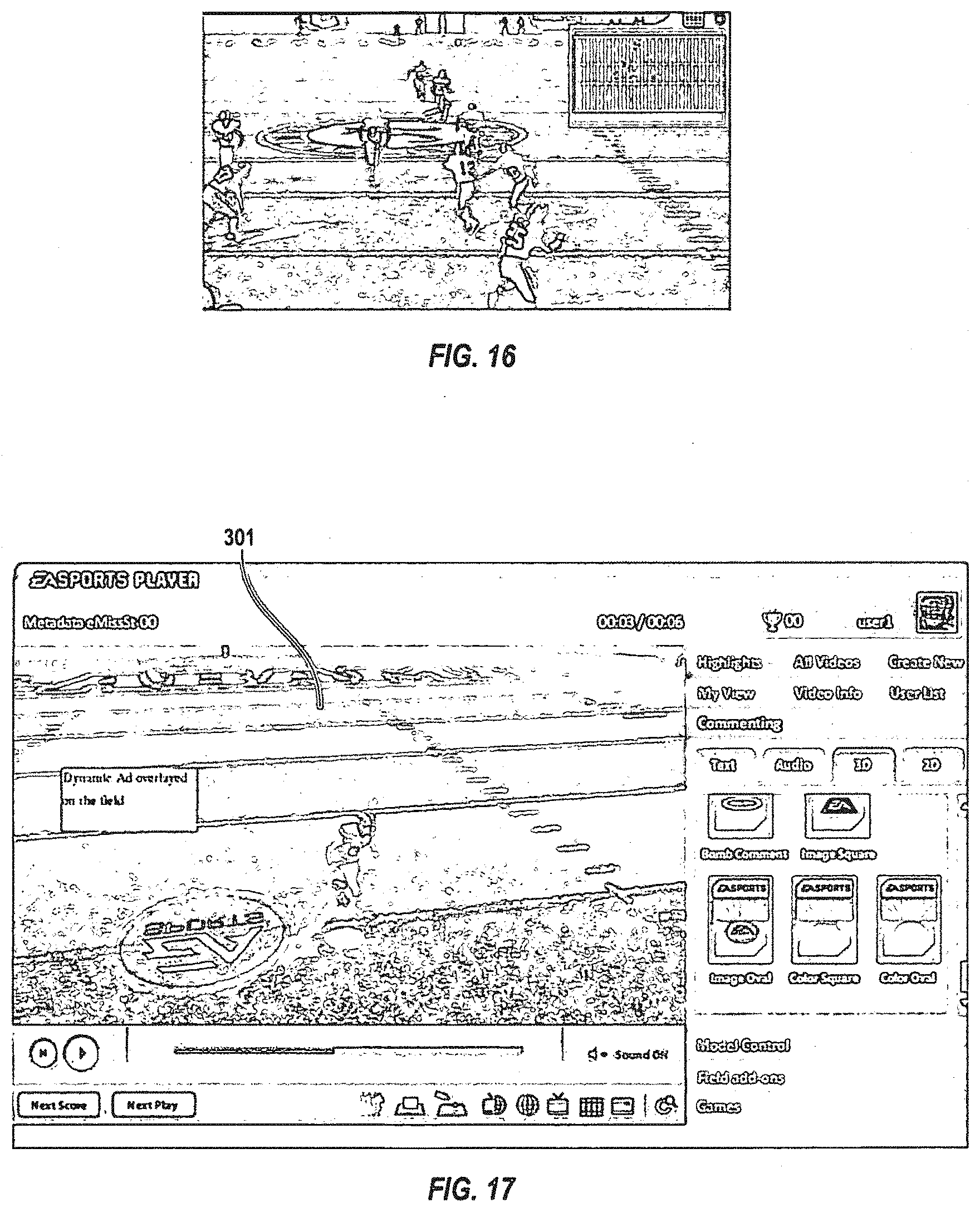

[0034] FIG. 16 is a screen captured image of the screen captured image shown in FIG. 15 in a picture-in-picture configuration with another video stream.

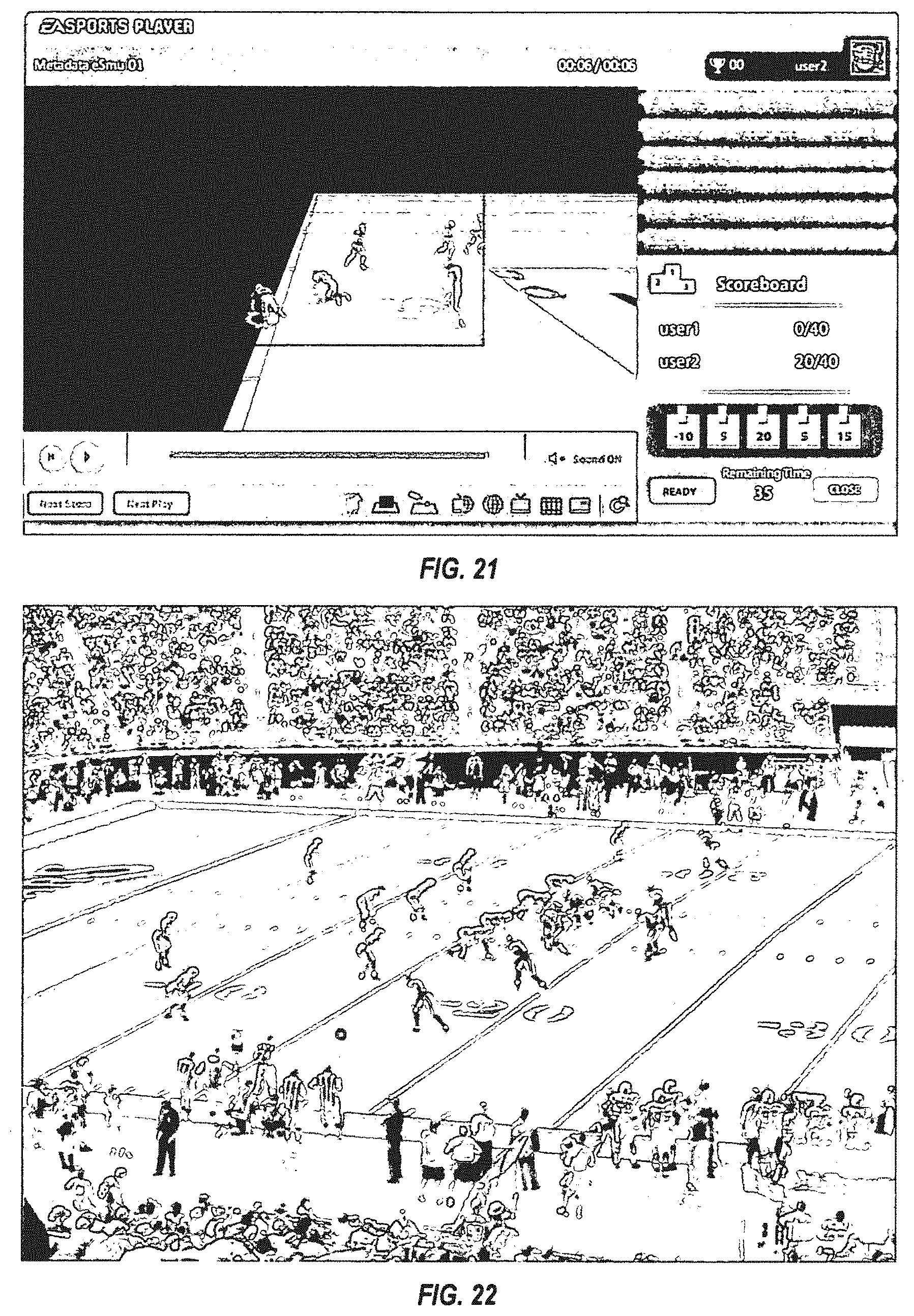

[0035] FIG. 17 is an alternative view of the user interface shown in FIG. 10.

[0036] FIG. 18 is another alternative view of the user interface shown in FIG. 10.

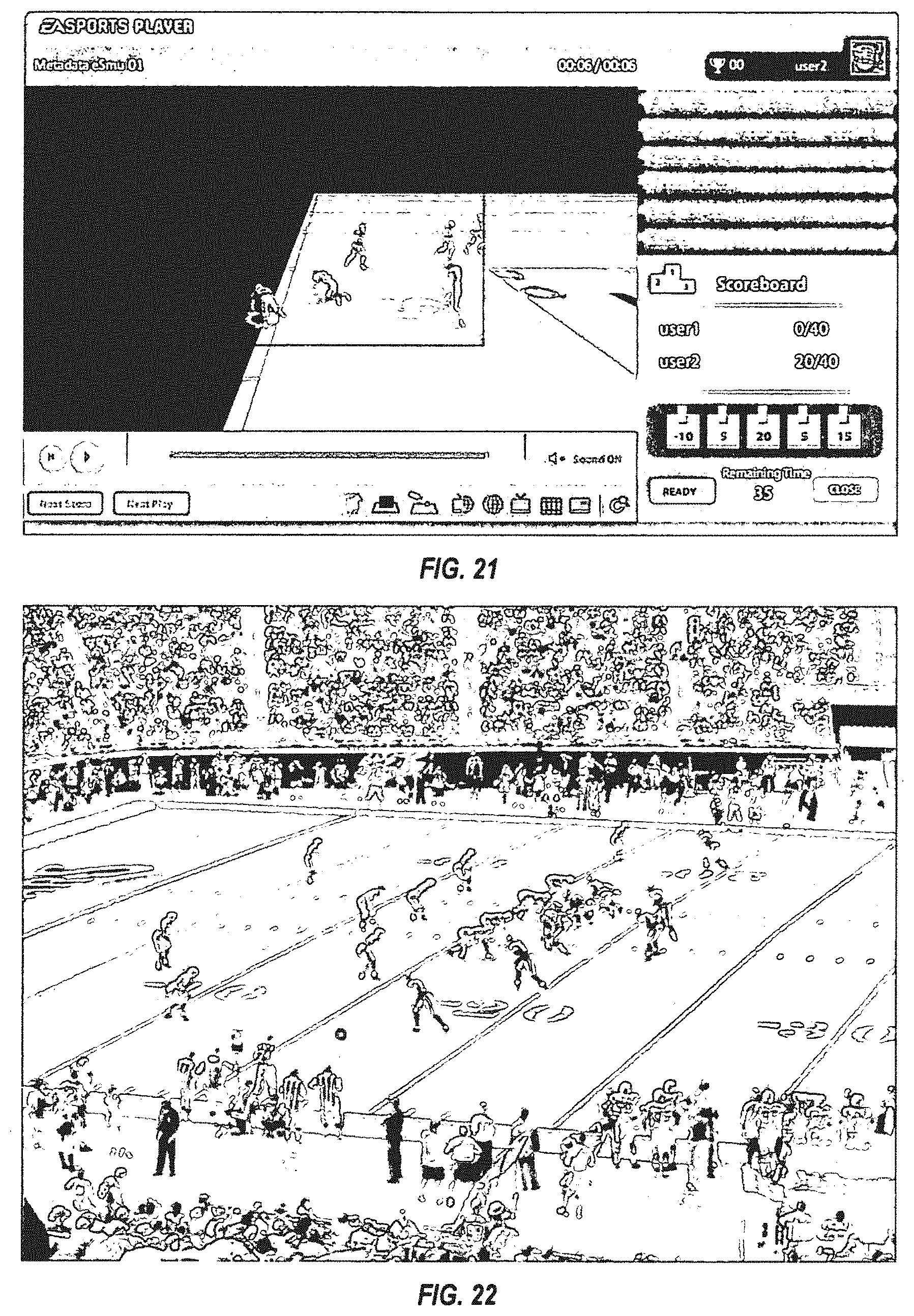

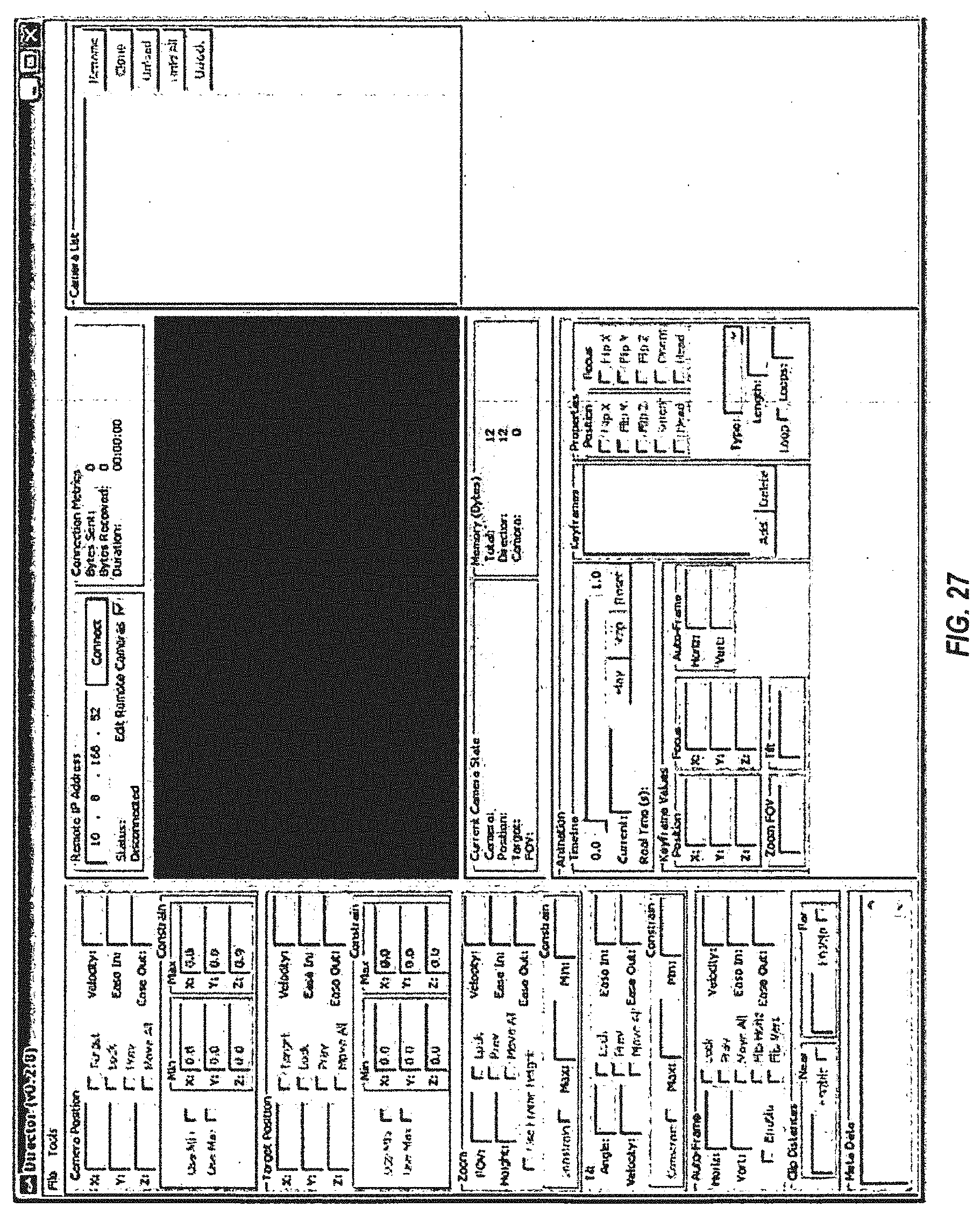

[0037] FIGS. 19a-19b. 20, and 21 are screen captured images of a "meta-game" that may be provided by the game code in conjunction with a game that is displayed on spectator computers or a commentator computer.

[0038] FIG. 22 is a screen captured image of video stream having a message composited with the video stream that may be played on the losing player's computer for the select period of time.

[0039] FIG. 23 is a simplified schematic of a spectator mode system 2300 according to another embodiment of the present invention.

[0040] FIG. 24 is a diagram illustrating, in accordance with an embodiment of the invention, a system for using data-driven real time game camera editing and playback to combine three dimensional video game graphics with broadcast images taken in a studio.

[0041] FIGS. 25A and 25B are diagrams illustrating a combined view of three dimensional game elements (football players) from a video game and a commentator (person pointing) who is being filmed in a broadcast studio.

[0042] FIG. 26 is an exemplary block diagram illustrating a system for providing video game based replays in a broadcast environment such as that pictured in FIG. 24.

[0043] FIG. 27 is a diagram illustrating an exemplary graphical user interface for providing input to the system of FIG. 26.

[0044] FIG. 28A is a UML diagram illustrating the architecture of the camera server module, a.k.a., the "Director." FIG. 28B is a UML diagram illustrating an architecture of a director system and a camera system, according to some embodiments. FIG. 28C is a UML diagram illustrating an architecture of an application interface and a director interface, according to some embodiments.

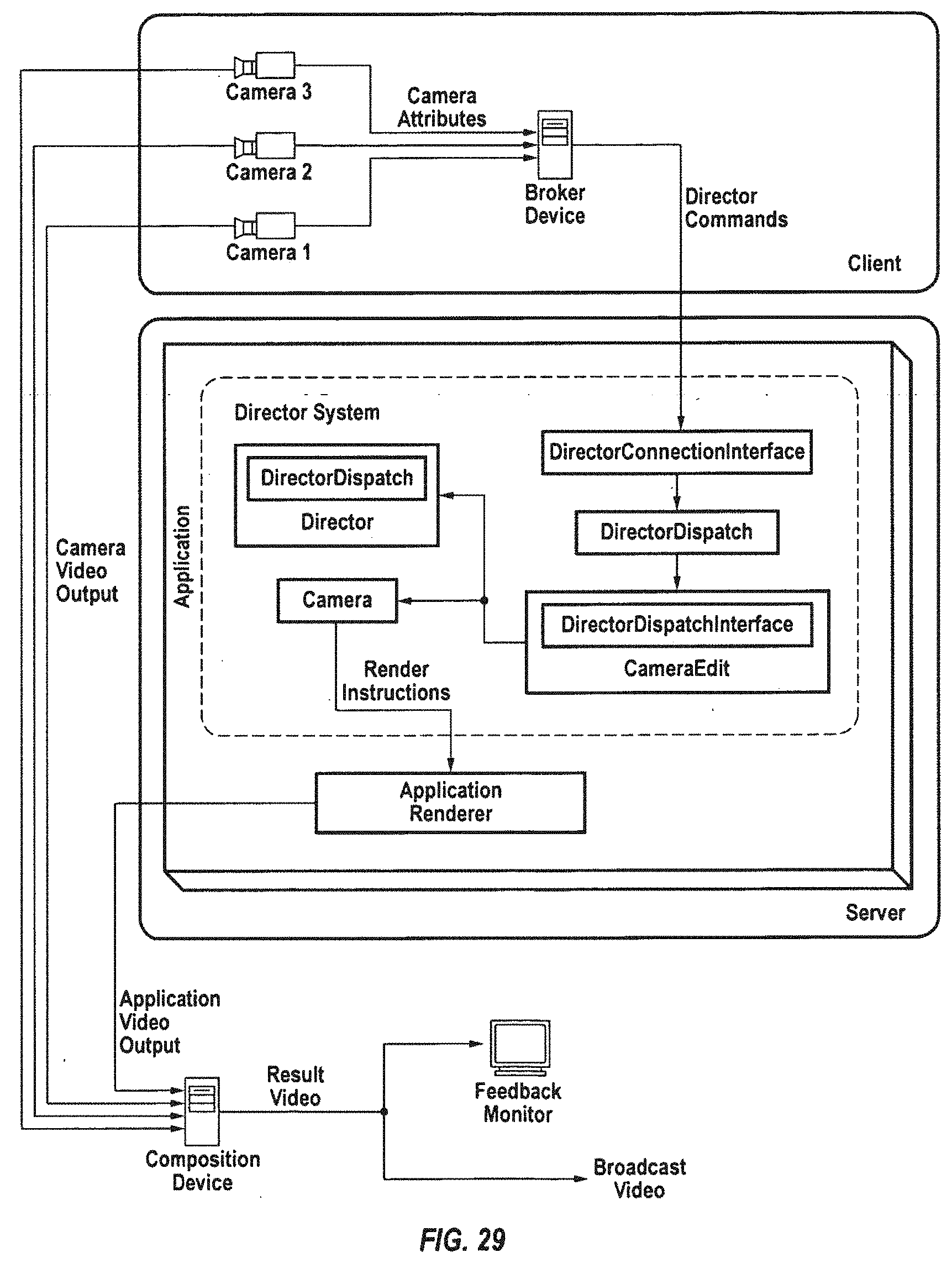

[0045] FIG. 29 is a block diagram illustrating an exemplary application of the Director architecture, such as that of the system described in FIGS. 24 and 26.

[0046] FIG. 30 is a block diagram illustrating an exemplary application of the Director architecture showing multiple Director dispatch interfaces used for processing multiple

[0047] FIG. 31 illustrates a game system for providing one or more games for a user according to one embodiment of the present invention.

[0048] FIG. 32 illustrates a game device according to another embodiment of the present invention.

[0049] FIG. 33 illustrates an example of an application of a system incorporating the Director architecture described in FIG. 29.

DETAILED DESCRIPTION OF THE INVENTION

[0050] Systems and methods for providing video game based replays is described herein. The systems and methods described herein may also be used for other forms of interactive entertainment and digital content, as will be described in further detail below.

[0051] FIG. 1 is a simplified schematic of a spectator mode system 100 according to one embodiment of the present invention. Various alternative embodiments of the spectator mode system may not include all of the components and systems shown in FIG. 1. These alternative embodiments are described below. According to the embodiment shown in FIG. 1, spectator mode system 100 includes first and second player computers 105 and 110, and a set of spectator computers 115 (e.g., first and second spectator computers 115a and 115b). A set as referred to herein includes one or more elements. Spectator mode system 100 further includes a commentator computer 120, a media server 125, and a game lobby server 130. Media server 125 may have access to a content management server 135, which is configured to provide to the media server content management information 140, a program guide 145 for content, and the like. The media server may be configured to in-turn provide the content management information and the program guide to the spectator computers and/or the commentator computer. Spectator mode system 100 may also include a recorded streams server 150, and a live streams server 155. According to one embodiment, the spectator mode server does not include recorded streams server 150, live streams server 155, and the system used with these serves. According to another embodiment, the spectator mode system does not include first and second player computers 105 and 110, and game lobby server 130. According to another embodiment, the spectator mode system does not include first and second player computers 105 and 110, game lobby server 130, and recorded streams server 150. According to another embodiment, the spectator mode system does not include first and second player computers 105 and 110, game lobby server 130, and live streams server.

[0052] The first and second player computers 105 and 110 may be configured to play a game in a peer-to-peer configuration. Both the first and second player computers may include a memory device (not shown) for storing game code and a processor for executing the game code. The executed game code provides for play of the game between the first and second computers.

[0053] According to one embodiment, the first and second player computers may locate one another via game lobby server 130. That is, a player A, using the first player computer, and player B, using the second player computer, may access (e.g., log into) the game lobby server to locate other players interested in playing a game. Player A and player B operating their respective player computers may be facilitated by the game lobby server to locate one another for game play in a peer-to-peer configuration.

[0054] One or both of the player computers may be configured to generate a live game stream 160 for the game as the game is being played on the first and second player computers. A live game stream is one example of an event stream. Other examples of events streams include a recorded stream 161 (stream for a recorded event) and a live sports stream 162 (stream from a live sporting event broadcast by a broadcaster 163). Recorded streams and live sports streams are described in further detail below. Live game stream 160 may include one or more types of data. For example, the live game stream may include a video stream, an audio stream, and/or a metadata stream. The video stream may include information for the video for the game being played. For example, the video stream may include video encoded in a variety of formats, such as one or more of the MPEG formats. The video stream may be for the field of view of the game shown on the monitors of the first and second game computers. The audio stream may include information for the audio of the game being played. The audio may also be encoded in a variety of formats, such as one of the MPEG formats. The metadata stream may include a variety of information for the game being played. The metadata might include information that may be used by the game code operating on another computer system (e.g., one of the spectator computers) to recreate the game (or to recreate portions of the game) on the other computer system. The video stream, the audio stream, and the metadata stream may be independent streams transmitted in the live game stream.

[0055] Live game stream 160 may be transmitted from one or both of the player computers to media server 125. The media server may broadcast the live game stream in a broadcast steam 164 over a network to one or more computers, which have permission to receive the broadcast stream. For example, the spectator computers may be configured to receive the broadcast stream if these computers appropriately access (e.g., login into) the media server and select the game for viewing. The media server may be configured to allow users of the spectator computers to select from a variety of broadcast streams for a variety of games being played.

[0056] Each spectator computer may receive the broadcast stream from the media server so that a game being played between the first and second player computers may be displayed on the spectator computers. More specifically, the spectator computer may include the same, or substantially the same, game code that is being executed on the first and second player computers. The spectator computer may be configured to supply the broadcast stream (i.e., the video stream, the audio stream, and/or the metadata stream) to the game code executed on the spectator computer. The game code may be configured to use the video stream, the audio stream, and/or the metadata stream to reconstruct the game that is being played on the first and second computers. The spectator computer may then direct the output of the game code to the spectator computer's monitor and/or speaker system. Thereby, a user using the spectator computer may view and hear the game being played on the first and second computers. Use of the broadcast stream by the spectator computers and the game code is described in further detail below.

[0057] According to a further embodiment, commentator computer 120 may also be configured to receive the broadcast stream if, for example, the commentator computer is logged into the media server. The commentator computer may include the same, or substantially the same, game code that is being executed on the first and second player computers. The game code may be configured to use the video stream, the audio stream, and/or the metadata stream to reconstruct the game that is being played on the first and second computers. The commentator computer may then direct the output of the game code to the commentator computer's monitor and/or speaker system. Thereby, a user (e.g., a commentator) using the commentator computer may view and hear the game being played on the first and second computers.

[0058] According to one embodiment, the commentator computer is configured to provide a plurality of viewing options for viewing and/or commenting on the game being played. The commentator computer may be configured to store and execute "director" code, which provides a user interface by which a commentator my select one or more of the viewing options. The director code may be a code module of the game code. The director code is described in further detail below.

[0059] A commentator operating the commentator computer may interact with the director code executed on the commentator computer to comment on the game being played. FIGS. 2-6 are examples of user interfaces that the executed director code may be configured to provide for display on the monitor of the commentator computer. The user interfaces provide a number of screen selectable options that a commentator may be permitted to select from for commenting on the game.

[0060] Specifically, FIG. 2 is a schematic of a stream-selection user interface that may be displayed on the commentator computers and may include a set of viewing options 200. The set of viewing options 200 may include viewing options for selecting a specific broadcast stream that the commentator may provide comments for. The broadcast stream options may include a live-stream option 205 for selecting "live" events. The live events from which a commentator may make a selection may include the game that is being played in the peer-to-peer connection between the first and second game computers, a live sporting event (such as a football game, a tennis match, a soccer game, a basketball game or the like), or other live event. The term "live." as used herein, refers to an event that is occurring as the broadcast stream for the event is being broadcast and consumed by a computer, such as a spectator computer or a commentator computer. It will be understood by those of skill in the art that there may be a delay (e.g., 1 second, 5 seconds, 15 second, etc.) between actual activity occurring in an event and the broadcast for the live event. Broadcast streams for live sporting events are discussed in further detail below. The user interface shown in FIG. 2 also includes viewing options 210 for selecting a recorded stream.

[0061] FIG. 3 is schematic of a messaging user interface that may be displayed on the commentator computer. The messaging user interface may include a video display 301 of an event (e.g., the game being played peer-to-peer on the first and second player computers) selected for viewing via the stream-selection user interface shown in FIG. 2. The messaging user interface may also include a "chat" box 302 for chatting with other users who may be watching the event on the user's respective computers (e.g., other commentator computers, other spectator computers or the like). The chat box may be configured for typing "in" for chat message that may be delivered to other user computers (e.g., other commentator computers, other spectator computers, or the like), and for displaying chat messages received from other user computers. Each user computer (e.g., other commentator computers, other spectator computers, or the like) may include a copy of the director code for displaying the user interfaces shown in FIGS. 2-6, and for providing the user selectable options provided via the user interfaces (e.g., chat). The messaging user interface may include a viewer list 305, which displays identifiers for users who are streaming the game to their computers. Users identified in the viewers list may include friends of the commentator, user's that have selected the particular commentator for viewing the commentators commentary, or the like.

[0062] The director code operating on the commentator computer may be configured to generate metadata for the commentator's chat message. The commentator computer may be configured to transmit the metadata for the commentator's chat message from the commentator computer in an on-air commentator stream 167 to the media server. The media server may be configured to combine and synchronize (referred to herein as compositing) the commentator's chat message with the live game stream (or alternatively the live sports stream or the recorded stream if one these streams is selected) into the broadcast stream, which may be transmitted to the spectator computers. The spectator computers, also displaying a messaging user interface, may be configured to display the commentator's chat message. The metadata for the commentator's chat message may include time information for the temporal position in the game at which the chat message was generated. The media server may use the time information to composite the commentator's chat message with the live game stream, such that the chat message appears on the spectator computers at the same temporal position in the game at which the commentator entered the chat message. According to an alternative embodiment, each spectator computer and commentator computer may be configured to composite the metadata and the chat message with the live game stream. Each instance herein where the media server is described as being configured composite various data streams, the announcer computer and/or the spectator computers may be configured to the describe compositing. As described briefly above, the broadcast stream might be temporally delayed from the actual events occurring in the game to provide processing time for the media server to composite the chat message with the live game stream, and so that the chat message may be temporally displayed to the correct temporal position in the game on the spectator computers. According to some embodiments, each spectator computer executing the director code may be a commentator computer.

[0063] FIG. 4 is a simplified schematic of a text-grab user interface, which may be displayed on the commentator computer and includes a set of phrases 400 where each phrase may be dragged and dropped (or otherwise selected) onto the video display for the event displayed in video display 301. The executed director code may be configured to generate metadata for a selected phrase that is dragged and dropped onto the video display. The metadata may be sent out from the commentator computer in the on-air commentator stream to the media server. The media server may be configured to composite the metadata for the selected phrase with the live game stream into the broadcast stream, and transmit the broadcast stream to the spectator computers. As described above, the metadata for the selected phrase may include time information so that the selected phrase may be displayed on the spectator computers at the same temporal position in the game at which the commentator dragged and dropped the selected phrase onto the video display of the game. Each phrase selected for display may be played on the spectator computers for a period of time. The period of time may be a predetermined period of time (e.g., two or three seconds) or a period of time selected by the commentator. The metadata may include information for the period of time.

[0064] FIG. 5 is a simplified schematic of an audio-grab user interface that may be displayed on the commentator computer and includes a set of audio selections 500. Each audio selection may be associated with a piece of audio that may be played on the spectator computers. Specifically, each audio selection may be dragged and dropped (or otherwise selected) onto the video display for the event displayed in video display 301. The executed director code may be configured to generate metadata for a selected piece of audio that is dragged and dropped onto the video display. The metadata may include encoded audio encoded in a variety of formats. Alternatively, the metadata may include information to identify the piece of audio that might form a portion of the game code, which is resident on each spectator computer. The metadata may be sent out from the commentator computer in the on-air commentator stream to the media server. The media server may be configured to composite the metadata for the selected piece of audio with the live game stream into the broadcast stream, and transmit the broadcast stream to the spectator computers. As described above, the metadata for the selected piece of audio may include time information so that the selected piece of audio may be played on the spectator computers at the same temporal position in the game at which the commentator dragged and dropped the selected piece of audio onto the video display of the game. According to a further embodiment, an interface may be provided via which a commentator may verbally comment on a game. This commentary may be digitized and transmitted in the on-air commentator stream for inclusion in the broadcast stream transmitted to the spectator computers, and played thereon with the game.

[0065] FIG. 6 is a simplified schematic of a graphics-grab user interface that may be displayed on the commentator computer and includes a set of graphics 600. Each graphics selection from the set of graphics 600 may be played on the spectator computers in conjunction with the video for the game displayed on the spectator computers. Specifically, one or more graphics selections may be dragged and dropped (or otherwise selected) onto the video display for the event displayed in video display 301. The executed director code may be configured to generate metadata for a selected graphic that is dragged and dropped onto the video display. The metadata may be sent out from the commentator computer in the on-air commentator stream to the media server. The media server may be configured to composite the metadata for the selected graphic with the live game stream into the broadcast stream, and transmit the broadcast stream to the spectator computers. As described above, the metadata for the selected graphic may include time information so that the selected graphic may be played on the spectator computers at the same temporal position in the game at which the commentator dragged and dropped the selected graphic onto the video display of the game. Each selected graphic may be played on the spectator computers for a period of time. The period of time may be a predetermined period of time (e.g., two or three seconds) or a period of time selected by the commentator. The metadata may include information for the period of time. According to one embodiment, the graphics-grab user interface is configured to permit a commentator to select multiple graphics (e.g., the three graphics shown in the example of FIG. 6) for display on a game on the spectator computers.

[0066] As discussed briefly above, the media server may be configured to receive recorded streams 161 from the recorded streams server 150 and receive live sports streams 162 from the live sports server 155. Similar to live game streams, both recorded streams and live sports streams may include a video stream, an audio stream, and/or a metadata stream. Also similar to the live game stream, one or more of a video stream, an audio stream, and a metadata stream included in recorded streams and live sports steams may be transmitted in the broadcast stream to the spectator computers and/or the commentator computer. Game code and/or other computer code operable on a spectator computer or a commentator computer may be configured to use the video stream, the audio stream, and/or the audio stream for recorded streams or live sports streams for playing the recorded streams or the live sports streams on the spectator computer or commentator computer.

[0067] According to one embodiment of the present invention, a recorded stream might be a stream that is recorded from a live event or a simulated event. A simulated event (e.g., a simulated game event) might be a live event. For example, the game played on the first and second player computers may be a live event, and the game code for the game may generate simulated video for one or more players in the game, a background in the game, game pieces (e.g., a football, a basketball, a sword, a car, etc.) in the game or the like. Each of the user interfaces shown in FIGS. 2-6 may be used in conjunction with a recorded stream or a live sports stream.

[0068] FIG. 7 is a further detailed view of the data streams (video stream, audio stream, and metadata stream) that may be included in an event stream (e.g., a live game stream, a recorded stream, and/or a live sports stream) and/or a broadcast stream. FIG. 7 also shows a further detailed view of a spectator computer according to one embodiment of the present invention. FIG. 7 also shows various content that might be provided by content manager system 135 to the spectator computers and/or the commentator computer.

[0069] As shown in FIG. 7, a video stream might include time coded and packetized video information for an event. An audio stream might include encoded audio information for commentary, coach audio (e.g., is a coach is being audio monitored), player audio (e.g., if a player is being audio monitored), fan audio, music, etc.

[0070] Various types of metadata that might be included in a data stream include: motion metadata, motion enhancement metadata, situation metadata, plan metadata, outcome metadata, player situation metadata, homography metadata, presentation metadata, statistics metadata, rosters metadata, models metadata, environments metadata, user interface metadata, comments metadata, ads metadata, etc.

[0071] More specifically, motion metadata may include position information (e.g., (x, y, and/or z) coordinate data) for the player's in a game, and may include direction information for the direction each player is moving in a game. For example, the position information may specify a player's position on a football field, and the direction information may specify the direction each football player is moving in a given play. Motion enhancement metadata may include information for the acceleration and deceleration of players in a game, information for where players may be looking, information for where a player places her feet, information and/or models for articulated skeleton motion, etc. Situation metadata may include information for a specific time remaining in a game, information for a down number in football game, information for the number of yards from a first down, information for the specific down number in a football game, information for the time left on the clock for a quarter in a game, information for the quarter number, or the like. Plan metadata may include information for a particular play that was called. For example, the plan information may indicate that a quarter back sneak was planned in a football game, a hail mary pass was planned in a football game, etc. Outcome metadata may include information for the outcome of a given situation in a game, such as the outcome of a particular down played in a football game. For example, the outcome information may indicate that a pass was completed, a pass was intercepted, a field goal kick was successful, a turnover of a football occurred, etc. Player substitution metadata may include information for a player removed from a game and another player put in place of the removed player.

[0072] Player telestration metadata may include information for placing a graphic on a video and displaying the video on a spectator computer or a commentator computer. Example telestrations include the graphics shown in video display 301 in FIG. 6. Telestration metadata might also include information that identifies a player (e.g., a football player) or an object (e.g., football, a soccer ball, a hockey puck, etc.) whose movement is to be tracked as play occurs in a game. For example, as play in a game occurs, a telestration for a tracking path of the movement of the player or object may be displayed on the monitor of the spectator computers and/or the commentator computer. Homographic metadata may include information for a camera that is shooting a game. For example, the homographic metadata may include information for the field of view of the camera, the camera angle, the camera position, etc.

[0073] Presentation metadata may include information for how a game is to be presented. Statistic metadata may include statistics for a game such as scores, individual player statistics, etc. Roster metadata may include information for the group of players who are eligible to play a game, players that play a game, substitute players for a game, etc. The roster metadata may include player numbers, player positions (e.g., quarter back, tight end, etc.), and the like. Models metadata may include three dimensional (3D) models for players (bodies, limbs, heads, feet, etc.), stadiums, ads, characters, etc.

[0074] Environment metadata may include information for a stadium in which a game is played. Environmental metadata might also include weather information, geographic coordinates of where a game is being played so that weather information may be retrieved for the location. Environmental metadata might also include information for automobile traffic near a location where a game is played. User interface metadata may include information the user interface against with a game is presented. Comment metadata may include information for comments for a commentator or the like. Ads metadata may include information for an ad that is to be displayed, and may include position information for the location at which an ad is to be placed (e.g., on a football field).

[0075] As shown in FIG. 7, a spectator computer may include a media player 705, which includes a streaming playback coordinator, a navigation tool, a network I/F controller, a network transport and streaming module, a codec abstraction layer, and one or more decoders. The director code may include a data synchronization engine for synchronizing data streams received in a broadcast stream. The data synchronization engine may be configured to synchronize the video and audio streams with the metadata (e.g., chat messages, user selected graphics, user selected phrases, user selected audio clips, or commentator commentary). The director code may also include a run time assert manager, and a composite engine. The composite engine is configured to composite the video stream, the audio stream, and/or one or more pieces of the metadata. According to one embodiment, the executed director code is configured to provide a user interface via which a user may select the specific pieces of metadata that the user wants to have composited with the video stream and/or the audio stream. For example, the executed director code may be configured to provide an option where a user may select that a video stream is to be composited with metadata for a telestration of a player executing a play. The game code may be configured to use the composited video stream and metadata to display the video in the video stream with a telestration of the player. FIG. 8 is a schematic of various video segments in a video stream, various audio segments in an audio stream, and various metadata in a metadata stream that may be composited. Various specific example of composited video streams, audio streams, and user selected metadata streams are discussed in further detail below.

[0076] As shown in FIG. 7, the spectator computer might include, or be coupled to, a local cache, which is configured to store graphic templates, models, and environments. The graphics templates may include the graphics for the user interfaces discussed above with respect to FIGS. 2-6. The models may include two dimensional models for players, three dimensional models for players, and other models. The models for the players are relatively sophisticated and allow a player recorded from one angle in a game (e.g., a live sports game), to be viewed from other angles (i.e., rotated by the director code) so that a player moving in a game may be displayed on a computer monitor executing the recorded move from any angle, or more generally from any field of view. According to one embodiment, the director code operating on the spectator computers and/or the commentator computer provides a user interface via which a user may select a field of view (different from the angle at which a player was recorded). The executed game code may generate the selected field of view for display on the spectator computer's monitor.

[0077] As described briefly above, the director code may be configured to display one or more user interfaces via which a user (e.g., a commentator or a spectator user) may select various data streams received in a broadcast stream for compositing. The director code may be configured to receive a user selection for compositing and may generate a composited data stream for display based on the user selection. The director code may provide for the display of a user interface, such as the user interface show in FIG. 2, via which a variety of events streams (e.g., video streams) may be selected for compositing. The director interface may be configured to provide a variety of other similar user interfaces via which various audio streams and/or metadata may be chosen for compositing with a video stream.

[0078] FIG. 9 is a simplified schematic of a screen captured image of a video that has been composited with various graphics, which may be available for selection and composition via the director code executed on a spectator computer or a commentator computer. The screen captured image may be a simulated image of a football game that might be generated from game code executed on the first or second player computers during peer-to-peer game play. Alternatively, the screen captured image may be a simulated image of a football game that might be generated from game code executed on a single computer, such as the first player computer during game play. According to another alternative embodiment, the screen captured image may be from a real football game, which may be live or recorded. According to another alternative embodiment, the screen captured image may be from a motion capture of a real football game, which may be live or recorded. According to one embodiment, various computers in spectator mode system 100 may be configured to perform motion capture on a video stream. For example, live sports server 155 may be configured to perform motion capture of a broadcast of a live sports event to generate live sports streams 162. Alternatively, recorded streams server 155 may be configured to perform motion capture on a recorded stream to generate recorded streams 161. Alternatively, media server 125 may be configured to perform motion capture on recorded sports streams 161 or live sports streams 162.

[0079] The screen captured image shown in FIG. 9 includes a plurality of graphics composited with the video stream from which the screen captured image is taken. For example, the screen captured image includes a dark horizontal line 900, which indicates the foot position on the playing field of the player (e.g., player 13) who is in possession of the football. In the composited video from which the screen captured image was taken, the horizontal line may be configured to follow the foot position of the player in possession of the football as the player moves. A highlighted rectangle 905 is also shown in the screen captured image. Note that the highlighted rectangle is transparent and is shown as being above the plane of the field but behind the players. A commentator, or the like, interacting with the user interface might select to the have highlighted rectangle composited with the video stream to draw attention to some action in the football game that is about to occur or that is occurring in that area of the field. Another graphic that is composited with the video stream and shown in the screen captured image includes arrow 910. Arrow 910 may be configured to point at a player and follow the player as the player moves in the game. For example, the arrow may be configured to point at the player in possession of the football and move to follow this player.

[0080] According to one embodiment, the metadata stream may include information and data for the plurality of graphics composited with the video stream. Each of the graphics displayed may be user selectable via selection of one or more pieces of metadata from the metadata stream. Subsequent to selection of a piece of metadata, the relevant portions of the metadata stream corresponding to the selected metadata may be composited with the video stream. Additional or alternative graphics, sound clips, or the like may, which may be composited with the video stream may also be user selectable via the user interface provided by the director code. Further, the field of view for a game may also be user selectable. The field of view may be selected based on portions of video stream capture and generated by various cameras capturing the game. The field of view may also be selected for a virtual camera (referred to herein as a game camera). The virtual camera operating in conjunction with the game code, the director code, 2D or 3D player models, and the like, may be configured to generate simulated video of a game for the user selected field of view. The simulated video may be composited with the graphics discussed above, audio clips, and the like.

[0081] FIG. 10 is an example user interface 1000 having a video display 301 thereon, and a plurality of user selectable options 1005. The user selectable options may be tabbed. The tabs are labeled with the reference numeral 1005 and alphabetic suffixes. In example user interface 1000 the model option tab is selected. According to one embodiment, the model option tab may include user selectable options for a trail, a path, a model, a shadow, and a label. The user selectable options are associated with the metadata stream and may represent the various metadata in the metadata stream. Video display 301 includes a screen captured image of a video stream of a game where the user selectable option for a model (e.g., a 3D model) of the players is selected. Models of the players may be simulated by the game code. According to one embodiment, the models of the players may be simulated based on a video stream of a live sports game, for example, where motion capture of the players in the live sports game has been performed. That is, the simulated players may follow the paths, and perform the actions of the "real" players in the live sports game. According to the specific embodiment of user interface 1000, the path option in the model option tab has been selected and a path has been composited with simulated video of one of the modeled players. A path shows not only positions that a players has been previously, but also shows the position that a player will be at in the future. A path differs from a trail option, it that a trail shows where a player has been previously, but does not show where a player will be in the future.

[0082] FIG. 11 is an example screen captured image of a composite video where a video stream of a game is composited with a trail for a player. FIG. 12 is an example screen captured image of a composite video where a video stream of a game is composited with an arrow that is configured to trail for a player. FIG. 13 is an example screen captured image of a composite video where a video stream of a game is composited with a strobe image of a player moving in a game. As can be seen in screen captured images shown in FIGS. 9-13, embodiment of the present invention provide a variety of user selectable options where a user is permitted to choose how to view an event. More specifically, as embodiments of the present invention provide user selectable option for allowing a user to choose various metadata in a metadata stream to composite with a video stream and/or an audio stream, the user can control her experience in viewing an event, such as a live or recorded sporting event, a game played between two computers or the like.

[0083] FIG. 14 is a screen captured image of another user selectable option that the director code or game code may provide. The screen captured image includes a boarder 1405. Inside of border 1405, the screen captured image may be from a video stream for game for which the video stream is available from a live game stream, a live sports stream, or a recorded stream. Outside of border 1405, the screen captured image may be from a simulated video stream. That is, a video stream for the portion of the screen captured image outside of the border may not be available in a received live game stream, a live sports stream, or a recorded stream. However, the metadata stream provided with a live game stream, a live sports stream, or a recorded stream may include position information for players and the directions the players traveled during play. If the user chooses (via a user interface supplied by the director code or the game code) to view the extended field of view that is outside of border 1405, the game code may be configured to generate a simulated video stream of the player's positions and movements based on the received metadata stream. The executed game code may be configured to composite this simulated video stream with the video stream for the live game stream (or the like). The simulated video stream may include simple circular graphics 1410 for the players as shown in FIG. 14 or may include 2D or 3D simulations of the players (not shown).

[0084] FIG. 15 is a screen captured image of a top down view of a video stream. The video stream from which screen captured image is taken may be a simulated video stream, which is simulated by the game code. The game code may generate the simulated video stream based on position metadata of players provided in the metadata stream and may also be based on a live game stream, a live sports stream, or a recorded stream. FIG. 16 is a screen captured image 1600 of the screen captured image shown in FIG. 15 in a picture-in-picture configuration with another video stream.

[0085] FIG. 17 is an alternative view of user interface 1000, which is shown in FIG. 10. In the view of user interface 1000 shown in FIG. 17, the commentating tab is selected. The commentating tabs provide a plurality of user selectable options for compositing an ad with a video stream. Video display 301 shows an ad (e.g., an EA Sports ad) composited with a video stream of a football game. A commentator who may be commentating on a game (e.g., an EA Sports game played between two player computers) may select a screen option in the commentator tab for placing the ad so that each spectator using her spectator computer may see the ad when viewing a video stream for the game.

[0086] FIG. 18 is another alternative view of user interface 1000, which is shown in FIG. 10. In the view of user interface 1000 shown in FIG. 18, the model control tab is selected. As discussed above the model control tab provides a plurality of user selectable option for compositing a graphic with a video stream. In the particular example of FIG. 18, a label is selected for compositing with a video stream. The label is shown a above a player on the right hand side of video display 310. The label may be composited with the video stream for a predetermined period of time (2 second, 5 second, etc.). The label may follow the player. The game code may use position metadata for the player to generate a composited video stream where the label follows the player.

[0087] FIGS. 19a-19b, 20, and 21 are screen captured images of a "meta-game" that may be provided by the game code in conjunction with a game (e.g., a football game) that is displayed on spectator computers or a commentator computer. The specific meta-game shown in FIGS. 19a-19b. 20, and 21 divides a playing field (e.g., a football field) into a grid having a number of boxes. The meta-game is configured to receive a set of user selections for one or more boxes in the grid. Each selected box may be a portion of the playing field at which a user might guess that a game event is going to occur. A game event may be a completed pass in a football game, a tackle in a football game, or the like. The game code may provide a set of betting weights 1900 that a user might place on the boxes that the user has selected. If a game event enters the boxes selected by the user, the user might be awarded the points of the betting weights selected. One or more players using their player computers, for example, may play a meta-game against one another. For example, two players might play the meta-game shown in FIGS. 19a-19b. 20, and 21 to selected point total (e.g., 200 points). The game code may be configured to provide a reward to the player who wins the meta-game (first accumulates 200 points). For example, the winner of the meta-game may be provided with a user selectable option for placing a message in the losing player's video streams for a select period of time (e.g., a week). The message might be configured to remind the losing player of the loss. FIG. 22 is a screen captured image of video stream having a message (e.g., "loser") composited with the video stream that may be played on the losing player's computer for the select period of time.

[0088] FIG. 23 is a simplified schematic of a spectator mode system 2300 according to another embodiment of the present invention. Spectator mode system 2300 is substantially similar to spectator mode system 100 shown in FIG. 1, except that spectator mode system 2300 is configured to provide video streams and audio streams 2305, and metadata streams 2310 to a web client operating on a web console 2315. Web console 2315 may be a web-enabled game console, a web-enabled portable device (e.g., a smart phone), a computer, or the like. The web client operating on web console 2305 may be configured to provide substantially similar user selectable options for compositing video streams, audio stream, and/or metadata streams as the user selectable option described above with respect to FIGS. 1-21. According to one embodiment, spectator mode system 2300 includes first and second player computers 105 and 110, and a set of spectator computers 115. The first and second player computers 105 and 110 may be a source of a live game stream 160. Spectator mode system 2200 may include other sources (e.g., a sports broadcaster, a recorded video archive, etc.) of event streams, such as live sports streams and recorded streams. The spectator computers may be game consoles, such as Play Station Three.TM. game consoles. Spectator mode system 2300 may also include a commentator computer 120, a media server 125, and a game lobby server 130. According to one embodiment, the metadata streams generated by the spectator computers and/or the commentator computer may be transmitted from these computers to a game broadcast web server 2325, which may be configured to transmit the metadata stream in a web formatted transport stream to the web client. The video streams and audio streams generated by the player computers and/or the spectator computers may be transmitted from these computers to the media server, which in turn may be configured to transmit these event streams to a streaming partner server 2330, which in turn is configured to transmit these event streams to the web client. According to one embodiment, the game broadcast server 2225 and the streaming partner server 2330 may be the same server.

[0089] Various embodiments configured to composite two or more video streams are described immediately below. FIG. 24 is a diagram illustrating, in accordance with an embodiment of the invention, a system for using data-driven real time game camera editing and playback to combine three dimensional video game graphics with broadcast images taken in a studio. The system as shown is located in a television broadcast studio. A commentator 101 ("talent") is standing in front of an LED wall that can be used for displaying graphics used in the television broadcast. A graphic representing a football field is located on the floor of the studio set. A first camera (Camera 1) is aimed at the studio scene as shown and records the football field graphic along with the commentator 101. A second camera (Camera 2) is aimed at the studio scene as shown and records the football field graphic along with the commentator 101 from a different angle as shown. Camera parameters are sent from a first camera (Camera 1) and a second camera (Camera 2) to a computer that is running a camera application which translates the camera parameters into real-time camera commands that can be understood by an in-game camera module installed on game device 14, for example, a game console. Game device 14 is described in more detail in FIGS. 27 and 28A-28C below.

[0090] Game console 14 provides a green screen version of video recorded by the game camera. For this example, the game console has been loaded with a football game, such as Madden.TM., published by Electronic Arts Inc., and the green screen video shows a particular play being executed. Particular plays can be selected through the game user interface, and multiple game play scenarios can be recorded by the game camera. It should be noted that Camera 1 and Camera 2 are different from the game camera. Examples of Cameras 1 and 2 include video camera hardware such as that used by a television studio to record events. The game camera is used for showing a field of view in a video game, and can be programmed to show various views.

[0091] The game play that is recorded by the in-game camera can be processed as a green screen video. The green screen video shows the game play without the game environment (for example, a particular football stadium as can be selected within the game), so that the game characters are more easily seen when they are projected onto a different scene. In the example shown in FIG. 24, the green screen video from the game is combined with the video obtained from Camera 1 and Camera 2 to create a composite video which is a combination of the studio scene and the green-screen video from the game console. The composite video output is displayed to workstation 105 and monitor 104 and appears as a football game being played inside the studio with the commentator 101 standing near the game players, the graphics on the LED wall being displayed behind him. In an embodiment of the invention, the compositing is two dimensional and the commentator generally appears to be located behind the players, rather than in the middle of the game play.

[0092] FIGS. 25A and 25B are diagrams each illustrating a combined view of a plurality of three dimensional game elements (football players) from a video game and a commentator (person pointing) who is being filmed in a broadcast studio. This combined view appears such that the commentator 101 is present on the field with the football players 102, 103, and is in the midst of the action of the play currently being executed. This provides the commentator 101 with the ability to "step into" the game action and point out areas of interest. For example, in FIG. 25A the commentator 101 in this scenario can point out that player 102 is getting tackled by player 103. In FIG. 25B, players 204 and 205 are running away from the commentator 101.

[0093] In an embodiment of the invention, the commentator can be "placed" in the middle of game play by wearing motion capture markers 201, 202 in a real-time motion capture space that can be used to capture his movement around the studio. In one example, the real-time motion capture space can be approximately 30 feet by 30 feet in size. It is understood that numerous other motion capture space sizes may also be appropriate. Motion capture markers (or sensors) can be located at various points on the commentator's body, for example, motion capture marker 201 is on the top of the commentator's head, and motion capture marker 202 is on the commentator's wrist. Any motion capture technology is suitable for use with this embodiment of the invention, and is not intended to be limited to methods which require the use of motion capture markers.

[0094] The three dimensional position data associated with the commentator can be fed into the video game simulation running on game console 14. The game simulation can then process the commentator's position data so that the commentator will appear in the right position relative to the rest of the players, thus providing more realism in the scene. If the game simulation is running live, then the commentator could interact with other virtual players by way of the position data input. For example, the commentator could slap a virtual player in the head, and that virtual player could be shown as responding to the commentator's actions.

[0095] FIG. 26 is an exemplary block diagram which illustrates a system 300 for providing video game based replays in a broadcast environment such as that pictured in FIG. 24. The system as shown includes a video game console 301, a client user interface 302 (shown as a personal computer), a studio camera 303, and a broadcast system 304. The video game console includes a camera server module 305 (a.k.a. the "director") and a game code module 306. The game code module 306 is the video game software, for example, the game Madden.TM. described above. The camera server module 305 provides in-game camera views in response to inputs received from the client user interface 302. These in-game camera views are used in connection with the video game to produce various replays of the action happening in the game.

[0096] The inputs 307 entered into the client user interface 302 determine what data is passed back and forth between the client user interface 302 and the camera server module 305. These inputs 307 include, for example, virtual playbook commands which utilize a standard network socket for communication. Virtual playbook commands and examples of their application are described in further detail below. In the example shown in FIG. 24, virtual playbook commands are entered into the personal computer and are used to select virtual replay content 308 from the game. The camera server module 305 responds to virtual playbook commands by directing the in-game camera to provide requested replays 308 from the game. In the example shown in FIG. 24, the requested replays 308 include videos of the players against a green background. The game environment (football stadium background) is removed from the video so that only the players remain. This virtual replay content 308 provides one input to a broadcast system 304, as shown.

[0097] The other input to the broadcast system 304 is a studio image 309. The studio image 309 is the background image for the composited video output 310 from the broadcast system 304. The studio image 309 is the video taken by the studio cameras 303. Camera 1 and Camera 2, described above in FIG. 24. In the example of FIG. 24, the studio cameras 303 provide real world video of a commentator standing in a broadcast studio as a background image. The studio cameras 303 send current location and camera-related property information 311 to the client input device 302, shown as a personal computer.

[0098] The data from the studio cameras describes the location, orientation and zoom of each physical camera. This data is captured by a device that is specifically set up to gather and interpret this data. Once this information is gathered, it can be adjusted and translated into commands that the Director uses to position the in-game virtual cameras. This data is then sent from either the capture device, or via some broker that is connected remotely to the Director. The director then uses the commands to set the virtual camera to correlate the application view with the physical camera view. The image from the application renders the involved objects against a green screen and outputs this video image. The application green screen image is then processed to make the green transparent and the result image is then overlaid on top of the physical camera image. The result is that the virtual world appears as if it is a part of the real world. As the physical camera moves, the Director camera moves instep creating the illusion that the virtual objects are physically present in the real world. The broker or camera data capture device can potentially track multiple physical cameras and switch which data feed is translated and sent to the director through the broker. A setup as described would allow the result image to cut between multiple physical cameras while still maintaining the illusion that the virtual objects were `physically` present in the real world.

[0099] The studio cameras are distinguished from the in-game cameras described above. The in-game cameras record what is happening in the video game, while the studio cameras record what is happening in the broadcast studio.

[0100] The broadcast system then composites the two images, the studio (background) image and the virtual replay content, into a final image which is shown on the monitor 104 and workstation 105 shown in FIG. 24. This final image can be broadcast to a cable television audience via known broadcast channels.