Multi-camera Device

Love; Russell S. ; et al.

U.S. patent application number 16/586095 was filed with the patent office on 2020-07-09 for multi-camera device. The applicant listed for this patent is Intel Corporation. Invention is credited to Yu-Tseh Chi, James Granger, Russell S. Love, Kabeer R. Manchanda, Ali Mehdizadeh, Varun Nasery, Gerald A. Pham, Peter W. Winer, Ka-Kei Wong.

| Application Number | 20200218933 16/586095 |

| Document ID | / |

| Family ID | 55438736 |

| Filed Date | 2020-07-09 |

| United States Patent Application | 20200218933 |

| Kind Code | A1 |

| Love; Russell S. ; et al. | July 9, 2020 |

MULTI-CAMERA DEVICE

Abstract

Apparatuses, methods and storage medium associated with multi-camera devices are disclosed herein. In embodiments, a multi-camera device may include 3 or more camera sensors disposed on a world facing side of the multi-camera device. Further, the multi-camera device may be configured to provide a soft shutter button at a location on an opposite side to the world facing side, coordinated with locations of the 3 or more camera sensors that reduces likelihood of blocking of one or more of the 3 or more camera sensors. Other embodiments may be disclosed or claimed.

| Inventors: | Love; Russell S.; (Palo Alto, CA) ; Winer; Peter W.; (Los Altos, CA) ; Granger; James; (Larkspur, CA) ; Pham; Gerald A.; (San Jose, CA) ; Wong; Ka-Kei; (Washington, DC) ; Nasery; Varun; (Santa Clara, CA) ; Manchanda; Kabeer R.; (Sunnyvale, CA) ; Chi; Yu-Tseh; (Santa Clara, CA) ; Mehdizadeh; Ali; (Belmont, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 55438736 | ||||||||||

| Appl. No.: | 16/586095 | ||||||||||

| Filed: | September 27, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15900359 | Feb 20, 2018 | 10460202 | ||

| 16586095 | ||||

| 15617816 | Jun 8, 2017 | 9898684 | ||

| 15900359 | ||||

| 14818987 | Aug 5, 2015 | 9710724 | ||

| 15617816 | ||||

| 62046398 | Sep 5, 2014 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/6212 20130101; G06K 9/4642 20130101; H04N 5/23293 20130101; H04N 5/23245 20130101; H04N 5/2258 20130101; G06K 9/4652 20130101 |

| International Class: | G06K 9/62 20060101 G06K009/62; H04N 5/232 20060101 H04N005/232; G06K 9/46 20060101 G06K009/46; H04N 5/225 20060101 H04N005/225 |

Claims

1. (canceled)

2. A mobile computing device, comprising: a housing having: a first edge; a second edge; a first corner joining the first and second edges; a third edge opposite the first edge; a second corner joining the second and third edges; a fourth edge opposite the second edge, the first and third edges having a first length, the second and fourth edges having a second length greater than the first length; a third corner joining the third and fourth edges; a fourth corner joining the first and fourth edges; a first face bordered by the first, second, third, and fourth edges and the first, second, third, and fourth corners; and a second face opposite the first face, the second face bordered by the first, second, third, and fourth edges and the first, second, third, and fourth corners; a touchscreen on the first face and facing in a first direction; a first camera sensor facing in a second direction opposite the first direction; a second camera sensor facing in the second direction; a third camera sensor facing in the second direction, the first, second, and third camera sensors arranged in a triangular pattern, the first, second, and third camera sensors closer to the first edge than to the third edge; at least one storage device to store instructions; and at least one processor to execute the instructions to: cause a soft shutter button to be displayed on the touchscreen, the soft shutter button to be displayed closer to the third edge than to the first edge and closer to the second edge than to the fourth edge; cause the touchscreen to display first content sensed by the first camera sensor and second content sensed by the second camera sensor, the first content and the second content to be displayed concurrently in a preview; enable switching between different camera modes; and process, when in a first one of the different camera modes, image data captured concurrently by at least two of the first, second, and third camera sensors.

3. The mobile computing device of claim 2, wherein the soft shutter button is to be displayed closer to the second corner than to the first, third, and fourth corners.

4. The mobile computing device of claim 2, wherein the processor is to generate depth information based on data from at least two of the first, second, and third camera sensors.

5. The mobile computing device of claim 2, wherein the first one of the different camera modes enables an effect based on depth.

6. The mobile computing device of claim 2, wherein the processor is to cause the touchscreen to display the first and second content while the first and second camera sensors continue to sense the first and second content.

7. The mobile computing device of claim 2, wherein the processor is to synchronize the first and second camera sensors when the first and second camera sensors are sensing the first and second content.

8. The mobile computing device of claim 2, further including network interface circuitry.

9. The mobile computing device of claim 2, wherein the mobile computing device is a smartphone.

10. A mobile computing device, comprising: a housing having: a first corner; a second corner; a third corner; a fourth corner; a first edge between the first and second corners; a second edge between the second and third corners; a third edge opposite the first edge, the third edge between the third and fourth corners; a fourth edge opposite the second edge, the fourth edge between the first and fourth corners, the first and third edges having a first length, the second and fourth edges having a second length greater than the first length; a first face circumscribed by the first, second, third, and fourth edges and the first, second, third, and fourth corners; and a second face opposite the first face, the second face circumscribed by the first, second, third, and fourth edges and the first, second, third, and fourth corners; a touchscreen on the first face and facing in a first direction; a first camera facing in a second direction opposite the first direction; a second camera facing in the second direction; a third camera facing in the second direction, the first, second, and third cameras arranged in a triangular pattern, the first, second, and third cameras closer to the first edge than to the third edge; at least one storage device to store instructions; and at least one processor to execute the instructions to: cause a soft shutter button to be displayed on the touchscreen, the soft shutter button to be closer to the third edge than to the first edge and closer to the second edge than to the fourth edge; cause the touchscreen to display first content sensed by the first camera and second content sensed by the second camera, the first content and the second content to be displayed concurrently; enable switching between different camera modes; process, when in a first one of the different camera modes, image data captured concurrently by at least two of the first, second, and third cameras; and generate depth information based on the image data captured by the at least two of the first, second, and third cameras.

11. The mobile computing device of claim 10, wherein the soft shutter button is to be closer to the third corner than to the first, second, and fourth corners.

12. The mobile computing device of claim 10, further including a modem to provide wireless communications.

13. The mobile computing device of claim 10, wherein the touchscreen is to display the first content and the second content as portions of a single image.

14. The mobile computing device of claim 10, wherein the touchscreen is to display the first and second content in an overlapping manner.

15. The mobile computing device of claim 14, wherein the first and second content are displayed as one image.

16. The mobile computing device of claim 10, wherein the first one of the different camera modes enables an effect based on depth.

17. The mobile computing device of claim 10, wherein the processor is to cause the touchscreen to display the first and second content while the first and second cameras continue to sense the first and second content.

18. The mobile computing device of claim 10, wherein the processor is to synchronize the first and second cameras when the first and second cameras are sensing the first and second content.

19. A mobile computing device, comprising: a housing having: a first edge; a second edge; a first corner joining the first and second edges; a third edge opposite the first edge; a second corner joining the second and third edges; a fourth edge opposite the second edge, the first and third edges having a first length, the second and fourth edges having a second length greater than the first length; a third corner joining the third and fourth edges; a fourth corner joining the first and fourth edges; a first face bordered by the first, second, third, and fourth edges and the first, second, third, and fourth corners; and a second face opposite the first face, the second face bordered by the first, second, third, and fourth edges and the first, second, third, and fourth corners; means for displaying on the first face, the displaying means facing in a first direction; first means for sensing, the first sensing means facing in a second direction opposite the first direction; second means for sensing on the second face, the second sensing means facing in the second direction; third means for sensing on the second face, the third sensing means facing in the second direction, the first, second, and third sensing means arranged in a triangular pattern, the first, second, and third sensing means closer to the first edge than to the third edge; at least one means for storing instructions; and at least one means for executing the instructions to: cause a soft shutter button to be displayed on the touchscreen, the soft shutter button to be closer to the third edge than to the first edge and closer to the second edge than to the fourth edge; cause the displaying means to display a preview of first content sensed by the first sensing means and second content sensed by the second sensing means, the first content and the second content to be displayed concurrently; enable switching between different camera modes; and process, when in a first one of the different camera modes, image data captured concurrently by at least two of the first, second, and third sensing means.

20. The mobile computing device of claim 19, wherein the soft shutter button is to be closer to the second corner than to the first, third, and fourth corners.

21. The mobile computing device of claim 19, wherein the executing means is to generate depth information based on data from at least two of the first, second, and third sensing means.

22. The mobile computing device of claim 19, further including means for wirelessly communicating.

23. The mobile computing device of claim 19, further including network interface circuitry.

24. The mobile computing device of claim 19, wherein the displaying means is to display the preview of the first and second content in substantially real-time to when the first and second sensing means sense the first and second content.

25. The mobile computing device of claim 19, wherein the first one of the different camera modes enables a photography effect based on depth.

Description

RELATED APPLICATION

[0001] The present application is a continuation of U.S. patent application Ser. No. 15/900,359 file, entitled "MULTI-CAMERA DEVICE," filed Feb. 20, 2018, which is a continuation of U.S. patent application Ser. No. 15/617,816, entitled "MULTI-CAMERA DEVICE," filed Jun. 8, 2017, which is a divisional application of U.S. patent application Ser. No. 14/818,987, entitled "MULTI-CAMERA DEVICE," filed Aug. 5, 2015, which is a non-provisional application of U.S. provisional application 62/046,398, entitled "Multi-Camera Device," filed on Sep. 5, 2014. The present application claims priority to the Ser. No. 15/900,359, the Ser. No. 15/617,816, the Ser. No. 14/818,987, and the 62/046,398 applications. The Ser. No. 15/900,359, the Ser. No. 15/617,816, the Ser. No. 14/818,987, and the 62/046,398 applications are hereby fully incorporated by reference.

TECHNICAL FIELD

[0002] The present disclosure relates to the field of photography, in particular, to apparatuses, methods and storage medium associated with multi-camera devices for depth photography and/or depth video applications.

BACKGROUND

[0003] The background description provided herein is for the purpose of generally presenting the context of the disclosure. Unless otherwise indicated herein, the materials described in this section are not prior art to the claims in this application and are not admitted to be prior art by inclusion in this section.

[0004] Depth photography and depth video applications require multi-camera devices with 2 or more world-facing cameras. Further, for proper multi-camera, depth mode operation, the 2 or more world-facing cameras need to be running concurrently with their captured frames synchronized and numbered in sequence.

BRIEF DESCRIPTION OF THE DRAWINGS

[0005] Embodiments will be readily understood by the following detailed description in conjunction with the accompanying drawings. To facilitate this description, like reference numerals designate like structural elements. Embodiments are illustrated by way of example, and not by way of limitation, in the figures of the accompanying drawings.

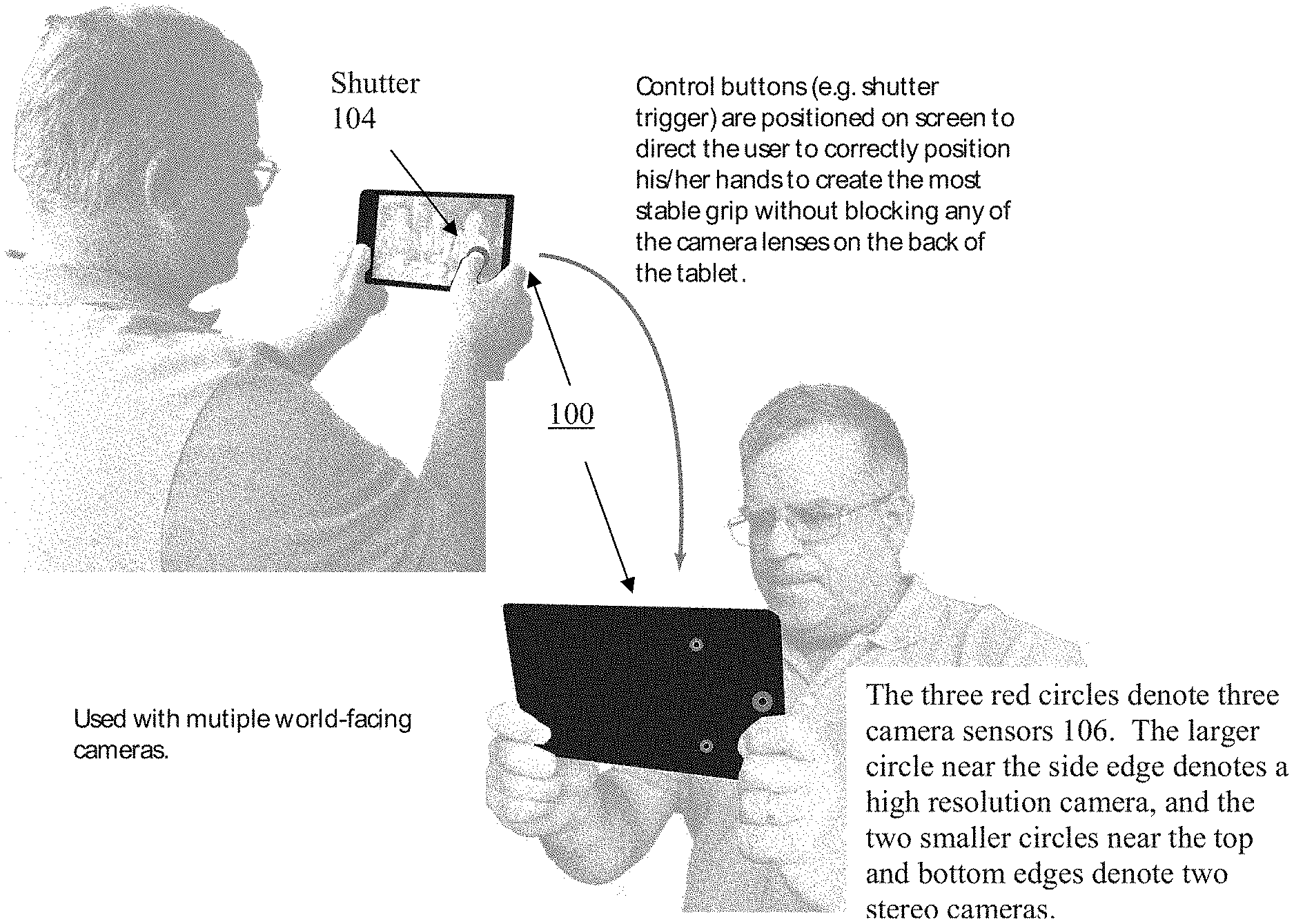

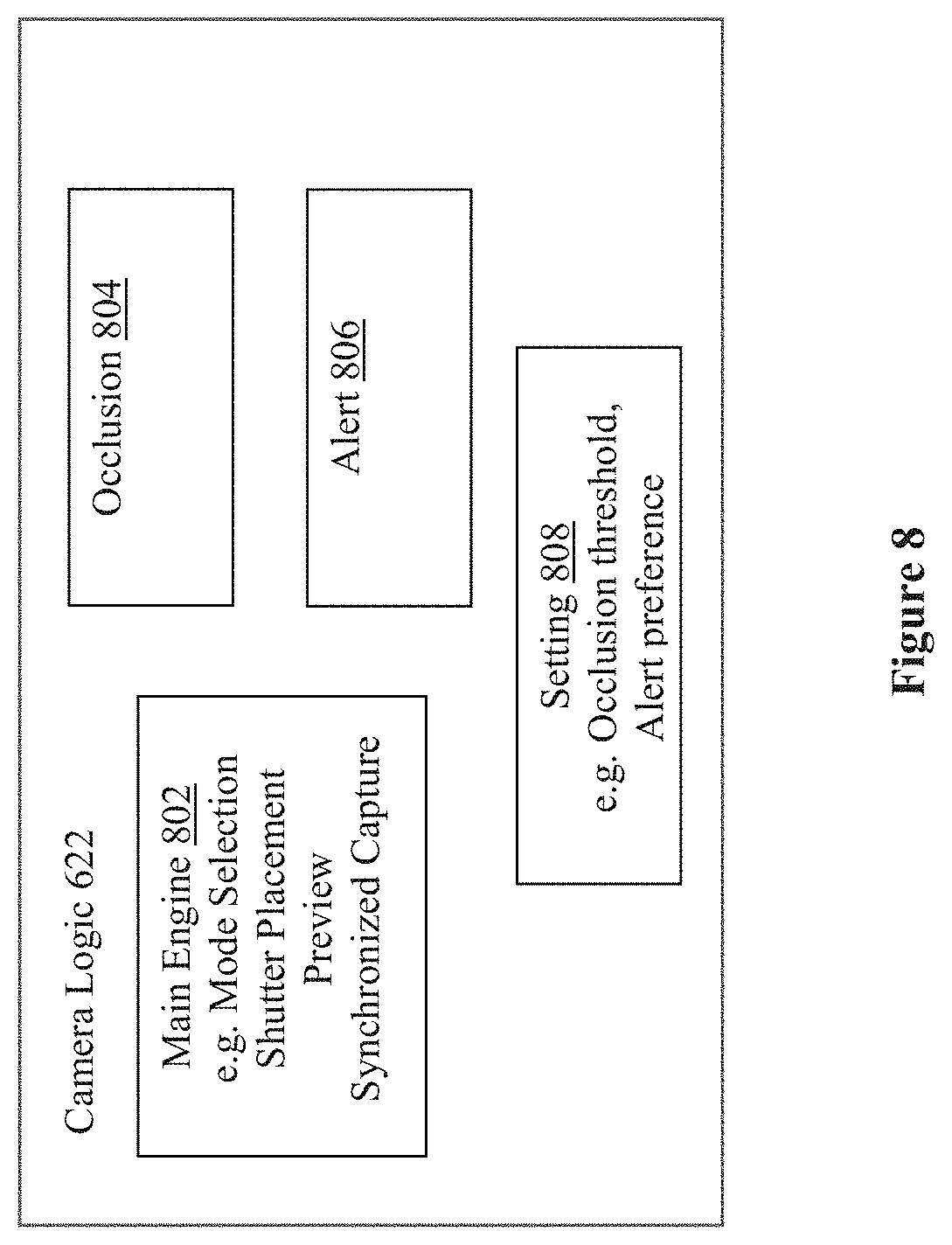

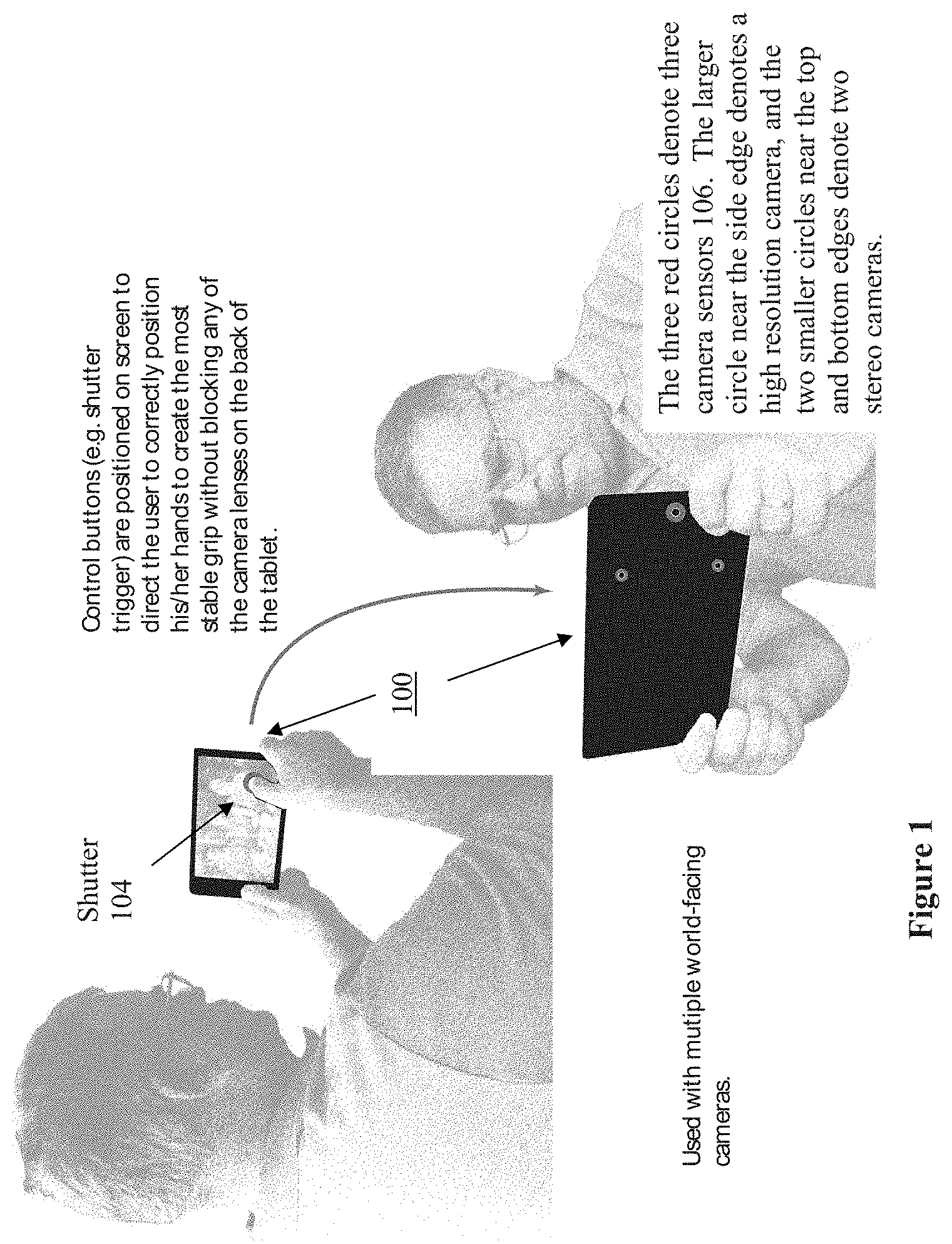

[0006] FIG. 1 illustrates a user facing view and a world facing view of a multi-camera device of the present disclosure for depth photography and depth video applications, in accordance with embodiments.

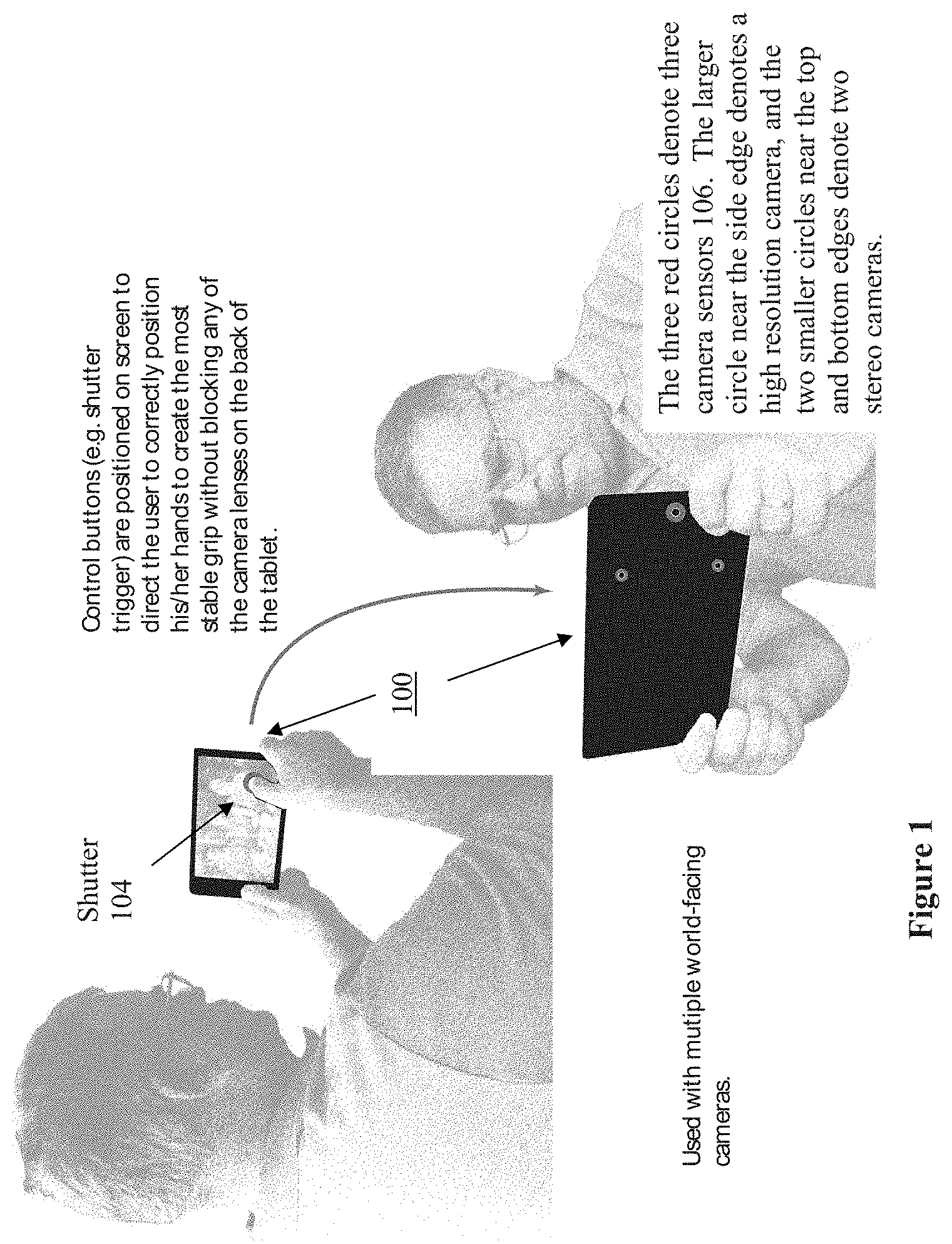

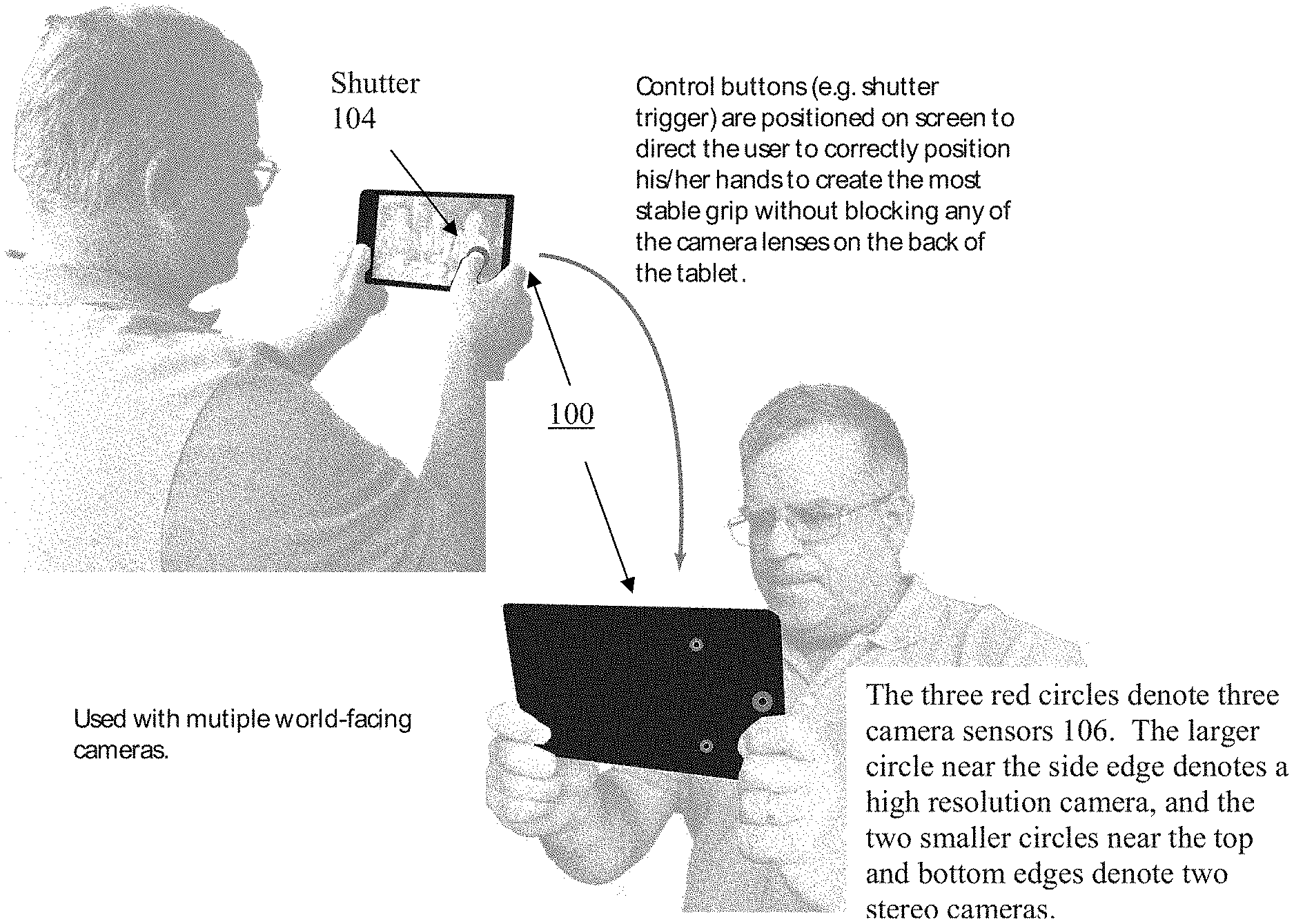

[0007] FIG. 2 illustrates a front view of the multi-camera device, further depicting placement of a soft shutter button, in accordance with embodiments.

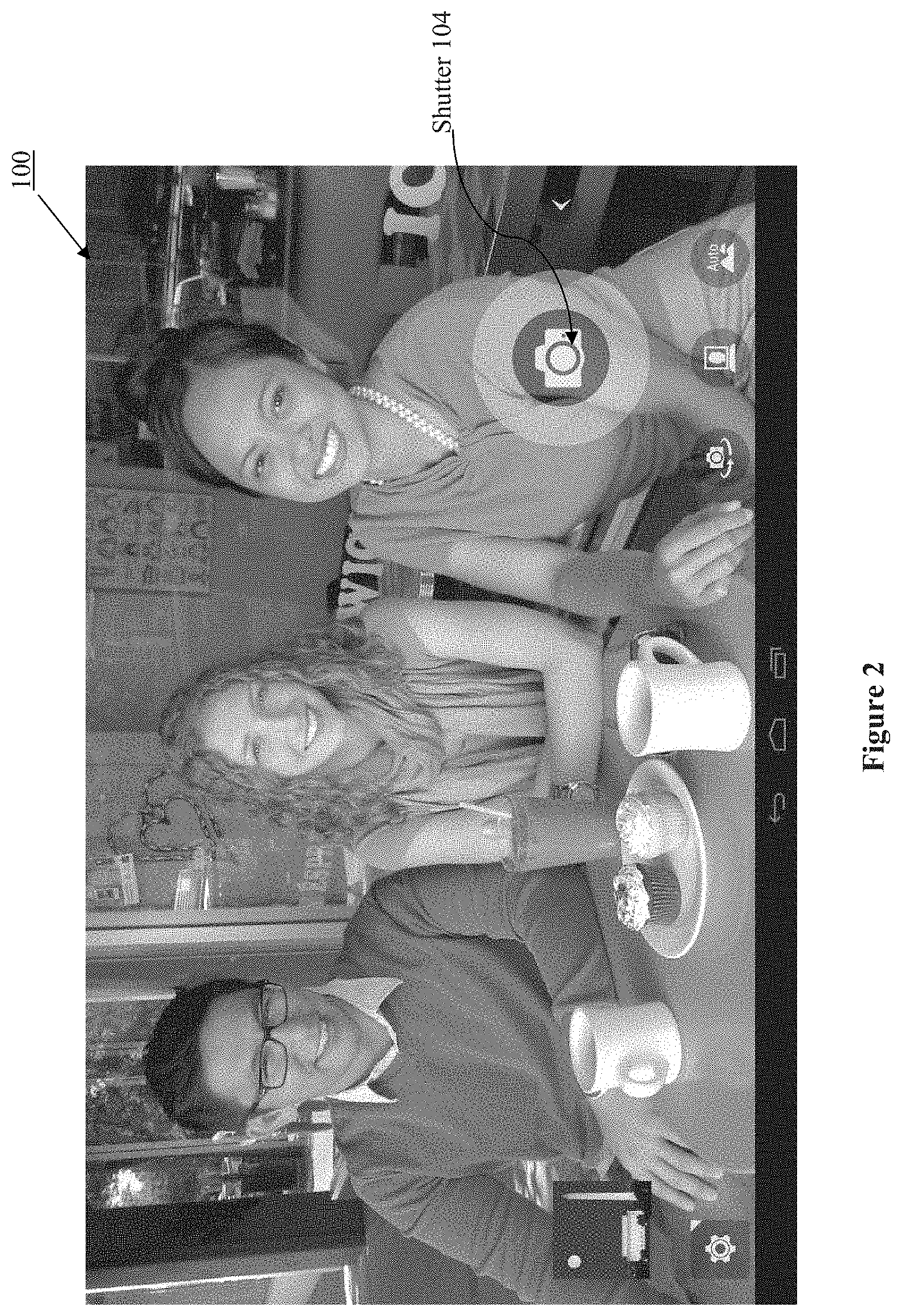

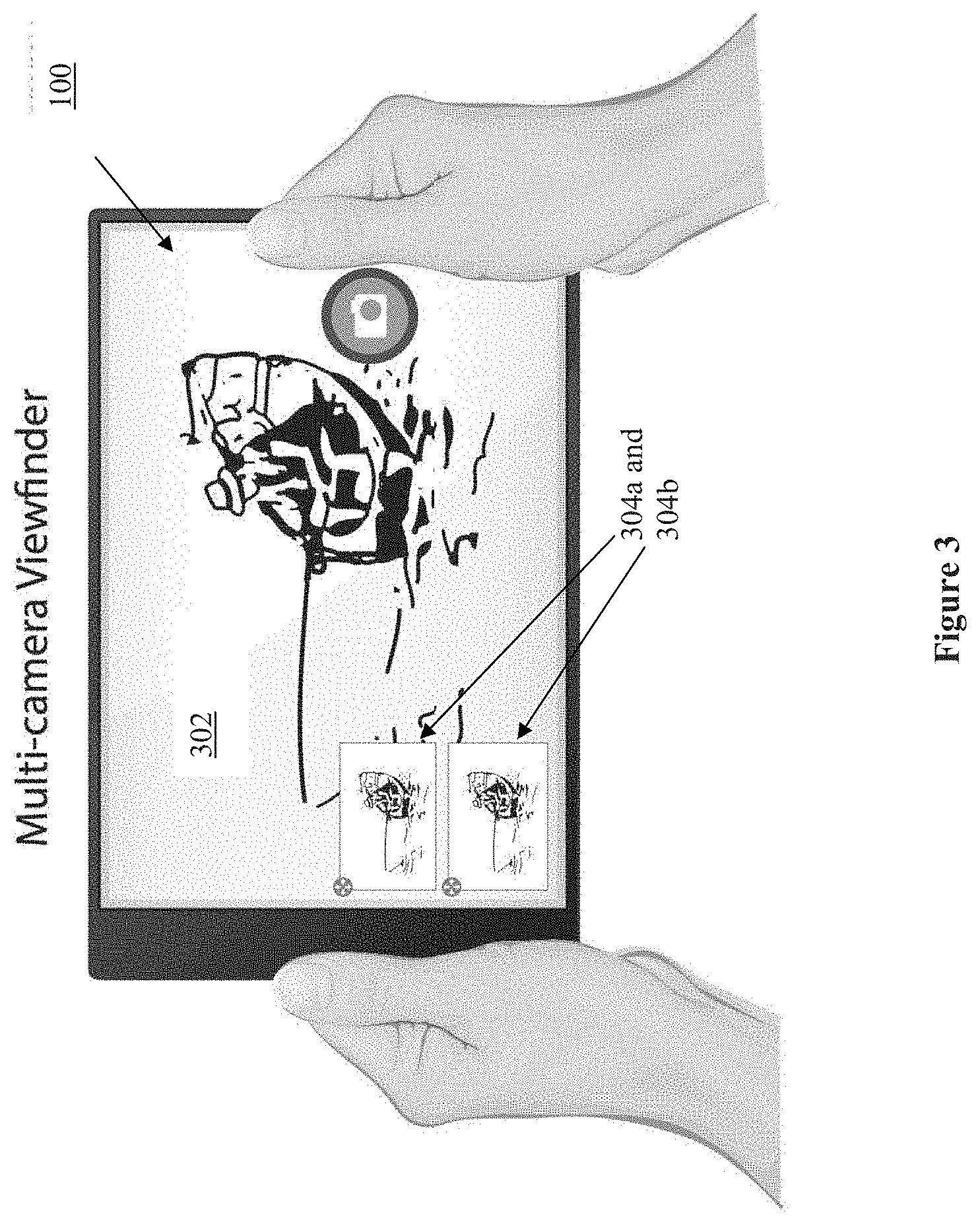

[0008] FIG. 3 illustrates another front view of the multi-camera device, depicting viewfinders of the multi-camera device, in accordance with embodiments.

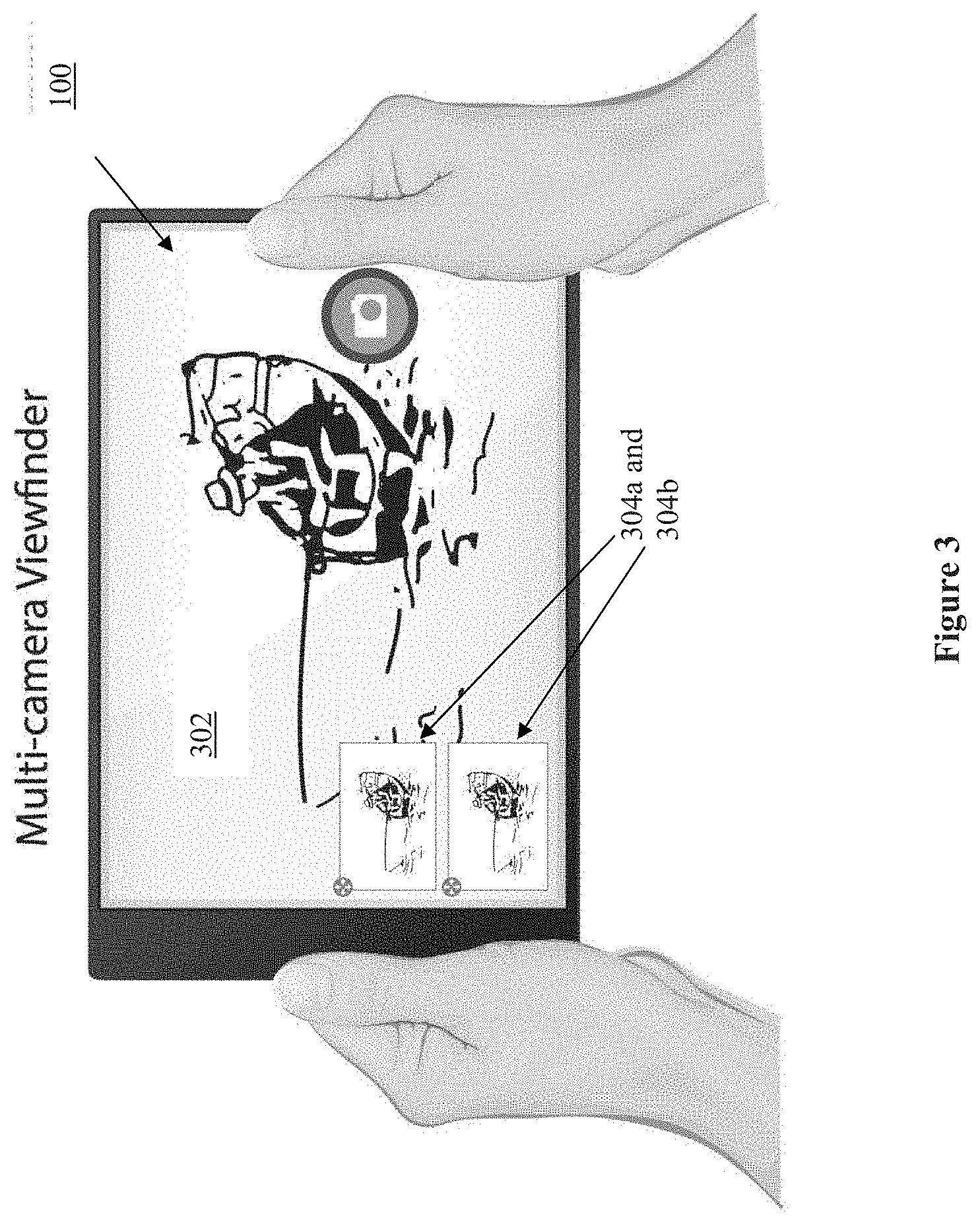

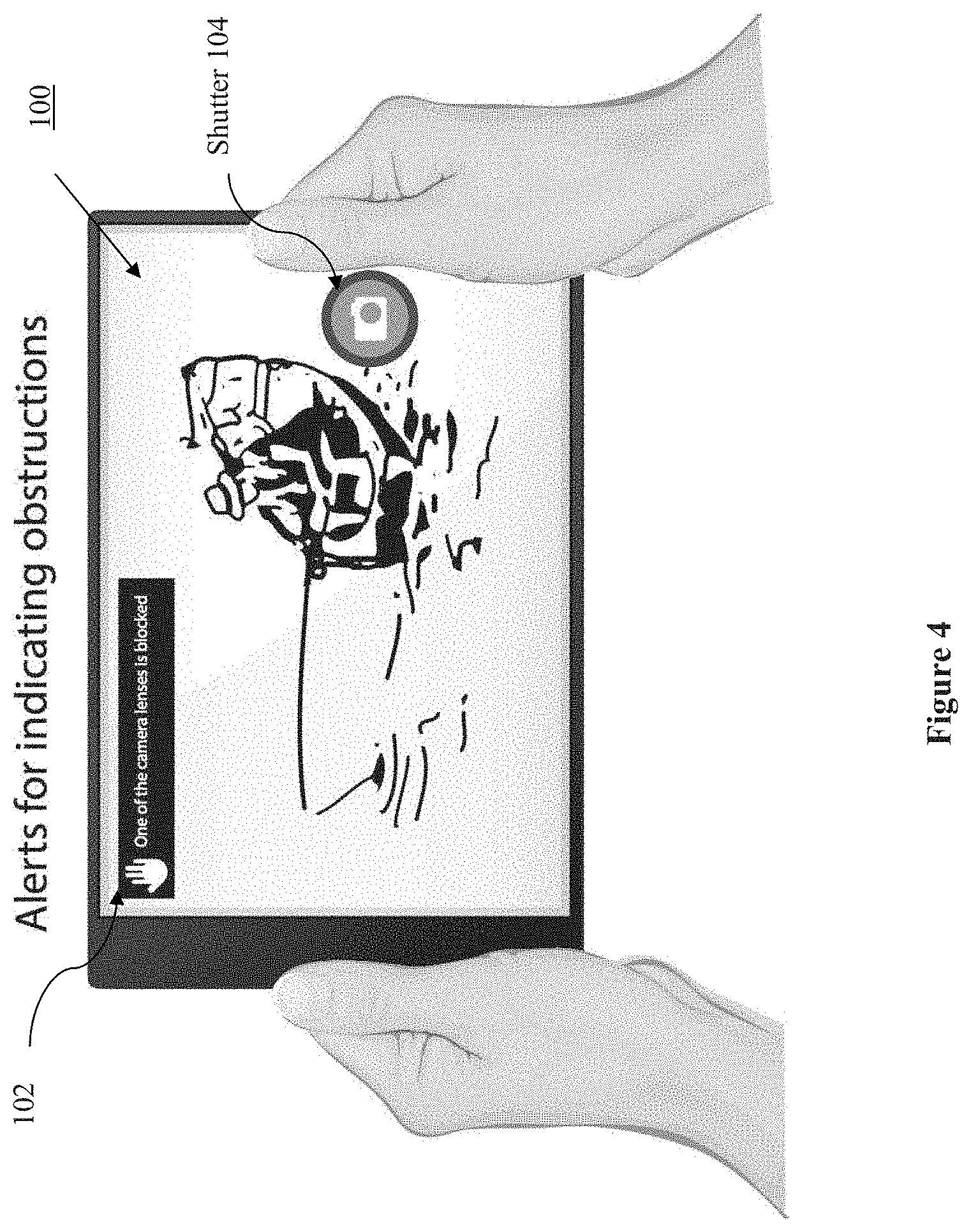

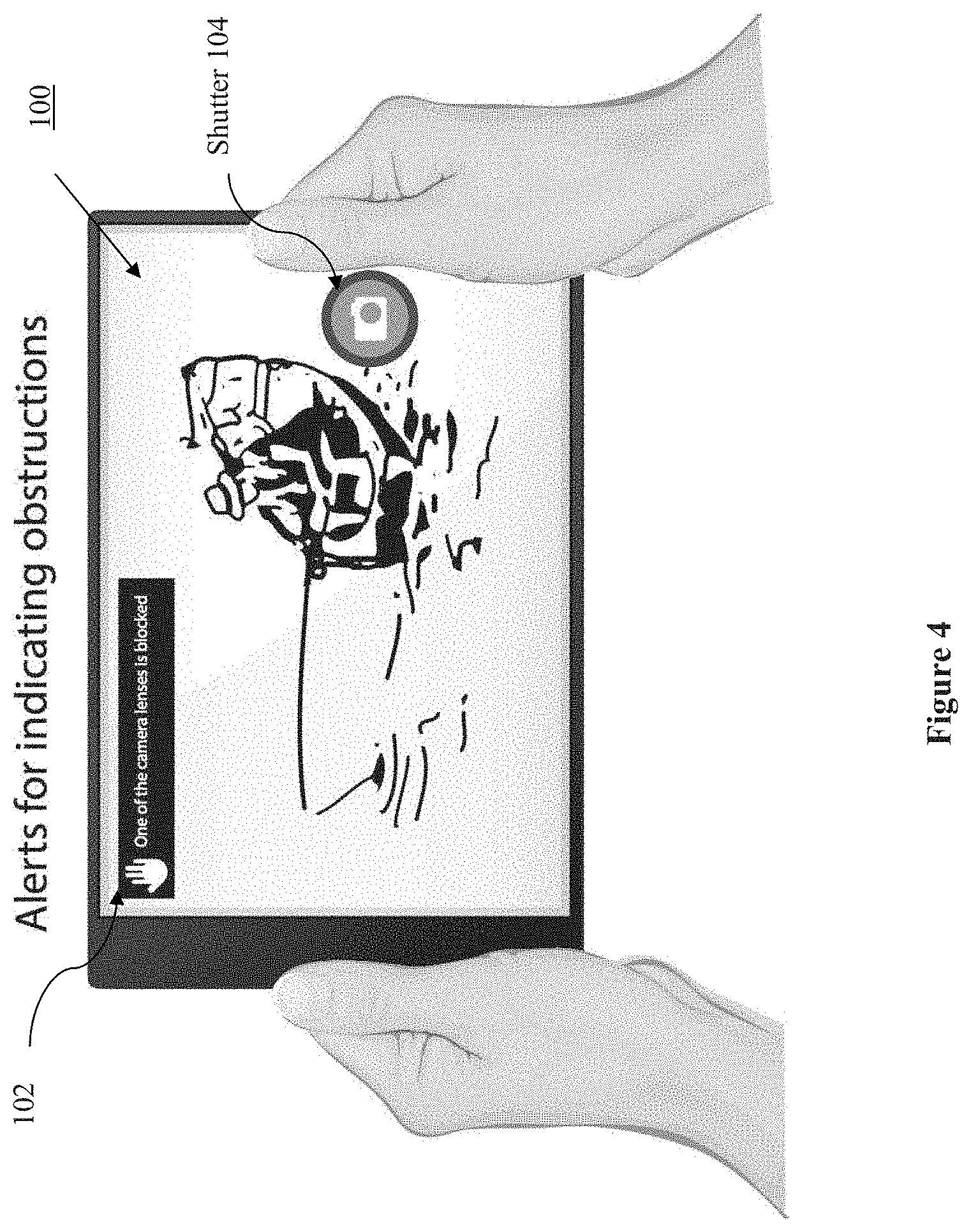

[0009] FIG. 4 illustrates still another front view of the multi-camera device, depicting provision of an alert when one or more of the camera sensors are blocked or obscured, in accordance with embodiments.

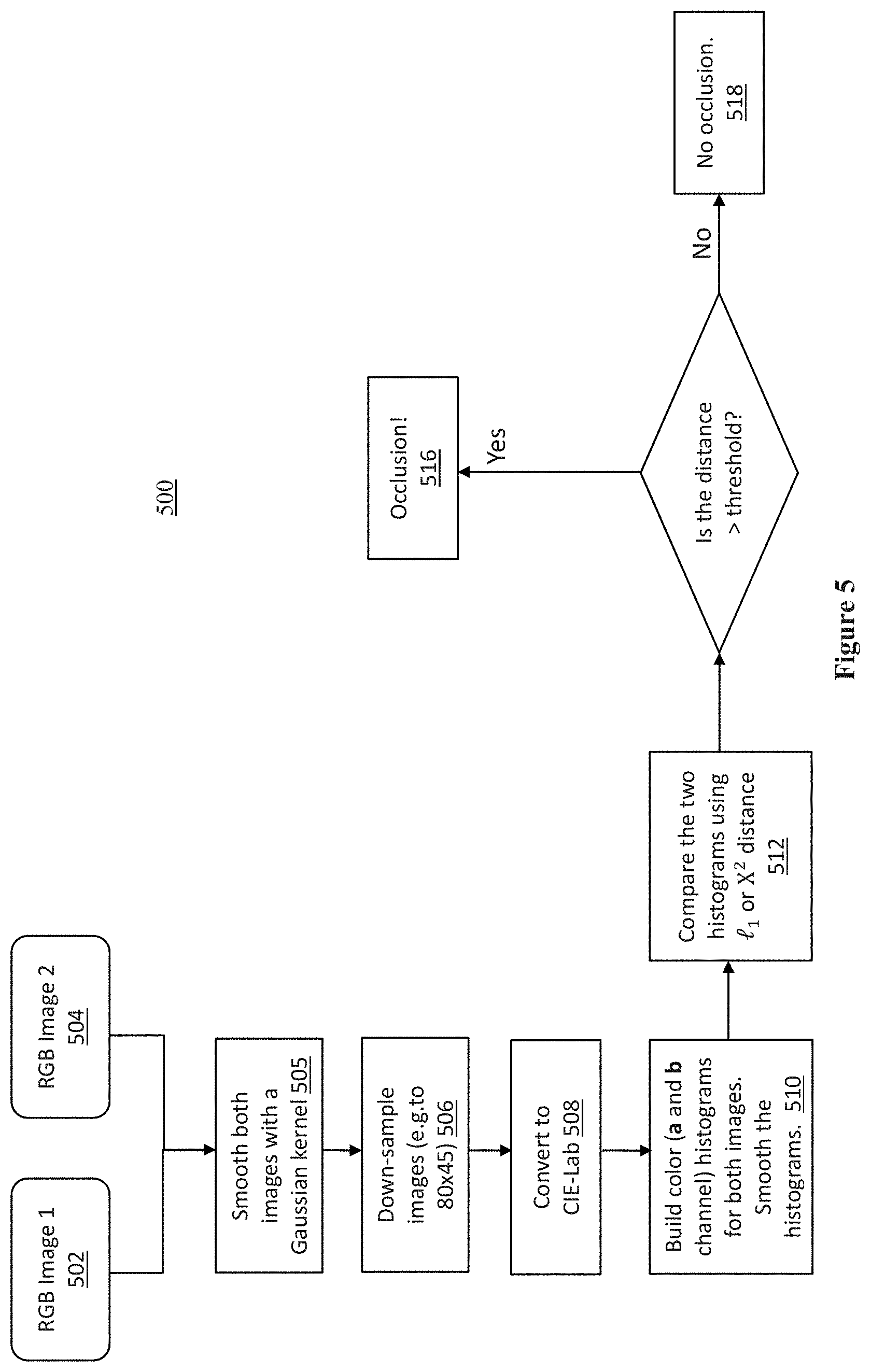

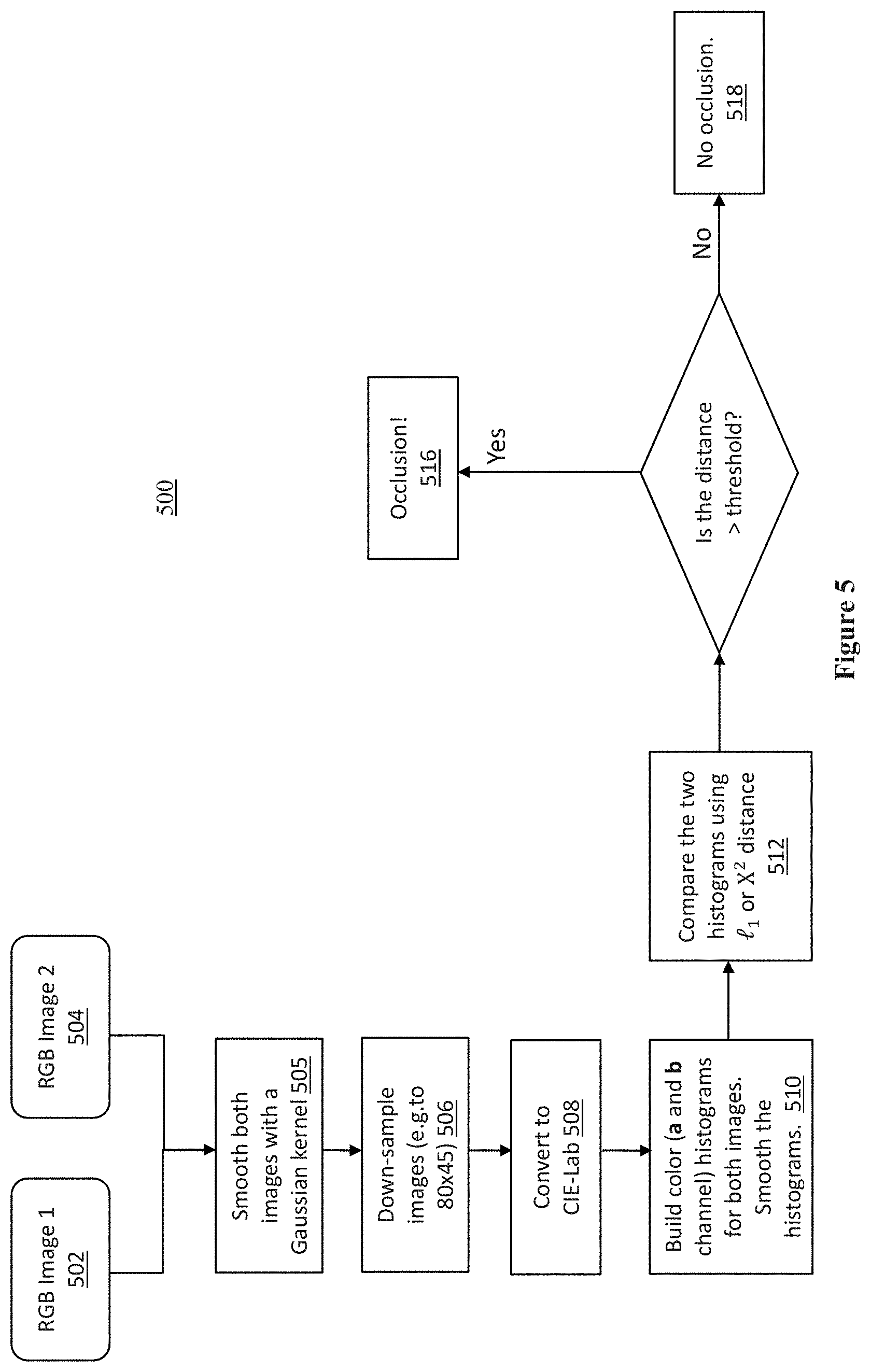

[0010] FIG. 5 illustrates a process for determining occlusion, blocking of a camera sensor, in accordance with embodiments.

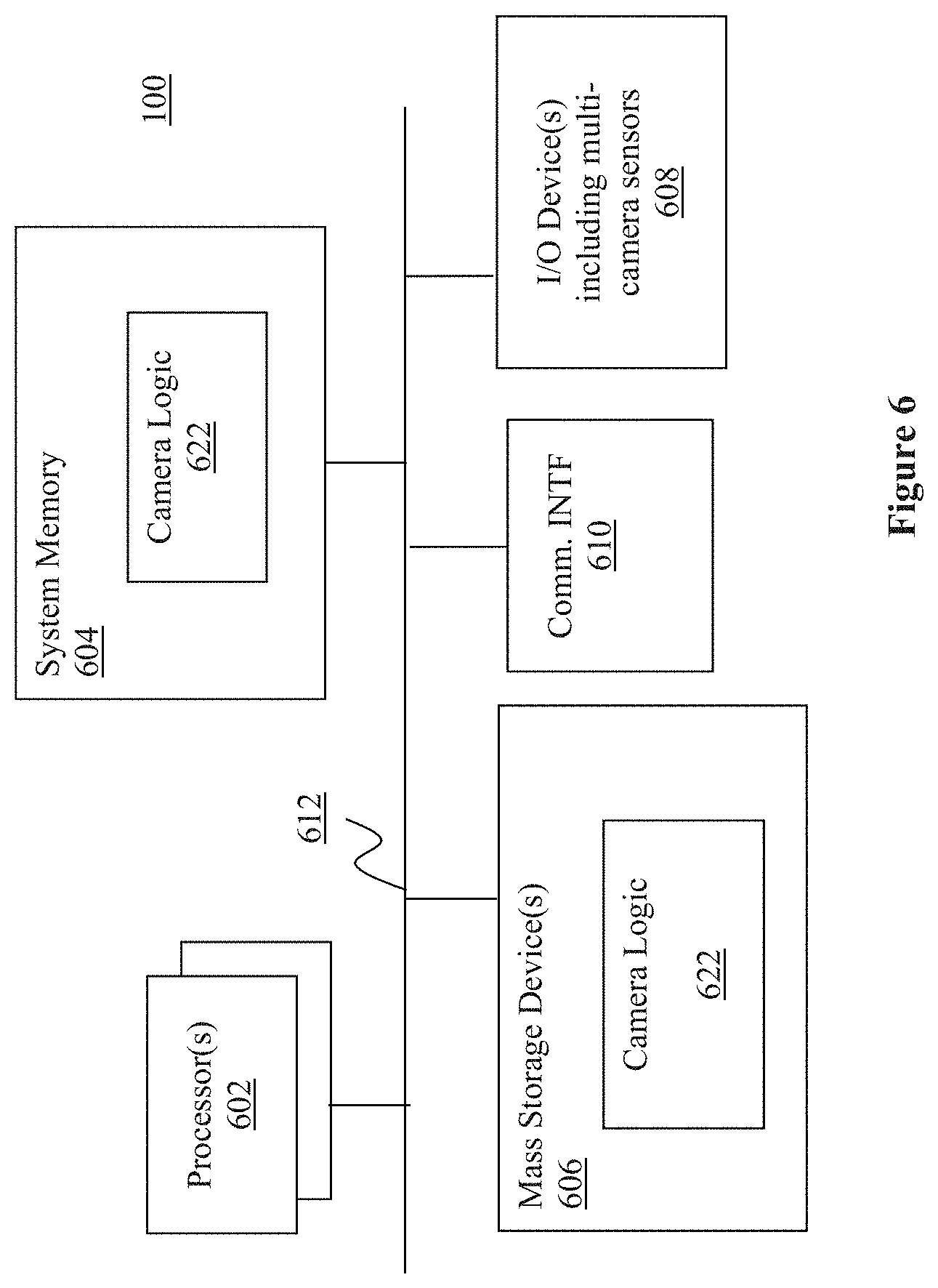

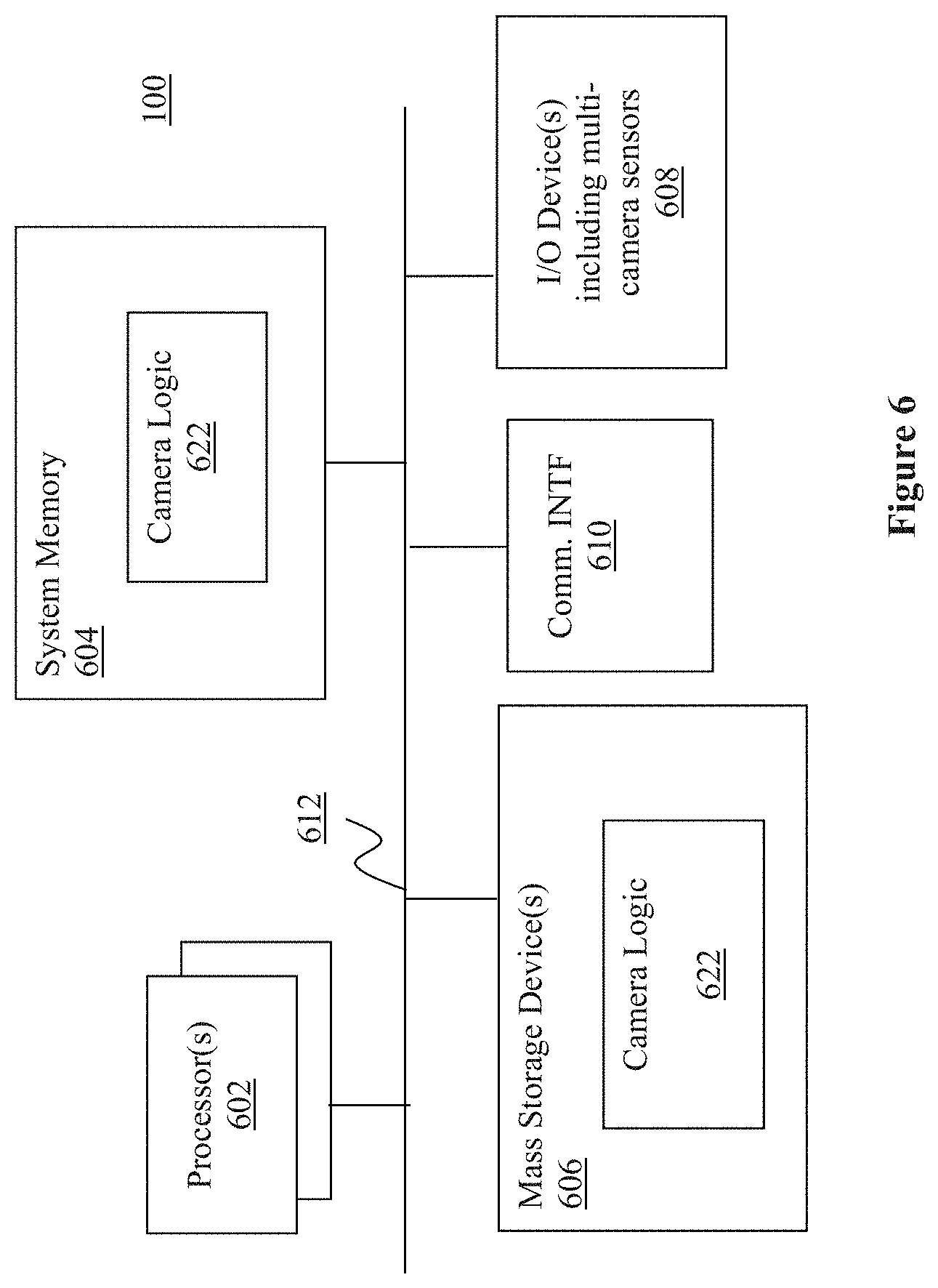

[0011] FIG. 6 illustrates a block diagram of the multi-camera device of FIGS. 1-5, in accordance with various embodiments.

[0012] FIG. 7 illustrates an example storage medium with instructions configured to enable a multi-camera device to practice the present disclosure, in accordance with various embodiments.

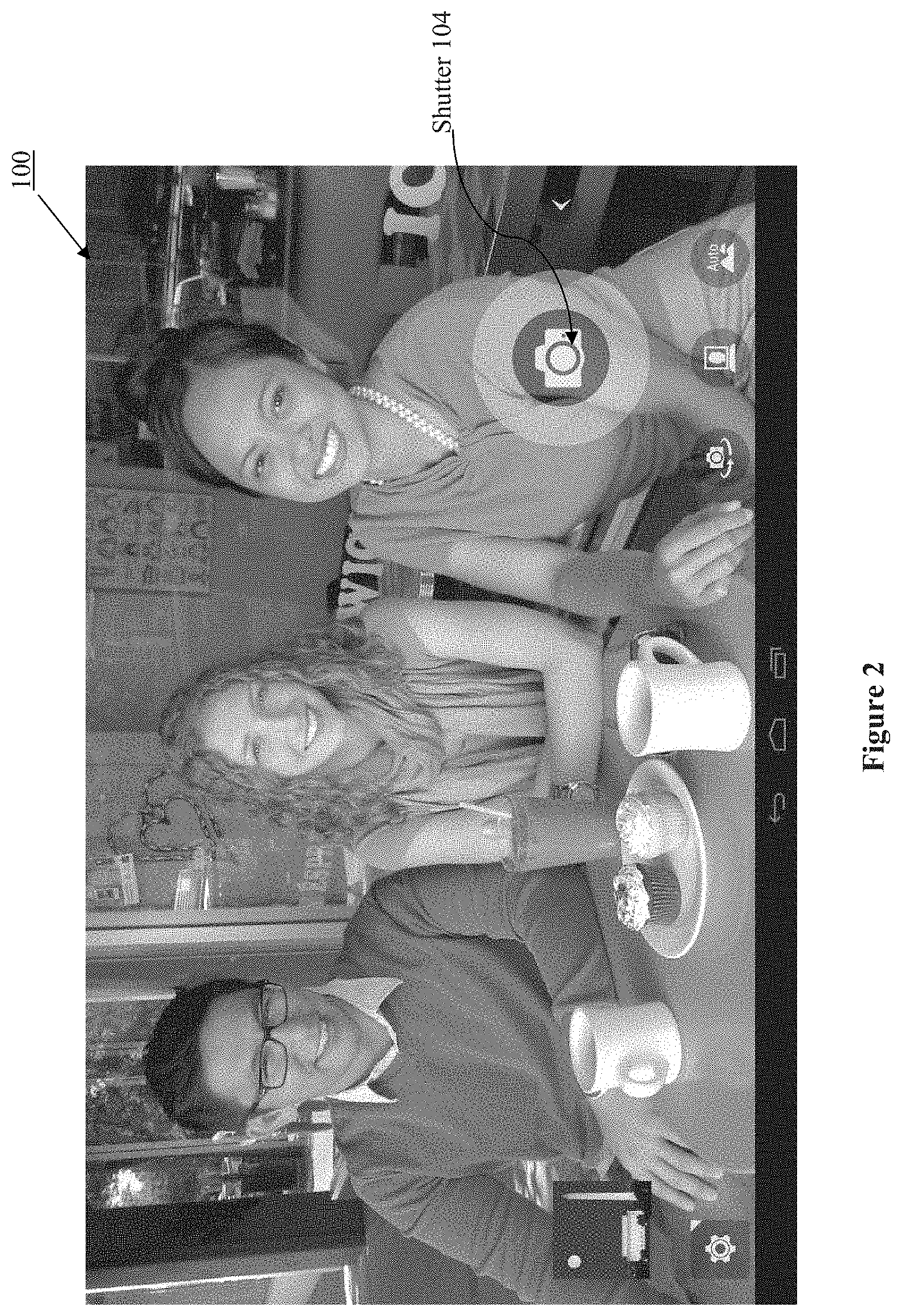

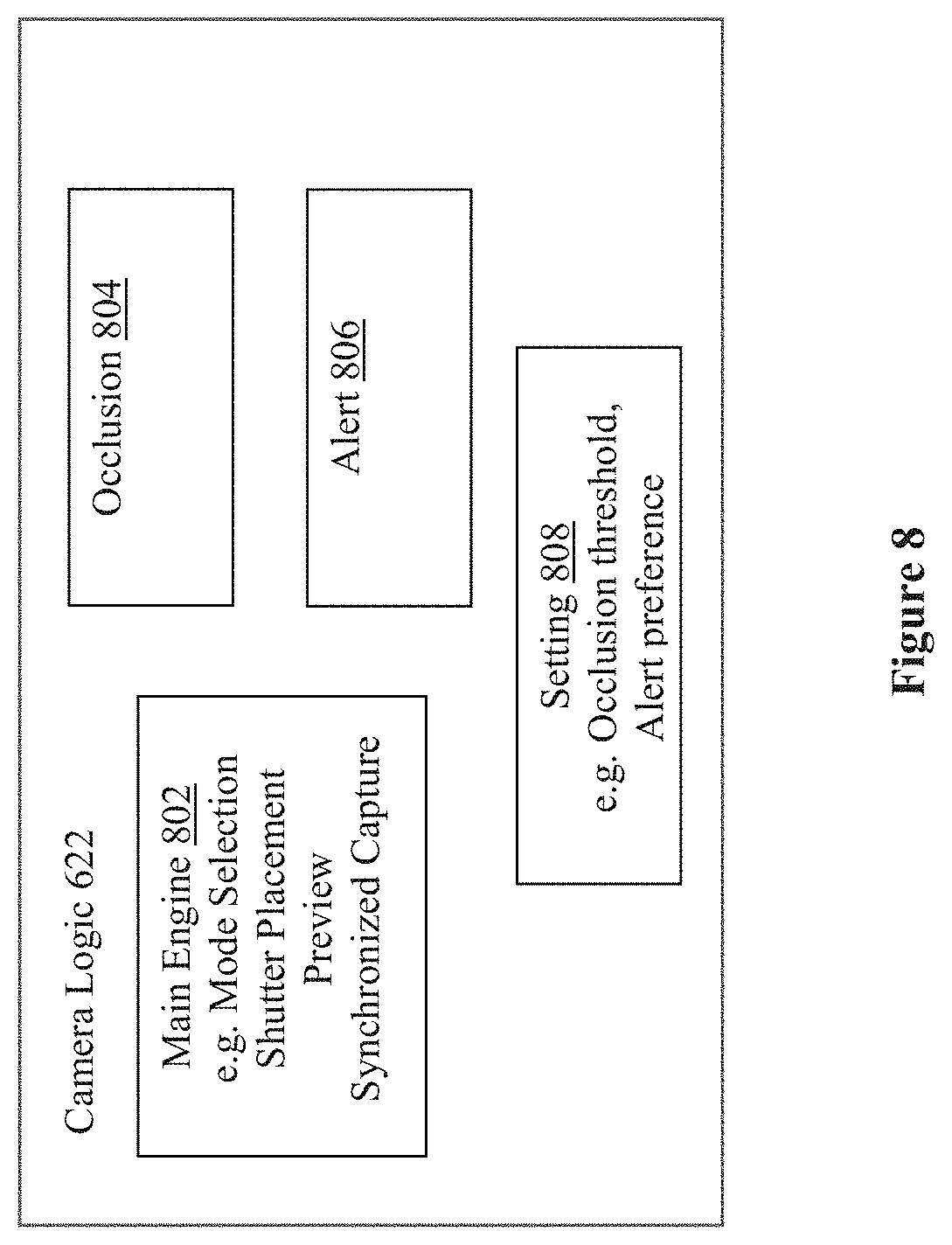

[0013] FIG. 8 illustrate a function block view of the camera logic, in accordance with various embodiments.

DETAILED DESCRIPTION

[0014] Apparatuses, methods and storage medium associated with multi-camera devices are disclosed herein. In embodiments, a multi-camera device may include 3 or more camera sensors disposed on a world facing side of the multi-camera device for depth photography or depth video applications. Further, the multi-camera device may be configured to provide a soft shutter button to be disposed at a location on an opposite side to the world facing side, coordinated with locations of the 3 or more camera sensors that reduces likelihood of blocking of one or more of the 3 or more camera sensors. When the soft shutter button is activated, the multi-camera device operates with all camera sensors sensing concurrently and their captured frames synchronized and numbered in sequence.

[0015] In embodiments, the 3 or more camera sensors may include one high resolution camera sensor and two stereo camera sensors, and the multi-camera device is further configured with view finder logic to provide a main view finder for the one high resolution camera sensor, and two picture-in-picture viewfinders for the two stereo camera sensors.

[0016] Still further, in embodiments, the multi-camera device may include blocking determination logic to determine whether one or more of the 3 or more camera sensors is blocked, and provide an alert if at least one of the 3 or more camera sensors is blocked.

[0017] In the description to follow, reference is made to the accompanying drawings which form a part hereof wherein like numerals designate like parts throughout, and in which is shown by way of illustration embodiments that may be practiced. It is to be understood that other embodiments may be utilized and structural or logical changes may be made without departing from the scope of the present disclosure. Therefore, the following detailed description is not to be taken in a limiting sense, and the scope of embodiments is defined by the appended claims and their equivalents.

[0018] Operations of various methods may be described as multiple discrete actions or operations in turn, in a manner that is most helpful in understanding the claimed subject matter. However, the order of description should not be construed as to imply that these operations are necessarily order dependent. In particular, these operations may not be performed in the order of presentation. Operations described may be performed in a different order than the described embodiments. Various additional operations may be performed and/or described operations may be omitted, split or combined in additional embodiments.

[0019] For the purposes of the present disclosure, the phrase "A and/or B" means (A), (B), or (A and B). For the purposes of the present disclosure, the phrase "A, B, and/or C" means (A), (B), (C), (A and B), (A and C), (B and C), or (A, B and C).

[0020] The description may use the phrases "in an embodiment," or "in embodiments," which may each refer to one or more of the same or different embodiments. Furthermore, the terms "comprising," "including," "having," and the like, as used with respect to embodiments of the present disclosure, are synonymous.

[0021] As used hereinafter, including the claims, the term "module" may refer to, be part of, or include an Application Specific Integrated Circuit (ASIC), an electronic circuit, a processor (shared, dedicated, or group) and/or memory (shared, dedicated, or group) that execute one or more software or firmware programs, a combinational logic circuit, and/or other suitable components that provide the described functionality.

[0022] FIG. 1 illustrates a user facing view and a world facing view of a multi-camera device of the present disclosure, in accordance with embodiments. As illustrated, in embodiments, multi-camera device 100 may include 3 camera sensors 106 co-disposed on a world facing side of multi-camera device 100 for depth photography or depth video applications during a depth camera mode of operation. A main one of the 3 camera sensors 106 may be employed for conventional photography during a single camera mode of operation.

[0023] Unlike traditional smartphones or computing tablets where the shutter button is typically horizontally centered and below the centerfold of the device, the position of the shutter button 104 of multi-camera device 100 intelligently accounts for the physical locations of the world-facing camera sensors. More specifically, in embodiments, multi-camera device 100 may be configured to provide a soft shutter button 102 at a location on an opposite side to the world facing side, the user facing side, coordinated with locations of the 3 camera sensors 106 that reduce likelihood of blocking of one or more of the 3 camera sensors 106. The user facing side may also be referred to as the front side of multi-camera device 100, and the world facing side may be referred to as the rear or back side of multi-camera device 100.

[0024] In embodiments, the 3 camera sensors 106 may include one high resolution camera sensor (8MP) and two stereo camera sensors (720p). All 3 camera sensors, the high resolution camera sensor (8MP) and the two stereo camera sensors (720p) are employed during the depth camera mode of operation and are synchronized by logic to concurrently capture multiple frames with identical frame sequence numbers, whereas only the high resolution camera sensor (8MP) is employed during the single camera mode of operation. In embodiments, the 3 camera sensors 106 may be arranged in a triangular pattern with the high resolution camera sensor (8MP) disposed proximally at the center of a side edge, and the two stereo camera sensors (720p) disposed proximally near the side edge at the top and bottom edges.

[0025] In embodiments, multi-camera device 100 may be configured with camera logic to place the soft shutter button 102 (also referred to as trigger) at the lower right corner of the user facing (front) side of multi-camera device 100 to guide a user in holding the multi-camera device 100 to reduce the likelihood of the user's hands or fingers blocking one or more of the 3 camera sensors 106. See also FIG. 2 for the complementary placement of the soft shutter button 102 or trigger, when the multi-camera device 100 is operated in a landscape orientation. When the soft shutter button is activated, the multi-camera device operates with all camera sensors sensing concurrently and their captured frames synchronized and numbered in sequence.

[0026] Referring now to FIG. 3, wherein another user facing or front view of multi-camera device 100, in accordance with embodiments, is illustrated. As shown, in embodiments, multi-camera device 100 may be configured with camera logic (e.g., view finder logic) to provide a main view finder 302 for the one high resolution camera sensor, and two picture-in-picture viewfinders 304a and 304b for the two stereo camera sensors.

[0027] In embodiments, multi-camera device 100 may be configured with a camera preview window (not shown) for a user to select the depth camera mode of operation or the single camera mode of operation. In response to a user selecting the depth camera mode, a new preview display that shows all of the camera sensors 106 in real-time will be presented. The background of the main preview surface 302 will display the main high resolution camera frame, while two smaller picture-in-picture windows 304a and 304b will display the stereo camera previews.

[0028] Referring now to FIG. 4, wherein still another user facing or front view of multi-camera device 100, in accordance with embodiments, is illustrated. As shown, in embodiments, the multi-camera device 100 may be configured with camera logic (e.g., blocking determination logic) to determine whether one or more of the 3 camera sensors 106 is blocked or obscured by either the user's hands, fingers or by another object, and provide an alert 102 if at least one of the 3 camera sensors 106 is blocked. In alternate embodiments, in addition to or in lieu of a visual alert 102, an audio and/or mechanical alert, such as vibration, may be provided.

[0029] In embodiments, in response to a user selection of the depth camera mode, the multi-camera device 100 may switch from the single camera mode to the multi-camera mode operation. At this time, a scan may begin to evaluate if any or all of the stereo cameras 106 are being blocked or obscured. If a block or obscure state is flagged, an alert message in the camera preview (or a sound/voice or vibration alert) may be provided to indicate that the multi-camera device 100 is blocked or obscured. In embodiments, the alert message and/or signals may continue and not go away until the user has adjusted their hands, fingers or any object, unblocking all the multi-camera sensors 106.

[0030] In embodiments, multi-camera device 100 may be configured to perform a threshold scan of the preview image to determine if one or more of camera sensors 106 is blocked, thus ensuring all three world-facing camera sensors are unobscured at the same time for depth photography or depth video application.

[0031] FIG. 5 illustrates a process for determining occlusion, blocking or obscuring of one or more of the camera sensors, in accordance with embodiments. Process 500 may be performed by e.g., a camera application within multi-camera device 100.

[0032] At blocks 502 and 504, RGB images of two stereo camera sensors may be received.

[0033] At block 505, the images of the two camera may be smoothed, e.g., using a Gaussian kernel. At block 506, the images of the two cameras may be down-sampled, e.g. to 80.times.45.

[0034] At block 508, the down-sampled images may be converted to CIE-LAB color space images (CIE=International Commission on Illumination).

[0035] At block 510, color histograms for both a and b channel may be built for both images.

[0036] At block 512, the two histograms may be compared using I.sub.1 or X.sup.2 distance, where I.sub.1 stands for the distance of the histograms, and X.sup.2 stands for the Chi-Squared distances of the histograms.

[0037] At block 514, a determination may be made on whether the distance is greater than a threshold? The threshold value may vary dependent on applications, e.g., quality desired, and/or lighting environments, low light and/or high, intense, reflective light environments. The threshold values may be empirically determined.

[0038] At block 516, if a result of the determination indicates that the threshold is exceeded, an occlusion, i.e. blocking, conclusion may be drawn. Further, on conclusion of occlusion, an alert action (audio, visual, and/or mechanical) may be taken as earlier described.

[0039] At block 518, on the other hand, if a result of the determination indicates that the threshold is not exceeded, no occlusion, i.e. blocking, conclusion may be drawn.

[0040] Before further describing multi-camera device 100, it should be noted that while for ease of understanding, multi-camera device 100 has been described as having 3 camera sensors 106, the present disclosure is not so limited. In alternate embodiments, multi-camera device 100 may be configured with more than 3 camera sensors.

[0041] Referring now to FIG. 6, wherein a block diagram of the multi-camera device 100 of FIG. 1, in accordance with various embodiments, is illustrated. As shown, multi-camera device 100 may include one or more processors or processor cores 602, and system memory 604. In embodiments, multiples processor cores 602 may be disposed on one die. For the purpose of this application, including the claims, the terms "processor" and "processor cores" may be considered synonymous, unless the context clearly requires otherwise. Additionally, multi-camera device 100 may include mass storage device(s) 606 (such as solid state drives), input/output device(s) 608 (such as camera sensors 106, display, and so forth) and communication interfaces 610 (such as network interface cards, modems and so forth). In embodiments, the display may be touch sensitive. In embodiments, communication interfaces 610 may support wired or wireless communication, including near field communication. The elements may be coupled to each other via system bus 612, which may represent one or more buses. In the case of multiple buses, they may be bridged by one or more bus bridges (not shown).

[0042] Each of these elements may perform its conventional functions known in the art. In particular, system memory 604 and mass storage device(s) 606 may be employed to store a working copy and a permanent copy of the programming instructions implementing the operations described earlier, e.g., but not limited to, operations associated with placement of the shutter button, provision of the previews, determination of blocking, provision of alert, capturing frames, synchronization of captured frames, numbering the synchronized frames in sequence, and so forth, denoted as camera logic 622. The various elements may be implemented by assembler instructions supported by processor(s) 602 or high-level languages, such as, for example, C, that can be compiled into such instructions.

[0043] The permanent copy of the programming instructions may be placed into permanent mass storage device(s) 606 in the factory, or in the field, through, for example, a distribution medium (not shown), such as a compact disc (CD), or through communication interface 610 (from a distribution server (not shown)).

[0044] The number, capability and/or capacity of these elements 610-612 may vary, depending on the intended use of example multi-camera device 100, e.g., whether example multi-camera device 100 is a smartphone, tablet, ultrabook, or a laptop. The constitutions of these elements 610-612 are otherwise known, and accordingly will not be further described.

[0045] FIG. 7 illustrates an example non-transitory computer-readable storage medium having instructions configured to practice all or selected ones of the operations associated with operations associated with placement of the shutter button, provision of the previews, determination of blocking, provision of alert, and so forth, earlier described, in accordance with various embodiments. As illustrated, non-transitory computer-readable storage medium 702 may include a number of programming instructions 704. Programming instructions 704 may be configured to enable a device, e.g., multi-camera device 100, in response to execution of the programming instructions, to perform, e.g., various operations associated with placement of the shutter button, provision of the previews, determination of blocking, provision of alert, capturing frames, synchronization of captured frames, numbering the synchronized frames in sequence, and so forth, described with references to FIGS. 1-5. In alternate embodiments, programming instructions 704 may be disposed on multiple non-transitory computer-readable storage medium 702 instead. In still other embodiments, programming instructions 704 may be encoded in transitory computer readable medium, such as signals.

[0046] Referring back to FIG. 6, for one embodiment, at least one of processors 602 may be packaged together with a computer-readable storage medium having camera logic 622 (in lieu of storing in system memory 604 and/or mass storage device 606) configured to practice all or selected ones of the operations earlier described with references to FIG. 1-5. For one embodiment, at least one of processors 602 may be packaged together with a computer-readable storage medium having camera logic 622 to form a System in Package (SiP). For one embodiment, at least one of processors 602 may be integrated on the same die with a computer readable storage medium having camera logic 622. For one embodiment, at least one of processors 602 may be packaged together with a computer-readable storage medium having camera logic 622 to form a System on Chip (SoC). For at least one embodiment, the SoC may be utilized in, e.g., but not limited to, a hybrid computing tablet/laptop.

[0047] Referring now to FIG. 8, wherein a function block view of the camera logic, in accordance with various embodiments, is shown. As illustrated, in embodiments, camera logic 622 may include main engine 802, and a number of auxiliary function blocks, such as occlusion function block 804, alert function block 806, and setting function block 808. Main engine 802 may be configured with the main logic to operate multi-camera device 100, including, but are not limited to, operation mode selection, soft shutter placement, preview, capturing of image, synchronization of captured frames, numbering the synchronized frames in sequence, and so forth. Occlusion function block 804 may be configured to determine obstruction/occlusion as earlier described. Alert function block 806 may be configured to provide alert as earlier described. Setting function block 808 may be configured to set various configuration parameters, including, but are not limited to, threshold values for determining occlusion, alert preferences, and so forth. In alternate embodiments, camera logic 622 may include more or less functions distributed in more or less function blocks.

[0048] Example 1 may be a multi-camera device, comprising: 3 or more camera sensors disposed on a world facing side of the multi-camera device; and a soft shutter button to be disposed on an opposite side to the world facing side, at a location coordinated with locations of the 3 or more camera sensors that reduces likelihood of a user of the multi-camera device blocking one or more of the 3 or more camera sensors.

[0049] Example 2 may be example 1, wherein the 3 or more camera sensors are disposed in a triangular pattern on one edge of the world facing side of the multi-camera device, and the soft shutter button may be disposed in a lower corner of an opposite edge of the opposite side.

[0050] Example 3 may be example 2, wherein a first of the 3 or more camera sensors may be disposed proximally at a center location of a side edge of the world facing side of the multi-camera device, a second of the 3 or more camera sensors may be disposed proximally at a location biased towards the side edge, at a top edge of the world facing side of the multi-camera device, and a third of the 3 or more camera sensors may be disposed proximally at a location biased towards the side edge, at a bottom edge of the world facing side of the multi-camera device.

[0051] Example 4 may be example 3, wherein the first camera sensor has a first resolution, and the second and third camera sensors have second and third resolutions that are lower than the first resolution.

[0052] Example 5 may be example 4, wherein the second and third camera sensors form a stereo camera pair.

[0053] Example 6 may be example 4, wherein the first camera sensor has a first resolution of 8 MP, and the second and third camera sensors have second and third resolutions, both of 720p.

[0054] Example 7 may be example 4, further comprising view finder logic to provide a main view finder for the first camera sensor.

[0055] Example 8 may be example 7, wherein the multi-camera device has at least a single camera mode of operation, and the view finder logic may provide the main view finder in at least the single camera mode of operation.

[0056] Example 9 may be example 8, wherein the multi-camera device has at least another depth camera mode of operation, and the view finder logic may also provide the main view finder during the depth camera mode of operation.

[0057] Example 10 may be example 7, wherein the view finder logic to further provide two picture-in-picture viewfinders for the second and third camera sensors.

[0058] Example 11 may be example 10, wherein the multi-camera device has at least a depth camera mode of operation, and the view finder logic may also provide the two picture-in-picture viewfinders during the depth camera mode of operation.

[0059] Example 12 may be any one of examples 1-11, further comprising blocking determination logic to determine whether one or more of the 3 or more camera sensors is blocked, and provide an alert, when at least one of the 3 camera sensors is determined to be blocked.

[0060] Example 13 may be example 12, wherein the alert may comprise a selected one of a visual alert, an audio alert or a mechanical alert.

[0061] Example 14 may be example 12, wherein the blocking determination logic may receive and analyze images from at least 2 of the 3 or more cameras to determine whether one or more of the 3 or more camera sensors is blocked.

[0062] Example 15 may be example 14, wherein to analyze images from at least 2 camera sensors, the blocking determination logic may build respective histograms for one or more color channels for the images, and compare the histograms.

[0063] Example 16 may be example 15, wherein to compare the histograms, the blocking determination logic may compute distances of the histograms.

[0064] Example 17 may be example 14, wherein to analyze images from at least 2 camera sensors, the blocking determination logic may smooth or down sample the images.

[0065] Example 18 may be at least one computer-readable storage medium comprising a plurality of instructions configured to cause a multi-camera device having 3 or more camera sensors, in response to execution of the instructions by the multi-camera device, to provide a soft shutter button at a location on an opposite side to a world facing side of the multi-camera device where the 3 or more camera sensor are disposed, wherein the location of the soft shutter button may be selected in view of locations of the 3 or more camera sensors on the world facing side to reduce likelihood of a user of the multi-camera device blocking one or more of the 3 or more camera sensors.

[0066] Example 19 may be example 18, wherein the 3 or more camera sensors are disposed in a triangular pattern on one edge of the world facing side of the multi-camera device, and the multi camera device may be caused to place the soft shutter button in a lower corner of an opposite edge of the opposite side.

[0067] Example 20 may be example 18, wherein a first of the 3 or more camera sensors has a first resolution, and a second and a third of the 3 or more camera sensors have a second and a third resolution that are lower than the first resolution; wherein the multi-camera device may be further caused to provide a main view finder for the first camera sensor, and two picture-in-picture viewfinders for the second and third camera sensors.

[0068] Example 21 may be example 20, wherein the multi-camera device has at least a single camera mode of operation, and the multi-camera device may be caused to provide the main view finder in at least a single camera mode of operation.

[0069] Example 22 may be example 21, wherein the multi-camera device has at least another depth camera mode of operation, and the multi-camera device may be caused to also provide the main view finder during the depth camera mode of operation.

[0070] Example 23 may be example 20, wherein the multi-camera device has at least a depth camera mode of operation, and the multi-camera device may provide the two picture-in-picture viewfinders during the depth camera mode of operation.

[0071] Example 24 may be any one of examples 18-23, wherein the multi-camera device may be further caused to determine whether one or more of the 3 or more camera sensors is blocked, and provide an alert, when at least one of the 3 or more camera sensors is blocked.

[0072] Example 25 may be example 24, wherein the multi-camera device may be further caused to provide one or more of a visual alert, an audio alert or a mechanical alert, when at least one of the 3 or more camera sensors may be blocked.

[0073] Example 26 may be example 24, wherein the multi-camera device may be further caused to analyze images from at least 2 of the 3 or more cameras to determine whether one or more of the 3 or more camera sensors is blocked.

[0074] Example 27 may be example 26, wherein the multi-camera device may be further caused to build respective histograms for one or more color channels for the images, and compare the histograms, to analyze images from at least 2 camera sensors.

[0075] Example 28 may be example 27, wherein the multi-camera device may be further caused to compute distances of the histograms, to compare the histograms.

[0076] Example 29 may be example 26, wherein the multi-camera device may be further caused to smooth or down sample the images, to analyze images from at least 2 camera sensors.

[0077] Example 30 may be a method for operating a multi-camera device, comprising: providing, by the multi-camera device, a soft shutter button at a location on an opposite side to a world facing side of the multi-camera device where 3 or more camera sensors are disposed, wherein the location may be coordinated with location of the 3 or more camera sensors to reduce likelihood of blocking one or more of the 3 or more camera sensors; determining, by the multi-camera device, whether one or more of the 3 or more camera sensors is blocked; and providing, by the multi-camera device, an alert when a result of the determination indicates at least one of the 3 or more camera sensors is blocked.

[0078] Example 31 may be example 30, wherein providing an alert may comprise providing, by the multi-camera device, a selected one of a visual alert, an audio alter or a mechanical alert.

[0079] Example 32 may be example 30 or 31, wherein a first of the 3 camera sensors has a first resolution, and a second and a third of the 3 camera sensors have a second and a third resolution that are lower than the first resolution; wherein the method may further comprise providing a main view finder for the first camera sensor, and two picture-in-picture viewfinders for the second and third camera sensors.

[0080] Example 33 may be example 32, wherein the multi-camera device has at least a single camera mode of operation, and providing the main view finder may comprise providing the main view finder in at least a single camera mode of operation.

[0081] Example 34 may be example 33, wherein the multi-camera device has at least another depth camera mode of operation, and providing the main view finder may comprise providing the main view finder during the depth camera mode of operation.

[0082] Example 35 may be example 32, wherein the multi-camera device has at least a depth camera mode of operation, and providing the two picture-in-picture view finders may comprise providing the two picture-in-picture viewfinders during the depth camera mode of operation.

[0083] Example 36 may be example 30 or 31, wherein determining whether one or more of the 3 or more camera sensors is blocked may comprise analyzing images from at least 2 of the 3 or more cameras to determine whether one or more of the 3 or more camera sensors is blocked.

[0084] Example 37 may be example 36, wherein analyzing images from at least 2 camera sensors may comprise building respective histograms for one or more color channels for the images, and comparing the histograms.

[0085] Example 38 may be example 37, wherein comparing the histograms may comprise computing distances of the histograms.

[0086] Example 39 may be example 38, wherein analyzing images from at least 2 camera sensors comprises smoothing or down sampling the images.

[0087] Example 40 may be a multi-camera apparatus, comprising: 3 or more camera sensors disposed on a world facing side of the multi-camera apparatus; and means for providing a soft shutter button at a location at a location on an opposite side to the world facing side of the multi-camera apparatus, wherein the location may be coordinated with locations of the 3 or more camera sensors to reduce likelihood of blocking one or more of the 3 or more camera sensors.

[0088] Example 41 may be example 40, further comprising means for determining whether one or more of the 3 or more camera sensors is blocked; and means for providing an alert when a result of the determination indicates at least one of the 3 or more camera sensors is blocked.

[0089] Example 42 may be example 41, wherein means for providing an alert comprises means for providing a selected one of a visual alert, an audio alter or a mechanical alert.

[0090] Example 43 may be example 40, 41 or 42, wherein a first of the 3 camera sensors has a first resolution, and a second and a third of the 3 camera sensors have a second and a third resolution that are lower than the first resolution; wherein the apparatus further comprises means for providing a main view finder for the first camera sensor, and means for providing two picture-in-picture viewfinders for the second and third camera sensors.

[0091] Example 44 may be example 43, wherein the multi-camera apparatus has at least a single camera mode of operation, and means for providing the main view finder comprises means for providing the main view finder in at least a single camera mode of operation.

[0092] Example 45 may be example 44, wherein the multi-camera apparatus has at least another depth camera mode of operation, and means for providing the main view finder comprises means for providing the main view finder during the depth camera mode of operation.

[0093] Example 46 may be example 43, wherein the multi-camera apparatus has at least a depth camera mode of operation, and means for providing the two picture-in-picture view finders comprises means for providing the two picture-in-picture viewfinders during the depth camera mode of operation.

[0094] Example 47 may be example 41 or 42, wherein means for determining whether one or more of the 3 or more camera sensors is blocked comprises means for analyzing images from at least 2 of the 3 or more cameras to determine whether one or more of the 3 or more camera sensors is blocked.

[0095] Example 48 may be example 47, wherein means for analyzing images from at least 2 camera sensors comprises means for building respective histograms for one or more color channels for the images, and means for comparing the histograms.

[0096] Example 49 may be example 48, wherein means for comparing the histograms may comprise means for computing distances of the histograms.

[0097] Example 50 may be example 49, wherein means for analyzing images from at least 2 camera sensors may comprise means for smoothing or down sampling the images.

[0098] Although certain embodiments have been illustrated and described herein for purposes of description, a wide variety of alternate and/or equivalent embodiments or implementations calculated to achieve the same purposes may be substituted for the embodiments shown and described without departing from the scope of the present disclosure. This application is intended to cover any adaptations or variations of the embodiments discussed herein. Therefore, it is manifestly intended that embodiments described herein be limited only by the claims.

[0099] Where the disclosure recites "a" or "a first" element or the equivalent thereof, such disclosure includes one or more such elements, neither requiring nor excluding two or more such elements. Further, ordinal indicators (e.g., first, second or third) for identified elements are used to distinguish between the elements, and do not indicate or imply a required or limited number of such elements, nor do they indicate a particular position or order of such elements unless otherwise specifically stated.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.