Virtualization Of A Reconfigurable Data Processor

Grohoski; Gregory Frederick ; et al.

U.S. patent application number 16/239252 was filed with the patent office on 2020-07-09 for virtualization of a reconfigurable data processor. This patent application is currently assigned to SambaNova Systems, Inc.. The applicant listed for this patent is SambaNova Systems, Inc.. Invention is credited to Gregory Frederick Grohoski, Sumti Jairath, Mark Luttrell, Raghu Prabhakar, Manish K. Shah, Ram Sivaramakrishnan.

| Application Number | 20200218683 16/239252 |

| Document ID | / |

| Family ID | 69400643 |

| Filed Date | 2020-07-09 |

View All Diagrams

| United States Patent Application | 20200218683 |

| Kind Code | A1 |

| Grohoski; Gregory Frederick ; et al. | July 9, 2020 |

VIRTUALIZATION OF A RECONFIGURABLE DATA PROCESSOR

Abstract

A reconfigurable data processor comprises an array of configurable units and a bus system configurable to define virtual machines. The system can partition the array of configurable units into a plurality of sets of configurable units, and block communications via the bus system between configurable units within a particular set and configurable units outside the particular set. A memory access controller can be connected to the bus system, configurable to confine access to memory outside the array of configurable units originating from within the particular set to memory space allocated to the particular.

| Inventors: | Grohoski; Gregory Frederick; (Bee Cave, TX) ; Jairath; Sumti; (Santa Clara, CA) ; Luttrell; Mark; (Cedar Park, TX) ; Prabhakar; Raghu; (Sunnyvale, CA) ; Sivaramakrishnan; Ram; (San Jose, CA) ; Shah; Manish K.; (Austin, TX) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SambaNova Systems, Inc. Palo Alto CA |

||||||||||

| Family ID: | 69400643 | ||||||||||

| Appl. No.: | 16/239252 | ||||||||||

| Filed: | January 3, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 13/1668 20130101; G06F 15/7839 20130101; G06F 13/4027 20130101; G06F 2212/657 20130101; G06F 15/7871 20130101; G06F 15/7882 20130101; G06F 12/10 20130101; G06F 9/45533 20130101 |

| International Class: | G06F 13/40 20060101 G06F013/40; G06F 15/78 20060101 G06F015/78; G06F 9/455 20060101 G06F009/455; G06F 13/16 20060101 G06F013/16; G06F 12/10 20060101 G06F012/10 |

Claims

1. A reconfigurable data processor, comprising: an array of configurable units on one die or multichip module; a bus system connected to the array of configurable units, configurable to partition the array of configurable units into a plurality of sets of configurable units, and block communications via the bus system between configurable units within a particular set and configurable units outside the particular set; and a memory access controller connected to the bus system and configurable to confine access to memory outside the array of configurable units originating from within the particular set to memory space allocated to the particular set in the memory outside the array of configurable units.

2. The processor of claim 1, including a plurality of memory access controllers, including the first mentioned memory access controller, memory access controllers in the plurality of memory access controllers being addressable nodes on the bus system, and the memory access controllers are configurable to confine access to memory outside the array of configurable units originating from within corresponding sets of configurable units in the plurality of sets of configurable units to memory space in the memory outside the array of configurable units allocated to the corresponding sets.

3. The processor of claim 1, wherein sets of configurable units in the plurality of sets of configurable units are configurable to execute application graphs using virtual addresses, and the memory access controller includes or has access to a configurable table to translate virtual addresses in requests originating from an application graph executing within the particular set, to addresses in the memory space allocated to the particular set.

4. The processor of claim 1, wherein the bus system comprises a grid of switches connected to configurable units in the array of configurable units, switches in the grid including circuits to partition the bus system.

5. The processor of claim 1, wherein the bus system comprises a grid of switches connected to configurable units in the array of configurable units, switches in the grid including circuits configurable using port parameters, that enable and disable ports on the switches according to the port parameters.

6. The processor of claim 1, wherein the bus system includes a top level network and an array level network, the top level network connected to an external data interface for communication with memory outside of the array, the array level network connected to configurable units in the array of configurable units, and wherein the memory access controller is connected to the array level network and to the top level network, and includes logic to route data transfers between the top level network and the array level network.

7. The processor of claim 6, wherein the array level network comprises a grid of switches, and the switches in the grid, the configurable units in the array of configurable units and the memory access controller are addressable nodes on the array level network.

8. The processor of claim 6, including a plurality of memory access controllers, including said first mentioned memory access controller, memory access controllers in the plurality of memory access controllers being addressable nodes in the array level network.

9. The processor of claim 8, wherein the array level network comprises a grid of switches, and the switches in the grid, the configurable units in the array of configurable units and the memory access controllers in the plurality of memory access controllers are addressable nodes on the array level network.

10. The processor of claim 1, wherein the array of configurable units includes a plurality of tiles of configurable units, and the bus system comprises switches on boundaries between the tiles including circuits to partition the bus system on the tile boundaries.

11. The processor of claim 10, including a plurality of memory access controllers, including said first mentioned memory access controller, at least one memory access controller in the plurality of memory access controllers being operatively coupled to each tile in the plurality of tiles.

12. The processor of claim 1, including a configuration controller connected to the bus system, including logic to execute a configuration load process, including distributing configuration files to configurable units in individual sets of the configurable units in the array, wherein an application graph in one of the sets of configurable units is executable during the configuration load process in another set of configurable units.

13. The processor of claim 1, including a configuration controller connected to the bus system, including logic to execute a configuration unload process, including unloading state information from configurable units in individual sets, wherein an application graph in one of the sets of configurable units is executable during the configuration unload process in another set of configurable units.

14. A reconfigurable data processor, comprising: an array of configurable units including a plurality of tiles of configurable units on one die or a multichip module; a bus system connected to the array of configurable units, comprising boundary switches on tile boundaries between the tiles including circuits to configurable partition the bus system on the tile boundaries, and block communications via the bus system between configurable units within a particular tile and configurable units outside the particular tile; and a plurality of memory access controllers connected to the bus system, memory access controllers in the plurality of memory access controllers being configurable to confine access to memory outside the array of configurable units originating from within corresponding tiles to memory space allocated to the corresponding tile in the memory outside the array of configurable units, and wherein at least one memory access controller in the plurality of memory access controllers is operatively coupled to each tile in the plurality of tiles.

15. The processor of claim 14, wherein the bus system includes a top level network and an array level network, the top level network connected to an external data interface for communication with memory outside of the array, the array level network connected to configurable units in the array of configurable units, and wherein the memory access controllers in the plurality of memory access controllers are connected to the array level network and to the top level network, and include logic to route data transfers between the top level network and the array level network.

16. The processor of claim 15, wherein the array level network comprises a grid of switches including said boundary switches, and the switches in the grid, the configurable units in the array of configurable units and the memory access controllers are addressable nodes on the array level network.

17. The processor of claim 14, including a configuration controller connected to the bus system, including logic to execute a configuration load process, including distributing configuration files to configurable units in individual sets of the configurable units in the array, wherein an application graph in one of the sets of configurable units is executable during the configuration load process in another set of configurable units; and logic to execute a configuration unload process, including unloading state information from configurable units in individual sets, wherein an application graph in one of the sets of configurable units is executable during the configuration unload process in another set of configurable units.

18. A method for configuring a reconfigurable data processor, comprising an array of configurable units and a bus system connected to the array of configurable units on one die or multichip module; comprising partitioning the array of configurable units on the die or multichip module into a plurality of sets of configurable units, by blocking communications via the bus system between configurable units within a particular set and configurable units outside the particular set; and confining access to memory outside the array of configurable units originating from within the particular set to memory space allocated to the particular set in the memory outside the array of configurable units.

19. The method of claim 18, including partitioning the array of configurable units by loading configuration data in switches on boundaries of the sets of configurable units to block communications across the boundaries.

20. The method of claim 18, wherein sets of configurable units in the plurality of sets of configurable units are configurable to execute application graphs using virtual addresses, including confining access to memory outside the array by translating virtual addresses in requests originating from an application graph executing within the particular set, to addresses in the memory space allocated to the particular set.

21. The method of claim 18, including: loading application graphs in respective sets of configurable units, and starting the loaded application graphs; and stopping and unloading a selected application graph in one of the sets of configurable units, and loading another application graph in said one of the sets, while other application graphs in other sets of configurable units in the array of configurable units continue executing.

22. The method of claim 21, wherein the selected application graph includes a checkpoint, and stopping the selected application graph includes waiting until it reaches the checkpoint.

Description

BACKGROUND

Technological Field

[0001] The present technology relates to virtualization of reconfigurable architectures, which can be particularly applied to coarse-grain reconfigurable architectures.

Description of Related Art

[0002] Reconfigurable processors, including field programmable gate arrays (FPGAs), can be configured to implement a variety of functions more efficiently or faster than might be achieved using a general purpose processor executing a computer program. So-called coarse-grain reconfigurable architectures (e.g. CGRAs) are being developed in which the configurable units in the array are more complex than used in typical, more fine-grained FPGAs, and may enable faster or more efficient execution of various classes of functions. For example, CGRAs have been proposed that can enable implementation of energy-efficient accelerators for machine learning and artificial intelligence workloads. See, Prabhakar, et al., "Plasticine: A Reconfigurable Architecture for Parallel Patterns," ISCA '17, Jun. 24-28, 2017, Toronto, ON, Canada.

[0003] Configuration of reconfigurable processors involves compilation of a configuration description to produce an application graph represented by a configuration file, referred to sometimes as a bitstream or bit file, and distributing the configuration file to the configurable units on the processor. To start a process implemented using an application graph, the configuration file must be loaded for that process. To change a process implemented using an application graph, the configuration file must be replaced with a new configuration file.

[0004] The procedures and supporting structures for distributing and loading configuration files can be complex, and the execution of the procedures can be time consuming.

[0005] In some environments, it may be desirable to execute multiple application graphs simultaneously in a single reconfigurable processor.

[0006] It is desirable therefore to provide technologies supporting virtualization of reconfigurable processors.

SUMMARY

[0007] A technology is described which enables execution of multiple, unrelated application graphs in a Coarse-Grained Reconfigurable Array processor and in other types of reconfigurable processors, which contain an array of configurable units.

[0008] Technology described herein provides for a reconfigurable data processor, comprising an array of configurable units; a bus system connected to the array of configurable units, which is configurable to partition the array of configurable units into a plurality of sets of configurable units, and block communications via the bus system between configurable units within a particular set and configurable units outside the particular set. In addition, a memory access controller connected to the bus system is configurable to confine access to memory outside the array of configurable units, such as mass DRAM, SRAM and other memory classes, originating from within the particular set to memory space allocated to the particular set in the memory outside the array of configurable units.

[0009] In embodiments described herein a plurality of memory access controllers includes memory access controllers connected as addressable nodes on the bus system, and configurable to confine access to memory outside the array of configurable units originating from within corresponding sets of configurable units to memory space allocated to the corresponding sets.

[0010] An example of the bus system comprises a grid of switches connected to configurable units in the array of configurable units, switches in the grid including circuits to partition the bus system. Switches in the grid can include circuits configurable using port parameters, that enable and disable ports on the switches according to the port parameters.

[0011] Sets of configurable units in the plurality of sets of configurable units can be configurable to execute application graphs using virtual addresses. The memory access controller includes or has access to a configurable table to translate virtual addresses in requests originating from an application graph executing within the particular set, to addresses in the memory space allocated to the particular set. A physical address for the purposes of this description is an address used by a memory interface on the bus system that identifies locations in memory space in the external memory, and a virtual address is an address used by an application graph in a particular virtual machine that is translated to a physical address, such as by a memory access controller. In a device described herein, the bus system includes a top level network and an array level network. The top level network is connected to an external data interface for communication with memory outside of the array using physical addresses. The array level network is connected to configurable units in the array of configurable units. In a two level bus system like that described herein, the memory access controller is connected to the array level network and to the top level network, and includes logic to route data transfers between the top level network and the array level network.

[0012] The array level network can comprise a grid of switches, in which the switches in the grid, the configurable units in the array of configurable units and the memory access controller are addressable nodes on the array level network.

[0013] In some embodiments, a device comprises an array of configurable units including a plurality of tiles of configurable units. The device including such plurality of tiles can be implemented on a single integrated circuit or single multichip module. The bus system can comprise switches on boundaries between the tiles including circuits to partition the bus system on the tile boundaries. More generally, an array of configurable units can include blocks of configurable units which for the purposes of partitioning comprise partitionable groups in the array. In some embodiments, a partitionable group may comprise more than one type of configurable unit. In some embodiments, the array can include atomic partitionable groups which include a minimum set of configurable units usable for composing virtual machines. Also, the bus system can be configured to isolate configurable units in the array on boundaries of the partitionable groups.

[0014] A device is described in which a configuration controller is connected to the bus system which can be used to swap application graphs in a set of configurable units without interfering with application graphs executing in other sets of configurable units on the same reconfigurable processor. The reconfigurable processor including such configuration controller can be implemented on a single integrated circuit or single multichip module. A configuration controller can include logic to execute a configuration load process, including distributing configuration files to configurable units in individual sets of the configurable units in the array, wherein an application graph in one of the sets of configurable units is executable during the configuration load process in another set of configurable units. Also, a configuration controller can include logic to execute a configuration unload process, including unloading state information from configurable units in individual sets, wherein an application graph in one of the sets of configurable units is executable during the configuration unload process in another set of configurable units. A configuration controller can execute configuration load and unload operations on individual configurable units independently of other sets of configurable units.

[0015] In general, technology is described that includes a method for configuring a reconfigurable data processor, comprising an array of configurable units and a bus system connected to the array of configurable units. The method can comprise partitioning the array of configurable units into a plurality of sets of configurable units, by blocking communications via the bus system between configurable units within a particular set and configurable units outside the particular set; and confining access to memory outside the array of configurable units originating from within the particular set to memory space allocated to the particular set in the memory outside the array of configurable units.

[0016] Technology described herein provides for dynamic reconfiguration of a CGRA or other type of array of configurable units. A runtime application or service in a host can include a routine for allocation and reallocation of resources within a reconfigurable processor. In one such routine, a host can load application graphs in respective sets of configurable units, and start the loaded application graphs to cause a plurality of application graphs to execute at the same time, or in parallel. When it is desirable to change or update an executing application graph, the host can stop and unload a selected application graph in one of the sets of configurable units, and load another application graph in said one of the sets, while other application graphs in other sets of configurable units in the array of configurable units continue executing.

[0017] Other aspects and advantages of the technology described herein can be seen on review of the drawings, the detailed description and the claims, which follow.

BRIEF DESCRIPTION OF THE DRAWINGS

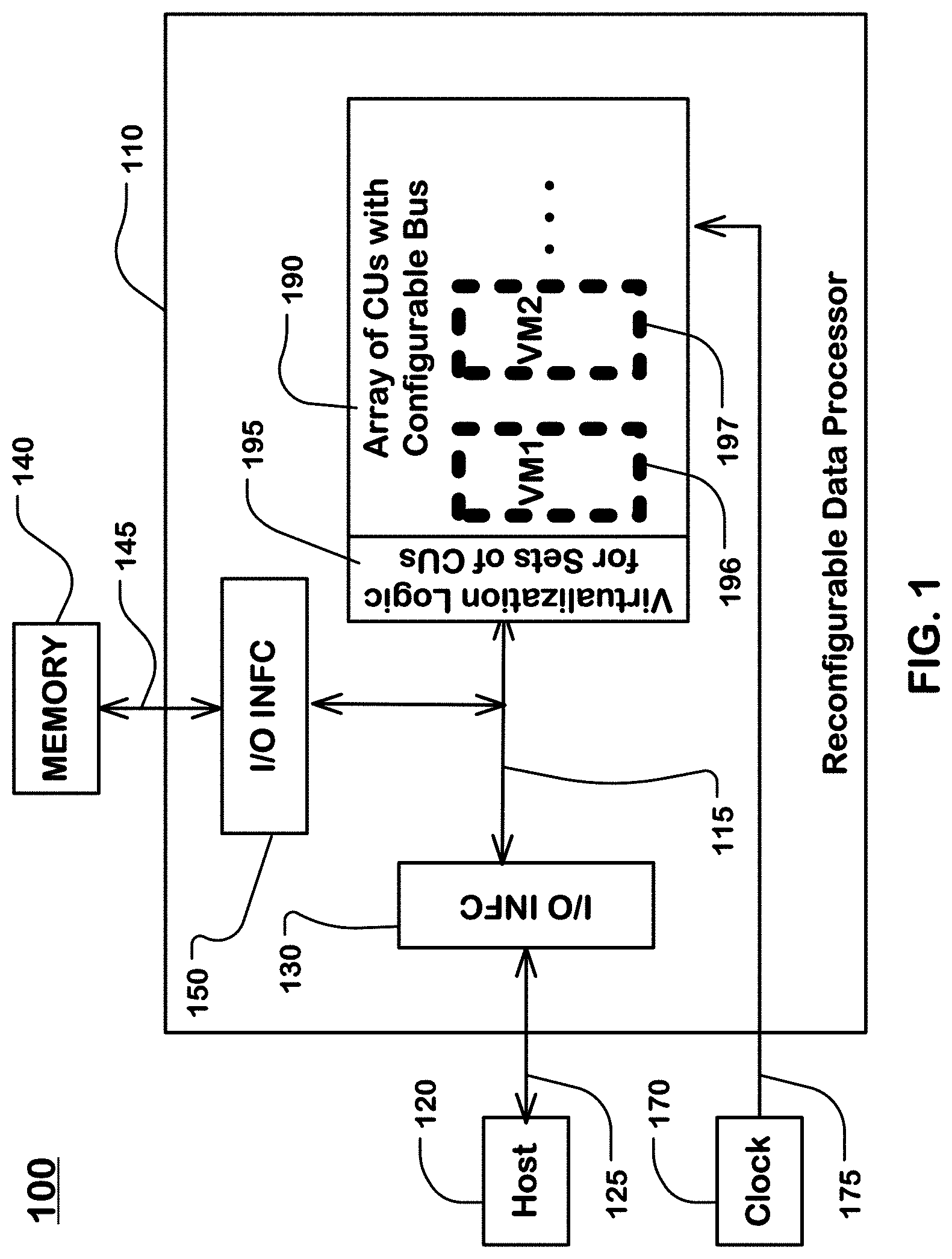

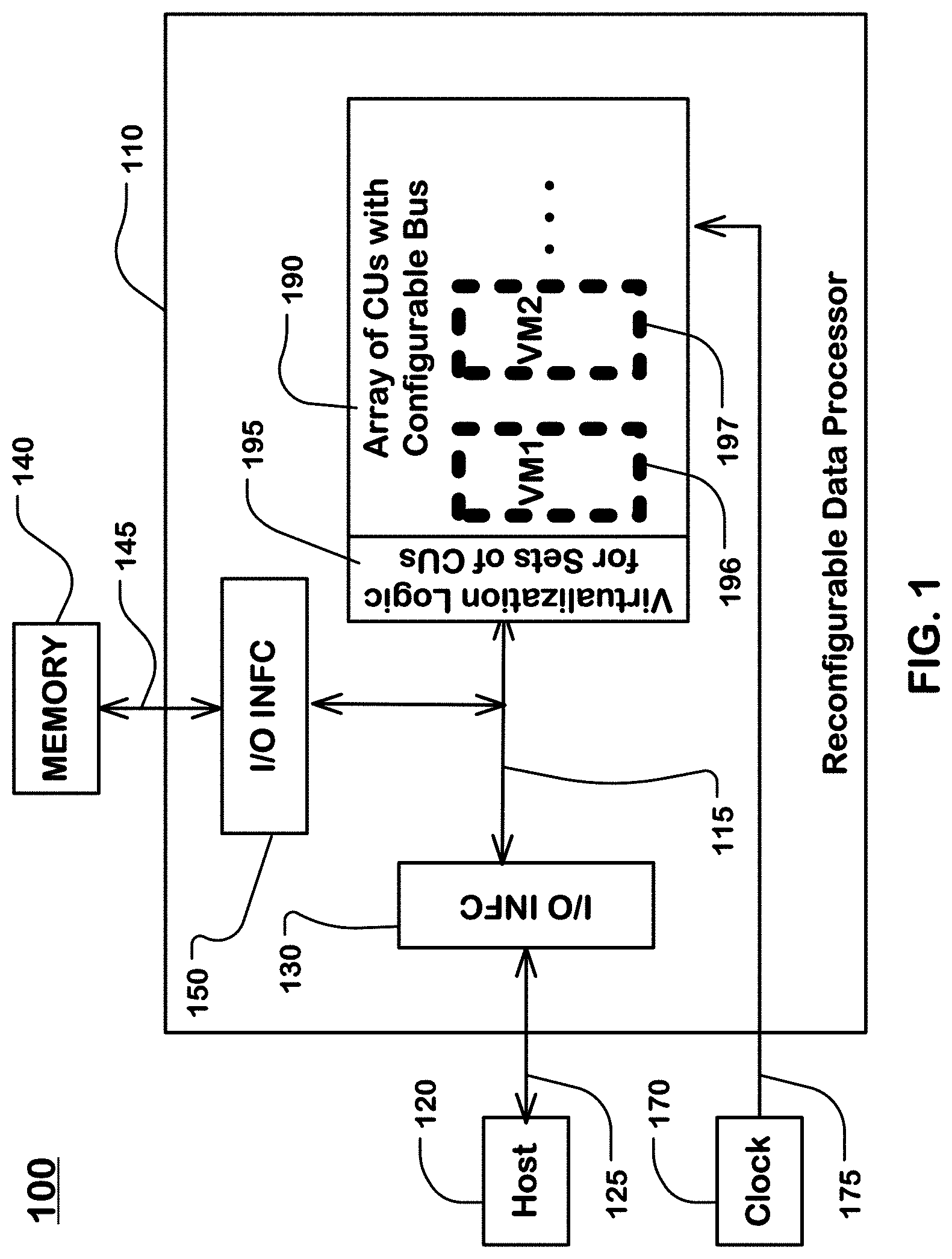

[0018] FIG. 1 is a system diagram illustrating a system including a host, a memory, and a reconfigurable data processor.

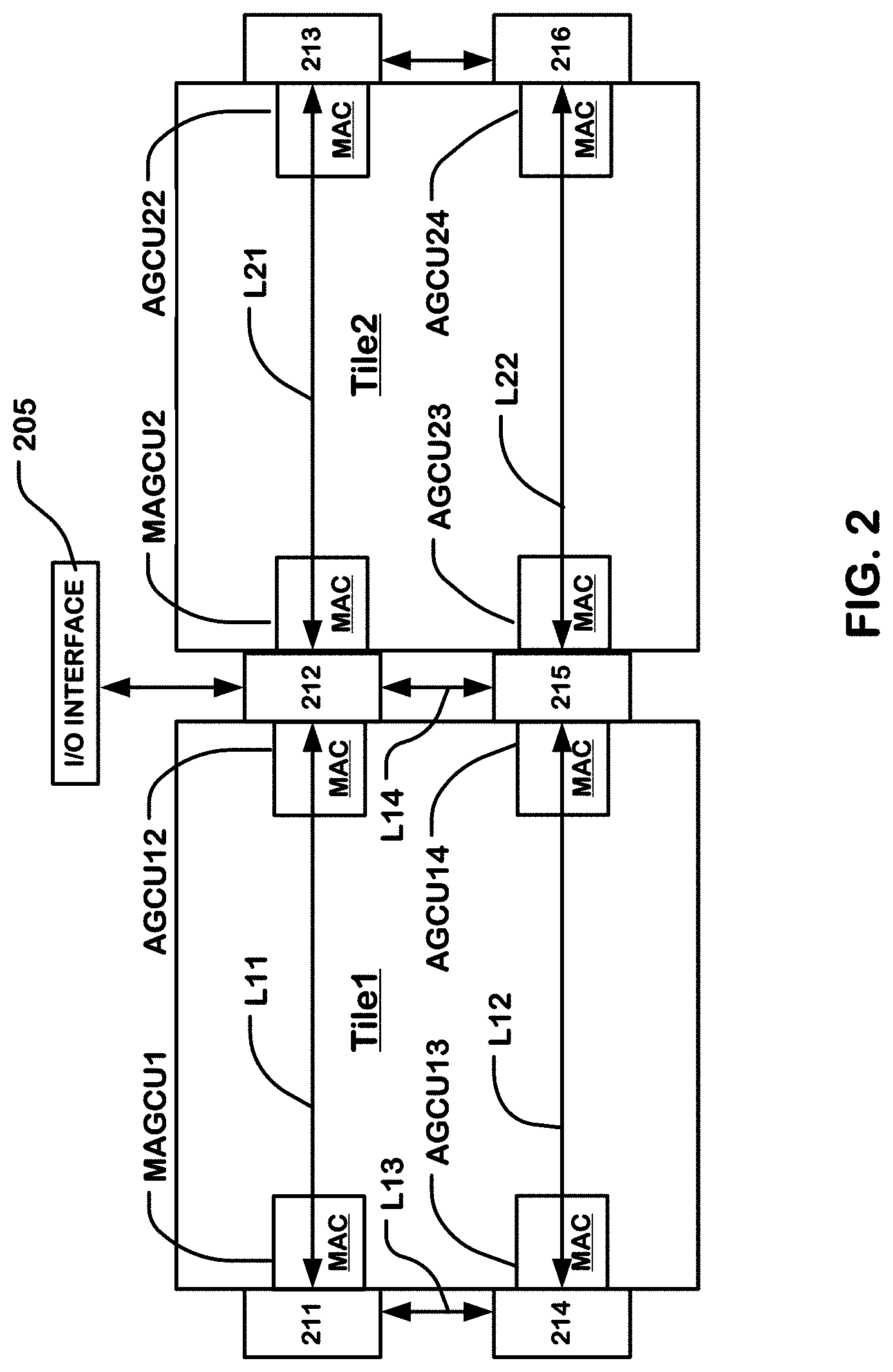

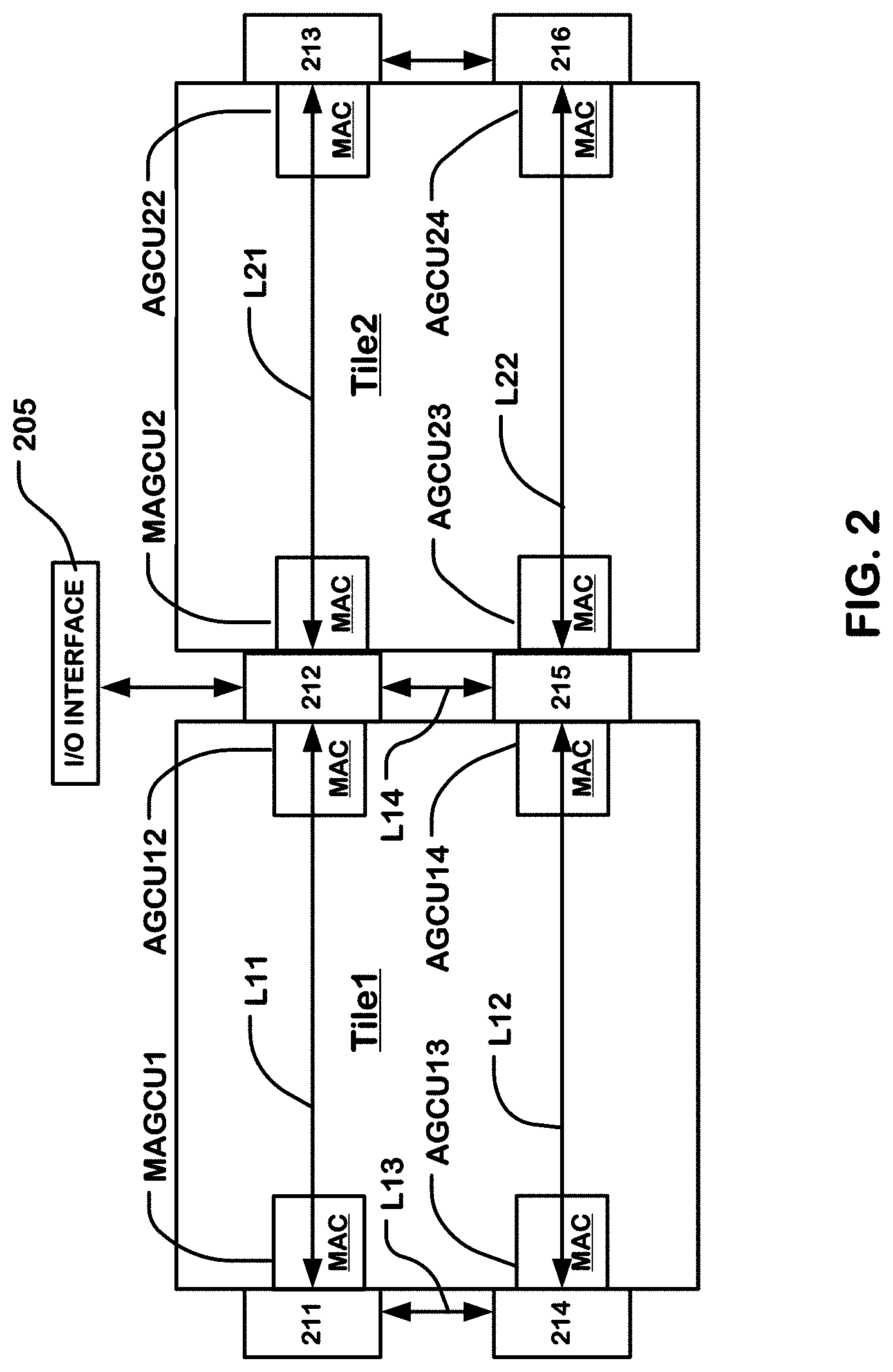

[0019] FIG. 2 is a simplified block diagram of a top level network and components of a CGRA (Coarse Grain Reconfigurable Architecture).

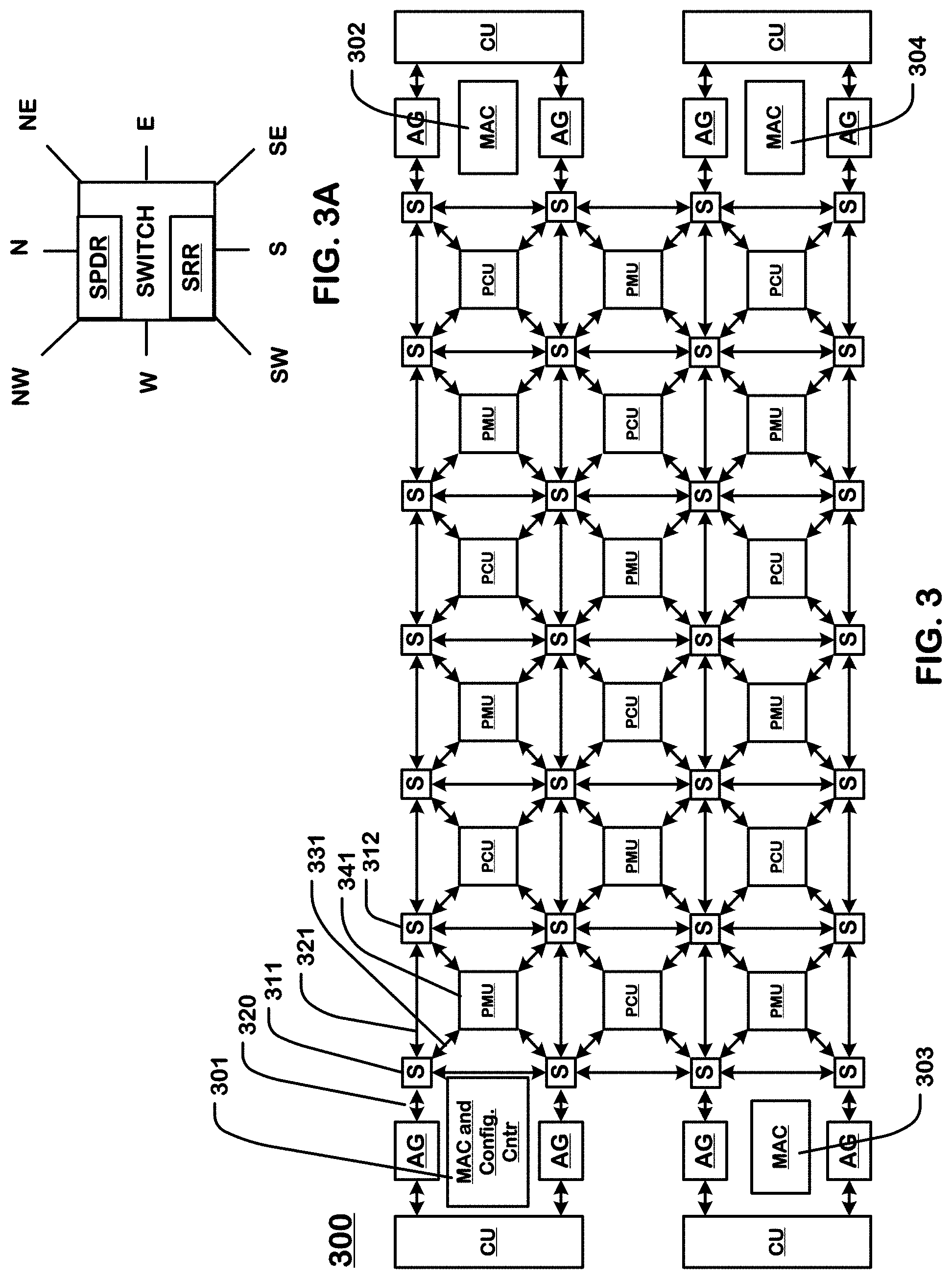

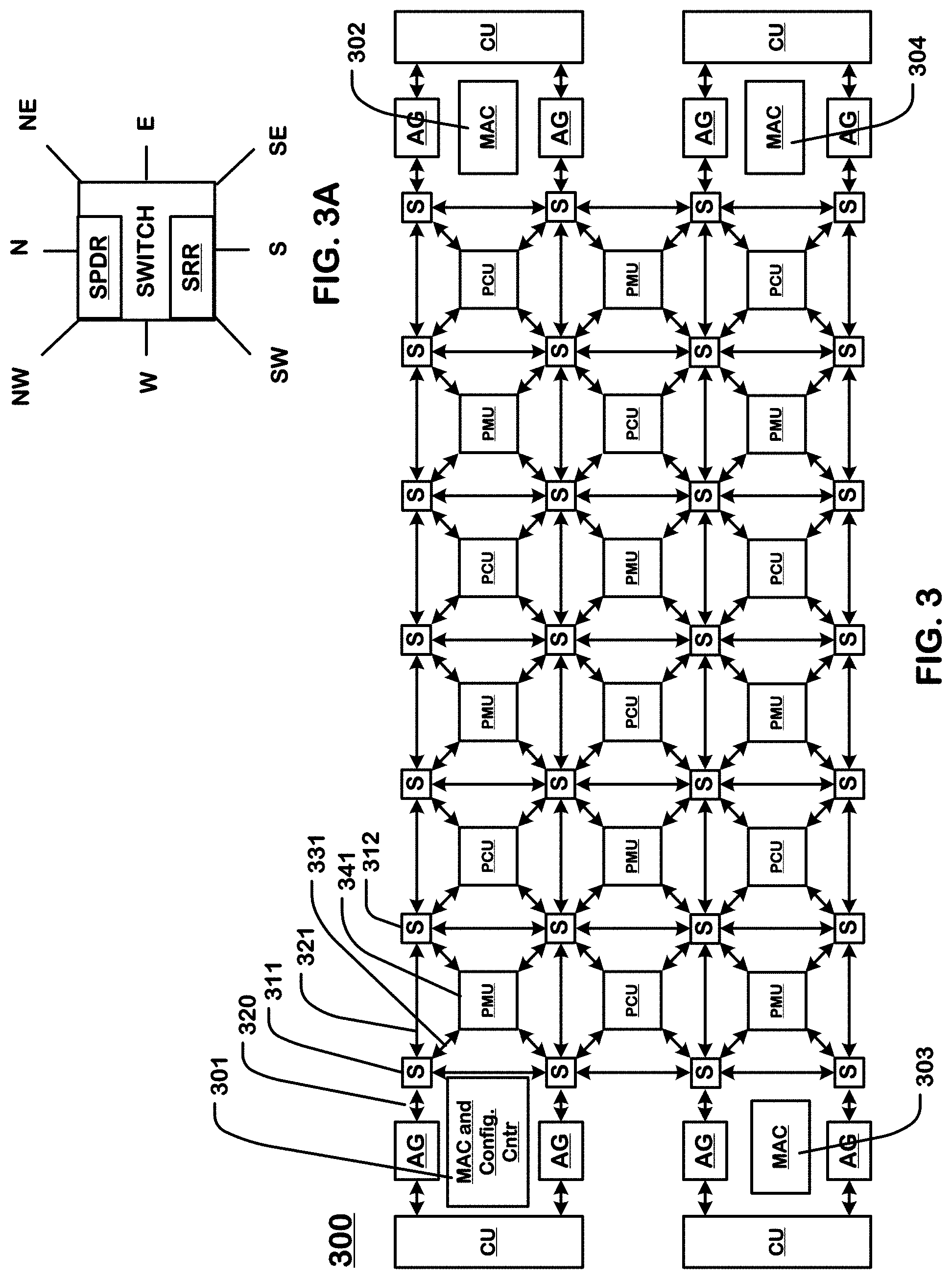

[0020] FIG. 3 is a simplified diagram of a tile and an array level network usable in the configuration of FIG. 2, where the configurable units in the array are nodes on the array level network.

[0021] FIG. 3A illustrates an example switch unit connecting elements in an array level network.

[0022] FIG. 4 is a block diagram of a system including an array of configurable units and resources implementing virtual machines.

[0023] FIG. 5 is a flowchart of a procedure executed by a memory access controller in a system like that of FIG. 4.

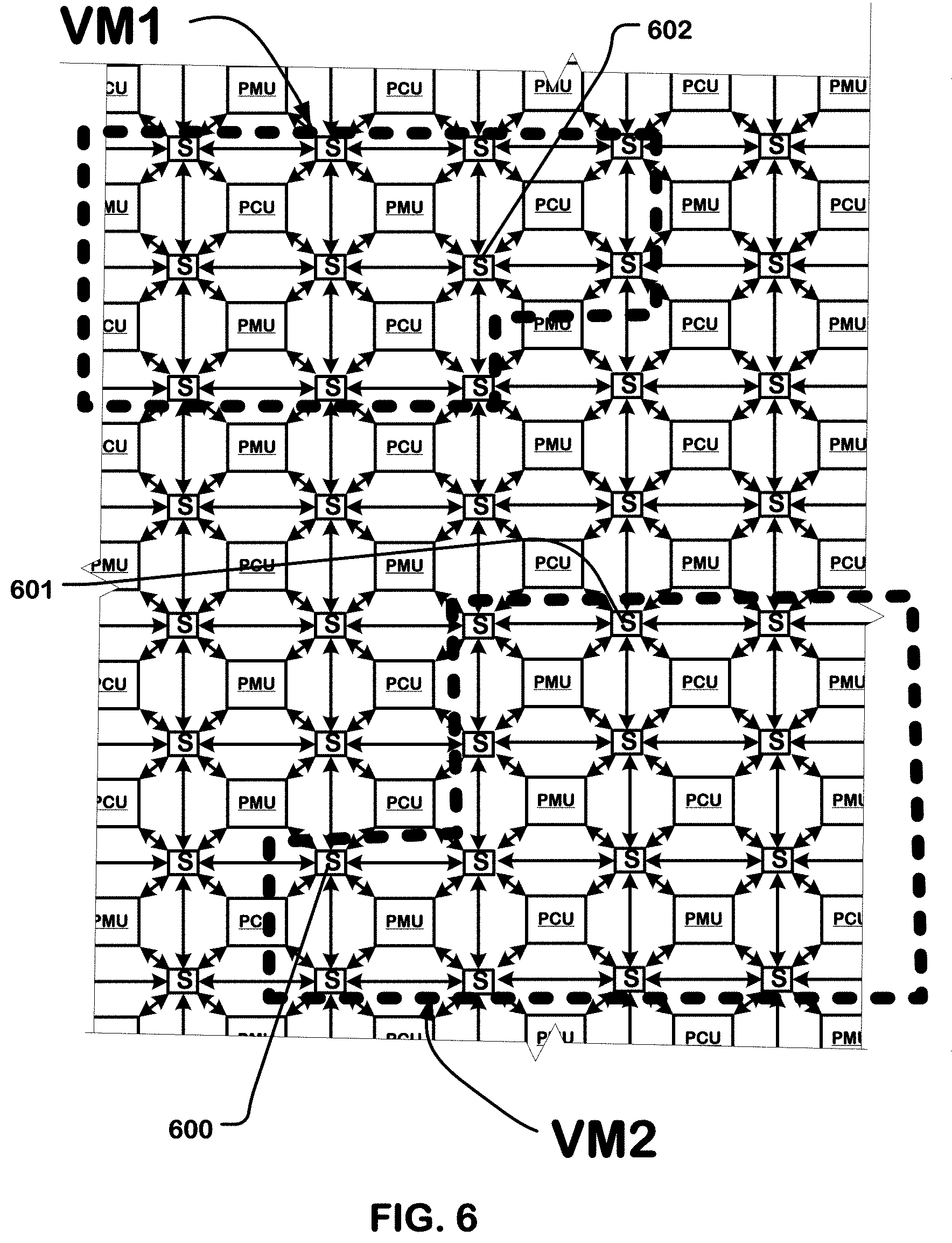

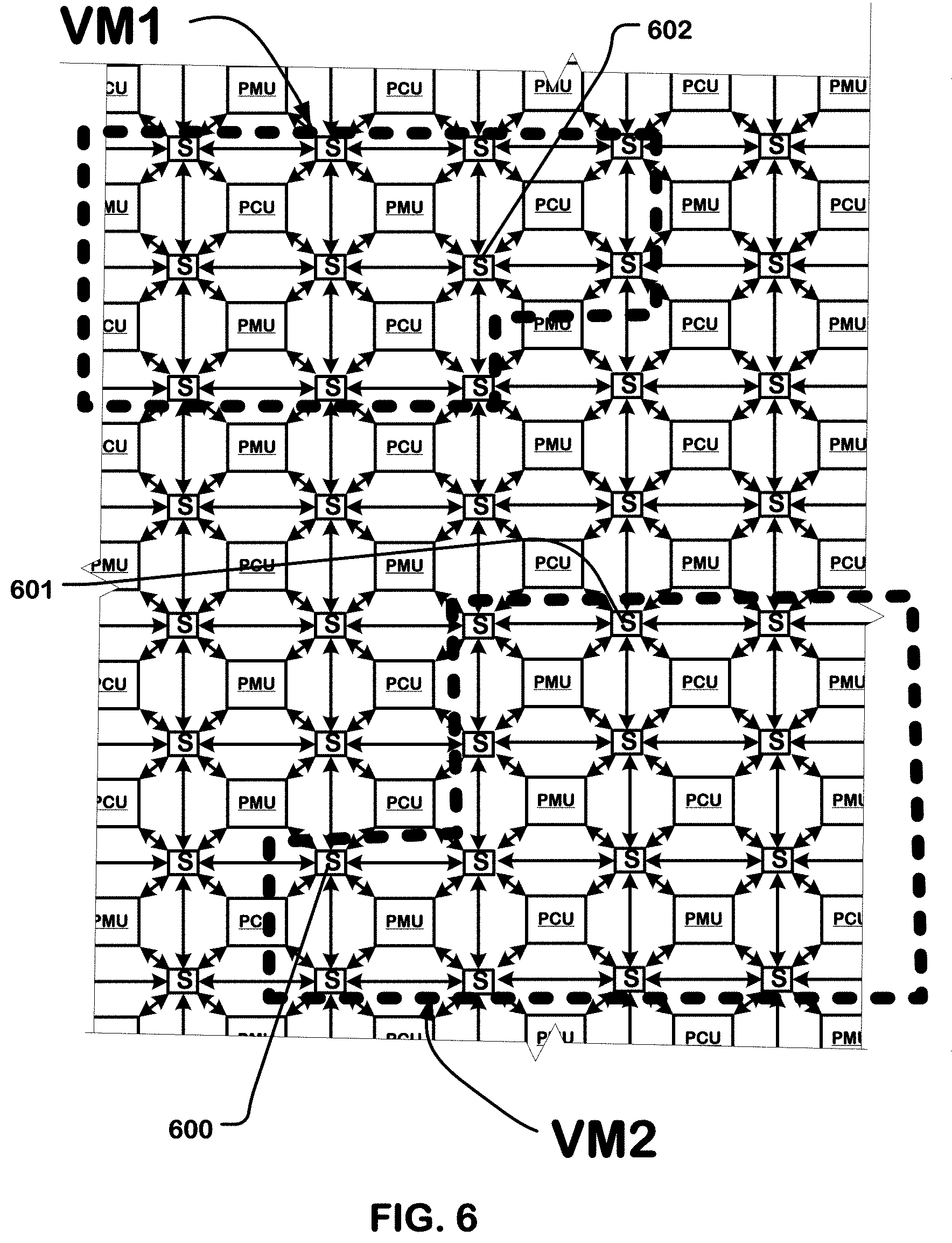

[0024] FIG. 6 illustrates a portion of an array of configurable units partitioned into a plurality of sets of configurable units.

[0025] FIG. 7 illustrates a portion of an array of configurable units including a plurality of tiles, partitioned into a plurality of sets of configurable units according to another embodiment.

[0026] FIG. 8 illustrates one example of an array of configurable units partitioned into a plurality of sets of configurable units on tile boundaries.

[0027] FIG. 9 illustrates another example of an array of configurable units partitioned into a plurality of sets of configurable units.

[0028] FIG. 10 illustrates implementation of virtual machines across a plurality of CGRA devices.

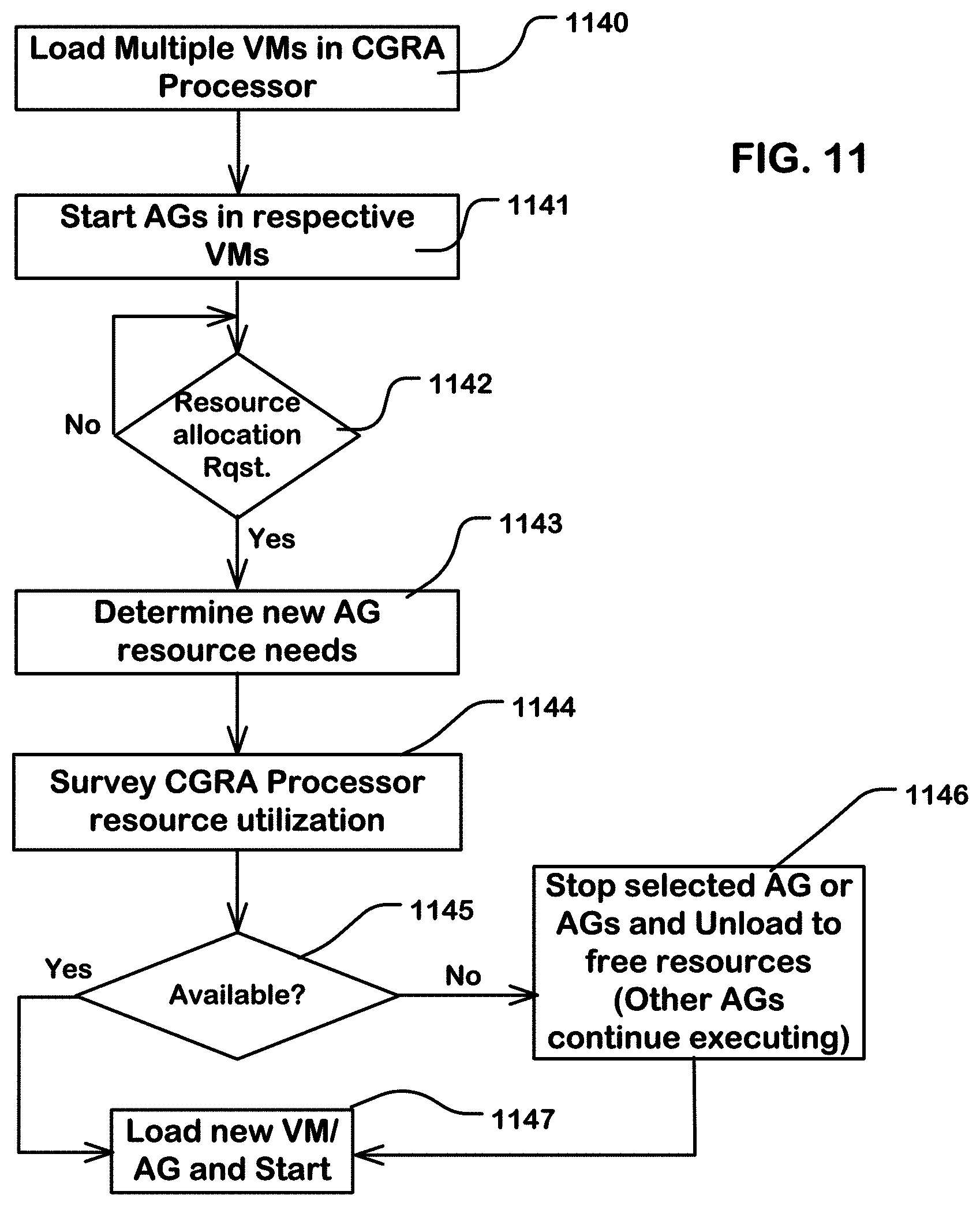

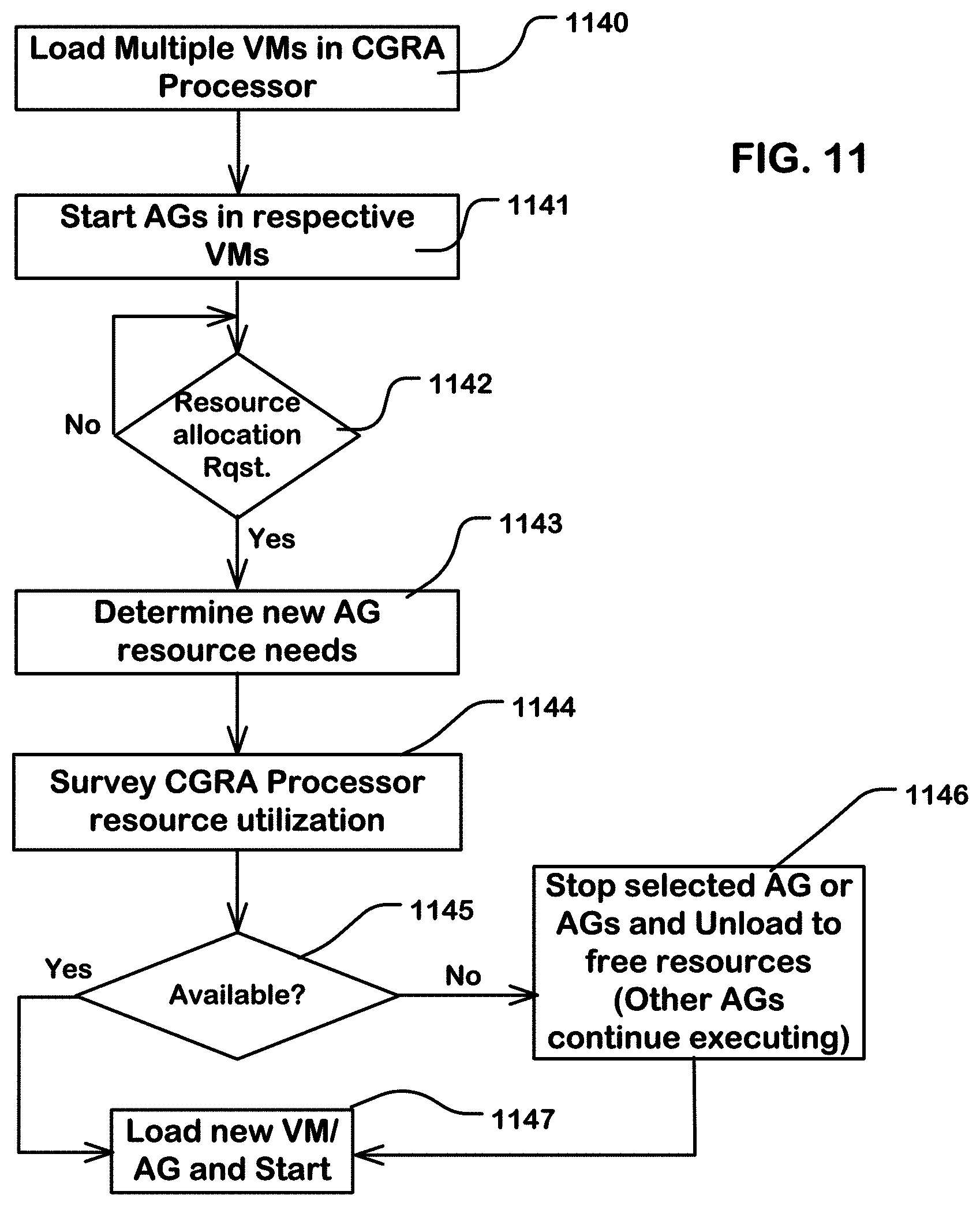

[0029] FIG. 11 is a flowchart of a procedure for allocating virtual machine resources in an array of configurable units.

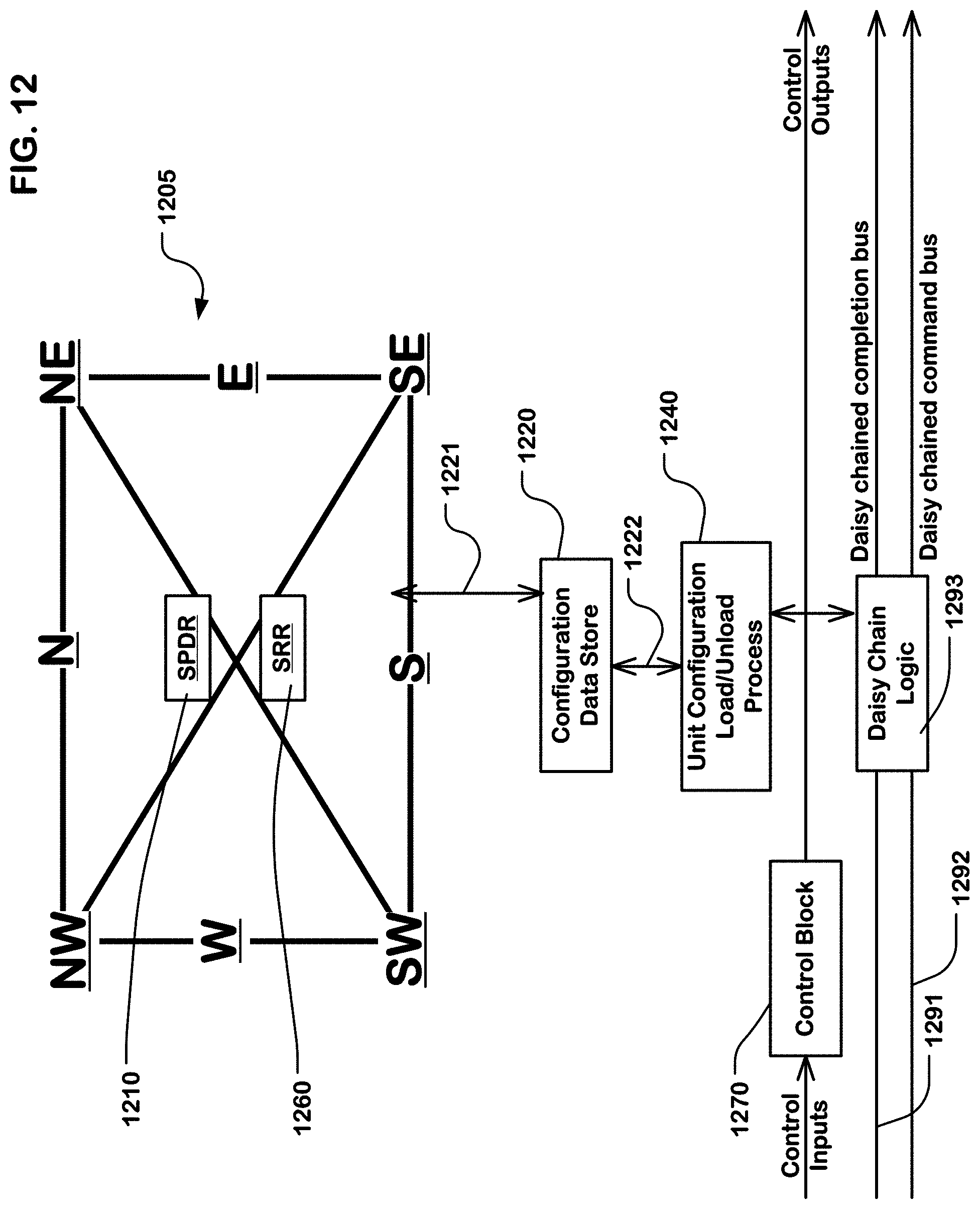

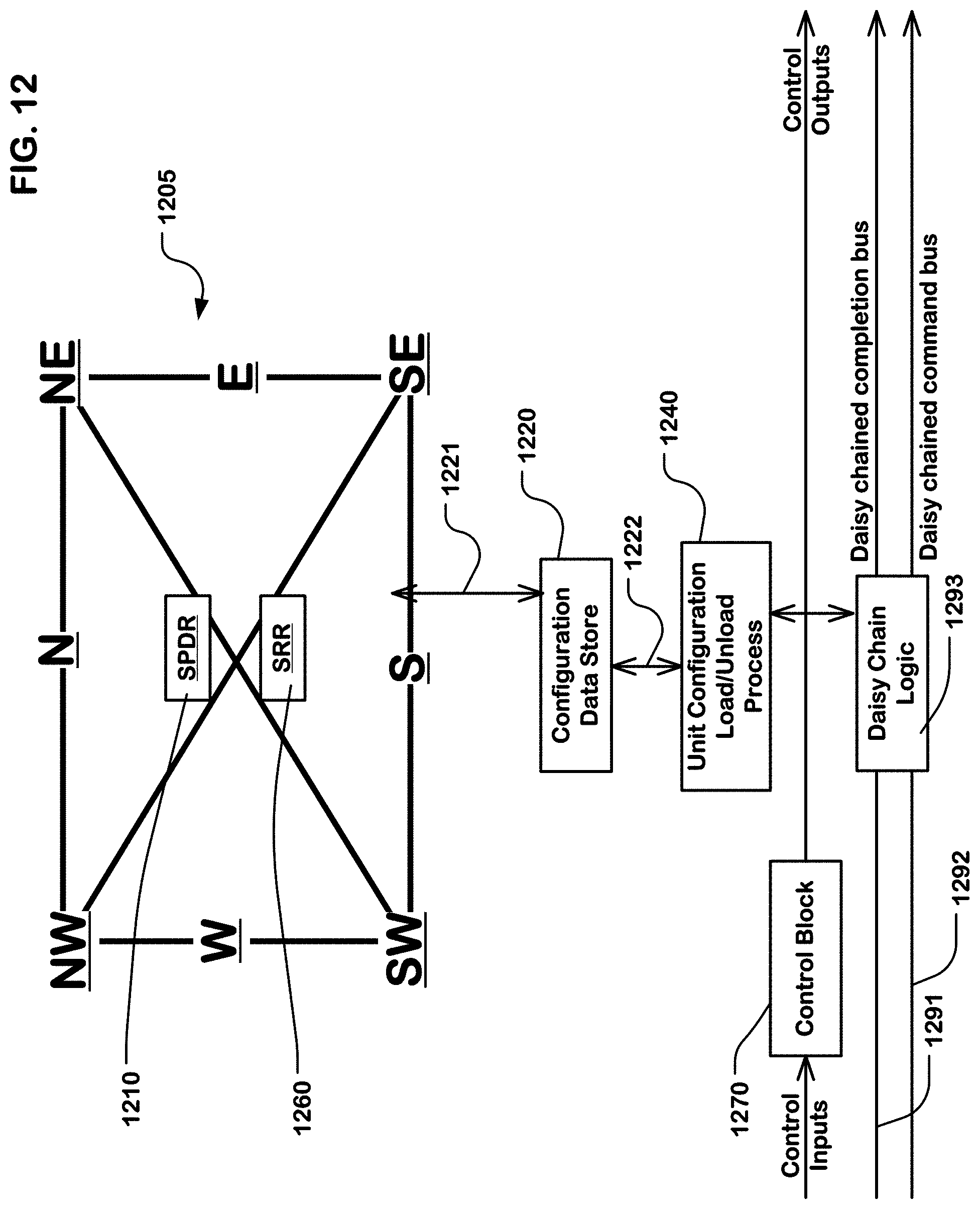

[0030] FIG. 12 is a block diagram illustrating an example of a configurable switch usable in an array of configurable units.

[0031] FIG. 13 is a block diagram illustrating an example configurable unit.

[0032] FIG. 14 illustrates one example of a state machine diagram that can be used to control a unit configuration load process in a configurable unit.

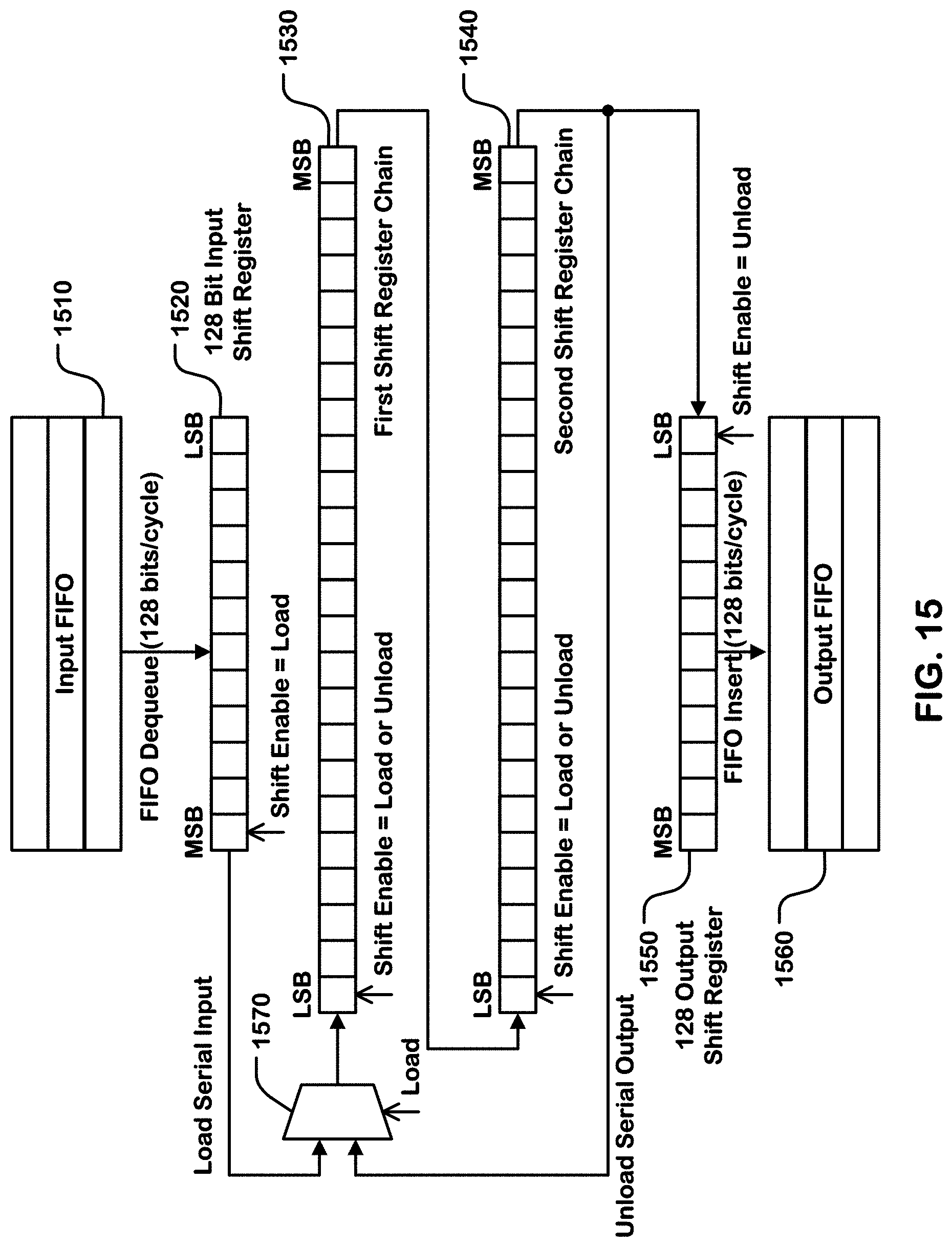

[0033] FIG. 15 is a logical representation of a structure supporting load of a configuration store in a configurable unit.

[0034] FIG. 16 is a flowchart illustrating operations of a host coupled to a reconfigurable data processor.

[0035] FIG. 17 is a flowchart illustrating operations of the configuration load controller, which can be part of a master AGCU or otherwise in communication with the array of configurable units in a tile.

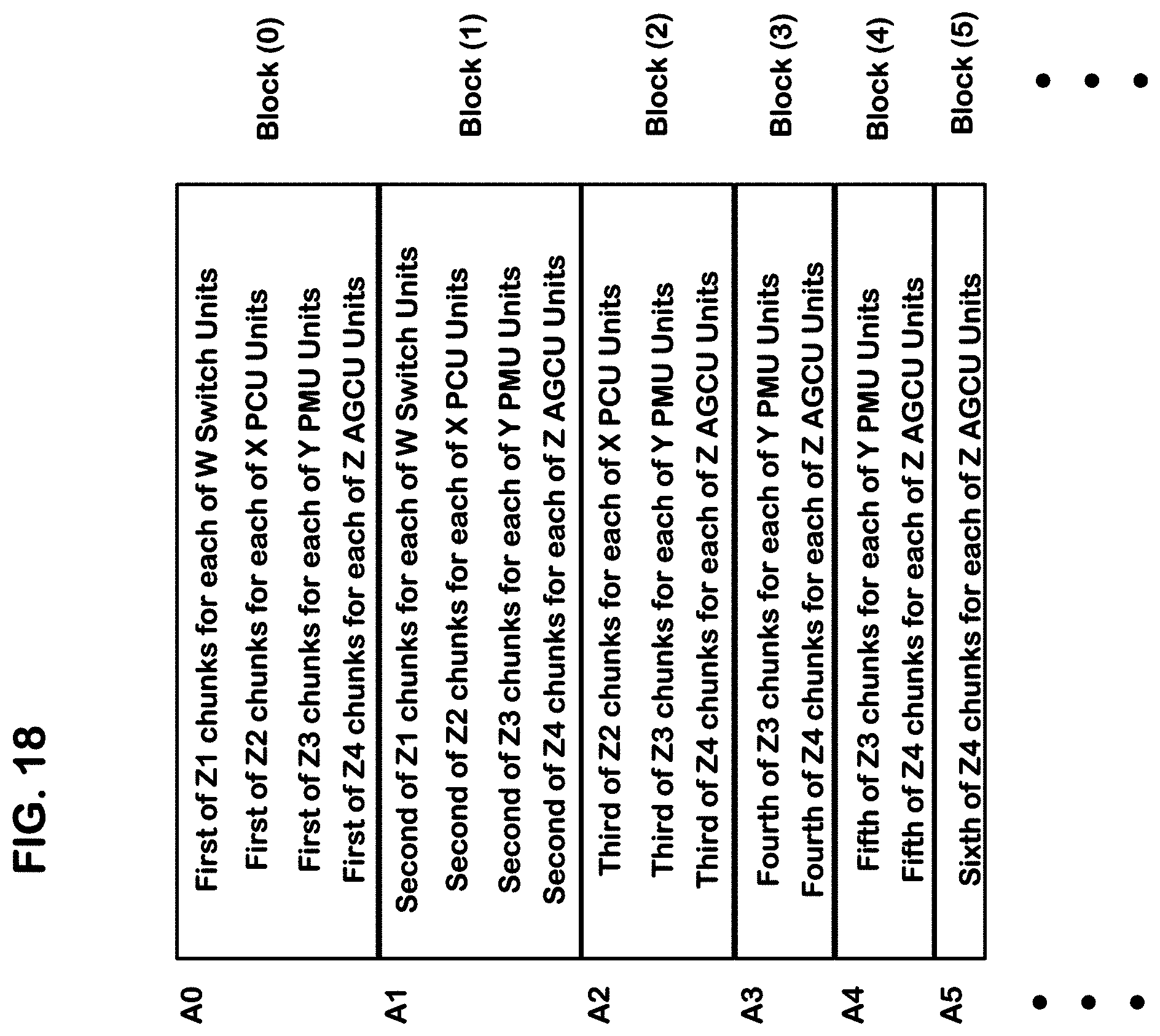

[0036] FIG. 18 illustrates an example organization of a configuration file.

[0037] FIG. 19 is a state machine diagram illustrating one example of logic to execute an array configuration load process for a system like that of FIGS. 2 and 3.

[0038] FIG. 20 is a timing diagram illustrating a timing of early rounds of a distribution sequence like that of FIG. 19.

[0039] FIG. 21 is a flowchart illustrating a unit configuration load process in a configurable unit.

[0040] FIG. 22 is a state machine diagram illustrating one example of logic to execute an array configuration unload process for a system like that of FIGS. 2 and 3.

[0041] FIG. 23 is a flowchart illustrating a unit configuration unload process in a configurable unit.

DETAILED DESCRIPTION

[0042] The following description will typically be with reference to specific structural embodiments and methods. It is to be understood that there is no intention to limit the technology to the specifically disclosed embodiments and methods but that the technology may be practiced using other features, elements, methods and embodiments. Preferred embodiments are described to illustrate the present technology, not to limit its scope, which is defined by the claims. Those of ordinary skill in the art will recognize a variety of equivalent variations on the description that follows.

[0043] FIG. 1 is a system diagram illustrating a system including a host 120, a memory 140, and a reconfigurable data processor 110. As shown in the example of FIG. 1, the reconfigurable data processor 110 includes an array 190 of configurable units (CUs) and virtualization logic 195. The virtualization logic 195 can include resources that support or enable simultaneous execution of multiple, unrelated application graphs (or related ones) in an array of configurable units on one die or one multichip module. In the illustration, a first application graph is implemented in virtual machine VM1 in a particular set 196 of configurable units, and a second application graph is implemented in virtual machine VM2 in another set 197 of configurable units.

[0044] An application graph for the purposes of this description includes the configuration file for configurable units in the array compiled to execute a mission function procedure or set of procedures using the device, such as inferencing or learning in an artificial intelligence or machine learning system. A virtual machine for the purposes of this description comprises a set of resources (including elements of virtualization logic 195 and of bus system 115) configured to support execution of an application graph in an array of configurable units in a manner that appears to the application graph as if there were a physical constraint on the resources available, such as would be experienced in a physical machine. The virtual machine can be established as a part of the application graph of the mission function that uses the virtual machine, or it can be established using a separate configuration mechanism. In embodiments described herein, virtual machines are implemented using resources of the array of configurable units that are also used in the application graphs, and so the configuration file for the application graph includes the configuration data for its corresponding virtual machine, and links the application graph to a particular set of configurable units in the array of configurable units.

[0045] The virtualization logic 195 can include a number of logical elements, including circuits for partitioning the array 190, one or multiple memory access controllers and one or multiple configuration load/unload controllers, as described in more details below.

[0046] The phrase "configuration load/unload controller", as used herein, refers to a combination of a configuration load controller and a configuration unload controller. The configuration load controller and the configuration unload controller may be implemented using separate logic and data path resources, or may be implemented using shared logic and data path resources as suits a particular embodiment.

[0047] The processor 110 can be implemented on a single integrated circuit die or on a multichip module. An integrated circuit can be packaged in a single chip module or a multi-chip module (MCM). An MCM is an electronic package consisting of multiple integrated circuit die assembled into a single package, configured as a single device. The various die of an MCM are mounted on a substrate, and the bare die of the substrate are connected to the surface or to each other using for some examples, wire bonding, tape bonding or flip-chip bonding.

[0048] The processor 110 includes an external I/O interface 130 connected to the host 120 via lines 125, and external I/O interface 150 connected to the memory 140. The I/O interfaces 130, 150 connect via a bus system 115 to the array 190 of configurable units and to the virtualization logic 195. The bus system 115 may have a bus width of one chunk of data, which can be for this example 128 bits (references to 128 bits throughout can be considered as an example chunk size more generally). In general, a chunk of the configuration file can have a number N of bits of data, and the bus system can be configured to transfer N bits of data in one bus cycle, where N is any practical bus width. A sub-file distributed in the distribution sequence can consist of one chunk, or other amounts of data as suits a particular embodiment. Procedures are described herein using sub-files consisting of one chunk of data each. Of course, the technology can be configured to distribute sub-files of different sizes, including sub-files that may consist of two chunks distributed in two bus cycles for example.

[0049] To configure configurable units in the array 190 of configurable units with a configuration file for an application graph and a virtual machine, the host 120 can send the configuration file to the memory 140 via the interface 130, the bus system 115, and the interface 150 in the reconfigurable data processor 110. The configuration file can be loaded in many ways, as suits a particular architecture, including in data paths outside the configurable processor 110. The configuration file can be retrieved from the memory 140 via the memory interface 150. Chunks of the configuration file for an application graph in a virtual machine can then be sent in a distribution sequence as described herein to configurable units in the set of configurable units in array 190 corresponding to the virtual machine, while application graphs in other sets of configurable units, or other virtual machines, can continue to simultaneously execute. In support of virtualization, the configuration file can include parameters used by circuits to partition the array and parameters used by memory access controllers and configuration load and unload logic allocated to particular virtual machines.

[0050] An external clock generator 170 or other internal or external clock signal sources can provide a clock signal 175 or clock signals to elements in the reconfigurable data processor 110, including the array 190 of configurable units, and the bus system 115, and the external data I/O interfaces.

[0051] FIG. 2 is a simplified block diagram of components of a CGRA (Coarse Grain Reconfigurable Architecture) processor which can be implemented on a single integrated circuit die or on a multichip module. In this example, the CGRA processor has 2 tiles (Tile1, Tile2). The tile comprises a set of configurable units connected to a bus system, including an array level network in this example. The bus system includes a top level network connecting the tiles to external I/O interface 205 (or any number of interfaces). In other embodiments, different bus system configurations may be utilized. The configurable units in each tile are addressable nodes on the array level network in this embodiment.

[0052] Each of the four tiles has 4 AGCUs (Address Generation and Coalescing Units) (e.g. MAGCU1, AGCU12, AGCU13, AGCU14). The AGCUs are nodes on the top level network and nodes on the array level networks, and include resources for routing data among nodes on the top level network and nodes on the array level network in each tile. In other embodiments, different numbers of AGCUs may be used, or their function may be combined with other components in the CGRA processor or reconfigurable elements in the tile.

[0053] Nodes on the top level network in this example include one or more external I/O interfaces, including interface 205. The interfaces to external devices include resources for routing data among nodes on the top level network and external devices, such as high-capacity memory, host processors, other CGRA processors, FPGA devices and so on, that are connected to the interfaces.

[0054] One of the AGCUs in a tile is configured in this example to be a master AGCU, which includes an array configuration load/unload controller for the tile. In other embodiments, more than one array configuration load/unload controller can be implemented and one array configuration load/unload controller may be implemented by logic distributed among more than one AGCU. All of the AGCUs in a tile include a memory access controller (MAC) in this example. In other embodiments, a memory access controller can be implemented as a separate node on the array level and top level networks, and includes logic to act as a gateway between the array level and top level networks that confines communications with a set of configurable units executing a graph to memory space allocated to the set of configurable units, and optionally other allocated resources, accessible using the top level network. The memory access controller can include address registers and address translation logic configurable to confine accesses to memory outside the array of configurable units to memory space allocated to sets of configurable units from which the accesses originate, or to which data from memory outside the array of configurable units is directed.

[0055] The MAGCU1 includes a configuration load/unload controller for Tile1, and MAGCU2 includes a configuration load/unload controller for Tile2 in this example. In other embodiments, a configuration load/unload controller can be designed for loading and unloading configuration of more than one tile. In other embodiments, more than one configuration controller can be designed for configuration of a single tile. Also, the configuration load/unload controller can be implemented in other portions of the system, including as a stand-alone node on the top level network and the array level network or networks.

[0056] The top level network is constructed using top level switches (211-216) connecting to each other as well as to other nodes on the top level network, including the AGCUs, and I/O interface 205. The top level network includes links (e.g. L11, L12, L21, L22) connecting the top level switches. Data travels in packets between the top level switches on the links, and from the switches to the nodes on the network connected to the switches. For example, top level switches 211 and 212 are connected by a link L11, top level switches 214 and 215 are connected by a link L12, top level switches 211 and 214 are connected by a link L13, and top level switches 212 and 213 are connected by a link L21. The links can include one or more buses and supporting control lines, including for example a chunk-wide bus (vector bus). For example, the top level network can include data, request and response channels operable in coordination for transfer of data in a manner analogous to an AXI compatible protocol. See, AMBA.RTM. AXI and ACE Protocol Specification, A R M, 2017.

[0057] Top level switches can be connected to AGCUs. For example, top level switches 211, 212, 214 and 215 are connected to MAGCU1, AGCU12, AGCU13 and AGCU14 in the tile Tile1, respectively. Top level switches 212, 213, 215 and 216 are connected to MAGCU2, AGCU22, AGCU23 and AGCU24 in the tile Tile2, respectively.

[0058] Top level switches can be connected one or more external I/O interfaces (e.g. interface 205).

[0059] FIG. 3 is a simplified diagram of a tile and an array level network usable in the configuration of FIG. 2, where the configurable units in the array are nodes on the array level network.

[0060] In this example, the array of configurable units 300 includes a plurality of types of configurable units. The types of configurable units in this example, include Pattern Compute Units (PCU), Pattern Memory Units (PMU), switch units (S), and Address Generation and Coalescing Units (each including two address generators AG and a shared CU). For an example of the functions of these types of configurable units, see, Prabhakar et al., "Plasticine: A Reconfigurable Architecture For Parallel Patterns", ISCA '17, Jun. 24-28, 2017, Toronto, ON, Canada, which is incorporated by reference as if fully set forth herein. Each of these configurable units contains a configuration store comprising a set of registers or flip-flops that represent either the setup or the sequence to run a program, and can include the number of nested loops, the limits of each loop iterator, the instructions to be executed for each stage, the source of the operands, and the network parameters for the input and output interfaces.

[0061] Additionally, each of these configurable units contains a configuration store comprising a set of registers or flip-flops that store status usable to track progress in nested loops or otherwise. A configuration file contains a bit-stream representing the initial configuration, or starting state, of each of the components that execute the program. This bit-stream is referred to as a bit-file. Program load is the process of setting up the configuration stores in the array of configurable units based on the contents of the bit file to allow all the components to execute a program (i.e., a machine). Program Load may also require the load of all PMU memories.

[0062] The array level network includes links interconnecting configurable units in the array. The links in the array level network include one or more and, in this case three, kinds of physical buses: a chunk-level vector bus (e.g. 128 bits of data), a word-level scalar bus (e.g. 32 bits of data), and a multiple bit-level control bus. For instance, interconnect 321 between switch units 311 and 312 includes a vector bus interconnect with vector bus width of 128 bits, a scalar bus interconnect with a scalar bus width of 32 bits, and a control bus interconnect.

[0063] The three kinds of physical buses differ in the granularity of data being transferred. In one embodiment, the vector bus can carry a chunk that includes 16-Bytes (=128 bits) of data as its payload. The scalar bus can have a 32-bit payload, and carry scalar operands or control information. The control bus can carry control handshakes such as tokens and other signals. The vector and scalar buses can be packet switched, including headers that indicate a destination of each packet and other information such as sequence numbers that can be used to reassemble a file when the packets are received out of order. Each packet header can contain a destination identifier that identifies the geographical coordinates of the destination switch unit (e.g. the row and column in the array), and an interface identifier that identifies the interface on the destination switch (e.g. North, South, East, West, etc.) used to reach the destination unit. The control network can be circuit switched based on timing circuits in the device, for example. The configuration load/unload controller can generate a header for each chunk of configuration data of 128 bits. The header is transmitted on a header bus to each configurable unit in the array of configurable unit.

[0064] In one example, a chunk of data of 128 bits is transmitted on the vector bus that provides the chunk as vector inputs to a configurable unit. The vector bus can include 128 payload lines, and a set of header lines. The header can include a sequence ID for each chunk, which can includes: [0065] A bit to indicate if the chunk contains scratchpad memory or configuration store data. [0066] Bits that form a chunk number. [0067] Bits that indicate a column identifier. [0068] Bits that indicate a row identifier. [0069] Bits that indicate a component identifier.

[0070] For a load operation, the configuration load controller can send the number N of chunks to a configurable unit in order from N-1 to 0. For this example, the 6 chunks are sent out in most significant bit first order of Chunk 5->Chunk 4->Chunk 3->Chunk 2->Chunk 1->Chunk 0. (Note that this most significant bit first order results in Chunk 5 being distributed in round 0 of the distribution sequence from the array configuration load controller.) For an unload operation, the configuration unload controller can write the unload data out of order to the memory. For both load and unload operations, the shifting in the configuration serial chains in a configuration data store in a configurable unit is from LSB (least-significant-bit) to MSB (most-significant-bit), or MSB out first.

[0071] FIG. 3A illustrates an example switch unit connecting elements in an array level network. As shown in the example of FIG. 3A, a switch unit can have 8 interfaces. The North, South, East and West interfaces of a switch unit are used for connections between switch units. The Northeast, Southeast, Northwest and Southwest interfaces of a switch unit are each used to make connections to PCU or PMU instances. A set of 2 switch units in each tile quadrant have connections to an Address Generation and Coalescing Unit (AGCU) that include multiple address generation (AG) units and a coalescing unit (CU) connected to the multiple address generation units. The coalescing unit (CU) arbitrates between the AGs and processes memory requests. Each of the 8 interfaces of a switch unit can include a vector interface, a scalar interface, and a control interface to communicate with the vector network, the scalar network, and the control network.

[0072] In an embodiment of logic to partition the array of configurable switches, the switches include configuration data such as a switch port disable register SPDR and a switch routing register SRR. In one embodiment, each switch in the array is configurable using the configuration load and unload processes, to block communications using one or more of the switch ports on the switch. Thereby a set of switches surrounding a set of configurable units can be configured to partition the tile into a plurality of sets of configuration units, usable by different application graph graphs.

[0073] In another embodiment in which there are multiple tiles, only switches on outer rows and outer columns of the tiles are configurable using the configuration load and unload processes, to allow or to block communications using one or more of the switch ports across tile boundaries. For example, a switch port disable register can be set to disable communication across tile boundaries.

[0074] During execution of a virtual machine after configuration, data can be sent via one or more unit switches and one or more links between the unit switches to the configurable units using the vector bus and vector interface(s) of the one or more switch units on the array level network.

[0075] In embodiments described herein, a configuration file or bit file, before configuration of the tile, can be sent from the configuration load controller using the same vector bus, via one or more unit switches and one or more links between the unit switches to the configurable unit using the vector bus and vector interface(s) of the one or more switch units on the array level network. For instance, a chunk of configuration data in a unit file particular to a configurable unit PMU 341 can be sent from the configuration load/unload controller 301 to the PMU 341, via a link 320 between the configuration load/unload controller 301 and the West (W) vector interface of the switch unit 311, the switch unit 311, and a link 331 between the Southeast (SE) vector interface of the switch unit 311 and the PMU 341.

[0076] In this example, one of the AGCUs is configured to be a master AGCU, which includes a configuration load/unload controller (e.g. 301). The master AGCU implements a register through which the host (120, FIG. 1) can send commands via the bus system to the master AGCU. The master AGCU controls operations on an array of configurable units in a tile and implements a program control state machine to track the state of the tile based on the commands it receives from the host through writes to the register. For every state transition, the master AGCU issues commands to all components on the tile over a daisy-chained command bus (FIG. 4). The commands include a program reset command to reset configurable units in an array of configurable units in a tile, and a program load command to load a configuration file to the configurable units.

[0077] The configuration load controller in the master AGCU is responsible for reading the configuration file from the memory and sending the configuration data to every configurable unit of the tile. The master AGCU can read the configuration file from the memory at preferably the maximum throughput of the top level network. The data read from memory are transmitted by the master AGCU over the vector interface on the array level network to the corresponding configurable unit according to a distribution sequence described herein.

[0078] In one embodiment, in a way that can reduce the wiring requirements within a configurable unit, configuration and status registers holding unit files to be loaded in a configuration load process, or unloaded in a configuration unload process in a component are connected in a serial chain and can be loaded through a process of shifting bits through the serial chain. In some embodiments, there may be more than one serial chain arranged in parallel or in series. When a configurable unit receives the for example 128 bits of configuration data from the master AGCU in one bus cycle, the configurable unit shifts this data through its serial chain at the rate of 1 bit per cycle, where shifter cycles can run at the same rate as the bus cycle. It will take 128 shifter cycles for a configurable unit to load 128 configuration bits with the 128 bits of data received over the vector interface. The 128 bits of configuration data are referred to as a chunk. A configurable unit can require multiple chunks of data to load all its configuration bits. An example shift register structure is shown in FIG. 6.

[0079] The configurable units interface with the memory through multiple memory interfaces (150, FIG. 1). Each of the memory interfaces can be accessed using several AGCUs. Each AGCU contains a reconfigurable scalar datapath to generate requests for the off-chip memory. Each AGCU contains buffers for organizing data to buffer outgoing commands, data, and incoming responses from the off-chip memory.

[0080] The address generators AGs in the AGCUs can generate memory commands that are either dense or sparse. Dense requests can be used to bulk transfer contiguous off-chip memory regions, and can be used to read or write chunks of data from/to configurable units in the array of configurable units. Dense requests can be converted to multiple off-chip memory burst requests by the coalescing unit (CU) in the AGCUs. Sparse requests can enqueue a stream of addresses into the coalescing unit. The coalescing unit uses a coalescing cache to maintain metadata on issued off-chip memory requests and combines sparse addresses that belong to the same off-chip memory request to minimize the number of issued off-chip memory requests.

[0081] As mentioned above, each of the AGCUs in the illustrated embodiment includes a memory access controller MAC 301, 302, 303 and 304. Each of the memory access controllers can be dedicated to all of the configurable units in the tile. Alternatively, different graphs set up by a configuration file may reside on different partitioned sets of configurable units in the tile, and each of the partitioned sets can be allocated by the configuration file to one of the memory access controllers connected to a switch inside the partitioned set.

[0082] FIG. 4 illustrates a system including a CGRA device 400 coupled to an external host 401 and external memory 402. There can be a plurality of interfaces 405 coupling the device 400 to the host 401, including for example PCIE type interfaces or other types of interfaces suitable to a particular implementation. One or more of the interfaces 405 can be network connections, including Internet-based network connections. The host 401 can comprise any system, including cloud-based systems capable of executing a runtime program in coordination with the device 400.

[0083] There can be a plurality of interfaces 406 coupling the device 400 to memory 402, including for example high-speed double data rate interfaces suitable for connection to high density DRAM memory, or other types of interfaces suitable for a particular implementation.

[0084] The CGRA device 400 includes an array 410 of configurable units that is coupled to an array level network 411. In this illustration, the array is partitioned into a plurality of sets of configurable units VM1, VM2, . . . VMn. The array of configurable units can support different "shapes" of virtual machines. For example, in some embodiments, there can be a minimum-sized set of configurable units and other CGRA resources (atomic group) that can support a virtual machine whose resource needs match the resources of the atomic group. Larger VMs can be composed of aggregate sets of atomic groups to compose different VM shapes. In one example, the atomic group is a tile as illustrated in FIG. 3, and VMs can span several tiles. In other embodiments, the atomic group can be a fraction of a tile (a subset of rows and columns), with logic to isolate VM communications, memory, and load/unloading of VMs composed of different configurations of atomic groups from each other.

[0085] The array level network 411 is also coupled to one or more address generation and coalescing units AGCUs 420. The AGCUs 420 are coupled to a top level network 412, which in turn is coupled to the interfaces 405, 406 for communication with external resources including the external host 401 and the external memory 402.

[0086] The AGCUs 420 include logic that acts as a gateway between the array level network 411 and the top level network 412.

[0087] The gateway function of the AGCUs includes a memory access controller that utilizes in this example, a mapping table 422 that maps virtual machines memory space used to confine communications between sets of configurable units in the array 410 to regions in memory allocated to virtual machines that are implemented by corresponding sets of configurable units.

[0088] In one embodiment, any memory accesses through the AGCU are filtered by the mapping table 422. If a memory access is attempted from a particular set of configurable units outside the memory space allocated to that particular set of configurable units, then it is blocked. In some embodiments, in addition to blocking the access, an exception message can be sent to the host indicating a memory access violation by the particular application graph running in that set of configurable units.

[0089] The AGCUs in this example also include a configuration controller 421, used to load and unload graphs from corresponding sets of configurable units in the array, in support of the virtual machines.

[0090] The mapping table 422 can be configured as part of a configuration file for the corresponding AGCU 420.

[0091] FIG. 5 is a flowchart illustrating logic executed by a memory access controller in an AGCU, such as can be used in the systems described above. In this flow, the AGCU during execution of an application graph in a set of configurable units that includes the AGCU can monitor communications on the array level network for a memory access (510). When a memory access is received, the virtual address (i.e. the address used by the application graph executing in the particular virtual machine) in the request is parsed (511). The source of the request is matched to a particular virtual machine (512). The virtual address is translated to a physical address (i.e. an address to memory outside of the array of configurable units) using the mapping table 422. This can be done by looking up a memory map MM using the identifier VMID of the virtual machine allocated to the particular set of configurable units (513). The memory access controller determines whether the access maps to a physical address that is out of bounds of the memory space allocated to the virtual machine (515). If the access is not out of bounds, then a memory access request is issued on the top level network using the physical address (516). If the access is out of bounds, then an exception is issued on the top level network, and the request is dropped (517).

[0092] This flowchart describes the logic for a request from inside a particular set of configurable units in which a particular virtual machine is executing. A similar process can be executed for data transfers from the top level network destined to the particular set of configurable units.

[0093] Sets of configurable units in the plurality of sets of configurable units are configurable to execute application graphs using virtual addresses, and the memory access controller includes or has access to a configurable table to translate virtual addresses in requests originating from an application graph executing within the particular set, to addresses in the memory space allocated to the particular set. In some examples, the virtual addresses are generated in an AGCU in response to communications from other units in the array. These virtual addresses are converted to physical addresses in the memory access controller.

[0094] In one embodiment, the memory access controller is configurable to allocate each application graph to numerous memory regions. The mapping can be implemented according to a maximum number of regions to be included in the allocated memory space using a base/bounds approach. Assuming for example that memory space allocated to each application graph can include a maximum of 16 memory regions, the compiler can assign a region ID statically using bits 63:60 of address registers in the AGCU.

[0095] In one approach a compiler assigns a base memory address of 0 and a size to each region. It also assigns an indicator to validate the use of each assigned region.

[0096] The assigned regions are contained within the configuration file generated by the compiler. This could take the form of a X-entry region table where X is an integer, and each table entry is a structure with the following fields:

[0097] Valid--the table entry is assigned;

[0098] Size--the N-bit size of the region; the region may be a non-power-of-two in size; and

[0099] A list of associations for each region that binds the AGCU memory address generators (counter/ALU outputs) to a given region.

[0100] At bitfile load time, entries from the region table are used to program the valid, base, and bounds registers in each AGCU linked to the set of configurable units used to implement the application graph. Depending on the region binding mechanism, each AGCU address generator can also be bound to a particular region. Base addresses can be assigned by the runtime in some examples.

[0101] When the AGCU generates a memory address and length in response to access request originating from configurable units within its set of configurable units, the following actions are performed, prior to sending a transaction with the address on the top level network.

[0102] The Z-bit address in this X region example can include 2 parts. A number of upper most bits (Z-1:W) identify a region access register. The lower bits (W-1:0) identify the relative virtual offset in the region.

[0103] The AGCU looks up the base/bounds of the physical region using bits (Z-1:W) of the virtual address. The offset in bits is added to the base register for that region to produce the actual DDR physical address. The base value is incremented by the length, and the resulting extent address is checked against the limit register. If the extent address is less than or equal to the limit, the address and request is passed to the DDR via the top level network for processing.

[0104] If the extent address exceeds the limit, a run-time exception is generated, the top level network request is suppressed, and the AGCU stops processing further address requests from that address generator.

[0105] In an alternative embodiment, the memory access controller can implement a virtual address (VA) to physical address (PA) mapping using virtual to physical translation using other approaches. For example, in a VA-PA Buffer translation approach, the runtime software maps compiler generated address regions to available memory space in physical memory through the process of VA-PA translation. This allows runtime to partition a large virtual address space into multiple physical address spaces which could be spread across multiple arrays of configurable units. The physical address space can be partitioned into segments, having in some cases a minimum size (e.g., 1 MB) and a maximum size (e.g., 1 TB).

[0106] In order to efficiently translate the virtual address to a physical address, each memory access controller can implement a 16 entry VA-PA Buffer which holds the mapping information for 16 virtual segments. The VA-PA Buffer in this example is fully associative and is loaded from the bit-file (configuration file) during configuration load. Each entry of VA-PA Buffer can also be written through register writes. Each VA-PA Buffer entry can consist of 2 sub-fields: VSID_PA and V_SSIZE. Each of these sub-fields are described in the table below:

///

VSID_PA:

TABLE-US-00001 [0107] Bits Field Description N-1:0 Physical Translated Physical address specifying the base Address physical address of the continuous virtual segment, whose bits are significant down to the segment size boundary. M:N VSID Virtual Segment ID Corresponds to the upper bits virtual address.

V_SSIZE:

TABLE-US-00002 [0108] Bits Field Description P-1:0 SSIZE Specifies the size of segment. The size of the segment is a function of SSIZE P Valid Specifies a Valid entry

[0109] The AGCU populates its VA-PA Buffer from the VA-PA Buffer structure in the bit-file in the configuration load process.

[0110] During execution of the application graph in the virtual machine, a virtual address is generated based on the application graph in the set of configurable units linked to the AGCU.

[0111] The memory access controller translates the virtual address by searching each VA-PA Buffer entry for a matching virtual segment.

[0112] Translation errors can be detected by the memory access controller, including for example: [0113] 1. Translation not present: The VA of the request does not match the VSID bits of any of the VA-PA Buffer entries. [0114] 2. Multiple matches: The VA of the request matches the VSID bits of multiple entries. [0115] 3. SSIZE greater than a maximum: The SSIZE of the matching entries is greater than a set maximum.

[0116] These errors can be recorded in a status register along with the VA which caused the error. Also, on a translation error, the memory access controller can send an interrupt or other type of notification to the Host, drop the request which caused the error, and stop processing any new requests.

[0117] FIG. 6 illustrates an array of configurable units such as those described in reference to FIG. 3, including PCUs, PMUs and switch units. The configuration load logic and memory access controller logic implemented in other addressable nodes (such as in AGCUs) in the array are not shown. The array level network comprises the grid of switches, which are configurable to connect to other configurable units in the region.

[0118] A configuration file can be used to configure the switches to block communications out of selected ports on the switches, in order to partition the array into partitioned sets of configurable units. In the example shown in FIG. 6, the array is configured to include a first set of configurable units labeled VM1 and a second set of configurable units labeled VM2. The switches on the boundaries of the sets of configurable units are configured to block communications from units inside the corresponding set to units outside. For example, the switch 600 can be configured to block the Southwest port, the West port, the Northwest port, the North Port, and the Northeast port, while enabling the East port, the Southeast port, and the South port. The switch 601 can be configured to block the Northwest port, the North port and the Northeast port, while enabling the other ports on the switch. The switch 602 can be configured to block only the Southeast port, while enabling the other ports on the switch. In this manner, the partition of the array of configurable units can be composed to form isolated sets of configurable units. In the embodiments described herein, each of the isolated sets is coupled to a memory access controller and a configuration controller as described above.

[0119] More generally, an array of configurable units can include blocks of configurable units which for the purposes of partitioning comprise partitionable groups in the array. In some embodiments, a partitionable group may comprise more than one type of configurable unit, including as illustrated in FIG. 3, PMUs, PCUs, Switches and AGCUs. The bus system can be configured to provide for partition the array into sets of configurable units on boundaries of partitionable groups within the array of any size suitable for a given implementation.

[0120] FIG. 7 illustrates an array of configurable units such as those described in reference to FIG. 3, including PCUs, PMUs and switch units. In this example the array of configurable units includes a plurality of tiles of configurable units. The bus system comprises switches on boundaries between the tiles that include circuits to partition the bus system on tile boundaries. In this example, the sets of configurable units that can be allocated to particular virtual machines or graphs executing in the corresponding sets are partitioned on tile boundaries, and can include one or more tiles.

[0121] Thus, as illustrated a first virtual machine VM1 is isolated within a set of configurable units that includes at least parts of tiles T1 and T3. A second virtual machine VM2 is isolated within a set of configurable units that includes at least parts of tiles T2, T4, T5 and T6.

[0122] The configuration load logic and memory access controller logic are implemented in other addressable nodes (such as in AGCUs) in the array are not shown, including at least one of each tile.

[0123] The switches on the tile boundaries can be referred to as boundary switches which are configurable to block communications on selected ports using a configuration file is discussed above. Other switches in the grid may or may not have the ability to disable ports. For example, the switch 700 can be configured to block all ports that lead from the set of configurable units in tile T5 to the set of configurable units in tile T3. Likewise, the switch 701 can be configured to block ports that cross the boundary from tiles T5 to T3. In this illustrated embodiment, each tile includes a grid of switches arranged in rows and columns. A boundary switch is disposed at the end of each row. All switches in the top and bottom rows of each tile can be boundary switches. Boundary switches can be configured to route to each other as follows. Switches at the innermost chip column of each tile can join to their left/right neighbor, creating a bi-directional east/west link to their neighboring tile, thereby fusing into a single logical tile. Similarly, switches along the innermost chip rows in each tile can join to their north/south neighbor, fusing a north/south tile pair into a single tile. In some embodiments, only one row or column of boundary switches can be implemented on tile boundaries, rather than one row and one column in each tile as illustrated.

[0124] The sets of configurable units partitioned in an array of configurable units can have a variety of configurations. FIG. 8 illustrates an example configuration in a system in which the array 800 of configurable units includes a plurality of tiles T1 to T6, with boundary switches configurable to enable or block communication on tile boundaries. In this example, a first virtual machine VM1 is configured within tiles T1 and T3, and a second virtual machine VM2 is configured within tiles T2, T4, T5 and T6. The sets of configurable units configured as virtual machines VM1 and VM2 each include a memory access controller MAC and the configuration controller CC (804, 805) on an addressable node on the array level network accessible from within the set of configurable units.

[0125] FIG. 9 illustrates an example configuration in a system in which the array of configurable units may not include tiles, or within a single tile of an array of configurable units, where switches on the array level network are configured to partition the array into a plurality of sets having more flexible boundaries. In this illustration, virtual machine VM1 is implemented in a set of configurable units in the illustrated region in the upper left, and virtual machine VM2 is implemented in a set of configurable units in the illustrated region in the lower part of the array 900. The sets of configurable units configured as virtual machines VM1 and VM2 each include a memory access controller MAC and the configuration controller CC (904, 905) on an addressable node on the array level network accessible from within the set of configurable units.

[0126] FIG. 10 illustrates a system including a plurality of CGRAs in which virtual machines can be configured across CGRA1 and CGRA2. The CGRAs can each be implemented on a single integrated circuit. The CGRAs can have an array of configurable units like that discussed above in connection with FIG. 3 and other figures, or any other type of array of configurable units that are partitionable into sets of configurable units with supporting virtualization logic.

[0127] The system in FIG. 10 includes a host 1000 which executes a runtime program. Also, a first array of configurable units 1010 implemented on the CGRA1 and a second array of configurable units 1020 is implemented on the CGRA2. Each of the CGRAs is coupled to the corresponding memory 1110, 1120. A host system bus 1005 interconnects the host 1000 with the arrays of configurable units 1010, 1020 on the two CGRAs. High-speed memory interfaces 1015, 1025 couple the CGRAs to corresponding memory 1110, 1120.

[0128] In some embodiments, the host 1000 can communicate with the memory 1110 and the memory 1120 via top-level networks in the CGRAs.

[0129] In FIG. 10, a first virtual machine VM1 is configured in a set of configurable units confined to the array 1010 on CGRA1. Also, a second virtual machine VM2 is configured in a set of configurable units that includes configurable units in the array 1010 on CGRA1, and configurable units on the array 1020 on CGRA2.

[0130] FIG. 11 is a flowchart illustrating the ability of a system supporting virtual machines as described herein to perform resource allocation routines to make optimal use of the arrays of configurable units in the system. In the simplified flowchart, an application executing on a host at runtime, or other monitoring service, can load multiple VMs and corresponding application graphs (AGs) in an array of configurable units of a reconfigurable processor (1140). The host application can send commands to initiate execution of loaded AGs so that multiple AGs are simultaneously executing within their respective VMs (1141). While the AGs are executing, the host application can monitor for a request to allocate resources to implement a virtual machine for a particular application graph (1142), such as when an executing AG needs to be updated with a new AG, or a higher priority AG needs to be initiated. The service then determines the needs of a new virtual machine which can implement the new AG (1143). Also, the service can survey resource utilization in the array of configurable units in the reconfigurable processer, and more generally in all available reconfigurable processers in the system (1144). The needs of the new AG and the utilization of resources by executing AGs are compared to determine whether there are available resources to be allocated in response to the request (1145). If resources are not available, then the service can select an application graph having lower priority, stop the selected AG (using a checkpoint if available) and unload the AG to free the resources (1154). In some embodiments, the service can wait until resources become available for other reasons. In systems implementing VMs for executing AGs, the other AGs can remain executing while the selected AG is stopped and unloaded. If the resources are available, then a VM can be provisioned using the newly available set of configurable units, and the new AG configuration file can be loaded, and started (1155).

[0131] In general, virtualization enables allocation and reallocation of resources in a manner that can change during execution of an application graph. In order to fully checkpoint an application graph, application graphs can be compiled with quiescent points where the application graph has no outstanding memory or host transactions, and in which it can be stopped in a consistent fashion across tiles and chips. In one approach, the compiler can insert checkpoint operations at particular points in the graph execution. These may correspond to completion of a certain number of outermost loops or other execution-related events.

[0132] At a checkpoint, the application graph is stopped, and a configuration unload can be executed that includes state information of the configurable units usable to restart the paused application graph. Thus, the configuration unload process can include dumping scratchpad memory (e.g. PMU memory in the Plasticine example), pipeline and control register state, and switch and memory access controller or AGCU state.

[0133] FIG. 12 is a block diagram illustrating an example configurable switch usable in an array of configurable units as described herein. The configurable switch includes circuitry 1205 for arbitrating communications among vector, scalar and control buses in the array level network. In this embodiment, each switch includes circuitry 1205 having eight ports, including North, South, East and West ports configured for connection to adjacent switches in the grid of switches. Also, the eight ports include Northeast, Southeast, Southwest and Northwest ports configured for connection to other types of configurable units, such as PCUs, PMUs, and AGCUs (which can include memory access controllers and configuration controllers as described herein).

[0134] In order to partition the array into sets of configurable units, configurable switches include a switch port disable register SPDR[0:7]. In one implementation, the SPDR register contains 1 bit for each north/south/east/west port in each direction; bit assignment is as follows.

[0135] [0]: If set to `1`, disable outbound transactions on the North port. Any outgoing transactions will be silently dropped. Otherwise, outbound transactions on the North port are enabled.

[0136] [1]: If set to `1`, disable inbound transactions on the North port. Any inbound transactions will be silently dropped. Otherwise, inbound transactions on the North port are enabled.

[0137] [2]: If set to `1`, disable outbound transactions on the South port. Any outgoing transactions will be silently dropped. Otherwise, outbound transactions on the South port are enabled.

[0138] [3]: If set to `1`, disable inbound transactions on the South port. Any inbound transactions will be silently dropped. Otherwise, inbound transactions on the South port are enabled.

[0139] [4]: If set to `1`, disable outbound transactions on the East port. Any outgoing transactions will be silently dropped. Otherwise, outbound transactions on the East port are enabled.

[0140] [5]: If set to `1`, disable inbound transactions on the East port. Any inbound transactions will be silently dropped. Otherwise, inbound transactions on the East port are enabled.

[0141] [6]: If set to `1`, disable outbound transactions on the West port. Any outgoing transactions will be silently dropped. Otherwise, outbound transactions on the West port are enabled.

[0142] [7]: If set to `1`, disable inbound transactions on the West port. Any inbound transactions will be silently dropped. Otherwise, inbound transactions on the West port are enabled.

[0143] This design could be simplified by having a single bit per port direction, if individual inbound and outbound port controls are unnecessary. Also, a smaller number of predefined configurations might be indicated in a configuration register using even fewer bits.

[0144] Configurable switches in the grid include configuration data stores 1220 (e.g. serial chains that can include a port disable register) to store unit files comprising a plurality of chunks (or sub-files of other sizes) of configuration data particular to the corresponding switch. The configuration data store 1220 is connected to circuitry 1205 via line 1221. Also, the configurable switches in the grid each include a unit configuration load logic 1240 connected to the configuration data store 1220 via line 1222. The unit configuration load logic 1240 executes a unit configuration load process. The unit configuration load process includes receiving via the bus system (e.g. the vector inputs), chunks of a unified particular to the configurable switch, and loading the received chunks into the configuration data store 1220 of the configurable switch. Unit configuration load process is further described with reference to FIG. 15.

[0145] The configuration data stores in the configurable switches in the grid in this example comprise serial chains of latches, where the latches store bits that control configuration of the resources in the switch. A serial chain in a configuration data store can include a first shift register chain for configuration data, such as port enable and disable parameters, and a second shift register chain for state information and counter values connected in series.

[0146] Each port in the configurable switch can interface with the scalar, vector and control buses using three corresponding sets of inputs and outputs. Also, the configurable switch can interface with the scalar, vector and control buses for communications directed to the switch, including for example for communications associated with configuration load and unload processes.

[0147] Although not shown, each of the ports can include FIFO buffers and other resources to support packet-based and/or route based communication in the grid of switches.

[0148] In this embodiment, the configuration load and unload process uses a daisy-chained completion bus to indicate when a load/unload command to configure the configurable switches in the grid completes. As shown in the example of FIG. 12, a daisy-chained completion bus 1291 and a daisy-chained command bus 1292 are connected to daisy-chained logic 1293. The daisy-chained logic 1293 communicates with unit configuration load logic 1240. The daisy-chained logic 1293 can include load complete status logic as described below. The daisy-chained completion bus is further described below. Control inputs are received by control block 1270, and control outputs are provided by the control block 1270. Other topologies for the command and completion buses are possible but not described here.

[0149] FIG. 13 is a block diagram illustrating an example configurable unit 1300, such as a Pattern Compute Unit (PCU). Configurable units in the array of configurable units include configuration data stores 1320 (e.g. serial chains) to store unit files comprising a plurality of chunks (or sub-files of other sizes) of configuration data particular to the corresponding configurable units. Configurable units in the array of configurable units each include unit configuration load logic 1340 connected to the configuration data store 1320 via line 1322, to execute a unit configuration load process. The unit configuration load process includes receiving via the bus system (e.g. the vector inputs), chunks of a unit file particular to the configurable unit, and loading the received chunks into the configuration data store 1320 of the configurable unit. The unit configuration load process is further described in reference to FIG. 14.

[0150] The configuration data stores in configurable units in the plurality of configurable units in this example comprise serial chains of latches, where the latches store bits that control configuration of the resources in the configurable unit. A serial chain in a configuration data store can include a first shift register chain for configuration data and a second shift register chain for state information and counter values connected in series. A configuration store is further described in reference to FIG. 15.

[0151] A configurable unit can interface with the scalar, vector, and control buses using three corresponding sets of inputs and outputs (IO): scalar inputs/outputs, vector inputs/outputs, and control inputs/outputs. Scalar IOs can be used to communicate single words of data (e.g. 32 bits). Vector IOs can be used to communicate chunks of data (e.g. 128 bits), in cases such as receiving configuration data in a unit configuration load process, and transmitting and receiving data during operation after configuration across a long pipeline between multiple PCUs. Control IOs can be used to communicate control signals such as the start or end of execution of a configurable unit. Control inputs are received by control block 1370, and control outputs are provided by the control block 1370.

[0152] Each vector input is buffered using a vector FIFO in a vector FIFO block 1360 which can include one or more vector FIFOs. Each scalar input is buffered using a scalar FIFO 1350. Using input FIFOs decouples timing between data producers and consumers, and simplifies inter-configurable-unit control logic by making it robust to input delay mismatches.