Fully Automated Sem Sampling System For E-beam Image Enhancement

ZHOU; Wentian ; et al.

U.S. patent application number 16/718706 was filed with the patent office on 2020-07-02 for fully automated sem sampling system for e-beam image enhancement. The applicant listed for this patent is ASML Netherlands B.V.. Invention is credited to Wei FANG, Lingling PU, Teng WANG, Liangjiang YU, Wentian ZHOU.

| Application Number | 20200211178 16/718706 |

| Document ID | / |

| Family ID | 69061340 |

| Filed Date | 2020-07-02 |

| United States Patent Application | 20200211178 |

| Kind Code | A1 |

| ZHOU; Wentian ; et al. | July 2, 2020 |

FULLY AUTOMATED SEM SAMPLING SYSTEM FOR E-BEAM IMAGE ENHANCEMENT

Abstract

Disclosed herein is a method of automatically obtaining training images to train a machine learning model that improves image quality. The method may comprise analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model. The method may comprise obtaining a first image having a first quality for each of the plurality of training locations, and obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality. The method may comprise using the first image and the second image to train the machine learning model.

| Inventors: | ZHOU; Wentian; (San Jose, CA) ; YU; Liangjiang; (San Jose, CA) ; WANG; Teng; (San Jose, CA) ; PU; Lingling; (San Jose, CA) ; FANG; Wei; (Milpitas, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 69061340 | ||||||||||

| Appl. No.: | 16/718706 | ||||||||||

| Filed: | December 18, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62787031 | Dec 31, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 7/0006 20130101; G06T 2207/30148 20130101; G06T 2207/10061 20130101; G06T 2207/20081 20130101; G06K 9/6262 20130101; G06K 9/036 20130101; G06K 9/6256 20130101 |

| International Class: | G06T 7/00 20060101 G06T007/00; G06K 9/62 20060101 G06K009/62 |

Claims

1. An apparatus for automatically obtaining training images to train a machine learning model that improves image quality, the apparatus comprising: a memory; and at least one processor coupled to the memory and configured to: analyze a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations to use in relation to training the machine learning model; obtain a first image having a first quality for each of the plurality of training locations; obtain a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and use the first image and the second image to train the machine learning model.

2. The apparatus of claim 1 wherein the data is in a database.

3. The apparatus of claim 2, wherein the database is any one of a graphic database system (GDS), an Open Artwork System Interchange Standard, or a Caltech Intermediate Form.

4. The apparatus of claim 3, where the GDS includes GDS formatted data or GDSII formatted data.

5. The apparatus of claim 1 wherein the at least one processor is further configured to obtain more than one first image having a first quality for each of the plurality of training locations.

6. The apparatus of claim 1, wherein the at least one processor is further configured to obtain more than one second image having a second quality for each of the plurality of training locations.

7. The apparatus of claim 1, wherein the at least one processor is further configured to classify the plurality of patterns into a plurality of subsets of patterns.

8. The apparatus of claim 1, wherein the at least one processor is further configured to extract a feature from the plurality of patterns.

9. The apparatus of claim 8, wherein the extracted feature includes a shape, a size, a density, or a neighborhood layout.

10. The apparatus of claim 7, wherein the at least one processor is further configured to classify the plurality of patterns into a plurality of subsets of patterns based on the extracted feature.

11. The apparatus of claim 7, wherein each subset of the plurality of subsets of patterns is associated with information relating to a location, a type, a shape, a size, a density or a neighborhood layout.

12. The apparatus of claim 1, wherein the at least one processor is further configured to identify the plurality of training locations based on a field of view, a local alignment point, or an auto-focus point.

13. The apparatus of claim 1, wherein the at least one processor is further configured to determine a first scanning path including a first scan for obtaining the first image, the first scanning path based on an overall scan area for the plurality of training locations.

14. The apparatus of claim 13, wherein the at least one processor is further configured to determine a second scanning path including a second scan for obtaining the second image, the second scanning path based on an overall scan area for the plurality of training locations.

15. A non-transitory computer readable medium storing a set of instructions that is executable by a controller of a device to cause the device to perform a method comprising: analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations to use in relation to training the machine learning model; obtaining a first image having a first quality for each of the plurality of training locations; obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and using the first image and the second image to train the machine learning model.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims priority of U.S. application 62/787,031 which was filed on Dec. 31, 2018, and which is incorporated herein in its entirety by reference.

FIELD

[0002] The present disclosure relates generally to systems for image acquisition and image enhancement methods, and more particularly, to systems for and methods of improving metrology by automatically obtaining training images to train a machine learning model that improves image quality.

BACKGROUND

[0003] In manufacturing processes used to make integrated circuits (ICs), unfinished or finished circuit components are inspected to ensure that they are manufactured according to design and are free of defects. Inspection systems utilizing optical microscopes or charged particle (e.g., electron) beam microscopes, such as a scanning electron microscope (SEM), can be employed. As the physical sizes of IC components continue to shrink, accuracy and yield in defect detection become more and more important. However, imaging resolution and throughput of inspection tools struggle to keep pace with the ever-decreasing feature size of IC components. Further improvements in the art are desired.

SUMMARY

[0004] The following presents a simplified summary of one or more aspects in order to provide a basic understanding of such aspects. This summary is not an extensive overview of all contemplated aspects, and is intended to neither identify key or critical elements of all aspects nor delineate the scope of any or all aspects. Its sole purpose is to present some concepts of one or more aspects in a simplified form as a prelude to the more detailed description that is presented later.

[0005] In an aspect of the disclosure, there is provided a method of automatically obtaining training images to train a machine learning model. The method may comprise analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model. The method may comprise obtaining a first image having a first quality for each of the plurality of training locations, and obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality. The method may comprise using the first image and the second image to train the machine learning model.

[0006] In another aspect of the disclosure, there is provided an apparatus for automatically obtaining training images to train a machine learning model. The apparatus may comprise a memory, and one or more processors coupled to the memory. The processor(s) may be configured to analyze a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model. The processor(s) may be further configured to obtain a first image having a first quality for each of the plurality of training locations, and obtain a second image having a second quality for each of the plurality of training locations, the second quality higher than the first quality. The processor(s) may be further configured to use the first image and the second image to train the machine learning model.

[0007] In another aspect of the disclosure, there is provided a non-transitory computer readable medium storing a set of instructions that is executable by a controller of a device to cause the device to perform a method comprising: analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model; obtaining a first image having a first quality for each of the plurality of training locations; obtaining a second image having a second quality for each of the plurality of training locations, the second quality higher than the first quality; and using the first image and the second image to train the machine learning model.

[0008] In another aspect of the disclosure, there is provided an electron beam inspection apparatus comprising a controller having circuitry to cause the electron beam inspection apparatus to perform: analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model; obtaining a first image having a first quality for each of the plurality of training locations; obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and using the first image and the second image to train the machine learning model.

[0009] To accomplish the foregoing and related ends, aspects of embodiments comprise the features hereinafter described and particularly pointed out in the claims. The following description and the annexed drawings set forth in detail certain illustrative features of the one or more aspects. These features are indicative, however, of but a few of the various ways in which the principles of various aspects may be employed, and this description is intended to include all such aspects and their equivalents.

BRIEF DESCRIPTION OF FIGURES

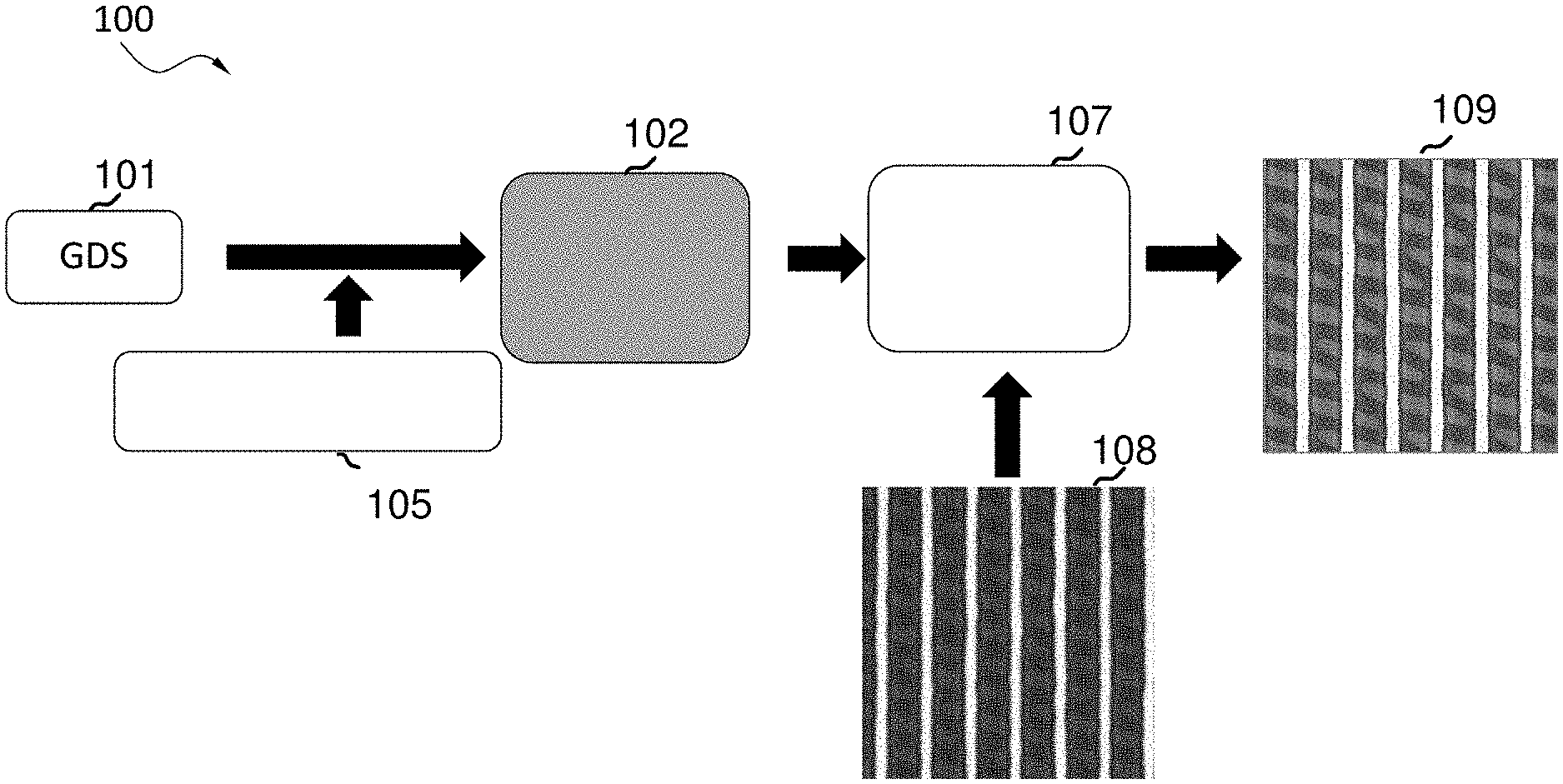

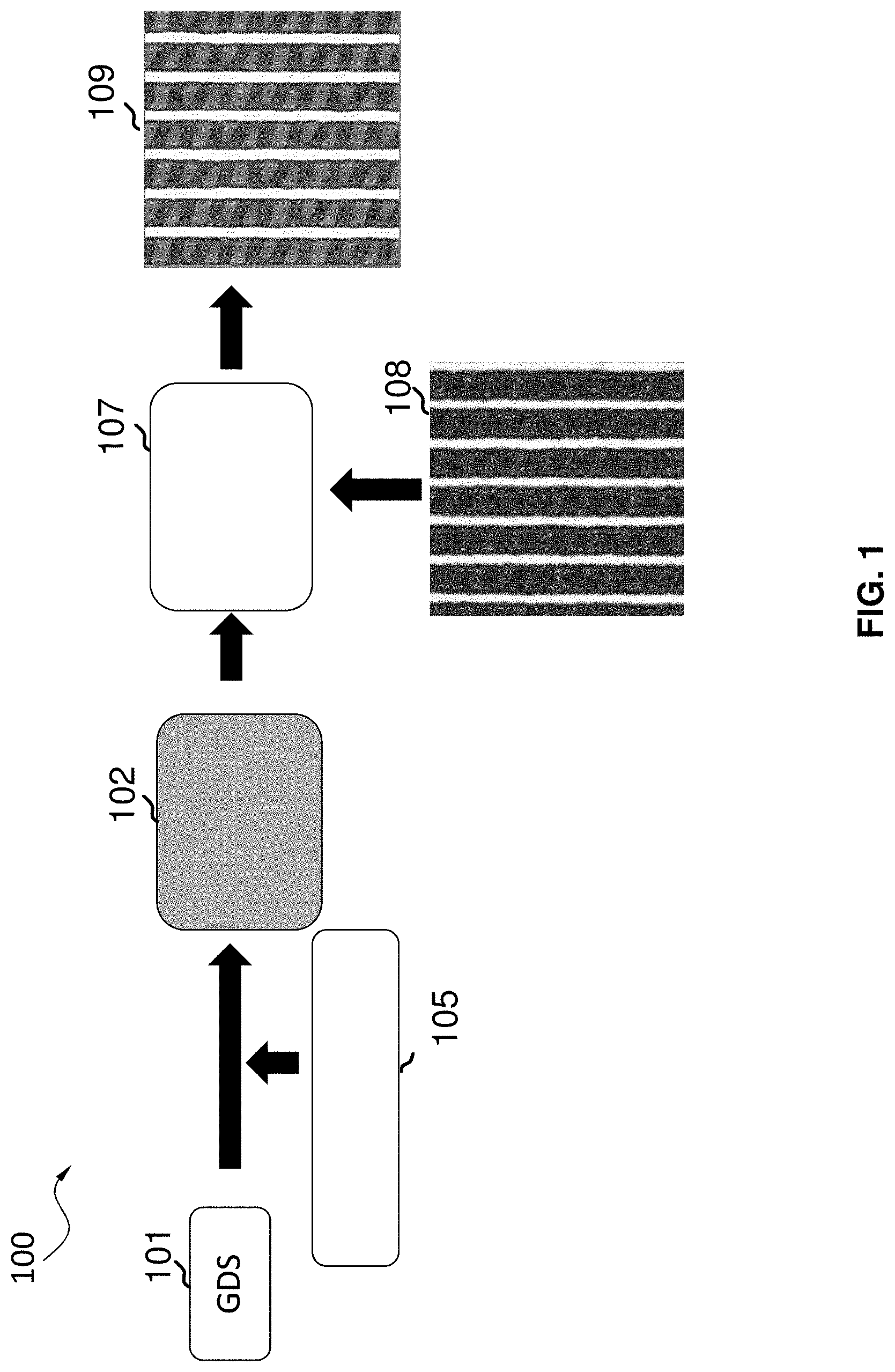

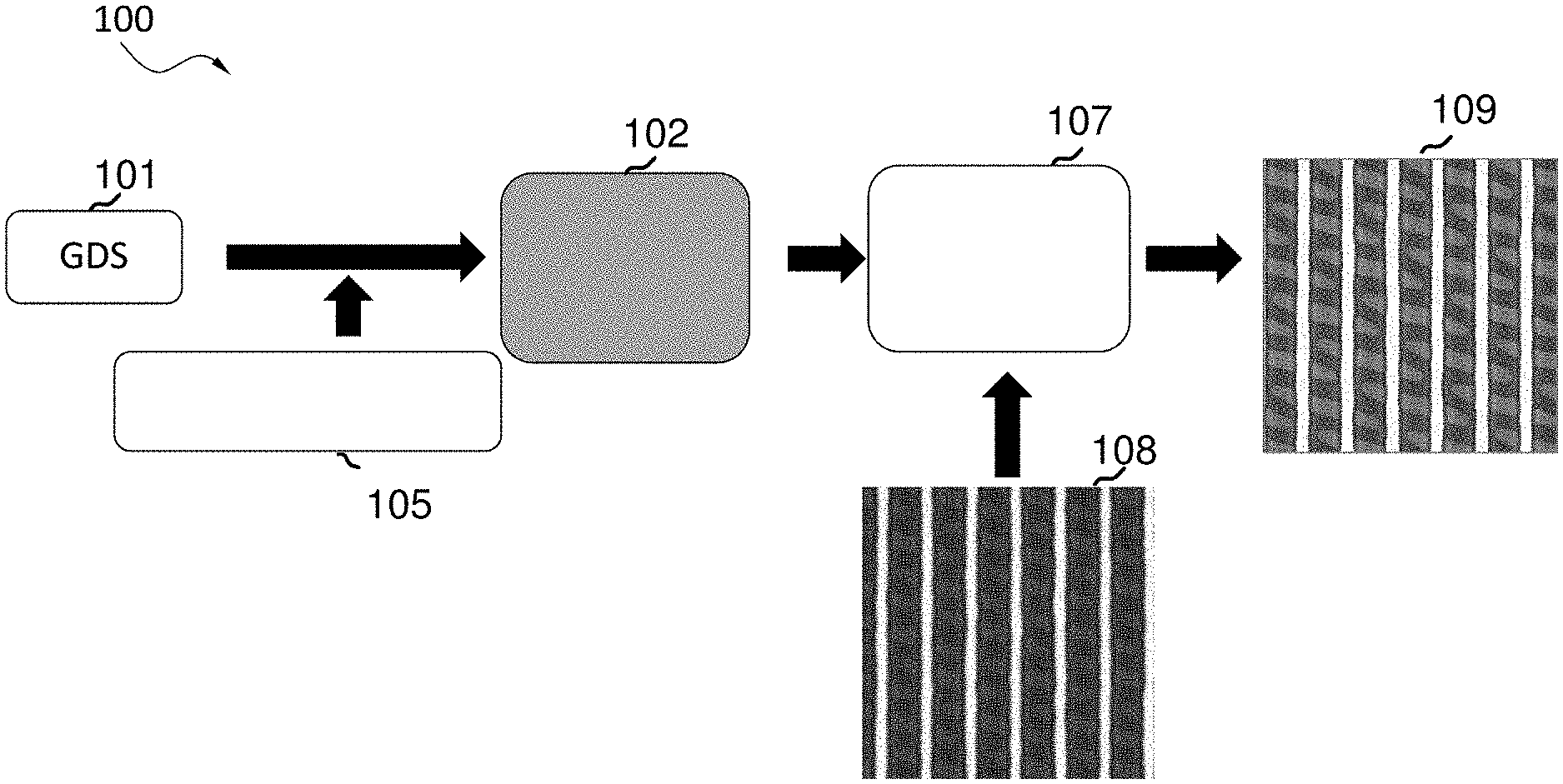

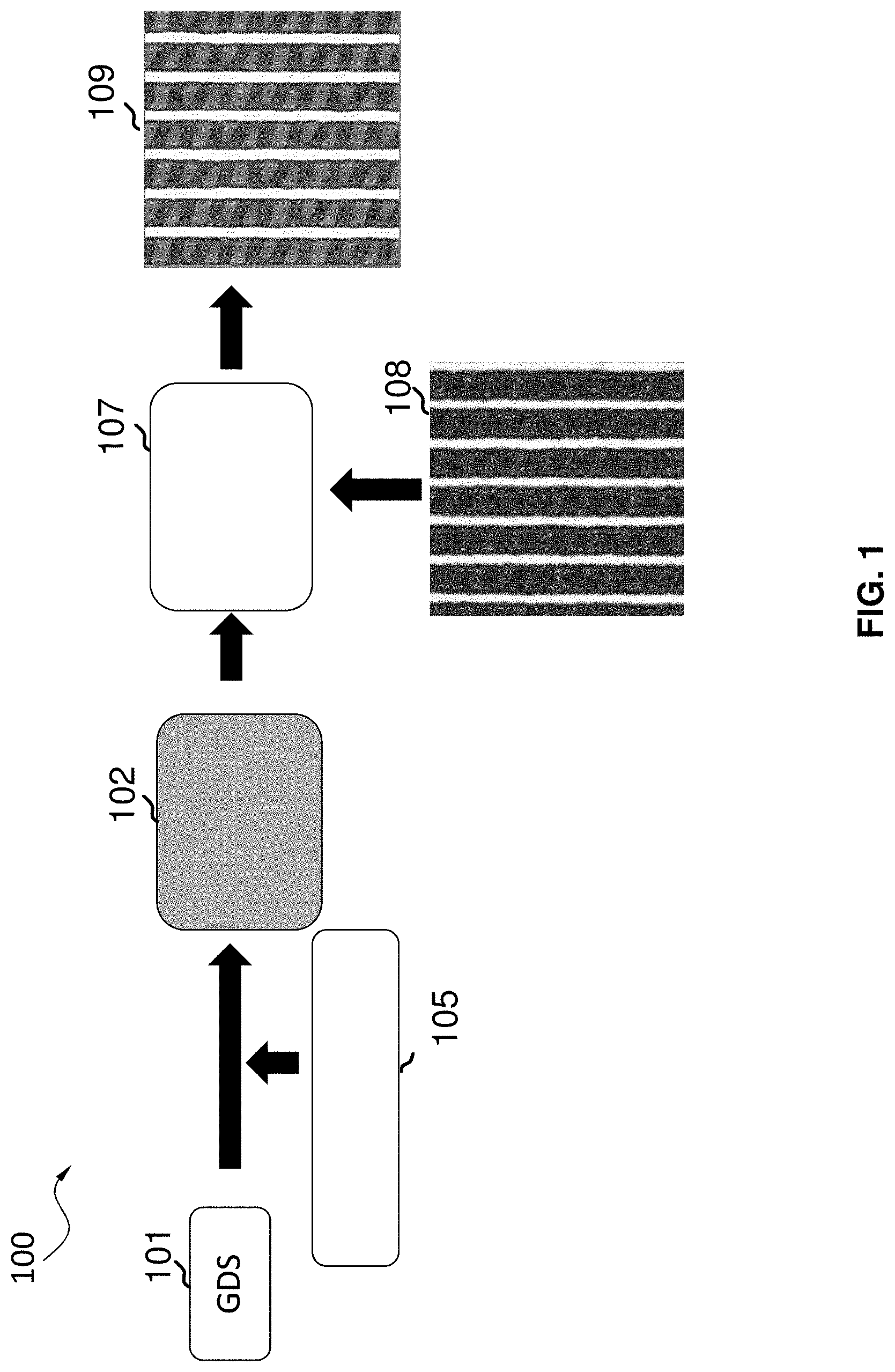

[0010] FIG. 1 is a flow diagram of a process for improving images obtained by a SEM sampling system.

[0011] FIG. 2 is a block diagram illustrating an example of an automatic SEM sampling system, according to some aspects of the present disclosure.

[0012] FIG. 3 is a schematic diagram illustrating an example of an electron beam inspection (EBI) system, according to some aspects of the present disclosure.

[0013] FIG. 4 is a schematic diagram illustrating an example of an electron beam tool that can be a part of the example electron beam inspection (EBI) system of FIG. 3, according to some aspects of the present disclosure.

[0014] FIGS. 5A-5C illustrate a plurality of design patterns of a graphic database system (GDS) of a product, according to some aspects of the present disclosure.

[0015] FIG. 5D is a diagram illustrating a plurality of training locations, according to some aspects of the present disclosure.

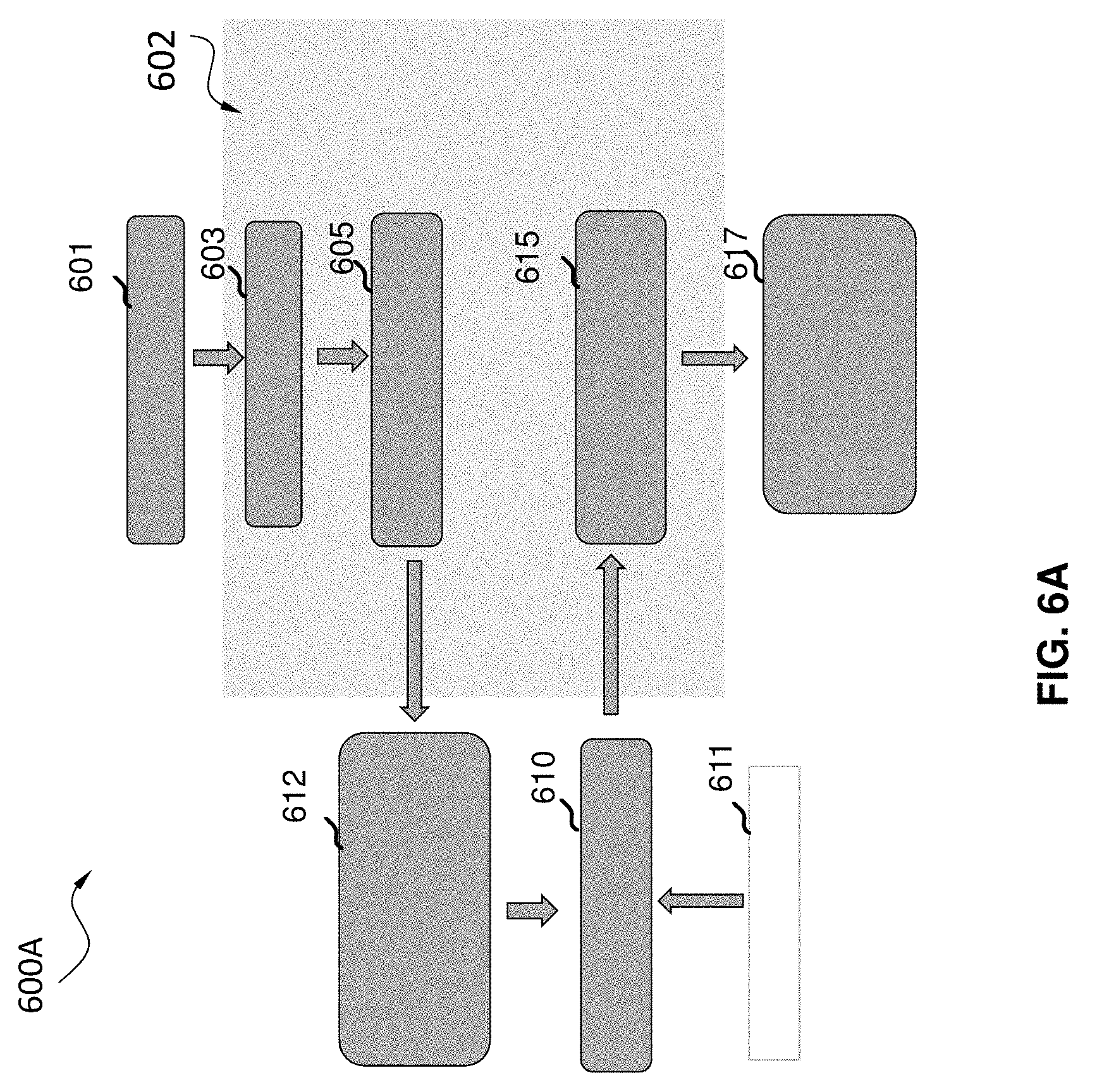

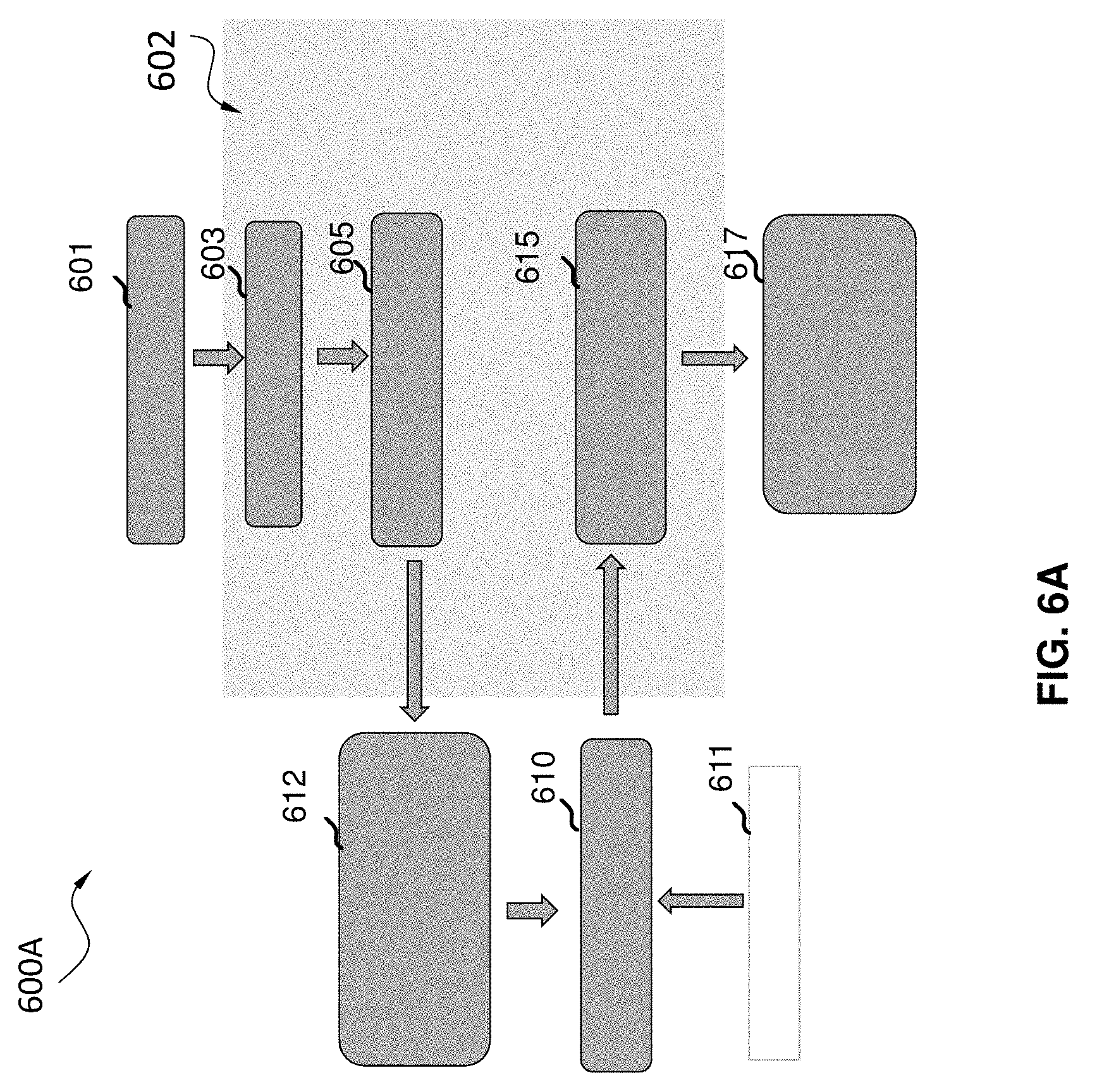

[0016] FIG. 6A is a flow diagram illustrating an example of a method of automatically obtaining training images to train a machine learning model, according to some aspects of the present disclosure.

[0017] FIG. 6B is a block diagram illustrating details of an automatic SEM sampling system, according to some aspects of the present disclosure.

[0018] FIG. 7 is a block diagram illustrating an example of a method of automatically obtaining training images to train a machine learning model that improves image quality, according to some aspects of the present disclosure.

DETAILED DESCRIPTION

[0019] Reference will now be made in detail to example aspects of embodiments, examples of which are illustrated in the accompanying drawings. The following description refers to the accompanying drawings in which the same numbers in different drawings represent the same or similar elements unless otherwise represented. The implementations set forth in the following description of example aspects of embodiments do not represent all implementations consistent with the invention. Instead, they are merely examples of apparatuses and methods consistent with aspects of embodiments related to the invention as recited in the claims. For example, although some aspects of embodiments are described in the context of utilizing electron beam inspection (EBI) system such as scanning electron microscope (SEM) for generation of a wafer image, the disclosure is not so limited. Other types of inspection system and image generation system be similarly applied.

[0020] The enhanced computing power of electronic devices, while reducing the physical size of the devices, can be accomplished by significantly increasing the packing density of circuit components such as, transistors, capacitors, diodes, etc. on an IC chip. For example, in a smart phone, an IC chip (which is the size of a thumbnail) may include over 2 billion transistors, the size of each transistor being less than 1/1000.sup.th of a human hair. Not surprisingly, semiconductor IC manufacturing is a complex process, with hundreds of individual steps. Errors in even one step have the potential to dramatically affect the functioning of the final product. Even one "killer defect" can cause device failure. The goal of the manufacturing process is to improve the overall yield of the process. For example, for a 50-step process to get 75% yield, each individual step must have a yield greater than 99.4%, and if the individual step yield is 95%, the overall process yield drops to 7%.

[0021] In various steps of the semiconductor manufacturing process, pattern defects can appear on at least one of a wafer, a chip, or a mask, which can cause a manufactured semiconductor device to fail, thereby reducing the yield to a great degree. As semiconductor device sizes continually become smaller and smaller (along with any defects), identifying defects becomes more challenging and costly. Currently, engineers in semiconductor manufacturing lines spend usually hours (and even sometimes days) to identify locations of small detects to minimize their impact on the final product.

[0022] Conventional optical inspection techniques are ineffective in inspecting for small defects (e.g., nanometer scale defects). Advanced electron-beam inspection (EBI) tools, such as an SEM with high resolution and large depth-of-focus, have been developed to meet the need in the semiconductor industry. E-beam images may be used in monitoring semiconductor manufacturing processes, especially for more advanced nodes where optical inspection falls short of providing enough information.

[0023] An E-beam image may be characterized according to one or more qualities, such as contrast, brightness, noise level, etc. In general, a low quality images often requires less parameter tuning and fewer scans, but the information embedded in the low quality images (such as defect types and locations) is hard to extract, which may have a negative impact on the analyses. High quality images which do not suffer from this problem may be obtained by an increased number of scans. However, high quality images may have a low throughput.

[0024] Further, an E-Beam image acquisition procedure may go through many steps, such as identifying the pattern of interests, setting up scanning areas for inspection, tuning SEM conditions, determining quality enhancement methods, etc. Many of these settings and parameters are contributing factors to both the system throughput and the E-beam image quality. There may be a trade-off between the throughput and image quality.

[0025] In order to obtain high quality images and at the same time achieve a high throughput, an operator generally needs to set many parameters and make decisions as to how the images should be obtained. However, determining these parameters is often not straightforward. To minimize possible operator-to-operator variation, machine learning based enhancement methods can be trained to learn the enhancement framework/network. In such cases, the acquisition of sufficient and representative training samples is advantageous to increase the final performance of the trained system. However, common procedures to obtain SEM image samples require significant human intervention, including searching the proper design patterns for scanning, determining a number of images to collect and various imaging conditions, etc. Such intensive human involvement impedes full utilization of the advanced machine learning based enhancement methods. Therefore, there is a need to develop a fully automated smart sampling system for E-beam images quality enhancement.

[0026] Disclosed herein, among other things, is equipment that automatically obtains training images to train a machine learning model that improves image quality, and methods used by the equipment. Electron-beam (E-beam) imaging plays an important role in inspecting very small defects (e.g., nanometer scale defects with a nanometer bring 0.000000001 meters) in semiconductor manufacturing processes. In general, it is possible to obtain many relatively low quality E-beam images very quickly, but the images may not provide enough useful information about issues such as defect types and locations. On the other hand, it is possible to obtain high quality images but doing so takes more time and so decreases the speed at which devices can be analyzed. This increases manufacturing costs. Samples may be scanned multiple times to improve image quality, such as by reducing noise by averaging over multiple images. However, scanning each sample multiple times reduces the system throughput and may cause charge build up or may damage the sample.

[0027] Some of the systems and methods disclosed herein embody ways to achieve high quality images with a reduced number of scans of a sample, in some embodiments by automatically selecting sample locations to use to generate training images for a machine learning (ML) algorithm. The term "quality" refers to a resolution, a contrast, a sensitivity, a brightness, or a noise level, etc. Some of the systems and methods disclosed herein may obtain the benefit of higher quality images without excessively slowing down production. Some embodiments of the systems may automatically analyze a plurality of patterns of data relating to a layout of a product, in order to identify multiple training locations on a sample of the product to use in relation to training a machine learning model. The patterns of data may be SEM images of the product, or a layout design (e.g., graphics database system (GDS), Open Artwork System Interchange Standard (OASIS), Caltech Intermediate Format (CIF), etc.) representation of a product). As an example, a GDS analyzer may be used to determine good locations on a sample to use for training the ML algorithm. These locations are scanned multiple times, resulting in asset of images, some of which incrementally improve with each scan, such as due to noise averaging or using a higher resolution setting on the SEM. These images (e.g., lower quality images and associated higher quality images) are used as training samples to train the ML algorithm. Other locations on the sample are scanned a reduced number of times, and the ML algorithm modifies the image to approximate how it would incrementally improve with additional scans or with a scan using a higher resolution setting.

[0028] The disclosure provides, among others, a method of automatically obtaining training images to train a machine learning model that improves image quality. The method may comprise analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model. For example, the method may comprise analyzing a plurality of patterns from a graphic database system (GDS) of a product, and identifying a plurality of training locations on a sample of the product to use in relation to training the machine learning model based on the analyzing.

[0029] As an example, the method may obtain one or more low quality images and one or more high quality images for each of the multiple training locations. The method may use the low quality image(s) and the high quality image(s) to train the machine learning model. For example, the machine learning model can learn how an image changes between a low quality image and a high quality image, and can be trained to generate an image that approximates a high quality image from a low quality image. After the training, the machine learning model may be used to automatically generate high quality images from low quality images for the product. In this way, the high quality images may be obtained quickly. Further, the method may minimize the amount of human supervision required, prevent inconsistency that would otherwise result from used by different operators, and avoid various human errors. Therefore, the method may increase inspection accuracy. Accordingly, the method may increase manufacturing efficiency and reduce the manufacturing cost.

[0030] As an example, the method may further comprise using the machine learning model to modify an image to approximate a result obtained with an increased number of scans. The term "approximate" refers to come close or be similar to in quality. For example, a quality of an image using the machine learning model may be within 5%, 10%, 15%, or 20% of a quality from an image obtained with an increased number of scans.

[0031] Some of the methods disclosed herein are advantageous for generating high quality images with high throughput. Further, some of the methods may require minimal human intervention, reducing or eliminating inconsistency from different operators and various human errors, and thereby increasing the accuracy of the inspection. In this way, manufacturing efficiency may be increased and the manufacturing cost may be reduced.

[0032] Some disclosed embodiments provide a fully automated smart E-beam image sampling system for E-beam image enhancement, which comprises a GDS pattern analyzer and a smart inspection sampling planner for collecting sample images to feed into a machine learning quality enhancement system to generate non-parameterized quality enhancement modules. The automated smart E-beam image sampling system can be used to enhance lower quality images collected from higher throughput mode. The automated smart E-beam image sampling system is advantageous to require minimum human intervention and generate high quality images with high throughput for inspection and metrology analysis. Advantageously, the automated smart E-beam image sampling system can increase manufacturing efficiency and reduce manufacturing cost.

[0033] FIG. 1 is a flow diagram 100 of a process for improving images obtained by SEM sampling system 102, according to some aspects of the disclosure. The automated smart SEM sampling system 102 may, for example, be EBI system 300 of FIG. 3. The SEM sampling system 102 may be configured to obtain training images to train a machine learning model that improves image quality. The automated smart SEM sampling system 102 may be configured to analyze a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model. For example, the data may be in a database. For example, the database may be any one of a GDS, an Open Artwork System Interchange Standard, or a Caltech Intermediate Form. For example, the GDS may include both GDS and GDSII. Further, the automated smart SEM sampling system 102 may comprise a GDS pattern analyzer that is configured to analyze a plurality of patterns from a graphic database system (GDS) 101 of a product. The automated smart SEM sampling system 102 may additionally comprise a smart inspection sampling planner that is configured to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model based on the analyzing.

[0034] The automated smart SEM sampling system 102 may be configured to obtain a first image having a first quality for each of the plurality of training locations. For example, the system 102 may be configured to enable a first scan for each of the plurality of training locations to obtain a first image for each of the plurality of training locations. The automated smart SEM sampling system 102 may be configured to obtain the first image for each of the plurality of training locations based on the first scan. Further, the system 102 may be configured to obtain more than one first image having a first quality for each of the plurality of training locations. For example, the first scan may include a low number of scans and the first image may be a low quality image. The low number of scans may be a number of scans in a range of about, e.g., 1 to about 10. The automated smart SEM sampling system 102 may be configured to obtain a second image for each of the plurality of training locations, wherein the second image has a second quality higher than the first quality. For example, the second image may be a high quality image. The second image may be a higher quality image due to having a higher resolution, a higher contrast, a higher sensitivity, a higher brightness, or a lower noise level, etc., or some combination of these.

[0035] For example, the system 102 may be configured to obtain more than one second image having a second quality for each of the plurality of training locations. As an example, the second image may be obtained by enabling a second set (or series) of scans, where the set (or series) of scans can include an increased number of scans, thereby resulting in a higher quality image. For example, the increased number of scans may be a number of scans in the range of about 32 to about 256. As another example, a higher quality image may be obtained by taking a number of low quality images, and averaging the images, to result in a higher quality image (due to, e.g., less noise as a result of the averaging). As still another example, a higher quality image may be obtained by combining a number of low quality images to result in a higher quality image. As yet another example, the second image may be received as a reference image by an optional user input, as illustrated at 105. As one more example, the second image may be obtained based on an improved quality scan, such as a scan with a higher resolution or other setting change(s) that result in an improved quality scan.

[0036] The automated smart SEM sampling system 102 may use the first image and the second image for each of the plurality of training locations as training images to train the machine learning model. In some aspects, the automated smart SEM sampling system 102 may be configured to enable scanning each of the training locations a plurality of times to create a plurality of training images for each location, where some of the training images reflect an improvement in image quality that results from an additional number of scans of a training location. For example, a plurality of low quality image and high quality image pairs may be obtained and used as training images to train the machine learning model. For example, the automated smart SEM sampling system 102 may collect sample images (e.g., the training image pairs) to feed into a machine learning based quality enhancement system 107 to generate non-parameterized quality enhancement modules. The automated smart SEM sampling system can be used to enhance low quality images 108 collected during operation in a high throughput mode to generate enhanced high quality images 109. For example, the automated smart SEM sampling system 102 may be configured to use the machine learning model to modify an image to approximate a result obtained with an increased number of scans. The fully automated smart SEM sampling system 102 has the advantage of requiring a minimal amount of human intervention while being able to generate high quality images with high throughput for inspection and metrology analysis.

[0037] FIG. 2 is a block diagram 200 illustrating an example of an automated SEM sampling system 202, according to some aspects of the present disclosure. As shown in FIG. 2, the automated SEM sampling system 200 may comprise a computer system 202 (e.g., computer system 309 in FIG. 3), which is in communication with an inspection system 212 and a reference storage device 210. For example, the inspection system 212 may be an EBI tool (e.g., EBI system 300 of FIG. 3). The computer system 202 may comprise a processor 204, a storage medium 206 and a user interface 208. The processor 204 can comprise multiple processors, and the storage medium 206 and the reference storage device 210 can be a same single storage medium. The computer system 202 may be in communication with the inspection system 212 and the reference storage device 210 via wired or wireless communications. For example, the computer system may be a controller of the EBI tool, and the controller may have circuitry to cause the EBI tool to perform automated SEM sampling.

[0038] The computer system 202 may include, but is not limited to, a personal computer, a workstation, a network computer or any device having one or more processors. The storage medium 206 stores SEM sampling instructions and the processor 204 is configured (via its circuitry) to execute the SEM sampling instructions to control the automated SEM sampling process. The processor 204 may be configured to obtain training images to train a machine learning model that improves image quality, as described in connection with FIG. 1. For example, the processor 204 may be configured to analyze a plurality of GDS patterns of a product and identify a plurality of training locations on a sample of the product. The processor 204 may communicate with the inspection system 212 to enable a first scan for each of the plurality of training locations to obtain a first image for each of the plurality of training locations. For example, the processor 204 may instruct the inspection system 212 to perform the first scan to obtain the at least one image, which may be a low quality image with a lower number of scans. The processor 204 may obtain the first image for each of the plurality of training locations based on the first scan from the inspection system 212. The processor 204 may further obtain a second image, which may be a high quality image, for each of the plurality of training locations. For example, the processor 204 may instruct the inspection system 212 to perform a second scan with an increased number of scans to obtain the high quality image. For another example, the processor 204 may obtain the high quality image as a reference image from reference storage device 210, by an optional user input. The processor 202 may be configured to use the first image (e.g., low quality image) and the second image (e.g., high quality image) for each of the plurality of training locations as training images to train the machine learning model. In some aspects, a plurality of low quality image and high quality image pairs may be obtained and used as training images to train the machine learning model. The processor 204 may be further configured to use the machine learning model to modify a first image of a new location with a low quality and generate a high quality image of the new location.

[0039] The user interface 208 may include a display configured to display an image of a wafer, an input device configured to transmit user command to computer system 202, etc. The display may be any type of a computer output surface and projecting mechanism that shows text and graphic images, including but not limited to, cathode ray tube (CRT), liquid crystal display (LCD), light-emitting diode (LED), gas plasma, a touch screen, or other image projection technologies, for displaying information to a computer user. The input device may be any type of a computer hardware equipment used to provide data and control signals from an operator to computer system 202. The input device may include, but is not limited to, a keyboard, a mouse, a scanner, a digital camera, a joystick, a trackball, cursor direction keys, a touchscreen monitor, or audio/video commanders, etc., for communicating direction information and command selections to processor or for controlling cursor movement on display.

[0040] The reference storage device 210 may store a reference file database that is accessed by computer system 202 during the automated SEM sampling process. In some embodiments, reference storage device 210 may be a part of computer system 202. The reference image file for inspection of the wafer can be manually provided to computer system 202 by a human operator. Alternatively, reference storage device 210 may be implemented with a processor and the reference image file can be automatically provided to computer system 202 by reference storage device 210. Reference storage device 210 may be a remote server computer configured to store and provide any reference images, may be cloud storage, etc.

[0041] Inspection system 212 can be any inspection system that can generate an image of a wafer. For example, the wafer can be a sample of the product, of which the plurality of design patterns of the GDS is analyzed by the processor 204. The wafer can be a semiconductor wafer substrate, a semiconductor wafer substrate having one or more epitaxial layers or process films, etc. The embodiments of the present disclosure are not limited to use in a specific type for wafer inspection system 212 as long as the wafer inspection system can generate a wafer image having a resolution high enough to observe key features on the wafer (e.g., less than 20 nm), consistent with contemporary semiconductor foundry technologies. In some aspects of the present disclosure, inspection system 212 is an electron beam inspection (EBI) system 304 described with respect to FIG. 3.

[0042] Once a wafer image is acquired by inspection system 212, the wafer image may be transmitted to computer system 202. Computer system 202 and reference storage device 210 may be part of or remote from inspection system 212.

[0043] In some aspects of embodiments, the automated SEM sampling system 202 may further comprise the inspection system 212 and the reference storage device 210. For example, the automated SEM sampling system 202 may be further configured to perform a first scan for each of the plurality of training locations to obtain at least one first image for each of the plurality of training locations. For another example, the automated SEM sampling system 202 may be further configured to perform a second scan for each of the plurality of training locations to obtain the at least one second image for each of the plurality of training locations. For example, the at least one second image may have an enhanced quality resulted from an increased number of scans.

[0044] FIG. 3 is a schematic diagram illustrating an example electron beam inspection system, according to some aspects of the present disclosure. As shown in FIG. 3, electron beam inspection system 300 includes a main chamber 302, a load/lock chamber 304, an electron beam tool 306, a computer system 309, and an equipment front end module 308. The computer system 309 may be a controller of the electron beam inspection system 300. Electron beam tool 306 is located within main chamber 302. Equipment front end module 308 includes a first loading port 308a and a second loading port 308b. Equipment front end module 308 may include additional loading port(s). First loading port 308a and second loading port 308b receive wafer cassettes that contain wafers (e.g., semiconductor wafers or wafers made of other material(s)) or samples to be inspected (wafers and samples are collectively referred to as "wafers" hereafter). One or more robot arms (not shown) in equipment front end module 308 transport the wafers to load/lock chamber 304. Load/lock chamber 304 is connected to a load/lock vacuum pump system (not shown) which removes gas molecules in load/lock chamber 304 to reach a first pressure below the atmospheric pressure. After reaching the first pressure, one or more robot arms (not shown) transport the wafer from load/lock chamber 304 to main chamber 302. Main chamber 302 is connected to a main chamber vacuum pump system (not shown) which removes gas molecules in main chamber 302 to reach a second pressure below the first pressure. After reaching the second pressure, the wafer is subject to inspection by electron beam tool 306. The electron beam tool 306 may scan a location a plurality of times to obtain an image. In general, a low quality image may be obtained by a low number of scans with a high throughput, and a high quality may be obtained by a high number of scans with a low throughput.

[0045] FIG. 4 is a schematic diagram illustrating an example of an electron beam tool 400 (e.g., 306) that can be a part of the example electron beam inspection system of FIG. 3, according to some aspects of the present disclosure. FIG. 4 illustrates examples of components of electron beam tool 306, according to some aspects of the present disclosure. As shown in FIG. 4, the electron beam tool 400 may include a motorized stage 400, and a wafer holder 402 supported by motorized stage 400 to hold a wafer 403 to be inspected. Electron beam tool 400 further includes an objective lens assembly 404, electron detector 406 (which includes electron sensor surfaces), an objective aperture 408, a condenser lens 410, a beam limit aperture 412, a gun aperture 414, an anode 416, and a cathode 418. Objective lens assembly 404, in some aspects, can include a modified swing objective retarding immersion lens (SORIL), which includes a pole piece 404a, a control electrode 404b, a deflector 404c, and an exciting coil 404d. The electron beam tool 400 may additionally include an energy dispersive X-ray spectrometer (EDS) detector (not shown) to characterize the materials on the wafer.

[0046] A primary electron beam 420 is emitted from cathode 418 by applying a voltage between anode 416 and cathode 418. Primary electron beam 420 passes through gun aperture 414 and beam limit aperture 412, both of which can determine the size of electron beam entering condenser lens 410, which resides below beam limit aperture 412. Condenser lens 410 focuses primary electron beam 420 before the beam enters objective aperture 408 to set the size of the electron beam before entering objective lens assembly 404. Deflector 404c deflects primary electron beam 420 to facilitate beam scanning on the wafer. For example, in a scanning process, deflector 404c can be controlled to deflect primary electron beam 420 sequentially onto different locations of top surface of wafer 403 at different time points, to provide data for image reconstruction for different parts of wafer 403. Moreover, deflector 404c can also be controlled to deflect primary electron beam 420 onto different sides of wafer 403 at a particular location, at different time points, to provide data for stereo image reconstruction of the wafer structure at that location. Further, in some aspects, anode 416 and cathode 418 may be configured to generate multiple primary electron beams 420, and electron beam tool 400 may include a plurality of deflectors 404c to project the multiple primary electron beams 420 to different parts/sides of the wafer at the same time, to provide data for image reconstruction for different parts of wafer 203.

[0047] Exciting coil 404d and pole piece 404a generate a magnetic field that begins at one end of pole piece 404a and terminates at the other end of pole piece 404a. A part of wafer 403 being scanned by primary electron beam 420 can be immersed in the magnetic field and can be electrically charged, which, in turn, creates an electric field. The electric field reduces the energy of impinging primary electron beam 420 near the surface of the wafer before it collides with the wafer. Control electrode 404b, being electrically isolated from pole piece 404a, controls an electric field on the wafer to prevent micro-arching of the wafer and to ensure proper beam focus.

[0048] A secondary electron beam 422 can be emitted from the part of wafer 403 upon receiving primary electron beam 420. Secondary electron beam 422 can form a beam spot on a surface of a sensor of electron detector 406. Electron detector 406 can generate a signal (e.g., a voltage, a current, etc.) that represents an intensity of the beam spot and provide the signal to a processing system (not shown). The intensity of secondary electron beam 422, and the resultant beam spot, can vary according to the external or internal structure of wafer 403. Moreover, as discussed above, primary electron beam 420 can be projected onto different locations of the top surface of the wafer to generate secondary electron beams 422 (and the resultant beam spot) of different intensities. Therefore, by mapping the intensities of the beam spots with the locations of wafer 403, the processing system can reconstruct an image that reflects the internal or external structures of wafer 403. Once a wafer image is acquired by electron beam tool 400, the wafer image may be transmitted to computer system 402 (e.g., 202, as shown in FIG. 2).

[0049] FIGS. 5A-5C illustrate a plurality of design patterns of a database such as a GDS database of a product, according to some aspects of the present disclosure. The automated SEM sampling system disclosed herein may be configured to perform a method of automatically obtaining training images to train a machine learning model that improves image quality. For example, the automated SEM sampling system may be a controller of the EBI tool, and the controller may have circuitry to cause the EBI tool to perform automated SEM sampling. For example, the automated SEM sampling system may comprise a GDS analyzer (e.g., a GDS analyzer component). The GDS analyzer may be configured to perform pattern analysis and classification based on various features, i.e. line pattern, logic pattern, 1D/2D pattern, dense/isolated pattern, etc. Same patterns may be grouped together via pattern grouping.

[0050] For example, a plurality of manufacture design patterns may be rendered from a GDS input. At this stage, the plurality of patterns are scattered patterns. Various features of each pattern may analyzed and extracted, such as a pattern location within a die, a shape, a size, a density, a neighborhood layout, a pattern type, etc.

[0051] Further, the plurality of design patterns may be classified into different categories based on the extracted features. As illustrated in FIGS. 5A-5C, a subset of patterns with a similar or same shape may be grouped together via pattern grouping. For example, a first subset of patterns, Group 1, may include patterns with a same or similar shape to pattern 501a. For example, a second subset of patterns, Group 2, may include patterns with a same or similar shape to pattern 501b. For example, a third subset of patterns, Group 3, may include patterns with a same or similar shape to pattern 501c. Each pattern group may be associated with corresponding metadata, which may include information of a pattern location within a die, pattern type, shape, size and other extracted features.

[0052] The automated SEM sampling system may comprise a smart inspection sampling planner (e.g., an inspection sampling planner component). The smart inspection sampling planner may identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model based on the analyzing results of the analyzer. The GDS database of the product may have information regarding a location associated with each pattern group. Thus, the design patterns rendered from the GDS may contain location information. Therefore, by analyzing and recognizing pattern groups from the GDS, locations of corresponding pattern groups on a wafer of the product may be determined.

[0053] FIG. 5D is a diagram 500d illustrating a plurality of training locations 506t on a wafer 503 (e.g. 403, described in connection with FIG. 4). For each pattern group, there are many potential locations 506 to acquire training images, as illustrated in FIG. 5D. The automated SEM sampling system may be further configured to determine one or more specific training locations 506t for obtaining training images. For example, the SEM sampling system may identify the one or more training locations based on one or more of a location within a die, an inspection area, a field of view (FOV), or other imaging parameters such as local alignment points (LAPs) and auto-focus points on the covered area in the wafer, from the analyzing results of the analyzer. For example, for each pattern group, the SEM sampling system may determine the one or more training locations based on, at least in part, a location within a die. A planner may automatically generate die sampling across the wafer.

[0054] A scanning path may be analyzed and created based on the overall scan areas for all the pattern groups. The scanning path may be determined by a parameter such as location, FOV, etc., or some combination of these. Furthermore, the scanning path along with other parameters, such as FOV, shape, type, etc., may be used according to a recipe for an electron beam tool. The electron beam tool may be configured to follow the recipe to automatically scan and capture training images for the machine learning module. For example, LAPs and auto-focus points may be determined based on the factors such as a number of field of views (FOVs) and a distance between each FOV, etc.

[0055] FIG. 6A is a block diagram 600a illustrating a flow diagram of a system for automatically obtaining training images 610 to train a machine learning model 615 that improves image quality, according to some aspects of the present disclosure. FIG. 6B is a block diagram 600b illustrating details of an automatic SEM sampling system, according to some aspects of the present disclosure. Referring to FIG. 6A and FIG. 6B, the method implemented by the system may be performed by an automated SEM sampling system 602 (e.g., a processor 604, the computer system 309) communicating with an EBI tool 612 (e.g., the EBI system 300). For example, the automated SEM sampling system may be a controller of the EBI tool, and the controller may have circuitry to cause the EBI tool to perform the method. For example, the method may include performing pattern analysis and classification as by a GDS analyzer 603 (e.g., a GDS analyzer component 603 of the processor). The pattern analysis and classification may be performed based on various features, for example, line pattern, logic pattern, 1D/2D pattern, dense/isolated pattern, etc. The method may further comprise grouping same or similar patterns together via pattern grouping.

[0056] The method may comprise, by a sampling planner 605 (e.g., a sampling planner component 605 of the processor), determining scan areas, which are training locations, based on the analyzing results of the step of analyzing. The analyzing results of the step of analyzing may include pattern locations with a die, inspection areas, FOV sizes, and LAP points and auto-focus points and other imaging parameters based on the covered area in the wafer.

[0057] The method may comprise, by a user interface 608, enabling scanning each of the training locations a plurality of times to create a plurality of training images 610 for each training location, and obtaining the plurality of training images 610 from the EBI tool 612. For example, some of the plurality of images may be low quality images, e.g., generated using a low number of scans. For example, some of the plurality of images may have enhanced image quality, e.g., generated using an increased number of scans. The enhanced image quality may refer to a higher resolution, a higher contrast, a higher sensitivity, a higher brightness, or a lower noise level, etc. For example, some of the training images 610 may reflect an improvement in image quality that results from the additional number of scans of a training location. In some aspects, a plurality of low quality image and high quality image pairs may be obtained, via the user interface 608, and be used as training images 610 to train the machine learning model 615. For example, a low quality SEM imaging mode may be based on default setting or user-input throughput requirements. For example, a high quality SEM image mode may be based on default setting or user-input quality requirements. In some aspects, a user may also have the option of directly inputting high quality reference image 611. For example, the high quality reference images 611 may be stored in a storage medium 606. In such cases, acquisition of high quality images may be skipped.

[0058] The method may further comprise using the machine learning model 615 (e.g., a machine learning model component of the processor) to modify an image to approximate a result obtained with an increased number of scans. Various machine learning methods can be employed in the machine learning model 615 to learn the enhancement framework from the training image pairs 610. The machine learning model 615 may be parametric. Data may be collected for the machine learning model 615.

[0059] A quality enhancement module 617 (e.g., a quality enhancement module 617 of the processor) may be learned at the end of the step of using the machine learning model for each type of pattern-of-interest. The quality enhancement module 617 can be used directly for inspection or metrology purpose in a high throughput mode. After being trained based on images sampled from the automatic sampling system 602, the quality enhancement module 617 may be used for image enhancement without training data. Therefore, the quality enhancement module 617 may be a non-parameterized, which does not involve the use of an excessive number of parameter settings that may result in too much overhead. Accordingly, the quality enhancement module 617 is advantageous to generate high quality images with high throughput, thereby increasing manufacturing efficiency and reducing manufacturing cost.

[0060] FIG. 7 is a flowchart 700 illustrating an example of a method of automatically obtaining training images to train a machine learning model that improves image quality, according to some aspects of the present disclosure. The method may be performed by an automated SEM sampling system (e.g., 102, 202, 602) communicating with an EBI tool (e.g., 212, 612). For example, the automated SEM sampling system may be a controller of the EBI tool, and the controller may have circuitry to cause the EBI tool to perform the method.

[0061] As shown in FIG. 7, at step 702, the method may comprise analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model. For example, the data may be in a database. For example, the database may be any one of a graphic database system (GDS), an Open Artwork System Interchange Standard, or a Caltech Intermediate Form, among others. For example, the GDS may include both GDS and GDSII.

[0062] For example, the step of analyzing the plurality of patterns of data relating to layout of the product may further comprise classifying the plurality of patterns into a plurality of subsets of patterns. For example, the step of analyzing a plurality of patterns of data relating to layout of a product further may comprise extracting a feature from the plurality of patterns. For example, the classifying the plurality of patterns into a plurality of subsets of patterns may be based on the extracted feature. For example, each subset of the plurality of subsets of patterns may be associated with information relating to a location, a type, a shape, a size, a density or a neighborhood layout. For example, identifying the plurality of training locations may be based on a field of view, a local alignment point, or an auto-focus point. For example, identifying the plurality of training locations may comprise identifying one or more training locations for each subset of patterns.

[0063] At step 704, the method may comprise obtaining a first image having a first quality for each of the plurality of training locations. For example, the step of obtaining a first image having a first quality for each of the plurality of training locations comprises obtaining more than one first image having a first quality for each of the plurality of training locations.

[0064] For example, the method may further comprise determining a first scanning path including a first scan for obtaining the first image. For example, the first scanning path may be based on an overall scan area for the plurality of training locations. For example, the first scanning path may be determined by some of the parameters such as location, FOV, etc. Furthermore, the first scanning path along with other parameters, such as FOV, shape, type, etc., may provide a first recipe to an electron beam tool. The electron beam tool may be configured to follow the first recipe to automatically scan and capture images for the machine learning module.

[0065] At step 706, the method may comprise obtaining a second image having a second quality for each of the plurality of training locations. For example, the second quality may be higher than the first quality. For example, the step of obtaining a second image having a second quality for each of the plurality of training locations comprises obtaining more than one second image having a second quality for each of the plurality of training locations.

[0066] For example, the method may comprise determining a second scanning path including a second scan for obtaining the second image. For example, the second scanning path based on an overall scan area for the plurality of training locations. For example, the second scanning path may be determined by some of the parameters such as location, FOV, etc. Furthermore, the second scanning path along with other parameters, such as FOV, shape, type, etc., may provide a second recipe to the electron beam tool. The electron beam tool may be configured to follow the second recipe to automatically scan and capture images for the machine learning module. For example, the first scan may include a first number of scans and the second scan may include a second number of scans, where the second number of scans may be larger than the first number of scans.

[0067] For example, the second image may be obtained as a reference image by an optional user input.

[0068] At step 708, the method may comprise using the first image and the second image to train the machine learning model.

[0069] At step 710, the method may comprise using the machine learning model to modify an image to approximate a result obtained with an increased number of scans.

[0070] For example, the method may further comprise using the machine learning model to modify a first image of a location to obtain a second image of the location, where the second image has an enhanced quality than the first image. In this way, the method is advantageous to obtain high quality images with high throughput, thereby increasing manufacturing efficiency and reduce manufacturing cost. Further, the method are fully automatic. Thus, the method may prevent human error and inconsistency from different operators. therefore, the method is further advantageous to increase inspection accuracy.

[0071] Now referring back to FIG. 2, the computer system 202 may be a controller of inspection system 212 (e.g., e-beam inspection system) and the controller may include circuitry for: analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model; obtaining a first image having a first quality for each of the plurality of training locations; obtaining a second image having a second quality for each of the plurality of training locations, the second quality higher than the first quality; and using the first image and the second image to train the machine learning model.

[0072] Further referring to FIG. 2, the storage medium 206 may be a non-transitory computer readable medium storing a set of instructions that is executable by a controller of a device to cause the device to perform a method comprising: analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations on a sample of the product to use in relation to training the machine learning model; obtaining a first image having a first quality for each of the plurality of training locations; obtaining a second image having a second quality for each of the plurality of training locations, the second quality higher than the first quality; and using the first image and the second image to train the machine learning model.

[0073] The embodiments may further be described using the following clauses:

1. A method of automatically obtaining training images for use in training a machine learning model, the method comprising:

[0074] analyzing a plurality of patterns of data relating to layout of a product to identify a plurality of training locations to use in relation to training the machine learning model;

[0075] obtaining a first image having a first quality for each of the plurality of training locations;

[0076] obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and

[0077] using the first image and the second image to train the machine learning model.

2. The method of clause 1 wherein the data is in a database. 3. The method of clause 2 wherein the database is any one of a graphic database system (GDS), an Open Artwork System Interchange Standard, or a Caltech Intermediate Form. 4. The method of clause 3 wherein the GDS includes GDS formatted data or GDSII formatted data. 5. The method of clause 1 wherein the step of obtaining a first image having a first quality for each of the plurality of training locations comprises obtaining more than one first image having a first quality for each of the plurality of training locations. 6. The method of clause 1 wherein the step of obtaining a second image having a second quality for each of the plurality of training locations comprises obtaining more than one second image having a second quality for each of the plurality of training locations. 7. The method of clause 1, wherein the step of analyzing the plurality of patterns of data relating to layout of the product further comprises classifying the plurality of patterns into a plurality of subsets of patterns. 8. The method of any one of clauses 1 to 7, wherein the step of analyzing a plurality of patterns of data relating to layout of a product further comprises extracting a feature from the plurality of patterns. 9. The method of clause 8, wherein the extracted feature includes a shape, a size, a density, or a neighborhood layout. 10. The method of clause 7 wherein the classifying the plurality of patterns into a plurality of subsets of patterns is based on the extracted feature. 11. The method of clause 7 wherein each subset of the plurality of subsets of patterns is associated with information relating to a location, a type, a shape, a size, a density or a neighborhood layout. 12. The method of any one of clauses 1 to 11, wherein identifying the plurality of training locations is based on a field of view, a local alignment point, or an auto-focus point. 13. The method of any one of clauses 1 to 12, wherein the method further comprises determining

[0078] a first scanning path including a first scan for obtaining the first image, the first scanning path based on an overall scan area for the plurality of training locations.

14. The method of clause 13, wherein the method further comprises

[0079] determining a second scanning path including a second scan for obtaining the second image, the second scanning path based on an overall scan area for the plurality of training locations.

15. The method of clause 14, wherein the first scan includes a first number of scans, wherein the second scan includes a second number of scans, and wherein the second number of scans is larger than the first number of scans. 16. The method of any one of clauses 1 to 13, wherein the second image is obtained as a reference image by an optional user input. 17. The method of any one of clauses 1 to 16, further comprising using the machine learning model to modify a first image of a location to obtain a second image of the location, wherein the second image has an enhanced quality than the first image. 18. The method of any one of clauses 1 to 17, wherein identifying the plurality of training locations comprises identifying one or more training locations for each subset of patterns. 19. The method of any one of clauses 1 to 18, wherein the quality includes a resolution, a contrast, a brightness, or a noise level. 20. The method of any one of clauses 1 to 19, further comprising [0080] using the machine learning model to modify an image to approximate a result obtained with an increased number of scans. 21. An apparatus for automatically obtaining training images to train a ML model that improves image quality, the apparatus comprising:

[0081] a memory; and

[0082] at least one processor coupled to the memory and configured to: [0083] analyze a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations to use in relation to training the machine learning model; [0084] obtain a first image having a first quality for each of the plurality of training locations; [0085] obtain a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and [0086] use the first image and the second image to train the machine learning model. 22. The apparatus of clause 21 wherein the data is in a database. 23. The apparatus of clause 22, wherein the database is any one of a graphic database system (GDS), an Open Artwork System Interchange Standard, or a Caltech Intermediate Form. 24. The apparatus of clause 23, where the GDS includes GDS formatted data or GDSII formatted data. 25. The apparatus of clause 21 wherein the at least one processor is further configured to obtain more than one first image having a first quality for each of the plurality of training locations. 26. The apparatus of clause 21, wherein the at least one processor is further configured to obtain more than one second image having a second quality for each of the plurality of training locations. 27. The apparatus of clause 21, wherein the at least one processor is further configured to classify the plurality of patterns into a plurality of subsets of patterns. 28. The apparatus of clause 21, wherein the at least one processor is further configured to extract a feature from the plurality of patterns. 29. The apparatus of clause 28, wherein the extracted feature includes a shape, a size, a density, or a neighborhood layout. 30. The apparatus of clause 27, wherein the at least one processor is further configured to classify the plurality of patterns into a plurality of subsets of patterns based on the extracted feature. 31. The apparatus of clause 27, wherein each subset of the plurality of subsets of patterns is associated with information relating to a location, a type, a shape, a size, a density or a neighborhood layout. 32. The apparatus of any one of clauses 21 to 31, wherein the at least one processor is further configured to identify the plurality of training locations based on a field of view, a local alignment point, or an auto-focus point. 33. The apparatus of any one of clauses 21 to 32, wherein the at least one processor is further configured to

[0087] determine a first scanning path including a first scan for obtaining the first image, the first scanning path based on an overall scan area for the plurality of training locations.

34. The apparatus of clause 33, wherein the at least one processor is further configured to

[0088] determine a second scanning path including a second scan for obtaining the second image, the second scanning path based on an overall scan area for the plurality of training locations.

35. The apparatus of clause 34, wherein the first scan includes a first number of scans, wherein the second scan includes a second number of scans, and wherein the second number of scans is larger than the first number of scans. 36. The apparatus of any one of clauses 21 to 33, wherein the second image is received as a reference image by an optional user input. 37. The apparatus of any one of clauses 21 to 36, wherein the at least one processor is further configured to

[0089] use the machine learning model to modify a first image of a location to obtain a second image of the location, wherein the second image has an enhanced quality than the first image.

38. The apparatus of any one of clauses 21 to 37, wherein the at least one processor is further configured to

[0090] identify one or more training locations for each subset of patterns.

39. The apparatus of any one of clauses 21 to 38, wherein the quality includes a resolution, a contrast, a brightness, or a noise level. 40. The apparatus of any one of clauses 21 to 39, wherein the at least one processor is further configured to [0091] use the machine learning model to modify an image to approximate a result obtained with an increased number of scans. 41. A non-transitory computer readable medium storing a set of instructions that is executable by a controller of a device to cause the device to perform a method comprising:

[0092] analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations to use in relation to training the machine learning model;

[0093] obtaining a first image having a first quality for each of the plurality of training locations;

[0094] obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and

[0095] using the first image and the second image to train the machine learning model.

42. The non-transitory computer readable medium of clause 41 wherein the data is in a database. 43. The non-transitory computer readable medium of clause 42 wherein the database is any one of a graphic database system (GDS), an Open Artwork System Interchange Standard, a Caltech Intermediate Form, or Electronic Design Interchange Format. 44. The non-transitory computer readable medium of clause 43 where the GDS includes at least one of GDS or GDSII. 45. The non-transitory computer readable medium of clause 41 wherein the step of obtaining a first image having a first quality for each of the plurality of training locations further comprises obtaining more than one first image having a first quality for each of the plurality of training locations. 46. The non-transitory computer readable medium of clause 41, wherein the step of obtaining a second image having a second quality for each of the plurality of training locations comprises obtaining more than one second image having a second quality for each of the plurality of training locations. 47. The non-transitory computer readable medium of clause 41, wherein the step of analyzing the plurality of patterns of data relating to layout of the product further comprises classifying the plurality of patterns into a plurality of subsets of patterns. 48. The non-transitory computer readable medium of any one of clauses 41 to 47, wherein the step of analyzing a plurality of patterns of data relating to layout of a product further comprises extracting a feature from the plurality of patterns. 49. The non-transitory computer readable medium of clause 48, wherein the extracted feature includes a shape, a size, a density, or a neighborhood layout. 50. The non-transitory computer readable medium of clause 47, wherein the classifying the plurality of patterns into a plurality of subsets of patterns is based on the extracted feature. 51. The non-transitory computer readable medium of clause 47, wherein each subset of the plurality of subsets of patterns is associated with information relating to a location, a type, a shape, a size, a density or a neighborhood layout. 52. The non-transitory computer readable medium of any one of clauses 41 to 51, wherein identifying the plurality of training locations is based on a field of view, a local alignment point, or an auto-focus point. 53. The non-transitory computer readable medium of any one of clauses 41 to 52, wherein the method further comprises

[0096] determining a first scanning path including a first scan for obtaining the first image, the first scanning path based on an overall scan area for the plurality of training locations.

54. The non-transitory computer readable medium of clause 53, wherein the method further comprises

[0097] determining a second scanning path including a second scan for obtaining the second image, the second scanning path based on an overall scan area for the plurality of training locations.

55. The non-transitory computer readable medium of clause 54, wherein the first scan includes a first number of scans, wherein the second scan includes a second number of scans, and wherein the second number of scans is larger than the first number of scans. 56. The non-transitory computer readable medium of any one of clauses 41 to 53, wherein the second image is obtained as a reference image by an optional user input. 57. The non-transitory computer readable medium of any one of clauses 41 to 56, wherein the method further comprises using the machine learning model to modify a first image of a location to obtain a second image of the location, wherein the second image has an enhanced quality than the first image. 58. The non-transitory computer readable medium of any one of clauses 41 to 57, wherein identifying the plurality of training locations comprises identifying one or more training locations for each subset of patterns. 59. The non-transitory computer readable medium of any one of clauses 41 to 58, wherein the quality includes a resolution, a contrast, a brightness, or a noise level. 60. The non-transitory computer readable medium of any one of clauses 41 to 59, wherein the method further comprises

[0098] using the machine learning model to modify an image to approximate a result obtained with an increased number of scans.

61. An electron beam inspection apparatus, comprising:

[0099] a controller having circuitry to cause the electron beam inspection apparatus to perform: [0100] analyzing a plurality of patterns of data relating to a layout of a product to identify a plurality of training locations to use in relation to training the machine learning model; [0101] obtaining a first image having a first quality for each of the plurality of training locations; [0102] obtaining a second image having a second quality for each of the plurality of training locations, the second quality being higher than the first quality; and [0103] using the first image and the second image to train the machine learning model. 62. The electron beam inspection apparatus of clause 61 wherein the data is in a database. 63. The electron beam inspection apparatus of clause 62 wherein the database is any one of a graphic database system (GDS), an Open Artwork System Interchange Standard, or a Caltech Intermediate Form. 64. The electron beam inspection apparatus of clause 63 where the GDS includes at least one of GDS or GDSII. 65. The electron beam inspection apparatus of clause 61 wherein the step of obtaining a first image having a first quality for each of the plurality of training locations comprises obtaining more than one first image having a first quality for each of the plurality of training locations. 66. The electron beam inspection apparatus of clause 61 wherein the step of obtaining a second image having a second quality for each of the plurality of training locations comprises obtaining more than one second image having a second quality for each of the plurality of training locations. 67. The electron beam inspection apparatus of clause 61, wherein the step of analyzing the plurality of patterns of data relating to layout of the product further comprises classifying the plurality of patterns into a plurality of subsets of patterns. 68. The electron beam inspection apparatus of any one of clauses 61 to 67, wherein the step of analyzing a plurality of patterns of data relating to layout of a product further comprises extracting a feature from the plurality of patterns. 69. The electron beam inspection apparatus of clause 68, wherein the extracted feature includes a shape, a size, a density, or a neighborhood layout. 70. The electron beam inspection apparatus of clause 67, wherein the classifying the plurality of patterns into a plurality of subsets of patterns is based on the extracted feature. 71. The electron beam inspection apparatus of clause 67, wherein each subset of the plurality of subsets of patterns is associated with information relating to a location, a type, a shape, a size, a density or a neighborhood layout. 72. The electron beam inspection apparatus of any one of clauses 61 to 71, wherein identifying the plurality of training locations is based on a field of view, a local alignment point, or an auto-focus point. 73. The electron beam inspection apparatus of any one of clauses 61 to 72, wherein the controller having circuitry to cause the electron beam inspection apparatus to further perform:

[0104] determining a first scanning path including a first scan for obtaining the first image, the first scanning path based on an overall scan area for the plurality of training locations.

74. The electron beam inspection apparatus of clause 73, wherein the controller having circuitry to cause the electron beam inspection apparatus to further perform:

[0105] determining a second scanning path including a second scan for obtaining the second image, the second scanning path based on an overall scan area for the plurality of training locations.

75. The electron beam inspection apparatus of clause 74, wherein the first scan includes a first number of scans, wherein the second scan includes a second number of scans, and wherein the second number of scans is larger than the first number of scans. 76. The electron beam inspection apparatus of any one of clauses 61 to 73, wherein the second image is obtained as a reference image by an optional user input. 77. The electron beam inspection apparatus of any one of clauses 61 to 76, wherein the controller having circuitry to cause the electron beam inspection apparatus to further perform:

[0106] using the machine learning model to modify a first image of a location to obtain a second image of the location, wherein the second image has an enhanced quality than the first image.

78. The electron beam inspection apparatus of any one of clauses 61 to 77, wherein identifying the plurality of training locations comprises identifying one or more training locations for each subset of patterns. 79. The electron beam inspection apparatus of any one of clauses 61 to 78, wherein the quality includes a resolution, a contrast, a brightness, or a noise level. 80. The electron beam inspection apparatus of any one of clauses 61 to 79, wherein the controller having circuitry to cause the electron beam inspection apparatus to further perform:

[0107] using the machine learning model to modify an image to approximate a result obtained with an increased number of scans.