Systems And Methods For Adaptive And Responsive Video

Bloch; Yoni ; et al.

U.S. patent application number 16/800994 was filed with the patent office on 2020-06-18 for systems and methods for adaptive and responsive video. The applicant listed for this patent is JBF Interlude 2009 LTD. Invention is credited to Yoni Bloch, Barak Feldman, Yuval Hofshy, Tal Zubalsky.

| Application Number | 20200194037 16/800994 |

| Document ID | / |

| Family ID | 58096138 |

| Filed Date | 2020-06-18 |

View All Diagrams

| United States Patent Application | 20200194037 |

| Kind Code | A1 |

| Bloch; Yoni ; et al. | June 18, 2020 |

SYSTEMS AND METHODS FOR ADAPTIVE AND RESPONSIVE VIDEO

Abstract

Systems and methods for providing adaptive and responsive media are disclosed. In various implementations, a video for playback is received at a user device having a plurality of associated properties. Based on at least one of the properties, a first state of the video is configured, and the video is presented according to the first state. During playback of the video, a change in one of the device properties is detected, and the video is seamlessly transitioned to a second state based on the change.

| Inventors: | Bloch; Yoni; (Brooklyn, NY) ; Zubalsky; Tal; (Brooklyn, NY) ; Hofshy; Yuval; (Kfar Saba, IL) ; Feldman; Barak; (Tenafly, NJ) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 58096138 | ||||||||||

| Appl. No.: | 16/800994 | ||||||||||

| Filed: | February 25, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16559082 | Sep 3, 2019 | |||

| 16800994 | ||||

| 14835857 | Aug 26, 2015 | 10460765 | ||

| 16559082 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 21/00 20130101; G11B 27/102 20130101; G11B 27/36 20130101; H04N 21/8541 20130101; G11B 27/10 20130101; G11B 27/34 20130101; G06F 2200/1614 20130101; H04N 21/8456 20130101 |

| International Class: | G11B 27/34 20060101 G11B027/34; G11B 27/10 20060101 G11B027/10; G11B 27/36 20060101 G11B027/36 |

Claims

1-20. (canceled)

21. A computer-implemented method comprising: receiving, from a sensor embedded within a mobile device, an indication of a physical orientation of the user device; simultaneously receiving at the user device over a network a first video file having a first aspect ratio and a second, different video file having a second aspect ratio; storing, on the user device, the first video file and the second video file; presenting the first video file on the user device based on the orientation associated with the user device; and during presentation of the first video file on the user device: receiving, from the sensor, an indication that a change in orientation of the user device has occurred; and in response to the change in orientation, retrieving the second video file from storage on the user device and seamlessly transitioning from presentation of the first video file to presentation of the second video file on the user device.

22. The method of claim 21, wherein presenting the first video file according to the orientation of the device further comprises setting a quality of presentation of the first video file.

23. The method of claim 21, wherein presenting the first video file according to the orientation of the device further comprises setting a viewing region of the first video file to a partial dimensional area of the first video file.

24. The method of claim 21, wherein presenting the first video file according to the orientation of the device further comprises setting a viewing region of the first video file to a full dimensional area of the first video file.

25. The method of claim 21, further comprising: playing a first audio file during presentation of the first video file on the user device; and in response to the change in the orientation of the device, seamlessly transitioning from playing the first audio file to playing a second, different audio file on the user device.

26. The method of claim 21, further comprising, in transitioning to presentation of the second video file, modifying a position, a shape, and/or a size of a viewing region of the second video file.

27. A system comprising: at least one memory storing computer-executable instructions; and at least one processor for executing the instructions stored on the memory, wherein execution of the instructions programs the at least one processor to perform operations comprising: receiving, from a sensor embedded within a mobile device, an indication of a physical orientation of the user device; simultaneously receiving at the user device over a network a first video file having a first aspect ratio and a second, different video file having a second aspect ratio; storing, on the user device, the first video file and the second video file; presenting the first video file on the user device based on the orientation associated with the user device; and during presentation of the first video file on the user device: receiving, from the sensor, an indication that a change in orientation of the user device has occurred; and in response to the change in orientation, retrieving the second video file from storage on the user device and seamlessly transitioning from presentation of the first video file to presentation of the second video file on the user device.

28. The system of claim 27, wherein presenting the first video file according to the orientation of the device further comprises setting a quality of presentation of the first video file.

29. The system of claim 27, wherein presenting the first video file according to the orientation of the device further comprises setting a viewing region of the first video file to a partial dimensional area of the first video file.

30. The system of claim 27, wherein presenting the first video file according to the orientation of the device further comprises setting a viewing region of the first video file to a full dimensional area of the first video file.

31. The system of claim 27, wherein the operations further comprise: playing a first audio file during presentation of the first video file on the user device; and in response to the change in the orientation of the device, seamlessly transitioning from playing the first audio file to playing a second, different audio file on the user device.

32. The system of claim 27, wherein the operations further comprise, in transitioning to presentation of the second video file, modifying a position, a shape, and/or a size of a viewing region of the second video file.

Description

FIELD OF THE INVENTION

[0001] The present disclosure relates generally to dynamic video and, more particularly, to systems and methods for dynamically modifying a video state based on changes in user device properties.

BACKGROUND

[0002] The rise of the mobile web and the vast increase in different platforms and devices with different screen sizes, resolutions, and orientations, have necessitated various new techniques in web design, such as enabling a website to display differently according to the device or screen it is displayed on. These capabilities are supported by standards like HyperText Markup Language (HTML), Cascading Style Sheets (CSS), and JavaScript, which enable designers and developers to implement responsive and adaptive websites.

[0003] However, the responsive and adaptive features used in website design do not similarly apply to video presentations. Digital videos have fixed resolutions, fixed proportions, and fixed content. Dynamic changes to digital video are limited to adaptations in video size and quality to accommodate, for example, different device screen sizes or available communications bandwidth. However, such changes have their own disadvantages. For example, videos scaled to fit a screen size having a different aspect ratio are typically cropped, which results in a loss of content, or are letterboxed, with mattes abutting the video.

SUMMARY

[0004] Systems and methods for responsive and adaptive video are described. In general, the present disclosure describes a "smart video response" technique, in which video content (streaming or otherwise) can adapt in real-time, with targeted, customized, or other responsive content, to changes in properties associated with a user device, all without scaling, letterboxing, or other noted disadvantages of the prior art.

[0005] Accordingly, in one aspect a video for playback is received at a user device having a plurality of identified associated properties. The device properties can include, for example, physical orientation, model, physical screen size, screen resolution, and window size. Based on at least one of the properties, a first state of the video is configured, and the video is presented according to the first state. During playback of the video, a change in one of the device properties is detected, and the video is seamlessly transitioned to a second state based on the change.

[0006] The first state of the video is configured by, for example, setting which video and/or audio content will be played, setting the dimensional ratio and/or quality of the video, and/or setting the viewing region of the video to a particular partial area of the video. Similarly, seamlessly transitioning the video to the second state can involve changing audio/video content playback, video dimensional ratio, video quality, and/or the position, shape and/or size of the video viewing region. The seamless transition to the second state can also include seamlessly transitioning from a first to a second video in a plurality of videos that are simultaneously received.

[0007] In one implementation, a plurality of videos associated with a particular one of the properties is provided, and each video is associated with a different value of the particular property. When determining that a change in a device property has occurred, the video can be seamlessly transitioned to a second video that is associated with the value of the changed property.

[0008] Aspects of these inventions also include corresponding systems and computer programs. Further aspects and advantages of the invention will become apparent from the following drawings, detailed description, and claims, all of which illustrate the principles of the invention, by way of example only.

BRIEF DESCRIPTION OF THE DRAWINGS

[0009] A more complete appreciation of the invention and many attendant advantages thereof will be readily obtained as the same becomes better understood by reference to the following detailed description when considered in connection with the accompanying drawings. In the drawings, like reference characters generally refer to the same parts throughout the different views. Further, the drawings are not necessarily to scale, with emphasis instead generally being placed upon illustrating the principles of the invention.

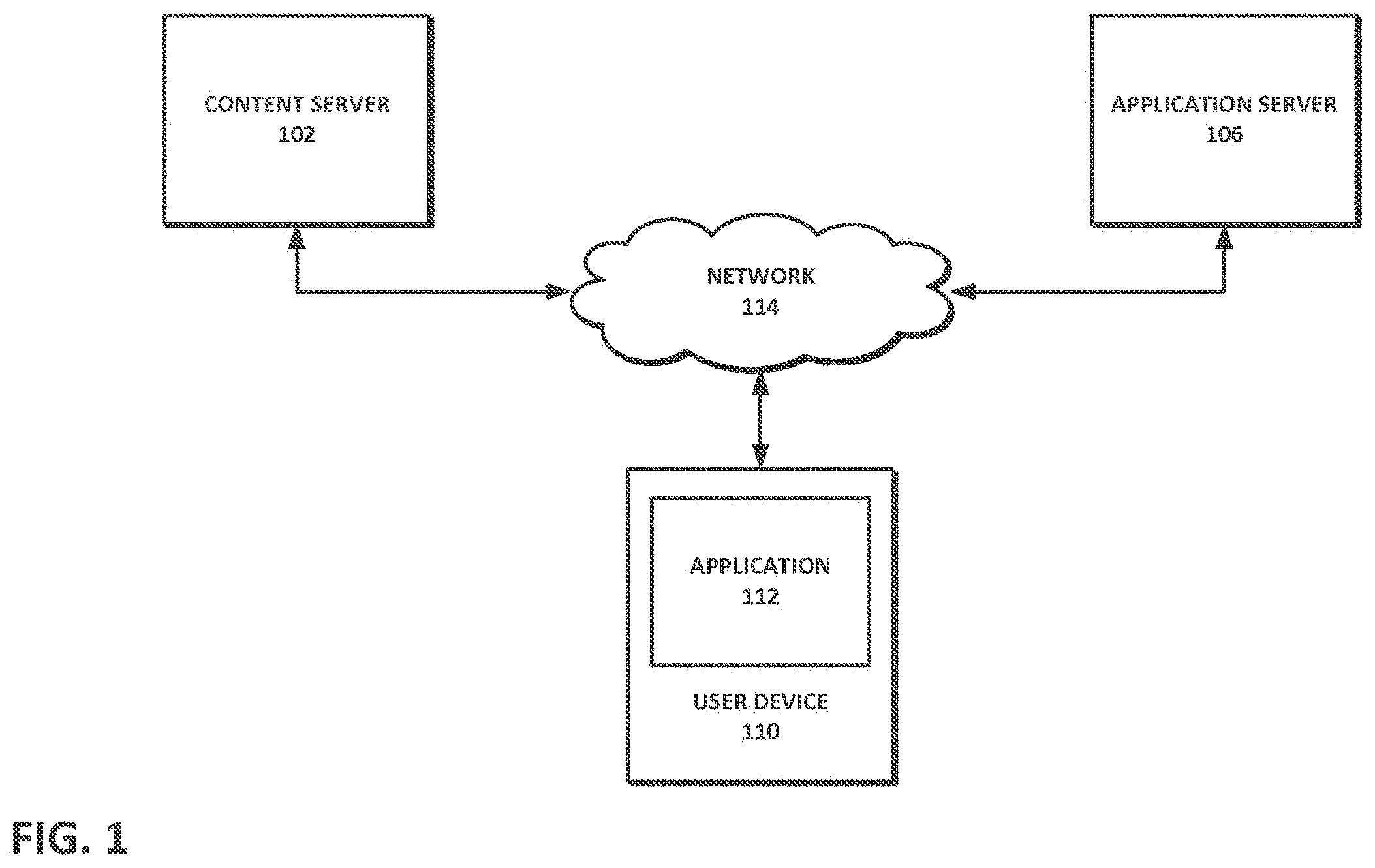

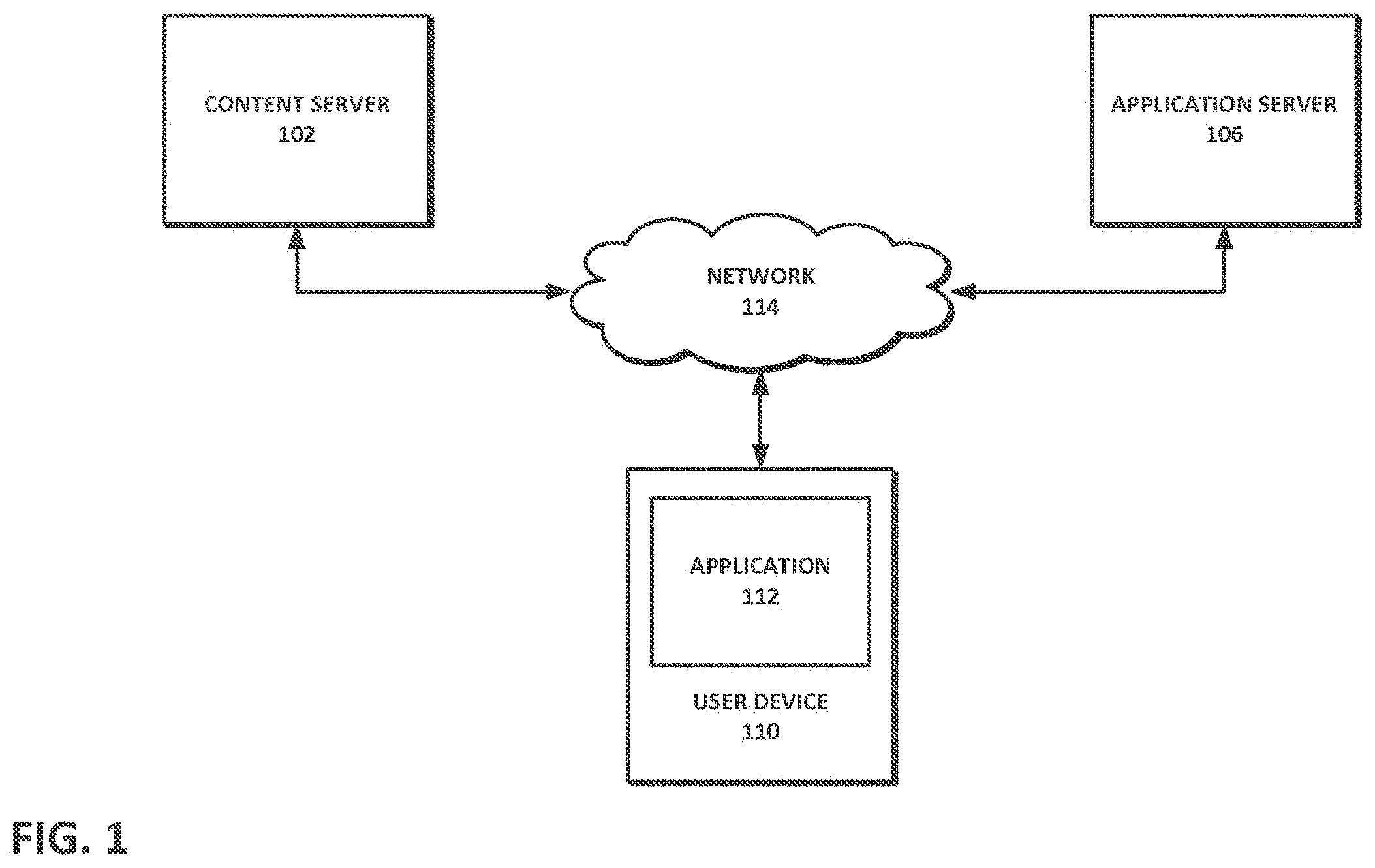

[0010] FIG. 1 depicts a high-level diagram of a system architecture according to an implementation.

[0011] FIG. 2 depicts a video state change responsive to a rotation of a user device.

[0012] FIG. 3 depicts a video state change responsive to a window resizing.

[0013] FIGS. 4A-4D depict a viewport location modification responsive to a change in a user device property.

[0014] FIGS. 5A and 5B depict viewport size and location modifications responsive to a change in a user device property.

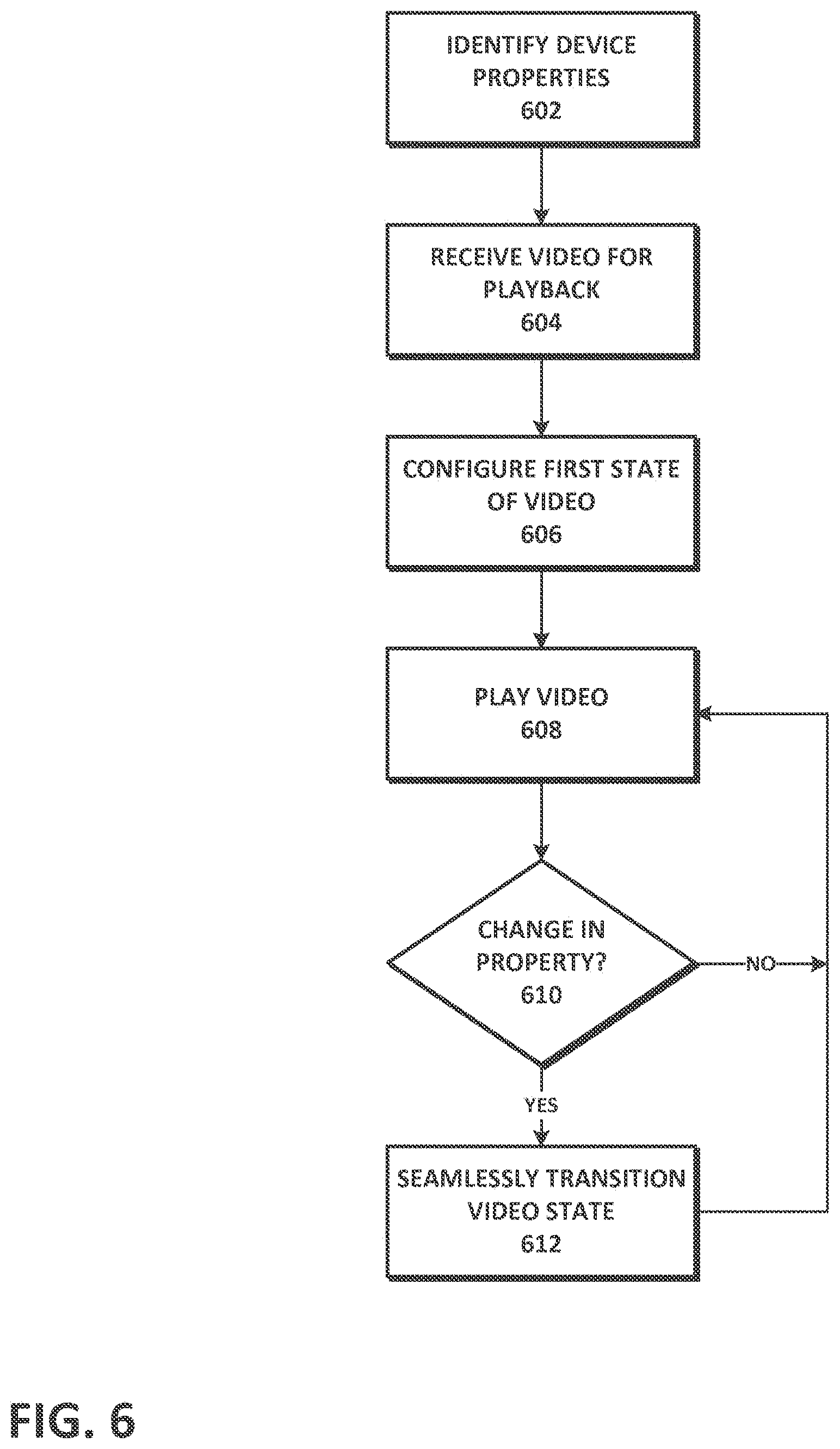

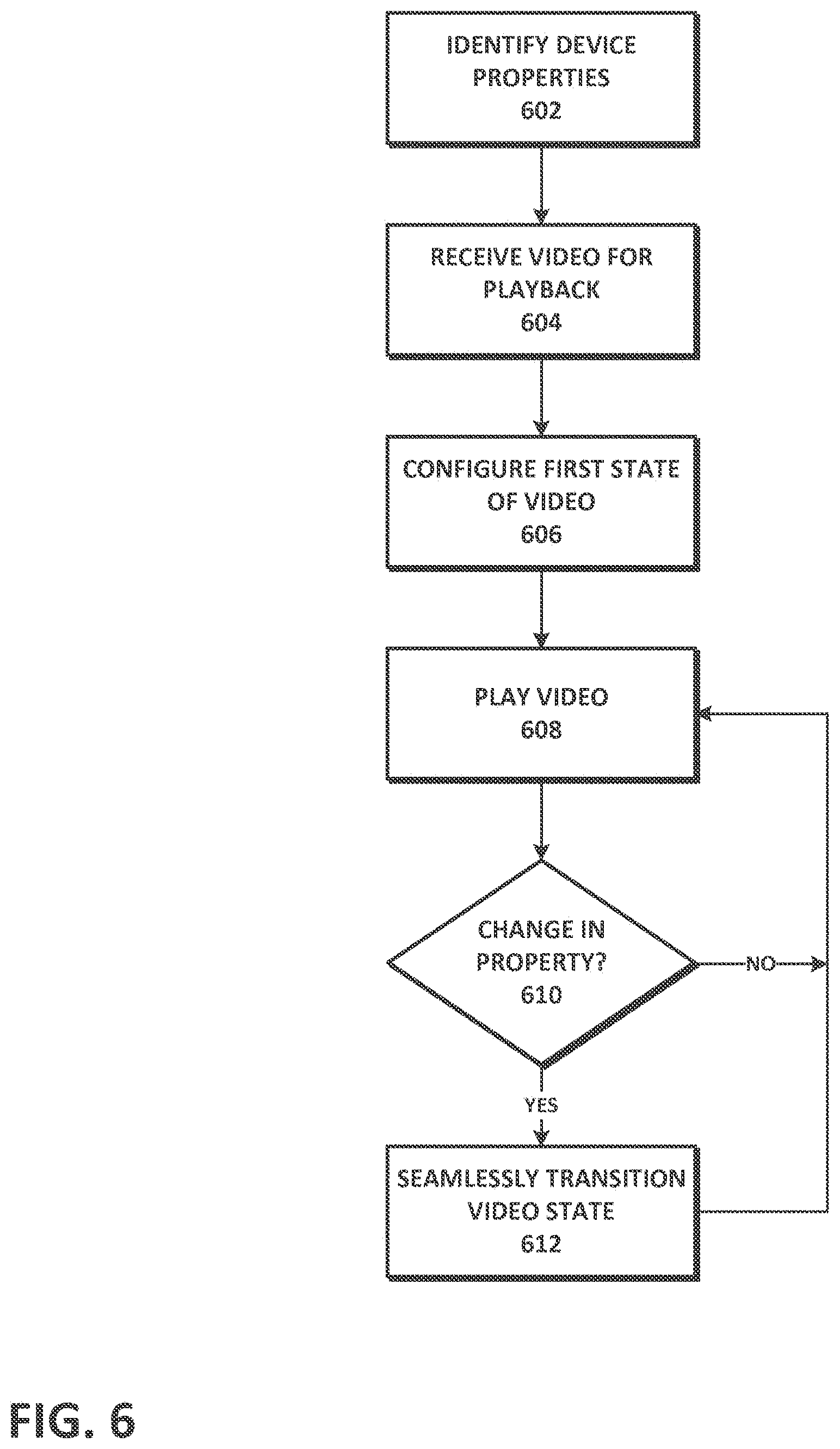

[0015] FIG. 6 depicts a flowchart of a method for providing adaptive and responsive media according to an implementation.

[0016] FIG. 7 depicts a flowchart of a method for providing parallel tracks in a media presentation according to an implementation.

[0017] FIG. 8 depicts an appending of a video portion from one of a number of parallel tracks.

DETAILED DESCRIPTION

[0018] Described herein are various implementations of systems and methods for adaptive and responsive media, in which a media presentation (e.g., video) playing on a user device responds in real-time to a change in one or more properties of the user device by altering the content, viewport, or other characteristic relating to the presentation.

[0019] Referring to FIG. 1, media content can be presented to a user on a user device 110 having an application 112 capable of playing and/or editing the content. The user device 110 can be, for example, a smartphone, tablet, laptop, palmtop, wireless telephone, television, gaming device, music player, mobile telephone, information appliance, workstation, a smart or dumb terminal, network computer, personal digital assistant, wireless device, minicomputer, mainframe computer, or other computing device, that is operated as a general purpose computer or a special purpose hardware device that can execute the functionality described herein.

[0020] The application 112 can be a video player and/or editor that is implemented as a native application, web application, or other form of software. In some implementations, the application 112 is in the form of a web page, widget, and/or Java, JavaScript, .Net, Silverlight, Flash, and/or other applet or plug-in that is downloaded to the device and runs in conjunction with a web browser. The application 112 and the web browser can be part of a single client-server interface; for example, the application 112 can be implemented as a plugin to the web browser or to another framework or operating system. Any other suitable client software architecture, including but not limited to widget frameworks and applet technology can also be employed.

[0021] Media content can be provided to the user device 110 by content server 102, which can be a web server, media server, a node in a content delivery network, or other content source. Application server 106 can provide the application 112 (or a portion thereof) to the user device 110. For example, some or all of the described functionality of the application 112 can be implemented in software downloaded to or existing on the user device 110 and, in some instances, some or all of the functionality exists remotely. For example, certain video encoding and processing functions can be performed on one or more remote servers, such as application server 106. In some implementations, the user device 110 serves only to provide output and input functionality, with the remainder of the processes being performed remotely.

[0022] The user device 110, content server 102, application server 106, and/or other devices and servers can communicate with each other through communications network 114. The communication can take place via any media such as standard telephone lines, LAN or WAN links (e.g., T1, T3, 56 kb, X.25), broadband connections (ISDN, Frame Relay, ATM), wireless links (802.11, Bluetooth, GSM, CDMA, etc.), and so on. The network 114 can carry TCP/IP protocol communications and HTTP/HTTPS requests made by a web browser, and the connection between clients and servers can be communicated over such TCP/IP networks. The type of network is not a limitation, however, and any suitable network can be used.

[0023] As a general matter, the techniques described herein can be implemented in any appropriate hardware or software. If implemented as software, the processes can execute on a system capable of running one or more commercial operating systems such as the Microsoft Windows.RTM. operating systems, the Apple OS X.RTM. operating systems, the Apple iOS.RTM. platform, the Google Android.TM. platform, the Linux.RTM. operating system and other variants of UNIX.RTM. operating systems, and the like. The software can be implemented on a general purpose computing device in the form of a computer including a processing unit, a system memory, and a system bus that couples various system components including the system memory to the processing unit.

[0024] If implemented as software, such software can include a plurality of software modules stored in a memory and executed on one or more processors. The modules can be in the form of a suitable programming language, which is converted to machine language or object code to allow the processor or processors to read the instructions. The software can be in the form of a standalone application, implemented in any suitable programming language or framework.

[0025] Method steps of the techniques described herein can be performed by one or more programmable processors executing a computer program to perform functions of the invention by operating on input data and generating output. Method steps can also be performed by, and apparatus of the invention can be implemented as, special purpose logic circuitry, e.g., an FPGA (field programmable gate array) or an ASIC (application-specific integrated circuit). Modules can refer to portions of the computer program and/or the processor/special circuitry that implements that functionality.

[0026] Processors suitable for the execution of a computer program include, by way of example, both general and special purpose microprocessors, and any one or more processors of any kind of digital computer. Generally, a processor will receive instructions and data from a read-only memory or a random access memory or both. The essential elements of a computer are a processor for executing instructions and one or more memory devices for storing instructions and data. Information carriers suitable for embodying computer program instructions and data include all forms of non-volatile memory, including by way of example semiconductor memory devices, e.g., EPROM, EEPROM, and flash memory devices; magnetic disks, e.g., internal hard disks or removable disks; magneto-optical disks; and CD-ROM and DVD-ROM disks. One or more memories can store media assets (e.g., audio, video, graphics, interface elements, and/or other media files), configuration files, and/or instructions that, when executed by a processor, form the modules, engines, and other components described herein and perform the functionality associated with the components. The processor and the memory can be supplemented by, or incorporated in special purpose logic circuitry.

[0027] It should also be noted that the present implementations can be provided as one or more computer-readable programs embodied on or in one or more articles of manufacture. The article of manufacture can be any suitable hardware apparatus, such as, for example, a floppy disk, a hard disk, a CD-ROM, a CD-RW, a CD-R, a DVD-ROM, a DVD-RW, a DVD-R, a flash memory card, a PROM, a RAM, a ROM, or a magnetic tape. In general, the computer-readable programs can be implemented in any programming language. The software programs can be further translated into machine language or virtual machine instructions and stored in a program file in that form. The program file can then be stored on or in one or more of the articles of manufacture.

[0028] FIG. 2 depicts a user device in the form of a smartphone 200 having a number of associated properties. One example property of the smartphone 200 is its physical orientation, which can refer to the alignment of the smartphone screen in a portrait or landscape mode. The orientation can also include a rotational position of the smartphone 200 in three-dimensional space determined based on readings from a sensor (e.g., gyroscope) in the device. Other properties of user devices, such as smartphone 200, can include screen resolution, aspect ratio, display proportions, and physical screen size. Device properties can also include the type of device (e.g., smartphone, smart watch, desktop, laptop, gaming device, television, etc.), model, brand, and other physical characteristics of the device. In some implementations, the existence of a particular device property depends on the device type and/or software operating on the device (e.g., operating system). For example, for device operating systems that support windowed applications (e.g., desktops, laptops, televisions, or other devices supporting Microsoft Windows.RTM. operating systems or Apple OS X.RTM. operating systems), one device property can be the window size (e.g., height and width values) of a media player application (e.g., native application, browser, or otherwise), or the window state (e.g., minimized, maximized, in thumbnail) of a media player application.

[0029] As shown in FIG. 2, smartphone 200 is rotatable between a portrait mode A and a landscape mode B. In a typical mode of operation, when a mobile device, such as a smartphone or tablet, is displaying a video, photograph, webpage, or the like, rotating the device between portrait and landscape results in a rotation of the item displayed on the device screen in order to maintain the orientation of the item while, in some cases, simultaneously resizing the item to fit to the current screen proportions. For example, an image that occupies the entire device screen in landscape mode will retain its orientation when the device is rotated to portrait mode, but is resized so that the width of the image fits within the narrower width of the portrait screen, resulting in mattes displayed above and below the image.

[0030] Advantageously, the present technique provides further enhancements to the user's media viewing experience beyond simple rotation or resizing of images or videos. For instance, in one implementation, the rotation of the smartphone 200 from portrait mode A to landscape mode B results in a change in the state of the video presentation (in this example, a change in the video and/or audio content). Still referring to FIG. 2, a smartphone user watching a music video can seamlessly alternate between two distinct views of the video by switching between portrait and landscape modes. As depicted, when the smartphone 200 is positioned in portrait mode A, video of the lead singer 210 is shown to the user. Upon rotating the smartphone 200 to landscape mode B, the video changes to show the rest of the band 220. In some implementations, the audio plays continuously and seamlessly when changing between modes, such that no user-perceptible gaps, pauses, or buffering occurs. The same audio can be played independent of the display mode of the smartphone 200 or, in some instances, the audio can be altered, enhanced, or otherwise differ among modes (e.g., when in portrait mode A, the volume of the lead singer's vocals can be emphasized relative to the musical instruments of the band 220 and, when in portrait mode B, the sound of the instruments can be emphasized).

[0031] It should be appreciated that the present technique is not limited to two display modes (i.e., landscape and portrait). Rather, various combinations of audio, video, and/or other media content can be shown based on any rotation or positioning of a user device. For example, a first video may be shown when in portrait mode, a second video when changing to landscape mode by rotating the device counter-clockwise, a third video when changing to landscape mode by rotating the device clockwise, a fourth video when tilting the device away from the user, a fifth video when tilting the device toward the user, a sixth video when laying the device flat, and so on.

[0032] FIG. 3 depicts a concept similar to that shown in FIG. 2, with a windowed media player 300 on a desktop computer, laptop, or other user device supporting windowed applications. In this instance, rather than physically rotating or repositioning the user device, the user changes the window size or state (e.g., minimized, maximized, thumbnailed) of the media player 300 using an input device (e.g., mouse, keyboard, touchscreen, etc.). In some instances, the media player 300 is resizable to fixed dimensions and will "snap to" the closest size as a user resizes the associated window. Different media content can be associated with each fixed window dimension (defined height and width). For example, using the same music video example as described with respect to FIG. 2, upon changing from fixed dimensions X to fixed dimensions Y, the video shown in the media player 300 can change from the singer 210 to the band 220. There can be multiple fixed dimensions with varying audio and/or video content associated with particular fixed dimensions.

[0033] In some implementations, instead of limiting the windowed media player 250 to fixed dimensions, ranges for window heights and/or widths can be defined and associated with differing media content. For example, assuming the height and width of a particular window can be individually resized to occupy between 5% and 100% of a screen, Table 1 indicates which of three different videos is presented depending on current window dimensions.

TABLE-US-00001 TABLE 1 Window Height Range Window Width Range Video 5% to <50% 5% to 100% Video 1 50% to 100% 5% to <25% Video 2 50% to 100% 25% to 100% Video 3

[0034] In addition to changes in audio and video content, as described above, other states of playing media can be dynamically modified in real-time based on a change to a device property (or a combination of device properties). Such states can include, but are not limited to, video aspect ratio, video dimensions, video and/or audio quality, viewport (i.e., the portion of the video visible to the user), video and/or audio playback speed, audio volume, and audio/video sound mix.

[0035] In one example, a change in a property associated with a user device can result in a change in the size and/or position of the viewport. Referring to FIGS. 4A-4D, a video 400 of a family is provided to a user device 402; however, only a portion of the video 400 is viewable by the user at any point in time during playback of the video 400. The viewable portion is defined by the viewport 410, which can be resized, rotated, or moved around about the video 400 during playback in response to change in a device property. In one implementation, the viewport is a mask layered over the video that includes a resizable transparent area allowing the user to see a portion of the underlying video. To reposition the viewport 410, the mask can be moved with respect to the video 400 and/or the video 400 can be moved with respect to the mask.

[0036] As depicted, initially, the viewport 410 allows the user to see video playback of the mother 422 (FIG. 4A). Upon the user tilting the device 402 in a clockwise direction (e.g., in the case of a smartphone, briefly rotating the smartphone clockwise and returning it to the 12 o'clock position), the viewport 410 can change to show video of the father 424 (FIG. 4B). The viewport 410 can move from the mother 422 to the father 424 while the device 402 is being tilted, or can directly switch to the father 424 upon completing the tilting motion. Similarly, the user can tilt the device 402 again in the clockwise direction to change the viewport 410 to video of the young boy 426 (FIG. 4C), or can tilt the device 402 repeatedly in the counter-clockwise direction to change the viewport 410 to video of the young girl 428 (FIG. 4D). In some implementations, a single rotational motion can move the viewport 410 among family members that are one or more persons apart, depending on the amount of rotation (e.g., a 90-degree rotation clockwise moves the viewport from the mother 422 to the boy 426, a 180-degree rotation counter-clockwise moves the viewport from the boy 426 to the girl 428, and so on).

[0037] FIGS. 5A and 5B depict a change in device property which results in the viewport 510a to a lecture video 500 changing both size and location. In the first instance, in FIG. 5A, the viewport 510a allows the user to view the full height (300 units) and width (450 units) of the video 500, thereby displaying the full dimensions of the lecture video 500, including the speaker, presentation screen, and audience. The viewport 510a is a rectangular shape (although other shapes are contemplated), and the upper left-hand corner of the viewport 510a is positioned at coordinates (0, 0). Referring now to FIG. 5B, upon detecting a change in a property of the device (e.g., the device is rotated from landscape to portrait mode), the viewport 510b is modified in size and repositioned to better accommodate the modified state of the device. Specifically, the viewport 510b is modified to a size that better fills the screen of the user device (height=300 units, width=200 units) and is positioned with the upper left-hand corner at coordinates (200, 0), to better focus on the speaker. The video and viewport may be zoomed out or in so that the viewport fills the height and/or width of the device display.

[0038] It should be noted that changes in various combinations of media states can occur based on a change in one or more device properties. For example, rotating a device so that it changes from portrait to landscape mode can result in a combined change in video content, audio volume, and viewport size for a particular media presentation. As another example, the audio content of a media presentation can change upon the occurrence of multiple property changes simultaneously or within a particular time period, such as two tilt movements in the same direction within three seconds.

[0039] In addition to the music and lecture videos described above, the techniques described herein have wide applicability and are useful in a variety of situations. In one example, a movie watched in landscape mode on a user device includes a director's commentary audio track in which, from time to time, the director provides commentary on scenes in the movie currently being watched. During playback of the video and audio, upon changing the orientation of the device to portrait mode, accompanying video of the director providing the commentary is shown instead of the movie. For example, the director can be shown sitting in front of a monitor and pointing out various details in the film as he comments. Of note, the transition between landscape and portrait mode, and vice-versa, is seamless, such that the audio commentary is continuously synchronized and continues playback without buffering or delay from the same point in time where the switch is made. In another example, a full-screen video includes video thumbnails (e.g., picture-in-picture) of parallel video tracks. A user interacting with the full-screen video can select one of the thumbnails to switch seamlessly to the parallel track.

[0040] In accordance with the systems and techniques described herein, FIG. 6 depicts one implementation of a method for providing adaptive and responsive video. In STEP 602, an application on a user device, such as a media player on a smartphone or tablet, identifies one or more properties associated with the device. The identified properties can be limited to a subset of device properties that the application considers in determining whether to change the state of the video (e.g., orientation, window size, and/or other device properties). A video for playback is received at the device (STEP 604), and the first state of the video is configured based on one or more of the identified properties (STEP 606). For example, if the device is currently in landscape mode, a video suitable for landscape mode can be set for initial playback. In STEP 608, the video is played according to the configured first state.

[0041] During presentation of the video, the application determines whether there has been a change in one or more of the identified properties associated with the device (STEP 610). For example, the application may determine that the device has been rotated from a landscape orientation to a portrait orientation. If a change in a relevant property is detected, and there is a different video state associated with the change, the application seamlessly transitions the video to a second state based on the change. Referring to the previous example, if there is different video content associated with the portrait orientation, the different video content can be seamlessly and instantly switched to upon the change in the device orientation property from landscape to portrait. The video can continue to play uninterrupted (return to STEP 608), and subsequent property changes can be detected and state transitions made.

[0042] Various techniques can be used for real-time modification of the state of a media presentation (e.g., switching currently playing media content) in response to a change in a user device property or properties. For example, in addition to the masking/viewport technique applied to a single video, as described above, a media presentation can be dynamically modified using "parallel tracks," as disclosed in U.S. patent application Ser. No. 14/534,626, filed on Nov. 6, 2014, and entitled "Systems and Methods for Parallel Track Transitions," the entirety of which is incorporated by reference herein.

[0043] For example, referring to FIG. 7, to facilitate near-instantaneous switching among parallel "tracks" or "channels", multiple media tracks (e.g., video streams) can be downloaded simultaneously to a user's device, in separate data streams and/or combined together in container structures with associated metadata. Upon selecting a streaming video for playback, an upcoming portion of the video stream is typically buffered by a video player prior to commencing playback of the video, and the video player can continue buffering as the video is playing. Accordingly, in one implementation, if an upcoming segment of a video presentation (including the beginning of the presentation) includes two or more parallel tracks, an application on the user device (e.g., a video player) can initiate download of the upcoming parallel tracks (in this example, three tracks) substantially simultaneously (STEP 702). The application can then simultaneously receive and/or retrieve video data portions of each track (STEP 712). The receipt and/or retrieval of upcoming video portions of each track can be performed prior to playback of any particular parallel track as well as during playback of a parallel track. The downloading of video data in parallel tracks can be achieved in accordance with smart downloading techniques such as those described in U.S. Pat. No. 8,600,220, issued on Dec. 3, 2013, and entitled "Systems and Methods for Loading More than One Video Content at a Time," the entirety of which is incorporated by reference herein.

[0044] Upon reaching a segment of the video presentation that includes parallel tracks, the application makes a determination in real-time of which track to play (STEP 720). The determination can be based on the state of one or more device properties. For example, in one implementation, each parallel track is mapped to one or more device properties, such as screen size, window size, or device orientation and/or a value of a particular device property, such as screen size=3 in..times.4.5 in., window size=1024 pixels.times.768 pixels, or orientation="landscape". This mapping information can be included in the metadata associated with each track that is transmitted to the user device. Upon initially playing the parallel video, the initial or current state of one or more device properties is determined, and the track associated with that property or properties is played. For example, if the device is oriented in portrait mode when the video commences, a parallel track associated with the device property value="portrait" can be selected as the track to play.

[0045] In STEP 724, based on the determined track to play, the application appends a portion of the video data from the determined track to the current video being presented. The appended portion can be in temporal correspondence with an overall timeline of the video presentation. For example, if two parallel tracks are 30 seconds long and begin at the same time, a switch from the first track (e.g., at 10 seconds in) to the second track results in playback continuing with the second track video at the same point in time (i.e., at 10 seconds in). One will appreciate, however, that tracks can overlap in various manners and may not correspond in length. Following the appending, playback of the video continues using the appended video data from the determined track (STEP 728). As the video is playing, the relevant properties of the device can be monitored to detect any changes that may affect which parallel track should be selected for playback (return to STEP 720). If, for example, the device is rotated into landscape mode, the property change is identified and the video for a parallel track associated with the landscape mode can be switched to immediately or after a delay. Switching among tracks can be seamless, such that no noticeable delays, buffering, or gaps in audio and/or video playback occur.

[0046] FIG. 8 provides an abstracted visual representation of the process in FIG. 7. Specifically, three parallel tracks 802 of the same length are simultaneously downloaded, and, in this example, each of the tracks 802 has been downloaded approximately in the same amount (represented by downloaded video 812), with approximately the same amount of each track to be downloaded (represented by remaining video to download 816). The video player or other application includes a function 820 that determines which track should be selected and played, and a portion 808 of the selected track is appended to the currently playing video, after the played video 804 up to that point.

[0047] In one implementation, the appended portion 808 is relatively short in length (e.g., 100 milliseconds, 500 milliseconds, 1 second, 1.5 seconds, etc.). Advantageously, the short length of the appended portion 808 provides for near-instantaneous switching to a different parallel track. For example, while the video is playing, small portions of the selected parallel track are continuously appended onto the video. In one instance, this appending occurs one portion at a time and is performed at the start of or during playback of the most recently appended portion 808. If a determination is made that a different parallel track has been selected, the next appended portion(s) will come from the different track. Thus, if the appended video portion 808 is 500 milliseconds long and a selection of a different track is made at the start of or during playback of the portion 808, then the next portion from the different track will be appended on the video and presented to the user no more than 500 milliseconds after the selection of the different track. As such, for appended portions of short length, the switch from one parallel track to another can be achieved with an imperceptible delay.

[0048] In one implementation, dynamically adapting media content to changes in device properties can be incorporated into branched media presentations, such as interactive video structured in a video tree, hierarchy, or other form. A video tree can be formed by nodes that are connected in a branching, hierarchical, or other linked form. Nodes can each have an associated video segment, audio segment, graphical user interface (GUI) elements, and/or other associated media. Users (e.g., viewers) can watch a video that begins from a starting node in the tree and proceeds along connected nodes in a branch or path. Upon reaching a point during playback of the video where multiple video segments branch off from a segment, the next video segment to watch can be selected based on the state of a device property. For example, the user can interactively select the branch or path to traverse by physically manipulating the orientation of the user device (e.g., tilting or rotating a smartphone or tablet). As another example, the branch to traverse can be automatically determined based on, e.g., the current device orientation, screen size, window size, or other device property.

[0049] As referred to herein, a particular branch or path in an interactive media structure, such as a video tree, can refer to a set of consecutively linked nodes between a starting node and ending node, inclusively, or can refer to some or all possible linked nodes that are connected subsequent to (e.g., sub-branches) or that include a particular node. Branched video can include seamlessly assembled and selectably presentable multimedia content such as that described in U.S. patent application Ser. No. 13/033,916, filed on Feb. 24, 2011, and entitled "System and Method for Seamless Multimedia Assembly" (the "Seamless Multimedia Assembly application"), and U.S. patent application Ser. No. 14/107,600, filed on Dec. 16, 2013, and entitled "Methods and Systems for Unfolding Video Pre-Roll," the entireties of which are hereby incorporated by reference.

[0050] The prerecorded video segments in a video tree or other structure can be selectably presentable multimedia content; that is, some or all of the video segments in the video tree can be individually or collectively played for a user based upon the user's selection of a particular video segment, an interaction with a previous or playing video segment, or other interaction that results in a particular video segment or segments being played. The video segments can include, for example, one or more predefined, separate multimedia content segments that can be combined in various manners to create a continuous, seamless presentation such that there are no noticeable gaps, jumps, freezes, delays, or other visual or audible interruptions to video or audio playback between segments. In addition to the foregoing, "seamless" can refer to a continuous playback of content that gives the user the appearance of watching a single, linear multimedia presentation, as well as a continuous playback of multiple content segments that have smooth audio and/or video transitions (e.g., fadeout/fade-in, linking segments) between two or more of the segments.

[0051] In some instances, the user is permitted to make choices or otherwise interact in real-time at decision points or during decision periods interspersed throughout the multimedia content. This can be accomplished, for example, by the user interacting with a user interface or changing a property of the user device. Decision points and/or decision periods can occur at any time and in any number during a multimedia segment, including at or near the beginning and/or the end of the segment. Decision points and/or periods can be predefined, occurring at fixed points or during fixed periods in the multimedia content segments. Based at least in part on the user's choices made before or during playback of content, one or more subsequent multimedia segment(s) associated with the choices can be presented to the user. In some implementations, the subsequent segment is played immediately and automatically following the conclusion of the current segment, whereas in other implementations, the subsequent segment is played immediately upon the user's interaction with the video, without waiting for the end of the decision period or the end of the segment itself.

[0052] If a user does not make a selection at a decision point or during a decision period, a device property-based, default, previously identified selection, or random selection can be automatically made by the system. In some instances, the user is not provided with options; rather, the system automatically selects the segments that will be shown based on information that is associated with the device, the user, other users, or other factors, such as the current date. For example, the present system can automatically select subsequent segments based on the device type, orientation, screen resolution, aspect ratio, display proportions, physical screen size, window size, window state, and other device properties. As another example, the system can automatically select subsequent segments based on the user's IP address, location, time zone, the weather in the user's location, social networking ID, saved selections, stored user profiles, preferred products or services, and so on. The system can also automatically select segments based on previous selections made by other users, such as the most popular suggestion or shared selections. The information can also be displayed to the user in the video, e.g., to show the user why an automatic selection is made. As one example, video segments can be automatically selected for presentation based on the geographical location of three different users: a user in Canada will see a twenty-second beer commercial segment followed by an interview segment with a Canadian citizen; a user in the US will see the same beer commercial segment followed by an interview segment with a US citizen; and a user in France is shown only the beer commercial segment.

[0053] Multimedia segment(s) selected automatically or by a user can be presented immediately following a currently playing segment, or can be shown after other segments are played. Further, the selected multimedia segment(s) can be presented to the user immediately after selection, after a fixed or random delay, at the end of a decision period, and/or at the end of the currently playing segment. Two or more combined segments can form a seamless multimedia content path or branch, and users can take multiple paths over multiple play-throughs, and experience different complete, start-to-finish, seamless presentations. Further, one or more multimedia segments can be shared among intertwining paths while still ensuring a seamless transition from a previous segment and to the next segment. The content paths can be predefined, with fixed sets of possible transitions in order to ensure seamless transitions among segments. The content paths can also be partially or wholly undefined, such that, in some or all instances, the user can switch to any known video segment without limitation. There can be any number of predefined paths, each having any number of predefined multimedia segments. Some or all of the segments can have the same or different playback lengths, including segments branching from a single source segment.

[0054] Traversal of the nodes along a content path in a tree can be performed by selecting among options that appear on and/or around the video while the video is playing or by automatic path selection, as described above. In some implementations, user-selectable options are presented to users at a decision point and/or during a decision period in a content segment. Some or all of the displayed options can hover and then disappear when the decision period ends or when an option has been selected. Further, a timer, countdown or other visual, aural, or other sensory indicator can be presented during playback of content segment to inform the user of the point by which he should (or, in some cases, must) make his selection. For example, the countdown can indicate when the decision period will end, which can be at a different time than when the currently playing segment will end. If a decision period ends before the end of a particular segment, the remaining portion of the segment can serve as a non-interactive seamless transition to one or more other segments. Further, during this non-interactive end portion, the next multimedia content segment (and other potential next segments) can be downloaded and buffered in the background for later playback (or potential playback).

[0055] A segment that is played after (immediately after or otherwise) a currently playing segment can be determined based on an option selected or other interaction with the video. Each available option can result in a different video and audio segment being played. As previously mentioned, the transition to the next segment can occur immediately upon selection, at the end of the current segment, or at some other predefined or random point. Notably, the transition between content segments can be seamless. In other words, the audio and video continue playing regardless of whether a segment selection is made, and no noticeable gaps appear in audio or video playback between any connecting segments. In some instances, the video continues on to another segment after a certain amount of time if none is chosen, or can continue playing in a loop.

[0056] Although the systems and methods described herein relate primarily to audio and video playback, the invention is equally applicable to various streaming and non-streaming media, including animation, video games, interactive media, and other forms of content usable in conjunction with the present systems and methods. There can be more than one audio, video, and/or other media content stream played in synchronization with other streams. Streaming media can include, for example, multimedia content that is continuously presented to a user while it is received from a content delivery source, such as a remote video server. If a source media file is in a format that cannot be streamed and/or does not allow for seamless connections between segments, the media file can be transcoded or converted into a format supporting streaming and/or seamless transitions.

[0057] While various implementations of the present invention have been described herein, it should be understood that they have been presented by example only. Where methods and steps described above indicate certain events occurring in certain order, those of ordinary skill in the art having the benefit of this disclosure would recognize that the ordering of certain steps can be modified and that such modifications are in accordance with the given variations. For example, although various implementations have been described as having particular features and/or combinations of components, other implementations are possible having any combination or sub-combination of any features and/or components from any of the implementations described herein.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

D00011

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.