Biometric Identification Techniques

Ralston; Tyler S. ; et al.

U.S. patent application number 16/712843 was filed with the patent office on 2020-06-18 for biometric identification techniques. This patent application is currently assigned to Tesseract Health, Inc.. The applicant listed for this patent is Tesseract Health, Inc.. Invention is credited to Maurizio Arienzo, Jacobus Coumans, Owen Kaye-Kauderer, Christopher Thomas McNulty, Tyler S. Ralston, Benjamin Rosenbluth, Jonathan M. Rothberg, Lawrence C. West.

| Application Number | 20200193156 16/712843 |

| Document ID | / |

| Family ID | 71071494 |

| Filed Date | 2020-06-18 |

View All Diagrams

| United States Patent Application | 20200193156 |

| Kind Code | A1 |

| Ralston; Tyler S. ; et al. | June 18, 2020 |

BIOMETRIC IDENTIFICATION TECHNIQUES

Abstract

The present disclosure provides techniques and apparatus for capturing an image of a person's retina fundus, identifying the person, accessing various electronic records (including health records) or accounts or devices associated with the person, determining the person's predisposition to certain diseases, and/or diagnosing health issues of the person. Some embodiments provide imaging apparatus having one or more imaging devices for capturing one or more images of a person's eye(s). Imaging apparatus described herein may include electronics for analyzing and/or exchanging captured image and/or health data with other devices. In accordance with various embodiments, imaging apparatus described herein may be alternatively or additionally configured for biometric identification and/or health status determination techniques, as described herein.

| Inventors: | Ralston; Tyler S.; (Clinton, CT) ; Arienzo; Maurizio; (New York, NY) ; Kaye-Kauderer; Owen; (Brooklyn, NY) ; Rosenbluth; Benjamin; (Hamden, CT) ; Rothberg; Jonathan M.; (Guilford, CT) ; West; Lawrence C.; (San Jose, CA) ; Coumans; Jacobus; (Old Lyme, CT) ; McNulty; Christopher Thomas; (Guilford, CT) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Tesseract Health, Inc. Guilford CT |

||||||||||

| Family ID: | 71071494 | ||||||||||

| Appl. No.: | 16/712843 | ||||||||||

| Filed: | December 12, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62833179 | Apr 12, 2019 | |||

| 62833210 | Apr 12, 2019 | |||

| 62833239 | Apr 12, 2019 | |||

| 62778494 | Dec 12, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06N 20/00 20190101; A61B 5/117 20130101; G06T 7/0014 20130101; G06T 2207/30041 20130101; A61B 3/1208 20130101; G06T 7/0012 20130101; G01N 2021/1787 20130101; A61B 5/0022 20130101; G06T 2207/20084 20130101; G06T 2207/20081 20130101; G16H 50/20 20180101; H04L 9/3231 20130101; A61B 5/0066 20130101; G16H 30/40 20180101; G06K 9/0061 20130101; G06K 9/6215 20130101; G16H 15/00 20180101; G01N 21/17 20130101; A61B 5/0071 20130101; H04L 63/00 20130101; G06F 21/6245 20130101; G16H 50/70 20180101; G06K 9/00617 20130101; G06K 9/4671 20130101; G16H 10/60 20180101; A61B 3/102 20130101; G06N 3/0445 20130101; G01B 9/02091 20130101; G06N 7/005 20130101; G06F 21/32 20130101; G06K 9/00926 20130101; G06K 9/685 20130101; G06N 3/0454 20130101 |

| International Class: | G06K 9/00 20060101 G06K009/00; G06K 9/62 20060101 G06K009/62; G06N 20/00 20060101 G06N020/00; G06N 7/00 20060101 G06N007/00 |

Claims

1.-129. (canceled)

130. A system comprising at least one processor configured to, based on first image and/or measurement data associated with and/or including a first image and/or measurement of a person's retina fundus, identify the person and/or update stored image or measurement data associated with the person.

131. The system of claim 130, further comprising a computer-readable storage medium having stored thereon the stored data.

132. The system of claim 130, further comprising: an imaging and/or measurement apparatus configured to capture the first image and/or measurement, wherein the at least one processor is configured to obtain the first image and/or measurement from the imaging and/or measurement apparatus.

133. The system of claim 130, wherein the at least one processor is configured to update the stored data at least in part by storing, with the stored data, the first image and/or measurement data.

134. The system of claim 130, wherein the at least one processor is further configured to associate the first image and/or measurement data with identification information.

135. The system of claim 132, wherein the imaging apparatus is further configured to capture a first plurality of images and/or measurements of the person's retina fundus, wherein the first plurality of images and/or measurements comprises the first image and/or measurement.

136. The system of claim 130, wherein the at least one processor is configured to identify the person in part by: comparing the first image data to the stored data, wherein the stored data comprises second image and/or measurement data having at least a predetermined degree of similarity to the first image and/or measurement data; and obtaining identification information associated with the second image and/or measurement data.

137. The system of claim 136, wherein the predetermined degree of similarity is between 70% and 90%.

138. The system of claim 136, wherein the predetermined degree of similarity is at least 99%.

139. The system of claim 136, wherein the first image and/or measurement data is further associated with at least a second of the first plurality of images and/or measurements.

140. The system of claim 135, wherein the at least one processor is further configured to: obtain another image and/or measurement of the first plurality of images and/or measurements; and identify the person based on second image and/or measurement data associated with the another image and/or measurement at least in part by: comparing the second image and/or measurement data to the first image and/or measurement data; and obtaining identification information associated with the first image and/or measurement data.

141. The system of claim 136, wherein the at least one processor is further configured to extract the first image and/or measurement data from the first image and/or measurement, wherein the first image and/or measurement data is indicative of features of the person's retina fundus.

142. The system of claim 136, wherein the at least one processor is further configured to perform template matching between at least a portion of the first image and/or measurement data and at least a portion of the second image and/or measurement data to generate a similarity measure, wherein the similarity measure indicates that the second image and/or measurement data has at least the predetermined degree of similarity to the first image and/or measurement data.

143. The system of claim 136, wherein the first image and/or measurement data comprises translationally and rotationally invariant features of the person's retina fundus.

144. The system of claim 143, wherein the at least one processor is further configured to compare relative positions and orientations of the translationally and rotationally invariant features of the first image and/or measurement data with relative positions and orientations of translationally and rotationally invariant features of the second image and/or measurement data to generate a similarity measure, wherein the similarity measure indicates that the second image and/or measurement data has at least the predetermined degree of similarity to the first image and/or measurement data.

145. The system of claim 130, further comprising: a first device including a first processor of the at least one processor configured to transmit, over a communication network, the first image and/or measurement data; and a second device including a second processor of the at least one processor configured to: receive, over the communication network, the first image and/or measurement data; identify the person; and update the stored data.

146. The system of claim 145, wherein the first processor is further configured to encrypt the first image and/or measurement data before transmitting, over the communication network, the first image and/or measurement data.

147. The system of claim 132, further comprising: a first device including a first processor of the at least one processor configured to: obtain the first image and/or measurement from the imaging apparatus; identify the person; and update the stored data.

148. The system of claim 136, wherein the second image and/or measurement data is associated with multiple images and/or measurements of the plurality of retina fundus images and/or measurements, and wherein each of the multiple images and/or measurements is associated with the person.

149. The system of claim 132, wherein the imaging and/or measurement apparatus comprises a digital camera having an imaging and/or measuring field-of-view between 30 degrees and 45 degrees.

150-153. (canceled)

154. A device configured to, based on first image and/or measurement data associated with and/or including a first image and/or based on a measurement of a person's retina fundus, identify the person and/or update image and/or measurement data associated with the person.

155. The device of claim 154, further comprising: an imaging apparatus configured to capture the first image and/or measurement; and a processor configured to identify the person.

156. The device of claim 154, further comprising a computer-readable storage medium having stored thereon the stored data.

157. The device of claim 155, wherein the processor is configured to update the stored data at least in part by storing, with the stored data, the first image and/or measurement data.

158. The device of claim 157, wherein the processor is further configured to associate the first image and/or measurement data with identification information.

159. The device of claim 155, wherein the imaging and/or measurement apparatus is further configured to capture a first plurality of images and/or measurements of the person's retina fundus, wherein the first plurality of images and/or measurements comprises the first image and/or measurement.

160. The device of claim 154, wherein the processor is configured to identify the person at least in part by: comparing the first image and/or measurement data to the stored data, wherein the stored data comprises second image and/or measurement data having at least a predetermined degree of similarity to the first image and/or measurement data; and obtaining identification information associated with the second image and/or measurement data.

161. The device of claim 160, wherein the predetermined degree of similarity is between 70% and 90%.

162. The device of claim 160, wherein the predetermined degree of similarity is at least 99%.

163. The device of claim 160, wherein the first image and/or measurement data is further associated with at least a second of the first plurality of images and/or measurements.

164. The device of claim 159, wherein the processor is further configured to: obtain a second image and/or measurement of the first plurality of images and/or measurements; and identify the person based on second image and/or measurement data associated with the second image and/or measurement at least in part by: comparing the second image and/or measurement data to the first image and/or measurement data; and obtaining identification information associated with the first image and/or measurement data.

165. The device of claim 155, wherein the processor is further configured to extract the first image and/or measurement data from the first image and/or measurement, wherein the first image and/or measurement data is indicative of features of the person's retina fundus.

166. The device of claim 160, wherein the processor is further configured to perform template matching between at least a portion of the first image and/or measurement data and at least a portion of the second image and/or measurement data to generate a similarity measure, wherein the similarity measure indicates that the second image and/or measurement data has at least the predetermined degree of similarity to the first image and/or measurement data.

167. The device of claim 160, wherein the first image and/or measurement data comprises translationally and rotationally invariant features of the person's retina fundus.

168. The device of claim 167, wherein the processor is further configured to compare relative positions and orientations of the translationally and rotationally invariant features of the first image and/or measurement data with relative positions and orientations of translationally and rotationally invariant features of the second image and/or measurement data to generate a similarity measure, wherein the similarity measure indicates that the second image and/or measurement data has at least the predetermined degree of similarity to the first image and/or measurement data.

169. The device of claim 160, wherein the second image and/or measurement data is associated with multiple images and/or measurement of the plurality of retina fundus images and/or measurements, and wherein each of the multiple images and/or measurements is associated with the person.

170. The device of claim 155, wherein the imaging apparatus comprises a digital camera having an imaging and/or measuring field-of-view between 30 degrees and 45 degrees.

171. The device of claim 154, wherein the device is portable.

172. The device of claim 154, wherein the device is configured to be held in a user's hand.

173. The device of claim 155, wherein the device is a mobile phone, and wherein the imaging and/or measurement apparatus is a camera integrated with the mobile phone.

174. The device of claim 154, wherein the device is wearable.

175. A method comprising, based on first image data associated with and/or including a first image and/or measurement of a person's retina fundus, identifying the person and updating stored data associated with a plurality of retina fundus images and/or measurements.

176. The method of claim 175, further comprising obtaining, from an imaging and/or measurement apparatus, the first image and/or measurement.

177. The method of claim 175, wherein updating the stored data comprises storing, with the stored data, first image and/or measurement data associated with the first image and/or measurement.

178. The method of claim 177, wherein updating the stored data based on the first image and/or measurement data further comprises associating the first image and/or measurement data with identification information.

179. The method of claim 175, further comprising capturing the first image and/or measurement.

180. The method of claim 175, further comprising capturing a first plurality of images of the person's retina fundus, wherein the first plurality of images and/or measurements comprises the first image and/or measurement.

181. The method of claim 175, wherein identifying the person comprises: comparing the first image and/or measurement data to the stored data, wherein the stored data comprises second image and/or measurement data having at least a predetermined degree of similarity to the first image and/or measurement data; and obtaining identification information associated with the second image and/or measurement data.

182. The method of claim 181, wherein the predetermined degree of similarity is between 70% and 90%.

183. The method of claim 181, wherein the predetermined degree of similarity is at least 99%.

184. The method of claim 181, wherein the first image and/or measurement data is further associated with at least a second of the first plurality of images and/or measurements.

185. The method of claim 180, further comprising: obtaining second image and/or measurement data from a second of the first plurality of images and/or measurements, wherein identifying the person comprises identifying the person at least in part by: comparing the second image and/or measurement data to the first image and/or measurement data; obtaining identification information associated with the first image and/or measurement data.

186. The method of claim 175, further comprising extracting the first image and/or measurement data from the first image and/or measurement, wherein the first image and/or measurement data is indicative of features of the person's retina fundus.

187. The method of claim 181, further comprising template matching between at least a portion of the first image and/or measurement data and at least a portion of the second image and/or measurement data to generate a similarity measure, wherein the similarity measure indicates that the second image and/or measurement data has at least the predetermined degree of similarity to the first image and/or measurement data.

188. The method of claim 181, wherein the first image and/or measurement data comprises translationally and rotationally invariant features of the person's retina fundus.

189. The method of claim 188, further comprising comparing relative positions and orientations of the translationally and rotationally invariant features of the first image and/or measurement data with relative positions and orientations of translationally and rotationally invariant features of the second image and/or measurement data to generate a similarity measure, wherein the similarity measure indicates that the second image and/or measurement data has at least the predetermined degree of similarity to the first image and/or measurement data.

190.-257. (canceled)

Description

FIELD OF THE DISCLOSURE

[0001] The present application relates to biometric identification, such as using a person's retina fundus.

BACKGROUND

[0002] Present techniques for identifying a person, accessing a person's private devices or accounts, determining a health status of a person, and/or diagnosing a health condition of the person would benefit from improvement.

BRIEF SUMMARY

[0003] Some aspects of the present disclosure provide a system comprising at least one processor configured to, based on multiple types of features indicated in first image and/or measurement data associated with and/or included in a first image and/or based on a measurement of a person's retina fundus, identify the person.

[0004] Some aspects of the present disclosure provide a device configured to obtain, based on first image and/or measurement data indicative of multiple types of features of a person's retina fundus, an identity of the person.

[0005] Some aspects of the present disclosure provide a method comprising, based on first image and/or measurement data associated with and/or including a first image and/or measurement of a person's retina fundus indicative of multiple types of features of the person's retina fundus, identifying the person.

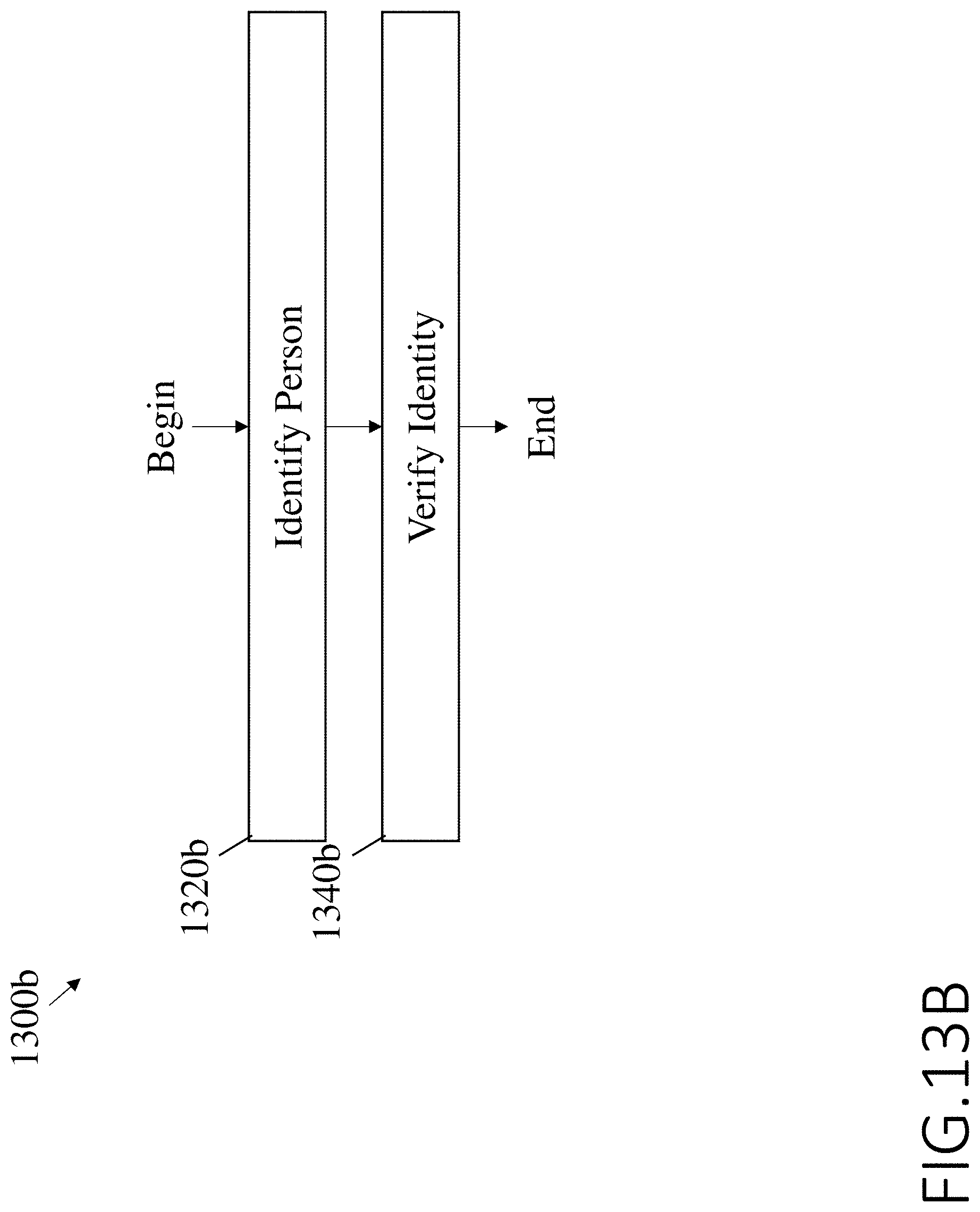

[0006] Some aspects of the present disclosure provide a system comprising at least one processor configured to, based on first image and/or measurement data associated with and/or including a first image and/or measurement of a person's retina fundus, identify the person, and, based on a first biometric characteristic of the person, verify an identity of the person.

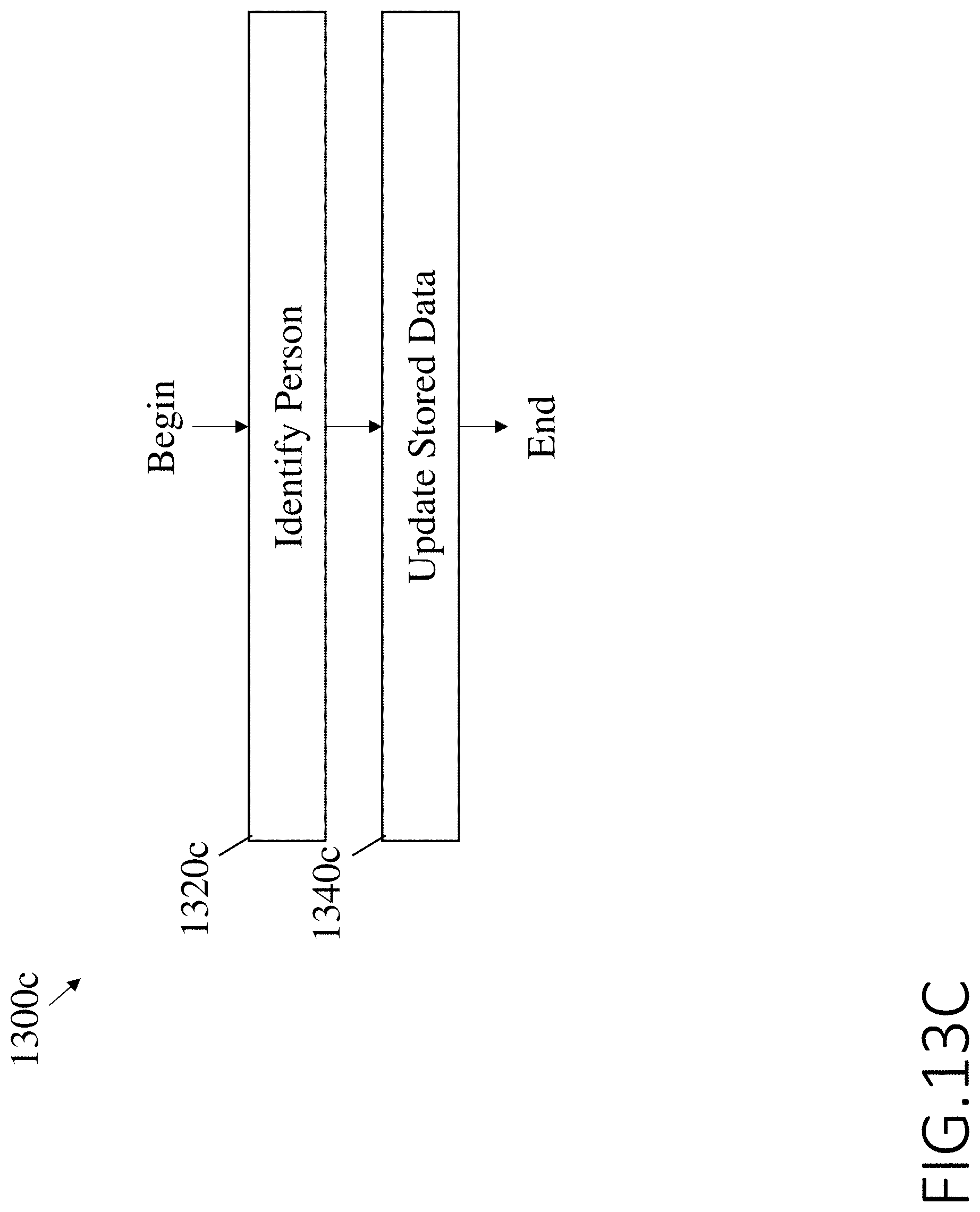

[0007] Some aspects of the present disclosure provide a system comprising at least one processor configured to, based on first image and/or measurement data associated with and/or including a first image and/or measurement of a person's retina fundus, identify the person and update stored data associated with a plurality of retina fundus images and/or measurements.

[0008] Some aspects of the present disclosure provide a device configured to, based on first image and/or measurement data associated with and/or including a first image and/or based on a measurement of a person's retina fundus, identify the person and update stored data associated with a plurality of retina fundus images and/or measurements.

[0009] Some aspects of the present disclosure provide a device configured to provide, as a first input to a trained statistical classifier (TSC), first image and/or measurement data associated with and/or including a first image and/or based on measurement of a person's retina fundus and, based on at least one output from the TSC, identify the person.

[0010] Some aspects of the present disclosure provide a method comprising providing, as a first input to a trained statistical classifier (TSC), first image and/or measurement data associated with and/or including a first image and/or based on a measurement of a person's retina fundus and, based on at least one output from the TSC, identifying the person.

[0011] The foregoing summary is not intended to be limiting. In addition, various embodiments may include any aspects of the disclosure either alone or in combination with other aspects.

BRIEF DESCRIPTION OF DRAWINGS

[0012] The accompanying drawings are not intended to be drawn to scale. In the drawings, each identical or nearly identical component that is illustrated in various figures is represented by a like numeral. For purposes of clarity, not every component may be labeled in every drawing. In the drawings:

[0013] FIG. 1 is a block diagram of a cloud-connected system for biometric identification and health or other account access, in accordance with some embodiments of the technology described herein.

[0014] FIG. 2 is a block diagram an exemplary device for local biometric identification and health or other account access, in accordance with some embodiments of the system illustrated in FIG. 1.

[0015] FIG. 3 is a flow diagram illustrating an exemplary method for capturing one or more retina fundus images and extracting image data from the captured image(s), in accordance with the embodiments of FIGS. 1-2.

[0016] FIG. 4 is a side view of a person's retina fundus including various features which may be captured in one or more image(s) and/or indicated in data extracted from the image(s), in accordance with the method of FIG. 3.

[0017] FIG. 5A is a block diagram of an exemplary convolutional neural network (CNN), in accordance with some embodiments of the method of FIG. 3.

[0018] FIG. 5B is a block diagram of an exemplary convolutional neural network (CNN), in accordance with some embodiments of the CNN of FIG. 5A.

[0019] FIG. 5C is a block diagram of an exemplary recurrent neural network (RNN) including a long short-term memory (LSTM) network, in accordance with alternative embodiments of the CNN of FIG. 5A.

[0020] FIG. 6 is a block diagram of an exemplary fully convolutional neural network (FCNN), in accordance with some embodiments of the method of FIG. 3.

[0021] FIG. 7 is a block diagram of an exemplary convolutional neural network (CNN), in accordance with alternative embodiments of the method of FIG. 3.

[0022] FIG. 8 is a block diagram of an exemplary convolutional neural network (CNN), in accordance with further alternative embodiments of the method of FIG. 3.

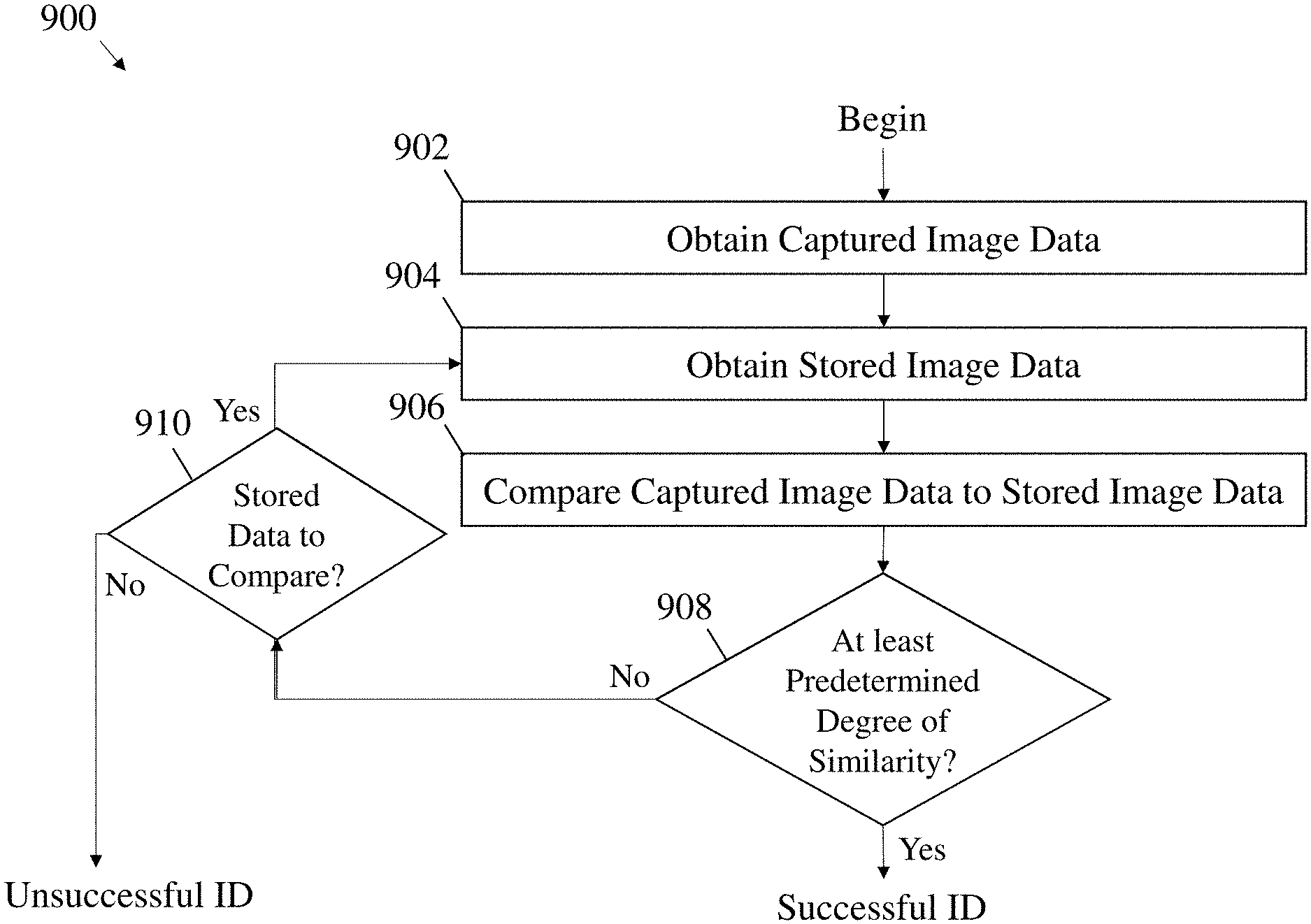

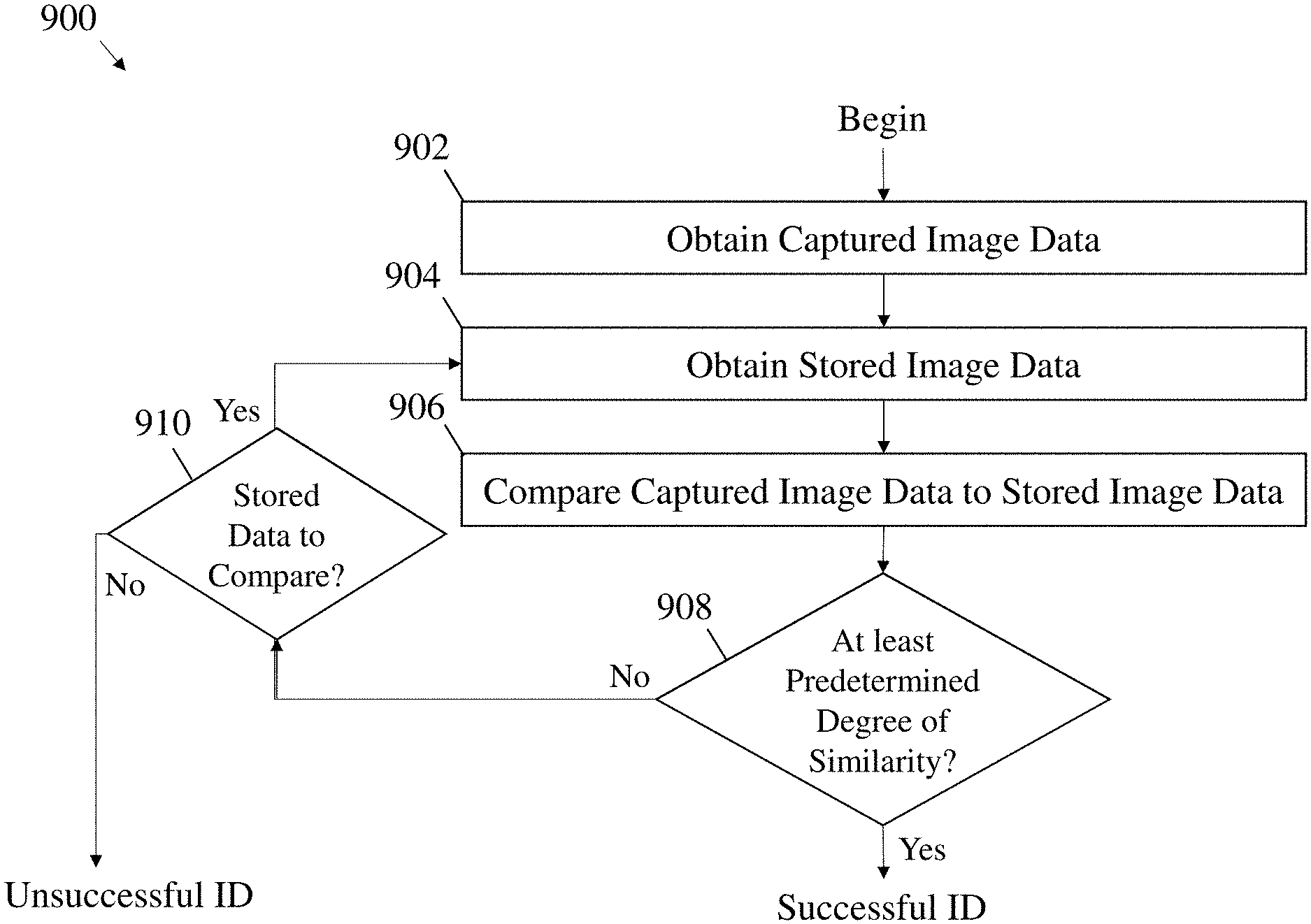

[0023] FIG. 9 is a flow diagram illustrating an exemplary method for identifying a person, in accordance with the embodiments of FIGS. 1-2.

[0024] FIG. 10A is a flow diagram of a method for template-matching retina fundus features, in accordance with some embodiments of the method of FIG. 9.

[0025] FIG. 10B is a flow diagram of a method for comparing translationally and rotationally invariant features of a person's retina fundus, in accordance with some embodiments of the method of FIG. 9.

[0026] FIG. 11 is a block diagram illustrating an exemplary user interface in accordance with the embodiments of FIGS. 1-2.

[0027] FIG. 12 is a block diagram illustrating an exemplary distributed ledger, components of which are accessible over a network, in accordance with some embodiments of the technology described herein.

[0028] FIG. 13A is a flow diagram illustrating an exemplary method including transmitting, over a communication network, first image data associated with and/or including a first image of a person's retina fundus, and receiving, over the communication network, an identity of the person, in accordance with some embodiments of the technology described herein.

[0029] FIG. 13B is a flow diagram illustrating an exemplary method including, based on first image data associated with and/or including a first image of a person's retina fundus, identifying the person, and, based on a first biometric characteristic of the person, verifying an identity of the person, in accordance with some embodiments of the technology described herein.

[0030] FIG. 13C is a flow diagram illustrating an exemplary method including, based on first image data associated with and/or including a first image of a person's retina fundus, identifying the person and updating stored data associated with a plurality of retina fundus images, in accordance with some embodiments of the technology described herein.

[0031] FIG. 13D is a flow diagram illustrating an exemplary method including providing, as a first input to a trained statistical classifier (TSC), first image data associated with and/or including a first image of a person's retina fundus, and, based on at least one output from the TSC, identifying the person, in accordance with some embodiments of the technology described herein.

[0032] FIG. 13E is a flow diagram illustrating an exemplary method including, based on first image data associated with and/or including a first image of a person's retina fundus, identifying the person, and determining a medical condition of the person, in accordance with some embodiments of the technology described herein.

[0033] FIG. 13F is a flow diagram illustrating an exemplary method including providing, as a first input to a trained statistical classifier (TSC), first image data associated with and/or including a first image of a person's retina fundus, based on at least one output from the TSC, identifying the person at step, and determining a medical condition of the person, in accordance with some embodiments of the technology described herein.

[0034] FIG. 14A is a front perspective view of an exemplary imaging apparatus, in accordance with some embodiments of the technology described herein.

[0035] FIG. 14B is a rear perspective, and partly transparent view of the imaging apparatus of FIG. 14A, in accordance with some embodiments of the technology described herein.

[0036] FIG. 15 is a bottom view of an alternative exemplary imaging apparatus, in accordance with some embodiments of the technology described herein.

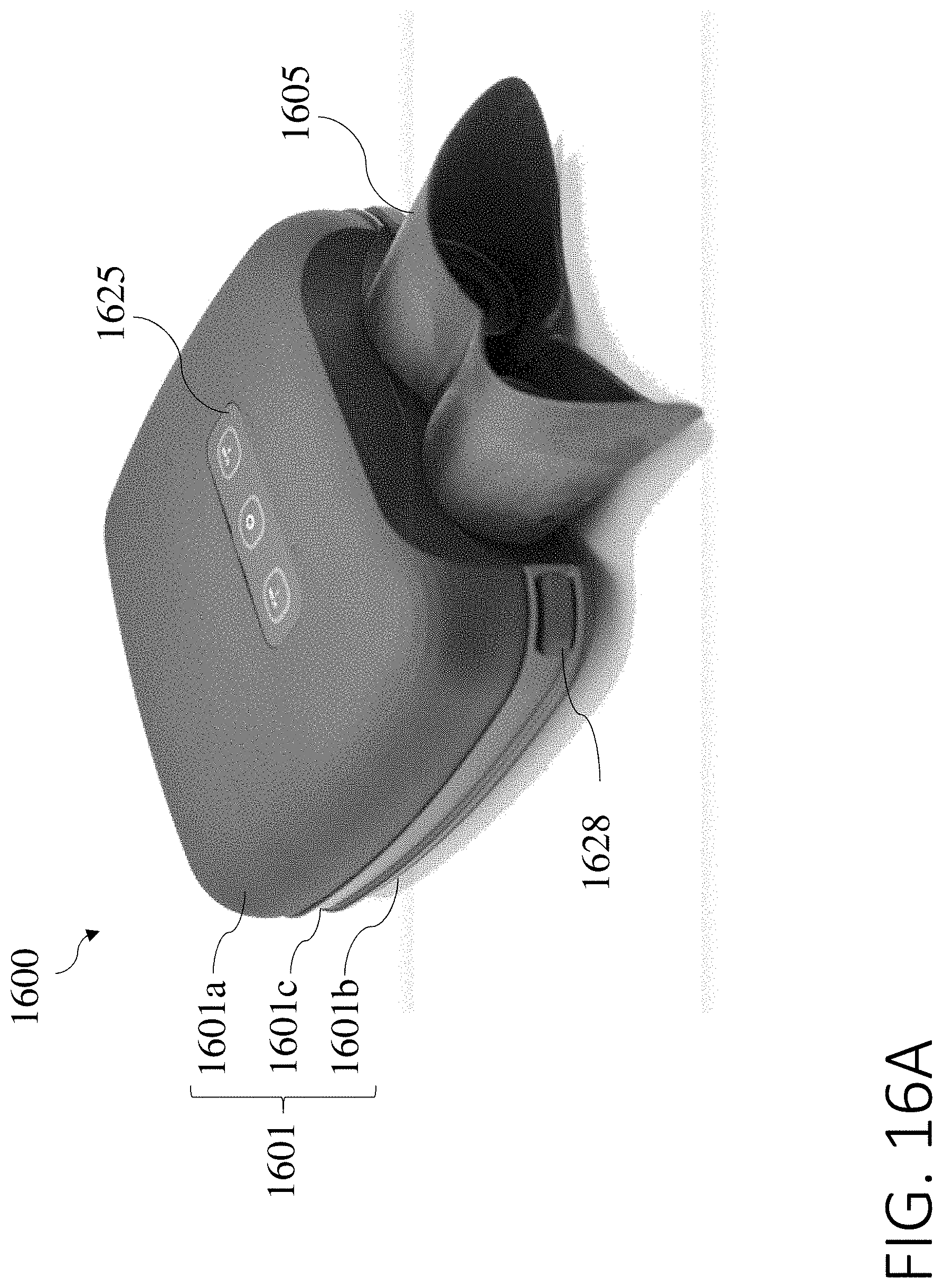

[0037] FIG. 16A is a rear perspective view of a further exemplary imaging apparatus, in accordance with some embodiments of the technology described herein.

[0038] FIG. 16B is an exploded view of the imaging apparatus of FIG. 16A, in accordance with some embodiments of the technology described herein.

[0039] FIG. 16C is a side view of a person using the imaging apparatus of FIG. 16A to image one or each of the person's eyes, in accordance with some embodiments of the technology described herein.

[0040] FIG. 16D is a perspective view of the imaging apparatus of FIG. 16A supported by a stand, in accordance with some embodiments of the technology described herein.

DETAILED DESCRIPTION

[0041] The inventors have discovered that a captured image of a person's retina fundus can be used to identify a person, determine the person's predisposition to certain diseases, and/or diagnose health issues of the person. Accordingly, the inventors have developed techniques for capturing an image of a person's retina fundus. Further, the inventors have developed techniques for identifying a person, accessing various electronic records (including health records) or accounts or devices associated with the person, determining the person's predisposition to certain diseases, and/or diagnosing health issues of the person.

[0042] Some embodiments of the technology described herein provide systems for cloud-based biometric identification capable of protecting sensitive data such as electronic records or accounts stored on the cloud. Some embodiments provide systems for storing health information associated with various patients on the cloud, and/or for protecting patients' health information with a biometric identification system such that the health information may be more accessible to patients without sacrificing security or confidentiality. In some embodiments, a biometric identification system may be integrated together with a system for storing health information and/or for determining a medical condition of the patients, such that data from one or more captured image(s) used to identify a person may also be used to update the person's health information, and/or to determine a medical condition of the person.

[0043] The inventors have recognized several problems in current security systems such as for authentication using alphanumeric password or passcode systems and various forms of biometric security. Alphanumeric password or passcode systems may be susceptible to hacking, for example by brute force (e.g., attempting every possible alphanumeric combination). In such cases, users may strengthen their passwords by using a long sequence of characters or by using a greater diversity of characters (such as punctuation or a mix of letters and numbers). However, in such methods, passwords are more difficult for users to remember. In other cases, users may select passwords or passcodes which incorporate personal information (e.g., birth dates, anniversary dates, or pet names), which may be easier to remember but also may be easier for a third party to guess.

[0044] While some biometric security systems are configured for authentication such as by voiceprint, face, fingerprint, and iris identification may provide improved fraud protection compared to password and passcode systems, the inventors have recognized that these systems end up being inefficient at identifying the correct person. Typically, these systems will either have a high false acceptance rate or a false rejection rate. A high false acceptance rate makes fraudulent activity easier, and a high false rejection rate makes it more difficult to positively identify the patient. In addition, while other systems such as DNA identification are effective at identifying the correct person, the inventors have recognized that such systems are overly invasive. For example, DNA identification requires an invasive testing procedure such as a blood or saliva sample, which becomes increasingly impractical and expensive as identification is done with increasing frequency. Further, DNA identification is expensive and may be susceptible to fraud by stealing an artifact such as a hair containing DNA.

[0045] To solve the problems associated with existing systems, the inventors have developed biometric identification systems configured to identify a person using a captured image of the person's retina fundus. Such systems provide a minimally invasive imaging method with a low false acceptance rate and a low false rejection rate.

[0046] Moreover, biometric identification as described herein is further distinguished from authentication techniques of conventional systems in that biometric identification systems described herein may be configured to not only confirm the person's identity but actually to determine the person's identity without needing any information from the person. Authentication typically requires that the person provide identification information along with a password, passcode, or biometric measure to determine whether the identification information given matches the password, passcode, or biometric measure. In contrast, systems described herein may be configured to determine the identity of a person based on one or more captured images of the person's retina fundus. In some embodiments, further security methods such as a password, passcode, or biometric measure such as voiceprint, face, fingerprint, and iris of the person may be obtained for further authentication to supplement the biometric identification. In some embodiments, a person may provide identification information to a biometric identification system in addition to the captured image(s) of the person's retina fundus.

[0047] The inventors have further recognized that retina fundus features which may be used to identify a person from a captured image may also be used as indicators of the person's predisposition to certain diseases, and even to diagnose a medical condition of the person. Accordingly, systems described herein may be alternatively or additionally configured to determine the person's predisposition to various diseases, and to diagnose some health issues of the person. For example, upon capturing or otherwise obtaining one or more images of the person's retina fundus for identification, the system may also make such determinations or diagnoses based on the image(s).

[0048] Turning to the figures, FIGS. 1-2 illustrate exemplary systems and devices configured to implement techniques for any or each of biometric identification, health information management, medical condition determination, and/or electronic account access. The description of these techniques which follows the description of the systems and devices will refer back to the systems and devices illustrated in FIGS. 1-2.

[0049] Referring to FIGS. 1-2, FIG. 1 illustrates a cloud-connected system in which a device may communicate with a remote computer to perform various operations associated with the techniques described herein. In contrast to FIG. 1, FIG. 2 illustrates a device which may be configured to perform any or all of the techniques described herein locally on the device.

[0050] With reference to FIG. 1, the inventors have recognized that the processing required for biometric identification, health information management, and other tasks on a user-end device may require, at least in some circumstances, power-hungry and/or expensive processing and/or memory components. To solve these problems, the inventors have developed cloud-connected systems and devices which may offset some or all of the most demanding processing and/or memory intensive tasks onto a remote computer, such that user-end devices may be implemented having less expensive and more power efficient hardware. In some instances, the device may only need to capture an image of a person and transmit data associated with the image to the remote computer. In such instances, the computer may perform biometric identification, access/update health information and/or account information, and/or determine a medical condition based on the image data, and transmit the resulting data back to the device. Because the device may only capture an image and transmit data associated with the image to the computer, the device may require very little processing power and/or memory, which facilitates a corresponding decrease in both cost and power consumption at the device end. Thus, the device may have an increased battery life and may be more affordable to the end user.

[0051] FIG. 1 is a block diagram of exemplary system 100 including device 120a and computer 140, which are connected to communication network 160.

[0052] Device 120a includes imaging apparatus 122a and processor 124a. In some embodiments, device 120a may be a portable device such as a mobile phone, a tablet computer, and/or a wearable device such as a smart watch. In some embodiments, device 120a may include a standalone network controller for communicating over communication network 160. Alternatively, the network controller may be integrated with processor 124a. In some embodiments, device 120a may include one or more displays for providing information via a user interface. In some embodiments, imaging apparatus 122a may be packaged separately from other components of device 120a. For example, imaging apparatus 122a may be communicatively coupled to the other components, such as via an electrical cable (e.g., universal serial bus (USB) cable) and/or a wired or wireless network connection. In other embodiments, imaging apparatus 122a may be packaged together with other components of device 120a, such as within a same mobile phone or tablet computer housing, as examples.

[0053] Computer 140 includes storage medium 142 and processor 144. Storage medium 142 may contain images and/or data associated with images for identifying a person. For example, in some embodiments, storage medium 142 may contain retina fundus images and/or data associated with retina fundus images for comparing to retina fundus images of the person to be identified.

[0054] In accordance with various embodiments, communication network 160 may be a local area network (LAN), a cell phone network, a Bluetooth network, the internet, or any other such network. For example, computer 140 may be positioned in a remote location relative to device 120a, such as a separate room from device 120a, and communication network 160 may be a LAN. In some embodiments, computer 140 may be located in a different geographical region from device 120a, and may communicate over the internet.

[0055] It should be appreciated that, in accordance with various embodiments, multiple devices may be included in place of or in addition to device 120a. For example, an intermediary device may be included in system 100 for communicating between device 120a and computer 140. Alternatively or additionally, multiple computers may be included in place of or in addition to computer 140 to perform various tasks herein attributed to computer 140.

[0056] FIG. 2 is a block diagram of exemplary device 120b, in accordance with some embodiments of the technology described herein. Similar to device 120a, device 120b includes imaging apparatus 122b and processor 124b, which may be configured in the manner described for device 120a. Device 120b may include one or more displays for providing information via a user interface. Device 120b also includes storage medium 126. Data stored on storage medium 126, such as image data, health information, account information, or other such data may facilitate local identification, health information management, medical condition determination, and/or account access on device 120b. It should be appreciated that device 120b may be configured to perform any or all operations associated with the techniques described herein locally, and in some embodiments may transmit data to a remote computer such as computer 140 so as to perform such operations remotely. For example, device 120b may be configured to connect to communication network 160.

[0057] I. Techniques and Apparatus for Obtaining an Image of and/or Measuring a Person's Retina

[0058] The inventors have developed techniques for capturing one or more images of a person's retina fundus and/or obtaining data associated with the images, aspects of which are described with reference to FIGS. 1-2.

[0059] Imaging apparatus 122a or 122b may be configured to capture a single image of the person's retina fundus. Alternatively, imaging apparatus 122a or 122b may be configured to capture multiple images of the person's retina fundus. In some embodiments, imaging apparatus 122a or 122b may be a 2-Dimensional (2D) imaging apparatus such as a digital camera. In some embodiments, imaging apparatus 122a or 122b may be more advanced, such as incorporating Optical Coherence Tomography (OCT) and/or Fluorescence Lifetime Imaging Microscopy (FLIM). For example, in some embodiments, imaging apparatus 122a or 122b may be a retinal sensing device may be configured for widefield or scanning retina fundus imaging such as using white light or infrared (IR) light, fluorescence intensity, OCT, or fluorescence lifetime data. Alternatively or additionally, imaging apparatus 122a or 122b may be configured for one-dimensional (1D), 2-dimensional (2D), 3-dimensional (3D) or other dimensional contrast imaging. Herein, fluorescence and lifetime are considered different dimensions of contrast. Images described herein may be captured using any or each of a red information channel (e.g., having a wavelength between 633-635 nm), a green information channel (e.g., having a wavelength of approximately 532 nm), or any other suitable light imaging channel(s). As a non-limiting example, a fluorescence excitation wavelength may be between 480-510 nm with an emission wavelength from 480-800 nm.

[0060] Imaging apparatus 122a or 122b may be packaged separately from other components of device 120a or 120b, such that it may be positioned near a person's eye(s). In some embodiments, device 120a or device 120b may be configured to accommodate (e.g., conform to, etc.) a person's face, such as specifically around the person's eye(s). Alternatively, device 120a or 120b may be configured to be held in front of the person's eye(s). In some embodiments, a lens of imaging apparatus 122a or 122b may be placed in front of the user's eye during imaging of the person's retina fundus. In some embodiments, imaging apparatus 122a or 122b may be configured to capture one or more images in response to a user pressing a button on device 120a or 120b. In some embodiments, imaging apparatus 122a or 122b may be configured to capture the image(s) responsive to a voice command from the user. In some embodiments, imaging apparatus 122a may be configured to capture the image(s) responsive to a command from computer 140. In some embodiments, imaging apparatus 122a or 122b may be configured to capture the image(s) automatically upon device 120a or 120b sensing the presence of the person, such as by detecting the person's retina fundus in view of imaging apparatus 122a or 122b.

[0061] The inventors have also developed novel and improved imaging apparatus having enhanced imaging functionality and a versatile form factor. In some embodiments, imaging apparatus described herein may include two or more imaging devices, such as OCT and/or FLIM devices within a common housing. For example, a single imaging apparatus may include a housing shaped to support OCT and FLIM devices within the housing along with associated electronics for performing imaging and/or accessing the cloud for image storage and/or transmission. In some embodiments, electronics onboard the imaging apparatus may be configured to perform various processing tasks described herein, such as identifying a user of the imaging apparatus (e.g., by imaging the person's retina fundus), accessing a user's electronic health records, and/or determine a health status or medical condition of the user.

[0062] In some embodiments, imaging apparatus described herein may have a form factor that is conducive to imaging both of a person's eyes (e.g., simultaneously). In some embodiments, imaging apparatus described herein may be configured for imaging each eye with a different imaging device of the imaging apparatus. For example, as described further below, the imaging apparatus may include a pair of lenses held in a housing of the imaging apparatus for aligning with a person's eyes, and the pair of lenses may also be aligned with respective imaging devices of the imaging apparatus. In some embodiments, the imaging apparatus may include a substantially binocular shaped form factor with an imaging device positioned on each side of the imaging apparatus. During operation of the imaging apparatus, a person may simply flip the vertical orientation of the imaging apparatus (e.g., by rotating the device about an axis parallel to the direction in which imaging is performed). Accordingly, the imaging apparatus may transition from imaging the person's right eye with a first imaging device to imaging the right eye with a second imaging device, and likewise, transition from imaging the person's left eye with the second imaging device to imaging the left eye with the first imaging device. In some embodiments, imaging apparatus described herein may be configured for mounting on a table or desk, such as on a stand. For example, the stand may permit rotation of the imaging apparatus about one or more axes to facilitate rotation by a user during operation.

[0063] It should be appreciated that aspects of the imaging apparatus described herein may be implemented using a different form factor than substantially binocular shaped. For instance, embodiments having a form factor different than substantially binocular shaped may be otherwise configured in the manner described herein in connection with the exemplary imaging apparatus described below. For example, such imaging apparatus may be configured to image one or both of a person's eyes simultaneously using one or more imaging devices of the imaging apparatus.

[0064] One example of an imaging apparatus according to the technology described herein is illustrated in FIGS. 14A-14B. As shown in FIG. 14A, imaging apparatus 1400 includes a housing 1401 with a first housing section 1402 and a second housing section 1403. In some embodiments, the first housing section 1402 may accommodate a first imaging device 1422 of the imaging apparatus 1400, and the second housing section 1403 may accommodate a second imaging device 1423 of the imaging apparatus. As illustrated in FIGS. 14A-14B, housing 1401 is substantially binocular shaped.

[0065] In some embodiments, the first and second imaging devices 1422 may include an optical imaging device, a fluorescent imaging device, and/or an OCT imaging device. For example, in one embodiment, the first imaging device 1422 may be an OCT imaging device, and the second imaging device 1423 may be an optical and fluorescent imaging device. In some embodiments, the imaging apparatus 1400 may include only a single imaging device 1422 or 1423, such as only an optical imaging device or only a fluorescent imaging device. In some embodiments, first and second imaging devices 1422 and 1423 may share one or more optical components such as lenses (e.g., convergent, divergent, etc.), mirrors, and/or other imaging components. For instance, in some embodiments, first and second imaging devices 1422 and 1423 may share a common optical path. It is envisioned that the devices may operate independently or in common. Each may be an OCT imaging device, each may be a fluorescent imaging device, or both may be one or the other. Both eyes may be imaged and/or measured simultaneously, or each eye may be imaged and/or measured separately.

[0066] Housing sections 1402 and 1403 may be connected to a front end of the housing 1401 by a front housing section 1405. In the illustrative embodiment, the front housing section 1405 is shaped to accommodate the facial profile of a person, such as having a shape that conforms to a human face. When accommodating a person's face, the front housing section 1405 may further provide sight-lines from the person's eyes to the imaging devices 1422 and/or 1423 of the imaging apparatus 1400. For example, the front housing section 1405 may include a first opening 1410 and a second opening 1411 that correspond with respective openings in the first housing section 1402 and the second housing section 1403 to provide minimally obstructed optical paths between the first and second optical devices 1422 and 1423 and the person's eyes. In some embodiments, the openings 1410 and 1410 may be covered with one or more transparent windows (e.g., each having its own window, having a shared window, etc.), which may include glass or plastic.

[0067] First and second housing sections 1402 and 1403 may be connected at a rear end of the housing 1401 by a rear housing section 1404. The rear housing section 1404 may be shaped to cover the end of the first and second housing sections 1402 and 1403 such that light in an environment of the imaging apparatus 1400 does not enter the housing 1401 and interfere with the imaging devices 1422 or 1423.

[0068] In some embodiments, imaging apparatus 1400 may be configured for communicatively coupling to another device, such as a mobile phone, desktop, laptop, or tablet computer, and/or smart watch. For example, imaging apparatus 1400 may be configured for establishing a wired and/or wireless connection to such devices, such as by USB and/or a suitable wireless network. In some embodiments, housing 1401 may include one or more openings to accommodate one or more electrical (e.g., USB) cables. In some embodiments, housing 1401 may have one or more antennas disposed thereon for transmitting and/or receiving wireless signals to or from such devices. In some embodiments, imaging devices 1422 and/or 1423 may be configured for interfacing with the electrical cables and/or antennas. In some embodiments, imaging devices 1422 and/or 1423 may receive power from the cables and/or antennas, such as for charging a rechargeable battery disposed within the housing 1401.

[0069] During operation of the imaging apparatus 1400, a person using the imaging apparatus 1400 may place the front housing section 1405 against the person's face such that the person's eyes are aligned with openings 1410 and 1411. In some embodiments, the imaging apparatus 1400 may include a gripping member (not shown) coupled to the housing 1401 and configured for gripping by a person's hand. In some embodiments, the gripping member may be formed using a soft plastic material, and may be ergonomically shaped to accommodate the person's fingers. For instance, the person may grasp the gripping member with both hands and place the front housing section 1405 against the person's face such that the person's eyes are in alignment with openings 1410 and 1411. Alternatively or additionally, the imaging apparatus 1400 may include a mounting member (not shown) coupled to the housing 1401 and configured for mounting the imaging apparatus 1400 to a mounting arm, such as for mounting the imaging apparatus 1400 to a table or other equipment. For instance, when mounted using the mounting member, the imaging apparatus 1400 may be stabilized in one position for use by a person without the person needing to hold the imaging apparatus 1400 in place.

[0070] In some embodiments, the imaging apparatus 1400 may employ a fixator, such as a visible light projection from the imaging apparatus 1400 towards the person's eyes, such as along a direction in which the openings 1410 and 1411 are aligned with the person's eyes, for example. In accordance with various embodiments, the fixator may be a bright spot, such as a circular or elliptical spot, or an image, such as an image or a house or some other object. The inventors recognized that a person will typically move both eyes in a same direction to focus on an object even when only one eye perceives the object. Accordingly, in some embodiments, the image apparatus 1400 may be configured to provide the fixator to only one eye, such as using only one opening 1410 or 1411. In other embodiments, fixators may be provided to both eyes, such as using both openings 1410 and 1411.

[0071] FIG. 15 illustrates a further embodiment of an imaging apparatus 1500, in accordance with some embodiments. As shown, imaging apparatus 1500 includes housing 1501, within which one or more imaging devices (not shown) may be disposed. Housing 1501 includes first housing section 1502 and second housing section 1503 connected to a central housing portion 1504. The central housing portion 1504 may include and/or operate as a hinge connecting the first and second housing sections 1502 and 1503, and about which the first and second housing portions 1502 and 1503 may rotate. By rotating the first and/or second housing sections 1502 and/or 1503 about the central housing portion 1504, a distance separating the first and second housing sections 1502 and 1503 may be increased or decreased accordingly. Before and/or during operation of the imaging apparatus 1500, a person may rotate the first and second housing sections 1502 and 1503 to accommodate a distance separating the person's eyes, such as to facilitate alignment of the person's eyes with openings of the first and second housing sections 1502 and 1503.

[0072] The first and second housing sections 1502 and 1503 may be configured in the manner described for first and second housing sections 1402 and 1403 in connection with FIGS. 14A-14B. For instance, each housing section may accommodate one or more imaging devices therein, such as an optical imaging device, a fluorescent imaging device, and/or an OCT imaging device. In FIG. 15, each housing section 1502 and 1503 is coupled to a separate one of front housing sections 1505A and 1505B. Front housing sections 1505A and 1505B may be shaped to conform to the facial profile of a person using the imaging apparatus 1500, such as conforming to portions of the person's face proximate the person's eyes. In one example, the front housing sections 1505A and 1505B may be formed using a pliable plastic that may conform to the person's facial profile when placed against the person's face. Front housing sections 1505A and 1505B may have respective openings 1511 and 1510 that correspond with openings of first and second housing sections 1502 and 1503, such as in alignment with the openings of the first and second housing sections 1502 and 1503 to provide minimally obstructed optical paths from the person's eyes to the imaging devices of the imaging apparatus 1500. In some embodiments, the openings 1510 and 1511 may be covered with a transparent window made using glass or plastic.

[0073] In some embodiments, the central housing section 1504 may include one or more electronic circuits (e.g., integrated circuits, printed circuit boards, etc.) for operating the imaging apparatus 1500. In some embodiments, one or more processors of device 120a and/or 120b may be disposed in central housing section 1504, such as for analyzing data captured using the imaging devices. The central housing section 1504 may include wired and/or wireless means of electrically communicating to other devices and/or computers, such as described for imaging apparatus 1400. For instance, further processing (e.g., as described herein) may be performed by the devices and/or computers communicatively coupled to imaging apparatus 1500. In some embodiments, the electronic circuits onboard the imaging apparatus 1500 may process captured image data based on instructions received from such communicatively coupled devices or computers. In some embodiments, the imaging apparatus 1500 may initiate an image capture sequence based on instructions received from a devices and/or computers communicatively coupled to the imaging apparatus 1500. In some embodiments, processing functionality described herein for device 120a and/or 120b may be performed using one or more processors onboard the imaging apparatus.

[0074] As described herein including in connection with imaging apparatus 1400, imaging apparatus 1500 may include a gripping member and/or a mounting member, and/or a fixator.

[0075] FIGS. 16A-16D illustrate a further embodiment of an imaging apparatus 1600, according to some embodiments. As shown in FIG. 16A, imaging apparatus 1600 has a housing 1601, including multiple housing portions 1601a, 1601b, and 1601c. Housing portion 1601a has a control panel 1625 including multiple buttons for turning imaging apparatus 1600 on or off, and for initiating scan sequences. FIG. 16B is an exploded view of imaging apparatus 1600 illustrating components disposed within housing 1601, such as imaging devices 1622 and 1623 and electronics 1620. Imaging devices 1622 and 1623 may include an optical imaging device, a fluorescent imaging device, and/or an OCT imaging device, in accordance with various embodiments, as described herein in connection with FIGS. 14A-14B and 15. Imaging apparatus further includes front housing portion 1605 configured to receive a person's eyes for imaging, as illustrated, for example, in FIG. 16C. FIG. 16D illustrates imaging apparatus 1600 seated in stand 1650, as described further herein.

[0076] As shown in FIGS. 16A-16D, housing portions 1601a and 1601b may substantially enclose imaging apparatus 1600, such as by having all or most of the components of imaging apparatus 1600 disposed between housing portions 1601a and 1601b. Housing portion 1601c may be mechanically coupled to housing portions 1601a and 1601b, such as using one or more screws fastening the housing 1601 together. As illustrated in FIG. 16B, housing portion 1601c may have multiple housing portions therein, such as housing portions 1602 and 1603 for accommodating imaging devices 1622 and 1623. For example, in some embodiments, the housing portions 1602 and 1603 may be configured to hold imaging devices 1622 and 1623 in place. Housing portion 1601c is further includes a pair of lens portions in which lenses 1610 and 1611 are disposed. Housing portions 1602 and 1603 and the lens portions may be configured to hold imaging devices 1622 and 1623 in alignment with lenses 1610 and 1611. Housing portions 1602 and 1603 may accommodate focusing parts 1626 and 1627 for adjusting the foci of lenses 1610 and 1611. Some embodiments may further include securing tabs 1628. By adjusting (e.g., pressing, pulling, pushing, etc.) securing tabs 1628, housing portions 1601a, 1601b, and/or 1601c may be decoupled from one another, such as for access to components of imaging apparatus 1600 for maintenance and/or repair purposes.

[0077] Electronics 1620 may be configured in the manner described for electronics 1620 in connection with FIG. 15. Control panel 1625 may be electrically coupled to electronics 1520. For example, the scan buttons of control panel 1625 may be configured to communicate a scan command to electronics 1620 to initiate a scan using imaging device 1622 and/or 1623. As another example, the power button of control panel 1625 may be configured to communicate a power on or power off command to electronics 1620. As illustrated in FIG. 16B, imaging apparatus 1600 may further include electromagnetic shielding 1624 configured to isolate electronics 1620 from sources of electromagnetic interference (EMI) in the surrounding environment of imaging apparatus 1600. Including electromagnetic shielding 1624 may improve operation (e.g., noise performance) of electronics 1620. In some embodiments, electromagnetic shielding 1624 may be coupled to one or more processors of electronics 1620 to dissipate heat generated in the one or more processors.

[0078] In some embodiments, imaging apparatus described herein may be configured for mounting to a stand, as illustrated in the example of FIG. 16D. In FIG. 16D, imaging apparatus 1600 is supported by stand 1650, which includes base 1652 and holding portion 1658. Base 1652 is illustrated including a substantially U-shaped support portion and has multiple feet 1654 attached to an underside of the support portion. Base 1652 may be configured to support imaging apparatus 1600 above a table or desk, such as illustrated in the figure. Holding portion 1658 may be shaped to accommodate housing 1601 of imaging apparatus 1600. For example, an exterior facing side of holding portion 1658 may be shaped to conform to housing 1601.

[0079] As illustrated in FIG. 16D, base 1652 may be coupled to holding portion 1658 by a hinge 1656. Hinge 1656 may permit rotation about an axis parallel to a surface supporting base 1652. For instance, during operation of imaging apparatus 1600 and stand 1650, a person may rotate holding portion 1658, having imaging apparatus 1600 seated therein, to an angle comfortable for the person to image one or both eyes. For example, the person may be seated at a table or desk supporting stand 1650. In some embodiments, a person may rotate imaging apparatus 1600 about an axis parallel to an optical axis along which imaging devices within imaging apparatus image the person's eye(s). For instance, in some embodiments, stand 1650 may alternatively or additionally include a hinge parallel to the optical axis.

[0080] In some embodiments, holding portion 1658 (or some other portion of stand 1650) may include charging hardware configured to transmit power to imaging apparatus 1600 through a wired or wireless connection. In one example, the charging hardware in stand 1650 may include a power supply coupled to one or a plurality of wireless charging coils, and imaging apparatus 1600 may include wireless charging coils configured to receive power from the coils in stand 1650. In another example, charging hardware in stand 1650 may be coupled to an electrical connector on an exterior facing side of holding portion 1658 such that a complementary connector of imaging apparatus 1600 interfaces with the connector of stand 1650 when imaging apparatus 1600 is seated in holding portion 1658. In accordance with various embodiments, the wireless charging hardware may include one or more power converters (e.g., AC to DC, DC to DC, etc.) configured to provide an appropriate voltage and current to imaging apparatus 1600 for charging. In some embodiments, stand 1650 may house at least one rechargeable battery configured to provide the wired or wireless power to imaging apparatus 1600. In some embodiments. Stand 1650 may include one or more power connectors configured to receive power from a standard wall outlet, such as a single-phase wall outlet.

[0081] In some embodiments, front housing portion 1605 may include multiple portions 1605a and 1605b. Portion 1605a may be formed using a mechanically resilient material whereas front portion 1605b may be formed using a mechanically compliant material, such that front housing portion 1605 is comfortable for a user to wear. For example, in some embodiments, portion 1605a may be formed using plastic and portion 1605b may be formed using rubber or silicone. In other embodiments, front housing portion 1605 may be formed using a single mechanically resilient or mechanically compliant material. In some embodiments, portion 1605b may be disposed on an exterior side of front housing portion 1605, and portion 1605a may be disposed within portion 1605b.

[0082] The inventors have recognized several advantages which may be gained by capturing multiple images of the person's retina fundus. For instance, extracting data from multiple captured images facilitates biometric identification techniques which are less costly to implement while also being less susceptible to fraud. As described herein including with reference to section III, data extracted from captured images may be used to identify a person by comparing the captured image data against stored image data. In some embodiments, a positive identification may be indicated when the captured image data has at least a predetermined degree of similarity to some portion of the stored image data. While a high predetermined degree of similarity (e.g., close to 100%) may be desirable to prevent the system from falsely identifying a person, such a high degree of required similarity conventionally results in a high false-rejection ratio (FRR), meaning that it is more difficult for the correct person to be positively identified. This may be because, when identifying a person using a single captured image of the person having a low resolution and/or a low field-of-view, the captured image may not achieve the high predetermined degree of similarity, for example due to missing or distorted features in the image. As a result, an imaging apparatus capable of capturing images with a high resolution and a high field-of-view may be desirable to allow use of a high predetermined degree of similarity without compromising FRR. However, a high quality imaging apparatus capable of supporting a high predetermined degree of similarity is typically more expensive than a simple digital camera. The conventional alternative to using a more expensive imaging apparatus is to use a lower predetermined degree of similarity. However, such a system may be more susceptible to fraud.

[0083] To solve this problem, the inventors have developed techniques for biometric identification which may be performed using an ordinary digital camera for enhanced flexibility. In contrast to single-image comparison systems, the inventors have developed systems which may capture multiple images for comparison, which facilitates use of a higher degree of similarity without requiring a higher resolution or field-of-view imaging apparatus. In some embodiments, data may be extracted from multiple images of the person's retina fundus and combined into a single set for comparison. For example, multiple images may be captured by imaging apparatus 122a or 122b, each of which may be slightly rotated from one another so as to capture different portions of the person's retina fundus. In some embodiments, the person's eye(s) may rotate and/or may track imaging apparatus 122a or 122b. Accordingly, data indicative of features of the person's retina fundus may be extracted from the images and combined into a dataset indicative of locations of the various features. Because multiple images are combined for use, no single captured image needs to be high resolution or have a high field of view. Rather, a simple digital camera, such as a digital camera integrated with a mobile phone, may be used for imaging as described herein.

[0084] In some embodiments, system 100 or 120b may be configured to verify retina fundus identification using recorded biometric characteristics (e.g., multi-factor identification). For example, device 120a or 120b may also include one or more biometric sensors such as a fingerprint reader and/or a microphone. Thus, device 120a or 120b may record one or more biometric characteristics of a person, such as a fingerprint and/or a voiceprint of the person. Data indicative of features of the biometric characteristic(s) may be extracted in the manner described for retina fundus images, and in the case of device 120a, the data may be transmitted to computer 140 for verification. Accordingly, once an identification is made based on the retina fundus image(s), the biometric characteristic data may be compared against stored characteristic data associated with the person to verify the retina fundus identification for added security.

[0085] II. Techniques for Identifying a Person based on a Retinal Image

[0086] The inventors have developed techniques for identifying a person based on a retinal image of the person. The technique may include comparing data extracted from one or more captured images of the person's retina fundus to stored data extracted from other retina fundus images. Techniques for extracting data from one or more captured images is described herein including with reference to FIGS. 3-4. FIG. 3 provides an illustrative method for capturing one or more images of a person's retina fundus and extracting data from the captured image(s), and FIG. 4 illustrates some features of a person's retina fundus which may be indicated in data extracted from the image(s).

[0087] FIG. 3 is a flow diagram illustrating exemplary method 300 including capturing one or more retina fundus images at step 302 and extracting image data from the image(s) at step 304. In accordance with the embodiment of FIG. 1, method 300 may be performed by device 120a, or alternatively may be performed in part by device 120a and in part by computer 140. In accordance with the embodiment of FIG. 2, method 300 may be performed entirely by device 120b.

[0088] Capturing the image(s) at step 302 may be performed in accordance with any or all embodiments of the technology described in section I. Extracting image data from the image(s) at step 304 may include processor 124a or 124b obtaining the captured image(s) from imaging apparatus 122a or 122b and extracting data indicative of features of the person's retina fundus from the image(s). For example, the data may include relative positions and orientations of the features. In some embodiments, feature data may be extracted from multiple captured images and combined into a single feature dataset. It should be appreciated that feature extraction at step 304 may be performed by computer 140. For example, in some embodiments of system 100, device 120a may be configured to capture the image(s) and to transmit the image(s) to computer 140 for data extraction.

[0089] Also during step 304, the extracted data may be recorded on a storage medium, such as storage medium 124 of device 120b. In some embodiments of cloud-based system 100, imaging apparatus 122a may capture the image(s) and/or extract data from the image(s) when device 120a does not have access to communication network 160, and so processor 124a may store the image(s) and/or data on the storage medium at least until a time when it may be transmitted over communication network 160. In such cases, processor 124a may obtain the image(s) and/or data from the storage medium shortly before transmitting the image(s) and/or data to computer 140. In some embodiments, the retina fundus image(s) may not be captured by device 120a or 120b, but by a separate device. The image(s) may be transferred to device 120a or 120b, from which data may be extracted and stored on the storage medium. Alternatively, the data may also be extracted by the separate device and transferred to device 120a or to device 120b. For example, device 120a may be tasked with passing the data to computer 140, or device 120b may identify a person or perform some other task based on the data.

[0090] FIG. 4 is a side view of retina fundus 400 including various features which may be captured in one or more images at step 302 during method 300 of FIG. 3, and/or may be indicated in data extracted from the image(s) at step 304. For example, features of veins and arteries of retina fundus 400 may be used to identify a person. Such features may include branch endings 410 and bifurcations 420 of the veins and arteries. The inventors have recognized that, similar to in fingerprinting, locations of branch endings 410 and bifurcations 420 (sometimes referred to as "minutiae") may be used as unique identifiers. Accordingly, in some embodiments, relative locations of branch endings 410 and/or bifurcations 420 may be extracted from a single captured image and recorded in one or more datasets. In some instances, relative locations of branch endings 410 and/or bifurcations 420 may be extracted from multiple captured images and combined into a single dataset. For example, an average relative location of each branch ending 410 and/or bifurcation 420 may be recorded in the dataset. In some embodiments, relative locations of specific veins or arteries such as nasal artery 430, nasal vein 440, temporal artery 450, and/or temporal vein 460 may be recorded in one or more datasets.

[0091] In some embodiments, data indicative of other features may be extracted instead of or in addition to data for branch endings 410 and/or bifurcations 420 at step 304. For example, aspects of optic disc 470 or optic disc edges such as a relative position within retina fundus 400 may be recorded in a dataset. In some embodiments, data associated with optic disc 470 may be recorded in a separate dataset from data associated with veins or arteries. Alternatively or additionally, data indicative of a relative position of fovea 480 and/or macula 490 may be recorded in a dataset. Further features which may be indicated in data extracted from the captured image(s) include the optic nerve, blood vessel surroundings, AV nicks, drusen, retinal pigmentations, and others.

[0092] In some embodiments, extracting any or all of the features described above may include solving segmentation of the image(s) into a full spatial map including relative positions and orientations of the individual features. For example, the spatial map may include a binary mask indicative of whether features such as branch endings 410 or bifurcations 420 are present at any particular location in the map. In some embodiments, a relative angle indicating locations of the features may be calculated based on the spatial map. To conserve storage space and/or simplify computing of the spatial map, thickness of some features such as veins may be reduced to a single pixel width. Alternatively or additionally, redundant data may be removed from the spatial map, such as data resulting from a combination of multiple images.

[0093] In some embodiments, the feature data may include relative positions and orientations of translationally and rotationally invariant features to facilitate a Scale Invariant Feature Transform (SIFT) and/or Speeded Up Robust Feature (SURF) comparison, as described herein including with reference to section III. For example, the extracted features described above may be Scale Invariant Feature Transform (SIFT) features and/or Speeded Up Robust Features (SURF).

[0094] The inventors have also developed techniques for extracting data from one or more captured images using a trained statistical classifier (TSC), in accordance with the embodiments illustrated in FIGS. 5A-5C, 6, and 7A-7B. For example, in some embodiments, step 304 of method 300 may be performed by a TSC such as illustrated in the embodiments of FIGS. 5A-5C, 6, and 7A-7B. One or more images(s) captured by imaging apparatus 122a or 122b may be input to the TSC. The captured image(s) may include data from one or more widefield or scanned retinal images collected from imaging apparatus 122a or 122b such as by white light, IR, fluorescence intensity, OCT, or 1D, 2D or 3D fluorescence lifetime data. The TSC may be configured to identify and output aspects of various retina fundus features in the image(s). The inventors have recognized that implementing TSCs for extracting feature data from captured images facilitates identification using multiple captured images. For example, TSCs described herein may be configured to form predictions based on individual images or groups of images. The predictions may be in the form of one or more outputs from the TSC. Each output may correspond to a single image or to multiple images. For example, one output may indicate the likelihood of a particular retina fundus feature appearing in one or more locations in a given image. Alternatively, the output may indicate the likelihood of multiple features appearing in one or more locations of the image. Further, the output may indicate the likelihood of a single feature or of multiple features appearing in one or more locations in multiple images.

[0095] TSCs described herein may be implemented in software, in hardware, or using any suitable combination of software and hardware. For example, a TSC may be executed on processor 124a of device 120a, processor 144 of computer 140, and/or processor 124b of device 120b. In some embodiments, one or more machine learning software libraries may be used to implement TSCs as described herein such as Theano, Torch, Caffe, Keras, and TensorFlow. These libraries may be used for training a statistical classifier such as a neural network, and/or for implementing a trained statistical classifier.

[0096] In some embodiments, data extraction using a TSC may take place on device 120a, which may transmit the output of the TSC to computer 140 over communication network 160. Alternatively, computer 140 may obtain the captured image(s) from device 120a and extract the captured image data from the captured image(s), for example using a TSC executed on computer 140. In accordance with the latter embodiment, device 120a may be configured to transmit the captured image(s) to computer 140 in the form of one or more compressed versions of the image(s), such as standardized by the Joint Photographic Experts Group (JPEG), or alternatively as one or more uncompressed versions such as by Portable Network Graphic (PNG). In the embodiment of FIG. 2, device 120b may obtain the captured image data from the captured image by extraction, such as using a TSC executed on processor 124b.

[0097] FIGS. 5A-5C, 6, and 7-8 illustrate aspects of neural network statistical classifiers for use in biometric security systems described herein. In accordance with the illustrative embodiments of FIGS. 5A-5B, a neural network statistical classifier may include a convolutional neural network (CNN). In accordance with the illustrative embodiments of FIGS. 5A and 5C, the neural network statistical classifier may further include a recurrent neural network (RNN), such as a long short-term memory (LSTM) network. Alternatively, in accordance with the illustrative embodiment of FIG. 6, the neural network statistical classifier may include a fully convolutional neural network (FCNN). FIG. 7 illustrates an FCNN configured to identify boundaries of features in an image of a person's retina fundus. FIG. 8 illustrates a CNN configured to identify individual voxels which has the advantage of higher invariance to locations of various retina fundus features such as blood vessels.

[0098] FIGS. 5A and 5B are block diagrams of portions 500a and 500b forming an exemplary convolutional neural network (CNN) configured to extract data from a captured image of a person's retina fundus. In the illustrative embodiment of FIGS. 5A and 5B, portion 500a may be operatively coupled to portion 500b, such as with an output of portion 500a coupled to an input of portion 500b.

[0099] As shown in FIG. 5A, portion 500a of the CNN includes an alternating series of convolutional layers 510a-510g and pooling layers 520a-520c. Image 530, which may be a 256 pixel by 256 pixel (256x256) image of a person's retina fundus, is provided as an input to portion 500a. Portion 500a may be configured to obtain feature map 540 from image 530, and to output feature map 540 to portion 500b. Portion 500b may be configured to generate predictions 570 to indicate aspects of image 530, such as locations of retina fundus features.