Image Processing Apparatus And Method

NAKAGAMI; Ohji

U.S. patent application number 16/783716 was filed with the patent office on 2020-06-04 for image processing apparatus and method. This patent application is currently assigned to SONY CORPORATION. The applicant listed for this patent is SONY CORPORATION. Invention is credited to Ohji NAKAGAMI.

| Application Number | 20200177894 16/783716 |

| Document ID | / |

| Family ID | 53478426 |

| Filed Date | 2020-06-04 |

View All Diagrams

| United States Patent Application | 20200177894 |

| Kind Code | A1 |

| NAKAGAMI; Ohji | June 4, 2020 |

IMAGE PROCESSING APPARATUS AND METHOD

Abstract

The present disclosure relates to an image processing apparatus and method capable of suppressing a decrease in the coding efficiency. A residual predicting unit that performs a prediction with bit depths of residual data arranged to be uniform among components when the prediction is performed among the components for the residual data between an input image configured by a plurality of the components and a predicted image and a coding unit that codes predicted residual data generated through the prediction performed by the residual predicting unit are included. The present disclosure, for example, may be applied to an image processing apparatus such as an image coding apparatus that codes image data or an image decoding apparatus that decodes coded data acquired by performing coding of the image data.

| Inventors: | NAKAGAMI; Ohji; (Tokyo, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SONY CORPORATION Tokyo JP |

||||||||||

| Family ID: | 53478426 | ||||||||||

| Appl. No.: | 16/783716 | ||||||||||

| Filed: | February 6, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15034753 | May 5, 2016 | 10623752 | ||

| PCT/JP2014/082923 | Dec 12, 2014 | |||

| 16783716 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 19/146 20141101; H04N 19/184 20141101; H04N 19/186 20141101; H04N 19/136 20141101; H04N 19/105 20141101; H04N 19/172 20141101; H04N 19/119 20141101; H04N 19/70 20141101 |

| International Class: | H04N 19/186 20060101 H04N019/186; H04N 19/105 20060101 H04N019/105; H04N 19/70 20060101 H04N019/70; H04N 19/136 20060101 H04N019/136; H04N 19/184 20060101 H04N019/184; H04N 19/146 20060101 H04N019/146; H04N 19/172 20060101 H04N019/172 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Dec 27, 2013 | JP | 2013-272941 |

Claims

1. An image processing apparatus for processing an input image, a color space of the input image comprising a luminance component and a chrominance component, the apparatus comprising: a setting unit configured to arrange a bit depth of residual data of the chrominance component and a bit depth of residual data of the luminance component to be uniform in a case where the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component are different from each other, the residual data of the luminance component being a difference between the luminance component of the input image and a luminance component of a predicted image and the residual data of the chrominance component being another difference between the chrominance component of the input image and a chrominance component of the predicted image; a residual predicting unit configured to predict the residual data of the chrominance component in accordance with the bit depth of the residual data of the chrominance component by using the residual data of the luminance component to predict the residual data of the chrominance component; and a coding unit that codes the residual data predicted by the residual predicting unit, wherein the setting unit, the residual predicting unit, and the coding unit are each implemented via at least one processor.

2. The image processing apparatus according to claim 1, wherein the setting unit is configured to arrange the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component to be uniform by performing a bit shift of the bit depth of the residual data of the luminance component such that the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component are the same.

3. The image processing apparatus according to claim 1, wherein the setting unit is configured to arrange the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component to be uniform by performing a bit shift of the bit depth of the residual data of the luminance component such that the bit depth of the residual data of the luminance component matches the bit depth of the residual data of the chrominance component.

4. The image processing apparatus according to claim 2, wherein the setting unit is configured to arrange the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component to be uniform by performing a bit shift of the bit depth of the residual data of the luminance component such that the bit depth of the residual data of the luminance component matches the bit depth of the residual data of the chrominance component.

5. The image processing apparatus according to claim 1, wherein the setting unit is configured to arrange the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component to be uniform by performing a bit shift of the bit depth of the residual data of the luminance component such that the bit depth of the residual data of the luminance component matches the bit depth of the residual data of the chrominance component in a case where a difference between the bit depth of the residual data of the luminance component and the bit depth of the residual data of the chrominance component has a positive value.

6. The image processing apparatus according to claim 2, wherein the setting unit is configured to arrange the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component to be uniform by performing a bit shift of the bit depth of the residual data of the luminance component such that the bit depth of the residual data of the luminance component matches the bit depth of the residual data of the chrominance component in a case where a difference between the bit depth of the residual data of the luminance component and the bit depth of the residual data of the chrominance component has a positive value.

7. The image processing apparatus according to claim 5, wherein the input image is an image of a 4:4:4 format in which a number of pixels of the luminance component and a number of pixels of the chrominance component are the same.

8. The image processing apparatus according to claim 6, wherein the input image is an image of a 4:4:4 format in which a number of pixels of the luminance component and a number of pixels of the chrominance component are the same.

9. An image processing method for processing an input image, a color space of the input image comprising a luminance component and a chrominance component, the method comprising: arranging a bit depth of residual data of the chrominance component and a bit depth of residual data of the luminance component to be uniform in a case where the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component are different from each other, the residual data of the luminance component being a difference between the luminance component of the input image and a luminance component of a predicted image and the residual data of the chrominance component being another difference between the chrominance component of the input image and a chrominance component of the predicted image; predicting the residual data of the chrominance component in accordance with the bit depth of the residual data of the chrominance component by using the residual data of the luminance component to predict the residual data of the chrominance component; and coding the predicted residual data

10. A non-transitory computer-readable medium having embodied thereon a program, which when executed by a computer causes the computer to execute a method, the method comprising: arranging a bit depth of residual data of the chrominance component and a bit depth of residual data of the luminance component to be uniform in a case where the bit depth of the residual data of the chrominance component and the bit depth of the residual data of the luminance component are different from each other, the residual data of the luminance component being a difference between the luminance component of the input image and a luminance component of a predicted image and the residual data of the chrominance component being another difference between the chrominance component of the input image and a chrominance component of the predicted image; predicting the residual data of the chrominance component in accordance with the bit depth of the residual data of the chrominance component by using the residual data of the luminance component to predict the residual data of the chrominance component; and coding the predicted residual data.

Description

CROSS REFERENCE TO PRIOR APPLICATION

[0001] This application is a continuation of U.S. patent application Ser. No. 15/034,753 (filed on May 5, 2016), which is a National Stage Patent Application of PCT International Patent Application No. PCT/JP2014/082923 (filed on Dec. 12, 2014) under 35 U.S.C. .sctn. 371, which claims priority to Japanese Patent Application No. 2013-272941 (filed on Dec. 27, 2013), which are all hereby incorporated by reference in their entirety.

TECHNICAL FIELD

[0002] The present disclosure relates to an image processing apparatus and method, and more particularly, to an image processing apparatus and method capable of suppressing a decrease in the coding efficiency.

BACKGROUND ART

[0003] Recently, the number of requests for the compression of raw data that is generated by an image sensor or the like and is image data before the execution of a demosaic process and the like has been increased.

[0004] As a coding system of image data, there is MPEG-4 Part 10 (Advanced Video Coding, hereinafter, referred to as AVC). Recently, in order to improve the coding efficiency, standardization of an coding system called High Efficiency Video Coding (HEVC) has been progressed by Joint Collaboration Team--Video Coding (JCTVC) that is a joint standards organization of International Telecommunication Union Telecommunication Standardization Sector (ITU-T) and International Organization for Standardization/International Electrotechnical Commission (ISO/IEC) (for example, see Patent Document 1).

[0005] In such a coding system, a method for making a prediction of a residual signal between components has been considered (for example, see Non-patent Document 2).

CITATION LIST

Non-Patent Document

[0006] Non-Patent Document 1: Benjamin Bross, Woo-Jin Han, Jens-Rainer Ohm, Gary J. Sullivan, Ye-Kui Wang, Thomas Wiegand, "High Efficiency video Coding (HEVC) text specification draft 10 (for FDIS & Last Call)", JCTVC-L1003_version 34, 2013 Mar. 19

[0007] Non-Patent Document 2: Wei Pu, Woo-Shik Kim, Jianle Chen, Joel Sole, Marta Karczewics, "RCE1: Descriptions and Results for Experiments 1, 2, 3, and 4", JCTVC-O0202, 2013 Nov. 12

SUMMARY OF THE INVENTION

Problems to be Solved by the Invention

[0008] However, generally, the bit depths of data of components are independent from each other, and accordingly, there is a possibility that mutually-different values are set thereto. However, in a method disclosed in Non-patent Document 2, a prediction of a bit depth between mutually-different components is not considered, and there is concern that a prediction is not correctly made, and the coding efficiency is lowered.

[0009] The present disclosure is made in consideration of such a situation and is for suppressing a decrease in the coding efficiency.

Solutions to Problems

[0010] According to one embodiment of the present technology, there is provided an image processing apparatus including: a residual predicting unit that performs a prediction with bit depths of residual data arranged to be uniform among components when the prediction is performed among the components for the residual data between an input image configured by a plurality of the components and a predicted image; and a coding unit that codes predicted residual data generated through the prediction performed by the residual predicting unit.

[0011] The residual predicting unit may arrange the bit depths of the residual data to be uniform through a bit shift.

[0012] The residual predicting unit may perform the prediction with the bit depths of the residual data arranged to be uniform among the components through the bit shift in a case where a difference between the bit depths of two components for which the prediction is performed is not zero.

[0013] The residual predicting unit may perform the prediction with the bit depths of the residual data arranged to be uniform among the components through the bit shift in a case where the difference between the bit depths is positive and omit the prediction in a case where the difference between the bit depths is negative.

[0014] The residual predicting unit may perform the prediction with the bit depths of the residual data arranged to be uniform among the components through the bit shift in a case where a color space of the input image is not an RGB space and omit the prediction in a case where the color space of the input image is the RGB space.

[0015] A color space of the input image may be a YUV space, and the residual predicting unit may perform the prediction with the bit depths of the residual data arranged to be uniform through the bit shift between a luminance component and a color difference component.

[0016] A color space of the input image may be an RGB space, and the residual predicting unit may perform the prediction with the bit depths of the residual data arranged to be uniform through the bit shift between a G component and an R component or a B component.

[0017] The residual predicting unit may perform the prediction by acquiring a difference between the bit depths of two components for which the prediction is performed, performing the bit shift of the residual data of one component out of the two components that corresponds to the difference between the bit depths, multiplying the bit-shifted residual data by a predetermined weighting coefficient, performing a bit shift of a result of the multiplication that corresponds to a predetermined number of bits, and acquiring a difference between the residual data of the other component and the bit-shifted result of the multiplication.

[0018] The residual predicting unit may set the weighting coefficient that is common to a plurality of components.

[0019] According to one embodiment of the present technology, there is provided on image processing method including: performing a prediction with bit depths of residual data arranged to be uniform among components when the prediction is performed among the components for the residual data between an input image configured by a plurality of the components and a predicted image; and coding predicted residual data generated through the prediction.

[0020] According to another embodiment of the present technology, there is provided an image processing apparatus including: a decoding unit that decodes coded data in which predicted residual data, which is a result of a prediction of residual data between an image configured by a plurality of components and a predicted image thereof among the components, is coded; and a residual restoring unit that performs restoration with bit depths of the residual data arranged to be uniform among the components when the restoration of the residual data is performed using the predicted residual data acquired by the decoding unit by decoding the coded data.

[0021] The residual restoring unit may arrange the bit depths of the residual data to be uniform through a bit shift.

[0022] A reception unit that receives information relating to the bit depths may be further included, and the residual restoring unit may arrange the bit depths of the residual data to be uniform by acquiring a difference between the bit depths of two components for which the prediction is performed based on information relating to the bit depths received by the reception unit and performing the bit shift based on the acquired difference between the bit depths.

[0023] The residual restoring unit, in a case where the acquired difference between the bit depths is not zero, may arrange the bit depths of the residual data to be uniform through the bit shift.

[0024] The residual restoring unit may perform restoration with the bit depths of the residual data arranged to be uniform through the bit shift in a case where the acquired difference between the bit depths is positive and omit the restoration in a case where the difference between the bit depths is negative.

[0025] The reception unit may further receive information relating to a color space of the image, and the residual restoring unit may perform the restoration with the bit depths of the residual data arranged to be uniform among the components through the bit shift in a case where the color space of the image is not an RGB space based on the information relating to the color space of the image received by the reception unit and omit the restoration in a case where the color space of the image is the RGB space.

[0026] A color space of the image may be a YUV space, and the residual restoring unit may perform the restoration with the bit depths of the residual data arranged to be uniform between a luminance component and a color difference component through the bit shift.

[0027] A color space of the image may be an RGB space, and the residual restoring unit may perform the restoration with the bit depths of the residual data arranged to be uniform through the bit shift between a G component and an R component or a B component.

[0028] The residual restoring unit, by acquiring a difference between the bit depths of two components for which the restoration is performed, performing the bit shift of the restored residual data of one component out of the two components that corresponds to the difference between the bit depths, multiplying the bit-shifted residual data by a predetermined weighting coefficient, performing a bit shift of a result of the multiplication that corresponds to a predetermined number of bits, and adding the bit-shifted result of the multiplication and the predicted residual data, may perform the restoration of the residual data of the other component.

[0029] According to another embodiment of the present technology, there is provided an image processing method including: decoding coded data in which predicted residual data, which is a result of a prediction of residual data between an image configured by a plurality of components and a predicted image thereof among the components, is coded; and performing restoration with bit depths of the residual data arranged to be uniform among the components when the restoration of the residual data is performed using the predicted residual data acquired by decoding the coded data.

[0030] According to one embodiment of the present technology, a prediction is performed with bit depths of residual data arranged to be uniform among components when the prediction is performed among the components for the residual data between an input image configured by a plurality of the components and a predicted image, and predicted residual data generated through the prediction is coded.

[0031] According to another embodiment of the present technology, coded data is decoded in which predicted residual data, which is a result of a prediction of residual data between an image configured by a plurality of components and a predicted image thereof among the components, is coded, and restoration is performed with bit depths of the residual data arranged to be uniform among the components when the restoration of the residual data is performed using the predicted residual data acquired by decoding the coded data.

Effects of the Invention

[0032] According to the present disclosure, an image can be coded and decoded. Particularly, a decrease in the coding efficiency can be suppressed.

BRIEF DESCRIPTION OF DRAWINGS

[0033] FIG. 1 is a diagram that illustrates a configuration example of a coding unit.

[0034] FIG. 2 is a diagram that illustrates an example of semantics.

[0035] FIG. 3 is a block diagram that illustrates an example of the main configuration of an image coding apparatus.

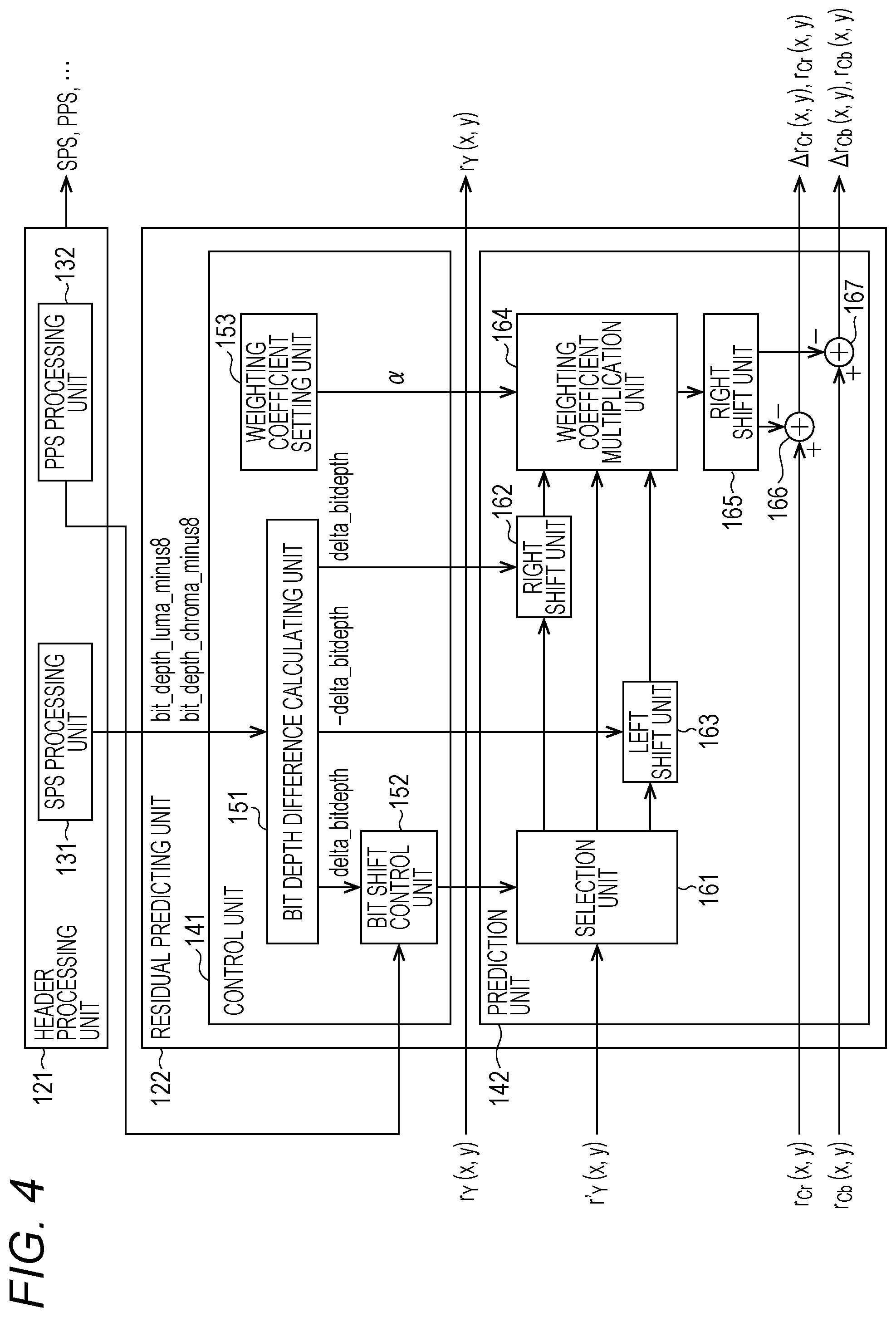

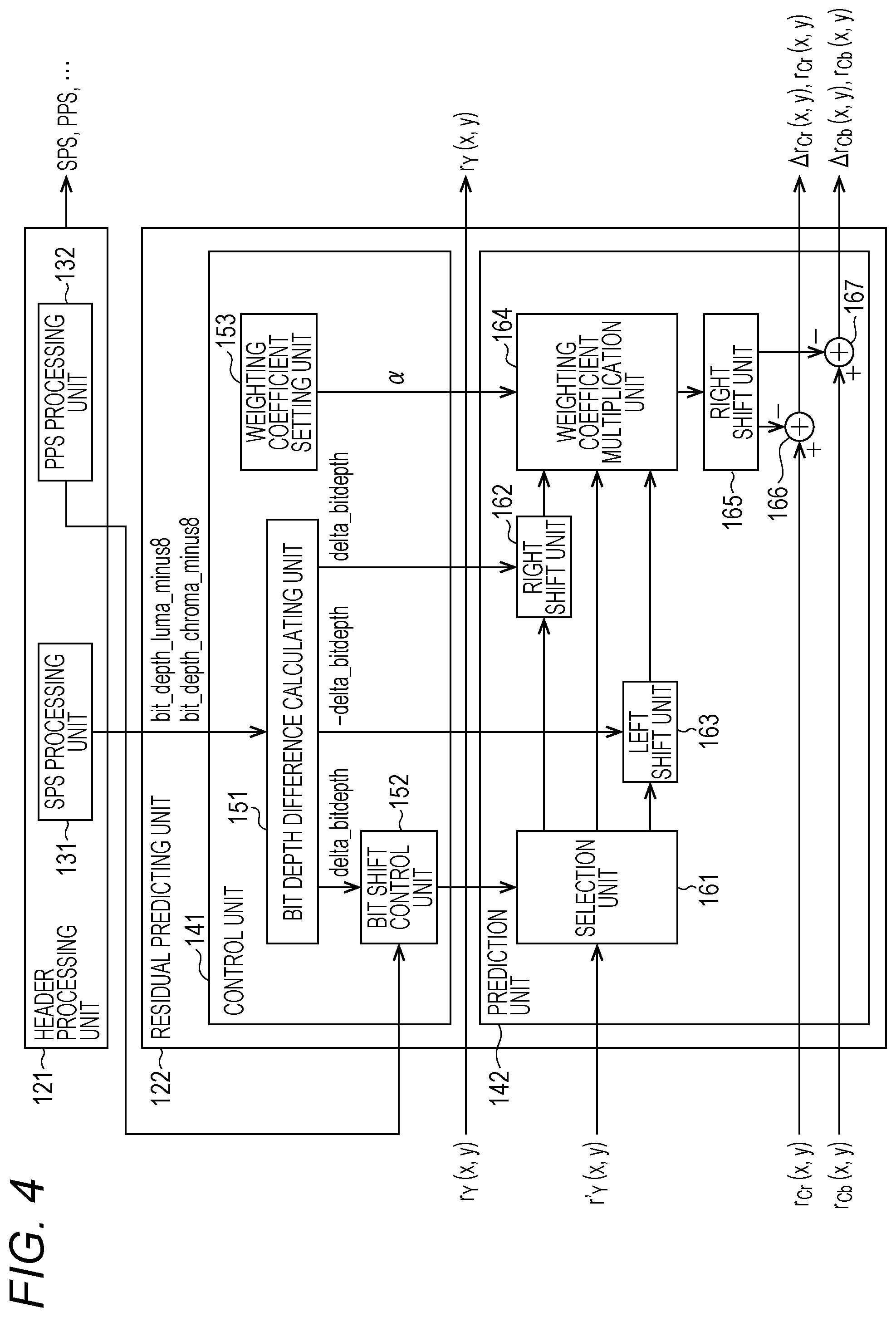

[0036] FIG. 4 is a block diagram that illustrates an example of the main configurations of a header processing unit and a residual prediction unit.

[0037] FIG. 5 is a flowchart that illustrates an example of the flow of a coding process.

[0038] FIG. 6 is a flowchart that illustrates an example of the flow of a residual predicting process.

[0039] FIG. 7 is a flowchart that illustrates an example of the flow of a predicted residual data generating process.

[0040] FIG. 8 is a flowchart that illustrates an example of the flow of a predicted residual data generating process.

[0041] FIG. 9 is a flowchart that illustrates an example of the flow of a predicted residual data generating process.

[0042] FIG. 10 is a block diagram that illustrates an example of the main configuration of an image decoding apparatus.

[0043] FIG. 11 is a block diagram that illustrates an example of the main configurations of a header acquiring unit and a residual restoring unit.

[0044] FIG. 12 is a flowchart that illustrates an example of the flow of a decoding process.

[0045] FIG. 13 is a flowchart that illustrates an example of the flow of a residual restoring process.

[0046] FIG. 14 is a flowchart that illustrates an example of the flow of a residual data restoring process.

[0047] FIG. 15 is a flowchart that illustrates an example of the flow of a residual data restoring process.

[0048] FIG. 16 is a flowchart that illustrates an example of the flow of a residual data restoring process.

[0049] FIG. 17 is a diagram that illustrates an example of semantics.

[0050] FIG. 18 is a block diagram that illustrates an example of the main configurations of a header processing unit and a residual predicting unit.

[0051] FIG. 19 is a flowchart that illustrates an example of the flow of a residual predicting process.

[0052] FIG. 20 is a block diagram that illustrates an example of the main configurations of a header acquiring unit and a residual restoring unit.

[0053] FIG. 21 is a flowchart that illustrates an example of the flow of a residual restoring process.

[0054] FIG. 22 is a diagram that illustrates an example of semantics.

[0055] FIG. 23 is a block diagram that illustrates an example of the main configurations of a header processing unit and a residual predicting unit.

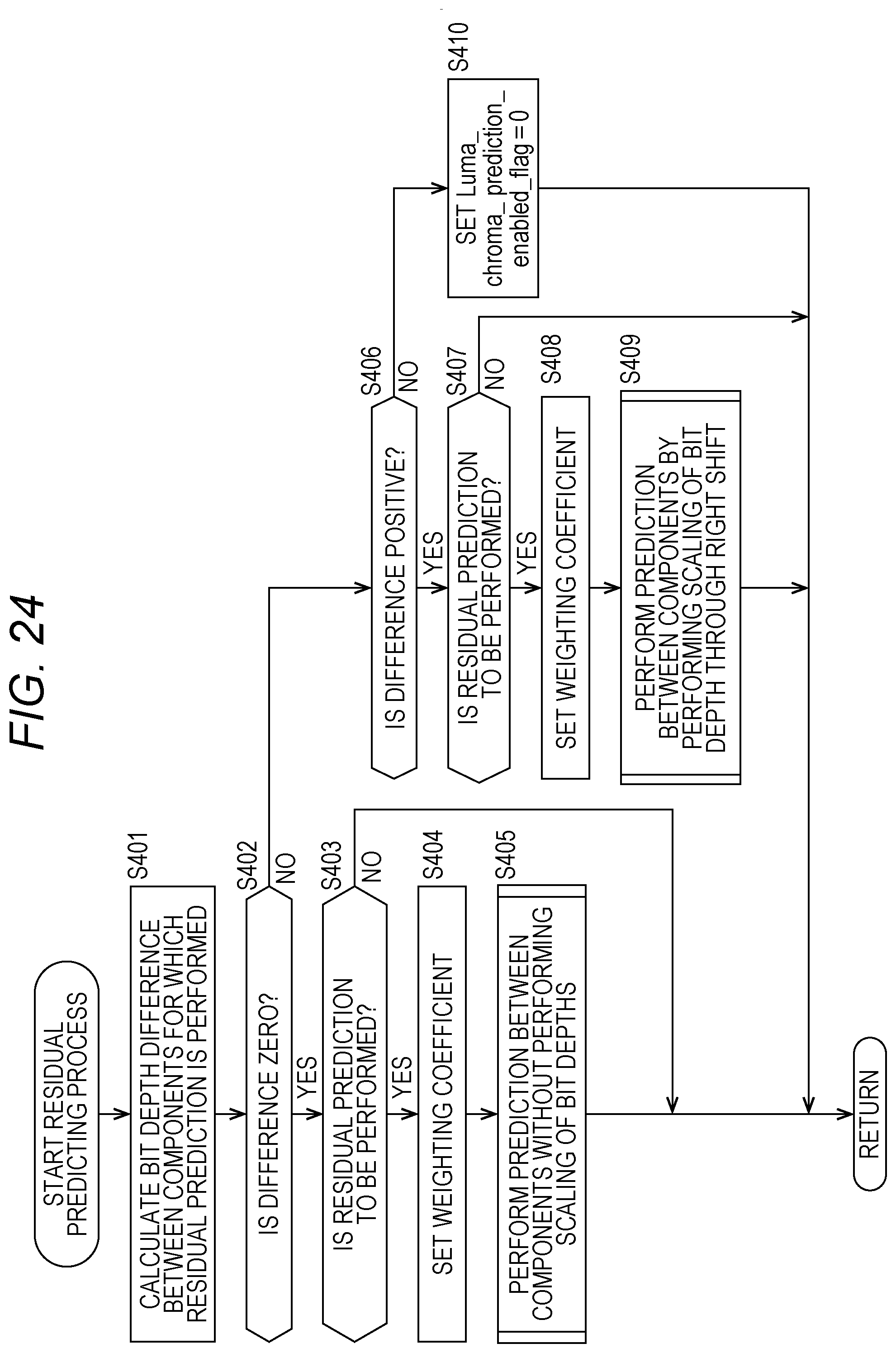

[0056] FIG. 24 is a flowchart that illustrates an example of the flow of a residual predicting process.

[0057] FIG. 25 is a block diagram that illustrates an example of the main configuration of a header acquiring unit and a residual restoring unit.

[0058] FIG. 26 is a flowchart that illustrates an example of the flow of a residual restoring process.

[0059] FIG. 27 is a diagram that illustrates an example of semantics.

[0060] FIG. 28 is a block diagram that illustrates an example of the main configurations of a header processing unit and a residual predicting unit.

[0061] FIG. 29 is a flowchart that illustrates an example of the flow of a residual predicting process.

[0062] FIG. 30 is a block diagram that illustrates an example of the main configurations of a header acquiring unit and a residual restoring unit.

[0063] FIG. 31 is a flowchart that illustrates an example or the flow of a residual restoring process.

[0064] FIG. 32 is a diagram that illustrates an example of syntax.

[0065] FIG. 33 is a diagram that illustrates an example of semantics.

[0066] FIG. 34 is a diagram that illustrates an example of semantics.

[0067] FIG. 35 is a diagram that illustrates an example of semantics.

[0068] FIG. 36 is a diagram that illustrates an example of syntax.

[0069] FIG. 37 is a diagram that illustrates an example of semantics.

[0070] FIG. 38 is a diagram that illustrates an example of semantics.

[0071] FIG. 39 is a diagram that illustrates an example of semantics.

[0072] FIG. 40 is a diagram that illustrates an example of a multiple viewpoint image coding system.

[0073] FIG. 41 is a diagram that illustrates an example of the main configuration of a multiple viewpoint image coding apparatus according to the present technology.

[0074] FIG. 42 is a diagram that illustrates an example of the main configuration of a multiple viewpoint image decoding apparatus according to the present technology.

[0075] FIG. 43 is a diagram that illustrates an example of a hierarchical image coding system.

[0076] FIG. 44 is a diagram that illustrates an example of spatial scalable coding.

[0077] FIG. 45 is a diagram that illustrates an example of temporal scalable coding.

[0078] FIG. 46 is a diagram that illustrates an example of scalable coding of a signal-to-noise ratio.

[0079] FIG. 47 is a diagram that illustrates an example of the main configuration of a hierarchical image coding apparatus according to the present technology.

[0080] FIG. 43 is a diagram that illustrates an example of the main configuration of a hierarchical image decoding apparatus according to the present technology.

[0081] FIG. 49 is a block diagram that illustrates an example of the main configuration of a computer.

[0082] FIG. 50 is block diagram that illustrates an example of the schematic configuration of a television apparatus.

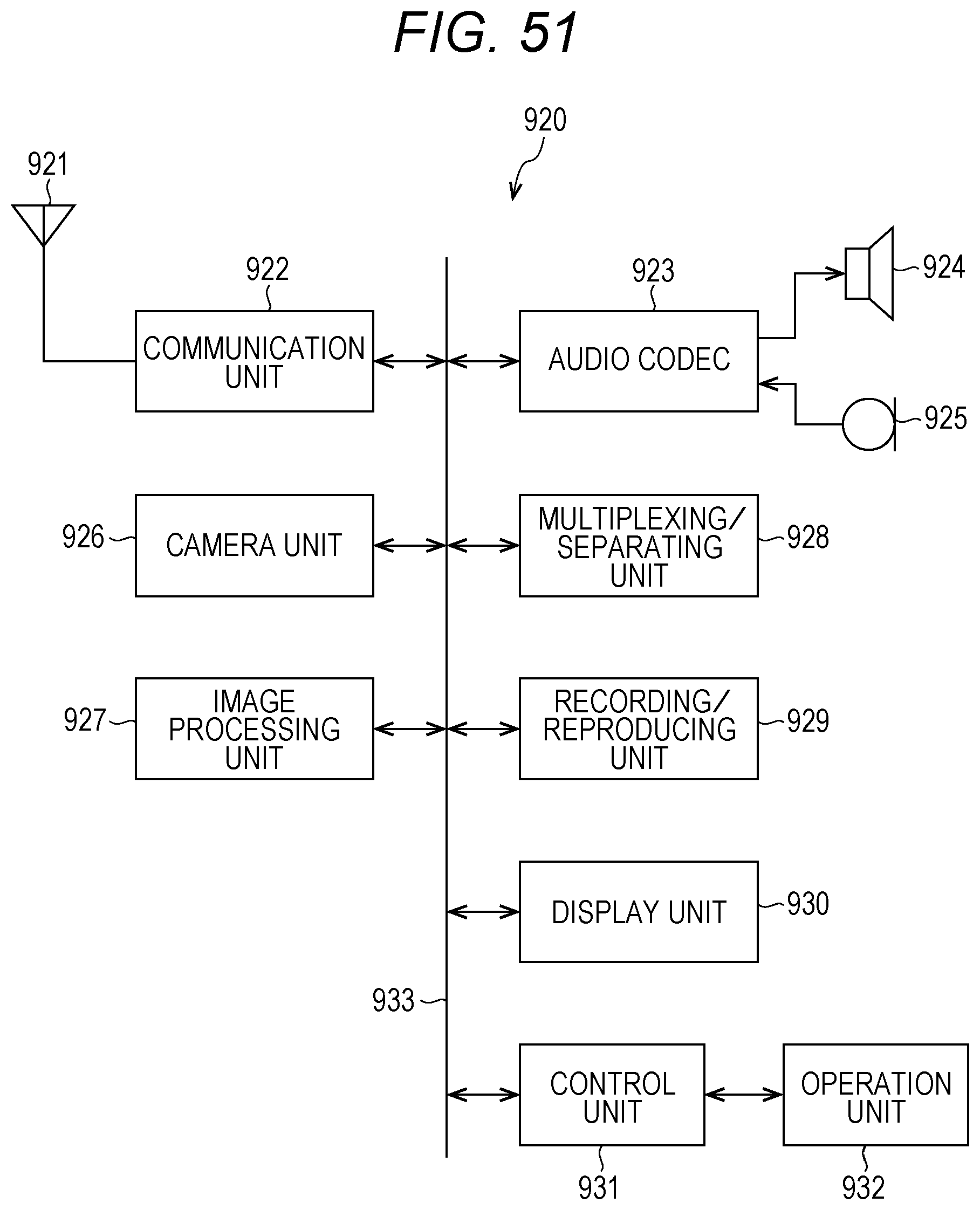

[0083] FIG. 51 is a block diagram that illustrates an example of the schematic configuration of a mobile phone.

[0084] FIG. 52 is a block diagram that illustrates an example of the schematic configuration of a recording/reproducing apparatus.

[0085] FIG. 53 is a block diagram that illustrates an example of the schematic configuration of an imaging apparatus.

[0086] FIG. 54 is a block diagram that illustrates an example of the schematic configuration of a video set.

[0087] FIG. 55 is a block diagram that illustrates an example of the schematic configuration of a video processor.

[0088] FIG. 56 is a block diagram that illustrates another example of the schematic configuration of a video processor.

MODE FOR CARRYING OUT THE INVENTION

[0089] Hereinafter, embodiments (hereinafter, referred to as embodiments) for performing the present disclosure will be described. The description will be presented in the following order.

[0090] 1. First Embodiment (Image Coding Apparatus/Image Decoding Apparatus)

[0091] 2. Second Embodiment (Image Coding Apparatus/Image Decoding Apparatus)

[0092] 3. Third Embodiment (Image Coding Apparatus/Image Decoding Apparatus)

[0093] 4. Fourth Embodiment (Image Coding Apparatus/Image Decoding Apparatus)

[0094] 5. Fifth Embodiment (Commonization of Weighting Coefficient)

[0095] 6. Sixth Embodiment (Multiple Viewpoint Image Coding Apparatus/Multiple Viewpoint Image Decoding Apparatus

[0096] 7. Seventh Embodiment (Hierarchical Image Coding Apparatus/Hierarchical Image Decoding Apparatus)

[0097] 8. Eighth Embodiment (Computer)

[0098] 9. Ninth Embodiment (Application Example)

[0099] 10. Tenth Embodiment (Set/Unit/Module/Processor)

1. First Embodiment

Flow of Standardization of Image Coding

[0100] Recently, image information is handled as digital data, and, at that time, for the purpose of transmitting and accumulating information with high efficiency, devices are widely used which compress and code an image by employing a coding system compressing data through an orthogonal transform such as a discrete cosine transform and a motion compensation by using redundancy that is specific to the image information. Examples of such a coding system include Moving Picture Experts Group (MPEG) and the like.

[0101] Particularly, MPEG2 (ISO/IEC 13818-2) is defined as a general-purpose image coding system and is a standard covering both an interlaced scanning image and a sequential scanning image and a standard resolution image and a high definition image. For example, currently, MPEG2 is widely used for a broad range of applications for professional uses and consumer uses. By using the MPEG2 compression system, for example, in the case of an interlaced scanning image of the standard resolution having 720.times.480 pixels, a code amount (bit rate) of 4 to 8 Mbps is assigned. In addition, by using the MPEG2 compression system, for example, in the case of an interlaced scanning image of high resolution having 1920.times.1088 pixels, a code amount (bit rate) of 18 to 22 Mbps is assigned. Accordingly, a high compression rate and a satisfactory picture quality can be realized.

[0102] The MPEG2 is mainly targeted for high image quality coding that is mainly appropriate for a broadcast but does not comply with a coding system having a code amount (bit rate) lower than that of MPEG1, in other words, having a compression rate higher than that of MPEG1. However, it is considered that the number of such requests will be increased in the future in accordance with the wide use of portable terminals, and standardization of an MPEG4 coding system was made in accordance therewith. Its standard relating to an image coding system was approved on December, 1998 as ISO/IEC 14496-2.

[0103] Furthermore, in recent years, for the initial purpose of image coding for television conferences, H.26L International Telecommunication Union Telecommunication Standardization Sector (ITU-T) Q6/16 Video Coding Expert Group (VCEG)) has been standardized. It is known that H.26L requires a mote calculation amount due to the coding process and the decoding process thereof than that of a conventional coding system such as MPEG2 or MPEG4 and realizes higher coding efficiency. In addition, currently, as part of activities of MPEG4, a standard realizing higher coding efficiency by introducing functions not supported according to H.26L based on H.26L has been made as Joint Model of Enhanced-Compression Video Coding.

[0104] As a schedule of the standardization thereof, in March, 2003, an international standard was made based on names of H.264 and MPEG-4 Part 10 (Advanced Video Coding; hereinafter, referred to as AVC).

[0105] In addition, as an extension of H.264/AVC, standardization of Fidelity Range Extension (FRExt) including coding tools required for a business use and 8.times.8 DCT and a quantization matrix defined in MPEG-2, which are called RGB, 4:2:2, and 4:4:4, has been completed in February, 2005. In this way, a coding system capable of representing a film noise included in movie is formed by using H.264/AVC, and H.264/AVC is on a stage of being used in a broad range of applications of Blu-Ray Disc (trademark) and the like,

[0106] However, recently, the number of requests for coding having a further higher compression rate such as a request for compressing an image of about 4000.times.2000 pixels that are four times the number of pixels of a high definition image and a request for delivering a high definition image in an environment having a limited transmission capacity such as the Internet has been increased. For this reason, reviews for improving the coding efficiency have been continuously made by the VCEG affiliated with the ITU-T described above.

[0107] Thus, currently, for the purpose of further improving the coding efficiency to be higher than that of the AVC, standardization of a coding system called High Efficiency Video Coding (HEVC) has been progressed by a Joint Collaboration Team--Video Coding (JCTVC) that is a joint standardization organization of the ITU-T and the International Organization for Standardization/International Electrotechnical Commission (ISO/IEC). Regarding the HEVC standard, a committee draft that is a draft-edition specification was issued in January, 2013 (for example, see Non-Patent Document 1).

Coding System

[0108] Hereinafter, the present technology will be described in the case of being applied to image coding/decoding of the High Efficiency Video Coding (HEVC) system as an example.

Coding Unit

[0109] In the Advanced Video Coding (AVC) system, a hierarchical structure configured by a macro block and a sub macro block is defined. However, a macro block of 16.times.16 pixels is not optimal for a large image frame called Ultra High Definition (UHD; 4000 pixels.times.2000 pixels) that becomes a target for a next-generation coding system.

[0110] In contrast to this, in the HEVC system, as illustrated in FIG. 1, a coding unit (Cu) is defined.

[0111] A CU is also called a Coding Tree Block (CTB) and is a partial area of a picture unit that achieves a role similar to that of the macro block in the AVC system. While the macro block is fixed to the size of 16.times.16 pixels, the size of the CU is not fixed but is designated in image compression information in each sequence.

[0112] For example, in the sequence parameter set (SPS) included in coded data that is an output, a maximum size (Largest Coding Unit (LCU)) and a minimum size (Smallest Coding Unit (SCU)) of the CU are defined.

[0113] Within each LCU, by setting split-flag=1 in a range not under the size of the SCU, the LCU can be split into CUs having a smaller size. In the example illustrated in FIG. 1, the size of the LCU is 128, and a largest hierarchical depth is 5. When the value of split_flag is "1", the CU having a size of 2N.times.2N is split into CUs each having a size of N.times.N at a hierarchy one level below.

[0114] Furthermore, the CU is split into prediction units (PUs) that are areas (partial areas of an image in units of pictures) that are processing units of an intra prediction or an inter prediction and is split into transform units (TUs) that are areas (partial areas of an image in units of pictures) that are processing units of an orthogonal transform. At present, in the HEVC system, 16.times.16 and 32.times.32 orthogonal transforms can be used in addition to 4.times.4 and 8.times.8 orthogonal transforms.

[0115] As in the HEVC system described above, in the case of a coding system in which a CU is defined, and various processes are performed in units of CUs, it may be considered that a macro block of the AVC system corresponds to an LCU, and a block (sub block) corresponds to a CU. In addition, a motion condensation block of the AVC system may be considered to correspond to a PU. However, since the CU has a hierarchical structure, generally, the size of the LCU of the highest hierarchy is set to be larger than that of the macro block of the AVC system such as 128.times.123 pixels.

[0116] Thus, hereinafter, an LCU is assumed to include a macro block of the AVC system as well, and a CU is assumed to include a block (sub block) of the AVC system as well. In other words, a "block" used in the description presented below represents an arbitrary partial area within the picture, and the size, the shape, the characteristic, and the like thereof are not specifically limited. In other words, a "block", for example, includes arbitrary areas such as a TU, a PU, an SCU, a CU, an LCU, a sub block, a macro block, and a slice. It is apparent that a partial area (processing unit) other than those is also included therein. In addition, a case where the size, the processing unit, or the like needs to be limited will be appropriately described.

[0117] In this specification, a Coding Tree Unit (CTU) is assumed to be a unit that includes a Coding Tree Block (CTB) of a CU of a largest number (LCU) and a parameter at the time of processing at the LCU base (level) thereof. In addition, a Coding Unit (CU) configuring the CTU is assumed to be a unit that includes a Coding Block (CB) and a parameter at the time of processing at the CU base (level) thereof.

Mode Selection

[0118] In the AVC and HEVC coding systems, in order to attain higher coding efficiency, selection of an appropriate prediction mode is significant.

[0119] As an example of such a selection system, there is a method in which the selection system is embedded in reference software (published at http://iphome.hhi.de/suehring/tml/index.htm) of H.264/MPEG-4AVC called a JM (Joint Model).

[0120] In the JM, a mode determination method for determining two modes including a high complexity mode and a low complexity mode to be described below can be selected. In any one of the modes, a cost function value relating to each prediction mode Mode is calculated, and a prediction mode having a smallest cost function value is selected as an optimal mode for the block or the macro block.

[0121] The cost function in the high complexity mode is represented below as in Equation (1).

[Mathematical Formula 1]

Cost (Mode .di-elect cons. .OMEGA.)=D+.lamda.*R (1)

[0122] Here, .OMEGA. is a universal set of candidate modes used for coding the block or the macro block, D is differential energy between a decoded image and an input image in a case where coding is performed in the prediction mode. In addition, .lamda. is a Lagrange undefined multiplier given as a function of a quantization parameter. R is a total code amount of a case where coding is performed in the mode that includes the orthogonal transform coefficient.

[0123] In other words, when coding is performed in the high complexity mode, in order to calculate the above-described parameters D and R, a provisional encoding process needs to be performed once for all the candidate modes, and accordingly, a larger calculation amount is necessary.

[0124] A cost function in the low complexity mode is represented below as in Equation (2).

[Mathematical Formula 2]

Cost (Mode .di-elect cons. .OMEGA.)=D+QP2Quant(QP)*HeaderBit (2)

[0125] Here, D, different from that of the case of the high complexity mode, is differential energy between a predicted image and an input image. In addition, QP2Quant (QP) is given as a function of a quantization parameter QP, and HeaderBit is a code amount relating to information belonging to a header such as a motion vector and a mode that does not include an orthogonal transform coefficient.

[0126] In other words, in the low complexity mode, while a prediction process needs to be performed for each candidate mode, a decoded image is not necessary, and the coding process does not need to be performed. For this reason, the low complexity mode can realize a calculation amount lower than that of the high complexity mode.

Residual Prediction

[0127] Meanwhile, in the HEVC, a method for making a prediction (also referred to as a residual prediction) of a residual signal between components at the time of 444 coding has been considered (for example, see Non-patent Document 2).

[0128] In a method disclosed in this Non-patent Document 2, a color difference component (Cb/Cr) (or an R component or a B component) is predicted using a luminance component (Y) (or a G component) as in the following Equation (3).

[Mathematical Formula 3]

.DELTA.r.sub.c(x, y)=r.sub.c(x, y)-(.alpha..times.r.sub.L(x, y))>>3 (3)

[0129] Here, r.sub.c(x, y) represents residual data (a difference between an input image and a predicted image) of a color difference component (Cb or Cr). In addition, r.sub.L(x, y) represents residual data (a difference between an input image and a predicted image) of a luminance component (Y). Furthermore, .DELTA.r.sub.c(x, y) represents a prediction result (the residual data of the color difference component (Cb or Cr) is predicted using residual data of the luminance component (Y)) of a residual prediction (also referred to as predicted residual data). In these, "x, y" represents a position (coordinates) within an image.

[0130] In addition, .alpha. represents a weighting coefficient and takes a value of one of .+-.(0, 1, 2, 4, 8). This value is set in units of TUs (in other words, the value is transmitted to the decoding side in units of TUs). Here, ">>" represents a bit shift to the right side (right shift). For example, ">>n" represents a right shift corresponding to n bits.

[0131] Whether or not such a residual prediction is made is controlled in units of picture parameter sets (PPS). In other words, in a PPS, an on/off flag controlling whether or not such a residual prediction is made is transmitted to the decoding side.

[0132] Meanwhile, generally, the bit depths of data of components are independent from each other, and thus, there is a possibility that mutually-different values are set. However, as illustrated in Equation (3) described above, in the prediction method disclosed in Non-patent Document 2, there is a premise that the bit depths of the residual data (luminance components or color difference components) of the components are the same, and a case where the bit depths of the residual data are different between the components is not considered. In other words, in a prediction as represented in Equation (3), in a case where the bit depths of the residual data are different from each other between the components, a correct prediction is not made, and there is concern that the coding efficiency is lowered.

Scaling Bit Depth

[0133] Thus, when a prediction (residual prediction) among components is made for residual data between an input image configured by a plurality of the components and a predicted image, the bit depths of the residual data are arranged to be uniform (scaling is performed) among the components. By arranging as such, also in a case where the bit depths of residual data are different among the components, a prediction can be made with the bit depths of the residual data of the components being uniform, and accordingly, the residual prediction can be correctly made. Accordingly, a decrease in the coding efficiency can be suppressed.

[0134] While a method for arranging the bit depths of the residual data to be uniform among the components is arbitrary, for example, the bit depths of the residual data may be arranged to be uniform among the components by performing a bit shift of the residual data. By configuring as such, the bit depths of the residual data can be easily arranged to be uniform among the components. In other words, the processing load and the processing time can be decreased.

[0135] Here, the color space of the input image in which the bit depths of the residual data are arranged to be uniform is arbitrary. For example, the color space may be a YUV space (YUV444 or the like) or an RGB space (RGB444 or the like). For example, in a case where the color space of an input image is the YUV space, the prediction may be made with the bit depths of residual data being arranged to be uniform between a luminance component and a color difference component through a bit shift. More specifically, for example, the prediction may be made after the bit depth of the luminance component is arranged to be uniform with respect to the bit depth of the color difference component through a bit shift. In addition, for example, in a case where the color space of the input image is the RGB space, the prediction may be performed with the bit depths of the residual data being arranged to be uniform between a G component and an R component or a B component through a bit shift. More specifically, for example, the prediction may be made after the bit depth of the G component is arranged to be uniform with respect to the bit depth of the R component or the B component through a bit shift.

Shift Operation

[0136] A specific example of the bit shift (shift operation) for arranging the bit depths to be uniform as described above will be described. A method for the shift operation is arbitrary. For example, in a case where a bit depth difference between two components to be predicted is not zero, a prediction may be made with the bit depths of residual data being arranged to be uniform between the components through a bit shift. For example, as represented in the following Equation (4), it may be configured such that a difference (bit depth difference) of bit depths of residual data between the components is acquired, and, as represented in Equations (5) to (8), a bit shift corresponding to the bit depth difference is made. At that time, the bit shift corresponding to the absolute value of the bit depth difference, as represented in Equation (6) or (8), may be made. In such a case, for example, a shift direction may be determined as represented in Equation (6) and Equation (8) based on whether or not the conditions of Equation (5) and Equation (7) are satisfied.

[Mathematical Formula 4]

.DELTA.bitdepth=BitDepth.sub.Y-BitDepth.sub.C (4)

if(.DELTA.bitdepth.gtoreq.0) (5)

.DELTA.r.sub.c(x, y)=r.sub.c(x, y)-(.alpha..times.(r.sub.L(x, y)>>.DELTA.bitdepth))>>3 (6)

else (7)

.DELTA.r.sub.c(x, y)=r.sub.c(x, y)-(.alpha..times.(r.sub.L(x, y)<<-.DELTA.bitdepth))>>3 (8)

[0137] In Equation (4) to Equation (8), BitDepth.sub.Y represents the bit depth of the residual data of the luminance component (Y), and BitDepth.sub.C represents the bit depth of the residual data of the color difference component (Cb or Cr). In addition, .DELTA.bitdepth represents a bit depth difference (a difference between the bit depth (BitDepth.sub.Y) of the residual data of the luminance component (Y) and the bit depth (BitDepth.sub.C) of the residual data of the color difference component (Cb or Cr)) between components.

[0138] In addition, r.sub.c(x, y) represents the residual data of the color difference component (Cb or Cr). Furthermore, r.sub.L(x, y) represents the residual data of the luminance component (Y). In addition, .DELTA.r.sub.c(x, y) represents a predicted residual data (the residual data of the color difference component (Cb or Cr) is predicted using the residual data of the luminance component (Y)) between components. In these, "x, y" represents a position (coordinates) within an image.

[0139] In addition, .alpha. represents a weighting coefficient. The value of .alpha. is arbitrary and, for example, is set to a value of one of .+-.(0,1,2,4,8). This value is set in units of TUs (in other words, the value is transmitted to the decoding side in units of TUs). Here, ">>" represents a bit shift to the right side (right shift), and "<<" represents a bit shift to the left side (left shift). For example, ">>n" represents a right shift corresponding to n bits, and "<<m" represents a left shift corresponding to m bits.

[0140] In case of this example, as represented in Equation (4) to Equation (8), a bit depth difference (.DELTA.bitdepth) between components is calculated using Equation (4). Then, in a case where this value satisfies the condition of Equation (5), in other words, in a case where .DELTA.bitdepth is zero or more, predicted residual data (.DELTA.r.sub.c(x, y)) is calculated as in Equation (6). As represented in Equation (6), although a calculation method of this case is basically the same as Equation (3), the predicted residual data (.DELTA.r.sub.c(x, y)) is calculated by shifting the residual data (r.sub.L(x, y)) of the luminance component (Y) to the right side in correspondence with the bit depth difference (.DELTA.bitdepth).

[0141] On the other hand, in a case where the bit depth difference (.DELTA.bitdepth) between the components has a negative value (a value less than zero) and does not satisfy the condition of Equation (5) (the condition of Equation (7) is satisfied), predicted residual data (.DELTA.r.sub.c(x, y)) is calculated as in Equation (8). As represented in Equation (8), although a calculation method of this case is basically the same as Equation (3), the predicted residual data (.DELTA.r.sub.c(x, y)) is calculated by shifting the residual data (r.sub.L(x, y)) of the luminance component (Y) to left right side in correspondence with the bit depth difference (-.DELTA.bitdepth).

[0142] In other words, in such a case, the bit depth (BitDepth.sub.Y) of the residual data (r.sub.L(x, y)) of the luminance component (Y) can be arranged to be uniform for the bit depth (BitDepth.sub.C) of the residual data (r.sub.c(x, y)) of the color difference component (Cb or Cr), and predicted residual data (.DELTA.r.sub.c(x, y)) is calculated. More specifically, a difference between the bit depths of two components used for a residual prediction is acquired, a bit shift (scaling) corresponding to a difference between the bit depths is made for the residual data of one component of the two components, the residual data that is bit-shifted is multiplied by a predetermined weighting coefficient, a bit shift corresponding to a predetermined number of bits is made for a result of the multiplication, and a difference between the residual data of the other component and the result of the multiplication that has been bit-shifted is acquired. A prediction may be made as above.

[0143] In a case where .DELTA.bitdepth is zero, the bit shift amount of the residual data (r.sub.L(x, y)) of the luminance component (Y) is zero, and thus, the predicted residual data (.DELTA.r.sub.c(x, y)) can be regarded as being calculated as in Equation (3).

[0144] By calculating as described above, also in a case where the bit depths of the residual data are different between components, predicted residual data (.DELTA.r.sub.c(x, y)) can be correctly calculated. Accordingly, a decrease in the coding efficiency can be suppressed.

Semantics

[0145] In order to perform scaling of the bit depth as described above, semantics may be described as an example illustrated in FIG. 2. A portion of the semantics illustrated in FIG. 2 to which an underline is applied is a description corresponding to the scaling (for example, Equation (5) to Equation (8)) described above.

Image Coding Apparatus

[0146] FIG. 3 is a block diagram that illustrates an example of the configuration of an image coding apparatus that is one aspect of an image processing apparatus according to the present technology. The image coding apparatus 100 illustrated in FIG. 3 codes image data of a moving image, for example, by using a prediction process or the HEVC or a prediction process of a system that is compliant therewith. Hereinafter, a case will be described as an example in which the color space of an input image is YUV444.

[0147] As illustrated in FIG. 3, the image coding apparatus 100 includes: a screen rearranging buffer 102; a calculation unit 103; an orthogonal transform unit 104; a quantization unit 105; a reversible coding unit 106; an accumulation buffer 107; an inverse quantization unit 108; and an inverse orthogonal transform unit 109. In addition, the image coding apparatus 100 includes: a calculation unit 110; a loop filter 111; a frame memory 112; an intra prediction unit 113; an inter prediction unit 114; a predicted image selecting unit 115; and a rate control unit 116. Furthermore, the image coding apparatus 100 includes: a header processing unit 121; a residual predicting unit 122; and a residual restoring unit 123.

[0148] The screen rearranging buffer 102 stores images of frames of input image data in order of display, rearranges the stored images of the frames in order for coding in accordance with a Group Of Picture (GOP), and supplies the images acquired by rearranging the order of frames to the calculation unit 103. In addition, the screen rearranging buffer 102 supplies the images acquired by rearranging the order of frames also to the intra prediction unit 113 and the inter prediction unit 114.

[0149] The calculation unit 103 subtracts a predicted image supplied from the intra prediction unit 113 or the inter prediction unit 114 through the predicted image selecting unit 115 from an image read from the screen rearranging buffer 102 and supplies difference information (residual data) thereof to the residual predicting unit 122. For example, in the case of an image for which intra coding is performed, the calculation unit 103 subtracts a predicted image supplied from the intra prediction unit 113 from an image read from the screen rearranging buffer 102. On the other hand, for example, in the case of an image for which inter coding is performed, the calculation unit 103 subtracts a predicted image supplied from the inter prediction unit 114 from an image read from the screen rearranging buffer 102.

[0150] The orthogonal transform unit 104 performs an orthogonal transform such as a discrete cosine transform or a Karhuren-Loeve transform for the residual data of the luminance component or the predicted residual data of the color difference component supplied from the residual predicting unit 122. The orthogonal transform unit 104 supplies transform coefficients acquired through the orthogonal transform to the quantization unit 105.

[0151] The quantization unit 105 quantizes the transform coefficients supplied from the orthogonal transform unit 104. The quantization unit 105 sets quantization parameters based on information relating to a target value of a coding amount that is supplied from the rate control unit 116 and performs the quantization thereof. The quantization unit 105 supplies the quantized transform coefficients to the reversible coding unit 106.

[0152] The reversible coding unit 106 codes the quantized transform coefficients quantized by the quantization unit 105 using an arbitrary coding system. Since the coefficient data is quantized under the control of the rate control unit 116, the coding amount becomes the target value (or a value close to the target value) set by the rate control unit 116.

[0153] In addition, the reversible coding unit 106 acquires information representing the mode of the intra prediction and the like from the intra prediction unit 113 and acquires the information representing the mode of the inter prediction, differential motion vector information, and the like from the inter prediction unit 114.

[0154] The reversible coding unit 106 codes such various information items using an arbitrary coding system and configures the coded information as a part of header information of coded data (also referred to as a coded stream) (multiplexed). The reversible coding unit 106 supplies the coded data acquired by the coding process to the accumulation buffer 107, thereby accumulating the coded data therein.

[0155] Examples of the coding system of the reversible coding unit 106 include a variable length coding, arithmetic coding, and the like. As examples of the variable length coding, there are Context-Adaptive Variable Length Coding (CAVLC) defined in the H.264/AVC system and the like. As examples of the arithmetic coding, there is Context-Adaptive Binary Arithmetic Coding (CABAC) or the like.

[0156] The accumulation buffer 107 temporarily maintains the coded data supplied from the reversible coding unit 106. In addition, the accumulation buffer 107 outputs the maintained coded data to the outside of the image coding apparatus 100 at predetermined timing. In other words, the accumulation buffer 107 is a transmission unit that transmits the coded data as well.

[0157] In addition, the transform coefficients quantized by the quantization unit 105 are supplied also to the inverse quantization unit 108. The inverse quantization unit 108 performs inverse quantization of the quantized transform coefficients by using a method corresponding to the quantization process performed by the quantization unit 105. The inverse quantization unit 108 supplies the transform coefficients acquired by the inverse quantization process to the inverse orthogonal transform unit 109.

[0158] The inverse orthogonal transform unit 109 performs an inverse orthogonal transform of the transform coefficients supplied from the inverse quantization unit 108 by using a method corresponding to the orthogonal transform process performed by the orthogonal transform unit 104. The inverse orthogonal transform unit 109 supplies output (the residual data of the restored luminance component and the predicted residual data of the color difference component) for which the inverse orthogonal transform is performed to the residual restoring unit 123. In addition, the inverse orthogonal transform unit 109 supplies the residual data of the restored luminance component to the residual predicting unit 122 as well.

[0159] The calculation unit 110 adds a predicted image supplied from the intra prediction unit 113 or the inter prediction unit 114 through the predicted image selecting unit 115 to the restored residual data of each component supplied from the residual restoring unit 123, thereby acquiring an image (hereinafter, referred to as a reconstruction image) that is locally reconstructed. The restored image is supplied to the loop filter 111 or the intra prediction unit 113.

[0160] The loop filter 111 includes a de-blocking filter, an adaptive loop filter, or the like and appropriately performs a filter process for a reconstruction image supplied from the calculation unit 110. For example, the loop filter 111 removes a block distortion of the reconstruction image by performing a de-blocking filter process for the reconstruction image. In addition, for example, the loop filter 111 performs a loop filter process using a Wiener filter for a result (a reconstruction image from which the block distortion has been removed) of the de-blocking filter process, thereby improving the image quality.

[0161] Furthermore, the loop filter 111 may be configured to perform any other additional arbitrary filter process for the reconstruction image. In addition, the loop filter 111, as is necessary, may be configured to supply information such as filter coefficients used for the filter process and the like to the reversible coding unit 106 so as to code the information.

[0162] The loop filter 111 supplies a filter process result (hereinafter, referred to as a decoded image) to the frame memory 112.

[0163] The frame memory 112 stores the supplied decoded image and supplies the stored decoded image to the inter prediction unit 114 at predetermined timing as a reference image.

[0164] The intra prediction unit 113 performs an intra prediction (intra-screen prediction) in which a predicted image is generated by using pixel values within a processing target picture that is a reconstruction image supplied from the calculation unit 110 as a reference image. The intra prediction unit 113 performs the intra prediction using a plurality of intra prediction modes prepared in advance.

[0165] The intra prediction unit 113 generates predicted images in all the intra prediction modes that are candidates, evaluates a cost function value of each predicted image by using an input image supplied from the screen rearranging buffer 102, and selects an optimal mode. When the optimal intra prediction mode is selected, the intra prediction unit 113 supplies the predicted image generated in the optimal mode to the predicted image selecting unit 115.

[0166] In addition, as described above, the intra prediction unit 113 appropriately supplies intra prediction mode information representing the employed intra prediction mode and the like to the reversible coding unit 106 so as to code them.

[0167] The inter prediction unit 114 performs an inter prediction process (a motion prediction process and a compensation process) by using an input image supplied from the screen rearranging buffer 102 and a reference image supplied from the frame memory 112. More specifically, the inter prediction unit 114 performs a motion compensation process in accordance with a motion vector detected by performing a motion prediction as an inter prediction process, thereby generating a predicted image (inter prediction image information). The inter prediction unit 114 performs such an inter prediction in a plurality of inter prediction modes prepared in advance.

[0168] The inter prediction unit 114 generates prediction images in all the inter prediction modes that are candidates. The inter prediction unit 114 evaluates a cost function value of each predicted image by using the input image supplied from the screen rearranging buffer 102 and information of the generated difference motion vector and the like and selects an optimal mode. When the optimal inter prediction mode is selected, the inter prediction unit 114 supplies the predicted image generated in the optimal mode to the predicted image selecting unit 115.

[0169] The inter prediction unit 114 supplies information representing an employed inter prediction mode, information required for performing the process in the inter prediction mode at the time of decoding the coded data, and the like to the reversible coding unit 106 so as to code the information. As the required information, for example, there are information of the generated difference motion vector, a flag representing an index of a predicted motion vector as predicted motion vector information, and the like.

[0170] The predicted image selecting unit 115 selects a supply source of a predicted image to be supplied to the calculation unit 103 and the calculation unit 110. For example, in the case of the intra coding, the predicted image selecting unit 115 selects the intra prediction unit 113 as a supply source of a predicted image and supplies the predicted image supplied from the intra prediction unit 113 to the calculation unit 103 and the calculation unit 110. On the other hand, for example, in the case of the inter coding, the predicted image selecting unit 115 selects the inter prediction unit 114 as a supply source of a predicted image and supplies the predicted image supplied from the inter prediction unit 114 to the calculation unit 103 and the calculation unit 110.

[0171] The rate control unit 116 controls of the rate of the quantization operation performed by the quantization unit 105 based on the coding amount of the coded data accumulated in the accumulation buffer 107 such that an overflow or an underflow does not occur.

[0172] The header processing unit 121, for example, generates additional information (also referred to as header information) such as a video parameter set (VPS), a sequence parameter set (SPS), a picture parameter set (PPS), supplemental enhancement information (SEI), and a slice header other than the image information. The header processing unit 121 supplies the generated header information to the reversible coding unit 106 and transmits the header information with being included in a bit stream to the decoding side. In addition, the header processing unit 121 supplies necessary information among the generated header information to the residual predicting unit 122. Details thereof will be described later.

[0173] The residual predicting unit 122 performs a residual prediction by using the residual data of a color difference component supplied from the calculation unit 103 and the residual data of a restored luminance component supplied from the inverse orthogonal transform unit 109. More specifically, the residual predicting unit 122 performs a prediction of the residual data of the color difference component by using the residual data of the restored luminance component, thereby generating predicted residual data. At that time, the residual predicting unit 122 performs a prediction with the bit depths of the residual data arranged to be uniform between the components. Details thereof will be described later. The residual predicting unit 122 supplies the predicted residual data of the color difference component acquired through such a residual prediction and the residual data of the luminance component supplied from the calculation unit 103 to the orthogonal transform unit 104. On the other hand, in a case where the residual prediction is not performed, the residual predicting unit 122 supplies the residual data of each component supplied from the calculation unit 103 to the orthogonal transform unit 104.

[0174] The residual restoring unit 123 restores the residual data of the color difference component by using the residual data of the luminance component and the predicted residual data of the color difference component supplied from the inverse orthogonal transform unit 109 (also referred to as residual restoration). At that time, the residual restoring unit 123 performs restoration by arranging the bit depths of the residual data to be uniform between the components. The process of the residual restoration is basically similar to the process of the residual restoration performed on the decoding side, and thus, when the decoding side is described, the description of the process of the residual restoration will be presented by using the description for the decoding side. The residual restoring unit 123 supplies the residual data of each component that has been restored to the calculation unit 110.

Header Processing Unit and Residual Predicting Unit

[0175] FIG. 4 is a block diagram that illustrates an example of the main configurations of the header processing unit 121 and the residual predicting unit 122 illustrated in FIG. 3. As illustrated in FIG. 4, the header processing unit 121 includes: an SPS processing unit 131; and a PPS processing unit 132.

[0176] The SPS processing unit 131, for example, performs a process relating to the generation of a sequence parameter set (SPS). In addition, the SPS processing unit 131, for example, supplies information including information (for example, bit_depth_luma_minus8), which is included in the sequence parameter set (SPS), representing the bit depth of the luminance component or information including the information representing the bit depth of the luminance component and information (for example, bit_depth_chroma_minus8) representing the bit depth of the color difference component (Cb/Cr) or information including the information representing the bit depth of the color difference component to the residual predicting unit 122 (a bit depth difference calculating unit 151 to be described later).

[0177] The PPS processing unit 132, for example, performs a process relating to the generation of a picture parameter set (PPS). In addition, the PPS processing unit 132, for example, supplies information (for example, luma_chroma_prediction_enabled_flag), which is included in the picture parameter set (PPS), used for controlling whether or not a residual prediction is performed to the residual predicting unit 122 (a bit shift control unit 152 to be described later).

[0178] The residual predicting unit 122 supplies the residual data (r.sub.Y(x, y)) of the luminance component supplied from the calculation unit 103 to the orthogonal transform unit 104. The residual predicting unit 122 includes a control unit 141 and a prediction unit 142. The control unit 141 performs a process relating to the control of calculation of a residual prediction. The prediction unit 142 is controlled by the control unit 141 and performs calculation relating to the prediction thereof. For example, the prediction unit 142, under the control of the control unit 141, generates predicted residual data (.DELTA.r.sub.Cr(x, y) and .DELTA.r.sub.Cb(x, y)) by making a prediction (residual prediction) of the residual data (r.sub.Cr(x, y) and r.sub.Cb(x, y)) of the color difference component, which is acquired from the calculation unit 103, by using the restored residual component (r'.sub.Y(x, y)) of the luminance component, which is acquired from the inverse orthogonal transform unit 109, and supplies the generated predicted residual data to the orthogonal transform unit 104. In addition, the prediction unit 142, under the control of the control unit 141, supplies the residual data (r.sub.Cr(x, y) and r.sub.Cb(x, y)) of the color difference component acquired from the calculation unit 103 to the orthogonal transform unit 104 without making a residual prediction.

[0179] The control unit 141 includes: a bit depth difference calculating unit 151; a bit shift control unit 152; and a weighting coefficient setting unit 153.

[0180] The bit depth difference calculating unit 151 calculates a bit depth difference between the residual data of components used for a residual prediction. For example, the bit depth difference calculating unit 151 acquires the information (for example, bit_depth_luma_minus8) representing the bit depth of the luminance component or information including the information representing the bit depth of the luminance component and the information (for example, bit_depth_chroma_minus8) representing the bit depth of the color difference component (Cb/Cr) or information including the information representing the bit depth of the color difference component from the SPS processing unit 131 and calculates a bit depth difference (delta_bitdepth) between the components by performing calculation represented in Equation (4) by using such information. The bit depth difference calculating unit 151 supplies the calculated bit depth difference (delta_bitdepth) to the bit shift control unit 152.

[0181] In addition, the bit depth difference calculating unit 151, under the control of the bit shift control unit 152, supplies the calculated bit depth difference (delta_bitdepth) to the prediction unit 142. For example, in a case where a right shift of the residual data is performed at the time of scaling the bit depth, the bit depth difference calculating unit 151 supplies the calculated bit depth difference (delta_bitdepth) to the right shift unit 162. On the other hand, in a case where a left shift of the residual data is performed at the time of scaling the bit depth, the bit depth difference calculating unit 151 supplies the calculated bit depth difference (-delta_bitdepth) to the left shift unit 163.

[0182] The bit shift control unit 152 controls the calculation performed by the prediction unit 142 based on the value of the bit depth difference (delta_bitdepth) between the components supplied from the bit depth difference calculating unit 151. For example, in a case where the bit depth difference (delta_bitdepth) is zero, the bit shift control unit 152 performs control of the prediction unit 142 (the selection unit 161 thereof) such that a bit shift (the scaling of the bit depth) of the residual data is not performed. In addition, at that time, the bit shift control unit 152 also performs control of the bit depth difference calculating unit 151 such that the bit depth difference (delta_bitdepth) is not supplied to the prediction unit 142.

[0183] In addition, for example, in a case where the bit depth difference (delta_bitdepth) has a positive value (>0), the bit shift control unit 152 performs control of the prediction unit 142 (the selection unit 161 thereof) such that the residual data is shifted to the right side (the scaling of the bit depth is performed). In addition, at that time, the bit shift control unit 152 also performs control of the bit depth difference calculating unit 151 such that the bit depth difference (delta_bitdepth) is supplied to the prediction unit 142 (the right shift unit 162).

[0184] On the other hand, for example, in a case where the bit depth difference (delta_bitdepth) has a negative value (<0), the bit shift control unit 152 performs control of the prediction unit 142 (the selection unit 161 thereof) such that the residual data is shifted to the left side (the scaling of the bit depth is performed). In addition, at that time, the bit shift control unit 152 also performs control of the bit depth difference calculating unit 151 such that the bit depth difference (-delta_bitdepth) is supplied to the prediction unit 142 (the left shift unit 163).

[0185] In addition, the bit shift control unit 152, for example, acquires information (for example, luma_chroma_prediction_enabled_flag) used for controlling whether or not a residual prediction is performed from the PPS processing unit 132 and controls whether or not a residual prediction is performed based on the value thereof. For example, in a case where it is represented that a residual prediction is not performed based on the information used for controlling whether or not a residual prediction is performed, the bit shift control unit 152 performs control of the prediction unit 142 such that calculation relating to the prediction is not performed. In addition, in such a case, the bit shift control unit 152 performs control of the bit depth difference calculating unit 151 such that the supply of the bit depth difference (delta_bitdepth or -delta_bitdepth) is stopped. Furthermore, the bit shift control unit 152, in such a case, performs control of the weighting coefficient setting unit 153 such that the weighting coefficient .alpha. is not set.

[0186] On the other hand, for example, in a case where it is represented that a residual prediction is performed based on the information used for controlling whether or not a residual prediction is performed, the bit shift control unit 152 performs control of the prediction unit 142 such that calculation relating to the prediction is performed. In addition, in such a case, the bit shift control unit 152 performs control of the bit depth difference calculating unit 151 such that the bit depth difference (delta_bitdepth or -delta_bitdepth) is supplied to the prediction unit 142. Furthermore, in such a case, the bit shift control unit 152 performs control of the weighting coefficient setting unit 153 to set the weighting coefficient .alpha. and supplies the weighting coefficient to the prediction unit 142 (weighting coefficient multiplication unit 164).

[0187] The weighting coefficient setting unit 153 sets the weighting coefficient .alpha. under the control of the bit shift control unit 152. A method of setting the weighting coefficient .alpha. and the value thereof is arbitrary. For example the weighting coefficient setting unit 153 may be configured to independently set the weighting coefficient .alpha. for each component. In addition, in a case where the weighting coefficient .alpha. is set, the weighting coefficient setting unit 153 supplies the weighting coefficient .alpha. to the prediction unit 142 (the weighting coefficient multiplication unit 164). This weighting coefficient .alpha. is used also for residual restoration and thus, is provided for the decoding side with being included in a bit stream.

[0188] The prediction unit 142 includes: a selection unit 161; a right shift unit 162; a left shift unit 163; a weighting coefficient multiplication unit 164; a right shift unit 165; a calculation unit 166; and a calculation unit 167.

[0189] The selection unit 161 selects a supply destination of the residual data (r'.sub.Y(x, y)) of the luminance component, which has been restored, supplied from the inverse orthogonal transform unit 109 under the control of the bit shift control unit 152. For example, in case of performing scaling (right shift) of the bit depth, the selection unit 161 supplies the residual data (r'.sub.Y(x, y)) of the luminance component, which has been restored, to the right shift unit 162. In this case, calculation represented in Equation (6) is performed. In addition, in case of performing scaling (left shift) of the bit depth, the selection unit 161 supplies the residual data (r'.sub.Y(x, y)) of the luminance component, which has been restored, to the left shift unit 163. In this case, calculation represented in Equation (8) is performed. On the other hand, for example, in a case where the scaling of the bit depth is not performed, the selection unit 161 supplies the residual data (r'.sub.Y(x, y)) of the luminance component, which has been restored, to the weighting coefficient multiplication unit 164. In this case, calculation represented in Equation (3) is performed.

[0190] The right shift unit 162 performs scaling of the bit depth by shifting the residual data (r'.sub.Y(x, y)) of the luminance component, which has been restored, acquired from the selection unit 161 to the right side in correspondence with a bit depth difference (delta__bitdepth) acquired from the bit depth difference calculating unit 151. The right shift unit 162 supplies a result (r'.sub.Y(x, y)<<delta_bitdepth) of the right shift of the residual data of the luminance component to the weighting coefficient multiplication unit 164.

[0191] The left shift unit 163 performs scaling of the bit depth by shifting the residual data (r'.sub.Y(x, y)) of the luminance component, which has been restored, acquired from the selection unit 161 to the left side in correspondence with a bit depth difference (-delta_bitdepth) acquired from the bit depth difference calculating unit 151. The left shift unit 163 supplies a result (r'.sub.Y(x, y)<<-delta_bitdepth) of the left shift of the residual data of the luminance component to the weighting coefficient multiplication unit 164.

[0192] The weighting coefficient multiplication unit 164 acquires the residual data of the luminance component that has been restored from one of the selection unit 161 to the left shift unit 163. For example, the weighting coefficient multiplication unit 164 acquires the restored residual data (r'.sub.Y(x, y)) of the luminance component that has not been bit-shifted from the selection unit 161. In addition, for example, the weighting coefficient multiplication unit 164 acquires the restored residual data (r'.sub.Y(x, y)>>delta__bitdepth) of the luminance component that has been shifted to the right side from the right shift unit 162. In addition, for example, the weighting coefficient multiplication unit 164 acquires the restored residual data (r'.sub.Y(x, y)<<-delta_bitdepth) of the luminance component that has been shifted to the left side from the left shift unit 163.