Methods And Apparatus For Malware Threat Research

Morris; Melvyn ; et al.

U.S. patent application number 16/786692 was filed with the patent office on 2020-06-04 for methods and apparatus for malware threat research. The applicant listed for this patent is Webroot Inc.. Invention is credited to Joseph Jaroch, Melvyn Morris.

| Application Number | 20200177552 16/786692 |

| Document ID | / |

| Family ID | 45688475 |

| Filed Date | 2020-06-04 |

View All Diagrams

| United States Patent Application | 20200177552 |

| Kind Code | A1 |

| Morris; Melvyn ; et al. | June 4, 2020 |

METHODS AND APPARATUS FOR MALWARE THREAT RESEARCH

Abstract

Methods for classifying computer objects as malware and the associated apparatus are disclosed. An exemplary method includes, at a base computer, receiving data about a computer object from each of plural remote computers on which the object or similar objects are stored and or processed and counting the number of times in a given time period objects having one or more common attributes or behaviors that have been seen by the remote computers. The counted number is then compared with the expected number based on past observations, and if the comparison exceeds a predetermined threshold, the objects are flagged as unsafe or as suspicious.

| Inventors: | Morris; Melvyn; (Belper, GB) ; Jaroch; Joseph; (Elk Grove Village, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 45688475 | ||||||||||

| Appl. No.: | 16/786692 | ||||||||||

| Filed: | February 10, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13372433 | Feb 13, 2012 | 10574630 | ||

| 16786692 | ||||

| 61443095 | Feb 15, 2011 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 63/145 20130101; G06F 21/566 20130101; H04L 63/0263 20130101; G06F 21/56 20130101; H04L 63/14 20130101 |

| International Class: | H04L 29/06 20060101 H04L029/06; G06F 21/56 20060101 G06F021/56 |

Claims

1. A computer program product comprising a non-transitory computer-readable medium storing thereon a set of instructions executable by a processor, the set of instructions comprising instructions for: receiving checksum data about a computer object from each of plural remote computers on which the computer object is located; storing said checksum data in a database; and presenting, on a display and in response to receiving a selection of a first group of plural objects having commonality amongst an attribute, information relating to a second group of plural objects including the first group of plural objects and additional objects not in the first group of plural objects, and information relating to one or more checksummed attributes of the objects of the second group of plural objects from the database, the information relating to the second group of plural objects being arranged such that one or more values of the one or more checksummed attributes and one or more symbols are shown, wherein the one or more symbols are assigned to the one or more values based on at least one of a uniqueness and a commonality among the one or more values of the one or more checksummed attributes of the second group of plural objects, wherein information relating to another group of plural objects comprises a number of known objects that are not malware, a number of known malware objects, and a number of unknown objects; presenting on the display, a first symbol assigned to one or more values based on the uniqueness of the one or more values among the second group of plural objects when one or more values of the one or more checksummed attributes is unique amongst the second group of plural objects; and presenting on the display, a second symbol, different from the first symbol, when one or more values of the one or more checksummed attributes is common amongst the second group of plural objects.

2. The computer program product of claim 1, wherein the information relating to the second group of plural objects is displayed in tabular form with rows of the table corresponding to objects and columns of the table corresponding to attributes of the objects.

3. The computer program product of claim 1, wherein at least one of the first and second symbols comprises a symbol having at least one of a shape and a color different than another symbol.

4. The computer program product of claim 1, wherein the set of instructions further comprises instructions for: identifying commonality of one or more attribute values between the second group of plural objects; and refining a query in accordance with said identified commonality.

5. The computer program product of claim 1, wherein the set of instructions further comprises instructions for creating a rule from a user query if it is determined that the user query is deterministic in identifying malware.

6. The computer program product of claim 5, wherein the set of instructions further comprises instructions for: monitoring user groupings of objects along with any and all user actions taken such as classifying the objects of the second group of plural objects as being safe or unsafe; and automatically applying said groupings and actions in generating new rules for classifying objects as malware.

7. The computer program product of claim 5, wherein the set of instructions further comprises instructions for applying the rule to an object at a first computer.

8. The computer program product of claim 7, wherein the set of instructions further comprises instructions for: storing a classification of the object as safe or unsafe according to the rule in the database.

9. The computer program product of claim 8, wherein the set of instructions further comprises instructions for: receiving an indication from a remote computer that an object classified as malware by said rule is believed not to be malware; and amending or deleting the rule in accordance with said indication.

10. The computer program product of claim 5, wherein the set of instructions further comprises instructions for sending the rule to a remote computer such that the remote computer can apply the rule to an object at the remote computer.

11. The computer program product of claim 10, wherein the set of instructions further comprises instructions for: storing a classification of the object as safe or unsafe according to the rule in the database.

12. The computer program product of claim 11, wherein the set of instructions further comprises instructions for: receiving an indication from the remote computer that an object classified as malware by said rule is believed not to be malware; and amending or deleting the rule in accordance with said indication.

13. The computer program product of claim 1, wherein the set of instructions further comprises instructions for receiving actor information pertaining to an actor object performing an act and victim information pertaining to a victim object upon which the act is being performed.

14. The computer program product of claim 1, wherein the one or more checksummed attributes correspond to an object pathname and an object filename.

15. The computer program product of claim 1, where the set of instructions further comprises instructions for displaying a third symbol, different from the first symbol and the second symbol, when one or more values of the one or more checksummed attributes is common amongst the second group of plural objects.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of, and claims a benefit of priority under 35 U.S.C. 120 from U.S. patent application Ser. No. 13/372,433 filed Feb. 13, 2012, entitled "METHODS AND APPARATUS FOR DEALING WITH MALWARE," which claims the benefit of priority under 35 U.S.C. .sctn. 119 to U.S. Provisional Patent Application No. 61/443,095 filed Feb. 15, 2011, entitled "METHODS AND APPARATUS FOR DEALING WITH MALWARE," which are hereby fully incorporated herein by reference for all purposes. This application is related to U.S. patent application Ser. No. 13/372,375 filed Feb. 13, 2012, "METHODS AND APPARATUS FOR DEALING WITH MALWARE," issued as U.S. Patent No. 9,413,721, and U.S. patent application Ser. No. 14/286,786 filed May 23, 2014, entitled "METHODS AND APPARATUS FOR AGENT-BASED MALWARE MANAGEMENT," now abandoned, which are hereby fully incorporated herein by reference for all purposes.

BACKGROUND

1. Field

[0002] The present invention relates generally to methods and apparatus for dealing with malware. And more specifically, systems and methods for protection against malware.

2. Background

[0003] The term "malware" is used herein to refer generally to any executable computer file or, more generally "object", that is or contains malicious code, and thus includes viruses, Trojans, worms, spyware, adware, etc. and the like.

[0004] A typical anti-malware product, such as virus scanning software, scans objects or the results of an algorithm applied to the object or part thereof to look for signatures in the object that are known to be indicative of the presence of malware. Generally, the method of dealing with malware is that when new types of malware are released, for example via the Internet, these are eventually detected. Once new items of malware have been detected, then the service providers in the field generate signatures that attempt to deal with these and these signatures are then released as updates to their anti-malware programs. Heuristic methods have also been employed.

[0005] These systems work well for protecting against known malicious objects. However, since they rely on signature files being generated and/or updated, there is inevitably a delay between a new piece of malware coming into existence or being released and the signatures for identifying that malware being generated or updated and supplied to users. Thus, users are at risk from new malware for a certain period of time which might be up to a week or even more.

[0006] More recently, so-called "cloud" based techniques have been developed for fighting malware/viruses. In these techniques, protection is provided by signatures that are stored in the cloud, i.e. in a central server to which the remote computers are connected. Thus, a remote computer can be given protection as soon as a new malware object is spotted and its signature stored in the central server, so that the remote computer is protected from it without the need to wait for the latest signatures to be downloaded and installed on the remote computer. This technique can also give the advantage of moving the processing burden from the remote computer to the central server. However, this technique is limited by sending only signature information or very basic information about an object to the central server. Therefore, in order to analyse whether or not an unknown object is malware, a copy of that object is normally sent to the central server where it is investigated by a human analyst. This is a time consuming and laborious task introducing considerable delay in classifying malware as safe or unsafe. Also, given the considerable volume of new objects that can be seen daily across a community, it is unrealistic to have a skilled human analyst investigate each new object thoroughly. Accordingly, malevolent objects may escape investigation and detection for considerable periods of time during which time they can carry out their malevolent activity in the community.

[0007] We refer in the following to our previous application US-2007/0016953, published 18 Jan. 2007, entitled "METHODS AND APPARATUS FOR DEALING WITH MALWARE," the entire contents of which are hereby incorporated by reference. In this document, various new and advantageous cloud-based strategies for fighting malware are disclosed. In particular a cloud-based approach is outlined where the central server receives information about objects and their behaviour on remote computers throughout the community and builds a picture of objects and their behaviour seen throughout the community. This information is used to make comparisons with this data across the community in developing and applying various heuristics and or rules to determine whether a newly seen object is malevolent or not.

[0008] This approach to fighting malware involves communicating, storing and managing vast amounts of data at the central server, which is a challenging problem in itself. It is also challenging to develop new schemes for more accurately and more efficiently detecting malware given the vast amount of data collected about objects seen in the community and the constantly evolving strategies used by malware writers to evade detection. It is also desirable to improve the processes for analysing the data to make the best use of the time and specialised skills of the human malware analysts.

[0009] Malware is also becoming increasingly adept at self-defence by interfering with the operation of security programs installed on a computer. This is another problem that security software must contend with.

SUMMARY

[0010] According to a first aspect of the present invention, there is provided a method of classifying a computer object as malware, the method comprising:

[0011] at a base computer, receiving data about a computer object from each of plural remote computers on which the object or similar objects are stored and or processed;

[0012] counting the number of times in a given time period objects having one or more common attributes or behaviours that have been seen by the remote computers;

[0013] comparing the counted number with the expected number based on past observations; and

[0014] if the comparison exceeds some predetermined threshold, flagging the objects as unsafe or as suspicious.

[0015] As will be appreciated, the amount of data received from an agent program running on remote computers can be vast and therefore suited to being processed by computer. It would be impractical to have human operators look at all of this data to determine malware. This aspect allows greater focus to be placed on researching these outliers. Processes can be readily developed that can use this outlier information to automatically group, identify or prioritise research effort and even make malware determinations. Where automated rules are used to identify malware, this information can be used to automatically heighten sensitivity of these rules when applied to objects in the group that has been identified as an outlier.

[0016] The attribute of the object may be one or any combination of: the file name, the file type, the file location, vendor information contained in the file, the file size, registry keys relating to that file, or any other system derived data, or event data relating to the object.

[0017] According to a second aspect of the present invention, there is provided apparatus for classifying a computer object as malware, the apparatus comprising a base computer arranged to receive data about a computer object from each of plural remote computers on which the object or similar objects are stored and or processed; the base computer being arranged to:

[0018] count the number of times in a given time period objects having one or more common attributes or behaviours that have been seen by the remote computers;

[0019] compare the counted number with the expected number based on past observations; and,

[0020] if the comparison exceeds some predetermined threshold, flag the objects as unsafe or as suspicious.

[0021] According to a third aspect of the present invention, there is provided a method of classifying a computer object as malware, the method comprising:

[0022] at a base computer, receiving data about a computer object from each of plural remote computers on which the object or similar objects are stored and or processed;

[0023] storing said data in a database; and,

[0024] presenting the user with a display of information relating to a group of plural objects and various attributes of those objects, the display being arranged such that commonality is shown between objects, wherein the group of objects displayed correspond to a user query of the database.

[0025] Thus, by being able to query and analyse the collective view of an object, i.e. its metadata and behaviours, across all remote computers that have seen it, a more informed view can be derived, whether by human or computer, of the object. In addition it is possible to cross-group objects based on any of their criteria, i.e. metadata and behaviour.

[0026] The users employed in grouping objects according to this scheme need not be skilled malware analysts. The users need only basic training in use of the tool and in grouping objects by running queries and looking for commonality. The final analysis of objects covered by the query can be taken by a skilled malware analyst. A key advantage of this system is that the computer is doing the work in processing the raw data which is too large of a task to be practical for a human operator to complete. The human operators grouping the data need not be skilled, which reduces costs for the business. This reduces costs and leaves the skilled malware analysts to concentrate on queries that are found to be good candidates for further investigation. Thus, the skill of these operators is most effectively deployed in using their experience to identify malware.

[0027] In an embodiment, the information is displayed in tabular form with rows of the table corresponding to objects and columns of the table corresponding to attributes of the objects. Nonetheless, other forms of display can be used e.g. graphs, charts, 3D-visualizations etc.

[0028] In some embodiments, at least one attribute of the objects is presented to the user pictorially such that objects with common attributes are given the same pictorial representation. In at least one embodiment, the pictorial representation comprises symbols having different shapes and/or colours. This allows the user to spot commonality in the attribute at a glance. This works well for attributes where commonality is important and the actual value of the attribute is relatively unimportant.

[0029] An exemplary method consistent with some embodiments includes: identifying commonality in one or more attributes between objects; and, refining the query in accordance with said identified commonality. By refining the query according to discovered commonality the user can quickly and simply investigate malware. The data grouped the queries can be flagged for further investigation by a human analyst or be immediately flagged as benign or malevolent.

[0030] The method may include creating a rule from a user query if it is determined that the query is deterministic in identifying malware.

[0031] In an exemplary embodiment, the method comprises monitoring user groupings of objects along with any and all user actions taken such as classifying the objects of the group as being safe or unsafe; automatically applying said groupings and actions in generating new rules for classifying objects as malware. Thus, by tracking these actions it is possible for the server to learn from human analysts how to identify and determine objects automatically. The application can remember queries that have been run by users. If the same query is being run repeatedly by a user and returning malware objects, or if a researcher consistently takes the action of determining the objects to be malware, then the system can automatically identify this condition and create a rule of the criteria and the action to determined matching objects as malicious.

[0032] The method may include applying the rule to an object at the base computer and or sending the rule to a remote computer and applying the rule to an object at the remote computer to classify the object as safe or unsafe. Thus, protection can be given in real time against previously unseen objects by applying the rules to the objects. Agent software running at the remote computer can apply the rules against objects for example in the event that contact with the base computer is lost allowing the agent software to carry on providing protection against new malware objects even when the base computer is "offline".

[0033] The method may include storing the classification of an object as safe or unsafe according to the rule in the database at the base computer. This information can be taken into account in evaluating the performance of rules and in formulating new rules.

[0034] In an exemplary embodiment, the method comprises receiving information from a remote computer that an object classified as malware by said rule is believed not to be malware; and amending or deleting the rule in accordance with said information. This allows feedback on the performance of the rule to the central server which can be taken into account in amending or deleting the rule if necessary and which may be taken into account in determining future rules.

[0035] According to an fourth aspect of the present invention, there is provided apparatus for classifying a computer object as malware, comprising a base computer arranged to receive data about a computer object from each of plural remote computers on which the object or similar objects are stored and or processed; the base computer being arranged to:

[0036] store said data in a database; and,

[0037] present the user with a display of information relating to a group of plural objects and various attributes of those objects, the display being arranged such that commonality is shown between objects, wherein the group of objects displayed correspond to a user query of the database.

[0038] As will become apparent in view of the following disclosure, the various aspects and embodiments of the invention can be combined.

BRIEF DESCRIPTION OF THE DRAWINGS

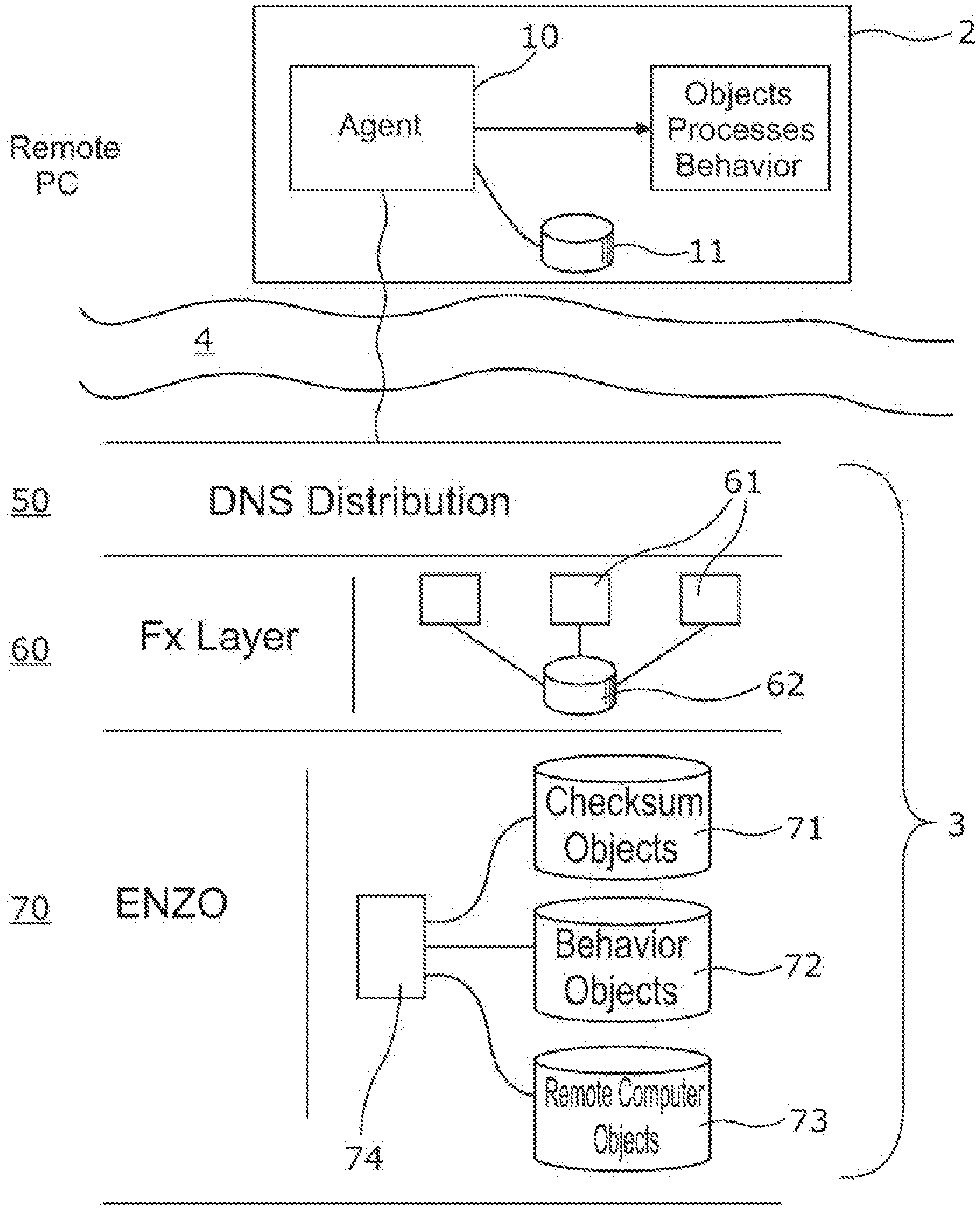

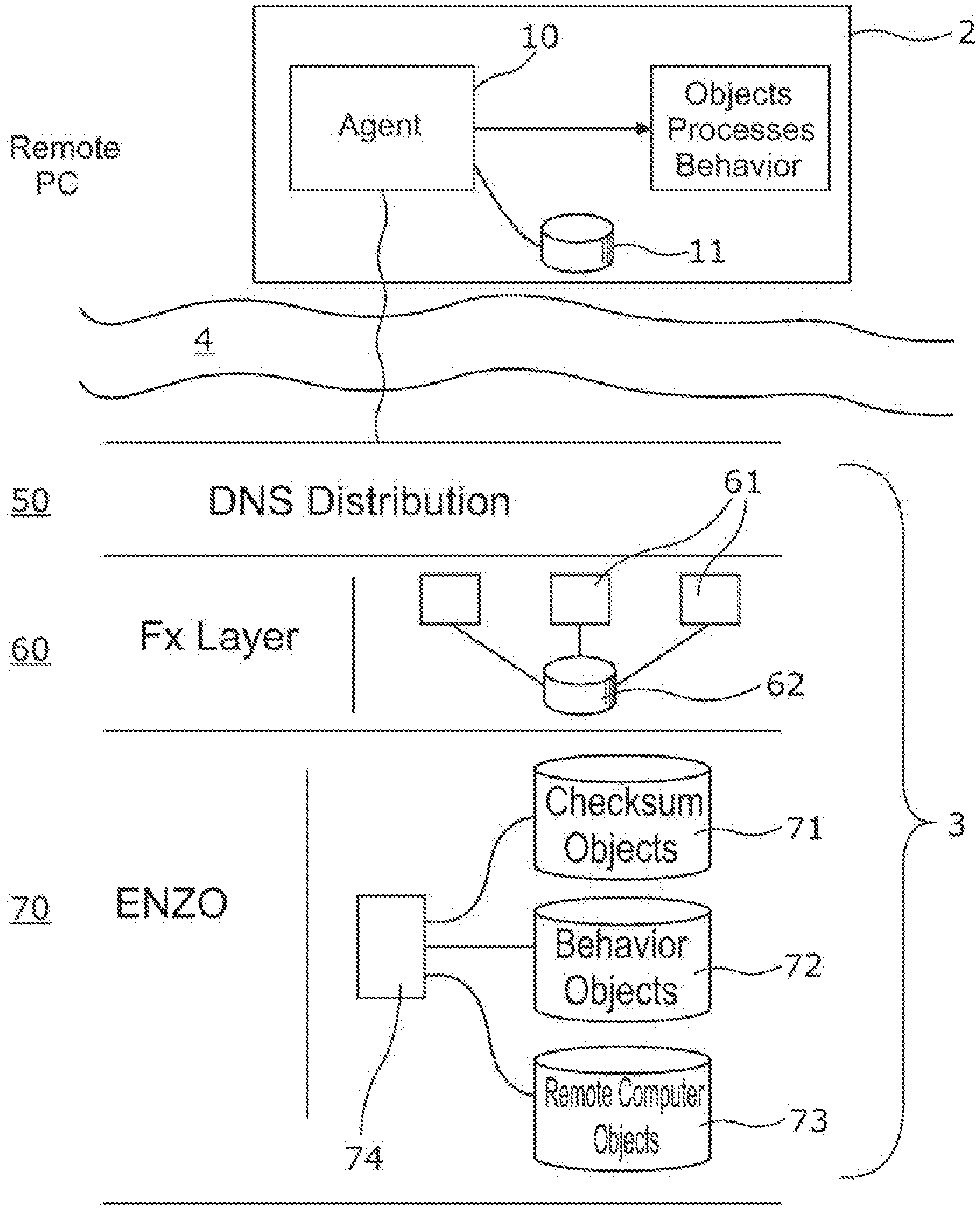

[0039] FIG. 1 shows schematically apparatus in which an embodiment of the present invention may be implemented;

[0040] FIG. 2 is a flowchart showing schematically the operation of an example of a method according to an embodiment of the present invention;

[0041] FIG. 3 shows a more detailed view of an example of a base computer 3 according to an embodiment of the present invention;

[0042] FIG. 4 shows an example where web servers are located in different geographical locations;

[0043] FIG. 5 shows schematically the apparatus of FIG. 3 in more detail and in particular the way in which data can be moved from the FX layer to the ENZO layer in an embodiment;

[0044] FIG. 6 shows schematically an example in which a new instance of an ENZO server is created in accordance with an embodiment of the invention;

[0045] FIG. 7 shows schematically an example of apparatus by which an ENZO server shares out its workload between plural servers in accordance with an embodiment of the invention;

[0046] FIG. 8 is a chart showing an example of a scheme for analysing data about objects according to an embodiment of the present invention;

[0047] FIG. 9 show shows schematically an example of a scheme for processing data about objects according to an embodiment of the invention;

[0048] FIGS. 10 to 13 show graphical user interfaces of an example of a computer program for researching objects according to an embodiment of the present invention;

[0049] FIG. 14 shows an example of an agent program on a remote computer according to an embodiment of the present invention; and

[0050] FIG. 15 shows the hierarchy on a computer system.

DETAILED DESCRIPTION

Overview

[0051] Referring to FIG. 1, a computer network is generally shown as being based around a distributed network such as the Internet 1. Embodiments of the present invention may however be implemented across or use other types of network, such as a LAN. Plural local or "remote" computers 2 are connected via the Internet 1 to a "central" or "base" computer 3. The computers 2 may each be variously a personal computer, a server of any type, a PDA, mobile phone, an interactive television, or any other device capable of loading and operating computer objects. An object in this sense may be a computer file, part of a file or a sub-program, macro, web page or any other piece of code to be operated by or on the computer, or any other event whether executed, emulated, simulated or interpreted. An object 4 is shown schematically in the figure and may for example be downloaded to a remote computer 2 via the Internet 1 as shown by lines 5 or applied directly as shown by line 6. The object 4 may reside in computer RAM, on the hard disk drive of the computer, on removable storage connected to the computer, such as a USB pen drive, an email attachment, etc.

[0052] In one exemplary embodiment, the base computer 3 is in communication with a database 7 with which the remote computers 2 can interact when the remote computers 2 run an object 4 to determine whether the object 4 is safe or unsafe. The community database 7 is populated, over time, with information relating to each object run on all of the connected remote computers 2. As will be discussed further below, data representative of each object 4 may take the form of a so-called signature or key relating to the object, its attributes and behaviour.

[0053] Referring now to FIG. 2, at the start point 21, a computer object 4 such as a process is run at a remote computer 2. At step 22, by operation of a local "agent" program or software running on the remote computer 2, the operation of the process is hooked so that the agent program can search a local database stored at the remote computer 2 to search for a signature or key representing that particular process, its related objects and/or the event. If the local signature is present, it will indicate either that the process is considered to be safe or will indicate that that process is considered unsafe. An unsafe process might be one that has been found to be malware or to have unforeseen or known unsafe or malevolent results arising from its running. If the signature indicates that the process is safe, then that process or event is allowed by the local agent program on the remote computer 2 to run at step 23. If the signature indicates that the process is not safe, then the process or event is stopped at step 24.

[0054] It will be understood that there may be more than two states than "safe" or "not-safe" and choices may be given to the user. For example, if an object is considered locally to be not safe, the user may be presented with an option to allow the related process to run nevertheless. It is also possible for different states to be presented to each remote computer 2. The state can be varied by the base computer to take account of the location, status or ownership of the remote computer or timeframe.

[0055] Furthermore, the agent software at the remote computer 2 may be arranged to receive rules or heuristics from the base computer 3 for classifying objects as safe or unsafe. If the object is unknown locally, the agent software may apply the rules or heuristics to the details of the object to try to classify the object as safe or unsafe. If a classification is made, details of the object and classification are passed to the base computer 3 to be stored in the community database 7. This means that the agent software is capable of providing protection against previously unseen objects even if it is "offline", e.g. it is unable to connect to the base computer for any reason. This mechanism is described in more detail later in the disclosure.

[0056] If the object is still not classified locally, then details of the object are passed over the Internet 1 or other network to the base computer 3 for storing in the community database 7 and for further analysis at the base computer 3. In that case, the community database 7 is then searched at step 25 for a signature for that object that has already been stored in the community database 7. The community database 7 is supplied with signatures representative of objects, such as programs or processes, run by each monitored remote computer 2. In a typical implementation in the field, there may be several thousands or even millions of remote computers 2 connected or connectable to the base computer 3 and so any objects that are newly released upon the Internet 1 or that otherwise are found on any of these remote computers 2 will soon be found and signatures created and sent to the base computer 3 by the respective remote computers 2.

[0057] When the community database 7 is searched for the signature of the object that was not previously known at the remote computer 2 concerned, then if the signature is found and indicates that that object is safe, then a copy of the signature or at least a message that the object is safe is sent to the local database of the remote computer 2 concerned at step 26 to populate the local database. In this way, the remote computer 2 has this information immediately to hand the next time the object 4 is encountered. A separate message is also passed back to the remote computer 2 to allow the object to run in the current instance.

[0058] If the signature is found in the community database 7 and this indicates for some reason that the object is unsafe, then again the signature is copied back to the local database and marked "unsafe" at step 27, and/or a message is sent to the remote computer 2 so that running of the object is stopped (or it is not allowed to run) and/or the user given an informed choice whether to run it or not.

[0059] If after the entire community database 7 has been searched the object is still unknown, then it is assumed that this is an entirely new object which has never been seen before in the field. A signature is therefore created representative of the object at step 28, or a signature sent by the remote computer 2 is used for this purpose.

[0060] At this point, rules or heuristics may be applied by the base computer 3 to the details of the object to try to classify the object as safe or unsafe. If a classification is made, the signature is marked as safe or unsafe accordingly in the community database 7. The signature is copied to the local database of the remote computer 2 that first ran the object. This mechanism is described in more detail later in the disclosure.

[0061] If the object is still not classified, the signature may be initially marked as bad or unsafe in the community database 7 at step 29. The signature is copied to the local database of the remote computer 2 that first ran the object at step 30. A message may then be passed to the remote computer 2 to instruct the remote computer 2 not to run the object or alternatively the user may be given informed consent as to whether to allow the object to run or not. In addition, a copy of the object itself may be requested at step 31 by the community database 7 from the remote computer 2.

[0062] If the user at the remote computer 2 chooses to run a process that is considered unsafe because it is too new, then that process may be monitored by the remote computer 2 and/or community database 7 and, if no ill effect occurs or is exhibited after a period of time of n days for example, it may then be considered to be safe. Alternatively, the community database 7 may keep a log of each instance of the process which is found by the many remote computers 2 forming part of the network and after a particular number of instances have been recorded, possibly with another particular number of instances or the process being allowed to run and running safely, the signature in the community database 7 may then be marked as safe rather than unsafe. Many other variations of monitoring safety may be done within this concept.

[0063] The database 7 may further include a behaviour mask for the object 4 that sets out the parameters of the object's performance and operation. If an object is allowed to run on a remote computer 2, even if the initial signature search 22 indicates that the object is safe, then operation of that object may be monitored within the parameters of the mask. Any behaviour that extends beyond that permitted by the mask is identified and can be used to continually assess whether the object continues to be safe or not.

[0064] The details of an object 4 that are passed to the base computer 3 may be in the form of a signature or "key" that uniquely identifies the object 4. This is mainly to keep the data storage and transmission requirements as minimal as possible. This key may be formed by a hashing function operating on the object at the remote computer 2.

[0065] The key in the exemplary embodiment is specially arranged to have at least three severable components, a first of said components representing executable instructions contained within or constituted by the object, a second of said components representing data about said object, and a third of said components representing the physical size of the object. The data about the object in the second component may be any or all of the other forms of identity such as the file's name, its physical and folder location on disk, its original file name, its creation and modification dates, resource information such as vendor, product and version, and any other information stored within the object, its file header or header held by the remote computer 2 about it; and, events initiated by or involving the object when the object is created, configured or runs on the respective remote computers. In general, the information provided in the key may include at least one of these elements or any two or more of these elements in any combination.

[0066] In one embodiment, a checksum is created for all executable files, such as (but not limited to) .exe and .dll files, which are of the type PE (Portable Executable file as defined by Microsoft). Three types of checksums are generated depending on the nature of the file:

[0067] Type 1: five different sections of the file are check summed. These include the import table, a section at the beginning and a section at the end of the code section, and a section at the beginning and a section at the end of the entire file. This type applies to the vast majority of files that are analysed;

[0068] Type 2: for old DOS or 16 bit executable files, the entire file is check summed;

[0069] Type 3: for files over a certain predefined size, the file is sampled into chunks which are then check summed. For files less than a certain predefined size, the whole file is check summed.

[0070] For the check summing process, in principle any technique is possible. The MD5

[0071] (Message-Digest algorithm 5) is a widely-used cryptographic hash function that may be used for this purpose.

[0072] This allows a core checksum to be generated by viewing only the executable elements of the checksum and making a comparison between two executables that share common executable code.

[0073] For the type 1 checksum mentioned above, three signature processes may be used. The first defines the entire file and will change with almost any change to the file's content. In particular, the first defines a representative sampling of the contents of the program in that if any fundamental change is made to the program, the checksum will change, but trivial changes can be identified as such, allowing correlation back to the original program body. The second attempts to define only the processing instructions of the process, which changes much less. The third utilises the file's size, which massively reduces the potential of collisions for objects of differing sizes. By tracking the occurrences of all signatures individually appearing with different counterparts, it is possible to identify processes that have been changed or have been created from a common point but that have been edited to perform new, possibly malevolent functionality.

[0074] As well as checksum data, in many embodiments metadata about the object is captured and sent to the base computer 3. Amongst other types, the types of metadata captured and sent to the base computer 3 might be:

[0075] "Events": these define the actions or behaviours of an object acting upon another object or some other entity. The event may include three principal components: the key of the object performing the act (the "Actor"), the act being performed (the "Event Type"), and the key of the object or identity of another entity upon which the act is being performed (the "Victim"). For example, the event data might capture the identity, e.g. the IP address or URL, of a network entity with which an object is communicating, another program acting on the object or being acted on by the object, a database or IP registry entry being written to by the object, etc. While simple, this structure allows a limitless series of behaviours and relationships to be defined. Examples of the three components of an event might be:

[0076] TABLE-US-00001 Actor Event Type Victim Object 1 Creates Program Object 2 Object 1 Sends data IP Address 3 Object 1 Deletes Program Object 4 Object 1 Executes Object 2 Object 2 Creates registry key Object 4

[0077] "Identities:" these define the attributes of an object. They include items such as the file's name, its physical location on the disk or in memory, its logical location on the disk within the file system (its path), the file's header details which include when the file was created, when it was last accessed, when it was last modified, the information stored as the vendor, the product it is part of and the version number of the file and its contents, its original file name, and its file size.

[0078] "Genesisactor": the key of an object that is not the direct Actor of an event but which is the ultimate parent of the event being performed. For example in the case of a software installation, this would be the key of the object that the user or system first executed and that initiated the software installation process, e.g. Setup.exe.

[0079] "Ancillary data": many events may require ancillary data, for example an event such as that used to record the creation of a registry run key. In this situation the "event" would identify the Actor object creating the registry run key, the event type itself (e.g. "regrunkey"), and the Victim or subject of the registry run key. The ancillary data in this case would define the run key entry itself; the Hive, Key name and Value.

[0080] "Event Checksums": because the event data can be quite large extending to several hundred bytes of information for a single event, its identities for the Actor and Victim and any ancillary data, the system allows for this data itself to be summarised by the Event Checksums. Two event checksums are used utilising a variety of algorithms, such as CRC and Adler. The checksums are of the core data for an event. This allows the remote computer 2 to send the checksums of the data to the central computer 3 which may already have the data relating to those checksums stored. In this case, it does not require further information from the remote computer 2. Only if the central computer 3 has never received the checksums will it request the associated data from the remote computer 2. This affords a considerable improvement in performance for both the remote and central computers 2,3 allowing much more effective scaling.

[0081] Thus, the metadata derived from the remote computers 2 can be used at the community database 7 to define the behaviour of a process across the community. As mentioned, the data may include at least one of the elements mentioned above (file size, location, etc.) or two or three or four or five or six or all seven (or more elements not specifically mentioned here). The data stored in the community database 7 provides an extensive corollary of an object's creation, configuration, execution, behaviour, identities and relationships to other objects or entities that either act upon it or are acted upon by it. This may be used accordingly to model, test and create new automated rules and heuristics for use in the community database 7 and as rules that may be added to those held and used in the local database of the remote computers 2 to identify and determine the response of the remote computers 2 to new or unknown processes and process activity.

[0082] Moreover, it is possible to monitor a process along with any optional sub-processes as a homogenous entity and then compare the activities of the top level process throughout the community and deduce that certain, potentially malevolent practices only occur when one or more specific sub-processes are also loaded. This allows effective monitoring (without unnecessary blocking) of programs, such as Internet Explorer or other browsers, whose functionality may be easily altered by downloadable optional code that users acquire from the Internet, which is of course the principal source of malevolent code today.

[0083] Distributed Architecture

[0084] The system as described so far is generally as described in our previous application US-A-2007/0016953. There now follows a description of new, advantageous schemes in operating such a system. It will be appreciated that in the description in relation to FIGS. 1 and 2 the central computer and the community database are presented for convenience as single entities. As will be apparent from the following discussion, the base computer 3 can be comprised of multiple computers and servers, etc. and the community database 7 can be made up multiple databases and storage distributed around this central system.

[0085] FIG. 3 shows an example of an arrangement for the base computer 3. The remote computer 2 has an agent program 10, which generally includes the same functionality as the agent program described above in relation to FIGS. 1 and 2. In short, the agent program 10 the monitors objects on that computer 2 and their behaviour and communicates with the base computer 3 to send details of new objects and behaviour found on the remote computer 2 and to receive determinations of whether the objects are safe or not. The agent program 10 optionally communicates with a local database 11, which holds a local copy of signatures relating to the objects found on the remote computer 2.

[0086] The agent program 10 can be comparatively small compared with other commercially available anti-malware software. The download size for the agent program 10 can be less than 1 MB and occupy about 3 MB of memory when running, using more memory only to temporarily hold an image of a file(s) being scanned. In comparison, other anti-malware packages will typically have a download of 50 MB and occupy 50 MB to 200 MB of computer memory whilst scanning files. Thus, the exemplary architecture can occupy less than 2% of system resources compared with other products.

[0087] This is achieved primarily by the exemplary agent program 10 being developed in a low-level language, having direct access to system resources such as the video display, disk, memory, the network(s), and without the incorporation of many standard code or dynamic linked libraries to perform these functions. Memory usage is optimised by storing data in the local database structure that provides the ability to refer to objects by a unique identifier rather than requiring full filenames or signatures. All unnecessary dynamic link libraries are unloaded from the process immediately as they are identified as no longer being used, and background threads are merged to reduce CPU usage. A small, efficient agent can be deployed more quickly and can used alongside other programs, including other security programs, with less load on or impact on the computer's performance. This approach also has the advantage of having less surface area for attack by malware making it inherently more secure.

[0088] The agent program 10 communicates with the base computer 3 over the Internet 1 via the Internet's Domain Name Resolution System (DNS) 50.

[0089] The base computer 3 has a first layer comprising one or more servers 61, which in this example are web servers. In the present disclosure, the web servers 61 are referred to as "FX servers" or "threat servers" and the first layer is referred to as the "FX layer". However, it will be appreciated that any type of suitable server may be used according to need. The remote computers 2 are allocated to one of the web servers 61 as explained in more detail below.

[0090] The FX layer 60 makes real time decisions as to whether or not an object is benign or malevolent based on the details of that object sent from the remote computer 2. Each web server 61 of the FX layer 60 is connected to a database 62 which contains entries for all of the objects known to the base computer 3 and a classification of whether the object is safe or unsafe. The database 62 also stores rules for deciding whether unknown objects are safe or unsafe based on the information received from the remote computer 2. The web server 61 first searches the database 62 for matching signatures to determine if the object is safe or unsafe or unknown. As described above, the signature can be a function of the hashes (checksum data) derived from file itself, event data involving the object and or metadata about the object in any combination. If the object is unknown, the web server 61 then determines if any of the rules classify the object as unsafe. The web server 61 then communicates back to the remote computer 2 whether the object is safe and can be allowed to run, unsafe and is to be prevented from running, or unknown in which case the object can be allowed to run or not according to user preference.

[0091] Thus, the FX layer 60 reacts in real time to threats to determine whether or not malware should be allowed to run on a remote computer 2.

[0092] Sitting behind the FX layer 60 is a second layer (called the "ENZO layer" 70 herein). Information about objects received by the FX layer 60 from the remote computers 2 is sent to the ENZO layer 70 such that a master record is maintained at the ENZO layer 70 of all data received from all remote computers 2. This process is described in more detail below.

[0093] The ENZO layer 70 has one or more servers 74 (referred to as "central servers" or

[0094] "ENZO servers" in this disclosure) connected to one or more "master" databases which aggregate all of the information received from all web servers 61 from all remote computers 2. The databases may comprise any combination of the following three databases and in one exemplary embodiment has all three.

[0095] 1) An object database 71, which has entries containing object signatures (e.g. their MD5 checksums) and including metadata received about objects, e.g. file name, file location, or any other metadata collected by the system as described above.

[0096] 2) A behaviour database 72 for capturing details of the behaviour of objects observed on a remote computer 2. This database 72 is populated by the event data sent from the remote computers 2 and allows a picture to be built up of the behaviour and relationships an object has across the community.

[0097] 3) A computer-object database 73 which associates remote computers 2 in the community with objects observed on those remote computers 2 by associating objects with identification codes sent from the remote computer 2 during object detail transmission. The computer may be identified by at least the following three identifiers sent from the remote computer: one which relates to the physical system, one which relates to the operating system instance and one which relates to the logged on user.

[0098] As will be appreciated, in a community having for example 10 million or more remote computers 2, each having on average hundreds to thousands of objects, each having a large number of behaviours and associations with other objects, the amount of data held in the databases 71,72,73 is enormous.

[0099] The ENZO servers 74 can query the databases 71,72,73 and are operable, either by automation or by input from a human analyst or both, in monitoring object behaviour across the community and in investigating objects and developing rules for spotting malware. These rules are fed back to the FX layer 60 and or the agent program 10 running on remote computers 10 and, as discussed elsewhere in this document, are used by the web servers 61 or the agent program 10 in real time to stop malware from running on remote computers 2. The performance of those rules in accurately stopping malware on the remote computers 2 is fed back to the ENZO layer 70 and can be used to refine the rules. The techniques used are described further in the following disclosure.

[0100] In an exemplary embodiment, some or all of the servers 61,74 and databases 62,71,72,73 of the FX and ENZO layers 60,70 are implemented using cloud computing. Cloud computing is a means of providing location-independent computing, whereby shared servers provide resources, software, and data to computers and other devices on demand. Generally, cloud computing customers do not own the physical infrastructure, instead avoiding capital expenditure by renting usage from a third-party provider. Cloud computing users avoid capital expenditure on hardware, software, and services when they pay a provider only for what they use. New resources can quickly be put online. This provides a large degree of flexibility for the user.

[0101] An example of cloud computing is the Amazon Elastic Compute Cloud (EC2), which is a central part of the Amazon.com cloud computing platform, Amazon Web Services (AWS). Another example is the Windows Azure Platform, which is a Microsoft cloud platform that enables customers to deploy applications and data into the cloud. EC2 is used in the present example to provide cloud computing. Nonetheless, it will be appreciated that, in principle, any suitable cloud architecture could be used to implement the base computer 3. Alternatively conventional data centres could be used to implement the base computer 3, or a mixture of conventional data centres and cloud-based architecture could be used.

[0102] EC2 allows users to rent virtual computers on which to run their own computer applications. EC2 allows scalable deployment of applications by providing a web service through which a user can boot an Amazon Machine Image to create a virtual machine, which Amazon calls an "instance," containing any software desired.

[0103] A user can create, launch, and terminate server instances as needed, paying by the hour for active servers, allowing the provision of "elastic" computing. EC2 provides users with control over the geographical location of instances, which allows for latency optimization and high levels of redundancy. For example, to minimize downtime, a user can set up server instances in multiple zones which are insulated from each other for most causes of failure such that one backs up the other. In this way, the cloud provides complete control of a user's computing resources and its configuration, i.e. the operating system and software packages installed on the resource. Amazon EC2 allows the user to select a configuration of memory, CPU, instance storage, and the boot partition size that is optimal for the operating system and application. EC2 reduces the time required to obtain and boot new server instances to minutes, allowing the user to quickly scale capacity, both up and down, their computing requirements change.

[0104] EC2 maintains a number of data centres in different geographical regions, including: US East Coast, US West Coast, EU (Ireland), and Asia Pacific (APAC) (Singapore). As shown by FIG. 4, in an exemplary embodiment, the servers and databases of the base computer 3 are implemented in plural of these geographical locations 80.

[0105] The important question therefore arises of how to allocate remote computers 2 to a particular instance of web server 61 in the FX layer 60. Important considerations in this decision are to minimise latency and to share the load evenly across the web servers. Ideally, the load on resources would be shared approximately equally across all instances of web servers in order to avoid web servers being under utilised and to minimise the number of web servers required. It is also desirable to implement a simple solution which does not add significantly to the speed or size of the agent program 10 on the remote computers, or place an overhead on the base computer 3.

[0106] 1) In an exemplary embodiment, the agent program 10 uses the geographical location of the remote computer 2 in allocating the remote computer 2 to a web server 61, i.e. based on its proximity to a geographical region 80 of the cloud. The location of the remote computer can be determined by any convenient means, such as examining the IP address of the remote computer 2 against a database of known location ranges, or performing a network route analysis to determine the number of "hops" required to reach the destination server. This functionality can be performed by the agent program 10 running on the remote computer 2 when it is first installed on the computer 2. And by allocating based on location, latency can be minimised.

[0107] 2) In an exemplary embodiment, the agent program 10 generates a random number based on the date and time of its installation seeded with unique identifiers of the remote computer to decide to which web server 61 it should be mapped. This provides a simple, pseudo random way of mapping remote computers 2 to web servers 61, which shares the load evenly between web servers 61 even if several thousand remote computers are being installed simultaneously.

[0108] 3) The DNS distribution layer then maps the URL to the physical data centre. The Domain Name System (DNS) is a hierarchical naming system built on a distributed database for computers, services, or any resource connected to the Internet or a private network. It associates various information with domain names assigned to each of the participating entities. The DNS distribution network 50 allows load balancing to plural servers 61 making up the FX layer 60.

[0109] Any combination of these techniques can be used by the agent program 10 to allocate a remote computer 2 to a web server 61. And all three may be used in combination.

[0110] The agent program may also utilize a secondary algorithm to re-map the remote computer 2 to different URL in case the primary URL is unavailable for any reason, e.g. if the servers go down. Also, in the event that malware has been able to disable the DNS mechanism then the agent program 10 can make direct contact with the FX servers 61 by referring directly to a series of direct IP addresses reserved for this purpose.

[0111] Optionally, the agent program 10 is arranged so that it can reassign the remote computer 2 to a different server 61 by receiving feedback/instruction from the server 61 the computer is already connected to. This can be used to add central control to the allocation of agent program 10 to servers 61, which adds more flexibility to the system.

[0112] Thus, this scheme allows a simple, agent managed way of dynamically load balancing between servers and providing resiliency, whilst dealing with geographical location. In known prior art arrangements, specialised hardware load balancers are employed in the network to divide load to other servers, or else agents are manually allocated to web servers 61 when the agent program 10 is installed.

[0113] Using a cloud-based distributed architecture for the base computer 3 presents challenges in trying to maintain integrity of data across the servers and in managing the update of data across the many servers. As will be clear from the foregoing, the web servers 61 in a typical implementation will be dealing with huge amounts of data. There will be large amounts of commonality in the data, which can be used for determining rules on what objects are malware. However, it is impractical to store all data on all web servers 61 on all regions. This presents a problem in determining whether or not the data is common in real time.

[0114] To address these issues, an exemplary embodiment adopts the following scheme. Each web server 61 in the FX layer 60 examines an incoming packet of data from a remote computer 2 about an object seen on that remote computer 2. The agent program 10 generates a checksum of the data packet and sends it to the web server 61. The web server 61 has a database 62 of objects that have previously been seen and which have already been sent to the ENZO server 74. The web server 61 checks its database 62 for the checksum of the incoming data. If the checksum is found, then the data has already been seen by the ENZO server 74. In this case, the web server 61 just increases the count associated with that checksum in its database of the number of times that data has been seen to assist in determining the popularity of a piece of software or the frequency of the occurrence of a specific event. This information can then be forwarded to the ENZO server 74. If the checksum is not found in the FX database 62, then the web server requests the full data packet from the remote computer and then forwards this to the ENZO server 74. The ENZO server 74 then stores this data in the appropriate databases 71,72,73. Thus, the ENZO layer 70 keeps a master list in the object database 71 of all data objects, their metadata and behaviour seen on all of the remote computers 2. This master list is propagated back to all of the web servers 61 in all of the geographical locations 80. Thus, all web servers 61 are updated with information about which objects have been seen by the community.

[0115] This scheme has been found to reduce workload and traffic in the network by a factor of about 50 compared with a conventional scheme where typically each piece of data on being received by a web server would immediately be propagated to all other servers in the system.

[0116] It is desirable that the data held in the master databases 71,72,73 in the ENZO layer 70 provide scalability, accessibility and resilience to the data for users. It will be appreciated that if just one live ENZO server 74 is used, then if that server becomes inaccessible, all live data is lost. For this reason, in many embodiments at least one ENZO server 74 resides in each region 80 of the cloud to provide redundancy. This means that the web servers 61 are not all directly linked to the same ENZO server 74, but rather the web servers 61 are only linked to the ENZO server 74 in their own region 80. This creates a need for an extensible method of providing data to multiple database servers.

[0117] FIG. 5 shows schematically a scheme of updating data from a web server 61 to an ENZO server 74. Each web server 61 has two temporary databases 63a, 63b linked to it. At an initial starting point, one database 63a is part full 63b (active) and the other is empty (inactive). As information about objects is received from a remote computer 2 by the web server 61, it posts the information into the active database 63a. This information will be checksum information received from the remote computers 2 about objects or events seen on the remote computer. If the checksum is previously unseen at the web server, the associated data about that object or event is posted to the database along with its checksum.

[0118] Once the active database 63a reaches a predetermined size or some other predetermined criteria is met, the contents of the active database 63a are put into long term storage 66 together with a time stamp of the point in time when this happens. While this is occurring, the inactive database 63b is made active and starts to fill with new data as it arrives, and the formerly active database 63a is cleared and made inactive. Again, when this database is full, then the process of time stamping and moving the contents of the database to long term storage is repeated and the databases are swapped over. In some embodiments, the FX layer 60 has a S3 sub-layer 65, which is responsible for managing input/output to the long term storage 66. S3 (Simple Storage Service) is an online storage web service offered by Amazon Web Services. S3 is an Amazon service for the storage of high volume data in a secure and non-volatile environment through a simple web services interface. S3 is used to store the history of the FX server data being sent from the FX servers to the ENZO servers. When a block of data is put into long term storage 66, the S3 layer 66 also forwards this data to the ENZO server 74 or servers so that the ENZO server can update its databases. All FX servers feed all ENZO servers via the S3 storage service.

[0119] The databases 63a, 63b may be emptied into long term storage 66 for example every few minutes or even every few seconds. However, as will be appreciated, the actual time period used can be selected according to the load and size of the databases and may be chosen dynamically based on for example the load on the database or the size of the data. Thus, the S3 layer 65 holds all of the data received by all of the web servers 61 in the community. This has a number of advantages as illustrated by FIG. 6.

[0120] Firstly, this can be used to allow the master ENZO databases 71,72,73 to be rebuilt for example in the event that an ENZO server 74 develops a problem. Also, if the data held by an ENZO server 74 is corrupted somehow, for example by a problem in a software upgrade on the ENZO server 74, it is possible to roll back the databases at the ENZO server 74 to a point before the corruption occurred. By using the timestamps associated with the blocks of data in the S3 layer 65, the ENZO server 74 can request the data blocks in long term storage 66 at the S3 layer 65 to be resent to the ENZO server 74 so the server's databases 71,72,73 can be brought up to date.

[0121] Furthermore, using this scheme, the ENZO servers 74 in multiple regions 80 can create backup databases of the ENZO databases by receiving the data from the S3 layer. This can be done without affecting the live ENZO databases 74, so these can carry on functioning seamlessly.

[0122] The alternative would be to post to databases at a single ENZO server which is designated the master server and to propagate the data from the master server to other servers in the community. However, this in effect creates one "live" server and one or more backups. The live server therefore does most of the work, e.g. pre-processing data received from the agents, updating the databases and analysing the data for threats, whilst the backup servers are under utilised. Thus, the live server must be more powerful than the backup servers. This tends to be wasteful and inefficient of resources. In comparison, the exemplary embodiment, shares the workload around the servers equally.

[0123] It is also desirable for operators, human malware analysts, etc., to have a copy of the data from a live server for research, etc. Image servers may be created in the same way as the live servers and the backup servers. The user of the system can take the image server offline when he needs to take an image for offline use. Once the image is taken, the image database can be brought up to date using the blocks of data from the S3 layer.

[0124] Cloud computing can recreate instances of web servers very quickly, e.g., in a matter of minutes. Cloud computing is therefore advantageous in implementations where the volume of transactions grows (or shrinks). In this situation, a new server instance can be quickly created to handle the new load. However, it is necessary to take an image of a live server to create the image. This is disruptive to the processes running on that instance during imaging. It is therefore clearly not acceptable to take a live server out of commission for this period. Therefore, in many embodiments, a special dedicated image server is maintained behind the ENZO server, which is updated in a similar way to the live and backup servers. In this case, the image server is taken off-line and imaged. This process might take for example 30 minutes. During this time, the image server is not receiving updates from the S3 server and therefore becomes out of date. However, since the exact time when the server went offline is known, it can be updated by requesting data blocks from the S3 server with a timestamp later than when it went offline. Thus, an image can be taken of an updated server for offline use etc. and brought back up to date without affecting the running of the system.

[0125] It is also desirable to be able to scale out the architecture of the ENZO layer 70. For example, a live server 74 may be running out of processing power due to the increase in traffic it is seeing from web servers 61 or agents 10. For example, more remote computers 2 may be connected to the system, or a common software package may be updated leading to a large volume of new data being sent to the ENZO layer 70. In this situation, a second or further live server instance can be created in the ENZO layer 70 in order to scale out horizontally (as opposed to adding more CPU/RAM to an existing server). As described above, it is relatively straightforward to create an image of an ENZO server 74 in a cloud-computing environment. As can be seen from FIG. 7, the data on each server 74 is divided into a plurality of sections. In this case the data on the server 74 is divided into eight sections 0-7. Each section does a share of the processing on that server. When the server is divided, various sections are allocated to each server. So, for example, in the example of FIG. 7, the original server L1 processes data 0-3, and the newly created server L2 processes data 4-7. Each server then handles pre-processing and storing its own data. For the purposes of analysing the data, in many embodiments the data from these multiple severs is aggregated so that the human analyst is working on the complete set of data when investigating malware (as described in more detail below). Of course, the data can be divided in different ways among the servers according to need.

[0126] So, instead of holding a single database and then having to dissect it if it is required to distribute the load across multiple servers, in many embodiments the database is already dissected making it is much easier to make a transition to further distribution of the database workload across multiple servers.

[0127] The number of data sections may be selected to be eight, because this is enough to give reasonable flexibility and ability to expand. However, it is rare that a business needs to expand by more than 800% at any time. Nonetheless, other plural numbers of data sections can be used as appropriate.

[0128] This architecture allows vast amounts of data to be managed across multiple locations and servers. The architecture can be scaled and servers copied without incurring extra work or affecting the running of the system. The data can be exchanged across servers that can change dynamically.

[0129] Using Trend Data

[0130] Malware evolves at a significant pace. Historically, malware used a single infection (module or program) to infect many computers. As this allowed malware researchers to quickly become aware of the infection, signatures could be quickly deployed to identify and block the threat. In the last decade, malware authors have made their threats contain many varied modules, which shared a common purpose. To detect and block these new threats is a much greater challenge for malware research and to creating and distributing signatures to identify and block all of the variations, any one of which could infect one or more computers. Due to the varied and distributed nature of these modern threats malware researchers are often late in identifying that a threat even exists.

[0131] By collecting hashes, signatures and statistics of new programs and modules correlated by path and file details, and aggregating this information at a central location, it is possible to use a method of analysis that will allow faster identification of new threats.

[0132] On any given day the amount of new commercial programs and modules that are created can be considered almost constant. This data can be measured against any of the metadata or event data collected about the object. In one example, the data is measured against the Path (Folder) location of new files created. The data can also be measured against their filenames, and file types (e.g. .exe, .dll, .drv, etc.) along with details such as vendor, product and version information, registry keys, or any other system derived data forwarded to base computer. Any combination of these factors is possible. In whichever way it is chosen to group the data, by establishing a baseline for the pattern and distribution of this data across the groups it is possible to quickly identify outliers.

[0133] FIG. 8 shows an example of collected data over a time period grouped according to folders in the file system compared with the established normal distribution (base line). If the number of new programs in the windows system 32 subfolder is normally x in any given time period t (e.g., in a given day) and in a particular time period t1 under consideration it is 1.4x (or exceeds some other predetermined ratio) then it indicates an abnormal increase in the number of new files which signifies possible malware activity. This identification allows greater focus to be placed on researching these outliers. Processes can be readily developed that can use this outlier information to automatically group, identify or prioritise research effort and even make malware determinations. Where automated rules are used to identify malware, this information can be used to automatically heighten sensitivity of these rules when applied to objects in the group that has been identified as an outlier.

[0134] Another example of this technique is to focus on a filename and compare the number of new objects (as represented by its hash) that utilize that name, or the number of new objects all of which have new names. This is contrary to the general principles of most commercial software, which needs consistency to enable manageability of their products. Most commercial applications have only a few names and each file name has only a few variants as each new release is made available. In contrast, some malware has thousands of variants using the same, similar or similarly structured name and which are observed by the community utilising the related names within a very short window of time (e.g., seconds-weeks).

[0135] In principle, any length of time for the window can be selected. In practice, this is likely to be of the order of every 15 minutes, or every few hours, or a day, rather than longer periods like every week.

[0136] In some embodiments, techniques are provided to provide context for researchers. In detecting malware, human analysts and computer automated analysis have different strengths and are often used together to some degree to harness the capabilities of both. Computers are good at applying rules to and processing large amounts of data. In the present application, the amount of data received from agent program 10 running on remote computers 2 is vast and therefore suited to being processed by computer. It would be impractical to have human operators to look at all of this data to determine malware. On the other hand, experienced human analysts are highly adept at spotting malware given the appropriately focussed data about that object and similar objects and their context. Often a human analyst can "intuitively" spot malware, in a way that is difficult to reduce to a set of rules and teach to a machine. For example, kernel32.dll is a file associated with the Windows (RTM) operating system. A malware program may be called something similar, e.g. kernel64.dll, which would immediately be apparent to a user as being suspicious, but which it would be difficult to teach a machine to spot.

[0137] The pattern may be obvious in retrospect when an analyst is presented with the relevant data. However, this is a rear view mirror approach. The problem is finding the relevant data in the vast amount of data being received at the base computer 3 so that the analyst can focus his/her time on the important data. As illustrated by FIG. 9, what is needed is some way of distilling the raw data 100 into groups of related data 101 to provide a focussed starting point for a malware researcher to investigate and take necessary action 102 to flag objects bad or safe 103 accordingly.

[0138] As will be appreciated, a single object on a remote computer 2 across the community can have a high degree of associations with other objects on remote computers 2. Some tools currently exist which can map links between entities to create a network of associations. However, when used for a malware object and its associations with other objects, these will result in a diagram that is so dense and complicated that it is practically useless for the purpose of allowing a human analyst to identify malware. The problem is sifting this data so useful features are extracted for the analyst so that he can quickly focus on the likely candidates for being malware.

[0139] An exemplary embodiment, illustrated by FIGS. 10 to 13, provides a software program running on the ENZO server 74 and giving the user a visual based tool for running queries against the databases. A query language such as TSQL may be used for example to query the database based on the user's input. As mentioned in the foregoing, the ENZO layer 70 has a complete record of the properties and activities of all objects seen on all remote computers 2 in their databases. FIG. 10 shows an example of an on-screen display and user interface including a table 105 in which the rows represent the objects (determined by their signatures) that match the results of the current query. The user interface provides tools for creating new queries 106, for displaying current and recent queries 107 and for sorting the data displayed in the table for the current query 108. Clearly there may be more results than will fit on one screen. There may therefore be more than one page of results to which the user can navigate through appropriate controls 109 provided by the user interface.

[0140] The table 105 has a plurality of columns of data representing information about the objects. The information may include any of the data collected about an object, its metadata or its behaviour, sent to the base computer 3 by the remote computers 2. This may include any of the following for example: the name of the file, and the location (path) of the file, the country where the object was first encountered, the identity of the remote computer that encountered the object, its operating system and version number, details of the web browser and any other security products installed on the computer, the creator of the file, the icon associated with the file, etc. The information may include metadata about the file, such as the author or the size of the object or information relating to executable instructions in the object. The data may contain "actor" and "victim" information and details of its registry key. The information may include information about whether or not the object has been identified as safe or unsafe at the remote computer 2 or at the FX server 61.

[0141] The information displayed may contain counts of how often a particular attribute is present among all the objects returned by the query or across the community. In addition, the information may contain less easily understood information. As discussed above, the information collected about an object can include checksums of parts of the executable, e.g. the first 500 bytes of the executable part of the file. In many cases, the actual value of the checksum or other data is of little or no interest to the user.

[0142] Therefore, in many implementations some or all of this information is presented to the user in a graphical way, utilising colour, shape and or different symbols, which makes spotting patterns in the data more intuitive and speedy for the user.

[0143] In some embodiments, at least one column of data is presented according to the following scheme:

[0144] 1) if the value is unique in that column between the rows of data representing objects then the numeral "1" is presented.

[0145] 2) if the value is replicated between the rows of data on the screen, or between all the rows of data matching that query, then a coloured symbol (e.g. a green triangle or a red letter "P" in a blue square, etc.) is allocated for that value and displayed in that column for all rows that share that value. Thus, without needing to know what the symbols represent, the user can see at a glance which rows on the screen have commonality of an attribute.

[0146] This provides a starting point for running further queries. For example, the user can quickly see commonality between objects, which look interesting. As explained elsewhere in this document, a typical trait of malware is that the objects use a large number of file names, file locations, similar file sizes, similar code portions, etc. The program allows a user to refine a query by for example clicking on columns in the table and using the query creating tools 106 to add a particular attribute value to the predicate of the query, or to start a new query with this column value in the predicate, etc.