Omnidirectional Obstacle Avoidance Method For Vehicles

LEE; TSUNG-HAN ; et al.

U.S. patent application number 16/662342 was filed with the patent office on 2020-06-04 for omnidirectional obstacle avoidance method for vehicles. The applicant listed for this patent is METAL INDUSTRIES RESEARCH & DEVELOPMENT CENTRE. Invention is credited to TSU-KUN CHANG, SHIH-CHUN HSU, JINN-FENG JIANG, TSUNG-HAN LEE, HUNG-YUAN WEI.

| Application Number | 20200175288 16/662342 |

| Document ID | / |

| Family ID | 70767137 |

| Filed Date | 2020-06-04 |

View All Diagrams

| United States Patent Application | 20200175288 |

| Kind Code | A1 |

| LEE; TSUNG-HAN ; et al. | June 4, 2020 |

OMNIDIRECTIONAL OBSTACLE AVOIDANCE METHOD FOR VEHICLES

Abstract

A method for avoiding obstacles surrounding a mobile vehicle is disclosed. The method discloses that it is more accurate to merge images after a depth information is obtained to construct an environment information. The mobile vehicle set an omnidirectional depth sensing module using a lot of depth sensors for capturing a depth information of an environment surrounding the mobile vehicle and identifying the obstacles on the road upon the depth information. Also, scanning the smooth degree of the road for identifying static obstacles and moved obstacles, Thereby, a control circuit controls the mobile vehicle to lower speed or circumvent the danger.

| Inventors: | LEE; TSUNG-HAN; (KAOHSIUNG CITY, TW) ; WEI; HUNG-YUAN; (KAOHSIUNG CITY, TW) ; JIANG; JINN-FENG; (KAOHSIUNG CITY, TW) ; HSU; SHIH-CHUN; (KAOHSIUNG CITY, TW) ; CHANG; TSU-KUN; (KAOHSIUNG CITY, TW) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 70767137 | ||||||||||

| Appl. No.: | 16/662342 | ||||||||||

| Filed: | October 24, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | B60Q 9/008 20130101; G06K 9/00805 20130101; G08G 1/16 20130101; B60W 50/14 20130101; B60W 2420/42 20130101 |

| International Class: | G06K 9/00 20060101 G06K009/00; G08G 1/16 20060101 G08G001/16; B60Q 9/00 20060101 B60Q009/00; B60W 50/14 20060101 B60W050/14 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Nov 29, 2018 | TW | 107142647 |

Claims

1. An omnidirectional obstacle avoidance method for vehicles, comprising steps of capturing a plurality of peripheral images using a plurality of depth camera units of a vehicle and acquiring a plurality pieces of peripheral depth information according to a sensing algorithm, said plurality of peripheral images including one or more obstacle, and said one or more obstacle located on one side of said vehicle; fusing said plurality of peripheral images to a plurality of omnidirectional environment images according to a fusion algorithm and dividing said side of said vehicle into a plurality of detection regions; operating said plurality pieces of peripheral depth information and said plurality of omnidirectional environment images according to said plurality of detection regions to give acceleration vector information and distance information; estimating a moving path of said one or more obstacle according to said acceleration vector information and said distance information of said one or more obstacle; and producing alarm information according to said moving path of said one or more obstacle.

2. The method of claim 1, wherein in said step of capturing a plurality of peripheral images using a plurality of depth camera units of a vehicle and acquiring a plurality pieces of peripheral depth information according to a sensing algorithm, said plurality pieces of peripheral depth information is deduced according to the sensing result of said plurality of depth camera units and said sensing algorithm.

3. The method of claim 1, wherein fusing said plurality of peripheral images to a plurality of omnidirectional environment images according to a fusion algorithm and dividing said side of said vehicle into a plurality of detection regions, comprises eliminating a plurality of overlap regions of said plurality of peripheral images according said fusion algorithm and fuse to said omnidirectional environment images.

4. The method of claim 1, wherein operating said plurality pieces of peripheral depth information and said plurality of omnidirectional environment images according to said plurality of detection regions, comprises using the image difference method to calculate according to said plurality pieces of peripheral depth information and said plurality of omnidirectional environment images for giving a plurality of optical flow vectors, and further giving said acceleration vector information and said distance information according to said plurality of optical flow vectors.

5. The method of claim 1, wherein estimating a moving path of said one or more obstacle according to said acceleration vector information and said distance information of said one or more obstacle, further comprises estimating a moving speed and a moving distance of said one or more obstacle.

6. The method of claim 1, wherein producing alarm information according to said moving path of said one or more obstacle, further comprises controlling said vehicle to avoid said one or more obstacle.

7. The method of claim 1, wherein before said step of producing alarm information according to said moving path of said one or more obstacle, further comprises classifying and labelling said one or more obstacle.

8. The method of claim 1, wherein said alarm information includes obstacle alarm information and avoidance information.

Description

FIELD OF THE INVENTION

[0001] The present invention relates generally to an obstacle avoidance method, and particular to an omnidirectional obstacle avoidance method for vehicles.

BACKGROUND OF THE INVENTION

[0002] Currently, it has been a pretty mature technology to install image capture devices on a vehicle, for example, a 3D automobile panorama image system using distance parameter to calibrate image correctness, which installs capture units, sensing units, and a processing unit on a vehicle. The capture units are used for capturing and transmitting omnidirectional aerial images surrounding an automobile. The sensing units are used for sensing the distances between the automobile and surrounding objects for generating and transmitting a plurality of distance signals. The processing unit includes a calibration module, a stitch module, and an operational module. The processing module receives the aerial images transmitted by the capture units and the distance signals transmitted by the sensing units. The calibration module calibrates the coordinate system of the aerial images according to the distance signals. Then the operational unit uses algorithms as well as insertion and patch techniques to give 3 D automobile panorama images. The capture units are used for capturing and transmitting a plurality of omnidirectional aerial images surrounding the automobile. The sensing units are used for sensing the distance between the automobile and one or more object surrounding the automobile, and generating and transmitting a plurality of distance signals. Nonetheless, since ultrasonic sensors cannot get complete distance information unless the distance is closer and the sensing accuracy is low, they are not applicable to obstacle detection for moving automobiles.

[0003] To improve the sensing accuracy, according to the prior art, there is a camera lens set formed by multiple camera lenses. The camera lenses are disposed at different locations surrounding an automobile for taking and outputting multiple external images. The three-dimensional image processing module used for receiving images synthesize to give three-dimensional images using panorama projection images, captures partial three-dimensional panorama projection images according to the driver's field of vision corresponding to the driver's viewing angle, and outputs partial three-dimensional external images. Unfortunately, the images of the other angles during movement cannot be observed concurrently. Besides, the distance information of the obstacles surrounding a vehicle cannot be acquired real-timely.

[0004] Thereafter, although improvements are made in detecting the obstacles surrounding a vehicle for monitoring, there are still more objects might influence the movement of a vehicle in addition to other vehicles. For example, pedestrians, animals, and moving objects can be regarded as obstacles for a vehicle. They might cause emergent conditions for a moving vehicle. This influence is most serious in crowded streets of a city.

[0005] To solve the above problem, the present invention provides an omnidirectional obstacle avoidance method for vehicles. By acquiring omnidirectional images and omnidirectional depth information, the obstacle information surrounding a vehicle can be given. Then the vehicle is controlled to avoid the obstacles with higher risks and thus achieving the purpose of omnidirectional obstacle avoidance.

SUMMARY

[0006] An objective of the present invention is to provide an omnidirectional obstacle avoidance method for vehicles, which provides a fused omnidirectional image along with depth information for detecting omnidirectional obstacles and alarming for avoidance.

[0007] Another objective of the present invention is to provide an omnidirectional obstacle avoidance method for vehicles, which provides an alarm for omnidirectional obstacle avoidance and further controls a vehicle to avoid obstacles.

[0008] The present invention discloses an omnidirectional obstacle avoidance method for vehicles, which comprises steps of: capturing a plurality of peripheral images using a plurality of depth camera units of a vehicle and acquiring a plurality pieces of peripheral depth information according to a sensing algorithm; fusing the plurality of peripheral images to a plurality of omnidirectional environment images according to a fusion algorithm and dividing one side of the vehicle into a plurality of detection regions; operating the plurality pieces of peripheral depth information and the plurality of omnidirectional environment images according to the plurality of detection regions to give acceleration vector information and distance information of one or more obstacle; estimating a moving path of the one or more obstacle according to the acceleration vector information and the distance information of the one or more obstacle; and producing alarm information according to the moving path of the one or more obstacle. Thereby, the present invention can provide avoidance alarms for obstacles in the omnidirectional environment for a vehicle, so that the driver of the vehicle can prevent danger caused by obstacles in the environment.

[0009] According to an embodiment of the present invention, in the step of using a plurality of depth camera units of a vehicle and acquiring a plurality pieces of peripheral depth information according to a sensing algorithm, the plurality pieces of peripheral three-dimensional depth information are given according to the sensing result of the plurality of depth camera units and the sensing algorithm.

[0010] According to an embodiment of the present invention, in the step of fusing the plurality of peripheral images to a plurality of omnidirectional environment images, eliminate a plurality of overlap regions of the plurality of peripheral images according to a fusion algorithm and fuse to the plurality of omnidirectional environment images.

[0011] According to an embodiment of the present invention, the fusion algorithm further detects the edges of the plurality of peripheral images according to a plurality of detection ranges of the image capture module for giving and eliminating the plurality of overlap regions.

[0012] According to an embodiment of the present invention, in the step of estimating a moving path of the one or more obstacle according to the acceleration vector information and the distance information of the one or more obstacle, further estimate a moving speed and a moving distance of the one or more obstacle.

[0013] According to an embodiment of the present invention, in the step of estimating a moving path of the one or more obstacle according to the acceleration vector information and the distance information of the one or more obstacle, calculate to give a plurality of optical flow vectors using the image difference method, and estimate the acceleration vector information, the distance information, the moving speed, and the moving distance of the one or more obstacle according to the plurality of optical flow vectors.

[0014] According to an embodiment of the present invention, in the step of producing alarm information according to the moving path of the one or more obstacle, further control the vehicle to avoid the one or more obstacle.

[0015] According to an embodiment of the present invention, before the step of producing alarm information according to the moving path of the one or more obstacle, further classifying the one or more obstacle and labeling the one or more obstacle.

[0016] According to an embodiment of the present invention, the alarm information includes obstacle alarm information and avoidance information.

BRIEF DESCRIPTION OF THE DRAWINGS

[0017] FIG. 1 shows a flowchart according to an embodiment of the present invention;

[0018] FIG. 2A shows a schematic diagram of the depth sensing module according to an embodiment of the present invention;

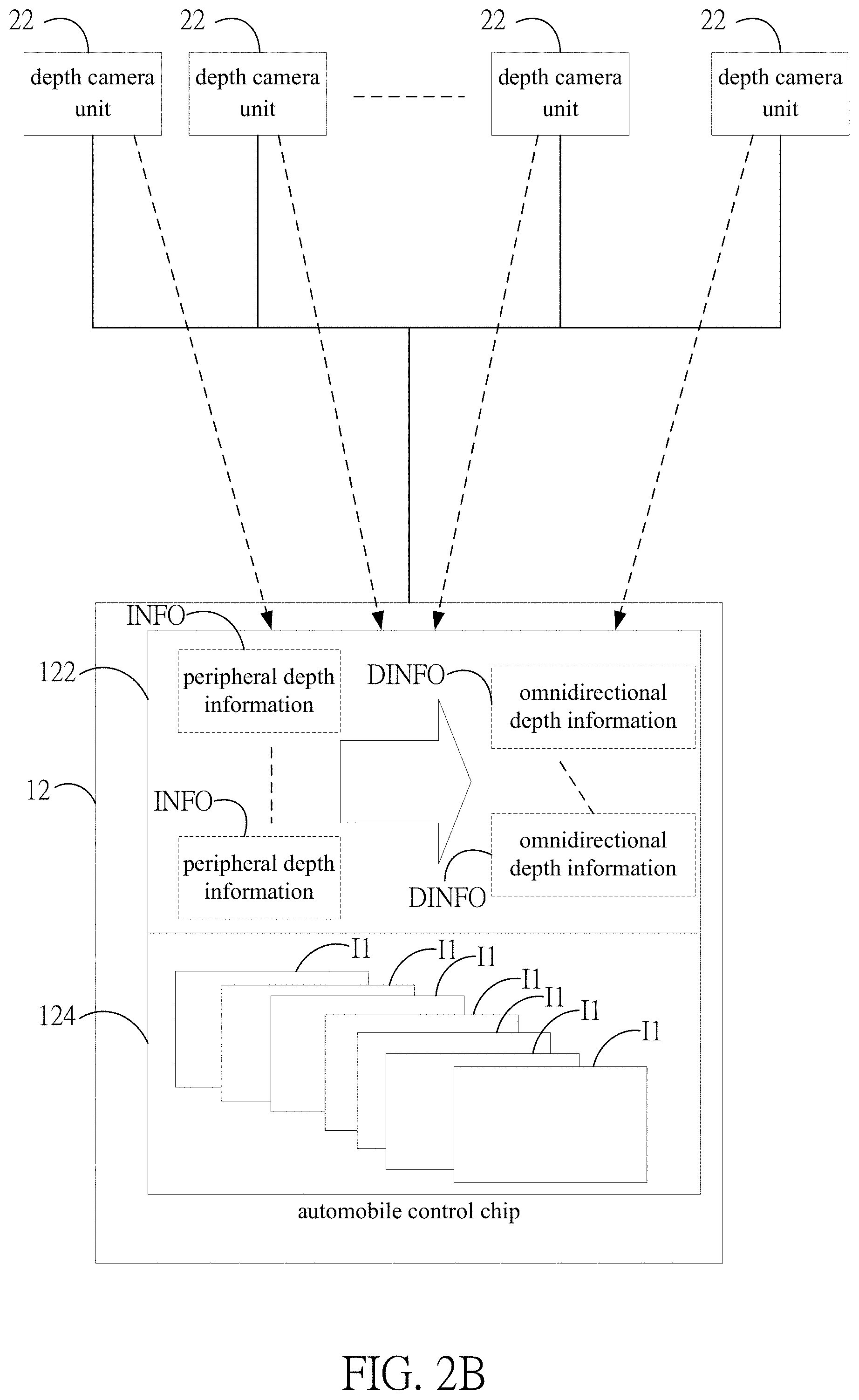

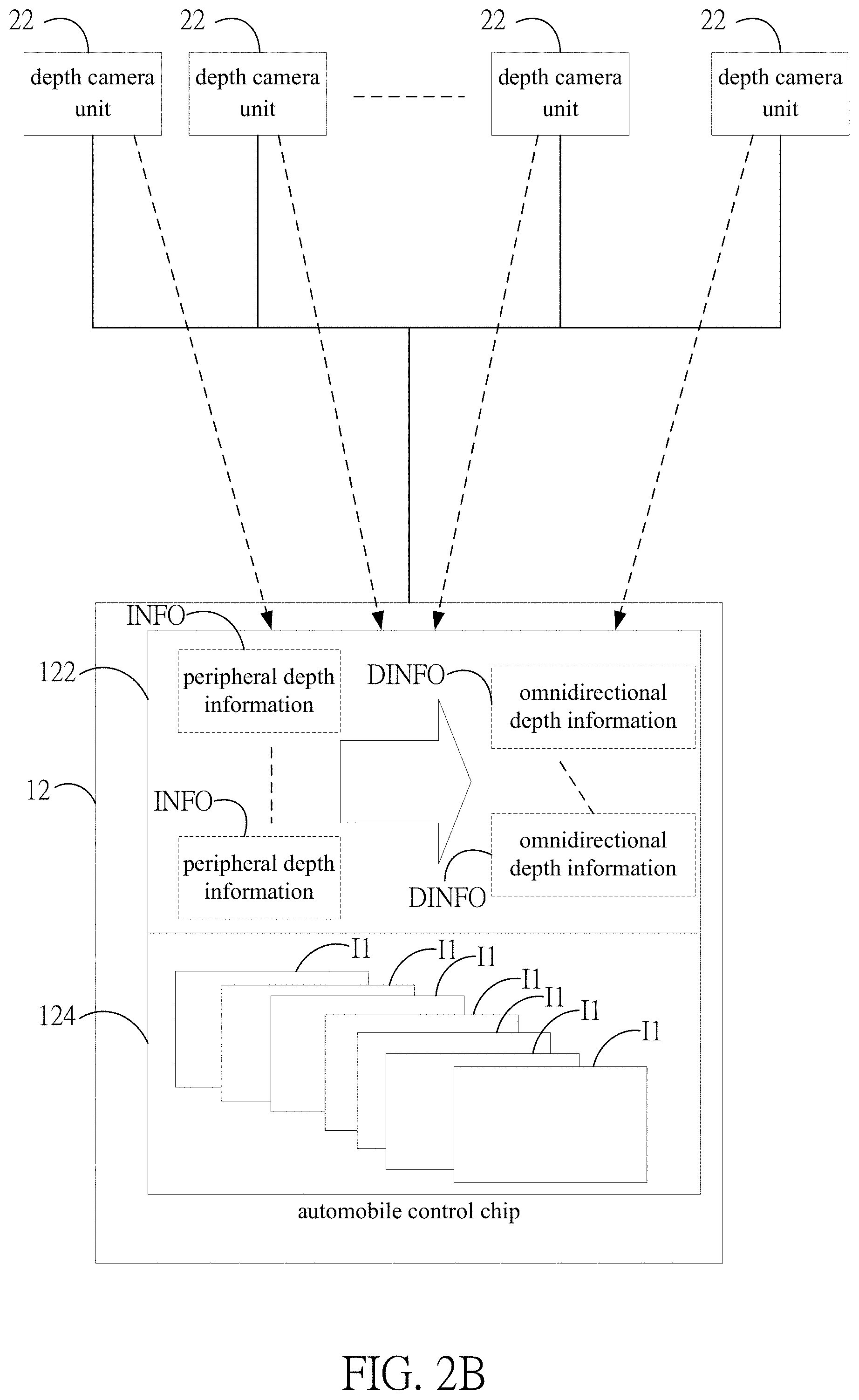

[0019] FIG. 2B shows a schematic diagram of integrating depth information according to an embodiment of the present invention;

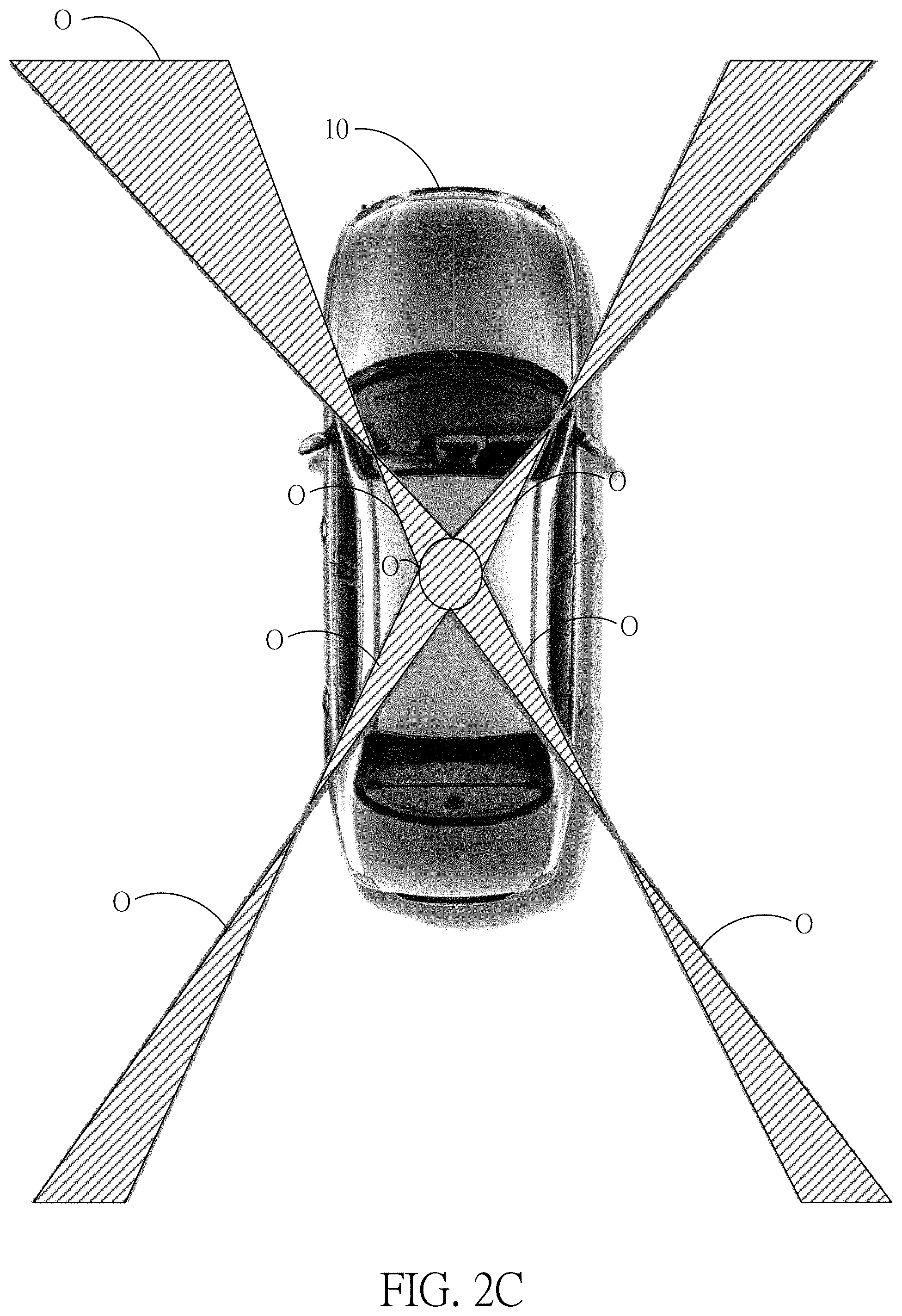

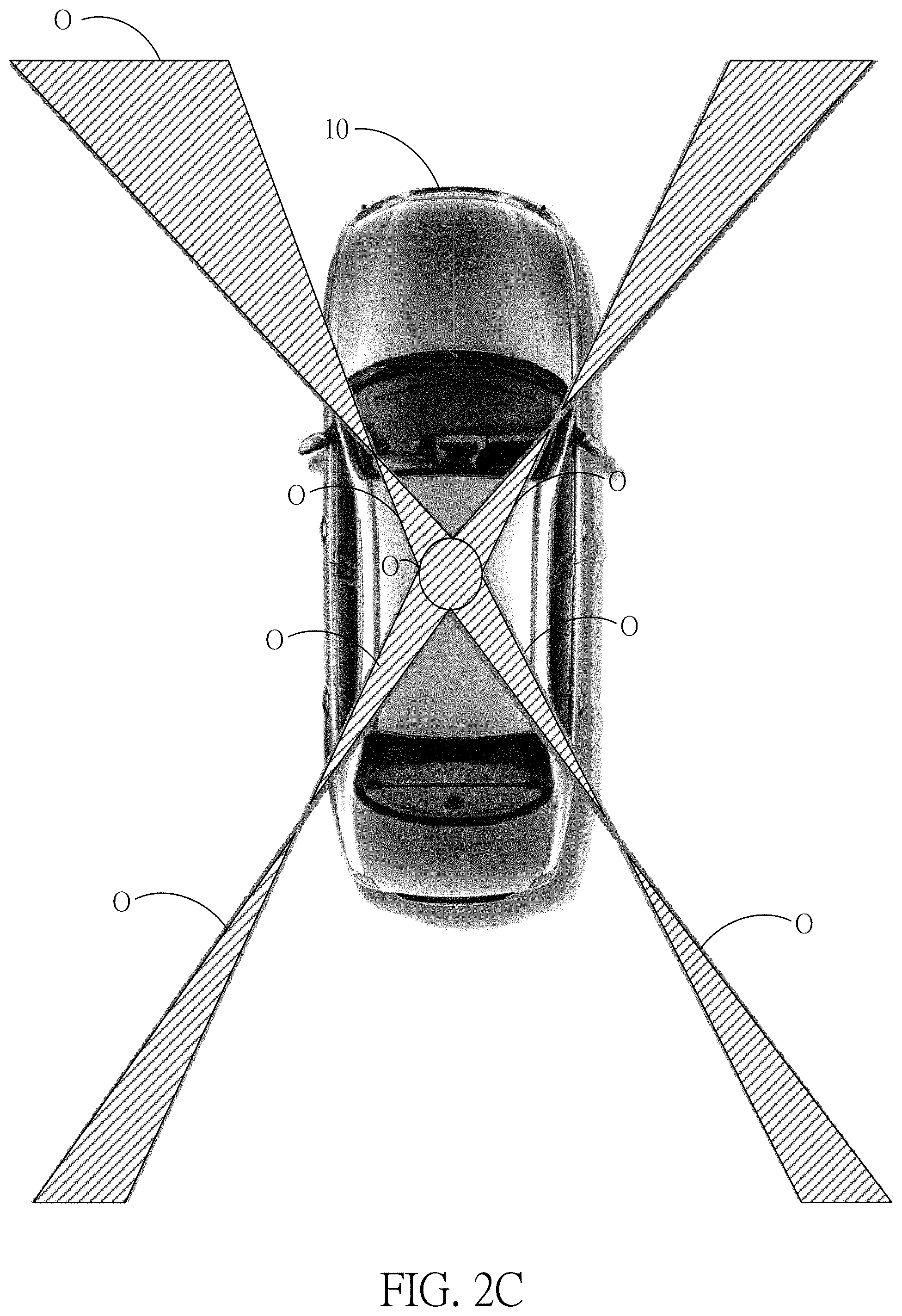

[0020] FIG. 2C shows a schematic diagram of the overlap regions according to an embodiment of the present invention;

[0021] FIG. 2D shows a schematic diagram of image fusion according to an embodiment of the present invention;

[0022] FIG. 2E shows a schematic diagram of detecting obstacle according to an embodiment of the present invention; and

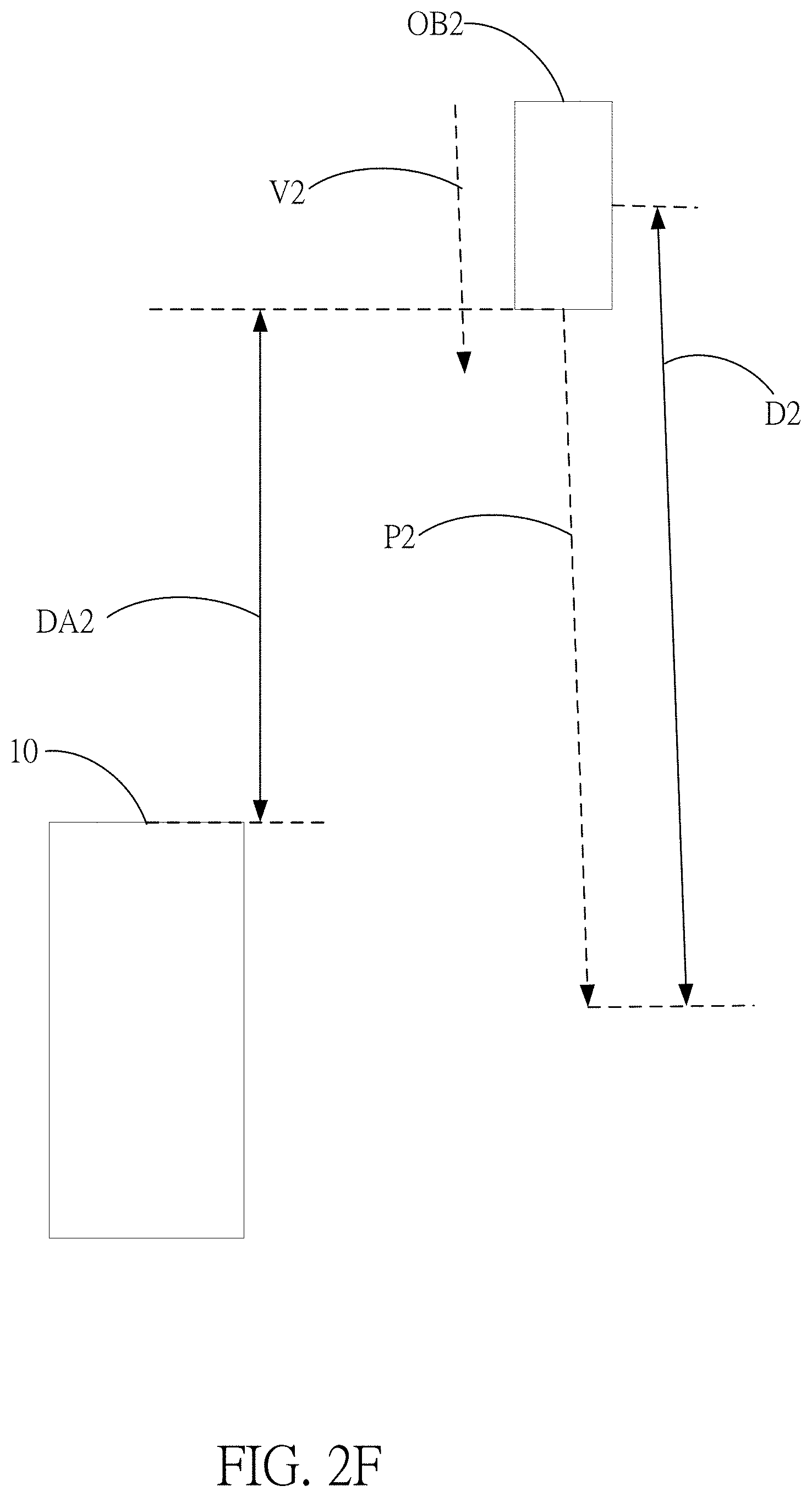

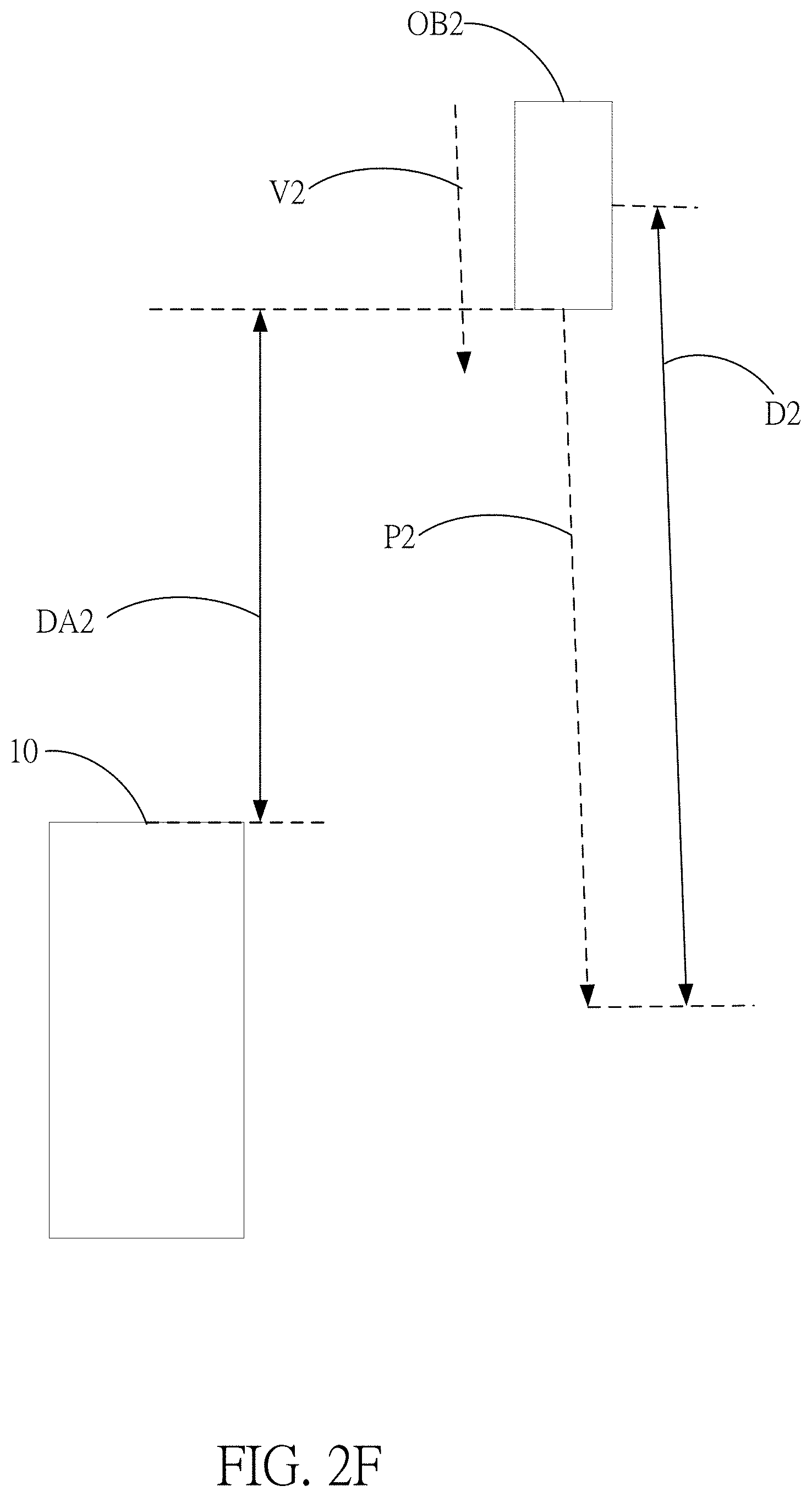

[0023] FIG. 2F shows a schematic diagram of detecting obstacle according to another embodiment of the present invention.

DETAILED DESCRIPTION

[0024] In order to make the structure and characteristics as well as the effectiveness of the present invention to be further understood and recognized, the detailed description of the present invention is provided as follows along with embodiments and accompanying figures.

[0025] Please refer to FIG. 1, which shows a flowchart according to an embodiment of the present invention. As shown in the figure, the omnidirectional obstacle avoidance method according to the present invention comprises steps of:

[0026] Step S10: Capturing peripheral images using depth camera units of a vehicle and acquiring peripheral depth information according to a sensing algorithm;

[0027] Step S20: Fusing the peripheral images to omnidirectional environment images according to a fusion algorithm and dividing one side of the vehicle into detection regions;

[0028] Step S30: Operating the peripheral depth information and the omnidirectional environment images according to the detection regions to give acceleration vector information and distance information of the obstacle;

[0029] Step S40: Estimating a moving path of the obstacle according to the acceleration vector information and the distance information of the obstacle; and

[0030] Step S50: Producing alarm information according to the moving path of the obstacle.

[0031] In the step S10, as shown in FIG. 2A, a vehicle 10 taking peripheral images using a plurality of depth camera units 22 in a depth sensing module 20. By using the operational processing unit 122 of the automobile control chip 12, a plurality pieces of peripheral depth information INFO are acquired, as shown in FIG. 2B. By using the image processing unit 124 of the automobile control chip 12, a plurality of peripheral images I1 are acquired, as shown in FIG. 2B. According to the present embodiment, the plurality of depth camera units 22 are located on the periphery of the vehicle 10, respectively, for capturing the plurality of peripheral images surrounding the vehicle 100 at a time. In addition, according to the present embodiment, the depth sensing module 20 includes a plurality of high-resolution depth camera units 22 for acquiring depth images within the radius of 15 meters. The plurality of depth camera units 22 acquires the plurality pieces of peripheral depth information Info.

[0032] The automobile control chip 12 is connected with the plurality of depth camera units 22 and forming the depth sensing module 20 for acquiring omnidirectional depth images. After the operations and processing of the operational processing unit 122 and the image processing unit 124, the plurality of peripheral depth information Info1 and the plurality of peripheral images I1 are given. The plurality of depth camera units 22 can further sense using infrared or ultrasonic methods. In addition, as shown in FIG. 2B, according to the present embodiment, the sensing result of the plurality of depth camera units 22 is operated by the operational processing unit 122 according to a sensing algorithm for producing the plurality pieces of peripheral depth information INFO, which is integrated by the operational processing unit 122 to form a plurality pieces of omnidirectional depth information DINFO.

[0033] The sensing algorithm executed by the operational processing unit 122 is described as follows:

Sobel Edge Detection

[0034] Each pixel in an image and its neighboring pixels are represented by a 3.times.3 matrix and expressed by P1, P2, P3, P4 . . . , P9 in Equation 1:

.gradient. f = Gx + Gy Equation 1 [ P 1 P 2 P 3 P 4 P 5 P 6 P 7 P 8 P 9 ] , Equation 2 Gx = [ P 1 P 2 P 3 P 4 P 5 P 6 P 7 P 8 P 9 ] Equation 3 Gy = [ P 1 P 2 P 3 P 4 P 5 P 6 P 7 P 8 P 9 ] Equation 4 [ f ( x - 1 , y - 1 ) f ( x , y - 1 ) f ( x + 1 , y - 1 ) f ( x - 1 , y ) f ( x , y ) f ( x + 1 , y ) f ( x - 1 , y + 1 ) f ( x , y + 1 ) f ( x + 1 , y + 1 ) ] Equation 5 E ( h , O ) = D ( O , h , C ) + .lamda. k * Kc ( h ) Equation 6 D ( O , h , C ) = min ( o d - r d , d M ) ( o s r m ) + + .lamda. ( 1 - 2 ( o s r m ) ( o s r m ) + ( o s r m ) ) Equation 7 ##EQU00001##

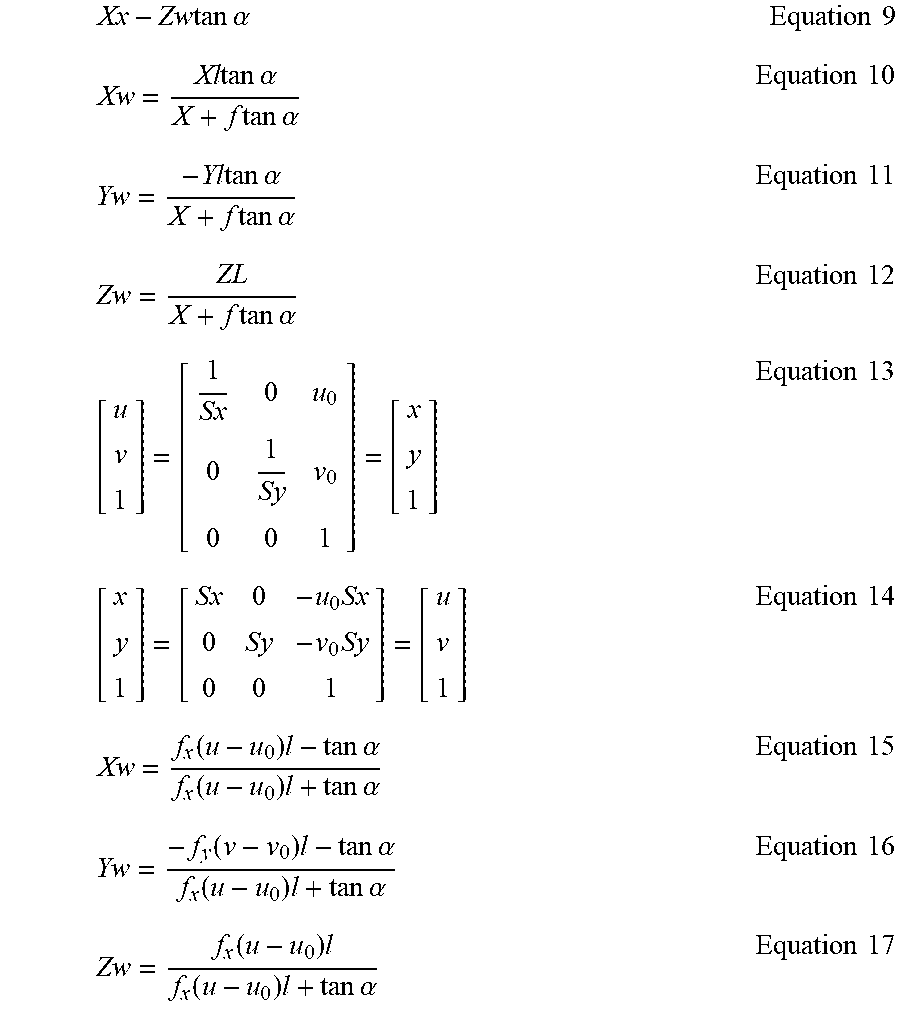

[0035] Images are formed according to the angle a between two depth sensors corresponding to the world coordinates. The world coordinates are defined as Ow-XwYwZw, where Xc=Xw, Yc=-Yw, Zc=L-Zw, and A is the image A'. Then:

X w X = - Y w y = L - Z w f Equation 8 ##EQU00002##

[0036] Thereby, Equation 8 is rewritten according to the world coordinates. The previous plane equations are expressed in the world coordinates to deduce the sensing result for the peripheral environment, and giving:

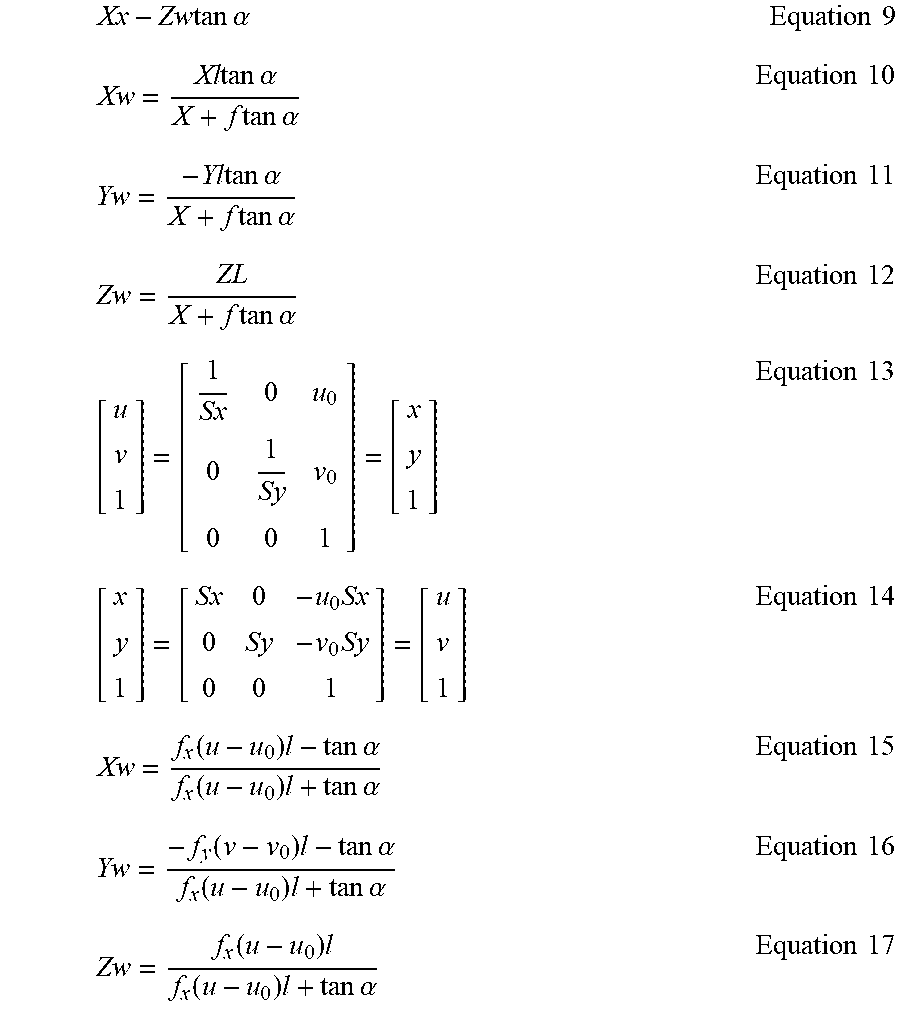

Xx - Zw tan .alpha. Equation 9 Xw = Xl tan .alpha. X + f tan .alpha. Equation 10 Yw = - Yl tan .alpha. X + f tan .alpha. Equation 11 Zw = ZL X + f tan .alpha. Equation 12 [ u v 1 ] = [ 1 Sx 0 u 0 0 1 Sy v 0 0 0 1 ] = [ x y 1 ] Equation 13 [ x y 1 ] = [ Sx 0 - u 0 Sx 0 Sy - v 0 Sy 0 0 1 ] = [ u v 1 ] Equation 14 Xw = f x ( u - u 0 ) l - tan .alpha. f x ( u - u 0 ) l + tan .alpha. Equation 15 Yw = - f y ( v - v 0 ) l - tan .alpha. f x ( u - u 0 ) l + tan .alpha. Equation 16 Zw = f x ( u - u 0 ) l f x ( u - u 0 ) l + tan .alpha. Equation 17 ##EQU00003##

[0037] In the step S20, as shown in FIG. 2D, fuse the plurality of peripheral images I1 to an omnidirectional environment image I2. According to the present embodiment, the fusion algorithm adopts characteristic functions f(x,y), f(x,y) shown in Equation 8. When x or y satisfies a certain fact, the characteristic function will be 1.

f.sub.i(x, y).di-elect cons.{0,1}, i=1,2, . . . , m Equation 18

[0038] The corresponding hidden status of an observation value is determined by the context (observation, status). By using characteristic functions, the environment characteristics (the combination of observation or status) can be selected. Namely, characteristics (observation combination) is used to replace observation for avoiding the limitation imposed by the assumption of independent observation of naive Bayes in the hidden Markov model (HMM) model.

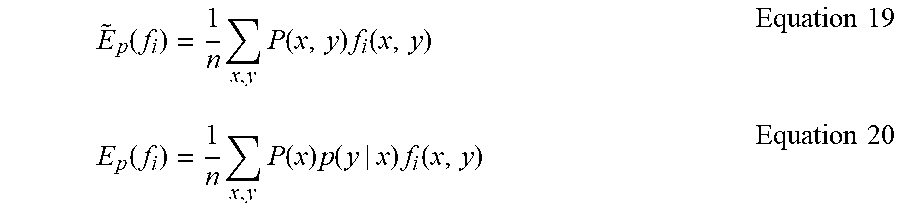

[0039] According to the training data D={(x.sup.(1), y.sup.(1), (x.sup.(2), y.sup.(2)), . . . , (x.sup.(N), y.sup.(N))} with the size T, the empirical expectation value is expressed in Equation 19 and the model expectation value is expressed in Equation 20. The learning of the maximum entropy model is equivalent to constraint optimization.

E ~ p ( f i ) = 1 n x , y P ( x , y ) f i ( x , y ) Equation 19 E p ( f i ) = 1 n x , y P ( x ) p ( y | x ) f i ( x , y ) Equation 20 ##EQU00004##

[0040] Assuming the empirical expectation value is identical to the model expectation value, there are multiple sets C satisfying the constraint related to the conditional probability distribution of arbitrary characteristic function Fi, expressed as in Equation 21 below:

C={P|E.sub.p(f.sub.i)={tilde over (E)}.sub.p(f.sub.i), i=1,2, . . . , m} Equation 21

[0041] According to the maximum entropy theory, to deduce the only reasonable probability distribution from incomplete information (such as limited training data), the entropy should be maximized given the constraints imposed by the information are satisfied. Namely, the distribution of maximum entropy is the optimized distribution given the probability set of limited conditions. Thereby, the maximum entropy model becomes a constraint optimization problem for convex functions.

max P .di-elect cons. C H ( P ) = - x , y P ( x ) P ( y | x ) log P ( y | x ) Equation 22 s . t . E p ( f i ) = E p ( f i ) , i = 1 , 2 , , m Equation 23 s . t . y P ( y | x ) = 1 Equation 24 ##EQU00005##

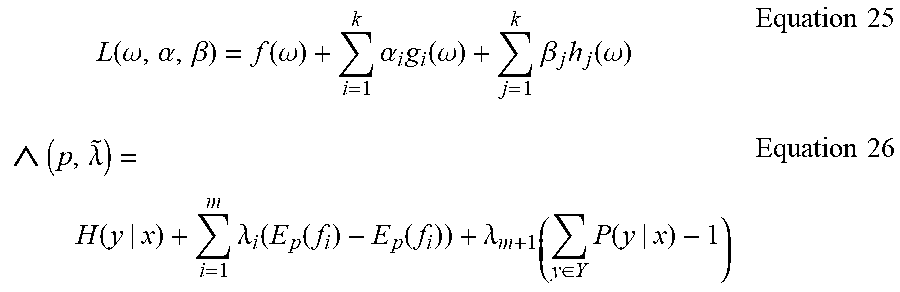

[0042] The Lagrange duality is generally adopted to transform an equation to an unconstrained one for solving for extreme values:

L ( .omega. , .alpha. , .beta. ) = f ( .omega. ) + i = 1 k .alpha. i g i ( .omega. ) + j = 1 k .beta. j h j ( .omega. ) Equation 25 ( p , .lamda. ~ ) = H ( y | x ) + i = 1 m .lamda. i ( E p ( f i ) - E p ( f i ) ) + .lamda. m + 1 ( y .di-elect cons. Y P ( y | x ) - 1 ) Equation 26 ##EQU00006##

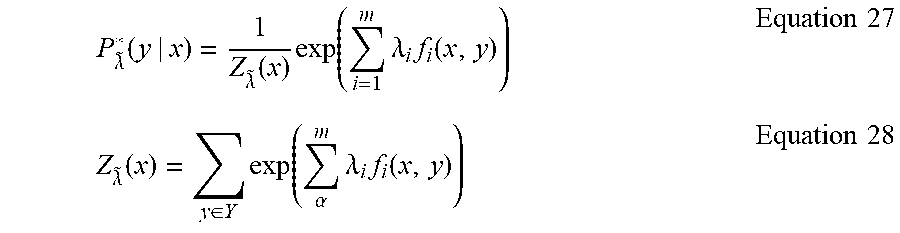

[0043] Calculate the partial derivative of the Langrage function with respect to p and make it equal to 0. Solve the equation. By omitting several steps, the following equations are derived:

P .lamda. ~ * ( y | x ) = 1 Z .lamda. ~ ( x ) exp ( i = 1 m .lamda. i f i ( x , y ) ) Equation 27 Z .lamda. ~ ( x ) = y .di-elect cons. Y exp ( .alpha. m .lamda. i f i ( x , y ) ) Equation 28 ##EQU00007##

Maximum Entropy Markov Model (MEMM)

[0044] P y i - 1 ( y i x i ) = 1 Z .lamda. ~ ( x i , y i - 1 ) exp ( .alpha. m .lamda. .alpha. f .alpha. ( x i , y i ) ) , i = 1 , 2 , , T Equation 29 ##EQU00008##

[0045] Use P(y.sub.i|y.sub.i-1, x.sub.i) distribution to replace the two conditional probability distribution in the HMM. It means to calculate the probability of the current state according to the previous state and the current observation. Each of such distribution function p.sub.y.sub.i-1(y.sub.i|x.sub.i) is an index model complying with maximum entropy.

[0046] If the points .DELTA.p.sub.1, p.sub.2, . . . , p.sub.n} in discrete probability distribution and the maximum information entropy have been found and the probability distribution to be found is {p.sub.1, p.sub.2, . . . , p.sub.n}, the maximum entropy equation is:

f(p.sub.1, p.sub.2, . . . p.sub.n)=-.SIGMA..sub.j-1.sup.n P.sub.j. log.sub.2 P.sub.j Equation 30

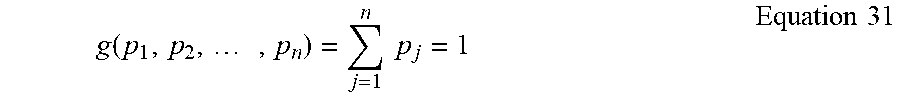

[0047] The sum of the probability distribution P.sub.i at each point x.sub.i must be 1:

g ( p 1 , p 2 , , p n ) = j = 1 n p j = 1 Equation 31 ##EQU00009##

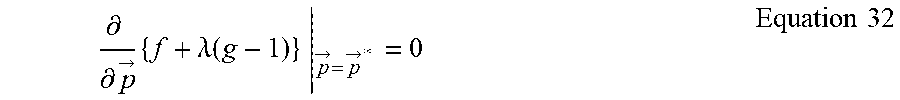

[0048] By using the Lagrange multiplier, the angle of the maximum entropy can be deduced; {right arrow over (p)} covers all the {x.sub.1, x.sub.2, . . . , x.sub.n} in the discrete probability distribution of {right arrow over (p)}:

.differential. .differential. p -> { f + .lamda. ( g - 1 ) } p -> = p -> * = 0 Equation 32 ##EQU00010##

[0049] A system of N equations, where k=1, . . . n, is given, to make:

.differential. .differential. p k { - ( j = 1 n p j log 2 p j ) + .lamda. ( j = 1 n p j - 1 ) } p k = p k * = 0 Equation 33 ##EQU00011##

[0050] By extending these N equations, it gives:

- ( 1 ln 2 + log 2 p k * ) + .lamda. = 0 Equation 34 ##EQU00012##

[0051] It means that all p*.sub.k are equal, since they depend on .lamda. only. By using the following constraint:

j p j = 1 .times. p k * = 1 n Equation 35 ##EQU00013##

it gives

p k * = 1 n Equation 36 ##EQU00014##

[0052] Thereby, the uniform distribution is the distribution for maximum entropy.

Maxmium entropy distribution = H = j = 1 n P j - P k j = 1 n P j Equation 37 ##EQU00015##

[0053] By using Equation 37, the maximum entropy distribution can be deduced. As shown in FIG. 2C, during the process of image fusion, the image overlap regions O are eliminated and an omnidirectional environment image I2 is produced, as shown in FIG. 2D. In other words, by using the maximum entropy distribution given by Equation 37, all peripheral images I1 are fused, the overlap regions O are eliminated, and the omnidirectional environment image I2 is produced.

[0054] Besides, as shown in FIG. 2A and FIG. 2B, in the step S20, divide the peripheral region of the vehicle 10 into a plurality of detection regions A according to the plurality of omnidirectional environment images I2 and the plurality pieces of omnidirectional depth information DINFO.

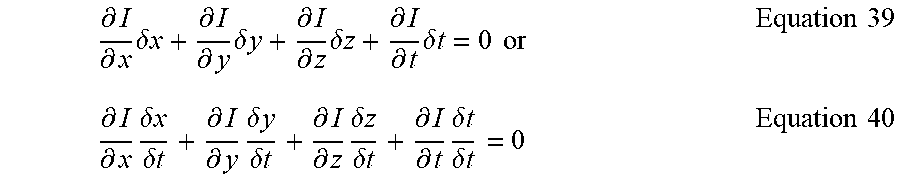

[0055] In the step S30, referring to FIG. 2A and FIG. 2B, by using the plurality of detection regions A and the Lucas-Kanade optical flow algorithm, the obstacle is estimated. First, by using the image difference method, apply the Taylor equation to the image constraint equation to give:

I ( x + .delta. x , y + .delta. y , z + .delta.z , t + .delta. t ) = I ( x , y , z , t ) + .differential. I .differential. x .delta. x + .differential. I .differential. y .delta. y + .differential. I .differential. z .delta. z + .differential. I .differential. t .delta. t + H . O . T Equation 38 ##EQU00016##

[0056] The H.O.T. represent the higher order terms, which can be ignored when the movement is small enough. According to the equation, it can be obtained as follow:

.differential. I .differential. x .delta. x + .differential. I .differential. y .delta. y + .differential. I .differential. z .delta. z + .differential. I .differential. t .delta. t = 0 or Equation 39 .differential. I .differential. x .delta. x .delta. t + .differential. I .differential. y .delta. y .delta. t + .differential. I .differential. z .delta. z .delta. t + .differential. I .differential. t .delta. t .delta. t = 0 Equation 40 ##EQU00017##

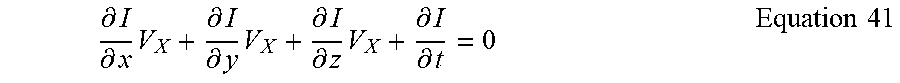

giving:

.differential. I .differential. x V X + .differential. I .differential. y V X + .differential. I .differential. z V X + .differential. I .differential. t = 0 Equation 41 ##EQU00018##

[0057] V.sub.x, V.sub.y, and V.sub.z are the optical flow vector components of I(x,y,z,t) in x, y, and z directions, respectively.

.differential. I .differential. x , .differential. I .differential. y , .differential. I .differential. z and .differential. I .differential. t ##EQU00019##

are the differences in the corresponding directions for a point (x,y,z,t) in an image. Thereby, Equation 42 can be converted to the following equation:

I.sub.xV.sub.X+I.sub.yV.sub.y+I.sub.zV.sub.Z=-I.sub.t Equation 42

[0058] Equation 42 can be further rewritten as:

.gradient.I.sup.T{right arrow over (V)}=-I.sub.t Euation 43

[0059] Since there are three unknowns (Vx,Vy,Vz) in Equation 41, the subsequent algorithm is used to deduce the unknowns.

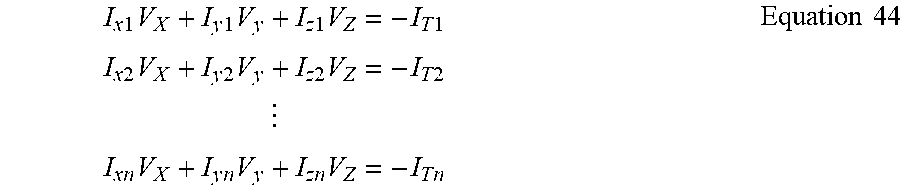

[0060] First, assume the optical flow (V.sub.x,V.sub.y,V.sub.z) is constant in a small window of m*m*m (m>1). Then, according to the primitives 1 to n, n=m.sup.3, the following ser of equations is given:

I x 1 V X + I y 1 V y + I z 1 V Z = - I T 1 I x 2 V X + I y 2 V y + I z 2 V Z = - I T 2 I x n V X + I y n V y + I z n V Z = - I T n Equation 44 ##EQU00020##

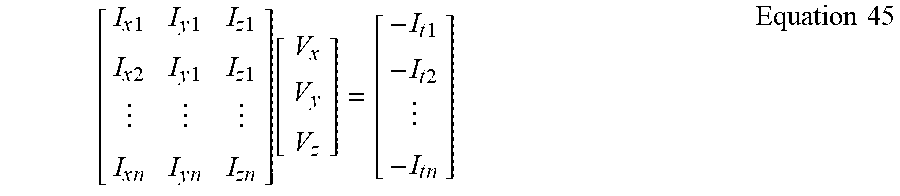

[0061] The above multiple equations all include three unknowns and forming an equation set. The equation set is an overdetermined equation set, and can be expressed as:

[ I x 1 I y 1 I z 1 I x 2 I y 1 I z 1 I x n I y n I z n ] [ V x V y V z ] = [ - I t 1 - I t 2 - I t n ] Equation 45 ##EQU00021##

which is denoted as

A{right arrow over (v)}=-b Equation 46

[0062] To solve this overdetermined problem, Equation 46 is deduced using the least squares method:

A.sup.T A{right arrow over (v)}=A.sup.T(-b) Equation 47

Or

{right arrow over (v)}=(A.sup.T A).sup.-1 A.sup.T(-b) Equation 48

giving

[ V x V y V z ] = [ I x i 2 I x i I y i I x i I .apprxeq. i I x i I y i I y i 2 I y i I .apprxeq. i I x i I z i I y i I z i I z i 2 ] - 1 [ - I x i I t i - I y i I t i - I z i I t i ] Equation 49 ##EQU00022##

[0063] Substitute the result of Equation 49 into Equation 41 for estimating acceleration vector information and distance information of the one or more obstacle.

[0064] In the step S40, as shown in FIG. 2E, by using the acceleration vector information and the distance information given by the previous step S30, the moving direction of the one or more obstacle surrounding the vehicle 10 can be deduced and thus estimating a moving path P1 of the one or more obstacle OB. Besides, the vehicle 10 according to the present embodiment can further use the operational processing unit 122 in the automobile control chip 12 to further estimate a moving speed V1 and a moving distance D1 of a first obstacle OB1. The first obstacle OB1 according to the present embodiment will pass in front of the vehicle 10, and the vehicle 10 will pass across the moving path P1 of the first obstacle OB1.

[0065] In the step S50, the automobile control chip 12 in the vehicle 10 generates alarm information according the moving path of the one or more obstacle. According to the present embodiment, the automobile control chip 12 judges that a first alarm distance DA1 between the vehicle 10 and the first obstacle OB1 reaches within a first threshold value and transmits the alarm information for general obstacle. For example, the alarm information is displayed on a head-up display (HUD). When the first alarm distance DA1 enters a second threshold value, the automobile control chip 12 outputs a single alarm sound and controls the HUD to display the alarm information. When the first alarm distance DA1 enters a third threshold value, the automobile control chip 12 outputs intermittent alarm sound and controls the HUD (not shown in the figure) to display the alarm information. When the first alarm distance DA1 enters a fourth threshold value, the automobile control chip 12 outputs continuous alarm sound and controls the HUD (not shown in the figure) to display the alarm information. Since the fourth threshold value means that the obstacle OB is quite close to the vehicle 10, the automobile control chip 12 uses an urgent method to express the alarm information.

[0066] As shown in FIG. 2F, another embodiment of the present invention is illustrated. By using the omnidirectional obstacle avoidance method for vehicles according to the present invention, a second obstacle OB2 is detected and the moving path P2 of the second obstacle OB2 is estimated. The automobile control chip 12 judges that the vehicle will not pass across the moving path P2 of the second obstacle OB2. In addition, after judgement, the moving distance D2 and the moving speed V2 of the second obstacle OB2 do not change. Thereby, when the distance between the vehicle 10 and the second obstacle OB2 is a second alarm distance DA2 and the second alarm distance DA1 is within the first, or even the fourth threshold value, the automobile control chip 12 only controls the HUD to display the alarm information. The HUD described above is a mature technology for vehicles. Since the emphasis of the present invention is not on the HUD, it is used for illustrating display method. According to the present invention, the alarm information can be further displayed on the display on the dashboard of the vehicle 10, such as a car audio and video display.

[0067] Besides, according to the present invention, before the step S50, the automobile control chip 12 can classify and label obstacles. For example, classify the first obstacle OB1 as a dangerous obstacle and the second obstacle OB2 as a non-dangerous one. They are labeled in the omnidirectional environment image 12, displayed on the HUD, or displayed on the car audio and video display.

[0068] To sum up, the present invention provides an omnidirectional obstacle avoidance method for vehicles to achieve preventive avoidance. According to the distance, location, speed, acceleration of obstacles provided by the sensors, multiple types of information such as moving direction can be estimated. According to the information, driving risks can be evaluated and provided to drivers for reference. In addition, the obstacle information for vehicles encountering danger during the moving process is provided as reference data and acts as important data for prevention and judgement. Thereby, the vehicles can avoid high risk behaviors in advance, reduce risks effectively, and achieve the following effects: [0069] 1. The omnidirectional avoidance technology can be applied to various environments, such as reversing a car with a reversing radar. In addition to acquiring more detailed distance information and a broader identification range, the moving direction of the vehicles can be alarmed in advance for preventing accidents. Thereby, better alarm efficacy can be achieved. [0070] 2. Side blind spots reminder can provide alarms before the driver opens the door and gets off the car for reminding the driver to watch out for pedestrians. The present invention can be applied to turning and lane change. Compared to current methods, the present invention can provide more driving information beneficial to the driver's judgement. Currently, car accidents are mostly caused by driver's distraction. The present invention can prevent driving risks caused by distracted driving.

[0071] Accordingly, the foregoing description is only embodiments of the present invention, not used to limit the scope and range of the present invention. Those equivalent changes or modifications made according to the shape, structure, feature, or spirit described in the claims of the present invention are included in the appended claims of the present invention.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.