Technique For Automatically Tracking An Object By A Camera Based On Identification Of An Object

Holtz; Alex ; et al.

U.S. patent application number 16/693774 was filed with the patent office on 2020-05-21 for technique for automatically tracking an object by a camera based on identification of an object. The applicant listed for this patent is GVBB HOLDINGS S.A.R.L.. Invention is credited to Alex Holtz, Scott Raymond Matics, Lionel Oisel.

| Application Number | 20200160536 16/693774 |

| Document ID | / |

| Family ID | 40897615 |

| Filed Date | 2020-05-21 |

| United States Patent Application | 20200160536 |

| Kind Code | A1 |

| Holtz; Alex ; et al. | May 21, 2020 |

TECHNIQUE FOR AUTOMATICALLY TRACKING AN OBJECT BY A CAMERA BASED ON IDENTIFICATION OF AN OBJECT

Abstract

Automatic tracking by a camera of an object such as on-air talent appearing in a television show commences by first determining whether the object lies within the camera field of view matches a reference object. If so, tracking of the object then occurs to maintain the object in fixed relationship to a pre-set location in the camera's field of view, provided the designated object has moved more than a threshold distance from the pre-set location.

| Inventors: | Holtz; Alex; (Jacksonville, FL) ; Matics; Scott Raymond; (Jacksonville, FL) ; Oisel; Lionel; (La Nouaye, FR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 40897615 | ||||||||||

| Appl. No.: | 16/693774 | ||||||||||

| Filed: | November 25, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15637166 | Jun 29, 2017 | 10489917 | ||

| 16693774 | ||||

| 12736227 | Sep 20, 2010 | 9741129 | ||

| PCT/US2009/002286 | Apr 13, 2009 | |||

| 15637166 | ||||

| 61124094 | Apr 14, 2008 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G01S 3/7865 20130101; G06T 7/20 20130101; G06T 2207/30201 20130101; H04N 5/23203 20130101; G06T 2207/10016 20130101; H04N 5/23216 20130101 |

| International Class: | G06T 7/20 20060101 G06T007/20; G01S 3/786 20060101 G01S003/786; H04N 5/232 20060101 H04N005/232 |

Claims

1. A system for automatically tracking objects in a video feed generated, the system comprising: a camera configured to generate the video feed for the production of a television program; an object detector configured to compare an image characteristic the video feed with a stored image of an object to detect whether the object is captured in the video feed; a tolerance determiner configured to calculate whether a distance between a pre-set position in a field of view of the camera and the detected object therein is within a tolerance radius; and an object tracker configured to automatically control a robotic camera pedestal to physically adjust a positioning of the camera and a corresponding position of the object within the field of view of the camera when the distance between the pre-set position and the detected object is outside the tolerance radius.

2. The system according to claim 1, further comprising a tracking window setting module configured to define a tracking window in the field of view of the camera based on an operator defined region of interest received by a user interface, such that the system will not track the object in the video feed when the object is outside the defined tracking window.

3. The system according to claim 2, further comprising a tracking location setting module configured to define the pre-set position in the field of view of the camera for the identified object; and wherein the object tracker is configured to automatically track the identified object by controlling the robotic camera pedestal when the camera generates the video feed to maintain the identified and tracked object in a fixed relationship relative to the defined pre-set position in the field of view of the camera.

4. The system according to claim 1, wherein the object tracker is configured to automatically control the robotic camera pedestal by displacing the camera in an X and Y axis to physically adjust the positioning of the camera.

5. The system according to claim 1, wherein the object tracker is configured to automatically control at least one of a panning operation and a tilting operation of the camera to physically adjust the positioning of the camera.

6. The system according to claim 1, wherein the object detector includes a processor configured to execute instructions stored in memory to compare the image characteristic with the stored image, the tolerance determiner includes a processor configured to execute instructions stored in memory to calculate whether the distance between the pre-set position in the field of view and the detected object therein is within the tolerance radius, and the object tracker includes a processor configured to execute instructions stored in memory to automatically control the robotic camera pedestal to physically adjust the positioning of the camera.

7. An apparatus for automatically tracking objects in a video feed generated by a camera, the apparatus comprising: a memory; and a processor configured to implement instructions stored on the memory so as to provide: an object detector configured to compare an image characteristic in frames of the video feed with a stored image of an object to detect the object in the video feed; a tolerance determiner configured to calculate whether a distance between a pre-set position in a field of view of the camera and the detected object therein is within a tolerance radius; and an object tracker configured to automatically control a robotic camera pedestal to physically adjust a positioning of the camera and a corresponding position of the object within the field of view of the camera when the distance between the pre-set position and the detected object is outside the tolerance radius in at least one frame of the video feed.

8. The apparatus according to claim 7, further comprising the camera configured to capture the frames of the video feed for the production of a television program.

9. The apparatus according to claim 7, wherein the processor is configured to implement instructions stored on the memory so as to provide a tracking window setting module configured to define a tracking window in the field of view of the camera, such that the system will not track the object in the video feed when the object is outside the defined tracking window.

10. The apparatus according to claim 9, wherein the tracking window setting module is configured to define the tracking window based on an operator defined region of interest received by a user interface.

11. The apparatus according to claim 9, wherein the processor is configured to implement instructions stored on the memory so as to provide a tracking location setting module configured to define the pre-set position in the field of view of the camera for the identified object.

12. The apparatus according to claim 11, wherein the object tracker is configured to automatically track the identified object by controlling the robotic camera pedestal when the camera captures the frames of the video feed to maintain the identified and tracked object in a fixed relationship relative to the defined pre-set position in the field of view of the camera.

13. The apparatus according to claim 7, wherein the object tracker is configured to automatically control the robotic camera pedestal by displacing the camera in an X and Y axis to physically adjust the positioning of the camera.

14. The apparatus according to claim 7, wherein the object tracker is configured to automatically control at least one of a panning operation and a tilting operation of the camera to physically adjust the positioning of the camera.

15. A system for automatically tracking objects in a video feed generated by a camera, the system comprising: an object detector configured to compare an image characteristic in frames of the video feed with a stored image of an object to detect the object in the video feed; a tolerance determiner configured to calculate whether a distance between a pre-set position in a field of view of the camera and the detected object therein is within a tolerance radius; and an object tracker configured to automatically control a robotic camera pedestal to physically adjust a positioning of the camera and a corresponding position of the object within the field of view of the camera when the distance between the pre-set position and the detected object is outside the tolerance radius in at least one frame of the video feed.

16. The system according to claim 15, further comprising the camera configured to capture the frames of the video feed for the production of a television program.

17. The system according to claim 15, further comprising a tracking window setting module configured to define a tracking window in the field of view of the camera, such that the system will not track the object in the video feed when the object is outside the defined tracking window.

18. The system according to claim 16, wherein the tracking window setting module is configured to define the tracking window based on an operator defined region of interest received by a user interface.

19. The system according to claim 17, further comprising a tracking location setting module configured to define the pre-set position in the field of view of the camera for the identified object; and wherein the object tracker is configured to automatically track the identified object by controlling the robotic camera pedestal when the camera captures the frames of the video feed to maintain the identified and tracked object in a fixed relationship relative to the defined pre-set position in the field of view of the camera.

20. The system according to claim 15, wherein the object tracker is configured to automatically control the robotic camera pedestal by displacing the camera in an X and Y axis to physically adjust the positioning of the camera.

21. The system according to claim 15, wherein the object tracker is configured to automatically control at least one of a panning operation and a tilting operation of the camera to physically adjust the positioning of the camera.

22. The system according to claim 15, wherein the object detector includes a processor configured to execute instructions stored in memory to compare the image characteristic with the stored image, the tolerance determiner includes a processor configured to execute instructions stored in memory to calculate whether the distance between the pre-set position in the field of view and the detected object therein is within the tolerance radius, and the object tracker includes a processor configured to execute instructions stored in memory to automatically control the robotic camera pedestal to physically adjust the positioning of the camera.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. application Ser. No. 15/637,166, filed Jun. 29, 2017, which is a continuation of U.S. application Ser. No. 12/736,227, filed Sep. 20, 2010, which is a National Stage Entry of PCT/US2009/002286, filed Apr. 13, 2009, which claims priority under 35 U.S.C. .sctn. 119 to U.S. Provisional Patent Application Ser. No. 61/124,094, filed Apr. 14, 2008, the entire contents of each of which are hereby incorporated by reference in their entirety.

TECHNICAL FIELD

[0002] This invention relates to a technique for tracking an object whose image is captured by a camera or the like.

BACKGROUND

[0003] Live production of a television program such as a news show often requires one or more television cameras to capture the image of different "on-air" talent, such as a news anchor, weather reporter and/or sports reporter. In the past, a camera operator would manually operate each television camera. Such manual operation often entailed moving the camera to different positions within a television studio to make sure that the particular on-air talent appeared in the center of the camera's field of view. During broadcasting, the on-air talent often will make slight lateral movements, forcing the camera operator to displace the camera by a corresponding amount to maintain the on-air talent within the center of the camera's field of view. The camera operator will generally observe the image of the on-air talent in the camera's view finder so the operator will have immediate knowledge of the movement of the talent and move the camera accordingly.

[0004] Advances in technology have led to the development of robotic television cameras, such as the "Cameraman", available from Thomson Grass Valley, Jacksonville, Fla. Such robotic cameras operate under the control of one or more computers which manage functions such as camera displacement along the x, y, and z axes, pan, tilt, zoom and focus. By appropriately programming the computer(s), the camera will operate automatically, thus obviating the need for manual control. Typical robotic cameras have the ability to move from a known home position to one or more pre-set positions, each pre-set position enabling a particular camera shot of an on-air talent. Generally, the pre-set camera positions remain static. In other words, if the on-air talent moves even slightly to the right or left while the robotic camera remains static, then the on-air talent will appear off-center within the field of view of the camera.

[0005] To overcome this difficulty, robotic cameras can include automatic tracking technology such as such the tracking system described in U.S. Pat. No. 5,668,629 issued in the name of Jeffrey Parker et al. The automatic tracking system described in the '629 patent employs a Infra-Red (BR) transmitter carried by the moving object (e.g., the on-air talent) for transmitting signals to an IR receiver carried by the robotic camera. By detecting the deviation in the signal transmitted by the transmitter as it moves with the object, the IR receiver can establish the new position of the moving object and provide that information to the computer(s) controlling the robotic camera to displace the camera accordingly.

[0006] The IR tracking technology described in the '629 patent works well for tracking a single moving object. However, tracking of multiple objects can prove problematic, such as in the case when a single robotic camera serves to capture the image of several different on-air talent, as occurs when the camera moves to capture the image of a news anchor at one instant, and a weather reporter at a different instant. Each different on-air talent would need to carry a separate IR transmitter to avoid interference, thus necessitating the need for multiple IR receivers on the camera. This IR system also suffers from the disadvantage that the anchor person has to wear an embedded system that should be located at the center of the head to have an accurate estimate of the head position

[0007] Thus, a need exists for a tracking technique that overcomes the aforementioned disadvantage of the prior art.

SUMMARY OF THE INVENTION

[0008] Briefly, in accordance with a preferred embodiment, there is provided a method for tracking an object within the field of view of a robotically controlled camera. The method commences by first determining whether the object lying within the camera field of view matches a reference object. If so, tracking of the object commences to maintain the object in fixed relationship to a pre-set location in the camera's field of view, provided the designated object has moved more than a threshold distance from the pre-set location. In this way, tracking occurs in accordance with the camera's field of view, and does not depend on any apparatus worn by the object being tracked.

BRIEF DESCRIPTION OF THE DRAWINGS

[0009] FIG. 1 depicts a block schematic diagram of an exemplary system for practicing the automatic tracking technique of the present principles;

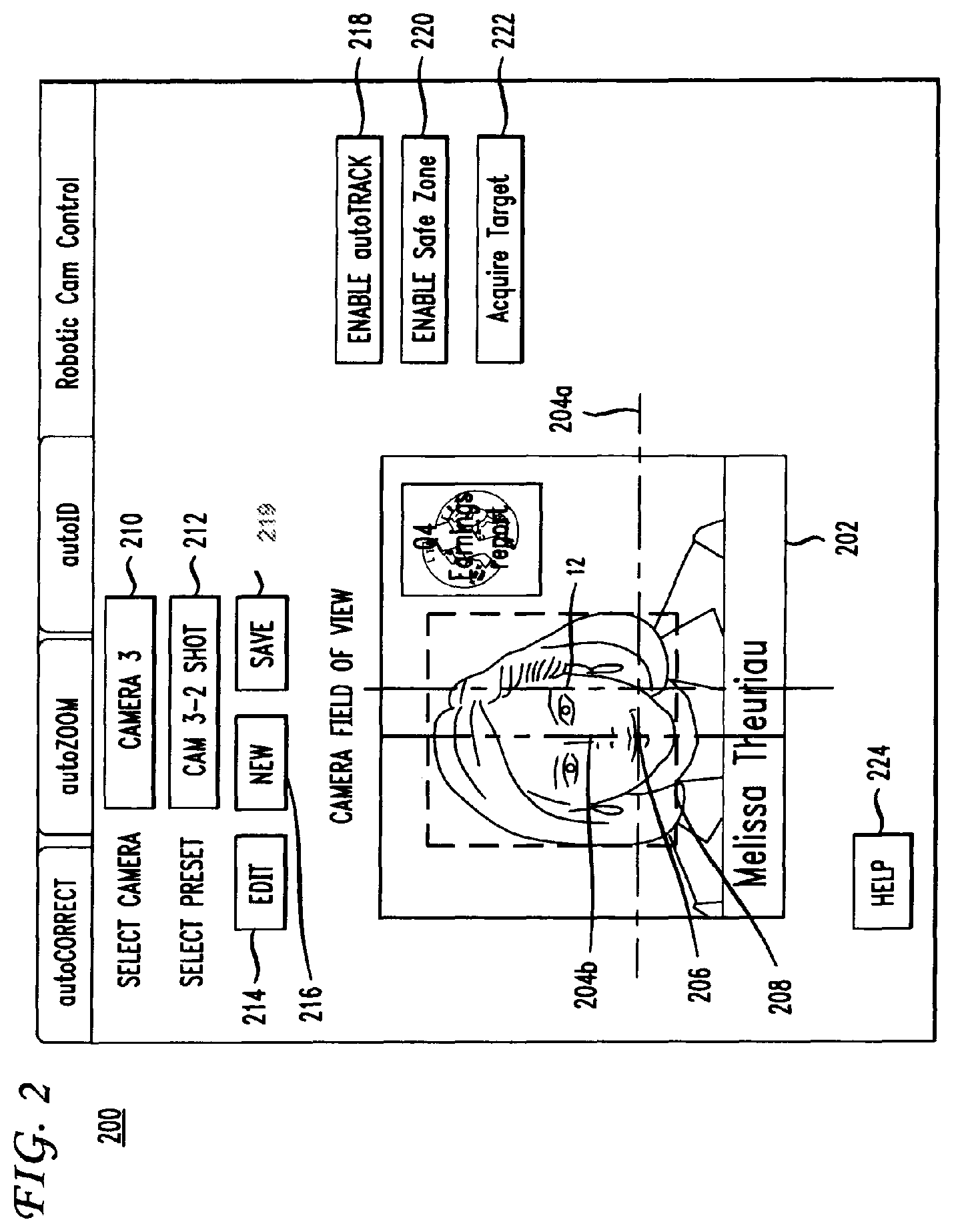

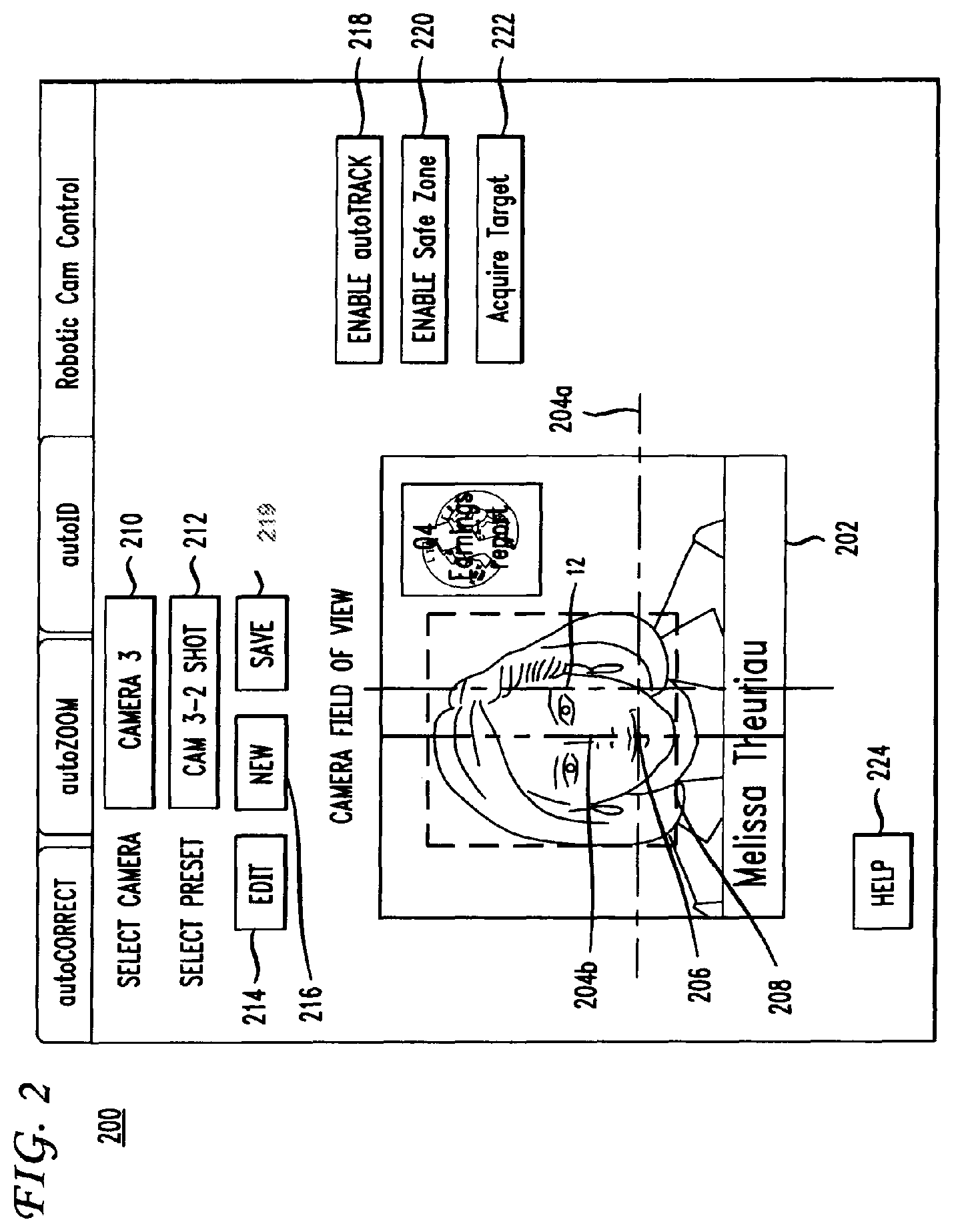

[0010] FIG. 2 depicts a Graphical User Interface (GUI) through which an operator can control the system of FIG. 1

[0011] FIG. 3 depicts an enlarged portion of the of the GUI of FIG. 2 showing the manner in which an operator can manipulate a camera offset; and

[0012] FIG. 4 depicts in flow chart form the steps of a method practiced by the apparatus of FIG. 1 for performing automatic tracking technique of the present principles.

DETAILED DESCRIPTION

[0013] FIG. 1 depicts a block schematic diagram of an exemplary system 10 for automatically tracking an object 12, such as on-air talent, in accordance with the present principles. The on-air talent 12 could take the form of a newscaster, sports reporter, or weatherman in connection with a production of a television news program, or on-air talent in connection with other types of television programming (e.g., a game show host).

[0014] The system 10 includes a robotic camera assembly 14, such as the "Camerman" robotic camera assembly available from Thomson Grass Valley, Jacksonville, Fla. The robotic camera assembly 14 typically includes a television camera 16 that carries a zoom lens 18 whose functions, such as iris and zoom, respond to signals supplied by a processor 20, such as but not limited to, a personal computer or the like. Thus, the lens 18 has a variable zoom function. The processor 20 also controls a robotic camera pedestal 22 which has the capability of displacing the camera 16 along the x, and y axes as well as panning and tilting the camera responsive to signals from the processor. The processor 20 operates to control the movement of the robotic camera pedestal 22 as well as the functions of the lens 18 in accordance with the video signal from the camera 16. Although the robotic camera system 14 depicts a single camera 16, the system could include multiple cameras controlled by a single processor or by individual processors.

[0015] FIG. 2 depicts a display of a Graphical User Interface (GUI) 200 via which an operator enters data to, and receives information from, a program executed by the processor 20 to carry out automatic tracking of an object (e.g., the on-air talent 12 of FIG. 1) in the manner described hereinafter. The GUI 200 of FIG. 2 includes a video screen 202 which displays the image of a selected television camera, such as the camera 16 of FIG. 1. The image displayed in the video screen 202 includes horizontal and vertical lines 204a and 204b, whose intersection 206 represent an offset associated with the tracking technique of the present principles. The offset constitutes the difference in position between the center of the object (e.g., the on-air talent 12 of FIG. 1) and the intersection 206 of the lines 204a and 204b. An operator can manipulate the location of the lines 204a and 204b by touching and dragging the lines to manipulate the offset. The video screen 202 also displays a "safe zone box", in the form of a border 208 which defines the region within which automatic tracking occurs. No tracking occurs for any object appearing outside the border 208. Thus, if the on-air 12 of FIG. 1 appears outside of the border 208, the camera 16 will not respond to movement of the on-air talent.

[0016] In addition to the video screen 202, the GUI 200 includes a plurality of "toggle buttons" 210-224, each taking the form of a particular region within the GUI, which when activated, triggers a particular action as described hereinafter. In practice, actuation of a particular one of the toggle buttons 210-224 can occur by the use of a computer mouse (not shown). Alternatively, the GUI 200 could undergo display on a touch screen so that touching the particular toggle button would trigger the corresponding action associated with that button. The toggle button 210 triggers selection of a particular one of several cameras, whereas the toggle button 212 selects a preset shot for the camera selected by the toggle button 210. Toggle button 214 triggers an edit capability to allow the operator to adjust various parameters, including but not limited to the speed of camera movement. In this way, the operator can adjust the sensitivity of the automatic tracking. Toggle button 216 triggers a new tracking session.

[0017] Toggle button 219 triggers a save of the various settings and other information associated with a current tracking session, including but not limited to related safe zone settings for particular preset camera locations. Toggle button 218 enables automatic tracking of an object (e.g., the on-air talent 12 of FIG. 1) in accordance with the method of the present principles. Toggle button 240 enables creation of a safe zone defined by the border 208 to define a region outside of which no tracking will occur. Toggle button 222, when actuated, initiates automatic tracking, by entering into an "auto find" mode, whereupon the processor 20 of FIG. 1 will search the currently selected camera's field of view for a suitable object to begin tracking. Toggle button 222 automatically enables both automatic tracking and the Safe Zone without operator intervention. Lastly, toggle button 224, when actuated, triggers a help screen to assist the operator.

[0018] The GUI 200 advantageously enables an operator to set a tracking window (i.e., the border 208) as well as setting of x and y offsets (as defined the intersection 206 of the lines 204a and 204b in FIG. 2). In this way, the operator can maintain the object (the on-air talent 12 of FIG. 1) in a particular perspective, depending on graphics that appear in the same field of view as the on-air talent. For example, the graphics could appear over the right or left shoulder of the on-air talent 12 of FIG. 1, as indicated in the image depicted in the video screen 202 of FIG. 2, resulting in a "right OTS" or "left OTS" shot. Upon operator selection of the automatic tracking function following actuation of the auto track toggle button 218, the video screen 202 within the GUI 200 will display the image of the camera 16 of FIG. 1 with the current position of the offset. As described previously, the operator can make adjustments by touching the lines 204a and 204b and dragging them to the desired location. After saving the position of the lines 204a and 204b as a preset, the intersection 206 now becomes the x and y offset associated with that particular location preset. The camera 16 of FIG. 1 will track the object (e.g., the on-air talent 12 of FIG. 1) and re-adjust the position of the camera based on the difference between the stored offset and the location preset without operator intervention. FIG. 3 represents an enlarged view of the video screen 202 of FIG. 2 and more clearly depicts a tracking window having an "offset" from the center of the object in the field of view of the camera 16 of FIG. 1.

[0019] FIG. 4 depicts in flow chart form the steps of an exemplary process 400 by which the processor 20 of FIG. 1 can control the robotic camera assembly 14 of FIG. 1 to carry out automatic tracking of the on-air talent 12 of FIG. 1 in accordance with the present principles. The auto-tracking method 400 commences by first executing step 402 to create or re-set an object for tracking. Initial execution of step 400 serves to create an "empty" object. For tracking purposes, an object possesses certain characteristics, such as a shape and location as well as certain content-based characteristics, such as color and feature points for example. Initially, all of the object characteristics have zero values.

[0020] Execution of step 400 also serves to reset the position of the camera 16 of FIG. 1 in the x, y and z coordinate to locate the camera to a pre-defined (e.g., a pre-set) position. Similarly, the pan, tilt, zoom and iris are set to pre-defined values.

[0021] Following step 402, execution of step 404 occurs whereupon the processor 20 detects the object (e.g., the on-air talent 12 of FIG. 1) by comparing characteristics of the image (e.g., color feature points etc.) in a current video frame captured by camera 16 of FIG. 1 to a stored image of the object. Upon detecting the object (which occurs when the characteristics of the captured frame substantially matches the corresponding characteristics of the stored image), the processor 20 executes step 406 to determine stability of the object. Upon failing to detect the object, step 404 undergoes re-execution upon capture of the next video frame. In practice, step 404 will undergo re-execution to detect the object for a succession of captured video frames until reaching a time-out interval to avoid the execution of an endless loop. Although not shown in FIG. 2, an operator could intervene at this point to either continue object detection, or end the process.

[0022] Tracking of the object (i.e., displacement of the camera) generally requires that the object remain stable. In other words, the object should not undergo significant motion when attempting automatic tracking. Attempting automatic tracking while the object undergoes significant motion could result in movement of the camera 20 to a location from which the object has already moved, which could lead to the camera 16 of FIG. 1 "chasing" the object. To avoid such a possibility, the operator will typically select an interval during which the object must remain generally at the same position before the processor 20 will initiate movement of the camera 16 of FIG. 1. If the object generally substantially motionless for the specified interval, then the object remains stable for purposes of determining stability during step 406. The object stabilization step occurs because at the initial step the camera moves in open loop (i.e. no images are processed during this time). This initial displacement can take one second or more to reach the desired preset position (the zoom command is not that fast) and when the camera finally converges to this position the object that was still moving can be far away from this position leading to an object tracking failure or to a new very important camera displacement that is not the behavior desired.

[0023] If the processor 20 of FIG. 1 finds the object stable during step 406, then the processor displaces the camera 16 of FIG. 1 to the desired pre-set position, and likewise commands the lens 18 of FIG. 1 to zoom to a desired pre-set position during step 408. The operator can change these parameters using the preset modification ability available in the GUI 200 of FIG. 2. For each preset, the operator can modify the location of center of the captured image and image size. The operator can also change the preset using the preset selector of the GUI 200. During step 410, processor 20 updates the object characteristics and resets the object position counter used for stability determination purposes to zero. In particular, the processor 20 of FIG. 1 updates the object characteristics by establishing the position of the object in the current image. The object's characteristics include its shape, for example a rectangle or an ellipse). Using the shape information, the processor 20 extracts content-based characteristics for tracking the object. In the event of an inability to detect object stability during step 406, then process execution branches back to step 404.

[0024] Following step 410, the processor 20 of FIG. 1 executes step 412 to detect whether object tracking occurs with sufficient confidence. Object tracking occurs with sufficient confidence when the actual position of the object as detected from its characteristics lies with a given probability of its expected position, denoting the tracking confidence. An example of a tracking technique suitable for tracking objects is described infra. If the tracking confidence equals or exceeds a given threshold, the processor 20 of FIG. 1 assumes successful tracking and then proceeds to execute step 418 to test convergence. Otherwise, if the tracking confidence does not equal or exceed the threshold, then the processor 20 assumes the object to be lost.

[0025] Under such circumstances, process execution branches to step 414 to look for the object, using the position of the object in the previous frame as a reference position. The processor 20 looks for the object throughout the overall image, typically in a random manner by enlarging image sampling. A check then occurs during step 416 to determine whether the object has been found. To determine if it has found the object, the processor 20 checks whether the distance between the object characteristics and the object candidate characteristics remains lower than half of the tracking confidence. If so, then process execution branches back to step 412 to check for successful tracking. Otherwise, step 414 undergoes re-execution until the processor 20 of FIG. locates the object. To avoid an endless loop, the process 400 could time out after a given interval in the absence of not finding the object. Note that the operator can change the tracking confidence in real time via the GUI 200 of FIG. 2.

[0026] Upon execution of step 418 of FIG. 4, the processor 20 of FIG. 1 determines convergence by determining if the position of the object corresponds to the desired pre-set position. At each instant in time, the object will have a convergence state, either TRUE or FALSE, depending on whether the distance between the actual position of the object and the desired pre-set position does not exceed a threshold value. Initially, the object has a FALSE convergent state. Upon detecting a FALSE convergent state, the processor 20 launches a test of convergence. If convergence state remains FALSE when checked during step 418, then step 420 undergoes execution, whereupon the processor 20 causes the camera 16 to move to a selected preset position. The processor 20 can separately control the pan and tilt speed, with the direction determined by using different values for pan and tilt speed. An operator can change the magnitude of the camera speed via the GUI 200 of FIG. 2.

[0027] To avoid the possibility of shaking caused by the camera 16 of FIG. 1 rapidly moving back and forth over a short distance during tracking, the processor 20 performs a tolerance check during step 422 following a determination during step 418 of a TRUE convergence state. During step 422, the processor 20 checks for tolerance by making use of a tolerance radius about each preset position. If the distance between the desired pre-set position and the current object position remains less than the tolerance radius, then no further movement of the camera 16 of FIG. 1 becomes necessary and the process ends at step 424. Otherwise, if the object (e.g., the on-air talent 12 of FIG. 1) lies outside the tolerance radius, then the processor 20 resets the convergence state to FALSE and step 420 undergoes re-execution to move the camera 16 to match object position and desired preset position.

[0028] An example of a tracking technique includes, e.g., a method of image processing adapted for tracking any general moving object marked by a user in a sequence of images. An example of such a method is randomized template tracking method. In the starting frame of the video sequence, a hand marked object or a detected object is identified. Then, in the same frame, a set of N template locations is drawn using, for example, a uniform number generation. A template of predefined maximum size is associated to each of these locations. The templates, {Ti}=.sub.i=1.sup.i=N are possibly trimmed in order that each of them lies within the marked object boundary. The following steps are then applied sequentially in the same order on every frame following the starting frame unless a tracking failure is signal.

[0029] Each template Ti is tracked in the current frame using for example a common normalized cross-correlation tracking method in a predefined search space. Such a method is further described in an article from J. P. Lewis entitled "Fast Template Matching" and published in Vision Interface in 1995 on p. 120-123. This method associates every template with a correlation surface. The grid location corresponding to the maximum of the correlation surface is the estimated new location of the template. Consequently a translation vector, denoted Vj, is derived for each template Tj in the set.

[0030] A subset of these templates is then possibly discarding in the current frame, i.e. outliers are removed from the set {Vi}.sub.i=1.sup.i=N. The step 12 is referred as rejection control step on FIG. 1. A robust clustering step is used to derive a first subset of set {Vi}.sub.i=1.sup.i=N. To this aim, each motion vector in the set {Vi}.sub.i.di-elect cons.I compared with the remaining vectors using an Euclidean distance measure. A corresponding bin-count Bi is incremented for every vector that lies within a small predefined clustering radius r of this vector. All bin counts are set to 1 initially for all the templates. A subset {Ti}.sub.i=1.sup.i=N, |I|.ltoreq.N of the set {Vi}.sub.i=1.sup.i=N formed by selecting all the motion vectors with associated bin-count Bi.gtoreq.N/2. If N is odd the relationship is defined as Bi.gtoreq.(N+1)/2. A second subset is then derived. To this aim, the two-dimensional mean of the first subset {Vi}.sub.i.di-elect cons.I and the resulting covariance matrix denoted as {.mu.,.SIGMA.} are computed. For computation sake, the cross variances are assumed to be zero. A Gaussian distribution g(V) is defined in motion space with these parameters. A weight wi=g(Vi) is assigned to each motion vector in the set {Vi}.sub.i=1.sup.i=N. The set {Ti,wi}.sub.i=1.sup.i=N is then sorted in a descending order of weights. The weight of the element with index (N/2)-1, if N is even, or (N-1)/2, if N is odd is selected. This weight is denoted as C. Each template is then assigned a probability pi=min{1.0,Wl/C}. Templates are finally discarded based on a threshold on this probability, i.e. templates with an assigned probability lower than a predefined threshold are discarded. At the end of this step, only M templates are retained. This subset is denoted {Ti}.sub.i=1.sup.i=M, where M.ltoreq.N.

[0031] It is checked if the tracking is successful or not. The tracking is considered successful if at least N/2 (i.e. M>N/2), if N is even, or (N+1)/2 (i.e. M>(N+1)/2), if N is odd, templates are retained, and is considered failing otherwise.

[0032] If the tracking is successful, the correlation surfaces of the retained templates are combined into a probability distribution p(x) from which new templates are resampled to replenish the template set.

[0033] With {Ti}.sub.i=1.sup.i=M, the target state x.sub.T is estimated to be the mean of these template locations. The target state is the location of the target object on the image grid. The minimum bounding rectangle is also constructed, denoted Bmin, around these templates, which acts as the spatial bound for the state distribution computed below. Given x.sub.T, the set of correlation surfaces associated with {Ti}.sub.i=1.sup.i=M is translated to this location, sum and normalized, all within Bmin, to result in a probability distribution p(x). We consider p(x) as the distribution of target state x.sub.T generated by the randomized template tracking method. N-M template locations, denoted yk, are sampled from p(x) as follows:

y.sub.k.apprxeq.p(x), 1.ltoreq.k.ltoreq.(N-M)

To each sampled location in the set {y.sub.k}, Gaussian noise is added to increase sample diversity as shown below:

y.sub.k=y.sub.k+.eta.(0,.sigma..sup.2)

[0034] Finally, to each sample location y.sub.k an image patch is associated around it as described below. In absence of a natural scale estimation scheme here, we are forced to remain conservative in choosing the dimensions of the new template. The chosen template is trimmed to fit .alpha.Bmin, .alpha..gtoreq.1. The value .alpha.=1 is the most conservative estimate which we employ when tracking very small sized objects in aerial videos and the like. Here a must not be misconstrued to be a scale estimate of the tracked object.

[0035] If the tracking failed, all templates in the set {Ti}.sub.i=1.sup.i=N are retained and their positions are extrapolated by the last successfully registered object translation, meaning the translation of the target state at the step in the past when tracking was signaled as successful. A target state x.sub.T is however estimated, at step 23, mainly for display purpose. In this case the target state x.sub.T is estimated to be the mean of the template locations after extrapolation. The control is then passed to when the next frame arrives. Such a scheme is found to be useful in handling very short occlusions of a few frames.

[0036] Probabilistic tracking methods are also known from the prior art. Some of them are based on particle filtering. An example of such a color model based particle filtering method is given in the document from P. Perez et al. entitled "Data fusion for visual tracking with particles" and published in Proc. IEEE, 92(3):495-513, 2004.

[0037] For example, initially, the particle positions denoted {xi}.sub.i=1.sup.i=K in the current frame are predicted from the positions estimated in the past frame using for example random walk approach. A weight denoted .pi..sub.i is also computed for each particle position by matching the local histogram around the particle to the constant color histogram model of the target object stored in a memory from the first frame. This weight is higher if the location match the color model. The weights {.pi..sub.i} sum to unity {.pi.i,xi}.sub.i=1.sup.i=K defines a probability distribution from which the target state xc, i.e. the location of the target object on the image grid.

[0038] A rejection probability is computed. This rejection probability is further used to make a binary decision for the color based tracking method. To this aim, the covariance matrix C.pi. of the distribution {xi,1/K}.sub.i=1.sup.i=K are computed. Then the determinants of these covariance matrices are computed. From the property of determinants we know that this scalar quantity measures the effective volume of the parallelopiped constructed by the row vectors of the matrix. This motivates the use of this quantity as a scalar confidence measure to determine if the tracking is successful or not. With the above notations, the rejection probability pr is defined as follows:

pr = min { 1.0 , det [ C .pi. ] det [ CS ] } ##EQU00001##

Pr tends to 1 as the uncertainty in the distribution increases and tends towards 0 as the distribution becomes more peaked. It is interesting to note that it can be inconsistent to analyze the performance of the filter based solely on evolution of its covariance over time. This is because the spread (covariance) of the samples at each tracking step is not constant and even with resampling there is bound to be some fluctuations. Therefore, it is necessary to account for this variable spread via a factor like Cs.

[0039] The tracking is considered successful if pr is less than an empirical threshold and is considered failing otherwise.

[0040] If the tracking is successful, the filtering distribution is resampled and the target state xc is estimated to be the mean of the distribution estimated.

[0041] If the tracking failed, the resampling of the filtering distribution is stopped. This causes the samples to be more and more spread at each tracking step. In the absence of clutter, the sample weights tend to be uniform which results in the probability pr tending to 1. But once a subset of the samples gains distinctly more weight (say in a relock scenario after a few frames of occlusion) a few frames later, the rejection probability pr tends towards 0 leading to a success signal. In this case; the target state xT is estimated to be the mean of the filtering distribution.

The particle filtering recommences when the next frame arrives.

[0042] Image processing adapted for tracking an object in a sequence of images may comprise the following steps applied on each image of the sequence: [0043] determining the location of N templates in the current image on the basis of locations of the N templates in a preceding image, with N an integer, the step being called first tracking step; [0044] determining, according to a first predefined criteria, if the first tracking step is successful or is not successful; and [0045] determining a probability distribution, called first probability distribution, representing the probability distribution of the object location in the current image. The method further comprises the following steps: [0046] determining the location of K particles in the current image on the basis of locations of the K particles in the preceding image, with K an integer, and assigning each particle a weight representing the level of match between an observation made for the particle in the current image and a predefined model, the K particles' locations and associated weights defining a second probability distribution, the step being called second tracking step; [0047] determining, according to a second predefined criteria, if the second tracking step is successful or is not successful; [0048] if the first tracking step is not successful and the second tracking step is successful: [0049] determining the location of N new templates in the current image based on the second probability distribution; [0050] replacing the N templates in the current image with the N new templates; [0051] determining the location of the object in the current image based on the second probability distribution; and [0052] if the first tracking step is successful and the second tracking is not successful: --determining the location of K new particles in the current image based on the first probability distribution; [0053] replacing the K particles in the current image with the K new particles and assigning each particle a same predefined weight; [0054] determining the location of the object in the current image based on the first probability distribution; and [0055] if both first and second tracking steps are successful or if both first and second tracking steps are not successful determining the location of the object in the current image on the basis of the first probability distribution or on the basis of the second probability distribution.

[0056] The predefined model may be a color histogram of the object and the observation may be a color histogram computed in a patch centered on the particle location and the predefined weight equals 1 divided by K.

[0057] The first tracking step may comprise: [0058] determining, for each template of the preceding image, an image area in the current image, called correlation surface, whose correlation with a previously defined correlation surface centered on the template is maximum; and [0059] determining the template positions in the current image as the centers of the correlation surfaces in the current image.

[0060] The method may further comprise a step for assigning a priority level to the first tracking step and to the second tracking step, and: [0061] if the priority level of the first tracking step is higher than the priority level to the second tracking step, then: [0062] if the first tracking step is not successful and the second tracking step is successful, switching the priority levels between the first tracking step and the second tracking step; [0063] if the first tracking step is successful and the second tracking is not successful, leaving the priority levels unchanged; [0064] if both first and second tracking steps are successful or if both first and second tracking steps are not successful determining the location of the object in the current image on the basis of the first probability distribution and leaving the priority levels unchanged; and [0065] if the priority level of the first tracking step is lower than the priority level to the second tracking step, then: [0066] if the first tracking step is not successful and the second tracking step is successful, leaving the priority levels unchanged; [0067] if the first tracking step is successful and the second tracking is not successful, switching the priority levels between the first tracking step and the second tracking step; [0068] if both first and second tracking steps are successful or if both first and second tracking steps are not successful determining the location of the object in the current image on the basis of the second probability distribution.

[0069] A tracking method may combine two known tracking methods. As an example, the two tracking methods described may be used. However, any other tracking method may be used provided that one of them relies on an adaptive set/catalogue model of the object and the other relies on a constant holistic model like a color histogram of the object. In the following, the two methods are referred as method T and method C, where T stands for template tracking and C for color based tracking. The method C may be replaced by any particle filtering based tracking method, i.e. not necessarily color based.

[0070] At the initialization stage of tracking, a priority denoted P is assigned to one of the two tracking methods. This priority P is then switched between the two methods depending on their current signs of success or failure of the methods at each tracking step. Furthermore, at each instant the method that has the priority makes an estimation of the target state denoted X.

[0071] Both methods are run independently without any interaction or information exchange except possibly at the very end. Therefore, both methods may run in parallel.

[0072] As a first example, it is assumed that at the initialization stage method T has been given the priority, i.e. P=T. [0073] if T is successful, the target state X is made from T; [0074] otherwise (i.e. if T failed) it is checked if C is successful: [0075] if C is successful, the cross sampling described below applies, P is set to C and the target state X is made from C; [0076] otherwise (i.e. if C failed), resampling is interrupted and the target state is made from T. [0077] if C is successful target state is estimated from the T side, (if T is also successful) or from the C side (if T failed); [0078] otherwise: [0079] if T also failed, resampling is interrupted at step 34 but target state is made from T since P=T; [0080] Otherwise, cross-sampling applies, and the target state is estimated from T.

[0081] As a second example, it is assumed that at the initialization stage method C has been given the priority, i.e. P=C. [0082] if C is successful, the target state X is made from C; [0083] otherwise (i.e. if C failed) it is checked if T is successful: [0084] if T is successful, the cross sampling step 38 described below applies, P is set to T and the target state X is made from T; [0085] otherwise (i.e. if T failed), resampling is interrupted and the target state is made from C;

[0086] It should be understood that even if P=C: [0087] if T is successful target state is estimated from the C side, either (if C is also successful) or from the T side (if C failed); [0088] otherwise: if C also failed, resampling is interrupted, but target state is made from C since P=C, [0089] Otherwise, cross-sampling applies, and the target state is estimated from C.

[0090] At cross-sampling steps both tracking methods interact and exchange information in only one direction, i.e. the successful tracking method provides information in the form of a probability distribution of locations of the target object to the other tracking method. There are two types of cross sampling. Both steps are further detailed below.

[0091] If P=T and method T is successful, then the state estimate as stated earlier is made from method T. If at some later instant method T fails but method C is successful, possibly when there is partial occlusion and/or jerky out of the image plane rotation. The target state is now estimated from method C, the priority switched to method C and the following cross sampling applies. The entire template set is discarded, i.e. are erased from memory, and a new set of N template locations are sampled from the color filtering distribution (i.e. {.pi.i,xi}.sub.i=1.sup.i=K) at the corresponding instant and each location assigned an image template, e.g. a rectangle of a predefined size. The templates are then trimmed, if necessary, to fit the bounds of the object as decided by method C. Therefore, the trimming step may change the size of each template. It is also to be noticed that the past object model composed by the templates is totally discarded and updated at the current instant from the color distribution. The step gives the template tracking method on the whole a new set/catalogue model of the target object.

[0092] If P=C and method C is successful, then the state estimate as stated earlier is made from method C. Now say at some later instant method C fails but method T is successful, typically when there are extreme illumination changes and/or nearby clutter. The state estimate is now made from the method T, the priority switched to method T and the following cross sampling applies. The current samples {.pi.i,xi}.sub.i=1.sup.i=K which compose the filtering distribution are discarded, i.e. erased from memory, and replaced by new samples drawn from the target state distribution p(x) defined by the combination of correlation surfaces output by method T. Each sample is weighted equally (i.e. the new weights are all set to 1/K to result in the distribution given as

( { 1.0 K , xi } i = 1 i = K ) . ##EQU00002##

The color model, i.e. color histogram, is however not updated. The constant color model of the target object derived from the first frame is stored in a memory and is never updated.

[0093] If both methods T and C failed at the same tracking stage (i.e. on the same image), then the resampling step in both tracking method are interrupted. In this case we resort to both interrupting resampling. This case may occur in a complete occlusion scenario where the occluding object shares no commonality with the target reference model.

[0094] According to another embodiment, no priority is set, but T is the main tracking method and C is used to update its state distribution especially when T failed and C is successful. This solution is equivalent to set P=T and to never update P.

[0095] In a third embodiment, no priority is set, but C is the main tracking method and T is used to update its state distribution especially when C failed and T is successful. This solution is equivalent to set P=C and to never update P.

[0096] The target model which is employed in the method according to the invention is a multi-part model. Advantageously, the method of the invention does not require a single fused target state distribution but uses instead two target state distributions: one coming from the template tracking method and the other one coming from the color based particle filtering method. The first part is composed of the gray level templates. Due to the sequential resampling procedure, at any tracking instant, the age of each element in the template set is possibly different from the rest. Therefore, this set consists of templates having lifespans of a few to several tens of frames and thus plays the role of the dynamically adapted part of the entire target model. The second part of the model is the constant color histogram of the target object. This is the static part of the two part target appearance model and does not interact with the first part. The histogram is deliberately kept constant to avoid false adaptation due to illumination, orientation and size changes.

[0097] The foregoing describes a technique for automatically tracking an object.

* * * * *

D00000

D00001

D00002

D00003

D00004

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.