Integrated Configuration Engine For Interference Mitigation In Cloud Computing

Maji; Amiya Kumar ; et al.

U.S. patent application number 16/431686 was filed with the patent office on 2020-05-21 for integrated configuration engine for interference mitigation in cloud computing. This patent application is currently assigned to Purdue Research Foundation. The applicant listed for this patent is Purdue Research Foundation. Invention is credited to Saurabh Bagchi, Amiya Kumar Maji, Subrata Mitra.

| Application Number | 20200159559 16/431686 |

| Document ID | / |

| Family ID | 57731036 |

| Filed Date | 2020-05-21 |

| United States Patent Application | 20200159559 |

| Kind Code | A1 |

| Maji; Amiya Kumar ; et al. | May 21, 2020 |

INTEGRATED CONFIGURATION ENGINE FOR INTERFERENCE MITIGATION IN CLOUD COMPUTING

Abstract

A cloud computing system which is configured to monitor operating status of a plurality of virtual computing machines running on a physical computing machine, wherein said monitoring includes monitoring a cycles per instruction (CPI) parameter and a cache miss rate (CMR) parameter of at least one of the plurality of virtual computing machines. The system detects interference in the operation of the at least one virtual machine, with the detection including determining when at least one of the CPI and CMR values exceed a predetermined threshold. When interference is detected, the system reconfigures a load balancing module associated with the virtual machine in question to send fewer requests to the virtual machine.

| Inventors: | Maji; Amiya Kumar; (West Lafayette, IN) ; Mitra; Subrata; (West Lafayette, IN) ; Bagchi; Saurabh; (West Lafayette, IN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Purdue Research Foundation West Lafayette IN |

||||||||||

| Family ID: | 57731036 | ||||||||||

| Appl. No.: | 16/431686 | ||||||||||

| Filed: | June 4, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15203733 | Jul 6, 2016 | 10310883 | ||

| 16431686 | ||||

| 62188957 | Jul 6, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/5083 20130101; G06F 9/45558 20130101; G06F 2009/4557 20130101; G06F 2009/45591 20130101; G06F 2009/45595 20130101 |

| International Class: | G06F 9/455 20060101 G06F009/455; G06F 9/50 20060101 G06F009/50 |

Goverment Interests

STATEMENT REGARDING GOVERNMENT FUNDING

[0002] This invention was made with government support under CNS1405906 awarded by the National Science Foundation. The government has certain rights in the invention.

Claims

1. A cloud computing system, comprising: a) a computing engine configured to: i) monitor operating status of a plurality of virtual computing machines running on a physical computing machine, wherein said monitoring includes monitoring a cycles per instruction (CPI) parameter and a cache miss rate (CMR) parameter of at least one of the plurality of virtual computing machines; ii) detect interference in the operation of the at least one virtual machine, said detection including determining when at least one of said CPI and CMR values exceed a predetermined threshold; and iii) reconfigure a load balancing module associated with the at least one virtual machine to send fewer requests to said one of said virtual machines when said interference is detected.

2. The system of claim 1, wherein said reconfiguring the load balancing module comprises reducing a request scheduling weight associated with said virtual machine in said load balancing module.

3. The system of claim 2, wherein said reconfiguring the load balancing module comprises determining an estimated processor utilization of a web server running on said virtual computing machine, determining a request rate for the virtual machine that will reduce the processor utilization below a utilization threshold, and reducing said request scheduling weight to achieve said request rate.

4. The system of claim 1, wherein the computing engine is further configured to: i) collect training data relating to the at least one virtual machine; and ii) utilize said training data to determine said estimated processor utilization.

5. The system of claim 1, wherein the computing engine is further configured to: i) reconfigure a web server associated with the at least one virtual machine to improve performance of the at least one virtual machine if the interference exceeds a predetermined interference duration.

6. The system of claim 5, wherein said reconfiguration comprises reducing a maximum clients parameter of said web server, said maximum clients parameter representing a maximum number of worker threads that the web server can initiate.

7. The system of claim 6, wherein said reconfiguration further comprises increasing a keep alive timeout parameter of said web server, said keep alive timeout parameter representing a duration a client connection to the web server is persisted in an idle state before being terminated.

8. A cloud computing system, comprising: a plurality of physical computing machines having a processor and a memory, each of the physical computing machines comprising a plurality of virtual computing machines, each of the virtual computing machines running a web server module; a computing engine, the computing engine configured to: monitor operating status of a plurality of virtual computing machines running on a physical computing machine, wherein said monitoring includes monitoring a cycles per instruction (CPI) parameter and a cache miss rate (CMR) parameter of at least one of the plurality of virtual computing machines; detect interference in the operation of the at least one virtual machine, said detection including determining when at least one of said CPI and CMR values exceed a predetermined threshold; and reconfigure a load balancing module associated with the at least one virtual machine to send fewer requests to said one of said virtual machines when said interference is detected.

9. The system of claim 8, wherein said reconfiguring the load balancing module comprises reducing a request scheduling weight associated with said virtual machine in said load balancing module.

10. The system of claim 9, wherein said reconfiguring the load balancing module comprises determining an estimated processor utilization of a web server running on said virtual computing machine, determining a request rate for the virtual machine that will reduce the processor utilization below a utilization threshold, and reducing said request scheduling weight to achieve said request rate.

11. The system of claim 8, wherein the computing engine is further configured to: i) collect training data relating to the at least one virtual machine; and ii) utilize said training data to determine said estimated processor utilization.

12. The system of claim 8, wherein i) reconfigure a web server associated with the at least one virtual machine to improve performance of the at least one virtual machine if the interference exceeds a predetermined interference duration.

13. The system of claim 12, wherein said reconfiguration comprises reducing a maximum clients parameter of said web server, said maximum clients parameter representing a maximum number of worker threads that the web server can initiate.

14. The system of claim 13, wherein said reconfiguration further comprises increasing a keep alive timeout parameter of said web server, said keep alive timeout parameter representing a duration a client connection to the web server is persisted in an idle state before being terminated.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] The present patent application is a continuation of U.S. patent application Ser. No. 15/203,733, filed Jul. 6, 2016, which is related to and claims the priority benefit of U.S. Provisional Patent Application Ser. No. 62/188,957, filed Jul. 6, 2015, the contents of which are hereby incorporated by reference in their entirety into the present disclosure.

TECHNICAL FIELD

[0003] The present application relates to cloud computing, and more specifically to interference mitigation in cloud computing hardware and data processing.

BACKGROUND

[0004] Performance issues in web service applications are notoriously hard to detect and debug. In many cases, these performance issues arise due to incorrect configurations or incorrect programs. Web servers running in virtualized environments also suffer from issues that are specific to cloud, such as, interference, or incorrect resource provisioning. Among these, performance interference and its more visible counterpart performance variability cause significant concerns among IT administrators. Interference also poses a significant threat to the usability of Internet-enabled devices that rely on hard latency bounds on server response (imagine the suspense if Ski took minutes to answer your questions!). Existing research shows that interference is a frequent occurrence in large scale data centers. Therefore, web services hosted in the cloud must be aware of such issues and adapt when needed.

[0005] Interference happens because of sharing of low level hardware resources such as cache, memory bandwidth, network etc. Partitioning these resources is practically infeasible without incurring high degrees of overhead (in terms of compute, memory, or even reduced utilization). Existing solutions primarily try to solve the problem from the point of view of a cloud operator. The core techniques used by these solutions include a combination of one or more of the following: a) Scheduling, b) Live migration, c) Resource containment. Research on novel scheduling policies look at the problem at two abstraction levels. Cluster schedulers (consolidation managers) try to optimally place VMs on physical machines such that there is minimal resource contention among VMs on the same physical machine. Novel hypervisor schedulers try to schedule VM threads so that only non-contending threads run in parallel. Live migration involves moving a VM from a busy physical machine to a free machine when interference is detected. Resource containment is generally applicable to containers such as LXC, where the CPU cycles allocated to batch jobs is reduced during interference. Note that all these approaches require access to the hypervisor (or kernel in case of LXC), which is beyond the scope of a cloud consumer. Therefore, improvements are needed in the field.

SUMMARY

[0006] According to one aspect, a cloud computing system comprising a computing engine configured to monitor operating status of a plurality of virtual computing machines running on a physical computing machine is provided, wherein the monitoring includes monitoring a cycles per instruction (CPI) parameter and a cache miss rate (CMR) parameter of at least one of the plurality of virtual computing machines. The computing engine detects interference in the operation of the at least one virtual machine, said detection including determining when at least one of said CPI and CMR values exceed a predetermined threshold; and reconfigures a load balancing module associated with the at least one virtual machine to send fewer requests to said one of said one of said virtual machines when said interference is detected. The reconfiguring of the load balancing module may comprise reducing a request scheduling weight associated with said virtual machine in said load balancing module. The reconfiguring of the load balancing module may also comprise determining an estimated processor utilization of a web server running on said virtual computing machine, determining a request rate for the virtual machine that will reduce the processor utilization below a utilization threshold, and reducing said request scheduling weight to achieve said request rate. The computing engine may be further configured to collect training data relating to the at least one virtual machine; and utilize said training data to determine said estimated processor utilization. The computing engine may be further configured to reconfigure a web server associated with the at least one virtual machine to improve performance of the at least one virtual machine if the interference exceeds a predetermined interference duration.

BRIEF DESCRIPTION OF THE DRAWINGS

[0007] The above and other objects, features, and advantages of the present invention will become more apparent when taken in conjunction with the following description and drawings wherein identical reference numerals have been used, where possible, to designate identical features that are common to the figures, and wherein:

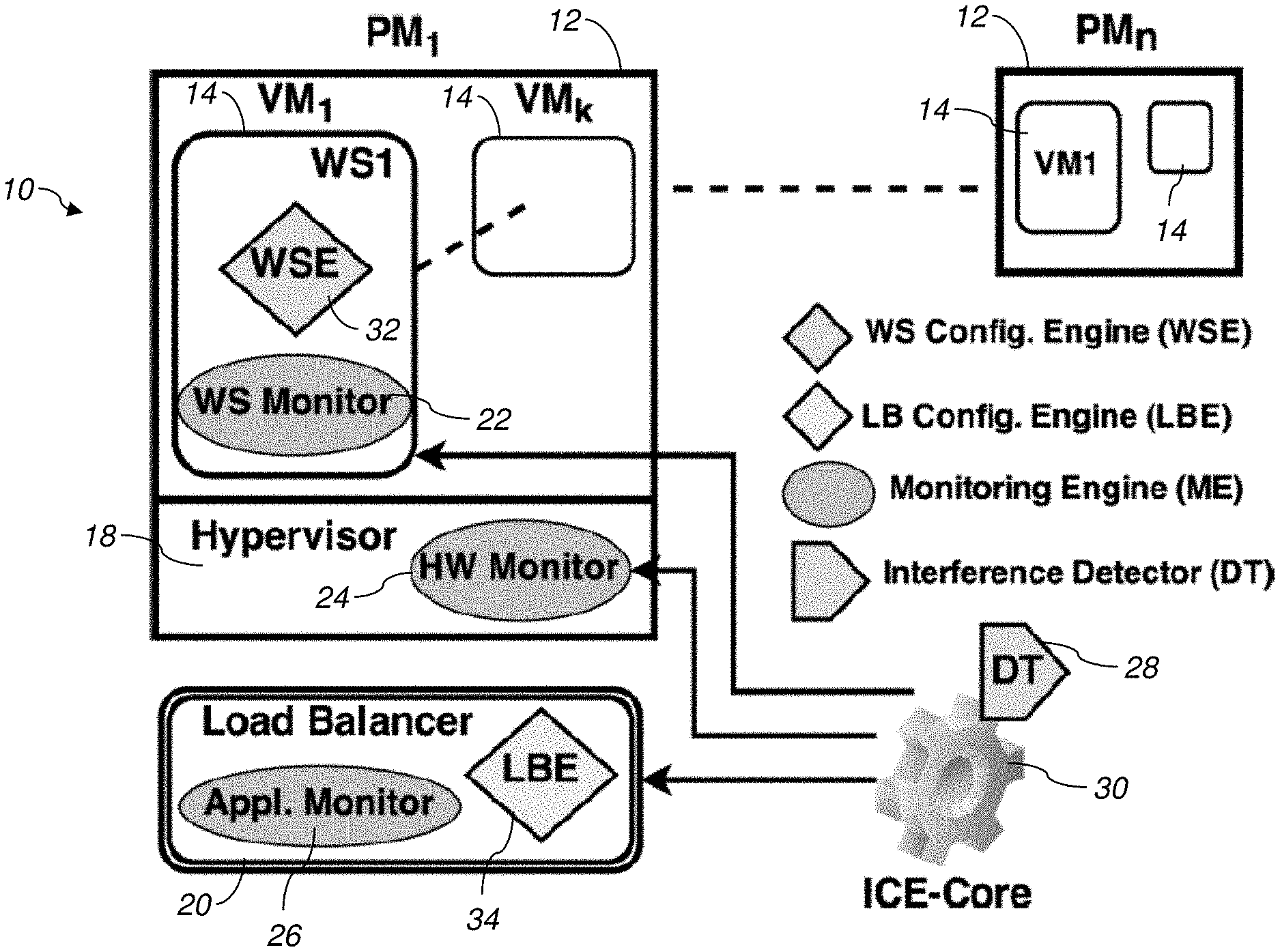

[0008] FIG. 1 is a schematic diagram of an integrated configuration engine system according to one embodiment.

[0009] FIG. 2 is process flow diagram for the system of FIG. 3a according to one embodiment.

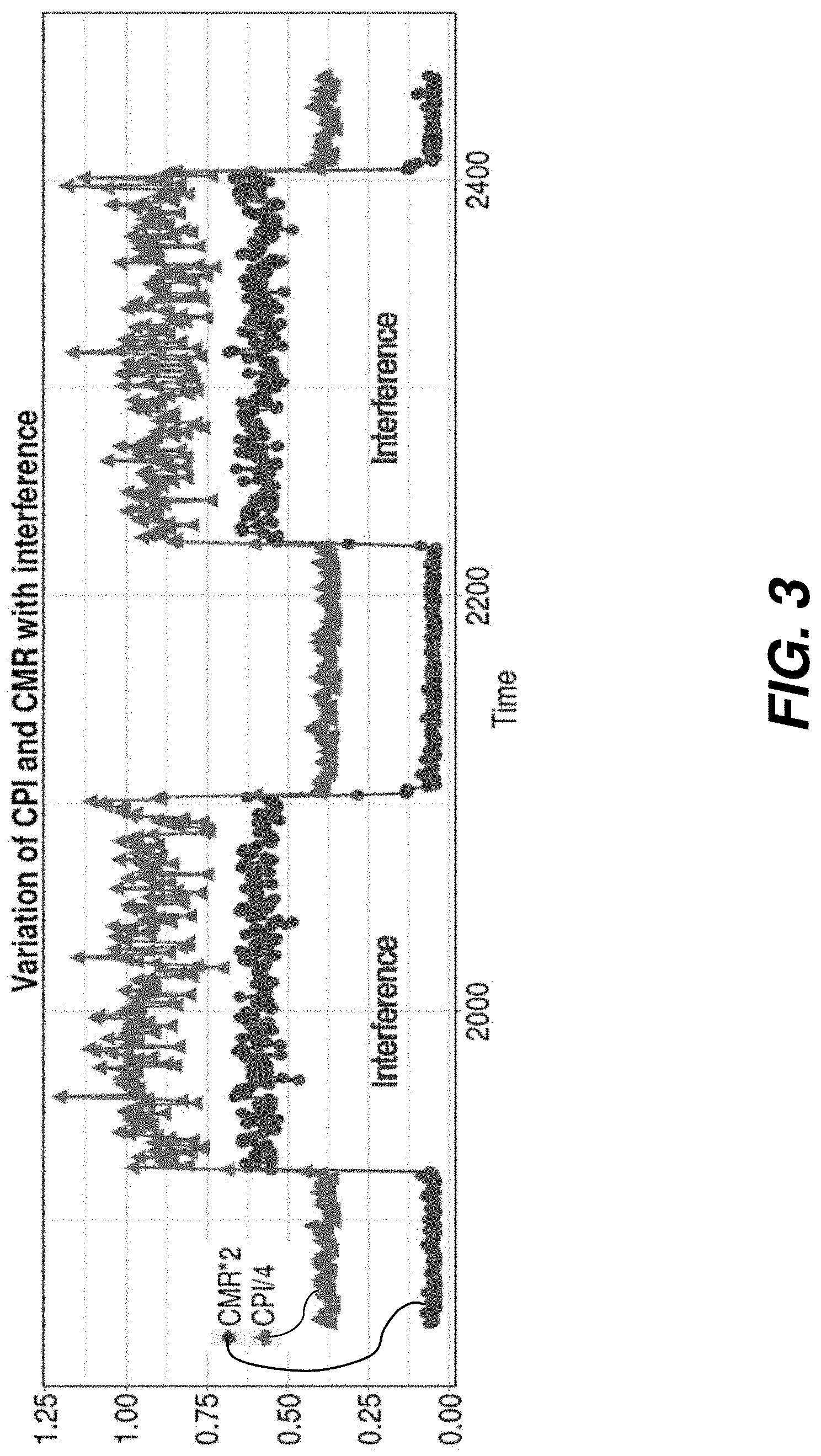

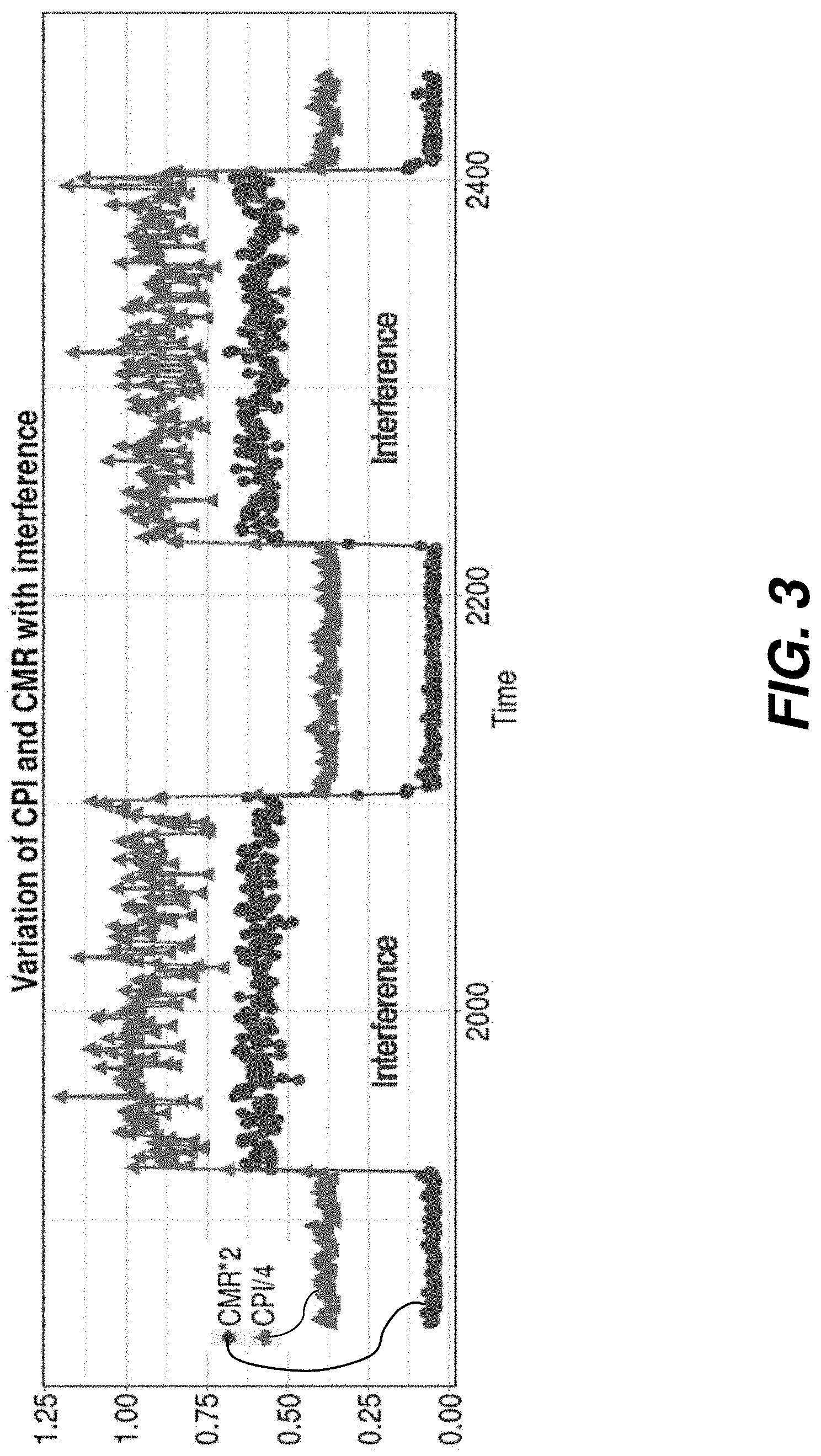

[0010] FIG. 3 is a plot illustrating variation in cycles per instruction (CPI) and cache miss rate (CMR) with interference according to one embodiment.

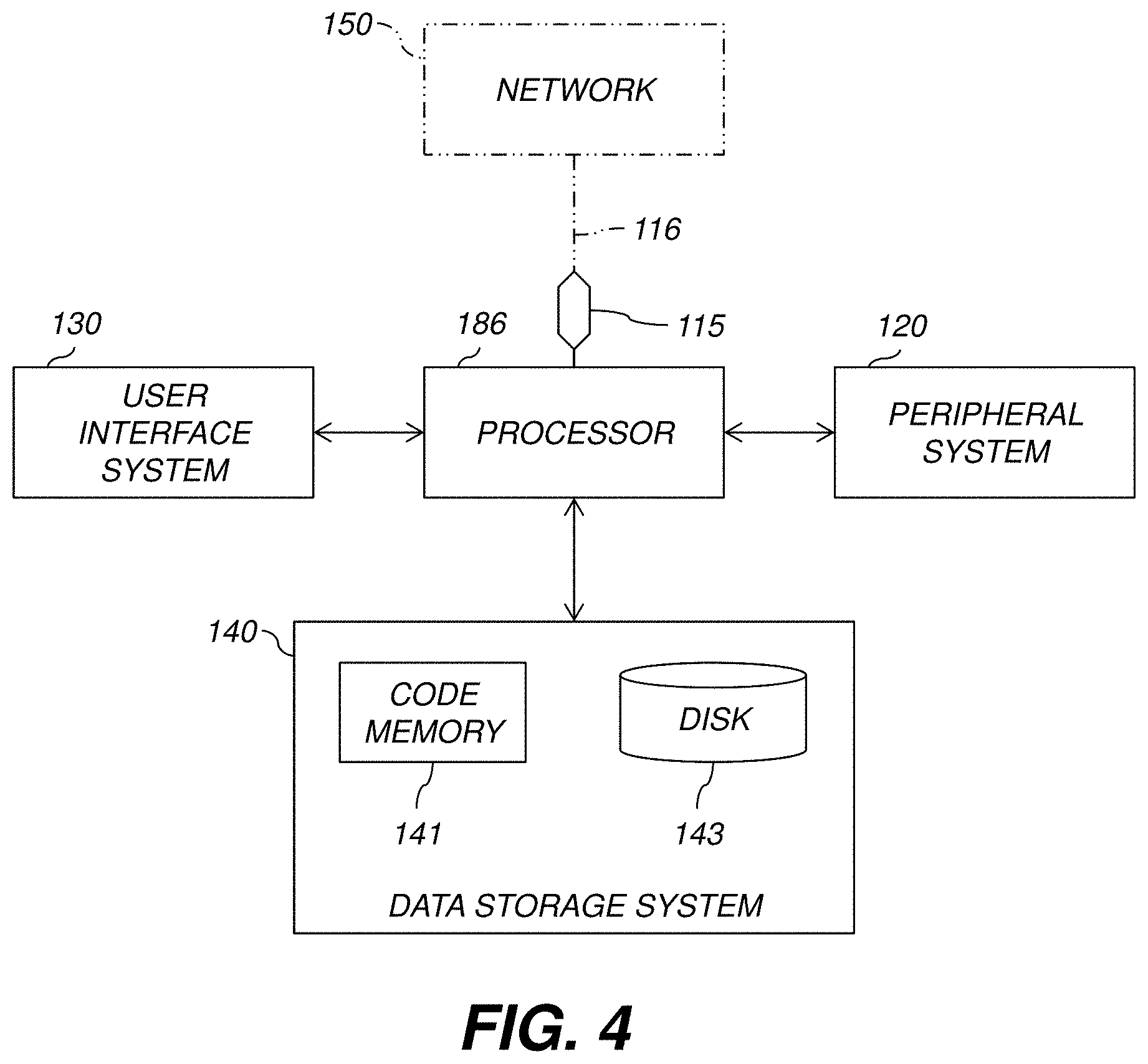

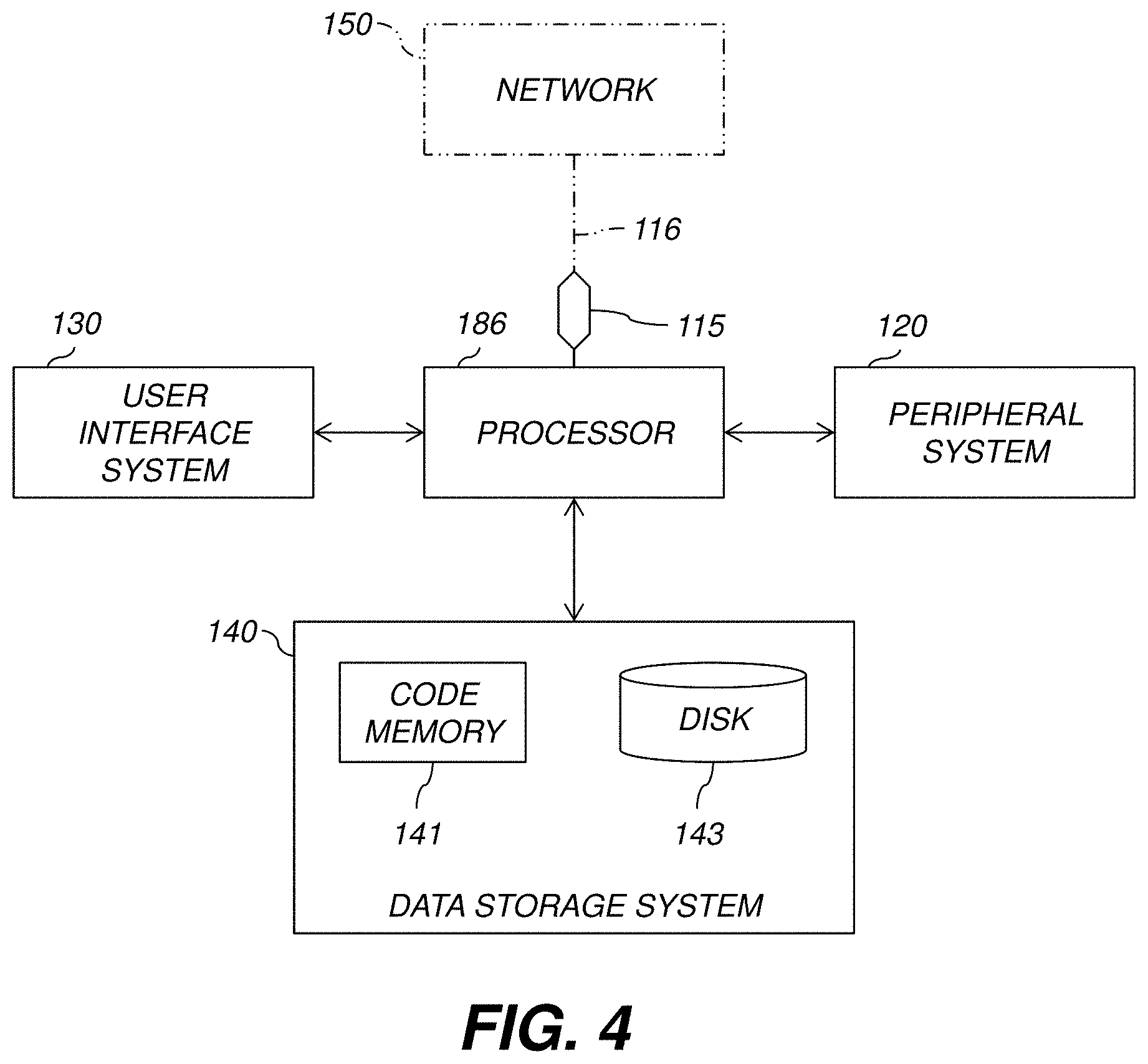

[0011] FIG. 4 is a high-level diagram showing the components of a data-processing system according to one embodiment.

[0012] The attached drawings are for purposes of illustration and are not necessarily to scale.

DETAILED DESCRIPTION

[0013] In the following description, some aspects will be described in terms that would ordinarily be implemented as software programs. Those skilled in the art will readily recognize that the equivalent of such software can also be constructed in hardware, firmware, or micro-code. Because data-manipulation algorithms and systems are well known, the present description will be directed in particular to algorithms and systems forming part of, or cooperating more directly with, systems and methods described herein. Other aspects of such algorithms and systems, and hardware or software for producing and otherwise processing the signals involved therewith, not specifically shown or described herein, are selected from such systems, algorithms, components, and elements known in the art. Given the systems and methods as described herein, software not specifically shown, suggested, or described herein that is useful for implementation of any aspect is conventional and within the ordinary skill in such arts.

[0014] In an example embodiment, a novel system and method for the management of interference induced delay in cloud computing is presented. The system may be implemented in a cloud computing environment as shown in FIG. 1, which typically includes a plurality of physical computing machines (e.g., computer servers) 12 connected together by an electronic network. The physical machines (PMs) 12 include a plurality of virtual computing machines (VMs) 14 which each run their own operating system and appear to outside clients to have their own processor, memory, and other resources, although they in fact share hardware in a physical machine 12. Each physical computing machine 12 typically has a hypervisor module 18, which routes electronic messages and other data to and from the various virtual machines 14 within a physical machine 12. Each virtual machine 14 includes one or more web server modules, which receive requests from outside client nodes and send information back to the clients as needed. Some non-limiting examples of applications for such web server software programs are website hosting, e-commerce applications, distributed computing, and data mining. A load balancer 20, which may be implemented on a separate physical computing machine or on one of the physical machines 12, may be included. The load balancer 20 is used to scale websites being hosted in the cloud computing environment to serve larger number of users by distributing load multiple physical machines 12, or multiple virtual machines 14.

[0015] As shown in FIG. 1, the present disclosure provides a system 10 which operates in a cloud computing environment and includes an integrated configuration engine (ICE) 20, an interference detector 28, a monitoring engine (ME), a load balancer configuration engine (LBE) 34, and a web server configuration engine (WSE) 32. The monitoring engine may include a web server monitor 22, a hardware monitor 24, and an application monitor 26. The system utilizes these components to monitor operating statistics, detect interference, reconfigure the load balancer if necessary, further monitors operations to determine load data, and reconfigures the web server to improve performance as shown in FIG. 3b.

[0016] The interference configuration engine 28 and interference detector 28 may comprise software modules which reside on a physical computing machine separate from the physical machines 12, or on the same physical machines 12, or distributed across multiple physical machines 12.

[0017] In one embodiment, the interference configuration engine monitors the performance metrics of WS VMs 14 at three levels of the system 10. The hardware monitor 24 collects values for cycles per instruction (CPI) and cache miss rate (CMR) for all monitored VMs 14, whereas, CPU utilization (CPU) of each VM 14 is monitored inside the VM 14 by the web server monitor 22. The application monitor 26 inside the load balancer 20 gives the average response time (RT), and requests/second (RPS) metrics for each VM 14. While the hardware counter values are primarily used for interference detection, system and application metrics are used for reconfiguration actions.

[0018] CPI and CMR values of affected WS VMs increase significantly during phases of interference. This is shown in FIG. 3 where the red vertical lines (start of interference) are followed by a sudden rise in CPI and CMR. Both these metrics are leading indicators of interference (as opposed to RT which is a lagging indicator) and therefore allow us to reconfigure quickly and prevent the web server from going into a death spiral (i.e., one where the performance degrades precipitously and does not recover for many subsequent requests). The ICE 30 senses the rise in CPI and CMR information to determine when interference is occurring. In certain embodiments, the ICE 30 may evaluate only CPI, only CMR, or CPI and CMR in combination to determine that interference is occurring (stage 54).

[0019] FIG. 2 shows a process 50 for monitoring and reconfiguring components in the system 10 in order to reduce interference and improve response time according to one embodiment. First the monitoring engine collects performance metrics from the web servers running on the virtual machines 14 using the web server monitor 22 (stage 52). The metrics are then fed as inputs to the interference detector 28 and integrated configuration engine 30. In the second stage, the interference detector analyzes observed metrics to find which WS VM(s) 14 are suffering from interference (stage 54). Once the affected VM(s) 14 are identified, the load-balancer configuration engine then reduces the load on these VMs 14 by diverting some traffic to other VMs 14 in the cluster (stage 56). If interference lasts for a long time (stage 58), the web server configuration engine 32 reconfigures the web server parameters (e.g., maximum timeout, keep alive time) to improve response time (RT) further (stage 60). When interference goes away the load balancer and web server configurations may be reset to their default values.

[0020] In certain embodiments, the load balancer reconfiguration (stage 56) is performed as follows: When interference is detected, a scheduling weight of the corresponding VM 14 is updated (typically lowered). A scheduling weight is a factor that is assigned to the particular VM 14 as part of the algorithm performed by the load balancer 20 that assigns incoming requests or messages to a particular VM 14 among the plurality, of VMs 14. By lowering the scheduling weight, a particular VM 14 will generally receive less requests. Note that, # requests forwarded to a WS is a function of its scheduling weight. Formally,

r=f(w,T),

[0021] where r is the requests per second (RPS) received by a WS, w is the scheduling weight, and T is total requests received at front-end (HAProxy).

[0022] Similarly, the CPU utilization (u) of a WS is a function of its load, i.e., u=g(r). The degree of interference on a WS can be approximated by its CPI measurements (c). Therefore, u=h(c). We approximate the dependence of u on r and c with the empirical function

u=.xi.(r,c)

[0023] During our experiments we also found that CPU utilization ut at time t is often dependent on the utilization ut-1 at time t-1. This happens because execution of a task may often last multiple intervals. For example, a request that started executing just before taking measurement for interval t may be served in interval t+1. Therefore, the measurements in intervals t and t+1 are not independent. This dependence is captured by taking into account the CPU utilization at the previous interval (we denote this as OldCPU or o) in our empirical function. The final function for estimating u is represented as

u=.xi.(o,r,c)

[0024] The The LICE works as shown in Algorithm 1 of Table 1 below:

TABLE-US-00001 TABLE 1 Algorithm 1 LB reconfiguration function for ICE 1: procedure UPDATE_LB_WEIGHT( ) 2: if DT detected interference then 3: Estimate u.sub.t .rarw. .xi.(u.sub.t - 1, r.sub.t, c.sub.t) 4: if u.sub.t > u.sub.thres then 5: Estimate {circumflex over (r)}.sub.t, s.t. u.sub.thres = .xi.(u.sub.t, {circumflex over (r)}.sub.t, c.sub.t) 6: Compute .delta. .rarw. (r.sub.t - {circumflex over (r)}.sub.t)/r.sub.t 7: Set weight w.sub.new .rarw. (1 - .delta.)w.sub.current 8: Check Max-Min Bounds (w.sub.new) 9: end if 10: else 11: Reset default weight 12: end if 13: end procedure

[0025] Assume that CPI, RPS and CPU values at time t are c.sub.t, r.sub.t, and u.sub.t. When the DT 28 detects interference it sends a reconfiguration trigger to LBE 34. The LBE then computes the predicted CPU utilization (u{circumflex over ( )}t), given the current metrics c.sub.t, r.sub.t, and u.sub.t-1. Notice that we use the estimated CPU u{circumflex over ( )}.sub.t to compare with setpoint u.sub.thres since rise in u often lags behind rise in c. If u{circumflex over ( )}.sub.t is found to exceed the setpoint, we predict a new RPS value r{circumflex over ( )}.sub.t such that the CPU utilization falls below setpoint. To predict a new load balancer weight we first compute the percentage reduction in RPS (.delta.) that is required to achieve this. This is then used to reduce weight w proportionately. Note that during periods of heavy load, the estimated .delta. may be very high, practically marking the affected server offline (very low w.sub.new). To avoid this, we limit the change in w within a maximum bound (40% of its default weight).

[0026] In certain embodiments, .xi.( ) is estimated using multi-variate regression on variables r, c, and o, where o is a time-shifted version of the vector u (observed CPU utilization).

[0027] In certain embodiments, the stage 56 of reconfiguring the web server parameters is achieved by adjusting the parameters of the web server. For example, the maximum number of worker threads that the web server can initiate may be lowered in times of detected interference (e.g., the MaxClients parameter in Apache web servers), and the time that client connections are persisted in an idle state before terminating (e.g., the KeepAliveTimeout parameter in Apache web servers) may be increased.

[0028] In view of the foregoing, various aspects provide a cloud computing platform with improved performance. A technical effect is to improve response times and performance in cloud computing environments.

[0029] It shall be understood that each of the physical machines 12, the load balancer 20, and the interference configuration engine 30 may comprise computer server components, such as those shown in FIG. 4. FIG. 4 is a high-level diagram showing the components of an exemplary data-processing system for analyzing data and performing other analyses described herein, and related components. The system, which represents any of the physical machines 12, load balancer 20, or interference engine 30, includes a processor 186, a peripheral system 120, a user interface system 130, and a data storage system 140. The peripheral system 120, the user interface system 130 and the data storage system 140 are communicatively connected to the processor 186. Processor 186 can be communicatively connected to network 150 (shown in phantom), e.g., the Internet or a leased line, as discussed below. Processor 186, and other processing devices described herein, can each include one or more microprocessors, microcontrollers, field-programmable gate arrays (FPGAs), application-specific integrated circuits (ASICs), programmable logic devices (PLDs), programmable logic arrays (PLAs), programmable array logic devices (PALs), or digital signal processors (DSPs). It shall be understood that the system 120 may also be implemented in a virtual machine (VM) environment, such as with the physical computing machines 12 and other components shown in FIG. 1.

[0030] Processor 186 can implement processes of various aspects described herein. Processor 186 can be or include one or more device(s) for automatically operating on data, e.g., a central processing unit (CPU), microcontroller (MCU), desktop computer, laptop computer, mainframe computer, personal digital assistant, digital camera, cellular phone, smartphone, or any other device for processing data, managing data, or handling data, whether implemented with electrical, magnetic, optical, biological components, or otherwise. Processor 186 can include Harvard-architecture components, modified-Harvard-architecture components, or Von-Neumann-architecture components.

[0031] The phrase "communicatively connected" includes any type of connection, wired or wireless, for communicating data between devices or processors. These devices or processors can be located in physical proximity or not. For example, subsystems such as peripheral system 120, user interface system 130, and data storage system 140 are shown separately from the data processing system 186 but can be stored completely or partially within the data processing system 186.

[0032] The peripheral system 120 can include one or more devices configured to provide digital content records to the processor 186. The processor 186, upon receipt of digital content records from a device in the peripheral system 120, can store such digital content records in the data storage system 140.

[0033] The user interface system 130 can include a mouse, a keyboard, another computer (connected, e.g., via a network or a null-modem cable), or any device or combination of devices from which data is input to the processor 186. The user interface system 130 also can include a display device, a processor-accessible memory, or any device or combination of devices to which data is output by the processor 186. The user interface system 130 and the data storage system 140 can share a processor-accessible memory.

[0034] In various aspects, processor 186 includes or is connected to communication interface 115 that is coupled via network link 116 (shown in phantom) to network 150. For example, communication interface 115 can include an integrated services digital network (ISDN) terminal adapter or a modem to communicate data via a telephone line; a network interface to communicate data via a local-area network (LAN), e.g., an Ethernet LAN, or wide-area network (WAN); or a radio to communicate data via a wireless link, e.g., WiFi or GSM. Communication interface 115 sends and receives electrical, electromagnetic or optical signals that carry digital or analog data streams representing various types of information across network link 116 to network 150. Network link 116 can be connected to network 150 via a switch, gateway, hub, router, or other networking device.

[0035] Processor 186 can send messages and receive data, including program code, through network 150, network link 116 and communication interface 115. For example, a server can store requested code for an application program (e.g., a JAVA applet) on a tangible non-volatile computer-readable storage medium to which it is connected. The server can retrieve the code from the medium and transmit it through network 150 to communication interface 115. The received code can be executed by processor 186 as it is received, or stored in data storage system 140 for later execution.

[0036] Data storage system 140 can include or be communicatively connected with one or more processor-accessible memories configured to store information. The memories can be, e.g., within a chassis or as parts of a distributed system. The phrase "processor-accessible memory" is intended to include any data storage device to or from which processor 186 can transfer data (using appropriate components of peripheral system 120), whether volatile or nonvolatile; removable or fixed; electronic, magnetic, optical, chemical, mechanical, or otherwise. Exemplary processor-accessible memories include but are not limited to: registers, floppy disks, hard disks, tapes, bar codes, Compact Discs, DVDs, read-only memories (ROM), erasable programmable read-only memories (EPROM, EEPROM, or Flash), and random-access memories (RAMs). One of the processor-accessible memories in the data storage system 140 can be a tangible non-transitory computer-readable storage medium, i.e., a non-transitory device or article of manufacture that participates in storing instructions that can be provided to processor 186 for execution.

[0037] In an example, data storage system 140 includes code memory 141, e.g., a RAM, and disk 143, e.g., a tangible computer-readable rotational storage device such as a hard drive. Computer program instructions are read into code memory 141 from disk 143. Processor 186 then executes one or more sequences of the computer program instructions loaded into code memory 141, as a result performing process steps described herein. In this way, processor 186 carries out a computer implemented process. For example, steps of methods described herein, blocks of the flowchart illustrations or block diagrams herein, and combinations of those, can be implemented by computer program instructions. Code memory 141 can also store data, or can store only code.

[0038] Various aspects described herein may be embodied as systems or methods. Accordingly, various aspects herein may take the form of an entirely hardware aspect, an entirely software aspect (including firmware, resident software, micro-code, etc.), or an aspect combining software and hardware aspects These aspects can all generally be referred to herein as a "service," "circuit," "circuitry," "module," or "system."

[0039] Furthermore, various aspects herein may be embodied as computer program products including computer readable program code stored on a tangible non-transitory computer readable medium. Such a medium can be manufactured as is conventional for such articles, e.g., by pressing a CD-ROM. The program code includes computer program instructions that can be loaded into processor 186 (and possibly also other processors), to cause functions, acts, or operational steps of various aspects herein to be performed by the processor 186 (or other processor). Computer program code for carrying out operations for various aspects described herein may be written in any combination of one or more programming language(s), and can be loaded from disk 143 into code memory 141 for execution. The program code may execute, e.g., entirely on processor 186, partly on processor 186 and partly on a remote computer connected to network 150, or entirely on the remote computer.

[0040] The invention is inclusive of combinations of the aspects described herein. References to "a particular aspect" and the like refer to features that are present in at least one aspect of the invention. Separate references to "an aspect" (or "embodiment") or "particular aspects" or the like do not necessarily refer to the same aspect or aspects; however, such aspects are not mutually exclusive, unless so indicated or as are readily apparent to one of skill in the art. The use of singular or plural in referring to "method" or "methods" and the like is not limiting. The word "or" is used in this disclosure in a non-exclusive sense, unless otherwise explicitly noted.

[0041] The invention has been described in detail with particular reference to certain preferred aspects thereof, but it will be understood that variations, combinations, and modifications can be effected by a person of ordinary skill in the art within the spirit and scope of the invention.

* * * * *

D00000

D00001

D00002

D00003

D00004

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.