Building System With Semantic Modeling Based Custom Logic Generation

Goyal; Siddharth

U.S. patent application number 16/379652 was filed with the patent office on 2020-05-21 for building system with semantic modeling based custom logic generation. This patent application is currently assigned to Johnson Controls Technology Company. The applicant listed for this patent is Johnson Controls Technology Company. Invention is credited to Siddharth Goyal.

| Application Number | 20200159173 16/379652 |

| Document ID | / |

| Family ID | 70726554 |

| Filed Date | 2020-05-21 |

View All Diagrams

| United States Patent Application | 20200159173 |

| Kind Code | A1 |

| Goyal; Siddharth | May 21, 2020 |

BUILDING SYSTEM WITH SEMANTIC MODELING BASED CUSTOM LOGIC GENERATION

Abstract

A building system for implementing user defined logic includes one or more memory devices configured to store instructions thereon, that, when executed by one or more processors, cause the one or more processors to receive user input from a user device, the user input providing a natural language description of a building logic application for a piece of building equipment. The instructions cause the one or more processors to retrieve a semantic logic application comprising a semantic description of the building logic application based on the natural language description and a semantic building model comprising a semantic building description of the piece of building equipment, generate a custom logic application based on the semantic logic application, the natural language description, and the semantic building model, and operate the custom logic application.

| Inventors: | Goyal; Siddharth; (Germantown, WI) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Johnson Controls Technology

Company Auburn Hills MI |

||||||||||

| Family ID: | 70726554 | ||||||||||

| Appl. No.: | 16/379652 | ||||||||||

| Filed: | April 9, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62769447 | Nov 19, 2018 | |||

| 62791991 | Jan 14, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G05B 13/0265 20130101; G05B 2219/25011 20130101; G06F 16/248 20190101; G06F 40/30 20200101; G05B 19/0426 20130101; G06F 16/242 20190101; G06F 2111/20 20200101; G05B 2219/23008 20130101; G06F 40/35 20200101; G06F 8/38 20130101; G06F 3/0484 20130101; G06F 3/0481 20130101; G06F 3/0482 20130101; G06F 30/13 20200101; G05B 15/02 20130101; G05B 2219/2642 20130101; G06F 9/451 20180201; G06F 3/04817 20130101 |

| International Class: | G05B 13/02 20060101 G05B013/02; G06F 17/27 20060101 G06F017/27 |

Claims

1. A building system for implementing user defined logic, the building system comprising one or more memory devices configured to store instructions thereon, that, when executed by one or more processors, cause the one or more processors to: receive user input from a user device, the user input providing a natural language description of a building logic application for a piece of building equipment; retrieve a semantic logic application comprising a semantic description of the building logic application based on the natural language description and a semantic building model comprising a semantic building description of the piece of building equipment; generate a custom logic application based on the semantic logic application, the natural language description, and the semantic building model; and operate the custom logic application.

2. The building system of claim 1, wherein the instructions cause the one or more processors to: identify a conflict between the custom logic application and a second logic application occurring when the custom logic application and the second logic application operate; generate a resolution operation to resolve the conflict; and operate the custom logic application based on the resolution operation.

3. The building system of claim 1, wherein the instructions cause the one or more processors to: generate a user interface comprising an indication of the custom logic application; and cause the user device to display the user interface.

4. The building system of claim 1, wherein the building logic application is at least one of a control application configured to control the piece of building equipment or a fault detection application configured to detect a fault associated with the piece of building equipment.

5. The building system of claim 1, wherein the semantic description of the semantic logic application describes the semantic logic application with a first graph comprising a first plurality of nodes, each node of the first plurality of nodes describing a first characteristic of the semantic logic application, wherein the first graph further comprises a first plurality of relationships, the first plurality of relationships relating the first plurality of nodes to an identifier of the semantic logic application; wherein the semantic building description of the semantic building model describes the piece of building equipment with second graph comprising a second plurality of nodes, each node of the second plurality of nodes describing a second characteristic of the piece of building equipment, wherein the second graph further comprises a second plurality of relationships, the second plurality of relationships relating the second plurality of nodes to the piece of building equipment.

6. The building system of claim 1, wherein the instructions cause the one or more processors to generate an update to the semantic building model, where the update comprises an indication of the custom logic application.

7. The building system of claim 6, wherein the instructions cause the one or more processors to generate a second custom logic application based on a second semantic logic application, second user input, and the indication of the custom logic application of the semantic building model.

8. The building system of claim 1, wherein the instructions cause the one or more processors to generate one or more logic inputs describing the building logic application by analyzing the natural language description; wherein the instructions cause the one or more processors to retrieve the semantic logic application based on the one or more logic inputs; wherein the instructions cause the one or more processors to generate the custom logic application based on the one or more logic inputs.

9. The building system of claim 8, wherein the one or more logic inputs comprise: a logic purpose describing a purpose of the building logic application, wherein the purpose is at least one of fault detection or equipment control; a logic condition, wherein the logic condition describes one or more conditions to check with an input; and a logic action to perform in response to the logic condition being a particular state.

10. The building system of claim 1, wherein the instructions cause the one or more processors to retrieve the semantic logic application by selecting the semantic logic application from a plurality of different semantic logic applications based on the natural language description.

11. The building system of claim 10, wherein the instructions cause the one or more processors to retrieve the semantic logic application from the plurality of different semantic logic applications and generate the custom logic application by performing semantic reasoning with the natural language description.

12. The building system of claim 10, wherein the natural language description comprises an indication of an action to perform, a conditional requirement for performing the action, and a purpose of the building logic application in a natural language form; wherein the instructions cause the one or more processors to select the semantic logic application from the plurality of different semantic logic applications based on the natural language description by identifying, based on a particular semantic description of each of the plurality of different semantic logic applications, the semantic logic application, wherein the semantic description of the semantic logic application indicates the action to perform, the conditional requirement for performing the action, the purpose of the building logic application.

13. A method of implementing user defined logic, the method comprising: receiving, by a processing circuit, user input from a user device, the user input providing a natural language description of a building logic application for a piece of building equipment; retrieving, by the processing circuit, a semantic logic application comprising a semantic description of the building logic application based on the natural language description and a semantic building model comprising a semantic building description of the piece of building equipment; generating, by the processing circuit, a custom logic application based on the semantic logic application, the natural language description, and the semantic building model; and operating, by the processing circuit, the custom logic application.

14. The method of claim 13, wherein the semantic description of the semantic logic application describes the semantic logic application with a first graph comprising a first plurality of nodes, each node of the first plurality of nodes describing a first characteristic of the semantic logic application, wherein the first graph further comprises a first plurality of relationships, the first plurality of relationships relating the first plurality of nodes to an identifier of the semantic logic application; wherein the semantic building description of the semantic building model describes the piece of building equipment with second graph comprising a second plurality of nodes, each node of the second plurality of nodes describing a second characteristic of the piece of building equipment, wherein the second graph further comprises a second plurality of relationships, the second plurality of relationships relating the second plurality of nodes to the piece of building equipment.

15. The method of claim 13, wherein the method further comprises generating, by the processing circuit, one or more logic inputs describing the building logic application by analyzing the natural language description; wherein retrieving, by the processing circuit, the semantic logic application is based on the one or more logic inputs; wherein generating, by the processing circuit, the custom logic application is based on the one or more logic inputs.

16. The method of claim 15, wherein the one or more logic inputs comprise: a logic purpose describing a purpose of the building logic application, wherein the purpose is at least one of fault detection or equipment control; a logic condition, wherein the logic condition describes one or more conditions to check with an input; and a logic action to perform in response to the logic condition being a particular state.

17. The method of claim 13, wherein retrieving, by the processing circuit, the semantic logic application comprises selecting, by the processing circuit, the semantic logic application from a plurality of different semantic logic applications based on the natural language description.

18. The method of claim 17, wherein the natural language description comprises an indication of an action to perform, a conditional requirement for performing the action, and a purpose of the building logic application in a natural language form; wherein selecting, by the processing circuit, the semantic logic application from the plurality of different semantic logic applications based on the natural language description comprises identifying, based on a particular semantic description of each of the plurality of different semantic logic applications, the semantic logic application, wherein the semantic description of the semantic logic application indicates the action to perform, the conditional requirement for performing the action, the purpose of the building logic application.

19. A system for implementing user defined logic, the system comprising: one or more memory devices configured to store instructions thereon; and one or more processors configured to execute the instructions to: receive user input from a user device, the user input providing a natural language description of a logic application for a piece of equipment; retrieve a semantic logic application comprising a semantic description of the logic application based on the natural language description and a semantic model comprising a semantic building description of the piece of building equipment by selecting the semantic logic application from a plurality of different semantic logic applications based on the natural language description; generate a custom logic application based on the semantic logic application, the natural language description, and the semantic model; and operate the custom logic application.

20. The system of claim 19, wherein the system is at least one of: a local system located within one or more physical buildings; or a remote processing system located outside the one or more physical building.

Description

CROSS-REFERENCE TO RELATED PATENT APPLICATION

[0001] This application claims the benefit of and priority to U.S. Provisional Patent Application No. 62/791,991 filed Jan. 14, 2019 and U.S. Provisional Patent Application No. 62/769,447 filed Nov. 19, 2018, the entirety of each of these patent applications is incorporated by reference herein.

BACKGROUND

[0002] The present disclosure generally relates to building systems of a building. More particularly, the present disclosure relates to information management of building data, control of building equipment, and analytics generation for the building equipment.

[0003] In a building, multiple different sub-systems (e.g., environmental control, security, fire suppression, lighting, etc.) may exist. Furthermore, across multiple buildings, the same, or similar systems may also be implemented. Even though the sub-systems in a single building, or across multiple buildings, may be similar there is no efficient mechanism for implementing control applications, defining custom operating logic, generating user interface graphics or visualizations to display building data, and searching through and understanding building information. Software applications for performing building operations may be developed in a time inefficient manner, i.e., similar software may be developed multiple times for each of multiple sub-system implementations and/or for each of multiple different buildings.

SUMMARY

Semantic Building Applications

[0004] One implementation of the present disclosure is a building system for implementing building applications. The building system includes one or more memory devices configured to store instructions thereon, that, when executed by one or more processors, cause the one or more processors to receive a static semantic building application, wherein the static semantic building application comprises one or more operations and a first semantic description of the static semantic building application. The instructions cause the one or more processors to determine, based on building information describing a building and the first semantic description of the static semantic building application, one or more implementation details of a particular implementation of the static semantic building application, generate, based on the one or more implementation details, a dynamic semantic building application comprising the one or more operations and a second semantic description of the dynamic semantic building application, and operate the dynamic semantic building application.

[0005] In some embodiments, the dynamic semantic building application includes a performance requirement indicating whether performance results of the dynamic semantic building application operating are normal or abnormal. In some embodiments, the instructions cause the one or more processors to identify whether the static semantic building application is available to be implemented based on the building information and generate the dynamic semantic building application in response to a determination that the static semantic building application is available to be implemented. In some embodiments, the instructions cause the one or more processors to generate a resolution to resolve an operating conflict caused by the dynamic semantic building application and a second building application operating and operate the dynamic semantic building application based on the resolution.

[0006] In some embodiments, the first semantic description of the static semantic building application describes the static semantic building application with graph including nodes, each node of the nodes describing a characteristic of the static semantic building application, wherein the graph further includes relationships, the relationships relating the nodes to an identifier of the static semantic building application.

[0007] In some embodiments, the static semantic building application is at least one of a control application configured to control a piece of building equipment or an analytics application configured to analyze data of the piece of building equipment.

[0008] In some embodiments, the static semantic building application is a generic description of the dynamic semantic building application, wherein the dynamic semantic building application is specific implementation of the static semantic building application, wherein the second semantic description of the dynamic semantic building application comprises implementation details to operate the dynamic semantic building application.

[0009] In some embodiments, the instructions cause the one or more processors to determine the one or more implementation details of the static semantic building application by performing semantic reasoning with the building information describing the building and the first semantic description of the static semantic building application.

[0010] In some embodiments, the first semantic description of the static semantic building application comprises one or more performance criteria, wherein the one or more performance criteria define normal or abnormal behavior of the static semantic building application. In some embodiments, the instructions cause the one or more processors to generate the dynamic semantic building application by causing the second semantic description of the dynamic semantic building application to include the one or more performance criteria. In some embodiments, the instructions cause the one or more processors to receive operational data of the dynamic semantic building application resulting from an operation of the dynamic semantic building application and determine a performance result of the dynamic semantic building application based on the one or more performance criteria and the operational data.

[0011] In some embodiments, the one or more implementation details comprise a conflict between a first operation of a building application and a second operation of the dynamic semantic building application. In some embodiments, the instructions cause the one or more processors to generate the dynamic semantic building application by causing the second semantic description of the dynamic semantic building application to include one or more conflict resolution operations resolving the conflict between the first operation of the building application and the second operation of the dynamic semantic building application.

[0012] In some embodiments, the one or more implementation details comprise a dependency between a building application and the dynamic semantic building application. In some embodiments, the instructions cause the one or more processors to generate, based on the one or more implementation details, the dynamic semantic building application by causing the second semantic description of the dynamic semantic building application to include one or more dependency operations based on the dependency between the building application and the dynamic semantic building application.

[0013] In some embodiments, the dependency between the building application and the dynamic semantic building application indicates at least one of the dynamic semantic building application operates if the building application fails to meet a goal or the dynamic semantic building application receives an output of the building application as an input into the dynamic semantic building application.

[0014] In some embodiments, the building information defining the building is a semantic building model comprising a semantic building description of one or more systems of the building. In some embodiments, the instructions cause the one or more processors to determine the one or more implementation details by identifying a system target of the building defined by the semantic building model based on the first semantic description of the static semantic building application and the semantic building description.

[0015] In some embodiments, the first semantic description of the static semantic building application comprises a system target criteria. In some embodiments, the instructions cause the one or more processors to identify the system target by determine particular systems of the building meeting the system target criteria.

[0016] In some embodiments, the instructions cause the one or more processors to determine one or more second implementation details by identifying a second system target of the building defined by the semantic building model, generate, based on the one or more second implementation details, a second dynamic semantic building application comprising one or more second operations and a third semantic description of the second dynamic semantic building application, and operate the second dynamic semantic building application.

[0017] In some embodiments, the instructions cause the one or more processors to operate the dynamic semantic building application to control the system target, wherein the system target is located in the building. In some embodiments, the instructions cause the one or more processors to operate the second dynamic semantic building application to control the second system target, wherein the second system target is located in a second building.

[0018] Another implementation of the present disclosure is a method of implementing building applications. The method includes receiving, by a processing circuit, a static semantic building application, wherein the static semantic building application comprises one or more operations and a first semantic description of the static semantic building application and determining, by the processing circuit, based on building information describing a building and the first semantic description of the static semantic building application, one or more implementation details of a particular implementation of the static semantic building application. The method includes generating, by the processing circuit, based on the one or more implementation details, a dynamic semantic building application comprising the one or more operations and a second semantic description of the dynamic semantic building application and operating, by the processing circuit, the dynamic semantic building application.

[0019] In some embodiments, the first semantic description of the static semantic building application describes the static semantic building application with graph comprising a plurality of nodes, each node of the plurality of nodes describing a characteristic of the static semantic building application, wherein the graph further comprises a plurality of relationships, the plurality of relationships relating the plurality of nodes to an identifier of the static semantic building application.

[0020] In some embodiments, the static semantic building application is a generic description of the dynamic semantic building application, wherein the dynamic semantic building application is specific implementation of the static semantic building application, wherein the second semantic description of the dynamic semantic building application comprises implementation details to operate the dynamic semantic building application.

[0021] In some embodiments, determining, by the processing circuit, the one or more implementation details of the static semantic building application comprises performing semantic reasoning with the building information describing the building and the first semantic description of the static semantic building application.

[0022] In some embodiments, the first semantic description of the static semantic building application comprises one or more performance criteria, wherein the one or more performance criteria define normal or abnormal behavior of the static semantic building application. In some embodiments, generating, by the processing circuit, the dynamic semantic building application comprises causing the second semantic description of the dynamic semantic building application to include the one or more performance criteria. In some embodiments, the method further includes receiving, by the processing circuit, operational data of the dynamic semantic building application resulting from an operation of the dynamic semantic building application and determining, by the processing circuit, a performance result of the dynamic semantic building application based on the one or more performance criteria and the operational data.

[0023] In some embodiments, the building information defining the building is a semantic building model comprising a semantic building description of one or more systems of the building. In some embodiments, determining, by the processing circuit, the one or more implementation details comprises identifying a system target of the building defined by the semantic building model based on the first semantic description of the static semantic building application and the semantic building description.

[0024] Another implementation of the present disclosure is a building system for implementing building applications. The building system includes one or more memory devices configured to store instructions thereon and one or more processors configured to execute the instructions to receive a static semantic building application, wherein the static semantic building application comprises one or more operations and a first semantic description of the static semantic building application. The one or more processors are configured to execute the instructions to receive a semantic building model comprising a semantic building description of one or more systems of the building, determine, based on the semantic building description and the first semantic description of the static semantic building application, one or more implementation details of a particular implementation of the static semantic building application, wherein determining the one or more implementation details comprises identifying a system target of the building defined by the semantic building model based on the first semantic description of the static semantic building application and the semantic building description, generate, based on the one or more implementation details, a dynamic semantic building application comprising the one or more operations and a second semantic description of the dynamic semantic building application, and operate the dynamic semantic building application.

[0025] In some embodiments, the building system is at least one of a local system located within one or more physical buildings or a remote processing system located outside the one or more physical buildings.

Custom Logic Generation

[0026] Another implementation of the present disclosure is a building system for implementing user defined logic. The building system includes one or more memory devices configured to store instructions thereon, that, when executed by one or more processors, cause the one or more processors to receive user input from a user device, the user input providing a natural language description of a building logic application for a piece of building equipment, retrieve a semantic logic application comprising a semantic description of the building logic application based on the natural language description and a semantic building model comprising a semantic building description of the piece of building equipment, generate a custom logic application based on the semantic logic application, the natural language description, and the semantic building model, and operate the custom logic application.

[0027] In some embodiments, the instructions cause the one or more processors to identify a conflict between the custom logic application and a second logic application occurring when the custom logic application and the second logic application operate, generate a resolution operation to resolve the conflict, and operate the custom logic application based on the resolution operation.

[0028] In some embodiments, the instructions cause the one or more processors to generate a user interface including an indication of the custom logic application and cause the user device to display the user interface.

[0029] In some embodiments, the building logic application is at least one of a control application configured to control the piece of building equipment or a fault detection application configured to detect a fault associated with the piece of building equipment.

[0030] In some embodiments, the semantic description of the semantic logic application describes the semantic logic application with a first graph including a first plurality of nodes, each node of the first plurality of nodes describing a first characteristic of the semantic logic application, wherein the first graph further includes a first plurality of relationships, the first plurality of relationships relating the first plurality of nodes to an identifier of the semantic logic application. In some embodiments, the semantic building description of the semantic building model describes the piece of building equipment with second graph comprising a second plurality of nodes, each node of the second plurality of nodes describing a second characteristic of the piece of building equipment, wherein the second graph further comprises a second plurality of relationships, the second plurality of relationships relating the second plurality of nodes to the piece of building equipment.

[0031] In some embodiments, the instructions cause the one or more processors to generate an update to the semantic building model, where the update comprises an indication of the custom logic application.

[0032] In some embodiments, the instructions cause the one or more processors to generate a second custom logic application based on a second semantic logic application, second user input, and the indication of the custom logic application of the semantic building model.

[0033] In some embodiments, the instructions cause the one or more processors to generate one or more logic inputs describing the building logic application by analyzing the natural language description. In some embodiments, the instructions cause the one or more processors to retrieve the semantic logic application based on the one or more logic inputs. In some embodiments, the instructions cause the one or more processors to generate the custom logic application based on the one or more logic inputs.

[0034] In some embodiments, the one or more logic inputs include a logic purpose describing a purpose of the building logic application, wherein the purpose is at least one of fault detection or equipment control, a logic condition, wherein the logic condition describes one or more conditions to check with an input, and a logic action to perform in response to the logic condition being a particular state.

[0035] In some embodiments, the instructions cause the one or more processors to retrieve the semantic logic application by selecting the semantic logic application from a plurality of different semantic logic applications based on the natural language description.

[0036] In some embodiments, the instructions cause the one or more processors to retrieve the semantic logic application from the plurality of different semantic logic applications and generate the custom logic application by performing semantic reasoning with the natural language description.

[0037] In some embodiments, the natural language description includes an indication of an action to perform, a conditional requirement for performing the action, and a purpose of the building logic application in a natural language form. In some embodiments, the instructions cause the one or more processors to select the semantic logic application from the plurality of different semantic logic applications based on the natural language description by identifying, based on a particular semantic description of each of the plurality of different semantic logic applications, the semantic logic application, wherein the semantic description of the semantic logic application indicates the action to perform, the conditional requirement for performing the action, the purpose of the building logic application.

[0038] Another implementation of the present disclosure is a method of implementing user defined logic. The method includes receiving, by a processing circuit, user input from a user device, the user input providing a natural language description of a building logic application for a piece of building equipment, retrieving, by the processing circuit, a semantic logic application comprising a semantic description of the building logic application based on the natural language description and a semantic building model comprising a semantic building description of the piece of building equipment, generating, by the processing circuit, a custom logic application based on the semantic logic application, the natural language description, and the semantic building model, and operating, by the processing circuit, the custom logic application.

[0039] In some embodiments, the semantic description of the semantic logic application describes the semantic logic application with a first graph including a first plurality of nodes, each node of the first plurality of nodes describing a first characteristic of the semantic logic application, wherein the first graph further comprises a first plurality of relationships, the first plurality of relationships relating the first plurality of nodes to an identifier of the semantic logic application. In some embodiments, the semantic building description of the semantic building model describes the piece of building equipment with second graph comprising a second plurality of nodes, each node of the second plurality of nodes describing a second characteristic of the piece of building equipment, wherein the second graph further comprises a second plurality of relationships, the second plurality of relationships relating the second plurality of nodes to the piece of building equipment.

[0040] In some embodiments, the method further includes generating, by the processing circuit, one or more logic inputs describing the building logic application by analyzing the natural language description. In some embodiments, retrieving, by the processing circuit, the semantic logic application is based on the one or more logic inputs. In some embodiments, generating, by the processing circuit, the custom logic application is based on the one or more logic inputs.

[0041] In some embodiments, the one or more logic inputs include a logic purpose describing a purpose of the building logic application, wherein the purpose is at least one of fault detection or equipment control, a logic condition, wherein the logic condition describes one or more conditions to check with an input, and a logic action to perform in response to the logic condition being a particular state.

[0042] In some embodiments, retrieving, by the processing circuit, the semantic logic application comprises selecting, by the processing circuit, the semantic logic application from a plurality of different semantic logic applications based on the natural language description.

[0043] In some embodiments, retrieving, by the processing circuit, the semantic logic application from the plurality of different semantic logic applications and generating, by the processing circuit, the custom logic application includes performing semantic reasoning with the natural language description. In some embodiments, the semantic reasoning is performed with a machine readable description which may be independent of, or derived from, the natural language description.

[0044] In some embodiments, the natural language description includes an indication of an action to perform, a conditional requirement for performing the action, and a purpose of the building logic application in a natural language form. In some embodiments, selecting, by the processing circuit, the semantic logic application from the plurality of different semantic logic applications based on the natural language description comprises identifying, based on a particular semantic description of each of the plurality of different semantic logic applications, the semantic logic application, wherein the semantic description of the semantic logic application indicates the action to perform, the conditional requirement for performing the action, the purpose of the building logic application.

[0045] Another implementation of the present disclosure is a system for implementing user defined logic. The system includes one or more memory devices configured to store instructions thereon. The system includes one or more processors configured to execute the instructions to receive user input from a user device, the user input providing a natural language description of a logic application for a piece of equipment, retrieve a semantic logic application comprising a semantic description of the logic application based on the natural language description and a semantic model comprising a semantic building description of the piece of building equipment by selecting the semantic logic application from a plurality of different semantic logic applications based on the natural language description, generate a custom logic application based on the semantic logic application, the natural language description, and the semantic model, and operate the custom logic application.

[0046] In some embodiments, the one or more processors execute the instructions to retrieve the semantic logic application from the plurality of different semantic logic applications and generate the custom logic application by performing semantic reasoning with the natural language description.

[0047] In some embodiments, the natural language description includes an indication of an action to perform, a conditional requirement for performing the action, and a purpose of the logic application in a natural language form. In some embodiments, the one or more processors execute the instructions to select the semantic logic application from the plurality of different semantic logic applications based on the natural language description comprises identifying, based on a particular semantic description of each of the plurality of different semantic logic applications, the semantic logic application, wherein the semantic description of the semantic logic application indicates the action to perform, the conditional requirement for performing the action, the purpose of the logic application.

[0048] In some embodiments, the system is at least one of a local system located within one or more physical buildings or a remote processing system located outside the one or more physical buildings.

User Interface Generation Based on Semantic Models

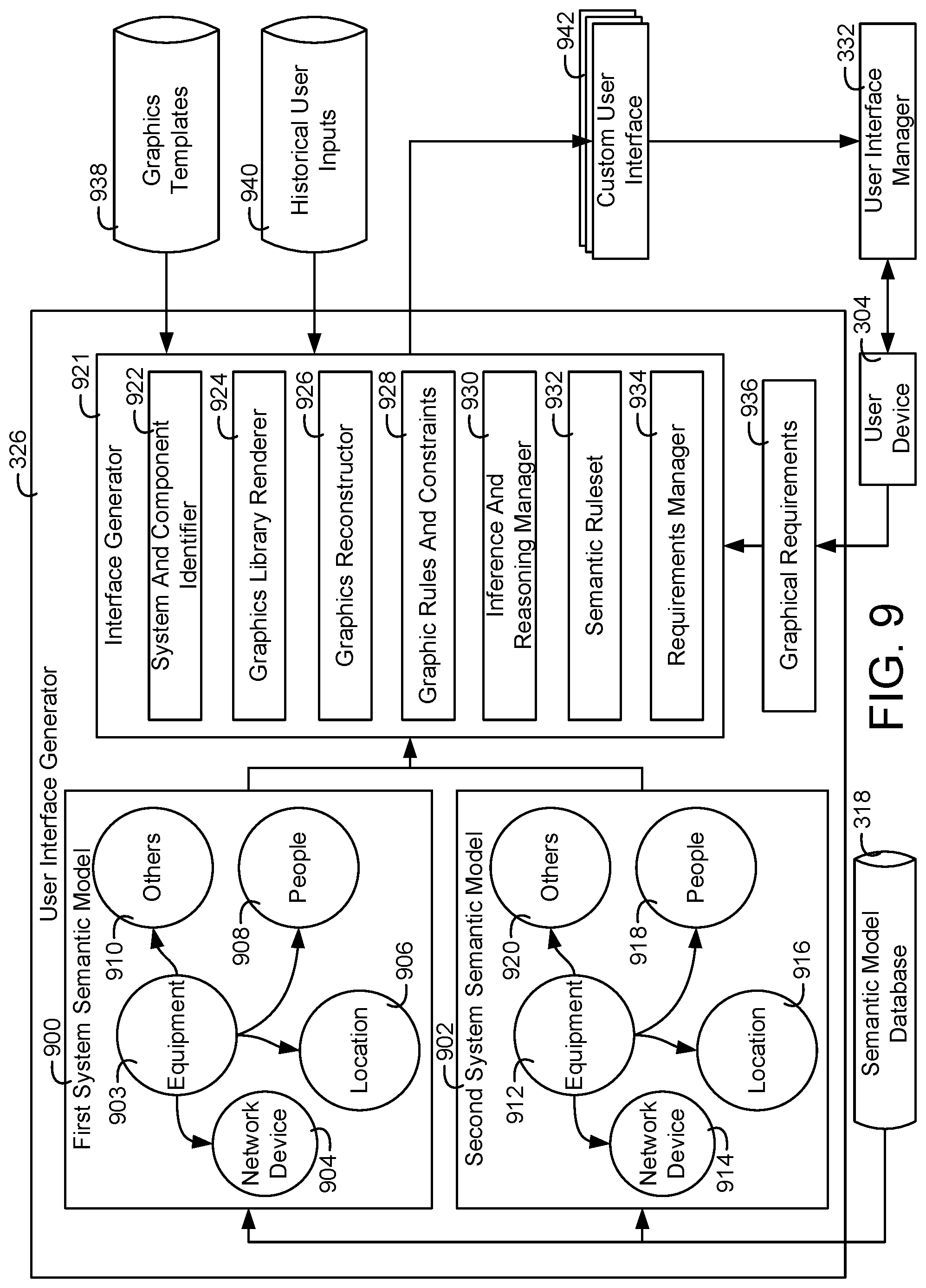

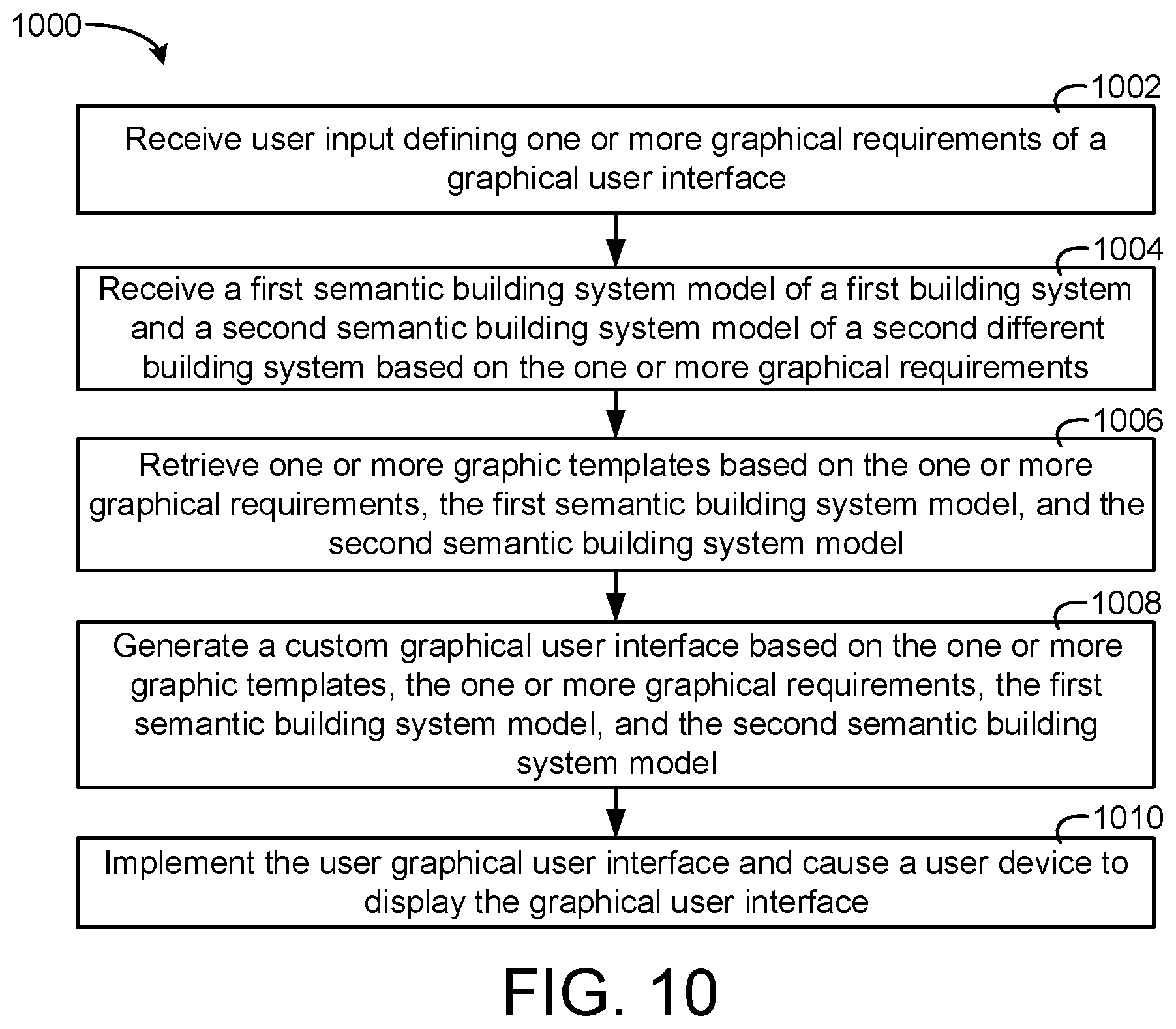

[0049] One implementation of the present disclosure is a building interface system for generating a custom user interface. The building interface system includes one or more memory devices configured to store instructions thereon, the instructions causing one or more processors to receive user input defining one or more graphical requirements from a user device. The instructions cause the one or more processors to retrieve a semantic building system model based on the one or more graphical requirements, wherein the semantic building system model comprises semantic information describing a building system, retrieve one or more graphic templates based on the semantic building system model and the one or more graphical requirements, the one or more graphic templates providing a generic description of one or more user interface elements, generate the custom user interface based on the one or more graphic templates and the semantic building system model, and cause the user device to display the custom user interface.

[0050] In some embodiments, the instructions cause the one or more processors to determine whether the user input has not been received, retrieve one or more default graphic templates in response to a determination that the user input has not been received, and generate a user interface based on the one or more default graphic templates and the semantic building system model.

[0051] In some embodiments, the one or more graphics templates are master templates for multiple implementations. In some embodiments, the instructions cause the one or more processors to generate multiple custom user interfaces based on one or more templates and multiple semantic building models, wherein each of the plurality of custom interfaces is generated based on one of the semantic building models.

[0052] In some embodiments, the instructions cause the one or more processors to retrieve the semantic building system model by determining an identify of the building system based on the one or more graphical requirements of the custom user interface and selecting the semantic building system model from a plurality of other semantic building system models based on the identity of the building system.

[0053] In some embodiments, the instructions cause the one or more processors to receive one or more modifications to the custom user interface and update the custom user interface based on the one or more modifications.

[0054] In some embodiments, the one or more graphic templates comprise a first template defining user interface elements for a particular type of building system, wherein the building system is the particular type of building system, wherein the semantic information comprises a node indicating the building system is the particular type of building system.

[0055] In some embodiments, the instructions cause the one or more processors to receive historical user input defining feedback on previous custom user interfaces. In some embodiments, the instructions cause the one or more processors to generate the custom user interface further based on the historical user input.

[0056] In some embodiments, the one or more graphical requirements include an indication of a purpose of the custom user interface, wherein the indication of the purpose is at least one of a first indication of fault detection diagnostics, a second indication of system monitoring, or a third indication system visualization.

[0057] In some embodiments, the semantic information of the semantic building system model describes the building system with a graph comprising a plurality of nodes, each node of the plurality of nodes describing a characteristic of the building system, wherein the graph further comprises a plurality of relationships, the plurality of relationships relating the plurality of nodes to an identifier of the building system.

[0058] In some embodiments, the instructions cause the one or more processors to generate the custom user interface based on the one or more graphic templates and the semantic building system model based on a first characteristic of the building system by identifying the first characteristic based on a first relationship between the identifier of the building system and a first node representing the first characteristic of the building system.

[0059] In some embodiments, the one or more memory devices store a plurality of templates comprising the one or more graphic templates, wherein the plurality of templates are predefined reusable templates.

[0060] In some embodiments, the instructions cause the one or more processors to receive second user input defining one or more second graphical requirements of a second custom user interface, retrieve a second semantic building system model comprising second semantic information describing a second building system, retrieve the one or more graphic templates based on the second semantic building system model and the one or more second graphical requirements, and generate the second custom user interface based on the one or more graphic templates and the second semantic building system model.

[0061] In some embodiments, the instructions cause the one or more processors to retrieve a second semantic building system model comprising second semantic information describing a second building system different than the building system. In some embodiments, wherein the instructions cause the one or more processors to retrieve the one or more graphic templates further based on the second building system. In some embodiments, the instructions cause the one or more processors to generate the custom user interface further based on the second semantic building system model.

[0062] In some embodiments, the custom user interface include interface information for the building system and the second building system.

[0063] In some embodiments, the building system and the second building system are at least one of a fire suppression system, a security system, a lighting system, or an environmental control system.

[0064] Another implementation of the present disclosure is a method of generating a custom user interface. The method includes receiving, by a processing circuit, user input defining one or more graphical requirements from a user device, retrieving, by the processing circuit, a semantic building system model based on the one or more graphical requirements, wherein the semantic building system model comprises semantic information describing a building system, and retrieving, by the processing circuit, one or more graphic templates based on the semantic building system model and the one or more graphical requirements, the one or more graphic templates providing a generic description of one or more user interface elements. The method includes generating, by the processing circuit, the custom user interface based on the one or more graphic templates and the semantic building system model and causing, by the processing circuit, the user device to display the custom user interface.

[0065] In some embodiments, the method includes retrieving, by the processing circuit, the semantic building system model includes determining, by the processing circuit, an identify of the building system based on the one or more graphical requirements of the custom user interface and selecting, by the processing circuit, the semantic building system model from a plurality of other semantic building system models based on the identity of the building system.

[0066] In some embodiments, the one or more graphic templates include a first template defining user interface elements for a particular type of building system, wherein the building system is the particular type of building system, wherein the semantic information comprises a node indicating the building system is the particular type of building system.

[0067] In some embodiments, the method includes receiving, by the processing circuit, historical user input defining feedback on previous custom user interfaces. In some embodiments, generating, by the processing circuit, the custom user interface is based on the historical user input.

[0068] In some embodiments, the semantic information of the semantic building system model describes the building system with a graph comprising a plurality of nodes, each node of the plurality of nodes describing a characteristic of the building system, wherein the graph further comprises a plurality of relationships, the plurality of relationships relating the plurality of nodes to an identifier of the building system.

[0069] In some embodiments, generating, by the processing circuit, the custom user interface based on the one or more graphic templates and the semantic building system model is based on a first characteristic of the building system by identifying the first characteristic based on a first relationship between the identifier of the building system and a first node representing the first characteristic of the building system.

[0070] Another implementation of the present disclosure is a system for generating a custom user interface. The system includes one or more memory devices configured to store instructions thereon and one or more processors configured to execute the instructions to receive user input defining one or more graphical requirements from a user device. The one or more processors are configured to execute the instructions to retrieve a semantic building system model based on the one or more graphical requirements, wherein the semantic building system model comprises semantic information describing a building system, wherein the semantic information of the semantic building system model describes the building system with a graph comprising a plurality of nodes, each node of the plurality of nodes describing a characteristic of the building system, wherein the graph further comprises a plurality of relationships, the plurality of relationships relating the plurality of nodes to an identifier of the building system. The one or more processors are configured to execute the instructions to retrieve one or more graphic templates based on the semantic building system model and the one or more graphical requirements, the one or more graphic templates providing a generic description of one or more user interface elements, generate the custom user interface based on the one or more graphic templates and the semantic building system model based on a first characteristic of the building system by identifying the first characteristic based on a first relationship between the identifier of the building system and a first node representing the first characteristic of the building system, and cause the user device to display the custom user interface.

[0071] In some embodiments, the system is at least one of a local system located within one or more physical building or a remote processing system located outside the one or more physical buildings.

Building Semantic Model Searching

[0072] Another implementation of the present disclosure is a building system for searching a semantic building model. The building system includes one or more memory devices configured to store instructions thereon, wherein the instructions, when executed by one or more processors, cause the one or more processors to cause a user interface of a user device to display a plurality of nodes of the semantic building model and a plurality of relationships between the plurality of nodes of the semantic building model. The instructions cause the one or more processors to receive a search input from the user device, generate a search result based on the search input, wherein the search result comprises an indication of a portion of nodes of the plurality of nodes and a portion of relationships of the plurality of relationships, and cause the user interface to display the portion of nodes of the plurality of nodes and the portion of relationships of the plurality of relationships.

[0073] In some embodiments, the instructions cause the one or more processors to receive a selection via the user device, wherein the selection is at least one of a selection of one or more of the portion of nodes, a selection of one or more relationships of the portion of relationships, or a subgraph of a graph defined by the portion of nodes and the portion of relationships and generate and execute an application based on an application definition and the selection.

[0074] In some embodiments, the instructions cause the one or more processors to receive historical user input indicating feedback of a user of previous search results at a first point in time, the user input indicating preferred search results and store the historical user input. In some embodiments, the instructions cause the one or more processors to generate the search result further based on a learning model and the historical user input, wherein the search result is generated at a second point in time after the first point in time and is based on preferred search results.

[0075] In some embodiments, the search input is a natural language input defined by a user of the user device. In some embodiments, the instructions cause the one or more processors to generate a query based on the natural language input and generate the search result by generating the indication of the portion of nodes of the plurality of nodes by querying the semantic building model with the query.

[0076] In some embodiments, the search input includes a first indication of a first node of the plurality of nodes. In some embodiments, the instructions cause the one or more processors to generate the search result by identifying, based on the first node, the portion of nodes by identifying a plurality of first relationships between the first node and the portion of nodes of the plurality of nodes.

[0077] In some embodiments, the search input further includes a particular indication of a node type. In some embodiments, the instructions cause the one or more processors to generate the search result by identifying, based on the particular indication of the node type, the portion of nodes by identifying that the portion of nodes are the node type.

[0078] In some embodiments, the search input includes a first indication of a first node type, a second indication of a second node type, and a relationship indication of a relationship type relating the first node type and the second node type. In some embodiments, the instructions cause the one or more processors to determine whether the plurality of nodes includes one or more first nodes of the first node type and one or more second nodes of the second node type, determine whether the one or more first nodes are related to the one or more second nodes by one or more relationships of the plurality of relationships of the relationship type, and cause the portion of nodes of the plurality of nodes to include the one or more first nodes and the one or more second nodes and cause the portion of relationships of the plurality of relationships to include the one or more relationships in response to a determination that the plurality of nodes include the one or more first nodes of the first node type and the one or more second nodes of the second node type and the one or more first nodes are related to the one or more second nodes by the one or more relationships of the plurality of relationships of the relationship type.

[0079] In some embodiments, the search input is a shape drawn by a user on the user interface, wherein the instructions cause the one or more processors to generate the search result based on the shape.

[0080] In some embodiments, the instructions cause the one or more processors to determine whether the portion of nodes of the plurality of nodes displayed by the user interface are graphically within the shape drawn by the user. In some embodiments, the instructions cause the one or more processors to generate the search result to include the portion of nodes in response to a determination that the portion of nodes are graphically within the shape drawn by the user.

[0081] In some embodiments, the instructions cause the one or more processors to receive historical user input indicating feedback of a user of previous search results. In some embodiments, the instructions cause the one or more processors to generate the search result further based on a learning model and the historical user input.

[0082] In some embodiments, the instructions cause the one or more processors to receive, one or more interactions with the user interface causing the user interface to display one or more other nodes of the plurality of nodes and one or more other relationships of the plurality of relationships and update the historical user input to include the one or more interactions, the one or more other nodes, the one or more other relationships, and the search input.

[0083] Another implementation of the present disclosure is a method of searching a semantic building model. The method includes causing, by a processing circuit, a user interface of a user device to display a plurality of nodes of the semantic building model and a plurality of relationships between the plurality of nodes of the semantic building model, receiving, by the processing circuit, a search input from the user device, generating, by the processing circuit, a search result based on the search input, wherein the search result comprises an indication of a portion of nodes of the plurality of nodes and a portion of relationships of the plurality of relationships, and causing, by the processing circuit, the user interface to display the portion of nodes of the plurality of nodes and the portion of relationships of the plurality of relationships.

[0084] In some embodiments, the search input is a natural language input defined by a user of the user device. In some embodiments, the method further includes generating, by the processing circuit, a query based on the natural language input and generating, by the processing circuit, the search result by generating the indication of the portion of nodes of the plurality of nodes by querying the semantic building model with the query.

[0085] In some embodiments, the search input includes a first indication of a first node of the plurality of nodes. In some embodiments, generating, by the processing circuit, the search result comprises identifying, based on the first node, the portion of nodes by identifying a plurality of first relationships between the first node and the portion of nodes of the plurality of nodes.

[0086] In some embodiments, the search input further includes a particular indication of a node type. In some embodiments, generating, by the processing circuit, the search result comprises identifying, based on the particular indication of the node type, the portion of nodes by identifying that the portion of nodes are the node type.

[0087] In some embodiments, the search input includes a first indication of a first node type, a second indication of a second node type, and a relationship indication of a relationship type relating the first node type and the second node type. In some embodiments, the method further includes determining, by the processing circuit, whether the plurality of nodes includes one or more first nodes of the first node type and one or more second nodes of the second node type, determining, by the processing circuit, whether the one or more first nodes are related to the one or more second nodes by one or more relationships of the plurality of relationships of the relationship type, and causing, by the processing circuit, the portion of nodes of the plurality of nodes to include the one or more first nodes and the one or more second nodes and cause the portion of relationships of the plurality of relationships to include the one or more relationships in response to a determination that the plurality of nodes include the one or more first nodes of the first node type and the one or more second nodes of the second node type and the one or more first nodes are related to the one or more second nodes by the one or more relationships of the plurality of relationships of the relationship type.

[0088] In some embodiments, the search input is a shape drawn by a user on the user interface, wherein the method further comprises generating, by the processing circuit, the search result based on the shape.

[0089] In some embodiments, determining, by the processing circuit, whether the portion of nodes of the plurality of nodes displayed by the user interface are graphically within the shape drawn by the user. In some embodiments, generating, by the processing circuit, the search result comprises causing the search result to include the portion of nodes in response to a determination that the portion of nodes are graphically within the shape drawn by the user.

[0090] In some embodiments, the method further includes receiving, by the processing circuit, historical user input indicating feedback of a user of previous search results. In some embodiments, generating, by the processing circuit, the search result is based on a learning model and the historical user input.

[0091] In some embodiments, the method further includes receiving, by the processing circuit, one or more interactions with the user interface causing the user interface to display one or more other nodes of the plurality of nodes and one or more other relationships of the plurality of relationships and updating, by the processing circuit, the historical user input to include the one or more interactions, the one or more other nodes, the one or more other relationships, and the search input.

[0092] Another implementation of the present disclosure is a building system for searching a semantic building model. The building system includes one or more memory devices configured to store instructions and the semantic building model, wherein the semantic building model comprises a plurality of nodes and a plurality of relationships between the plurality of nodes and one or more processors configured to execute the instructions to cause a user interface of a user device to display the plurality of nodes of the semantic building model and the plurality of relationships between the plurality of nodes of the semantic building model. The one or more processors are configured to execute instructions to receive a search input from the user device, wherein the search input is a natural language input defined by a user of the user device, generate a search result based on the search input, wherein the search result comprises an indication of a portion of nodes of the plurality of nodes and a portion of relationships of the plurality of relationships, and cause the user interface to display the portion of nodes of the plurality of nodes and the portion of relationships of the plurality of relationships.

[0093] In some embodiments, the search input includes a first indication of a first node type, a second indication of a second node type, and a relationship indication of a relationship type relating the first node type and the second node type. In some embodiments, the one or more processors configured to execute the instructions to determine whether the plurality of nodes includes one or more first nodes of the first node type and one or more second nodes of the second node type, determine whether the one or more first nodes are related to the one or more second nodes by one or more relationships of the plurality of relationships of the relationship type, and cause the portion of nodes of the plurality of nodes to include the one or more first nodes and the one or more second nodes and cause the portion of relationships of the plurality of relationships to include the one or more relationships in response to a determination that the plurality of nodes include the one or more first nodes of the first node type and the one or more second nodes of the second node type and the one or more first nodes are related to the one or more second nodes by the one or more relationships of the plurality of relationships of the relationship type. The building system is at least one of a local system located within a physical building or a remote processing system located outside the physical building.

BRIEF DESCRIPTION OF THE DRAWINGS

[0094] Various objects, aspects, features, and advantages of the disclosure will become more apparent and better understood by referring to the detailed description taken in conjunction with the accompanying drawings, in which like reference characters identify corresponding elements throughout. In the drawings, like reference numbers generally indicate identical, functionally similar, and/or structurally similar elements.

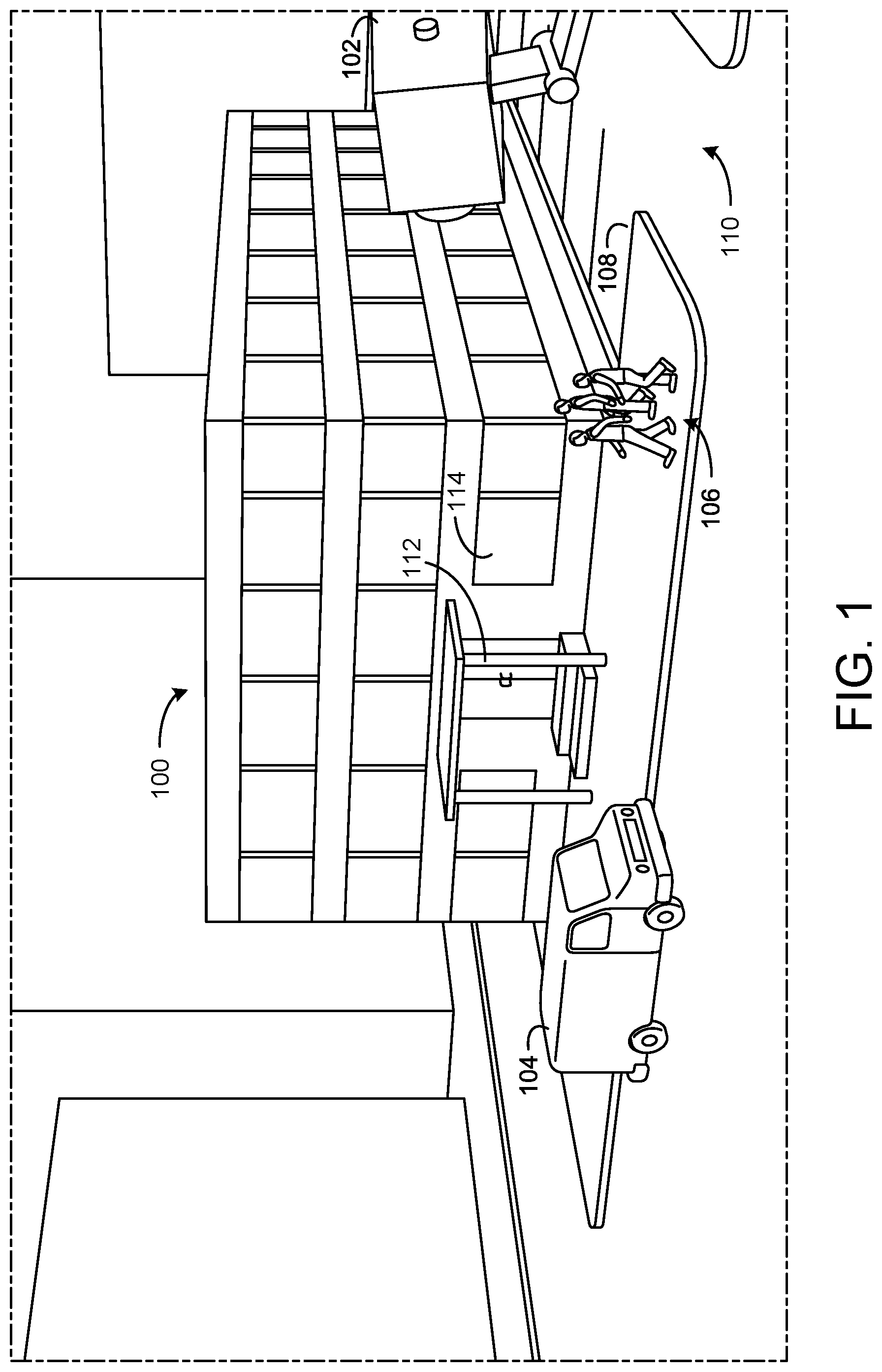

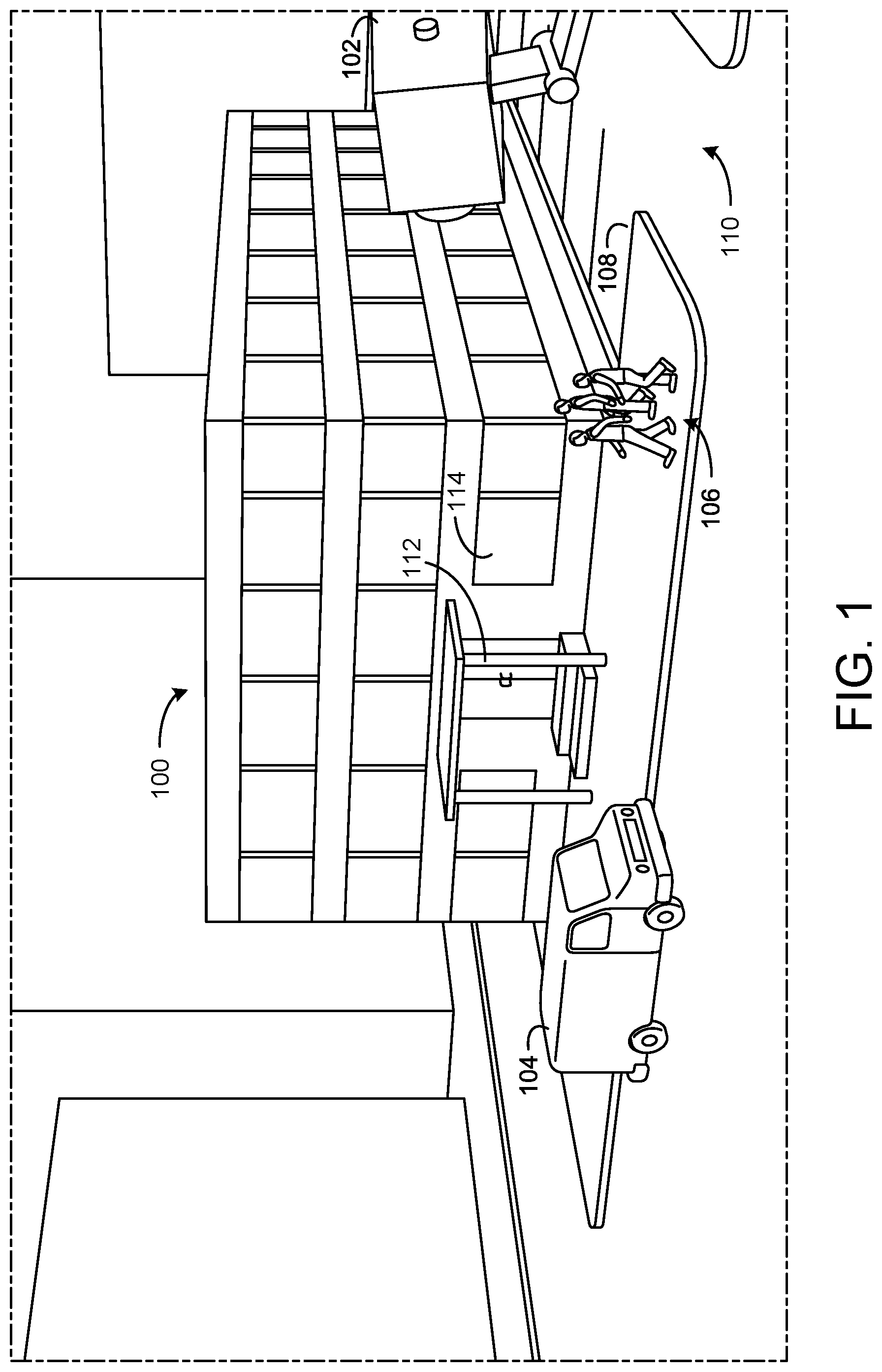

[0095] FIG. 1 is a perspective view schematic drawing of a building with building systems, according to an exemplary embodiment.

[0096] FIG. 2 is a block diagram of a BMS which can be used to monitor and control the building of FIG. 1, according to an exemplary embodiment.

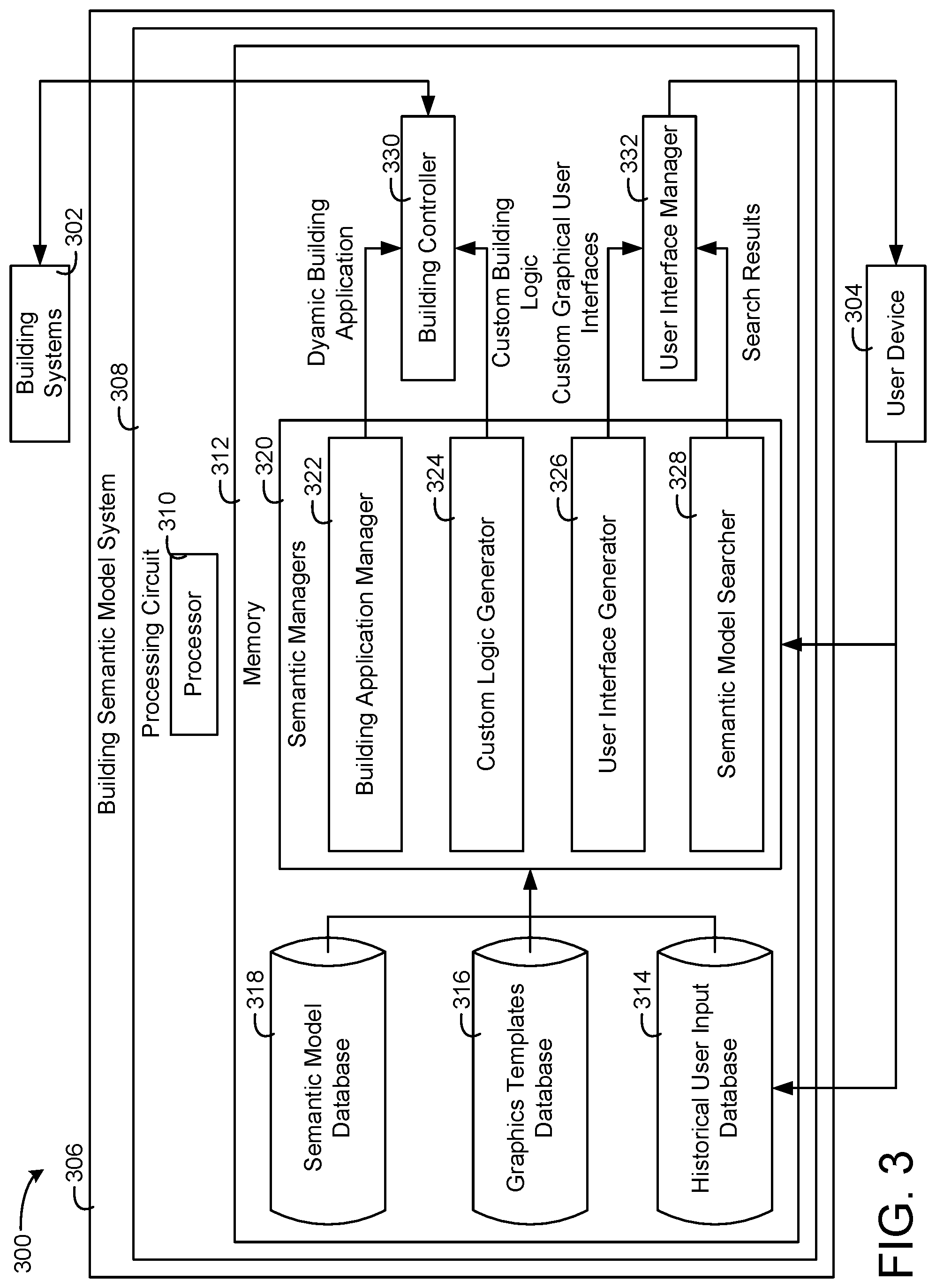

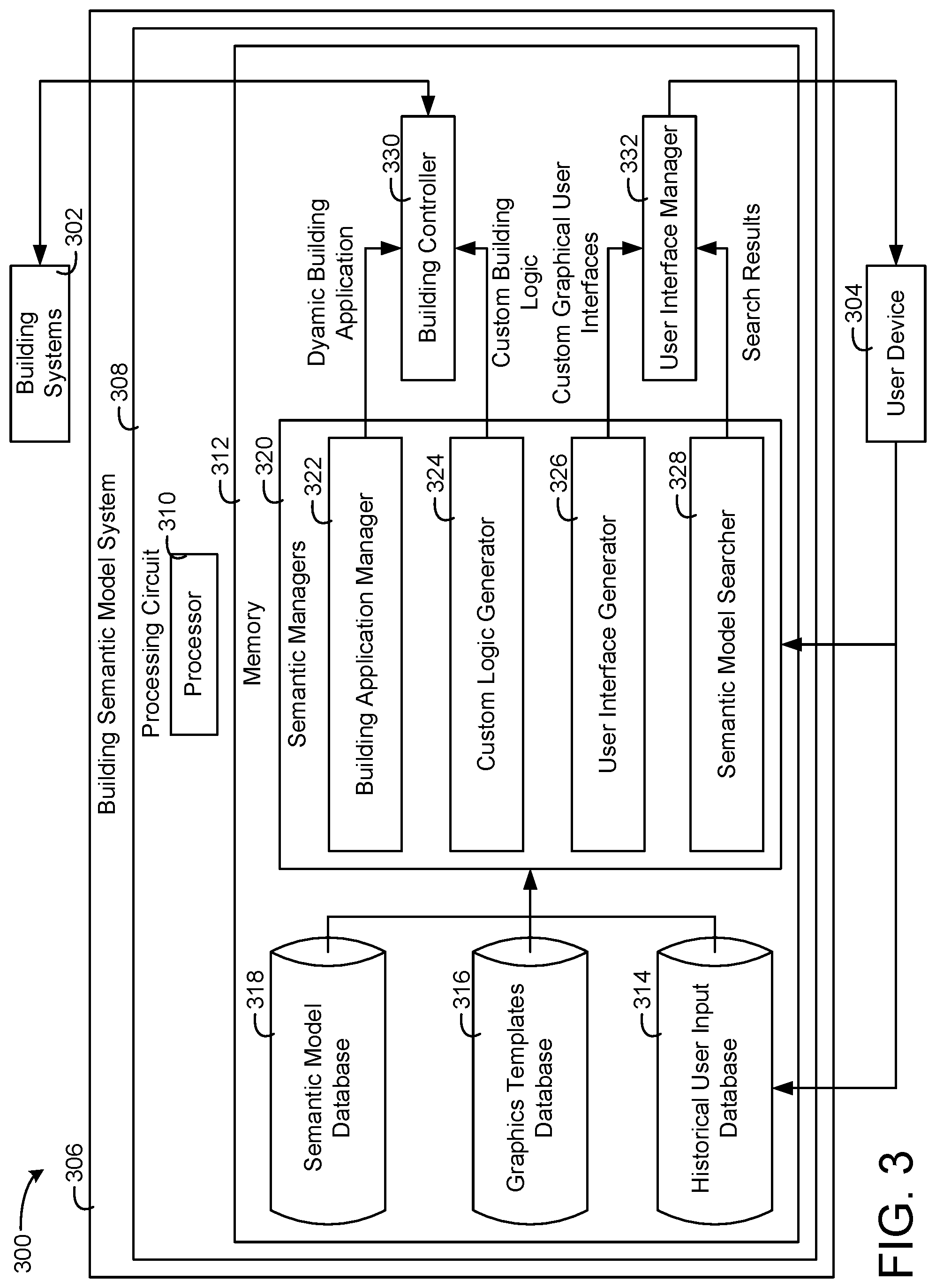

[0097] FIG. 3 is a building semantic model system that can be implemented in the building of FIG. 1, the building semantic model system including a building application manager, a custom logic generator, a user interface generator, and a semantic model searcher, according to an exemplary embodiment.

[0098] FIG. 4 is a block diagram of the building application manager configured to specify a building application in a form of semantic model and use the building semantic model to enable plug-and-play of the building application, according to an exemplary embodiment.

[0099] FIG. 5 is a block diagram of components of the building application manager of FIG. 4 implementing a dynamic semantic control application based on a static semantic control application and a semantic building model, according to an exemplary embodiment.

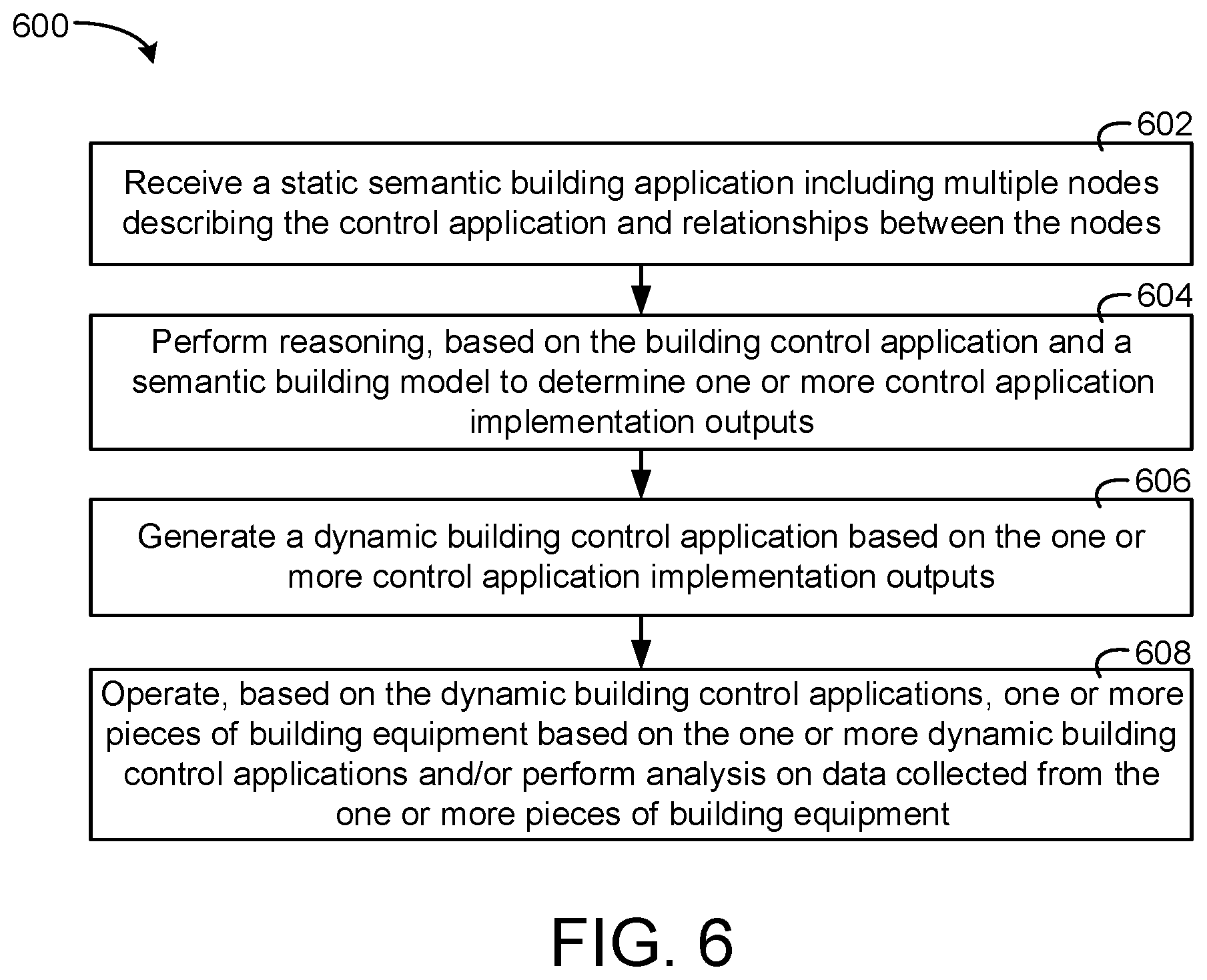

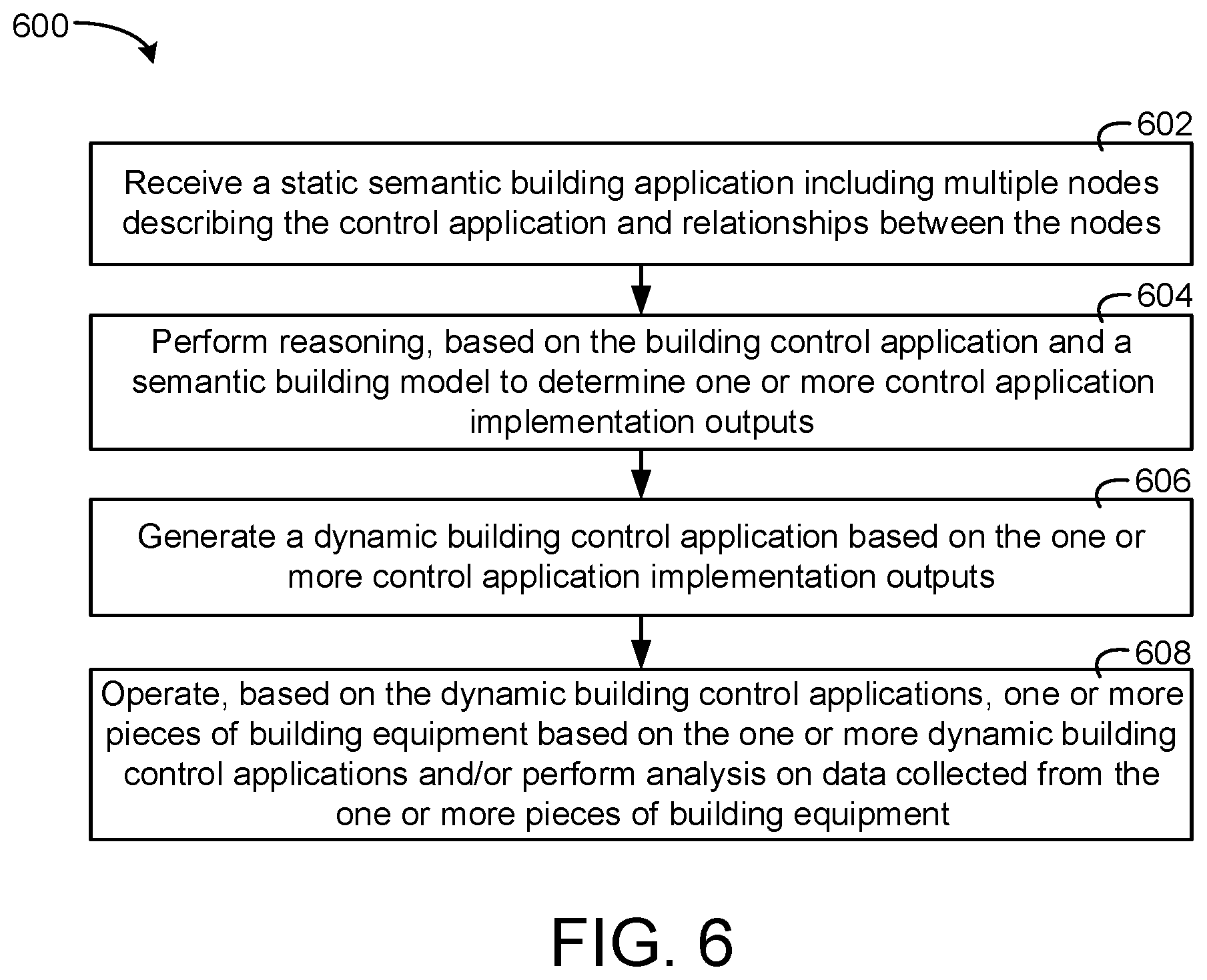

[0100] FIG. 6 is a flow diagram of a process of implementing the dynamic semantic control application with the static semantic control application and the semantic building model that can be performed by the building application manager of FIG. 4, according to an exemplary embodiment.

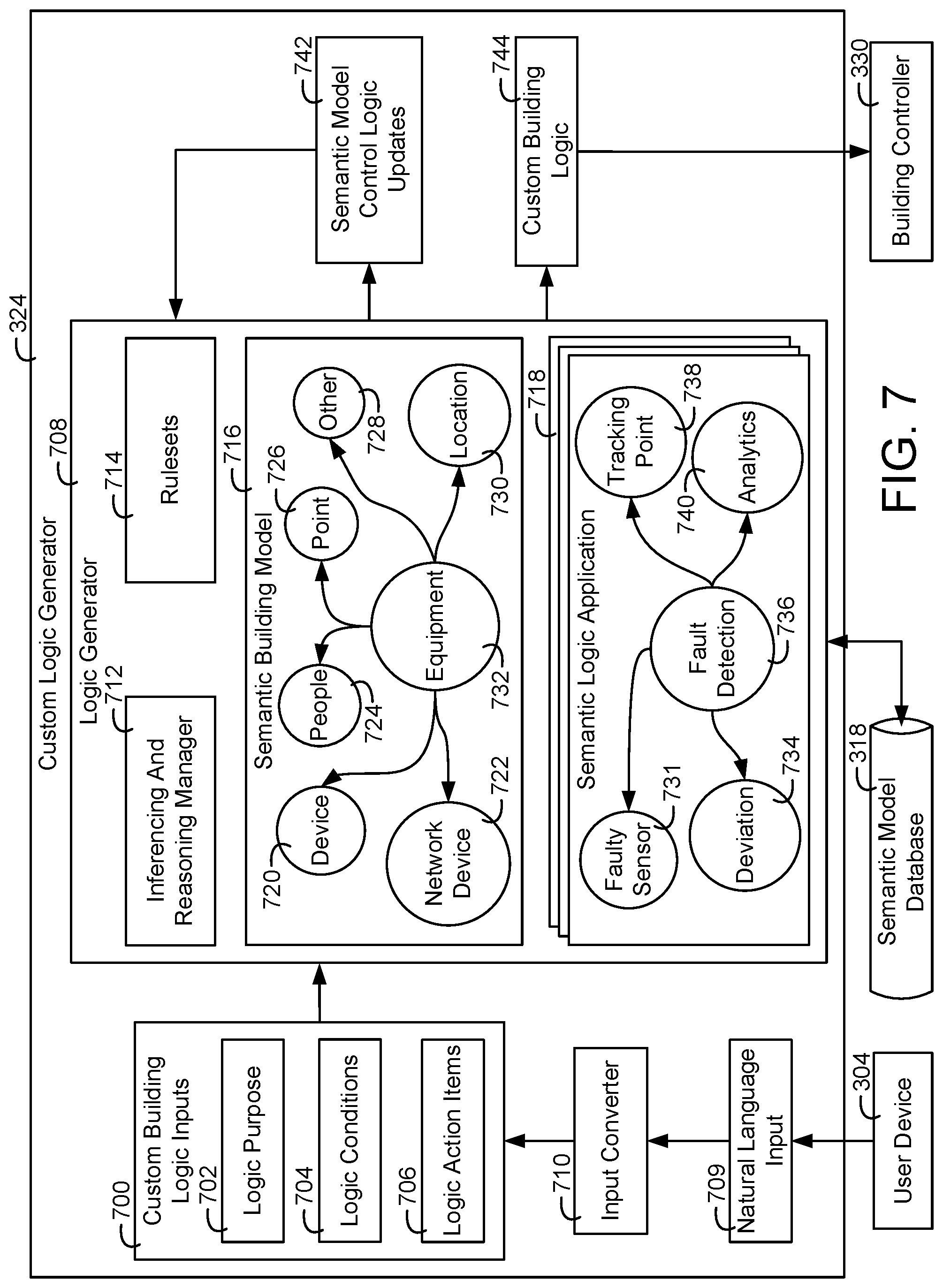

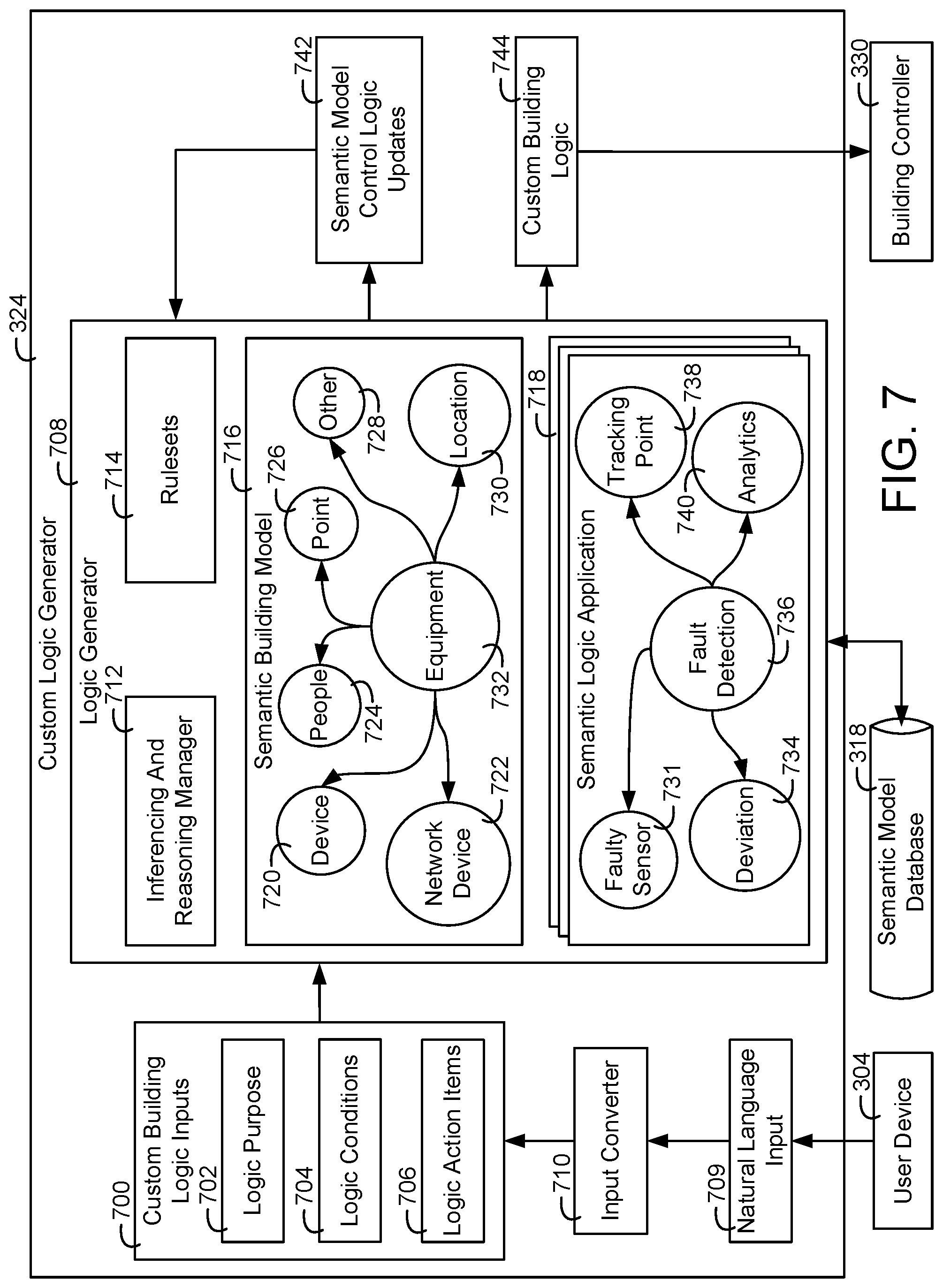

[0101] FIG. 7 is a block diagram of the custom logic generator of the building semantic model system of FIG. 3 in greater detail configured to receive input from a user device and generate custom building logic based on the input, according to an exemplary embodiment.

[0102] FIG. 8 is a flow diagram of a process of receiving input from a user device and generating custom building logic based on the input that can be performed by the custom logic generator of FIG. 3, according to an exemplary embodiment.

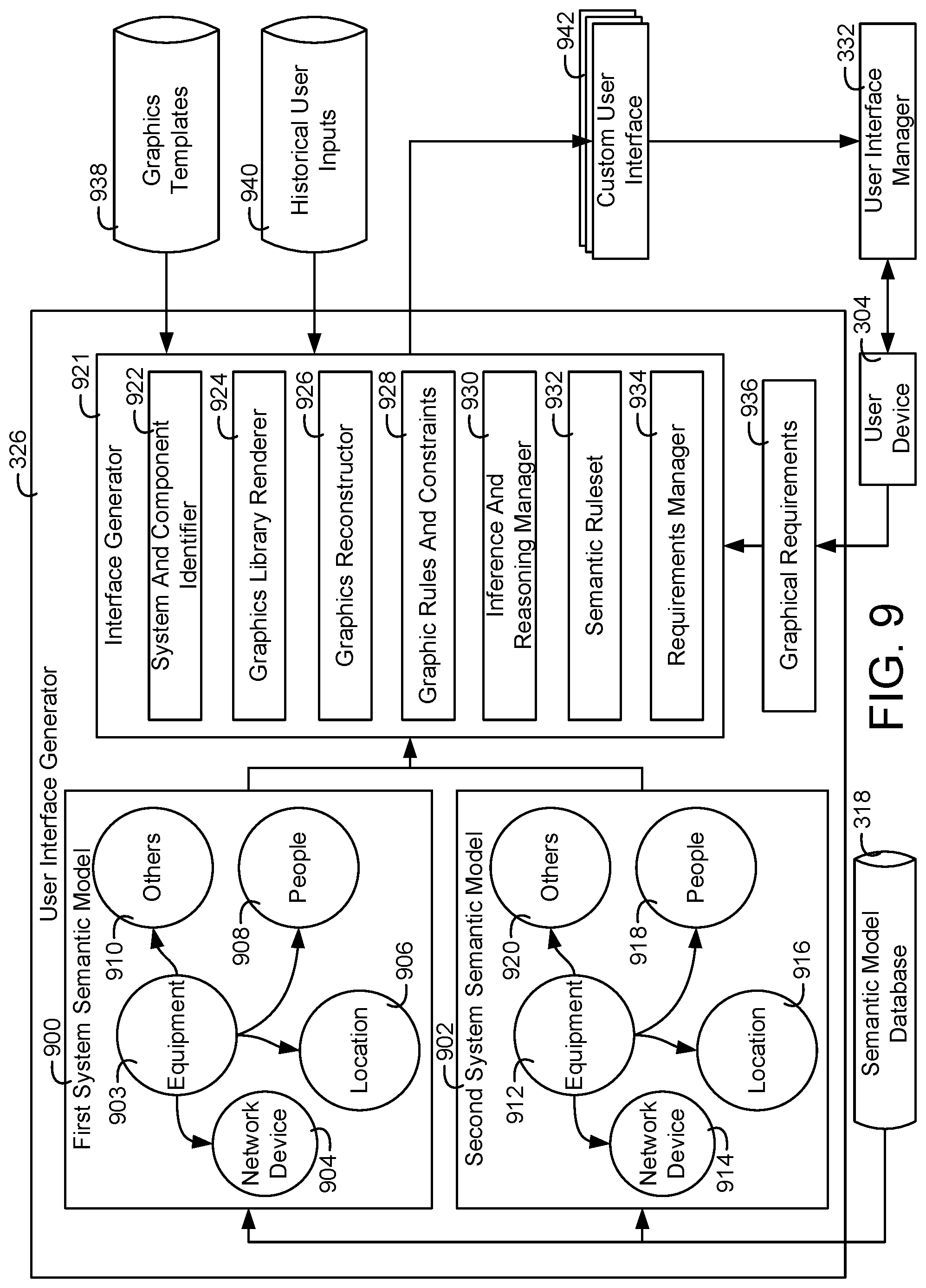

[0103] FIG. 9 is a block diagram of the user interface generator of FIG. 3 in greater detail configured to automatically generate custom graphical user interfaces based on one or more semantic building models, according to an exemplary embodiment.

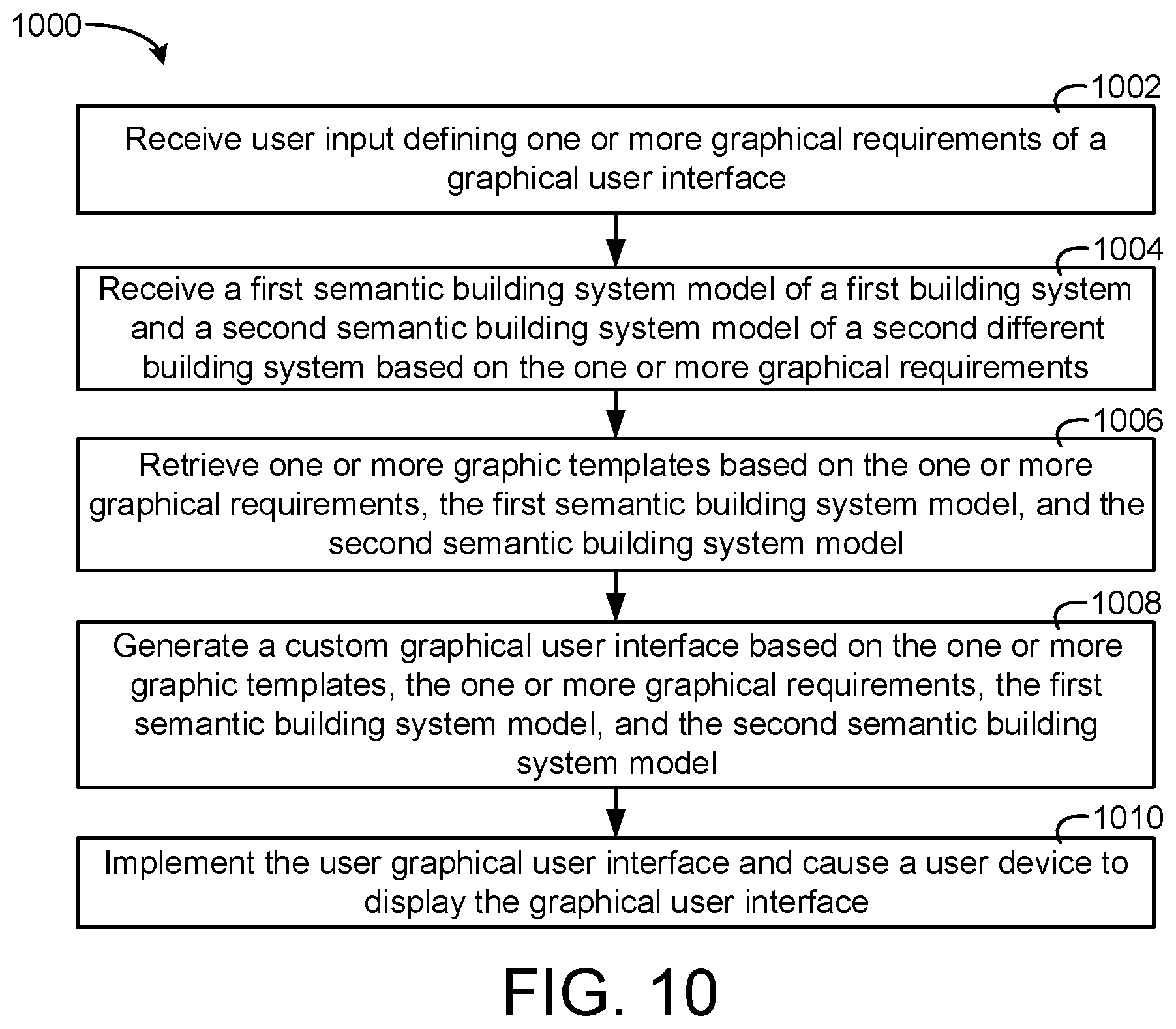

[0104] FIG. 10 is a flow diagram of a process of generating the custom graphical user interfaces based on the one or more semantic building models that can be performed by the user interface generator of FIG. 9, according to an exemplary embodiment.

[0105] FIG. 11 is a user interface including environmental control components that can be generated by the user interface generator of FIG. 9, according to an exemplary embodiment.

[0106] FIG. 12A is a user interface including security video feed components and some of the environmental control components of FIG. 11 that can be generated by the user interface generator of FIG. 9, according to an exemplary embodiment.

[0107] FIG. 12B is a user interface including graphic visualization of equipment of a building that can be generated by the user interface generator of FIG. 9 based on a particular semantic building model, according to an exemplary embodiment.

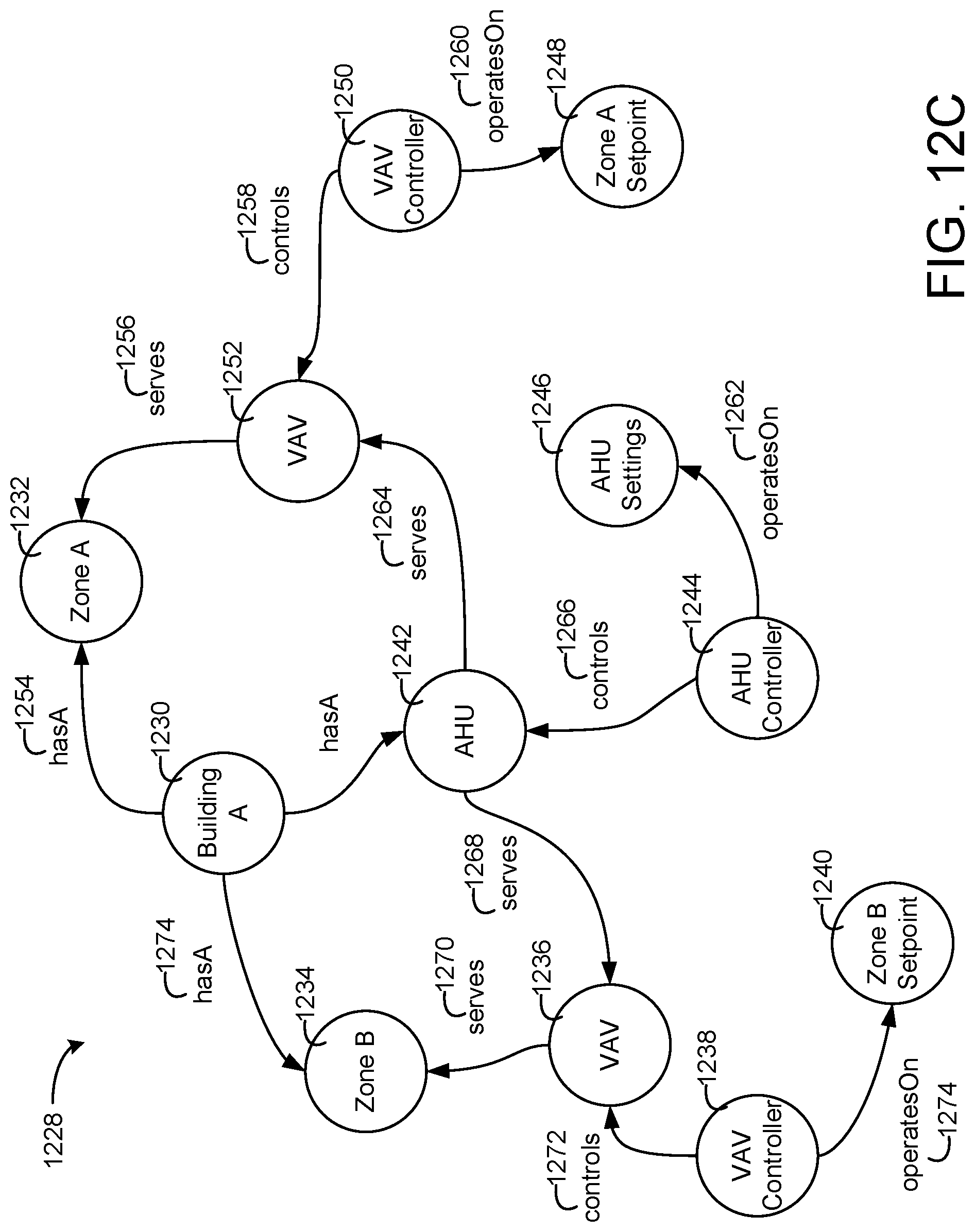

[0108] FIG. 12C is a block diagram of the particular semantic building model used to generate the user interface of FIG. 12B by the user interface generator of FIG. 9, according to an exemplary embodiment.

[0109] FIG. 13 is a user interface for selecting one of multiple generated user interfaces and making modifications to the user interfaces that can be generated by the user interface generator of FIG. 9, according to an exemplary embodiment.

[0110] FIG. 14 is a block diagram of the semantic model searcher of the building semantic model system of FIG. 3 configured to receive a user search input and query semantic models to generate a search result, according to an exemplary embodiment.

[0111] FIG. 15 is a flow diagram of a process of receiving a user search input and querying the semantic models to generate the search result that can be performed by the semantic model searcher of FIG. 3, according to an exemplary embodiment.

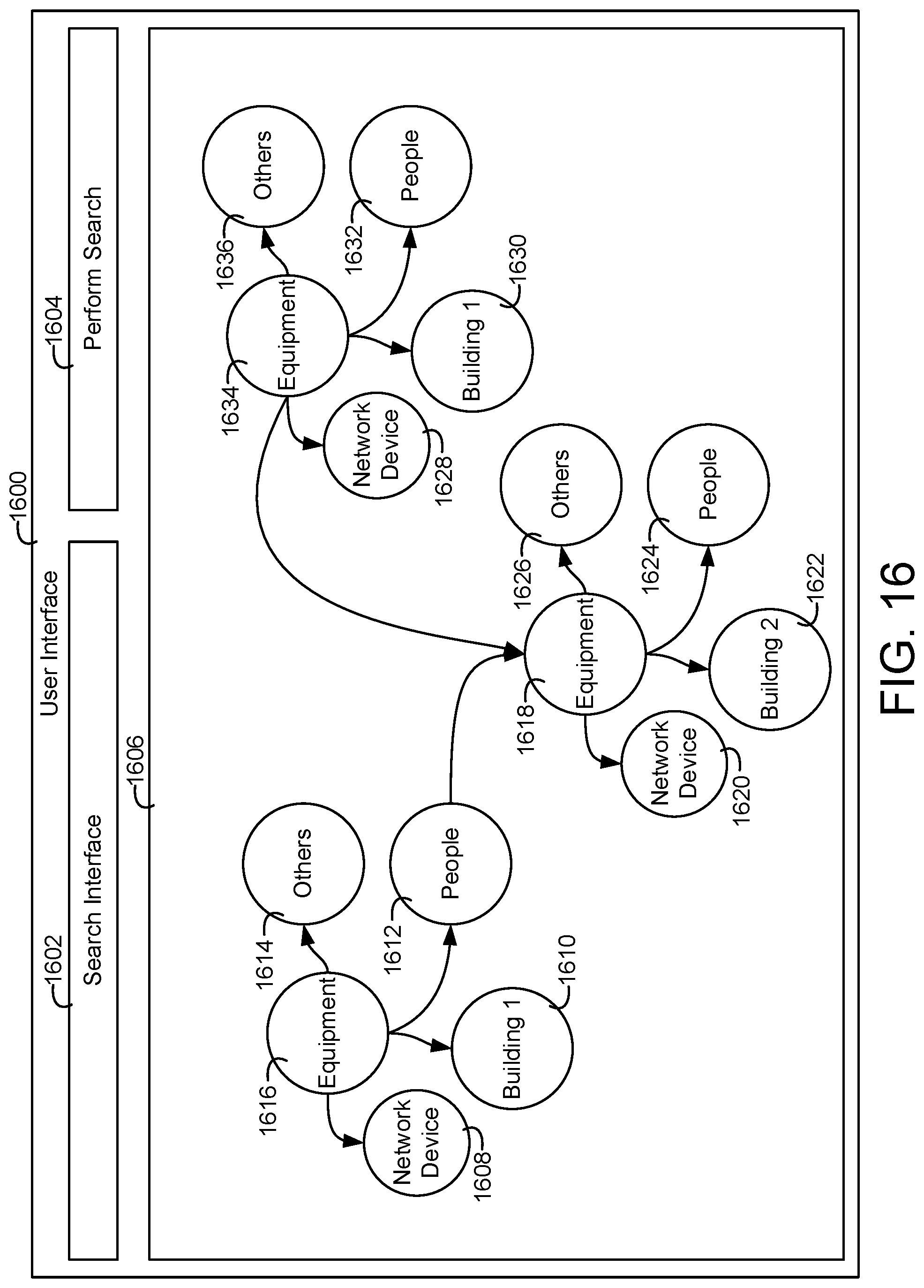

[0112] FIG. 16 is a user interface generated by the user interface manager of the building semantic model system of FIG. 3 allowing a user to search through a semantic building model, according to an exemplary embodiment.

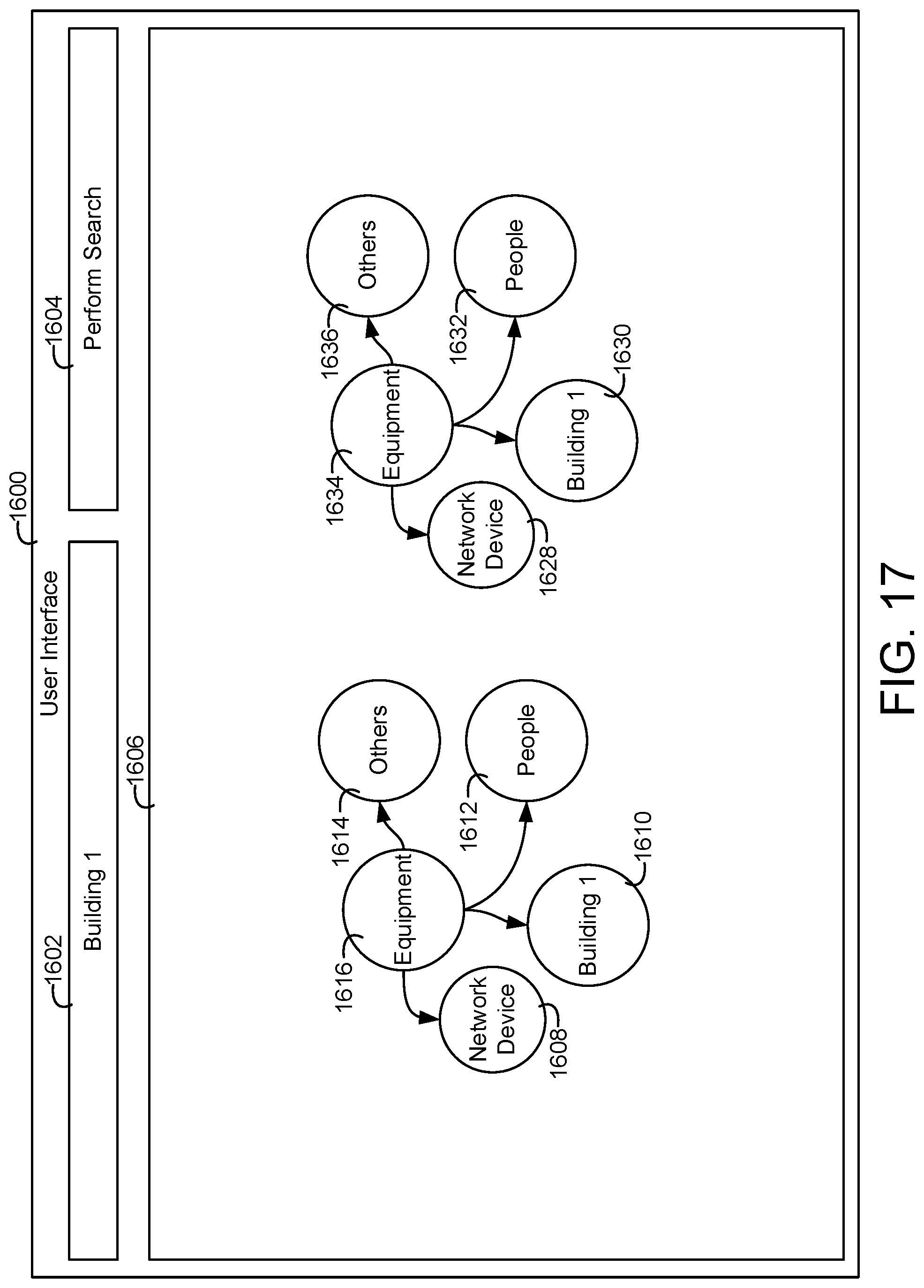

[0113] FIG. 17 is the user interface of FIG. 13 where a user searches for information of a particular building, according to an exemplary embodiment.

[0114] FIG. 18 is the user interface of FIG. 13 where a user searches for information of a particular building component within a particular building, according to an exemplary embodiment.

[0115] FIG. 19 is the user interface of FIG. 13 where a user searches for information of particular building components associated with other building components located within a particular building, according to an exemplary embodiment.

[0116] FIG. 20 is the user interface of FIG. 13 where the user provides a graphical input to filter information of the semantic building model where the results or selection can be dragged and dropped into a graphically defined building application, according to an exemplary embodiment.

[0117] FIG. 21 is the user interface of FIG. 13 where a filtering result of the graphical input of FIG. 17 is displayed to the user, according to an exemplary embodiment.

[0118] FIG. 22 is a semantic model of a building application, according to an exemplary embodiment.

[0119] FIG. 23 is a semantic model of a building and building equipment, according to an exemplary embodiment.

[0120] FIG. 24 is a block diagram of a system including a building management system (BMS) and a building cloud platform that provides collected building data and ingested building data to an artificial intelligence (AI) platform, according to an exemplary embodiment.

[0121] FIG. 25 is a block diagram of the system of FIG. 24 where the BMS includes smart equipment and an analysis gateway configured to flag collected data, generate reliability timeseries, and publish equipment manufacturer information, according to an exemplary embodiment.

[0122] FIG. 26 is a block diagram of the system of FIG. 24 where the building cloud platform includes a building model and an analysis platform for cleansing building data and providing the cleansed building data to the AI platform, according to an exemplary embodiment.

[0123] FIG. 27 is a block diagram of the system of FIG. 24 where the system includes an input learning system for receiving user input and cleansing collected building data based on the user input, according to an exemplary embodiment.

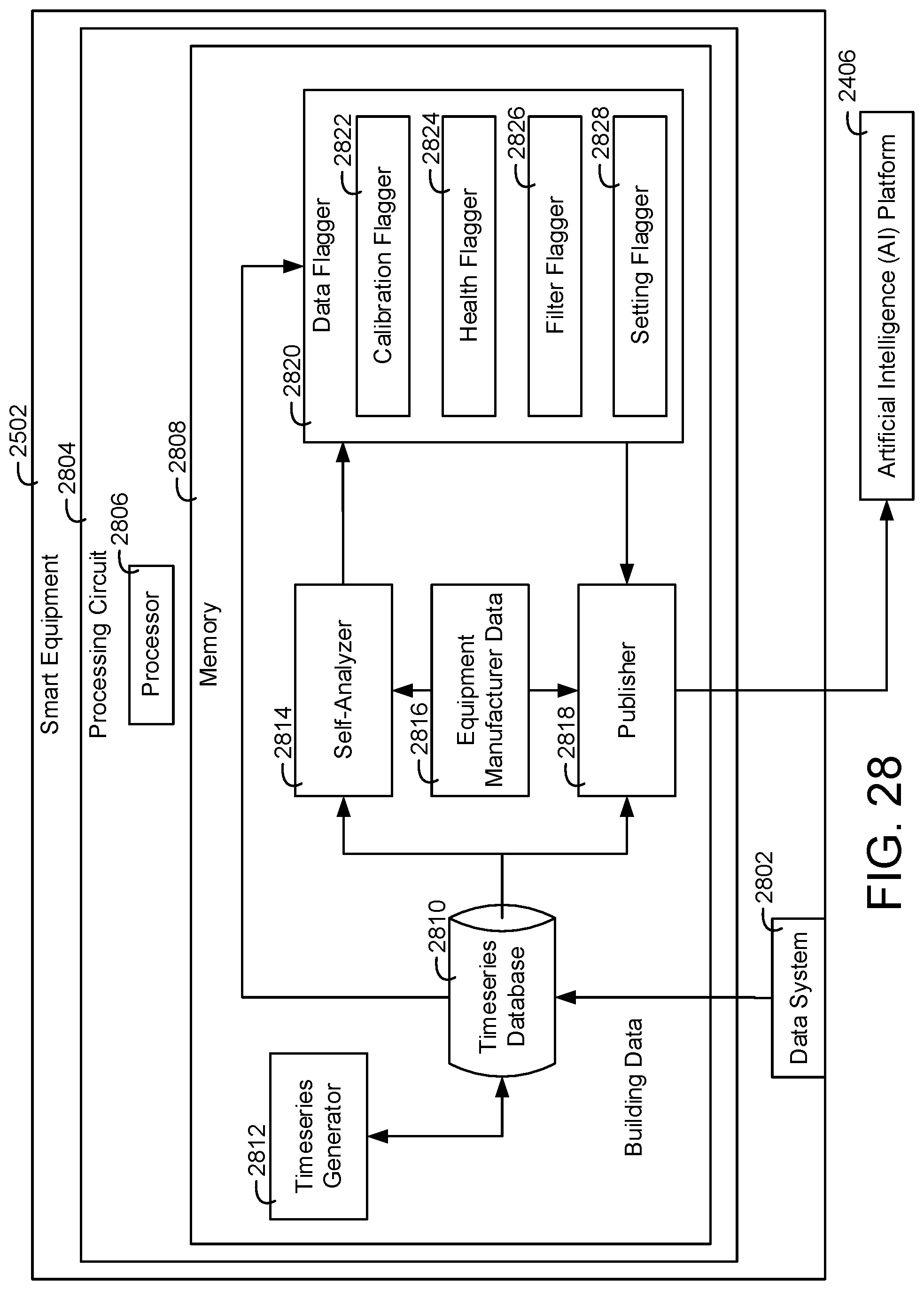

[0124] FIG. 28 is a block diagram illustrating the smart equipment of FIG. 25 in greater detail, according to an exemplary embodiment.

[0125] FIG. 29 is a block diagram of a timeseries generator that can be implemented by the smart equipment of FIG. 25 or the building cloud platform of FIG. 24 for generating a reliability timeseries, according to an exemplary embodiment.

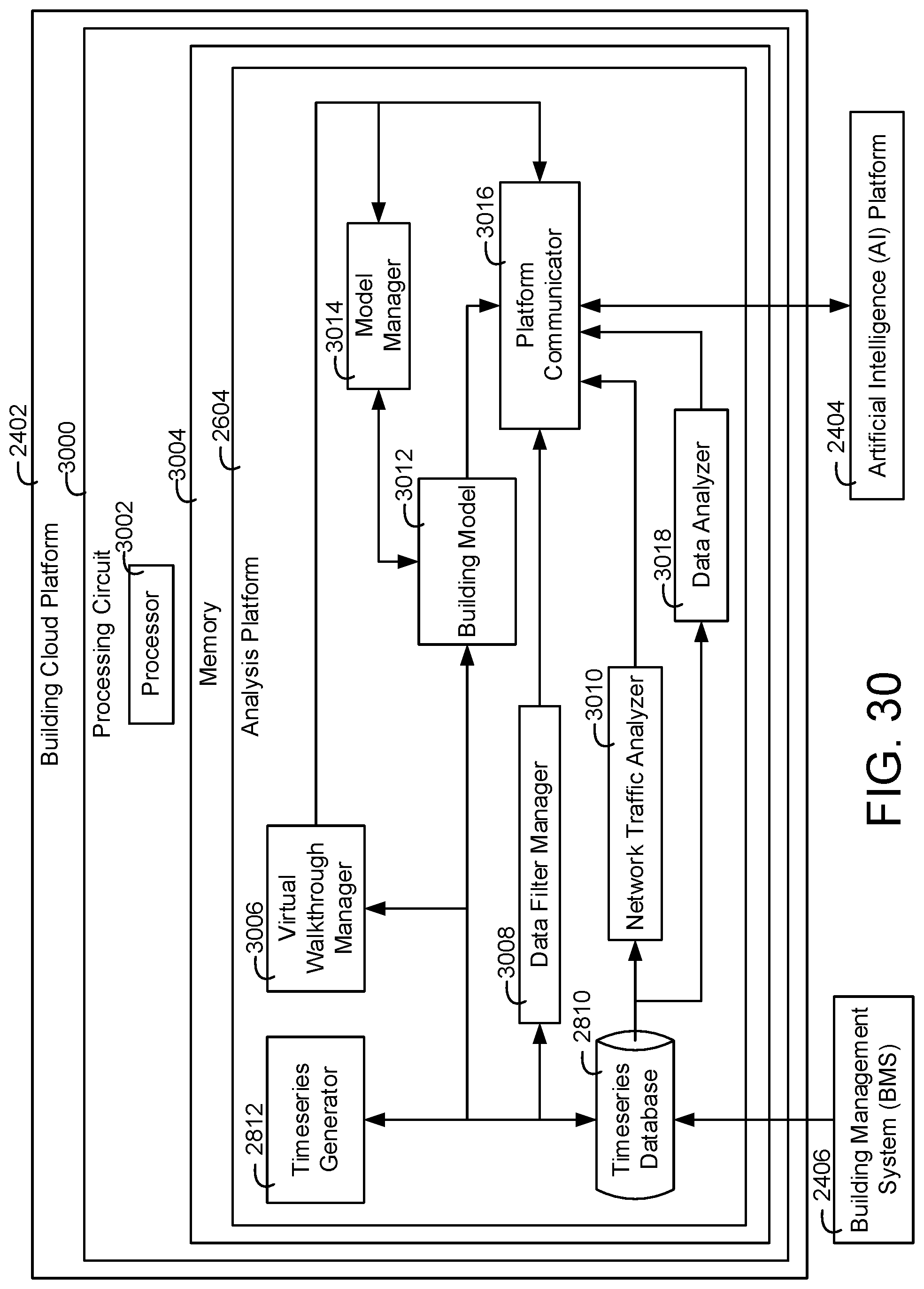

[0126] FIG. 30 is a block diagram illustrating the building cloud platform of FIG. 24 in greater detail, according to an exemplary embodiment.

[0127] FIG. 31 is a block diagram illustrating the AI platform in greater detail, according to an exemplary embodiment.

DETAILED DESCRIPTION

Overview

[0128] Referring generally to the FIGURES, systems and methods for semantic modeling in building systems are shown and described, according to various exemplary embodiments. A semantic model can be a conceptual data model which includes semantic information, e.g., relational logic. In some embodiments, a building system can generate and manage semantic building models which represent building information and utilize the semantic building models to implement control operations in a building, analyze data collected in the building, etc. The semantic building models can define physical building systems, for example, heating, ventilation, and air conditioning (HVAC) systems, security systems, lighting systems, fire suppression systems, etc. Furthermore, the semantic building models can define building applications. For example, control applications configured to operate the building systems, fault analysis applications configured to identify faults in the building systems, analytics applications configured to generate analytics for the building systems, etc.

[0129] The semantic building model can be defined by a semantic model framework, e.g., the Resource Description Framework (RDF), the Web Ontology Language (OWL), RDF/OWL, RDF Schema (RDFS), Smart Appliances REFerence (SAREF) ontology, etc. In some embodiments, a semantic building model can include multiple nodes and relationships in a graph format. The nodes can represent physical building components, e.g., buildings, systems, equipment, people, etc. and semantic information defining relationships between the buildings, systems, equipment, and people, e.g., an indication that one building component is located within another component, an indication that a first building component controls a second building component, etc. Furthermore, the nodes can represent building applications, e.g., the input to a building application, an output to a building application, a set of operations to perform on the input to generate the output, fault detection thresholds, etc.

Semantic Building Applications

[0130] Buildings are a major energy consumer. In some countries, buildings contribute to approximately 40% of energy use. Advanced control applications (e.g., distributed control, optimal control, adaptive control, artificial intelligence (AI) based control) can significantly reduce energy consumption of buildings (e.g., between 10-30% of total energy consumption in buildings). Furthermore, the control applications can improve occupant comfort, improve employee productivity, improve indoor air quality, reduce carbon emissions, etc.

[0131] In some cases, implementing energy savings control applications can be difficult in view of the expertise and configuration time required to deploy the control applications. For example, an individual implementing a control application may need to manually look different components in the building systems and/or the interconnections between the systems to understand how to implement the control applications. Furthermore, the individual may need to review control-related requirements of the building system to determine if a particular control application is applicable for the building system.

[0132] In order to efficiently implement building applications in a building, in some embodiments, a building system is configured to model pieces of control applications in a semantic framework, i.e., a semantic building control model. Based on inferencing techniques, the building system is configured to relate the control application to semantic building models and/or external ontologies (SSO) so that the control application can fully describe itself (e.g., describe itself in a complete manner providing appropriate equipment targets, operational results, inputs, outputs, etc.), which the building system can be configured to utilize to identify target systems to run the control application on. In this regard, the building system can automatically deploy the control applications on particular systems without requiring substantial manual intervention.

[0133] Furthermore, the semantic model, in some embodiments, is used by the building system to describe the expected behavior of the building application e.g., appropriate performance metric values identifying the performance of the building application. The building system is configured to use the semantic model of the building application to verify that that the building application is successfully initiated and behaving as expected. A user can predefine the building application in a semantic manner identify application requirements for the control application. For example, the requirements may indicate what systems an application is applicable for (e.g., applicable for systems with fan-coil units connected to air-cooled chillers for laboratory rooms, applicable for systems with two room temperature sensors with certain precision levels), etc. The control application can also include an indication of the expected performance and performance metrics to be used in identifying whether the application is behaving as expected. For example, the performance metric could be maximum water flow deviation and the expected behavior may be that the maximum water flow deviation within a particular range (e.g., within 30% of a particular value).

[0134] This building system is configured to deploy independent, portable building applications, in some embodiments. The building system can be configured to identify dependency between multiple different building applications, identify connections between the building applications, and/or automatically resolve conflicts between multiple building applications. For example, an application to optimize supply-fan operation and an application to optimize the coiling coils could conflict with each other although they control two different components. The building system can be configured to identify the conflict (e.g., a temperature control application may conflict with a supervisory temperature setpoint control application) and modify one or both of the control applications to resolve the conflict, e.g., identify a proper sequence of execution for the control applications that resolves the conflict. In some embodiments, the building system can configure the first running application to take precedence over any other subsequent application (a first come, first operation scheme). In some embodiments, the building system can configure the second application to run if the first application fails to meet a performance goal.

Custom Logic Generation