Write Buffering

Bruce; Rolando H. ; et al.

U.S. patent application number 16/601327 was filed with the patent office on 2020-05-14 for write buffering. This patent application is currently assigned to BITMICRO LLC. The applicant listed for this patent is BITMICRO LLC. Invention is credited to Mark Ian Alcid Arcedera, Rolando H. Bruce, Elmer Paule Dela Cruz.

| Application Number | 20200151098 16/601327 |

| Document ID | / |

| Family ID | 68165030 |

| Filed Date | 2020-05-14 |

View All Diagrams

| United States Patent Application | 20200151098 |

| Kind Code | A1 |

| Bruce; Rolando H. ; et al. | May 14, 2020 |

WRITE BUFFERING

Abstract

A hybrid storage system is described having a mixture of different types of storage devices comprising rotational drives, flash devices, SDRAM, and SRAM. The rotational drives are used as the main storage, providing lowest cost per unit of storage memory. Flash memory is used as a higher-level cache for rotational drives. Methods for managing multiple levels of cache for this storage system is provided having a very fast Level 1 cache which consists of volatile memory (SRAM or SDRAM), and a non-volatile Level 2 cache using an array of flash devices. It describes a method of distributing the data across the rotational drives to make caching more efficient. It also describes efficient techniques for flushing data from L1 cache and L2 cache to the rotational drives, taking advantage of concurrent flash devices operations, concurrent rotational drive operations, and maximizing sequential access types in the rotational drives rather than random accesses which are relatively slower. Methods provided here may be extended for systems that have more than two cache levels.

| Inventors: | Bruce; Rolando H.; (South San Francisco, CA) ; Dela Cruz; Elmer Paule; (Pasay City, PH) ; Arcedera; Mark Ian Alcid; (Paranaque, PH) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | BITMICRO LLC RESTON VA |

||||||||||

| Family ID: | 68165030 | ||||||||||

| Appl. No.: | 16/601327 | ||||||||||

| Filed: | October 14, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15665321 | Jul 31, 2017 | 10445239 | ||

| 16601327 | ||||

| 14689045 | Apr 16, 2015 | 9734067 | ||

| 15665321 | ||||

| 14217436 | Mar 17, 2014 | 9430386 | ||

| 14689045 | ||||

| 61980561 | Apr 16, 2014 | |||

| 61801422 | Mar 15, 2013 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 13/28 20130101; G06F 2212/452 20130101; G06F 2212/1016 20130101; G06F 11/1076 20130101; G06F 12/0875 20130101; G06F 2212/62 20130101; G06F 12/0804 20130101; G06F 12/0897 20130101; G06F 2212/225 20130101; G06F 12/0833 20130101; G06F 12/0868 20130101; G06F 2212/262 20130101; G06F 2212/312 20130101 |

| International Class: | G06F 12/0831 20060101 G06F012/0831; G06F 12/0875 20060101 G06F012/0875 |

Claims

1. Apparatus for storing data comprising: a write buffering scheme comprising a plurality of cache devices, wherein write data is moved from a first cache device to a second cache device when a pre-defined threshold of unused cache lines is reached in the first cache device; wherein the second cache is slower than the first cache; wherein the second cache has a greater storage capacity than the first cache.

Description

CROSS-REFERENCE(S) TO RELATED APPLICATIONS

[0001] This application is a continuation of application Ser. No. 15/665,321, filed Jul. 31, 2017 and issuing Oct. 15, 2019 as U.S. Pat. No. 10,445,239, which is a continuation of application Ser. No. 14/689,045, filed Apr. 16, 2015 and issued as U.S. Pat. No. 9,734,067 on Aug. 15, 2017, which claims the benefit of and priority to U.S. Provisional App. No. 61/980,561, filed Apr. 16, 2014. This U.S. Provisional Application 61/980,561 is hereby fully incorporated herein by reference. U.S. application Ser. No. 14/689,045 is a continuation-in-part of application Ser. No. 14/217,436, filed Mar. 17, 2014 and issued as U.S. Pat. No. 9,430,386 on Aug. 30, 2016, which claims the benefit of and priority to App. No. 61/801,422, filed Mar. 15, 2013. U.S. application Ser. Nos. 15/665,321 and 14/689,045 and 14/217,436 and U.S. Provisional Application 61/801,422 are each hereby fully incorporated by reference herein.

BACKGROUND

Field

[0002] This invention relates to the management of data in a storage system having both volatile and non-volatile caches. It relates more specifically to the methods and algorithms used in managing multiple levels of caches for improving the performance of storage systems that make use of Flash devices as higher-level cache.

Description of Related Art

[0003] Typical storage systems comprising multiple storage devices usually assign a dedicated rotational or solid state drive as cache to a larger number of data drives. In such systems, the management of the drive cache is clone by the host and the overhead brought about by this contributes to degradation of the caching performance of the storage system. Prior approaches to improving the caching performance focus on the cache replacement policy being used. The most common replacement policy or approach to selecting victim data in a cache is the Least Recently Used (LRU) algorithm. Other solutions consider the frequency of access to the cached data, replacing less frequently used data first. Still other solutions keep track of the number of times the data has been written while in cache so that it is only flushed to the media once it reaches a certain write threshold. Others even separate read cache from write cache offering the possibility for parallel read and write operations.

[0004] The use of non-volatile storage as cache has also been described in prior art, declaring that response time for such storage systems approaches that of a solid state storage rather than a mechanical drive. However, prior solutions that made use of non-volatile memory as cache did not take advantage of the architecture of the non-volatile memories that could have further increased the caching performance of the system. The storage system does not make any distinction between a rotational drive and a solid-state drive cache thus failing to recognize possible improvements that can be brought about by the architecture of the solid-state drive. Accordingly, there is a need for a cache management method for hybrid storage system that takes advantage of the characteristic of flash memory and the architecture of the solid-state drive.

SUMMARY

[0005] The present invention describes cache management methods for a hybrid storage device having volatile and non-volatile caches. Maximizing concurrent data transfer operations to and from the different cache levels especially to and from flash-based L2 cache results in increased performance over conventional methods. Distributed striping is implemented across the rotational drives maximizing parallel operations on multiple drives. The use of Fastest-To-Fetch and Fastest-To-Flush victim data selection algorithms side-by-side with the LRU algorithm results in further improvements in performance.

[0006] Flow of data to and from the caches and the storage medium is managed using a cache state-based algorithm allowing the firmware application to choose the necessary state transitions that produces the most efficient data flow.

[0007] The present invention is described in several exemplary hybrid storage systems illustrated in FIGS. 1, 2, 3, and 4. The present invention is applicable to additional hybrid storage device architectures, wherein more details can be found in U.S. Pat. No. 7,613,876, entitled "Hybrid Multi-Tiered Caching Storage System", which is incorporated herein by reference.

[0008] The methods through which read and write operations to the flash devices are improved are discussed in U.S. Pat. No. 7,506,098, entitled "Optimized Placement Policy for Solid State Storage Devices," which is incorporated herein by reference. The present invention uses such access optimizations in caching.

BRIEF DESCRIPTION OF DRAWINGS

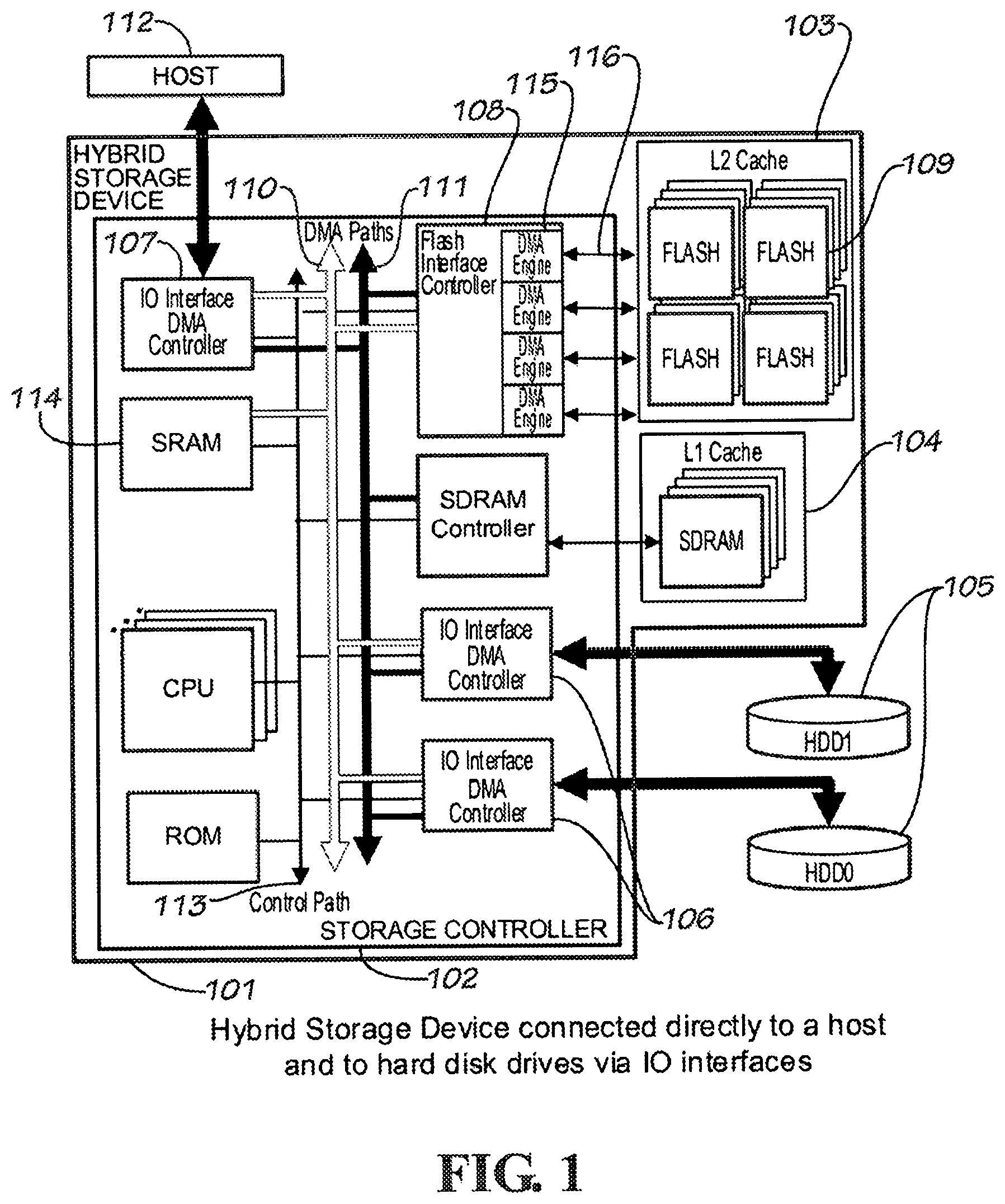

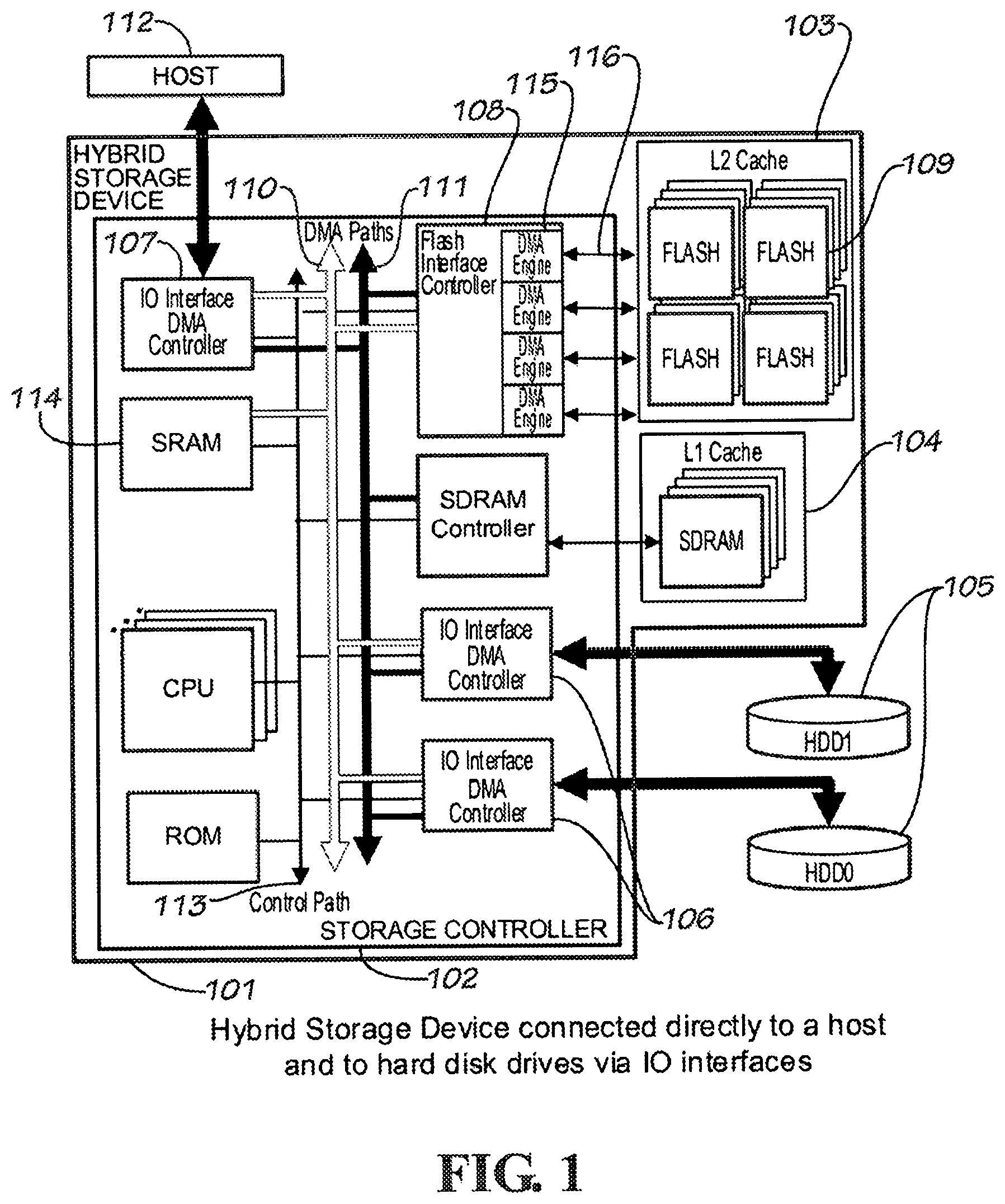

[0009] FIG. 1 is a diagram illustrating a hybrid storage device connected directly to the host and to the rotational drives through the storage controller's available IO interfaces according to an embodiment of the present invention.

[0010] FIG. 2 is a diagram illustrating a hybrid storage device that is part of the host and connected directly or indirectly to hard disk drives through its IO interfaces according to an embodiment of the present invention.

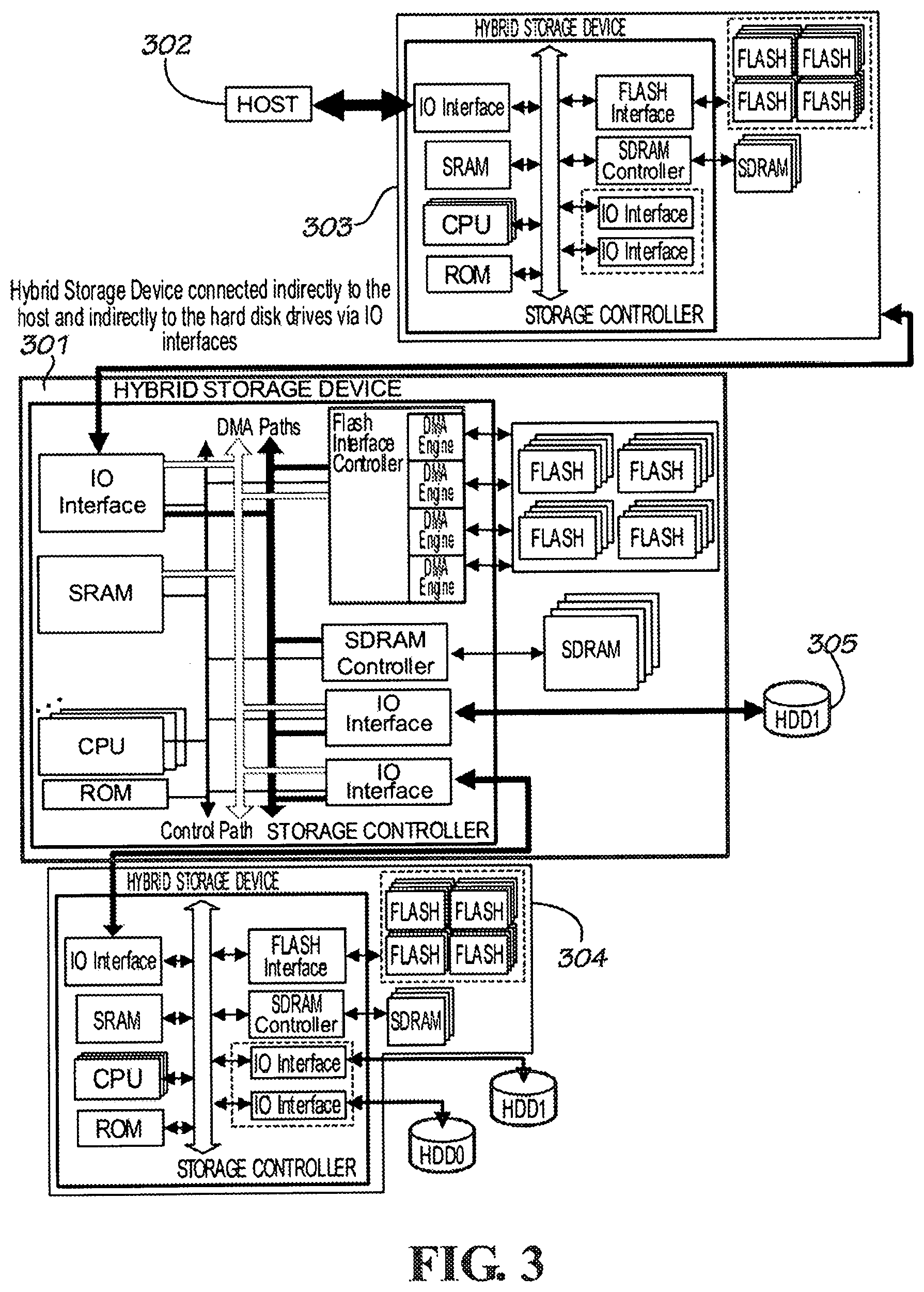

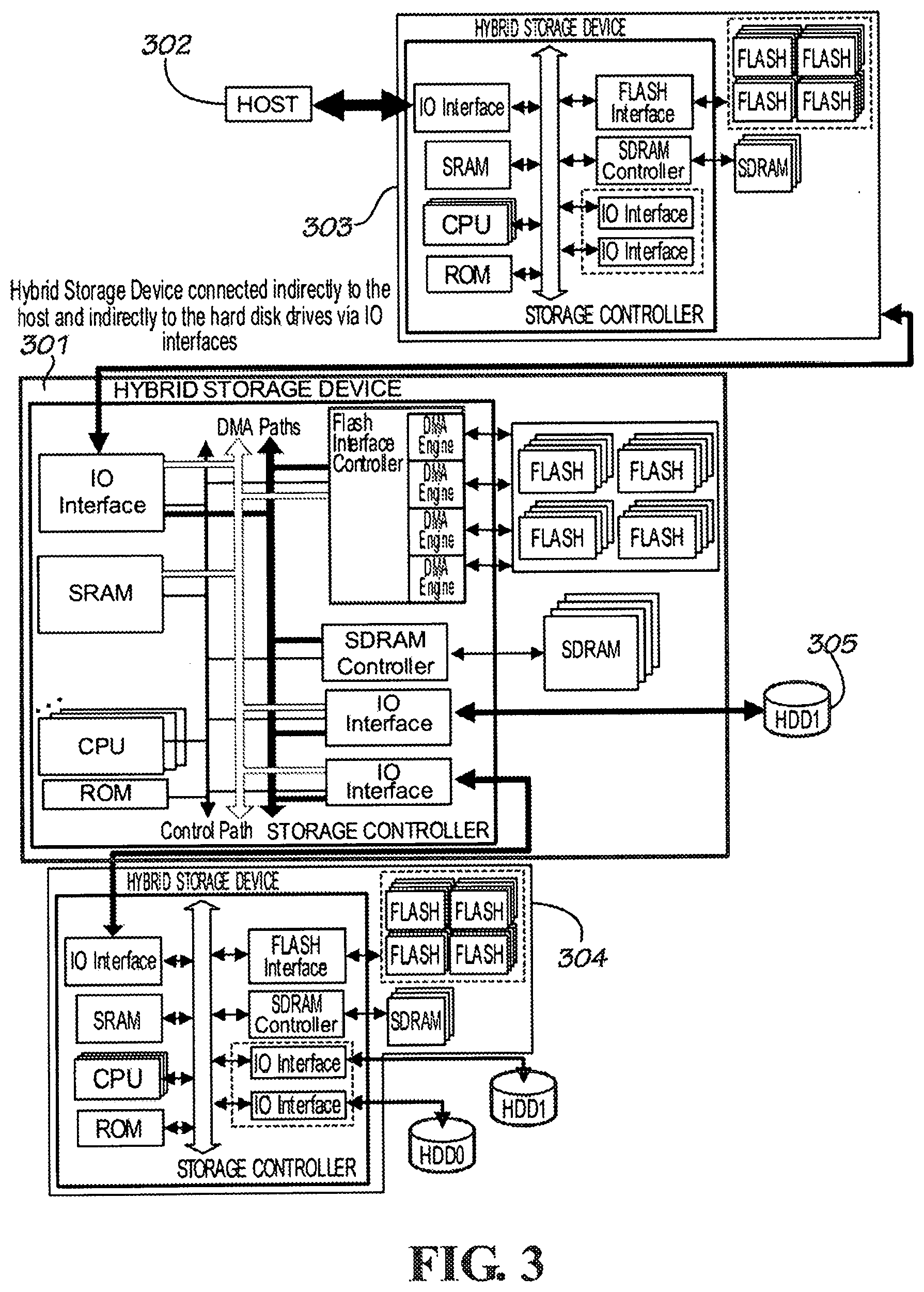

[0011] FIG. 3 is a diagram illustrating a hybrid storage device connected indirectly to the host and indirectly to the hard disk drives though its IO interfaces according to an embodiment of the present invention.

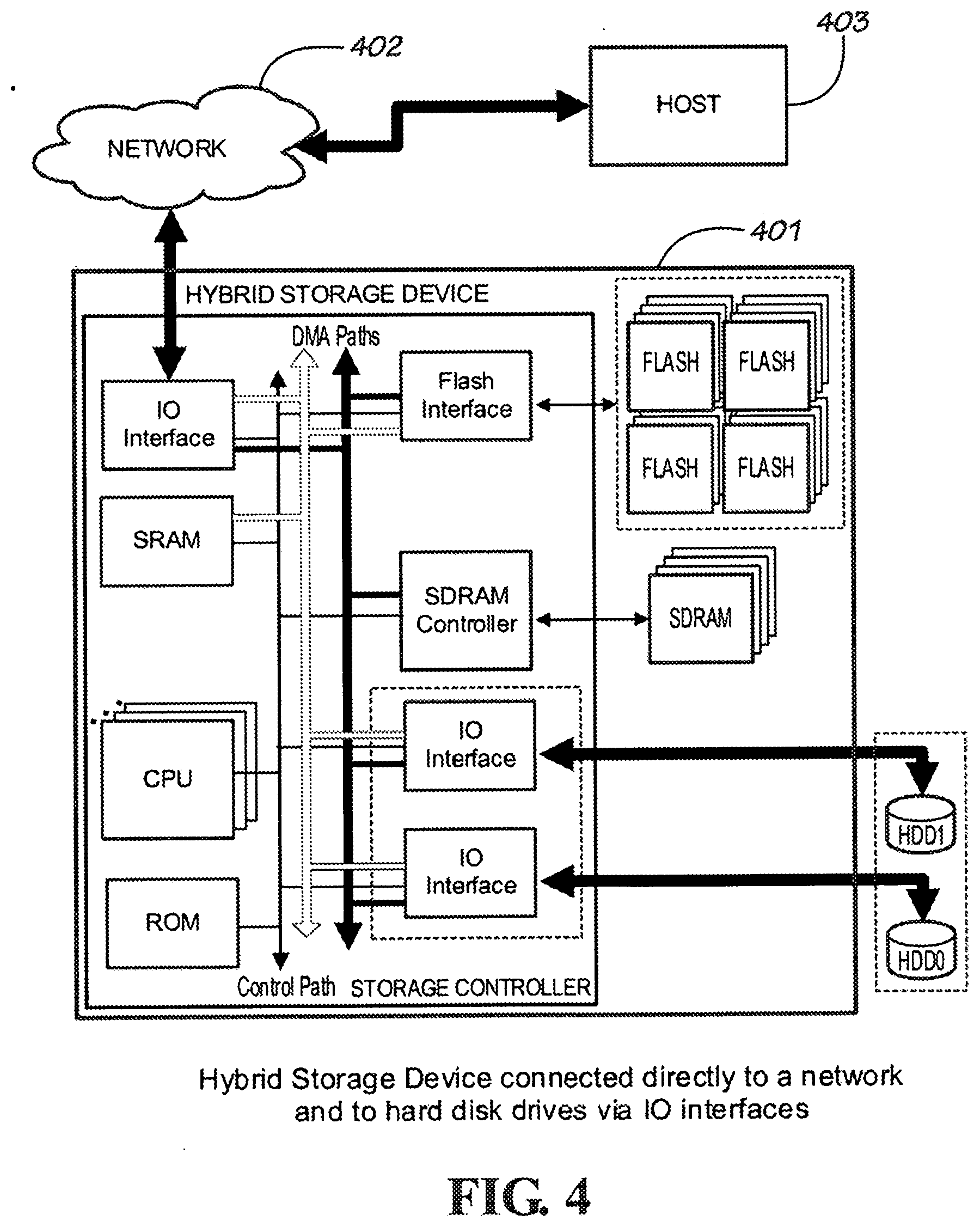

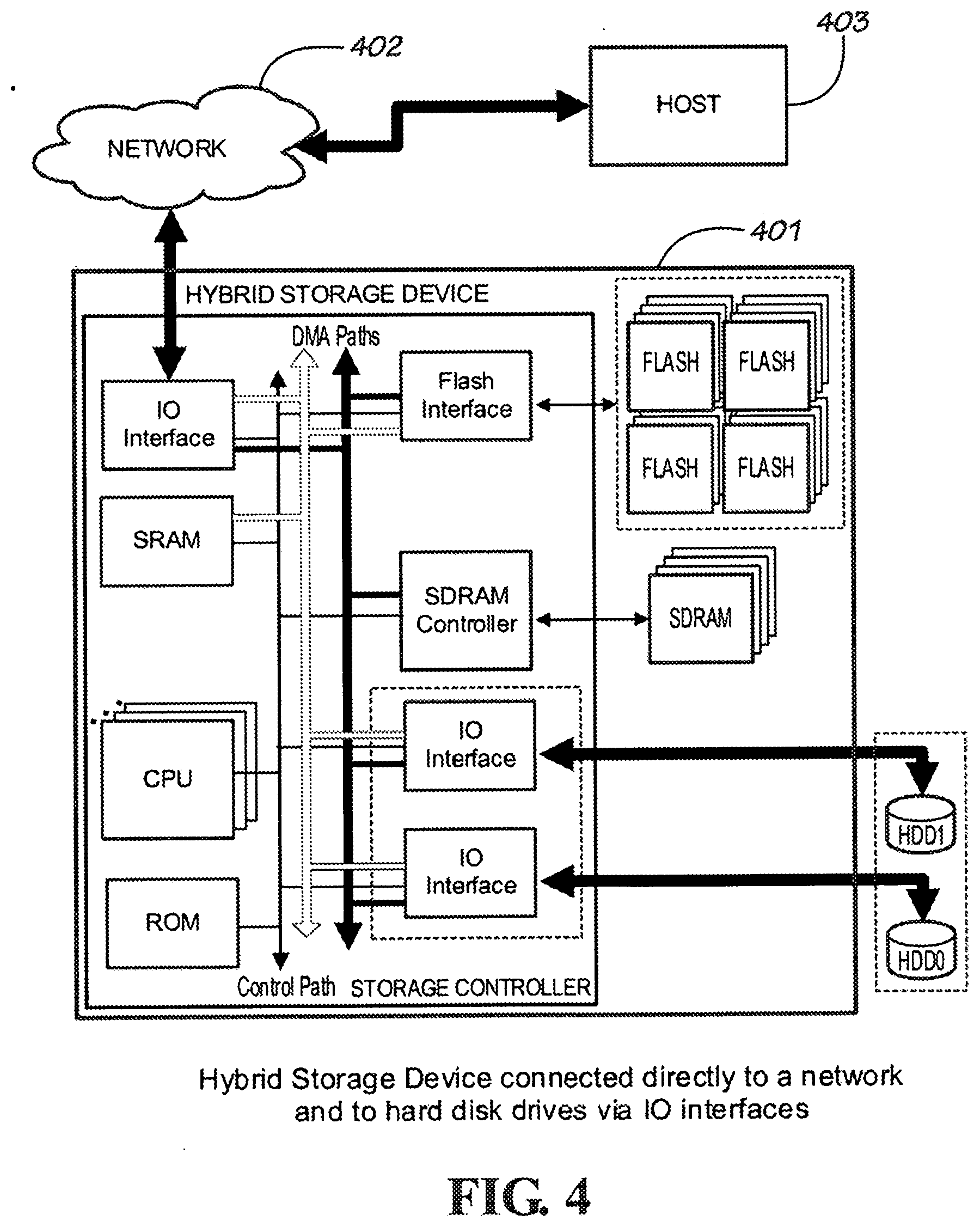

[0012] FIG. 4 is a diagram illustrating a hybrid storage device connected indirectly to the host through a network and directly to the hard disk drives through its IO interfaces according to an embodiment of the present invention.

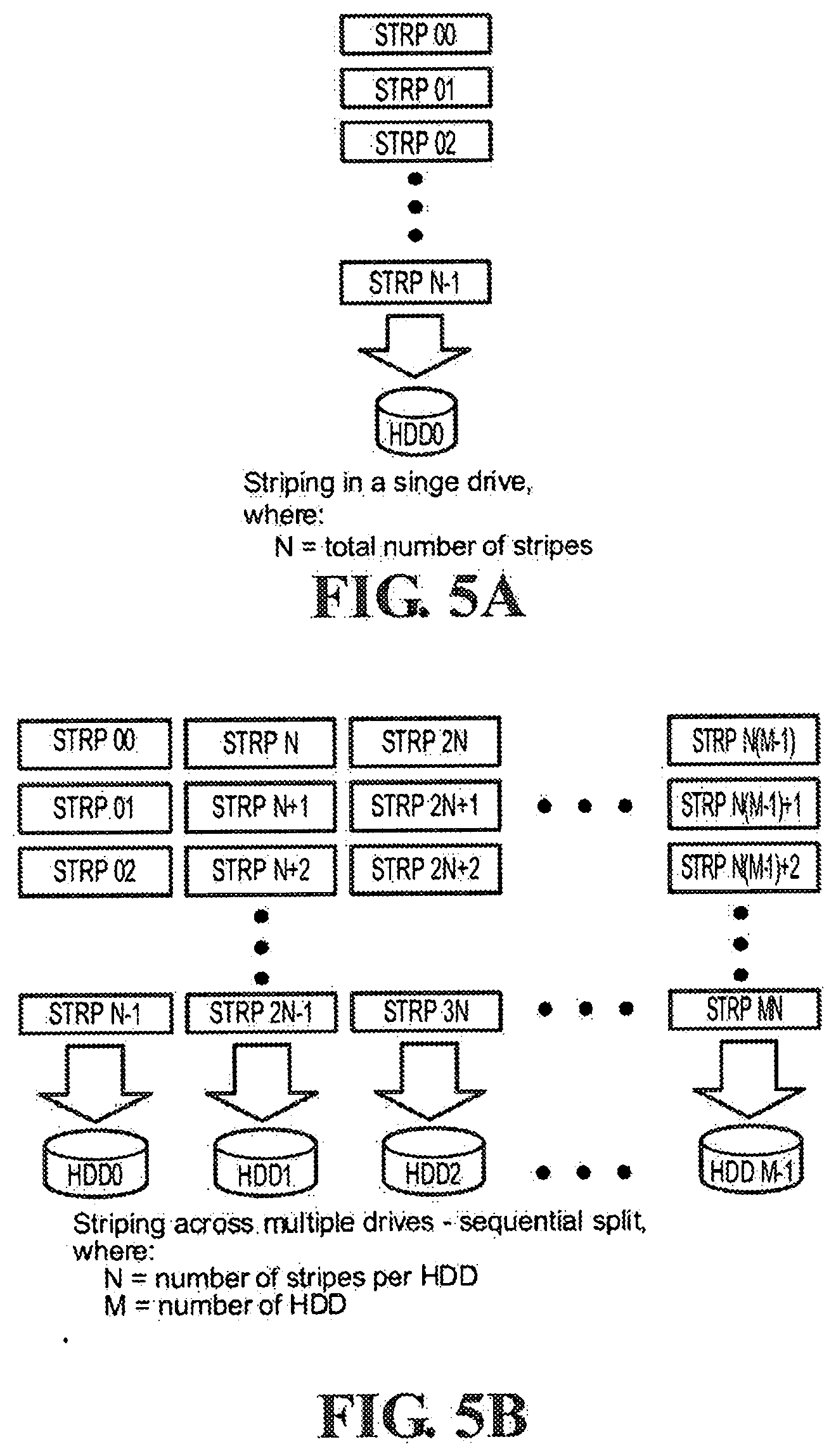

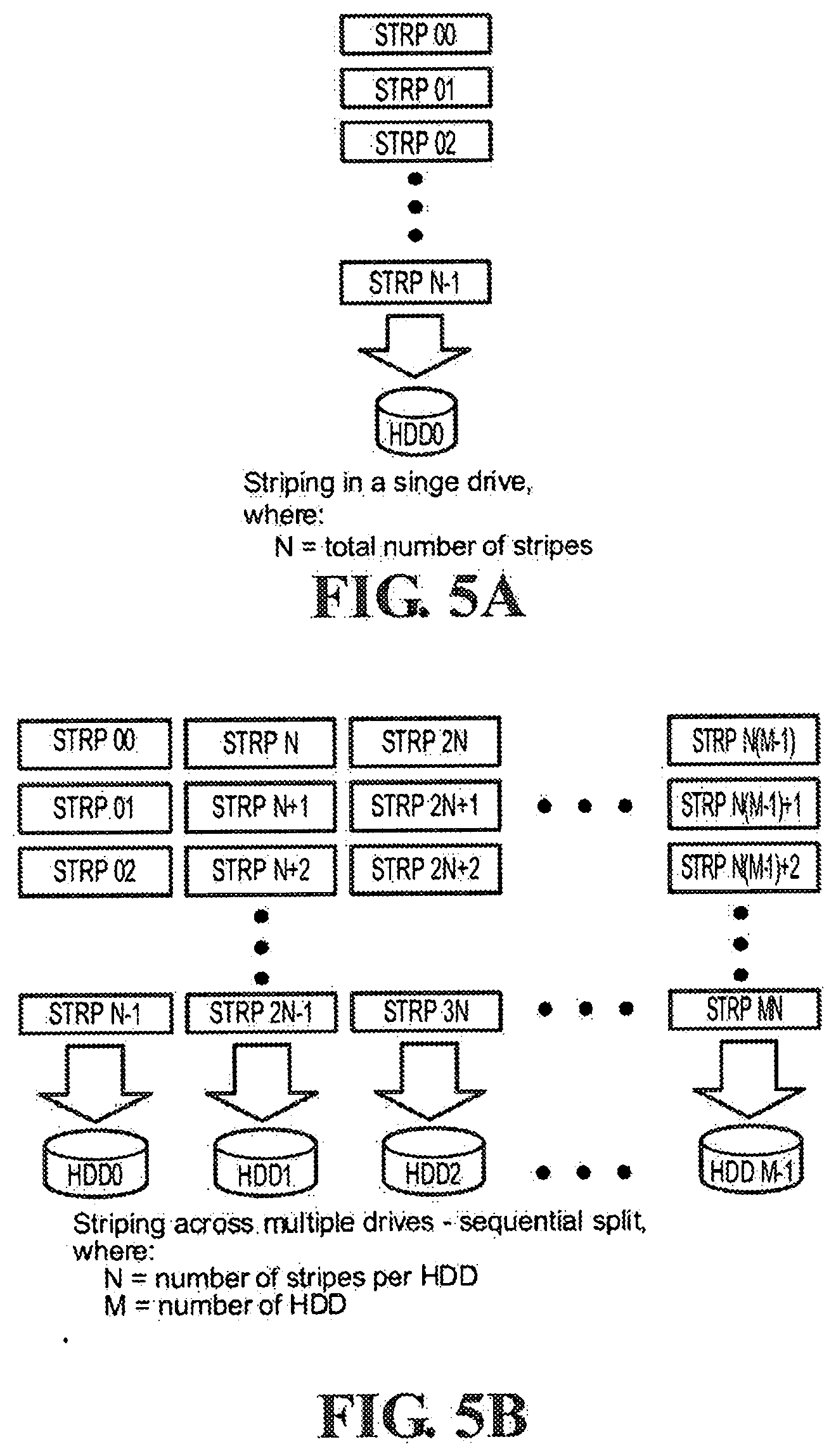

[0013] FIG. 5A shows data striping in a single drive storage system according to an embodiment of the present invention.

[0014] FIG. 5B shows data striping in a multiple drive storage system using sequential split without implementing parity checking according to an embodiment of the present invention.

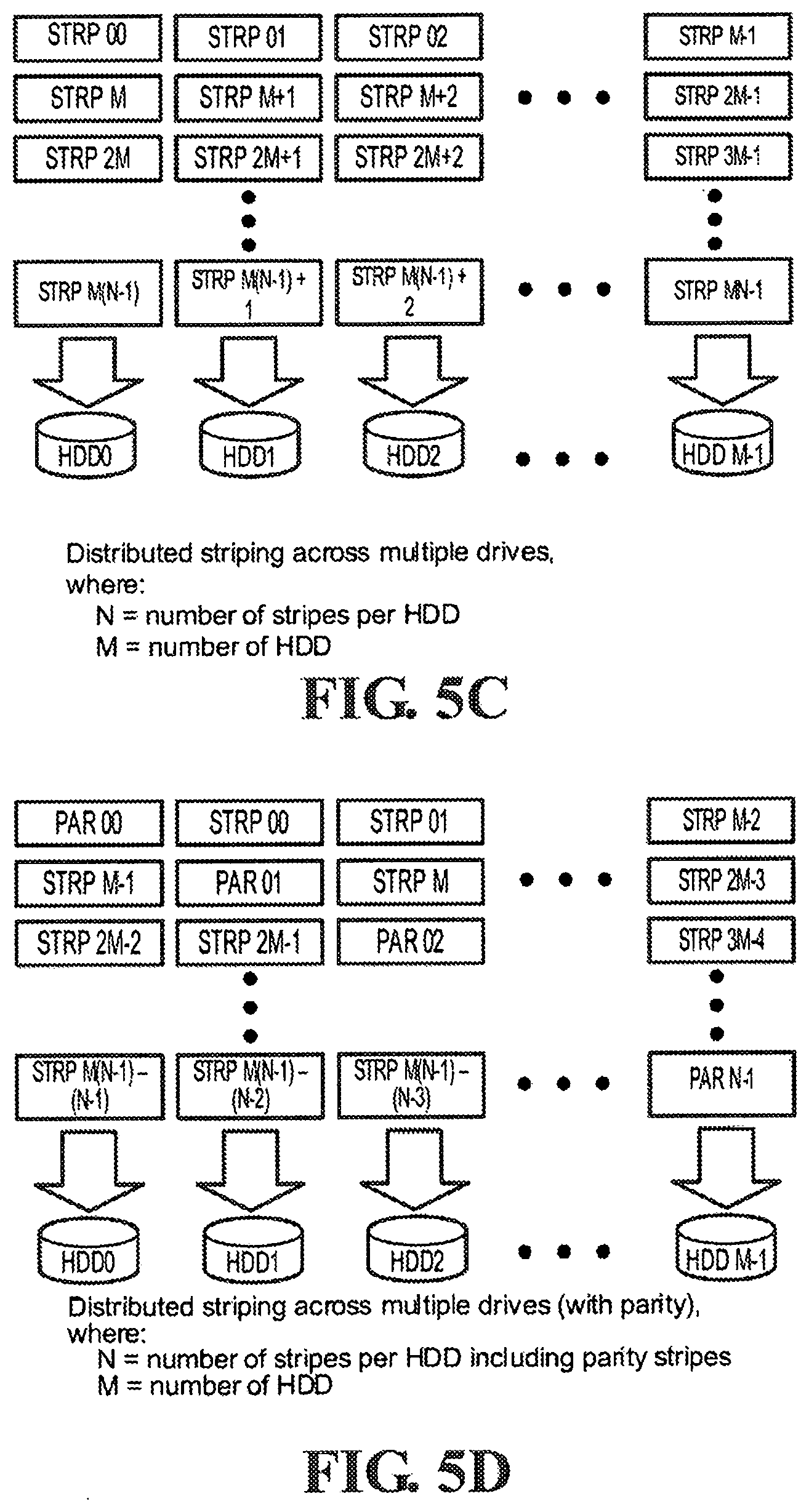

[0015] FIG. 5C shows data striping in a multiple drive storage system using distributed stripes without implementing parity checking according to an embodiment of the present invention.

[0016] FIG. 5D shows data striping in a multiple drive storage system using distributed stripes and implementing parity checking according to an embodiment of the present invention.

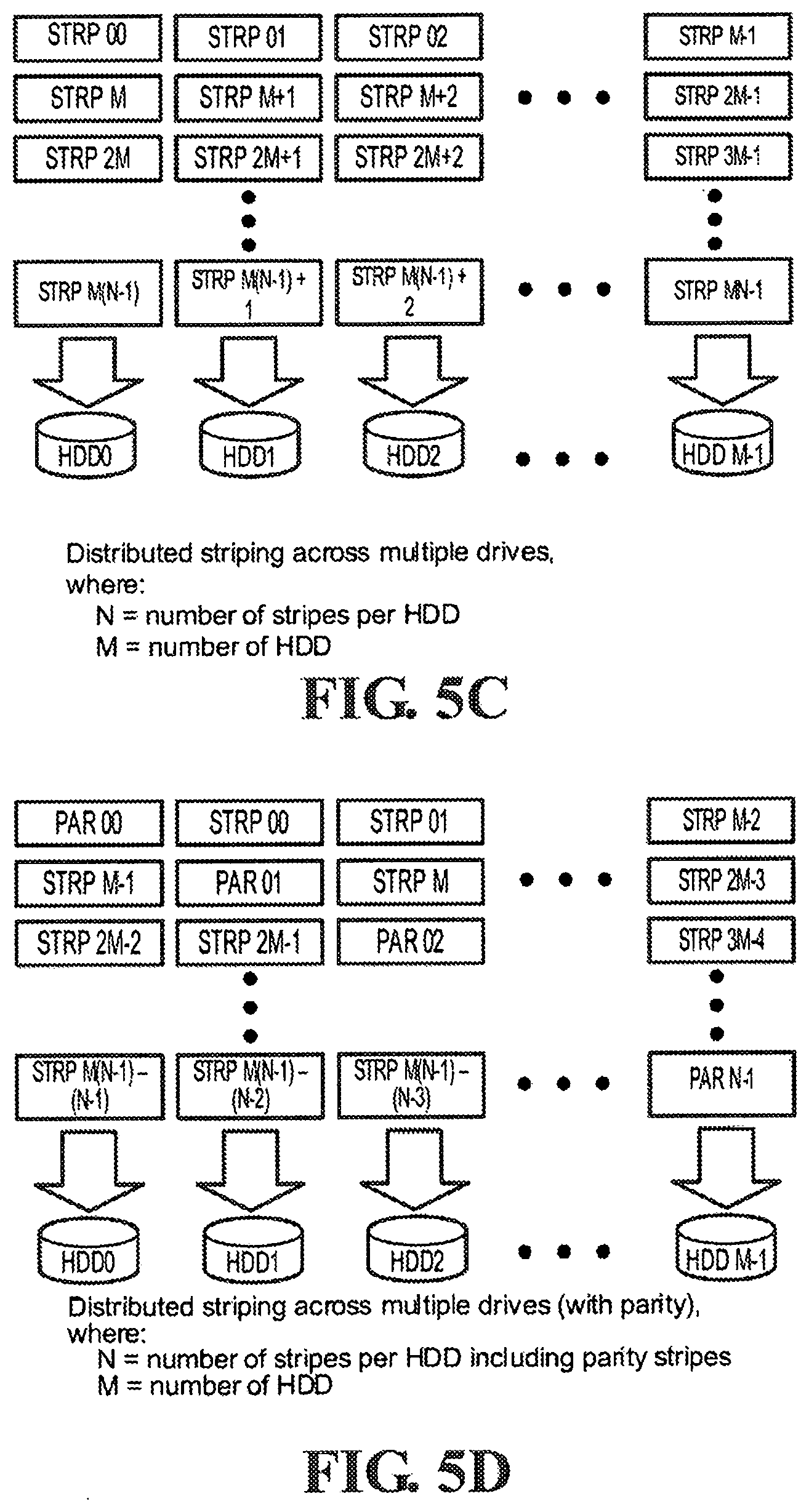

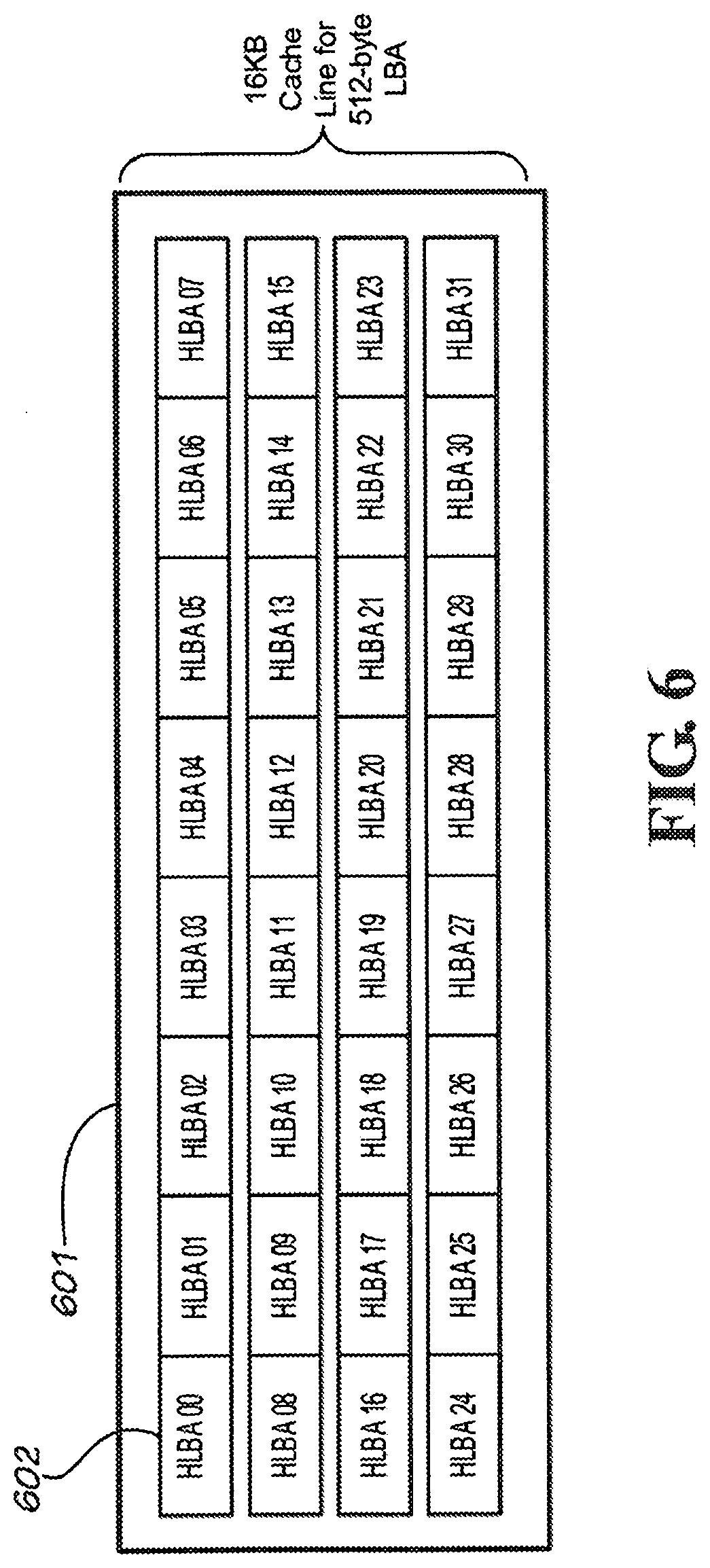

[0017] FIG. 6 shows a cache line consisting of a collection of host LBA units according to an embodiment of the present invention.

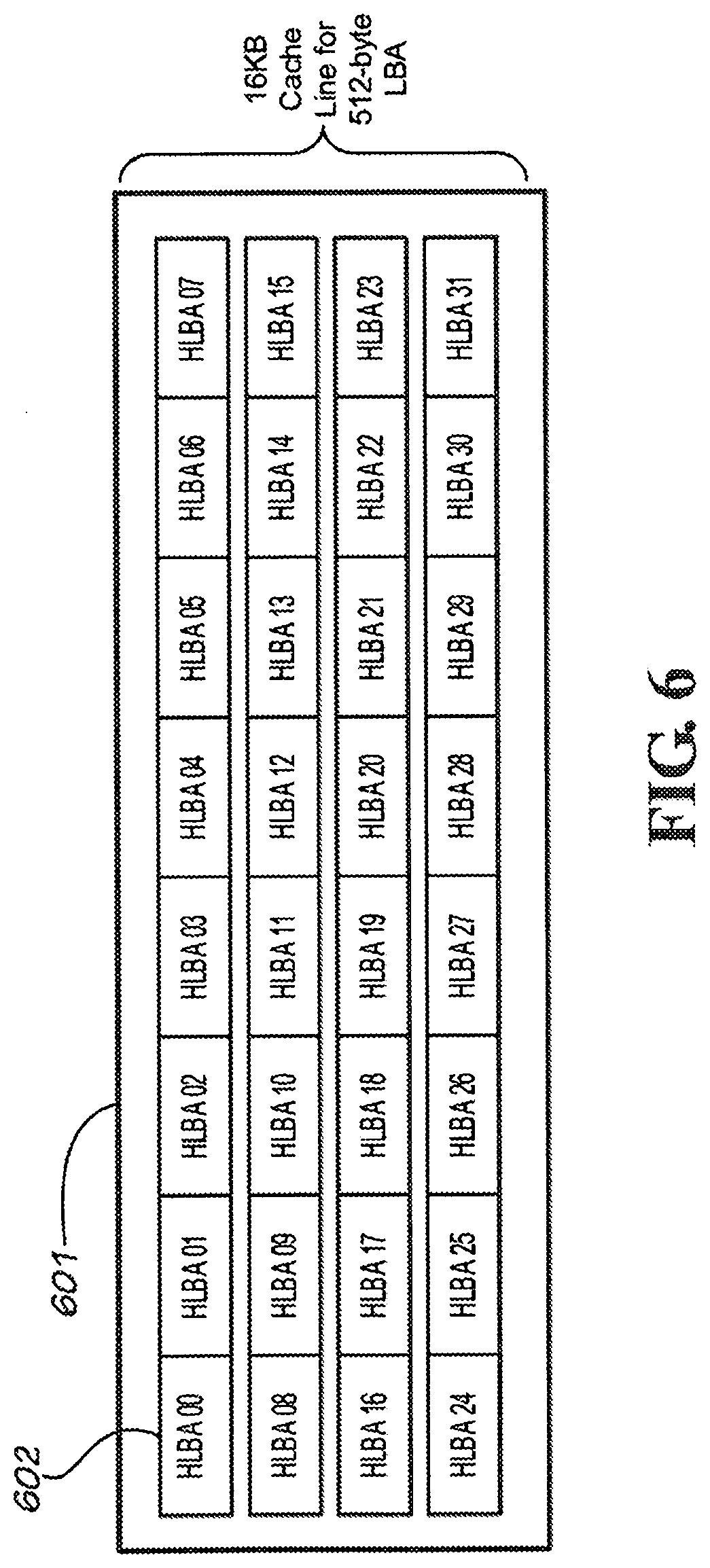

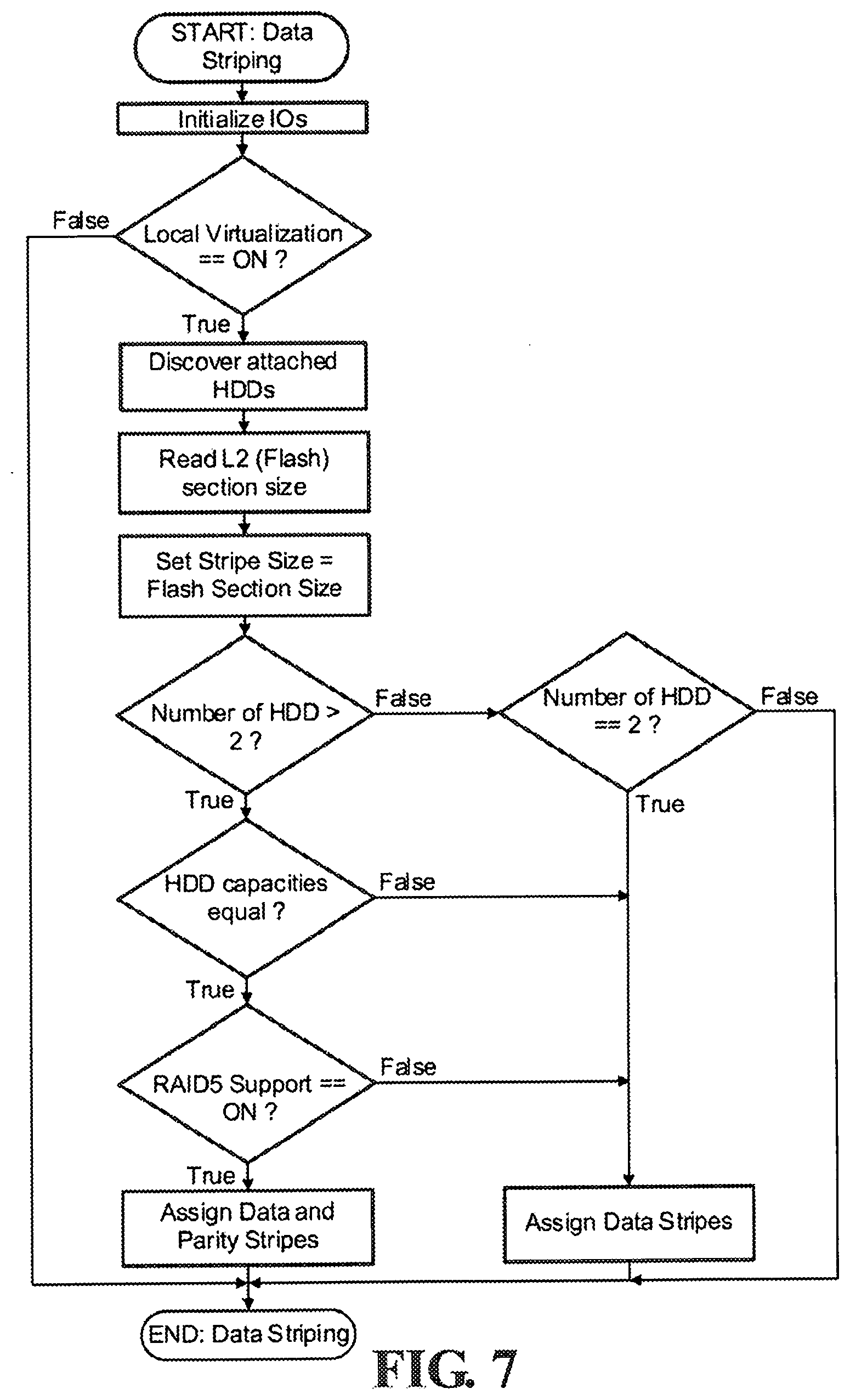

[0018] FIG. 7 shows a process flow for initializing a hybrid storage device supporting data striping according to an embodiment of the present invention.

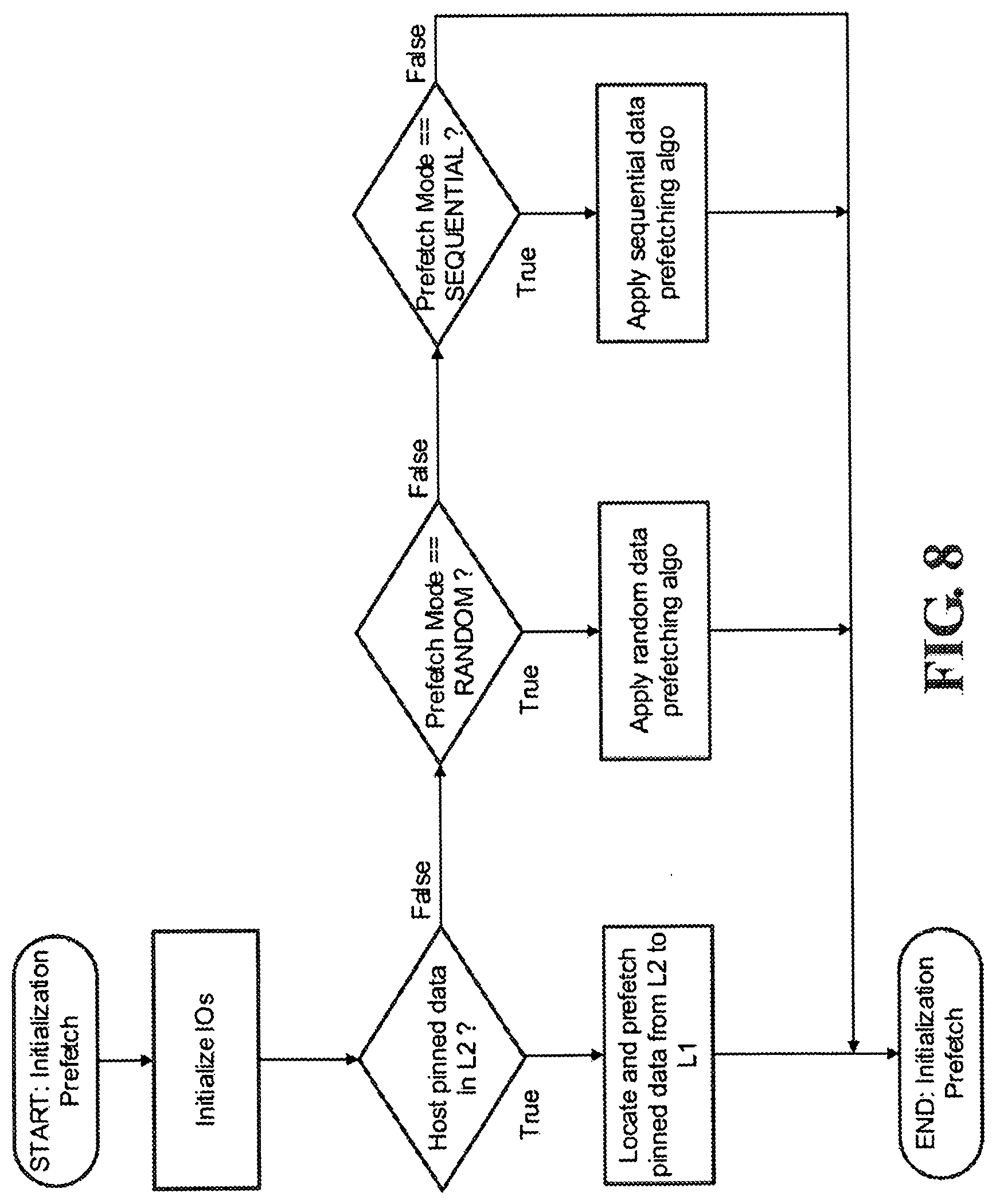

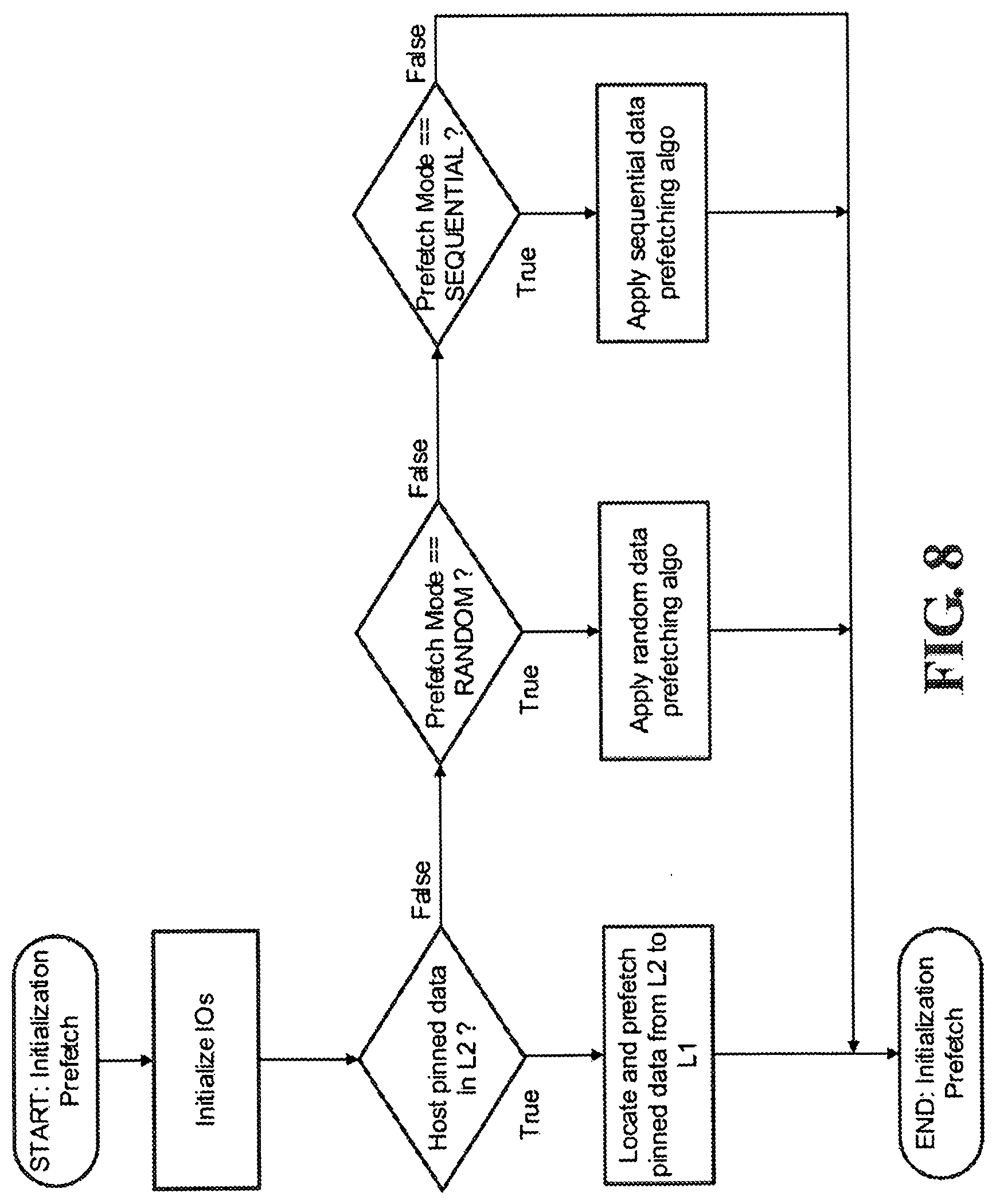

[0019] FIG. 8 shows a process flow for initializing a hybrid storage device supporting pre-fetch of data at boot-up according to an embodiment of the present invention.

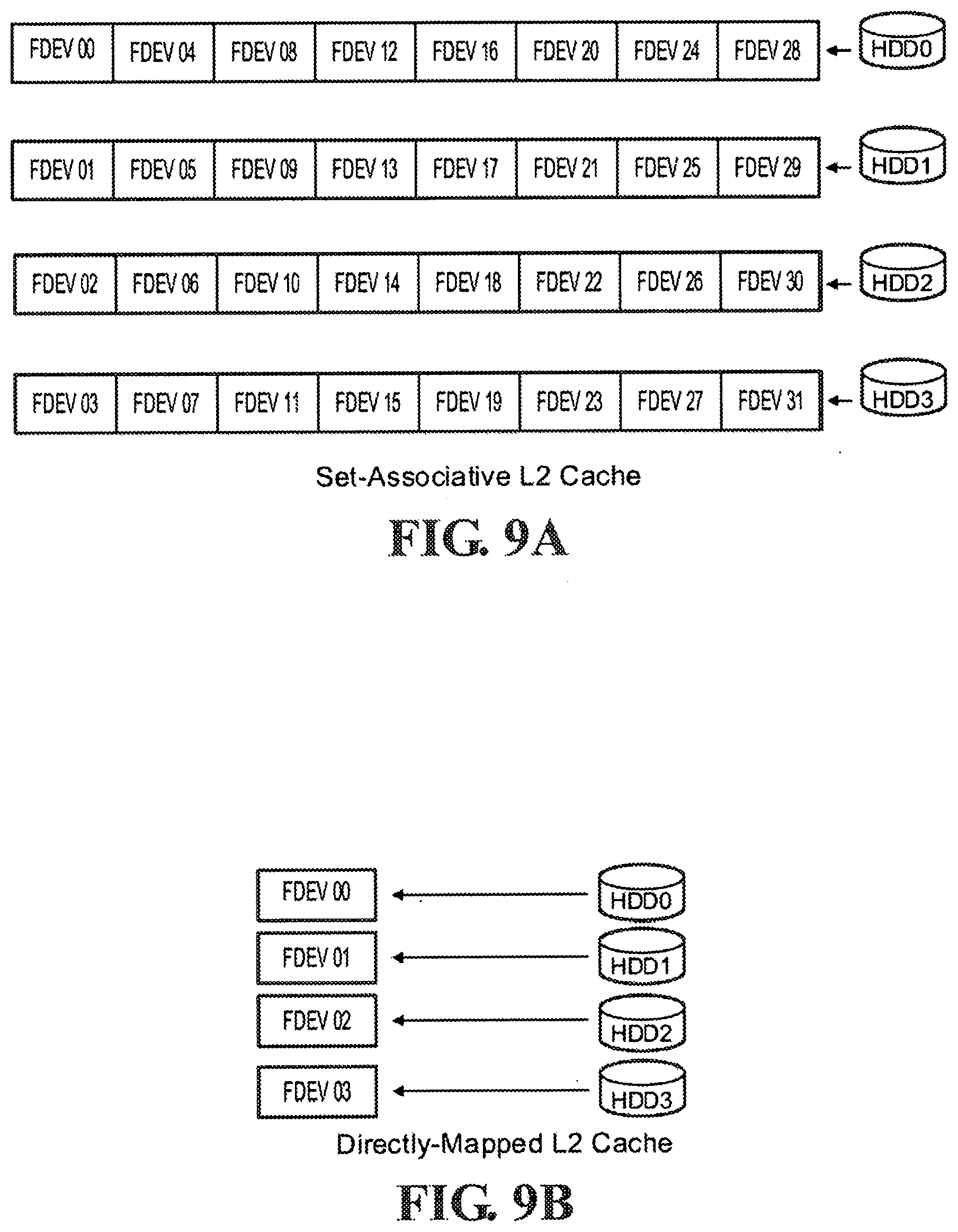

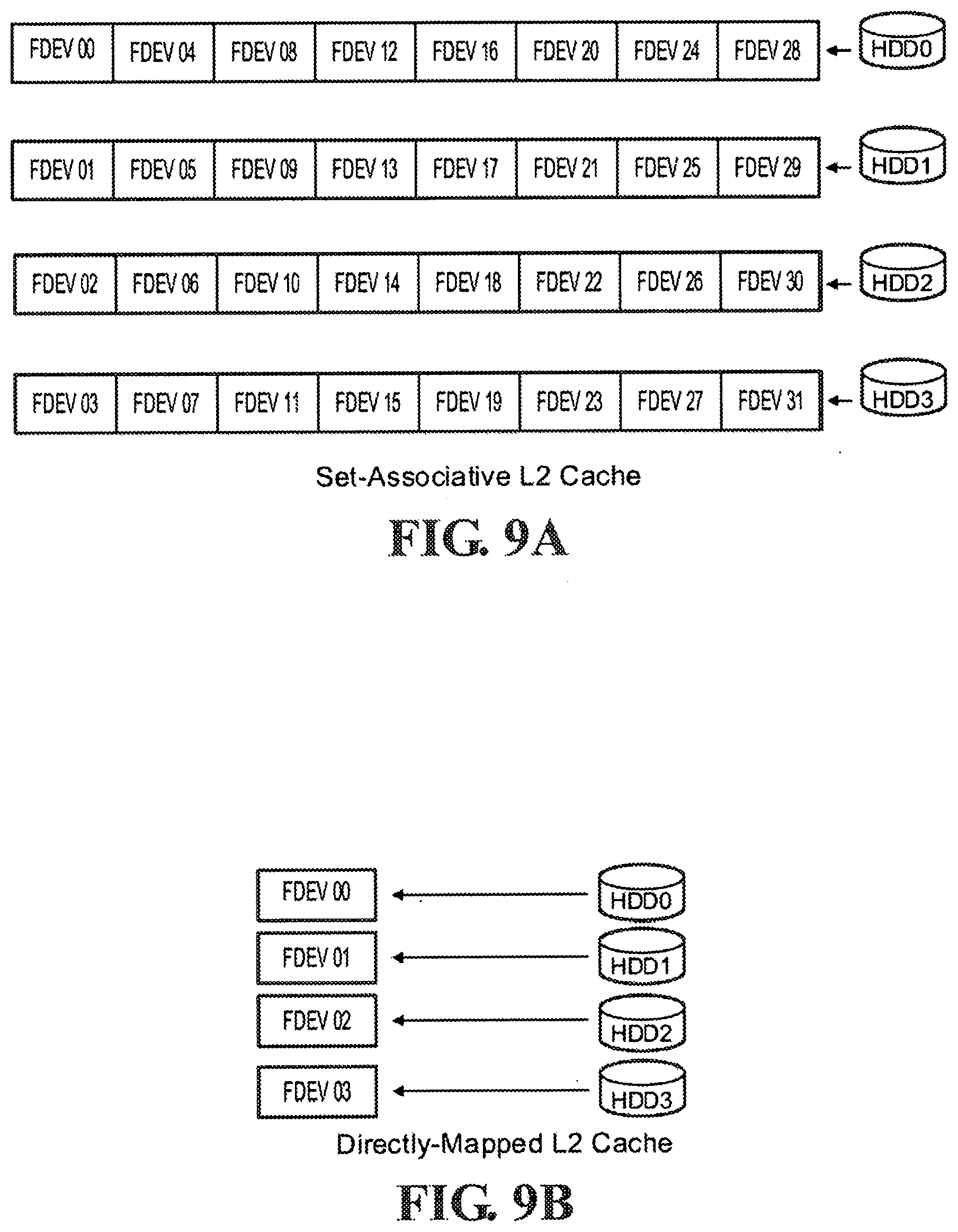

[0020] FIG. 9A is a diagram illustrating a set-associative L2 cache according to an embodiment of the present invention.

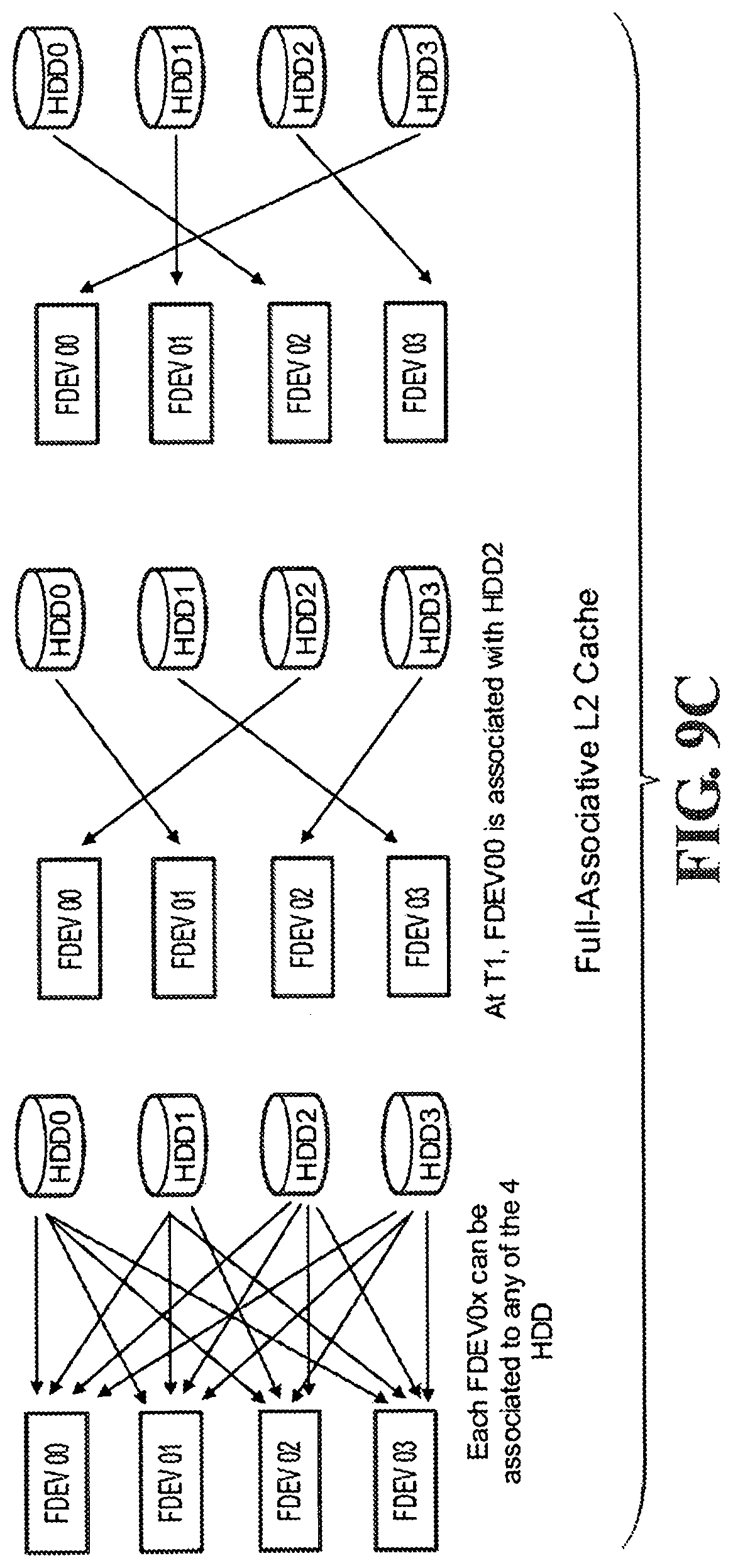

[0021] FIG. 9B is a diagram illustrating a directly-mapped L2 cache according to an embodiment of the present invention.

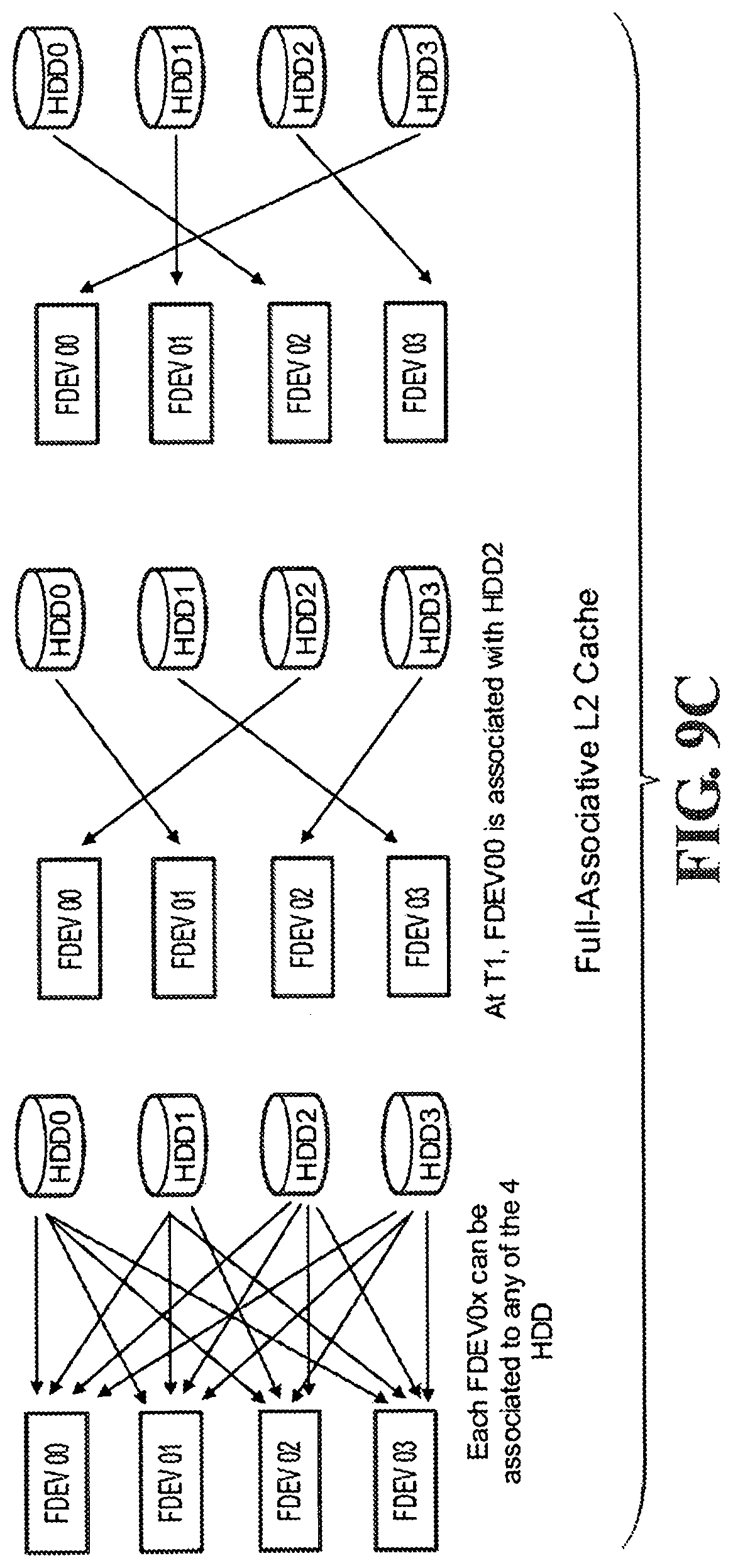

[0022] FIG. 9C is a diagram illustrating a full-associative L2 cache according to an embodiment of the present invention.

[0023] FIG. 10 shows a cache line information table according to an embodiment of the present invention.

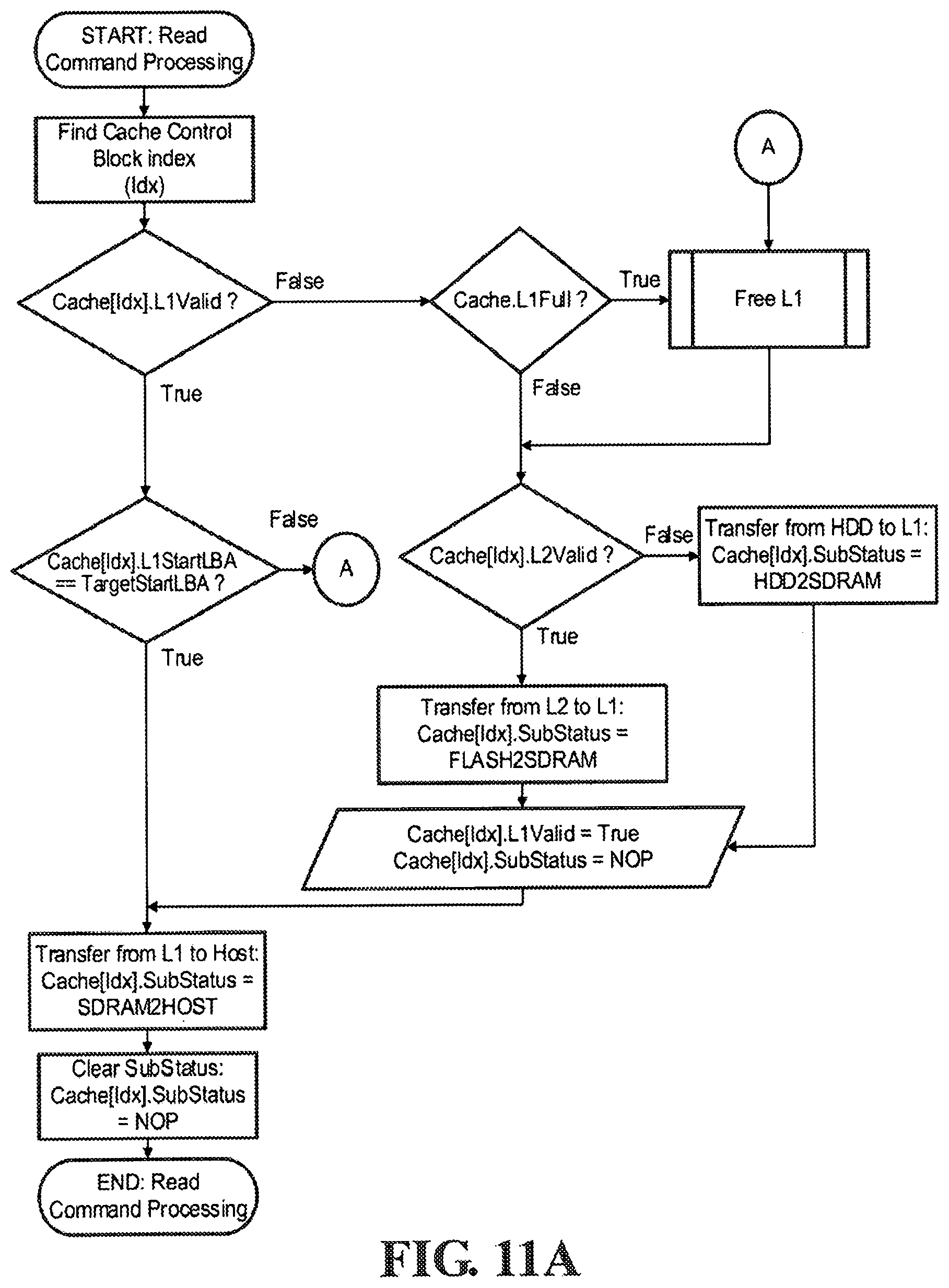

[0024] FIG. 11A shows a process flow for servicing host read commands according to an embodiment of the present invention.

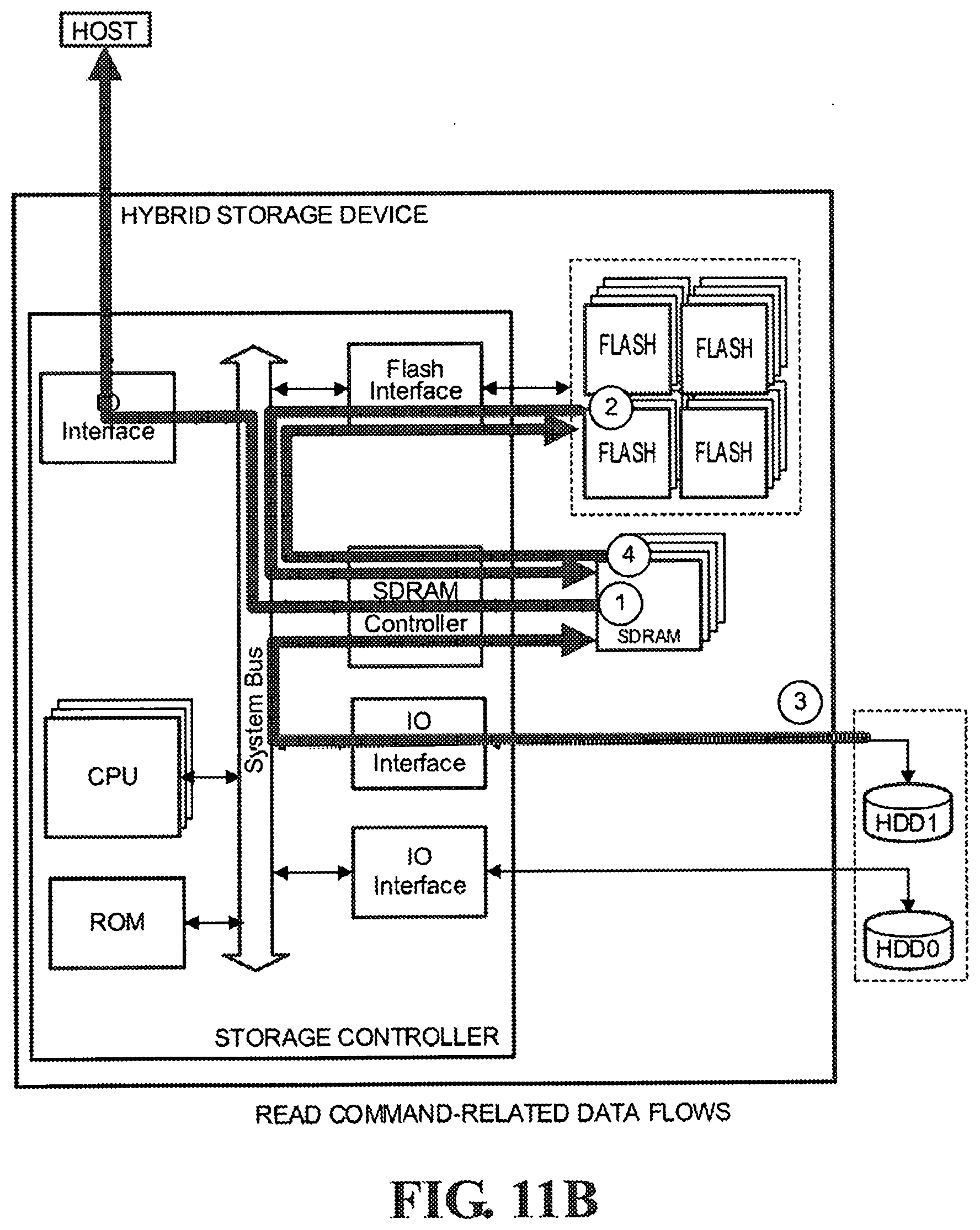

[0025] FIG. 11B is a diagram illustrating host read command-related data flows according to an embodiment of the present invention.

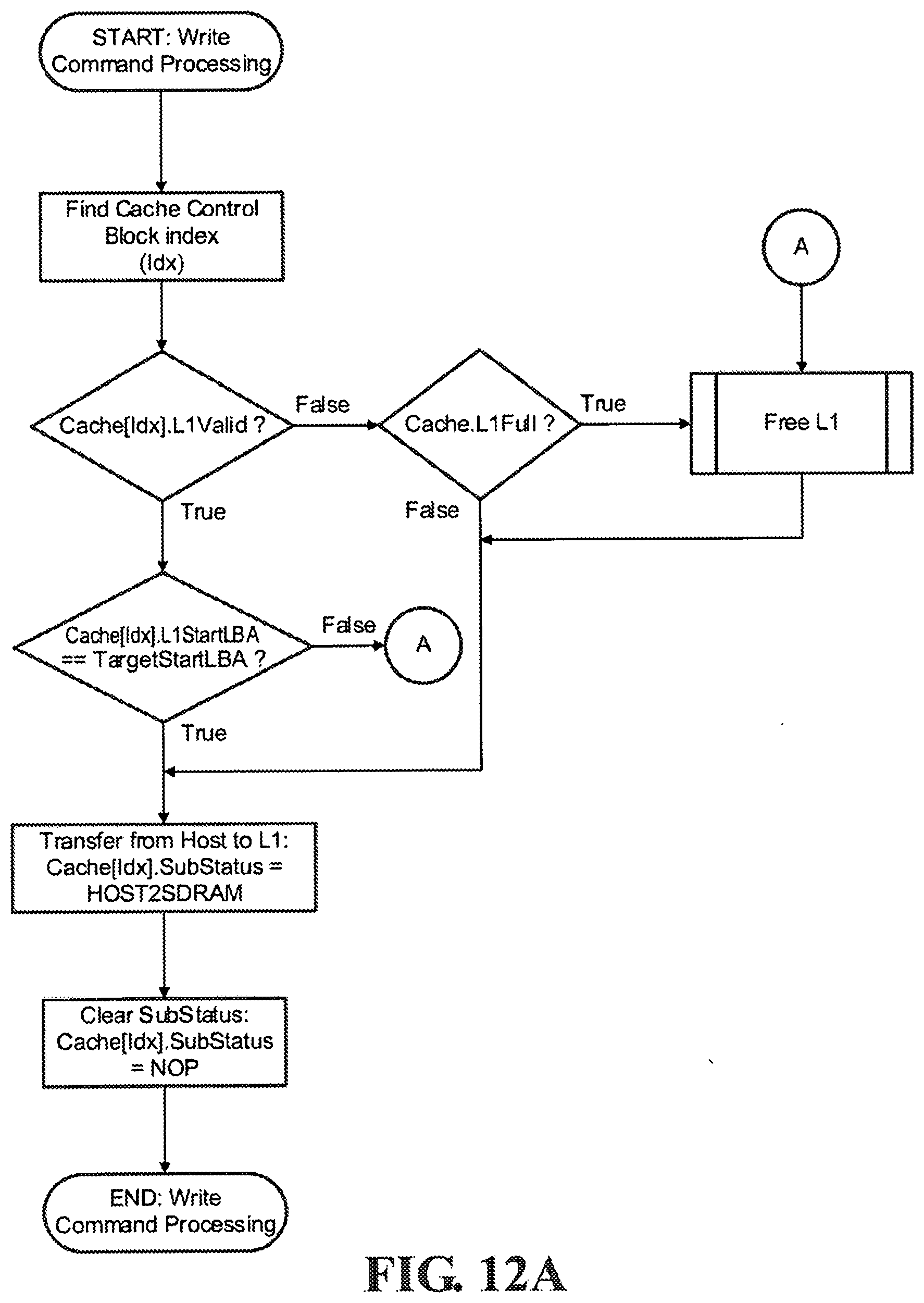

[0026] FIG. 12A shows a process flow for servicing host write commands according to an embodiment of the present invention.

[0027] FIG. 12B is a diagram illustrating write command-related data flows according to an embodiment of the present invention.

[0028] FIG. 13 shows a process flow for freeing L1 cache according to an embodiment of the present invention.

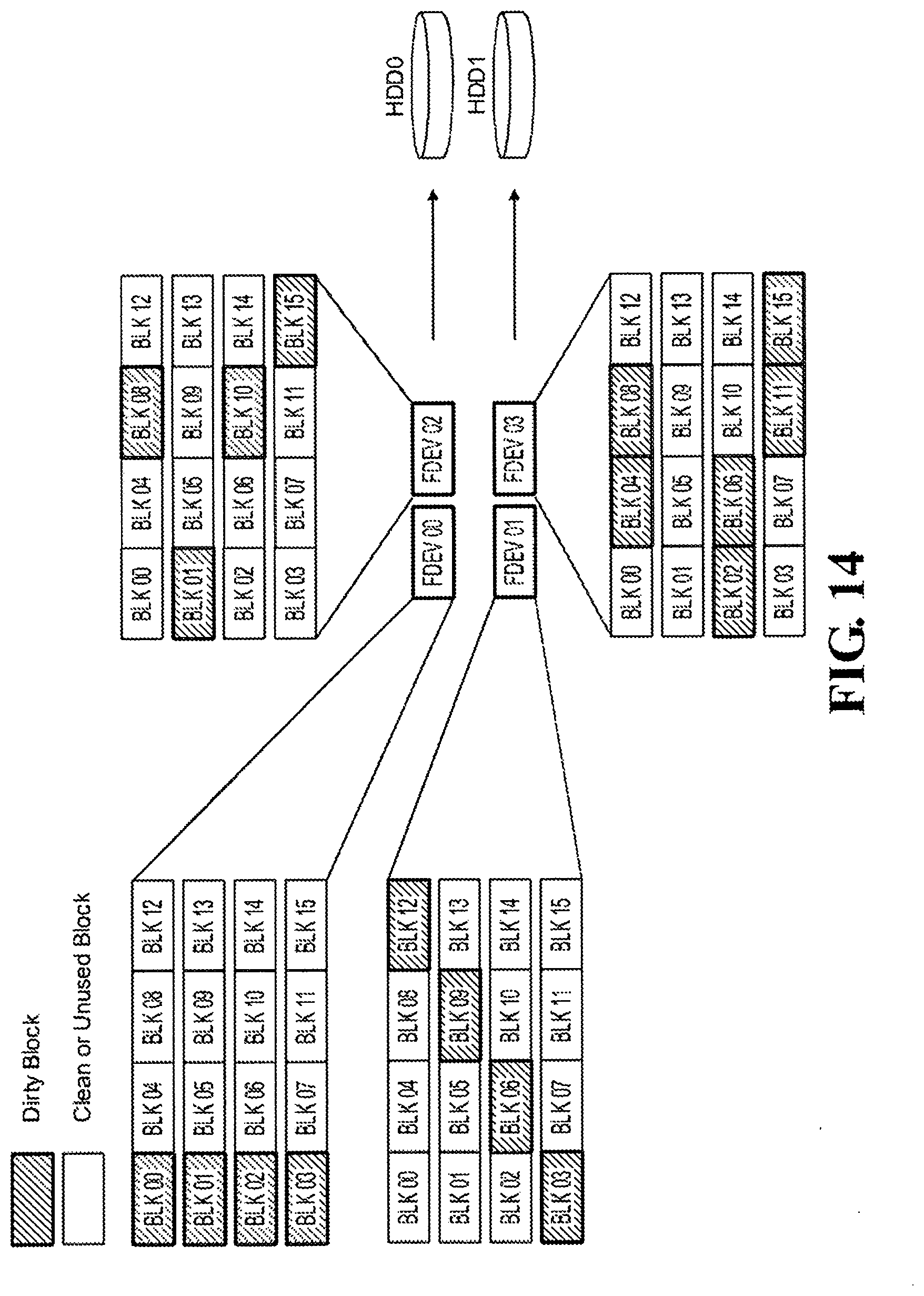

[0029] FIG. 14 shows a diagram illustrating optimized fetching of data from L2 and flushing to HDD according to an embodiment of the present invention.

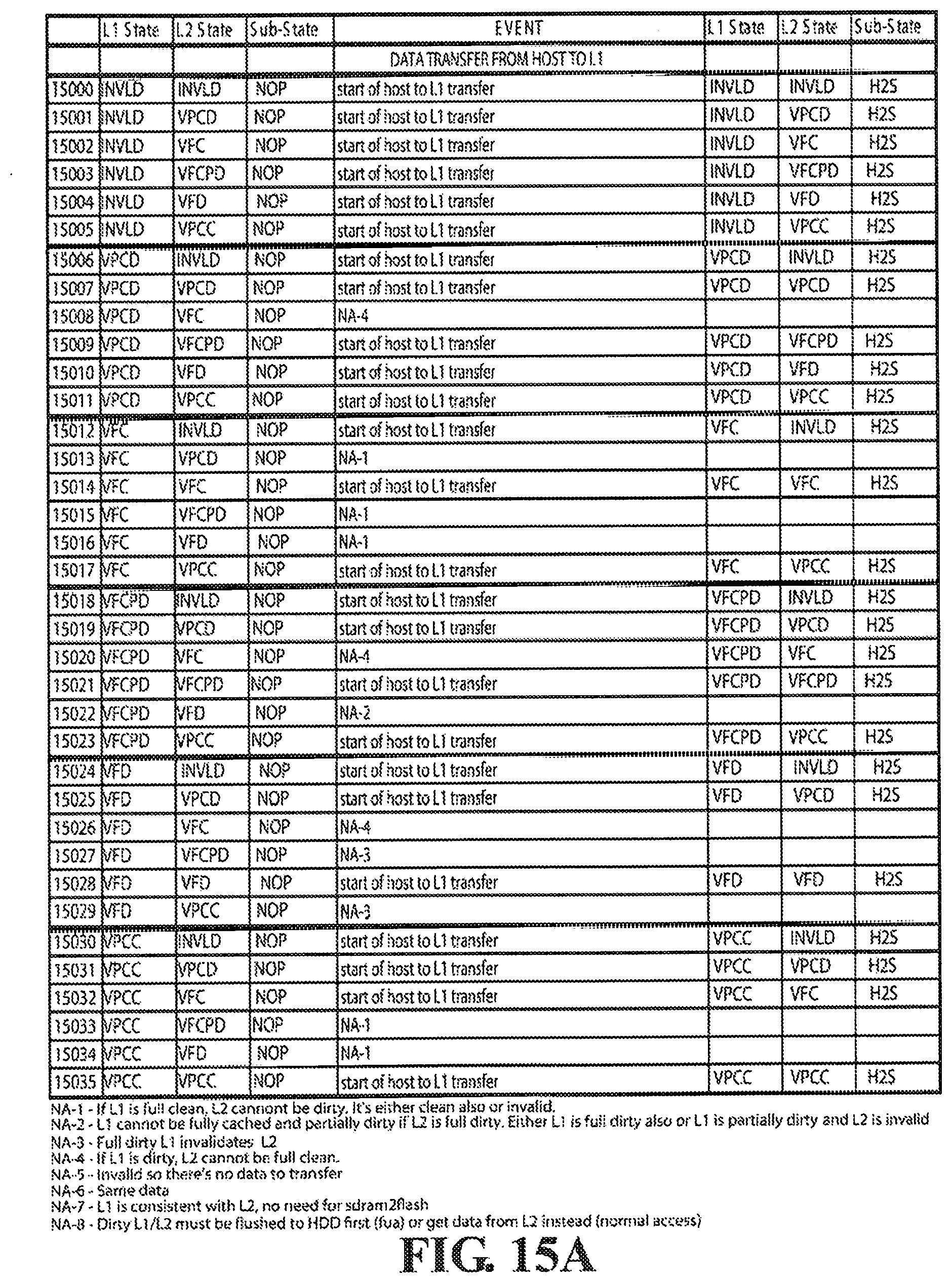

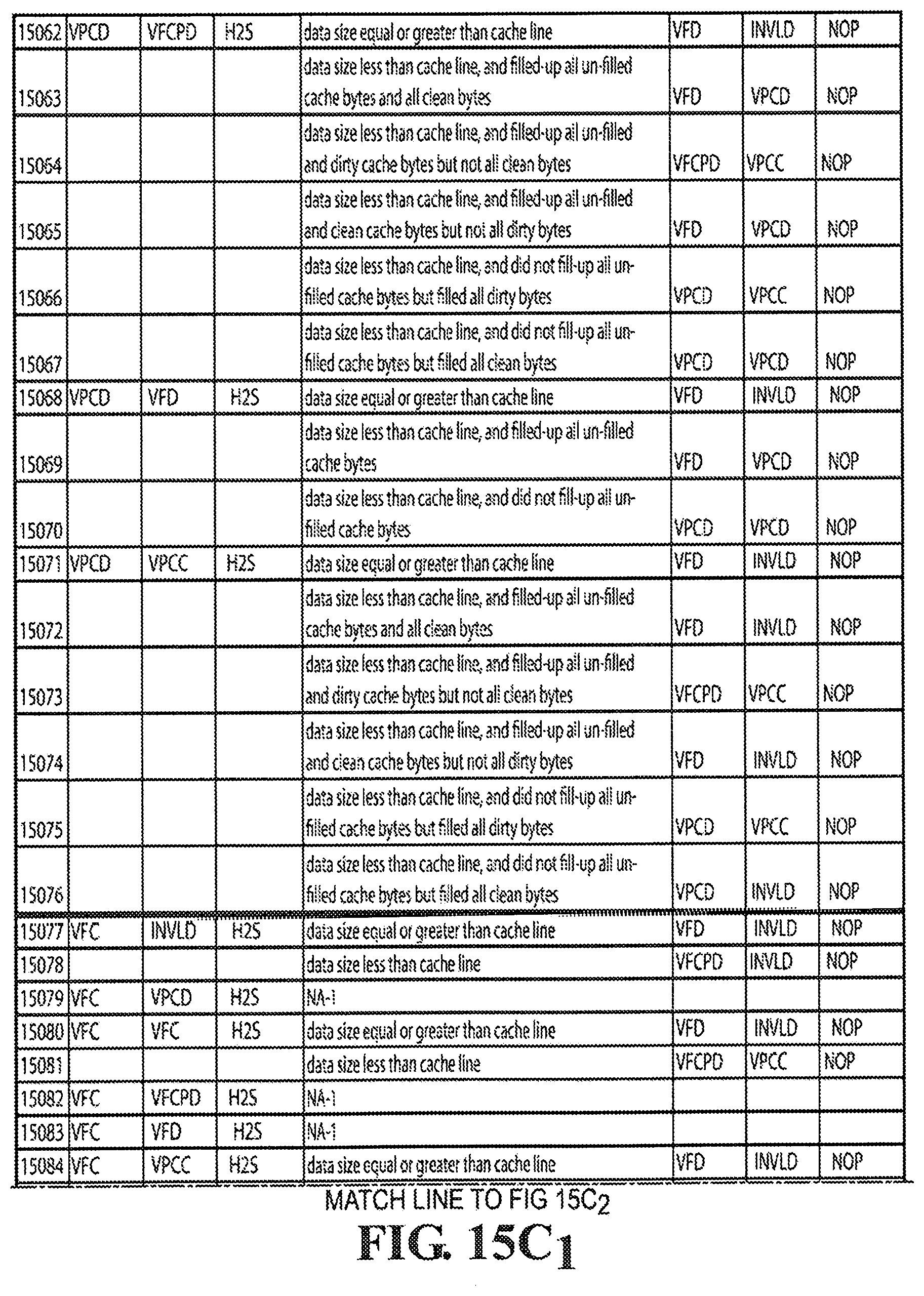

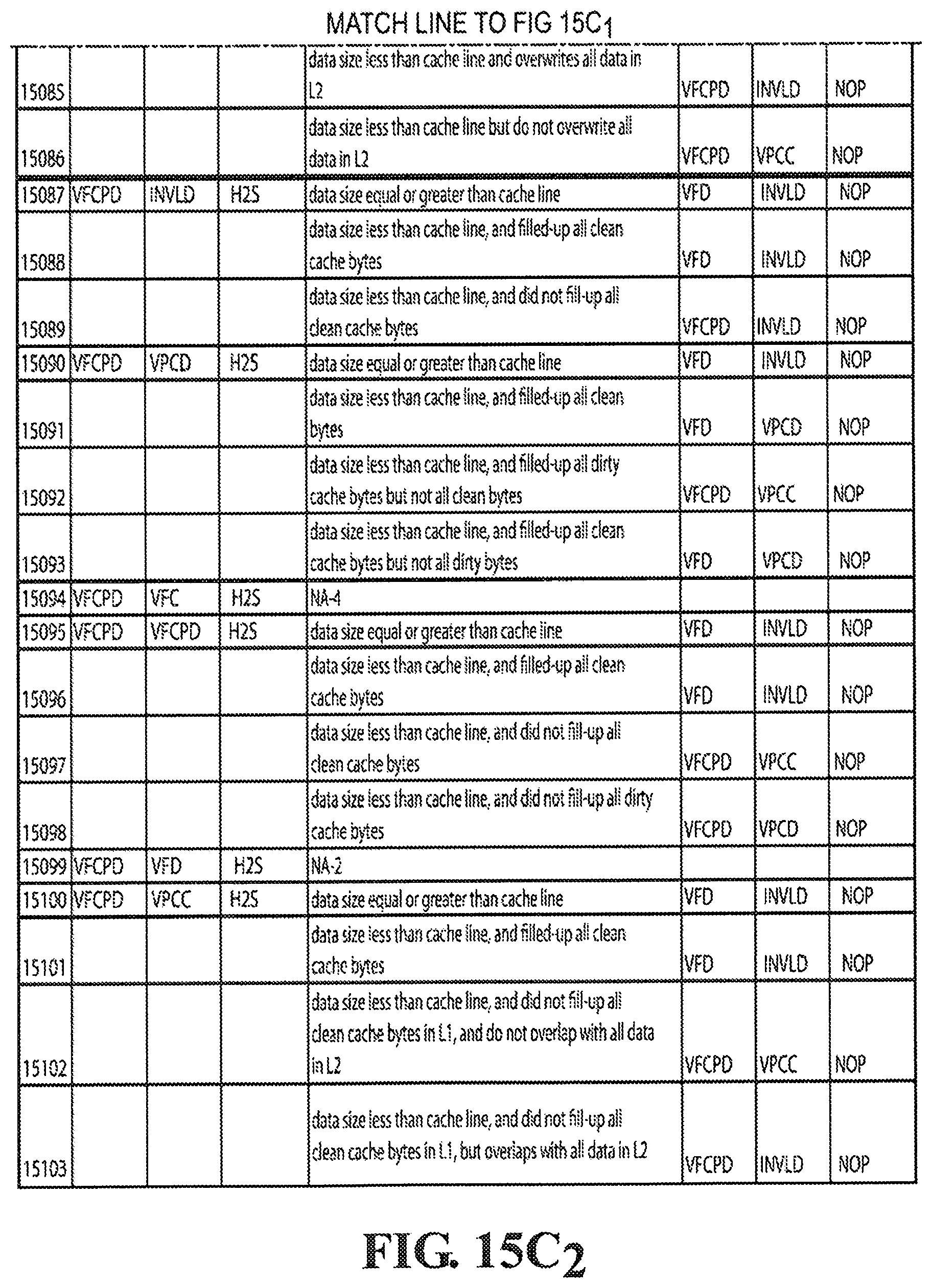

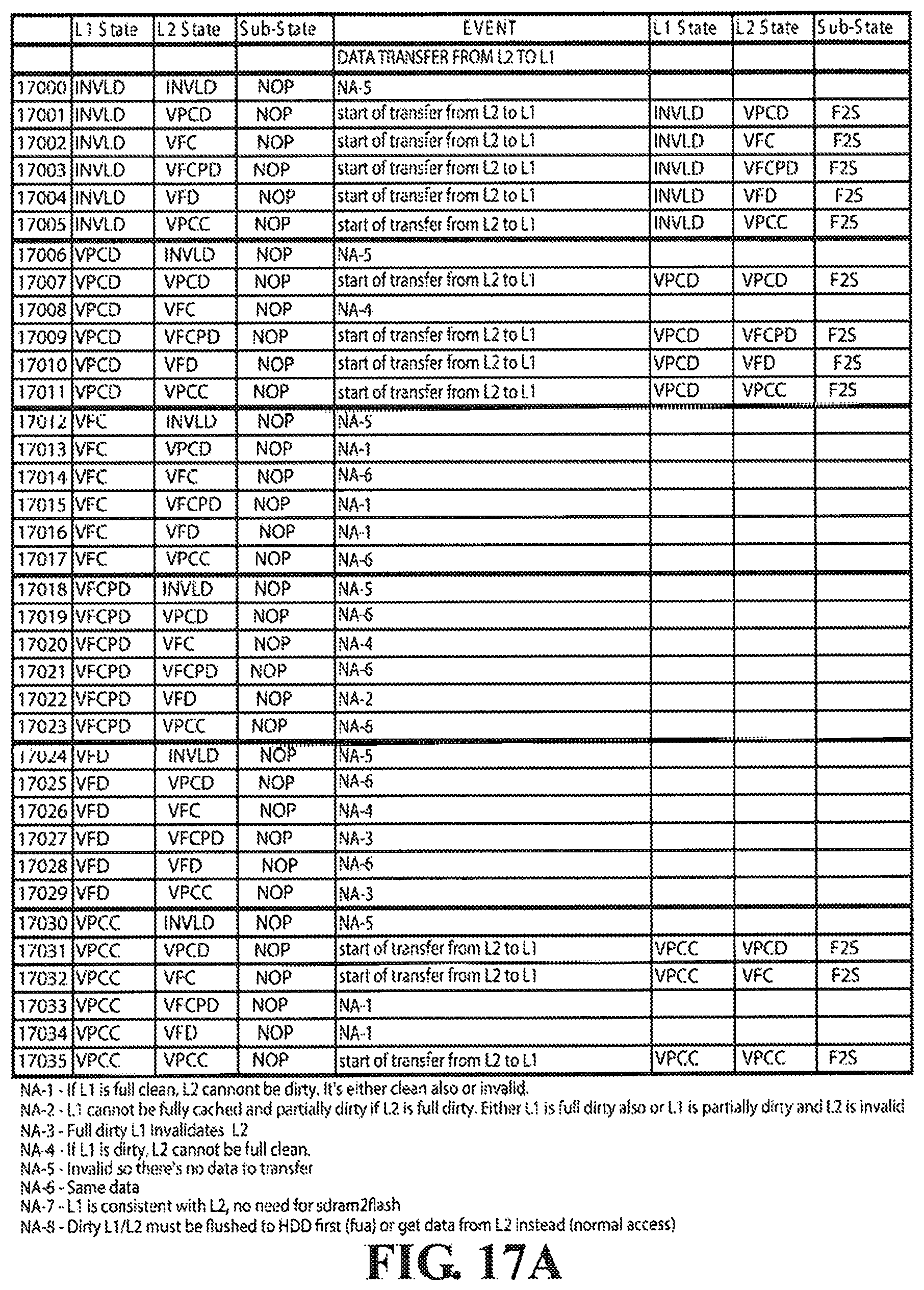

[0030] FIGS. 15A, 15B, 15C.sub.1, 15C.sub.2, 15D show the cache state transition table for Host to L1 data transfer according to an embodiment of the present invention.

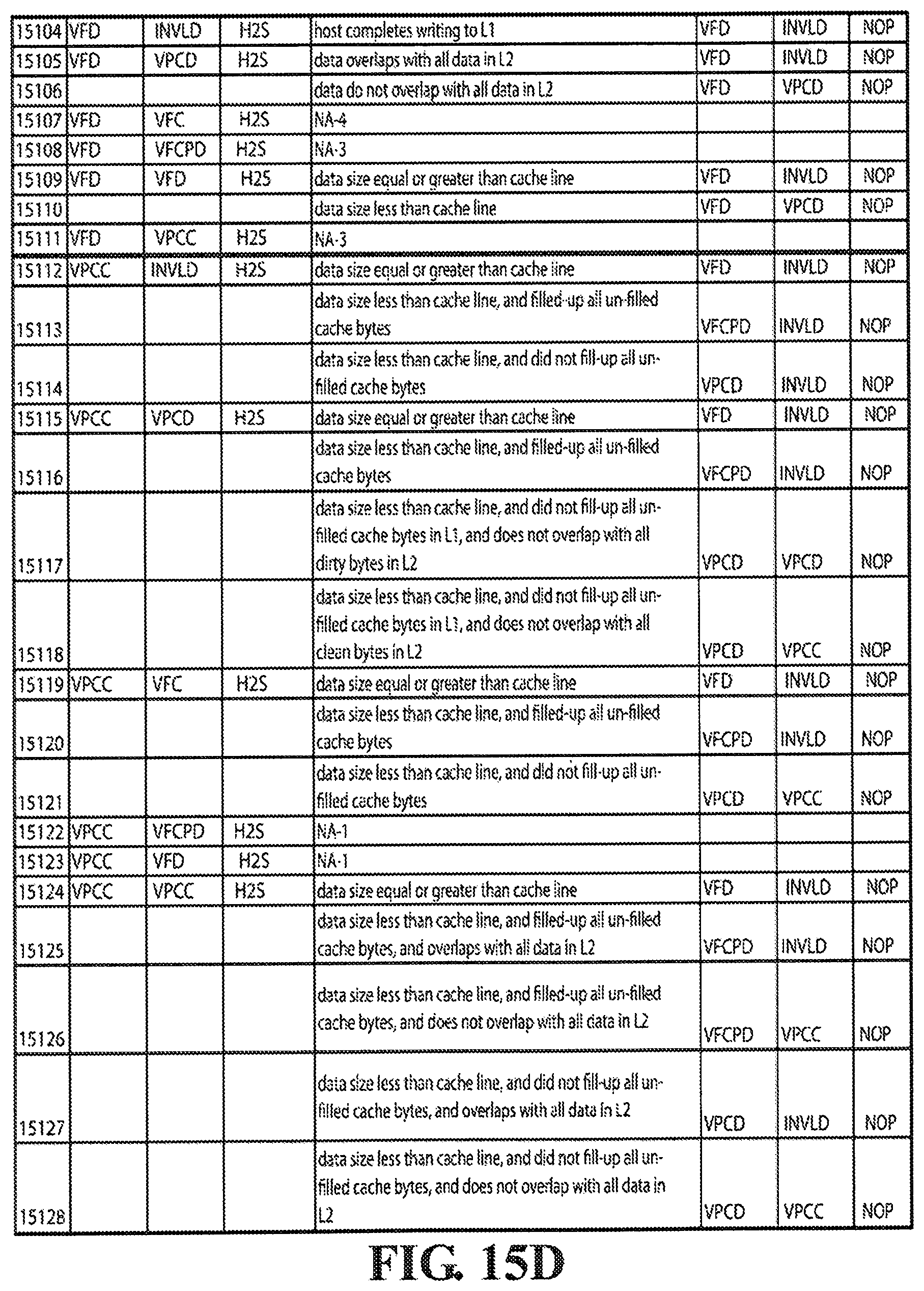

[0031] FIGS. 16A and 16B show the cache state transition table for L1 to Host data transfer according to an embodiment of the present invention.

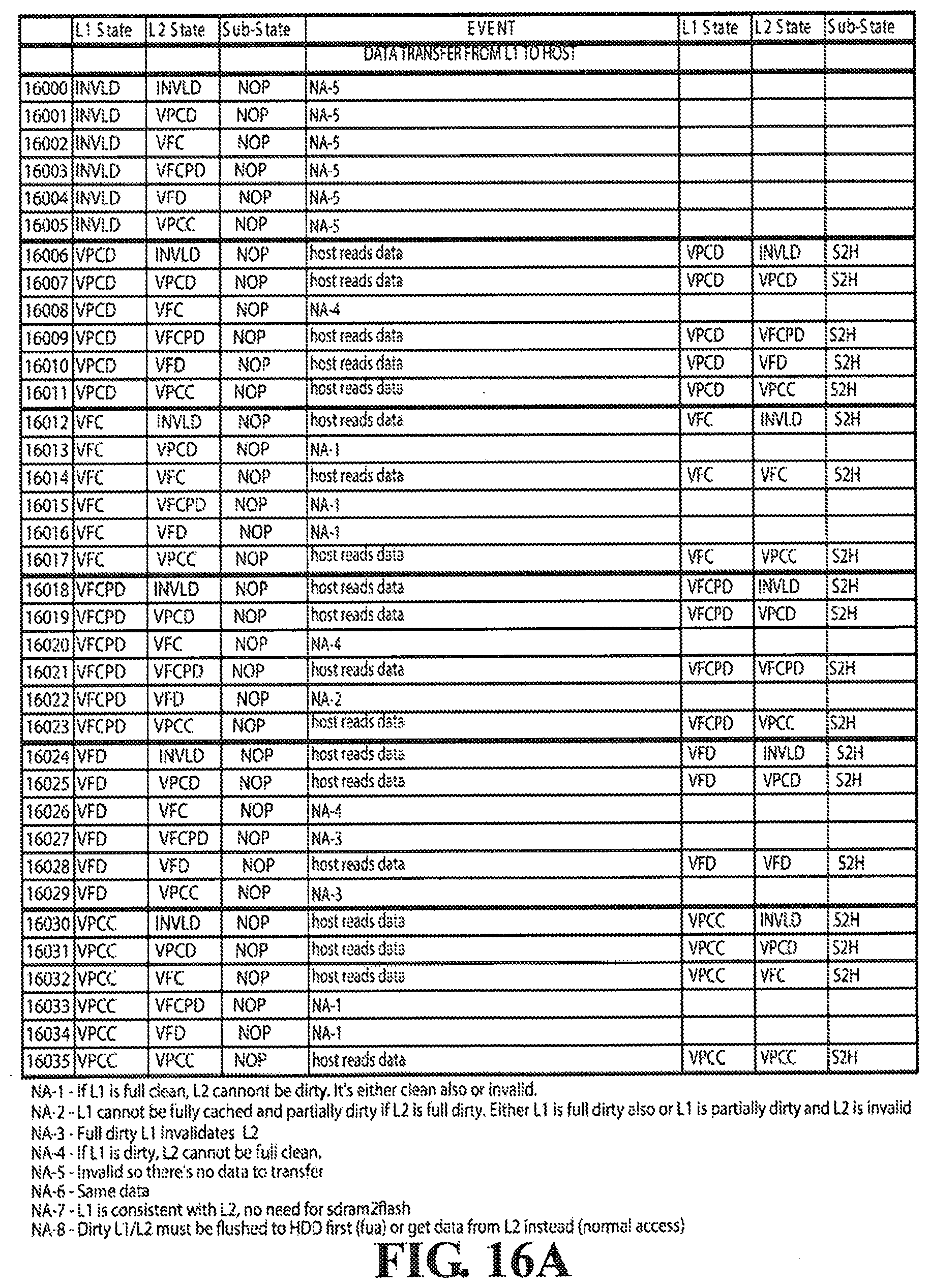

[0032] FIGS. 17A and 17B show the cache state transition table for L2 to L1 data transfer according to an embodiment of the present invention.

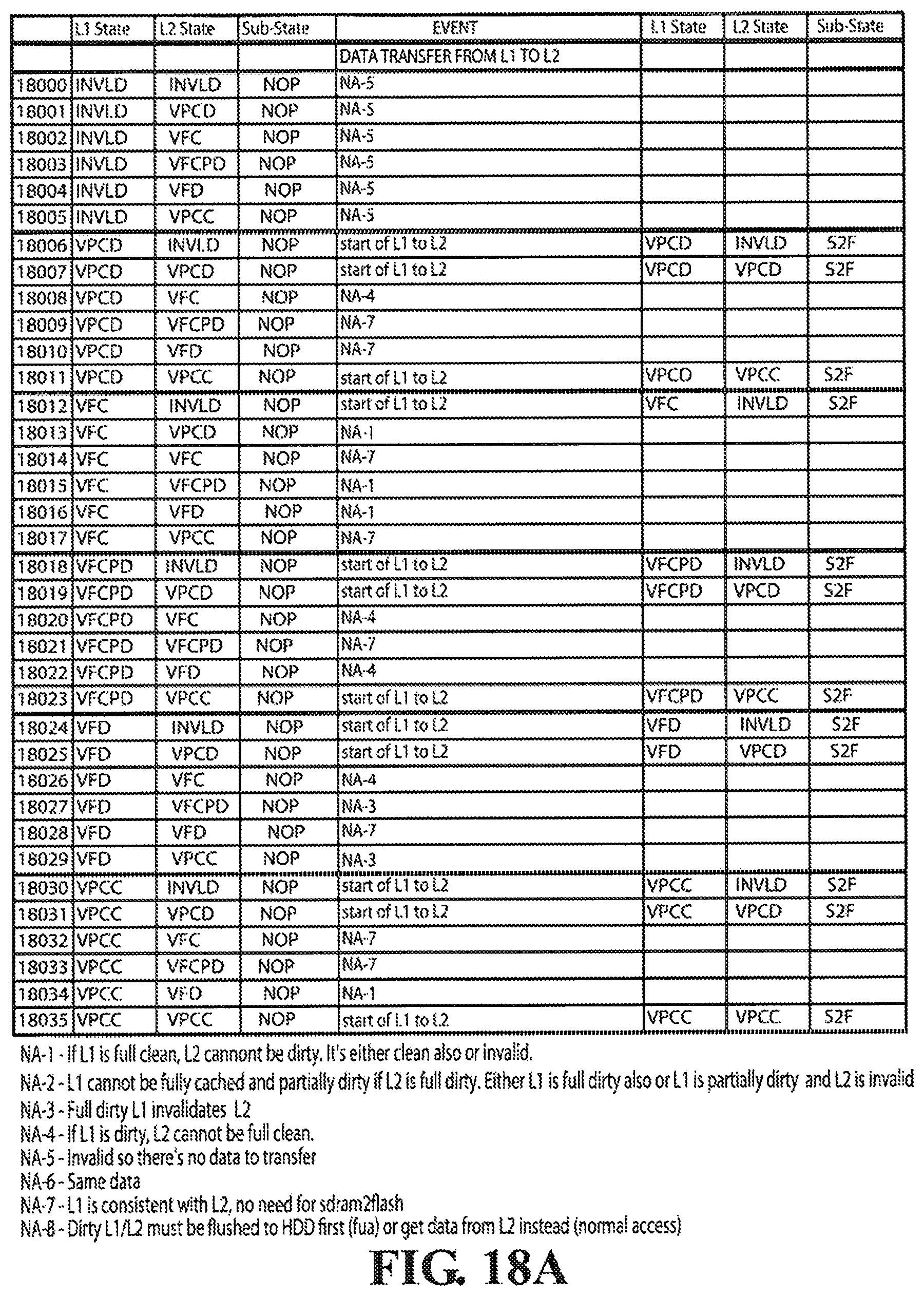

[0033] FIGS. 18A and 18B show the cache state transition table for L1 to L2 data transfer according to an embodiment of the present invention.

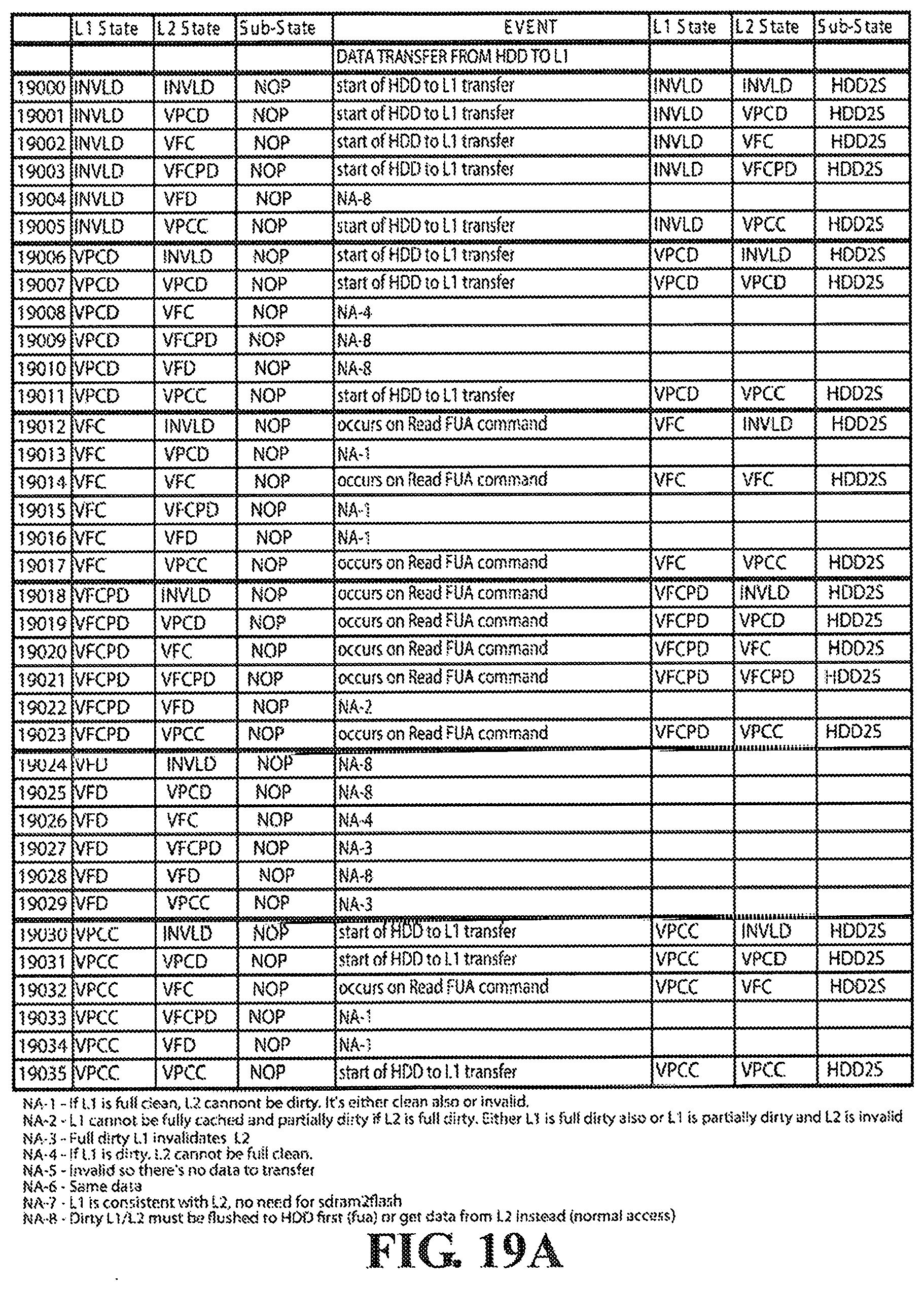

[0034] FIGS. 19A and 19B show the cache state transition table for hard disk drive to L1 data transfer according to an embodiment of the present invention.

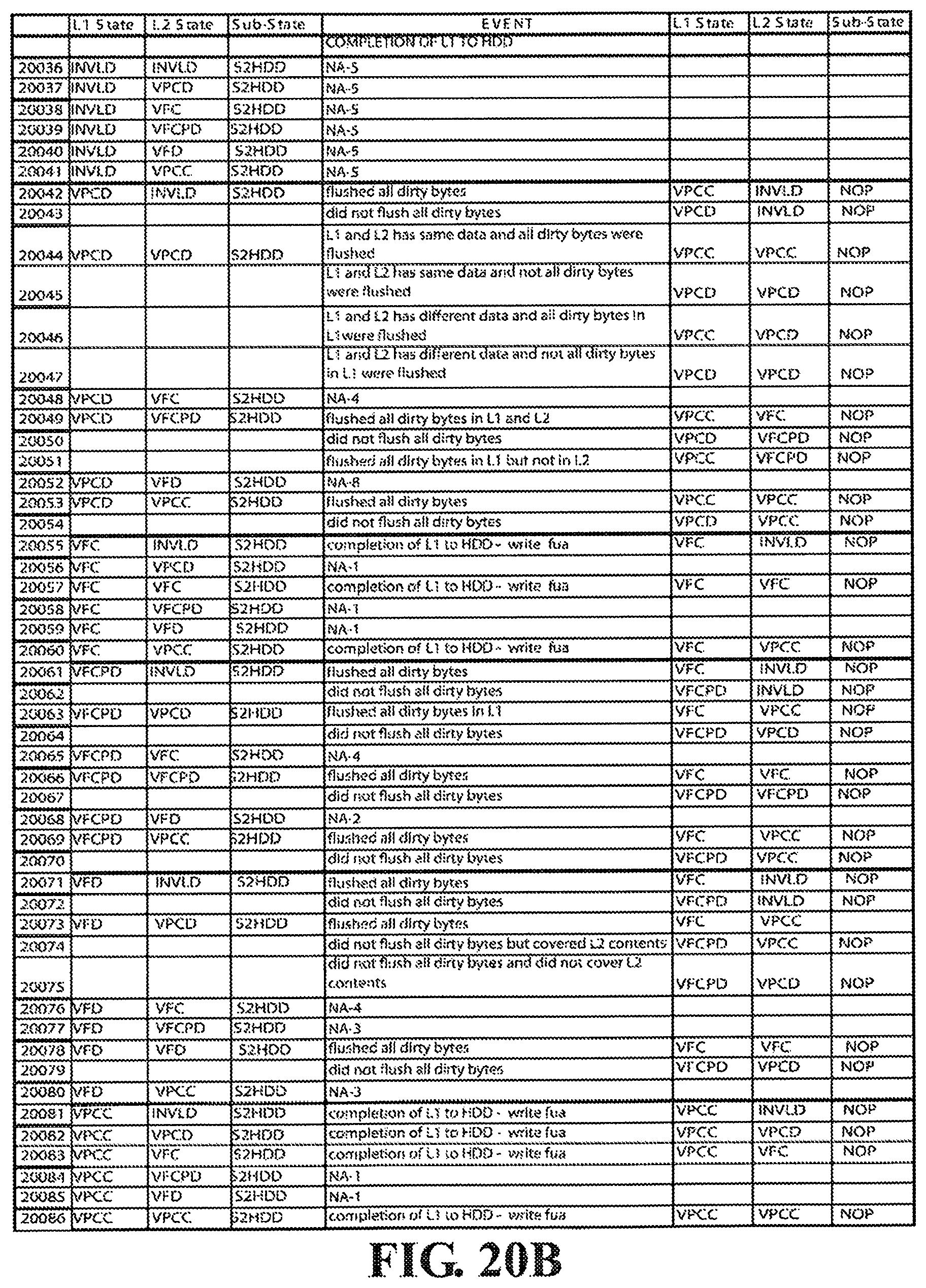

[0035] FIGS. 20A and 20B show the cache state transition table for L1 to hard disk drive data transfer according to an embodiment of the present invention.

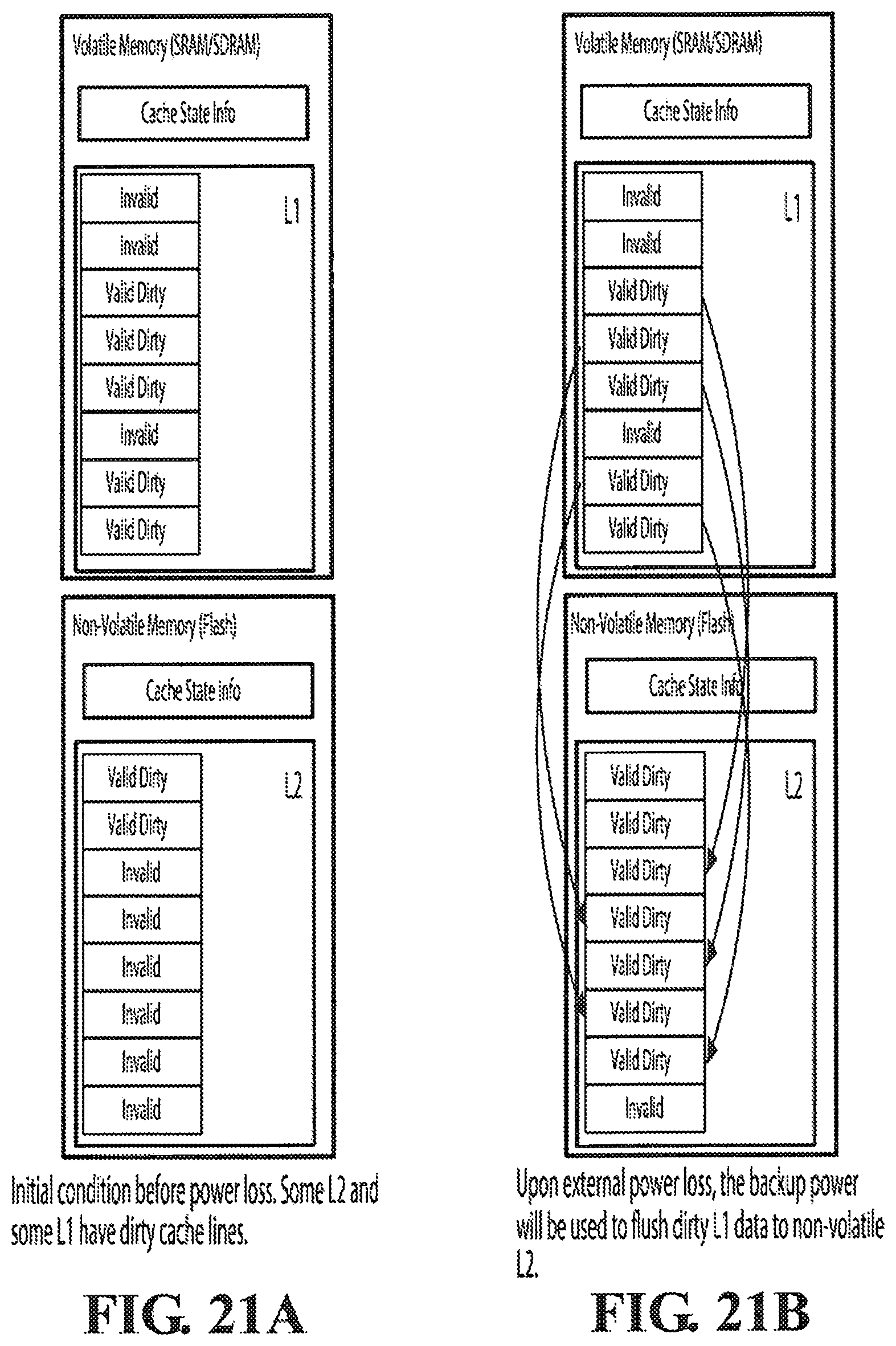

[0036] FIG. 21A shows an example initial state of L1 and L2 during normal operation before a power loss occurs.

[0037] FIG. 21B illustrates the step of flushing valid dirty data from L1 to L2 upon detection of external power loss, using a backup power source.

[0038] FIG. 21C shows the state of L1 and L2 before the backup power source is completely used up.

[0039] FIG. 21D shows the state of L1 and L2 upon next boot-up coming from an external power interruption. It also shows the step of copying valid dirty data from L2 to L1 in preparation for flushing to rotational drives or transferring to host.

[0040] FIG. 21E shows the state of L1 and L2 after the valid dirty data from L2 have been copied to L1.

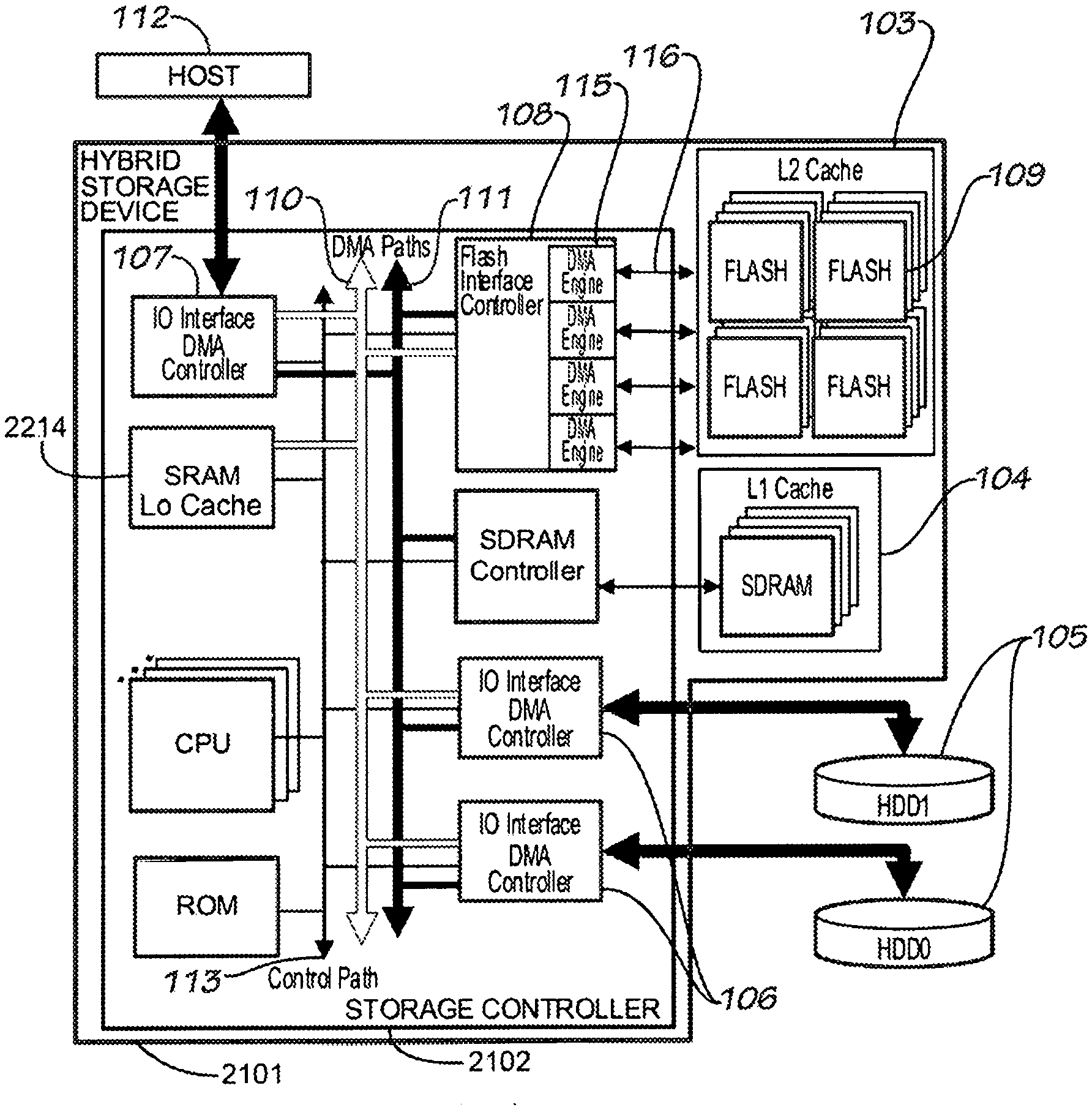

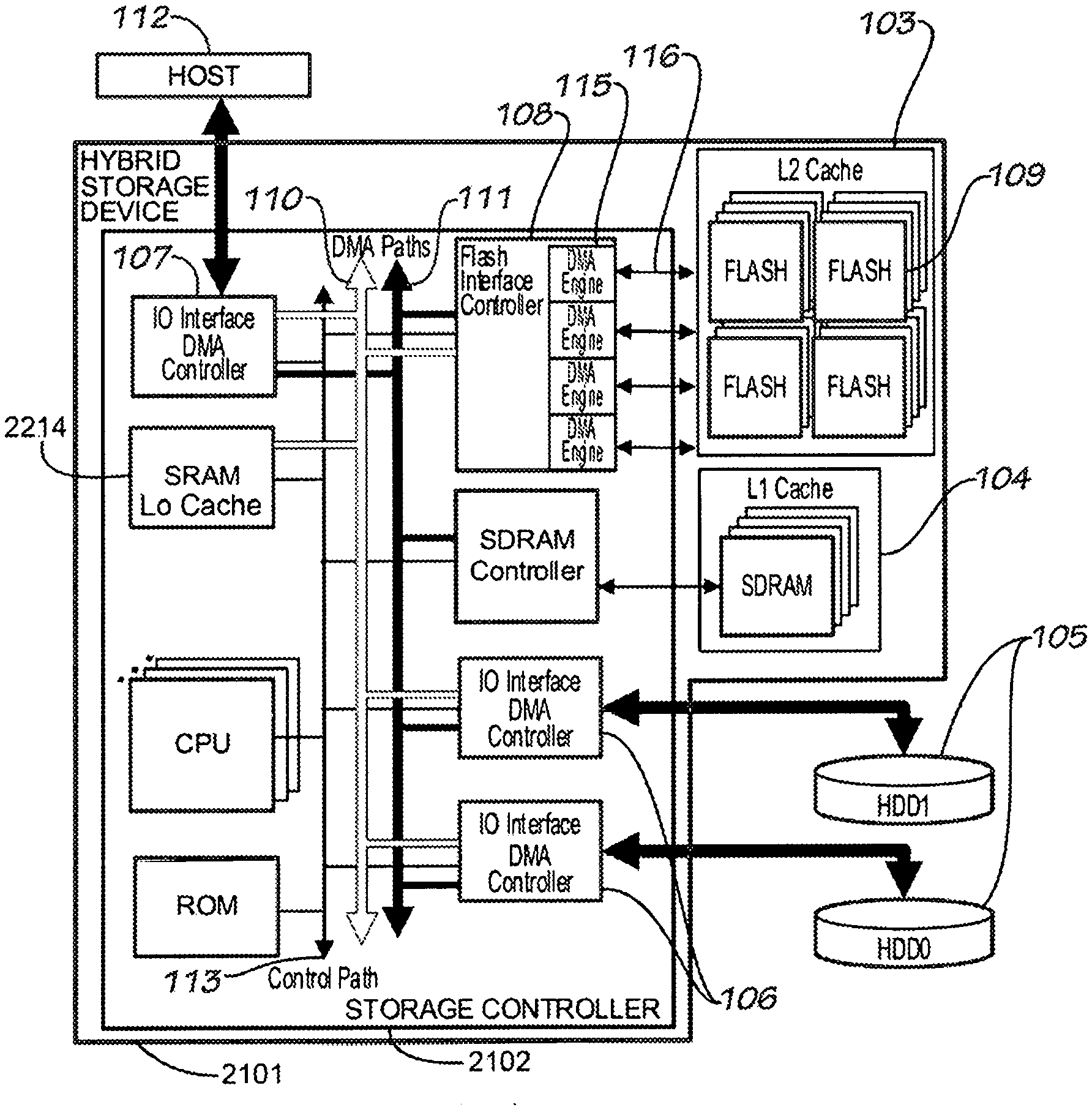

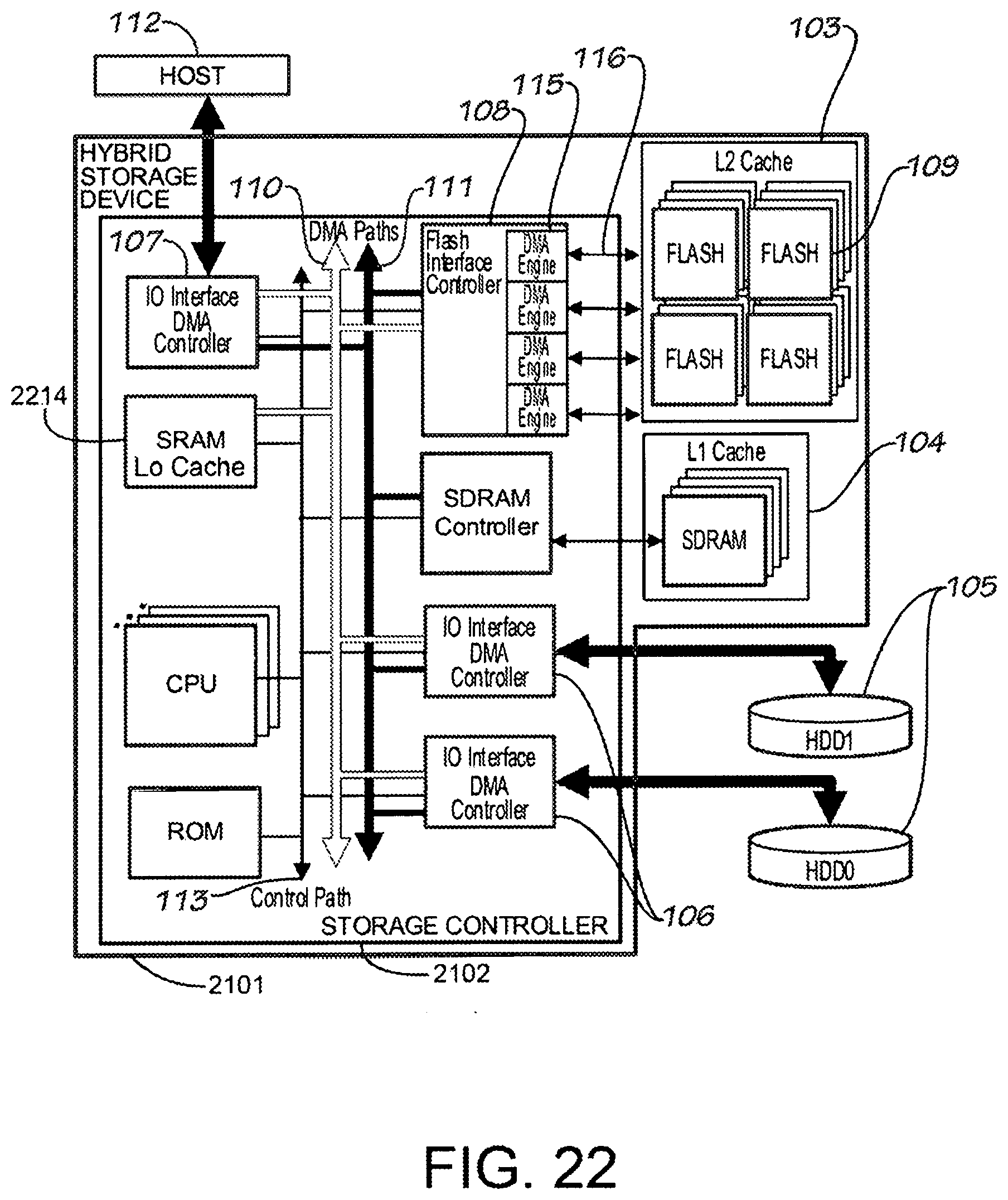

[0041] FIG. 22 illustrates a hybrid storage device connected directly to the host and to the rotational drives through the storage controller's available IO interface DMA controllers, in accordance with an embodiment of the invention.

DETAILED DESCRIPTION

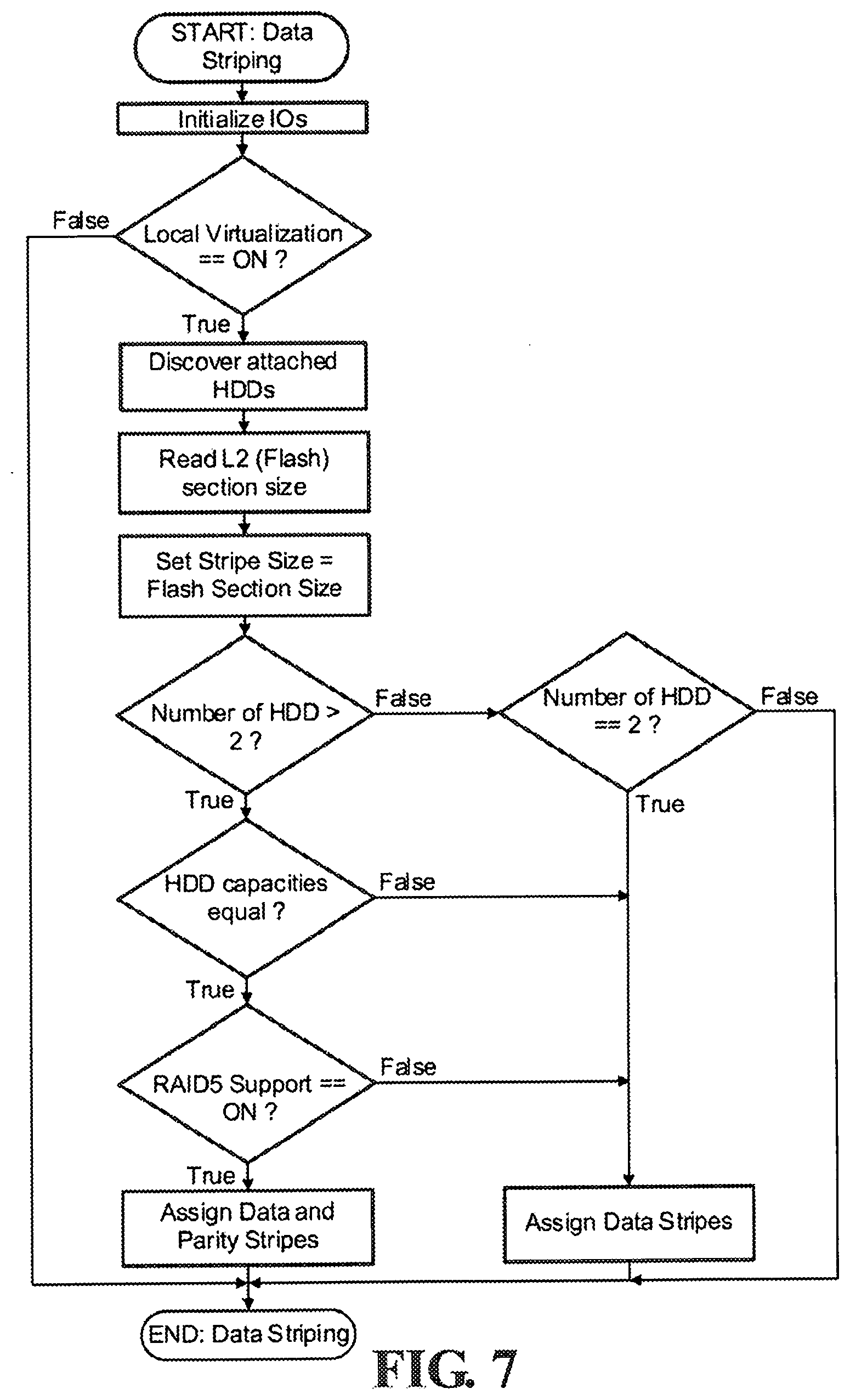

[0042] Cache line is an unit of cache memory identified by a unique tag. A cache line consists of a number of host logical blocks identified by host logical block addresses (LBAs). Host LBA is the address of a unit of storage as seen by the host system. The size of a host logical block unit depends on the configuration set by the host. The most common size of a host logical block unit is 512 bytes, in which case the host sees storage in units of 512 bytes. The Cache Line Index is the sequential index of the cache line to which a specific LBA is mapped.

[0043] HDD LBA (Hard-Disk Drive LBA) is the address of a unit of storage as seen by the hard disk. In a system with a single drive, there is a one-to-one correspondence between the host LBA and the HOD LBA. In the case of multiple drives, host LBAs are usually distributed across the hard drives to take advantage of concurrent IO operations.

[0044] HDD Stripe is the unit of storage by which data are segmented across the hard drives. For example, if 32 block data striping is implemented across 4 hard drives, the first stripe (32 logical blocks) is mapped to the first drive, the second stripe is mapped to the second drive, and so on.

[0045] A Flash Section is a logical allocation unit in the flash memory which can be relocated independently. The section size is the minimum amount of allocation which can be relocated.

[0046] Directly-mapped, set-associative, and full-associative caching schemes can be used for managing the multiple cache levels. A cache line information table is used to store the multi-level cache states and to track valid locations of data. The firmware implements a set of cache state transition guidelines that dictates the sequences of data movements during host reads, host writes, and background operations.

[0047] FIG. 1 illustrates a hybrid storage device 101 connected directly to the host 112 and to the rotational drives 105 through the storage controller's available IO interface DMA controllers 107 and 106 respectively. The rotational drives 105 are connected to one or more IO interface DMA controllers 106 capable of transferring data between the drives 105 and the high-speed L1 cache (SDRAM) 104. Another set of IO interface DMA controllers 107 is connected to the host 112 for transferring data between the host 112 and the L1 cache 104. The Flash interface controller 108 on the other hand, is capable of transferring data between the L1 cache 104 and the L2 cache (flash devices) 103.

[0048] Multiple DMA controllers can be activated at the same time both in the storage IO interface and the Flash interface sides. Thus, it is possible to have simultaneous operations on multiple flash devices, and simultaneous operations on multiple rotational drives.

[0049] Data is normally cached in L1 104, being the fastest among the available cache levels. The IO interface DMA engine 107 connected between the host 112 and the DMA buses 110 and 111 is responsible for high-speed transfer of data between the host 112 and the L1 cache 104. There can be multiple IO interface ports connected to a single host and there can be multiple IO interface ports connected to different hosts. In the presence of multiple IO interface to host connections, dedicated engines are available in each IO interface ports allowing simultaneous data transfer operations between hosts and the hybrid device. The engines operate directly on the L1 cache memory eliminating the need for temporary buffers and the extra data transfer operations associated with them.

[0050] For each level of cache, the firmware keeps track of the number of cache lines available for usage. It defines a maximum threshold of unused cache lines, which when reached causes it to either flush some of the used cache lines to the medium or copy them to a different cache level which has more unused cache lines available. When the system reaches that pre-defined threshold of unused L1 cache, it starts moving data from L1 104 to L2 cache 103. L2 cache is slower than L1 but usually has greater capacity. L2 cache 103 consists of arrays of flash devices 109. Flash interface 108 consists of multiple DMA engines 115 and connected to multiple buses 116 connected to the flash devices. Multiple operations on different or on the same flash devices can be triggered in the flash interface. Each engine operation involves a source and a destination memory. For L1 to L2 data movements, the flash interface engines copy data directly from the memory location of the source L1 cache to the physical flash blocks of the destination flash. For L2 to L1 data movements, the flash interface engines copy data directly from the physical flash blocks of the source flash to the memory location of the destination L1 cache.

[0051] Transfers of data from L1 104 to hard disk drives 105 and vice versa are handled by the DMA controllers of the IO interfaces 106 connected to the hard disk drives 105. These DMA controllers operate directly on the L1 cache memories, again eliminating the need for temporary buffers. Data transfers between L2 103 and the hard disk drives 105 always go through L1 104. This requires synchronization between L2 and L1 be built into the caching scheme.

[0052] Although FIG. 1 shows a system where the rotational drives 105 are outside the hybrid storage device 101 connected via IO interfaces 106, slightly different architectures can also be used. For example, the rotational drives 105 can be part of the hybrid storage device 101 itself, connected to the storage controller 102 via a disk controller. Another option is to connect the rotational drives 105 to an IO controller connected to the hybrid storage controller 102 through one if its IO interfaces 106. Similarly, the connection to the host is not in any way limited to what is shown in FIG. 1. The hybrid storage device can also attach to the host through an external IO controller. It can also be attached directly to the host's network domain. More details of these various configurations can be found in FIGS. 1, 3, 4, 7, and 9 of U.S. Pat. No. 7,613,876, entitled "Hybrid Multi-Tiered Caching Storage System".

[0053] In FIG. 2, the hybrid storage device 201 is part of the host system 202, acting as cache for a group of storage devices 203 and 205. In the example given, one of the IO interfaces 206 is connected directly to a hard disk drive 203. Another IO interface 207 is connected to another hybrid device 204 which is connected directly to another set of hard disk drives 205. Contrary to the example in FIG. 1 where the hybrid storage device is a slave device receiving IO commands from the host and translating them to subcommands delivered to the hard disk drives, and handling the caching in between these processes, FIG. 2 shows a host hybrid device doing caching of data on the host side using its own dedicated L1 and L2 caches. An example of this is a multi-ported HBA (Host Bus Adapter) with integrated L1 and L2 caches. In the HBA's point of view, it is connected to, and thus capable of caching multiple storage devices regardless of whether or not the attached storage devices are also doing caching internally. The hybrid device intercepts IO requests coming from the host application and utilizes its built-in caches as necessary.

[0054] FIG. 3 is another variation of the architecture. In this case, a hybrid storage device 301 acts as a caching switch/bridge connected to the host 302 via another hybrid storage device 303, which is shown as a HBA. The hybrid storage device 301 is connected to a hybrid storage device 304 also a plain rotational drive 305. In this example, all three devices 301, 303, and 304 are capable of L1 and L2 caching.

[0055] In FIG. 4, the hybrid storage device 401 is directly connected to the network 402 where the host 403 is also connected to. In this mode, the hybrid storage device can be a network-attached storage or a network-attached cache to other more remote storage devices. If it is used as a pure cache, it can implement up to three levels of caches, L1 (SDRAM), L2 (Flash), and L3 (HDD).

[0056] In the example architectures illustrated such as FIG. 1, the host can configure the hybrid storage device to handle virtualization locally. The hybrid storage device presents the whole storage system to the host as a single large storage without the host knowing the number and exact geometry of the attached rotational drives.

[0057] A firmware application running inside the hybrid storage device is responsible for the multi-level cache management.

[0058] Data Striping

[0059] If virtualization is implemented locally in the hybrid storage device, the device firmware can control the mapping of data across one or more rotational drives. Initially at first boot-up, the firmware will initialize the IO interfaces and detect the number and capacity of attached hard drives. It then selects the appropriate host LBA to HDD LBA mapping that will most likely improve the performance of the system. In its simplest form, the mapping could be a straightforward sequential split of the host LBA among the drives. FIG. 5A shows division of data into stripes in a single rotational drive. FIG. 5B shows sequential division of stripes among multiple rotational drives. In this mapping scheme, given for example, 3 drives with 80 GB capacity each, the first 80 GB seen by the host will be mapped to the first drive, the second 80 GB to the second drive, and the last 80 GB mapped to the third drive.

[0060] This mapping scheme is simplest but not too efficient. A better mapping would spread the data across the drives to maximize the possibility of concurrent operations. In this type of mapping, the firmware will distribute the stripes across the drives such that sequential stripes are stored in multiple drives. FIG. 5C, shows distributed stripes across multiple hard drives. In a system with 3 or more hard drives, distributed parity can be added for a RAID5-like implementation as shown in FIG. 5D.

[0061] The size of each stripe is configured at first boot-up. An example configuration is setting stripe size equal to the cache line size and setting cache line size equal to the native flash block size or to the flash section size. A system with host LBU of X bytes, and with flash devices with block size of Y bytes, a data stripe and a cache line will consist of Y divided by X number of host logical blocks. FIG. 6 shows an example cache line for a 16 KB flash section and 512 byte LBU.

[0062] FIG. 7 is a flowchart for the initialization part of data striping in the hybrid storage device. If local virtualization is active, firmware initiates discovery of attached hard drives, and gets the flash section size to be used as reference size for the stripe. If the number of detected drives is greater than two and the drives have equal capacities and RAID5 feature is set to on, RAID5 configuration is selected and parity stripes are assigned in addition to data stripes. If there are only two drives, plain striping is implemented.

[0063] Pre-Fetching

[0064] At initialization, the hybrid storage device firmware offers the option to pre-fetch data from the rotational drives to L1 cache. Since rotational drives are slow on random accesses, firmware by default may choose to pre-fetch from random areas in rotational drives. A more flexible option is for the firmware to provide an external service in the form of a vendor-specific interface command to allow the host to configure the pre-fetching method to be used by the firmware at boot-up.

[0065] If the system is being used for storing large contents such as video, the firmware can be configured to pre-fetch sequential data. If the system is being used for database applications, it can be configured to pre-fetch random data. If fastest boot-up time is required, pre-fetch may also be disabled.

[0066] In another possible configuration, the system may support a host-controlled Non-Volatile Cache command set. This allows the host to lock specific data in the non-volatile L2 cache so that they are immediately available at boot-up time. When the firmware detects that data was pinned by the host in the L2 non-volatile cache, it automatically pre-fetches those data.

[0067] FIG. 8 shows the flowchart for doing data pre-fetching at boot-up time.

[0068] Caching Mode

[0069] In FIG. 1, the rotational drives 105 have the largest storage capacity. The flash devices 103, acting as second level cache, may have less capacity. The SDRAM 104, acting as first level cache, may have the least capacity. Both L1 cache and L2 cache can either be fully-associative, set-associative, or directly-mapped. In a full-associative cache, data from any address can be stored to any of the cache lines. In a set-associative cache, data from a specific address can be mapped to a certain set of cache lines. In a directly-mapped cache, each address in storage can be cached only to one specific cache line.

[0070] FIG. 9A shows an illustration of a set-associative L2 cache, where the flash devices are divided among the rotational drives. Data from HDD0 can be cached to any of the 8 flash devices assigned to HDD0 (FDEV 00, FDEV 04, FDEV 08, FDEV 12, FDEV 16, FDEV20, FDEV24, and FDEV28), data from HDD1 can be cached to any of the 8 flash devices assigned to HDD1, and so on.

[0071] FIG. 9B shows an illustration of a directly-mapped L2 cache. In this setup, each of the four hard drives has dedicated flash devices where their data can be cached. In this example, data from HDD0 can only be cached in FDEV00, HDD1 in FDEV01, and so on.

[0072] FIG. 9C is an illustration of a full-associative L2 cache. In this setup, data from any of the four drives can be cached to any of the four flash devices.

[0073] Full-associative caching has the advantage of cache usage efficiency since all cache lines will be used regardless of the locations of data being accessed. In the full-associative caching scheme, the firmware keeps cache line information for each set of available storage. In a system with N number of cache lines, where N is computed as the available cache memory divided by the size of each cache line, the firmware will store information for M number of cache lines, where M is computed as the total storage capacity of the system divided by the cache line size. This information is used to keep track of the state of each storage stripe.

[0074] FIG. 10 shows an example table for storing cache line information in a full-associative caching system. Since each storage stripe has its own entry in the table, firmware can easily determine a stripe's caching state and location.

[0075] L1 Index is the cache line/cache control block number. HDD ID is the sequential index of the rotational drive where the data resides. HDD LBA is the first hard-disk LBA assigned to the cache line. L1 Address is the actual memory address where data resides in L1, and L2 Address is the physical address of the location of data in L2.

[0076] The HDD ID and HDD LBA can be derived at runtime to minimize memory usage of the table. L1 Cache State and L2 Cache State specify whether the SDRAM and/or the flash contain valid data. If valid, it also specifies if data is clean or dirty. A dirty cache contains a more up-to-date copy of data than the one in the actual storage media, which in this case is the rotational drive. Cache Sub-State specifies whether cache is locked because of on-going transfer between SDRAM and Host (sdram2host or host2sdram), SDRAM and Flash (sdram2flash or flash2sdram), or SDRAM and rotational drive (sdram2hdd or hdd2sdram).

[0077] Direct-mapping is less efficient in terms of cache memory usage, but takes less storage for keeping cache line information. In a system with N number of cache lines, where N is computed as the available cache memory divided by the size of each cache line, the firmware can store information for as few as N number of cache lines. When checking for cache hits, firmware derives the cache line index from the host LBA, and looks directly to the assigned cache line information. Firmware compares the cache-aligned host LBA to the start of the currently cached LBA range and declares a hit if they are the same.

[0078] At initialization, firmware allocates memory for storing the cache information. The amount of memory required for this depends on the caching method used as discussed above.

[0079] Address Translation

[0080] Cache states stored in the cache line information or cache control block outlined in FIG. 10 specify the validity of data copy in L1 and L2 caches. After inspection of cache states, the next step in processing an IO command is to locate the exact address of the target data, which is stored also in the cache line table in FIG. 10. If data is neither in L1 nor in L2, a HostLBA2HDDLBA translation formula is used to derive the addresses of the hard disk logical blocks where the data is stored.

[0081] The host LBA size is usually smaller than the flash block of L2, thus a set of logical blocks is addressed by a single physical block. For example, given a 512-byte host LBA and 16 KB flash block, 32 LBAs will fit into one flash block. Therefore, only one entry in the table is needed for each set of 32 host logical blocks.

[0082] The cache information table is stored in non-volatile memory and fetched at boot-up time. In systems with very large storage capacities, it might not be practical to copy the entire table to volatile memory at boot-up time clue to boot-up speed requirement and limitation of available volatile memory. At boot-up only a small percentage of the table is copied to volatile memory. In effect, the cache control block table is also cached. If the table entry associated with an IO command being serviced is not in volatile memory, it will be fetched from the non-volatile memory and will replace a set of previously cached entries.

[0083] The HostLBA2HDDLBA translation formula depends on the mapping method used to distribute the host logical blocks across the rotational drives. For example, if host data is striped across 4 rotational drives and parity is not implemented, the formula would look like the following:

HDDLBA=StripeSz*(NumHDD/SDRAMIdx)+HostLBA % StripeSz.

[0084] The index to the rotational drive can be derived through the formula:

HDDIdx=SDRAMIdx % NumHDD

[0085] In the first equation, StripeSz is specified in terms of logical block units.

[0086] Cache State Transitions

[0087] The firmware keeps track of data in the L1 and L2 caches using a set of cache states which specifies the validity and span of data in each cache line. The cache state information is part of the cache information table in FIG. 10. Each cache level has its own cache state, and in addition, the field cache sub-state specifies whether the cache line is locked due to an ongoing data transfer between caches, between the medium and a cache, or between the host and a cache. Although the cache states are presented in the table as one data field, the representation in the actual implementation is not restricted to using a single variable for each cache state. For example, it may be a collection of flags and page bitmaps but when treated collectively still equate to one of the possible distinct states. The page bitmap is the accurate representation of which parts of the cache line are valid and which are dirty. As an example, the cache line 601 of FIG. 6 has 32 host LBAs and the state of each LBA (whether valid, invalid, clean, or dirty) can be tracked by using two 32-bit bitmap ValidBitmap and DirtyBitmap. Each bit in the two variables represents one LBA in the cache line. For ValidBitmap, a bit set to one means the data in the corresponding LBA is valid. For DirtyBitmap, a bit set to one means the data in the corresponding LBA is more up to date than what is stored in the medium. The six possible cache states are: Invalid, Valid Partially Cached Dirty, Valid Fully Cached Partial Dirty, Valid Full Dirty, Valid Full Clean, and Valid Partially Cached Clean. The seven possible cache sub-states are: NOP, H2S, S2H, F2S, S2F, HDD2S and S2HDD.

[0088] A sub-state of NOP (No Operation) indicates that the cache is idle. H2S indicates that the cache line is locked clue to an ongoing transfer of data from the host to L1. S2H indicates that the cache line is locked clue to an ongoing transfer of data from L1 to host. F2S indicates that the cache line is locked clue to an ongoing transfer from L2 to L1. S2F indicates that the cache line is locked clue to an ongoing transfer from L1 to L2. HDD2S indicates that the cache line is locked clue to an ongoing transfer from the hard disk to L1. Finally, S2HDD indicates the cache line is locked clue to an ongoing transfer from L1 to the hard disk drive.

[0089] An Invalid cache line does not contain any data or contains stale data. Initially, all caches are invalid until filled-up with data during pre-fetching or processing of host read and write commands. A cache line is invalidated when a more up-to-date copy of data is written to a lower-level cache thus making the copy of the data in higher level caches invalid (15038, 15040, 15041, 15043, 15046, 15048, 15050, 15055, 15057, 15059, 15060, 15062, 15068, 15071, 15072, 15074, 15076, 15080, 15084, 15085, 15090, 15095, 15096, 15100, 15101, 15103, 15105, 15109, 15115, 15116, 15119, 15120, 15124, 15125, 15127, 17049 and 17050). For example if a dirty cache line in L1 is copied to L2 so that L1 can be freed up during an L1 cache full condition, and later new version of that data is written to L1 by host, the copy in L2 becomes old and unusable, so the firmware invalidates the cache line in L2. From an invalid state, a write to an L1 cache line by host will result to switching of state to either Valid Partially Cached Dirty (15037, 15039, 15040, 15042, 15044, 15045, 15047, 15049 and 15050) or Valid Full Dirty (15036, 15038, 15041, 15043, 15046 and 15048), depending on whether the data spans the entire cache line or not. On the other hand, a read from the medium to L1 makes an Invalid cache line either Valid Partially Cached Clean (19036, 19038, 19039, 19041 and 19043) or Valid Full Clean (19037, 19040 and 19044). Finally, a read from L2 to an invalid L1 could result to inheritance of L2's state by L1 (17037, 17038, 17040, 17042 and 17044). However, if the data from L2 is not enough to fill the entire L1 cache line, the resulting state of L1 would either be Valid Partially Cached Clean (17039) or Valid Partially Cached Dirty (17041 and 17043). From an invalid state, a write to an L2 cache line will result to inheritance of state from L1 to L2 (18042, 18050, 18056, 18062, and 18068).

[0090] Valid Partially Cached Dirty state indicates that the cache line is partially filled with data and some or all of these data are dirty. A dirty copy of data is more up-to-date than what is stored in the actual medium. An example sequence that will result to this state is a partial Write FUA command to an Invalid cache line followed by a partial normal Write command. The Write FUA command partially fills the L1 cache line with clean data (19036, 19038, 19039, 19041 and 19043), and the normal Write command makes the partial L1 cache line dirty (15114, 15117, 15118, 15121, 15127 and 15128). L1 cache will take on a Valid Partially Cached Dirty state whenever new data transferred from the host or L2 cache is not enough to fill its entire cache line (15037, 15039, 15040, 15042, 15044, 15045, 15047, 15049, 15050, 15053, 15058, 15059, 15066, 15067, 15070, 15075, 15076, 15114, 15117, 15118, 15121, 15127, 15128, 17037, 17041, 17043, 17047, 17052, 17054 and 17075). Transfer of data from hard disk drive or from L2 to a Valid Partially Cached Dirty L1 occurs when the firmware wants to fill-up the un-cached portions of the L1 cache. When the transfer completes, the L1 cache either becomes Valid Full Dirty (17049 and 17051) or Valid Fully Cached Partial Dirty (17046, 17050, 17053, 19046, 19048 and 19053), depending on whether the entire cache line became dirty or not. However, for cases wherein data transferred from the hard disk drive or L2 cache is not enough to fill all un-cached portion of the L1 cache, its state remains in Valid Partially Cached Dirty (17047, 17052, 17054, 19045, 19047 and 19052). Flushing of dirty bytes from a Valid Partially Cached Dirty L1 to the medium will either cause its state to change to Valid Partially Cached Clean (20042, 20044, 20046, 20049, 20051 and 20053) or stay in Valid Partially Cached Dirty (20043, 20045, 20047, 20050 and 20054) depending on whether all dirty bytes were flushed to the medium or just a portion of it. A host write to a Valid Partially Cached Dirty L1, either makes it Valid Full Dirty, Valid Fully Cached Partial Dirty, or leave it as Valid Partially Cached Dirty, depending on the span of data written by the host. If the new data covers the entire cache, it naturally becomes Valid Full Dirty (15051, 15055, 15062, 15068, and 15071). If the new data fills all un-cached bytes and all clean bytes, L1 still becomes Valid Full Dirty (15052, 15056, 15057, 15063, 15065, 15069, 15072 and 15074). If the new data fills all un-cached bytes but some bytes remained clean, L1 becomes Valid Fully Cached Partial Dirty (15054, 15060, 15064 and 15073). Finally, if the new data does not fill all un-cached, L1 stays as Valid Partially Cached Dirty (15053, 15058, 15059, 15066, 15067, 15070, 15075 and 15076). L2 will switch to Valid Partially Cached Dirty state if a Valid Partially Cached Dirty L1 is copied to it (18042, 18043 and 18048) and copied data does not fill the entire cache line of L2. Data transfer from the host to L1 could invalidate some of the data in L2 effectively causing L2's state to switch to Valid Partially Cached Dirty (15044, 15047, 15063, 15065, 15067, 15069, 15070, 15098 and 15110). L2 will likewise switch to Valid Partially Cached Dirty if it shares the same set of data with LI, and some of the dirty bytes in L1 were flushed to the medium (20079). When new data is written by the host to L1 overlaps with the data in L2, the L2 copy becomes invalid (15038, 15040, 15055, 15057, 15059, 15060, 15090, 15105, 15115 and 15116) or Valid Partially Cached Clean (15092 and 15118), otherwise it will stay in its Valid Partially Cached Dirty state (15039, 15056, 15058, 15091, 15093, 15106 and 15117). A transfer from L1 to L2 could also change L2's state from Valid Partially Cached Dirty to Valid Full Dirty (18044 and 18063) or Valid Fully Cached Partial Dirty (18057, 18069 and 18070), depending on whether the entire cache line became dirty or not as a result of the data transfer. If the dirty bytes in L1 is flushed to the medium incidentally coincides with the dirty bytes in L2, the L2 copy becomes Valid Partially Cached Clean (20044, 20062, 20072 and 20073.

[0091] A Valid Full Clean state indicates that the entire cache line is filled with data that is identical to what is stored in the actual medium. This happens when un-cached data is read from the medium to L1 (19037, 19040, 19044, 19073, 19076 and 19080), or when data in L1 is flushed to the medium (20049, 20060, 20062, 20065, 20068, 20070, 20072 and 20077). A data transfer from L1 could also result to a Valid Full Clean state for L2 if data copied to L2 matches what is stored in the hard disk (18050 and 18075). Likewise, L1 will switch to a Valid Full Clean state (17038, 17076 and 17080) following a transfer from L2, if cached data in L2 matches what is stored in the hard disk and transferred data from L2 is enough to fill the entire L1 cache line. When written with new data, a Valid Full Clean either becomes Valid Full Dirty (15077, 15080, and 15084) or Valid Fully Cached Partial Dirty (15078, 15081, 15085 and 15086), depending on whether the new data spans the entire cache line or not. A Valid Full Clean L2 could become Valid Partially Cached Clean (15042, 15081 and 15121) or could be invalidated (15041, 15080, 15119 and 15120) depending on whether new data written to LI by the host invalidates a portion or the entire content of L2.

[0092] The Valid Fully Cached Partial Dirty state indicates that the entire cache line is filled up with data and some of the data are dirty. An example sequence that will result to such state is a Read FUA command of the entire cache line followed by a partial Write command. The Read FUA command copies the data from the medium to L1, making L1 Valid Full Clean (19037, 19040, 19044, 19054, 19056, 19059, 19073, 19076 and 19080), and the following partial Write command makes some of the data in the cache line dirty (15078, 15081, 15085 and 15086). Writing data to un-cached portions of a partially filled LI could likewise result to a Valid Fully Cached Partial Dirty state (15054, 15060, 15064, 15073, 15113, 15116, 15120, 15125, 15126, 17046, 17050, 17053, 17074, 19046, 19048 and 19053). Writing this LI cache line to L2 in turn, makes L2 inherit the state of LI as Valid Fully Cached Partial Dirty (18056, 18057 and 18061). Similarly, copying a Valid Fully Cached Partial Dirty L2 to L1 will make L1 inherit the state of L2 (17040 and 17050). Transferring data from L1 to un-filled portions of L2 would likewise cause L2's state to switch to Valid Fully Cached Partial Dirty (18049, 18069 and 18070). A Valid Fully Cached Partial Dirty L1 will remain in this state until a portion of the dirty bytes in L1 were flushed to the medium after which it would shift to a Valid Full Clean state (20061, 20063, 20066 and 20069). Furthermore, when the host writes new data to the L1 cache, L1 either stays as Valid Fully Cached Partial Dirty (15089, 15092, 15097, 15098, 15102 and 15103) or becomes Valid Full Dirty (15087, 15088, 15090, 15091, 15093, 15095, 15096, 15100 and 15101). A Valid Fully Cached Partial Dirty L2 cache, on the other hand, either gets invalidated (15043, 15062, 15095 and 15096), switches to Valid Partially Cached Dirty state (15044, 15063, 15065, 15067 and 15098) or changes state to Valid Partially Cached Clean (15045, 15064, 15066 and 15097) following a transfer from the host to L1. Copying data from a Valid Fully Cached Partial Dirty L2 to L1 would likewise invalidate the contents of L2 (17049 and 17050). Flushing all cached dirty bytes from L1 will cause L2's state to change from Valid Fully Cached Partial Dirty to Valid Full Clean (20049 and 20065), otherwise, L2 stays in Valid Fully Cached Partial Dirty state (20050, 20051 and 20067).

[0093] The Valid Full Dirty state indicates that the entire cache line contains newer data than what is stored in the medium. L1 may become Valid Full Dirty from any state (i.e. Invalid: 15036, 15038, 15041, 15043, 15046 and 15048; VPCD: 15051, 15052, 15055, 15056, 15057, 15062, 15063, 15065, 15068, 15069, 15071, 15072 and 15074; VFCPD: 15087, 15088, 15090, 15091, 15093, 15095, 15096, 15100 and 15101; VFD: 15104, 15105, 15106, 15109 and 15110; VFC: 15077, 15080 and 15084; VPCC: 15112, 15115, 15119 and 15124) once the host writes enough data to it to make all its data dirty. Aside from this, a Valid Full Dirty L1 may also be a result of a previously empty or Valid Partially Cached Dirty L1 that has been filled up with dirty bytes from L2 (17042, 17049 and 17051). A Valid Full Dirty L1 will stay at this state until flushed out to the medium, after which it will become Valid Full Clean (20071, 20073 and 20078) or Valid Fully Cached Partial Dirty (20072, 20074, 20075 and 20079). A Valid Full Dirty L2 is a result of data transfer from a Valid Full Dirty L1 to L2 (18062 and 18063) or when new data copied from L1 is enough to fill all portions of L2 (18044). L2 will stay at this state until the host writes new data to L1 which effectively invalidates portions or the entire data in L2. If only a portion of cached data in L2 is invalidated a Valid Full Dirty L2 switches to Valid Partially Cached Dirty state (15047, 15069, 15070 and 15110), otherwise it switches to Invalid state (15046, 15068 and 15109). The state of L2 could also change from Valid Full Dirty to Valid Partially Cached Dirty (20079) or Valid Full Clean (20078) depending on whether all or just a portion of the dirty bytes in L1 was flushed to the medium.

[0094] The Valid Partially Cached Clean state indicates that the cache is partially filled with purely clean data. For L1, this may be a result of a partial Write FUA (20081, 20082, 20083 and 20086), or partial Read FUA command (19036, 19038, 19039, 19041, 19043, 19072, 19074, 19075 and 19079), or flushing of a partially cached dirty L1 to the hard disk drive (20042, 20044, 20046, 20049, 20051 and 20053) or data transferred from L2 to L1 cache did not fill entire L1 cache line (17039, 17044, 17077 and 17081). A Valid Partially Cached Clean will transition to Valid Full Clean if remaining un-cached data are read from the medium (19073, 19076 and 19080) or from L2 (17076 and 17080) to L1. When host writes to a Valid Partially Cached Clean L1, the L1 state will transition to Valid Full Dirty, Valid Fully Cached Partial Dirty, or Valid Partially Cached Dirty. If the written data covers the entire cache line, the L1 becomes Valid Full Dirty (15112, 15115, 15119 and 15124). If the new data does not cover the entire cache line, L1 becomes Valid Partially Cached Dirty (15114, 15117, 15118, 15121, 15127 and 15128). If the new data does not cover the entire cache line but was able to fill all un-cached data, L1 becomes Valid Fully Cached Partial Dirty (15113, 15116, 15120, 15125 and 15126). When data from L2 is copied to a Valid Partially Cached Clean L1, it could likewise transition to Valid Partially Cached Dirty state (17075), Valid Fully Cached Partial Dirty (17074), Valid Full Clean (17076 and 17080), or Valid Partially Cached Clean (17077 and 17081). A Valid Partially Cached Clean L2 is the result of a Valid Partially Cached Clean L1 being written to L2 (18068 and 18074), or a Valid Partially Cached Dirty L1 being flushed out to the medium (20044, 20053 and 20054). A Valid Partially Cached Clean L2 could likewise result from a host to L1 transfer whenever some of the data in L2 gets invalidated (15042, 15045, 15064, 15066, 15081, 15092, 15097, 15118 and 15121). When host writes to L1, the entire contents of a Valid Partially Cached Clean L2 would be invalidated if the data transferred by the host overlaps with the contents of L2 (15038, 15040, 15055, 15057, 15059, 15060, 15090, 15085, 15105, 15115 and 15116) otherwise it stays in Valid Partially Cached Clean state (15092 and 15118). Transferring new data bytes from L1 will cause a transition of L2's state from Valid Partially Cached Clean to Valid Partially Cached Dirty state (18048) or Valid Fully Cached Partial Dirty state (18049 and 18061) depending on whether copied data from L1 fills the entire L2 cache line or not. A Valid Partially Cached Clean L2 could also transition to Valid Full Clean (18075) if data transferred from L1 fills empty cache bytes of L2, otherwise, L2 stays in Valid Partially Cached Clean state (18074).

[0095] FIGS. 15A, 15B 15C.sub.1, 15C.sub.2, and 15B, FIGS. 16A and 16B, FIGS. 17A and 17B, FIGS. 18A and 18B, FIGS. 19A and 19B and FIGS. 20A and 20B show the complete tables showing the state transitions that occur in a hybrid storage system with two levels of cache. For systems with more than two cache levels, the additional table entries can easily be derived using the same concepts used in the existing table.

[0096] Read Command

[0097] The succeeding paragraphs discuss in details, the processing of a Read command by a hybrid storage device as described by the flow chart illustrated in FIG. 11A. The process performs different types of cache operations which make use of different cache transition tables. The cache transition tables used are also discussed.

[0098] When the firmware receives a Read command from the host, it derives the cache control block index (SDRAM Index) based on the host LBA. Then it checks the designated cache control block if the requested LBA is in L1 cache.

[0099] If L1 cache is valid and the associated cache control block entry is for the requested block, firmware starts data transfer from L1 cache to host and updates cache sub-status to S2H (SDRAM to host). Note that there are 5 defined valid cache states (valid full clean (VFC), valid full dirty (VFD), valid partially cached clean (VPCC), valid partially cached dirty (VPCD), and valid fully cached partial dirty (VFCPD)), and before firmware can initiate L1 cache to host data transfer and update the cache sub-state to S2H, it must check first if there is an ongoing locked cache operation. Should there be any ongoing locked cache operation, the firmware will wait until the operation is finished (or current cache sub-state becomes NOP) before initiating the data transfer from L1 cache to host. FIGS. 16A and 16B lists the 5 defined valid states for L1 cache (and other states) and the combination with L2 cache state and cache sub-state values for allowable and non-allowable data transfer from L1 cache to host. As an example, assuming the requested data being targeted by the received Read command from the host is LBA 0-99 and is determined to be in L1 cache based on the cache line information table. Based on FIGS. 16A and 16B, firmware may execute read from L1 cache to host provided that current cache sub-state is NOP. Note also that S2H operation can be initiated regardless of the valid current state of L2 cache since content of the L1 cache is always the latest or most updated copy.

[0100] If L1 cache is valid but a different entry is stored in the associated cache control block (for the case of directly mapped cache), the firmware initiates the freeing of that cache. If that cache is clean, it can be freed instantly without any flush operation. But if the cache is dirty, firmware gets the associated flash physical location of data from cache control info and initiates copying of data to L2 cache after determining that there is enough space for the L1 cache content to be flushed, which is faster than flushing to rotational drive. Then it updates sub-status to sdram2flush (S2F). Refer to "movement from L1 cache to L2 cache" for detailed discussion on this cache operation. FIGS. 18A and 18B lists the cache state transition for L1 cache to L2 cache data transfer.

[0101] However, if L2 cache is full, flushing to rotational drive will be initiated instead, and sub-status will be set to S2HDD (SDRAM to hard disk drive). Flushing of L2 cache to rotational drives can also be done in the background when firmware is not busy servicing host commands. After flushing of L1, firmware proceeds with the steps below as if data is not in L1 cache. Refer to "flushing of L1 cache" subsection of this document for a detailed discussion on the flushing of L1 cache mentioned in the Read operation. FIGS. 20A and 20B lists the cache state transition for L1 cache to rotational drive data transfer.

[0102] If data is not in L1 cache, firmware checks state of L2 cache.

[0103] If L2 cache is valid, firmware gets the physical location of data based on the L2 address field of the cache control info table and starts transfer from L2 cache to L1 cache, and updates sub-status to F2S (flash to SDRAM). FIGS. 17A and 17B lists the current L1 cache state, L2 cache state, and cache sub-state condition requirements for F2S operation. Based on the table, F2S operation can be initiated when current cache sub-state is NOP and current L1 cache state can be INVLD, VPCD, or VPCC. The same as the previously mentioned cache operations, F2S can only be initiated by firmware if there is no ongoing locked cache operation. If there is no available L1 cache (L1 cache full), firmware selects an L1 cache victim. If the selected victim is clean, or if it is dirty but consistent with the copy in L2 cache, it is freed instantly. Otherwise, it is flushed to the rotational drive. The cache is then invalidated and assigned to the current command being serviced. For example, the read command is requesting LBA 20-25 which is located in L2 cache.

[0104] Assuming the configuration is 10 LBAs per L1 cache line or index, the requested LBAs are mapped to L1 index #2 of the cache control information table. To start the transfer of the data from L2 cache to L1 cache, firmware checks L1 cache state if it is not yet full. If not full, firmware searches for an available L1 address (ex. 0x0000_3000), assigned it to L1 index #2, and set the cache sub-state value from NOP to F2S. However, if current L1 cache is full (VFC, VFD, or VFCPD), an L1 address is selected. Assuming the selected L1 address is 0x0001_0000, firmware checks from the L1 segment bitmap if the content of this address is clean or dirty. If clean, then the address is invalidated. If dirty, firmware flushes to the rotational drive if needed, before invalidating the selected L1 address. Once invalidated, firmware initiates LBA 20-29 transfer from L2 cache to L1 cache address 0x0001_0000 once the current cache sub-state is NOP. After completing the data transfer, firmware updates the L1 cache state and sets cache sub-state back to NOP.

[0105] If L2 cache is invalid, the firmware determines physical location of data in rotational drives, starts transfer of data from rotational drive to L1 cache, and updates sub-status to HDD2S (hard disk drive to SDRAM). For example, LBA 100-199 is being requested by a received Read command from the host, and based on the cache control information table, this LBA range is not in the cache (L1 and L2). After determining, the physical location in the hard disk using the HostLBA2HDDLBA translation formula, firmware selects a free L1 cache address and initiates the data transfer from the hard disk to the selected L1 cache address when no L1 cache operation is happening.

[0106] Note that HDD2S cache operation can also be initiated for other values of L2 cache state. FIG. 19 lists L1 cache state, L2 cache state, and cache sub-state current values, the allowable event for each cache combinations, and the resulting states per event. Based on the figure, HDD2S can be initiated when--(1) current L1 or L2 cache states is not full dirty, (2) current L1 cache state is VPCD and current L2 cache state is valid full, (3) current L1 cache state is VFC and current L2 cache state is dirty, (4) current L1 cache state is VFCPD and current L2 cache state is VFD, (5) current L1 cache state is VPCC and current L2 cache state is VFCPD or VFP, and (6) there's no ongoing cache operation. The case when current L1 cache state is VFC and VFCPD, and HDD2S is initiated, is applicable only when the received command is Read FUA where clean data is read directly from the hard disk regardless if there's a cache hit or not. Note also that if L1 cache is full, flushing of L1 cache is done before fetching from HDD can occur.

[0107] Upon completion of S2H, firmware clears cache sub-status (NOP), sends command status to host, and completes command. FIGS. 16A and 16B also lists the cache state transitions when completing the data transfer from L1 cache to host. The figure details the corresponding next L1 and L2 cache states based on their current states after finishing the data transfer. Based on the figure, L1 cache and L2 cache states are retained even after the host completed reading from L1 cache (16042, 16403, 16045-16048, 16050, 16053-16055, 16057, 16059-16061, 16064, 16066-16068, and 16071). However, cache sub-state transitions to NOP after the operation.

[0108] Upon completion of F2S, firmware updates cache control block (L1 cache is now valid) and starts transfer from L1 cache to host. Sub-status is marked as S2H. FIGS. 17A and 17B also lists the cache state transitions when completing the data transfer from L2 cache to L1 cache. The figure details the corresponding next L1 and L2 cache states based on their current states after finishing the data transfer. As illustrated in the figure, cache sub-state always transitions to NOP after the operation.

[0109] If current L1 cache state is invalid, its next state is set depending on the current L2 cache state and the type of L2 to L1 data transfer. If current L2 cache state is VPCD or VPCC, the L1 cache state is also set to the L2 cache state after the operation (17037 and 17044). If current L2 cache state is VFC, VFCPD, or VFD, current L1 cache state is set depending on the 2 type of L2 to L1 data transfer event--(1) entire L1 cache is filled after transferring the data from L2 cache and (2) L1 cache is not filled after the data transfer. If (1), L1 cache is set to the L2 cache state (17038, 17040, and 17042). If (2), L1 cache state is set to VPCC if current L2 cache state is VFC (17039), set to VPCD if current L2 cache state is VFCPD (17041), or set to VPCD is current L2 cache state is VFD (17043).

[0110] If current L1 cache state is VPCD, its next state is set depending on the current L2 cache state. If current L2 cache state is also VPCD, L1 cache state is set based on the 2 events described on the previous paragraph. If (1), L1 cache state is set to VFCPD (17046). If (2), L1 cache state is set to VPCD (17047). If current L2 cache state is VFCPD, L1 cache state is set based on another 2 L2 to L1 data transfer events--(1) all un-cached bytes in L1 are dirty in L2 and (2) not all un-cached bytes in L1 are dirty in L2. (1), L1 cache state is set to VFD (17049). If (2), L1 cache state is set to VFCPD (17050). For the 2 cases, L2 cache state is set to INVLD after F2S operation. If current L2 cache state is VFD, LI cache is set based on the former 2 events--(1) entire L1 cache is filled after the operation and (2) L1 cache is not filled after the operation. If (1), L1 cache state is set to VFD (17051). If (2), LI cache state is set to VPCD (17052). If current L2 cache state is VPCC, L1 cache state is set based also on the 2 previous events. If (1), L1 cache state is set to VFCPD (17053). If (2), L1 cache state is set to VPCD (17054).

[0111] If current L1 cache state is VPCC, its next state is set to VFCPD or VFC if current L2 cache state is VPCD or valid clean, respectively (17074 or 17076/17080) for the case when the entire L1 cache is filled after the data transfer. L1 cache state is set to VPCD or VPCC if current L1 cache state is VPCD or valid clean (17075 or 17077/17081) for the case the entire L1 cache is not filled after the data transfer.

[0112] Upon completion of HDD2S, firmware updates cache control block (L1 cache is now valid) and starts transfer from L1 cache to host. FIGS. 19A and 19B also lists the cache state transitions when completing the data transfer from rotational disks to L1 cache. The figure-details the corresponding next L1 and L2 cache states based on their current states after finishing the data transfer. Cache sub-state always transitions to NOP after the operation. Note that although L2 cache state is not affected since the operation only involves the L1 cache and the rotational drive, its current state affects the L1 cache succeeding cache state as listed in the figures.

[0113] If current L1 cache state is INVLD, its next state is set depending on the current L1 cache state. If current L2 cache state is INVLD, VFC, or VPCC, L1 cache state is set based on 2 events--(1) data from the hard drive did not fill the entire cache and (2) data from the hard drive filled the entire cache. If (l), L1 cache state is set to VPCC (19036, 19039, and 19043). If (2), L1 cache state is set to VFC (19037, 19040, and 19044). If current L2 cache state is VPCD, L1 cache state is set to VPCC (19038). If current L2 cache state is VFCPD, L1 cache state is set to VPCC (19041).

[0114] If current L1 cache state is VPCD and current L2 cache state is INVLD, VPCD, or VPCC, the L1 cache state is set based also on the 2 events discussed on the previous paragraph. If (1), L1 cache state is set to VPCD (19045, 19047, and 19052). If (2), L1 cache state is set to VFCPD (19046, 19048, and 19053).

[0115] If current L1 cache state is VFC or VFCPD, the state is retained after the operation (19054, 19056, 19059-19063, and 19065).

[0116] If current L1 cache state is VPCC its next state is set depending on the current L1 cache state. If current L2 cache state is INVLD, VFC, or VPCC, the L1 cache state is set based also on the 2 events discussed on a previous paragraph. If (1), L1 cache state is set to VPCC (19072, 19075, and 19079). If (2), L1 cache state is set to VFC (19073, 19076, and 19080). If current L2 cache state is VPCD, L1 cache state is set to retained (19074).

[0117] In the background, when interface is not busy, firmware initiates copying if L1 cache to L2 cache, flushing of L1 cache to rotational drives, and flushing of L2 cache to rotational drives.

[0118] Note that when the received command is Read FUA, the data is fetched from the rotational drive regardless if there is a cache hit or not. If there is, however, a cache hit for the Read FUA command and the cache is dirty, the cache is flushed to the rotational drive before the data is fetched.

[0119] Write Command

[0120] When firmware receives a Write command from the host, it derives the cache control block index (SDRAM Index) based on the host LBA. Then it checks the designated cache control block if requested LBA is in L1 cache.

[0121] If L1 cache state is invalid (INVLD) and there is no ongoing locked operation (NOP), firmware start transfer from host to L1 cache and updates cache sub-status to H2S. After completion of host2sdram transfer, firmware updates cache sub-status to NOP. If the write data uses all of the cache line space, L1 cache state becomes VFD (e.g. 15036), otherwise L1 cache state becomes VPCD (e.g. 15037). For the case when write data uses all of the L1 cache line space, the copy in L2 cache becomes INVLD (e.g. 15038).

[0122] If L1 cache state is valid (VPCD, VFC, VFCPD, VFD, VPCC), there is no ongoing locked operation (NOP), and the associated cache contains the correct set of data, firmware start transfer from host to L1 cache and updates cache sub-status to host2sdram. After completion of host2sdram transfer, firmware updates cache sub-status to NOP.

[0123] If L1 previous cache state is VPCD, there are 4 options: (1) if write data uses all of the cache line space, L1 cache state becomes VFD (e.g. 15055). (2) If write data is less than the cache line space, there's no more free cache line space, and there's no more clean cache area, L1 cache state becomes VFD (e.g. 15057). (3) If write data is less than the cache line space and there's still some free cache line space, L1 cache state becomes VPCD (e.g. 15058). (4) If write data is less than the cache line space, there's no more free cache line space, and there's still some clean cache area, L1 cache state becomes VFCPD (e.g. 15060).

[0124] If L1 previous cache state is VFC, there are 2 options: (1) if write data uses all of the cache line space, L1 cache state becomes VFD (e.g. 15080), (2) if write data is less than the cache line space, L1 cache state becomes VFCPD (e.g. 15081), since not all the cache data were over written.

[0125] If L1 previous cache state is VFCPD, there are 3 options: (1) If write data uses all of the cache line space, L1 cache state becomes VFD (e.g. 15087). (2) If write data is less than the cache line space and there's no more clean cache line space, L1 cache state becomes VFD (e.g. 15088). (3) If write data is less than the cache line space, and there's still some clean cache area, L1 cache state becomes VFCPD (e.g. 15089).

[0126] If L1 previous cache state is VFD, there is only 1 option: (1) L1 cache state remains at VFD no matter what the write data size is (e.g. 15105).

[0127] If L1 previous cache state is VPCC, there are 3 options: (1) if write data uses all of the cache line space, L1 cache state becomes VFD (e.g. 15115), (2) If write data is less than the cache line space and there's still some free cache line space, L1 cache state becomes VPCD (e.g. 15117). (3) If write data is less than the cache line space, there's no more free cache line space, and there's still some clean cache area, L1 cache state becomes VFCPD (e.g. 15116).

[0128] If L1 cache state is valid but the associated cache block does not contain the correct set of data (for the case of a directly-mapped cache), the firmware initiates freeing of that cache block. If that cache is clean, it can be freed instantly without any flush operation. But if the cache is dirty, firmware gets the associated flash physical location of data from LBA2FlashPBA table and initiates copying of data to L2 cache, which is faster than flushing to rotational drive. Then it updates sub-status to sdram2flash. However, if L2 cache is full, flushing to rotational drive will be initiated instead, and sub-status will be set to sdram2hdd. Flushing of L2 cache to rotational drives can be done in the background when firmware is not busy servicing host commands. After flushing of L1, firmware proceeds with the steps below as if data is not in L1 cache.

[0129] If data is not in L1 cache, firmware requests for available L1 cache. If there is no available L1 cache (L1 cache full), firmware selects an L1 cache victim. If the selected victim is clean, or if it is dirty but consistent with the copy in L2 cache, it is freed instantly. Otherwise, it is flushed to the rotational drive. The cache is invalidated (INVLD) and then assigned to the current command being serviced. Processing of the firmware continues as if the L1 cache state is INVLD (see discussion above).

[0130] After the L1 cache state is updated due to a host-write (H2S), L2 cache state is also updated. For the case when write data occupies only a part of the L1 cache line space and the write data did not cover all the copy in L2 cache, the copy in L2 cache becomes partially valid (VPCC, VPCD), since some parts of the L2 cache copy is invalidated (whether partially or fully dirty previously) (e.g. 15039). For the case when write data occupies only a part of the L1 cache line space and the write data covered all the copy in L2 cache, the copy in L2 cache becomes INVLD (e.g. 15038).

[0131] Upon completion of host2sdram (H2S), firmware sends command status to host and completes the command. But if the write command is of the write FUA (first unit access) type, host2sdram (H2S) and sdram2hdd (S2HDD) is done first before the command completion status is sent to the host. Once all L1 cache data is written to the HDD, L1 cache state becomes clean (VFC, VPCC) (e.g. 20060, 20042).

[0132] In the background, when interface is not busy, firmware initiates flushing of L1 cache to L2 cache, L2 cache to rotational drives, and L1 cache to rotational drives.

[0133] Flushing Algorithm

[0134] For a full-associative cache implementing a write-back policy, flushing is usually done when there is new data to be placed in cache, but the cache is full and the selected victim data to be evicted from the cache is still dirty. Flushing will clean the dirty cache and allow it to be replaced with new data.

[0135] Flushing increases access latency due to the required data transfer from L1 volatile cache to the much slower rotational drive. The addition of L2 nonvolatile cache allows faster transfers from L1 to L2 cache when the L1 cache is full, effectively postponing the flushing operation and allowing it to be more optimized.

[0136] To reduce latency and enhance the cache performance, flushing can be done as a background operation. The LRU and LFU are the usual algorithms used to identify the victim data candidates, but the addition of a Fastest-to-Flush algorithm takes advantage of the random access performance of the L2 cache. It optimizes the flushing operation by selecting dirty victim data that can be written concurrently to the L2 cache, and thus minimizing access time. The overhead brought about by flushing of cache can then be reduced by running concurrent flush operations whenever possible. Depending on processor availability, flushing may be scheduled regularly or during idle times when there are no data transfers between the hybrid storage system and the host or external device.

[0137] Flushing of LI Cache

[0138] Flushing of L1 cache will occur only if copy of data in L1 cache is more updated than the copy in the rotational drive. This may occur, for example, when a non FUA write command hits the L1 cache.

[0139] Flushing of LI cache is triggered by the following conditions:

[0140] 1. Eviction caused by shared cache line--In set-associative or directly-mapped caching mode, if the cache or cache set assigned to a specific address is valid but contains another data, that old data must be evicted to give way to the new data that needs to be cached. If the old data is clean, the cache is simply overwritten. If the old data is dirty, the cache is flushed first before writing the new data.

[0141] 2. L1 cache is full--If an IO command being processed could not request for a cache due to a cache-full condition, a victim must be selected to give way to the current command. If the victim data is clean, the cache is simply overwritten. If the victim data is dirty, the cache is flushed first before writing the new data.

[0142] In either (1) or (2), the victim data will be moved to either L2 cache or rotational drive. Ideally in this case, firmware will move L1 cache data to L2 cache first, since movement to L2 cache is faster. Refer to "Movement from L1 Cache to L2 Cache" for a detailed discussion. In case the L2 Cache is full, firmware will have to move L1 cache data to the rotational drive.

[0143] 3. Interface is not busy--Flushing may also be done in the background when drive is not busy servicing host commands. L1 cache is flushed directly to the rotational drive first, then if number of available L1 caches has reached a pre-defined threshold, data is also copied to L2 cache, in anticipation for more flushing due to L1 cache full condition. Refer to "Movement from L1 Cache to L2 Cache" for a detailed discussion.

[0144] When moving data from L1 cache to rotational drive, the firmware takes advantage of concurrent drive operations by selecting cache lines that can be flushed in parallel among the least recently used candidates. The firmware also takes into consideration the resulting access type to the destination drives. The firmware queues the request according to the values of the destination addresses such that the resulting access is a sequential type.

[0145] Before firmware can initiate the flushing operation from L1 cache to rotational drive, it must check first if there is an ongoing locked cache operation. If there is an ongoing locked cache operation, the firmware will have to wait until the operation is finished before initiating the data transfer. When the current cache sub-state finally becomes NOP, it will be changed back to S2HDD and the L1 cache flushing will start. This change in cache sub-state indicates a new locked cache operation. After the L1 cache is flushed, cache sub-state goes back to NOP to indicate that the cache is ready for another operation.

[0146] FIGS. 20A and 20B lists the valid combinations of L1 and L2 cache states and cache sub-state values that will allow data transfers from L1 cache to rotational drive. It also shows the resulting cache states and cache sub-state values when an L1 cache to rotational drive data transfer is initiated, and when it is completed. The L1 cache to rotational drive data transfer may be initiated by an L1 cache flushing operation or write FUA operation. The succeeding discussion will focus on the L1 cache flushing operation.

[0147] The L1 cache flushing operation may be initiated only for valid but dirty L1 cache states--either VPCD (rows 2006, 2007, 2009, 20011), VFCPD (rows 20018, 20019, 20021, 20023) or VFD (rows 20024, 20025, 20028). Upon completion of the flushing operation, the L1 cache is declared clean. If the flushing operation was not completed, the cache page bitmap is updated to reflect the dirty bytes that were cleaned. The L2 cache state and cache page bitmap are also updated accordingly.

[0148] In the first case 2006 L1 cache state is VPCD and L2 cache state is INVLD. An example case is when the partially cached data in L1 was updated by a write operation and is now inconsistent with the data in the rotational drive, but there is no copy yet in the L2 cache. If all the dirty data are flushed 20042, L1 cache state is changed to VPCC to indicate that the partially cached data is now consistent with data in the rotational drive. However if not all dirty bytes were flushed 20043, L1 cache state stays at VPCD, with the cache page bitmap updated to reflect the dirty bytes that were cleaned. L2 cache state stays INVLD.

[0149] In the second case 2007 both L1 and L2 cache state is VPCD. An example case is when the partially cached dirty data in L1 was initially evicted to L2, then a cache miss happens and data is partially cached in L1. L1 was then updated by a write operation. This will also occur when the partially cached dirty data in L1 was initially evicted to L2, then an L2 cache hit occurs and L2 data is copied back to L1. L1 and L2 can have the same data, but they can also have different data if the L1 cache is subsequently updated by a write operation. If L1 and L2 have the same data and all the dirty data are flushed 20044, both L1 and L2 cache states are changed to VPCC to indicate that the partially cached data is now consistent with data in the rotational drive. If L1 and L2 have the same data but not all dirty bytes in L1 were flushed 20045, L1 and L2 cache state stays at VPCD, with the cache page bitmap updated to reflect which pages were cleaned. If L1 and L2 have different data and all the dirty data are flushed 20046, L1 cache state is changed to VPCC to indicate that the partially cached data is now consistent with data in the rotational drive. Since L2 cache contains different data, L2 cache state stays at VPCD. If L1 and L2 have different data and not all dirty bytes were flushed 20047, L1 cache state stays at VPCD but the cache page bitmap is updated to reflect which dirty bytes were cleaned. Since L2 cache contains different data, L2 cache state stays at VPCD.

[0150] In the third case 2009, L1 cache state is VPCD and L2 cache state is VFCPD. An example case is when the fully cached dirty data in L1 was initially evicted to L2, then an L2 cache hit occurs and some L2 cache data is copied back to LI. LI dirty data can initially be the same as L2 dirty data, but they can have different dirty data if the L1 cache is subsequently updated by a write operation. If all the dirty data in L1 and L2 are flushed 20049, L1 cache state is changed to VPCC and L2 cache state is changed to VFC to indicate that cached data in both locations are now consistent with data in the rotational drive. If not all dirty bytes in L1 were flushed 20050, L1 cache state stays at VPCD and L2 cache state stays at VFCPD with the cache page bitmap updated to reflect which dirty bytes were cleaned. If all dirty bytes in L1 were flushed but does not cover all the dirty bytes in L2 20051, only the L1 cache state is changed to VPCC. L2 cache state stays at VFCPD with cache page bitmap updated to reflect which dirty bytes were cleaned.

[0151] In the fourth case 20011, L1 cache state is VPCD and L2 cache state is VPCC. An example case is when clean, partially cached data in L1 was initially evicted to L2, then an L2 cache hit occurs, L2 cache data is copied back to L1 and a subsequent write operation updated the data in L1 cache. This will also occur when a cache miss occurs, data is partially cached in L1, and a subsequent write operation updated the data in L1 cache. If all the dirty data are flushed 20053, L1 cache state is changed to VPCC to indicate that the partially cached data is now consistent with data in the rotational drive. However if not all dirty bytes were flushed 20054, L1 cache state stays at VPCD with the cache page bitmap updated to reflect which dirty bytes were cleaned. In both cases, L2 cache state stays at VPCC since it is not affected by the L1 cache flushing operation.

[0152] In the fifth case 20018, L1 cache state is VFCPD and L2 cache state is INVLD. An example case is when fully cached data in L1 is updated by a write operation and is now inconsistent with data in the rotational drive, but there is no copy yet in the L2 cache. If all the dirty data are flushed 20061, L1 cache state is changed to VFC to indicate that the fully cached data is now consistent with data in the rotational drive. If not all dirty bytes were flushed 20062, L1 cache state stays at VFCPD with the cache page bitmap updated to reflect which dirty bytes were cleaned. L2 cache state stays INVLD.