Handheld or Wearable Device for Recording or Sonifying Brain Signals

Parvizi; Josef ; et al.

U.S. patent application number 16/700578 was filed with the patent office on 2020-05-07 for handheld or wearable device for recording or sonifying brain signals. The applicant listed for this patent is The Board of Trustees of the Leland Stanford Junior University. Invention is credited to Christopher D. Chafe, Xinghuan Chao, Ronald C. Eddington, JR., Josef Parvizi.

| Application Number | 20200138320 16/700578 |

| Document ID | / |

| Family ID | 70458193 |

| Filed Date | 2020-05-07 |

View All Diagrams

| United States Patent Application | 20200138320 |

| Kind Code | A1 |

| Parvizi; Josef ; et al. | May 7, 2020 |

Handheld or Wearable Device for Recording or Sonifying Brain Signals

Abstract

A handheld device for sonifying electrical signals obtained from a subject is provided. The device can utilize at least one of several operations including (but not limited) digitizing signals from electrodes, adjusting the signals based on accelerometer input, filtering the signals, conditioning the signals according to conditioning parameters, modulating the signal according to sound synthesis parameters, and generating sound from the representations of the signals to accomplish sonification. The device can include an analog-to-digital (A/D) converter to digitize the one or more electrical signals and a processor that receives the one or more digitized electrical signals and produces a representation of an acoustic signal. The device further includes a speaker system that sonifies the representation of the acoustic signal.

| Inventors: | Parvizi; Josef; (Palo Alto, CA) ; Chafe; Christopher D.; (Woodside, CA) ; Chao; Xinghuan; (Palo Alto, CA) ; Eddington, JR.; Ronald C.; (Los Gatos, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 70458193 | ||||||||||

| Appl. No.: | 16/700578 | ||||||||||

| Filed: | December 2, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15159759 | May 19, 2016 | |||

| 16700578 | ||||

| 62163637 | May 19, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 5/04017 20130101; A61B 5/4094 20130101; A61B 2562/0219 20130101; A61B 5/0478 20130101 |

| International Class: | A61B 5/04 20060101 A61B005/04; A61B 5/0478 20060101 A61B005/0478; A61B 5/00 20060101 A61B005/00 |

Claims

1. A method for sonifying and playing bioelectrical signals, the method comprising: digitizing at least one bioelectrical signal; performing a set of operations to produce a sonification of the at least one bioelectrical signal, the set of operations including: (i)conditioning the digitized at least one bioelectrical signal by: (a) rejecting signal components with an amplitude below a threshold, (b) scaling signal components above the threshold to create a fixed range signal, and (c) compressing the fixed range signal to raise the prominence of small features, and (ii) producing an audio signal by using the conditioned and digitized at least one bioelectrical signal to modulate sound synthesis parameters; and generating sound based upon the audio signal using a speaker system.

2. The method of claim 1, wherein the at least one bioelectrical signal comprises at least one electroencephalogram signal (EEG) that is indicative of brain activity of a subject.

3. The method of claim 2, further comprising filtering non-seizure-related brain wave features from the at least one EEG signal.

4. The method of claim 3, wherein the non-seizure-related brain wave features are filtered using a dual stage filter comprising a first stage with a DC-blocking high pass filter and a second stage with a bandpass filter with a passband of 0.1-3.0 Hz to 5.0-15.0 Hz.

5. The method of claim 3, wherein at least one of DC-bias, AC line contamination, and non-seizure-related brain wave features are related by the dual stage filter.

6. The method of claim 1, wherein the sonification of the at least one bioelectrical signal is performed in real-time.

7. The method of claim 1, wherein the set of operations to condition the digitized at least one bioelectrical signal further includes boosting said signal to enhance contrast.

8. The method of claim 7, wherein said signal is boosted by taking the power-law exponent of said signal.

9. The method of claim 8, wherein absolute value rectification is applied to the at least one digitized signal prior to taking the power-law exponent.

10. The method of claim 7, wherein the set of operations to condition the digitized at least one bioelectrical signal further includes applying absolute value signal rectification to said signal to double signal frequency.

11. The method of claim 7, wherein said signal is boosted prior to (a) rejecting signal components with the amplitude below the threshold, (b) scaling signal components above the threshold to create the fixed range signal, and (c) compressing the fixed range signal to raise the prominence of small features.

12. The method of claim 1, wherein the sound synthesis parameters are modulated by continuously modulating vocal sound parameters according to at least one of pitch, loudness, and vowel quality.

13. The method of claim 1, wherein producing the audio signal comprises: performing a formant pitch mapping on the conditioned and digitized at least one bioelectrical signal using a midi-to-frequency function; and performing an inverse pitch frequency mapping on the at least one bioelectrical signal using an interpolated look-up table for the inverse of the pitch frequency.

14. The method of claim 1, wherein the fixed range signal is compressed by a factor of between 1.5 and 3.0.

15. The method of claim 1, wherein the audio signal is produced by performing at least one process selected from the group consisting of: applying a pitch offset in the range of 50-150 Hz; performing pitch scaling to a pitch scale in the range of 110-440 Hz; applying an amplitude offset in the range of 0.0001-0.01; performing amplitude scaling in the range of 0.05-2.0; applying a vowel offset in the range of 0.0-1.0; performing vowel scaling in the range of 0.05-2.0; and mapping the at least one digitized signal to a vowel lookup table comprising the sounds: "iii", "ahh", "ehh", "eee", "ohh", and "ooo".

16. The method of claim 14, wherein the audio signal is produced by performing the processes of: applying a pitch offset in the range of 50-150 Hz; performing pitch scaling to a pitch scale in the range of 110-440 Hz; applying an amplitude offset in the range of 0.0001-0.01; performing amplitude scaling in the range of 0.05-2.0; applying a vowel offset in the range of 0.0-1.0; performing vowel scaling in the range of 0.05-2.0; and mapping the at least one digitized signal to a vowel lookup table comprising the sounds: "iii", "ahh", "ehh", "eee", "ohh", and "ooo".

17. The method of claim 1, further comprising: detecting occurrence of a seizure by ear based upon the sound generated from the speaker system; and administering treatment in response to the detection of a seizure to interrupt the seizure.

18. The method of claim 1, wherein the at least one bioelectrical signal comprises at least one electrocardiogram signal (ECG) that is indicative of cardiac activity of a subject.

19. The method of claim 1, wherein the at least one bioelectrical signal comprises at least one electromyogram signal (EMG) that is indicative of muscle activity of a subject.

20. A system for sonifying and playing bioelectrical signals, the system comprising: an input port configured to receive the at least one bioelectrical signal; a processor configured to digitize the at least one bioelectrical signal and perform the set of operations to produce the sonification of the at least one bioelectrical signal according to claim 1; and a speaker system configured to generate sound according to claim 1.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/159,759 filed May 19, 2016, which is incorporated herein by reference. U.S. patent application Ser. No. 15/159,759 claims priority from U.S. Provisional Patent Application 62/163,637 filed May 19, 2015, which is incorporated herein by reference.

FIELD OF THE INVENTION

[0002] The disclosed embodiments relate generally to the field of sonifying signals detected from a living subject (e.g., electrical signals indicative of brain activity and/or heart activity), and in particular, to a handheld or wearable device for sonifying signals from a living subject.

BACKGROUND OF THE INVENTION

[0003] The ability to measure signals from a living subject (e.g., relating to the living subject's bodily functions) is beneficial for medical and diagnostic applications. For example, from a diagnostic point of view, measuring brain signals helps to ascertain brain activity related to abnormal brain function, to monitor spatial and/or temporal progression of brain disease, to aid surgical or nonsurgical intervention by localizing disease-sites in the brain, and to monitor brain activity of a healthy subject or a subject of unknown health status when the subject experiences a variety of stimuli and lack of stimuli.

[0004] However, the use of electrical signals received from, for example, the brain (e.g., electroencephalography (EEG) signals) often requires a great deal of resources. Conventional EEG tests are typically performed at specialized centers (e.g., tertiary care centers), by specialized technicians, and the results are interpreted by specialized doctors (e.g., neurologists). Thus, conventional EEG is not typically available to, e.g., first responders in an acute emergency. Instead, the first responders must rely on external signs (e.g., level of consciousness or shaking) when deciding whether a patient may have a neurological problem. Because conventional EEG is beyond the resources of even some hospitals, a patient with suspected neurological problems will often be taken to a specialized center. Even at a specialized center, it may take hours to obtain EEG results and have the results interpreted by a neurologist.

[0005] Every year in the United States alone, about 10 million people are seen in emergency departments (ED) for evaluation of altered mental state (AMS). Additionally, 5 million patients with critical conditions are admitted to intensive care units (ICU). Some of these are admitted through EDs but a majority of the patients are either transferred directly from other hospitals or are cases with postsurgical complications. In these patients, electroencephalography (EEG) is the gold-standard test for detecting seizures. While there are many causes of AMS, seizures are one of the most frequently suspected. About 10-20% of ICU patients are subject to seizures, and 90% of seizures in ICUs are non-convulsive. Where EEG is available, physicians order it to rule in/out ongoing non-convulse status epilepticus (NCSE). If the diagnosis of NCSE is made quickly, it will precipitate appropriate acute management, and will reduce unnecessary diagnostic procedures, length of hospitalization, and morbidity.

[0006] In the US alone, about 20,000 patients die of NCSE. These patients have other severe co-morbidities but ongoing non-convulsive seizures will be a significant contributing factor to their extremely high mortality rate. In fact, NCSE has a mortality rate higher than the mortality rate of convulsive status epilepticus partly because of lack of obvious behavioral signs of seizures (e.g., convulsions), which delays detection and treatment. EEG is the only way to detect ongoing seizures. Early diagnosis of NCSE is life-saving for these patients and every hour of delay in diagnosis counts. Mortality of patients with NCSE treated with a delay of 20 hours is twice as high as those treated within 30 minutes.

[0007] Because EEG is one of the oldest diagnostic tools in neurology, and because it has shown promise in saving lives, one would assume that it is widely integrated into medical practice everywhere and one might think that it is equally available to all populations at risk. This is unfortunately not the case. Inequality of access exists even in the United States, and at a wider scale on the global stage. Many hospitals in the US cannot offer an EEG platform. In addition to purchasing expensive EEG platforms, a given hospital has to hire certified EEG techs and neurologists with training in clinical electrophysiology and maintain an on-call schedule leading to a substantial management cost. For hospitals without a large number of neurological cases, this is simply not a wise investment. If they have patients with AMS in whom they suspect seizures, the patient is transferred to a larger tertiary hospital. As a result, a large number of patients may be held in NCSE condition before they are transferred.

[0008] In hospitals with EEG capability, acquiring an EEG may take hours. There may also be a longer delay from the time the EEG is acquired to the time the ordering physician receives the diagnostic information. If the EEG tech is not on duty in the hospital premises, it may take even longer. There is also a significant financial waste in using the EEG platform where it is available (especially in the United States). Many more EEGs are ordered and acquired than the number of seizures detected by these EEGs. In a retrospective review of 300 EEGs, only 1% of EEGs acquired in the ED had ongoing seizures, yet more than 95% of EEGs were ordered to confirm or rule out seizures.

[0009] The current EEG practice in the outpatient ambulatory setting also suffers from significant limitations. Patients with paroxysmal events (e.g., transient confusional state or loss of awareness) are referred to 12-48 hours of EEG monitoring at home. The recording may yield useful information only if it captures one of the infrequent paroxysmal events. Moreover, patients with dementia cannot even be tested with continuous EEG for a long period of time given their inability to follow instructions. Patients with dementia have increased risk of subclinical seizures which may in part contribute to worsening of their memory loss. About 3 million people in the United States suffer from unprovoked seizures whose occurrence is unpredictable. When patients with seizure do not recover fully, there is always a concern that they may be having indolent subclinical seizures. Parents of infants and children with such seizures take them for evaluation in local hospitals. By the time they reach the hospital, seizures may have stopped.

[0010] Traditional approaches to measuring signals from a living subject (e.g., location-specific brain signals, or electrocardiography (ECG) signals corresponding to heart activity) typically involve recording and visually displaying electrical signals acquired from the brain or other organs. Moreover, these approaches are typically used for diagnostic or scientific purposes. When represented in visual or graphical form, subtle features and attributes--and subtle changes in features and attributes--of the electrical signals may not be easily discernible. However, when sonified or converted to auditory form, these subtle features and attributes can become more apparent. Furthermore, sonification methodologies that transform the signals acquired from the living subject into vocal patterns and vocal parameters--and changes in vocal patterns and vocal parameters--that resemble a human voice make it easier to discern, upon auditory inspection, subtleties in the underlying electrical signals that correspond to bodily functions.

[0011] Additionally, traditional approaches to measuring signals from a living subject have not focused on applications beyond diagnostics and scientific research. To that end, a method of sonifying signals obtained from a living subject is provided. In particular, in some embodiments, the method transforms signals acquired from the living subject into vocal patterns and vocal parameters that can be used for applications in entertainment as well as medical diagnostics and research.

[0012] Therefore, there is a need for devices and methods that increase the availability of the diagnostic benefits of analyzing electrical signals received from a living subject's brain. In addition, traditional methods of measuring and analyzing signals from a living subject have not focused on sonification (e.g., aural presentation) of the signals.

SUMMARY OF THE INVENTION

[0013] Systems and methods for sonification of electroencephalogram signals using sonification devices in accordance with embodiments of the invention are disclosed herein. In one embodiment, a device for sonifying signals includes an input port configured to receive at least one electroencephalogram signal produced by a plurality of electrodes, the electroencephalogram signals are indicative of brain activity, an analog-to-digital converter to digitize the at least one electroencephalogram signal; an accelerometer to indicate mechanical movement affecting the at least one electroencephalogram signal; a filter, where the filer is configured to filter non-seizure-related brain wave features from the at least one digitized signal; a processor that receives the at least one digitized signals and is directed by machine readable instructions to produce a sonification of the at least one electroencephalogram signal by performing a set of operations in real time. The set of operations include conditioning the at least one digitized signals according to conditioning parameters, and modulating the at least one digitized signals according to sound synthesis parameters to produce an audio signal. The device for sonifying signals further includes a speaker system configured to generate sound based upon an audio signal output by the processor. The processor of the device interrupts sonification of the at least one electroencephalogram signal when the accelerometer indicates mechanical movement affecting the at least one electroencephalogram signal above a particular threshold. The processor of the device resumes sonification of the at least one electroencephalogram signal when the accelerometer indicates mechanical movement affecting the at least one electroencephalogram signal has returned below the particular threshold.

[0014] In a further embodiment, the filter filters the at least one digitized signal utilizing filter bandpass cutoffs as part a dual-stage filter. In another embodiment, at least one of DC-bias, AC line contamination, and non-seizure-related brain wave features are rejected by the filter bandpass cutoffs. In a still further embodiment, the dual-stage filter comprises a first stage with a first-order pole-zero infinite impulse response DC-blocking filter and a second stage with a finite impulse response filter.

[0015] In still another embodiment, conditioning the at least one digitized signal brings the at least one digitized signals into range for sonification and enhances contrasts within the at least one digitized signal. In a yet further embodiment, modulating the at least one digitized signals according to sound synthesis parameters further includes continuously modulating vocal sound parameters according to sound synthesis parameters including at least one of pitch, loudness and vowel quality. In a further embodiment again, the processor sonifies the representation of the at least one electroencephalogram signal using at least one lookup table that consolidates formant pitch mapping and inverse pitch frequency mapping.

[0016] In another embodiment again, a device for sonifying signals includes an input port configured to receive at least one electroencephalogram signal produced by a plurality of electrodes, where the electroencephalogram signals are indicative of brain activity, an analog-to-digital converter to digitize the at least one electroencephalogram signal, an accelerometer to indicate mechanical movement affecting the at least one electroencephalogram signal, a filter, where the filer is configured to filter non-seizure-related brain wave features from the at least one digitized signal, a processor that receives the at least one digitized signals and is directed by machine readable instructions to produce a sonification of the at least one electroencephalogram signal by performing a set of operations in real time. The set of operations includes conditioning the at least one digitized signal by bringing the at least one digitized signal into a predetermined range for sonification and enhancing contrast between components within the at least one digitized signal, and modulating the at least one digitized signal by continuously modulating vocal sound parameters according to sound synthesis parameters including at least one of pitch, loudness and vowel quality to produce an audio signal. The device includes a speaker system configured to generate sound based upon an audio signal output by the processor. The processor interrupts sonification of the at least one electroencephalogram signal when the accelerometer indicates mechanical movement affecting the at least one electroencephalogram signal above a particular threshold. The processor resumes sonification of the at least one electroencephalogram signal when the accelerometer indicates mechanical movement affecting the at least one electroencephalogram signal has returned below the particular threshold.

[0017] In another embodiment again, a method for sonifying signals using a sonification device is provided, the method includes receiving at least one electroencephalogram signal produced by a plurality of electrodes using an input port of a sonification device, where the electroencephalogram signals are indicative of brain activity, digitizing the at least one electroencephalogram signal using an analog-to-digital converter of the sonification device, receiving an indication of mechanical movement affecting the at least one electroencephalogram signal using an accelerometer of the sonification device, filtering non-seizure-related brain wave features from the at least one digitized signal using a filter of the sonification device, producing a sonification of the at least one electroencephalogram signal by performing a set of operations in real time using a processor of the sonification device. The set of operations includes conditioning the at least one digitized signals according to conditioning parameters, and modulating the at least one digitized signals according to sound synthesis parameters to produce an audio signal. The method further includes generating sound based upon an audio signal output using a speaker system of the sonification device, interrupting sonification of the at least one electroencephalogram signal when the accelerometer indicates mechanical movement affecting the at least one electroencephalogram signal above a particular threshold, and resuming sonification of the at least one electroencephalogram signal when the accelerometer indicates mechanical movement affecting the at least one electroencephalogram signal has returned below the particular threshold.

[0018] In another additional embodiment, the filter of the sonification device filters the at least one digitized signal utilizing filter bandpass cutoffs as part a dual-stage filter. In a still yet further embodiment, at least one of DC-bias, AC line contamination, and non-seizure-related brain wave features are rejected by the filter bandpass cutoffs. In still yet another embodiment, the dual-stage filter comprises a first stage with a first-order pole-zero infinite impulse response DC-blocking filter and a second stage with a finite impulse response filter.

[0019] In a still further embodiment again, conditioning the at least one digitized signal brings the at least one digitized signals into range for sonification and enhances contrasts within the at least one digitized signal. In still another embodiment again, modulating the at least one digitized signals according to sound synthesis parameters further comprises continuously modulating vocal sound parameters according to sound synthesis parameters including at least one of pitch, loudness and vowel quality. In a still further additional embodiment, the processor sonifies the representation of the at least one electroencephalogram signal using at least one lookup table that consolidates formant pitch mapping and inverse pitch frequency mapping.

BRIEF DESCRIPTION OF THE DRAWINGS

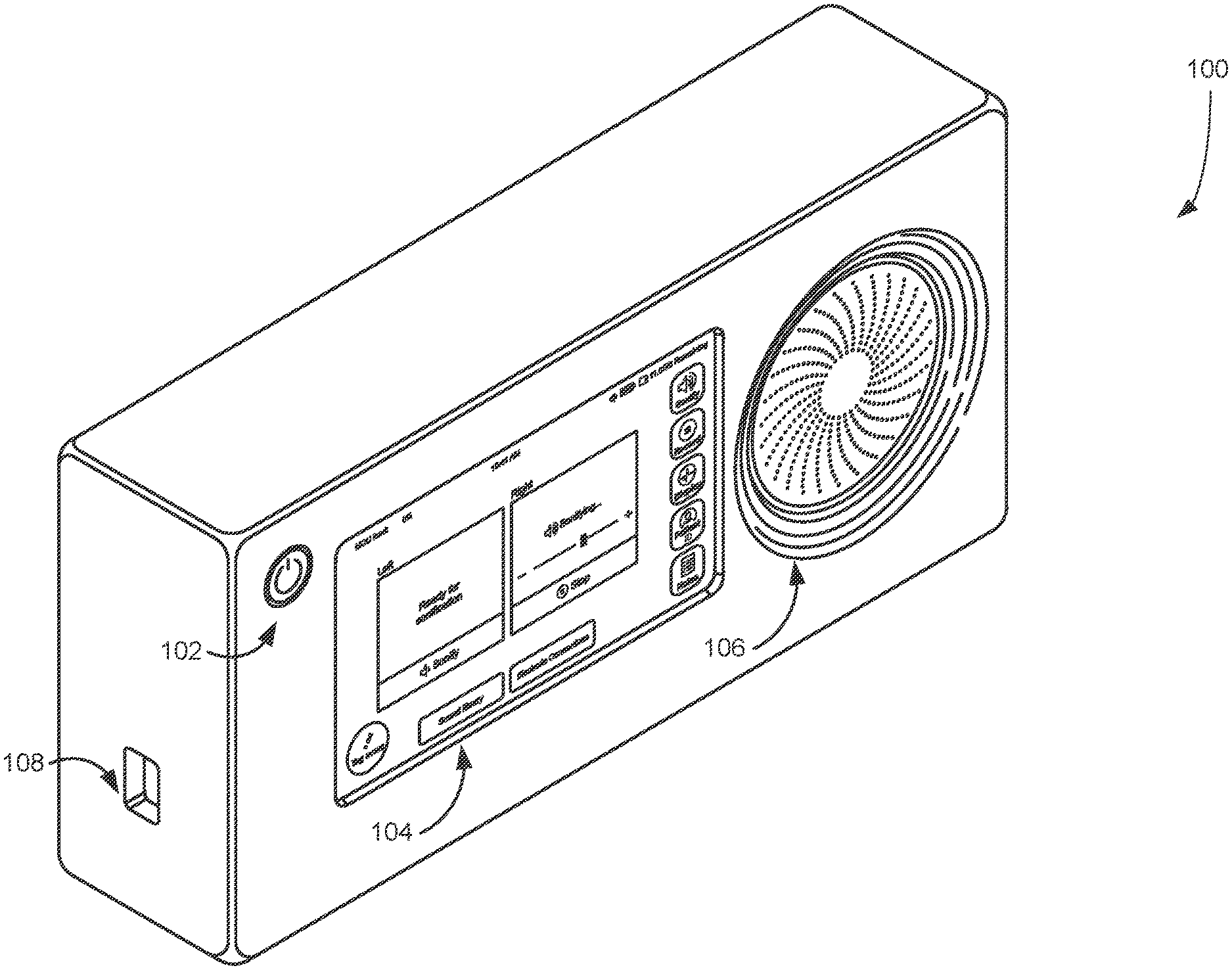

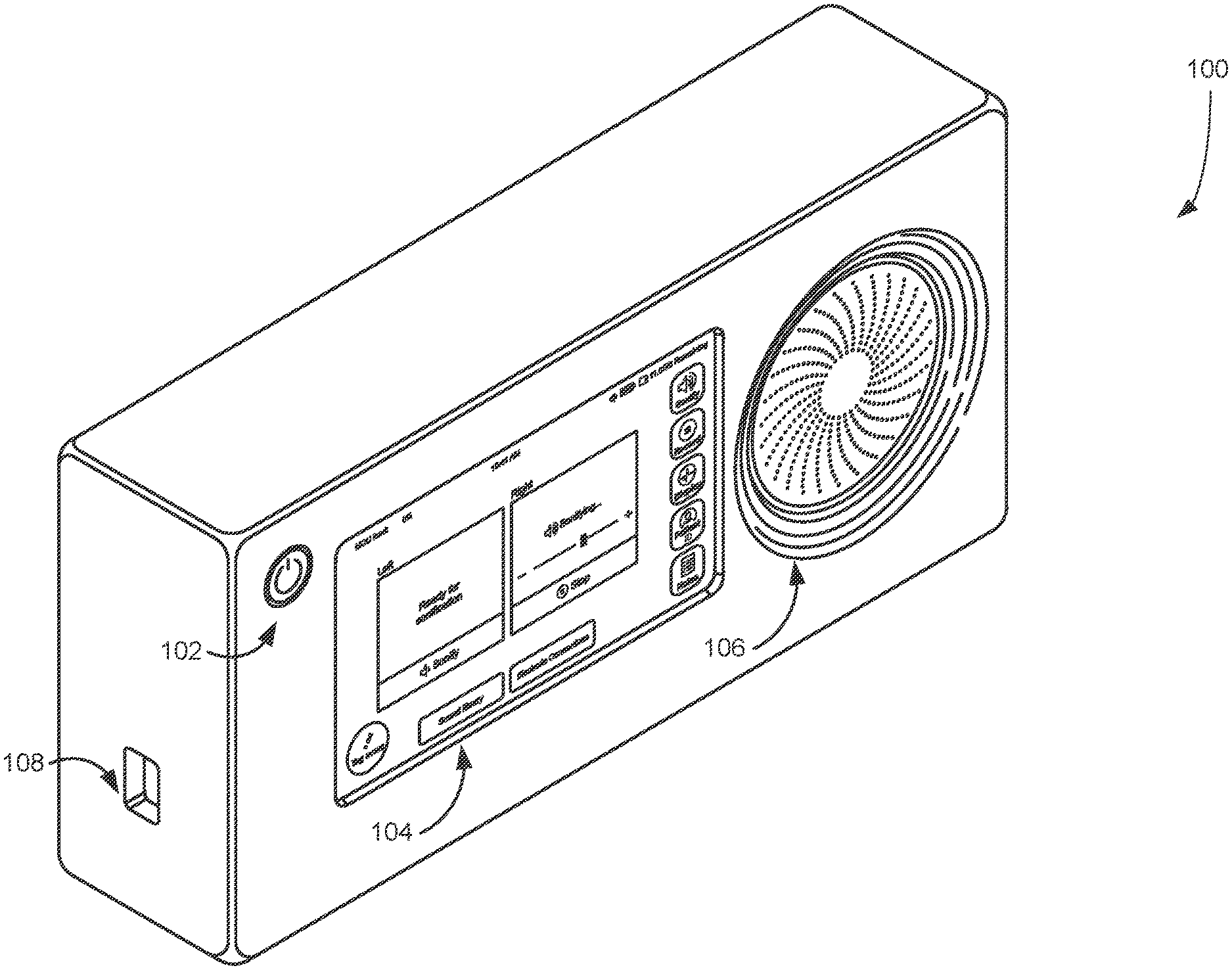

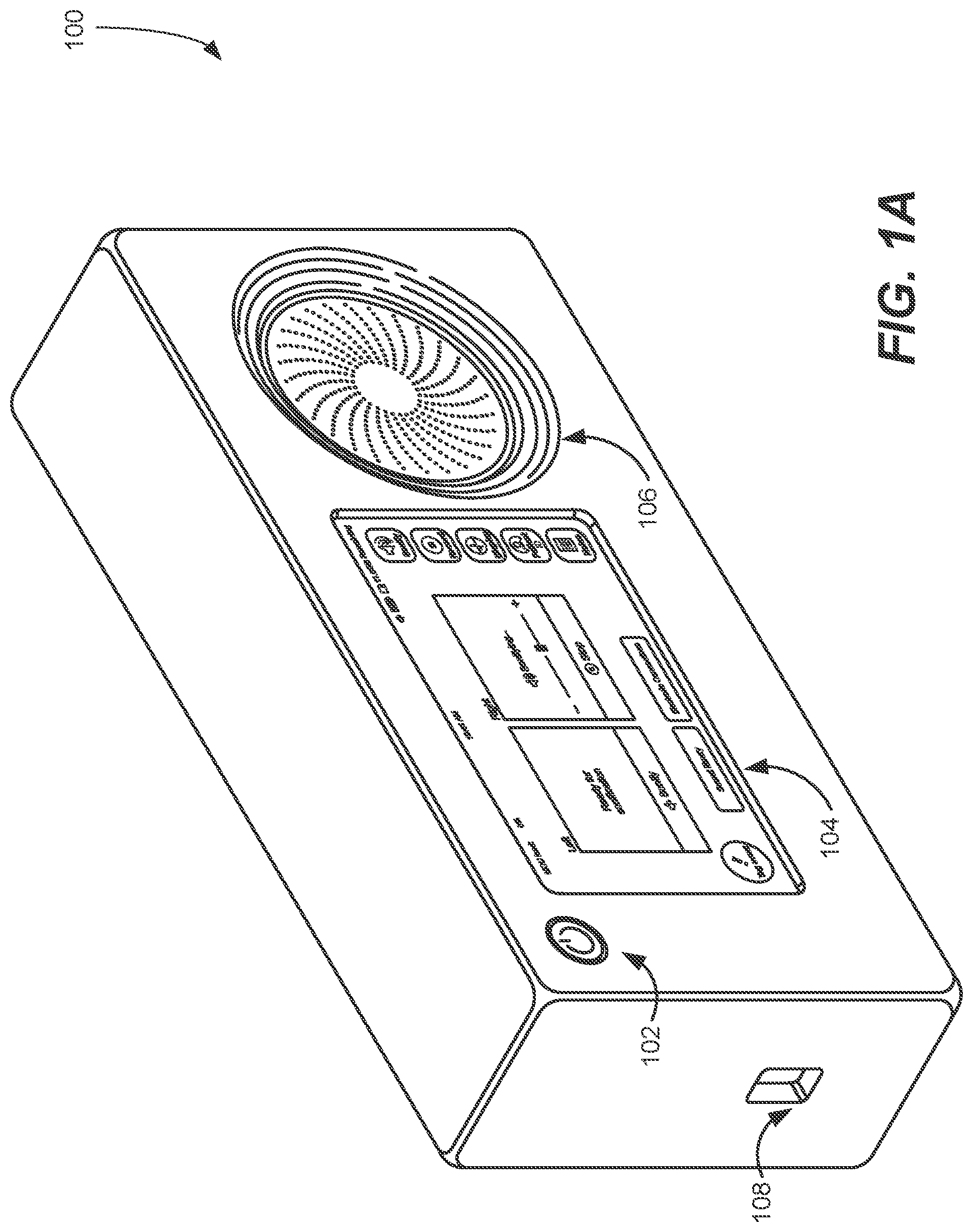

[0020] FIGS. 1A-1B are illustrations of a handheld device for sonifying electrical signals obtained from a subject, in accordance with some embodiments of the invention.

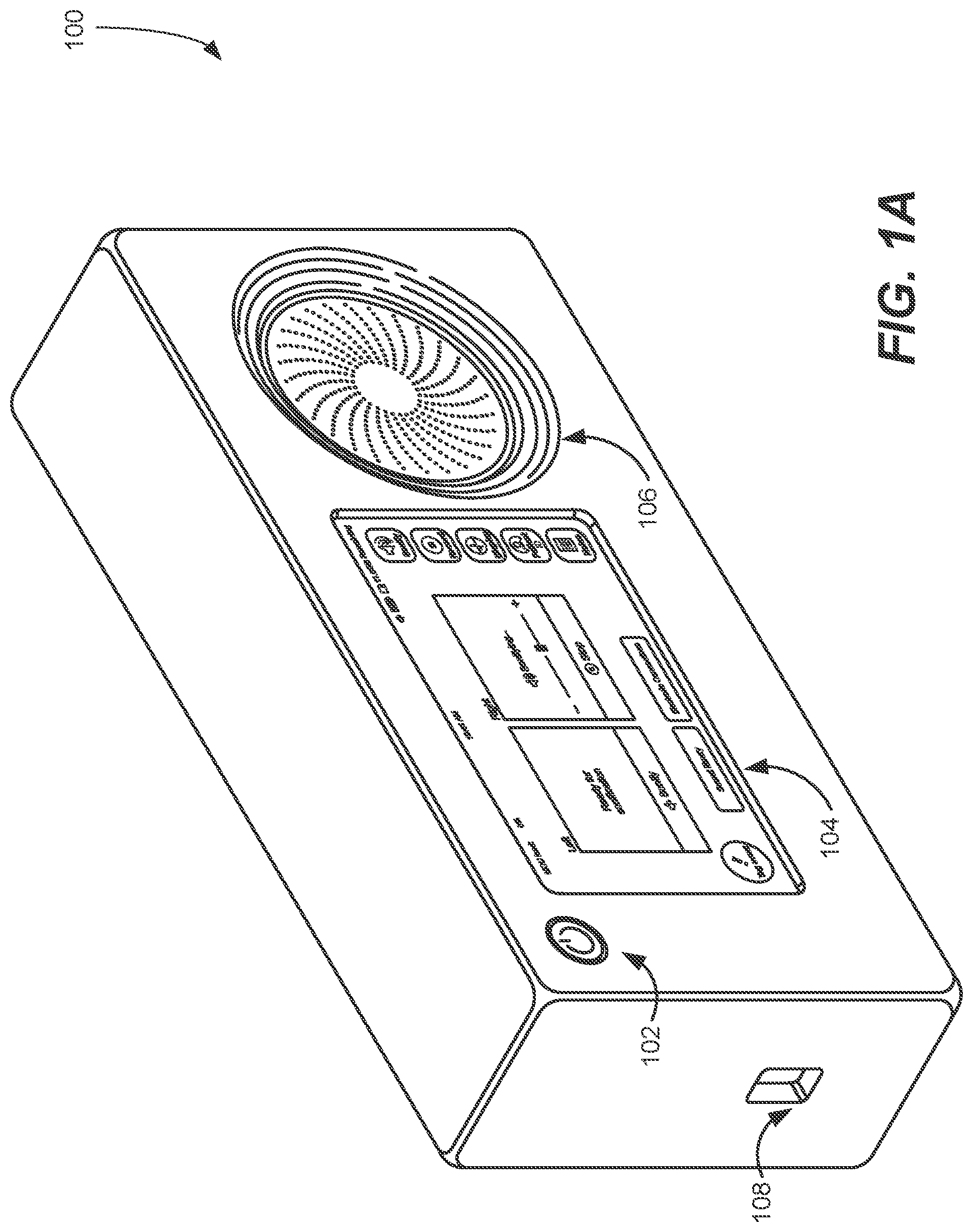

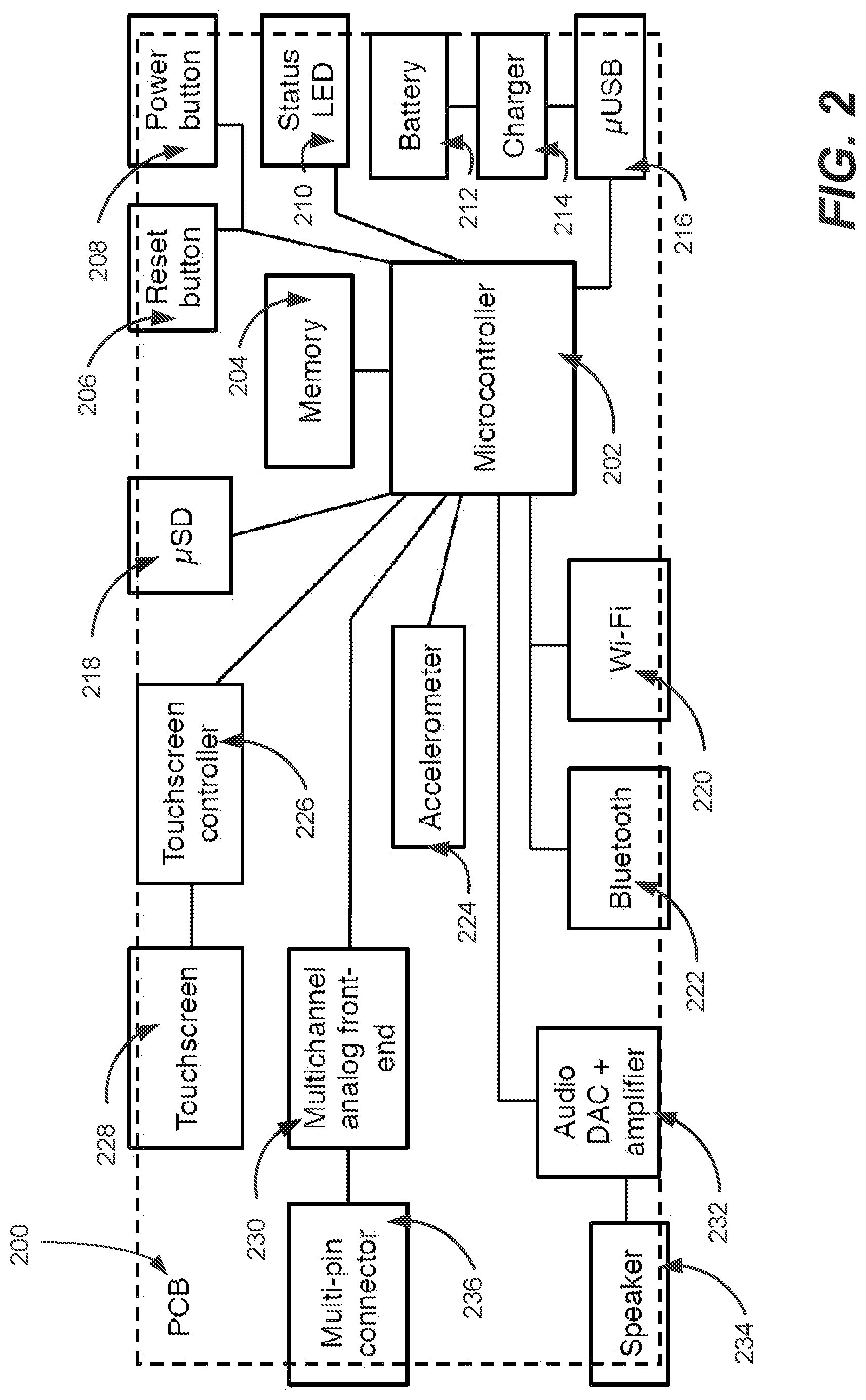

[0021] FIG. 2 is a circuit diagram for a sonification device, in accordance with some embodiments of the invention.

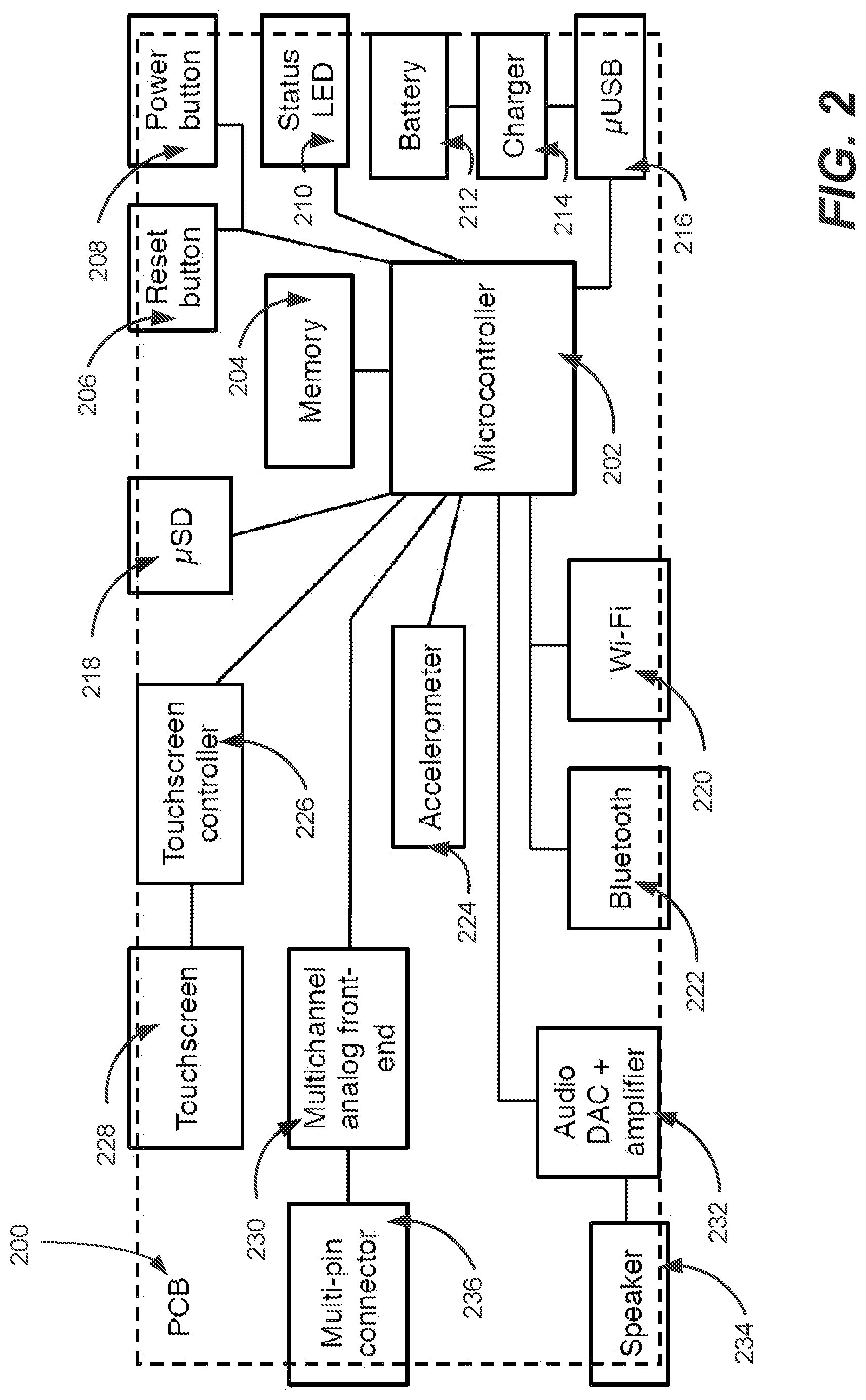

[0022] FIG. 3 is a flow chart illustrating a process for sonifying signals capable of being performed by a sonification device in accordance with some embodiments of the invention.

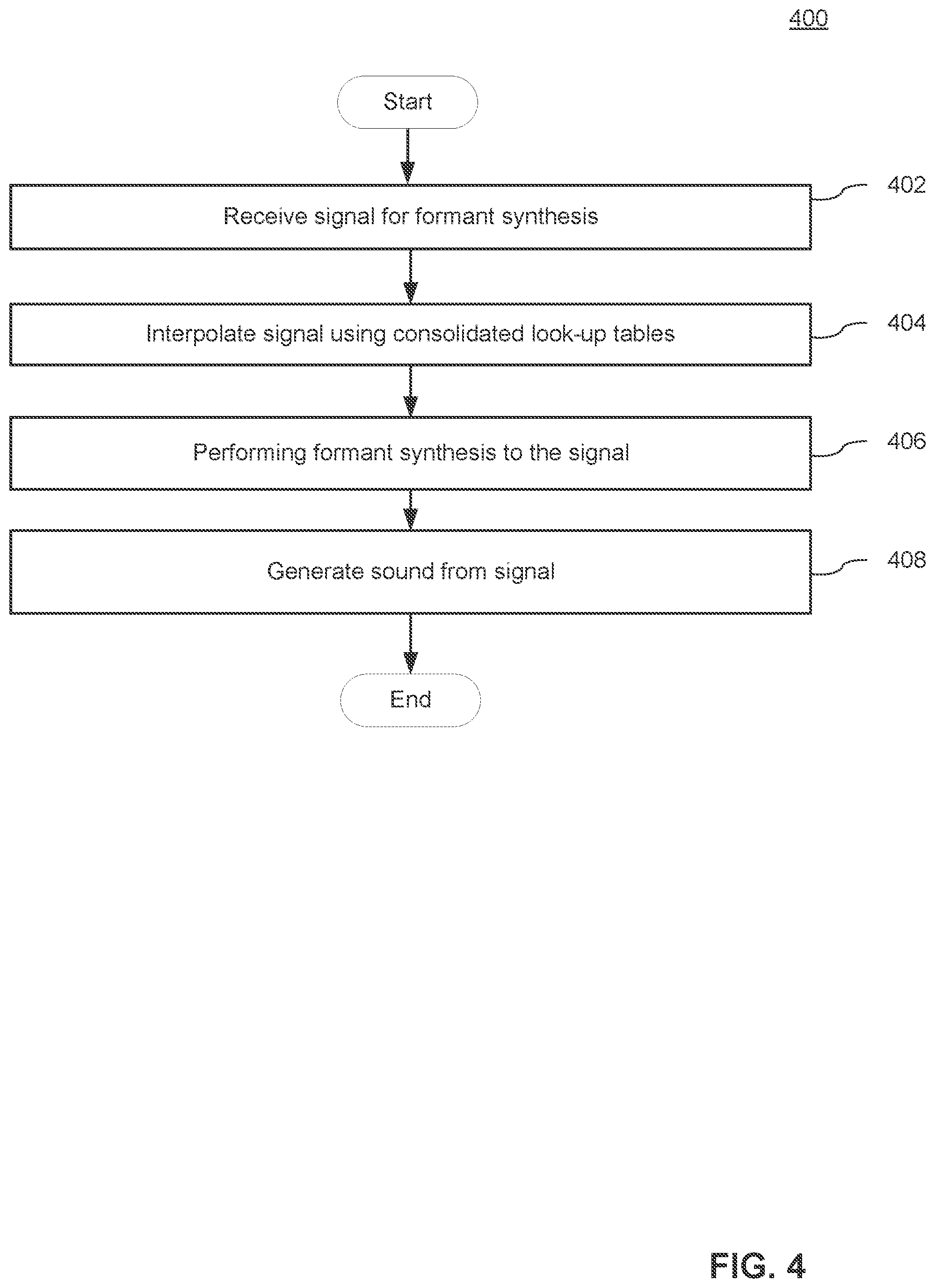

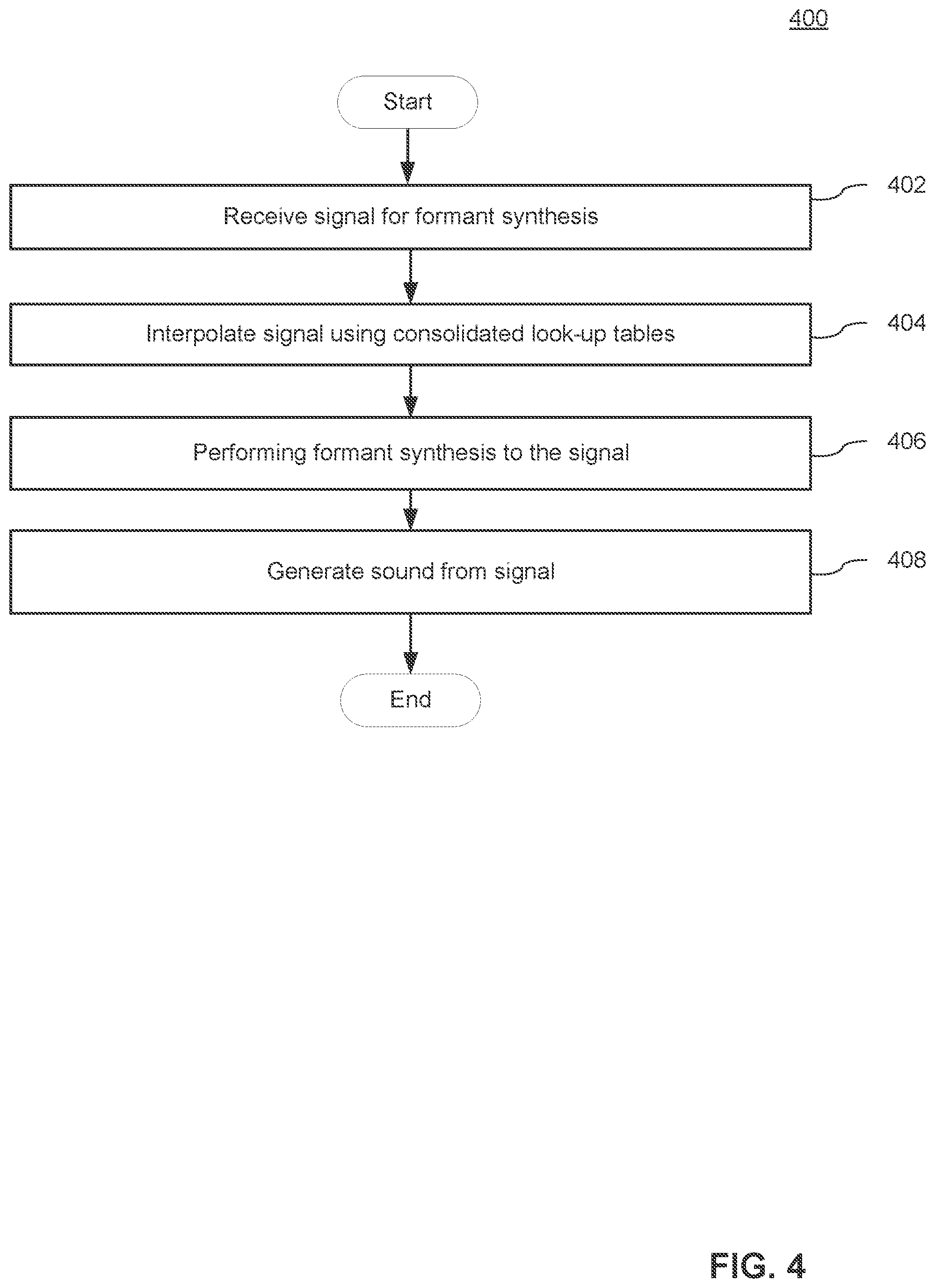

[0023] FIG. 4 is a flow chart illustrating a process for sonifying signals that takes advantage of certain hardware optimizations in accordance with some embodiments of the invention.

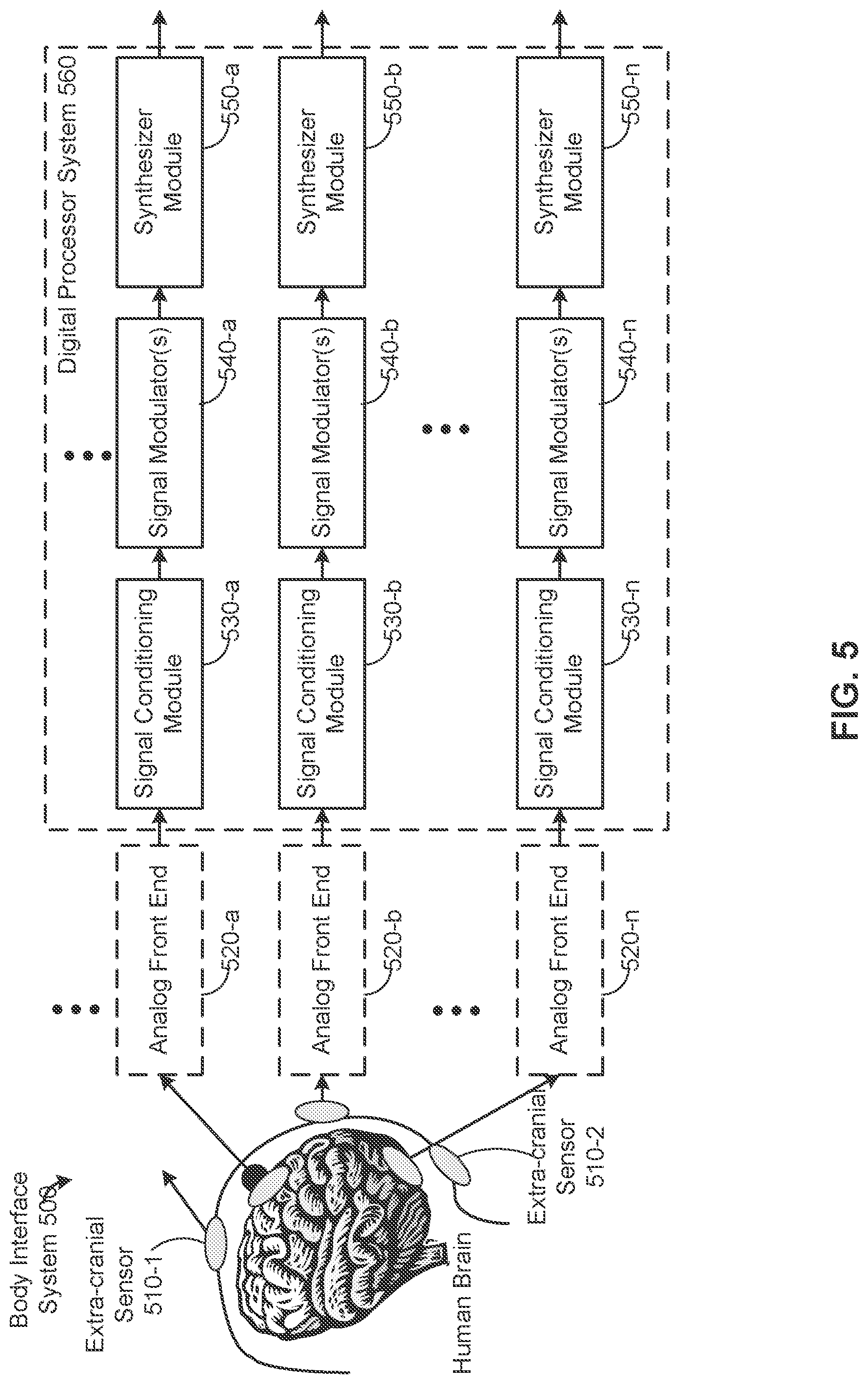

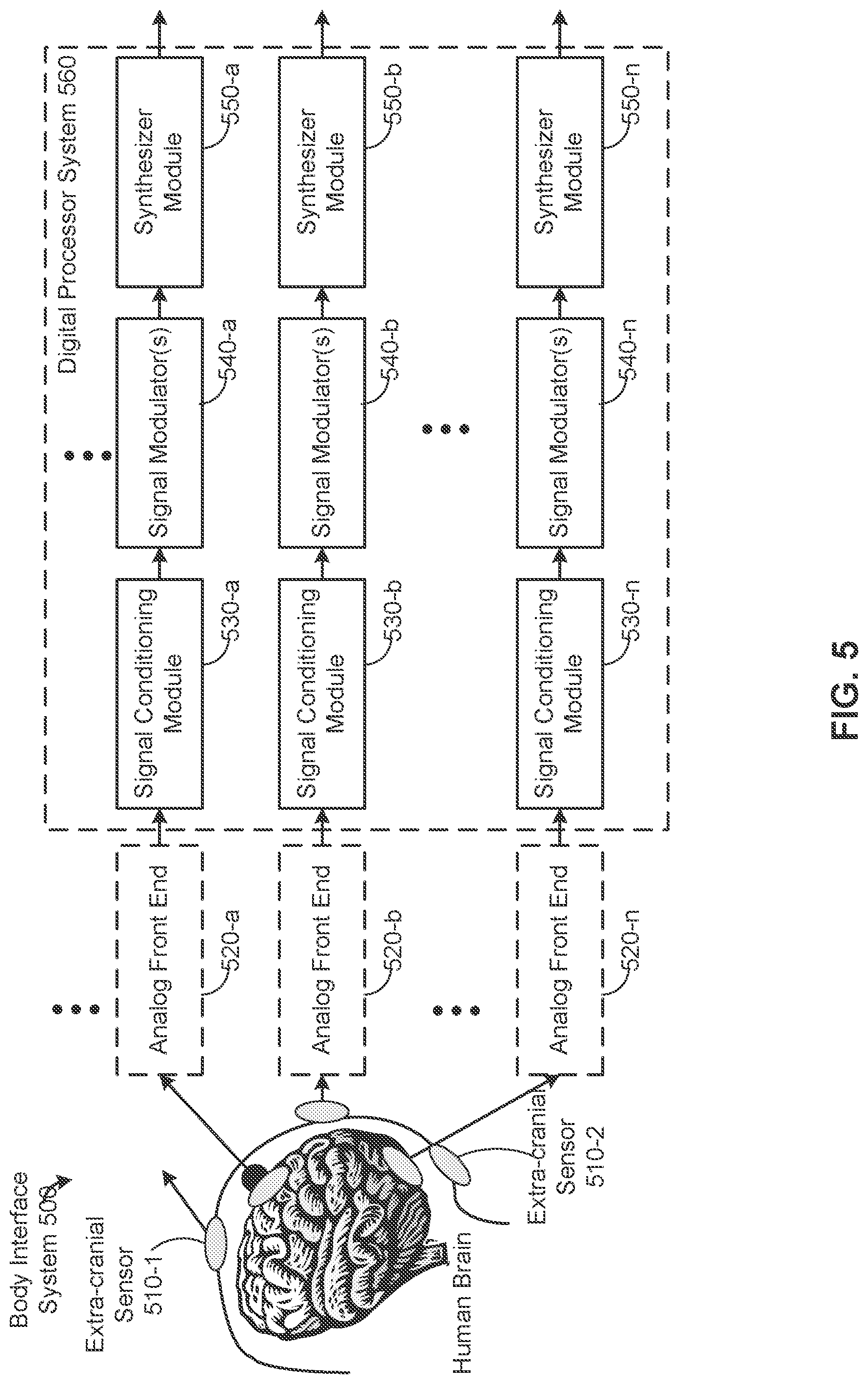

[0024] FIG. 5 illustrates a body interface system for acquiring and processing signals from a living subject, in accordance with some embodiments of the invention.

[0025] FIG. 6A is a block diagram illustrating an analog front end used for pre-processing electrical signals obtained from a living subject, in accordance with some embodiments of the invention.

[0026] FIG. 6B is a block diagram illustrating a signal conditioning module used for processing electrical signals obtained from a living subject, in accordance with some embodiments of the invention.

[0027] FIG. 6C is a block diagram illustrating signal modulators and a synthesizer module used for processing electrical time-domain signals obtained from a living subject to produce a representation of an acoustic signal, in accordance with some embodiments of the invention.

[0028] FIG. 7A is a block diagram illustrating a digital processor used for processing signals representing bodily functions, in accordance with some embodiments of the invention.

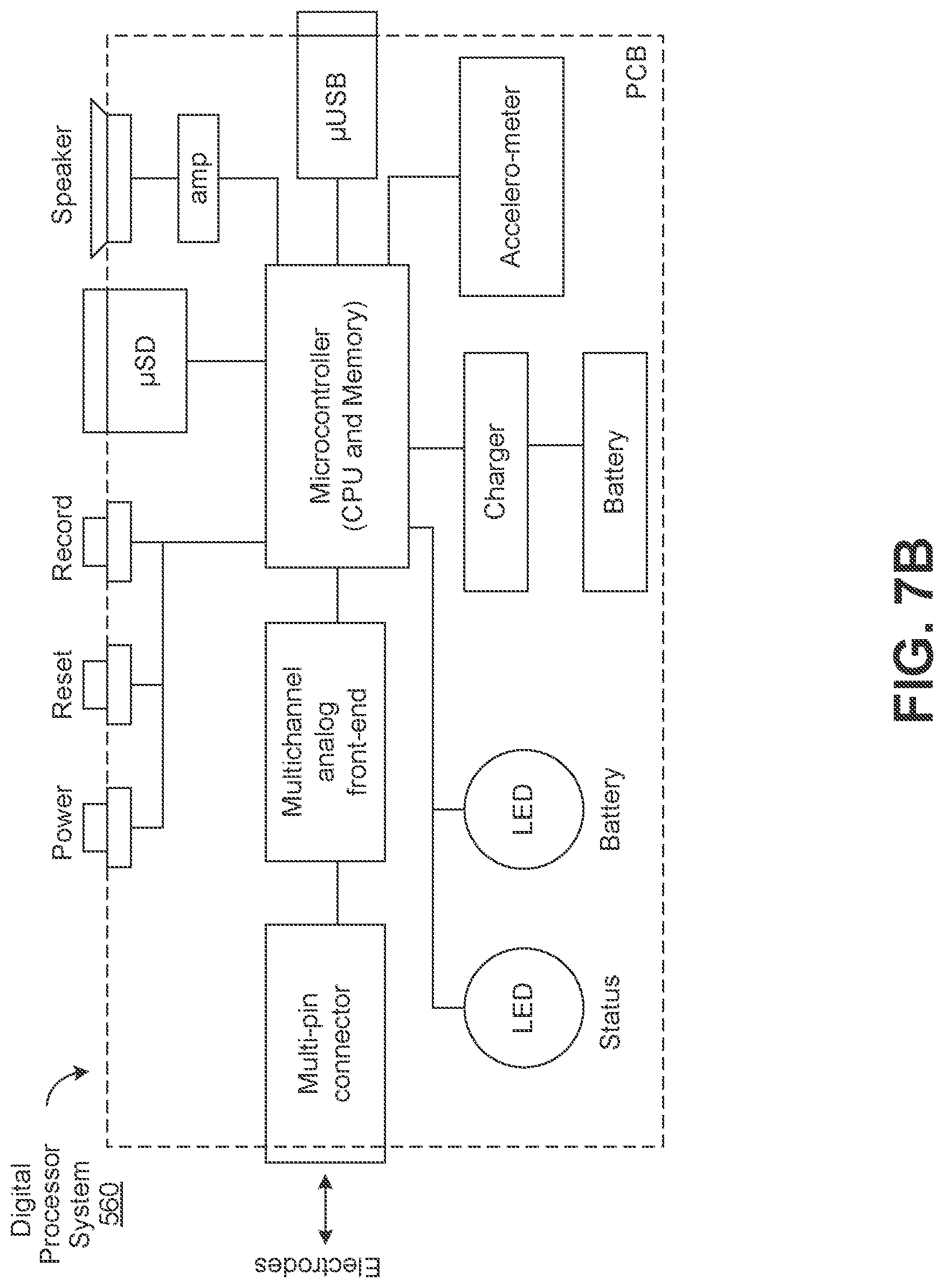

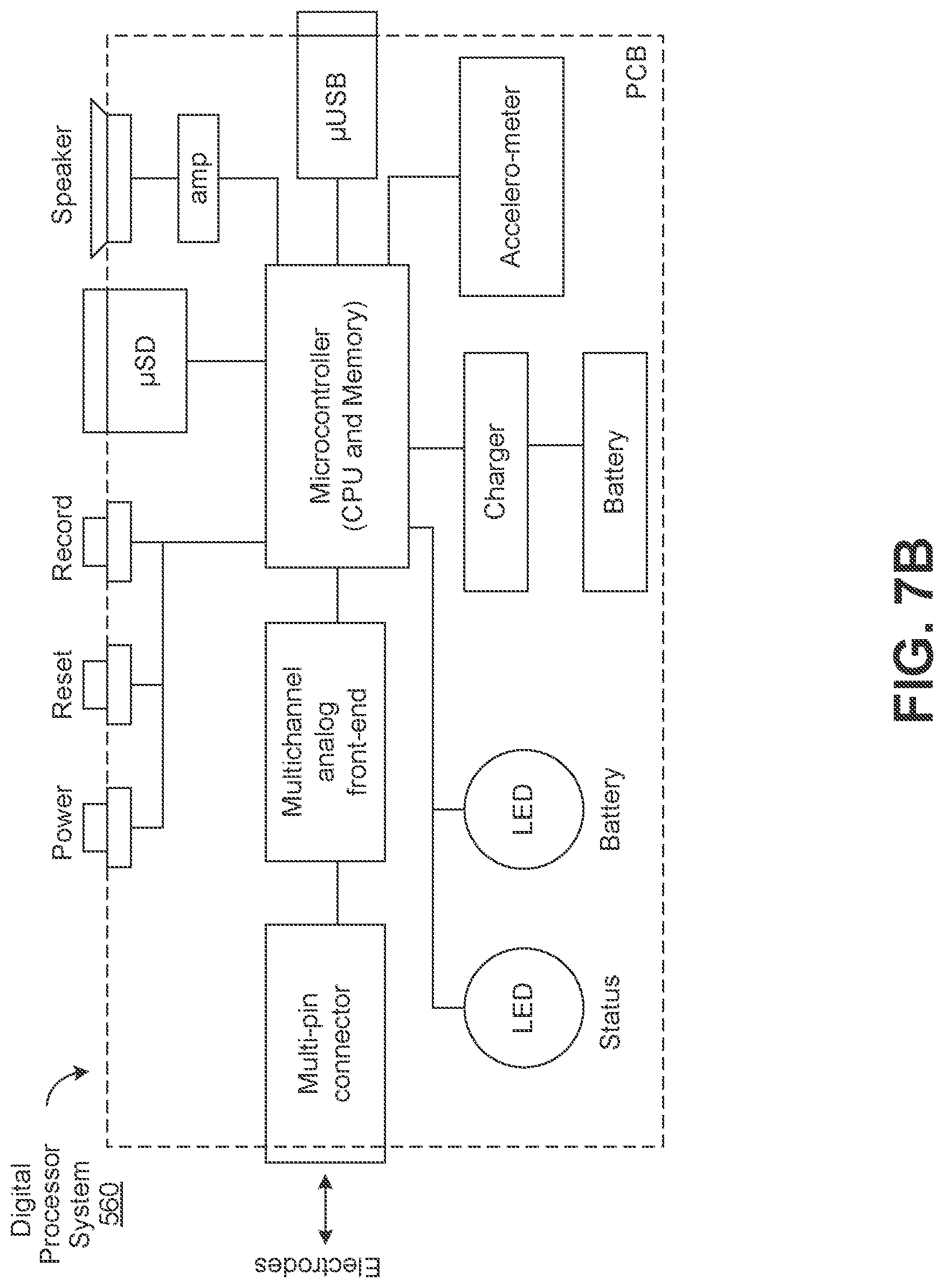

[0029] FIG. 7B is a schematic diagram of circuitry in a portable, pocket-sized handheld device for sonifying electrical signals, in accordance with some embodiments of the invention.

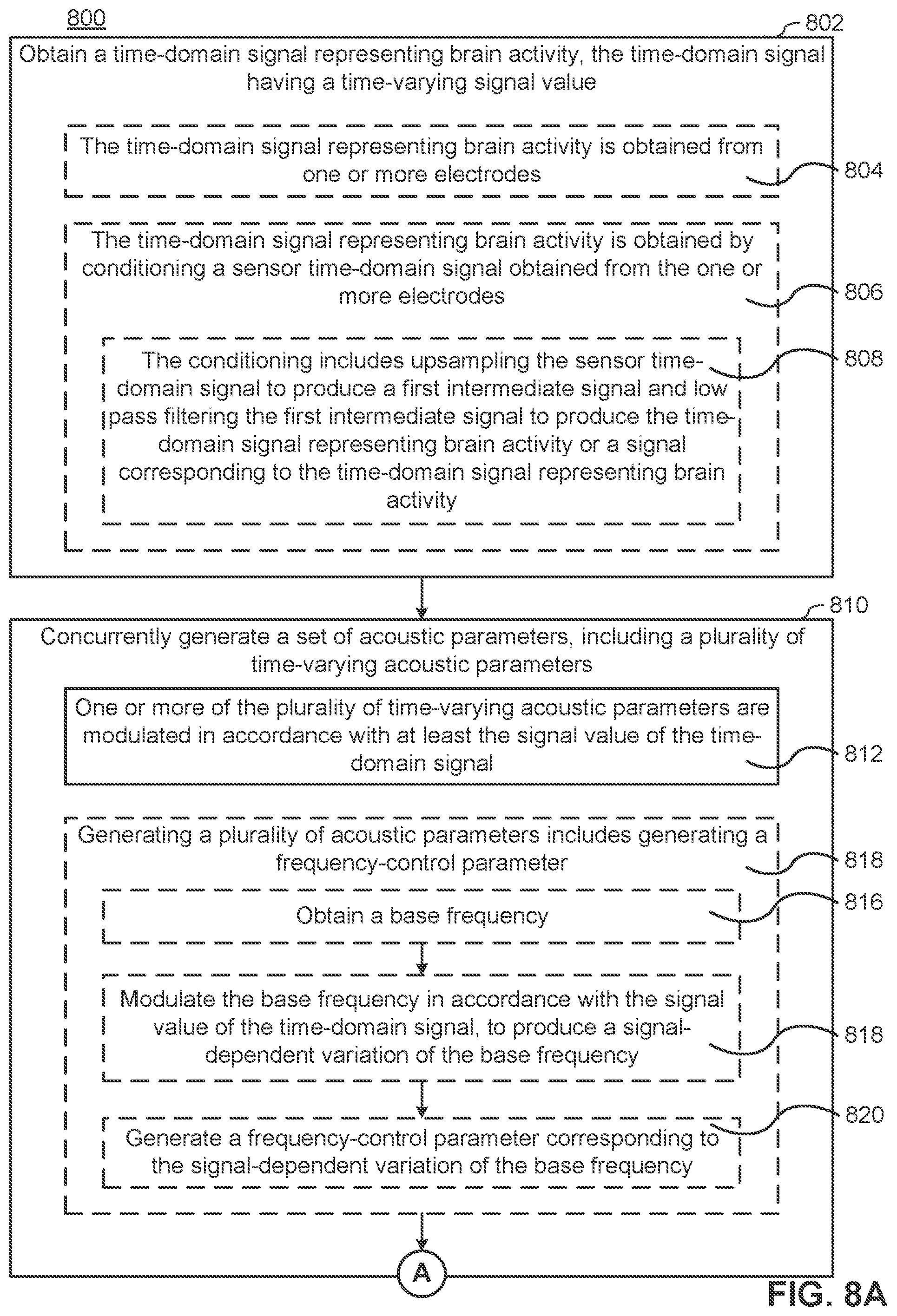

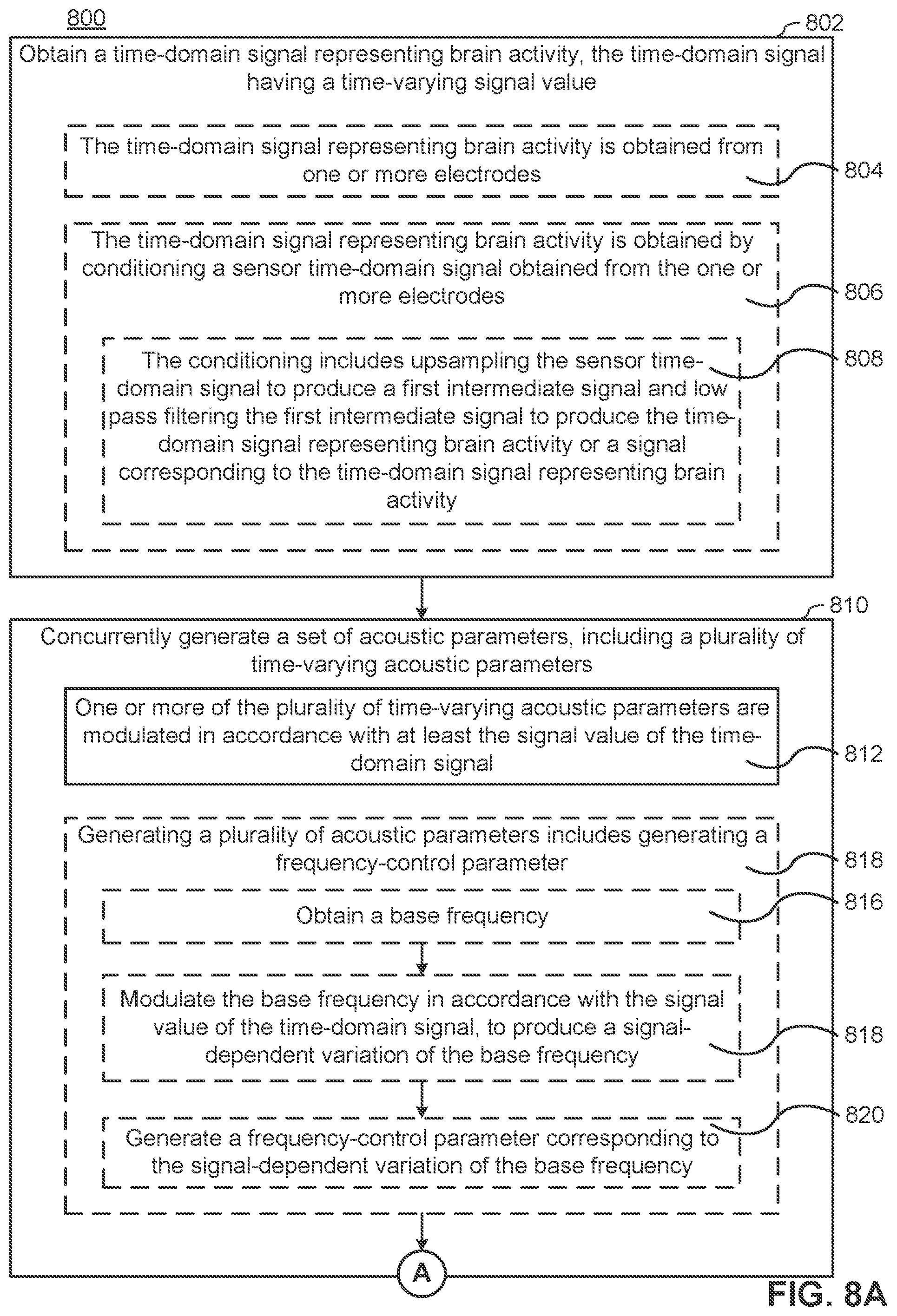

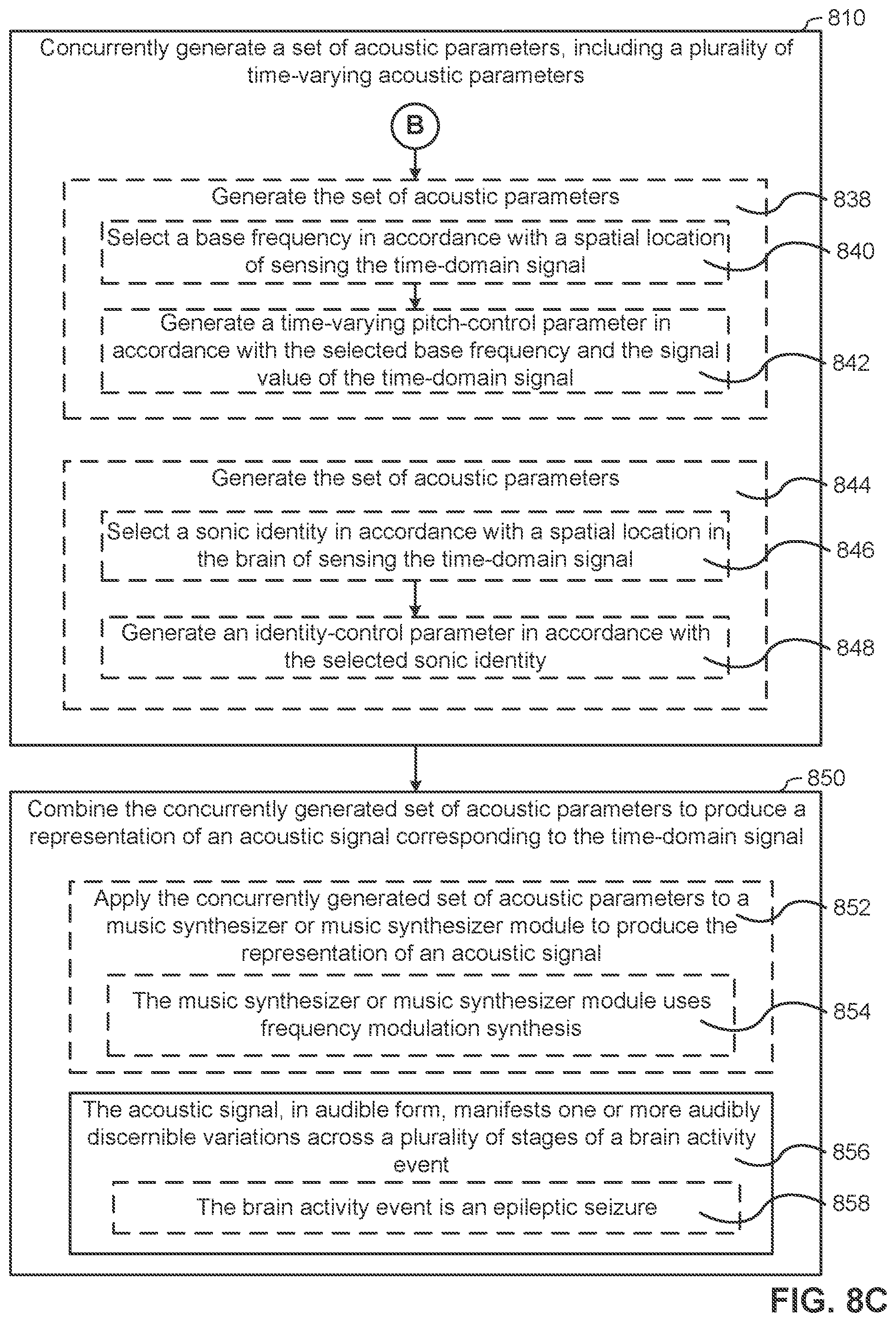

[0030] FIGS. 8A-8C include a flow chart illustrating a method for sonifying brain electrical activity, in accordance with some embodiments of the invention.

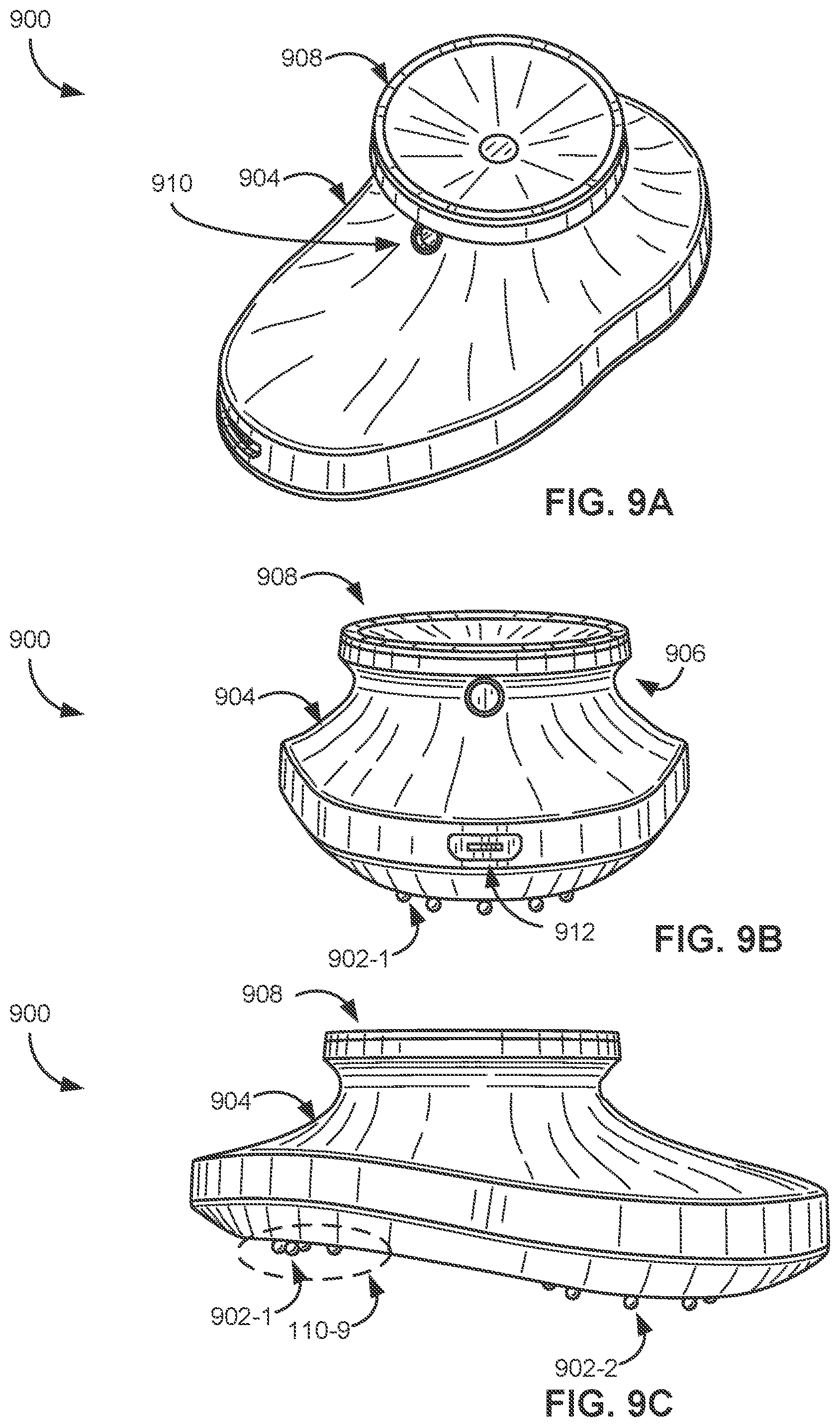

[0031] FIGS. 9A-9C are illustrations from a variety of perspectives of a handheld device for sonifying electrical signals obtained from a subject, in accordance with some embodiments of the invention.

[0032] FIGS. 10A-10B are illustrations of another handheld device for sonifying electrical signals obtained from a subject, in accordance with some embodiments of the invention.

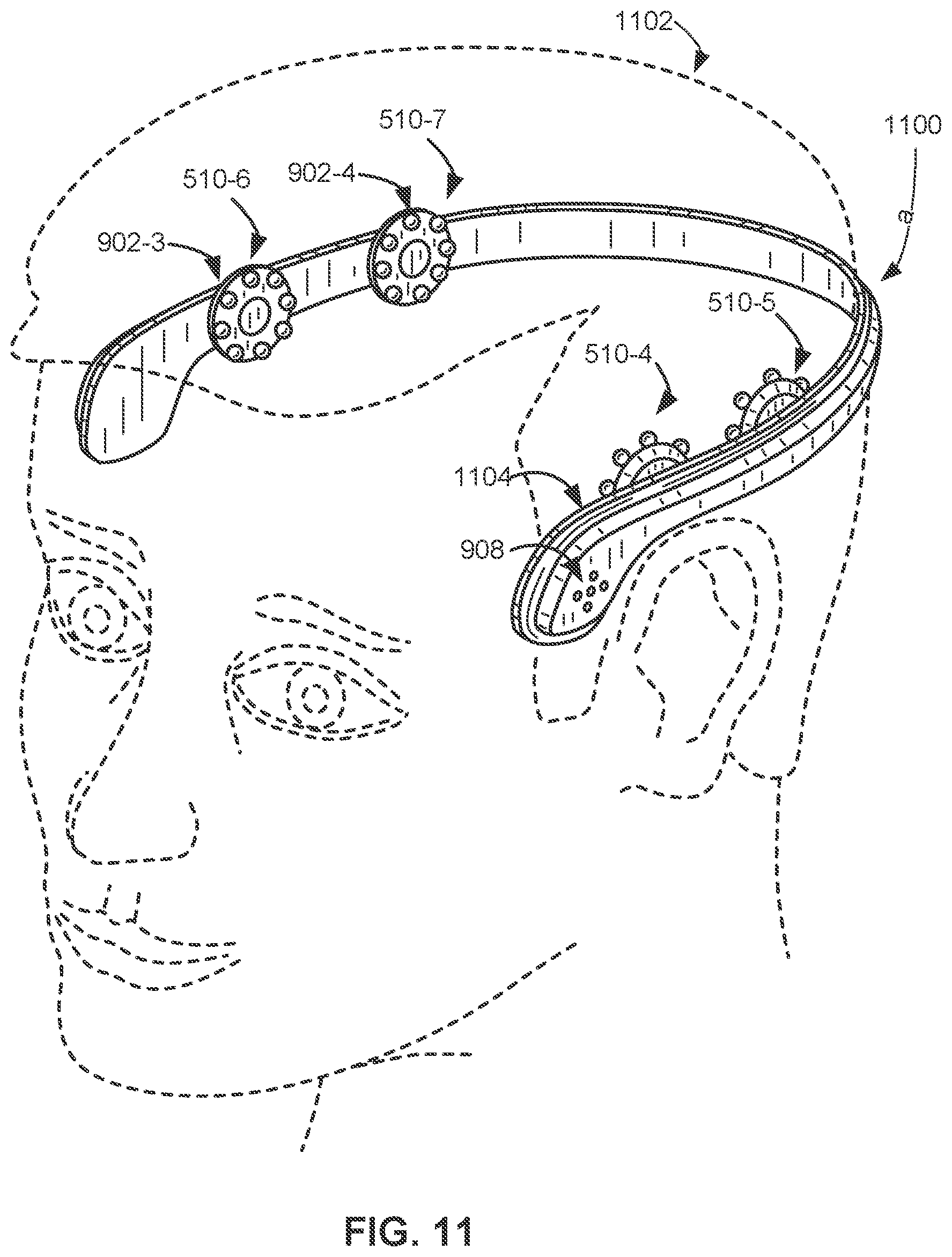

[0033] FIG. 11 is an illustration of a wearable device for sonifying electrical signals obtained from a subject, in accordance with some embodiments of the invention.

[0034] FIG. 12 provides several tables showing experimental results in accordance with some embodiments of the invention.

[0035] FIG. 13 is a computer system diagram in accordance with embodiments of the invention.

[0036] Like reference numerals refer to corresponding parts throughout the drawings.

DETAILED DESCRIPTION

[0037] Turning now to the drawings, handheld sonification devices that sonifiy signals (e.g., EEG signals received from electrodes on a living subject) in real time to audio signals for instant diagnostic evaluation in accordance with various embodiments of the invention are illustrated. The sonified signals help listeners differentiate seizures from non-seizures. Previous experimentation has shown greater than 85% to 97% accuracy by even lay individuals in differentiating seizures from non-seizures when utilizing sonification devices in accordance with some embodiments of the invention.

[0038] The sonification devices can include embedded software and/or hardware instructions that direct the sonification devices to perform a number of operations during sonification. Sonification devices in accordance with many embodiments utilize a unique combination of three core operations to produce human comprehendible sound that indicates the presence or lack thereof of seizure symptoms from electrical signals. The produced sounds have human recognizable vowel and tonal sound. These three core operations can include signal conditioning, parameter modulation, and sound generation. Moreover, a multitude of embodiments of particular importance expand upon these three core operations and can optionally further include new schemes for digitization of the signal, adjustments to the signal based on accelerometer input, and further techniques for filtration of the signal.

[0039] Signal conditioning can be an initial stage in some embodiments, which acquires raw sensor data before analyzing it. During signal conditioning, many embodiments process incoming real-time signals (e.g., EEG signals gathered from electrodes placed on a living subject) to bring the signals into range for sonification and enhancing contrasts. Signal conditioning can also include filtering out less important frequencies and DC-bias (i.e., the mean value of the waveform of the signal). In a number of embodiments, a combination of different filters, compressor processes, and upsampling processes are utilized as appropriate to the requirements of different sonification applications. Moreover, sonification devices in accordance with many embodiments of the invention utilize particular coefficient tunings for filtering, scaling and thresholds determined through exhaustive testing to measure changes in ranges of these values against the specificity and sensitivity of listeners ability to distinguish seizure from non-seizure states.

[0040] Parameter modulation uses the conditioned signal to continuously modulate vocal sound parameters according to matrices of sound synthesis parameters such as (but not limited to) pitch, loudness and vowel (timbral) quality. Parameter modulation can further include audification. Audification usually is thought to refer to time-base manipulation such as speeding up or slowing down playback rate so that inaudible low-frequency or high-frequency signals are shifted into our range of hearing. With this technique a wide range of time series data becomes audible (from slow seismic to ultra-fast physics). Previous audification methods rely on time-base shifting (e.g., by compressing the EEG signals .about.60 times) whereas various embodiments of the invention can utilize direct listening to low-frequency without distorting the temporal information.

[0041] By comparison to audification, "sonification" typically refers to translation of data to sound through manipulation of parameters in some sound-producing instrument. For example, a piano can be played by quarterly gross domestic product (GDP) values. Translation of these values into a sequence of piano notes can be accomplished by mapping the GDP range to a desired range of pitches. In several embodiments, the range of the EEG signal is mapped to a pitch range but without distorting the EEG signal by directly applying it as a pitch modulation. Some embodiment further map the same signal to loudness and vowel parameters, in parallel.

[0042] In a number of embodiments, the sound generation process produces audible sound via vocal synthesizers. The produced sound can be computer-generated sung vowels in real time. This end result is a continuous sound that can provide a listener with exactly what is needed to distinguish seizure and seizure-like states from non-seizure states. In certain embodiments, the sonification device is capable of executing these operations in real time on a handheld device to perform EEG-to-sound sonification.

[0043] As noted above, sonification devices in accordance with many embodiments of the invention can be handheld or wearable devices that sonify electrical signals obtained from a subject (e.g., a living subject such as a human or a non-human animal). In some embodiments, the device includes a plurality of electrodes configured to be placed at respective locations inside the brain during neurosurgical procedures. The plurality of electrodes produces one or more electrical signals corresponding to brain activity. In several embodiments, the device includes an input port configured to couple a plurality of electrodes to the device and to receive the one or more electrical signals produced by the plurality of electrodes. In certain embodiments, the plurality of electrodes includes a first electrode (or a first set of electrodes) that is configured to be placed at any of a plurality of locations on the subject's head. That is to say, the first electrode (or first set of electrodes) is capable of being moved (e.g., intended to be moved) to different locations on the subject's head (e.g., as described with reference to FIGS. 9A-9C and FIGS. 10A-10B). The device further includes an analog-to-digital (A/D) converter to digitize the one or more electrical signals, a processor that receives the one or more digitized electrical signals and produces a digital representation of an acoustic signal, and a speaker system that converts the digital representation of the acoustic signal to an output sound (and/or an output port through which to pass the produced acoustic signal to an external speaker (and/or an output port through which to pass the produced representation of the acoustic signal to an external speaker).

[0044] In a number of embodiments, the device is intended for use by patients, patients' family members, emergency medical personnel and/or medical doctors who are not neurologists (e.g., emergency room physicians). As can readily be appreciated, however, the device can also be a vital tool for neurologists. In some embodiments, the device includes memory (and/or makes use of cloud-based memory external to the device) that stores the EEG data. The stored EEG data allows a specialist (e.g., a neurologist) to review the EEG data (and/or sonified data) after an acute episode has passed. For example, consider a patient who visits a neurologist complaining of occasional episodes of altered mental status (AMS). In a conventional medical test for AMS, a neurologist will send the patient home with adhesive electrodes (e.g., ten or more electrodes) applied to her head along with a device to record the data from the electrodes. This type of test is sub-optimal for a number of reasons. First, it is unpleasant for the patient to have the adhesive electrodes applied to their head, resulting in a maximum of twenty-four to forty-eight hours during which the electrodes can stay on the patient. Second, if the patient's episodes only occur on average once a month, there's a high probability that the portable EEG device will not capture an episode and the inconvenience will be for naught.

[0045] In accordance with some embodiments, the electrodes of the devices described herein are applied adhesively or non-adhesively (e.g., with wet or dry electrodes) by the patient when an episode begins, thus addressing both problems. Furthermore, in some circumstances, the patient is instructed to place the handheld device at a plurality of positions on her head. For example, the patient may be instructed by the neurologist to, upon noticing the onset of an episode, place the handheld device's electrodes on the left side of her head for a short period of time (seconds to minutes) seconds and then place the handheld device's electrodes on the right side of her head for the same amount of time. Alternatively, if the patient suffers from a condition that renders them unconscious, unresponsive, and/or unreliable during such episodes, a friend or family member can be instructed to do the same.

[0046] Consider, as another example, use of the handheld device by emergency medical personnel (e.g., emergency department physicians and/or field medical personnel such as emergency medical technicians). When a patient that is unconscious, unresponsive, and/or unreliable (more generally known as having an altered mental status) arrives in the emergency department or is discovered in the field, in some circumstances, the emergency medical personnel will apply the electrodes of the devices described herein to one or more locations on the patient's head (e.g., both sides of the patient's head). The sonified electrical signals obtained from various locations on the patient's head will manifest differently depending on the patient's brain condition. For example, bilateral silence and/or quiet are, in some embodiments, representative of brain death. Sonified electrical signals that are quieter on one side of the patient's head than the other in some circumstances represent a stroke. Rhythmic activity in the sonified electrical signals in some circumstances represents a seizure. Thus, the sonified electrical signals produced by the devices described herein, in accordance with some embodiments, provide early clues to medical providers that may help direct the patient to an appropriate medical center (e.g., a stroke center) and/or obviate the need for expensive, time-consuming, and potentially risky medical procedures (e.g., lumbar punctures, colloquially known as spinal taps). The devices described herein also provide an inexpensive option to medical facilities that do not have access to EEG capabilities.

[0047] In addition, sonification devices in accordance with various embodiments of the invention can be useful for assessing neurological and/or other conditions in non-human animals. Thus, a veterinarian may, in some circumstances, use the devices described herein to "auscultate" (e.g., by sonifying, as described below) brain signals from non-human animals.

[0048] Reference will now be made in detail to various embodiments, examples of which are illustrated in the accompanying drawings. In the following detailed description, numerous specific details are set forth in order to provide a thorough understanding of the invention and the described embodiments. However, the invention is optionally practiced without these specific details. In other instances, well-known methods, procedures, components, and circuits have not been described in detail so as not to unnecessarily obscure aspects of the embodiments.

[0049] For ease of explanation, various embodiments are described below with reference to sonification of signals representing brain activity (e.g., electroencephalography (EEG) signals) of a living subject. However, one of skill in the art will recognize that signals representing other bodily functions (e.g., electrocardiography (ECG) signal, an electromyography (EMG) signal, or an electronystagmography (ENG) signal, a pulse oximetry signal, a capnography signal, a photoplethysmography signal), and/or any other detectable signal may be substituted, or used in addition to (e.g., in conjunction with), one or more signals representing brain activity.

A. Sonification Devices

[0050] FIGS. 1A-1B are illustrations of a handheld sonification device 100 for sonifying electrical signals obtained from a subject, in accordance with some embodiments of the invention. Sonification device 100 can receive signals from signal capturing devices. Several signal capturing devices are described below in accordance with embodiments of the invention; specifically, device 900 (FIGS. 9A-9C), device 1000 (FIGS. 10A-10B), and/or device 1100 (FIG. 11). In various embodiments, device 100 may share any of the features described below with reference to device 900 (FIGS. 9A-9C), device 1000 (FIGS. 10A-10B), and/or device 1100 (FIG. 11), unless context makes clear that such features are incompatible with device 100. Likewise, device 900, device 1000, and/or device 1100 may share any of the features described with reference to sonification device 100 unless context makes clear that such features are incompatible with a respective one of those signal capturing devices.

[0051] Sonification device 100 can include an input port 808 configured to couple to a plurality of electrodes (not shown) to sonification device 100 and to receive electrical signals produced by the plurality of electrodes. The electrical signals correspond to the subject's brain activity. Sonification device 100 can include an analog-to-digital (A/D) converter (e.g., analog-to-digital converter 608, FIG. 6C) to digitize the electrical signals. Sonification device 100 can further include one or more processors that receive the digitized electrical signals and produce a representation of an acoustic signal based on the digitized signals (e.g., in accordance with process 800, FIGS. 8A-8C and/or process 300, FIG. 3). The sonification device can include a speaker system 806 that sonifies the representation of the acoustic signal.

[0052] Sonification device 100 can be a "pocket sized" device. Sonification device 100 can include a power button 802 and a display 804 (e.g., an LCD display/touch screen). The electrodes can be tethered to the sonification device 100 through input port 808. Sonification device 100 can received electrical signals via the input port 808. The received electrical signals can correspond to a subject's brain activity, received through input port 808. The brain activity can include a first electrical signal corresponding to left-hemisphere brain activity and a second electrical signal corresponding to right-hemisphere brain activity. The output acoustic signal can include independently audible voices corresponding to each of the first electrical signal and the second electrical signal.

[0053] Display 804 can display a graphical user interface that can enable a user of the sonification device 100 to independently control the two voices (acoustic signals or acoustic signal portions) corresponding to the distinct sensors, one for each hemisphere of the subject's brain, and their time-domain signals. For example, display 804 includes display region 810-a corresponding to the left-hemisphere signal and display region 810-b corresponding to the right hemisphere signal. In some embodiments, display regions 810 include affordances (e.g., buttons, touch screen buttons) for controlling sonification of the respective signals (e.g., turning on/off the sonification of the respective signals and/or controlling other properties of the respective sonified signals). In the example shown in FIGS. 1A-1B, the user is sonifying the right-hemisphere signal (e.g., display region 810-b says "Sonifying"), but not the left-hemisphere signal (e.g., display region 810-a says "Ready for sonification"). The graphical user interface also includes other display regions/affordances for navigating the graphical user interface. For example, the graphical user interface can display EEG signals graphically, set parameters for recording EEG signals, input information about the patient, write notes, etc. The graphical user interface also can include a button to tag events, e.g., so that a non-specialist can tag a particular time in a recording of a sonified signal, which can be reviewed by a specialist later.

[0054] FIG. 2 conceptually illustrates a circuit board 200 of a sonification device in accordance with some embodiments. The example circuit board 200 includes a microcontroller 202, a memory 204, a reset button 206, a power button 208, a status LED 210, a battery 212, a charger 214, a micro-USB interface 216, a micro-SD card reader 218, a Wi-Fi interface 220, a Bluetooth interface 222, an accelerometer 224, touchscreen controller 226, a touchscreen 228, a multichannel analog front-end 230, an audio Digital-to-Analog Converter (DAC) and amplifier 232, a speaker 234, and a multi-pin connector 236. Different embodiments can include different combinations and/or sub-combinations components than those shown in the example circuit board 200 in FIG. 200. Real-time deadlines for digital sound generation are exacting. If the firmware misses a deadline, annoying clicks (brief sound dropouts) can be heard. Circuit 200 was architected to support consistent audio on a relatively inexpensive processor. The relatively inexpensive processor suggested the use of a firmware interrupt scheme for performing sonification operations. As can readily be appreciated, modifications to the circuitry can be contemplated as more powerful processors and/or real time operating systems are utilized in the implementation of the sonification device. The sonification operations of some embodiments are discussed in detail below.

B. Processes for Sonifying Signals

[0055] FIG. 3 is a flowchart illustrating a process 300 for sonifying signals according to an embodiment of the invention. Process 300 can be applied in sonifying electrical activity (e.g., electrical signals) obtained from a subject. Process 300 can be performed by a handheld and/or wearable sonification device in accordance with multiple embodiments of the invention; such as device 900 of FIGS. 9A-9C, device 1000 of FIGS. 10A-10B, device 1100 of FIG. 11, and/or device 100 of FIGS. 1A-1B). Process 300 is optionally governed by instructions that are stored in a computer readable storage medium and that are executed by a digital processor system (or, optionally, one or more digital processor systems) (e.g., digital processor system 560, which in various embodiments is or is a component of any of the aforementioned handheld or wearable devices). The operations shown in FIG. 3 optionally correspond to instructions stored in a computer memory or computer readable storage medium. The computer readable storage medium optionally includes a magnetic or optical disk storage device, solid state storage devices such as flash memory, or other non-volatile memory device or devices. The computer readable instructions stored on the computer readable storage medium are in source code, assembly language code, object code, or another instruction format that is interpreted by one or more processors. Various embodiments of the invention can implement portions and/or all of process 300 in sonifying signals. Moreover, the operations process 300 can be implemented as sub-processes or in connection with other processes described herein as in accordance with embodiments of the invention.

[0056] Process 300 can include receiving (302) one or more signals. The received signals can be electrical signals produced by a plurality of electrodes and received via input ports to a handheld sonification device. The one or more electrical signals can correspond to a subject's brain activity. In some embodiments, the device includes a plurality of electrodes, while in several other embodiments the device includes an input port that is coupled to the plurality of electrodes (e.g., the electrodes are tethered to the device). In certain embodiments, a plurality of representations of acoustic signals is combined to produce a combined acoustic signal. Alternatively, a combined acoustic signal can be generated by combining acoustic signals corresponding to the plurality of representations of acoustic signals produced by the digital processor system. In yet another alternative, a plurality of acoustic signals, each corresponding to one or more of the aforementioned representations of acoustic signals, are recorded on distinct tracks, where the distinct tracks are configured to enable concurrent playback of the acoustic signals recorded in those tracks.

[0057] Process 300 can include digitizing (304) the received signal. The digitization can be accomplished on one or more electrical signals using an analog-to-digital converter. An analog-to-digital converter converts a continuous analog input signal (e.g., acoustic signals) to a digital number that represents the quantity's amplitude. Most embodiments of the invention, when implemented as a sonification device, include hardware analog-to-digital converters of sufficient quality and/or number of quantization levels to minimize errors introduced by the digitization. In several embodiments, the digitization process involves oversampling which refers to a process that samples at a rate that is significantly higher than the Nyquist frequency (i.e. twice the highest frequency component) of a bandlimited digitized signal.

[0058] In order to mitigate against subject mechanical movement, process 300 can include adjusting (306) the signals based on accelerometer input. In some circumstances, the mechanical movement is due to a medical condition of the patient (e.g., a seizure and/or head shaking). In some embodiments, the mechanical movement is due to an environment in which the device is being used (e.g., the device is being used in the back of a moving ambulance). The mechanical movement can introduce artifacts that are in fact rhythmic and thus the listener may mistake the artifact rhythms for seizures.

[0059] In some embodiments, the devices performing process 300 can include an accelerometer that produces the one or more signals indicative of mechanical movement of a subject (e.g., the accelerometer is within the device's housing). In some embodiments, the sonification device performing process 300 includes an accelerometer interface for receiving the one or more signals indicative of mechanical movement of a subject. In some embodiments, the sonification device performing process 300 includes a second input port (e.g., coupled with the accelerometer interface) for receiving one or more signals indicative of mechanical movement of the subject (e.g., the accelerometer is external to the devices performing process 300 and is tethered to the devices performing process 300 through the second input port). In some embodiments, the accelerometer is located on the subject (e.g., attached to the subject or attached to an article of clothing worn by the subject).

[0060] Process 300 can then adjust (306) the one or more digitized signals based on the one or more signals indicative of mechanical movement. In some embodiments, adjusting the one or more digitized electrical signals based on the one or more signals indicative of mechanical movement includes: in accordance with a determination that an amplitude of the one or more signals indicative of mechanical movement is above a predefined movement threshold, forgoing sonification of the acoustic signal (e.g., the devices performing process 300 rejects the EEG signals by forgoing sonification). In order words, process 300 interrupts and pauses sonification of the signal due to detecting mechanical movement from an accelerometer. Conversely, in accordance with a determination that the amplitude of the one or more signals indicative of mechanical movement is below the predefined movement threshold, the process 300 sonifies the acoustic signal (e.g., process 300 accepts the EEG signal). In some embodiments, process 300 displays an indication of whether the EEG signal is being accepted or rejected (e.g., on a display or using a blinking LED).

[0061] Process 300 can include filtering (306) the one or more signals. In several embodiments, filtering the one or more signals includes configuring a first filter (e.g., a high-pass filter) in accordance with the one or more signals indicative of mechanical movement and applying the configured first filter to the one or more digitized electrical signals. In some embodiments, process 300 detects frequencies in mechanical movement and configures a frequency response of the first filter to attenuate the detected frequencies within the digitized electrical signals (e.g., the devices performing process 300 reshape the frequency response of the first filter, so that there is a change in the response of at least a first frequency with respect to the response of a second frequency). In many embodiments, configuring the first filter includes adjusting a cutoff frequency of the first filter. In various embodiments, configuring the first filter includes detecting prominent frequency modes in the mechanical movement and configuring the first filter to reject the prominent frequency modes in the digitized electrical signals (e.g., rejecting a fixed number of frequency modes, such as 3-5 modes, or rejecting any mode that has a power spectral density value in the mechanical movement above a predefined power threshold).

[0062] In several embodiments, filtering (308) the one or more signals includes, in accordance with one or more predefined criteria, applying a low-pass filter (e.g., a second filter) to the one or more digitized electrical signals. In yet further embodiments, the low-pass filter is intended to remove artifacts that are the results of convulsive muscle movements in the range of 70 Hz. In various embodiments, the low-pass filter has a cutoff frequency selected to remove artifacts that are the result of the convulsive muscle movements (e.g., a cutoff frequency below 70 Hz). In still yet more embodiments, the one or more predefined criteria are met when a user selects an option, via a user interface (e.g., the graphical user interface shown in FIGS. 1A-1B), to apply the low-pass filter. In other embodiments, the low-pass filter is applied in accordance with a determination that the subject is experiencing a seizure (e.g., the predefined criteria are seizure-detection criteria that are met when the process 300 determines that the subject is experiencing a seizure). In further additional embodiments, the devices performing process 300 are configured to detect a seizure using information garnered from the accelerometer signal (e.g., by analyzing the one or more signals indicative of mechanical movement of the subject). In further embodiments, the devices performing process 300 are configured to detect a seizure using the electrical signals corresponding to brain activity. In many embodiments, the devices performing process 300 use a combination of accelerometer information and brain activity information to detect a seizure. In additional embodiments, the low-pass filter is a digital low-pass filter. The digital low-pass filter can be implemented using software, firmware, hardware, or a combination thereof. In additional embodiments, the low-pass filter is an analog low-pass filter and the one or more signals are filtered before being digitized by the A/D converter.

[0063] In several embodiments, the filters employed by process 300 include EEG signal filter bandpass cutoffs. The EEG signal filter bandpass cutoffs can, according to some embodiments of the invention, be determined through testing. The EEG signal filter bandpass cutoffs can be utilized as part a dual-stage filter which is selective of only the signal features needed for recognition. DC-bias, AC line contamination, and non-seizure-related brain wave features can be rejected by employing EEG signal filter bandpass cutoffs. The first stage can be a first-order pole-zero Infinite impulse response (IIR) DC-blocker with a cutoff range of 0.1 Hz to 1.0 Hz. Experimentally, this configuration was found to be the optimal choice for rejecting analog front-end DC-bias. The second stage can be a 501-tap finite impulse response (FIR) filter with bandpass of 0.1-3.0 Hz to 5.0-15.0 Hz. An IIR equivalent can be less optimal because of resonance problems which created low-frequency ringing that was confused with signal features of interest. While particular embodiments and ranges and values were described herein with respect to specific filter values, reasonable variation on the values presented herein can be utilized as appropriate to the requirements of specific applications in accordance with various embodiments of the invention.

[0064] Process 300 can include conditioning (310) the signals according to conditioning parameters. Signal conditioning can be an initial stage in some embodiments, which acquires raw sensor data before analyzing it. During signal conditioning, many embodiments process incoming real-time signals (e.g., EEG signals gathered from electrodes placed on a living subject) to bring the signals into range for sonification and enhancing contrasts. Signal condition can include EEG rate processing according to particular signal conditioning parameters. EEG signal filter coefficients can be derived through offline testing on (actual, recorded) device signals and the best filter settings ported to the signal conditioning stage. Moreover, parameters specific to signal conditioning can be adapted according to various firmware configurations.

[0065] Other conditioning parameters of various other embodiments can include the following values as bases for ranges of success: thresholds of 1-25 .mu.V, full-range scaling of 30-60 .mu.V and compression of 1.5-3.0. Signal rectification i.e., absolute value can be applied to the filtered signal and then thresholding can be used to reject low-amplitude signal noise. Signal values below a threshold can be set to zero. Above a threshold signals can be scaled to create a fixed range of 0.0-1.0 with full range scaling and then can be compressed to raise the prominence of small features. Signal values can be boosted by taking the power-law exponent of the signal. In many instances, values which exceed full scale after compression can be clipped to full scale (1.0).

[0066] Process 300 can include modulating (312) the one or more signals according to sound synthesis parameters. Parameter modulation can include continuously modulating vocal sound parameters according to matrices of sound synthesis parameters such as pitch, loudness and vowel (timbral) quality. Parameter modulation refers to the matrix of sound synthesis parameters (pitch, loudness, vowel) and how a single conditioned EEG signal modulates each parameter in the group. Relationships can be expressed as offset and scale coefficients. The offset and scale coefficients can in many embodiments be determined ahead of device development in software-based research. The matrices determine the perceived identity of the vocal sound. While not necessarily a realistic replica of a singer, there is a recognizable "device identity" that can result from any particular matrix. The set of parameter coefficients chosen can make this identity invariant and almost trademark-like (for example, in the range of: "111", "ahh", "ehh", "eee", "ohh", "ooo"). As an example, a sample matrix is provided herein will result in the following sonified output: [0067] Pitch offset in the range of 50-150 Hz--This sets the "hum" base pitch of the vocalist for below-threshold EEG: [0068] Pitch scale in the range of 110-440 Hz--This sets the pitch excursion for full-range EEG. [0069] Amplitude offset in the range of 0.0001-0.01)--This sets the "hum" amplitude for below-threshold EEG. [0070] Amplitude scale in the range of 0.05-2.0--This sets the maximum loudness for full-range EEG. [0071] Vowel offset in the range of 0.0-1.0--This sets the "hum" vowel for below-threshold EEG. [0072] Vowel scale in the range of 0.05-2.0--This sets the vowel interpolation amount for full-range EEG. [0073] Vowel lookup table--a combination of these 6 vowels are used.

[0074] Process 300 can include generating (314) sound from the one or more signals. Device sound generation can be real-time and heard via onboard loudspeakers of devices performing process 300. The loudspeaker and device enclosure can affect sound quality. Most embodiments utilize particular speaker types and enclosure for better acoustics linearity. For instance, a CUI Inc. MODEL: GF0401M can be utilized for good performance in several embodiments. This model provides for a speaker that is open to the front and to the inside of the enclosure. A different model, 1 cm smaller diameter, was 12 dB quieter and had a more nasal sound (the bottom octave of sound compared to the best choice was mostly missing). Enclosures which have been prototyped and tested included fully open, sandwich with open edges, and ported boxes. Ported boxes have been found to yield the best sound for most embodiments, the best sound being loud and resonant for rendering the vocalist "identity" as described above. As can readily be appreciated, the specific speaker and enclosure utilized in a sonification device largely depend upon the requirements of a given application.

[0075] Device sound generation is real-time and heard via an onboard loudspeaker. The loudspeaker and device enclosure affects sound quality. Real-time deadlines for digital sound generation are exacting. If the firmware misses a deadline, annoying clicks (brief sound dropouts) can be heard. Firmware in preferred embodiments was architected to support consistent audio on the relatively inexpensive processor chosen. Sound generation can take advantage of numerous hardware design techniques to speed up and enhance performance in numerous embodiments. The following discussion describes some of these techniques.

[0076] Sonification often requires mapping of data with a linear range into pitch frequencies of the human voice. The most common and natural way to do this is with the well-defined midi-to-frequency function: 440*2{circumflex over ( )}(midinote-69)/12 which requires both a power and a divide operation. Sonification process 300 creates formant audio that avoids fringing effects, discontinuity clicks, phase distortion, spectral modulation, and other problems with simpler algorithms. However, the operations of process 300 utilize a division to calculate each formant harmonic as shown in the midi-to-frequency function. Thus a two formant synthesis with interpolation of between upper and lower frequencies involve 4 division operations (typically multiple clock cycle processor operations) per sample. Process 400 can eliminate these division operations using an interpolated look-up table for the inverse of the pitch frequency (look up of 10), thus requiring only a multiply for each formant. Process 400 can be executed in conjunction with process 300 or as a sub-process of process 300.

[0077] Formant synthesis refers to creating audio signals that sound like a human singing voice or in more general terms, signals that have an acoustic resonance like the human vocal tract. Formant synthesis is useful in sonification applications where complex non-audio data is mapped to a human-like singing voice that can clarify or distinguish features in the data that may otherwise be difficult to detect. Process 400 provides for a fast method of synthesizing formant audio signals is described that achieves high quality real-time performance with a smaller, more efficient implementation in real-time embedded CPU/DSP processor firmware, FPGA, or ASIC devices. Process 400 receives (402) a signal for formant synthesis. This receipt can in many embodiments be in CPU/DSP processor firmware, FPGA, or ASIC devices.

[0078] Process 400 can include interpolating (404) signals using consolidated lookup tables. This consolidates interpolation for look-up into two tables for the formant pitch mapping and inverse pitch frequency mapping. The consolidation eliminates computationally expensive divide and power operations while maintaining synchronous operation and the interpolation of the pitch and formant frequencies from linear data.

[0079] Process 400 can include performing formant synthesis (406). High quality formant synthesis can in many embodiments be performed using a bank of linked oscillators, such as the phase-synchronous oscillators, where a single phasor is shared by the modulator and all carriers. In a typical implementation the bank is constructed with any number of harmonic outputs that are tapped off of a single common phasor. In practice, a bank of four (or more) carrier oscillators of this kind will can be used to generate a vocal sound. These can create human voice phonemes of 2 (or more) formants represented by a time-varying distribution pitch, harmonic, and amplitude. Process 400 can then complete sonification by generating (408) sound comparably to process 300 as discussed above.

[0080] Although specific processes for sonification of EEG signals are described above with reference to FIGS. 3 and 4, any of a variety of signal processing and sonification processes can be utilized as appropriate to the requirements of a given application in accordance with various embodiments of the invention. Sonification devices and the interfacing of sonification devices in accordance with a number of embodiments of the invention are discussed further below.

C. Exemplary Body Interface System

[0081] FIG. 5 illustrates body interface system 500 for sensing, acquiring and processing one or more signals obtained from a living subject (e.g., obtained from a human or animal's brain and/or heart) to produce a representation of an acoustic signal (also referred to herein as an "output acoustic signal") corresponding to the one or more signals (e.g., representing brain and/or heart activity). In some circumstances, body interface system 500 is deployed in a clinical setting (e.g., during or before surgical interventions and/or during diagnosis and/or treatment of conditions, such as epileptic seizures) for aural (e.g., auditory) measurement or monitoring of brain activity. Alternatively, or in addition, body interface system 500 is deployed as part of a user interface for a handheld or wearable device (e.g., a smart-phone, tablet, or the like) for diagnostic, entertainment, biofeedback, monitoring, therapeutic or other purposes. In some embodiments, one or more components of body interface system 500 constitute a handheld or wearable device for sonifying electrical signals obtained from a subject. Three examples of handheld devices for sonifying electrical signals obtained from a subject are shown in FIGS. 9A-9C, FIGS. 10A-10B, and FIG. 11, respectively. An example of a wearable device for sonifying electrical signals obtained from a subject is shown in FIG. 11. In some implementations of the wearable device, shown in FIG. 11, digital processor system 560 is embedded in the wearable device, for example in a "headband housing" that also holds dry or wet electrodes that contact both sides (left and right sides) of the subject's head. In some other implementations, digital processor system 560 is not embedded in a headband housing, and is instead coupled to electrodes in (or held in position by) a headband by one or more electrical wires or connectors. Optionally, digital processor system 560 has a separate housing that includes a clip for attachment to the headband.

[0082] In some embodiments, as shown in FIG. 5, body interface system 500 includes one or more sensors 510 (e.g., sensor 510-1 and sensor 510-2), optionally includes one or more analog front ends 520 (e.g., one or more analog front end modules) and a digital processor system 560 (herein often called digital processor 560 for ease of reference) for receiving and processing signals from sensors 510. In some embodiments, digital processor system 560 includes the one or more analog front ends.

[0083] In some embodiments, sensors 510 are provided to interface with a living subject's brain to obtain (e.g., sense and/or acquire) sensor time-domain signals (e.g., sensor time-domain signal 601, FIG. 6A) corresponding to brain electrical activity. In some embodiments, sensors 510 are a component of a handheld or wearable device for sonifying electrical signals (see FIGS. 9A-9C through 8A-1B). Alternatively, in some embodiments, the handheld or wearable device is configured to interface with the sensors 510 (e.g., the sensors 510 are disposable and plug into the handheld or wearable device). In some embodiments, the sensors 510 include one or more electrodes.

[0084] As an example, signals (e.g., sensor time-domain signal 601, FIG. 6A) corresponding to brain electrical activity are obtained from a human brain and correspond to electrical signals obtained from a single neuron or from a plurality of neurons. In some embodiments, the one or more electrical signals represent electroencephalography (EEG) data that are concordant with laboratory EEG data. In some embodiments, sensors 510 include one or more sensors affixed (e.g., taped, attached, glued) externally to a human scalp (e.g., extra-cranial sensor 510-1). For example, extra-cranial sensor 510-1 includes an electrode (e.g., electroencephalography (EEG) electrode) or a plurality of electrodes (e.g., electroencephalography (EEG) electrodes) affixed externally to the scalp (e.g., glued to the skin via conductive gel), or more generally positioned at respective positions external to the scalp. Alternatively, dry electrodes can be used in some implementations (e.g., conductive sensors that are mechanically placed against a living subject's body rather than implanted within the living subject's body or held in place with a conductive gel). An example of a dry-electrode is a headband with one or more metallic sensors (e.g., electrodes) that is worn by the living subject during use (FIG. 11). The signals obtained from an extra-cranial sensor 510-1 are sometimes herein called EEG signals or time-domain EEG signals.

[0085] In some embodiments, although not shown in FIG. 5, sensors 510 are heartbeat pulse sensors. In some embodiments, sensors 510 can be used both as EEG sensors (e.g., by placing sensors 510 on the subject's head) and as heartbeat pulse sensors (e.g., by placing sensors 510 on the subject's chest or another location where a heart signal is detectable). The heartbeat pulse sensors are provided to interface with a living subject's heart to obtain (e.g., sense and/or acquire) sensor time-domain signals corresponding to heart electrical activity. For example, signals corresponding to heart electrical activity are obtained from a human heart and correspond to electrical signals obtained from a single cardiomyocyte or from a plurality of cardiomyocytes (e.g., a sinoatrial (SA) node of a human subject). In some embodiments, the heartbeat pulse sensors include one or more sensing elements affixed (e.g., taped, attached, glued) externally to a human body (e.g., a human subject's chest, abdomen, arm, or leg). For example, the heartbeat pulse sensors include an electrode (e.g., electrocardiography (ECG) electrode) or a plurality of electrodes (e.g., electrocardiography (ECG) electrodes) affixed externally to the human body (e.g., glued to the skin via conductive gel), or more generally positioned at respective positions external to the human body. Alternatively, dry electrodes can be used in some implementations (e.g., conductive sensors that are mechanically placed against a human body rather than being implanted within the human body or held in place with a conductive gel). An example of a dry-electrode is a chest strap with one or more metallic sensors (e.g., electrodes) that is worn by the living subject during use. Another example of a dry-electrode is a thumb apparatus or a hand apparatus with one or more metallic sensing elements (e.g., electrodes) that is touched (e.g., with the living subject's thumbs) and/or held onto (e.g., with the living subject's hands) by the living subject during use. The signals obtained from heartbeat pulse sensors are sometimes herein called ECG signals or time-domain ECG signals.

[0086] In some embodiments, heartbeat pulse sensors sense voltages corresponding to heart electrical activity. In alternative embodiments, heartbeat pulse sensors sense electrical currents corresponding to heart electrical activity. In some implementations, heartbeat pulse sensors sense differential voltages (e.g., differences in voltage values) between two measurement locations (e.g., between two sensing elements). For example, when a respective heartbeat pulse sensor includes two or more sensing elements (e.g., electrodes) positioned at respective positions external to the human body, the respective heartbeat pulse sensor senses differential voltages (e.g., bipolar voltages) between the two or more sensing elements located at the respective positions. In some implementations, a "twelve-lead electrocardiogram" is constructed by referencing each sensing element of a set of sensing elements to one or more other sensing elements to produce a corresponding set of differential voltage signals (e.g., a twelve-lead set of differential voltage signals), each of which is a respective sensor time-domain signal 601 (FIG. 6A).

[0087] In some embodiments, arrays of sensors (e.g., sensors 510) are designed to record intracranial EEG and produce a plurality of sensor time-domain signals (e.g., sensor time-domain signals 601, FIG. 6A). In some embodiments, sensor time-domain signals (e.g., sensor time-domain signal 601, FIG. 6A) include wideband features including high-gamma bursts in the range of 80-150 Hz. In some embodiments, sensor time-domain signals (e.g., sensor time-domain signal 601, FIG. 6A) include frequencies (sometimes called frequency components) below (e.g., lower than or in the lowest ranges of) the human audible frequency-range.

[0088] In some implementations, analog front end 520 receives sensor time-domain signals (e.g., sensor time-domain signal 601, FIG. 6A) from sensors 510 and optionally pre-processes the sensor time-domain signals to produce filtered sensor time-domain signals (e.g., filtered sensor time-domain signals 607, FIG. 6A). In some embodiments, a separate (e.g., independent) analog front end is provided for interfacing with each of a set of sensors 510. In some embodiments, a first analog front end is provided for interfacing with a set of EEG sensors 510, and a second (i.e., distinct) electrocardiography (ECG) analog front end is provided for interfacing with a set of heartbeat pulse sensors 510. In such embodiments, body interface system 500 comprises a plurality of analog front end modules (e.g., analog front end 520-a, analog front end 520-b, through analog front end 520-n) for interfacing with a plurality of sensors 510.

[0089] As shown in FIG. 5, body interface system 500 includes digital processor system 560 for processing signals obtained from the living subject (e.g., signals corresponding to electrical activity of the brain or heart), optionally after the signals are pre-processed by analog front end 520. Digital processor 560 includes signal conditioning modules 530, signal modulators 540, and synthesizer modules 550. In some embodiments, a separate (e.g., independent) signal conditioning module, a separate (e.g., independent) signal modulator, and/or a separate (e.g., independent) synthesizer module is provided for interfacing with each sensor 510 in a set of two or more sensors 510 (optionally through a separate analog front end module). In such embodiments, body interface system 500 comprises a plurality of signal conditioning modules (e.g., signal conditioning module 530-a, signal conditioning module 530-b, through signal conditioning module 530-n), a plurality of signal modulators (e.g., signal modulators 540-a, signal modulators 540-b, through signal modulators 540-n), and/or a plurality of synthesizer modules (e.g., synthesizer module 550-a, synthesizer module 550-b, through synthesizer module 550-n) for interfacing with a plurality of sensors 510 and processing signals obtained from those sensors.

[0090] In some embodiments, a respective signal conditioning module 530 includes a data converter (e.g., an analog-to-digital converter for converting an analog filtered sensor time-domain signal obtained from sensors 510 to a corresponding digital representation), an up-sampler and a digital low-pass filter. In some implementations, signal modulators 540 receive the digitized time-domain signals output by signal conditioning modules 530, and concurrently generate a set of acoustic parameters, including a plurality of time-varying acoustic parameters from (e.g., using) the digitized time-domain signals. One or more of the plurality of time-varying acoustic parameters is modulated in accordance with at least the signal value of the time-domain signal (e.g., time-domain signal 618, FIG. 6B, produced by signal conditioning module 530). In some embodiments, synthesizer module (e.g., synthesizer module 550) combines the concurrently generated set of acoustic parameters to produce a representation of an acoustic signal corresponding to the time-domain signal (e.g., time-domain signal 618, FIG. 6B, produced by signal conditioning module 530). As used herein, the term "representation of an acoustic signal" can be exchanged synonymously with the term "output acoustic signal".

[0091] In some embodiments, a plurality of representations of acoustic signals is combined to produce a combined acoustic signal. Alternatively, a combined acoustic signal is generated by combining acoustic signals corresponding to the plurality of representations of acoustic signals produced by digital processor system 560. In yet another alternative, a plurality of acoustic signals, each corresponding to one or more of the aforementioned representations of acoustic signals, are recorded on distinct tracks, where the distinct tracks are configured to enable concurrent playback of the acoustic signals recorded in those tracks.

[0092] FIG. 6A illustrates a block diagram of an analog front end (e.g., analog front end 520, FIG. 5) optionally included in body interface system 500. In some embodiments, analog front end 520 receives a sensor time-domain signal (e.g., sensor time-domain signal 601) from a respective sensor 510 and pre-processes the sensor time-domain signal to produce a filtered sensor time-domain signal (e.g., filtered sensor time-domain signal 607). When body interface system 500 includes a plurality of analog front ends 520, the analog front ends 520 process a corresponding number of sensor time-domain signals in parallel to produce filtered sensor time-domain signals.

[0093] In some embodiments, analog front end 520 includes interface circuitry (e.g., interface analog circuitry 602) to interface with a respective sensor 510, for example, by way of providing bias voltages and/or currents to the respective sensor 510, buffering signals (e.g., using a buffer amplifier) received from sensors 510 and/or providing appropriate coupling conditions (e.g., providing appropriate input impedance) for interfacing with the signals received from sensors 510.

[0094] Alternatively, or in addition, according to some implementations, analog front end 520 includes one or more amplifiers 604 and/or filters 606 to pre-process (e.g., amplify and/or filter) sensor time-domain signals corresponding to brain electrical activity or heart electrical activity (e.g., sensor time-domain signal 601, FIG. 6A) obtained (e.g., sensed and/or acquired) from one or more sensors 510. As noted above, in some embodiments, analog front end 520 produces a filtered sensor time-domain signal (e.g., filtered sensor time-domain signal 607).