Regional-level Representation Of User Location On A Social Media Platform

Amitay; Daniel ; et al.

U.S. patent application number 15/965744 was filed with the patent office on 2020-04-16 for regional-level representation of user location on a social media platform. The applicant listed for this patent is Daniel Brody Amitay. Invention is credited to Daniel Amitay, Jonathan Brody, Timothy Jordan Garcia, Leonid Gorkin, Andrew Lin, Walton Lin, Evan Spiegel.

| Application Number | 20200117339 15/965744 |

| Document ID | / |

| Family ID | 63918792 |

| Filed Date | 2020-04-16 |

View All Diagrams

| United States Patent Application | 20200117339 |

| Kind Code | A1 |

| Amitay; Daniel ; et al. | April 16, 2020 |

REGIONAL-LEVEL REPRESENTATION OF USER LOCATION ON A SOCIAL MEDIA PLATFORM

Abstract

A social media platform provides a map-based graphical user interface (GUI) for accessing social media content submitted to a social media platform supported by the map-based GUI. User icons are displayed on a map of the GUI to represent locations of friend users. The locations of at least some users are represented by their user icons at a regional granularity, such that the displayed locations that are different from their accurate locations, but that are located within an accurate geographical region such as a city or a town. Users can select the granularity level at which their icons are displayed to others.

| Inventors: | Amitay; Daniel; (New York City, NY) ; Brody; Jonathan; (Venice, CA) ; Garcia; Timothy Jordan; (Venice, CA) ; Gorkin; Leonid; (Chappaqua, NY) ; Lin; Andrew; (Long Island City, NY) ; Lin; Walton; (New York, NY) ; Spiegel; Evan; (Venice, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 63918792 | ||||||||||

| Appl. No.: | 15/965744 | ||||||||||

| Filed: | April 27, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62556134 | Sep 8, 2017 | |||

| 62552958 | Aug 31, 2017 | |||

| 62491115 | Apr 27, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 41/22 20130101; H04L 67/306 20130101; H04L 67/12 20130101; H04W 4/21 20180201; G06F 16/9537 20190101; G06Q 50/01 20130101; G06T 2200/24 20130101; H04L 63/107 20130101; G06F 3/04817 20130101; H04L 63/101 20130101; G06F 9/547 20130101; H04W 4/02 20130101; H04L 67/22 20130101; H04W 4/021 20130101; G06F 16/29 20190101; H04L 67/18 20130101; G06F 16/9535 20190101; G06T 11/60 20130101; H04L 51/32 20130101; H04L 41/28 20130101; G06T 11/206 20130101; H04W 4/029 20180201 |

| International Class: | G06F 3/0481 20060101 G06F003/0481; G06F 17/30 20060101 G06F017/30; H04L 29/08 20060101 H04L029/08; H04W 4/02 20060101 H04W004/02 |

Claims

1. A system comprising: a user location mechanism comprising one or more computer processors configured to perform operations comprising: determining an actual location of a user device associated with a user of a social media application; identifying a defined geographical region within which the actual location of the user device is situated, the defined geographical region having geographical boundaries that are agnostic to the actual location of the user device, so that the defined geographical region remains consistent between different instances irrespective of changes in the actual location of the user device; and determining a display location for the user such that the display location differs from the actual location of the user device, and such that the display location is within the defined geographical region; and a map engine comprising one or more computer processor devices configured to perform operations comprising: causing generation of a map-based graphical user interface (GUI) for a social media application, the map-based GUI including an interactive map of a geographical area that includes at least part of the defined geographical region; and causing display on the interactive map of the GUI of a user icon at the display location, the user icon being representative of the user associated with the user device.

2. The system of claim 1, wherein the map-based GUI is generated on a friend user device that is associated with a friend user who is a member of a social network of the user.

3. The system of claim 2, wherein the map engine is configured to, in response to generation of an instance of the map-based GUI on the user device, cause display of the user icon for the user substantially at the actual location, so that the display location of the user icon is different on the user device than it is on the friend user device.

4. The system of claim 1, wherein the defined geographical region is a cartographically defined area.

5. The system of claim 4, wherein the geographically defined region is a city.

6. The system of claim 4, wherein the geographically defined region is a neighborhood.

7. The system of claim 2, wherein the user location mechanism is configured to determine the display location by generating the display location in an at least partially randomized procedure.

8. The system of claim 2, wherein the user location mechanism is configured to determine the display location by generating a random location within the defined geographical region.

9. The system of claim 2, wherein the user location mechanism is further configured to maintain, while the user device is located in the defined geographical region, the display location consistent between different instances of generation of the map-based GUI.

10. The system of claim 2, wherein the map engine is configure to display in association with the user icon a label indicating the identified defined geographical region.

11. A method comprising: determining an actual location of a user device associated with a user of a social media application; identifying a defined geographical region within which the actual location of the user device is situated, the defined geographical region having geographical boundaries that are agnostic to the actual location of the user device, so that the defined geographical region remains consistent between different instances irrespective of changes in the actual location of the user device; determining a display location for the user such that the display location differs from the actual location of the user device, and such that the display location is within the defined geographical region; causing generation of a map-based graphical user interface (GUI) for a social media application, the map-based GUI including an interactive map of a geographical area that includes at least part of the defined geographical region; and causing display on the interactive map of the GUI of a user icon at the display location, the user icon being representative of the user associated with the user device.

12. The method of claim 11, wherein the map-based GUI is generated on a friend user device that is associated with a friend user who is a member of a social network of the user.

13. The method of claim 12, further comprising, in response to generation of an instance of the map-based GUI on the user device, causing display of the user icon for the user substantially at the actual location, so that the display location of the user icon is different on the user device than it is on the friend user device.

14. The method of claim 13, wherein the geographically defined region is a city.

15. The method of claim 13, wherein the geographically defined region is a neighborhood.

16. The method of claim 12, wherein determining the display location comprises generating the display location in an at least partially randomized procedure.

17. The method of claim 12, wherein the determining of the display location comprises generating a random location within the defined geographical region.

18. The method of claim 12, further comprising, while the user device is located in the defined geographical region, maintaining the display location consistent between different instances of generation of the map-based GUI.

19. The method of claim 12, wherein the displaying of the user icon comprises displaying in association with the user icon a label indicating the defined geographical region.

20. A non-transitory computer-readable storage medium having stored thereon instructions for causing a machine, when executing the instructions, to perform operations comprising: determining an actual location of a user device associated with a user of a social media application; identifying a defined geographical region within which the actual location of the user device is situated, the defined geographical region having geographical boundaries that are agnostic to the actual location of the user device, so that the defined geographical region remains consistent between different instances irrespective of changes in the actual location of the user device; determining a display location for the user such that the display location differs from the actual location of the user device, and such that the display location is within the defined geographical region; causing generation of a map-based graphical user interface (GUI) for a social media application, the map-based GUI including an interactive map of a geographical area that includes at least part of the defined geographical region; and causing display on the interactive map of the GUI of a user icon at the display location, the user icon being representative of the user associated with the user device.

Description

PRIORITY APPLICATIONS

[0001] This application is a non-provisional application which claims the benefit of priority to U.S. Provisional Application Ser. No. 62/556,134, filed Sep. 8, 2017; U.S. Provisional Application Ser. No. 62/552,958, filed Aug. 31, 2017; and U.S. Provisional Application Ser. No. 62/491,115, filed Apr. 27, 2017, the contents of which are incorporated herein by reference in their entireties.

BACKGROUND

[0002] Social media applications implement computer-mediated technologies allowing for the creating and sharing of content that communicates information, ideas, career interests, and other forms of expression via virtual communities and networks. Social media platforms use web-based technologies, desktop computers, and mobile technologies (e.g., smart phones and tablet computers) to create highly interactive platforms through which individuals, communities, and organizations can share, co-create, discuss, and modify user-generated content or pre-made content posted online.

[0003] Mobile electronic devices on which end-user social media applications can be executed typically provide geolocation services that determine the geographic location of the mobile electronic device, by extension indicating the geographic location of the associated user. Social media content posted by users is often geo-tagged based on the geolocation of a mobile electronic device (such as a mobile phone) by use of which the social media content is captured and/or posted to the social media platform. In other embodiments, social media content may explicitly be geo-tagged by a user using a computer device that does not have activated geolocation services and/or that is not a mobile device (such as a desktop PC).

[0004] In many social media platforms, the total number of individual social media items that are available for viewing by any particular user can be very large. Search mechanisms that enable users to locate social media content that may be of interest to them can consume significant server-side resources and often provide less than satisfactory search results.

BRIEF DESCRIPTION OF THE DRAWINGS

[0005] Some aspects of the disclosure are illustrated in the appended drawings. Note that the appended drawings illustrate example embodiments of the present disclosure and cannot be considered as limiting the scope of the disclosure.

[0006] FIG. 1 is a block diagram showing an example social media platform system for exchanging, posting, and consuming social media data (e.g., messages and associated content) over a network.

[0007] FIG. 2 is a block diagram illustrating further details regarding a social media platform system, according to example embodiments.

[0008] FIG. 3 is a schematic diagram illustrating data which may be stored in a database of the social media platform system, according to certain example embodiments.

[0009] FIG. 4 is a schematic diagram illustrating a structure of a message, according to some embodiments, generated by a social media client application according to example embodiments.

[0010] FIG. 5 is a schematic diagram illustrating an example access-limiting process, in terms of which access to content (e.g., an ephemeral message, and associated multimedia payload of data) or a content collection (e.g., an ephemeral message gallery or story) may be time-limited (e.g., made ephemeral).

[0011] FIGS. 6A and 6B are respective schematic views of a client device providing a map-based graphical user interface for a social media application, according to different respective example embodiments.

[0012] FIGS. 7A-7C are respective schematic views of a client device providing a destination selection interface forming part of a map-based graphical user interface for a social media application, according to some example embodiments.

[0013] FIGS. 8A-8C are respective screenshots of a map-based graphical user interface, providing features relating to display of user icons in a map forming part of the interface, according to an example embodiment.

[0014] FIGS. 9A and 9B are respective screenshots of the functionalities of a map-based graphical user interface that provides access to a chat interface and to friend content via a friend icon displayed as part of the map, according to an example embodiment.

[0015] FIGS. 11A-11B is a series of schematic screenshots illustrating a location-based search mechanism provided by a map-based graphical user interface, according to one example embodiment.

[0016] FIGS. 10A-10D are respective schematic screenshots of a search interface forming part of a map-based graphical user interface, according to one example embodiment.

[0017] FIG. 12 is a schematic view of a social media platform system for providing a map-based graphical user interface for a social media application, according to one example embodiment.

[0018] FIGS. 13A-13D is a series of schematic flow charts illustrating an example embodiment of a method of providing a map-based graphical user interface for a social media application that includes location-based search functionality, according to an example embodiment.

[0019] FIG. 14 is a schematic flowchart showing a method of providing a map-based graphical user interface that includes display of user location at a regional granularity level, according to an example embodiment.

[0020] FIGS. 15A and 15B is a pair of schematic flowcharts illustrating a method of providing a social media platform GUI that provides one or more friend-level access mechanisms for location-agnostic friend content, according to an example embodiment.

[0021] FIG. 16 is a schematic flowchart illustrating a method of providing a social media platform GUI that includes one or more mechanisms which enable searching for social media content based on a location and/or attribute(s) of a selected friend user, according to an example embodiment.

[0022] FIGS. 17A and 17B or screenshots of a map-based graphical user interface, according to an example embodiment.

[0023] FIG. 18 is a flowchart illustrating a method of providing for friend icon clustering, according to example embodiments.

[0024] FIG. 19 is a screenshot of a map-based graphical user interface, according to an example embodiment.

[0025] FIG. 20 is a block diagram illustrating a representative software architecture, which may be used in conjunction with various hardware architectures herein described.

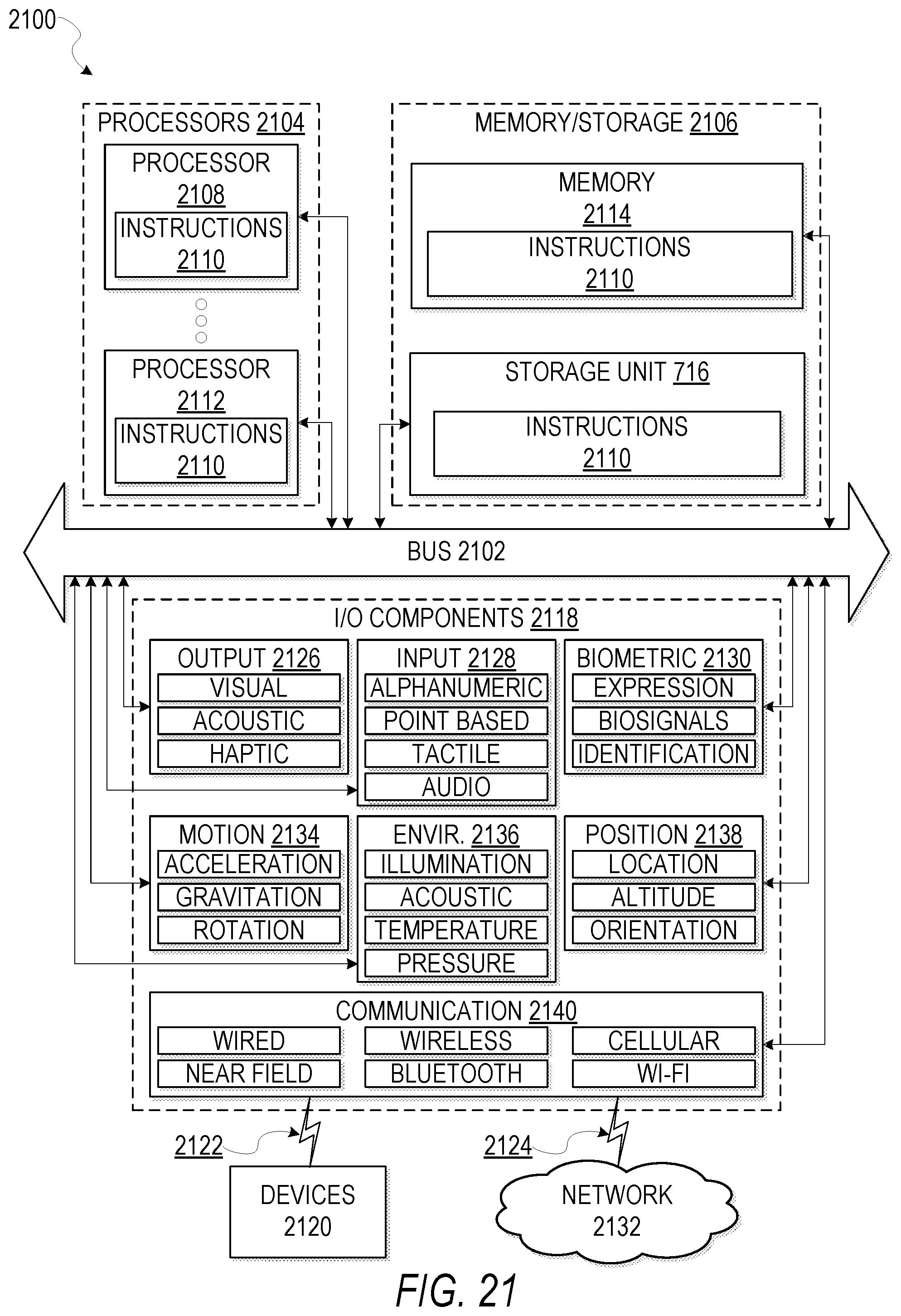

[0026] FIG. 21 is a block diagram illustrating components of a machine, according to some example embodiments, able to read instructions from a machine-readable medium (e.g., a machine-readable storage medium) and perform any one or more of the methodologies discussed herein.

[0027] The headings provided herein are merely for convenience and do not necessarily affect the scope or meaning of the terms used.

DESCRIPTION

[0028] One aspect of the disclosure provides a geographical map-based graphical user interface (GUI) for a social media platform or application, to allow user access via the map-based GUI to ephemeral social media content. Such an interface is also referred to herein as a "map GUI."

[0029] As will be described in greater detail below, ephemeral social media content comprises social media items that are available for viewing via the social media application for only a limited period. For example, an ephemeral social media item or message (also referred to herein as a "snap") submitted by a user to the social media application may be available for viewing by other users via the map GUI of the social media application for only a predefined period subsequent to submission. In one example embodiment, each ephemeral item or snap has an availability lifetime (also referred to herein as a "gallery participation timer") of 24 hours after submission, after which the ephemeral item "disappears" and is no longer available for viewing by other users via the map GUI. Such ephemeral social media items (also referred to herein as ephemeral messages) typically comprise photographic or video content, which may be submitted with or without augmentations made by the user to the underlying photographic or video content.

[0030] Ephemeral messages submitted by multiple different users may be available on a map forming part of the map GUI based at least in part on respective location information (e.g., geotag information) of the ephemeral messages. In some embodiments, the map GUI may provide location-based access to one or more collections of ephemeral social media items (also known as and referred to herein as galleries or "stories"). In some example embodiments, a plurality of ephemeral messages submitted by different users are included in a common geo-anchored gallery or story based at least in part on respective geotagging information of the plurality of ephemeral messages. Such a location-based gallery or story is in some embodiments represented on the map GUI by a respective gallery icon displayed at a corresponding map location, the gallery icon being selectable by the user to trigger automated sequential display of the plurality of ephemeral messages in the gallery on the user device on which the map GUI is rendered.

[0031] In some embodiments, such a map GUI includes representations (e.g. by means of friend icons or bitmojis) of at least approximate respective positions of a user's friends in a social network graph accessed by the social media application, with the social media application enabling the user to explore the world around friends' locations by use of the GUI. Thus, the map GUI can in some embodiments enable the user to explore uploaded social media content (e.g., individual photos or video clips/snaps, or social media galleries such as stories comprising respective collections of photos, messages, or snaps).

[0032] One aspect of the disclosure provides for display of user locations in the map GUI at a regional level of granularity, such that the user location is displayed at an intentionally inaccurate location within a defined geographical region in which the user is actually located. Some embodiments enable a user to selectively vary the level of display granularity applying to their user icon when the map GUI is rendered on the user devices of other users, for example enabling the user to switch between a precise and a regional level of granularity. In one example embodiment, the regional level of granularity displays the user location accurately at a city or town level.

[0033] These and additional aspects of the disclosure will be described below with reference to specific example embodiments. First, platform architecture and a technical background to implementation of the various embodiments will be described with reference to FIGS. 1-5. Thereafter, specific example embodiments are described with reference to FIGS. 6A-19. FIGS. 20-21 finally describe aspects of software and hardware components that are in some instances used in the implementation of the described example embodiments.

DETAILED DESCRIPTION

[0034] The description that follows includes systems, methods, devices, techniques, instruction sequences, and computing machine program products that embody illustrative embodiments of the disclosure. In the following description, for the purposes of explanation, numerous specific details are set forth in order to provide an understanding of various embodiments of the inventive subject matter. It will be evident, however, to those skilled in the art, that embodiments of the disclosed subject matter may be practiced without these specific details. In general, well-known instruction instances, protocols, structures, and techniques are not necessarily shown in detail.

System Architecture and Operating Environment

[0035] FIG. 1 is a block diagram showing an example social media platform system 100 for exchanging data (e.g., social media items or messages and associated content) over a network. In this description, items communicated from one user to one or more other users via a social media application or platform, as well as items uploaded or provided by users to a social media application or platform for availability to or consumption by other users via the social media application or platform, are referred to as "messages." Thus, the term "messages" as used herein is not limited to communications from one user to specified recipient users, but includes messages made available for public consumption via the relevant social media platform.

[0036] The social media platform system 100 includes multiple client devices 102, each of which hosts a number of applications including a social media client application 104. Each social media client application 104 is communicatively coupled to other instances of the social media client application 104 and a social media application server system 108 via a network 106 (e.g., the Internet).

[0037] Accordingly, each social media client application 104 is able to communicate and exchange data with another social media client application 104 and with the social media application server system 108 via the network 106. The data exchanged between social media client applications 104, and between a social media client application 104 and the social media application server system 108, includes functions (e.g., commands to invoke functions) as well as payload data (e.g., text, audio, video, or other multimedia data).

[0038] The social media application server system 108 provides server-side functionality via the network 106 to a particular social media client application 104. While certain functions of the social media platform system 100 are described herein as being performed by either a social media client application 104 or by the social media application server system 108, it will be appreciated that the location of certain functionality either within the social media client application 104 or the social media application server system 108 is a design choice. For example, it may be technically expedient to initially deploy certain technology and functionality within the social media application server system 108, but to later migrate this technology and functionality to the social media client application 104 where a client device 102 has a sufficient processing capacity.

[0039] The social media application server system 108 supports various services and operations that are provided to the social media client application 104. Such operations include transmitting data to, receiving data from, and processing data generated by the social media client application 104. This data may include message content, client device information, geolocation information, media annotations and overlays, message content persistence conditions, social network information, and live event information, as examples. Data exchanges within the social media platform system 100 are invoked and controlled through functions available via user interfaces (UIs) of the social media client application 104.

[0040] Turning now specifically to the social media application server system 108, an application programming interface (API) server 110 is coupled to, and provides a programmatic interface to, an application server 112. The application server 112 is communicatively coupled to a database server 118, which facilitates access to a database 120 in which is stored data associated with messages processed by the application server 112.

[0041] Dealing specifically with the API server 110, this server receives and transmits message data (e.g., commands and message payloads) between the client device 102 and the application server 112. Specifically, the API server 110 provides a set of interfaces (e.g., routines and protocols) that can be called or queried by the social media client application 104 in order to invoke functionality of the application server 112. The API server 110 exposes various functions supported by the application server 112, including account registration; login functionality; the sending of messages, via the application server 112, from a particular social media client application 104 to another social media client application 104; the sending of media files (e.g., images or video) from a social media client application 104 to a social media server application 114, for possible access by another social media client application 104; the setting of a collection of media data (e.g., a story or gallery); the retrieval of such collections; the retrieval of a list of friends of a user of a client device 102; the retrieval of messages and content; the adding and deletion of friends to and from a social graph; the location of friends within a social graph; opening an application event (e.g., relating to the social media client application 104); and so forth.

[0042] The application server 112 hosts a number of applications and subsystems, including the social media server application 114, an image processing system 116, and a social network system 122. The social media server application 114 implements a number of message processing technologies and functions, particularly related to the aggregation and other processing of content (e.g., textual and multimedia content) included in messages received from multiple instances of the social media client application 104. As will be described in further detail, the text and media content from multiple sources may be aggregated into collections of content (e.g., called "stories" or "galleries"). These collections are then made available, by the social media server application 114, to the social media client application 104. Other processor- and memory-intensive processing of data may also be performed server-side by the social media server application 114, in view of the hardware requirements for such processing.

[0043] The application server 112 also includes the image processing system 116, which is dedicated to performing various image processing operations, typically with respect to images or video received within the payload of a message at the social media server application 114.

[0044] The social network system 122 supports various social networking functions and services, and makes these functions and services available to the social media server application 114. To this end, the social network system 122 maintains and accesses an entity graph 304 (described below with reference to FIG. 3) within the database 120. Examples of functions and services supported by the social network system 122 include the identification of other users of the social media platform system 100 with whom a particular user has relationships or whom the particular user is "following," and also the identification of other attributes and interests of a particular user. In some embodiments, the social network system 122 includes an identification of other users whose location is available for viewing by a particular user via a map-based GUI displayable on a client device 102 using the corresponding social media client application 104.

[0045] FIG. 2 is a block diagram illustrating further details regarding the social media platform system 100, according to example embodiments. Specifically, the social media platform system 100 is shown to comprise the social media client application 104 and the application server 112, which in turn embody a number of some subsystems, namely an ephemeral timer system 202, a collection management system 204, and an annotation system 206.

[0046] The ephemeral timer system 202 is responsible for enforcing the temporary access to content permitted by the social media client application 104 and the social media server application 114. To this end, the ephemeral timer system 202 incorporates a number of timers that, based on duration and display parameters associated with a message, or collection/gallery of messages (e.g., a SNAPCHAT story), selectively display and enable access to messages and associated content via the social media client application 104. Further details regarding the operation of the ephemeral timer system 202 are provided below.

[0047] The collection management system 204 is responsible for managing collections of media (e.g., collections of text, image, video, and audio data). In some examples, a collection of content (e.g., messages, including images, video, text, and audio) may be organized into an "event gallery" or an "event story." Such a collection may be made available for a specified time period, such as the duration of an event to which the content relates, or until expiry of a last message or snap in the gallery. For example, content relating to a music concert may be made available as a "story" for the duration of that music concert. The collection management system 204 may also be responsible for publishing an icon that provides notification of the existence of a particular collection to the user interface of the social media client application 104. As will be described in greater detail with reference to the specific example embodiments that follow, the collection management system 204 may also be responsible for compiling and managing multiple location-based social media galleries based at least in part on geo-tag data of social media items or messages uploaded to the social media platform by multiple users. Other types of galleries that may be provided by the collection management system 204 include a "place story" that collects ephemeral messages having geotag data indicating a location within a predefined associated geographical area; and an ad-hoc story or spike story that is dynamically surfaced on a map GUI as described herein based on underlying location-based social media activity, e.g., based on geo-temporal volume or anomality/unusualness of social media items submitted by users for public consumption (e.g., for inclusion in a "Live Story" or "Our Story"). With "anomality" is meant is metric indicating a how anomalous something is.

[0048] The collection management system 204 furthermore includes a curation interface 208 that allows a human operator (e.g., a collection manager) to manage and curate a particular collection of content. For example, the curation interface 208 enables an event organizer to curate a collection of content relating to a specific event (e.g., to delete inappropriate content or redundant messages). Instead, or in addition, the collection management system 204 may employ machine vision (or image recognition technology), geotag data, and/or content rules to automatically compile and/or curate a content collection. In certain embodiments, compensation may be paid to a user for inclusion of user-generated content into a collection. In such cases, the curation interface 208 operates to automatically make payments to such users for the use of their content.

[0049] The annotation system 206 provides various functions that enable a user to annotate or otherwise augment, modify, or edit media content associated with a message. For example, the annotation system 206 provides functions related to the generation and publishing of media overlays for messages processed by the social media platform system 100. The annotation system 206 operatively supplies a media overlay (e.g., a SNAPCHAT filter) to the social media client application 104 based on a geolocation of the client device 102. In another example, the annotation system 206 operatively supplies a media overlay to the social media client application 104 based on other information, such as social network information of the user of the client device 102. A media overlay may include audio and visual content and visual effects. Examples of audio and visual content include pictures, texts, logos, animations, and sound effects. An example of a visual effect includes color overlaying. The audio and visual content or the visual effects can be applied to a media content item (e.g., a photo) at the client device 102. For example, the media overlay includes text that can be overlaid on top of a photograph taken by the client device 102. In another example, the media overlay includes an identification of a location overlay (e.g., Venice Beach), a name of a live event, or a name of a merchant overlay (e.g., Beach Coffee House). In another example, the annotation system 206 uses the geolocation of the client device 102 to identify a media overlay that includes the name of a merchant at the geolocation of the client device 102. The media overlay may include other indicia associated with the merchant. The media overlays may be stored in the database 120 and accessed through the database server 118.

[0050] In one example embodiment, the annotation system 206 provides a user-based publication platform that enables users to select a geolocation on a map, and upload content associated with the selected geolocation. The user may also specify circumstances under which a particular media overlay should be offered to other users. The annotation system 206 generates a media overlay that includes the uploaded content and associates the uploaded content with the selected geolocation.

[0051] In another example embodiment, the annotation system 206 provides a merchant-based publication platform that enables merchants to select a particular media overlay associated with a geolocation via a bidding process. For example, the annotation system 206 associates the media overlay of a highest-bidding merchant with a corresponding geolocation for a predefined amount of time

[0052] FIG. 3 is a schematic diagram illustrating data 300 which may be stored in the database 120 of the social media application server system 108, according to certain example embodiments. While the content of the database 120 is shown to comprise a number of tables, it will be appreciated that the data could be stored in other types of data structures (e.g., as an object-oriented database).

[0053] The database 120 includes message data stored within a message table 314. An entity table 302 stores entity data, including an entity graph 304. Entities for which records are maintained within the entity table 302 may include individuals, corporate entities, organizations, objects, places, events, etc. Regardless of type, any entity regarding which the social media application server system 108 stores data may be a recognized entity. Each entity is provided with a unique identifier, as well as an entity type identifier (not shown).

[0054] The entity graph 304 furthermore stores information regarding relationships and associations between entities. Such relationships may be social, professional (e.g., work at a common corporation or organization), interested-based, or activity-based, merely for example.

[0055] The database 120 also stores annotation data, including in the example form of filters, in an annotation table 312. Filters for which data is stored within the annotation table 312 are associated with and applied to videos (for which data is stored in a video table 310) and/or images (for which data is stored in an image table 308). Filters, in one example, are overlays that are displayed as overlaid on an image or video during presentation to a recipient user. Filters may be of various types, including user-selected filters from a gallery of filters presented to a sending user by the social media client application 104 when the sending user is composing a message. Other types of filters include geolocation filters (also known as geo-filters), which may be presented to a sending user based on geographic location. For example, geolocation filters specific to a neighborhood or special location may be presented within a user interface by the social media client application 104, based on geolocation information determined by a Global Positioning System (GPS) unit of the client device 102. Another type of filter is a data filter, which may be selectively presented to a sending user by the social media client application 104, based on other inputs or information gathered by the client device 102 during the message creation process. Examples of data filters include a current temperature at a specific location, a current speed at which a sending user is traveling, a battery life for a client device 102, or the current time.

[0056] Other annotation data that may be stored within the image table 308 is so-called "lens" data. A "lens" may be a real-time special effect and sound that may be added to an image or a video.

[0057] Yet further annotation data that may be stored within the annotation table 312 is user-generated annotations or augmentations provided by the user to overlay an underlying photographic image or video. Such augmentations/annotations can include, for example, text annotations and drawing annotations or augmentations provided by the user, e.g., via a client device touchscreen.

[0058] As mentioned above, the video table 310 stores video data which, in one embodiment, is associated with messages for which records are maintained within the message table 314. Similarly, the image table 308 stores image data associated with messages for which message data is stored in the message table 314. The entity table 302 may associate various annotations from the annotation table 312 with various images and videos stored in the image table 308 and the video table 310.

[0059] A story table 306 stores data regarding collections of messages and associated image, video, or audio data, which are compiled into a collection (e.g., a SNAPCHAT story or a gallery). The creation of a particular collection may be initiated by a particular user (e.g., any user for whom a record is maintained in the entity table 302). A user may create a "personal story" in the form of a collection of content that has been created and sent/broadcast by that user. To this end, the user interface of the social media client application 104 may include an icon that is user selectable to enable a sending user to add specific content to his or her personal story. In the context of this description, such messages and stories/galleries are understood to be for private consumption, being limited for viewing via the social media application to particular users identified by the submitting user or to users who are members of a social network of the submitting user. This is to be contrasted with social media items provided for public or non-private consumption via the social media application, not being limited to a user-specific or user-specified subset of all users of the social media application. An example of a publicly viewable collection or gallery is a "Live Story" or "Our Story."

[0060] As mentioned, a collection may also constitute a "Live Story," which is a collection of content from multiple users that is created manually, automatically, or using a combination of manual and automatic techniques. For example, a "Live Story" may constitute a curated stream of user-submitted content from various locations and events. Users whose client devices have location services enabled and are at a common event location at a particular time may, for example, be presented with an option, via a user interface of the social media client application 104, to contribute content to a particular Live Story. The Live Story may be identified to the user by the social media client application 104, based on his or her location. The end result is a "Live Story" told from a community perspective. In accordance with some example embodiments of this disclosure, a submitting user can submit social media items or messages to a non-specific common Live Story. Such content is accessible to other users via a map-based graphical user interface, with such social media items or messages being accessible via the map GUI based on a respective location indicated by corresponding geo-tag data, either by forming part of a location-based gallery or story, or by such other users using location-based search mechanisms forming part of the map GUI.

[0061] A further type of content collection is known as a "location story," which enables a user whose client device 102 is located within a specific geographic location (e.g., on a college or university campus) to contribute to a particular collection. In some embodiments, a contribution to a location story may require a second degree of authentication to verify that the end user belongs to a specific organization or other entity (e.g., is a student on the university campus). In some embodiments of this disclosure, a message uploaded to a Live Story or Our Story generally, without the user specifying a particular location story in which the message is to be included, can automatically or semi-automatically be included in a location story based at least in part on geo-tag data of the message.

[0062] A map tile table 320 stores multiple map tiles that can be used for presenting a map in a map viewport of a map-based GUI, according to some embodiments of this disclosure. In a particular example embodiment, each map view is composed of 9 or 16 map tiles stitched together. A plurality of sets of map tiles may be maintained for different map zoom levels. In some example embodiments, a superset of map tiles is maintained server-side, being forwarded to a requesting client device 102 for composing a map representation of specific requested areas.

[0063] A user location table 326 stores current or most recent user location data for multiple users of the social media application. The user location data may be based on location data received from respective client devices 102 associated with the respective users. Such user location data is in some example embodiments used to display in a map-based GUI respective locations of a plurality of users who form part of the social network of the requesting user and/or who have provided permission for the requesting user to view their locations. Each such user may be represented on a map forming part of the map GUI by a respective user icon or bitmoji.

[0064] FIG. 4 is a schematic diagram illustrating a structure of a social media item or message 400, according to some embodiments, generated by one instance of the social media client application 104 for communication to a further instance of the social media client application 104 or to the social media server application 114. The content of a particular message 400 is used to populate the message table 314 stored within the database 120, accessible by the social media server application 114. Similarly, the content of a message 400 is stored in memory as "in-transit" or "in-flight" data of the client device 102 or the application server 112. The message 400 is shown to include the following components:

[0065] A message identifier 402: a unique identifier that identifies the message 400.

[0066] A message text payload 404: text, to be generated by a user via a user interface of the client device 102 and that is included in the message 400.

[0067] A message image payload 406: image data, captured by a camera component of a client device 102 or retrieved from memory of a client device 102, and that is included in the message 400.

[0068] A message video payload 408: video data, captured by a camera component or retrieved from a memory component of the client device 102 and that is included in the message 400.

[0069] A message audio payload 410: audio data, captured by a microphone or retrieved from the memory component of the client device 102, and that is included in the message 400.

[0070] A message annotation 412: annotation data (e.g., filters, stickers, or other enhancements) that represents annotations to be applied to the message image payload 406, message video payload 408, or message audio payload 410 of the message 400.

[0071] A display duration parameter 414: a parameter value indicating, in seconds, the amount of time for which content of the message (e.g., the message image payload 406, message video payload 408, and message audio payload 410) is to be presented or made accessible to a user via the social media client application 104. The display duration parameter 414 is also referred to herein as a "display duration timer."

[0072] A message geolocation parameter 416: geolocation data or geo-tag data (e.g., latitudinal and longitudinal coordinates) associated with the content payload of the message 400. Multiple message geolocation parameter 416 values may be included in the payload, each of these parameter values being associated with respective content items included in the content (e.g., a specific image within the message image payload 406, or a specific video in the message video payload 408).

[0073] A message story identifier 418: identifier values identifying one or more content collections (e.g., "stories") with which a particular content item in the message image payload 406 of the message 400 is associated. For example, multiple images within the message image payload 406 may each be associated with multiple content collections using identifier values. An example of such a message story identifier 418 can in some embodiments comprise one or more thumbnail images.

[0074] A message tag 420: each message 400 may be tagged with multiple tags, each of which is indicative of the subject matter of content included in the message payload. For example, where a particular image included in the message image payload 406 depicts an animal (e.g., a lion), a tag value may be included within the message tag 420 that is indicative of the relevant animal. Tag values may be generated manually, based on user input, or may be automatically generated using, for example, image recognition.

[0075] A message sender identifier 422: an identifier (e.g., a messaging system identifier, email address, or device identifier) indicative of a user of the client device 102 on which the message 400 was generated and from which the message 400 was sent.

[0076] A message receiver identifier 424: an identifier (e.g., a messaging system identifier, email address, or device identifier) indicative of a user of the client device 102 to which the message 400 is addressed.

[0077] The contents (e.g., values) of the various components of the message 400 may be pointers to locations in tables within which content data values are stored. For example, an image value in the message image payload 406 may be a pointer to (or address of) a location within an image table 308. Similarly, values within the message video payload 408 may point to data stored within a video table 310, values stored within the message annotation 412 may point to data stored in an annotation table 312, values stored within the message story identifier 418 may point to data stored in a story table 306, and values stored within the message sender identifier 422 and the message receiver identifier 424 may point to user records stored within an entity table 302.

[0078] FIG. 5 is a schematic diagram illustrating an access-limiting process 500, in terms of which access to content (e.g., an ephemeral message 502, and associated multimedia payload of data) or a content collection (e.g., an ephemeral message story 504) may be time-limited (e.g., made ephemeral).

[0079] An ephemeral message 502 is shown to be associated with a display duration parameter 506, the value of which determines an amount of time that the ephemeral message 502 will be displayed to a receiving user of the ephemeral message 502 by the social media client application 104. In one embodiment, where the social media client application 104 is a SNAPCHAT client application, an ephemeral message 502 is viewable by a receiving user for up to a maximum of 10 seconds, depending on the amount of time that the sending user specifies using the display duration parameter 506. In some embodiments, the system automatically attaches a default display duration parameter 506 to photographic or still-image messages, e.g., having a default display duration of 5 seconds. The display duration parameter 506 of video-based messages may automatically correspond to the duration of the underlying video, with an automatically enforced upper limit. Thus, in an example embodiment in which an upper limit of 10 seconds is enforced, a 7-second video message will have a display duration parameter of 7 seconds.

[0080] The display duration parameter 506 and the message receiver identifier 424 are shown to be inputs to a message timer 512, which is responsible for determining the amount of time that the ephemeral message 502 is shown to a particular receiving user identified by the message receiver identifier 424. In particular, the ephemeral message 502 will only be shown to the relevant receiving user for a time period determined by the value of the display duration parameter 506. The message timer 512 is shown to provide output to a more generalized ephemeral timer system 202, which is responsible for the overall timing of display of content (e.g., an ephemeral message 502) to a receiving user.

[0081] The ephemeral message 502 is shown in FIG. 5 to be included within a social media gallery in the form of an ephemeral message story 504 (e.g., a personal SNAPCHAT story, or an event story). The ephemeral message story 504 has a story duration parameter 508, a value of which determines a time duration for which the ephemeral message story 504 is made available and is accessible to users of the social media platform system 100. The story duration parameter 508, for example, may be the duration of a music concert, where the ephemeral message story 504 is a collection of content pertaining to that concert. Alternatively, a user (either the owning user or a curator user) may specify the value for the story duration parameter 508 when performing the setup and creation of the ephemeral message story 504. In some embodiments, the story duration parameter 508 is determined based at least in part on respective story participation parameters 510 (or lifetimes) of one or more of the ephemeral messages 502 forming part of the particular ephemeral message story 504. In one example embodiment, the story duration parameter 508 corresponds to a story participation parameter 510 or lifetime of a last-posted one of the ephemeral messages 502 in the relevant ephemeral message story 504. In such a case, the ephemeral message story 504 expires (e.g., by becoming unavailable for viewing via the social media platform) when the last-posted ephemeral message 502 therein expires (e.g., when a story participation parameter 510 or lifetime of the last ephemeral message 502 expires).

[0082] As alluded to above, each ephemeral message 502 within the ephemeral message story 504 has an associated story participation parameter 510 (also referred to herein as a "gallery participation parameter" or a "gallery participation timer"), a value of which determines the duration of time for which the ephemeral message 502 will be accessible within the context of the ephemeral message story 504. Accordingly, a particular ephemeral message 502 may "expire" and become inaccessible within the context of the ephemeral message story 504, prior to the ephemeral message story 504 itself expiring in terms of the story duration parameter 508. The story duration parameter 508, story participation parameter 510, and message receiver identifier 424 each provide input to a story timer 514, which operationally determines, first, whether a particular ephemeral message 502 of the ephemeral message story 504 will be displayed to a particular receiving user, and, if so, for how long. Note that the ephemeral message story 504 is also aware of the identity of the particular receiving user as a result of the message receiver identifier 424.

[0083] Accordingly, the story timer 514 in some embodiments operationally controls the overall lifespan of an associated ephemeral message story 504, as well as an individual ephemeral message 502 included in the ephemeral message story 504. In one embodiment, each and every ephemeral message 502 within the ephemeral message story 504 remains viewable and accessible for a time period specified by the story duration parameter 508. In a further embodiment, a certain ephemeral message 502 may expire, within the context of the ephemeral message story 504, based on a story participation parameter 510. Note that a respective display duration parameter 506 may still determine the duration of time for which a particular ephemeral message 502 is displayed to a receiving user upon replay of the ephemeral message 502, even within the context of the ephemeral message story 504. Accordingly, the display duration parameter 506 determines the duration of time that a particular ephemeral message 502 is displayed to a receiving user, regardless of whether the receiving user is viewing that ephemeral message 502 inside or outside the context of an ephemeral message story 504.

[0084] The ephemeral timer system 202 may furthermore operationally remove a particular ephemeral message 502 from the ephemeral message story 504 based on a determination that it has exceeded an associated story participation parameter 510. For example, when a sending user has established a story participation parameter 510 of 24 hours from posting, the ephemeral timer system 202 will remove the relevant ephemeral message 502 from the ephemeral message story 504 after the specified 24 hours. The ephemeral timer system 202 also operates to remove an ephemeral message story 504 either when the story participation parameter 510 for each and every ephemeral message 502 within the ephemeral message story 504 has expired, or when the ephemeral message story 504 itself has expired in terms of the story duration parameter 508. Note that in this disclosure, at least some ephemeral messages 502 may be submitted by the user to the social media application for general or public viewing via the map-based GUI, without being included by the user in any particular event gallery and without being included in any location-based gallery represented by a respective gallery icon on the map GUI. Such ephemeral messages 502 in some embodiments also have respective story participation parameters 510 specifying time periods for which the ephemeral messages 502 are accessible via the map GUI as part of a collective Live Story or Our Story, as described with reference to specific example embodiments below. In a particular example embodiment, each ephemeral message 502 thus submitted for public or non-private view has a default gallery participation parameter or story participation parameter 510 of 24 hours. Such ephemeral messages 502 are thus viewable via the map GUI for only 24 hours after submission.

[0085] In certain use cases, a creator of a particular ephemeral message story 504 may specify an indefinite story duration parameter 508. In this case, the expiration of the story participation parameter 510 for the last remaining ephemeral message 502 within the ephemeral message story 504 will determine when the ephemeral message story 504 itself expires. In this case, a new ephemeral message 502, added to the ephemeral message story 504, with a new story participation parameter 510, effectively extends the life of an ephemeral message story 504 to equal the value of the story participation parameter 510.

[0086] In response to the ephemeral timer system 202 determining that an ephemeral message story 504 has expired (e.g., is no longer accessible), the ephemeral timer system 202 communicates with the social media platform system 100 (and, for example, specifically the social media client application 104) to cause an indicium (e.g., an icon) associated with the relevant ephemeral message story 504 to no longer be displayed within a user interface of the social media client application 104. Similarly, when the ephemeral timer system 202 determines that the story participation parameter 510 for a particular ephemeral message 502 has expired, the ephemeral timer system 202 causes the social media client application 104 to no longer display an indicium (e.g., an icon or textual identification) associated with the ephemeral message 502.

Example Embodiments of Map GUI Functionality

[0087] First, various aspects and features of the disclosure will be described conceptually with respect to specific example embodiments discussed with reference to and illustrated in FIGS. 6A-11B.

Basic Map GUI Architecture

[0088] FIG. 6A shows an example embodiment of a map-based graphical user interface, further referred to as a map GUI 612, displayed on a client device 102 in the example form of a mobile phone. In this example embodiment, the map GUI 612 is generated on a display in the form of a touchscreen 606 capable of receiving haptic input. The map GUI 612 includes an interactive map 618 showing a stylized aerial or satellite representation of a particular geographical area. The map 618 is displayed within a map viewport 621 which, in this example embodiment, uses the full available area of the touchscreen 606. In other example embodiments, the map viewport 621 may be a bounded panel or window within a larger display screen. The map GUI 612 further comprises a plurality of user-selectable graphical user interface elements displayed at specific respective geographic locations on the map 618. Each such geo-anchored GUI element is in this example embodiment represented by a respective indicium or icon overlaid on the map 618. The different types of icons and their respective functionalities will be described in greater detail below. As will also be described briefly, the map GUI 612 may further include one or more informational overlays rendered over the underlying geographical map 618, in this example embodiment including a heatmap 625 representative of the geographical distribution of underlying social media activity on the social media platform provided by the relevant social media application.

[0089] As mentioned, the map GUI 612 includes a number of different user-selectable icons or UI elements that indicate different geographically based content or information. In this example embodiment, the map GUI 612 includes a plurality of different gallery icons, also referred to in this description as "story icons." Each story icon corresponds in location on the map 618 to a respective location-based social media gallery or collection, in this example embodiment corresponding to a location-based story of ephemeral messages in the example form of so-called "snaps," as discussed elsewhere herein. Each of these stories that are represented by respective story icons on the map 618 consists of a respective set of snaps (respectively comprising augmented or unaugmented photographic or video content) that are grouped together based at least in part on respective geo-tag data associated with respective snaps.

[0090] In the example embodiment of FIG. 6A, the map GUI 612 includes two different types of gallery icons for two different respective types of location-based social media galleries, namely place icons 631 for place galleries/stories, and spike icons 633 for spike galleries/stories that are dynamically surfaced on the map GUI 612 based on one or more metrics of underlying social media activity relating to the submission of social media items/snaps to the social media platform with geo-tag data indicating the respectively associated geographical areas. Note that these different types of galleries are represented by different types of icons 631, 633. The differences between these different types of galleries and the corresponding visually distinct gallery icons 631, 633 are discussed later herein. The map GUI 612 in this example embodiment further includes friend icons in the example form of bitmojis 640 that are displayed on the map GUI 612 based on the current or last known geographic location of respective friends of the user associated with the client device 102.

Message and/or Story Ephemerality

[0091] In this example embodiment, the social media items that are selectively playable by selection of the corresponding story icons 631, 633 in the map GUI 612 are ephemeral social media items or messages. As described previously, ephemeral content is social media content (e.g., augmented and/or unaugmented video clips, pictures, and/or other messages) that is available for viewing by social media users via the map GUI 612 for only a predetermined limited period, also referred to herein as a respective gallery participation parameter or timer. After expiry of a respective gallery participation parameter or timer for any ephemeral message or snap uploaded by a particular user, that ephemeral message or snap is no longer available for viewing by other users via the map GUI 612 generated on their respective client devices 102. Current examples of such ephemeral social media content include the respective snaps or messages included in so-called "stories" in the SNAPCHAT or the INSTAGRAM social media applications.

[0092] Instead of, or in addition to, management of ephemerality on a per-snap level using respective gallery participation timers, availability of the ephemeral messages by the map GUI 612 can in some instances be managed collectively, e.g., on a per-story level. In such instances, each story can have a respective story duration parameter 508 (e.g., being based on a corresponding story timer 514--see FIG. 5), at the expiry of which availability of the corresponding story for viewing via the map GUI 612 is terminated. In some embodiments, the story duration parameter 508 is calculated based on the story participation parameter 510 of one of the ephemeral messages included in the relevant story. For example, a story may in some embodiments expire when a last uploaded item within the story expires, in response to which the corresponding story icon 631, 633 is no longer displayed on the map GUI 612. In one example embodiment, the map GUI 612 may include one or more event icons (e.g., similar in appearance to the place icons 631 of FIG. 6A) corresponding to respective event stories, with the story duration parameter 508 of the event story being set to expire a predetermined period of time from the start or conclusion of the underlying event. At expiry of the story duration parameter 508, the corresponding gallery icon 631, 633 is removed from the map GUI 612, irrespective of individual timers associated with respective snaps included in the event story.

Story Playback

[0093] The user can select any one of the gallery icons 631, 633 by haptic contact with the touchscreen 606 at the on-screen location of the selected gallery icon 631/633. In response to such selection, automated sequential playback of the corresponding set of ephemeral messages or snaps in the selected story is performed by the client device 102 on the touchscreen 606. Such automated sequential playback of the selected story consists of: [0094] displaying on the touchscreen 606 the content or media payload of a first one of the ephemeral messages for a corresponding display duration (e.g., a default value of five seconds for photo-based messages and a maximum value of 10 seconds for video-based snaps), in this example embodiment temporarily replacing the map GUI 612 on the touchscreen 606 with a full-screen replay of the relevant snap; [0095] at expiry of the display duration, displaying the content of the next snap/message for its display duration; and [0096] thus progressing in sequence through all of the ephemeral messages in the selected story until all of the snaps in the story have been replayed or until the user selectively dismisses the playback sequence.

[0097] In some embodiments, not all of the snaps in a particular story/gallery are necessarily included in the replay sequence. For example, if there are many overlapping snaps (e.g., snaps showing substantially identical content), some of those snaps are automatically skipped to keep a continuous narrative and not repeat some sections of an event commonly captured by the different snaps. Instead, or in addition, the social media server application 114 can in some embodiments be programmed automatically to identify and curate overlapping or contemporaneous snaps based on timestamp information associated with respective snaps.

[0098] In this example embodiment, the snaps automatically collected together in a replayable spike story or place story are arranged automatically to be played back in chronological order based on respective timestamps (e.g., being played in sequence from oldest to newest or earliest posted to most recently posted). A benefit of such chronological playback is that viewing of the story provides a user with sequentially arranged views of events transpiring at the relevant location. In some instances, however, a human curator may choose to rearrange snaps out of chronological order, for example to improve the narrative flow of the story. In other embodiments, the snaps may be played in reverse chronological order, from newest to oldest.

[0099] It can thus be seen that the example map GUI 612 includes multiple location-based gallery icons in the example form of story icons 631, 633 that are user-selectable to trigger playback of respective collections of ephemeral social media items, in this example embodiment being respective ephemeral stories consisting of respective sets of ephemeral messages (also referred to in this description as "snaps"). In this example embodiment, each of the plurality of location-based stories represented by the respective story icons 631, 633 may comprise media content contributed by multiple different users.

Our Story and Publication of Ephemeral Messages to Our Story

[0100] The respective ephemeral stories are in this example embodiment compiled from ephemeral messages submitted by multiple users based at least in part on geo-tagging of the respective snaps. Note that the ephemeral messages made available for viewing via the map GUI 612 are in this example embodiment not limited to content provided by other users who are members of an in-application social network of the user on whose client device 102 the map GUI 612 is generated. Instead, the social media content to which the map GUI 612 allows access is in this example embodiment provided by snaps uploaded or submitted by any user to be publicly accessible via the map GUI 612.

[0101] One aspect of the example map GUI 612 provides for the functionality for users to submit social media content that is publicly viewable via the map GUI 612. Turning briefly to FIG. 7A, therein is shown an example embodiment of a destination selection interface 707 forming part of the map GUI 612 to provide a mechanism that gives the user a selectable option to make a snap publicly viewable via the map GUI 612 upon capturing of the snap.

[0102] In this example embodiment, snaps can be captured via the map GUI 612 while the map viewport 621 is displayed (as seen in FIG. 6A) by operation of a camera soft button 650 (FIG. 6A) forming part of the map GUI 612. After capturing of photo or video content by operation of the camera soft button 650, the captured media content is displayed on the touchscreen 606 (FIG. 7A) together with the destination selection interface 707. In this example embodiment, the user can select one or both destination options identified in FIG. 7A as "My Story" and "Our Story," represented in FIG. 7A by respective radio buttons 714, 721. By selecting the Our Story radio button 721 and thereafter selecting a "Send" soft button 728, the user can submit the snap over the network 106 to the application server 112 with an indication that the snap is available for non-private publication via the map GUI 612. If the snap is not so marked by the user, for example being associated with selection of the My Story radio button 714 only, then the snap is not available for inclusion in any of the stories associated with the story icons 631, 633 and is not available for inclusion in search results of a location-based search via the map GUI 612, as described later herein. Snaps included only in the My Story gallery are available only to friends of the user (e.g., members of the uploading user's social network). The My Story gallery is a per-user location-agnostic gallery of ephemeral messages available to friend users only, thus being a non-public or private gallery.

[0103] In other example embodiments described herein, the superset of ephemeral messages made available by multiple users for public viewing via the map GUI 612 is alternatively referred to as the "Live Story" or simply as a "Live" gallery. For the purposes of the description of example embodiments herein, "Live Story" and "Our Story" are thus to be read as being synonymous. In the present example embodiment, the compilation and/or surfacing of gallery icons 631, 633 and the rendering of the heatmap 625 are based exclusively on publicly available social media content provided by snaps uploaded to Our Story. Calculation of metrics or attributes of social media activity upon which one or more aspects of the map GUI 612 are based (e.g., an unusualness or anomality metric indicating geo-temporal unusualness or anomality of social media activity within respective geographical areas) is in this example embodiment likewise based exclusively on snaps uploaded to Our Story.

Visual Distinctions Between Story Icons for Different Story Types

[0104] Returning now to the visual distinctions or differences between the different types of gallery icons 631, 633, it will be noted that each gallery icon 631/633 in this example embodiment comprises a circular graphical user interface element bearing a thumbnail image provided by the one of the snaps contained in the respective story. Each place icon 631, however, also includes an associated label 635 bearing a text indication of the associated place. In this example embodiment, the labels 635 indicate the respective places of the place stories surfaced in the geographic window presently displayed in the example map 618 as being the Rockefeller Center, Bryant Park, and the Empire State Building, respectively.

[0105] In other example embodiments, the visual distinctions between different types of story icons may be provided in a different manner. FIG. 6B, for example, shows another example embodiment of a map GUI 612 that is analogous to the example embodiment of FIG. 6A, a major difference being that the visual distinction between place icons 631 and spike icons 633 is provided at least in part by differently shaped thumbnail images. In the example of FIG. 6B, the thumbnails of the place icons 631 are rectangular, while the thumbnails of the spike icons 633 are circular.

[0106] The respective thumbnail images that are used for the spike icons 633 are in the example embodiments of FIGS. 6A and 6B automatically selected by the social media server application 114. In this instance, the thumbnail image for a spike icon 633 is automatically selected based on the posting time of the respective snaps forming part of the corresponding spike story, in this case being selected as a most recently posted snap in the relevant story. In other embodiments, automatic selection of the thumbnail image to be used in the spike icon 633 can be based on selecting the earliest-posted ephemeral message/snap that is still available for viewing as part of the spike story. The thumbnail images for the place icons 631 (or for icons associated with other curated stories, such as event stories) can in some embodiments likewise be selected automatically. In this embodiment, however, the thumbnail images for the place icons 631 can be selected from the snaps included in the corresponding story/gallery by a human operator via a content management interface forming part of the social media server application 114. Absent such an explicit designation of a particular snap to be used for the thumbnail image, thumbnail selection may revert to an automatic default selection as described previously.

Differences Between Different Story Types

Place Stories

[0107] Returning to FIG. 6A, the differences between the different types of social media galleries or stories accessible via respective story icons 631, 633 on the map GUI 612 will now be briefly discussed.

[0108] Place stories, represented by respective place icons 631, are social media galleries for defined locations or places, typically being places that consistently have relatively large activity volumes (e.g., Times Square, Universal Studios, etc.). Note that, in this example embodiment, not all defined places are by default surfaced in the map GUI 612 by respective place icons 631. Instead, the geo-anchored place stories are surfaced based on the amount of activity (e.g., the raw number of uploaded snaps) captured within a defined geographical area associated with the relevant place, as indicated by associated geo-tag data. This ensures that places that regularly or always attract relatively large volumes of snaps are identified as such on the map 618.

[0109] Defined places for which place icons 631 may be surfaced in the map GUI 612 are in this example embodiment manually created by one or more human operators using a server-side gallery management system or content management system (CMS) 1224 provided by a server-side social media platform system 1200 (FIG. 12). In this example embodiment, each defined place has: [0110] (a) an associated operator-defined polygon marking its geographical boundaries, that specify a particular geographical area for the place story; [0111] (b) a thumbnail location or icon location, typically lying within the associated polygon, that specifies the on-map position at which the place icon 631 for a gallery or story associated with that place is displayed in the map 618; and [0112] (c) a name by which the place is identified. In the example embodiment of FIG. 6A, this is the name that is displayed on the associated label 635 of the place icon 631. In other embodiments, such places and associated place stories are automatically identified by historical snap volume. In some such embodiments, the defined places and their associated stories/galleries are created and curated automatically by server-side procedures.

[0113] In some instances, each place story includes all of the snaps having geotag information indicating a geographic location lying within the associated polygon. Selection of a particular place icon 631 (e.g., by clicking in a desktop application or by tapping on the touchscreen 606 in the example embodiment of FIG. 6A) in such cases plays all the snaps from within the corresponding polygon. In this example embodiment, the CMS 1224 provides functionality to operators or administrators to curate the collection of snaps associated with any operator-selected defined place (e.g., a particular geographical area defined by the corresponding polygon). The operator or one or more automated procedures can thus, for example, delete individual snaps from the place story, or can select individual snaps for inclusion in the place story.

[0114] When snaps are played in response to selection of a place icon 631, the name of the place appears on-screen together with the replayed content or payload of the respective snaps. As mentioned, in this example embodiment, the snap represented by a corresponding thumbnail within the relevant place icon 631 is played first, then the rest in time order.

Spike Stories

[0115] The unlabeled circular spike icons 633 are automatically surfaced for geographical areas of unusually high activity, with the respective associated spike stories or ad hoc galleries including unexpired snaps within the associated geographical area. In the example embodiment of FIG. 6A, all ad hoc galleries associated with spike icons 633 are unmoderated, so that selecting a spike icon 633 triggers automated sequential replay of all of the snaps within a geographical area associated with the spike icon 633. In a particular example embodiment, the geographical area associated with the spike icon 633 includes all geographical points located within a predefined radius of the on-map location of the selected spike icon 633.

[0116] Thus, clicking or tapping on the spike icon 633 plays all the snaps in that cluster, showing the snap in the thumbnail first and then the rest of the snaps in time order. Note, again, that the snaps clustered under the common spike icon 633 are in this example uploaded by multiple different respective social media users to Our Story, and are uncurated by moderators. In other embodiments, the stories collected under such spike thumbnails may be curated.