Automated Umbrella

Gharabegian; Armen

U.S. patent application number 16/449462 was filed with the patent office on 2020-04-16 for automated umbrella. This patent application is currently assigned to Shadecraft, Inc.. The applicant listed for this patent is Shadecraft, Inc.. Invention is credited to Armen Gharabegian.

| Application Number | 20200113297 16/449462 |

| Document ID | / |

| Family ID | 57324673 |

| Filed Date | 2020-04-16 |

View All Diagrams

| United States Patent Application | 20200113297 |

| Kind Code | A1 |

| Gharabegian; Armen | April 16, 2020 |

AUTOMATED UMBRELLA

Abstract

An intelligent shading object, comprises a plurality of shading elements, a shading element deployment mechanism, a support structure, and a base unit. A shading element deployment mechanism deploys a plurality of shading elements independently of each other. A support structure is coupled to the shading element deployment mechanism. A base unit is coupled to the support structure to provide stability to the support structure, the shading element deployment mechanism, and the plurality of shading elements.

| Inventors: | Gharabegian; Armen; (Glendale, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Shadecraft, Inc. |

||||||||||

| Family ID: | 57324673 | ||||||||||

| Appl. No.: | 16/449462 | ||||||||||

| Filed: | June 24, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14810380 | Jul 27, 2015 | 10327521 | ||

| 16449462 | ||||

| 62165859 | May 22, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A45B 19/04 20130101; G01J 2001/4266 20130101; A45B 2023/0031 20130101; A45B 19/08 20130101; A45B 2023/0012 20130101; A45B 17/00 20130101; G01S 3/7861 20130101; A45B 23/00 20130101; G01S 19/14 20130101; A45B 2023/0093 20130101; G05B 15/02 20130101; A45B 2025/003 20130101; A45B 2200/1027 20130101; G01J 1/4204 20130101; A45B 19/02 20130101; A45B 25/165 20130101; A45B 2019/026 20130101; A45B 2017/005 20130101; A45B 2019/002 20130101; G01J 1/42 20130101; A45B 25/143 20130101; A45B 25/16 20130101; G01J 1/44 20130101; A45B 2200/1018 20130101; G01C 17/00 20130101; A45B 25/18 20130101 |

| International Class: | A45B 19/04 20060101 A45B019/04; G01J 1/44 20060101 G01J001/44; G01S 19/14 20060101 G01S019/14; G01J 1/42 20060101 G01J001/42; G01C 17/00 20060101 G01C017/00; A45B 25/18 20060101 A45B025/18; A45B 25/16 20060101 A45B025/16; A45B 19/08 20060101 A45B019/08; G05B 15/02 20060101 G05B015/02; G01S 3/786 20060101 G01S003/786; A45B 25/14 20060101 A45B025/14; A45B 17/00 20060101 A45B017/00; A45B 23/00 20060101 A45B023/00; A45B 19/02 20060101 A45B019/02 |

Claims

1-22. (canceled)

23. An automated umbrella, comprising: a base assembly; a support assembly coupled to the base assembly, the support assembly comprising an upper support assembly and a lower support assembly; one or more arms coupled to the support assembly; a first motor, the first motor configurable to cause the support assembly to rotate in a clockwise direction about the base assembly; a second motor, the second motor configurable to cause the upper support assembly to rotate about the lower support assembly; a third motor, the third motor configurable to cause opening or closing of the one or more arms.

24. The automated umbrella of claim 23, further comprising a processor, one or more memory devices, and computer-readable instructions, the computer-readable instructions executable by the processor to send commands or signals to the first motor to rotate the support assembly about the base assembly, the second motor to rotate the upper support assembly about the lower support assembly and the third motor to open or close the one or more arms.

25. The automated umbrella of claim 24, further comprising a keypad or a touch screen to receive input to control operations of the automated umbrella.

26. The automated umbrella of claim 24, further comprising a display, the computer-readable instructions further executable by the processor to communicate status messages to the display.

27. The automated umbrella of claim 24, further comprising an audio amplifier and a speaker, the computer-readable instructions further executable by the processor to communicate music files to the audio amplifier and the speaker to produce sound at the speaker.

28. The automated umbrella of claim 23, further comprising a GNSS receiver, a Bluetooth transceiver and a WiFi transceiver.

29. The automated umbrella of claim 23, further comprising a rechargeable power source and an AC adapter, the AC adapter to convert power from an external power source to recharge the rechargeable power source.

30. The automated umbrella of claim 29, further comprising a USB interface, the USB interface to receive power from the rechargeable power source and to provide power to mobile computing devices.

31. The automated umbrella of claim 23, further comprising a misting system, the misting system to cool an area around the automated umbrella.

32. The automated umbrella of claim 23, further comprising a fan assembly, the fan assembly to cool an area around the automated umbrella.

33. The automated umbrella of claim 23, further comprising a wind sensor configurable to capture a wind speed measurement.

34. The automated umbrella of claim 23, further comprising a gyroscope or a tilt sensor, the gyroscope or the tilt sensor to capture a wind resistance measurement.

35. The automated umbrella of claim 23, further comprising an accelerometer, the accelerometer to capture a wind resistance measurement.

36. The automated umbrella of claim 23, the base assembly further comprising an accelerometer, the accelerometer configurable to determine if the base is in a stable position.

37. The automated umbrella of claim 23, further comprising a temperature sensor, the temperature sensor configurable to capture a temperature measurement in an area around the automated umbrella.

38. The automated umbrella of claim 23, further comprising a light detector, the light detector to capture a light brightness measurement.

39. The automated umbrella of claim 24, further comprising a camera, the camera to capture an image of an object in an area around the automated umbrella.

40. The automated umbrella of claim 39, the computer-readable instructions further executable by the processor to" capture an image, by the camera, of an individual; receive the captured image; retrieve reference images from the one or more memory devices; compare the captured image to the reference images to determine if the captured image matches one reference image of the reference images; and retrieve personalized settings corresponding to the one reference image if the one reference image matches the captured image.

41. The automated umbrella of claim 40, the computer-readable instructions further executable by the processor to: utilize the retrieved personal settings and communicate signals or instructions to components of the automated umbrella to adjust the components base at least in part on the retrieved personal settings.

Description

BACKGROUND

1. Field

[0001] The subject matter disclosed herein relates to a methods and systems for providing shade or protection from weather and more specifically to an intelligent sun shading object.

2. Information/Background of the Invention

[0002] Conventional sun shading devices usually are comprised of a supporting frame and an awning or fabric mounted on the supporting frame to cover a predefined area. For example, a conventional sun shading device may be an outdoor umbrella or an outdoor awning.

[0003] However, current sun shading devices do not appear to be flexible or modifiable or able to adapt to changing environmental conditions or user's desires. Many of the current sun shading devices appear to require manual operation in order to change inclination angle of the frame to more fully protect an individual from the environment. In addition, the current sun shading devices appear to cover a set area that is defined by an area of the awning or umbrella. Further, the current sun shading devices appear to have one (or a single) awning or fabric piece that is mounted to an interconnected unitary frame. An interconnected unitary frame may not be able to be opened or deployed in a situation where only a portion or several portions of the shading object are necessary to be deployed. Accordingly, alternative embodiments may be desired.

BRIEF DESCRIPTION OF DRAWINGS

[0004] Non-limiting and non-exhaustive aspects are described with reference to the following figures, wherein like reference numerals refer to like parts throughout the various figures unless otherwise specified.

[0005] FIG. 1A illustrates a shading object according to an embodiment.

[0006] FIG. 1B illustrates a shading object comprising deployed shading objects according to an embodiment.

[0007] FIG. 1C illustrates a deployment of shading elements according to an embodiment

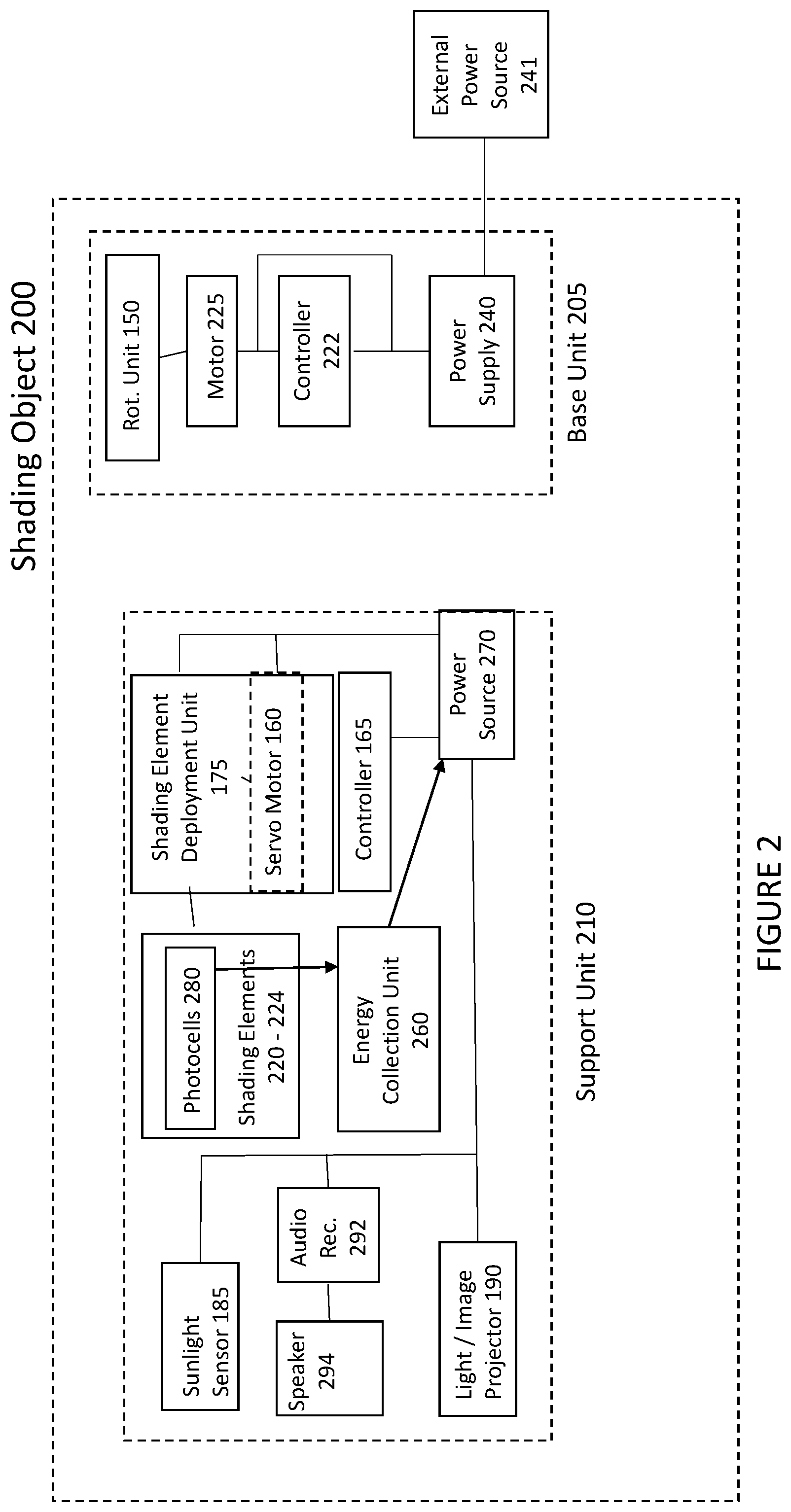

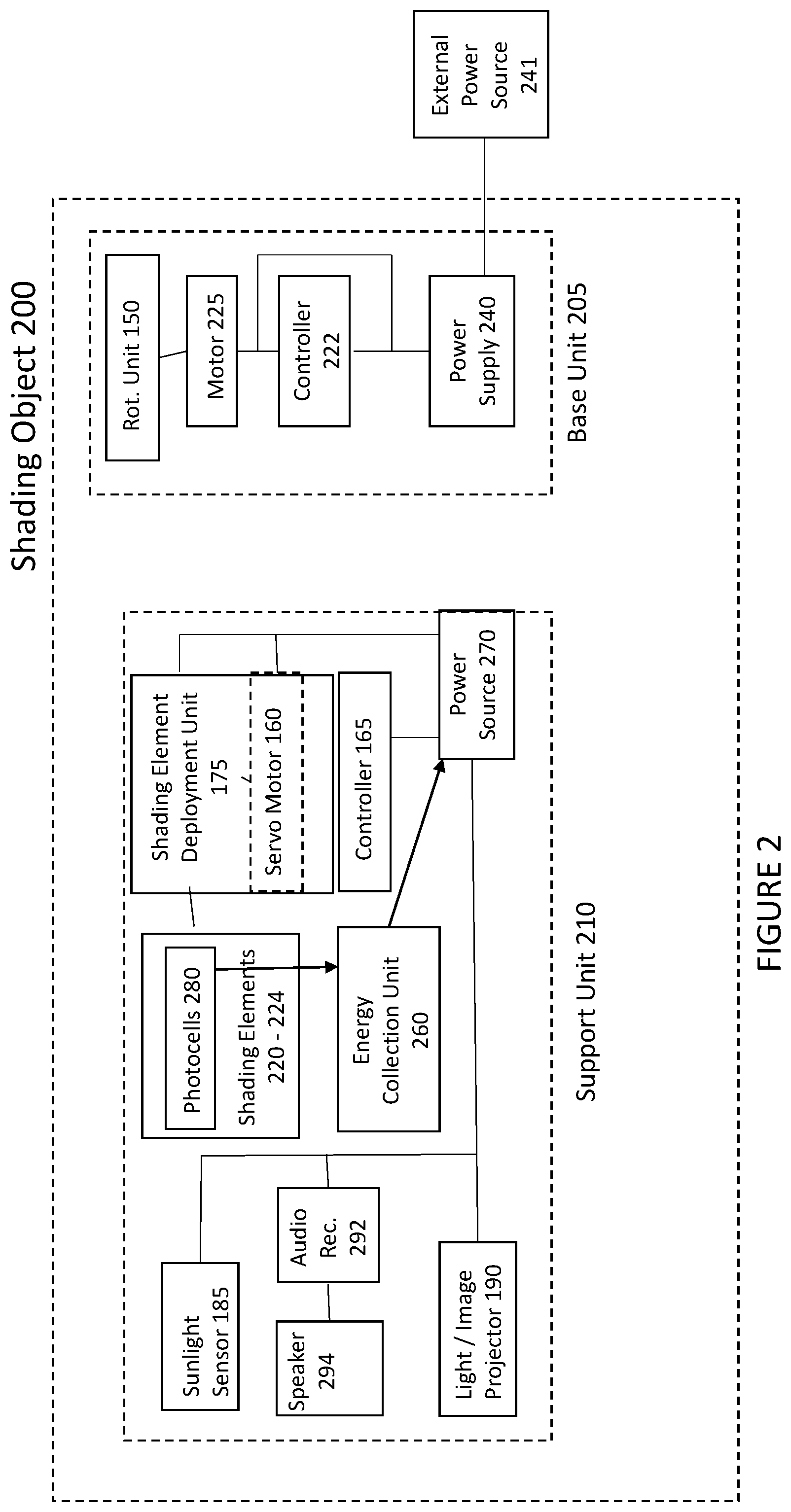

[0008] FIG. 2 illustrates a schematic diagram of a shading object according to an embodiment.

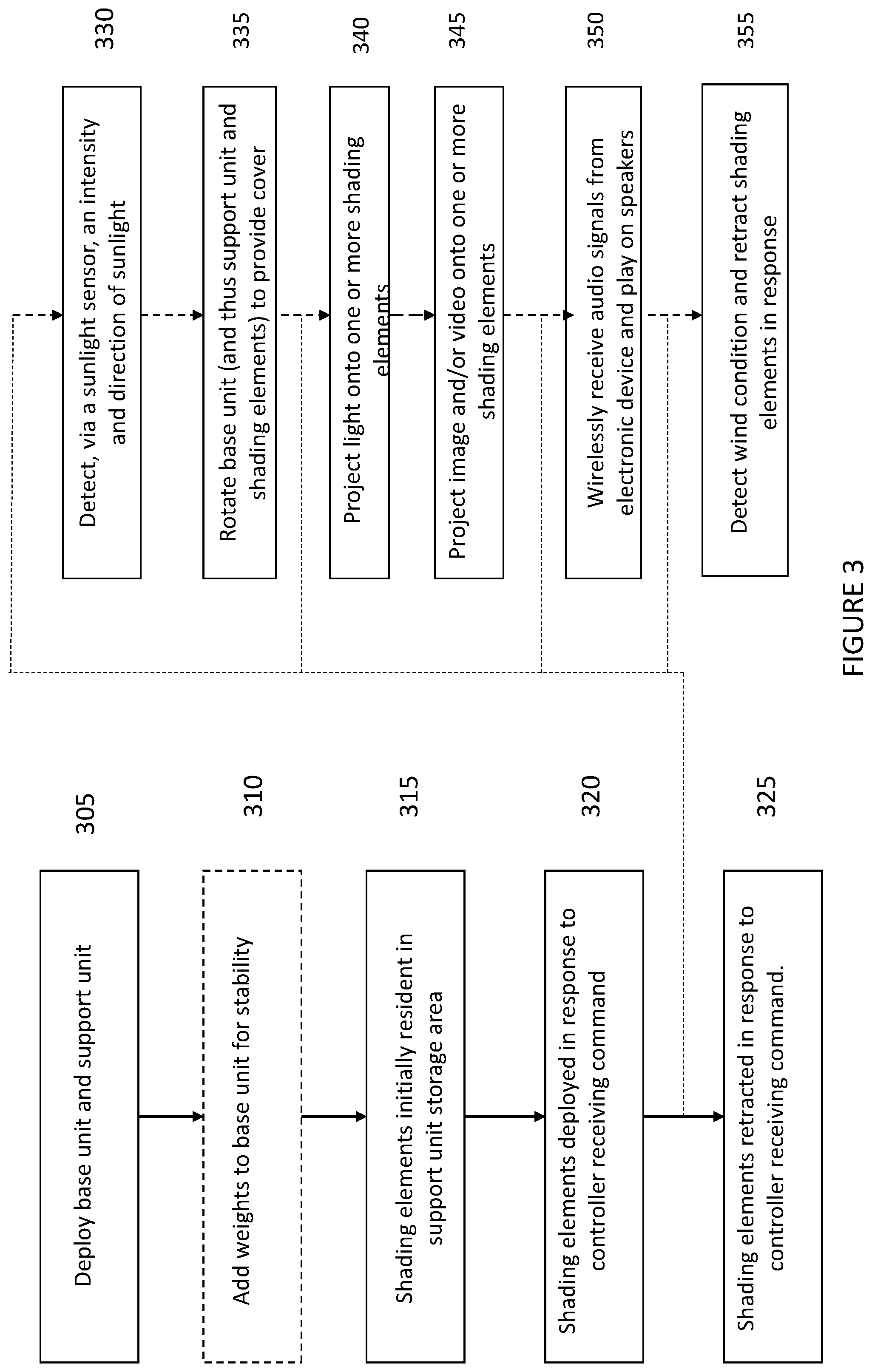

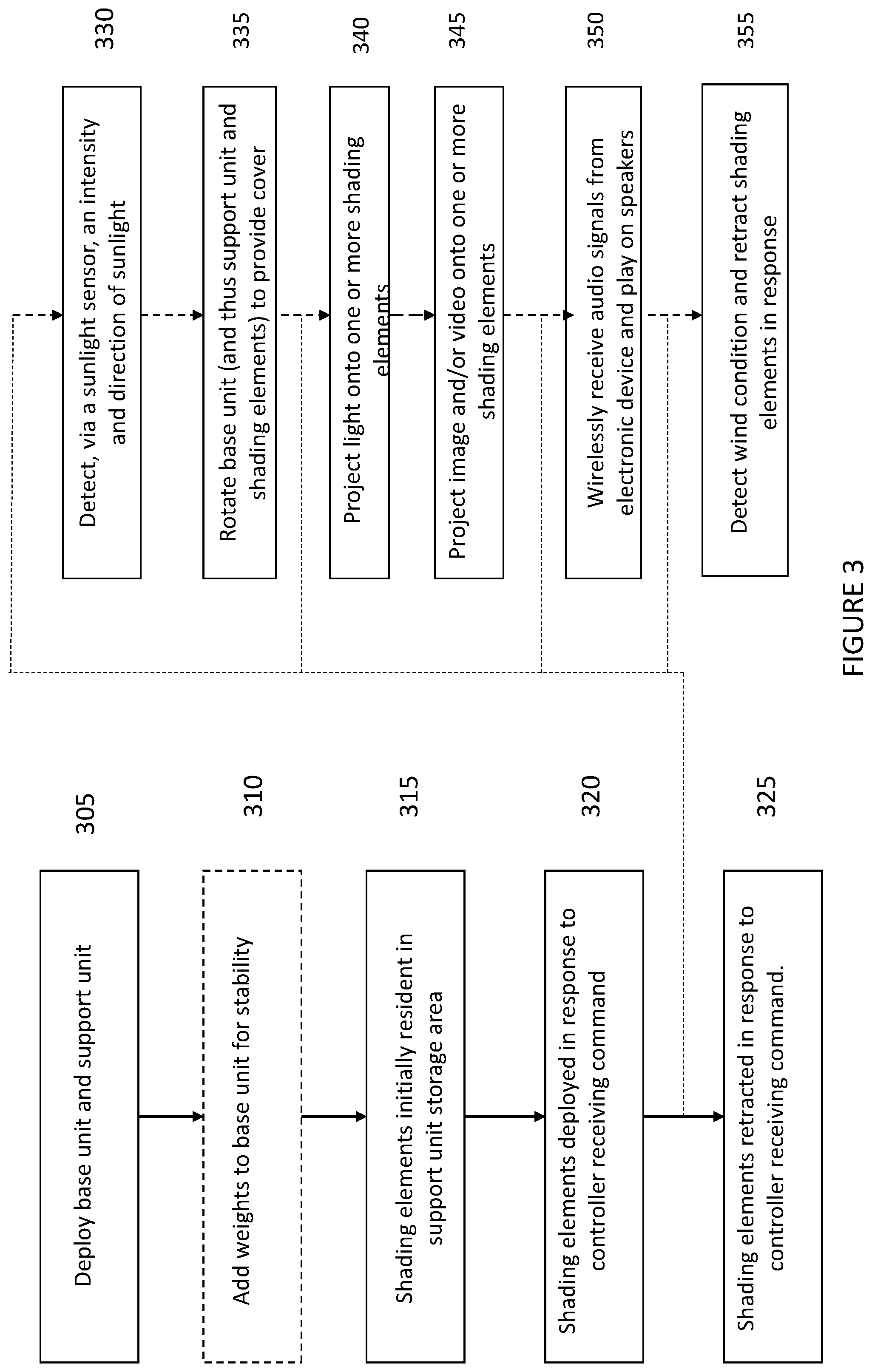

[0009] FIG. 3 illustrates a method of deploying a shading object.

[0010] FIG. 4A illustrates a second shading object according to an example embodiment.

[0011] FIG. 4B is a side view of a shading object with a deployed shading element according to an embodiment.

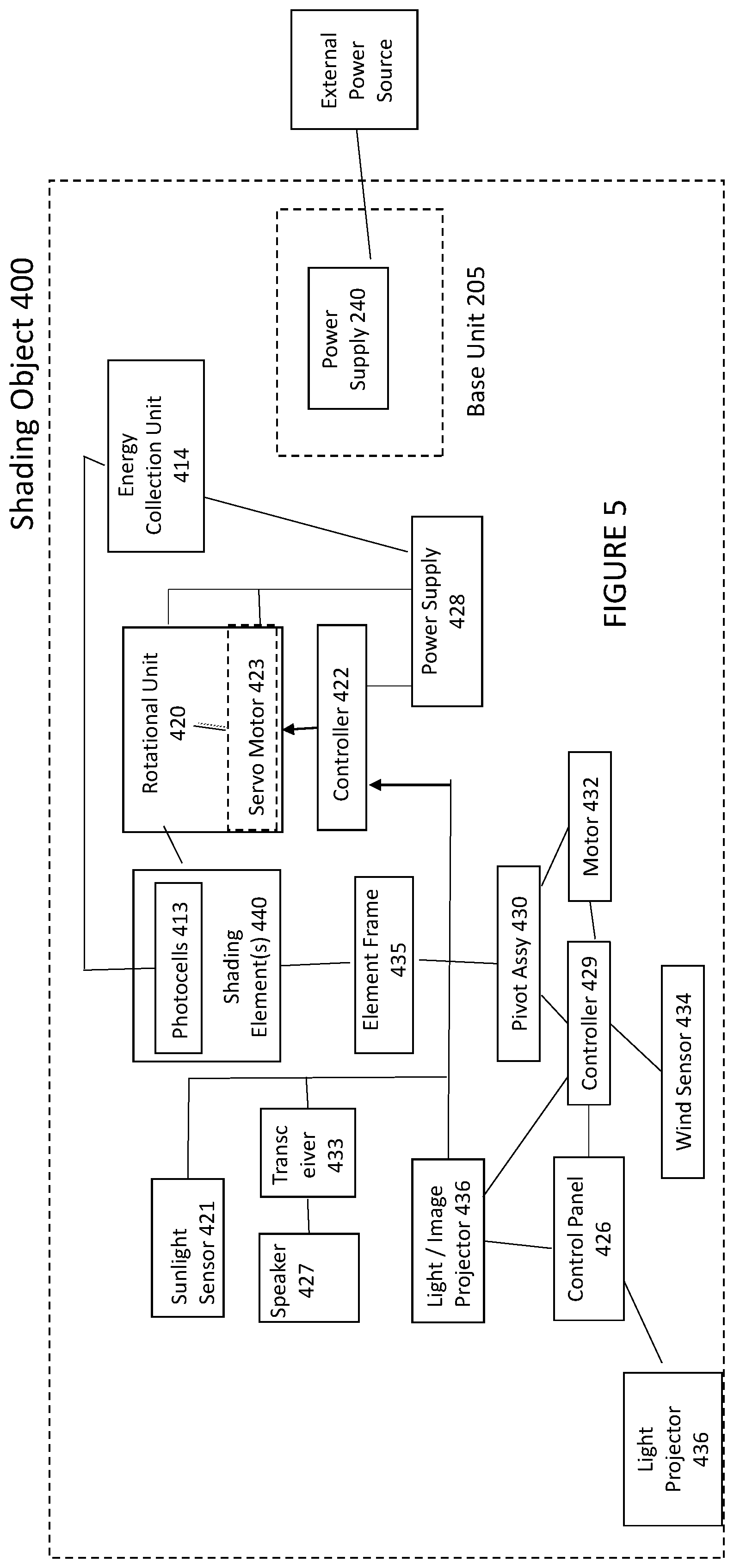

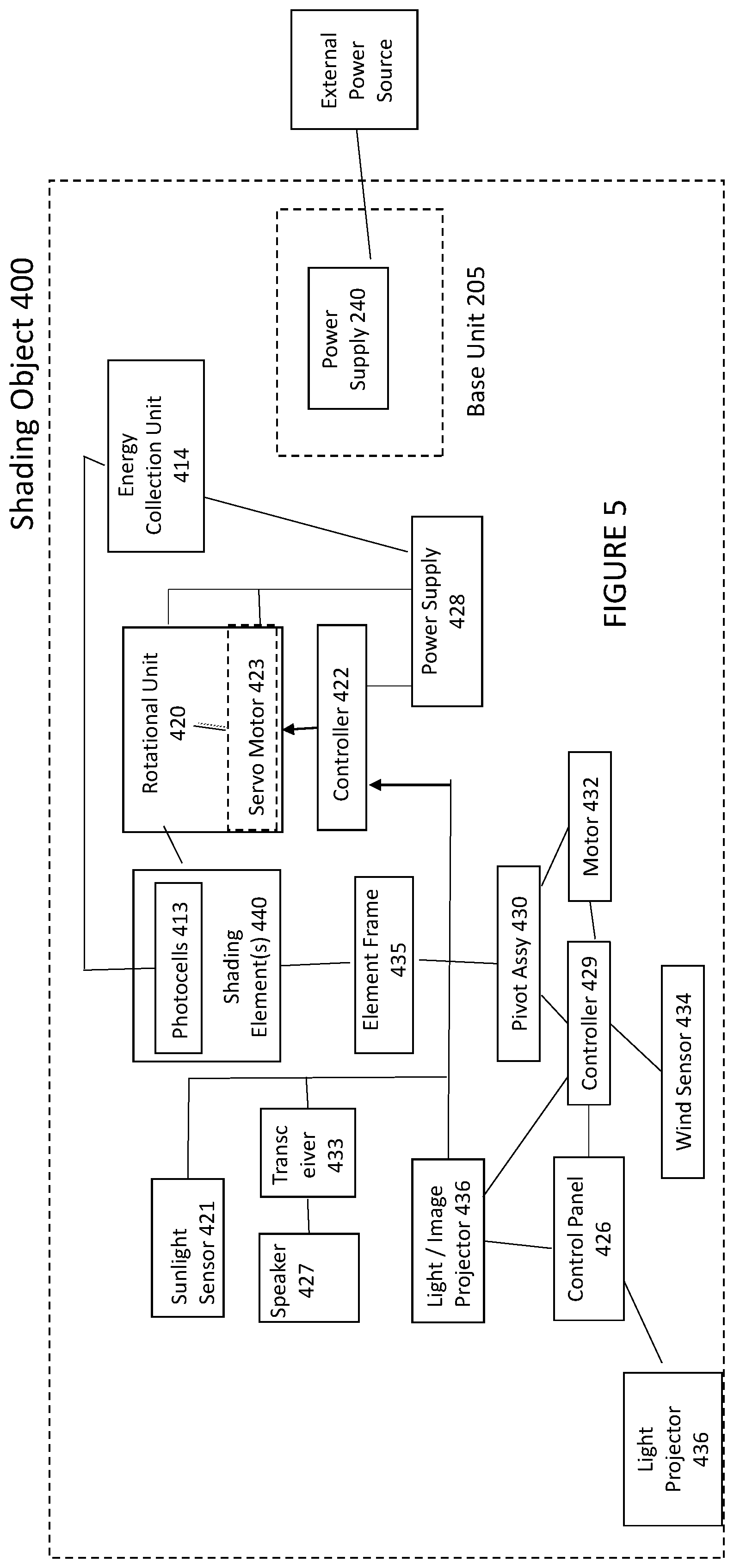

[0012] FIG. 5 illustrates a block diagram on a second shading object according to an embodiment.

[0013] FIG. 6 illustrates a method of operating a second shading object according to an embodiment.

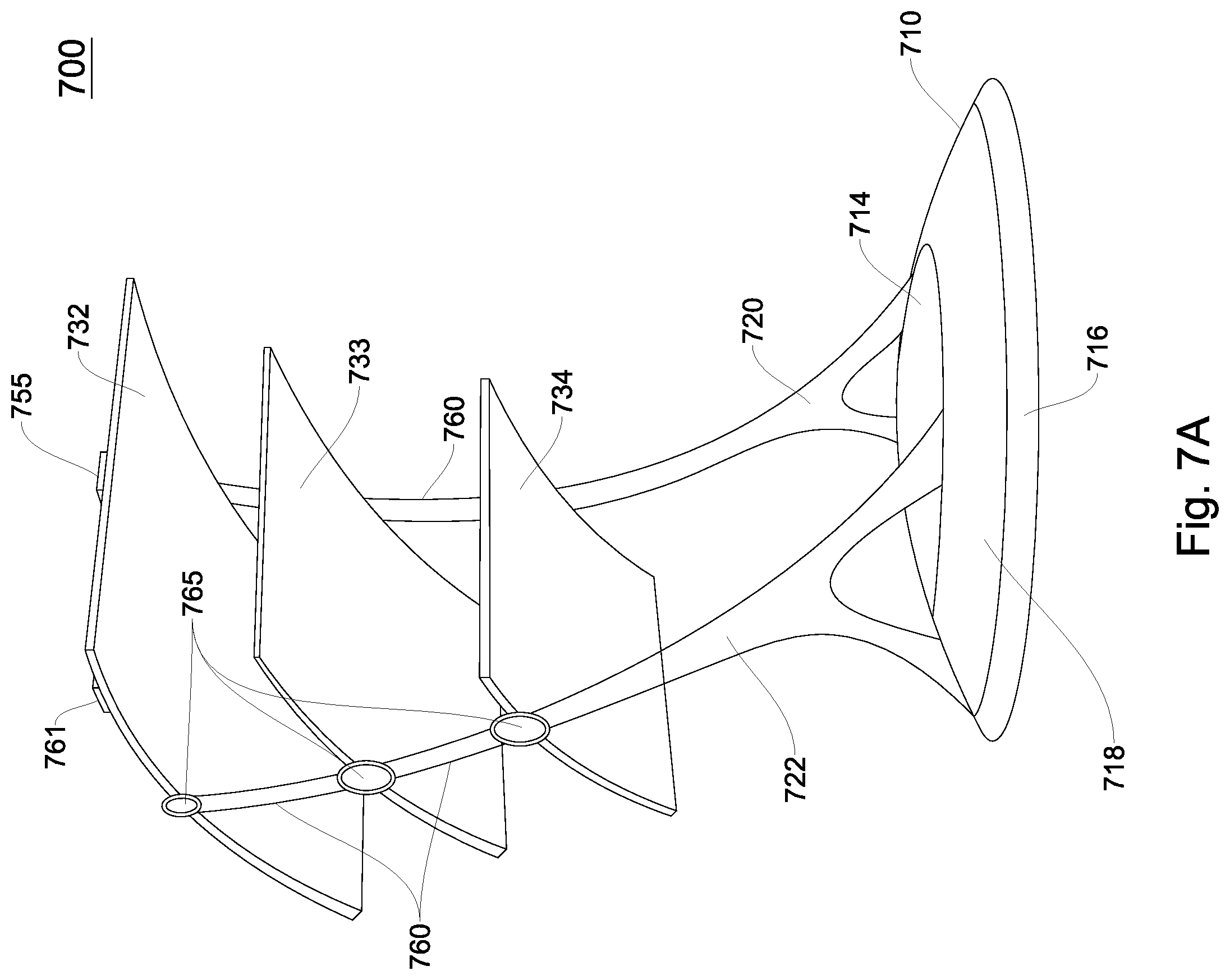

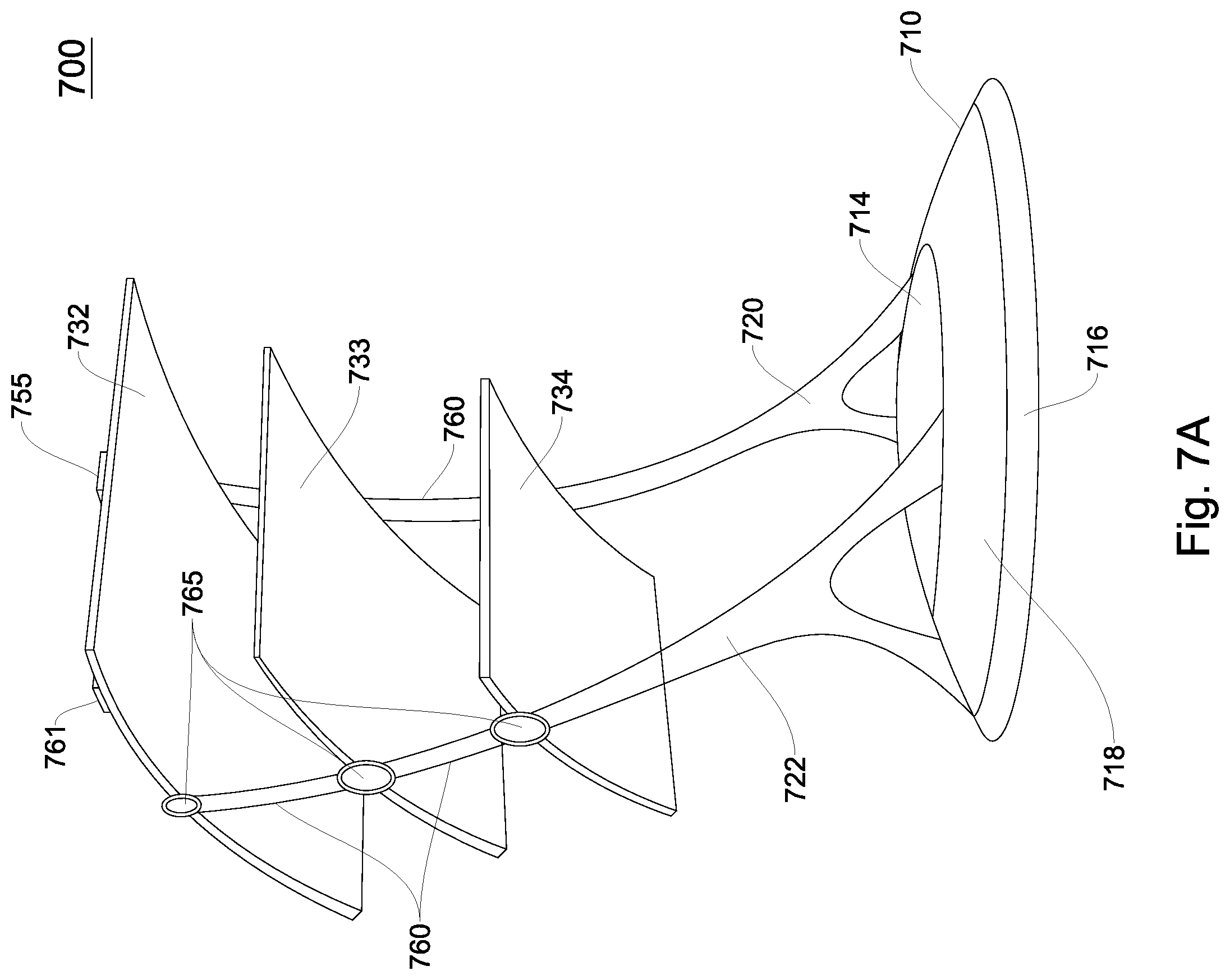

[0014] FIG. 7A illustrates a third shading object according to an embodiment.

[0015] FIG. 7B illustrates a side view of a third shading object according to an embodiment.

[0016] FIG. 8 illustrates a block diagram of a shading object including a louvre system according to an embodiment.

[0017] FIG. 9 discloses a method of operation for a third shading object according to an embodiment of the invention.

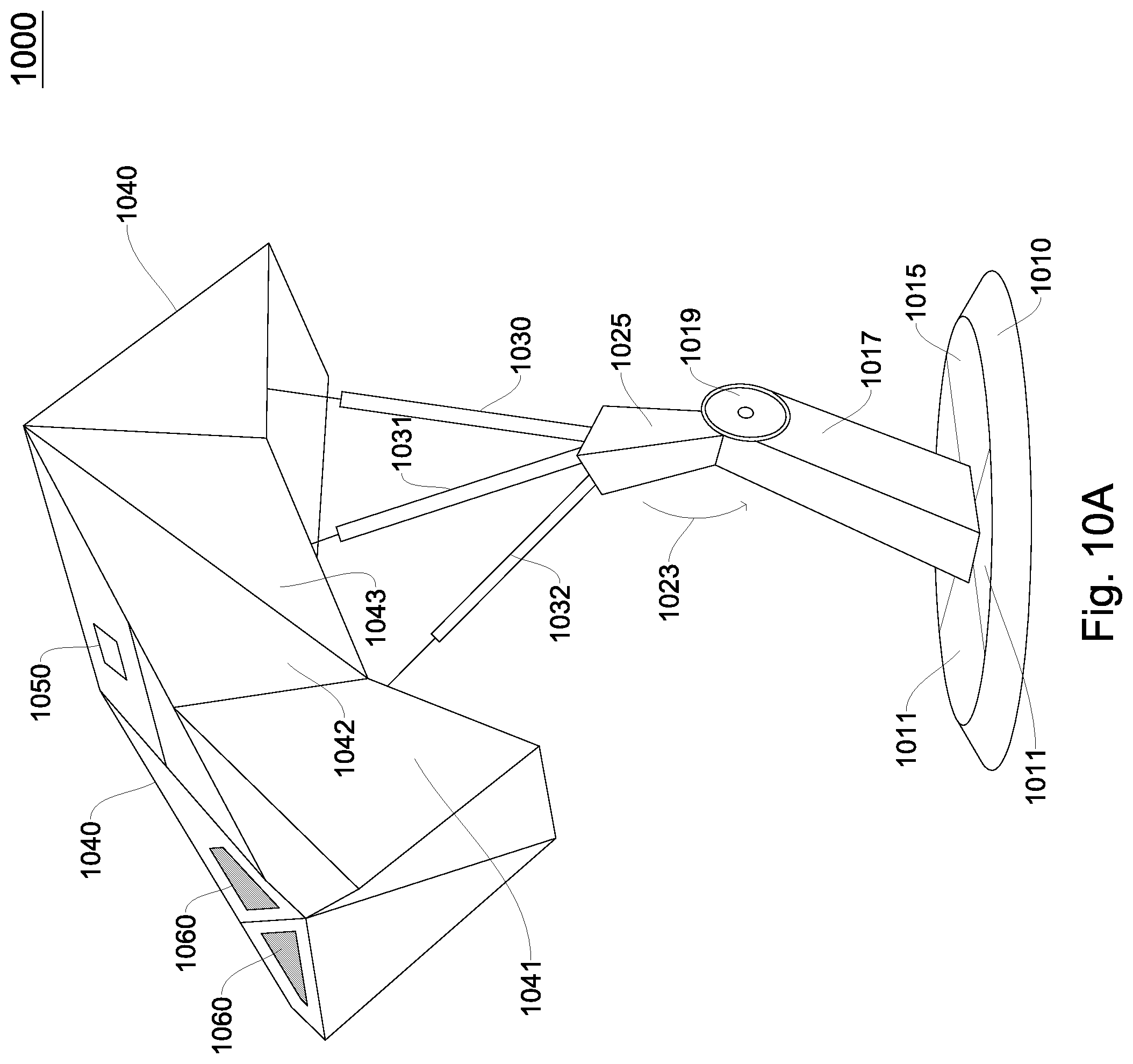

[0018] FIG. 10A illustrates a fourth shading object according to an embodiment.

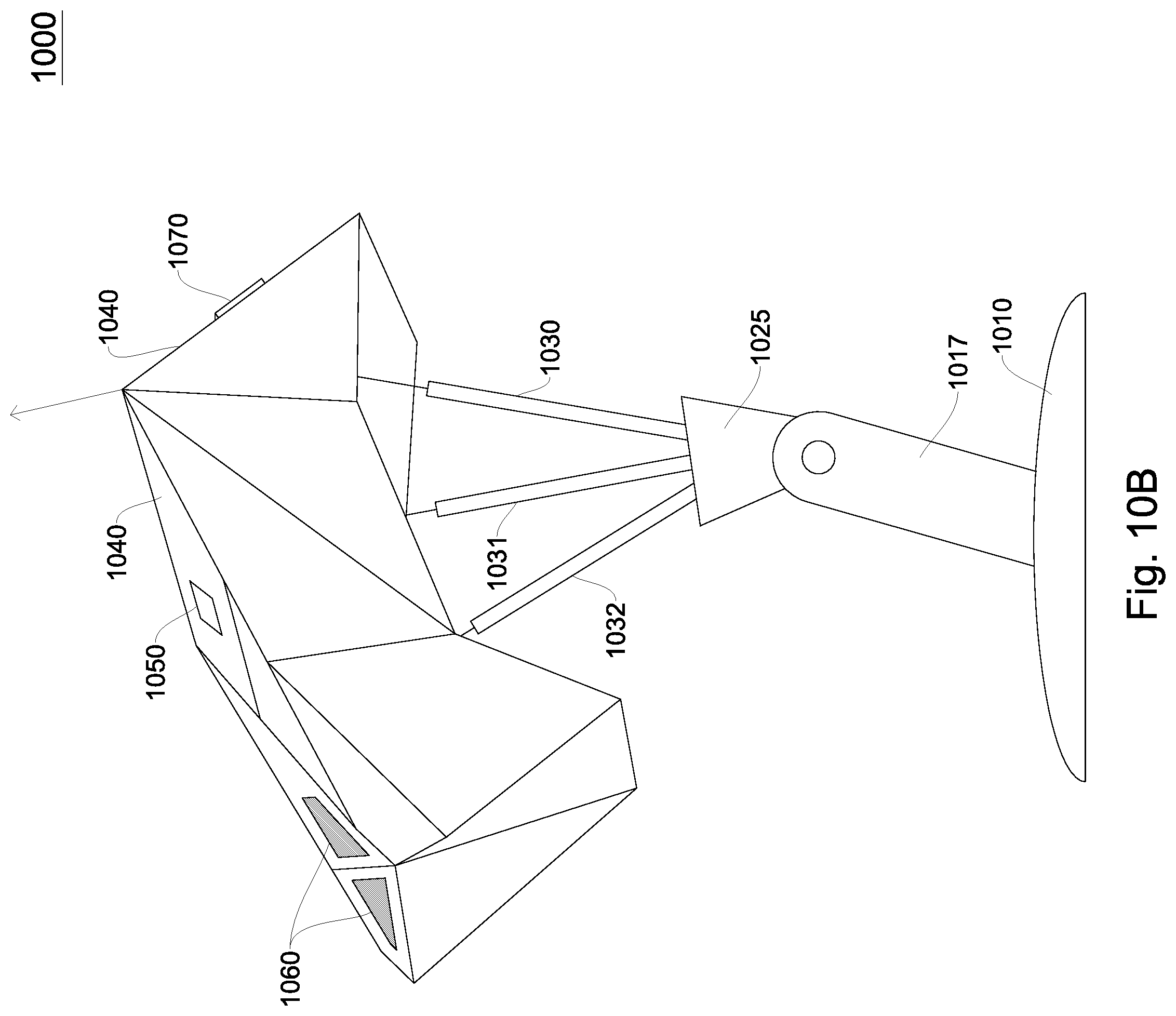

[0019] FIG. 10B is a side view of a fourth shading object according to an embodiment.

[0020] FIG. 11 is a block diagram of a fourth shading object according to an embodiment.

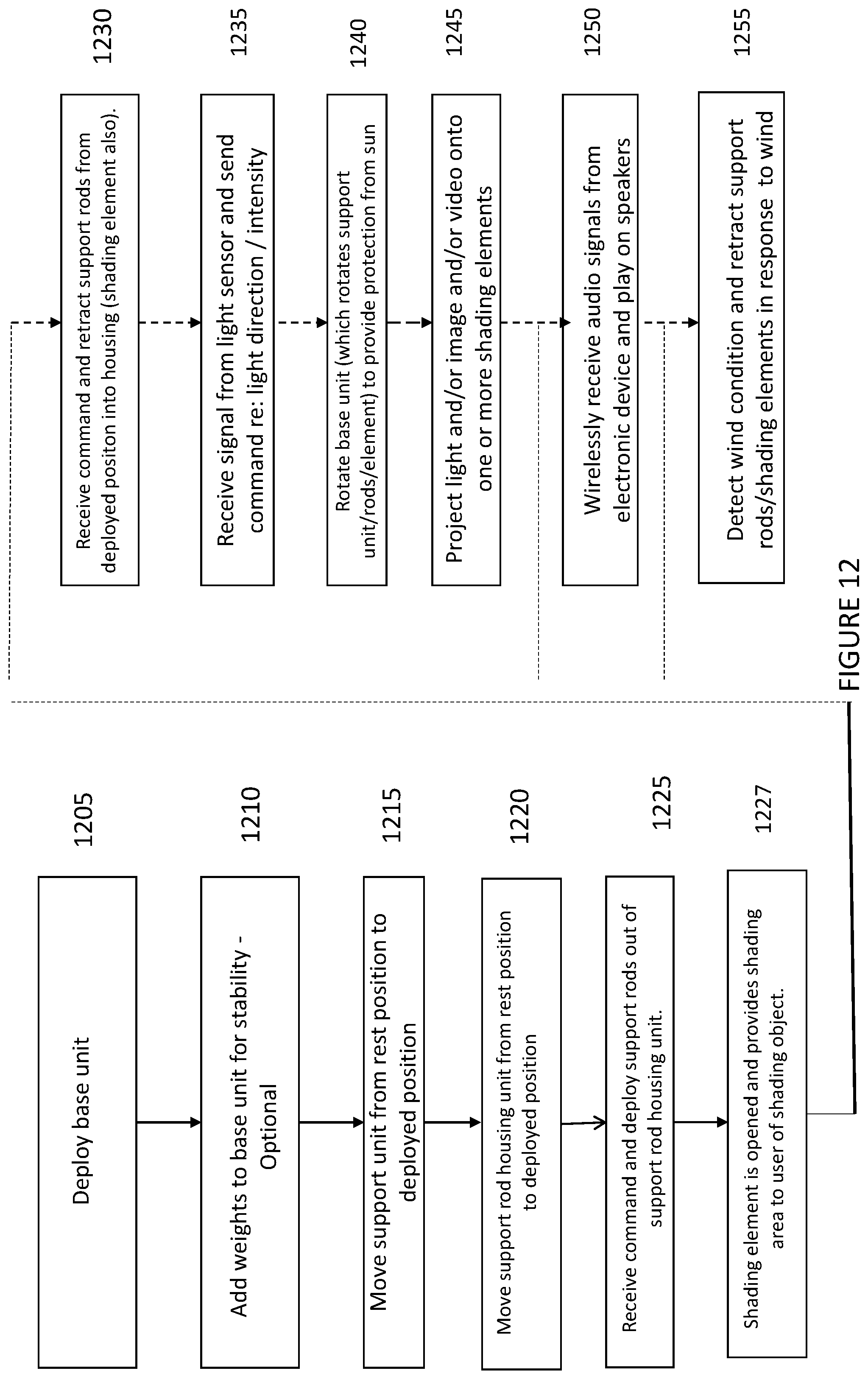

[0021] FIG. 12 illustrates a method of operating a fourth shading object according to an embodiment.

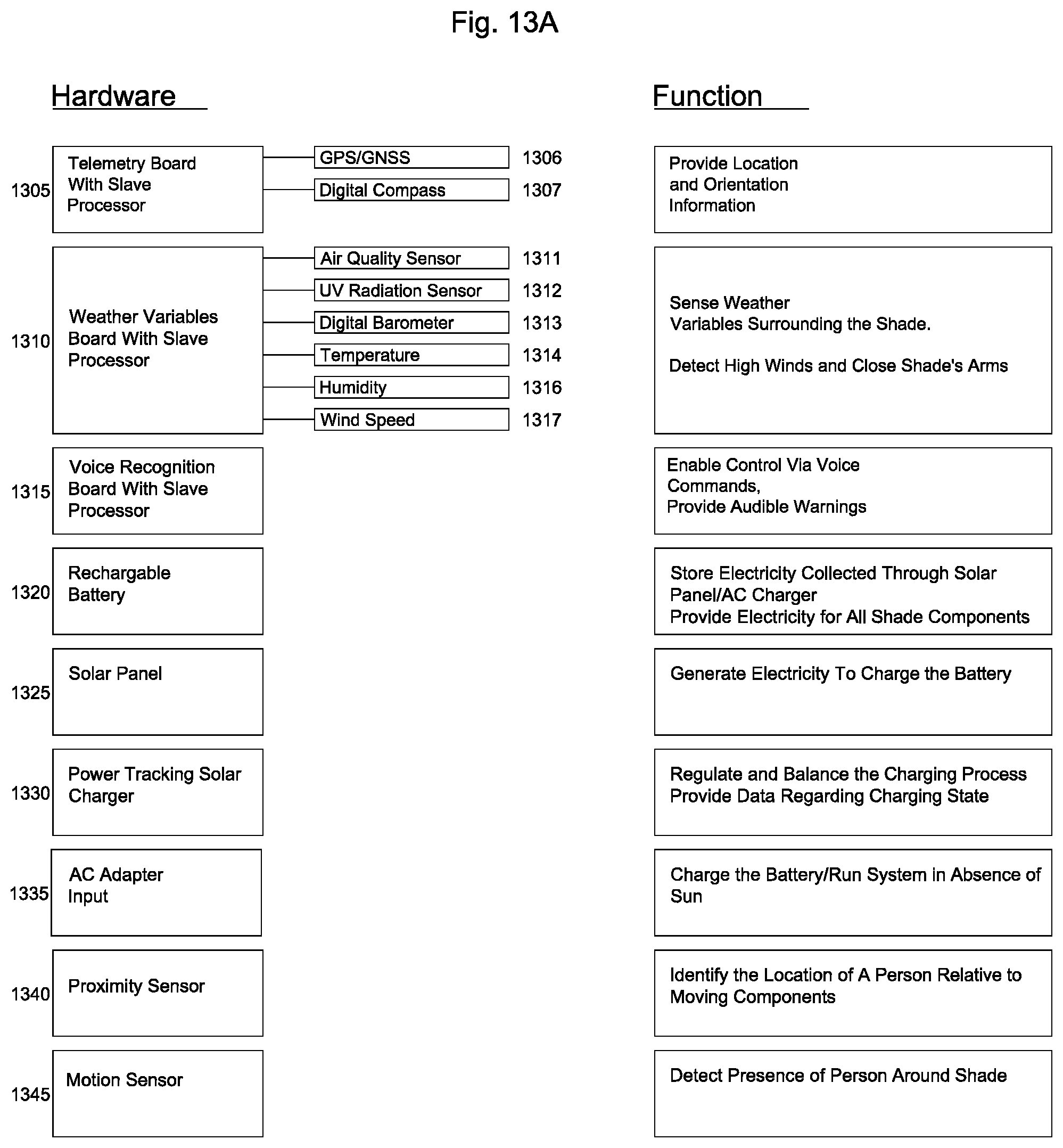

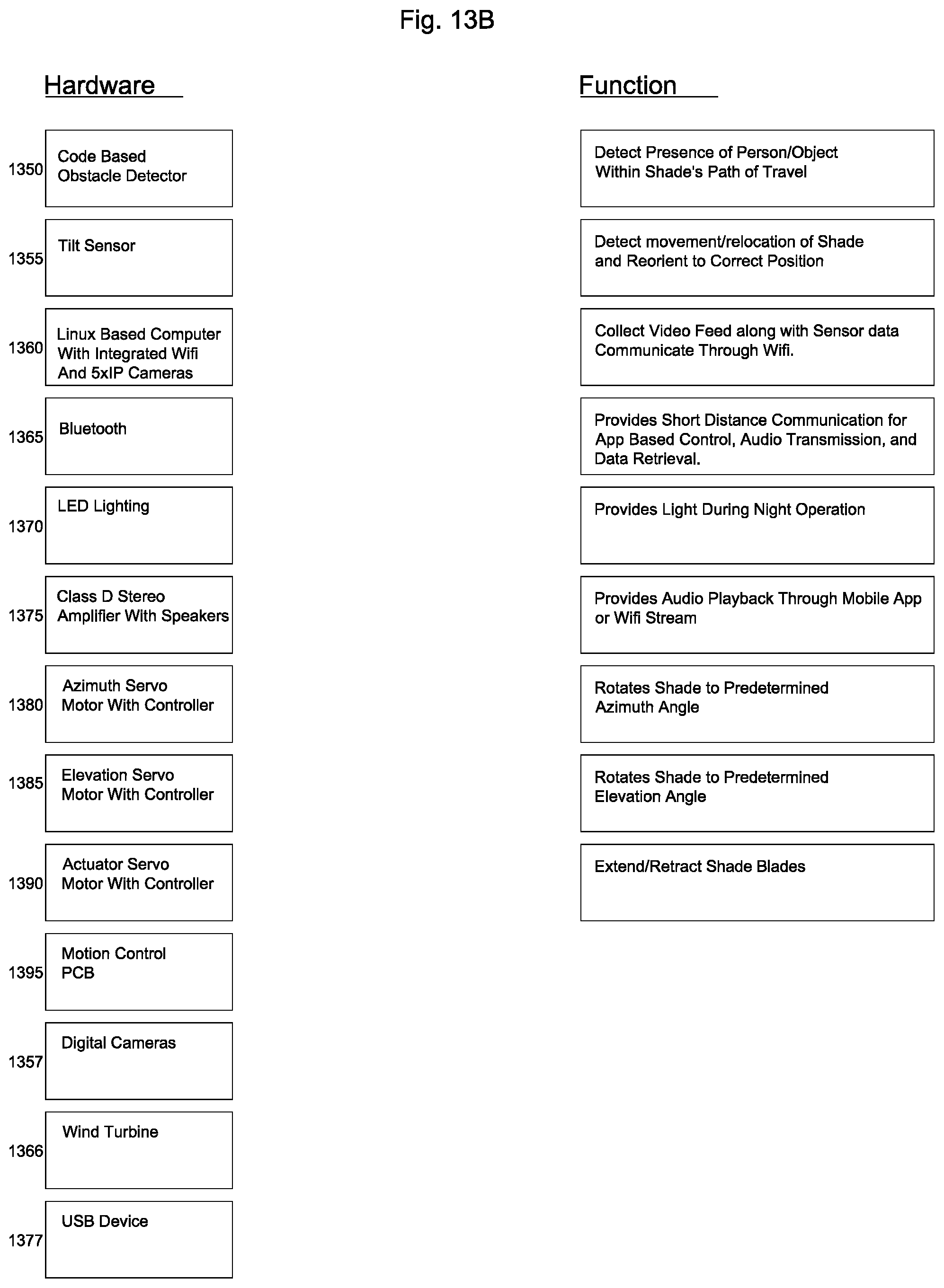

[0022] FIG. 13 is a block diagram of a block diagram of multiple components within a shading object.

[0023] FIG. 14 is a flow diagram of an embodiment of a process to position a shading object in a shading element.

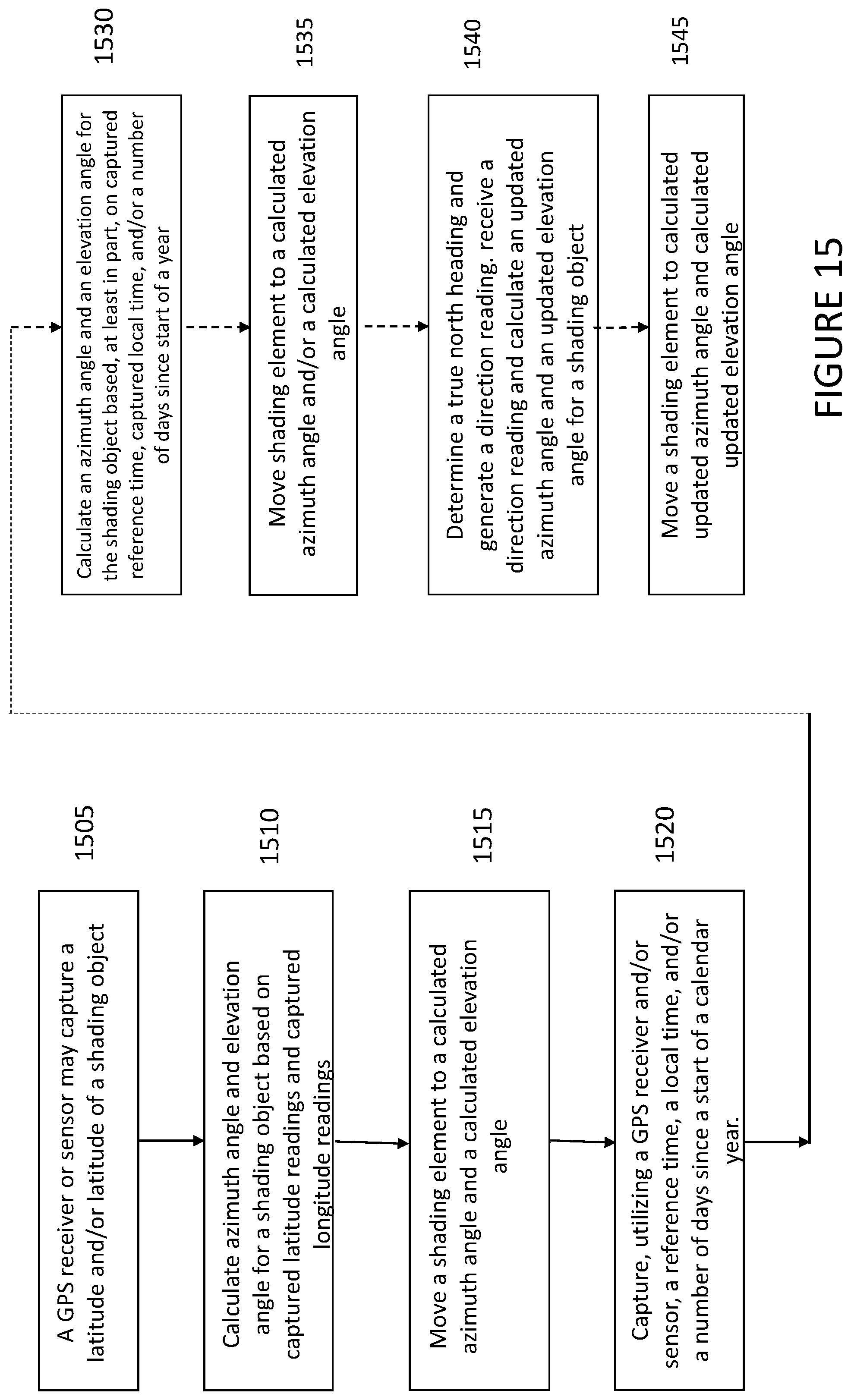

[0024] FIG. 15 is a flow diagram of an embodiment of a process to position a shading object in a shading element utilizing a global positioning sensor or receiver.

[0025] FIG. 16 is a flow diagram of an embodiment of a process to apply personal settings to a shading object.

DETAILED DESCRIPTION

[0026] In the following detailed description, numerous specific details are set forth to provide a thorough understanding of claimed subject matter. For purposes of explanation, specific numbers, systems and/or configurations are set forth, for example. However, it should be apparent to one skilled in the relevant art having benefit of this disclosure that claimed subject matter may be practiced without specific details. In other instances, well-known features may be omitted and/or simplified so as not to obscure claimed subject matter. While certain features have been illustrated and/or described herein, many modifications, substitutions, changes and/or equivalents may occur to those skilled in the art. It is, therefore, to be understood that appended claims are intended to cover any and all modifications and/or changes as fall within claimed subject matter.

[0027] References throughout this specification to one implementation, an implementation, one embodiment, an embodiment and/or the like means that a particular feature, structure, and/or characteristic described in connection with a particular implementation and/or embodiment is included in at least one implementation and/or embodiment of claimed subject matter. Thus, appearances of such phrases, for example, in various places throughout this specification are not necessarily intended to refer to the same implementation or to any one particular implementation described. Furthermore, it is to be understood that particular features, structures, and/or characteristics described are capable of being combined in various ways in one or more implementations and, therefore, are within intended claim scope, for example. In general, of course, these and other issues vary with context. Therefore, particular context of description and/or usage provides helpful guidance regarding inferences to be drawn.

[0028] With advances in technology, it has become more typical to employ distributed computing approaches in which portions of a problem, such as signal processing of signal samples, for example, may be allocated among computing devices, including one or more clients and/or one or more servers, via a computing and/or communications network, for example. A network may comprise two or more network devices and/or may couple network devices so that signal communications, such as in the form of signal packets and/or frames (e.g., comprising one or more signal samples), for example, may be exchanged, such as between a server and a client device and/or other types of devices, including between wireless devices coupled via a wireless network, for example.

[0029] A network may comprise two or more network devices and/or may couple network devices so that signal communications, such as in the form of signal packets, for example, may be exchanged, such as between a server and a client device and/or other types of devices, including between wireless devices coupled via a wireless network, for example.

[0030] In this context, the term network device refers to any device capable of communicating via and/or as part of a network and may comprise a computing device. While network devices may be capable of sending and/or receiving signals (e.g., signal packets and/or frames), such as via a wired and/or wireless network, they may also be capable of performing arithmetic and/or logic operations, processing and/or storing signals (e.g., signal samples), such as in memory as physical memory states, and/or may, for example, operate as a server in various embodiments. Network devices capable of operating as a server, or otherwise, may include, as examples, dedicated rack-mounted servers, desktop computers, laptop computers, set top boxes, tablets, netbooks, smart phones, wearable devices, integrated devices combining two or more features of the foregoing devices, the like or any combination thereof. As mentioned, signal packets and/or frames, for example, may be exchanged, such as between a server and a client device and/or other types of network devices, including between wireless devices coupled via a wireless network, for example. It is noted that the terms, server, server device, server computing device, server computing platform and/or similar terms are used interchangeably. Similarly, the terms client, client device, client computing device, client computing platform and/or similar terms are also used interchangeably. While in some instances, for ease of description, these terms may be used in the singular, such as by referring to a "client device" or a "server device," the description is intended to encompass one or more client devices and/or one or more server devices, as appropriate. Along similar lines, references to a "database" are understood to mean, one or more databases and/or portions thereof, as appropriate.

[0031] It should be understood that for ease of description a network device (also referred to as a networking device) may be embodied and/or described in terms of a computing device. However, it should further be understood that this description should in no way be construed that claimed subject matter is limited to one embodiment, such as a computing device or a network device, and, instead, may be embodied as a variety of devices or combinations thereof, including, for example, one or more illustrative examples.

[0032] Operations and/or processing, such as in association with networks, such as computing and/or communications networks, for example, may involve physical manipulations of physical quantities. Typically, although not necessarily, these quantities may take the form of electrical and/or magnetic signals capable of, for example, being stored, transferred, combined, processed, compared and/or otherwise manipulated. It has proven convenient, at times, principally for reasons of common usage, to refer to these signals as bits, data, values, elements, symbols, characters, terms, numbers, numerals and/or the like. It should be understood, however, that all of these and/or similar terms are to be associated with appropriate physical quantities and are intended to merely be convenient labels.

[0033] Likewise, in this context, the terms "coupled", "connected," and/or similar terms are used generically. It should be understood that these terms are not intended as synonyms. Rather, "connected" is used generically to indicate that two or more components, for example, are in direct physical, including electrical, contact; while, "coupled" is used generically to mean that two or more components are potentially in direct physical, including electrical, contact; however, "coupled" is also used generically to also mean that two or more components are not necessarily in direct contact, but nonetheless are able to co-operate and/or interact. The term coupled is also understood generically to mean indirectly connected, for example, in an appropriate context. In a context of this application, if signals, instructions, and/or commands are transmitted from one component (e.g., a controller or processor) to another component (or assembly), it is understood that signals, instructions, and/or commands may be transmitted directly to a component, or may pass through a number of other components on a way to a destination component. For example, a signal transmitted from a controller or processor to a motor may pass through glue logic, an amplifier, and/or an interface. Similarly, a signal transmitted through an cooling system may pass through an air conditioning module, and a signal transmitted from a sensor to a controller or processor may pass through a conditioning module, an analog-to-digital controller, and/or a comparison module.

[0034] The terms, "and", "or", "and/or" and/or similar terms, as used herein, include a variety of meanings that also are expected to depend at least in part upon the particular context in which such terms are used. Typically, "or" if used to associate a list, such as A, B or C, is intended to mean A, B, and C, here used in the inclusive sense, as well as A, B or C, here used in the exclusive sense. In addition, the term "one or more" and/or similar terms is used to describe any feature, structure, and/or characteristic in the singular and/or is also used to describe a plurality and/or some other combination of features, structures and/or characteristics. Likewise, the term "based on" and/or similar terms are understood as not necessarily intending to convey an exclusive set of factors, but to allow for existence of additional factors not necessarily expressly described. Of course, for all of the foregoing, particular context of description and/or usage provides helpful guidance regarding inferences to be drawn. It should be noted that the following description merely provides one or more illustrative examples and claimed subject matter is not limited to these one or more illustrative examples; however, again, particular context of description and/or usage provides helpful guidance regarding inferences to be drawn.

[0035] A network may also include now known, and/or to be later developed arrangements, derivatives, and/or improvements, including, for example, past, present and/or future mass storage, such as network attached storage (NAS), a storage area network (SAN), and/or other forms of computing and/or device readable media, for example. A network may include a portion of the Internet, one or more local area networks (LANs), one or more wide area networks (WANs), wire-line type connections, wireless type connections, other connections, or any combination thereof. Thus, a network may be worldwide in scope and/or extent.

[0036] The Internet refers to a decentralized global network of interoperable networks that comply with the Internet Protocol (IP). It is noted that there are several versions of the Internet Protocol. Here, the term Internet Protocol, IP, and/or similar terms, is intended to refer to any version, now known and/or later developed of the Internet Protocol. The Internet includes local area networks (LANs), wide area networks (WANs), wireless networks, and/or long haul public networks that, for example, may allow signal packets and/or frames to be communicated between LANs. The term World Wide Web (WWW or Web) and/or similar terms may also be used, although it refers to a part of the Internet that complies with the Hypertext Transfer Protocol (HTTP). For example, network devices may engage in an HTTP session through an exchange of appropriately compatible and/or compliant signal packets and/or frames. It is noted that there are several versions of the Hypertext Transfer Protocol. Here, the term Hypertext Transfer Protocol, HTTP, and/or similar terms is intended to refer to any version, now known and/or later developed. It is likewise noted that in various places in this document substitution of the term Internet with the term World Wide Web (`Web`) may be made without a significant departure in meaning and may, therefore, not be inappropriate in that the statement would remain correct with such a substitution.

[0037] Although claimed subject matter is not in particular limited in scope to the Internet and/or to the Web; nonetheless, the Internet and/or the Web may without limitation provide a useful example of an embodiment at least for purposes of illustration. As indicated, the Internet and/or the Web may comprise a worldwide system of interoperable networks, including interoperable devices within those networks. The Internet and/or Web has evolved to a public, self-sustaining facility that may be accessible to tens of millions of people or more worldwide. Also, in an embodiment, and as mentioned above, the terms "WWW" and/or "Web" refer to a part of the Internet that complies with the Hypertext Transfer Protocol. The Internet and/or the Web, therefore, in this context, may comprise an service that organizes stored content, such as, for example, text, images, video, etc., through the use of hypermedia, for example. A HyperText Markup Language ("HTML"), for example, may be utilized to specify content and/or to specify a format for hypermedia type content, such as in the form of a file and/or an "electronic document," such as a Web page, for example. An Extensible Markup Language ("XML") may also be utilized to specify content and/or format of hypermedia type content, such as in the form of a file or an "electronic document," such as a Web page, in an embodiment. Of course, HTML and/or XML are merely example languages provided as illustrations. Furthermore, HTML and/or XML (and/or similar terms) is intended to refer to any version, now known and/or later developed of these languages. Likewise, claimed subject matter is not intended to be limited to examples provided as illustrations, of course.

[0038] Also as used herein, one or more parameters may be descriptive of a collection of signal samples, such as one or more electronic documents, and exist in the form of physical signals and/or physical states, such as memory states. For example, one or more parameters, such as referring to an electronic document comprising an image, may include parameters, such as time of day at which an image was captured, latitude and longitude of an image capture device, such as a camera, for example, etc. In another example, one or more parameters relevant to content, such as content comprising a technical article, may include one or more authors, for example. Claimed subject matter is intended to embrace meaningful, descriptive parameters in any format, so long as the one or more parameters comprise physical signals and/or states, which may include, as parameter examples, name of the collection of signals and/or states (e.g., file identifier name), technique of creation of an electronic document, purpose of an electronic document, time and date of creation of an electronic document, logical path of an electronic document (or portion thereof), encoding formats and/or standards used for encoding an electronic document, and so forth.

[0039] Some portions of the detailed description which follow are presented in terms of algorithms or symbolic representations of operations on binary digital signals stored within a memory of a specific apparatus or special purpose computing device or platform. In the context of this particular specification, the term specific apparatus or the like includes a general purpose computer once it is programmed to perform particular functions pursuant to instructions from program software. Algorithmic descriptions or symbolic representations are examples of techniques used by those of ordinary skill in the signal processing or related arts to convey the substance of their work to others skilled in the art. An algorithm is here, and generally, considered to be a self-consistent sequence of operations or similar signal processing leading to a desired result. In this context, operations or processing involve physical manipulation of physical quantities. Typically, although not necessarily, such quantities may take the form of electrical or magnetic signals capable of being stored, transferred, combined, compared or otherwise manipulated.

[0040] It has proven convenient at times, principally for reasons of common usage, to refer to such signals as bits, data, values, elements, symbols, characters, terms, numbers, numerals or the like. It should be understood, however, that all of these or similar terms are to be associated with appropriate physical quantities and are merely convenient labels. Unless specifically stated otherwise, as apparent from the following discussion, it is appreciated that throughout this specification discussions utilizing terms such as "processing," "computing," "calculating," "determining" or the like refer to actions or processes of a specific apparatus, such as a special purpose computer or a similar special purpose electronic computing device. In the context of this specification, therefore, a special purpose computer or a similar special purpose electronic computing device is capable of manipulating or transforming signals, typically represented as physical electronic or magnetic quantities within memories, registers, or other information storage devices, transmission devices, or display devices of the special purpose computer or similar special purpose electronic computing device.

[0041] In an embodiment, a controller typically performs a series of instructions resulting in data manipulation. In an embodiment, a microcontroller may be a compact microcomputer designed to govern the operation of embedded systems in motor vehicles, robots, office machines, complex medical devices, mobile radio transceivers, vending machines, home appliances, and various other devices. In an embodiment, a microcontroller may include a processor, a, and/or peripherals. In an embodiment, a controller may be a commercially available processor such as an Intel Pentium, Motorola PowerPC, SGI MIPS, Sun UltraSPARC, or Hewlett-Packard PA-RISC processor, but may be any type of application-specific and/or specifically designed processor or controller as many other processors and/or controllers are available. In an embodiment, a controller may be connected to other system elements, including one or more memory devices, by a bus. Usually, a processor or controller, may execute an operating system which may be, for example, a Windows-based operating system (e.g., Windows NT, Windows 2000 (Windows ME), Windows XP operating systems) available from the Microsoft Corporation, a MAC OS System X operating system available from Apple Computer, one of many Linux-based operating system distributions (e.g., the Enterprise Linux operating system available from Red Hat Inc.), a Solaris operating system available from Sun Microsystems, or a UNIX operating systems available from various sources. Many other operating systems may be used, and embodiments are not limited to any particular implementation.

[0042] The specification may refer to a shading object as an apparatus that provides shade to a user from weather elements. The apparatus may also be referred to as a parasol, umbrella, sun shade, outdoor shade furniture, sun screen, sun shelter, awning, sun cover, sun marquee, brolly and other similar names, which may all be utilized interchangeably in this application. The shading objects described herein include many novel and non-obvious features. The shading objects each comprise a plurality of shading elements. The shading elements may be addressed and/or moved as a group and may be addressed and/or moved separately. Other prior art shade screens or umbrellas are normally comprised of one shading elements.

[0043] FIG. 1A illustrates a shading object according to an embodiment. The shading object 100 includes a base unit 105, a central support frame 110, and a plurality of shading elements 120 121 122 123 and 124. In an example embodiment, a base unit 105 may comprise a power cord for connection to an external power source 241 (shown in FIG. 2), for example, an alternating current (AC) power outlet.

[0044] FIG. 2 illustrates a schematic diagram of a shading object according to an embodiment. In an example embodiment, a base unit 205 comprises a motor 225, a controller 222, a power supply 240 and a rotation apparatus 150. In an example embodiment, an external power source 241 may provide power to the power supply 240 through a power cord. In another example embodiment, a power source may be a battery may provide backup power for a power supply 240. Continuing with an illustrative embodiment, a power supply 240 may provide power, at different voltage and/or current levels, to a motor 225, a controller 222 and/or a rotation apparatus 226.

[0045] In an illustrative embodiment, a base unit 105 may comprise weight compartments 155. In an embodiment, weight compartments 155 may include weights to provide stability for a shading object. For example, one or more weights may be placed into weight compartments 155 to stabilize the shading object 100. By having removable weights, moving the shading object is easier, which increases a shading object's portability. In an example embodiment, weights may be easily removed from the weight compartments and retrieved once weights are needed again to stabilize a shading object. In an example embodiment illustrated in FIG. 1A, one weight compartment 155 is illustrated, but a plurality of weight compartments (and removable weights) may be present in a base unit 105.

[0046] In an example embodiment, a support frame 110 may be curved, as is illustrated in FIG. 1A. As is illustrated in FIG. 1A, in an embodiment, a support frame 110 may be connected to a top surface of the base unit 105 via a connection element. In an embodiment, a connection element may be an adhesive (glue, other adhesive materials) or a fastener (including but not limited to screws, nails, nuts and bolts, hinges). In an embodiment, a support frame 110 may comprise a second actuator/motor 160, a second controller 165, shading element storage space 170 and a deployment/retraction apparatus 175. In an example embodiment, a second actuator may be a motor that is responsible for moving or controlling a mechanism or system. An actuator or motor (e.g., second motor 160 or motor 225) may be operated by a source of energy, such as electric current, fluid or pneumatic pressure that is converted in mechanical energy. A linear actuator may be ballscrew actuators, rack and pinion actuators, belt driven actuators, linear motor driver actuators. In an example embodiment, a process may be automated, and a controller may be connected to an actuator, where a controller receives input and provides an output to an actuator to adjust a mechanical aspect of the shading object. In an example embodiment, a motor may any type of motor, including but not limited to: combustion, AC, DC, brushless, servo, stepper or gear motor. In an embodiment, motors 160 or 225 may also be connected to one or more controllers 222 or 165 that can actuate movement of the shading object. In an example embodiment, a controller 222 may connected to a linear actuator or motor 160 or 225 wirelessly as long as a control signal may be received by a shading object.

[0047] In an embodiment, there are other methods or devices for providing linear and/or rotation movement in the support frame. The support frame 110, and its position relative to the base unit 105, may be adjusted by user of a rack and pinion, worm gear, barrel cam, or any other form of general motion, for example.

[0048] As illustrated in FIG. 1A, the shading object may comprise a storage space 170 that may be located inside the support frame 110. In an example embodiment, the support frame 110 may include an opening at a top surface of the support frame 110. In an example embodiment, for example, when the shading elements are not deployed, the plurality of shading elements 120 121 122 123 and 124 may be resident within a storage space 170. Continuing with an illustrative embodiment, a storage space 170 may comprise channels to provide a structure in an interior portion into which one or more shading elements 120 121 122 123 and/or 124 may be received and/or stored. In alternative embodiments, other storage mechanisms may be utilized to provide a structure to house non-deployed shading elements 120 121 122 123 and/or 124.

[0049] In an example embodiment, a controller 165 may generate commands, instructions, and/or signals to deploy one or more of a plurality of shading objects 120 121 122 123 and 124. In an embodiment, a motor or actuator 160 may receive a command, instruction, and/or signal, and may generate signals to cause a deployment mechanism 175 to deploy one or more shading elements 120 121 122 123 and 124. In an embodiment, the deployment mechanism 175 deploys one or more of the shading elements 120 121 122 123 124 to a deployed or "providing shade" position from the storage space 170. In an example embodiment, a deployment mechanism 175 may comprise a motor 160 to project or deploy one or more of the shading elements. As illustrated in FIG. 1B, a deployment mechanism or apparatus 175 may deploy one or more of the plurality of shading units 120 121 122 123 and/or 124 in an outwardly direction, as is illustrated by reference number 126 in FIG. 1B. FIG. 1B illustrates a shading object comprising deployed shading objects according to an embodiment.

[0050] In an example embodiment, a deployment mechanism 175 may select and/or deploy only one shading element, multiple shading elements, or most of shading elements 120 121 122 123 and 124. In other words, any of a plurality of shading elements may be independently selectable. Continuing with an illustrative embodiment, because the shading elements 120 121 122 123 and 124 are independently selectable, single shading elements may be deployed at a different time, in a different direction, and/or may be deployed partially or fully. In an example embodiment, a deployment mechanism 175 may only deploy the first, third and fifth shading elements. In an example embodiment, a deployment mechanism 175 may deploy one or more of the shading elements a certain distance and not have the shading element extended to a full deployment. This is illustrated in FIG. 1B where shading elements 121 and 123 are deployed out more than shading elements 120 122 and 124. In another example embodiment, a deployment mechanism 175 may not deploy the shading elements in a uniform fashion, for example, where there is one surface that is providing shade to the user (like there is for an umbrella). In the example embodiment illustrated in FIG. 1A, for example, a deployment mechanism may deploy one of the shading elements at a 15-45 degree range horizontally from the center frame (shading element 124) and may deploy one or more of the shading elements at a different height vertically from one or more of the shading elements (e.g., for example shading element 120 versus shading element 123).

[0051] Further, in an example embodiment, one or more of a plurality of shading elements may have a different length and/or width as compared to other shading elements 120 121 122 123 and 124. In another example embodiment, one or more of the plurality of shading elements may have a different geometric shape as compared to other shading elements. By having a variety of shading element widths, lengths and/or shapes, an intelligent shading system may be able to provide cover, shade, and/or protection from the elements to many different areas that have unique dimensions and/or spacing. For example, a variety of shading element width, length and/or shapes may allow for the shading element to provide shade to a corner, irregularly shaped area, and/or non-uniform shaped area that a less flexible shading system is not equipped to address.

[0052] In an embodiment, a plurality of shading elements may be composed of materials such as plastics, plastic composites, fabric, metals, woods, composites, or any combination thereof. In an example embodiment, the plurality of shading elements 120 121 122 123 and 124 may be made of a flexible material. In an alternative example embodiment, the plurality of shading elements 120 121 122 123 and 124 may be made of a stiffer material.

[0053] In an example embodiment, each or some of the plurality of shading elements 120 121 122 123 and 124 may also have an array of photocells 180 disposed on its surface. In the example embodiment illustrated in FIG. 1A, a photocell array 180 may be disposed on or attached to a top surface of one or more of the plurality of shading elements 120 121 122 123 and 124. In an embodiment, solar photovoltaic cells (photocells) 180 may be exposed to sunlight and photon particles in the sunlight may cause a photocell to generate electrical energy, which then is transferred to a power collection unit 260 for storage and later utilization. In embodiments of the invention, the solar energy collection unit 260 may generate enough power to provide voltage and current to other components within the intelligent shading object. In an embodiment, a solar energy collection unit 260 may be coupled to a power unit or supply 270, which may include a battery. In an embodiment, a power unit 270 may be the power source for the entire shading object and in an example embodiment, no external power source may be needed for the intelligent shading object. In an alternative embodiment, an external power supply, such as power source 241 may also or solely supply power to an intelligent shading object.

[0054] As is illustrated in the example embodiment of FIG. 1A, a deployment mechanism 175 may deploy a plurality of shading elements 120 121 122 123 124 in a tree-branch like manner. Illustratively, as is shown in FIG. 1A, a deployment mechanism 175 may deploy shading element 120 at a first level which is the highest vertical level, shading element 121 at a second level slightly offset and to a right orientation of shading element 120. Continuing with an embodiment, shading element 122 may be deployed at a lower vertical level compared to shading element 120, but at a higher vertical level and not overlapping with shading element 121. Similarly, in this embodiment, a deployment mechanism may deploy shading elements 123 and 124 at lower vertical levels as compared to shading element 120. Portions of shading elements 120 121 and 122 may overlap different portions of shading elements 123 and 124.

[0055] FIG. 1C illustrates a deployment of shading elements according to an embodiment. In this embodiment, as compared the FIG. 1B, shading elements 120 121 122 123 and 124 are not deployed as far in an outward direction as in FIG. 1B. In an illustrative embodiment, a shading element may thus provide shade and/or protection to an area closer to a central support unit 110 and shading object than when deployed in a more outwardly fashion. In an embodiment, element rods 127 may connect or couple deployment mechanism 175 to the plurality of shading elements 120 121 122 123 and 124.

[0056] In an example embodiment, the shading object 100 central support unit 110 may also include a light sensor 185. In an embodiment, a light sensor 185 may be integrated into a central support unit 110 or may be disposed on a surface of a central support unit 110. In an embodiment, a light sensor 185 may detect a direction having the highest light energy and may determine that the solar light source is coming from a specific direction. In an embodiment, a light sensor may be implemented as a single light sensor or may comprise multiple light sensors arranged in a fashion to collect light from different directions. In an example embodiment, a light sensor 185 may identify that a sun (or a light source) is directly overhead or a sun may be located at an angle of 45 degrees from directly overhead. In this example embodiment, a light sensor 185 may transmit this information (via an electrical signal) to a first controller 222. In an embodiment, a first controller 222 may receive a transmitted signal and generate instructions, signals and/or commands to, for example, a motor 225 and then to a rotation unit 150 to cause a base unit 205 to rotate in a direction to adjust shading elements to provide maximum shade for a user. In an example embodiment, if a light source (e.g., a sun) is at a 30 degree angle to the left of the center, then a rotation unit 150 may rotates an interior section of the base unit 205 counterclockwise to cause the support unit 110, and thus the plurality of shading elements 120 121 122 123 and 124 to move to a location to provide shade or protection from the sun or other weather elements. In an embodiment, a first rotation unit 150 may be located in an interior portion of a base unit 105. In this example embodiment, an interior portion of a base unit 105 may rotate, whereas an exterior portion of the base unit 105 may be fixed and not rotate. As illustrated In FIG. 1A, an interior portion 196 may rotates in either a clockwise or counterclockwise direction, whereas exterior portion 197 is stationary. The interior portion 196 of the base unit may coupled to one end of support unit 110.

[0057] In an example embodiment, the support unit 110 may comprise a light and/or image projector 190 (reference number 290 in FIG. 2). Light and/or image projector may project light and/or images onto a surface of one or more of a plurality of shading elements 120 121 122 123 and 124. Illustratively, in an embodiment, a surface may be a top surface or a bottom surface of a shading element.

[0058] In an example embodiment, a support unit 110 (e.g., 210 in FIG. 2) may comprise an audio transceiver 292 and/or speakers 294. An audio device, such as an iPhone, a digital music player, or the like, may be electronically coupled to the audio transceiver 292 and transmit and/or receive audio signals from the audio device. In an embodiment, an audio transceiver 292 may receive audio signals and transfer audio signals to the speakers 294 so that speakers may reproduce and play sound for shading object users to hear. In an embodiment, audio signals may be transmitted wirelessly between the audio device and the audio transceiver 292, and/or the audio receiver 292 and the speaker 294.

[0059] FIG. 3 illustrates a method of deploying a shading object according to an embodiment. In an example embodiment, a base system 205 and a support unit 210 may be connected or positioned together and placed 305 in an upright position. In an example embodiment, weights may be added 310 to weight compartments in a base unit 205 in order to provide stability for the shading object 200. In an embodiment, a plurality of shading elements may be stored 315, when the one or more shading elements are not deployed (or are in a retracted position), in a storage area of the support unit 210. Upon receiving instructions from a controller 235, a deployment mechanism may deploy 320 one or more of the plurality of shading elements into a shade or cover position. As noted previously, shading elements do not have to be deployed as a group and may be deployed individually. In an embodiment, after shading object and associated shading elements are no longer needed, a controller may receive a command and a deployment mechanism may retract 325 any of the plurality of shading elements that were previously deployed.

[0060] FIG. 3 also illustrates additional features of an intelligent shading object. In embodiments, a shading object may include a sunlight sensor. A sunlight sensor may detect 330 an intensity and/or direction of light from a light source (e.g., sun) and generate a signal that is transmitted to a controller 222 in a base unit 205. The signal may identify that sunlight has been detected at a specific intensity and/or at an angle. In an embodiment, a base unit controller 222 may receive a signal and provide instructions, commands, and/or signals to a base unit rotation unit to rotate 335 an inner portion of a base unit (which is connected to a support unit) in order to change an orientation or direction of a support unit 205 (and thus a plurality of shading elements).

[0061] In embodiments, a shading object may also include an illumination source that can project light and/or videos onto surfaces of a shading object. In an example embodiment, a illumination source 290 may project 340 light onto a surface of one or more of the shading elements. Alternatively, or in addition to, in an embodiment, an illumination source may project 345 an image and/or video onto surfaces of one or more of a plurality of shading elements.

[0062] In an embodiment, a shading object 200 may include an audio system including a wireless audio receiver 292 and speakers 294. In an embodiment, an audio system may receive 350 audio signals from an electronic device that can wirelessly transmit audio signals. The audio system may cause the received audio signals to be played on speakers for listening enjoyment of the shade object user.

[0063] In an example embodiment, the shading object may also include a wind sensor. In an embodiment, a wind sensor may detect 355 that the wind velocity is greater than an acceptable value and send a signal to a controller 222 in the support unit 205, which in response to a signal, may generate instructions, commands, and/or signals to transmit to a deployment mechanism to cause a deployment mechanism to retract 230 one or more deployed plurality of shading elements. In an embodiment, retraction may be necessary so that the shading object may not be damaged in high wind conditions and/or injure an individual residing under the shading object

[0064] FIG. 4A illustrates a second shading object according to an example embodiment. In an embodiment, a shading object 400 illustrated in FIG. 4 may comprise a base unit 410, a support unit 415, a rotation hub assembly 420, a control housing 425, a pivot assembly 430, a shading element frame 435, and/or a shading element 440 or shading elements.

[0065] In an example embodiment, a base unit 410 may be rectangular in shape. In alternative example embodiments, a base unit 410 may be circular, square, trapezoidal or any other shape that provides necessary stability for a shading object. In an example embodiment, a base unit 410 may include weight compartments 411 into which removable weights can be placed and/or removed. As illustrated in FIG. 4A, for example, the weight compartments 411 may be rectangular in shape and there may be four, where the four weight compartments may be positioned around a centralized support unit 415. In an example embodiment, a base unit 410 may be connected or coupled to an external power source, such as an AC power source.

[0066] In an example embodiment, a support unit 415 may be comprised of a support post 416 and/or a support rod/stem 417. In an embodiment, a support post 416 may be rigid and connected to a central section of a base unit 410. Continuing with an embodiment, an end of a support post 416 may be connected to a support rod/stem 417. In an embodiment, for example, a support rod/stem 417 may be comprised of a plurality of pieces. Continuing with an embodiment, a support rod/stem 417 may also be collapsible. In an embodiment where a support rod/stem 417 is collapsible, a height of an intelligent shading object may be adjustable. In addition, a collapsibility of a support rod/stem 417 provides for easily dismantling and/or storage of the shading object 400.

[0067] In an embodiment, a rotational hub 420 may be connected to a support rod/stem 417. In an embodiment illustrated in FIG. 4A, a rotational hub 420 may be connected a top portion of the support rod/stem 417. In an example embodiment, a rotational hub 420 may be comprised of a housing, bearings, and a controller/motor. Continuing with an example embodiment, a rotational hub 420 may be comprised of any assembly allowing circular movement in a horizontal plane. In an example embodiment, a rotational hub 420 may be connected to a control housing 425. Continuing with an example embodiment, a control housing 425 may be connected to a pivot assembly 430, which in turn may be connected to a shading element support frame 435, to which a shading element (or shading elements) 440 may be attached. In an embodiment, a pivot assembly 430 may be connected to a plurality of shading element frames to which shading elements may be attached. In an embodiment, a control housing 425 may rotate 360 degrees, a shading element 440 (or shading elements) may also rotate 360 degrees, and thus may be able to track a light source (e.g., a sun). In an example embodiment, if a rotational hub 420 rotates in first direction, a shading element frame 435, and/or a shading element 440 may rotate in the corresponding first direction because of a connection and/or coupling of a rotational hub 420 to a control housing 425 and/or a pivot assembly 430.

[0068] In the example embodiment illustrated in FIG. 4A and FIG. 5, a rotational hub 420 and/or a control housing 425 may comprise a tracking sensor (or sunlight sensors) 421, a first controller 422, and a first motor 423. In an example embodiment, a tracking sensor (or sensors) 421 may be capture light intensity from a light source, which causes tracking sensors 421 to generate a signal indicative of a direction and/or intensity of light. In an embodiment, a tracking sensor 421 may be coupled to a first controller or processor 422 and generated signals may be transferred to a controller or processor 422. In an example embodiment, the controller or processor 422 may receive the generated signal, process the signal to identify an intensity and/or direction of sunlight, and transmit a signal and/or a command directly or indirectly to a first motor 423 to cause the rotational hub 420 to rotate in a direction that tracks a light source, such as a sun. This may result in a shading element 440 rotating in a direction to track a sun. Illustratively, rotation of the rotational hub 420 causes a control housing 425, a pivot unit 430, a shading element frame 435 and/or a shading element 440 to move in the corresponding clockwise or counterclockwise direction about a central axis.

[0069] In an example embodiment, a housing unit 425 may comprise a control panel 426, speaker(s) 427, a power source 428, a second controller or processor 429 and a second motor 432. In an embodiment, a control panel 426 may allow a user to control operation of a shading object 400. In an example embodiment, a wireless transceiver 433 may receive transmitted audio signals from a computing device. Continuing with an embodiment, a wireless transceiver may be coupled to speaker(s) 427 and may transmit the audio signals to the speakers 427 to cause sound to be produced or played. In an example embodiment, a pivot assembly 430 may include a wireless transceiver 433 and speaker(s) 427, and a wireless transceiver 433 and speakers 427 may not be installed in the housing unit 425.

[0070] FIG. 5 illustrates a block diagram on a second shading object according to an embodiment. In an example embodiment, a housing unit 420 may also include a wind sensor 434. As noted previously, a wind sensor 434 may monitor wind conditions and transmit a signal to the controller or processor 429 indicative of wind conditions in the area or environment in which the shading object is installed and/or located. The controller or processor 429 may process a signal from the wind sensor and if a signal identifies wind conditions higher than a set threshold, a controller or process 429 may generate a command to, directly or indirectly, instruct a pivot assembly 430 (with or without the motor 432) to lower a shading element support frame 435 (and thus the shading element 440) to protect a shading element from being damages in a threatening wind condition. In another embodiment, a pivot assembly 430 may include the controller (or processor) and/or a wind sensor 434, rather than a housing unit 425.

[0071] In embodiments, a housing unit 425 may include a light projector 436. In an alternative embodiment, a pivot assembly 430 may include a light projector 436 rather than a housing unit. As discussed previously, in an example embodiment, a projector 436 may transmit light and/or images to be displayed and/or projected onto shading elements 440. In an embodiment, a controller or processor 429 may generate a signal and/or instructions which are transmitted, directly or indirectly, to a projector 436 to cause the light and/or images to be displayed on a shading element and/or a section of a shading object.

[0072] In an embodiment, an intelligent shading object may have a shading element frame 435 and/or a shading element 440 (or elements) moved to a number of positions. In an example embodiment, a control panel 436 may control movement of a shading element frame 435. In an example embodiment, a controller or processor 429 may receive a signal and/or commands from control panel 426 (or another external source) identifying an intended movement of a shading element support frame 435 (and thus a shading element (or shading elements)). In an embodiment, a controller or processor 429 may generate and then transmit a signal and/or command, directly or indirectly, to a second motor 432. In an embodiment, a second motor 432 may receive the signal from a controller and may generate a signal to control and/or direct movement of a pivot assembly 430. In an example embodiment, a pivot assembly 430 may move in clockwise or counterclockwise direction and cause a shading element frame 435 and thus a shading element 440 to move in an up and down, or vertical, direction.

[0073] In an example embodiment, a pivot assembly 430 may be coupled to a control housing 425 and a shading element frame 435. In embodiments, a gearing system may couple a pivot assembly 430 to a control housing 425. In an illustrative embodiment, a shading element 440 may move from a position where that is parallel to a support unit 415 (e.g., a rest position) to a position where a shading element 440 (or shading elements) is perpendicular to a support unit 415, which may be referred to as an engaged or "shade" position.

[0074] In an example embodiment, a shading element frame 435 may comprise a counterweight assembly 445. For example, a counterweight assembly 445 may offset the weight of a shading element and provide stability to a shading object 400.

[0075] In an example embodiment, the shading element may be of many different shapes and sizes. Illustratively, as shown in FIG. 4A, a shading element 440 may cover a portion and not the entirety of the shading element frame 435. As illustrated in FIG. 4A, A shading element 440 includes an opening 446 where no there is no shading element potion. Thus, in an example embodiment, a shading element 440 may cover a specific area. For example, in an embodiment, a shading element frame 435 may have a length and a width and the shading element 440 may cover the width and a portion of the length of the shading element frame 435.

[0076] In an example embodiment, a shading element 440 may comprise photocells 413 on a top surface of a shading element 440. In an embodiment, photocells 413 may be exposed to sunlight and the photon particles may cause the photocells to generate electric energy. Electric energy is stored in an energy collection unit 414, which may comprise a memory. In an embodiment, energy in energy collection unit 414 may transfer power to a power unit or supply 428.

[0077] FIG. 6 illustrates a method of operating a second shading object according to an embodiment. In an example embodiment, a base unit 410 may be deployed 605. Weights may be added 610 into a base unit 410 for stability of the shading object. In an embodiment, a support unit may be built 615 to a desired height. In an example embodiment, a rotation unit may be placed or inserted 620 into a support unit. In an embodiment, a control unit or assembly may be placed into or connected into a control unit. Continuing with an embodiment, a pivot unit may be placed into, connected into, or integrated into a control unit. In an embodiment, a shading element may be fastened, connected or coupled to a pivot unit. In an embodiment, a controller or processor may receive a command and send instructions to move a shading element frame to a shading position 625 and accordingly move a shading element. In an embodiment, a controller or processor may move 630 the shading element frame to a rest position in response to the controller receiving command.

[0078] In embodiments, the shading object may include a sunlight sensor. In an embodiment, a sunlight sensor may detect 631 an intensity and/or direction of light from a sun and generate a signal that is transmitted to a controller or processor 422. In an embodiment, a signal may identify that sunlight has been detected at a specific angle and/or intensity. In an embodiment, a controller or processor 422 may receive a signal and provide instructions, directly or indirectly, to a rotation unit 420 to rotate 635 a control unit 425 (which is connected to the pivot assembly 430 and shading element frame 435) in order to change an orientation or direction of the shading element frame 435 (and thus a shading element 440 or shading elements).

[0079] In embodiments of the invention, a shading object may also include an illumination source that can project light and/or videos onto surfaces. In this example embodiment, the light projector 436 may project 640 light onto a surface of one or more of a plurality of shading elements 440. Alternatively, or in addition to, an illumination source 436 may project 645 an image and/or video onto surfaces of one or more of the plurality of shading elements 440.

[0080] In embodiments of the invention, a shading object 400 may include an audio system including a wireless transceiver receiver 433 and/or speakers 427. In embodiments, an audio system may receive 650 audio signals from an electronic device that can wirelessly transmit audio signals. In an embodiment, an audio system may cause received audio signals to be played on speakers for listening enjoyment of an intelligent shading object user.

[0081] In an example embodiment, a shading object may also include a wind sensor. In an embodiment, a wind sensor may detect 655 that a wind velocity is greater than an acceptable value and send a signal to a controller or processor 429, which in response to signal may instruct, directly or indirectly, a motor 432 and/or pivot assembly 430 to change an orientation of a shading element(s) 440. The change in orientation may be necessary so that an intelligent shading object 400 may not be damaged in high wind conditions and/or injure an individual residing under a shading object. FIG. 4B is a side view of a shading object with a deployed shading element according to an embodiment.

[0082] FIG. 7A illustrates another shading object according to an embodiment. In an embodiment, a shading object 700 may include a base unit 710, one or more support units 720 and 722, a louver system 760, and a plurality of shading elements 732, 733 and 734. In an embodiment, one or more support units 720 and 722 may be connected or coupled to a base unit 710. In embodiments, one or more support units 720 and 722 may be next to each other in a closed position. In a closed position, a plurality of shading elements 732 733 and 734 may be folded or bunched together and may not provide coverage to individuals within a shading area. In an embodiment, If one or more two support units 720 and 722 are moved apart to an open and/or deployed position, a plurality of shading elements 732 733 and 734 may expand to a deployed position and provide coverage to the shading area. In an embodiment, a louver system 760 may allow an orientation of the shading element 732 733 or 734 to be modified (e.g., change a shading element's vertical and/or horizontal orientation).

[0083] FIG. 7A illustrates a third shading object according to an embodiment. In an embodiment, a base unit 710 may include one or more weight compartments, a first motor 711, a controller or processor 719, a first rotation unit 712, a second motor 713, and a support deployment unit 714. In an embodiment, a support deployment unit 714 may also referred to as a support structure deployment mechanism. Similarly, in an embodiment, a support or support unit may be referred to as a support structure. In an embodiment, a base unit 710 may also include a light source 717. In embodiments, a base unit 710 may include a first section 716 that does rotate. Continuing with an embodiment, a first section 716 may be circular in shape and may include an outer circumference. In an embodiment, a second section 718 may rotate in response to commands. Illustratively, in embodiments, a second section 718 may be located inside a first section 716 and a second section 718 may be circular in shape. In an embodiment, a first motor 711 may receive signals and/or commands, directly or indirectly, from a controller or processor 719. In an embodiment, a first motor 711 may cause a first rotation unit 712 to rotate in a clockwise or a counterclockwise direction. Continuing with an embodiment, a rotation causes one or more support units 720 and 722 to move in a clockwise and/or counterclockwise direction. For example, in embodiments, a controller or processor may receive a signal from a sunlight sensor 761 identifying a direction of sunlight and/or intensity of sunlight with respect to an orientation of shading elements. In an embodiment, a controller or processor 719 may send a signal and/or commend, directly or indirectly, to a first motor 711 to cause a rotation unit 712 to move a second section 718 and track a direction of sunlight.

[0084] In an embodiment, a support deployment unit 714 may cause one or more support units 720 and 722 to move from a rest position (where one or more support units 720 and 722 are in close proximity) to an "in use," open or deployed position. In an embodiment, a second motor 713 may be connected to a support deployment unit 714 and may drive a support deployment unit 714 from the rest to open position or vice-versa. In an embodiment, a controller or processor 719 may provide a signal and/or comment, directly or indirectly, to a second motor 713 to instruct a motor to cause the support deployment to move from a rest position to an open position. Alternatively, in an embodiment, a mechanical assembly, instead of a motor, may be utilized to move a support deployment unit 714 from a rest position to an open position. In the illustrative embodiment of FIG. 7A, support deployment unit 714 is shown in an open position.

[0085] In embodiments, one or more support units 720 and 722 may be permanently connected to a base unit 710. Alternatively, in an embodiment, one or more support units 720 and 722 may be detachable from a base unit 710 and may be inserted into support holders in a base unit 710. In embodiments, support units 720 and 722 may include a louver system 760, or be connected and/or coupled to a louvre system.

[0086] In an embodiment, a louver system 760 may be activated either mechanically or electrically. If activated mechanically, rope or strings or similar material may allow manual repositioning of shading elements 732 733 and 734. In an embodiment, a louver system 760 may include pivot hinges 765 located in pairs on opposite sides of shading element support frames 720 and 722. As illustrated in FIG. 7A, one or more support units 720 and 722 may have three pivot hinges 765 located at a same height on one or more support units 720 and 722. In an embodiment, pivot hinges 765 may be connected to a driving/deployment/movement unit 766. In an embodiment, a driving unit 766 may be electrical or mechanical. Continuing with an embodiment, a driving unit 766 may be located or integrated into one or more support units 720 and 722. Alternatively, a driving unit 766 may be located or integrated into a base unit 710. If a driving unit is mechanical, a string or rope may connect a driving unit 766 to pivot hinges 765. If a driving unit 766 is electrical, a cable may connect a driving unit 766 to pivot hinges 765. In an embodiment, a shading element may be connected between a pairs of pivot hinges 765. In an embodiment, a driving unit 766 may cause a shading element to rotate in a clockwise or counterclockwise direction about an axis. Continuing with an embodiment, a driving unit 766 may be able to cause individual shading elements to rotate rather than having a number or most of shading elements 732 733 and 734 rotate. Alternatively, in an embodiment, a driving unit 766 may move a plurality of shading elements to move in unison. Illustratively, in FIG. 7A, in an embodiment, a plurality of the shading elements 732 733 and 734 may have moved in unison to a position that is between 90 degrees and 135 degrees counterclockwise from an axis 768. In an embodiment, a front of shading elements 732 733 and 734 are higher than the back of shading elements. In an embodiment, light source, e.g., a sun, may be directly overhead or behind a center of the shading object 800 and thus more of a shading element is provided to provide cover for the shading area.

[0087] In an embodiment, a top surface of shading elements 732 733 and 734 may have photo cells 741 disposed thereon. In an embodiment, photocells 741 may capture sunlight and may store energy in a solar energy connection unit 742. In an embodiment, a solar energy connection unit 742 may provide power to any of the power sources or electronic components of a shading object 800. In an embodiment, only a top shading element, e.g., 732, may have photocells 741 disposed thereon. In an embodiment, a shading object 800 may also include a light sensor 761. In embodiments, a light sensor 761 may detect a direction and/or intensity of the sunlight. Continuing with an embodiment, a light sensor 761 may be connected to a controller or processor 719 in a base unit 710. In an embodiment, a light sensor 761 may send a signal, directly or indirectly, to a controller in a base unit 710 instructing a controller or processor 719. In an embodiment, a controller or processor 770 may receive the signal and directly or indirectly cause a first motor 711 to drive a rotation unit 712 and cause one or more support units 720 and 722 (and thus the shading elements 732 733 and 734) to move in a desired clockwise and counterclockwise direction.

[0088] In an embodiment, At least one of shading elements 732 733 or 734 may comprise a wind sensor 755. Alternatively, in an embodiment, one of a plurality support units 720 or 722 may comprise a wind sensor 755. In an embodiment, a wind sensor 755 may capture a direction and/or velocity of wind in the environment where a shading object is installed. In embodiments, a wind sensor 755 may be coupled to a controller or processor 719. In an embodiment, a wind sensor 755 may transmits a signal to a controller or processor 719. If a captured velocity is over a threshold value, e.g., 10 miles per hour, a controller or processor 719 may cause shading elements 731 732 or 733 to move to a position that is not impacted by the wind. In embodiments, a controller or processor 719 may transmit a command to a support unit deployment apparatus 714 to cause one or more support units 720 and 722 to move a rest position where the shading elements 731 732 and 733 are folded and not impacted by the wind.

[0089] FIG. 8 illustrates a block diagram of a shading object including a louvre system according to an embodiment. In an embodiment, a shading object may also comprise a transceiver 780, a light projector 785, and/or a speaker 790. In an embodiment, a transceiver 780 may receive either signals representing video information and/or signals representing audio information. Continuing with an embodiment, a transceiver 780 may receive these signals via a wired or wireless connection. In an embodiment, a transceiver may receive the video information and may transit the information to a light projector 785, which may project representative video information onto one or more of shading elements 732 733 or 734. In an embodiment, a light projector 785 may transmit light and/or video onto surfaces of one or more shading elements 732 733 or 734. In an embodiment, a transceiver 780 may receive audio information and may transmit received audio information to speakers 790 for playback.

[0090] FIG. 9 discloses a method of operation for a third embodiment of a shading object according to an embodiment. In step 905, a base unit is deployed. In step 910, in embodiments, a base unit may have weights added into compartments of a base unit. In step 915, in an embodiment, detachable support units are placed into holders in the base unit. In step 920, in an embodiment, a controller or processor may receive a command and one or more support units may move from a rest position to a shading or deployed position. In step 925, in embodiments, a controller or processor may receive a command and move support units from a shading position to a rest position.

[0091] In embodiments, in step 930, a sunlight sensor may detect an intensity and/or direction of sunlight. In step 935, a controller or processor may receive the signal from a sunlight sensor and may send a signal and/or commands directly, or indirectly, to rotate a base unit (and thus support units and shading elements) in a clockwise (or counterclockwise) direction to provide shade from a light source (e.g., the sun). In embodiments, in step 940, a light projector may project light onto a surface of one or more shading elements. In embodiments, in step 945, a projector may project an image and/or video onto one or more shading elements. In embodiments, in step 950, an audio system may receive, via wireless communications, an audio signal from an electronic device and transmit an audio signal to speakers for playing in and around the shading object. In embodiments, in step 955, a wind sensor detects wind conditions and if the conditions are greater than a wind threshold, then shading elements (and support units) may be moved to a rest position from a shading position. FIG. 7B illustrates a side view of a third shading object according to an embodiment.

[0092] FIG. 10A illustrates a fourth embodiment of an intelligent shading object. In an embodiment, shading object 1000 may comprise a base unit 1010, a support unit 1017, a telescope support housing 1025, a plurality of telescoping rods 1030 1031 1032, and/or a shading element 1040. In an embodiment, an intelligent shading object may also include at least one photo cell 1060 and/or a light sensor 1050.

[0093] In an embodiment, a base unit 1010 may include weight compartments 1011 for housing weights to provide additional support to a base unit 1010, when a shading element 1040 is deployed. In an embodiment, weights may be removable and may fit into weight compartments. In an embodiment, a base unit 1010 may also include a rotation unit 1015. In an embodiment, a rotation unit 1015 may be circular in shape and may be located in an interior surface of the base unit 1010, as illustrated in FIG. 10A.

[0094] In an embodiment, a support unit 1017 may be connected or coupled to a base unit 1010. In embodiments, a support unit 1017 may be connected to a rotation unit 1015 of a base unit. In an embodiment, a rotation unit 1015 may be configured to allow a support unit 1017 to rotate in a clockwise or counterclockwise direction to, for example, follow a light source, e.g., the sun, or to respond to a user's voice or digital command. In embodiments, a support unit 1017 may comprise be coupled to a first pivot hub (not shown) and a second pivot hub 1019. In an embodiment, a first pivot hub may be configured to allow a support unit 1017 to move in a vertical direction and, illustratively, fold against a top surface of a base unit 1010. This allows for easier storage and/or transport of a shading object 1000. In an embodiment, a telescoping support housing 1025 may be coupled or connected to a support unit 1017. In embodiments, a telescoping support housing 1025 may be connected to a support housing 1017 via a second pivot hub 1019. Illustratively, in an embodiment, a second pivot hub 1019 may be configured such that a telescoping support housing 1025 may rotate in a clockwise or counterclockwise direction in order to move from a rest or non-use position to a deployed or "in use" position, as is illustrated by reference arrow 1023 in FIG. 10A. In embodiments, a telescoping support housing 1025 may rotate about the second pivot hub 1019 to lie flat against a side of a support unit 1017 or inside a compartment of a support unit 1017. This may allow a support unit 1017 and telescoping support housing 1025 to have a smaller footprint for easier storage and/or portability.

[0095] In embodiments, a telescoping support housing 1025 comprises a deployment mechanism 1027, a rod storage area 1028, and/or a plurality of telescoping rods 1030 1031 and 1032. In embodiments, in a rest position, a plurality of telescoping rods 1030 1031 and 1032 are stored in the rod storage area 1028. Illustratively, in an embodiment, after a controller or processor in telescoping support housing 1025 receives a command to deploy a plurality of telescoping rods 1030 1031 and/or 1032 (and thus the shading element 1040), a controller or processor may provide commands, and/or signals directly, or indirectly, to a deployment mechanism 1027. In an embodiment, a deployment mechanism may deploy or push to an extended position, a plurality of telescoping rods 1030 1031 and/or 1032. In an embodiment, a plurality of telescoping rods 1030 1031 and/or 1032 may exit the telescoping support housing 1025 via a top surface. In embodiments, telescoping support rods 1030 1031 and/or 1032 may support a shading element 1040 in its deployment. After receiving another command, telescoping support housing 1025 may retract a plurality of telescoping rods 1030 1031 and/or 1032, which causes a shading element 1040 to move to a folded position. In embodiments, a telescoping support housing 1025 may also include a storage area 1029 for a shading element 1040. In embodiments, after telescoping rods 1030 1031 and/or 1032 have been retracted, a shading element 1040 may be removed from ends of the plurality of telescoping rods 1030 1031 and/or 1032 and placed in a storage area 1028 or another storage area. In other embodiments, a storage area 1028 may be located in a base unit 1010, a support unit 1017 and/or a telescoping support housing 1025.