Presentation Assessment And Valuation System

Scholz; Brian A. ; et al.

U.S. patent application number 16/151226 was filed with the patent office on 2020-04-09 for presentation assessment and valuation system. This patent application is currently assigned to eduPresent LLC. The applicant listed for this patent is eduPresent LLC. Invention is credited to Bruce E. Fischer, Brian A. Scholz.

| Application Number | 20200111386 16/151226 |

| Document ID | / |

| Family ID | 70051775 |

| Filed Date | 2020-04-09 |

View All Diagrams

| United States Patent Application | 20200111386 |

| Kind Code | A1 |

| Scholz; Brian A. ; et al. | April 9, 2020 |

Presentation Assessment And Valuation System

Abstract

A computer implemented interactive presentation assessment and valuation system which provides a server computer that allows one or more computing devices to access a presentation assessment and valuation system which provides a presentation analyzer which applies standardized scoring algorithms to the video data or audio data associated with a presentation and correspondingly generates standardized word rate, word clarity, filler words, tone, or eye contact scores, and calculates a presentation score based upon an average or weighted average of the scores.

| Inventors: | Scholz; Brian A.; (Fort Collins, CO) ; Fischer; Bruce E.; (Windsor, CO) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | eduPresent LLC Loveland CO |

||||||||||

| Family ID: | 70051775 | ||||||||||

| Appl. No.: | 16/151226 | ||||||||||

| Filed: | October 3, 2018 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G10L 15/22 20130101; G09B 19/04 20130101; G10L 25/48 20130101; G06F 3/013 20130101; G10L 15/26 20130101; G10L 25/63 20130101 |

| International Class: | G09B 19/04 20060101 G09B019/04; G10L 15/26 20060101 G10L015/26; G10L 15/22 20060101 G10L015/22; G10L 25/63 20060101 G10L025/63; G06F 3/01 20060101 G06F003/01 |

Claims

1-40. (canceled)

41. A system, comprising: a server which serves a presentation assessment and valuation program through a network to coordinate processing of presentation data between at least two computing devices, said presentation assessment and valuation program served to a first of said at least two computing devices, including: a transcription module executable to analyze speech data included in a recorded presentation including one or more of: count words in said speech data; count filler words in said speech data; and assign a word recognition confidence metric to each of said words in said speech data; and a presentation scoring module executable to calculate two or more of: a word rate score; a clarity score; a filler word score; and calculate a presentation score as an average of the sum of two or more of said word rate score, said clarity score and said filler word score.

42. The system of claim 41, wherein said presentation assessment and valuation program further includes a submission module executable to: associate said word rate score, said clarity score, said filler word score, and said presentation score with said recorded presentation; and retrievably save said recorded presentation associated with said word rate score, said clarity score, said filler word score, and said presentation score in a presentation data base.

43. The system of claim 42, wherein said presentation assessment and valuation program further executable to: serve said recorded presentation associated with said word rate score, said clarity score, said filler word score, and said presentation score from said presentation data base to either said first computing device or a second of said computing devices; playback said recorded presentation on said computing devices, wherein playback includes: depiction of a video in a video display area on a display surface associated with said computing device; generate audio from a speaker associated with said computing device; and depiction of one or more of said word rate score, said clarity score, said filler word score, and said presentation score in a presentation score display area on said display surface associated with said computing device.

44. The system of claim 43, wherein said presentation assessment and valuation program further executable to: associate a grade with said recorded presentation, said grade variably based on one or more of associated said word rate score, said clarity score, said filler word score, and said presentation score entered by a user of said second computing device; retrievably save said recorded presentation associated with said grade in said presentation database accessible by said first computing device or said second computing device.

45. The system of claim 44, further comprising a tone analyzer executable to analyze tone of said speech data within said presentation.

46. The system of claim 45, wherein said tone analyzer is further executable to: identify a central tone tendency in a fundamental frequency contour of said speech data; and calculate a tone variance from said central tone tendency identified in said fundamental frequency contour.

47. The system of claim 46, wherein said tone analyzer further executable to compare said one variance to a tone variance threshold.

48. The system of claim 47, wherein said tone analyzer further executable to calculate a tone variance rate based on a count of tone variance exceeding said tone variance threshold over time.

49. The system of claim 48, wherein said presentation scoring module is further executable to calculate a tone score, wherein if said tone variance rate is between zero and 10, then said tone score equals zero, and wherein if said tone variance rate is greater than 10, then the tone score equals 100 minus the tone rate.

50. The system of claim 49, wherein said presentation assessment and valuation program further comprising an eye contact analyzer executable to analyze occurrence of eye contact in speaker data within said presentation.

51. The system of claim 50, wherein said eye contact analyzer further executable to: retrieve pixel data in said speaker data corresponding to head, eye, or iris position or combinations thereof; and compare relative pixel intensity level of said pixel data to an eye contact threshold.

52. The system of claim 51, wherein said eye contact analyzer is further executable to calculate an eye contact rate based upon an amount of time units said pixel level intensity exceeds said eye contact threshold divided by total time units encompassed by said speaker data.

53. The system of claim 52, wherein said presentation scoring module further executable to calculate an eye contact score, wherein if said eye contact rate exceeds a pre-selected eye contact rate threshold, then said eye contact score equals 100, and wherein if said eye contact rate is less than the eye contact rate threshold, then the eye contact score equals the eye contact rate.

54. The system of 53, further comprising a formatter executable to depict formatted text of said speech data including all of said words and said filler words on a display surface of said first computing device or said second computing device.

55. The system of claim 54, wherein said formatted text comprises spatially fixed paragraphs.

56. The system of claim 55, wherein said formatted text comprises scrolled text.

57. The system of claim 56, wherein said formatter is further executable to depict a line chart including a word rate baseline and a word rate line which compares said word rate of said speech to said word rate baseline.

58. The system of claim 57, wherein said word rate baseline corresponds to a pre-selected word rate which matches a word rate score of 100, and wherein and each integer deviation above or below said pre-selected word rate results in a corresponding integer reduction in said word rate score.

59. The system of claim 58, wherein said formatter is further executable to coordinate scrolling speed of said line chart to match scrolling speed of said scrolled text of said speech.

60. The system of claim 59, wherein said formatter is further executable to concurrently depict said scrolled text in a scrolled text field and said line chart in a line chart field on said display surface of said computing device, said scrolled text field in spatial relation to said line chart field to visually align said words with corresponding time points in said word rate line.

61. The system of claim 60, wherein said formatter is further executable to concurrently depict a filler indicator in a filler indicator field, said filler indicator field in spatial relation to said scrolled text field to visually align said filler indicator corresponding to said filler in said scrolled text.

62. The system of claim 61, wherein said presentation scoring module further executable to highlight said words in said formatted text having a word recognition confidence metric of less than said word recognition confidence metric threshold.

63. The system of claim 62, wherein said presentation scoring module further executable to: associate a trigger area with each word in said formatted text having a word recognition confidence metric of less than said word recognition confidence metric threshold; activate said trigger area by user command; and depict a clarity score image in said formatted text, said clarity score image including said word recognition confidence metric of said word associated with said trigger area.

64. The system of claim 63, wherein said presentation scoring module further executable to highlight said filler words in said formatted text.

65. The presentation analyzer system of claim 64, wherein said presentation scoring module further executable to: associate a trigger area with each filler word in said formatted text; activate said trigger area by user command; and depict a filler score image in said formatted text, said filler score image including a filler word usage metric, said filler word usage metric indicates numerical use of said filler word in said formatted text.

66-105. (canceled)

Description

I. FIELD OF THE INVENTION

[0001] Generally, a computer implemented interactive presentation assessment and valuation system which provides a server computer that allows one or more computing devices to access a presentation assessment and valuation program which provides a presentation analyzer which applies standardized scoring algorithms to the video data or audio data associated with a presentation and correspondingly generates standardized word rate, word clarity, filler word, tone, or eye contact scores, and calculates a presentation score based upon an average or weighted average of the scores.

II. BACKGROUND OF THE INVENTION

[0002] Currently, in the context of distance learning, there does not exist a computer implemented system which coordinates use of a presentation analyzer between a student user for analysis of video data or audio data associated with preparation of a presentation and an instructor user for analysis of video data or audio data associated with a presentation submitted by the student user which presentation analyzer applies standardized scoring algorithms to the video data or audio data associated with a presentation and correspondingly generates standardized word rate, word clarity, filler word, tone, or eye contact scores, and calculates a presentation score based upon an average or weighted average of the scores.

III. SUMMARY OF THE INVENTION

[0003] Accordingly, a broad object of embodiments of the invention can be to provide a presentation assessment and valuation system for distance learning distributed on one or more servers operably coupled by a network to one or more computing devices to coordinate use of a presentation analyzer between a student user for analysis of video data or audio data associated with preparation of a presentation and an instructor user for analysis of video data or audio data associated with a presentation submitted by the student user which presentation analyzer applies standardized scoring algorithms to the video data or audio data associated with a presentation and correspondingly generates standardized word rate, word clarity, filler word, tone, or eye contact scores, and calculates a presentation score based upon an average or weighted average of the scores.

[0004] Another broad object of embodiments of the invention can be to provide method in a presentation assessment and valuation system for coordinating use of a presentation analyzer between a student user for analyzing video data or audio data associated with preparing a presentation and an instructor user for analyzing video data or audio data associated with a presentation submitted by the student user which method further includes executing a presentation analyzer to: apply standardized scoring algorithms to the video data or audio data associated with a presentation; and generating standardized word rate scores, word clarity scores, filler word scores, tone scores, or eye contact scores; and further calculating a presentation score based upon averaging or weighted averaging of the scores.

[0005] Another broad object of embodiments of the invention can be to provide a method in a presentation assessment and valuation system which includes serving a presentation assessment and valuation program to a plurality of computing devices to coordinate operation of a student user interface and an instructor user interface on the plurality of computing devices within the system, and by user command in the student user interface:

[0006] decode video data or audio data, or combined data, in presentation data to display a video in the video display area on the display surface or generate audio via an audio player associated with the student user computing device;

[0007] concurrently depict in the student user interface indicators of one or more of word rate, word clarity, filler words, tone variance, or eye contact synchronized in timed relation with the video or audio of the presentation;

[0008] depict in the student user interface one or more of a word rate score, word clarity score, filler word score, tone variance score or eye contact score by applying algorithms to the video data or audio data associated with the presentation data;

[0009] depict a presentation score based upon averaging or weighted averaging of one or more of the word rate scores, word clarity scores, filler word scores, tone scores, or eye contact scores; and

[0010] submit the presentation data to a database within the system.

[0011] Naturally, further objects of the invention are disclosed throughout other areas of the specification, drawings, photographs, and claims.

IV. BRIEF DESCRIPTION OF THE DRAWINGS

[0012] FIG. 1A is a block diagram of a particular embodiment of the inventive computer implemented interactive presentation assessment and valuation system.

[0013] FIG. 1B is a block diagram of a server including a processor communicatively coupled to a non-transitory computer readable media containing an embodiment of a presentation assessment and valuation program.

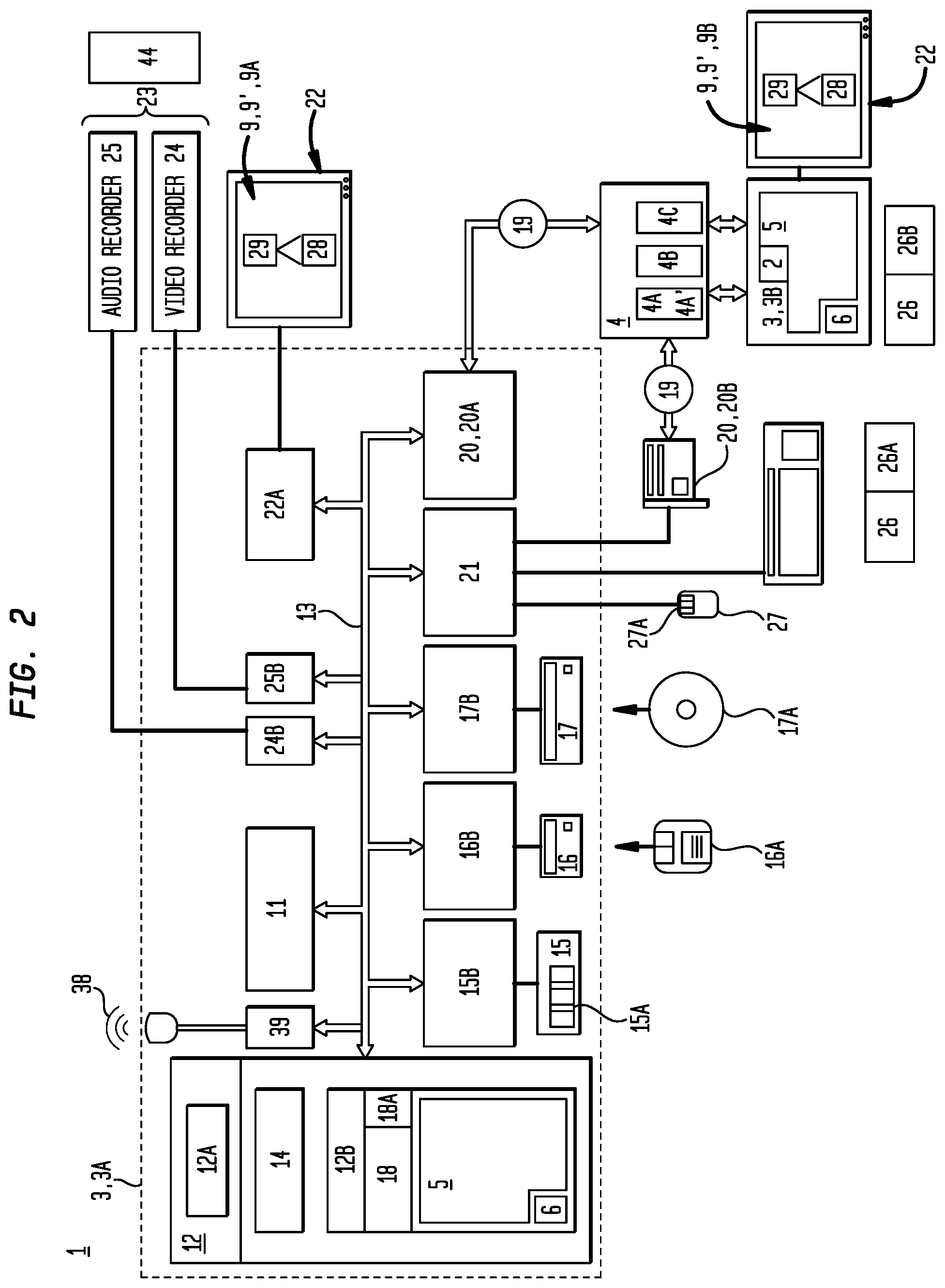

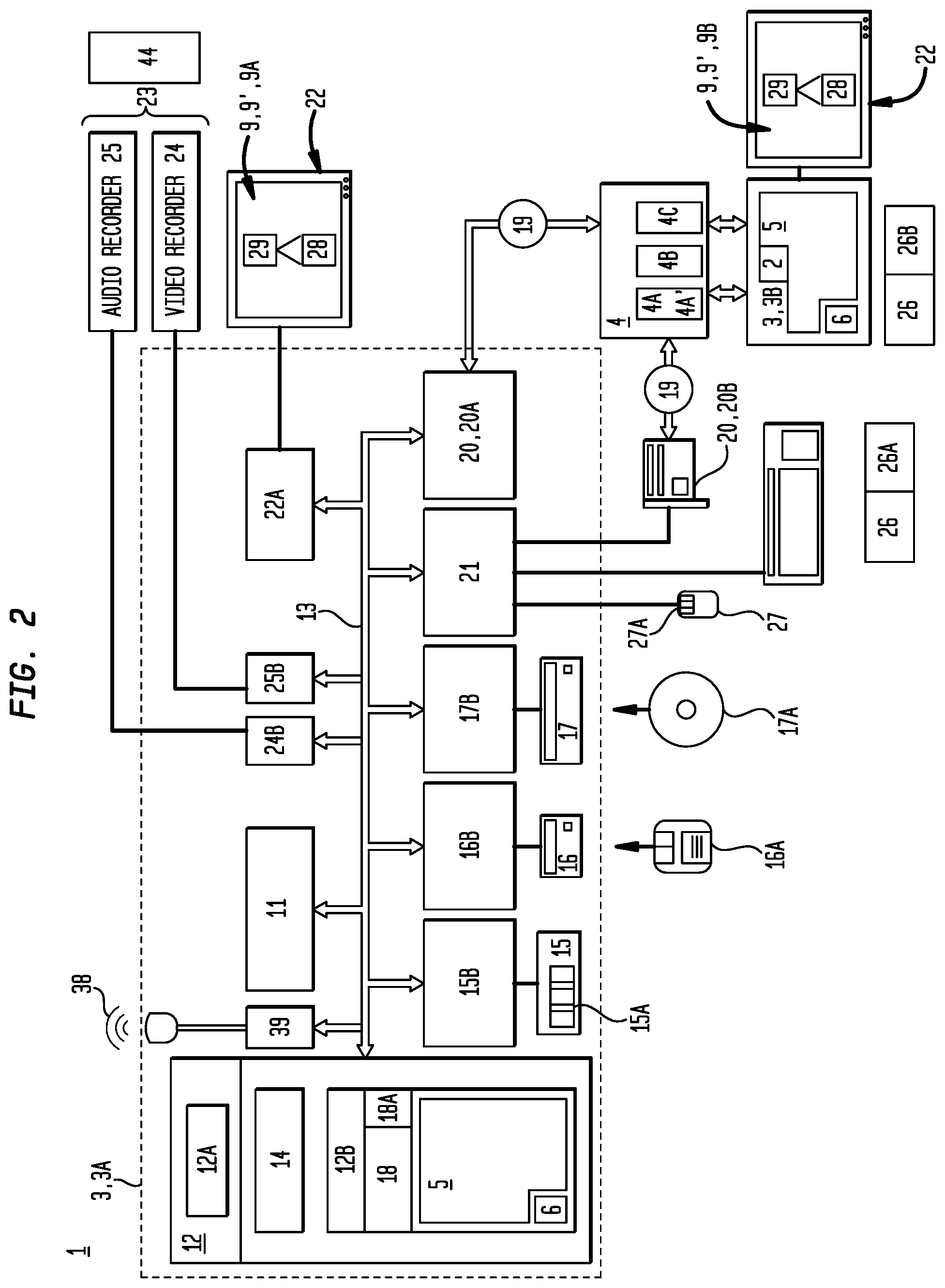

[0014] FIG. 2 is a block diagram of an illustrative computer means, network means and non-transitory computer readable medium which provides computer-executable instructions to implement an embodiment of the interactive presentation assessment and valuation system.

[0015] FIG. 3 depicts an illustrative embodiment of a graphical user interface implemented by operation of an embodiment of the interactive presentation assessment and valuation system.

[0016] FIG. 4 depicts an illustrative embodiment of a graphical user interface implemented by operation of an embodiment of the interactive presentation assessment and valuation system.

[0017] FIG. 5 depicts an illustrative embodiment of an assignment events interface implemented by operation of an embodiment of the interactive presentation assessment and valuation system.

[0018] FIG. 6 is first working example in an embodiment of presentation assessment and valuation system.

[0019] FIG. 7 is second working example in an embodiment of presentation assessment and valuation system.

[0020] FIG. 8 is third working example in an embodiment of presentation assessment and valuation system.

V. DETAILED DESCRIPTION OF THE INVENTION

[0021] Generally, referring to FIGS. 1A, 1B and 2, a presentation assessment and valuation system (1) (also referred to as the "system") can be distributed on one or more servers (2) operably coupled to one or more computing devices (3) by a network (4), including as examples, a wide area network (4A) such as, the Internet (4A'), a local area network (4B), or cellular-based wireless network(s) (4C) (individually or collectively the "network"). The one or more computing devices (3) can include as illustrative examples: desktop computer devices, and mobile computer devices such as personal computers, slate computers, tablet or pad computers, cellular telephones, personal digital assistants, smartphones, programmable consumer electronics, or combinations thereof.

[0022] The network (4) supports a presentation assessment and valuation program (5) (also referred to as the "program") which can be accessed by or downloaded from one or more servers (2) to the one or more computing device (3) to confer all of the functions of the program (5) and the system (1) to each of the one or more computing devices (3).

[0023] In particular embodiments, the program (5) can be served by the server (2) over the network (4) to coordinate operation of one or more student computing devices (3A) with operation of one or more instructor computing devices (3B). However, this is not intended to preclude embodiments in which the program (5) may be contained on or loaded to a computing device (3), or parts thereof contained on or downloaded to one or more student computing devices (3A) or one or more instructor computing devices (3B) from one or more of: a computer disk, universal serial bus flash drive, or other non-transitory computer readable media.

[0024] While embodiments of the program (5) may be described in the general context of computer-executable instructions such as program modules which utilize routines, programs, objects, components, data structures, or the like, to perform particular functions or tasks or implement particular abstract data types, it is not intended that any embodiments be limited to a particular set of computer-executable instructions or protocols. Additionally, in particular embodiments, while particular functionalities of the program (5) may be attributable to one of the student computing device (3A) or the instructor computing device (3B); it is to be understood that embodiments may allow implementation of a function by more than one device, or the function may be coordinated by the system (1) between two or more computing devices (3).

[0025] Now, referring primarily to FIGS. 1A and 2, each of the one or more computing devices (3)(3A)(3B) can include an Internet browser (6) (also referred to as a "browser"), as illustrative examples: Microsoft's INTERNET EXPLORER.RTM., GOOGLE CHROME.RTM., MOZILLA.RTM., FIREFOX.RTM., which functions to download and render computing device content (7) formatted in "hypertext markup language" (HTML). In this environment, the one or more servers (2) can contain the program (5) including a user interface module (8) which implements the most significant portions of one or more user interface(s)(9) which can further depict a combination of text and symbols in a graphical user interface (9') to represent options selectable by user command (10) to activate functions of the program (5). As to these embodiments, the one or more computing devices (3)(3A)(3B) can use the browser (6) to depict the graphical user interface (9) including computing device content (7) and to relay selected user commands (10) back to the one or more servers (2). The one or more servers (2) can respond by formatting additional computing device content (7) for the respective user interfaces (9) including graphical user interfaces (9').

[0026] Again, referring primarily to FIG. 1A, in particular embodiments, the one or more servers (2) can be used primarily as sources of computing device content (7), with primary responsibility for implementing the user interface (9)(9') being placed upon each of the one or more computing devices (3). As to these embodiments, each of the one or more computing devices (3) can download and run the appropriate portions of the program (5) implementing the corresponding functions attributable to each computing device (3)(3A)(3B).

[0027] Now referring primarily to FIG. 2, as an illustrative example, a computing device (3)(3A)(encompassed by broken line) can include a processing unit (11), one or more memory elements (12), and a bus (13) (which operably couples components of the client device (3)(3A), including without limitation the memory elements (12) to the processing unit (11). The processing unit (11) can comprise one central-processing unit (CPU), or a plurality of processing units which operate in parallel to process digital information. The bus (13) may be any of several types of bus configurations including a memory bus or memory controller, a peripheral bus, and a local bus using any of a variety of bus architectures. The memory element (12) can without limitation be a read only memory (ROM) (12A) or a random access memory (RAM) (12B), or both. A basic input/output system (BIOS) (14), containing routines that assist transfer of data between the components of the computing device (3), such as during start-up, can be stored in ROM (12A). The client device (3) can further include one or more of a hard disk drive (15) for reading from and writing to a hard disk (15A), a magnetic disk drive (16) for reading from or writing to a removable magnetic disk (16A), and an optical disk drive (17) for reading from or writing to a removable optical disk (17A) such as a CD ROM or other optical media. The hard disk drive (15), magnetic disk drive (16), and optical disk drive (17) can be connected to the bus (13) by a hard disk drive interface (15B), a magnetic disk drive interface (16B), and an optical disk drive interface (17B), respectively. The drives and their associated non-transitory computer-readable media provide nonvolatile storage of computer-readable instructions, data structures, program modules and other data for the computing device (3)(3A)(3B)

[0028] It can be appreciated by those skilled in the art that any type of non-transitory computer-readable media that can store data that is accessible by the computing device (3), such as magnetic cassettes, flash memory cards, digital video disks, Bernoulli cartridges, random access memories (RAMs), read only memories (ROMs), and the like, may be used in a variety of operating environments. A number of program modules may be stored on one or more servers (2) accessible by the computing device (3), or on the hard disk drive (15), magnetic disk (16), optical disk (17), ROM (12A), or RAM (12B), including an operating system (18), one or a plurality of application programs (18A) and in particular embodiments the entirety or portions of the interactive presentation assessment and valuation program (5) which implements the user interfaces (9)(9') one or more student user interface(s)(9A) and the one or more administrator user interface(s)(9B) or other program interfaces.

[0029] The one or more computing devices (3)(3A)(3B) can operate in the network (4) using one or more logical connections (19) to connect to one or more of server computers (2) and transfer computing device content (7). These logical connections (19) can be achieved by one or more communication devices (20) coupled to or a part of the one or more computing devices (3); however, the invention is not limited to any particular type of communication device (20). The logical connections (19) depicted in FIG. 2 can include a wide-area network (WAN) (4A), a local-area network (LAN) (4B), or cellular-based network (4C).

[0030] When used in a LAN-networking environment, the computing device (3) can be connected to the local area network (4B) through a network interface (20A), which is one type of communications device (20). When used in a WAN-networking environment, the computing device (3) typically includes a modem (20B), a type of communications device, for establishing communications over the wide area network (4A). The modem (20B), which may be internal or external, can be connected to the bus (13) via a serial port interface (21). It is appreciated that the network connections shown are illustrative and other means of and communications devices can be used for establishing a communications link between the computing devices (3)(3A)(3B) the server computers (2).

[0031] A display surface (22), such as a graphical display surface, provided by a monitor screen or other type of display device can also be connected to the computing devices (3)(3A)(3B). In addition, each of the one or more computing devices (3)(3A)(3B) can further include peripheral input devices (23) such as a video recorder (24), for example a camera, video camera, web camera, mobile phone camera, video phone, or the like, and an audio recorder (25) such as microphones, speaker phones, computer microphones, or the like. The audio recorder (25) can be provided separately from or integral with the video recorder (24). The video recorder (24) and the audio recorder (25) can be respectively connected to the computing device (3) by a video recorder interface (24A) and an audio recorder interface (25A).

[0032] A "user command" occurs when a computing device user (26) whether a student computing device user (26A) or instructor computing device user (26B) operates a program (5) function through the use of a user command (10). As an illustrative example, pressing or releasing a left mouse button (27A) of a mouse (27) while a pointer (28) is located over an interactive control element (29) depicted in a graphical user interface (9') displayed on the display surface (22) associated with a computing device (3). However, it is not intended that the term "user command" be limited to the press and release of the left mouse button (27A) on a mouse (27) while a pointer (28) is located over an interactive control element (29), rather, a "user command" is intended to broadly encompass any command by the user (26)(26A)(26B) through which a function of the program (5) (or other program, application, module or the like) which implements a user interface (9) can be activated or performed, whether through selection of one or a plurality of interactive control elements (29) in a user interface (9) including but not limited to a graphical user interface (9'), or by one or more of user (26) voice command, keyboard stroke, screen touch, mouse button, or otherwise.

[0033] Now, referring primarily to FIGS. 1A, 1B, and 2, the program (5) can be accessed by or downloaded from one or more servers (2) to the one or more computing devices (3) to confer all of the functions of the program (5) and the system (1) to one or more computing devices (3). In particular embodiments, the program (5) can be executed to communicate with the server (2) over the network (4) to coordinate operation of one or more student computing devices (26A) with operation of one or more instructor computing devices (26B). However, this is not intended to preclude embodiments in which the program (5) may be contained on and loaded to the student computing device(s) (3A), the instructor computing device(s) (3B) from one or more of: a computer disk, universal serial bus flash drive, or other computer readable media.

[0034] Now referring primarily to FIGS. 1A and 1B, the program (5) in part includes a user interface module (8) accessible by browser based on-line processing or downloadable in whole or in part to provide a user interface (9) including, but not necessarily limited to, a graphical user interface (9') which can be depicted on the display surface (22) associated with the computing devise(s)(3)(3A)(3B) and which correspondingly allows a user (26) whether a student user (26A) or an instructor user (26B) to execute by user command (10) one or more functions of the program (5).

[0035] Again, referring primarily to FIGS. 1A and 1B, which provides an illustrative example of a user interface (9), in accordance with the invention. The user interface (9) can be implemented using various technologies and different devices, depending on the preferences of the designer and the particular efficiencies desired for a given circumstance. By user command (10) a user (26) can activate a graphic user interface module (8) of the program (5) which functions to depict a video display area (30) in the graphical user interface (9') on the display surface (22) associated with the computing device (3). Embodiments of the graphic user interface module (8) can further function to depict further display areas in the graphical user interface (9'). As shown in the illustrative example of FIG. 1A, the graphic user interface module (8) can further function to concurrently depict a media display area (31) on the display surface (22) associated with the computing device (3).

[0036] In particular embodiments, the user (26) can utilize a video recorder (24) or an audio recorder (25) to respectively generate a video stream (24A) or an audio stream (25A).

[0037] The term "video recorder (24)" for the purposes of this invention, means any device capable of recording one or more video streams (24B). Examples of a video recorder (24) include, but are not necessarily limited to, a video camera, a video surveillance recorder, a computer containing a video recording card, mobile phones having video recording capabilities, or the like.

[0038] The term "audio recorder (25)" for the purposes of this invention, means any device capable of recording one or more audio streams (25B). Examples of an audio recorder (25) include, but are not necessarily limited to, a video camera having audio recording capabilities, mobile phones, a device containing a microphone input, a device having a line-in input, a computer containing an audio recording card, or the like.

[0039] The term "video stream (24A)" for the purposes of this invention, means one or more channels of video signal being transmitted, whether streaming or not streaming, analog or digital.

[0040] The term "audio stream (25A)" for the purposes of this invention, means one or more channels of audio signal being transmitted, whether streaming or not streaming, analog or digital.

[0041] The program (5) can further include an encoder module (32) which upon execution encodes the video stream (24B) as video stream data (24C), and the audio stream (25B) as audio stream data (25C). The encoder module (32) can further function upon execution to generate a combined stream data (24C/25C) containing video stream data (24C) and audio stream data (25C). The encoded video stream data (24C) or audio stream data (25C) can be assembled in a container bit stream (33) such as MP4, FLV, WebM, ASF, ISMA, MOV, AVI, or the like.

[0042] The program (5) can further include a codec module (34) which functions to compress the discrete video stream data (24B) or audio stream data (25B) or the combined stream (24C/25C) using an audio codec (34B) such as MP3, Vorbis, AAC, or the like. The video stream data (24C) can be compressed using a video codec (34A) such as H.264, VP8, or the like. The compressed discrete or combined stream data (24C/25C) can be retrievably stored in a database (35) whether internal to the recorder (24)(25), the computing device (3), or in a network server (2) or other network node accessible by a computing device (3).

[0043] Again referring primarily to FIGS. 1A, 1B and 2, the program (5) can further include a media input module (36) which during acquisition of the video stream (24B) or the audio stream (25B) by the respective recorder (24)(25), as above described, decodes the discrete or combined video and audio data streams (24B)(25B) and either in the LAN (4B) or the WAN (4A) environment to display a video (37) in the video display area (30) on the display surface (22) associated with the computing device (33) or to generate audio (38) via an audio player (39) associated with the computing device (3).

[0044] Each of the video stream data (24C) or audio stream data (25C) or combined stream data (24C/25C) can be stored as media files (40) in the database (35), the server computer (2) or other network node or in the computing device (3). The media input module (36) can further function to retrieve from a server computer (2) or a computing device (3) a media file (40) containing the compressed video or audio stream data (24C)(25C).

[0045] The term "media file (40)" for the purposes of this invention means any type of file, or a pointer, to video or audio stream data (24C)(25C) and without limiting the breadth of the foregoing can be a video file, an audio filed, extensible markup language file, keyhole markup language file, or the like.

[0046] Whether the media input module (36) functions during acquisition of the video stream (24B) or the audio stream (25B) or functions to retrieve media files (40), the media input module (36) can utilize a plurality of different parsers (41) to read video stream data (24C), audio stream data (25C), or the combined stream data (24C/25C) or from any file format or media type. Once the media input module (36) receives the video stream data (24C) or the audio stream data (25C) or combined stream data (24C/25C) and opens the media file (40), the media input module (36) uses a video and audio stream decoder (42) to decode the video stream data (24C) or the audio stream data (25C) or the combined stream data (24C/25C).

[0047] The media input module (36) further functions to activate a media presentation module (43) which functions to display the viewable content of the video stream data (24C) or combined stream data (24C/25C) or the media file (40) in the video display area (30) on the display surface (22) associated with the computing device (3) or operates the audio player (39) associated with the computing device (39) to generate audio (38). As an illustrative example, a user (26) can by user command (10) in the user interface (9) select one of a plurality of video recorders (24) or one of a plurality of audio recorders (25), or combinations thereof, to correspondingly capture a video stream (24B) or an audio stream (25B), or combinations thereof, which in particular embodiments can include recording a user (26) giving a live presentation (44) which by operation of the program (5), as above described, the live video stream (24B) or the live audio stream (25B), or combinations thereof, can be processed and the corresponding live video (37) or live audio (38), or combinations thereof, can be displayed in the video image area (30) in the graphical user interface (9') or generated by the audio player (39) associated with the computing device (3). As a second illustrative example, a user (26) by user command (10) in the user interface (9) can select a media file (40) including video stream data (24C) or audio stream data (25C), or a combination thereof, which can be processed by operation of the program (5) as above described, and the corresponding video (37) or audio (38), or combinations thereof, can be displayed in the video image area (30) in the graphical user interface (9') or generated by the audio player (39) associated with the computing device (38). As a third illustrative example, a user (26) by user command (10) in the user interface (9) can select a first media file (40A), such as an video MP4 file, and can further select a second media file (40B), such as an audio MP3 file and generate a combined stream data (24C/25C) which can be processed by operation of the program (5) as above described and the video (37) can be displayed in the video image area (30) in the graphical user interface (9') and the audio (38) can be generated by the audio player (39) associated with the computing device (3).

[0048] The user interface (9) can further include a video controller (45) which includes a start control (46) which by user command (10) commences presentation of the video (37) in the video display area (30), a rewind control (47) which by click event allows re-presentation of a portion of the video (37), a fast forward control (48) which by click event increases the rate at which the video (37) is presented in the video display area (30), and a pause control (49) which by user command (10) pauses presentation of video (37) in the video display area (30).

[0049] Again, referring primarily to FIGS. 1A, 1B and 2, the program (5) can further include a presentation analyzer (50) executable to analyze a presentation (44) (whether live or retrieved as a media file (40)). For the purposes of this invention the term "presentation" means any data stream whether live, pointed to, or retrieved as a file from a memory element, and without limitation to the breadth of the foregoing can includes a video stream data (24C) representing a speaker (51)(also referred to as "speaker data (51A)") or an audio stream data (25C) of a speech (52)(also referred to as "speech data (52A)") or a combination thereof.

[0050] In particular embodiments, the presentation analyzer (50) includes a transcription module (53) executable to analyze speech data (52A) in a presentation (44). For the purpose of this invention the term "speech" means vocalized words (54) or vocalized filler words (55), or combinations thereof. The term "words" means a sound or combination of sounds that has meaning. The term "filler word" means a sound or combination of sounds that marks a pause or hesitation that does not have a meaning, and without limitation to the breadth of the foregoing examples of filler words (55) can include, as examples: aa, um, uh, er, shh, like, right, you know.

[0051] In particular embodiments, the transcription module (53) can be discretely served by a server (2) and activated by the program (5) to analyze speech data (52A) included in a presentation (44). The transcription module (53) can be executed to recognize and count word data (54A) in the speech data (52A). A date and time stamp (56) can be coupled to each identified word (54).

[0052] The transcription module (53) can further be executed to identify and count filler word data (55A) in the speech data (52A). A date and time stamp (56) can, but need not necessarily, be coupled to each identified filler word (55).

[0053] The transcription module (53) can further function to derive and associate a word recognition confidence metric (57) with each word (54). In particular embodiments, the word recognition confidence metric (57) can be expressed as percentile confidence metric (57A) produced by extracting word confidence features (58) and processing these word confidence features (58) against one or more word confidence feature recognition thresholds (58A) for the word (54). Each word (54) can be assigned a word recognition confidence metric (57) (such as a percentile confidence metric (57A) by a confidence level scorer (59).

[0054] Again, referring primarily to FIGS. 1A, 1B and 2, embodiments can further include a presentation scoring module (60). The presentation scoring module (60) can be executed to calculate a word rate score (61) based on matching a word rate (62) to a word rate score (61) in word rate scoring matrix (63).

[0055] In particular embodiments, the presentation scoring module (60) can be executed to calculate a Word Rate in accordance with:

( 62 ) Word Rate = Total Words ( 64 ) Minutes ( ( 56 B ) less ( 56 A ) ) ##EQU00001##

[0056] Based on the date and time stamp (56) associated with each word (54) recognized by the transcription module (53), the presentation scoring module (60) can calculate word count (64) and divide the word count (64) by elapsed time (65) between a first counted word date and time (56A) and a last word counted word date and time (56B) to obtain the word rate (62).

[0057] In particular embodiments, the presentation scoring module (60) can retrieve the word rate score (61) from a look up table which matches pre-selected word rates (62A) to corresponding word rate score (61). Depending on the application, the word rate scoring matrix (63) can be to a lesser or greater degree granular by adjusting the integer reduction in the word rate score (61) to a greater or lesser range in the pre-selected word rate (62A).

[0058] As an example, the look up table can include a word rate scoring matrix (63) in which one pre-selected word rate (62A) matches a word rate score (61) of 100 and each integer deviation in the pre-selected word rate (62A) results in a corresponding integer reduction in the word rate score (61). Therefore, if a pre-selected word rate (62A) of 160 matches a word rate score (61) of 100, then a word rate of 150 or 170 matches a word rate score of 90, a word rate of 140 or 180 matches a word rate score of 80, and so forth. In a second example, a range in the pre-selected word rate (62A) of 150 to 170 can correspond to a word rate score of 100 and each integer deviation in the pre-selected word rate (62A) outside of the range of 150 to 170 words per minute results in a corresponding integer reduction in the word rate score (61). In a particular embodiment, the look up table or word rate scoring matrix (63) can take the form illustrated in Table 1.

[0059] Again, referring primarily to FIGS. 1A, 1B, and 2, in particular embodiments of the presentation scoring module (60) can be further executed to calculate a clarity score (66) based on the total words (54) having a word recognition confidence metric (57) greater than a pre-selected word recognition confidence metric (57A) divided by the total word count (64). In particular embodiments the clarity score (66) can be calculated as follows:

( 66 ) Clarity Score = Total Words > 80 % Confidence ( 57 A ) .times. 100 Total Word Count ( 64 ) ##EQU00002##

[0060] Depending upon the application, the pre-selected percentile confidence metric (57A) can be of greater or lesser percentile to correspondingly increase or decrease the resulting clarity score (66).

[0061] Again, referring primarily to FIGS. 1A, 1B and 2, in particular embodiments of the presentation scoring module (60) can be further executed to calculate a filler word score (67) based on subtrahend equal to the total filler words (55) divided by the total word count (64).times.100 subtracted from a pre-selected minuend (67A). When the minuend equal 100, then the subtrahend equal to one percent corresponding reduces the score by one percentage. In particular embodiments, a subtrahend of less than one percent yields a filler word score (67) of 100. In particular embodiments, the minuend can be increased over 100, to allow for a score of 100 when the subtrahend equals a percentage less than the integer amount of the minuend over 100. For example, if the minuend equals 102 and the subtrahend equals 1.5, then the filler word score (67) would be 100. If the minuend equals 101 and the subtrahend equals 1.5, then the filler word score (67) would be 95.5.

[0062] Accordingly, in particular embodiments, the filler word score (67) can be calculated as follows:

( 67 ) Filler Word Score = 101 ( 67 A ) - ( Filler Words ( 55 ) .times. 100 ) ( Total Word Count ( 64 ) ) ##EQU00003##

[0063] Again, referring primarily to FIGS. 1A, 1B and 2, in particular embodiments, the presentation scoring module (60) can be further executed to calculate a presentation score (68) by calculating an average of a sum of the word rate score (61), the clarity score (66), and the filler score (67). In particular embodiments, the presentation score (68) can be calculated as follows:

( 68 ) Presentation Score = Word Rate Score ( 61 ) + Clarity Score ( 66 ) + Filler Score ( 67 ) 3 ##EQU00004##

[0064] In particular embodiments, the presentation score (68) can comprise a weighted average based on coefficients (69) applied to each of the word rate score (61), the clarity score (66) and the filler score (67) prior to calculating the average to generate the presentation score (68).

[0065] Again, referring primarily to FIGS. 1A, 1B and 2, in particular embodiments, the presentation analyzer (50) can further include an eye contact analyzer (70) executable to calculate occurrence of eye contact (71) of a speaker (51) with an audience (72) during delivery of a speech (52). The eye contact analyzer (70) determines eye contact (71) with the audience (72) by analysis of speaker data (51A), whether live video stream data (24B), or speaker data (51A) retrieved from a media file (40). As an illustrative example, the eye contact analyzer (70) can retrieve eye contact pixel data (73) representative of human head position (74), eye position (75) or iris position (76), or combinations thereof. The eye contact analyzer (70) can then compare pixel intensity level (77) representative of human head position (74), eye position (75), or iris position (76) to one or a plurality of eye contact thresholds (78) to further calculate an eye contact rate (79) by calculating the cumulative time that the pixel intensity level (77) exceeds the one or the plurality of eye contact thresholds (78)(time looking at audience) over the duration of the speaker data (51A), as follows

( 79 ) Eye Contact Rate = Time Looking at Audience Minutes * 100 ##EQU00005##

[0066] As an illustrative example, the speaker data (51A) can include eye contact pixel data (73) that corresponds to the iris position (76) of each eye (79) of the speaker (51). In particular embodiments, the eye contact analyzer (70) can analyze speaker data (51A) to record the iris position (76) based on relative pixel intensity level (77). A pixel intensity level (77) exceeding one or more pre-selected eye contact threshold levels (78) can be counted as an eye contact (71) with the audience (72).

[0067] The presentation scoring module (60) can further generate an eye contact score (80) by applying the following rules: [0068] If Eye Contact Rate (79)>90, then the eye contact score=100 [0069] If Eye Contact Rate (79)< or =90, then the eye contact score=the Eye Contact Rate (79)

[0070] Again, referring primarily to FIGS. 1A, 1B, and 2, embodiments of the presentation analyzer (50) can further include a tone analyzer (81) executable to analyze tone (82) of a speech (52) represented by the speech data (52A). The tone analyzer (81) receives speech data (52A) and further functions to analyze tone variation (83) over speech data time (89). The tone (82) of a speech (52) represented by speech data (52A) can be characterized by the fundamental frequency ("Fx") contours (84) associated with Fx (85) within the speech data (52A) (having the environmental or mechanical background noise filtered or subtracted out of the speech data (52A)). In particular embodiments, the tone analyzer (81) can analyze the Fx contours (84) of the speech data (52A) for Fx (85). The Fx contour (84) analysis can compare certain characteristics of the speech data (52A): (i) change in Fx (85) that are associated with pitch accents (ii) the range of the Fx (85) used by the speaker (51); (iii) voiced and voiceless regions; and (iv) regular and irregular phonation.

[0071] From the Fx contour (84) the tone analyzer (81) can establish the durations of each individual vocal fold cycle (86) for a phrase or passage ("fundamental period data"). From the fundamental period data (87), the tone analyzer (81) can calculate the instantaneous Fx value (88) for each fundamental period data (87). A plurality of Fx values (88) from an utterance or speech data (51A) plotted against speech data time (89) at which they occur gives us an Fx contour (84).

[0072] The Fx values (88) from speech data (52A) can be used calculate a Fx distribution (90). From the FX distribution (90), the tone analyzer (81) can calculate the central tone tendency (median or mode)(91) and tone variance value (92) from the central tone tendency (91) of the Fx contour (84).

[0073] In regard to particular embodiments, the speech data (52A) can be segmented into word data (54A) or syllables. The fundamental frequency contour (84) for the word data (54A) or syllables within the duration of the speech data (52A) can be compared to generate a tone variation value (92) which can be further compared to one or more tone variance thresholds (93) where exceeding the tone variance thresholds (93) results a tone variance (94). The tone analyzer (81) can be further executed to calculate the rate at which a tone variance (94) exceeds the one or more tone variance thresholds (93) to generate a tone rate (95) by the following formula:

( 95 ) Tone Rate = Tone Variance ( 94 ) Minutes * 100 ##EQU00006##

[0074] In particular embodiments, the presentation scoring module (60) can further generate a tone score (96) by applying the following rules: [0075] If Tone Rate (95) is between 0-10, then the Tone Score (96)=0 (monotone) [0076] If Tone Rate (95) is >10, then the Tone Score (96)=100-Tone Rate (95)

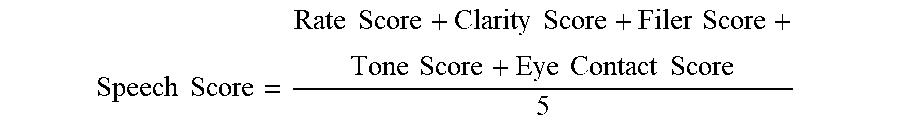

[0077] Again, referring primarily to FIGS. 1A, 1B and 2, particular embodiments further including an eye contact score (80) or a tone score (96), the presentation scoring module (60) can be further executed to calculate the Presentation Score (69) by calculating an average of a sum of the word rate score (61), the clarity score (66), the filler score (67), and optionally the eye contact score (80), and optionally the tone score (96). In particular embodiments, the presentation score (69) can be calculated as follows:

Speech Score = Rate Score + Clarity Score + Filer Score + Tone Score + Eye Contact Score 5 ##EQU00007##

[0078] Now, referring primarily to FIG. 3, which provides an illustrative example of a user interface (9)(9')(9A), in accordance with the invention. The user interface (9) can be implemented using various technologies and different devices, depending on the preferences of the designer and the particular efficiencies desired for a given circumstance. By user command (10) the user (26) can activate the user interface module (8) of the program (5) which functions to depict a video display area (30) in a graphical user interface (9) on the display surface (22) associated with the computing device (3). Embodiments of the user interface module (8) can further function to depict further display areas in the graphical user interface (9'). As shown in the illustrative example of FIG. 1, the user interface module (8) can further function to concurrently depict a media display area (31) on the display surface (22) associated with the computing device (3).

[0079] Again, referring primarily to FIG. 3, in particular embodiments, the program can further include a formatter (97) executable to depict formatted text (98) of the speech (52) including all of the words (54) and the filler words (55) in a formatted text display area (99) on a display surface (22) of a computing device (3). In particular embodiments the formatted text (98) can be depicted as fixed paragraphs (100) include the words (54) of the speech (52) within the formatted text display area (99). In particular embodiments, the formatted text (98) can be depicted as scrolled text (101) of the speech (52) within a formatted text display area (99).

[0080] Again, referring primarily to FIG. 3, in particular embodiments, the formatter (97) can further depict a word rate line chart (102) in a word rate line chart display area (103). The particular embodiment of the word rate line chart (102) shown in the example includes a word rate baseline (104) corresponding to the pre-selected word rate (62A) corresponding to a word rate score (61) of 100 superimposed by a word rate line (105) which varies in correspondence to the calculated word rate (62) and affords visual comparison of the word rate (62) of the speech (52) to the pre-selected word rate (62A). In particular embodiments, the formatter (97) coordinates scrolling speed of the word rate line chart (102) in the word rate line chart display area (103) to match scrolling speed of the scrolled text (101) of the speech (52) depicted within the formatted text display area (99) to align word data (54A) representing words (54) and filler words (55) in the scrolled text (101) with the corresponding points in the word rate line (105).

[0081] As shown in the illustrative example of FIG. 3, the formatter (97) can concurrently depict the scrolled text (101) in formatted text display area (99) and depict the scrolled word rate line chart (102) in word rate line chart display area (103) in spatial relation to visually align the scrolled text (101) with corresponding time points in the scrolled word rate line chart (102).

[0082] Again, referring primarily to FIG. 3, the formatter (97) can be further executed to concurrently depict a filler work indicator (106) in a filler word indicator display area (107) in spatial relation to the scrolled text display area (99) to visually align the filler indicator (106) to the filler word (55) in the scrolled text (101).

[0083] Again, referring primarily to FIG. 3, the user interface module (8) can further depict a speech score display area (108) on the display surface (22) associated with the computing device (3). The speech scoring module (60) can be further executed to depict one or more of the word rate score (61), the clarity score (66), filler word score (67) and the presentation score (68) in the corresponding fields within the speech score display area (108).

[0084] Now referring to the example of FIG. 1, the user interface module (8) can be executed to depict one or more of: the video display area (30), a media display area (31), formatted text display area (99)(paragraphs (100) or scrolling text (101)), a word rate line chart display area (103), a filler word indicator display area (107)(as shown in the example of FIG. 3) and presentation score display area (108). In the example of FIG. 1, the user interface module (8) can further function to depict a video recorder selector (109) which can as an illustrative example be in the form of a video recorder drop down list (110) which by user command (10) selects a video recorder (24). However, this illustrative example is not intended to preclude other types of selection or activation elements which by user command (10) selects or actives the video recorder (24). Similarly, the user interface module (8) can further provide an audio recorder selector (111) which as shown in the illustrative example can be in the form of an audio recorder drop list (112) which by user command (10) selects an audio recorder (25). A user (26)(26A) can activate the video recorder (24) and the audio recorder (25) by user command (10) to generate a live video stream (24A) and a live audio stream (25A) of a speech (52) which the corresponding encoder module (32) and media input module (36) can process to display the video (37) in the video display area (30) and generate audio (38) from the audio player (39).

[0085] In particular embodiments, operation of the video recorder (24) or the audio recorder (25) can further activate the codec module (34) to compress the audio stream (25A) or video stream (24A) or the combined stream and retrievably store each in a database (35) whether internal to the recorder (24)(25), the computing device (3), or in a network server (2) or other network node accessible by the computing device (3).

[0086] In the illustrative examples of FIGS. 1 and 3, the user interface module (8) can be further executable to depict a presentation selector (113) on said display surface (22) of the computing device (3), which in the illustrative examples can be in the form of a presentation drop list (114) which by user command (100) selects and retrieves a media file (40) stored in the database (35). Selection of the media file (40) can activate the media input module (36) to display the video (37) in the video display area (30) and generate audio (38) from the audio player (29). In the particular embodiment illustrated by FIG. 3, the user (26)(26A) can select a first media file (40A) (or a plurality of media files which can be combined), such as an video MP4 file, and can further select a second media file (40B)(or a plurality of media files which can be combined), such as an audio MP3 file, and generate a combined data stream (24C/25C) which can be processed by operation of the program (5), as above described, to display the video (37) in the video image area (30) in the graphical user interface (9)(9') and generate the audio (38) by operation of the audio player (39) associated with the computing device (3).

[0087] Now referring primarily to FIG. 3, in particular embodiments, the user interface module (8) can be further executed to depict a presentation analyzer selector (115)(depicted as "Auto Assess" in the example of FIG. 3) which by user command (10) activates the presentation analyzer (50) to analyze the speech data (52A) (whether live video or live audio data streams or video or audio data streams associated with a selected media file(s)), as above described, to calculate one or more of the word rate score (61), the clarity score (66), filler word score (67), the eye contact score (80) or the tone score (96) and further calculate and depict the presentation score (68). In particular embodiments, the calculated scores can be appended to the recorded presentation (44), and the presentation (44) including the calculated scores can be retrievably stored as a media file (40) in a database (35) whether internal to the computing device (3), or in a network server (2) or other network node accessible by the computing device (3). Upon retrieval of the media file (40) from the database (35), the presentation (44) can be depicted in the user interface (9)(9') along with the calculated scores, as above described.

[0088] Again, referring primarily to FIG. 3, in particular embodiments, the user interface module (8) can be further executed to depict a preview selector (116) which by user command (10) activates a preview module (117) which allows the user (26)(26A) to preview the presentation (44) on the display surface (22) associated with the computing device (3) prior to activating the presentation analyzer (50).

[0089] In particular embodiments, the user interface module (8) can be further depict an editor selector (118) which by user command (10) activates an editor module (119) which functions to allow a user (26)(26A) to modify portions of or replace the speech data (52A)(whether the video stream data (24C) or the audio stream data (25C)) and subsequently process or re-process a plurality of iterations of the edited presentation (44) and analyze and re-analyze the iterations of the presentation (44) by operation of the presentation analyzer (50) to improve one or more of the word rate score (61), the clarity score (66), the filler word score (67), the eye contact score (80), or the tone score (96) to further improve the overall presentation score (68).

[0090] Again, referring primarily to FIG. 3, in particular embodiments, the user interface (9)(9')(for example, a student user interface (9A)) can further depict a submission element (120) which by user command (10) by a first user (26)(student user (26A)) in a first computing device (3)(as an example, a student computing device (3A)) in the system (1) transmits the presentation (44) (whether or not processed by the presentation analyzer (50)) to the server (2) to be stored in the database (35).

[0091] Now referring primarily to FIGS. 1 and 4, a second computing device (3)(as an example, an instructor computing device (3B)) in the system (1) having access to the program (5) through the network (4) and having the corresponding user interface (9)(9') (for example, an instructor user interface (9B)) depicted on the display surface (22) associated with the second computing device (3B) a second user (26) (for example, an instructor user (26B)) can by user command (10) in the presentation selector (113)(114) retrieve a submitted presentation (44) of the first user (9) (for example, a student user (26A)) and by operation of the program (5), as above described, allows the video (37) to be depicted in the video display area (30) and any associated media (31A) to be depicted in a media display area (31) on the display surface (22) of the second computing device (3B) (as shown in the example of FIG. 1) along with the audio (38) generated by the audio player (39).

[0092] In particular embodiments, in which the submitted presentation (44) has not been processed by the presentation analyzer (50), the second user (26B) can by user command (10) in the presentation analyzer selector (115) activate the presentation analyzer (50) to process the submitted presentation (44) and depict one or more of the formatted text (98) (fixed or scrolling), the word rate line chart (102), and filling word indicators (106), and further depict the word rate score (61), clarity score (66), filler word score (67), tone score (96), eye contact score (80) and presentation score (68). The instructor user (26B) may then post a grade (129) which may in part be based upon the presentation score (68) derived by the speech analyzer (50).

[0093] Now referring primarily to FIG. 5, in particular embodiments, the system (1) and the program (5) can be incorporated into the context of distance education or correspondence education in which an instructor user (26B) can post one or more assignments (121) in an assignment events interface (122) which can be depicted in the graphical user interface (9')(9B) and further depicted in the graphical user interface (9')(9A) for retrieval of assignments events (124) by one or a plurality of student users (26A). For the purposes of this invention, the term "assignment" means any task or work required of the student user (26B) which includes the production of speech data (52A), and without limitation to the breadth of the foregoing, includes presentations (44) for any purpose which include recording of only an audio stream (25A) or recording only a video stream (24A), or combinations thereof (whether live or stored as a media file).

[0094] Again, referring primarily to FIGS. 4 and 5, in particular embodiments, the user interface module (8) can be further executed to depict in the instructor user interface (9B) an assignment events interface (122) which by operation of an assignment events module (123) allows entry by the instructor user (26B) of assignment events (124) and corresponding assignment event descriptions (125) in corresponding assignment events areas (126) and assignment event description areas (127). The assignment events interface (122) can further allow the instructor user (26B) to link assignment resources (128) to each assignment event (124). The assignment events interface (122) further allows the instructor user (26B) to indicate whether or not submitted presentations (44) will be processed by the presentation analyzer (50) to score the presentation (44) which score may be used in part to apply a grade (129) to the presentation (44).

[0095] In particular embodiments, the instructor user (26B) by user command (10) in a annotation selector (136) can further activate an annotation module (137) to cause depiction of an annotation display area (138) in which annotations (139) can be entered by the instruction user (26B) (as shown in the example of FIG. 1).

[0096] Again, referring primarily to FIGS. 3 and 5, the graphical user interface module (8) can further function with the assignment module (123) to depict the assignment events interface (122) in the student user interface (9A). The student user (26B) by user command (10) can retrieve the assignment resources (128) linked to each of the assignment events (124). The student user (26B) can undertake each assignment event (124) which in part or in whole includes preparation of a presentation (44) including speech data (52A) and apply the speech analyzer (50) to the speech data (52A), as above described, to obtain one or more of the word rate score (61), the clarity score (66), the filler word score (67), the tone score (96), or the eye contact score (80), and a presentation score (68).

Working Example 1

[0097] Now referring primarily to FIG. 6, in a particular embodiment, a user (26) accesses the server (2) through a WAN (4A) and by browser based on-line processing depicts a graphical user interface (9') on the display surface (22) associated with the user (26) computing device(s)(3). The instant embodiment of the graphical user interface (9') depicted includes a video display area (30), a presentation score display area (108), a formatted text display area (99) (for both fixed paragraphs (100) and scrolling text (101)), a word rate line chart display area 103, and a filler word indicator display area (107). The user (26) by user command (10) in the video recorder selector (109) selects a video recorder (24) and in the audio recorder selector (111) selects an audio recorder (25)(as shown in the example of FIG. 1A). By selection of the video recorder (24) and selection of the audio recorder (25), the program (5) by operation of the encoder module (32) encodes the live video stream (24A) and the live audio stream (25A) generated by the video recorder (24) and the audio recorder (25). Concurrently, the codec module (34) compresses the video stream data (24B) or audio stream data (25B) or the combined data stream (24C/25C) and retrievably stores the video stream data (24B) and the audio stream data (25B) in a database (35) and the media input module (36) activates the media presentation module (43) which functions to display the viewable content (37) of the video stream data (24C) in the video display area (30) on the display surface (22) associated with the user (26) computing device (3) and operates the audio player (39) associated with the user (26) computing device (39) to generate audio (38). In the instant example, the viewable content and the audio content represents the user (26) giving an oral presentation (44).

[0098] The user (26) by user command (10) in a presentation analyzer selector (115) (as shown in the example of FIG. 1) activates the presentation analyzer (50) to analyze the speech data (52A)(whether during live streaming of the speech data (52A) or by retrieval of the corresponding media file (40)). Analysis of the speech data (52A), as above described, causes further depiction of the formatted text (98) by operation of one or more of the transcription module (53), the formatter (97) and the media input module (36), as both fixed paragraphs (100) and scrolling text (101) in the respective formatted text display areas (99), a word rate line chart (102) in the a word rate line chart display area (103), and filler word indicators (106) in the filler word indicator display area (107).

[0099] Upon analysis of the speech data (52A) representing the presentation (44), the presentation scoring module (60) operates to calculate he presentation score (69) by calculating an average of a sum of the word rate score (61), the clarity score (66), the filler score (67), and optionally the eye contact score (80), and optionally the tone score (96). The media input module further functions to depict presentation score (69), the word rate score (61), the clarity score (66), and the filler score (67) in the presentation score display area (108).

Working Example 2

[0100] Now referring primarily to FIG. 7, in a particular embodiment, a user (26) accesses the functionalities of the system (1) by user command (10) in the graphical user interface (9') (as above described in Example 1) resulting in a depiction of the presentation score (69), the word rate score (61), the clarity score (66), and the filler score (67) in the presentation score display area (108) and the formatted text (98) in the respective formatted text display areas (99), a word rate line chart (102) in the a word rate line chart display area (103), and filler word indicators (106) in the filler word indicator display area (107).

[0101] In the instant working example, the presentation scoring module (60) can further function to associate or link words or phrases having a word recognition confidence metric (57) of less than a pre-selected word confidence recognition threshold (58A) (referred to as "unclear words (130)") with a clarity score image (131). In particular embodiments, the scoring module (60) can further function to identify and highlight (132) unclear words (130) in the formatted text (98) having a word recognition confidence metric (57) of less than a pre-selected word confidence recognition threshold (58A) of about 80%. The highlight (132) can be depicted by under lineation of the unclear words (130); however, this example does not preclude any manner of visually viewable highlight of unclear words (130), such as shading, colored shading, encircling, dots, bold lines, or the like. Additionally, while examples include a pre-selected word confidence recognition threshold (58A) of 80% or 90%; this is not intended to preclude the use of a greater or lesser pre-selected word confidence recognition threshold (58A), which will typically fall in the range of 70% to about 90% which can be selectable in 1% increments, or other incremental percentile subdivisions.

[0102] The presentation scoring module (60) can further function to associate a trigger area (133) with each unclear word (130). The trigger area (133) comprises a graphical control element activated when the user (26) moves a pointer (28) over the trigger area (133). In the instant example, the user (26) by user command (10) in the form of a mouse over (134) activates the trigger area (133); however, the user command (10) could take the form of mouse roll over, touch over, hover, digital pen touch or drag, or other manner of disposing a pointer (28) over the trigger area (133) associated with unclear words (130) in the formatted text (98).

[0103] When the user (26) moves the pointer (28) over the trigger area (133) associated with the unclear words (130) in the formatted text (98) the presentation scoring module (60) further operates to depict the clarity score image (131). In the instant example, the clarity score image (131) indicates that that "this word was unclear to the transcriber" and provides the word recognition confidence metric (57) "48% confidence." However, this illustrative working example is not intended to preclude within the clarity score image (131) other text information, graphical information, instructions, or links to additional files, data, or information.

Working Example 3

[0104] Now referring primarily to FIG. 8, in a particular embodiment, a user (26) accesses the functionalities of the system (1) by user command (10) in the graphical user interface (9') (as above described in Examples 1 or 2) resulting in a depiction of the presentation score (69), the word rate score (61), the clarity score (66), and the filler score (67) in the presentation score display area (108) and the formatted text (98) in the respective formatted text display areas (99), a word rate line chart (102) in the a word rate line chart display area (103), and filler word indicators (106) in the filler word indicator display area (107).

[0105] In the instant working example, the presentation scoring module (60) can further function to associate or link words or phrases used as filler words (55) with a filler score image (135). In particular embodiments, the scoring module (60) can further function to identify and highlight (132) filler words (55) in the formatted text (98). The highlight (132) can be depicted by under lineation of the filler words (55); however, this example does not preclude any manner of visually viewable highlight of filler words (55), such as shading, colored shading, encircling, dots, bold lines, or the like. The highlight (132) of filler words (55) can comprise the graphical element used to identify unclear words (130); but does not preclude the use of different highlight (132) between unclear words (130) and filler words (55).

[0106] The presentation scoring module (60) can further function to associate a trigger area (133), as described in working example 2 with each filler word (55). When the user (26) moves the pointer (28) over the trigger area (133) associated with the filler words (55) in the formatted text (98) the presentation scoring module (60) further operates to depict the filler score image (135). In the instant example, the filler score image (135) indicates that that "this word was identified as a filler word" and provides a usage metric "used 16 times." However, this illustrative working example is not intended to preclude within the filler score image (135) other text information, graphical information, instructions, or links to additional files, data, or information.

[0107] As can be easily understood from the foregoing, the basic concepts of the present invention may be embodied in a variety of ways. The invention involves numerous and varied embodiments of interactive presentation assessment and valuation system and methods for making and using such interactive presentation assessment and valuation system including the best mode.

[0108] As such, the particular embodiments or elements of the invention disclosed by the description or shown in the figures or tables accompanying this application are not intended to be limiting, but rather exemplary of the numerous and varied embodiments generically encompassed by the invention or equivalents encompassed with respect to any particular element thereof. In addition, the specific description of a single embodiment or element of the invention may not explicitly describe all embodiments or elements possible; many alternatives are implicitly disclosed by the description and figures.

[0109] It should be understood that each element of an apparatus or each step of a method may be described by an apparatus term or method term. Such terms can be substituted where desired to make explicit the implicitly broad coverage to which this invention is entitled. As but one example, it should be understood that all steps of a method may be disclosed as an action, a means for taking that action, or as an element which causes that action. Similarly, each element of an apparatus may be disclosed as the physical element or the action which that physical element facilitates. As but one example, the disclosure of an "analyzer" should be understood to encompass disclosure of the act of "analyzing"--whether explicitly discussed or not--and, conversely, were there effectively disclosure of the act of "analyzing", such a disclosure should be understood to encompass disclosure of an "analyzer" and even a "means for analyzing." Such alternative terms for each element or step are to be understood to be explicitly included in the description.

[0110] In addition, as to each term used it should be understood that unless its utilization in this application is inconsistent with such interpretation, common dictionary definitions should be understood to be included in the description for each term as contained in the Random House Webster's Unabridged Dictionary, second edition, each definition hereby incorporated by reference.

[0111] All numeric values herein are assumed to be modified by the term "about", whether or not explicitly indicated. For the purposes of the present invention, ranges may be expressed as from "about" one particular value to "about" another particular value. When such a range is expressed, another embodiment includes from the one particular value to the other particular value. The recitation of numerical ranges by endpoints includes all the numeric values subsumed within that range. A numerical range of one to five includes for example the numeric values 1, 1.5, 2, 2.75, 3, 3.80, 4, 5, and so forth. It will be further understood that the endpoints of each of the ranges are significant both in relation to the other endpoint, and independently of the other endpoint. When a value is expressed as an approximation by use of the antecedent "about," it will be understood that the particular value forms another embodiment. The term "about" generally refers to a range of numeric values that one of skill in the art would consider equivalent to the recited numeric value or having the same function or result. Similarly, the antecedent "substantially" means largely, but not wholly, the same form, manner or degree and the particular element will have a range of configurations as a person of ordinary skill in the art would consider as having the same function or result. When a particular element is expressed as an approximation by use of the antecedent "substantially," it will be understood that the particular element forms another embodiment.

[0112] Moreover, for the purposes of the present invention, the term "a" or "an" entity refers to one or more of that entity unless otherwise limited. As such, the terms "a" or "an", "one or more" and "at least one" can be used interchangeably herein.

[0113] Thus, the applicant(s) should be understood to claim at least: i) presentation assessment and valuation system or presentation analyzer herein disclosed and described, ii) the related methods disclosed and described, iii) similar, equivalent, and even implicit variations of each of these devices and methods, iv) those alternative embodiments which accomplish each of the functions shown, disclosed, or described, v) those alternative designs and methods which accomplish each of the functions shown as are implicit to accomplish that which is disclosed and described, vi) each feature, component, and step shown as separate and independent inventions, vii) the applications enhanced by the various systems or components disclosed, viii) the resulting products produced by such systems or components, ix) methods and apparatuses substantially as described hereinbefore and with reference to any of the accompanying examples, x) the various combinations and permutations of each of the previous elements disclosed.