Conversion Method, Device, Computer Equipment, and Storage Medium

Liu; Shaoli ; et al.

U.S. patent application number 16/667593 was filed with the patent office on 2020-04-02 for conversion method, device, computer equipment, and storage medium. The applicant listed for this patent is Cambricon Technologies Corporation Limited. Invention is credited to Qi Guo, Jun Liang, Shaoli Liu.

| Application Number | 20200104129 16/667593 |

| Document ID | / |

| Family ID | 65690403 |

| Filed Date | 2020-04-02 |

| United States Patent Application | 20200104129 |

| Kind Code | A1 |

| Liu; Shaoli ; et al. | April 2, 2020 |

Conversion Method, Device, Computer Equipment, and Storage Medium

Abstract

A model conversion method is disclosed. The model conversion method includes obtaining model attribute information of an initial offline model and hardware attribute information of a computer equipment, determining whether the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment and in the case when the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, converting the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and a preset model conversion rule.

| Inventors: | Liu; Shaoli; (Beijing, CN) ; Liang; Jun; (Beijing, CN) ; Guo; Qi; (Beijing, CN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 65690403 | ||||||||||

| Appl. No.: | 16/667593 | ||||||||||

| Filed: | October 29, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/CN2019/080510 | Mar 29, 2019 | |||

| 16667593 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/44505 20130101; G06F 9/3004 20130101; G06N 20/00 20190101; G06F 8/35 20130101; G06N 3/08 20130101; G06N 3/02 20130101 |

| International Class: | G06F 9/30 20060101 G06F009/30; G06F 9/445 20060101 G06F009/445; G06N 3/08 20060101 G06N003/08; G06N 20/00 20060101 G06N020/00 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Aug 10, 2018 | CN | 201810913895.6 |

Claims

1. A model conversion method for converting an initial offline model to a target offline model matching a plurality of hardware attributes of a computer system, the method comprising: obtaining, by an I/O interface of the computer system, the initial offline model and storing the initial offline model to a local memory of the computer system; obtaining, by a processor of the computer system, the plurality of hardware attributes of the computer system; determining, by the processor of the computer system, whether model attributes of the initial offline model match the plurality of hardware attributes of the computer system according to the initial offline model and the plurality of hardware attributes of the computer system; and in the case when the model attributes of the initial offline model do not match the plurality of hardware attributes of the computer system, converting, by the processor, the initial offline model to a target offline model that matches the plurality of hardware attributes of the computer system according to the plurality of hardware attributes of the computer system and a preset model conversion rule; storing, by the I/O interface, the target offline model into the local memory of the computer system or an external memory connected to the computer system, wherein the computer system is capable of running the target offline model to implement a corresponding artificial intelligence application.

2. The model conversion method of claim 1, wherein the converting the initial offline model to the target offline model that matches the plurality of hardware attributes of the computer system according to the plurality of hardware attributes of the computer system and the preset model conversion rule further comprises: determining model attributes of the target offline model according to the plurality of hardware attributes of the computer system; selecting a model conversion rule as a target model conversion rule from a plurality of the preset model conversion rules according to the model attributes of the initial offline model and the model attributes of the target offline model; and converting the initial offline model to the target offline model according to the model attributes of the initial offline model and the target model conversion rule.

3. The model conversion method of claim 2, wherein the selecting a model conversion rule as the target model conversion rule from the plurality of the preset model conversion rules according to the model attributes of the initial offline model and the model attributes of the target offline model further comprises: selecting more than one applicable model conversion rules from the plurality of the preset model conversion rules according to the model attributes of the initial offline model and the model attributes of the target offline model; and prioritizing the more than one applicable model conversion rules, and serving an applicable model conversion rule with a highest priority as the target conversion rule.

4. The model conversion method of claim 3, wherein the prioritizing the more than one applicable model conversion rules further comprises: obtaining process parameters of using each of the applicable model conversion rules to convert the initial offline model to the target offline model, respectively, wherein the process parameters include one or more of conversion speed, power consumption, memory occupancy rate, and magnetic disk I/O occupancy rate; and prioritizing the more than one applicable model conversion rules according to the process parameters of each of the applicable model conversion rules.

5. The model conversion method of claim 3, wherein the model attributes of the initial offline model, the model attributes of the target offline model, and the applicable model conversion rules are stored in one-to-one correspondence.

6. The model conversion method of claim 1, wherein the determining whether the model attributes of the initial offline model match the hardware attributes of the computer equipment according to the initial offline model and the plurality of hardware attributes of the computer system further comprises: according to the model attributes of the initial offline model and the plurality of hardware attributes of the computer equipment, in the case when the computer system is determined as being able to support running of the initial offline model, determining the model attributes of the initial offline model match the hardware attributes of the computer system, and according to the model attributes of the initial offline model and the hardware attributes of the computer system, in the case when the computer system is determined as not being able to support the running of the initial offline model, determining the model attributes of the initial offline model do not match the hardware attributes of the computer equipment.

7. The model conversion method of claim 1, wherein the obtaining the initial offline model further comprises: obtaining the initial offline model and the plurality of hardware attributes of the computer system via an application in the computer system.

8. The model conversion method of claim 1, further comprises: storing the target offline model in a first memory or a second memory of the computer system.

9. The model conversion method of claim 8, further comprises: obtaining the target offline model; and running the target offline model to implement the corresponding artificial intelligence application, wherein the target offline model includes network weights and instructions corresponding to respective offline nodes in an original network, and interface data among the respective offline nodes and other compute nodes in the original network.

10. A non-transitory computer program product storing instructions which, when executed by at least one data processor forming part of at least one computing device, result in operations comprising: obtaining, by an I/O interface of the computer system, the initial offline model and storing the initial offline model to a local memory of the computer system; obtaining, by a processor of the computer system, the plurality of hardware attributes of the computer system; determining, by the processor of the computer system, whether model attributes of the initial offline model match the plurality of hardware attributes of the computer system according to the initial offline model and the plurality of hardware attributes of the computer system; and in the case when the model attributes of the initial offline model do not match the plurality of hardware attributes of the computer system, converting, by the processor, the initial offline model to a target offline model that matches the plurality of hardware attributes of the computer system according to the plurality of hardware attributes of the computer system and a preset model conversion rule; storing, by the I/O interface, the target offline model into the local memory of the computer system or an external memory connected to the computer system, wherein the computer system is capable of running the target offline model to implement a corresponding artificial intelligence application.

11. The non-transitory computer program product storing instructions of claim 10 which, when executed by at least one data processor forming part of at least one computing device, result in operations wherein the converting the initial offline model to the target offline model that matches the plurality of hardware attributes of the computer system according to the plurality of hardware attributes of the computer system and the preset model conversion rule further comprises: determining model attributes of the target offline model according to the plurality of hardware attributes of the computer system; selecting a model conversion rule as a target model conversion rule from a plurality of the preset model conversion rules according to the model attributes of the initial offline model and the model attributes of the target offline model; and converting the initial offline model to the target offline model according to the model attributes of the initial offline model and the target model conversion rule.

12. The non-transitory computer program product storing instructions of claim 10 which, when executed by at least one data processor forming part of at least one computing device, result in operations wherein the selecting a model conversion rule as the target model conversion rule from the plurality of the preset model conversion rules according to the model attributes of the initial offline model and the model attributes of the target offline model further comprises: selecting more than one applicable model conversion rules from the plurality of the preset model conversion rules according to the model attributes of the initial offline model and the model attributes of the target offline model; and prioritizing the more than one applicable model conversion rules, and serving an applicable model conversion rule with a highest priority as the target conversion rule.

13. The non-transitory computer program product storing instructions of claim 10 which, when executed by at least one data processor forming part of at least one computing device, result in operations wherein the prioritizing the more than one applicable model conversion rules further comprises: obtaining process parameters of using each of the applicable model conversion rules to convert the initial offline model to the target offline model, respectively, wherein the process parameters include one or more of conversion speed, power consumption, memory occupancy rate, and magnetic disk I/O occupancy rate; and prioritizing the more than one applicable model conversion rules according to the process parameters of each of the applicable model conversion rules.

14. The non-transitory computer program product storing instructions of claim 10 which, when executed by at least one data processor forming part of at least one computing device, result in operations wherein the model attributes of the initial offline model, the model attributes of the target offline model, and the applicable model conversion rules are stored in one-to-one correspondence.

15. The non-transitory computer program product storing instructions of claim 10 which, when executed by at least one data processor forming part of at least one computing device, result in operations wherein the determining whether the model attributes of the initial offline model match the hardware attributes of the computer equipment according to the initial offline model and the plurality of hardware attributes of the computer system further comprises: according to the model attributes of the initial offline model and the plurality of hardware attributes of the computer equipment, in the case when the computer system is determined as being able to support running of the initial offline model, determining the model attributes of the initial offline model match the hardware attributes of the computer system, and according to the model attributes of the initial offline model and the hardware attributes of the computer system, in the case when the computer system is determined as not being able to support the running of the initial offline model, determining the model attributes of the initial offline model do not match the hardware attributes of the computer equipment.

16. The non-transitory computer program product of claim 10, wherein, the processor further configured to execute following steps when obtaining the initial offline model further comprises: obtaining the initial offline model and the hardware attribute information of the computer equipment via an application in the computer equipment.

17. The non-transitory computer program product of claim 10, the processor further configured to execute following steps: storing the target offline model in a first memory or a second memory of the computer equipment.

18. The non-transitory computer program product of claim 17, the processor further configured to execute following steps: obtaining the target offline model; and running the target offline model to realize a corresponding artificial intelligence application, wherein the target offline model includes network weights and instructions corresponding to respective offline nodes in an original network, as well as interface data among the respective offline nodes and other compute nodes in the original network.

19. The non-transitory computer program product of claim 11, wherein, the processor comprises a computation unit and a controller unit, wherein the computation unit further comprises a primary processing circuit and a plurality of secondary processing circuits, wherein the controller unit is configured to obtain data, a machine learning model, and an operation instruction; wherein the controller unit is further configured to parse the operation instruction to obtain a plurality of computation instructions, and send the plurality of computation instructions and the data to the primary processing circuit; wherein the primary processing circuit is further configured to perform preprocessing on the data as well as data and computation instructions transmitted among the primary processing circuit and the plurality of secondary processing circuits; wherein the plurality of secondary processing circuits are further configured to perform intermediate computation in parallel according to data and computation instructions transmitted by the primary processing circuit to obtain a plurality of intermediate results, and transmit the plurality of intermediate results to the primary processing circuit, and wherein the primary processing circuit is further configured to perform post-processing on the plurality of intermediate results to obtain an operation result of the operation instruction.

20. A non-transitory computer program product, wherein, a computer program is stored in the computer readable storage medium, and when the computer program is executed by a processor, the following steps are implemented: obtaining, by the I/O interface, an initial offline model and storing the initial offline model to the local memory of the computer equipment; obtaining, by the processor, hardware attribute information of a computer equipment; determining, by the processor, whether the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment; and in the case when the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, converting, by the processor, the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and a preset model conversion rule; storing, by the I/O interface, the target offline model into the local memory of the computer equipment or an external memory connected to the computer equipment, therefore the computer equipment is capable of running the target offline model directly to realize a corresponding artificial intelligence application.

Description

[0001] This application is a continuation of International Patent Application No. PCT/CN2019/080510 filed Mar. 29, 2019, which claims the benefit of Chinese Patent Application No. 201810913895.6 filed Aug. 10, 2018, the entire contents of each of which are incorporated herein by reference.

TECHNICAL FIELD

[0002] The present disclosure relates to the field of computer technology, and specifically to a model conversion method, a device, computer equipment, and a storage medium.

BACKGROUND ART

[0003] With the development of artificial intelligence technology, deep learning can be seen everywhere now and has become indispensable. Many extensible deep learning systems, such as TensorFlow, MXNet, Caffe and PyTorch, have emerged therefrom. The deep learning systems can be used for providing various neural network models or other machine learning models that can be run on a processor such as CPU or GPU. When neural network models and other machine learning models need to be run on different processors, they are often required to be converted.

[0004] A traditional method of conversion is as follows: developers configure multiple different conversion models for a neural network model, and the multiple conversion models can be applied to different processors; in practical terms, computer equipment needs to receive a neural network model and multiple corresponding conversion models at the same time, then users are required to select one model from the multiple models according to the type of the computer equipment so that the neural network model can be run on the computer equipment. However, the conversion method can have large data input and a large amount of data processing, which can easily lead to a situation where the memory capacity and the processing limit of computer equipment are exceeded, the processing speed of the computer equipment decreases, and the computer equipment malfunctions.

SUMMARY

[0005] In view of this situation, aiming at the above-mentioned technical problem, it is necessary to provide a model conversion method, a device, computer equipment, and a storage medium that can be able to reduce data input and data processing of computer equipment, as well as improve the processing speed of the computer equipment.

[0006] The disclosure provides a model conversion method. The model conversion method include: obtaining an initial offline model and hardware attribute information of computer equipment; determining whether model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment; and if the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, converting the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and a preset model conversion rule. According to some embodiments, the converting the initial offline model to the target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and the preset model conversion rule include: determining model attribute information of the target offline model according to the hardware attribute information of the computer equipment; selecting a model conversion rule as a target model conversion rule from a plurality of the preset model conversion rules according to the model attribute information of the initial offline model and the model attribute information of the target offline model; and converting the initial offline model to the target offline model according to the model attribute information of the initial offline model and the target model conversion rule.

[0007] According to some embodiments, the selecting a model conversion rule as the target model conversion rule from the plurality of the preset model conversion rules according to the model attribute information of the initial offline model and the model attribute information of the target offline model further include: selecting more than one applicable model conversion rules from the plurality of the preset model conversion rules according to the model attribute information of the initial offline model and the model attribute information of the target offline model; and prioritizing the more than one applicable model conversion rules, and serving an applicable model conversion rule with a highest priority as the target model conversion rule.

[0008] According to some embodiments, the prioritizing the more than one applicable model conversion rules further include: obtaining process parameters of using each of the applicable model conversion rules to convert the initial offline model to the target offline model, respectively, where the process parameters can include one or more of conversion speed, power consumption, memory occupancy rate, and magnetic disk I/O occupancy rate; and prioritizing the more than one applicable model conversion rules according to the process parameters of the respective applicable model conversion rules.

[0009] According to some embodiments, the model attribute information of the initial offline model, the model attribute information of the target offline model, and the applicable model conversion rules be stored in one-to-one correspondence.

[0010] According to some embodiments, the determining whether the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment include: according to the model attribute information of the initial offline model and the hardware attribute information of the computer equipment, if the computer equipment is determined as being able to support the running of the initial offline model, determining that the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment; and according to the model attribute information of the initial offline model and the hardware attribute information of the computer equipment, if the computer equipment is determined as not being able to support the running of the initial offline model, determining that the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment.

[0011] According to some embodiments, the obtaining the initial offline model further include: obtaining the initial offline model and the hardware attribute information of the computer equipment via an application in the computer equipment.

[0012] According to some embodiments, the method further include: storing the target offline model in a first memory or a second memory of the computer equipment.

[0013] According to some embodiments, the method further include: obtaining the target offline model, and running the target offline model; the target offline model can include network weights and instructions corresponding to respective offline nodes in an original network, as well as interface data among the respective offline nodes and other compute nodes in the original network.

[0014] According to some embodiments, a model conversion device is disclosed. The device include: an obtaining unit configured to obtain an initial offline model and hardware attribute information of computer equipment; a determination unit configured to determine whether model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment; and a conversion unit configured to, if the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, convert the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and a preset model conversion rule. The present disclosure provides computer equipment, where the computer equipment can include a memory and a processor. A computer program can be stored in the memory. The steps of the method in any example mentioned above can be implemented when the processor executes the computer program.

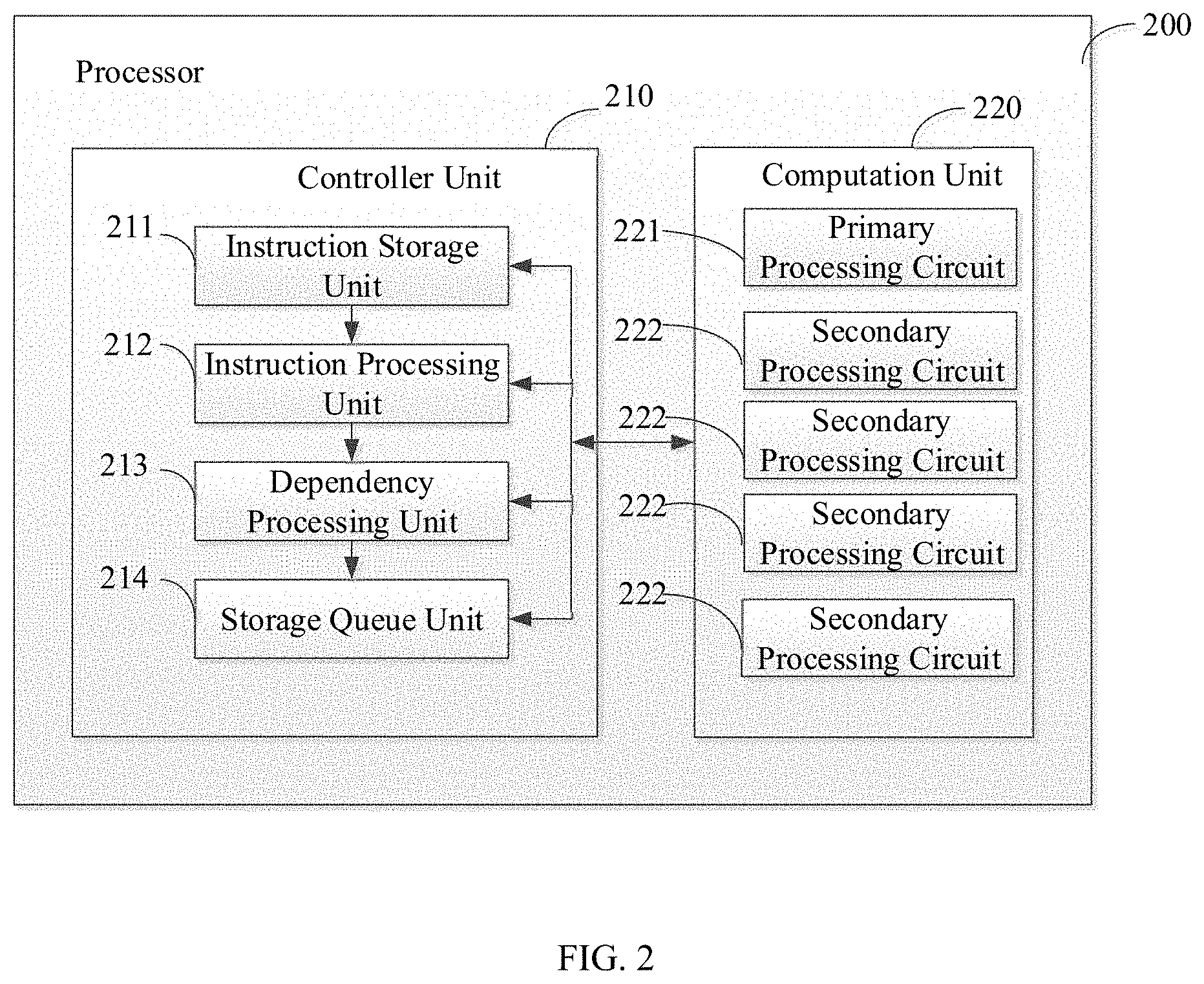

[0015] According to some embodiments, the processor can include a computation unit and a controller unit, where the computation unit can further include a primary processing circuit and a plurality of secondary processing circuits; the controller unit can be configured to obtain data, a machine learning model, and an operation instruction; the controller unit can further be configured to parse the operation instruction to obtain a plurality of computation instructions, and send the plurality of computation instructions and the data to the primary processing circuit; the primary processing circuit can be configured to perform preprocessing on the data as well as data and computation instructions transmitted among the primary processing circuit and the plurality of secondary processing circuits; the plurality of secondary processing circuits can be configured to perform intermediate computation in parallel according to data and computation instructions transmitted by the primary processing circuit to obtain a plurality of intermediate results, and transmit the plurality of intermediate results to the primary processing circuit; and the primary processing circuit can further be configured to perform post-processing on the plurality of intermediate results to obtain an operation result of the operation instruction.

[0016] The present disclosure further provides a computer readable storage medium. A computer program can be stored in the computer readable storage medium. The steps of the method in any example mentioned above can be implemented when a processor executes the computer program.

[0017] Regarding the model conversion method, the device, the computer equipment, and the storage medium, by merely receiving an initial offline model, the computer equipment can be able to convert the initial offline model into a target offline model according to a preset model conversion rule and hardware attribute information of the computer equipment without the need of obtaining multiple different versions of the initial offline models matching with different computer equipment. Data input of the computer equipment can thus be greatly reduced, problems such as excessive data input exceeding a memory capacity of the computer equipment can be avoided, and normal functioning of the computer equipment can be ensured. In addition, the model conversion method can reduce an amount of data processing of the computer equipment, and can thus improve the processing speed and processing efficiency of the computer equipment as well as reduce power consumption. Moreover, the model conversion method can have a simple process and can require no manual intervention. With a relatively high level of automation, the model conversion method can be easy to use.

BRIEF DESCRIPTION OF THE DRAWINGS

[0018] FIG. 1 is a block diagram of a computer equipment, according to some embodiments;

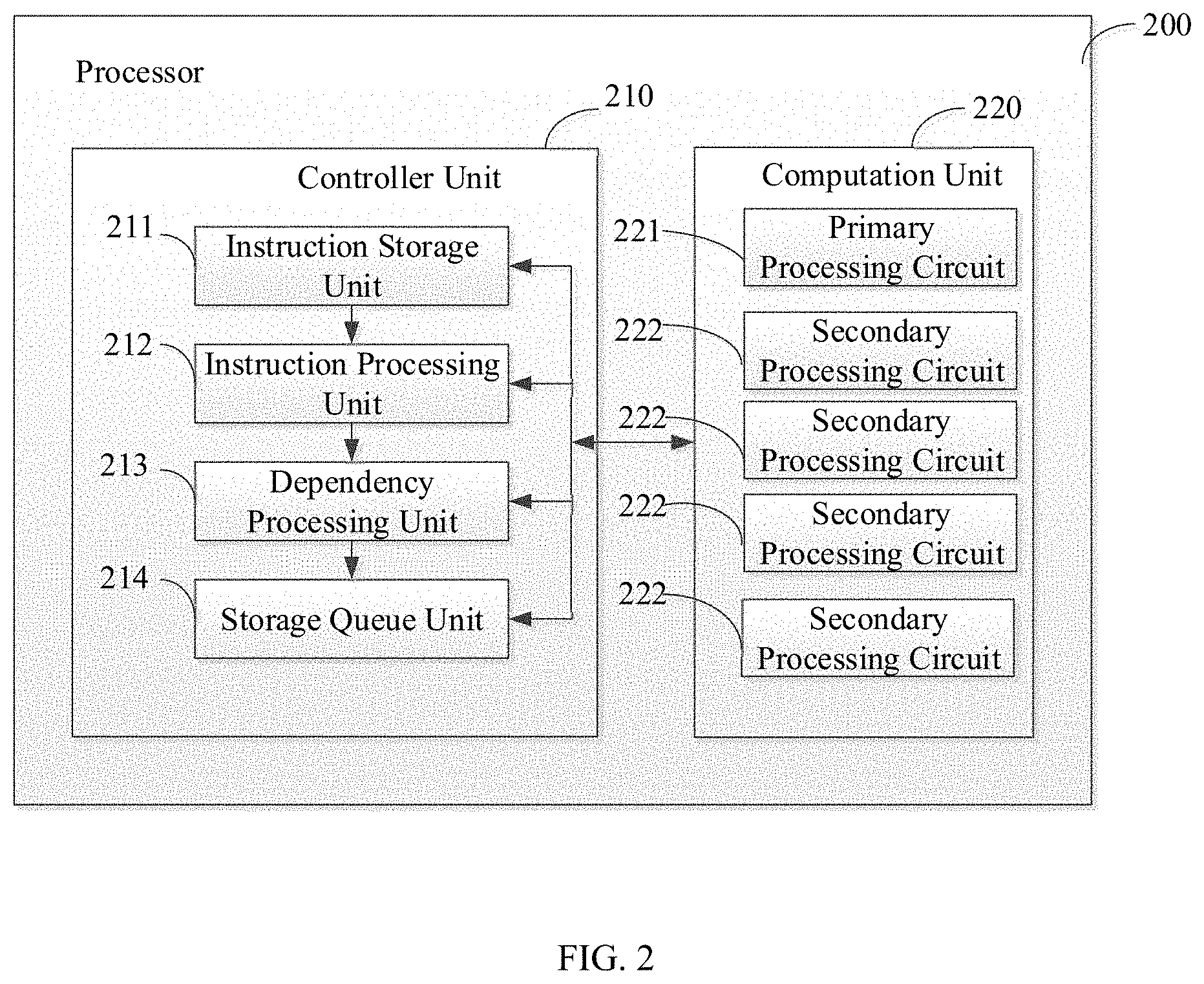

[0019] FIG. 2 is a block diagram of a processor according to FIG. 1, according to some embodiments;

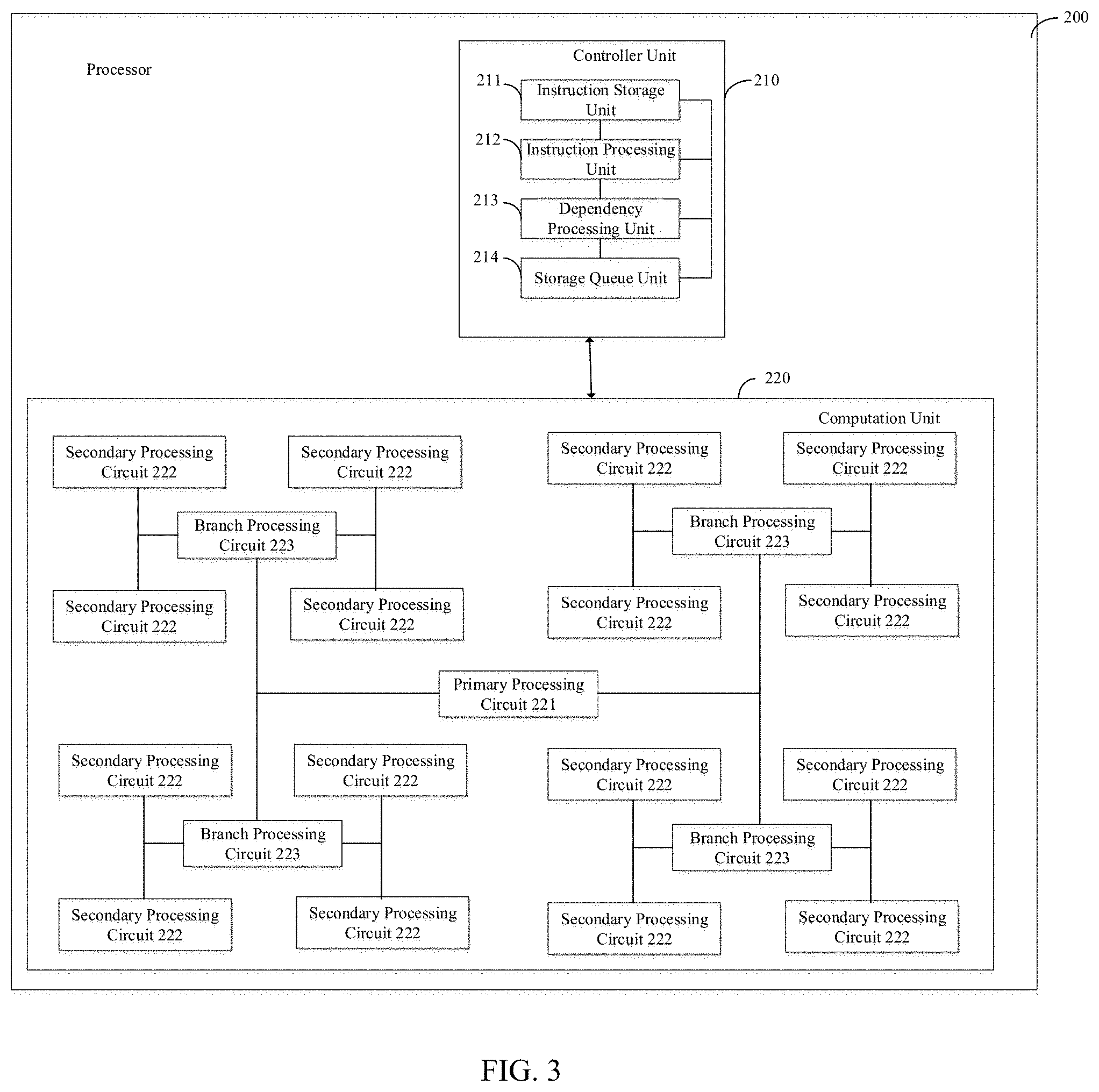

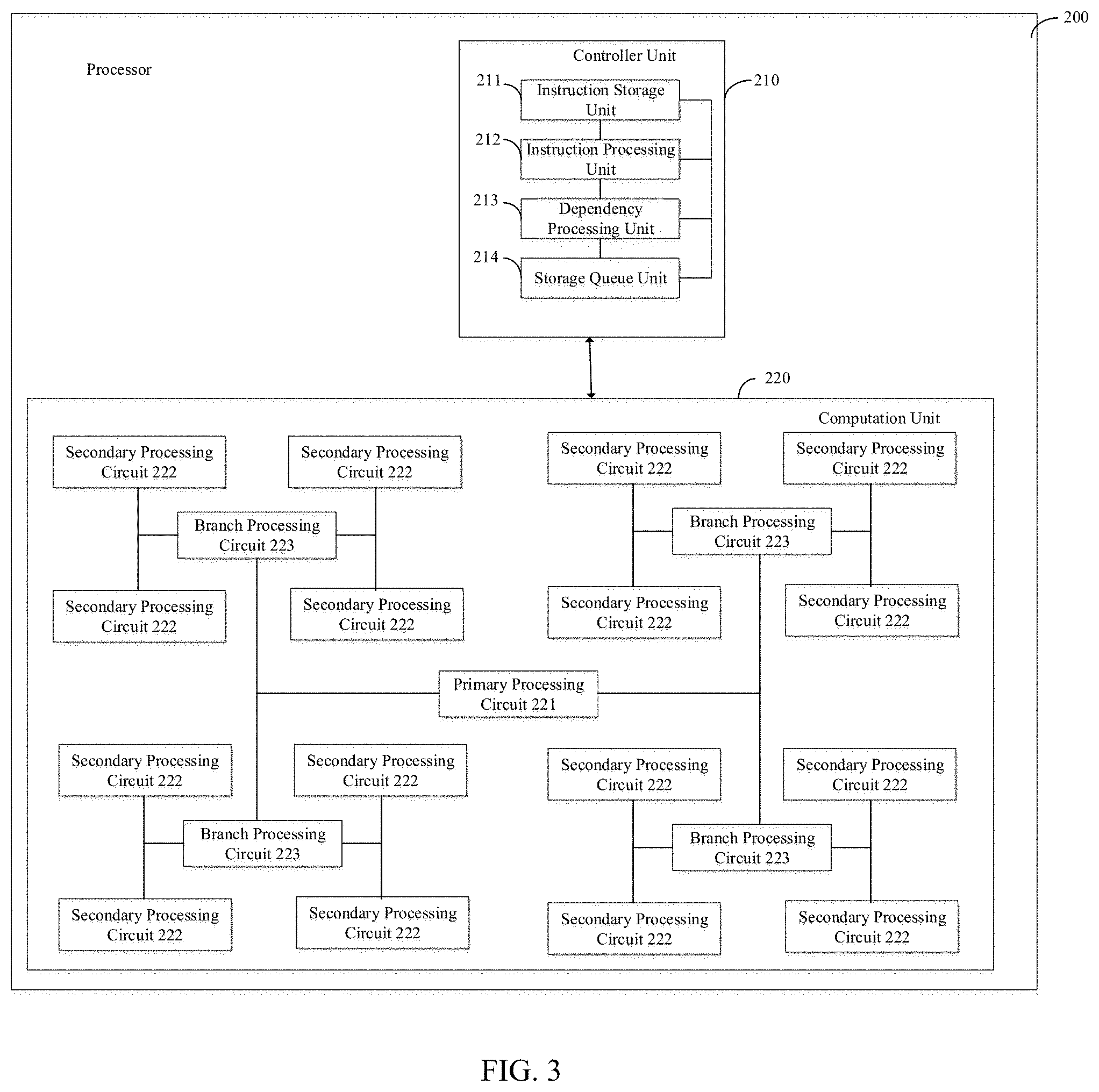

[0020] FIG. 3 is a block diagram of a processor according to FIG. 1, according to some embodiments;

[0021] FIG. 4 is a block diagram of a processor according to FIG. 1, according to some embodiments;

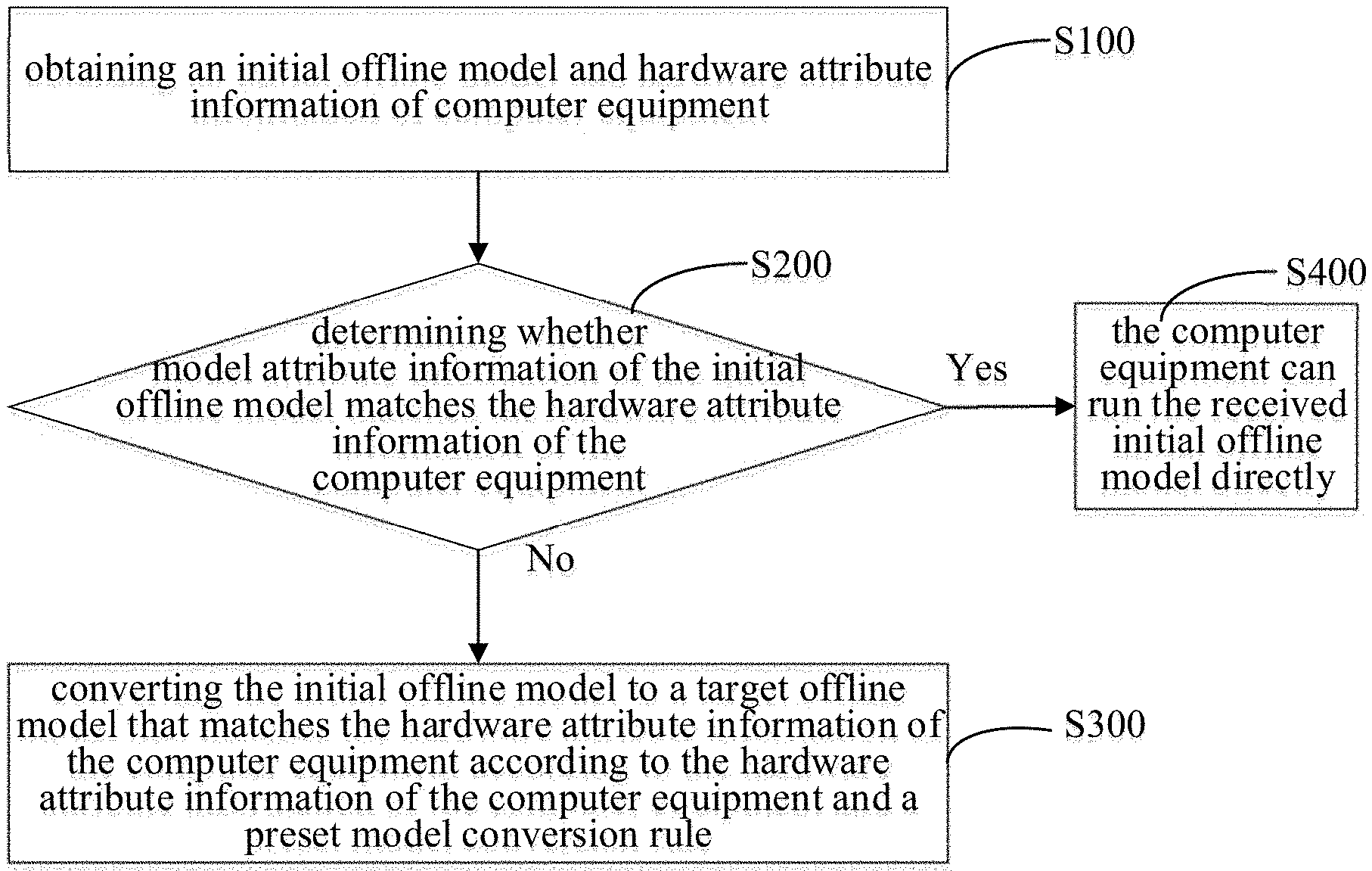

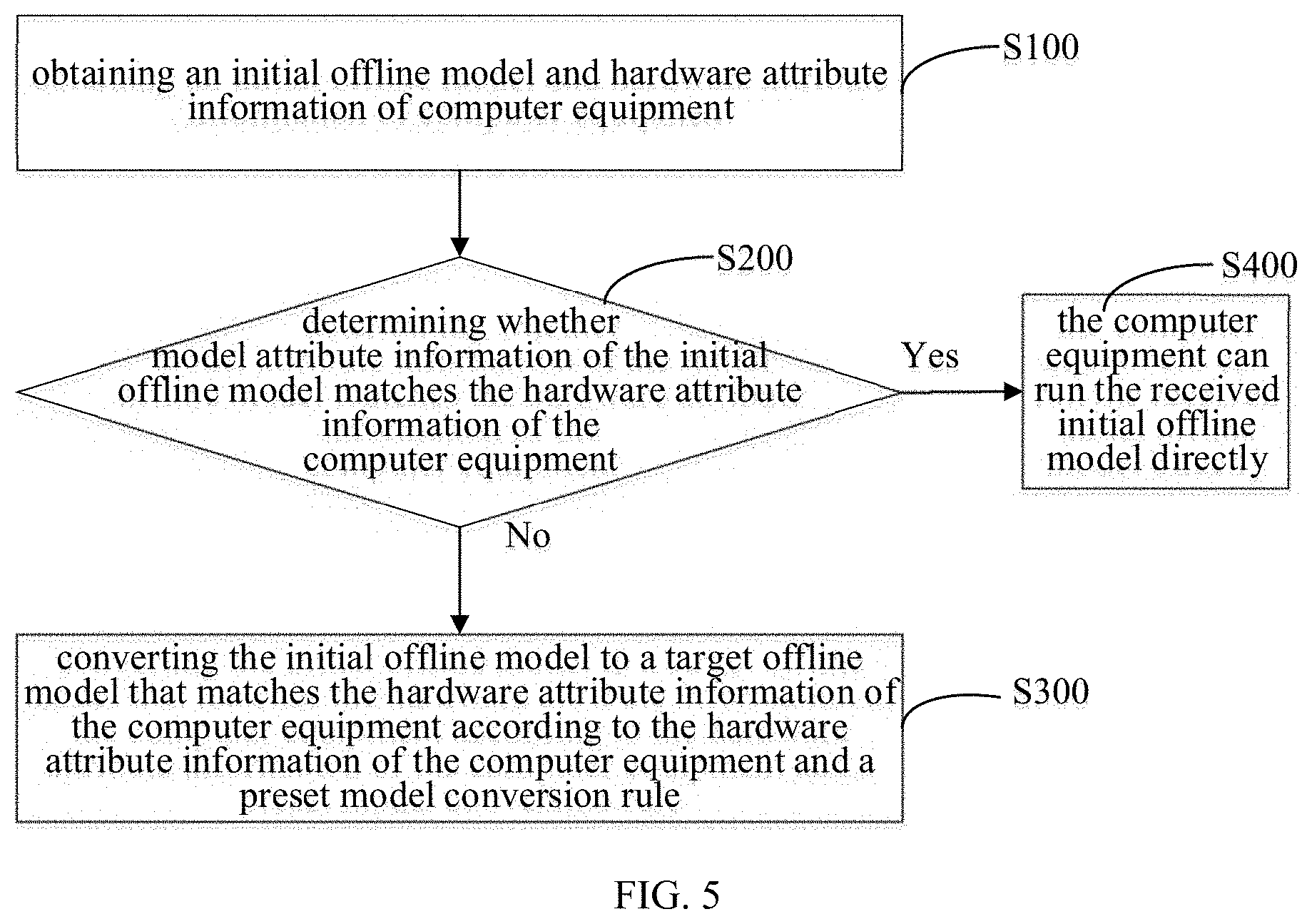

[0022] FIG. 5 is a flowchart of a model conversion method, according to some embodiments;

[0023] FIG. 6 is a flowchart of a model conversion method, according to some embodiments;

[0024] FIG. 7 is a flowchart of an offline model generation method, according to some embodiments;

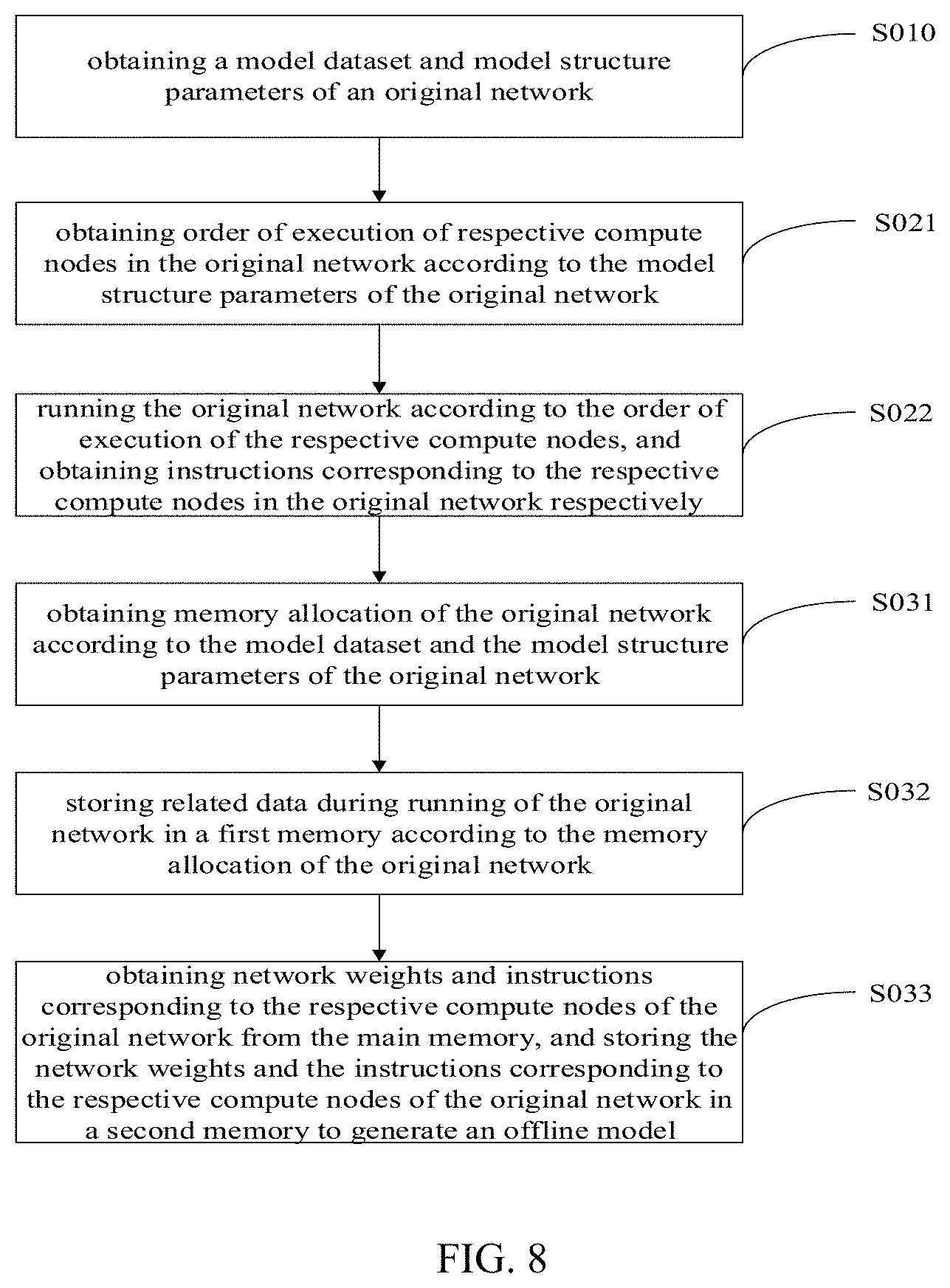

[0025] FIG. 8 is a flowchart of an offline model generation method, according to some embodiments;

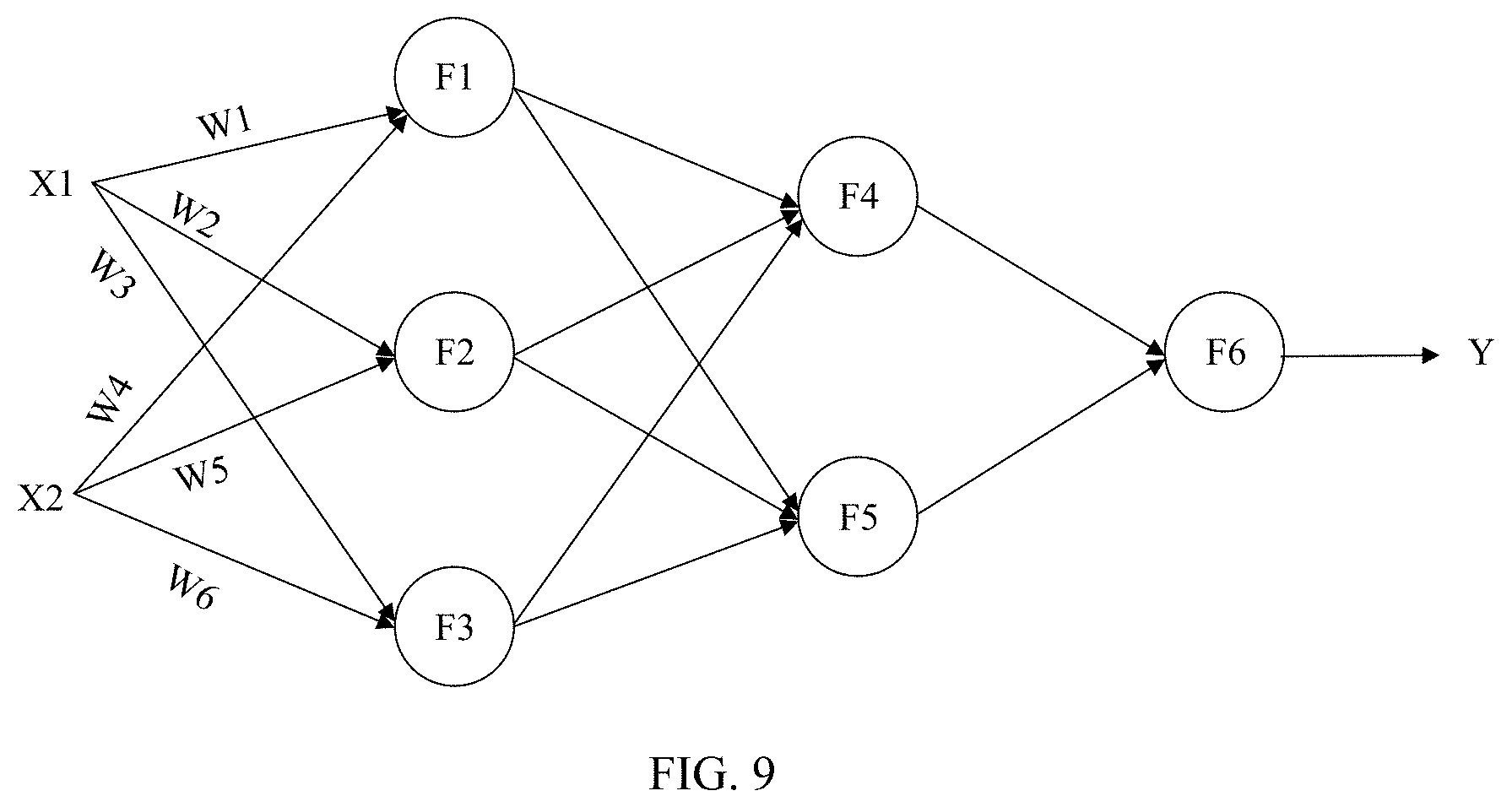

[0026] FIG. 9 is a diagram of a network structure of a network model, according to some embodiments;

[0027] FIG. 10 is a diagram showing a generation process of an offline model of the network model in FIG. 9, according to some embodiments.

DETAILED DESCRIPTION

[0028] In order to make the purposes, technical schemes, and technical effects of the present disclosure clearer, the present disclosure will be described hereinafter with reference to the accompanied drawings and examples. It should be understood that the examples described herein are merely used for explaining the present disclosure, rather than limiting the present disclosure.

[0029] FIG. 1 is a block diagram of a computer equipment, according to some embodiments. The computer equipment can be a mobile terminal such as a mobile phone or a tablet, or a terminal such as a desktop computer, a board, or a cloud server. The computer equipment can be applied to robots, printers, scanners, traffic recorders, navigators, cameras, video cameras, projectors, watches, portable storage devices, wearable devices, transportation, household appliances and/or medical equipment. The transportation can include airplanes, ships, and/or vehicles. The household electrical appliances can include televisions, air conditioners, microwave ovens, refrigerators, electric rice cookers, humidifiers, washing machines, electric lamps, gas cookers, and range hoods. The medical equipment can include nuclear magnetic resonance spectrometers, B-ultrasonic scanners, and/or electrocardiographs.

[0030] The computer equipment can include a processor 110, and a first memory 120 and second memory 130 that can be connected to the processor 110. Alternatively, the processor 110 can be a general processor, such as a CPU (Central Processing Unit), a GPU (Graphics Processing Unit), or a DSP (Digital Signal Processing). The processor 110 can also be a network model processor such as an IPU (Intelligence Processing Unit). The processor 110 can further be an instruction set processor, a related chipset, a dedicated microprocessor such as an ASIC (Application Specific Integrated Circuit), an onboard memory for caching, or the like.

[0031] FIG. 2 is a block diagram of a processor according to FIG. 1, according to some embodiments. As shown in FIG. 2, the processor 200 can include a controller unit 210 and a computation unit 220, where the controller unit 210 can be connected to the computation unit 220, and the computation unit 220 can include a primary processing circuit 221 and a plurality of secondary processing circuits 222. The controller unit 210 can be configured to obtain data, a machine learning model, and an operation instruction. The machine learning model can specifically include a network model, where the network model can be a neural network model and/or a non-neural network model. The controller unit 210 can be further configured to parse a received operation instruction to obtain a plurality of computation instructions, and send the plurality of computation instructions and data to the primary processing circuit. The primary processing circuit can be configured to perform preprocessing on the data as well as data and computation instructions transmitted among the primary processing circuit and the plurality of secondary processing circuits. The plurality of secondary processing circuits can be configured to perform intermediate computation in parallel according to data and computation instructions transmitted by the primary processing circuit to obtain a plurality of intermediate results, and transmit the plurality of intermediate results to the primary processing circuit; the primary processing circuit can be further configured to perform post-processing on the plurality of intermediate results to obtain an operation result of the operation instruction.

[0032] According to some embodiments, the controller unit 210 can include an instruction storage unit 211, an instruction processing unit 212, and a storage queue unit 214; the instruction storage unit 211 can be configured to store an operation instruction associated with a machine learning model; the instruction processing unit 212 can be configured to parse an operation instruction to obtain a plurality of computation instructions; the storage queue unit 214 can be configured to store an instruction queue where the instruction queue can include: a plurality of computation instructions or operation instructions that are to be performed and are sorted in sequential order. According to some embodiments, the controller unit 210 can further include a dependency processing unit 213. The dependency processing unit 213 can be configured to, when a plurality of computation instructions exist, determine whether a first computation instruction and a zero-th computation instruction preceding the first computation instruction are associated, for instance, if the first computation instruction and the zero-th computation instruction are associated, the first computation instruction can be cached in the instruction storage unit, and after the zero-th computation instruction is completed, the first computation instruction can be fetched from the instruction storage unit and transmitted to the computation unit. According to some embodiments, the dependency processing unit 213 can fetch a first memory address of required data (e.g., a matrix) of the first computation instruction according to the first computation instruction, and can fetch a zero-th memory address of a required matrix of the zero-th computation instruction according to the zero-th computation instruction. According to some embodiments, if there is overlap between the first memory address and the zero-th memory address, then it can be determined that the first computation instruction and the zero-th computation instruction are associated; if there is no overlap between the first memory address and the zero-th memory address, then it can be determined that the first computation instruction and the zero-th computation instruction are not associated.

[0033] FIG. 3 is a block diagram of a processor according to FIG. 1, according to some embodiments. According to some embodiments, as shown in FIG. 3, the computation unit 220 can further include a branch processing circuit 223, where the primary processing circuit 221 can be connected to the branch processing circuit 223, and the branch processing circuit 223 can be connected to the plurality of secondary processing circuits 222; the branch processing circuit 223 can be configured to forward data or instructions among the primary processing circuit 221 and the secondary processing circuits 222. According to some embodiments, the primary processing circuit 221 can be configured to divide one input neuron into a plurality of data blocks, and send at least one data blocks of the plurality of data blocks, a weight, and at least one computation instruction of the plurality of computation instructions to the branch processing circuit; the branch processing circuit 223 can be configured to forward the data block, the weight, and the computation instruction among the primary processing circuit 221 and the plurality of secondary processing circuits 222; the plurality of secondary processing circuits 222 can be configured to perform computation on the received data block and weight according to the computation instruction to obtain an intermediate result, and transmit the intermediate result to the branch processing circuit 223; the primary processing circuit 221 can further be configured to perform post-processing on the intermediate result transmitted by the branch processing circuit to obtain an operation result of the operation instruction, and transmit the operation result to the controller unit.

[0034] FIG. 4 is a block diagram of a processor according to FIG. 1, according to some embodiments. According to some embodiments, as shown in FIG. 4, the computation unit 220 can include one primary processing circuit 221 and a plurality of secondary processing circuits 222. The plurality of secondary processing circuits can be arranged in the form of an array. Each of the secondary processing circuits can be connected to other adjacent secondary processing circuits, and the primary processing circuit can be connected to k secondary processing circuits of the plurality of secondary processing circuits, where the k secondary processing circuits can be: n secondary processing circuits in a first line, n secondary processing circuits in an m-th line, and in secondary processing circuits in a first column. It should be noted that, k secondary processing circuits shown in FIG. 4 can only include n secondary processing circuits in a first line, n secondary processing circuits in an m-th line, and in secondary processing circuits in a first column. In other words, the k secondary processing circuits can be secondary processing circuits that are connected to the primary processing circuit directly. The k secondary processing circuits can be configured to forward data and instructions among the primary processing circuit and the plurality of secondary processing circuits.

[0035] According to some embodiments, the primary processing circuit 221 can include one or any combination of a conversion processing circuit, an activation processing circuit, and an addition processing circuit. The conversion processing circuit can be configured to perform an interconversion between a first data structure and a second data structure (e.g., an interconversion between continuous data and discrete data) on a received data block or intermediate result of the primary processing circuit, or the conversion processing circuit can be configured to perform an interconversion between a first data type and a second data type (e.g., an interconversion between a fixed point type and a floating point type) on a received data block or intermediate result of the primary processing circuit. The activation processing circuit can be configured to perform activation operation on data in the primary processing circuit. The addition processing circuit can be configured to perform addition operation or accumulation operation.

[0036] A computer program can be stored in the first memory 120 or the second memory 130 as shown in FIG. 1. The computer program can be used for implementing a model conversion method provided in an example of the disclosure. Specifically, the conversion method can be used for, if model attribute information of an initial offline model received by computer equipment does not match hardware attribute information of the computer equipment, converting the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment so that the computer equipment can be able to run the initial offline model. When using the model conversion method, data input of a computer equipment can be reduced, and an initial offline model can be automatically converted to a target offline model without any manual intervention. The method can have a simple process and high conversion efficiency. Further, the computer program can also be able to implement an offline model generation method.

[0037] According to some embodiments, the first memory 120 can be configured to store related data in running of a network model, such as network input data, network output data, network weights, instructions, and the like. The first memory 120 can be an internal memory, such as a volatile memory including a cache. The second memory 130 can be configured to store an offline model corresponding to a network model, and for instance, the second memory 130 can be a nonvolatile memory.

[0038] Working principles of the above-mentioned computer equipment can be consistent with an implementing process of each step of a model conversion method below. When the processor (110, 200) of the computer equipment perform the computer program stored in the first memory 120, each of the steps of the model conversion method can be implemented, for details, see the description below.

[0039] According to some embodiments, the computer equipment can further include a processor and a memory, and the computer equipment can furthermore include a processor and a memory that can be connected to the processor. The processor can be a processor shown in FIGS. 2.about.4, for a specific structure of the processor, see the description above about the processor 200. The memory can include a plurality of storage units. For instance, the memory can include a first storage unit, a second storage unit, and a third storage unit. The first storage unit can be configured to store a computer program, where the computer program can be used for implementing a model conversion method provided in an example of the disclosure. The second memory unit can be configured to store related data in running of an original network. The third memory can be configured to store an offline model corresponding to the original network, a target offline model corresponding to the offline model, and a preset model conversion rule, and the like. Further, a count of storage units included by the memory can be greater than three, which is not limited here.

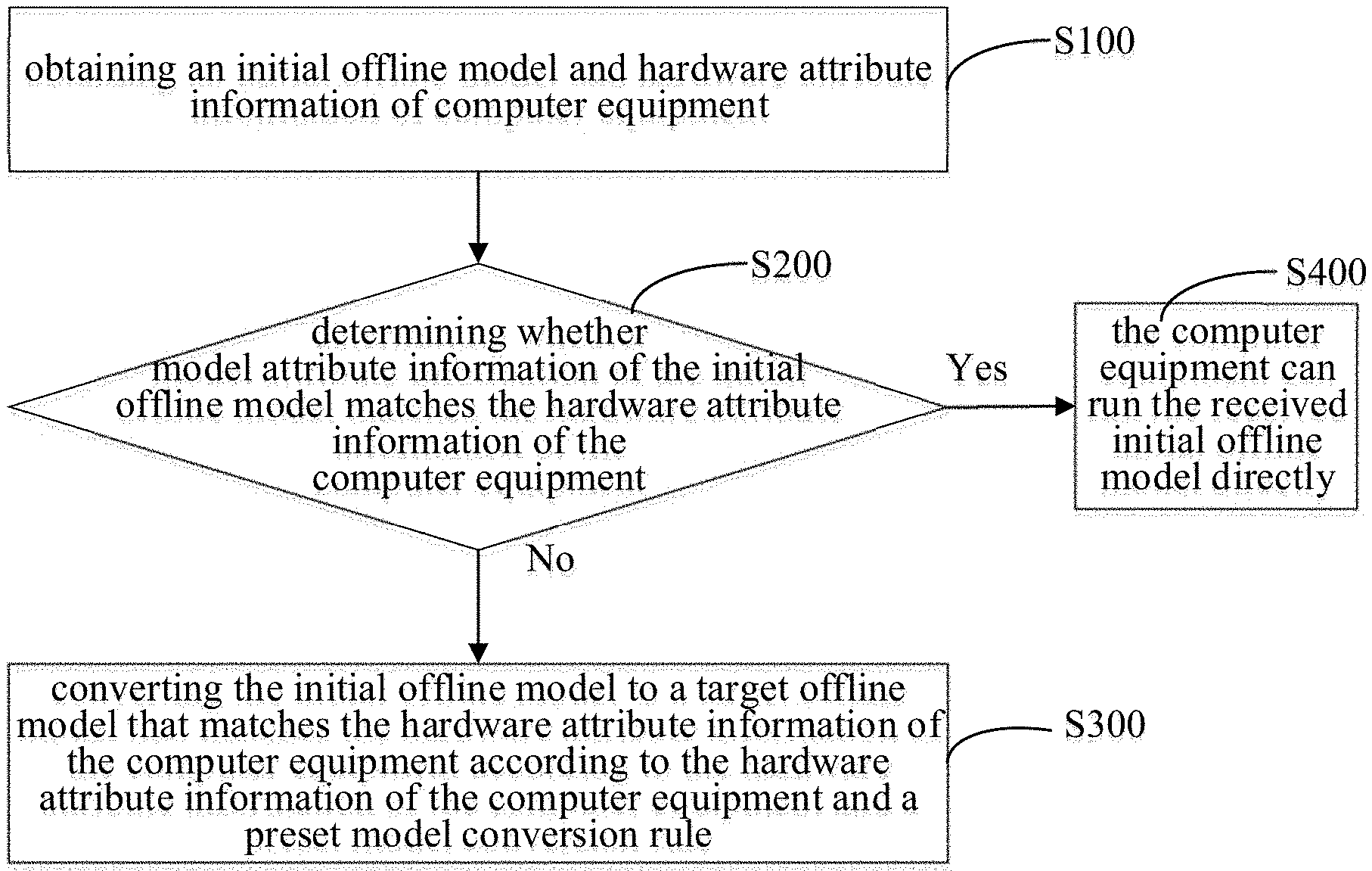

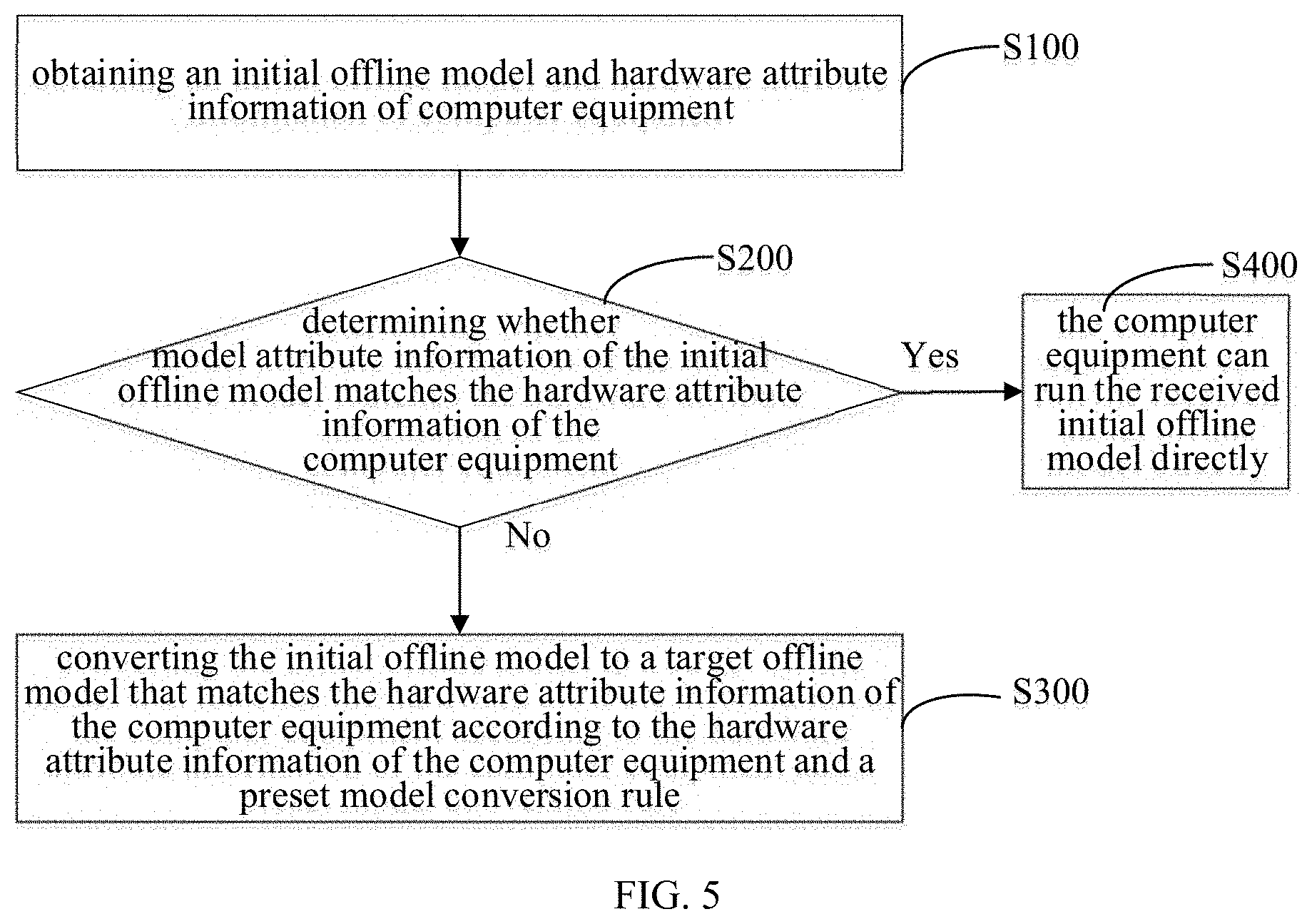

[0040] FIG. 5 is a flowchart of a model conversion method, according to some embodiments. According to some embodiments, as shown in FIG. 5, the present disclosure provides a model conversion method that can be used in the above-mentioned computer equipment. The model conversion method can be configured to, if model attribute information of an initial offline model received by computer equipment does not match hardware attribute information of the computer equipment, convert the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment so that the computer equipment can be able to run the initial offline model. According to some embodiments, the method can include steps: S100, obtaining an initial offline model and hardware attribute information of computer equipment; S200, determining whether model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment; and S300, in the case when the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, converting the initial offline model to a target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and a preset model conversion rule.

[0041] According to some embodiments, the initial offline model refers to an offline model obtained directly by the computer equipment, and the initial offline model can be stored in the second memory 130. The offline model can include necessary network structure information, such as network weights and instructions of respective compute nodes in an original network, where the instructions can be configured to represent computational functions that the respective compute nodes can be configured to perform, according to some embodiments, the instructions can be executed by the processor directly. According to some embodiments, the instructions are machine code. The instructions can specifically include computational attributes of the respective compute nodes in the original network, connections among the respective compute nodes, and other information. The original network can be a network model, such as a neural network model, see FIG. 9 for reference. Thus, the computer equipment can realize a computational function of the original network by running the offline model corresponding to the original network without the need of performing operation, such as compiling, repeatedly on the same original network. Time for running the network by the processor can be reduced, and processing speed and efficiency of the processor can be improved. Alternatively, the initial offline model of the example can be an offline model generated according to the original network directly, and can also be an offline model obtained through an offline model having other model attribute information being converted for once or a plurality of times, which is not confined here.

[0042] S200, determining whether model attribute information of the initial offline model matches the hardware attribute information of the computer equipment according to the initial offline model and the hardware attribute information of the computer equipment.

[0043] According to some embodiments, the model attribute information of the initial offline model can include a data structure, a data type, and the like, of the initial offline model. For instance, the model attribute information can include an arrangement manner of each network weight and instruction, a type of each network weight, a type of each instruction, and the like, in the initial offline model. The hardware attribute information of the computer equipment can include an equipment model of the computer equipment, a data type (e.g., fixed point, floating point, and the like) and a data structure that the computer equipment can be able to process. The computer equipment can be configured to determine whether the computer equipment is able to support running of the initial offline model (in other words, whether the computer equipment is able to run the initial offline model) according to the model attribute information of the initial offline model and the hardware attribute information of the computer equipment, and then determine whether the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment.

[0044] According to some embodiments, if the computer equipment is able to support the running of the initial offline model, it can be determined that the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment. If the computer equipment is not able to support the running of the initial offline model, it can be determined that the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment.

[0045] According to some embodiments, the computer equipment can determine the data type and the data structure that the computer equipment can be able to process according to the hardware attribute information, and determine the data type and the data structure of the initial offline model according to the model attribute information of the initial offline model. If the computer equipment determines that the computer equipment is able to support the data type and the data structure of the initial offline model according to the hardware attribute information of the computer equipment and the model attribute information of the initial offline model, the computer equipment can determine that the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment. If the computer equipment determines that the computer equipment is not able to support the data type and the data structure of the initial offline model according to the hardware attribute information of the computer equipment and the model attribute information of the initial offline model, the computer equipment can determine that the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment.

[0046] According to some embodiments, if the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, S300 can be performed, the initial offline model can be converted to a target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and a preset model conversion rule.

[0047] According to some embodiments, if the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, which indicates that the computer equipment cannot be able to support the running of the initial offline model, the initial offline model can need to be converted so that the computer equipment can be able to use the initial offline model to realize corresponding computation. In other words, if the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, the initial offline model can be converted to the target offline model that matches the hardware attribute information of the computer equipment according to the hardware attribute information of the computer equipment and the preset model conversion rule, where model attribute information of the target offline model can match the hardware attribute information of the computer equipment, and the computer equipment can be able to support running of the target offline model. As stated above, the target offline model can also include the necessary network structure information, such as the network weights and the instructions of the respective compute nodes in the original network.

[0048] According to some embodiments, the preset model conversion rule can be pre-stored in a first memory or a second memory in the computer equipment. If the model attribute information of the initial offline model does not match the hardware attribute information of the computer equipment, then the computer equipment can obtain a corresponding model conversion rule from the first memory or the second memory to convert the initial offline model to the target offline model. According to some embodiments, a count of the preset model conversion rule can be greater than one, and the more than one model conversion rules can be stored with the initial offline model and the target offline model in one-to-one correspondence. According to some embodiments, the initial offline model, the target offline model, and the preset model conversion rules can be stored correspondingly via a mapping table. According to some embodiments, the model attribute information of the initial offline model, the model attribute information of the target offline model, and applicable model conversion rules can be stored in one-to-one correspondence.

[0049] If the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment, then S400 can be performed, the computer equipment can run the received initial offline model directly. In other words, the computer equipment can perform computation according to the network weights and the instructions included in the initial offline model, so as to realize the computational function of the original network. According to some embodiments, if the model attribute information of the initial offline model matches the hardware attribute information of the computer equipment, which indicates that the computer equipment can be able to support the running of the initial offline model, the initial offline model cannot need to be converted, and the computer equipment can be able to run the initial offline model. It should be understood that in the present example, running the initial offline model directly refers to that, using the initial offline model to run a corresponding machine learning algorithm (e.g., a neural network algorithm) of the original network, and to realize a target application (e.g., an artificial intelligence application such as speech recognition) of the algorithm by performing forward computation.

[0050] Regarding the model conversion method according to the example of the disclosure, by merely receiving an initial offline model, the computer equipment can convert the initial offline model into the target offline model according to the preset model conversion rule and the hardware attribute information of the computer equipment without the need of obtaining multiple different versions of the initial offline models matching with different computer equipment. Data input of the computer equipment can thus be greatly reduced, problems such as excessive data input exceeding a memory capacity of the computer equipment can be avoided, and normal functioning of the computer equipment can be ensured. In addition, the model conversion method can reduce an amount of data processing of the computer equipment, and can thus improve the processing speed as well as reduce power consumption. According to some embodiments, the model conversion method can require no manual intervention. With a relatively high level of automation, the model conversion method can be easy to use.

[0051] FIG. 6 is a flowchart of a model conversion method, according to some embodiments. According to some embodiments, as shown in FIG. 6, S300 can include the steps of S310, determining the model attribute information of the target offline model according to the hardware attribute information of the computer equipment; S320, selecting a model conversion rule as a target model conversion rule from a plurality of preset model conversion rules according to the model attribute information of the initial offline model and the model attribute information of the target offline model; S330, converting the initial offline model to the target offline model according to the model attribute information of the initial offline model and the target model conversion rule.

[0052] Specifically, the computer equipment can determine the data type and the data structure that the computer equipment can be able to support according to the hardware attribute information of the computer equipment, and determine the model attribute information, including the data type and the data structure, of the target offline model. In other words, the computer equipment can determine the model attribute information of the target offline model according to the hardware attribute information of the computer equipment, where the model attribute information of the target offline model can include information, including the data structure and the data type, of the target offline model.

[0053] S320 may comprise selecting a model conversion rule as a target model conversion rule from a plurality of preset model conversion rules according to the model attribute information of the initial offline model and the model attribute information of the target offline model.

[0054] According to some embodiments, since the initial offline model, the target offline model, and the preset model conversion rules are mapped in one-to-one correspondence, the target model conversion rule can be determined according to the model attribute information of the initial offline model and the model attribute information of the target offline model, where the model conversion rules can include a data type conversion method, a data structure conversion method, and the like.

[0055] S330 may comprise converting the initial offline model to the target offline model according to the model attribute information of the initial offline model and the target model conversion rule.

[0056] According to some embodiments, the computer equipment can convert the initial offline model to the target offline model according to a conversion method provided by the target model conversion rule, so that the computer equipment can be able to run the target offline model and perform computation.

[0057] According to some embodiments, if there are a plurality of model conversion rules for the initial offline model and the target offline model, the computer equipment can select the target offline model automatically according to a preset algorithm. Specifically, S320 can further include: selecting more than one applicable model conversion rules from the plurality of the preset model conversion rules according to the model attribute information of the initial offline model and the model attribute information of the target offline model; and prioritizing the more than one applicable model conversion rules, and serving an applicable model conversion rule with a highest priority as the target conversion rule.

[0058] According to some embodiments, the applicable model conversion rules refers to conversion rules that are able to convert the initial offline model to the target offline model. The case where more than one applicable model conversion rules exist indicates that there can be a plurality of different manners for converting the initial offline model into the target offline model. The manner of prioritizing can be preset, or can be defined by users.

[0059] According to some embodiments, the computer equipment can obtain process parameters of the respective applicable model conversion rules, and prioritize the more than one applicable model conversion rules according to the process parameters of the respective applicable model conversion rules. The process parameters can be performance parameters of the computer equipment that are involved when using the model conversion rules to convert the initial offline model to the target offline model. According to some embodiments, the process parameters can include one or more of conversion speed, power consumption, memory occupancy rate, and magnetic disk I/O occupancy rate.

[0060] According to some embodiments, the computer equipment can score the respective applicable model conversion rules (e.g., obtaining scores by performing weighted computation on respective reference factors) according to a combination of one or more of the process parameters, including conversion speed, conversion power consumption, memory occupancy rate, and magnetic disk I/O occupancy rate, during the conversion of the initial offline model to the target offline model, and serve an applicable model conversion rule with a highest score as the applicable model conversion rule with the highest priority. In other words, the applicable model conversion rule with the highest score can be used as the target conversion rule. According to some embodiments, S330 can be performed, and the initial offline model can be converted to the target offline model according to the model attribute information of the initial offline model and the target model conversion rule. In this way, a better model conversion rule can be selected by considering performance factors of equipment during a model conversion process, and processing speed and efficiency of the computer equipment can thus be improved.

[0061] According to some embodiments, as shown in FIG. 6, the method above can further include:

[0062] S500, storing the target offline model in the first memory or the second memory of the computer equipment.

[0063] According to some embodiments, the computer equipment can store the obtained target offline model in a local memory (e.g., the first memory) of the computer equipment. According to some embodiments, the computer equipment can further store the obtained target offline model in an external memory (e.g., the second memory) connected to the computer equipment, where the external memory can be a cloud memory or another memory. In this way, when the computer equipment needs to use the target offline model repeatedly, the computer equipment can realize the corresponding computation merely by reading the target offline model directly without the need of repeating the above-mentioned conversion operation.

[0064] According to some embodiments, a process that the computer equipment performs the target offline model can include:

[0065] Obtaining the target offline model, and running the target offline model to execute the original network. The target offline model can include necessary network structure information, including network weights and instructions corresponding to respective offline nodes in the original network, and interface data among the respective offline nodes and other compute nodes in the original network.

[0066] According to some embodiments, when the computer equipment needs to use the target offline model to realize computation, the computer equipment can obtain the target offline model from the first memory or the second memory directly, and perform computation according to the network weights, the instructions, and the like, in the target offline model, so as to realize the computational function of the original network. In this way, when the computer equipment needs to use the target offline model repeatedly, the computer equipment can realize the corresponding computation by merely reading the target offline model without the need of repeating the above-mentioned conversion operation.

[0067] It should be understood that in the present example, running the target offline model directly refers to that, using the target off-line model to run a corresponding machine learning algorithm (e.g., a neural network algorithm) of the original network, and realize a target application (e.g., an artificial intelligence application such as speech recognition) of the algorithm by performing forward computation.

[0068] According to some embodiments, an application can be installed on the computer equipment. An initial offline model can be obtained via the application on the computer equipment. The application can provide an interface for obtaining the initial offline model from the first memory 120 or an external memory, so that the initial offline model can be obtained via the application. According to some embodiments, the application can further provide an interface for reading hardware attribute information of the computer equipment, so that the hardware attribute information of the computer equipment can be obtained via the application. According to some embodiments, the application can further provide an interface for reading a preset model conversion rule, so that the preset model conversion rule can be obtained via the application.

[0069] According to some embodiments, the computer equipment can provide an input/output interface (e.g., an I/O interface), and the like, where the input/output interface can be configured to obtain an initial offline model, or output a target offline model. Hardware attribute information of the computer equipment can be preset to be stored in the computer equipment.

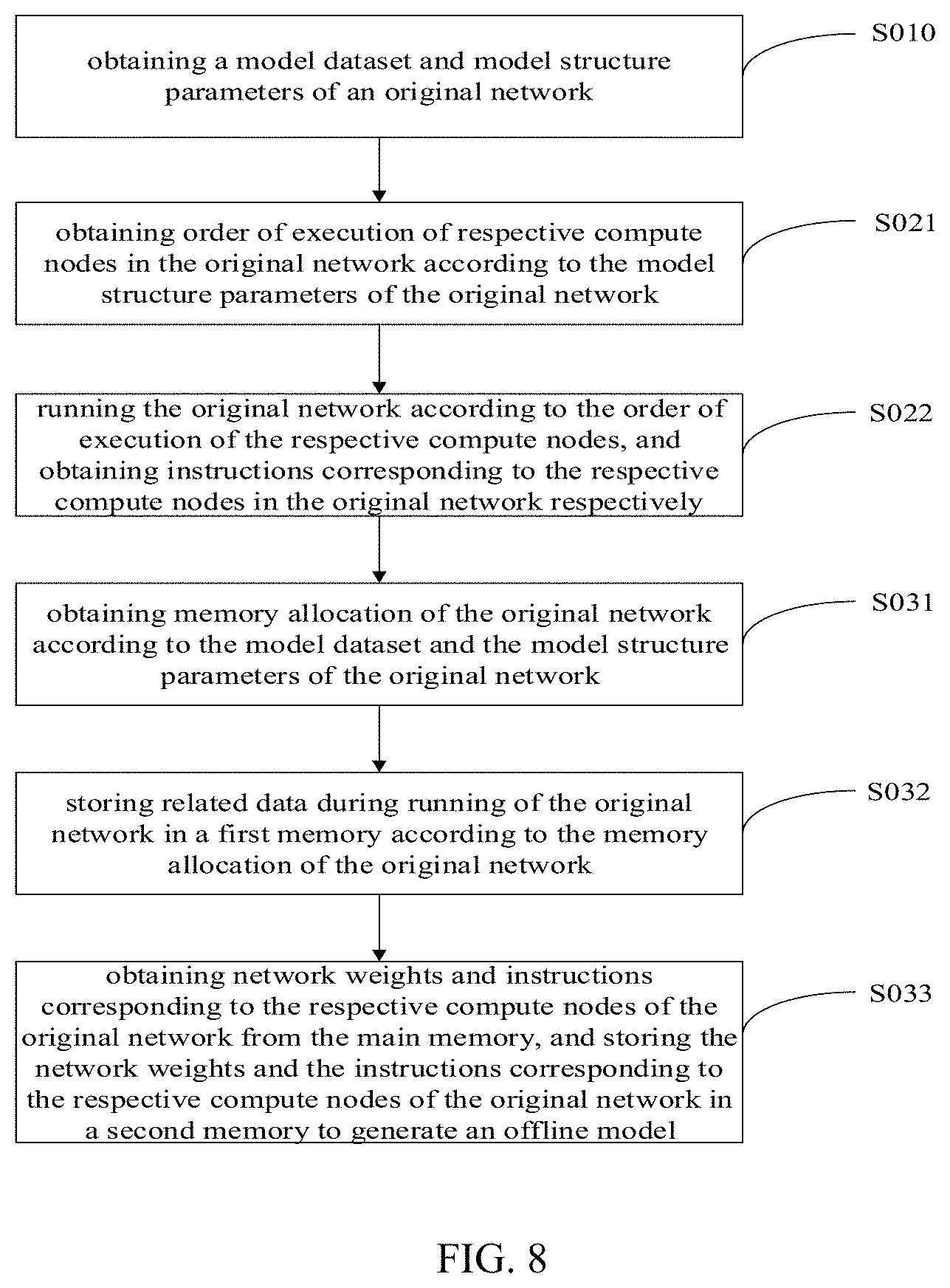

[0070] FIG. 7 is a flowchart of an offline model generation method, according to some embodiments. According to some embodiments, as shown in FIG. 7, an example of the present disclosure further provides a method of generating an offline model according to an original network model. The method can be configured to generate and store an offline model of an original network according to obtained related data of the original network, so that when a processor runs the original network again, the processor can run the offline model corresponding to the original network directly without the need of performing operation, such as compiling, repeatedly on the same original network. Time for running the network by the processor can thus be reduced, and then processing speed and efficiency of the processor can be improved. The original network can be a network model such as a neural network or a non-neural network. For instance, the original network can be a network shown in FIG. 9. According to some embodiments, the method above can include: S010, obtaining a model dataset and model structure parameters of an original network; S020, running the original network according to the model dataset and the model structure parameters of the original network, and obtaining instructions corresponding to the respective compute nodes in the original network; S030, generating an offline model corresponding to the original network according to the network weights and the instructions corresponding to the respective compute nodes of the original network, and storing the offline model corresponding to the original network in a nonvolatile memory (or a database).

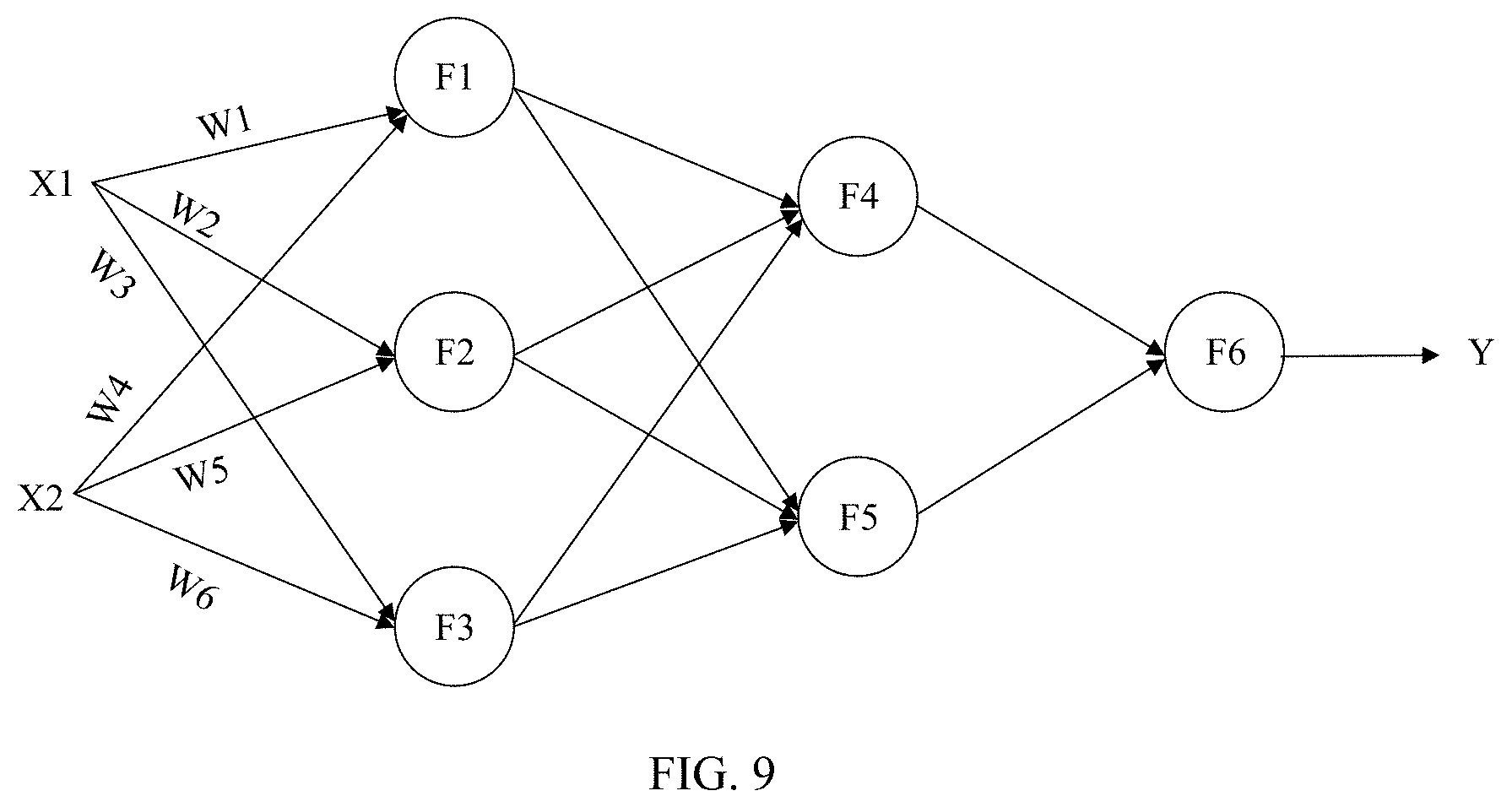

[0071] According to some embodiments, a processor of computer equipment can obtain the model dataset and the model structure parameters of the original network, and can obtain a diagram of network structure of the original network according to the model dataset and the model structure parameters of the original network. The model dataset can include data such as network weights corresponding to respective compute nodes in the original network. According to some embodiments, W1.about.W6 in a neural network shown in FIG. 9 are used for representing network weights of compute nodes. The model structure parameters can include connections among a plurality of compute nodes in the original network, and computational attributes of the respective compute nodes, where the connections among the compute nodes can be used for indicating whether data is transmitted among the compute nodes. According to some embodiments, when a data stream is transmitted among a plurality of compute nodes, the plurality of compute nodes can have connections among them. Further, the connections among the compute nodes can include an input relation, an output relation, and the like. According to some embodiments, as shown in FIG. 9, if output of compute node F1 serves as input of compute nodes F4 and F5, then the compute node F1 can have a connection with the compute node F4, and the compute node F1 can have a connection relation with the compute node F5. According to some embodiments, if no data is transmitted between the compute node F1 and compute node F2, then the compute node F1 can have no connection with the compute node F2.

[0072] The computational attributes of the respective computes node can include a computational type and computation parameters corresponding to a compute node. The computational type of the compute node refers to a kind of computation to be fulfilled by the compute node. According to some embodiments, the computational type of the compute node can include addition operation, subtraction operation, and convolution operation, etc. Accordingly, the compute node can be a compute node configured to implement addition operation, a compute node configured to implement subtraction operation, a compute node configured to implement convolution operation, or the like. The computation parameters of the compute node can be necessary parameters for completing the type of computation corresponding to the compute node. According to some embodiments, a computational type of a compute node can be addition operation, and accordingly, computation parameters of the compute node can be an added in addition operation, and an augend in the addition operation can be obtained, as input data, via an obtaining unit, or the augend in the addition operation can be output data of a compute node prior to this compute node, etc.

[0073] S020 may comprise running the original network according to the model dataset and the model structure parameters of the original network, and obtaining instructions corresponding to the respective compute nodes in the original network.

[0074] According to some embodiments, the processor of the computer equipment can run the original network according to the model dataset and the model structure parameters of the original network, and obtaining the instructions corresponding to the respective compute nodes in the original network. According to some embodiments, the processor can further obtain input data of the original network, and run the original network according to the input data, the network model dataset and the model structure parameters of the original network, and obtain the instructions corresponding to the respective compute nodes in the original network. According to some embodiments, the above-mentioned process of running the original network to obtain the instructions of the respective compute nodes can in fact be a process of compiling. In other words, the instructions is obtained after compiling, and the instructions can be executed by the processor, for instance, the instructions are machine code. The process of compiling can be realized by the processor or a virtual device of the computer equipment. In other words, the processor or the virtual device of the computer equipment can run the original network according to the model dataset and the model structure parameters of the original network. The virtual device refers to virtualizing an amount of processor running space virtualized in memory space of a memory.

[0075] It should be understood that in the present example, miming the original network refers to, the processor uses artificial neural network model data to run a machine learning algorithm (e.g., a neural network algorithm), and realize a target application (e.g., an artificial intelligence application such as speech recognition) of the algorithm by performing forward operation.

[0076] S030 may comprise generating an offline model corresponding to the original network according to the network weights and the instructions corresponding to the respective compute nodes of the original network, and storing the offline model corresponding to the original network in a nonvolatile memory (or a database).

[0077] According to some embodiments, the processor can generate the offline model corresponding to the original network according to the network weights and the instructions corresponding to the respective compute nodes of the original network. According to some embodiments, the processor can store the network weights and the instructions corresponding to the respective compute nodes of the original network in the nonvolatile memory to realize the generating and storing of offline model. For each compute node of the original network, the weight and the instruction of the compute node can be stored in one-to-one correspondence. In this way, when running the original network again, the offline model corresponding to the original network can be obtained from the nonvolatile memory directly, and the original network can be run according to the corresponding offline model without the need of compiling the respective compute nodes of the original network to obtain the instructions, thus, running speed and efficiency of a system can be improved.

[0078] It should be understood that in the present example, directly running the corresponding offline model of the original network refers to that, using the offline model to run a corresponding machine learning algorithm (e.g., a neural network algorithm) of the original network, and realize a target application (e.g., an artificial intelligence application such as speech recognition) of the algorithm by performing forward operation.

[0079] According to some embodiments, as shown in FIG. 8, S020 can include: S021, obtaining order of execution of the respective compute nodes in the original network according to the model structure parameters of the original network; S022, running the original network according to the order of execution of the respective compute nodes in the original network, and obtaining the instructions corresponding to the respective compute nodes in the original network respectively.

[0080] According to some embodiments, the processor can obtain the order of execution of the respective compute nodes in the original network according to the model structure parameters of the original network. According to some embodiments, the processor can obtain the order of execution of the respective compute nodes in the original network according to the connections among the respective compute nodes in the original network. According to some embodiments, as shown in FIG. 9, input data of the compute node F4 can be output data of the compute node F1 and output data of the compute node F2, and input data of compute node F6 can be output data of the compute node F4 and output data of the compute node F5. Thus, the order of execution of the respective compute nodes in the neural network shown in FIG. 9 can be F1-F2-F3-F4-F5-F6, F1-F3-F2-F5-F4-F6, and the like. According to some embodiments, the compute nodes F1, F2, and F3 can be performed in parallel, and the compute nodes F4 and F5 can also be performed in parallel. The instances are given here merely for the purpose of explanation, and are not to be considered limiting of the order of execution.

[0081] S022 may comprise running the original network according to the order of execution of the respective compute nodes in the original network, and obtaining the instructions corresponding to the respective compute nodes in the original network respectively.

[0082] According to some embodiments, the processor can run the original network according to the order of execution of the respective compute nodes in the original network, so as to obtain the corresponding instructions of the respective compute nodes in the original network. According to some embodiments, the processor can compile data such as the model dataset of the original network to obtain the corresponding instructions of the respective compute nodes. According to the corresponding instructions of the respective compute nodes, computational functions fulfilled by the compute nodes can be known. In other words, computational attributes such as computational types and computation parameters of the compute nodes can be known.

[0083] FIG. 8 is a flowchart of an offline model generation method, according to some embodiments. According to some embodiments, as shown in FIG. 8, S030 can further include S031, S032 and S033.

[0084] S031 may comprise obtaining memory allocation of the original network according to the model dataset and the model structure parameters of the original network.

[0085] According to some embodiments, the processor can obtain the memory allocation of the original network according to the model dataset and the model structure parameters of the original network, obtain the order of execution of the respective compute nodes in the original network according to the model structure parameters of the original network, and can obtain the memory allocation of the current network according to the order of execution of the respective compute nodes in the original network. According to some embodiments, related data during running of the respective compute nodes can be stored in a stack according to the order of execution of the respective compute nodes. The memory allocation refers to determining a position where the related data (including input data, output data, network weight data, and intermediate result data) of the respective compute nodes in the original network are stored in memory space (e.g., the first memory). According to some embodiments, a data table can be used for storing mappings between the related data (input data, output data, network weight data, intermediate result data, etc.) of the respective compute nodes and the memory space.

[0086] S032 may comprise storing the related data during the running of the original network in the first memory according to the memory allocation of the original network, where the related data during the running of the original network can include network weights, instructions, input data, intermediate computation results, output data, and the like, corresponding to the respective compute nodes of the original network.

[0087] According to some embodiments, as shown in FIGS. 9, X1 and X2 represent input data of the neural network, and Y represent output data of the neural network. The processor can convert the output data of the neural network into control commands for controlling a robot or a different digital interface. According to some embodiments, W1.about.W6 are used for representing network weights corresponding to the compute nodes F1, F2, and F3, and output data of the compute nodes F1.about.F5 can be used as intermediate computation results. According the confirmed memory allocation, the processor can store the related data during the running of the original network in the first memory, such as a volatile memory including an internal memory or a cache. See memory space shown in the left part of FIG. 10 for detailed storage of the related data.