Virtual Reality Training Device

Schradin; Aaron ; et al.

U.S. patent application number 16/571835 was filed with the patent office on 2020-03-26 for virtual reality training device. The applicant listed for this patent is PRAVAEDI LLC. Invention is credited to Christopher A. Eusebi, Aaron Schradin.

| Application Number | 20200098185 16/571835 |

| Document ID | / |

| Family ID | 69884957 |

| Filed Date | 2020-03-26 |

View All Diagrams

| United States Patent Application | 20200098185 |

| Kind Code | A1 |

| Schradin; Aaron ; et al. | March 26, 2020 |

VIRTUAL REALITY TRAINING DEVICE

Abstract

According to the present teachings a system is provided having a landscape engine configured to take digital input and convert it into three dimensional at virtual reality dataset. The landscape engine is functionally coupled to a physics engine configured to physical rules to objects within the virtual reality dataset. A display engine is coupled to the physics engine to convert the dataset into first and second content streams.

| Inventors: | Schradin; Aaron; (West Olive, MI) ; Eusebi; Christopher A.; (Bloomfield Township, MI) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 69884957 | ||||||||||

| Appl. No.: | 16/571835 | ||||||||||

| Filed: | March 14, 2018 | ||||||||||

| PCT Filed: | March 14, 2018 | ||||||||||

| PCT NO: | PCT/US2018/022481 | ||||||||||

| 371 Date: | September 16, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/US18/14056 | Jan 17, 2018 | |||

| 16571835 | ||||

| 62471333 | Mar 14, 2017 | |||

| 62447329 | Jan 17, 2017 | |||

| 62524317 | Jun 23, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A47C 15/004 20130101; A47C 7/72 20130101; G06T 17/05 20130101; A47C 1/00 20130101; A63F 2300/8082 20130101; A63F 13/211 20140902; A63F 13/24 20140902; G06F 3/011 20130101; G06T 19/006 20130101; A63F 2300/1043 20130101; A47C 3/18 20130101; A47C 3/20 20130101; A63F 13/285 20140902; A63F 2300/105 20130101; A63F 2300/302 20130101; G06T 2219/024 20130101; G09B 9/003 20130101; A63F 13/98 20140902 |

| International Class: | G06T 19/00 20060101 G06T019/00; G09B 9/00 20060101 G09B009/00; G06F 3/01 20060101 G06F003/01; G06T 17/05 20060101 G06T017/05; A47C 1/00 20060101 A47C001/00 |

Claims

1. A method of sharing a three-dimensional virtual reality space among a plurality of users, the method comprising the steps of: apply physical rules to objects within the virtual reality dataset, a display engine is coupled to the physics engine to convert the dataset into first and second content streams; and streaming a first content set from the three-dimensional graphics data at a first level of detail to a first VR headset and a second content set three-dimensional graphics data at a second level of detail to second VR headset.

2. The method according to claim 1 wherein streaming a first content set includes streaming training scenarios and wherein the content set further comprises a rendered topographic landscape as an .obj file having a plurality of .mtl files.

3. The method according to claim 1 further comprising a second content set that has an augmented reality content that is less than the content of the first content set.

4. The method according to claim 1 further including, capturing a series of 2d images for a topographic landscape using a camera mounted on an aircraft and converting the series of images for the topographic landscape into a captured set of images and onto a wire frame of the topographic landscape using photogrammetry.

5. The method according to claim 4, including syncing the topographic landscape to a real world located using a fixed coordinate system.

6. The method according to claim 4, including providing a set of polygons over the wire frame to form a virtual reality image dataset.

7. The method according to claim 1, including providing a second AR image dataset having less content than the first VR image dataset which is streamed to an AR display.

8. The method according to claim 1, wherein providing a first VR image dataset includes a selecting engageable virtual sandbox which gives an overhead view of the entire topographic landscape, and a VR data space topography.

9. A method of sharing a three-dimensional virtual reality space among a plurality of users, the method comprising the steps of: acquiring a plurality of 2-D images of a geographic region using a mobile platform; using photogrammetry to create a first set of three-dimensional graphics data associated with a geographic region to be used by the plurality of users in a shared manner, the three-dimensional graphics data containing a first .obj file and an associated plurality of .mtl files; functionally coupling the three-dimensional graphics data to a physics engine configured to apply physical rules to objects within a virtual reality dataset; coupling a display engine to a physics engine to convert the dataset into first and second content streams; and selectively streaming either one of the first content streams and second content streams from the three-dimensional graphics data to a VR headset.

10. The method according to claim 9 wherein streaming a first content stream includes streaming the first dataset which comprises a rendered topographic landscape.

11. The method according to claim 9 wherein streaming a second content stream includes streaming the second dataset having an augmented reality content that is less than the content of the first dataset.

12. The method according to claim 9 including capturing a series of images for a terrain using a camera mounted on an unmanned aircraft and converting the series of images for a terrain into a captured set of images and into a wire frame of the topographic landscape.

13. The method according to claim 12 including syncing the topographic landscape to a real world located using a fixed coordinate system.

14. The method according to claim 12 including providing a set of polygons over the wire frame to form a virtual reality image dataset.

15. The method according to claim 9 including converting the coupling the three-dimensional graphics data to a second AR image dataset having less content than the first VR image dataset which is streamed to an AR display.

16. The method according to claim 9 wherein providing a first VR image dataset includes a first engageable virtual sandbox visual content which gives an overhead view of the entire topography, and in VR data space topography visual content.

17. A method for training a user comprising the steps of: capturing a series of images of a terrain using a ccd camera and a LIDAR providing pixel location information disposed on a mobile aircraft traveling over the terrain; converting the series of images for the terrain into a captured subset of images and into a wire frame of the topographic landscape to form a VR image dataset; syncing the wire frame of the topographic landscape to a real world location using a fixed coordinate system by associating the ccd camera and a LIDAR providing pixel location information; applying a physics engine to an object within the VR image dataset; streaming a first image dataset stream from the VR image dataset to a VR display; and streaming a second image dataset from the VR image dataset to the VR display.

18. The method according to claim 17 wherein capturing a series of images of a terrain using a ccd camera disposed on a mobile aircraft traveling over the terrain includes defining a flight path using CNC cutter-path system to define the path of a data collection drone to most efficiently calculate needed information for a variety of layers in a variety of spectrum.

19. The method according to claim 17 further includes providing a virtual tool that allows the placement of vehicles within the VR image dataset.

20. The method according to claim 17 further includes providing a virtual tool that allows visualization of the movement of people on the terrain and modifying the VR image set to include information related to the movement of people on the terrain.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of PCT/US18/22481 AND U.S. application Ser. No. 16/478,778, which claims the benefit of PCT/US18/14056, filed on Jan. 17, 2018, U.S. Provisional Application No. 62/447,329, filed on Jan. 17, 2017 and U.S. Provisional Application No. 62/524,317, filed on Jun. 23, 2017. The entire disclosure of the above applications are incorporated herein by reference.

FIELD OF THE INVENTION

[0002] The present invention relates to an augmented and virtual reality training system and more particularly to input and systems data structures intended to increase the performance of the augmented and virtual reality training system.

BACKGROUND OF THE INVENTION

[0003] For humans to interact and operate computers, external input devices are generally required. Signals from these external input devices are received by the computer and processed to act as a control signal for controlling an aspect of the computer's function and/or applications (programs) running on the computer.

[0004] Traditionally, input devices such as keyboards, mice, game controllers and the like have focused on receiving input movements from the hands and particularly the fingers of users. While these have proven effective, they are poorly suited for more immersive, intuitive control schemes. The development of immersive computer-generated environments such as those used for gaming, social interaction, computer-aided design and other similar functions have highlighted the need for new input devices. Of note is the rise of augmented reality ("AR") and virtual reality ("VR") technology that enables users to be fully immersed in computer generated environments. AR and VR technology platforms are poorly suited for traditional input methods as they can break immersion and detract from the user's experience.

[0005] Often input device associated with VR have functions that are inherently unstable. Generally, the further a user moves from a center location, the easier it is to continue moving further because the user's center of gravity goes outside the bounds of the device. To counteract this, devices can be modified with the addition of ballast. This however still never truly corrects the problem as it often increases the resistance force. For example, the further the pivot point for movement is from a user's hips, the further the users must move a user's body to create the angle the MPU needs and still have a decent sensitivity and proper "dead-zone." Also, somewhat susceptible to "signal drift."

[0006] Further, the users must move to create the movement, the longer it takes to adjust a user's movement or change movement directions, which makes the user's overshoot a user's preferred movement position. Depending slightly on the radius of the bottom, to go full-speed forward to full-speed backwards means the users must move a user's body around 22 inches.

[0007] The further the user's movement can put a user off-balance in VR, the more a user's body contemplates going on strike via way of VR induced motion sickness.

[0008] Fundamental VR Problems: [0009] Does not address cable management/tangle [0010] Does not address uncoupled look/move [0011] Leaves room for improvement for a more compact operation envelope [0012] Rubs and walks on flooring due to off axis rotation and no turntable

[0013] Another problem associated with VR systems is sickness caused by the vestibular system which provides the leading contribution to the sense of balance and spatial orientation for the purpose of coordinating movement with balance. As movements consist of rotations and translations, the vestibular system comprises two components: a first which indicates rotational movements; and a second, which indicates linear accelerations. The vestibular system sends signals primarily to the neural structures that control eye movements, and to the muscles that keep an individual upright. Discoordination of these signals leads to motion sickness when using VR and AR systems.

[0014] These approaches were a bit more complex, but much more satisfying. Though the experience was less interesting for a crowd of VR curious onlookers to observe, it was eroding away at the real problems that faced VR. Traditionally, VR systems couple head movement to torso movement. For example, a user in a VR environment can for example travel down a sidewalk and wherever the user's looked, the user travels in the vision direction.

SUMMARY OF THE INVENTION

[0015] As specified in the Background Section above, there is a need for improved devices and methods for providing user input for controlling and or interacting with a computing device.

[0016] To overcome the afore mentioned problems, the system according to the present system measures the angle of the user's torso and feeds it back to the application so that the player axis is defined by torso angle. The head mounted display is then constrained to that player but "uncoupled" so that the view from the head mounted display is not affected by the torso angle, but only by the angle interpreted by the head mounted display. The torso angle information is presented as a part of the Human Interface device packet.

[0017] According to an alternate teaching, the system can include a rotary slip connector, and a quadrature rotary encoder that keeps track of the orientation of a user's body. Each degree moved is added or subtracted from the original calibration position and can be as accurate as one degree. Optionally, when a user of a system initiates movement, the natural response of the user is to "lean" in the direction they wish to head.

[0018] The design of system allows for super-fast directional changes, because a properly trained user of a system does not have to translate their center of gravity to move any direction, they simply use core muscle movement to redistribute their weight to create the movement in VR. Optionally, the system utilizes a solution which the seating surface tilts at a point closer to the hips or seat of the user This pivot location is critical as this approach never puts the user in a position of instability of falling.

[0019] According to the present teachings, the system allows a user's lower body function to allow movement in a VR space. The system can incorporate mechanical binary or linear input switches and an analog representation through a multiple axis processing unit (MPU).

[0020] According to the present teachings, the system can provide to those users which are less sensitive to the sensations of VR movement, users can optionally just use a raw analog input gradient to create user movements and use the switches for jump or some other function.

[0021] According to the present teachings, the system includes a rotary slip connector design, configured to reliably pass the following signals through the system to ensure that cables, and their signals, are heard loud and clear and never bind, tangle, or twist: HDMI; USB; Power; and 3 Line Developer. The rotary slip connector design "Infinite" rotation with no tangle. According to the present teachings, the system includes a small stool or chair.

[0022] The present invention is not to be limited in scope by the specific embodiments described herein. Indeed, various modifications of the invention in addition to those described herein will become apparent to those skilled in the art from the foregoing description. Such modifications are intended to fall within the scope of the appended claims.

[0023] According to the present teachings, the AR/VR training and mapping system allows users or soldiers to gain situational comfort for by training in a virtual training environment, and optionally followed by an augmented training environment. This increases the speed at which the users absorb memories about a terrain. Information from the VR and AR environments can be used in a mixed reality (MR) situation or real mission the information provides a more memorable experience for retention of the knowledge of the terrain. According to the present teachings a system is provided having a landscape engine configured to take digital input and convert it into three dimensional at virtual reality dataset. The landscape engine is functionally coupled to a physics engine (such as by way of non-limiting example the unreal engine) configured to physical rules to objects within the virtual reality dataset. A display engine is coupled to the physics engine to convert the dataset into first and second content streams. According to the present teachings the system includes a first VR headset configured to receive the first content and a first AR headset configured to present a second dataset onto an AR display.

[0024] According to the present teachings the system includes training scenarios wherein the first dataset comprises a rendered topographic landscape.

[0025] According to the present teachings the system includes a second dataset having an augmented reality content that is less than the content of the first dataset.

[0026] According to another teaching, a method for training a solder is presented. The method includes capturing a series of images for a terrain using a drone. The image data series of the terrain is converted into a captured set of images and into a wire frame of the topographic landscape. The system syncs the wire frame of the topographic landscape to a real world located using a fixed coordinate system such as gps. The system then optionally provides a set of polygons over the wire frame to form a virtual reality image dataset.

[0027] The system applies a physics engine to object within the VR image dataset. The VR data set is coupled to a display engine which provides a first VR image dataset stream to a VR display. The display engine also provides a second AR image dataset less than the first VR image dataset which is streamed to an AR display. The training system according to the aforementioned system where the first VR image dataset includes a selecting engageable virtual sandbox which gives an overhead view of the entire topography, and in VR data space topography.

[0028] The training system according to the aforementioned system wherein the second AR image dataset is polygons for texture and content selectively disposed onto a real world optically viewable surface through an AR display and fourth set of data which overlays a wire frame image over real word surfacing in an augmented reality goggles.

[0029] According to a new teaching, the landscape engine can selectively accept data from GIS, 3-D heat map data from an FLIR image, data from a Lidar imaging system, and combinations thereof and augment portions of the VR landscape by applying layers of the new information.

[0030] According to a new teaching, the aforementioned system can include a dataset having image data for items such as a vehicles, or red team personnel that are placeable by the first person onto the sandbox, these items also being simultaneously positioned within the VR space, where they are affected by rules defined by the physics engine.

[0031] The system according to the aforementioned systems further having a difference engine which compares a first image with a second image. The difference engine highlighting changes between the first and second images within at least one of the sand box, VR and MR datasets.

[0032] According to another teaching, the difference engine calculates changes in elevation of ground of greater than 5 mm, placement of vehicles, changes in thermal energy emitted from a portion of the image.

[0033] According to another teaching, a physics engine can selectively apply vary light conditions to the display data.

[0034] According to another teaching, the physics engine can selectively apply varying weather conditions to the display data.

[0035] According to another teaching, the aforementioned systems can further include a distance calculator which can calculate distance between two locations in one of the virtual dataset and the AR world.

[0036] According to another teaching, the system can further include an injury probability system which calculates a probably of injury for a given path in VR, AR, MR space and highlights least probability of injury paths in VR, AR, MR space and changes the probabilities based on changes in the VR, AR, MR space model.

[0037] According to another teaching, the aforementioned systems can utilize intelligent CNC cutter-path mathematics to define the path of a data collection drone to most efficiently calculate needed information for a variety of layers in a variety of spectrum. This will allow, the maintenance a constant data buffering rates to minimize data feed rate losses and to maximize program processing speed.

[0038] "Smart imaging" is a feature that aims to produce an intelligent, optimized drone path. Its functionality can include options for examining data between Z layers--including data feed connections, slope control flight, and geometry identification. To achieve near net shape when imaging, it is important for the control software to understand what changes in surface topology occur between the layers of down-steps. Knowledge of terrain remaining algorithms must look ahead to determine where extra closer imaging-steps are necessary. Smart imaging is how a flight control system images this "between layers" material. By rough imaging in this manner, often the semifinish pass may be eliminated, saving on drone flight time and computation resources. Smart imaging may also include helical ramping functionality. This is used for pocket imagine. The helical ramping function determines helical movement based on entry angle and geometry. This function is most important when the imager reaches an obscured area of terrain. It can make the drone path shorter and safer by eliminating unneeded duplication, as a result of tailoring the tool path to the geometry of the obscured features.

[0039] According to the present teachings, the system can map available electromagnetic spectrum including EM radio signal dead zones and the location of transmitters within the VR, AR, MR dataset.

[0040] According to the present teachings, a method and system for sharing a three-dimensional virtual reality space among a plurality of users is provided. The method includes the step of, acquiring three-dimensional graphics data associated with a geographic region to be used by the plurality of users in a shared manner using a mobile platform such as an autonomous vehicle first and second times. Noting when objects or surfaces within the geographic region whose state is changed between the first and second time is changed during a predetermined time period. The method includes the step of functionally coupling the three-dimensional graphics data to a physics engine configured to physical rules to objects within the virtual reality dataset. Additionally, the method includes functionally coupling a display engine coupled to the physics engine to convert the dataset into first and second content streams. The method includes streaming a first content set from the three-dimensional graphics data to a VR headset and a second content set three-dimensional graphics data to an AR headset.

[0041] The method and system according to a previous or following paragraphs wherein streaming a first content set includes streaming training scenarios and wherein the first dataset comprises a rendered topographic landscape.

[0042] The method or system according to a previous or following paragraphs wherein the second dataset has an augmented reality content that is less than the content of the first dataset.

[0043] The method or system according to a previous or following paragraphs including capturing a series of images for a terrain using a drone and converting into a captured set of images and into a wire frame of the topographic landscape.

[0044] The method or system according to a previous or following paragraphs including the topographic landscape to a real world located using a fixed coordinate system.

[0045] The method or system according to a previous or following paragraphs including providing a set of polygons over the wire frame to form a virtual reality image dataset.

[0046] The method or system according to a previous or following paragraphs including providing a second AR image dataset having less content than the first VR image dataset which is streamed to an AR display.

[0047] The method or system according to a previous or following paragraphs wherein providing a first VR image dataset includes a selecting engageable virtual sandbox which gives an overhead view of the entire topography, and in VR data space topography.

[0048] According to another embodiment, a method or system for training a soldier is provided. The method or system includes the steps of capturing a series of images for a terrain using a drone or flying mobile platform. The method or system includes the steps of converting the image data series of the terrain into a captured set of images and into a wire frame of the topographic landscape. The method or system includes syncing the wire frame of the topographic landscape to a real world located using a fixed 5 coordinate system. The method or system includes applying a physics engine to object within the VR image dataset. The method or system includes streaming a first image dataset stream to a VR display, and a second AR image dataset less than the first VR image dataset which is streamed to an AR display.

[0049] The method or system according to a previous or following paragraphs includes sharing a three-dimensional virtual reality space among a plurality of users. The method or system can include acquiring a plurality of images of a geographic region using a mobile platform.

[0050] The method according to one or more of the previous or following paragraphs includes using photogrammetry to create a first set of three-dimensional graphics data associated with a geographic region to be used by the plurality of users in a shared manner. The three-dimensional graphics data is functionally coupled to a physics engine configured to physical rules to objects within the virtual reality dataset. A display engine is coupled to the physics engine to convert the dataset into first and second content streams. One of the first content streams and second content streams from the three-dimensional graphics data is selectively streaming either to a VR headset.

[0051] The method or system according to one or more of the previous or following paragraphs wherein streaming a first content stream includes streaming the first dataset which comprises a rendered topographic landscape.

[0052] The method or system according to one or more of the previous or following paragraphs wherein streaming a second content stream includes streaming the second dataset has an augmented reality content that is less than the content of the first dataset.

[0053] The method or system according to one or more of the previous or following paragraphs includes capturing a series of images for a terrain using a drone and converting into a captured set of images and into a wire frame of the topographic landscape.

[0054] The method or system according to one or more of the previous or following paragraphs includes syncing the topographic landscape to a real world located using a fixed coordinate system.

[0055] The method or system according to one or more of the previous or following paragraphs includes providing 5 a set of polygons over the wire frame to form a virtual reality image dataset. The method or system according to one or more of the previous or following paragraphs includes providing a second AR image dataset having less content than the first VR image dataset which is streamed to an AR display.

[0056] The method or system according to one or more of the previous or following paragraphs wherein providing a first VR image dataset includes a selecting engageable virtual sandbox which gives an overhead view of the entire topography, and in VR data space topography.

[0057] According to a further teaching, a method for training a soldier is disclosed. The method includes the steps of capturing a series of images for a terrain using a drone. The method includes converting the image data series of the terrain into a captured set of images and into a wire frame of the topographic landscape. The method includes syncing the wire frame of the topographic landscape to a real world located using a fixed coordinate system and applying a physics engine to object within the VR image dataset. The method or system includes further streaming a first image dataset stream to a VR display; and streaming a second image dataset to the VR Display. All references cited herein, including all patents, published patent applications, and published scientific articles, are incorporated by reference in their entireties for all purposes. Therefore, an embodiment of the present invention is an input device comprising a user engaging portion; a plurality of positional sensors, the plurality of positional sensors further comprising; at least one pitch sensor; at least one yaw sensor; at least one roll sensor; and a coupling mechanism capable of coupling the input device to a computing device such that the sensing mechanisms can send data to the computing device. In use, a user will sit on, or straddle the user-engaging portion of the device and lean forwards/backwards, lean side/side, and/or rotate the device. These motions by the user will be detected by the sensors and converted to control signal(s) which are transmitted to a computing device and used to interact with the computing device and/or an application (program) running on the computing device.

BRIEF DESCRIPTION OF THE DRAWINGS

[0058] FIG. 1 represents an electronics module having a processors and sensors used for the input devices;

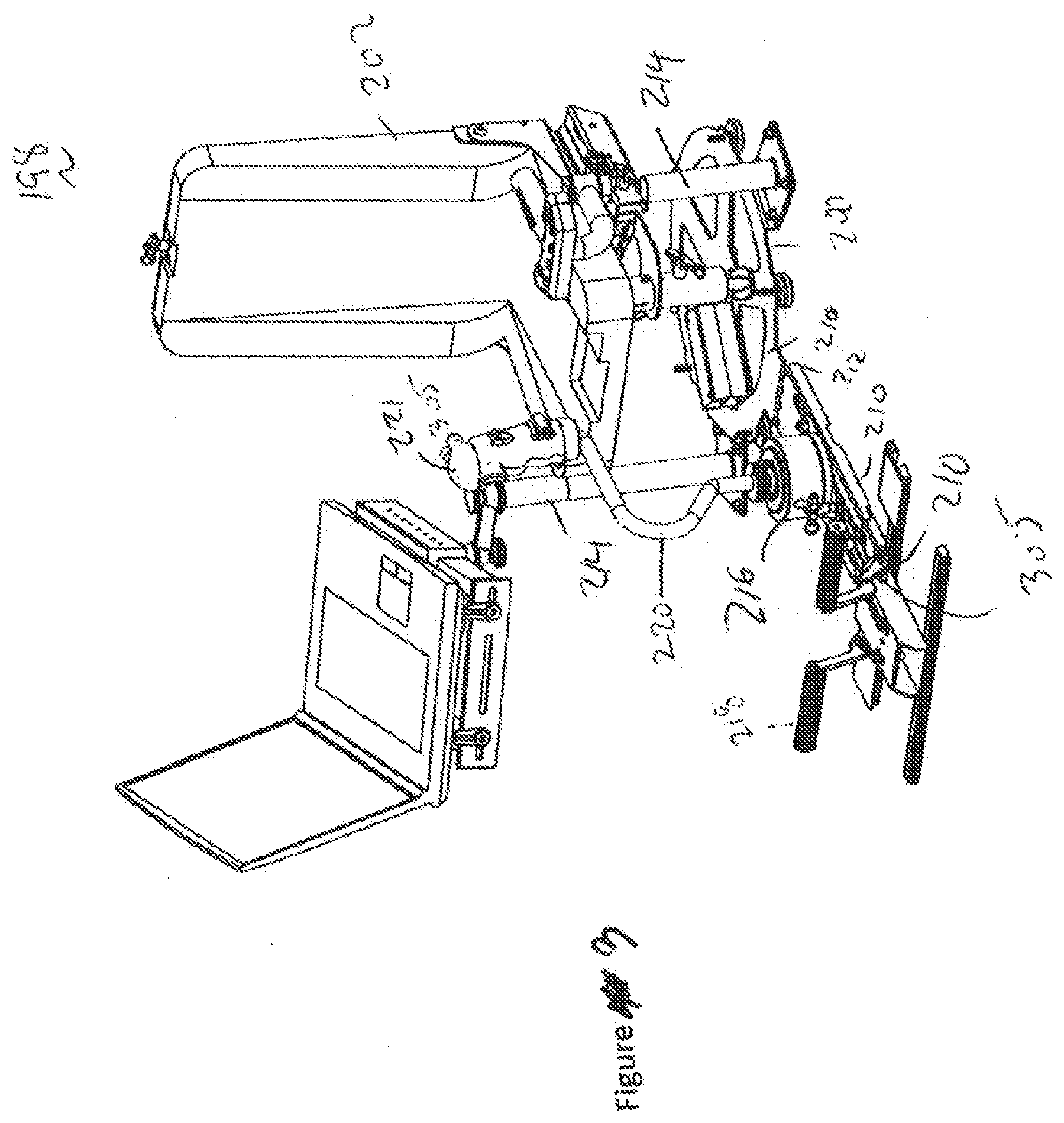

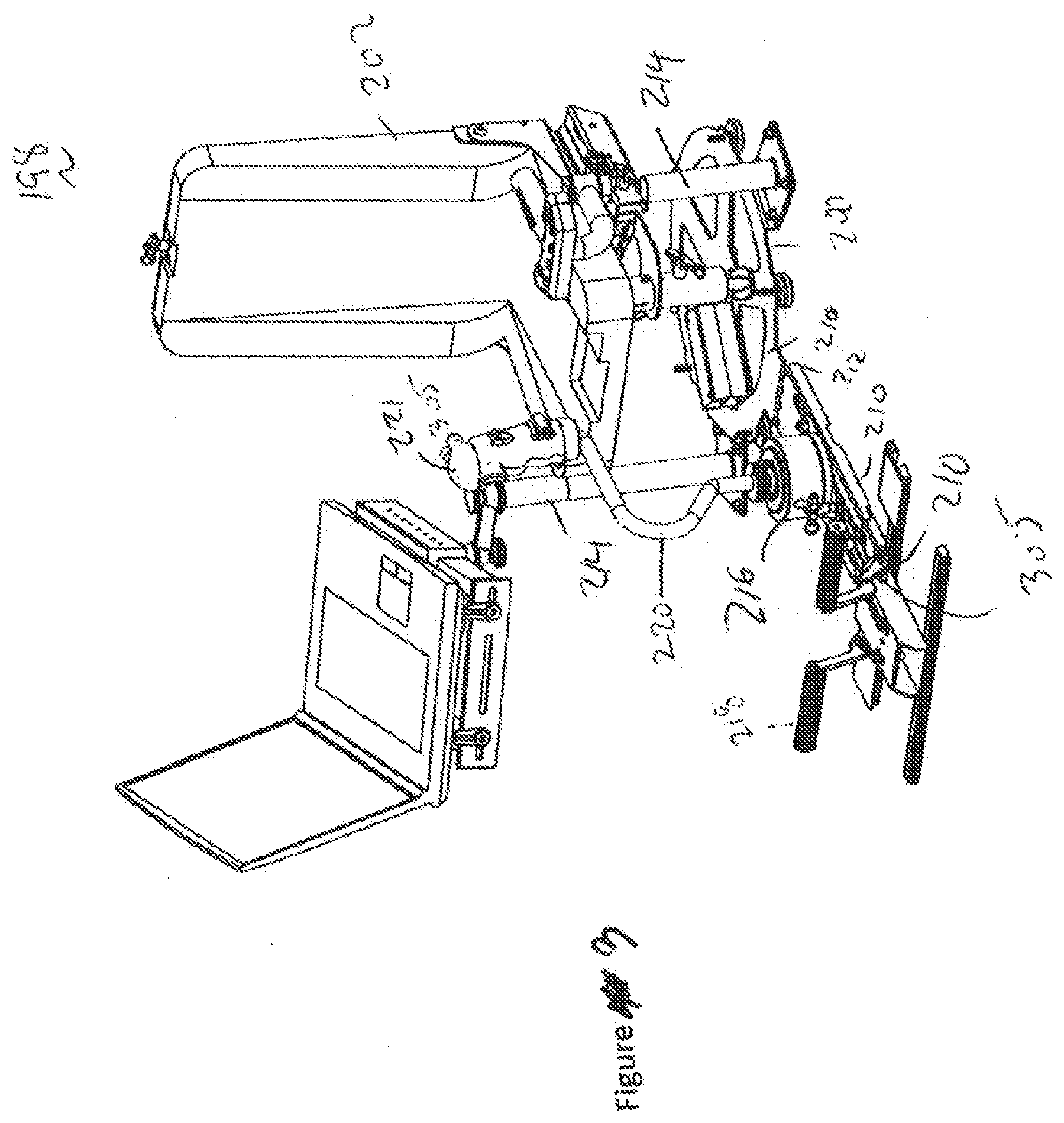

[0059] FIGS. 2-6 represents a virtual trainer and control device according to the present teachings;

[0060] FIGS. 7a and 7b represents the system shown in FIGS. 1-6 in a collapsed foldable configuration;

[0061] FIGS. 8 and 9 represents the system;

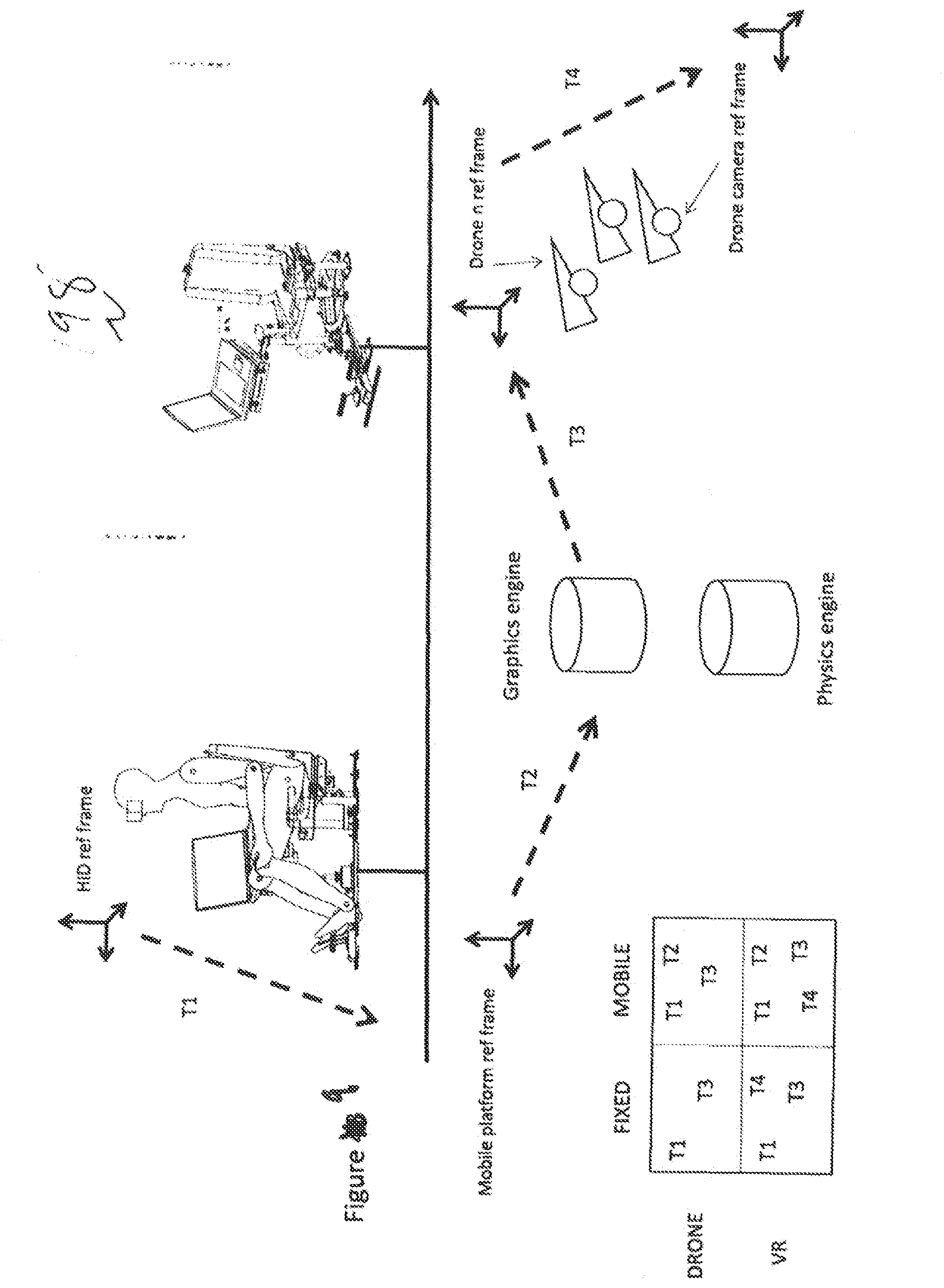

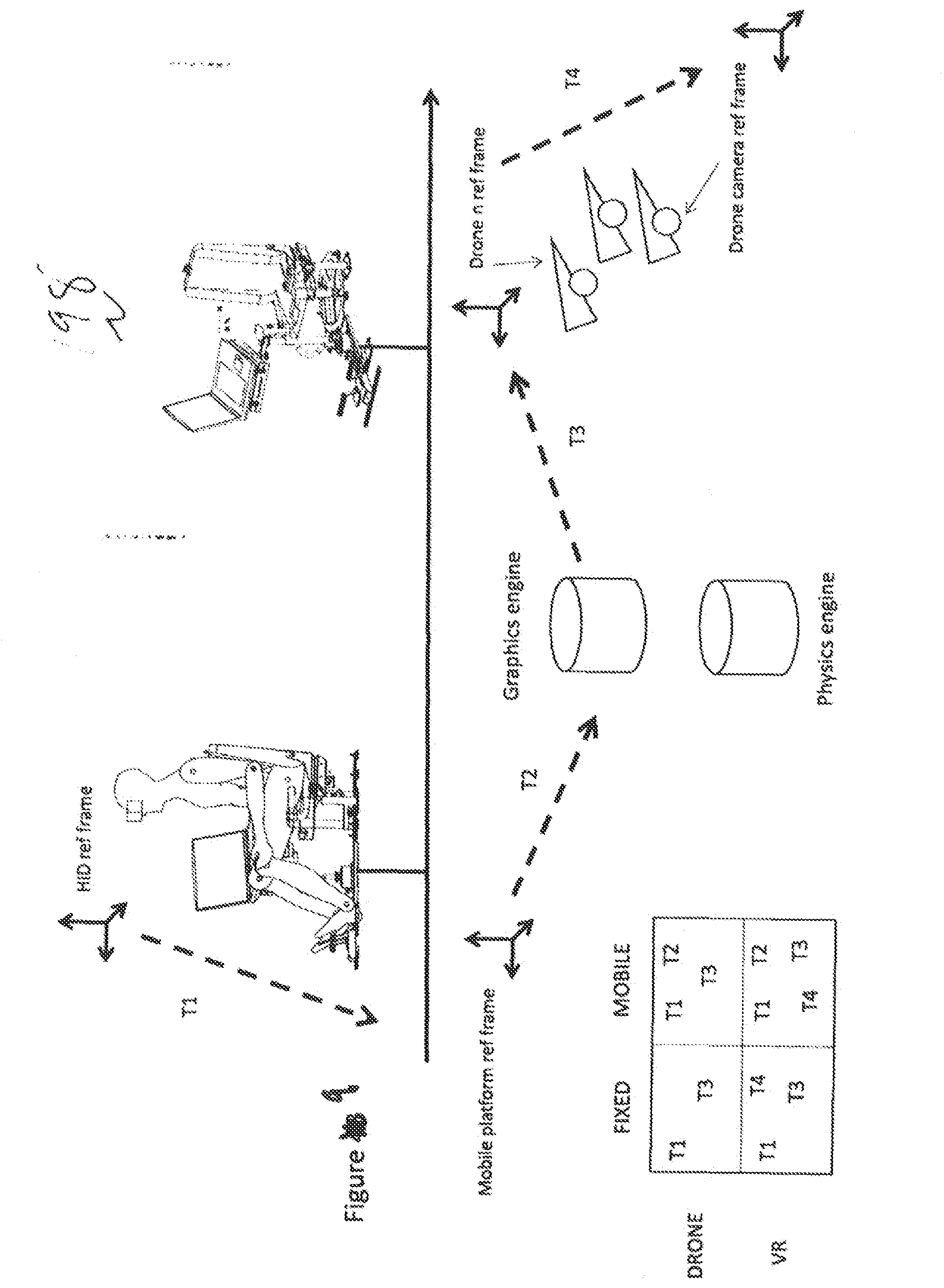

[0062] FIG. 10 represents a data structure for transferring VR maps.

DETAILED DESCRIPTION OF THE INVENTION

[0063] In the following, reference is made to embodiments of the disclosure. However, the disclosure is not limited to specific described embodiments. Instead, any combination of the following features and elements, whether related to different embodiments or not, is contemplated to implement and practice the disclosure. Furthermore, although embodiments of the disclosure may achieve advantages over other possible solutions and/or over the prior art, whether an advantage is achieved by a given embodiment is not limiting of the disclosure. Thus, the following aspects, features, embodiments and advantages are merely illustrative and are not considered elements or limitations of the appended claims except where explicitly recited in a claim(s). Likewise, reference to "the invention" shall not be construed as a generalization of any inventive subject matter disclosed herein and shall not be an element or limitation of the appended claims except where explicitly recited in a claim(s).

[0064] The system applies a physics engine to object within the VR image dataset. The VR data set is coupled to a display engine which provides a first VR image dataset stream to a VR display. The display engine also provides a second AR image dataset less than the first VR image dataset which is streamed to an AR display. The training system according to the aforementioned system where the first VR image dataset includes a selecting engageable virtual sandbox which gives an overhead view of the entire topography, and in VR data space topography. The second AR image dataset is polygons for texture and content selectively disposed onto a real world optically viewable surface through an AR display and fourth set of data which overlays a wire frame image over real word surfacing in an augmented reality goggles.

[0065] For data input data can come from image or sensor data from man-based sensors, machine-based sensors, Man/Machine. The System can optimize the path of the drone utilizing intelligent CNC cutter-path mathematics to define the path of a data collection drone to most efficiently calculate needed information for a variety of layers in a variety of spectrum. This will allow, the maintenance a constant data buffering rates to minimize data feed rate losses and to maximize program processing speed.

[0066] The flight path for the drone can be set based upon the area being photographed. In this regard, when there are large differences between the heights of buildings, a spiral flight path is often best. Additionally, using image object differentiation and shadow lengths. The flight path can be prioritized to take more images or images with a greater image angle where there are features such as roads between buildings and or buildings with large differences in height.

[0067] A "Smart Imaging" control for the drone aims to produce an intelligent, optimized drone path. Its functionality can include options for examining data between Z layers--including data feed connections, slope control flight, and geometry identification. To achieve near net shape when imaging, it is important for the control software to understand what changes in surface topology occur between the layers of down-steps. Knowledge of terrain remaining algorithms must look ahead to determine where extra closer imaging-steps are necessary. Smart imaging is how a flight control system images this "between layers" material. By rough imaging in this manner, often the semi-finish pass may be eliminated, saving on drone flight time and computation resources. Smart imaging may also include helical ramping functionality. This is used for pocket imagine.

[0068] The helical ramping function determines helical movement based on entry angle and geometry. This function is most important when the imager reaches an obscured area of terrain. It can make the drone path shorter and safer by eliminating unneeded duplication, as a result of tailoring the tool path to the geometry of the obscured features. The sensors can calculate color, distance, heat emission, reflectivity, emissivity, and density. The data can be collected from Air, Land, Sea, and Space (satellite) machines--manned or unmanned. Data layers are mathematically processed into proper formats for system integration for user integration. Layers are created to allow users to observe the machine data in the proper spectrum to allow for enhanced decision-making and proper intuition prompts. Points are tessellated and properly approximated to create a mapping system that represents the area of activity. Optionally when an earth-based frame of reference is not known, for instance within a building. Simultaneous localization and mapping (SLAM) techniques can be used to create VR datasets for unknown areas in cave/mine/indoor rescue scenarios.

[0069] With respect to the electromagnetic atmosphere, the VR and MR system can be used to display and show a scan cellular flow of cell phones for enhanced civil planning to track how many cars go which way, as well as the location of various towers. Additionally, Oil-Ground Scans provide locations of minerals/oil georeferenced in the ground to augmented reality. Drilling for water reference local aquifer map while drilling for water wearing AR goggles you can see how close your pipe is in relation to aquifer.

[0070] Software and swarm Identify differences in 3D landscape reality to provide prompts on areas of further inspection. An imagining engine or processor contains the ability to combine SLAM with Photogrammetry and older 3d models. In this regard, once a sparse mesh is calculated in photogrammetry, a determination is made if enough tie points are available. If there not enough tie points available, the system can send out a second drone to take more images.

[0071] When there is incomplete or old data in high priority areas, the System AI can display prioritized drone flight patterns to focus surveillance detection on differences based on outputs for a difference engine or algorithm. Optionally, heightened scrutiny will be available for 3D landscape and indicators differences and anomalies human activity. The head of an actor or drones can include a sensor package, either virtual or real which provides the system physics engine which indicates where hits are made with weapons calculate probability of lethality and offer indication as to keep shooting or not. Facial and or voice recognition indicates whether bad actor is HVT or pawn. Optionally the intuition prompts show target-to-target head or leg.

[0072] Recordings of the movement inside the VR or AR simulator can be monitored by instructors or our public version can be checked for high value targets OTMs. This allows a team to can go back in time and revisit old missions and replay scenarios from raw data gathered before the creation of the model.

[0073] The physics engine initiates and references area of activity. The area of activity is presented in several scales. Of which is miniature for an overview/observance and another is full scale for full immersion. The physics engine allows the first user or System AI to have the ability to properly set time, sun/star position and weather effects.

[0074] Recordings of users inside the VR simulator can be monitored by instructors or our public version can be checked for HVT OTMs. This allows a team to can go back in time and revisit old missions and replay scenarios from raw data gathered before the creation of a VR sand table. Geo-referenced 3D data for ballistic calculations of probabilities of mortal impact both good and bad side. Percentages of hitting and not being hit shown in Augmented Reality to provide confidence on the battlefield.

[0075] Live wind and altitude and angle of shot calculations done in augmented reality. For added Confidence for shooters, the location of indicia for wind speed--flags that can be identified and highlighted in VR, MR, and AR. This allows collectively get better wind data at multiple points in a valley using drones to drift them in the wind. Better-fit ballistic calculations can be suggested to a user using intuition prompts.

[0076] The display engine will track all users for easy teleportation within the VR space. the sandbox view represents a Minimap that shows user positions and includes a Rangefinder-Point to Point, User--Identification, a Compass that can be sky compass in VR and AR situations. The first person or commander can walk around the sandbox in the virtual space. The system contains a database of weather options that will affect for example travel speed, visibility. The system can include a back-move button--teleport back to previous location.

[0077] Drawing within the system can be done with decals. Each time a ray trace hits a mesh it adds a decal to its surface. Performance can be reduced quickly so optionally, limited the number of decals that can be drawn but more will need to be done here. Ideally the system uses a ray trace to static mesh and gather the UV coordinate of the hit and then add pixels to a "paint" texture at those coordinates. This will mean the only decrease in system speed and refresh rate is the texture memory and a single draw call instead of potentially thousands of draw calls per decal. Multiple users are displayed onto the sandbox view of the VR and in the VR space. To better simulate real world environments, when moving through the VR space, the physics engine will selectively increase resistance (decrease user speed) when climbing hills etc.

[0078] With a full understanding of the area of activity and environment variables, proper assessment can be made for the collection of assets or the "ingredients" to the recipe of successful batch of missions. The revision of these recipes will occur by way of intuition prompts that can occur by various features/actions/layers including: walkthrough; collaboration; visualization and preparation. Assets can be queued in the staging area. Assets can include, but not limited to: Boats; Tanks; Aircraft; ATV; Buggies; Motorcycles; Beasts of Burden; and Wildlife, etc. After assets and method are decided, areas can be populated with expected good and bad actors and scenarios run. Actors can be artificial intelligence driven or networked participants who can be in overview or in immersed modes for a full-scale joint simulation.

[0079] For a training session in a mixed reality event, such as a shoot house, the AR goggles can have an input such as a QR code which will allow the change to the background of the walls from for example shipping containers or a mud hut, or a Williamston colonial with a picket fence. As is described, data is feed to the AR goggles using the visualization engine. Biometic and reaction data is captured during the event for analysis later.

[0080] Playback of the scenario is possible from a variety of camera angles where the operation can be assessed and streamlined to decrease cost from a variety of aspects: Human; Machine; Animal; Monetary. During a training phase in VR, or AR training, the virtual simulation is the ability to rewind and evaluate many scenarios, many times. During the Execution of the Mission, the first user or commander can view the goings on of the mission in VR space. This VR space can be augmented using image data that augments the VR space in near real time. This data can come from satellite and drone imaging data. This data can be used to provide AR Prompts to the users during the mission. For example, data can be live streamed to the war fighter to allow them to avoid collisions on the road during driving or provide better troop location formation with live enemy trackers in respect to live 3d mapping.

[0081] Importantly, intuition prompts in the form of information pushed into the AR goggles during a mission can visually alert a soldier to changes in the environment which they may not be aware of. This can be for example the movement of a vehicle into and area, the movement of soil which may indicate the placement of an IED, the visual acquisition of a potential sniper on a roof. Colored decals can be placed into the AR space and visually alert the soldier to the new information.

[0082] The AR headset, when used in the field can have a provision which will allow for solider to increase or decrease the amount of information within the goggles or AR screen. The sandbox and augmented reality displays can include goggles; include tablets or cell phones as well as mission planning of the battle space and military manuals. The system can utilize body part sensors in virtual reality and a tank turret. In the mixed reality situations, the system can utilize weapon-mounted sensor for weapon direction.

[0083] In the mixed reality situations, a trainee can be evaluated preconditioned conditioned and post conditioned using Virtual Reality, Augmented Reality and/or Mixed Reality so this could turn out to be a testing scenario to filter participants with respect to preferred traits for preferred objective goals/outcome. This can include the measuring biological inputs such as heart rate and reaction times when changes in muscle tension are compared to the timing of visual cues.

[0084] The system is backpackable at the scout level and provide hardware connection to other users around the globe. Additionally, maps can be updated in the field by imputing optical and sensor data from hand thrown drones. Enterprise and heavy level hardware and software connection can be provided to allow for cloud-based calculation of the graphics. Geo-referenced intelligence images and cross platform capabilities can be used to provide multiuser support.

[0085] With respect to the electromagnetic atmosphere, the VR and MR system can be used to display and show a scan cellular flow of cell phones for enhanced civil planning to track how many cars go which way, as well as the location of various towers.

[0086] Images of oil-ground scans provide locations of minerals/oil georeferenced in the ground to augmented reality. Drilling for water reference local aquifer map while drilling for water wearing AR goggles you can see how close your pipe is in relation to aquifer.

[0087] Differences in 3D landscape reality to provide prompts on areas of further inspection. As described, intuition prompts identifying differences to users using intuition prompts in the form or VR, MR, and AR prompts to the user. The prompts can take the form of visual cues displayed, text, and haptic output. For example, the system can identify to a user; "I see footprints there" or "I see fresh car tracks" or the ground in that region appears to have been displaced, or there is a hole here that wasn't there but was filled in.

[0088] Optionally, these areas can be highlighted visually with a color such as a warning red. When there is incomplete or old data in high priority areas, the System AI can display prioritized drone flight patterns to Focus Surveillance Detection On differences based on 3d landscape and Indicators differences and anomalies human activity. When data is old or incomplete, these areas can be highlighted as being suspect within the VR/AR environment. The System AI can use machine training, machine learning based on anomalies detected over time.

[0089] The prompting augmented reality goggle wearing individual which way to face his head to provide more information on newly found difference. During the mission in AR and MR, intuition prompts not limited to just humans. Intuition prompts can be provided for animals such as for dogs. These intuition prompts can be made via vibration, tones (audible and inaudible to humans), and radio communication. Optionally, sensor platforms can be integrated onto the animal using harnesses of for example dog helmet cameras.

[0090] Different type of Geo-referenced 5 Data can be combined in a fashion that is best understandable to humans in AR VR. For example, it better to show white hot thermal people on top of colored visible camera textured 3d models or red outlined movements noticed by LIDAR. In another example, if there is a VR dataset that corresponds to a physical topography, each having a desk, and a first user places an object on the desk in the VR space, a second user, when viewing the desk through an AR display will see the object on the real desk. Similarly, the systems engines can provide data directly to the AR goggles in the real world to augment a soldier's situational awareness and provide intuition prompts.

[0091] The AR and VR screens can display where hits are made with weapons and can calculate probability of lethality and offer indication as to keep shooting or not. Facial and or voice recognition indicates whether bad actor is High Value Target or pawn. Optionally the intuition prompts show target-to-target head or leg. Additionally, AR medics can be positioned with the AR goggles to interact with an injured soldier.

[0092] The display engine will track all users for easy teleportation within the VR space. The sandbox view represents a Minimap that shows user positions and includes a Rangefinder-Point to Point, User--Identification, a Compass that can be sky compass in VR and AR situations. The system contains a database of weather options that will affect for example travel speed, visibility.

[0093] Disclosed is a method of sharing a three-dimensional virtual reality space among a plurality of users. The method contains the steps of first, acquiring a plurality of images of a geographic region using a mobile platform. Second, photogrammetry is used to create a first set of three-dimensional graphics data associated with a geographic region to be used by the plurality of users in a shared manner. Next, the three-dimensional graphics data is functionally cooped to a physics engine configured to physical rules to objects within the virtual reality dataset. A display engine is coupled to the physics engine to convert the dataset into first and second content streams. Either one of the first content streams and second content streams from the three-dimensional graphics data is the presented to one of a table, a computer, or to a VR headset.

[0094] The first content stream can include streaming a rendered topographic landscape. Alternatively, streaming a second content stream can include streaming the second dataset has an augmented reality content that is less than the content of the first dataset.

[0095] As previously discussed, capturing a series of 5 images for a terrain using a drone and converting into a captured set of images and into a wire frame of the topographic landscape. The topographic landscape can be synced to a real world located using a fixed coordinate system. A set of polygons are provided over a wire frame to form a virtual reality image dataset.

[0096] The first VR image dataset can include includes a selecting engage able virtual sand table which gives an overhead view of "gods view" of the entire topography, and in VR data space topography. The second data set can be engaged, by selectively pointing to a spot on the sand table with a VR pointer to "move" the user into the second VR space represented by the 3d area in the map. This allows the user to traverse inside the vr second space in room scale.

[0097] A system for training a person according to the present teaching includes a camera or cameras used to capture a series of images of a terrain. These cameras are mounted on a mobile platform which can be a drone or a terrestrial based vehicle. The images are transferred to a computer using a cable or a memory card. The computer preferably has a GPU to efficient handle the data. The images stored in a file structure are converted using photogrammetry into a 3d model of the terrain. This 3d model formed from the image data series of the terrain is converted into a wire frame of the topographic landscape. The wire frame is synced to the topographic landscape to a real world located using a fixed coordinate system within a VR system. A physics engine is used apply structure to objects and topography found within the VR image dataset.

[0098] A first image dataset is streamed to a VR display which represents a tent or a hanger having a "table sized" map of the terrain disposed several feet off the floor of the virtual space. An overhead 2d map to the area is formed and positioned on the wall of the hanger or room. Additionally, streaming content from for example the internet or a software defined radio can also be placed in the first VR space.

[0099] Additionally, a pointer in VR can be used for instance to accurately determine things such as shot distance and angles between two points. These distances can be correlated for image correction with know distances in the real world. A user or users can surround the virtual sand table representation of the terrain and can use virtual tools such as paint brushes to paint infill and exfiltration routes, place vehicles such a helicopters, or Humvees, or rally points. Images from drones can be directly superimposed onto the mesh for "image" feeds. Which will give the 5 VR either in the Room scale or in the "hanger" a view of what is happening "live." This is of course subject to the normal latency in the system.

[0100] The system which is used to convert video streams from drones into a 3d or 2d output. The video streams are formed of a series of images which can be compressed and an associated set of meta data. The meta date can hold for example information regarding the mobile platform such as the drone type, the orientation, altitude and speed of the drone. Additionally, the meta data can contain information related to the camera type, focusing measurements, and angles and position of the camera with respect to the drone or ground. This meta data and thumbnail images for reach frame are copied into separate data tables which are relationally bound using a film frame reference number. It is often the case that the metadata does not include information needed to assist in photogrammetry.

[0101] Furthermore, the metadata may not be in a format (such as EXIF) which is used by the photogrammetry transforming software. This metadata for each frame can be transformed and saved into a second data table which is relationally bound using a film frame reference number. This transformed table can be filled with data which is calculated from data stored in the first data table. For example, the focal length can be transformed using the following formula:

Angular Field of View(degrees)=2.times.tan-1(h/2f);

where h is the height of the imaging sensor.

[0102] Where f is the focal length which is usable by the photogrammetry processing. This data is used in the photogrammetry portion of the system to transform the images into a mesh as well as 2D and 3D images. Once the images are separated, the Meta data is used to segregate the data into separate sets. These discrete sets will be used separately to be run through the photogrammetry engine. In this regard, the images and metadata in the first and second data tables are separates into groups. The segregation occurs based on data stored in the Meta data. The system will incrementally through the metadata for changes in data such as a change in focus, a change of angle of the camera with respect to the drone, or earth. Once a "new set" have been started, the system will increment through the images until it comes across a new set of images based on the aforementioned changes in the camera's relation to the drone body or the ground.

[0103] Each of these segmented sets of images can be separately evaluated. If a single segment has too many files for effective transformation into a 3d and 2d map a subset for these images can be used to transform the data. In 5 this regard, every 5th or 12th image can be used. Additionally, in situation where a pilot is changing the focus on an object or changing the field of view, the photogrammetry portion of the system can treat this as a separate and independent camera. Multiple subsets of photos can be used to form the mesh and the 3d image.

[0104] Also associated with each from can be additional metadata such as indicators of what might be found in a particular frame such as from AI. The AI can use machine vision techniques to label information of those things within a particular frame, such as a plane, tank, and rocket launcher.

[0105] To allow for the searching of images within the database, the system allows for the formation of a mathematical model which represents an image being investigated. The search image of interest is converted into a digital format for processing by a computer. This image is input into the computer as an image file or can be scanned using known techniques. Optionally, key words are associated with the image file. These key words can be associated with the goods or services related to a product.

[0106] The series of master transformation and analysis are conducted on the image file. In this regard, if necessary, the image is transformed into a grey scale or black and white image. As the master image file is expected to be of high quality, it is envisioned that needed image transformations will be at a minimum.

[0107] A series of analysis are run on the master or transformed image to characterize an object or objects depicted in the image. Properties such as centroid of the object, the object's aspect ratio, spline equations which describe parts or all of the object's edge, and character recognition can be run.

[0108] A correlation matrix is formed from the results of the analysis. The system evaluates the features or analysis, which best distinguishes the object being detected. This analysis can be for example a convolution solution such a convolution integral. In this regard, a convolution solution is a mathematical operation that combines the output response of a system to individual responses. In this regard, a solution or solutions is conceptually a fold, shift, multiple or summation of individual discrete, scaled, time-shifted impulse responses. Analogously, this maps to a multiplication of the functions in the frequency domain.

[0109] Efficiency and applicability of the methodology arises from the commutative property. Included (see end of document for the Pattern Classification Decision algorithm) is an adaptive learning algorithm that extends, 5 in addition to the convolution solution, heuristic method that abstracts and generalizes to the degree to that it interprets the overall results. To reduce computational load in the future, those features having the highest likelihood of being able to distinguish the object are used to search for images as described below. These features represent a subset of the correlation matrix or a test function set.

[0110] Optionally, the system will ask whether the detection of rotated objects is required. In this case, the system will optionally rotate the master image about a predetermined axis, at a predetermined angle, a predetermined number of times. For example, the image can be rotated at plus or minus 5 degrees for 0 to 45 degrees. These rotations can occur about a centroid of the image to accommodate for scanning errors or can be located at a centroid away from the image to represent a 3d rotation in space. When the axis of rotation is located away from the image, it can be perpendicular to or within the surface of the image. All of these rotated images can then be run through the analysis system as described above.

[0111] It should be understood that the order of processes can be altered as desired. It is envisioned that those analysis techniques which have been deemed proper for one image may or not be deemed proper for its rotated analogues.

[0112] The system allows for searching images within a distributed network or database. The distributed network is run based on key words. This will significantly reduce the number of html pages to be evaluated. A determination is made if files on the target list have associated image files. Should there be associated image files, the images are downloaded for further analysis.

[0113] An initial evaluation of the images is conducted. In this regard, simple evaluations are conducted as to the determination if the image is larger than a predetermined size. By way of non-limiting examples, further preliminary analysis can be conducted to determine if images contain too many colors or if the color content is correct. For those images to be deemed worthy of analysis, the system can convert the images into grey scale.

[0114] A series of analysis are run on the images to determine how close the image is to the master image. In this regard, it is envisioned the image can be processed to segregate individual areas of a downloaded image for analysis. This segregation is performed using edge detection techniques. It is envisioned the system will conduct edge detection such as, but not limited to, convolution, converging square systems, or other edge detection techniques. To improve the success of the edge detection, the image can be preprocessed 5 prior to analysis using edge enhancement and field flattening techniques.

[0115] Once portions of the images are segregated, each of the test functions can be run on the individual segregated image portions. Each image portion having its own correlation matrix can be then ranked for review after being compared to the correlation matrix of the master image.

[0116] The correlation matrix can be formed using the analysis. It should be noted that to improve computational efficiency, a subset of the analysis of those used can be used to analyze the image in the distributed network or database. This subset can be, for example, those analysis techniques determined to best correlate the master image. To increase the accuracy or robustness, the number of analysis techniques can be increased. The results of the correlation matrix the components of the image can then be compared to the master image correlation matrix. The correlation matrix for the varied components of the varied images can then be ranked for human evaluation. It should be noted that the images being evaluated can be compared with correlation matrices of the rotated images should this be desired.

[0117] In an example implementation of a system according to the present disclosure is depicted. Clients and send image file access requests to a load balancer. The load balancer assigns the image file requests to one of proxy servers. Although more or fewer proxy servers can be used, three are shown for purposes of illustration only.

[0118] The proxy servers retrieve and store large files from and to a storage system. The large files are aggregates of image files. In various implementations, proxy servers provide an interface between image file accesses and large file accesses. In other words, the proxy servers interact with the clients in terms of image files and interact with the storage system in terms of large files video files.

[0119] The clients are graphically depicted as a personal computer and a server, respectively. However, in various implementations, clients can take many other forms, such as mobile devices which rung image presentation software such as ATAK. Further, although the term client is used, the client may not be the end consumer of information/data in the image files. For example, only, the client may be a web server that retrieves small files and presents them as part of a web page or web application to end users. This can be run on for instance a Linux LAMP server.

[0120] A simplified block diagram of selected components of the client is illustrated for example only. Although depicted with respect to the client, similar or identical components can also be included in other clients, such as the client. The client, in this example, includes a processor that executes instructions stored in memory and/or nonvolatile storage. The processor communicates with a network interface. When the processor decides to access a small file, such as based on a user requested new content, the processor 402 transmits a small file access request to the load balancer 430 via the network interface 408.

[0121] In various implementations, the load balancer 430 may appear as a single server to the clients. Alternatively, the load balancer may be bypassed or omitted, and the clients may communicate with one or more of the proxy servers. For purposes of illustration only, selected components of the proxy server are depicted. In this example, an access module receives an image file access request via a network interface.

[0122] The access module determines large files corresponding to the specified small file according to a selection function from a selection module. The selection module may configure the selection function so that files expected to be accessed contemporaneously are grouped into a large file. For example, only, files related to a web page may be grouped into a large file. Similarly, files that may often be accessed in succession as a user navigates a website may be grouped into a single large file. The access module 446 then accesses the corresponding large file from a storage cache 452. When the storage cache 452 does not include the large file, the large file is read from the storage system 460.

[0123] For purposes of illustration only, the storage system is shown as including a network interface and file module. The file module may implement a variety of file systems, such as FAT, NTFS and/or ext2. The file module may include a variety of nonvolatile storage media, such as tape optical disk and magnetic storage. The file module may implement a variety of storage techniques, such as RAID (redundant array of independent disks). The file module may be unaware of the content of any of the large files and simply treat them as standard files.

[0124] Image data processing generation, specially adapted particular applications. This can include indexing scheme image analysis image enhancement; special algorithmic details; image segmentation details; active shape model. The system can be specially adapted for particular applications and can include geometric image transformation plane image, bit-mapped bit-mapped creating different image.

[0125] Image data processing for the VR and AR goggles can include generation can include specially adapted particular applications including image analysis, bitmapped non bit-mapped, depth shape recovery, 5 multiple images, multiple light sources, and photometric stereo.

[0126] Real-time bi-directional transmission motion video data can be accomplished using network structure processes which are specifically adapted to use a video distribution server and client remote clients. Data switching networks and wireless communication networks can be used for control of signaling. Basic layer enhancement layers can be transmitted using different transmission paths, setting peer-to-peer communication internet remote stb's. Communication protocols including addressing, signaling, control architecture real-time multimedia is used to maximize throughput.

[0127] The system can use peer-to-peer communications with control signaling network components server clients, network processes video distribution server clients, controlling quality video stream, dropping packets, protecting content unauthorized alteration network, monitoring network load, bridging different networks, ip wireless. The control architecture allows for near real-time multimedia communications.

[0128] The system can use a broadcast conference packet-switching network including real-time bi-directional transmission motion video data. Servers are specifically adapted distribution content, vod servers; operations; management operations performed server facilitating content distribution administrating data related end-users client devices, end-user client device authentication, learning user preferences recommending movies. The system provides integrated maintenance administration data networks.

[0129] Broadcast communication is managed using circuit systems communication control processing protocol. The use of Broadcast conference packet switching networks in real-time in bi-directional transmission motion video data specifically adapted video distribution server client remote clients. Data switching networks and wireless communication network including a video encoder decoder, transmission management data server client, sending server client commands recording incoming content stream. The Protocols client-server architecture is specially adapted downstream path transmission network.

[0130] As previously mentioned, the system provides selective content distribution arrangements, apparatus, circuits, and systems communication control processing protocol. Broadcast conference packet-switching networks are used for the real-time bi-directional transmission motion video data and generation processing content additional data content creator independently distribution process. Arrangements generating broadcast information, the assembly of content and the generation multimedia applications.

[0131] Electrical digital data processing using where computers computation affected self-contained input output peripheral equipment. Optionally, impedance 10 networks using digital techniques, error detection, error correction, monitoring, and methods for verifying correctness marking record carrier can be used. Error detection and correction of data is included using redundancy hardware or using active fault masking. The system will automatically switch off faulty elements and switch to spare elements. Interconnections communication control functionality redundant; flexible 15 arrangements bus networks involving redundancy. Defective hardware can be detected and located by testing during standby operation idle time, start-up.

[0132] Database structures are provided containing file structures for data processing systems. Methods specially adapted administrative, commercial, financial managerial, supervisory forecasting purposes. The system uses data structured data 20 stores including storage indexing structures; management. The engine uses computers computation effects the input output peripheral equipment using indexing scheme relating accessing, addressing allocation memory systems architectures; details cache specific multiprocessor cache arrangements.

[0133] The AR system allows for the management of power consumption, 25 standby mode; power saving data processing device general and wake-up procedures. Recognition data and methods arrangements reading recognizing printed written characters recognizing patterns, fingerprints; processing analysis tracks nuclear particles and chemical constituents, molecular sequences; radio frequency.

[0134] The visualization engine allows for the system to utilize recognition field 30 perception and scene-specific objects. Image and video retrieval and image analysis and image segmentation are included. Pixel labelling and alarm systems, traffic control, pictorial communication, recognizing scenes, perceived perspective of the user. The system allows for the recognizing of patterns in remote scenes, aerial images, vegetation versus urban areas; radar similar technologies; segmentation general image 35 processing; using hyperspectral data, i.e. Wavelengths rgb.

[0135] Example embodiments are provided so that this disclosure will be thorough and will fully convey the scope to those who are skilled in the art. Numerous specific details are set forth such as examples of specific components, devices, and methods, to provide a thorough understanding of embodiments of the present disclosure. It will be apparent to those skilled in the art 5 that specific details need not be employed, that example embodiments may be embodied in many different forms and that neither should be construed to limit the scope of the disclosure. In some example embodiments, well-known processes, well-known device structures, and well-known technologies are not described in detail.

[0136] Addressing the ability to suggest or even force movement of the user. In some embodiments, the use of vibrational stimulators provides feedback to the user. This allows for force feedback, which in VR, could be implemented as director's nudges/encouragement to ensure that the participant is not missing key cinematic or other important events. 15

[0137] The system is configured for optimizing a flight plan for an aerial and or mobile observation platform. In this regard, the flight plan can be optimized to maximize one or more of several different results. The first can be, what is the shortest flight path above a specific topography which allows the minimum number of 2d or 3d (lidar) images which can be used to form a three-dimensional dataset of a surface or 20 topography at a desired image quality. The second can be, what is the flight path or paths which maximize the time a mobile platform can observe the most area or surfaces at any given time at a desired image quality. Another optimal path can be the avoidance of regions the platform or camera cannot operate optimally. For example, acoustical requirement, or danger.

[0138] The system utilizes a low-resolution 3d wireframe or sparse map of a topography of interest, covered with faceted polygons to define surfaces. This resolution can be for example (1 pixel per meter). The low-resolution map is placed into a 3d physics engine such as, by way of non-limiting example, an Unreal Engine. An air space of 3d locations is defined above the map of the topography of interest. Included in this airspace is a floor limit, which can be set, based on safety or noise requirement of the mobile platform. Also included is an upper limit, which can be set, based on the camera requirements or operational parameters of the mobile platform to allow the desired image quality (for example less than 1 pixel per ground 2 cm and more particularly less than 1 pixel per ground 1 cm).

[0139] As described below, the system uses ray tracing to determine which locations in the space of 3d locations are optimum for capturing images of the terrain. Once the system determines which locations are optimum, a 3d traveling salesman solution is defined to determine which optimum (shortest) flight path is needed to capture the minimum number of 2d and 3d images needed to form the high resolution 3d image. Or if an optimum flight zone is determined, the central location of this zone can be used to reduce error. By way of non-limiting example, the system can have a computer which implements one of the following solutions to the traveling salesman problem. Various branch-and-bound algorithms, Progressive improvement algorithms, 10 Implementations of branch-and-bound and problem-specific cut generation, this is the method of choice for solving large instances. A cutting-plane method based on linear programming, a Concorde TSP Solver.

[0140] For each location in the air space of 3d locations, a projection is recursively made onto the space low-resolution surface. The system determines which 15 triangles are visible from each location in space. Each location in the space of 3d locations is then sorted to determine at which location the largest number or surface area of triangles is visible as well as the smallest number of triangles are not visible because of obscuration. A cluster of these locations is then stored in a separate memory location or tagged as primary imagining points. These observable n-gons will 20 be labeled "unobscured." The system will then determine which locations within the air space of 3d locations has an unobscured path to the largest number of "obscured triangles." A cluster of these locations is then stored in a separate memory location or tagged as secondary imagining points. These now observable triangles will be labeled "unobscured." The system will continue to recursively evaluate locations in the air 25 space of 3d locations until a point where the number of obscured triangles is below a predetermined threshold.

[0141] Optionally, the reverse process is possible, where observations are made "from the triangles" to determine which are the locations in the air space of 3d locations which are available. Once a series of locations the air space of 3d locations where the 30 number of obscured triangles is below a threshold. The system uses a 3d traveling salesman solution to solve which is the shortest path in space of 3d locations.

[0142] It should be noted, that the solution is fairly straightforward when utilizing a rotator lift aircraft such as a quad copter. When using a fixed wing, the relative distance between points in airspace changes depending on the angle of attack of the location in space. For example, the kinematics such as speed and inertial of an aircraft may limit the angle of the approach in a manner such that the aircraft will need to turn around and approach a near neighbor observation location.

[0143] Once optimum path is determined, the flight plan and imaging plan is loaded into the aircraft. A breadcrumb trail of images can be taken at a predetermine frequency to ensure proper image overlap to assist in 5 stitching together the images, as can be done in photogrammetry and lidar map construction.

[0144] Additionally, it is envisioned that the above system can be used to determine the best locations from legacy video streams to construct 3d maps, such as the images discussed above with respect to FIGS. 7 and 8. In this situation, the 3d 10 airspace locations will be limited to those locations where images have been taken from the legacy map. This reduced subset of locations will be used to determine the minimum number of locations, and thus images from the legacy data, which will be needed to transform the legacy data into 3d maps.

[0145] Throughout this specification, plural instances may implement 15 components, operations, or structures described as a single instance. Although individual operations of one or more methods are illustrated and described as separate operations, one or more of the individual operations may be performed concurrently, and nothing requires that the operations be performed in the order illustrated. Structures and functionality presented as separate components in example 20 configurations may be implemented as a combined structure or component. Similarly, structures and functionality presented as a single component may be implemented as separate components. These and other variations, modifications, additions, and improvements fall within the scope of the subject matter herein.

[0146] The control system may be deployed on a variety of different devices. For illustration purposes, an VR trainer system 198 will be featured in this example. The VR trainer system 198 is positioned on a surface which can be a mobile platform such as an aircraft 199. Once engaged, the VR trainer system 198 simulates the movement of a vehicle on a trajectory dictated by the human interface devices 204. In the case of the VR trainer system 198, the vehicle can follow its own guidance system and operates under its own propulsion. The control system disclosed here provides a deployable VR training system which can be used to rehearse a flight training of numerous numbers of vehicles.

[0147] To allow folding and transportation of the trainer 198, the base 200 is formed of more than one generally plainer member 210, which are coupled together by at least one hinge 212. As shown in FIGS. 3 through 7b, the base 200 has a display support number 214 and a plurality of human interface devices coupling interfaces 216.