Intelligent Shooting Training Management System

LI; Danyang ; et al.

U.S. patent application number 16/694180 was filed with the patent office on 2020-03-19 for intelligent shooting training management system. The applicant listed for this patent is Huntercraft Limited. Invention is credited to Ming CHEN, Yayun GONG, Danyang LI.

| Application Number | 20200090370 16/694180 |

| Document ID | / |

| Family ID | 66632563 |

| Filed Date | 2020-03-19 |

View All Diagrams

| United States Patent Application | 20200090370 |

| Kind Code | A1 |

| LI; Danyang ; et al. | March 19, 2020 |

INTELLIGENT SHOOTING TRAINING MANAGEMENT SYSTEM

Abstract

A data information integrated management system, particularly to an intelligent shooting training management system. The system includes a data acquisition apparatus, an operation terminal and a server. The operation terminal is connected with the data acquisition apparatus and the server. The data acquisition apparatus acquires a target paper image to obtain a photograph and a video record, and meanwhile, the data acquisition apparatus includes an automatic analysis module that analyzes a point of impact in the target paper image to obtain a shooting accuracy. The server manages the photograph, the video record, and the shooting accuracy. The operation terminal controls data exchange with the data acquisition apparatus and the server, and the operation terminal invokes and displays the photograph, the video record and the shooting accuracy.

| Inventors: | LI; Danyang; (Albany, NY) ; CHEN; Ming; (Albany, NY) ; GONG; Yayun; (Albany, NY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 66632563 | ||||||||||

| Appl. No.: | 16/694180 | ||||||||||

| Filed: | November 25, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15822698 | Nov 27, 2017 | 10489932 | ||

| 16694180 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/3233 20130101; G06T 7/74 20170101; G06K 2209/21 20130101; G09B 9/003 20130101; H04W 4/80 20180201; G06K 9/48 20130101; G06K 9/38 20130101; H04N 5/23229 20130101; G06T 7/194 20170101; G06F 16/27 20190101; F41J 5/02 20130101; G06T 7/13 20170101; G06T 5/006 20130101; H04W 84/12 20130101; G06K 9/6267 20130101; G06T 7/181 20170101; G09B 5/02 20130101; G06T 7/11 20170101; G06T 7/136 20170101; G09B 5/00 20130101; G06T 7/12 20170101; H04N 5/23296 20130101; G06K 9/00 20130101; G06T 2207/30212 20130101; G06K 9/78 20130101; G06T 7/168 20170101; G06T 2207/20061 20130101; F41J 1/00 20130101 |

| International Class: | G06T 7/73 20060101 G06T007/73; F41J 5/02 20060101 F41J005/02; G06K 9/62 20060101 G06K009/62; G06K 9/78 20060101 G06K009/78; G06T 7/13 20060101 G06T007/13; G06T 7/136 20060101 G06T007/136; G09B 5/00 20060101 G09B005/00; G06T 7/194 20060101 G06T007/194; G06F 16/27 20060101 G06F016/27 |

Claims

1. An intelligent shooting training management system, comprising: a data acquisition apparatus, an operation terminal and a server, wherein the operation terminal is connected with the data acquisition apparatus and the server; the data acquisition apparatus is configured to acquire a target paper image on a target to obtain photographs and video records, while the data acquisition apparatus has an automatic analysis module, and the automatic analysis module is configured to analyze point of impacts from the target paper image to obtain shooting accuracies; the server is configured to manage the photographs, the video records and the shooting accuracies; and the operation terminal controls data exchange with the data acquisition apparatus and the server, and invokes and displays the photographs, the video records and the shooting accuracies.

2. The intelligent shooting training management system according to claim 1, wherein the automatic analysis module is configured to convert an optical image captured by the data acquisition apparatus into an electronic image, extract a target paper area from the electronic image, perform pixel-level subtraction on the target paper area and an electronic reference target paper to detect points of impact, calculate a center point of each of the points of impact, and determine the shooting accuracies according to a deviation between the center point of each of the points of impact and a center point of the target paper area; and the electronic reference target paper is an electronic image of a blank target paper or a target paper area extracted in historical analysis; and the deviation has a longitudinal deviation and a lateral deviation.

3. The intelligent shooting training management system according to claim 1, wherein the operation terminal is connected with the data acquisition apparatus as follows: the data acquisition apparatus serves as a wireless WiFi hotspot and the operation terminal serves as a client to access a WiFi hotspot network, so that a connection between the operation terminal and the data acquisition apparatus is achieved, and the operation terminal obtains the photographs acquired by the data acquisition apparatus, raw data of the video records and the shooting accuracies obtained by the data acquisition apparatus; the operation terminal displays information of the target paper image acquired by the data acquisition apparatus in real time, controls startup and shutdown of acquisition of the data acquisition apparatus, controls startup of the automatic analysis module of the data acquisition apparatus, and controls startup and shutdown of the WiFi hotspot.

4. The intelligent shooting training management system according to claim 1, wherein the operation terminal is connected with the server as follows: the operation terminal and the server are in the same wireless network to implement the connection between the operation terminal and the server; and after the operation terminal has been verified, the shooting accuracies, the photographs and the video records local to the operation terminal can be transmitted to the server.

5. The intelligent shooting training management system according to claim 1, wherein the operation terminal, the data acquisition apparatus and the server are interconnected as follows: 1) the operation terminal notifies the data acquisition apparatus of information of a network to be accessed via Bluetooth or WiFi; 2) after receiving instruction data, the data acquisition apparatus analyzes the instruction data to obtain a name, a user name and a password of the network to be accessed; 3) the data acquisition apparatus performs a network access function and feeds a network connection result back to the operation terminal via Bluetooth or WiFi; and 4) the operation terminal analyzes and determines whether the data acquisition apparatus is successfully accessed or not, and if the data acquisition apparatus is successfully accessed, the operation terminal, the data acquisition apparatus and the server are interconnected.

6. The intelligent shooting training management system according to claim 1, wherein the target is an intelligent target, and the server remotely controls the intelligent target to replace a target paper through a network; the intelligent target has an exterior structure, wherein the exterior structure internally has a target paper recovery compartment, a target paper rotary shaft, drive shafts, a target paper area, a new target paper compartment, a motor servo mechanism, a CPU processing unit and a wireless WiFi unit; and the CPU processing unit receives an instruction of the server through the wireless WiFi unit, the CPU processing unit processes information of the instruction of the server and controls an execution action of the motor servo mechanism, and the motor servo mechanism is connected with the target paper rotary shaft through the drive shafts, the motor servo mechanism drives the drive shafts and the target paper rotary shaft to rotate to realize replacement of the target paper among the new target paper compartment, the target paper area and the target paper recovery compartment.

7. The intelligent shooting training management system according to claim 5, wherein the server manages the shooting accuracies, the photographs and the video records, respectively; the server classifies and manages the photographs and the video records in accordance with the uploaded users as a basic unit; and the server performs data query statistics on the shooting accuracies in accordance with time, user and group conditions, and calculates a trend curve diagram under such conditions.

8. The intelligent shooting training management system according to claim 1, wherein the management system further has an image projection display screen, a score publishing display screen, a PC terminal, a data printer, and a network device; the network device has a wired router, a wireless router, a switch and a repeater; the video projection display screen is directly interconnected with an acquisition host through an HDMI and an AV interface, and the screen only displays projection information; the interface of the score publishing display screen is a network or the HDMI or the AV interface, the score publishing display screen is directly connected with the server through a network interface or with a PC terminal through the HDMI or the AV interface, the server publishes and displays current real-time shooting accuracy ranking data on the score publishing display screen; the data printer is connected with the server by employing a network, a parallel port and a USB interface for data printing; and the PC terminal is connected with the data printer and the score publishing display screen to control the printing of the data printer and the displaying of the score publishing display screen.

9. The intelligent shooting training management system according to claim 1, wherein the data acquisition apparatus has an exterior structure, wherein the exterior structure is a detachable structure as a whole, and the exterior structure internally has a field of view acquisition unit, an electric zooming assembly, an electro-optical conversion circuit, a CPU processing unit and an automatic analysis module; the field of view acquisition unit has an objective lens combination or other optical visual device; the objective lens combination or other optical visual device is mounted on the front end of the field of view acquisition unit to obtain field of view information; the electro-optical conversion circuit is configured to convert the field of view information into electronic information that can be displayed by the electronic unit; the CPU processing unit is connected with the electro-optical conversion circuit and configured to process the electronic information; the automatic analysis module is configured to analyze the electronic information to obtain shooting accuracies; the electric zooming assembly is configured to change a focal length of the objective lens combination or other optical visual device; and the CPU processing unit is connected with the electric zooming assembly, and the CPU processing unit sends a control instruction to the electric zooming assembly for controlling the zooming.

10. The intelligent shooting training management system according to claim 2, wherein performing perspective correction on the target paper area after the target paper area is extracted corrects an outer contour of the target paper area to a circular contour, and point of impact detection is performed by using the target paper area subjected to perspective correction.

11. The intelligent shooting training management system according to claim 2, wherein extracting a target paper area from the electronic image particularly includes: performing large-scale mean filtering on the electronic image to eliminate grid interference on the target paper; segmenting the electronic image into a background and a foreground by using an adaptive Otsu threshold segmentation method according to a gray property of the electronic image; and determining a minimum contour by adopting a vector tracing method and a geometric feature of a Freeman link code according to the image segmented into the foreground and background to obtain the target paper area.

12. The intelligent shooting training management system according to claim 2, wherein performing pixel-level subtraction on the target paper area and an electronic reference target paper to detect points of impact particularly has: performing pixel-level subtraction on the target paper area and an electronic reference target paper to obtain a pixel difference image of the target paper area and the electronic reference target paper; wherein a pixel difference threshold of images of a previous frame and a following frame is set in the pixel difference image, and a setting result is 255 when a pixel difference exceeds the threshold, and the setting result is 0 when the pixel difference is lower than the threshold; and the pixel difference image is subjected to contour tracing to obtain a point of impact contour and a center of the contour is calculated to obtain a center point of each of the points of impact.

13. The intelligent shooting training management system according to claim 10, wherein the perspective correction includes: obtaining an edge of the target paper area by using a Canny operator, performing maximum elliptical contour fitting on the edge by using Hough transform to obtain a maximum elliptical equation, and performing straight line fitting of cross lines on the edge by using the Hough transform to obtain points of intersection with an uppermost point, a lowermost point, a rightmost point and a leftmost point of a largest circular contour, and combining the uppermost point, the lowermost point, the rightmost point and the leftmost point of the largest circular contour with four points at the same positions in a perspective transformation template to obtain a perspective transformation matrix by calculation, and performing perspective transformation on the target paper area by using the perspective transformation matrix.

Description

TECHNICAL FIELD

[0001] The present invention mainly belongs to the technical field of a data information integrated management system, and particularly relates to an intelligent shooting training management system.

BACKGROUND

[0002] A management process of a traditional shooting training site sequentially includes user shooting, target paper taking, target paper replacing and score statistics registration; performing statistics on shooting accuracies of users is achieved by means of the most original manual registration manner, each shooting score is recorded in a paper text form, and such a text recording manner is relatively long in recording time, low in retrieving speed and is not conducive to statistic analysis; with the popularization of a computer, scores are recorded and managed by employing a computer manner in a shooting training site, such a manner is greatly improved in terms of the efficiency relative to a traditional paper text manner, but always prevents from recording data manually by using the computer; and meanwhile, such a manner still has no change in manual operation from the management, may not perform real-time analytic statistics, may not replace a target paper in time for the next round of shooting training, resulting in no conversation of the time of the user while increasing the workload of the operation and management personnel, and still bringing great inconvenience to a shooting experience.

[0003] In the shooting site, a shooting location and a target have a certain distance therebetween, and shooting results may not be directly seen through a human eye after shooting is completed. In order to observe the shooting results and quickly achieve result data statistics, under this condition, a data acquisition apparatus capable of remotely acquiring and analyzing the shooting results remotely can solve the above-mentioned problems.

SUMMARY

[0004] In view of the above-mentioned problems, the present invention provides an intelligent shooting training management system which acquires information of a target paper in real time and automatically analyzes shooting accuracies while managing user data.

[0005] The present invention is achieved by the following technical solution:

[0006] An intelligent shooting training management system, comprising a data acquisition apparatus, an operation terminal and a server, wherein the operation terminal is connected with the data acquisition apparatus and/or the server;

[0007] the data acquisition apparatus is configured to acquire a target paper image on a target to obtain photographs and video records, while the data acquisition apparatus comprises an automatic analysis module, and the automatic analysis module is configured to analyze point of impacts from the target paper image to obtain shooting accuracies;

[0008] the server is configured to manage the photographs, the video records and the shooting accuracies; and

[0009] the operation terminal controls data exchange with the data acquisition apparatus and/or the server, and invoke and display the photographs, the video records and the shooting accuracies.

[0010] Further, wherein the automatic analysis module is configured to convert an optical image captured by the data acquisition apparatus into an electronic image, extract a target paper area from the electronic image, perform pixel-level subtraction on the target paper area and an electronic reference target paper to detect points of impact, calculate a center point of each of the points of impact, and determine the shooting accuracies according to a deviation between the center point of each of the points of impact and a center point of the target paper area; and

[0011] the electronic reference target paper is an electronic image of a blank target paper or a target paper area extracted in historical analysis; and

[0012] the deviation comprises a longitudinal deviation and a lateral deviation.

[0013] Further, wherein the operation terminal is connected with the data acquisition apparatus as follows: the data acquisition apparatus serves as a wireless WiFi hotspot, and the operation terminal serves as a client to access a WiFi hotspot network, so that a connection between the operation terminal and the data acquisition apparatus is achieved, and the operation terminal obtains the photographs acquired by the data acquisition apparatus, raw data of the video records and the shooting accuracies obtained by the data acquisition apparatus;

[0014] the operation terminal displays information of the target paper image acquired by the data acquisition apparatus in real time, controls startup and shutdown of acquisition of the data acquisition apparatus, controls startup of the automatic analysis module of the data acquisition apparatus, and controls startup and shutdown of the WiFi hotspot.

[0015] Further, wherein the operation terminal is connected with the server as follows: the operation terminal and the server are in the same wireless network to implement the connection between the operation terminal and the server; and

[0016] after the operation terminal has been verified, the shooting accuracies, the photographs and the video records local to the operation terminal can be transmitted to the server.

[0017] Further, wherein the operation terminal, the data acquisition apparatus and the server are interconnected particularly as follows:

[0018] 1) the operation terminal notifies the data acquisition apparatus of information of a network to be accessed via Bluetooth or WiFi;

[0019] 2) after receiving instruction data, the data acquisition apparatus analyzes the instruction data to obtain a name, a user name and a password of the network to be accessed;

[0020] 3) the data acquisition apparatus performs a network access function and feeds a network connection result back to the operation terminal via Bluetooth or WiFi; and

[0021] 4) the operation terminal analyzes and determines whether the data acquisition apparatus is successfully accessed or not, and if the data acquisition apparatus is successfully accessed, the operation terminal, the data acquisition apparatus and the server are interconnected.

[0022] Further, wherein the target is an intelligent target, and the server remotely controls the intelligent target to replace a target paper through a network;

[0023] intelligent target comprises an exterior structure, wherein the exterior structure internally comprises a target paper recovery compartment, a target paper rotary shaft, drive shafts, a target paper area, a new target paper compartment, a motor servo mechanism, a CPU processing unit and a wireless WiFi unit; and

[0024] the CPU processing unit receives an instruction of the server through the wireless WiFi unit, the CPU processing unit processes information of the instruction of the server and controls an execution action of the motor servo mechanism, and the motor servo mechanism is connected with the target paper rotary shaft through the drive shafts, the motor servo mechanism drives the drive shafts and the target paper rotary shaft to rotate to realize replacement of the target paper among the new target paper compartment, the target paper area and the target paper recovery compartment.

[0025] Further, wherein the server manages the shooting accuracies, the photographs and the video records, respectively;

[0026] the server classifies and manages the photographs and the video records in accordance with the uploaded users as a basic unit; and

[0027] the server performs data query statistics on the shooting accuracies in accordance with time, user and group conditions, and calculates a trend curve diagram under such conditions.

[0028] Further, wherein the management system further comprises an image projection display screen, a score publishing display screen, a PC terminal, a data printer, and a network device;

[0029] the network device comprises a wired router, a wireless router, a switch and a repeater;

[0030] the video projection display screen is directly interconnected with an acquisition host through an HDMI and an AV interface, and the screen only displays projection information;

[0031] the interface of the score publishing display screen is a network or the HDMI or the AV interface, the score publishing display screen is directly connected with the server through a network interface or with a PC terminal through the HDMI or the AV interface, the server publishes and displays current real-time shooting accuracy ranking data on the score publishing display screen;

[0032] the data printer is connected with the server by employing a network, a parallel port and a USB interface for data printing; and

[0033] the PC terminal is connected with the data printer and the score publishing display screen to control the printing of the data printer and the displaying of the score publishing display screen.

[0034] Further, wherein the data acquisition apparatus comprises an exterior structure, wherein the exterior structure is a detachable structure as a whole, and the exterior structure internally comprises a field of view acquisition unit, an electric zooming assembly, an electro-optical conversion circuit, a CPU processing unit and an automatic analysis module;

[0035] the field of view acquisition unit comprises an objective lens combination or other optical visual device; the objective lens combination or other optical visual device is mounted on the front end of the field of view acquisition unit to obtain field of view information;

[0036] the electro-optical conversion circuit is configured to convert the field of view information into electronic information that can be displayed by the electronic unit;

[0037] the CPU processing unit is connected with the electro-optical conversion circuit and configured to process the electronic information;

[0038] the automatic analysis module is configured to analyze the electronic information to obtain shooting accuracies;

[0039] the electric zooming assembly is configured to change a focal length of the objective lens combination or other optical visual device; and

[0040] the CPU processing unit is connected with the electric zooming assembly, and the CPU processing unit sends a control instruction to the electric zooming assembly for controlling the zooming.

[0041] Further, wherein performing perspective correction on the target paper area after the target paper area is extracted corrects an outer contour of the target paper area to a circular contour, and point of impact detection is performed by using the target paper area subjected to perspective correction.

[0042] Further, wherein extracting a target paper area from the electronic image particularly comprises: performing large-scale mean filtering on the electronic image to eliminate grid interference on the target paper; segmenting the electronic image into a background and a foreground by using an adaptive Otsu threshold segmentation method according to a gray property of the electronic image; and determining a minimum contour by adopting a vector tracing method and a geometric feature of a Freeman link code according to the image segmented into the foreground and background to obtain the target paper area.

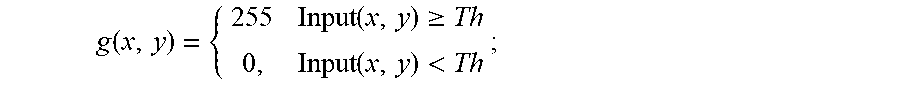

[0043] Further, wherein performing pixel-level subtraction on the target paper area and an electronic reference target paper to detect points of impact particularly comprises: performing pixel-level subtraction on the target paper area and an electronic reference target paper to obtain a pixel difference image of the target paper area and the electronic reference target paper; wherein

[0044] a pixel difference threshold of images of a previous frame and a following frame is set in the pixel difference image, and a setting result is 255 when a pixel difference exceeds the threshold, and the setting result is 0 when the pixel difference is lower than the threshold; and

[0045] the pixel difference image is subjected to contour tracing to obtain a point of impact contour and a center of the contour is calculated to obtain a center point of each of the points of impact.

[0046] Further, wherein the perspective correction particularly comprises: obtaining an edge of the target paper area by using a Canny operator, performing maximum elliptical contour fitting on the edge by using Hough transform to obtain a maximum elliptical equation, and performing straight line fitting of cross lines on the edge by using the Hough transform to obtain points of intersection with an uppermost point, a lowermost point, a rightmost point and a leftmost point of a largest circular contour, and combining the uppermost point, the lowermost point, the rightmost point and the leftmost point of the largest circular contour with four points at the same positions in a perspective transformation template to obtain a perspective transformation matrix by calculation, and performing perspective transformation on the target paper area by using the perspective transformation matrix.

[0047] The present invention has advantageous effects as follows:

[0048] the intelligent shooting training management system of the present invention may realize the following functions:

[0049] (1) point of impacts of shooting are automatically recognized and scores are counted;

[0050] (2) the shooting accuracies are automatically matched with shooters, and the scores may be queried;

[0051] (3) individual single-gun scores and single-score ranking are achieved, and a single score is based on data submitted after each shooting;

[0052] (4) score ranking information is published by a large screen in real time;

[0053] (5) a live shooting process image may be connected to a large screen for being displayed;

[0054] (6) statistical analysis in a team manner is achieved, such as a group manner, and the total score comparison between teams is achieved;

[0055] (7) a score and team score trend analysis for a single person and a team is achieved and scores are displayed in a chart manner;

[0056] (8) data printing is achieved, and the data includes text data and trend data;

[0057] (9) remote control of replacing the target paper is achieved, without manual site replacement.

BRIEF DESCRIPTION OF THE DRAWINGS

[0058] FIG. 1 is a block diagram of a flow of an analysis method according to the present invention;

[0059] FIG. 2 is a 8-connected chain code in an embodiment 1 according to the present invention;

[0060] FIG. 3 is a bitmap in an embodiment 1 according to the present invention;

[0061] FIG. 4 is a block diagram of a process for extracting a target paper area according to the present invention;

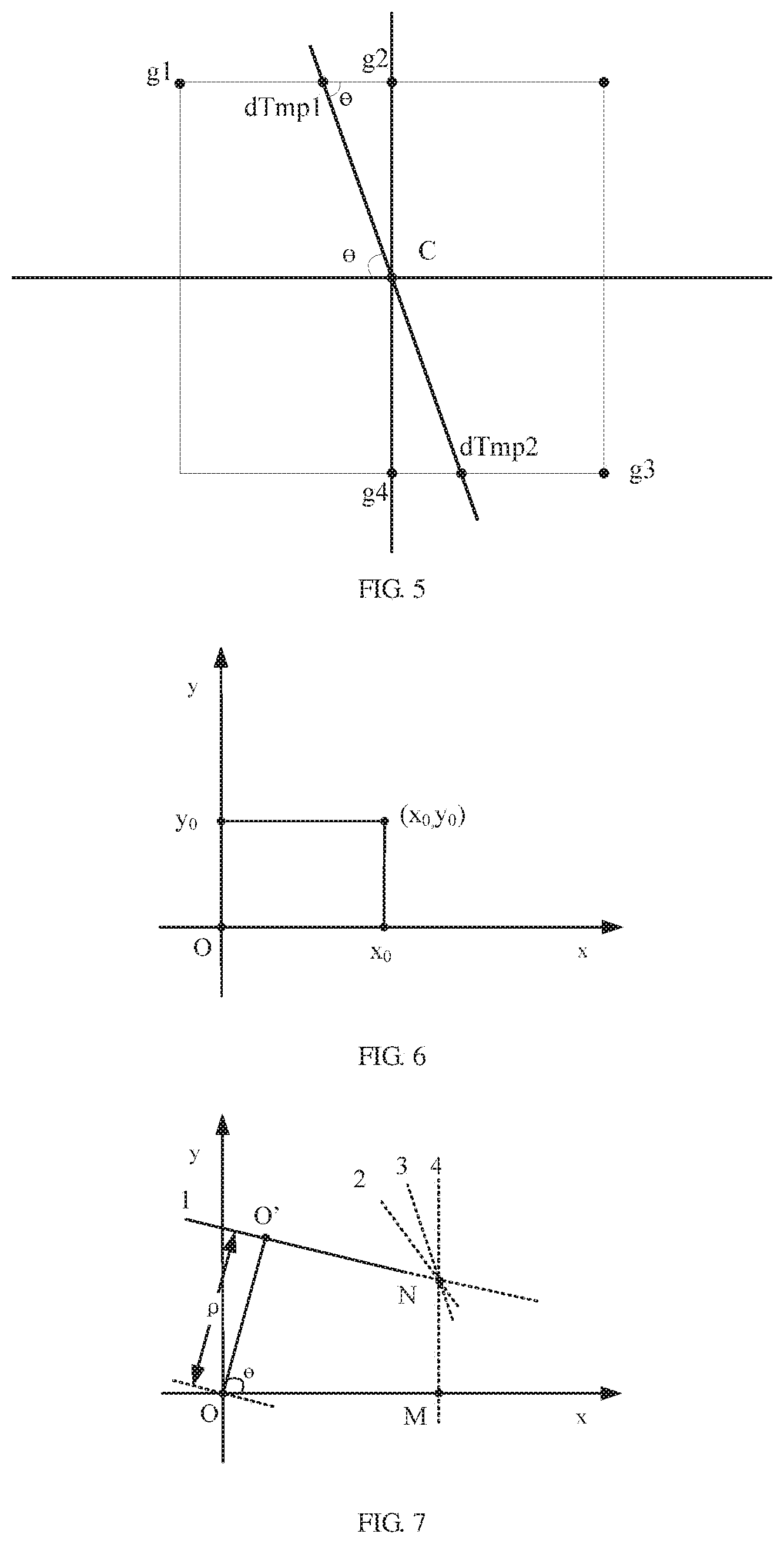

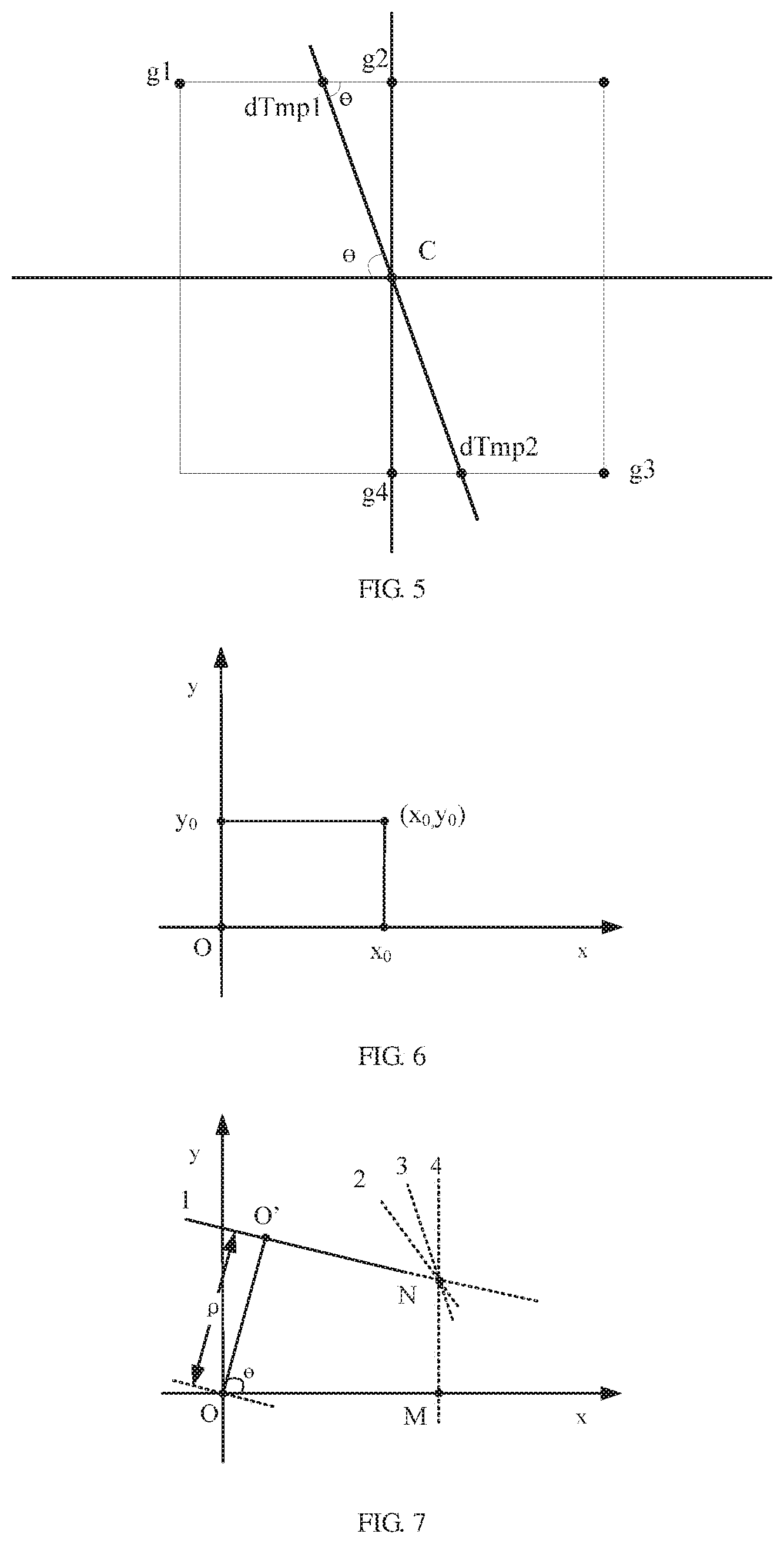

[0062] FIG. 5 is a schematic diagram of non-maximum suppression in an embodiment 2 according to the present invention;

[0063] FIG. 6 is a schematic diagram of transformation of an original point under a Caresian coordinate system in an embodiment 2 according to the present invention;

[0064] FIG. 7 is a schematic diagram showing any four straight lines passing through an original point under a Caresian coordinate system in an embodiment 2 according to the present invention;

[0065] FIG. 8 is a schematic diagram of expression of any four straight lines passing through an original point under a polar coordinate system in a Caresian coordinate system in an embodiment 2 according to the present invention;

[0066] FIG. 9 is a schematic diagram of determining points of intersection of cross lines L1 and L2 with an ellipse in an embodiment 2 according to the present invention;

[0067] FIG. 10 is a schematic diagram of a perspective transformation diagram in an embodiment 2 according to the present invention;

[0068] FIG. 11 is a block diagram of a process for performing target paper area correction according to the present invention;

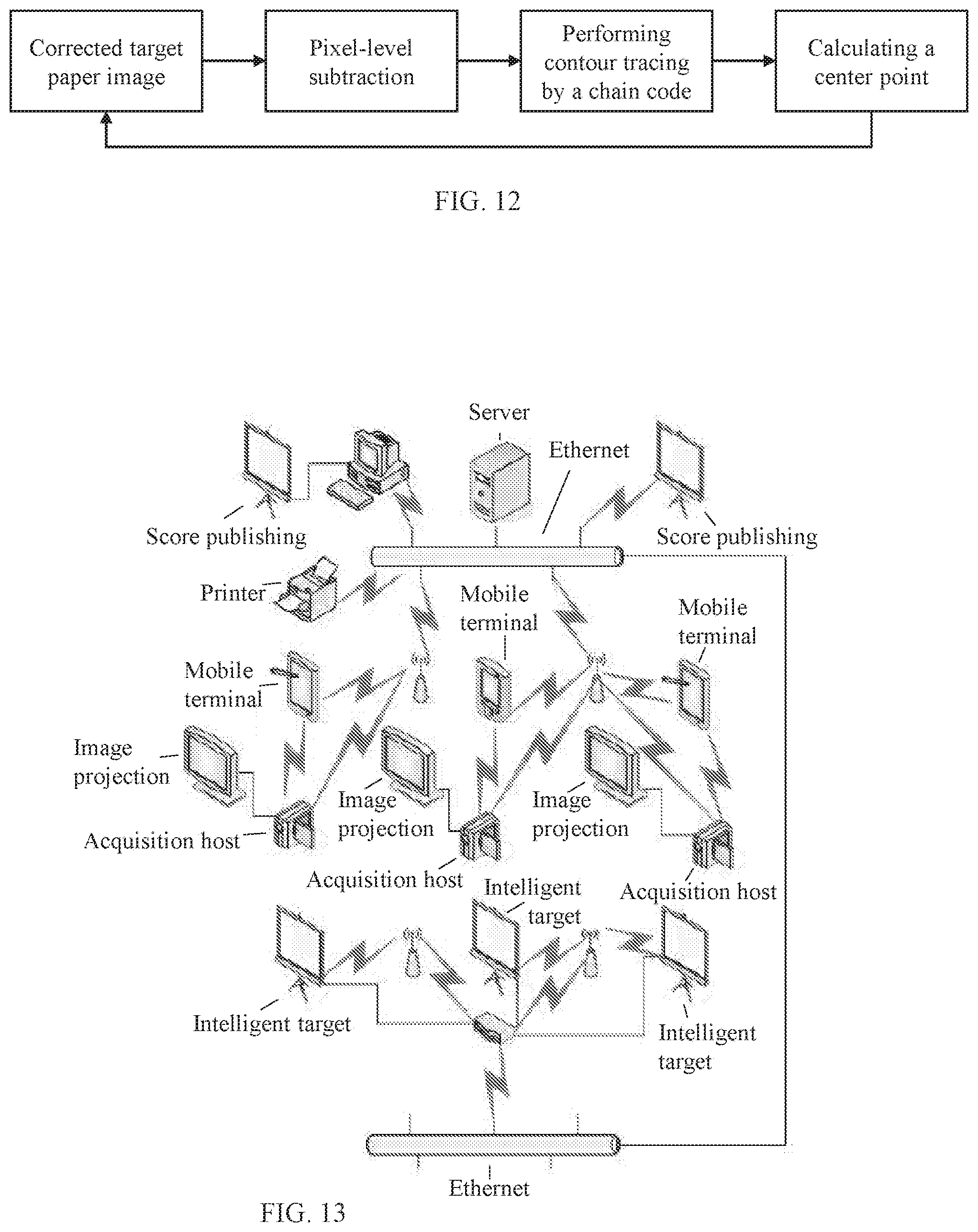

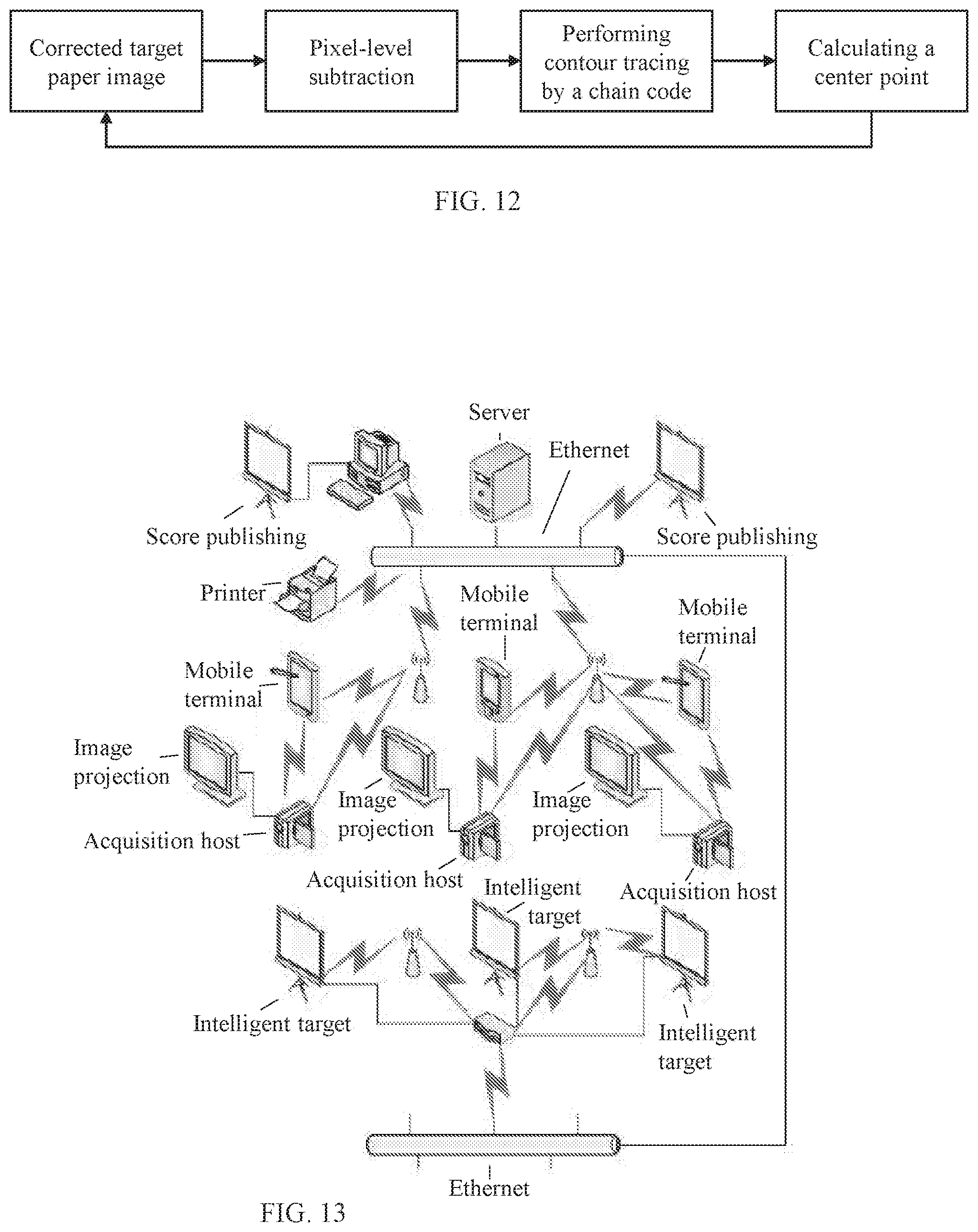

[0069] FIG. 12 is a block diagram of a process for performing a point of impact detection method according to the present invention;

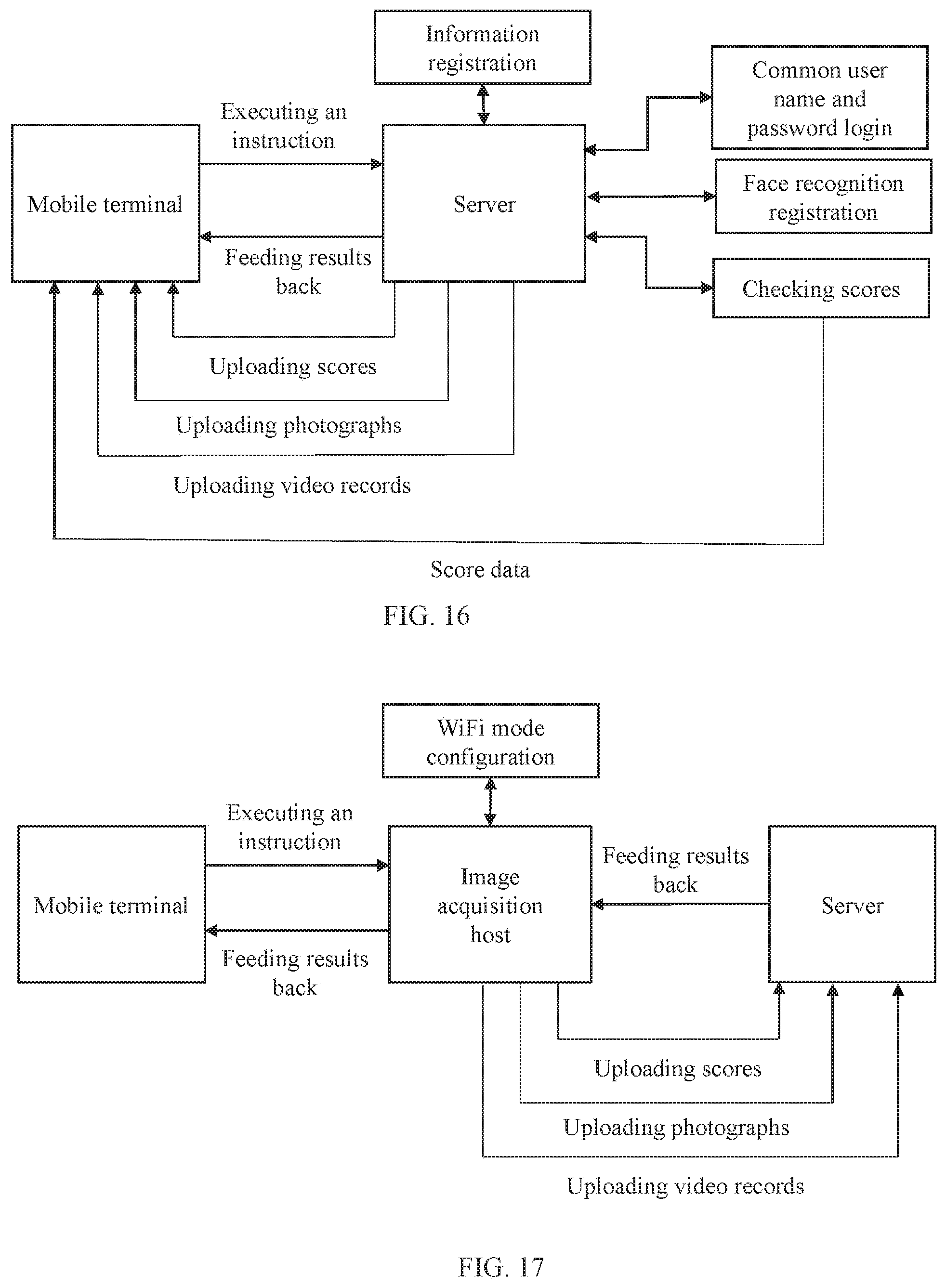

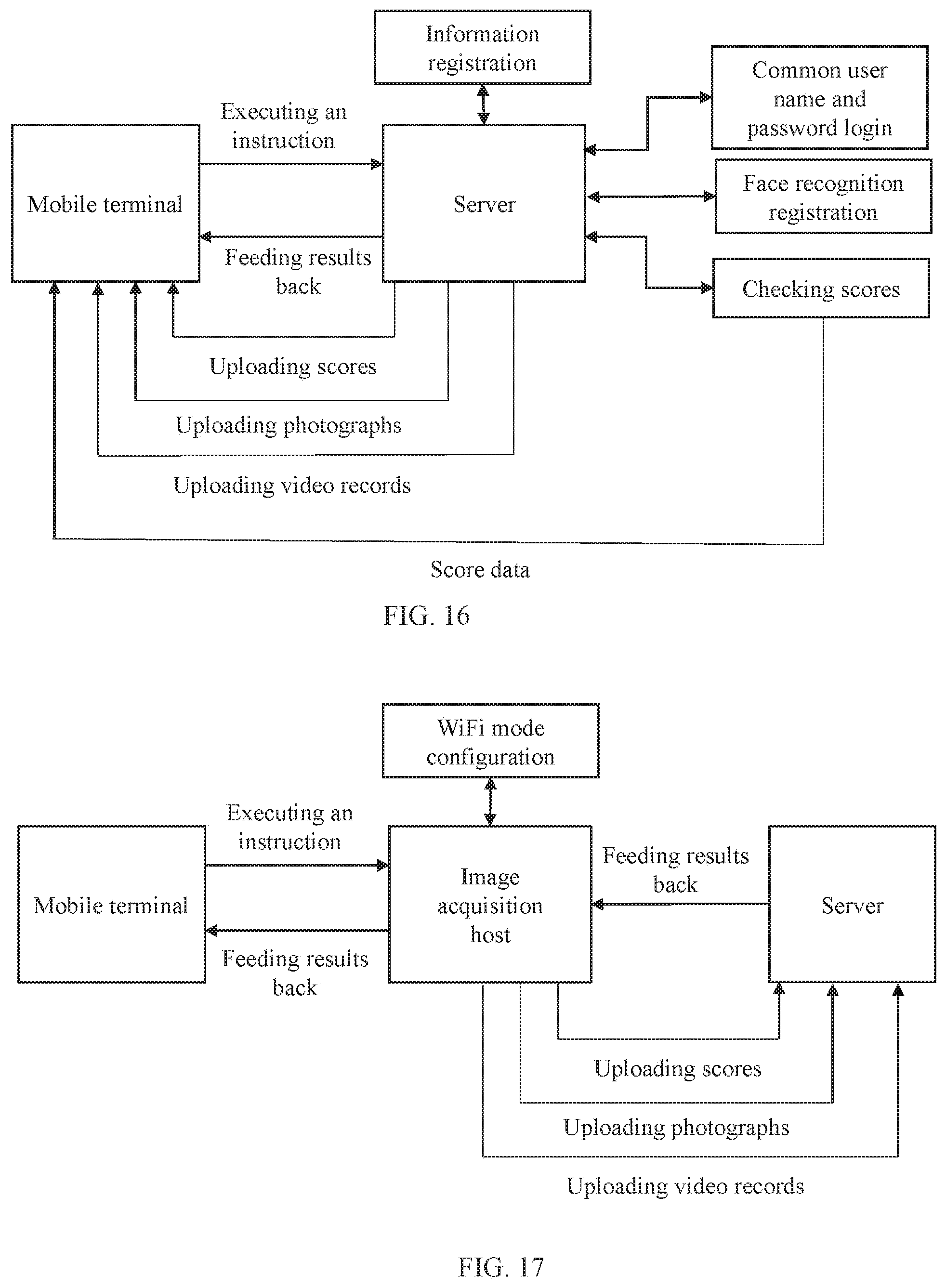

[0070] FIG. 13 is a schematic diagram showing functions of the data acquisition apparatus in an embodiment 1 according to the present invention;

[0071] FIG. 14 is a schematic diagram showing a structure of the data acquisition apparatus in an embodiment 1 according to the present invention;

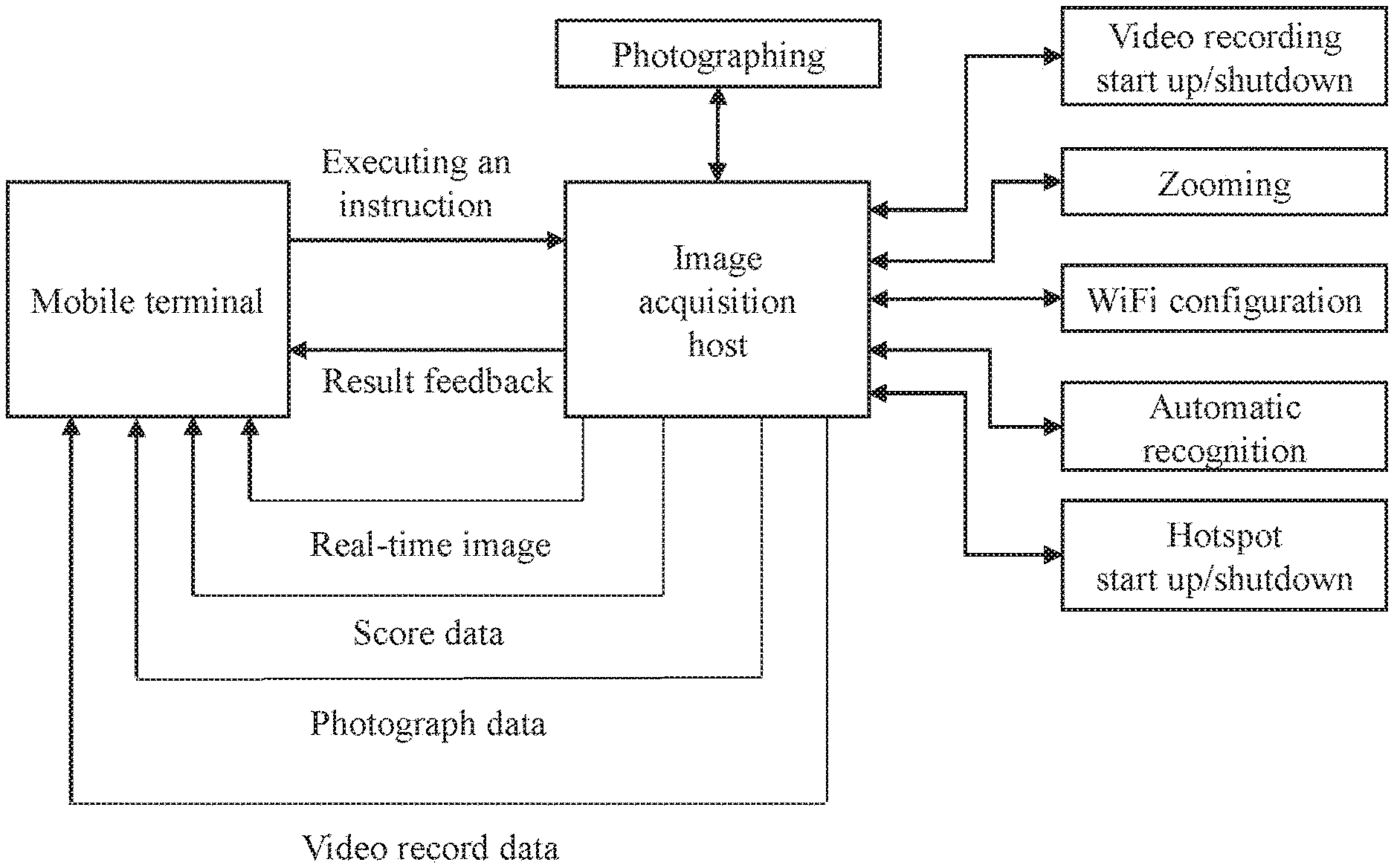

[0072] FIG. 15 is a data flow diagram of a direct connection mode between a terminal and an acquisition host;

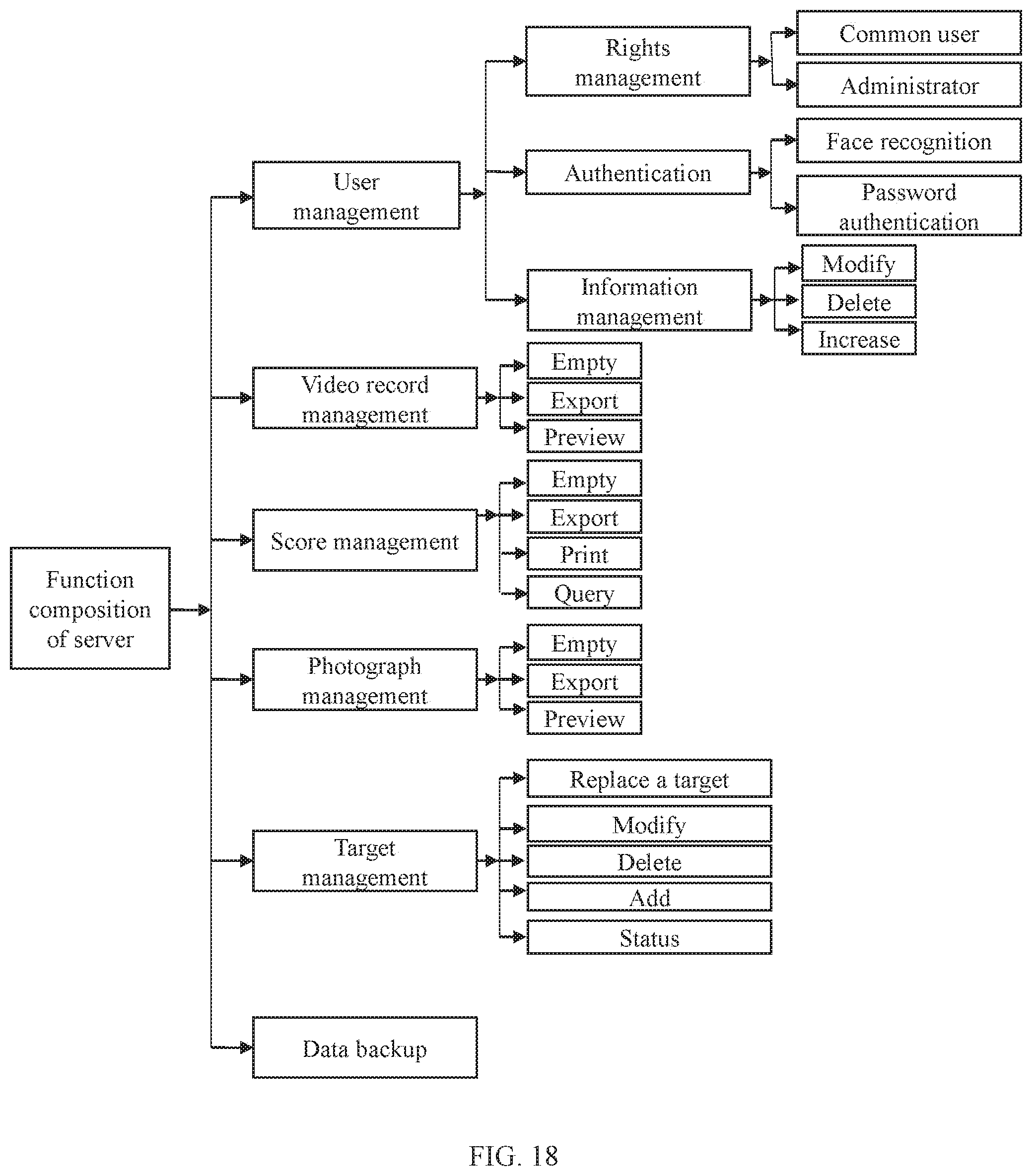

[0073] FIG. 16 is a data flow diagram of an interconnection mode between a terminal and a server;

[0074] FIG. 17 is a data flow diagram of a mobile terminal in an interconnection mode between an acquisition host and a server;

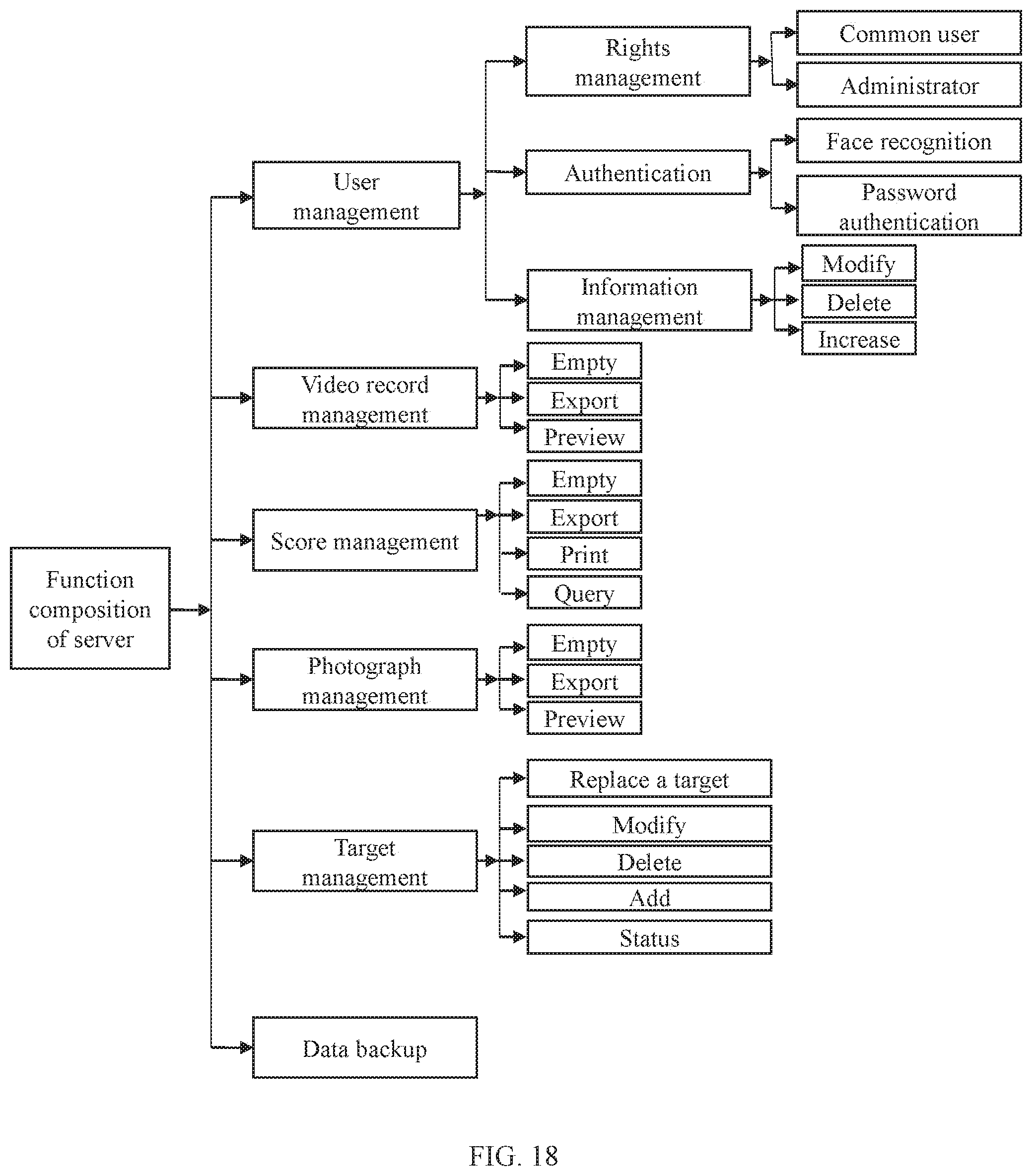

[0075] FIG. 18 is a schematic diagram showing a function composition of a server system;

[0076] wherein 1. field of view acquisition unit; 2. external leather track; 3. external key; 4. line transmission interface antenna; 5. bluetooth excuse antenna; 6. tripod interface; 7. battery compartment; 8. electro-optical conversion board; 9. CPU core board; 10. interface board; 11. function operation board; 12. electric zooming assembly; 13. battery pack; 14. rotary encoder; and 15. focusing knob; 01. target paper recovery compartment; 02. target paper rotary shaft; 03. currently-used target paper area; 04. first drive shaft; 05. second drive shaft; 06. new target paper compartment; 07. motor servo mechanism; 08. CPU processing unit; 09 wireless WiFi unit; 010. battery compartment; 011. power management unit; 012. external power interface; and 013 transmission antenna.

DETAILED DESCRIPTION

[0077] Objectives, technical solutions and advantages of the present invention will become more apparent from the following detailed description of the present invention when taken in conjunction with accompanying drawings. It should be understood that specific embodiments described herein are merely illustrative of the present invention and are not intended to limit the present invention.

[0078] Rather, the present invention encompasses any alternatives, modifications, equivalents, and solutions made within the spirit and scope of the present invention as defined by the claims. Further, in order to give the public a better understanding of the present invention, some specific details are described below in detail in the following detailed description of the present invention. It will be appreciated by those skilled in the art that the present invention may be understood without reference to the details.

Example 1

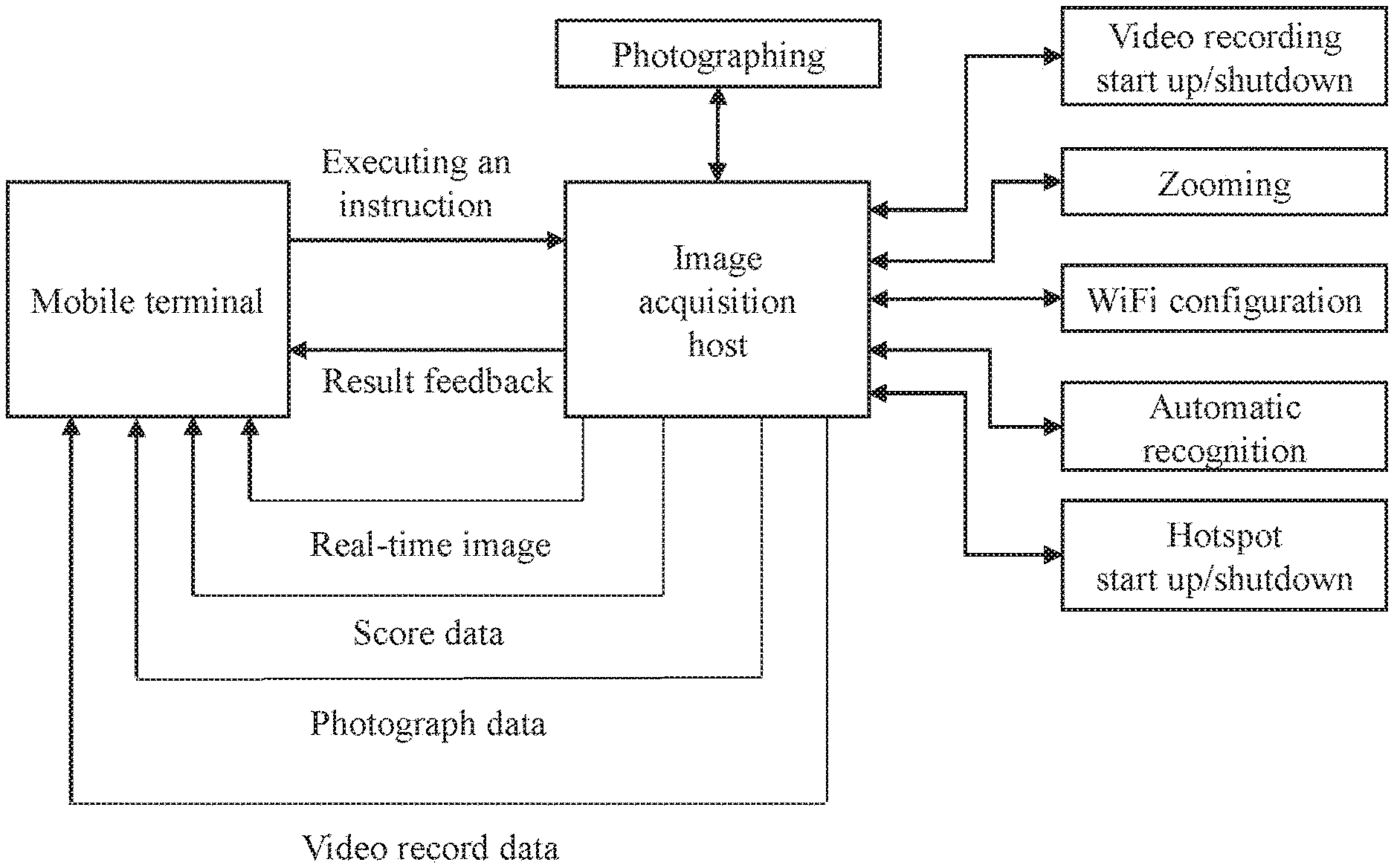

[0079] As shown in FIG. 13, an intelligent shooting training management system includes a data acquisition apparatus, an operation terminal and a server, wherein the operation terminal is connected with the data acquisition apparatus and/or the server; the data acquisition apparatus acquires a target paper image to obtain photographs and video records; meanwhile the data acquisition apparatus includes an automatic analysis module, which analyzes points of impact in the target paper image to obtain shooting accuracies; the server manages the photographs, the video records and the shooting accuracies; the operation terminal controls data exchange with the data acquisition apparatus and/or the server, and the operation terminal invokes and displays the photographs, the video records and the shooting accuracies.

[0080] The data acquisition apparatus performs projection imaging on a target (target paper) by means of an optical imaging principle, optical data is converted into calculable electronic data by a built-in electro-optical conversion unit, shooting results are calculated by means of analysis, the data acquisition apparatus and the operating terminal as well as a high-definition display screen are linked, so that image data and an analysis result are displayed in real time; and meanwhile the data acquisition apparatus and the data server are linked, so that data about the shooting results is uploaded to the server for storage and further analysis and processing.

[0081] Further, the shooting training management system includes an intelligent target, wherein the server remotely controls the intelligent target to replace the target paper through a network. The server and the intelligent target are linked through the network, after the shooting is completed, the shooting target paper is remotely replaced by means of an operation of the server, without waiting for intensively replacing the target paper, and the next round of shooting training is performed conveniently and rapidly, so that the time is saved.

[0082] Especially, in the intelligent shooting training management system, the data acquisition apparatus does not have a display and a function operation input. In the intelligent shooting training management system, a mobile terminal serves as an input interface device and an output display device of functional operations of the data acquisition apparatus. A hardware platform of the mobile terminal employs a mature and stable smart phone, an intelligent terminal and a tablet as a carrier, and a dedicated APP is set on the software as a human-computer interaction. The mobile terminal includes the following three operation modes:

[0083] (1) Direct Connection with the Data Acquisition Apparatus

[0084] Under such a mode, the data acquisition apparatus serves as a wireless WiFi hotspot, and the mobile terminal serves as a client accessed into a WiFi hotspot network, so that the direct connection mode of the mobile terminal with the data acquisition apparatus is achieved. Under the direct connection mode, a transmission distance between the data acquisition apparatus and the mobile terminal is controlled within 100 meters. The mobile terminal is directly connected with an acquisition host, and the mobile terminal displays information of the target paper image in real time, and performs data and instruction interaction with the acquisition host. Its advantages are as follows:

[0085] 1) the terminal acquires an image and displays it in real time;

[0086] 2) the terminal controls startup and shutdown of photographing and video recording;

[0087] 3) the terminal controls the acquisition host to perform zooming, and the acquisition host receives an instruction to control a stepping motor to perform zooming;

[0088] 4) the terminal configures a channel for WiFi;

[0089] 5) the terminal controls startup and shutdown of a hotspot function;

[0090] 6) the terminal controls the acquisition host to start an automatic recognition function; and

[0091] 7) score data, photographs and video records are downloaded locally.

[0092] A data flow diagram of a direct connection mode between the terminal and the acquisition host is shown in FIG. 15.

[0093] (2) Interconnection with the Server

[0094] Under this mode, the mobile terminal and the server are in the same wireless network, when the mobile terminal and the server are interconnected, identity registration, login authentication and personal score information query may be performed, and necessary information such as a user name, a password and an avatar photo are required to be recorded during the identity registration; the login may be performed in a traditional manner of the user name and the password, quick login may be performed in a face recognition manner as well; and after the login is successful, the score data, photographs and video records local to the mobile terminal may be transmitted to the server. Its advantages are as follows:

[0095] 1) information registration;

[0096] 2) login, namely, the user name and password login manner or the face recognition manner;

[0097] 3) uploading of the score data, photographs and video records;

[0098] 4) checking of personal scores and a score trend within a period of time; and

[0099] 5) checking of scores of a team to which an individual belongs and a score trend of the team within a period of time.

[0100] A data flow diagram of an interconnection mode between the terminal and the server is as shown in FIG. 16.

[0101] (3) Control of the Interconnection Mode Between the Acquisition Host and the Server

[0102] As the data acquisition apparatus does not have a human-computer interaction during operation, its function is executed depending on an instruction of the mobile terminal. Specific steps for implementing this mode are as follows:

[0103] 1) the mobile terminal notifies the data acquisition apparatus of information of a network to be accessed via Bluetooth or WiFi;

[0104] 2) the data acquisition apparatus analyzes instruction data after receiving it, so as to obtain necessary information such as a name of the network to be accessed, a user name and a password;

[0105] 3) the data acquisition apparatus performs a network access function and feeds a network connection result back to the mobile terminal via Bluetooth or WiFi; and

[0106] 4) the mobile terminal analyzes and determines whether the data acquisition apparatus is successfully accessed or not; and after the data acquisition apparatus is successfully accessed, the mobile terminal may operate to upload the score data, photographs and video records in the acquisition host to the server.

[0107] A data flow diagram of a mobile terminal in an interconnection between an acquisition host and a server is as shown in FIG. 17.

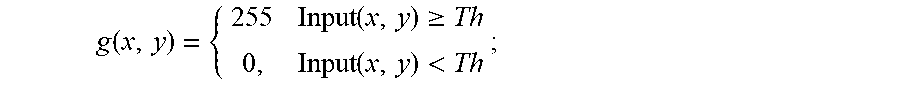

[0108] In the intelligent shooting training management system, the server serves as a final place for data processing, interaction and storage, and is an important part for achieving shooting training score analysis and management, which includes the following functions:

[0109] 1) shooting accuracy management

[0110] data query statistics may be performed according to time, users, teams and other conditions, and a trend curve diagram under such conditions is calculated, and meanwhile, data exporting and emptying operations may be performed;

[0111] 2) photograph management

[0112] photographs uploaded by the users are classified, data is managed with the users as a basic unit, batch exporting and deleting operations may be performed, and meanwhile, local previewing may be performed;

[0113] 3) video record management

[0114] video record files uploaded by the users are classified, video record data is managed with the users as a basic unit, batch exporting and deleting operations may be performed, and meanwhile, local previewing may be performed;

[0115] 4) intelligent target management

[0116] a wireless WiFi module is built in an intelligent target and accessed into a network environment where the server is located through a wireless router, the server monitors a status of the intelligent target online in real time, and detects whether the target is online or online by means of a mode of regularly sending a heartbeat packet; after the intelligent target receives a status detection instruction of the server, it sends online status information to the server; and after the server receives the online status information, a status of the intelligent target is marked as an online status. If the server detects that an instruction may not be sent to the target or the status of the target may not be fed back to the server due to a network failure or target failure, the server determines that the target is in an offline status after a period of time internal. A target area on the server is clicked so that basic information of the target may be checked. When the target is in an online state, the server may remotely control its operation of replacing the target paper; and the server may add, delete and modify a configuration operation of the target;

[0117] 5) data printing

[0118] conditionally queried results will be printed and output, wherein the data includes a text and a chart.

[0119] 6) Score ranking publishing

[0120] The users fleeting the conditions in a system are displayed according to ranking conditions configured by administration rights as well as a number of ranking lists in real time, and are automatically ranked;

[0121] 7) user Management

[0122] it includes rights management, user information management and identity authentication, wherein the rights management includes ordinary rights management and administrator rights management; during the login with different rights, operable tasks will be automatically matched; the user information management includes user information registration increase, information modification and user deletion; and the identity authentication includes common user name and password authentication and dynamic face recognition authentication; and

[0123] 8) database backup

[0124] the database backup function is operated under the administrator rights, and the database backup may reduce the server capacity burden while ensuring that the data is safe and restorable.

[0125] A function composition of a server system is as shown in FIG. 18.

[0126] Intelligent Target

[0127] In the shooting training management system, the server remotely controls the intelligent target to replace the target paper through the network, without manual site replacement. The present invention provides an intelligent target for remotely controlling automatic replacement of a target paper. A function of an intelligent target system is as shown in FIG. 13, and its structure is as shown in FIG. 19.

[0128] The intelligent target is mounted on a flat ground, the intelligent target includes an exterior structure which is a detachable structure as a whole, and an internal portion of the exterior structure is an accommodating space with a fixing component, the accommodating space with the fixing component includes a target paper recovery compartment, a target paper rotary shaft, drive shafts, a target paper area, a new target paper compartment, a motor servo mechanism, a CPU processing unit, a wireless WiFi unit, a transmission antenna, a battery compartment, a power manager and an external power interface.

[0129] The target paper recovery chamber 01 is a space area for the motor servo mechanism 07 to control the recovery and the storage of the used target paper.

[0130] The target paper rotation shaft 02 is a rotary shaft built in the target paper recovery compartment for storing a recovered target paper roll.

[0131] The target paper area is a new target paper hanging area, and the processor controls the motor servo mechanism 07 to suspend a new target paper in this area for shooting.

[0132] The first drive shaft 04 and the second drive shaft 05 are used for connecting the motor servo mechanism 07 and the target paper rotary shaft 02 and are action drive components between the motor servo mechanism 07 and the target paper rotary shaft 02 for driving the target paper rotary shaft 02 to rotate.

[0133] The new target paper compartment 06 stores unused new target papers.

[0134] The motor servo mechanism 07 is used for controlling the replacement of the target paper. The motor servo mechanism 07 is connected with the CPU processing unit 08 through an interface. The CPU processing unit 08 controls an execution of the motor servo mechanism 07 to drive the drive shafts 04 and 05 and the target paper rotary shaft 02 to rotate, so that the replacement of the target paper is achieved.

[0135] The CPU processing unit 08 is configured to process information of the instruction of the server, and control an execution action of the motor servo mechanism 07. The CPU processing unit receives the instruction of the server through the wireless WiFi unit 09, performs a control action, and feeds results back to the server.

[0136] The wireless WiFi unit 09 is connected with the CPU processing unit 08 and is responsible for receiving information from the server and sending data to the server. The wireless WiFi unit 09 is connected with the transmission antenna 013, so as to achieve signal amplification and increase a transmission distance.

[0137] The battery compartment 010 is internally provided with a lithium battery pack as a standby power source for the intelligent target. The battery compartment 010 is connected with the power management unit 011. The power management unit is responsible for supplying a power to the system.

[0138] The power management unit 011 is connected with the battery compartment 010, the CPU processing unit 08, the wireless WiFi unit 09, and the external power interface 012 to supply a power to the CPU processing unit 08 and the wireless WiFi unit 09. When an external power supply is used, the power management unit charges the battery pack mounted in the battery compartment 010. When external power supply is disconnected, the power management unit automatically switches to use the battery compartment 010 to supply a power to the system.

[0139] The external power interface 012 is a mains output interface.

[0140] Further, for better display management, the management system of the present invention may further include an image projection display screen, a score publishing display screen, a PC terminal, a data printer, a network device, and the like. The network device includes a wired router, a wireless router, a switch, a repeater and the like.

[0141] Image Projection Display Screen

[0142] The image projection screen may be directly interconnected with the acquisition host through an HDMI, an AV interface and other interfaces by employing a mature and stable large display screen, and the screen only shows projection information.

[0143] Score Publishing Display Screen

[0144] The display screen may employ a display screen an interface of which is the network or the HDMI and the AV interface, the screen may be directly connected with the server through the network when being a network interface, if the screen is not a screen without a network interface, then it is connected with the PC terminal through the HDMI and the AV interface, and the server publishes and displays current real-time score ranking data on the screen.

[0145] PC Terminal

[0146] In order to facilitate a printing operation of the user and the displaying of a non-network large screen, there is a need for a PC terminal to be connected with the printer and a wired large screen for controlling the displaying.

[0147] Data Printer

[0148] The printer is connected with the PC terminal or the server by employing a network, a parallel port, a USB interface, and the like for data printing.

[0149] The data acquisition apparatus of the present invention has an automatic analysis module, which uses an automatic image analysis method to analyze the shooting accuracies.

[0150] The function of the data acquisition apparatus in the intelligent shooting training management system is as shown in FIG. 13, and its structure is as shown in FIG. 14.

[0151] The data acquisition apparatus may be conveniently mounted on a fixed tripod. The data acquisition apparatus includes an exterior structure, wherein the exterior structure is a detachable structure body as a whole, an internal portion of the exterior structure is an accommodating space with a fixed component, and the accommodating space with the fixed component includes a field of view unit, electro-optical conversion, a CPU processing unit, an electric zooming assembly, a power supply and a wireless transmission unit.

[0152] The field of view acquisition unit 1 includes an objective lens combination or other optical visual device, and the objective lens combination or the optical visual device is mounted on the front end of the field of view acquisition unit 1 to acquire field of view information.

[0153] The data acquisition apparatus is a digitallizer as a whole, which may communicate with a smart phone, an intelligent terminal, a sighting apparatus or a circuit and sends video information acquired by the field of view acquisition unit 1 to the smart phone, the intelligent terminal, the sighting apparatus or the circuit, and the information of the field of view acquisition unit 1 is displayed by the smart phone, the intelligent terminal or the like. The field of view information in the field of view acquisition unit 1 is converted by the electro-optical conversion circuit to obtain video information available for electronic display. The circuit includes an electro-optical conversion board 8 which converts a field of view optical signal into an electrical signal, the electro-optical conversion board 8 is located at the rear end in the field of view acquisition unit 1, the electro-optical conversion board 8 converts the optical signal into the electrical signal, while performing automatic exposure, automatic white balance, noise reduction and sharpening operation on the signal, so that the signal quality is improved, and high-quality data is provided for imaging.

[0154] The rear end of the electro-optical conversion circuit is connected with a CPU core board 9, and the rear end of the CPU core board 9 is connected with an interface board 10, particularly, the CPU core board 9 is connected with a serial port of the interface board 10 through a serial port, the CPU core board 9 is disposed between the interface board 10 and the electro-optical conversion plate 8, the three components are placed in parallel, and board surfaces are all perpendicular to the field of view acquisition unit 1, and the electro-optical conversion plate 8 transmits the converted video signal to the CPU core board 9 for further processing through a parallel data interface, and the interface board 10 communicates with the CPU core board 9 through a serial port to transmit peripheral operation information such as battery power, time, WIFI signal strength, key operation and knob operation to the CPU core board 9 for further processing.

[0155] The CPU core board 9 may be connected with a memory card through the interface board 10. In the embodiment of the present invention, with the field of view acquisition unit 1 as an observation entrance direction, a memory card slot is disposed at the left side of the CPU core board 9, the memory card is inserted in the memory card slot, information may be stored in the memory card, and the memory card may automatically upgrade a software program built in the system.

[0156] With the field of view acquisition unit 1 as the observation entrance direction, a USB interface is disposed on a side of the memory card slot on the left side of the CPU core board 9, and by means of the USB interface, the system may be powered by an external power supply or information of the CPU core board 9 is output.

[0157] With the field of view acquisition unit 1 as the observation entrance direction, an HDMI interface is disposed on a side of the USB interface at the side of the memory card slot on the left side of the CPU core board 9, and real-time video information may be transmitted to a high-definition display device of the HDMI interface through the HDMI interface for display.

[0158] A housing is internally provided with a battery compartment 7, a battery pack 13 is disposed within the battery compartment, an elastic sheet is disposed within the battery compartment 7 for fastening the battery pack 13, the battery compartment 7 is disposed in the middle in the housing, and a cover of the battery compartment may be opened by the side of the housing to realize replacement of the battery pack 13.

[0159] A line welding contact is disposed at the bottom side of the battery compartment 7, the contact is connected with the elastic sheet inside the battery compartment, the contact of the battery compartment 7 is welded with a wire with a wiring terminal, and is connected with the interface board 10 for powering the interface board 10, the CPU core board 9, the electro-optical conversion board 8, the function operation board 11, the electric zooming assembly 12.

[0160] The electric zooming assembly 12 is a stepping motor control unit, wherein the stepping motor control unit is connected with an interface board 10, thereby communicating with a CPU core board 9; and the CPU core board sends a control instruction to the zooming assembly 12 for controlling the zooming.

[0161] An external key 3 is disposed at the top of the housing, and connected onto the interface board 10 through the function operation board 11 on the inner side of the housing, and functions of turning the device on or off, photographing and video-recording may be realized by touching and pressing the external key.

[0162] A rotary encoder 14 with a key function is disposed on one side, which is close to the external key 3, on the top of the housing, and the rotary encoder 14 is connected with the function operation board 11 inside the housing. The rotary encoder controls functions such as function switching, magnification data adjustment, information setting, operation derivation and transmission.

[0163] A wireless transmission interface antenna 4 is disposed at a position, which is close to the rotary encoder 14, on the top of the housing, the interface antenna is connected with the function operation board 11 inside the housing, and the function operation board has a wireless transmission processing circuit which is responsible for transmitting an instruction and data transmitted by the CPU core board as well as receiving instructions transmitted by networking devices such as an external mobile terminal.

[0164] With the field of view acquisition unit 1 as the observation entrance direction, a focusing knob 15 is disposed at one side, which is close to the field of view acquisition unit 1, on the right side of the housing, and the focusing knob 15 adjusts focusing of the field of view acquisition unit 1 by a spring mechanism, so as to achieve the purpose of clearly observing an object under different distances and different magnifications.

[0165] A tripod interface 6 is disposed at the bottom of the housing for being fixed on the tripod.

[0166] An external leather track 2 is disposed at the top of the field of view acquisition unit 1 of the housing, and the external leather track 2 and the field of view acquisition unit 1 are designed with the same optical axis and fastened by screws. The external leather track 2 is designed in a standard size and may be provided with an object fixedly provided with a standard Picatinny connector, and the object includes a laser range finder, a fill light, a laser pen, and the like.

[0167] By applying the above data acquisition apparatus, an observer does not need to observe by a monocular eyepiece. Front target surface information is displayed directly in a high-definition liquid crystal display of the data acquisition apparatus in an image video form through the electro-optical conversion circuit. By means of an optical magnification and electronic magnification combination manner, a distant object is displayed in a magnified manner, and the target surface information may be clearly and completely seen through the screen.

[0168] By applying the above data acquisition apparatus, without manual data interpretation, through related technologies of image recognition and pattern recognition, old points of impact are automatically filtered, information of newly-added points of impact is reserved, and a specific deviation value and a specific deviation direction of each bullet from a blank at the time of this shooting are automatically calculated; shooting accuracy information may be stored in a database, data in the database may be locally browsed, and shooting within a period of time may be self-evaluated according to data time, the spotting scope system may automatically generate a shooting accuracy trend within a period of time, and provide an intuitive accuracy expression for training in a graph form; and the above text data and the above graph data may be derived locally for being printed so as to be further analyzed and used.

[0169] By applying the above data acquisition apparatus, the entire process may be completely recorded in a video manner, and the video record may be used as a sharing video between enthusiasts, the video is uploaded to a video sharing platform via Internet, and the video may be locally placed back for a user to play back the entire shooting and accuracy analyzing process.

[0170] By applying the above data acquisition apparatus, it may be linked with a mobile terminal through the network. A linkage mode includes: with the spotting scope as a hotspot, the mobile device is connected with it; and further includes: the spotting scope and the mobile device are connected to the same wireless network for connection.

[0171] By applying the above data acquisition apparatus, it is possible to output real-time image data to a high-definition large-size liquid crystal display television or a television wall by wired transmission, so that all people in a certain area can watch on-site at the same time.

[0172] The present embodiment further provides an analysis method for automatically analyzing a shooting accuracy. The analysis method includes the following steps.

[0173] (1) Electro-optical conversion, namely, converting an optical image obtained by the data acquisition apparatus into an electronic image.

[0174] (2) Target paper area extraction, namely, extracting a target paper area from the electronic image.

[0175] A target paper area of interest is extracted from a global image, and the interference of complex background environment information is eliminated. The target paper area extraction method is a target detection method based on adaptive threshold segmentation. The detection method is high in speed of determining the threshold, and better in performance for a variety of complex conditions, and guarantees the segmentation quality. The detection method sets t as a segmentation threshold of the foreground and the background by employing an idea of maximizing an interclass variance, wherein a ratio of the number of foreground points to the image is w0, an average gray value is u0; and a ratio of the number of background points to the image is w1, an average gray value is u1, and u is set as the total average gray value of the image, then:

u=w0*u0+w1*u1

[0176] t is traversed from the minimum gray level value to the maximum gray level value, when a value of t lets a value of g to be maximum, t is an optimal segmentation threshold;

g=w0*(u0-u).sup.2+w1*(u1-u).sup.2;

[0177] A process for executing the target paper extraction method is as shown in FIG. 4. The target paper extraction method includes four steps, namely, image mean filtering, determination of the segmentation threshold by using an Otsu threshold method, determination of a candidate area by threshold segmentation, determination and truncation of the minimum contour by using a contour tracing algorithm.

[0178] (21) Image Mean Filtering.

[0179] The image is subjected to large-scale mean filtering to eliminate grid interference on a target paper, highlighting a circular target paper area. By taking a sample with a size 41*41 as an example, a calculation method is as follows:

g ( x , y ) = 1 41 * 41 i = - 20 i = 20 j = - 20 j = 20 origin ( x + i , y + j ) ##EQU00001##

wherein g(x,y) represents a filtered image, x represents a horizontal coordinate of a center point of a sample on a corresponding point on the image, y represents a longitudinal coordinate of the center point of the sample on the corresponding point on the image, i represents a pixel point horizontal coordinate index value between -20 and 20 relative to x, and j represents a pixel point longitudinal coordinate index value between -20 and 20 relative to y.

[0180] (22) Determination of the Segmentation Threshold by Using an Otsu Threshold Method.

[0181] Threshold segmentation segments the image into the background and the foreground by using the adaptive Otsu threshold segmentation (OTSU) method according to a gray property of the image. The greater a variance between the background and the foreground is, the greater the difference between the two parts of the image is. Therefore, for the image I(x, y), the segmentation threshold of the foreground and the background is set as Th, a ratio of pixel points belonging to the foreground to the whole image is w2, and its average gray level is G1; a ratio of pixel points belonging to the background to the whole image is w3, and its average gray level is G2, the total average gray level of the image is G_Ave, an interclass variance is g, a size of the image is M*N, in the image, the number of pixels with gray level values smaller than the threshold is denoted as N1, and the number of pixels with gray level values greater than the threshold is denoted as N2, then

w 2 = N 1 M * N ; ##EQU00002## w 3 = N 2 M * N ; ##EQU00002.2## M * N = N 1 + N 2 ; ##EQU00002.3## w 2 + w 3 = 1 ; ##EQU00002.4## G_Ave = w 2 * G 1 + w 3 * G 2 ; ##EQU00002.5## g = w 2 * ( G_Ave - G 1 ) 2 + w 3 * ( G_Ave - G 2 ) 2 ; ##EQU00002.6##

[0182] the resultant equivalence formula is as follows:

g=w2*w3*(G1-G2).sup.2;

[0183] the segmentation threshold th when the interclass variance g is maximum may be obtained by employing a traversing method.

[0184] (23) Segmentation of the Filtered Image in Combination with the Determined Segmentation Threshold Th.

g ( x , y ) = { 255 Input ( x , y ) .gtoreq. Th 0 , Input ( x , y ) < Th ; ##EQU00003##

[0185] a binary image segmented into the foreground and the background is obtained.

[0186] (24) Determination and Truncation of the Minimum Contour by Employing a contour Tracing Algorithm.

[0187] Contour tracing employs a vector tracing method of a Freeman chain code, which is a method for describing a curve or boundary by using coordinates of a starting point of the curve and direction codes of boundary points. The method is a coded representation method of a boundary, which uses a direction of the boundary as a coding basis. In order to simplify the description of the boundary, a method for describing a boundary point set is employed.

[0188] Commonly used chain codes are divided into a 4-connected chain code and a 8-connected chain code according to the number of adjacent directions of a center pixel point. The 4-connected chain code has four adjacent points, respectively in the upper side, the lower side, the left side and the right side of the center point. The 8-connected chain code increases 4 inclined 45 directions compared with the 4-connected chain code, because there are eight adjacent points around any one pixel, and the 8-connected chain code just coincides with an actual situation of the pixel points, information of the center pixel point and its adjacent points may be accurately described. Accordingly, this algorithm employs the 8-connected chain code, as shown in FIG. 2.

[0189] A 8-connected chain code distribution table is as shown in Table 1:

TABLE-US-00001 TABLE 1 8-connected chain code distribution table 3 2 1 4 P 0 5 6 7

[0190] As shown in FIG. 3, a 9.times.9 bitmap is given, wherein a line segment with a starting point S and an end point E may be represented as L=43322100000066.

[0191] A FreemanList structure is customized in combination with a custom structure body:

TABLE-US-00002 { int x; int y; int type; FreemanList* next; }

[0192] whether the head and the tail of a chain code structure are consistent or not is determined, so that whether the contour is complete or not is determined.

[0193] An image of the target paper area is obtained and then stored.

[0194] (3) Detecting Points of Impact.

[0195] The point of impact detection method is a background subtraction-based point of impact detection method. The method includes: detecting points of impact from the image of the target paper area, and determining a position of a center point of each of the points of impact. This method stores the previous target surface pattern, and then uses the current target surface pattern for pixel-level subtraction with the previous target surface pattern. Since images of two frames may have a pixel deviation during the perspective correction calculation of the image, a downsampling method is employed to count an area with 2 pixels as a step length, wherein the area is obtained by calculating the downsampled gray level map with the minimum gray level value as the pixel gray level value within a 2*2 pixel area, with a gray level greater than 0; and this area is subjected to contour detection to obtain information of newly generated points of impact pattern.

[0196] The point of impact detection method is high in processing speed when comparison is performed by utilizing pixel-level subtraction of the images of the previous frame and the following frames, and can ensure that positions of the newly generated points of impact are returned.

[0197] The point of impact detection method is performed as follows.

[0198] (31) Storing an Original Target Paper Image

[0199] Data of the original target image is stored and read in a cache to enable the original target image to serve as a reference target paper image. If a target subjected to accuracy calculation is shot again during shooting, the target paper area stored at the time of the last accuracy calculation is used as a reference target paper image.

[0200] (32) Performing Pixel-Level Subtraction on the Image Subjected to the Processing of the Steps (1) to (2) and the Original Target Paper Image to Obtain a Difference Position.

[0201] The pixel difference threshold of the images of the previous frame and the following frame is set. A setting result is 255 when a pixel difference exceeds the threshold, and the setting result is 0 when the pixel difference is lower than the threshold.

result ( x , y ) = { 255 , grayPre ( x , y ) _grayCur ( x , y ) .gtoreq. threshold 0 , grayPre ( x , y ) _grayCur ( x , y ) < threshold ; ##EQU00004##

[0202] a specific threshold may be obtained through debugging, with a set range generally between 100 and 160.

[0203] (33) Performing Contour Tracing on the Image Generated in the Step (32) to Obtain a Point of Impact Contour and Calculating a Center Point of Each of the Points of Impact.

[0204] Contour tracing is performed by a Freeman chain code to calculate an average to obtain the center point of each of the points of impact, and its calculation formula is as follows:

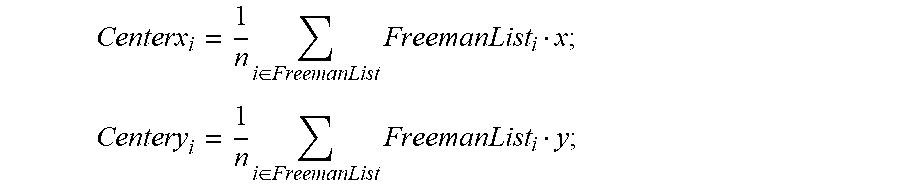

Centerx i = 1 n i .di-elect cons. FreemanList FreemanList i x ; ##EQU00005## Centery i = 1 n i .di-elect cons. FreemanList FreemanList i y ; ##EQU00005.2##

[0205] Centerx.sub.i represents a center x-axis coordinate of an i-th point of impact, Centery.sub.i represents a center y-axis coordinate of the i-th point of impact, Freemanlist.sub.i represents a contour of the i-th point of impact; and n is a positive integer.

[0206] A process for performing the point of impact detection method is as shown in FIG. 12.

[0207] (4) Calculating a Deviation.

[0208] A horizontal deviation and a longitudinal deviation between each of the points of impact and a center of the target paper are detected to obtain a deviation set.

[0209] Pixel-level subtraction is performed on the target paper area and the electronic reference target paper to detect the points of impact, and the center point of each of the points of impact is calculated, and the shooting accuracy is determined according to the deviation between the center point of each of the points of impact and the center point of the target paper area.

Embodiment 2

[0210] This embodiment is substantially the same as the embodiment 1, with a difference lying in including a target paper area correction step after the target paper area is extracted.

[0211] Target Paper Area Correction.

[0212] Due to the pasting of the target paper as well as an angular deviation between the spotting scope and the target paper when the image is acquired, an effective area of the extracted target paper may be tilted so that the acquired image is non-circular. In order to ensure that the calculated deviation value of each of the points of impact is higher in accuracy, perspective correction is performed on the target paper image to correct the outer contour of the target paper into a regularly circular contour. The target paper area correction method is a target paper image correction method based on an elliptical end point, and the method obtains the edge of the image by using a Canny operator. Since the target paper image almost occupies the whole image, maximum elliptical contour fitting is performed by using Hough transform in the case of small parameter change range to obtain the maximum elliptic equation. There are cross lines in the target paper image, and a number of points of intersection with the ellipse, and these points of intersection correspond to the uppermost point, the lowermost point, the rightmost point and the leftmost point of the largest circular contour in a standard graph, respectively. Straight line fitting of the cross lines is performed by using Hough transform. In an input sub-image, an intersection point set of the cross lines and the ellipse is obtained, and a perspective transformation matrix is calculated in combination with a point set of the same positions of the template.

[0213] The target paper area correction method may quickly obtain an outermost ellipse contour parameter by using the Hough transform. Meanwhile, a Hough transform straight line detection algorithm under polar coordinates can quickly obtain a straight line parameter as well, so that the method can quickly correct the target paper area.

[0214] The target paper area correction method is performed as follows.

[0215] (51) Performing Edge Detection by Using a Canny Operator.

[0216] The method includes five parts of conversion of RGB into a gray level map, Gaussian filtering to suppress noise, first-order derivative calculation of a gradient, non-maximum suppression, detection and connection of the edge by a double-threshold method.

[0217] Conversion of RGB into a Gray Level Map

[0218] Gray level conversion is performed by a conversion ratio of RGB into a gray level to convert a RGB image into a gray level map (three-primary colors of R, G and B are converted to gray level values), and its process is performed as follows:

Gray=0.299R+0.587G+0.114B

[0219] Gaussian Filtering of the Image.

[0220] Gaussian filtering is performed on the converted gray level map to suppress noise of the converted image, .sigma. is set as a standard deviation, at this time, a size of the template is set as (3*.sigma.+1) (3.sigma.+1) according to a Gaussian loss minimization principle, x is set as a horizontal coordinate deviating from the center point of the template, y is set as a longitudinal coordinate deviating from the center point of the template, and K is set as a weight value of a Gaussian filtering template, and its process is performed as follows:

K = 1 2 .pi. .sigma. * .sigma. e - x * x + y * y 2 .sigma. * .sigma. ##EQU00006##

[0221] Calculation of a gradient magnitude and a gradient direction by using a finite difference of first-order partial derivative.

[0222] A convolution operator:

S x = [ - 1 1 - 1 1 ] ; ##EQU00007## S y = [ 1 1 - 1 - 1 ] ; ##EQU00007.2##

[0223] the gradient is calculated as follows:

P[i,j]=(f[i,j+1]-f[i,j]+f[i+1,j+1]-f[i+1,j])/2;

Q[i,j]=(f[i,j]-f[i+1,j]+f[i,j+1]-f[i+1,j+1])/2;

M[i,j]= {square root over (P[i,j].sup.2+Q[i,j].sup.2)};

?[i,j]=tan.sup.-1(Q[i,j]/P[i,j]);

[0224] Non-Maximum Suppression.

[0225] The method is to find the local maximum of the pixel point, the gray level value corresponding to a non-maximum point is set to 0, so that most of non-marginal points are eliminated.

[0226] It may be known from FIG. 5, it is necessary to determine whether the gray level value of the pixel point C is maximum within its 8-valued neighborhood when non-maximum suppression is performed. In FIG. 5, a direction of a line dTmpldTmp2 in FIG. 5 is a gradient direction of the point C, in this way, it may be determined that its local maximum value is definitely distributed on this line, that is, in addition to the point C, values of the two points of intersection dtmp1 and dTmp2 in the gradient direction will be local maximums. Therefore, determining the gray level value of the point C and the gray level values of these two points may determine whether the point C is a local maximum gray point within its neighborhood. If the gray level value of the point C is less than any of these two points, then the point C is not the local maximum, and it may be excluded that the point C is an edge.

[0227] Detection and Connection of the Edge by Adopting a Double-Threshold Algorithm.

[0228] A double-threshold method is used to further reduce the number of non-edges. A low threshold parameter Lthreshold and a high threshold parameter Hthreshold are set, and the two constitute a comparison condition, the high threshold and numerical values above the high threshold are converted into 255 values for storage, numerical values between the low threshold and the high value are uniformly converted into 128 values for storage, and other values are considered as non-edge data and replaced by 0.

g ( x , y ) = { 0 , g ( x , y ) .ltoreq. Lthreshold 255 , g ( x , y ) .gtoreq. Hthreshold 128 , Lthreshold < g ( x , y ) < Hthreshold ; ##EQU00008##

[0229] edge tracing is performed by utilizing the Freeman chain code again to filter out edge points with small length.

[0230] (52) Fitting the Cross Lines by Using the Hough Transform Under the Polar Coordinates to Obtain a Linear Equation.

[0231] The Hough transform is a method for detecting a simple geometric shape of a straight line and a circle in image processing. One straight line may be represented as y=kx+b by using a Caresian coordinate system, then any one point (x,y) on the straight line is converted into a point in a k-b space, in other words, all non-zero pixels on the straight line in an image space are converted into a point in the k-b parameter space. Accordingly, one local peak point in the parameter space may correspond to one straight line in an original image space. Since a slope has an infinite value or an infinitesimal value, the straight line is detected by using a polar coordinate space. In a polar coordinate system, the straight line can be represented as follows:

.rho.=x*cos .theta.+y*sin .theta.

[0232] It may be known from the above formula in combination with FIG. 7, a parameter .rho. represents a distance from an origin of coordinates to the straight line, each set of parameters .rho. and .theta. will uniquely determine one straight line, and only if the local maximum value serves as a search condition in the parameter space, a straight line parameter set corresponding to the local maximum may be acquired.

[0233] After the corresponding straight line parameter set is obtained, the non-maximum suppression is used to reserve a parameter of the maximum.

[0234] (53) Calculating Four Points of Intersection of the Cross Lines with the Ellipse.

[0235] L1 and L2 linear equations are known, as long as points of intersection with an outer contour of the ellipse are searched in a straight line direction to obtain four intersection point coordinates (a, b), (c, d), (e, f), (g, h), as shown in FIG. 9.

[0236] (54) Calculating a Perspective Transformation Matrix Parameter for Image Correction.

[0237] The four points of intersection are used to form four point pairs with coordinates of four points defined by the template, and the target paper area is subjected to perspective correction.

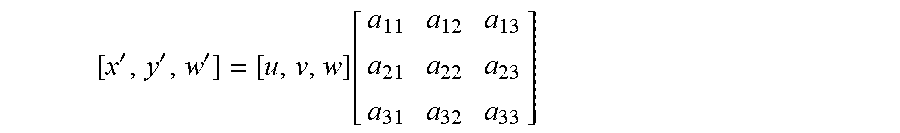

[0238] The perspective transformation is to project the image to a new visual plane, and a general transformation formula is as follows:

[ x ' , y ' , w ' ] = [ u , v , w ] [ a 11 a 12 a 13 a 21 a 22 a 23 a 31 a 32 a 33 ] ##EQU00009##

[0239] u and v are coordinates of an original image, corresponding to coordinates x' and y' of the transformed image. In order to construct a three-dimensional matrix, auxiliary factors w, w' are added, w is taken as 1, and w' is a value of the transformed w, wherein

x'=x/w;

y'=y/w;

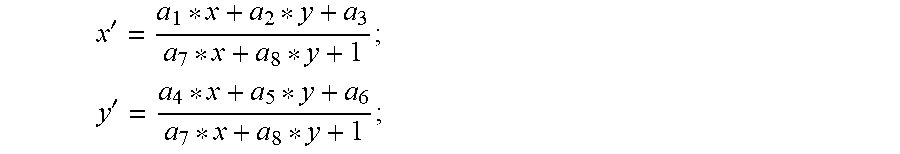

the above formulas may be equivalent to:

x ' = x w = a 11 * u + a 12 * v + a 31 a 13 * u + a 23 * v + a 33 ; ##EQU00010## y ' = y w = a 12 * u + a 22 * v + a 32 a 13 * u + a 23 * v + a 33 ; ##EQU00010.2##