Systems And Methods For Efficient Distribution Of Stored Data Objects

Moorthi; Jay ; et al.

U.S. patent application number 16/681032 was filed with the patent office on 2020-03-12 for systems and methods for efficient distribution of stored data objects. The applicant listed for this patent is Solano Labs, Inc.. Invention is credited to William Josephson, Jay Moorthi, Christopher A. Thorpe, Thomas E. Westberg, Steven R. Willis.

| Application Number | 20200084274 16/681032 |

| Document ID | / |

| Family ID | 60677171 |

| Filed Date | 2020-03-12 |

View All Diagrams

| United States Patent Application | 20200084274 |

| Kind Code | A1 |

| Moorthi; Jay ; et al. | March 12, 2020 |

SYSTEMS AND METHODS FOR EFFICIENT DISTRIBUTION OF STORED DATA OBJECTS

Abstract

A distributed data storage system is provided for offering shared data to one or more clients. In various embodiments, client systems operate on shared data while having a unique writeable copy of the shared data. According to one embodiment, the data storage system can be optimized for various use cases (e.g., read-mostly where writes to shared data are rare or infrequent (although writes to private data may be frequent. Some implementations of the storage system are configured to provide fault tolerance and scalability for the shared storage. For example, read-only data can be stored in (relatively) high latency, low cost, reliable storage (e.g. cloud), with multiple layers of cache supporting faster retrieval. In addition, some implementations of the data storage system offer a low-latency approach to data caching. Other embodiments improve efficiency with access modeling and conditional execution cache hints that can be distributed across the data storage system.

| Inventors: | Moorthi; Jay; (San Francisco, CA) ; Josephson; William; (Greenwich, CT) ; Willis; Steven R.; (Cambridge, MA) ; Westberg; Thomas E.; (Stow, MA) ; Thorpe; Christopher A.; (Lincoln, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 60677171 | ||||||||||

| Appl. No.: | 16/681032 | ||||||||||

| Filed: | November 12, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15636490 | Jun 28, 2017 | 10484473 | ||

| 16681032 | ||||

| 62452248 | Jan 30, 2017 | |||

| 62355590 | Jun 28, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 67/42 20130101; H04L 67/2847 20130101; H04L 67/1097 20130101 |

| International Class: | H04L 29/08 20060101 H04L029/08; H04L 29/06 20060101 H04L029/06 |

Claims

1. A proxy unit, comprising: a copy-on-write layer; a read cache; a read overlay; a hardware processor device that executes programmed instructions that when executed: provide an electronic client device external to the proxy unit access to common data stored in an electronic storage unit, the common data being accessed by the hardware processor device through a first server, wherein the copy-on-write layer, the read cache, and the read overlay form a layered architecture; execute remote requests received from an application on the common data and data in the copy-on-write layer; wherein the application modifies the data in a file system that is external to the proxy unit, and wherein after the application has modified data in the file system, the modifications are stored in the copy-on-write layer and the application subsequently disconnects from the proxy unit; wherein after the application disconnects from the proxy unit, the copy-on-write layer is saved in the read-overlay; wherein the read-overlay including the modifications is uploaded to a first server and a second server to be made available to any other proxy unit in any other client device.

2. The proxy unit of claim 1, wherein the proxy unit is included in an electronic client device, and the electronic client device includes the computer application and the file system.

3. The proxy unit of claim 2, wherein the proxy unit executes on the client device.

4. The proxy unit of claim 1, wherein the hardware processor device of the proxy unit is further configured to manage a local cache for pre-fetching data responsive to cache hints.

5. The proxy unit of claim 1, wherein the hardware processor device of the proxy unit is configured to retrieve and store data from the first server or the storage unit in the read cache responsive to data access patterns for respective client devices.

6. The proxy unit of claim 1, wherein the hardware processor device of the proxy unit is configured to: interact with the copy-on-write layer; and request data from the copy-on-write layer for respective clients.

7. The proxy unit of claim 1, wherein the hardware processor device of the proxy unit is configured to: host at least a portion of data managed in a copy-on-write layer; and store any data written by a respective client associated with at least the portion within the copy-on-write layer.

8. The proxy unit of claim 1, wherein the common data is configured to be available only in read-only form.

9. The proxy unit of claim 1, wherein the hardware processor device of the proxy unit is configured to load the data read from the first server or the storage unit into the read cache based on at least one predicted request.

10. A computer implemented method, comprising: providing a copy-on-write layer, a read cache, and a read overlay; by a hardware processor device that executes programmed instructions, providing an electronic client device external to the proxy unit access to common data stored in an electronic storage unit, the common data being accessed by the hardware processor device through a first server, wherein the copy-on-write layer, the read cache, and the read overlay form a layered architecture; by the hardware processor device, executing remote requests received from an application on the common data and data in the copy-on-write layer; wherein the application modifies the data in a file system that is external to the proxy unit, and wherein after the application has modified data in the file system, the modifications are stored in the copy-on-write layer and the application subsequently disconnects from the proxy unit; wherein after the application disconnects from the proxy unit, the copy-on-write layer is saved in the read-overlay; wherein the read-overlay including the modifications is uploaded to a first server and a second server to be made available to any other proxy unit in any other client device.

11. The method of claim 10, wherein the method is executed at an electronic client device, and the electronic client device includes the computer application and the file system.

12. The method of claim 10, wherein the hardware processor device is further configured to manage a local cache for pre-fetching data responsive to cache hints.

13. The method of claim 10, wherein the hardware processor device is configured to retrieve and store data from the first server or the storage unit in the read cache responsive to data access patterns for respective client devices.

14. The method of claim 10, wherein the hardware processor device is configured to: interact with the copy-on-write layer; and request data from the copy-on-write layer for respective clients.

15. The method of claim 10, wherein the hardware processor device is configured to: host at least a portion of data managed in a copy-on-write layer; and store any data written by a respective client associated with at least the portion within the copy-on-write layer.

16. The method of claim 10, wherein the common data is configured to be available only in read-only form.

17. The method of claim 10, wherein the hardware processor device is configured to load the data read from the first server or the storage unit into the read cache based on at least one predicted request.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. application Ser. No. 15/636,490, filed on Jun. 28, 2017, which claims the benefit of U.S. Provisional Application No. 62/452,248, filed Jan. 30, 2017, and U.S. Provisional Application No. 62/355,590, filed Jun. 28, 2016, all of which are herein incorporated by reference in their entireties.

BACKGROUND

[0002] Increasing usage of cloud computing has provided increasing compute capacity for addressing compute intensive tasks. In some conventional approaches, the ready availability of scalable cloud services has led to overuse of such resources. In some examples, the increased utilization of resources also results in a performance at cost problem, and for some architectures an upper scale limit beyond which additional resources are limited by the capability to distribute data across the resources on which compute tasks are to be executed.

SUMMARY

[0003] It is realized that fundamental to scaling architectures to handle compute intensive tasks, is the need to efficiently distribute the data and the tasks themselves to the compute resources that are to execute the tasks. Accordingly, a distributed data storage system is provided that manages some of the issues and problems with convention implementations. Various aspects provide for a distributed data storage system offering shared data to one or more clients. In various embodiments, client systems operate on shared data while having a unique writeable copy of the shared data. According to one embodiment, the data storage system can be optimized for various use cases (e.g., read-mostly use case, where clients' writes to the shared data are rare or infrequent (although writes to the client's private data may be frequent or in some alternatives, performed on a separate, independent storage system)). Some implementations of the storage system are configured to provide fault tolerance and scalability for the shared storage. For example, read-only data can be stored in (relatively) high latency, low cost, reliable storage (e.g. cloud based storage, (e.g., even supported by SSD)), with multiple layers of cache supporting faster retrieval. In addition, some implementations of the data storage system offer a low-latency approach to data caching. Various embodiments of the distributed data storage system are described with reference to a BX system (the name derived from "Block eXchanger"). The BX system embodiments provide details of some operations and functions of the data storage system that can be used independently, in conjunction with other embodiments, and/or in various combination of functions, features, and optimizations discussed below.

[0004] According to another aspect, a BX proxy has a cache for blocks requested from a server. Various embodiments of the BX proxy cache are configured to improve data access performance in several ways. The proxy can, within its local system, provide an image into the remote server to several local filesystem clients each with its own Copy on Write layer. An entity creating proxy cache hints can send those cache hints to pre-fetch blocks of data from the server. Because storage servers introduce latency through network request/response time, and server disk access time, reducing this on average can be a significant advantage over various conventional approaches.

[0005] According to another aspect various embodiments are directed to improving utilization and efficiency of conventional computer system based on improving integration of network-attached storage. In some examples, improvements in network-attached storage are provided via embodiments of the "BX" system. In some embodiments, network attached storage can be used to provide advantages in flexibility and administration over local disks. In cloud computing systems network-attached storage provides a persistent storage medium that can be increased on demand and even moved to new locations on the fly. Such a storage medium may be accessed at a block level or at the file layer of abstraction, for examples as part of layered storage architecture.

[0006] According to one aspect, a distributed data storage system is provided. The distributed data system comprises: a storage unit configured to host common data, a first server, configured to access the storage unit and access at least a portion of the common data, a proxy unit, configured to grant a client access to the storage unit through the first server and manage the common data as a layered architecture including at least a first write layer, a second server, configured to coordinate authentication of a client device, wherein the storage unit is located external to the client device and wherein the proxy unit is further configured to: execute remote requests on the common data and any write layer data, and present the execution of the remote request to the client device as a local execution.

[0007] According to one embodiment, the proxy unit includes at least an executable component configured to execute on a client device. According to one embodiment, the proxy unit is further configured to manage a local cache for pre-fetching data responsive to cache hints. According to one embodiment, the proxy unit is configured to retrieve and store data from the first server or the storage unit in the local cache responsive to data access patterns for respective client devices. According to one embodiment, the first server is configured to: manage a data architecture including at least a portion of common data and a copy on write layer, and store any data written by the client within the copy on write layer and associated with a respective client.

[0008] According to one embodiment, the proxy unit is configured to: interact with the copy on write layer and request data from the copy on write layer for respective clients. According to one embodiment, the proxy unit is configured to: host at least a portion of data managed in a copy on write layer, and store any data written by a respective client associated with at least the portion within the copy on write layer. According to one embodiment, the second server is configured to access the copy on write layer of the first server. According to one embodiment, at least one of the first server and the second server is configured to access the copy on write layer of the proxy unit.

[0009] According to one embodiment, the common data is configured to be available only in read-only form and the first server is configured to access the storage unit without checking a status of the common data. According to one embodiment, the proxy unit is configured to load the data read from the first server or the storage unit into the cache based on at least one predicted request. According to one aspect, a computer implemented method for managing a distributed data storage is provided. The method comprises: obtaining, by at least one processor, a remote request from a client device, the remote request requesting access to common data hosted on a storage unit, connecting to a proxy unit configured to provide access to at least a portion of the common data, managing, by the proxy unit, access to the common data as a layered architecture including at least a first write layer, executing remote requests on the common data and any write layer data, and presenting the execution of the remote request to the client device as a local execution. According to one embodiment, the method further comprises authenticating the client device through a server.

[0010] According to one embodiment, the storage unit is located external to the client device method further comprises executing an executable component on the proxy device. According to one embodiment, the write layer is configured to store any data written to the storage unit by the client device. According to one embodiment, the method further comprises: managing a data architecture including at least a portion of common data and a copy on write layer, and storing any data written by the client within the copy on write layer and associated with a respective client. According to one embodiment, a first server is configured to access the first write layer of the proxy unit.

[0011] According to one embodiment, the common data is configured to be available only in read-only form and a first server is configured to access the storage unit without checking a status of the common data. According to one embodiment, the method further comprises on a cache of the proxy unit, pre-fetching data from the storage unit in response to a cache hint. According to one embodiment, the method further comprises: hosting at least a portion of data managed in a copy on write layer, and storing any data written by a respective client associated with at least the portion within the copy on write layer. According to one embodiment, the method further comprises accessing a predicted request and accessing at least a portion of the common data based on the predicted request.

[0012] According to one aspect, a distributed data storage system is provided. The distributed data storage comprises: a proxy component configured to manage a connection between a client device and a storage unit containing at least common data, a modelling component configured to: track historic access data of accesses of the common data, and generate one or more profiles corresponding to the historic access data, wherein the one or more profiles are associated with a cache execution hint, wherein the proxy layer is further configured to: match a request from the client device to a profile of the one or more profiles, and trigger caching of data specified by the profile.

[0013] According to one embodiment, the cache execution hint specifies a data access pattern, and data to pre-fetch responsive to the data access pattern. According to one embodiment, the storage server comprises the proxy component and the modelling component and the storage server is configured to access the common data on the storage unit. According to one embodiment, the modelling component is configured to generate a cache execution hint including data to pre-fetch responsive to a pattern. According to one embodiment, the modelling component is further configured to generate data eviction parameters for the pre-fetched data responsive to modelling historic access data. According to one embodiment, the modelling component is further configured to monitor a caching performance parameter.

[0014] According to one embodiment, monitoring the caching performance parameter further comprises comparing the caching performance parameter to a threshold value and increasing a size of a cache if the caching performance parameter falls below the threshold value. According to one embodiment, monitoring the caching performance parameter further comprises comparing the caching performance parameter to a threshold value and ignoring the cache execution hint if the caching performance parameter falls below the threshold value.

[0015] According to one aspect, a computer implemented method for managing distributed data is provided. The method comprises: tracking, by at least one processor, historic access data of access of common data, generating, by the at least one processor, one or more profiles corresponding to the historic access data, wherein generating includes associating the one or more profiles with a cache execution hint, analyzing, by the at least one processor, a request from a client device to access at least a portion of the common data, matching, by the at least one processor, the request from the client device to a profile of the one or more profiles, and triggering, by the at least one processor, caching of data specified by the profile

[0016] According to one embodiment, generating one or more profiles further comprises generating a first profile and a second profile, wherein the second profile branches from the first profile. According to one embodiment, the method further comprises retrieving data from a process table of the client device. According to one embodiment, matching the request further comprises selecting a profile of the one or more profiles based at least in part on the retrieved data from the process table and the request. According to one embodiment, the method further comprises tracking a caching performance and increasing a size of a cache if the caching performance falls below a threshold value.

[0017] Still other aspects, examples, and advantages of these exemplary aspects and examples, are discussed in detail below. Moreover, it is to be understood that both the foregoing information and the following detailed description are merely illustrative examples of various aspects and examples, and are intended to provide an overview or framework for understanding the nature and character of the claimed aspects and examples. Any example disclosed herein may be combined with any other example in any manner consistent with at least one of the objects, aims, and needs disclosed herein, and references to "an example," "some examples," "an alternate example," "various examples," "one example," "at least one example," "this and other examples" or the like are not necessarily mutually exclusive and are intended to indicate that a particular feature, structure, or characteristic described in connection with the example may be included in at least one example. The appearances of such terms herein are not necessarily all referring to the same example.

BRIEF DESCRIPTION OF DRAWINGS

[0018] Various aspects of at least one embodiment are discussed below with reference to the accompanying figures, which are not intended to be drawn to scale. The figures are included to provide an illustration and a further understanding of the various aspects and embodiments, and are incorporated in and constitute a part of this specification, but are not intended as a definition of the limits of any particular embodiment. The drawings, together with the remainder of the specification, serve to explain principles and operations of the described and claimed aspects and embodiments. In the figures, each identical or nearly identical component that is illustrated in various figures is represented by a like numeral. For purposes of clarity, not every component may be labeled in every figure. In the figures:

[0019] FIG. 1 illustrates an exemplary distributed data storage system, in accordance with some embodiments.

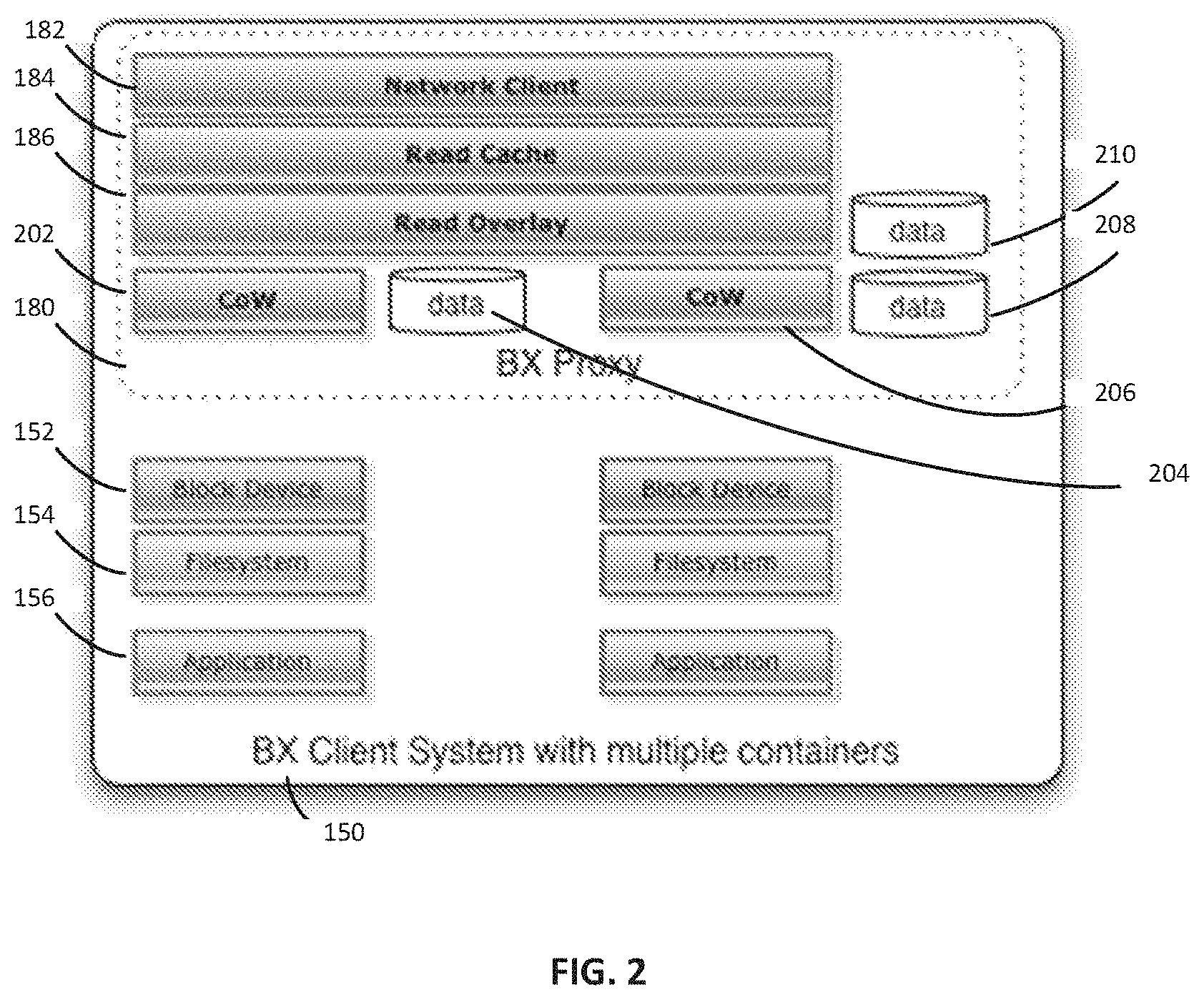

[0020] FIG. 2 illustrates an exemplary client device containing a proxy unit, in accordance with some embodiments.

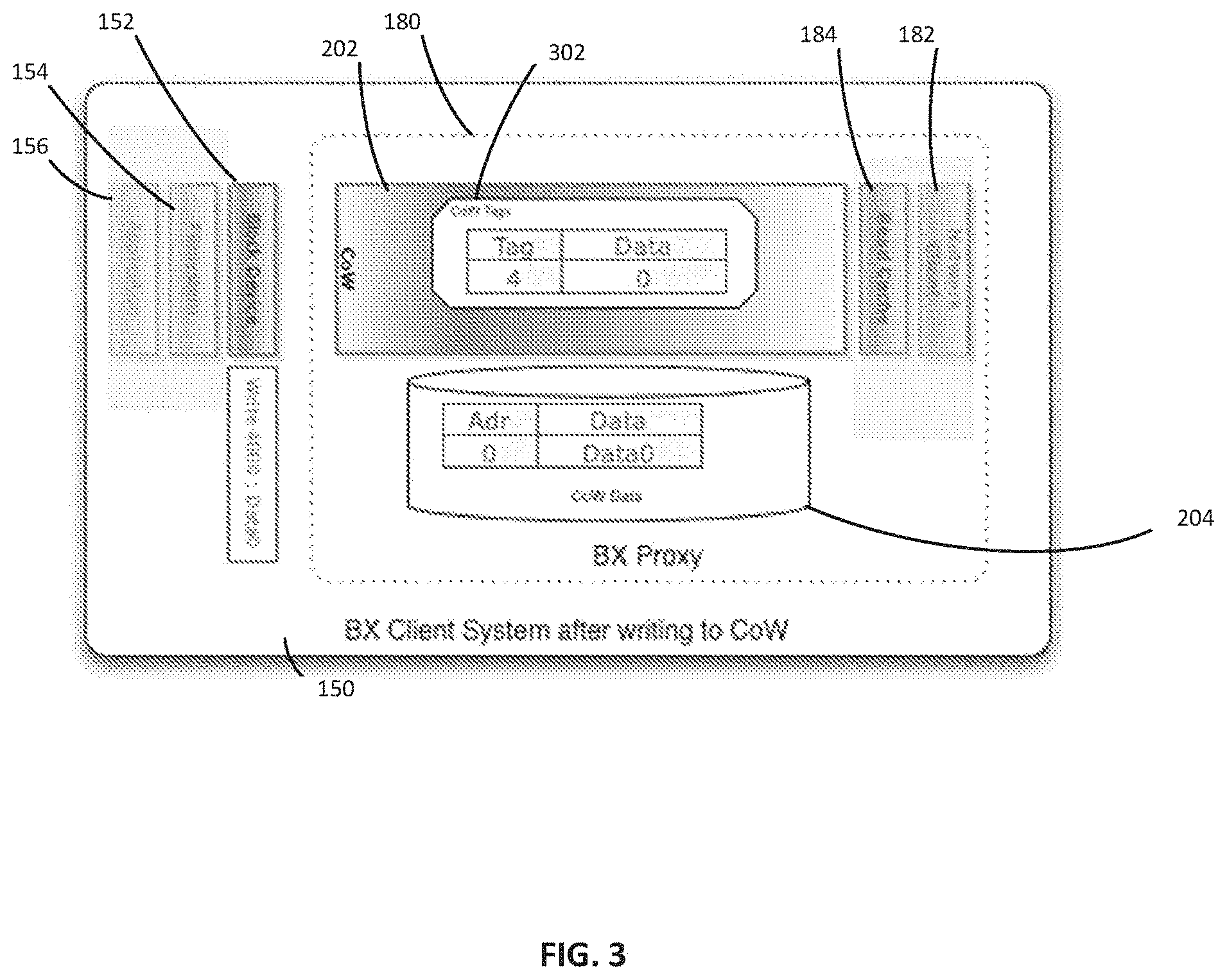

[0021] FIG. 3 illustrates an exemplary client device containing a copy on write layer, in accordance with some embodiments.

[0022] FIG. 4 illustrates an exemplary server device containing server lookup functionality, in accordance with some embodiments.

[0023] FIG. 5 is an example process flow for managing a distributed data storage, according to one embodiment;

[0024] FIG. 6 is an example process flow for managing caching within a distributed data store, according to one embodiment.

[0025] FIG. 7 is an example process flow for managing caching within a distributed data storage architecture, according to one embodiment.

[0026] FIGS. 8A-8D illustrate parallelizing a job across multiple virtual machines, in accordance with some embodiments.

[0027] FIG. 9 illustrates an exemplary read overlay sharing system, in accordance with some embodiments:

[0028] FIG. 10 is a block diagram of a computer system that can be specially configured to execute the functions described herein.

DETAILED DESCRIPTION

[0029] Stated broadly, various aspects of the disclosure describe shared data architectures with respective clients having unique writable copies of the shared data In various embodiments, client systems operate on shared data while having a unique writeable copy of the shared data. In some embodiments, the data storage system can be optimized for the read-mostly use case, where clients' writes to the shared data are rare or infrequent (although writes to the client's private data may be frequent), or in some embodiments, performed on a separate, independent storage system. Some implementations of the storage system are configured to provide fault tolerance and scalability for the shared storage.

[0030] According to another aspect, a BX proxy has a cache for blocks requested from a server. According to some embodiments, the BX proxy cache can be configured to emulated RAM caching done by the filesystem layer, and provide performance improvements in several ways. The proxy can, within its local system, provide an image into the remote server to several local filesystem clients each with its own Copy on Write layer. An entity creating proxy cache hints can send those cache hints to pre-fetch blocks of data from the server. In one embodiment, a BX server can recognize that a file has been opened for reading and do an early read of some or all of file's contents into its local cache in anticipation that opening the file means that the data will likely be needed soon by the client. In another embodiment, a BX proxy could request this same data from the BX server once a file is opened, causing the BX server to fetch the applicable segments from the BX store. Because storage servers introduce latency through network request/response time, and server disk access time, reducing this on average can be a significant advantage. The cache hinting system can generate cache eviction hints, detect changed access patterns, and even reorder the filesystem that the BX is serving as an image.

[0031] Various embodiments described herein relate to a distributed data storage system comprising a storage unit configured to host common data, a first server, configured to access the storage unit and access at least a portion of the common data, a proxy unit, configured to grant a client access to the storage unit through the first server and manage the common data as a layered architecture including at least a first write layer, and a second server, configured to coordinate authentication of a client device. The storage unit can be located externally to the client device. The proxy unit can be further configured to execute remote requests on the common data and any write layer data, and present the execution of the remote request to the client device as a local execution. The distributed data storage system can enable multiple client devices to access the common data through the proxy units, while maintaining the modifications as write layer data, which in turn reduces the need for data storage and redundant repositories found in conventional data storage systems. Conventional data storage systems require maintenance of updated records for all the clients that request the data, leading to a large increase in data storage and redundancy in the storage systems.

[0032] Examples of the methods, devices, and systems discussed herein are not limited in application to the details of construction and the arrangement of components set forth in the following description or illustrated in the accompanying drawings. The methods and systems are capable of implementation in other embodiments and of being practiced or of being carried out in various ways. Examples of specific implementations are provided herein for illustrative purposes only and are not intended to be limiting. In particular, acts, components, elements and features discussed in connection with any one or more examples are not intended to be excluded from a similar role in any other examples.

[0033] Also, the phraseology and terminology used herein is for the purpose of description and should not be regarded as limiting. Any references to examples, embodiments, components, elements or acts of the systems and methods herein referred to in the singular may also embrace embodiments including a plurality, and any references in plural to any embodiment, component, element or act herein may also embrace embodiments including only a singularity. References in the singular or plural form are not intended to limit the presently disclosed systems or methods, their components, acts, or elements. The use herein of "including," "comprising," "having," "containing," "involving," and variations thereof is meant to encompass the items listed thereafter and equivalents thereof as well as additional items. References to "or" may be construed as inclusive so that any terms described using "or" may indicate any of a single, more than one, and all of the described terms.

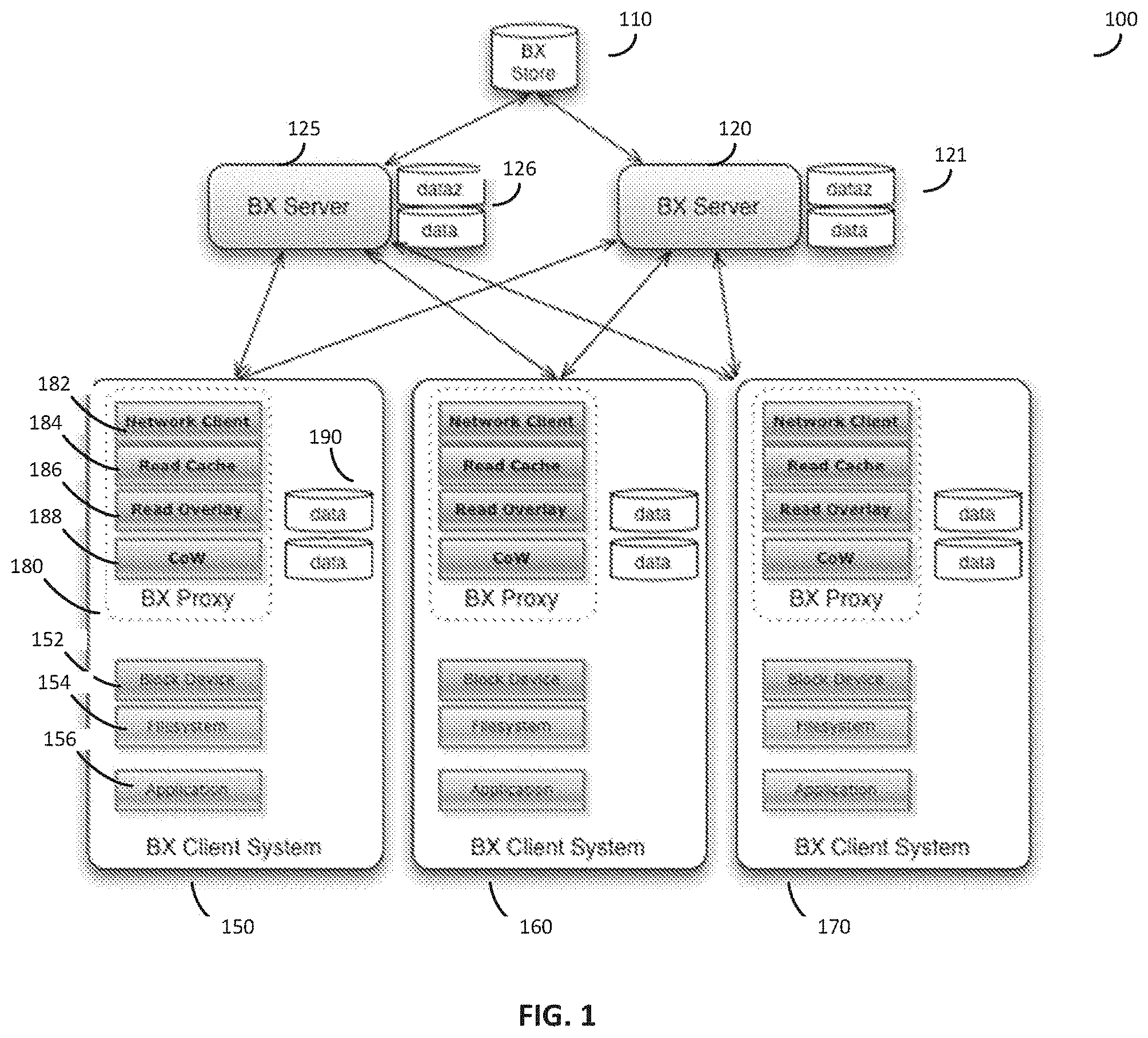

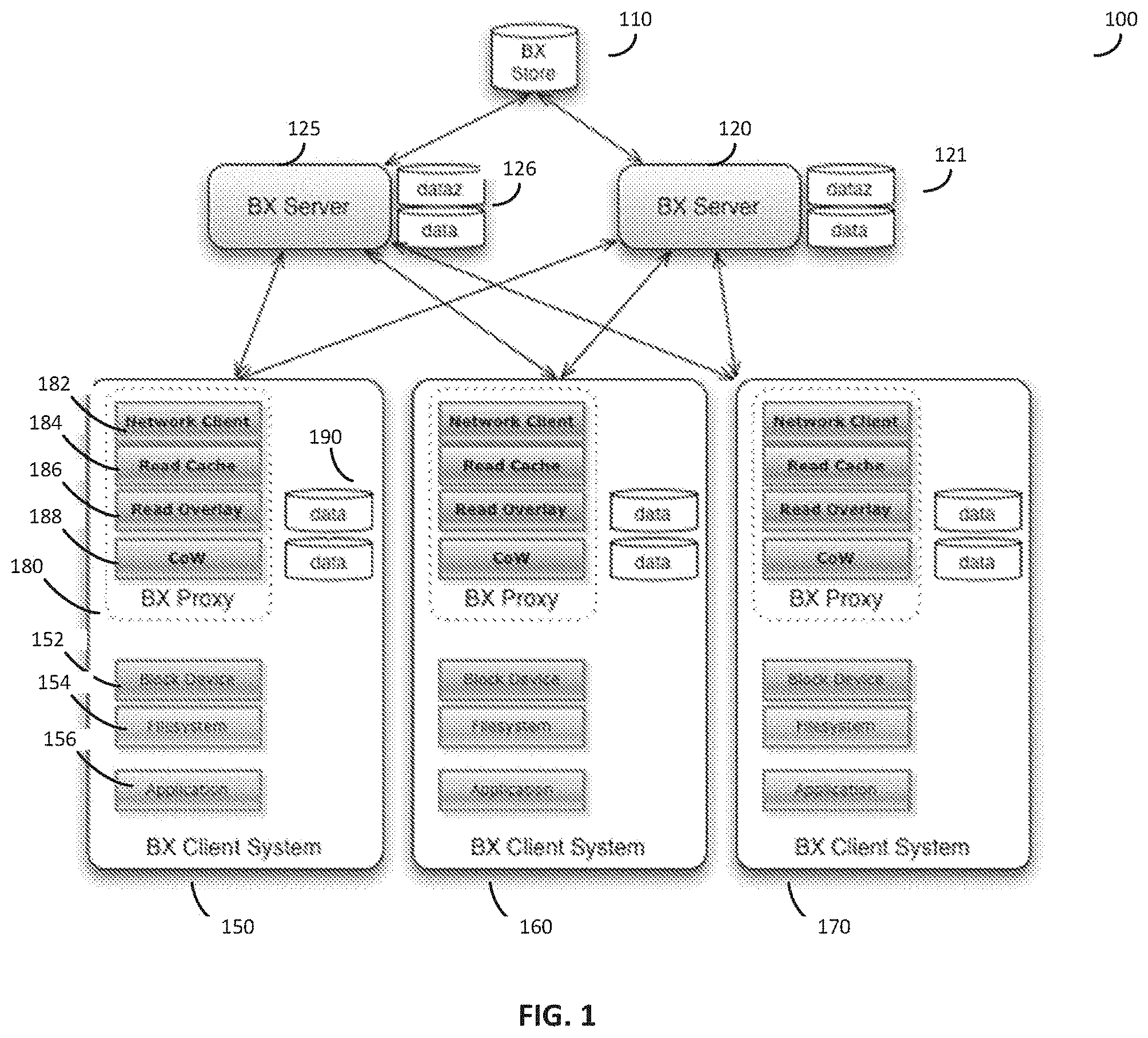

[0034] According to various embodiments, the block exchanger (BX) architecture is comprised of several distinct components, each of which corresponds to a layer of storage. FIG. 1 shows a distributed storage system 100 which can comprise one or more storage units 110, one or more servers 120, and one or more client devices 150. As an example, FIG. 1 shows a distributed storage system 100 with a single storage unit 110, a first server 120, a second server 125, a first client device 150, a second client device 160, and a third client device 170, but it should be appreciated that any number of storage units, servers, and client devices is possible. In some embodiments, the storage unit 110 includes a highly reliable, network-accessible storage system that is the most authoritative but often most distant from the clients 150, 160, and 170. In some embodiments, the storage unit 110 can be implemented by a cloud storage service like Amazon Web Services' S3. The servers 120 and 125 can be configured to intermediate requests from the clients 150, 160, and 170 to the storage unit 110. The clients 150, 160, and 170 may comprise client devices using data from the storage unit 110. The client device 150 can include a proxy 180, which may enable a client to view the storage unit 110 as a native device rather than employ custom software to interact with the storage unit 110. According to various embodiments, storage unit 110 is configured as the authoritative source of all images and can be optimized to store image data with low cost, high reliability, and high availability. The servers 120 and 125 can be optimized to deliver higher performance to the client devices 150, 160, and 170 than a direct connection from the client devices 150, 160, and 170 to the storage unit 110 would be.

[0035] The client devices 150, 160, and 170 can be any system reading from the storage unit 110 or the servers 120 and 125. Some embodiments may support one or more client devices. In one embodiment, the client 150 connects to an storage unit 110, such as a database, in which objects or records can be stored, and the objects or records can be transmitted to the client device 150 via the server 120 or the server 125 and/or the proxy 180.

[0036] The client device 150 can be one of several supported connectors to the servers 120 and/or 125. In some embodiment, the client device 150 can be a Network Block Device (NBD) client that may attach to (a) a proxy process 180 running locally on the same client device 150 (a "proxy" embodiment), (b) a specialized coprocessor or similar device exposing a network interface to the same client device 150, or (c) on another device connected to the client device 150, to which the client connects via a high-speed network interface. In another embodiment, a local hardware "bridge" on the client device 150 can be configured to expose a block storage device to the client, while connecting to the storage unit 110 over the bridge's network interface (the "bridge" embodiment). In some embodiments, the client device 150 can be configured to connect or interact with a simple block device (e.g., of some kind), whether the block storage device appears networked, local, or virtual; the system 100 can be configured to manage the input/output (I/O) from the block device seen by the client device 150 to the servers 120 and 125.

[0037] Various embodiments of the system 100 can include a proxy 180, with a relationship to the client device 150 as described above. The proxy 180 can include a Copy-on-Write (CoW) layer 188 that is not directly seen by the client device 150. According to one embodiment, written data ("writes") that would be communicated to the client device 150 are intercepted in the CoW layer 188, and stored on separate storage 190 from the primary, read-only data. In some embodiments the separate storage 190 can be maintained by the proxy 180 and stored locally on the client device 150. The proxy 180 can then verify that subsequent reads to any blocks written to in this way return the updated data, not the original read-only data. For example, in some embodiments the proxy 180 can be configured to check local storage 190 or metadata associated with local storage 190 to check if updated data is available.

[0038] In some embodiments, the proxy 180 can also implement a local block cache 184, and attach to a server 120 or 125. Since writes can be intercepted locally by the CoW layer 188, the connection to the server 120 or 125 can be configured as Read-Only (and in some examples always read-only). According to some embodiment, the configuration enables any server (e.g., 120 or 125) to offer its storage to many clients 150, 160, and/or 170, while needing fewer resources for updating its data, for example, due to any clients 150, 160, and/or 170 writing back to one of its blocks (or objects). According to one embodiment, this architecture and configuration enables a proxy 180 to connect to many servers, such as servers 120 and 125, for identical data. In one example, enabling the proxy 180 to connect to many servers offers performance and reliability advantages--the scalability of some implementations provides more resources and greater efficiency than convention approaches. As an example, there can be one server and one or more clients connected to it, but in other embodiments a larger deployment is configured support multiple servers and multiple proxies, improving scalability and fault tolerance.

[0039] According to one embodiment, the server 120 or 125 can serve one or more "images." An image may represent a collection of sequential data objects, which may be referred to generically as "blocks." In some embodiments, an image can include a sequence of blocks, as may be found on a block storage device such as a disk drive or disk partition. For example, a "block" can be referred to as a physical record, and is a sequence of bytes or bits, usually containing some whole number of records, having a maximum length, a "block size." In one example, the blocks for a particular image are stored on a server 120 or 125. In some embodiments, an image can also include storage blocks and may also include references to storage blocks of other images.

[0040] According to various embodiments, each server 120 and 125 serving an image can be configured to present the same image to all clients 150, 160, and 170, for example, in read-only form. Images can be created and maintained by an administrator. The administrator may establish an exclusive, read-write connection to the storage unit 110, and may create or update an image in the storage unit 110 for all clients 150, 160, and/or 170 simultaneously. In some embodiments, the administrator can be a person using the system. As an example, the image can include a system upgrade or addition of a new data set and index to a database. In some embodiments, "exclusive" is a system configuration that when executed prevents other clients from accessing the data store while, for example, an upgrade or addition is operation is ongoing.

[0041] When the proxy 180 establishes a new connection with a server 120 or 125, the proxy 180 can specify an identifier of the image it wishes to use. In one embodiment, if that server 120 or 125 does not have the specified image available, the server is configured to search a database of images, or other known servers, to pull the specified image from the storage unit 110 as needed. If no image can be found with that identifier, the server 120 or 125 can return an error.

[0042] In various embodiments, the servers 120 and 125 may have local storages 121 and 126 respectively, with which the servers can use to cache blocks needed by the clients 150, 160, and/or 170. In some embodiments, the local storages 121 and 126 can be partitioned into multiple types, which can be configured to include "fast" (lowest latency, highest number of I/O operations per second--IOPS), "small" (compressed data segments that permit storing more data with lower performance due to the cost of decompressing the data), "encrypted", or other types. In one embodiment, the server 120 or 125 can request compressed data from the storage unit 110, decompresses the data and stores it on its local storage 121 or 126, and sends the decompressed data to the proxy 180. Subsequent accesses to the same data can be routed to the decompressed, local cache within the local storage 121 or 126. This can reduce client compute demand (for decompression) at the cost of network bandwidth, and can reduce latency by making recently used data immediately accessible from the server 120 or 125 instead of the more distant storage unit 110--either option can represent execution improvement over some conventional approaches.

[0043] Caches can be inherently nonlinear; as caches are commonly designed to leverage locality of references, keeping important accesses in local fast storage. A BX server cache for a particular application can be configured to respond to wildly different request patterns, for example, the second time the application is run, not because the BX data accesses are different, but in some cases the BX cache can see very few accesses at all. For example, one test can have about 10,000 accesses the first run, and a few dozen during the second run. This can be because there is also an operating-system controlled file system cache between the BX (cached) client driver and the application itself. For example, in some embodiments, the BX proxy 180 may not know when the operating system returned data from an internal cache without requesting it from the BX proxy 180, though in some embodiments (for example an implementation on Solaris running ZFS) may permit a greater level of transparency with respect to the OS cache of a filesystem: the BX proxy 180 could still be able to discover when particular data was requested and returned from the OS cache, even if the data was not requested from the proxy 180. That transparency could be used in other embodiments to provide cache hint data to the BX system, even if the relevant data were cached and provided by the operating system.

[0044] In some embodiments, clients 150, 16, and 170, do not directly create, update, or delete images. Instead, an administrator (not shown) can connect directly to the storage unit 110 and create an image. Administrators can also perform operations such as: cloning images, apply Readoverlays to cloned images, or deleting images.

[0045] If an image is deleted, any other cloned or overlaid images referring to its segments may not be deleted, and the segments referred to by other images can be maintained until no image refers to those segments. This can be implemented with a simple reference count or garbage collection mechanism.

[0046] In some embodiments, the original disk image to be served may originate in a server outside the location hosting the storage unit 110 or servers 120 or 125. To facilitate this, the administrator can use an upload tool to directly create an image in the storage unit 110 from a local device. The upload can be done as a block copy, using for example the Unix "dd" command. In one embodiment, this feature can read blocks into a buffer for a segment, compress the segment, and store the segment in the storage unit 110. Because this can be a relatively slow process (for example, if the image is many terabytes and the network link does not accommodate that) the upload can be interruptible. The upload tool can be configured to keep track of which segments it had successfully uploaded and on restart uploads the remainder. In addition, the upload tool can be statically or dynamically throttled to keep from saturating the local network link.

[0047] Various embodiments can incorporate one or more the system elements described above with respect to FIG. 1, and can include any combination of the data storage system architecture elements. According to some implementations the architecture elements include a BX store configure to provide a reliable, low cost, high latency network storage system (for example, a BX storage system can be implemented on an AWS S3, as a cloud data warehouse, or as a file server in a private data center, among other options); a BX server configured to provide network interfaces for connectivity to BX store(s) and to BX client(s) (for example, including any associated proxies). According to one embodiment, a BX server translates client read requests into accesses to the BX store. In another embodiment, a BX server can be configured with local storage to cache BX data. Further, the BX server can be configured to decompress data stored on the BX Store and transmit from the BX Store in compressed form or decompress from the BX Store as part of transmission.

[0048] Because each BX network client 150 can connect to many BX servers 120 or 125 offering identical read-only images, the system can benefit from load balancing to the least loaded server. In one embodiment, the client 150 can choose the least loaded server. In another, if any given read transaction is too slow, an identical read request can be made to another BX server, and the first received response is used, evening out the load among servers. In still another implementation, a system with excess bandwidth may have the client 150 make two or more identical read requests to different servers 120 or 125, and use the first response that comes back. In some implementations, a BX proxy 180 also has a local read cache 184 with a Cache Hinting system to lower latency in many cases, as discussed in greater detail herein.

[0049] In one embodiment, if a read request from a server 120 or 125 is relatively slow, or if the server 120 or 125 is unavailable, future and/or retried read requests can be routed to other servers 120 or 125 in the pool, providing fault tolerance. This embodiment can prevents a central BX server 120 or 125 from being a single point of failure.

[0050] In one embodiment, a separate system monitors load on the BX system and adds or deletes BX servers 120 or 125 to or from the pool based on client demand. In another embodiment, a similar process can be applied to the BX storage unit 110, where high load or latency to the BX storage unit 110 may lead to duplication of the BX storage unit 110. The BX storage unit 110 can be duplicated within a data center or geographic area for redundancy or load balancing, or across regions to reduce latency.

[0051] If a BX proxy loses its network connection to its server, it may use other available connections, but also periodically retry connections back to the original server. This allows server upgrades to proceed in a rolling manner, which minimizes impact on clients.

[0052] In one embodiment, the BX server 120 or 125 keeps basic information (for example, image length, applicable compression or encryption algorithms, cache hint data, etc.) about an image named, for example, "myImage" in one or more files and the data for the image in a series of one or more segment data files. In one example, the basic information can be stored in "myImage.img", uncompressed data can be stored in "myImage.data.N", compressed data can be stored in "myImage.dataz.N", encrypted data can be stored in "myImage.datax.N", and compressed and encrypted data can be stored in "myImage.datazx.N". The N refers to the N-th segment file of the image. In some embodiments, each segment size can be identical, except for the last segment, and an offset into the image data of N.times.segmentSize would be stored at the beginning of the data file with sequence number N. For example, with a 16 MB segment size, a 32 GB image would be comprised of 2048 segments numbered 0 to 2047, and an 34 MB offset into the image would begin 2 MB into the data file with sequence number 2. It should be appreciated that the image file names and sizes are given simply as an example, and should not be considered limiting.

[0053] According to one embodiment, a sequence may include either or both compressed or uncompressed data for any sequence number. Compressed and/or encrypted image data may include header information specifying the applied compression or encryption algorithms.

[0054] In one embodiment, the .dataz file is a compressed form of the N-th segment file compressed. As discussed above, BX data is stored in one or more locations in a hierarchy of caches, and even on the BX server, the disk image is stored in lower-latency .data files (on higher performance local storage) and slightly higher-latency .dataz files (which is decompressed to .data files when needed, potentially deleting an older .data file for a different segment to make room). Once the BX server's local capacity is exhausted, even local .dataz files can be deleted, knowing that the .dataz files are also stored in the BX store.

[0055] Some embodiments also include a BX proxy configured to provide a hardware "bridge" or software "bridge" residing on a BX client. According to some embodiments, the BX proxy is configured to expose the BX system to the client as a standard storage or network device. In one example, the BX client interacts with the BX system as a standard storage device (e.g., issuing commands, write, reads, etc. to the standard storage device as if it were a hard disk on any computer). The BX proxy provides a transparent interface to the BX client obscuring the interactions between the BX sever and the BX store from the client's perspective, so that the BX client accepts responses to standard commands on the standard storage device. In further examples, a BX proxy may also implement additional features such as caching BX data or storing local writes to BX data. Some embodiments, also include a BX API server or a central server (or, for high availability, two or more servers) that can be configured to: coordinate(s) client authentication and authorization; automatic scaling; deduplication of image data; and replication and dissemination of BX system configuration (e.g. through configuration profiles, discovery). A configuration profile can be obtained by the client, giving the client a static view of the BX system; this configuration can be periodically updated by BX proxy software polling the BX server for the current configuration. A discovery protocol (such as SLP, used by the SMI-S Storage Management Initiative--Specification) can be used to automatically allow BX proxies to discover and use available BX servers, and/or BX servers to discover and use available BX stores, including any changes to the BX system topology from time to time. According to some implementations, for example in systems without a BX API server, administrative personnel handle some of the BX system tasks, For example, administrative personnel can be responsible for ensuring correct client configuration in embodiments, where a BX API server is not configured or present.

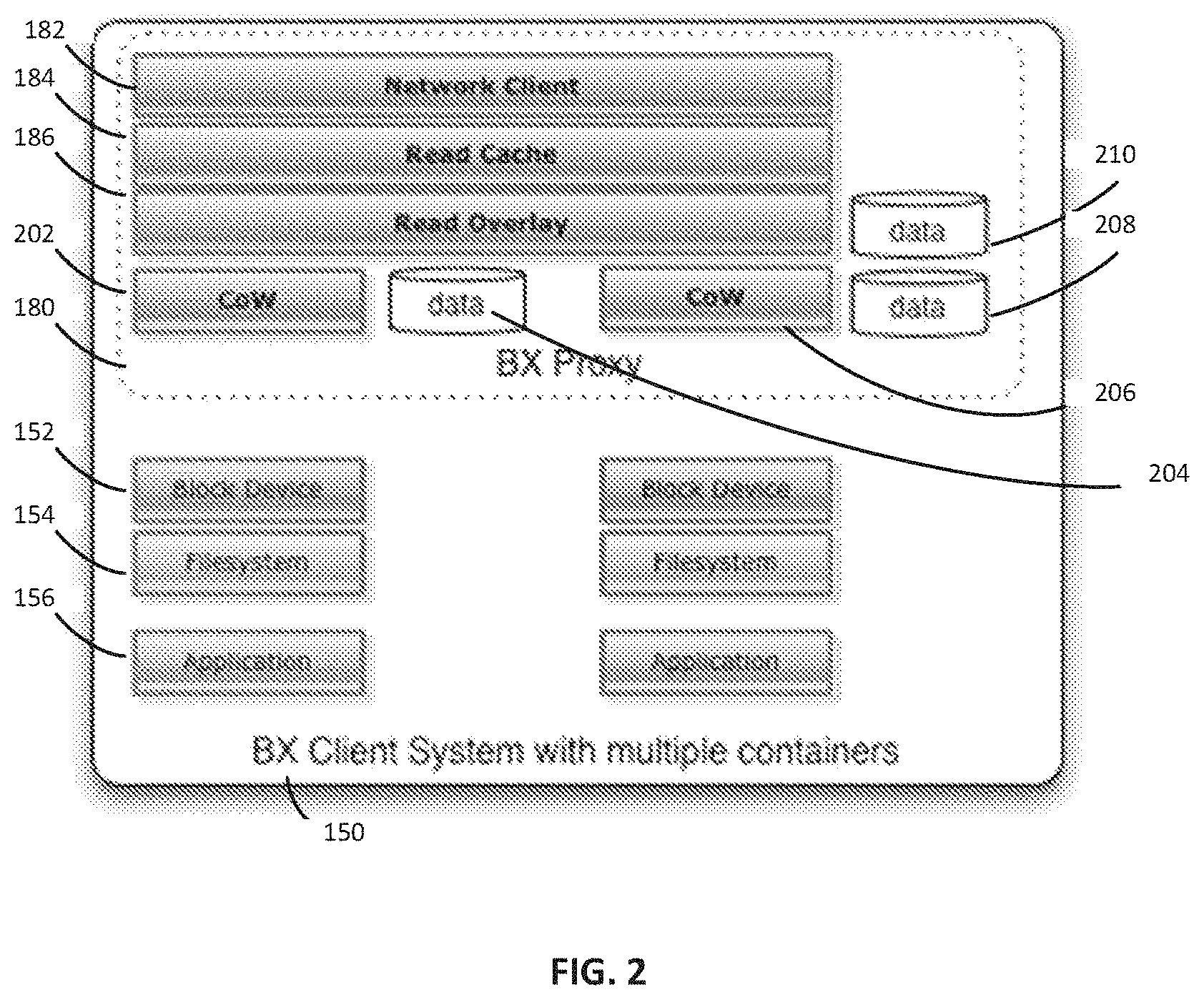

[0056] FIG. 2 shows an example of a client system 150, containing a proxy 180. The client system 150 can include a block device 152, a filesystem 154, and an application 156. The proxy 180 can include a network client 182, a read cache 184, and a read overlay 186. The network client 182 can connect to any of the servers 120 and 125. The read cache 184 can be used by the proxy 180 to generate cache hints to lower latency. The read cache 184 can be RAM, or any other suitable type of memory.

[0057] Referring back to FIG. 1, in some embodiments, the system 100 can operate at the block, or "sequential data" level of abstraction. In embodiments where storage unit 110 is used to act as a "block device" for a computing system, it can operate at the "disk drive" level of abstraction, rather than at the file level (or higher). Because the filesystem layer can place portions of files anywhere on its disk, it can be difficult to infer future access patterns without the higher level filesystem information. In one embodiment, a server 120 or 125 can be configured to use the fact that a file has been opened for reading and do an early read of some or all of file's contents into its local cache 121 or 126 in anticipation that opening the file means that the data will likely be needed soon by the client 150, 160, or 170. In another embodiment, a proxy 180 could request this same data from the server 120 or 125 once a file is opened, causing the server 120 or 125 to fetch the applicable segments from the storage unit 110. Because storage servers can introduce latency in two ways, through network request and response time, and server disk access time, reducing this on average can be a significant advantage.

[0058] Similar improvements can be accomplished at the block level by looking for access patterns. The storage unit 110 can be read-only in various embodiments; a file or object does not move from one location to another. The CoW 188 can hide changes to a file from a server 120 or 125 based on writes. If, for example, a large file which is usually consumed whole and linearly, occupies a contiguous sequence of blocks (or even non-contiguous; just repeatable) in the storage unit 110, the server 120 or 125 may know that when a first block is read, for example block 783, there is a very high probability block 787 will be requested next, followed by 842 and so on. In this case, the server 120 or 125, upon obtaining a read request for block 783, can include a Cache Hint along with this frequently seen pattern, which tells the proxy 180 that it should pre-fetch the others in the list (787 and 842) even though the filesystem 154 may have not yet made that request. The proxy 180 can launch one or more of those read requests, filling its cache 184 in anticipation of the potential read requests to come. If the client application 156 soon requests those blocks, the system is configured to have those files quickly accessible from the proxy's 180 read cache 184 or the local cache 121 or 126 of the servers 120 or 125 respectively, instead of waiting for the potentially longer round request to the storage unit 110 through a server 120 or 125.

[0059] In another embodiment, the proxy's 180 speculative requests can be sent to an alternate server 125 in the background. In the event the next request of the filesystem 154 is not the predicted block, the client 150 may still (e.g., in parallel) launch a request to its main server 120. In this way incorrectly predicted (or merely prematurely predicted) requests do not block the most recent real request from the filesystem 154. It should be appreciated that either server of servers 120 and 125 can be the main server and either server can be the alternate server. In some embodiments, the system is configured to ensure that speculative data requests are communicated to servers not handling data requests triggered from the client.

[0060] Referring back to FIG. 2, in one embodiment, a client system 150 can run multiple proxies 180 each with their own CoW layer. In this embodiment, each proxy 180 can have greater ability to predict what the filesystem 154 operations are executed next because there may not be competition between multiple processes on any particular proxy 180. In some embodiments, a single proxy 180 can have multiple CoW layers 202 and 206. In some embodiments, each CoW layer 202 and 206 can have its own memory 204 and 208, respectively.

[0061] In another embodiment, multiple proxies 180 may coordinate with each other to share data in their local cache 184 using a peer-to-peer protocol such as IPFS, or a custom protocol where proxies 180 can query nearby other proxies before asking the server 120 or 125. In one such embodiment, a proxy 180 may act as both a proxy and a lightweight server, answering nearby proxies' requests for data and referring them to the servers 120 or 125 if the requested data is not found. These proxies 180 may also act as overlay servers, where a cluster of clients wishing to share an overlay could all connect first to a particular proxy 180.

[0062] Similarly, in another embodiment, multiple servers 120 or 125 or proxies 180 can coordinate to share data from their local caches, avoiding duplication of data in caches. In yet another embodiment, multiple clients 150, 160, and 170 sharing a view of one or more images on the storage unit 110 can connect to the same proxy 180, who could return any data in the image overwritten by one of the clients 150, 160, or 170 to every other connected client 150, 160, or 170 requesting access to the overwritten data. In some embodiments, there can be any number of BX clients 150, 160, or 170, proxies 180, servers 120 or 125, and storage units 110 in an embodiment.

[0063] In embodiments using cache hints, some hints can be the result of a complex process of pattern recognition, extracted from access patterns: typically logs of reads from the servers 120 or 125. In one embodiment, the hint generation process is not real time, but instead is done in the background, potentially over relatively long periods of time, with large sets of log data. In this embodiment, the server 120 or 125 does not generate hints in real time, but instead operates from data built by this process. The hint generator in this embodiment, for example, can be configured to determine that reading block 430 followed by 783 means there is a high probability that 784 and 785 will be needed soon. It can create a data set which controls state machines (430, then 783, then send hint) one or more of which can be operating simultaneously. It should be appreciated that the terms "block" and "segment" can be considered to be interchangeable, depending on the embodiment implemented.

[0064] In one embodiment, the hint data sent to the proxy 180 can be a list of blocks to read. In some embodiments, the hint data can also include a probability (chances it will be read based on the data analysis), and/or a timeout (a timestamp after which it is highly unlikely the pre-fetched block will be used), or it may push some of the state machine operation to the client 150 if the hint generator recognizes a branch of the hints. For example after hinting through block 785, perhaps reading block 786 greatly increases the probability of 787, 788, and so on, while reading block 666 indicates a different access pattern is happening. While that state machine can run at the server 120 or 125, in some embodiments the branching may also be executed within the proxy 180. In various examples, a cache hint can include a probability variable that can be used to limit execution of the cache hint (e.g., a low probability hint is not executed, a threshold probability can be met for the system to execute the cache hint and retrieve the associated data into cache). In other examples, the cache hint can include a timeout (e.g., based on usage pattern the system determines an outer time limit by which the cache data will be used (if at all)). The system can use the timeout to evict cached data associated with a stale hint, which may override normal caching/eviction ordering.

[0065] In some embodiments, the proxy 180 can receive an encoding of a state machine with each state corresponding to a set of blocks to pre-fetch (and/or evict), optional timeouts after which the pre-fetched blocks are unlikely to be useful, and transitions according to future block numbers read. The block number of each new block read can be checked against the transition table out of the current state, and the new state can dictate which blocks should be pre-fetched or evicted based on the new information from the new block read.

[0066] Hint generation may use knowledge of higher-level structures in the data in addition to logs of accesses. For example, the hint generator can read the filesystem structure written in its block store and use that additional information to improve hint quality. In the example above, if blocks 786 through 788 are commonly read sequentially, that can be used as input. If the blocks are all sequential blocks of the same higher level file saved in the image, the hint generator may follow the blocks allocated to that same file (even if the blocks are not sequential) and add them to the hint stream.

[0067] In another embodiment, hints can include "cache eviction" hints that can allow for branching logic. In this embodiment, the hint generator can provide the client 150 multiple blocks to fetch. Based on the future access patterns, the client 150 can be able to tell that some blocks may not be likely to be used if a particular "branch" block is fetched. For example, the data may frequently include the following two patterns: 430, 783, 784, 785 and 430, 786, 787, 788. In this example, the first block in the two patterns can be the same, but the subsequent blocks can be different. The hint generator can be configured to send the client a hint that if block 430 is loaded, to pre-fetch 783, 784, 785, 786, 787, and 788--but include conditions for execution to handle the multiple patterns. For example, the moment 783 is used then 786, 787 and 788 can be safely evicted, or if on the other hand 786 is used then 783, 784 and 785 can be safely evicted (e.g., as specified by execution conditions in a cache hint).

[0068] In some embodiments, the system can be configured to analyze hints having common elements and consolidate various hints into a matching pattern portion and subsequent execution conditions that enable eviction or further specification of an optimal or efficient pattern matching sequence. In other embodiments, the cache hint can trigger caching of data for multiple patterns and trigger ejection of the cache data that represents an unmatched pattern as the clients usage continues and narrows a pattern match from many options to fewer and (likely) to one match. Although in some scenarios a final match may not resolve into a single pattern or execution.

[0069] It should be appreciated that the patterns listed here are simply for example, and patterns of any length and/or similarity can be used. It should also be appreciated that this sort of cache hint generation is not restricted to networked storage caches; it can be used for any other suitable caches such as a CPU cache.

[0070] In some embodiments, the hint generation may not occur on the server 120 or 125. In some embodiments, for example those using large databases, a running application can know the high-level structure of data within its files, or it can keep its data in a raw block device to remove filesystem overhead. In these cases, the application may have an API to create hints for itself, and execute speculative read requests to fill its cache. In other embodiments, a Hadoop engine, data warehouse software, or SQL Query planner, or any other suitable system can be configured as discussed herein to analyze current data requests and predict row reads and generate cache hints for the proxy 180 and server 120 or 125, for example, if there is a long-running query that enables such analysis. In some embodiments, a cache hint API can be implemented with a database and configured to reduce latency by prefetching the data used later in the query.

[0071] In some embodiments managing execution of data requests with a high degree of randomization, pre-loading blocks can be wasteful. To help performance in these situations, the client 150 can also be configured with a simpler cache to account for some jitter in accesses or to account for a full breakdown in which the hint stream is out of date. In one embodiment, the client 150 is configured to monitor its caching performance (for example its hit rate) for every algorithm or hint stream, and if cache performance falls below a certain threshold, the monitoring process can inform the server 120 or 125 that the hints are no longer helping. In some embodiments, the server 120 or 125 can be configured to increase the size of the client cache in response. In some embodiments, the number of jobs running on the server 120 or 125 can be adjusted. In some embodiments, the server 120 or 125 may stop or ignore cache hinting if the cache performance falls below a certain threshold. For example, if cache hint performance fails below a threshold level for a period of time, the system can be configured to disable cache hints for a period of time, client data request session, etc. Disabling hinting when performance falls below the threshold can increase system bandwidth.

[0072] In one embodiment, the hint stream (e.g., system analysis of access patterns) can reveal that certain portions of the served image tend to be read together. The system is configured to reorder the filesystem the server 120 or 125 is serving as an image to keep frequently-used data within the same segment of the storage system 110; packing them into likely streams of data. In various embodiments, the system is configured to re-order the served image responsive to analysis of the hint stream that indicates common access elements. For example, re-ordering is configured to reduce the number of independent segments fetched from the storage unit 110, which can also reduce latency and increase cache performance on the server 120 or 125. Various conventional systems fail to provide this analysis, thus various embodiments can optimize data retrieval execution over such conventional systems, for example, as is described herein.

[0073] As shown in FIG. 2, the proxy 180 may comprise a read overlay 186. In some embodiments, a client 150 can see its own unique filesystem 154 due to the read overlay layer 186. If the client 150 creates a new file in its filesystem 154, the disk blocks which were changed as a result of the writes can be recorded in the proxy's 180 CoW layer 202 or 206, operating much like a block-level cache, with an extensible data store 204 or 208, respectively. In some embodiments, the CoW layer 202 or 206 can use a write-allocate write policy, under which any data written to the CoW layer 202 or 206 to an address which has not yet been seen by the CoW tags, is configured to allocate a new block in its tags and data. In some embodiments, the CoW layer 202 or 206 may never allow writes to propagate back to the caches or connections to servers beyond it. The CoW layer 202 or 206 can intercept any or all of the read and write requests. According to one embodiment, the write requests received by the proxy can be written to the CoW data store 204 or 208, with the number of the overlaid block and the location in the data store kept in the CoW's cache tags lookup. This cache tag store can be a hash table, or more complex data structure, depending on the size of the table. The cache tag store may also utilize a probabilistic data structure, such as a Bloom filter. Read requests can consult the cache tag store first before passing through to check the Read Cache 184 and then (if the request missed both the proxy's 180 write and read caches 186) on to the server 120 or 125.

[0074] In some embodiments, a user can have a large (e.g. several terabyte) data storage unit 110, and wishes to update a small portion of it during a large operation across the storage unit 110, but may wish to make that update permanently available for the future. Saving the CoW 202 or 206 and using it later can save significant disk storage. Instead of duplicating the entire data store, the system 100 can create a new image with references to the original data segments where nothing has changed, and references to separate data segments where the CoW 202 or 206 recorded modified segments. This can allow multiple versions of a large block device to take up a small amount of space where the changed segments are duplicated.

[0075] In one embodiment, after a client 150 has made its changes to its filesystem 154 and the changes can be stored in the CoW 202 or 206, it may disconnect from the proxy 180 and save the CoW 202 or 206 to be used in the future as a Read-overlay 186. A future proxy 180 may load in a Read-overlay 186 when mounting a BX image; this can make it appear to be the same as when the Read-overlay 186 was last stored by a proxy's 180 CoW layer 202 or 206. This can allow a modified view of the base image without copying all of the base image data, and persisting changes between sessions of the proxy 180.

[0076] In some embodiments, one or more Read-overlay 186 files can be stored separately and made available to proxies 180 when mounting an image. This can provide for easy access to various similar versions of the same large data set.

[0077] In some embodiments, a Read-overlay 186, the tags and/or data files can be uploaded to the server 120 or 125 to be made available to any proxy 180 as a derivative image. In some embodiments, the Read-overlay 186, the tags and/or data files can be uploaded to the storage unit 110.

[0078] In some embodiments, one client can do a set of setup work and share its output with a set of one or more other client machines. For example, an administrator might upload a year's worth of financial trading data in an image. Each day, a client (e.g., system or device) can mount that image via a proxy 180, then load, clean, and index the previous day's financial trading data. After that, the client can share that Read-overlay 186 so that a large number of other clients could use proxies to perform analysis on the updated data set.

[0079] The system 100 stores images as a base file (which contains possible overlay Tags if this image is a server-side overlay, as well as the name of the image the overlay was created on top of) and a sequence of data files, called segments. In some embodiments, these can be large compared to normal disk accesses, but small compared to the disk image as a whole.

[0080] Clones can be created by cloning image A to a new image A-prime by creating a new A-prime image file which has no overlay Tags, but a base image name of "A". All reads to image A-prime, segment 3 would look for the file A-prime.data.3 then A-prime.dataz.3 and then A.data.3 and A.dataz.3 Connecting to the A-prime image in Read-Write mode, all writes can allocate a data segment file (copying from the base image first) as needed. Clones, then, essentially use the server's filesystem as its overlay tags. It should be appreciated that this is given as an example of a method for creating clones, and any other suitable method can be used.

[0081] An example use case for a server-side clone is to apply a permanent update to a very large image. For example, a client can be configured to operate hundreds of virtual machines (VMs) with the same operating system and analysis software. The administrator can install a service pack on the base image then store the updated operating system and software on a clone. This can enable clients to continue to use either the base image or the updated service pack, which can help with rolling upgrades or easy rollbacks in the event of problems with the upgrade. In addition, this example can limit the amount of extra storage required by using additional storage for the changed segments when needed.

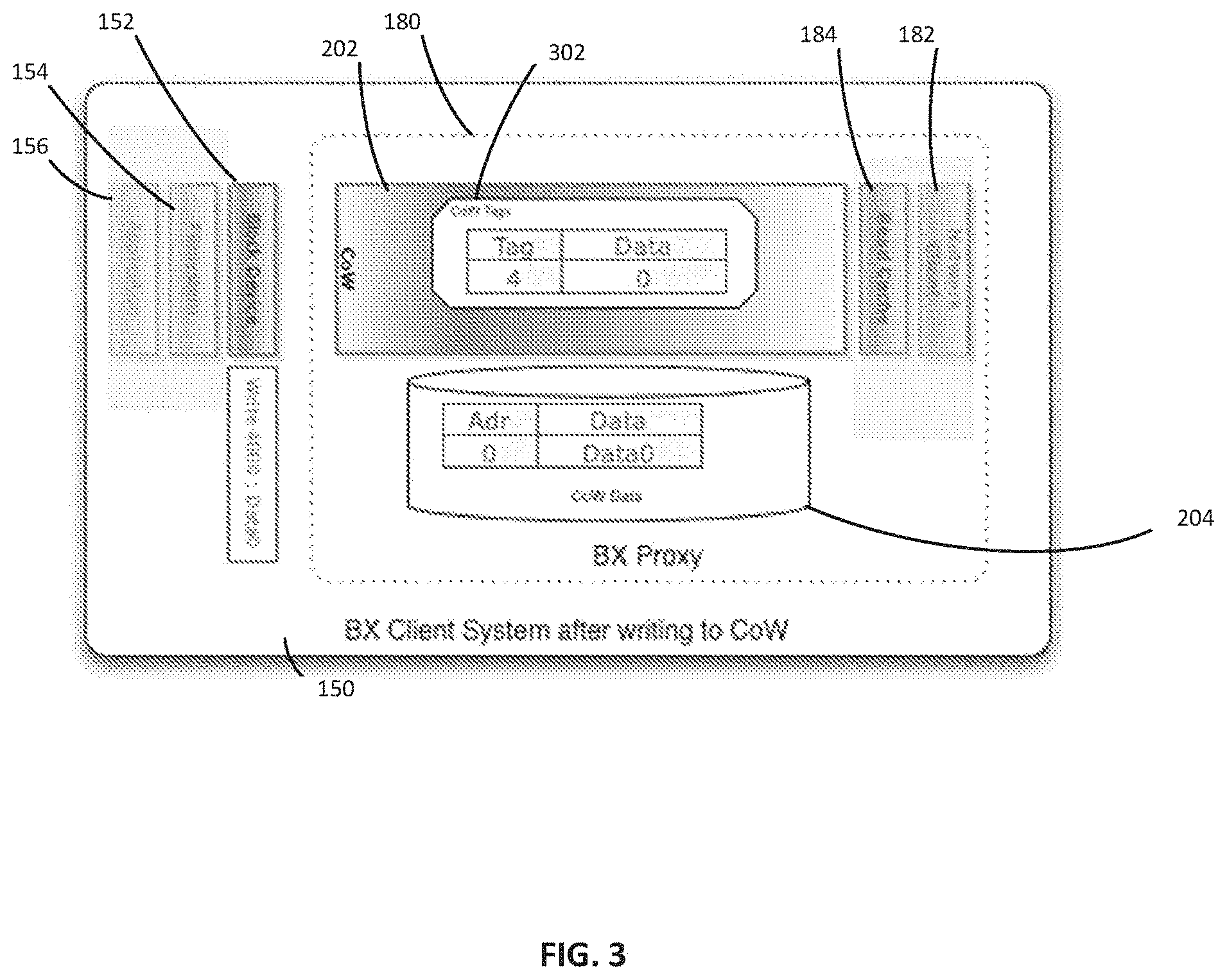

[0082] FIG. 3 shows a client system 150 with a copy on write layer 202 and copy on write data 204. In some embodiments, the proxy 180 can accept client 150 write requests to its device with given data, length, and offset. It should be appreciated that these parameters are given as an example, and any suitable parameters can be used. The write can caught at the proxy's 180 CoW layer 202. For each target block falling within the region defined by the request's offset/length pair, the proxy 180 can check to see if that block already exists in the tag structure 302 of the CoW data 204. If that target block is present, the new block of data can be written to the CoW Data 204. Data can be written by overwriting the old block or by writing a new block and modifying a tag to point to the new block and marking the old block as free, for example. If the target block is not present, the CoW Data 204 can be extended, and the new location in the CoW data can be written as a new tag. The tag can be the original offset block number, or any suitable location reference, such as a hashed location.

[0083] As an example, a method for writing to an empty CoW layer is provided. It should be appreciated that the method described is given only as an example, and should not be considered as limiting. For the purpose of this example, the block size is 4 kb and addresses accessed are on block boundaries, in hexadecimal. For simplicity, single-block accesses are shown, but when longer accesses are done, the system can break them up into individual block accesses as needed. For a new CoW layer 202, no writes have yet been done. If the client provides a write request to Write 0x4000 Data0, the CoW layer 202 can look up block 4 in its associated CoW tag structure 302. If the value is not found; a new location for this data is allocated in the next available block of the CoW data 204. Future reads within block 4 (address range 0x4000-4FFF) returns the "Data0" values from within the CoW data 204 rather than reading further up the stack. Reads which are not found in the CoW tags 302 lookup can check the read cache 184 and, if not found there, can be sent through the network client 182 to a connected server.

[0084] In some embodiments, the proxy 180 can accept client 150 read requests with a buffer to fill, length, and offset. It can be the proxy's 180 responsibility to decompress any data. As an example, for each possible block within the request's offset/length pair, the client 150 can check to see if the corresponding block number is in the proxy's 180 CoW layer 202 using the tag structure 302. For any block present in the CoW layer 202, the data from the corresponding block can be returned. If not, the request passes up to the proxy 180 to search the Readoverlay, if any, and then the read cache 184. If the request misses in both of those layers, the proxy 180 can generate a network read request to be sent to the server. The proxy 180 can also allocate new space in the read cache 184 for future reads to the same data, if appropriate. Additionally, the proxy read request may trigger a local cache hint state machine to queue up additional server read requests to load the read cache speculatively. In some embodiments, the data stored in the buffer can be encrypted. Any of the proxy 180, client 150, or server can be configured to decrypt the data, based on the embodiment.

[0085] FIG. 4 shows an example of a server 120 with a server lookup layer 402. If server 120 has a data segment requested by a read request through the network server connection 404 in its local storage, server 120 can first check to see whether a server-side Readoverlay applies by checking the tag structure for the requested block or blocks. If so, server 120 can return the data from the Readoverlay. In some embodiments, one read overlay can be searched, while in other embodiments multiple read overlays can be searched. If server 120 has the data file in local uncompressed storage 408 but no Readoverlay applies, server 120 can return the data to the proxy in a specified format. Server 120 may store the uncompressed/decrypted data locally for future reads of the same segment. In some embodiments, server 120 can also decompress or decrypt the appropriate segment as needed. If the server 120 does not have the data segment, server 120 can generates a network read request to the storage unit through the storage unit client 406 to fetch the segment or segments containing the data in the read request. Compressed data can be stored in the compressed storage unit 410. It should be appreciated that in some embodiments, a server 120 may have one of uncompressed storage 480 and compressed storage 410, or both.

[0086] While in some embodiments, the server 120 uses a pre-generated hint stream to send hints to the proxy suggesting blocks to be pre-fetched, server 120 may internally maintain a set of hint state machines to prefetch .dataz segments from the storage unit, or to decompress .dataz segments to their .data form within the server 120.

[0087] In some embodiments the server 120 may contain compressed data blocks. Compressed data blocks in the server 120 can be created once, when the image is created. In some embodiments, if the image is modified, data segments which are marked can be recompressed. In some embodiments, the administrator can compress and store blocks to the storage unit. Blocks fetched from the storage unit on behalf of authorized clients can be decrypted by the server 120, and then stored and sent to the clients encrypted with a session key particular to that client and/or session. Clients thereby do not have access to decrypt any data other than that provided by an authorized BX session. In some embodiments, the administrator and clients exclusively possess the encryption and/or decryption keys, and the data stored on the storage unit can be received in encrypted form by the server 120, and transmitted to the clients in encrypted form.

[0088] FIG. 5 shows an example process flow for managing a distributed data storage. The process begins with step 502, where a remote request from a client device is obtained. The remote request can comprise a request for access to common data hosted on a storage unit, for example. In one embodiment, the remote request is generated by a client device and received by a proxy unit. At step 504 the proxy unit manages the client data request, for example, the proxy unit is configured to provide access to the common data for the client device. According to one embodiment, step 504 can be executed by any proxy unit as described herein, or any suitable combination of the proxy units described herein. In some embodiments, the proxy unit provides access to a portion of the common data for the client device. For example, the client device may be authorized to access a portion of the common data, or the client device may request a portion of the common data in the remote request.

[0089] In step 506, access to the common data is provided based on a layered architecture including a first write layer. For example, a client device can access a portion of common data via a first proxy, or in some alternatives the first proxy can manage access to a complete data set for the client device. In further examples, access to the first write layer can be executed by the system as described herein. For example, the proxy unit may contain a write layer which, in some embodiments, can be stored, distributed, and/or reused by other servers or proxy units. In some embodiments, the server may contain a write layer which can be stored, distributed, and/or reused by other servers. The server and the proxy may manage the access to their respective write layers.

[0090] In some embodiments, the write layer is configured to manage write requests on the common data issued by the client device. For example, the write layer enables the client device to utilize a common data core that is modified by a write layer reflecting any changes to the common data made by a respective client device.

[0091] In step 508, the remote request is executed on the common data and any write layer data as necessary. For example, the proxy unit can manage execution and retrieval of data from the common data and/or any write layer (e.g., locally, through a BX server, respective caches, and/or BX store). In some examples, the proxy layer can be configured with a local cache of data to be retrieved, and source data requests through the available cache. Accesses through other system can also be enhanced through respective caches (e.g., at a BX server).

[0092] As discussed, executing the remote request on the common data can also include accessing data stored on a server between the proxy unit and the storage unit, or sending an indication of the remote request to the server. In step 510, the remote request is executed transparently to the client device as a local execution. For example, a proxy unit appears to the client device as a local disk or file system. The client executes data retrieval or write requests through the proxy unity, which handles the complexity of cache management, work load distribution, connections with data servers and/or data stores. In some embodiments, this can comprise making the proxy unit appear to the client device as a local storage unit. It should be appreciated that any of the various components FIGS. 1-4 can execute the steps described herein in any suitable order.

[0093] FIG. 6 shows an example process flow 600 for managing caching within a distributed data store. The process begins with step 602, where a remote request from a client device is obtained and analyzed. The client device can send a request for access to data from a remote storage unit, for example, and the request can be received by a proxy unit or by a server. At step 604, the proxy unit or server may match the client data request to a cache profile and respective cache hint. The cache profile can be a profile that is substantially similar to the client data request in terms of the locations in memory of the storage unit or other memory space that the client data request is attempting to access. For example, if the client data request is attempting to access blocks 430 and 783, the cache profile may correspond to blocks 430 and 783. The respective cache hint can be associated with the cache profile, and contains at least one suggested memory block to access next. For example, if clients typically request access to block 784 after requesting blocks 430 and 783, the cache hint may suggest block 784. In some embodiments, cache hints may comprise cache eviction hints, with branched options, as described herein.

[0094] In step 606, the proxy or server can evaluate cache conditions associated with the cache hint. It should be appreciated that not all embodiments may utilize step 606. If cache performance falls below a certain threshold, the monitoring process can inform the server or proxy that the hints are no longer helping. In some embodiments, the server or proxy may increase the size of the client cache in response. In some embodiments, the number of jobs running on the server or proxy can be adjusted. In some embodiments, the server or proxy may stop or ignore cache hinting if the cache performance falls below a certain threshold.