Augmented Reality Service Software As A Service Based Augmented Reality Operating System

HA; Tae Jin ; et al.

U.S. patent application number 16/531742 was filed with the patent office on 2020-02-27 for augmented reality service software as a service based augmented reality operating system. The applicant listed for this patent is VIRNECT INC. Invention is credited to Chang Suu HA, Tae Jin HA, Kyung Won KIL, Back Sun KIM, Jea In KIM, Noh Young PARK.

| Application Number | 20200066050 16/531742 |

| Document ID | / |

| Family ID | 66811137 |

| Filed Date | 2020-02-27 |

View All Diagrams

| United States Patent Application | 20200066050 |

| Kind Code | A1 |

| HA; Tae Jin ; et al. | February 27, 2020 |

AUGMENTED REALITY SERVICE SOFTWARE AS A SERVICE BASED AUGMENTED REALITY OPERATING SYSTEM

Abstract

An augmented reality operating system based on augmented reality software as a service (SaaS) comprises an augmented reality management system providing a pre-assigned 3D virtual image to a web browser which has transmitted a URL address in a distribution mode and in supporting creation of augmented reality content based on augmented reality software as a service in an authoring mode, providing a template for creating the augmented reality content on a web browser authorized as a manager and billing a payment according to the type of template used; a user terminal receiving the 3D virtual image from the augmented reality content management system by transmitting the URL address through an installed web browser and displaying each physical object of actual image information displayed on the web browser by augmenting the physical object with a pre-assigned virtual object of the 3D virtual image in a distribution mode; and a manager terminal accessing augmented reality software as a service of the augmented reality content management system via an installed web browser, creating the augmented reality content by determining an augmentation position on a map, a physical object of actual image information located at the augmentation position, and a virtual object assigned to the physical object respectively in an authoring mode.

| Inventors: | HA; Tae Jin; (Naju-si, KR) ; KIM; Jea In; (Naju-si, KR) ; PARK; Noh Young; (Naju-si, KR) ; KIM; Back Sun; (Naju-si, KR) ; HA; Chang Suu; (Naju-si, KR) ; KIL; Kyung Won; (Naju-si, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 66811137 | ||||||||||

| Appl. No.: | 16/531742 | ||||||||||

| Filed: | August 5, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/4676 20130101; G06Q 30/04 20130101; G06T 19/006 20130101; G06K 9/00979 20130101; H04L 67/10 20130101; G06K 9/6215 20130101; G06K 9/00671 20130101; H04L 67/02 20130101; G06K 9/6217 20130101 |

| International Class: | G06T 19/00 20060101 G06T019/00; G06K 9/00 20060101 G06K009/00; G06K 9/62 20060101 G06K009/62; G06Q 30/04 20060101 G06Q030/04 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Aug 24, 2018 | KR | 10-2018-0099262 |

Claims

1. An augmented reality operating system comprising: a manager terminal accessing augmented reality software as a service of an augmented reality content management system via an installed web browser and creating augmented reality content by determining an augmentation position on a map, a physical object of actual image information located at the augmentation position, and a virtual object assigned to the physical object respectively; and in supporting creation of the augmented reality content based on the augmented reality software as a service, the augmented reality content management system providing a template for creating the augmented reality content on the web browser authorized as a manager and billing a payment according to the type of template used, wherein in learning a plurality of images in the form of tree-type data structure suitable for extraction and matching of image features and performing real-time recognition and detection of input images based on the learning result, the augmented reality content management system supports search tree generation for large-scale image learning through dynamic generation of Bag-of-visual Words (BoW) based hierarchical tree-type data structure.

2. The system of claim 1, wherein the augmented reality content management system provides current position information transmitted from the manager terminal and a 3D virtual image corresponding to the actual image information to the manager terminal in real-time; and identifies a physical object of the actual image information and updates a virtual object of the 3D image information, assigned to the identified physical object, in real-time according to the control of the manager terminal.

3. An augmented reality operating system comprising: an augmented reality management system providing a pre-assigned 3D virtual image to a web browser which has transmitted a URL address in a distribution mode and in supporting creation of augmented reality content based on augmented reality software as a service in an authoring mode, providing a template for creating the augmented reality content on a web browser authorized as a manager and billing a payment according to the type of template used; a user terminal receiving the 3D virtual image from the augmented reality content management system by transmitting the URL address through an installed web browser and displaying each physical object of actual image information displayed on the web browser by augmenting the physical object with a pre-assigned virtual object of the 3D virtual image in a distribution mode; and a manager terminal accessing augmented reality software as a service of the augmented reality content management system via an installed web browser, creating the augmented reality content by determining an augmentation position on a map, a physical object of actual image information located at the augmentation position, and a virtual object assigned to the physical object respectively in an authoring mode, wherein in learning a plurality of images in the form of tree-type data structure suitable for extraction and matching of image features and performing real-time recognition and detection of input images based on the learning result, the augmented reality content management system supports search tree generation for large-scale image learning through dynamic generation of Bag-of-visual Words (BoW) based hierarchical tree-type data structure.

4. The system of claim 3, wherein the augmented reality content management system provides current position information transmitted from the user terminal and the 3D virtual image corresponding to actual image information to the user terminal in real-time, identifies a physical object of the actual image information and provides a virtual object of the 3D virtual image, assigned to the identified physical object, to the user terminal in the distribution mode; and provides current position information transmitted from the manager terminal and a 3D virtual image corresponding to the actual image information to the manager terminal in real-time, identifies a physical object of the actual image information and updates a virtual object of the 3D image information, assigned to the identified physical object, in real-time according to the control of the manager terminal in the authoring mode.

Description

CROSS REFERENCE TO RELATED APPLICATION(S)

[0001] This application claims the priority to Korean Patent Application No. 10-2018-0099262, filed on Aug. 24, 2018, which is all hereby incorporated by reference in its entirety.

BACKGROUND

Technical Field

[0002] The present invention relates to an augmented reality operating system and, more particularly, to an augmented reality software as a service based augmented reality operating system.

Related Art

[0003] Recently, researches are actively conducted on provision of interactive contents based on the augmented reality technique that shows the physical world overlaid with various pieces of information when a camera module captures a scene of the physical world.

[0004] Augmented Reality (AR) belongs to the field of Virtual Reality (VR) technology and is a computer technique that makes a virtual environment interwoven with the real-world environment perceived by the user, by which the user feels as if the virtual world actually exists in the original physical environment.

[0005] Different from the conventional virtual reality that deals with only the virtual space and objects, AR superimposes virtual objects on the physical world base, thereby providing information augmented with additional information, which is hard to be obtained only from the physical world.

[0006] In other words, augmented reality may be defined as the reality created by blending real images as seen by the user and a virtual environment created by computer graphics, for example, a 3D virtual environment. Here, the 3D virtual environment may provide information necessary for real images as perceived by the user, where 3D virtual images, being blended with real images, may enhance the immersive experience of the user.

[0007] Compared with pure virtual reality techniques, augmented reality provides real images along with a 3D virtual environment and makes the physical world interwoven with virtual worlds seamlessly, thereby providing a better feeling of reality.

[0008] To exploit the advantages of augmented reality, research/development is now actively being conducted around the world on the techniques employing augmented reality. For example, commercialization of augmented reality is under progress in various fields including broadcasting, advertisement, exhibition, game, theme park, military, education, and promotion.

[0009] Due to improvement of computing power of mobile devices such as mobile phones, Personal Digital Assistants (PDAs), and Ultra Mobile Personal Computers (UMPCs); and advances of wireless network devices, mobile terminals of today have been improved so as to implement a handheld augmented reality system.

[0010] As such a system has become available, a plurality of augmented reality applications based on mobile devices have been developed. Moreover, as mobile devices are spread quite rapidly, an environment in which a user may experience augmented reality applications is being constructed accordingly.

[0011] In addition, user demand is increasing on various additional services based on augmented reality for their mobile terminal, and attempts are increasing to apply various augmented reality contents for users of mobile terminals.

[0012] The Korean public patent No. 10-2016-0092292 is related to a "system and method for providing augmented reality service of materials for promotional objects" and proposes a system that advertises a target object effectively by applying augmented reality to advertisement content to allow people exposed to the advertisement to easily obtain information related to the advertisement object and learn the details thereof by being immersed with interest.

[0013] Meanwhile, conventional augmented reality systems require construction of not only a dedicated augmented reality server but also applications to access the augmented reality server and operate the augmented reality. In other words, to realize an augmented reality service from conventional methods, the "server-app" components have to be constructed for each individual augmented reality service; therefore, a problem exists that more costs are required to construct a system rather than to create content.

PRIOR ART REFERENCES

Patent Reference

[0014] (Patent reference 1) KR10-2016-0092292 A

SUMMARY OF THE INVENTION

[0015] The present invention has been made in an effort to solve the technical problem above and provides an augmented reality operating system capable of creating and distributing augmented reality content easily and quickly in the form of Uniform Resource Locator (URL) addresses by utilizing augmented reality Software as a Service (SaaS).

[0016] According to one embodiment of the present invention to solve the technical problem above, an augmented reality operating system comprises an augmented reality content management system providing a pre-assigned 3D virtual image to a web browser which has transmitted a URL address and a user terminal providing a URL address through the installed web browser, receiving the 3D virtual image from the augmented reality content management system, and displaying each physical object of actual image information displayed on the web browser by augmenting the physical object with a pre-assigned virtual object of the 3D virtual image.

[0017] Also, the augmented reality content management system provides current position information transmitted from the user terminal and the 3D virtual image corresponding to the actual image information to the user terminal in real-time; and identifies a physical object of the actual image information and provides a virtual object of the 3D virtual image, assigned to the identified physical object, to the user terminal.

[0018] Also, according to another embodiment of the present invention, an augmented reality content management system comprises a manager terminal accessing augmented reality software as a service of an augmented reality content management system via an installed web browser and creating augmented reality content by determining an augmentation position on a map, a physical object of actual image information located at the augmentation position, and a virtual object assigned to the physical object respectively; and in supporting creation of the augmented reality content based on the augmented reality software as a service, the augmented reality content management system providing a template for creating the augmented reality content on the web browser authorized as a manager and billing a payment according to the type of template used.

[0019] Also, the augmented reality content management system according to the present invention provides current position information transmitted from the manager terminal and a 3D virtual image corresponding to the actual image information to the manager terminal in real-time; and identifies a physical object of the actual image information and updates a virtual object of the 3D image information, assigned to the identified physical object, in real-time according to the control of the manager terminal.

[0020] Also, an augmented reality operating system according to yet another embodiment of the present invention comprises an augmented reality management system providing a pre-assigned 3D virtual image to a web browser which has transmitted a URL address in a distribution mode and in supporting creation of augmented reality content based on augmented reality software as a service in an authoring mode, providing a template for creating the augmented reality content on a web browser authorized as a manager and billing a payment according to the type of template used; a user terminal receiving the 3D virtual image from the augmented reality content management system by transmitting the URL address through an installed web browser and displaying each physical object of actual image information displayed on the web browser by augmenting the physical object with a pre-assigned virtual object of the 3D virtual image in a distribution mode; and a manager terminal accessing augmented reality software as a service of the augmented reality content management system via an installed web browser, creating the augmented reality content by determining an augmentation position on a map, a physical object of actual image information located at the augmentation position, and a virtual object assigned to the physical object respectively in an authoring mode.

[0021] Also, the augmented reality content management system according to the present invention provides current position information transmitted from the user terminal and the 3D virtual image corresponding to actual image information to the user terminal in real-time, identifies a physical object of the actual image information and provides a virtual object of the 3D virtual image, assigned to the identified physical object, to the user terminal in the distribution mode; and provides current position information transmitted from the manager terminal and a 3D virtual image corresponding to the actual image information to the manager terminal in real-time, identifies a physical object of the actual image information and updates a virtual object of the 3D image information, assigned to the identified physical object, in real-time according to the control of the manager terminal in the authoring mode.

BRIEF DESCRIPTION OF THE DRAWINGS

[0022] FIG. 1 illustrates a structure of an augmented reality operating system 1 according to an embodiment of the present invention.

[0023] FIG. 2 illustrates an operation example of the augmented reality operating system 1 in a distributed mode.

[0024] FIGS. 3 to 5 illustrate operation examples of the augmented reality operating system 1 in an authoring mode.

[0025] FIG. 6 illustrates a structure of an augmented reality content management system 100 of the augmented reality operating system 1.

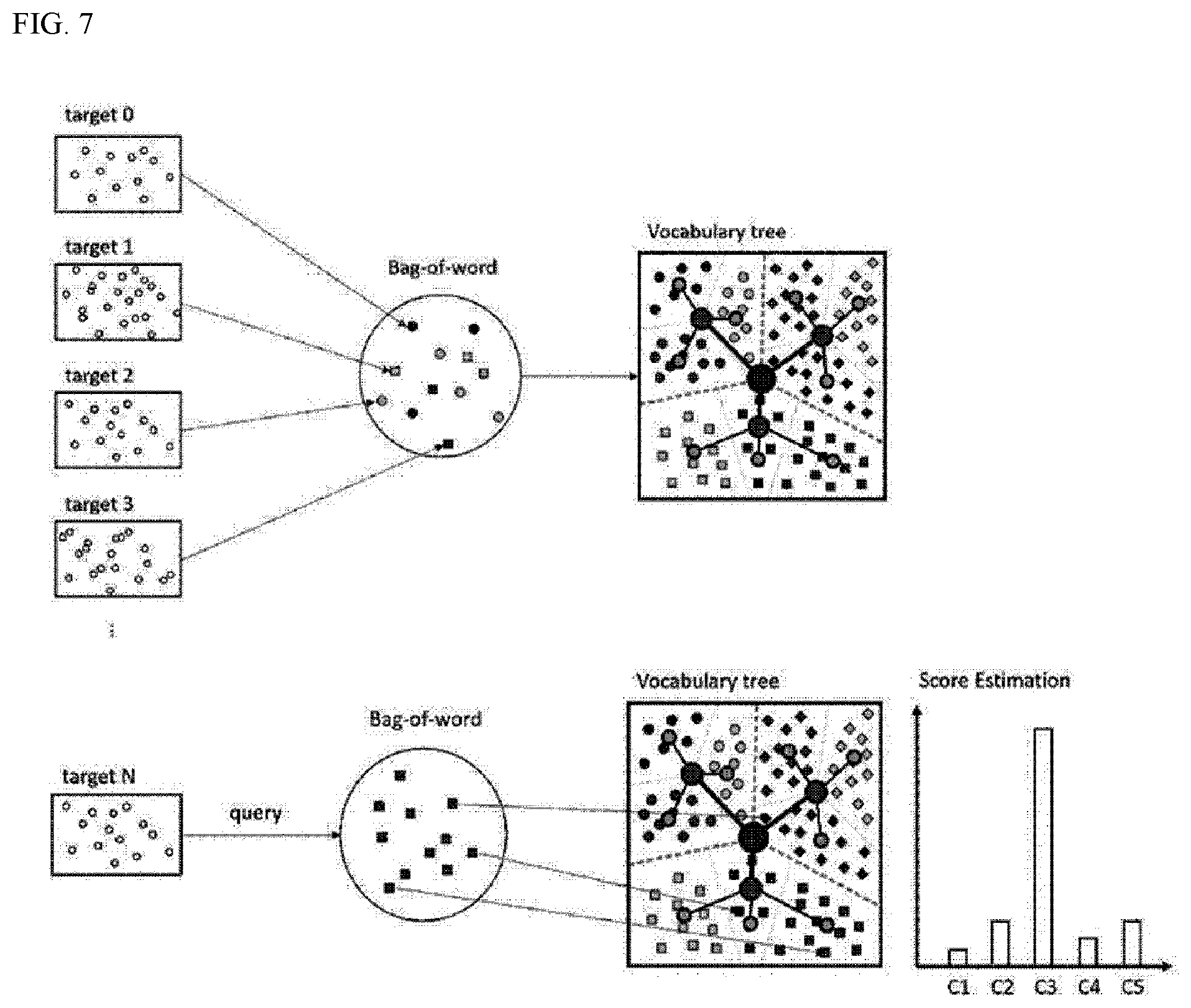

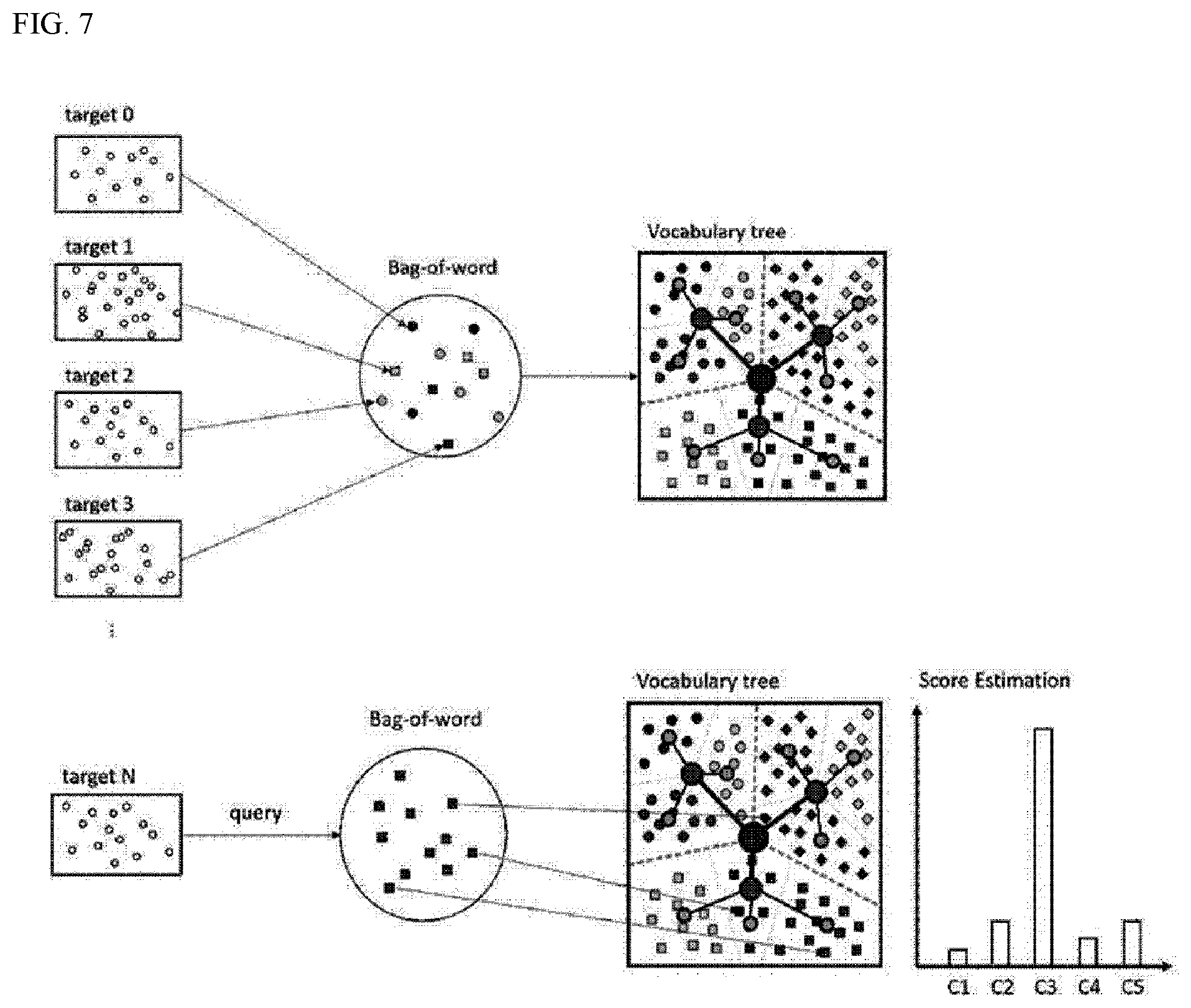

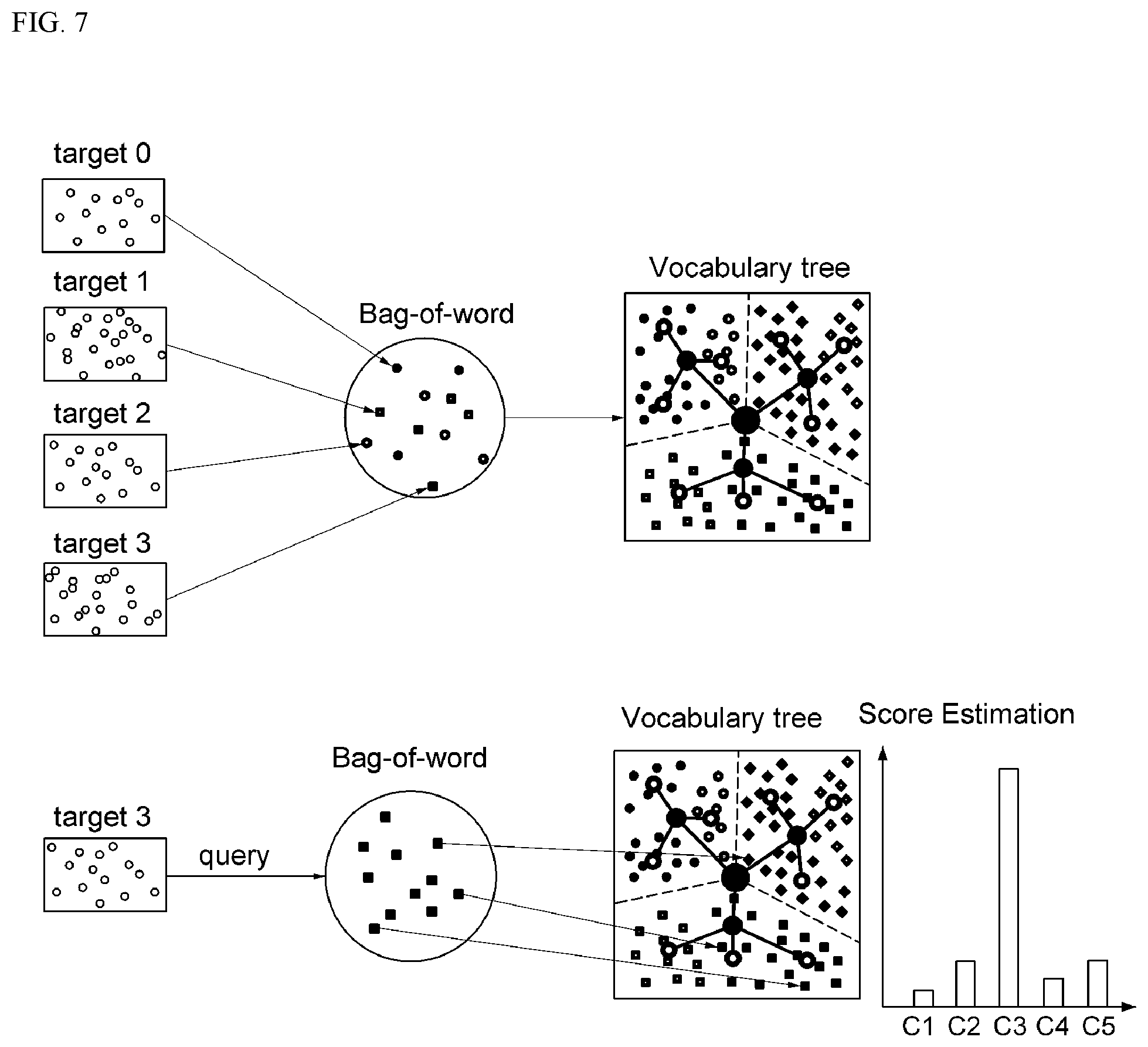

[0026] FIG. 7 illustrates a real-time image search method based on a hierarchical tree-type data structure.

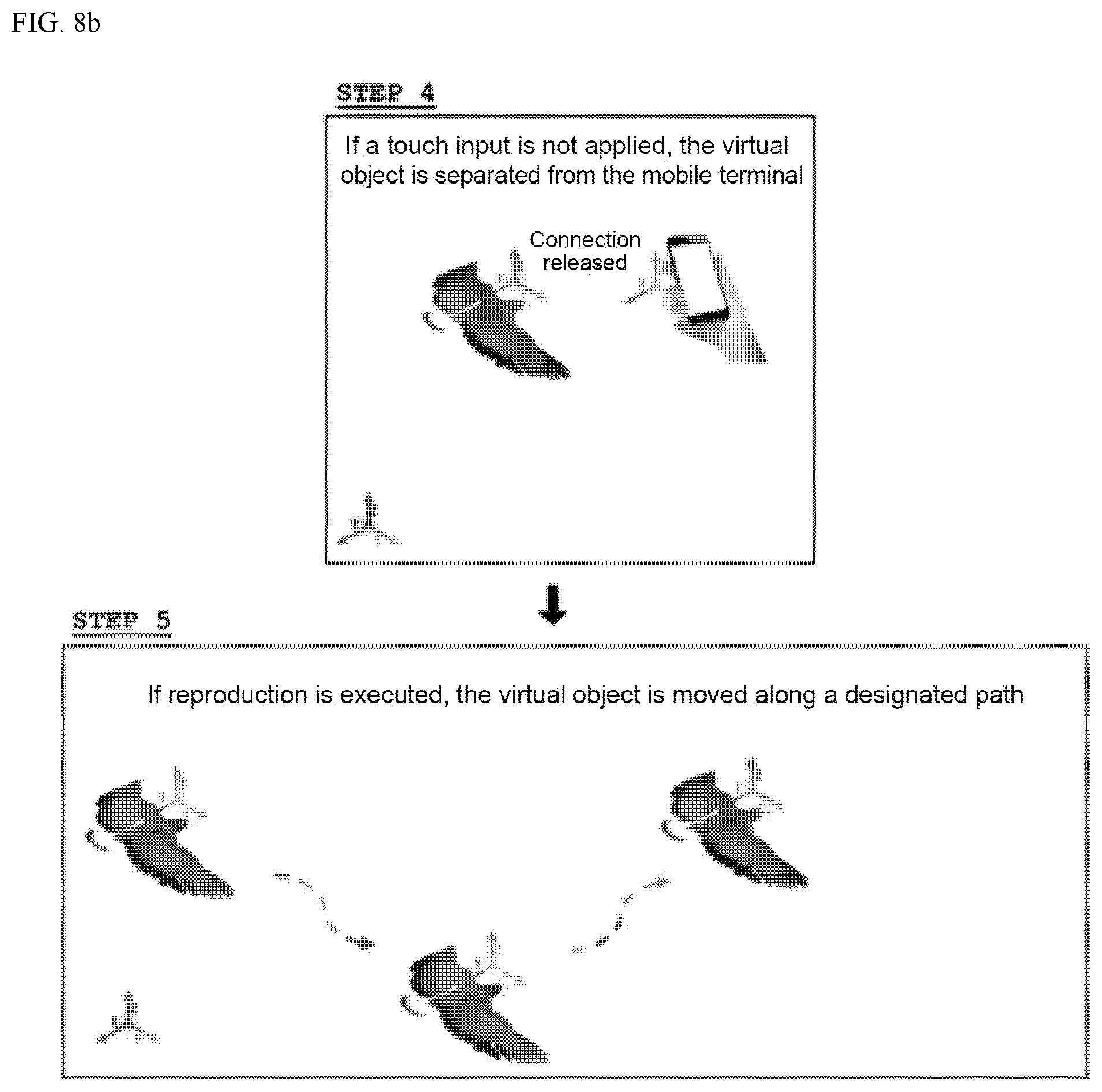

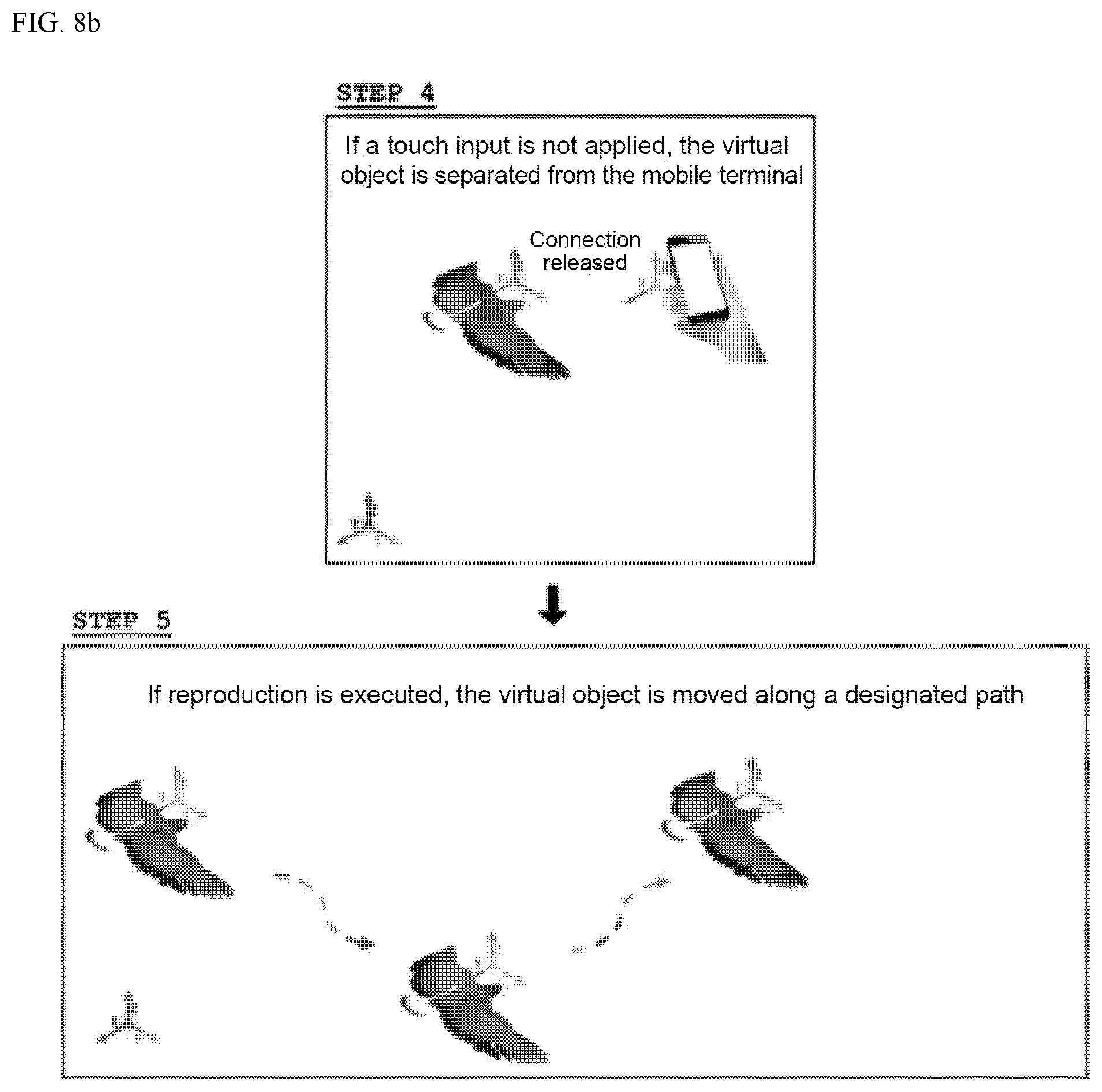

[0027] FIGS. 8a and 8b illustrate an example where a virtual object is moved in the augmented reality operating system 1.

[0028] FIG. 9 illustrates another example where a virtual object is moved in the augmented reality operating system 1.

[0029] FIG. 10 illustrates a condition for selecting a virtual object in the augmented reality operating system 1.

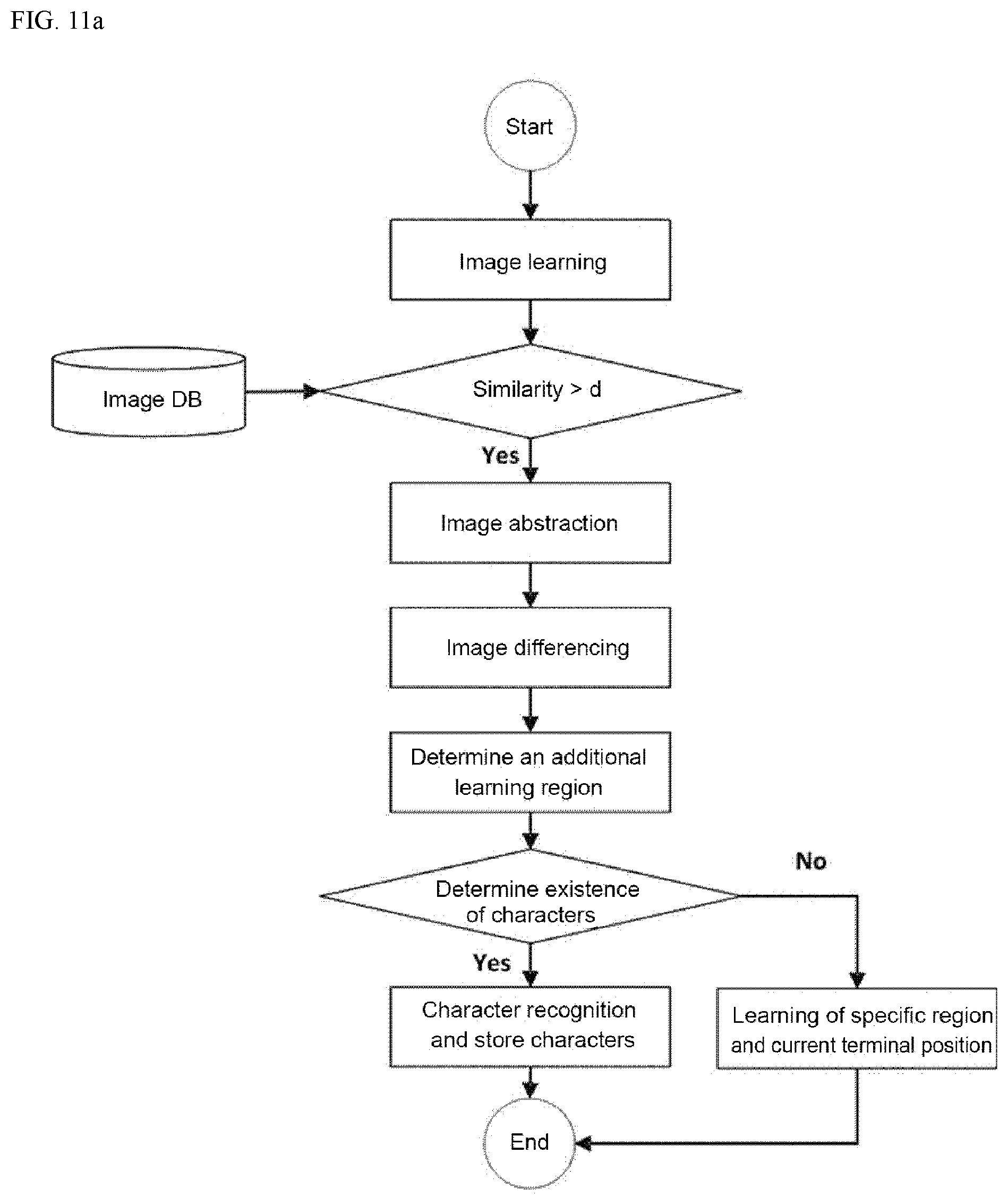

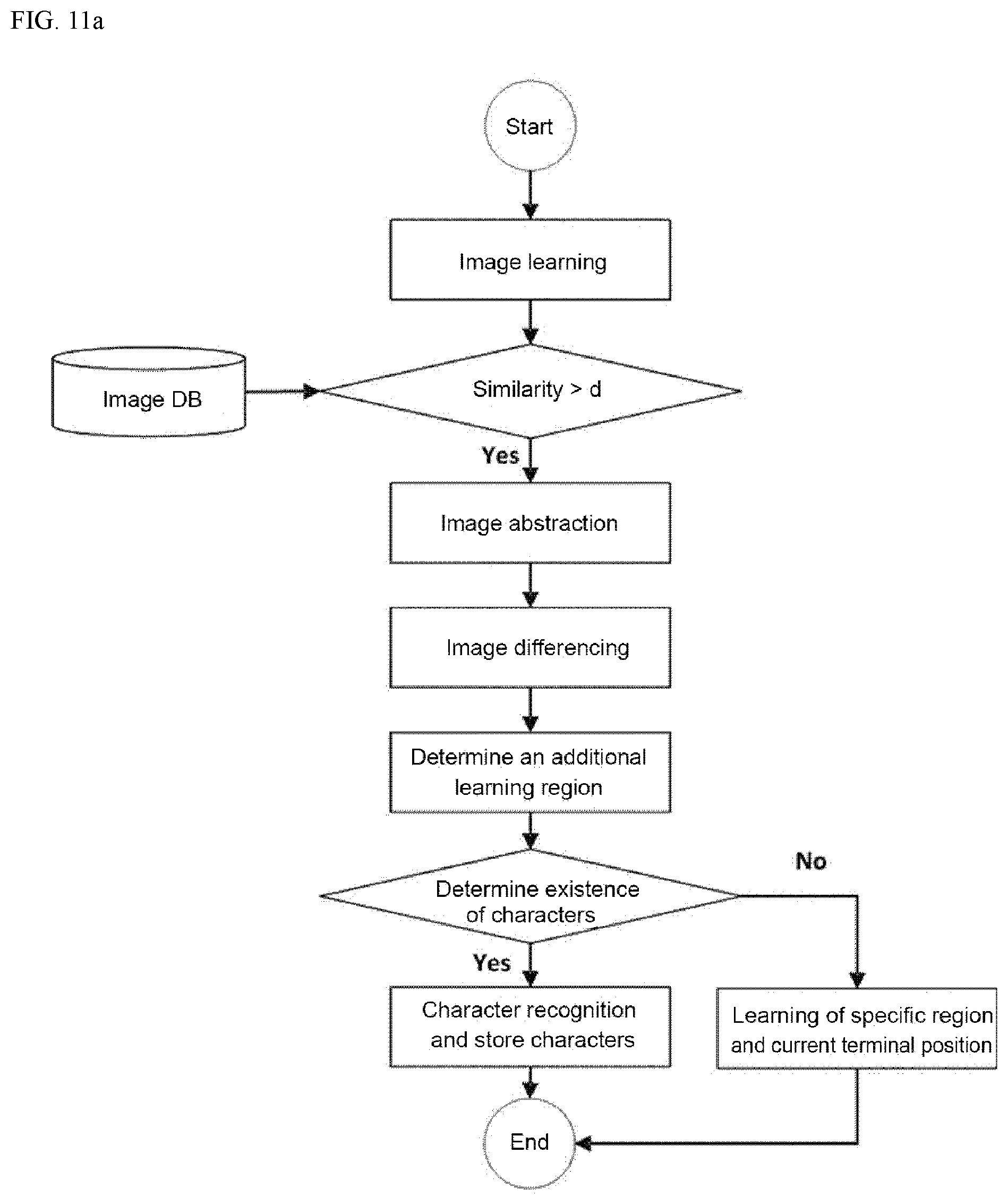

[0030] FIG. 11a is a flow diagram illustrating a learning process for identifying similar objects in the augmented reality operating system 1, and FIG. 11b illustrates a process for determining an additional recognition area for identifying similar objects in the augmented reality operating system 1.

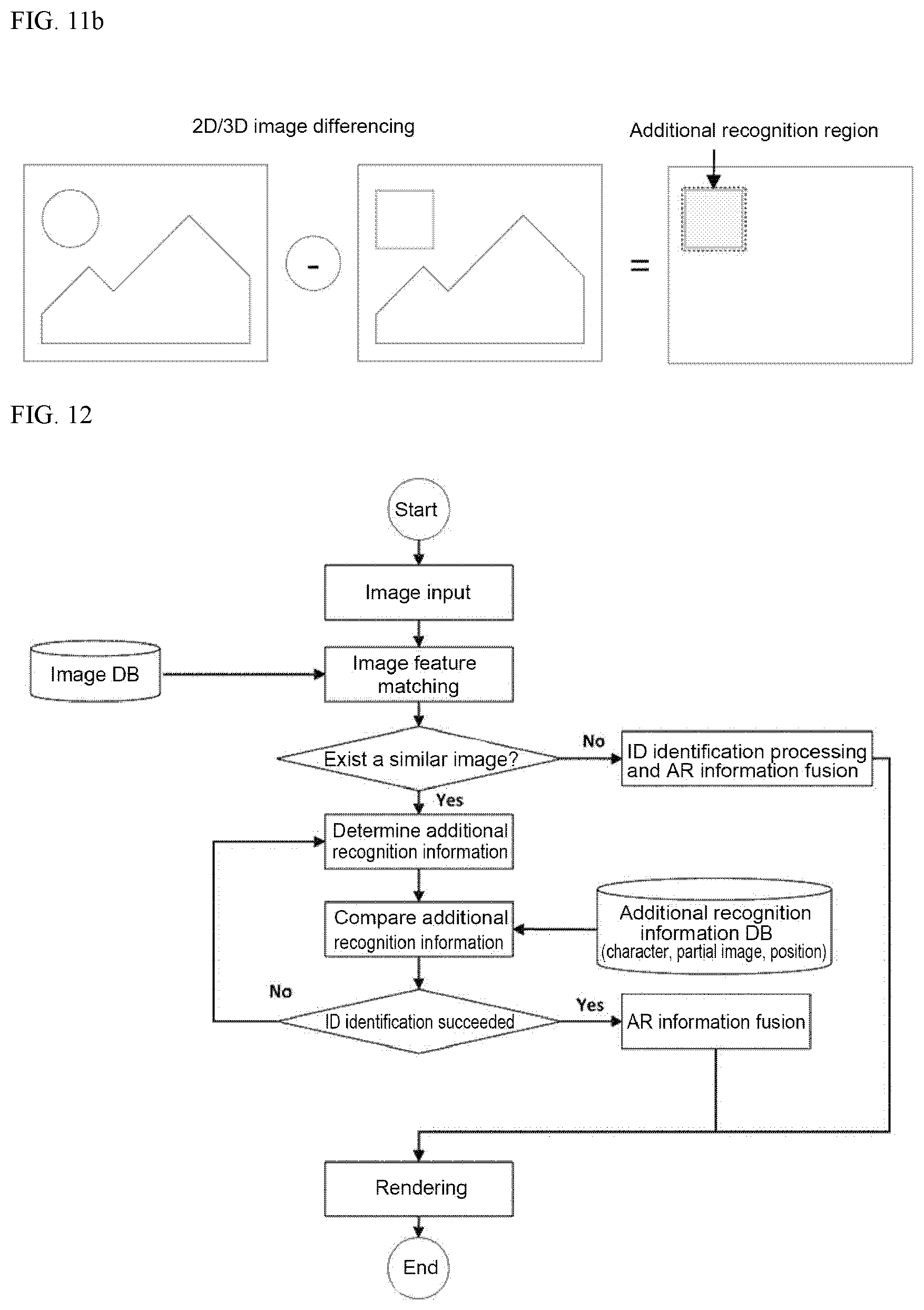

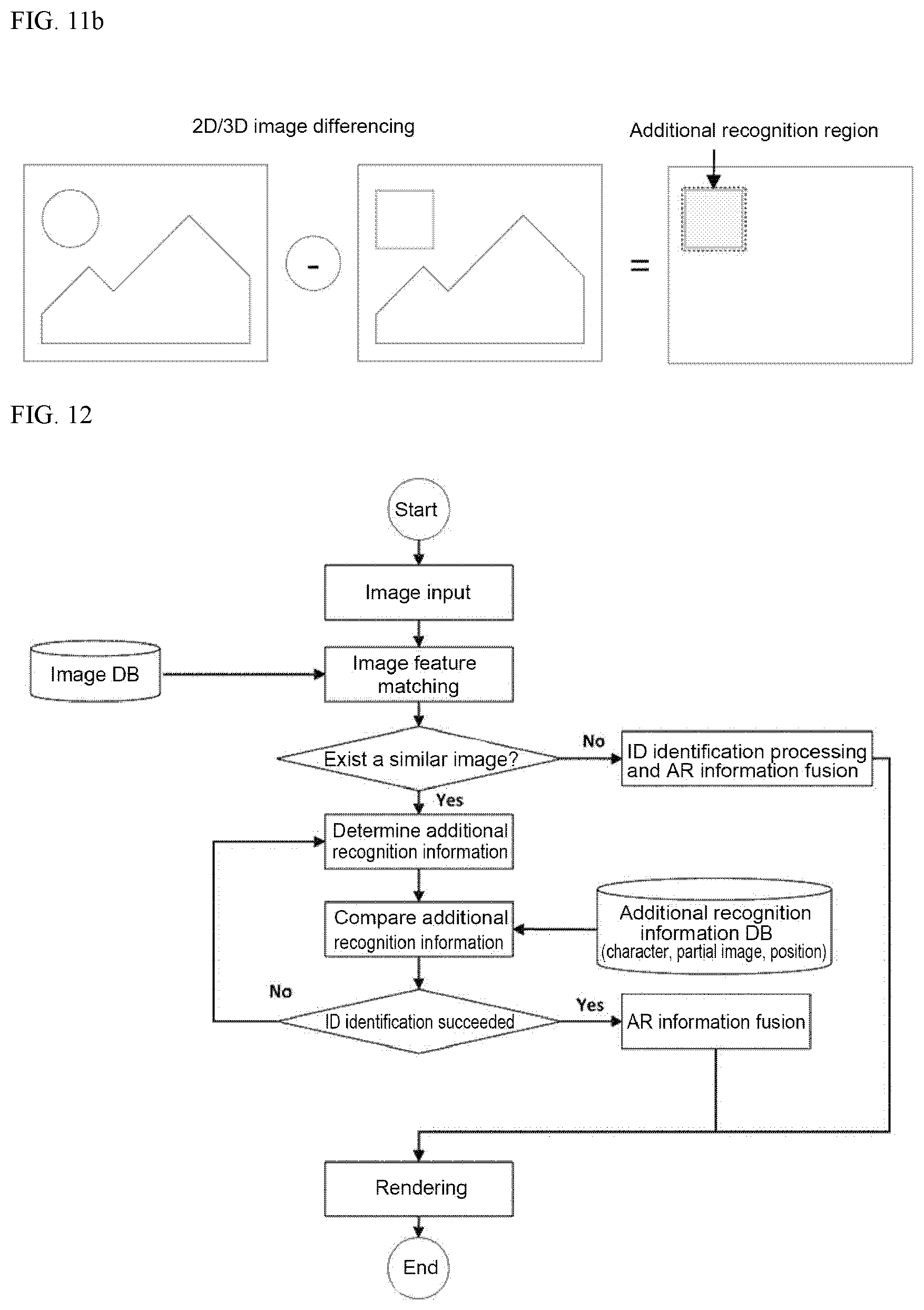

[0031] FIG. 12 is a flow diagram illustrating a process for identifying similar objects in the augmented reality operating system 1.

[0032] FIG. 13 is a first example illustrating a state for identifying similar objects in the augmented reality operating system 1.

[0033] FIG. 14 is a second example illustrating a state for identifying similar objects in the augmented reality operating system 1.

[0034] FIG. 15 illustrates another operating principle of the augmented reality operating system 1.

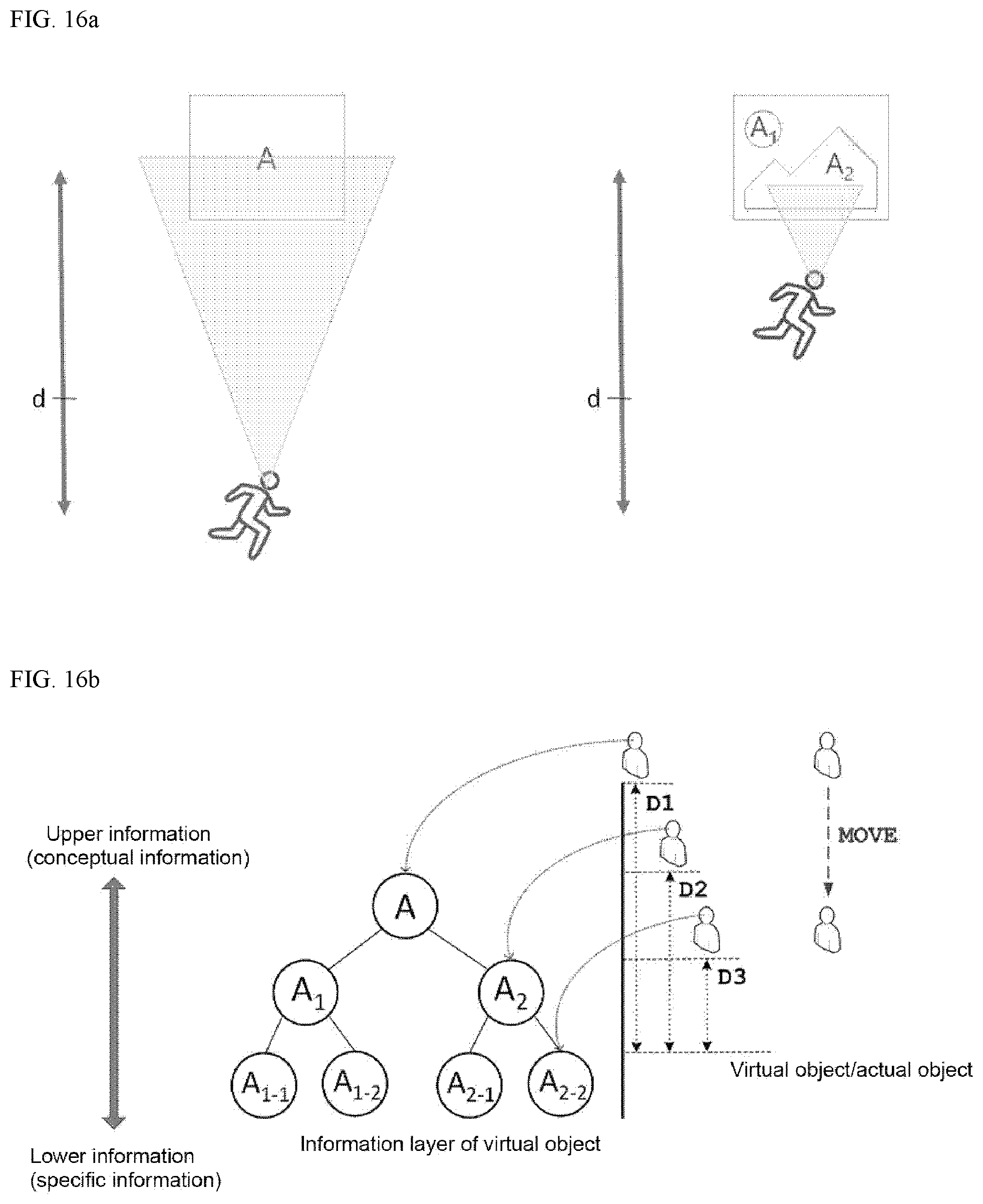

[0035] FIGS. 16a and 16b illustrate yet another operating principle of the augmented reality operating system 1.

DESCRIPTION OF EXEMPLARY EMBODIMENTS

[0036] In what follows, embodiments of the present invention will be described in detail with reference to appended drawings so that those skilled in the art to which the present invention belongs may readily apply the technical principles of the present invention.

[0037] FIG. 1 illustrates a structure of an augmented reality operating system 1 according to an embodiment of the present invention.

[0038] The augmented reality operating system 1 according to the present embodiment shows only a simplified structure for clearly describing the technical principles of the proposed invention.

[0039] Referring to FIG. 1, the augmented reality operating system 1 comprises an augmented reality content management system 100, user terminal 200, and manager terminal 300.

[0040] In what follows, the structure of the augmented reality operating system 1 as described above and main operations thereof will be described.

[0041] FIG. 2 illustrates an operation example of the augmented reality operating system 1 in a distributed mode.

[0042] Referring to FIG. 2, the augmented reality content management system 100 in a distributed mode provides a pre-assigned, 3D virtual image to a web browser which has transmitted a URL address.

[0043] The user terminal 200 transmits a URL address via an installed web browser and receives a 3D virtual image from the augmented reality content management system 100.

[0044] In other words, the user terminal 200 displays each physical object of actual image information displayed on a web browser by augmenting a pre-assigned virtual object of the 3D virtual image on the physical object. FIG. 2 illustrates an experience screen showing augmented reality content on the web browser when a user clicks a button linked to a URL address at a web page displayed through the web browser of the user terminal 200.

[0045] Without executing a separate augmented reality application, the user terminal 200 may receive augmented reality content through the URL address via the installed web browser and display the received augmented reality content.

[0046] FIGS. 3 to 5 illustrate operation examples of the augmented reality operating system 1 in an authoring mode.

[0047] Referring to FIGS. 3 to 5, in the authoring mode, the manager terminal 300 accesses augmented reality Software as a Service (SaaS) of the augmented reality content management system 100 via an installed web browser and creates augmented reality content by determining an augmentation position on a map, a physical object of actual image information located at the augmentation position, and a virtual object assigned to the physical object respectively.

[0048] At this time, the augmented reality content management system 100 supports creation of augmented reality content based on augmented reality Software as a Service (SaaS). In other words, the augmented reality content management system 100 provides a template for creating the augmented reality content on the web browser authorized as a manager and bills a payment according to the type of template used.

[0049] Therefore, since a non-expert, who may not be a programmer, may more easily select a template to create augmented reality content and thereby concentrate on creation of content itself. Also, an advantage is obtained that since a user only has to pay for as many functions as the user has used, the user may produce content conveniently according to an allowed budget.

[0050] Also, a manager may check location, image, the total number of inquiries of augmented reality content per user, average, time series, cross analysis, and pattern analysis from a management page in the authoring mode. A management page provides an infographics function for intuitive visualization of a statistical analysis result.

[0051] Meanwhile, the augmented reality content management system 100 provides current position information transmitted from the user terminal 200 and a 3D virtual image corresponding to actual image information to the user terminal 200 in real-time; and identifies a physical object of the actual image information and provides a virtual object of the 3D virtual image, assigned to the identified physical object, to the user terminal in the distribution mode.

[0052] In other words, if current position information transmitted from the user terminal 200 and actual image information captured by a camera of the user terminal 200 is transmitted to the augmented reality content management system 100, the augmented reality content management system 100 identifies a physical object of the actual image information and transmits a virtual object of the 3D virtual image, assigned to the identified physical object, to the user terminal 200.

[0053] Also, the augmented reality content management system 100 provides current position information transmitted from the manager terminal 300 and a 3D virtual image corresponding to actual image information to the manager terminal 300 in real-time; and identifies a physical object of the actual image information and updates a virtual object of the 3D image information, assigned to the identified physical object, in real-time according to the control of the manager terminal in the authoring mode.

[0054] In other words, while checking an augmented virtual object by using the manager terminal 200, the manager may edit the virtual object on-site, in real-time by performing an operation such as replacing the virtual object or changing the position thereof.

[0055] FIG. 6 illustrates a structure of an augmented reality content management system 100 of the augmented reality operating system 1.

[0056] Referring to FIG. 6, the augmented reality operating system 1 comprises a content server, web-based augmented reality viewer module, image recognition server, and web-based augmented reality content management module.

[0057] The content server is equipped with a database containing position information of objects, physical objects, and virtual objects. Also, the web-based augmented reality viewer module receives a physical object and position information from a web browser and transmits a virtual object assigned to the physical object. Also, the image recognition server performs the role of identifying image features and distinguishing individual images. The web-based augmented reality content management module provides a map view and provides functions related to the authoring mode and functions related to the manager.

[0058] The augmented reality operating system 1 is defined as a system by which any one may create and distribute augmented reality content easily at a very low cost.

[0059] In other words, the present invention proposes the web-based augmented reality content management system 100 for creating and managing augmented reality content, thereby allowing a non-expert to easily create augmented reality content.

[0060] Also, the present invention proposes the first, web-based, Software as a Service (SaaS)-based augmented reality content management system, by which a non-expert may create/distribute augmented reality content easily and quickly, at a very low cost. Also, via a web browser, a user may experience augmented reality content without installing a separate application. Also, the user may visit an augmented reality site to experience augmented virtual objects via a web browser. At this time, the web browser may be equipped with a navigation function to guide the user to the augmented reality site.

[0061] The augmented reality operating system 1 is capable of creating and managing augmented reality content at a very low cost by using augmented reality Software as a Service (SaaS)-based rental software.

[0062] Also, if content is updated by utilizing an augmented reality content management system based on augmented reality Software as a Service (SaaS), updates are automatically reflected in the web-based augmented reality viewer module, by which maintenance is made easy. Therefore, customers may concentrate on creation of content itself rather than to strive for system construction. Also, since it is possible to figure out the types of content desired by users by analyzing usage information of augmented reality content, the proposed invention may contribute to determine the direction for content production.

[0063] Demand for creating and distributing augmented reality content directly is increasing from public organizations, companies, and individuals; however, since it is not easy to overcome technology barriers and high costs are required for the initial system construction/maintenance, widespread use of augmented reality content is not easily achieved.

[0064] To solve the problem above, the augmented reality operating system 1 provides a means for easily create, manage, and analyze augmented reality content without coding the augmented reality content; and to improve accessibility to the content, the augmented reality operating system 1 provides a method for accessing augmented reality content conveniently through a URL address without installing a separate application.

[0065] Also, an augmented reality content management system (CMS) has been developed in the form of a web-based augmented reality Software as a Service (SaaS), which adopts a flexible billing policy that charges only for the functions actually used. Also, a management page is provided, by which content production/advertisement policy may be developed through content big data collection and data mining.

[0066] The augmented reality operating system 1 employs a traffic manager to which a CMS server and an augmented reality recognition server are added for large-scale image recognition (about 100,000 images) and control of network traffic of the augmented reality content management system.

[0067] Also, the augmented reality operating system 1 employs a database for position and image recognition and an augmented reality learning server. In other words, cloud-based object (space) image feature learning techniques and position information-based matched coordinate system registration techniques are applied. Also, the augmented reality operating system 1 is equipped with an augmented reality content database (DB) and server that manages content augmented after image recognition.

[0068] Also, the augmented reality operating system 1 is equipped with a database (DB) and server for managing accounts/rights and user groups. Also, the augmented reality operating system 1 is equipped with a database (DB) and server that manages resources of the augmented reality content management system for each user. Also, the augmented reality operating system 1 may be equipped with a database (DB) and server for information analysis.

[0069] Also, the augmented reality operating system 1 employs image recognition, load balancing for managing traffic of content and resources, and traffic manager techniques; and responds to a DNS request incoming according to a routing policy via a normal end-point.

[0070] Also, the augmented reality operating system 1 may support round robin distribution of traffic, cookie-based session preference, and URL path-based routing function through the layer 7 load distribution function. Also, the augmented reality operating system 1 provides the layer 4 load balance with high performance and low latency for all of UDP and TCP protocols and is capable of inbound and outbound connection management.

[0071] Also, the database is composed of database transaction units (DTUs) and elastic DTUs (eDTUs) that are able to react flexibly to the database (DB) load. Also, through the DTUs, relative performance measures of resources between SQL databases may be calculated, and an adaptive pool of databases is constructed through the eDTUs, by which a performance goal that is dynamically changed and difficult to predict may be managed. Also, transmission and reception of augmented reality authoring information based on RESTful APIs is possible.

[0072] The augmented reality operating system 1 is organized to provide basic performance that larger-scale image recognition and processing (about 100,000 images) is performed within 2 seconds and a response time of the augmented reality management system for resource loading is kept within 3 seconds.

[0073] As described above, the augmented reality operating system 1 supports production of augmented reality content based on the augmented reality Software as a Service (SaaS).

[0074] In other words, the augmented reality operating system 1 allows the user to configure an experience position by using map-based GPS coordinate setting and content clustering functions and to configure the radius of augmentation by designating the radius of experience and radius on the map.

[0075] Also, the augmented reality operating system 1 proceeds with registration of images for recognition by displaying file upload and image recognition rate and supports authoring of text content by providing color, position, rotation, size, and editing functions.

[0076] Also, the augmented reality operating system 1 supports authoring of memo/drawing content by providing color, position, rotation, size, and Undo/Redo functions. Also, the augmented reality operating system 1 supports authoring of image and GIF content by providing position, rotation, and size setting functions. Also, the augmented reality operating system 1 supports authoring of screen skins by providing screen skin setting with reference to screen coordinate system, image insertion, size adjustment, and so on. Also, the augmented reality operating system 1 supports authoring of audio content by providing play button insertion function and so on. Also, the augmented reality operating system 1 supports authoring of video and 360-degree video content by providing video portal (YouTube) link input, position, rotation, and size functions.

[0077] Also, the augmented reality operating system 1 supports authoring of web page links by adding a web page link input so that a linked web page appears when the link is clicked.

[0078] Also, the augmented reality operating system 1 supports authoring of 3D model content by supporting a web-based model loader, implementation of an animation control function, and position and size setting function, and so on.

[0079] Also, the augmented reality operating system 1 is configured to determine a period during which augmented reality content is exposed and a maximum number of inquiries, by which supports a function for managing the exposure period/the number of inquires

[0080] Also, the augmented reality operating system 1 may be configured to determine a reward (image or text content) to be provided when a user experiences augmented reality content and to determine a reward period and the maximum number of issues to support a reward registration and management functions.

[0081] Also, the augmented reality operating system 1 supports an account management, rights, and group setting functions through rights managements of individual authoring functions, augmented reality content experience group setting function, user group setting function, and invitation function. Also, the augmented reality operating system 1 supports a content storage function in the server database (DB) after authoring is completed.

[0082] FIG. 7 illustrates a real-time image search method based on a hierarchical tree-type data structure.

[0083] Referring to FIG. 7, the augmented reality operating system 1 applies a technique that learns a plurality of images in the form of tree-type data structure suitable for extraction and matching of image features and performs real-time recognition and detection of input images based on the learning result. In other words, image detection and camera pose acquisition techniques are combined with 3D spatial tracking techniques.

[0084] Through dynamic generation of Bag-of-visual Words (BoW) based hierarchical tree-type data structure, the augmented reality operating system 1 supports dynamic learning and search tree generation for large-scale image learning.

[0085] Also, the augmented reality operating system 1 supports enhancement of recognition speed by using image query generation and per-class histogram scoring techniques through bag-of-word construction based on binary descriptors.

[0086] Also, the augmented reality operating system 1 supports enhancement of recognition speed through small scale image feature clustering per position and user ID and dynamic data structure generation technique.

[0087] Also, the augmented reality operating system 1 supports position information based image query generation and acquisition of real-time target image ID and matching data.

[0088] Also, the augmented reality operating system 1 supports 2D homography matrix detection and real-time acquisition of camera rotation matrix and translation vector by matching image feature information obtained from the database (DB) to the input image.

[0089] Also, the augmented reality operating system 1 supports association of camera pose determined from images with Simultaneous Localization And Mapping (SLAM) or Visual Inertial Odometry (VIO), which is a spatial tracking technique performed with reference to an arbitrary 3D coordinate system.

[0090] Also, the augmented reality operating system 1 employs multi-image target recognition technique to ensure derivation of a result within 2 seconds after an inquiry is generated and recognition rate of 95% or more. Also, if the image target detection technique is operated in conjunction with spatial tracking technique, recognition speed of 30 fps or more may be obtained.

[0091] Also, the augmented reality operating system 1 supports to record and analyze big data such as user log information, content view information, and advertisement effect; and to analyze the pattern of the big data. In other words, the big data analysis result may be used to improve usability, find content preferred by users, and develop a strategy for increasing profits.

[0092] Also, the augmented reality operating system 1 supports user group analysis (cross analysis); tracking advertisement (advertisement channel); setting country, date, OS version, and cross analysis variables; advertisement analysis/tracking link; convenient and simple tracking of link issue and management thereof; advertisement channel integrated reporting (ROI, LTV, Retention); filter function that allows conditional search (for each channel, landing URL, attribute, and manager) and excel downloading function; authorization and management of partner companies; and implementation of a postback interworking function with various media companies.

[0093] As an example, the augmented reality operating system 1 supports setting of position and image, content, the number of reward registration, and exposure period; setting of augmentation radius; setting of content storage space; setting of monthly traffic limit; and download speed, public/private, and group setting.

[0094] Also, the augmented reality operating system 1 supports interoperation of augmented reality content among a server, mobile augmented reality content management system, and augmented reality viewer app; compensation technique for matched coordinate system based on internal sensor information of a mobile device; and sensor fusion-based mobile camera tracking technique.

[0095] Meanwhile, other functions of the augmented reality operating system 1 will be described.

[0096] The user terminal 100 is equipped with a video camera that captures a scene in the surroundings of a user and provides actual image information; and in displaying a 3D virtual image on a display, display the 3D virtual image corresponding to the current position information and actual image information on the display via a web browser.

[0097] The augmented reality management system 100 provides, to the user terminal 200, 3D virtual image corresponding to current position information and actual image information transmitted from the user terminal 200 in real-time.

[0098] By default, the user terminal 200 is configured to provide satellite position information to the augmented reality content management system 100 as the current position information. When a communication module is included in the user terminal 200, not only the satellite position information but also the position of a nearby Wi-Fi repeater, position of a base station, and so on may be additionally provided to the augmented reality content management system 100 as the current position information.

[0099] In particular, since it is often the case that the satellite position information is unavailable in indoor environments, the user terminal 200 may additionally detect signal strength of at least one or more Wi-Fi repeaters found and transmit the detected signal strength to the augmented reality content management system 100. In other words, since the absolute positions of indoor Wi-Fi repeaters are pre-stored in the augmented reality content management system 100, if the user terminal 200 additionally provides a unique number and signal strength of a searched Wi-Fi repeater, the augmented reality content management system 100 may determine a relative travel path of the user terminal 200.

[0100] In other words, a relative distance between the user terminal 200 and Wi-Fi repeater may be determined from signal strength, and a travel direction may be calculated based on the change of signal strength with respect to a nearby Wi-Fi repeater. Additional methods for obtaining the current position information in an indoor environment will be described later.

[0101] Therefore, the augmented reality content management system 100 determines a virtual object assigned to each physical object through the current position information of the user carrying the user terminal 200 and the actual image information captured by the video camera of the user terminal 200; and transmits the information about the virtual object to the user terminal 200 in real-time.

[0102] The augmented reality content management system 100 identifies a physical object of actual image information and provides a virtual object of a 3D virtual image, assigned to the identified physical object, to the user terminal 200, where the user terminal 200 is equipped with a 9-axis sensor to obtain its own 3D pose information, and poses of at least one or more virtual objects selected in the user terminal 200 may be changed in synchronization with the 3D pose information.

[0103] At this time, coordinates of at least one or more virtual objects selected in the user terminal 200 are transformed to the mobile coordinate system of the user terminal 200 from the spatial coordinate system for actual image information, and coordinates of a virtual object released from selection are transformed to the spatial coordinate system for actual image information from the mobile coordinate system of the user terminal 200. Detailed descriptions of the coordinate transformation will be given later.

[0104] When the user moves the user terminal 200 in the 3D space, a selected virtual object is synchronized with the 3D motion, and the pose of the selected virtual object is automatically changed. The 9 axis sensor is so called because measurement is performed along a total of 9 axes comprising 3 axis acceleration outputs, 3 axis inertial outputs, and 3 axis geomagnetic outputs, where temperature sensors may be added for temperature compensation. The 9 axis sensor may detect not only the 3D movement of a mobile terminal but also the forward-looking direction, movement direction, and inclination of the user.

[0105] FIGS. 8a and 8b illustrate an example where a virtual object is moved in the augmented reality operating system 1.

[0106] Referring to FIGS. 8a and 8b, in the step 1, virtual objects are enhanced in the space with respect to a spatial coordinate system and displayed on the display of the user terminal 200.

[0107] In the step 2, the user selects a virtual object. If the user touches one or more virtual objects displayed on the display (touchscreen) of the user terminal 200, the selected virtual object is connected to the user terminal 200. Here, connection implies that the reference coordinate system of the virtual object has been changed from the spatial coordinate system to the coordinate system of the user terminal 200.

[0108] In the step 3, while the virtual object is being touched, if the user terminal 200 is moved or rotated, translation or rotation information of the virtual object connected to the user terminal 200 is changed in 3D by being synchronized with the 3D motion of the user terminal 200.

[0109] In the step 4, if the user does not touch the virtual object, the virtual object is separated from the user terminal 200. Here, separation implies that the reference coordinate system of the virtual object has been changed from the coordinate system of the user terminal 200 to the spatial coordinate system.

[0110] In the step 5, a video showing attitude change of the virtual object in synchronization with the 3D pose information of the user terminal 200 is stored for reproduction afterwards. In other words, if a video showing attitude change of the virtual object is stored, the recorded information may be retrieved and reproduced by pressing a play button afterwards.

[0111] In the step 2 to step 4 of the example above, only when the virtual object is in a touched state, the reference coordinate system of the touched virtual object is changed from the spatial coordinate system to the coordinate system of the user terminal 200, and the virtual object is moved in conjunction with the 3D motion of the user terminal 200.

[0112] Depending on embodiments, if the user touches a virtual object for more than a predetermined time period, the virtual object may be selected, and if the virtual object is touched again for more than a predetermined time period, selection of the virtual object may be released.

[0113] FIG. 9 illustrates another example where a virtual object is moved in the augmented reality operating system 1.

[0114] Referring to FIG. 9, if the user terminal 200 loads augmented reality contents and displays a virtual object, the user selects the virtual object and changes the coordinate system of the corresponding virtual object to the mobile coordinate system. Afterwards, the selected virtual object is moved in conjunction with the 3D motion of the user terminal 200.

[0115] FIG. 10 illustrates a condition for selecting a virtual object in the augmented reality operating system 1.

[0116] Referring to FIG. 10, in order for the user to select a virtual object displayed on the display of the user terminal 200, the method described above may be applied, where, if the user touches the virtual object for more than a predetermined time period to, the virtual object is selected, and if the user touches the virtual object again for the predetermined time period t0, selection of the virtual object is released.

[0117] At this time, it is preferable that a touch input period for a virtual object is considered to be valid only when the display is touched with more than predetermined pressure k1.

[0118] In other words, if the user touches a virtual object for more than a predetermined time period t0, the virtual object is selected, the reference coordinate system of the virtual object is changed from the spatial coordinate system to the coordinate system of the user terminal 200, and the virtual object is moved in conjunction with a 3D motion of the user terminal 200.

[0119] At this time, according to the duration of the touch after the virtual object is touched for more than a predetermined time period t0, the time period for storing a video showing attitude change of the virtual object may be automatically configured.

[0120] As shown in FIG. 10, when the user continues to touch a virtual object until a first time t1 after having touched the virtual object up to more than a predetermined time t0, a storage time period P1 ranging from the predetermined time t0 to the first time t1 is automatically configured. Therefore, as duration of touch on the virtual object is made longer, the storage time period becomes further elongated, where the storage time period is set as a multiple of the touch duration. At this time, the storage time period is counted from the initial movement of the virtual object.

[0121] At this time, the automatically configured storage time is displayed in the form of a time bar on the display, and estimated time to completion is displayed in real-time. At this time, if the user drags the time bar to the left or right, the estimated time is increased or decreased. In other words, the automatically configured storage time may be increased or decreased according to the dragging motion of the user.

[0122] Meanwhile, a camera capable of capturing the user's face may be additionally installed to the direction of the display in the user terminal 200. This camera is capable of capturing a 3D image and recognize the physical body of the user, particularly, a 3D image (depth image) of the face.

[0123] Therefore, if a body association model is set, the camera may detect at least one or more of 3D motion of the user's head, 3D motion of the finger, and 3D motion of the pupil, and a virtual object may be configured to move in synchronization with the detected motion. At this time, the head motion, finger motion, and pupil motion may be configured to be selected separately or a plurality of the motions may be configured to be selected for motion detection.

[0124] Meanwhile, the augmented reality operating system 1 according to an embodiment of the present invention may determine an additional recognition area and identify similar objects by assigning unique identifiers to the respective physical objects based on an image difference of the additional recognition area.

[0125] Also, the augmented reality operating system 1 may identify physical objects by taking into account all of the unique identifiers assigned to the respective physical objects based on the image difference of the additional recognition area and current position information of each physical object.

[0126] Therefore, even if physical objects with a high similarity are arranged, the physical objects may be identified, virtual objects assigned to the respective physical objects may be displayed, and thereby unambiguous information may be delivered to the user.

[0127] Visual recognition may be divided into four phases: Detection, Classification, Recognition, and Identification (DCRI).

[0128] First, detection refers to a phase where only the existence of an object may be known.

[0129] Next, classification refers to a phase where the type of the detected object is known; for example, whether a detected object is a human or an animal may be determined.

[0130] Next, recognition refers to a phase where overall characteristics of the classified object are figured out; for example, brief information about clothes worn by a human is obtained.

[0131] Lastly, identification refers to a phase where detailed properties of the recognized object are figured out; for example, face of a particular person may be distinguished, and the numbers of a car license plate may be known.

[0132] The augmented reality operating system 1 of the present invention implements the identification phase and thereby distinguishes detailed properties of similar physical objects from each other.

[0133] For example, the augmented reality operating system 1 may recognize characters attached to particular equipment (physical object) having a similar shape and assign a unique identification number thereto or identify a part of the physical object exhibiting a difference from the others, determine the identified part as an additional recognition region, and by using all of the differences of 2D/3D characteristic information of the additional recognition region, satellite position information, and current position information measured through a Wi-Fi signal, distinguish the respective physical objects having high similarities from each other.

[0134] FIG. 11a is a flow diagram illustrating a learning process for identifying similar objects in the augmented reality operating system 1, and FIG. 11b illustrates a process for determining an additional recognition area for identifying similar objects in the augmented reality operating system 1.

[0135] Referring to FIGS. 11a and 11b, the augmented reality content management system 100 operates to identify a physical object of actual image information and provide the user terminal 200 with a virtual object of a 3D virtual image assigned to the identified physical object.

[0136] In other words, among a plurality of physical objects present in the actual image information, the augmented reality content management system 100 determines additional recognition regions by subtracting the physical objects showing a predetermined degree of similarity d from the corresponding actual image information and assigns unique identifiers to the respective physical objects based on the visual differences of the additional recognition regions.

[0137] For example, if additional recognition regions contain different characters or numbers, the augmented reality content management system 100 may assign unique identifiers to the respective physical objects based on the differences among the additional recognition regions, store the assigned unique identifiers in the form of a database, and transmit virtual objects assigned to the respective unique identifiers to the user terminal 200.

[0138] In other words, if a plurality of physical objects have a visual similarity larger than a predetermined value d, the image is abstracted and subtracted to determine additional recognition regions (additional learning regions), and then unique identifiers are assigned to the respective physical objects by identifying the differences of the additional recognition regions.

[0139] FIG. 12 is a flow diagram illustrating a process for identifying similar objects in the augmented reality operating system 1, and FIG. 13 is a first example illustrating a state for identifying similar objects in the augmented reality operating system 1.

[0140] Referring to FIGS. 12 and 13, if actual image information is received from the user terminal 200, the augmented reality content management system 100 distinguishes a physical object of the actual image information, and if similar images are not found (if physical objects having a similar shape are not found), a virtual object corresponding to the identified image is assigned.

[0141] At this time, in the presence of similar objects (in the presence of physical objects with a similar shape), the augmented reality content management system 100 compares the information of the additional recognition regions and identifies unique identifiers and then allocates a virtual object corresponding to each unique identifier.

[0142] In other words, as shown in FIG. 13, if particular equipment (physical object) having a similar shape is disposed in the vicinity, the augmented reality content management system 100 may recognize a plurality of physical objects from actual image information transmitted from the user terminal 200, identify unique identifiers by comparing information of additional recognition regions of the respective physical objects, and assign a virtual object corresponding to each unique identifier identified from the corresponding additional recognition region.

[0143] Meanwhile, when a different identifying marker is printed on the additional recognition region of each equipment, the shape of the identifying marker may be composed as follows.

[0144] An identifying marker may be composed of a first identifying marker region, second identifying marker region, third identifying marker region, and fourth identifying marker region.

[0145] In other words, the first, second, third, and fourth identifying markers are recognized as one identifier. In other words, by default, the user terminal 200 captures all of the first to the fourth identifying markers and transmits the captured identifying markers to the augmented reality content management system 100; and then the augmented reality content management system 100 regards the recognized identifying markers as a single unique identifier.

[0146] At this time, the first identifying marker is constructed to reflect visible light. In other words, the first identifying marker region is printed with a normal paint so that a human may visually recognize the marker.

[0147] Also, the second identifying marker reflects light in a first infrared region, which is printed with a paint that reflects light in the first infrared region and is not recognizable by a human.

[0148] Also, the third identifying marker reflects light in a second infrared region, in which wavelength of light is longer than that of the light in the first infrared region. The third identifying marker is printed with a paint that reflects light in the second infrared region and is not recognizable by a human.

[0149] Also, the fourth identifying marker reflects light in the first and second infrared regions simultaneously, which is printed with a paint that reflects light in both of the first and second infrared regions and is not recognizable by a human.

[0150] At this time, the camera of the user terminal 200 that captures the identifying markers is equipped with a spectral filter that adjusts infrared transmission wavelength and is configured to recognize the identifying markers by capturing the infrared wavelength region.

[0151] Therefore, among the identifying markers printed on the equipment, only the first identifying marker may be checked visually by a human while the second, third, and fourth identifying marker regions may not be visually checked by the human but may be captured only through the camera of the user terminal 200.

[0152] The relative print positions (left, right, up, and down) of the first, second, third, and fourth identifying markers may be used as identifiers. In the identifying marker region, various characters such as numbers, symbols, or codes may be printed. Also, identifying markers may also be printed in the form of an QR code or barcode.

[0153] FIG. 14 is a second example illustrating a state for identifying similar objects in the augmented reality operating system 1.

[0154] Referring to FIG. 14, the augmented reality content management system 100 may identify each physical object by considering all of the unique identifier assigned to the physical object based on an image difference of the corresponding additional recognition region and current position information of the physical object. In other words, a physical object may be identified by additionally considering the current position information of the user (user terminal 200).

[0155] For example, if a plurality of physical objects maintain a predetermined separation distance from each other, even a physical object showing a high similarity may be recognized by using the current position information of the user. Here, it is assumed that the current position information includes all of the absolute position information, relative position information, travel direction, acceleration, and gaze direction of the user.

[0156] At this time, to further identify physical objects not identifiable from current position information, the augmented reality content management system 100 may determine an additional recognition region and distinguish the differences among physical objects by recognizing the additional recognition region.

[0157] Also, the augmented reality content management system 100 may determine a candidate position of the additional recognition region based on the current position information of each physical object.

[0158] In other words, referring to FIG. 14, if the user is located at the first position P1 and looks at a physical object in the front, the augmented reality content management system 100 determines a separation distance between the corresponding physical object and the user based on actual image information and detects the 3D coordinates (x1, y1, z1) of the physical object.

[0159] Since a plurality of additional recognition regions are already assigned to the physical object located at the 3D position (x1, y1, z1), the augmented reality content management system 100 may determine a candidate position of the additional recognition region based on the 3D coordinates (x1, y1, z1) of the physical object, namely based on the current position information of the physical object.

[0160] Since the augmented reality content management system 100 already knows which physical object already exists at the 3D coordinates (x1, y1, z1) and which part of the physical object has been designated as the additional recognition region, the augmented reality content management system 100 may identify an object simply by identifying the candidate position of the additional recognition region. This method provides an advantage that the amount of computations may be reduced for identifying additional recognition regions.

[0161] It should be noted that if an indoor environment is assumed and lighting directions are all the same, even physical objects with a considerable similarity may have shadows with different positions and sizes due to the lighting. Therefore, the augmented reality content management system 100 may identify the individual physical objects by using the differences among positions and sizes of shadows of the respective physical objects as additional information with reference to the current position of the user.

[0162] Meanwhile, the position of a virtual object of a 3D virtual image assigned to the corresponding physical object of actual image information is automatically adjusted so that a separation distance from the physical object is maintained and so displayed on the user terminal 200.

[0163] Also, positions of individual virtual objects are automatically adjusted so that a predetermined separation distance is maintained among the virtual objects and so displayed on the user terminal 200.

[0164] Therefore, since positions of objects are automatically adjusted by taking into account a relative position relationship between a physical and virtual objects so that the objects are not overlapped with each other, the user may check the information of a desired virtual object conveniently. In other words, a time period during which a user concentrates on the corresponding virtual object is lengthened, and thereby an advertisement effect may be increased.

[0165] FIG. 15 illustrates another operating principle of the augmented reality operating system 1.

[0166] Referring to FIG. 15, the operating principles of the augmented reality system 1 will be described in more detail.

[0167] The separation distance D2 between a first virtual object (virtual object 1) and a physical object is automatically configured to be longer than a sum of the distance R1 between the center of the first virtual object (virtual object 1) and the outermost region of the first virtual object (virtual object 1) and the distance R3 between the center of the physical object and the outermost region of the physical object.

[0168] Also, the separation distance D3 between a second virtual object (virtual object 2) and a physical object is automatically configured to be longer than a sum of the distance R2 between the center of the second virtual object (virtual object 2) and the outermost region of the second virtual object (virtual object 2) and the distance R3 between the center of the physical object and the outermost region of the physical object.

[0169] Also, the separation distance D1 between the first virtual object (virtual object 1) and the second virtual object (virtual object 2) is automatically configured to be longer than a sum of the distance R1 between the center of the first virtual object (virtual object 1) and the outermost region of the first virtual object (virtual object 1) and the distance R2 between the center of the second virtual object (virtual object 2) and the outermost region of the second virtual object (virtual object 2).

[0170] The first virtual object (virtual object 1) and the second virtual object (virtual object 2) move in the horizontal and vertical directions and their positions in 3D space are automatically adjusted--with respect to the x, y, and z axis--so as to prevent the first and second virtual objects from being overlapped with other objects and disappearing from the field of view of the user.

[0171] Meanwhile, virtual lines L1, L2 are dynamically generated and displayed between the physical object and the first virtual object (virtual object 1) and between the physical object and the second virtual object (virtual object 2).

[0172] Virtual lines L1, L2 are displayed to indicate association with the physical object when a large number of virtual objects are present on the screen; and thickness, transparency, and color of the virtual lines L1, L2 may be changed automatically according to the gaze of the user.

[0173] For example, if the user gazes at the first virtual object (virtual object 1) longer than a predetermined time period, the augmented reality glasses 100 may operate to detect whether the first virtual object (virtual object 1) is gazed at and then to automatically change the thickness of the virtual line L1 between the physical object and the virtual object 1 (virtual object 1) to be thicker than that of the other virtual line L2, change the transparency to be lower, and change the color to another color that may be used to emphasize the virtual line, such as red color.

[0174] At this time, if it is assumed that both of the first virtual object (virtual object 1) and the second virtual object (virtual object 2) are allocated to the same physical object, the distance of a plurality of virtual objects assigned to the physical object is kept to a predetermined separation distance D2, D3, but the virtual object that has more specific information is disposed to be relatively closer to the physical object.

[0175] For example, suppose the information of the first virtual object (virtual object 1) is more specific, and the information of the second virtual object (virtual object 2) is relatively conceptual information.

[0176] Then the separation distance D3 between the second virtual object (virtual object 2) and the physical object is automatically set to be longer than the distance D2 between the first virtual object (virtual object 1) and the physical object, and thereby the user may quickly recognize the specific information.

[0177] Also, if a plurality of virtual objects are assigned to the physical object, two virtual objects may be automatically disposed to be closer as association with each other becomes high while the two virtual objects may be automatically disposed to be distant from each other as association with each other becomes low.

[0178] FIGS. 16a and 16b illustrate yet another operating principle of the augmented reality operating system 1.

[0179] Referring to FIGS. 16a and 16b, the amount of information of a virtual object in a 3D virtual image, displayed by being assigned to the corresponding physical object of actual image information may be automatically adjusted dynamically according to the distance between the physical object and the user and displayed on the user terminal 200.

[0180] Therefore, since the virtual object displays more specific information when the user approaches the physical object, the user may check information of a desired virtual object conveniently. In other words, a time period during which the user concentrates on the corresponding virtual object is lengthened, and thereby an advertisement effect may be increased.

[0181] In general, since a user tends to see information about an object of interest more closely, when the user is distant from the object of interest, information is displayed in an abstract manner while, when the user approaches an object of interest, more detailed information is made to be displayed.

[0182] A virtual object assigned to a physical object begins to be displayed from since the distance between the user and the physical object or virtual object reaches a predetermined separation distance D1; as the distance between the user and the physical object or virtual object becomes short, a virtual object having more specific information is displayed.

[0183] In other words, the information of a virtual object assigned to one physical object is organized in a layered structure. As shown in FIG. 11b, the most abstract virtual object A is displayed when the user enters a predetermined separation distance D1; when the user approaches D2 further toward the physical object or virtual object, a virtual object A1, A2 having more specific information is displayed. Also, if the user approaches D3 most closely toward the physical object or virtual object, a virtual object A1-1, A1-2, A2-1, A2-2 having the most specific information is displayed.

[0184] For example, suppose an automatic vending machine is disposed in front of the user as a physical object.

[0185] If the user approaches within a predetermined separation distance D1, a virtual object assigned to the automatic vending machine is displayed. Here, a virtual object is assumed to be expressed by an icon of the automatic vending machine.

[0186] Next, if the user further approaches D2 the automatic vending machine, icons of beverage products sold at the automatic vending machine are displayed as virtual objects with more specific information.

[0187] Lastly, if the user approaches D3 most closely to the automatic vending machine, calories, ingredients, and so on of the beverage products may be displayed as virtual objects with more specific information.

[0188] In another example, suppose a car dealership exists as a physical object in front of the user.

[0189] If the user approaches within a predetermined separation distance D1, a virtual object assigned to the car dealership is displayed. Here, the virtual object is assumed to be the icon of a car company.

[0190] Next, if the user further approaches D2 the car dealership, various types of car icons may be displayed as virtual objects providing more specific information. At this time, if a car is currently displayed, namely, in the presence of a physical object, a virtual object may be displayed in the vicinity of the physical object, and a virtual object may also be displayed in the vicinity of the user even if no car is currently displayed, namely, even in the absence of a physical object.

[0191] Finally, if the user approaches D3 the car dealership most closely, technical specifications, price, and estimated delivery date of a car being sold may be displayed as virtual objects of more specific information.

[0192] Meanwhile, if vibration occurs while the user is gazing at a virtual or physical object of interest, the user terminal 200 may calculate a movement distance according to the change rate of the vibration, reconfigure the amount of information of the virtual object based on the gaze direction and calculated movement distance, and displays the virtual object with the reconfigured information.

[0193] In other words, it may be assumed from vibration that the user has effectively moved without a physical movement, or a weight may be assigned to the movement distance through vibration, or the amount of information of a virtual object may be displayed after being reconfigured according to the virtual movement distance.

[0194] In other words, a large change rate of vibration indicates that the user is running or moving fast while a small change range of vibration indicates that the user is moving slowly; therefore, the movement distance may be calculated based on the change rate of vibration. Therefore, the user may apply vibration by moving his or her head up and down without actually moving around so as to reflect a virtual movement distance.

[0195] When vibration is continuously generated simultaneously while the user is gazing in the direction along which the user wants to check status, for example, while the user turns his head and gazes to the right, the user terminal 200 calculates the movement direction and movement distance based on the gaze direction of the user and the vibration.

[0196] In other words, the user terminal 200 detects rotation of the user's head through an embedded sensor and calculates the virtual current position after vibration is detected, where the movement distance due to walking or running is figured out through the change rate of the vibration.

[0197] When the user's gaze direction is detected, the user terminal 200 may be configured to detect the gaze direction based on the rotational direction of the head or configured to detect the gaze direction by detecting the movement direction of the eyes of the user.

[0198] Also, the gaze direction may be calculated more accurately by detecting the rotational direction of the head and the movement direction of the eyes simultaneously but assigning different weights to the two detection results. In other words, the gaze direction may be configured to be calculated by assigning a weight of 50% to 100% to the rotation angle detection due to rotation of the head and assigning a weight of 0% to 60% to the rotation angle detection due to the movement direction of the eyes.

[0199] Also, the user may configure the user terminal 200 to select and perform a movement distance extension configuration mode so that a distance weight 2 to 100 times the calculated virtual moved distance may be applied.

[0200] Also, in calculating a virtual movement distance corresponding to the change rate of vibration, to exclude a noise value, the user terminal 200 may calculate a virtual movement distance based on the change rate of the vibration value except for the upper 10% and the lower 20% of the vibration magnitude.

[0201] As a result, even if the user does not actually approach a physical or virtual object or the user approaches the object very slightly, the user may still check the virtual object with the same amount of information as when the user approaches the physical or virtual object right in front thereof.

[0202] Also, the augmented reality operating system 1 is equipped with a Wi-Fi communication module and may further comprise a plurality of sensing units (not shown in the figure) disposed at regular intervals in the indoor environment.

[0203] A plurality of sensing units may be disposed selectively so as to detect the position of the user terminal 200 in the indoor environment.

[0204] Each time a Wi-Fi hotspot signal periodically output from the user terminal 200 is detected, a plurality of sensing units 300 may transmit the detected information to the augmented reality content management system 100, and then the augmented reality content management system 100 may determine a relative position of the user terminal 200 with reference to the absolute position of the plurality of sensing units.

[0205] As described above, the proposed system obtains the current position information based on the satellite position information in the outdoor environment while obtaining the current position information by using Wi-Fi signals in the indoor environment.

[0206] Meanwhile, an additional method for obtaining current position information in the indoor and outdoor environments may be described as follows.

[0207] A method for obtaining current position information from Wi-Fi signals may be largely divided into triangulation and fingerprinting methods.

[0208] First, triangulation measures Received Signal Strengths (RSSs) from three or more Access Points (APs), converts the RSS measurements into distances, and calculates the position through a positioning equation.

[0209] Next, fingerprinting partitions an indoor space into small cells, collects a signal strength value directly from each cell, and constructs a database of signal strengths to form a radio map, after which a signal strength value received from the user's position is compared with the database to return the cell that exhibits the most similar signal pattern as the user's position.

[0210] Next, a method for collecting position data of individual smartphones by exchanging Wi-Fi signals directly and indirectly with a plurality of nearby smartphone users.

[0211] Also, since the communication module of the user terminal 200 includes a Bluetooth communication module, current position information may be determined by using Bluetooth communication.

[0212] Also, according to another method, a plurality of beacons are first disposed in the indoor environment, and when communication is performed with one of the beacons, the user's position is estimated to be in the vicinity of the beacon.

[0213] Next, according to yet another method, a receiver having a plurality of directional antennas arranged on the surface of a hemisphere is disposed in the indoor environment, and the user's position is estimated through identification of a specific directional antenna that receives a signal transmitted by the user terminal 200. At this time, when two or more receivers are disposed, a 3D position of the user may also be identified.

[0214] Also, according to still another method, current position information of the user is normally determined based on the satellite position information, and when the user terminal 200 enters a satellite-denied area, speed and travel direction of the user are estimated by using the information of 9-axis sensor. At this time, the user's position in the indoor environment may be estimated through step counting, stride length estimation, and heading estimation. At this time, to improve accuracy of estimation, information of the user's physical condition (such as height, weight, and stride) may be received for position estimation computations. When the information of the 9-axis sensor is used, accuracy of estimation information may be improved by fusing the dead-reckoning result of the 9-axis sensor and the position estimation technique based on the Wi-Fi communication.

[0215] In other words, a global absolute position is computed even though the position accuracy based on the Wi-Fi technique may be somewhat low, and then a relative position with a locally high accuracy obtained through the information of the 9-axis sensor is combined with the global absolute position to improve the overall position accuracy. Also, by additionally applying the Bluetooth communication, accuracy of position estimation may be further improved.

[0216] Also, according to yet still another method, since a unique magnetic field is formed for positioning in the indoor environment, a magnetic field map of each space is constructed, and the current position is estimated in a similar manner as the Wi-Fi based fingerprinting technique. At this time, a change pattern of the magnetic field generated as the user moves in the indoor environment may also be used as additional information.

[0217] Also, according to a further method, lights installed in the indoor environment may be used. In other words, while LED lights are blinked at a speed at which a human is unable to discern their on and off switching, a specific position identifier is outputted from the LED light, and the camera of the user terminal 200 recognizes the specific position identifier for position estimation.