System And Method For Location Aware Processing

DANILAK; Radoslav

U.S. patent application number 16/540328 was filed with the patent office on 2020-02-20 for system and method for location aware processing. This patent application is currently assigned to TACHYUM LTD.. The applicant listed for this patent is TACHYUM LTD.. Invention is credited to Radoslav DANILAK.

| Application Number | 20200057645 16/540328 |

| Document ID | / |

| Family ID | 69524071 |

| Filed Date | 2020-02-20 |

View All Diagrams

| United States Patent Application | 20200057645 |

| Kind Code | A1 |

| DANILAK; Radoslav | February 20, 2020 |

SYSTEM AND METHOD FOR LOCATION AWARE PROCESSING

Abstract

A methodology for preparing a series of instruction operations for execution by plurality of arithmetic logic units (ALU) is provided. The methodology includes first assigning a first instruction operation to the first ALU; first determining, for a second instruction operation having an input that depends directly on an output of a first instruction operation, whether all inputs for the second instruction operation are available within a locally predefined range from the first ALU; second assigning, in response to at least a positive result of the first determining, the second instruction operation to the second ALU; in response to a negative result of the first determining: ensuring a pause of at least one clock cycle will occur between execution of the first instruction operation and the second instruction operation; and third assigning the second instruction operation to an ALU of the plurality of ALUs.

| Inventors: | DANILAK; Radoslav; (Cupertino, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | TACHYUM LTD. San Jose CA |

||||||||||

| Family ID: | 69524071 | ||||||||||

| Appl. No.: | 16/540328 | ||||||||||

| Filed: | August 14, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62764767 | Aug 16, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/3836 20130101; G06F 8/451 20130101; G06F 7/57 20130101; G06F 9/30145 20130101; G06F 9/3828 20130101; G06F 9/3869 20130101; G06F 9/3853 20130101; G06F 9/3885 20130101; G06F 9/30007 20130101; G06F 16/9024 20190101; G06F 9/3826 20130101; G06F 15/78 20130101; G06F 9/3889 20130101; G06F 9/3893 20130101; G06F 9/3838 20130101; G06F 9/4881 20130101 |

| International Class: | G06F 9/38 20060101 G06F009/38; G06F 9/30 20060101 G06F009/30; G06F 7/57 20060101 G06F007/57 |

Claims

1. A computer hardware device having a clock speed and a clock cycle, the device comprising: a plurality of arithmetic logic units (ALU) within a data path including a first, second and third ALUs, the second ALU being within a locally predefined range of the first ALU and the third ALU being outside of the locally predefined range of the first ALU, wherein the locally predefined range is smaller than the data path; the device being programmed to execute a series of instructions stored in a non-transitory memory to perform operations, the operations comprising: first assigning a first instruction operation to the first ALU; first determining, for a second instruction operation having an input that depends directly on an output of a first instruction operation, whether all inputs for the second instruction operation are available within a locally predefined range from the first ALU; second assigning, in response to at least a positive result of the first determining, the second instruction operation to the second ALU; in response to a negative result of the first determining: ensuring a pause of at least one clock cycle will occur between execution of the first instruction operation and the second instruction operation; and third assigning the second instruction operation to an ALU of the plurality of ALUs.

2. The device of claim 1, wherein a clock speed of the device is defined in part by a worst case time of transmission between a consumer ALU and producer ALU of the plurality of ALUs within the locally predefined range.

3. The device of claim 1, wherein the locally predefined range is a distance between two adjacent ALUs.

4. The device of claim 3, wherein the locally predefined range is further defined by inputs and outputs of the two adjacent ALUs facing each other.

5. The device of claim 1, wherein the first and second ALU are the same, and the locally predefined range is an ALU to itself.

6. The device of claim 1, wherein: the plurality of ALUs in the data path includes a third ALU that is outside the locally predefined range from the first ALU; the clock cycle of the device is shorter than an amount of time to needed to guarantee that the third ALU (a) receives and selects an input produced from the first ALU and (b) executes the second instruction operation.

7. The device of claim 1, wherein the ensuring further comprises: second determining whether the first and second instruction operations are already separated in time of execution by at least one clock cycle of the device; and in response to a negative outcome of the second determining, inserting a delay of at least one clock cycle of the device between execution of the first and second instruction operations.

8. The device of claim 1, wherein the first and second assigning further comprises setting the first and second instruction operations to be executed during a same clock cycle of the device.

9. A method for preparing a series of instruction operations for execution on a device having plurality of arithmetic logic units (ALU) within a data path including at least first, second and third ALUs, the second ALU being within a locally predefined range of the first ALU and the third ALU being outside of the locally predefined range of the first ALU, the locally predefined range being smaller than the data path, the method comprising: first assigning a first instruction operation to the first ALU; first determining, for a second instruction operation having an input that depends directly on an output of a first instruction operation, whether all inputs for the second instruction operation are available within a locally predefined range from the first ALU; second assigning, in response to at least a positive result of the first determining, the second instruction operation to the second ALU; in response to a negative result of the first determining: ensuring a pause of at least one clock cycle will occur between execution of the first instruction operation and the second instruction operation; and third assigning the second instruction operation to an ALU of the plurality of ALUs.

10. The method of claim 9, wherein a clock speed of the device is defined in part on a worst case time of transmission between a consumer ALU and producer ALU of the plurality of ALUs within the locally predefined range.

11. The method of claim 9, wherein the locally predefined range is a distance between two adjacent ALUs.

12. The method of claim 11, wherein the locally predefined range is further defined by inputs and outputs of the two adjacent ALUs facing each other.

13. The method of claim 9, wherein the first and second ALUs are the same, and the locally predefined range is an ALU to itself.

14. The method of claim 9, wherein: the clock cycle of the device is shorter than an amount of time needed to guarantee that the third ALU (a) receives and selects an input produced from the first ALU and (b) executes the second instruction operation.

15. The method of claim 9, wherein the ensuring comprises: second determining whether the first and second instruction operations are already separated in time of execution by at least one clock cycle of the device; and in response to a negative outcome of the second determining, inserting a delay of at least one clock cycle of the device between execution of the first and second instruction operations.

16. The method of claim 9, wherein the first and second assigning further comprises setting the first and second instruction operations to be executed during a same clock cycle of the device.

17. A non-transitory computer readable medium storing instructions to perform operations to assign instruction operations to a device, the device having a clock speed and a clock cycle and comprising a plurality of arithmetic logic units (ALU) within a data path including at least three ALUs, the operations comprising: first assigning a first instruction operation to the first ALU; first determining, for a second instruction operation having an input that depends directly on an output of a first instruction operation, whether all inputs for the second instruction operation are available within a locally predefined range from the first ALU; second assigning, in response to at least a positive result of the first determining, the second instruction operation to the second ALU; in response to a negative result of the first determining: ensuring a pause of at least one clock cycle will occur between execution of the first instruction operation and the second instruction operation; and third assigning the second instruction operation to an ALU of the plurality of ALUs.

10. The method of claim 9, wherein a clock speed of the device is defined in part on a worst case time of transmission between a consumer ALU and producer ALU of the plurality of ALUs within the locally predefined range.

18. The device of claim 17, wherein a clock speed of the device is defined in part on a worst case time of transmission between a consumer ALU and producer ALU of the plurality of ALUs within the locally predefined range.

19. The device of claim 17, wherein the locally predefined range is a distance between two adjacent ALUs.

20. The device of claim 19, wherein the locally predefined range is further defined by inputs and outputs of the two adjacent ALUs facing each other.

21. The device of claim 17, wherein the first and second ALU are the same, and the locally predefined range is an ALU to itself.

22. The device of claim 17, wherein: the plurality of ALUs in the data path includes a third ALU that is outside the locally predefined range from the first ALU; the clock cycle of the device is shorter than an amount of time to needed to guarantee that the third ALU (a) receives and selects an input produced from the first ALU and (b) executes the second instruction operation.

23. The device of claim 17, wherein the ensuring further comprises: second determining whether the first and second instruction operations are already separated in time of execution by at least one clock cycle of the device; and in response to a negative outcome of the second determining, inserting a delay of at least one clock cycle of the device between execution of the first and second instruction operations.

24. The device of claim 17, wherein the first and second assigning further comprises setting the first and second instruction operations to be executed during a same clock cycle of the device.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] The instant application claims priority to U.S. Provisional Patent Application No. 62/764,767 entitled SYSTEM AND METHOD FOR LOCATION AWARE PROCESSING filed Aug. 16, 2018, the contents of which are expressly incorporated herein by reference in its entirety.

FIELD OF THE INVENTION

[0002] The various embodiments described herein relate generally to processors with high clock speed. More specifically, embodiments of the application relate to a processing architecture and related methodology that utilizes location-aware processing that assigns Arithmetic Logic Units (ALU) in a processor to instruction operations based on prior allocations of ALUs to prior instruction operations. Such embodiments minimize the influence of internal transmission delay on wires between ALUs in a processor, with a corresponding significant increase in clock speed, reduction in power consumption and reduction in size.

BACKGROUND

[0003] In recent years, clock speed of processors in modern computing has substantially plateaued. Referring now to FIG. 1, a logarithmic graph 100 shows the evolution of transistor size, clock speed and performance from 1975-2015 (data adapted from 40 Years of Microprocessor Trend Data, Karl Rupp, Jun. 25, 2015). Transistors have continued to reduce in size along a fairly linear path 102; since smaller transistors are faster than larger transistors, the decrease in transistor size has produced a corresponding increase in transistor switching speed by a factor of eight, roughly every nine years, although this is slowing even more in more recent years. Transistors make up the ALUs inside processors that perform operations.

[0004] Prior to 2000, the predominant factor in CPU clock speed and performance was the transistor speed. Thus, from 1975-2000, as transistor size decrease along path 102, clock speed curve 104 improved at a nearly corresponding linear path and performance curve 106 similarly remained along a fairly linear path.

[0005] In the early 2000s, clock speed began to peak and performance improvements with each product generation flattened. Despite continuing improvement in transistor size and speed, clock speed showed minimal improvement from 2010-2015. Processor technology was simply no longer able to fully leverage reduction of transistor size into an improvement in clock speed. Performance continued with slight improvement over that time period, although it was relatively modest and largely attributable to other improvements in processor technology.

[0006] A reason for the peaking of clock speed is an increased influence of factors other than transistor speed. Prior to 2000 the influence of those factors were so small compared to the influence of transistor speed that these other factors were effectively inconsequential; thus clock speed and performance improvements tracked improvements in transistor speed. However, eventually the transistors became so small and fast that the influence of these other factors was no longer negligible and over time evolved into significant limiting factors. One of these factors is the time of transmission, which is set in part by the amount of time it takes for a signal to complete its travel over a wire from whatever source produced the signal to the ALU that would consume it (which includes technical consideration in such time, including but not limited to charging and inductive effects, leakage, etc.).

[0007] The factors of transistor speed and wire delays (time of transmission) both contribute to the clock speed of the supporting system. Modern computing is based on a guarantee of two events occurring within a single clock cycle. First, an Arithmetic Logic Unit ("ALU") will receive and select as inputs from whatever source within the core generated those inputs; this implicates the time of transmission for the inputs to travel from the source to the ALU. Second, the ALU will perform a processing step on the received/selected input(s); this implicates the time of processing.

[0008] In this context, the reduction in the size of the transistors and the wires have offsetting effects--the reduction in transistor size decreases the time for processing, while the reduction in wire size increases the time for transmission because thinner wires (in height and width) are more resistant to signal flow.

[0009] While the effects are offsetting, historically they have not been comparable. Prior to 2000, the time of processing was so much larger than the time of transmission (e.g., on the order of about 100 times greater) that the time of transmission (even as it was increasing) was negligible to the establishment of clock speed. This is reflected in the pre-2000 time frame of graph 100, where improvements in clock speed and performance tracked improvements in transistor size.

[0010] As the size of the transistors and wires continued to reduce, the time of processing continued to decrease, but the time of transmission continued to increase, at a much higher pace. Eventually the gap between the two narrowed to where the time of transmission was no longer negligible compared to the time of processing, and as such, the increasing transmission time became an increasingly consequential offsetting factor against the time of processing.

[0011] This is understood in context by the layout of ALUs within a core. Referring now to FIGS. 2A and 2B, as is known in the art, a core 205 of a processor 250 includes a data path 207 with 4-8 ALUs 202 that execute the program instructions. Data path 207 may include at least one simple ALU (performing functions including, e.g., integer arithmetic (add, subtract), boolean logic (and, or, complement) and shifts (left, right, rotate)) and at least one complex ALU (performing functions including, e.g., integer operations (multiply and divide), floating point operations (add, subtract, multiply, divide) and even mathematical functions (square root, sine, cosine, log, etc.)).

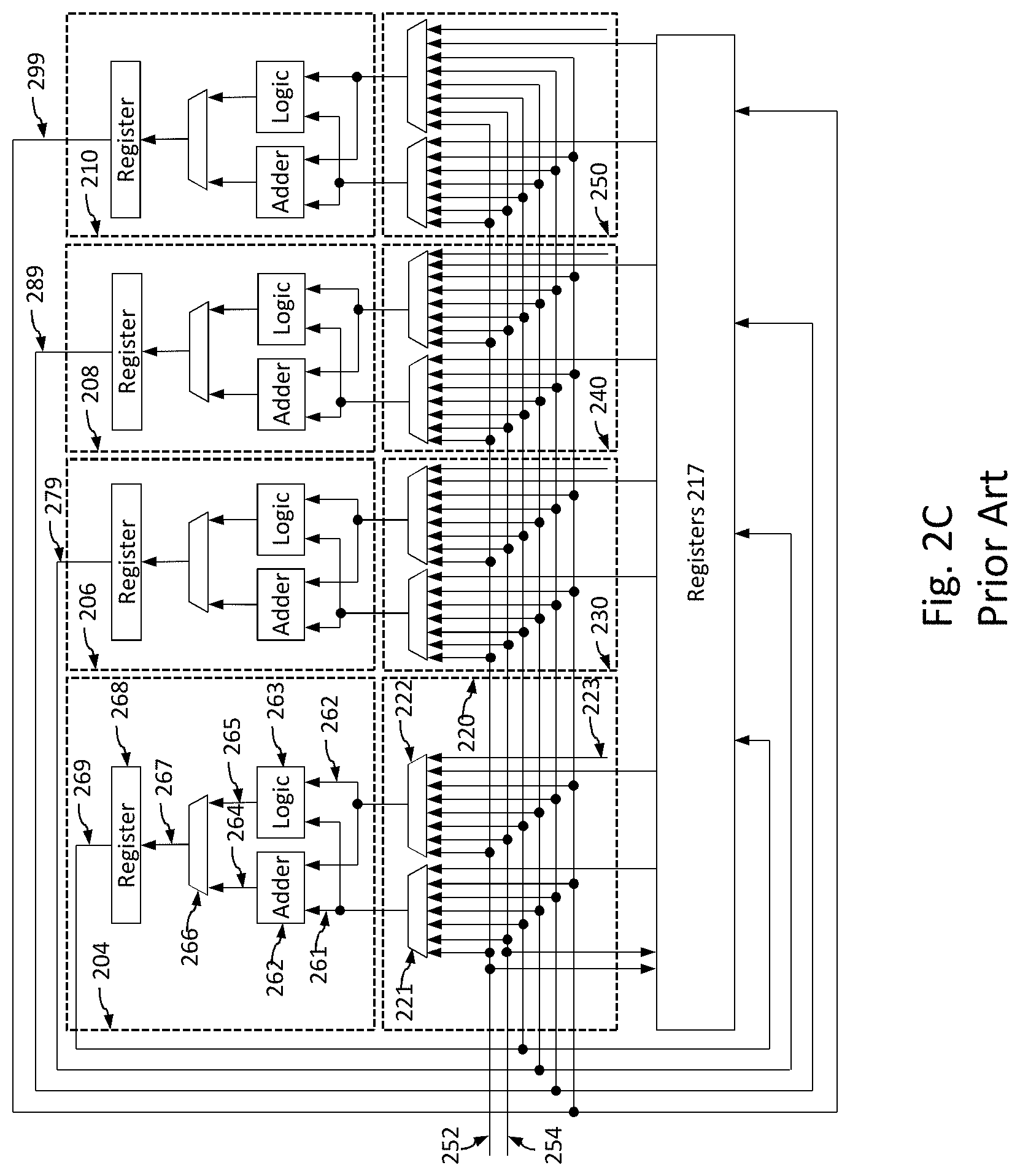

[0012] FIG. 2B shows a prior art data path with six ALUs 202 (204, 206, 208, 210, 212 and 214) along a data path 216, of which four (208, 210, 212, 214) are simple ALUs and two (204, 206) are a complex ALUs. The data path 216 includes an operand selection unit 215 for each ALU to collectively define an operand selection network and selects the specific inputs available on data path 216 that are input to its corresponding ALU. Registers 217 present values for use by the ALUs 202, and such values may be provided earlier by prior activity of an ALU.

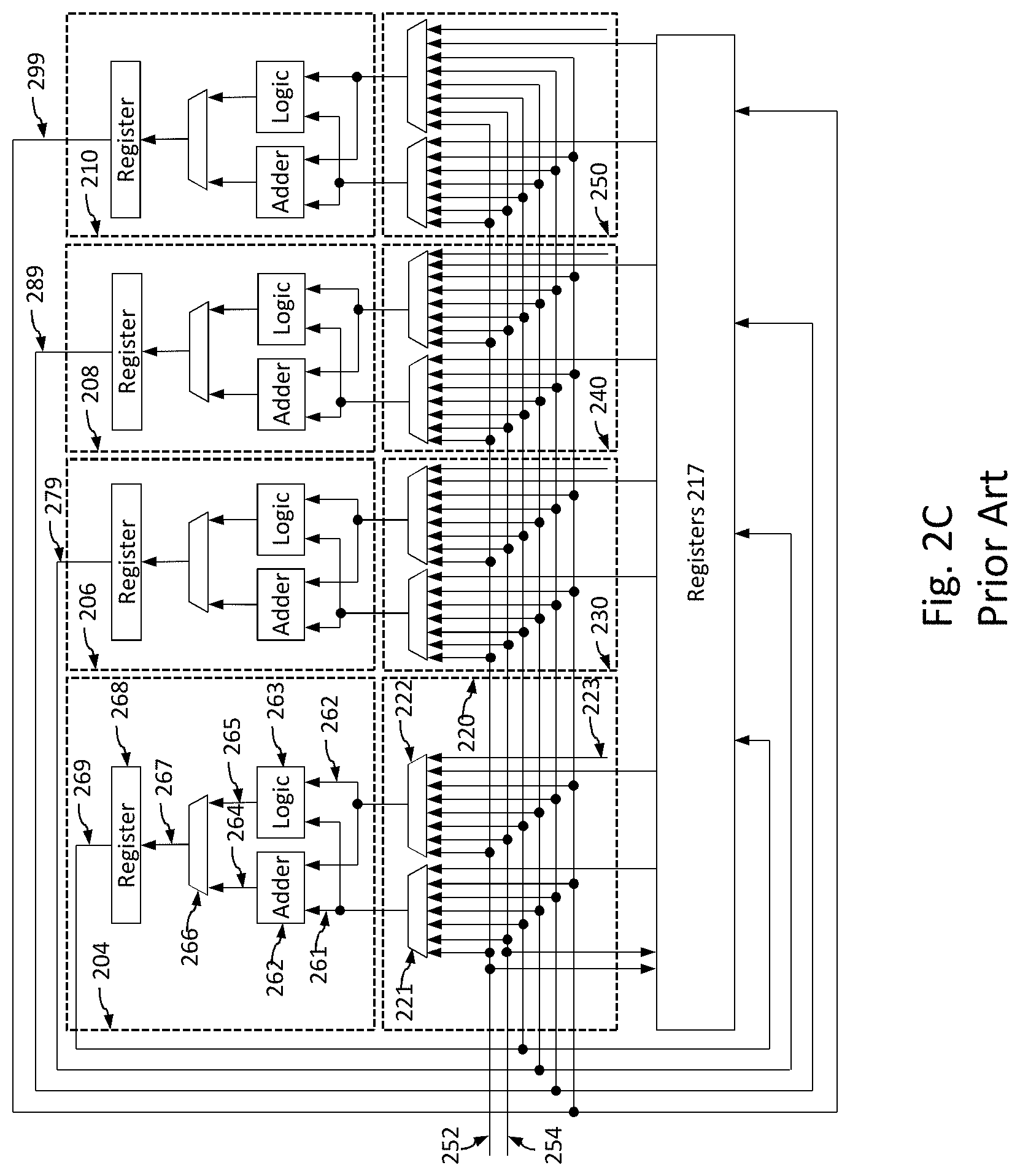

[0013] FIG. 2C provides a more detailed discussion of the layout of FIG. 2B for a layout of ALUs 202 including ALU 204, 206, 208 and 210. Each ALU execution unit 202 is composed of adder 262 and logic unit 263. The adder 262 may be used to calculate address for memory read described by load instruction and for memory write described by store instruction. In addition adder 262 may be used for executing conditional branches. Based on whether the ALU is simple or complex, the logic unit may include bitwise logic unit, shifter, sign extension, logic, and many other operations. Each ALU 202 receives operands through inputs 261 and 262. The inputs 261 and 262 are coupled to adder 262 and logic unit 263 to provide operands for operation execution. The result from adder 262 is coupled to multiplexor 266 via coupling 264 and results from logic unit 263 is coupled to multiplexor 266 via coupling 265. The multiplexor selects results from adder 264 or logic unit 263 depending on what operation is requested. The result of multiplexor 266 is transferred to output register 268 via coupling 267.

[0014] The plurality of operand selection units 215 are shown as 220, 230, 240, 245 are used to select operands for ALUs 204, 206, 208, 210. Each operand selection unit 215 selects operand 261 for ALU 260 by multiplexor 221 coupled to adder 262 and logic 263 via coupling 261. The multiplexor 221 selects operands from memory ports 252 or 254, register 256 using coupling 258, ALU 204 via coupling 269, ALU 206 via coupling 279, ALU 208 via coupling 289, or from ALU 210 via coupling 299. Similarly operand selection unit 220 selects operand 262 for ALU 260 by multiplexor 222 coupled to adder and logic unit via coupling 262. The multiplexor 222 can similarly select from such available inputs, or from immediate operand constant 223.

[0015] Data provided by memory ports 252 and 254, as well as the results of ALUs 220, can be written to registers 217 and made available for subsequent computations.

[0016] The transmission time of the wires that connect the ALUs 202 has a specific impact on the processor methodology of the prior art. To maintain the guarantee of completing the signal receipt and processing within a single clock cycle, the clock speed must be set to allow for a combination of the worst case scenarios for both the communication and the processing events. On the processing side, the worst case scenario is the most time consuming operation that an ALU would process within a single clock cycle (not including processes that are not expected to complete within one clock cycle). On the transmission side, the worst case scenario is the furthest distance that the input signal would have to travel to reach the ALU that performs the operation. The overall worst case scenario is when an ALU is tasked with the most time consuming processing operation that requires inputs from the furthest distance away.

[0017] By way of example in FIG. 2B, for purposes of explanation the most complicated operation that can be completed by an ALU in a single clock cycle is "shift" by one of the complex ALUs 204 or 206. The most complicated transmission distance is for ALU 204 on one end of data path 216 to select inputs from the farthest ALU 214 on the opposite side of the data path. The worst case scenario timewise is when the ALU 204 needs to perform shift based on input from the ALU 214. The clock cycle of the chip for ALUs 202 must be larger than that worst case scenario to guarantee the operation can be performed.

[0018] Whatever the worst case scenario is for a particular chip, when the clock cycle is set to allow for the worst case scenario to occur within a single clock cycle, then the clock cycle will be long enough to guarantee that any other (single clock cycle) operation can be performed with that clock cycle, as that other operation will be less taxing in total transmission and processing, and can be performed within the single clock cycle.

[0019] In contrast, setting the clock cycle shorter that the worst case scenario would violate that guarantee, as the correct results may not be produced within the clock cycle and other ALUs that are instructed to use the results of that process would receive incorrect information and produce incorrect results themselves. For example in FIG. 2B, if the clock cycle was not long enough for ALU 204 to receive input from ALU 214, then ALU 204 would produce an incorrect output that would propagate to other ALUs that rely upon the output of ALU 204.

[0020] The prior art clock cycle may thus be defined by the following equation:

Minimum clock cycle time=tmaxglobalcomm+tmaxop+toh; and

Maximum clock speed=(1/minimum clock cycle time)

[0021] where: [0022] tmaxglobalcomm is the maximum amount of time that would be taken for one ALU to receive and select content from another ALU within the data path; [0023] tmaxop is the maximum amount of time that one ALU would take to complete a processing step within a single clock cycle; and [0024] toh is the time allocated to "overhead" parameters as are known in the art, including but not limited to one or more of the following: clock skew and clock jitter, variation of process, temperature or voltage, ground bounce, simultaneous switching, signal integrity, Miller effect, guard band and/or aging.

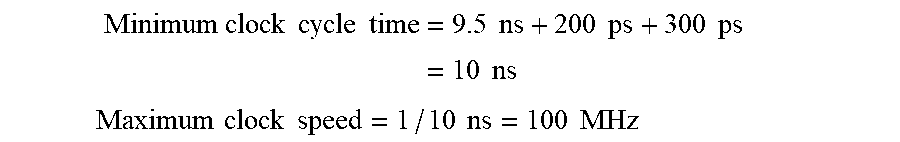

[0025] Referring back to FIG. 1, in the 1990's the time of communication (i.e. wire delay) was negligible compared to time of processing (i.e. transistor switching speed). A typical example of approximate values for that time period may be tmaxop=9.5 nanoseconds (ns), tmaxglobalcomm=200 picoseconds (ps) and toh=300 ps. Thus in this circa 1990's example:

Minimum clock cycle time = 9.5 ns + 200 ps + 300 ps = 10 ns ##EQU00001## Maximum clock speed = 1 / 10 ns = 100 MHz ##EQU00001.2##

[0026] This was a common speed for home computers circa 1997. The resulting equation was so dominated (in this example 95%) by the time of processing that the influence of the other factors was minimal (in this example, 5%).

[0027] The flattening of the clock speed curve 104 after 2005 in FIG. 1 reflects the narrowing of the gap of the influence of the time factors. Given the size of components circa year 2018, the time of processing for the worst case operation is similar to the time of communications for worst case transmission from one corner of the core to another. By way of example, for a current processor (with massively faster transistor switching speed than 20 years ago, and with significantly more wire delay/time of transmission) approximate values maybe tmaxop=120 ps, tmaxglobalcomm=120 ps, and toh=20 ps. Thus in this year 2018 example:

Minimum clock cycle time = 120 ps + 120 ps + 20 ps = 260 ps ##EQU00002## Maximum clock speed = ( 1 / 260 ps ) = 3.85 Ghz . ##EQU00002.2##

[0028] This was a common speed for home computers circa 2018. Whereas for circa 1997 technology the equation was 95% dominated by the time of processing, for circa 2018 technology the influence of time of processing is similar to that of time of transmission.

[0029] The above examples can show practical limits of further reductions in transistor size, and corresponding time of processing. If hypothetically the transistors were improved in speed to reduce the processing time by 85% over the circa 2018 numbers above, then the tmaxop could drop to about 20 ps. This would cause a corresponding reduction in wire size, with a corresponding increase in the worst case time of transmission. Presuming a conservative 20% increase in time of transmission for a one millimeter long wire due to wire size (for a corresponding Tmaxglobalcomm of approximately 140 ps) and keeping other factors the same for purpose of illustration, the parameters for this hypothetical transistor are:

Minimum clock cycle time=20 ps+140 ps+20 ps=180 ps

Maximum clock speed=5.55 Ghz

[0030] An 85% reduction in processing time (120 ps to 20 ps) relative to current levels represents an enormous investment of time and expense. Yet it would only improve clock speed by about 44% (3.85 Ghz to 5.55 Ghz), which is a poor recovery relative to the underlying investment. The reason for the lack of comparable improvement between the transistor size and clock cycle time is that the influence of time of processing is becoming negligible, while the influence of time of transmission--once itself a negligible factor--has now become dominant.

[0031] Efforts have been made to improve clock speed by reducing the time of transmission. Once type of effort was to change the composition of the wires to something with lower resistance, such as from an aluminum based compound to a copper based compound. Another type of effort was to optimize the layout of chip ALUs to minimize the distances that signals had to traverse. Another type of effort was to cool the chips, as cooler wires will have lower resistance with respect to signal transmission.

[0032] 2. Instruction Execution

[0033] Any particular processor is designed with an instruction set architecture (ISA). A common type of ISA is Complex Instruction Set Computing (CISC) where single instructions can execute several low-level machine operations (such as a load from memory, an arithmetic operation, and a memory store) or are capable of multi-step operations or addressing modes within single instructions. Another common ISA is Reduced Instruction Set Computer (RISC), which uses a small set of simple and general instructions, rather than a large set of complex and specialized instructions as in CISC.

[0034] CISC and RISC allow programs to specify instructions to execute in sequence only. Another ISA that does not is Very Long Instruction Word (VLIW), in which several instructions can be group into an instruction word, and the multiple instructions in the instruction word can be executed at the same time (i.e. during the same clock cycle), concurrently, in parallel. A limitation of VLIW is that the instructions within any instruction word may not have any direct interdependencies, such as when a first instruction operation's result is used as a second instruction operation's input, then they cannot execute at the same time and the second instruction cannot execute until the first instruction has completed.

BRIEF DESCRIPTION OF THE DRAWINGS

[0035] Various embodiments in accordance with the present disclosure will be described with reference to the drawings, in which:

[0036] FIG. 1 illustrates a graph of processor parameters from the early 1970s through 2015.

[0037] FIG. 2A is a block diagram of a prior art processor chip.

[0038] FIGS. 2B and 2C are block diagrams of a prior art ALU layout within a data path of a prior art chip.

[0039] FIGS. 3A and 3B show non-limiting examples of ALUs in producer-consumer relationships.

[0040] FIG. 4 shows a processor chip according to an embodiment of the invention.

[0041] FIG. 5A is a prior art arrangement of a pair of ALUs.

[0042] FIG. 5B is an embodiment of an arrangement of a pair of ALUs.

[0043] FIG. 6 is an embodiment of an arrangement of ALUs in a data path.

[0044] FIGS. 7A-7E are dependency graphs.

[0045] FIG. 8 is a flowchart of an embodiment of the invention for assigning instruction operations to ALUs.

[0046] FIG. 9 is a flowchart of an embodiment of node selection block from FIG. 8.

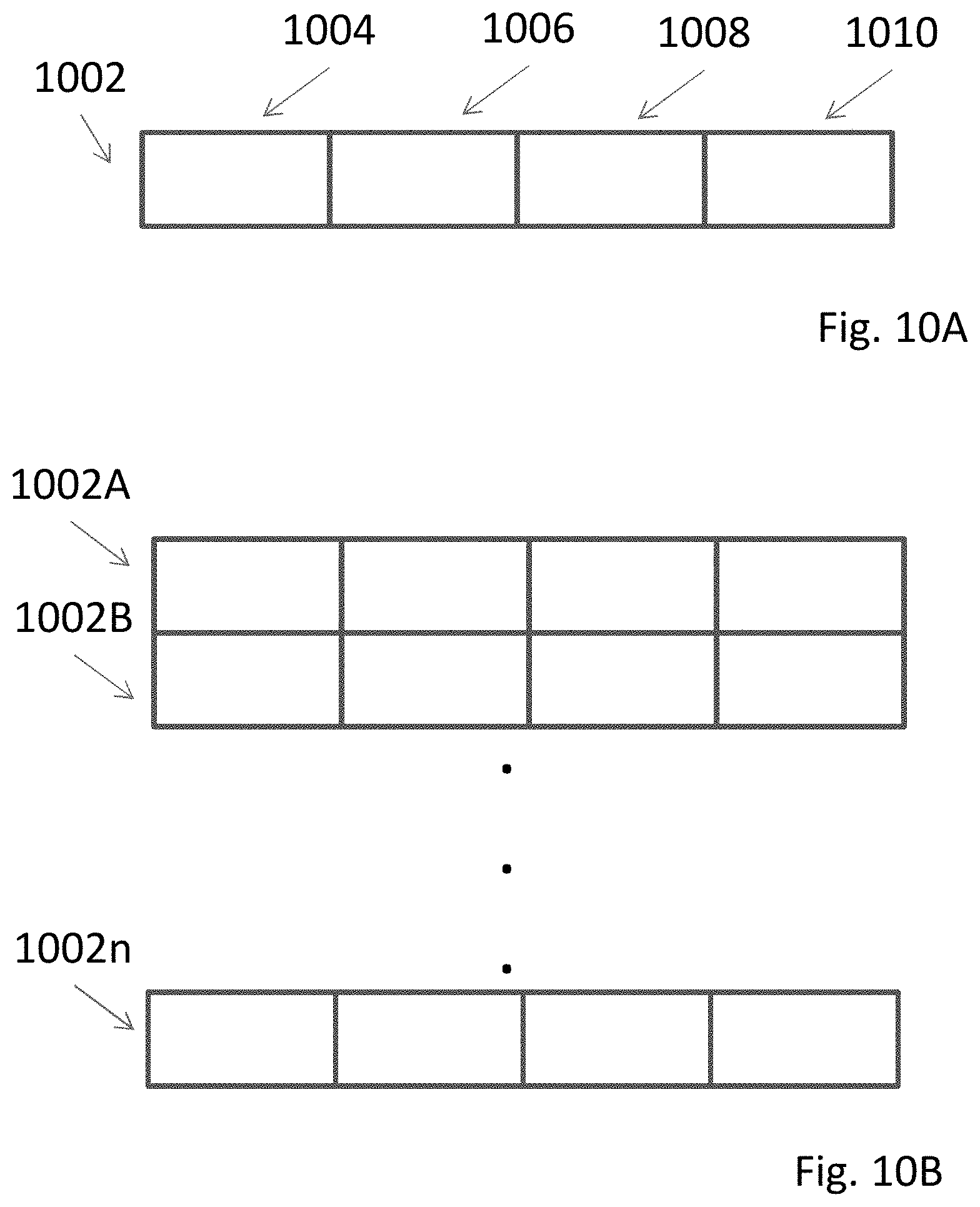

[0047] FIGS. 10A and 10B are block diagrams of an embodiment of instruction words.

[0048] FIGS. 11A-11E are block diagrams of instructions words populated according to an embodiment of the invention.

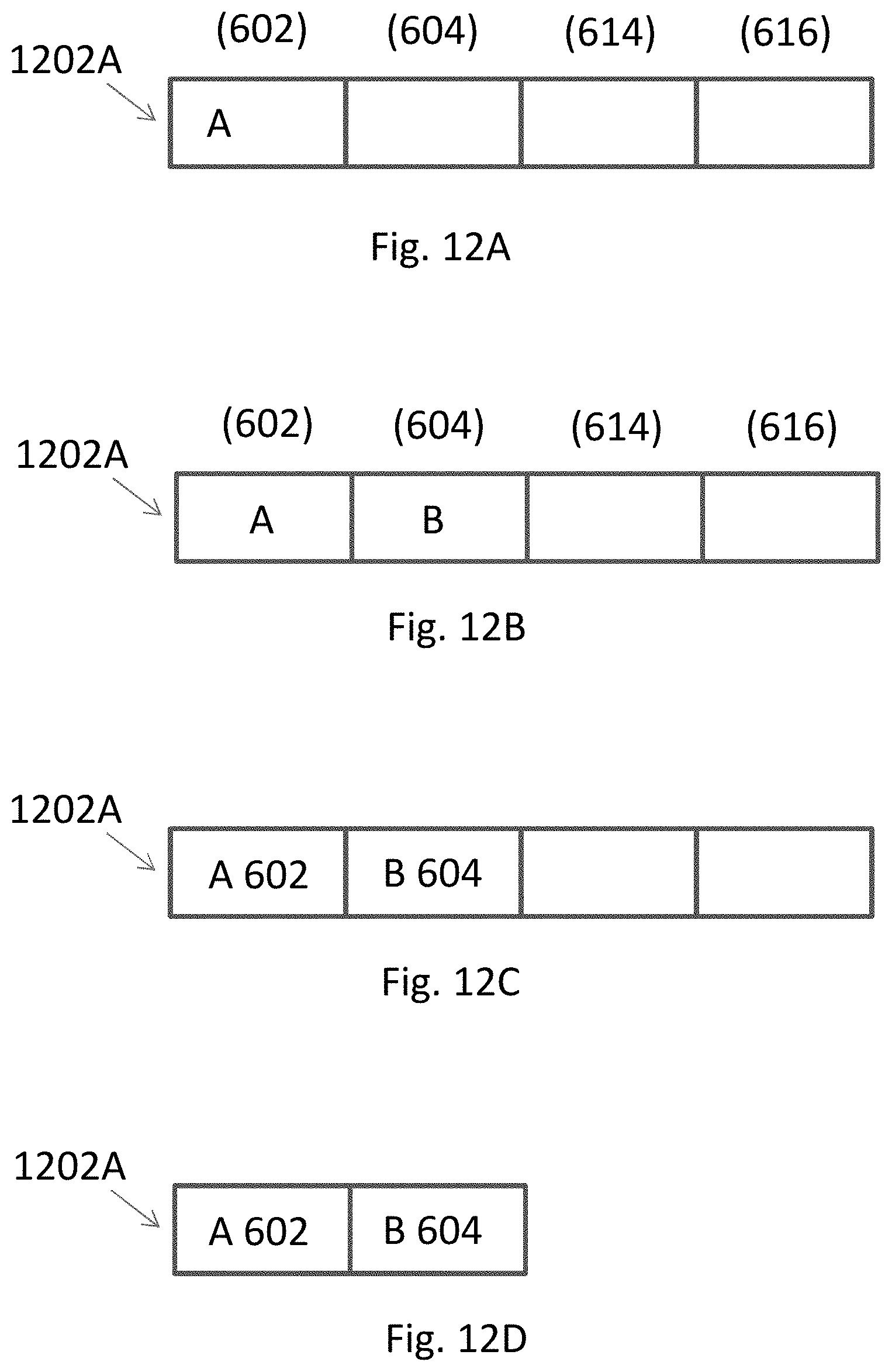

[0049] FIGS. 12A-12D are block diagrams of instructions words populated according to an embodiment of the invention.

[0050] FIGS. 13A-13B are block diagrams of instructions words populated according to an embodiment of the invention.

[0051] FIGS. 14A-14C are block diagrams of instructions words populated according to an embodiment of the invention.

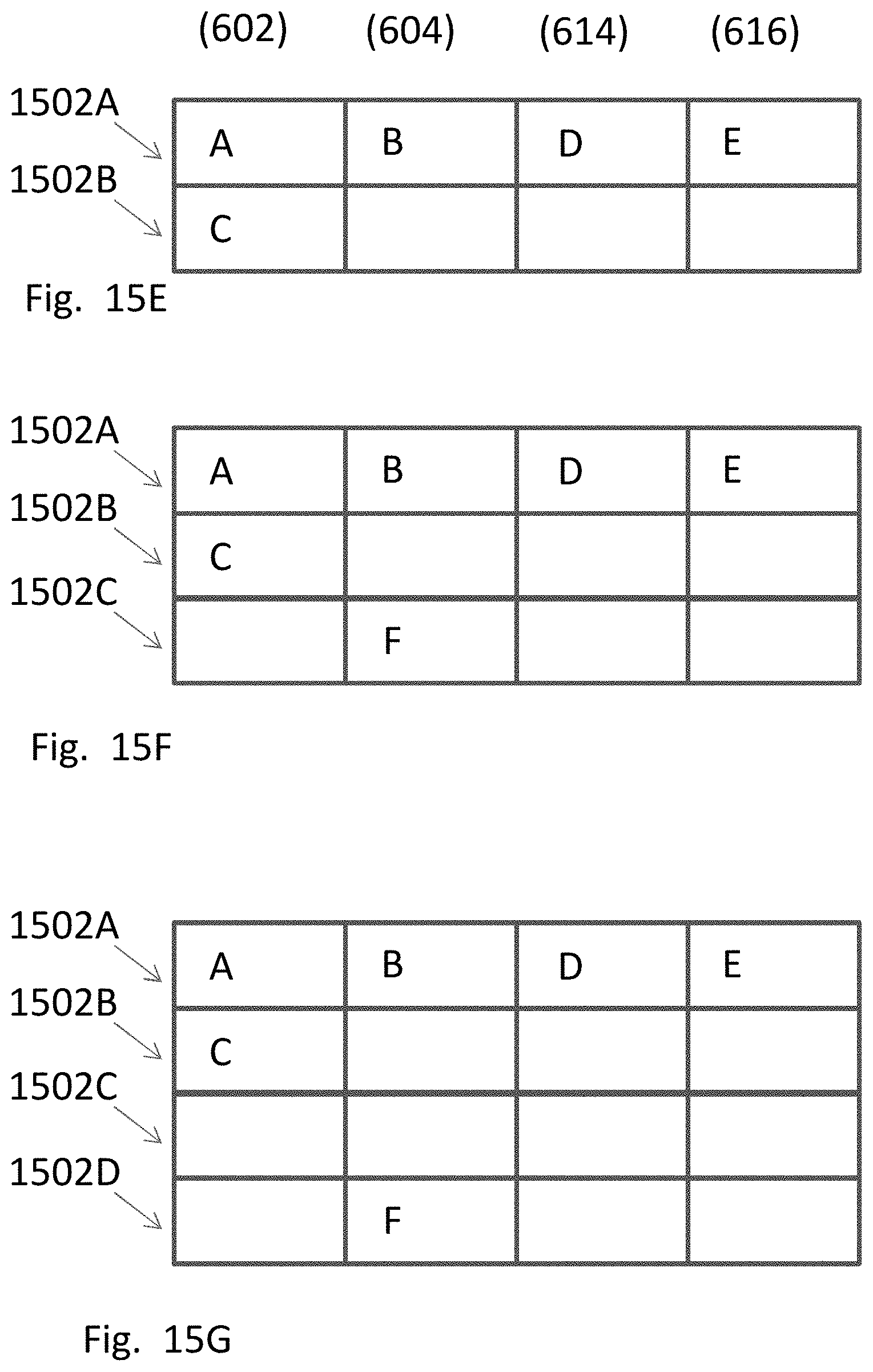

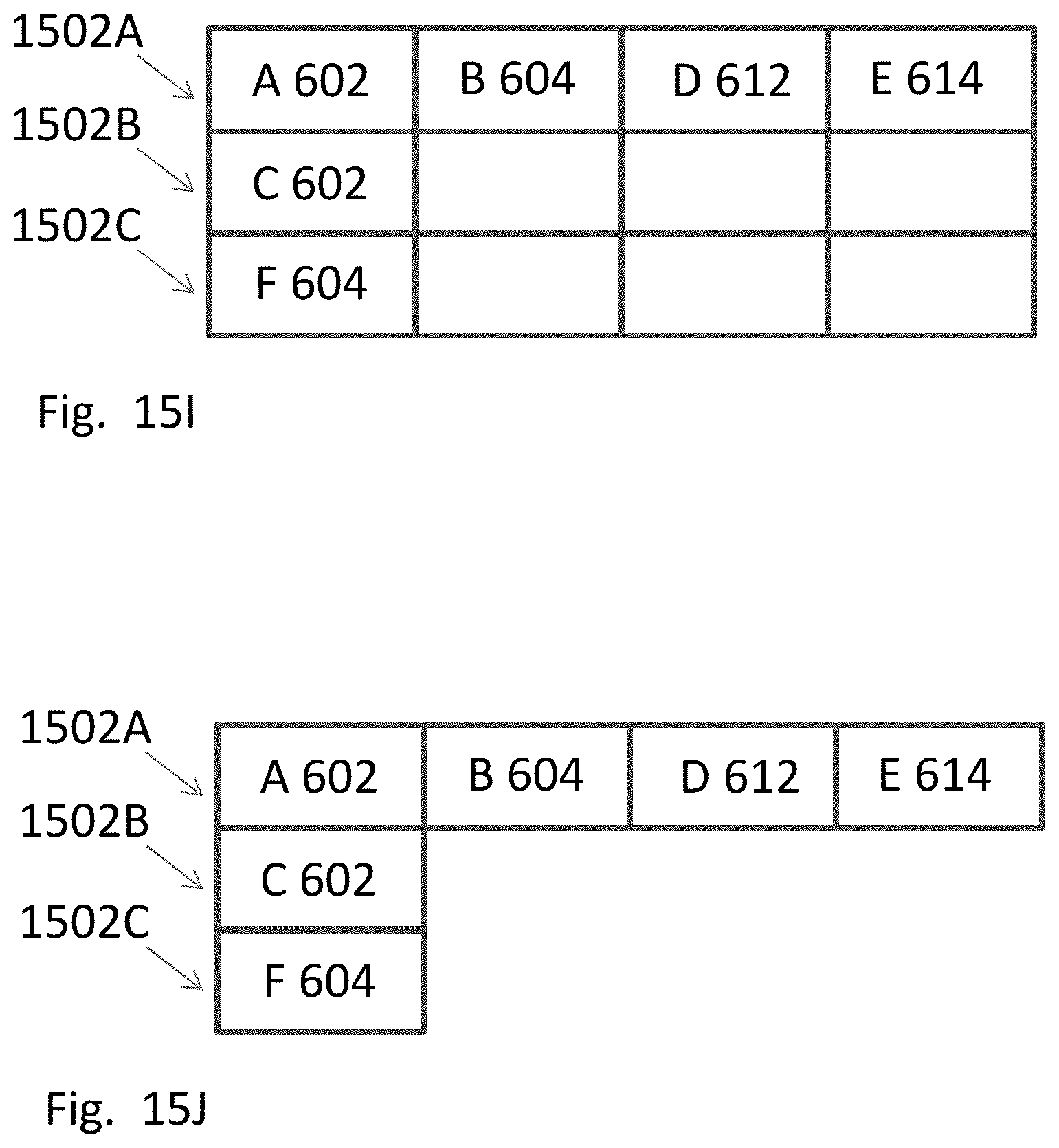

[0052] FIGS. 15A-15J are block diagrams of instructions words populated according to an embodiment of the invention.

[0053] FIG. 16 is a flowchart of an embodiment of the invention for executing instruction words.

[0054] FIGS. 17-21 are embodiments of different data paths.

[0055] FIG. 22 shows a layout of a mirrored ALU pair such as shown in FIG. 5.

[0056] FIG. 23 shows a layout of a mirrored ALU pair in combination with a complex ALU such as shown in FIG. 21.

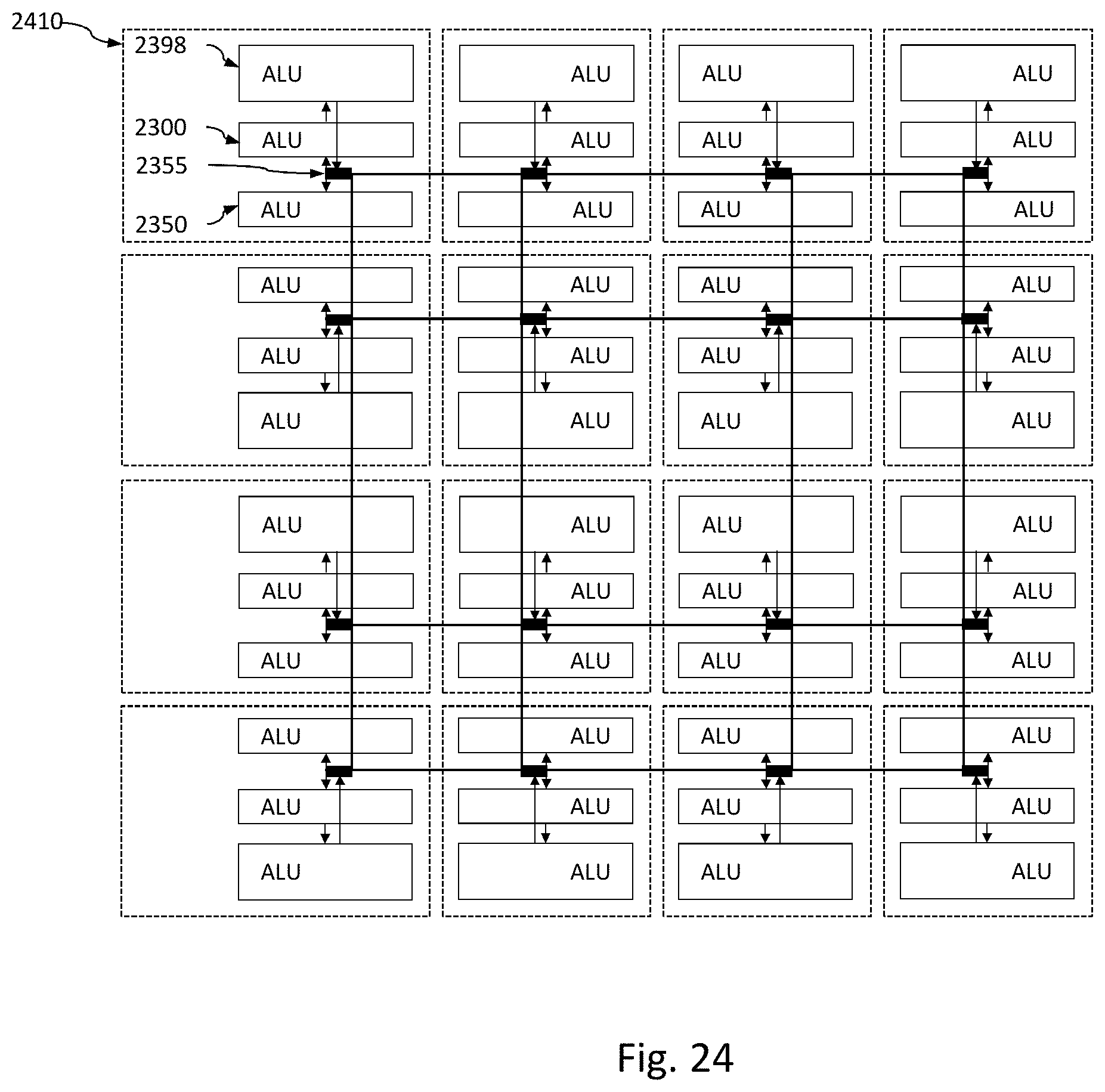

[0057] FIG. 24 shows groups of ALUs of FIG. 24.

OVERVIEW

[0058] According to an embodiment of the invention, a method for preparing a series of instruction operations for execution on a device is provided. The device has plurality of arithmetic logic units (ALU) within a data path including at least first, second and third ALUs, the second ALU being within a locally predefined range of the first ALU and the third ALU being outside of the locally predefined range of the first ALU, the locally predefined range being smaller than the data path. The method includes: first assigning a first instruction operation to the first ALU; first determining, for a second instruction operation having an input that depends directly on an output of a first instruction operation, whether all inputs for the second instruction operation are available within a locally predefined range from the first ALU; second assigning, in response to at least a positive result of the first determining, the second instruction operation to the second ALU; in response to a negative result of the first determining: ensuring a pause of at least one clock cycle will occur between execution of the first instruction operation and the second instruction operation; and third assigning the second instruction operation to an ALU of the plurality of ALUs.

[0059] The operations of the above method embodiment may also be provided in an embodiment as a computer hardware device having a clock speed and a clock cycle, where the device is programmed to execute a series of instructions stored in a non-transitory memory to perform the recited operations. The operations of the above method embodiment may also be provided in an embodiment as a non-transitory computer readable medium storing instructions to perform the operations to assign instruction operations to a device.

[0060] The above embodiments may have various optional features. A clock speed of the device can be defined in part on a worst case time of transmission between a consumer ALU and producer ALU of the plurality of ALUs within the locally predefined range. The locally predefined range can be a distance between two adjacent ALUs. The locally predefined range is further defined by inputs and outputs of the two adjacent ALUs facing each other. The first and second ALUs can be the same, and the locally predefined range can be an ALU to itself. The clock cycle of the device can be shorter than an amount of time needed to guarantee that the third ALU (a) receives and selects an input produced from the first ALU and (b) executes the second instruction operation. The ensuring can include second determining whether the first and second instruction operations are already separated in time of execution by at least one clock cycle of the device, and in response to a negative outcome of the second determining, inserting a delay of at least one clock cycle of the device between execution of the first and second instruction operations. The first and second assigning may further comprises setting the first and second instruction operations to be executed during a same clock cycle of the device.

[0061] According to an embodiment of the invention, a method for creating and executing instruction words for simultaneous execution of instruction operations by a plurality of Arithmetic Logic Units (ALUs) in a data path operating on a clock cycle is provided. The method includes creating a dependency graph of nodes with instruction operations, the graph including at least a first node having a first instruction operation and a second node having a second instruction operation, the second instruction operation being directly dependent upon the outcome of the first instruction operation; first assigning the first instruction operation to a first instruction word; second assigning a second instruction operation: to the first instruction word upon satisfaction of a first at least one predetermined criteria; and to a second instruction word, that is scheduled to be executed during a later clock cycle than the first instruction word, upon satisfaction of a second at least one predetermined criteria; and executing, in parallel by the plurality of ALUs and during a common clock cycle, any instruction operations within the first instruction word.

[0062] The operations of the above method embodiment may also be provided as an embodiment of a system for creating and executing instruction words for simultaneous execution of instruction operations, where the system includes a plurality of Arithmetic Logic Units (ALUs) in a data path operating on a clock cycle and a non-transitory computer readable memory storing instructions, where the system is being programmed to implement the instructions to perform the above operations. The operations of the above method embodiment may also be provided as an embodiment of a non-transitory computer readable medium storing instructions programmed to cooperate with a system to cause the system to perform the above operations to create and execute instruction words for simultaneous execution of instruction operations by a plurality of Arithmetic Logic Units (ALUs) in a data path operating on a clock cycle.

[0063] The above embodiments may have various optional features. The second at least one predetermined criteria can be a failure to satisfy the first at least one predetermined criteria. The first at least one predetermined criteria may include the first instruction word has sufficient capacity to hold the second instruction operation. The first at least one predetermined criteria may include both the first and second instruction operations are guaranteed under normal operation to be completed within the same clock cycle. The first at least one predetermined criteria may include the first and second instruction operations are not prohibited by any predefined rule from being in the first instruction word. The first assigning may include assigning the first instruction operation of the first instruction word to a first ALU of the plurality of ALUs. The first at least one predetermined criteria may include that a second ALU of the plurality of ALUs is available within a locally predefined range from a first ALU, the locally predefined range being smaller than the data path. The operations may include ensuring that at least one clock cycle separates execution of the first instruction word and the second instruction word.

[0064] According to an embodiment of the invention, a processor has a first group of ALUs including first, second and third ALUs. The first ALU has on a first side an input and an output. The second ALU has a first side facing the first side of the first ALU, an input and an output on the first side of the second ALU and being in a rotated orientation relative to the input and the output of the first side of the first ALU, and an output on a second side of the second ALU. The third ALU has a first side facing the second side of the second ALU, and an input and an output on the first side of the third ALU. The input of the first side of the first ALU is logically directly connected to the output of the first side of the second ALU. The input of the first side of the second ALU is logically directly connected to the output of the first side of the first ALU. The output on the second side of the second ALU is logically directly connected to the input on the first side of the third ALU.

[0065] The above embodiment may have various optional features. A pathway may extend between the first and second ALUs, the pathway being a conduit by which data is provided to and received from the first group of ALUs. The pathway may include an operand selection network between the first and second ALUs that controls data flow between the first and second ALUs. The output on the first side of the third ALU may be logically directly connected to the operand selection network. The operand selection network may be logically directly connected to the input and the output on the first side of the first ALU. The operand selection network may be logically directly connected to the input and the output on the first side of the second ALU. The processor may have a clock speed based upon a worst case combination of: (a) a most time consuming process that could be performed in one clock cycle by any of the first, second and/or third ALUs, and (b) a most amount of time it would take for the any of the first, second and/or third ALUs performing (a) to receive any needed inputs from the pathway and remaining ALUs of the first, second and third ALUs. The first and second ALUs may be limited to a first set of types of operations, and the third ALU may be limited to a second set of types of operations, where the second set of types of operations includes more complicated operations than the first set of types of operations. The first side of the first ALU, the first and second sides of the second ALU, and the first side of the third ALU may be in parallel. The second side of the second ALU may lack an input. The processor may include a plurality of groups of ALUs, each of the groups of ALUs having a layout identical to the first group of ALUs.

[0066] According to another embodiment of the invention, a processor has a plurality of group of ALUs, each group including first, second and third ALUs. Within each group of ALUs is a first ALU, second ALU, and third ALU. A common pathway extends into each group between the first and second ALUs, the common pathway being a conduit by which data is provided to and received from each group of ALUs and exchanged between the groups of ALUs. The first ALU has on a first side an input and an output. The second ALU has a first side facing the first side of the first ALU, an input and an output on the first side of the second ALU and being in a rotated orientation relative to the input and the output of the first side of the first ALU, and an output on a second side of the second ALU. The third ALU has a first side facing the second side of the second ALU, and an input and an output on the first side of the third ALU. The input of the first side of the first ALU is logically directly connected to the output of the first side of the second ALU. The input of the first side of the second ALU is logically directly connected to the output of the first side of the first ALU. The output on the second side of the second ALU is logically directly connected to the input on the first side of the third ALU.

[0067] The above embodiment may have various optional features. The processor may have a clock speed based upon a worst case combination of: (a) a most time consuming process that could be performed in one clock cycle by any of the first, second and/or third ALUs, and (b) a most amount of time it would take for the any of the first, second and/or third ALUs performing (a) to receive any needed inputs from the pathway and remaining ALUs of the first, second and third ALUs. The processor may have a clock speed that is too fast to accommodate a combination of: (a) a most time consuming process that could be performed in one clock cycle by any of the first, second and/or third ALUs within a particular one of the groups of ALUs, and (b) receive any needed inputs from outside the particular one of the groups of ALUs. The pathway may include an operand selection network between the first and second ALUs that controls data flow between the first and second ALUs. The output on the first side of the third ALU may be logically directly connected to the operand selection network. The operand selection network may be logically directly connected to the input and the output on the first side of the first ALU. The operand selection network may be logically directly connected to the input and the output on the first side of the second ALU. Within each of the groups of ALUs the first and second ALUs may be limited to a first set of types of operations, and the third ALU may be limited to a second set of types of operations, where the second set of types of operations includes more complicated operations than the first set of types of operations. Within each of the groups of ALUs the first, second and third ALUs may be aligned in a linear path. Within each of the groups of ALUs the first side of the first ALU, the first and second sides of the second ALU, and the first side of the third ALU may be in parallel. Within each of the groups of ALUs the second side of the second ALU may lack an input.

[0068] According to an embodiment of the invention, a method for populating an instruction word for simultaneous execution of instruction operations by a plurality of ALUs in a data path is provided. The method includes: creating a dependency graph of instruction nodes, each instruction node including at least one instruction operation; first selecting a first available instruction node from the dependency graph; first assigning the selected first available instruction node to the instruction word; second selecting any available dependent instruction nodes that are dependent upon a result of the selected first available instruction node and do not violate any predetermined rule; second assigning to the instruction word the selected any available dependent instruction nodes; and updating the dependency graph to remove any instruction nodes assigned during the first and second assigning from further consideration for assignment.

[0069] The operations of the above method embodiment may also be provided as an embodiment of a system that includes a plurality of Arithmetic Logic Units (ALUs) in a data path operating on a clock cycle and a non-transitory computer readable memory storing instructions, where the system is programmed to implement the instructions to perform the above operations. The operations of the above embodiment may also be provided as an embodiment of a non-transitory computer readable media storing instructions populating instruction words for simultaneous execution of instruction operations by a plurality of ALUs in a data path, which when executed by a system, cause the system to perform the disclosed operations.

[0070] The above embodiment may have various optional features. The operations may include after the second assigning: determining whether the instruction word is complete or incomplete; returning to the first selecting in response to the instruction word being incomplete per the determining; and closing the instruction word in response to the instruction word being complete per the determining. The operations may include creating a new instruction word in response to (a) the closing and (b) the dependency graph still has unassigned instruction nodes. The updating may include (a) removing from the dependency graph any instruction nodes assigned during the first and second assigning, or (b) marking any instruction nodes assigned during the first and second assigning as unavailable for further assignment to the instruction word. The first assigning may assign the selected first available instruction node to a first ALU slot of the instruction word, the first ALU slot corresponding to a first ALU of the plurality of ALUs. The second assigning may assign one of the any available dependent nodes to a second ALU slot of the instruction word, the second ALU slot corresponding to a second ALU of the plurality of ALUs where the first and second ALUs are within a locally predefined range of each other. The operations may include in response to absence of an available node during the first selecting, bypassing the first assigning, second selecting and second assigning. The operations may include initially designating, after the creating, any of the instruction nodes in the dependency graph as global, wherein a global designation represents that the instruction nodes requires inputs that are outside of a predefined physical range of ALUs, the range being smaller than the full extent of the data path, and the any predetermined rule includes that the instruction node may not include an available dependent instruction node that is dependent upon a result of the selected first available instruction node when the an available dependent instruction node is designated as global. The initially designating, in response to being unable to determine whether a particular instruction node is global, may designate the particular instruction node as non-global.

[0071] According to an embodiment of the invention, a method for populating multiple instruction words for execution of instruction operations by a plurality of ALUs in a data path is provided. The method includes: creating a dependency graph of instruction nodes, each instruction node including at least one instruction operation; first assigning a first instruction node to a first instruction word; identifying a dependent instruction node that is directly dependent upon a result of the first instruction node; first determining whether the dependent instruction node requires any input from two or more sources that are outside of a predefined physical range of each other, the range being smaller than the full extent of the data path; second assigning, in response to satisfaction of at least one predetermined criteria including a negative result of the first determining, the dependent instruction node to the first instruction word; and third assigning, in response to a negative result of the first determining and violation of any of the at least one predetermined criteria, the dependent instruction node to a second instruction word; wherein execution of the first and second instruction words occur at different clock cycles.

[0072] The operations of the above method embodiment may also be provided as an embodiment of a system for populating multiple instruction words for instruction operations, where the system includes a plurality of Arithmetic Logic Units (ALUs) in a data path operating on a clock cycle, a non-transitory computer readable memory storing instructions, and the system is programmed to implement the instructions to perform the operations. The operations of the above method embodiment may also be provided as an embodiment of a non-transitory computer readable media storing instructions for populating multiple instruction words of instruction operations by a plurality of ALUs in a data path, which when executed by a system cause the system to perform the operations.

[0073] The above embodiment may have various optional features. The operations may further include fourth assigning, in response to at least a positive result of the first determining, the dependent instruction node to a third instruction word, where execution of the first and third instruction word are separated by at least one clock cycle. The operations may further include optimizing, after the first and second assigning, assignments of instruction nodes of the dependency graph to the first and second instruction words; and executing, after the optimizing, the first and second instruction words. The operations may further include: first executing the first instruction word during a first clock cycle; determining whether executing the second instruction word in a second clock cycle immediately after the first clock cycle would result in at least one violation of at least one predetermined rule; second executing, in response to a negative result of the second determining, the second instruction word during the second clock cycle; delaying execution of the second instruction word, in response to a positive result of second determining, by at least one clock cycle until the at least one violation resolves; and third executing, in response to resolution of the at least one violation, the second instruction word. The delaying may include generating an instruction word that either lacks instruction operations or contains instruction operations for the ALUs to take no action during a corresponding clock cycle. The at least one predetermined rule may include a guarantee that the ALUs will receive all inputs needed for all the instruction operations of the second instruction word and will execute the all the instruction operations of the second instruction word within the second clock cycle.

DETAILED DESCRIPTION

[0074] In the following description, various embodiments will be illustrated by way of example and not by way of limitation in the figures of the accompanying drawings. References to various embodiments in this disclosure are not necessarily to the same embodiment, and such references mean at least one embodiment. While specific implementations and other details are discussed, it is to be understood that this is done for illustrative purposes only. An individual skilled in the relevant art will recognize that other components and configurations may be used without departing from the scope and spirit of the claimed subject matter.

[0075] Several definitions that apply throughout this disclosure will now be presented. The term "substantially" is defined to be essentially conforming to the particular dimension, shape, or other feature that the term modifies, such that the component need not be exact. For example, "substantially cylindrical" means that the object resembles a cylinder, but can have one or more deviations from a true cylinder. The term "comprising" when utilized, means "including, but not necessarily limited to"; it specifically indicates open-ended inclusion or membership in the so-described combination, group, series and the like. The term "a" means "one or more" absent express indication that it is limited to the singular. "First," "second," etc., are labels to differentiate like terms from each other, and does not imply any order or numerical limitation.

[0076] "Core" is a term of art and refers to an independent processing unit found on a processor chip. A processor chip may have multiple cores.

[0077] "Arithmetic logic unit" (ALU) is a term of art, and refers to a combinational digital electronic circuit that performs arithmetic and bitwise operations on integer binary numbers.

[0078] Two ALUs may have a relationship in which one ALU generates a result as an output and a second ALU receives that output result as an input that the second ALU will use/consume in a further processing step. To distinguish between the two components in that relationship, "producer" refers to the component that generates an output result, and "consumer" refers to the component that consumes the previously generated output result. ALUs can be in multiple producer/consumer relationships, as a single ALU can both receive a result that it consumes (and is thus a consumer ALU) and produces a result from that consumption (and is thus a producer ALU). Components other than ALUs can also be consumers or producers, e.g., a register that provides a value to an ALU is producer.

[0079] By way of non-limiting example in FIG. 3A, ALU 208 provides an output to ALU 210, and ALU 210 provides its output to ALU 212. ALU 208 and ALU 210 are thus in a consumer-provider relationship 302 where ALU 208 is a provider and ALU 210 a consumer. ALU 210 and ALU 212 are also in a consumer-provider relationship 304 where ALU 210 is a provider and ALU 212 a consumer; ALU 210 is thus both a consumer ALU in one relationship and a provider ALU in another relationship.

[0080] Referring now to FIG. 3B, a specific example of provider-consumer relationship is when an ALU receives as input, feedback from its prior output. In this example, the ALU 214 is both a provider and a consumer in that it provides the output that it consumes as input.

[0081] "Data path" refers to the group of ALUs on a core that are specifically dedicated to executing program instructions.

[0082] "Local communication," "local," "locally," "locally predefined range" or the like means structures, communications, processes or the like that are contained with a predefined limited physical range of ALUs, which range is smaller than the full extent of the data path. A non-limiting example of local communication is a defined range of communication from an ALU to itself as is shown in FIG. 3B. Another non-limiting example is a defined range of any immediately adjacent ALU, i.e., one ALU distance (e.g., for the layout in FIG. 2B, from ALU 204-206, ALU 206-208, ALU 208-210, etc.). Another non-limiting example is an ALU within a two ALU distance. Another non-limiting example is ALUs in certain directions, such as an adjacent ALU to the left but not the right. Another example is a particular type of ALU relationship (e.g., a mirrored ALU as described below). The invention is not limited to any particular arrangement as local, other than as predefined.

[0083] "Global communication," "global," "globally," "non-local communication," "non-local" or the like means structures, communications, processes or the like that require receipt of signals from outside the defined scope of local (e.g., in distance, orientation, and/or relationship). By way of a non-limiting example, if the local communication range is defined by an ALU to itself, then communications from an ALU to any other ALU is a "global communication" (e.g., for the layout in FIG. 2B, ALU 208 to ALU 210 is global because ALU 210 is not ALU 208). In another non-limiting example, if the local communication range is defined by one adjacent ALU, then communications from an ALU to another ALU that is not adjacent is a "global communication" (e.g., for the layout in FIG. 2B, ALU 204 to ALU 206 is local because the two are adjacent, whereas ALU 204 to ALU 208/210/212/214/216 is global because ALU 204 is not adjacent to these others but rather more than one ALU distance away).

[0084] Some signals may be global or local by their nature. For example, the values provided by registers may be available at the input of the ALUs with the data pathway and may thus always be local. Non-limiting examples of local may include constants (also called immediate operands), data read from memory over multiple cycles, or data read from a special register, special storage, or special execution unit. Non-limiting examples of global may include ALU bypass network, the result from a complex or specialized execution unit such as multiplier, or data from load execution unit. The invention is not limited to what signals are local or global, other than as may satisfy the predetermined rules for the same.

[0085] "Defined," "definition" or the like in the context of local is the various applicable rules that determine whether a particular situation is local or global. It is to be understood that "defined" or the like is used as an open set (e.g., consistent with "comprising") in that a particular definition as discussed herein may have other rules that are not specified. By way of non-limiting example, a "definition" of local for an "immediately adjacent ALU" requires the noted adjacent orientation, but does not exclude other (non-conflicting rules) such as the presence of operand selector switches and/or registers proximate to the ALUs that provide various inputs within the local range.

[0086] A "compiler" is computer software operating in combination with hardware that transforms computer code written in one programming language (the source language, usually person-readable) into another computer language (the target language, typically non-human readable). A compiler will typically take program steps of a source program for execution and convert the steps into a series of machine-level final instructions for ALUs to execute. In the compiling process, the various program steps may be reorganized and/or transformed into different related groups as the groups are refined into instructions.

[0087] "Instruction operation" or "instruction operations" refers to any collection of operations (including a collection of one) from a program in any state as it transitions from the initial program steps to the final program instructions to be executed by the ALUs.

[0088] "Instruction word" refers to a bundle of instructions operations to be executed by at least some of the ALUs in the data path at the same time, concurrently, in parallel, during a common clock cycle. As discussed below, each instruction operation of the instruction word is associated with a particular ALU that will perform that particular instruction operation. An instruction word may be unpopulated (no instructions have yet been associated), referred to as a "bubble." Instruction words are described herein in the singular, although it is to be understood that an instruction word may be plural (e.g., two smaller instruction words can collectively be considered a single instruction word). If the chip has different cores, there may be different instructions words for each core executed in parallel during the same clock cycle.

[0089] "Mapped instruction word" refers to a type of instruction word with multiple slots in which each of the slots of the instruction word corresponds to a particular ALU. For multiple mapped instruction words, the slots collectively form columns corresponding to a particular ALU. Multiple instruction words thus define a map of instruction operations to ALUs, in that each row represents the instruction operations to be executed in parallel during a clock cycle while the columns represent the assigned ALUs for those instructions that will be executed in sequential fashion; the opposite could also be used (where each column represents the instruction operations to be executed in parallel during a clock cycle while the rows represent the assigned ALUs for those instructions), although for ease of discussion the following description only further discusses the column=ALUs. Instruction words may have any desirable number of slots, although preferably the number of slots is the same as the number of ALUs in the data path.

[0090] When created, a mapped instruction word may be unpopulated, and thereafter some of the slots may be filed. As discussed below, the processor may assign instruction operations to particular ALUs for execution by associating (e.g., inserting, linking) each instruction operation to a particular slot/column of the instruction word. When the instruction word is due to be executed, the entire row is read and the individual instruction operations of the row are executed by the corresponding ALUs. If any slot of an instruction word is unpopulated, then the ALU corresponding to that instruction slot does not receive any new instruction for that clock cycle and will either remain idle or continue with a prior multiple-clock cycle process that it is engaged in. In the alternative, slots that do not receive an instruction operation from the program could be assigned a dummy instruction operation for the ALU to either remain idle or continue with a prior multiple-clock cycle process that it is engaged in.

[0091] "Logically direct connection," "logically directly connected" and the like refers to a connection over a pathway that lacks intervening logic that would modify a signal passing over the connection. A non-limiting example is a wire connecting two different points. Another example is wires in combination with traffic direction components, such as a multiplexer or an operand selection switch. A buffer may also be part of a logically direct connection when it does not change the signal. A logically direct connection may pass through another component (e.g., an ALU) provided that the component does not exert a logic based modification on the signal.

[0092] "Normal operation" refers to operations that occur while the support structure and software is operating in an undamaged state under ambient conditions. Operations that occur while the structure and/or software is physically or electrical damaged (e.g., a virus) or under external duress conditions (e.g., excessive heat, cold, pressure, water exposure) are to be considered abnormal.

[0093] The embodiments herein are discussed with respect to components at room temperatures, and without any specific effort to reduce the temperature. However the invention is not so limited, and the embodiments could be implemented in other temperature conditions.

[0094] Some embodiments herein are discussed with respect to processes and/or flowcharts. It should be understood that, for any process or flowchart discussed herein, there can be additional, fewer, or alternative steps, performed in similar or different orders, or in parallel, within the scope of the various embodiments, unless otherwise stated.

[0095] As discussed above, a paradigm of the prior art is to guarantee two events in a single clock cycle. First, an ALU will receive and select as inputs from whatever source within the data path that provides those inputs. Second, the ALU will perform a processing step on the received/selected input(s) where the processing step is known to take one cycle or less to complete.

[0096] According to an embodiment of the invention, a new paradigm is provided in which the guarantee is limited to completing an act of local communication to a consumer ALU, and the consumer ALU performs its assigned processing step. Thus there are two requirements to maintain the guarantee. First, like the prior art the ALU will perform a processing step on the received/selected input(s) where the processing step is known to take one cycle or less to complete. Second, an ALU will receive and select as inputs from whatever local producer within the data path provided them; unlike the prior art this paradigm does not require, and thus does not guarantee, that the ALU will receive and select as inputs content that is non-local.

[0097] This paradigm is different than the prior art in that the prior art requires that the clock speed allow the time needed for global communication across the entire data path, while some embodiments herein only require that the clock speed allow for the time needed for local communication; since the time for local communication is smaller than the time for global communication, the overall clock speed is faster. When a particular instruction operation requires a global communication, then the corresponding instruction operation is delayed by one or more clock cycles to allow for the global communication to be completed. This paradigm may be achieved in part by taking into account the location of ALUs assigned to specific steps, and performing related subsequent steps in ALUs that are local.

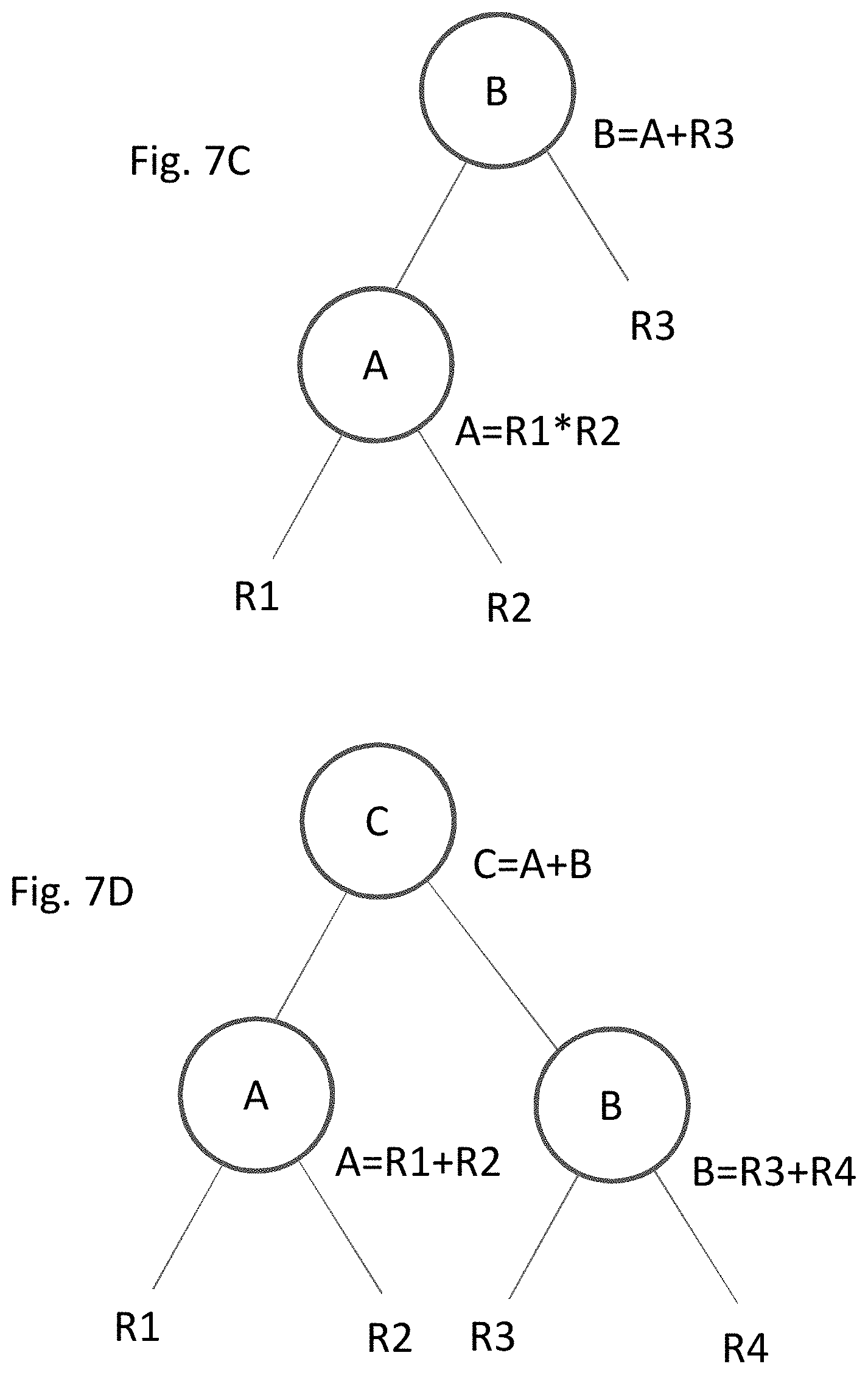

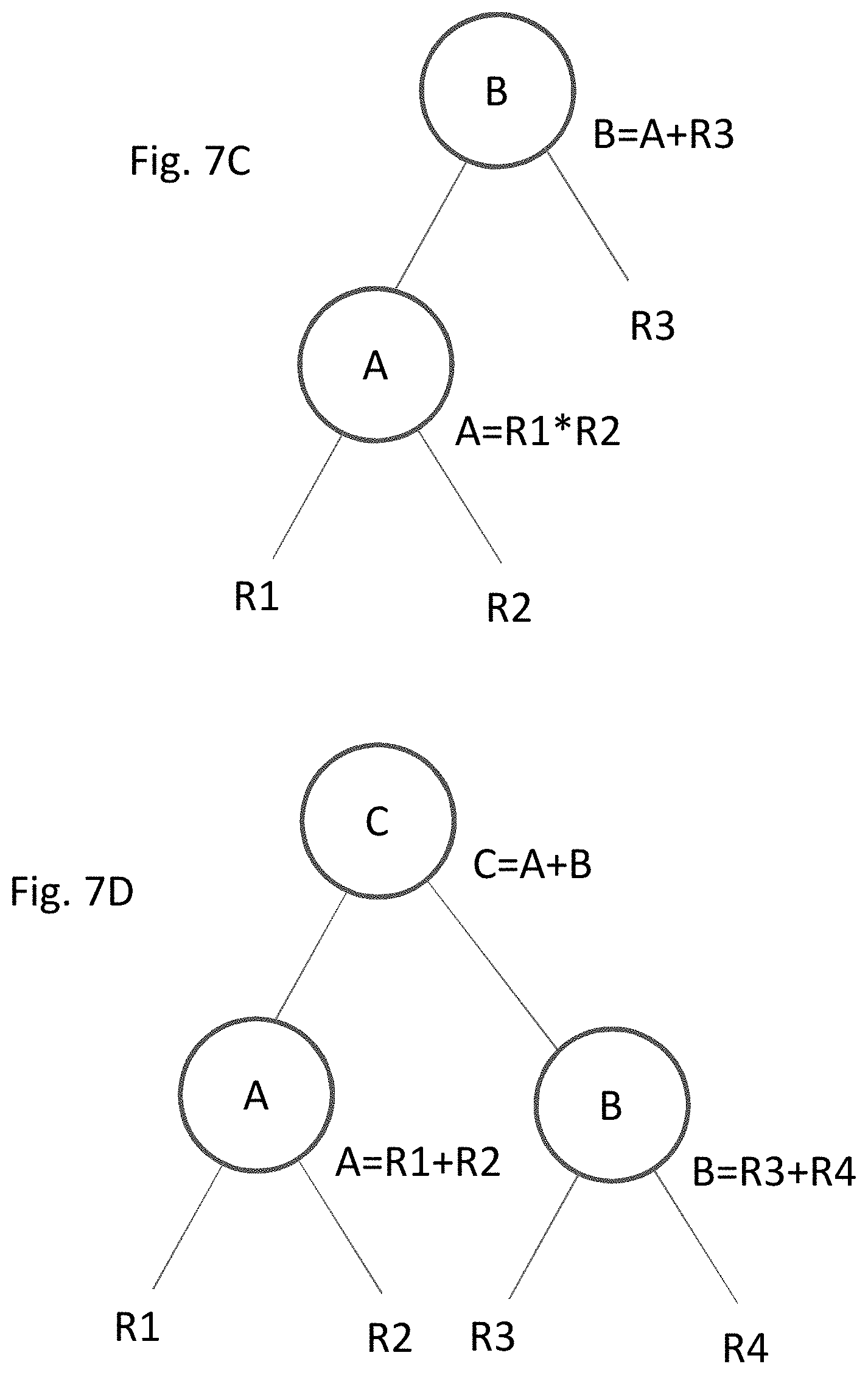

[0098] The above may be demonstrated in a basic example of two instruction operations for a processor 250 of FIGS. 2A and 2B to implement, hereinafter referred to as Instruction Set 1: [0099] 1: A=R1+R2 [0100] 2: B=A+R3.

[0101] where [0102] R1, R2 and R3 are registers that provide a particular value as available within the data path.

[0103] To execute Instruction Set 1 under the methodology of the prior art, processor 250 would select any ALU in FIG. 2B it wants for the first instruction operation A, such as for example ALU 208. The prior art processor must then also select a second ALU to perform the second instruction operation B. In selecting the second ALU, the prior art processor does not take into account the location of ALU 208 where the prior instruction operation A was executed. This is in part because the prior art paradigm is based on the clock speed allowing for the selection of any ALU within the data path to perform the second step; the distance between the ALUs is irrelevant and ALU location of prior instructions are thus not considered in selection of the second ALU. The prior art processor could thus just as easily pick ALU 210 (close to ALU 208) as ALU 214 (several ALUs distant). Similarly, the prior art processor does not leverage the dependent relationship between instruction operations A and B, in that instruction operation B depends on the outcome of instruction operation A.

[0104] Per an embodiment of the invention, a processor will likewise select a first ALU such as ALU 210 for instruction operation A. To assign the second ALU, the processor can take into account both the nature of the dependent relationship between instruction operations A and B and the location of the ALU where A is performed. Here, the processor recognizes that (a) ALU 210 performed the first instruction operation A and thus already has access to the outcome, and (b) that all of the information needed to execute instruction operation B is available locally to ALU 210. That is, instruction operation B needs the result of instruction operation A, which will be present on ALU 210 by virtue of completion of the first instruction operation A, and the value from register R3 (B=A+R3), which is locally accessible from the registers 217. The processor can thus assign one of the ALUs local to ALU 210 to perform the second instruction operation B.

[0105] As noted above, what is considered local is predefined. A non-limiting example of local communication is an ALU to itself, such as shown in FIG. 3B. In the architecture of FIG. 2B the "most" proximate consumer ALU to a producer ALU is itself, in that the shortest transmission path from one ALU to the next is between the input and output of a single ALU. For example, if local is defined as an ALU to itself, then with instruction operation A having been assigned to ALU 210, then instruction operation B (as both dependent to instruction operation A and executable locally), can also be assigned to ALU 210. Since the distance between the input and output of ALU 210 is relatively short, the corresponding delay from time of transmission is minimal (compared to global communication across the entire data path).

[0106] Another non-limiting example of a definition of local communication is any adjacent ALUs; for example under such a definition the ALU 210 executing instruction operation A is within that local range of adjacent ALUs 208 and 212. Communication by ALU 210 to ALU 208, 210 (i.e., to itself) or 212 would be local. Communication beyond one adjacent ALU (e.g., 204, 214) is considered global. If instruction operation A is assigned to ALU 210, then instruction operation B could thus be assigned to ALU 208, 210 or 212 under this definition of local. Instruction operation B would thus be assigned to ALU 204, 206 or 214 under this definition of local, as those ALUs are too far away from ALU 210 to guarantee that ALU 201 would receive their outputs and perform instruction operation B within a single clock cycle.

[0107] The definition of local also preferably includes the range to the operand selection units and registers that provide the input to the ALUs, such as 2255 and 2355 in FIGS. 22 and 23, described below.

[0108] In the above examples, the processor and corresponding methodology applied "location awareness" in the selection of the ALU for instruction operation B, in that the processor knew the location of the producer ALU that would execute the prior instruction operation A and used that location as a factor in deciding the location of the consumer ALU that would perform the second instruction operation B. As discussed in more detail below, the processor may actively seek to assign instructions to ALUs to avoid non-local communication.

[0109] The above paradigm allows for a CPU to operate with significantly faster clock speed than the prior art because the "worst case" time of transmission is established by local communication rather than global communication. The distance for local communication (e.g., between a range of two adjacent ALUs) is considerably smaller than the distance for global communication from the extreme edges of ALUs in the data path. Since the corresponding distance is so small, the corresponding time of transmission for local communication is also quite small compared to the time of transmission for global communication.

[0110] By way of comparison, as discussed above in the prior art the clock cycle may be defined by the following equation:

Minimum clock cycle time=tmaxglobalcomm+tmaxop+toh

[0111] In an embodiment of the invention, the clock cycle would be defined by:

Minimum clock cycle time=tmaxlocal+tmaxop+toh

[0112] where [0113] tmaxlocal is the maximum amount of time for an ALU to receive and select inputs from a local producer; [0114] tmaxop (as discussed above) is the maximum amount of time that one ALU would take to complete a step within a single clock cycle; and [0115] toh (as discussed above) is the time allocated to "overhead" parameters as are known in the art, including but not limited to one or more of clock skew and clock jitter, variation of process, temperature or voltage, ground bounce, simultaneous switching, signal integrity, Miller effect, guard band and/or aging.

[0116] As discussed above, non-limiting examples of tmaxglobalcomm, tmaxop, toh are 120 ps, 120 ps, and 20 ps, with a corresponding clock cycle of 260 ps and a minimum clock speed of 3.85 Ghz. For that same example and circuit conditions, an approximate value of local communication (where local is defined by an adjacent ALU) may be tmaxlocal=20 ps. The corresponding clock cycle time and clock speed art between the prior art and the instant embodiment is thus:

TABLE-US-00001 TABLE 1 Prior Art Instant embodiment Minimum clock tmaxglobalcomm + tmaxlocal + cycle time tmaxop + toh tmaxop + toh 260 ps = 120 + 160 ps = 20 + 120 + 20 120 + 20 Maximum clock speed 1/260 ps 1/160 ps 3.85 Ghz 6.25 Ghz

[0117] The clock speed of the instant embodiment through the application of local communication is thus some 60% faster than the prior art. This is because the prior art speed is based on tmaxglobalcomm whereas in the above embodiment speed is based on tmaxlocal where tmaxlocal<<tmaxglobalcomm.

[0118] Even greater improvements are potentially available, via scaling in improvement of clock speed with faster transistors. As discussed above, in the prior art, further improvements in transistor speed yield little improvement in clock speed because time of processing became a negligible factor compared to tmaxglobalcomm, but this is not the case with some embodiments herein due to the reliance on the much smaller tmaxlocal. For example, applying the above equations with a potential future doubling of transistor speed (tmaxop=60 s) and keeping other factors stable for sake of example, the clock speeds are as follows:

TABLE-US-00002 TABLE 2 Prior Art Instant embodiment Minimum clock tmaxglobalcomm + tmaxlocal + cycle time tmaxop + toh tmaxop + toh 200 ps = 120 + 100 ps = 20 + 60 + 20 60 + 20 Maximum clock 5 Ghz 10 Ghz speed

[0119] In the above example, the prior art paradigm only saw about 1.25 Ghz (+30%) improvement in clock speed from Table 1 to Table 2 in response to the doubling of the transistor speed. In contrast, the paradigm of the instant embodiment set forth herein saw a 3.75 Ghz improvement (+60%) in clock speed from Table 1 to Table 2. Overall, the clock speed of the instant embodiment in this example would be twice that of the prior art paradigm.

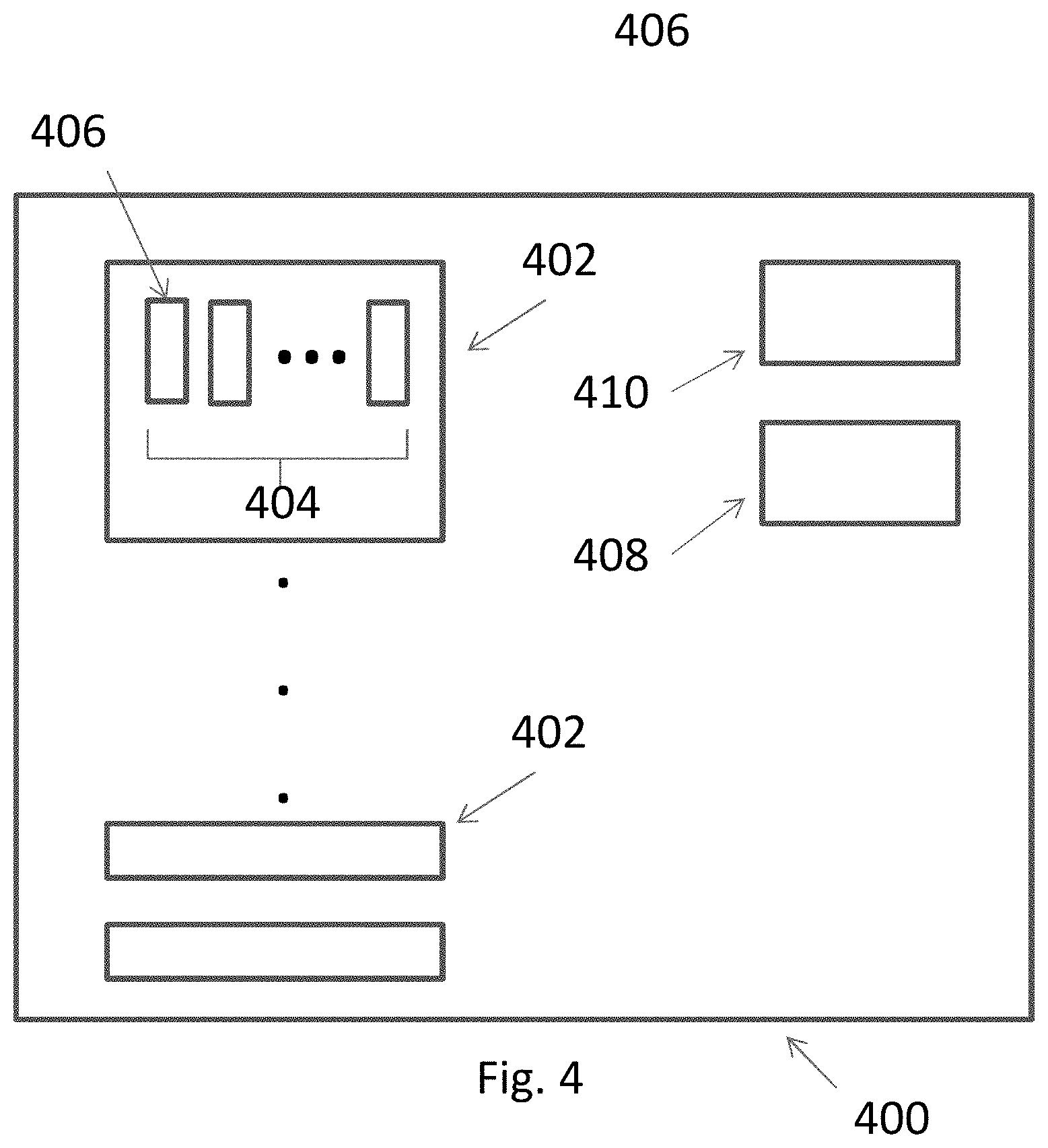

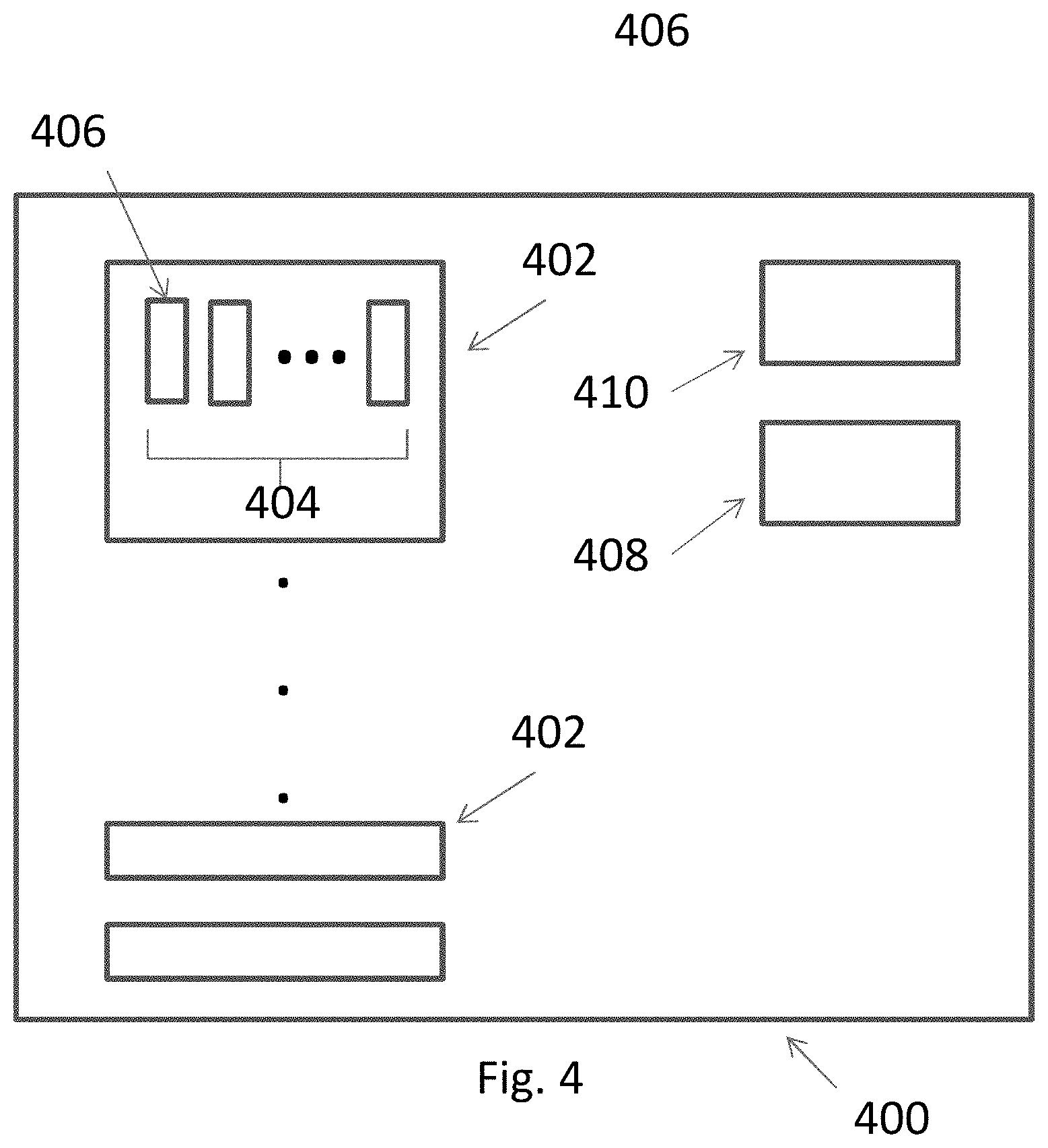

[0120] A representation of a processor chip 400 which can practice the paradigms discussed herein is shown in FIG. 4. Processor chip 400 includes one more cores 402, each core having a data path 404 with multiple ALUs 406. Compiler functionality is provided by a compiler as software resident on appropriate hardware as is known in the art, and represented in FIG. 4 as compiler 408 running on processor chip 400. A scheduler 410 will implement the compiled instructions on the ALUs. Processor 400 has a clock cycle and corresponding clock speed consistent with that described above, in that it guarantees local communication but not global communication. The invention is not limited to this particular chip layout, and other layouts could be used, and the various operations distributed as appropriate within those layouts.

[0121] As discussed above, the improved clock speeds are based on the guarantee that an ALU will receive and select as inputs from whatever local producer provided them. However, inevitably not every instruction can be performed locally, in that for whatever reason a particular consumer ALU needs a result from a non-local producer. A single clock cycle as set for local communication does not provide enough time to guarantee that the input from a non-local producer will arrive at the consumer ALU in time to allow for processing.

[0122] Some embodiments of the instant invention may compensate for this by delaying processing by the consumer ALU for as a many clock cycles as needed until the input has enough time to reach the consumer ALU. Thus, where the prior art processor used a single clock cycle for the global communication and the processing, a processor of the instant embodiment uses multiple separate clock cycles--at least one for the non-local communication and at least one for the processing once the inputs are received.

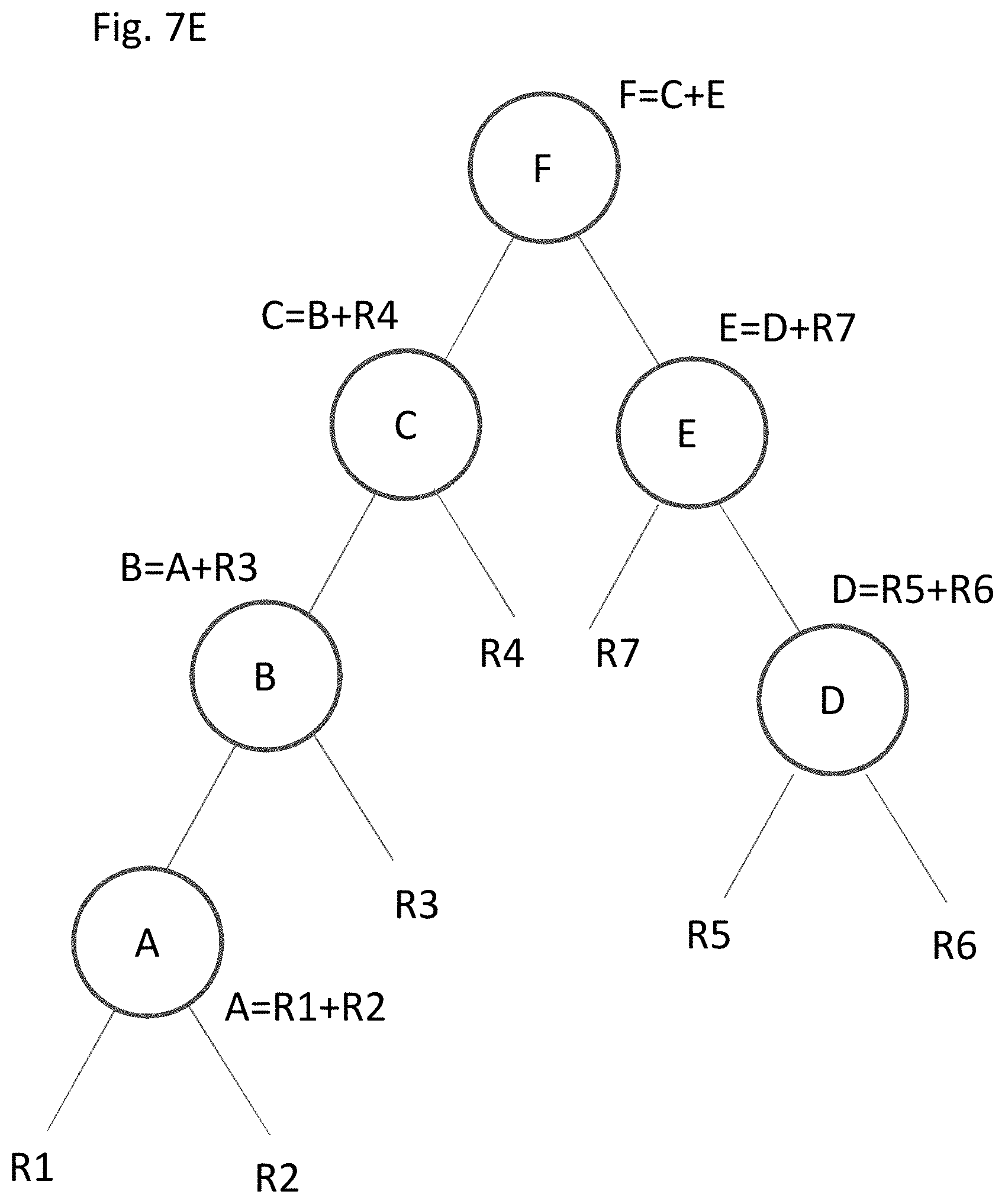

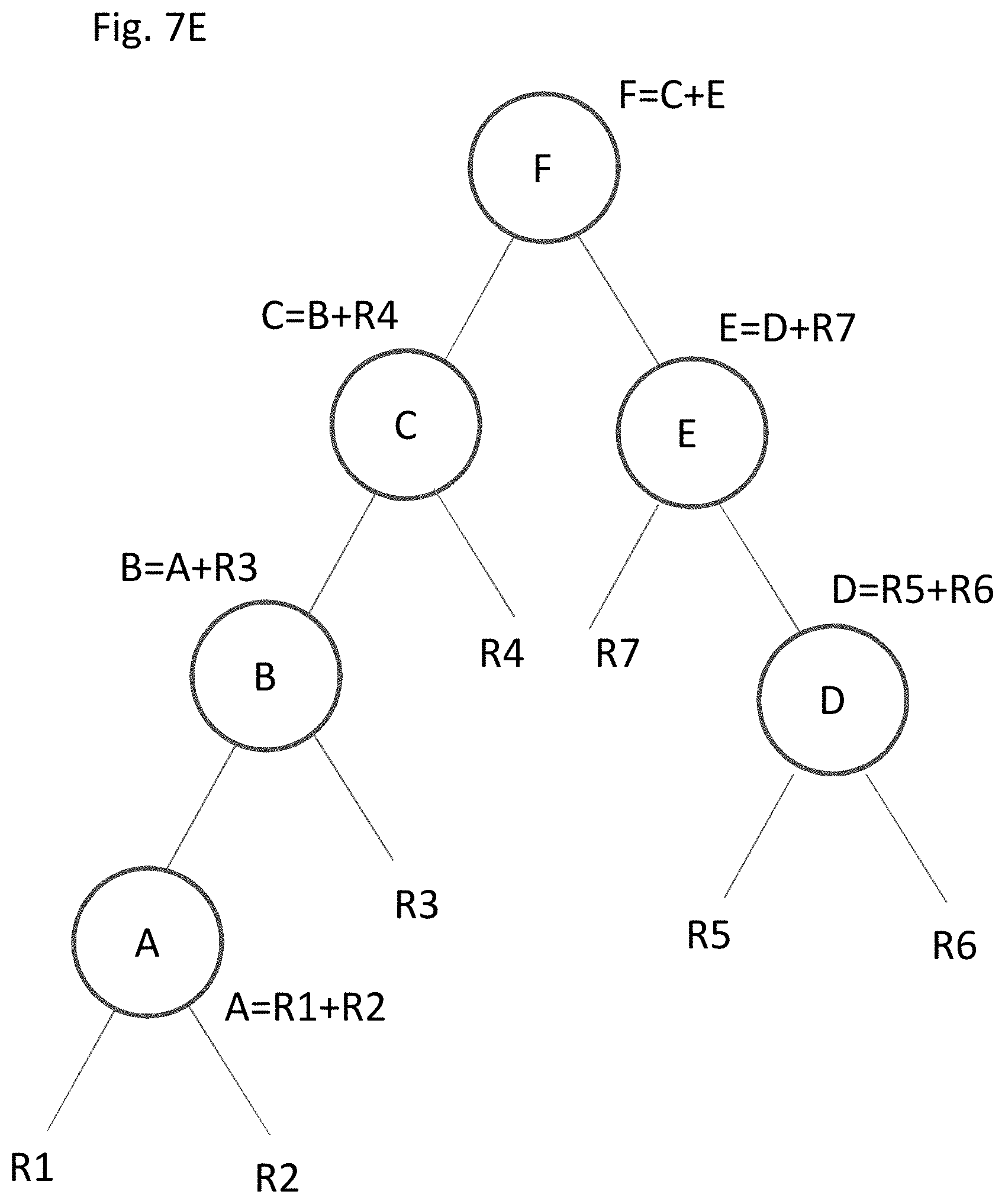

[0123] This can be illustrated with respect to Instruction Set 2, which includes five instruction operations: [0124] 1: A=R1+R2 [0125] 2: B=A+R3 [0126] 3: D=R5+R6 [0127] 4: E=D+R7 [0128] 5: F=B+E

[0129] In the foregoing example, instruction operations A and B are in a dependent relationship, and each can be performed at a local level (e.g., by ALU 208 alone if that was the definition of local), within a single clock cycle. Instruction operations D and E are also in a dependent relationship, and each can be performed locally at a different ALU, (e.g. ALU 214). Thus four of the five instructions of Instruction Set 2 can be performed within local ALUs, all within a range of local communication.

[0130] This is not the case for instruction operation F. Since instruction operation F is dependent upon the outcomes of instruction operations B and E, in theory instruction operation F could be processed locally where A-B occurs, locally where D-E occurs, or at a new ALU entirely (e.g., ALU 212). However, execution of instruction operations B and E occur at ALUs too far apart to guarantee that the result of both instruction operations B and E could reach the corresponding ALU assigned to instruction operation F to process instruction operation F within the same clock cycle.

[0131] For example, if instruction operation F were performed in the same locality as instruction operations A-B (ALU 208), then the result of instruction operation B is locally available, but the result of instruction operation E (from ALU 214) is not. Execution of instruction operation F thus requires a non-local communication. As discussed above, the clock cycle is not long enough to guarantee non-local communication, in that the clock cycle is not long enough to guarantee that the result of instruction operation E will reach ALU 208 from ALU 214 in time to be processed.

[0132] To account for this need for an instance of non-local communication, processer 400 may insert an additional instruction into Instruction Set 2, which is a delay instruction in which the execution of instruction operation F is delayed by one clock cycle. The processor will thus assign the ALUs as a schedule of six instruction operations, rather than five:

[0133] 1: A=R1+R2

[0134] 2: B=A+R3

[0135] 3: D=R5+R6

[0136] 4: E=D+R7

[0137] 5: WAIT (bubble)

[0138] 6: F=B+E

[0139] The addition of the inserted instruction operation provides an additional clock cycle of time for the result of instruction operations E and/or B to travel globally to reach a destination ALU. For example, using the metrics as discussed above with respect to Tables 1, the maximum time needed to get from one ALU to another in the data path is about 120 ps. A single clock cycle of the processor is 160 ps. Since the clock cycle of 160 ps is more than the maximum travel time of 120 ps, waiting to execute instruction operation F by one clock cycle will guarantee that the results of instruction operations E and B will have enough time to reach the consuming ALU to process instruction operation F. If for some reason it was not enough time (e.g., the maximum travel time was longer than the clock cycle), then an additional delay step(s) could be inserted until enough time was provided.

[0140] Despite the additional clock cycle, the entire process occurs faster than the prior art. In the prior art processor using the metrics discussed above in Table 1, the above five steps 1-5 would be executed on 3.85 Ghz processor at 260 ps per step, for a total of 1300 ps to execute the five instruction operations. In the processor of the above embodiment, the six instruction operations (five of the original plus one delay step) would be executed on 6.25 Ghz processor at 160 ps per step, for a total of 960 ps. The processor of the instant embodiment executes the overall instruction operation set A-F at higher clock speed (+62%) and in less time (-26%) than the prior art paradigm.