Electronic Device For Performing Task Including Call In Response To User Utterance And Operation Method Thereof

KIM; Kwangyoun ; et al.

U.S. patent application number 16/534950 was filed with the patent office on 2020-02-13 for electronic device for performing task including call in response to user utterance and operation method thereof. The applicant listed for this patent is Samsung Electronics Co., Ltd.. Invention is credited to Eunsu JEONG, Jihyun JUNG, Juyeoung KIM, Kwangyoun KIM, Woochan KIM, Yusic KIM, Jaeeun SUH.

| Application Number | 20200053219 16/534950 |

| Document ID | / |

| Family ID | 69406700 |

| Filed Date | 2020-02-13 |

View All Diagrams

| United States Patent Application | 20200053219 |

| Kind Code | A1 |

| KIM; Kwangyoun ; et al. | February 13, 2020 |

ELECTRONIC DEVICE FOR PERFORMING TASK INCLUDING CALL IN RESPONSE TO USER UTTERANCE AND OPERATION METHOD THEREOF

Abstract

An electronic device includes: a microphone; a speaker; a touchscreen display; a communication circuit; at least one processor; and a memory storing instructions that, when executed, cause the at least one processor to: receive a first user input; identify a service provider and a detailed service; select a first menu corresponding to the detailed service from menu information; attempt to connect a call to the service provider; when the call to the service provider is connected, transmit one or more responses until reaching a step corresponding to the first menu; in response to reaching the first menu, determine whether an attendant is connected; in response to completion of connection to the attendant, output a notification indicating that the connection to the attendant has been completed; and in response to reception of a second user input for the output notification, display a screen for a call with the service provider.

| Inventors: | KIM; Kwangyoun; (Suwon-si, KR) ; KIM; Woochan; (Suwon-si, KR) ; KIM; Yusic; (Suwon-si, KR) ; KIM; Juyeoung; (Suwon-si, KR) ; SUH; Jaeeun; (Suwon-si, KR) ; JEONG; Eunsu; (Suwon-si, KR) ; JUNG; Jihyun; (Suwon-si, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 69406700 | ||||||||||

| Appl. No.: | 16/534950 | ||||||||||

| Filed: | August 7, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G10L 15/22 20130101; G06F 3/0482 20130101; G10L 17/08 20130101; G06F 3/04883 20130101; H04M 3/5166 20130101; G10L 17/18 20130101; G10L 2015/223 20130101; G06F 3/0488 20130101; G10L 17/00 20130101 |

| International Class: | H04M 3/51 20060101 H04M003/51; G10L 17/00 20060101 G10L017/00; G06F 3/0482 20060101 G06F003/0482; G06F 3/0488 20060101 G06F003/0488 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Aug 7, 2018 | KR | 10-2018-0091964 |

Claims

1. An electronic device comprising: a microphone; a speaker; a touchscreen display; a communication circuit; at least one processor operatively connected to the microphone, the speaker, the touchscreen display, and the communication circuit; and a memory operatively connected to the processor and storing instructions that, when executed by the at least one processor, cause the at least one processor to: receive a first user input through the touchscreen display or the microphone; identify a service provider and a detailed service based on at least a part of the first user input; select a first menu corresponding to the detailed service from menu information comprising one or more detailed services provided by the service provider; attempt to connect a call to the service provider using the communication circuit; based on the call to the service provider being connected, control the communication circuit to transmit one or more responses until reaching a step corresponding to the first menu in response to one or more voice prompts provided by the service provider; in response to reaching the first menu, determine whether an attendant is connected based on at least one voice transmitted by the service provider; in response to completion of connection to the attendant, output a notification indicating that the connection to the attendant has been completed, using the speaker or the touchscreen display; and in response to reception of a second user input for the output notification, display a screen for a call with the service provider.

2. The electronic device of claim 1, wherein, to cause the at least one processor to select the first menu corresponding to the detailed service from the menu information comprising one or more detailed services provided by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: request the menu information to an external server using the communication circuit; and receive the menu information from the external server using the communication circuit.

3. The electronic device of claim 2, wherein: the menu information comprises information of one or more services provided by the service provider in a tree structure, and the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to select, as the first menu, one of one or more services located in a leaf node in the tree structure in response to the detailed service.

4. The electronic device of claim 3, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: in response to the detailed service being matched to a second menu located in an intermediate node in the tree structure, display, via the touchscreen display, information of a parent node or a child node of the second menu; and based on a third user input received in response to the displayed information, select the first menu located in the leaf node in the tree structure in response to the detailed service.

5. The electronic device of claim 1, wherein, to cause the at least one processor to determine whether the attendant is connected based on the at least one voice transmitted by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: by using a determination model obtained by learning an attendant voice and a first voice among the at least one voice, perform comparison to determine whether the first voice is similar to the determination model by a threshold value or greater.

6. The electronic device of claim 1, wherein, to cause the at least one processor to determine whether the attendant is connected based on the at least one voice transmitted by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: extract at least one audio characteristic from a first voice, and perform comparison to determine, using a determination model obtained by learning the at least one audio characteristic extracted from voice of the attendant, whether the first voice is similar to the determination model by a threshold value or greater.

7. The electronic device of claim 1, wherein, to cause the at least one processor to determine whether the attendant is connected based on the at least one voice transmitted by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: convert a first voice of the at least one voice into text; determine a correlation between the converted text and a determination model obtained by learning corpus for designated greetings; and based on the correlation including a value equal to or greater than a threshold value, determine that the first voice corresponds to voice of the attendant.

8. The electronic device of claim 1, wherein, as to cause the at least one processor to control the communication circuit to transmit one or more responses until reaching a step corresponding to the first menu in response to one or more voice prompts provided by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: control the communication circuit to receive, from the service provider, a voice prompt requesting user information of the electronic device; and control the communication circuit to transmit, to the service provider, a response generated based on the user information of the electronic device.

9. The electronic device of claim 8, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: in response to selection of the first menu, check whether the service provider requests the user information of the electronic device in order to provide the first menu; and in response to requesting of the user information of the electronic device, acquire the user information before attempting call connection to the service provider.

10. The electronic device of claim 1, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to execute the call connection in a background until the second user input is received.

11. The electronic device of claim 1, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: extract at least one keyword related to a service provider and a detailed service from the first user input; select a service provider corresponding to the at least one keyword from among multiple service providers, wherein each of the multiple service providers provides one or more services via call connection; and acquire an identification number for call connection to the service provider.

12. The electronic device of claim 1, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to, after outputting a notification indicating that the connection to the attendant has been completed, before receiving the second user input for the output notification, transmit, to the service provider, a message requesting maintenance of connection to the attendant of the service provider.

13. The electronic device of claim 1, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: estimate a time required for connection to the attendant of the service provider; and provide information of the estimated time by using the touchscreen display.

14. An electronic device comprising: a speaker; a touchscreen display; a communication circuit; at least one processor operatively connected to the speaker, the display, and the communication circuit; and a memory operatively connected to the at least one processor and storing instructions that, when executed by the at least one processor, cause the processor to: execute a calling application; attempt to connect a call to a service provider using the communication circuit; during call connection to the service provider, receive a first user input requesting a standby mode for connection to an attendant of the service provider, wherein the calling application is executed in a background in the standby mode; in response to the first user input, execute the calling application in the standby mode; while the calling application is being executed in the standby mode, determine whether the attendant is connected based on a voice transmitted by the service provider; in response to completion of connection to the attendant, output a notification indicating that the connection to the attendant has been completed using the speaker or the touchscreen display; and in response to reception of a second user input for the output notification, terminate the standby mode.

15. The electronic device of claim 14, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: display an icon indicating a function to provide the standby mode via the touchscreen display; and in response to an input of selecting the icon, switch a mode of the calling application to the standby mode.

16. The electronic device of claim 14, wherein, to cause the at least one processor to determine whether the attendant is connected based on at least one voice transmitted by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: by using a determination model obtained by learning an attendant voice and a first voice from among the at least one voice, perform comparison to determine whether the first voice is similar to the determination model by a threshold value or greater.

17. The electronic device of claim 14, wherein, to cause the at least one processor to determine whether the attendant is connected based on at least one voice transmitted by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: extract at least one audio characteristic from a first voice, and perform comparison to determine, using a determination model obtained by learning the at least one audio characteristic extracted from voice of the attendant, whether the first voice is similar to the determination model by a threshold value or greater.

18. The electronic device of claim 14, wherein, to cause the at least one processor to determine whether the attendant is connected based on at least one voice signal transmitted by the service provider, the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to: convert a first voice of the at least one voice into text; determine a correlation between the converted text and a determination model obtained by learning corpus for designated greetings; and based on the correlation including a value equal to or greater than a threshold value, determine that the first voice corresponds to voice of the attendant.

19. The electronic device of claim 14, wherein the memory stores instructions that, when executed by the at least one processor, cause the at least one processor to, while the calling application is being executed in a standby mode: refrain from displaying an execution screen of the calling application on the touchscreen display, and restrict a function of the speaker or microphone.

20. An electronic device comprising: a communication circuit; at least one processor operatively connected to the communication circuit; and a memory operatively connected to the at least one processor and storing instructions that, when executed by the at least one processor, cause the processor to: control the communication circuit to receive a request for a call connection to a service provider from an external electronic device, wherein the request comprises user information of the external electronic device and at least one piece of keyword information related to a detailed service and the service provider; in response to receiving the request, acquire an identification number for the call connection to the service provider; select a first menu corresponding to the detailed service included in the request from menu information comprising one or more detailed services provided by the service provider; control the communication circuit to attempt to connect a call between the service provider and the external electronic device; based on the call to the service provider being connected, control the communication circuit to transmit one or more responses until reaching a step corresponding to the first menu in response to one or more voice prompts provided by the service provider; in response to reaching the first menu, determine whether an attendant is connected based on at least one voice transmitted by the service provider; in response to completion of connection to the attendant, provide the external electronic device with information indicating that the connection to the attendant of the service provider has been completed using the communication circuit; and in response to reception of a message indicating that the call between the external electronic device and the service provider has been connected, terminate the call connection to the service provider.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is based on and claims priority under 35 U.S.C. 119 of Korean Patent Application No. 10-2018-0091964, filed on Aug. 7, 2018, in the Korean Intellectual Property Office, the disclosure of which is incorporated herein by reference in its entirety.

BACKGROUND

1. Field

[0002] Various embodiments relate to an electronic device that performs a task including a call in response to a user utterance, and an operation method thereof.

2. Description of the Related Art

[0003] As technology has been developed, technology capable of receiving an utterance from a user to provide various content services based on user intent or to perform specific functions within an electronic device via a voice recognition service and a voice recognition interface has arisen. Linguistic understanding is technology for recognizing, applying, and processing human language/characters and includes natural language processing, machine translation, dialogue systems, query responses, speech recognition/synthesis, and the like.

[0004] Automatic Speech recognition (ASR) may allow reception of an input user voice, extraction of an acoustic feature vector therefrom, and generation of text corresponding to the input voice. Via the ASR, an electronic device may receive natural language through direct input by a user. Natural language is language commonly used by humans, and a machine is unable to directly understand natural language without additional analysis. In general, a Natural Language Understanding (NLU) method in a speech recognition system may be classified into two types. The first is a method of understanding a spoken language via a passive semantic-level grammar, and the other is a method of understanding a word string in relation to a semantic structure defined on the basis of a language model generated by a statistical method.

[0005] An electronic device may provide various forms of voice-based services to a user via the described voice recognition and natural language processing.

SUMMARY

[0006] A service provider providing various services through a call with an attendant may request, after a customer using a service makes a call, the customer to press a button to select a desired service or may request user authentication. The procedure of requesting by the service provider may take an excessive amount of time. A waiting time for connection to the attendant may take several minutes to several tens of minutes, which is excessive time for the customer to use the service.

[0007] An electronic device according to the disclosure may reduce a waiting time of a user in terms of using a service provided by a service provider and may allow the user to smoothly use the service. The electronic device according to the disclosure may process an utterance of the user so as to, on behalf of the user, make a call to the service provider, press a button for selecting a desired service, and determine whether an attendant is connected, and the electronic device provides a notification to the user. The electronic device according to the disclosure may enable the user to use other functions of the electronic device until the service provider's attendant is connected.

[0008] An electronic device according to various embodiments may include: a microphone; a speaker; a touchscreen display; a communication circuit; at least one processor operatively connected to the microphone, the speaker, the touchscreen display, and the communication circuit; and a memory operatively connected to the processor, wherein the memory stores instructions configured to, when executed, cause the at least one processor to: receive a first user input through the touchscreen display or the microphone; identify a service provider and a detailed service based on at least a part of the first user input; select a first menu corresponding to the detailed service from menu information including one or more detailed service provided by the service provider; attempt to connect a call to the service provider by using the communication circuit; when the call to the service provider is connected, transmit one or more responses until reaching a step corresponding to the first menu, in response to one or more voice prompts provided by the service provider; in response to reaching the first menu, determine whether an attendant is connected, based on at least one voice transmitted by the service provider; in response to completion of connection to the attendant, output a notification indicating that connection to the attendant has been completed, using the speaker or the touchscreen display; and in response to reception of a second user input for the output notification, display a screen for a call with the service provider.

[0009] An electronic device according to various embodiments may include: a speaker; a touchscreen display; a communication circuit; at least one processor operatively connected to the speaker, the display, and the communication circuit; and a memory operatively connected to the processor, wherein the memory stores instructions configured to, when executed, cause the at least one processor to: execute a calling application; attempt to connect a call to a service provider using the communication circuit; during call connection to the service provider, receive a first user input to request a standby mode for connection to an attendant of the service provider, the calling application being executed in the background in the standby mode; in response to the first user input, execute the calling application in the standby mode; while the calling application is being executed in the standby mode, determine whether the attendant is connected, based on a voice transmitted by the service provider; in response to completion of connection to the attendant, output a notification indicating that connection to the attendant has been completed, using the speaker or the touchscreen display; and in response to reception of a second user input for the output notification, terminate the standby mode.

[0010] An electronic device according to various embodiments may include: a communication circuit; at least one processor operatively connected to the communication circuit; and a memory operatively connected to the processor, wherein the memory stores instructions configured to, when executed, cause the at least one processor to: receive a request for a call connection to a service provider from an external electronic device, the request including user information of the external electronic device and at least one piece of keyword information related to a detailed service and the service provider; in response to the request, acquire an identification number for the call connection to the service provider; select a first menu corresponding to the detailed service included in the request from menu information including one or more detailed services provided by the service provider; attempt to connect a call between the external electronic device and the service provider; when the call to the service provider is connected, transmit one or more responses until reaching a step corresponding to the first menu, in response to one or more voice prompts provided by the service provider; in response to reaching the first menu, determine whether an attendant is connected, based on at least one voice transmitted by the service provider; in response to completion of connection to the attendant, provide the external electronic device with information indicating that connection to the attendant of the service provider has been completed, using the communication circuit; and in response to reception of a message indicating that the call between the external electronic device and the service provider has been connected, terminate the call connection to the service provider.

[0011] According to various embodiments, an electronic device capable of attempting to connect a call to an external electronic device in response to a user utterance and an operation method thereof may be provided.

[0012] An electronic device according to various embodiments can perform at least one action on behalf of a user during a call to a connected external electronic device, in response to a user utterance.

[0013] An electronic device according to various embodiments can improve a user experience by connecting a call to a call center on behalf of a user of the electronic device and executing a calling application in the background until an attendant of the call center is connected.

[0014] Before undertaking the DETAILED DESCRIPTION below, it may be advantageous to set forth definitions of certain words and phrases used throughout this patent document: the terms "include" and "comprise," as well as derivatives thereof, mean inclusion without limitation; the term "or," is inclusive, meaning and/or; the phrases "associated with" and "associated therewith," as well as derivatives thereof, may mean to include, be included within, interconnect with, contain, be contained within, connect to or with, couple to or with, be communicable with, cooperate with, interleave, juxtapose, be proximate to, be bound to or with, have, have a property of, or the like; and the term "controller" means any device, system or part thereof that controls at least one operation, such a device may be implemented in hardware, firmware or software, or some combination of at least two of the same. It should be noted that the functionality associated with any particular controller may be centralized or distributed, whether locally or remotely.

[0015] Moreover, various functions described below can be implemented or supported by one or more computer programs, each of which is formed from computer readable program code and embodied in a computer readable medium. The terms "application" and "program" refer to one or more computer programs, software components, sets of instructions, procedures, functions, objects, classes, instances, related data, or a portion thereof adapted for implementation in a suitable computer readable program code. The phrase "computer readable program code" includes any type of computer code, including source code, object code, and executable code. The phrase "computer readable medium" includes any type of medium capable of being accessed by a computer, such as read only memory (ROM), random access memory (RAM), a hard disk drive, a compact disc (CD), a digital video disc (DVD), or any other type of memory. A "non-transitory" computer readable medium excludes wired, wireless, optical, or other communication links that transport transitory electrical or other signals. A non-transitory computer readable medium includes media where data can be permanently stored and media where data can be stored and later overwritten, such as a rewritable optical disc or an erasable memory device.

[0016] Definitions for certain words and phrases are provided throughout this patent document. Those of ordinary skill in the art should understand that in many, if not most instances, such definitions apply to prior, as well as future uses of such defined words and phrases.

BRIEF DESCRIPTION OF THE DRAWINGS

[0017] The above and other aspects, features, and advantages of the disclosure will be more apparent from the following detailed description taken in conjunction with the accompanying drawings, in which:

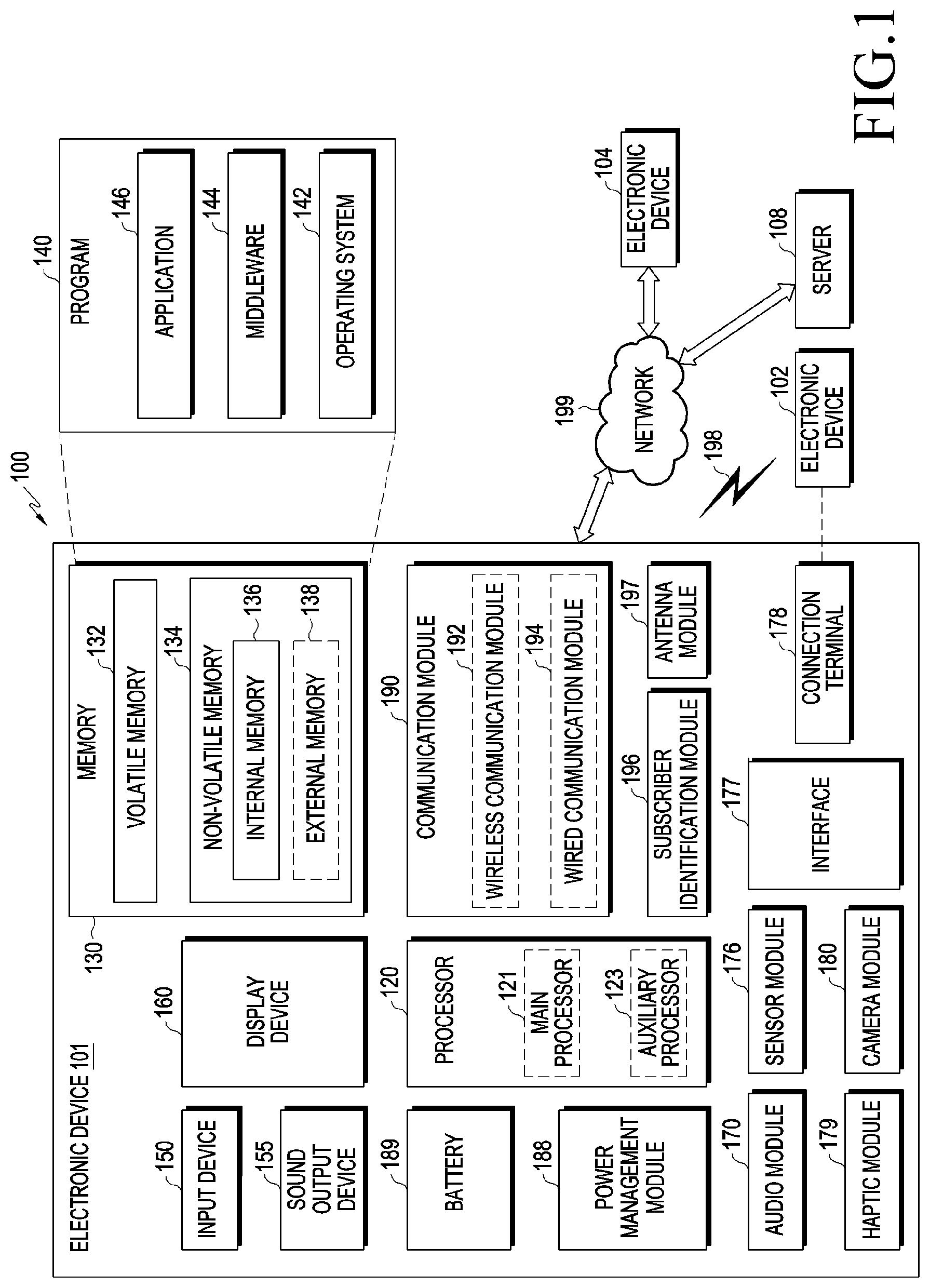

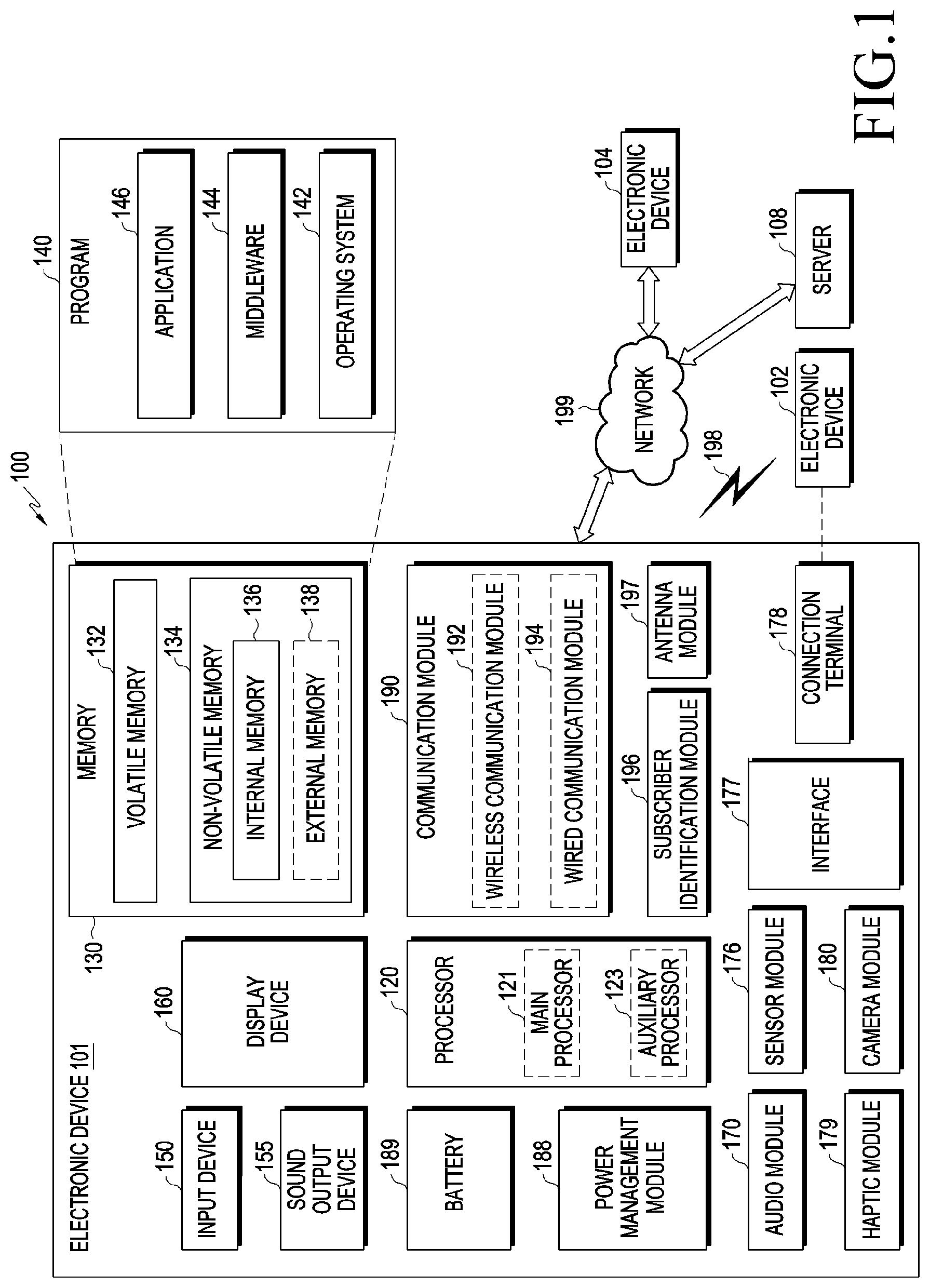

[0018] FIG. 1 illustrates a block diagram of an electronic device in a network environment according to various embodiments;

[0019] FIG. 2 illustrates a flowchart for explaining an operation method of the electronic device according to various embodiments;

[0020] FIG. 3 illustrates conceptual diagrams for explaining a procedure of connecting a call to an external electronic device on the basis of a user utterance according to various embodiments;

[0021] FIG. 4 illustrates a flowchart for explaining the operation of the electronic device and a service provider according to various embodiments;

[0022] FIG. 5 illustrates a tree structure for menu information provided by the service provider according to various embodiments;

[0023] FIG. 6 illustrates a flowchart for explaining an operation of selecting, by the electronic device, a menu corresponding to a detailed service from menu information provided by the service provider according to various embodiments;

[0024] FIG. 7 illustrates a conceptual diagram for explaining an operation of transmitting, by the electronic device, a response in response to one or more voice prompts provided by the service provider according to various embodiments;

[0025] FIG. 8 illustrates a flowchart for explaining an operation of determining, by the electronic device, whether an attendant is connected according to various embodiments;

[0026] FIG. 9 illustrates a flowchart for explaining an operation of determining, by the electronic device, whether an attendant is connected according to various embodiments;

[0027] FIG. 10A illustrates various conceptual diagrams for providing a notification, which indicates connection to the attendant, by the electronic device according to various embodiments;

[0028] FIG. 10B illustrates various conceptual diagrams for providing a notification, which indicates connection to the attendant, by the electronic device according to various embodiments;

[0029] FIG. 11 illustrates a flowchart for explaining an operation of checking user information of the electronic device according to various embodiments:

[0030] FIG. 12 illustrates a flowchart for explaining an operation method of the electronic device according to various embodiments:

[0031] FIG. 13 illustrates conceptual diagrams for explaining a standby mode of the electronic device to be connected to an attendant according to various embodiments;

[0032] FIG. 14 illustrates a flowchart for explaining an operation method of the electronic device according to various embodiments;

[0033] FIG. 15A illustrates a flowchart for explaining the operation of a server, the electronic device, and the service provider according to various embodiments;

[0034] FIG. 15B illustrates a flowchart for explaining the operation of the server, the electronic device, and the service provider according to various embodiments;

[0035] FIG. 16 illustrates conceptual diagrams for explaining information provision according to a user utterance by the electronic device according to various embodiments:

[0036] FIG. 17 is a diagram illustrating an integrated intelligence system according to various embodiments;

[0037] FIG. 18 is a block diagram illustrating a user terminal of the integrated intelligence system according to an embodiment;

[0038] FIG. 19 is a diagram illustrating execution of an intelligent app by the user terminal according to an embodiment;

[0039] FIG. 20 is a block diagram illustrating a server of the integrated intelligence system according to an embodiment;

[0040] FIG. 21 is a diagram illustrating a method of generating a path rule by a path Natural Language Understanding (NLU) module according to an embodiment;

[0041] FIG. 22 is a diagram illustrating collecting of a current state by a context module of a processor according to an embodiment;

[0042] FIG. 23 is a diagram illustrating management of user information by a persona module according to an embodiment; and

[0043] FIG. 24 is a block diagram illustrating a suggestion module according to an embodiment.

DETAILED DESCRIPTION

[0044] FIGS. 1 through 24, discussed below, and the various embodiments used to describe the principles of the present disclosure in this patent document are by way of illustration only and should not be construed in any way to limit the scope of the disclosure. Those skilled in the art will understand that the principles of the present disclosure may be implemented in any suitably arranged system or device

[0045] FIG. 1 is a block diagram illustrating an electronic device 101 in a network environment 100 according to various embodiments. Referring to FIG. 1, the electronic device 101 in the network environment 100 may communicate with an electronic device 102 via a first network 198 (e.g., a short-range wireless communication network), or an electronic device 104 or a server 108 via a second network 199 (e.g., a long-range wireless communication network). According to an embodiment, the electronic device 101 may communicate with the electronic device 104 via the server 108. According to an embodiment, the electronic device 101 may include a processor 120, memory 130, an input device 150, a sound output device 155, a display device 160, an audio module 170, a sensor module 176, an interface 177, a haptic module 179, a camera module 180, a power management module 188, a battery 189, a communication module 190, a subscriber identification module (SIM) 196, or an antenna module 197. In some embodiments, at least one (e.g., the display device 160 or the camera module 180) of the components may be omitted from the electronic device 101, or one or more other components may be added in the electronic device 101. In some embodiments, some of the components may be implemented as single integrated circuitry. For example, the sensor module 176 (e.g., a fingerprint sensor, an iris sensor, or an illuminance sensor) may be implemented as embedded in the display device 160 (e.g., a display).

[0046] The processor 120 may execute, for example, software (e.g., a program 140) to control at least one other component (e.g., a hardware or software component) of the electronic device 101 coupled with the processor 120, and may perform various data processing or computation. According to one embodiment, as at least part of the data processing or computation, the processor 120 may load a command or data received from another component (e.g., the sensor module 176 or the communication module 190) in volatile memory 132, process the command or the data stored in the volatile memory 132, and store resulting data in non-volatile memory 134. According to an embodiment, the processor 120 may include a main processor 121 (e.g., a central processing unit (CPU) or an application processor (AP)), and an auxiliary processor 123 (e.g., a graphics processing unit (GPU), an image signal processor (ISP), a sensor hub processor, or a communication processor (CP)) that is operable independently from, or in conjunction with, the main processor 121. Additionally or alternatively, the auxiliary processor 123 may be adapted to consume less power than the main processor 121, or to be specific to a specified function. The auxiliary processor 123 may be implemented as separate from, or as part of the main processor 121.

[0047] The auxiliary processor 123 may control at least some of functions or states related to at least one component (e.g., the display device 160, the sensor module 176, or the communication module 190) among the components of the electronic device 101, instead of the main processor 121 while the main processor 121 is in an inactive (e.g., sleep) state, or together with the main processor 121 while the main processor 121 is in an active state (e.g., executing an application). According to an embodiment, the auxiliary processor 123 (e.g., an image signal processor or a communication processor) may be implemented as part of another component (e.g., the camera module 180 or the communication module 190) functionally related to the auxiliary processor 123.

[0048] The memory 130 may store various data used by at least one component (e.g., the processor 120 or the sensor module 176) of the electronic device 101. The various data may include, for example, software (e.g., the program 140) and input data or output data for a command related thereto. The memory 130 may include the volatile memory 132 or the non-volatile memory 134.

[0049] The program 140 may be stored in the memory 130 as software, and may include, for example, an operating system (OS) 142, middleware 144, or an application 146.

[0050] The input device 150 may receive a command or data to be used by other component (e.g., the processor 120) of the electronic device 101, from the outside (e.g., a user) of the electronic device 101. The input device 150 may include, for example, a microphone, a mouse, a keyboard, or a digital pen (e.g., a stylus pen).

[0051] The sound output device 155 may output sound signals to the outside of the electronic device 101. The sound output device 155 may include, for example, a speaker or a receiver. The speaker may be used for general purposes, such as playing multimedia or playing record, and the receiver may be used for an incoming calls. According to an embodiment, the receiver may be implemented as separate from, or as part of the speaker.

[0052] The display device 160 may visually provide information to the outside (e.g., a user) of the electronic device 101. The display device 160 may include, for example, a display, a hologram device, or a projector and control circuitry to control a corresponding one of the display, hologram device, and projector. According to an embodiment, the display device 160 may include touch circuitry adapted to detect a touch, or sensor circuitry (e.g., a pressure sensor) adapted to measure the intensity of force incurred by the touch.

[0053] The audio module 170 may convert a sound into an electrical signal and vice versa. According to an embodiment, the audio module 170 may obtain the sound via the input device 150, or output the sound via the sound output device 155 or a headphone of an external electronic device (e.g., an electronic device 102) directly (e.g., wiredly) or wirelessly coupled with the electronic device 101.

[0054] The sensor module 176 may detect an operational state (e.g., power or temperature) of the electronic device 101 or an environmental state (e.g., a state of a user) external to the electronic device 101, and then generate an electrical signal or data value corresponding to the detected state. According to an embodiment, the sensor module 176 may include, for example, a gesture sensor, a gyro sensor, an atmospheric pressure sensor, a magnetic sensor, an acceleration sensor, a grip sensor, a proximity sensor, a color sensor, an infrared (IR) sensor, a biometric sensor, a temperature sensor, a humidity sensor, or an illuminance sensor.

[0055] The interface 177 may support one or more specified protocols to be used for the electronic device 101 to be coupled with the external electronic device (e.g., the electronic device 102) directly (e.g., wiredly) or wirelessly. According to an embodiment, the interface 177 may include, for example, a high definition multimedia interface (HDMI), a universal serial bus (USB) interface, a secure digital (SD) card interface, or an audio interface.

[0056] A connecting terminal 178 may include a connector via which the electronic device 101 may be physically connected with the external electronic device (e.g., the electronic device 102). According to an embodiment, the connecting terminal 178 may include, for example, a HDMI connector, a USB connector, a SD card connector, or an audio connector (e.g., a headphone connector).

[0057] The haptic module 179 may convert an electrical signal into a mechanical stimulus (e.g., a vibration or a movement) or electrical stimulus which may be recognized by a user via his or her tactile sensation or kinesthetic sensation. According to an embodiment, the haptic module 179 may include, for example, a motor, a piezoelectric element, or an electric stimulator.

[0058] The camera module 180 may capture a still image or moving images. According to an embodiment, the camera module 180 may include one or more lenses, image sensors, image signal processors, or flashes.

[0059] The power management module 188 may manage power supplied to the electronic device 101. According to one embodiment, the power management module 188 may be implemented as at least part of, for example, a power management integrated circuit (PMIC).

[0060] The battery 189 may supply power to at least one component of the electronic device 101. According to an embodiment, the battery 189 may include, for example, a primary cell which is not rechargeable, a secondary cell which is rechargeable, or a fuel cell.

[0061] The communication module 190 may support establishing a direct (e.g., wired) communication channel or a wireless communication channel between the electronic device 101 and the external electronic device (e.g., the electronic device 102, the electronic device 104, or the server 108) and performing communication via the established communication channel. The communication module 190 may include one or more communication processors that are operable independently from the processor 120 (e.g., the application processor (AP)) and supports a direct (e.g., wired) communication or a wireless communication. According to an embodiment, the communication module 190 may include a wireless communication module 192 (e.g., a cellular communication module, a short-range wireless communication module, or a global navigation satellite system (GNSS) communication module) or a wired communication module 194 (e.g., a local area network (LAN) communication module or a power line communication (PLC) module). A corresponding one of these communication modules may communicate with the external electronic device via the first network 198 (e.g., a short-range communication network, such as Bluetooth.TM., wireless-fidelity (Wi-Fi) direct, or infrared data association (IrDA)) or the second network 199 (e.g., a long-range communication network, such as a cellular network, the Internet, or a computer network (e.g., LAN or wide area network (WAN)). These various types of communication modules may be implemented as a single component (e.g., a single chip), or may be implemented as multi components (e.g., multi chips) separate from each other. The wireless communication module 192 may identify and authenticate the electronic device 101 in a communication network, such as the first network 198 or the second network 199, using subscriber information (e.g., international mobile subscriber identity (IMSI)) stored in the subscriber identification module 196.

[0062] The antenna module 197 may transmit or receive a signal or power to or from the outside (e.g., the external electronic device) of the electronic device 101. According to an embodiment, the antenna module 197 may include an antenna including a radiating element composed of a conductive material or a conductive pattern formed in or on a substrate (e.g., PCB). According to an embodiment, the antenna module 197 may include a plurality of antennas. In such a case, at least one antenna appropriate for a communication scheme used in the communication network, such as the first network 198 or the second network 199, may be selected, for example, by the communication module 190 (e.g., the wireless communication module 192) from the plurality of antennas. The signal or the power may then be transmitted or received between the communication module 190 and the external electronic device via the selected at least one antenna. According to an embodiment, another component (e.g., a radio frequency integrated circuit (RFIC)) other than the radiating element may be additionally formed as part of the antenna module 197.

[0063] At least some of the above-described components may be coupled mutually and communicate signals (e.g., commands or data) therebetween via an inter-peripheral communication scheme (e.g., a bus, general purpose input and output (GPIO), serial peripheral interface (SPI), or mobile industry processor interface (MIPI)).

[0064] According to an embodiment, commands or data may be transmitted or received between the electronic device 101 and the external electronic device 104 via the server 108 coupled with the second network 199. Each of the electronic devices 102 and 104 may be a device of a same type as, or a different type, from the electronic device 101. According to an embodiment, all or some of operations to be executed at the electronic device 101 may be executed at one or more of the external electronic devices 102, 104, or 108. For example, if the electronic device 101 should perform a function or a service automatically, or in response to a request from a user or another device, the electronic device 101, instead of, or in addition to, executing the function or the service, may request the one or more external electronic devices to perform at least part of the function or the service. The one or more external electronic devices receiving the request may perform the at least part of the function or the service requested, or an additional function or an additional service related to the request, and transfer an outcome of the performing to the electronic device 101. The electronic device 101 may provide the outcome, with or without further processing of the outcome, as at least part of a reply to the request. To that end, a cloud computing, distributed computing, or client-server computing technology may be used, for example.

[0065] FIG. 2 illustrates a flowchart 200 for explaining an operation method of an electronic device according to various embodiments. The embodiment of FIG. 2 will be described in more detail with reference to FIG. 3. FIG. 3 illustrates conceptual diagrams 300 for explaining a procedure of connecting a call to an external electronic device on the basis of a user utterance according to various embodiments.

[0066] In operation 201, an electronic device 101 (e.g., a processor 120) may receive a first user input through a touchscreen display (e.g., an input device 150 or a display device 160) or a microphone (e.g., the input device 150). The first user input may include a request to make a call to an external electronic device by using the electronic device 101. For example, as shown in FIG. 3, the electronic device 101 may receive a user utterance 301 by using the microphone 150. The electronic device 101 may display one or more screens on a touchscreen display 310. The electronic device 101 may display an execution screen 320 for reception of the user utterance 301 on at least a part of the touchscreen display 310. The electronic device 101 may process the user utterance by a calling application (a native calling application) or an enhanced calling application, and the execution screen 320 for reception of the user utterance 301 may be displayed by the native calling application or the enhanced calling application. The execution screen 320 may include an indicator 321 indicating that listening is being performed, an OK icon 322, and a text display window 323. The electronic device 101 may activate the microphone 150, and may display, for example, the indicator 321 indicating that listening is being performed. The electronic device 101 may input text or a command acquired as a result of processing the user utterance 301 into the text window 323 so as to display the same. When the OK icon 322 is designated, the electronic device 101 may perform a task corresponding to the text or command within the text display window 323. For example, the electronic device 101 may receive the user utterance 301 of "How much is the Samsung Card payment this month?" through the microphone 150. The electronic device 101 may display text indicating "How much is the Samsung Card payment this month?" within the text display window 323, and when the OK icon 322 is selected, the electronic device 101 may perform a task included in "How much is the Samsung Card payment this month?". According to a voice recognition analysis result, the electronic device 101 may confirm multiple tasks of at least one operation for reaching steps of executing the calling application, inputting a phone number corresponding to Samsung Card or connecting a call to Samsung Card, and confirming a payment amount after connecting the call. For example, the electronic device 101 may directly confirm the multiple tasks, or may receive the multiple tasks from a server 108 for voice recognition analysis. Hereinafter, confirmation of specific information by the electronic device 101 may be understood as confirmation of the specific information on the basis of information received from the server 108. The electronic device 101 may stop displaying the execution screen 320 in response to selection of the OK icon 322. Referring to FIG. 3, according to the stoppage of displaying the execution screen 320, at least one screen displayed prior to the display of the execution screen 320 may be displayed on the touchscreen display 310. For example, an execution screen 330 of a launcher application may be displayed. The execution screen 330 of the launcher application may include at least one icon for execution of at least one application.

[0067] In operation 203, the electronic device 101 may identify a service provider and a detailed service on the basis of the received first user input. The service provider may provide one or more services by using a call connection. For example, a Samsung Card customer center may provide a service, such as card application, payment information confirmation, and lost card report, via the call connection. The detailed service may be one of one or more services provided by the service provider. For example, on the basis of user input of "How much is the Samsung Card payment this month?", the electronic device 101 may identify the "Samsung Card customer center" as the service provider and may identify "payment amount inquiry" as the detailed service. The electronic device 101 may search for one or more keywords related to the detailed service or the service provider from the first user input, in information on multiple service providers. For example, the electronic device 101 may extract, as a keyword. "Samsung", "Samsung Card", "this month", "payment", "payment amount", or "how much" from "How much is the Samsung Card payment this month?". The electronic device 101 may identify a Samsung Card customer center as the service provider by using the keyword "Samsung Card". The electronic device 101 may store information on multiple service providers that provide one or more services, or may receive information on the multiple service providers from the external server 108. The example 101 may identify the service provider by selecting "Samsung Card customer center", which matches the keyword "Samsung Card", from among the multiple service providers. The electronic device 101 may identify "payment amount inquiry" as the detailed service by using the keyword "this month", "payment amount", or "how much".

[0068] In operation 205, the electronic device 101 may acquire menu information including one or more detailed services provided by the identified service provider. The electronic device 101 may select a first menu corresponding to the identified detailed service from the menu information of the identified service provider. For example, the electronic device 101 may select a payment amount item from "individual member information inquiry" in response to "payment amount inquiry" in the menu information of the Samsung Card customer center.

[0069] In operation 207, the electronic device 101 may attempt to connect a call to the identified service provider. The electronic device 101 may acquire a phone number of the identified service provider, and may make a call to the service provider by using the phone number. The electronic device 101 may connect the call via the calling application or the enhanced calling application in the background. The enhanced calling application may direct connect the call, or may connect the call by execution of the calling application. For example, the electronic device 101 may attempt to connect the call by pressing a button provided by the calling application, which corresponds to a number corresponding to the phone number, without separate user input. The electronic device 101 may not display an execution screen related to the call connection. The electronic device 101 may limit the functions of the microphone 150 and a speaker 155 while the call is being connected in the background.

[0070] In operation 209, when the call to the service provider is connected, the electronic device 101 may transmit one or more responses until reaching a step corresponding to the first menu, in response to one or more voice prompts provided by the service provider. The electronic device 101 may receive a voice prompt from the service provider, and may transmit a response corresponding to the received voice prompt on the basis of information on the first menu. For example, the electronic device 101 may receive a voice prompt, such as "Press 1 for individual members, or press 2 for corporate members" from the service provider, and may transmit a response of pressing button 1, on the basis of which the first menu is the payment amount item in "individual member information inquiry". The electronic device 101 may sequentially receive multiple voice prompts, and may determine and transmit responses corresponding to the respective voice prompts. For example, the electronic device 101 may transmit the responses by selecting at least some numbers on a keypad provided by the calling application or the enhanced calling application.

[0071] In operation 211, the electronic device 101 may determine whether an attendant is connected on the basis of at least one voice transmitted by the service provider in response to reaching the first menu. The service provider may include connection to an attendant in order to provide a service corresponding to the first menu, and when a corresponding menu is selected after the call is connected, the service provider may proceed with the connection to an attendant. When an attendant cannot be connected immediately after the corresponding menu is selected, the service provider may request to wait until the attendant is connected. The service provider may transmit an announcement relating to a standby state while connecting to an attendant. When connection to an attendant is completed, the service provider may transmit an utterance of the attendant. The electronic device 101 may determine, using a determination model, whether the voice transmitted by the service provider is an utterance of the attendant or an announcement previously stored as an Automatic Response Service (ARS).

[0072] In operation 213, in response to completion of connection to the attendant, the electronic device 101 may output a notification, indicating that the attendant has been connected, by means of the touchscreen display 310 or the speaker 155. For example, as shown in FIG. 3, the electronic device 101 may display a pop-up window 340 on at least a part of the touchscreen display 310. The pop-up window 340 may include text indicating that an attendant has been connected. The pop-up window 340 may include an OK icon for connection to the attendant and a cancel icon for cancellation of connection to the attendant. The electronic device 101 may output a designated notification sound using the speaker 155. The electronic device 101 may simultaneously display a notification message on the pop-up window 340 and output the notification sound.

[0073] In operation 215, the electronic device 101 may display a call screen 350 for the call with the service provider in response to reception of a second user input for the output notification. For example, in response to selection of the OK icon in the pop-up window 340 of FIG. 3, the electronic device 101 may display the call screen 350 for the call with the service provider on the touchscreen display 310. The electronic device 101 may release the function limitation of the microphone 150 and the speaker 155 in response to reception of the second user input for the output notification. The electronic device 101 may display the call screen 350, may receive a user utterance through the microphone 150, and may output, through the speaker 155, a voice transmitted from the service provider.

[0074] FIG. 4 illustrates a flowchart 400 for explaining the operation of the electronic device 101 and a service provider 450 according to various embodiments. In various embodiments, the electronic device 101 may connect a call to the service provider 450 in response to a request for a call connection according to a user utterance.

[0075] In operation 401, the electronic device 101 (e.g., the processor 120) may display an execution screen for reception of a user input. The execution screen may display an icon for reception of a user utterance or may display an input window for reception of a text input.

[0076] In operation 403, the electronic device 101 may receive a first user input through the touchscreen display 310 or the microphone 150.

[0077] In operation 405, the electronic device 101 may identify a service provider and a detailed service on the basis of the received first user input. The electronic device 101 may identify a service provider and a detailed service by extracting one or more keywords from the first user input. For example, the electronic device 101 may identify the service provider 450 from a list of multiple service providers by selecting a service provider matching one or more keywords extracted from the first user input. The electronic device 101 may select one from among the one or more keywords and identify the selected keyword as the detailed service.

[0078] In operation 407, the electronic device 101 may acquire menu information for the service provider 450. The menu information for the service provider 450 may be acquired from the memory 130 of the electronic device 101 or may be acquired from the external server 108.

[0079] In operation 409, the electronic device 101 may select a first menu corresponding to the detailed service from the menu information for the service provider 450.

[0080] In operation 411, the electronic device 101 may request call connection from the identified service provider 450.

[0081] In operation 413, the service provider 450 may approve a call connection with the electronic device 101 in response to the call connection request from the electronic device 101.

[0082] In operation 415, the call connection may be established between the electronic device 101 and the service provider 450.

[0083] In operation 417, the service provider 450 may sequentially transmit determined voice prompts on the basis of the menu information. For example, after establishment of the call connection, the service provider 450 may transmit a voice prompt of "Please, enter your mobile phone number" which is configured so as to have a highest priority in the menu information. The service provider 450 may identify the user of the electronic device 101 that initiated the call.

[0084] In operation 419, the electronic device 101 may receive a voice prompt and may transmit a response corresponding to the received voice prompt. For example, the electronic device 101 may perform an action of sequentially pressing buttons corresponding to a phone number of the electronic device 101 in response to the voice prompt, such as "Please, enter your mobile phone number". The electronic device 101 may determine a response corresponding to the received voice prompt on the basis of the information of the first menu, and may transmit the determined response.

[0085] In operation 421, the service provider 450 may determine whether the first menu has been reached on the basis of the received response. The first menu may provide a service including connection to an attendant. In response to reaching the first menu, the service provider 450 may attempt to connect to an attendant. Due to limited resources, it may take time to complete connection to an attendant. The service provider 450 may transmit, to the electronic device 101, a voice prompt according to the received response until reaching the first menu. For example, the service provider 450 may repeat operation 417 until reaching the first menu.

[0086] In operation 423, the service provider 450 may transmit a voice. While attempting to connect to an attendant, the service provider 450 may transmit a voice prompt indicating a standby state for connecting to the attendant. Here, the voice prompt may be an announcement previously stored as the ARS. The announcement may be generated by a machine. The service provider 450 may transmit a voice of an attendant in response to completion of connection to the attendant. The attendant may be a person, and the voice of the attendant may be different from that generated by the machine.

[0087] In operation 425, the electronic device 101 may determine whether an attendant is connected on the basis of the received voice. The electronic device 101 may check correlation with the received voice using a determination model for an attendant voice, and may determine that the received voice signal is the attendant voice according to the result of checking when the correlation has a value higher than a threshold value. For example, the electronic device 101 may use, as a determination model, a deep-learning model generated by performing deep learning for multiple pre-stored audio signals having a label of an attendant voice. The electronic device 101 may compare the received voice with the deep-learning model in order to determine whether an attendant is connected.

[0088] In operation 427, if an attendant is connected, the electronic device 101 may output a notification indicating that the connection to the attendant has been established. For example, the electronic device 101 may output text, indicating that the connection to the attendant has been established, through the touchscreen display 310, or may output a notification sound specified for indicating connection to the attendant.

[0089] In operation 429, the electronic device 101 may receive a second user input in response to the output notification. For example, the electronic device 101 may receive, through the touchscreen display 310, the second user input for selecting an OK icon additionally displayed in the output text window.

[0090] In operation 431, the electronic device 101 may display a call screen of the call with the service provider 450. The electronic device 101 may display a call screen, may output a voice signal transmitted from the service provider 450 through the speaker 155, and may receive a user utterance through the microphone 150.

[0091] FIG. 5 illustrates a tree structure 500 for menu information provided by the service provider according to various embodiments. The electronic device 101 (e.g., the processor 120) may store, in a tree structure, menu information provided by a service provider (e.g., the service provider 450 in FIG. 4), or may receive the menu information from the external server 108. For example, it is assumed that the service provider 450 is a Samsung Card customer center 501. FIG. 5 is a tree structure indicating menu information of multiple services provided via a call connection to the Samsung Card customer center 501. A root node in the tree structure for the menu information may indicate the service provider 450. Referring to FIG. 5, the Samsung Card customer center 501 may be the root node. The Samsung Card customer center 501 may provide services for individual members and services for corporate members, and an individual 502 and a corporation 503 may be included as child nodes of the root node in the tree structure for the menu information. The Samsung Card customer center 501 may provide an individual member with services for lost card report, information inquiry, information change, card cancellation, card application, and attendant connection. Each service is a child node of the individual 502 indicating an individual member, and may be included as lost card report 504, information inquiry 505, information change 506, card cancellation 507, card application 508, or attendant connection 509 in the tree structure for the menu information.

[0092] FIG. 6 illustrates a flowchart 600 for explaining an operation of selecting, by the electronic device, a menu corresponding to a detailed service from among menu information provided by a service provider according to various embodiments. The electronic device 101 (e.g., the processor 120) may identify a service provider (e.g., the service provider 450) and a detailed service by using one or more keywords included in a user utterance. For example, when a user utterance of "Apply to Samsung Card for a new card" is received, the electronic device 101 may extract keywords, such as "Samsung Card", "new card", and "apply for card". The electronic device 101 may identify a Samsung Card customer center (e.g., the Samsung Card customer center 501 in FIG. 5) as the service provider 450 on the basis of the keyword "Samsung Card", and may identify issuance of a new card as the detailed service on the basis of the keywords of "new card" and "apply for card". The electronic device 101 may perform matching with the detailed service in the menu information of the service provider 450 having the tree structure. For example, the electronic device 101 may select a node corresponding to the detailed service in the tree structure for the menu information of the Samsung Card customer center 501. Operations of FIG. 6 will be described with reference to the tree structure 500 for the menu information of Samsung Card, in FIG. 5.

[0093] In operation 601, the electronic device 101 may compare node information with a detailed service in the menu information tree structure 500. For example, referring to FIG. 5, the electronic device 101 may compare new card issuance with information of each node included in FIG. 5. For example, when new card issuance and a lost-card-reporting node 504 are compared, the electronic device 101 may determine that the comparison does not result in a match. When new card issuance, a card application node 508, and a new node 513 are compared, the electronic device 101 may determine that the comparison results in a match to the new node 513. The electronic device 101 may determine that one or more matching leaf nodes exists.

[0094] In operation 603, the electronic device 101 may determine whether there is one matching leaf node, as a result of matching. For example, the electronic device 101 may determine that the new node 513 matches new card issuance, and the new node 513 may correspond to a leaf node.

[0095] In operation 605, the electronic device 101 may determine one matched leaf node as a menu for the detailed service. The electronic device 101 may determine the new node 513 as a menu of the Samsung Card customer center 501. FIG. 5 is merely an example, and the menu information may vary depending on the service provider 450.

[0096] In operation 607, the electronic device 101 may determine whether there are two matching leaf nodes, as the result of matching. When the detailed service is payment date confirmation, the electronic device 101 may match two leaf nodes to a payment date node 510 and a payment date node 511.

[0097] In operation 609, the electronic device 101 may select one of intermediate nodes, from which the leaf nodes are branched, in response to determining that two or more matching leaf nodes have been found. The electronic device 101 may identify ancestor nodes of the two or more leaf nodes, may select intermediate nodes from which the two or more leaf nodes are branched from among the ancestor nodes, and may select one from among the selected intermediate nodes. The electronic device 101 may select one from among the intermediate nodes based on user input. The electronic device 101 may display information of the intermediate nodes in order to request a user input. For example, when an account inquiry item matches two leaf nodes, and intermediate nodes, from which the two leaf nodes are branched, are a personal information change item and a payment information confirmation item, the electronic device 101 may provide text of "Please, select whether the item is an account inquiry based on personal information change or an account inquiry item based on payment information confirmation" to induce a user input.

[0098] In operation 611, the electronic device 101 may determine a lower leaf node of the selected intermediate node as the menu for the detailed service. For example, the electronic device 101 may determine, as the first menu, a leaf node matching the account inquiry item according to personal information change, in response to selection of personal information change according to the user input.

[0099] In operation 613, the electronic device 101 may determine whether there is one matching intermediate node, as a result of matching.

[0100] In operation 615, the electronic device 101 may select one from among child nodes of the matching intermediate node. The electronic device 101 may select one from among the child nodes of the intermediate nodes based on user input.

[0101] In operation 617, the electronic device 101 may determine whether the selected child node is a leaf node. The electronic device 101 may repeat operation 615 of selecting a child node until reaching the leaf node.

[0102] In operation 619, when the selected child node corresponds to a leaf node, the electronic device 101 may determine the selected child node, i.e., the corresponding leaf node, as the first menu item.

[0103] In operation 621, the electronic device 101 may determine whether there are two or more intermediate nodes, as the result of matching.

[0104] In operation 623, the electronic device 101 may select one from among the two or more intermediate nodes. With respect to the selected intermediate node, in operation 615, a child node of the intermediate node may be selected.

[0105] In operation 625, the electronic device 101 may terminate the operation without determining the first menu, in response to determining that neither a leaf node nor an intermediate node is a match according to the matching result. The electronic device 101 may display information indicating that the first menu is not specified.

[0106] FIG. 7 illustrates conceptual diagram 700 for explaining an operation of transmitting, by the electronic device, a response to one or more voice prompts provided by a service provider according to various embodiments. After a call is connected to a service provider (e.g., the service provider 450 in FIG. 4), the electronic device 101 may transmit a response corresponding to one or more voice prompts transmitted by the service provider 450. The service provider 450 may select one of menu information according to the received response, and may determine a subsequent voice prompt. In FIG. 7, it is assumed that a detailed service is new card application, and a description thereof will be provided with reference to the menu information in FIG. 5. In response to establishment of the call connection, the service provider 450 may request to select one of information relating to child nodes until reaching a leaf node in the tree for the menu information of FIG. 5. For example, referring to FIG. 7, the electronic device 101 may display a call screen of a call with the Samsung Card customer center 501 on a touchscreen display 710 (e.g., the touchscreen display 310 in FIG. 3).

[0107] The electronic device 101 may transmit a response of pressing button 1 711 in response to a voice prompt of "Please, press 1 if you are a personal client, and press 2 if you are a corporate client" transmitted by the Samsung Card customer center 501. The Samsung Card customer center 501 may receive the response of pressing button 1 711, may identify that the user of the electronic device 101 is a personal client, and may request to select a child node of the individual 502 in FIG. 5. For example, the Samsung Card customer center 501 may transmit the voice prompts in FIG. 5, such as "Please, press 1 to report a lost card 504, press 2 for information inquiry 505, press 3 for information change 506, press 4 for card cancellation 507, press 5 for card application 508, and press 6 for attendant connection 509". For example, referring to FIG. 7, the electronic device 101 may transmit a response of pressing button 5 721 on the basis of a new card application. Subsequently, the electronic device 101 may receive a voice prompt of "Please, press 1 to apply for a new card, and press 2 for re-issuance of card" transmitted from the Samsung Card customer center 501, and may transmit a response of pressing button 1 731. The Samsung Card customer center 501 may request that the customer wait for connection to an attendant, in response to reaching the new node 513 corresponding to the detailed service, i.e., the new card application. For example, the electronic device 101 may receive one or more voice prompts from the Samsung Card customer center 501 until connection to the attendant is completed. The electronic device 101 may determine whether connection to the attendant has been completed on the basis of the received one or more voice prompts. The electronic device 101 may display the call screen on the touchscreen display 710 and wait while the attendant is being connected.

[0108] The screens shown in FIG. 7 may be executed in the background so as not to be displayed on the touchscreen display 710, or may by displayed on the touchscreen display 710 of the electronic device 101 depending on the implementation. Even in the case where screens are displayed, as shown in FIG. 7, the electronic device 101 may sequentially press button 1 711, button 5 721, and button 1 711 and wait, without user input to the displayed screen, until connection to the attendant is completed.

[0109] FIG. 8 illustrates a flowchart 800 for explaining an operation of determining, by the electronic device, whether an attendant is connected according to various embodiments. The electronic device 101 (e.g., the processor 120) may determine whether an attendant is connected on the basis of one or more voices transmitted by a service provider (e.g., the service provider 450 in FIG. 4). In order to discriminate an attendant voice, the electronic device 101 may use a determination model for the attendant voice.

[0110] In operation 801, the electronic device 101 may receive an audio signal from the service provider 450. For example, the electronic device 101 may receive, from the service provider 450, an audio signal generated by a machine (or pre-stored and output thereby) or an audio signal generated by an attendant. For example, an audio signal generated by a machine may be an audio signal provided by an ARS.

[0111] In operation 803, the electronic device 101 may determine a correlation between the received audio signal and the determination model related to the attendant voice. The electronic device 101 may use, as the determination model, a learned deep-learning model with respect to multiple audio signals related to attendant voice. The electronic device 101 may extract characteristics from the multiple audio signals related to the attendant voice, and may use, as the determination model, a machine-learning model for the extracted characteristics. For example, the electronic device 101 may extract, as the audio signal characteristics, a zero crossing rate, energy, entropy of energy, spectral centroid/spread/entropy/flux/rolloff, Mel Frequency Cepstral Coefficients (MFCCs), or Chroma vector/deviation, and may use the same.

[0112] In operation 805, the electronic device 101 may determine whether an attendant is connected on the basis of the comparison result. For example, when the correlation with the determination model for the audio signal shows similarity and has a value equal to or greater than a threshold value, the electronic device 101 may determine that an attendant is connected.

[0113] FIG. 9 illustrates a flowchart 900 for explaining an operation of determining, by the electronic device, whether an attendant is connected according to various embodiments. The electronic device 101 (e.g., the processor 120 or 210) may determine whether an attendant is connected on the basis of one or more transmitted by a service provider (e.g., the service provider 450 in FIG. 4). In order to distinguish an attendant voice, the electronic device 101 may convert the attendant voice into text, and may determine the similarity between the converted text and greetings that may be uttered when an attendant is connected.

[0114] In operation 901, the electronic device 101 may receive an audio signal from the service provider 450. The service provider 450 may transmit an announcement indicating a waiting state for connection to an attendant until connection to the attendant is completed. The service provider 450 may transmit an attendant voice when connection to the attendant is completed. For example, after connection to an attendant, the attendant may utter a message indicating that connection to the attendant has been completed, such as "Hello, this is Samsung Card customer center attendant OOO".

[0115] In operation 903, the electronic device 101 may convert an audio signal into text using a voice recognition technology.

[0116] In operation 905, the electronic device 101 may determine a correlation between the converted text and a determination model for corpus for greetings. The electronic device 101 may store the corpus for greetings provided by the service provider 450, and may use a learning model for the corpus.

[0117] In operation 907, the electronic device 101 may determine whether an attendant is connected on the basis of the comparison result. For example, when the correlation between the converted text and the corpus for the determination model has a value higher than the threshold value, the electronic device 101 may determine that an attendant is connected.

[0118] According to various embodiments, the electronic device 101 may determine whether an attendant is connected, using at least one among the method, shown in FIG. 8, of using the determination model related to an attendant voice and the method, shown in FIG. 9, of using the determination model related to the corpus of greetings. The electronic device 101 may assign a weight value to the at least one method and may finally determine whether an attendant is connected in consideration of a value calculated according to the weighted value.