Method And System For Selective Access Of Stored Or Transmitted Bioinformatics Data

Baluch; Mohamed Khoso ; et al.

U.S. patent application number 16/341426 was filed with the patent office on 2020-02-06 for method and system for selective access of stored or transmitted bioinformatics data. This patent application is currently assigned to GENOMSYS SA. The applicant listed for this patent is GENOMSYS SA. Invention is credited to Mohamed Khoso Baluch, Daniele Renzi, Giorgio Zoia.

| Application Number | 20200042735 16/341426 |

| Document ID | / |

| Family ID | 61905752 |

| Filed Date | 2020-02-06 |

View All Diagrams

| United States Patent Application | 20200042735 |

| Kind Code | A1 |

| Baluch; Mohamed Khoso ; et al. | February 6, 2020 |

METHOD AND SYSTEM FOR SELECTIVE ACCESS OF STORED OR TRANSMITTED BIOINFORMATICS DATA

Abstract

The storage or transmission of genomic data is realized by employing a structured compressed genomic dataset in a file or in a stream of genomic data. Selective access to the data, or subsets of the data, corresponding to specific genomic regions is achieved by employing user-defined labels based on data classification and a specific indexing mechanism.

| Inventors: | Baluch; Mohamed Khoso; (Chantilly, VA) ; Zoia; Giorgio; (Lausanne, CH) ; Renzi; Daniele; (Lausanne, CH) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | GENOMSYS SA Lausanne CH |

||||||||||

| Family ID: | 61905752 | ||||||||||

| Appl. No.: | 16/341426 | ||||||||||

| Filed: | February 14, 2017 | ||||||||||

| PCT Filed: | February 14, 2017 | ||||||||||

| PCT NO: | PCT/US2017/017841 | ||||||||||

| 371 Date: | April 11, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G16B 30/20 20190201; G16B 45/00 20190201; H03M 7/3086 20130101; G16B 20/10 20190201; G16B 50/00 20190201; G16B 30/10 20190201; G06F 21/602 20130101; G06F 16/285 20190101; G16B 50/30 20190201; G06F 16/2282 20190101; G06F 16/2365 20190101; G16B 30/00 20190201; G06F 7/00 20130101; G16B 50/40 20190201; G16B 40/10 20190201; G16B 99/00 20190201; G06F 3/048 20130101; G16B 20/20 20190201; G16B 50/50 20190201; H03M 7/70 20130101; G06F 21/6245 20130101; G06F 21/6218 20130101; G16B 40/00 20190201; G16B 50/10 20190201 |

| International Class: | G06F 21/62 20060101 G06F021/62; G16B 30/10 20060101 G16B030/10; G16B 50/40 20060101 G16B050/40; G16B 50/30 20060101 G16B050/30; G16B 20/20 20060101 G16B020/20; G06F 16/28 20060101 G06F016/28; G06F 16/22 20060101 G06F016/22; G06F 21/60 20060101 G06F021/60 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Oct 11, 2016 | EP | PCT/EP2016/074297 |

| Oct 11, 2016 | EP | PCT/EP2016/074301 |

| Oct 11, 2016 | EP | PCT/EP2016/074307 |

| Oct 11, 2016 | EP | PCT/EP2016/074311 |

Claims

1. A method for selective access of regions of genomic data by employing labels, said labels comprising: an identifier of a reference genomic sequence, an identifier of said genomic regions, and an identifier of the data class of said genomic data, wherein said genomic data are sequences of genomic reads, and wherein said data classes can be of the following type or a subset of them: "Class P" comprising genomic reads which do not present any mismatch with respect to a reference sequence, "Class N" comprising genomic reads including only mismatches in positions where the sequencing machine was not able to call any "base" and the number of said mismatches does not exceed a given threshold, "Class M" comprising genomic reads in which mismatches are constituted by positions where the sequencing machine was not able to call any base, named "n type" mismatches, and/or it called a different base than the reference sequence, named "s type" mismatches, and said numbers of mismatches do not exceed given thresholds for the number of mismatches of "n type", of "s type" and a threshold obtained from a given function (f(n,s)), "Class I" when the genomic reads can possibly have the same type of mismatches of "Class M", and in addition at least one mismatch of type: "insertion" ("i type"), "deletion" ("d type"), soft clips ("c type"), and wherein the numbers of mismatches for each type does not exceed the corresponding given thresholds and a threshold provided by a given function (w(n,s,i,d,c)), "Class U" comprising all reads that do not find any classification in the classes P, N, M, I.

2. (canceled)

3. (canceled)

4. The method of claim 1, further comprising the case of said genomic data being paired sequences of genomic reads.

5. The method of claim 4 wherein said data class of paired reads can be of the following types or a subset of them: "Class P" comprising genomic read pairs which do not present any mismatch with respect to a reference sequence, "Class N" comprising genomic reads pairs including only mismatches in positions where the sequencing machine was not able to call any "base" and said numbers of mismatches for each read do not exceed a given threshold, "Class M" comprising genomic read pairs including only mismatches in positions where the sequencing machine was not able to call any "base" and said numbers of mismatches for each read do not exceed a given threshold, named "n type" mismatches, and/or it called a different base than the reference sequence, named "s type" mismatches, and said numbers of mismatches does not exceed a given thresholds for the number of mismatches of "n type", of "s type" and a threshold obtained from a given function (f(n,s)), "Class I" comprising read pairs which can possibly have the same type of mismatches of "Class M" pairs, and in addition at least one mismatch of type: "insertion" ("i type") "deletion" ("d type") soft clips ("c type"), and wherein the number of mismatches for each type does not exceed the corresponding given threshold and a threshold provided by a given function (w(n,s,i,d,c)), "Class HM" comprising read pairs for which only one read mate does not satisfy the matching rules for being classified in any of the classes P, N, M, I, Class "U" comprising all reads pairs for which both reads do not satisfy the matching rules for being classified in the classes P, N, M, I.

6. The method of claim 1, wherein said identifier of said genomic regions is comprised in a master index table.

7. The method of claim 6 wherein said genomic data and said labels are entropy coded.

8. The method of claim 7 wherein said master index table is comprised in a genomic dataset header.

9. The method of claim 8, wherein said regions of genomic data are dispersed among separate Access Units.

10. The method of claim 9 wherein the location of said regions of genomic data, in a file, is indicated in a local index table.

11. The method of claim 1, wherein said labels are user specified.

12. The method of claim 1, wherein said regions are protected and/or encrypted in a separate manner, without encrypting the whole genomic file.

13. The method of claim 1, wherein said labels are stored in a genomic label list (GLL).

14. A method for encoding genomic data with selective access to regions of genomic data as claimed in claim 1.

15. The method of claim 13, wherein said genomic label list is periodically retransmitted or updated in order to enable multiple synchronization points.

16. A method for decoding a stream or a file of genomic data with selective access to regions of genomic data as claimed in claim 1.

17. An apparatus for encoding genomic data as claimed in claim 14.

18. An apparatus for decoding genomic data as claimed in claim 16.

19. Storing means for storing genomic data encoded according to claim 14.

20. A computer-readable medium comprising instructions that when executed cause at least one processor to perform the encoding method of claim 14.

21. A computer-readable medium comprising instructions that when executed cause at least one processor to perform the decoding method of claim 16.

Description

TECHNICAL FIELD

[0001] The present application provides new methods for the efficient storage, transmission and multiplexing of bioinformatics data, and in particular genomic sequencing data, in compressed form that enable efficient selective access and selective protection of the different data categories composing the genomic datasets.

BACKGROUND

[0002] An appropriate representation of genome sequencing data is fundamental to enable efficient processing, storage and transmission of genomic data to make possible and facilitate analysis applications such as genome variants calling and all analysis performed, with various purposes, by processing the sequencing data and metadata. Today, genome sequencing information is generated by High Throughput Sequencing (HTS) machines in the form of sequences of nucleotides (a. k. a. bases) represented by strings of letters from a defined vocabulary.

[0003] These sequencing machines do not read out an entire genomes or genes, but they produce short random fragments of nucleotide sequences known as sequence reads. A quality score is associated to each nucleotide in a sequence read. Such number represents the confidence level given by the machine to the read of a specific nucleotide at a specific location in the nucleotide sequence. This raw sequencing data generated by NGS machines are commonly stored in FASTQ files (see also FIG. 1).

[0004] The smallest vocabulary to represent sequences of nucleotides obtained by a sequencing process is composed by five symbols: {A, C, G, T, N} representing the four types of nucleotides present in DNA namely Adenine, Cytosine, Guanine, and Thymine plus the symbol N to indicate that the sequencing machine was not able to call any base with a sufficient level of confidence, so the type of base in such position remains undetermined in the reading process. In RNA Thymine is replaced by Uracil (U). The nucleotides sequences produced by sequencing machines are called "reads". In case of paired reads the term "template" is used to designate the original sequence from which the read pair has been extracted. Sequence reads can be composed by a number of nucleotides in a range from a few dozen up to several thousand. Some technologies produce sequence reads in pairs where each read can be originated from one of the two DNA strands.

[0005] In the genome sequencing field the term "coverage" is used to express the level of redundancy of the sequence data with respect to a reference genome. For example, to reach a coverage of 30.times. on a human genome (3.2 billion bases long) a sequencing machine shall produce a total of about 30.times.3.2 billion bases so that in average each position in the reference is "covered" 30 times.

State of the Art Solutions

[0006] The most used genome information representations of sequencing data are based on FASTQ and SAM file formats which are commonly made available in zipped form in the attempt of reducing the original size. The traditional file formats, respectively FASTQ and SAM for non-aligned and aligned sequencing data, are constituted by plain text characters and are thus compressed by using general purpose approaches such as LZ (from Lempel and Ziv) schemes (the well-known zip, gzip etc). When general purpose compressors such as gzip are used, the result of the compression is usually a single blob of binary data. The information in such monolithic form results quite difficult to archive, transfer and elaborate particularly in the case of high throughput sequencing when the volumes of data are extremely large.

[0007] After sequencing, each stage of a genomic information processing pipeline produces data represented by a completely new data structure (file format) despite the fact that in reality only a small fraction of the generated data is new with respect to the previous stage.

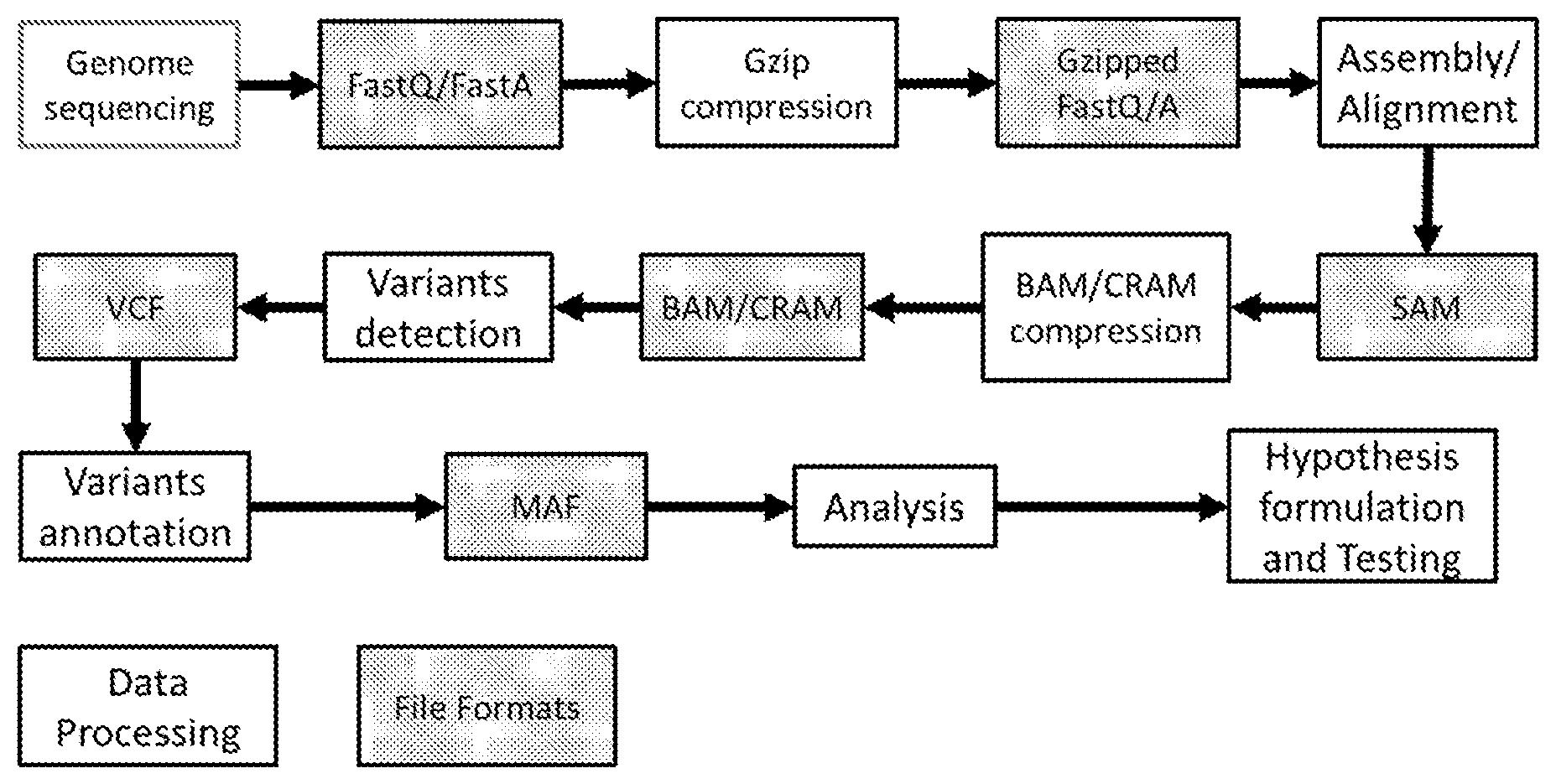

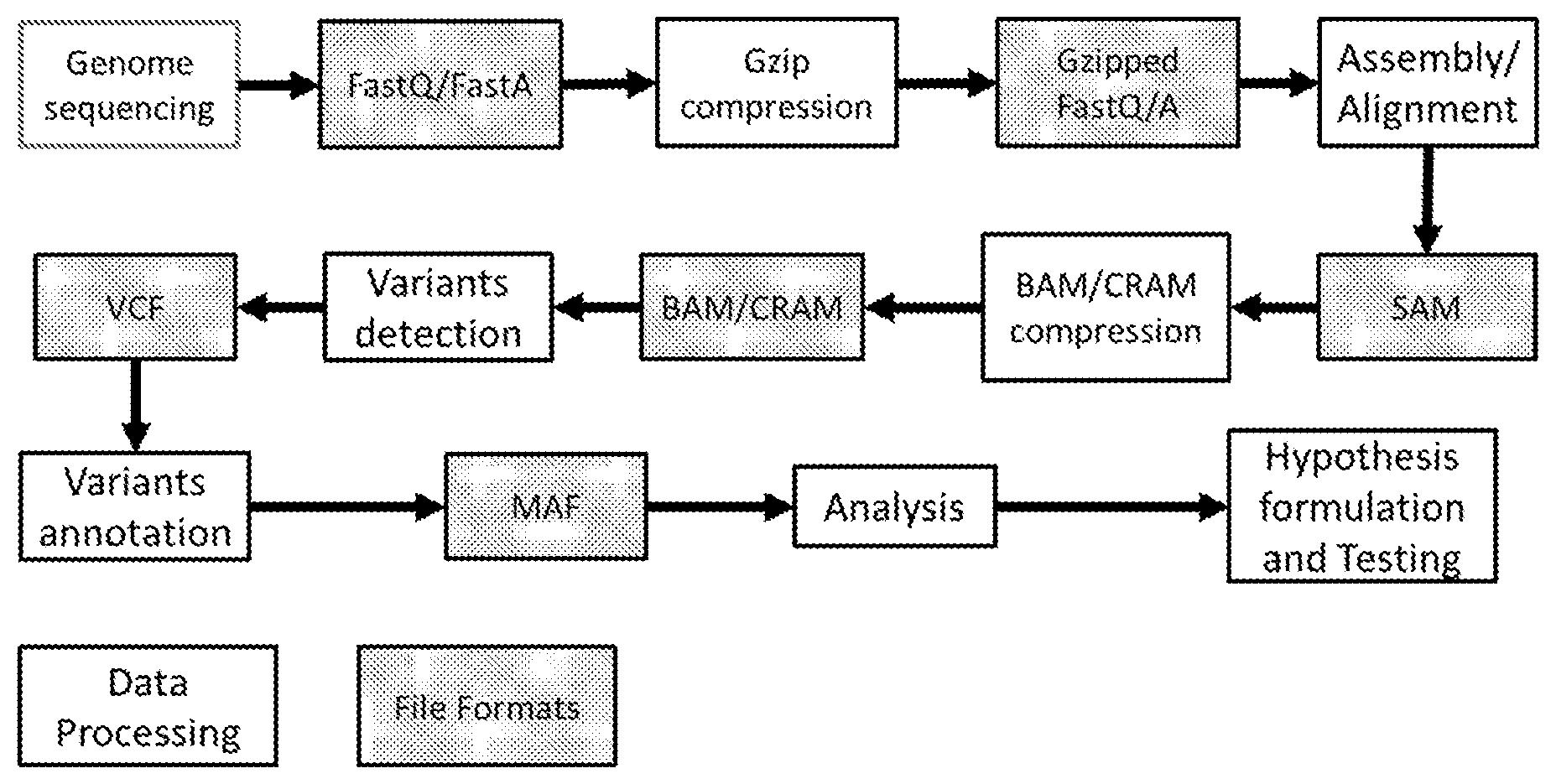

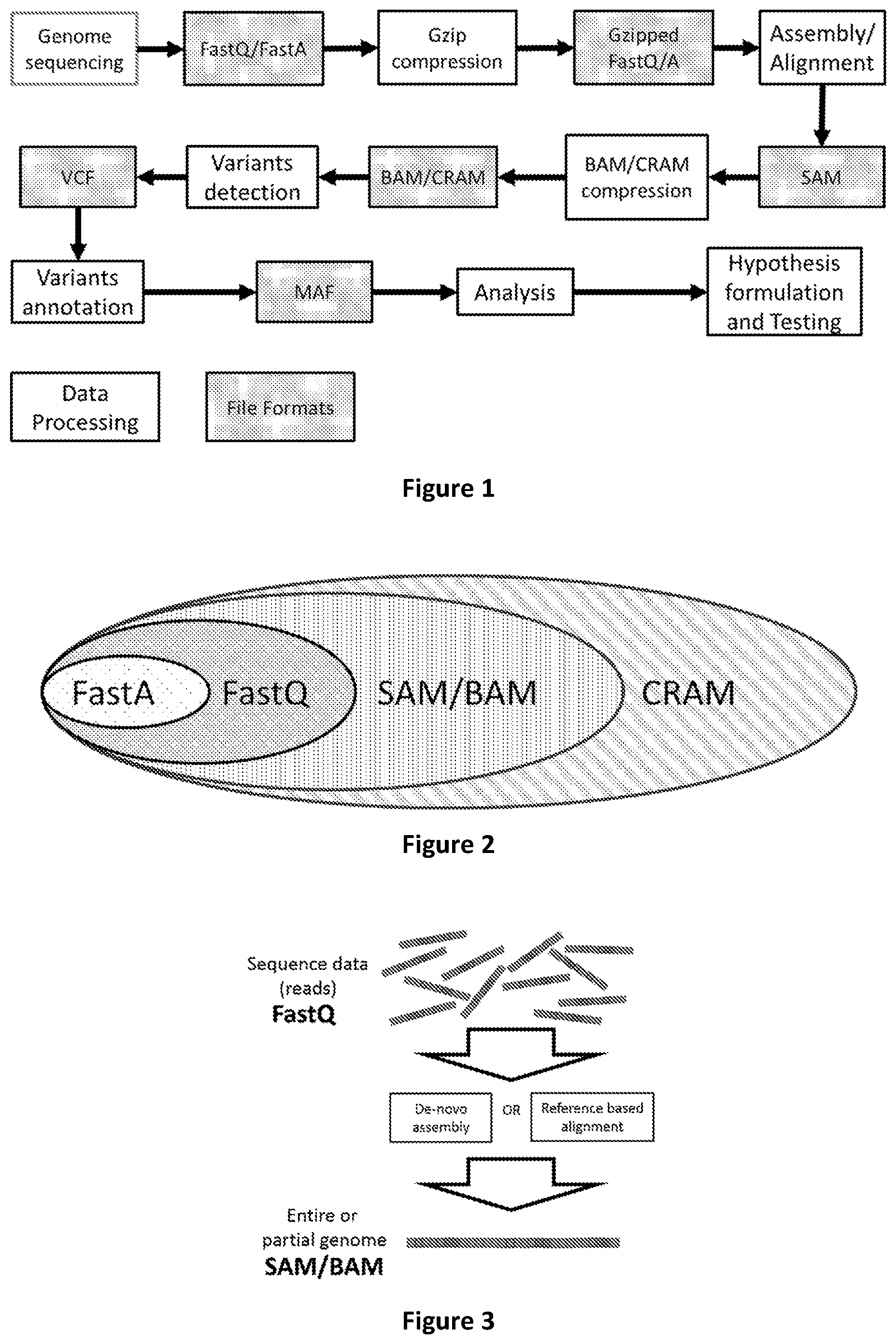

[0008] FIG. 1 shows the main stages of a typical genomic information processing pipeline with the indication of the associated file format representation.

[0009] Commonly used solutions presents several drawbacks: data archival is inefficient for the fact that a different file format is used at each stage of the genomic information processing pipelines which implies the multiple replication of data, with the consequent rapid increase of the required storage space. This is inefficient and unnecessary and it is also becoming not sustainable for the increase of the data volume generated by HTS machines. This has in fact consequences in terms of available storage space and generated costs, and it is also hindering the benefits of genomic analysis in healthcare from reaching a larger portion of the population. The impact of the IT costs generated by the exponential growth of sequence data to be stored and analysed is currently one of the main challenges the scientific community and that the healthcare industry have to face (see Scott D. Kahn "On the future of genomic data"--Science 331, 728 (2011) and Pavlichin, D. S., Weissman, T., and G. Yona. 2013. "The human genome contracts again" Bioinformatics 29(17): 2199-2202). At the same time several are the initiatives attempting to scale genome sequencing from a few selected individuals to large populations (see Josh P. Roberts "Million Veterans Sequenced"--Nature Biotechnology 31, 470 (2013))

[0010] The transfer of genomic data is slow and inefficient because the currently used data formats are organized into monolithic files of up to several hundred Gigabytes of size which need to be entirely transferred at the receiving end in order to be processed. This implies that the analysis of a small segment of the data requires the transfer of the entire file with significant costs in terms of consumed bandwidth and waiting time. Often online transfer is prohibitive for the large volumes of the data to be transferred, and the transport of the data is performed by physically moving storage media such as hard disk drives or storage servers from one location to another.

[0011] These limitations occurring when employing state of the art approaches are overcome by the present invention.

[0012] Processing the data is slow and inefficient for to the fact that the information is not structured in such a way that the portions of the different classes of data and metadata required by commonly used analysis applications cannot be retrieved without the need of accessing the data in its totality. This fact implies that common analysis pipelines can require to run for days or weeks wasting precious and costly processing resources because of the need, at each stage of accessing, of parsing and filtering large volumes of data even if the portions of data relevant for the specific analysis purpose is much smaller.

[0013] These limitations are preventing health care professionals from timely obtaining genomic analysis reports and promptly reacting to diseases outbreaks. The present invention provides a solution to this need.

[0014] There is another technical limitation that is overcome by the present invention.

[0015] In fact the invention aims at providing an appropriate genomic sequencing data and metadata representation by organizing and partitioning the data so that the compression of data and metadata is maximized and several functionality such as selective access and support for incremental updates are efficiently enabled.

[0016] A key aspect of the invention is a specific definition of classes of data and metadata to be represented by an appropriate source model, coded (i.e. compressed) separately by being structured in specific layers. The most important achievements of this invention with respect to existing state of the art methods consist in: [0017] the increase of compression performance due to the reduction of the information source entropy constituted by providing an efficient model for each class of data or metadata; [0018] the possibility of performing selective accesses to portions of the compressed data and metadata for any further processing purpose directly in the compressed domain; [0019] the possibility of defining user specified "labels" identifying genomic regions or sub-regions or aggregations of regions or sub-regions to enable efficient selective access to the compressed data by means of parsing a "labels list" contained in the genomic file header; [0020] the possibility of implementing access control and protection to the different genomic regions or sub-regions identified by a label; [0021] the possibility of incrementally (without the need of re-encoding) updating and adding encoded data and metadata with new sequencing data and/or metadata and/or new analysis results; [0022] the possibility of efficiently processing data as soon as they are produced by the sequencing machine or alignment tools without the need of waiting the end of the sequencing or alignment process.

[0023] The present application discloses a method and system addressing the problem of efficient manipulation, storage and transmission of very large amounts of genomic sequencing data, by employing a structured access units approach combined with multiplexing techniques.

[0024] The present application overcomes all the limitations of the prior art approaches related to the functionality of genomic data accessibility, selective data protection, efficient processing of data subsets, transmission and streaming functionality combined with an efficient compression.

[0025] Today the most used representation format for genomic data is the Sequence Alignment Mapping (SAM) textual format and its binary correspondent BAM. SAM files are human readable ASCII text files whereas BAM adopts a block based variant of gzip. BAM files can be indexed to enable a limited modality of random access. This is supported by the creation of a separate index file.

[0026] The BAM format is characterized by poor compression performance for the following reasons: [0027] 1. It focuses on compressing the inefficient and redundant SAM file format rather than on extracting the actual genomic information conveyed by SAM files and using appropriate models for compressing it. [0028] 2. It employs a general purpose text compression algorithm such as gzip rather than exploiting the specific nature of each data source (the genomic information itself). [0029] 3. It lacks any concept and does not support any functionality related to data classification that would enable the implementation of mechanisms providing selective access to specific classes of genomic data.

[0030] A more sophisticated approach to genomic data compression that is less commonly used, but more efficient than BAM is CRAM (CRAM specification: https://samtools.github.io/hts-specs/CRAMv3.pdf). CRAM provides a more efficient compression for the adoption of differential encoding with respect to an existing reference (it partially exploits the data source redundancy), but it still lacks features such as incremental updates, support for streaming and selective access to specific classes of compressed data.

[0031] CRAM relies on the concept of the CRAM record. Each CRAM record encodes a single mapped or unmapped reads by encoding all the elements necessary to reconstruct it.

[0032] CRAM presents the following drawbacks and limitations that are solved and removed by the invention described in this document: [0033] 1. CRAM does not support data indexing and random access to data subsets sharing specific features. Data indexing is out of the scope of the specification (see section 12 of CRAM specification v 3.0) and it is implemented as a separate file. Conversely the approach of the invention described in this document employs a data indexing method that is integrated with the encoding process and indexes are embedded in the encoded (i.e. compressed) bit stream. [0034] 2. CRAM does not support the aggregation of the data related to several sequencing runs so that selective access is efficient and segregation of runs (i.e. the process of extracting the genomic information from the actual organic sample) is preserved. CRAM does provide the possibility to label reads as belonging to different groups, but this is provided on a read by read base and reads from different groups are then mixed in the file structure. In the present invention a method is described to structure the data so as to keep segregation among different sequencing runs so that efficient selective access is available. [0035] 3. CRAM is built by core data blocks that can contain any type of mapped reads (perfectly matching reads, reads with substitutions only, reads with insertions or deletions (also referred to as "indels")). There is no notion of data classification and grouping of reads in classes according to the result of mapping with respect to a reference sequence. This means that all data need to be inspected even if only reads with specific features are searched. Such limitation is solved by the invention by classifying and partitioning data in classes before coding. [0036] 4. CRAM is based on the concept of encapsulating each read into a "CRAM record". This implies the need to inspect each complete "record" when reads characterized by specific biological features (e.g. reads with substitutions, but without "indels", or perfectly mapped reads) are searched. Conversely, in the present invention there is the notion of data classes coded separately in separate information layers and there is no notion of record encapsulating each read. This enables more efficient access to set of reads with specific biological characteristics (e.g. reads with substitutions, but without "indels", or perfectly mapped reads) without the need of decoding each (block of) read(s) to inspect its features. [0037] 5. In a CRAM record each field in a record is associated to a specific flag and each flag must always have the same meaning as there is no notion of context since each CRAM record can contain any different type of data. This coding mechanism introduces redundant information and prevents the usage of efficient context based entropy coding. [0038] Conversely in the present invention there is no notion of flag denoting data because this is intrinsically defined by the information "layer" the data belongs to. This implies a largely reduced number of symbols to be used and a consequent reduction of the information source entropy which results into a more efficient compression. Such improvement is possible because the use of different "layers" enables the encoder to reuse the same symbol across each layer with different meanings according to the context. In CRAM each flag must always have the same meaning as there is no notion of contexts and each CRAM record can contain any type of data. [0039] 6. In CRAM, substitutions, insertions and deletions are represented by using different syntax elements, option that increases the size of the information source alphabet and yields a higher source entropy. Conversely the approach of the disclosed invention uses a single alphabet and encoding for substitutions, insertions and deletions. This makes the encoding and decoding process simpler and produces a lower entropy source model which coding yields bitstreams characterized by high compression performance. [0040] 7. CRAM does not provide any mechanism to uniquely identify specific regions or sub regions of the genomic data or aggregations thereof. Apart from the definition of loci in terms of start and end positions on the reference sequence, according to the CRAM specification there is no way to: [0041] label a region and access it using the defined label instead of the genomic start and end position. Start and end positions of the same genomic region may change if a new reference sequence is published, while a defined label would hide such change to any end user. The encoding and decoding system would take care of adapting the actual region identified by the label to the newly published reference sequence [0042] aggregate several regions or sub-regions under the same label so that any end user would be able to select the required data via a single query not involving complex nested queries. The entire aggregation mechanism would be embedded in the encoding and decoding system as described in this document. [0043] 8. CRAM does not provide or support any mechanism to implement selective protection and access control relative to specific regions or sub regions of the genomic data or aggregations thereof, neither when such regions are pre-defined nor when they are specified by the user inserting appropriate "Labels".

[0044] Beside CRAM also the other approaches to genomic data compression and processing present strong limitations to most of the desired functionality and do not support features that are provided by this invention disclosure as described and specified in the following of the document.

[0045] Genomic compression algorithms used in the state of the art can be classified into these categories: [0046] Transform-based [0047] LZ-based [0048] Read reordering [0049] Assembly-based [0050] Statistical modeling

[0051] The first two categories share the disadvantage of not exploiting the specific characteristics of the data source (genomic sequence reads) and process the genomic data as string of text to be compressed without taking into account the specific properties of such kind of information (e.g. redundancy among reads, reference to an existing sample). Two of the most advanced toolkits for genomic data compression, namely CRAM and Goby ("Compression of structured high-throughput sequencing data", F. Campagne, K. C. Dorff, N. Chambwe, J. T. Robinson, J. P. Mesirov, T. D. Wu), make a poor use of arithmetic coding as they implicitly model data as independent and identically distributed by a Geometric distribution. Goby is slightly more sophisticated since it converts all the fields to a list of integers and each list is encoded independently using arithmetic coding without using any context. In the most efficient mode of operation, Goby is able to perform some inter-list modeling over the integer lists to improve compression. These prior art solutions yield poor compression ratios and data structures that are difficult if not impossible to selectively access and manipulate once compressed. Downstream analysis stages can result to be inefficient and very slow due to the necessity of handling large and rigid data structures even to perform simple operation or to access selected regions of the genomic dataset.

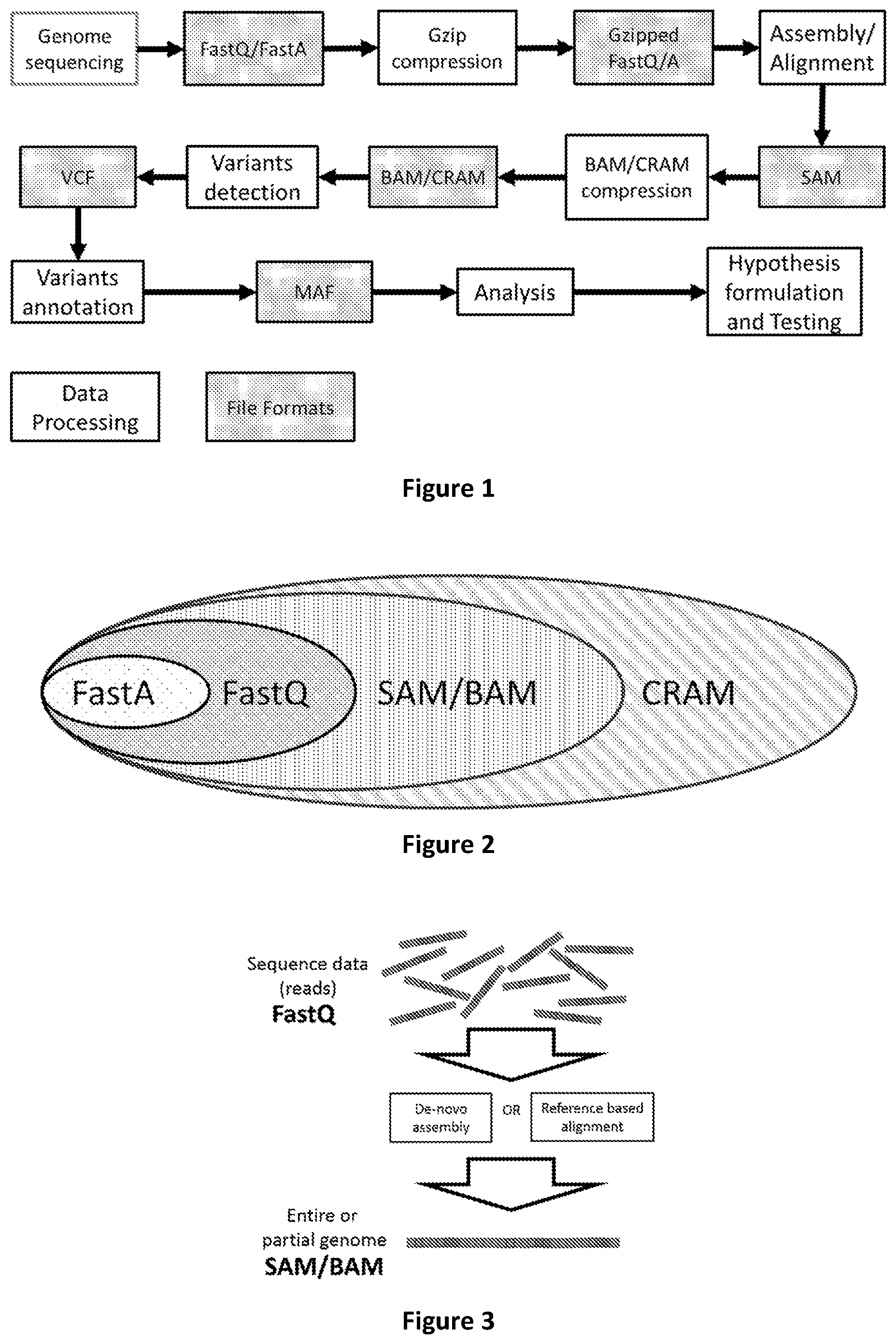

[0052] A simplified vision of the relation among the file formats used in genome processing pipelines is depicted in FIG. 1. In this diagram file inclusion does not imply the existence of a nested file structure, but it only represents the type and amount of information that can be encoded for each format (i.e. SAM contains all information in FASTQ, but organized in a different file structure). CRAM contains the same genomic information as SAM/BAM, but it has more flexibility in the type of compression that can be used, therefore it is represented as a superset of SAM/BAM.

[0053] The use of multiple file formats for the storage of genomic information is highly inefficient and costly. Having different file formats at different stages of the genomic information life cycle implies a linear growth of utilized storage space even if the incremental information is minimal. Further disadvantages of prior art solutions are listed below. [0054] 1. Accessing, analysing or adding annotations (metadata) to raw data stored in compressed FastQ files or any combination thereof requires the decompression and recompression of the entire file with extensive usage of computational resources and time. [0055] 2. Retrieving specific subsets of information such as read mapping position, read variant position and type, indels position and types, or any other metadata and annotation contained in aligned data stored in BAM files requires to access the whole data volume associated to each read. Selective access to a single class of metadata is not possible with prior art solutions. [0056] 3. Prior art file formats require that the whole file is received at the end user before processing can start. For example the alignment of reads could start before the sequencing process has been completed relying on an appropriate data representation. Sequencing, alignment and analysis could proceed and run in parallel. [0057] 4. Prior art solution do not support structuring and are not able of distinguishing genomic data obtained by different sequencing processes according to their specific generation semantic (e.g. sequencing obtained at different time of the life of the same individual). The same limitation occurs for sequencing obtained by different types of biological samples of the same individual. [0058] 5. The protection by means of access control mechanisms (e.g. encryption, watermarking, digital signature, hashing) of entire or selected portions of the data is not supported by prior art solutions. For example the protection of: [0059] a. selected DNA regions [0060] b. only those sequences containing variants [0061] c. chimeric sequences only [0062] d. unmapped sequences only [0063] e. regions or sub-regions or aggregations of regions or sub-regions identified by user defined Labels [0064] f. specific metadata (e.g. origin of the sequenced sample, identity of sequenced individual, type of sample) [0065] is not supported in files and data formats of prior art solutions. [0066] 6. The transcoding from sequencing data aligned to a given reference (i.e. a SAM/BAM file) to a new reference requires to process the entire data volume even if the new reference differs only by a single nucleotide position from the previous reference.

[0067] Therefore there is the clear need of an appropriate Genomic Information Storage Format (Genomic File Format) and Transport Mechanism that enable efficient compression, support selective access and protection functionality in the compressed domain, of local and remotely stored data and support the incremental addition of heterogeneous metadata in the compressed domain at all levels of the different stages of the genomic data processing.

[0068] The present invention provides a solution to the limitations of the state of the art by employing the method, devices and computer programs as claimed in the accompanying set of claims.

LIST OF FIGURES

[0069] FIG. 1 shows the main steps of a typical genomic pipeline and the related file formats.

[0070] FIG. 2 shows the mutual relationship among the most used genomic file formats

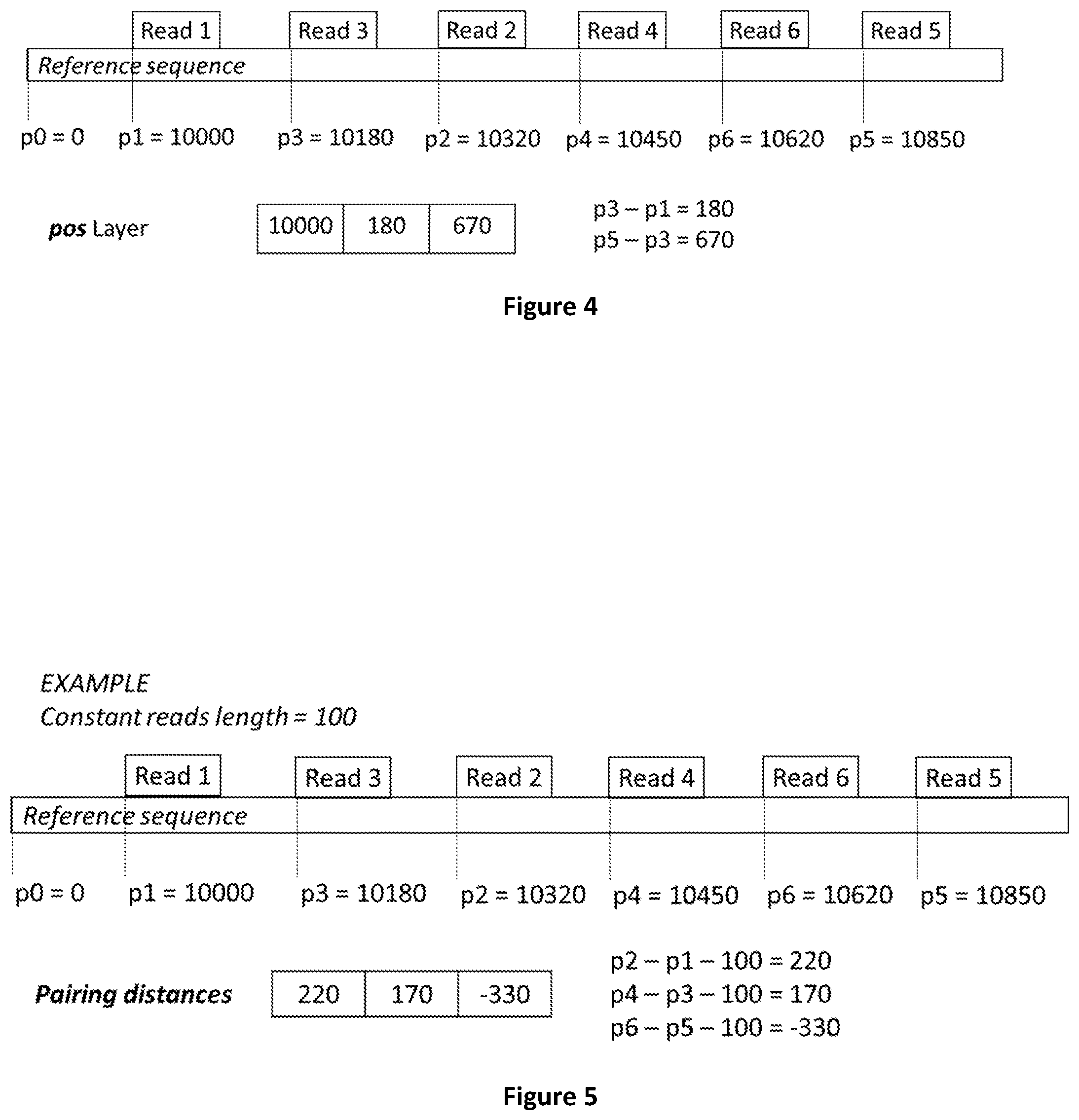

[0071] FIG. 3 shows how genomic sequence reads are assembled in an entire or partial genome via de-novo assembly or reference based alignment.

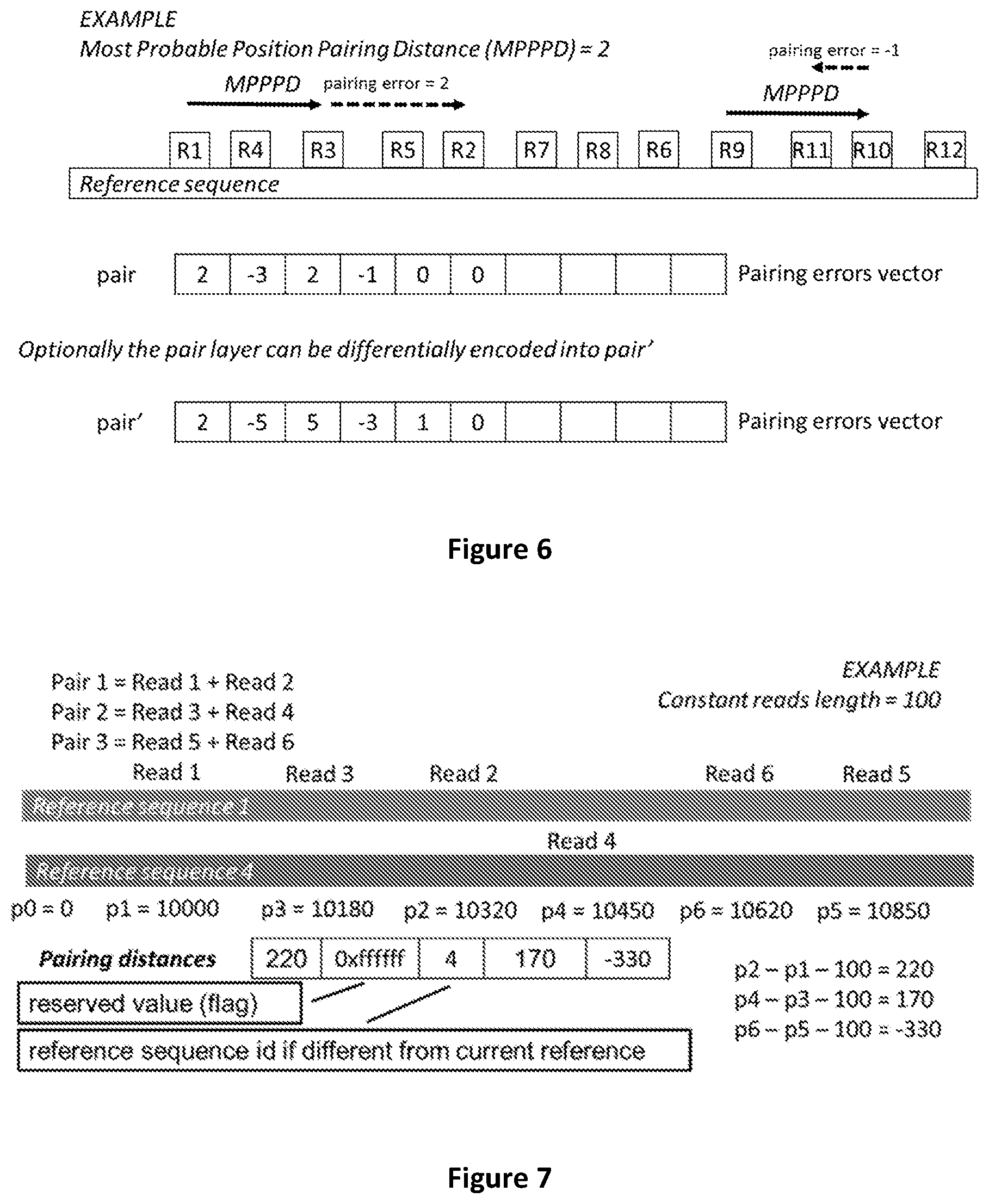

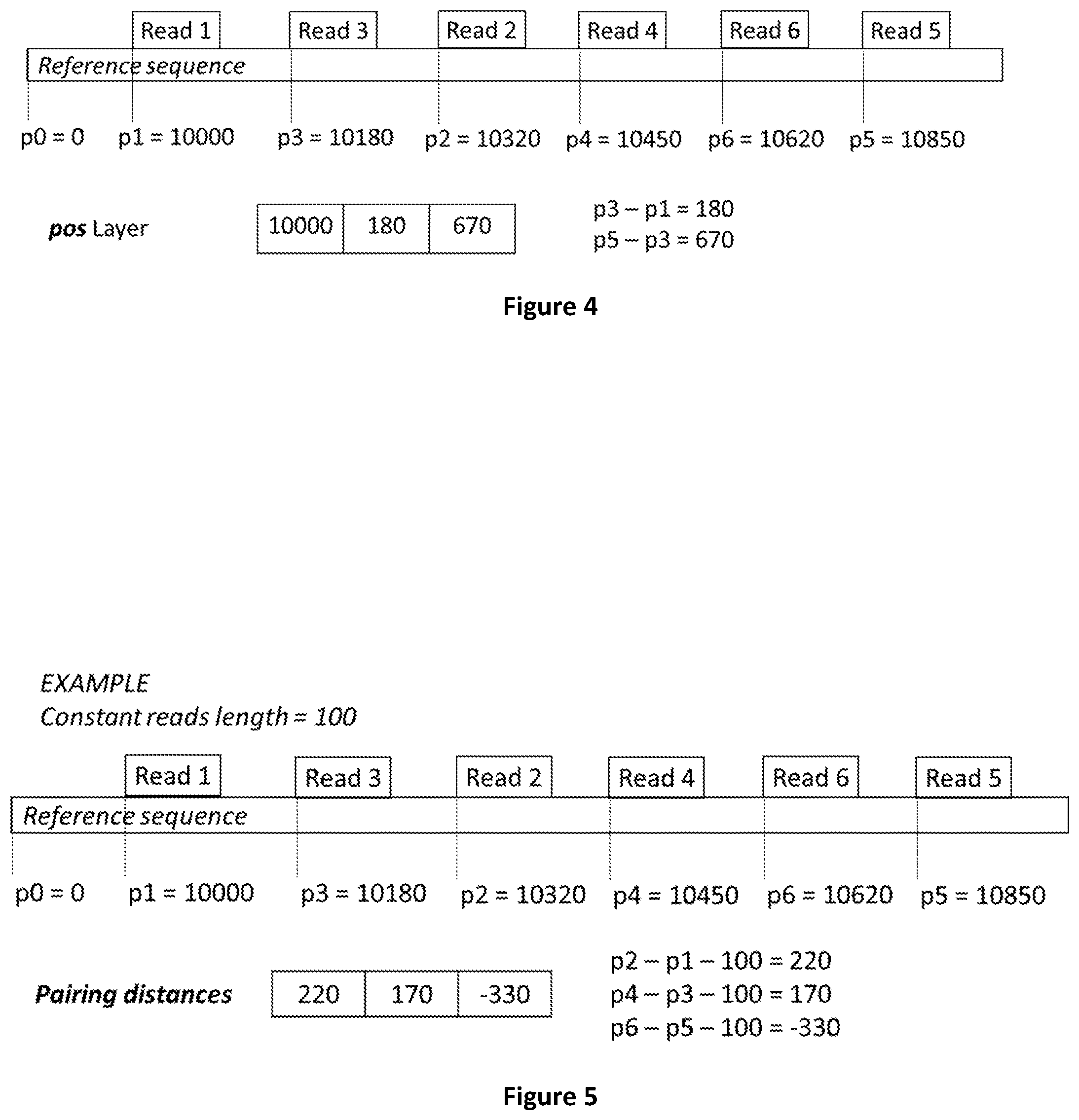

[0072] FIG. 4 shows how reads mapping positions on the reference sequence are calculated.

[0073] FIG. 5 shows how reads pairing distances are calculated.

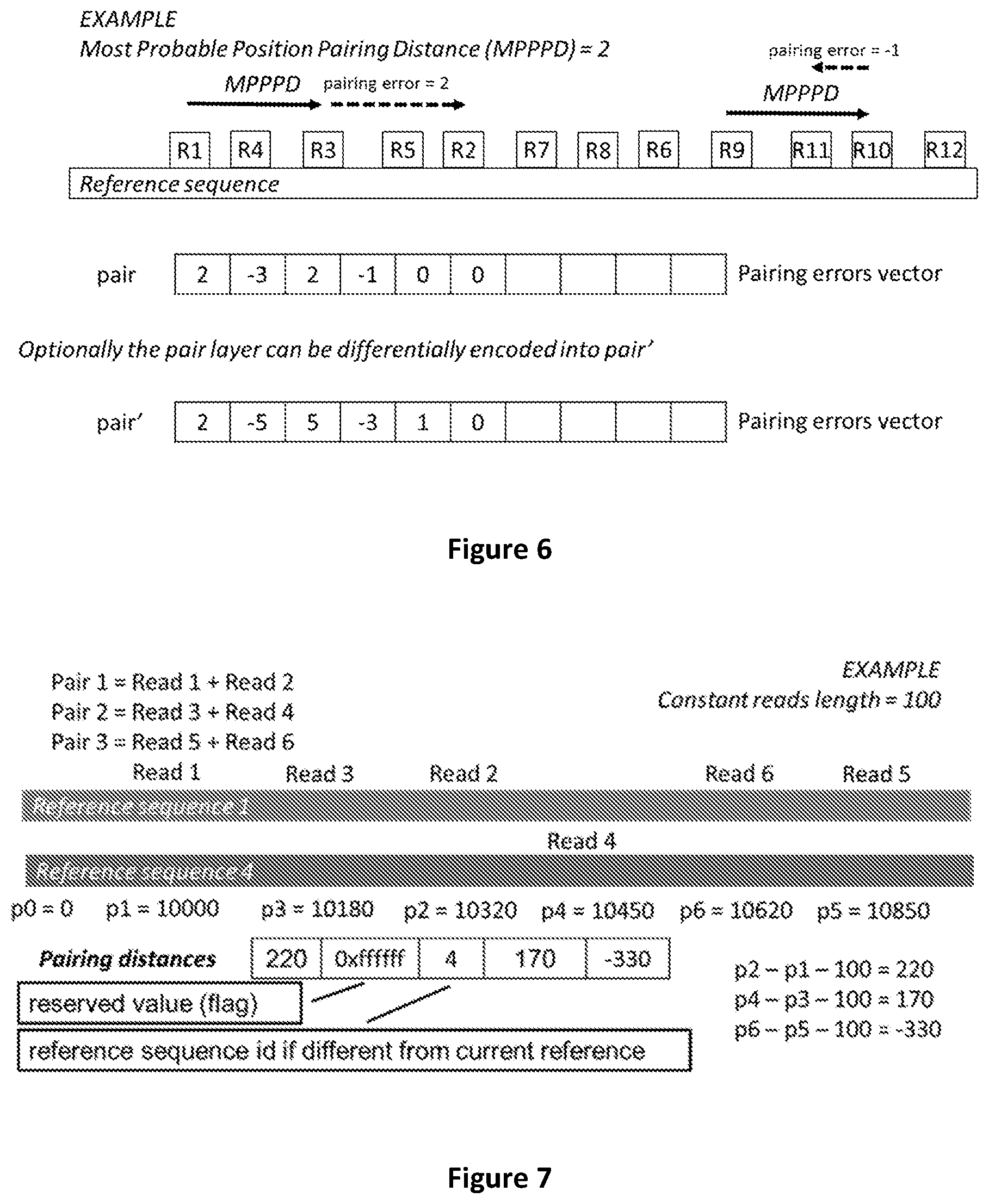

[0074] FIG. 6 shows how pairing errors are calculated.

[0075] FIG. 7 shows how the pairing distance is encoded when a read mate pair is mapped on a different chromosome.

[0076] FIG. 8 shows how sequence reads can be generated from the first or second DNA strand of a genome.

[0077] FIG. 9 shows how a read mapped on strand 2 has a corresponding reverse complemented read on strand 1.

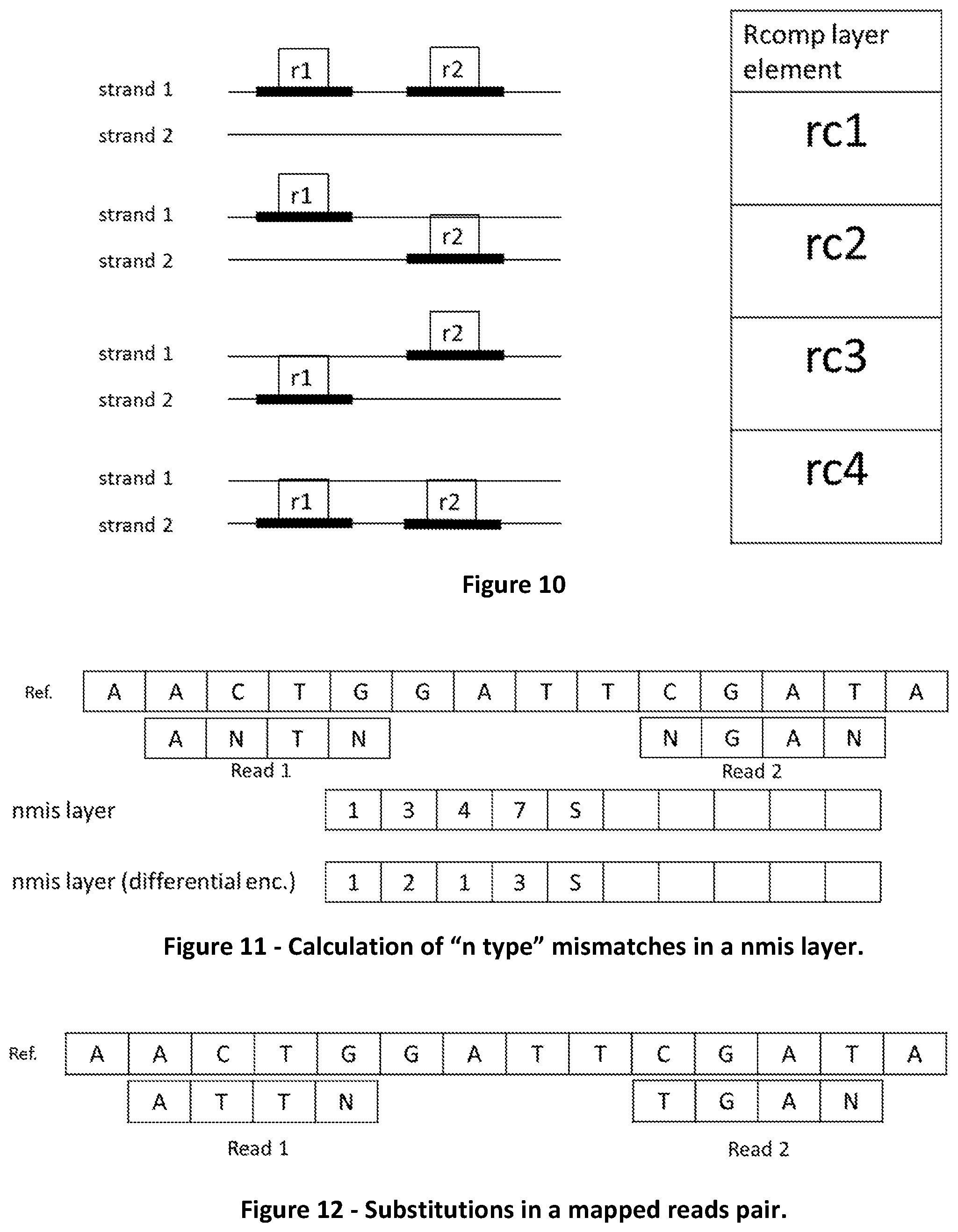

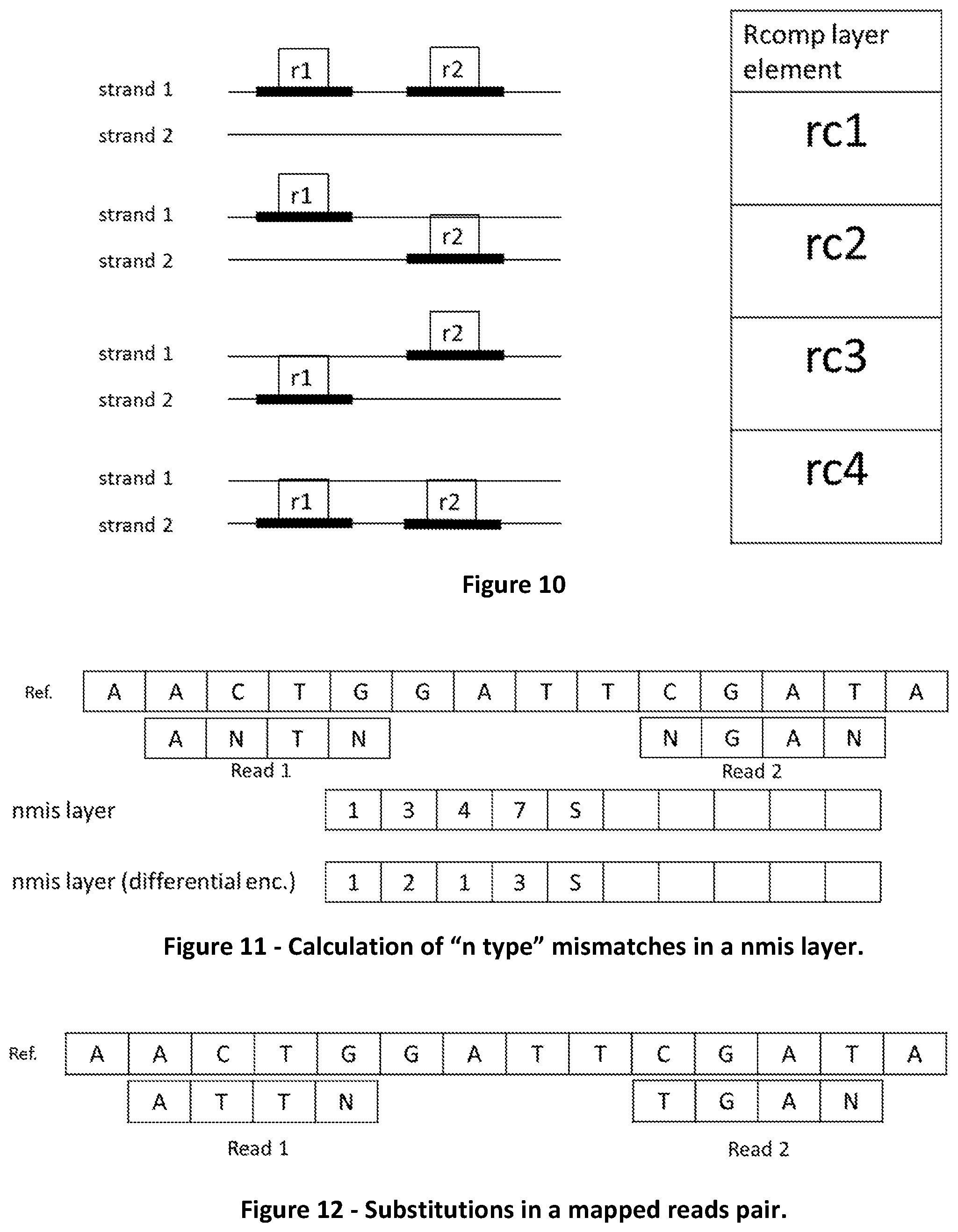

[0078] FIG. 10 shows the four possible combinations of reads composing a reads pair and the respective encoding in the rcomp layer.

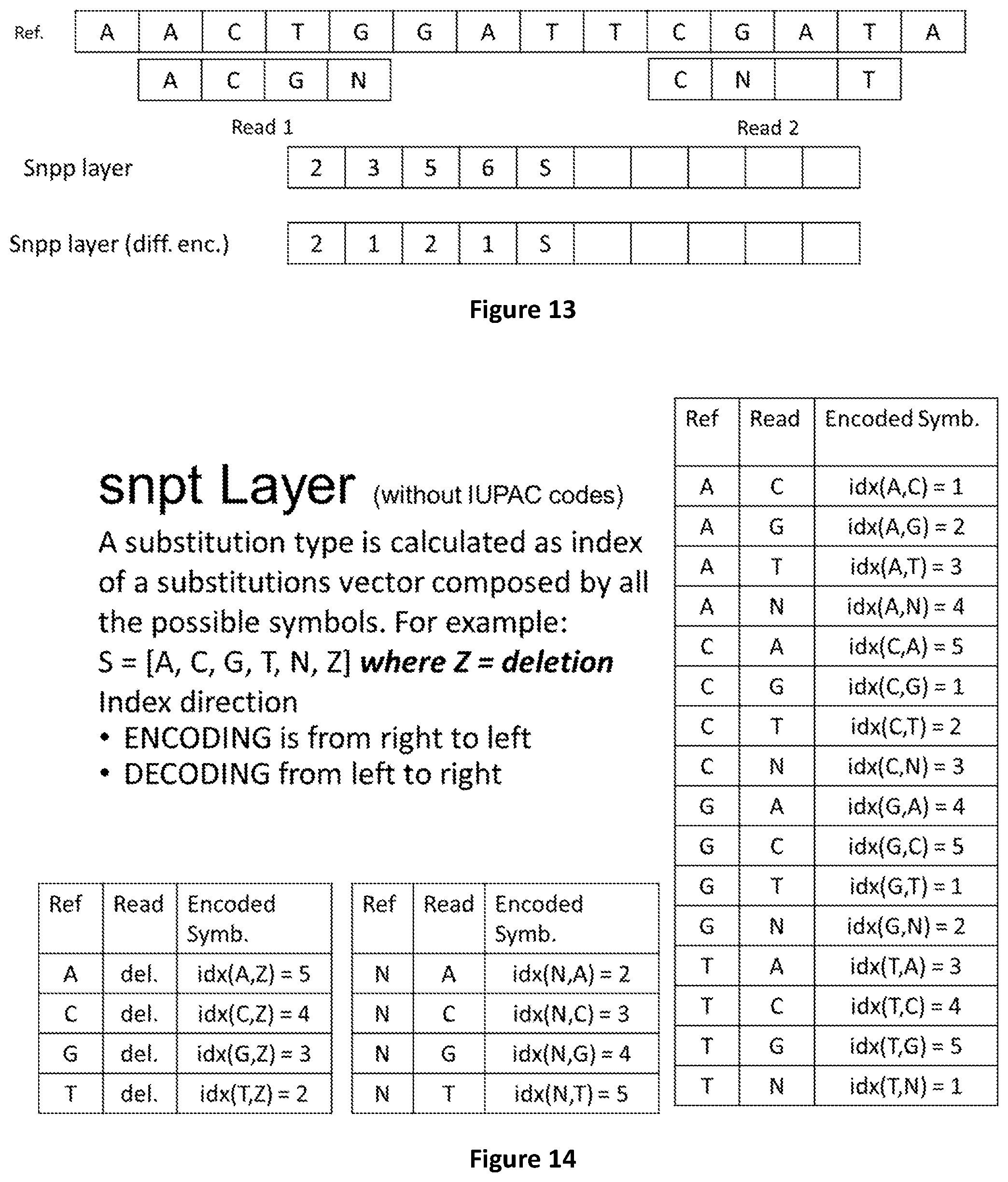

[0079] FIG. 11 shows how "n type" mismatches are encoded in a nmis layer.

[0080] FIG. 12 shows an example of substitutions in a mapped read pair.

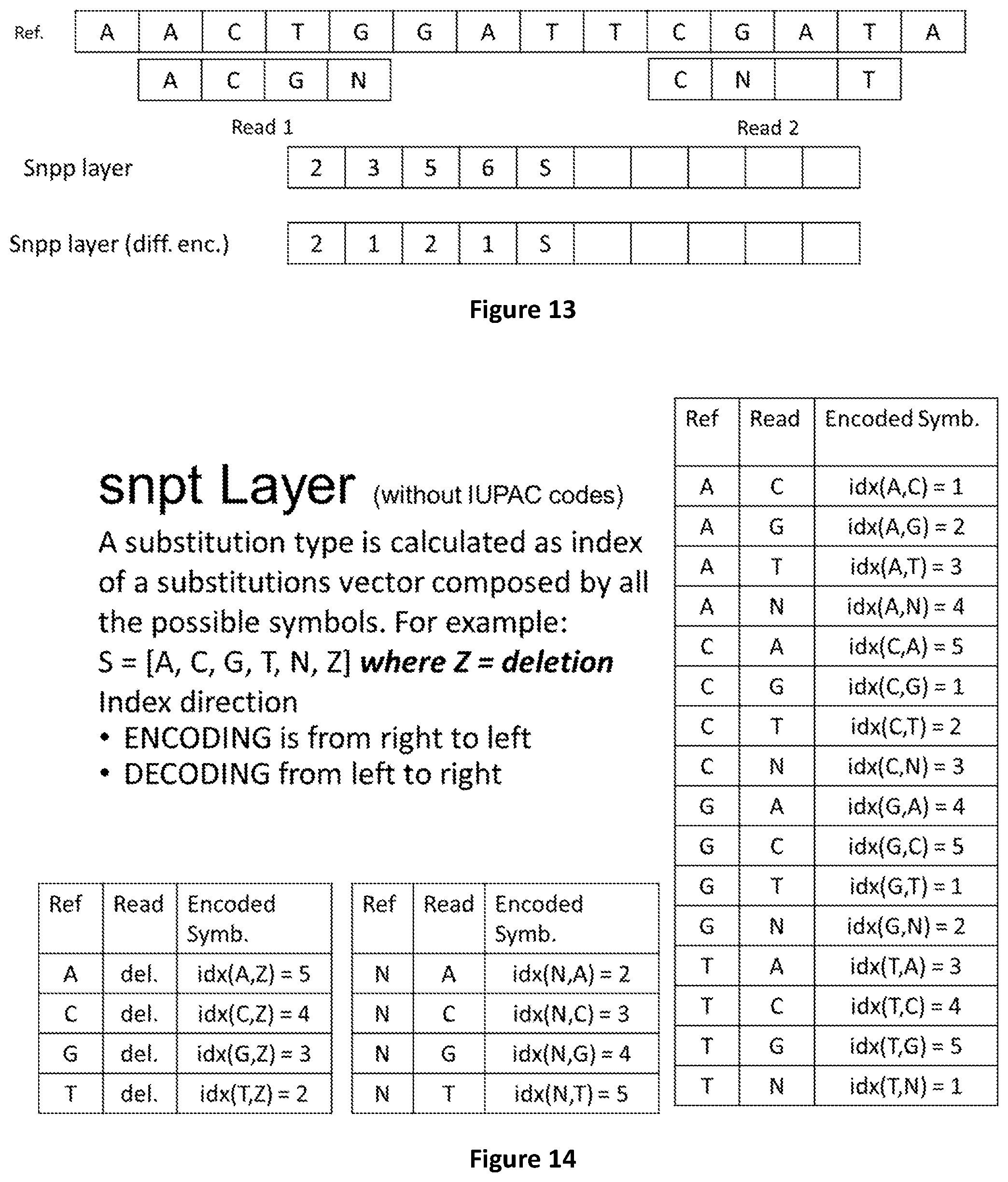

[0081] FIG. 13 shows how substitutions positions can be calculated either as absolute or differential values.

[0082] FIG. 14 shows how symbols encoding substitutions without IUPAC codes are calculated.

[0083] FIG. 15 shows how substitution types are encoded in the snpt layer.

[0084] FIG. 16 shows how symbols encoding substitutions with IUPAC codes are calculated.

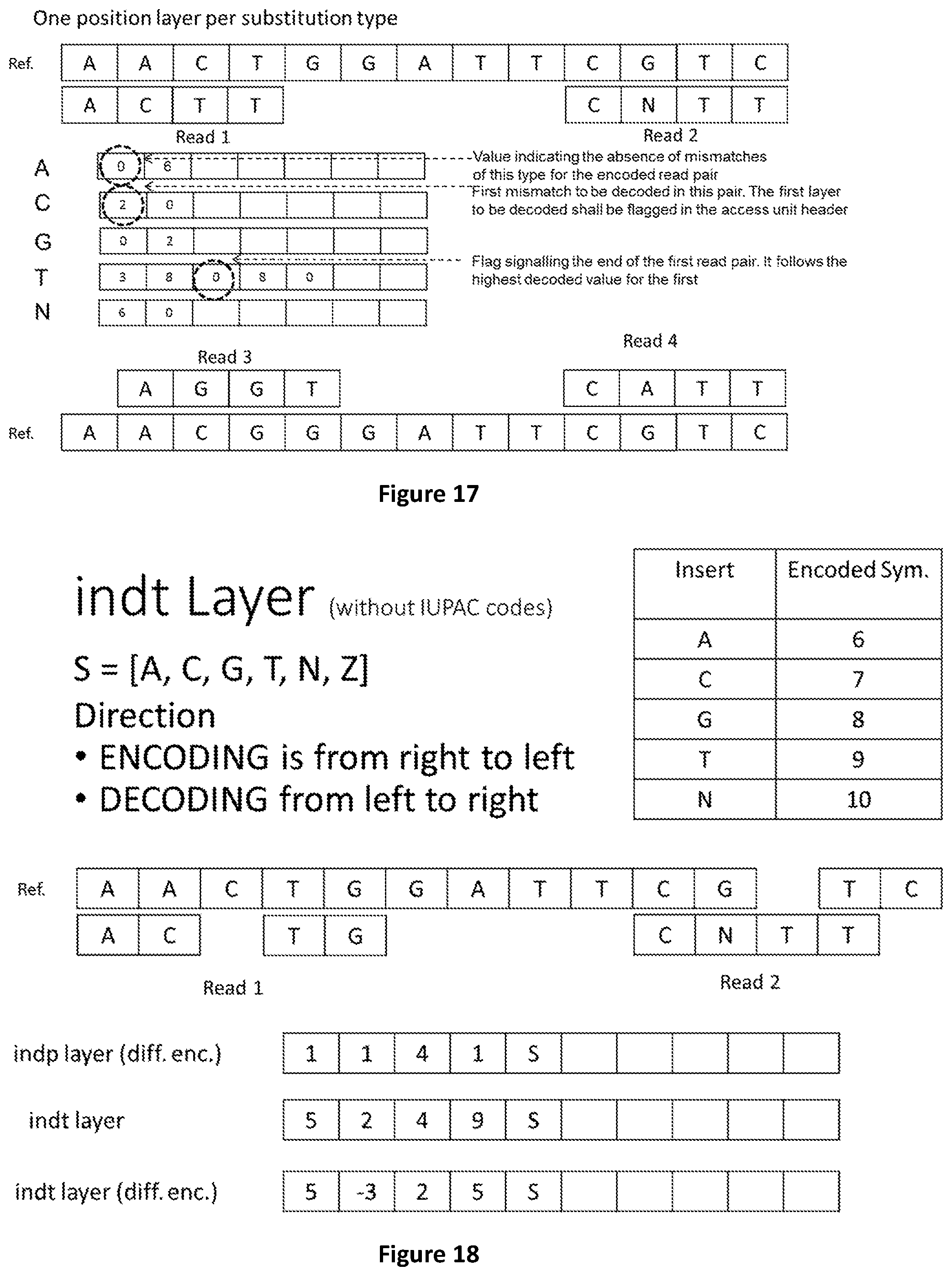

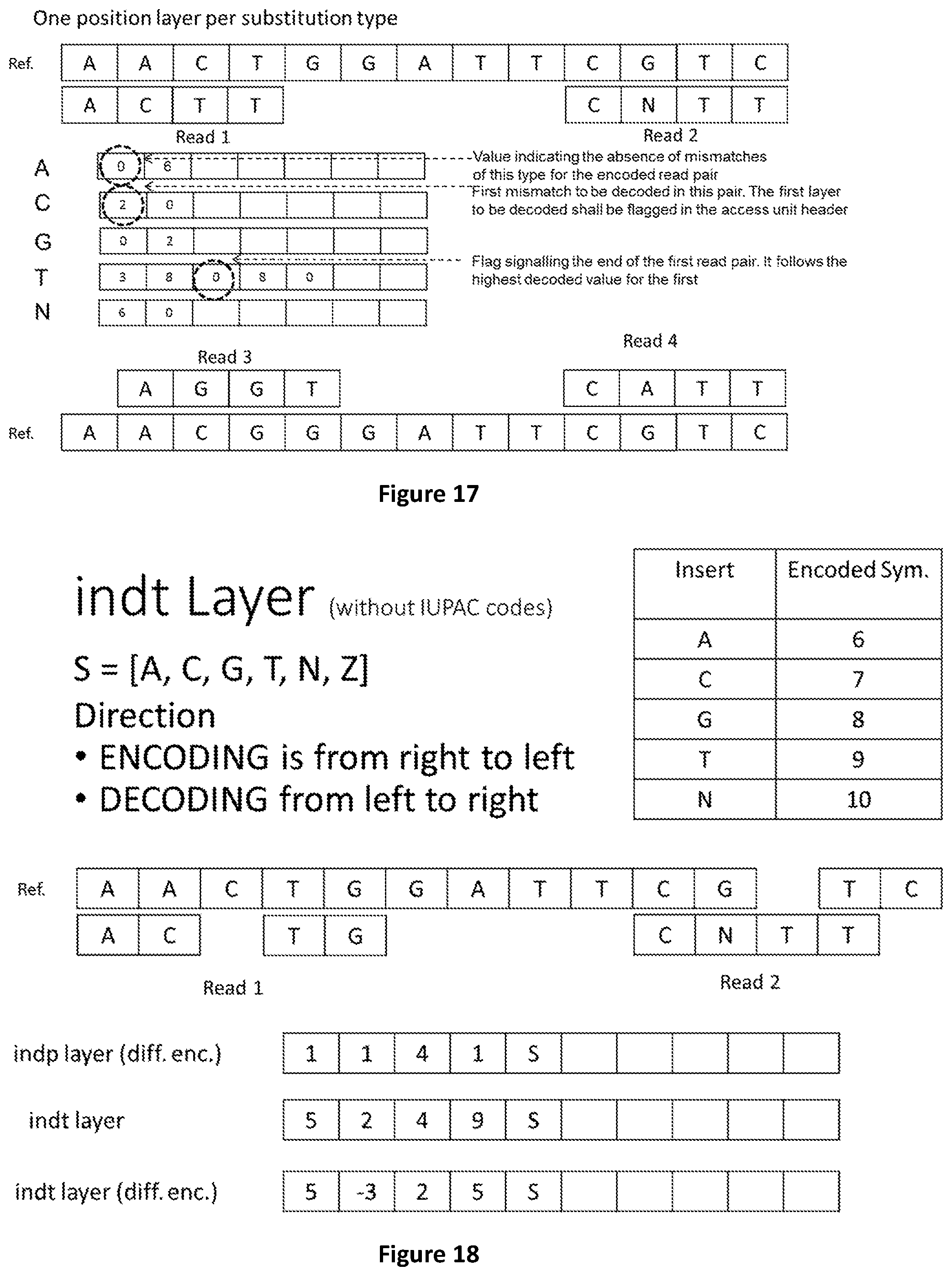

[0085] FIG. 17 shows an alternative source model for substitution where only positions are encoded, but one layer per substitution type is used.

[0086] FIG. 18 shows how to encode substitutions, insertions and deletions in a reads pair of class I when IUPAC codes are not used.

[0087] FIG. 19 shows how to encode substitutions, insertions and deletions in a reads pair of class I when IUPAC codes are used.

[0088] FIG. 20 shows the structure of the Genomic Dataset Header of the genomic information data structure disclosed by this invention.

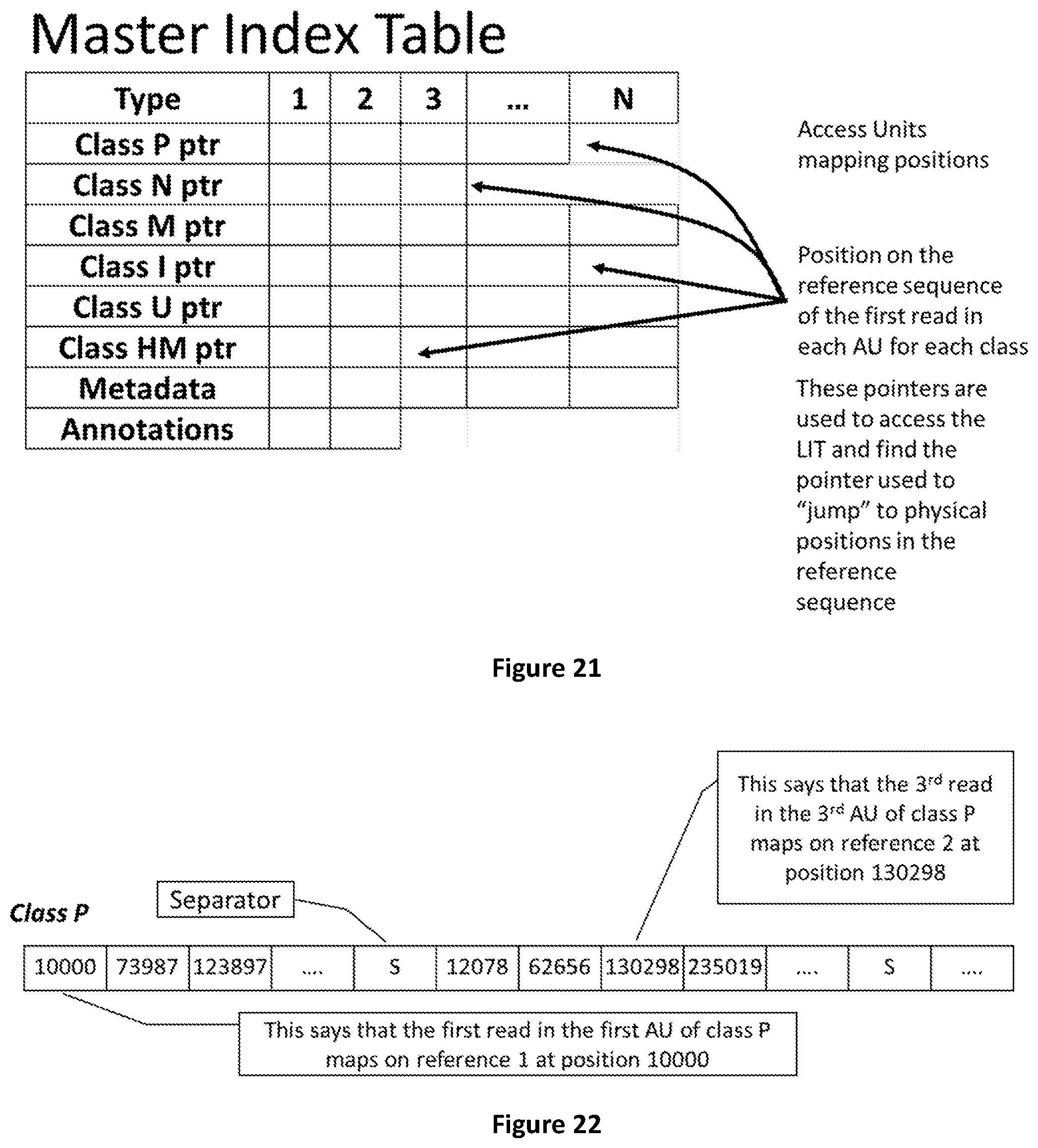

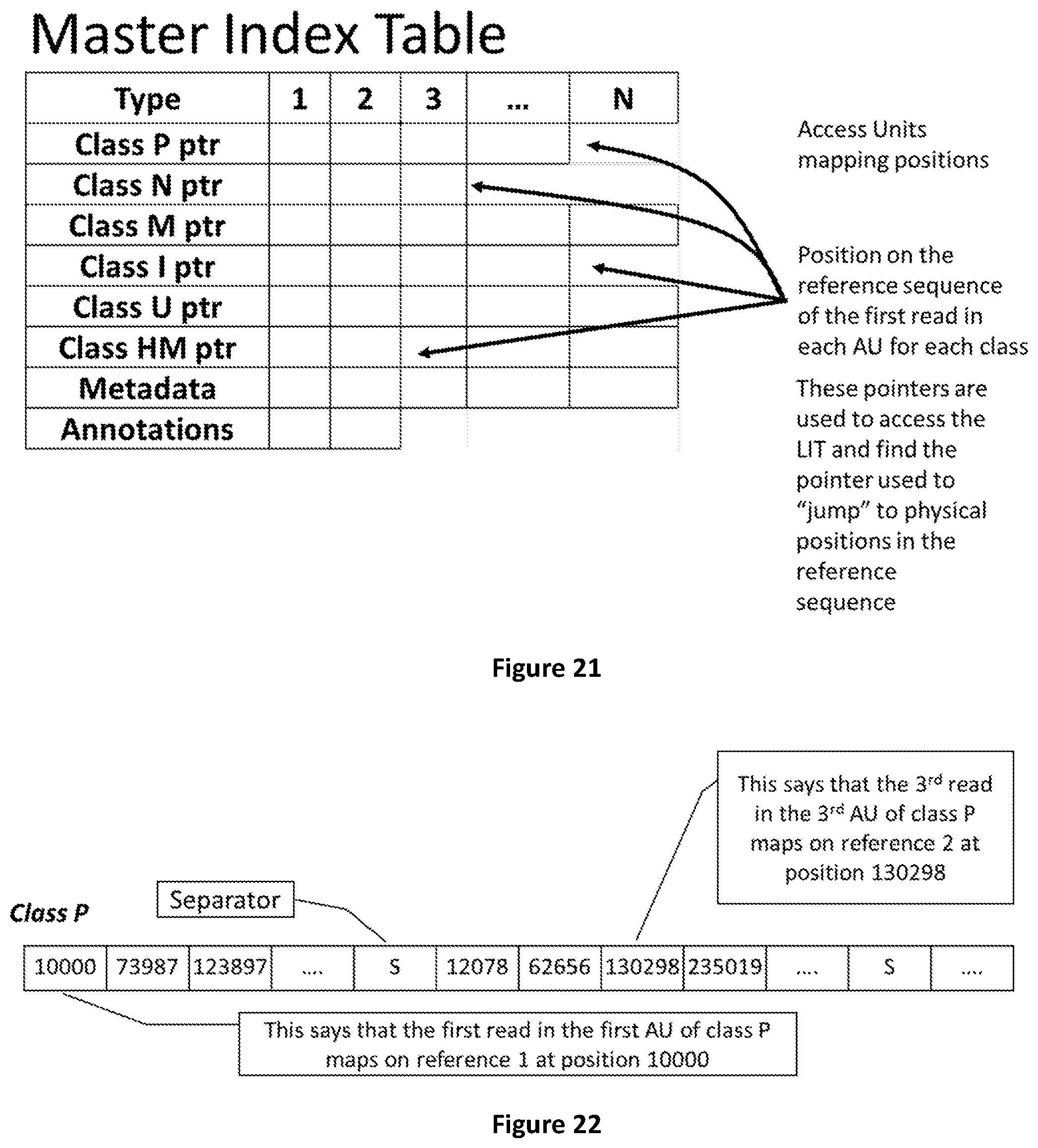

[0089] FIG. 21 shows how the Master Index Table contains the positions on the reference sequences of the first read in each Access Unit.

[0090] FIG. 22 shows an example of partial MIT showing the mapping positions of the first read in each pos AU of class P.

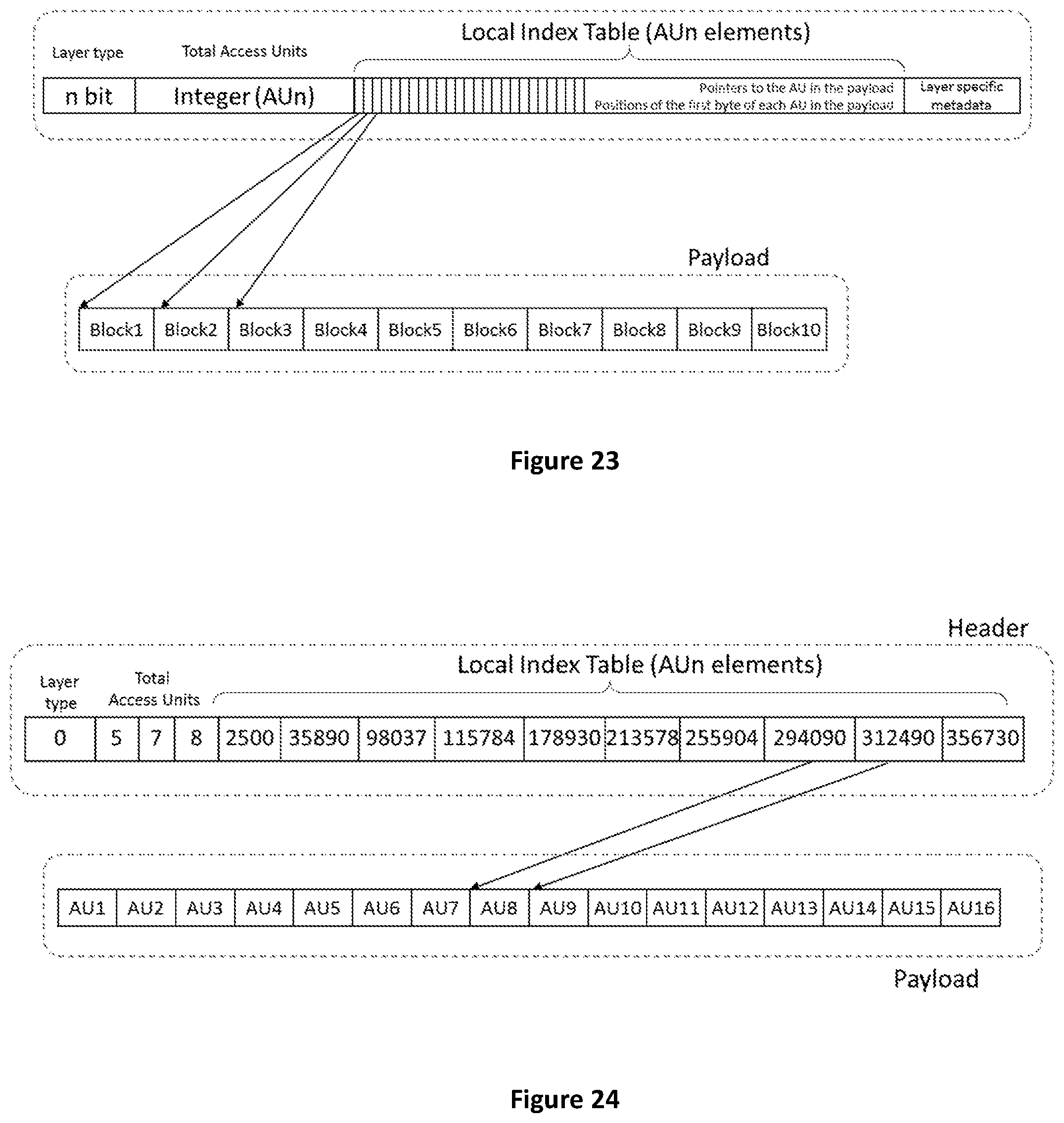

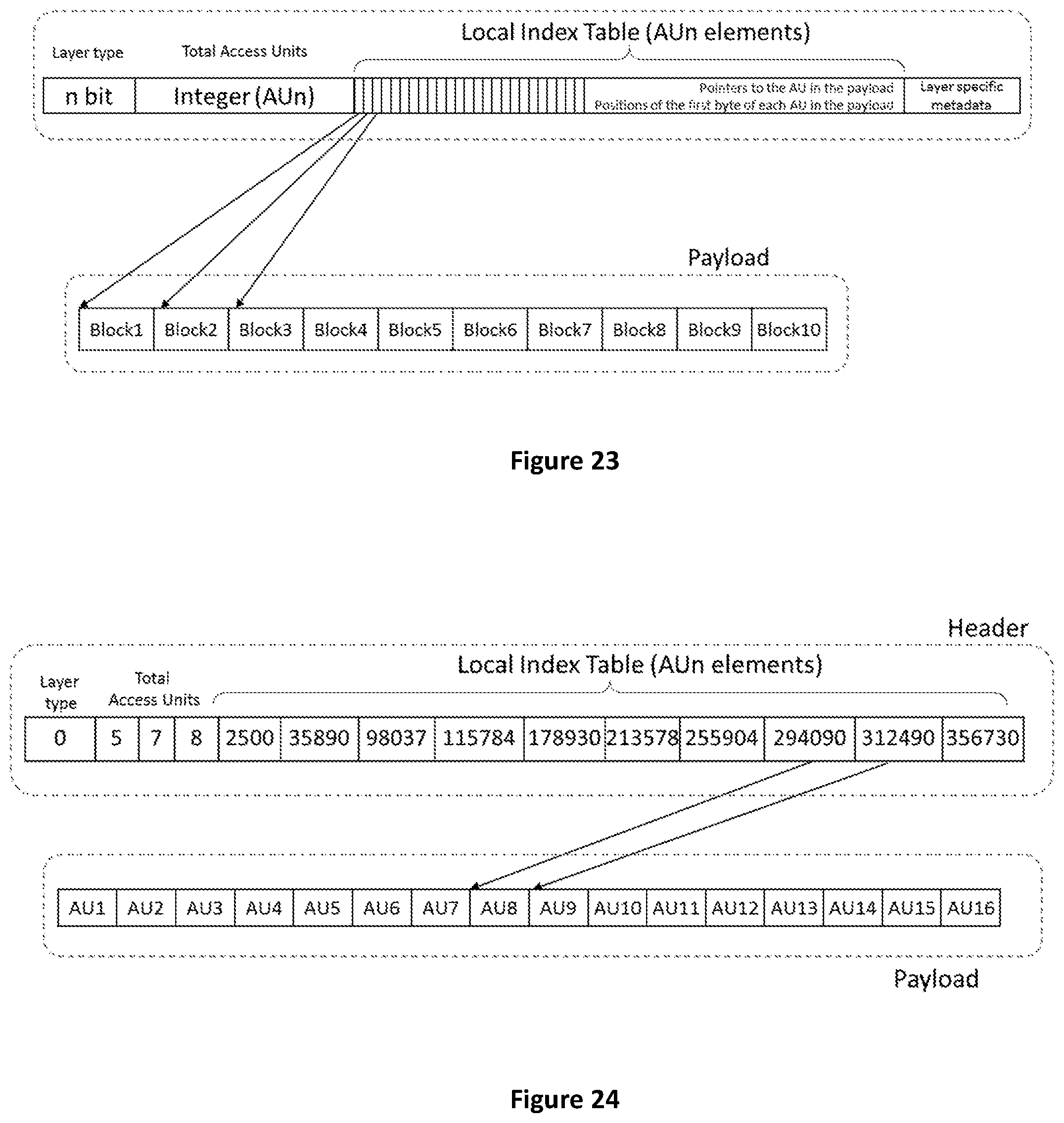

[0091] FIG. 23 shows how the Local Index Table in the layer header is a vector of pointers to the AUs in the payload.

[0092] FIG. 24 shows an example of Local Index Table.

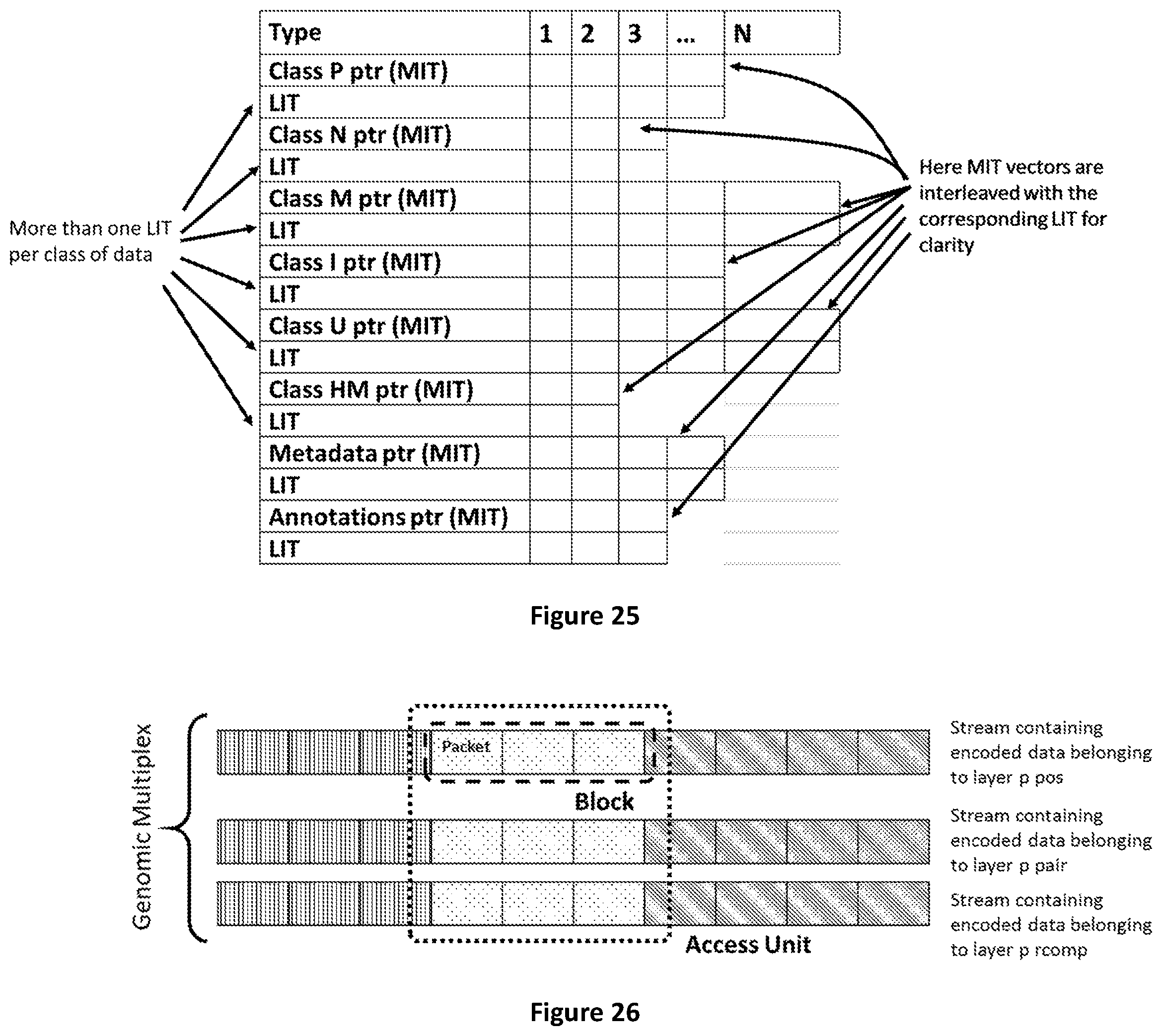

[0093] FIG. 25 shows the functional relation between Master Index Table and Local Index Tables

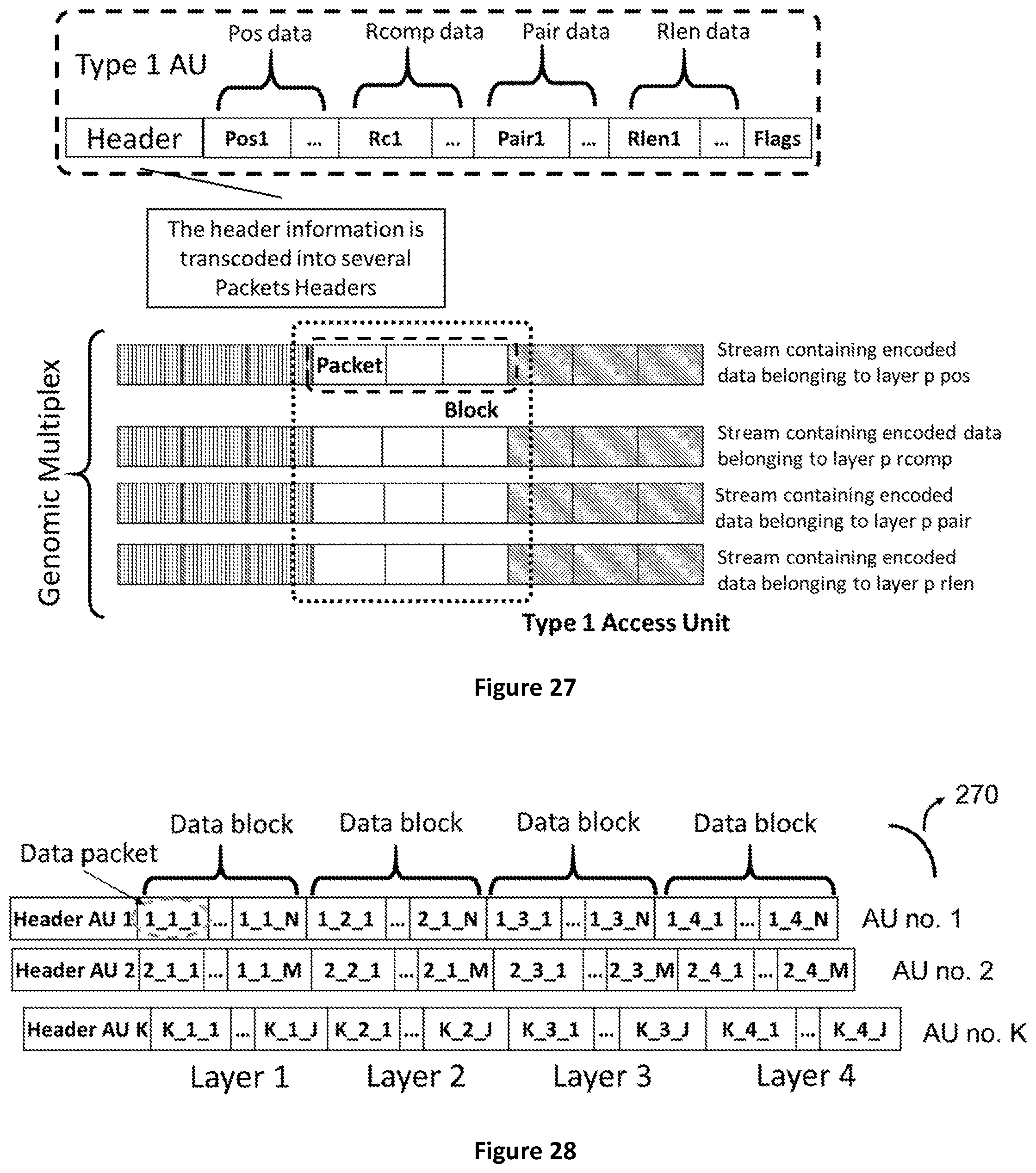

[0094] FIG. 26 shows how Access Units are composed by blocks of data belonging to several layers. Layers are composed by Blocks subdivided in Packets.

[0095] FIG. 27 shows how a Genomic Access Unit of type 1 (containing positional, pairing, reverse complement and read length information) is packetized and encapsulated in a Genomic Data Multiplex.

[0096] FIG. 28 shows how Access Units are composed by a header and multiplexed blocks belonging to one or more layers of homogeneous data. Each block can be composed by one or more packets containing the actual descriptors of the genomic information.

[0097] FIG. 29 shows the structure of Access Units of type 0 which do not need to refer to any information coming from other access units to be accessed or decoded and accessed.

[0098] FIG. 30 shows the structure of Access Units of type 1.

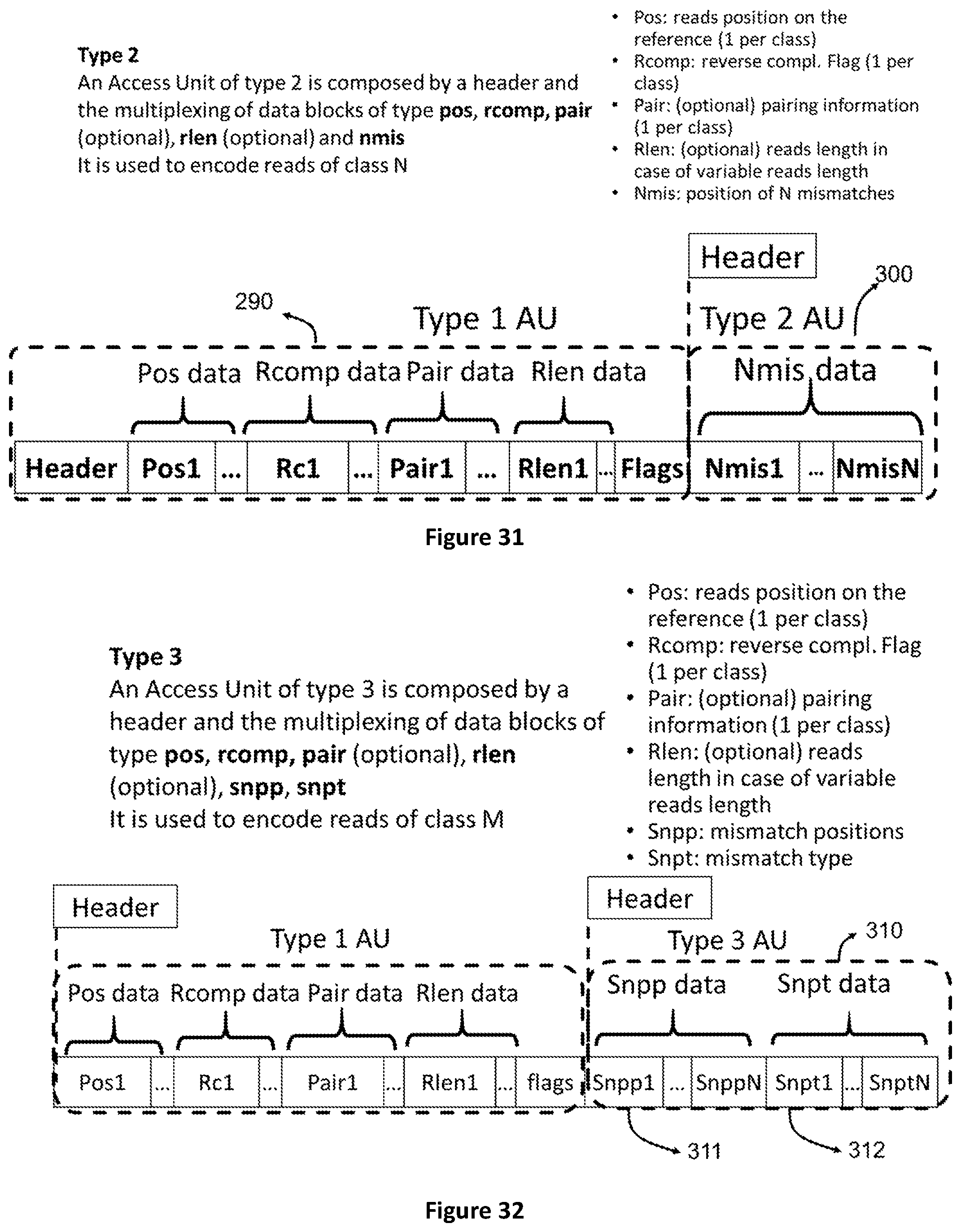

[0099] FIG. 31 shows the structure of Access Units of type 2 which contain data that refer to an access unit of type 1. These are the positions of N bases in the encoded reads.

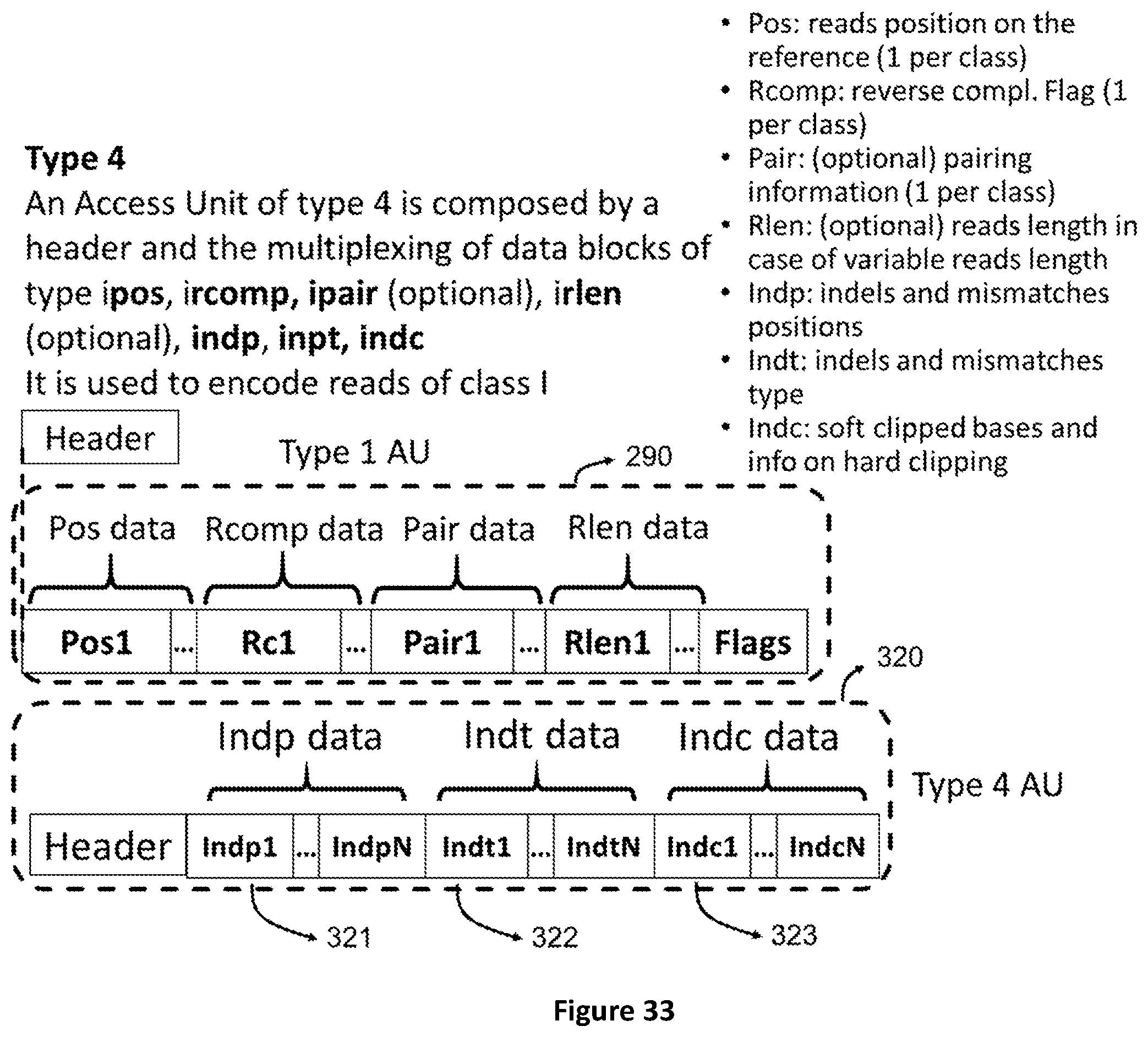

[0100] FIG. 32 shows the structure of Access Units of type 3 which contain data that refer to an access unit of type 1. These are the positions and types of mismatches in the encoded reads.

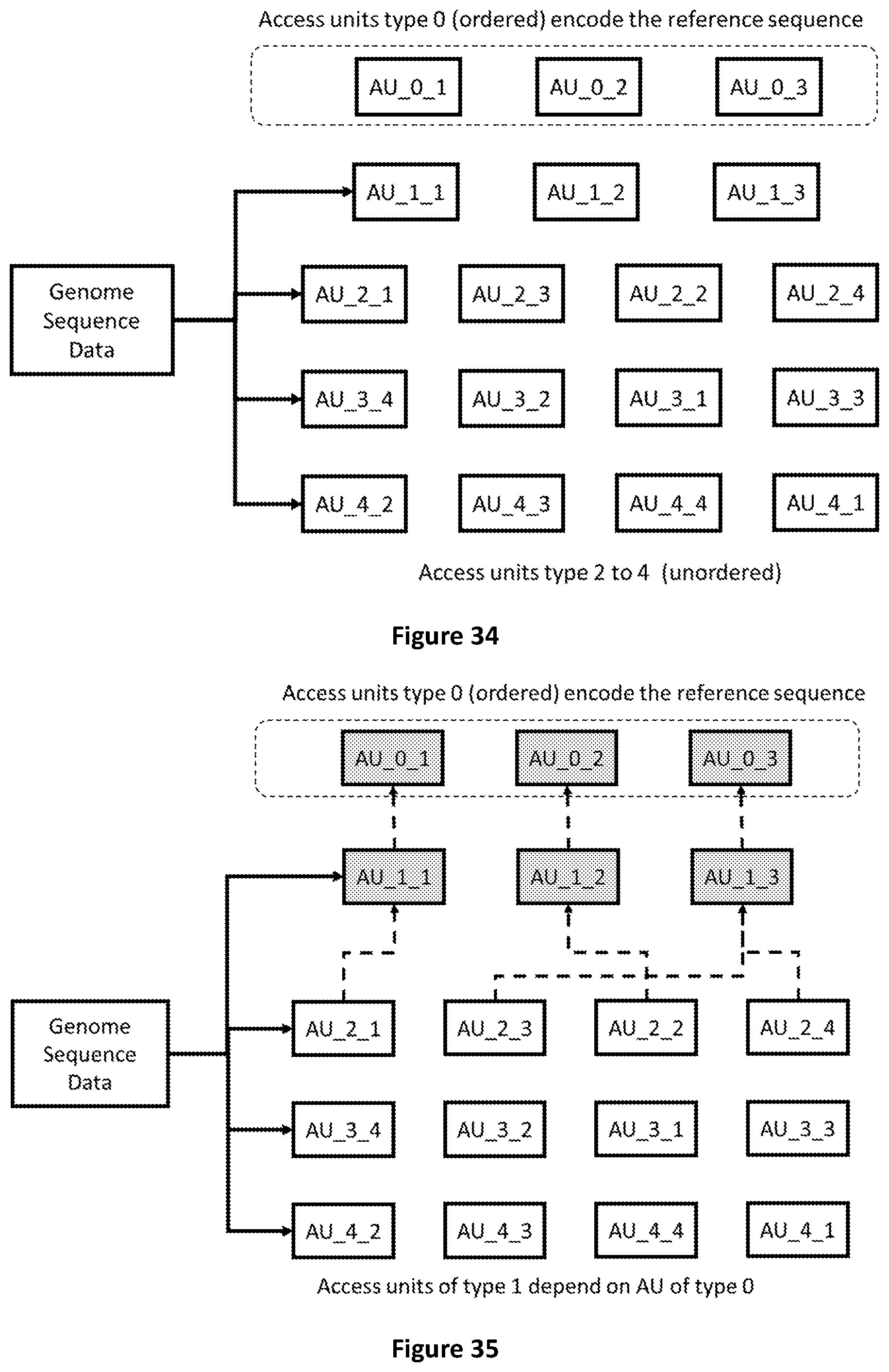

[0101] FIG. 33 shows the structure of Access Units of type 4 which contain data that refer to an access unit of type 1. These are the positions and types of mismatches in the encoded reads.

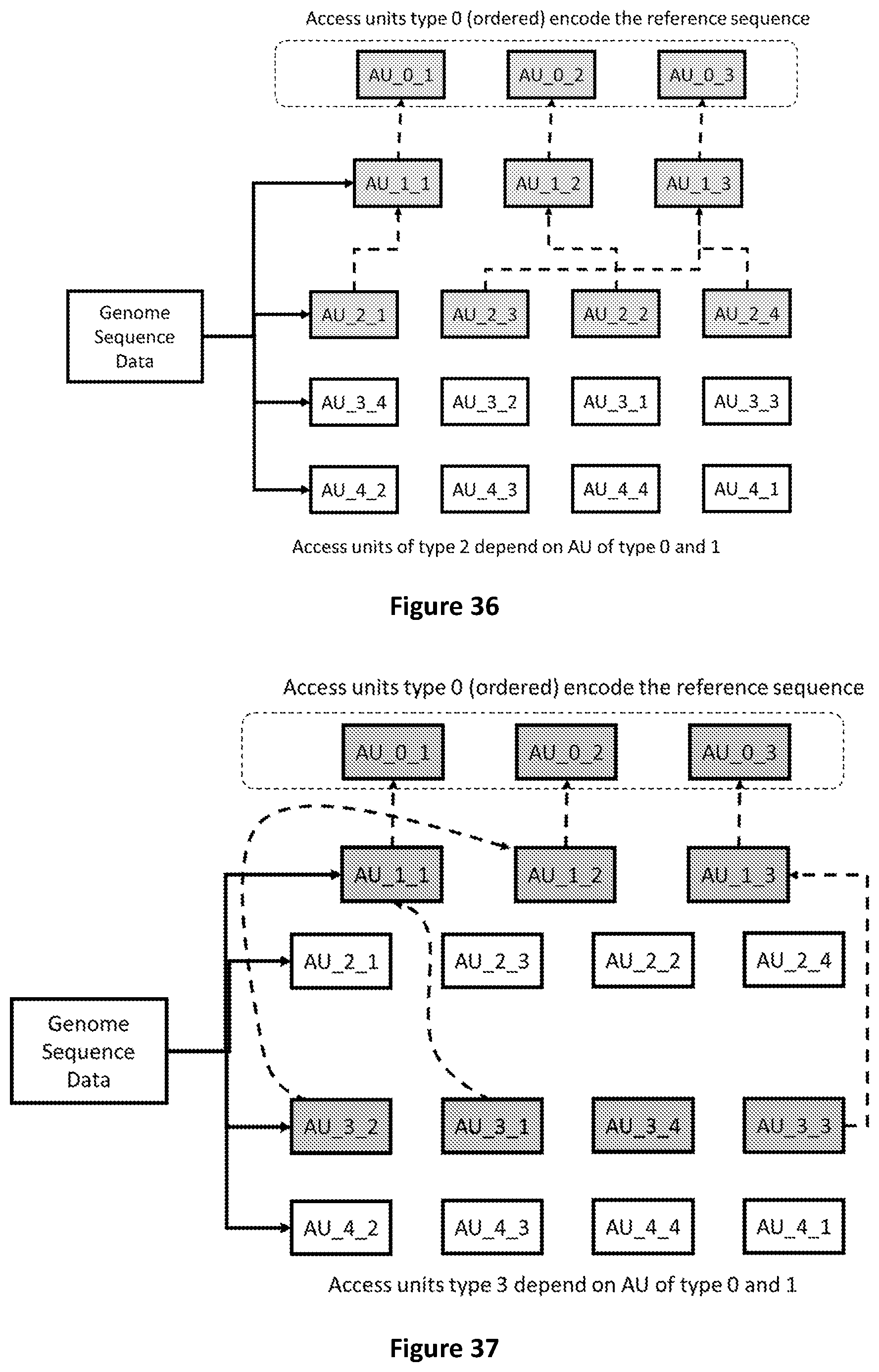

[0102] FIG. 34 shows the first five type of Access Units.

[0103] FIG. 35 shows that Access Units of type 1 refer to Access Units of type 0 to be decoded.

[0104] FIG. 36 shows that Access Units of type 2 refer to Access Units of type 0 and 1 to be decoded.

[0105] FIG. 37 shows that Access Units of type 3 refer to Access Units of type 0 and 1 to be decoded.

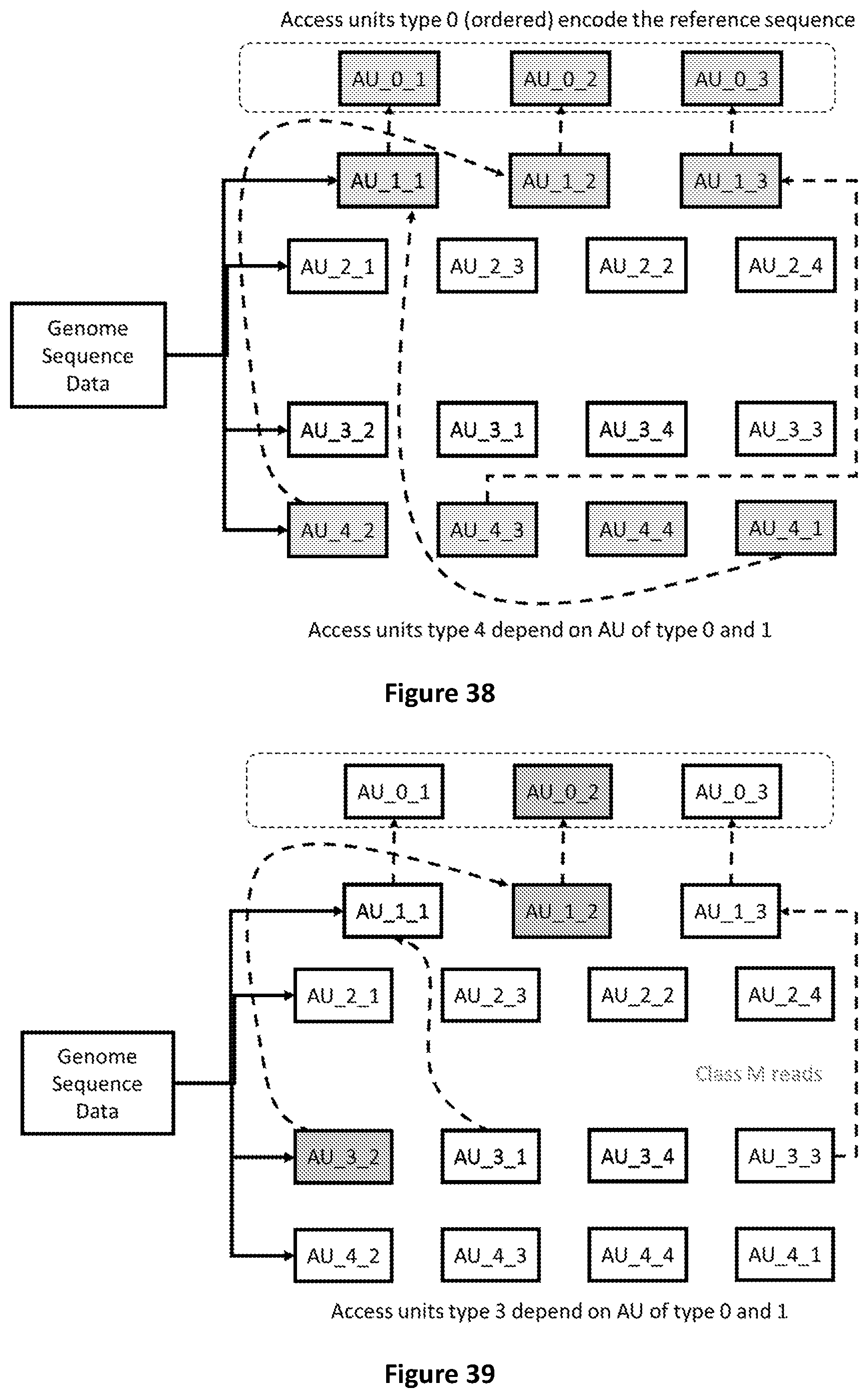

[0106] FIG. 38 shows that Access Units of type 4 refer to Access Units of type 0 and 1 to be decoded.

[0107] FIG. 39 shows the Access Units required to decode sequence reads with mismatches mapped on the second segment of the reference sequence (AU 0-2).

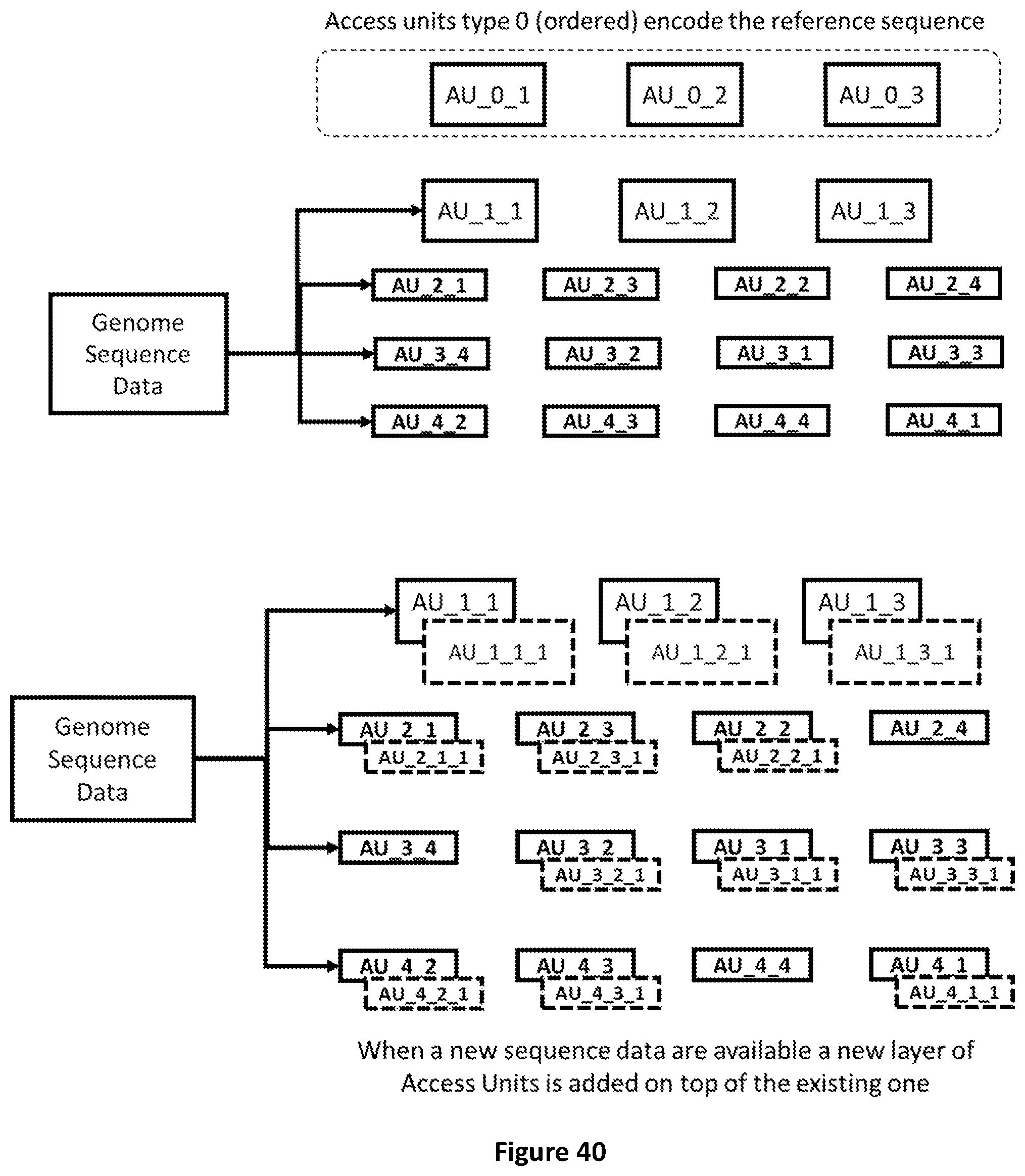

[0108] FIG. 40 shows how raw genomic sequence data that becomes available can be incrementally added to pre-encoded genomic data.

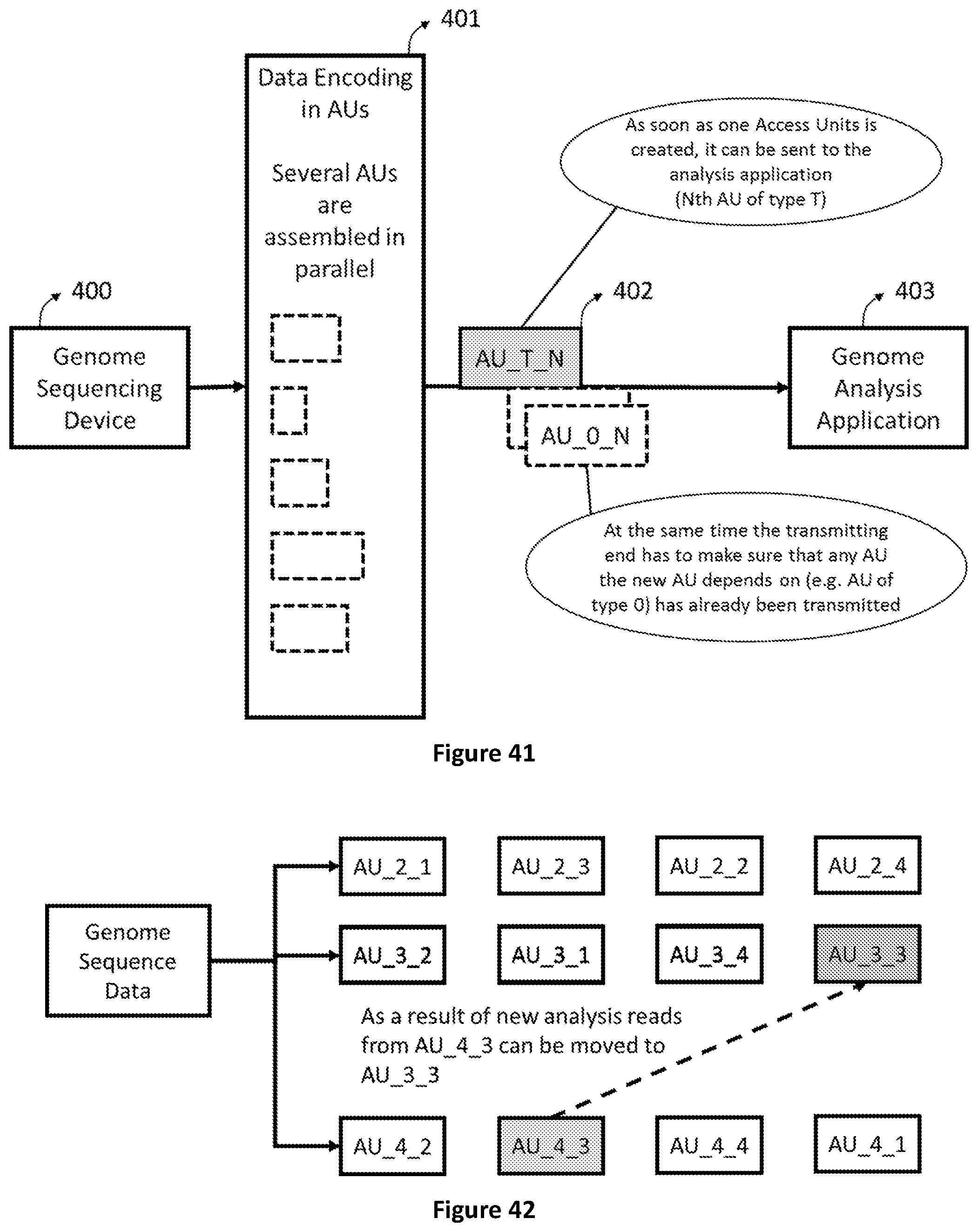

[0109] FIG. 41 shows how a data structure based on Access Units enables genomic data analysis to start before the sequencing process is completed.

[0110] FIG. 42 shows how new analysis performed on existing data can imply that reads are moved from AUs of type 4 to one of type 3.

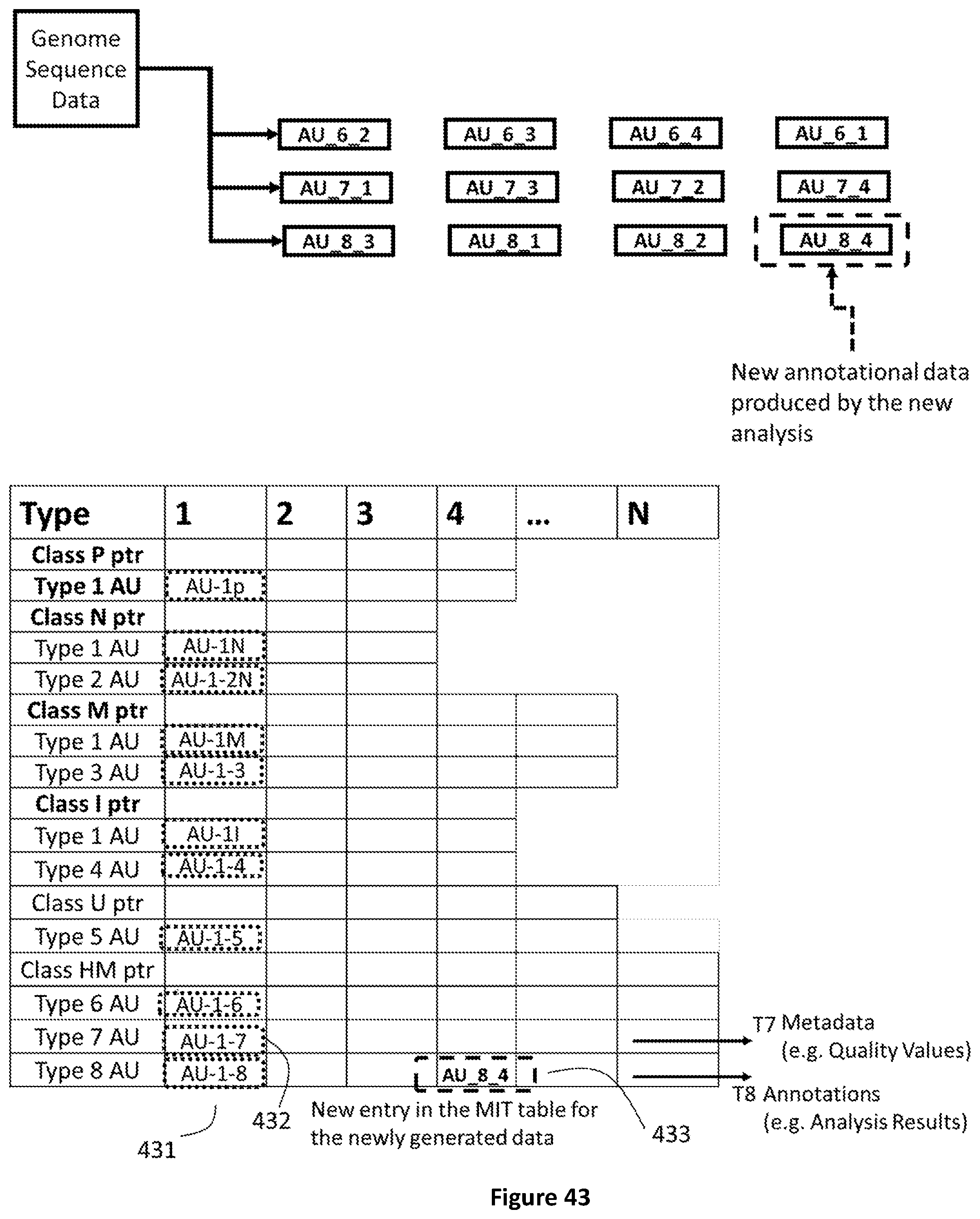

[0111] FIG. 43 shows how newly generated analysis data are encapsulated in a new AU of type 8 and a corresponding index is created in the MIT.

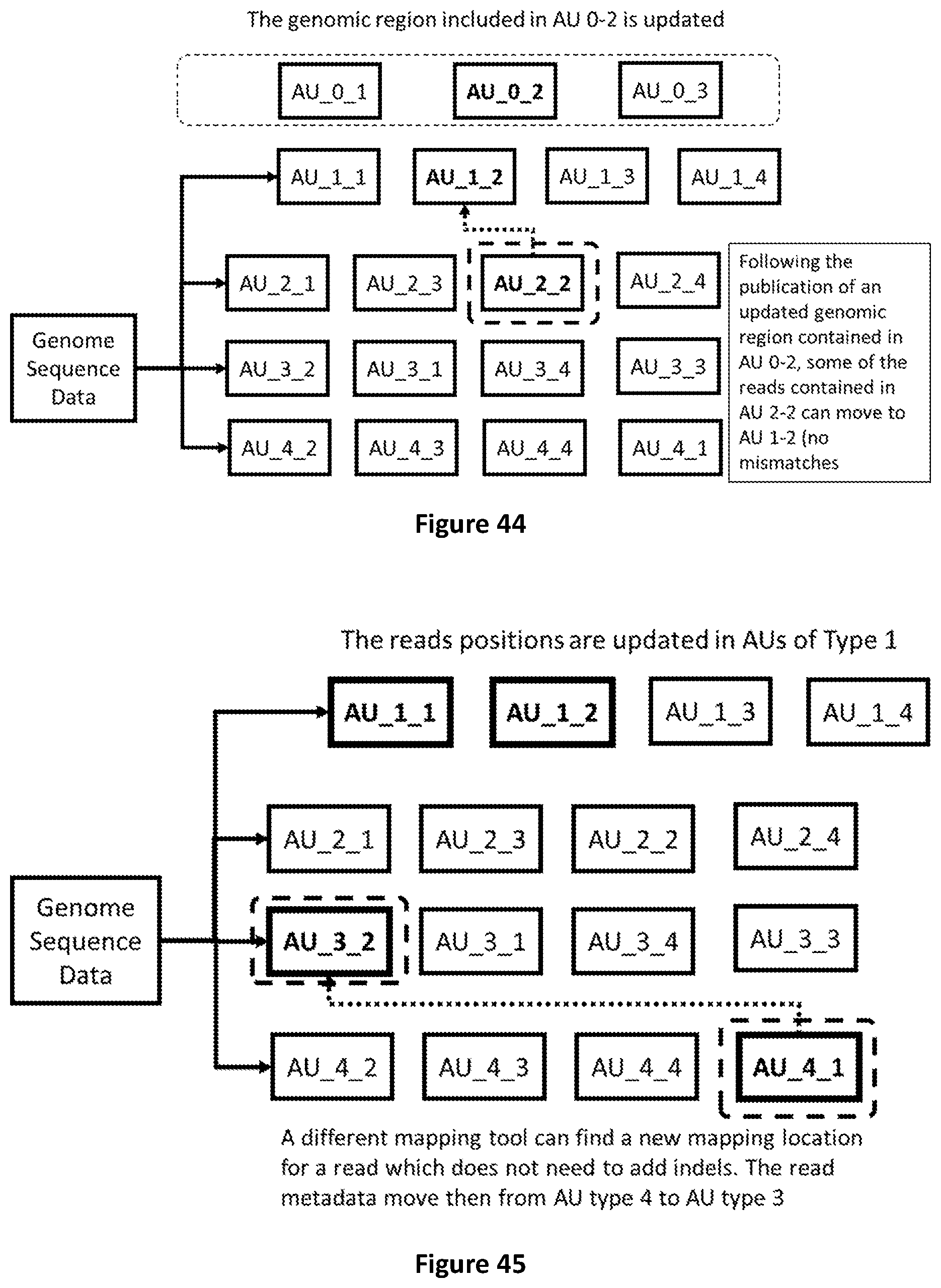

[0112] FIG. 44 shows how to transcode data due to the publication of a new reference sequence (genome).

[0113] FIG. 45 shows how reads mapped to a new genomic region with better quality (e.g. no indels) are moved from AU of type 4 to AU of type 3

[0114] FIG. 46 shows how, in case new mapping location is found, (e.g. with less mismatches) the related reads can be moved from one AU to another of the same type.

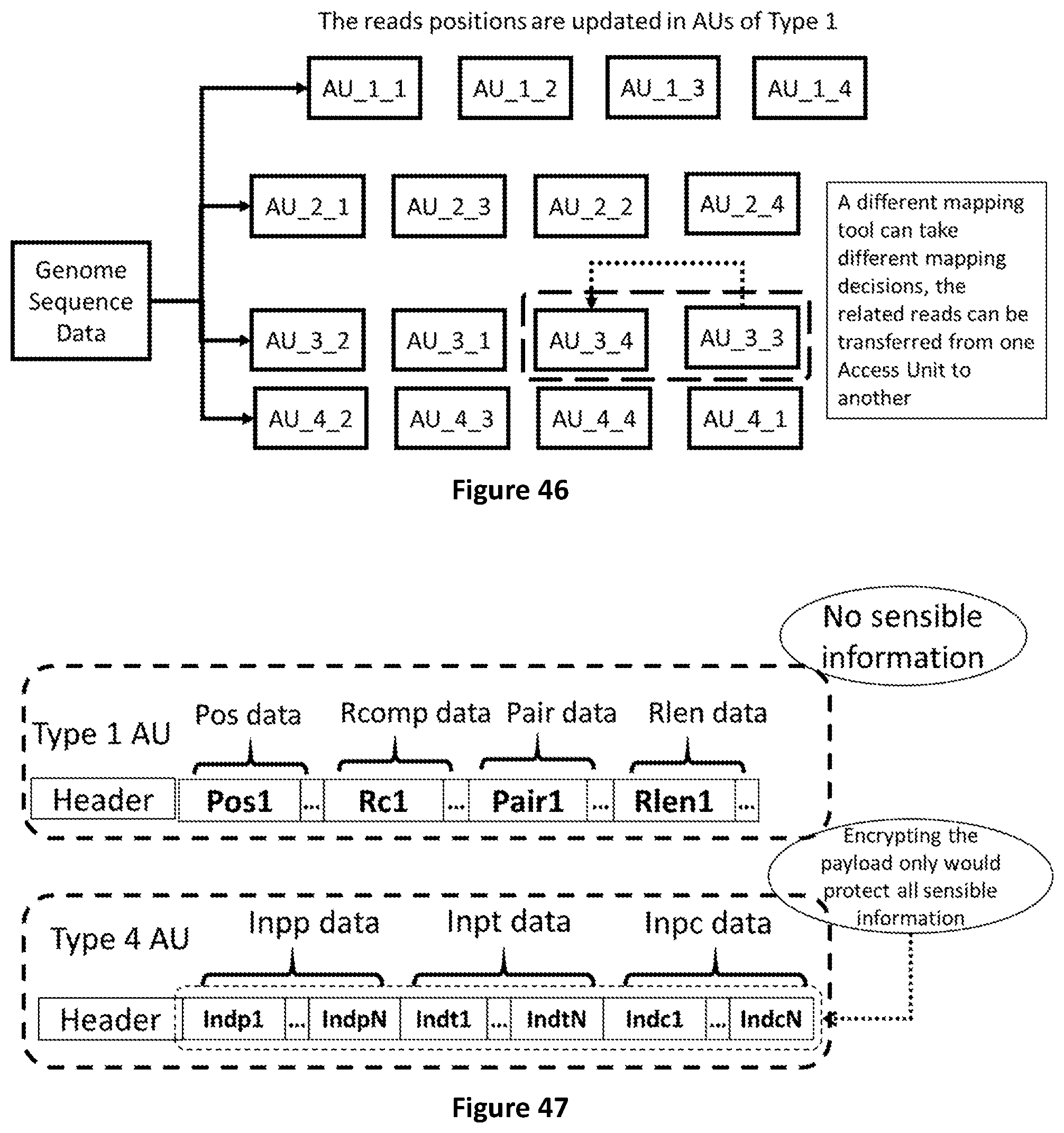

[0115] FIG. 47 shows how selective encryption can be applied on Access Units of Type 4 only as they contain the sensible information to be protected.

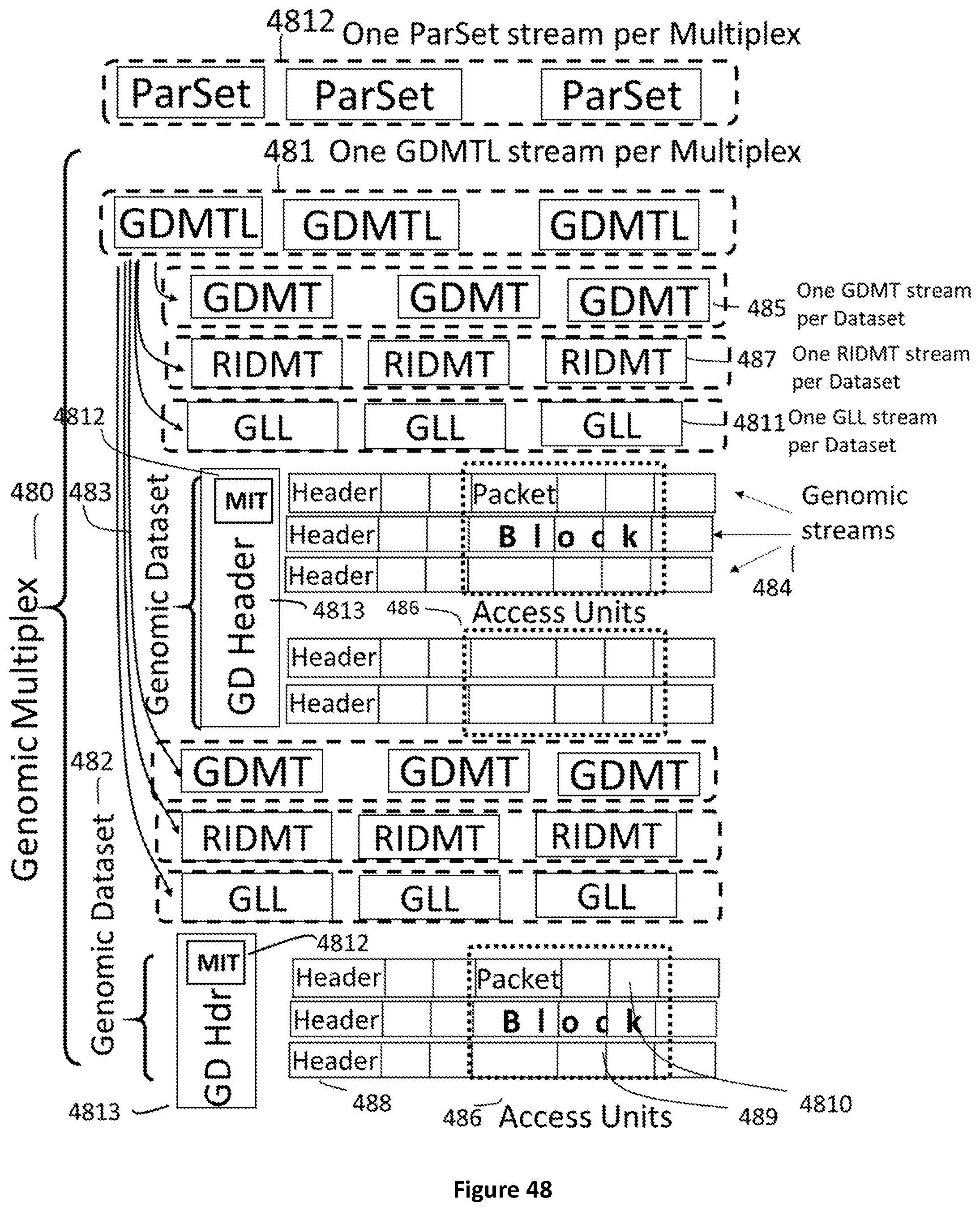

[0116] FIG. 48 shows the data encapsulation in a genomic multiplex where one or more genomic datasets 482-483 contain Genomic streams 484 and streams of Genomic Datasets Mapping Table Lists 481, Genomic Dataset Mapping Tables 485, and Reference Identifiers Mapping Tables 487. Each genomic stream is composed by a Header 488 and Access Units 486. Access Units encapsulate Blocks 489 which are composed by Packets 4810.

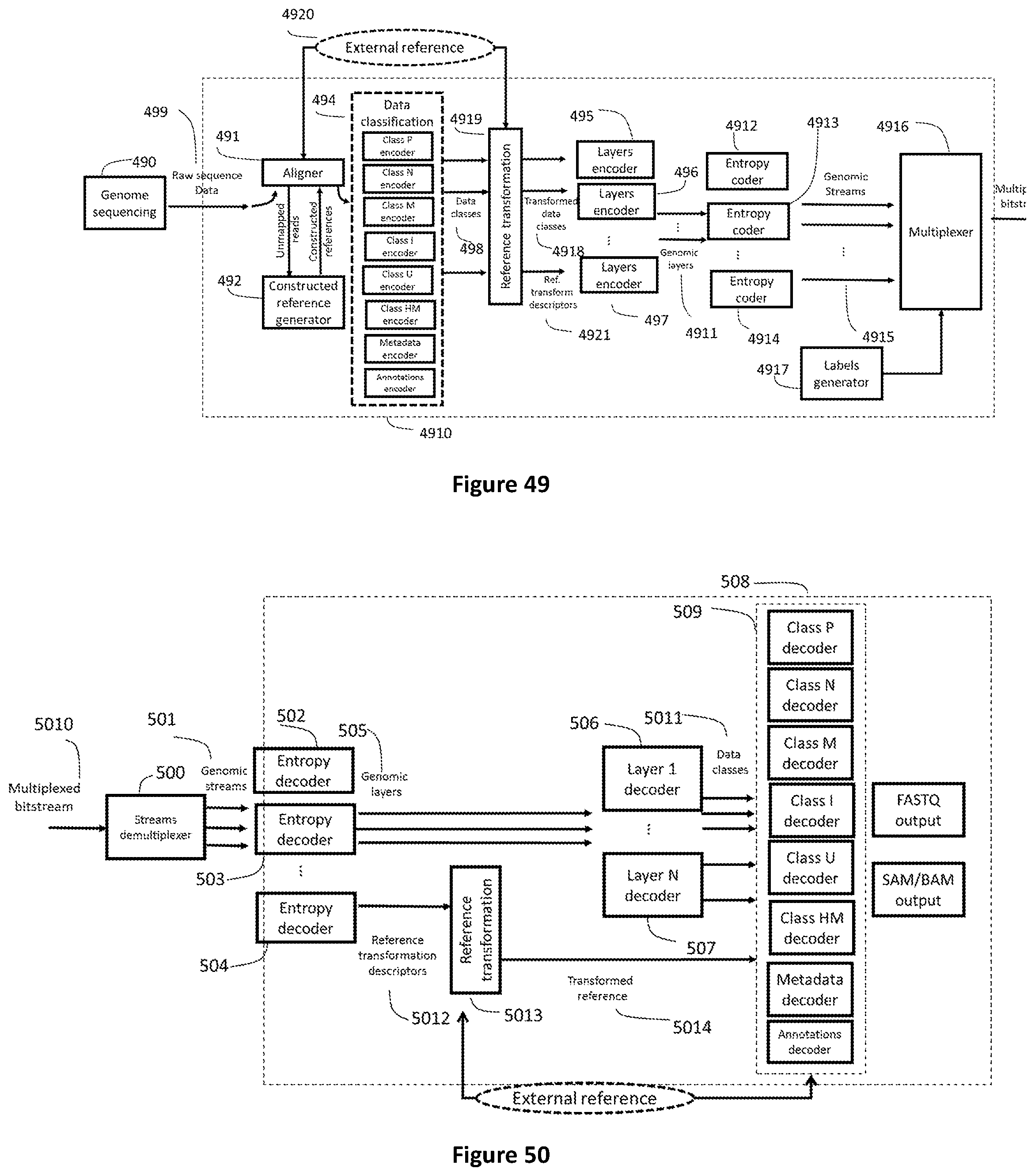

[0117] FIG. 49 shows how raw genomic sequence data (499) or aligned genomic data (produced by element 491) are processed to be encapsulated in a Genomic Multiplex. The alignment (491) and reference genome construction (492) stages can be necessary to prepare the data for encoding. Data classes (498) generated by a data classification unit (494) can be further classified with respect to one or more transformed reference generated by a reference transformation unit (4919). The transformed classes (4918) are then sent to layers encoders (495-497). The generated layers (4911) are encoded by entropy coders (4912-4914) which generate Genomic Streams of Access Units (4915) fed to the Genomic Multiplexer (4916).

[0118] FIG. 50 shows how a genomic demultiplexer (500) extracts Genomic Streams (501) from the Genomic Multiplex (5010), one decoder per AU type (502-504) extracts the genomic layers which are then decoded (506-507) into various data classes (5011) which are used by class decoders (509) to reconstruct genomic formats such as for example FASTQ and SAM/BAM. When present in the multiplexed bitstream (5010) a genomic stream containing one or more reference transformations is decoded by an entropy decoder (504) to produce reference transformation descriptors (5012). Reference transformation descriptors are processed by a reference transformation unit (5013) to transform one or more "external" references to generate one or more transformed references (5014) to be used by the class decoders (509).

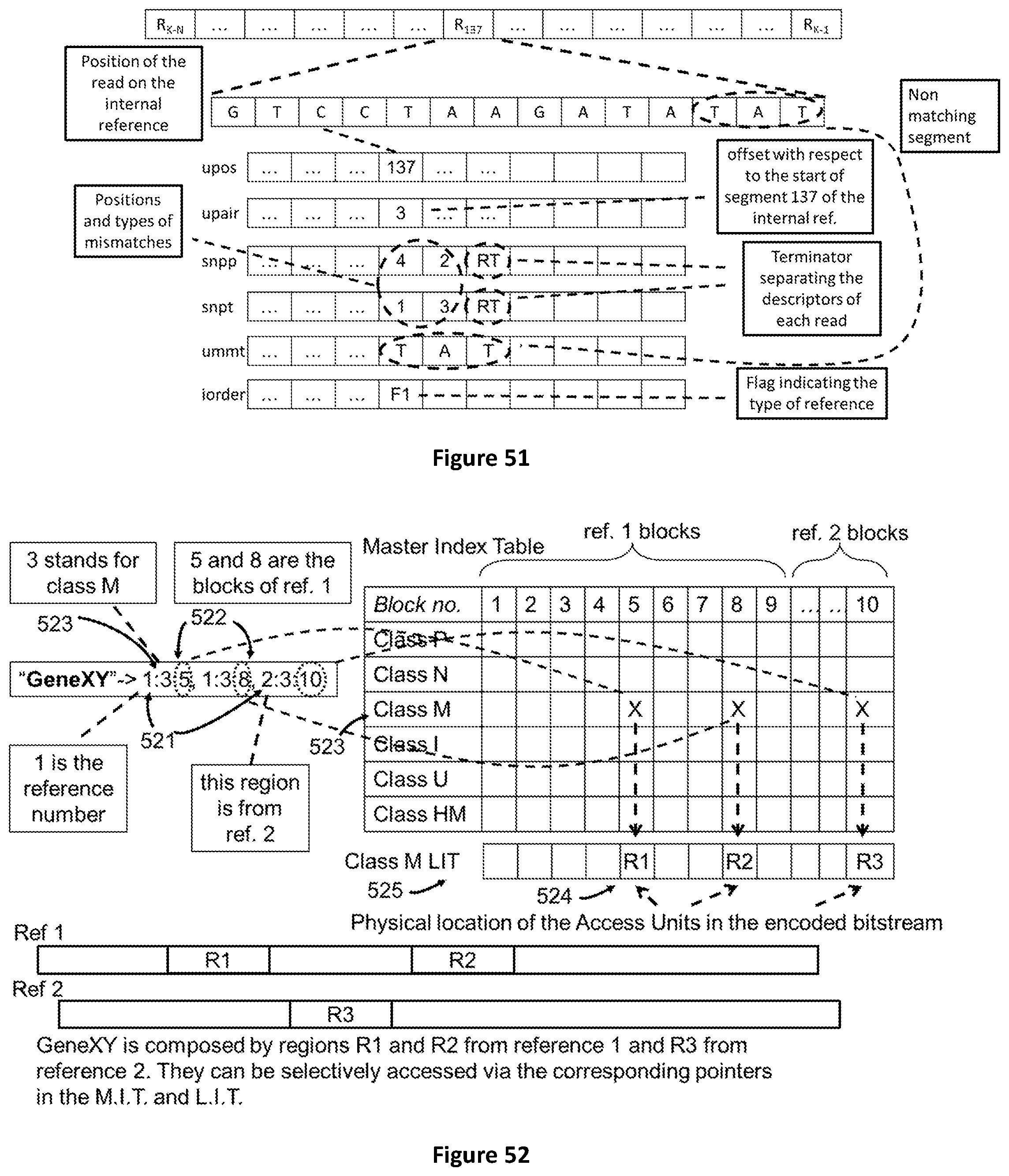

[0119] FIG. 51 shows the process of encoding sequence reads belonging to class U using a self-generated reference sequence using six layers of descriptors. Four layers are the same used for other classes P, N, M, I while two layers are specific to class U reads.

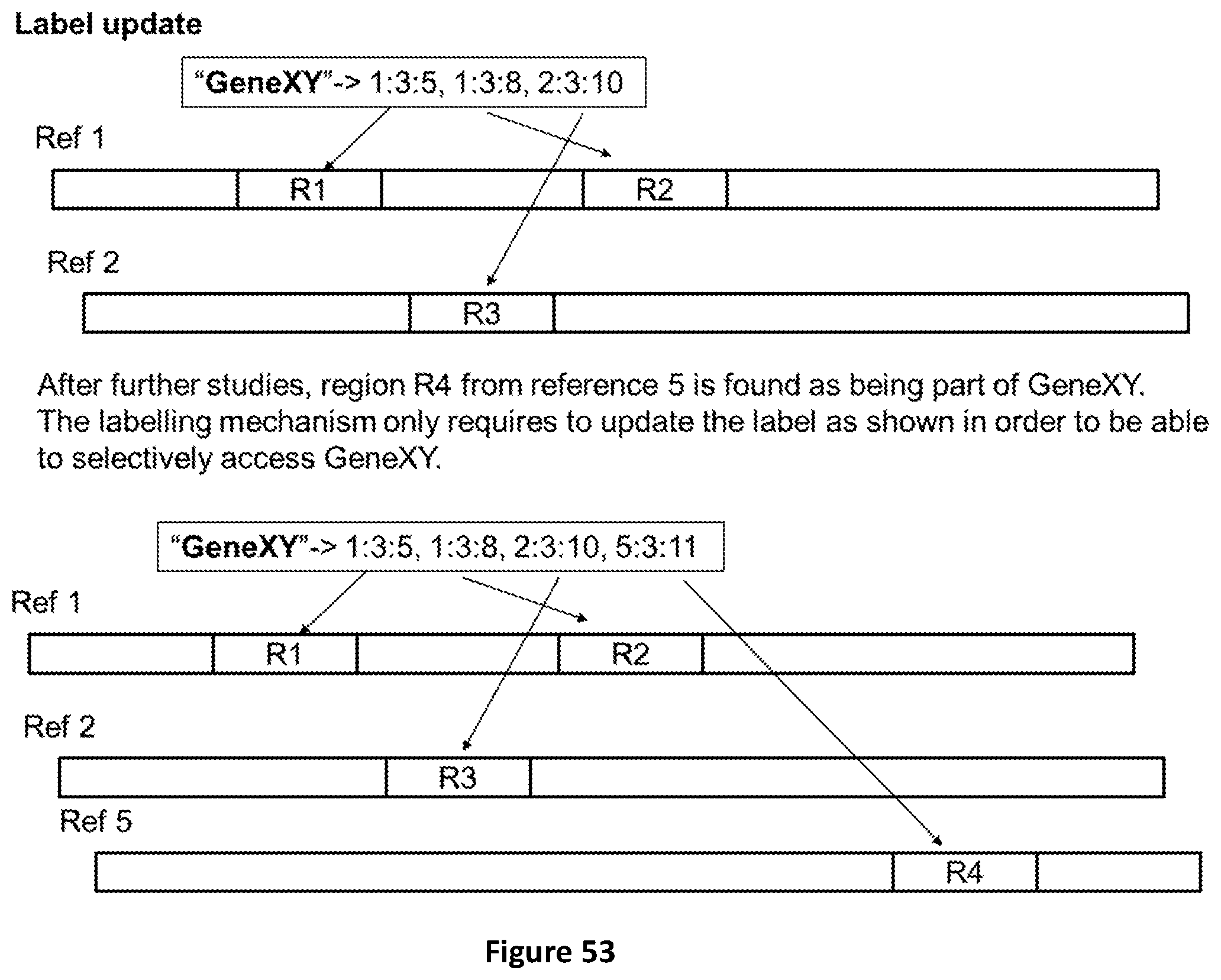

[0120] FIG. 52 shows how a label is built to aggregate genomic regions belonging to two different references.

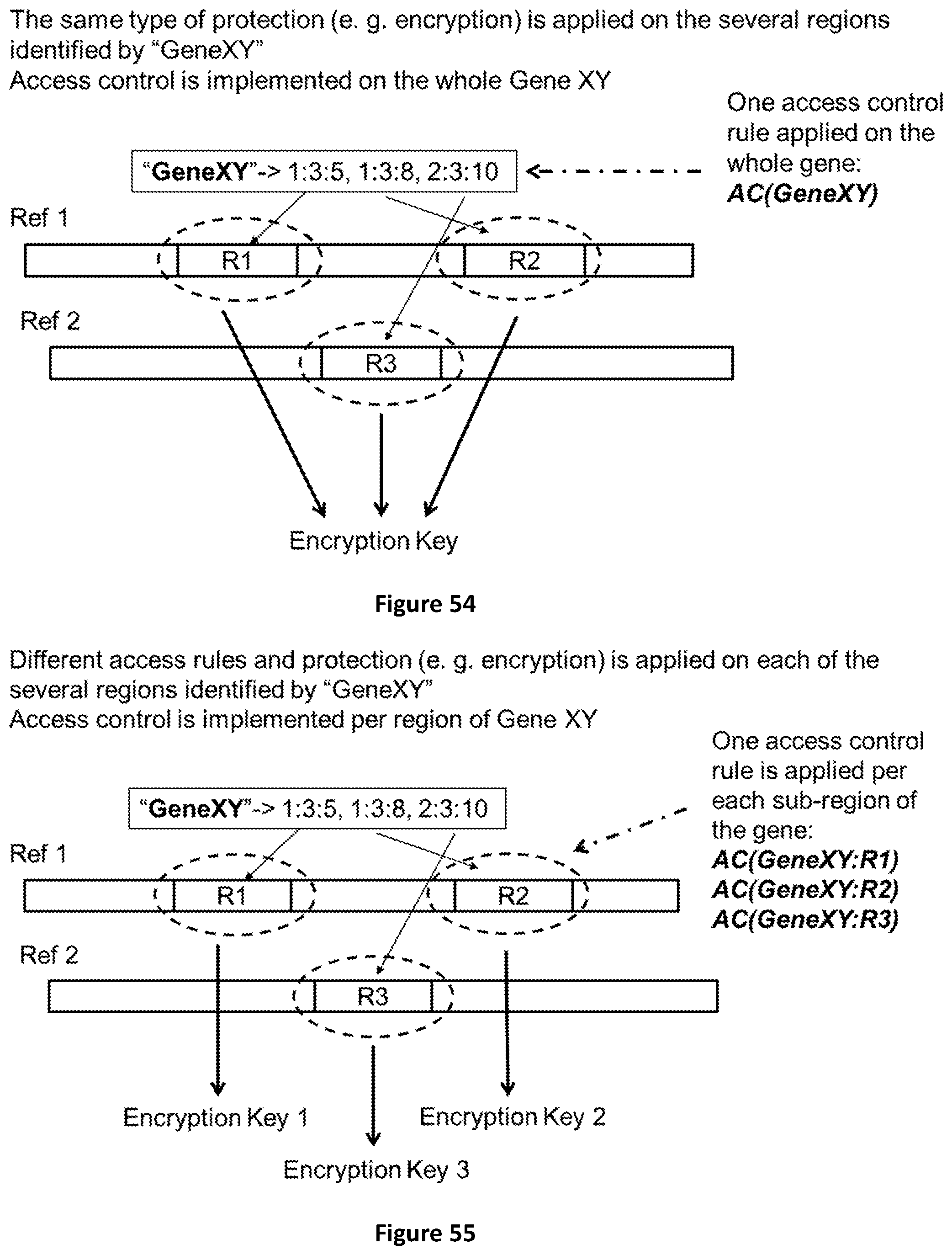

[0121] FIG. 53 shows how an existing label can be updated in case new results of analysis require to add an additional region R4 to the existing ones (R1, R2 and R3).

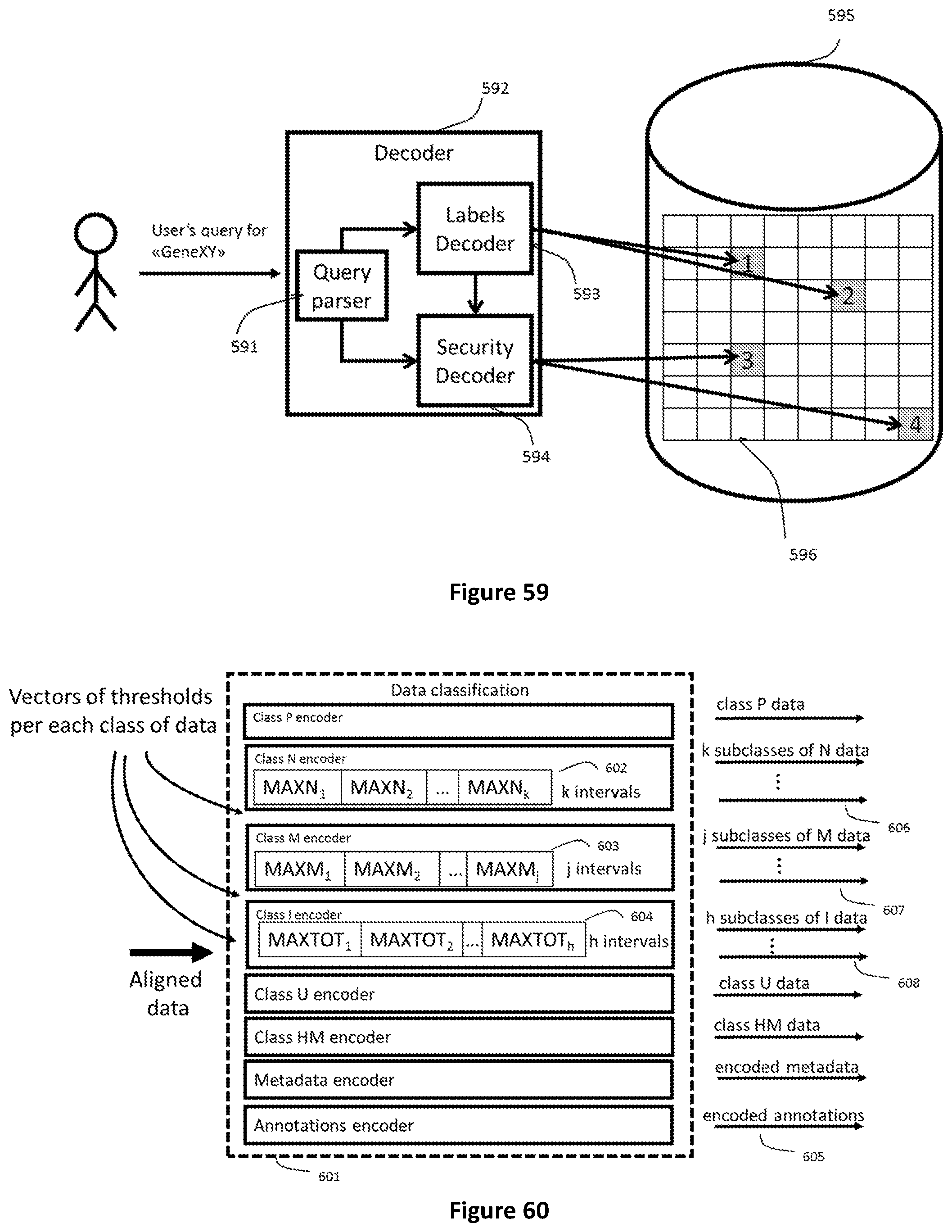

[0122] FIG. 54 shows how the labeling mechanism can be used to implement access control and data protection on specific genomic regions or sub regions. The simple case uses one access control rule (AC) and one protection mechanism (e. g. encryption) for all genomic regions identified by one label.

[0123] FIG. 55 shows how the different genomic regions identified by the same label can be protected by several different access control rules (AC) and several different encryption keys.

[0124] FIG. 56 shows how an alternative encoding of reads of class U where a signed POS descriptor is used to encode the mapping position of a read on the computed reference FIG. 57 shows how half mapped read pairs can help in filling unknown regions of the reference sequence by assembling longer contigs with unmapped reads.

[0125] FIG. 58 shows the hierarchical structure of headers for genomic data stored following the structure described in this invention.

[0126] FIG. 59 shows how a device implementing the labeling mechanism described by this invention enables concurrent access to data related to several genomic regions when they are stored in different records of a database. This can happen either in presence of controlled access or not.

[0127] FIG. 60 shows how vectors of thresholds are used in encoders of classes N, M and I to generate separated subclasses of data

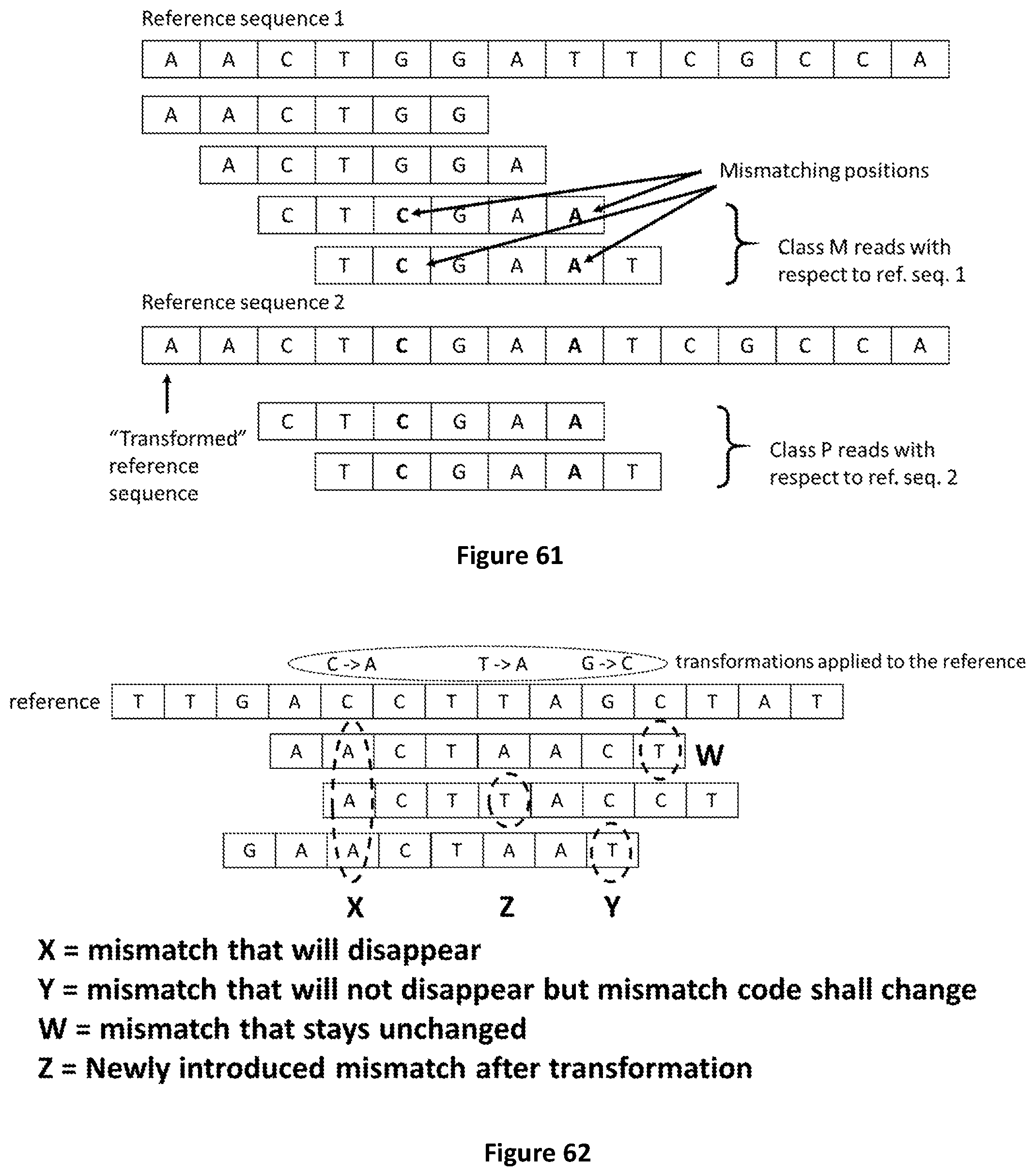

[0128] FIG. 61 provides an example of how reference transformations can change the class reads belong to when all or a subset of mismatches are removed (i.e. the read belonging to class M before transformation is assigned to class P after the transformation of the reference has been applied).

[0129] FIG. 62 shows how reference transformations can be applied to remove mismatches (MMs) from reads. In some cases reference transformations may generate new mismatches or change the type of mismatches found when referring to the reference before the transformation has been applied.

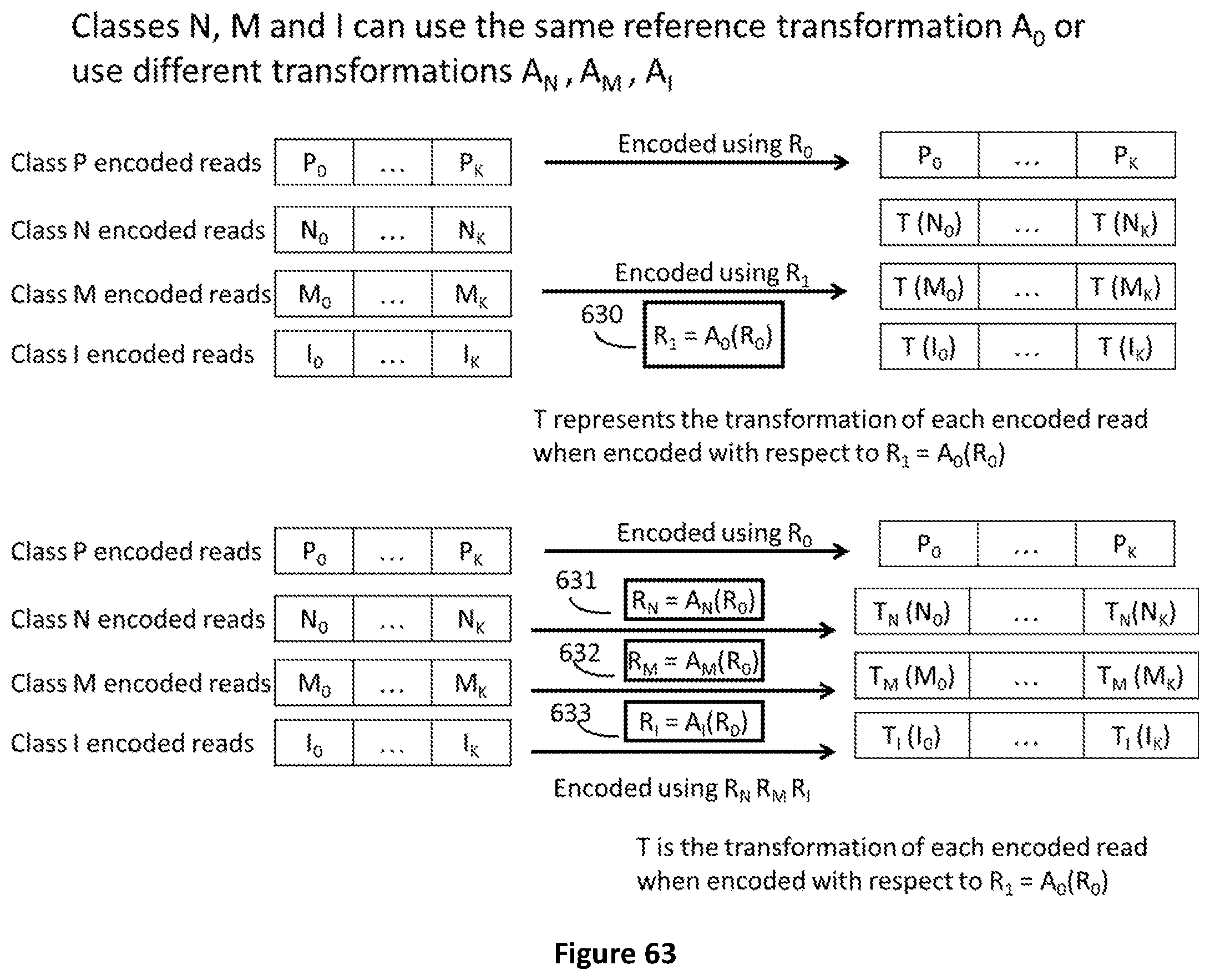

[0130] FIG. 63 The same reference transformation A0 can be used for all classes of data or different transformations AN, A.sub.M, A.sub.I are used for each class N, M, I

SUMMARY

[0131] The features of the claims below solve the problem of existing prior art solutions by providing

a method for selective access of regions of genomic data by employing labels, said labels comprising: an identifier of a reference genomic sequence (521), an identifier of said genomic regions (522), and an identifier of the data class (523) of said genomic data

[0132] In another aspect of the method said genomic data are sequences of genomic reads.

[0133] In another aspect of the method data classes can be of the following type or a subset of them: [0134] "Class P" comprising genomic reads which do not present any mismatch with respect to a reference sequence [0135] "Class N" comprising genomic reads including only mismatches in positions where the sequencing machine was not able to call any "base" and the number of said mismatches does not exceed a given threshold [0136] "Class M" comprising genomic reads in which mismatches are constituted by positions where the sequencing machine was not able to call any base, named "n type" mismatches, and/or it called a different base than the reference sequence, named "s type" mismatches, and said numbers of mismatches do not exceed given thresholds for the number of mismatches of "n type", of "s type" and a threshold obtained from a given function (f(n,s)) [0137] "Class I" when the genomic reads can possibly have the same type of mismatches of "Class M", and in addition at least one mismatch of type: "insertion" ("i type"), "deletion" ("d type"), soft clips ("c type"), and wherein the numbers of mismatches for each type does not exceed the corresponding given thresholds and a threshold provided by a given function (w(n,s,i,d,c)) [0138] "Class U" comprising all reads that do not find any classification in the classes P, N, M, I

[0139] In another aspect of the method said genomic data are paired sequences of genomic reads.

[0140] In another aspect of the method said data class of paired reads can be of the following types or a subset of them: [0141] "Class P" comprising genomic read pairs which do not present any mismatch with respect to a reference sequence [0142] "Class N" comprising genomic reads pairs including only mismatches in positions where the sequencing machine was not able to call any "base" and said numbers of mismatches for each read do not exceed a given threshold [0143] "Class M" comprising genomic read pairs including only mismatches in positions where the sequencing machine was not able to call any "base" and said numbers of mismatches for each read do not exceed a given threshold, named "n type" mismatches, and/or it called a different base than the reference sequence, named "s type" mismatches, and said numbers of mismatches does not exceed a given thresholds for the number of mismatches of "n type", of "s type" and a threshold obtained from a given function (f(n,s)) [0144] "Class I" comprising read pairs which can possibly have the same type of mismatches of "Class M" pairs, and in addition at least one mismatch of type: "insertion" ("i type") "deletion" ("d type") soft clips ("c type"), and wherein the number of mismatches for each type does not exceed the corresponding given threshold and a threshold provided by a given function (w(n,s,i,d,c)) [0145] "Class HM" comprising read pairs for which only one read mate does not satisfy the matching rules for being classified in any of the classes P, N, M, I [0146] Class "U" comprising all reads pairs for which both reads do not satisfy the matching rules for being classified in the classes P, N, M, I

[0147] In another aspect of the method said identifier of said genomic regions is comprised in a master index table.

[0148] In another aspect of the method said genomic data and said labels are entropy coded.

[0149] In another aspect of the method said master index table (4812) is comprised in a genomic dataset header (4813).

[0150] In another aspect of the method said regions of genomic data are dispersed among separate Access Units (524, 486).

[0151] In another aspect of the method the location of said regions of genomic data, in a file, is indicated in a local index table (525).

[0152] In another aspect of the method said labels are user specified.

[0153] In another aspect of the method said regions are protected and/or encrypted in a separate manner, without encrypting the whole genomic file.

[0154] In another aspect of the method said labels are stored in a genomic label list (GLL)

[0155] In another aspect the method further comprises encoding genomic data with selective access to regions of genomic data as previously defined.

[0156] In another aspect of the method said genomic label list is periodically retransmitted or updated in order to enable multiple synchronization points

[0157] In another aspect the method further comprises decoding a stream or a file of genomic data with selective access to regions of genomic data as previously defined.

[0158] The present invention further provides an apparatus for encoding genomic data as previously defined.

[0159] The present invention further provides an apparatus for decoding genomic data as previously defined.

[0160] The present invention further provides a storing mean for storing genomic data encoded as previously defined.

[0161] The present invention further provides a computer-readable medium comprising instructions that when executed cause at least one processor to perform the encoding method previously defined.

[0162] The present invention further provides a computer-readable medium comprising instructions that when executed cause at least one processor to perform the decoding method previously defined.

DETAILED DESCRIPTION

[0163] The present invention describes a labelling mechanism providing selective access and selective access control to genomic regions or sub-regions or aggregations of regions or sub-regions of compressed genomic data stored in a file format and/or the relevant access units to be used to store, transport, access and process genomic or proteomic information in the form of sequences of symbols representing molecules.

[0164] These molecules include, for example, nucleotides, amino acids and proteins. One of the most important pieces of information represented as sequence of symbols are the data generated by high-throughput genome sequencing devices.

[0165] The genome of any living organism is usually represented as a string of symbols expressing the chain of nucleic acids (bases) characterizing that organism. Current state of the art genome sequencing technology is able to produce only a fragmented representation of the genome in the form of several (up to billions) strings of nucleic acids associated to metadata (identifiers, level of accuracy etc.). Such strings are usually called "sequence reads" or "reads".

[0166] The typical steps of the genomic information life cycle comprise Sequence reads extraction, Mapping and Alignment, Variant detection, Variant annotation and Functional and Structural Analysis (see FIG. 1).

[0167] Sequence reads extraction is the process --performed by either a human operator or a machine--of representation of fragments of genetic information in the form of sequences of symbols representing the molecules composing a biological sample. In the case of nucleic acids such molecules are called "nucleotides". The sequences of symbols produced by the extraction are commonly referred to as "reads". This information is usually encoded in prior art as FASTA files including a textual header and a sequence of symbols representing the sequenced molecules.

[0168] When the biological sample is sequenced to extract DNA of a living organism the alphabet is composed by the symbols (A,C,G,T,N).

[0169] When the biological sample is sequenced to extract RNA of a living organism the alphabet is composed by the symbols (A,C,G,U,N).

[0170] In case the IUPAC extended set of symbols, so called "ambiguity codes" are also generated by the sequencing machine, the alphabet used for the symbols composing the reads are (A, C, G, T, U, W, S, M, K, R, Y, B, D, H, V, N or -).

[0171] When the IUPAC ambiguity codes are not used, a sequence of quality score can be associated to each sequence read. In such case prior art solutions encode the resulting information as a FASTQ file. Sequencing devices can introduce errors in the sequence reads such as: [0172] 1. identification of a wrong symbol (i.e. representing a different nucleic acid) to represent the nucleic acid actually present in the sequenced sample; this is usually called "substitution error" (mismatch); [0173] 2. insertion in one sequence read of additional symbols that do not refer to any actually present nucleic acid; this is usually called "insertion error"; [0174] 3. deletion from one sequence read of symbols that represent nucleic acids that are actually present in the sequenced sample; this is usually called "deletion error"; [0175] 4. recombination of one or more fragments into a single fragment which does not reflect the reality of the originating sequence.

[0176] The term "coverage" is used in literature to quantify the extent to which a reference genome or part thereof can be covered by the available sequence reads. Coverage is said to be: [0177] partial (less than 1.times.) when some parts of the reference genome are not mapped by any available sequence read [0178] single (1.times.) when all nucleotides of the reference genome are mapped by one and only one symbol present in the sequence reads [0179] multiple (2.times., 3.times., N.times.) when each nucleotide of the reference genome is mapped multiple times.

[0180] Sequence alignment refers to the process of arranging sequence reads by finding regions of similarity that may be a consequence of functional, structural, or evolutionary relationships among the sequences. When the alignment is performed with reference to a pre-existing nucleotides sequence referred to as "reference genome", the process is called "mapping". Sequence alignment can also be performed without a pre-existing sequence (i.e. reference genome) in such cases the process is known in prior art as "de novo" alignment. Prior art solutions store this information in SAM, BAM or CRAM files. The concept of aligning sequences to reconstruct a partial or complete genome is depicted in FIG. 3.

[0181] Variant detection (a.k.a. variant calling) is the process of translating the aligned output of genome sequencing machines, (sequence reads generated by NGS devices and aligned), to a summary of the unique characteristics of the organism being sequenced that cannot be found in other pre-existing sequence or can be found in a few pre-existing sequences only. These characteristics are called "variants" because they are expressed as differences between the genome of the organism under study and a reference genome. Prior art solutions store this information in a specific file format called VCF file.

[0182] Variant annotation is the process of assigning functional information to the genomic variants identified by the process of variant calling. This implies the classification of variants according to their relationship to coding sequences in the genome and according to their impact on the coding sequence and the gene product. This is in prior art usually stored in a MAF file.

[0183] The process of analysis of DNA (variant, CNV=copy number variation, methylation etc) strands to define their relationship with genes (and proteins) functions and structure is called functional or structural analysis. Several different solutions exist in the prior art for the storage of this data.

[0184] Genomic File Format

[0185] The invention disclosed in this document consists in the definition of a selective and controlled data access applied to a compressed data structure for representing, processing manipulating and transmitting genome sequencing data that differs from prior art solutions for at least the following aspects: [0186] It does not rely on any prior art representation formats of genomic information (i.e. FASTQ, SAM). [0187] It supports efficient handling and selective random access to data produced by multiple sequencing runs structured as multiple genomic datasets. Partitioning data from different sequencing runs into the same data structure enables analysts to simultaneously perform queries on them with great advantage for population genetics studies. [0188] It implements a new original classification of the genomic data and metadata according to their specific characteristics. Sequence reads are mapped to a reference sequence and grouped in distinct classes according to the results of the alignment process. This results in data classes with lower information entropy that can be more efficiently encoded applying different specific compression algorithms such as Huffman coding, arithmetic coding (CABAC, CAVLAC), Asymmetric Numerical Systems, Lempel-Ziv and its derivations. [0189] It implements a new method to associate data classes or subsets of data classes to specific genomic regions, or sub-regions or aggregations of regions or sub-regions, by means of user defined Labels that enable the selective access and protection of said compressed data classes corresponding to specific genomic regions or sub-regions or aggregations of regions or sub-regions. [0190] It defines syntax elements and the related encoding/decoding process conveying the sequence reads and the alignment information into a representation which is more efficient to be processed for downstream analysis applications.

[0191] Classifying the reads according to the result of mapping and coding them using descriptors to be stored in layers (position layer, mate distance layer, mismatch type layer etc, etc, . . . ) present the following advantages: [0192] A reduction of the information entropy when the different syntax elements are modelled by a specific source model which yields higher compression performance. [0193] A more efficient access to data that are already organized in groups/layers that have a specific meaning for the downstream analysis stages and that can be accesses separately and independently directly in the compressed domain. [0194] The presence of a modular data structure that can be updated incrementally by accessing only the required information without the need of decoding (i.e. decompressing) the whole data content. [0195] The genomic information produced by sequencing machines is intrinsically highly redundant due to the nature of the information itself and to the need of mitigating the errors intrinsic in the sequencing process. This implies that the relevant genetic information which needs to be identified and analyzed (the variations with respect to a reference) is only a small fraction of the produced data. Prior art genomic data representation formats are not conceived to "isolate" the meaningful information at a given analysis stage from the rest of the information so as to make it promptly available and understandable by the analysis applications. [0196] The solution brought by the disclosed invention is to represent genomic data in such a way that any relevant portion of data is readily available to the analysis applications without the need of accessing and decompressing the entirety of data and the redundancy of the data is efficiently reduced by efficient compression to minimize the required storage space and transmission bandwidth.

[0197] The key elements of the invention are: [0198] 1. The specification of a file format that "contains" structured and user-defined selectively accessible data elements called Access Units (AU) in compressed form. Such approach can be seen as the opposite of prior art approaches, SAM and BAM for instance, in which data are structured in non-compressed form and then the entire file is compressed. A first clear advantage of the approach is to be able to efficiently and naturally provide various forms of user-defined structured selective access to the data elements in the compressed domain which is impossible or extremely awkward in prior art approaches. [0199] 2. The structuring of the genomic information into specific "layers" of homogeneous data and metadata presents the considerable advantage of enabling the definition of different models of the information sources characterized by low entropy. Such models not only can differ from layer to layer, but can also differ inside each layer when the compressed data within layers are partitioned into Data Blocks included into Access Units. This structuring enables the use of the most appropriate compression for each class of data or metadata and portion of them with significant gains in coding efficiency versus prior art approaches. [0200] 3. The information is structured in Access Units (AU) so that any relevant subset of data used by genomic analysis applications is efficiently and selectively accessible by means of appropriate interfaces. These features enable faster access to data and yield a more efficient processing. [0201] 4. The definition of a Master Index Table and Local Index Tables enabling selective access to the information carried by the layers of encoded (i.e. compressed) data without the need to decode the entire volume of compressed data. [0202] 5. The possibility of accessing only the AUs that correspond to specific user defined genomic regions or sub-regions or aggregations of regions or sub-regions and data classes of interest by parsing a "Label List" present in the file header. [0203] 6. The possibility of providing different types of access control to different AUs and portions of data contained into the AU according to the user defined "Labels" identifying associated genomic regions. [0204] 7. The possibility of performing realignment of already aligned and compressed genomic data sets when they need to be re-aligned versus newly published reference genomes by performing an efficient transcoding of selected data portions in the compressed domain. The frequent release of new reference genomes currently requires resource consuming and time for the transcoding processes to re-align already compressed and stored genomic data with respect to the newly published references because all data volume need to be processed.

[0205] The method described in this document aims at exploiting the available a-priori knowledge on genomic data to define an alphabet of syntax elements with reduced entropy. In genomics the available knowledge is represented by an existing genomic sequence usually --but not necessarily --of the same species as the one to be processed. As an example, human genomes of different individuals differ only of a fraction of 1%. However, such small amount of data contain enough information to enable early diagnosis, personalized medicine, customized drugs synthesis etc. This invention aims at defining a genomic information representation format where the relevant information is efficiently accessible, access can be selectively controlled and data protected, the information is efficiently transportable and all such processing is performed handling compressed data structures.

[0206] The technical features used in the present invention are: [0207] 1. Partitioning genomic information generated by different sequencing runs into different genomic datasets in order to enable efficient data retrieval and processing when querying one or more of the available datasets. [0208] 2. Partition of the genome sequence data and metadata in "classes" sharing common features; [0209] 3. Definition of the structure of the genomic information carried by each data classes in which the genomic data is partitioned, into a sets of "layers" of descriptors in order to reduce the information entropy as much as possible; [0210] 4. Definition of a Master Index Table and Local Index Tables to enable selective access to the data classes and associated information by accessing only the desired layers of coded information (i.e. compressed) without the need to decode the entire coded genomic information; [0211] 5. Usage of different source models and entropy coders to code the syntax elements belonging to different layers of the data classes defined as specified in point 2; [0212] 6. Definition of specific mechanisms establishing a correspondence among dependent layers to enable selective access to the data without the need to decode all the layers if not necessary or desired; [0213] 7. Definition of a mechanism for labelling compressed data corresponding to specific genomic regions or sub-regions or aggregations of regions or sub-regions and corresponding data "classes" or subsets of data classes by "Labels" enabling efficient selective access; [0214] 8. Definition of mechanisms for the selective protection of specific genomic regions or sub-regions or aggregations of regions or sub-regions and corresponding data "classes" or subsets of data classes and any combination thereof. [0215] 9. Coding of the datasets or data "classes" with respect to one or more pre-existing or constructed reference sequences that can be further transformed to reduce the entropy of the sequence data representation.

[0216] In order to solve all the mentioned problems of the prior art in terms of efficient selective access and selective access control to specific data "classes", specific genomic regions or sub-regions or aggregations of regions or sub-regions, while preserving efficient transmission and storing by means of an efficient compressed representation, the present invention application provides a specific data structure specification that implements appropriate data reordering into accessible units of homogeneous and/or semantically significant data enabling seamless access and processing required by state of the art genome data analysis applications.

[0217] In particular the present invention adopts a data structure based on the concept of Access Unit, "Labels" and the multiplexing of the relevant data, concepts which are absent from all state of the art genomic data formats.

[0218] Genomic data are structured and encoded into different Access Units. Hereafter follows a description of the genomic data that are contained into different Access Units and can be identified by "Labels" associating genomic data to specific genomic regions or sub-regions or aggregations of regions or sub-regions versus reference genomes.

[0219] Genomic Data Classification According to Matching Rules

[0220] The sequence reads generated by sequencing machines are classified by the disclosed invention into five different "classes" according to the matching results of the alignment with respect to one or more pre-existing reference sequences.

[0221] When aligning a DNA sequence of nucleotides with respect to a reference sequence the following cases can be identified: [0222] 1. A region in the reference sequence is found to match the sequence read without any error (i.e. perfect mapping). Such sequence of nucleotides is referenced to as "perfectly matching read" or denoted as "Class P". [0223] 2. A region in the reference sequence is found to match the sequence read with a type and a number of mismatches determined only by the number of positions in which the sequencing machine generating the read was not able to call any base (or nucleotide). Such type of mismatches are denoted by an "N" the letter used to indicate an undefined nucleotide base. In this document this type of mismatch referred to as "n type" mismatch. Such sequences is referenced to as "N mismatching reads" or "Class N". Once the read is classified to belong to "Class N" it is useful to limit the degree of matching inaccuracy to a given upper bound and set a boundary between what is considered a valid matching and what it is not. Therefore, the reads assigned to Class N are also constrained by setting a threshold (MAXN) that defines the maximum number of undefined bases (i.e. bases called as "N") that a read can contain. Such classification implicitly defines the required minimum matching accuracy (or maximum degree of mismatch) that all reads belonging to Class N shares when referred to the corresponding reference sequence, which constitute an useful criterion for applying selective data searches to the compressed data. [0224] 3. A region in the reference sequence is found to match the sequence read with types and number of mismatches determined by the number of positions in which the sequencing machine generating the read was not able to call any nucleotide base, if present (i.e. "n type" mismatches), plus the number of mismatches in which a different base, than the one present in the reference, has been called. Such type of mismatch denoted as "substitution" is also called Single Nucleotide Variation (SNV) or Single Nucleotide Polymorphism (SNP). In this document this type of mismatch is also referred to as "s type" mismatch. The sequence read is then referenced to as "M mismatching reads" and assigned to "Class M". Like in the case of "Class N", also for all reads belonging to "Class M" it is useful to limit the degree of matching inaccuracy to a given upper bound, and set a boundary between what is considered a valid matching and what it is not. Therefore, the reads assigned to Class M are also constrained by defining a set of thresholds, one for the number "n" of mismatches of "n type" (MAXN) if present, and another for the number of substitutions "s" (MAXS). A third constraint is a threshold defined by any function of both numbers "n" and "s", f(n,s). Such third constraint enable to generate classes with an upper bound of matching inaccuracy according to any meaningful selective access criterion. For instance, and not as a limitation, f(n,s) can be (n+s)1/2 or (n+s) or any linear or non-linear expression that sets a boundary to the maximum matching inaccuracy level that is admitted for a read belonging to "Class M". Such boundary constitutes a very useful criterion for applying the desired selective data searches to the compressed data when analyzing sequence reads for various purposes because it makes possible to set a further boundary to any possible combination of the numbers "n" of "n type" mismatches and "s" of "s type" mismatches (substitutions) beyond the simple threshold applied to the one type or to the other. [0225] 4. A fourth class is constituted by sequencing reads presenting at least one mismatch of any type among "insertion", "deletion" (a.k.a. indels) and "clipped", plus, if present, any mismatches type belonging to class N or M. Such sequence is referenced to as "I mismatching reads" and assigned to "Class I". Insertions are constituted by an additional sequence of one or more nucleotides not present in the reference, but present in the read sequence. In this document this type of mismatch is referred to as "i type" mismatch. In literature when the inserted sequence is at the edges of the sequence it is also referred to as "soft clipped" (i.e. the nucleotides are not matching the reference but are kept in the aligned reads contrarily to "hard clipped" nucleotides which are discarded). In this document this type of mismatch is referred to as "c type" mismatch. Keeping or discarding nucleotides is a decisions taken by the aligner stage and not by the classifier of reads disclosed in this invention which receives and processes the reads as they are determined by the sequencing machine or by the following alignment stage. Deletion are "holes" (missing nucleotides) in the read with respect to the reference. In this document this type of mismatch is referred to as "d type" mismatch. Like in the case of classes "N" and "M" it is possible and appropriate to define a limit to the matching inaccuracy. The definition of the set of constraints for "Class I" is based on the same principles used for "Class M" and is reported in Table 1 in the last table lines. Beside a threshold for each type of mismatch admissible for class I data, a further constraint is defined by a threshold determined by any function of the number of the mismatches "n", "s", "d", "i" and "c", w(n,s,d,i,c). Such additional constraint make possible to generate classes with an upper bound of matching inaccuracy according to any meaningful user defined selective access criterion. For instance, and not as a limitation, w(n,s,d,i,c) can be (n+s+d+i+c)1/5 or (n+s+d+i+c) or any linear or non-linear expression that sets a boundary to the maximum matching inaccuracy level that is admitted for a read belonging to "Class I". Such boundary constitutes a very useful criterion for applying the desired selective data searches to the compressed data when analyzing sequence reads for various purposes because it enables to set a further boundary to any possible combination of the number of mismatches admissible in "Class I" reads beyond the simple threshold applied to each type of admissible mismatch. [0226] 5. A fifth class includes all reads that do now find any matching considered valid (i.e not satisfying the set of matching rules defining an upper bound to the maximum matching inaccuracy as specified in Table 1) for each data class when referring to the reference sequence. Such sequences are said to be "Unmapped" when referring to the reference sequences and are classified as belonging to the "Class U".

[0227] Classification of Read Pairs According to Matching Rules

[0228] The classification specified in the previous section concerns single sequence reads. In the case of sequencing technologies that generates read in pairs (i.e. Illumina Inc.) in which two reads are known to be separated by an unknown sequence of variable length, it is appropriate to consider the classification of the entire pair to a single data class. A read that is coupled with another is said to be its "mate".

[0229] If both paired reads belong to the same class the assignment to a class of the entire pair is obvious, the entire pair is assigned to the same class for any class (i.e. P, N, M, I, U). In the case the two reads belong to a different class, but none of them belongs to the "Class U", then the entire pair is assigned to the class with the highest priority defined according to the following expression:

P<N<M<I

in which "Class P" has the lowest priority and "Class I" has the highest priority.

[0230] In case only one of the reads belongs to "Class U" and its mate to any of the Classes P, N, M, I a sixth class is defined as "Class HM" which stands for "Half Mapped".

[0231] The definition of such specific class of reads is motivated by the fact that it is used for attempting to determine gaps or unknown regions existing in reference genomes (a.k.a. little known or unknown regions). Such regions are reconstructed by mapping pairs at the edges using the pair read that can be mapped on the known regions. The unmapped mate is then used to build the so called "contigs" of the unknown region as it is shown in FIG. 57. Therefore providing a selective access to only such type of read pairs greatly reduces the associated computation burden enabling much efficient processing of such data originated by large amounts of data sets that using the state of the art solutions would require to be entirely inspected.

[0232] The table below summarizes the matching rules applied to reads in order to define the class of data each read belongs to. The rules are defined in the first five columns of the table in terms of presence or absence of type of mismatches (n, s, d, i and c type mismatches). The sixth column provides rules in terms of maximum threshold for each mismatch type and any function f(n,s) and w(n,s,d,i,c) of the possible mismatch types.

TABLE-US-00001 TABLE 1 Type of mismatches and set of constrains that each sequence reads must satisfy to be classified in the data classes defined in this invention disclosure. Number and types of mismatches found when matching a read with a reference sequence Number of Number of unknown Number of Number of Number of clipped Set of matching Assignement bases ("N") substitutions deletions Insertions bases accuracy constraints Class 0 0 0 0 0 0 P n > 0 0 0 0 0 n .ltoreq. MAXN N n > MAXN U n .gtoreq. 0 s > 0 0 0 0 n .ltoreq. MAXN and M s .ltoreq. MAXS and f(n, s) .ltoreq. MAXM n > MAXN or U s > MAXS or f(n, s) > MAXM n .gtoreq. 0 s .gtoreq. 0 d .gtoreq. 0* i .gtoreq. 0* c .gtoreq. 0* n .ltoreq. MAXN and I *At least one mismatch s .ltoreq. MAXS and of type d, i, c must be resent d .ltoreq. MAXD and (i.e. d > 0 or i > 0 or > 0) i .ltoreq. MAXI and c .ltoreq. MAXC w(n, s, d, i, c) .ltoreq. MAXTOT d .gtoreq. 0 i .gtoreq. 0 c .gtoreq. 0 n > MAXN or U s > MAXS or d > MAXD or i > MAXI or c > MAXC w(n, s, d, i, c) > MAXTOT

[0233] Matching Rules Partition of Sequence Read Data Classes N, M and I into Subclasses with Different Degrees of Matching Accuracy

[0234] The data classes of type N, M and I as defined in the previous sections can be further decomposed into an arbitrary number of distinct sub-classes with different degrees of matching accuracy. Such option is an important technical advantage in providing a finer granularity and as consequence a much more efficient selective access to each data class. As an example and not as a limitation, to partition the Class N into a number k of subclasses (Sub-Class N.sub.1, . . . , Sub-Class N.sub.k) it is necessary to define a vector with the corresponding components MAXN.sub.1, MAXN.sub.2, MAXN.sub.(k-1), MAXN.sub.(k), with the condition that MAXN.sub.1<MAXN.sub.2< . . . <MAXN.sub.(k-1)<MAXN and assign each read to the lowest ranked sub-class that satisfy the constrains specified in Table 1 when evaluated for each element of the vector. This is shown in FIG. 60 where a data classification unit 601 contains Class P, N, M, I U, HM encoder and encoders for annotations and metadata. Class N encoder is configured with a vector of thresholds, MAXN.sub.1 to MAXN.sub.k 602 which generates k subclasses of N data (606).

[0235] In the case of the classes of type M and I the same principle is applied by defining a vector with the same properties for MAXM and MAXTOT respectively and use each vector components as threshold for checking if the functions f(n,s) and w(n,s,d,i,c) satisfy the constraint. Like in the case of sub-classes of type N, the assignment is given to the lowest sub-class for which the constraint is satisfied. The number of sub-classes for each class type is independent and any combination of subdivisions is admissible. This is shown in FIG. 60 where a Class M encoder and a Class I encoder are configured respectively with a vector of thresholds MAXM.sub.1 to MAXM.sub.j (603) and MAXTOT.sub.1 to MAXTOT.sub.h (604). The two encoders generate respectively j subclasses of M data (607) and h subclasses of I data (608). When two reads in a pair are classified in the same sub-class, then the pair belongs to the same sub-class.

[0236] When two reads in a pair are classified into sub-classes of different classes, then the pair belongs to the sub-class of the class of higher priority according to the following expression:

N<M<I

where N has the lowest priority and I has the highest priority.

[0237] When two reads belong to different sub-classes of one of classes N or M or I, then the pair belongs to the sub-class with the highest priority according to the following expressions:

N.sub.1<N.sub.2< . . . <N.sub.k

M.sub.1<M.sub.2< . . . <M.sub.j

I.sub.1<I.sub.2< . . . <I.sub.h

where the highest index has the highest priority.

[0238] Transformations of the "External" Reference Sequences

[0239] The mismatches found for the reads classified in the classes N, M and I can be used to create "transformed references" to be used to compress more efficiently the read representation. Reads classified as belonging to the Classes N, M or I (with respect to the pre-existing (i.e. "external") reference sequence denoted as RS.sub.0) can be coded with respect to the "transformed" reference sequence RS.sub.1 according to the occurrence of the actual mismatches with the transformed reference. For example if read.sup.M.sub.in belonging to Class M (denoted as the i.sup.th read of class M) containing mismatches with respect to the reference sequence RS.sub.n, then after "transformation" read.sup.M.sub.in=read.sup.P.sub.i(n+1) can be obtained with A(Ref.sub.n)=Ref.sub.n+1 where A is the transformation from reference sequence RS.sub.n to reference sequence RS.sub.n+1.

[0240] FIG. 61 shows an example on how reads containing mismatches (belonging to Class M) with respect to reference sequence 1 (RS.sub.1) can be transformed into perfectly matching reads with respect to the reference sequence 2 (RS.sub.2) obtained from RS.sub.1 by modifying the bases corresponding to the mismatch positions. They remain classified and they are coded together the other reads in the same data class access unit, but the coding is done using only the descriptors and descriptor values needed for a Class P read. This transformation can be denoted as:

RS.sub.2=A(RS.sub.1)

[0241] When the representation of the transformation A which generates RS.sub.2 when applied to RS.sub.1 plus the representation of the reads versus RS.sub.2 corresponds to a lower entropy than the representation of the reads of class M versus RS.sub.1, it is advantageous to transmit the representation of the transformation A and the corresponding representation of the read versus RS.sub.2 because an higher compression of the data representation is achieved.

[0242] The coding of the transformation A for transmission in the compressed bitstream requires the definition of two additional syntax elements as defined in the table below.

TABLE-US-00002 Syntax elements Semantic Comments rftp Reference position of difference between transformation reference and contig position used for prediction rftt Reference type of difference between reference and transformation contig used for prediction. Same syntax type described for the snpt descriptor defined below.