Robotic Camera Control Via Motion Capture

Atherton; Evan Patrick ; et al.

U.S. patent application number 16/588972 was filed with the patent office on 2020-01-30 for robotic camera control via motion capture. The applicant listed for this patent is Autodesk, Inc.. Invention is credited to Evan Patrick Atherton, Maurice Ugo Conti, Heather Kerrick, David Thomasson.

| Application Number | 20200030986 16/588972 |

| Document ID | / |

| Family ID | 59521648 |

| Filed Date | 2020-01-30 |

| United States Patent Application | 20200030986 |

| Kind Code | A1 |

| Atherton; Evan Patrick ; et al. | January 30, 2020 |

ROBOTIC CAMERA CONTROL VIA MOTION CAPTURE

Abstract

A motion capture setup records the movements of an operator, and a control engine then translates those movements into control signals for controlling a robot. The control engine may directly translate the operator movements into analogous movements to be performed by the robot, or the control engine may compute robot dynamics that cause a portion of the robot to mimic a corresponding portion of the operator.

| Inventors: | Atherton; Evan Patrick; (San Carlos, CA) ; Thomasson; David; (Fairfax, CA) ; Conti; Maurice Ugo; (Muir Beach, CA) ; Kerrick; Heather; (Oakland, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 59521648 | ||||||||||

| Appl. No.: | 16/588972 | ||||||||||

| Filed: | September 30, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15216583 | Jul 21, 2016 | 10427305 | ||

| 16588972 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 7/251 20170101; H04N 5/247 20130101; G06T 2207/30196 20130101; G06K 9/00342 20130101; G06F 3/017 20130101; G06F 3/0304 20130101; B25J 9/1679 20130101; G05D 1/0016 20130101; H04N 5/23203 20130101; B25J 9/1697 20130101; B25J 9/1689 20130101; B25J 19/023 20130101; B25J 9/16 20130101; B25J 9/1664 20130101; G05B 2219/35444 20130101 |

| International Class: | B25J 9/16 20060101 B25J009/16; G06T 7/246 20060101 G06T007/246; G05D 1/00 20060101 G05D001/00; G06F 3/03 20060101 G06F003/03; B25J 19/02 20060101 B25J019/02; G06K 9/00 20060101 G06K009/00; H04N 5/232 20060101 H04N005/232 |

Claims

1. A computer-implemented method for controlling a robot, the method comprising: generating motion capture data based on one or more movements of an operator; processing the motion capture data to generate control signals for controlling the robot; and transmitting the control signals to the robot to cause the robot to mimic the one or more movements of the operator.

2. The computer-implemented method of claim 1, wherein generating the motion capture data comprises tracking at least one of head, body, and limb movements associated with the operator via a motion capture setup.

3. The computer-implemented method of claim 1, wherein processing the motion capture data to generate the control signals comprises: modeling a first set of dynamics of the operator that corresponds to the one or more movements; determining a second set of dynamics of the robot that corresponds to the one or more movements; and generating the control signals based on the second set of dynamics.

4. The computer-implemented method of claim 1, wherein causing the robot to mimic the one or more movements of the operator comprises actuating a set of joints included in the robot to perform an articulation sequence associated with the one or more movements.

5. The computer-implemented method of claim 4, wherein the robot comprises a robotic arm that includes the set of joints.

6. The computer-implemented method of claim 1, wherein causing the robot to mimic the one or more movements comprises adjusting a speed of at least one motor coupled to a propeller.

7. The computer-implemented method of claim 6, wherein the robot comprises a flying drone.

8. The computer-implemented method of claim 1, wherein causing the robot to mimic the one or more movements comprises causing only a first portion of the robot to mimic only a subset of the one or more movements.

9. The computer-implemented method of claim 1, further comprising capturing sensor data while the robot mimics the one or more movements.

10. The computer-implemented method of claim 1, wherein the one or more movements comprise a target trajectory for a sensor array coupled to the robot.

11. A non-transitory computer-readable medium that, when executed by a processor, causes the processor to control a robot by performing the steps of: generating motion capture data based on one or more movements of an operator; processing the motion capture data to generate control signals for controlling the robot; and transmitting the control signals to the robot to cause the robot to mimic the one or more movements of the operator.

12. The non-transitory computer-readable medium of claim 11, wherein generating the motion capture data comprises tracking at least one of head, body, and limb movements associated with the operator via a motion capture setup.

13. The non-transitory computer-readable medium of claim 11, wherein processing the motion capture data to generate the control signals comprises: modeling a first set of dynamics of the operator that corresponds to the one or more movements; determining a second set of dynamics of the robot that corresponds to the one or more movements; and generating the control signals based on the second set of dynamics.

14. The non-transitory computer-readable medium of claim 11, wherein causing the robot to mimic the one or more movements of the operator comprises actuating a set of joints included in the robot to perform an articulation sequence associated with the one or more movements.

15. The non-transitory computer-readable medium of claim 11, wherein causing the robot to mimic the one or more movements comprises adjusting a speed of at least one motor coupled to a propeller.

16. The non-transitory computer-readable medium of claim 11, wherein causing the robot to mimic the one or more movements comprises causing only a first portion of the robot to mimic only a subset of the one or more movements.

17. The non-transitory computer-readable medium of claim 11, further comprising capturing sensor data while the robot mimics the one or more movements.

18. The non-transitory computer-readable medium of claim 11, wherein the one or more movements comprise a target trajectory for a sensor array coupled to the robot.

19. A system for controlling a robot, comprising: a memory storing a control application; and a processor coupled to the memory and configured to: generate motion capture data based on one or more movements of an operator, process the motion capture data to generate control signals for controlling the robot, and transmit the control signals to the robot to cause the robot to mimic the one or more movements of the operator.

20. The system of claim 19, wherein the processor is configured to execute the control application to: generate the motion capture data; process the motion capture data to generate the control signals; and transmit the control signals to the robot.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of the co-pending U.S. patent application titled, " ROBOTIC CAMERA CONTROL VIA MOTION CAPTURE," filed on July 21, 2016 and having Ser. No. 15/216,583. The subject matter of this related application is hereby incorporated herein by reference.

BACKGROUND OF THE INVENTION

Field of the Invention

[0002] Embodiments of the present invention relate generally to robotics and, more specifically, to robotic camera control via motion capture.

Description of the Related Art

[0003] When filming a movie, a camera operator controls the position, orientation, and motion of a camera in order to frame shots, capture sequences, and transition between camera angles, among other cinematographic procedures. However, operating a camera can be difficult and/or dangerous in certain situations. For example, when filming a close-up sequence, the camera operator may be required to assume an awkward position for an extended period of time while physically supporting heavy camera equipment. Or, when filming an action sequence, the camera operator may be required to risk bodily injury in order to attain the requisite proximity to the action being filmed. In any case, camera operators usually must perform very complex camera movements and oftentimes must repeat these motions across multiple takes.

[0004] As the foregoing illustrates, what is needed in the art are more effective approaches to filming movie sequences.

SUMMARY OF THE INVENTION

[0005] Various embodiments of the present invention set forth a computer-implemented method for controlling a robot, including generating motion capture data based on one or more movements of an operator, processing the motion capture data to generate control signals for controlling the robot, and transmitting the control signals to the robot to cause the robot to mimic the one or more movements of the operator.

[0006] At least one advantage of the approach discussed herein is that a human camera operator need not be subjected to discomfort or bodily injury when filming movies.

BRIEF DESCRIPTION OF THE DRAWINGS

[0007] So that the manner in which the above recited features of the present invention can be understood in detail, a more particular description of the invention, briefly summarized above, may be had by reference to embodiments, some of which are illustrated in the appended drawings. It is to be noted, however, that the appended drawings illustrate only typical embodiments of this invention and are therefore not to be considered limiting of its scope, for the invention may admit to other equally effective embodiments.

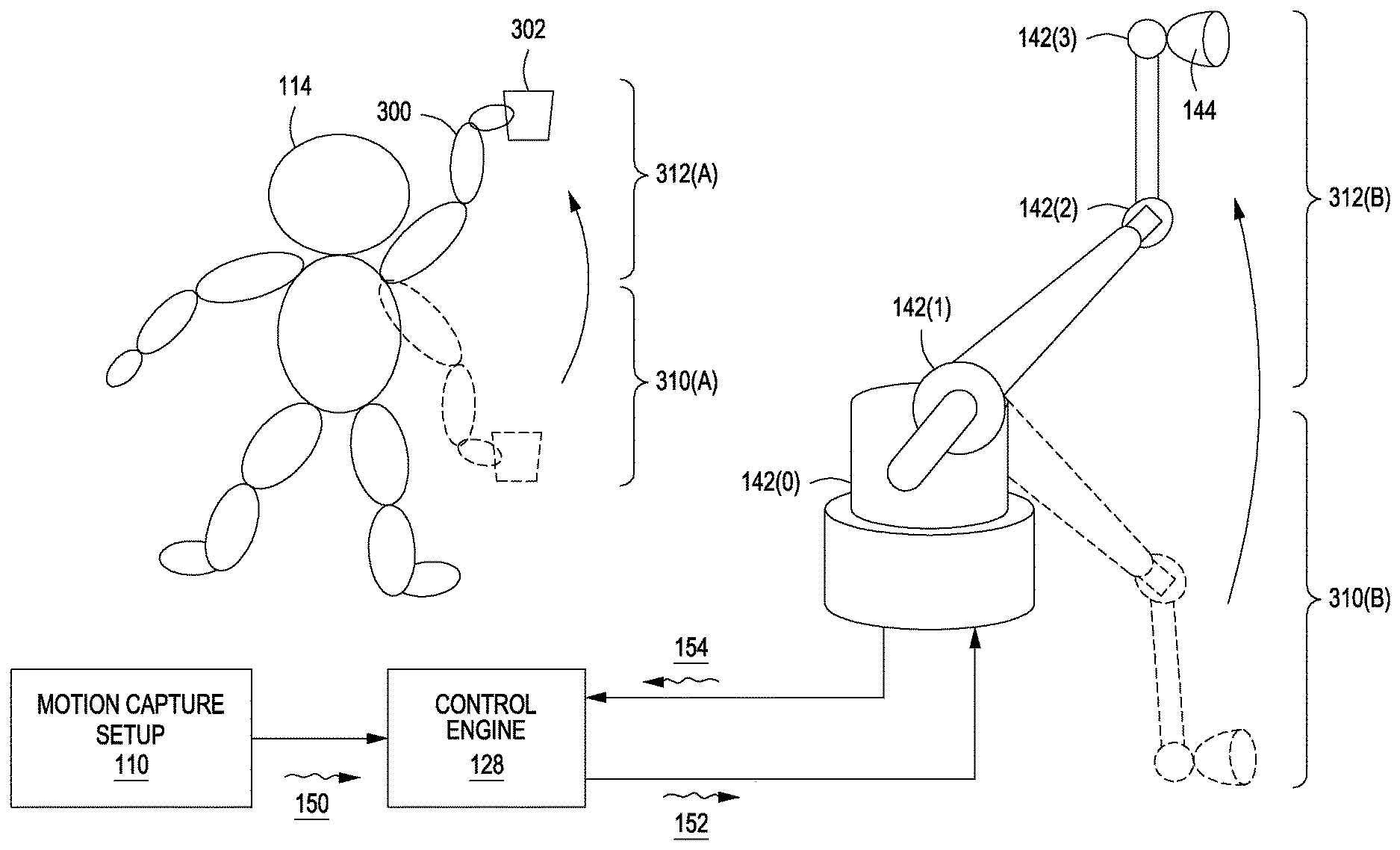

[0008] FIG. 1 illustrates a system configured to implement one or more aspects of the present invention;

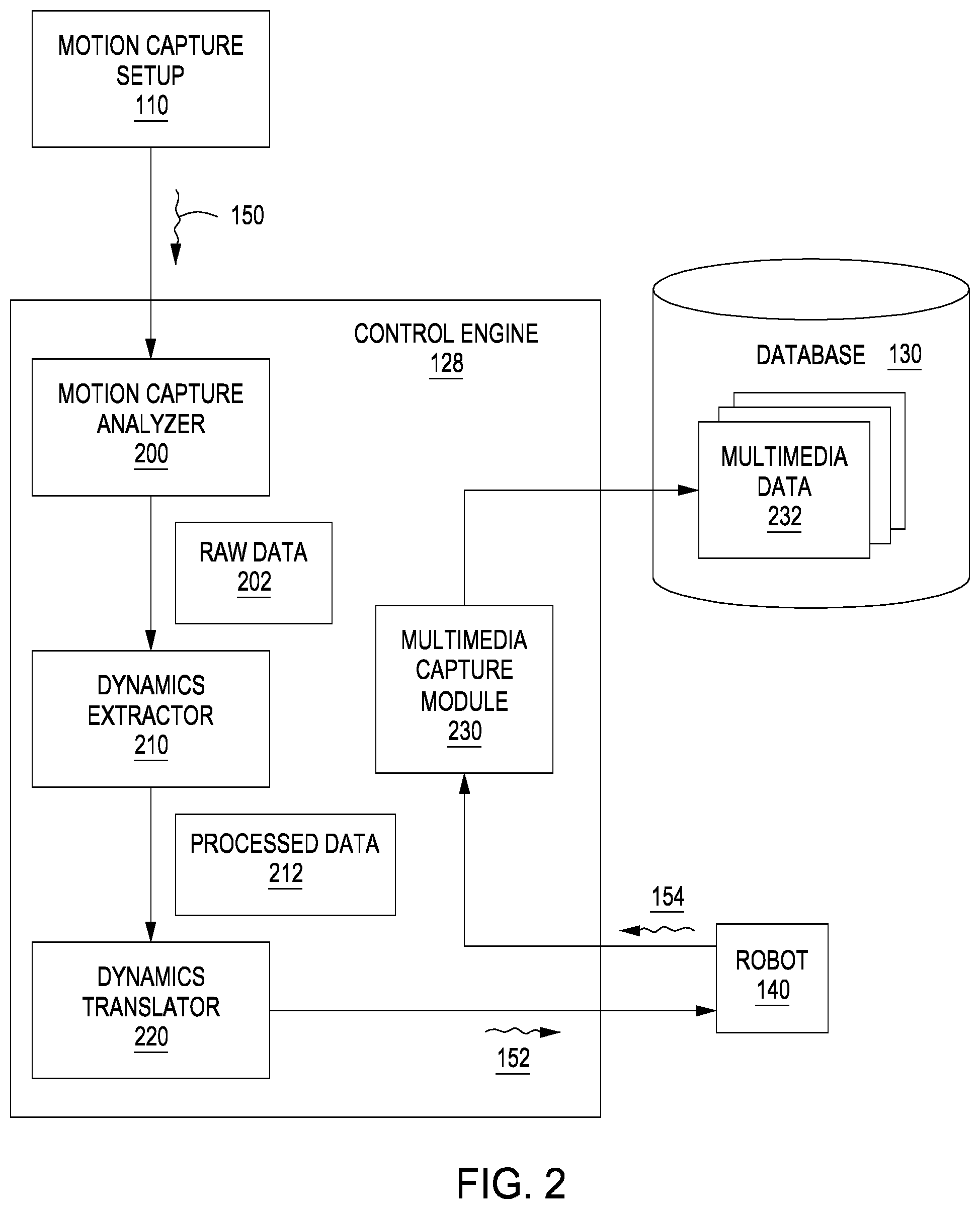

[0009] FIG. 2 is a more detailed illustration of the control engine of FIG. 1, according to various embodiments of the present invention;

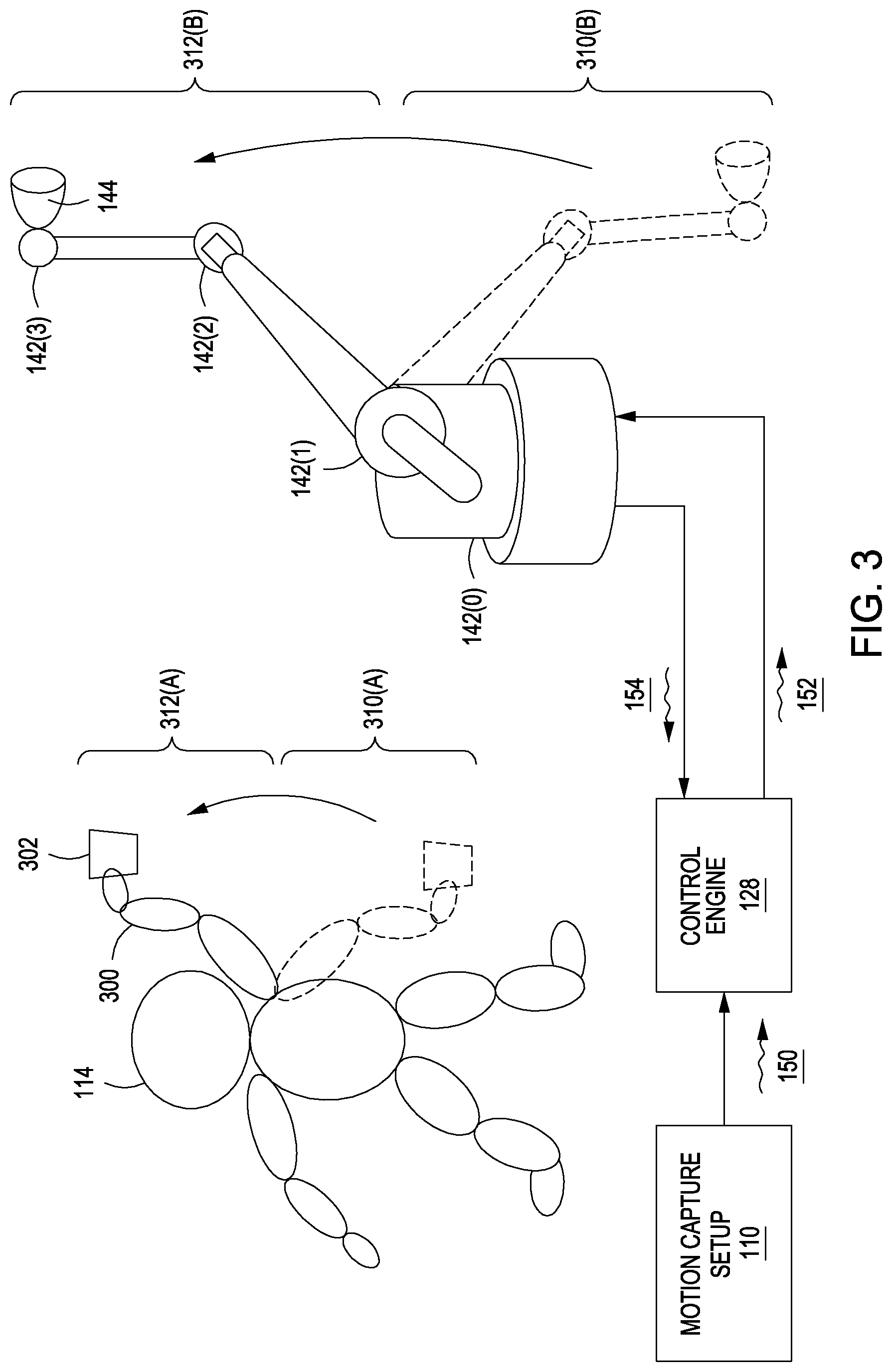

[0010] FIG. 3 illustrates how the articulation of a robotic arm can be controlled via a motion capture setup, according to various embodiments of the present invention;

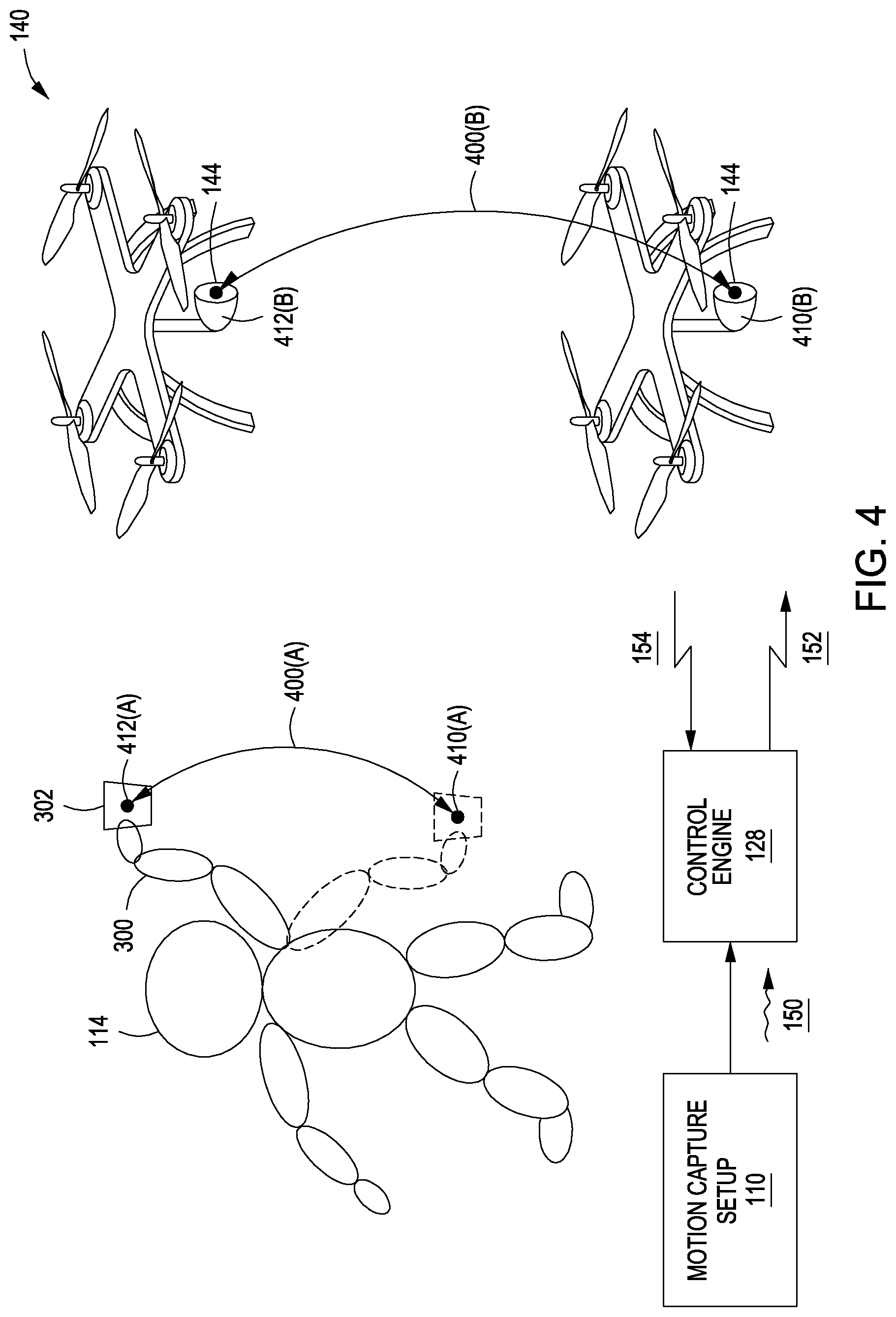

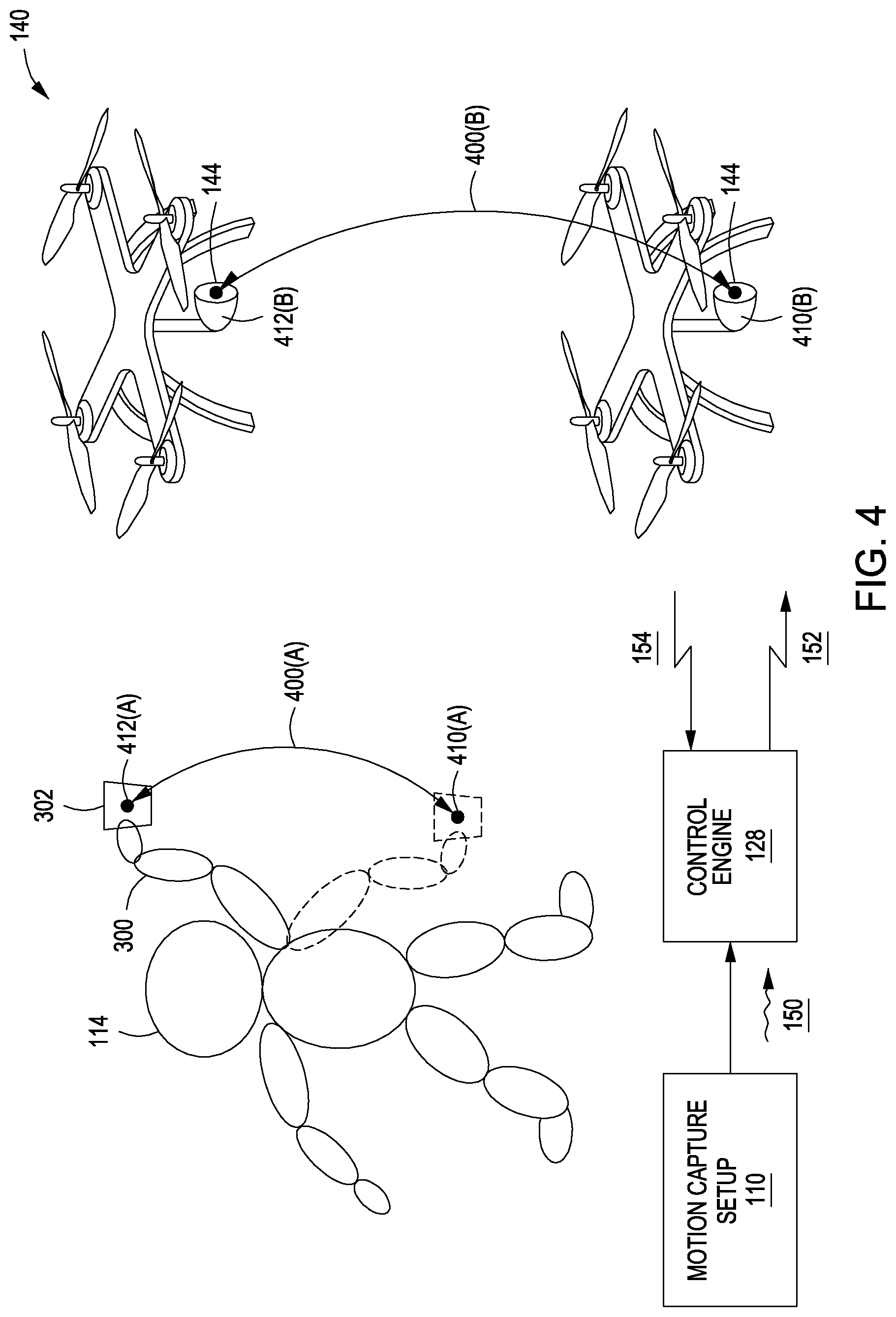

[0011] FIG. 4 illustrates how the position and orientation of a robotic drone can be controlled via a motion capture setup, according to various embodiments of the present invention; and

[0012] FIG. 5 is a flow diagram of method steps for translating motion capture data into control signals for a controlling a robot, according to various embodiments of the present invention.

DETAILED DESCRIPTION

[0013] In the following description, numerous specific details are set forth to provide a more thorough understanding of the present invention. However, it will be apparent to one of skill in the art that the present invention may be practiced without one or more of these specific details.

System Overview

[0014] FIG. 1 illustrates a system configured to implement one or more aspects of the present invention. As shown, system 100 includes a motion capture setup 110, a computer 120, and a robot 140. Motion capture setup 110 is coupled to computer 120, and computer 120 is coupled to robot 140.

[0015] Motion capture setup 110 includes sensors 112(0) and 112(1), configured to a capture motion associated with an operator 114. Motion capture setup 110 may include any number of different sensors, although generally motion capture setup 110 includes at least two sensors 112 in order to capture binocular data. Motion capture setup 110 outputs motion capture data 150 to computer 120 for processing.

[0016] Computer 120 includes a processor 122, input/output (I/O) utilities 124, and a memory 126, coupled together. Processor 122 may be any technically feasible form of processing device configured process data and execute program code. Processor 112 could be, for example, a central processing unit (CPU), a graphics processing unit (GPU), an application-specific integrated circuit (ASIC), a field-programmable gate array (FPGA), any technically feasible combination of such units, and so forth. I/O utilities 124 may include devices configured to receive input, including, for example, a keyboard, a mouse, and so forth. I/O utilities 124 may also include devices configured to provide output, including, for example, a display device, a speaker, and so forth. I/O utilities 124 may further include devices configured to both receive and provide input and output, respectively, including, for example, a touchscreen, a universal serial bus (USB) port, and so forth.

[0017] Memory 126 may be any technically feasible storage medium configured to store data and software applications. Memory 126 could be, for example, a hard disk, a random access memory (RAM) module, a read-only memory (ROM), and so forth. Memory 126 includes a control engine 128 and database 130. Control engine 128 is a software application that, when executed by processor 122, causes processor 122 to interact with robot 140.

[0018] Robot 140 includes actuators 142 coupled to a sensor array 144. Actuators 142 may be any technically feasible type of mechanism configured to induce physical motion of any kind, including linear or rotational motors, hydraulic or pneumatic pumps, and so forth. In one embodiment (described by way of example in conjunction with FIG. 3), actuators 142 include rotational motors configured to articulate a robotic arm of robot 140. In another embodiment (described by way of example in conjunction with FIG. 4), actuators 142 include rotational motors configured to drive a set of propellers that propel robot 140 through the air.

[0019] Sensor array 144 may include any technically feasible collection of sensors. For example, sensor array 144 could include an optical sensor, a sonic sensor, and/or other types of sensors configured to measure physical quantities. Generally, sensor array 144 is configured to record multimedia data. In practice, sensor array 144 includes a video camera configured to capture a frame 146. By capturing a sequence of such frames, sensor array 144 may record a movie.

[0020] In operation, motion capture setup 110 captures motion associated with operator 114 and then transmits motion capture signals 150 to control engine 128. Control engine 128 processes motion capture signals 150 to generate control signals 152. Control engine 128 transmits control signals 152 to robot 140 to control the motion of robot 140 via actuators 140. In general, the resultant motion of robot 140 mimics and/or is derived from the motion of operator 114. Robot 140 receives sensor data 154 via sensor array 146 and transmits that sensor data to control engine 128. Control engine 128 may process the received data for storage in database 130.

[0021] In this manner, operator 114 may control robot 140 to capture video sequences without being required to physically and/or directly operate any camera equipment. One advantage of this approach is that operator 114 need not be subjected to a difficult and/or dangerous working environment when filming. Control engine 128 is described in greater detail below in conjunction with FIG. 2.

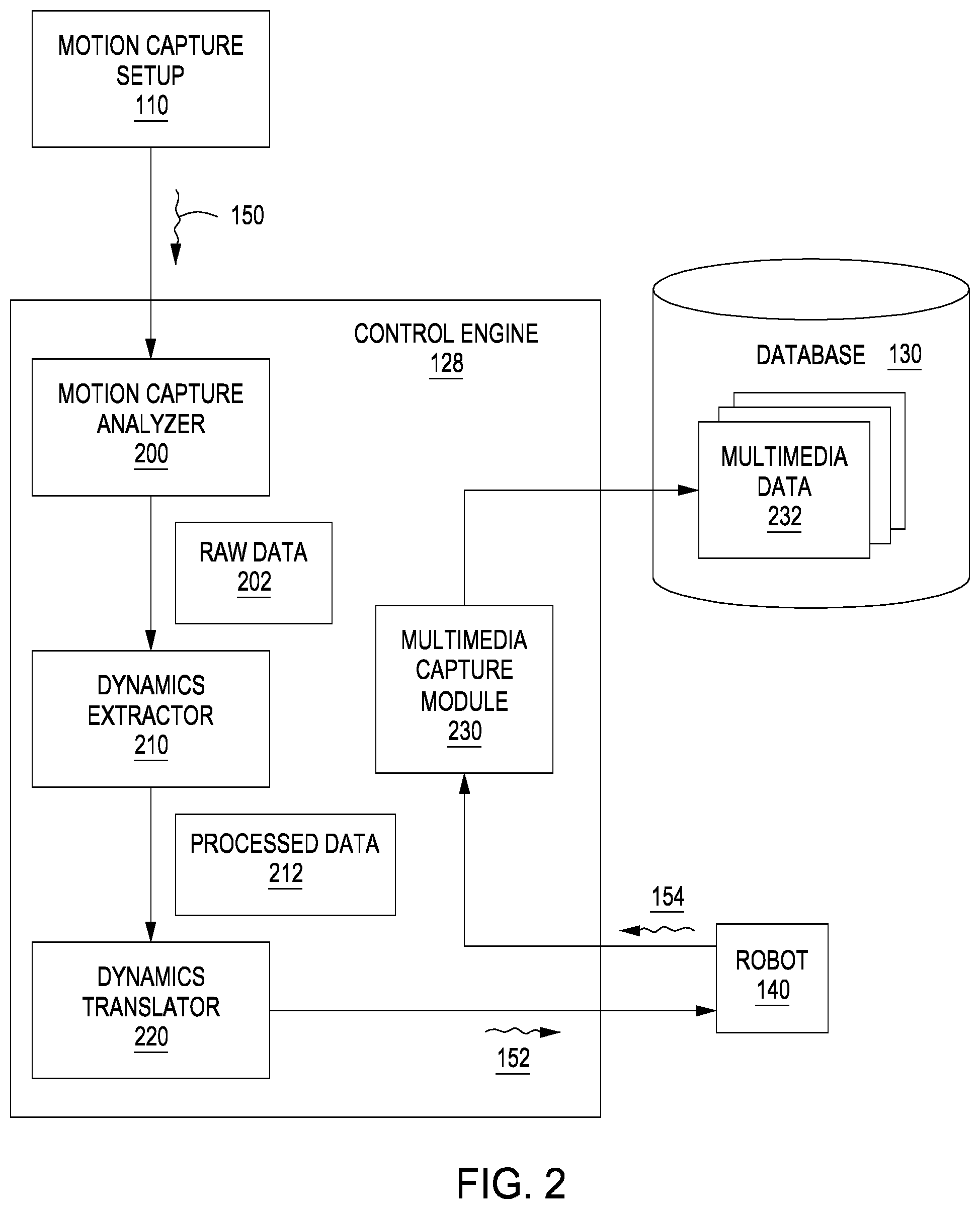

[0022] FIG. 2 is a more detailed illustration of the control engine of FIG. 1, according to various embodiments of the present invention. As shown, control engine 128 includes motion capture analyzer 200, dynamics extractor 210, dynamics translator 220, and multimedia capture module 230.

[0023] Motion capture analyzer 200 is configured to receive motion capture signals 150 from motion capture setup 110 and to process those signals to generate raw data 202. Motion capture signals 150 generally include video data captured by sensors 112. Motion capture analyzer 200 processes this video data to identify the position and orientation of some or all of operator 114 over a time period. In one embodiment, operator 114 wears a suit bearing reflective markers that can be tracked by motion capture analyzer 200. Motion capture analyzer 200 may be included in motion capture setup 110 in certain embodiments. Motion capture analyzer 200 generates raw data 202, which includes a set of quantities describing the position and orientation of any tracked portions of operator 114 over the time period.

[0024] Dynamics extractor 210 receives raw data 202 and then processes this data to generate processed data 212. In doing so, dynamics extractor 210 models the dynamics of operator 114 over the time period based on raw data 202. Dynamics extractor 210 may determine various rotations and/or translations of portions of operator 114, including the articulation of the limbs of operator 114, as well as the trajectory of any portion of operator 114 and/or any object associated with operator 114. Dynamics extractor 220 provides processed data 212 to dynamics translator 220.

[0025] Dynamics translator 220 is configured to translate the modeled dynamics included in processed data 212 into control signals 152 for controlling robot 140 to have dynamics derived from the modeled dynamics. Dynamics translator 220 may generate some control signals 152 that cause robot 140 to directly mimic the dynamics and motion of operator 114, or may generate other control signals 152 to cause only a portion of robot 140 to mimic the dynamics and motion of operator 114.

[0026] For example, dynamics translator 220 could generate control signals 152 that cause actuators 142 within a robotic arm of robot 140 to copy the articulation of joints within an arm of operator 114. This particular example is described in greater detail below in conjunction with FIG. 3. Alternatively, dynamics translator 220 could generate control signals 152 that cause actuators 142 to perform any technically feasible set of actuations in order to cause sensor array 144 to trace a similar path as an object held by operator 114. This example is described in greater detail below in conjunction with FIG. 4. In one embodiment, dynamics translator 220 may also amplify the movements of operator 114 when generating control signals 152, so that the dynamics of robot 140 represent an exaggerated version of the dynamics of operator 114.

[0027] In response to control signals 152, actuators 142 within robot 140 actuate and move sensor array 144 (and potentially robot 140 as a whole). Sensor array 144 captures sensor data 154 and transmits this data to multimedia capture module 230 within control engine 128. Multimedia capture application 230 generally manages the operation of sensor array and processes incoming sensor signals such as sensor signals 154. Based on these signals, multimedia capture module 230 generates multimedia data 232 for storage in database 130. Multimedia data 232 may include any technically feasible type of data, although in practice multimedia data 232 includes frames of video captured by sensor array 144, and possibly frames of audio data as well.

[0028] As a general matter, control engine 128 is configured to translate movements performed by operator 114 into movements performed by robot 140. In this manner, operator 114 can control robot 140 by performing a set of desired movements. This approach may be specifically applicable to filming a movie, where a camera operator may wish to control robot 140 to film movie sequences in a particular manner. However, persons skilled in the art will understand that the techniques disclosed herein for controlling a robot may be applicable to other fields beyond cinema.

Exemplary Robot Control via Motion Capture

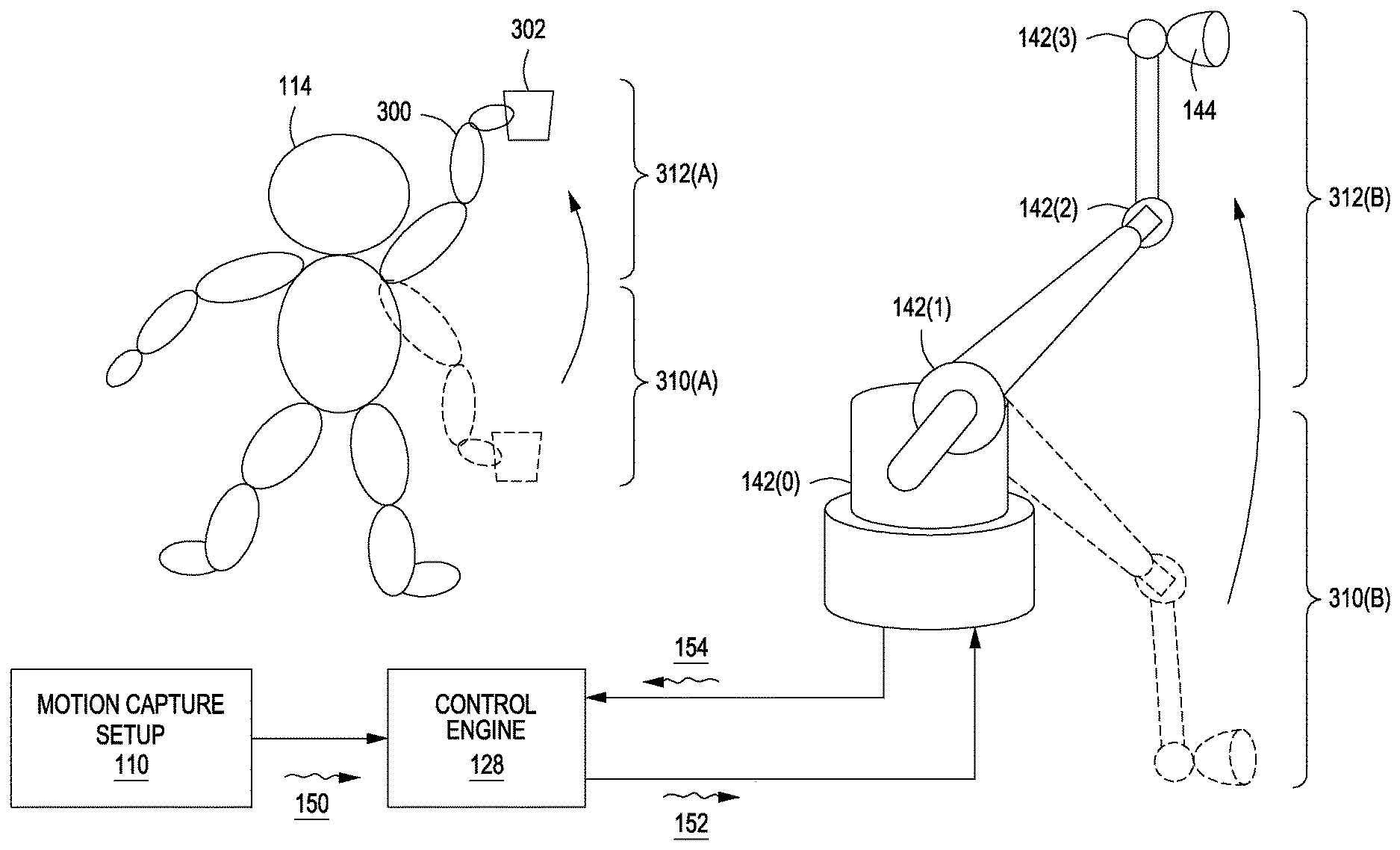

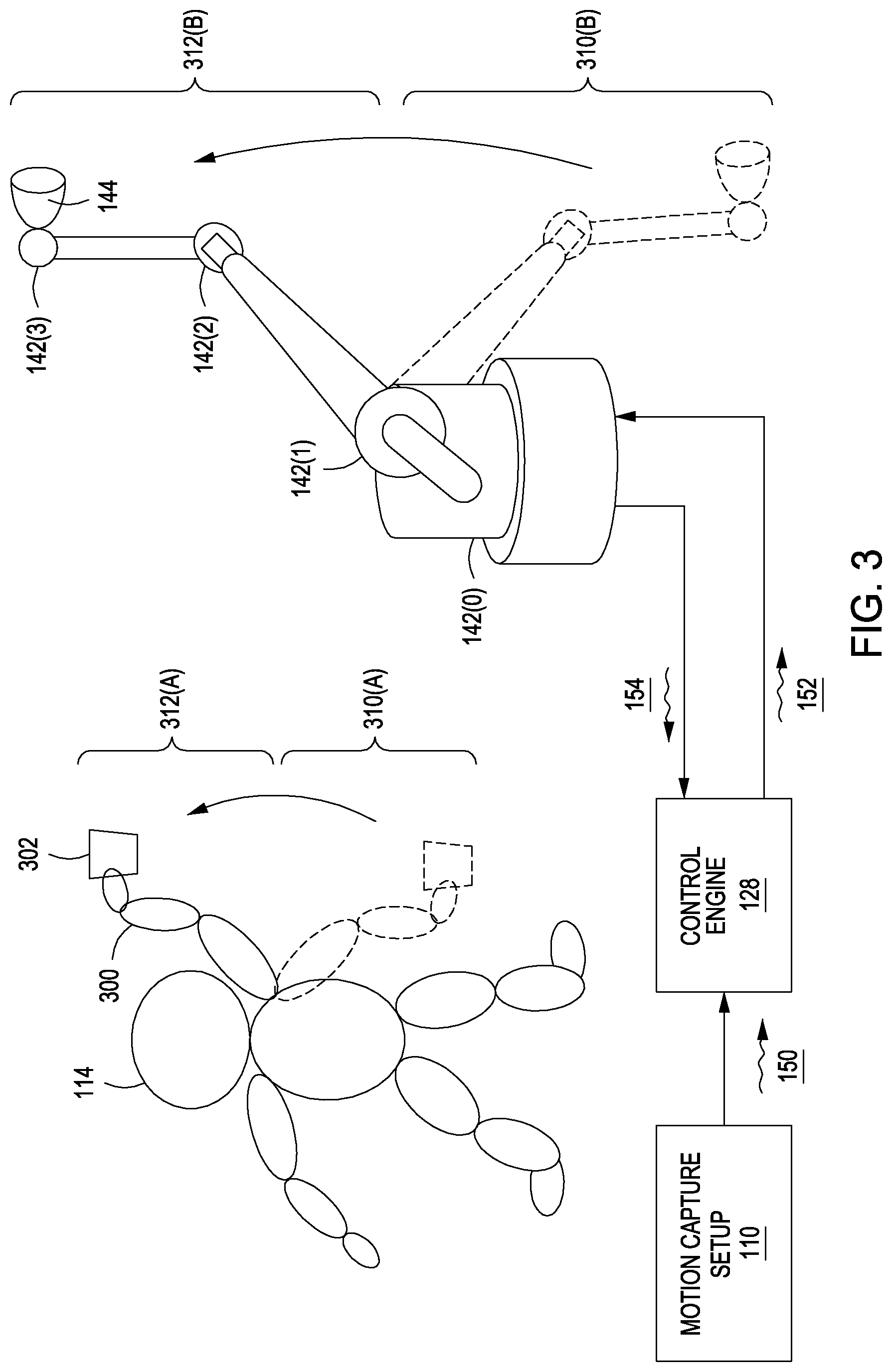

[0029] FIG. 3 illustrates how the articulation of a robotic arm can be controlled via a motion capture setup, according to various embodiments of the present invention. The scenario depicted in FIG. 3 is provided for exemplary purposes only to illustrate one possible robot and one possible set of movements. The techniques described herein are applicable to any technically feasible robot and any technically feasible set of movements.

[0030] As shown, operator 114 moves arm 300 from a position 310(A) upwards to assume a position 312(A). Operator 114 may optionally hold a motion capture object 302. Motion capture setup 110 is generally configured to track the motion of operator 114, and, specifically, to track the articulation of joints in arm 300 of operator 114. In one embodiment, motion capture setup 110 relies on markers indicating the positions of joints of arm 300. Motion capture setup 110 may also track the position and location of motion capture object 302. Motion capture setup 110 transmits motion capture signals 150 to control engine 128 that represent any and all captured data.

[0031] Control engine 128 then generates control signals 152 to cause robot 140 to move from position 310(B) to position 312(B). Positions 310(A) and 310(B) are generally analogous and have a similar articulation of joints. Likewise, positions 312(A) and 312(B) are analogous and have a similar articulation of joints. Additionally, any intermediate positions are generally analogous. Robot 140 may effect these analogous articulations by actuating actuators 142(0), 142(1), 142(2), and 142(3) to perform similar joint rotations as those performed by operator 114 with arm 300. In this manner, robot 140 performs a movement that is substantially the same as the movement performed by operator 114. In some embodiments, the motion of robot 140 represents an exaggerated version of the motion of operator 114. For example, control engine 128 could scale the joint rotations associated with arm 300 by a preset amount when generating control signals 152 for actuators 142 or perform a smoothing operation to attenuate disturbances and/or other perturbations in the mimicked movements.

[0032] During motion, sensor array 144 captures data that is transmitted to control engine 128 as sensor data 154. This sensor data is gathered from a sequence of locations that operator 114 indicates by performing movements corresponding to those locations, as described. In some cases, though, robot 140 need not implement analogous dynamics as operator 114. In particular, when operator 114 moves motion control object 302 along a certain path, robot 140 may perform any technically feasible combination of movements in order to cause sensor array 144 to trace a similar path. This approach is described in greater detail below in conjunction with FIG. 4.

[0033] FIG. 4 illustrates how the position of a robotic drone can be controlled via a motion capture setup, according to various embodiments of the present invention. Similar to FIG. 3, the scenario depicted in FIG. 4 is provided for exemplary purposes only to illustrate one possible robot and one possible set of movements. The techniques described herein are applicable to any technically feasible robot and any technically feasible set of movements.

[0034] In FIG. 4, operator 114 moves motion capture object 302 along trajectory 400(A) from initial position 410(A) to final position 412(A). In doing so, operator 114 articulates arm 300, in like fashion as described above in conjunction with FIG. 3. However, in the exemplary scenario shown in FIG. 4, motion capture setup 110 need not capture the specific articulation or arm 300.

[0035] Instead, motion capture setup 110 tracks the trajectory of motion capture object 302 to generate motion capture signals 150. Based on motion capture signals 150, control engine 128 determines specific dynamics for robot 140 that cause sensor array 144 to traverse a trajectory that is analogous to trajectory 400(A). Control engine 128 generates control signals 152 that represent these dynamics and then transmits control signals 152 to robot 140 for execution. In response, robot 140 moves sensor array 144 along trajectory 400(B) from position 410(A) to position 410(B).

[0036] Depending on the type of robot implemented, control engine 128 may generate different control signals 152. In the example shown, robot 140 is a quadcopter drone. Accordingly, control engine 128 generates control signals 152 that modulate the thrust of one or more propellers to cause the drone to move sensor array 144 along trajectory 400(B). In another example, robot 140 could include an arm with a number of joints, similar to robot 140 shown in FIG. 3. In this case, control engine 128 could determine a set of joint articulations that would cause robot 140 to move sensor array 144 along trajectory 400(B). Again, those articulations need not be similar to the articulations of arm 300.

[0037] With the approach described herein, operator 114 indicates, via motion capture object 302, a trajectory along with sensor array 144 should travel. Control engine 128 then causes robot to trace a substantially similar trajectory with sensor array 144. During motion, sensor array 144 captures sensor data 154 for transmission to control engine 128.

[0038] Referring generally to FIG. 3-4, persons skilled in the art will understand that the techniques described above represent just two exemplary approaches for generating robot control signals based on motion capture data. Other approaches also fall within the scope of the invention. For example, the techniques described in conjunction with FIGS. 2 and 3 could be combined. According to this combined technique, control engine 128 could instruct robot 140 to copy the motions of operator 114 under certain circumstances, and to copy the trajectory of motion capture object 302 under other circumstances. Alternatively, control engine 128 could be trained to interpret the motions of operator 114 according to a gesture-based language. For example, operator 114 could perform specific hand motions to indicate camera operations such as "zoom in," "pan left," and so forth. With this approach, operator 114 may exercise fine-grained control over both the motions of robot 140 and more specific cinematographic operations. In one embodiment, control engine 128 records any and all movements performed by operator 114 during real-time control of robot 140 for later playback to robot 140. In this manner, robot 140 can be made to repeat a set of movements across multiple takes without requiring operator 114 to repeat the associated movements.

Procedure for Robot Control via Motion Capture

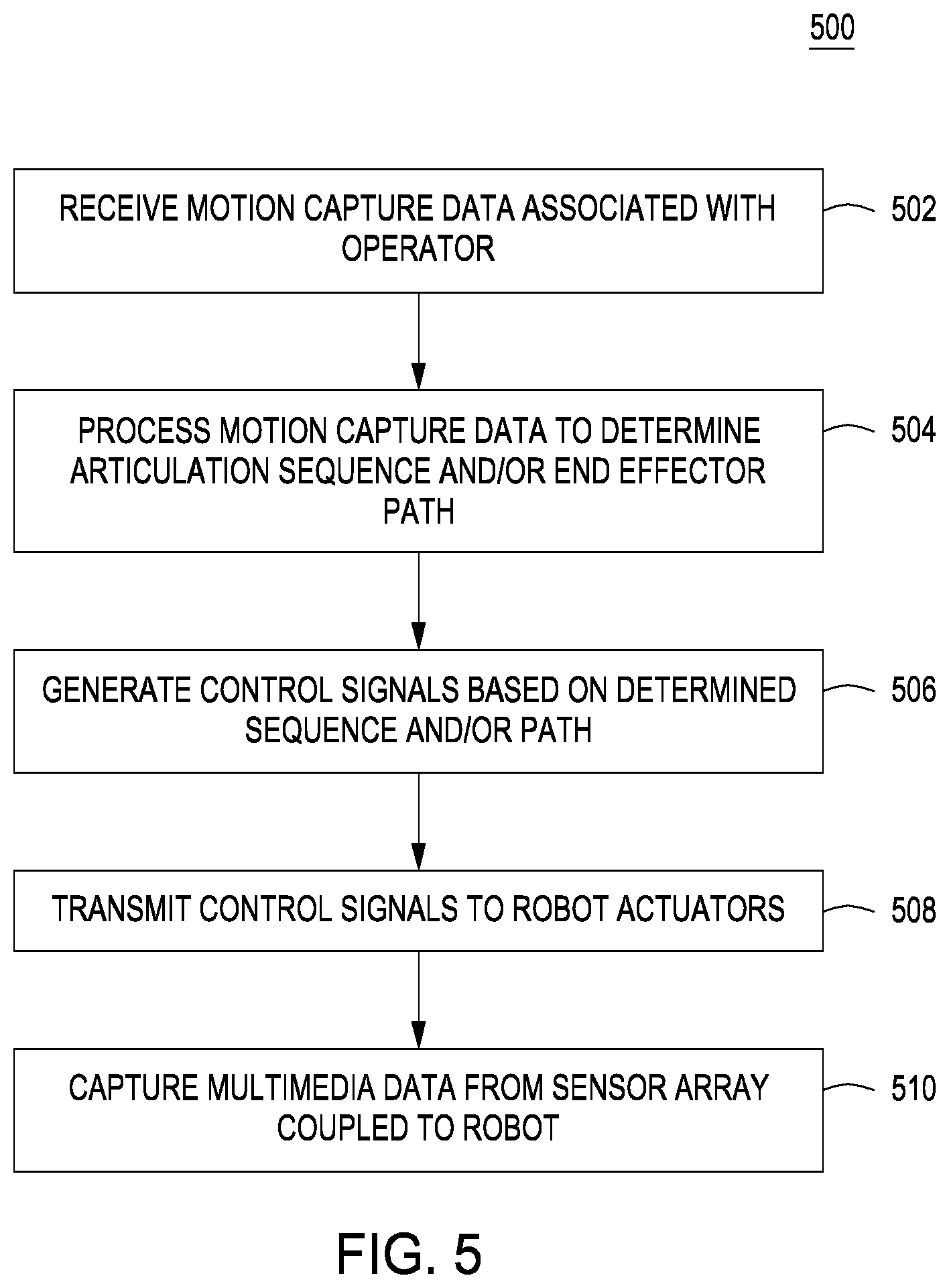

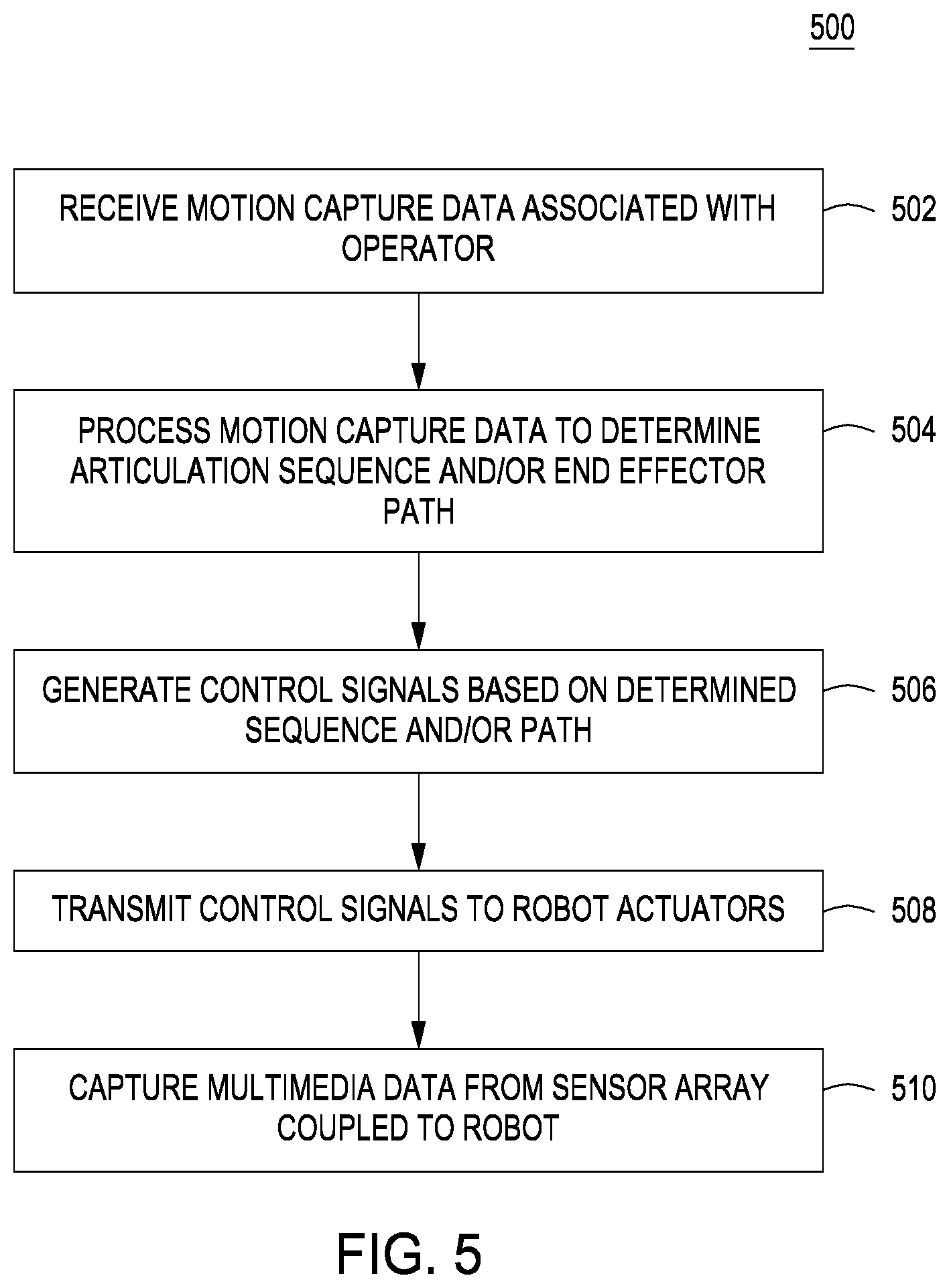

[0039] FIG. 5 is a flow diagram of method steps for translating motion capture data into control signals for controlling a robot, according to various embodiments of the present invention. Although the method steps are described in conjunction with the systems of FIGS. 1-4, persons skilled in the art will understand that any system configured to perform the method steps, in any order, is within the scope of the present invention.

[0040] As shown, a method 500 begins at step 502, where motion capture setup 110 receive motion capture data associated with operator 114. Motion capture setup 110 may implement computer vision techniques to track head, body, and limb movements of operator 114, or rely on marking tracking techniques to track the movements of operator 114. Motion capture setup transmits motion capture signals 150 to control engine 128 for processing.

[0041] At step 504, control engine 128 processes motion capture signals 150 to determine an articulation sequence to be performed by robot 140 and/or an end effector path for robot 140 to follow. An example of an articulation sequence is discussed above in conjunction with FIG. 3. An example of an end effector path is described above in conjunction with FIG. 4.

[0042] At step 506, control engine 128 generates control signals 152 based on the determined articulation sequence and/or end effector path. Control signals 152 may vary depending on the type of robot 140 implemented. For example, to control a robotic arm of a robot, control signals 152 could include joint position commands. Alternatively, to control a robotic drone, control signals 152 could include motor speed commands for modulating thrust produced by a propeller.

[0043] At step 508, control engine 128 transmits control signals 152 to actuators 142 of robot 140. Control engine 128 may transmit control signals 152 via one or more physical cables coupled to robot 140 or transmit control signals 152 wirelessly.

[0044] At step 510, control engine 128 captures multimedia data from sensor array 144 coupled to robot 140. Sensor array 144 may include any technically feasible type of sensor configured to measure physical quantities, including an optical sensor, sonic sensor, vibration sensor, and so forth. In practice, sensor array 144 generally includes a video camera and potentially an audio capture device as well. Multimedia capture module 230 processes the sensor data from sensor array 144 to generate the multimedia data.

[0045] Persons skilled in the art will understand that although the techniques described herein have been described relative to various cinematographic operations, the present techniques also apply to robot control in general. For example, motion capture setup 110 and control engine 128 may operate in conjunction with one another to control robot 140 for any technically purpose, beyond filming movies.

[0046] In sum, a motion capture setup records the movements of an operator, and a control engine then translates those movements into control signals for controlling a robot. The control engine may directly translate the operator movements into analogous movements to be performed by the robot, or the control engine may compute robot dynamics that cause a portion of the robot to mimic a corresponding portion of the operator.

[0047] At least one advantage of the techniques described herein is that a human camera operator need not be subjected to discomfort or bodily injury when filming movies. Instead of personally holding camera equipment and being physically present for filming, the camera operator can simply operate a robot, potentially from a remote location, that, in turn, operates camera equipment.

[0048] The descriptions of the various embodiments have been presented for purposes of illustration, but are not intended to be exhaustive or limited to the embodiments disclosed. Many modifications and variations will be apparent to those of ordinary skill in the art without departing from the scope and spirit of the described embodiments.

[0049] Aspects of the present embodiments may be embodied as a system, method or computer program product. Accordingly, aspects of the present disclosure may take the form of an entirely hardware embodiment, an entirely software embodiment (including firmware, resident software, micro-code, etc.) or an embodiment combining software and hardware aspects that may all generally be referred to herein as a "module" or "system." Furthermore, aspects of the present disclosure may take the form of a computer program product embodied in one or more computer readable medium(s) having computer readable program code embodied thereon.

[0050] Any combination of one or more computer readable medium(s) may be utilized. The computer readable medium may be a computer readable signal medium or a computer readable storage medium. A computer readable storage medium may be, for example, but not limited to, an electronic, magnetic, optical, electromagnetic, infrared, or semiconductor system, apparatus, or device, or any suitable combination of the foregoing. More specific examples (a non-exhaustive list) of the computer readable storage medium would include the following: an electrical connection having one or more wires, a portable computer diskette, a hard disk, a random access memory (RAM), a read-only memory (ROM), an erasable programmable read-only memory (EPROM or Flash memory), an optical fiber, a portable compact disc read-only memory (CD-ROM), an optical storage device, a magnetic storage device, or any suitable combination of the foregoing. In the context of this document, a computer readable storage medium may be any tangible medium that can contain, or store a program for use by or in connection with an instruction execution system, apparatus, or device.

[0051] Aspects of the present disclosure are described above with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems) and computer program products according to embodiments of the disclosure. It will be understood that each block of the flowchart illustrations and/or block diagrams, and combinations of blocks in the flowchart illustrations and/or block diagrams, can be implemented by computer program instructions. These computer program instructions may be provided to a processor of a general purpose computer, special purpose computer, or other programmable data processing apparatus to produce a machine, such that the instructions, which execute via the processor of the computer or other programmable data processing apparatus, enable the implementation of the functions/acts specified in the flowchart and/or block diagram block or blocks. Such processors may be, without limitation, general purpose processors, special-purpose processors, application-specific processors, or field-programmable processors or gate arrays.

[0052] The flowchart and block diagrams in the figures illustrate the architecture, functionality, and operation of possible implementations of systems, methods and computer program products according to various embodiments of the present disclosure. In this regard, each block in the flowchart or block diagrams may represent a module, segment, or portion of code, which comprises one or more executable instructions for implementing the specified logical function(s). It should also be noted that, in some alternative implementations, the functions noted in the block may occur out of the order noted in the figures. For example, two blocks shown in succession may, in fact, be executed substantially concurrently, or the blocks may sometimes be executed in the reverse order, depending upon the functionality involved. It will also be noted that each block of the block diagrams and/or flowchart illustration, and combinations of blocks in the block diagrams and/or flowchart illustration, can be implemented by special purpose hardware-based systems that perform the specified functions or acts, or combinations of special purpose hardware and computer instructions.

[0053] While the preceding is directed to embodiments of the present disclosure, other and further embodiments of the disclosure may be devised without departing from the basic scope thereof, and the scope thereof is determined by the claims that follow.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.