Robot And Operation Method Thereof

KIM; Jung Sik

U.S. patent application number 16/589340 was filed with the patent office on 2020-01-30 for robot and operation method thereof. The applicant listed for this patent is LG ELECTRONICS INC.. Invention is credited to Jung Sik KIM.

| Application Number | 20200030972 16/589340 |

| Document ID | / |

| Family ID | 68070476 |

| Filed Date | 2020-01-30 |

| United States Patent Application | 20200030972 |

| Kind Code | A1 |

| KIM; Jung Sik | January 30, 2020 |

ROBOT AND OPERATION METHOD THEREOF

Abstract

A robot and an operation method thereof are disclosed. A robot may include a loading box provided to load goods, and to be movable at a certain distance with respect to the robot when closed and opened, a drive wheel configured to drive the robot, an auxiliary wheel provided at a position spaced apart from the drive wheel, and a variable supporter configured to change the position of the auxiliary wheel, and supporting the loading box, and the variable supporter may move the auxiliary wheel so as to correspond to the movement direction of the center of gravity of the robot. The robot may transmit and receive a wireless signal on the mobile communication network constructed according to a 5 Generation (G) communication.

| Inventors: | KIM; Jung Sik; (Gyeonggi-do, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 68070476 | ||||||||||

| Appl. No.: | 16/589340 | ||||||||||

| Filed: | October 1, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06N 3/084 20130101; B25J 9/1666 20130101; B25J 13/081 20130101; B25J 9/1638 20130101; G05B 2219/39194 20130101; G06N 20/00 20190101; B25J 9/163 20130101; G05B 2219/39176 20130101 |

| International Class: | B25J 9/16 20060101 B25J009/16; G06N 20/00 20060101 G06N020/00; B25J 13/08 20060101 B25J013/08 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Aug 29, 2019 | KR | 10-2019-0106780 |

Claims

1. A robot capable of autonomous driving, the robot comprising: a loading box provided to load goods, and to be movable at a certain distance with respect to the robot when closed and opened; a drive wheel configured to drive the robot; an auxiliary wheel provided at a position spaced apart from the drive wheel; and a variable supporter configured to change the position of the auxiliary wheel, and supporting the loading box, wherein the variable supporter moves the auxiliary wheel so as to correspond to the movement direction of the center of gravity of the robot.

2. The robot of claim 1, wherein the variable supporter comprises a motor; a linear mover mounted with the auxiliary wheel, and configured to move linearly; a guider having one side fixed to the motor, and having the other side coupled to the linear mover that is movable; and a screw having one side coupled to a rotary shaft of the motor to rotate together according to the rotation of the motor and having the other side coupled to the linear mover that is movable to convert the rotational motion of the motor into the linear motion of the liner mover.

3. The robot of claim 1, wherein the variable supporter moves the auxiliary wheel in a first direction so that a first length measured from the drive wheel to the auxiliary wheel in the first direction is equal to or longer than a second length measured from the drive wheel to the center of gravity of the robot in the first direction.

4. The robot of claim 1, wherein the loading box is provided in a plurality of floors, and wherein each floor is provided with a pressure sensor configured to measure the weight distribution of the goods loaded in the loading box.

5. The robot of claim 4, wherein the robot estimates a position of the center of gravity of the robot based on the weight distribution of the goods measured from the pressure sensor and a structure of the robot.

6. The robot of claim 5, wherein the robot holds the center of gravity data, which is information on the position of the center of gravity of the robot, according to the weight distribution of the goods and the movement of the loading box, and adjusts a first length of the variable supporter measured from the drive wheel to the auxiliary wheel in a first direction based on the center of gravity data.

7. The robot of claim 6, wherein the center of gravity data holds information on the position of the center of gravity of the robot according to the weight distribution of the goods loaded in the loading box, the floor number loaded with the goods in the loading box, the movement distance of the loading box when the loading box is open, and the number of floors of the loading box.

8. The robot of claim 6, wherein the center of gravity data is derived by machine learning.

9. The robot of claim 8, wherein the center of gravity data is derived by supervised learning.

10. The robot of claim 9, wherein the supervised learning is performed so that training data infers labeling data, which is a pre-derived result value of the center of gravity data, and derives the functional relationship between the position of the center of gravity of the robot, which is an output value, and an input value specifying the position of the center of gravity.

11. The robot of claim 8, wherein the center of gravity data holds information on the position of the center of gravity of the robot in each condition, in which at least one of a plurality of input values has been learned in different conditions, during the machine learning for deriving the center of gravity data of the robot.

12. The robot of claim 11, wherein the input value comprises the weight distribution of the goods loaded in the loading box, the floor number loaded with the goods in the loading box, the movement distance of the loading box when the loading box is open, and the number of floors of the loading box.

13. The robot of claim 1, wherein the robot further comprises a support wheel located to be spaced apart from each of the drive wheel and the auxiliary wheel, and configured to support the robot.

14. The robot of claim 1, wherein the variable supporter adjusts the movement speed of the auxiliary wheel so as to correspond to the movement speed of the center of gravity of the robot.

15. The robot of claim 1, wherein the robot further comprises a sensing sensor configured to sense an external obstacle, and wherein the variable supporter adjusts the movement distance of the auxiliary wheel so that the distance between the obstacle sensed by the sensing sensor and the auxiliary wheel are spaced at a certain distance apart from each other.

16. An operation method of a robot, comprising: selecting a floor loaded with goods to be withdrawn from a loading box provided in a plurality of floors; measuring the weight distribution of the goods loaded on the selected floor; estimating the position of the center of gravity of the robot based on the weight distribution of the goods and a structure of the robot when the selected floor is open; setting the movement distance of an auxiliary wheel according to the estimated center of gravity of the robot; moving, by a variable supporter, the auxiliary wheel by the set movement distance; and opening the selected floor, wherein the variable supporter moves the auxiliary wheel so as to correspond to the movement direction of the center of gravity of the robot, and moves the auxiliary wheel in a first direction so that a first length measured from a drive wheel of the robot to the auxiliary wheel in the first direction is equal to or longer than a second length measured from the drive wheel to the center of gravity of the robot in the first direction.

17. The operation method of the robot of claim 16, further comprising loading the goods in the loading box, wherein in the loading the goods, the variable supporter moves the auxiliary wheel to the maximum distance at which the auxiliary wheel is movable.

18. The operation method of the robot of claim 17, wherein the loading the goods comprises selecting a floor on which the goods are loaded in the loading box; moving, by the variable supporter, the auxiliary wheel to the maximum position at which the auxiliary wheel is movable; opening the selected floor; loading the goods on the selected floor; and closing the floor loaded with the goods.

Description

CROSS-REFERENCE TO APPLICATION

[0001] This present application claims benefit of priority to Korean Patent Application No. 10-2019-0106780, entitled "ROBOT AND OPERATION METHOD THEREOF," filed on Aug. 29, 2019, in the Korean Intellectual Property Office, the entire disclosure of which is incorporated herein by reference.

BACKGROUND

1. Technical Field

[0002] An embodiment relates to a robot and an operation method thereof, and more particularly, to a robot and an operation method thereof capable of loading goods and enabling autonomous driving.

2. Description of-Related Art

[0003] Description of this section only provides the background information of embodiments without configuring the related art.

[0004] The development and dissemination of an artificial intelligence robot is a trend that is rapidly spreading. Accordingly, when a client has made a purchase of goods, rather than having the client directly receive the shipped goods, it may be possible to improve the convenience of the client by having a home robot, which is stationed at the client's residential address to assist in the daily life of the client, receive the delivered goods instead.

[0005] In addition, the delivery of goods is being increasingly performed by intelligent artificial delivery robots instead of conventional human operators, and such delivery of goods using artificial intelligent robots is becoming increasingly popular and commercialized.

[0006] Korean Patent Laid-Open Publication No. 10-2018-0123298 discloses a delivery robot device. This document proposes a delivery robot device, an operation method thereof, and a service server, which may deliver the delivery goods collected by a delivery person in each delivery area to an original destination through a delivery robot device located for each delivery area (for example, building, apartment, mall), thereby overall improving security and convenience in the delivery service.

[0007] Meanwhile, a robot may be provided to move for the delivery of goods. In addition, for efficient delivery of goods, the robot may be provided to enable autonomous driving.

[0008] Korean Patent Laid-Open Publication No. 10-2019-0089794 discloses a mobile robot and an operation method thereof, which may communicate with peripheral devices through a 5G communication environment, and efficiently perform clean through machine learning based on such communication.

[0009] The robot loads and moves the goods to the delivery destination, and then withdraws the goods from the delivery destination. In addition, the robot loads or withdraws the delivery of the goods.

[0010] In the process of various operations of the robot for the delivery of such goods, the center of gravity of the robot loaded with the goods may move, and if the robot correctly does not cope with such movement of the center of gravity, the robot may overturn. There is a need for improvement of this problem.

SUMMARY OF THE DISCLOSURE

[0011] An embodiment of the present disclosure is to provide a robot having a structure capable of preventing overturning during a movement process.

[0012] Another embodiment is to provide an operation method of the robot capable of effectively coping with the movement of the center of gravity in the movement process, thereby preventing the overturning caused by the movement of the center of gravity.

[0013] Still another embodiment is to provide a method for estimating the position of the center of gravity of the robot through machine learning.

[0014] The objects to implement in embodiments are not limited to the technical problems described above and other objects that are not stated herein will be clearly understood by those skilled in the art from the following specifications.

[0015] For achieving the objects, a robot may include a loading box provided to load goods, and to be movable at a certain distance with respect to the robot when closed and opened, a drive wheel configured to drive the robot, an auxiliary wheel provided at a position spaced apart from the drive wheel, and a variable supporter configured to change the position of the auxiliary wheel, and supporting the loading box, and the variable supporter may move the auxiliary wheel so as to correspond to the movement direction of the center of gravity of the robot.

[0016] The robot may be provided to enable autonomous driving.

[0017] The variable supporter may include a motor, a linear mover mounted with the auxiliary wheel, and for moving linearly, a guider having one side fixed to the motor, and having the other side couple to the linear mover so that the linear mover is movable, and a screw having one side coupled to a rotary shaft of the motor to rotate together according to the rotation of the motor and having the other side coupled to the linear mover so that the linear mover is movable, and converting the rotational motion of the motor into the linear motion of the liner mover.

[0018] The variable supporter may move the auxiliary wheel in a first direction so that a first length measured from the drive wheel to the auxiliary wheel in the first direction is equal to or longer than a second length measured from the drive wheel to the center of gravity of the robot in the first direction.

[0019] The loading box may be provided in a plurality of floors, and each floor may be provided with a pressure sensor configured to measure the weight distribution of the goods loaded in the loading box.

[0020] The robot may estimate a position of the center of gravity of the robot based on the weight distribution of the goods measured from the pressure sensor and a structure of the robot.

[0021] The robot may hold the center of gravity data, which is information on the position of the center of gravity of the robot, according to the weight distribution of the goods and the movement of the loading box, and adjust a first length of the variable supporter measured from the drive wheel to the auxiliary wheel in a first direction based on the center of gravity data.

[0022] The center of gravity data may hold information on the position of the center of gravity of the robot according to the weight distribution of the goods loaded in the loading box, the floor number loaded with the goods in the loading box, the movement distance of the loading box when the loading box is open, and the number of floors of the loading box.

[0023] The center of gravity data may be derived by machine learning.

[0024] The center of gravity data may be derived by supervised learning.

[0025] The supervised learning may be performed so that training data infers labeling data, which is a pre-derived result value of the center of gravity data, and derive the functional relationship between the position of the center of gravity of the robot, which is an output value, and an input value specifying the position of the center of gravity.

[0026] The center of gravity data may hold information on the position of the center of gravity of the robot in each condition, in which at least one of a plurality of input values has been learned in different conditions, during the machine learning for deriving the center of gravity data of the robot.

[0027] The input value may include the weight distribution of the goods loaded in the loading box, the floor number loaded with the goods in the loading box, the movement distance of the loading box when the loading box is open, and the number of floors of the loading box.

[0028] The robot may further include a support wheel located to be spaced apart from each of the drive wheel and the auxiliary wheel, and for supporting the robot.

[0029] The variable supporter may adjust the movement speed of the auxiliary wheel so as to correspond to the movement speed of the center of gravity of the robot.

[0030] The robot may further include a sensing sensor configured to sense an external obstacle, and the variable supporter may adjust the movement distance of the auxiliary wheel so that the distance between the obstacle sensed by the sensing sensor and the auxiliary wheel are spaced at a certain distance apart from each other.

[0031] An operation method of a robot may include selecting a floor loaded with goods to be withdrawn from a loading box provided in a plurality of floors, measuring the weight distribution of the goods loaded on the selected floor, estimating the position of the center of gravity of the robot based on the weight distribution of the goods and a structure of the robot when the selected floor is open, setting the movement distance of an auxiliary wheel according to the estimated center of gravity of the robot, moving, by a variable supporter, the auxiliary wheel by the set movement distance, and opening the selected floor, and the variable supporter may move the auxiliary wheel so as to correspond to the movement direction of the center of gravity of the robot, and move the auxiliary wheel in a first direction so that a first length measured from a drive wheel of the robot to the auxiliary wheel in the first direction is equal to or longer than a second length measured from the drive wheel to the center of gravity of the robot in the first direction.

[0032] The operation method of the robot may further include loading the goods in the loading box, and in the loading the goods, the variable supporter may move the auxiliary wheel to the maximum distance at which the auxiliary wheel is movable.

[0033] The loading the goods may include selecting a floor on which the goods are loaded in the loading box, moving, by the variable supporter, the auxiliary wheel to the maximum position at which the auxiliary wheel is movable, opening the selected floor, loading the goods on the selected floor, and closing the floor loaded with the goods.

[0034] In an embodiment, it is possible to move the auxiliary wheel of the robot in the outside direction of the robot to stably support the robot, thereby effectively suppressing the overturning of the robot due to the movement of the center of gravity even if the loading box of the robot is open and the center of gravity of the robot loaded with the goods moves.

[0035] In an embodiment, it is possible to effectively derive the position of the center of gravity of the robot, which changes due to the weight and shape of the goods loaded on the robot, the overall shape of the robot loaded with the goods, the position movement of the loading box, and the like through machine learning.

[0036] It is possible to move the auxiliary wheel in the first direction corresponding to the derived position of the center of gravity to stable support the robot, thereby effectively suppressing the overturning of the robot due to the position change of the center of gravity of the robot.

BRIEF DESCRIPTION OF THE DRAWINGS

[0037] FIG. 1 is a flowchart illustrating a robot according to an embodiment.

[0038] FIG. 2 is a diagram for explaining the weight distribution of the goods loaded in a loading box according to an embodiment.

[0039] FIG. 3 is a bottom diagram of a robot according to an embodiment.

[0040] FIG. 4 is a diagram illustrating a state where a variable supporter has been unfolded in FIG. 3.

[0041] FIGS. 5 and 6 are diagrams for explaining an operation of a moment according to the position of an auxiliary wheel.

[0042] FIG. 7 is a diagram illustrating an AI apparatus according to an embodiment.

[0043] FIG. 8 is a diagram illustrating an AI server according to an embodiment.

[0044] FIG. 9 is a diagram illustrating an AI system according to an embodiment.

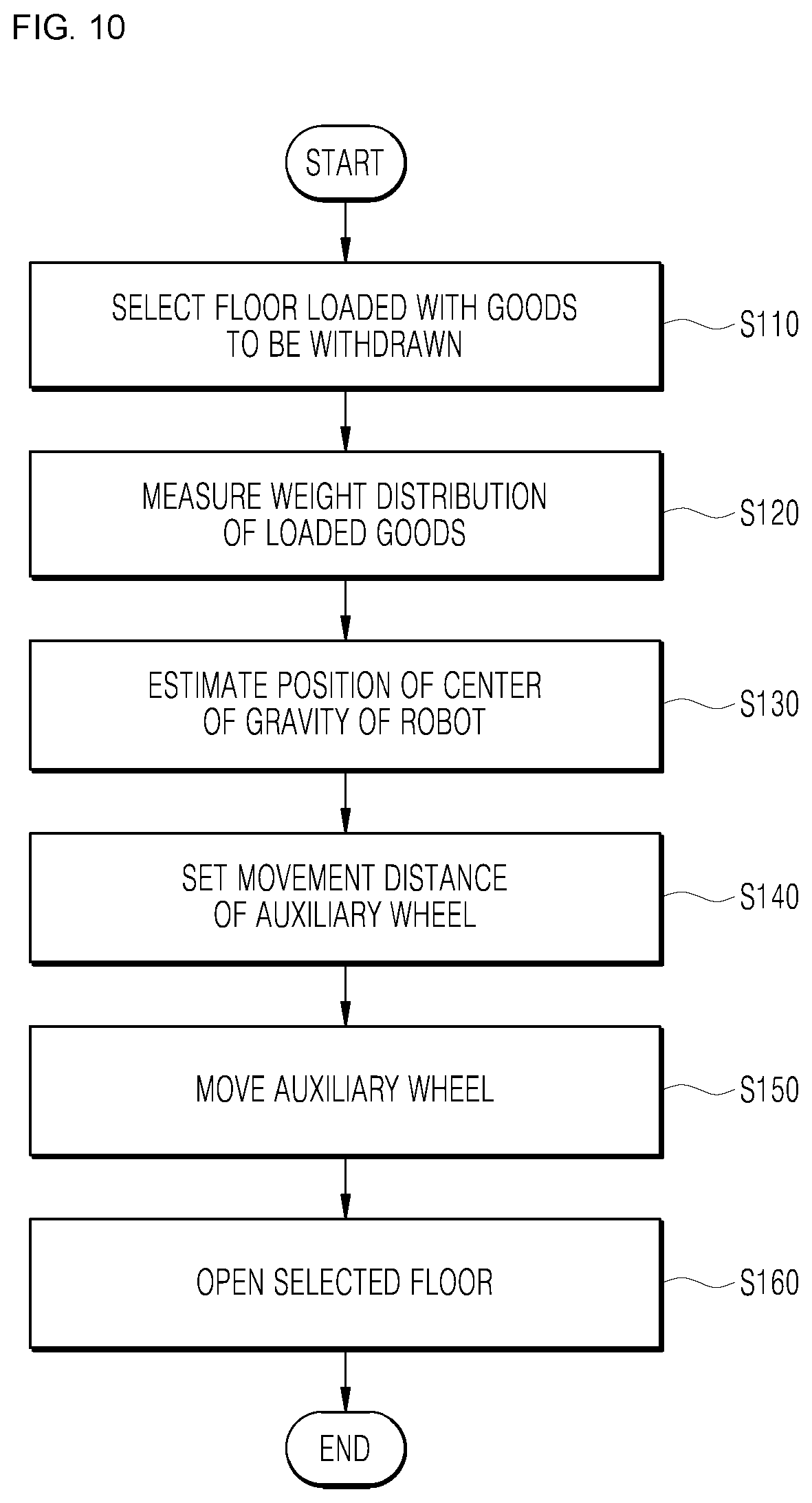

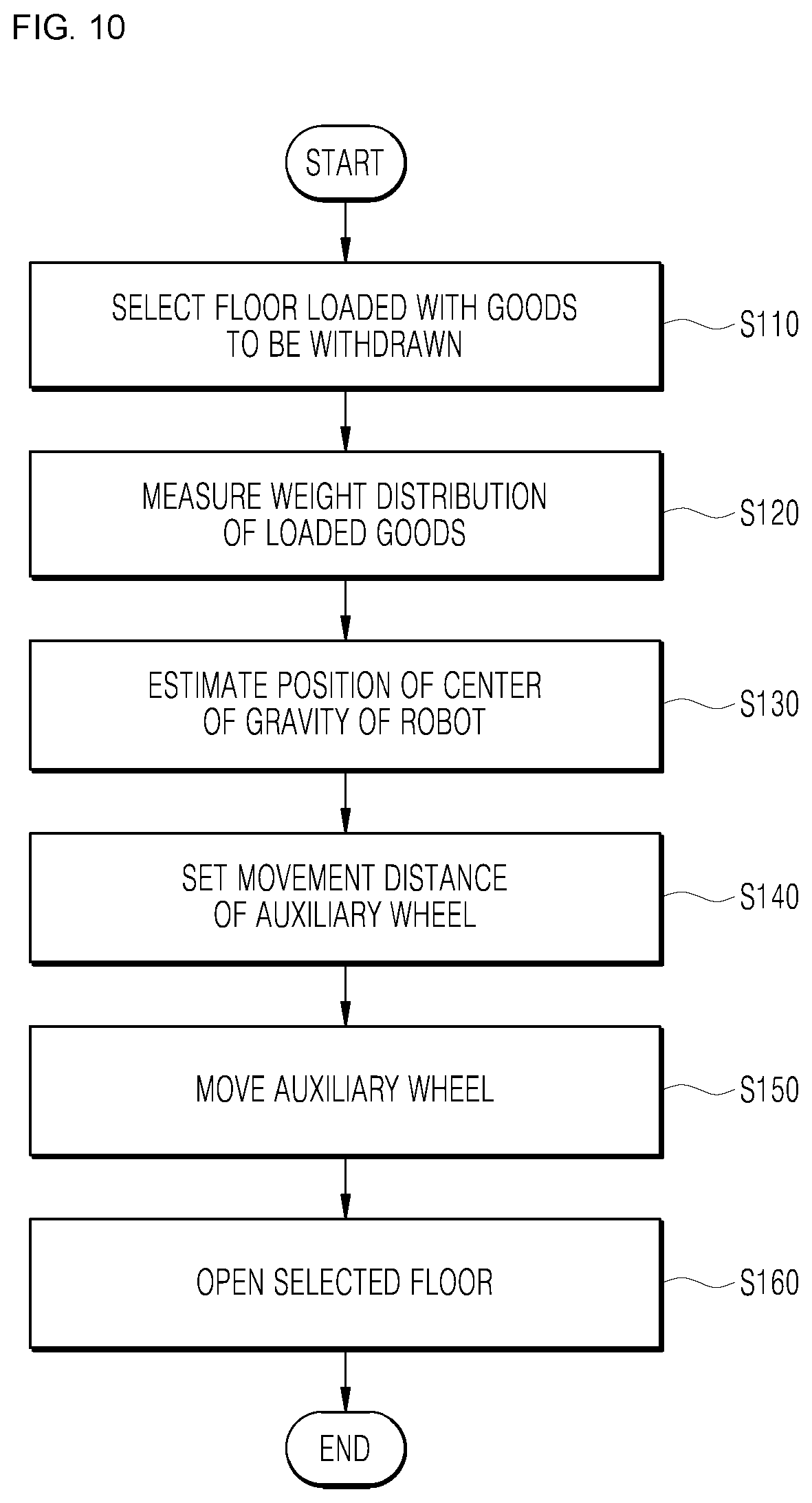

[0045] FIG. 10 is a flowchart illustrating an operation method of the robot according to an embodiment.

[0046] FIG. 11 is a flowchart illustrating a method for loading goods in the robot in the operation method of the robot according to an embodiment.

DETAILED DESCRIPTION

[0047] Hereinbelow, embodiments will be described in greater detail with reference to the accompanying drawings. The embodiments may be modified in various ways and may have various forms, and specific embodiments will be illustrated in the drawings and will be described in detail herein. However, this is not intended to limit the embodiments to the specific embodiments, and the embodiment should be understood as including all modifications, equivalents, and replacements that fall within the spirit and technical scope of the embodiments.

[0048] Terms such as "first," "second," and other numerical terms when used herein do not imply a sequence or order unless clearly indicated by the context. These terms are generally only used to distinguish one element from another. In addition, terms, which are specially defined in consideration of the configurations and operations of the embodiments, are given only to explain the embodiments, and do not limit the scope of the embodiments.

[0049] In the description of the embodiment, in the case in which it is described as being formed on "on" or "under" of each element, "on" or "under" includes two elements directly contacting each other or one or more other elements being indirectly formed between the two elements. In addition, when expressed as "on" or "under", it may include not only upwards but also downwards with respect to one element.

[0050] In addition, relational terms to be described below such as "on/over/up" and "beneath/under/down" may be used to discriminate any one subject or element from another subject or element without necessarily requiring or comprehending a physical or logical relationship or sequence of subjects or elements.

[0051] In addition, in the drawing, a rectangular coordinate system (x, y, z) may be used. In the drawings and the following description, a direction substantially horizontal with the ground surface (GROUND) has been defined as a first direction, and the first direction has been expressed as the y-axis direction in the drawing.

[0052] FIG. 1 is a flowchart illustrating a robot according to an embodiment. A robot may refer to a machine which automatically handles a given task by its own ability, or which operates autonomously. In particular, a robot having a function of recognizing an environment and performing an operation according to its own judgment may be referred to as an intelligent robot.

[0053] Robots may be classified into industrial, medical, household, and military robots, according to the purpose or field of use.

[0054] A robot may include a driving unit in order to perform various physical operations, such as moving joints of the robot. Moreover, a movable robot may include, for example, a wheel, a brake, and a propeller in the driver thereof, and through the driver may thus be capable of traveling on the ground or flying in the air.

[0055] The robot of an embodiment may, for example, serve to load goods and deliver the loaded goods, and be provided to enable autonomous driving.

[0056] Autonomous driving refers to a technology in which driving is performed autonomously, and an autonomous vehicle refers to a vehicle capable of driving without manipulation of a user or with minimal manipulation of a user.

[0057] For example, autonomous driving may include a technology in which a driving lane is maintained, a technology such as adaptive cruise control in which a speed is automatically adjusted, a technology in which a vehicle automatically drives along a defined route, and a technology in which a route is automatically set when a destination is set.

[0058] A vehicle includes a vehicle having only an internal combustion engine, a hybrid vehicle having both an internal combustion engine and an electric driving apparatus, and an electric vehicle having only an electric driving apparatus, and may include not only an automobile but also a train and a motorcycle. At this time, the autonomous vehicle may be regarded as a robot having an autonomous driving function.

[0059] The robot of an embodiment may be connected to communicate with the server and other communication devices. The robot may be configured to include at least one of a mobile communication module and a wireless internet module configured to communicate with a server, and the like. In addition, the robot may further include a short-range communication module.

[0060] The mobile communication module may transmit and receive wireless signals to and from at least one of a base station, an external terminal, and a server on a mobile communication network established according to technical standards or communication methods for mobile communications, for example, global system for mobile communication (GSM), code division multi access (CDMA), code division multi access 2000 (CDMA2000), enhanced voice-data optimized or enhanced voice-data only (EV-DO), wideband CDMA (WCDMA), high speed downlink packet access (HSDPA), high speed uplink packet access (HSDPA), long term evolution (LTE), long term evolution-advanced (LTE-A), 5 generation (5G) communication and the like.

[0061] The wireless Internet module refers to a module for wireless Internet access. The wireless Internet module may be provided in the robot. The wireless internet module may transmit and receive wireless signals via a communication network according to wireless internet technologies.

[0062] The robot may transmit/receive data to/from a server and various terminals that may perform communication through a 5G network. In particular, the robot may perform data communication with a server and a terminal using at least one service of eMBB (enhanced mobile broadband), URLLC (ultra-reliable and low latency communications), and mMTC (massive machine-type communications) through a 5G network.

[0063] The enhanced mobile broadband (eMBB) which is a mobile broadband service provides multimedia contents, wireless data access, and so forth. In addition, more improved mobile services such as a hotspot and a wideband coverage for receiving mobile traffic that are tremendously increasing may be provided through eMBB. Through a hotspot, the large-volume traffic may be accommodated in an area where user mobility is low and user density is high. Through broadband coverage, a wide-range and stable wireless environment and user mobility may be guaranteed.

[0064] The URLLC service defines the requirements that are far more stringent than existing LTE in terms of reliability and transmission delay of data transmission and reception, and corresponds to a 5G service for production process automation in the industrial field, telemedicine, remote surgery, transportation, safety, and the like.

[0065] mMTC is a transmission delay-insensitive service that requires a relatively small amount of data transmission. A much larger number of terminals, such as sensors, than a general portable phone may be connected to a wireless access network by mMTC at the same time. In this case, the price of the communication module of a terminal should be low and a technology improved to increase power efficiency and save power is required to enable operation for several years without replacing or recharging a battery.

[0066] An artificial intelligence technology may be applied to the robot. Artificial intelligence refers to a field of studying artificial intelligence or a methodology for creating the same. Moreover, machine learning refers to a field of defining various problems dealing in an artificial intelligence field and studying methodologies for solving the same. In addition, machine learning may be defined as an algorithm for improving performance with respect to a task through repeated experience with respect to the task.

[0067] An artificial neural network (ANN) is a model used in machine learning, and may refer in general to a model with problem-solving abilities, composed of artificial neurons (nodes) forming a network by a connection of synapses. The ANN may be defined by a connection pattern between neurons on different floors, a learning process for updating a model parameter, and an activation function for generating an output value.

[0068] The ANN may include an input floor, an output floor, and may selectively include one or more hidden floors. Each floor includes one or more neurons, and the artificial neural network may include synapses that connect the neurons to one another. In an ANN, each neuron may output a function value of an activation function with respect to the input signals inputted through a synapse, weight, and bias.

[0069] A model parameter refers to a parameter determined through learning, and may include weight of synapse connection, bias of a neuron, and the like. Moreover, a hyperparameter refers to a parameter which is set before learning in a machine learning algorithm, and includes a learning rate, a number of repetitions, a mini batch size, an initialization function, and the like.

[0070] The objective of training an ANN is to determine a model parameter for significantly reducing a loss function. The loss function may be used as an indicator for determining an optimal model parameter in a learning process of an artificial neural network.

[0071] The machine learning may be classified into supervised learning, unsupervised learning, and reinforcement learning depending on the learning method.

[0072] Supervised learning may refer to a method for training an artificial neural network with training data that has been given a label. In addition, the label may refer to a target answer (or a result value) to be guessed by the artificial neural network when the training data is inputted to the artificial neural network.

[0073] The Supervised Learning is a method of the Machine Learning for inferring one function from the training data.

[0074] Among the functions that may be thus derived, a function that outputs a continuous range of values may be referred to as a regressor, and a function that predicts and outputs the class of an input vector may be referred to as a classifier.

[0075] In the Supervised Learning, the Artificial Neural Network is learned in a state where a label for the training data has been given.

[0076] Here, the label may refer to a target answer (or a result value) to be guessed by the artificial neural network when the training data is inputted to the artificial neural network.

[0077] Throughout the present specification, the target answer (or a result value) to be guessed by the artificial neural network when the training data is inputted may be referred to as a label or labeling data.

[0078] Throughout the present specification, assigning one or more labels to training data in order to train an artificial neural network may be referred to as labeling the training data with labeling data.

[0079] Training data and labels corresponding to the training data together may form a single training set, and as such, they may be inputted to an artificial neural network as a training set.

[0080] In the meantime, the training data may exhibit a number of features, and the training data being labeled with the labels may be interpreted as the features exhibited by the training data being labeled with the labels. In this case, the training data may represent a feature of an input object as a vector.

[0081] Using training data and labeling data together, the artificial neural network may derive a correlation function between the training data and the labeling data. Then, through evaluation of the function derived from the artificial neural network, a parameter of the artificial neural network may be determined (optimized).

[0082] Unsupervised learning may refer to a method for training an artificial neural network using training data that has not been given a label. Reinforcement learning may refer to a learning method for training an agent defined within an environment to select an action or an action order for maximizing cumulative rewards in each state.

[0083] Machine learning of an artificial neural network implemented as a deep neural network (DNN) including a plurality of hidden floors may be referred to as deep learning, and the deep learning is one machine learning technique.

[0084] FIG. 1A shows a state where a loading box 100 has been closed, and FIG. 1B shows a state where a first floor 100-1 of the loading box 100 has been opened to withdraw or load goods. In FIG. 1B, the arrows indicate that a third floor 100-3 of the loading box 100, the center of gravity (COG) of the robot, and an auxiliary wheel 300 has moved in the first direction, in the direction away from the robot, respectively.

[0085] The robot may include the loading box 100, a drive wheel 200, the auxiliary wheel 300, a variable supporter 400, and a support wheel 500.

[0086] The loading box 100 may be provided to load the goods, and to be movable at a certain distance with respect to the robot when closed and opened. That is, the loading box 100 may be closed or opened to load the goods or to withdraw the loaded goods, as illustrated in FIGS. 1A and 1B, and when closed or opened, the loading box 100 may move a certain distance in the first direction, that is, the y-axis direction.

[0087] The loading box 100 may be provided in a single floor, or may be provided in a plurality of floors. For example, the loading box 100 may be provided as a first floor 100-1, a second floor 100-2, and a third floor 100-3. However, the loading box 100 may also be provided as a single floor, two floors, or four or more floors. Each floor of the loading box 100 may move in the first direction independently of each other, and each floor may load the goods independently.

[0088] Each floor of the loading box 100 is provided in a sliding type that may move a certain distance in the first direction, and when each floor is moved in the direction closer to the robot, each floor is closed, and when each floor is moved away from the robot, each floor may be open.

[0089] The first direction movement of each floor may be controlled by a controller provided in the robot. For example, FIG. 1A illustrates a state where the third floor 100-3 has been closed in a state where the goods has been loaded in the third floor 100-3, and FIG. 1B illustrates a state where the third floor 100-3 has moved in the direction away from the robot along the first direction to be opened in order to withdraw the goods in the state of FIG. 1A.

[0090] The drive wheel 200 may be located at a position where the robot and the ground surface contact each other, and may drive the robot. The controller may drive the robot by controlling the operation of the drive wheel 200. The drive wheel 200 may be provided in a pair, and control the rotational speed of each drive wheel 200 to control the operation necessary for driving the robot, such as rotation, straight, or change in the driving direction.

[0091] The auxiliary wheel 300 may be provided at a position spaced apart from the drive wheel 200 at a position where the robot and the ground surface contact each other, and may support the robot so that the robot does not overturn.

[0092] The support wheel 500 may be located so as to be spaced apart from the drive wheel 200 and the auxiliary wheel 300 at a position where the robot and the ground surface contact each other, and may support the robot so that the robot does not overturn, together with the auxiliary wheel 300.

[0093] The auxiliary wheel 300 and the support wheel 500 may be provided, for example, in the form of a caster capable of rotating 360.degree. on the x-y plane substantially parallel to the ground surface.

[0094] The variable supporter 400 may change the position of the auxiliary wheel 300. That is, the variable supporter 400 may change the position of the first direction of the auxiliary wheel 300 in the robot by moving the auxiliary wheel 300 in the first direction within a certain range.

[0095] At this time, the auxiliary wheel 300 may be coupled to the variable supporter 400, and accordingly, the variable supporter 400 may support the loading box 100 together with the auxiliary wheel 300.

[0096] The variable supporter 400 may move the auxiliary wheel 300 so as to correspond to the movement direction of the center of gravity (COG) of the robot. At this time, the center of gravity (COG) of the robot may mean the entire center of gravity (COG) in the state where the goods has been loaded on the robot.

[0097] Referring to FIG. 1, the position of the center of gravity (COG) in the state where the goods have been loaded in the third floor 100-3 of the loading box 100 and the third floor 100-3 has been closed is as illustrated in FIG. 1A. Meanwhile, the position of the center of gravity (COG) in the state where the goods have been loaded in the third floor 100-3 of the loading box 100, and the third floor 100-3 has been moved in the first direction in order to withdraw the goods is as illustrated in FIG. 1B.

[0098] Referring to FIG. 1B, when the third floor 100-3 moves in the direction away from the robot along the first direction, that is, in the outside direction of the robot, the center of gravity of the robot may also move in the outside of the robot equally.

[0099] Hereinafter, for clarity, the direction away from the robot along the first direction may be expressed as the outside direction of the robot, and the side away from the robot along the first direction may be expressed as the outside.

[0100] When the center of gravity (COG) of the robot moves in the outside direction of the robot and is located outside the robot more than the contact point (CP, see FIGS. 5 and 6) of the auxiliary wheel 300 and the ground surface, a moment due to the weight and structure of the robot acts, and the robot is likely to overturn due to this moment. The action of the moment will be described in detail with reference to the drawings below.

[0101] Accordingly, in order to suppress the overturning of the robot, as illustrated in FIG. 1B, the auxiliary wheel 300 moves in the outside direction of the robot, such that the contact point (CP) may be located at the same position as the center of gravity (COG) of the robot in the z-axis direction, or outside the center of gravity (COG) of the robot.

[0102] When the contact point (CP) is located at the same position as the center of gravity of the robot or outside the center of gravity of the robot, the moment may not act or the action direction of the moment may change, such that the moment may eliminate the risk of the robot overturning. The action of the moment will be described in detail with reference to the drawings below.

[0103] Each floor of the loading box 100 may be provided with a pressure sensor configured to measure the weight distribution of the goods loaded in the loading box 100. The pressure sensor may measure the weight distribution according to the shape of the goods loaded on each floor, the position placed on each floor, and the like.

[0104] FIG. 2 is a diagram for explaining the weight distribution of the goods loaded in the loading box 100 according to an embodiment. For example, the goods have been loaded on the third floor 100-3, and the loaded goods may be a rugby ball shaped first goods 10-1 and a donut shaped second goods 10-2.

[0105] The pressure sensor may measure a position where the first goods 10-1 and the second goods 10-2 are placed on the x-y plane, and the controller of the robot may obtain their numerical value. In addition, the pressure sensor may measure the weight distribution of each of the first goods 10-1 and the second goods 10-2, and the controller may obtain its numerical value.

[0106] Accordingly, the controller of the robot may receive the measured value from the pressure sensor to obtain information on the weight distribution of the entire goods of the third floor 100-3 of a graph as illustrated in FIG. 2.

[0107] FIG. 3 is a bottom diagram of a robot according to an embodiment. FIG. 4 is a diagram illustrating a state where the variable supporter 400 has been unfolded in FIG. 3. The variable supporter 400 may include a motor 410, a linear mover 420, a guider 430, and a screw 440.

[0108] The motor 410 may rotate to linearly move, that is, linearly reciprocate the linear mover 420. Of course, the operation of the motor 410 may be controlled by the controller provided in the robot.

[0109] The linear mover 420 may be mounted with the auxiliary wheel 300, and may move linearly according to the rotation of the motor 410. The rotation direction of the motor 410 is changed, such that the linear mover 420 may move in the outside direction of the robot or the opposite direction thereof.

[0110] The guider 430 may have one side fixed to the motor 410, and have the other side coupled to the linear mover 420 so that the linear mover 420 is movable. The guider 430 may be located so that its longitudinal direction is parallel to the first direction to guide so that the linear mover 420 moves linearly in the first direction.

[0111] The screw 440 may have one side coupled to the rotary shaft of the motor 410 to rotate according to the rotation of the motor 410 and have the other side coupled to the linear mover 420 so that the linear mover 420 is movable.

[0112] The screw 440 is located so that its longitudinal direction is parallel to the first direction, and accordingly, when the motor 410 rotates, the linear mover 420 may linearly move in the longitudinal direction of the screw 440. Due to this structure, the screw 440 may convert the rotational motion of the motor 410 into the linear motion of the linear mover 420.

[0113] According to the above-described structure, the controller of the robot may control the operation time and the rotation direction of the motor 410 to control the movement direction and the movement distance in the first direction of the variable supporter 400 and the auxiliary wheel 300 mounted thereto.

[0114] FIGS. 5 and 6 are diagrams for explaining the action of the moment according to the position of the auxiliary wheel 300. In FIGS. 5 and 6, the position of the center of gravity (COG) has been illustrated in the state where the third floor 100-3 has been open. When the third floor 100-3 has been open, the center of gravity (COG) may move in the outside direction of the robot compared to the state where the third floor 100-3 has been closed.

[0115] The variable supporter 400 may move the auxiliary wheel 300 in the first direction so that a first length (L1) measured from the drive wheel 200 to the auxiliary wheel 300 in the first direction is equal to or longer than a second length (L2) measured from the drive wheel 200 to the center of gravity (COG) of the robot in the first direction.

[0116] FIG. 5 is a diagram illustrating a case where the first length (L1) is shorter than the second length (L2). Hereinafter, the action of the moment due to the weight of the robot in the state illustrated in FIG. 5 will be described.

[0117] The center of gravity (COG) of the robot is in the position illustrated, and the weight of the robot is gravity (Fg). The gravity (Fg) acts in the direction perpendicular to the ground surface at the center of gravity (COG). At the contact point (CP) where the auxiliary wheel 300 of the robot contacts the ground surface, a reaction force (Fs) is generated in the direction opposite to the gravity (Fg). At this time, a straight line connecting the contact point (CP) and the center of gravity (COG) is referred to as a moment arm.

[0118] The magnitude (M1) of the moment acting on the robot around the contact point (CP) is the product of a distance (LM1) of the moment arm and the component force of the gravity (Fgv) acting in the direction perpendicular to the moment arm.

M1=LM1.times.Fgv

[0119] Meanwhile, the action direction of the moment acts clockwise as viewed from the paper around the contact point (CP) in FIG. 5. That is, the moment acts in the direction indicated by the semi-circular arrow in FIG. 5.

[0120] As the moment acts clockwise as viewed from the paper, the robot may overturn due to the action of the moment. This is because there is no means configured to support the robot even though the robot may turn clockwise as viewed from the paper due to the action of the position of the center of gravity of the robot (COG) and the action of the moment.

[0121] As may be seen in the above description with reference to FIG. 5, when the first length (L1) is shorter than the second length (L2), the robot may overturn due to the action of the moment.

[0122] FIG. 6 is a diagram illustrating a case where the first length (L1) is longer than the second length (L2) according to an embodiment. FIG. 6 is a state where the auxiliary wheel 300 has moved in the outside direction of the robot along the first direction as compared with FIG. 5. Hereinafter, the action of the moment due to the weight of the robot in the state illustrated in FIG. 6 will be described.

[0123] The center of gravity (COG) of the robot is in the position illustrated, and the weight of the robot is gravity (Fg). The gravity (Fg) acts in the direction perpendicular to the ground surface at the center of gravity (COG). The reaction force (Fs) is generated in the opposite direction to the gravity (Fg) at the contact point (CP) where the auxiliary wheel 300 of the robot contacts the ground surface (GROUND).

[0124] The magnitude (M2) of the moment acting on the robot around the contact point (CP) is the product of a distance (LM2) of the moment arm and the component force of the gravity (Fgv) acting in the direction perpendicular to the moment arm.

M2=LM2.times.Fgv

[0125] Meanwhile, the action direction of the moment acts counterclockwise as viewed from the paper around the contact point (CP) in FIG. 6. That is, the moment acts in the direction indicated by the semi-circular arrow in FIG. 6.

[0126] As the moment acts counterclockwise as viewed from the paper, the risk of overturning the robot due to the action of the moment may be solved. This means that even if the moment acts counterclockwise when the robot is viewed from the paper due to the position of the center of gravity (COG) of the robot and the action of the moment, the drive wheel 200 and the auxiliary wheel 300 may support the robot so as not to overturn.

[0127] As may be seen from the above description with reference to FIG. 6, when the first length (L1) is longer than the second length (L2), the robot does not overturn due to the action of the moment.

[0128] Meanwhile, when the first length (L1) and the second length (L2) are the same, the moment due to the gravity (Fg) does not occur around the contact point (CP), and accordingly, the risk of overturning the robot due to the action of the moment does not occur.

[0129] Accordingly, in order to prevent the robot from overturning due to the moment, an embodiment may move the auxiliary wheel 300 in the first direction so that the first length (L1) is equal to or longer than the second length (L2).

[0130] For stable support of the robot, it may be more appropriate to move the auxiliary wheel 300 so that the first length (L1) is longer than the second length (L2). The difference between the first length (L1) and the second length (L2) may be appropriately set considering the stable support of the robot.

[0131] In an embodiment, the auxiliary wheel 300 of the robot is moved in the outside direction of the robot to stably support the robot, and accordingly, it is possible to effectively suppress the overturning of the robot due to the movement of the center of gravity (COG) even if the loading box 100 of the robot is opened and the center of gravity (COG) of the robot loaded with the goods moves.

[0132] Hereinafter, a method for estimating the position of the center of gravity (COG) of the robot will be described in detail. The robot may estimate the position of the center of gravity (COG) of the robot based on the weight distribution of the goods measured from the pressure sensor and the structure of the robot.

[0133] The robot may hold the center of gravity (COG) data, which is information on the position of the center of gravity (COG) of the robot according to the weight distribution of the goods and the movement of the loading box 100. The information on the position of the center of gravity (COG) of the robot may be numerically coordinated (x, y, z), and at this time, the origin (0, 0, 0) of the coordinate may be appropriately set to a specific point of the robot.

[0134] The center of gravity (COG) data may hold an input value relating to the structure of the robot considering the weight distribution of the goods and the movement position of the loading box 100, and coordinates indicating the position of the center of gravity (COG) of the robot that is an output value corresponding to these input values.

[0135] At this time, as the input value is changed, the position of the center of gravity (COG) of the robot may vary. Accordingly, the input value may be to specify the position of the center of gravity (COG) of the robot.

[0136] Specifically, the center of gravity (COG) data may hold information on the position of the center of gravity (COG) of the robot according to the weight distribution of the goods loaded in the loading box 100, the floor number loaded with the goods in the loading box 100, the movement distance of the loading box 100 when the loading box 100 is open, and the number of floors of the loading box 100.

[0137] At this time, the center of gravity (COG) data may hold, as an input value, the weight distribution of the goods loaded in the loading box 100, the floor number of the goods loaded in the loading box 100, the movement distance of the loading box when the loading box 100 is open, and a numerical value on the number of floors of the loading box 100. In addition, the center of gravity (COG) data may hold a coordinate value on the position of the center of gravity (COG) of the robot as an output value.

[0138] The robot may adjust the first length (L1) measured from the drive wheel 200 of the variable supporter 400 to the auxiliary wheel 300 in the first direction based on the center of gravity (COG) data. The method for adjusting the first length (L1) is as described above.

[0139] That is, when receiving the input value, the controller of the robot may find a coordinate value of the position of the center of gravity (COG) corresponding to the input value from the center of gravity (COG) data, and the first length (L1) according to the found coordinate value.

[0140] The center of gravity (COG) data may be derived by machine learning. The machine learning may be performed by using an AI apparatus 1000, an AI server 2000, and an AI system 1 that are connected to communicate with the robot.

[0141] The variable supporter 400 may adjust the movement speed of the auxiliary wheel 300 so as to correspond to the movement speed of the center of gravity (COG) of the robot. For example, the controller of the robot may confirm the position change of the center of gravity (COG) according to the change in time and derive the movement speed of the center of gravity (COG) of the robot therefrom.

[0142] The controller may control the operation of the variable supporter 400 so as to correspond to the movement speed of the center of gravity (COG) of the robot, such that the variable supporter 400 may adjust the movement speed of the auxiliary wheel 300.

[0143] The movement speed of the center of gravity (COG) may also vary in proportion to the movement speed of the loading box 100. As a result, the robot may adjust the movement speed of the auxiliary wheel 300 so as to correspond to the movement speed of the loading box 100.

[0144] It is possible to prevent the auxiliary wheel 300 from moving too fast so that the auxiliary wheel 300 may stably support the robot, thereby suppressing the overturning of the robot.

[0145] The robot may further include a sensing sensor configured to detect an external obstacle. The sensor may be provided with a lidar, a radar, a 3D camera, an RGB-D camera, an ultrasonic sensor, an infrared sensor, a laser sensor, or the like.

[0146] The variable supporter 400 may adjust the movement distance of the auxiliary wheel 300 so that the obstacle sensed by the sensor is spaced at a certain distance apart from the auxiliary wheel 300.

[0147] When finding an obstacle adjacent to the outside of the robot through the sensing sensor, the controller of the robot may control the operation of the variable supporter 400 so that the auxiliary wheel 300 and the obstacle are spaced at a certain distance apart from each other.

[0148] This is to prevent breakage of the auxiliary wheel 300 due to the contact between the obstacle and the auxiliary wheel 300 and the overturning of the robot.

[0149] Hereinafter, the AI apparatus 1000, the AI server 2000, and the AI system 1 related to an embodiment will be described.

[0150] FIG. 7 is a diagram illustrating the AI apparatus 1000 according to an embodiment.

[0151] The AI apparatus 1000 is a TV, a projector, a mobile phone, a smartphone, a desktop computer, a notebook, a digital broadcasting terminal, a personal digital assistants (PDA), a portable multimedia player (PMP), a navigation device, a tablet PC, a wearable device, and a set-top box (STB), a DMB receiver, a radio, a washing machine, a refrigerator, a desktop computer, a digital signage, a robot, a vehicle, or the like.

[0152] Referring to FIG. 7, the AI apparatus 1000 may include a communicator 1100, an inputter 1200, a learning processor 1300, a sensor 1400, an outputter 1500, a memory 1700, a processor 1800, and the like.

[0153] The communicator 1100 may transmit and receive data to and from external devices such as the other AI apparatuses 1000a to 1000e or the AI server 2000 by using a wired or wireless communication technology. For example, the communicator 1100 may transmit and receive sensor information, a user input, a learning model, a control signal, and the like with external devices.

[0154] In this case, the communications technology used by the communications unit 1100 may be technology such as global system for mobile communication (GSM), code division multi access (CDMA), long term evolution (LTE), 5G, wireless LAN (WLAN), Wireless-Fidelity (Wi-Fi), Bluetooth.TM., radio frequency identification (RFID), infrared data association (IrDA), ZigBee, and near field communication (NFC).

[0155] The inputter 1200 may obtain various types of data.

[0156] The inputter 1200 may include a camera for inputting an image signal, a microphone for receiving an audio signal, and a user inputter for receiving information inputted from a user. Here, the signal obtained from the camera or the microphone may also be referred to as sensing data or sensor information by regarding the camera or the microphone as a sensor.

[0157] The inputter 1200 may obtain, for example, learning data for model learning and input data used when output is obtained using a learning model. The inputter 1200 may obtain raw input data. In this case, the processor 1800 or the learning processor 1300 may extract an input feature by preprocessing the input data.

[0158] The learning processor 1300 may allow a model, composed of an artificial neural network to be trained using learning data. Here, the trained artificial neural network may be referred to as a trained model. The trained model may be used to infer a result value with respect to new input data rather than learning data, and the inferred value may be used as a basis for a determination to perform an operation of classifying the detected hand motion.

[0159] The learning processor 1300 may perform AI processing together with a learning processor 2400 of the AI server 2000.

[0160] The learning processor 1300 may include a memory which is combined or implemented in the AI apparatus 1000. Alternatively, the learning processor 1300 may be implemented using the memory 1700, an external memory directly coupled to the AI apparatus 1000, or a memory maintained in an external device.

[0161] The sensor 1400 may obtain at least one of internal information of the AI apparatus 1000, surrounding environment information of the AI apparatus 1000, or user information by using various sensors.

[0162] The sensor 1400 may include a proximity sensor, an illumination sensor, an acceleration sensor, a magnetic sensor, a gyroscope sensor, an inertial sensor, an RGB sensor, an infrared (IR) sensor, a finger scan sensor, an ultrasonic sensor, an optical sensor, a microphone, a light detection and ranging (LiDAR) sensor, radar, or a combination thereof.

[0163] The outputter 1500 may generate a visual, auditory, or tactile related output.

[0164] The outputter 1500 may include a display unit outputting visual information, a speaker outputting auditory information, and a haptic module outputting tactile information.

[0165] The memory 1700 may store data supporting various functions of the AI apparatus 1000. For example, the memory 1700 may store input data, training data, training model, training history, and the like obtained from the input unit 1200.

[0166] The processor 1800 may determine at least one executable operation of the AI apparatus 1000 based on information determined or generated by using a data analysis algorithm or a machine learning algorithm. In addition, the processor 1800 may control components of the AI apparatus 1000 to perform the determined operation.

[0167] To this end, the processor 1800 may request, retrieve, receive, or use data of the learning processor 1300 or the memory 1700, and may control components of the apparatus 1000 to execute a predicted operation or an operation determined to be preferable of the at least one executable operation.

[0168] At this time, when the external device needs to be linked to perform the determined operation, the processor 1800 may generate a control signal for controlling the corresponding external device, and transmit the generated control signal to the corresponding external device.

[0169] The processor 1800 obtains intent information about user input, and may determine a requirement of a user based on the obtained intent information.

[0170] The processor 1800 may obtain intent information corresponding to user input by using at least one of a speech to text (operation STT) engine for converting voice input into a character string or a natural language processing (NLP) engine for obtaining intent information of a natural language.

[0171] In an embodiment, the at least one of the STT engine or the NLP engine may be composed of artificial neural networks, some of which are trained according to a machine learning algorithm. In addition, the at least one of the STT engine or the NLP engine may be trained by the learning processor 1300, trained by a learning processor 2400 of an AI server 2000, or trained by distributed processing thereof.

[0172] The processor 1800 collects history information including, for example, operation contents and user feedback on an operation of the AI apparatus 1000, and stores the history information in the memory 1700 or the learning processor 1300, or transmits the history information to an external device such as an AI server 2000. The collected history information may be used to update a learning model.

[0173] The processor 1800 may control at least some of components of the AI apparatus 1000 to drive an application stored in the memory 1700. Furthermore, the processor 1800 may operate two or more components included in the AI apparatus 1000 in combination with each other to drive the application.

[0174] FIG. 8 is a diagram illustrating the AI server 2000 according to an embodiment.

[0175] Referring to FIG. 8, the AI server 2000 may refer to a device for training an artificial neural network using a machine learning algorithm or using a trained artificial neural network. Here, the AI server 2000 may include a plurality of servers to perform distributed processing, and may be defined as a 5G network. In this case, the AI server 2000 may be included as a configuration of a portion of the AI apparatus 1000, and may thus perform at least a portion of the AI processing together.

[0176] The AI apparatus 1000 means an apparatus that may perform machine learning.

[0177] The AI apparatus 1000 is a TV, a projector, a mobile phone, a smartphone, a desktop computer, a notebook, a digital broadcasting terminal, a personal digital assistants (PDA), a portable multimedia player (PMP), a navigation device, a tablet PC, a wearable device, and a set-top box (STB), a DMB receiver, a radio, a washing machine, a refrigerator, a desktop computer, a digital signage, a robot, a vehicle, or the like.

[0178] The AI server 2000 may include a communications unit 2100, a memory 2300, a learning processor 2400, and a processor 2600.

[0179] The communications unit 2100 may transmit and receive data with an external device such as the AI apparatus 1000.

[0180] The memory 2300 may include a model storage 2310. The model storage 2310 may store a model (or an artificial neural network 231a) learning or learned via the learning processor 2400.

[0181] The learning processor 2400 may train the artificial neural network 2310a by using learning data. The learning model may be used while mounted in the AI server 2000 of the artificial neural network, or may be used while mounted in an external device such as the AI apparatus 1000.

[0182] The learning model may be implemented as hardware, software, or a combination of hardware and software. When a portion or the entirety of the learning model is implemented as software, one or more instructions, which constitute the learning model, may be stored in the memory 2300.

[0183] The processor 2600 may infer a result value with respect to new input data by using the learning model, and generate a response or control command based on the inferred result value.

[0184] FIG. 9 is a diagram illustrating the AI system 1 according to an embodiment.

[0185] Referring to FIG. 9, in the AI system 1, at least one or more of AI server 2000, robot 1000a, autonomous vehicle 1000b, XR apparatus 1000c, smartphone 1000d, or home appliance 1000e are connected to a cloud network 10. Here, the robot 1000a, the self-driving vehicle 1000b, the XR apparatus 1000c, the smartphone 1000d, or the home appliance 1000e, to which AI technology is applied, may be referred to as AI apparatuses 1000a to 1000e.

[0186] The cloud network 10 may include part of the cloud computing infrastructure or refer to a network existing in the cloud computing infrastructure. Here, the cloud network 10 may be constructed by using the 3G network, 4G or Long Term Evolution (LTE) network, or a 5G network.

[0187] In other words, individual devices (1000a to 1000e, 2000) constituting the AI system 1 may be connected to each other through the cloud network 10. In particular, each individual device (1000a to 1000e, 2000) may communicate with each other through the base station but may communicate directly to each other without relying on the base station.

[0188] The AI server 2000 may include a server performing AI processing and a server performing computations on big data.

[0189] The AI server 2000 may be connected to at least one or more of the robot 1000a, autonomous vehicle 1000b, XR apparatus 1000c, smartphone 1000d, or home appliance 1000e, which are AI apparatuses constituting the AI system, through the cloud network 10 and may help at least part of AI processing conducted in the connected AI apparatuses (1000a to 1000e).

[0190] At this time, the AI server 0200 may train the AI network according to the machine learning algorithm instead of the AI apparatuses 1000a to 1000e, and may directly store the learning model or transmit the learning model to the AI apparatuses 1000a to 1000e.

[0191] At this time, the AI server 2000 may receive input data from the AI apparatus 1000a to 1000e, infer a result value from the received input data by using the learning model, generate a response or control command based on the inferred result value, and transmit the generated response or control command to the AI apparatus 1000a to 1000e.

[0192] Similarly, the AI apparatus 1000a to 1000e may infer a result value from the input data by employing the learning model directly and generate a response or control command based on the inferred result value.

[0193] The center of gravity (COG) data may be derived, for example, by supervised learning in the method of machine learning.

[0194] The supervised learning may be, for example, performed in such a manner that the training data infers labeling data that is a pre-derived result value of the center of gravity (COG) data.

[0195] The center of gravity (COG) data has an input value and an output value corresponding thereto. The output value may be a position coordinate of the center of gravity (COG) of the robot. The input value is a variable specifying the output value and may be plural.

[0196] The input value is, for example, the weight distribution of the goods loaded in the loading box 100, the floor number on which the goods have been loaded in the loading box 100, the movement distance of the loading box 100 when the loading box 100 has been open, and the number of floors of the loading box 100.

[0197] The training data has any input value. The labeling data is the derived result value of the center of gravity (COG) data, which is the correct answer that the artificial neural network should infer in the supervised learning.

[0198] The supervised learning may be to derive the functional relationship between a position of the center of gravity (COG) of the robot, that is, a position coordinate of the center of gravity (COG) of the robot, and an input value specifying the position of the center of gravity (COG).

[0199] The artificial neural network may select the position coordinate of the center of gravity (COG) of the gravity that is an output value with respect to any input value, and select a function of connecting the input value and the output value.

[0200] The artificial neural network may perform a comparison task of the labeling data with the same input value. The artificial neural network may modify the function so that each output value is the same when the selected output value and the output value of the labeling data are different from each other.

[0201] The artificial neural network may repeat the above task to derive a function of connecting any input value and the output value. Accordingly, the center of gravity (COG) data may hold a function derived from the supervised learning, and the position coordinate of the center of gravity (COG) of the robot for any input value derived by using the function.

[0202] The following relationship is established between the position coordinate (x, y, z) of the center of gravity (COG) of the robot, which is the output value derived from supervised learning, and the function (f) derived by the artificial neural network.

COG(x,y,z)=f(l,p.sub.dist,m,n)

[0203] Where,

[0204] l: floor number in which the goods have been loaded in the loading box 100

[0205] p.sub.dist: weight distribution of the goods loaded in the loading box 100

[0206] m: movement distance of the loading box 100 when the loading box 100 is open

[0207] n: number of floors of the loading box 100

[0208] However, the input value is not limited thereto, and more various variables may be used as the input value.

[0209] Meanwhile, the m and n values may be constant values that do not change in the same robot. Accordingly, the m and n values may be replaced with a parameter function (.theta.) whose value is changed when it is different robots. That is, the parameter function (.theta.) may be expressed as a function (g) for m and n as follows.

.theta.=g(m,n)

[0210] Of course, the function (g) may also be derived by the supervised learning.

[0211] Accordingly, the position coordinate of the center of gravity (COG) of the robot may be expressed as follows.

COG(x,y,z)=h(l,p.sub.dist,.theta.)

[0212] here,

f(l,p.sub.dist,m,n)=h(l,p.sub.dist,.theta.)

[0213] The center of gravity (COG) data derived from the supervised learning may have the above-described function relating to the input value and the output value. Accordingly, the position coordinate of the center of gravity (COG) of the robot, which is an output value, may be found from the center of gravity (COG) data for any input value.

[0214] The labeling data may be derived, for example, by using a program that finds the center of gravity (COG) from the shape of the object. That is, such a program may output the center of gravity (COG) of the object when the shape of the object is input.

[0215] Accordingly, when the specific shape of the robot and the shape of the goods loaded on the robot are input to the program, the center of gravity (COG) of the robot loaded with the goods may be found. Of course, the center of gravity (COG) of the entire robot at each position in the x-direction of the loading box 100 may also be found when the loading box 100 loaded with the goods has moved in the x direction due to the opening or closing.

[0216] The labeling data derived by using the program may have some limitations in finding the position of the center of gravity (COG) corresponding to all situations. This is because the inputting the shape is a very time consuming task.

[0217] Accordingly, an embodiment may derive the function by performing the supervised learning by using the labeling data having a relatively small number of cases, and estimate the center of gravity (COG) of the robot in the implementable situation by using the center of gravity (COG) data having the derived function.

[0218] The above-described program configured to find the center of gravity (COG) may also be produced by using a commercial program or through programming. The commercial program may be, for example, a program configured to derive the center of gravity of the Auto CAD, which is a commercial program.

[0219] Meanwhile, the center of gravity (COG) data may hold information on the position of the center of gravity (COG) of the robot in each condition, in which at least one among a plurality of input values has been learned in different conditions, during machine learning for deriving the center of gravity (COG) data of the robot.

[0220] Accordingly, when at least one of the plurality of input values, for example, the weight distribution of the goods loaded in the loading box 100, the floor number on which the goods have been loaded in the loading box 100, the movement distance of the loading box 100 when the loading box 100 is open, and the number of floors of the loading box 100 is different, the position coordinate of the center of gravity (COG), which is the output value, may also vary.

[0221] In an embodiment, the position of the center of gravity (COG) of the robot, which changes due to the weight and shape of the goods loaded in the robot, the overall shape of the robot loaded with the goods, the position movement of the loading box 100, and the like, may be effectively derived through machine learning.

[0222] It is possible to move the auxiliary wheel 300 in the first direction corresponding to the derived position of the center of gravity (COG) to stably support the robot, thereby effectively suppressing the overturning of the robot due to the change in position of the center of gravity (COG) of the robot.

[0223] Hereinafter, an operation method of the robot according to an embodiment will be described. FIG. 10 is a flowchart illustrating an operation method of a robot according to an embodiment. FIG. 10 shows an operation method when the robot withdraws the loaded goods.

[0224] The robot may select a floor loaded with the goods to be withdrawn from the loading box 100 provided with a plurality of floors (operation S110). The robot arriving at the delivery destination may select a floor of the loading box 100 loaded with the goods to be completed to deliver.

[0225] The robot may measure the weight distribution of the goods loaded in the selected floor by using a pressure sensor provided in each floor of the loading box 100 (operation S120).

[0226] The robot may estimate the position of the center of gravity (COG) of the robot based on the weight distribution of the goods and the structure of the robot when the selected floor is open (operation S130). The estimation of the position of the center of gravity (COG) of the robot, as described above, may proceed by using the center of gravity (COG) data held by the robot.

[0227] The robot may set the movement distance of the auxiliary wheel 300 according to the estimated center of gravity (COG) of the robot (operation S140). As described above, the robot may set the movement distance of the auxiliary wheel 300 in order to move in its outside direction at the position illustrated in FIG. 5 so that the robot does not overturn due to the action of the moment when the selected floor of the loading box 100 is open.

[0228] That is, the robot may set the movement distance of the auxiliary wheel 300 so that the first length (L1) is equal to or longer than the second length (L2). Of course, the movement distance of the auxiliary wheel 300 may vary according to the position of the center of gravity (COG) of the robot.

[0229] Referring to FIG. 6, the length of the difference (L1-L2) between the first length (L1) and the second length (L2) may be appropriately set.

[0230] The length of (L1-L2) may be zero or a positive value. The length of (L1-L2) may be appropriately selected considering the spatial size of the place where the goods is withdrawn, the safety of the user receiving the service of the robot, and the stable support of the robot.

[0231] The variable supporter 400 may move the auxiliary wheel 300 by the set movement distance (operation S150). The variable supporter 400 may move the auxiliary wheel 300 so as to correspond to the movement direction of the center of gravity (COG) of the robot.

[0232] The variable supporter 400 may move the auxiliary wheel 300 so that the first length (L1) is equal to or longer than the second length (L2). The controller provided in the robot may control the movement of the variable supporter 400 and the movement distance of the auxiliary wheel 300.

[0233] After the auxiliary wheel 300 moves by the set movement distance, the robot may open the selected floor (operation S160). After the selected floor has been open, the goods loaded thereon may be withdrawn.

[0234] The operation method of the robot in an embodiment may further include loading the goods in the loading box 100. The loading the goods may be performed prior to all the operations illustrated in FIG. 10. That is, the robot may load the goods on the selected floor of each of the loading box 100 and drive to the delivery destination to withdraw the goods at the delivery destination.

[0235] In the loading the goods, the variable supporter 400 may move the auxiliary wheel 300 to the maximum distance that the auxiliary wheel 300 may be moved. At this time, the length of (L1-L2) may be maximized.

[0236] In the loading the goods, since it is performed in a warehouse having a relatively safe and large space without the user, the user's safety and the spatial constraint may not be a big problem.

[0237] Accordingly, in the loading the goods, it may be appropriate to move the auxiliary wheel 300 so that the length of (L1-L2) is maximized in order to support the robot more stably so as not to overturn.

[0238] FIG. 11 is a flowchart illustrating a method for loading the goods in the operation method of the robot according to an embodiment.

[0239] The robot may select a floor in which the goods are loaded in the loading box 100 (operation S210).

[0240] The variable supporter 400 may move the auxiliary wheel 300 to the maximum position where the auxiliary wheel 300 is movable (operation S220). As described above, the length of (L1-L2) may be maximized so that it is possible to stably support the robot, and to suppress the robot from overturning due to the action of the moment in the loading process of the goods.

[0241] After the auxiliary wheel 300 moves in the outside direction of the robot, the robot may open the selected floor (operation S230). The robot may load the goods on the open floor of the loading box 100 (operation S240). The robot may close the floor loaded with the goods (operation S250).

[0242] After loading the goods, the robot drives to the delivery destination, and after arriving at the delivery destination, the robot may sequentially perform each operation after the operation S110 in order to withdraw the goods.

[0243] As described above in association with embodiments, although some cases were described, other various embodiments are possible. The technical contents of the embodiments described above may be combined in various ways unless they are not compatible, so new embodiments may be correspondingly implemented.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.