Feature Tracking Using Ultrasound

Lachaine; Martin Emile ; et al.

U.S. patent application number 16/557006 was filed with the patent office on 2020-01-23 for feature tracking using ultrasound. The applicant listed for this patent is Elekta LTD.. Invention is credited to Tony Falco, Martin Emile Lachaine, Fabienne Lathuiliere, Sebastien Tremblay.

| Application Number | 20200023203 16/557006 |

| Document ID | / |

| Family ID | 44303751 |

| Filed Date | 2020-01-23 |

| United States Patent Application | 20200023203 |

| Kind Code | A1 |

| Lachaine; Martin Emile ; et al. | January 23, 2020 |

FEATURE TRACKING USING ULTRASOUND

Abstract

Various implementations of the invention provide techniques and supporting systems that facilitate real-time or near-real-time ultrasound tracking for the purpose of calculating changes in anatomical features during a medical procedure. More specifically, anatomical features within a patient undergoing a medical procedure are tracked by obtaining temporally-distinct three dimensional ultrasound images that include the feature of interest and obtaining a targeted subset of ultrasound images focused on the feature. Based on the targeted subset of ultrasound images, a displacement of the feature is determined and image parameters used to obtain the targeted subset of ultrasound images are adjusted based on the displacement. This results in a time-based sequence of three dimensional images and targeted ultrasound images of the feature that identify changes in the position, size, location, and/or shape of the feature.

| Inventors: | Lachaine; Martin Emile; (Montreal, CA) ; Tremblay; Sebastien; (St.Jean-Sur-Richelieu, CA) ; Lathuiliere; Fabienne; (Outremont, CA) ; Falco; Tony; (La Prairie, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 44303751 | ||||||||||

| Appl. No.: | 16/557006 | ||||||||||

| Filed: | August 30, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14702037 | May 1, 2015 | 10449390 | ||

| 16557006 | ||||

| 12956991 | Nov 30, 2010 | |||

| 14702037 | ||||

| 61323064 | Apr 12, 2010 | |||

| 61294294 | Jan 12, 2010 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 8/587 20130101; G06T 7/0012 20130101; A61N 5/1049 20130101; A61B 2090/378 20160201; G06T 2207/10132 20130101; A61B 8/4263 20130101; A61B 8/4461 20130101; A61B 2034/2063 20160201; A61N 2005/1058 20130101; A61B 8/4245 20130101; A61B 8/483 20130101; A61B 34/20 20160201; G06T 2207/30004 20130101; A61B 8/54 20130101; A61N 5/1067 20130101; A61B 8/085 20130101; A61B 2034/2065 20160201; G06T 7/251 20170101; A61N 2005/1074 20130101; A61B 8/58 20130101; A61B 8/4472 20130101; A61B 2034/2055 20160201; G06T 2207/10136 20130101 |

| International Class: | A61N 5/10 20060101 A61N005/10; G06T 7/00 20060101 G06T007/00; A61B 8/08 20060101 A61B008/08; A61B 8/00 20060101 A61B008/00 |

Claims

1. A method of using a processor circuit executing a plurality of computer-executable instructions for monitoring one or more anatomical features of a patient, comprising: controlling an ultrasound imaging probe to acquire first three-dimensional image data including a first three-dimensional image of a volume of interest including an anatomical feature; reducing a size of the volume of interest from a first size to a second size to encompass only the anatomical feature plus a specified amount of surrounding voxels; reconstructing a second three-dimensional image of the reduced size volume of interest having the second size; determining whether the anatomical feature is present in the second three-dimensional image; in response to determining that the anatomical feature is not present in the second three-dimensional image or is close to a boundary in the second three-dimensional image, controlling the ultrasound imaging probe to acquire second three-dimensional image data including a third three-dimensional image of the volume of interest having the first size; and in response to determining that the anatomical feature is present in the second three-dimensional image, modifying a radiotherapy treatment plan to compensate for a location of the anatomical feature.

2. The method of claim 1, wherein reducing the size of the volume of interest comprises limiting a sector size of two-dimensional ultrasound frames through an ultrasound sweep.

3. The method of claim 2, wherein the sector size is limited asymmetrically or based on a depth of penetration.

4. The method of claim 1, wherein reducing the size of the volume of interest increases a frame-rate and sweeping motion speed while maintaining a sufficient number of slices for reconstructing the three-dimensional image.

5. The method of claim 1, wherein the second three-dimensional image data is acquired at specified time intervals.

6. A system for controlling an ultrasound imaging probe to monitor an anatomical feature of a patient, comprising: a memory circuit for storing instructions and image data; and a processor circuit for executing the instructions to perform operations comprising: controlling an ultrasound imaging probe to acquire first three-dimensional image data including a first three-dimensional image of a volume of interest including an anatomical feature; reducing a size of the volume of interest from a first size to a second size to encompass only the anatomical feature plus a specified amount of surrounding voxels; reconstructing a second three-dimensional image of the reduced size volume of interest having the second size; determining whether the anatomical feature is present in the second three-dimensional image; in response to determining that the anatomical feature is not present in the second three-dimensional image or is close to a boundary in the second three-dimensional image, controlling the ultrasound imaging probe to acquire second three-dimensional image data including a third three-dimensional image of the volume of interest having the first size; and in response to determining that the anatomical feature is present in the second three-dimensional image, modifying a radiotherapy treatment plan to compensate for a location of the anatomical feature.

7. The system of claim 6, wherein reducing the size of the volume of interest comprises limiting a sector size of two-dimensional ultrasound frames through an ultrasound sweep.

8. The system of claim 7, wherein the sector size is limited asymmetrically or based on a depth of penetration.

9. The system of claim 6, wherein reducing the size of the volume of interest increases a frame-rate and sweeping motion speed while maintaining a sufficient number of slices for reconstructing the three-dimensional image.

10. The system of claim 6, wherein the second three-dimensional image data is acquired at specified time intervals.

11. A method of using a processor circuit executing a plurality of computer-executable instructions for monitoring one or more anatomical features of a patient, comprising: controlling an ultrasound imaging probe to acquire first three-dimensional image data including a first three-dimensional image of a volume of interest including an anatomical feature; reconstructing image data in two or more tracking planes based on the first three-dimensional image of the volume of interest; locating a three-dimensional feature, corresponding to the anatomical feature, within the reconstructed image data in the two or more tracking planes; determining whether the three-dimensional feature intersects at least one of the two or more tracking planes to determine displacement of the three-dimensional feature relative to a previous point in time; in response to determining that the three-dimensional feature fails to intersect at least one of the two or more tracking planes, controlling the ultrasound imaging probe to acquire second three-dimensional image data including a second three-dimensional image of the volume of interest; and in response to determining that the three-dimensional feature intersects at least one of the two or more tracking planes, modifying a radiotherapy treatment plan to compensate for a location of the three-dimensional feature.

12. The method of claim 11, wherein the two or more tracking planes are orthogonal to each other.

13. The method of claim 11, further comprising controlling the ultrasound imaging probe to acquire a two-dimensional image along a third tracking plane position that intersects a region of the three-dimensional feature.

14. The method of claim 11, further comprising obtaining pixels from multiple two-dimensional images obtained from different plane positions of the ultrasound imaging probe instead of reconstructing the image data based on the first three-dimensional image.

15. The method of claim 14, wherein the different plane positions include three plane positions, a first of the three plane positions selected to pass through a center of the three-dimensional feature, a second of the three plane positions selected to pass through a first side left of the center of the three-dimensional feature, and a third of the three plane positions selected to pass through a second side right of the center of the three-dimensional feature.

16. The method of claim 11, further comprising producing a two-dimensional curve based on an intersection of first and second planes of the two or more tracking planes and the three-dimensional feature.

17. The method of claim 11, wherein locating the three-dimensional feature comprises determining that the three-dimensional feature is visible in the two or more tracking planes.

18. The method of claim 11, further comprising re-centering a position of the two or more tracking planes based on determining displacement of the three-dimensional feature relative to a previous point in time.

19. A system for controlling an ultrasound imaging probe to monitor an anatomical feature of a patient, comprising: a memory circuit for storing instructions and image data; and a processor circuit for executing the instructions to perform operations comprising: controlling an ultrasound imaging probe to acquire first three-dimensional image data including a first three-dimensional image of a volume of interest including an anatomical feature; reconstructing image data in two or more tracking planes based on the first three-dimensional image of the volume of interest; locating a three-dimensional feature, corresponding to the anatomical feature, within the reconstructed image data in the two or more tracking planes; determining whether the three-dimensional feature intersects at least one of the two or more tracking planes to determine displacement of the three-dimensional feature relative to a previous point in time; in response to determining that the three-dimensional feature fails to intersect at least one of the two or more tracking planes, controlling the ultrasound imaging probe to acquire second three-dimensional image data including a second three-dimensional image of the volume of interest; and in response to determining that the three-dimensional feature intersects at least one of the two or more tracking planes, modifying a radiotherapy treatment plan to compensate for a location of the three-dimensional feature.

20. The system of claim 19, wherein the two or more tracking planes are orthogonal to each other.

21. The system of claim 19, further comprising controlling the ultrasound imaging probe to acquire a two-dimensional image along a third tracking plane position that intersects a region of the three-dimensional feature.

22. The system of claim 19, further comprising obtaining pixels from multiple two-dimensional images obtained from different plane positions of the ultrasound imaging probe instead of reconstructing the image data based on the first three-dimensional image.

23. The system of claim 22, wherein the different plane positions include three plane positions, a first of the three plane positions selected to pass through a center of the three-dimensional feature, a second of the three plane positions selected to pass through a first side left of the center of the three-dimensional feature, and a third of the three plane positions selected to pass through a second side right of the center of the three-dimensional feature.

Description

CROSS-REFERENCES TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. Ser. No. 14/702,037, filed May 1, 2015, which is a continuation of U.S. Ser. No. 12/956,991, filed Nov. 30, 2010, which claims priority to and the benefit of U.S. provisional patent application Ser. No. 61/294,294, filed Jan. 12, 2010, and U.S. provisional patent application Ser. No. 61/323,064, filed Apr. 12, 2010, each entitled "Feature Tracking Using Ultrasound."

TECHNICAL FIELD

[0002] This invention relates to methods for tracking features during a medical procedure.

BACKGROUND INFORMATION

[0003] One purpose of radiotherapy is to target a specified anatomical region suspected of having either gross or suspected microscopic disease (sometimes referred to as the clinical treatment volume, or "CTV") with radiation while sparing surrounding healthy tissues and at-risk organs. Typically, a physician outlines the CTV on one or more planning images, such as a computed tomography (CT) image, magnetic resonance (MRI) image, three-dimensional ultrasound (3DUS) image, or a positron emission tomography (PET) scan. A treatment plan is then developed which optimizes the radiation dose distribution on the planning images to best accomplish the prescribed goals. The plan may be based on certain treatment parameters such as beam directions, beam apertures, dose levels, energy and/or type of radiation. The treatment is generally given in a finite number of fractions, typically delivered once a day. During treatment, the patient is positioned relative to the radiation beam prior to each fraction according to the treatment plan.

[0004] In practice, a margin is included around the CTV to account for anatomical changes in the CTV and surrounding areas. These changes can result from either interfractional motion, i.e., anatomical differences that develop immediately prior to the current fraction (often due to an inaccurate set-up or actual organ motion such as a different state of bladder fill), or from intrafractional motion, i.e., anatomical motion which occurs during the actual treatment delivery. In some instances, both types of motion may be present. In some instances, intrafractional motion may be cyclical, as caused by breathing, or random, as caused by gas or a steadily increasing bladder volume.

[0005] Some conventional image-guided radiotherapy (IGRT) applications may be used to track interfractional motion. Various imaging modalities may be used to implement IGRT, including three-dimensional ultrasound (3DUS) and x-ray imaging of fiducial "seeds" implanted in a patient's organ. Image capture is typically performed once prior to the radiation delivery, and the treatment couch is then adjusted to compensate for any changes in anatomy relative to the treatment plan. The use of IGRT to account for intrafractional motion, on the other hand, is in its infancy and requires continuous imaging throughout the treatment. As trends in radiotherapy begin to move towards fewer fractions and longer treatment times, correcting for intrafractional motion is growing in importance.

[0006] One method of tracking intrafractional motion uses x-rays to image fiducials at discrete points in time throughout treatment. However, continuous monitoring is not achievable with this methodology because the x-ray imaging exposure is unbearably high, with an image frequency of 30 seconds being the currently acceptable limit. Such procedures still require undesirable extra radiation as well as an invasive fiducial implantation procedure. Further, various surface monitoring technologies have been developed for cyclical intrafractional motion, but these do not provide internal information and are not sufficient in many applications, particularly when random motion occurs. Yet another technology uses beacons which are implanted in the feature of interest, and tracked in real-time using electromagnetic methods. As with fiducials, this procedure also requires an invasive implantation procedure.

[0007] Two-dimensional ultrasound (2DUS) can conceivably be proposed for intrafractional motion detection as it is real-time in nature, does not add radiation exposure to the patient during the monitoring process, and does not require implantation of fiducials. Temporally-spaced 2DUS images, as well as three-dimensional ultrasound (3DUS) images, have been proposed to track intrafractional motion during radiotherapy. See, for example, Xu et al, Med. Phys. 33 (2006), Hsu et al, Med. Phys. 32 (2005), Whitmore et al, US 2006/0241143 A1, Fu et al, US 2007/0015991 A1, and Bova et al, U.S. Pat. No. 6,390,982 B1. Some of these disclosures discuss the use of 3DUS probes to obtain a "four-dimensional" image series, however, there remain many obstacles in obtaining and using these images which are not addressed in the current literature.

[0008] One conventional three-dimensional (3D) probe utilizes a motorized two-dimensional (2D) probe placed inside a housing that sweeps mechanically within the housing, thus collecting a series of two-dimensional slices to cover the three-dimensional volume. For example, imaging a 10 cm.times.10 cm area at a given depth using a resolution of 0.5 mm, each sweep requires 200 slices. At a framerate of 20 Hz, one sweep takes approximately 10 seconds to complete, which precludes effective "real-time" four-dimensional imaging (three physical dimensions changing over time). Moreover, reconstruction of the entire three-dimensional volume takes at least two seconds which further reduces the theoretical three-dimensional refresh rate to 12 seconds, although multi-thread processing may help. Anatomical feature extraction based on the three-dimensional images is also time consuming and requires at least an additional five seconds. Aspects of this invention allow for real-time feature tracking ultrasound imaging during a medical procedure.

SUMMARY OF THE INVENTION

[0009] Various implementations of the invention provide techniques and supporting systems that facilitate real-time or near-real-time ultrasound tracking for the purpose of calculating changes in anatomical features during a medical procedure. While the methods are primarily described in terms of a radiotherapy fraction, other applications are contemplated, such as cryotherapy, brachytherapy, high-intensity focused ultrasound (HIFU), as well as imaging procedures such as computed tomography (CT), four-dimensional CT, planar x-ray, PET, MRI, and SPECT, or any other medical procedure where it is important to monitor anatomical features throughout the treatment.

[0010] Although primarily concerned with intrafractional motion tracking, in some cases correction for interfractional motion may also be implemented prior to the tracking process. In some cases, a hybrid technique of acquiring a temporally-spaced combination of three-dimensional ultrasound images and targeted subsets of two-dimensional ultrasound images may be used. The two-dimensional ultrasound images are used to increase the frequency of feature tracking to render the process as close to real-time as possible.

[0011] In a first aspect, a computer-implemented method for tracking an anatomical feature or features (e.g., an organ, tumor, tumor bed, gland, critical anatomical structure, or other lesion) within a patient undergoing a medical procedure such as radiotherapy, radiotherapy planning, image-guided surgery, or other treatment includes obtaining a three dimensional image of a region that includes the feature being treated and determining the location of the feature within the region. The three dimensional image is obtained at a first periodicity (e.g., every 30 seconds) as to reduce the processing and storage burdens as compared to higher frequencies. In between each three dimensional image, a series of temporally-displaced targeted subsets of ultrasound images focused on the region are obtained at a greater periodicity (e.g., every 0.1-3 seconds), and each is compared with the three dimensional image to determine if there has been any changes to the feature (e.g., movement, morphing). To reduce processing and memory requirements, the targeted subsets are typically of lower quality, resolution and/or represent a smaller area of the region than that of the three dimensional images, thereby allowing for more frequent imaging and comparisons. In some preferred embodiments the target subsets are planes of ultrasound data rather than a full reconstructed 3D volume.

[0012] In some cases, a determination is made as to whether the displacement exceeds a displacement threshold (such as an upper limit of spatial displacement of the feature of interest) and if so, an updated three dimensional image of the region of interest is obtained sooner than would be obtained according to the first periodicity. The updated three dimensional image maybe used for subsequent comparisons with the targeted set of ultrasound images. In addition (or alternatively) a determination is made as to whether the displacement exceeds a safety threshold and if so, the medical procedure is halted to allow for one or more adjustments to the patient's orientation with respect to a treatment device. In certain implementations, one or more treatment apparatus (e.g., a treatment couch on which the patient is supported and/or a multi-leaf collimator for administering radiation therapy) may be continuously adjusted while treatment is being delivered to compensate for the displacement.

[0013] In some embodiments, image parameters used in obtaining the targeted subset of ultrasound images are adjusted based on the displacement. The displacement threshold may be an upper limit on spatial displacement of the feature or exceeding some predefined change in size. The comparison may, in some cases, include comparing grey-scale values of subsequent images to determine the displacement or shift of the feature.

[0014] The targeted subset may be a series of two dimensional image slices of the feature, a combination of two or more tracking planes (such as two orthogonal planes), which may, in some cases, be reconstructed as a set of voxels intersecting the planes. The images may be used as obtained, or, in some cases segmented. The images may be obtained from various angles and directions aimed at the feature, including, for example transperineally in the case of a prostate gland. In certain implementations, the targeted subset may be three dimensional ultrasound datasets related to a limited region of interest, which may be determined on an adjusted sector size, an adjusted image depth and/or an adjusted ultrasound sector angle and in some cases have a reduced resolution.

[0015] The three dimensional ultrasound images may be obtained using a motorized probe, a bi-planar probe or a matrix probe, any of which may be internal or external to the patient. In some instances, the probe may have traceable markers attached to it and be calibrated to pixels within the images to facilitate spatial tracking over time with respect to a particular coordinate system.

[0016] The feature to be tracked can be the target lesion being treated, a subset of the lesion, another feature which is proximal to the lesion, a fiducial, or any other feature deemed to be of importance during the medical procedure. Features may be extracted from both full three-dimensional ultrasound images as well as the targeted subset of ultrasound images to obtain a representation of the feature's motion in time, using either segmentation or pattern recognition algorithms.

[0017] In another aspect, a system for tracking an anatomical feature within a patient undergoing a medical procedure includes a processor and a memory register. The processor is configured to locate the feature of interest within a series of three dimensional images and iteratively compare temporally displaced targeted subsets of ultrasound images obtained at a periodicity greater than the first periodicity with the three dimensional image. The processor then determines, based on each comparison, a displacement of the feature of interest. The register receives and stores the images.

[0018] In some versions, the processor determines if the displacement exceeds a displacement threshold (an upper limit of spatial displacement of the feature of interest, for example) and if so, provide instructions to obtain an updated three dimensional image of the region of interest sooner than would be obtained based on the first periodicity. The processor may also determine if the displacement exceeds a safety threshold. If so, the processor can provide instructions to halt the medical procedure, thereby allowing for adjustments to be made to the patient's orientation with respect to a treatment device and/or to the orientation of the treatment device itself prior to reinstating the procedure.

[0019] In some cases, the system also includes an ultrasound probe for providing the images to the register. The probe may be a two dimensional ultrasound probe rotatably mounted into a housing such that the probe can move according to at least one degree of freedom, either longitudinally, in a sweeping motion about an axis or rotating about an axis. A motor may provide movement to the probe, based, for example, on instructions from a controller to alter the position of the probe relative to the patient, the housing or both. The controller may also provide additional adjustments to one or more imaging parameters. Some embodiments may also provide a display and/or input devices, thus allowing an operator to view the images and interact with the system.

[0020] Changes identified in the feature may trigger a warning message (either visual, textual, audio or some combination thereof), warning the operator that the medical procedure should be modified. In other implementations, the changes may cause continuous or semi-continuous modifications to the treatment as it progresses.

BRIEF DESCRIPTION OF FIGURES

[0021] In the drawings, like reference characters generally refer to the same parts throughout the different views. Also, the drawings are not necessarily to scale, emphasis instead generally being placed upon illustrating the principles of the invention.

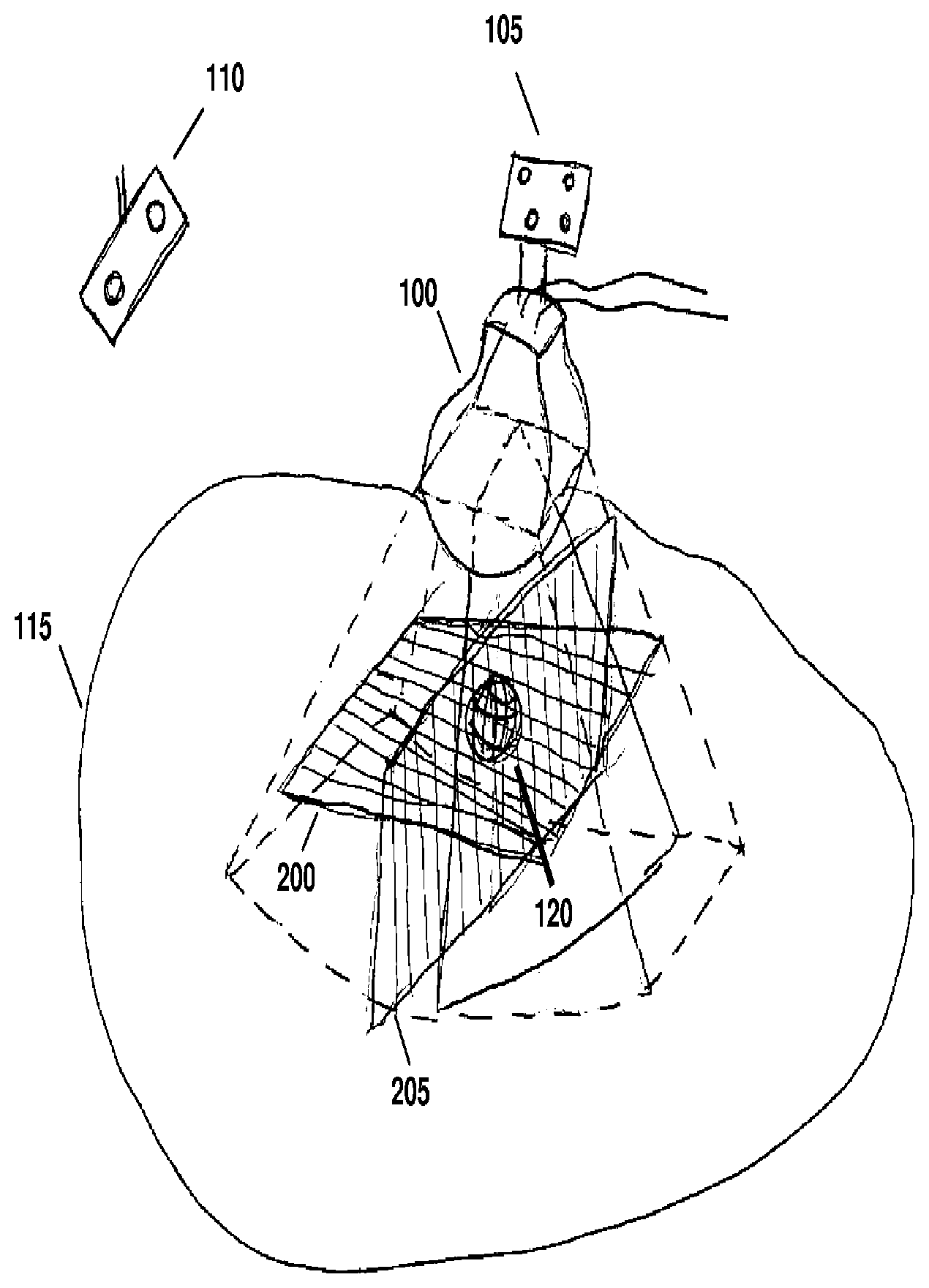

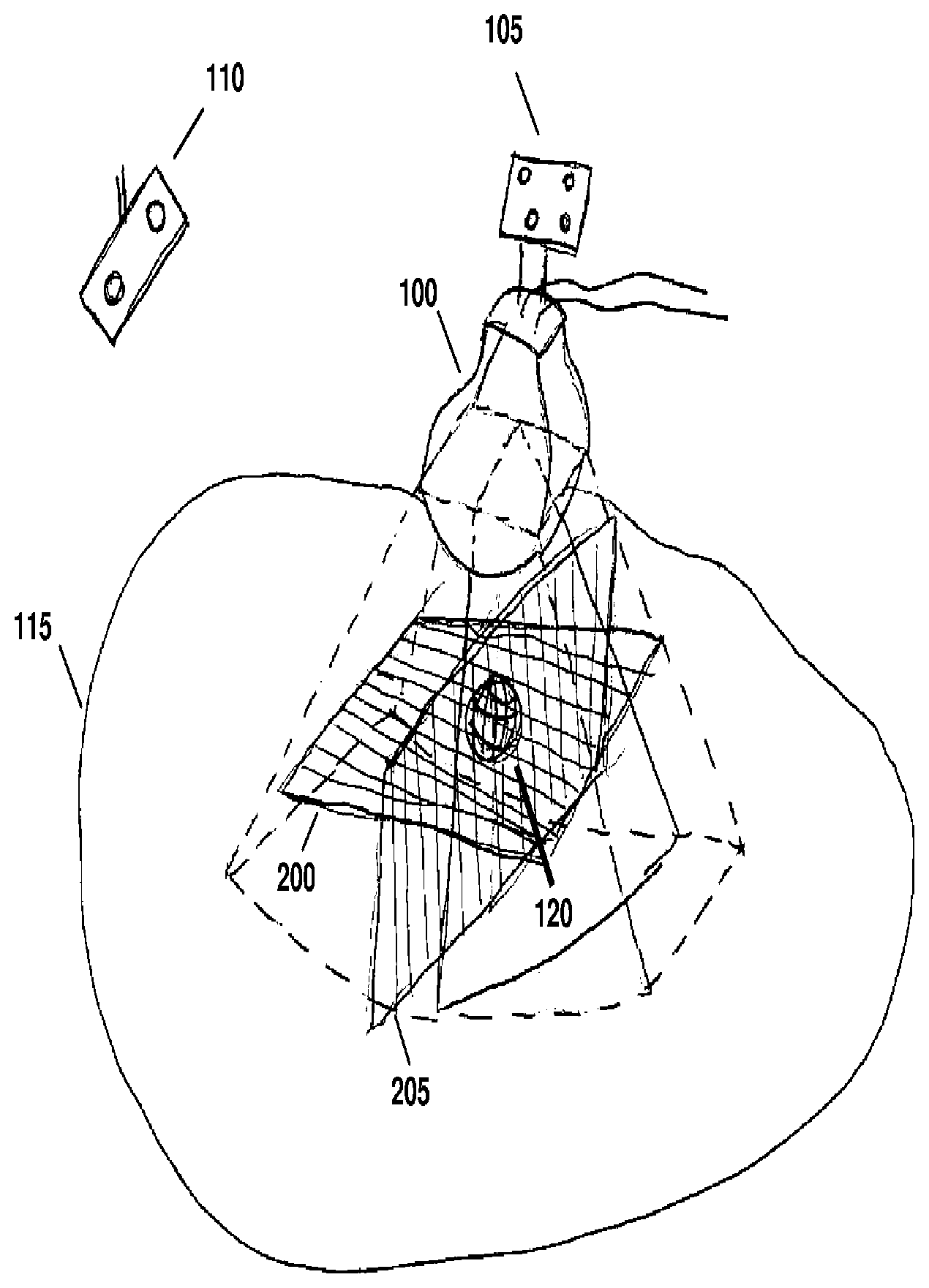

[0022] FIG. 1 is a schematic diagram illustrating the use of a mechanical three-dimensional probe, referenced to a room coordinate system, for imaging a feature within a patient according to various embodiments of the invention.

[0023] FIG. 2 is a flow-chart illustrating a method for implementing a hybrid three-dimensional and two-dimensional temporal tracking strategy according to various embodiments of the invention.

[0024] FIG. 3 is a flow-chart illustrating a particular implementation of a hybrid three-dimensional and multiple two-dimensional plane temporal tracking strategy according to various embodiments of the invention.

[0025] FIG. 3A illustrates a particular implementation of a hybrid three-dimensional and multiple two-dimensional plane temporal tracking technique according to various embodiments of the invention.

[0026] FIG. 4 illustrates the use of tracking planes in the method of FIGS. 2 and 3.

[0027] FIG. 5 is a flow-chart illustrating a particular implementation of a hybrid three-dimensional and limited ROI three-dimensional temporal tracking strategy according to various embodiments of the invention.

[0028] FIG. 6 illustrates a particular implementation of a hybrid three-dimensional and multiple two-dimensional plane temporal tracking in which the image extent encompassing the feature being treated is reduced according to various embodiments of the invention

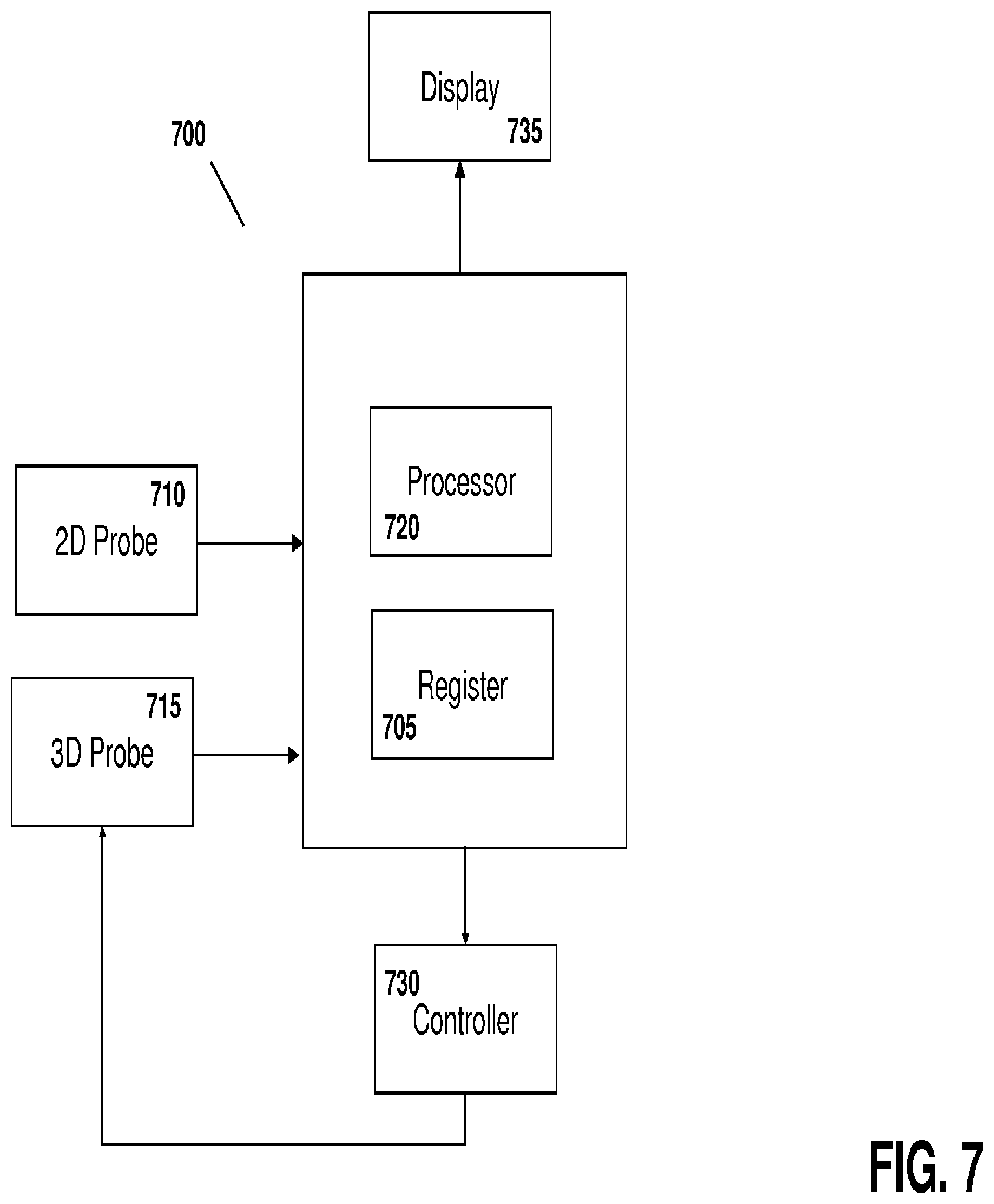

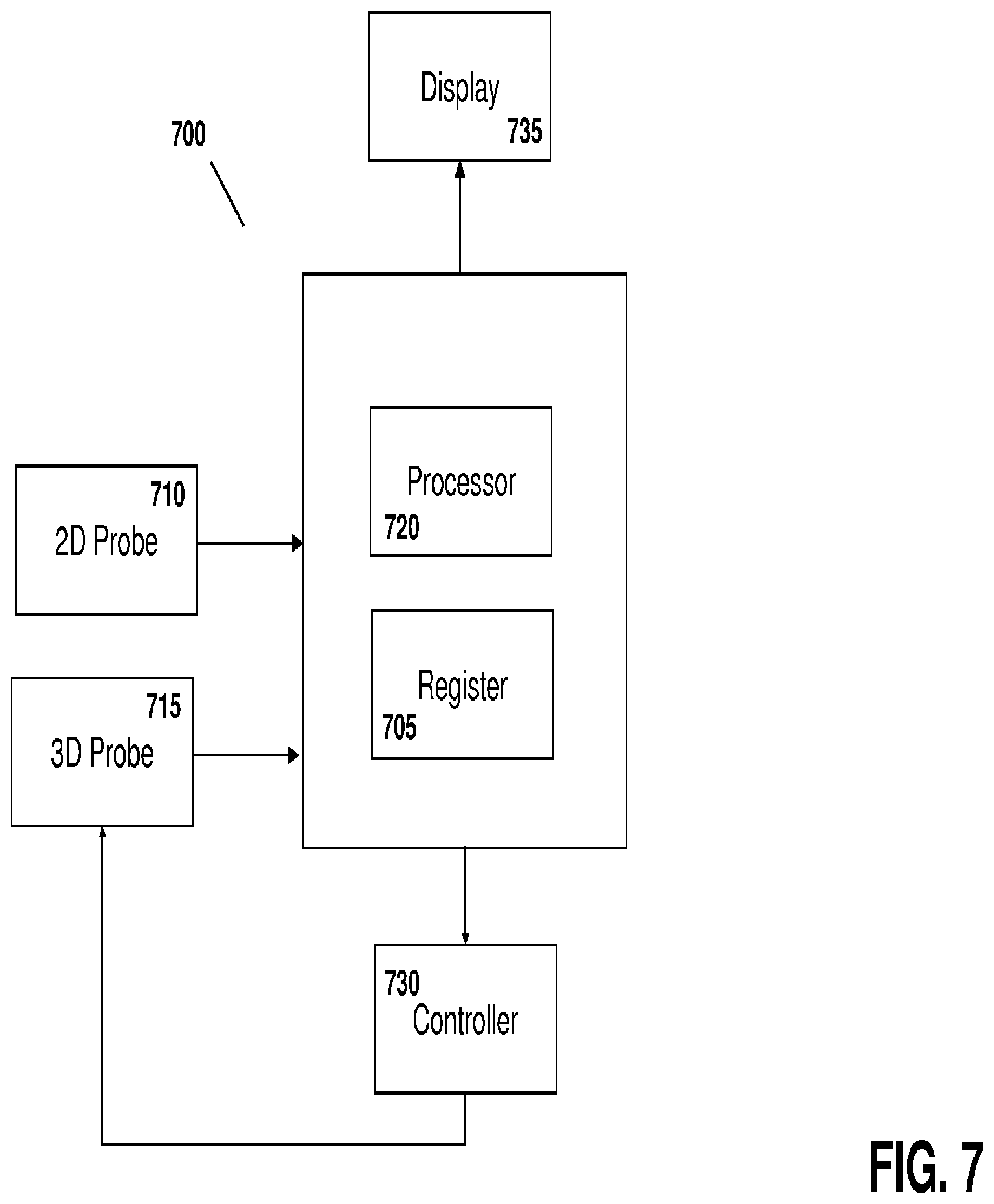

[0029] FIG. 7 illustrates a system for tracking intrafractional motion during the course of radiotherapy according to various embodiments of the invention.

DETAILED DESCRIPTION

[0030] Throughout the following descriptions and examples, aspects and embodiments of the invention are described in the context of tracking intrafractional motion during the delivery of radiotherapy. However, it is to be understood that the present invention may be applied to tracking attributes of virtually any feature within or on a patient during any form of medical procedure requiring anatomical tracking, such as external beam and brachytherapy, cryotherapy, hyperthermia, high intensity focused ultrasound treatments (HIFU)) and/or various forms of imaging (e.g., CT, 4DCT, PET, US, SPECT, and MRI).

[0031] Referring to FIG. 1, a motorized, mechanically sweeping three-dimensional ultrasound probe 100, which is of particular use in this application, contains a two-dimensional probe inside of a housing, the two-dimensional probe being able to sweep at different angles within the housing, controlled by a motor. In certain applications, tracking markers 105 are affixed to the probe handle such that the position of the probe can be detected by a tracking system 110. One such tracking device utilizes an infrared optical camera, which tracks infrared signals emitted from or reflected by the markers. The position and orientation of the probe housing can therefore be determined at all times, based on a relative coordinate system. In certain applications, the individual ultrasound pixels are referenced to a coordinate system useful for the medical procedure, which can for example be tied to room, a treatment device, an imaging device, or a patient.

[0032] Because the motorized sweeping probe is essentially a two-dimensional probe that moves according to a particular degree of freedom inside the housing, its position within the housing can be quantified in terms of a parameter X. The parameter X can be measured as an angle in the case of rotational sweep inside the housing, or as a distance in the case of a linear sweep. The parameter X can be controlled by a controller though an interface to the motor. For example, the controller may instruct the motor to move the two-dimensional probe to a particular location within the housing such that a two-dimensional frame can be acquired at a fixed position X In other cases, the controller may instruct the motor to continuously move probe within the housing, facilitating the acquisition of a three-dimensional sweep by acquiring a series of temporally-displaced image frames while continuously changing X.

[0033] In some applications, pixels in a given two-dimensional frame at position X are known relative to a fixed room coordinate system. One method of attributing coordinates to the pixels is to use a calibration algorithm similar to those developed for freehand 3DUS imaging, but using a fixed X=X.sub.cal, which relates all pixels in a "calibration slice" to the probe markers and hence to the room coordinate system. Known geometry of the three-dimensional probe can then be used to relate this calibration to the slices with other X values.

[0034] Calibration may also be achieved by temporarily affixing the three-dimensional probe to a phantom having embedded geometrical features. In such cases, a CT scan of the probe and phantom assembly is acquired, and then a three-dimensional sweep is acquired with the probe still fixed relative to the phantom. The 3DUS images are aligned relative to the CT scan using software that allows rotations and translations of the images such that the geometrical features visible in the 3DUS images match those as seen on CT. In some cases, segmented features extracted from the CT may be used instead of the CT pixel values themselves. The markers affixed to the probe handle are also visible on CT, and thus a relationship between the 3DUS pixels and the markers can be quantified, thus allowing each 3DUS pixel to be known relative to the markers. The pixels can then be referred back to the room coordinate system using known techniques used in the art for freehand 3DUS imaging.

[0035] For intrafractional tracking of a structure or anatomical feature, the probe is placed on the patient 115 prior to treatment such that the target 120 is within the field of view of the probe. The technique may be used, for example, for transperineal imaging of the prostate, or imaging of a breast tumor. A full three-dimensional image of the target structure 120 and its surrounding anatomy is acquired by continuously varying X, during which the ultrasound images are acquired at a given frame-rate f The frame-rate is primarily limited by ultrasound physics such as the time needed to send and receive a sound wave, but also may be limited by hardware and computer processing constraints. A typical frame-rate is on the order of 20 Hz. As described above, the pixels in each frame at a known X can be attributed to certain coordinates in the room coordinate system, and therefore the two-dimensional slices can be used to a "reconstructed" 3DUS volume in reference to the room coordinate system.

[0036] Prior to radiotherapy, the patient is typically placed on the treatment table according to skin markings. Correction for interfractional motion can then be performed by imaging of the target or a proximal feature and adjusting the patient's position relative to the room coordinate system either by moving the patient, the couch, or both. This corrects for daily setup errors as well as changes in the anatomy since the treatment planning phase, and can be done with any number of known IGRT techniques. In some cases, this process may be accomplished by acquiring a first three-dimensional sweep of the target structure with the mechanized probe. Typically, the patient couch is moved to correct for initial target misalignments, although other strategies can be used such as modifying the treatment plan. However, this initial interfractional correction does not account for motion during the treatment itself (intrafractional motion), as addressed below.

[0037] After initial patient setup, successive temporally-displaced three-dimensional sweeps of the target structure, or more generally of anatomical features related to or near the target structure or other area of interest, can be acquired using the mechanized probe. Displacement of the feature or features in each successive image relative to previous images can then be determined. In one method, a difference in the grayscale between the images is quantified, or, in other cases, a segmentation algorithm is used to recontour the features in each image and the displacement between successive segmentations is determined. One or more treatment parameters may then be modified as the feature changes location or form. These modifications can be, but are not limited to: warning the operator that the feature has moved outside a given tolerance and instructing her to halt treatment and reposition the patient; automatically halting the treatment beam by synchronizing with the linear accelerator if the feature moves past a given tolerance; correcting for the displacement by automatically adjusting the couch, and then turning on the beam again; iteratively adjusting the beam (for example, by moving the couch, the beam, or both) as the linear accelerator is turned off and on; and/or continuously changing the beam shapes or alignment in synchrony with newly updated feature positions. In some cases, no modification is instituted if the feature has not changed or the changes are within allowable tolerances.

[0038] Although successive acquisition of three-dimensional images may be useful, the images are not truly real-time because of the time delay inherent in the "sweep" process. More specifically, the sweeping technique includes varying X during the sweep to acquire enough frames for reconstruction without gaps between the frames, which is limited by the frame-rate of the ultrasound (which itself is limited by ultrasound physics), creating a full three-dimensional reconstruction of the two-dimensional slices into a full three-dimensional ultrasound volume, and calculation of a representation of the feature from the images.

[0039] Strategy 1: Hybrid three-dimensional and two-dimensional temporal tracking.

[0040] One approach to using ultrasound for real-time treatment monitoring uses targeted subsets of three-dimensional ultrasound images ("TUS"), and is illustrated in FIG. 2. In step 200, a full three-dimensional sweep of the patient's anatomy, including the feature to be tracked, is acquired by continuously (or in many small discrete steps) varying X to acquire a full set of two-dimensional slices. The two-dimensional slices are then reconstructed in the room coordinate system, using each tagged X-position of the slices as well as the tracking camera information and calibration information, to form a 3DUS image.

[0041] In step 205, the three-dimensional feature is located in the 3DUS image. This feature is referred to herein as the three-dimensional feature, as it is determined from a three-dimensional image, as opposed to a feature in a two-dimensional slice image, which is referred to as a two-dimensional feature. The location can be determined manually, semi-automatically, or fully automatically. For example, a three-dimensional pattern recognition algorithm may be used, or in the case of imaging a prostate, the user can place one or more "hint points" (i.e., one point in the center or 4-8 points on the prostate edges), to initiate a segmentation algorithm which then determines the full prostate surface in three dimensions. Alternatively, a contour from a planning session can be superimposed onto the three-dimensional image as an initial guess, and potentially warped to better fit the edges in the current image.

[0042] In step 210, the treatment is modified to account for the current position of the feature as found in step 205. This can be accomplished, for example, by moving the couch to align the feature (either manually or automatically) if the feature does not significantly change volume or shape. The beam may be temporarily stopped in some cases to allow for the couch motion. Other strategies may include completely recalculating the treatment plan, or re-shaping the beam apertures to better target the feature.

[0043] In step 215, the X-position of the motorized probe is moved to a fixed position such that the two-dimensional ultrasound slice is optimally aimed at the feature. For example, if the feature is an organ such as the prostate or a breast lumpectomy cavity, which has been segmented, the beam can be aimed at the center of the structure. The optimal slice can alternatively be selected based on feature discernibility statistics extracted from the three-dimensional image at step 205. In step 220, a two-dimensional ultrasound slice is acquired at this fixed X-position, which is targeted at the feature, and in step 225 the two-dimensional feature is located in this ultrasound slice. In step 230, if size, shape and/or locational characteristics of the feature have not changed since step 205, another two-dimensional acquisition and feature location is executed (step 235). The process is then repeated until changes in the two-dimensional feature are identified.

[0044] A change may include, for example, that the feature has moved outside of the two-dimensional plane, which would result in a significant change in the grayscale values in the region of interest surrounding the feature. The change may also be due to movement of the feature within the two-dimensional plane by an amount greater than a pre-determined threshold, or that the feature has changed shape greater than a predetermined threshold. For prostate imaging, the two-dimensional plane is typically aligned with the sagittal plane which can detect anterior/posterior and superior/inferior motions, which are the most common, with left-to-right motions being much less common. An acceptable threshold may be 2 mm, meaning so long as the prostate center moves by less than 2 mm, step 235 is continued. If the displacement is greater than 2 mm (or some other threshold), the process moves to step 240. Another reason to transition to step 240 is if that the two-dimensional prostate area changes significantly from one frame to the next, which implies that the prostate has moved out-of-plane--either to the right or left. In some applications, the location, alignment and/or orientation of the probe may be altered by a robotic arm into which the probe is placed.

[0045] At step 240, a new full 3DUS sweep is initiated, and the process is repeated. The entire flowchart loop is continued until the treatment is completed. Using this methodology, three-dimensional acquisition is triggered if motion is detected based on two-dimensional image acquisitions, which, due to the lower processing demands, allows for real-time monitoring. As such, a full three-dimensional adaptation of the treatment is triggered only if it appears that the feature has moved out of tolerance. In some embodiments, step 240 is initiated not only if the feature has likely moved out of tolerance, but also at regular temporal intervals (e.g., every fifteen seconds) as an extra check.

[0046] This approach may be used in applications when movement has a high likelihood to be in a particular two-dimensional plane chosen by the orientation of the motorized probe. In some variations, when this likelihood is high, modification of the treatment can be added as a step between 225 and 230 such that the two-dimensional tracking info is used to identify treatment modifications in real-time.

[0047] Strategy 2: Hybrid three-dimensional and multiple two-dimensional plane temporal tracking.

[0048] In some applications in which the motion is not likely to be primarily constrained to a particular two-dimensional plane, a hybrid of three-dimensional and multiple two-dimensional plane temporal tracking techniques may be used. Referring to FIG. 3, steps 300, 310 and 315 are the same as 200, 210 and 215 of FIG. 2, respectively. In step 320, a full sweep is acquired by the motorized probe. In step 325, instead of reconstructing the entire three-dimensional image set, only the pixels in two or more tracking planes, preferably being orthogonal or close to orthogonal to each other, are reconstructed. An example is shown in FIG. 4, showing tracking planes 200 and 205 being used for reconstruction.

[0049] The planes are selected so as to intersect with the feature 120. In the case of an organ such as the prostate, the planes preferably intersect through the center of the organ, which can be found from computing the centroid of the segmented structure. As used herein, "reconstructed ultrasound plane" refers to a reconstruction of a voxel set attached to a single plane, as opposed to a complete three-dimensional reconstruction that reconstructs the entire 3D voxel set. While limiting the information available to only certain planes, the computational requirements to produce only the reconstructed ultrasound plane(s) are significantly lower. As such, step 325 saves time and memory space, since it is much quicker and more efficient to reconstruct pixels in planes than an entire voxel space, as well as locate changes in features, thus reducing temporal intervals between successive localizations. In some cases, one of the tracking planes is not a reconstructed plane, but consists of the pixels from an actual two-dimensional ultrasound image from a fixed position (at one particular X location) of the motorized probe, as described above in reference to FIG. 2.

[0050] In other applications, none of the tracking planes are reconstructed, but consist of pixels from multiple two-dimensional ultrasound images obtained from different positions of the motorized probe along the X plane. For example, as shown in FIG. 3A, three plane positions can be selected, at positions X.sub.1 (in the center of the feature), X.sub.2 (to the left of center but still imaging part of the feature) and X.sub.3, (to the right of center but still imaging part of the feature). The probe can then obtain images at each of these positions in rapid succession in any convenient order without need for reconstruction. The X positions relative to the center of the feature can be strategically determined based, for example, on knowledge of the three-dimensional surface of the feature.

[0051] Referring back to FIG. 3, in step 330, the three-dimensional feature is located in the tracking planes, creating a three-dimensional surface, that when intersected by a plane, produces a two-dimensional curve. In one method, the shape and volume of the three-dimensional feature, as found in the first iteration of step 310, is assumed to remain constant. By determining where the two-dimensional curves generated by cutting through the tracking planes best fit the grayscale values yields the desired three-dimensional location of the surface, and thus displacement of the feature relative to its position at the previous point in time. "Best fit" can mean, for example, maximization of the sum of image gradients along the curves.

[0052] Finding the location of the three-dimensional feature from the tracking planes assumes that at least part of the feature is visible in at least two planes, and increasing the number of planes (e.g., from two to three, or even higher), increases the likelihood that the feature is visible. In some cases, the feature may move to a position where it is no longer visible, as determined at step 335. This determination can be made based on a failure of the process at step 330, for example. If, however, the feature remains visible in one or more of the planes, the treatment is modified to account for the new position (step 340) and acquisition of tracking plane data continues (step 345) to make further adjustments. The position of the tracking planes in 325 may be re-centered to account for the displaced feature found in 330. In the case where feature is no longer in the planes, the full 3DUS volume is reconstructed (step 350). This allows for re-centering of the tracking planes for further iterations, and to ensure that the tracking planes intersect the feature being tracked. The process illustrated in FIG. 3 ends once the treatment is complete (step 355). In some variations, path 350 will be taken even if the feature is still intersected by the tracking planes, at fixed time intervals in order to gather full three-dimensional data at various points in time.

[0053] Using this approach, the full three-dimensional displacement can be calculated as long the tracking planes intersect with the feature, thus reducing the number of times the full three-dimensional image needs to be reconstructed. In contrast to the hybrid three-dimensional and two-dimensional temporal tracking approach, the use of two-dimensional planes allows much faster monitoring of the feature because it does not necessitate full sweeps on the structure, even if a full three-dimensional image is reconstructed any time there is a significant change in the feature.

[0054] Strategy 3: Hybrid three-dimensional and low-resolution three-dimensional temporal tracking.

[0055] In another approach, a series of alternating high (full three-dimensional) and low resolution ("targeted"), ultrasound sweeps are used to track the volume and followed with full volume reconstruction. Reducing the resolution allows for faster sweeps, but due to the limited frame-rate of the ultrasound, fewer two-dimensional slices are acquired for the reconstruction. For example, the high resolution three-dimensional images may be acquired at a periodicity of every thirty seconds, whereas the lower resolution images are obtained every 0.1-3 seconds. A new high-resolution image is captured for every period, unless the comparison between the high-resolution and low-resolution images indicated the violation of a displacement threshold, in which case a new high-resolution image is obtained sooner than would have been taken otherwise. In some cases, the displacement may be sufficient to halt treatment altogether and adjust the patient, the treatment device or both.

[0056] Strategy 4: Hybrid three-dimensional and limited ROI three-dimensional temporal tracking.

[0057] FIG. 5 illustrates an alternative approach. Steps 500-515 are the same as steps 200-215 of FIG. 2, respectively. In step 520, the region of interest (ROI) of the three-dimensional volume is reduced such that it encompasses only the feature plus a limited amount of surrounding voxels. This is accomplished by limiting the sector size of the two-dimensional ultrasound frames throughout the sweep, in some cases asymmetrically, as well as the depth of penetration. Referring to FIG. 6 as an example, the full sector size and depth, leading to image extent 600, are reduced to form the image extent 605 which encompasses the feature 610 with a small amount of padding. Reducing sector size and/or depth increases the frame-rate, which allows for faster sweeping motion while still acquiring sufficient slices for high resolution three-dimensional image reconstruction. The range of X values for the sweeping motion can also be limited, which increases the three-dimensional image acquisition as well. Many more temporal three-dimensional images can be acquired, but due to the smaller region, the risk that the feature moves outside of the limited ROI increases.

[0058] Returning to FIG. 5, the limited three-dimensional ROI is reconstructed (step 525), and due to the smaller number of voxels, the speed of the reconstruction process is increased and the memory requirements are reduced as compared to a full three-dimensional reconstruction. In step 530, the location of the three-dimensional feature within the limited ROI is determined. In step 535, if the feature has remained in the limited ROI, step 545 is executed, continuing the tracking of the feature within the limited ROI. The ROI can be re-modified in step 520 to account for any new positioning of the feature. If the feature is no longer within the limited ROI, or getting too close to a boundary, then step 550 allows for a full ROI reconstruction prior to limiting the ROI again for further tracking. In some cases, full ROI sweeps are also acquired at various time intervals. The loop ends when treatment is complete, as represented by step 555.

[0059] Strategy 5: Hybrid three-dimensional and multiple two-dimensional plane temporal tracking with reduced sector size

[0060] In another approach, two tracking planes are used--the first plane is a pure two-dimensional ultrasound at a fixed X position of the motorized probe as described above (the X position can be adjusted to include the tracked feature as its position is updated), and the second plane is a reconstructed plane which is orthogonal or near-orthogonal to the first plane. The ultrasound data in the second plane is acquired with a very small sector size, ideally approaching zero, so that the sweep can be performed quickly. In some variations, the sector size is very small during most of the sweep, is rapidly increased as the sweep crosses through X of the pure ultrasound plane, then reduced quickly again to complete the acquisition of reconstructed plane.

[0061] Locating an anatomical feature according to one or more of the methods descried above can be performed by drawing a structure (either manually, semi-automatically, or automatically) in a first image. This first image can, for example, be an image from a previous planning session, a previous treatment session, or an image obtained for a first interfractional motion correction prior to tracking. In most applications of interest, the structure being tracked does not change shape while the patient is on the table. Thus, the original structure can be moved from image to image, keeping its shape intact, so that it best-fits each image. The amount the structure is moved within an image provides a distance the feature has travelled between each successive image. If image acquisition is fast enough, motion between successive images is small and easier to track. This applies to both two-dimensional contours in planes as well as three-dimensional contours.

[0062] Although the specific applications above utilize a mechanized three-dimensional probe, other types of three-dimensional probes can be used as well. For example, matrix probes, which consist of a two-dimensional surface of piezoelectric elements, can acquire full three-dimensional ultrasound datasets. Bi-planar probes, which can simultaneously acquire two perpendicular slices of two-dimensional ultrasound data, can also be used in some embodiments.

[0063] Referring to FIG. 7, a system 700 for performing the techniques described above includes a register 705 or other volatile or non-volatile storage device that receives image data from the ultrasound imaging probe(s) 710 and/or 715 via a cord or wire, or in some embodiments via wireless communications. The system also includes a processor 720 that, based on the image data, uses the techniques described above to create three-dimensional, time-based images of the region of interest and determine if the feature being treated has moved and/or morphed such that the displacement or changes in shape or size require adjustments to image parameters used to capture subsequent images. The processor calculates any necessary adjustments and, in some cases, provides updated imaging parameters to a controller 730. The controller 730 directs the probe(s) 710 and/or 715 to implement the adjustments either mechanically (e.g., by changing the physical location of the probe within its housing or implementing positional adjustments directly or using a brace, arm or other support device) or electronically (e.g., by altering the power delivered to the probes and/or frequency of the ultrasound energy). As such, the feature remains in the region being imaged throughout the entire imaging and treatment process.

[0064] In some embodiments, a display 735 and an associated user interface may also be included, thus allowing a user to view and manipulate the images and/or treatment parameters. The display 735 and user interface can be provided as one integral unit (as shown) or separate units and may also include one or more user input devices such as a keyboard and/or mouse. The display can be passive (e.g., a "dumb" CRT or LCD screen) or in some cases interactive, facilitating direct user interaction with the images and models through touch-screens (using, for example, the physician's finger as an input device) and/or various other input devices such as a stylus, light pen, or pointer. The display 735 and input devices may be in location different from that of the register 705 and/or processor 720, thus allowing users to receive, view, and manipulate images in remote locations using, for example, wireless devices, handheld personal data assistants, notebook computers, among others.

[0065] In various embodiments the register and/or processor may be provided as either software, hardware, or some combination thereof. For example, the system may be implemented on one or more server-class computers, such as a PC having a CPU board containing one or more processors such as the Pentium or Celeron family of processors manufactured by Intel Corporation of Santa Clara, Calif., the 680x0 and POWER PC family of processors manufactured by Motorola Corporation of Schaumburg, Ill., and/or the ATHLON line of processors manufactured by Advanced Micro Devices, Inc., of Sunnyvale, Calif. The processor may also include a main memory unit for storing programs and/or data relating to the methods described above. The memory may include random access memory (RAM), read only memory (ROM), and/or FLASH memory residing on commonly available hardware such as one or more application specific integrated circuits (ASIC), field programmable gate arrays (FPGA), electrically erasable programmable read-only memories (EEPROM), programmable read-only memories (PROM), programmable logic devices (PLD), or read-only memory devices (ROM). In some embodiments, the programs may be provided using external RAM and/or ROM such as optical disks, magnetic disks, as well as other commonly storage devices.

[0066] For embodiments in which the invention is provided as a software program, the program may be written in any one of a number of high level languages such as FORTRAN, PASCAL, JAVA, C, C++, C#, LISP, PERL, BASIC or any suitable programming language. Additionally, the software can be implemented in an assembly language and/or machine language directed to the microprocessor resident on a target device.

[0067] It will therefore be seen that the foregoing represents an improved method and supporting system for tracking features over the course of a medical procedure. The terms and expressions employed herein are used as terms of description and not of limitation, and there is no intention, in the use of such terms and expressions, of excluding any equivalents of the features shown and described or portions thereof, but it is recognized that various modifications are possible within the scope of the invention claimed. Moreover, although the above-listed text and drawings contain titles headings, it is to be understood that these title and headings do not, and are not intended to limit the present invention, but rather, they serve merely as titles and headings of convenience.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.