Object Coding In A Host-side Processing Device

Sorenson, III; James Christopher

U.S. patent application number 16/578017 was filed with the patent office on 2020-01-09 for object coding in a host-side processing device. This patent application is currently assigned to Amazon Technologies, Inc.. The applicant listed for this patent is Amazon Technologies, Inc.. Invention is credited to James Christopher Sorenson, III.

| Application Number | 20200012684 16/578017 |

| Document ID | / |

| Family ID | 67988797 |

| Filed Date | 2020-01-09 |

View All Diagrams

| United States Patent Application | 20200012684 |

| Kind Code | A1 |

| Sorenson, III; James Christopher | January 9, 2020 |

OBJECT CODING IN A HOST-SIDE PROCESSING DEVICE

Abstract

A host-side network processing device coupled between a storage system and a host machine to reconstruct a data object that has been encoded according to a sharding technique. Reconstructing the data object includes receiving, at the network processing device from the host machine, a request for the data object. The network processing device provides the request for the data object and an in-line network object reconstruction indicator to the storage system. The network processing device receives a location list from the storage system, where the location list includes location identifiers corresponding to respective shards of the requested data object. The network processing device requests at least a subset of the shards of the location list via a plurality of shard requests and reconstructs the data object from the subset. The network processing device provides the reconstructed data object to the host machine.

| Inventors: | Sorenson, III; James Christopher; (Seattle, WA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Amazon Technologies, Inc. Seattle WA |

||||||||||

| Family ID: | 67988797 | ||||||||||

| Appl. No.: | 16/578017 | ||||||||||

| Filed: | September 20, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14589916 | Jan 5, 2015 | 10423670 | ||

| 16578017 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/061 20130101; G06F 16/907 20190101; G06F 3/065 20130101; G06F 3/067 20130101; G06F 16/13 20190101; G06F 3/0617 20130101; G06F 16/196 20190101 |

| International Class: | G06F 16/907 20060101 G06F016/907; G06F 16/13 20060101 G06F016/13; G06F 16/188 20060101 G06F016/188 |

Claims

1.-23. (canceled)

24. A system, comprising: a host-side network interface configured to: receive a data object from a host machine; an object coding device configured to: encode the data object into a plurality of shards according to a sharding technique; determine a plurality of storage locations to store the plurality of shards; and a network-side network interface configured to: send respective ones of the plurality of shards to respective ones of the plurality of storage locations.

25. The system of claim 24, further comprising: a storage request processing device configured to: determine that the data object includes a request to store the data object; and request, from a storage system, a storage location list; and receive, from the storage system, the storage location list indicating the plurality of storage locations.

26. The system of claim 25, further comprising a credential management device configured to: in response to a determination that the data object includes the request, retrieve one or more credentials associated with the storage system; and encrypt the data object according to the one or more credentials.

27. The system of claim 24, wherein the host-side network interface is further configured to: receive a request to retrieve the data object, and wherein the object coding device is further configured to: receive at least a subset of the plurality of shards from the plurality of storage locations; and reconstruct the data object from the at least a subset of the plurality of shards.

28. The system of claim 27, wherein the object coding device is further configured to: receive, from a particular storage location of the plurality of storage locations, object reconstruction information, wherein the data object is reconstructed according to the object reconstruction information.

29. The system of claim 24, wherein to encode the data object into the plurality of shards, the object coding device is further configured to: determine an encoding matrix for the data object; apply the data object to the encoding matrix to generate an encoded data object; and divide the encoded data object into the plurality of shards.

30. The system of claim 24, wherein the object coding device is further configured to: generate a plurality of storage requests for the plurality of shards, wherein each of the plurality of storage requests indicates a different storage location of the plurality of storage locations and a different shard of the plurality of shards, wherein the respective ones of the plurality of shards are sent to the respective ones of the plurality of storage locations according to the plurality of storage requests.

31. A method, comprising: receiving, at an object coding device, a data object from a host machine; encoding, by the object coding device, the data object into a plurality of shards according to a sharding technique; determining, at the object coding device, a plurality of storage locations to store the plurality of shards; and sending, from the object coding device, respective ones of the plurality of shards to respective ones of the plurality of storage locations.

32. The method of claim 31, further comprising: determining that the data object includes a request to store the data object; and requesting, from a storage system, a storage location list; and receiving, from the storage system, the storage location list indicating the plurality of storage locations.

33. The method of claim 32, further comprising: in response to a determination that the data object includes the request, retrieving one or more credentials associated with the storage system; and encrypting the data object according to the one or more credentials.

34. The method of claim 31, receiving a request to retrieve the data object, and receiving at least a subset of the plurality of shards from the plurality of storage locations; and reconstructing the data object from the at least a subset of the plurality of shards.

35. The method of claim 34, further comprising: receiving, from a particular storage location of the plurality of storage locations, object reconstruction information, wherein the data object is reconstructed according to the object reconstruction information.

36. The method of claim 31, wherein encoding the data object into the plurality of shards comprises: determining an encoding matrix for the data object; applying the data object to the encoding matrix to generate an encoded data object; and dividing the encoded data object into the plurality of shards.

37. The method of claim 31, further comprising: generating a plurality of storage requests for the plurality of shards, wherein each of the plurality of storage requests indicates a different storage location of the plurality of storage locations and a different shard of the plurality of shards, wherein the respective ones of the plurality of shards are sent to the respective ones of the plurality of storage locations according to the plurality of storage requests.

38. One or more non-transitory, computer-readable storage media storing instructions that, when executed on or across one or more processors, cause the one or more processors to: encode a data object, received from a host machine, into a plurality of shards according to a sharding technique; determine a plurality of storage locations to store the plurality of shards; and send respective ones of the plurality of shards to respective ones of the plurality of storage locations.

39. The one or more non-transitory, computer-readable storage media of claim 38, further comprising instructions that cause the one or more processors to: determine that the data object includes a request to store the data object; and request, from a storage system, a storage location list; and receive, from the storage system, the storage location list indicating the plurality of storage locations.

40. The one or more non-transitory, computer-readable storage media of claim 39, further comprising instructions that cause the one or more processors to: in response to a determination that the data object includes the request, retrieve one or more credentials associated with the storage system; and encrypt the data object according to the one or more credentials.

41. The one or more non-transitory, computer-readable storage media of claim 38, wherein the data object is reconstructed according to object reconstruction information received from a particular storage location of the plurality of storage locations.

42. The one or more non-transitory, computer-readable storage media of claim 38, further comprising instructions that cause the one or more processors to: determine an encoding matrix for the data object; apply the data object to the encoding matrix to generate an encoded data object; and divide the encoded data object into the plurality of shards.

43. The one or more non-transitory, computer-readable storage media of claim 38, further comprising instructions that cause the one or more processors to: generate a plurality of storage requests for the plurality of shards, wherein each of the plurality of storage requests indicates a different storage location of the plurality of storage locations and a different shard of the plurality of shards, wherein the respective ones of the plurality of shards are sent to the respective ones of the plurality of storage locations according to the plurality of storage requests.

Description

[0001] This application is a continuation of U.S. patent application Ser. No. 14/589,916, filed Jan. 5, 2015, which is hereby incorporated by reference herein in its entirety.

BACKGROUND

[0002] Modern computing applications require storage of large amounts of data. Over time, the need to storage and retrieve large amounts of data continues to increase. Other common concerns are the ability to store data durably and efficiently. Durability typically refers to the ability to recover data in the event of a failure. Data durability typically requires some form of redundancy in how the data is stored. For example, data may be mirrored to two different storage devices such that an exact replica copy of the data is stored on both storage devices. If one of the storage devices fails, the data can still be retrieved from the other storage device. Some systems desire even stronger levels of durability that involve more complex redundancy encoding scheme that allow the data to still be retrieved even after multiple device failures. Techniques to provide data durability typically incur some amount of overhead, both in terms of the amount of additional storage required and computational overhead, and thus are at odds with the desire to be able to store and retrieve large amounts of data efficiently.

[0003] Web-based applications, cloud computing, and other network and Internet based technologies are areas frequently requiring storage of large amounts of data with needs for durability and efficiency. The conventional Web model allows clients to access Web resources (e.g., applications, services and data) via an HTTP client program, such as a Web browser. A technology referred to as Web services has been developed to provide programmatic access to Web resources. Web services may be used to provide programmatic access to Web resources including technology platforms (e.g., applications and services) and data (e.g., product catalogs and other databases) hosted on Web-connected computers such as Web server systems via a Web service interface. Generally speaking, a Web service interface may be configured to provide a standard, cross-platform API (Application Programming Interface) for communication between a client requesting some service to be performed and the service provider. In some implementations, a Web service interface may be configured to support the exchange of documents or messages including information describing the service request and response to that request. Such documents, or messages, may be exchanged using standardized Web protocols, such as the Hypertext Transfer Protocol (HTTP), for example, and may be formatted in a platform-independent data format, such as eXtensible Markup Language (XML), for example.

[0004] One example of a service that is provided to clients via a Web service interface is a virtual computing service. The advent of virtualization technologies for commodity hardware has provided benefits with respect to managing large-scale computing resources for many clients with diverse needs, allowing various computing resources to be efficiently and securely shared by multiple clients. For example, a virtual computing service may allow a single physical computing machine to be shared among multiple users by providing each user with one or more virtual machines hosted by the single physical computing machine, with each such virtual machine being a software simulation acting as a distinct logical computing system that provides users with the illusion that they are the sole operators and administrators of a given hardware computing resource, while also providing application isolation and security among the various virtual machines. Furthermore, some virtualization technologies are capable of providing virtual resources that span two or more physical resources, such as a single virtual machine with multiple virtual processors that spans multiple distinct physical computing systems. With virtualization, the single physical computing device can create, maintain or delete virtual machines in a dynamic manner. In turn, users can request computer resources from a data center and be provided with varying numbers of virtual machine resources on an "as needed" basis or at least on an "as requested" basis. In some systems, virtualized computing resources can be used to implement virtual desktops.

[0005] Another example of a service that is provided to clients via a Web service interface is a data storage service. A typical data storage service (which may be referred to herein as an "object-redundant storage system") may receive requests to store data objects on behalf of storage service clients, and may store those data objects using redundancy in order to provide a high level of durability for the stored data. For example, such a data storage service may replicate the objects it stores across different storage nodes to increase the likelihood that object data will survive the failure of any given storage node. In such systems, until a certain minimum number of replicas (e.g., two or three) of an object have been successfully written the write operation may not be considered to be completed. However, for a given object, the actual number of valid replicas (or instances) of that object might at some points in time be less than the target number, for a variety of reasons, and a replacement process may be invoked to correct the situation. For example, if a previously valid replica becomes inaccessible due to a failure of the device on which it was stored, the failed device may be replaced in the system, and another instance of the replica may be written to the replacement device. In some systems, each replica need not correspond to an exact copy of the object data. For example, in some object-redundant storage systems, an object may be divided into a number of portions or "shards" according to a redundant encoding scheme (such as a parity, error correction code or other scheme), such that the object data may be recreated from fewer than all of the generated portions. Typically, object-redundant storage systems may be also seek to improve performance characteristics, such as latency, throughput or availability.

BRIEF DESCRIPTION OF THE DRAWINGS

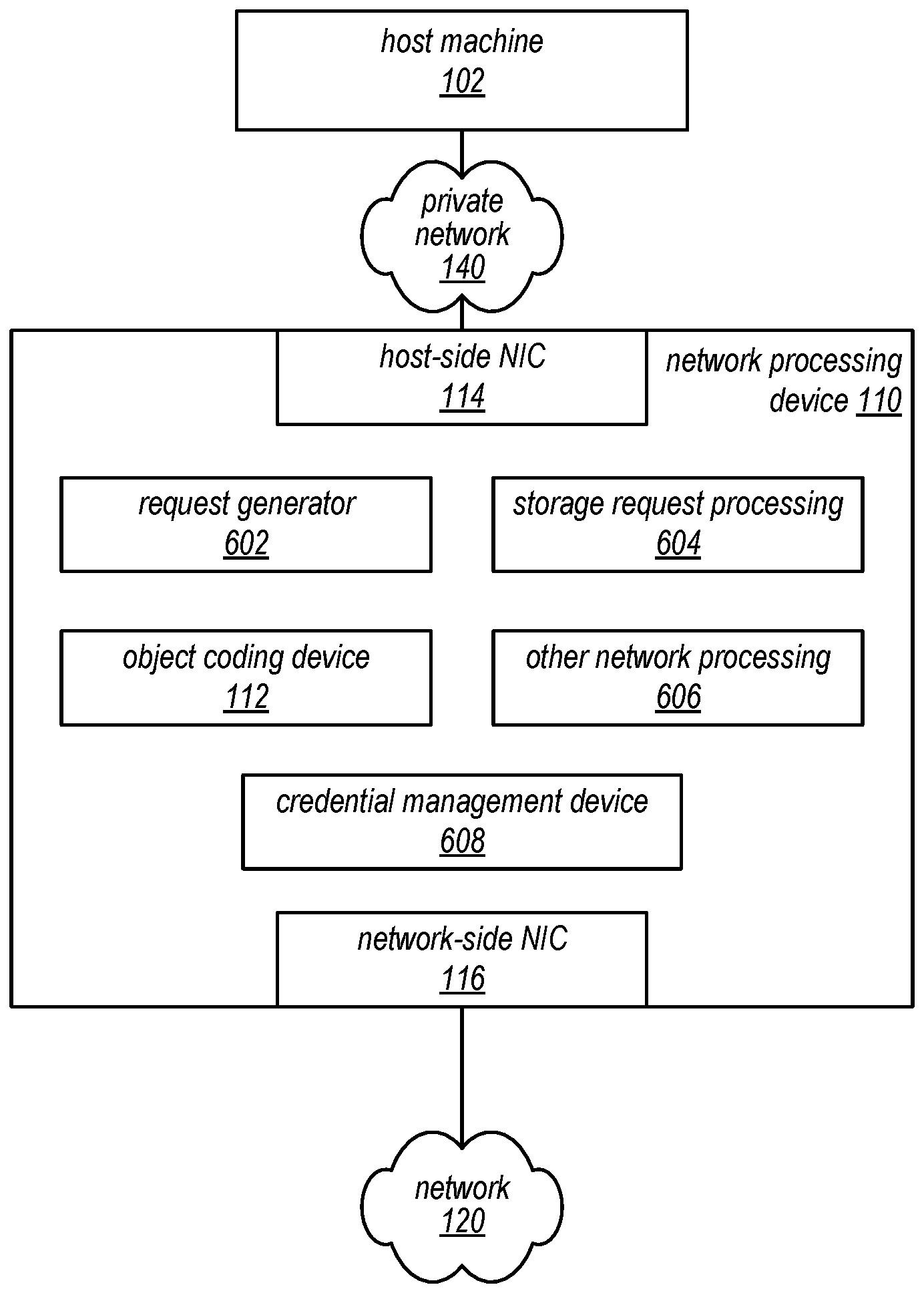

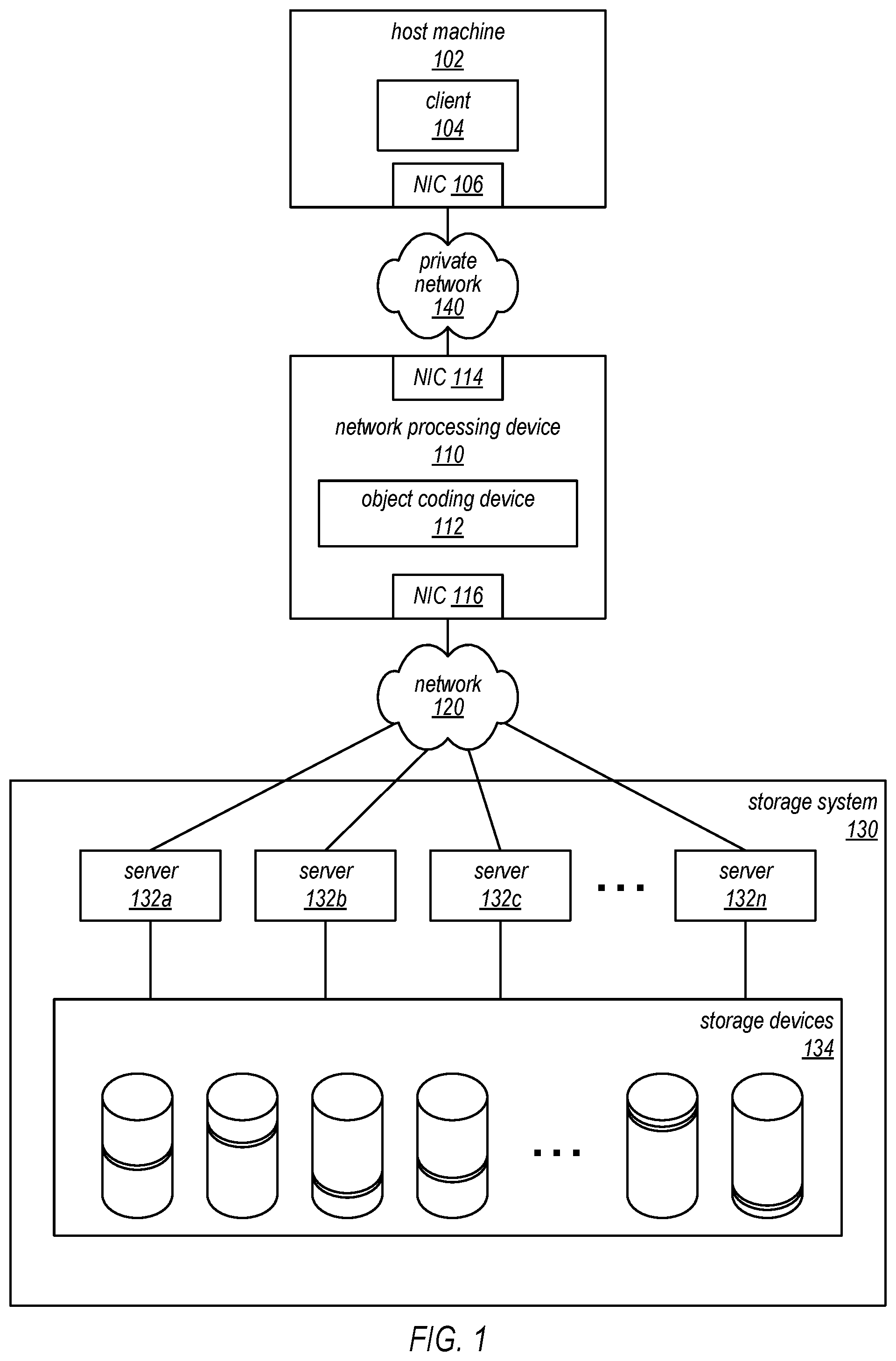

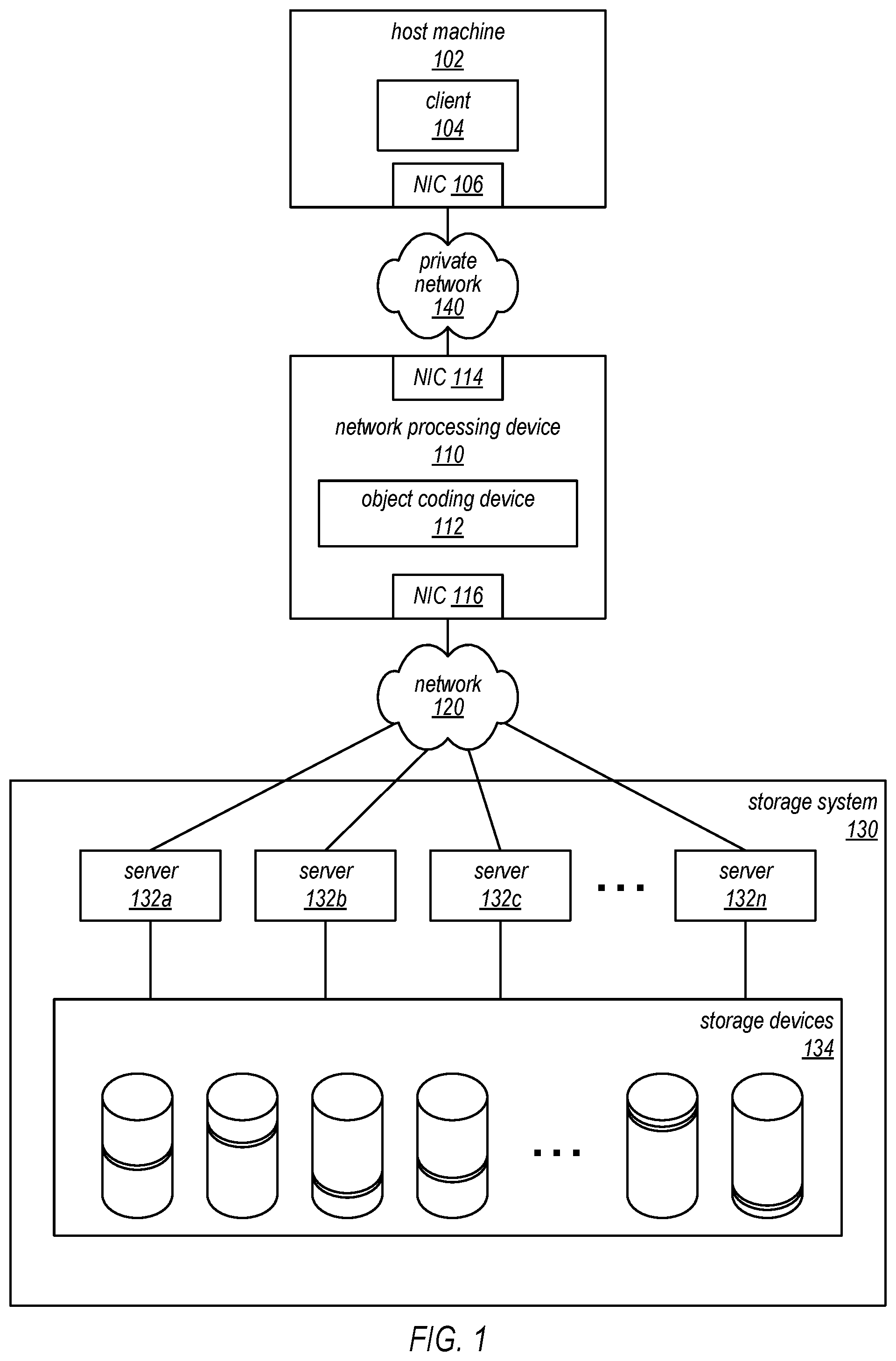

[0006] FIG. 1 is a high-level block diagram illustrating operations of an example system where a host-side network processing device can reconstruct a data object.

[0007] FIG. 2 is a block diagram illustrating an example of a reconstruction operation implemented by a host-side network processing device, according to at least some embodiments.

[0008] FIG. 3A is a block diagram illustrating an example data object request, according to some embodiments.

[0009] FIG. 3B is a block diagram illustrating an example data object response, according to some embodiments.

[0010] FIG. 4 is a block diagram illustrating a service system architecture that may be configured to perform a reconstruction operation, according to some embodiments.

[0011] FIG. 5 is a block diagram illustrating an example service system architecture, according to some embodiments, including a plurality of network localities.

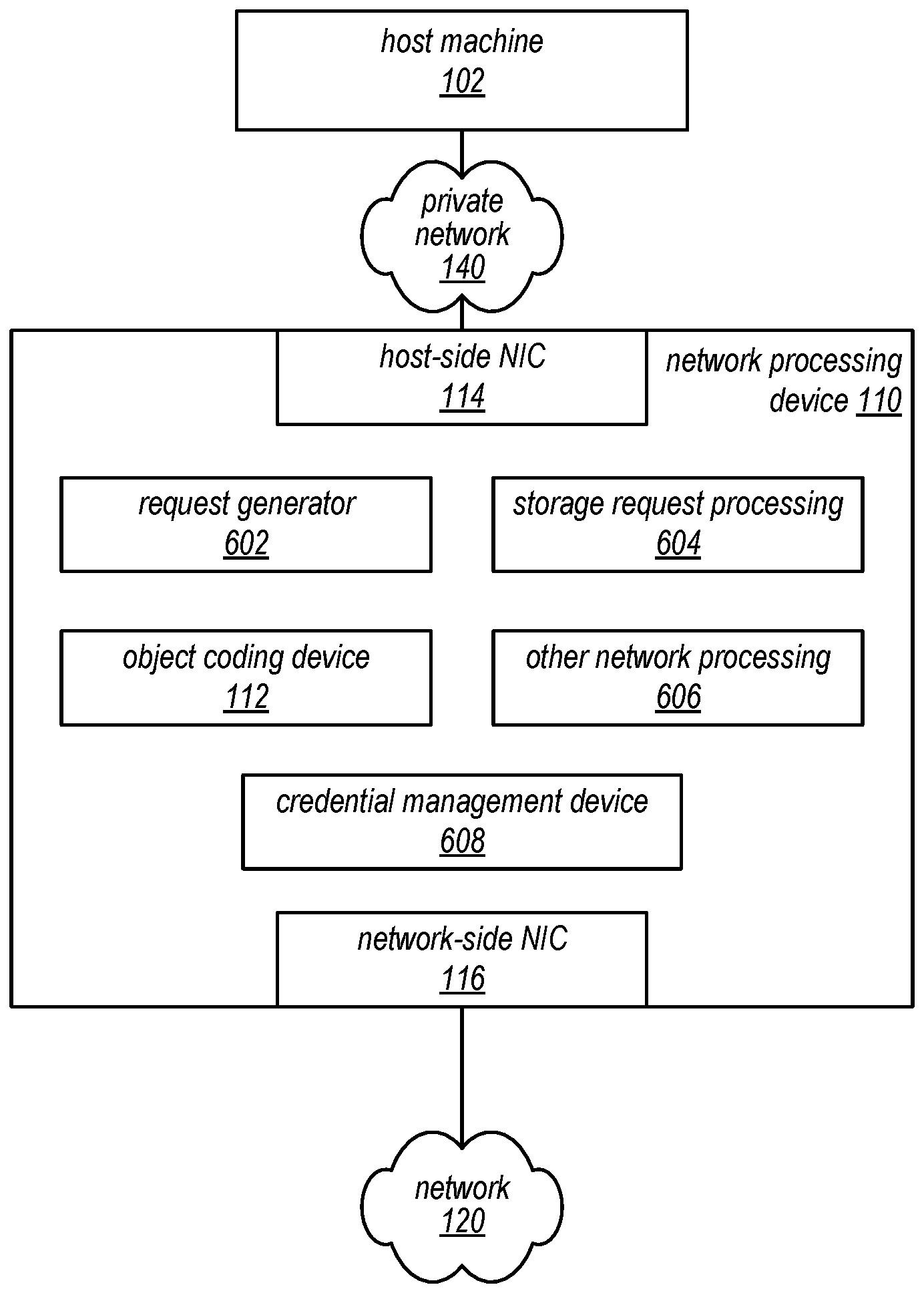

[0012] FIG. 6 is a block diagram illustrating an example network processing device, according to some embodiments.

[0013] FIG. 7 is a flow diagram illustrating one embodiment of a method for providing a data object that has been stored by a storage service.

[0014] FIG. 8 is a flow diagram illustrating one embodiment of a method for retrieving and reconstructing a data object that has been stored by a storage service.

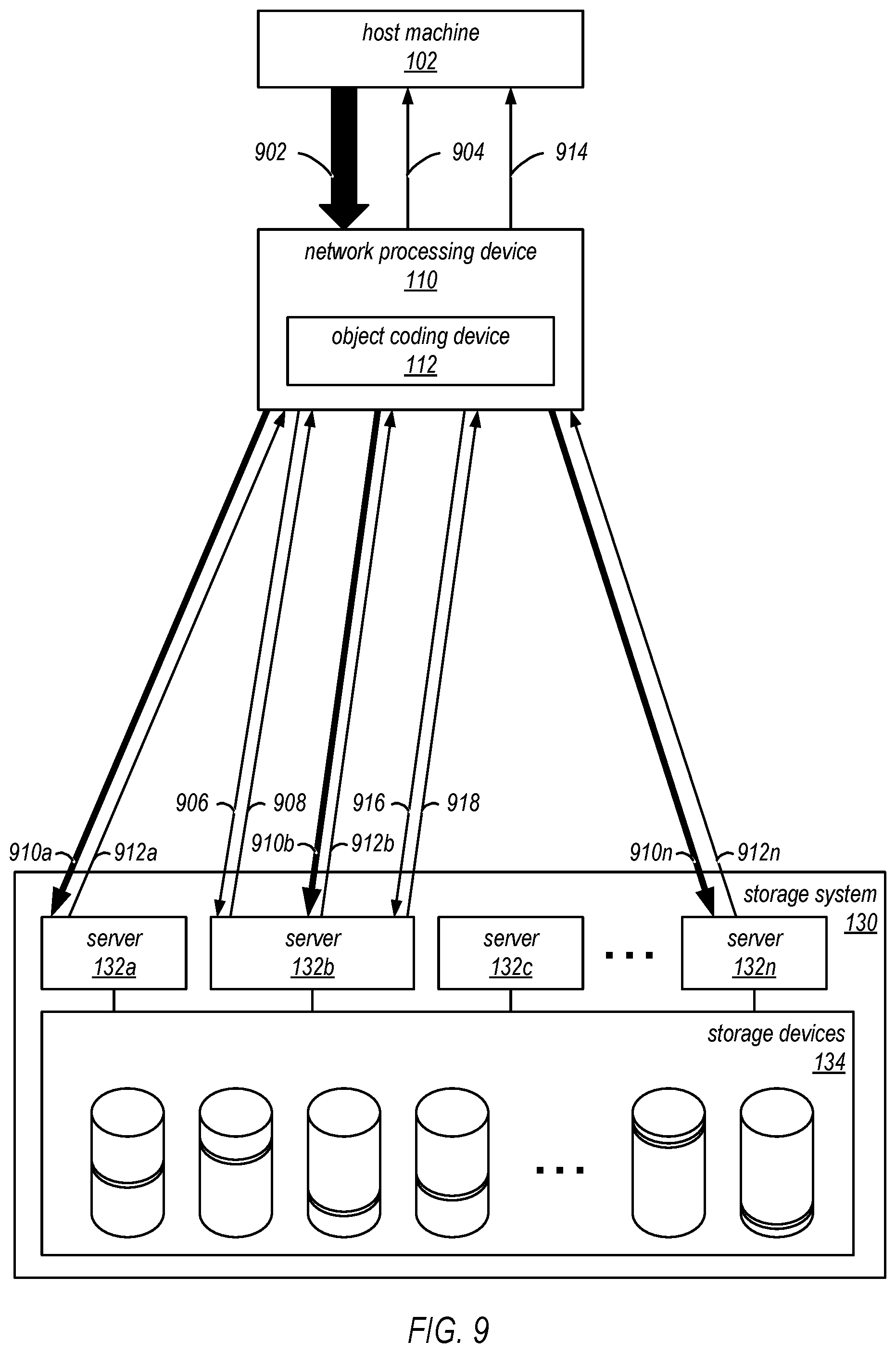

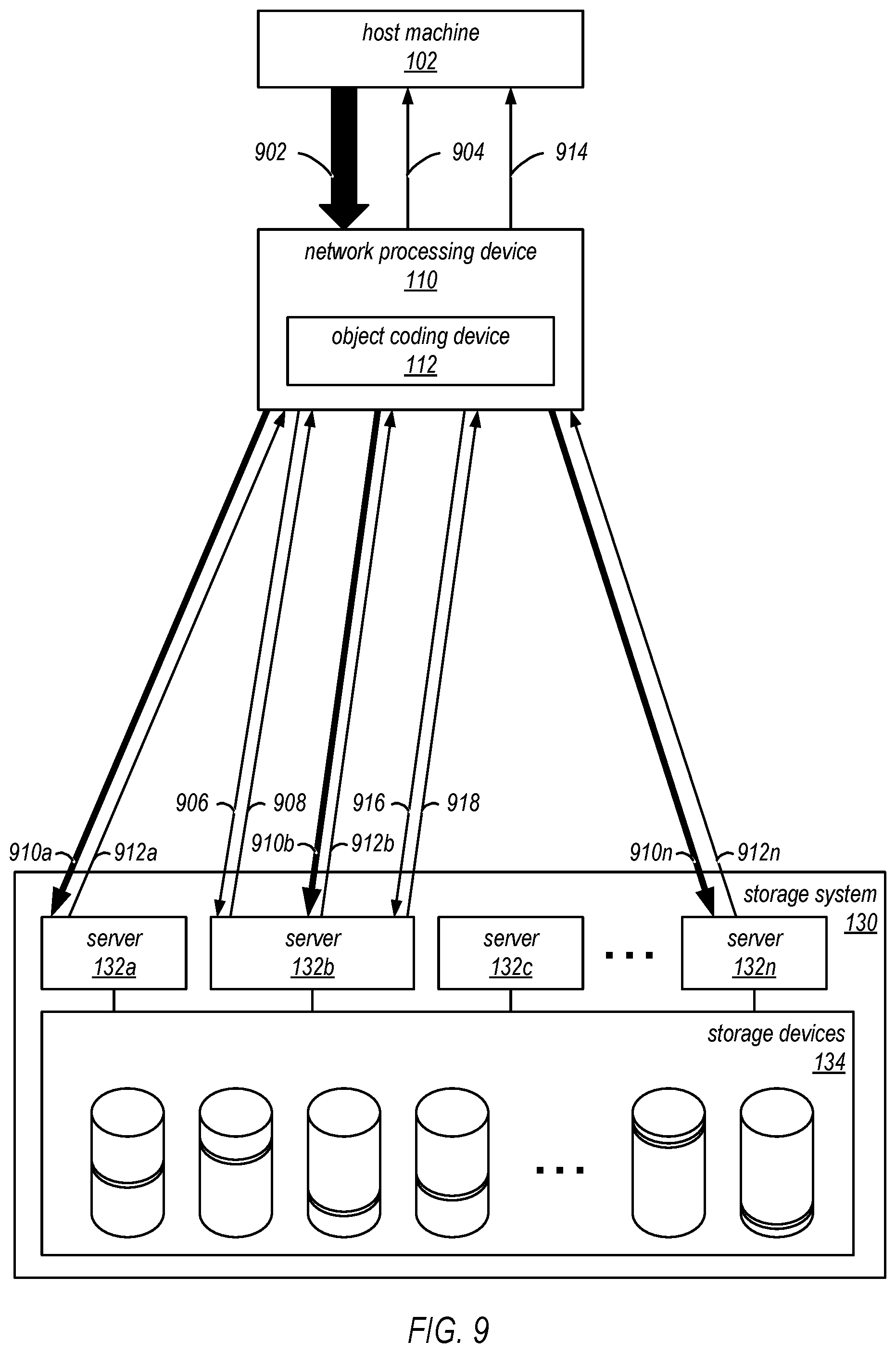

[0015] FIG. 9 is a block diagram illustrating an example of a storage operation, including an encoding operation implemented by a host-side network processing device, according to at least some embodiments.

[0016] FIG. 10A is a block diagram illustrating an example data object storage request, according to some embodiments.

[0017] FIG. 10B is a block diagram illustrating an example data object storage response, according to some embodiments.

[0018] FIG. 11 is a flow diagram illustrating one embodiment of a method for encoding a data object for storage in a storage service.

[0019] FIG. 12 is a block diagram illustrating one embodiment of a computer system configured to implement at least a portion of a network processing device, as described herein.

[0020] While embodiments are described herein by way of example for several embodiments and illustrative drawings, those skilled in the art will recognize that embodiments are not limited to the embodiments or drawings described. It should be understood, that the drawings and detailed description thereto are not intended to limit embodiments to the particular form disclosed, but on the contrary, the intention is to cover all modifications, equivalents and alternatives falling within the spirit and scope as defined by the appended claims. The headings used herein are for organizational purposes only and are not meant to be used to limit the scope of the description or the claims. As used throughout this application, the word "may" is used in a permissive sense (i.e., meaning having the potential to), rather than the mandatory sense (i.e., meaning must). Similarly, the words "include", "including", and "includes" mean including, but not limited to.

DETAILED DESCRIPTION

[0021] Various embodiments of systems and methods for providing low latency connections (or communication channels) to workspaces (e.g., virtual desktop instances) in a system that provides virtualized computing resources to clients are described herein. A computing system providing virtual computing services may generate and manage remote computing sessions between client computing devices and virtual desktop instances (workspaces) hosted on the service provider's network. In some embodiments, a service provider's networked environment may include multiple virtual desktop instances, each of which is hosted on a respective one of multiple computing nodes that collectively implement a virtual desktop (workspaces) service. These computing nodes may be located within data centers in multiple network localities (e.g., in different buildings, cities, countries, or regions). For example, each network locality may have network functionalities, cooling capabilities, and power systems which are failure-isolated from each other network locality. In some embodiments, this networked environment may include a virtual computing system configured to perform computing operations for one or more clients and/or a storage service configured to store data for one or more clients. As described in more detail herein, this storage service and a management component of the virtual desktop service may interoperate with each other within a virtual private cloud of the virtual desktop service, and may communicate with each other over a virtual private network.

[0022] In some embodiments, the client of the virtual computing services may desire to store data generated using the virtual computing services in a storage service. In some cases, the client may be more concerned about data storage costs than they are about performance-related parameters such as latency, throughput or availability. For example, the client may be willing to trade away some or all of these performance constraints to achieve a more cost-effective storage solution for archival storage, where the data should be stored with some durably, and will be stored for a long time, but it is not going to be accessed very often (if at all). The systems and methods described herein may provide a storage service that is well suited for such storage models, and for other storage models in which the storage service does not store the only instances of the stored data. The storage services described herein as may in some embodiments provide very low cost storage with granular failure detection, and may be offered to storage service clients as a web-based service. These services may in some embodiments be used to build highly durable storage solutions on top of lower cost infrastructures (e.g., the storage systems described herein). Note that in the descriptions that follow, the terms "storage service" and "storage system" may be used somewhat interchangeably to refer to the functionality provided by a storage service or to an underlying computing system (and/or various components thereof) that is configured to implement that functionality.

[0023] In some embodiments, the systems described herein may encode or encrypt a data object into a plurality of "shards" using an encoding matrix according to a sharding technique. Each shard may be stored on a different data storage device (e.g., to provide additional failure protection) of the storage service. At a future time, some or all of the shards may be used to reconstruct the original data object using a decoding matrix according to the sharding technique. In some embodiments, the encoding and decoding operations are performed at a host-side in-line network processing device, as opposed to performing the encoding and decoding operations at a server of the storage system. In other embodiments, the encoding and decoding operations are performed at another device, such as a server of the storage system.

[0024] FIG. 1 is a high-level block diagram illustrating operations of an example system where a host-side network processing device can encode or reconstruct a data object. In this example, the system includes a host machine 102 configured to request a data object and to request storage of a host data object, a network processing device 110 (e.g., a host-side network processing device) configured to reconstruct the data object from a coded data object and to encode the host data object according to a sharding technique, a storage system 130 configured to store a plurality of coded data objects, a private network 140 that links the network processing device 110 to the host machine 102, and a network 120 that links the network processing device 110 to the storage system 130. In particular, FIG. 1 illustrates a data decoding system of the network processing device 110. In some embodiments, the host machine 102 includes a client computing instance 104 and a network interface controller (NIC) 106. The network processing device 110 may include a host-side NIC 114, an object coding device 112, and a network-side NIC 116. In some embodiments, the network processing device 110 is part of the host machine 102 and the private network 140 is an internal connection (e.g., one or more wires) of the host machine 102. For example, the network processing device 110 may be connected to a Peripheral Component Interconnect (PCI) express connection of the host machine 102. In another embodiment, the network processing device 110 may be located within a different expansion slot of the host machine 102 or may be coupled to a rack switch of the host machine 102. The storage system 130 may include one or more servers 132a-n and a plurality of storage devices 134. In some embodiments, the storage system 130 is a distributed system, where multiple storage devices of the storage devices 134 are configured to store different portions of a particular coded data object.

[0025] In some embodiments, the host machine 102 is a computing device that implements at least a portion of a virtual computing service. The host machine 102 may include a client computing instance 104 that performs computing processes at the host machine 102 based on requests from a customer. In a particular embodiment, the client computing instance 104 may request (e.g., based on a customer request or based on a process requirement) a data object stored at the storage system 130. The client computing instance 104 may cause the host machine 102 to send, via the private network 140, a data packet including a request for the data object using the NIC 106. As further discussed below with reference to FIG. 6, in some embodiments, the request is encrypted according to an encryption protocol (e.g., a transmission control protocol (TCP) or a secure sockets layer protocol (SSL)). As further discussed below with reference to FIG. 6, in other embodiments, the request is unencrypted.

[0026] In some embodiments, the network processing device 110 (e.g., a host-side network processing device) is configured to receive, via the private network 140, the data packet including the request for the data object from the host machine 102 using the host-side NIC 114. Accordingly, the host-side NIC 114 may be coupled to the private network 140. The network processing device 110 may be configured to recognize the request for the data object. In some embodiments, in response to recognizing the request for the data object, the network processing device 110 is configured to insert an in-line object reconstruction indicator into the request for the data object. The in-line object reconstruction indicator may indicate to the storage system 130 that the network processing device 110 can reconstruct the data object from a subset of a plurality of shards corresponding to the data object. The network processing device 110 may be configured to transmit, via the network 120, using the network-side NIC 116, the request for the data object, including the in-line object reconstruction indicator, to the storage system 130. Accordingly, the network-side NIC 116 may be coupled to the network 120. In other embodiments, the network processing device 110 is configured to transmit the in-line object reconstruction indicator to the storage system 130 separately from the request for the data object and to indicate that the in-line object reconstruction is associated with the request for the data object.

[0027] In some embodiments, as further described with respect to FIGS. 2, 3A, 3B, and 8, the network processing device 110 receives, in response to the request for the data object, object reconstruction information and a location list (e.g., a storage location list) including a plurality of location identifiers. Each location identifier of the location list may correspond to an address of a shard of the requested data object. In a particular embodiment, the location list includes addresses for each of the shards of the requested data object. In another embodiment, the location list includes identifiers that are used by the storage system 130 to determine addresses for each of the shards of the requested data object. In a different embodiment, the location list includes a subset of the shards of the requested data object. In some embodiments, the network processing device 110 requests, from the storage system 130, the shards corresponding to all of the locations of the location list. In other embodiments, the network processing device 110 requests, from the storage system 130, a subset of the shards corresponding to the locations of the location list. If the network processing device 110 receives more shards than needed to reconstruct the data object, the network processing device 110 may be configured to discard excess shards. In another embodiment, the network processing device 110 receives the shards from the storage system 130 (without receiving the location list). When the network processing device 110 has received a sufficient number of shards to reconstruct the requested data object, the object coding device 112 may be configured to reconstruct the data object from the received subset of shards. In other embodiments, the network processing device 110 receives the requested data object (e.g., a reconstructed data object) from the storage system 130 (as opposed to receiving the location list or the shards). In some embodiments, the network processing device 110, via the host-side NIC 114, is configured to provide the reconstructed data object to the host machine 102.

[0028] In some embodiments, the private network 140 and/or the network 120 are internal networks of a service provider. In various embodiments, the private network 140 and/or the network 120 may encompass any suitable combination of networking hardware and protocols necessary to establish communications between the network processing device 110 and the host machine 102 and/or the network processing device 110 and the storage system 130, respectively. For example, the private network 140 and/or the network 120 may generally encompass the various telecommunications networks and service providers that collectively implement the Internet. The private network 140 and/or the network 120 may also include private networks such as local area networks (LANs) or wide area networks (WANs) as well as public or private wireless networks. In some embodiments, the private network 140 and/or the network 120 may be wires that directly connect between the network processing device 110 and the host machine 102 and/or the network processing device 110 and the storage system 130, respectively. In a particular embodiment, the network 120 includes a plurality of paths between the network-side NIC 116 and each of the plurality of servers 132a-n of the storage system 130. In some embodiments, portions of one or more paths between the network-side NIC 116 and different servers (e.g., the server 132a and the server 132b) overlap. In a particular embodiment, the network 120 further connects the network-side NIC 116 and each of the plurality of servers 132a-n to other devices which may be associated with the virtual computing service, the storage service, or another service of the service provider. In some embodiments, data packets transmitted between the network processing device 110 and the storage system 130 are transmitted according to a TCP protocol or a SSL protocol. In other embodiments, the data packets are transmitted according to a user datagram protocol (UDP).

[0029] As explained above, the storage system 130 may include a plurality of storage devices 134 configured to store different portions of a coded data object. The coded data object may be coded according to a sharding technique such as an erasure coding technique or according to another coding technique. In some embodiments, each respective data object is stored as a respective plurality of shards. Each respective data object can be reconstructed from a particular number of shards. In some embodiments, the particular number of shards is fewer than a total number of shards for that data object. Each shard for a respective data object may be stored on a different one of the plurality of storage devices 134 than any other shard of the plurality of shards for the respective data object. In some embodiments, as discussed further with reference to FIGS. 9-11, the shards may be received, based upon a request from the host machine 102, at the storage system 130 from the network processing device 110 via the plurality of servers 132a-n. Accordingly, the plurality of servers 132a-n of the storage system 130 may be coupled to the network 120. In other embodiments, the shards may be received at the storage system 130 from one or more other devices.

[0030] In some embodiments, a given server (e.g., the server 132a) of the plurality of servers 132a-n, responsive to detecting an in-line network object reconstruction indicator associated with a request for a data object, determines whether a corresponding network processing device (e.g., the network processing device 110) should reconstruct the data object. In a particular embodiment, the determination is based on, for example, whether a size of the data object exceeds a size threshold. In a particular embodiment, when the given server determines that the network processing device 110 should reconstruct the data object, the given server generates a location list including a plurality of location identifiers (e.g., addresses) corresponding to the plurality of storage devices 134. In some embodiments, the given server does not include (e.g., by not adding or by removing) location identifiers corresponding to some shards of the plurality of shards, where the location list includes location identifiers corresponding to a sufficient number of shards to reconstruct the data object. In some embodiments, the location identifiers are added to the list based on a determination of approximate network transmission times, as further described with respect to FIG. 5. In some embodiments, the given server returns the location list to the corresponding network processing device in response to the data request without providing the data object (reconstructed or encoded) or the shards. The given server may also return the object reconstruction information (e.g., a decode matrix) to the corresponding network processing device. In some embodiments, any data (e.g., the location list, the object reconstruction information, or other data) provided from the storage system 130 to the corresponding network processing device is formatted such that the corresponding network processing device is unaware of which server of the plurality of servers 132a-n handled the request for the data object.

[0031] In some embodiments, the storage system 130 is configured to, responsive to a plurality of requests (e.g., corresponding to the plurality of location identifiers of the location list) for a subset of the plurality of shards from the network processing device 110, for each requested shard, provide the requested shard to the network processing device 110 via the plurality of servers 132a-n. In a particular embodiment, at least two shards of the subset of the plurality of shards are provided to the network processing device 110 via different servers of the plurality of servers 132a-n. For example, a particular shard may be transmitted via the server 132a and a different shard may be transmitted via the server 132b. In another embodiment, the storage system 130 is configured to, responsive to a request for the data object, provide the plurality of shards to the network processing device 110 via a particular server (e.g., the server 132a) of the plurality of servers 132a-n. In some embodiments, the data object (e.g., the data object, the shards, or other data) provided from the storage system 130 to the network processing device 110 is formatted such that the network processing device 110 is unaware of which server of the plurality of servers 132a-n handled the request for the data object. Although FIG. 1 illustrates the network processing device 110 transmitting signals to the plurality of servers 132a-n directly, in some embodiments, the network processing device 110 sends signals to one or more load balancers, which forward the signals to the plurality of servers 132a-n. Similarly, Although FIG. 1 illustrates the network processing device 110 receiving signals from the plurality of servers 132a-n directly, in some embodiments, the plurality of servers 132a-n send signals to one or more load balancers, which forward the signals to the network processing device 110. Although the examples provided herein may be particularly applicable to a network-based distributed storage system, in other embodiments, any form of distributed storage system may be used.

[0032] FIG. 2 illustrates an example of a reconstruction operation implemented by a host-side network processing device, according to at least some embodiments. With reference to FIG. 2, an illustrative response to a data object request by a host machine will be described. In a particular embodiment, an object reconstruction operation is performed by the network processing device 110 of FIG. 1. In this example, for clarity purposes, portions of the system of FIG. 1. are not illustrated, though they may be present and may operate as described previously. For example, messages may be passed from the host machine 102 using the NIC 106. Similarly, signals between the network processing device 110 and the storage system 130 may be transmitted via the network 120.

[0033] In some embodiments, the host machine 102 may transmit a data packet 202 to the network processing device 110. The data packet 202 may include a request for a data object. In a particular embodiment, the data packet 202 is encrypted (e.g., because the host machine 102 believes it is sending the data packet over the network 120 to the storage system 130). In the particular embodiment, the network processing device 110 may decrypt the data packet and detect the request for the data object. In the particular embodiment, the network processing device 110 may send a response packet 204 (e.g., a handshake response) to the host machine 102, acknowledging the request for the data object. In some embodiments, the response packet 204 may be formatted such that the host machine 102 determines that the response packet 204 is received from the storage system 130. In a different particular embodiment, the data packet 202 is not encrypted (e.g., the data packet 202 is transmitted in a "clear-text" format). In such embodiments, the host machine 102 may send the data packet 202 to the network processing device 110 unencrypted because the host machine 102 expects the network processing device 110 to encrypt a request for the data object (e.g., the host machine 102 is aware of the network processing device 110). Accordingly, the network processing device 110 may be configured to encrypt a message sent to the storage system 130.

[0034] As described above with reference to FIG. 1, in some embodiments, the network processing device 110 forms a data object request 206 using contents of the data packet 202 (e.g., a data object identifier and/or credentials associated with the host machine 102) and an in-line network object reconstruction indicator. The network processing device 110 may send the data object request 206 to the storage system 130. In some embodiments, the network processing device 110 does not address the data object request 206 to a particular server of the plurality of servers 132a-n. For example, the storage system 130 may be addressable via a main address (e.g., a main uniform resource locator (URL) or a main uniform resource indicator (URI)) for the storage system 130. In the example, the storage system 130 may determine which server of the plurality of servers 132a-n receives the data object request 206 (e.g., based on a load balancing scheme). In other embodiments, the network processing device 110 addresses a specific server (e.g., the server 132b).

[0035] In the illustrated embodiment, the data object request 206 is received by the server 132b. In the illustrated embodiment, the server 132b determines that the host machine 102 is authorized to receive the requested data object. In other embodiments, in response to a particular server determining that a particular host machine is not authorized to receive a particular data object, the server may provide an error message to the particular host machine, ignore the request for the particular data object, or perform another action according to an error protocol.

[0036] In the illustrated embodiment, after authorizing the data object request 206, the server 132b detects the in-line object reconstruction indicator, which informs the server 132b that the network processing device 110 is capable of reconstructing the data object, where the data object is coded according to a sharding technique and stored at the plurality of storage devices 134 as a plurality of shards. The server 132b may determine whether the network processing device 110 should reconstruct the data object (e.g., based on whether a size of the data object exceeds a size threshold or based on a network load associated with the storage system 130). In the illustrated embodiment, the server 132b determines that the network processing device 110 should reconstruct the data object and generates a location list corresponding to the data object. The location list may include a plurality of location identifiers (e.g., storage locations or identifiers which can be recognized as storage locations by the storage system 130), where each location identifier corresponds to a different respective shard of a plurality of shards. Each location identifier may point to a particular memory location of a respective storage device of the plurality of storage devices 134. In some embodiments, each location identifier is encoded such that the network processing device 110 is unable to decode the plurality of location identifiers. Generating the location list may include looking up the shard locations of the requested data object from a database or another memory location. The server 132b may also retrieve or generate object reconstruction information corresponding to the data object (e.g., a decode matrix). In the illustrated embodiment, the server 132b generates a data object response 208 that includes the location list and the object reconstruction information and returns the data object response 208 to the network processing device 110.

[0037] In the illustrated embodiment, in response to receiving the data object response 208, the network processing device 110 sends a plurality of shard requests 210a-n corresponding to a subset of the plurality of shards. The subset of the plurality of shards may include all shards of the plurality of shards or less than all shards of the plurality of shards. The subset of the plurality of shards may include a sufficient number of shards to reconstruct the data object. Each shard request of the plurality of shard requests 210a-n may correspond to a different respective location identifier from the location list.

[0038] As shown in the illustrated embodiment, the plurality of shard requests 210a-n may be received at the storage system 130 at different servers of the plurality of servers 132a-n (e.g., the server 132a and the server 132b). In some embodiments, a particular server may receive more than one shard request. In some embodiments, not every server of the plurality of servers 132a-n receives a shard request of the plurality of shard requests 210a-n. For example, in the illustrated embodiment, the server 132c does not receive a shard request. In the illustrated embodiment, the server 132b, which provided the data object response 208 to the network processing device 110, receives the shard request 210b. However, in other embodiments, a given server which provides a data object response may not receive a shard request. In some embodiments, each server that received a shard request may decode a corresponding location identifier included in the shard request to determine a storage location of the requested shard. Each server of the plurality of servers 132a-n that received a shard request may retrieve the requested shard from a requested storage location (e.g., from a corresponding storage device of the plurality of storage devices 134) independently of the other servers of the plurality of servers 132a-n. Further, each server of the plurality of servers 132a-n that received a shard request may transmit the retrieved shard to the network processing device 110 as one of a plurality of shard responses 212a-n. In some embodiments, each server of the plurality of servers 132a-n that received a shard request retrieves object reconstruction corresponding to the requested shard in response to the shard request. In such embodiments, the object reconstruction information is transmitted to the network processing device 110 as part of the corresponding shard response of the plurality of shard responses 212a-n or as a separate transmission.

[0039] As shown in the illustrated embodiment, the plurality of shard responses 212a-n may be received by the network processing device 110. In some embodiments, the plurality of shard responses 212a-n are received at the network processing device 110 via different network paths. The plurality of shard responses 212a-n may include a sufficient number of shards to reconstruct the requested data object. The object coding device 112, using the received shards and the object reconstruction information, may reconstruct the data object 214. The network processing device 110 may transmit the data object 214 to the host machine 102. In some embodiments, the network processing device 110 may encode the data object 214 according to an encryption protocol prior to providing the data object 214 to the host machine 102.

[0040] Although FIG. 2 illustrates the network processing device 110 transmitting signals (e.g., the data object request 206) to the plurality of servers 132a-n directly, in some embodiments, the network processing device 110 sends signals to one or more load balancers, which forward the signals to the plurality of servers 132a-n. Similarly, Although FIG. 2 illustrates the network processing device 110 receiving signals (e.g., the data object response 208) from the plurality of servers 132a-n directly, in some embodiments, the plurality of servers 132a-n send signals to one or more load balancer, which forward the signals to the network processing device 110.

[0041] FIG. 3A illustrates an example of the data object request 206 described by FIG. 2. In some embodiments, the data object request 206 may include an object identifier 302, credentials 304, and an in-line object reconstruction indicator 306. In other embodiments, some or all of the illustrated components of the data object request 206 may be excluded from the data object request 206. For example, the in-line object reconstruction indicator 306 may be provided to the storage system 130 separately from the object identifier 302. Similarly, the credentials 304 may not be required for the requested data object, and thus, the credentials 304 may be omitted. The object identifier 302 may identify the data object requested by the host machine 102. Accordingly, the object identifier 302 may be used by the storage system 130 to determine which data object to provide to the host machine 102 (e.g., via the network processing device 110). The credentials 304 may include security credentials of the host machine 102, the network processing device 110, or both. The credentials 304 may be used by the storage system 130 to determine whether the host machine 102 is authorized to receive the requested data object. The in-line object reconstruction indicator 306 may indicate to the storage system 130 that the network processing device 110 is capable of reconstructing the data object from a plurality of shards.

[0042] FIG. 3B illustrates an example of the data object response 208 described by FIG. 2. In some embodiments, the data object response 208 may include a location list 310 and object reconstruction information 314. In other embodiments, some or all of the illustrated components of the data object response 208 may be excluded from the data object response 208. For example, the object reconstruction information 314 may be provided to the network processing device 110 separately from the location list 310. In the illustrated embodiment, the location list 310 includes a plurality of location identifiers 312a-n, where each location identifier corresponds to a shard of the requested data object. For example, the location list 310 may include a list of URIs or URLs, where each URI or URL addresses a particular shard and specifies a particular server of the plurality of servers 132a-n to provide the shard. Additionally, each location identifier 1012a-n of the location list 310 may be encrypted such that the network processing device 110 cannot decrypt the location identifiers 312a-n (e.g., the plurality of servers 132a-n will decrypt the location identifiers 312a-n to determine the designated storage locations). In a particular embodiment, each location identifier of the plurality of location identifiers 312a-n specifies a different memory location corresponding to a different storage device of the plurality of storage devices 134. The object reconstruction information 314 may be used by the network processing device 110 to reconstruct the data object from a plurality of shards. For example, the object reconstruction information 314 may be a decode matrix or an encode matrix that can be converted into a decode matrix.

[0043] One embodiment of a service system architecture, including the system described by FIGS. 1-3B, that may be configured to perform a reconstruction operation, is shown in FIG. 4. In the illustrated embodiment, a number of clients (shown as clients 402a-n) may be configured to interact with a provider network 406 via a network 404. The provider network 406 may include a plurality of systems including a virtual computation system 408 and the storage system 130 of FIG. 1. In the illustrated embodiment, the virtual computation system includes the host machine 102 coupled to the network processing device 110 and a host machine 412. In the illustrated embodiment, the host machine 102 includes a plurality of client virtual machine (VM) instances 410. In a particular embodiment, a particular client VM instance of the plurality of client VM instances 410 corresponds to the client computing instance 104 of FIG. 1. In the illustrated embodiment, the host machine 412 includes a plurality of client VM instances 414. Each client VM instance of the plurality of client VM instances 410 and the plurality of client VM instances 414 may respectively correspond to different clients of the clients 402a-n (e.g., one client VM instance may correspond to the client 402a and another client VM instance may correspond to the client 402b). Alternatively, as an example, at least some of the client VM instances of the plurality of client VM instances 410 and the plurality of client VM instances 414 may correspond to the client 402a. As described above with reference to FIG. 1, the storage system 130 includes a plurality of servers 132a-n and a plurality of storage devices 134. It is noted that where one or more instances of a given component may exist, reference to that component herein may be made in either the singular or the plural. However, usage of either form is not intended to preclude the other.

[0044] In some embodiments, the host machine 102, the network processing device 110, and the storage system 130 are configured to perform the reconstruction operation described above with reference to FIGS. 1 and 2. Accordingly, in response to a plurality of shard requests (e.g., the plurality of shard requests 210a-n described above with reference to FIG. 2) corresponding to a data object request from a particular client VM instance of the plurality of client VM instances 410, the storage system 130 may provide to the network processing device 110 a plurality of shard responses 416a-n. In some embodiments, the plurality of shard responses 416a-n correspond to the plurality of shard responses 212a-n. In the illustrated embodiment, the server 132b provides more than one shard response of the plurality of shard responses 416a-n (i.e., the shard response 416a and the shard response 416b). In the illustrated embodiment, the server 132a and the server 132c do not provide a shard response of the plurality of shard responses 416a-n to the network processing device 110.

[0045] In some embodiments, the host machine 412 and the storage system 130 are configured to perform the reconstruction operation described above with reference to FIGS. 1 and 2. In the illustrated embodiment, the storage system 130 does not receive an in-line object reconstruction indicator with a data object request from a particular client VM instance of the plurality of client VM instances 414 on the host machine 412. Accordingly, the storage system 130 may determine that there is no network processing device (e.g., the network processing device 110) between the host machine 412 and the storage system 130 that can reconstruct a data object according to a sharding technique. In some embodiments, the storage system 130 reconstructs a requested data object from a corresponding plurality of shards using one or more of the plurality of servers 132a-n. In the illustrated embodiment, the server 132c is used to reconstruct the data object and transmits the reconstructed data object to the host machine 412 as part of a data object transmission 418.

[0046] In some embodiments, the particular server of the plurality of servers 132a-n used to provide a particular shard response is determined based upon a locality of the particular server to a particular storage device of the plurality of storage devices 134 that stores a shard to be transmitted in the particular shard response, as further described with reference to FIG. 5. Similarly, in some embodiments, the particular server of the plurality of servers 132a-n used to provide a particular data object transmission is determined based upon a locality of the particular server to one or more particular storage device of the plurality of storage devices 134 that stores shards to be used to reconstruct the data object, as further described with reference to FIG. 5. In some embodiments, the particular server of the plurality of servers 132a-n used to provide a particular shard response or a particular data object transmission is determined based upon a work load or a network load corresponding to the particular server. For example, the server 132c may not be selected to provide one of the shard responses because the server 132c has been selected to provide the data object transmission 418, which may consume a more computation and/or network resources as compared to a single shard response of the plurality of shard responses 416a-n.

[0047] In various embodiments, the components illustrated in FIG. 4 may be implemented directly within computer hardware, as instructions directly or indirectly executable by computer hardware (e.g., a microprocessor, a computer system, or one or more hardware processors), or using a combination of these techniques. For example, the components of FIG. 4 may be implemented by a distributed system including a number of computing nodes (or simply, nodes), such as the computer system embodiment shown in FIG. 12 and discussed below. In various embodiments, the functionality of a given service system component (e.g., a service system architecture component) may be implemented by a particular node or distributed across several nodes. In some embodiments, a given node may implement the functionality of more than one service system component (e.g., more than one service system architecture component).

[0048] Generally speaking, the clients 402a-n may encompass any type of client configurable to submit web services requests to the provider network 406 via the network 404, including requests for storage services and requests for virtual computing services. For example, a given client 402a may include a suitable version of a web browser, or a plugin module or other type of code module configured to execute as an extension to or within an execution environment provided by a web browser. Alternatively, a client 402b may encompass an application such as a database application, media application, office application or any other application that may make use of persistent storage resources. In some embodiments, such an application may include sufficient protocol support (e.g., for a suitable version of Hypertext Transfer Protocol (HTTP)) for generating and processing web services requests without necessarily implementing full browser support for all types of web-based data. That is, the client 402b may be an application configured to interact directly with the systems of the provider network 406 (e.g., the virtual computation system 408, the storage system 130, another system (not shown), or any combination thereof). As described herein, the client 402b may be configured to generate web services requests according to a Representational State Transfer (REST)-style web services architecture, a document- or message-based web services architecture, or another suitable web services architecture.

[0049] In other embodiments, a client 402n may be configured to provide access to web services-based storage to other applications in a manner that is transparent to those applications. For example, the client 402n may be configured to integrate with an operating system or file system to provide storage in accordance with a suitable variant of the storage model described herein. However, the operating system or file system may present a different storage interface to applications, such as a conventional file system hierarchy of files, directories and/or folders. In such an embodiment, applications may not need to be modified to make use of the network processing service model of FIG. 1. Instead, the details of interfacing to the provider network 406 may be coordinated by the client 402n and the operating system or file system on behalf of applications executing within the operating system environment.

[0050] The clients 402a-n may convey web services requests to and receive responses from the provider network 406 via the network 404. In various embodiments, the network 404 may encompass any suitable combination of networking hardware and protocols necessary to establish web-based communications between the clients 402a-n and the systems of the provider network 406. For example, the network 404 may generally encompass the various telecommunications networks and service providers that collectively implement the Internet. The network 404 may also include private networks such as local area networks (LANs) or wide area networks (WANs) as well as public or private wireless networks. For example, both a given client 402a and the provider network 406 may be respectively provisioned within enterprises having their own internal networks. In such an embodiment, the network 404 may include the hardware (e.g., modems, routers, switches, load balancers, proxy servers, etc.) and software (e.g., protocol stacks, accounting software, firewall/security software, etc.) necessary to establish a networking link between a given client 402a and the Internet as well as between the Internet and the provider network 406. It is noted that in some embodiments, the clients 402a-n may communicate with the systems of the provider network 406 using a private network rather than the public Internet. For example, the clients 402a-n may be provisioned within the same enterprise as the provider network 406. In such a case, the clients 402a-n may communicate with the systems of the provider network 406 entirely through a private network (e.g., a LAN or WAN that may use Internet-based communication protocols but which is not publicly accessible).

[0051] Generally speaking, the provider network 406 may be configured to implement one or more service endpoints configured to receive and process web services requests, such as requests to decode data objects or requests for virtual computing services. For example, the provider network 406 may include hardware and/or software configured to implement a particular endpoint, such that an HTTP-based web services request directed to that endpoint is properly received and processed. In one embodiment, the provider network 406 may be implemented as a server system configured to receive web services requests from the clients 402a-n and to forward them to components of a host machine 102 to provide a virtual computation service. Alternatively, the web services requests may be forwarded to the storage system 130 to provide an object-redundant storage service. In other embodiments, the provider network 406 may be configured as a number of distinct systems (e.g., in a cluster topology) implementing load balancing and other request management features configured to dynamically manage large-scale web services request processing loads. In various embodiments, the provider network 406 may be configured to support REST-style or document-based (e.g., SOAP-based) types of web services requests.

[0052] In addition to functioning as an addressable endpoint for clients' web services requests, in some embodiments, the provider network 406 may implement various client management features. For example, the provider network 406 may coordinate the metering and accounting of client usage of web services, including storage resources, such as by tracking the identities of requesting clients 402a-n, the number and/or frequency of client requests, the size of objects stored or retrieved on behalf of the clients 402a-n, overall storage bandwidth used by the clients 402a-n, class of storage requested by the clients 402a-n, or any other measurable client usage parameter. The provider network 406 may also implement financial accounting and billing systems, or may maintain a database of usage data that may be queried and processed by external systems for reporting and billing of client usage activity. In certain embodiments, the provider network 406 may be configured to collect, monitor and/or aggregate a variety of storage service system operational metrics, such as metrics reflecting the rates and types of requests received from the clients 402a-n, bandwidth utilized by such requests, system processing latency for such requests, system component utilization (e.g., network bandwidth and/or storage utilization within the storage service system), rates and types of errors resulting from requests, characteristics of requested objects (e.g., size, data type, etc.), or any other suitable metrics. In some embodiments such metrics may be used by system administrators to tune and maintain system components, while in other embodiments such metrics (or relevant portions of such metrics) may be exposed to the clients 402a-n to enable such clients to monitor their usage of services provided by the provider network 406 (or the underlying systems that implement those services).

[0053] In some embodiments, the provider network 406 may also implement user authentication and access control procedures. For example, for a given web services request to access a particular data object (e.g., an encoded/encrypted data object) stored at the storage system 130, the provider network 406 may be configured to ascertain whether the client 402 associated with the request is authorized to access the particular data object. The provider network 406 may determine such authorization by, for example, evaluating an identity, password or other credential against credentials associated with the particular data object, or evaluating the requested access to the particular data object against an access control list for the particular data object. For example, if a client 402 does not have sufficient credentials to retrieve the particular object, the provider network 406 may reject the corresponding web services request, for example by returning a response to the requesting client 402 indicating an error condition. Various access control policies may be stored as records or lists of access control information by the host machine 102, the host machine 412, the network processing device 110, the storage system 130, and/or other virtual computing services (not shown).

[0054] It is also noted that while provider network 406 may represent the primary interface through which clients 402a-n may access the features of a storage system that implements the virtual computation system 408 and the storage system 130, the provider network 406 need not represent the sole interface to such features. For example, an alternate API that may be distinct from a web services interface may be used to allow clients internal to the enterprise providing the storage service system to bypass the provider network 406. In some cases, the accounting and/or credentialing services of the provider network 406 may be unnecessary for internal clients such as administrative clients or between service components within the same enterprise.

[0055] Note that while several examples included herein describe a virtual computation system and an object-redundant storage service as Web-based services exposed to clients, in other embodiments, the virtual computation service, the object-redundant storage service, or both, may be internal to a computing system or an enterprise system and may not be exposed to external clients (e.g., users or client applications). For example, a client may store objects to a primary storage service (a storage service other than an object-redundant storage service), and the primary storage service or an operating system (or another system component) may cause the object (or a replica or shard thereof) to be stored to an object-redundant storage service instead of or in addition to storing the object to the primary storage service. In another example, the operating system, a backup process, or another system component may back up a primary storage system (e.g., periodically, automatically or in response to a request to do so) to an object-redundant storage system. In these examples, the "client" of the storage system 130 may be another application internal to a web services platform (such as the virtual computation system 408).

[0056] FIG. 5 is a block diagram illustrating an example service system architecture, according to some embodiments, including a plurality of network localities. In the illustrated embodiment, the host machine 102, the network processing device 110, the plurality of servers 132a-n, and the plurality of storage devices 134 (e.g., the plurality of storage devices 134a-c illustrated in FIG. 5) of FIG. 1 and the host machine 412 of FIG. 4 are divided among a plurality of network localities 502a-c. In the illustrated embodiment, the plurality of network localities 502a-c are connected by a network 510. In some embodiments, the plurality of network localities 502a-c may correspond to a portion of or to all of the provider network 406 of FIG. 4. In some embodiments, the network 510 may correspond to a portion of or to all of the network 120 of FIG. 1, the private network 140 of FIG. 1, the network 404, or any combination thereof.

[0057] In some embodiments, a network locality is a set of resources for storing and/or providing access to at least some of the shards, where the set of resources shares a common infrastructure that is isolated from other network localities. For example, each network locality of the plurality of network localities 502a-c may correspond to a different building (e.g., data center), city, country, or geographic region. In some embodiments, each network locality of the plurality of network localities 502a-c has network functionalities, cooling capabilities, power systems, or a combination thereof which are failure-isolated from each other network locality of the plurality of network localities 502a-c. In some embodiments, network transmission times within a particular network locality (e.g., the network locality 502a) may be faster, as compared to network transmission times using the network 510. At least some network localities may include machines that host virtual compute instances such as a client instance that requests a shard. Additionally, the storage system 130 of FIG. 1 may operate based on a division of requested shards among the plurality of network localities. To illustrate, when less than all of the stored shards of a data object are needed to reconstruct the data object, the storage system 130 (e.g., using one or more servers of the plurality of servers 132a-n) may select, for the location list 310 of FIG. 3, a subset of the shards to send to the network processing device 110 based on a plurality of approximate network transmission times, where each respective approximate network transmission time represents a transmission time between a respective storage device of the plurality of storage devices 134 storing the shards and the network processing device 110.

[0058] For example, in the illustrated embodiment, three requested shards are stored by the storage devices 134a in the network locality 502a, two requested shards are stored by the storage devices 134b in the network locality 502b, and two requested shards are stored by the storage devices 134c in the network locality 502c. Further, in the illustrated embodiment, the host machine 102 and the network processing device 110 are located in the network locality 502a. As an example, in the illustrated embodiment, the network locality 502b may have a shorter approximate network transmission time to the network locality 502a, as compared to an approximate network transmission time between the network locality 502c and the network locality 502a. Accordingly, in the example, if five shards are needed to reconstruct the data object, the storage system 130 may select the shards stored at the storage devices 134a and the storage devices 134b based on the approximate network transmission times. Thus, in some embodiments, shards corresponding to location identifiers included in the location list 310 have a shorter network transmission time than at least some shards corresponding to storage locations not included in the location list 310. Although the example is specific to approximate network transmission time, other schemes may additionally or alternatively be used to select the shards, such as latency, network load, or resource availability.

[0059] Although FIG. 5 illustrates the network processing device 110 communicating with the plurality of servers 132a-n directly, in some embodiments, the communications are performed via one or more load balancers (not shown). Similarly, other communications (e.g., between the server 132a of the network locality 502a and the server 132b of the network locality 502b) may be performed via one or more load balancers.

[0060] FIG. 6 is a block diagram illustrating an example network processing device, according to some embodiments. In the illustrated embodiment, the network processing device 110 includes the object coding device 112, the host-side network interface controller (NIC) 114, and the network-side NIC 116 described above with reference to FIG. 1. The network processing device 110 may further include a request generator 602, storage request processing device 604, other network processing devices 606, and a credential management device 608. In this example, the network processing device illustrated in FIG. 6 is described as being the network processing device 110 of FIG. 1. However, the network processing device 110 may include other components not described herein or may not include some components described herein.

[0061] As explained above, the object coding device 112 may receive shards of a requested data object and may reconstruct the data object from at least a subset of the received shards. As further described below, the object coding device 112 may also encode a host data object received from the host machine 102 into a plurality of shards (host shards) according to a sharding technique. As explained above, the host-side NIC 114 may be coupled to the private network 140 which is also coupled to the host machine 102. Accordingly, in some embodiments, the host-side NIC 114 may receive data packets from the host machine 102 and may transmit data packets to the host machine 102. As explained above, the network-side NIC 116 may be coupled to the network 120. Accordingly, in some embodiments, the network-side NIC 116 may transmit data packets to the storage system 130 and may receive data packets from the storage system 130. The other network processing devices 606 may enable the network processing device 110 to perform additional networking duties.

[0062] In some embodiments, the storage request processing device 604 analyzes data packets received from the host machine 102 to determine whether the network processing device 110 should perform a coding operation on the received data packets. For example, the storage request processing device 604 may receive the data packet 202 of FIG. 2 and determine that the data packet 202 includes a request for a data object. As another example, as further described below with reference to FIGS. 9-11, the storage request processing device 604 may be configured to identify a request to store a data object according to a sharding technique.

[0063] In some embodiments, the request generator 602, in response to the storage request processing device 604 determining that the network processing device 110 should perform a coding operation on received data packets, generates requests to the storage system 130. For example, in response to the storage request processing device 604 determining that a received packet includes a request for a data object, the request generator 602 may generate a data object request (e.g., the data object request 206 of FIG. 2) that requests the data object and indicates that the network processing device 110 is capable of reconstructing the data object (e.g., by including the in-line object reconstruction indicator 306 of FIG. 3A). Further, in response to receiving the location list 310, the request generator 602 may generate a plurality of shard requests (e.g., the plurality of shard requests 210a-n of FIG. 2) requesting shards corresponding to location identifiers listed in the location list. As further described below with reference to FIGS. 9-11, the request generator 602 may also generate an object storage request that requests a plurality of storage locations of the storage system 130 to store a plurality of host shards. Further, the request generator 602 may generate a plurality of storage requests that each include a location identifier (e.g., corresponding to a particular memory location of a particular storage device of the plurality of storage devices 134) for storage of a corresponding shard. In some embodiments, the request generator 602 addresses at least two of the plurality of storage requests to different servers of the plurality of servers 132a-n (e.g., addresses one request to the server 132a and addresses another request to the server 132b). In some embodiments, the request generator 602 sends all of the plurality of storage requests to one or more load balancers which send the plurality of storage requests to the plurality of servers 132a-n.

[0064] In some embodiments, the credential management device 608 manages credentials such that the network processing device 110 is able to correctly and securely manage communications between the host machine 102 and the storage system 130. In some embodiments, data packets transmitted between the host machine 102 and the network processing device 110 and data packets transmitted between the network processing device 110 and the storage system 130 are transmitted according to a TCP protocol or a SSL protocol. In other embodiments, the data packets are transmitted according to a user datagram protocol (UDP).

[0065] For example, in some embodiments, when a data packet from the host machine 102 is unencrypted, in response to the storage request processing device 604 determining that the data packet includes a request for a data object, the credential management device 608 is configured to retrieve one or more credentials associated with the storage system 130 (e.g., credentials that identify an encryption scheme the storage system 130 is able to decrypt) and to encrypt the associated data object request (e.g., generated by the request generator 602) according to the retrieved credentials.

[0066] As a different example, in some embodiments, when a data packet from the host machine 102 is encrypted, prior to the storage request processing device 604 determining that the data packet includes a request for a data object, the credential management device 608 is configured to decrypt the data packet (e.g., using one or more credentials associated with the host machine 102). In the different example, after the data object request is generated, the credential management device may encrypt the data object request according to retrieved credentials. The credential management device 608 may also initiate sending signals (e.g., the response packet 204) to the host machine 102 according to a handshake protocol in response to the storage request processing device 604 determining that the data packet includes the request for the data object.

[0067] As described above, in one example of a storage service, a client may request a data object from storage in a storage system that includes a plurality of data storage devices. The data object may be stored as a plurality of shards. One or more servers may retrieve the shards of the data object from storage. The data object may be decoded prior to being provided to the client. One embodiment of a method for providing a data object that has been stored by a storage service is illustrated by the flow diagram of FIG. 7. Although FIG. 7 illustrates operations being performed in a particular order, in other embodiments, some operations may be performed in other orders.