Discovery And Sharing Of Photos Between Devices

Duggal; Sachin Dev ; et al.

U.S. patent application number 16/564731 was filed with the patent office on 2019-12-26 for discovery and sharing of photos between devices. This patent application is currently assigned to SHOTO, INC.. The applicant listed for this patent is SHOTO, INC.. Invention is credited to Sachin Dev Duggal, Mark Rolston.

| Application Number | 20190391997 16/564731 |

| Document ID | / |

| Family ID | 52428638 |

| Filed Date | 2019-12-26 |

View All Diagrams

| United States Patent Application | 20190391997 |

| Kind Code | A1 |

| Duggal; Sachin Dev ; et al. | December 26, 2019 |

DISCOVERY AND SHARING OF PHOTOS BETWEEN DEVICES

Abstract

Described are systems, media, and methods for discovery of media relevant to a user and sharing the media with the user.

| Inventors: | Duggal; Sachin Dev; (San Francisco, CA) ; Rolston; Mark; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SHOTO, INC. San Francisco CA |

||||||||||

| Family ID: | 52428638 | ||||||||||

| Appl. No.: | 16/564731 | ||||||||||

| Filed: | September 9, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15364159 | Nov 29, 2016 | 10409858 | ||

| 16564731 | ||||

| 14451331 | Aug 4, 2014 | 9542422 | ||

| 15364159 | ||||

| 61861922 | Aug 2, 2013 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 16/5866 20190101; G06F 16/51 20190101; G06F 16/2455 20190101 |

| International Class: | G06F 16/58 20060101 G06F016/58; G06F 16/51 20060101 G06F016/51; G06F 16/2455 20060101 G06F016/2455 |

Claims

1. A method for grouping a plurality of images from a plurality of users into an event, the method comprising: a) receiving, at a computing system, a first set of metadata from a first device associated with a first user and a second set of metadata from a second device associated with a second user, wherein: the first set of metadata is associated with a first image stored on the first device, and includes location information and time information for the first image; the second set of metadata is associated with a second image stored on the second device, and includes location information and time information for the second image; b) identifying, by the computing device, a first event based on the first set of metadata and the second set of metadata, wherein the first event has a defined beginning time and a defined end time; c) associating, by the computing device, the first image and the second image with the first event based on the location information or the time information associated with the first image and the second image; d) identifying, by the computing device, a relationship between the first user and the second user; and e) sending, by the computing device, a notification to the first user that the second user has an image associated with the first event.

2. The method of claim 1, further comprising: receiving a first set of contacts information from the first device and a second set of contacts information from the second device; and comparing the first set of contacts information with the second set of contacts information to identify a match, wherein the relationship between the first user and the second user is identified based on the match.

3. The method of claim 1, wherein the first device stores a plurality of images, the method further comprising: associating a portion of the plurality of images with the first event on the first device.

4. The method of claim 1, further comprising: creating the first event using at least one of the first set of metadata and the second set of metadata.

5. The method of claim 1, further comprising: analyzing the first set of metadata and the second set of metadata; and inferring information about the first user and the second user based on the analyzing.

6. The method of claim 1, further comprising: generating a recommendation for the first user or the second user based on the analyzing.

7. The method of claim 1, further comprising: analyzing location information included in the first set of metadata and the second set of metadata; and determining a radius of proximity for the first event based on the analyzing.

8. The method of claim 1, further comprising: receiving additional location-related information from an external source; and defining properties of the first event using the additional information.

9. The method of claim 1, further comprising: a) receiving additional sets of metadata from additional devices associated with additional users; b) receiving sets of contacts information from the additional devices; c) receiving a first set of contacts information from the first device; d) analyzing the first set of contacts information, the additional sets of contacts information and the additional sets of metadata associated with each of the additional users; e) determining a plurality of users among the additional users who has at least one image associated with the first event; f) discovering a subset of users among the plurality of users whose contact information is included in the first set of contacts information; and g) informing the first user about the determined subset of users.

10. The method of claim 1, further comprising: a) receiving a third set of metadata from the first device, wherein the third set of metadata is associated with a third image stored on the first device, and includes location information and time information for the third image; b) comparing the location information and time information for the third image to the location and time information of the first image or the second image; c) determining that the location information and time information for the third image is different from the location and time information of the first image or the second image; d) creating, by the computing device, a second event based on the third set of metadata, wherein the second event has a defined beginning time and a defined end time that is different than the first event; and e) associating the third image with the second event instead of the first event based on the location information or the time information associated with the third image.

11. The method of claim 10, further comprising: closing the first event.

12. The method of claim 1, further comprising: merging the first event and the second event into a merged event, wherein: a beginning time of the merged event is equal to or before than the beginning times of both the first event and the second event, and an end time of the merged event is equal to or after than the end times of both the first event and the second event.

13. The method of claim 1, further comprising: receiving an instruction from the first user to share the first image with the second user; and making the first image available to the second user.

14. The method of claim 1, wherein the first device stores a plurality of images, the method further comprising: a) receiving a query from the first user to identify one or more images on the first device that belongs to a given event; b) analyzing sets of metadata associated with the plurality of images; c) identifying zero or more images based on the analyzing; and d) providing the identified zero or more images to the first user in response to the query.

15. A computer-implemented system comprising: a) a digital processing device comprising an operating system configured to perform executable instructions and a memory; b) a computer program including instructions executable by the digital processing device to create a media sharing application comprising: i. a software module configured to receive, a first set of metadata from a first device associated with a first user and a second set of metadata from a second device associated with a second user, wherein: the first set of metadata is associated with a first image stored on the first device, and includes location information and time information for the first image, wherein the second set of metadata is associated with a second image stored on the second device, and includes location information and time information for the second image; ii. a software module configured to identify a first event based on the first set of metadata and the second set of metadata, wherein the first event has a defined beginning time and a defined end time; iii. a software module configured to associate the first image and the second image with the first event based on the location information or the time information associated with the first image and the second image; iv. a software module configured to identify a relationship between the first user and the second user; and v. a software module configured to send a notification to the first user that the second user has an image associated with the first event.

16. The system of claim 15, further comprising: a software module configured to receive a first set of contacts information from the first device and a second set of contacts information from the second device; and a software module configured to compare the first set of contacts information with the second set of contacts information to identify a match, wherein the relationship between the first user and the second user is identified based on the match.

17. The system of claim 15, wherein the first device stores a plurality of images, the system further comprises: a software module configured to associate a portion of the plurality of images with the first event on the first device.

18. The system of claim 15, further comprising: a software module configured to create the first event using at least one of the first set of metadata and the second set of metadata.

19. The system of claim 15, further comprising: a software module configured to analyze the first set of metadata and the second set of metadata; and a software module configured to infer information about the first user and the second user based on the analyzing.

20. The system of claim 15, further comprising: a software module configured to generate a recommendation for the first user or the second user based on the analysis.

21. The system of claim 15, further comprising: a software module configured to analyze location information included in the first set of metadata and the second set of metadata; and a software module configured to determine a radius of proximity for the first event based on the analysis.

22. The system of claim 15, further comprising: a software module configured to receive additional location-related information from an external source; and a software module configured to define properties of the first event using the additional information.

23. The system of claim 15, further comprising: a) a software module configured to receive additional sets of metadata from additional devices associated with additional users; b) a software module configured to receive sets of contacts information from the additional devices; c) a software module configured to receive a first set of contacts information from the first device; d) a software module configured to analyze the first set of contacts information, the additional sets of contacts information and the additional sets of metadata associated with each of the additional users; e) a software module configured to determine a plurality of users among the additional users who has at least one image associated with the first event; f) a software module configured to discover a subset of users among the plurality of users whose contact information is included in the first set of contacts information; and g) a software module configured to inform the first user and about the determined subset of users.

24. The system of claim 15, further comprising: a) a software module configured to receive a third set of metadata from the first device, wherein the third set of metadata is associated with a third image stored on the first device, including location information and time information for the third image; b) a software module configured to compare the location information and time information for the third image to the location and time information of the first image or the second image; c) a software module configured to determine that the location information and time information for the third image is different from the location and time information of the first image or the second image; d) a software module configured to create a second event based on the third set of metadata, wherein the second event has a defined beginning time and a defined end time that is different than the first event; and e) a software module configured to associate the third image with the second event instead of the first event based on the location information or the time information associated with the third image.

25. The system of claim 24, further comprising a software module configured to close the first event.

26. The system of claim 15, further comprising: a software module configured to merge the first event and the second event into a merged event, wherein: a beginning time of the merged event is equal to or before than the beginning times of both the first event and the second event, and an end time of the merged event is equal to or after than the end times of both the first event and the second event.

27. The system of claim 15, further comprising: a software module configured to receive an instruction from the first user to share the first image with the second user; and a software module configured to make the first image available to the second user.

28. The system of claim 15, wherein the first device stores a plurality of images, the system further comprises: a) a software module configured to receive a query from the first user to identify one or more images on the first device that belongs to a given event; b) a software module configured to analyze sets of metadata associated with the plurality of images; c) a software module configured to identify zero or more images based on the analyzing; and d) a software module configured to provide the identified zero or more images to the first user in response to the query.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] The present application is a continuation of U.S. Ser. No. 15/364,159 filed Nov. 29, 2016 which is a continuation of U.S. Ser. No. 14/452,331 filed Aug. 4, 2014 which claims priority from and is a non-provisional application of U.S. Provisional Application No. 61/861,922, entitled "System and Methods of Processing Data Associated with Captured Content Including Aspects of Curation/Consumption, Processing Information Derived from the Captured Content and/or Other Features" filed Aug. 2, 2013, the entire contents of which are herein incorporated by reference for all purposes.

BACKGROUND OF THE INVENTION

[0002] More photos are being snapped today than at any other point in history. The explosion in popularity of phones equipped with cameras, paired with steady development in photo-sharing software has led to staggering statistics: 33% of the photos taken today are taken on a smartphone; over 1 billion photos are taken on smartphones daily; and 250 million photos are uploaded to Facebook every day. Despite the great opportunity for consumption of all these photographs, today's simplistic tools for sharing and viewing them leave much to be desired.

SUMMARY OF THE INVENTION

[0003] Consider one data point that highlights the shortcomings of today's photo sharing tools: greater than 90% of today's pictures that are taken are "lost" as not shared or uploaded (and lost with them, aside from sentimental value, is a great amount of potentially valuable data). There are many reasons for this. For instance, it is cumbersome to share photos from a certain event or general circumstance with others who also took part in those moments; current solutions require pre-inviting others to join group albums or creating static lists and/or circles. There is no way to do this automatically, specifically for each situation and in the background. Requesting others to share photos after the event is often an irritating request for all parties and the multitude of work-around solutions means most photos are not shared before they are superseded by new photos. Current sharing is also primarily one-to-one and it is difficult to organize photos such that there is currently no easy way to see a complete album of an event or moment in time that has been shared with others. This also means that it is also difficult for someone to see photos of themselves; 70% of pictures taken are of someone else. Further, current systems do not utilize phonebook connections to determine the strength of relationships.

[0004] Today's online storage, social, and messaging systems do not focus on reliving moments. Rather, they focus on workflow automation and content distribution. But people generally do not want to have to actively share content--they want to simply create and consume it. The present disclosure teaches novel ways to meet these desires and to ensure that far fewer photographs are "lost" due to the shortcomings of today's photo management and sharing solutions.

[0005] The present disclosure describes systems and methods for curating and consuming photographs and information derived from photographs. Embodiments of the disclosed system may employ contact mapping, phonebook hashing, harvesting of photo metadata, collection of ambient contextual information (including but not limited to recordings, high frequency sounds etc) and other techniques.

[0006] In one aspect, disclosed herein are methods for grouping a plurality of images from a plurality of users into an event, the method comprising: receiving, at a computing system, a first set of metadata from a first device associated with a first user and a second set of metadata from a second device associated with a second user, wherein: the first set of metadata is associated with a first image stored on the first device, and includes location information and time information for the first image, the second set of metadata is associated with a second image stored on the second device, and includes location information and time information for the second image; identifying, by the computing device, a first event based on the first set of metadata and the second set of metadata, wherein the first event has a defined beginning time and a defined end time; associating, by the computing device, the first image and the second image with the first event based on the location information or the time information associated with the first image and the second image; identifying, by the computing device, a relationship between the first user and the second user; and sending, by the computing device, a notification to the first user that the second user has an image associated with the first event. In some embodiments, the method further comprises: receiving a first set of contacts information from the first device and a second set of contacts information from the second device; and comparing the first set of contacts information with the second set of contacts information to identify a match, wherein the relationship between the first user and the second user is identified based on the match. In some embodiments, the first device stores a plurality of images and the method further comprises: associating a portion of the plurality of images with the first event on the first device. In some embodiments, the method further comprises: creating the first event using at least one of the first set of metadata and the second set of metadata. In some embodiments, the method further comprises: analyzing the first set of metadata and the second set of metadata; and inferring information about the first user and the second user based on the analyzing. In some embodiments, the method further comprises: generating a recommendation for the first user or the second user based on the analyzing. In some embodiments, the method further comprises: analyzing location information included in the first set of metadata and the second set of metadata; and determining a radius of proximity for the first event based on the analyzing. In some embodiments, the method further comprises: receiving additional location-related information from an external source; and defining properties of the first event using the additional information. In some embodiments, the method further comprises: receiving additional sets of metadata from additional devices associated with additional users; receiving sets of contacts information from the additional devices; receiving a first set of contacts information from the first device; analyzing the first set of contacts information, the additional sets of contacts information and the additional sets of metadata associated with each of the additional users; determining a plurality of users among the additional users who has at least one image associated with the first event; discovering a subset of users among the plurality of users whose contact information is included in the first set of contacts information; and informing the first user and about the determined subset of users. In some embodiments, the method further comprises: receiving a third set of metadata from the first device, wherein the third set of metadata is associated with a third image stored on the first device, and includes location information and time information for the third image; comparing the location information and time information for the third image to the location and time information of the first image or the second image; determining that the location information and time information for the third image is different from the location and time information of the first image or the second image; creating, by the computing device, a second event based on the third set of metadata, wherein the second event has a defined beginning time and a defined end time that is different than the first event; and associating the third image with the second event instead of the first event based on the location information or the time information associated with the third image. In further embodiments, the method further comprises: closing the first event. In some embodiments, the method further comprises: merging the first event and the second event into a merged event, wherein: a beginning time of the merged event is equal to or before than the beginning times of both the first event and the second event, and an end time of the merged event is equal to or after than the end times of both the first event and the second event. In some embodiments, the method further comprises: receiving an instruction from the first user to share the first image with the second user; and making the first image available to the second user. In some embodiments, the first device stores a plurality of images and the method further comprises: receiving a query from the first user to identify one or more images on the first device that belongs to a given event; analyzing sets of metadata associated with the plurality of images; identifying zero or more images based on the analyzing; and providing the identified zero or more images to the first user in response to the query.

[0007] In another aspect, disclosed herein are computer-implemented systems comprising: a digital processing device comprising an operating system configured to perform executable instructions and a memory; a computer program including instructions executable by the digital processing device to create a media sharing application comprising: a software module configured to receive, a first set of metadata from a first device associated with a first user and a second set of metadata from a second device associated with a second user, wherein: the first set of metadata is associated with a first image stored on the first device, and includes location information and time information for the first image, wherein the second set of metadata is associated with a second image stored on the second device, and includes location information and time information for the second image; a software module configured to identify a first event based on the first set of metadata and the second set of metadata, wherein the first event has a defined beginning time and a defined end time; a software module configured to associate the first image and the second image with the first event based on the location information or the time information associated with the first image and the second image; a software module configured to identify a relationship between the first user and the second user; and a software module configured to send a notification to the first user that the second user has an image associated with the first event. In some embodiments, the system further comprises: a software module configured to receive a first set of contacts information from the first device and a second set of contacts information from the second device; and a software module configured to compare the first set of contacts information with the second set of contacts information to identify a match, wherein the relationship between the first user and the second user is identified based on the match. In some embodiments, the first device stores a plurality of images, the system further comprises: a software module configured to associate a portion of the plurality of images with the first event on the first device. In some embodiments, the system further comprises: a software module configured to create the first event using at least one of the first set of metadata and the second set of metadata. In some embodiments, the system further comprises: a software module configured to analyze the first set of metadata and the second set of metadata; and a software module configured to infer information about the first user and the second user based on the analyzing. In some embodiments, the system further comprises: a software module configured to generate a recommendation for the first user or the second user based on the analysis. In some embodiments, the system further comprises: a software module configured to analyze location information included in the first set of metadata and the second set of metadata; and a software module configured to determine a radius of proximity for the first event based on the analysis. In some embodiments, the system further comprises: a software module configured to receive additional location-related information from an external source; and a software module configured to define properties of the first event using the additional information. In some embodiments, the system further comprises: a software module configured to receive additional sets of metadata from additional devices associated with additional users; a software module configured to receive sets of contacts information from the additional devices; a software module configured to receive a first set of contacts information from the first device; a software module configured to analyze the first set of contacts information, the additional sets of contacts information and the additional sets of metadata associated with each of the additional users; a software module configured to determine a plurality of users among the additional users who has at least one image associated with the first event; a software module configured to discover a subset of users among the plurality of users whose contact information is included in the first set of contacts information; and a software module configured to inform the first user about the determined subset of users. In some embodiments, the system further comprises: a software module configured to receive a third set of metadata from the first device, wherein the third set of metadata is associated with a third image stored on the first device, and includes location information and time information for the third image; a software module configured to compare the location information and time information for the third image to the location and time information of the first image or the second image; a software module configured to determine that the location information and time information for the third image is different from the location and time information of the first image or the second image; a software module configured to create a second event based on the third set of metadata, wherein the second event has a defined beginning time and a defined end time that is different than the first event; and a software module configured to associate the third image with the second event instead of the first event based on the location information or the time information associated with the third image. In further embodiments, the system further comprises a software module configured to close the first event. In some embodiments, the system further comprises: a software module configured to merge the first event and the second event into a merged event, wherein: a beginning time of the merged event is equal to or before than the beginning times of both the first event and the second event, and an end time of the merged event is equal to or after than the end times of both the first event and the second event. In some embodiments, the system further comprises: a software module configured to receive an instruction from the first user to share the first image with the second user; and a software module configured to make the first image available to the second user. In some embodiments, the first device stores a plurality of images and the system further comprises: a software module configured to receive a query from the first user to identify one or more images on the first device that belongs to a given event; a software module configured to analyze sets of metadata associated with the plurality of images; a software module configured to identify zero or more images based on the analyzing; and a software module configured to provide the identified zero or more images to the first user in response to the query.

[0008] In another aspect, disclosed herein are computer-implemented systems comprising: a digital processing device comprising an operating system configured to perform executable instructions and a memory; a computer program including instructions executable by the digital processing device to create a media sharing application comprising: a software module configured to generate an event by analyzing metadata associated with a plurality of media stored on a mobile device of a first user, the metadata comprising date, time, and location of the creation of each media; a software module configured to generate a collection by identifying a second user having stored on their mobile device at least one media associated with the event; a software module configured to suggest sharing, by the first user, media associated with the collection, to the second user, based on a symmetrical relationship between the first user and the second user; and a software module configured to present an album to each user, each album comprising media associated with the collection and either shared with the user or created by the user. In some embodiments, the media is one or more photos or one or more videos. In some embodiments, the event is classified as an intraday event or an interday event, the classification based on distance of the location of the creation of each media to a home location. In some embodiments, the symmetrical relationship is identified from mutual inclusion in contacts, two-way exchange of email, two-way exchange of text or instant message, or a combination thereof. In some embodiments, the application further comprises a software module configured to present a notification stream to each user, the notification stream providing updates on albums presented to the user.

[0009] In another aspect, disclosed herein are non-transitory computer-readable storage media encoded with a computer program including instructions executable by a processor to create a media sharing application comprising: a software module configured to generate an event by analyzing metadata associated with a plurality of media stored on a mobile device of a first user, the metadata comprising date, time, and location of the creation of each media; a software module configured to generate a collection by identifying a second user having stored on their mobile device at least one media associated with the event; a software module configured to suggest sharing, by the first user, media associated with the collection, to the second user, based on a symmetrical relationship between the first user and the second user; and a software module configured to present an album to each user, each album comprising media associated with the collection and either shared with the user or created by the user. In some embodiments, the media is one or more photos or one or more videos. In some embodiments, the event is classified as an intraday event or an interday event, the classification based on distance of the location of the creation of each media to a home location. In some embodiments, the symmetrical relationship is identified from mutual inclusion in contacts, two-way exchange of email, two-way exchange of text or instant message, or a combination thereof. In some embodiments, the application further comprises a software module configured to present a notification stream to each user, the notification stream providing updates on albums presented to the user.

BRIEF DESCRIPTION OF THE DRAWINGS

[0010] FIG. 1 illustrates a block diagram of an exemplary system to perform the steps discussed in connection with various embodiments of the present invention.

[0011] FIG. 2 illustrates a flowchart of exemplary steps for associating two images with an event, according to an exemplary embodiment of the present invention.

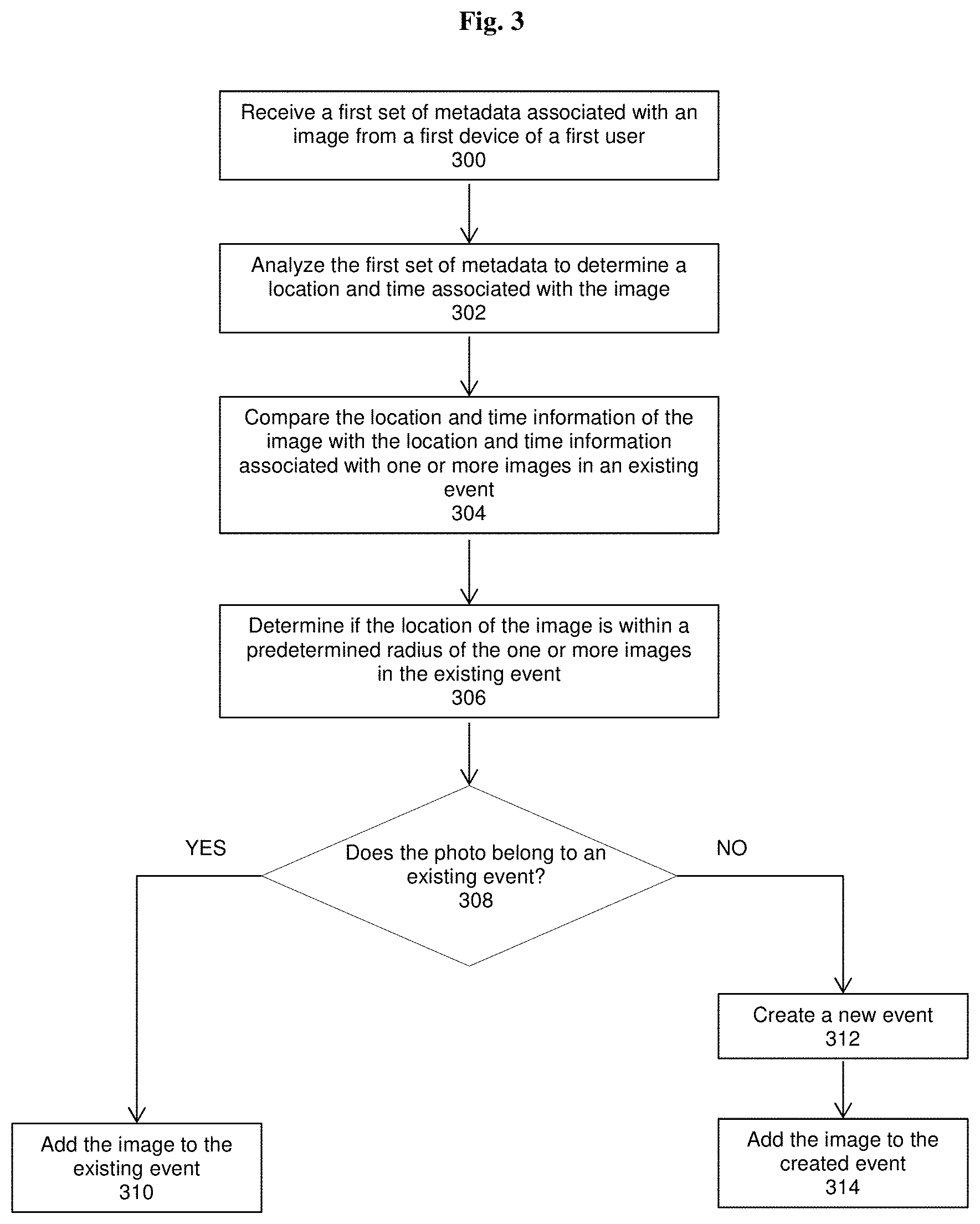

[0012] FIG. 3 illustrates a flowchart of exemplary steps for associating an image with an existing or new event, according to an exemplary embodiment of the present invention.

[0013] FIG. 4 illustrates an exemplary event, a plurality of exemplary collections within the event and an exemplary album within one of the collections in connection with various embodiments of the present invention.

[0014] FIG. 5 illustrates a flowchart of exemplary steps for identifying a plurality of users having at least an image associated with an event, according to an exemplary embodiment of the present invention.

[0015] FIG. 6 illustrates a flowchart of exemplary steps for sharing an image of a first user with other users, according to an exemplary embodiment of the present invention.

[0016] FIG. 7 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for inputting a number to which an activation code can be sent. When the user enters a phone number and then presses the button that says "Send Activation Code" the phone number the user inputted is sent a verification code via SMS.

[0017] FIG. 8 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for entering the verification code that they were sent via SMS. When the user enters the correct verification code and presses the button that says "Complete Verification," they are taken to the next screen in onboarding.

[0018] FIG. 9 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a modal the user will see in the event that the user does not enter a verification code within 30 seconds of it being sent. When the user presses the button "Try Again" another verification code will be sent to the user via SMS. When the user presses the button "Call Me" the user will be taken to a screen with the verification code.

[0019] FIG. 10 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a modal the user will see in the event that the user does not have any friends on Shoto. When the user presses the button labeled "Invite" they will be taken to a list of contacts from their address book on Shoto. The user can then invite contacts from this list to download Shoto.

[0020] FIG. 11 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for inviting contacts from the user's address book to download Shoto. When the user presses the placeholder avatar labeled "Invite a friend to Shoto", they will be taken to a list of contacts from their address book on Shoto. The user can then invite contacts from this list to download Shoto.

[0021] FIG. 12 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for inviting contacts from the user's address book to download Shoto. When the user presses the button that says "Send Invite" beside the user's name, an SMS will be loaded with a link to download Shoto. When the user sends the SMS, the contact will be sent the link.

[0022] FIG. 13 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting the slide out menu the user will see when they press on the three bars in the upper left hand corner of the timeline screen. The user will be able to change their avatar, create an event, view their friends list, merge albums, and access app settings from the slide out menu.

[0023] FIG. 14 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for creating an event. The user must input a name for the event; the event location; the time the event started and ended; their email; and a message to recipients of the event invite, in order to create an event. When the user fills in all the fields and presses on the button labeled "Create Event" the event will be created.

[0024] FIG. 15 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for sharing a created event. The user can share the event by sharing a URL that serves as a link to the event web page. When the user presses the button that says "Share Invite URL", they see a variety of external applications through which the user can share an invite URL.

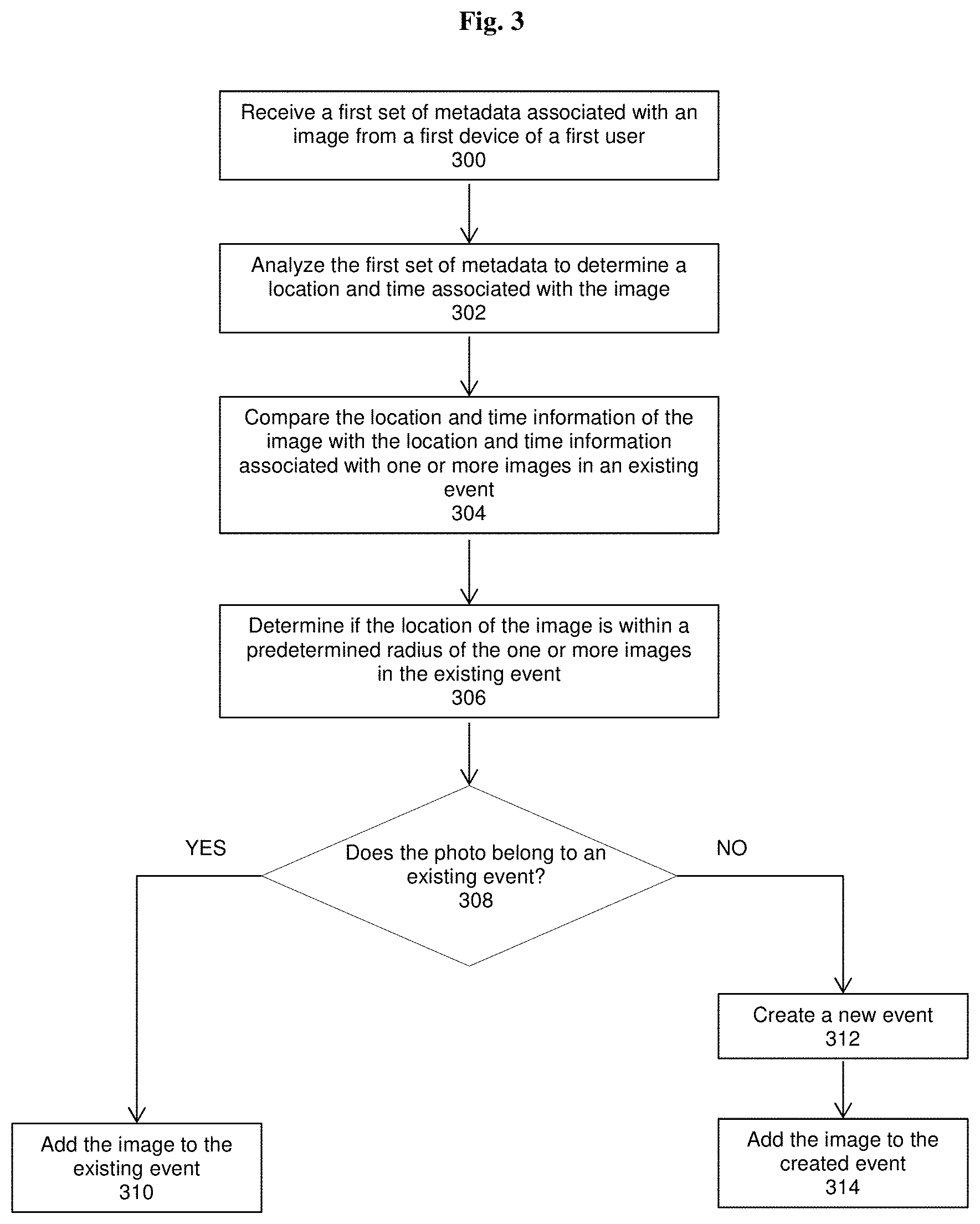

[0025] FIG. 16 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for adding photos to an event that the user attended. The user can toggle the selected state of the photo by tapping on it. When the user presses on the button labeled "Send Photos & Continue", the selected photos will be contributed to the event.

[0026] FIG. 17 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for uploading photos to Facebook. The user can upload an entire album by pressing the Facebook icon in the album view. When the user presses the Facebook icon, they will be taken to the Facebook upload screen, from which the user can edit the title and description of the album. The user can also select which photos they want to upload by tapping on them. When the user presses the button labeled "Share", the selected photos will be uploaded to Facebook.

[0027] FIG. 18 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for viewing albums that have been removed from the shared tab on the timeline. When the user presses on the preview for an album they will be able to view the photos in the album (and other information, too).

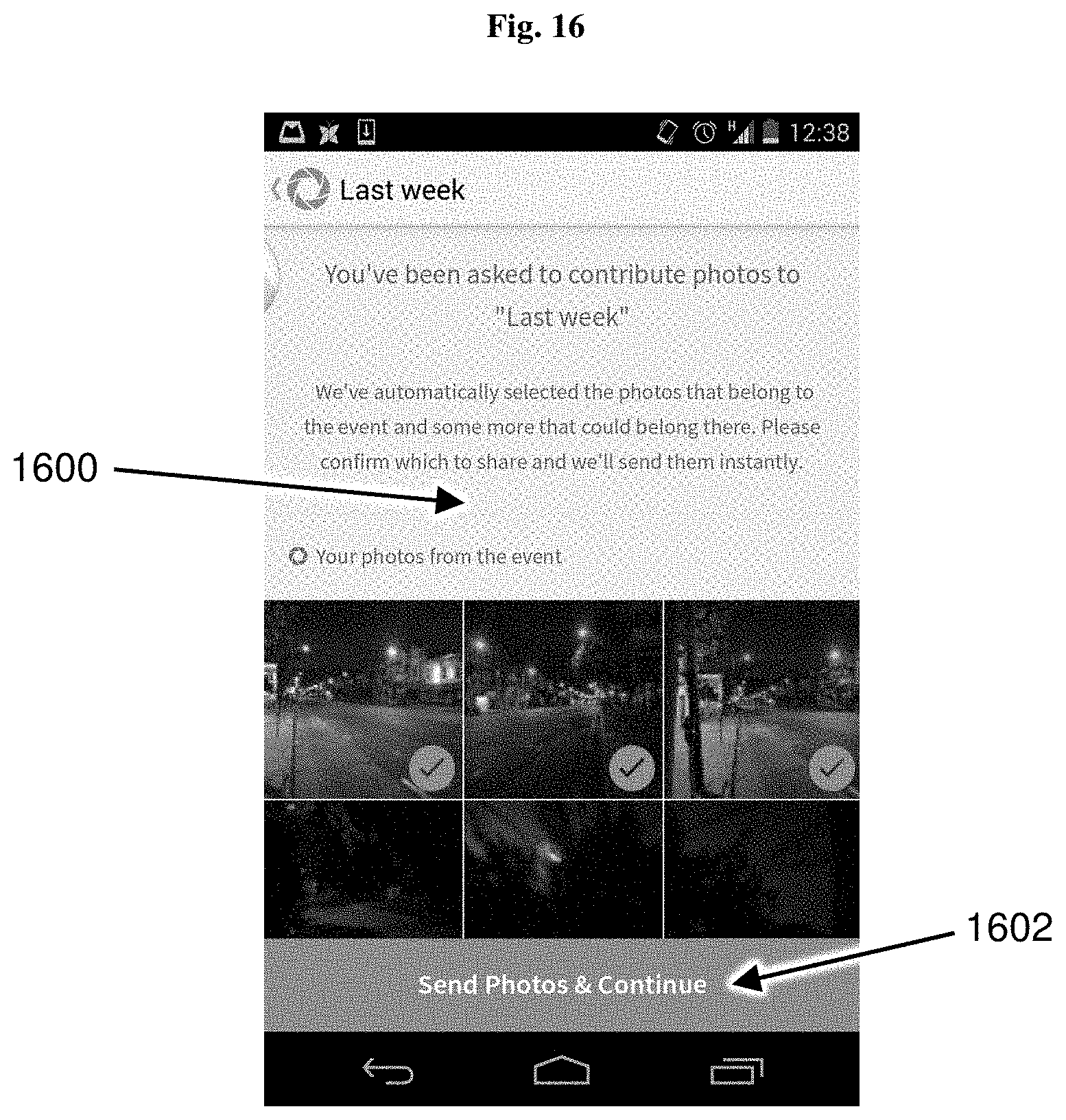

[0028] FIG. 19 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for viewing albums that have been removed from the unshared tab on the timeline. When the user presses on the preview for an album, they will be able to view the photos in the album.

[0029] FIG. 20 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting the screen the user will see when they press "Sounds Good!" on an onboarding screen. The screen shows the user and all the people in their address book who are on Shoto already. The screen also shows the user and list of all the people in their address book who are not Shoto and provide them with an opportunity to invite them to Shoto. When the user presses the button that says "Invite" beside the user's name, the button will change to say "Remove" and that user will be added as a recipient of a group SMS that contains a link to download Shoto. When the user presses the button that says "Send Invite," a SMS with a link to download Shoto will be sent to the selected contacts, and the button will change to say "Complete Onboarding." When the user presses the button that says "Complete Onboarding," they will be taken to a screen with suggests them an album to share.

[0030] FIG. 21 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a screen the user will only see if they have taken photos in the proximity of friends who are also on Shoto, and no one has started sharing their photos from the event. When the user presses the button that says "Start Sharing Photos" they will be taken to a screen from which they can select people with whom to share the photos.

[0031] FIG. 22 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a screen the user will see if the user and the user's friends all have photos from a single memory, and the user chooses to start sharing their photos from the memory. The user will see "Friends who were there" ordered at the top of the list. When the user presses on the empty circle beside each friend's name, the circle will occupy with a check mark and the friend will be considered selected. The user can toggle the selected state of the friend by tapping on the circle. When the user presses on the magnifying glass at the top of the screen, they will activate a search function. As the user types in the name of the contacts they are looking for, they will be provided with suggestions of names. When the user clicks on the button that says "Next" they will be taken to a screen as seen in FIG. 23.

[0032] FIG. 23 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a screen the user will see after they are done selecting friends they want to share photos with. When the user taps on the preview of the photos they check mark on the photo will disappear and the photo will be considered unselected. The user can toggle the selected state of the photo by tapping on it. When the user presses on the button that says "Send," the selected photos will be shared with the selected users and the user will be taken to the timeline.

[0033] FIG. 24 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a screen the user will only see if they have taken photos in the proximity of friends who are also on Shoto, and other people who were at the event have already started sharing their photos. When the user presses the button that says "Start Sharing Photos" they will be taken to a screen from which they can select people with whom to share the photos.

[0034] FIG. 25 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a screen the user will only see if they have not taken photos in the proximity of friends who are also on Shoto. This screen shows the user the album on their phone that has the most photos and is therefore labeled their most significant album.

[0035] FIG. 26 depicts a non-limiting, exemplary mobile user interface; in this case, a user interface for presenting a screen the user will only see if they have taken photos in the proximity of friends who are also on Shoto. The screen shows the user their photos from a particular time when they were with other Shoto users. By pressing the button labeled "Start Sharing", the user can share photos with other users who were there when the photos were taken, in addition to contacts and users who were not there.

[0036] FIG. 27 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will only see if they have taken photos while they were removed from other Shoto users. When the user presses the button labeled "Start Sharing", they will be able to share those photos with friends on Shoto and contacts from their phone book.

[0037] FIG. 28 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will only see if they attempt to share photos that were not taken in the vicinity of other Shoto users. The screen shows a list of friends who are also on Shoto, in addition to contacts from the user's phone book who are not on Shoto.

[0038] FIG. 29 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will only see if they have shared photos with, or received photos from, other Shoto users. The screen shows a list of albums that have been shared with other Shoto users, ordered by the date the photos in that album were found by the server.

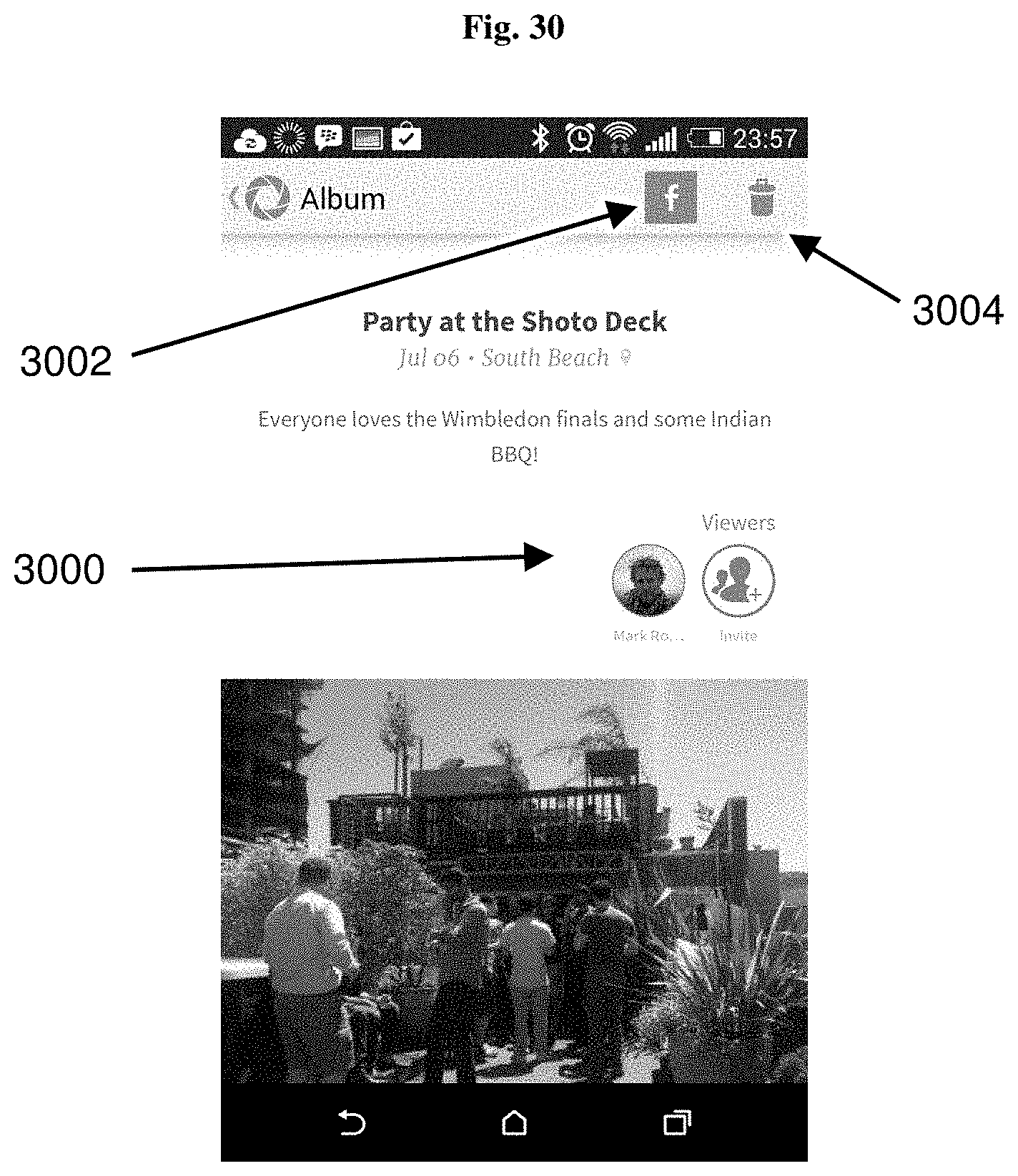

[0039] FIG. 30 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see if they press on the preview of an album they have shared with other Shoto users. The screen shows the photos that belong to that album, in addition to the avatars of those users who can view the album.

[0040] FIG. 31 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see if they have been invited to view photos by another Shoto user. The screen shows the photos they have been invited to view, in addition to the avatars of users who have the ability to contribute photos to the album.

[0041] FIG. 32 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see when they press on the preview of an album that is comprised of unshared photos the user took when they were removed from other Shoto users. The screen shows the photos in the album, in addition to a button labeled "Start Sharing". When the user presses the button labeled "Start Sharing", they can share the photos in the album with other Shoto users and contacts from their phone book, not on Shoto.

[0042] FIG. 33 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see when they press on the preview of an album that is comprised of unshared photos the user took when they were in the vicinity of other Shoto users. The screen shows the user's photos from the collection, in addition to a button labeled "Start Sharing". When the user presses the button labeled "Start Sharing", they can share the photos they took with other users who were in the vicinity of the user when the photos were taken. The user can also share the photos with other Shoto users and contacts from their phonebook, not on Shoto.

[0043] FIG. 34 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see when they press on the preview of an album that is comprised of unshared photos the user took when they were in the vicinity of other Shoto users, and those other users have already started sharing their photos from the event. The screen shows the photos that belong to that album, and who has already started contributing to that album. The screen will also show a button labeled "Share Back". When the user presses on the button labeled "Share Back", they will be able to share their photos with other Shoto users and contacts from their phonebook, not on Shoto.

[0044] FIG. 35 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for notifying the user to various activities that take place within the app.

[0045] FIG. 36 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see when they press on the activity tab in another user's profile. The screen will show the various actions that user has performed within the app including commenting and sharing.

[0046] FIG. 37 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see when they press on the shared tab in another user's profile. The screen will show previews of any albums that the user has shared with the other user since joining Shoto. When the user presses on the preview they will see the photos that belong to the shared album, in addition to who else can contribute to and view the album

[0047] FIG. 38 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for presenting a screen the user will see when they press on the unshared tab in another user's profile. The screen will show previews of any albums that contain photos that were taken when the user was with the other user. The screen will also show a button labeled "Start Sharing", beside the preview of the album. When the user presses the button, they will be able to share their photos from the album with the other Shoto user, as well as contacts from their phone book, not on Shoto.

[0048] FIG. 39 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for adjusting and accessing various settings. The screen will show a toggle function to back up the user's photos, back up the user's photos only when connected to Wi-Fi, and automatically check the user in with other Shoto users when they are with each other. Additionally, the screen will shows buttons to access removed albums from the timeline, a feedback system, and the company website.

[0049] FIG. 40 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for viewing a particular image within an album. The screen will show the image in full screen view and also the options to like, comment, and edit the photos. The screen will also show a button with three dots that can be used to access image settings.

[0050] FIG. 41 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for adjusting the image settings. The screen will show a toggle function to control the shared state of the photo. The screen will also show buttons labeled "Remove Photo" and "Report Photo". When the user presses on the button labeled "Remove Photo", the photo will be removed from the album. When the user presses on the button labeled "Report Photo", the photo will be reported to Shoto.

[0051] FIG. 42 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for a help screen with a verification code for communication with Shoto staff.

[0052] FIG. 43 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for viewing shared albums by other users and sharing albums with other users based on a location and a date.

[0053] FIG. 44 depicts a non-limiting, exemplary mobile user interface; in this case a user interface for viewing and adding comments to a shared album.

DETAILED DESCRIPTION OF THE INVENTION

[0054] Consider one data point that highlights the shortcomings of today's photo sharing tools: greater than 90% of today's pictures that are taken are "lost" as not shared or uploaded (and lost with them, aside from sentimental value, is a great amount of potentially valuable data). There are many reasons for this. For instance, it is cumbersome to share photos from a certain event or general circumstance with others who also took part in those moments; current solutions require pre-inviting others to join group albums or creating static lists and/or circles. There is no way to do this automatically, specifically for each situation and in the background. Requesting others to share photos after the event is often an irritating request for all parties and the multitude of work-around solutions means most photos are not shared before they are superseded by new photos. Current sharing is also primarily one-to-one and it is difficult to organize photos such that there is currently no easy way to see a complete album of an event or moment in time that has been shared with others. This also means that it is also difficult for someone to see photos of themselves; 70% of pictures taken are of someone else. Further, current systems do not utilize phonebook connections to determine the strength of relationships.

[0055] Today's online storage, social, and messaging systems do not focus on reliving moments. Rather, they focus on workflow automation and content distribution. But people generally do not want to have to actively share content--they want to simply create and consume it. The present disclosure teaches novel ways to meet these desires and to ensure that far fewer photographs are "lost" due to the shortcomings of today's photo management and sharing solutions.

[0056] Described herein, in certain embodiments, are methods for grouping a plurality of images from a plurality of users into an event, the method comprising: receiving, at a computing system, a first set of metadata from a first device associated with a first user and a second set of metadata from a second device associated with a second user, wherein: the first set of metadata is associated with a first image stored on the first device, and includes location information and time information for the first image, the second set of metadata is associated with a second image stored on the second device, and includes location information and time information for second first image; identifying, by the computing device, a first event based on the first set of metadata and the second set of metadata, wherein the first event has a defined beginning time and a defined end time; associating, by the computing device, the first image and the second image with the first event based on the location information or the time information associated with the first image and the second image; identifying, by the computing device, a relationship between the first user and the second user; and sending, by the computing device, a notification to the first user that the second user has an image associated with the first event.

[0057] Also described herein, in certain embodiments, are computer-implemented systems comprising: a digital processing device comprising an operating system configured to perform executable instructions and a memory; a computer program including instructions executable by the digital processing device to create a media sharing application comprising: a software module configured to receive, a first set of metadata from a first device associated with a first user and a second set of metadata from a second device associated with a second user, wherein: the first set of metadata is associated with a first image stored on the first device, and includes location information and time information for the first image, wherein the second set of metadata is associated with a second image stored on the second device, and includes location information and time information for the second image; a software module configured to identify a first event based on the first set of metadata and the second set of metadata, wherein the first event has a defined beginning time and a defined end time; a software module configured to associate the first image and the second image with the first event based on the location information or the time information associated with the first image and the second image; a software module configured to identify a relationship between the first user and the second user; and a software module configured to send a notification to the first user that the second user has an image associated with the first event.

[0058] Also described herein, in certain embodiments, are computer-implemented systems comprising: a digital processing device comprising an operating system configured to perform executable instructions and a memory; a computer program including instructions executable by the digital processing device to create a media sharing application comprising: a software module configured to generate an event by analyzing metadata associated with a plurality of media stored on a mobile device of a first user, the metadata comprising date, time, and location of the creation of each media; a software module configured to generate a collection by identifying a second user having stored on their mobile device at least one media associated with the event; a software module configured to suggest sharing, by the first user, media associated with the collection, to the second user, based on a symmetrical relationship between the first user and the second user; and a software module configured to present an album to each user, each album comprising media associated with the collection and either shared with the user or created by the user.

[0059] Also described herein, in certain embodiments, are non-transitory computer-readable storage media encoded with a computer program including instructions executable by a processor to create a media sharing application comprising: a software module configured to generate an event by analyzing metadata associated with a plurality of media stored on a mobile device of a first user, the metadata comprising date, time, and location of the creation of each media; a software module configured to generate a collection by identifying a second user having stored on their mobile device at least one media associated with the event; a software module configured to suggest sharing, by the first user, media associated with the collection, to the second user, based on a symmetrical relationship between the first user and the second user; and a software module configured to present an album to each user, each album comprising media associated with the collection and either shared with the user or created by the user.

Certain Definitions

[0060] Unless otherwise defined, all technical terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this invention belongs. As used in this specification and the appended claims, the singular forms "a," "an," and "the" include plural references unless the context clearly dictates otherwise. Any reference to "or" herein is intended to encompass "and/or" unless otherwise stated.

[0061] As used herein, "event" refers to photos that were grouped because there were taken within a particular distance (e.g., one hundred meters) of one another at a similar time. The difference in time and distance to determine an event may be changed based on the location of the user and the history of user activities.

[0062] As used herein, "collection" refers to a subset of photos that belong to an event that were grouped because they were taken within a particular distance (e.g., one hundred meters) of one another at a similar time and the users who took the photos had a reciprocal relationship (e.g., had the other users' phone number stored in their respective phone books). In some embodiments, the collection is a virtual construct and not visible to users.

[0063] As used herein, "album" refers to a subset of photos that belong to a collection, are grouped by location and time, and solely owned by the user who took those photos or had those photos shared with them.

Overview

[0064] In some embodiments, disclosed herein is a way of using metadata in photos to group, suggest what's relevant to an event and relevant invitees basis their photos and/or geolocation tracked in the app.

[0065] The present disclosure describes systems that work together to allow users to discover unseen photos taken by their friends and family. Through discovery the systems then allow users to see photos in a single album from a particular moment in time (historically from the first photo). Albums of these moments are grouped into Days or Trips. Albums can also be shared with users who were not present so that they can also see the photos and participate in the social communication (chat, comment, and like). To encourage more sharing, the systems generate memories for users to remind them of moments that are worth sharing. The systems also organize albums into days or trips depending if the photos were taken in the user's home location. The systems will also provide interaction with events such as music festivals asking a user if they would like to submit photos for prizes when they enter the geo fence for the event, they could upload automatically or when they leave, when leaving the systems would present only the photos taken at the event. The systems also let users see photos from events, after they install the application, even if they have not taken photos but have enabled auto check-in.

[0066] The present disclosure describes systems and methods for curating and consuming photographs and information derived from photographs (hereinafter, for convenience, referred to as "Shoto"). Shoto gives users new ways to discover, organize and share photos taken privately with their friends, family, and others. Embodiments may comprise a system or method of contact mapping, phonebook hashing, harvesting of photo metadata and other techniques.

[0067] Shoto maps a user's contacts stored in the user's mobile device (the identifying information for these contacts may be phone numbers, Facebook user IDs, or other identifying information). Shoto performs a one-way encryption of the identifying information for the contacts stored in the user's mobile device. The result is hereinafter referred to as the user Identifiable Encryption Key (UIKY). The UIKY is sent to a server, where it is then compared to the UIKY received from other users' mobile devices.

[0068] Shoto identifies relationships between users when there are matches, and can categorize the relationships depending on the type of identifying information that is matched. For instance, Shoto identifies that User A's mobile device contains User B's phone number, and vice versa. Shoto may also identify that User A's mobile device contains User C's Facebook ID, and vice versa. Certain embodiments may weigh these identified relationships differently in determining the nature and proximity of the relationship between the users, e.g., a phone number match means the users are close, while only a Facebook ID match means the users are just acquaintances.

[0069] Certain embodiments allow users to upload their photos to a server, where they may be stored. Uploading photos can be as easy, for instance, as tapping a plus symbol on any photo or tapping "Start Sharing". A "timeline" view shows all photos (whether taken with Shoto's native in-app camera or otherwise collected through Shoto). Photo uploading can easily be stopped or photos can be removed from Shoto (and thus only stored locally), for instance by tapping a symbol while the photos are uploading or briefly after they have been uploaded. In some embodiments, photos successfully uploaded are confirmed by a symbol displayed on the screen. Even uploaded photos can be removed from everyone else's phone by marking them as unshared.

[0070] In addition to contact mapping and phonebook hashing, certain embodiments also harvest metadata (EXIF) from the user's photos (hereinafter referred to as "Photo Metadata"). Photos do not necessarily need to be uploaded in order for Photo Metadata to be harvested from them. Photo Metadata may include, but is not necessarily limited to, latitude, longitude, time, rotation of photo and date of the photo. In some embodiments, ambient noise captured before, during or after the photo being taken is also included as Photo Metadata. Photo Metadata can be harvested from photos in the user's mobile device storage, cloud storage (e.g., DropBox, etc.), social networks (e.g., Facebook albums, etc.), and other locations. Photo Metadata is sent along with the UIKY to a server, where the Photo Metadata and UIKY are compared to the Photo Metadata and UIKY received from other users' mobile devices. The comparison of this data yields not only relationships between users, but also events or moments that the users likely shared (given similar photo metadata), as well as other useful information.

[0071] Certain embodiments allow the user to adjust overall app settings, for instance auto-syncing photos, local photo storage, connectivity with third party applications or third-party services, etc.

[0072] Referring to FIG. 1 when images are taken on two devices (100, 102), the metadata from each device is sent to the server. When the image reaches the server 120, the system looks to see if the photo belongs an event. In this case, the photos do not belong to an existing event, and so the metadata is dropped into an event generation module 122. The system creates a new event to accommodate the photo and then puts the photo into that event. After the event is created, and it is seen that the photos belong to the same event, a collection generation module 128 is created to identify a relationship between the two devices. If each user has the other in their phonebook, then a relationship is established and a collection is created. If each of the users takes multiple photos on their respective devices, those photos can then be grouped into albums on the user's device, through the album generation module 130. After the album is created, the system then works with the metadata to identify photos from an album that might belong to an established collection. When photos in an album are identified to also be in a collection, the discovery module 132 is triggered. The discovery module builds a relationship between the photos in an album on a user's device with photos in another album on another user's device. The users can then share the photos that have been identified to be part of a common collection through the sharing module 134.

Onboarding

[0073] Certain implementations of the present invention allow the user to verify their phone number through a verification code. The user selects a country to fill field 702. When the user enters a valid phone number in field 704 on screen 700, as illustrated in FIG. 7, and then clicks "Send Activation Code" 706, the user is sent an SMS with the verification code. Simultaneously, the user is taken to a third onboarding screen 800 in FIG. 8 on which they can enter their activation code. When the user enters the correct activation code in field 802 and then clicks "Finish Signing Up" 804, that user is verified.

[0074] There is a certain implementation of the present invention that calls the user with a verification code in the event that an activation code is not received via SMS. When the user does not enter a verification code into field 802 within 30 seconds of the SMS being sent, the user will see modal 900. When the user clicks "Call Me" 902, the user is taken to a help screen, such as depicted in FIG. 42, 4200, where they will see a verification code. Simultaneously, the user will receive a phone call from Shoto thanking them for downloading the app. When the user clicks the back button, they should be taken back to onboarding screen 800. When the user enters the correct verification code in field 802 and then clicks "Finish Signing Up" 804, that user is verified.

[0075] There is a certain implementation that prompts the user to allow Shoto access to their Contacts. In FIG. 10, an onboarding screen 1000 provides a route for the user to allow Shoto to access their contacts. When the user clicks "Allow Access to Contacts" 1004, Shoto is allowed access to the user's Contacts. If the user has no friends on Shoto, the user will see modal 1000. When the user clicks "Invite" 1002, the user will see a list of their contacts not on Shoto, as seen on screen 1200. When the user clicks on "Send Invite" 1202, a SMS will be sent to the selected contact with a link to download Shoto. When more than one contact is selected to be invited, the SMS with the link will be sent as a group SMS.

[0076] Some implementations of the present invention allow the user to invite contacts from their address book to Shoto. In FIG. 11 an onboarding screen 1100 shows contacts from the address book of users who have downloaded Shoto and a placeholder contact. When the user clicks on "Invite a friend to Shoto" 1102, the user will see a list of their contacts not on Shoto, as seen on screen 1200.

Determining an Event

[0077] The first photo(s) is analyzed by the server, depending on a number of factors that include but are not limited to the type of place, the day of week, density of location, history of merges made at that particular longitude and latitude (a merge is a manual user interaction of merging) and any public data about an event happening at a particular place. A geo fence, radius, average distance between clusters of photos, and average time between clusters are amongst some of the attributes generated.

[0078] Any of the methods described herein may be totally or partially performed with a computer system including one or more processors, which can be configured to perform the steps. Thus, embodiments are directed to computer systems configured to perform the steps of any of the methods described herein, potentially with different components performing a respective step or a respective group of steps. Although presented as numbered steps, steps of methods herein can be performed at a same time or in a different order. Additionally, portions of these steps may be used with portions of other steps from other methods. Also, all or portions of a step may be optional. Any of the steps of any of the methods can be performed with modules, circuits, or other means for performing these steps.

[0079] Referring to FIG. 2, certain embodiments of the present invention allow for one user to be notified when another user has an image associated with a common event. When the first user takes a picture, the metadata associated with that photo is sent to the server from the first user's device 202. When the second user takes a picture the metadata associated with that photo is sent to the server from the second user's device 204. Using the combined metadata from the first and second users' devices, an event is identified 206. After an event has been identified, the metadata is then used to associate the images with the event. Specifically, the time and location the photo was taken are used to associate the images with the event 208. After it is established that the photos are associated with the event, the system looks to identify a relationship between the first and second user 210. If each user has the other as a friend on Shoto, or a contact in each other's phone books, a relationship will be established, and the first user will be notified that the second user has an image associated with the event 212.

[0080] Adding a Photo to an Existing Event or Creating a New Event

[0081] An algorithm that can include machine learning elements applies these attributes to existing labels of events (examples such as concerts, brunch, walk in park, road trip) and then groups the photos into the event. Depending on the algorithm, if a photo falls outside any number of attributes, those photos may get moved into a new event.

[0082] Referring to FIG. 3, metadata can be used to determine if an event already exists or if an event needs to be created in order for a relationship between users to be identified. When a first user takes a photo, the metadata associated with that photo is sent from the device to server 300. When the metadata reaches the server it is analyzed to determine a location and time that can be associated with the image 302. Using the identified location and time image, the system looks for other images with similar location and times to see if the photo taken belongs to an existing event 304. Part of the process that determines whether a photo belongs to an existing event includes determining if the taken photo falls within a predetermined radius of one or more images in an existing event 306. If the photo belongs to an event, the photo is added to the existing event 310. If the photo does not belong to an event, an event is created to accommodate the photo 312, and the photo is added to the newly created event 314.

Manually Creating an Event

[0083] There are certain implementations that allow the user to create an event. In FIG. 13, screen 1300 exposes a slide out menu 1302. When the user clicks on "Create Event" 1304, the user will be able to fill in the empty fields 1402 as seen in screen 1400, illustrated in FIG. 14. When the user has filled in the fields and clicks on "Create Envelope" 1404, the event will be created.

[0084] The manually created album creates a link that can be sent to friends. When friends visit the link they are asked to enter their phone number (or open in app if they are on their mobile phone that has the app). When they enter their number, Shoto sends them a download link. Once they signup from that download link Shoto presents relevant photos to the event that they could submit during onboarding. Once the user agrees, the user will get to the timeline and the event host will get the photos.

Determining a Collection

[0085] Collections are super sets of photographs that have been grouped together based on location, time, and type of location. They include photos that belong to anyone who knows anyone else at the event. By way of example, everyone on a day trip that knew someone else who was there who is using Shoto and has taken photos would have their photos' metadata belonging to a collection.

[0086] Referring to FIG. 4, in a particular non-limiting embodiment, images (408, 410) can be grouped into album 424. The user's photos are the only photos that can end up in their album. Other user's photos will not appear in the user's album, however, can appear in the user's collection 402. Images that appear in the user's collection, but do not appear in the user's album (404, 406), are single images that belong to other users. Those images belong to other users who are in the user's phone book, which is why the images appear in the user's collection. A collection does not necessarily have to include an album, such as collection 412. Images can exist in a collection, without belonging to an album (414, 416, 418). Images can also belong to an event, and not to a collection (420, 422). The metadata associated with images 420 and 422, tells the system that there are no other Shoto users who belong to the event, with whom a relationship can be built with the user who uploaded either photo 420 or 422.

Albums

[0087] Albums are a particular user's view of a collection based on the symmetrical relationships a user has with those who are in the collection and any additional one-way relationships where the originator has sent the album to the user.

[0088] By way of example, a user is with five friends and three of their friends in Hyde Park. When five friends of the group take photos, they belong to the same album, collection, and event. The three others will not belong in the user's album until one of them sends the user the album. They won't belong in the user's album automatically because the user does not have their phone number in his contacts, but they may have the user's number in their contacts.

Discoverable Albums

[0089] Shoto has the ability to organize and build dynamic photo albums based on Photo Metadata and uses the UIKY to also populate additional photos into the SAME view and same Album.

[0090] In other words, Shoto infers context from Photo Metadata. For instance, an embodiment of Shoto could infer that a certain set of photographs taken by a user belong in a vacation album because the longitude and latitude of those photographs are located in a concentrated area away far from the user's home location and the dates of the photos show that they were taken over the course of a week.

[0091] Embodiments take into account certain radiuses of proximity (both in time and location) when inferring context from Photo Metadata. For example, Shoto may recognize that a group of photographs appear to have been taken in a certain restaurant, according to the latitude and longitude information in the Photo Metadata. Shoto can then infer that all pictures taken within a small radius of proximity to these latitude and longitudinal coordinates were taken at the restaurant. Shoto can use a larger radius of proximity for other situations, for instance where photos are taken in a sprawling national park. Additionally, Shoto analyses factors such as distance and time between clusters, historical photo taking behavior where available and uses machine learning to apply probability of existing modeled events to a particular grouping as described herein.

[0092] Shoto also uses public location sources such as (but not restricted to) FourSquare, Yahoo, Google, and WAYN to determine the type of location.

[0093] Embodiments can make similar inferences based on other types of collected data. For instance, Shoto can infer that a group of photographs belong together because of the time they were taken (e.g., all within a 3-hour window, or each within 30 minutes of the last). Some embodiments can also capture ambient noise when a photograph is taken, which can then be compared for grouping and sharing purposes with the ambient noise captured during other photographs. Certain embodiments can also perform a graphical analysis of the photographs (e.g., measuring the amount of light) to group and share them. In this vein, Shoto can similarly use the distance from Wi-Fi hotspots, compass position, or height of mobile device, among other things.

[0094] Shoto adjusts the time between pictures, distance between pictures and max timeout before album considered closed basis the location type which is sources from its services that include but are not limited to its own input, FourSquare, WAYN, and Yahoo.

[0095] Certain embodiments use facial recognition to make certain inferences, e.g., a certain user is present in a photograph. This technology makes it possible for Shoto to better organize user photos, as well as to give users more powerful discovery tools.