Methods And Apparatuses For Providing Input For Head-worn Image Display Devices

PAZMINO; Lorena ; et al.

U.S. patent application number 16/448452 was filed with the patent office on 2019-12-26 for methods and apparatuses for providing input for head-worn image display devices. This patent application is currently assigned to MAGIC LEAP, INC.. The applicant listed for this patent is MAGIC LEAP, INC.. Invention is credited to Mario Antonio BRAGG, Parag GOEL, David Charles LUNDMARK, Andrea Isabel MONTOYA, Savannah NILES, Lorena PAZMINO, Alexander ROCHA, Jeffrey Scott SOMMERS.

| Application Number | 20190391391 16/448452 |

| Document ID | / |

| Family ID | 68981024 |

| Filed Date | 2019-12-26 |

View All Diagrams

| United States Patent Application | 20190391391 |

| Kind Code | A1 |

| PAZMINO; Lorena ; et al. | December 26, 2019 |

METHODS AND APPARATUSES FOR PROVIDING INPUT FOR HEAD-WORN IMAGE DISPLAY DEVICES

Abstract

An apparatus for use with an image display device configured for head-worn by a user, includes: a screen; and a processing unit configured to assign a first area of the screen to sense finger-action of the user; wherein the processing unit is configured to generate an electronic signal to cause a change in a content displayed by the display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus.

| Inventors: | PAZMINO; Lorena; (Wilton Manors, FL) ; MONTOYA; Andrea Isabel; (Plantation, FL) ; NILES; Savannah; (Fort Lauderdale, FL) ; ROCHA; Alexander; (Boca Raton, FL) ; BRAGG; Mario Antonio; (Lake Worth, FL) ; GOEL; Parag; (Coral Springs, FL) ; SOMMERS; Jeffrey Scott; (Mountain View, CA) ; LUNDMARK; David Charles; (Los Altos, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | MAGIC LEAP, INC. Plantation FL |

||||||||||

| Family ID: | 68981024 | ||||||||||

| Appl. No.: | 16/448452 | ||||||||||

| Filed: | June 21, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62688108 | Jun 21, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/012 20130101; G02B 27/017 20130101; G06F 3/017 20130101; G06T 19/006 20130101; G06F 3/04812 20130101; G06F 1/163 20130101; G06T 19/20 20130101; G06F 3/04886 20130101; G06F 3/04883 20130101; G06F 2203/04808 20130101; G02B 2027/014 20130101; G02B 2027/0138 20130101; G06F 3/0346 20130101; G06F 3/011 20130101; G02B 2027/0178 20130101; G06F 1/1626 20130101; G06F 1/1643 20130101; G06F 3/0485 20130101; G06F 3/013 20130101; G06F 3/016 20130101; G06T 2219/2016 20130101 |

| International Class: | G02B 27/01 20060101 G02B027/01; G06T 19/00 20060101 G06T019/00; G06F 3/0481 20060101 G06F003/0481; G06F 3/01 20060101 G06F003/01 |

Claims

1. An apparatus for use with an image display device configured for head-worn by a user, the apparatus comprising: a screen; and a processing unit configured to assign a first area of the screen to sense finger-action of the user; wherein the processing unit is configured to generate an electronic signal to cause a change in a content displayed by the image display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus.

2. The apparatus of claim 1, wherein the screen has a touch-sensitive region, and wherein the assigned first area is less than a total area of the touch-sensitive region.

3. The apparatus of claim 1, wherein the processing unit is also configured to operate a feedback component in response to the finger-action of the user.

4. The apparatus of claim 3, wherein the first area has a boundary, and wherein the finger-action of the user comprises a finger of the user crossing, reaching, or moving to a location that is within a prescribed distance from, the boundary.

5. The apparatus of claim 3, wherein the processing unit is configured to operate the feedback component to generate different types of feedback based on different respective spatial relationships between one or more finger(s) of the user with respect to the first area.

6. The apparatus of claim 5, wherein the different respective spatial relationships comprise different distances between (1) one of the one or more finger(s), or a point that is between two fingers, of the user and (2) a reference location within the assigned first area.

7. The apparatus of claim 5, wherein the different respective spatial relationships comprise one of the one or more finger(s), or a point that is between two fingers, reaching different respective distances from a boundary of the assigned first area.

8. The apparatus of claim 5, wherein the assigned first area has a first boundary and a second boundary; wherein the different types of the feedback comprise at least a first type of feedback and a second type of feedback; wherein the processing unit is configured to operate the feedback component to generate the first type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the first boundary; and wherein the processing unit is configured to operate the feedback component to generate the second type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the second boundary.

9. The apparatus of claim 1, wherein the processing unit is configured to obtain an input signal associated with a pinching or un-pinching action performed on the assigned first area of the screen; and wherein the processing unit is configured to generate the electronic signal to cause a size of the content displayed by the image display device to change based on the input signal that is associated with the pinching or un-pinching action.

10. The apparatus of claim 9, further comprising an orientation sensor for sensing an orientation of the apparatus, wherein the processing unit is also configured to generate the electronic signal to cause the content displayed by the image display device to change based on the input signal associated with the pinching or un-pinching action and the sensed orientation of the apparatus.

11. The apparatus of claim 10, wherein the processing unit is configured to generate the electronic signal to cause the content to contract or expand in a first plane if the pinching or un-pinching action is sensed by the apparatus while the apparatus is at a first orientation; and wherein the processing unit is configured to generate the electronic signal to cause the content to contract or expand in a second plane if the pinching or un-pinching action is sensed by the apparatus while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

12. The apparatus of claim 11, wherein the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree.; and wherein the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

13. The apparatus of claim 1, wherein the processing unit is configured to obtain an input signal associated with a swiping action performed on the assigned first area of the screen.

14. The apparatus of claim 13, wherein the processing unit is configured to generate the electronic signal to cause the content to change by moving the content in response to the input signal associated with the swiping action.

15. The apparatus of claim 13, further comprising an orientation sensor for sensing an orientation of the apparatus, wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to change based on the input signal associated with the swiping action and the sensed orientation of the apparatus.

16. The apparatus of claim 15, wherein the processing unit is configured to generate the electronic signal to cause the content to move in a first plane if the swiping action is sensed by the apparatus while the apparatus is at a first orientation; and wherein the processing unit is configured to generate the electronic signal to cause the content to move in a second plane if the swiping action is sensed by the apparatus while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

17. The apparatus of claim 16, wherein the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree.; and wherein the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

18. The apparatus of claim 15, wherein the content is in a virtual three-dimensional environment, and wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to change by moving the content closer to or further from the user when the swiping action is sensed by the apparatus while the orientation of the apparatus is approximately parallel to a horizontal plane.

19. The apparatus of claim 15, wherein the content is in a virtual three-dimensional environment, and wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to move in a vertical plane in the three-dimensional environment when the swiping action is sensed by the apparatus while the orientation of the apparatus is approximately perpendicular to a horizontal plane.

20. The apparatus of claim 1, further comprising an orientation sensor for sensing an orientation of the apparatus, wherein the processing unit is configured to generate the electronic signal (1) to cause the content to expand in one or more directions, (2) to cause the content to rotate, or (3) to cause the content to move, based on the sensed orientation of the apparatus.

21. The apparatus of claim 1, wherein the apparatus is a handheld apparatus.

22. The apparatus of claim 1, wherein the processing unit is also configured to assign a second area of the screen as a first button.

23. The apparatus of claim 1, wherein the processing unit is also configured to assign a second area of the screen as a keyboard activation button, and wherein the processing unit is configured to operate the screen to display a keyboard in response to the user touching the assigned second area of the screen.

24. The apparatus of claim 1, wherein the change in the content comprises a change in a size of the content, a change in a position of the content, a change in a shape of the content, a change in a color of the content, a replacement of information in the content, an increase or decrease in a quantity of information in the content, or any combination of the foregoing.

25. A method comprising: assigning a first area of a screen of an apparatus to sense finger-action of a user of an image display device, wherein the image display device is configured for head-worn by the user, and wherein the apparatus is different from the image display device; generating an electronic signal to cause a change in a content displayed by the image display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus.

26. A product comprising a non-transitory medium storing a set of instructions, an execution of which will cause a method to be performed, the method comprising: assigning a first area of a screen of an apparatus to sense finger-action of a user of an image display device, wherein the image display device is configured for head-worn by the user, and wherein the apparatus is different from the image display device; generating an electronic signal to cause a change in a content displayed by the image display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus.

Description

RELATED APPLICATION DATA

[0001] The present application claims benefit under 35 U.S.C. .sctn. 119 to U.S. Provisional Patent Application Ser. No. 62/688,108 filed on Jun. 21, 2018, entitled "METHODS AND APPARATUSES FOR PROVIDING INPUT FOR HEAD-WORN IMAGE DISPLAY DEVICES," which is hereby incorporated by reference into the present application in its entirety.

INCORPORATION BY REFERENCE

[0002] The following applications are expressly incorporated by reference in their entireties:

U.S. patent application Ser. No. 15/968,673 filed on May 1, 2018, published on Nov. 1, 2018 as U.S. Patent Application Publication No. 2018/0315248, U.S. patent application Ser. No. 15/965,702 filed on Apr. 27, 2018, published on Nov. 1, 2018 as U.S. Patent Application Publication No. 2018/0314406, U.S. Provisional Patent Application No. 62/610,101 filed on Dec. 22, 2017, U.S. patent application Ser. No. 16/224,719 filed on Dec. 18, 2018, "DISPLAY PANEL OR PORTION THEREOF WITH A TRANSITIONAL GRAPHICAL USER INTERFACE" having attorney-docket No. ML-0678USDES1, filed concurrently, and "DISPLAY PANEL OR PORTION THEREOF WITH A GRAPHICAL USER INTERFACE" having attorney-docket No. ML-0678USDES2, filed concurrently.

FIELD

[0003] The present disclosure relates to head-worn image display devices, and methods and apparatus for providing input for such image display devices.

BACKGROUND

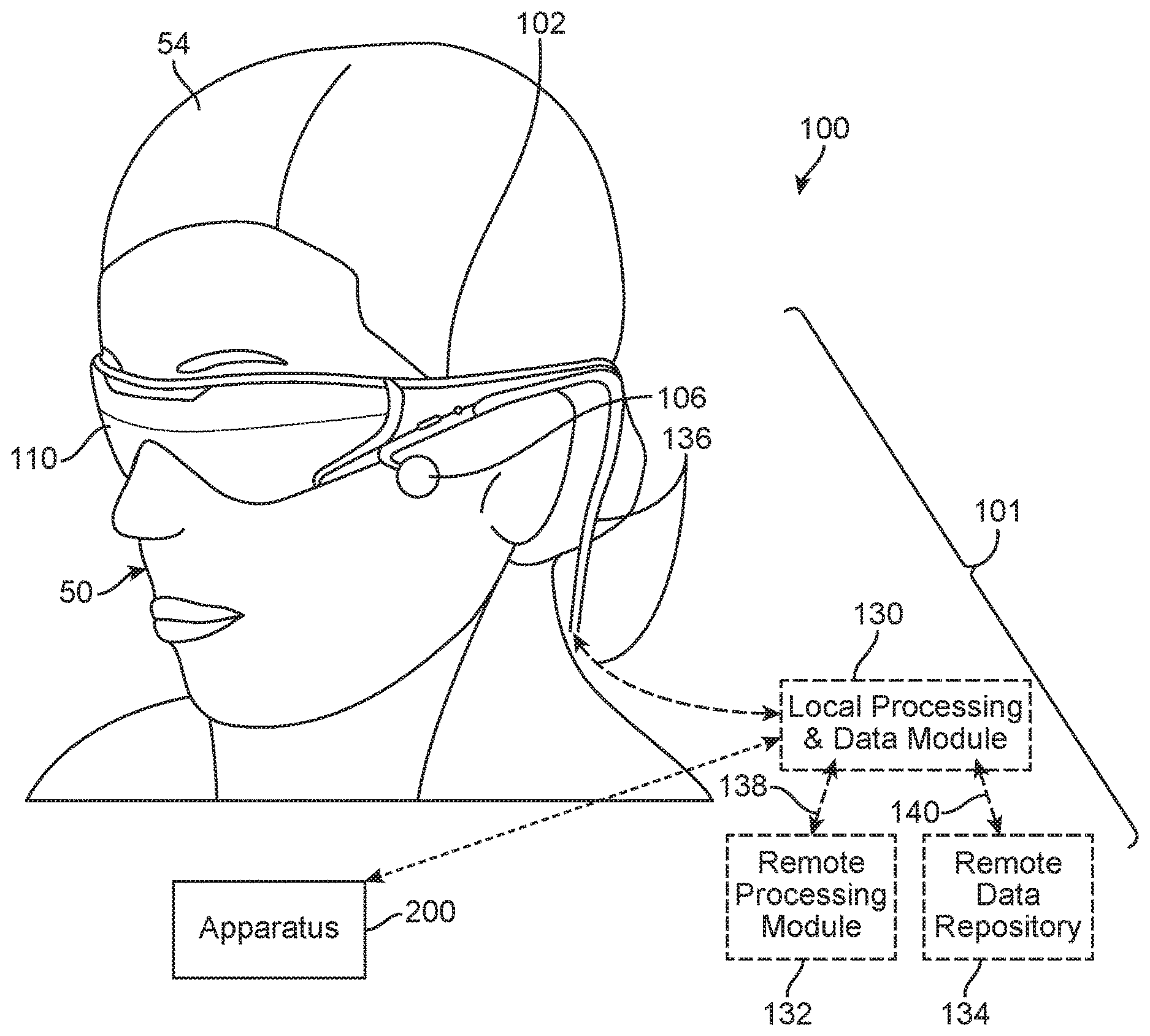

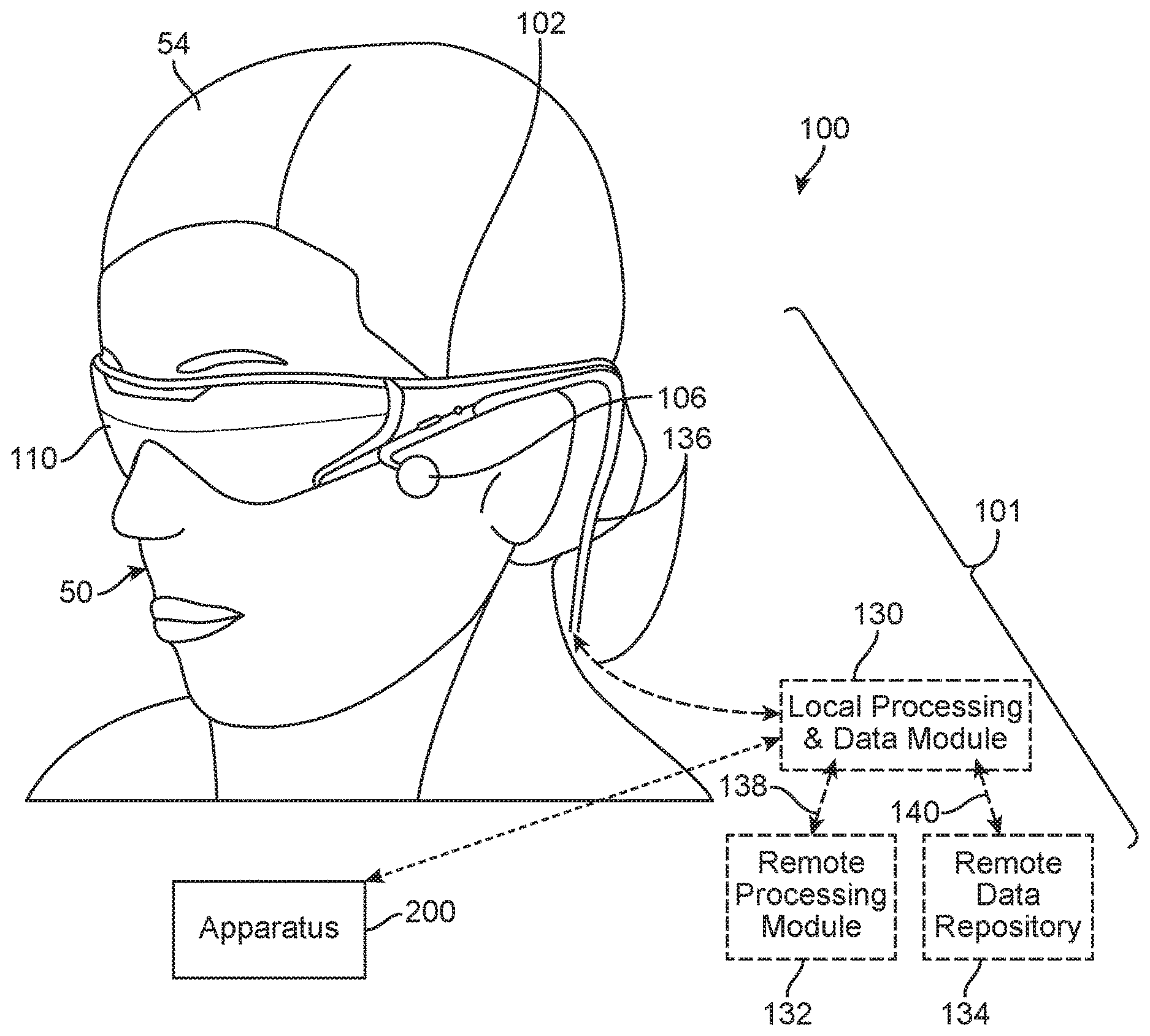

[0004] Modern computing and display technologies have facilitated the development of "mixed reality" (MR) systems for so called "virtual reality" (VR) or "augmented reality" (AR) experiences, wherein digitally reproduced images or portions thereof are presented to a user in a manner wherein they seem to be, or may be perceived as, real. A VR scenario typically involves presentation of digital or virtual image information without transparency to actual real-world visual input. An AR scenario typically involves presentation of digital or virtual image information as an augmentation to visualization of the real world around the user (i.e., transparency to real-world visual input). Accordingly, AR scenarios involve presentation of digital or virtual image information with transparency to the real-world visual input.

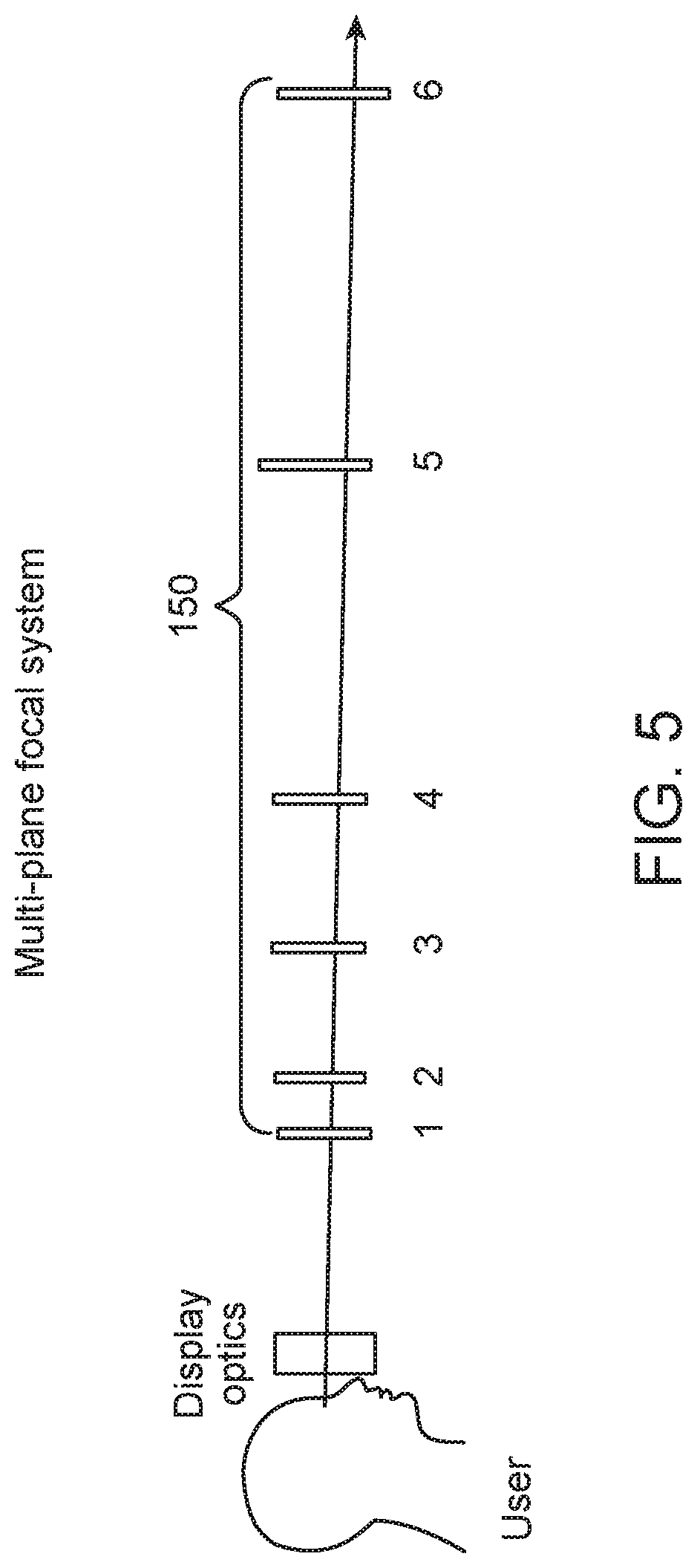

[0005] MR systems may generate and display color data, which increases the realism of MR scenarios. Many of these MR systems display color data by sequentially projecting sub-images in different (e.g., primary) colors or "fields" (e.g., Red, Green, and Blue) corresponding to a color image in rapid succession. Projecting color sub-images at sufficiently high rates (e.g., 60 Hz, 120 Hz, etc.) may deliver a smooth color MR scenario in a user's mind.

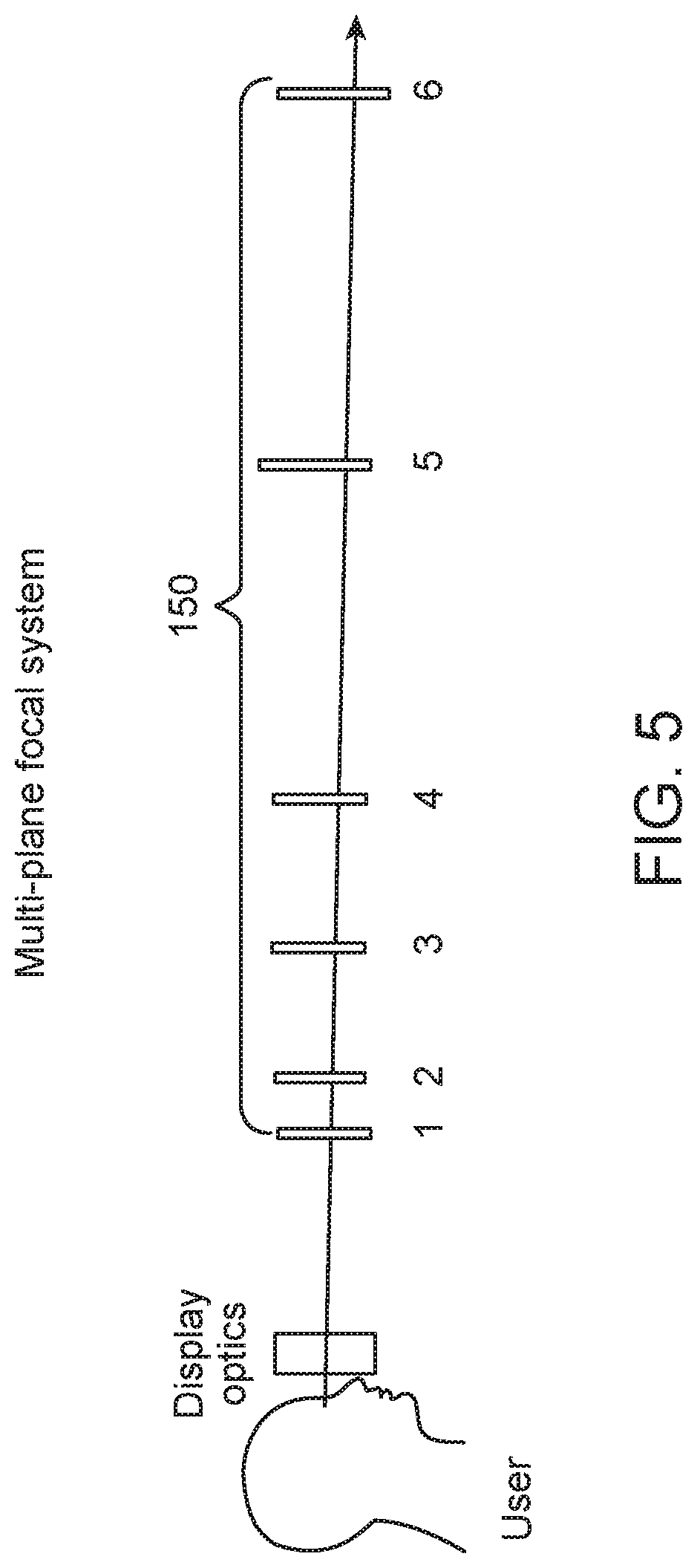

[0006] Various optical systems generate images, including color images, at various depths for displaying MR (VR and AR) scenarios. Some such optical systems are described in U.S. Utility patent application Ser. No. 14/555,585 filed on Nov. 27, 2014 (attorney docket number ML.20011.00), the contents of which are hereby expressly and fully incorporated by reference in their entirety, as though set forth in full.

[0007] MR systems may employ wearable display devices (e.g., head-worn displays, helmet-mounted displays, or smart glasses) that are at least loosely coupled to a user's head, and thus move when the user's head moves. If the user's head motions are detected by the display device, the data being displayed can be updated (e.g., "warped") to take the change in head pose (i.e., the orientation and/or location of user's head) into account.

[0008] As an example, if a user wearing a head-worn display device views a virtual representation of a virtual object on the display and walks around an area where the virtual object appears, the virtual object can be rendered for each viewpoint, giving the user the perception that they are walking around an object that occupies real space. If the head-worn display device is used to present multiple virtual objects, measurements of head pose can be used to render the scene to match the user's dynamically changing head pose and provide an increased sense of immersion.

[0009] Head-worn display devices that enable AR provide concurrent viewing of both real and virtual objects. With an "optical see-through" display, a user can see through transparent (or semi-transparent) elements in a display system to view directly the light from real objects in an environment. The transparent element, often referred to as a "combiner," superimposes light from the display over the user's view of the real world, where light from by the display projects an image of virtual content over the see-through view of the real objects in the environment. A camera may be mounted onto the head-worn display device to capture images or videos of the scene being viewed by the user.

[0010] Current optical systems, such as those in MR systems, optically render virtual content. Content is "virtual" in that it does not correspond to real physical objects located in respective positions in space. Instead, virtual content only exist in the brains (e.g., the optical centers) of a user of the head-worn display device when stimulated by light beams directed to the eyes of the user.

[0011] Methods and apparatuses for providing input for head-worn image display devices (e.g., MR devices, AR devices, VR devices, etc.) are disclosed herein.

SUMMARY

[0012] An apparatus for use with an image display device configured for head-worn by a user, includes: a screen; and a processing unit configured to assign a first area of the screen to sense finger-action of the user; wherein the processing unit is configured to generate an electronic signal to cause a change in a content displayed by the image display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus. As used in this specification, the term "finger-action" may include action performed by one or more fingers, and may include actions such as swiping, pinching, un-pinching, tapping, pressing, holding, twisting, turning, etc.

[0013] Optionally, the screen has a touch-sensitive region, and wherein the assigned first area is less than a total area of the touch-sensitive region.

[0014] Optionally, the assigned first area has a shape that corresponds with a shape of a screen of the image display device.

[0015] Optionally, the assigned first area has a dimension based on a brand and/or a model of the apparatus.

[0016] Optionally, the assigned first area has a dimension that is based on a feature of the apparatus and a feature of the image display device.

[0017] Optionally, the processing unit is configured to ignore input generated by the user using a portion of the touch-sensitive region that is not a part of the assigned first area, and that is not a part of an assigned button.

[0018] Optionally, the processing unit is also configured to operate a feedback component in response to the finger-action of the user.

[0019] Optionally, the first area has a boundary, and wherein the finger-action of the user comprises a finger of the user crossing, reaching, or moving to a location that is within a prescribed distance from, the boundary.

[0020] Optionally, the first area has one or more boundaries that at least partially surround a reference location, and wherein the processing unit is configured to operate the feedback component in response to a finger of the user reaching a prescribed distance from the reference location.

[0021] Optionally, the processing unit is configured to operate the feedback component to generate different types of feedback based on different respective spatial relationships between one or more finger(s) of the user with respect to the first area.

[0022] Optionally, the different types of feedback comprise a first haptic impulse with a first amplitude, and a second haptic impulse with a second amplitude that is different from the first amplitude.

[0023] Optionally, the different types of feedback comprise a first number of haptic impulse(s), and a second number of haptic impulse(s) that is different from the first number.

[0024] Optionally, the different types of feedback comprise a first series of haptic impulses with a first frequency, and a second series of haptic impulses with a second frequency that is different from the first frequency.

[0025] Optionally, the different respective spatial relationships comprise different distances between (1) one of the one or more finger(s), or a point that is between two fingers, of the user and (2) a reference location within the assigned first area.

[0026] Optionally, the reference location comprises a center of the assigned first area.

[0027] Optionally, the different distances exceed a threshold.

[0028] Optionally, the different respective spatial relationships comprise one of the one or more finger(s), or a point that is between two fingers, reaching different respective distances from a boundary of the assigned first area.

[0029] Optionally, the assigned first area has a first boundary and a second boundary; wherein the different types of the feedback comprise at least a first type of feedback and a second type of feedback; wherein the processing unit is configured to operate the feedback component to generate the first type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the first boundary; and wherein the processing unit is configured to operate the feedback component to generate the second type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the second boundary.

[0030] Optionally, the first boundary comprises a left or right boundary, and the second boundary comprises a top or bottom boundary, of the assigned first area.

[0031] Optionally, the processing unit is configured to operate the feedback component based on a swiping direction.

[0032] Optionally, the processing unit is configured to obtain an input signal associated with a pinching or un-pinching action performed on the assigned first area of the screen.

[0033] Optionally, the processing unit is configured to generate the electronic signal to cause a size of the content displayed by the image display device to change based on the input signal that is associated with the pinching or un-pinching action.

[0034] Optionally, the apparatus further includes an orientation sensor for sensing an orientation of the apparatus, wherein the processing unit is also configured to generate the electronic signal to cause the content displayed by the image display device to change based on the input signal associated with the pinching or un-pinching action and the sensed orientation of the apparatus.

[0035] Optionally, the processing unit is configured to generate the electronic signal to cause the content to contract or expand in a first plane if the pinching or un-pinching action is sensed by the apparatus while the apparatus is at a first orientation; and wherein the processing unit is configured to generate the electronic signal to cause the content to contract or expand in a second plane if the pinching or un-pinching action is sensed by the apparatus while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

[0036] Optionally, the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree..

[0037] Optionally, the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

[0038] Optionally, the first plane comprises a Y-Z plane in a virtual three-dimensional environment, and the second plane comprises a X-Y plane in the virtual three-dimensional environment.

[0039] Optionally, the first plane and the second plane are with respect to a virtual three-dimensional environment.

[0040] Optionally, the first plane is perpendicular to the second plane.

[0041] Optionally, the processing unit is configured to obtain an input signal associated with a swiping action performed on the assigned first area of the screen.

[0042] Optionally, the processing unit is configured to generate the electronic signal to cause the content to change by moving the content in response to the input signal associated with the swiping action.

[0043] Optionally, the apparatus further includes an orientation sensor for sensing an orientation of the apparatus, wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to change based on the input signal associated with the swiping action and the sensed orientation of the apparatus.

[0044] Optionally, the processing unit is configured to generate the electronic signal to cause the content to move in a first plane if the swiping action is sensed by the apparatus while the apparatus is at a first orientation; and wherein the processing unit is configured to generate the electronic signal to cause the content to move in a second plane if the swiping action is sensed by the apparatus while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

[0045] Optionally, the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree..

[0046] Optionally, the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

[0047] Optionally, the first plane comprises a Y-Z plane in a virtual three-dimensional environment, and the second plane comprises a X-Y plane in the virtual three-dimensional environment.

[0048] Optionally, the first plane and the second plane are with respect to a virtual three-dimensional environment.

[0049] Optionally, the first plane is perpendicular to the second plane.

[0050] Optionally, the content is in a virtual three-dimensional environment, and wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to change by moving the content closer to or further from the user when the swiping action is sensed by the apparatus while the orientation of the apparatus is approximately parallel to a horizontal plane.

[0051] Optionally, the content is in a virtual three-dimensional environment, and wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to move in a vertical plane in the three-dimensional environment when the swiping action is sensed by the apparatus while the orientation of the apparatus is approximately perpendicular to a horizontal plane.

[0052] Optionally, the apparatus further includes an orientation sensor for sensing an orientation of the apparatus, wherein the processing unit is configured to generate the electronic signal based on the sensed orientation of the apparatus.

[0053] Optionally, the processing unit is configured to generate the electronic signal to cause the content to expand in one or more directions based on the sensed orientation of the apparatus.

[0054] Optionally, the processing unit is configured to generate the electronic signal to cause the content to rotate based on the sensed orientation of the apparatus.

[0055] Optionally, the processing unit is configured to generate the electronic signal to cause the content to move based on the sensed orientation of the apparatus.

[0056] Optionally, the apparatus further includes a movement sensor for sensing a movement of the apparatus, wherein the processing unit is configured to generate the electronic signal to cause the content displayed by the image display device to change based on the sensed movement of the apparatus.

[0057] Optionally, the processing unit is configured to generate the electronic signal to cause the content to change by moving the content based on the sensed movement of the apparatus.

[0058] Optionally, the apparatus is a handheld apparatus.

[0059] Optionally, the handheld apparatus comprises a cell phone, a smart phone, a personal-digital-assistant (PDA), or a tablet.

[0060] Optionally, the assigned first area of the screen has no displayed object while the assigned first area of the screen is sensing the finger-action of the user.

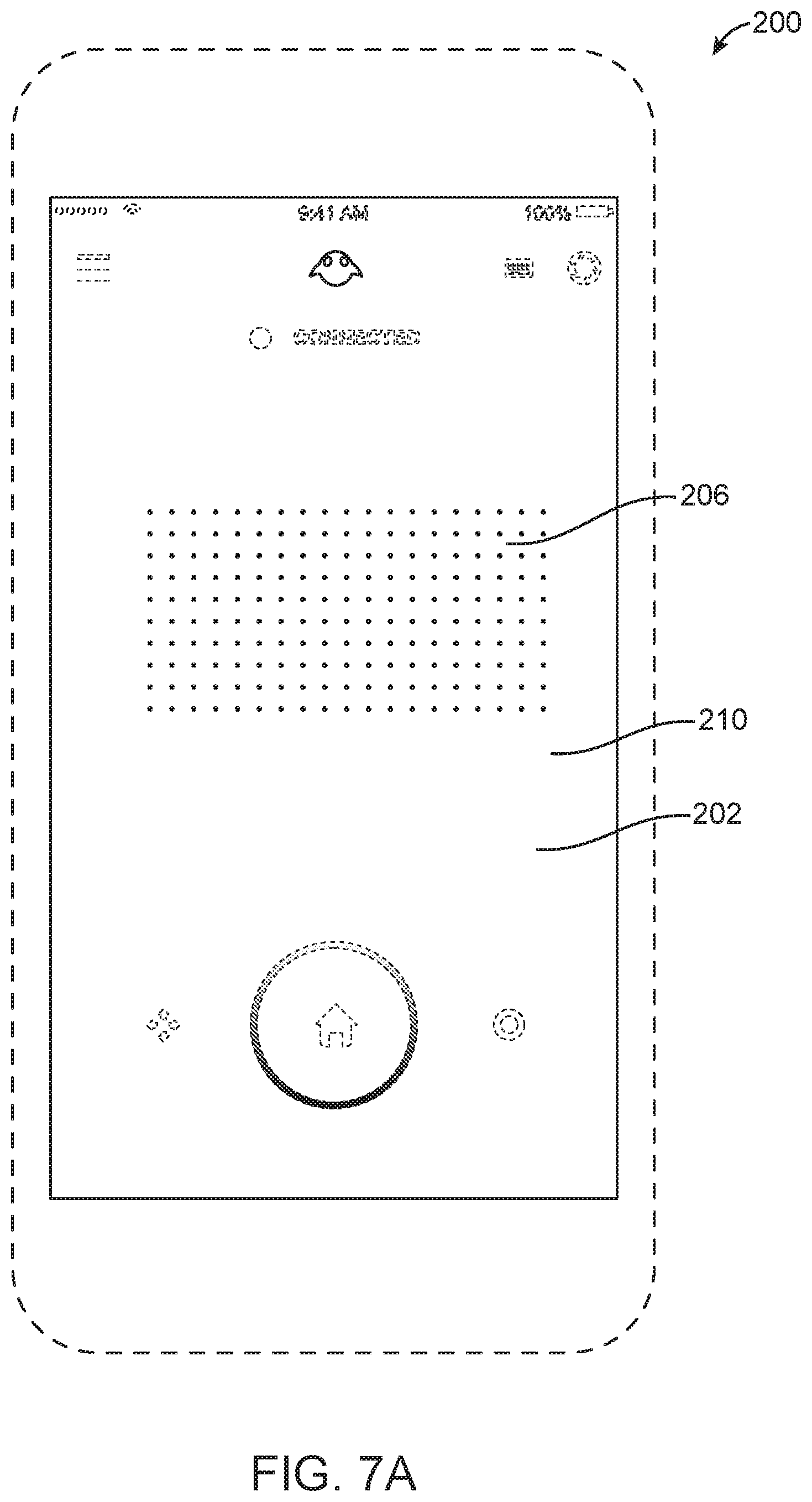

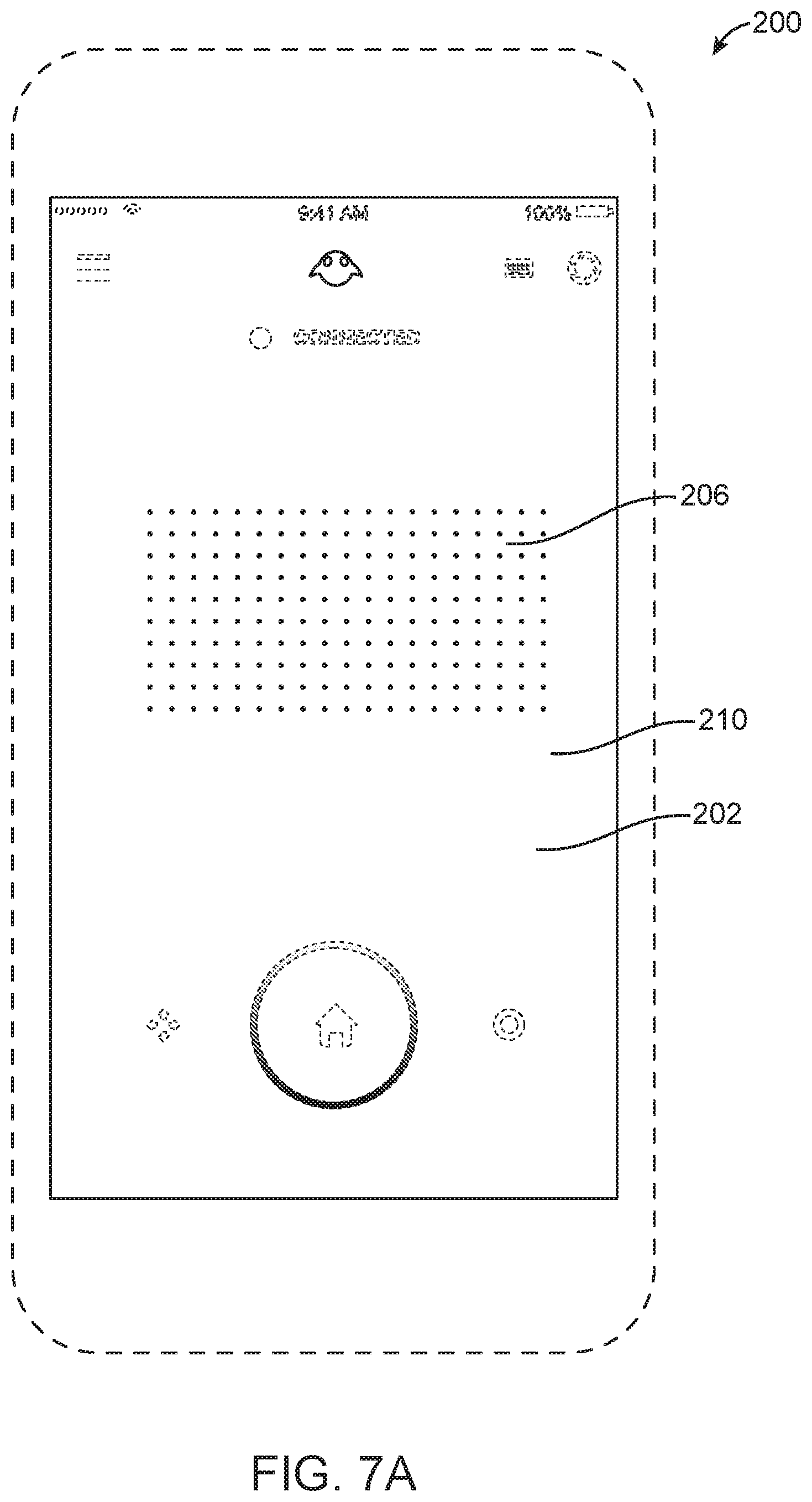

[0061] Optionally, the processing unit is configured to operate the screen to display a grid of dots in the assigned first area of the screen.

[0062] Optionally, the processing unit is also configured to change a feature of one or more of the dots in response to the user touching a part of the assigned first area of the screen where the one or more of the dots are displayed.

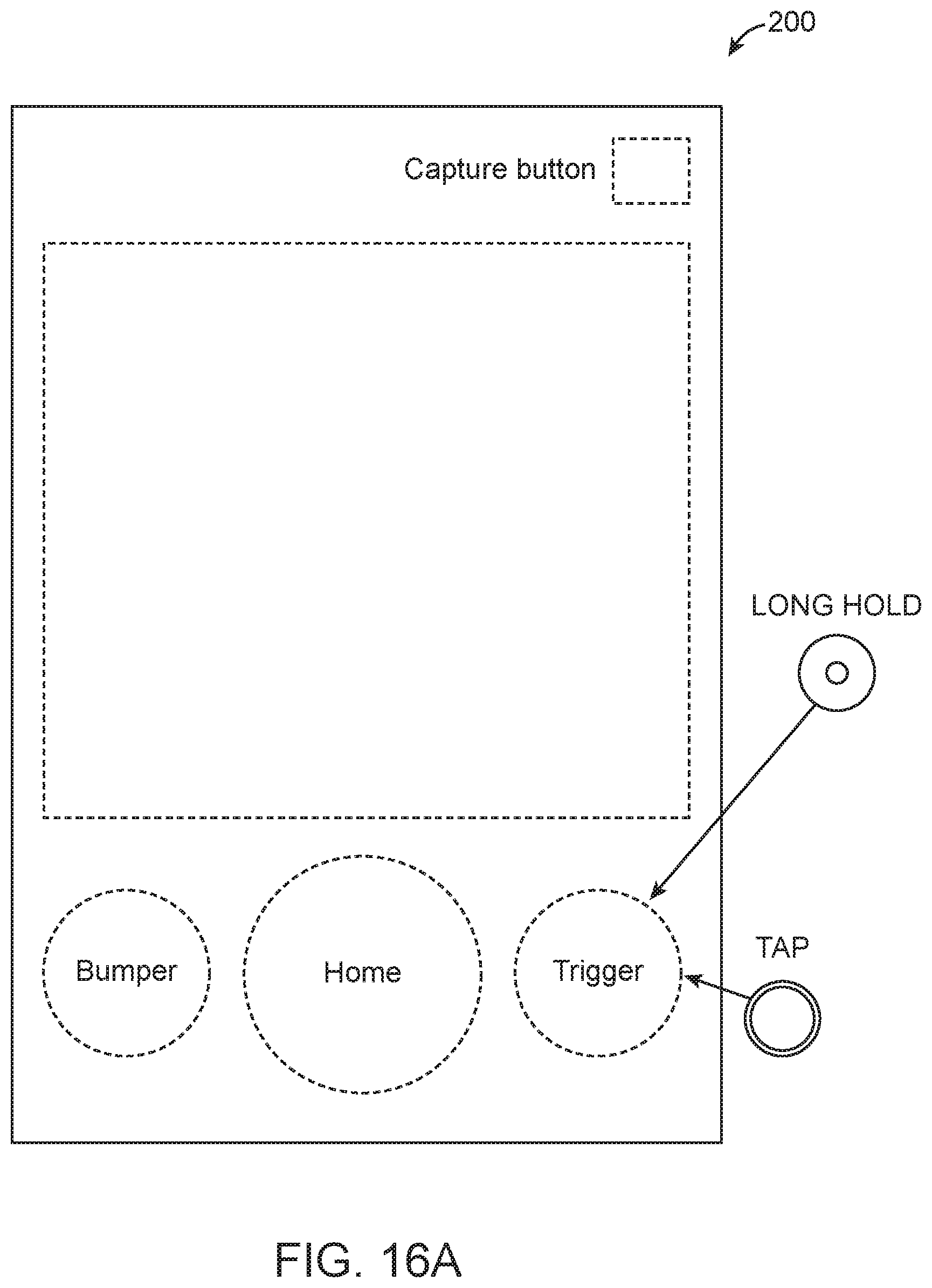

[0063] Optionally, the processing unit is also configured to assign a second area of the screen as a first button.

[0064] Optionally, the first button is a "HOME" button.

[0065] Optionally, the processing unit is also configured to assign a third area of the screen as a second button.

[0066] Optionally, the second button is a "TOGGLE" button.

[0067] Optionally, the processing unit is also configured to assign a fourth area of the screen as a third button.

[0068] Optionally, the third button is a "BUMPER" button.

[0069] Optionally, the processing unit is also configured to assign a second area of the screen as a keyboard activation button, and wherein the processing unit is configured to operate the screen to display a keyboard in response to the user touching the assigned second area of the screen.

[0070] Optionally, the apparatus further includes a wireless receiver for communication with the image display device.

[0071] Optionally, the apparatus further includes a connector for communication with the image display device via a cable.

[0072] Optionally, the apparatus further includes a non-transitory medium storing a set of instruction, an execution of which will cause the processing unit to assign the first area of the screen for sensing finger-action of the user.

[0073] Optionally, the change in the content comprises a change in a size of the content, a change in a position of the content, a change in a shape of the content, a change in a color of the content, a replacement of information in the content, an increase or decrease in a quantity of information in the content, or any combination of the foregoing.

[0074] A method includes: assigning a first area of a screen of an apparatus to sense finger-action of a user of an image display device, wherein the image display device is configured for head-worn by the user, and wherein the apparatus is different from the image display device; generating an electronic signal to cause a change in a content displayed by the image display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus.

[0075] Optionally, the screen has a touch-sensitive region, and wherein the assigned first area is less than a total area of the touch-sensitive region.

[0076] Optionally, the assigned first area has a shape that corresponds with a shape of a screen of the image display device.

[0077] Optionally, the assigned first area has a dimension based on a brand and/or a model of the apparatus.

[0078] Optionally, the assigned first area has a dimension that is based on a feature of the apparatus and a feature of the image display device.

[0079] Optionally, the method further includes ignoring input generated by the user using a portion of the touch-sensitive region that is not a part of the assigned first area, and that is not a part of an assigned button.

[0080] Optionally, the method further includes generating a control signal to operate a feedback component in response to the finger-action of the user.

[0081] Optionally, the first area has a boundary, and wherein the finger-action of the user comprises a finger of the user crossing, reaching, or moving to a location that is within a prescribed distance from, the boundary.

[0082] Optionally, the first area has one or more boundaries that at least partially surround a reference location, and wherein the control signal is for operating the feedback component in response to a finger of the user reaching a prescribed distance from the reference location.

[0083] Optionally, the method further includes generating different control signals to operate a feedback component to generate different respective types of feedback based on different respective spatial relationships between one or more finger(s) of the user with respect to the first area.

[0084] Optionally, the different types of feedback comprise a first haptic impulse with a first amplitude, and a second haptic impulse with a second amplitude that is different from the first amplitude.

[0085] Optionally, the different types of feedback comprise a first number of haptic impulse(s), and a second number of haptic impulse(s) that is different from the first number.

[0086] Optionally, the different types of feedback comprise a first series of haptic impulses with a first frequency, and a second series of haptic impulses with a second frequency that is different from the first frequency.

[0087] Optionally, the different respective spatial relationships comprise different distances between (1) one of the one or more finger(s), or a point that is between two fingers, of the user and (2) a reference location within the assigned first area.

[0088] Optionally, the reference location comprises a center of the assigned first area.

[0089] Optionally, the different distances exceed a threshold.

[0090] Optionally, the different respective spatial relationships comprise one of the one or more finger(s), or a point that is between two fingers, reaching different respective distances from a boundary of the assigned first area.

[0091] Optionally, the assigned first area has a first boundary and a second boundary; wherein the different types of the feedback comprise at least a first type of feedback and a second type of feedback; wherein the feedback component is operated to generate the first type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the first boundary; and wherein the feedback component is operated to generate the second type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the second boundary.

[0092] Optionally, the first boundary comprises a left or right boundary, and the second boundary comprises a top or bottom boundary, of the assigned first area.

[0093] Optionally, the control signal for operating the feedback component is based on a swiping direction.

[0094] Optionally, the method further includes receiving an input signal associated with a pinching or un-pinching action performed by the user on the assigned first area of the screen.

[0095] Optionally, the electronic signal is for changing a size of the content displayed by the image display device in response to the input signal that is associated with the pinching or un-pinching action.

[0096] Optionally, the method further includes obtaining an orientation of the apparatus from an orientation sensor, wherein the electronic signal is for changing the content displayed by the image display device based on the input signal that is associated with the pinching or un-pinching action and the orientation of the apparatus.

[0097] Optionally, the content is changed by contracting or expanding the content in a first plane if the pinching or un-pinching action is sensed by the assigned first area while the apparatus is at a first orientation; and wherein the content is changed by contracting or expanding the content in a second plane if the pinching or un-pinching action is sensed by the assigned first area while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

[0098] Optionally, the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree..

[0099] Optionally, the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

[0100] Optionally, the first plane comprises a Y-Z plane in a virtual three-dimensional environment, and the second plane comprises a X-Y plane in the virtual three-dimensional environment.

[0101] Optionally, the first plane and the second plane are with respect to a virtual three-dimensional environment.

[0102] Optionally, the first plane is perpendicular to the second plane.

[0103] Optionally, the method further includes receiving an input signal associated with a swiping action performed by the user on the assigned first area of the screen.

[0104] Optionally, the electronic signal is for moving the content displayed by the image display device in response to the sensed swiping action.

[0105] Optionally, the method further includes obtaining an orientation of the apparatus from an orientation sensor, wherein the electronic signal is for changing the content displayed by the image display device based on the input signal that is associated with the swiping action and the orientation of the apparatus.

[0106] Optionally, the content is changed by moving the content in a first plane if the swiping action is sensed by the assigned first area while the apparatus is at a first orientation; and wherein the content is changed by moving the content in a second plane if the swiping action is sensed by the assigned first area while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

[0107] Optionally, the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree..

[0108] Optionally, the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

[0109] Optionally, the first plane comprises a Y-Z plane in a virtual three-dimensional environment, and the second plane comprises a X-Y plane in the virtual three-dimensional environment.

[0110] Optionally, the first plane and the second plane are with respect to a virtual three-dimensional environment.

[0111] Optionally, the first plane is perpendicular to the second plane.

[0112] Optionally, the content is in a virtual three-dimensional environment, and wherein the electronic signal is for causing the content displayed by the image display device to move closer to or further from the user when the swiping action is sensed by the assigned first area while the orientation of the apparatus is approximately parallel to a horizontal plane.

[0113] Optionally, the content is in a virtual three-dimensional environment, and wherein the electronic signal is for causing the content displayed by the image display device to move in a vertical plane in the three-dimensional environment when the swiping action is sensed by the assigned first area while the orientation of the apparatus is approximately perpendicular to a horizontal plane.

[0114] Optionally, the method further includes obtaining a sensor input indicating a sensed orientation of the apparatus, wherein the electronic signal is for changing the content displayed by the image display device based on the sensor input indicating the sensed orientation of the apparatus.

[0115] Optionally, the electronic signal is for changing the content by expanding the content in one or more directions based on the sensor input indicating the sensed orientation of the apparatus.

[0116] Optionally, the electronic signal is for changing the content by rotating the content based on the sensor input indicating the sensed orientation of the apparatus.

[0117] Optionally, the electronic signal is for changing the content by moving the content based on the sensor input indicating the sensed orientation of the apparatus.

[0118] Optionally, the method further includes obtaining a sensor input indicating a sensed movement of the apparatus, wherein the electronic signal is for changing the content displayed by the image display device based on the sensor input indicating the sensed movement of the apparatus.

[0119] Optionally, the electronic signal is for changing the content by moving the content based on the sensor input indicating the sensed movement of the apparatus.

[0120] Optionally, the apparatus is a handheld apparatus.

[0121] Optionally, the handheld apparatus comprises a cell phone, a smart phone, a personal-digital-assistant (PDA), or a tablet.

[0122] Optionally, the assigned first area of the screen has no displayed object while the assigned first area of the screen is sensing the finger-action of the user.

[0123] Optionally, the method further includes operating the screen to display a grid of dots in the assigned first area of the screen.

[0124] Optionally, the method further includes changing a feature of one or more of the dots in response to the user touching a part of the assigned first area of the screen where the one or more of the dots are displayed.

[0125] Optionally, the method further includes assigning a second area of the screen as a first button.

[0126] Optionally, the first button is a "HOME" button.

[0127] Optionally, the method further includes assigning a third area of the screen as a second button.

[0128] Optionally, the second button is a "TOGGLE" button.

[0129] Optionally, the method further includes assigning a fourth area of the screen as a third button.

[0130] Optionally, the third button is a "BUMPER" button.

[0131] Optionally, the method further includes: assigning a second area of the screen as a keyboard activation button, and operating the screen to display a keyboard in response to the user touching the assigned second area of the screen.

[0132] Optionally, the method further includes wirelessly communicating with the image display device.

[0133] Optionally, the method further includes communicating with the image display device via a cable.

[0134] Optionally, the apparatus comprises a non-transitory medium storing an instruction, and wherein the act of assigning the first area of the screen for sensing finger-action of the user is performed based on the instruction.

[0135] Optionally, the change in the content comprises a change in a size of the content, a change in a position of the content, a change in a shape of the content, a change in a color of the content, a replacement of information in the content, an increase or decrease in a quantity of information in the content, or any combination of the foregoing.

[0136] A product includes a non-transitory medium storing a set of instructions, an execution of which will cause a method to be performed, the method comprising: assigning a first area of a screen of an apparatus to sense finger-action of a user of an image display device, wherein the image display device is configured for head-worn by the user, and wherein the apparatus is different from the image display device; generating an electronic signal to cause a change in a content displayed by the image display device based on the finger-action of the user sensed by the assigned first area of the screen of the apparatus.

[0137] Optionally, the screen has a touch-sensitive region, and wherein the assigned first area is less than a total area of the touch-sensitive region.

[0138] Optionally, the assigned first area has a shape that corresponds with a shape of a screen of the image display device.

[0139] Optionally, the assigned first area has a dimension based on a brand and/or a model of the apparatus.

[0140] Optionally, the assigned first area has a dimension that is based on a feature of the apparatus and a feature of the image display device.

[0141] Optionally, the method further comprises ignoring input generated by the user using a portion of the touch-sensitive region that is not a part of the assigned first area, and that is not a part of an assigned button.

[0142] Optionally, the method further comprises generating a control signal to operate a feedback component in response to the finger-action of the user.

[0143] Optionally, the first area has a boundary, and wherein the finger-action of the user comprises a finger of the user crossing, reaching, or moving to a location that is within a prescribed distance from, the boundary.

[0144] Optionally, the first area has one or more boundaries that at least partially surround a reference location, and wherein the control signal is for operating the feedback component in response to a finger of the user reaching a prescribed distance from the reference location.

[0145] Optionally, the method further comprises generating different control signals to operate a feedback component to generate different respective types of feedback based on different respective spatial relationships between one or more finger(s) of the user with respect to the first area.

[0146] Optionally, the different types of feedback comprise a first haptic impulse with a first amplitude, and a second haptic impulse with a second amplitude that is different from the first amplitude.

[0147] Optionally, the different types of feedback comprise a first number of haptic impulse(s), and a second number of haptic impulse(s) that is different from the first number.

[0148] Optionally, the different types of feedback comprise a first series of haptic impulses with a first frequency, and a second series of haptic impulses with a second frequency that is different from the first frequency.

[0149] Optionally, the different respective spatial relationships comprise different distances between (1) one of the one or more finger(s), or a point that is between two fingers, of the user and (2) a reference location within the assigned first area.

[0150] Optionally, the reference location comprises a center of the assigned first area.

[0151] Optionally, the different distances exceed a threshold.

[0152] Optionally, the different respective spatial relationships comprise one of the one or more finger(s), or a point that is between two fingers, reaching different respective distances from a boundary of the assigned first area.

[0153] Optionally, the assigned first area has a first boundary and a second boundary; wherein the different types of the feedback comprise at least a first type of feedback and a second type of feedback; wherein the feedback component is operated to generate the first type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the first boundary; and wherein the feedback component is operated to generate the second type of feedback when one or more finger(s) of the user crosses, reaches, or moves to a location that is within a prescribed distance from, the second boundary.

[0154] Optionally, the first boundary comprises a left or right boundary, and the second boundary comprises a top or bottom boundary, of the assigned first area.

[0155] Optionally, the control signal for operating the feedback component is based on a swiping direction.

[0156] Optionally, the method further comprises receiving an input signal associated with a pinching or un-pinching action performed by the user on the assigned first area of the screen.

[0157] Optionally, the electronic signal is for changing a size of the content displayed by the image display device in response to the input signal that is associated with the pinching or un-pinching action.

[0158] Optionally, the method further comprises obtaining an orientation of the apparatus from an orientation sensor, wherein the electronic signal is for changing the content displayed by the image display device based on the input signal that is associated with the pinching or un-pinching action and the orientation of the apparatus.

[0159] Optionally, the content is changed by contracting or expanding the content in a first plane if the pinching or un-pinching action is sensed by the assigned first area while the apparatus is at a first orientation; and wherein the content is changed by contracting or expanding the content in a second plane if the pinching or un-pinching action is sensed by the assigned first area while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

[0160] Optionally, the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree..

[0161] Optionally, the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

[0162] Optionally, the first plane comprises a Y-Z plane in a virtual three-dimensional environment, and the second plane comprises a X-Y plane in the virtual three-dimensional environment.

[0163] Optionally, the first plane and the second plane are with respect to a virtual three-dimensional environment.

[0164] Optionally, the first plane is perpendicular to the second plane.

[0165] Optionally, the method further comprises receiving an input signal associated with a swiping action performed by the user on the assigned first area of the screen.

[0166] Optionally, the electronic signal is for moving the content displayed by the image display device in response to the sensed swiping action.

[0167] Optionally, the method further comprises obtaining an orientation of the apparatus from an orientation sensor, wherein the electronic signal is for changing the content displayed by the image display device based on the input signal that is associated with the swiping action and the orientation of the apparatus.

[0168] Optionally, the content is changed by moving the content in a first plane if the swiping action is sensed by the assigned first area while the apparatus is at a first orientation; and wherein the content is changed by moving the content in a second plane if the swiping action is sensed by the assigned first area while the apparatus is at a second orientation different from the first orientation, the second plane being different from the first plane.

[0169] Optionally, the apparatus has the first orientation when a major axis of the apparatus forms an angle with a horizontal plane that is less than 45.degree..

[0170] Optionally, the apparatus has the second orientation when a major axis of the apparatus forms an angle with a vertical plane that is less than 45.degree..

[0171] Optionally, the first plane comprises a Y-Z plane in a virtual three-dimensional environment, and the second plane comprises a X-Y plane in the virtual three-dimensional environment.

[0172] Optionally, the first plane and the second plane are with respect to a virtual three-dimensional environment.

[0173] Optionally, the first plane is perpendicular to the second plane.

[0174] Optionally, the content is in a virtual three-dimensional environment, and wherein the electronic signal is for causing the content displayed by the image display device to move closer to or further from the user when the swiping action is sensed by the assigned first area while the orientation of the apparatus is approximately parallel to a horizontal plane.

[0175] Optionally, the content is in a virtual three-dimensional environment, and wherein the electronic signal is for causing the content displayed by the image display device to move in a vertical plane in the three-dimensional environment when the swiping action is sensed by the assigned first area while the orientation of the apparatus is approximately perpendicular to a horizontal plane.

[0176] Optionally, the method further comprises obtaining a sensor input indicating a sensed orientation of the apparatus, wherein the electronic signal is for changing the content displayed by the image display device based on the sensor input indicating the sensed orientation of the apparatus.

[0177] Optionally, the electronic signal is for changing the content by expanding the content in one or more directions based on the sensor input indicating the sensed orientation of the apparatus.

[0178] Optionally, the electronic signal is for changing the content by rotating the content based on the sensor input indicating the sensed orientation of the apparatus.

[0179] Optionally, the electronic signal is for changing the content by moving the content based on the sensor input indicating the sensed orientation of the apparatus.

[0180] Optionally, the method further comprises obtaining a sensor input indicating a sensed movement of the apparatus, wherein the electronic signal is for changing the content displayed by the image display device based on the sensor input indicating the sensed movement of the apparatus.

[0181] Optionally, the electronic signal is for changing the content by moving the content based on the sensor input indicating the sensed movement of the apparatus.

[0182] Optionally, the apparatus is a handheld apparatus.

[0183] Optionally, the handheld apparatus comprises a cell phone, a smart phone, a personal-digital-assistant (PDA), or a tablet.

[0184] Optionally, the assigned first area of the screen has no displayed object while the assigned first area of the screen is sensing the finger-action of the user.

[0185] Optionally, the method further comprises operating the screen to display a grid of dots in the assigned first area of the screen.

[0186] Optionally, the method further comprises changing a feature of one or more of the dots in response to the user touching a part of the assigned first area of the screen where the one or more of the dots are displayed.

[0187] Optionally, the method further comprises assigning a second area of the screen as a first button.

[0188] Optionally, the first button is a "HOME" button.

[0189] Optionally, the method further comprises assigning a third area of the screen as a second button.

[0190] Optionally, the second button is a "TOGGLE" button.

[0191] Optionally, the method further comprises assigning a fourth area of the screen as a third button.

[0192] Optionally, the third button is a "BUMPER" button.

[0193] Optionally, the method further comprises: assigning a second area of the screen as a keyboard activation button, and operating the screen to display a keyboard in response to the user touching the assigned second area of the screen.

[0194] Optionally, the method further comprises wirelessly communicating with the image display device.

[0195] Optionally, the method further comprises communicating with the image display device via a cable.

[0196] Optionally, the product includes instruction for assigning the first area of the screen for sensing finger-action of the user.

[0197] Optionally, the change in the content comprises a change in a size of the content, a change in a position of the content, a change in a shape of the content, a change in a color of the content, a replacement of information in the content, an increase or decrease in a quantity of information in the content, or any combination of the foregoing.

[0198] A computing device includes: a proximity-sensitive display; and one or more processors that are operatively coupled to the proximity-sensitive display and are communicatively coupled to a wearable computing system, the one or more processors configured to: receive, from the wearable computing system, data indicating whether the proximity-sensitive display is visible to a user of the wearable computing system; monitor the data that is received from the wearable computing system for changes in the user's visibility of the proximity-sensitive display; in response to a detection of a change in the user's visibility of the proximity-sensitive display, switch between: (i) a first presentation mode in which the one or more processors are configured to operate the proximity-sensitive display in a manner such that a first load is placed on a power supply of the computing device, and (ii) a second presentation mode in which the one or more processors are configured to operate the proximity-sensitive display in a manner such that a second load is placed on the power supply of the computing device, the second load being less than the first load.

[0199] Optionally, the first presentation mode is one in which the one or more processors are configured to present a graphical user interface on the proximity-sensitive display.

[0200] Optionally, the second presentation mode is one in which the one or more processors are configured to present a limited version of the graphical user interface on the proximity-sensitive display.

[0201] Optionally, the first presentation mode is one in which the one or more processors are configured to operate the proximity-sensitive display such that content is presented at a first level of brightness, and the second presentation mode is one in which the one or more processors are configured to operate the proximity-sensitive display such that content is presented at a second level of brightness that is lower than the first level of brightness.

[0202] Optionally, the computing device is configured to operate in a same or similar manner as one or more of the computing devices and/or apparatuses described herein.

[0203] Optionally, the computing device is configured to perform one or more of the operations described in one or both of the methods described immediately below.

[0204] A computer-implemented method includes: receiving, from a wearable computing system, a first set of data indicating whether a proximity-sensitive display is visible to a user of the wearable computing system at a first point in time; receiving, from the wearable computing system, a second set of data indicating whether the proximity-sensitive display is visible to the user of the wearable computing system at a second, later point in time; determining, based on receiving the first and second sets of data from the wearable computing system, that a change in the user's visibility of the proximity-sensitive display has occurred; and in response to determining that the change in the user's visibility of the proximity-sensitive display has occurred, switching between (i) a first presentation mode in which a graphical user interface is presented on the proximity-sensitive display, and (ii) a second presentation mode in which the proximity-sensitive display consumes less power than it does in the first presentation mode.

[0205] Optionally, determining, based on receiving the first and second sets of data from the wearable computing system, that a change in the user's visibility of the proximity-sensitive display has occurred comprises: determining, based on receiving the first and second sets of data from the wearable computing system, that the user has lost visibility of the proximity-sensitive display.

[0206] Optionally, in response to determining that the user has lost visibility of the proximity-sensitive display, the method comprises switching from the first presentation mode to the second presentation mode.

[0207] Optionally, determining, based on receiving the first and second sets of data from the wearable computing system, that a change in the user's visibility of the proximity-sensitive display has occurred comprises: determining, based on receiving the first and second sets of data from the wearable computing system, that the user has regained visibility of the proximity-sensitive display.

[0208] Optionally, in response to determining that the user has lost visibility of the proximity-sensitive display, the method comprises switching from the second presentation mode to the first presentation mode.

[0209] Optionally, the method may be performed by the computing device described immediately above and/or the computing device described immediately below.

[0210] A computing device includes: a proximity-sensitive display; a feedback component; and one or more processors that are operatively coupled to the proximity-sensitive display and the feedback component and are communicatively coupled to a wearable computing system, the one or more processors configured to: receive, from the wearable computing system, data indicating whether the proximity-sensitive display is visible to a user of the wearable computing system; monitor the data that is received from the wearable computing system for changes in the user's visibility of the proximity-sensitive display; in response to a detection of a change in the user's visibility of the proximity-sensitive display, switch between: (i) a first mode in which the one or more processors are configured to present a user interface on the proximity-sensitive display, and (ii) a second mode in the one or more processors are configured to convey one or more portions of the user interface to the user through use of the feedback component.

[0211] Optionally, the feedback component is a haptic actuator.

[0212] Optionally, the feedback component is a speaker.

[0213] Optionally, the user interface is a graphical user interface for controlling one or more functions of the wearable computing system.

[0214] Optionally, the graphical user interface a plurality of graphical control elements.

[0215] Optionally, the second mode is one in which the one or more processors are configured to refrain from presenting one or more of the plurality of graphical control elements on the proximity-sensitive display.

[0216] Optionally, the second mode is one in which the one or more processors are configured to present a limited version of the graphical user interface on the proximity-sensitive display.

[0217] Optionally, the limited version of the graphical user interface on the proximity-sensitive display is one in which one or more of the plurality of graphical control elements are not shown, one in which brightness levels of one or more of the plurality of graphical control elements are reduced, or a combination thereof.

[0218] Optionally, the second mode is one in which the one or more processors are configured to convey one or more locations on the proximity-sensitive display at which one or more of the plurality of graphical control elements as presented in the first mode.

[0219] Optionally, the one or more locations on the proximity-sensitive display that are conveyed in the second mode correspond to one or more outer boundaries of the plurality of graphical control elements as presented in the first mode.

[0220] Optionally, the plurality of graphical control elements correspond to a plurality of physical user input components of a dedicated input controller that is associated with the wearable computing system.

[0221] Optionally, the computing device is configured to operate in a same or similar manner as one or more of the computing devices and/or apparatuses described herein.

[0222] Optionally, the computing device is configured to perform one or more of the operations described in the method described immediately above and/or the method described immediately below.

[0223] A computer-implemented method includes: receiving, from a wearable computing system, a first set of data indicating whether a proximity-sensitive display is visible to a user of the wearable computing system at a first point in time; receiving, from the wearable computing system, a second set of data indicating whether the proximity-sensitive display is visible to the user of the wearable computing system at a second, later point in time; determining, based on receiving the first and second sets of data from the wearable computing system, that a change in the user's visibility of the proximity-sensitive display has occurred; and in response to determining that the change in the user's visibility of the proximity-sensitive display has occurred, switching between (i) a first mode in which a user interface is presented on the proximity-sensitive display, and (ii) a second mode in which one or more portions of the user interface are conveyed to the user of the wearable computing system through use of a feedback component.

[0224] Optionally, determining, based on receiving the first and second sets of data from the wearable computing system, that a change in the user's visibility of the proximity-sensitive display has occurred comprises: determining, based on receiving the first and second sets of data from the wearable computing system, that the user has lost visibility of the proximity-sensitive display.

[0225] Optionally, in response to determining that the user has lost visibility of the proximity-sensitive display, the method comprises switching from the first mode to the second mode.

[0226] Optionally, determining, based on receiving the first and second sets of data from the wearable computing system, that a change in the user's visibility of the proximity-sensitive display has occurred comprises: determining, based on receiving the first and second sets of data from the wearable computing system, that the user has regained visibility of the proximity-sensitive display.

[0227] Optionally, in response to determining that the user has lost visibility of the proximity-sensitive display, the method comprises switching from the second mode to the first mode.

[0228] Optionally, the method may be performed by one or both of the computing devices described immediately above.

[0229] A wearable computing system includes: a head-mounted display configured to be worn on a head of a user; one or more sensing devices configured to monitor an environment of head-mounted display; and one or more processors that are operatively coupled to the head-mounted display and the one or more sensing devices and are communicatively coupled to a computing device, the one or more processors configured to: use data obtained from the one or more sensing devices to determine whether a display of the computing device is visible to the user of the wearable computing system; generate one or more messages based at least in part on whether the display of the computing device is determined to be visible to the user of the wearable computing system; and transmit the one or more messages to the computing device.

[0230] Optionally, the one or more sensing devices include one or more cameras.

[0231] Optionally, the one or more cameras include one or more forward-facing cameras that are configured to capture images of an environment of the user of the wearable computing system.

[0232] Optionally, the one or more cameras include one or more inward-facing cameras that are configured to capture images of one or both of the user's eyes, one or more portions of the user's head or face, or a combination thereof.

[0233] Optionally, the one or more sensing devices include one or more proximity sensors.

[0234] Optionally, the wearable computing system is configured to operate in a same or similar manner as one or more of the wearable computing systems, the wearable display systems, and/or image display devices described herein.

[0235] Optionally, the wearable computing system is configured to perform one or more of the operations in the method described immediately below.

[0236] A computer-implemented method includes: obtaining data from one or more sensing devices of a wearable computing system; determining, based on receiving the data from the one or more sensing devices of the wearable computing system, whether a display of a computing device is visible to the user of the wearable computing system; generating one or more messages based on whether the display of the computing device is determined to be visible to the user of the wearable computing system; and transmitting the one or more messages to the computing device.

[0237] Optionally, obtaining data from one or more sensing devices of a wearable computing system comprises: obtaining data from one or more forward-facing cameras that are configured to capture images of an environment of the user of the wearable computing system.

[0238] Optionally, determining, based on receiving the data from the one or more sensing devices of the wearable computing system, whether a display of a computing device is visible to the user of the wearable computing system comprises: determining whether the display of the computing device is shown in one or more images captured by the one or more forward-facing cameras.

[0239] Optionally, obtaining data from one or more sensing devices of a wearable computing system comprises: obtaining data from one or more inward-facing cameras that are configured to capture images of one or both of the user's eyes, one or more portions of the user's head or face, or a combination thereof.

[0240] Optionally, determining, based on receiving the data from the one or more sensing devices of the wearable computing system, whether a display of a computing device is visible to the user of the wearable computing system comprises: determining, based on one or more images captured by the one or more inward-facing cameras, whether the wearable computing system is being worn by the user on the user's head.

[0241] Optionally, determining, based on one or more images captured by the one or more inward-facing cameras, whether the wearable computing system is being worn by the user on the user's head comprises: determining whether the user is shown in one or more images captured by the one or more inward-facing cameras.

[0242] Optionally, obtaining data from one or more sensing devices of a wearable computing system comprises: obtaining data from one or more proximity sensors.

[0243] Optionally, determining, based on receiving the data from the one or more sensing devices of the wearable computing system, whether a display of a computing device is visible to the user of the wearable computing system comprises: determining whether the data received from the one or more proximity sensors indicate that the wearable computing system is being worn by the user on the user's head.

[0244] Optionally, the method may be performed by the wearable computing system described immediately above.

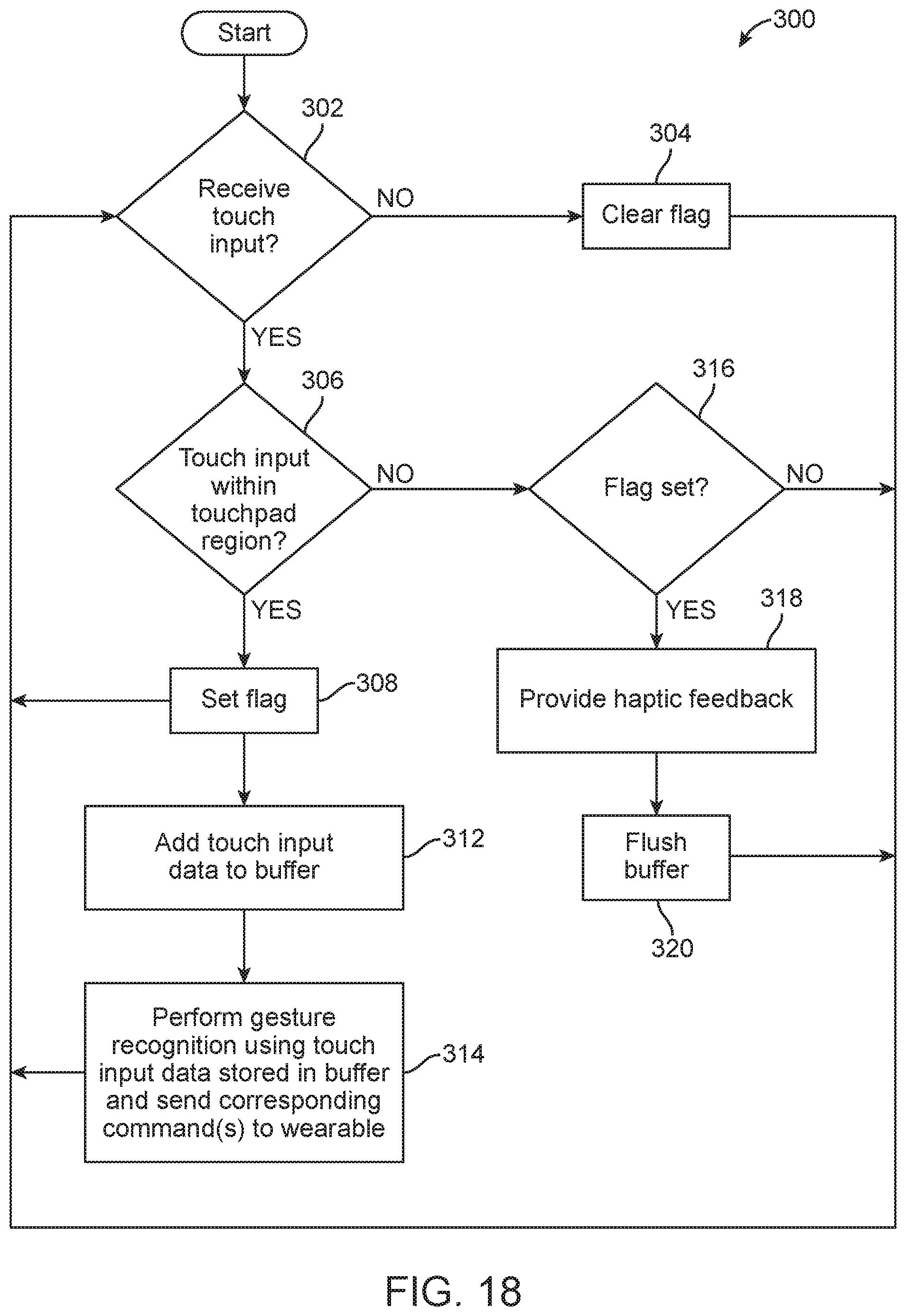

[0245] A computing device includes: a proximity-sensitive display; a feedback component; and one or more processors that are operatively coupled to the proximity-sensitive display and the feedback component and are communicatively coupled to a wearable computing system, the one or more processors configured to: receive data indicating a first touch input received at a first location on the proximity-sensitive display; receive data indicating a second touch input received at a second location on the proximity-sensitive display immediately subsequent the first touch input; determine whether each of the first and second locations fall within a particular region of the proximity-sensitive display; in response to a determination that one or both of the first and second locations fall within the particular region of the proximity-sensitive display: generate one or more messages based at least in part on one or both of the first and second touch inputs; and transmit the one or more messages generated based at least in part on one or both of the first and second touch inputs to the wearable computing system; and in response to (i) a determination that the first location falls within the particular region of the proximity-sensitive display, and (ii) a determination that the second location falls outside of the particular region of the proximity-sensitive display: cause the feedback component to generate feedback.

[0246] Optionally, the particular region of the proximity-sensitive display is associated with a particular control element.

[0247] Optionally, the particular control element is a graphical control element, and the one or more processors are further configured to display the particular control element at a location on the proximity-sensitive display coinciding with the particular region.

[0248] Optionally, the feedback component is a haptic actuator.

[0249] Optionally, the feedback component is a speaker.

[0250] Optionally, the computing device is configured to operate in a same or similar manner as one or more of the computing devices and/or apparatuses described herein.

[0251] Optionally, the computing device is configured to perform one or more of the operations in the method described immediately below.

[0252] A computer-implemented method includes: receiving data indicating a first touch input received at a first location on a proximity-sensitive display; receiving data indicating a second touch input received at a second location on the proximity-sensitive display immediately subsequent the first touch input; determining whether each of the first and second locations fall within a particular region of the proximity-sensitive display; in response to determining that one or both of the first and second locations fall within the particular region of the proximity-sensitive display: generating one or more messages based at least in part on one or both of the first and second touch inputs; and transmitting the one or more messages generated based at least in part on one or both of the first and second touch inputs to a wearable computing system; and in response to (i) determining that the first location falls within the particular region of the proximity-sensitive display, and (ii) determining that the second location falls outside of the particular region of the proximity-sensitive display: providing feedback for output through a feedback component.

[0253] Optionally, the method may be performed by the computing device described immediately above.

[0254] A computing device includes: a proximity-sensitive display; an orientation sensor configured to sense an orientation of the computing device; and one or more processors that are operatively coupled to the proximity-sensitive display and the orientation sensor and are communicatively coupled to a wearable computing system, the one or more processors configured to: monitor for a sequence of touch inputs received through the proximity-sensitive display corresponding to any one of a plurality of different predefined gestures; in response to a detection of any one of the plurality of different predefined gestures: select, from among a plurality of different types of transformations that are associated with the plurality of different predefined gestures, respectively, a particular type of transformation that is associated with the detected gesture; select, from among multiple different axes, a particular axis based on data obtained from the orientation sensor; generate a command to apply the particular type of transformation to a virtual object relative to the particular axis; and transmit the command to the wearable computing system. Optionally, the computing device is configured to operate in a same or similar manner as one or more of the computing devices and/or apparatuses described herein.

[0255] Optionally, the computing device is configured to perform one or more of the operations in the method described immediately below.

[0256] A computer-implemented method includes: obtaining data indicating an orientation of a computing device; receiving data indicating a sequence of touch inputs received through a proximity-sensitive display of the computing device; determining that the sequence of touch inputs received through the proximity-sensitive display corresponds to a particular gesture; and in response to determining that the sequence of touch inputs received through the proximity-sensitive display corresponds to the particular gesture: selecting, from among a plurality of different types of transformations that are associated with a plurality of different predefined gestures, respectively, a particular type of transformation that is associated with the particular gesture; and selecting, from among multiple different axes, a particular axis based on the orientation of the device; and generating a command to apply the particular type of transformation to a virtual object relative to the particular axis.

[0257] Optionally, the plurality of different types of transformations includes one or more of: rotation, translation, and resizing.

[0258] Optionally, the method may be performed by the computing device described immediately above.

[0259] A computing device includes: a proximity-sensitive display; and one or more processors that are operatively coupled to the proximity-sensitive display and are communicatively coupled to a wearable display system, the one or more processors configured to: present a particular piece of content on the proximity-sensitive display; monitor for a sequence of touch inputs received through the proximity-sensitive display corresponding to any one of a plurality of different predefined gestures during the presentation of the particular piece of content on the proximity-sensitive display; in response to a detection of any one of the plurality of different predefined gestures during the presentation of the particular piece of content on the proximity-sensitive display: generate one or more messages indicating that presentation of the particular piece of content is to be handed off to the wearable display system; transmit the one or more messages to the wearable display system; and discontinue presentation of the particular piece of content on the proximity-sensitive display.

[0260] Optionally, the computing device is configured to operate in a same or similar manner as one or more of the computing devices and/or apparatuses described herein.

[0261] Optionally, the computing device is configured to perform one or more of the operations in the method described immediately below.