Stand-alone Self-driving Material-transport Vehicle

Gariepy; Ryan Christopher ; et al.

U.S. patent application number 16/539249 was filed with the patent office on 2019-11-28 for stand-alone self-driving material-transport vehicle. The applicant listed for this patent is Andrew Blakey, Simon Drexler, Ryan Christopher Gariepy, Jason Scharlach, James Dustin Servos. Invention is credited to Andrew Blakey, Simon Drexler, Ryan Christopher Gariepy, Jason Scharlach, James Dustin Servos.

| Application Number | 20190360835 16/539249 |

| Document ID | / |

| Family ID | 63855431 |

| Filed Date | 2019-11-28 |

| United States Patent Application | 20190360835 |

| Kind Code | A1 |

| Gariepy; Ryan Christopher ; et al. | November 28, 2019 |

STAND-ALONE SELF-DRIVING MATERIAL-TRANSPORT VEHICLE

Abstract

Systems and methods for a stand-alone self-driving material-transport vehicle are provided. A method includes: displaying a graphical map on a graphical user-interface device based on a map stored in a storage medium of the vehicle, receiving a navigation instruction based on the graphical map, and navigating the vehicle based on the navigation instruction. As the vehicle navigates, it senses features of an industrial facility using its sensor system, and locates the features relative to the map. Subsequently, the vehicle stores the updated map including the feature on the vehicle's storage medium. The map can then be shared with other vehicles or a fleet-management system.

| Inventors: | Gariepy; Ryan Christopher; (Kitchener, CA) ; Scharlach; Jason; (Kitchener, CA) ; Blakey; Andrew; (Kitchener, CA) ; Drexler; Simon; (Kitchener, CA) ; Servos; James Dustin; (Kitchener, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 63855431 | ||||||||||

| Appl. No.: | 16/539249 | ||||||||||

| Filed: | August 13, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/CA2018/050464 | Apr 18, 2018 | |||

| 16539249 | ||||

| 62486936 | Apr 18, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G01C 21/3614 20130101; G01C 21/3461 20130101; G05D 1/0027 20130101; G05D 1/0274 20130101; G05D 2201/0216 20130101; G01C 21/32 20130101; G01C 21/3626 20130101; G05D 1/0297 20130101; G05D 1/0088 20130101; G05D 2201/0207 20130101; G05D 1/02 20130101; G06Q 10/08 20130101 |

| International Class: | G01C 21/36 20060101 G01C021/36; G05D 1/02 20060101 G05D001/02; G05D 1/00 20060101 G05D001/00; G06Q 10/08 20060101 G06Q010/08 |

Claims

1. A method of mapping an industrial facility with a self-driving vehicle, comprising: displaying a graphical map on a graphical user-interface device based on a map stored in a non-transient computer-readable medium of the vehicle; receiving at least one navigation instruction based on the graphical map; navigating the vehicle based on the at least one navigation instruction; sensing a feature of the facility using a sensor system of the vehicle; locating the feature relative to the map; and storing an updated map based on the map and the feature on the medium of the vehicle.

2. The method of claim 1, wherein the at least one navigation instruction is based on a location relative to the map, and navigating the vehicle comprises autonomously navigating the vehicle to the location.

3. The method of claim 1, wherein the at least one navigation instruction comprises a direction, and navigating the vehicle comprises semi-autonomously navigating the vehicle based on the direction.

4. The method of claim 1, further comprising updating the displayed graphical map based on the feature.

5. The method of claim 1, further comprising transmitting the updated map to a second self-driving vehicle.

6. The method of claim 5, wherein transmitting the updated map to the second self-driving vehicle comprises transmitting the updated map to a fleet-management system and subsequently transmitting the updated map from the fleet-management system to the second vehicle.

7. The method of claim 1, comprising the preliminary step of determining a map of the facility relative to the vehicle.

8. A method of commanding a self-driving vehicle in an industrial facility, comprising: determining a map of the facility relative to the vehicle; displaying a graphical map based on the map; navigating the vehicle in a semi-autonomous mode; and recording a recipe based on a plurality of semi-autonomous navigation steps associated with the navigating the vehicle in the semi-autonomous mode.

9. The method of claim 8, wherein recording the recipe comprises deriving a plurality of tasks associated with the plurality of semi-autonomous navigation steps, and wherein the recipe comprises the plurality of tasks.

10. The method of claim 8, further comprising: sensing a feature of the facility using a sensor system of the vehicle; and generating a substitute navigation step based on at least one semi-autonomous navigation step and the feature; wherein the recipe is based on the substitute navigation step.

11. The method of claim 10, wherein the recipe does not comprise the at least one semi-autonomous navigation step.

12. The method of claim 8, further comprising transmitting the recipe to a second self-driving vehicle, wherein the second vehicle autonomously navigates based on the recipe.

13. The method of claim 12, wherein transmitting the recipe to the second self-driving vehicle comprises transmitting the recipe to a fleet-management system and subsequently transmitting the recipe from the fleet-management system to the second vehicle.

14. A self-driving transport system comprising: a self-driving vehicle having a drive system for moving the vehicle and a control system for controlling the drive system; and a graphical user-interface device in communication with the vehicle for receiving a navigation instruction from a user and transmitting a corresponding navigation command to the control system; wherein, the control system receives the navigation command and controls the drive system to autonomously move the vehicle according to the navigation instruction.

15. The system of claim 14, wherein: the control system has a non-transitory computer-readable medium for storing a map; the self-driving vehicle has a server in communication with the control system for generating a graphical map based on the map; and the graphical user-interface device in communication with the vehicle comprises the graphical user-interface device in wireless communication with the server.

16. The system of claim 15, wherein the graphical user-interface device comprises: a coordinate system having a plurality of coordinates associated with the graphical map; and a user-input device for selecting a coordinate; wherein the selected coordinate is transmitted from the graphical user-interface device to the server, and the coordinate corresponds to a location in the map.

17. The system of claim 14, wherein: the control system has a non-transitory computer-readable medium for storing a map; the graphical user-interface device in communication with the vehicle comprises the graphical user-interface device in wireless communication with the control system; and the graphical user-interface device is configured to receive the map from the control system and generate a graphical map based on the map.

18. The system of claim 17, wherein the graphical user-interface device comprises: a coordinate system having a plurality of coordinates associated with the graphical map; and a user-input device for selecting a coordinate; wherein the graphical user-input device transmits a location on the map corresponding to the selected coordinate to the control system.

19. The system of claim 14, further comprising a fleet-management system, wherein: the vehicle comprises a sensor system for sensing objects within a peripheral environment of the vehicle; and the control system comprises a non-transitory computer-readable medium for storing a map based on the sensed objects and a transceiver in communication with the fleet-management system; wherein the control system transmits the map to the fleet-management system using the transceiver.

20. The system of claim 14, further comprising a fleet-management system, wherein: the control system comprises a non-transitory computer-readable medium for storing a vehicle status based on the performance of the vehicle and a transceiver in communication with the fleet-management system; and the control system transmits the vehicle status to the fleet-management system using the transceiver.

21. The system of claim 14, further comprising a fleet-management system, wherein: the control system comprises a non-transitory computer-readable medium for storing a vehicle configuration and a transceiver in communication with the fleet-management system; and the control system transmits the vehicle status to the fleet-management system using the transceiver.

22. The system of claim 21, where the control system receives a recovery configuration from the fleet-management system using the transceiver, stores the recovery configuration in the medium, and controls the vehicle according to the recovery configuration.

Description

CROSS-REFERENCE TO RELATED PATENT APPLICATIONS

[0001] The application is a continuation of International Application No. PCT/CA2018/050464 filed on Apr. 18, 2018 which claims the benefit of U.S. Provisional Application No. 62/486,936, filed on Apr. 18, 2017. The complete disclosure of International Application No. PCT/CA2018/050464 and U.S. Provisional Application No. 62/486,936 are incorporated herein by reference.

FIELD

[0002] The specification relates generally to self-driving vehicles, and specifically to a stand-alone self-driving material-transport vehicle.

BACKGROUND

[0003] Automated vehicles are presently being considered for conducting material-transport tasks within industrial facilities such as manufacturing plants and warehouses. Typical systems include one or more automated vehicles, and a centralized system for assigning tasks and generally managing the automated vehicles. These centralized systems interface with enterprise resource planning systems and other informational infrastructure of the facility in order to coordinate the automated vehicles with other process and resources within the facility.

[0004] However, such an approach requires a significant amount of infrastructure integration and installation before a vehicle can be used for any tasks. For example, automated vehicles may be dependent on the centralized system in order to obtain location information, maps, navigation instructions, and tasks, rendering the automated vehicles ineffective without the associated use of the centralized system.

SUMMARY

[0005] In a first aspect, there is a method of mapping an industrial facility with a self-driving vehicle. The method comprises displaying a graphical map on a graphical user-interface device based on a map stored in a non-transient computer-readable medium of the vehicle. A navigation instruction is received based on the graphical map, and the vehicle is navigated based on the navigation instruction. A feature of the facility is sensed using a sensor system of the vehicle, and the feature is located relative to the map. An updated map is stored on the medium based on the map and the feature.

[0006] According to some embodiments, the navigation instruction is based on a location relative to the map, and navigating the vehicle comprises autonomously navigating the vehicle to the location.

[0007] According to some embodiments, the navigation instruction comprises a direction, and navigating the vehicle comprises semi-autonomously navigating the vehicle based on the direction.

[0008] According to some embodiments, the method further comprises updating the displayed graphical map based on the feature.

[0009] According to some embodiments, the method further comprises transmitting the updated map to a second self-driving vehicle.

[0010] According to some embodiments, transmitting the updated map to the second self-driving vehicle comprises transmitting the updated map to a fleet-management system and subsequently transmitting the map from the fleet-management system to the second vehicle.

[0011] According to some embodiments, the method comprises the preliminary step of determining a map of the facility relative to the vehicle.

[0012] In a second aspect, there is a method of commanding a self-driving vehicle in an industrial facility. The method comprises determining a map of the facility relative to the vehicle, displaying a graphical map based on the map, and navigating the vehicle in a semi-autonomous mode. A recipe is recorded based on a plurality of semi-autonomous navigation steps associated with navigating the vehicle in the semi-autonomous mode.

[0013] According to some embodiments, the recording the recipe comprises deriving a plurality of tasks associated with the plurality of semi-autonomous navigation steps, and the recipe comprises the plurality of tasks.

[0014] According to some embodiments, the method further comprises sensing a feature of the facility using a sensor system of the vehicle, and generating a substitute navigation step based on at least one semi-autonomous navigation step and the feature. The recipe is based on the substitute navigation step.

[0015] According to some embodiments, the recipe does not include the semi-autonomous navigation step based on which the substitute navigation step was generated.

[0016] According to some embodiments, the method comprises transmitting the recipe to a second self-driving vehicle so that the second vehicle can autonomously navigate based on the recipe.

[0017] According to some embodiments, transmitting the recipe to the second self-driving vehicle comprises transmitting the recipe to a fleet-management system and subsequently transmitting the recipe from the fleet-management system to the second vehicle.

[0018] In a third aspect, there is a self-driving transport system. The system comprises a self-driving vehicle and a graphical user-interface device. The vehicle has a drive system for moving the vehicle and a control system for controlling the drive system. The graphical user-interface device is in communication with the control system for receiving navigation instructions from a user, and transmitting a corresponding navigation command to the control system. The control system receives the navigation command and controls the drive system to autonomously move the vehicle according to the navigation instruction.

[0019] According to some embodiments, the control system has a non-transitory computer-readable medium for storing a map. The self-driving vehicle has a server in communication with the control system for generating a graphical map based on the map, and the graphical user-interface device in communication with the vehicle comprises the graphical user-interface device in wireless communication with the server.

[0020] According to some embodiments, the graphical user-interface device comprises a coordinate system having a plurality of coordinates associated with the graphical map, and a user-input device for selecting a coordinate. The selected coordinate is transmitted from the graphical user-interface device to the server, and the coordinate corresponds to a location in the map.

[0021] According to some embodiments, the control system has a non-transitory computer-readable medium for storing a map. The graphical user-interface device in communication with the vehicle comprises the graphical user-interface device in wireless communication with the control system. The graphical user-interface device is configured to receive the map from the control system and generate a graphical map based on the map.

[0022] According to some embodiments, the graphical user-interface device comprises a coordinate system having a plurality of coordinates associated with a graphical map, and a user-input device for selecting a coordinate. The graphical user-input device transmits a location on the map corresponding to the selected coordinate to the control system.

[0023] According to some embodiments, the system comprises a fleet-management system. The vehicle comprises a sensor system for sensing objects within a peripheral environment of the vehicle, and the control system comprises a non-transitory computer-readable medium for storing a map based on the sensed objects and a transceiver in communication with the fleet-management system. The control system transmits the map to the fleet-management system using the transceiver.

[0024] According to some embodiments, the system comprises a fleet-management system. The control system comprises a non-transitory computer-readable medium for storing a vehicle status based on the performance of the vehicle and a transceiver in communication with the fleet-management system. The control system transmits the vehicle status to the fleet-management system using the transceiver.

[0025] According to some embodiments, the system further comprises a fleet-management system. The control system comprises a non-transitory computer-readable medium for storing a vehicle configuration and a transceiver in communication with the fleet-management system, and the control system transmits the vehicle status to the fleet-management system using the transceiver.

[0026] According to some embodiments, the control system receives a recovery configuration from the fleet-management system using the transceiver, stores the recovery configuration in the medium, and controls the vehicle according to the recovery configuration.

BRIEF DESCRIPTIONS OF THE DRAWINGS

[0027] Embodiments are described with reference to the following figures, in which:

[0028] FIG. 1 depicts a stand-alone self-driving material-transport vehicle according to a non-limiting embodiment;

[0029] FIG. 2 depicts a system diagram of a stand-alone self-driving material-transport vehicle according to a non-limiting embodiment;

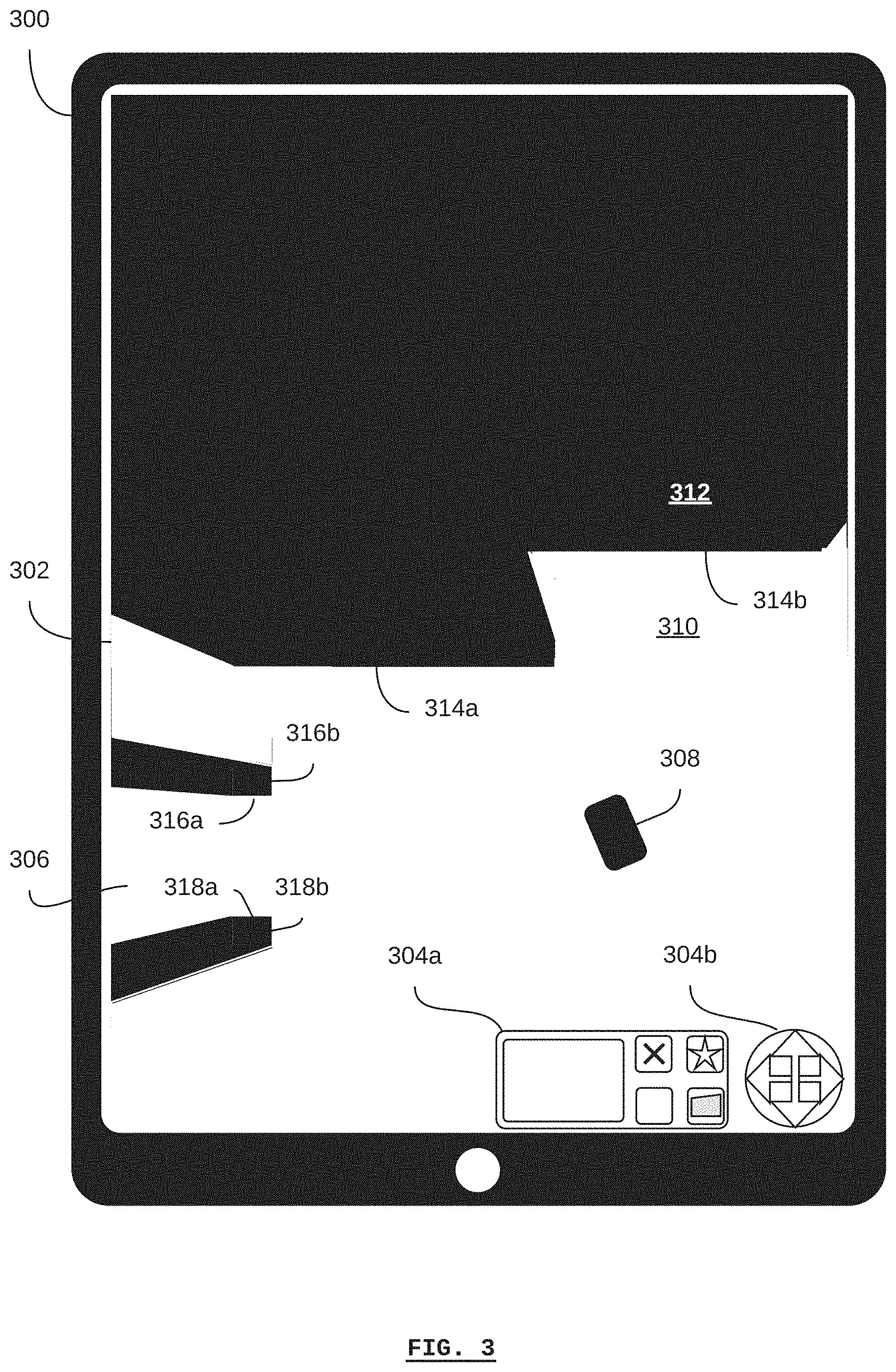

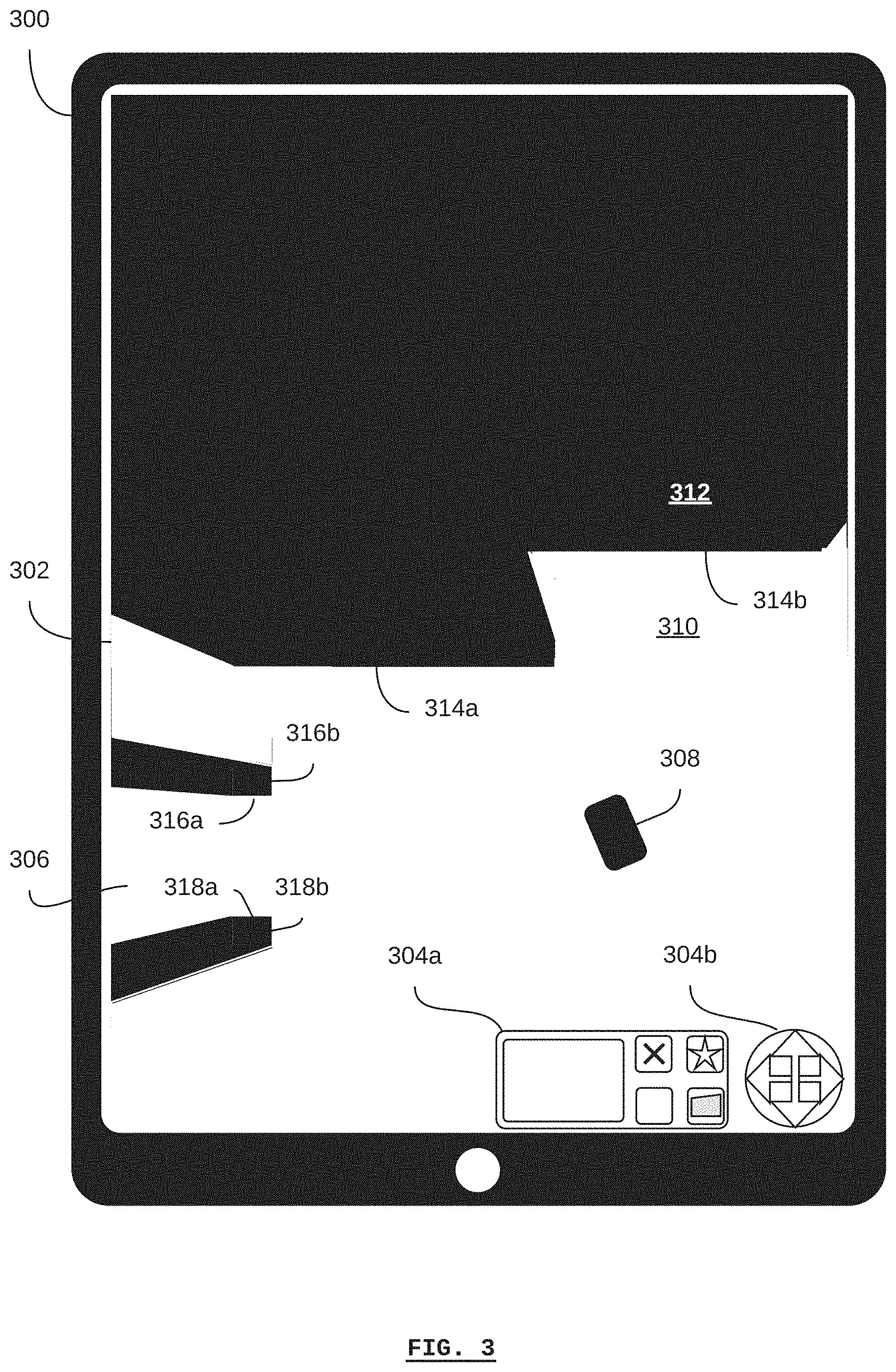

[0030] FIG. 3 depicts a graphical user-interface device for a stand-alone self-driving material-transport vehicle displaying a graphical map at a first time according to a non-limiting embodiment;

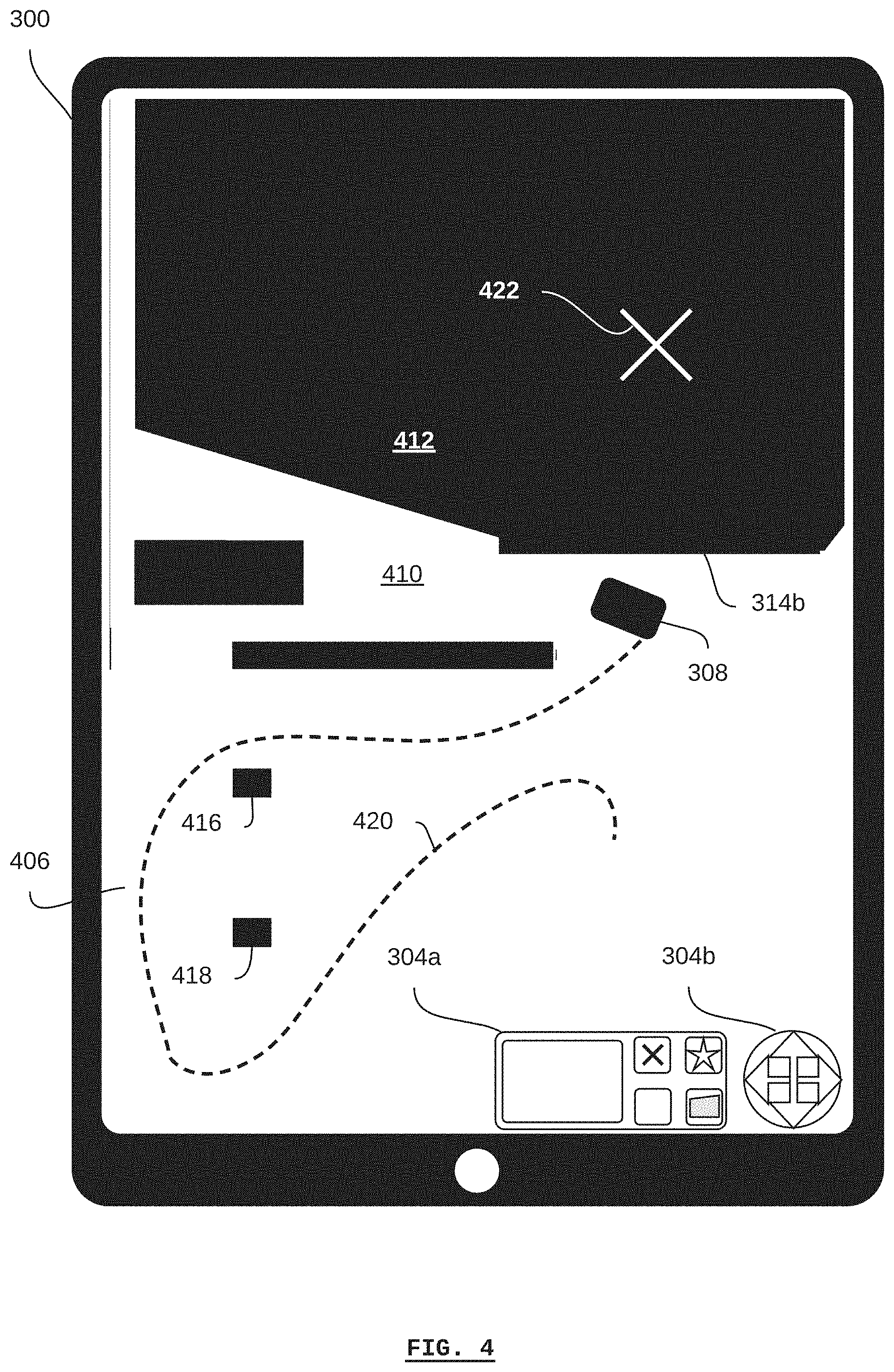

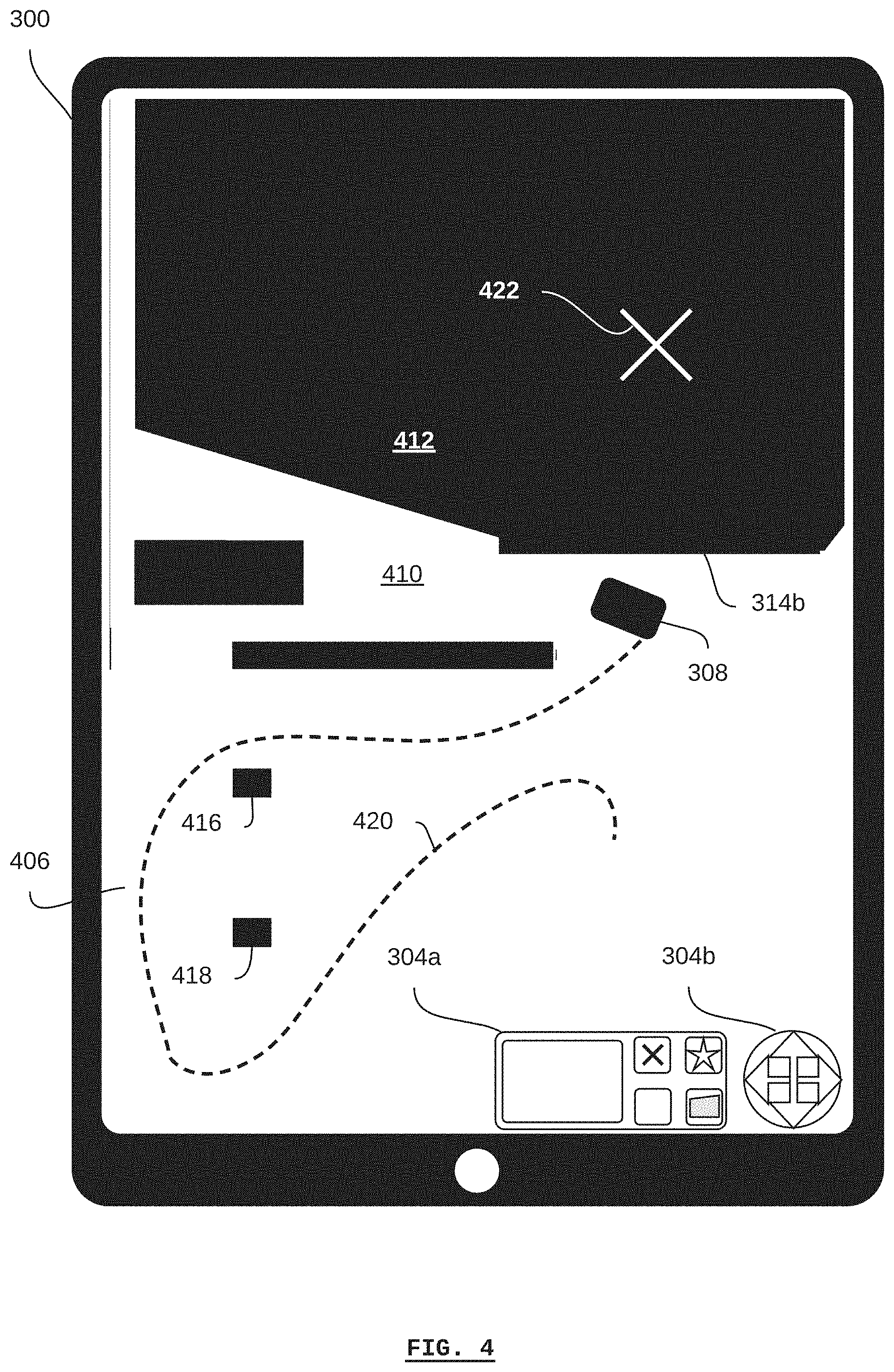

[0031] FIG. 4 depicts the graphical user-interface device of FIG. 3 displaying the graphical map at a second time according to a non-limiting embodiment;

[0032] FIG. 5 depicts the graphical user-interface device of FIG. 3 displaying the graphical map at a third time according to a non-limiting embodiment;

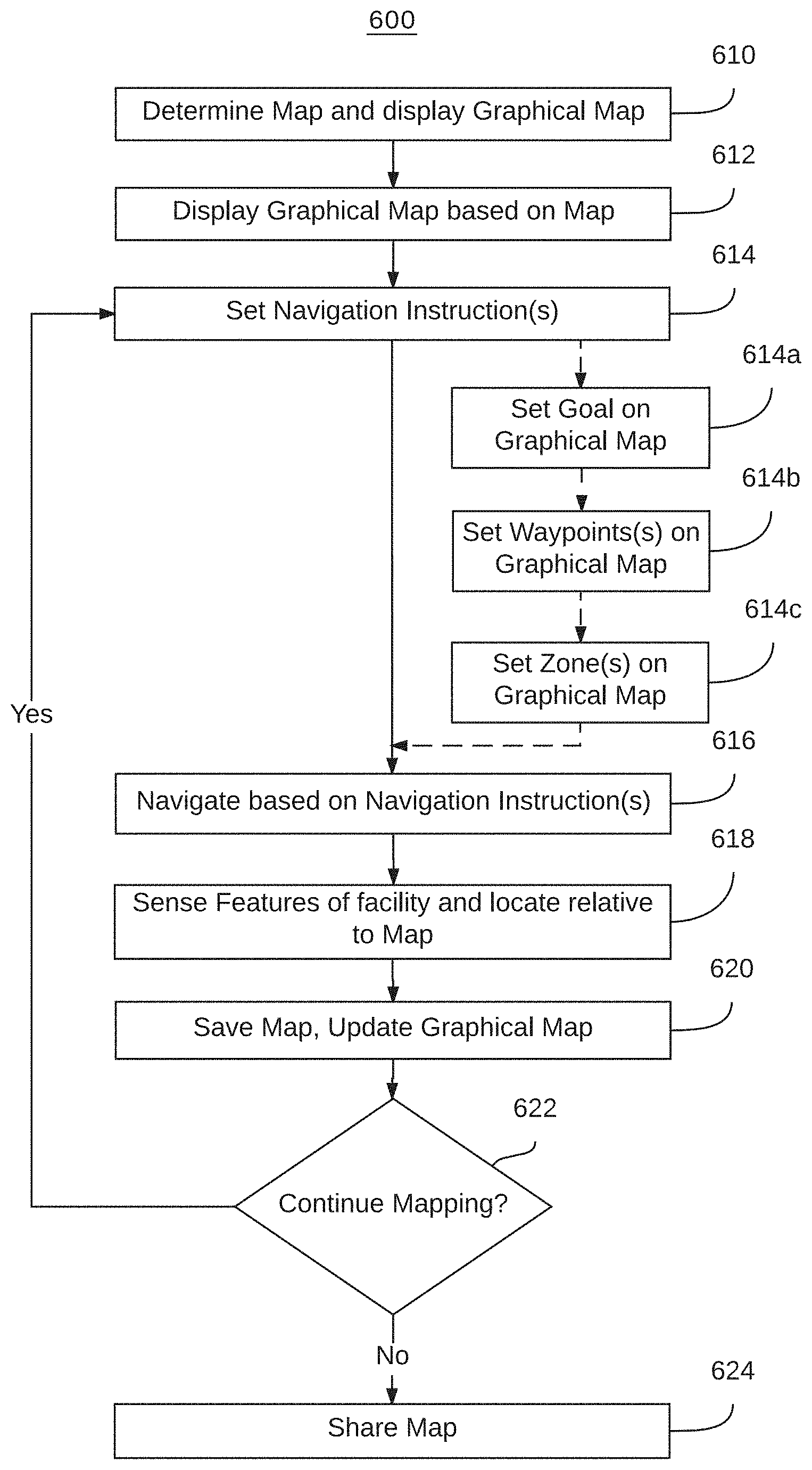

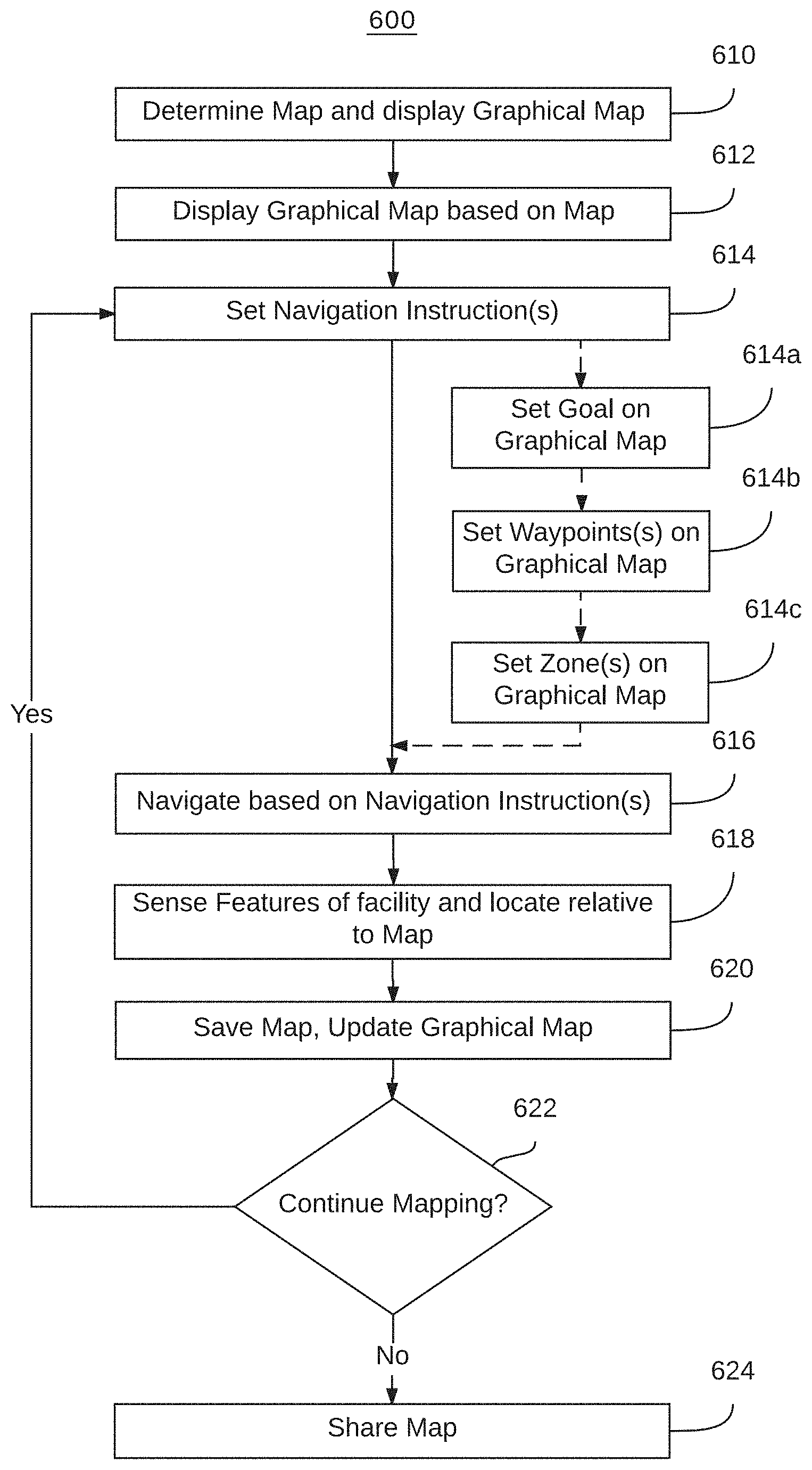

[0033] FIG. 6 depicts a flow diagram of a method of mapping an industrial facility with a self-driving vehicle according to a non-limiting embodiment;

[0034] FIG. 7 depicts a method of commanding a self-driving vehicle in an industrial facility according to a non-limiting embodiment; and

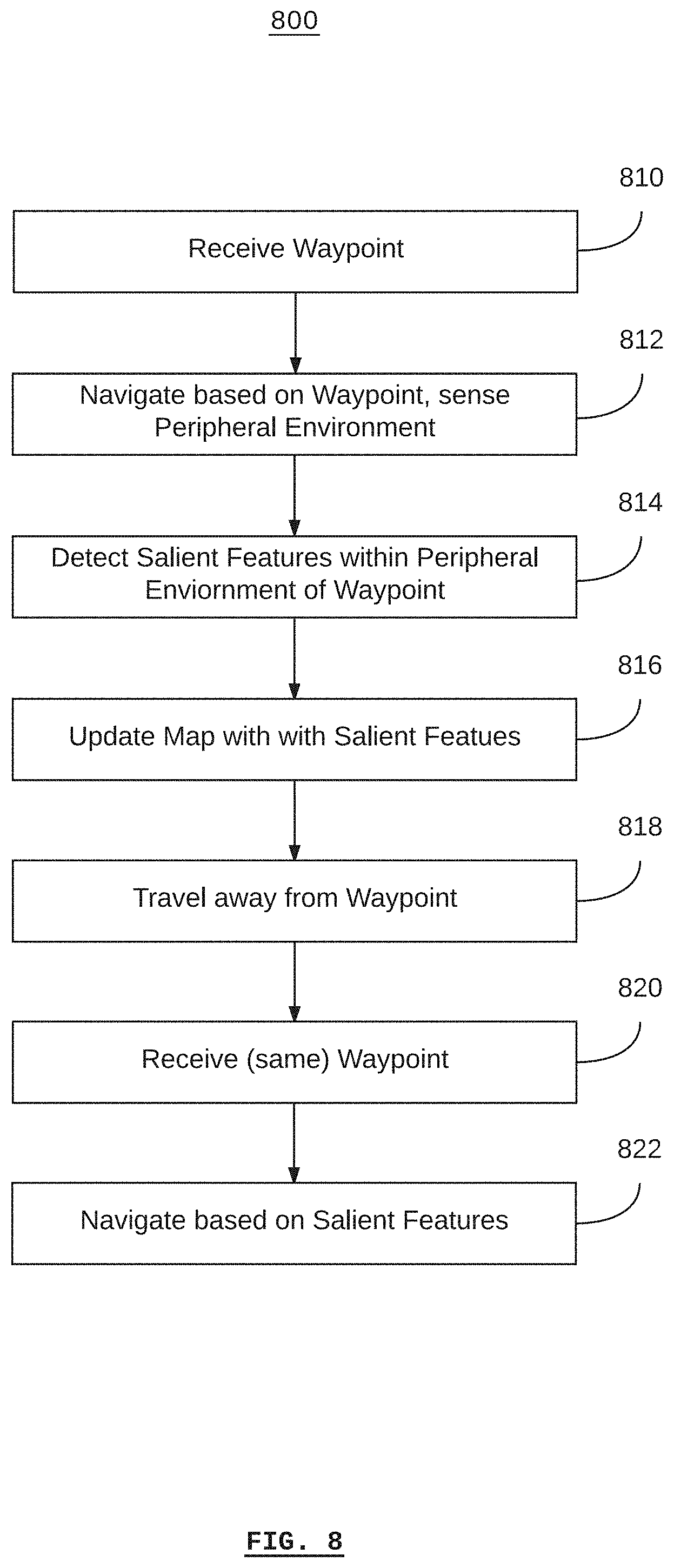

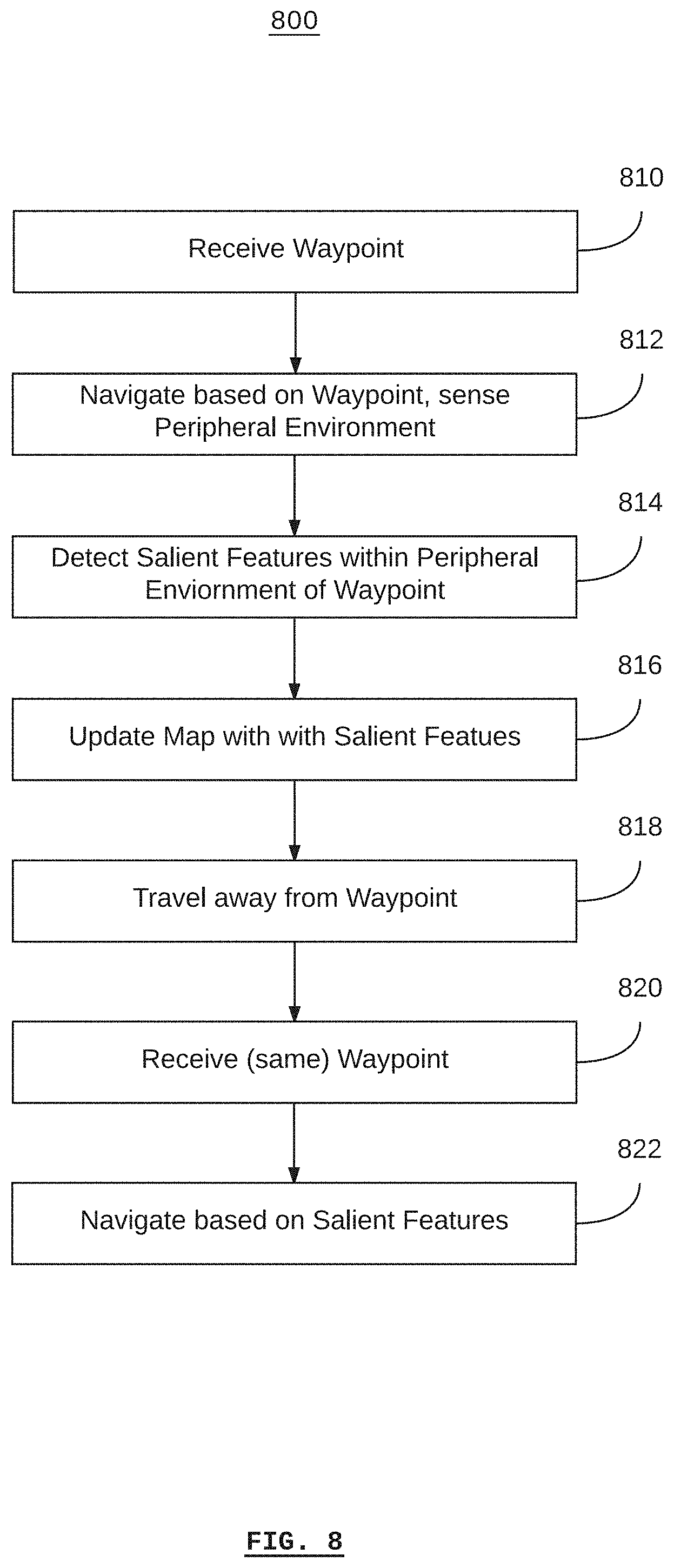

[0035] FIG. 8 depicts a method of instructing a self-driving vehicle in an industrial facility according to a non-limiting embodiment.

DETAILED DESCRIPTION OF THE EMBODIMENTS

[0036] Referring to FIG. 1, there is shown a stand-alone self-driving material-transport vehicle 100 according to a non-limiting embodiment. The vehicle comprises a vehicle body 126, sensors 106, 108a, and 108b for sensing the peripheral environment of the vehicle 100, and wheels 110a, 112a, and 112b for moving and directing the vehicle 100.

[0037] A graphical user-interface device 122 is mounted on the vehicle body 126. The graphical user-interface device 122 includes a wireless transceiver (not shown) for communicating with another wireless transceiver (not shown) inside the vehicle body 126 via a communication link 124. According to some embodiments, a cable 128 connects from within the vehicle body 126 to the graphical user-interface device 122 in order to provide power to the graphical user-interface device 122 and/or a communications link. According to some embodiments, the graphical user-interface device 122 may operate with its own power supply (e.g. batteries) and may be removabley mounted on the vehicle body 126.

[0038] Referring to FIG. 2, there is shown a self-driving material-transport vehicle 200, according to some embodiments, for deployment in a facility, such as a manufacturing facility, warehouse or the like. The facility can be any one of, or any suitable combination of, a single building, a combination of buildings, an outdoor area, and the like. A plurality of vehicles 200 may be deployed in any particular facility.

[0039] The vehicle comprises a drive system 202, a control system 204, and one or more sensors 206, 208a, and 208b.

[0040] The drive system 202 includes a motor and/or brakes connected to drive wheels 210a and 210b for driving the vehicle 200. According to some embodiments, the motor may be an electric motor, combustion engine, or a combination/hybrid thereof. Depending on the particular embodiment, the drive system 202 may also include control interfaces that can be used for controlling the drive system 202. For example, the drive system 202 may be controlled to drive the drive wheel #10a at a different speed than the drive wheel #10b in order to turn the vehicle 200. Different embodiments may use different numbers of drive wheels, such as two, three, four, etc.

[0041] According to some embodiments, additional wheels 212 may be included (as shown in FIG. 2, the wheels 212a, 212b, 212c, and 212d may be collectively referred to as the wheels 212). Any or all of the additional wheels 212 may be wheels capable of allowing the vehicle 200 to turn, such as castors, omni-directional wheels, and Mecanum wheels.

[0042] The control system 204 comprises a processor 214, a memory 216, and a non-transitory computer-readable medium 218. According to some embodiments, the control system 204 may also include a communications transceiver (not shown in FIG. 2), such as a wireless transceiver for communicating with a wireless communications network (e.g. using an IEEE 802.11 protocol or similar).

[0043] One or more sensors 206, 208a, and 208b may be included in the vehicle 200. For example, according to some embodiments, the sensor 206 may be a LiDAR device (or other optical/laser, sonar, or radar range-finding sensor). The sensors 208a and 208b may be optical sensors, such as video cameras. According to some embodiments, the sensors 208a and 208b may be optical sensors arranged as a pair in order to provide three-dimensional (e.g. binocular or RGB-D) imaging.

[0044] The control system 204 uses the medium 218 to store computer programs that are executable by the processor 214 (e.g. using the memory 216) so that the control system 204 can provide automated or autonomous operation to the vehicle 200. Furthermore, the control system 204 may also store an electronic map that represents the known environment of the vehicle 200, such as an industrial facility, in the media 218.

[0045] For example, the control system 204 may plan a path for the vehicle 200 based on a known destination location and the known location of the vehicle. Based on the planned path, the control system 204 may control the drive system 202 in order to drive the vehicle 200 along the planned path. As the vehicle 200 is driven along the planned path, the sensors 206, and/or 208a and 208b may update the control system 204 with new images of the vehicle's environment, thereby tracking the vehicle's progress along the planned path and updating the vehicle's location.

[0046] Since the control system 204 receives updated images of the vehicle's environment, and since the control system 204 is able to autonomously plan the vehicle's path and control the drive system 202, the control system 204 is able to determine when there is an obstacle in the vehicle's path, plan a new path around the obstacle, and then drive the vehicle 200 around the obstacle according to the new path.

[0047] The vehicle 200 can use the control system 240 to autonomously navigate to a waypoint or destination location provided to the vehicle 200, without receiving any other instructions from an external system. For example, the control system 204, along with the sensors 206, and/or 208a, and 208b, enable the vehicle 200 to navigate without any additional navigation aids such as navigation targets, magnetic strips, or paint/tape traces installed in the environment in order to guide the vehicle 200.

[0048] The vehicle 200 is equipped with a graphical user-interface device 220.

[0049] The graphical user-interface device 220 includes a graphical display and input buttons. According to some embodiments, the graphical user-interface device comprises a touchscreen display. In some cases, the graphical user-interface device may have its own processor, memory, and/or computer-readable media. In some cases, the graphical user-interface device may use the processor 214, the memory 216, and/or the media 218 of the control system 204.

[0050] The graphical user-interface device 220 is generally capable of displaying a graphical map based on a map stored in the control system 204. As used here, the term "map" refers to an electronic representation of the physical layout and features of a facility, which may include objects within the facility, based on a common location reference or coordinate system. The term "graphical map" refers to a graphical representation of the map, which is displayed on the graphical user-interface device 220. The graphical map is based on the map such that locations identified on the graphical map correspond to locations on the map, and vice-versa.

[0051] According to some embodiments, the vehicle 200 may also be equipped with a server 222. According to some embodiments, the server may include computer hardware such as a processor, memory, and non-transitory computer-readable media. According to some embodiments, the server may comprise software applications that are executed using the control system 204. According to some embodiments, the server 222 may be a web server for serving a web page (e.g. including a graphical map) to the graphical user-interface device 220.

[0052] According to some embodiments, the graphical user-interface device 220 may comprise software applications for rendering a graphical map based on data received from the server 222.

[0053] In the case that the vehicle 200 is equipped with the server 222, the graphical user-interface device 220 communicates with the server 222 via a communications link 224. The communications link 224 may be implemented using any suitable technology, for example, a wireless (WiFi) protocol such as the IEEE 802.11 family, cellular telephone technology (e.g. LTE or GSM), etc., along with corresponding transceivers in the graphical user-interface device 220 and the server 222 or control system 204.

[0054] Generally speaking, the graphical user-interface device 220 is in communication with the control system 204. According to some embodiments, this may be via a server 222, or the graphical user-interface device 220 may be in direct communication with the control system 204. As such, the graphical user-interface device 220 can receive a map from the control system 204 in order to display a rendered graphical map based on the map. Similarly, the graphical user-interface device 220 can send navigation instructions to the control system 204, such as goals, waypoints, zones, and guided navigation instructions. The control system 204 can then command the drive system # H02 in accordance with the navigation instructions.

[0055] According to some embodiments, the graphical user-interface 220 may display a graphical map in association with a coordinate system that corresponds to locations on the map. In this case, the graphical user-interface device 220 includes a user-input device (e.g. touchscreen, buttons, keyboard, etc.) for selecting a coordinate or multiple coordinates.

[0056] According to some embodiments, the graphical user-interface device 224 may be a laptop or desktop computer remotely located from the rest of the vehicle 200, in which case the communications link 224 may represent a combination of various wired and wireless network communications technologies.

[0057] Referring to FIG. 3, there is shown a graphical user-interface device 300 according to a non-limiting embodiment. The graphical user-interface device 300 is shown in the form of a tablet computer in the example of FIG. 3. The graphical user-interface device may be any type of computing device that is capable of communicating with a self-driving vehicle. For example, the graphical user-interface device may be a mobile phone (e.g. with WiFi or cellular communications enabled), a laptop computer, a desktop computer, etc. Generally, if the graphical user-interface device is not physically mounted on the self-driving vehicle, then it requires communications with the self-driving vehicle via a local-area network, wide-area network, intranet, over the Internet, etc.

[0058] According to some embodiments, the graphical user-interface device may be mounted directly on and physically-integrated with the self-driving vehicle. For example, a touch-screen panel or graphical display with other inputs may be included on the self-driving vehicle in order to provide a user-interface device.

[0059] The graphical user-interface device 300 includes a graphical display 302. According to some embodiments, the graphical display 302 is a touch-screen display for receiving input from a human operator. According to some embodiments, the graphical user-interface device 300 may also include input buttons (e.g. a keypad) that are not a part of the graphical display 302.

[0060] As shown in FIG. 3, the graphical display 302 displays a set of input buttons 304a and 304b (collectively referred to as buttons 304) and a graphical map 306.

[0061] The graphical map 306 includes a self-driving material-transport vehicle 308, and can be generally described as including known areas 310 of the graphical map 306, and unknown areas 312 of the graphical map 306. In the example shown, unknown areas of the graphical map 306 are shown as black and known areas of the map are shown as white. Any color or pattern may be used to graphically indicate the distinction between unknown areas and known areas.

[0062] As previously described, the graphical map 306 is associated with a map stored in the control system of the self-driving vehicle with which the graphical user-interface device 300 is communicating. This is the same self-driving vehicle as is represented graphically by the self-driving vehicle 308 on the graphical map 306. As such, the unknown areas of the graphical map 312 correspond to unknown areas of the map, and known areas 310 of the graphical map correspond to known areas of the map.

[0063] According to some embodiments, the control system may not store unknown areas of the map. For example, the map stored in the vehicle's control system may comprise only the known areas of the map, such that the process of mapping comprises starting with the known areas of a facility, adding them to the map, discovering new areas of the facility such that they also become known, and then adding the new areas to the map as well, relative to the previously-known areas. In this case, the generation of the graphical map may comprise the additional step of interpolating the unknown areas of the map so that a contiguous graphical map can be generated that represents both the known and unknown areas of the facility.

[0064] If, as an example, it is assumed that the vehicle 308 shown in the graphical map 306 is in its initial position (e.g. has not moved since being turned on), then the known area 310 of the graphical map 306 represents the peripheral environment of the vehicle 308. For example, according to the graphical map 306, the vehicle 308 can see an edge 314a and edge 314b at the boundary of the unknown area 312. Similarly, the vehicle 308 can see edges 316a and 316b of what might be an object, and edges 318a and 318b of what might be a second object. Generally, the unknown areas of the map lie in the shadows of these edges, and it cannot be determined whether there are other objects included behind these edges.

[0065] The buttons 304 may generally be used to command the vehicle (as represented by the vehicle 308) and to provide navigation instructions. For example, the buttons 304b are shown as a joystick-type interface that allows for the direction of the vehicle to be selected, such as to provide guided navigation instructions to the vehicle. The buttons 304a allow for the selection of different types of navigation instructions, such as goals, waypoints, and zones. For example, a human operator may select the goal button, and then select a location on the graphical map corresponding to the intended location of the goal.

[0066] Referring to FIG. 4, there is shown the graphical user-interface device 300 of FIG. 3 displaying a graphical map 406 that was generated based on the graphical map 306 at a time after the graphical map 306 was generated, according to a non-limiting embodiment. The dashed line 420 indicates the travel path that the vehicle 308 has travelled from its position shown in FIG. 3. For example, the travel path 420 may represent the result of a human operator providing guided navigation instructions using the joystick-type interface buttons 304b, and/or a series of goals or waypoints set using the buttons 304a, to which the vehicle may have navigated autonomously.

[0067] As can be seen in the graphical map 406, the known area 410 is substantially larger than the known area 310 of the graphical map 306, and accordingly, the unknown area 412 is smaller than the unknown area 312. Furthermore, due to the travel path 420, it can be seen that two objects 416 and 418 exist in the facility. In the example shown, the objects 416 and 418 are shown as black, indicating that the objects themselves are unknown (i.e. only their edges are known). According to some embodiments, individual colors or patterns can be used to indicate objects or specific types of objects on the graphical map. For example, images of the objects may be captured by the vehicle's vision sensor systems and analysed to determine the type of object. In some cases, a human operator may designate a type of object, for example, using the buttons 304.

[0068] As an example, once the vehicle 308 is at the position shown in FIG. 4, a human operator may use the buttons 304a to set a goal 422 for the vehicle. In this case, the human operator may have desired to send the vehicle further into the unknown area 412 in order to map the unknown area (in other words, reveal what is behind the edge 314b).

[0069] Referring to FIG. 5, there is shown the graphical user-interface device 300 of FIG. 3 displaying a graphical map 506 that was generated based on the graphical map 406 at a time after the graphical map 406 was generated, according to a non-limiting embodiment. The dashed line 524 indicates the travel path that the vehicle 308 has travelled from its position shown in FIG. 4 according to the autonomous navigation of the vehicle. As the vehicle 308 travelled along the travel path 524, it continued to map the facility, and was therefore able to detect and navigate around the object 514 on the way to its destination, according to the goal previously set.

[0070] The graphical map 506 indicates all of the features and objects of the facility known to the vehicle 308. Based on the known area of the map, and according to an example depicted in FIG. 5, a human operator may identify a narrow corridor in a potentially high-traffic area in proximity to the object 514. As such, the human operator may use the buttons 304a to establish a zone 528. According to some embodiments, the human operator may associate a "slow" attribute with the zone 528 such as to limit the speed of the vehicle 308 (or other vehicles, if the map is shared) as it travels through the zone 528.

[0071] Furthermore, since the machine 526 is represented on the graphical map 506, the human operator may use the buttons 304a to set a waypoint 530 adjacent to the machine 526.

[0072] Referring to FIG. 6, there is shown a method 600 of mapping an industrial facility with a self-driving vehicle according to a non-limiting embodiment. According to some embodiments, computer instructions may be stored on non-transient computer-readable media on the vehicle and/or on a remote graphical user-interface device, which, when executed by at least one processor on the vehicle and/or graphical user-interface device, cause the at least one processor to be configured to execute at least some steps of the method.

[0073] The method 600 begins at step 610 when a map of a facility is determined by a self-driving vehicle. According to some embodiments, the map is an electronic map of the facility that can be stored on a non-transient computer-readable medium on the vehicle. In some cases, the map may be incomplete, meaning that the map does not represent the entire physical area of the facility. Generally, the process of mapping can be described as a process of bringing the map to closer to a state of completion. Even when the entire physical area of the facility is represented in the map, further mapping may be done to update the map based on changes to the facility, such as the relocation of static objects (e.g. shelves, stationary machines, etc.) and dynamic objects (e.g. other vehicles, human beings, inventory items and manufacturing workpieces within the facility, etc.). To this end, in some cases, it can be said that all maps are "incomplete", either in space (the entire physical area of the facility is not yet represented in the map) and/or in time (the relocation of static and dynamic objects in the map are not yet represented in the map).

[0074] According to some embodiments, determining the map may comprises using the vehicle to generate a new map for itself. For example, if a vehicle is placed in a facility for the first time, and the vehicle has no other map of the facility available, the vehicle may generate its own map of the facility. According to some embodiments, generating a new map may comprise assuming an origin location for the map, such as the initial location of the vehicle.

[0075] According to some embodiments, determining the map may comprise receiving a map from another vehicle or from a fleet-management system. For example, an incomplete map may be received directly from another vehicle that has partially mapped a different section (in space and/or time) of the same facility, or from another vehicle via the fleet-management system. According to some embodiments, a map may be received that is based on a drawing of the facility, which, according to some embodiments, may represent infrastructure features of the facility, but not necessarily all of the static and/or dynamic objects in the facility.

[0076] According to some embodiments, determining the map may comprise retrieving a previously-stored map from the vehicle's control system.

[0077] At step 612, a graphical map is displayed on a graphical user-interface device. The graphical map is based on the map that was determined during the step 610. According to some embodiments, the graphical map may include both un-mapped and mapped areas of the facility, for example, as described with respect to FIG. 3, FIG. 4, and FIG. 5.

[0078] At step 614, navigation instructions are set. The navigation instructions are generally received from the graphical user-interface device based on the input of a human operator. For the sake of illustration, as shown in FIG. 6, navigation instructions may be related to setting goals for the vehicle at step 614a, setting waypoints in the facility at step 614b, and/or setting zones in the facility at step 614c. Each of step 614a, 614b, and 614c are merely examples of general navigation instructions at step 614, and any or all of these steps, as well as other navigation instructions, may be received from the graphical user-interface device. As used here, any of the steps 614a, 614b, and 614c may be individually or collectively referred to as the set navigation instruction step 614.

[0079] At any given time, the vehicle may operate according to any or all of an autonomous navigation mode, a guided navigation mode, and a semi-autonomous navigation mode.

[0080] The navigation instructions may also include guided navigation instructions determined by a human operator. For example, a human operator may use a directional indicator (e.g. a joystick-type interface) in order to provide the guided navigation instructions. These instructions generally comprise a real-time direction in which the vehicle is to travel. For example, if a human operator indicates an "up" direction in relation to the graphical map, then the vehicle will travel in the direction of the top of the graphical map. If the human operator then indicates a "left" direction in relation to the graphical map, the vehicle will turn to the left (e.g. drive to the left direction) in relation to the graphical map. According to some embodiments, when the human operator ceases to indicate a direction, the vehicle will stop moving. According to some embodiments, when the human operator ceases to indicate a direction, the vehicle will continue to move in the most-recently indicated direction until a future event occurs, such as the detection of an obstacle, and the subsequent autonomous obstacle avoidance by the vehicle. According to some embodiments, when the human operator ceases to indicate a direction, the vehicle will continue to autonomously navigate according to other navigation instructions, for example, towards a previously-determined waypoint or goal. As such, the indication of a direction for the vehicle to travel, such as determined relative to the graphical map, is a navigation instruction. Thus, some navigation instructions, such as guided navigation instructions, may be in a form that is relative to the current location, position, and/or speed of the vehicle, and some navigation instructions, such as waypoints, may be in a form that is relative to the facility or map.

[0081] If the navigation instructions correspond to a goal in the facility, then, at step 614a, the goal is set on the graphical map. A goal may be a particular location or coordinate indicated by a human operator relative to the graphical map, which is associated with a location or coordinate on the map. For example, if a human operator desires that the vehicle map an unknown or incomplete part of the map, then the human operator may set a goal within the corresponding unknown or incomplete part of the graphical map. Subsequently, the vehicle may autonomously navigate towards the goal location.

[0082] If the navigation instructions correspond to a waypoint in the facility, then, at step 614b, the waypoint is set on the graphical map. A waypoint may be a particular location or coordinate indicated by a human operator relative to the graphical map, which is associated with a location or coordinate on the map. For example, if a human operator desires that the vehicle navigate to a particular location in the facility, then the human operator may set a waypoint at the corresponding location on the graphical map. Subsequently, the vehicle may autonomously navigate towards the waypoint location. According to some embodiments, a waypoint may differ from a goal in that a waypoint can be labelled, saved, and identified for future use by the vehicle or other vehicles (e.g. via a fleet-management system). According to some embodiments, a waypoint may only be available in known parts of the map.

[0083] If the navigation instructions correspond to a zone in the facility, then, at step 614c, the zone is set on the graphical map. A zone may be an area indicated by a human operator relative to the graphical map, which is associated with an area on the map. For example, if a human operator desires that vehicles navigate through a particular area in a particular manner (or that they not navigate through a particular area at all), then the human operator may set a zone with corresponding attributes on the graphical map. Subsequently, the vehicle may consider the attributes of the zone when navigating through the facility.

[0084] At step 616, the vehicle navigates based on the navigation instructions set during the step 614. The vehicle may navigate in an autonomous mode, a guided-autonomous mode, and/or a semi-autonomous mode, depending, for example, on the type of navigation instruction received.

[0085] For example, if the navigation instructions comprise a goal or waypoint, then the vehicle may autonomously navigate towards the goal or waypoint. In other words, the vehicle may plan a path to the goal or waypoint based on the map, travel along the path, and, while travelling along the path, continue to sense the environment in order to plan an updated path according to any obstacles detected. Autonomous navigation is also relevant with respect to navigation instructions that comprise zones, since the autonomous navigation process takes the attributes of the zones into consideration.

[0086] For example, if the navigation instructions comprise a waypoint, and, during the course of the vehicle travelling to the waypoint, then the vehicle may semi-autonomously navigate towards the waypoint. This may occur if a human operator determines that the vehicle should alter course from its planned path on its way to the waypoint. The human operator may guide the vehicle on the altered course accordingly, for example, via guided navigation instructions. In this case, if the guided navigation instructions are complete (i.e. the human user stops guiding the vehicle off of the vehicle's autonomously planned path), the vehicle can continue to autonomously navigate to the waypoint.

[0087] The semi-autonomous navigation mode may be used, for example, if there is a preferred arbitrary path to the waypoint that the vehicle would be unaware of when autonomously planning its path. For example, if a waypoint corresponds to a particular stationary machine, or shelf, and, due to the constraints of the stationary machine, shelf, or the facility itself, it is preferred that the vehicle approach the stationary machine or shelf from a particular direction, then, as the vehicle approaches the stationary machine or shelf, the human operator can guide the vehicle so that it approaches from the preferred direction.

[0088] In another example of the vehicle navigating based on the navigation instructions set during the step 614, if the navigation instructions comprise a guided navigation instruction, then the vehicle may navigate according to the guided navigation instruction in a guided-autonomous navigation mode. For example, a human operator may guide the vehicle by indicating the direction (and, in some embodiments, the speed) of the vehicle, as previously described.

[0089] Operating the vehicle according to a guided-autonomous navigation instruction is distinct from a mere "remote-controlled" vehicle, since, even though the direction (and in some embodiment, the speed) of vehicle is being controlled by a human operator, the vehicle still maintains some autonomous capabilities. For example, the vehicle may autonomously detect and avoid obstacles within its peripheral environment, which may include the vehicle's control system overriding the guided instructions. Thus, in some cases, a human operator may not be able to drive the vehicle into an obstacle, such as a wall, since the vehicle's control system may autonomously navigate in order to prevent a collision with the obstacle regardless of the guided navigation instructions indicated by the human operator.

[0090] Furthermore, operating the vehicle according to a guided-autonomous navigation instruction is distinct from a human-operated vehicle operating with an autonomous collision-avoidance system, since the guided-autonomous navigation mode includes the vehicle continuously mapping its peripheral environment and the facility.

[0091] According to some embodiments, any or all of the steps 616, 618, or 620 may be run concurrently or in any other order. At step 618, the vehicle senses features of the facility within the vehicle's peripheral environment. For example, this may be done at the same time that the vehicle is navigating according to the step 616. The features may be sensed by the vehicle's sensors, and, using a processor on the vehicle, located relative to the map stored in the vehicle's memory and/or media. In some cases, the features may include the infrastructure of the facility such as walls, doors, structural elements such as posts, etc. In some cases, the features may include static features, such as stationary machines and shelves. In some cases, the features may include dynamic features, such as other vehicles and human pedestrians.

[0092] At step 620, the vehicle stores the map (e.g. an updated map that includes the features sensed during the step 620) on a storage medium of the vehicle. Based on the updated map, the graphical map on the graphical user-interface device is updated accordingly.

[0093] At step 622, a decision is made as to whether more mapping is desired. For example, a human operator may inspect the graphical map displayed on the graphical user-interface device, and determine that there is an unknown area of the facility that should be mapped, or that a known area of the facility should be updated in the map. If it is determined that more mapping is required, then the method returns to step 614. At step 614, new or subsequent navigation instructions may be set. For example, a new goal may be set for an unknown are of the map so that the unknown are can be mapped. If at step 622 it is determined that more mapping is not required, then the method proceeds to step 624.

[0094] At step 624, the vehicle may share the map. For example, the vehicle may retrieve the map from its media, and transmit the map to a fleet-management system, and/or directly to another vehicle. According to some embodiments, it may be necessary to install other equipment in order to share the map. For example, in some cases, the vehicle may be used as a stand-alone self-driving vehicle operating independently of a fleet-management system in order to map a facility. Subsequently, a fleet of vehicles and a fleet-management system may be installed in the facility, and the map may be transmitted to the fleet-management system from the vehicle. In other cases, the map may be shared with the fleet-management system or another vehicle using removable non-transient computer-readable media. In this way, a stand-alone self-driving vehicle can be used to map a facility prior to other vehicles or a fleet-management system being installed in the facility.

[0095] According to some embodiments, the vehicle may share additional information with the fleet-management system for the purposes of vehicle monitoring, diagnostics, and trouble shooting. For example, the vehicle may transmit vehicle status information pertaining to operating states such: offline, unavailable, lost, manual mode (autonomous navigation disabled), task failed, blocked, waiting, operating according to mission, operating without mission, etc.

[0096] The additional information may also include vehicle configuration information. For example, the vehicle may transmit vehicle configuration information pertaining to parameters of the vehicle's operating system or parameters of the vehicle's control system, sensor system, drive system, etc.

[0097] The fleet-management system may be configured to monitor the vehicle's status and/or configuration, create records of the vehicle's status and store back-up configurations. Furthermore, the fleet-management system may be configured to provide recovery configurations to the vehicle in the event that the robot's configuration is not operating as expected.

[0098] According to some embodiments, the vehicle status information and/or vehicle configuration information may be shared with a graphical user-interface device. For example, if the graphical user-interface device is a tablet, laptop, or desktop computer, then the graphical user-interface device can receive status and configuration information, record and back-up the information. Furthermore, the graphical-user interface device may provide recovery configuration to the vehicle in the event that the robot's configuration is not operating as expected. In some embodiments.

[0099] According to some embodiments, the vehicle may communicate with the graphical user-interface device independently of communicating with a fleet-management system (if one is present). For example, the vehicle may use a WiFi connection to connect with a fleet-management system, while simultaneously using a USB connection or another WiFi connection to share maps, status information, and configuration information with the graphical user-interface device.

[0100] Referring to FIG. 7, there is shown a method 700 of commanding a self-driving vehicle in an industrial facility according to a non-limiting embodiment. According to some embodiments, the method 700 may executed using a stand-alone self-driving vehicle with a graphical user-interface device.

[0101] The method begins at step 710 when a self-driving vehicle is driven in a semi-autonomous navigation mode or a guided-autonomous navigation mode, as previously described. Generally, both the semi-autonomous and the guided-autonomous navigation modes are associated with navigation steps. These navigation steps may comprise navigation instructions indicated by a human operator using the graphical user-interface device, as well as autonomous navigation steps derived by the control system of the vehicle as a part of autonomous navigation.

[0102] At step 712, the navigation steps associated with the semi-autonomous and/or guided-autonomous navigation are recorded. According to some embodiments, these may be recorded using the memory and/or media of the vehicle's control system. According to some embodiments, these may be recorded using the memory and/or media of the graphical user-interface device.

[0103] At step 714, tasks associated with the navigation steps are derived. The tasks may be derived using one or both of the vehicle's control system and the graphical user-interface device. According to some embodiments, deriving the tasks may comprise translating the navigation steps into tasks that are known to the vehicle, and which can be repeated by the vehicle.

[0104] For example, a human operator may use guided navigation instructions to move the vehicle from a starting point to a first location associated with a shelf. The operator may guide the vehicle to approach the shelf from a particular direction or to be in a particular position relative to the shelf. A payload may be loaded onto the vehicle from the shelf. Subsequently, the human operator may use guided navigation instructions to move the vehicle from the first location to a second location associated with a machine. The operator may guide the vehicle to approach the machine from particular direction or to be in a particular position relative to the machine. The payload may be unloaded from the vehicle for use with the machine.

[0105] In the above example, the navigation steps may include all of the navigation instructions as indicated by the human operator as well as any autonomous navigation steps implemented by the vehicle's control system. However, some of the navigation steps may be redundant, irrelevant, or sub-optimal with regards to the purpose of having the vehicle pick up a payload at the shelf and deliver it to the machine. For example, the starting point of the vehicle and the navigation steps leading to the first location may not be relevant. However, the first location, its association with the shelf, the direction of approach, the vehicle's position, and the fact that the payload is being loaded on the vehicle may be relevant. Thus, the derived tasks may include: go to the first location; approach from a particular direction; orient to the shelf with a particular position; and wait for a pre-determined time or until a signal is received to indicate that the payload has been loaded before continuing. Similarly, the particular guided navigation steps to the second location may not be particularly relevant, whereas the second location itself (and associated details may be).

[0106] At step 716, a recipe is generated based on the navigation steps and/or the derived tasks. The recipe may be generated by one or both of the vehicle's control system and the graphical user-interface device. According to some embodiments, the recipe may be generated as a result of input received by a human operator with the graphical user-interface device. According to some embodiments, the recipe may be presented to the human operator in a human-readable form so that the human operator can edit or supplement the recipe, for example, by defining waypoints for use in the recipe.

[0107] At step 718, the recipe is stored. The recipe may be stored in a computer-readable medium on one or both of the vehicle's control system and the graphical user-interface device. As such, the recipe can be made available for future use by the vehicle.

[0108] At step 720, the recipe may be shared by the vehicle. For example, the vehicle and/or the graphical user-interface device may retrieve the recipe from the medium, and transmit the recipe to a fleet-management system, and/or directly to another vehicle. According to some embodiments, it may be necessary to install other equipment in order to share the recipe. For example, in some cases, the vehicle may be used as a stand-alone self-driving vehicle operating independently of a fleet-management system in order to generate a recipe. Subsequently, a fleet of vehicles and a fleet-management system may be installed in the facility, and the recipe may be transmitted to the fleet-management system from the vehicle. In other cases, the recipe may be shared with the fleet-management system or another vehicle using removable non-transient computer-readable media. In this way, a stand-alone self-driving vehicle can be used to generate a recipe prior to other vehicles or a fleet-management system being installed in the facility.

[0109] Referring to FIG. 8, there is shown a method 800 of instructing a self-driving vehicle in an industrial facility according to a non-limiting embodiment. According to some embodiments, the method 800 may executed using a stand-alone self-driving vehicle with a graphical user-interface device.

[0110] The method 800 begins at step 810 when a waypoint is received. The waypoint may be received by the vehicles control system from the graphical user-interface device as indicated by a human operator relative to a graphical map. According to some embodiments the waypoint may be received by the vehicle's control system from the graphical user interface device as selected by a human operator from a list of available waypoints. According to other embodiments a graphical user-interface device may not be available or present, and the waypoint may be received from a fleet-management system.

[0111] At step 812, the vehicle navigates based on the location of the waypoint relative to the map stored in the vehicle's control system. Any navigation mode may be used, such as autonomous, semi-autonomous, and guided-autonomous navigation modes. Generally, the vehicle may sense its peripheral environment for features, using the vehicle's sensor system, and update the map accordingly, as previous described.

[0112] At step 814, when the waypoint location is within the peripheral environment of the vehicle, the vehicle senses salient features within the peripheral environment of the waypoint. For example, if the waypoint is associated with a particular target such as a vehicle docking station, payload pick-up and drop-off stand, shelf, machine, etc., then the salient features may be particular features associated with the target. In this case, the salient feature may be a feature of the target itself (e.g. based on the target's geometry), or the salient feature may be a feature of another object in the periphery of the target.

[0113] According to some embodiments, an image of the salient feature may be stored on the vehicle's control system as a signature or template image for future use.

[0114] At step 816, the map stored on the vehicle's control system is updated to include the salient feature or identifying information pertaining to the salient feature. The salient feature may be associated with the waypoint.

[0115] Subsequent to step 816, the vehicle may leave the area of the waypoint and conduct any number of other tasks and missions. Generally, this involves the vehicle travelling away from the waypoint at step 818.

[0116] At some future time, the vehicle may be directed back to the waypoint (i.e. the same waypoint as in 810). However, in this case, since there is now a salient feature associated with the waypoint, rather than navigating specifically based on the waypoint, the vehicle's control system autonomously navigates in part or in whole based on the salient features associated with the waypoint.

[0117] The scope of the claims should not be limited by the embodiments set forth in the above examples, but should be given the broadest interpretation consistent with the description as a whole.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.