Method Of Video Transmission And Display

MALLETT; Richard Peter Disney

U.S. patent application number 16/530826 was filed with the patent office on 2019-11-21 for method of video transmission and display. The applicant listed for this patent is TV ONE Limited. Invention is credited to Richard Peter Disney MALLETT.

| Application Number | 20190356940 16/530826 |

| Document ID | / |

| Family ID | 61617042 |

| Filed Date | 2019-11-21 |

View All Diagrams

| United States Patent Application | 20190356940 |

| Kind Code | A1 |

| MALLETT; Richard Peter Disney | November 21, 2019 |

METHOD OF VIDEO TRANSMISSION AND DISPLAY

Abstract

Aspects of the present disclosure relate to a method, in a video output device, for acquiring video data for outputting to a display. The method comprises subscribing to a multicast stream of a plurality of multicast streams. Each multicast stream is streamed from a video source and comprises video frame data corresponding to a portion of a video frame. The multicast stream to which the video output device subscribes comprises video frame data that is for display on a display associated with the video output device. The method then comprises receiving the video frame data that is for display on the display.

| Inventors: | MALLETT; Richard Peter Disney; (Margate, GB) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 61617042 | ||||||||||

| Appl. No.: | 16/530826 | ||||||||||

| Filed: | August 2, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/GB2018/050318 | Feb 2, 2018 | |||

| 16530826 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G09G 5/026 20130101; G09G 2340/0464 20130101; G09G 2350/00 20130101; G09G 2370/20 20130101; G09G 2340/12 20130101; G06F 3/1446 20130101; H04N 21/41415 20130101; H04N 21/431 20130101; G09G 2370/02 20130101; G09G 5/003 20130101; H04N 21/2393 20130101; H04N 21/437 20130101; H04N 21/4622 20130101; G09G 2300/026 20130101; G09G 5/14 20130101; H04N 21/4122 20130101; G09G 2360/121 20130101 |

| International Class: | H04N 21/41 20060101 H04N021/41; G06F 3/14 20060101 G06F003/14; H04N 21/239 20060101 H04N021/239; H04N 21/437 20060101 H04N021/437; H04N 21/462 20060101 H04N021/462; H04N 21/414 20060101 H04N021/414 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Feb 3, 2017 | GB | 1701859.9 |

| Feb 3, 2017 | GB | 1701860.7 |

Claims

1. A method, in a video client, for acquiring video data for output to a display of a video wall, the method comprising: transmitting, to a video server, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display of the video wall; receiving video frame data from the video server, the received video frame data comprising data for a portion of the video frame corresponding to the request; transmitting, to the video server, one or more further requests for video frame data for different portions of the video frame, the different portions of the video frame being associated with the display of the video wall; and receiving further video frame data from the video server, the received further video frame data comprising data for further portions of the video frame corresponding to the one or more further requests.

2. A method according to claim 1, wherein: the request defines the portion of the video frame based on a location of the portion of the video frame within the video frame; and the further request defines the different portion of the video frame based on a different location of the different portion of the video frame within the video frame.

3. A method according to claim 2, the method comprising outputting display data, based on the received video frame data, to the display.

4. A method according to claim 3, wherein the request or further request for video frame data comprises a request for a block of pixels.

5. A method according to claim 4, wherein the request for a block of pixels comprises a location for the block of pixels.

6. A method according to claim 4, wherein the request for a block of pixels comprises a location of a pixel in the block of pixels.

7. A method according to claim 4, wherein the request for a block of pixels comprises an identifier of the block of pixels.

8. A method according to claim 4, wherein the method comprises determining a mapping of at least one pixel of the display to a location in the block of pixels.

9. A method according to claim 8, wherein the or each block of pixels has predefined spatial dimensions.

10. A method according to claim 8, wherein the mapping is such that a requested block of pixels is for display on a block of pixels of the display, the block of pixels of the display having the same dimensions as the requested block of pixels.

11. A method according to claim 8, wherein: the mapping is such that a requested block of pixels is for display on a block of pixels of the display; and the method comprises determining pixel values of the block of pixels of the display by interpolating between pixel values of the requested block of pixels.

12. A method according to claim 1 wherein: the video server is one of a plurality of video servers, each video server of the plurality being configured to provide corresponding video frame data for display on the video wall; and the method comprises selecting the server to which a request is sent based on which video frame data is to be displayed on the display.

13. A method according to claim 1, the method comprising: transmitting, to a second video server, a second request for video frame data for a portion of a second video frame, the portion of the second video frame being associated with the display of the video wall; and receiving second video frame data from the second video server, the received second video frame data comprising data for a portion of the second video frame corresponding to the second request.

14. A method according to claim 1, comprising receiving the video frame data as streamed video data.

15. A method according to claim 1, wherein: the or each request comprises an indication of a desired video quality; and the received video frame data comprises video frame data at the desired video quality.

16. A method according to claim 15, wherein the desired video quality comprises a desired video frame resolution.

17. A method according to claim 15, wherein the desired video quality comprises a desired degree of video compression.

18. A non-transitory computer-readable storage medium comprising a set of computer-readable instructions stored thereon which, when executed by at least one processor, cause the at least one processor to perform a method according to claim 1.

19. A video client associated with a display of a video wall, the video client comprising: a network interface; and a processor coupled to the network interface and configured to: transmit, to a video server via the network interface, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display; receive video frame data, via the network interface, from the video server, the received video frame data comprising data for a portion of the video frame corresponding to the request; transmit, to the video server, one or more further requests for video frame data for different portions of the video frame, the different portions of the video frame being associated with the display of the video wall; and receive further video frame data from the video server, the received further video frame data comprising data for further portions of the video frame corresponding to the one or more further requests.

20. A video wall system comprising: a video wall comprising a plurality of display devices; at least one video server, the or each video server being configured to provide video frame data; and a plurality of video clients, each video client being associated with a corresponding display device of the video wall and each video client being configured to: transmit to a video server of the at least one video server, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display of the video wall; and transmit to the video server of the at least one video server, one or more further requests for video frame data for different portions of the video frame, the different portions of the video frame being associated with the display of the video wall; the one or more video servers each being configured to store video frame data and, in response to: receiving a request from one of said plurality of video clients, transmit video frame data to the corresponding video client, the video frame data comprising data for a portion of the video frame corresponding to the request; and receiving one or more further requests from one or said plurality of video clients, transmit further video frame data to the corresponding video client, the further video frame data comprising data for further portions of the video frame corresponding to the one or more further requests.

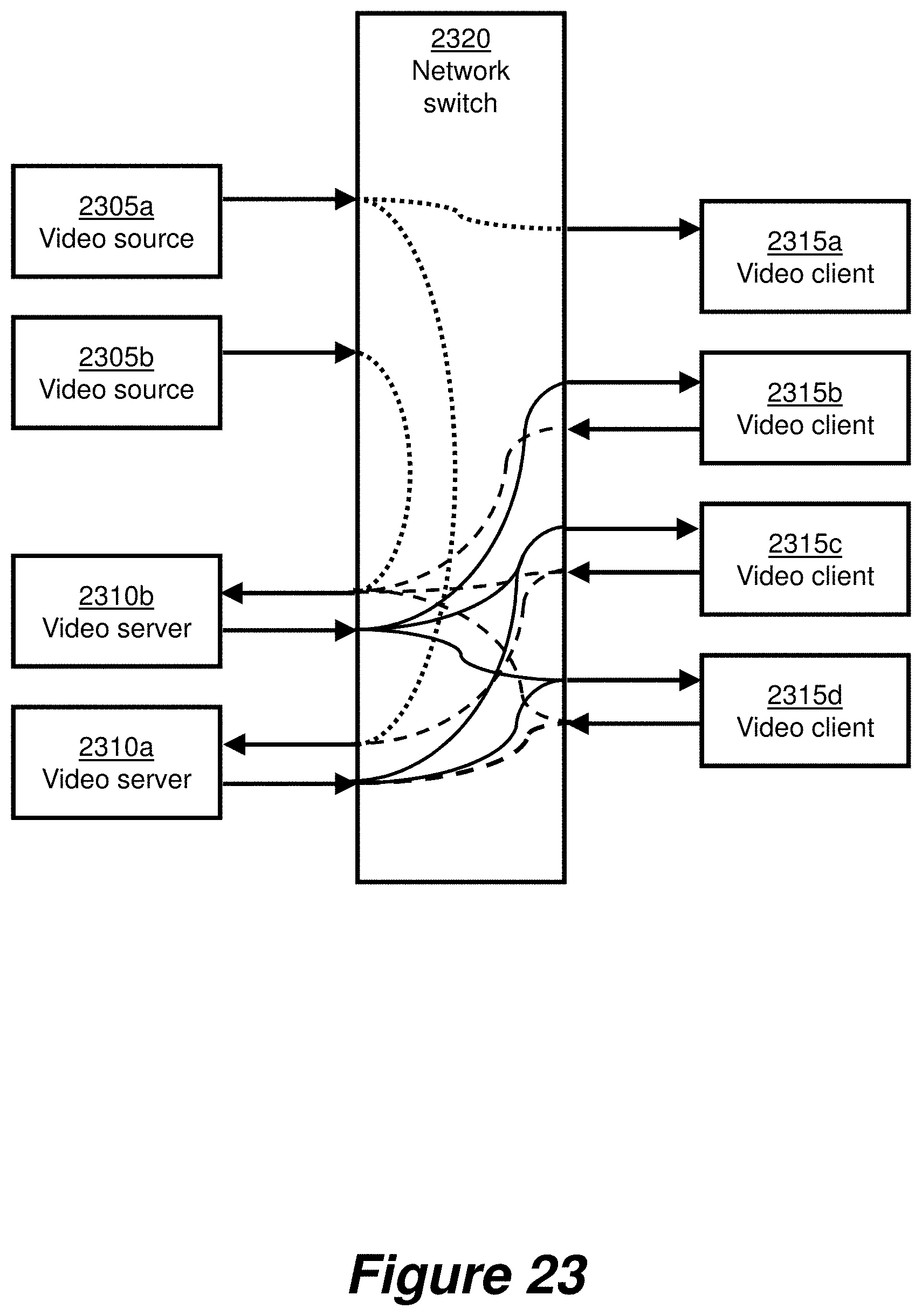

21. A video wall system according to claim 20, wherein: the plurality of video clients and the at least one video server comprise nodes of a network; the network comprises a network switch which couples each video client to each video server; each video client is configured to transmit requests for video data via the network switch; and the or each video server is configured to transmit video frame data via the network switch.

22. A method of video frame transmission in a video wall system, the system comprising a plurality of video clients wherein each video client is associated with a corresponding display of the video wall, the method comprising: transmitting, from a video client of the plurality of video clients and to a video server, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the corresponding display of the video wall; responsive to receiving a said request from a video client, transmitting, from the video server and to the video client, video frame data comprising data for a portion of video frame corresponding to the request; transmitting, from the video client of the plurality of video clients and to the video server, one or more further requests for video frame data for different portions of the video frame, the different portions of the video frame being associated with the corresponding display of the video wall; and responsive to receiving the one or more further requests from the video client, transmitting, from the video server and to the video client, further video frame data comprising data for further portions of the video frame corresponding to the one or more further requests.

23. A method, in a video server, for providing video data for display on a display of a video wall, the method comprising: receiving, from a video client, a request for video frame data for a portion of a video frame; transmitting video frame data to the video client, the transmitted video frame data comprising data for a portion of the video frame corresponding to the request; receiving, from the video client, one or more further requests for video frame data for different portions of the video frame; and transmitting further video frame data to the video client, the transmitted further video frame data comprising data for further portions of the video frame corresponding to the one or more further requests.

24. A video server comprising: a network interface; and a processor coupled to the network interface and configured to: receive, via the network interface and from a video client, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display; transmit video frame data, via the network interface, to the video client, wherein the transmitted video frame data comprises data for a portion of the video frame corresponding to the request; receive, via the network interface and from the video client, one or more further requests for video frame data for different portions of the video frame, the different portions of the video frame being associated with the display; and transmit further video frame data, via the network interface, to the video client, wherein the transmitted further video frame data comprises data for further portions of the video frame corresponding to the one or more further requests.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of International Application No. PCT/GB2018/050318, filed Feb. 2, 2018, which claims priority to UK Application No. GB1701860.7, filed Feb. 3, 2017, and UK Application No. GB1701859.9, filed Feb. 3, 2017, under 35 U.S.C. .sctn. 119(a). Each of the above-referenced patent applications is incorporated by reference in its entirety.

BACKGROUND OF THE INVENTION

Technical Field

[0002] The present invention relates to methods, apparatus and systems for requesting, transmitting and/or receiving video data for a display.

Background

[0003] A video wall is a video system in which a number of video monitors or displays are arranged in an array formation to create a relatively larger overall display screen. A typical video wall system includes a video processing unit which comprises a number of video input modules for providing a variety of video inputs, a central processing module for processing video data from the input modules, and a number of video output modules for providing video outputs for connection via standard video cables to the video monitors. The number of video outputs can be increased by adding further output modules to the video processing unit. However, the number of video outputs, and hence the number of monitors supported by the system, is limited by bandwidth capability of the central processing module. Increasing resolution of the video inputs places further load on the central processing module which can result in further limitations on the number of supported video outputs.

SUMMARY

[0004] According to a first aspect of the present invention, there is provided a method, in a video output device, for acquiring video data for output to a display, the method comprising: subscribing to a multicast stream from a plurality of multicast streams, wherein each multicast stream of the plurality of multicast streams is streamed from a video source and comprises video frame data corresponding to a portion of a video frame; and receiving video frame data from the subscribed multicast stream for display on the display.

[0005] A method in accordance with the first aspect of the invention may enable a video output device to subscribe to multicast streams corresponding to less than a full frame of video data from a video source. For example, where the display is a display of a video wall, the method enables the video output device to subscribe to streams corresponding to just the video frame data that is needed for a particular display of the video wall. Therefore, in comparison to a video output device that receives a full frame of video data, a video output device operating in accordance with the first aspect of the invention can receive relatively less video data but still provide a full set of video frame data to an associated display. This can have the benefit of enabling a reduction in the bandwidth requirements for the reception of video frame data by a video output device.

[0006] In accordance with the invention, the method may further comprise: subscribing to one or more further multicast streams of the plurality of multicast streams, and receiving video frame data from the further subscribed multicast streams for display on the display.

[0007] A video output device operating in accordance with the method can subscribe to a set of multicast streams. The set of multicast streams may contain video data which when output to a display may fill the entire frame of the display.

[0008] Suitably, subscribing to a particular multicast stream comprises transmitting a subscription request to a network switch, the network switch being coupled to the video output device and to the video source, wherein the subscription request identifies the particular multicast stream.

[0009] In some embodiments, each of the plurality of multicast streams comprises video frame data at a given video quality. In this case, the subscription request may comprise an indication of a desired video quality, and the received video frame data may comprise video frame data at the desired video quality.

[0010] This allows a reduction in network bandwidth, as video is not transmitted to the video output device at a higher quality than required. The desired video quality may comprise one or more of a desired video frame resolution, a desired degree of video compression, a desired video frame bit depth and a desired chroma subsampling scheme.

[0011] In embodiments, the method comprises determining the or each multicast stream to request based on a position of the video frame on the display.

[0012] In some embodiments, determining the position of the video frame on the display comprises determining a mapping of at least one pixel of the display to a location in the video frame.

[0013] In embodiments, the method may comprise: determining a change of the position of the video frame on the display; based on the determined change in position, determining a new multicast stream to subscribe to; and transmitting a further subscription request to the network switch, the further subscription request identifying the new multicast stream.

[0014] The video output device can thus update the streams to which it subscribes on the fly, based on changes in the location at which a given video frame is displayed.

[0015] The or each portion may have predefined spatial dimensions.

[0016] In some examples, the method comprises outputting display data, based on the received video frame data, to the display.

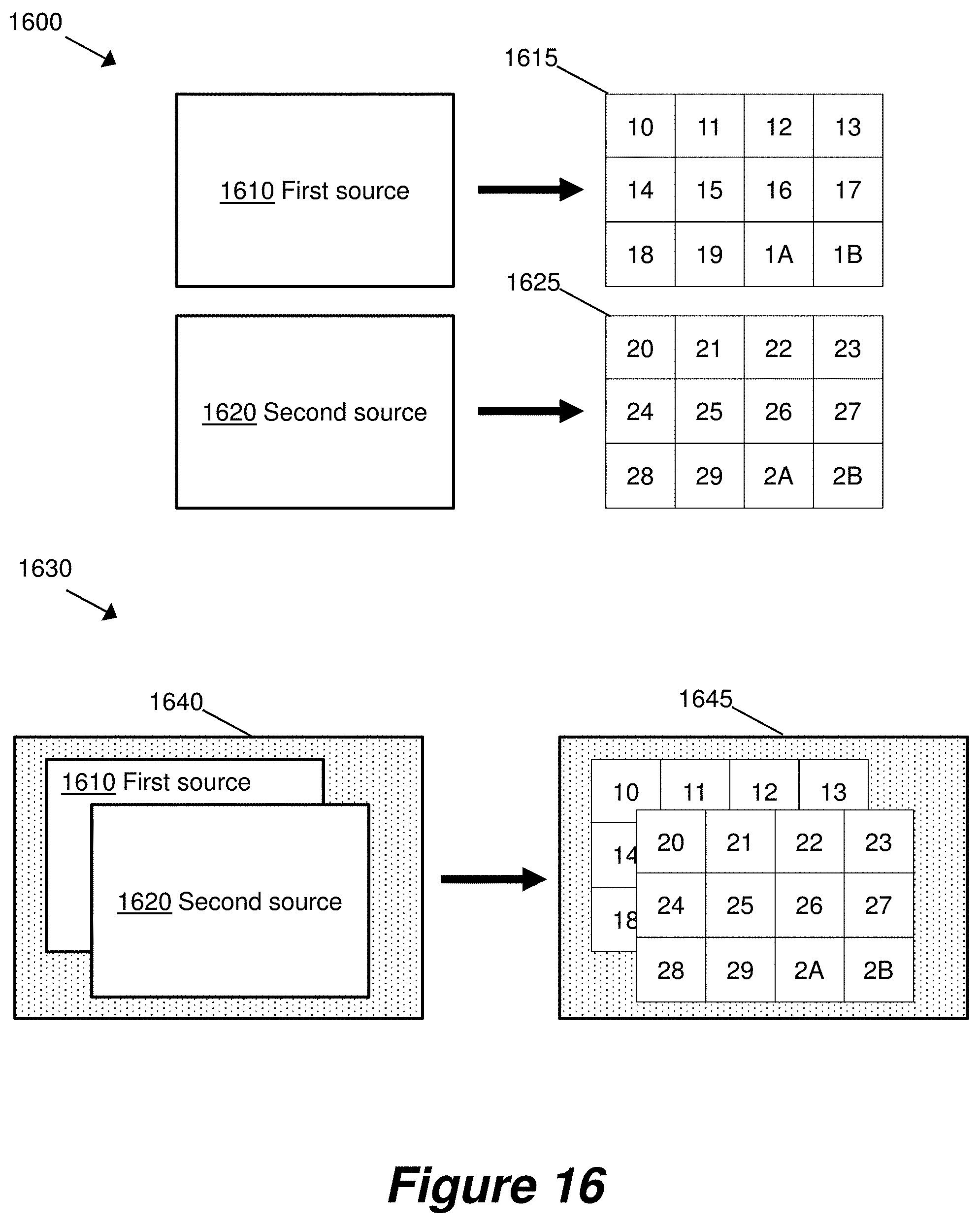

[0017] In an embodiment, the method comprises: subscribing to a second multicast stream from a second plurality of multicast streams, wherein each multicast stream of the second plurality of multicast streams is streamed from a second video source and comprises video frame data corresponding to a portion of a second video frame; and receiving video frame data from the subscribed second multicast stream for display on the display.

[0018] A given video output device can thus subscribe to streams originating from multiple video sources. Because a video output device only subscribes to streams corresponding to video frame portions that are for display on its corresponding display, a video output device may receive video data from multiple video sources without a significant increase in required bandwidth. Additionally, this allows a single video to be divided across multiple sources, which allows higher resolution video to be supported since each source only needs to provide a lower resolution video.

[0019] The display may be a display of a video wall.

[0020] According to an alternative first aspect of the present invention, there is provided a non-transitory computer-readable storage medium comprising a set of computer-readable instructions stored thereon which, when executed by at least one processor, cause the at least one processor to perform a method as described above.

[0021] According to another alternative first aspect of the present invention, there is provided a video output device comprising: a network interface; a processor coupled to the network interface and configured to: subscribe, via the network interface, to a multicast stream from a plurality of multicast streams, wherein each multicast stream of the plurality of multicast streams is streamed from a video source and comprises video frame data corresponding to a portion of a video frame; and receive, via the network interface, video frame data from the subscribed multicast stream.

[0022] According to a further embodiment, there is provided a video system comprising: a plurality of displays; a network switch; at least one video source coupled to the network switch and configured to provide a plurality of multicast streams via the network switch, each multicast stream of the plurality of multicast streams comprising video frame data corresponding to a portion of a corresponding video frame; a plurality of video output devices coupled to the network switch, each video output device being coupled to a corresponding display and being configured to: transmit a subscription request to the network switch, the subscription request being for at least one of the plurality of multicast streams; the network switch being configured to, in response to receiving a request from one of the plurality of video output devices, transmit each requested multicast stream to the corresponding video output device.

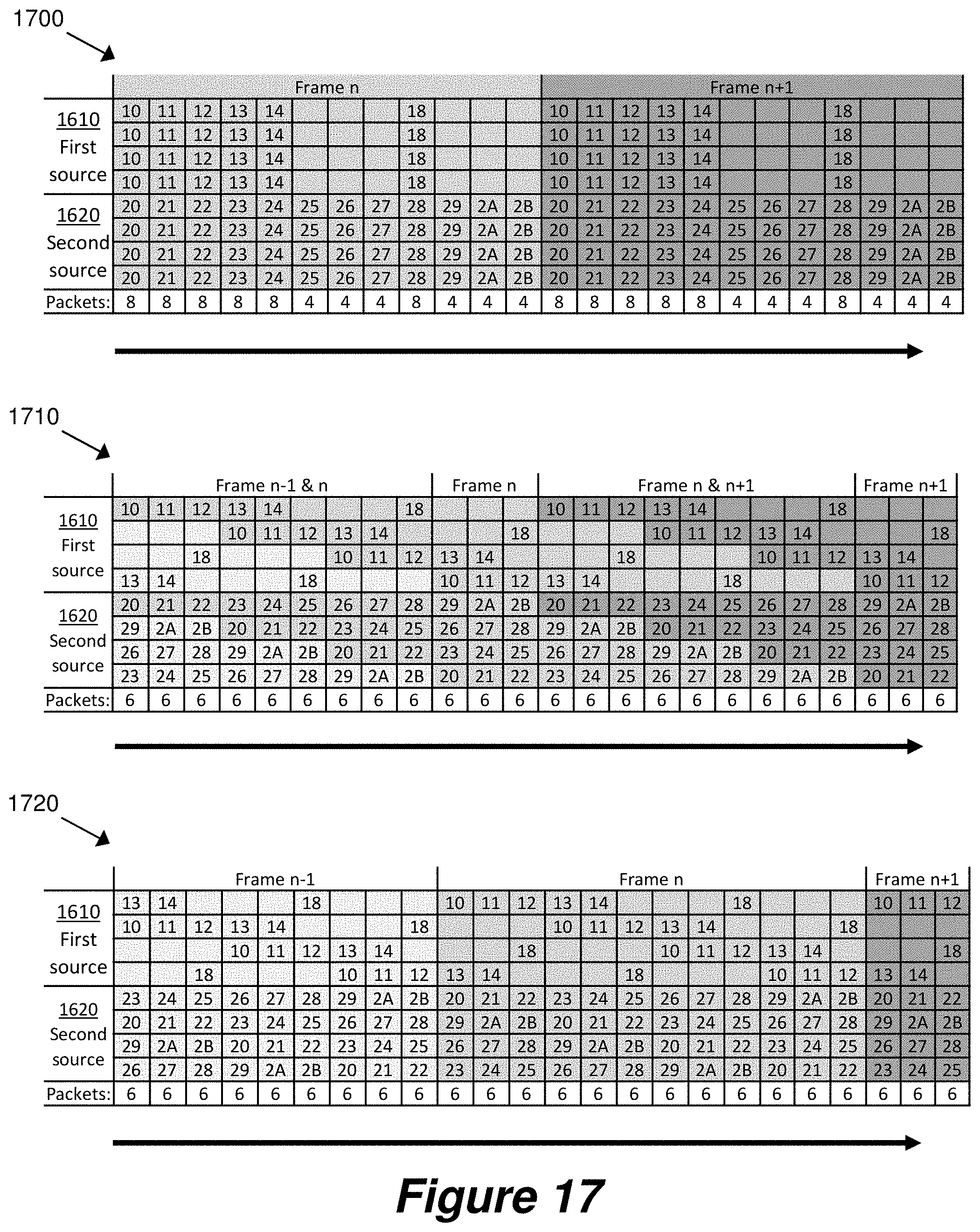

[0023] In some examples, each video source of the least one video sources provides its corresponding plurality of multicast streams such that, for a single video frame, a video source transmits video frame data of each multicast stream in series to the network switch; and

[0024] the series is such that video frame data corresponding to adjacent portions of a single video frame is transmitted nonconsecutively.

[0025] A consequence of the non-consecutive transmission of adjacent portions is a reduction in the incidence of spikes in required bandwidth, for example where a given video output device subscribes to streams corresponding to equivalent portions of multiple videos.

[0026] The series may be such that multicast streams corresponding to adjacent portions of a single video frame are transmitted in a pseudorandom order.

[0027] In some examples, each video source of the least one video sources provides its corresponding plurality of multicast streams such that, for a single video frame, a video source transmits video frame data of each multicast stream in series to the network switch; and the series is such that video frame data corresponding to a portion of a single video frame is split into multiple data packets; and the multiple data packets are transmitted spread out in time over the entirety of the single video frame.

[0028] In some examples, the network switch is configured to receive a multicast stream comprising video frame data corresponding to a portion of a corresponding video frame, and to provide the video frame data as a sequence of data packets at an output of the switch. Preferably, the sequence of data packets are provided at an output of the switch spread out in time over one or more video frames.

[0029] A consequence of the spreading out in time of the transmission of the data packets is a reduction in the incidence of spikes in required bandwidth, for example where a given video output device subscribes to streams corresponding to equivalent portions of multiple videos.

[0030] The series may be such that multicast streams corresponding to adjacent portions of a single video frame are transmitted in data packets that are spread out in time over a single video frame.

[0031] The plurality of displays may be displays of a video wall.

[0032] According to a further embodiment, there is provided a method of video frame transmission in a video system, the method comprising: transmitting, from a video source and to a network switch, a plurality of multicast streams, each multicast stream of the plurality of multicast streams comprising video frame data corresponding to a portion of a corresponding video frame; transmitting, from a video output device and to the network switch, a subscription request for at least one of the plurality of multicast streams; and in response to receiving a said subscription request, transmitting each requested multicast stream from the network switch to the video output device.

[0033] According to another alternative first aspect of the present invention, there is provided a method, in a video source, for providing streamed video data. The method comprises: retrieving video frame data from a video input; and providing to a network a plurality of multicast streams, wherein each multicast stream of the plurality of multicast streams comprises video frame data corresponding to a portion of a video frame.

[0034] According to another alternative first aspect of the present invention, there is provided a video source comprising: a video input; a network interface; and a processor, the processor being configured to: retrieve video frame data from the video input; and provide, via the network interface, a plurality of multicast streams, wherein each multicast stream of the plurality of multicast streams comprises video frame data corresponding to a portion of a video frame.

[0035] According to a second aspect of the present invention, there is provided a method, in a video client, for acquiring video data for output to a display of a video wall. The method comprises: transmitting, to a video server, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display of the video wall; and receiving video frame data from the video server, the received video frame data comprising data for a portion of the video frame corresponding to the request.

[0036] A method in accordance with the second aspect of the invention may enable a video client to request and receive less than a full frame of video data from a video server. This in turn enables the video client to request just the video frame data that is needed for a particular display of the video wall. Therefore, in comparison to a video client that receives a full frame of video data, a video client operating in accordance with the second aspect of the invention can receive relatively less video data but still provide a full set of video frame data to an associated display. This can have the benefit of enabling a reduction in the bandwidth requirements for the reception of video frame data in a video system, and has beneficial application in video wall systems comprising multiple displays.

[0037] In an embodiment, the method comprises: transmitting, to the video server, one or more further requests for video frame data for different portions of the video frame, the different portions of the video frame being associated with the display of the video wall; and receiving further video frame data from the video server, the received further video frame data comprising data for further portions of the video frame corresponding to the one or more further requests.

[0038] The video client thus receives a series of portions of video frame data, thereby allowing smooth network traffic.

[0039] In some examples, the method comprises outputting display data, based on the received video frame data, to the display.

[0040] In some examples, the request or further request for video frame data comprises a request for a block of pixels. The request for a block of pixels may comprise a location for the block of pixels, a location of a pixel in the block of pixels, or an identifier of the block of pixels. The video client can thus efficiently request particular blocks of pixels.

[0041] In an embodiment, the method comprises determining a mapping of at least one pixel of the display to a location in the block of pixels.

[0042] According to one embodiment, the or each block of pixels has predefined spatial dimensions. Video data may thus be stored, for example at the video server, in a block-based format. This allows efficient requesting and receiving of video frame data.

[0043] In some examples, the mapping is such that a requested block of pixels is for display on a block of pixels of the display, the block of pixels of the display having the same dimensions as the requested block of pixels.

[0044] In other examples, the mapping is such that: a requested block of pixels is for display on a block of pixels of the display; and the method comprises determining pixel values of the block of pixels of the display by interpolating between pixel values of the requested block of pixels.

[0045] In an embodiment the video server is one of a plurality of video servers, each video server of the plurality being configured to provide corresponding video frame data for display on the video wall; and the method comprises selecting the server to which a request is sent based on which video frame data is to be displayed on the display.

[0046] Video from various video servers may thus be provided to a single video client.

[0047] In some embodiments, the method comprises: transmitting, to a second video server, a second request for video frame data for a portion of a second video frame, the portion of the second video frame being associated with the display of the video wall; and receiving second video frame data from the second video server, the received second video frame data comprising data for a portion of the second video frame corresponding to the second request.

[0048] A video client can thus receive and output video frame data from multiple video servers. This allows a single video to be divided across multiple servers, which allows higher resolution video to be supported since each server only needs to support a lower resolution video. Additionally, because a video client only requests portions that are for display on its corresponding display, a video client may receive video data from multiple video servers without a significant increase in required bandwidth.

[0049] In some examples, the method comprises receiving the video frame data as streamed video data.

[0050] In an embodiment, the or each request comprises an indication of a desired video quality; and the received video frame data comprises video frame data at the desired video quality.

[0051] This allows a reduction in network bandwidth, as video is not transmitted at a higher quality than required. The desired video quality may comprise a desired video frame resolution and/or a desired degree of video compression.

[0052] According to an alternative second aspect of the present invention, there is provided a non-transitory computer-readable storage medium comprising a set of computer-readable instructions stored thereon which, when executed by at least one processor, cause the at least one processor to perform a method as described above.

[0053] According to another alternative second aspect of the invention, there is provided a method, in a video client, for acquiring video data for output to a display, the method comprising transmitting a series of video frame data requests to a video server, wherein each request is for a different portion of a video frame; and receiving, in a series of responses to the series of video frame data requests, video frame data from the video server for output to the display.

[0054] The different portions of the video frame may be different areas or regions within the video frame. Furthermore, the video frame data may be a subset of the video frame data for the complete video frame.

[0055] According to an embodiment, there is provided a video client associated with a display of a video wall, the video client comprising: a network interface; and a processor coupled to the network interface and configured to: transmit, to a video server via the network interface, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display; receive video frame data, via the network interface, from the video server, the received video frame data comprising data for a portion of the video frame corresponding to the request.

[0056] According to a further embodiment, there is provided a video wall system comprising: a video wall comprising a plurality of display devices; at least one video server, the or each video server being configured to provide video frame data; and a plurality of video clients, each video client being associated with a corresponding display device of the video wall and each video client being configured to: transmit to a video server of the at least one video server, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display of the video wall; the one or more video servers each being configured to store video frame data and, in response to receiving a request from one of said plurality of video clients, transmit video frame data to the corresponding video client, the video frame data comprising data for a portion of the video frame corresponding to the request.

[0057] In some examples, the plurality of video clients and the at least one video server comprise nodes of a network; the network comprises a network switch which couples each video client to each video server; each video client is configured to transmit requests for video data via the network switch; and the or each video server is configured to transmit video frame data via the network switch.

[0058] According to an alternative second aspect of the present disclosure, there is provided a method of video frame transmission in a video wall system, the system comprising a plurality of video clients wherein each video client is associated with a corresponding display of the video wall, the method comprising: transmitting, from a given video client of the plurality of video clients and to a video server, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the corresponding display of the video wall; and responsive to receiving a said request from a given video client, transmitting, from the video server and to the given video client, video frame data comprising data for a portion of video frame corresponding to the request.

[0059] According to an embodiment, there is provided a method, in a video server, for providing video data for display on a display of a video wall, the method comprising: receiving, from a video client, a request for video frame data for a portion of a video frame; and transmitting video frame data to the video client, the transmitted video frame data comprising data for a portion of the video frame corresponding to the request.

[0060] In a further embodiment, there is provided a video server comprising: a network interface; and a processor coupled to the network interface and configured to: receive, via the network interface and from a video client, a request for video frame data for a portion of a video frame, the portion of the video frame being associated with the display; and transmit video frame data, via the network interface, to the video client, wherein the transmitted video frame data comprises data for a portion of the video frame corresponding to the request.

[0061] Further features and advantages of the invention will become apparent from the following description of preferred embodiments of the first and second aspects of the invention, given by way of example only, which is made with reference to the accompanying drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

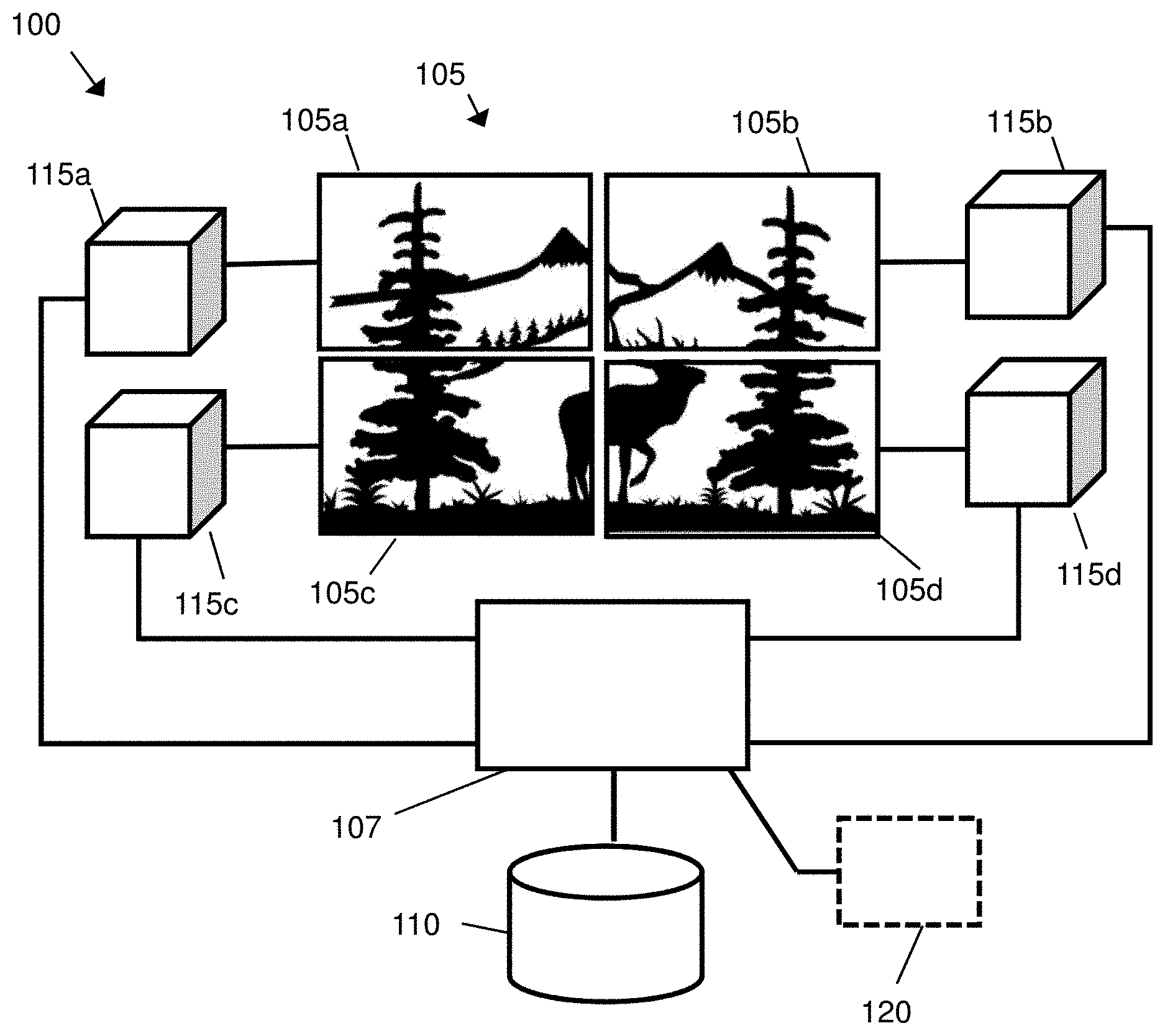

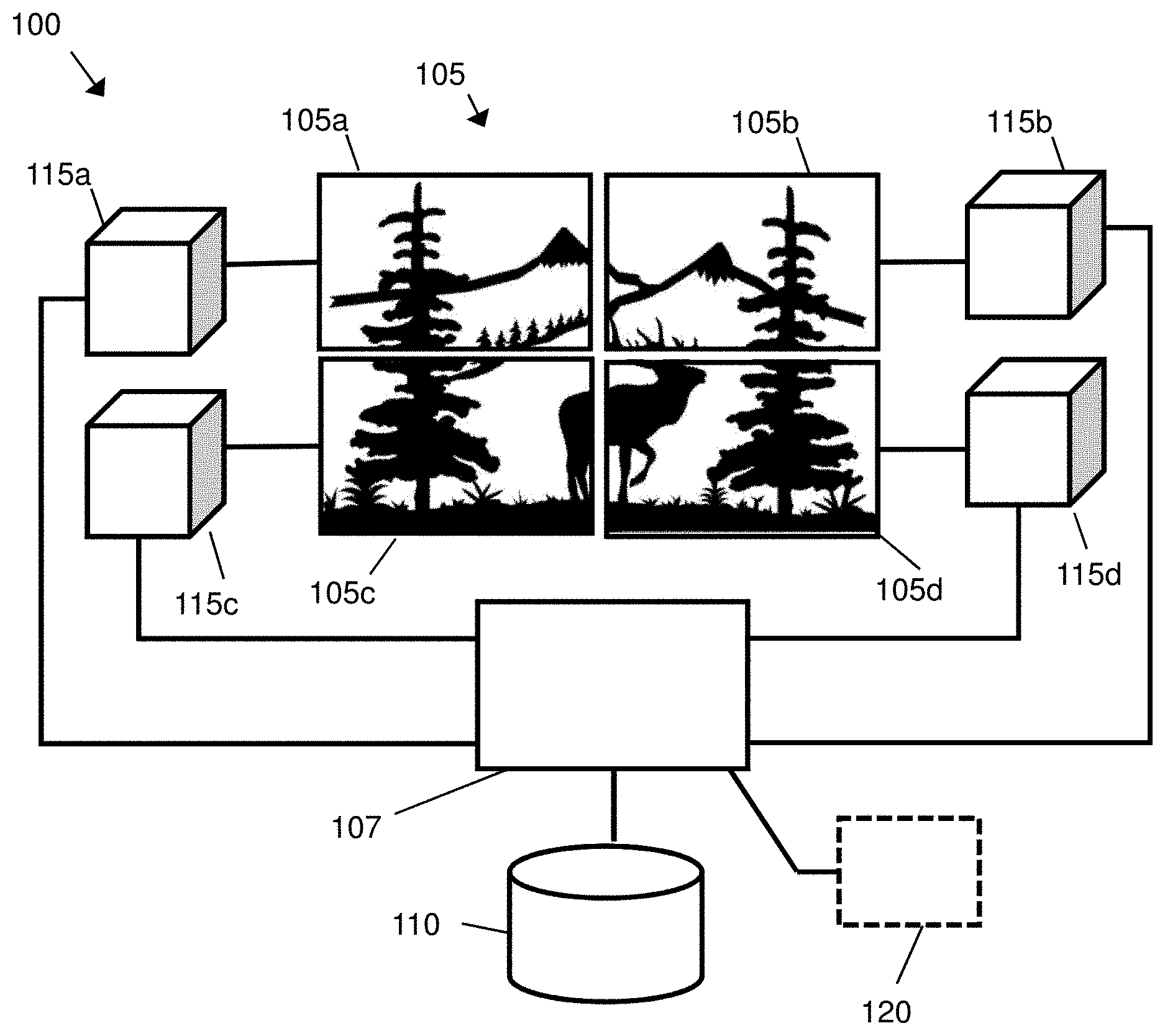

[0062] FIG. 1 shows a video wall system according to an embodiment of the first aspect of the invention.

[0063] FIG. 2 shows a schematic representation of a method according to an embodiment of the first aspect of the invention.

[0064] FIG. 3 shows a schematic representation of a method according to an embodiment of the first aspect of the invention.

[0065] FIG. 4 shows an example of data flow in a video system according to an embodiment of the first aspect of the invention.

[0066] FIG. 5 shows a schematic representation of a subscription request data packet according to an embodiment of the first aspect of the invention.

[0067] FIG. 6 shows a schematic representation of a video source according to embodiments of the first and second aspects of the invention.

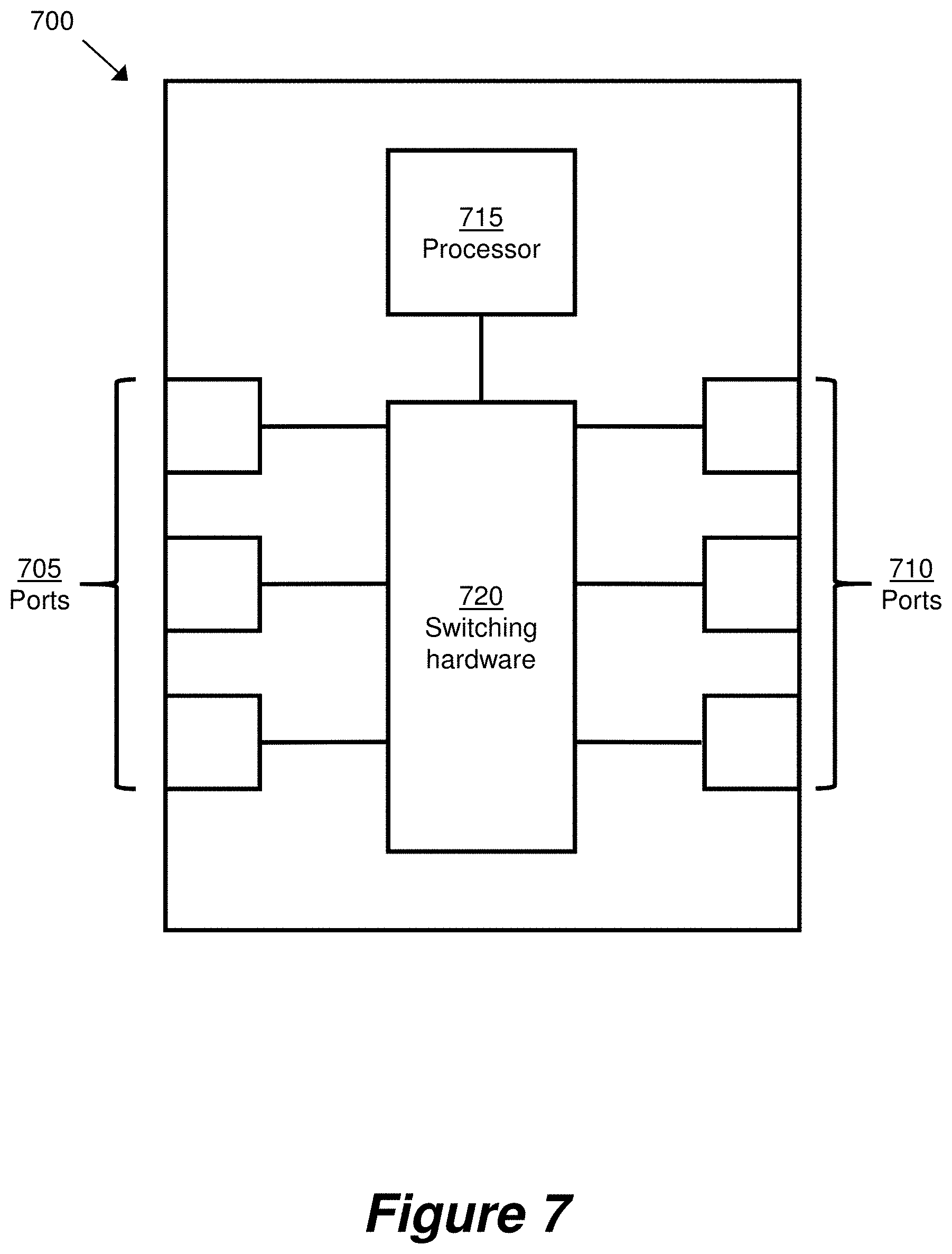

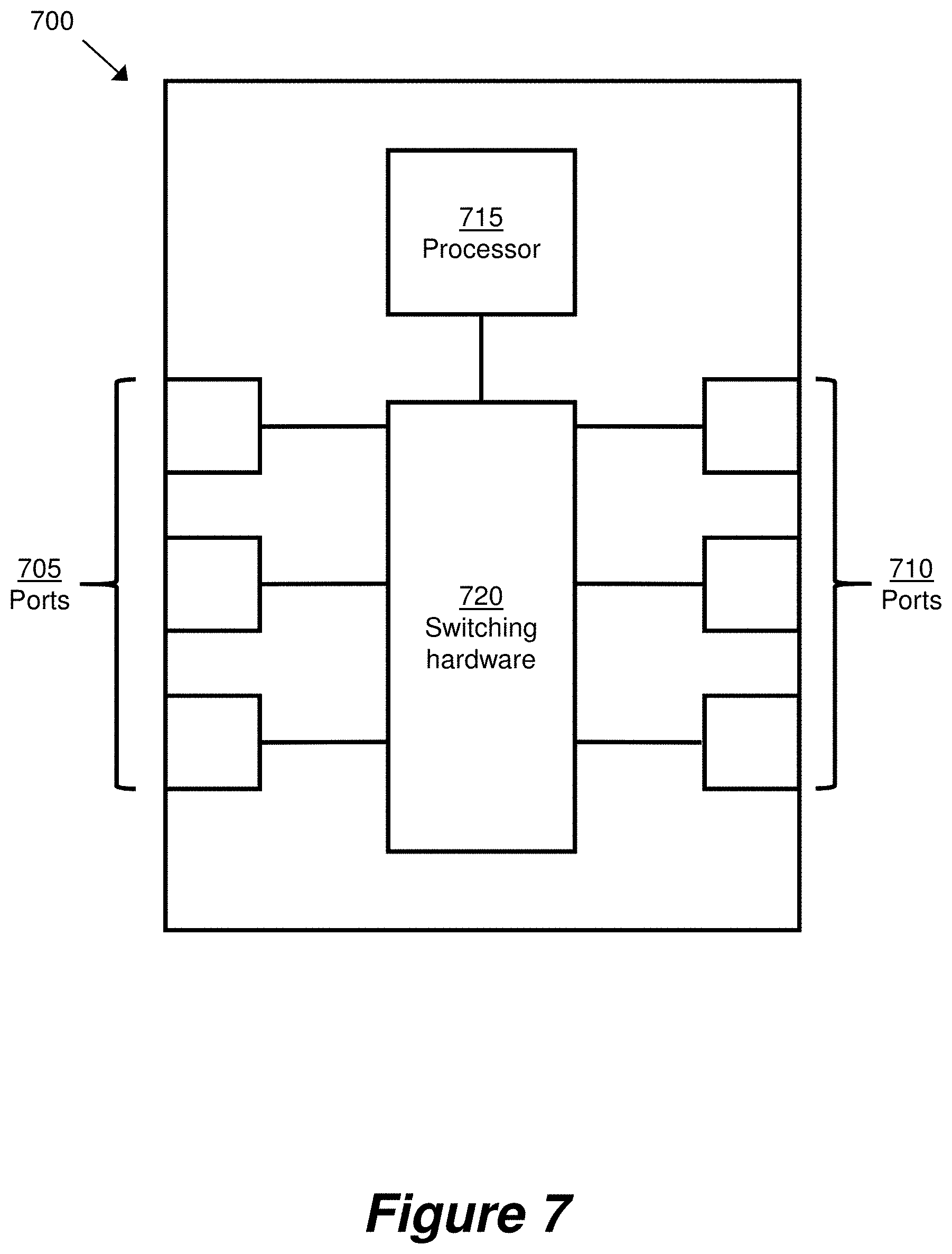

[0068] FIG. 7 shows a schematic representation of a network switch according to an embodiment of the first aspect of the invention.

[0069] FIG. 8 shows a schematic representation of a video output device according to embodiments of the first and second aspects of the invention.

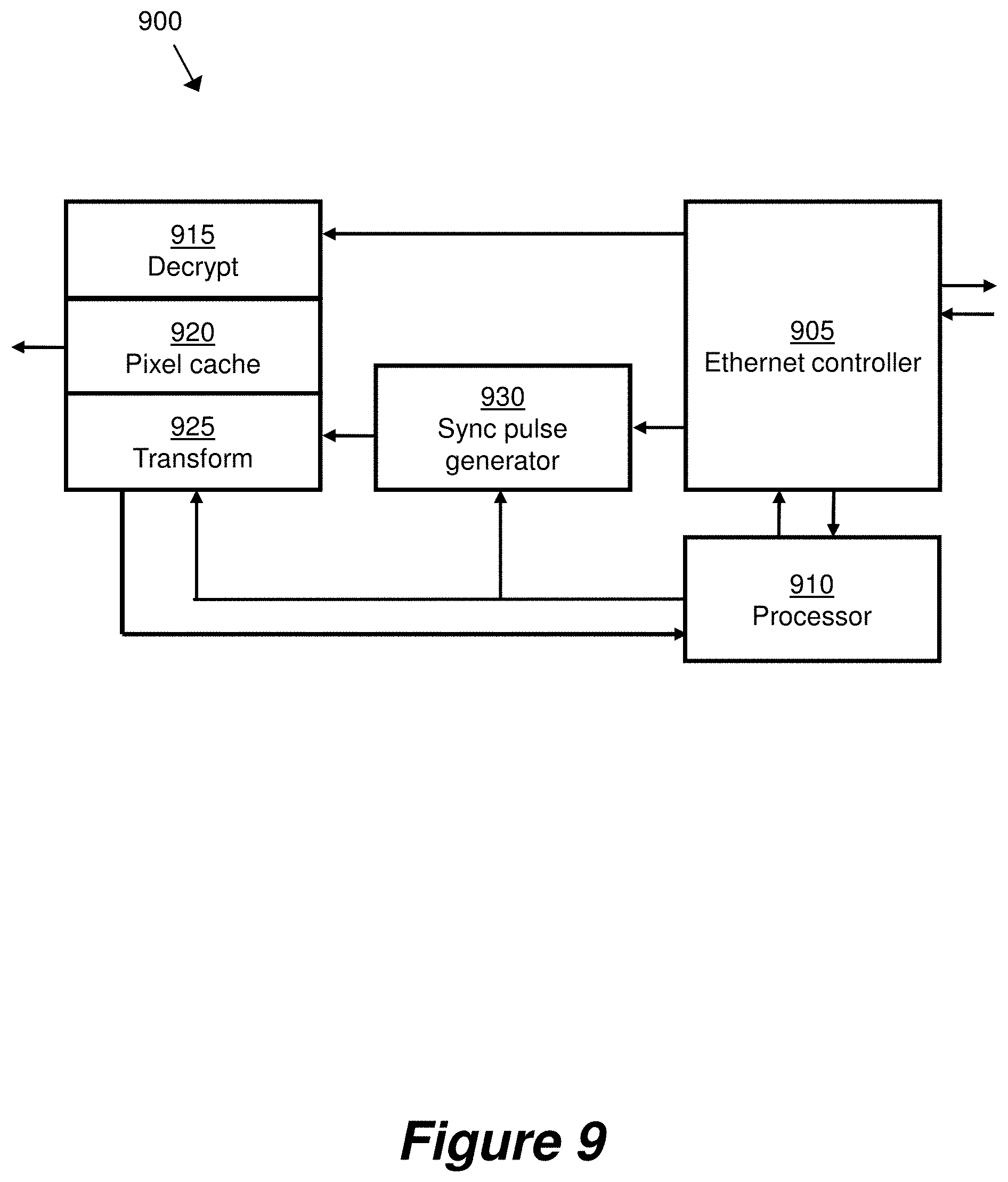

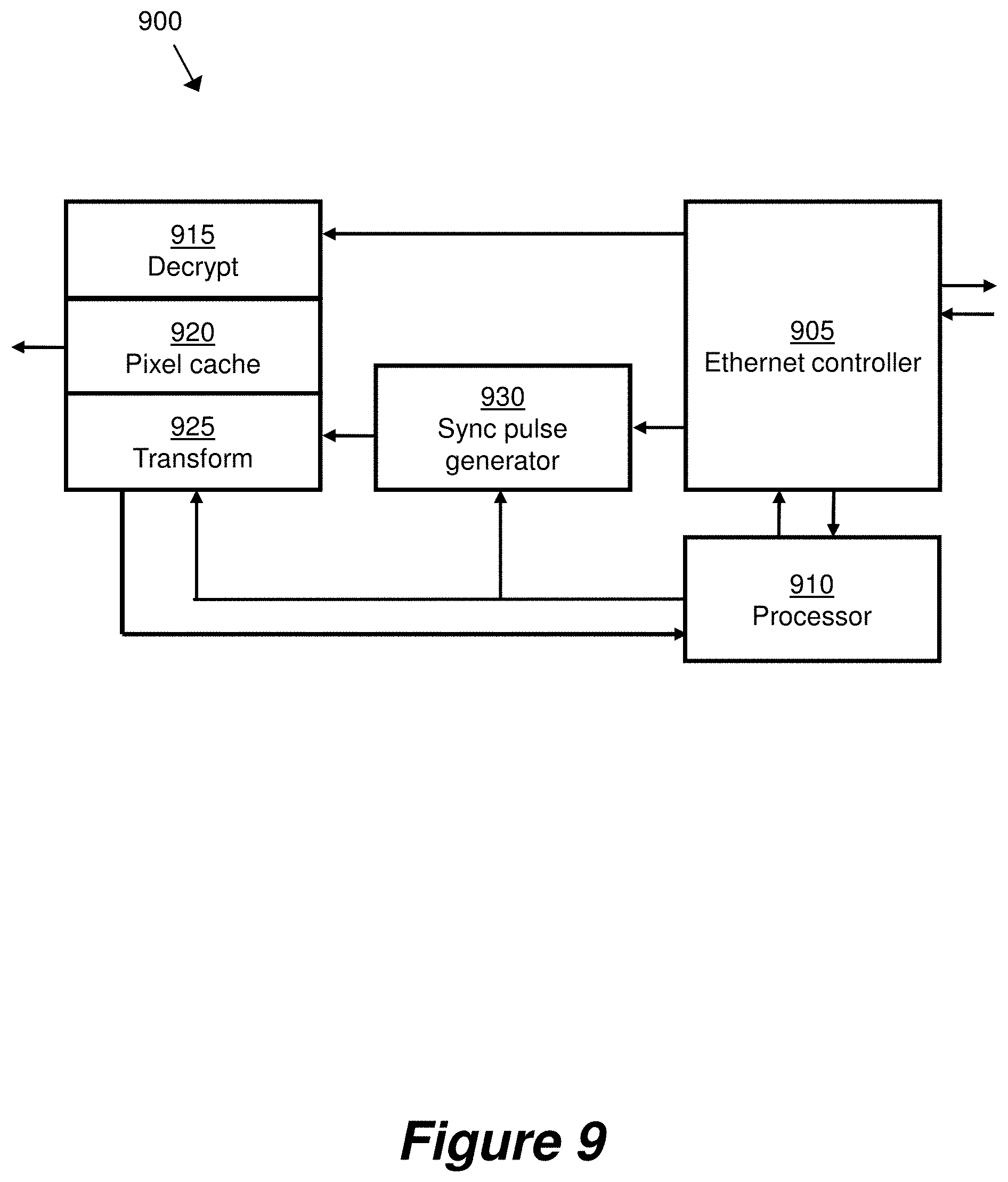

[0070] FIG. 9 shows a schematic representation of a video output device according to embodiments of the first and second aspects of the invention.

[0071] FIG. 10 shows a schematic representation of a video wall according to an embodiment of the first aspect of the invention.

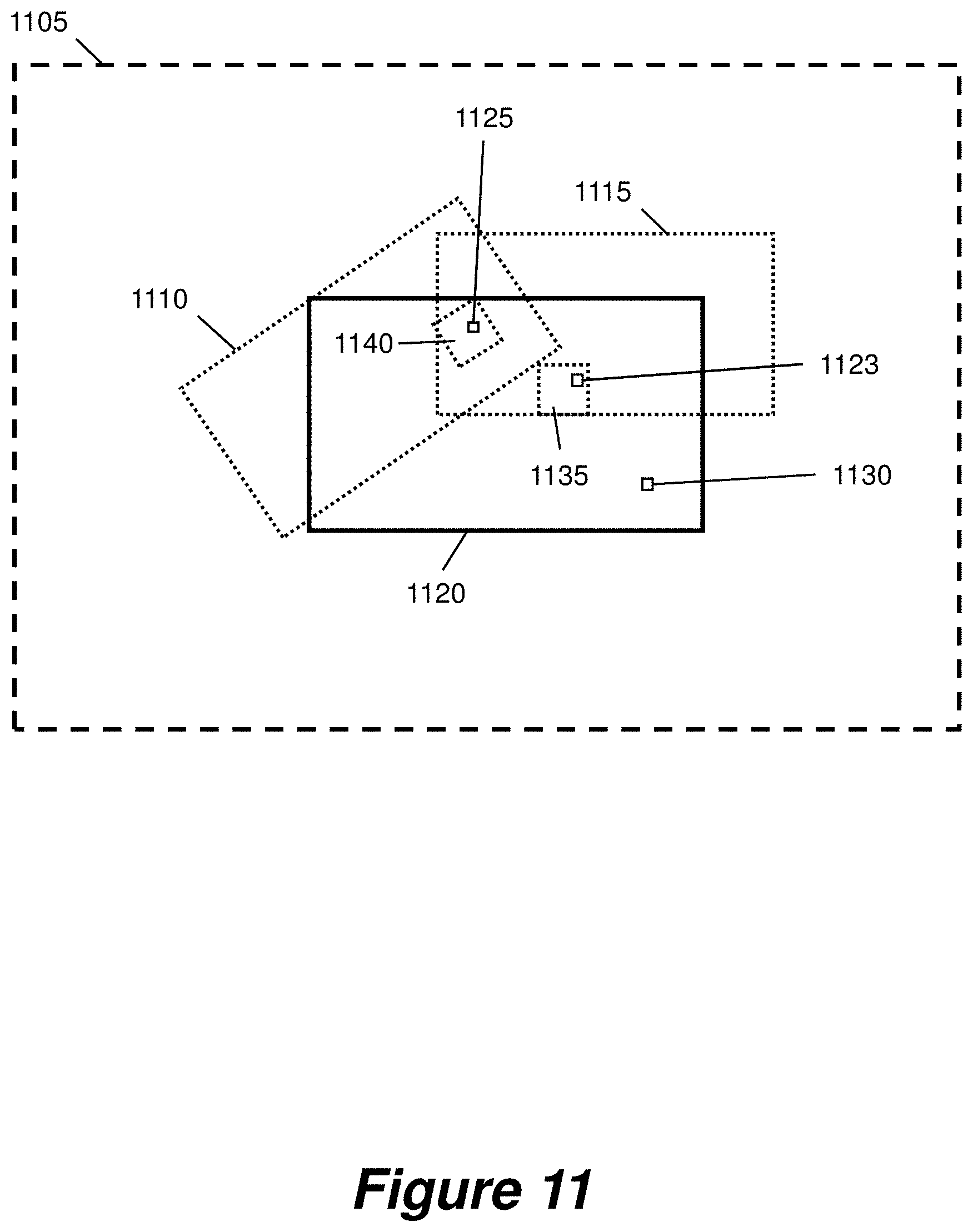

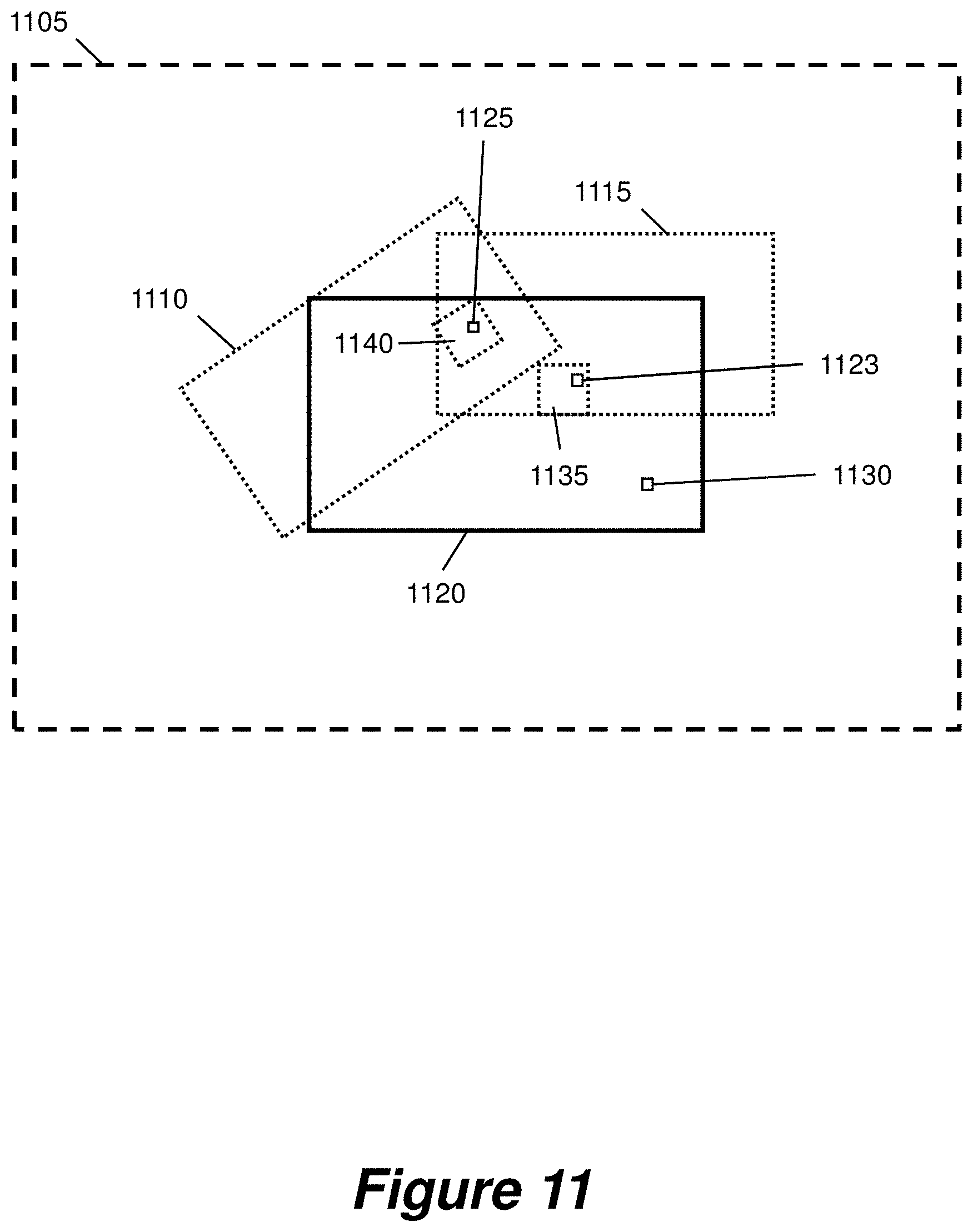

[0072] FIG. 11 shows a schematic representation of a layout of a display, a canvas and two video windows according to embodiments of the first and second aspects of the invention.

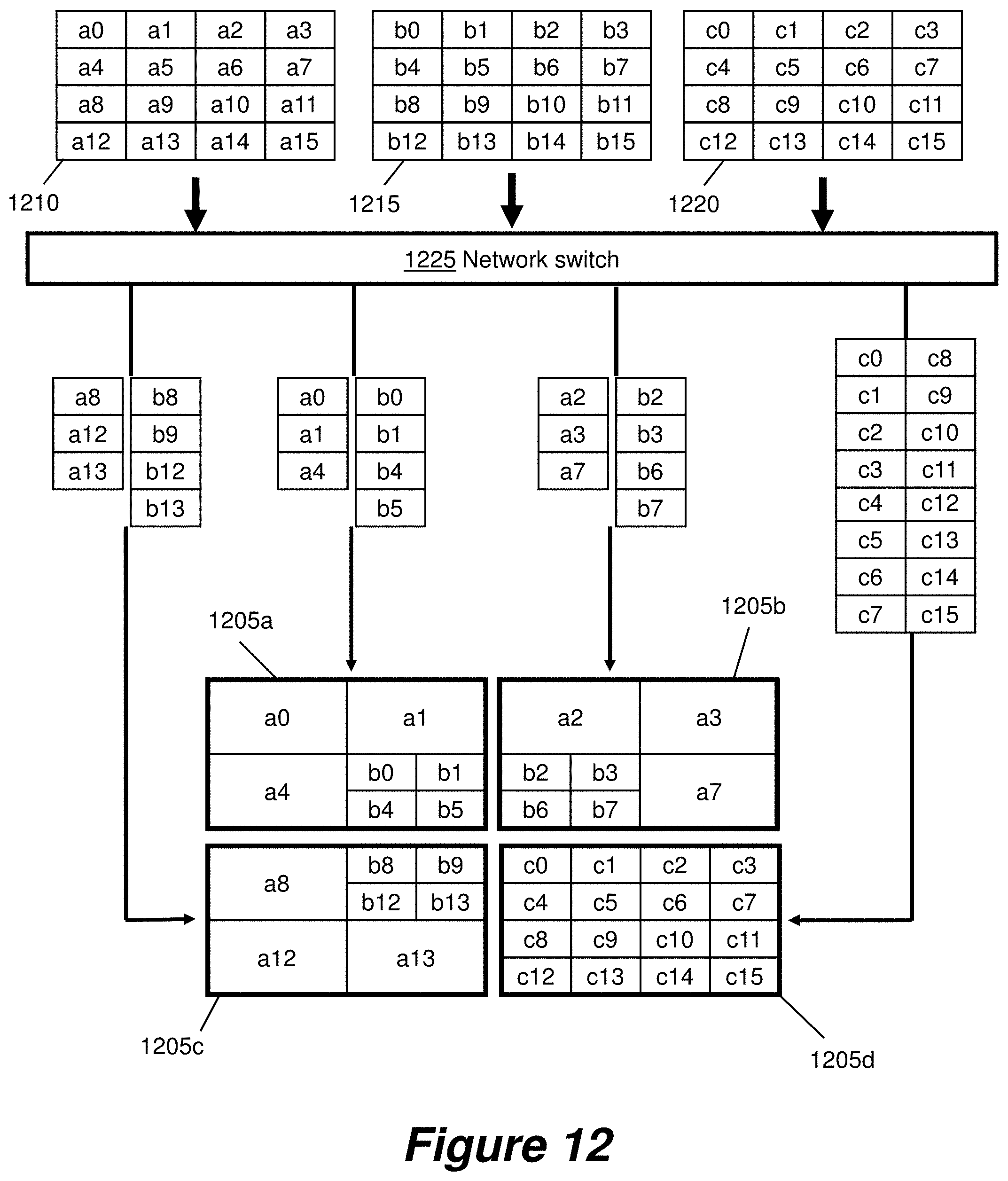

[0073] FIG. 12 shows an example of transmission, receipt and display of streamed video data on displays of a video wall according to an embodiment of the first aspect of the invention.

[0074] FIG. 13 shows a table of example bit rates required to transmit video data at various resolutions and chroma subsampling schemes according to some embodiments of the first and second aspects of the invention.

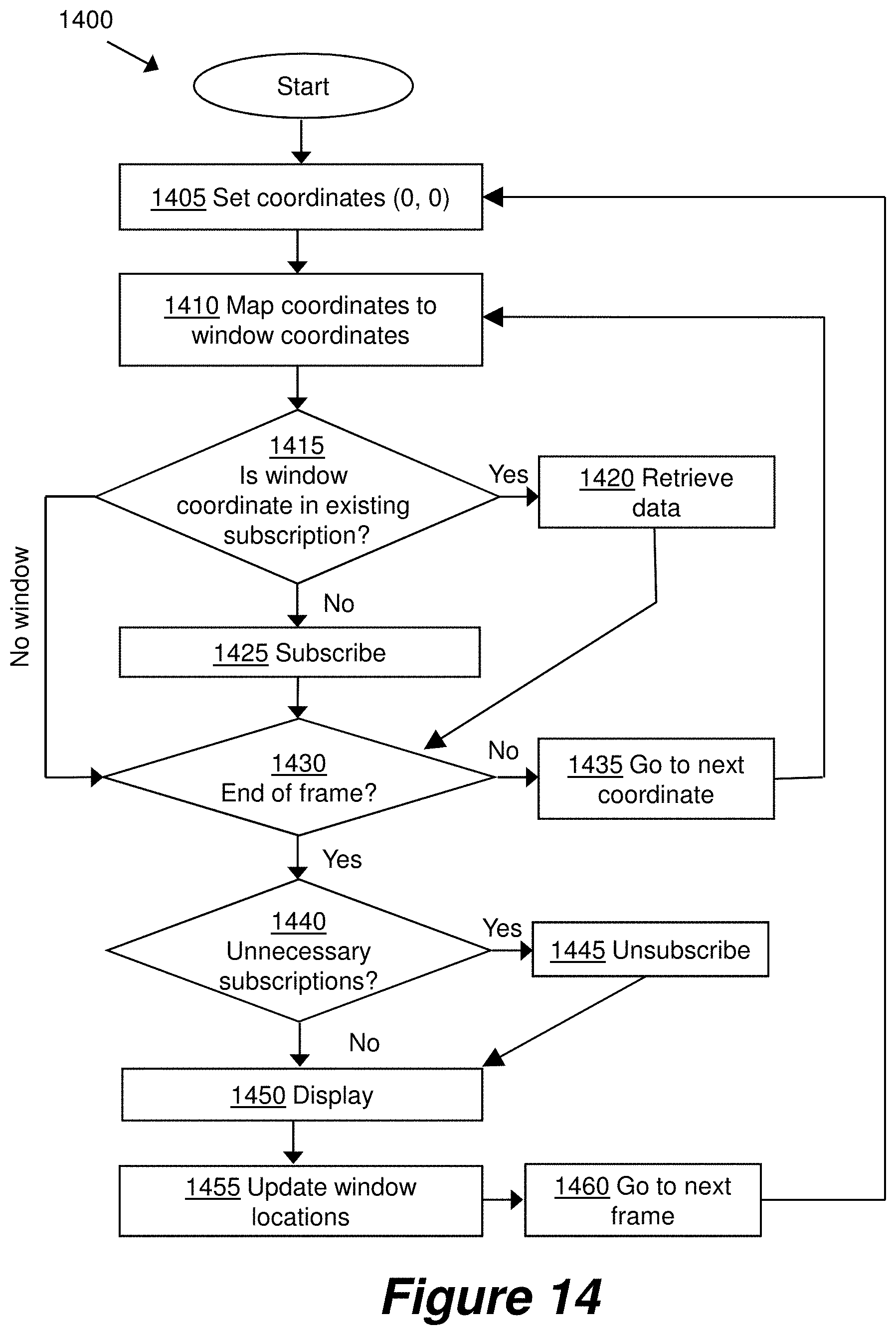

[0075] FIG. 14 shows an example procedure for a video output device to subscribe to streams according to an embodiment of the first aspect of the invention.

[0076] FIG. 15 shows an ordering of blocks of video frame data for transmission according to an embodiment of the first aspect of the invention.

[0077] FIG. 16 shows a division of video frame data for transmission and an example of a display layout according to an embodiment of the first aspect of the invention.

[0078] FIG. 17 shows a schematic representation of transmission of packets of video frame data according to an embodiment of the first aspect of the invention.

[0079] FIG. 18 shows a video wall system according to an embodiment of the second aspect of the invention.

[0080] FIG. 19 shows a video wall system according to an embodiment of the second aspect of the invention.

[0081] FIG. 20 shows a schematic representation of a method in a video client according to an embodiment of the second aspect of the invention.

[0082] FIG. 21 shows a schematic representation of a method of video frame data transmission in a video wall system according to an embodiment of the second aspect of the invention.

[0083] FIG. 22 shows a schematic representation of a request data packet according to an embodiment of the second aspect of the invention.

[0084] FIG. 23 shows an example of data flow in a video wall system according to an embodiment of the second aspect of the invention.

[0085] FIG. 24 shows an example of data flow in a video wall system according to an embodiment of the second aspect of the invention.

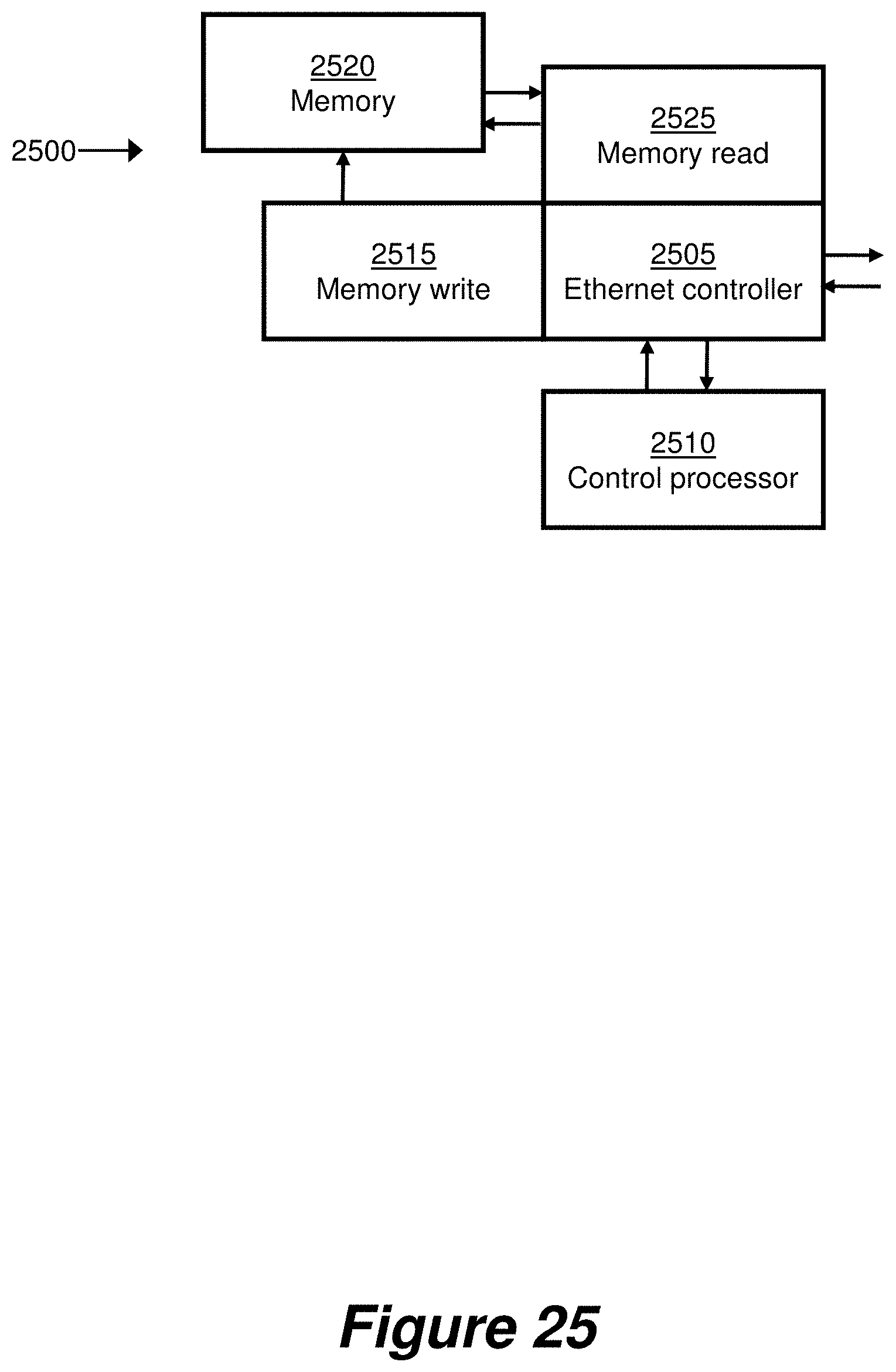

[0086] FIG. 25 shows a schematic representation of a video server according to an embodiment of the second aspect of the invention.

[0087] FIG. 26 shows an example process for mapping between a display and a video window according to an embodiment of the second aspect of the invention.

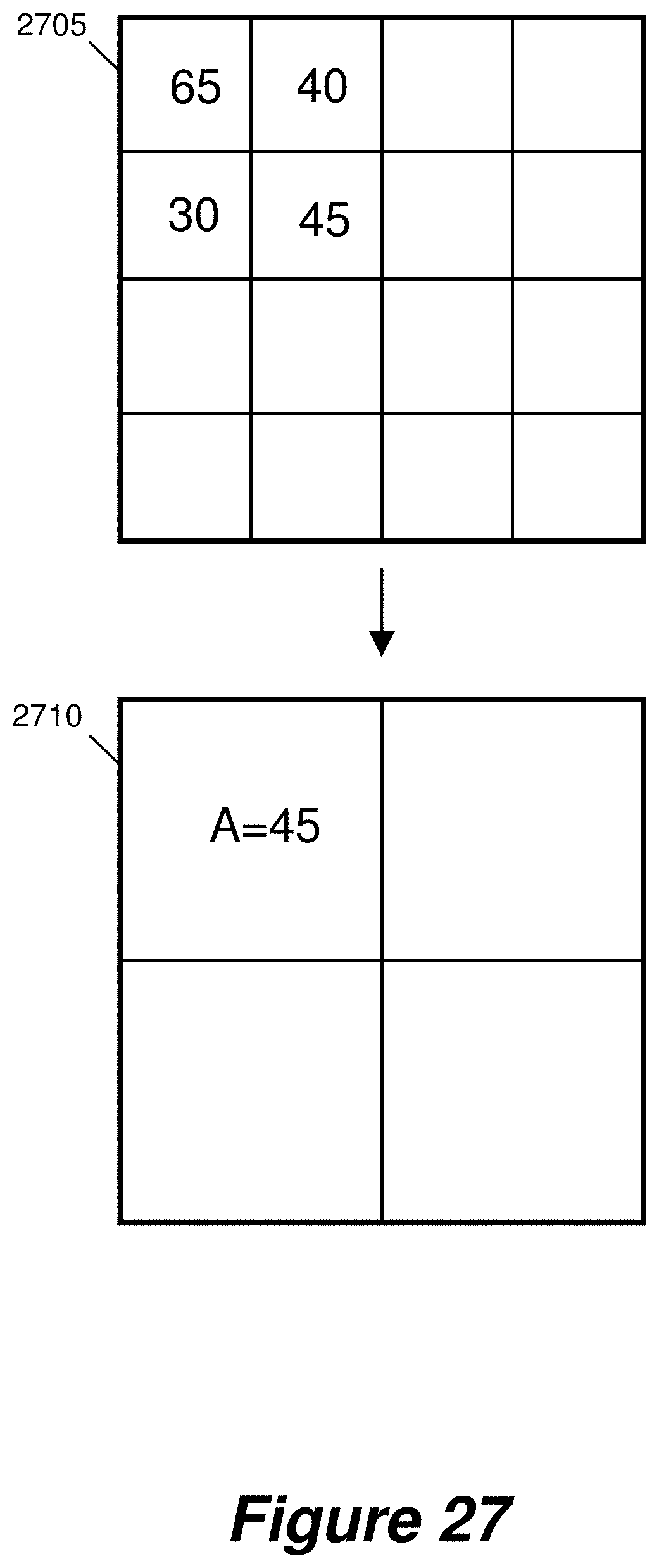

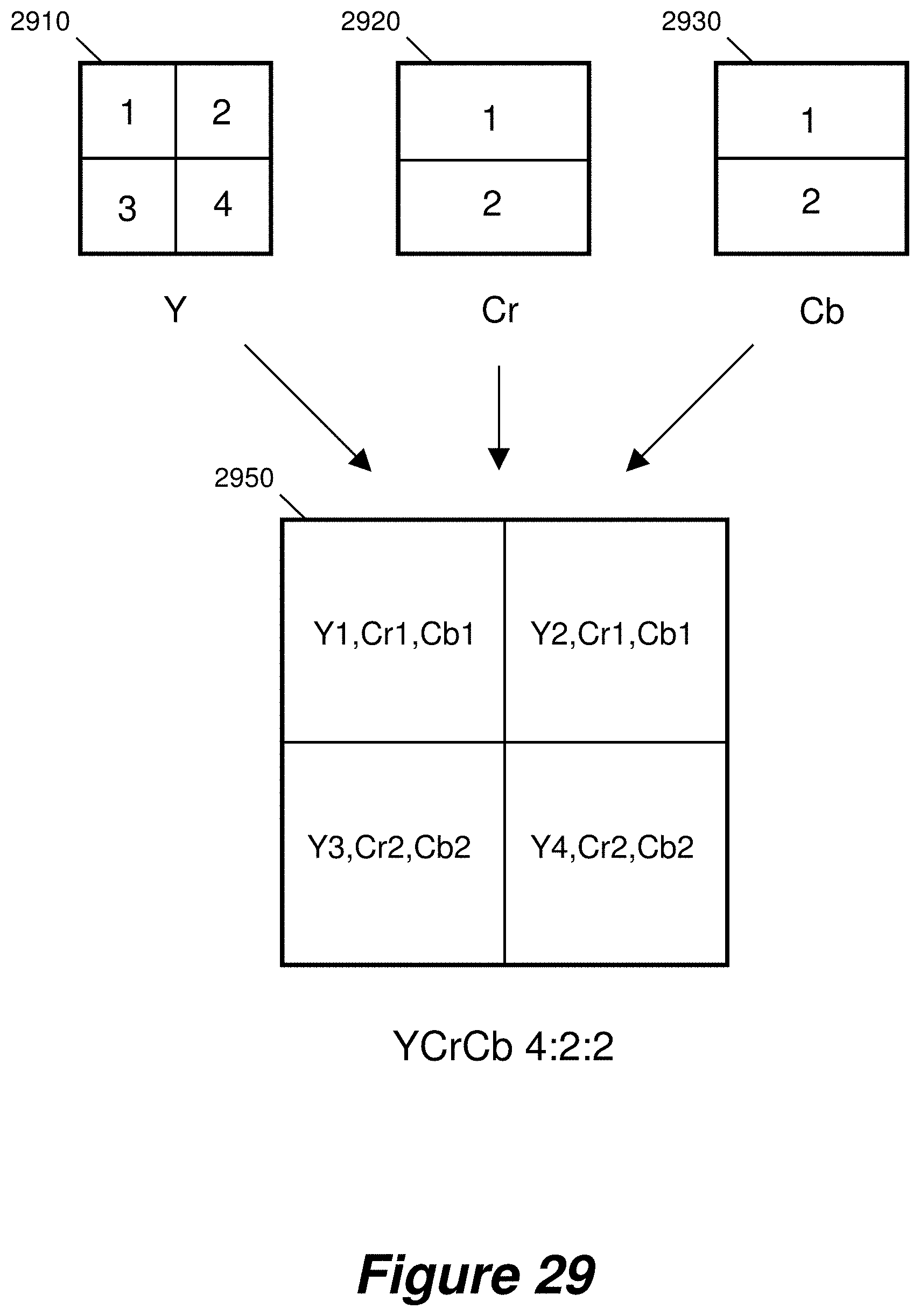

[0088] FIGS. 27 to 30 show example methods of downscaling video data.

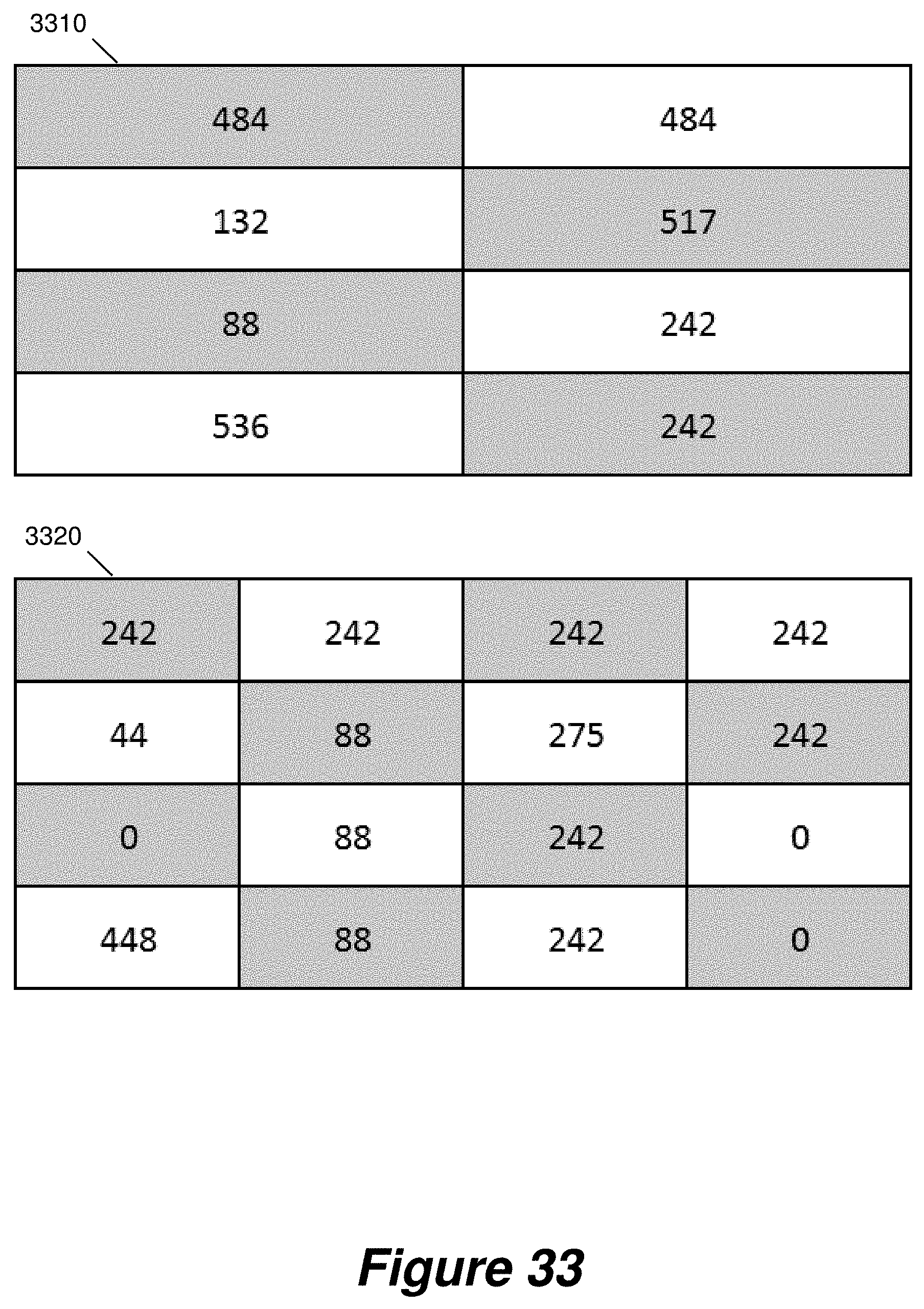

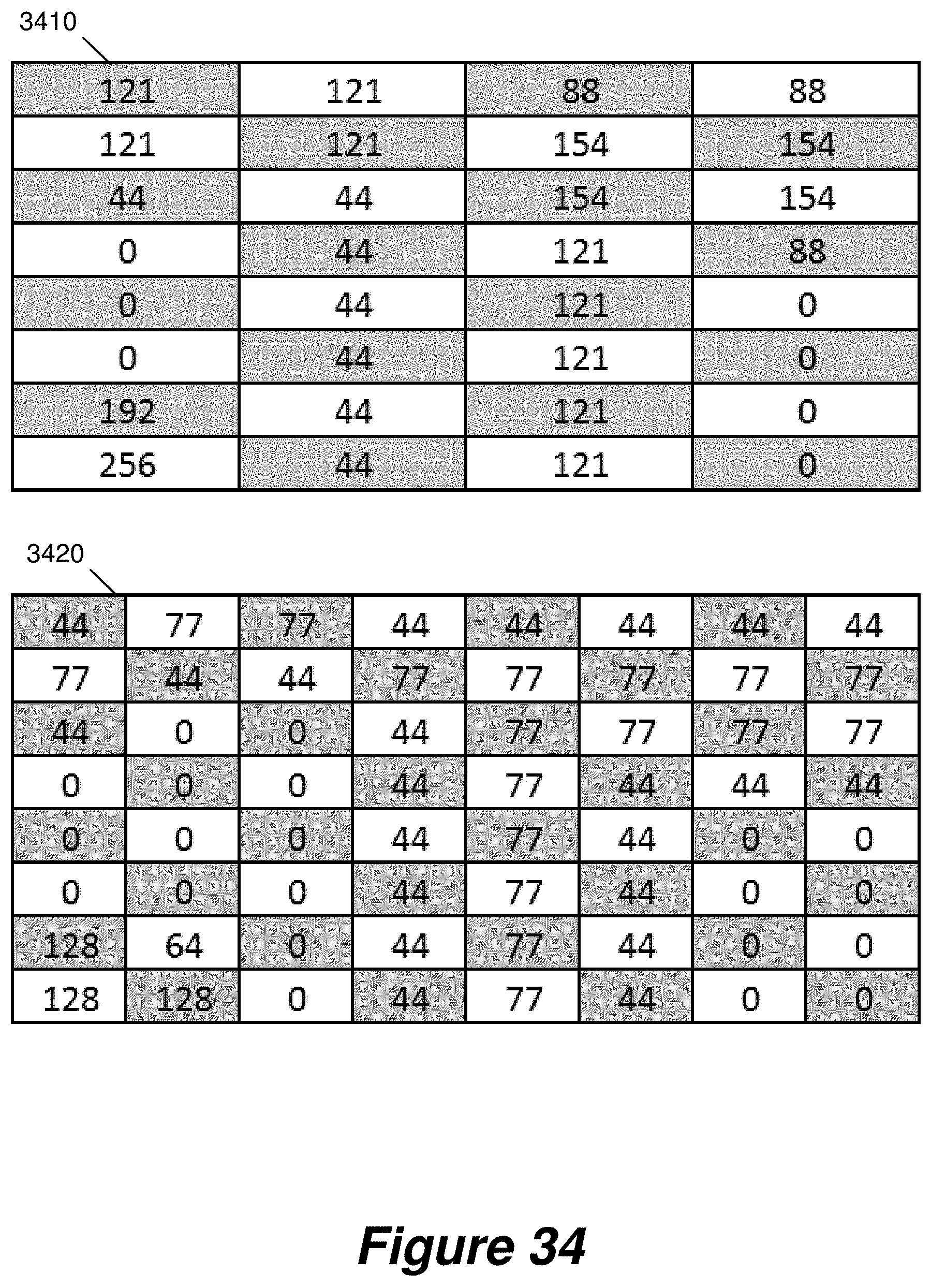

[0089] FIGS. 31 to 34 show a method of transmitting downscaled video data, according to an embodiment.

[0090] FIG. 35 shows a table of example efficiencies for transmitting downscaled video data.

DETAILED DESCRIPTION OF CERTAIN INVENTIVE EMBODIMENTS

[0091] In the following description, reference is made to videos and video data. In general, videos that are processed by computers and video processing devices can be thought of as a sequence of individual still images which are often referred to as video frames. Each image or video frame consists of a number of pixels which are typically arranged in a grid of horizontal rows and vertical columns. The number of horizontal rows (or lines) and vertical columns (or lines) determines a resolution characteristic of the image or frame as well as the corresponding video.

Multicast Video Wall

[0092] FIGS. 1 to 17 show examples and representations relating variously to a multicast aspect of the present invention. Referring to FIG. 1, there is shown an example video system 100. Links between entities of the system 100 may for example comprise 1G, 10G or 25G copper or fibre links.

[0093] The system 100 comprises a video wall 105 comprising an array of four display devices 105a-d arranged in a two-by-two layout. Although the video system 100 is shown as a video wall system, it will be appreciated that embodiments of the present disclosure are also applicable to other video systems including, for example, systems where a plurality of displays do not necessarily form a video wall. Examples video systems include systems used in courtrooms, government assemblies, boardrooms, command & control centers, simulators, operating theatres, television studios, live stage events, casinos, and sports stadiums.

[0094] Each display device may, for example, be a computer monitor, a video projector or a television screen. The displays may be general purpose display devices or, alternatively, they may be display devices designed specifically for use in a video wall 105.

[0095] The system 100 comprises a network switch 107, such as a non-blocking network switch. A network switch includes a number of bidirection input/output ports, and a non-blocking network switch is one where each port can typically pass data to every other port, and typically the internal bandwidth of the switch can handle all the port bandwidths, at the same time, at full capacity. The switch 107 is configured to handle multicast subscription requests. The switch 107 may be utilised to provide a master Dynamic Host Configuration Protocol (DHCP) server and/or time server for the system 100.

[0096] The video system 100 comprises one or more video sources 110. The video source 110 is coupled to the network switch 107 and together they are configured to provide a plurality of multicast streams via the network switch 107. Each multicast stream comprises video frame data corresponding to a portion of a video frame. For example, a video frame with a particular resolution may be divided into fixed-size lower-resolution portions as described in more detail below. Preferably, the video frame is divided in both the horizontal and vertical direction so that each portion of the video frame data corresponds to a block of pixels. Each block may be a rectangular block having the same aspect ratio as the original video frame. Or the blocks could be square blocks of pixels. Each portion of the video frame would then be transmitted in a respective multicast stream.

[0097] The portion of the video frame may be a region within the video frame, or an area within the video frame. If the video frame is available in different resolutions, the portion of a video frame may be a portion of a video frame at a particular resolution. If the video frame at a particular resolution has particular number of pixels then the portion of the video frame may be a portion, region or area of the video frame covering a subset of the full set of pixels. If the video frame at a particular resolution is represented by a particular set of video frame data then the portion of the video frame may be a portion, region or area of the video frame incorporating a subset of video frame data.

[0098] In some embodiments, a single video source 110 provides a single video for display on the video wall 105. In a further embodiment, the system 100 comprises a plurality of video sources 110, each of which are configured to provide respective video frame data for display on the video wall 105. Some or all of the plurality of sources may comprise separate hardware. Alternatively or additionally, some or all of the plurality of sources may comprise logically separate sources within the same source hardware. In this manner, a single physical video source 110 can provide multiple videos for simultaneous display on the video wall 105. In some embodiments wherein sources comprise separate hardware, multiple video sources 110 provide the same video frame data. Such an arrangement provides redundancy in the system so that a loss of video from one video source 110 can be compensated for by the provision of the same video from another video source.

[0099] In some embodiments, for example where provision of video frames would require particularly high bandwidth or processing power, a single source video can be divided, for example spatially, into separate videos, each corresponding to a region of the single source video. For example, video frames may be divided into a number of evenly spaced vertical strips. The separate videos can then be provided by physically separate video sources. This approach is useful for relatively high resolution source videos.

[0100] In the example shown in FIG. 1, the video source 110 provides a single video for display across the entire video wall 105 such that the top-left quarter of a given video frame is displayed on display device 105a, the top-right quarter is displayed on video device 105b, the bottom-left quarter is displayed on display device 105c and the bottom-right quarter is displayed on video device 105d.

[0101] The video source 110 may include a storage medium such as a hard disk or flash memory for storing a video file, which can be retrieved when a video needs to be output by the video source. The video could also originate from a live source such as a video from a video camera. Such a storage medium or live source of video can be local to the video source 110 hardware. For example, a video may be provided from a flash memory in the same cabinet as the video source 110. Alternatively, the storage medium or live source of video can be remote from the video source 110 hardware. For example, a live source may comprise a video camera coupled via a wireless link to the video source 110.

[0102] The video system 100 further comprises a plurality of video output devices 115a-d. Each video output device 115a-d is coupled to the network switch 107 and is also coupled to a corresponding display device 105a-d of the video wall 105. In some embodiments, a given video output device 115a-d is incorporated within the functionality of its corresponding display device 105a-d. For example, a display device 105a-d may comprise a dedicated video output device 115a-d hardware. Alternatively, a general purpose processor of a display device 105a-d may implement the functionality of a video output device 115a-d. Each video output device 115a-d is configured to transmit a subscription request to the network switch 107. The subscription request is for at least one of the plurality of multicast streams provided by the video source 110. The requested stream comprises video frame data that is for display on the display device 105a-d to which that video output device 115a-d is coupled. As an example, video output device 115a, associated with the top-left display 105a, would transmit a subscription request for at least one multicast stream corresponding to the top-left quarter of the video frame.

[0103] In an embodiment, a video output device 115a-d is configured to subscribe to at least one further stream of the plurality. Each of the at least one further streams comprises video frame data that is for display on the corresponding display 105a-d such that the combined requested streams together provide the entirety of video frame data that is for display on the display device 105a-d. In an example, a video output device 115a-d may submit separate subscription requests for each such stream. Alternatively, a video output device 115a-d may submit a single subscription request for multiple streams.

[0104] In some embodiments, the multicast streams are managed by a stream controller 120. Each video source 110 transmits to the stream controller 120 a description of the streams that it provides. The description may for example include a unique identifier of each stream and details of the specific portion of the video frame to which each stream relates. The stream controller 120 then transmits such a description to each video output device 115a-d. Each video output device 115a-d can thus determine which streams it should subscribe to, as described in more detail below.

[0105] In other embodiments, the system 100 does not comprise a stream controller 120. In such embodiments, each video source 110 broadcasts a description of the streams that it provides to each video output device 115a-d. As above, the description may include a unique identifier of each stream and details of the specific portion of the video frame to which each stream relates. Each video output device 115a-d can thus receive the broadcast stream descriptions and hence determine which streams it should subscribe to.

[0106] The network switch 107 is configured to receive subscription requests. In response to receiving a subscription request from a video output device 115a-d, the network switch transmits each requested stream to that video output device 115a-d. A given video output device 115a-d thus only receives video frame data based on the portion or portions to which it subscribes. Hence, the system 100 avoids the need to transmit the entirety of the video frame data to each video output device. As such, the overall bandwidth requirements of the system 100 can be reduced.

[0107] By using a network switch to distribute video streams to different video output devices, as well as manage streaming requests, the number of components of the system 100 can be kept low. For example, the system 100 may be configured with the network switch, the video input(s) 110, and the video output devices but without using any separate video servers to receive video frame data from the video source 110 and provide the data to the video output devices 105a-d.

[0108] It has been observed that, in systems in which the entire video frame is sent to each video output device 105a-d, the required network bandwidth increases with the number of displays. Similarly, the required processing power of a video source 110 increases with the number of displays. This can increase to the point that no further displays can be added to the video wall. The present system 100 enables pixel data of a video frame, provided by a video source 110, to be transmitted only to a subset of the video output device 115a-d that require that pixel data for their respective displays 105a-d. The system 100 can also enable a multicast stream relating to a portion of the video frame which is to be partially displayed on one display and partially displayed on one or more further displays to be subscribed to by the video output devices of those displays. Furthermore, in the case of edge blending, where two or more projectors acting as display devices provide partially overlapping video output, the system 100 enables the output of overlapping video by providing pixel data for the overlapping portion to the two or more projectors.

[0109] As such, the system 100 enables the bandwidth of video frame data transmitted from the video source 110 to each video output devices 115a-d, via the network switch 107, to be significantly lower than the bandwidth required for transmitting a complete version of the video frame data from the video source 110 to each display device 115a-d, regardless of how many displays 105a-d the video frame is spread across. Additional displays, with corresponding video output devices, can thus be added to the system without requiring additional or upgraded video source hardware. This remains true if such an additional display is physically remote from the rest of the network hardware, but it may be necessary to connect the additional display via upgraded connection hardware capable of transmission over an extended distance.

[0110] Suitably, the number of videos and/or the resolution of video available in the system 100 can be increased by upgrading individual video source 110 devices so that they are capable of handling increased bandwidth. Additionally or alternatively, the number of videos and/or the resolution of video available in the system 100 can be increased by adding additional video source 110 devices.

[0111] Typically, a video output device 115a-d will be capable of outputting video frame data at the maximum resolution of its corresponding display 105a-d. Therefore, even if the number of videos and/or the resolution of video available in the system 100 is increased, this would typically not require an upgrade the video output devices 115a-d.

[0112] After receiving the video frame data from the network switch 107, each video output device 115a-d then outputs display data, based on the received video frame data, to its corresponding display 105a-d. For example, the display data may comprise data in accordance with the HDMI standard, the DVI standard, or the VGA standard. In some examples, a video output device 115a-d may produce the display data by identifying a part of the received video frame data as not being for display, and producing the display data so as to exclude the identified part of the received video frame data. For example, where each multicast stream corresponds to a fixed-size block of a video frame, a given video output device 115a-d subscribes to streams such that the blocks corresponding to each requested stream together include the portion of the video frame that is for display on its corresponding display 105a-d. The received blocks may also cover further portions of the video frame, adjoining the portion that is for display. The video output device 115a-d may thus identify such further portions as not for display, and produce the display data omitting such further portions.

[0113] In some embodiments, when a video output device 115a-d does not receive a given block, for example due to network errors, the video output device 115a-d can interpolate the missing data from surrounding pixel blocks. Alternatively, the video output device 115a-d may replace the missing block with a corresponding block from the previous frame, for example held in a buffer. However, provided that a large number of blocks are not missing, such errors are typically not very noticeable on a display.

[0114] In some embodiments, multiple switches 107 can be used. In an example video wall 105 in which the total required bandwidth, for all displayed videos, does not exceed 10 Gb, the one or more video sources 110 may be linked to a single 10 Gb switch. This is then linked via a single 10 Gb link to a similar switch, to which all video output devices 115a-d are connected. For large video walls 105, multiple switches could be configured, with each switch being connected to a subset of the video output devices 115a-d. In some embodiments in which multiple switches are used, each switch is located physically near the video output devices 115a-d and/or video sources 110 to which it is connected. The switches may then be connected to each other via a fast interconnection, such as a fibre interconnection. In such examples, it is preferable for the interconnection to be capable of supporting non-blocking usage over all connected ports. The interconnection should preferably be capable of handling the greater of the total video output device 115a-d bandwidth and the total video source 110 bandwidth.

[0115] As a further example of network hardware, two or more connections could be used to send video frame data from the network switch 107 to a given video output device 115a-d. Such a configuration is particularly useful in embodiments such as control rooms, wherein multiple lower resolution sources are displayed on a higher resolution display, as this configuration allows a higher resolution display to be used since more video frame data can be transmitted from the network switch 107 to a given video output device 115a-d. Alternatively, a single high-bandwidth connection could be used instead of two or more lower-bandwidth connections.

[0116] Alternatively or additionally, two or more connections could be used to transmit multicast streams from a video source 110 to the network switch 107. This configuration is particularly advantageous in embodiments where the resolution of the video from the video source 110 is higher than the display resolution, as it allows a higher resolution video frame to be displayed on the video wall 205. Alternatively, a single high-bandwidth connection could be used instead of two or more lower-bandwidth connections.

[0117] A further advantage of the use of two connections to video output devices 115a-d and/or video sources 110 is that such a system could, in response for example to a network issue, use only one of the two connections. Such a system thus provides redundancy in case of network faults. As an example, when two links from a video source 110 are in use, video frame data could be streamed at a lower compression ratio and when a single link is in use, video frame data could be streamed at a higher compression ratio. Switching between these modes of operation may be triggered by automated sensing of a network issue, for example by further network hardware such as a network controller.

[0118] FIG. 2 shows a schematic representation of a method 200, in a video output device, for acquiring video data for outputting to a display according to an aspect of the present disclosure.

[0119] The method 200 comprises a subscribing step 205 of subscribing to a multicast stream of a plurality of multicast streams. Each multicast stream is streamed from a video source and comprises video frame data corresponding to a portion of a video frame. The multicast stream to which the video output device subscribes comprises video frame data that is for display on a display associated with the video output device.

[0120] The method then comprises a receiving step 210 of receiving the video frame data that is for display on the display.

[0121] FIG. 3 shows a schematic representation of a similar method 300 of video frame transmission in a video system. The system, as described above in connection with FIG. 1, comprises at least one video source 305, a network switch 310 and at least one video output device 315. The method comprises data flow between a given video source 305 and a given video output device 315 via the network switch 310.

[0122] The video source 305 transmits to the network switch 310 a plurality of multicast streams 325. As set out above, each multicast stream comprises video frame data corresponding to a portion of a corresponding video frame.

[0123] The video output device 315 transmits to the network switch 310 a subscription request 330 for at least one of the plurality of streams. Each requested stream comprises video frame data that is for display on a display device associated with the video output device.

[0124] In response to receiving a said subscription request, the network switch 310 transmits each requested stream 335 to the video output device 315.

[0125] FIG. 4 shows an example of data flow in a video system. The system comprises video sources 405, 410 and video output devices 415, 420, connected to each other via a network switch 425. Video source 405 provides multicast streams 405a-c to the network switch 425, each of which relate to portions of a first video. Video source 410 provides multicast streams 410a-c to the network switch 425, each of which relate to portions of a second video. Multicast streams 405a and 405b correspond to portions of the first video that are for display on the display associated with video output device 415. Multicast streams 405b and 405c correspond to portions of the first video that are for display on the display associated with video output device 420. Multicast streams 410a and 410c correspond to portions of the second video that are for display on the display associated with video output device 420. Multicast stream 410b corresponds to a portion of the second video that is not for display on either output device 415, 420.

[0126] Video output device 415 transmits to the network switch 425 one or more subscription requests 430 identifying streams 405a and 405b. A single subscription request 430 may be transmitted, identifying both streams 405a and 405b. Alternatively, video output device 415 may transmit separate subscription requests 430 for each stream 405a, 405b.

[0127] Similarly, video output device 420 transmits to the network switch 425 one or more subscription requests 435 identifying streams 405b, 405c, 410a, 410c. A single subscription request 435 may be transmitted, identifying all four streams 405b, 405c, 410a, 410c. Alternatively, video output device 420 may transmit one request 435 for streams 405b, 405c from video source 405, and a second request for streams 410a, 410c from video source 410. As a further example, separate subscription requests 435 may be transmitted for each stream 405b, 405c, 410a, 410c.

[0128] Dashed lines within the switch 425 indicate data flow from video sources 405, 410 to video output devices 415, 420 via the switch 425. In response to receiving the above-described subscription requests, the switch 425 transmits each requested stream to the corresponding video output devices 415, 420. Specifically, the switch 425 transmits streams 405a, 405b to video output device 415, and transmits streams 405b, 405c, 410a, 410c to video output device 420.

[0129] FIG. 5 shows a schematic representation of a subscription request data packet 500 that is transmitted from a video output device to a network switch according to an embodiment. In analogous embodiments, the packet may be structured according to a standard multicast protocol, for example Internet Group Management Protocol (IGMP).

[0130] The packet 500 comprises a network address 515, for example a multicast group IP address, of the source of the video data to which the subscription request relates. In some embodiments, a stream controller receives details of each available stream, including the corresponding multicast group addresses, from each video source. The stream controller transmits these details to each video output device. In other embodiments, video sources broadcast details of available streams to each video output device. Each video output device can thus maintain a record of the available streams, including the multicast group address of each stream.

[0131] The packet 500 comprises a network address 520 of the display device from which the subscription request originates.

[0132] In some embodiments, the packet 500 further comprises a field 525 identifying the packet as a subscription request. In some examples, the network address 515 is sufficient to identify the packet 500 as a subscription request. In such examples, the packet 500 does not include the identification field 525.

[0133] The packet 500 then comprises a checksum 530 to enable the network switch to detect errors in the packet 500. For example, the checksum may comprise the 16-bit one's complement of the one's complement sum of the entire packet 500.

[0134] A network switch is configured to identify the packet 500 as a subscription request, for example by identifying the network address 515 as a multicast group address or by way of the identification field 525 identifying the packet as a subscription request. The network switch is configured to maintain a record of subscriptions. The network switch receives each multicast stream from each video source and, based on the record of subscriptions, forwards each stream to the video output devices that have subscribed to that stream.

[0135] In some examples, the packet 500 comprises an identification of multiple streams to which the subscription request relates. For example, the packet 500 may identify multiple multicast group addresses. In one such example, the packet 500 comprises a field identifying the bit length of the packet and/or the number of streams to which the request relates.

[0136] FIG. 6 shows a schematic representation of a video source 600 according to one embodiment. The source 600 comprises various modules. Any or all of these modules could, either alone or in any combination, be implemented by dedicated circuitry, for example one or more field-programmable gate arrays, or be implemented by routines in one or more general processors.

[0137] The source 600 comprises an input 605 to receive video data, for example from a storage medium such as a hard disk or solid-state memory, or from a live video feed.

[0138] In some examples, video frame data is stored as block-by-block video data. The video frame data is then received at the input as block-by-block data, with each block of a given frame to be sent in a separate multicast stream. Alternatively, video frame data may be stored as line-by-line video frame data and converted by a module of the video source 600 to block-by-block video frame data, with each block corresponding to a separate multicast stream. As an example of such a conversion, 135 lines of video frame data may be cached and, from this, blocks of size 240.times.135 pixels may be determined. A 1920.times.1080 pixel frame may thus be converted into an 8.times.8 grid of blocks. This allows the block size to be modified on the fly, for example in response to a command from a stream controller.

[0139] In some examples, block-by-block video frame data is stored such that a given block is stored in successive memory locations of a bank of a memory, with adjacent blocks being stored in different banks of the memory. A given block can thus be rapidly read out from a bank of the memory. In embodiments wherein adjacent blocks are streamed in succession, this arrangement facilitates rapid access to adjacent blocks as one bank of the memory can be read while another is being opened or closed. An example of such block-based storage is described in more detail in European patent EP2446413B1, the contents of which is incorporated herein by reference.

[0140] The source comprises a sampling rate and colour conversion module 610, configured to convert the received video to a data rate and/or colour space that is suitable for providing via the network switch to video output devices.

[0141] The source 600 comprises a scaling module 615 for downscaling the video data. The scaling module 615 may for example perform block-based downscaling, downscaling each block of video frame data to provide multiple streams for each block, each having a different overall downscaling factor and/or chroma subsampling scheme. The downscaled block data is stored in a block memory 620.

[0142] The source 600 comprises an encryption module 625, configured to encrypt the downscaled video frame data into a format suitable for transmission via the network switch to the video output devices.

[0143] The source 600 comprises a control processor 630 configured to control an ethernet controller 635 to transmit the encrypted video frame data to the network switch.

[0144] FIG. 7 shows a schematic representation of a network switch 700 according to an embodiment. The switch 700 comprises various modules. Any or all of these modules could, either alone or in any combination, be implemented by dedicated circuitry, for example one or more field-programmable gate arrays, or be implemented by routines in one or more general processors.

[0145] The switch 700 comprises ports 705 for receiving multicast streams from one or more video sources. For example, if the switch 700 is an ethernet switch, the ports 705 are ethernet ports.

[0146] The switch 700 further comprises ports 710 for receiving subscription requests from a plurality of video display devices. It should be noted that although the ports 705 and the ports 710 are for ease of explanation shown as distinct in FIG. 7, ports 705 are not specifically optimised for connection to video sources and ports 710 are not specifically optimised for connection to display devices. A video source could be connected to a port 710 and/or a video display device could be connected to a port 705.

[0147] One or more of the ports 705 and the ports 710 may include buffers for buffering data going to the port (an input buffer) or going from the port (an output buffer). The buffers may be provided by dedicated memory for each port or by logical allocations from a common memory in the switch.

[0148] In one embodiment of the present invention, the video frame data is split into multiple data packets, whereby the data packet may be in the form of a wavelet. A wavelet results from a wavelet transformation of the data. In wavelet compression, a type of image data compression, each successive wavelet transformation provides a successively lower resolution video data wavelet which can be sent as a different multicast group. Thus, the video frame data comprises of multiple wavelets of decreasing resolution. Combining all wavelets that constitute the video frame data reconstructs the original resolution, or fewer (lower) wavelet levels can be combined to reconstruct a lower resolution version. Therefore, the final received and reconstructed image can be varied in resolution by subscribing to different wavelet multicast groups. An example of such data compression is described in more detail in U.S. Pat. No. 5,682,441A.

[0149] The switch comprises a processor 715 and switching hardware 720. The processor 715 is configured to process subscription requests received via ports 710. In response to receiving such a request, the processor 715 controls the switching hardware 720 to transmit each requested stream to the video display device from which the request was received.

[0150] The switch 700 may be configured to have `Quality of Service` capabilities, whereby transmission of certain data packets are prioritized. For example, a data packet containing lower resolution video frame data may be prioritized ahead of other data packets waiting to be transmitted from the network switch. In another example, the switch 700 may prioritize the transmission of subscription requests received from a plurality of video display devices for data packets containing lower resolution video frame data.

[0151] Alternatively, or in addition to, the `Quality of Service` capabilities may prevent the loss of a data packet containing lower resolution video data should an output buffer overrun occur. In a comparable example, the `Quality of Service` capabilities may actively discard a data packet containing higher resolution video data, particularly when the output buffer is overloading or is about to overload. Therefore if data packet loss occurs, a lower resolution set of pixels will still be available in the buffer, and can be transmitted for view on the video display device.

[0152] In one embodiment of the present invention, the video frame data packet may be in the form of a wavelet. The switch 700 will give a higher prioritization to the lowest resolution wavelet, and a lower prioritization given to higher resolution wavelets.

[0153] FIG. 8 shows a schematic representation of a video output device 800 according to some embodiments.

[0154] The video output device 800 comprises a network interface 805 and a processor 810 coupled to the network interface. The processor 810 is configured to subscribe, via the network interface 805, to a multicast stream of a plurality of multicast streams. Each multicast stream is streamed from a video source and comprises video frame data corresponding to a portion of a video frame. The multicast stream to which the processor 810 subscribes comprises video frame data that is for display on a display associated with the video output device 800.

[0155] The processor 810 is further configured to receive, via the network interface 805, the video frame data that is for display.

[0156] FIG. 9 shows a schematic representation of a video output device 900 according to an embodiment. The video output device 900 comprises various modules. Any or all of these modules could, either alone or in any combination, be implemented by dedicated circuitry, for example one or more field-programmable gate arrays, or be implemented by routines in one or more general processors.

[0157] The video output device 900 comprises an ethernet controller 905 controlled by a processor 910. The processor 910 is configured to transmit a subscription request for a multicast stream, via the ethernet controller 905 to a network switch, for example as described above.

[0158] The ethernet controller 905 is further configured to receive the video frame data of the requested stream from the network switch.

[0159] The video output device 900 comprises a decryption module 915, which is configured to decrypt the received video frame data into a format suitable for further processing and display.

[0160] The video output device 900 comprises a pixel cache 920 in which decrypted video frame data is stored and from which video frame data is output to a display of the video wall.

[0161] The video output device 900 comprises a transform module 925, configured to apply transforms to the stored video frame data to produce video frame data suitable for display. For example, the transforms may comprise warping, rotating, up-scaling, down-scaling, de-interlacing and/or edge blending, depending on the desired display configuration of the video frame. Additionally, the transform module 925 may determine the particular multicast streams for subscription, as described in more detail below, and transmit an identification of those streams to the processor 910.

[0162] The video output device 900 comprises a sync pulse generator 930 configured to provide a synchronisation signal for synchronising the outputting of video frame data with the refresh rate of the display.

[0163] FIG. 10 shows a schematic representation of a video wall 1000. The video wall comprises an array of four displays 1005a-d in a two-by-two layout. The video wall 1000 is configured to display three windows 1010, 1015, 1020. Each window 1010, 1015, 1020 represents a video served by a video server, and by analogy the window also represents a video frame of the video. Each window 1010, 1015, 1020 has a corresponding layer number, with window 1015 having a higher number than window 1010 and window 1020 having a higher number than window 1015. In a region where two windows 1010, 1015, 1020 overlap, the window with the highest layer number is displayed.

[0164] Window 1010 (shown in horizontally hatched shading) is displayed at a size equal to the combined area of all four displays 1005a-d.

[0165] Window 1015 (shown in diamond shading) is displayed at a size equal to a single display, and located in the geometric centre of the video wall. As such, the top-left quarter of window 1015 is displayed on the top-left display 1005a, the top-right quarter of window 1015 is displayed on the top-right display 1005b and the bottom-left quarter of window 1015 is displayed on bottom-left display 1005c.

[0166] Window 1020 (shown in dotted shading) is displayed at a size equal to a single display, filling the bottom-right display 1005d. As such, no portion of windows 1010 and 1015 is displayed on the bottom-right display 1020.

[0167] As set out above, each multicast stream corresponds to a portion of a video. Each such portion comprises a block of pixels in a video frame. A video output device determines the relevant streams to which it should subscribe based on determining a position of the video frame relative to the associated display. In some embodiments, determining the location on the display comprises determining a mapping of at least one pixel of the display to a location in the video frame. For example, pixels in the bottom-right quarter of the top-left display 1005a map to positions on the top-left quarter of the video frame in window 1015. Based on the mapping, it is thus determined that the video output device should subscribe to streams corresponding to the top-left corner of the video of window 1015.

[0168] Furthermore, pixels in the remaining three quarters of the top-left display 1005a map to positions in the top-left three quarters of the video frame in window 1010. Based on the mapping, it is thus determined that the video output device should subscribe to streams corresponding to the top-left corner of the video of window 1010. The "positions" referred to above may be defined by horizontal and vertical co-ordinates on the display, the canvas and/or the video frame.

[0169] In some examples, the mapping is such that a block of pixels received from a given stream is displayed on a block of pixels of the display, the block of pixels of the display having the same dimensions as the received block of pixels. In other examples, the mapping is such that a received block of pixels from a given stream is displayed on a block of pixels of the display, with pixel values of the block of pixels of the display being determined by interpolating between pixel values of the received block of pixels. For example, the video frame data may be displayed with a rotation angle with respect to the display such that a single pixel of the display does not correspond to a single pixel of received video frame data. In such a case, the pixel values of the display are determined by interpolating between pixel values of the received video frame data.

[0170] FIG. 11 shows a schematic representation of a mapping as described above, according to an example. A canvas 1105 is defined. The canvas 1105 is a virtual area in which windows 1110, 1115 are positioned. Each window 1110, 1115 displays video frame data. An area 1120 of the canvas 1105 corresponds to a display, such that portions of windows 1110, 1115 that fall within the display area 1120 are displayed at pixels in corresponding portions of the display.

[0171] The video output device maps each pixel of the display 1120 to a corresponding position on the canvas 1105. From this, the video output device maps each such position on the canvas to a position in each relevant window. For example, a pixel 1123 of the display maps onto a corresponding position in window 1115, and a pixel 1125 of the display maps onto corresponding positions in both window 1110 and window 1115. A pixel 1130 does not map onto a corresponding position in any window.