System for organizing and fast searching of massive amounts of data

Bhave; Ajit ; et al.

U.S. patent application number 16/501631 was filed with the patent office on 2019-11-21 for system for organizing and fast searching of massive amounts of data. This patent application is currently assigned to CUMULUS SYSTEMS INC.. The applicant listed for this patent is SANDEEP BELE, AJIT BHAVE, CUMULUS SYSTEMS INC., SAI KRISHNAM NADIMPALLI, ARUN RAMACHANDRAN. Invention is credited to Sandeep Bele, Ajit Bhave, Sai Krishnam Raju Nadimpalli, Arun Ramachandran.

| Application Number | 20190354547 16/501631 |

| Document ID | / |

| Family ID | 48042844 |

| Filed Date | 2019-11-21 |

View All Diagrams

| United States Patent Application | 20190354547 |

| Kind Code | A1 |

| Bhave; Ajit ; et al. | November 21, 2019 |

System for organizing and fast searching of massive amounts of data

Abstract

A system to collect and store in a special data structure arranged for rapid searching massive amounts of data. Performance metric data is one example. The performance metric data is recorded in time-series measurements, converted into unicode, and arranged into a special data structure having one directory for every day which stores all the metric data collected that day. The performance metric data is collected by one or more probes running on machines about which data is being collected. The performance metric data is compressed prior to transmission to a server over any data path. The data structure at the server where analysis is done has a subdirectory for every resource type. Each subdirectory contains text files of performance metric data values measured for attributes in a group of attributes to which said text file is dedicated. Each attribute has its own section and the performance metric data values are recorded in time series as unicode hex numbers as a comma delimited list. Analysis of the performance metric data is done using regular expressions. For speed, cache memory is used. Performance metric data outside the start time and end time named by the user on a query screen is eliminated before the regular expression is applied.

| Inventors: | Bhave; Ajit; (Palo Alto, CA) ; Ramachandran; Arun; (Cupertino, CA) ; Nadimpalli; Sai Krishnam Raju; (Bangalore, IN) ; Bele; Sandeep; (Chinchwadgaon, IN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | CUMULUS SYSTEMS INC. CAMPBELL CA |

||||||||||

| Family ID: | 48042844 | ||||||||||

| Appl. No.: | 16/501631 | ||||||||||

| Filed: | May 13, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15183717 | Jun 15, 2016 | 10387475 | ||

| 16501631 | ||||

| 13853925 | Mar 29, 2013 | 9396287 | ||

| 15183717 | ||||

| 13200996 | Oct 5, 2011 | |||

| 13853925 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 11/3409 20130101; H04L 43/12 20130101; G06F 40/126 20200101; G06F 40/146 20200101; G06F 16/2455 20190101; G06F 11/3072 20130101; G06F 16/90335 20190101; H04L 29/06047 20130101; H04L 29/08099 20130101; G06F 16/148 20190101; G06F 21/552 20130101; G06F 16/258 20190101; G06F 16/2468 20190101; G06F 16/334 20190101; G06F 11/3006 20130101; H04L 29/08072 20130101; G06F 16/2452 20190101; G06F 16/31 20190101; H04L 29/06 20130101; H04L 67/42 20130101; G06F 16/185 20190101; G06F 16/3331 20190101; G06F 11/3476 20130101; G06F 16/9024 20190101; G06F 16/335 20190101; H04L 43/04 20130101; G06F 40/106 20200101; G06F 2216/03 20130101; G06F 16/2453 20190101; H04L 43/14 20130101; G06F 16/283 20190101; G06F 16/24522 20190101; G06F 16/245 20190101; H04L 43/022 20130101; G06F 16/36 20190101; H04L 67/025 20130101; G06F 16/2423 20190101; G06F 16/2477 20190101; G06F 16/951 20190101; G06F 16/9535 20190101; G06F 16/86 20190101; G06F 16/90344 20190101; G06F 16/383 20190101; G06F 40/205 20200101; H04L 69/329 20130101; G06F 11/3082 20130101; G06F 16/248 20190101; G06F 16/3329 20190101; G06F 16/284 20190101; G06F 16/338 20190101; G06F 16/9032 20190101; H04L 12/4625 20130101; G06F 16/43 20190101; G05B 23/0272 20130101; G06F 16/3337 20190101 |

| International Class: | G06F 16/36 20060101 G06F016/36; G06F 16/903 20060101 G06F016/903; G06F 11/30 20060101 G06F011/30; G06F 11/34 20060101 G06F011/34; H04L 12/26 20060101 H04L012/26; G06F 16/2452 20060101 G06F016/2452; G06F 16/9535 20060101 G06F016/9535; G06F 16/9032 20060101 G06F016/9032; G06F 16/901 20060101 G06F016/901; G06F 16/33 20060101 G06F016/33; G06F 16/2458 20060101 G06F016/2458; G06F 16/2455 20060101 G06F016/2455; G06F 16/2453 20060101 G06F016/2453; G06F 16/242 20060101 G06F016/242; G06F 16/951 20060101 G06F016/951; G06F 16/335 20060101 G06F016/335; G06F 16/28 20060101 G06F016/28; G06F 16/25 20060101 G06F016/25; G06F 16/245 20060101 G06F016/245; G06F 16/185 20060101 G06F016/185; G06F 16/14 20060101 G06F016/14; G06F 16/84 20060101 G06F016/84; G06F 16/43 20060101 G06F016/43; G06F 17/27 20060101 G06F017/27; G06F 21/55 20060101 G06F021/55; H04L 29/08 20060101 H04L029/08; H04L 29/06 20060101 H04L029/06; G05B 23/02 20060101 G05B023/02; G06F 16/31 20060101 G06F016/31; G06F 16/332 20060101 G06F016/332; G06F 16/338 20060101 G06F016/338; G06F 16/383 20060101 G06F016/383; H04L 12/46 20060101 H04L012/46 |

Claims

1. An apparatus comprising: a memory storing performance metric data of one of more resource types, each resource type having one or more attributes, said performance metric data having been converted to Unicode prior to storage; a processor programmed with at least an operating system and a search program for searching said memory; said search program having the capability to receive one or more partial syntaxes, each comprised of a resource type, an attribute and a regular expression giving search conditions; and said search program capable of receiving two or more user-defined partial syntax expressions, one or more of said partial syntax expression for resource types which are related to the resource type of a first partial syntax, and capable of searching the first partial syntax for results which qualify, and applying said results as the starting point for evaluating said one or more related resource type partial syntaxes for results which qualify, and merging the evaluation results of all the partial syntaxes.

2. [user defined start time and stop time] The apparatus of claim 2 wherein said search program is capable of reading one or more time range boxes which contain a user supplied start time and stop time for said search and restricting data accessed from said memory to data gathered between and including said start time and said stop time.

3. [reverse mapping] The apparatus of claim 2 wherein said search program is capable of recognizing performance metric data in the form of numbers in said regular expression expressing filter conditions in each partial syntax and converting each said performance metric number to Unicode before carrying out said search.

4. [template search] The apparatus of claim 1 where said search program is capable of recognizing identification codes in said regular expression representing prearranged searches which are stored in advance and is capable of generating the proper syntax for an identification code to cause said search program to implement the appropriate prearranged search.

5. [user defined variables in template search] The apparatus of claim 4 wherein said search program is capable of recognizing user defined variables for one or more variables in a template search identified by an identification code and substituting said user defined variable(s) into said template search before running said search.

6. [plain language search] The apparatus of claim 1 wherein said search program is capable of accepting plain language searches and converting them to the proper syntax for the partial syntaxes before executing said search.

7. [data in said specially defined file system] The apparatus of claim 1 wherein said memory stores said Unicode in a file system organized with one top level directory per unit of time where a unit of time is the time over which performance metric data is gathered for one attribute, one performance metric number gathered per one subunit of time, said top level directory storing one or more subdirectories, one per resource type, each subdirectory storing one or more files of Unicode data gathered from said one or more attributes of said resource type to which said subdirectory is devoted, each said file having a one or more sections, each for storing the data from one of the related attributes of said resource in a plurality of time slots, each for storing one Unicode letter and each of which corresponds to the timeslot during which the underlying performance number was gathered.

8. [metadata for each performance number stored in the file system itself] The apparatus of claim 7 wherein each item of performance metric data has metadata comprising at least the time of day during which it was gathered, the attribute from which it was gathered, the date on which it was gathered, and wherein the metadata of each performance metric number is stored in the file system itself.

9. An apparatus comprising: a memory storing performance metric data of one of more resource types, each resource type having one or more attributes, said performance metric data having been converted to Unicode prior to storage; a processor programmed with at least an operating system and a search program for searching said memory; said search program having the capability to receive one or more partial syntaxes, each comprised of a resource type, an attribute and a regular expression giving search conditions; and said search program capable of receiving first, second, third, fourth and fifth or more user-defined partial syntax expressions, said second and fourth partial syntax expression for resource types which are related to the resource type of said first partial syntax, and third and fifth partial syntaxes being for resource types which are related to said second and fourth partial syntax, respectively, said search program being capable of searching the first partial syntax for results which qualify, and applying said results as the starting point for evaluating whether said second and fourth partial syntaxes have results which qualify, said search program being capable of using the results which qualify by using said second and fourth partial syntaxes and applying said results as the starting point for evaluating whether said third and fifth partial syntaxes have results which qualify, and merging the qualifying results of all the partial syntaxes.

10. [user defined start time and stop time] The apparatus of claim 9 wherein said search program is capable of reading one or more time range boxes which contain a user supplied start time and stop time for said search and restricting data accessed from said memory to data gathered between and including said start time and said stop time.

11. [reverse mapping] The apparatus of claim 9 wherein said search program is capable of recognizing performance metric data in the form of numbers in said regular expression expressing filter conditions in each partial syntax and converting each said performance metric number to Unicode before carrying out said search.

12. [template search] The apparatus of claim 9 where said search program is capable of recognizing identification codes in said regular expression representing prearranged searches which are stored in advance and is capable of generating the proper syntax for an identification code to cause said search program to implement the appropriate prearranged search.

13. [user defined variables in template search] The apparatus of claim 12 wherein said search program is capable of recognizing user defined variables for one or more variables in a template search identified by an identification code and substituting said user defined variable(s) into said template search before running said search.

14. [plain language search] The apparatus of claim 9 wherein said search program is capable of accepting plain language searches and converting them to the proper syntax for the partial syntaxes before executing said search.

15. [data in said specially defined file system] The apparatus of claim 9 wherein said memory stores said Unicode in a file system organized with one top level directory per unit of time where a unit of time is the time over which performance metric data is gathered for one attribute, one performance metric number gathered per one subunit of time, said top level directory storing one or more subdirectories, one per resource type, each subdirectory storing one or more files of Unicode data gathered from said one or more attributes of said resource type to which said subdirectory is devoted, each said file having a one or more sections, each for storing the data from one of the related attributes of said resource in a plurality of time slots, each for storing one Unicode letter and each of which corresponds to the timeslot during which the underlying performance number was gathered.

16. [metadata for each performance number stored in the file system itself] The apparatus of claim 15 wherein each item of performance metric data has metadata comprising at least the time of day during which it was gathered, the attribute from which it was gathered, the date on which it was gathered, and wherein the metadata of each performance metric number is stored in the file system itself.

17. An apparatus comprising: a memory storing performance metric data of one of more resource types, each resource type having one or more attributes, said performance metric data having been converted to Unicode prior to storage; a processor programmed with at least an operating system and a search program for searching said memory; said search program having the capability to receive one or more partial syntaxes, each comprised of a resource type, an attribute and a regular expression giving search conditions; and said search program capable of receiving first, second, third, fourth, fifth, sixth and seventh or more user-defined partial syntax expressions, said second partial syntax expression for a resource type which is related to the resource type of said first partial syntax, and third partial syntax being for a resource type which is related to said resource type of second partial syntax, said fourth partial syntax expression being for a resource type which is related to the resource type of said third partial syntax, said fifth partial syntax expression being for a resource which is related to the resource type of said second partial syntax, said sixth partial syntax expression being for a resource type which is related to the resource type of said first partial syntax, said seventh partial syntax expression being for a resource type which is related to the resource type of said sixth partial syntax, said search program being capable of searching the first partial syntax for results which qualify, and applying said results as the starting point for evaluating whether said second and sixth partial syntaxes have results which qualify, said search program being capable of using the results which qualify by using said sixth partial syntaxes and applying said results as the starting point for evaluating whether said seventh partial syntax have results which qualify, said search program being capable of using the results which qualify when using said second partial syntax as the starting point for evaluating whether said third and fifth partial syntaxes have results which qualify, said search program being capable of using the results which qualify when using said third partial syntax as the starting point for evaluating whether said fourth partial syntax has results which qualify, and merging the qualifying results of evaluation of said: first, second, third and fourth partial syntaxes, and first, second and fifth partial syntaxes, and first, sixth and seventh partial syntaxes.

18. [user defined start time and stop time] The apparatus of claim 17 wherein said search program is capable of reading one or more time range boxes which contain a user supplied start time and stop time for said search and restricting data accessed from said memory to data gathered between and including said start time and said stop time.

19. [reverse mapping] The apparatus of claim 17 wherein said search program is capable of recognizing performance metric data in the form of numbers in said regular expression expressing filter conditions in each partial syntax and converting each said performance metric number to Unicode before carrying out said search.

20. [template search] The apparatus of claim 17 where said search program is capable of recognizing identification codes in said regular expression representing prearranged searches which are stored in advance and is capable of generating the proper syntax for an identification code to cause said search program to implement the appropriate prearranged search.

21. [user defined variables in template search] The apparatus of claim 17 wherein said search program is capable of recognizing user defined variables for one or more variables in a template search identified by an identification code and substituting said user defined variable(s) into said template search before running said search.

22. [data in said specially defined file system] The apparatus of claim 17 wherein said memory stores said Unicode in a file system organized with one top level directory per unit of time where a unit of time is the time over which performance metric data is gathered for one attribute, one performance metric number gathered per one subunit of time, said top level directory storing one or more subdirectories, one per resource type, each subdirectory storing one or more files of Unicode data gathered from said one or more attributes of said resource type to which said subdirectory is devoted, each said file having a one or more sections, each for storing the data from one of the related attributes of said resource in a plurality of time slots, each for storing one Unicode letter and each of which corresponds to the timeslot during which the underlying performance number was gathered.

23. [metadata for each performance number stored in the file system itself] The apparatus of claim 22 wherein each item of performance metric data has metadata comprising at least the time of day during which it was gathered, the attribute from which it was gathered, the date on which it was gathered, and wherein the metadata of each performance metric number is stored in the file system itself.

Description

BACKGROUND OF THE INVENTION

[0001] In the management of IT systems and other systems where large amounts of performance data is generated, there is a need to be able to gather, organize and store large amounts of performance data and rapidly search it to evaluate management issues. For example, server virtualization systems have many virtual servers running simultaneously. Management of these virtual servers is challenging since tools to gather, organize, store and analyze data about them are not well adapted to the task.

[0002] One prior art method for remote monitoring of servers, be they virtual servers or otherwise, is to establish a virtual private network between the remote machine and the server to be monitored. The remote machine to be used for monitoring can then connect to the monitored server and observe performance data. The advantage to this method is that no change to the monitored server hardware or software is necessary. The disadvantage of this method is the need for a reliable high bandwidth connection over which the virtual private network sends its data. If the monitored server runs software which generates rich graphics, the bandwidth requirements go up. This can be a problem and expensive especially where the monitored server is overseas in a data center in, for example, India or China, and the monitoring computer is in the U.S. or elsewhere far away from the server being monitored.

[0003] Another method of monitoring a remote server's performance is to put an agent program on it which gathers performance data and forwards the gathered data to the remote monitoring server. This method also suffers from the need for a high bandwidth data link between the monitored and monitoring servers. This high bandwidth requirement means that the number of remote servers that can be supported and monitored is a smaller number. Scalability is also an issue.

[0004] Other non IT systems generate large amount of data that needs to be gathered, organized, stored and searched in order to evaluate various issues. For example, a bridge may have thousands of stress and strain sensors attached to it which are generating stress and strain readings constantly. Evaluation of these readings by engineers is important to managing safety issues and in designing new bridges or retrofitting existing bridges.

[0005] Once performance data has been gathered, if there is a huge volume of it, analyzing it for patterns is a problem. Prior art systems such as performance tools and event log tools use relational databases (tables to store data that is matched by common characteristics found in the dataset) to store the gathered data. These are data warehousing techniques. SQL queries are used to search the tables of time-series performance data in the relational database.

[0006] Several limitations result from using relational databases and SQL queries. First, there is a ripple that affects all the other rows of existing data as new indexes are computed. Another disadvantage is the amount of storage that is required to store performance metric data gathered by the minute regarding multiple attributes of one or more servers or other resources. Storing performance data in a relational database engenders an overhead cost not only in time but also money in both storing it and storing it in an indexed way so that it can be searched since large commercial databases can be required if the amount of data to be stored is large.

[0007] Furthermore, SQL queries are efficient when joining rows across tables using key columns from the tables. But SQL queries are not good when the need is to check for patterns in values of columns in a series of adjacent rows. This requires custom programming in the form of "stored procedures" which extract the desired information programmatically. This is burdensome, time consuming and expensive to have to write a custom program each time a search for a pattern is needed. As the pattern being searched for becomes more complex, the complexity of the stored procedure program also becomes more complex.

[0008] The other way of searching for a pattern requires joining the table with itself M-1 number of times and using a complex join clause. This becomes impractical as the number of joins exceeds 2 or 3.

[0009] As noted earlier, the problems compound as the amount of performance data becomes large. This can happen when, for example, receiving performance data every minute from a high number of sensors or from a large number of agents monitoring different performance characteristics of numerous monitored servers. The dataset can also become very large when, for example, there is a need to store several years of data. Large amounts of data require expensive, complex, powerful commercial databases such as Oracle.

[0010] There is at least one prior art method for doing analysis of performance metric data that does not use databases. It is popularized by the technology called Hadoop. In this prior art method, the data is stored in file systems and manipulated. The primary goal of Hadoop based algorithms is to partition the data set so that the data values can be processed independent of each other potentially on different machines thereby bring scalability to the approach. Hadoop technique references are ambiguous about the actual processes that are used to process the data.

[0011] Therefore, a need has arisen for an apparatus and method to reduce the amount of performance data that is gathered so that more sensors or servers can be remotely monitored with a data link of a given bandwidth. There is also a need to organize and store the data without using a relational database and to be able to search the data for patterns without having to write stored procedure programs, or do table joins and write complex join clauses.

BRIEF DESCRIPTION OF THE DRAWINGS

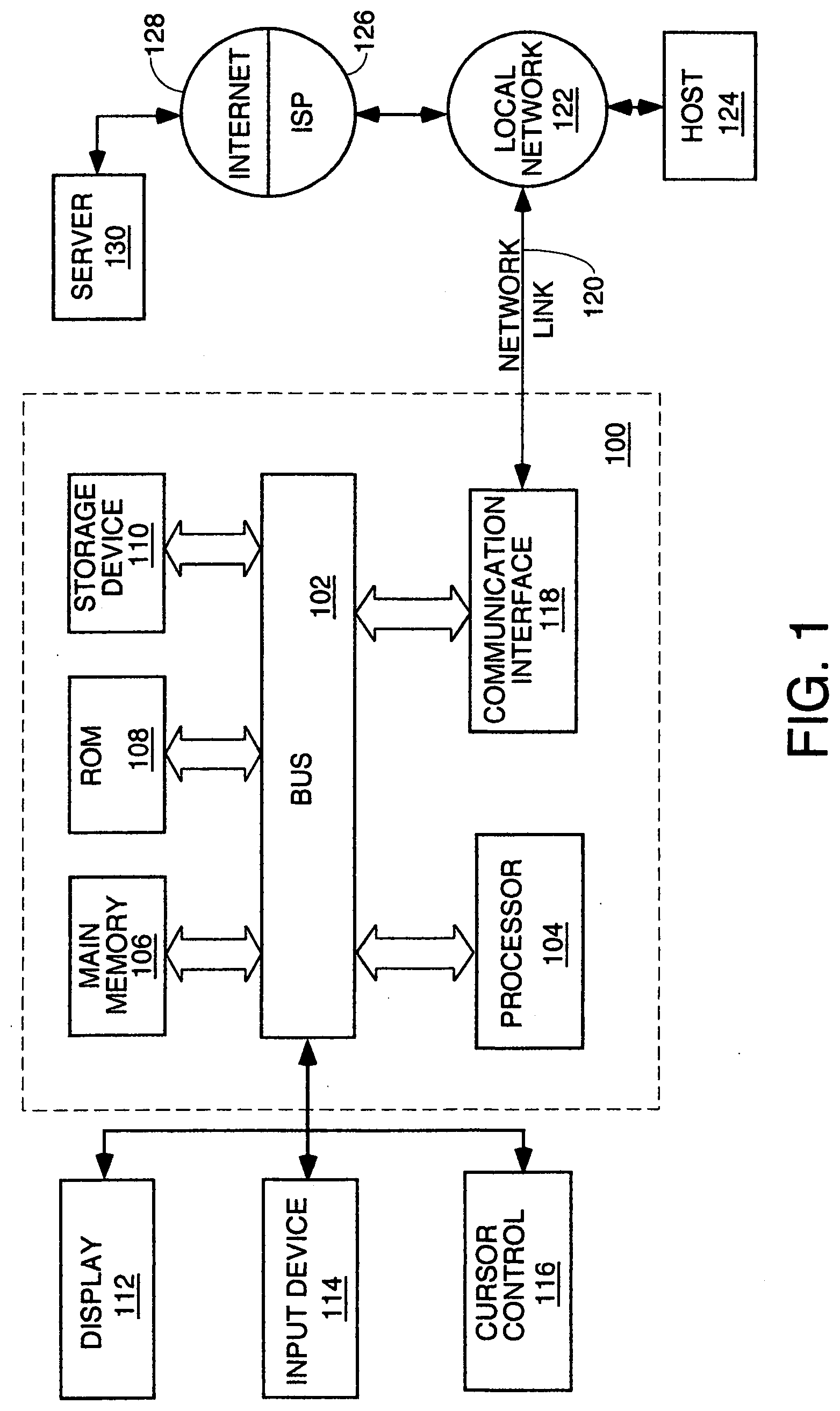

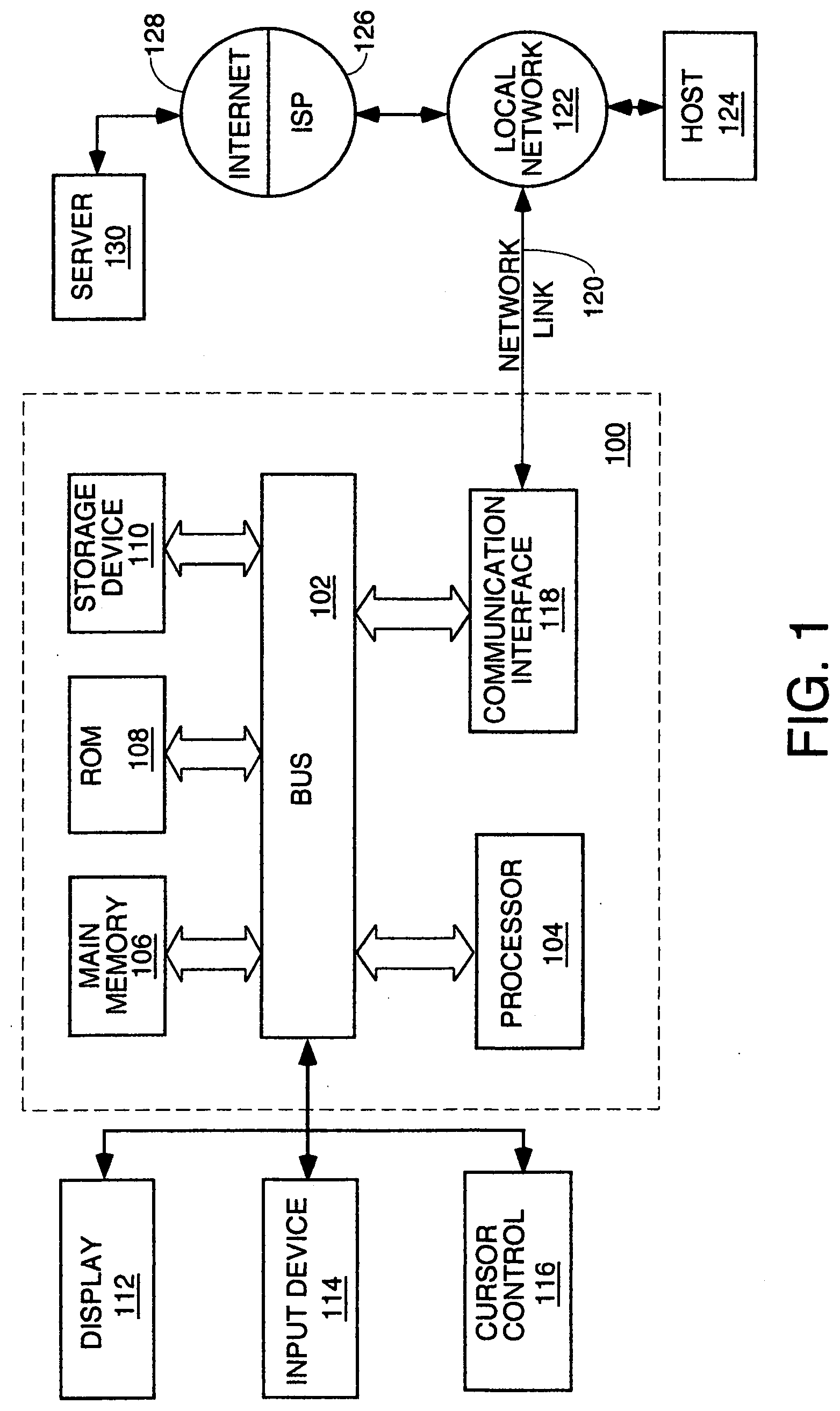

[0012] FIG. 1 is a block diagram of a typical server on which the processes described herein for organizing, storing and searching performance data can run.

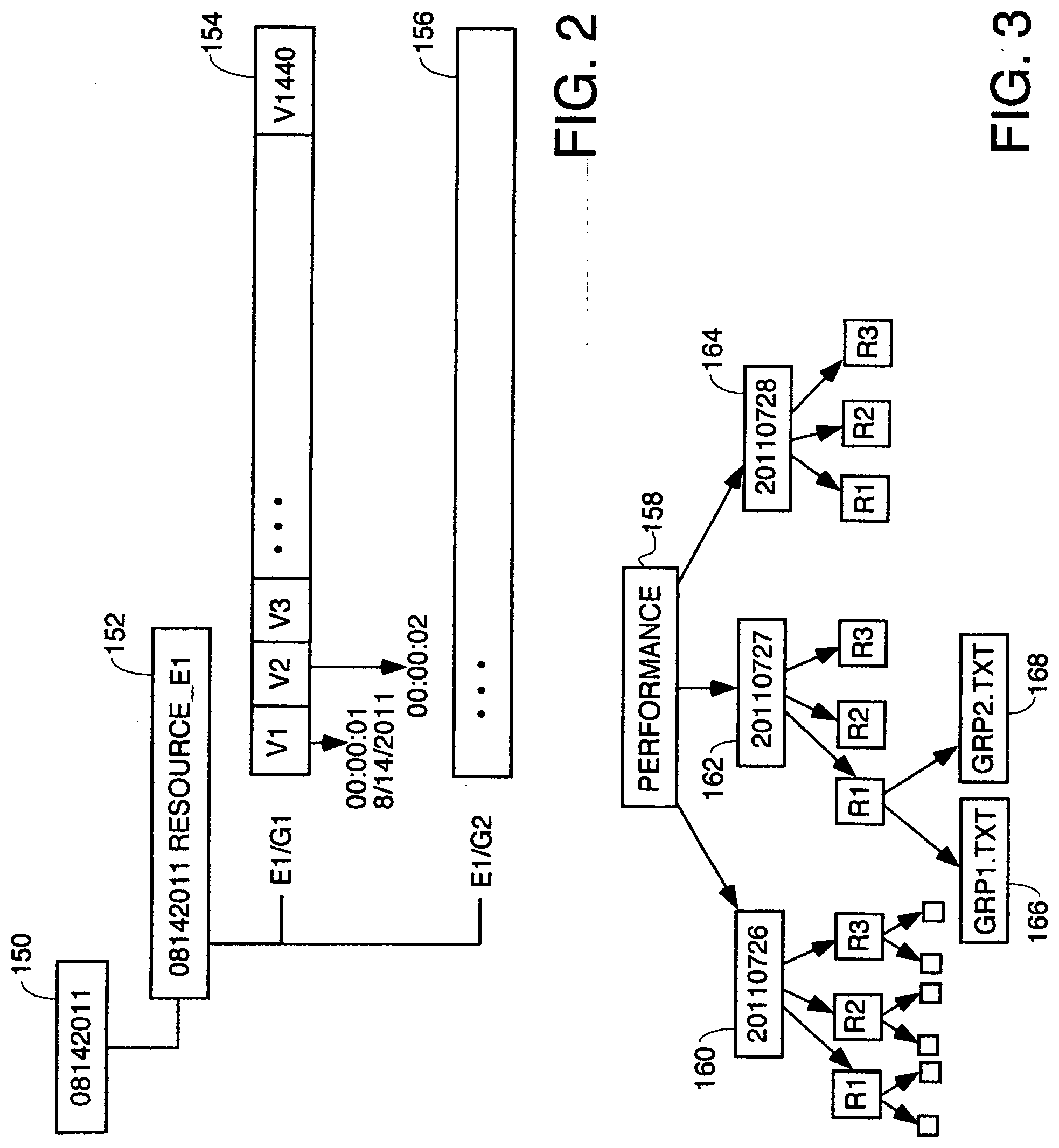

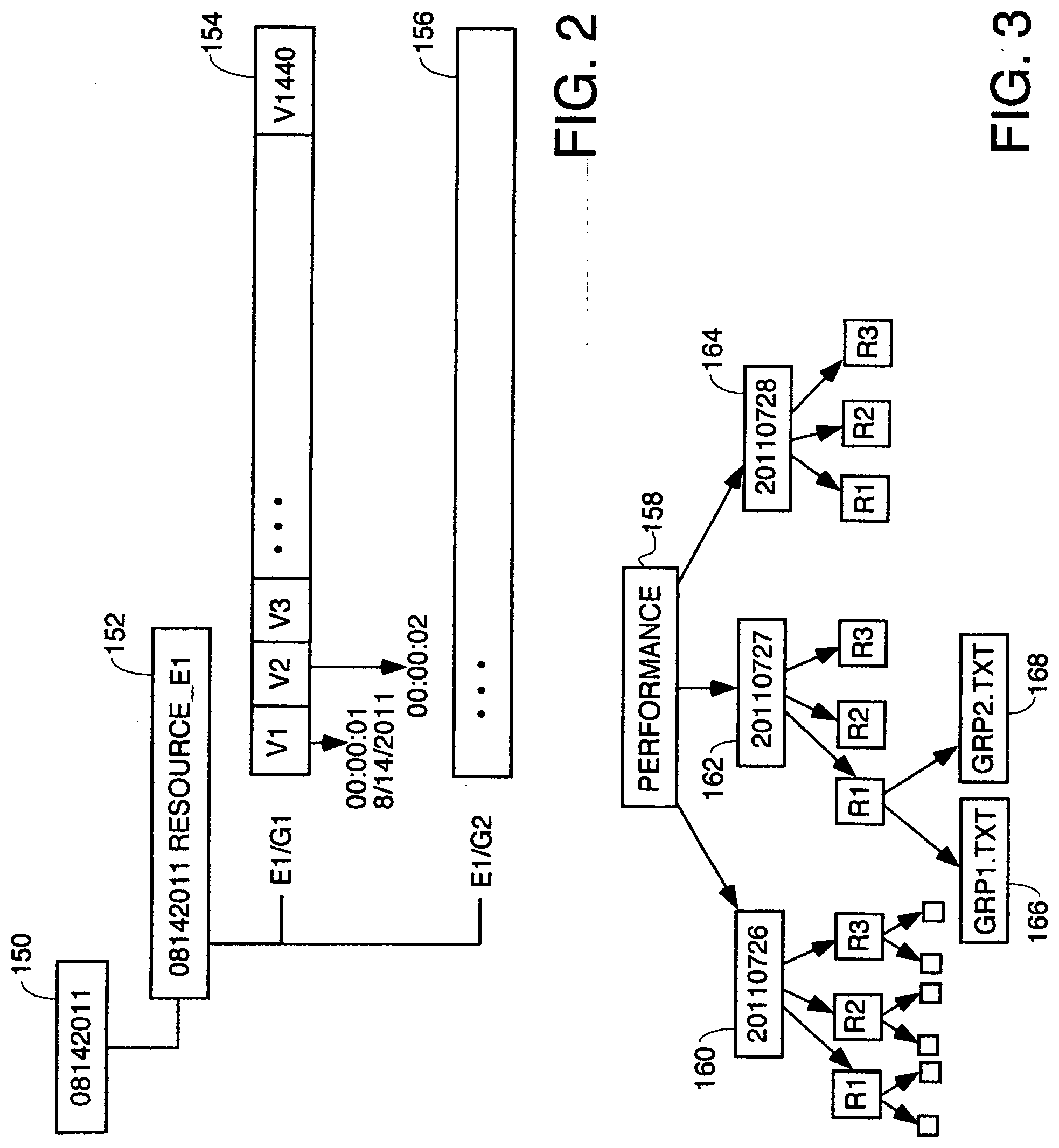

[0013] FIG. 2 is an example of a directory structure storing one day's performance data on a resource the performance of which is being monitored remotely.

[0014] FIG. 3 is another example of a file system containing a separate directory for storing performance metric data for three different days for three different resources, each resource having two groups of attributes.

[0015] FIG. 4 is a diagram of the directory structure of an example of data collected by a probe.

[0016] FIG. 5 is a flowchart of the high level process the monitoring server performs to receive probe data and stored it in the directory structure for search and analysis.

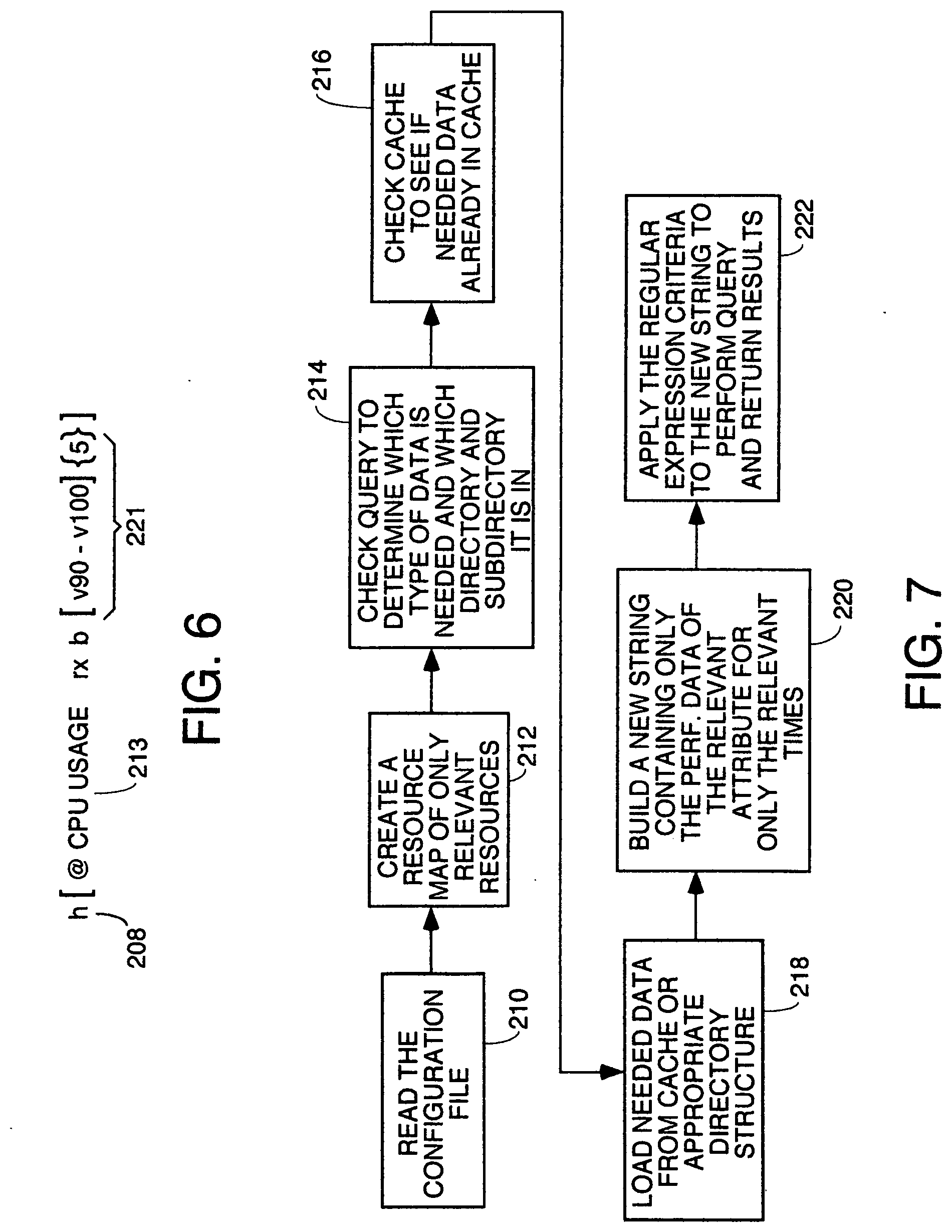

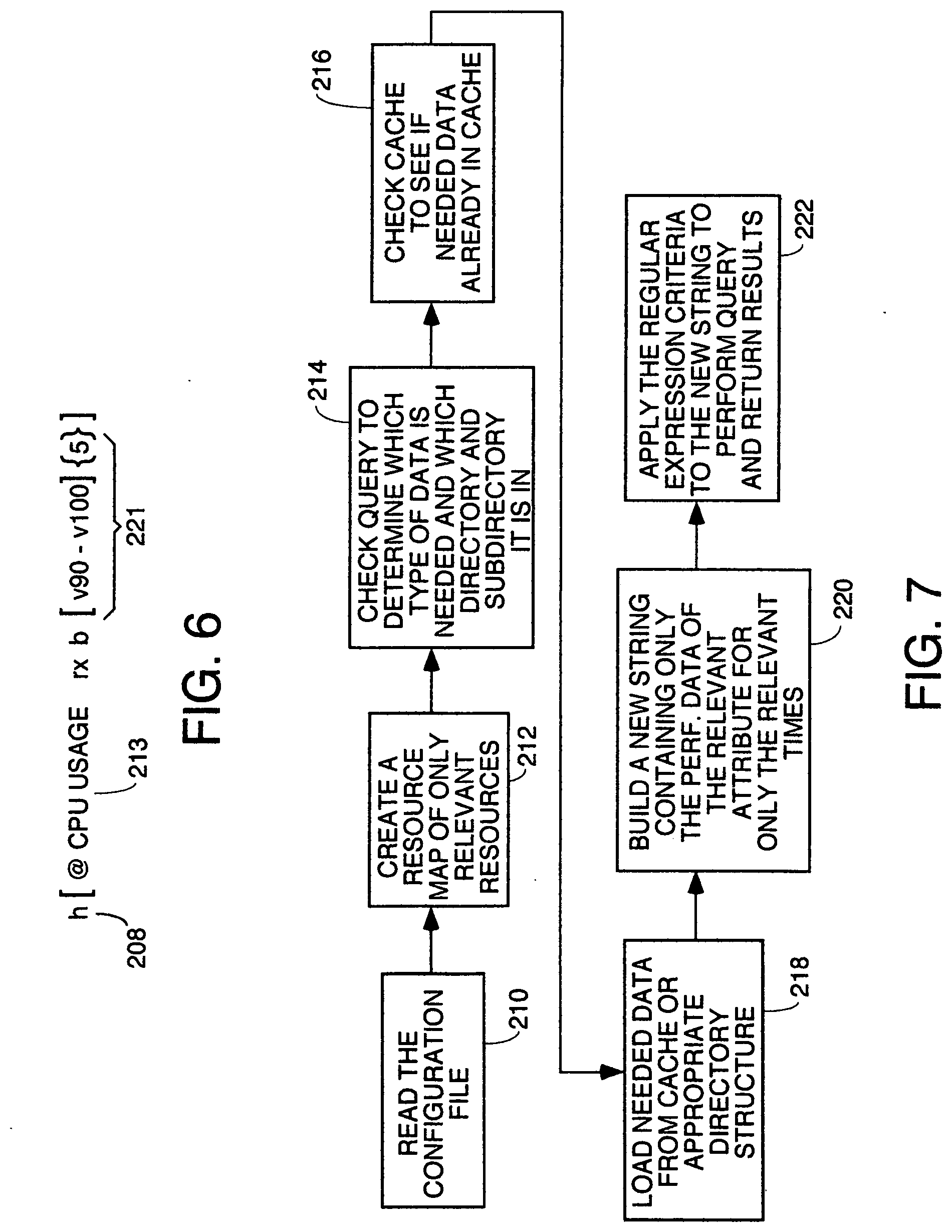

[0017] FIG. 6 is a template for a regular expression used to explain the syntax of a typical regular expression query.

[0018] FIG. 7 is a flowchart of one embodiment of the Query Request Handler module.

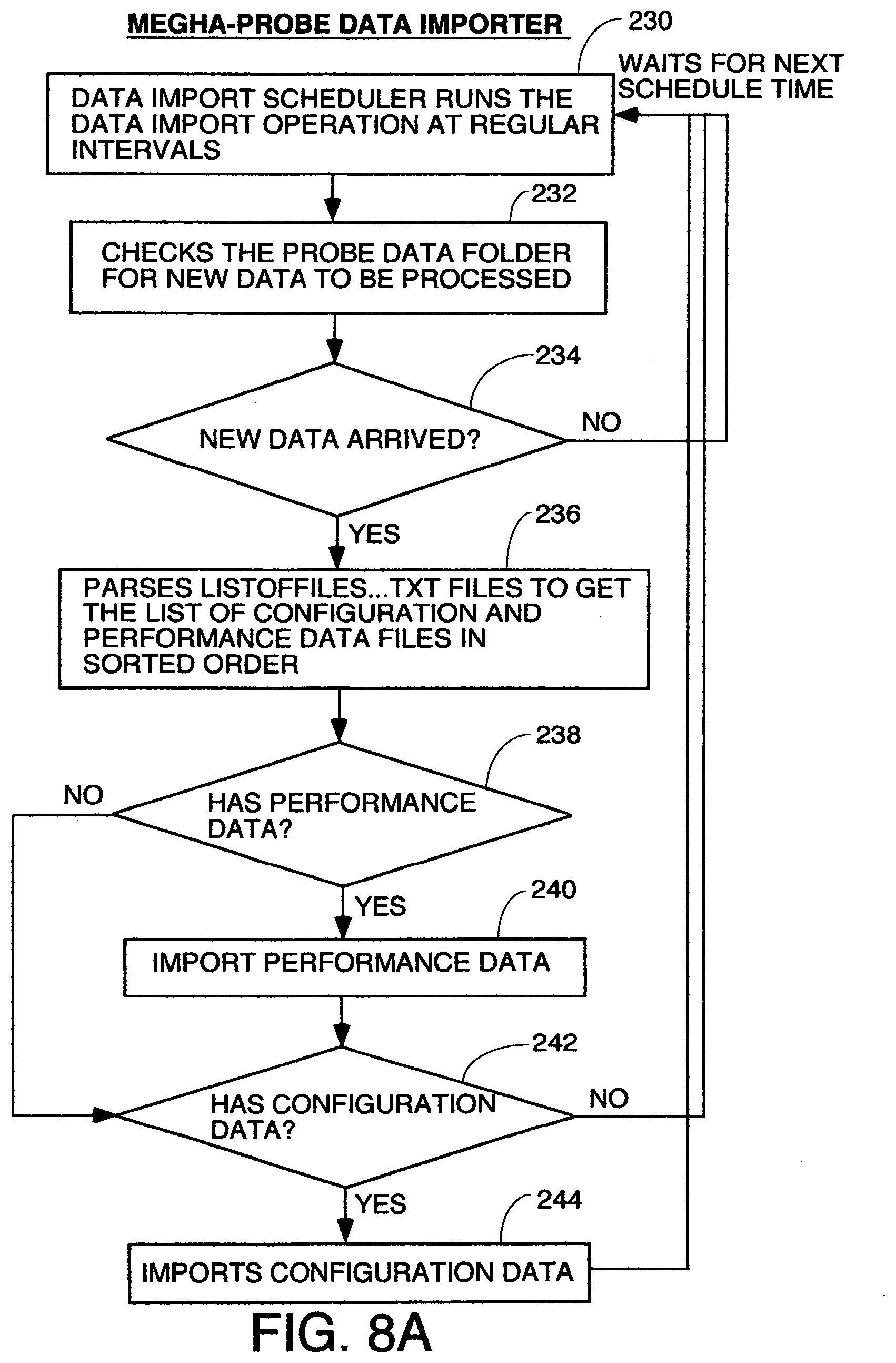

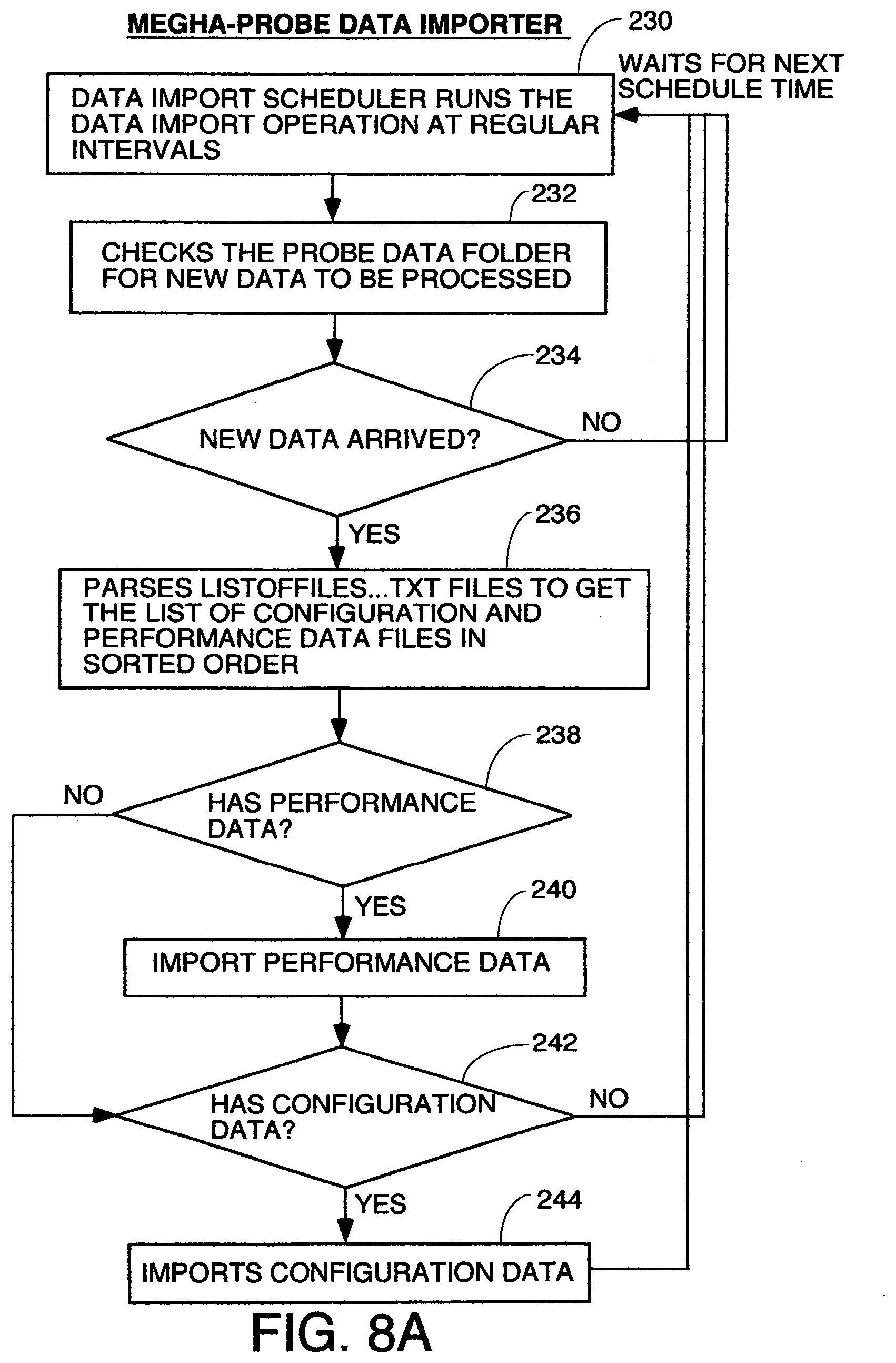

[0019] FIG. 8, comprised of FIGS. 8A through 8C, is a flowchart of the processing of the probe data importer.

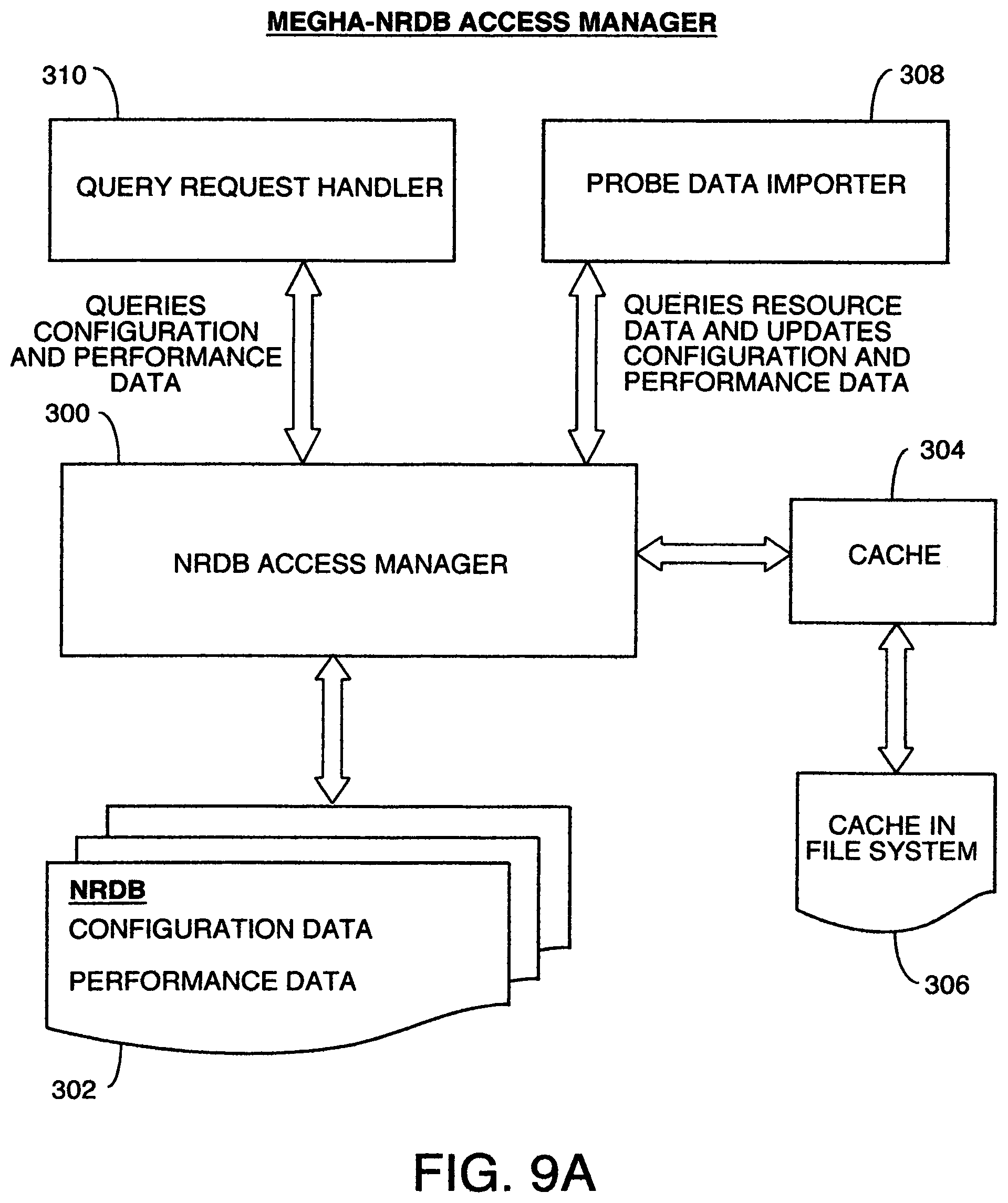

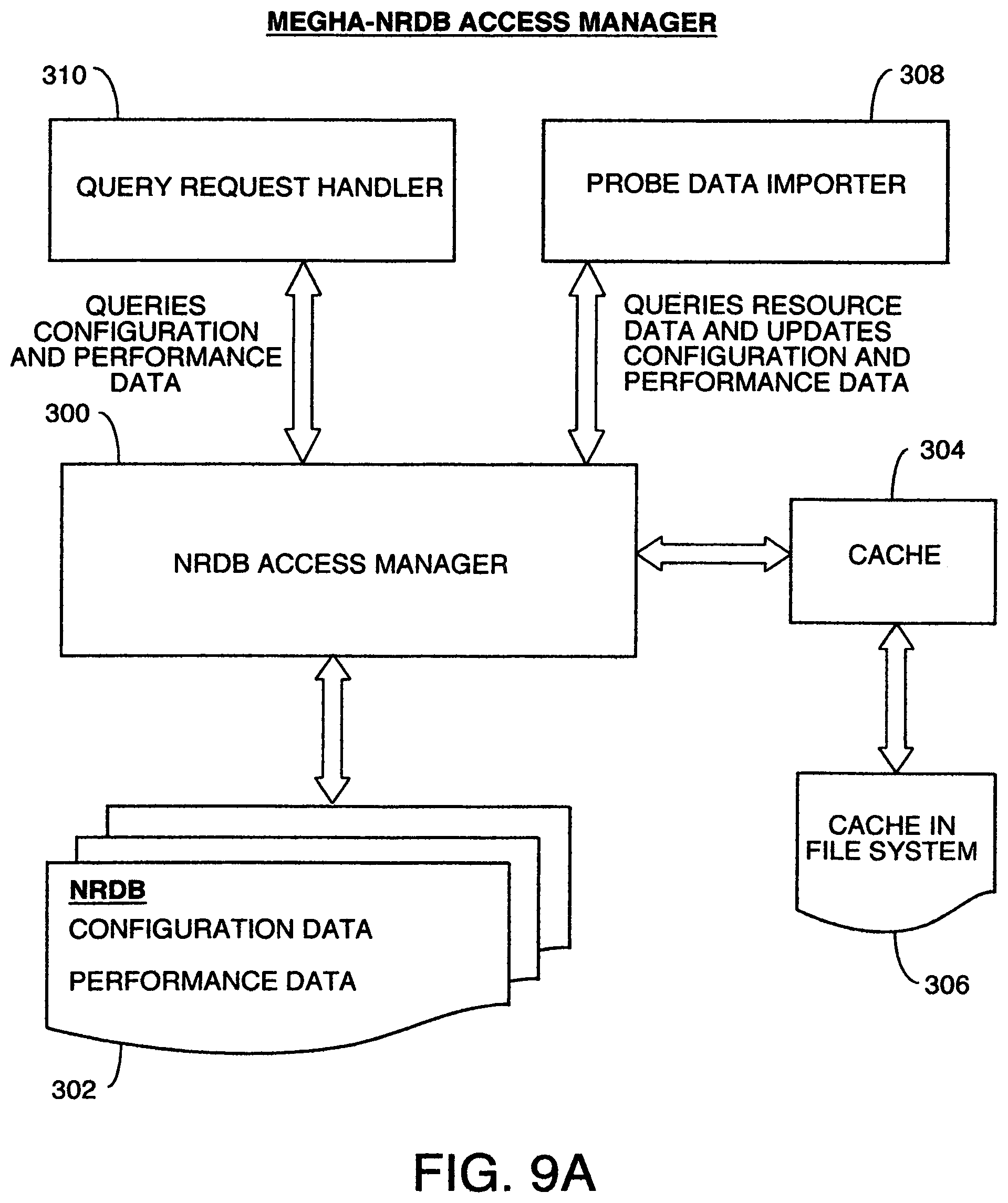

[0020] FIG. 9, comprised of FIGS. 9A and 9B, is a diagram of the modules in the system and a flowchart of the processing of the NRDB Access manager module.

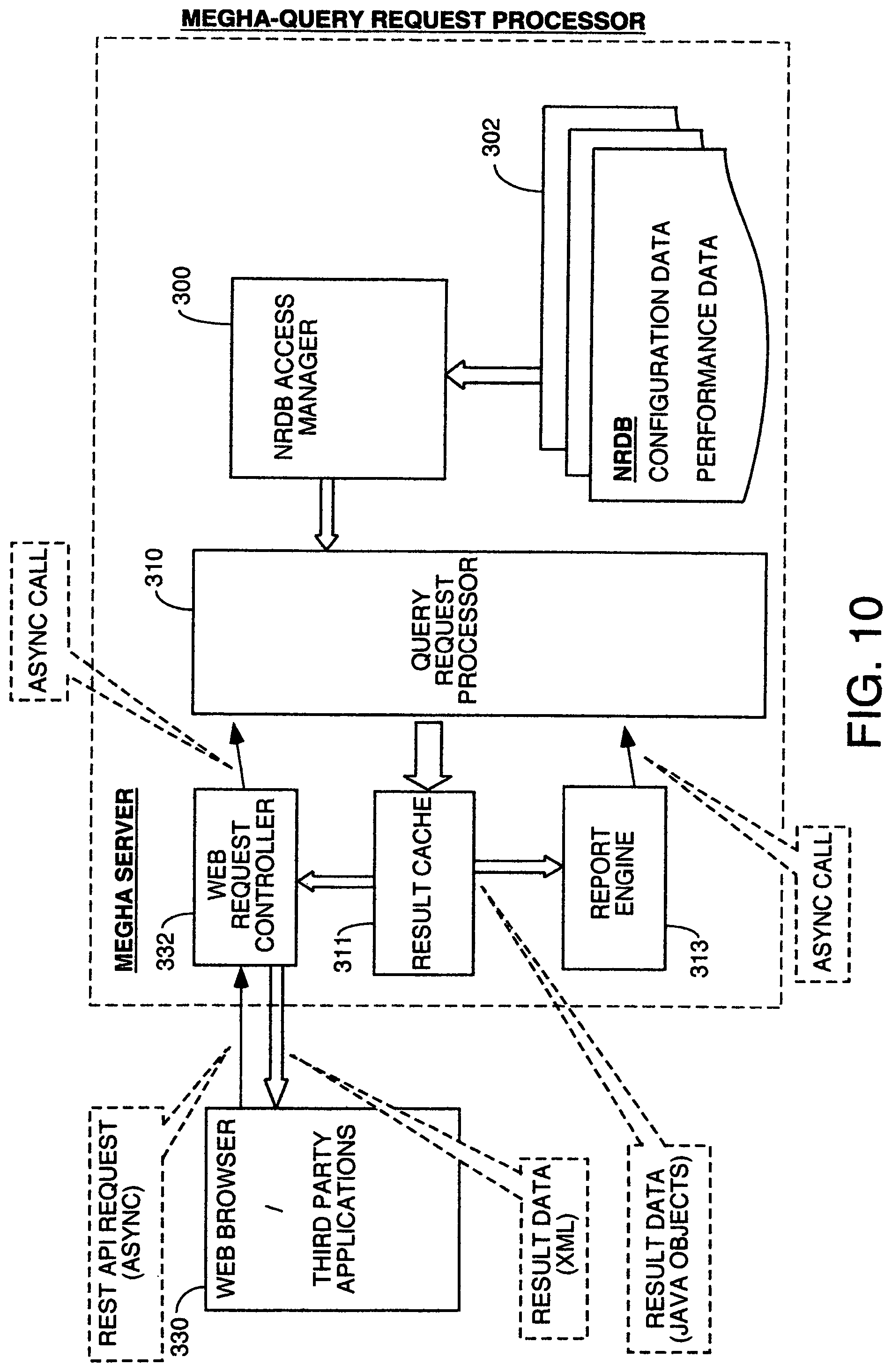

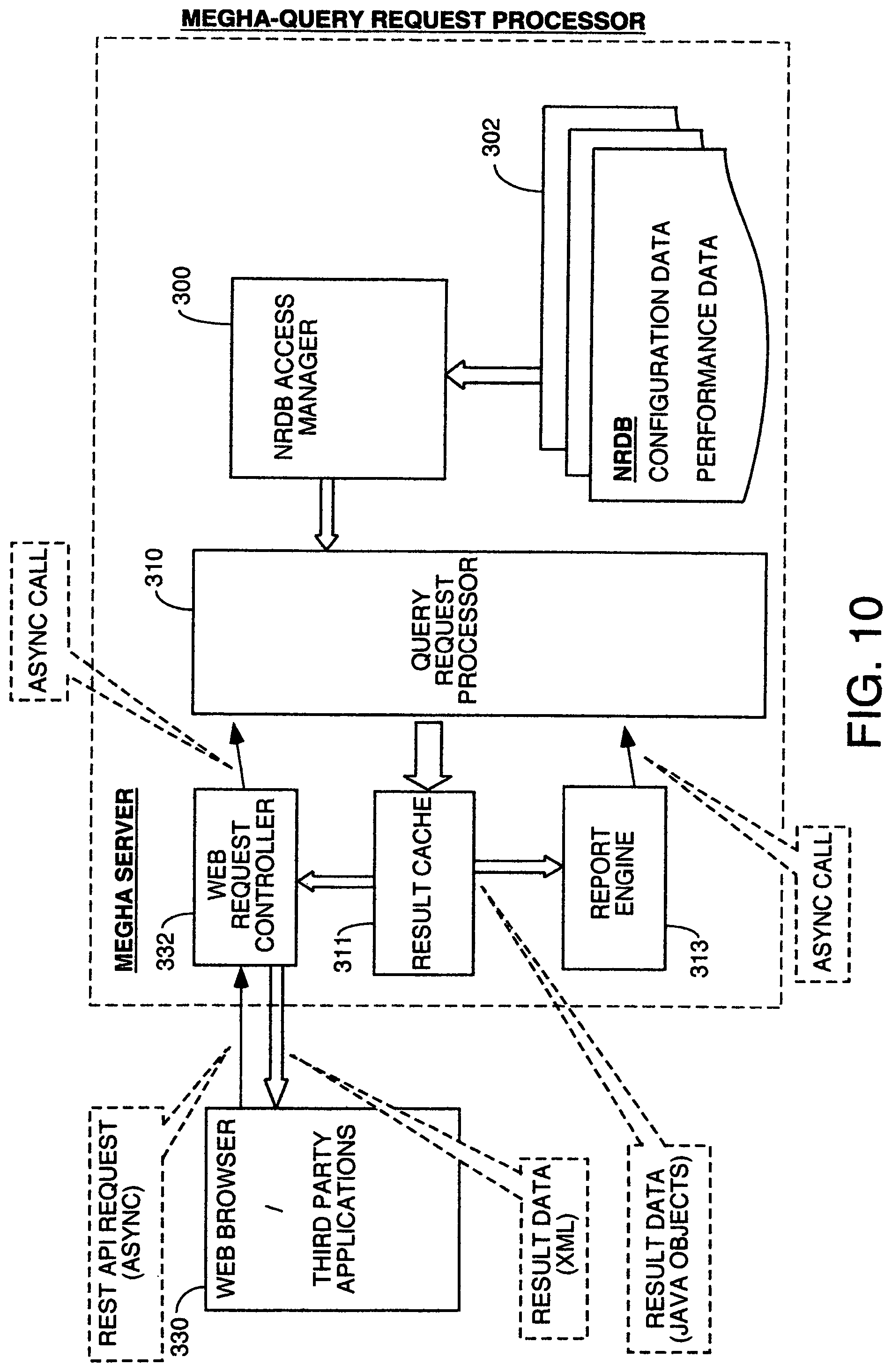

[0021] FIG. 10 is a block diagram of one embodiment of the overall system including the major functional modules in the central server called Megha, where the query request processing for analysis of performance metric data occurs and where the NRDB stores the performance metric data and configuration data.

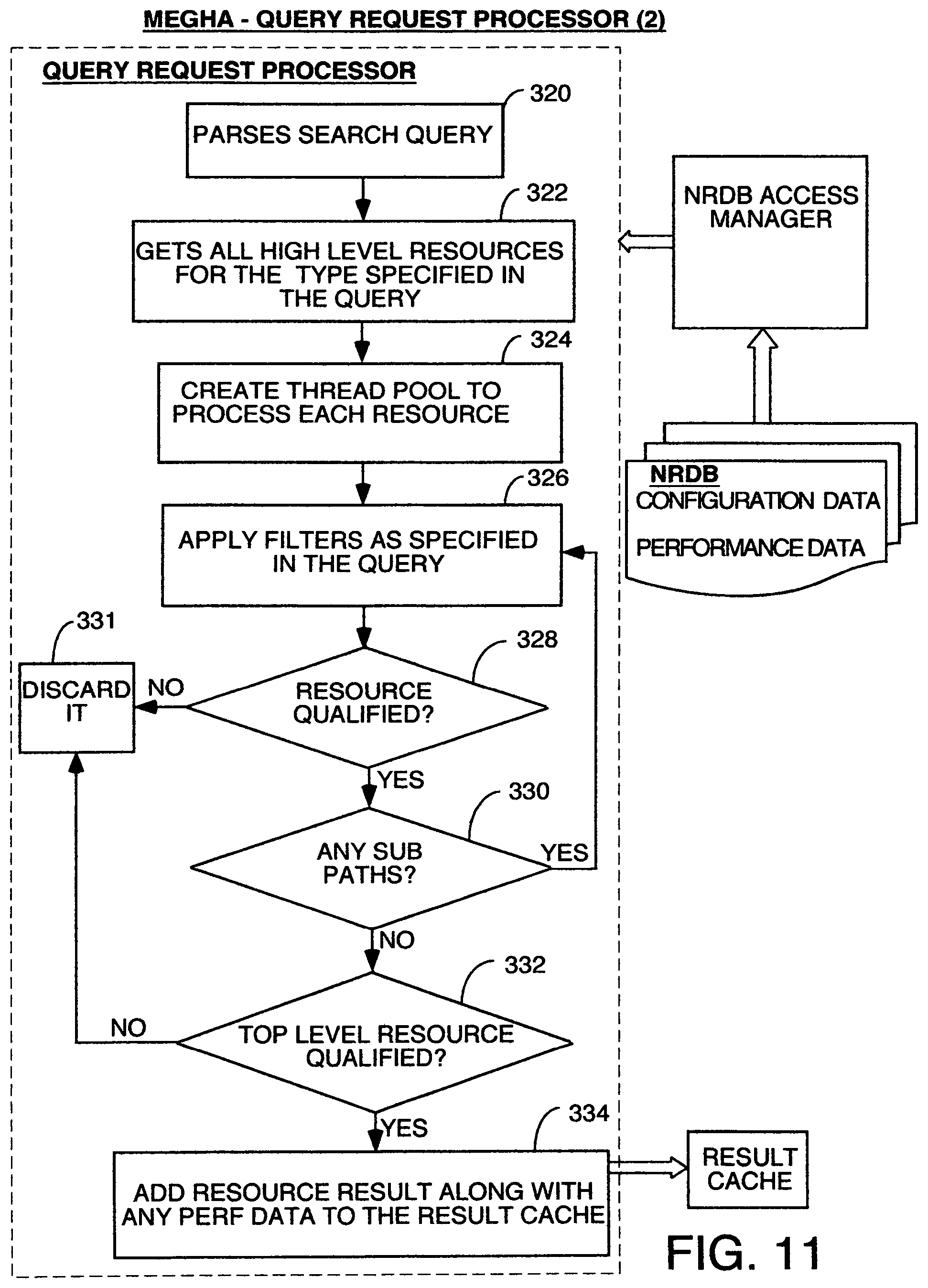

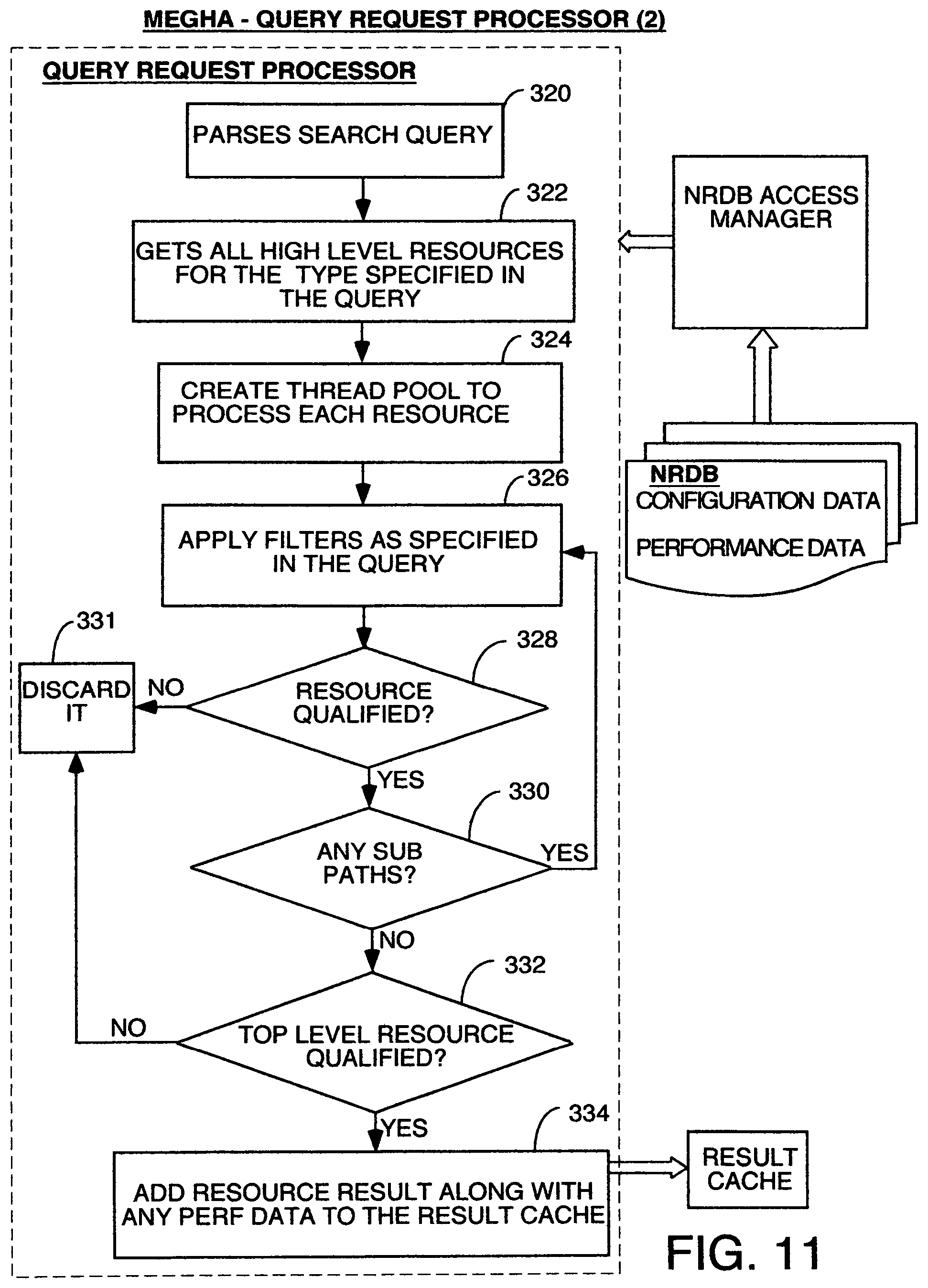

[0022] FIG. 11 is a flowchart of the processing by one embodiment of the Query Request Processor.

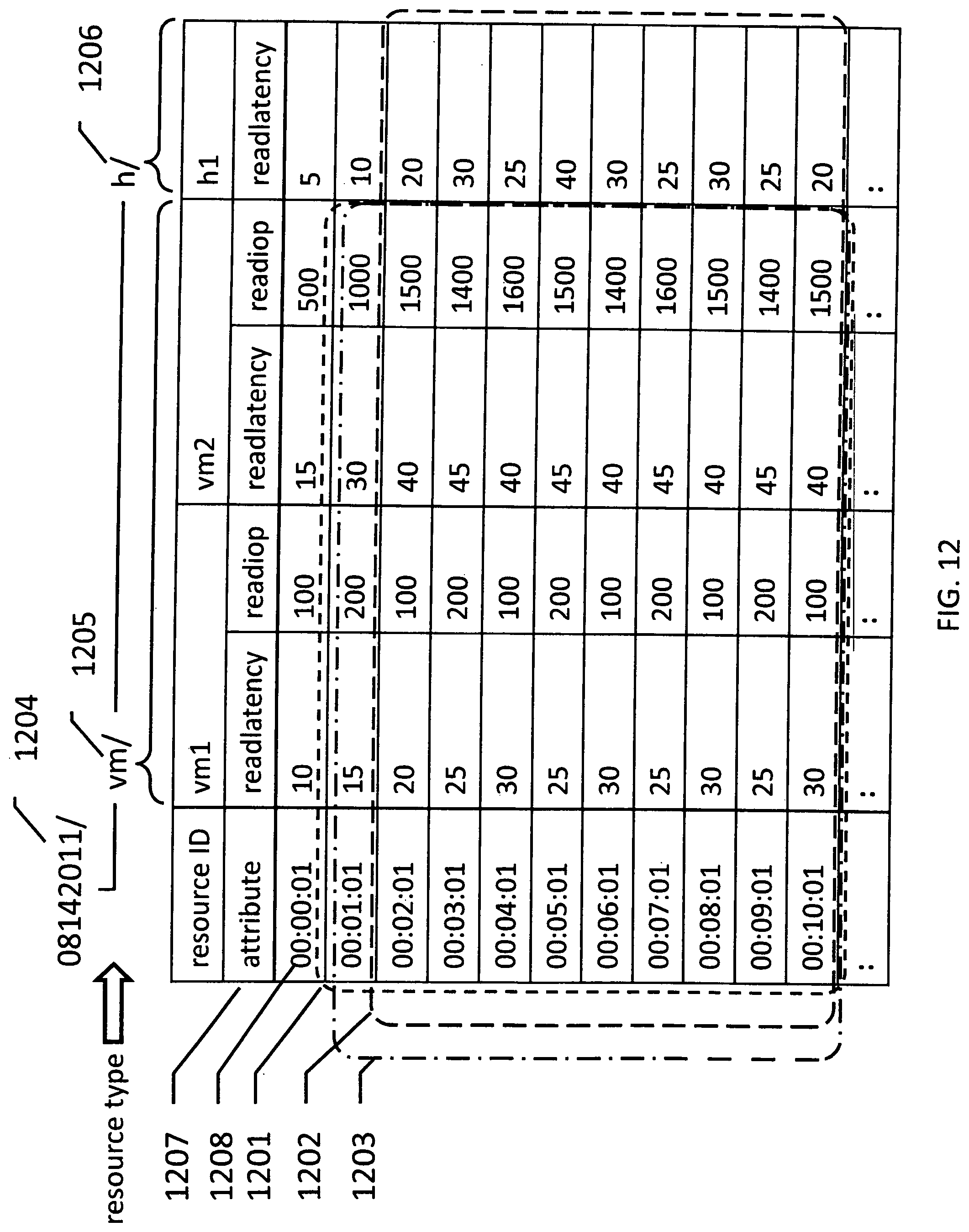

[0023] FIG. 12 is an example of time-series data.

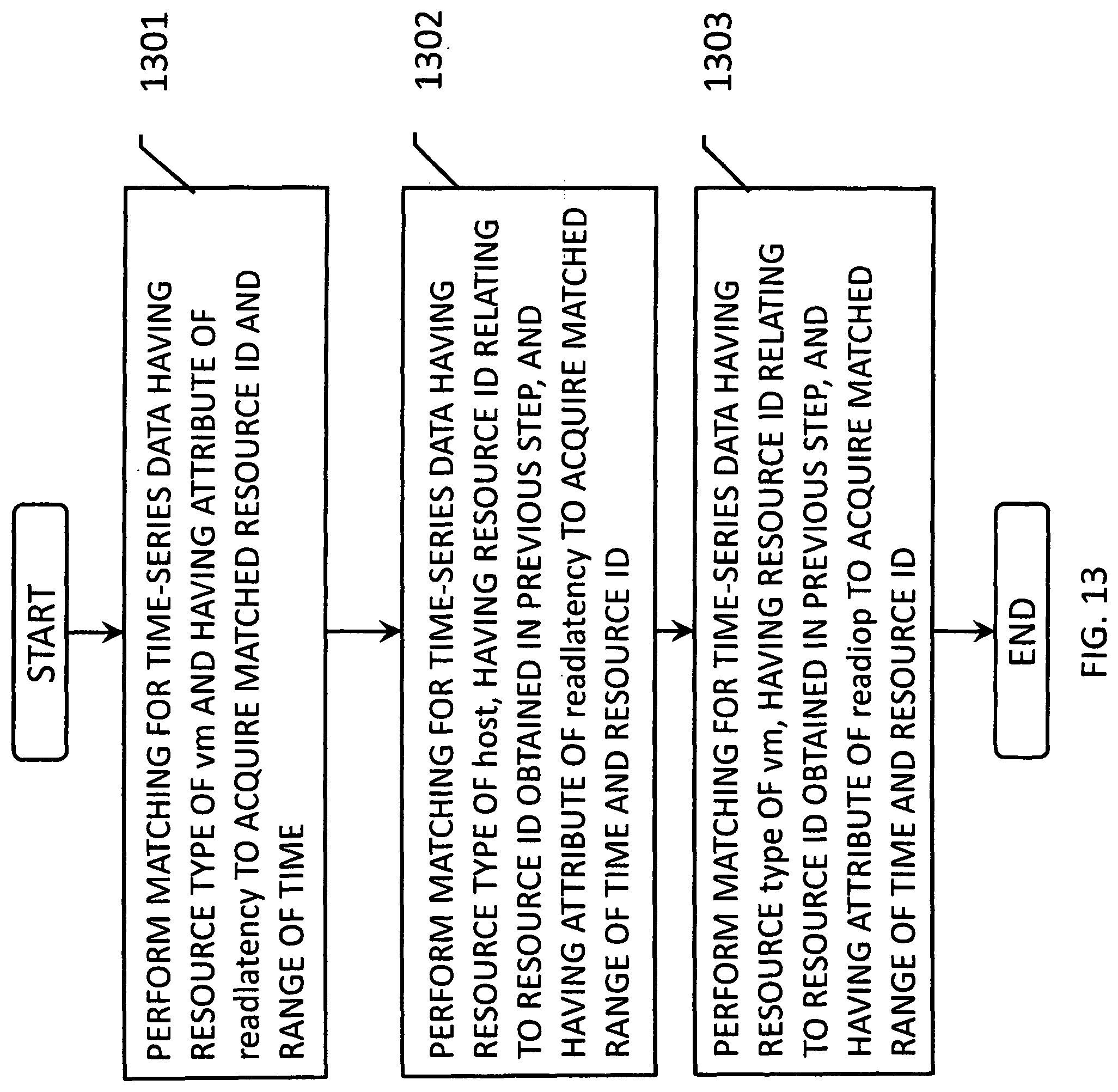

[0024] FIG. 13 is a flowchart of the processing of evaluation.

[0025] FIG. 14 is an example of syntax of searching query.

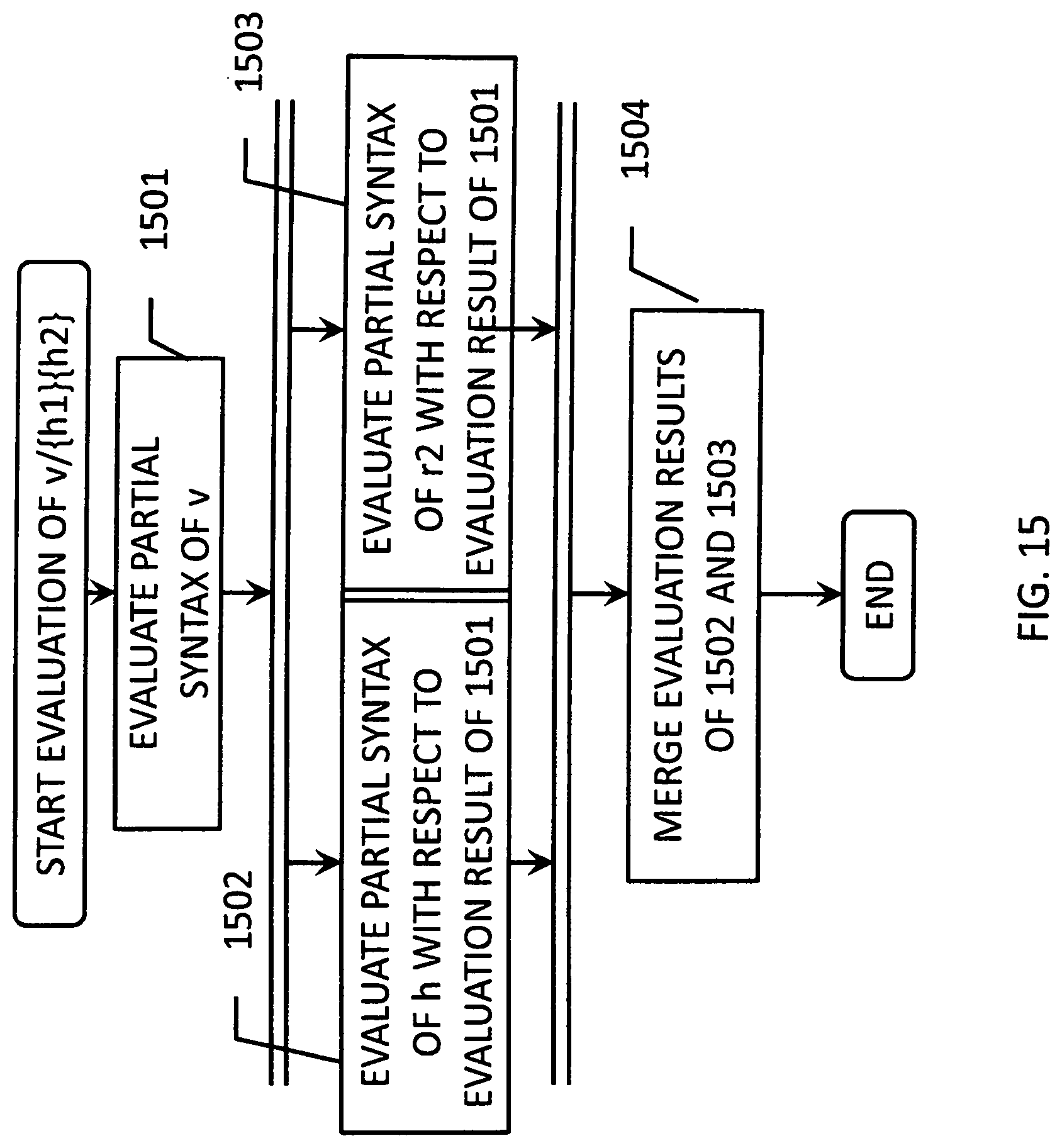

[0026] FIG. 15 is a flowchart of the processing.

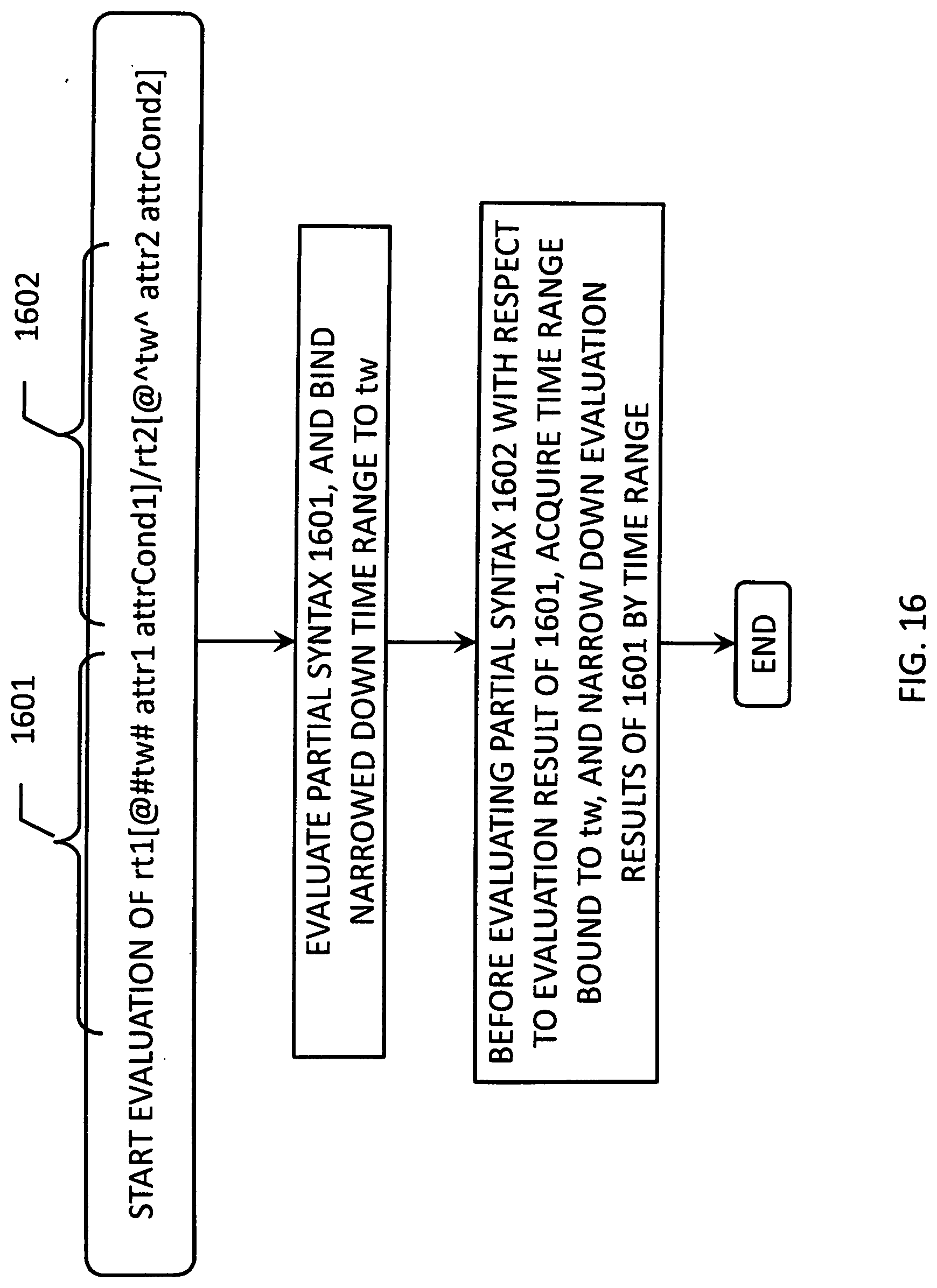

[0027] FIG. 16 is a flowchart of the processing.

[0028] FIG. 17 is a flowchart of the processing.

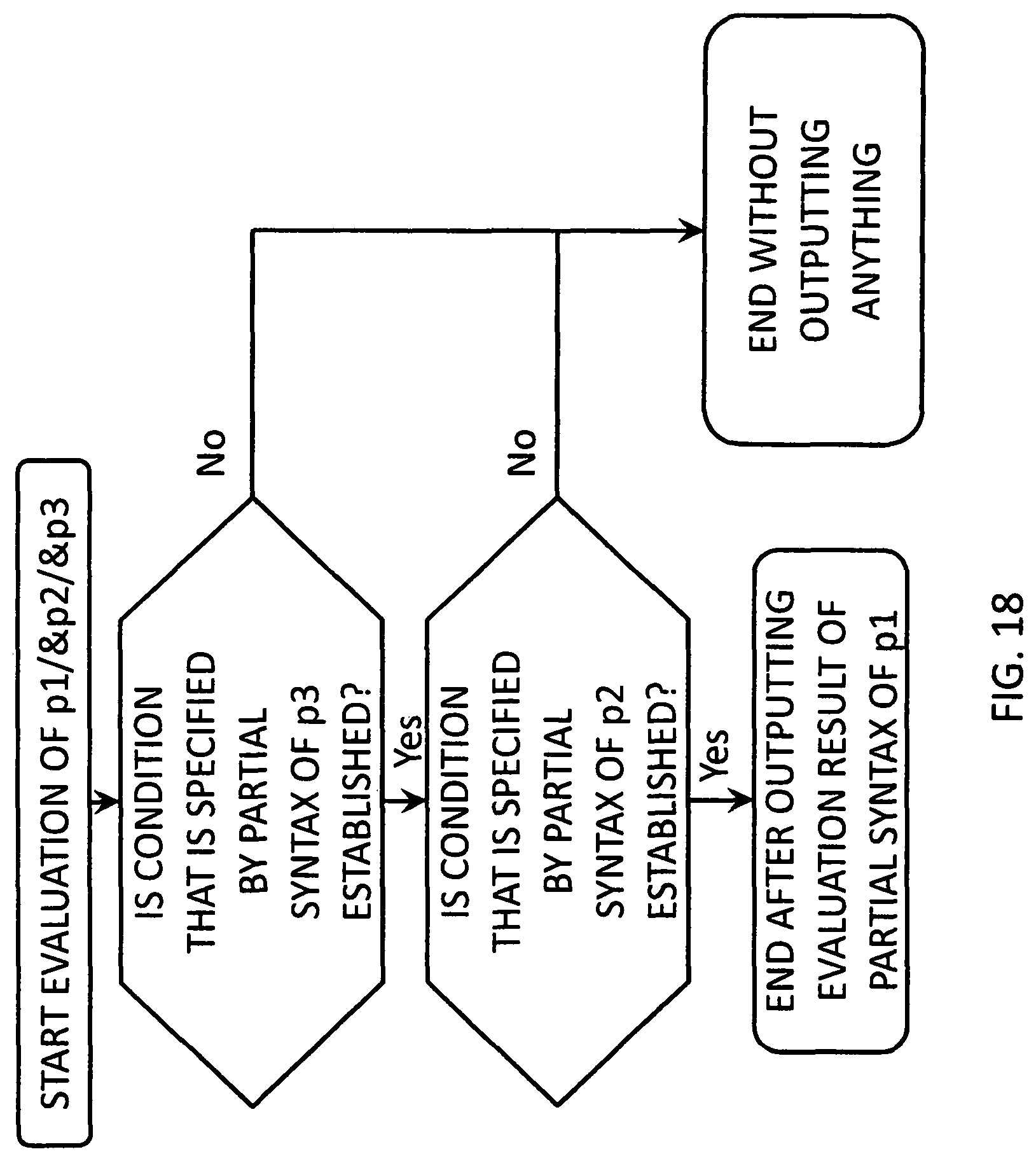

[0029] FIG. 18 is a flowchart of the processing.

[0030] FIG. 19 is a flowchart of the processing.

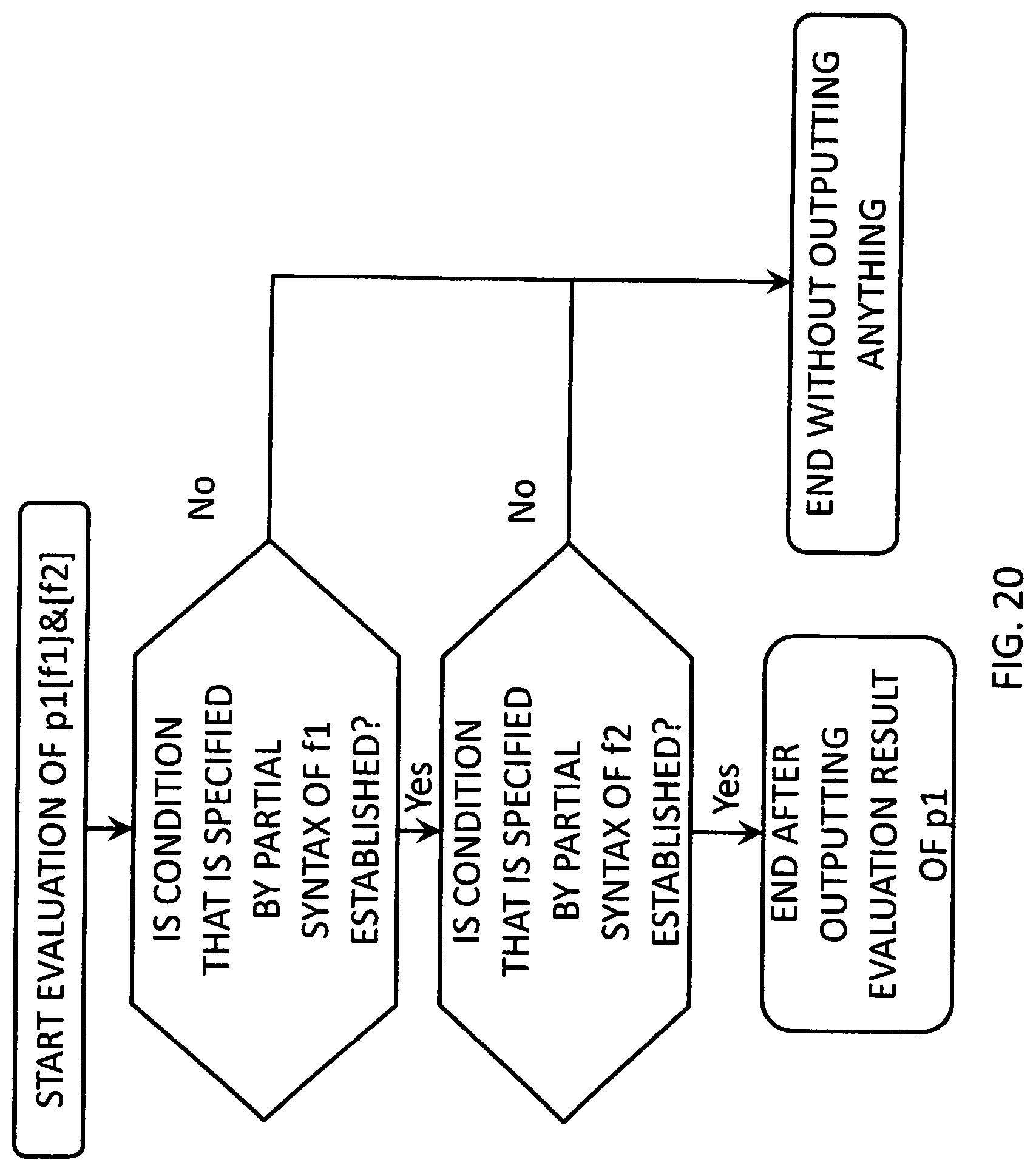

[0031] FIG. 20 is a flowchart of the processing.

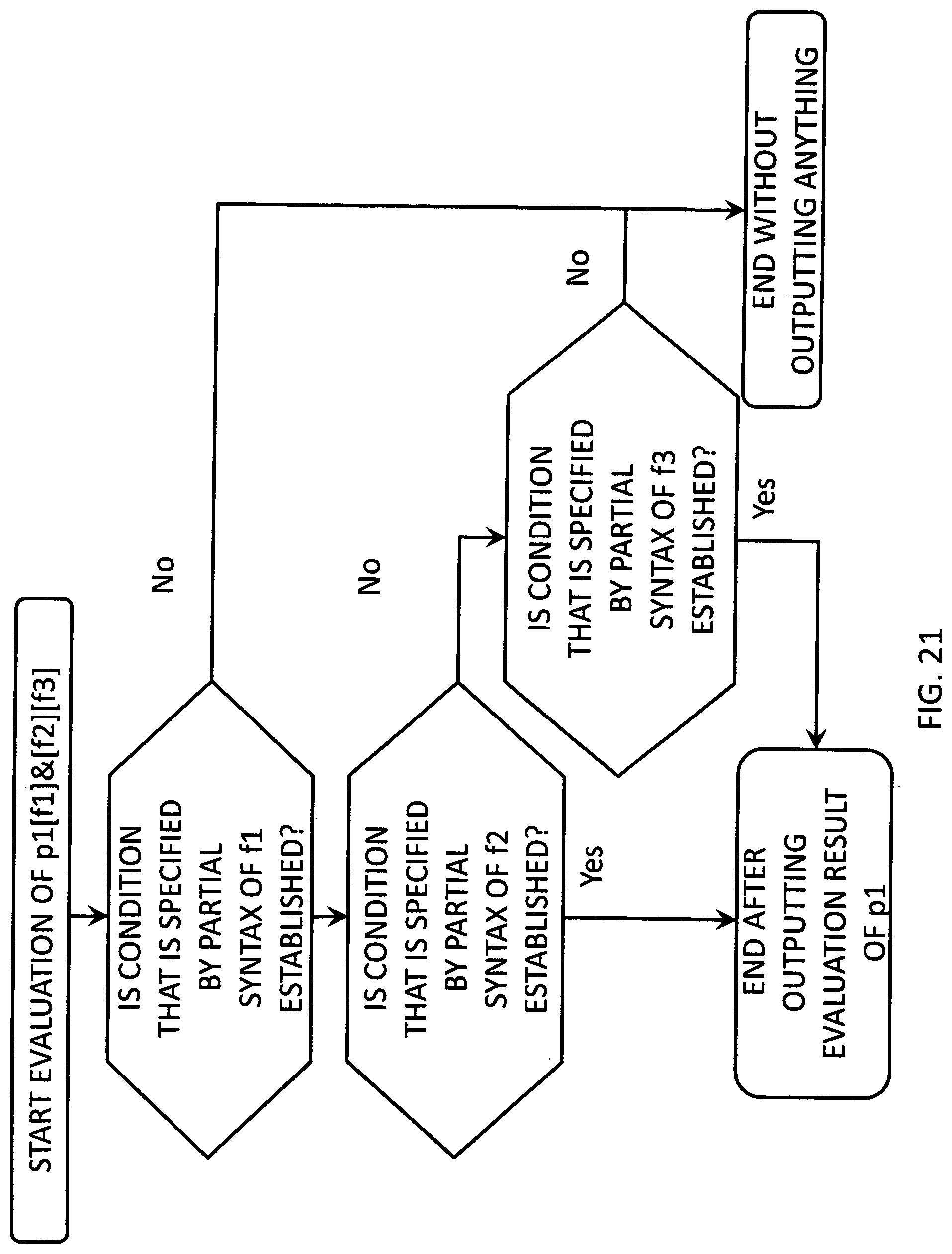

[0032] FIG. 21 is a flowchart of the processing.

[0033] FIG. 22 is an example of pattern matching.

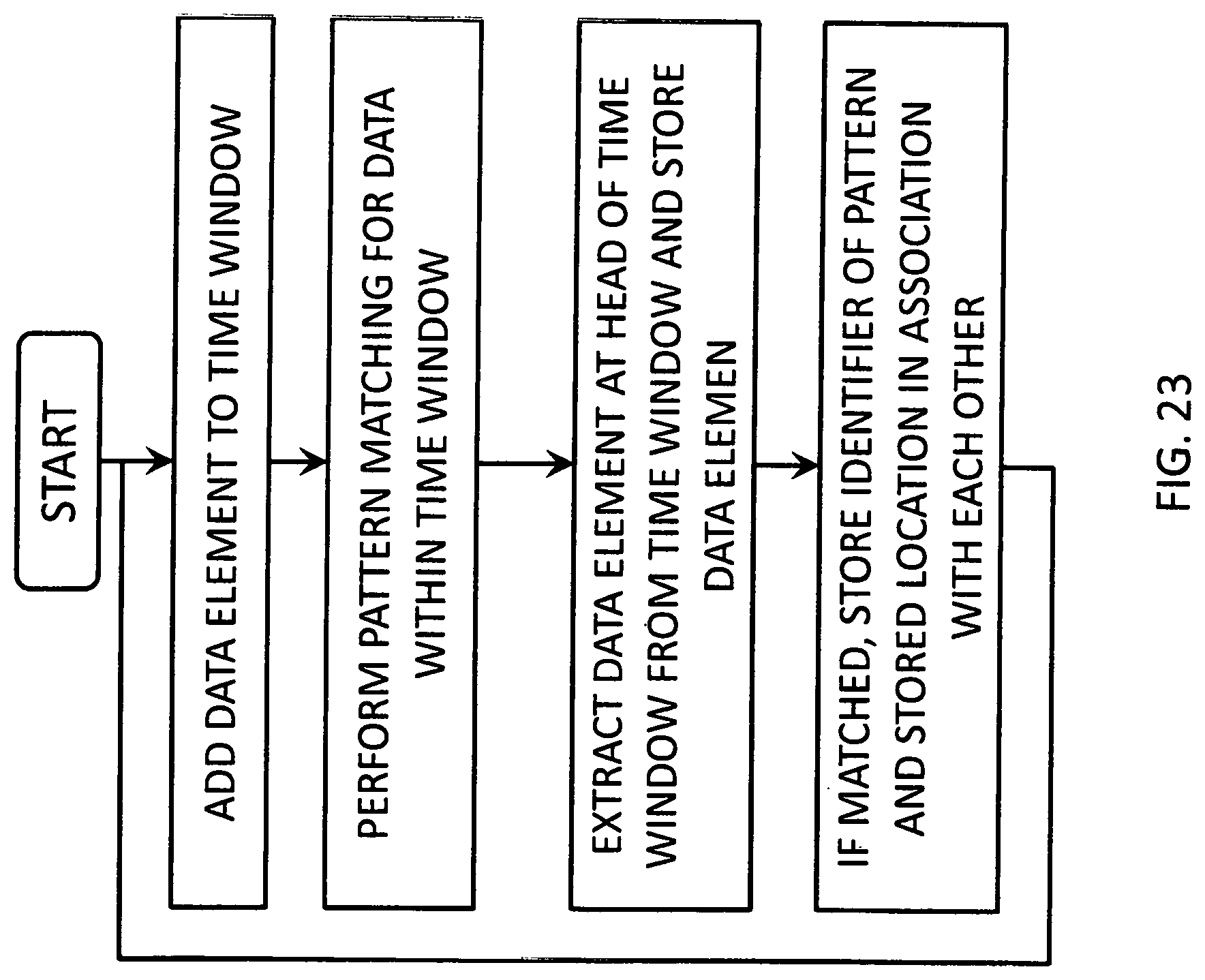

[0034] FIG. 23 is a flowchart of the processing.

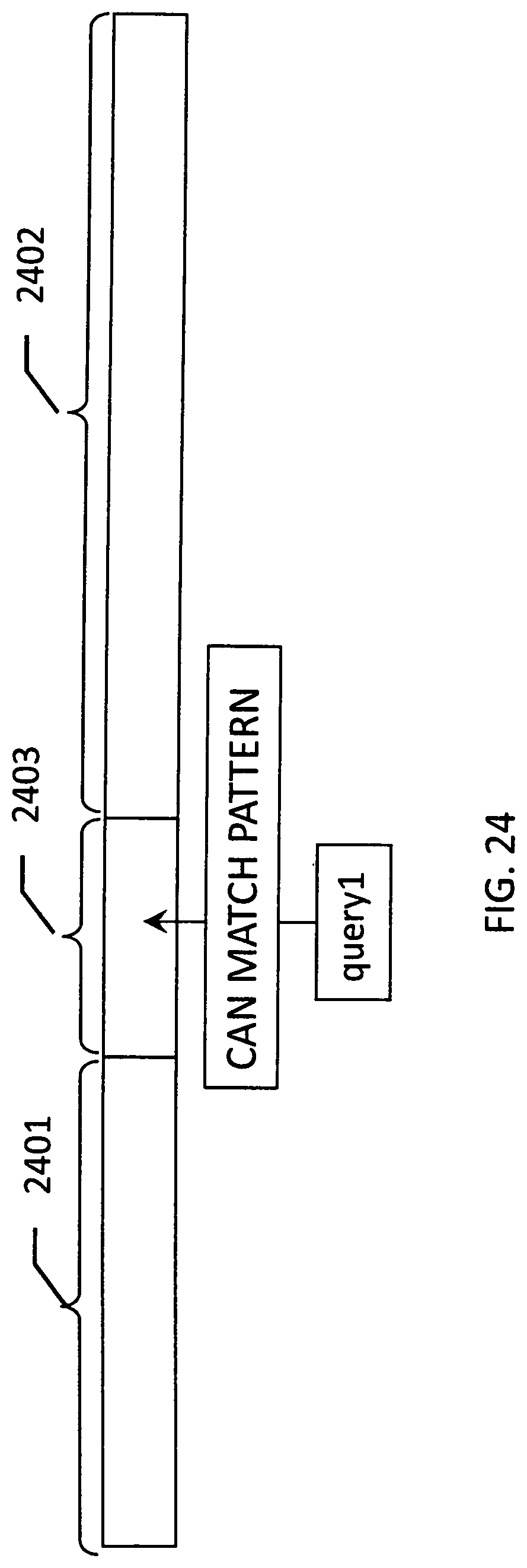

[0035] FIG. 24 is an example of pattern matching.

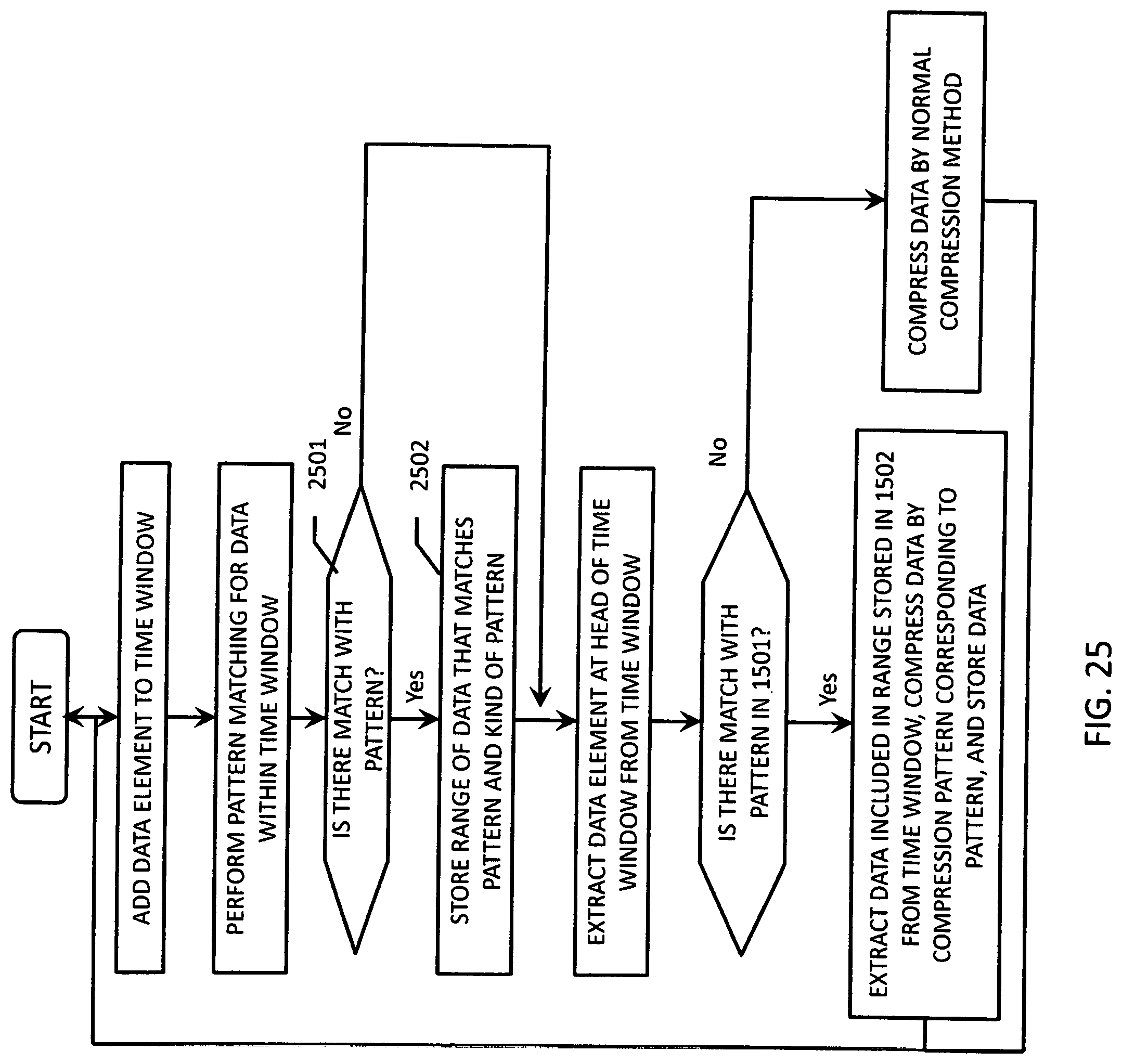

[0036] FIG. 25 is a flowchart of the processing.

[0037] FIG. 26 is example of time slicing.

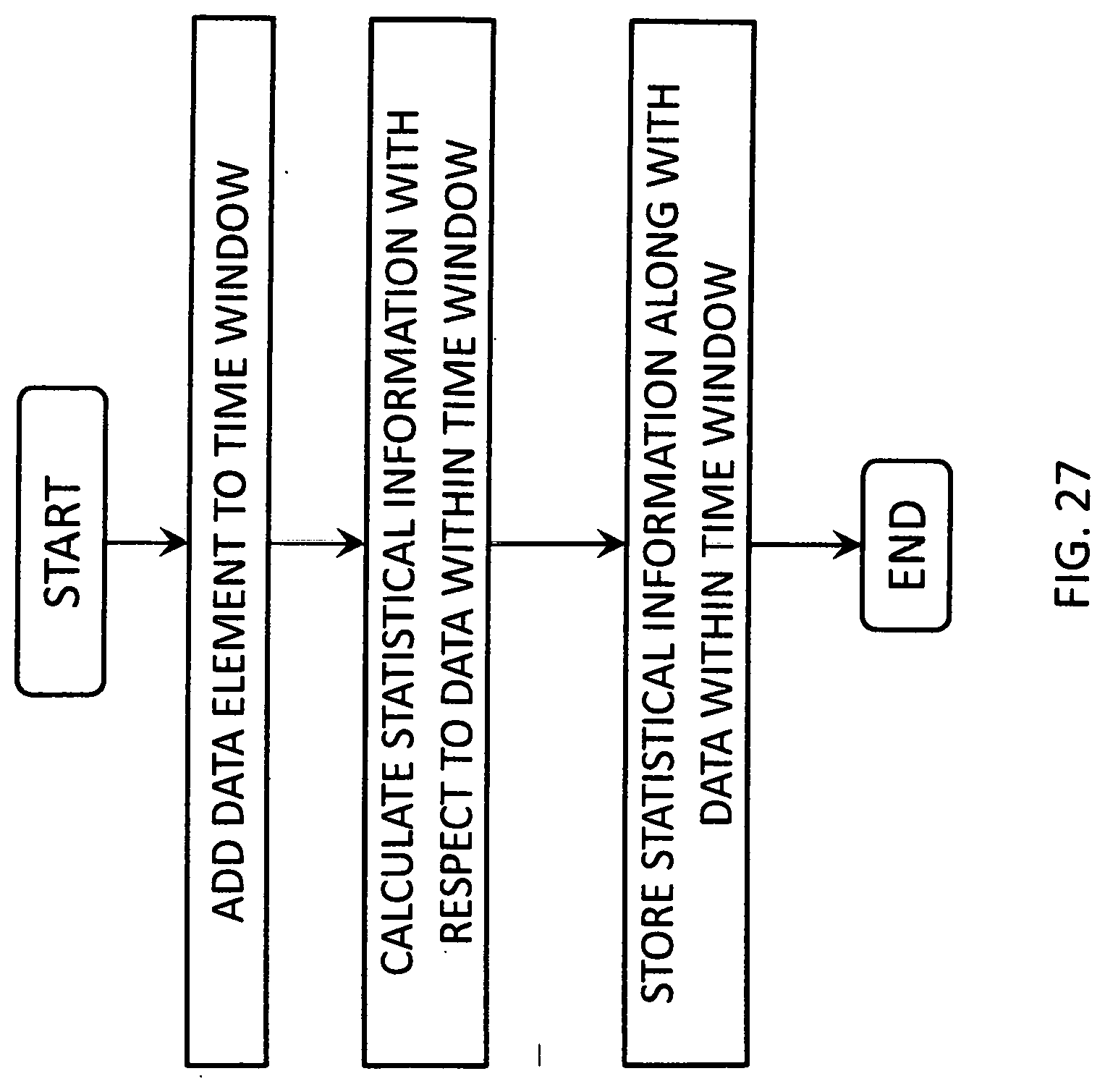

[0038] FIG. 27 is a flowchart of the processing.

DETAILED DESCRIPTION OF THE VARIOUS EMBODIMENTS

[0039] There is disclosed herein apparatus and processes for infrastructure performance data analysis (and analysis of other large amounts of performance data) which uses search techniques instead of relational databases to store and organize data. Data is stored in a special folder and directory structure with one directory for every day's worth of performance data. This allows data to be collected, processed and stored at a faster rate. All the performance data collected from one or more resources in an IT environment or one or more sensors in some other environment on the day corresponding to the directory is stored in files within the directory. There is a subdirectory for each resource where the directory name is the signature for that resource. There is one file for a group of attributes. Each attribute file has N sections, one for each attribute defined to be in the group. Each section has M values, where M values comprise the entire times series of values for that attribute for the entire day corresponding to the resource.

[0040] The result is that all the collected performance data is stored as patterns; the patterns being data from many sources which are sorted and stored in a time series in the special directory structure described above; so all data from all sources for a particular day is stored in one directory structure. This data structure allows the data set to be searched with time as one axis and each data element as the other axis.

[0041] Attribute values are stored either as band values or delta values. Each value for an attribute for a particular reading on a particular day is stored as Java UTF-8 encoded string with each value encoded as a single Unicode character. In other words, the numbers of each performance metric value are converted to letters of a Java UTF-8 encoded string. This allows searching using standard regular expressions the syntax of which is known and comprises a form of formal language. The various elements of syntax can be used to construct search queries which search through the performance data for patterns. Regular expressions can only search text and not numbers and that is why the performance metric readings or values have their numbers converted to text before storage.

[0042] The syntax of regular expression is rich with tools that allow complex searches and pattern analysis simply by writing an expression of the proper syntax thereby eliminating the time consuming need to write a custom program or "stored procedure" in SQL to do the same thing in searching the data of a relational database.

[0043] Unicode is a computing industry standard for the consistent encoding, representation and handling of text expressed in most of the world's writing systems. It is a set of approximately 1 million characters that span from hex 0 to hex 10FFFF. There are enough unicode characters to devote a single one to every symbol in the Japanese and Chinese languages and all the alphabets in the world and all the numbers in which performance metrics are expressed. Each performance metric value received from an agent is converted to one of these unicode characters.

[0044] Searching the performance data with regular expressions defining particular patterns of data from certain resources which satisfy certain conditions expressed in the regular expressions is analogous to searching large amounts of text for keywords and reporting only those portions of the text which fit a certain semantic usage or search string. This means the data pattern can be identified by use of regular expression to define the search criteria or a nondeterministic automaton as an equivalent thereto by encoding in advance the measured data to a describable code, i.e., a code searchable by use of a regular expression.

[0045] Therefore, the system according to the claimed technology encodes the performance metric data using an encoding method such as the encoding method of Unicode which results in the performance metric data being converted into characters that can be searched using regular expressions. Specific examples of the code that can be used to encode the performance data include Unicode. The Unicode standard defines 110,000 codes, which is an amount enough to encode the numerical values of the performance metric data. The following description is made on the assumption that the encoding is performed by Unicode, but in a system according to the technology claimed herein, any encoding method other than Unicode can be carried out as long as the encoded performance data can be searched using regular expressions.

[0046] The use of regular expressions allows complex patterns of performance data to be searched without having to write complex, custom programs called "stored procedures" which would be necessary if a relational database was used to store the data and SQL was used to search the database.

[0047] The system claimed herein allows users to draft their search queries as regular expressions. The user must know the syntax of regular expressions in order to do this unless the user wishes to only use predefined searches which some embodiments of the claimed technology provide for selection and execution by a user. A regular expression provides a concise and flexible means for matching strings of text, such as particular characters, words, or patterns of characters.

[0048] A regular expression is written in a formal language that can be interpreted by a regular expression processor, a program that either serves as a parser generator or examines text and identifies parts that match the provided specification. In the preferred embodiment, the MARS query language detailed in Appendix A attached here is the formal language from which searches implemented using regular expression are expressed.

[0049] Storing the Unicode characters encoding the performance metric data in the special directory structure described herein eliminates the need for use of an expensive database system such as Oracle even where very large amounts of data are collected and stored.

[0050] The performance data is collected by agent programs which are coupled to the sensors or are programmed on the IT resources being monitored. These agent programs collect, compress and send the performance data over the data link to the remote monitoring server which collects it, converts it to Unicode and stores it in the directory structure defined above. The remote monitoring server also provides an interface for a user to compose regular expression search queries and also provided "canned" searches which can be run by a user, each canned search being a predefined regular expression which the user may modify slightly to suit his or her purposes.

[0051] The process and apparatus for collecting, storing and processing performance metric data differs from SQL Database technology in at least two ways. First, the partition algorithm stores performance data based upon time slices. By recording data based on the time slice, it is possible to reduce the cost for creation of Index relating to the time axis when the data is added, which can suppress influences on the performance of the database. This is important in order to speed up the data search in a time-axis direction and to maintain the performance of data addition.

[0052] Further, by recording data by creating a slice for each data element in addition to the time slice, it is possible to perform the data search in the time axis and the axis of each data element. FIG. 2 and FIG. 3 are diagrams of a case where this method is realized for the directory structure and the file on the file system. The slice is realized by and implemented as the directory structure, and the data is recorded as a file on the file system. However, in addition thereto, by creating a key based on the date and the identifier of the data element and associating the key with the data, the same can be realized. The following description is made on the assumption that the above-mentioned method is realized by the directory structure and the file on the file system. In addition, combining the data by previously slicing the data by the time and the data element is important from the viewpoint of the reduction in the capacity at the time of compressing and storing the data. It is known that the data included in the same data element tends to assume a similar value and is therefore high in compressibility, i.e., the file size can be reduced by compaction programs.

[0053] Storing the data in time slices in the special directory structure, examples of which are shown in FIGS. 2 and 3, allows the data to be searched with time as one axis and each data element as the other axis. This is analogous to searching a large amount of text for keywords and then reporting only those portions of text that fit a certain semantic usage, such as matching the keywords.

[0054] Further, by using this method, it is also possible to speed up access to the stored data without affecting the entire database. In a case where the pattern that is often used for a search is known, such as a case where the data pattern that is often used for the search is registered in advance or a case where there is a tendency discernible from past searches or search results, it is possible to speed up the search using such a data pattern by performing the pattern matching before the storing. The processing performed at that time is described with reference to FIG. 22. FIG. 22 illustrates time-series data 2202 and a set 2203 of query data patterns that are often used for search. A time window 2201 represents a range of data that can be referred to when consecutive processing is performed for data that flows without interruptions such as the time-series data. In such a situation, it is possible to speed up the referring to the data stored by performing the processing as illustrated in FIG. 23.

[0055] First, after data is newly added to the time window, the pattern matching is performed for data included in the time window 2201 by using the known query data pattern 2203 that is often used for the search. After that, the data element at the head of the time window is extracted and stored, and if matched in the above-mentioned pattern matching at this time, it is predicted that the data is often referred to, and information relating to the stored location is stored together at the time of the storing.

[0056] The time required for this processing depends on the amount of data included in the time window and the number of data patterns used to perform the pattern matching. Further, the amount of data included in the time window normally has a size enough to be loaded in the memory. Therefore, there is a limitation on the referring to the data, which does not affect the entire database.

[0057] In addition, in the above-mentioned example, the stored location of the data that matches the data pattern that is often used for the search is stored together, but in the case where the data is compressed and stored or other such case, it is conceivable that target data cannot be extracted without decompressing the whole time series of data containing the string which matched even when the stored place is known. In that case, this problem can be avoided by, as illustrated in FIG. 24, compressing and storing only the data matching the query data pattern 2203 that is often used for the search in the manner of being ready to be extracted. In FIG. 24, the partial syntaxes 2401 and 2402 are data stored by a normal compression method, and the partial syntax 2403 is obtained by separately compressing the data matching the query data pattern 2203 that is often used for the search. Further, FIG. 25 illustrates the flow of the processing performed at this time. The matching processing is performed for the data included in the time window in the same manner as in FIG. 23, but the different point is that, when the data matches the pattern (Step 2501:YES), the data is encoded by another encoding method and stored (Step 2502).

[0058] Note that, the size of the time slice used in this method is assumed to be specified in a range that allows the data search to be performed at a satisfactorily high speed, but there is a fear that the amount of data within the time slice may become too large due to a change in the sampling rate or the like. In such a case, aside from changing the size of the time slice, by calculating statistical values of the data included in a given time range and then storing the statistical values together, it is possible to speed up the referring to the data. FIG. 26 illustrates an example thereof. In this case, it is assumed that such data is stored in two time slices 2601 and 2602 which include data of a, b, c, d, and e and data of d, e, f, and g, respectively, as the statistical values. At this time, assuming that a search is performed for the data string of fg, by referring to the statistical values in advance, it turns out that there is no need to search the time slice 2601, which can reduce the time required for the search.

[0059] FIG. 27 illustrates this flow. The statistical values are calculated with respect to the data included in the time window, and the results are stored along with the data. In this case, the range of data used to store the statistical values can be freely set in accordance with the degree of detail of the statistical values necessary for the data search, the response time obtained at the time of the data search, or the like, and the ranges of data may overlap with each other or may include data that does not include in any of the ranges.

[0060] The second difference between the claimed technology and the prior art is that the method of analysis and search of the performance data is based upon regular expressions which are used to search Unicode encoded text where the performance metric numbers have been converted to Unicode text characters. Regular expressions have a fixed, predefined syntax and semantics (together considered a grammar) and a variety of expressions can be formed using this syntax and semantics to search the performance data for patterns that meet criteria expressed in the regular expressions composed for the custom search. Regular expressions can be derived for all different kinds of search to limit the search to particular resources, particular attributes of those resources, particular days or particular time intervals during particular days, etc. Great flexibility is provided without the complexity and labor of having to write custom programs in the form of stored procedures to find the right data and analyze it.

[0061] The processes described here to search and analyze performance metric data are inspired by and somewhat similar to XPATH technology. XPATH is a technique used to traverse XML document data. XPATH-like techniques are used here to analyze infrastructure performance metric data and changes to that data over time. The processes described herein extend the XPATH notions to the search and analysis of data organized and stored by time slice which makes the search and analysis techniques taught herein efficient and fast. Search and analysis of the performance data is done using path-based techniques. A graph is created that represents the data. The graph G is a representation of vertex and edges (V,E). An edge connects two vertices and vertex has the ability to evaluate an expression and then, based on the expression, allow for a traversal through an appropriate edge.

[0062] FIG. 1 is a block diagram of a typical server on which the processes described herein for organizing, storing and searching performance data can run. Computer system 100 includes a bus 102 or other communication mechanism for communicating information, and a processor 104 coupled with bus 102 for processing information. Computer system 100 also includes a main memory 106, such as a random access memory (RAM) or other dynamic storage device, coupled to bus 102 for storing information and instructions to be executed by processor 104. Main memory 106 also may be used for storing temporary variables or other intermediate information during execution of instructions to be executed by processor 104. Computer system 100 further usually includes a read only memory (ROM) 108 or other static storage device coupled to bus 102 for storing static information and instructions for processor 104. A storage device 110, such as a magnetic disk or optical disk, is provided and coupled to bus 102 for storing information and instructions. Usually the performance data is stored in special directory structures on storage device 110.

[0063] Computer system 100 may be coupled via bus 102 to a display 112, such as a cathode ray tube (CRT) of flat screen, for displaying information to a computer user who is analyzing the performance data. An input device 114, including alphanumeric and other keys, is coupled to bus 102 for communicating information and command selections to processor 104. Another type of user input device is cursor control 116, such as a mouse, a trackball, a touchpad or cursor direction keys for communicating direction information and command selections to processor 104 and for controlling cursor movement on display 112. This input device typically has two degrees of freedom in two axes, a first axis (e.g., x) and a second axis (e.g., y), that allows the device to specify positions in a plane.

[0064] The processes described herein to organize, store and search performance data uses computer system 100 as its hardware platform, but other computer configurations may also be used such as distributed processing. According to one embodiment, the process to receive, organize, store and search performance data is provided by computer system 100 in response to processor 104 executing one or more sequences of one or more instructions contained in main memory 106. Such instructions may be read into main memory 106 from another computer-readable medium, such as storage device 110. Execution of the sequences of instructions contained in main memory 106 causes processor 104 to perform the process steps described herein. One or more processors in a multi-processing arrangement may also be employed to execute the sequences of instructions contained in main memory 106. In alternative embodiments, hard-wired circuitry may be used in place of or in combination with software instructions to implement the invention. Thus, embodiments of the claimed technology are not limited to any specific combination of hardware circuitry and software.

[0065] The term "computer-readable medium" as used herein refers to any medium that participates in providing instructions to processor 104 for execution. Such a medium may take many forms, including but not limited to, non-volatile media, volatile media, and transmission media. Non-volatile media include, for example, optical or magnetic disks, such as storage device 110.

[0066] Volatile media include dynamic memory, such as main memory 106. Transmission media include coaxial cables, copper wire and fiber optics, including the wires that comprise bus 102. Transmission media can also take the form of acoustic or light waves, such as those generated during radio frequency (RF) and infrared (IR) data communications. Common forms of computer-readable media include, for example, a floppy disk, a flexible disk, hard disk, magnetic tape, any other magnetic medium, a CD-ROM, DVD, any other optical medium, punch cards, paper tape, any other physical medium with patterns of holes, a RAM, a PROM, and EPROM, a FLASH-EPROM, any other memory chip or cartridge, a carrier wave as described hereinafter, or any other medium from which a computer can read.

[0067] Various forms of computer readable media may be involved in supplying one or more sequences of one or more instructions to processor 104 for execution. For example, the instructions may initially be borne on a magnetic disk of a remote computer. The remote computer can load the instructions into its dynamic memory and send the instructions over a telephone line using a modem. A modem local to computer system 100 can receive the data on a telephone line or broadband link and use an infrared transmitter to convert the data to an infrared signal. An infrared detector coupled to bus 102 can receive the data carried in the infrared signal and place the data on bus 102. Bus 102 carries the data to main memory 106, from which processor 104 retrieves and executes the instructions. The instructions received by main memory 106 may optionally be stored on storage device 110 either before or after execution by processor 104.

[0068] Computer system 100 also includes a communication interface 118 coupled to bus 102. Communication interface 118 provides a two-way data communication coupling to a network link 120 that is connected to a local network 122. For example, communication interface 118 may be an integrated services digital network (ISDN) card or a modem to provide a data communication connection to a corresponding type of broadbank link to the internet. As another example, communication interface 118 may be a local area network (LAN) card to provide a data communication connection to a compatible LAN. Wireless links may also be implemented. In any such implementation, communication interface 118 sends and receives electrical, electromagnetic or optical signals that carry digital data streams representing various types of information.

[0069] Network link 120 typically provides data communication through one or more networks to other data devices. For example, network link 120 may provide a connection through local network 122 to a host computer 124 or to data equipment operated by an Internet Service Provider (ISP) 126. ISP 126 in turn provides data communication services through the worldwide packet data communication network, now commonly referred to as the "Internet" 128. Local network 122 and Internet 128 both use electrical, electromagnetic or optical signals that carry digital data streams. The signals through the various networks and the signals on network link 120 and through communication interface 118, which carry the digital data to and from computer system 100, are exemplary forms of carrier waves transporting the information.

[0070] Computer system 100 can send messages and receive data, including program code, through the network(s), network link 120, and communication interface 118. In the Internet example, a server 130 which is having its performance data monitored might transmit performance data via an agent program that collects it through Internet 128, ISP 126, local network 122 and communication interface 118 to computer system 100. The received performance data is stored and can be searched by the processes described later herein.

[0071] The system according to the claimed technology has on the software and data side the following components which are executed and stored on the hardware platform described above or similar.

Data Store Manager;

Query Request Handler;

Data Access Manager;

Probe Interface; and

[0072] Proprietary non-relational database referred to as the NRDB and detailed in the Directory Structure heading below and illustrated in FIGS. 2 and 3

Data Store Manager

[0073] This component receives data from probes in a well defined format, and stores the received data in the NRDB. A probe is an external software program which collects data on a periodic basis from an external data source and writes data into a format which can be processed by Data Store Manager. The Data Store Manager can have any program structure so long as it can receive data in the probe data format described elsewhere herein, decompress it and store it in the NRDB in the directory structure and data format described herein for the NRDB. In the preferred embodiment, it will have a program structure which can perform the processing of the flowchart of FIG. 5. It can run on any off the shelf computer having sufficient speed, memory capacity and disk capacity to store the performance data being collected.

Query Request Handler

[0074] This component accepts search queries from external applications or users, and sends back the results. The query language is a proprietary syntax for regular expressions which is given below under the Query Definition Language Heading, and which provides constructs for specifying search patterns to analyze data. The Query Request Handler can have any program structure which can receive query requests with regular expressions embedded therein having the syntax described below, and parse those queries and perform the processing of the flowchart of FIG. 7. It can run on any off the shelf computer having sufficient speed, memory capacity and disk capacity to store the performance data being collected.

Data Access Manager

[0075] This component provides access to the data stored in the claimed technology's proprietary non-relational database (NRDB). This component internally employs standard caching techniques to provide results faster. The Data Access Manager can have any program structure which can access directory structures like those of the NRDB of which FIGS. 3 and 4 are examples, and which supports the Query Request Handler requests for data from the NRDB to perform the processing of the flowchart of FIG. 7. It can run on any off the shelf computer having sufficient speed, memory capacity and disk capacity to store the performance data being collected.

Probe Interface

NRDB

[0076] All the data in the claimed technology is stored in NRDB. NRDB uses a normal file system consisting of files and folders. It uses a special folder structure and special encoding of data files to optimize the storage and access of data.

[0077] The entire software that implements the Data Store Manager, the Search Handler, the Data Access Manager and the Probe Interface, in the preferred embodiment is designed to run on commodity hardware inside a Java virtual machine. Commodity hardware is defined as regularly available Intel x86/64 architecture based computers. Standard Linux distribution such as CentOS is used as the base operating system.

[0078] As an example of how the system works to collect performance metric data and analyze it, suppose server 130 is a server which has a couple of virtual machines running on it the performance of which is to be monitored. The performance metric data for each virtual machine is collected by an agent or probe process (not shown) or, in some embodiments, a separate probe process for every virtual machine. The performance data is gathered on a per day basis to measure various performance metrics on server 130. Performance data of the server 130 itself such as CPU cycle utilization, hard disk access time, hard disk capacity, etc. may also be gathered. There are usually several metrics that are measured simultaneously, often on a per minute basis.

[0079] This performance metric data gathered by the agent process is compressed and packetized and the packets are sent over the internet 128 to ISP 126 to which a local area network 122 is connected. The local area network is coupled via a network line 120 to the communications interface 118 of the monitoring server system 100.

Probe Data Format

[0080] The performance metric data for every element is collected by a probe. A probe is a program running on the computer having the element or attribute being monitored. The probe for each element periodically or sporadically (usually a call is made every minute) makes application programmatic interface calls to the operating system of the computer or other machine to gather the performance data on the element it is monitoring. The probes can be any agent hardware and/or software combination that can collect the desired performance metric data and put it into the data format described below for probe data.

[0081] Probes don't have to be just for IT attributes. They can also gather data for mechanical structures or automotive systems. For example, engineers designing bridges may attach temperature and strain sensors at various positions on the structures, each of which is read by a probe program running on a computer which periodically interrogates each sensor from time to time, takes its reading and sends it elsewhere for storage and analysis. The probe gathers all the sensor data, formats the data into the data structure format described below, compresses the data structure and packetizes the compressed data for transmission over any data path to a system elsewhere for analysis. Likewise for cars, engines, etc. The probe system is more or less like the modern day equivalent of telemetry systems used on satellites and missiles that feed performance data back to an earth station by a radio telemetry link.

[0082] The performance metric data values gathered by the probes are typically packetized for transmission over the internet. The primary objective of the probe data format is to reduce the amount of data which probe will produce so as to reduce bandwidth requirements on the data link over which the probe data is sent. This reduces the amount of storage required to store the data and also makes the transmission to another location faster. The probe programs do not do the conversion of the performance metric data to unicode in the preferred embodiment, but in some alternative embodiments, they could.

[0083] The probe collects all the attribute data for one day on all the elements it is monitoring and creates a directory structure such as the one shown in FIG. 4. The directory structure contains files which store the time series of attribute values (performance metric data) for every attribute for which the probe collected data. The attribute values are numbers and are not converted by the probe to unicode values. That happens at the monitoring server end.

[0084] In FIG. 4, block 180 represents the top level directory, block 182 represents a folder for all host type elements, block 184 represents a folder for all disk type elements being monitored. Each of the folders 182 and 184 preferably contains a text file which contains the attribute values obtained by the probe for every element being monitored of the type symbolized by the subdirectory. Each text file preferably contains all the performance metric values for all the monitored elements in the same group with one row containing the performance metric values measured for one of the elements being monitored in that group. For example, the host folder 182 may have a single text file A1.txt, but that file preferably contains multiple rows, one for each host element being monitored. For example, blocks 186 and 188 contain the performance metric values for two particular hosts being monitored in the group within A1.txt called H1 and H2. H1 and H2 in blocks 186 and 188 represent unique strings uniquely identifying the hosts for which the performance metric data was collected. H1 has 1440 performance metric measurements stored in the row symbolized by the V1, V2 . . . V1440 values in a comma delimited list. For host H1, a performance value was measured every minute. The same is true for host H2. Blocks 190 and 192 preferably contain performance metric values collected by the probe for two disks D1 and D2 in the group of monitored elements "disk" represented by folder 184. These performance metric values for disks D1 and D2 are preferably stored in different sections or rows of a text file named A2.txt.

[0085] The whole collection of data files and subdirectories is preferably zipped by the probe into one zip file which is a compressed version of the data structure. By sending a compressed version of the data, the bandwidth requirement on the data path between the probe and the monitoring server(s) is greatly reduced. When the zip file is unzipped, the data structure like that in FIG. 4 (or whatever the data structure is the number of elements and attributes being monitored) results.

[0086] Any payload produced by the probe must conform to the following structure:

The first file named ListOfFiles<YYYYMMDD_HHmmSS>_<base64 encoded text of encrypted value of (SiteName+"_"+ServerName+"_"

ArraySerialNumber)>_<ProbeType>.txt

[0087] Each line inside this file preferably has the name of a file which is part of this payload [0088] If the file has configuration or events data, the file must be named Conf<YYYYMMDD_HHmmSS>_<base64 encoded text of encrypted value of (SiteName+"_"+ServerName +"_"+ArraySerialNumber)>_<ProbeType>.zip.enc [0089] If the file has performance data, the file must be named Perf<YYYYMMDD_HHmmSS>_<base64 encoded text of encrypted value of (SiteName+"_"+ServerName+"_"+ArraySerialNumber)>_<ProbeType>.zip- .enc

Where:

[0089] [0090] SiteName--name of the site assigned for the probe [0091] ServerName--name of the entity from which data is being collected, it is the text filled in by the user during probe configuration. [0092] ArraySerialNumber--Optional additional information to further identify the entity. [0093] ProbeType--Type of entity from which data is being collected--VMWare, SMIS, NetApp, Amazon ECS, Bridge Sensors One or more .zip file are identified in the list of files The configuration zip file preferably contains one or more files which can be of two types:

[0094] Snapshot

[0095] Mini-snapshot

Snapshot

[0096] The snapshot type file preferably contains the entire configuration about the data source to which the probe is connected. The name of this file is: <Site Name>_<DataSource>_snapshot_<YYYYMMDD>_<HHMMSS>_<- Version>.txt, where: [0097] 2. <Site Name>: Identifier for location (actual physical site) where the probe is situated [0098] 3. <Data Source>: Identifier for the data source (resource, i.e., host, disk array, printer, etc.) from which the data is being collected [0099] 4. <YYYYMMDD>_<HHMMSS>: The date and time when the snapshot was made [0100] 5. <Version>: Version of the file. The file format of snapshot is preferably as follows:

TABLE-US-00001 [0100] %meta probe_id:<Identifier> probe_type:<Probe Type> probe_site:<Site Name> probe_server:<Server Name> probe_version:<Probe Version> %meta { t:<YYYYMMDD_HHMMSS> { R:<ResourceType>#<Resource Id> O:{<ResourceType>#<Another_Resource_id>,}+? b: <Begin Time YYYYMMDD_HHMMSS >? e:<End Time YYYYMMDD_HHMMSS >? a:{<Attribute Id>=<Attribute Value>}+ r:{<Resource Type>#<Resource Id>,}+ $:{<Event Id> <space><Event String>}+ }+ }+

Example

TABLE-US-00002 [0101] %meta probe_id:Cust_192.168.0.63 probe_type:VMWare probe_site:Cust1 probe_server:192.168.0.63 probe_version:10 %meta t:20110624_062248 R:dc#Cust_192.168.0.63_datacenter-2 a:name=MTNVIEW R:ds#Cust_192.168.0.63_datastore-205 a:name=FAS960_home a:capacity=51322806272 a:freeSpace=42685091840 a:uncommitted=17323200512 a:provisionedSpace=25960914944 a:type=NFS a:URL=netfs://192.168.0.50//vol/vol0/home/ a:sioc=disabled r:h#Cust1_192.168.0.63_host-171, R:ds#Cust1 _192.168.0.63_datastore-10 a:name=Storage1 $:AlarmSnmpCompleted Alarm `Host error`- an SNMP trap for entity 192.168.0.48 was sent

Updates

[0102] As configuration changes and configuration related events occur, they preferably will be written to a mini snapshot file. The name of this file will be:

<Site name>_<Data

Source>_minisnapshot_<YYYYMMDD>_<HHMMSS>_<version>.tx- t

<YYYYMMDD>_<HHMMSS>:

[0103] The format of this file is preferably exactly same as the snapshot file. The primary difference is that it will only have a subset of the data of the snapshot type of file. The subset captures the changes which have occurred in configuration data since the last time a snapshot file was made.

Performance Data

[0104] The performance data is a zip file which preferably has the following directory structure: [0105] 2. <YYYYMMDD_HHMMSS>--This directory name is the start time of the time series specified in this data set [0106] 3. <Resource Type>--One directory for each resource type [0107] 4. <Attribute Id>.txt--One file for each performance metric Each <Attribute Id>.txt has one or more lines where each line has the following format:

<Resource Signature>`,` {Value} `,` {`,`<Value>}+

[0108] The value list is a time ordered series of values for that performance metric for the resource specified at the beginning of the time. If the metric value does not exist for a particular point in time, then a blank or empty value is allowed.

NRDB File System Structure

[0109] The performance metric data is preferably stored in a file system structure as defined below. One directory is preferably created for each day in the format YYYYMMDD. All performance data for all the resources in the data model for a particular day are preferably stored in this directory. Under this directory, there is preferably a directory for each resource where the directory name is preferably the signature of that resource. Under this directory, there is preferably one file for a group of attributes. The directory will preferably look something like this:

TABLE-US-00003 <YYYYMMDD> - One Folder for each day <Resource Type> <AttributeGroupId>.perf

[0110] 5. <YYYYMMDD_HHMMSS>--This directory name preferably contains the start time of the time series specified in this data set [0111] 6. <Resource Type>--preferably one directory for each resource type [0112] <Attribute Id>.txt--preferably one file for each performance metric <AttributeGroupld>.perf file preferably stores processed values for each sample in a compressed format. This format is now described in detail. The file is preferably divided into "n" number of sections. Where "n` is the attributes which are defined to be in the same group. Each section preferably will hold "m" number of values--the entire time series values of that day for that resource's attribute. So, for example, if the probe sampling interval is 1 minute then there will be 1440 (1440 minutes in a day) values. Each <Attribute Id>.txt preferably has one or more lines where each line has the following format:

<Resource Signature>`,` {Value} `,` {`,`<Value>}+

[0113] The value list is preferably a time ordered series of values for that performance metric for the resource specified at the beginning of the time. If the metric value does not exist for a particular point in time, then a blank or empty value is allowed.

[0114] Currently, corresponding to each raw value of a performance metric attribute received from the probe, preferably two types of processed value are stored:

[0115] Band Value [0116] An attribute can define the "fidelity" with which it will store the raw value. This is called in Band Factor. Band factor is preferably an integer with a minimum value preferably of 1 and maximum of preferably any positive integer value. With a band factor of 1, there is no loss of fidelity. The processed value is same as raw value. With a band factor 10, the processed value will preferably be 1/10.sup.th of the raw value rounded to the nearest integer.

[0117] Delta Value [0118] It is preferably the change in percentage from band value at time t-1 and band value at time t.

[0119] Each set of 1440 values of a performance metric attribute (assuming one value is measured every minute) are stored preferably as a Java UTF-8 encoded String. Each performance metric attribute value is preferably encoded as a single Unicode character in the String.

[0120] FIG. 2 is an example of a directory structure storing one day's performance data on a resource the performance of which is being monitored remotely. The processor 104 in FIG. 1 is programmed by instructions stored in main memory 106, according to one embodiment of the invention, to create a special directory structure with preferably one directory for each day's worth of data, and preferably one subdirectory for each resource for which performance metric data is being received. In FIG. 2, block 150 represents the directory created for storing the performance metric data collected on Aug. 14, 2011. The subdirectory represented by block 152 represents the subdirectory where performance data for the resource E1 is to be stored. Suppose in this example, that resource E1 is the server 130 in FIG. 1.

[0121] Each subdirectory preferably has the directory name in its signature. In this case, subdirectory 152 has 20110814 in its directory name which is preferably the name of the directory of which it is a part.

[0122] Each subdirectory preferably contains one attribute file for each group of attributes that are being measured by the performance metric data that stores performance metric values. Each attribute file preferably has N sections, one section for each attribute defined to be in the group for which the file was created. Each section preferably holds M performance metric values for the particular attribute whose values are recorded in that section. That section's data preferably comprises the entire time series of values for the attribute to which the section is devoted.

[0123] In the example of FIG. 2, there are only two groups of attributes in subdirectory 152 so there are only two files 154 and 156. Suppose each of these files represents one of the virtual machines running on server 130. Each file is a time slice of performance metric data values that records the entire day's worth of a metric in the section of that file devoted to storing values for that performance metric. Typically, if a metric has a measured value every minute, the section of the file devoted to that metric will have 140 comma delimited values for that metric encoded as a Java UTF-8 encoded string. UTF-8 is a multibyte character encoding for unicode. UTF-8 can represent every character in the unicode character set. Each of the 1,112,064 code points in the unicode character set is encoded in a UTF-8 string comprised of one to four 8-bit bytes termed octets. The earlier characters in the unicode character set are encoded using fewer bytes leading to greater efficiency. The first 128 unicode character set coincide with the 128 ASCII characters.

[0124] The system of the claimed technology has a mapping table that maps performance metric values into unicode characters and then encodes them with UTF-8. Since unicode only supports positive values, the unicode range is split and a first range of unicode values is mapped to positive performance metric values and a second range of unicode values is mapped to negative performance metric values.

[0125] However, this mapping method is merely an example. By examining the occurrence frequency of the values and assigning the code having a small size to the value having a high occurrence frequency, it is possible to reduce the storage size necessary to store the data. Further, if the distribution of the values differs depending on a kind of performance metric value and a time slot (time slice) for the measurement of data, by changing the mapping depending on the kind of performance metric value and the time slot for the measurement of data, it is possible to realize further reduction in the storage size necessary to store the data. In addition, a mapping method which assigns (quantizes) a plurality of values to one code at the time of the mapping is conceivable, but by adjusting a range of quantization in that case in accordance with a degree of detail (resolution) obtained when the data is used, it is possible to reduce the number of necessary codes. As a result, it is possible to use codes having a short code length, which can realize a reduction in the necessary storage size.

[0126] Each performance metric value from a measurement is preferably encoded as a single unicode character in the hexadecimal number system (hex).

[0127] Each new day's worth of data from all resources and all probes is preferably stored in a new directory structure. The names of the directories, subdirectories and files preferably include information about the day during which the data was gathered, the resources from which it was gathered and the particular group of attributes whose performance metric data is stored in the various sections of the file.

[0128] In the example of FIG. 2, the directory structure 150 has files 154 and 156 for one day of metric data gathered every minute for two different metrics from the same resource, represented by subdirectory 152. In other words, there is only one resource being monitored. Also, for the example of FIG. 2, there is only one attribute in each group of attributes and only two attributes in total have performance metric data gathered. The performance metric data is gathered on Aug. 14, 2011 so the directory 150 created to store that day's metric data is named 20110814. There is only one resource being monitored called E1 so there is created a subdirectory 152 called 20110814_E1. That subdirectory contains two files. The first file 154 is named E1/G1, and it stores the metric values for metric M1 in group 1 (which has only one section because there is only one metric M1 in the group E1/G1). The values of metric M1 are gathered every minute and are symbolized as values V1 through V1440 which are stored as a comma delimited list. The value V1 is the value of metric M1 taken at time 00:01:01 on 8/14/2011, i.e., the first minute of 8/14/2011. The value V2 is the value of metric M1 taken at time 00:02:01 on 8/14/2011, the second minute of 8/14/2011. The value V1440 is the value of metric M1 taken at time 23:59:01 which is the last minute of 8/14/2011. Therefore, the position of any particular value on the comma delimited list denotes the time at which the value was captured on 8/14/2011.

[0129] The second file 156 in the resource E1 subdirectory is named E1/G2 and it stores values for a metric M2 in group 2 (which also only has one metric in the group so there is only one section in the file). It has not been shown in detail since it has the same structure as the file E1/G1.

[0130] The values stored in each position of the file are preferably Unicode encoded meaning the numeric value of the metric's value has been mapped to a text character or string of characters in the encoding process. Other encoding processes which convert numeric values to text values could be used in other embodiments.

[0131] This allows these values to be searched using regular expressions which are a form of formal language (used in the sense computer scientists use the term "formal language") which has predefined rules of syntax and semantics (together called its grammar). The elements from which regular expressions can be formed are known and each element has its own known syntax for how it is structured and has its own unique and known semantics defining what it means. Persons wishing to analyze the performance metric data in any way, can compose a regular expression using the available elements for composing a regular expression and their syntax and semantics. Any regular expression syntax can be used to carry out the teachings of the invention, but the claimed technology uses a proprietary syntax which is optimized for the application and is disclosed elsewhere herein.

[0132] FIG. 3 is another example of a file system containing a separate directory for storing performance metric data for three different days for three different resources, each resource having two groups of attributes. The file system storing metric data is represented by block 158. Three days of performance data are stored in directories 160, 162 and 164, respectively. Each of these directories has three subdirectories named R1, R2 and R3, each of which is a folder which contains actual files of text data encoding performance metric values that have been measured and transmitted by the agents. Blocks 166 and 168 represent comma delimited text files named GRP1.TXT and GRP2.TXT storing the performance metric data gathered on Jul. 27, 2011 for resource 1 for group 1 and group 2 attributes, respectively.

[0133] The reason for grouping different attributes performance values in the same file is for speed of loading and analysis. Typically, an analysis of a resource will involve looking at patterns or values or value changes of several different attributes over a particular interval. If the attributes involved in the analysis are all grouped in the same group, they will preferably be stored in the same file. In this way, all the data needed to do the analysis can preferably be loaded into memory for analysis simply by reading the appropriate file containing the attribute group for the resource under analysis from the directory structure corresponding to the day of interest. That file is loaded into memory by a standard file access call to the operating system, and the regular expression search or searches can be performed on the data. This is faster than having to load several different files or having to do SQL queries to a database which would require a larger number of reads.

[0134] FIG. 5 is a high level flowchart of the process the monitoring server preferably performs to receive the zip files of performance metric data from a probe, recover the data and store it. Block 200 represents the process of receiving the zip file of performance metric data from the probe. Block 202 represents the process of decompressing the zip file to recover the data structure such as that shown in FIG. 4. Block 204 represents the process of converting the numerical performance metric values stored in the text files preferably to unicode characters using a mapping table the server uses for such purposes. Block 206 represents the process of storing the unicode data structure derived in step 204 preferably in the appropriate parts of the NRDB data structure. Usually this just entails storing the entire directory and all its files on disk since the data structure is already structured as one directory for the particular day on which the data was collected preferably with individual text files of metric data for each element being monitored in subdirectories for the type of element each text file represents.

Example of how a Regular Expression can be Used to Analyze the Metric Performance Data

[0135] Suppose an analyst wanted to know if CPU utilization was between 90% and 100% for at least 5 minutes or more. The regular expression syntax to make a search and analysis of the performance metric data for CPU utilization would be in generic syntax:

[U90-U100]{5,} -100 -200

[0136] To convert this regular syntax to take into account the unicode encoding of the CPU utilization metric values, suppose a CPU utilization metric value representing 90% utilization is mapped to unicode hex character a, 92.5% CPU utilization is mapped to unicode hex character b, 95% to hex character c, 97.5% to hex character d, and 100% to hex character e. If CPU utilization metric values are measured every minute, then a regular expression to determine if the CPU utilization was between 90% and 100% for at least 5 minutes would be:

[a-e]{5}[g] which means if five consecutive values in the file storing CPU utilization values for the CPU in question on the day in question were any combination of hex characters a through e, then the expression evaluates to true. This means that every time on that particular day the CPU utilization metric values had five consecutive values which were any combination of hex a through hex e, then for each of those intervals, the CPU utilization was between 90% and 100%. This may mean the CPU is maxing out and another CPU should be added.