Generating And Displaying Blur In Images

SUSZEK; Austin

U.S. patent application number 15/976818 was filed with the patent office on 2019-11-14 for generating and displaying blur in images. This patent application is currently assigned to Google LLC. The applicant listed for this patent is Google LLC. Invention is credited to Austin SUSZEK.

| Application Number | 20190347771 15/976818 |

| Document ID | / |

| Family ID | 66049716 |

| Filed Date | 2019-11-14 |

View All Diagrams

| United States Patent Application | 20190347771 |

| Kind Code | A1 |

| SUSZEK; Austin | November 14, 2019 |

GENERATING AND DISPLAYING BLUR IN IMAGES

Abstract

Implementations relate to generating and displaying blur in images. In some implementations, a method includes generating a plurality of mipmap images based on an input image, including applying a blur to a respective plurality of pixels derived from the input image for each mipmap image. In some examples, the blur is at least partially based on depth data for the image. Parameter data is obtained that indicates an output focal plane depth for an output focal plane of an output image and an output focal range in front of the output focal plane. Output pixel values of the output image are generated, including determining blurred pixel values based on one or more of the mipmap images selected based on the output focal plane depth and the output focal range. The blurred pixel values are based on particular pixels associated with a depth outside the output focal range.

| Inventors: | SUSZEK; Austin; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Google LLC Mountain View CA |

||||||||||

| Family ID: | 66049716 | ||||||||||

| Appl. No.: | 15/976818 | ||||||||||

| Filed: | May 10, 2018 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 5/50 20130101; G06T 2207/20016 20130101; G06T 5/002 20130101; G06T 7/50 20170101; G06T 7/194 20170101; G06T 2207/20092 20130101; G06T 2207/20212 20130101; G06T 2210/62 20130101; G06T 3/40 20130101; G06T 5/20 20130101 |

| International Class: | G06T 5/00 20060101 G06T005/00; G06T 7/50 20060101 G06T007/50; G06T 5/50 20060101 G06T005/50; G06T 7/194 20060101 G06T007/194; G06T 3/40 20060101 G06T003/40 |

Claims

1. A computer-implemented method to provide blurring in an image, the method comprising: obtaining an input image; generating a plurality of mipmap images based on the input image, wherein generating each mipmap image of the plurality of mipmap images includes applying a blur to a respective plurality of pixels derived from the input image; obtaining parameter data that indicates an output focal plane depth for an output focal plane of an output image and that indicates an output focal range in front of the output focal plane; and generating output pixel values of the output image that include output blur, wherein generating the output pixel values includes determining blurred pixel values based on one or more selected mipmap images selected from the plurality of mipmap images based on the output focal plane depth and the output focal range, and wherein the blurred pixel values are based on particular pixels associated with a depth outside the output focal range.

2. The computer-implemented method of claim 1 wherein generating the plurality of mipmap images includes: generating a plurality of background mipmap images based on the input image, wherein generating each of the background mipmap images includes applying a respective background blur to each of a plurality of background pixels derived from the input image, wherein each background pixel has a respective depth behind a respective focal plane associated with the background pixel; and generating a plurality of foreground mipmap images based on the input image, wherein generating each of the foreground mipmap images includes applying a respective foreground blur to each of a plurality of foreground pixels derived from the input image, wherein each foreground pixel has a respective depth in front of a respective focal plane associated with the foreground pixel, wherein the one or more selected mipmap images include one or more of the background mipmap images and one or more of the foreground mipmap images.

3. The computer-implemented method of claim 2 wherein generating output pixel values includes: determining opaque pixel values based on at least one background mipmap image selected based on the output focal plane depth; determining overlay pixel values based on at least one foreground mipmap image selected based on the output focal range; and combining the opaque pixel values with corresponding values of the overlay pixel values to determine the output pixel values of the output image.

4. The computer-implemented method of claim 3 wherein determining the opaque pixel values based on the at least one background mipmap image includes, for each background pixel derived from the input image: selecting one or more background mipmap images from the plurality of background mipmap images based on the depth of the background pixel and based on the output focal plane depth; and using the one or more background mipmap images to determine a particular opaque pixel value corresponding to the background pixel.

5. The computer-implemented method of claim 3 wherein applying the respective foreground blur to each of the plurality of foreground pixels includes: applying the respective foreground blur to each foreground pixel based on surrounding pixels of the input image that surround the foreground pixel, and based on a foreground focal plane depth of a foreground focal plane associated with the foreground mipmap image.

6. The computer-implemented method of claim 5 wherein determining the overlay pixel values based on at least one foreground mipmap image includes, for each foreground pixel derived from the input image: selecting one or more foreground mipmap images of the plurality of foreground mipmap images based on the output focal plane depth and based on the output focal range; and using the one or more foreground mipmap images to determine a particular overlay pixel value corresponding to the foreground pixel.

7. The computer-implemented method of claim 3 wherein the combining includes using transparency values associated with the overlay pixel values, the transparency values indicating a respective transparency of the overlay pixel values.

8. The computer-implemented method of claim 1 wherein generating the plurality of mipmap images includes downscaling the input image to a plurality of downscaled images, and wherein applying the blur to the respective plurality of pixels includes applying the blur to a respective plurality of downscaled pixels of the downscaled images.

9. The computer-implemented method of claim 2 wherein generating the background mipmap images includes downscaling the input image to a plurality of background downscaled images and applying the respective background blur to each of the background pixels of the background downscaled images, wherein generating the foreground mipmap images includes downscaling the input image to a plurality of foreground downscaled images and applying the respective foreground blur to each of the foreground pixels of the foreground downscaled images, and wherein at least two of the background downscaled images are downscaled by different scale levels, and wherein at least two of the foreground downscaled images are downscaled by different scale levels.

10. The computer-implemented method of claim 2 wherein applying the respective background blur to each of the plurality of background pixels includes: applying the respective background blur to each background pixel based on surrounding pixels derived from the input image that surround the background pixel, based on a depth of the background pixel into a scene depicted by the input image, and based on a focal plane associated with the background pixel, wherein the focal plane is determined based on the depth of the background pixel and a background mipmap blur level assigned to the background mipmap image.

11. The computer-implemented method of claim 1 wherein at least one of the output focal plane depth or the output focal range are based on user input.

12. A computer-implemented method to provide blurring in an image, the method comprising: obtaining an input image; generating a plurality of mipmap images based on the input image, wherein generating the plurality of mipmap images includes, for each mipmap image: applying a blur to each particular pixel of a plurality of pixels derived from the input image for the mipmap image, wherein the blur is applied to each particular pixel based on a depth of the particular pixel into a scene depicted by the input image and based on a respective focal plane associated with the particular pixel; obtaining data indicating an output focal plane depth for an output focal plane of an output image; determining blurred output pixel values of the output image using at least one selected mipmap image of the plurality of mipmap images, including, for each designated pixel of a set of pixels of the input image, selecting the at least one selected mipmap image based on the depth of the designated pixel and based on the output focal plane depth; and causing the output image to be displayed on a display device.

13. The computer-implemented method of claim 12 wherein generating the plurality of mipmap images includes, for each mipmap image, downscaling the input image to a downscaled image, wherein applying the blur to each particular pixel of the plurality of pixels of the mipmap image includes applying the blur to each particular pixel of a plurality of pixels of the downscaled image.

14. The computer-implemented method of claim 12 wherein generating the plurality of mipmap images includes, for each mipmap image, assigning a mipmap blur level to the mipmap image, wherein the respective focal plane associated with the particular pixel is determined based on the depth of the particular pixel and based on the mipmap blur level assigned to the mipmap image such that respective focal planes associated with the pixels of the mipmap image vary among different pixels of the mipmap image.

15. The computer-implemented method of claim 14 wherein determining blurred output pixel values of the output image using the at least one selected mipmap image includes: determining a difference between the depth of the designated pixel and the output focal plane depth; and selecting one or more particular mipmap images of the plurality of mipmap images which have a respective mipmap blur level that is closest among the plurality of mipmap images to a lookup value determined based on the depth of the designated pixel and the output focal plane depth.

16. The computer-implemented method of claim 12 further comprising: obtaining a blur strength parameter that indicates a level of blur for the output image, wherein the blur strength parameter is based on user input, wherein determining the blurred output pixel values includes, for each designated pixel of the input image, interpolating between a corresponding mipmap pixel value and the pixel value of the input image using the blur strength parameter.

17. The computer-implemented method of claim 14 wherein the plurality of mipmap images are background mipmap images, wherein applying the blur includes applying a background blur based on pixels associated with a depth behind the respective focal plane associated with the particular pixel, and further comprising: generating a plurality of foreground mipmap images based on the input image, wherein generating each of the foreground mipmap images includes applying a foreground blur to each particular foreground pixel of a second plurality of pixels of the foreground mipmap image, wherein determining the blurred output pixel values includes: determining, based on the output focal plane depth, whether each pixel of the input image is one of a background pixel associated with a depth value behind the focal plane of the output image, or a foreground pixel associated with a depth value in front of the focal plane of the output image; and using at least one of the background mipmap images in response to the pixel of the input image being a background pixel, and using at least one of the foreground mipmap images in response to the pixel of the input image being a foreground pixel.

18. The computer-implemented method of claim 12, wherein applying the blur is applying a background blur based on the particular pixel associated with a depth behind the respective focal plane associated with the particular pixel, and further comprising: obtaining a focal range parameter for the input image, wherein the focal range parameter indicates an output focal range in front of the focal plane of the output image, wherein pixels associated with a depth in the output focal range do not contribute to blur of surrounding pixels that surround the pixels associated with the depth in the output focal range; and adding a respective foreground blur to one or more output pixel values of the output image, wherein the respective foreground blur is based at least in part on the focal range parameter.

19. The computer-implemented method of claim 18, further comprising: generating a plurality of foreground mipmap images based on the input image, wherein adding the respective foreground blur to the one or more output pixel values of the output image includes determining overlay pixel values using at least one foreground mipmap image of the plurality of foreground mipmap images, wherein adding the respective foreground blur includes combining the overlay pixel values with corresponding output pixel values of the output image.

20. A system comprising: a memory; and at least one processor configured to access the memory and configured to perform operations comprising: obtaining an input image; generating a plurality of background mipmap images based on the input image, wherein generating each of the background mipmap images includes applying a background blur to a plurality of background pixels derived from the input image for a respective background mipmap image, wherein each background pixel is associated with a respective depth behind a respective focal plane associated with the background pixel; generating a plurality of foreground mipmap images based on the input image, wherein generating each of the foreground mipmap images includes applying a foreground blur to a plurality of foreground pixels derived from the input image for a respective foreground mipmap image, wherein each foreground pixel is associated with a respective depth in front of a respective focal plane associated with the foreground pixel; obtaining parameter data indicating an output focal plane depth for an output focal plane of an output image and indicating an output focal range in front of the output focal plane; and generating output pixel values of the output image that include an output blur, wherein the output blur is based on particular pixels associated with a depth outside the output focal range, and wherein generating output pixel values includes: determining opaque pixel values based on at least one background mipmap image selected based on the output focal plane depth; determining overlay pixel values based on at least one foreground mipmap image selected based on the output focal range; and combining the opaque pixel values with corresponding values of the overlay pixel values to determine the output pixel values of the output image.

Description

BACKGROUND

[0001] The popularity and convenience of digital camera devices have caused visual content such as digital photographs and videos to become ubiquitous. For example, large numbers of images of various types can be captured, stored, and displayed by user devices. Some devices and software can process images to add effects to those images, including effects that simulate use of a physical camera. For example, blur effects and other visual effects can be digitally added to images to simulate the use of lenses and other analog or mechanical components of cameras.

[0002] The background description provided herein is for the purpose of generally presenting the context of the disclosure. Work of the presently named inventors, to the extent it is described in this background section, as well as aspects of the description that may not otherwise qualify as prior art at the time of filing, are neither expressly nor impliedly admitted as prior art against the present disclosure.

SUMMARY

[0003] Implementations of this application relate to generating and displaying blur in images. In some implementations, a computer-implemented method to provide blurring in an image includes obtaining an input image; generating a plurality of mipmap images based on the input image, where generating each mipmap image of the plurality of mipmap images includes applying a blur to a respective plurality of pixels derived from the input image; obtaining parameter data that indicates an output focal plane depth for an output focal plane of an output image and that indicates an output focal range in front of the output focal plane; and generating output pixel values of the output image that include output blur. Generating the output pixel values includes determining blurred pixel values based on one or more selected mipmap images selected from the plurality of mipmap images based on the output focal plane depth and the output focal range, where the blurred pixel values are based on particular pixels associated with a depth outside the output focal range.

[0004] Various implementations and examples of the method are described. For example, in some implementations, generating the plurality of mipmap images includes generating a plurality of background mipmap images based on the input image, which includes applying a respective background blur to each of a plurality of background pixels derived from the input image, where each background pixel has a respective depth behind a respective focal plane associated with the background pixel; and generating a plurality of foreground mipmap images based on the input image, which includes applying a respective foreground blur to each of a plurality of foreground pixels derived from the input image, where each foreground pixel has a respective depth in front of a respective focal plane associated with the foreground pixel, and the one or more selected mipmap images include one or more of the background mipmap images and one or more of the foreground mipmap images.

[0005] In some implementations, generating the plurality of mipmap images includes downscaling the input image to a plurality of downscaled images, and wherein applying the blur to the respective plurality of pixels includes applying the blur to a respective plurality of downscaled pixels of the downscaled images. For example, the downscaling can include downscaling the input image to a plurality of background downscaled images and applying the respective background blur to each of the background pixels of the background downscaled images, and downscaling the input image to a plurality of foreground downscaled images and applying the respective foreground blur to each of the foreground pixels of the foreground downscaled images. In some examples, at least two of the background downscaled images are downscaled by different scale levels, and at least two of the foreground downscaled images are downscaled by different scale levels.

[0006] In some implementations, applying the respective background blur to each of the plurality of background pixels includes applying the respective background blur to each background pixel based on surrounding pixels derived from the input image that surround the background pixel, based on a depth of the background pixel into a scene depicted by the input image, and based on a focal plane associated with the background pixel, where the focal plane is determined based on the depth of the background pixel and a background mipmap blur level assigned to the background mipmap image. In some implementations, applying the respective foreground blur to each of the plurality of foreground pixels of the respective foreground mipmap image includes applying the respective foreground blur to each foreground pixel based on surrounding pixels of the input image that surround the foreground pixel, and based on a foreground focal plane depth of a foreground focal plane associated with the foreground mipmap image.

[0007] In some implementations, generating output pixel values includes determining opaque pixel values based on at least one background mipmap image selected based on the output focal plane depth, determining overlay pixel values based on at least one foreground mipmap image selected based on the output focal range, and combining the opaque pixel values with corresponding values of the overlay pixel values to determine the output pixel values of the output image.

[0008] In some implementations, determining the opaque pixel values based on at least one background mipmap image includes, for each background pixel derived from the input image, selecting one or more background mipmap images from the plurality of background mipmap images based on the depth of the background pixel and based on the output focal plane depth; and using the one or more background mipmap images to determine a particular opaque pixel value corresponding to the background pixel. In some implementations, determining the overlay pixel values based on at least one foreground mipmap image includes, for each foreground pixel derived from the input image, selecting one or more foreground mipmap images of the plurality of foreground mipmap images based on the output focal plane depth and based on the output focal range; and using the one or more foreground mipmap images to determine a particular overlay pixel value corresponding to the foreground pixel. In some implementations, the combining includes using transparency values associated with the overlay pixel values, the transparency values indicating a respective transparency of the overlay pixel values.

[0009] In some implementations, a computer-implemented method to provide blurring in an image includes obtaining an input image; generating a plurality of mipmap images based on the input image, where generating the plurality of mipmap images includes, for each mipmap image, applying a blur to each particular pixel of a plurality of pixels derived from the input image for the mipmap image, where the blur is applied to each particular pixel based on a depth of the particular pixel into a scene depicted by the input image and based on a focal plane associated with the particular pixel; obtaining data indicating an output focal plane depth for an output focal plane of an output image; determining blurred output pixel values of the output image using at least one selected mipmap image of the plurality of mipmap images, including, for each designated pixel of a set of pixels of the input image, selecting the at least one mipmap image based on the depth of the designated pixel and based on the output focal plane depth; and causing the output image to be displayed on a display device.

[0010] Various implementations and examples of the method are described. For example, in some implementations, generating the plurality of mipmap images includes, for each mipmap image, downscaling the input image to a downscaled image, where applying the blur to each particular pixel of the plurality of pixels of the mipmap image includes applying the blur to each particular pixel of a plurality of pixels of the downscaled image. In some implementations, generating the plurality of mipmap images includes, for each mipmap image, assigning a mipmap blur level to the mipmap image, where the focal plane associated with the particular pixel is determined based on the depth of the particular pixel and based on the mipmap blur level assigned to the mipmap image, such that respective focal planes associated with the pixels of the mipmap image vary among different pixels of the mipmap image.

[0011] In some implementations, using the at least one selected mipmap image includes determining a difference between the depth of the designated pixel and the output focal plane depth, and selecting one or more particular mipmap images of the plurality of mipmap images which have a respective mipmap blur level that is closest among the plurality of mipmap images to a lookup value determined based on the depth of the designated pixel and the output focal plane depth. In some examples, the two mipmap images closest to the lookup value are blended together (e.g., interpolated). In some implementations, the method can further include obtaining a blur strength parameter that indicates a level of blur for the output image, where the blur strength parameter is based on user input, where determining the blurred output pixel values includes, for each designated pixel of the input image, interpolating between a corresponding mipmap pixel value and the pixel value of the input image using the blur strength parameter.

[0012] In some implementations, the plurality of mipmap images are background mipmap images, applying the blur includes applying a background blur based on pixels associated with a depth behind the focal plane associated with the particular pixel, and the method further includes generating a plurality of foreground mipmap images based on the input image, where generating each of the foreground mipmap images includes applying respective foreground blur to each particular foreground pixel of a second plurality of pixels of the foreground mipmap image; and determining the blurred output pixel values includes determining, based on the output focal plane depth, whether each pixel of the input image is one of a background pixel associated with a depth value behind the focal plane of the output image, or a foreground pixel associated with a depth value in front of the focal plane of the output image, using at least one of the background mipmap images in response to the pixel of the input image being a background pixel, and using at least one of the foreground mipmap images in response to the pixel of the input image being a foreground pixel.

[0013] In some implementations, applying the blur is applying a background blur based on the particular pixel associated with a depth behind the respective focal plane associated with the particular pixels, where the method further includes obtaining a focal range parameter for the input image, the focal range parameter indicating an output focal range in front of the focal plane of the output image, where pixels associated with a depth in the output focal range do not contribute to blur of surrounding pixels that surround the pixels associated with the depth in the output focal range; and adding a respective foreground blur to one or more output pixel values of the output image, where the respective foreground blur is based at least in part on the focal range parameter. For example, in some implementations, the method further includes generating a plurality of foreground mipmap images based on the input image, and adding the respective foreground blur to one or more output pixel values of the output image includes determining overlay pixel values using at least one foreground mipmap image, where adding the respective foreground blur includes combining the overlay pixel values with corresponding output pixel values of the output image.

[0014] In some implementations, a system includes a memory and at least one processor configured to access the memory and configured to perform operations including obtaining an input image; generating a plurality of background mipmap images based on the input image, where generating each of the background mipmap images includes applying a background blur to a plurality of background pixels derived from the input image for a respective background mipmap image, and each background pixel is associated with a respective depth behind a respective focal plane associated with the background pixel; generating a plurality of foreground mipmap images based on the input image, where generating each of the foreground mipmap images includes applying a foreground blur to a plurality of foreground pixels derived from the input image for a respective foreground mipmap image, and each foreground pixel is associated with a respective depth in front of a respective focal plane associated with the foreground pixel; obtaining parameter data indicating an output focal plane depth for an output focal plane of an output image and indicating an output focal range in front of the output focal plane; and generating output pixel values of the output image that include an output blur. The output blur is based on particular pixels associated with a depth outside the output focal range. Generating the output pixel values includes: determining opaque pixel values based on at least one background mipmap image selected based on the output focal plane depth; determining overlay pixel values based on at least one foreground mipmap image selected based on the output focal range; and combining the opaque pixel values with corresponding values of the overlay pixel values to determine the output pixel values of the output image.

[0015] In some implementations, a non-transitory computer readable medium has stored thereon software instructions that, when executed by a processor, cause the processor to perform operations. The operations can include operations of the methods or system as described above.

BRIEF DESCRIPTION OF THE DRAWINGS

[0016] FIG. 1 is a block diagram of example systems and a network environment which may be used for one or more implementations described herein;

[0017] FIG. 2 is a flow diagram illustrating an example method to provide and display blur in images, according to some implementations;

[0018] FIG. 3 is a diagrammatic illustration of a graph showing example blur curves and blur parameters, according to some implementations;

[0019] FIG. 4 is a flow diagram illustrating an example method to generate mipmap images, according to some implementations;

[0020] FIG. 5 is a diagrammatic illustration of an example set of generated mipmap images, according to some implementations;

[0021] FIG. 6 is a flow diagram illustrating an example method to generate a blurred output image, according to some implementations;

[0022] FIG. 7 is a flow diagram illustrating another example method to generate mipmap images, according to some implementations;

[0023] FIG. 8 is a diagrammatic illustration of another example set of generated mipmap images based on the method of FIG. 7, according to some implementations;

[0024] FIG. 9 is a flow diagram illustrating another example method to generate a blurred output image, according to some implementations;

[0025] FIGS. 10-12 are graphic representations of example user interfaces that provide adjustable blur parameters and blur effects in a displayed image, according to some implementations; and

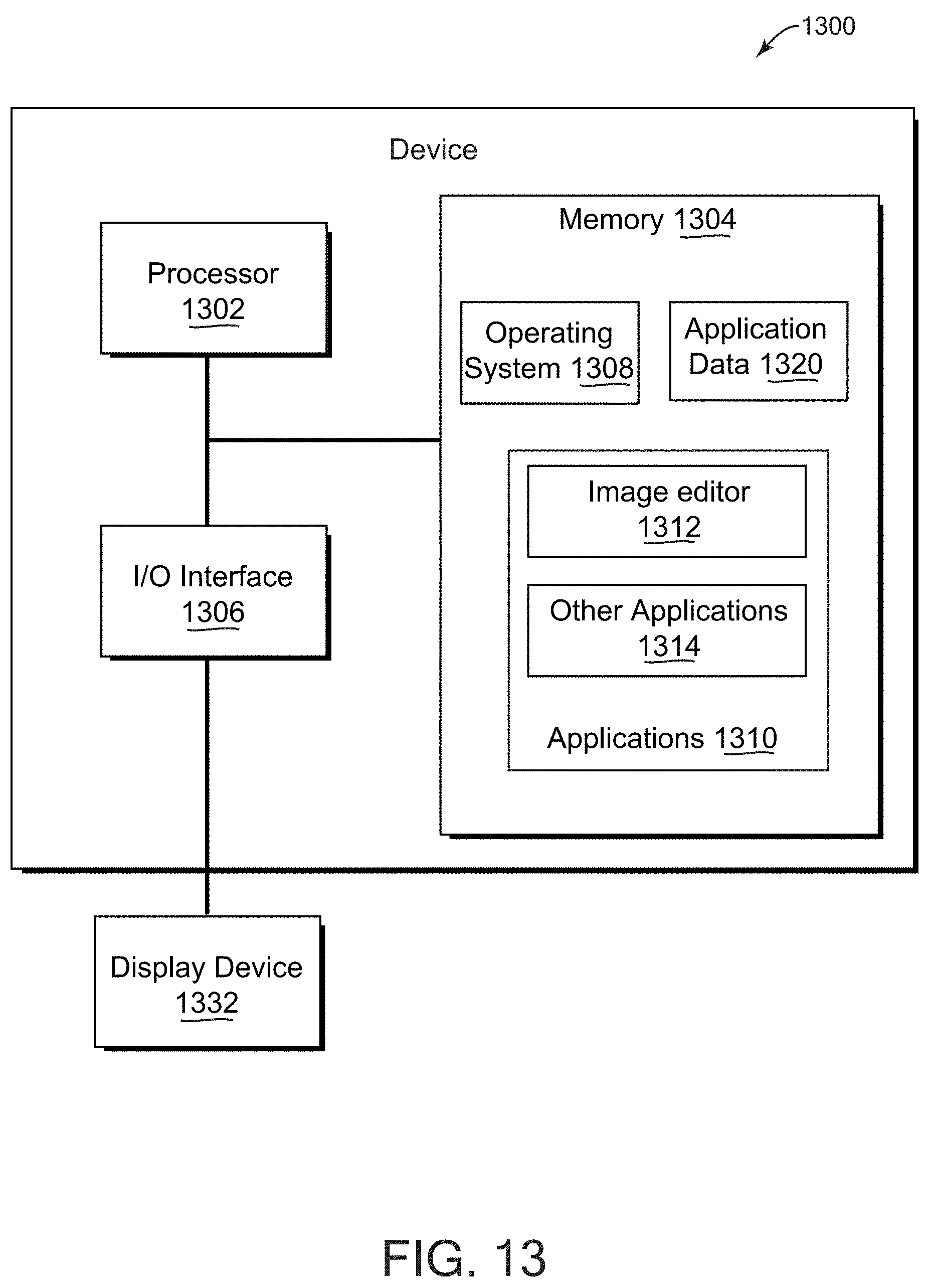

[0026] FIG. 13 is a block diagram of an example device which may be used for one or more implementations described herein.

DETAILED DESCRIPTION

[0027] One or more implementations described herein relate to generating and displaying blur effects in images, e.g., where the amount of blur depends on the distance of objects (e.g., described by pixels) into a depicted scene from a front image plane or camera. For example, implementations can provide a bokeh type of blur in images, e.g., images for which depth data has been obtained for the pixels of the image. In various implementations, a system generates multiple mipmap images that include blurred pixels based on the pixels of an input image. One or more of the mipmap images are selected, e.g., based on an output focal plane depth, a output focal range, and depth values of a plurality of pixels, and are used to generate blurred pixels for an output image that, for example, includes blur in the scene depicted in the input image. The blurred pixels can provide a blur effect such as a bokeh effect in which foreground and/or background pixels are blurred based on a depth of a focal plane. In various implementations, user input can adjust blur parameters such as the focal plane depth and/or a focal range, and the blur effect resulting from such adjusted parameters is generated and displayed quickly after the adjustment.

[0028] In some implementations, various mipmap images are generated by downscaling the input image. Different blur amounts are applied to different pixels of the downscaled image to generate the mipmap image. In some examples, a respective blur can be applied to each pixel using a blur equation that is dependent on a depth of the pixel into a scene depicted by the downscaled image and based on a focal plane associated with that pixel. The focal plane can be based on the depth of the particular pixel and based on an assigned mipmap blur level for the mipmap image. The respective focal planes associated with the pixels of a mipmap image vary among different pixels of the mipmap image, which allows all the pixels of the mipmap image to represent the particular blur level assigned to that mipmap image. In some implementations, background mipmap images and foreground mipmap images are generated based on the input image, where each background pixel has a respective depth behind a focal plane associated with the background pixel, and each foreground pixel has a respective depth in front of a focal plane associated with the foreground pixel.

[0029] In some implementations, each of the generated mipmap images is associated with or assigned a parameter representing a blur level or a depth (e.g., a foreground depth for foreground mipmap images, in some implementations). In some implementations, for example, a focal plane associated with a particular pixel can be based on the depth of the particular pixel and based on the mipmap blur level (or other parameter) assigned to the mipmap image such that respective focal planes associated with the pixels of the mipmap image vary among different pixels of the mipmap image.

[0030] When rendering a blurred output image, an output focal plane depth is obtained for the output focal plane of the output image, e.g., based on user input. Output pixel values of the output image are determined by, for each such pixel, selecting at least one of the generated mipmap images based on the depth of a pixel and based on the output focal plane depth, and obtaining a blurred pixel value from the selected mipmap image(s).

[0031] In some implementations, output pixel values of the output image are generated based on opaque pixel values from at least one background mipmap image that is selected based on the output focal plane depth, and overlay pixel values from at least one foreground mipmap image that is selected based on an output focal range, and combining the opaque pixel values with corresponding overlay pixel values to determine the output pixel values of the output image.

[0032] In some implementations, an output focal range for the output image can be specified by user input, which is a depth range in front of the focal plane of the output image. Pixels in front of the focal range cause a foreground blur. Pixels associated with a depth within the output focal range do not cause or contribute to blurring of pixels of the output image, e.g., blurring of pixels of an output image is based on pixels associated with a depth outside the output focal range. Mipmaps can be selected and blurred pixels used from the mipmaps based on the specified output focal range and the specified output focal plane depth.

[0033] One or more features described herein enable fast and efficient generation of realistic blur in an image, e.g., a bokeh effect. Disclosed features include pre-computation of mipmap images that have blurred pixel values based on an input image. In some examples, one or more of the mipmap images are selected and used to blur an output image, where the blurring of the output image at a certain point (e.g., pixel) is related to the depth at that point, thus simulating the effect of some physical cameras. The use of such mipmap images allows a lookup process to be used in response to input or change in blur parameters, providing a fast rendering of a blurred image. Such a process is much faster than computing blurred pixels in response to the input or change of blur parameters. For example, a common technique for applying bokeh to an image is to render a blurred disk for every pixel of the image, where the radius of the disk is 0 for pixels on the focal plane and grows for pixels farther away from the focal plane. Such a technique runs into performance issues when trying to render a bokeh effect quickly in response to user input.

[0034] Thus, using described features, blur parameters can be provided as user controlled parameters which can be changed on the fly. The change in blur effects resulting from change of some blur parameters can be animated in an image as well, e.g., showing a transition effect from one blur effect to another. Such features should be used with a very fast performance of rendering blur effects in an image, as provided by the described features, in order to keep a decent frame rate while the user is editing blur effects by changing blur parameters.

[0035] Described features include providing a different focal plane associated with each blurred pixel in a particular mipmap image. This allows all of the pixels in a single mipmap image to be blurred, where a particular blur level is associated with the mipmap image. Since all of the pixels of the mipmap are blurred, the mipmap image can be downscaled, thus saving storage space on storage devices. In contrast, if each mipmap represented blurring at a single focal plane depth, every mipmap could have blurred and unblurred pixels, such that all mipmaps would need to be kept at a relatively high resolution instead of downscaling the mipmap images to lower resolutions, thus resulting in using more storage space.

[0036] Described features include generating a blur effect (e.g., bokeh effect) that takes into account a variable focal range in the image. For example, the user can specify the focal range for a blurred output image. Described implementations provide background and foreground mipmap images providing different blur contributions to blurred pixels, and where blurred contributions from the foreground mipmap images take into account a focal range parameter. Such a variable parameter allows greater options and flexibility in generating different bokeh effects in images.

[0037] The described features can enable faster and more efficient generation and display of blur effects in images, more efficient storage of pre-computed mipmap images providing blurred pixels, and greater options and flexibility in generating different blur effects, thus reducing consumption of device resources that would otherwise be needed to generate desired blur effects in images. Consequently, a technical effect of one or more described implementations is that generation and display of fast, realistic, and varied blur effects in images is provided with less computational time and fewer computational resources expended to obtain results. For example, a technical effect of described techniques and features is a reduction in the consumption of system processing resources utilized to generate blur effects in images as compared to prior systems that do not provide one or more of the described techniques or features.

[0038] In situations in which certain implementations discussed herein may collect or use personal information about users (e.g., user data, information about a user's social network, user's location and time at the location, user's biometric information, user's activities and demographic information), users are provided with one or more opportunities to control whether information is collected, whether the personal information is stored, whether the personal information is used, and how the information is collected about the user, stored and used. That is, the systems and methods discussed herein collect, store and/or use user personal information specifically upon receiving explicit authorization from the relevant users to do so. For example, a user is provided with control over whether programs or features collect user information about that particular user or other users relevant to the program or feature. Each user for which personal information is to be collected is presented with one or more options to allow control over the information collection relevant to that user, to provide permission or authorization as to whether the information is collected and as to which portions of the information are to be collected. For example, users can be provided with one or more such control options over a communication network. In addition, certain data may be treated in one or more ways before it is stored or used so that personally identifiable information is removed. As one example, a user's identity may be treated so that no personally identifiable information can be determined. As another example, a user device's geographic location may be generalized to a larger region so that the user's particular location cannot be determined.

[0039] An image, as referred to herein, is a digital image having pixels with one or more pixel values (e.g., color values, brightness values, etc.). An image includes image data that is a digital representation of an image, such as a pixel map or other representation of an image including numeric values (pixel values) stored in a file and usable to render an image in an electronic display. An image can be a still image or single image, or can be an image included in a series of images, e.g., a frame in a video sequence of video frames, or an image in a different type of sequence or animation of images. A video includes a sequence of multiple images. For example, implementations described herein can be used with content data items that are single images or static images (e.g., a photograph, an emoji, or other image), videos, or animated images (e.g., cinemagraphs or other animated image that includes motion, a sticker that includes animation and audio, etc). Text, as referred to herein, can include alphanumeric characters, emojis, symbols, or other characters. An audio segment can include audio data that is provided in a standard audio format which can be processed to provide sound, e.g., from speakers.

[0040] FIG. 1 illustrates a block diagram of an example network environment 100, which may be used in some implementations described herein. In some implementations, network environment 100 includes one or more server systems, e.g., server system 102 in the example of FIG. 1. Server system 102 can communicate with a network 130, for example. Server system 102 can include a server device 104 and a database 106 or other storage device. Network environment 100 also can include one or more client devices, e.g., client devices 120, 122, 124, and 126, which may communicate with each other and/or with server system 102 via network 130. Network 130 can be any type of communication network, including one or more of the Internet, local area networks (LAN), wireless networks, switch or hub connections, etc. In some implementations, network 130 can include peer-to-peer communication 132 between devices, e.g., using peer-to-peer wireless protocols.

[0041] For ease of illustration, FIG. 1 shows one block for server system 102, server device 104, and database 106, and shows four blocks for client devices 120, 122, 124, and 126. Server blocks 102, 104, and 106 may represent multiple systems, server devices, and network databases, and the blocks can be provided in different configurations than shown. For example, server system 102 can represent multiple server systems that can communicate with other server systems via the network 130. In some examples, database 106 and/or other storage devices can be provided in server system block(s) that are separate from server device 104 and can communicate with server device 104 and other server systems via network 130. Also, there may be any number of client devices. Each client device can be any type of electronic device, e.g., desktop computer, laptop computer, portable or mobile device, camera, cell phone, smart phone, tablet computer, television, TV set top box or entertainment device, wearable devices (e.g., display glasses or goggles, head-mounted display (HMD), wristwatch, headset, armband, jewelry, etc.), virtual reality (VR) and/or augmented reality (AR) enabled devices, personal digital assistant (PDA), media player, game device, etc. Some client devices may also have a local database similar to database 106 or other storage. In other implementations, network environment 100 may not have all of the components shown and/or may have other elements including other types of elements instead of, or in addition to, those described herein.

[0042] In various implementations, end-users U1, U2, U3, and U4 may communicate with server system 102 and/or each other using respective client devices 120, 122, 124, and 126. In some examples, users U1, U2, U3, and U4 may interact with each other via applications running on respective client devices and/or server system 102, and/or via a network service, e.g., an image sharing service, a messaging service, a social network service, or other type of network service, implemented on server system 102. For example, respective client devices 120, 122, 124, and 126 may communicate data to and from one or more server systems (e.g., system 102). In some implementations, the server system 102 may provide appropriate data to the client devices such that each client device can receive communicated content or shared content uploaded to the server system 102 and/or network service. In some examples, the users can interact via audio or video conferencing, audio, video, or text chat, or other communication modes or applications, send content (images, text, audio data, etc.) to each other's devices, etc. In some implementations, a "user" can include one or more programs or virtual entities, as well as persons that interface with the system or network.

[0043] In some implementations, server system 102 and/or one or more client devices 120-126 can provide a display content program. The display content program may allow a system (e.g., client device or server device) to display content data such as one or more images in a particular layout (e.g., based on a grid).

[0044] A user interface can enable display of content data such as images, as well as enable communications, privacy settings, notifications, and other functions on a client device 120, 122, 124, and 126 (or alternatively on server system 102). Other applications can also be used with one or more features described herein, such as browsers, email applications, communication applications, etc. Such a user interface can be displayed using the display content program or other software on the client device, software on the server device, and/or a combination of client software and server software executing on server device 104, e.g., application software or client software in communication with server system 102. The user interface can be displayed by a display device of a client device or server device, e.g., a display screen(s), projector, etc. In some implementations, application programs running on a server system can communicate with a client device to receive user input at the client device and to output data such as visual data, audio data, etc. at the client device. For example, the user interface may provide various options to a user to cause the display of content data to view, select particular content data, etc.

[0045] In some examples, the network environment 100 can detect content characteristics of content data items and determine blur characteristics based on the content characteristics. For example, image features can include people (without determining identity of the people), animals, objects (e.g., articles, vehicles, etc.), particular monuments, landscape features (e.g., foliage, mountains, lakes, sky, clouds, sunrise or sunset, buildings, bridges, etc.), weather, etc. Various image recognition and detection techniques can be used (e.g., machine learning based on training images, comparison to reference features in reference images, etc.) to detect image content features. Some implementations can detect audio content features in audio segments, and determine blur characteristics based on the audio features. Audio content features can include recognized words from voice, etc. In some example implementations, server system 102 may include classifiers of particular types of content data items (e.g., images), and can determine whether any of particular classes are detected in the content data items (e.g., pixels of an image).

[0046] Various implementations of features described herein can use any type of system and/or service. For example, social networking services, image collection and sharing services or other networked services (e.g., connected to the Internet) can include one or more described features accessed by client and server devices. Any type of electronic device can make use of features described herein. Some implementations can provide one or more features described herein on client or server devices disconnected from or intermittently connected to computer networks. In some examples, a client device including or connected to a display device can examine and display images stored on storage devices local to the client device (e.g., not connected via a communication network) and can provide features and results as described herein that are viewable to a user.

[0047] FIG. 2 is a flow diagram illustrating an example method 200 to provide and display blur in images, according to some implementations. In some implementations, method 200 can be implemented, for example, on a server system, e.g., messaging server 101, as shown in FIG. 1. In some implementations, some or all of the method 200 can be implemented on a system such as one or more client devices 120-126 as shown in FIG. 1, and/or on both a server system and one or more client systems. In described examples, the implementing system includes one or more processors or processing circuitry, and one or more storage devices such as a database or other accessible storage. In some implementations, different components of one or more servers and/or clients can perform different blocks or other parts of the method 200.

[0048] Some implementations can initiate method 200 based on user input. A user may, for example, have selected the initiation of the method 200 from a displayed user interface. In some implementations, method 200 or portions thereof can be performed with guidance by the user via user input.

[0049] In some implementations, the method 200, or portions of the method, can be initiated automatically by a user device. For example, the method (or portions thereof) can be periodically initiated, or initiated based on the occurrence of one or more particular events or conditions. For example, such events or conditions can include a particular application being opened based on user input, obtaining one or more images or other content data items that have been newly captured/created by, uploaded to, or otherwise accessible by a user device, a predetermined time period having expired since the last performance of method 200, and/or one or more other events or conditions occurring which can be specified in settings of a device implementing method 200. In some implementations, such conditions can be previously specified by a user in stored custom preferences of the user (accessible by a device or method with user consent). In some examples, a device (server or client) can perform the method 200 with access to a collection of accessible content data items, e.g., a user's collection of images or other content data items (if user consent is received). In another example, a camera, cell phone, tablet computer, wearable device, or other client device can capture one or more content data items such as images, videos, etc., and can perform the method 200. In addition, or alternatively, a client device can send one or more content data items (e.g., captured content data items) to a server over a network, and the server can process the content data items using method 200.

[0050] In block 202, it is determined whether user consent (e.g., user permission) has been obtained to use user data in the implementation of method 200. For example, user data can include user preferences, user biometric information, images or other content data items in a content collection (e.g., images captured, uploaded, generated, received, accessed, or otherwise associated with a user), messages sent or received by a user, information about a user's social network and/or contacts, user characteristics (identity, name, age, gender, profession, etc.), social and other types of actions and activities, content, ratings, and opinions created or submitted by a user, a user's geographical location, historical user data, etc. One or more blocks of the methods described herein may use such user data in some implementations.

[0051] If user consent has been obtained from the relevant users for which user data may be used in the method 200, then in block 204, it is determined that the blocks of the methods herein can be implemented with possible use of user data as described for those blocks, and the method continues to block 208. If user consent has not been obtained, it is determined in block 206 that blocks are to be implemented without use of user data, and the method continues to block 208. In some implementations, if user consent has not been obtained, the remainder of method 200 is not performed, and/or particular blocks using the user data are not performed. In some implementations, if user consent has not been obtained, blocks of method 200 are to be implemented without use of user data and with generic or publicly-accessible and publicly-usable data.

[0052] In block 208 of method 200, an input image is obtained for processing. The input image can be a digital image composed of multiple pixels, for example, and can be stored on one or more storage devices of the system or otherwise accessible to the system, e.g., a connected storage device such as a local storage device, storage device connected to or in communication with a network accessible to the system, etc. For example, the input image can be a photo captured by a camera, an image frame extracted from a captured video stream or other video data, or an image derived from a different source.

[0053] In some implementations, a user can provide, select, or designate one or more input images to obtain for processing. In some implementations, the input image can be automatically obtained by the method, e.g., as an image from a stored collection of multiple images, e.g., from a user's album, a pool of stored images submitted by users, etc. Automatic obtaining of an input image from a user's collection is performed with the user's consent as indicated in block 204, e.g., via stored user preferences accessed by block 206. The collections can be locally stored and accessible by the system performing method 200, and/or can be remotely stored on a server or client device, e.g., as one or more albums provided in account(s) of user(s) of a network service. In some implementations, the system can determine which image to select based on evaluating one or more characteristics of accessible images, e.g., timestamps and other metadata of images, the color distributions of images, the recognized content or labels describing content in images, user data such as user preferences, etc. (accessed if user consent has been obtained).

[0054] For example, in some implementations, a system can automatically (e.g., without human intervention) select a particular input image for processing. For example, such selection may be determined (with user consent) based on user data, including stored user preferences, a user history of previous modifications made by the user to other images, social data indicating user preferences (e.g., previous comments, ratings, etc. made by the user), locations visited by the user's device (e.g. as detected by GPS sensors on the device), activities of the user (e.g., sensed or inferred by locations visited by the user), etc. The method continues to block 210.

[0055] In block 210, mipmap images are generated based on the input image. A mipmap image is an image included in a mipmap, which is a group of images representing an original image (e.g., the input image) at different pixel resolutions. In some examples, one or more mipmap images can be lower resolution than the original image, and can be provided in a sequence of reducing resolutions such that different mipmap images are at different scale levels (resolutions) of the mipmap.

[0056] The mipmap images can be generated at a number of different resolutions (scale levels), where the resolutions generated may have been determined prior to the performance of block 210. In some examples, the scale levels provided can be the same regardless of the characteristics of the input image, or can be different based on one or more characteristics of the input image. For example, the image characteristics can be a timestamp of the input image, a location of capture of the input image, one or more features depicted in the image using one or more image recognition techniques, machine learning techniques, etc.), etc. In some examples, if particular types of features are detected in the input image (e.g., faces, objects of particular type, monuments, etc.), then particular resolutions can be provided for the mipmap images, or if only other types of features are detected, then a different set of resolutions can be provided for the mipmap images.

[0057] In some implementations, background mipmap images and foreground mipmap images are generated. In some implementations, the background mipmap images can be used for processing background pixels of the input image, and the foreground mipmap images can be used for processing foreground pixels of the input image, as described herein. In some implementations, background and foreground mipmap images can be used to process one or more pixels of the input image. Some example methods of generating background and foreground mipmap images are described with respect to FIGS. 4 and 7. Generated mipmap images can be stored in accessible storage, e.g., memory and/or other storage devices. The method continues to block 212.

[0058] In block 212, one or more blur parameters are obtained for an output image to be generated. The blur parameters specify one or more characteristics of the blurring to be performed to the pixels of the input image. In some examples, the blur parameters may include a focal plane depth, a focal range, and a blur strength.

[0059] Referring to FIG. 3, a graph 300 illustrates some example blur parameters and blur curves that can be used in blurring an image. In graph 300, the horizontal axis represents depths into the image from a front plane of the image (e.g., camera position), and as indicated on the axis by depth values that can be normalized to a range from 0 to 1. For example, the depth is provided along a dimension oriented perpendicular to the plane of the image, e.g., a z-axis extending into the image. The vertical axis represents an amount or magnitude of blur to be applied to the pixels of the image to provide a blur effect, and can have a value of 0 to N, where N can be a maximum blur radius.

[0060] A focal plane depth 301 indicates a depth value of the focal plane along the depth axis, e.g., the depth value which is "in focus" for an image. For pixels at this depth, no blur should be applied to those pixels. The amount of blur applied to pixels at other depths can depend at least in part on the depth distance of those pixels to the focal plane. Pixels having depth values behind the focal plane (further away from the front plane of the image or camera that captured the image) are considered background pixels, and pixels having depth values in front of the focal plane are considered foreground pixels.

[0061] A background blur curve 302 indicates the amount of blur that is applied based on the depth of a background pixel, e.g., based on the depth deviation of the pixel from the focal plane depth into the image (away from the front plane of the image). In this example, background blur curve 302 is linear, but can be a curve of any shape in various implementations, e.g., as long as the curve represents an invertible function.

[0062] In some examples, the blur parameters can include a focal range 304. Focal range 304 is an amount of depth distance in front of the focal plane (towards the front plane of the image or camera). No blurring is applied to foreground pixels having a depth value within the focal range 304. For example, if the focal range is shallow, most of the foreground pixels are to be blurred the same way a digital single reflect lens (SLR) camera would blur them. If the focal range is large, most or all foreground pixels would remain unblurred.

[0063] A foreground focal plane 306 is determined based on the focal range 304. The foreground focal plane 306 indicates the other endpoint of the focal range from the focal plane 300.

[0064] A foreground blur curve 308 indicates the amount of blur that is applied based on the depth of a foreground pixel, e.g., based on the depth deviation of the pixel from the foreground focal plane depth toward the front plane of the image. In this example, foreground blur curve 308 is linear, but can be a curve of any shape in various implementations, e.g., a curve that represents an invertible function. In some implementations, foreground blur curve 308 is independent of the background blur curve 302.

[0065] In some examples, the blur parameters include a blur strength parameter, which can control an overall amount of blur to be applied to the input image to generate the output image. For example, the blur strength parameter can determine the value of N in the graph 300. In some examples, if this parameter is set to 0 ("none"), no blurring is applied to any pixels of the input image, and if this parameter is set to a maximum value or setting, pixels of the input image are blurred by the full amount determined in the blurring process. If this parameter is set to an intermediate value between maximum and none, then blur determined in the blurring process is reduced in accordance with the intermediate value (e.g., a 0.5 value can reduce the determined blur by half its strength).

[0066] Referring back to FIG. 2, in some implementations, one or more of the blur parameters can be obtained based on user input from a user. For example, a user interface can be displayed by a user device, which presents interface input fields or controls allowing the user to set or adjust the blur parameters for the blurring of the input image. In some implementations, one or more of the blur parameters can be obtained from accessible storage, e.g., specified prior to block 212 via stored user preferences, as default parameters, from a different application program or storage device, or from another source. The method continues to block 214.

[0067] In block 214, output pixels of an output image are determined, where the output pixels include pixels having blur. The output image is an image including blur as resulting from processing the pixels of the input image by the processing of method 200. The blur in output pixels is determined based on one or more of the generated mipmaps. For example, the one or more mipmaps can be selected based on particular characteristics of pixels of the input image, including depth values. Some example methods of determining output pixel values are described below with respect to FIGS. 6 and 9. The method continues to block 216.

[0068] In block 216, an output image is displayed, including the output pixels determined in block 214. For example, the output image can be displayed in a graphical user interface provided on a user device. In some implementations, the output image can be displayed while the input image is displayed, e.g., to allow a user to compare the input image and the output image. The method can continue to block 218.

[0069] In block 218, it determined whether one or more of the blur parameters are changed. In various implementations, the displayed user interface can include controls that are receptive to user input (e.g., user touch on a touchscreen, receiving user voice commands, user manipulation of an input control device such as joystick, trackpad, etc.) and that allow the user to adjust one or more blur parameters in accordance with the user input. For example, one or more sliders or other controls can be displayed, examples of which are shown in FIGS. 10-12. Blur parameters can also or alternatively be changed without user input, e.g., based on other events, user preferences, time conditions, at different stages of a displayed animation of change in blurring, etc.

[0070] If blur parameters have not been changed, the method can return to block 216 to continue to display the output image, and/or other user input or events are determined, etc.

[0071] If blur parameters have been changed, then the method continues to block 214, where output pixels are determined based on the new set of blur parameters. For example, the input image previously obtained in block 208 and the mipmap images previously generated in block 210 can be used to determine new output pixel values and generate a new output image, which is displayed in block 216, e.g., in place of the previously generated output image.

[0072] Due to the use of mipmap images that have been generated prior to the application and/or change in blur parameters, the determination and display of blurred output images based on the blur parameters can be performed quickly after blur parameters have been specified. This allows ease of editing blur parameters and viewing of the effects of the editing. This is in contrast to the much greater processing time in having to compute blur effects in the pixels of the input image for each change in blur parameters.

[0073] FIG. 4 is a flow diagram illustrating an example method 400 to generate mipmap images, according to some implementations. In some implementations, method 400 can be used in block 210 of FIG. 2. User consent is obtained for the blocks of method 400, similarly as described for FIG. 2.

[0074] In block 402, a set of mipmap parameter values and mipmap scale levels are determined for mipmap images to be generated. In some implementations, the set includes a set of background mipmap parameter values and background mipmap scale levels, and a set of foreground mipmap parameter values and foreground mipmap scale levels. In various implementations, the background values and levels can be the same as the foreground values and levels, or the values and/or levels can be different in the background and foreground sets.

[0075] In some implementations, this block determines the set of mipmap images to be generated. In some implementations, a mipmap parameter value is associated with each mipmap image to be generated. The parameter value indicates a parameter that is looked up by the output pixel determination process to select mipmap images and determine blurred pixel values. In some implementations, e.g., for background mipmap images, the parameter value can be a blur level associated with the mipmap image, which indicates an amount of blur applied to pixels of the associated mipmap image (e.g., 50%, 25%, etc.). In some implementations, e.g., for foreground mipmap images, the parameter value can be a foreground focal depth value that indicates a depth of a foreground plane used in the blurring of pixels in the associated mipmap image (e.g., 0.15, 0.3, etc.). An example of the use of the parameter value in selecting mipmap images for rendering output pixels is described with reference to FIG. 6.

[0076] Each mipmap parameter value is also associated with a scale level that indicates the amount of downscaling to apply to the input image when generating the associated mipmap image. In some examples, a mipmap scale level can be designated as 0.5 to indicate that the associated mipmap image is half the width and half the height of the input image, 0.25 to indicate one-quarter the width and one-quarter the height, etc.

[0077] In some implementations, a mipmap parameter can be provided which corresponds to a blur level of 0%, e.g., no blurring. In addition, such a parameter can be associated with a scale level of 1, such that the associated generated mipmap image will have the same resolution as, and is not downscaled from, the input image. Such a 0-level mipmap parameter can provide a 0-level mipmap image that is stored in storage similarly to any other mipmap image. For example, the 0-level mipmap image can be selected for use during rendering of a pixel on the focal plane and/or a pixel within the focal range, as described below in some implementations. A pixel having this focal plane depth value does not itself contribute blur to the corresponding output pixel value, but there may be foreground pixels near to the pixel that are blurred and can contribute to that output pixel blur, and this foreground blur contribution can be included in the pixels of the 0-level mipmap image.

[0078] The set of mipmap parameter values and associated scale levels to be used in the blurring of the input image can be obtained by method 400, e.g., from storage, default or user preferences, etc., or can be determined based on one or more image characteristics (image features detected in the image, timestamp and/or location of capture of the image, etc.). In one example, e.g., as shown in FIG. 5, the set of mipmap levels can include 0.5, 0.25, and 0.125, with one or more mipmap images at each of the mipmap levels. Other parameter values and/or scale levels can be used for mipmap images in various implementations, e.g., 0.33, 0.20, etc. In some implementations, the number of mipmap images and/or the scale levels to be used can be based on the amount of memory or other storage available to store the mipmap images, and/or based on processing speed or other capabilities of a device.

[0079] In block 404, a mipmap parameter value (and its associated mipmap scale level) is selected for which a mipmap image is to be generated. For example, the next mipmap parameter value for which a mipmap image has not yet been generated can be selected from the set of mipmap parameter values determined in block 402. In this example, the mipmap parameter level is a blur level.

[0080] In some implementations, multiple types of mipmap images are generated for a particular parameter value. In some examples, types of mipmap images includes a background mipmap image and a foreground mipmap image. For example, background mipmap images can be generated to include blurred pixel values for background pixels, e.g., pixels that are in the background of the input image (behind the focal plane of the input image). Foreground mipmap images can be generated to include blurred pixel values for foreground pixels, e.g., pixels that are in the foreground of the input image (e.g., in front of the focal plane of the input image and outside of a focal range of the input image).

[0081] In block 404, one type of mipmap image can be selected for generation. In some examples, a background type of mipmap image can be selected for the current iteration of method 400, or a foreground mipmap image can be selected.

[0082] In block 406, the input image is downscaled to provide a downscaled image, e.g., by a factor corresponding to the selected mipmap scale level. For example, if the selected scale level is 0.5, then the input image is downscaled such that the downscaled image has half the number of pixels in its width and its height. In some implementations, a previously-downscaled image (at the same scale level) can be used instead of performing the downscaling. For example, the previously-downscaled image can be a result from a previous iteration of block 406 for a different mipmap image.

[0083] In block 408, a pixel of the downscaled image is selected for processing, e.g., a pixel that has not yet been processed. The selected pixel can be considered the origin pixel for the blur process.

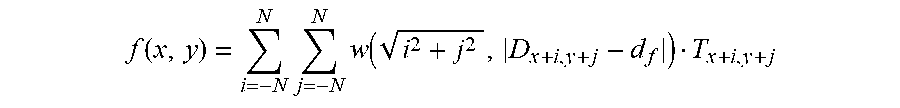

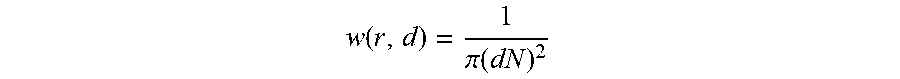

[0084] In block 410, blur is applied to the selected pixel based on depths of the selected and surrounding pixels of the downscaled image and based on a focal plane associated with the selected pixel (e.g., a focal plane calculated from the depth value of the selected pixel). A blur equation can be used to determine the blurred pixel color value. In some examples, the blur equation can sample pixels of the downscaled image that surround the selected pixel and are within a particular pixel distance to the selected pixel. In some examples, the particular pixel distance can be equal to the maximum blur radius, e.g., N in the graph 300 of FIG. 3. For example, the blur equation can multiply determined weights by sampled pixels, sum the weighted sampled pixels, and provide a weighted average pixel value as a blurred pixel value.

[0085] In some implementations, the blur equation can use the depths of sampled surrounding pixels (that surround the selected pixel in the downscaled image) to influence the amount of blur, e.g., influence the weight used in the blur equation. The depth value of a pixel indicates its position in the depth dimension extending into the downscaled image, e.g., perpendicular to the plane of the downscaled image. In some implementations, depth values can be obtained from a depth map associated with the input image, which indicates a depth value of each pixel of the input image. For example, in various implementations, the depth values may have originated from a capture device (e.g., depth camera) that senses and provides depth information for pixels of captured images. Depth values may have originated from an image processing technique that detects objects depicted in an image and estimates depths of the objects' pixels based on relative sizes of objects, types of objects, and other image characteristics. In some implementations, depth values may have originated from other sources. If a downscaled image pixel corresponds to multiple pixels in the input image that have different depths, any of a variety of techniques can be used to determine the depth of the downscaled image pixel, e.g., the depth map can be scaled to match the dimensions of the downscaled image using the same scaling technique, such that multiple pixel depths are averaged to downscale to one pixel depth.

[0086] In some implementations, the blur equation can use the depth of a focal plane that is associated with the selected pixel, when processing the selected pixel and surrounding pixels. In an example implementation, each pixel of a mipmap image is associated with a particular focal plane depth that would provide the blur amount of the mipmap blur level (parameter value) of the mipmap image at the depth of that pixel. Thus, per mipmap image, the amount of blur (blur level) is constant and the focal plane is changed per pixel to provide that blur level.

[0087] In some implementations, a difference between generating a background mipmap image and a foreground mipmap image is the method of determination of the focal planes ("normal" focal planes) and the foreground focal planes associated with the pixels of the mipmap image. In some implementations, if the selected type of the mipmap image being generated is a background mipmap image, the selected pixel can be treated as a background pixel for determining the focal plane and foreground focal plane. If the selected type of mipmap is a foreground mipmap image, the selected pixel can be treated as a foreground pixel for determining the focal plane and foreground focal plane.

[0088] For example, if the selected mipmap image is a background mipmap image, the associated normal focal plane of the selected pixel can be determined based on the depth of the selected pixel and based on the blur level assigned to the background mipmap image. The associated normal focal plane is determined from these parameters based on the particular background blur curve used to determine the blur equation to blur background pixels. For example, if the background blur curve is linear (as in the example of FIG. 3), then the difference between the depth of the selected pixel and the blur level of the selected background mipmap image can be used to determine the focal plane depth for the selected pixel. In some implementations, the background blur curve is based on a different relationship (e.g., exponential, or otherwise nonlinear), and the associated normal focal plane is determined based on the pixel depth and blur level using the background blur curve relationship.

[0089] For a background mipmap image, a foreground focal plane associated with the selected pixel is also determined, so that blur from qualifying foreground pixels that surround the selected pixel can contribute to the blur of the selected pixel. For example, in the linear blur curve example above, the foreground focal plane associated with a surrounding foreground pixel can be determined as the normal focal plane minus the focal range, where the normal focal plane is determined as described above for the background mipmap image. In some examples using the method of FIG. 6, the focal range is associated with the input image and can be a predetermined focal range used for all pixels of the generated mipmap images.