Three-dimensional Imager

Armstrong; Matthew ; et al.

U.S. patent application number 16/518135 was filed with the patent office on 2019-11-14 for three-dimensional imager. The applicant listed for this patent is FARO Technologies, Inc.. Invention is credited to Matthew Armstrong, Christopher S. Garcia, Theodore J. Hordeski, JR., Michael Veksland, Yevgeniy Vinshtok.

| Application Number | 20190346257 16/518135 |

| Document ID | / |

| Family ID | 59087866 |

| Filed Date | 2019-11-14 |

View All Diagrams

| United States Patent Application | 20190346257 |

| Kind Code | A1 |

| Armstrong; Matthew ; et al. | November 14, 2019 |

THREE-DIMENSIONAL IMAGER

Abstract

A three-dimensional (3D) measuring system and a method of determining a distance is provided. A three-dimensional (3D) measuring system includes a master part having a first base part and a first part-under-test including a second base. A photogrammetry camera images the master part to generate two-dimensional (2D) images. A first 3D imager determines 3D coordinates in a first imager frame of reference. A second 3D imager determines 3D coordinates in a second imager frame of reference. The system determines in a system frame of reference a first pose of the first imager and a second pose of the second imager. The system is further configured to determine 3D coordinates of the first part-under-test in the system frame of reference.

| Inventors: | Armstrong; Matthew; (Glenmoore, PA) ; Garcia; Christopher S.; (Malvern, PA) ; Hordeski, JR.; Theodore J.; (Dresher, PA) ; Veksland; Michael; (Mount Laurel, NJ) ; Vinshtok; Yevgeniy; (Downingtown, PA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 59087866 | ||||||||||

| Appl. No.: | 16/518135 | ||||||||||

| Filed: | July 22, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15457045 | Mar 13, 2017 | |||

| 16518135 | ||||

| 15233415 | Aug 10, 2016 | |||

| 15457045 | ||||

| 62309024 | Mar 16, 2016 | |||

| 62276325 | Jan 8, 2016 | |||

| 62276319 | Jan 8, 2016 | |||

| 62276329 | Jan 8, 2016 | |||

| 62272461 | Dec 29, 2015 | |||

| 62272451 | Dec 29, 2015 | |||

| 62272442 | Dec 29, 2015 | |||

| 62272469 | Dec 29, 2015 | |||

| 62207047 | Aug 19, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G01C 3/10 20130101; G01B 11/25 20130101; H04N 13/246 20180501; G01B 11/14 20130101; G01C 3/08 20130101; G01S 17/48 20130101; H04N 13/254 20180501; G01B 21/042 20130101; G06T 7/60 20130101; G01C 11/00 20130101; G06T 2207/30204 20130101; H05K 7/20136 20130101; G06T 2207/30244 20130101; H04N 2013/0081 20130101; G01B 11/245 20130101; H04N 13/239 20180501 |

| International Class: | G01B 11/14 20060101 G01B011/14; G01C 11/00 20060101 G01C011/00; G01C 3/08 20060101 G01C003/08; G01C 3/10 20060101 G01C003/10; G01S 17/48 20060101 G01S017/48; H04N 13/254 20060101 H04N013/254; G06T 7/60 20060101 G06T007/60; G01B 11/25 20060101 G01B011/25; G01B 11/245 20060101 G01B011/245; G01B 21/04 20060101 G01B021/04 |

Claims

1. A three-dimensional (3D) measuring system comprising: a master part including a first base part selected from a plurality of base parts, there being at least three fiducial markers affixed to the first base part; a first part-under-test including a second base part selected from the plurality of base parts; a photogrammetry camera configured to image the master part, including the at least three fiducial markers, from a plurality of photogrammetry camera positions to obtain a corresponding plurality of photogrammetry two-dimensional (2D) images; a first 3D imager having a first projector and a first camera, the first 3D imager configured to determine 3D coordinates in a first imager frame of reference; a second 3D imager having a second projector and a second camera, the second 3D imager configured to determine 3D coordinates in a second imager frame of reference, wherein the system is configured to determine in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the first imager frame of reference, and determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the second imager frame of reference, and wherein the system is further configured to determine 3D coordinates of the first part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, determined 3D coordinates of the first part-under-test by the first 3D imager in the first imager frame of reference, and determined 3D coordinates of the first part-under-test by the second 3D imager in the second imager frame of reference.

2. The system of claim 1, further comprising: a scale bar having a first target and a second target, a distance between the first target and the second target being a calibrated reference distance, the scale bar being configured to be fixedly positioned relative to the master part, the first target and the second target being visible in the plurality of photogrammetry 2D images, wherein the system if further configured to determine the first pose and the second pose based on the calibrated reference distance.

3. The system of claim 1, wherein the three fiducial markers include retroreflective targets and the photogrammetry camera further includes a flash unit configured to illuminate the retroreflector targets.

4. The system of claim 1, wherein the at least two of the plurality of photogrammetry 2D images are obtained with the photogrammetry camera rotated to different orientations.

5. The system of claim 1, wherein: the first 3D imager further includes a processor configured to determine the 3D coordinates in the first imager frame of reference, and is further configured to cooperate with an external computer to determine the 3D coordinates in the first imager frame of reference; and the second 3D imager further includes a processor configured to determine the 3D coordinates in the second imager frame of reference, and is further configured to cooperate with the external computer to determine the 3D coordinates in the second imager frame of reference, wherein the system is further configured to cooperate with an external computer to determine in the system frame of reference the first pose of the first 3D imager and the second pose of the second 3D imager.

6. The system of claim 1, wherein the system further comprises: a second part-under-test including a third base part selected from the plurality of base parts, wherein the system is further configured to determine 3D coordinates of the second part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, determined 3D coordinates of the second part-under-test by the first 3D imager in the first imager frame of reference, and determined 3D coordinates of the second part-under-test by the second 3D imager in the second imager frame of reference.

7. The system of claim 1, wherein: the at least three fiducial markers from among the at least three fiducial markers in the first imager frame of reference includes at least one fiducial marker not included in the at least three fiducial markers from among the at least three fiducial markers in the second imager frame of reference, and the at least three fiducial markers includes a first marker, a second marker, a third marker, a fourth marker, a fifth marker, and a sixth marker, the at least three fiducial markers from among the at least three fiducial markers in the first imager frame of reference including the first marker, the second marker, and the third marker but not the fourth marker, the fifth marker or the sixth marker, the at least three fiducial markers from among the at least three fiducial markers in the second imager frame of reference including the fourth marker, the fifth marker, and the sixth marker but not the first marker, the second marker, or the third marker.

8. A method comprising: providing a master part, a first part-under-test, a photogrammetry camera, a first three-dimensional (3D) imager, and a second 3D imager, the master part including a first base part selected from a plurality of base parts, there being at least three fiducial markers affixed to the first base part, the first part-under-test including a second base part selected from the plurality of base parts, the first 3D imager having a first projector, a first camera, and a first frame of reference, the second 3D imager having a second projector, a second camera, and a second frame of reference; imaging the master part, including the at least three fiducial markers, with the photogrammetry camera from a plurality of photogrammetry camera positions to obtain a corresponding plurality of photogrammetry two-dimensional (2D) images; determining with the first 3D imager 3D coordinates of the at least three fiducial markers in the first frame of reference; determining with the second 3D imager 3D coordinates of the at least three fiducial markers in the second frame of reference; determining in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, the determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the first frame of reference, and the determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the second frame of reference; determining with the first 3D imager first 3D coordinates of the first part-under-test in the first frame of reference; determining with the second 3D imager second 3D coordinates of the first part-under-test in the second frame of reference; determining 3D coordinates of the first part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, the determined first 3D coordinates of the first part-under-test in the first imager frame of reference, and the determined second 3D coordinates of the first part-under-test in the second frame of reference; and storing the 3D coordinates of the first part-under-test in the system frame of reference.

9. The method of claim 8, further comprising: providing a scale bar having a first target and a second target, a distance between the first target and the second target being a calibrated reference distance, the scale bar being configured to be fixedly positioned relative to the master part, the first target and the second target being visible in the plurality of photogrammetry 2D images, wherein in determining in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager, the first pose and the second pose are further based on the calibrated reference distance.

10. The method of claim 8, wherein: the at least three fiducial markers include retroreflective targets, the photogrammetry camera further includes a flash unit configured to illuminate the retroreflector targets, and the at least two of the plurality of photogrammetry 2D images are obtained with the photogrammetry camera rotated to different orientations.

11. The method of claim 8, further comprising: providing a second part-under-test, including a third base part selected from the plurality of base parts; and determining 3D coordinates of the second part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, determined 3D coordinates of the second part-under-test by the first 3D imager in the first imager frame of reference, and determined 3D coordinates of the second part-under-test by the second 3D imager in the second imager frame of reference.

12. The method of claim 8, wherein: in providing the master part, the part-under-test, the photogrammetry camera, the first 3D imager, and the second 3D imager, the first 3D imager further includes a first processor and the second 3D imager further includes a second processor, in determining with the first 3D imager first 3D coordinates of the part-under-test in the first frame of reference, the first processor determines the first 3D coordinates; and in determining with the second 3D imager second 3D coordinates of the part-under-test in the second frame of reference, the second processor determines the second 3D coordinates.

13. The method of claim 8, wherein: in determining with the first 3D imager first 3D coordinates of the part-under-test in the first frame of reference, the first 3D imager cooperates with an external computer to determine the 3D coordinates of the part-under-test in the first frame of reference; and in determining with the second 3D imager second 3D coordinates of the part-under-test in the second frame of reference, the second 3D imager cooperates with the external computer to determine the 3D coordinates of the part-under-test in the second frame of reference; and in determining in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager, an external computer assists in determining in the system frame of reference the first pose of the first 3D imager and the second pose of the second 3D imager.

14. A three-dimensional (3D) measuring system comprising: a master part including a first base part, there being at least three fiducial markers affixed to the first base part; a first part-under-test including a second base part; a photogrammetry camera configured to image the master part, including the at least three fiducial markers, and to obtain a plurality of photogrammetry 2D images; a first 3D imager having a first projector and a first camera, the first 3D imager configured to determine 3D coordinates in a first imager frame of reference; a second 3D imager having a second projector and a second camera, the second 3D imager configured to determine 3D coordinates in a second imager frame of reference; and one or more processors responsive to executable computer instructions, to perform a method comprising: determine first 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the first imager frame of reference; determine second 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the second imager frame of reference; determine in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, the first 3D coordinates and the second 3D coordinates; determine in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, the first 3D coordinates, and the second; determine a third 3D coordinates of the first part-under-test by the first 3D imager in the first imager frame of reference; determine a fourth 3D coordinates of the first part-under-test by the second 3D imager in the second imager frame of reference; and determine a third 3D coordinates of the first part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, the third 3D coordinates and the fourth 3D coordinates.

15. The system of claim 14, further comprising: a scale bar having a first target and a second target, a distance between the first target and the second target being a calibrated reference distance, the scale bar being configured to be fixedly positioned relative to the master part, the first target and the second target being visible in the plurality of photogrammetry 2D images, wherein the method further comprises determining the first pose and the second pose based on the calibrated reference distance.

16. The system of claim 14, wherein the three fiducial markers include retroreflective targets and the photogrammetry camera further includes a flash unit configured to illuminate the retroreflector targets.

17. The system of claim 14, wherein the at least two of the plurality of photogrammetry 2D images are obtained with the photogrammetry camera rotated to different orientations.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] The present application is a Continuation application of U.S. application Ser. No. 15/457,045 filed on Mar. 13, 2017, which is a Continuation-In-Part application of U.S. Nonprovisional application Ser. No. 15/233,415 filed on Aug. 10, 2016. Application Ser. No. 15/233,415 is a nonprovisional application of U.S. Provisional Application Ser. No. 62/207,047 filed Aug. 19, 2015, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/272,442 filed Dec. 29, 2015, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/272,451 filed on Dec. 29, 2015, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/272,461 filed Dec. 29, 2015, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/272,469 filed on Dec. 29, 2015, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/276,319 filed on Jan. 8, 2016, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/276,325 filed on Jan. 8, 2016, a Nonprovisional application of U.S. Provisional Application Ser. No. 62/276,329 filed on Jan. 8, 2016, and a Nonprovisional application of U.S. Provisional Application Ser. No. 62/309,024 filed Mar. 16, 2016. The contents of all of which are incorporated herein by reference in their entirety.

FIELD OF THE INVENTION

[0002] The subject matter disclosed herein relates in general to a triangulation-type, three-dimensional (3D) imager device, also known as a triangulation scanner.

BACKGROUND OF THE INVENTION

[0003] A 3D imager uses a triangulation method to measure the 3D coordinates of points on an object. The 3D imager usually includes a projector that projects onto a surface of the object either a pattern of light in a line or a pattern of light covering an area. A camera is coupled to the projector in a fixed relationship, for example, by attaching a camera and the projector to a common frame. The light emitted from the projector is reflected off of the object surface and detected by the camera. Since the camera and projector are arranged in a fixed relationship, the distance to the object may be determined using trigonometric principles. Compared to coordinate measurement devices that use tactile probes, triangulation systems provide advantages in quickly acquiring coordinate data over a large area. As used herein, the resulting collection of 3D coordinate values or data points of the object being measured by the triangulation system is referred to as point cloud data or simply a point cloud.

[0004] There are a number of areas in which existing triangulation scanners may be improved, including improved thermal stability and cooling, improved geometries for detecting problems or automatically correcting scanner compensation parameters, improved rejection of background lighting, reduced effect of cooling fan vibration, optimized illumination projection levels, improved ways to measure relatively large objects with relatively high accuracy and high resolution in a relatively short time, improved methods of registering an array of 3D imagers, and a structure configured to simplify proper alignment of 3D imagers to a part-under-test.

[0005] Accordingly, while existing triangulation-based 3D imager devices that use photogrammetry methods are suitable for their intended purpose, the need for improvement remains.

BRIEF DESCRIPTION OF THE INVENTION

[0006] According to an embodiment of the present invention, a three-dimensional (3D) measuring system is provided. The system comprising: a master part including a first base part selected from a plurality of base parts, there being at least three fiducial markers affixed to the first base part; a first part-under-test including a second base part selected from the plurality of base parts; a photogrammetry camera configured to image the master part, including the at least three fiducial markers, from a plurality of photogrammetry camera positions to obtain a corresponding plurality of photogrammetry two-dimensional (2D) images; a first 3D imager having a first projector and a first camera, the first 3D imager configured to determine 3D coordinates in a first imager frame of reference; a second 3D imager having a second projector and a second camera, the second 3D imager configured to determine 3D coordinates in a second imager frame of reference, wherein the system is configured to determine in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the first imager frame of reference, and determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the second imager frame of reference, and wherein the system is further configured to determine 3D coordinates of the first part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, determined 3D coordinates of the first part-under-test by the first 3D imager in the first imager frame of reference, and determined 3D coordinates of the first part-under-test by the second 3D imager in the second imager frame of reference.

[0007] According to an embodiment of the present invention, a method is provided. The method comprising: providing a master part, a first part-under-test, a photogrammetry camera, a first three-dimensional (3D) imager, and a second 3D imager, the master part including a first base part selected from a plurality of base parts, there being at least three fiducial markers affixed to the first base part, the first part-under-test including a second base part selected from the plurality of base parts, the first 3D imager having a first projector, a first camera, and a first frame of reference, the second 3D imager having a second projector, a second camera, and a second frame of reference; imaging the master part, including the at least three fiducial markers, with the photogrammetry camera from a plurality of photogrammetry camera positions to obtain a corresponding plurality of photogrammetry two-dimensional (2D) images; determining with the first 3D imager 3D coordinates of the at least three fiducial markers in the first frame of reference; determining with the second 3D imager 3D coordinates of the at least three fiducial markers in the second frame of reference; determining in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, the determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the first frame of reference, and the determined 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the second frame of reference; determining with the first 3D imager first 3D coordinates of the first part-under-test in the first frame of reference; determining with the second 3D imager second 3D coordinates of the first part-under-test in the second frame of reference; determining 3D coordinates of the first part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, the determined first 3D coordinates of the first part-under-test in the first imager frame of reference, and the determined second 3D coordinates of the first part-under-test in the second frame of reference; and storing the 3D coordinates of the first part-under-test in the system frame of reference.

[0008] According to an embodiment of the present invention, a three-dimensional (3D) measuring system is provided. The system comprising: a master part including a first base part, there being at least three fiducial markers affixed to the first base part; a first part-under-test including a second base part; a photogrammetry camera configured to image the master part, including the at least three fiducial markers, and to obtain a plurality of photogrammetry 2D images; a first 3D imager having a first projector and a first camera, the first 3D imager configured to determine 3D coordinates in a first imager frame of reference; a second 3D imager having a second projector and a second camera, the second 3D imager configured to determine 3D coordinates in a second imager frame of reference; and one or more processors responsive to executable computer instructions, to perform a method comprising: determine first 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the first imager frame of reference; determine second 3D coordinates of at least three fiducial markers from among the at least three fiducial markers in the second imager frame of reference; determine in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, the first 3D coordinates and the second 3D coordinates; determine in a system frame of reference a first pose of the first 3D imager and a second pose of the second 3D imager based at least in part on the plurality of photogrammetry 2D images, the first 3D coordinates, and the second; determine a third 3D coordinates of the first part-under-test by the first 3D imager in the first imager frame of reference; determine a fourth 3D coordinates of the first part-under-test by the second 3D imager in the second imager frame of reference; and determine a third 3D coordinates of the first part-under-test in the system frame of reference based at least in part on the determined first pose, the determined second pose, the third 3D coordinates and the fourth 3D coordinates.

[0009] These and other advantages and features will become more apparent from the following description taken in conjunction with the drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

[0010] The subject matter, which is regarded as the invention, is particularly pointed out and distinctly claimed in the claims at the conclusion of the specification. The foregoing and other features and advantages of the invention are apparent from the following detailed description taken in conjunction with the accompanying drawings in which:

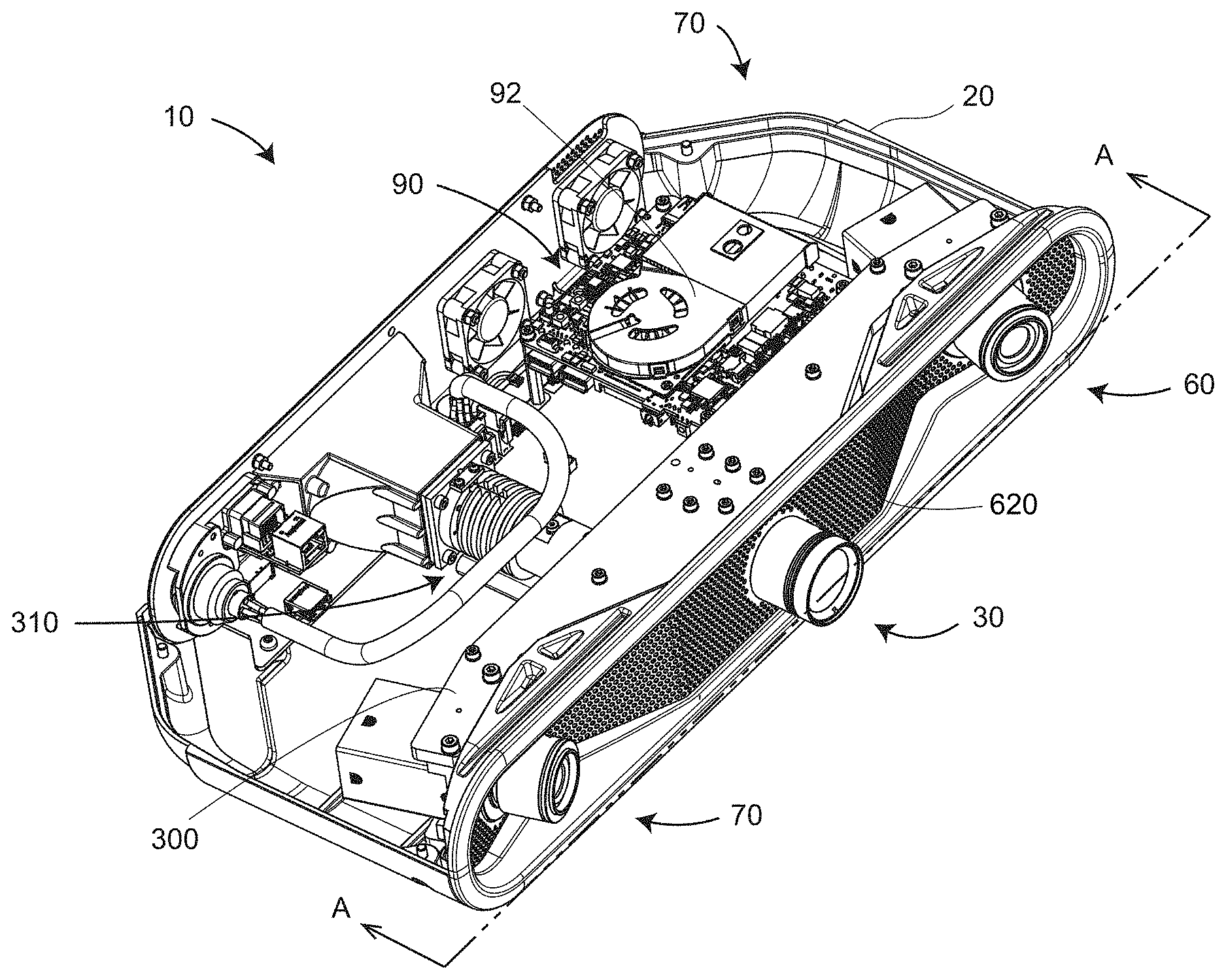

[0011] FIG. 1 is a perspective view of a 3D imager according to an embodiment;

[0012] FIG. 2 is a perspective view of internal elements of a 3D imager having its cover removed according to an embodiment;

[0013] FIG. 3 is a perspective view of a projector-camera assembly of a 3D imager according to an embodiment;

[0014] FIG. 4 is a top view of internal elements of a 3D imager having its cover removed according to an embodiment;

[0015] FIG. 5A is a cross sectional view of the projector-camera assembly according to an embodiment;

[0016] FIG. 5B is a perspective view of a light pipe according to an embodiment;

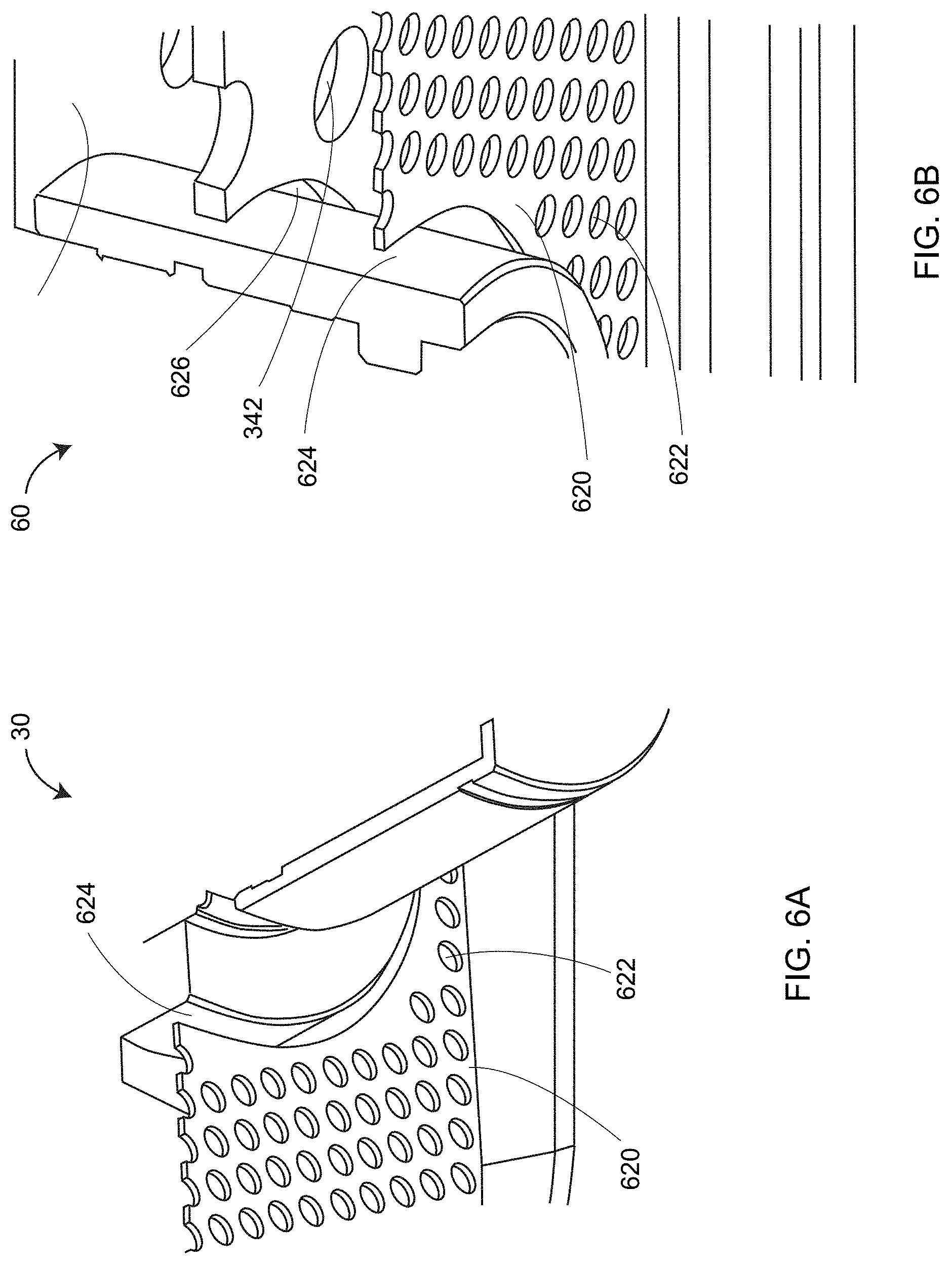

[0017] FIG. 6A is a partial perspective view of cooling vents surrounding a projector lens assembly according to an embodiment;

[0018] FIG. 6B is a partial perspective view of cooling vents surrounding a camera lens assembly according to an embodiment;

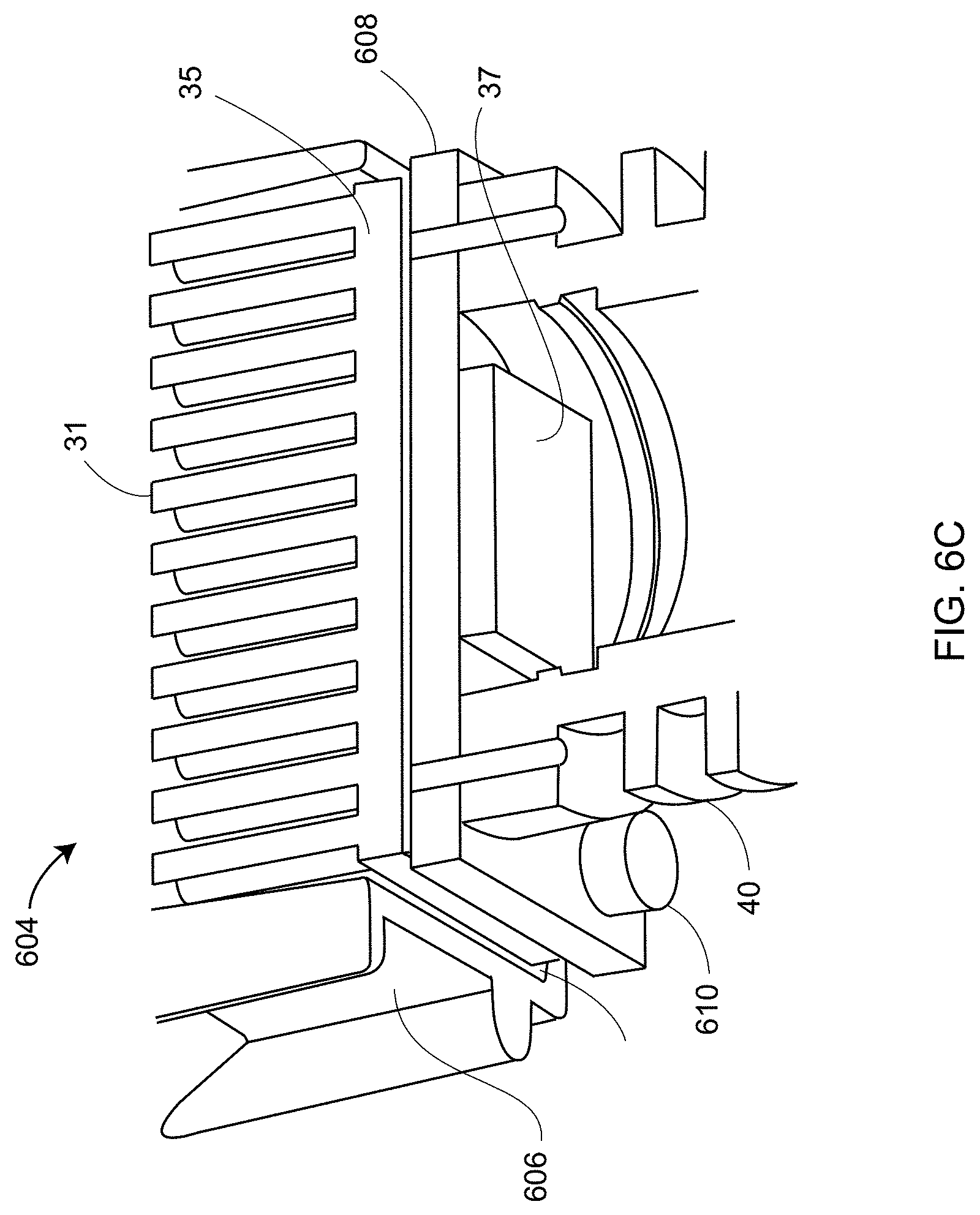

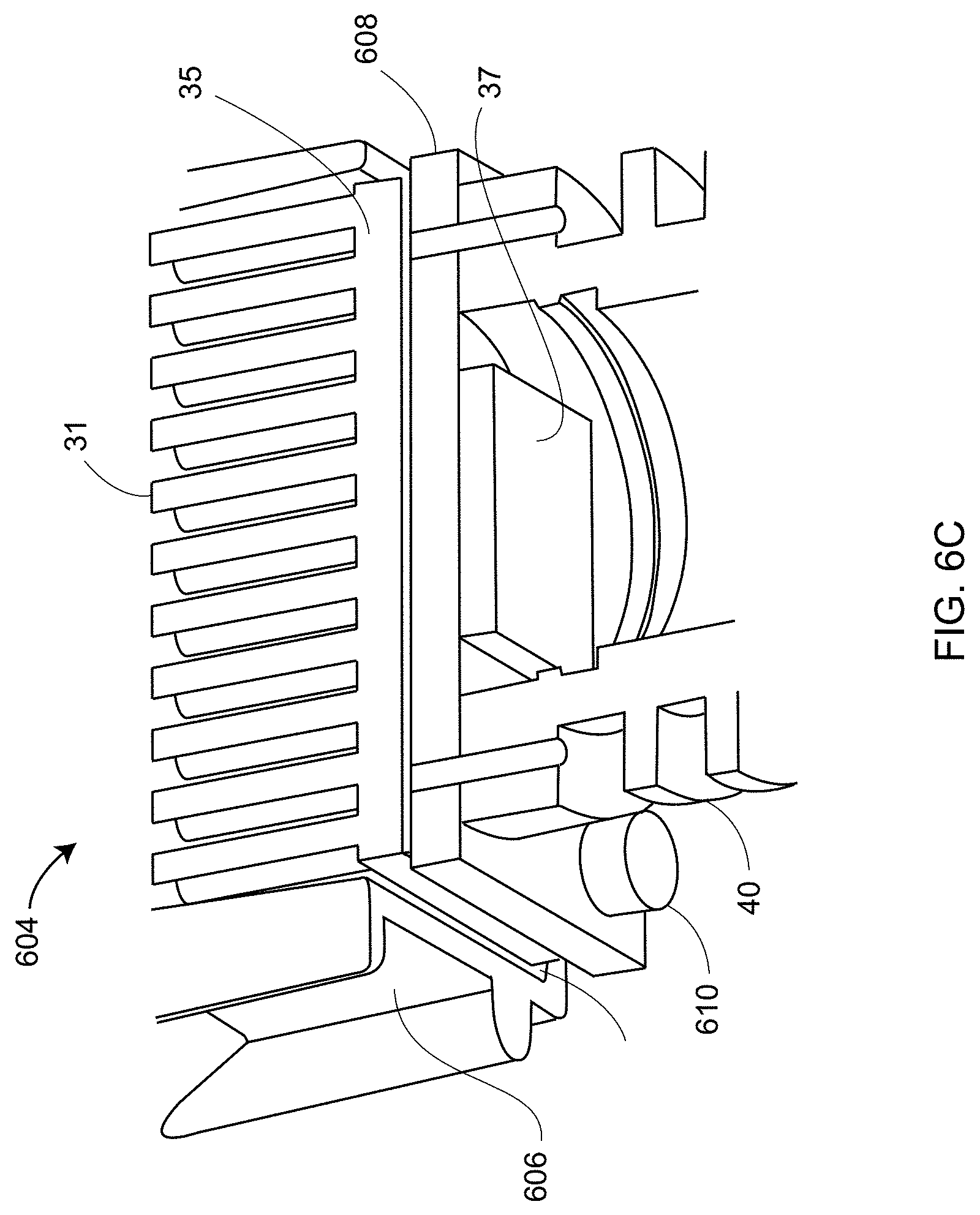

[0019] FIG. 6C is a partial perspective view of projector source cooling elements according to an embodiment;

[0020] FIG. 7 is a block diagram of electrical components of a 3D imager according to an embodiment;

[0021] FIG. 8 is a block diagram of a processor system according to an embodiment;

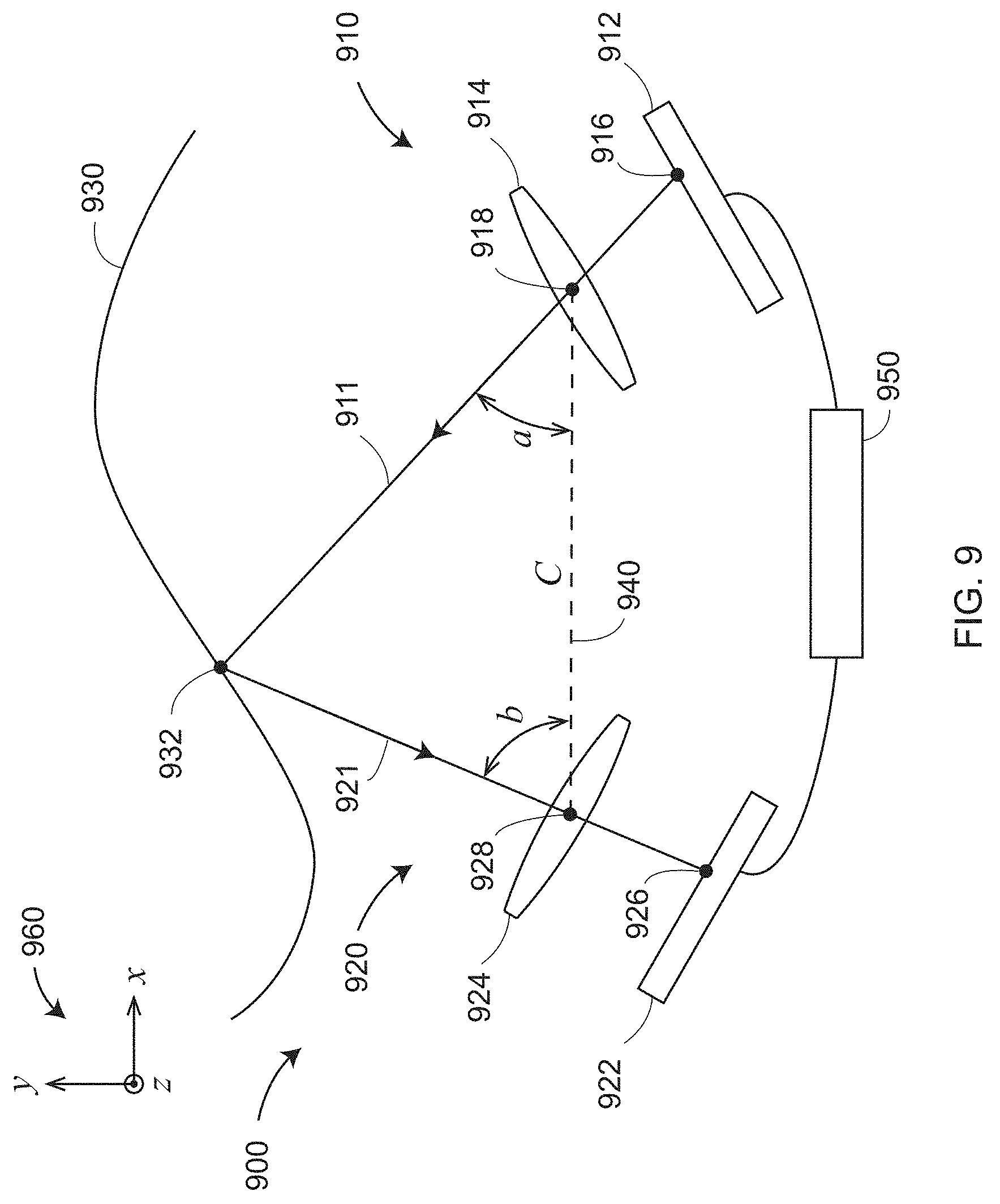

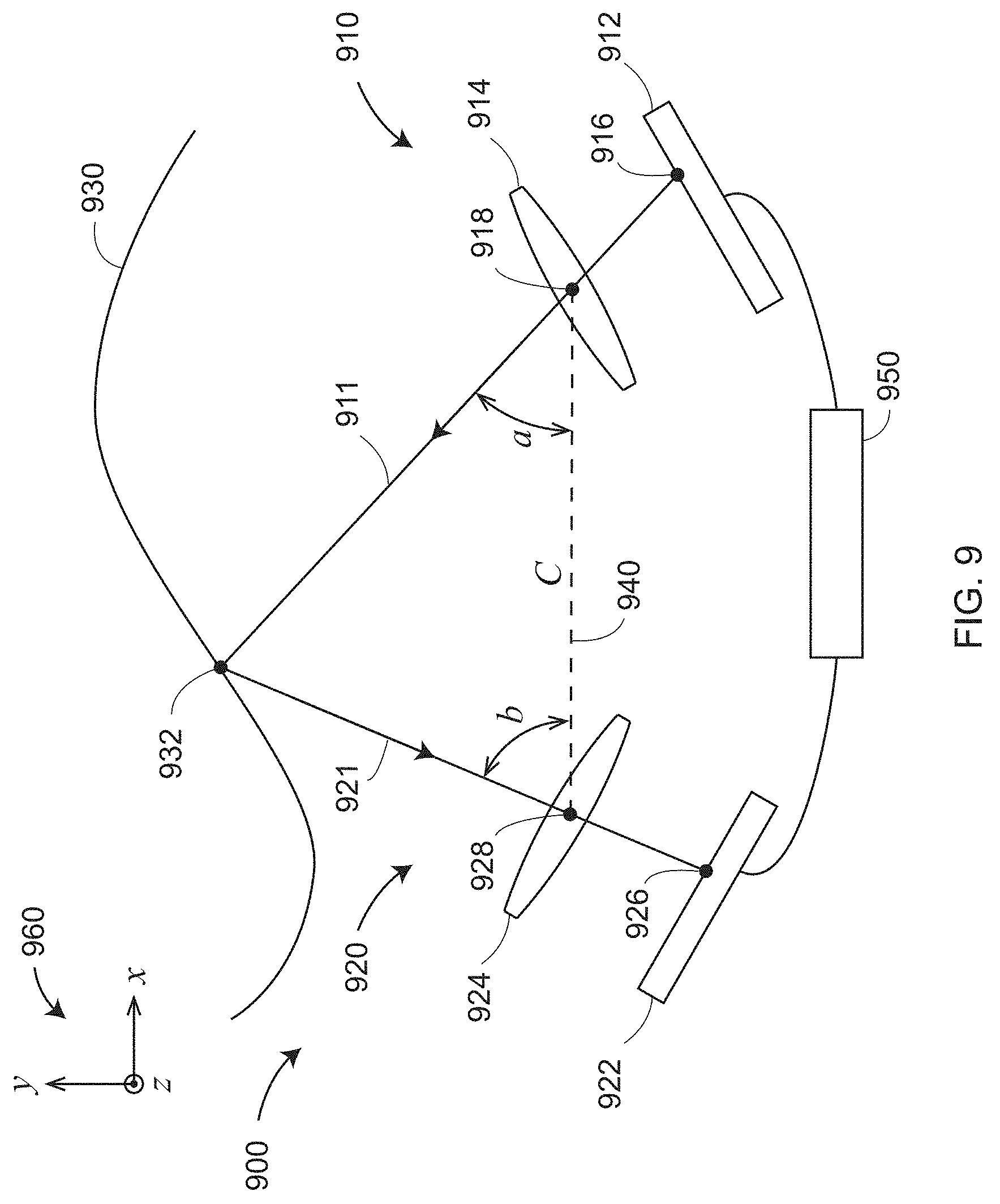

[0022] FIG. 9 is a schematic illustration of the principle of operation of a triangulation scanner having a camera and a projector according to an embodiment;

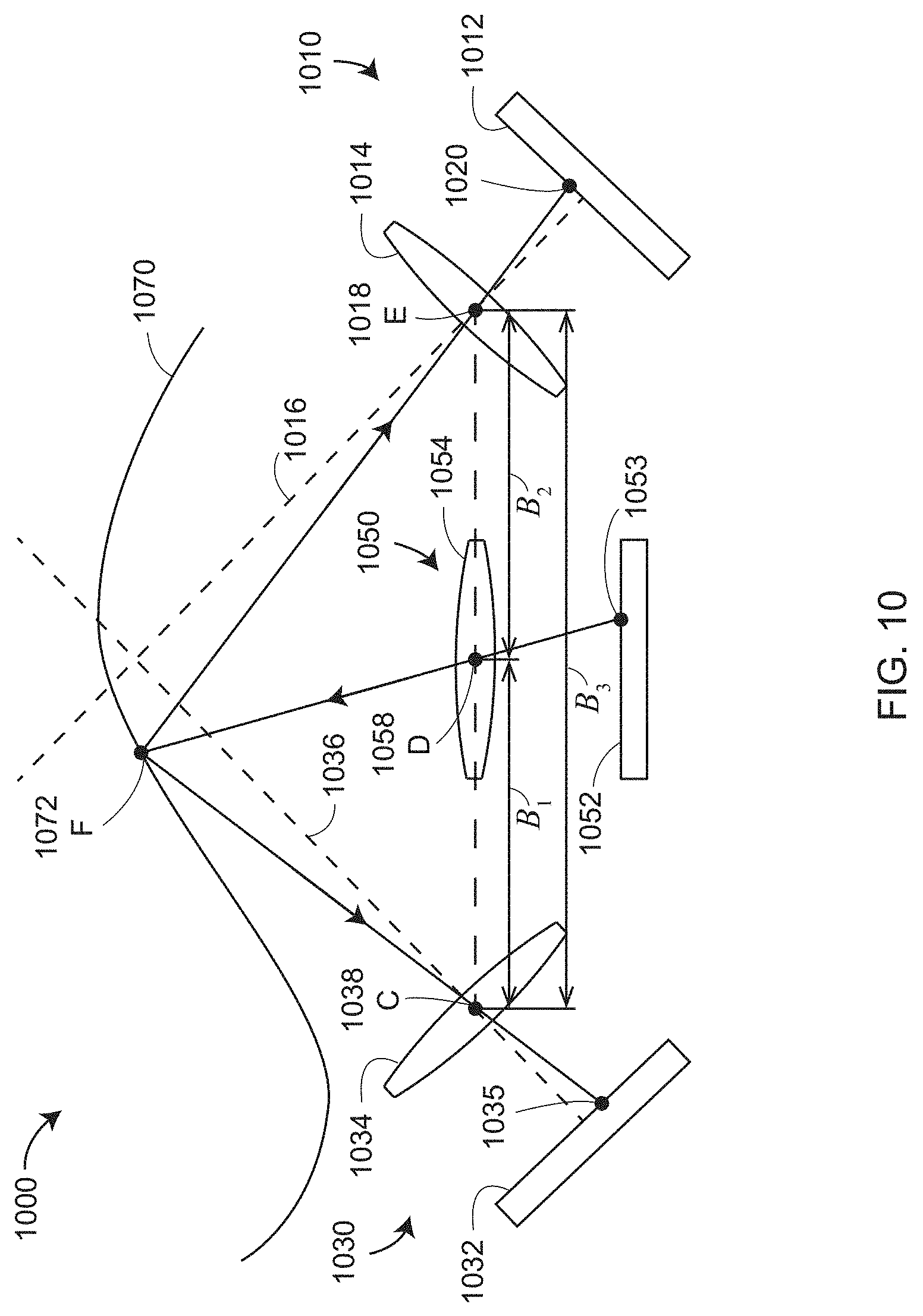

[0023] FIG. 10 is a schematic illustration of the principle of operation of a triangulation scanner having two cameras and one projector according to an embodiment;

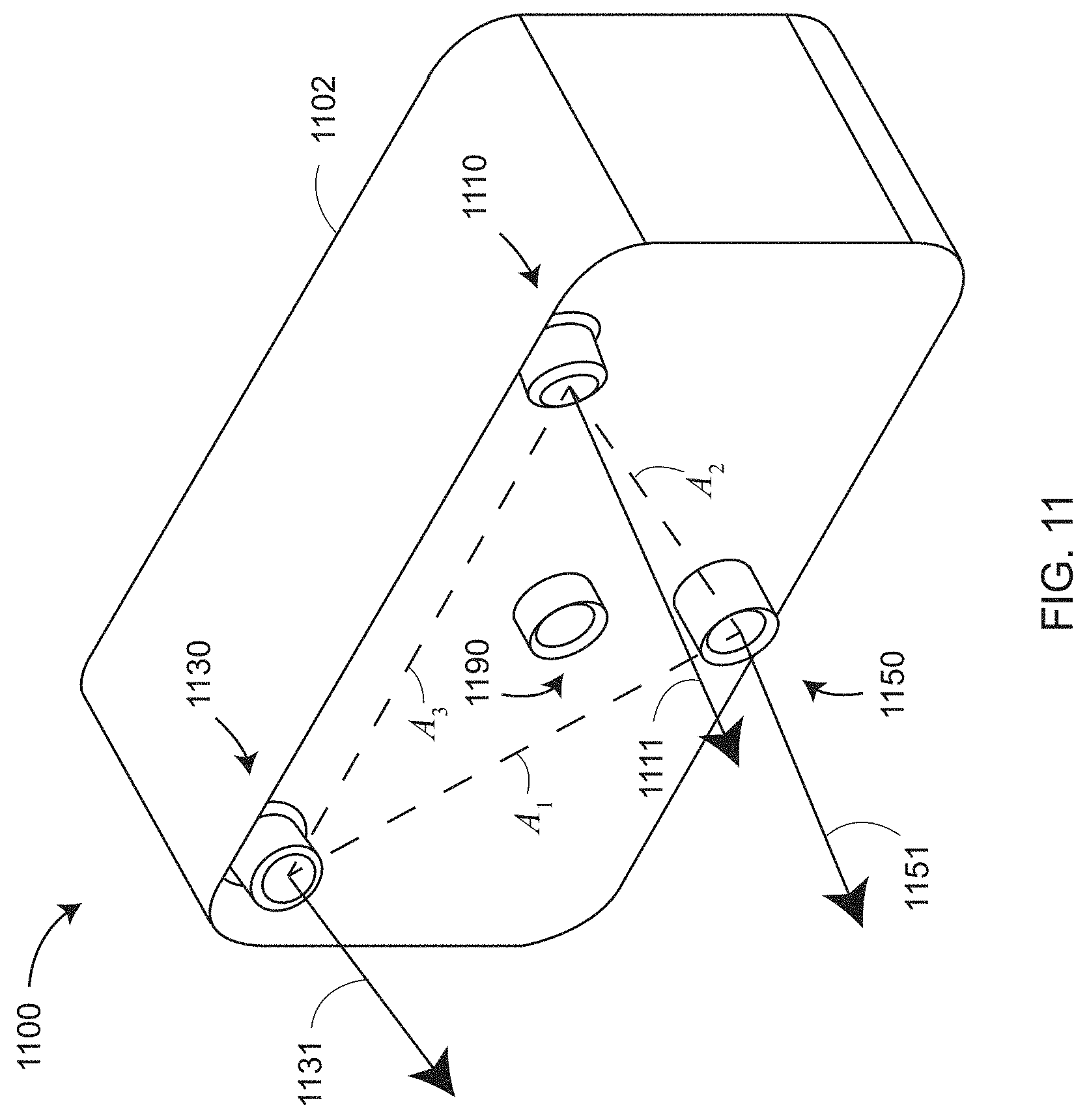

[0024] FIG. 11 is a perspective view of a scanner having two cameras and one projector arranged in a triangle for 3D measurement according to an embodiment;

[0025] FIGS. 12A and 12B are schematic illustrations of the principle of operation of the scanner of FIG. 11;

[0026] FIGS. 13A and 13B are schematic illustrations of 3D imagers having wide field-of-view (FOV) lenses and narrow FOV lenses, respectively, according to an embodiment;

[0027] FIG. 13C is a schematic representation of camera and projector lenses according to an embodiment;

[0028] FIGS. 13D and 13E are schematic representations of ray models used for the camera and projector lenses;

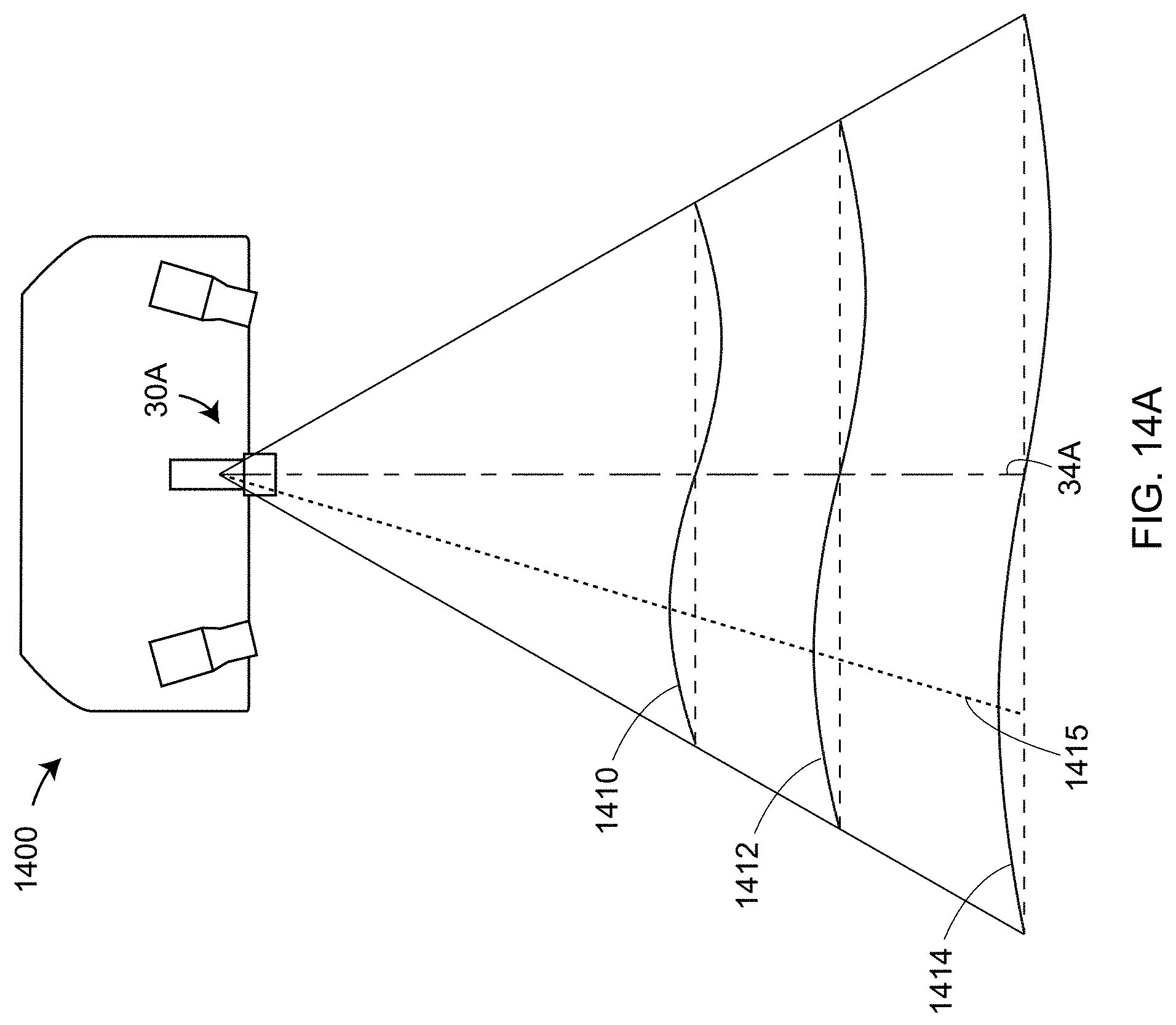

[0029] FIG. 14A illustrates projection of a coarse sine-wave pattern according to an embodiment;

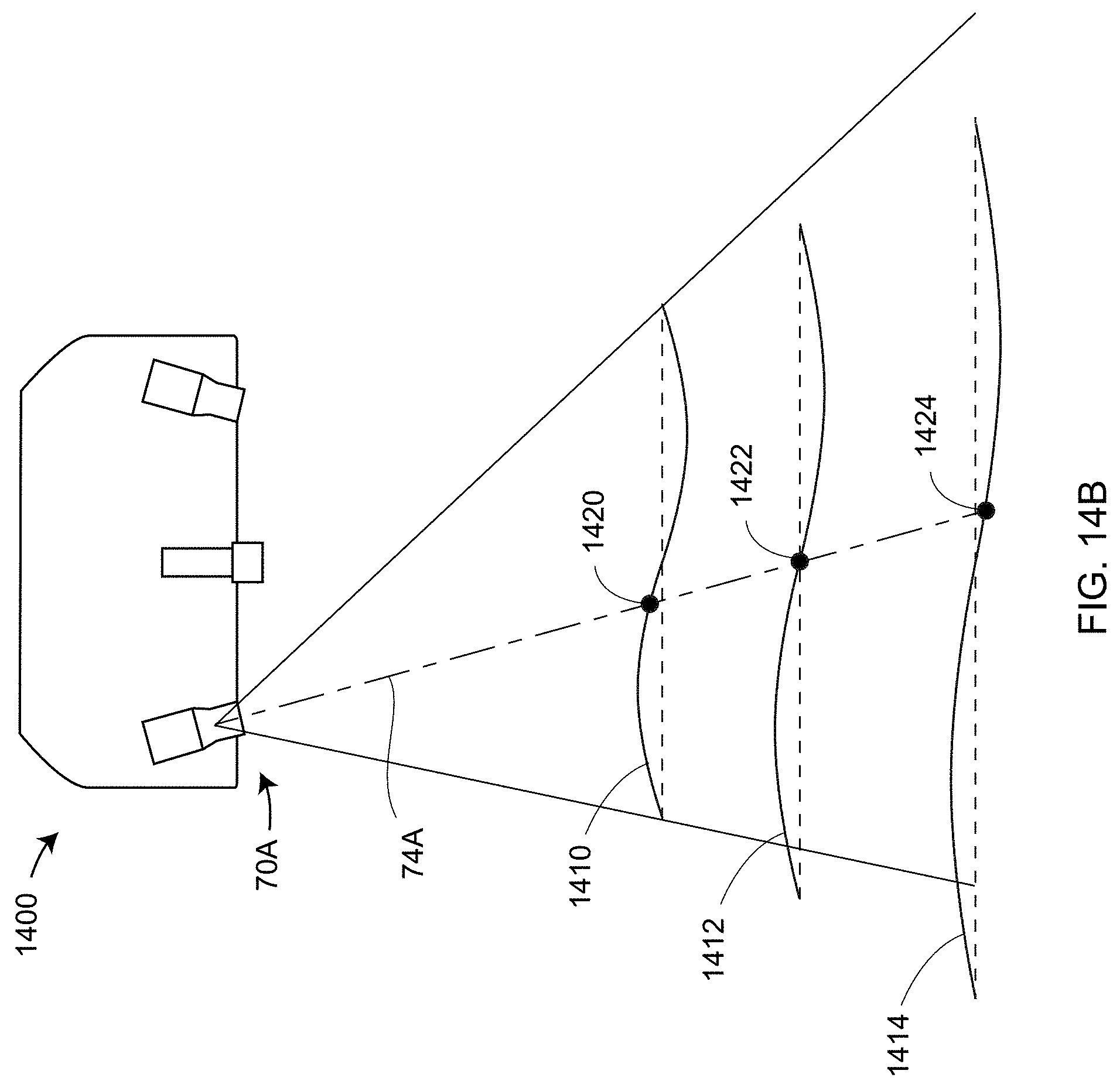

[0030] FIG. 14B illustrates reception of the coarse sine-wave pattern by a camera lens according to an embodiment;

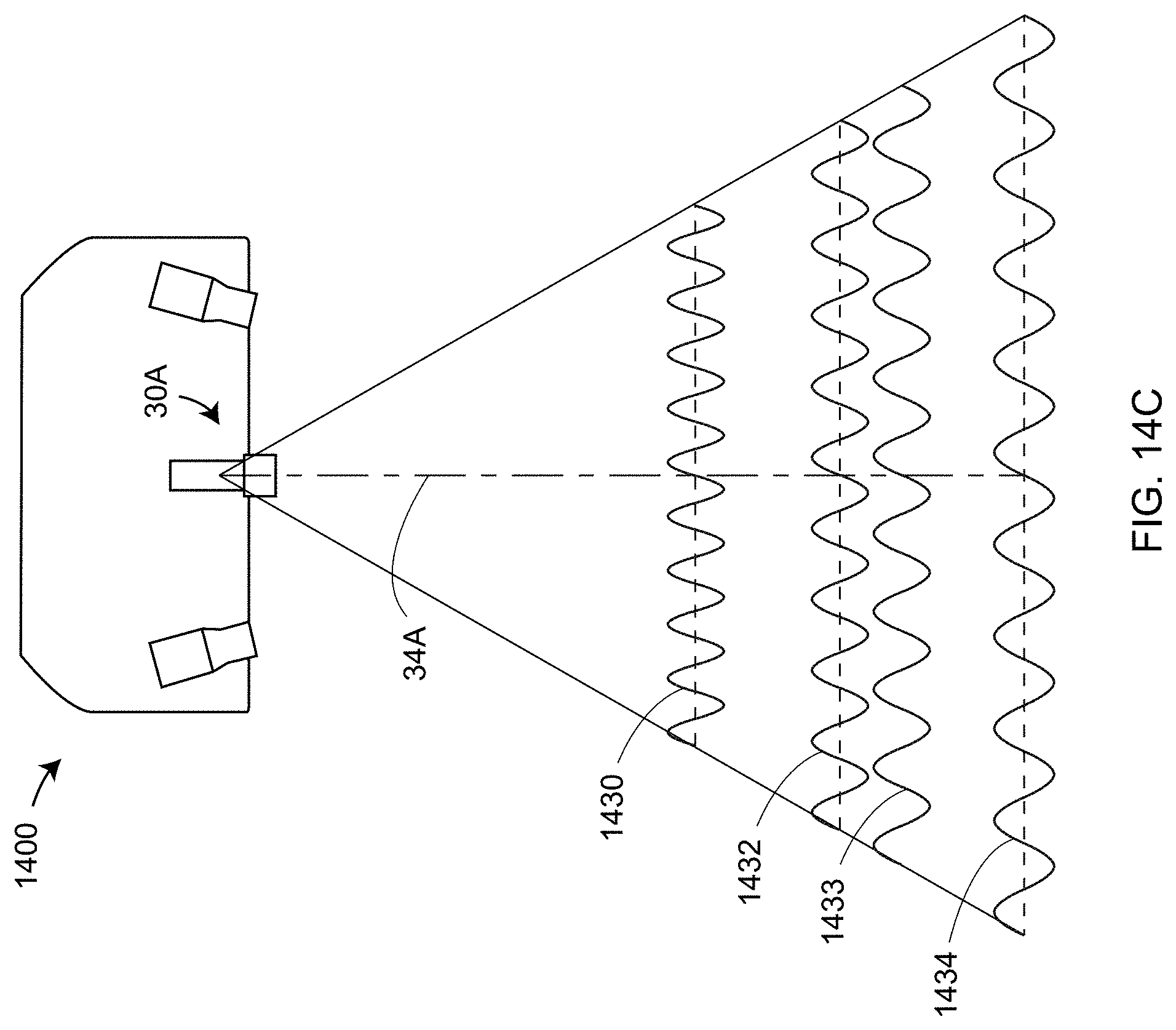

[0031] FIG. 14C illustrates projection of a finer sine-wave pattern according to an embodiment;

[0032] FIG. 14D illustrates reception of the finer sine-wave pattern according to an embodiment;

[0033] FIG. 15 illustrates how phase is determined from a set of shifted sine waves according to an embodiment;

[0034] FIG. 16 is a perspective view of a web support according to an embodiment;

[0035] FIG. 17 is a perspective view of an finite-element analysis (FEA) model of the web support when heated according to an embodiment;

[0036] FIG. 18 is a cross-sectional view of a projector lens assembly according to an embodiment;

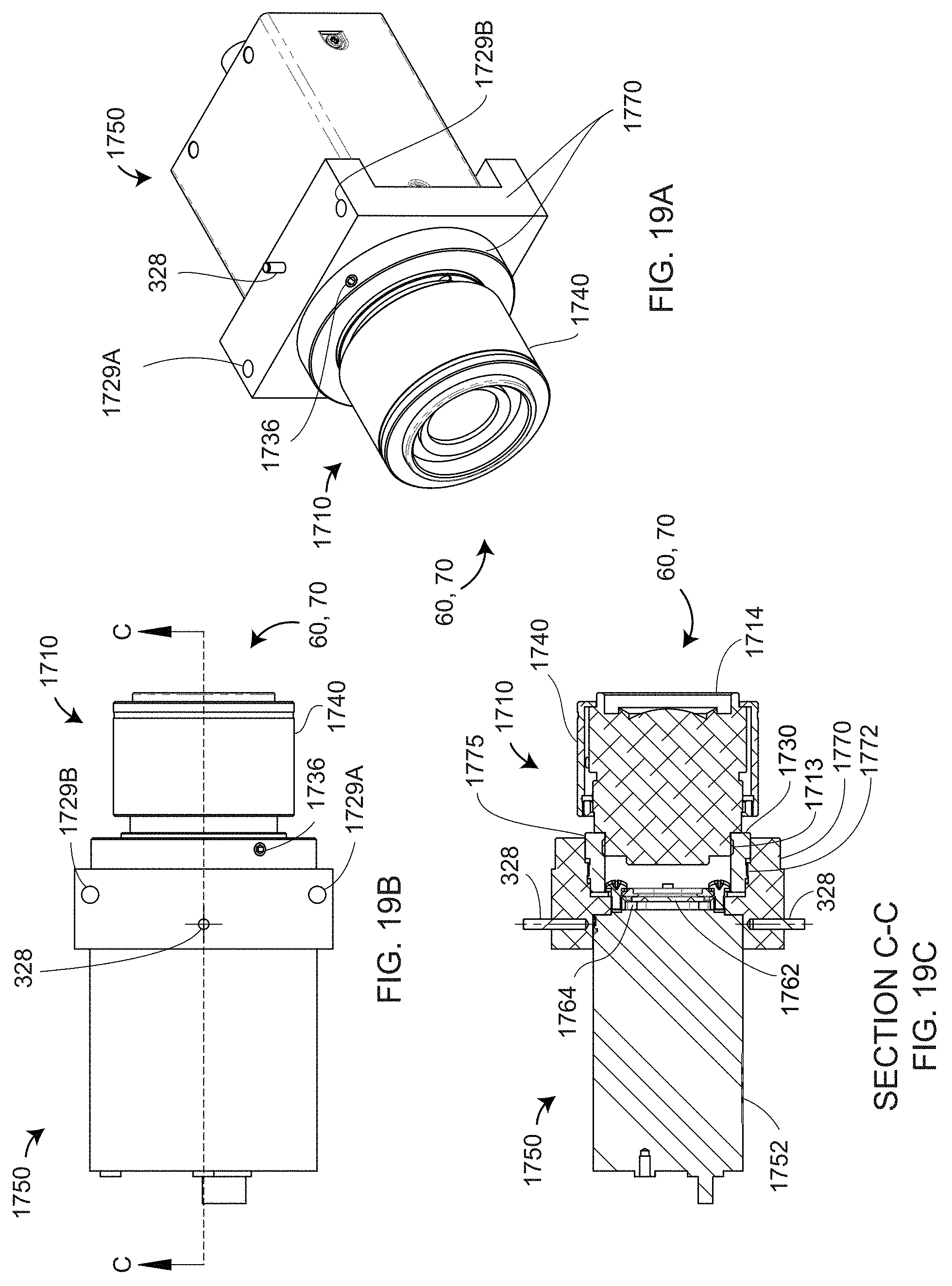

[0037] FIGS. 19A, 19B, and 19C are a perspective view, a top view, and a cross-sectional view of a camera assembly, respectively, according to an embodiment;

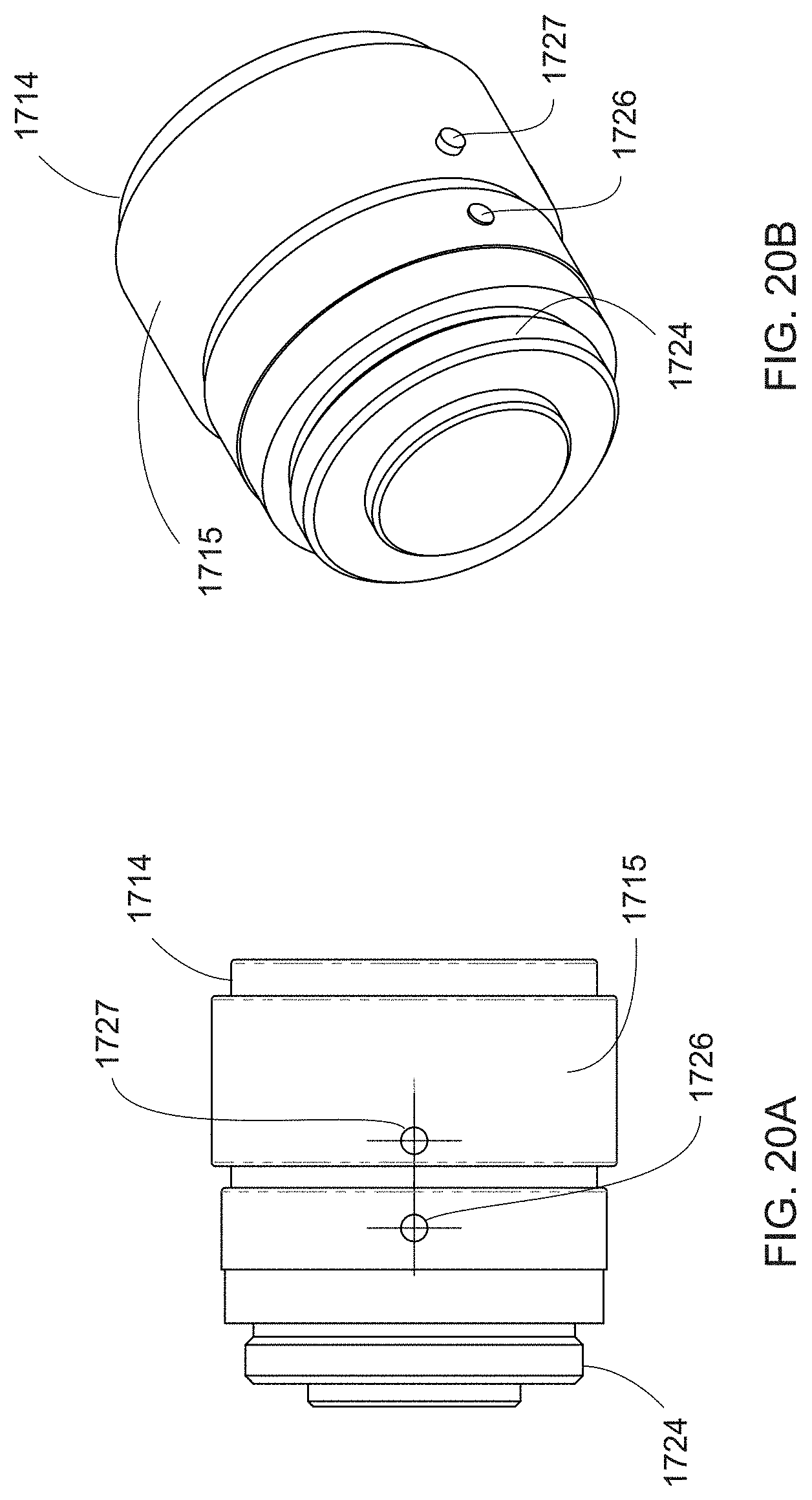

[0038] FIGS. 20A and 20B are top and perspective views of a first camera lens assembly according to an embodiment;

[0039] FIGS. 21A, 21B, and 21C are top, first perspective, and second perspective views of a second camera lens assembly according to an embodiment;

[0040] FIGS. 22A and 22B show an arrangement for obtaining consistent projector lens assemblies by using a golden projector lens assembly according to an embodiment;

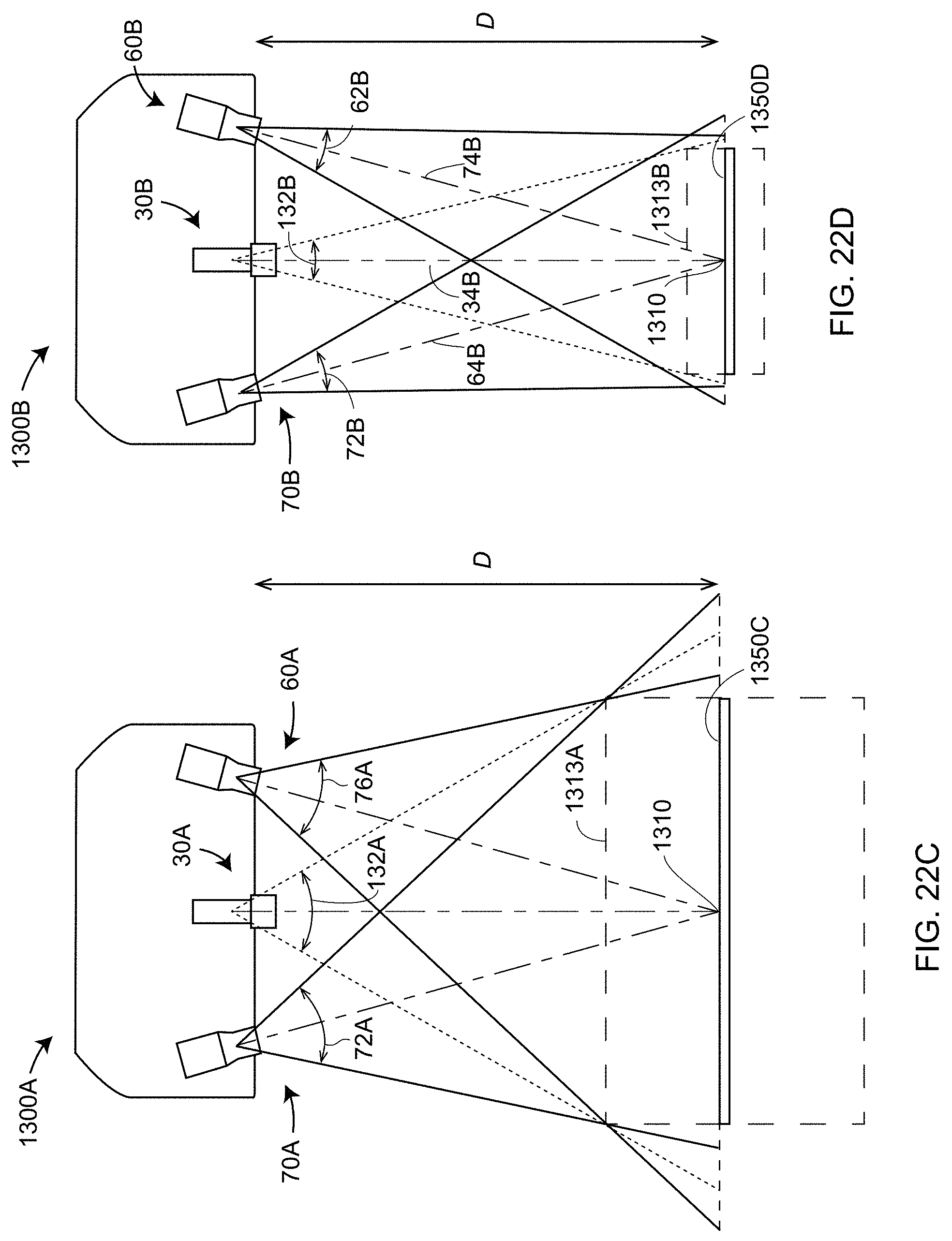

[0041] FIGS. 22C and 22D show an arrangement for obtaining consistent camera lens assemblies by using a golden camera lens assembly according to an embodiment;

[0042] FIGS. 23A and 23B show normalized light output of exemplary incandescent and compact fluorescent lights, respectively;

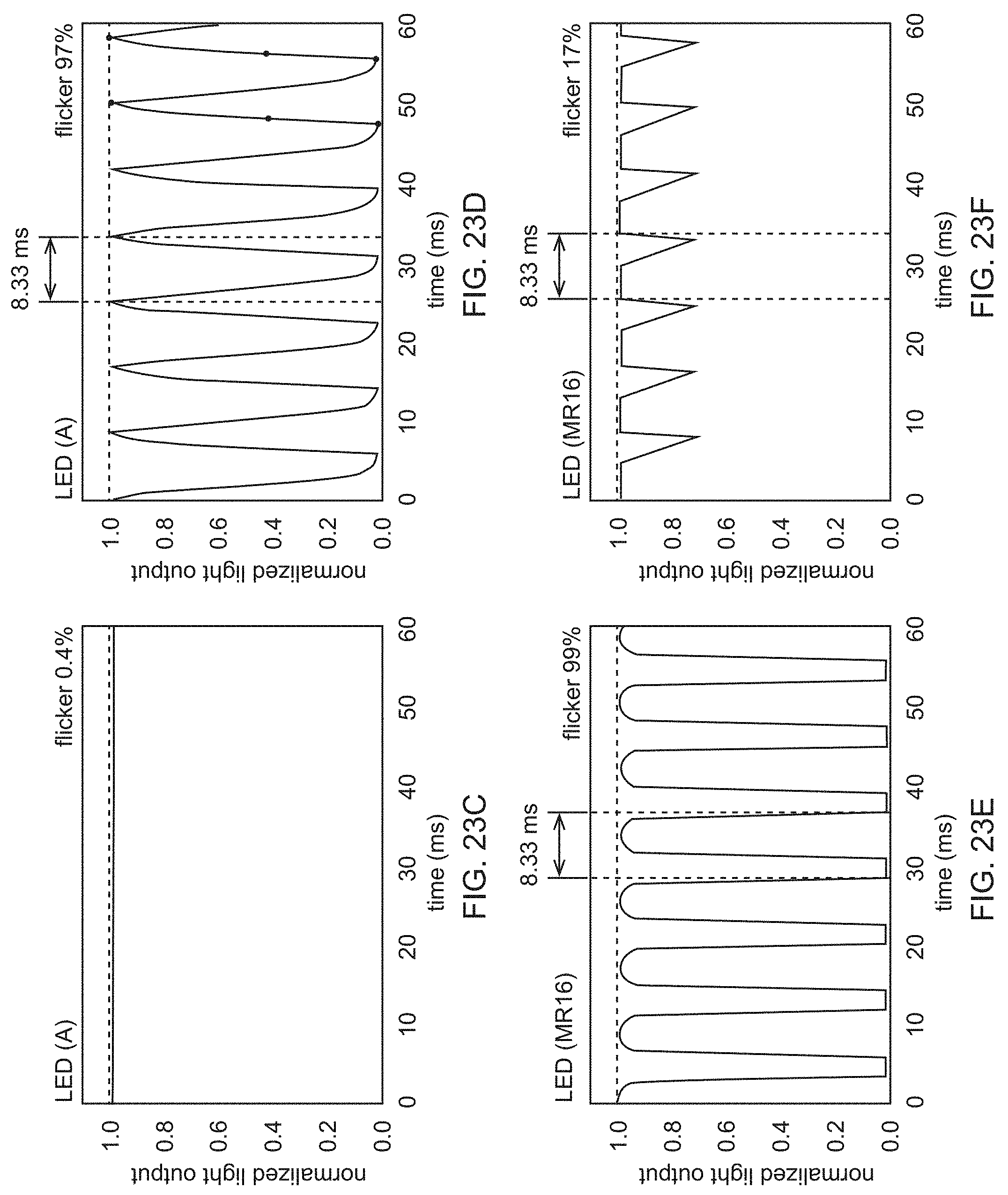

[0043] FIGS. 23C, 23D, 23E, and 23F show normalized light outputs of four exemplary types of light-emitting diode (LED) sources;

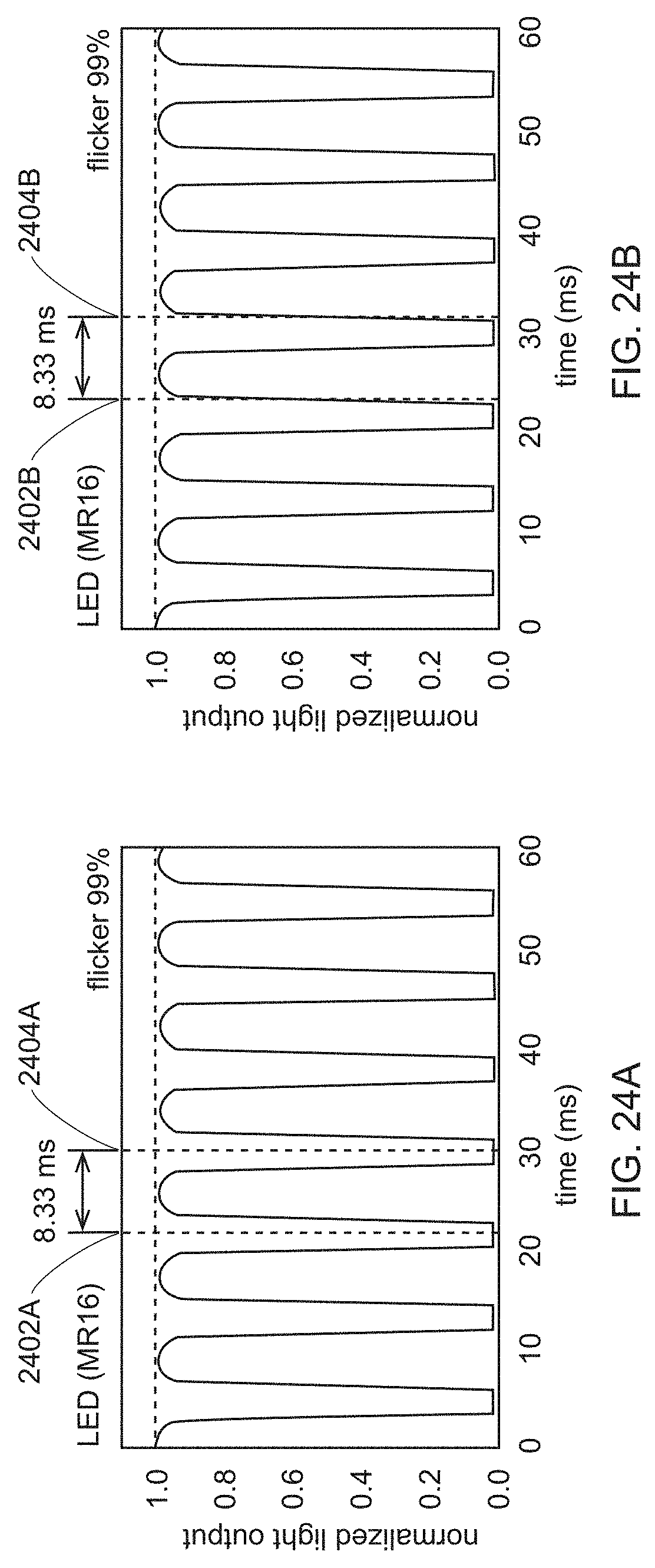

[0044] FIGS. 24A and 24B show how starting and stopping time of a measurement can change the influence of background lights;

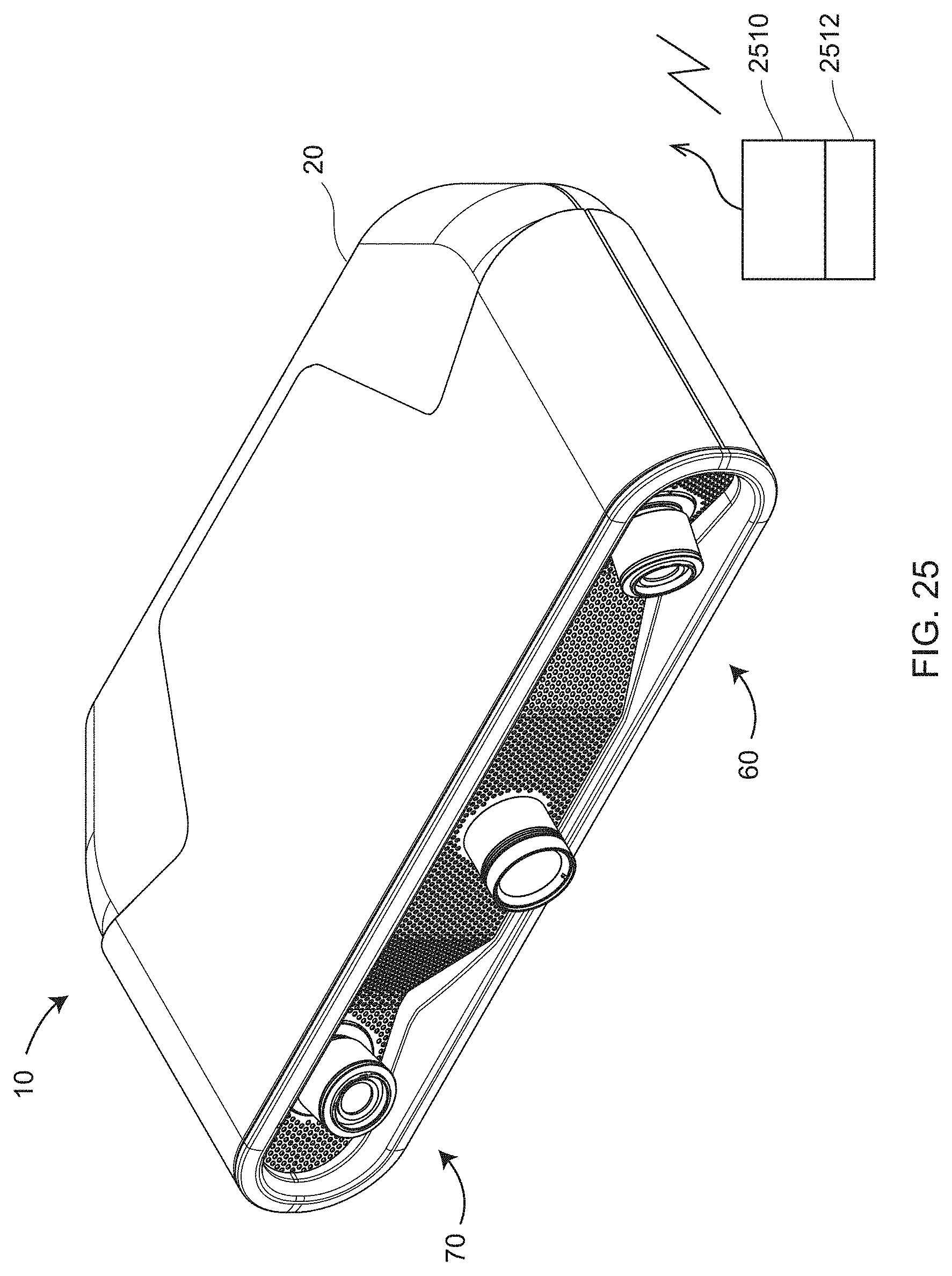

[0045] FIG. 25 shows elements that may be added to an exemplary scanner to determine background light as a function of time according to an embodiment;

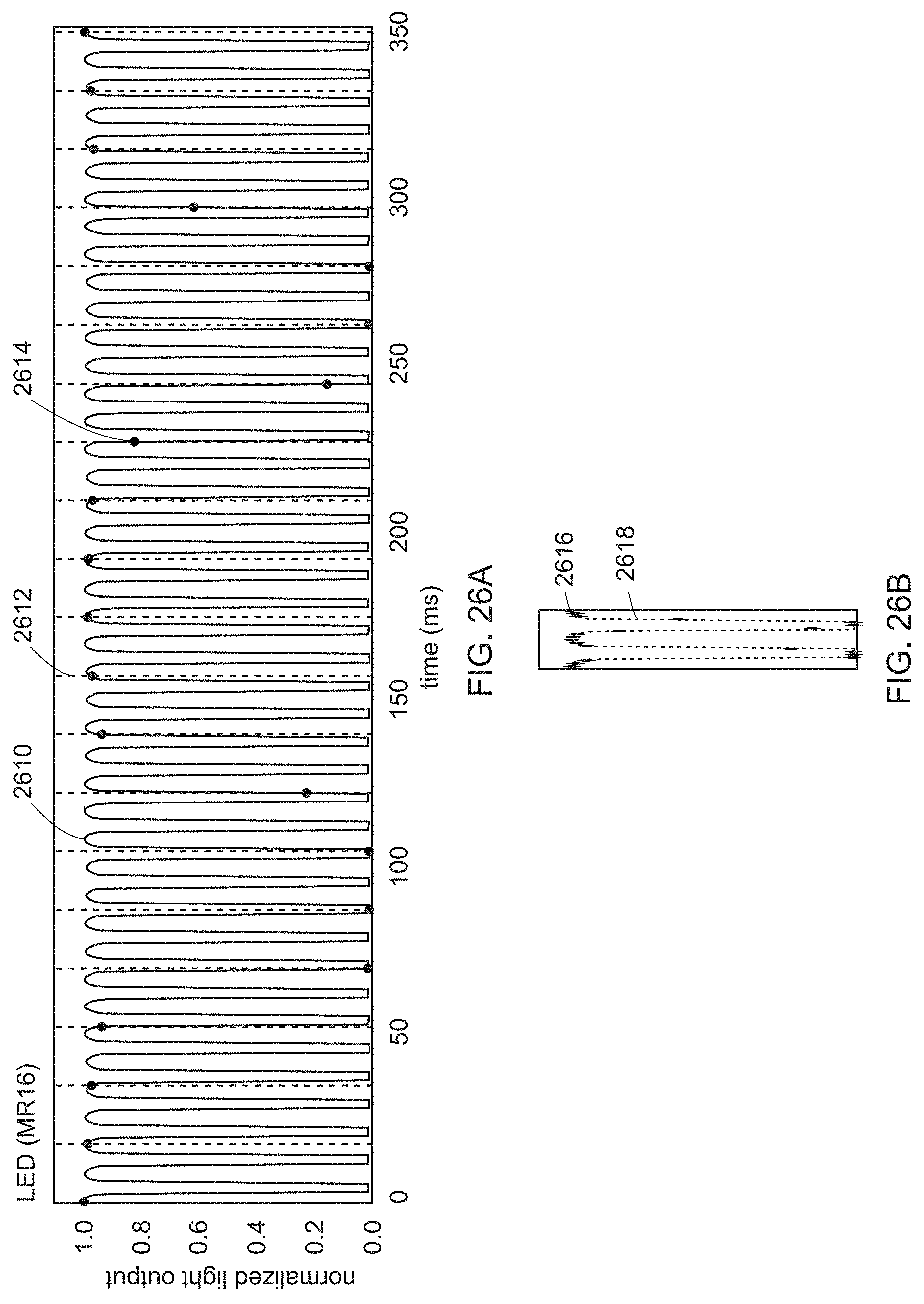

[0046] FIGS. 26A and 26B shows how an oversampling method can be used to determine emitted background light as a function of time according to an embodiment;

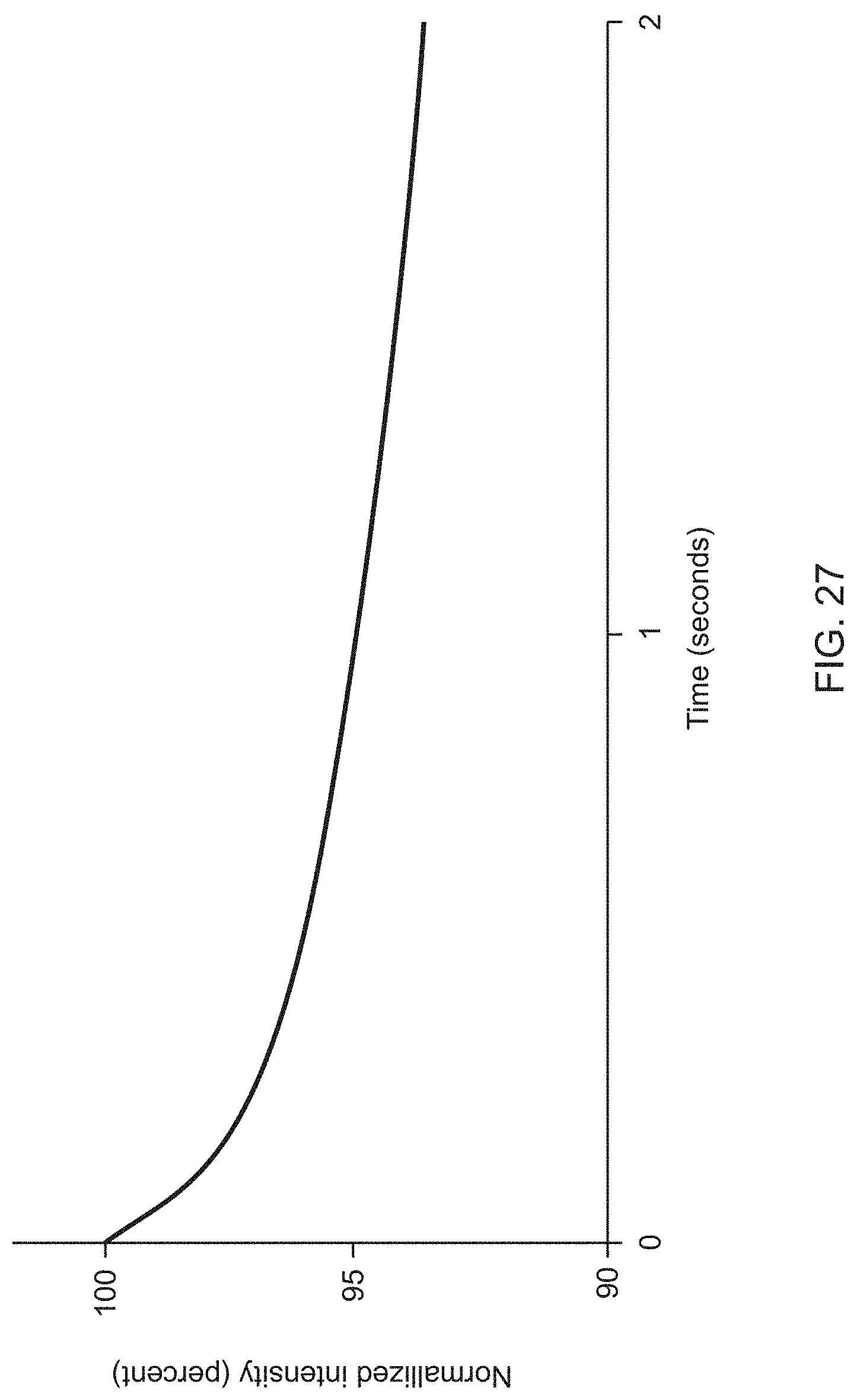

[0047] FIG. 27 illustrates the change in projected output power following shutting down of fans according to an embodiment;

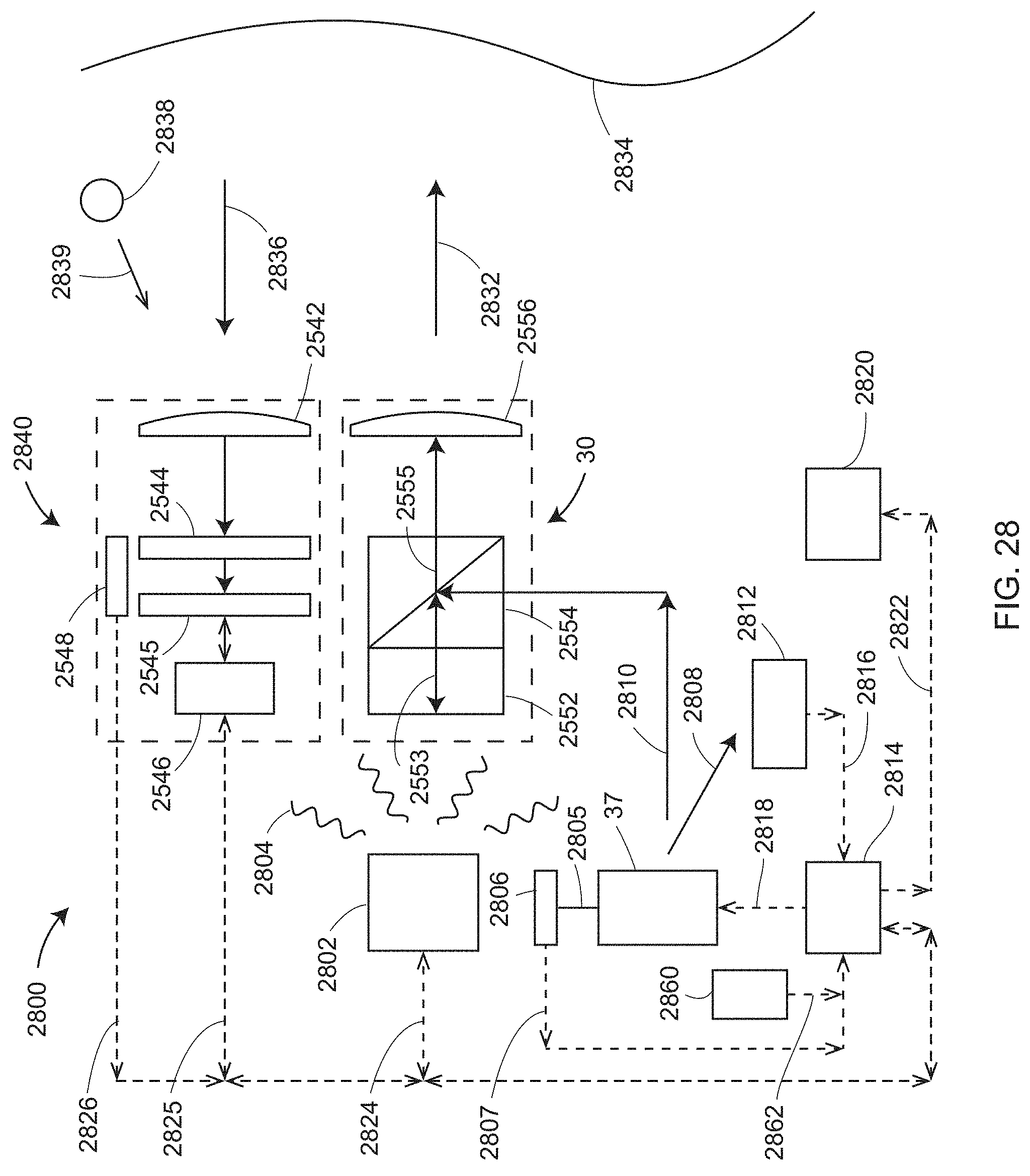

[0048] FIG. 28 shows structural elements of a feedback loop according to an embodiment;

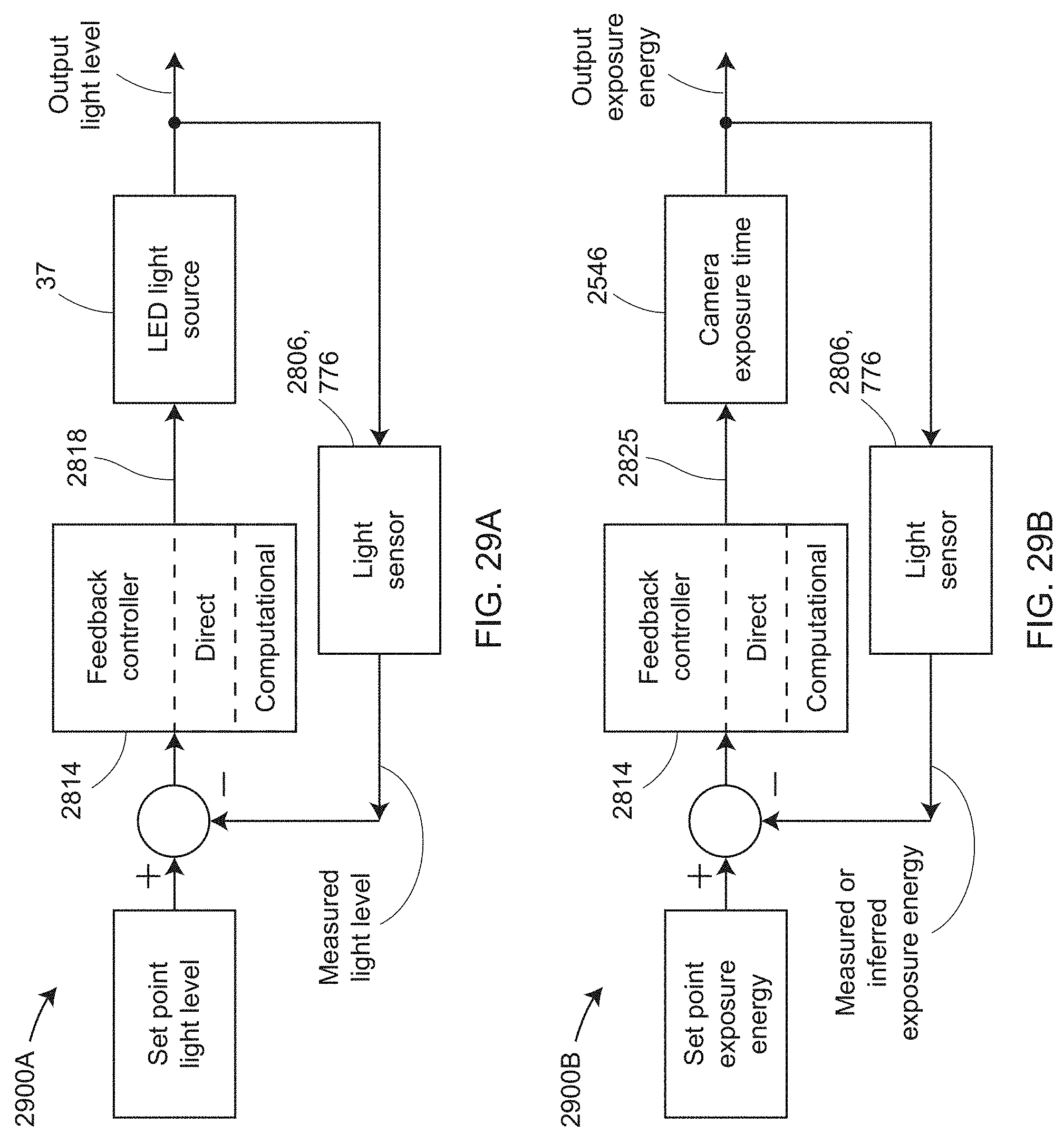

[0049] FIGS. 29A and 29B show two control systems according to an embodiment;

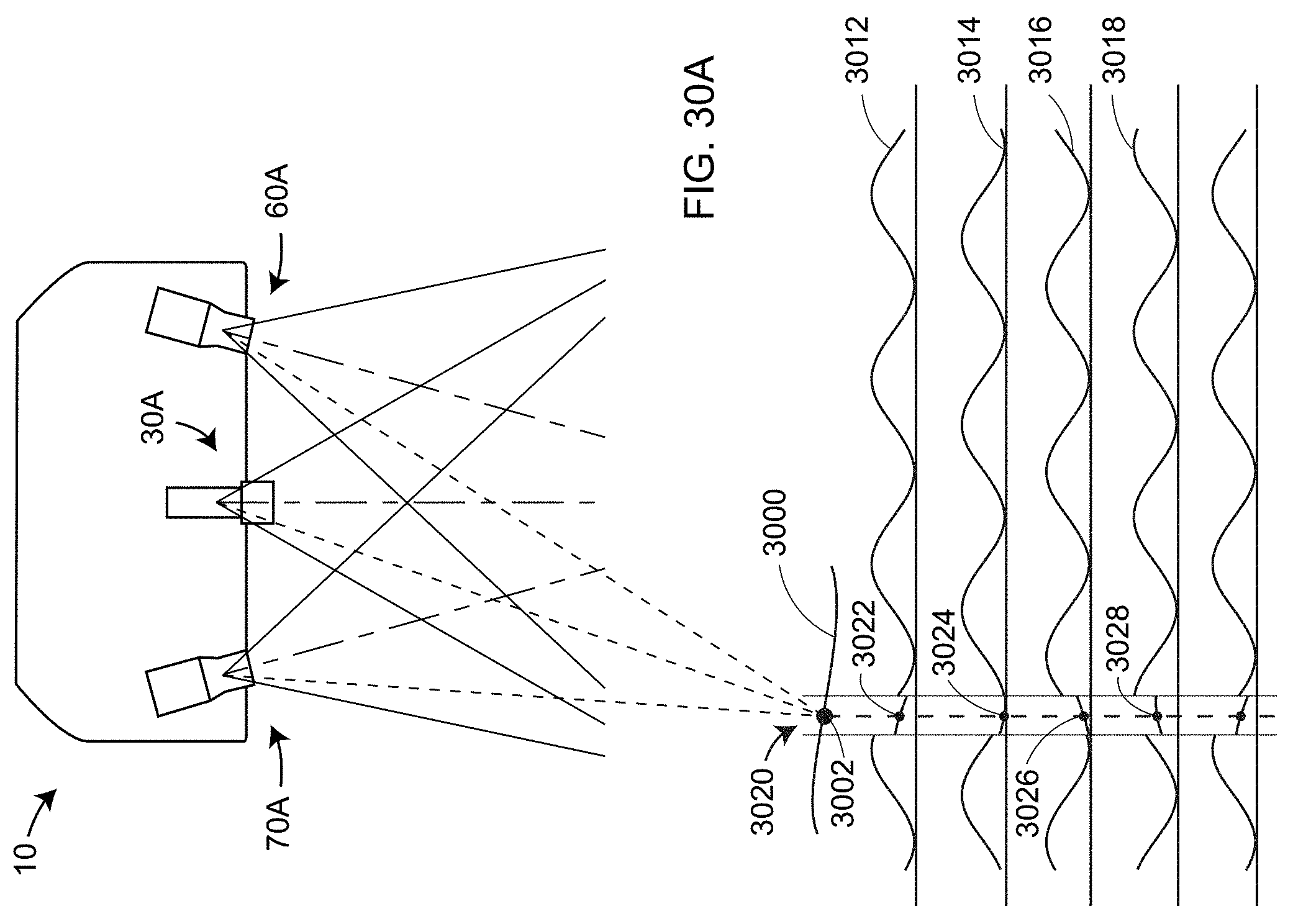

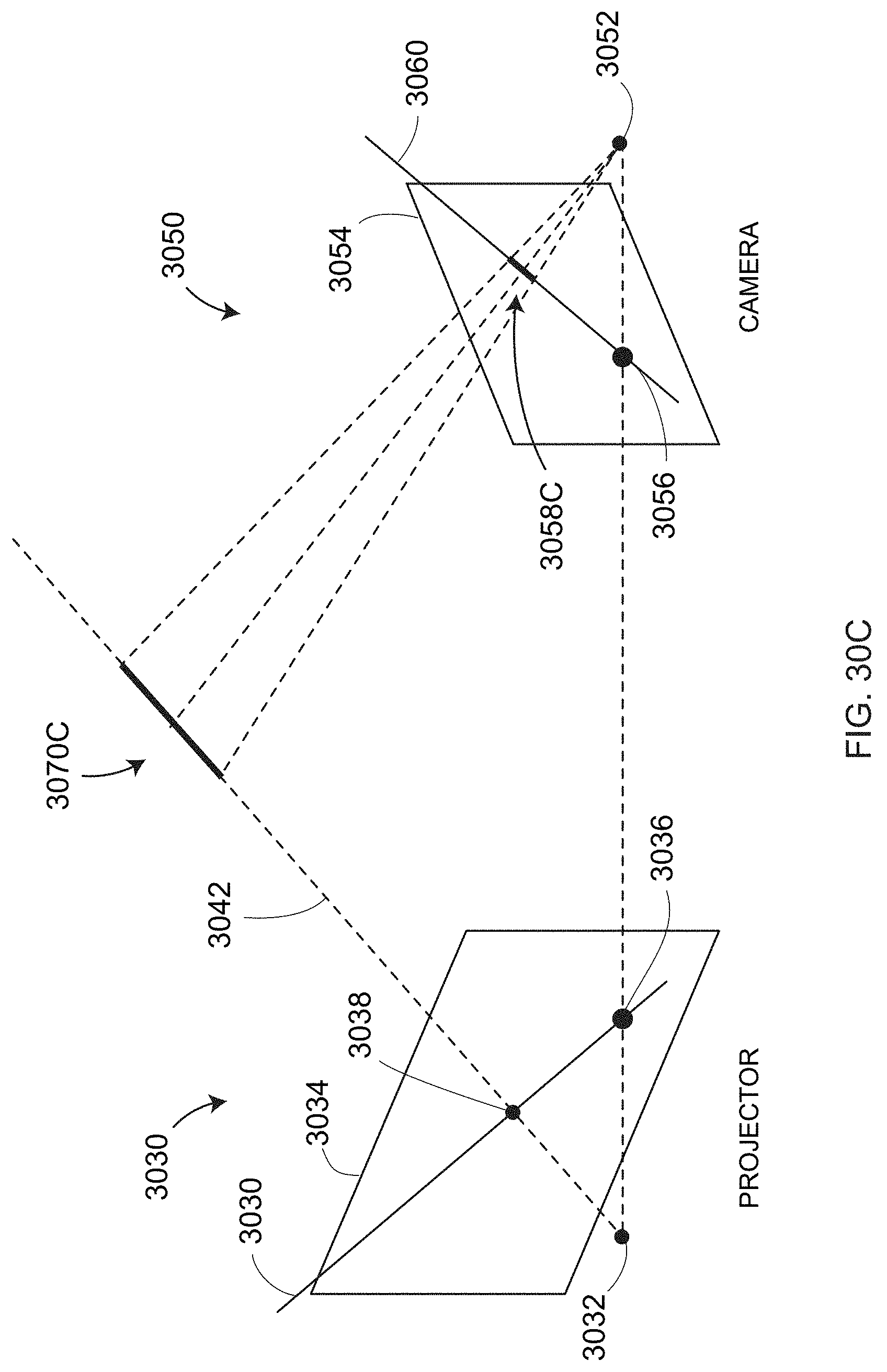

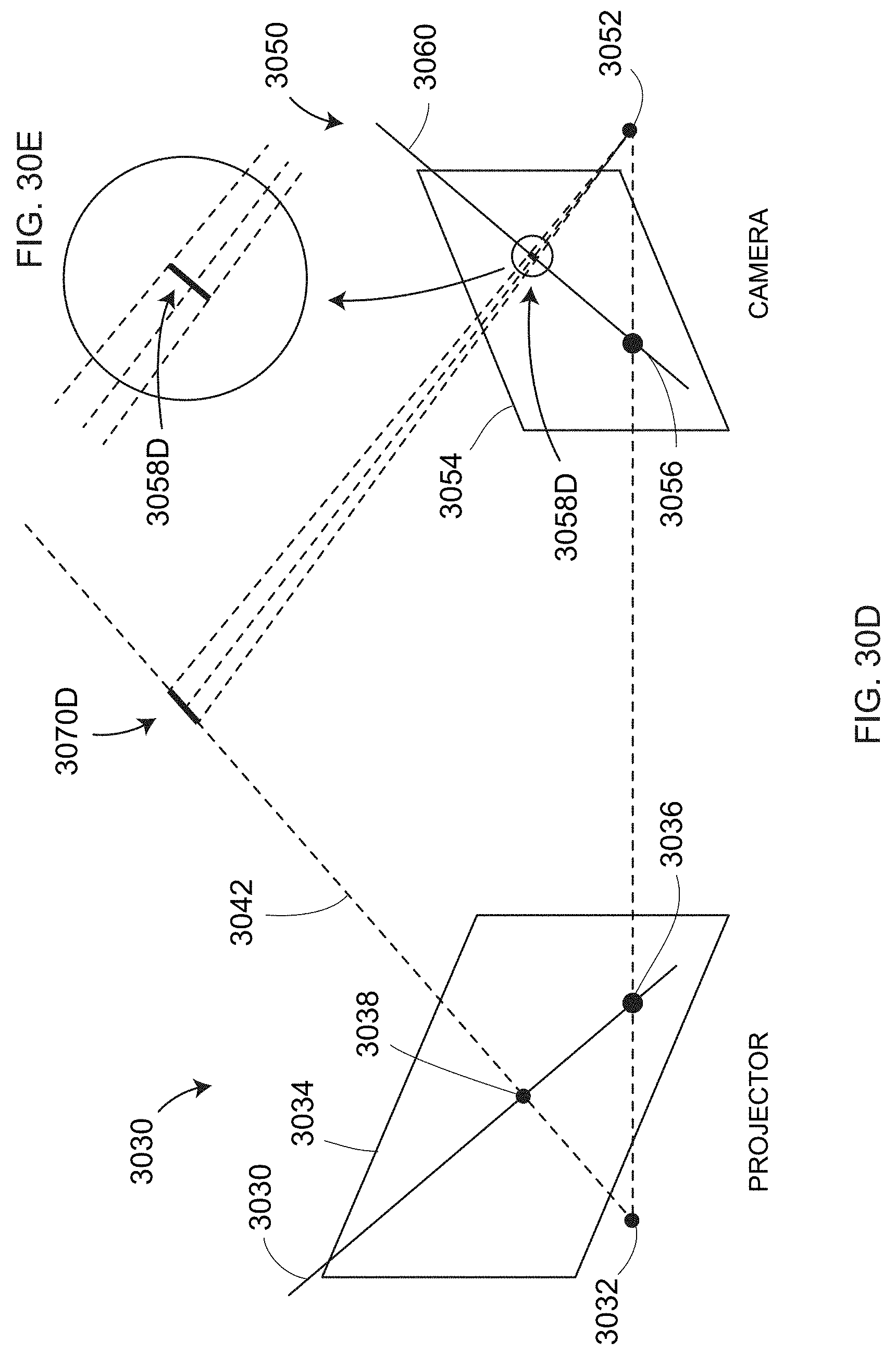

[0050] FIGS. 30A, 30B, 30C, and 30D illustrate a method of optimizing output power of projected light on a pixel-by-pixel basis according to an embodiment;

[0051] FIG. 31 illustrates measurement of a hole with a 3D imager according to an embodiment;

[0052] FIG. 32 illustrates how the image size of the imaged hole changes with distance from a scanner camera;

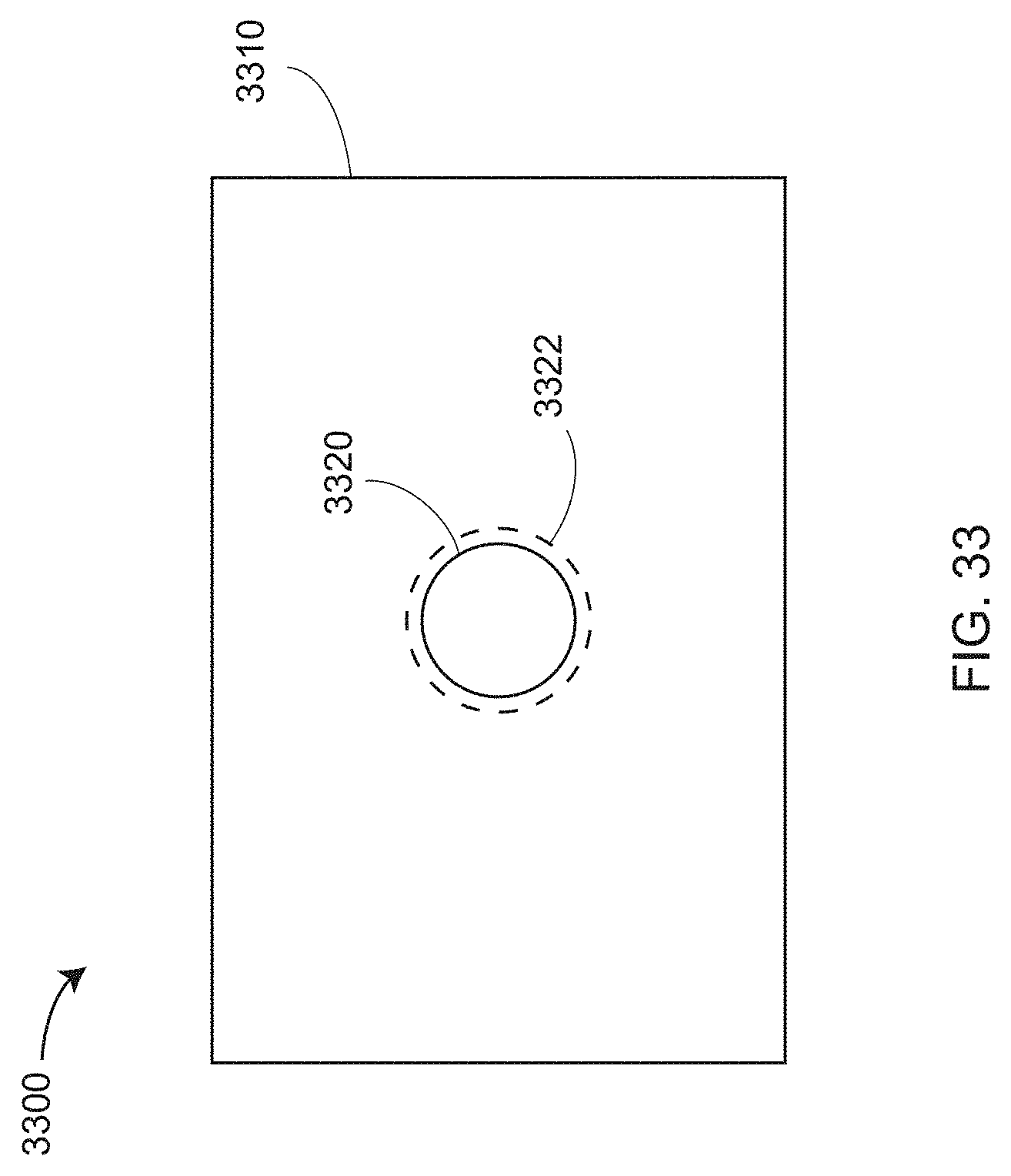

[0053] FIG. 33 illustrates how a measured 2D image may be combined with measured 3D points to improve the representation of hole edges according to an embodiment;

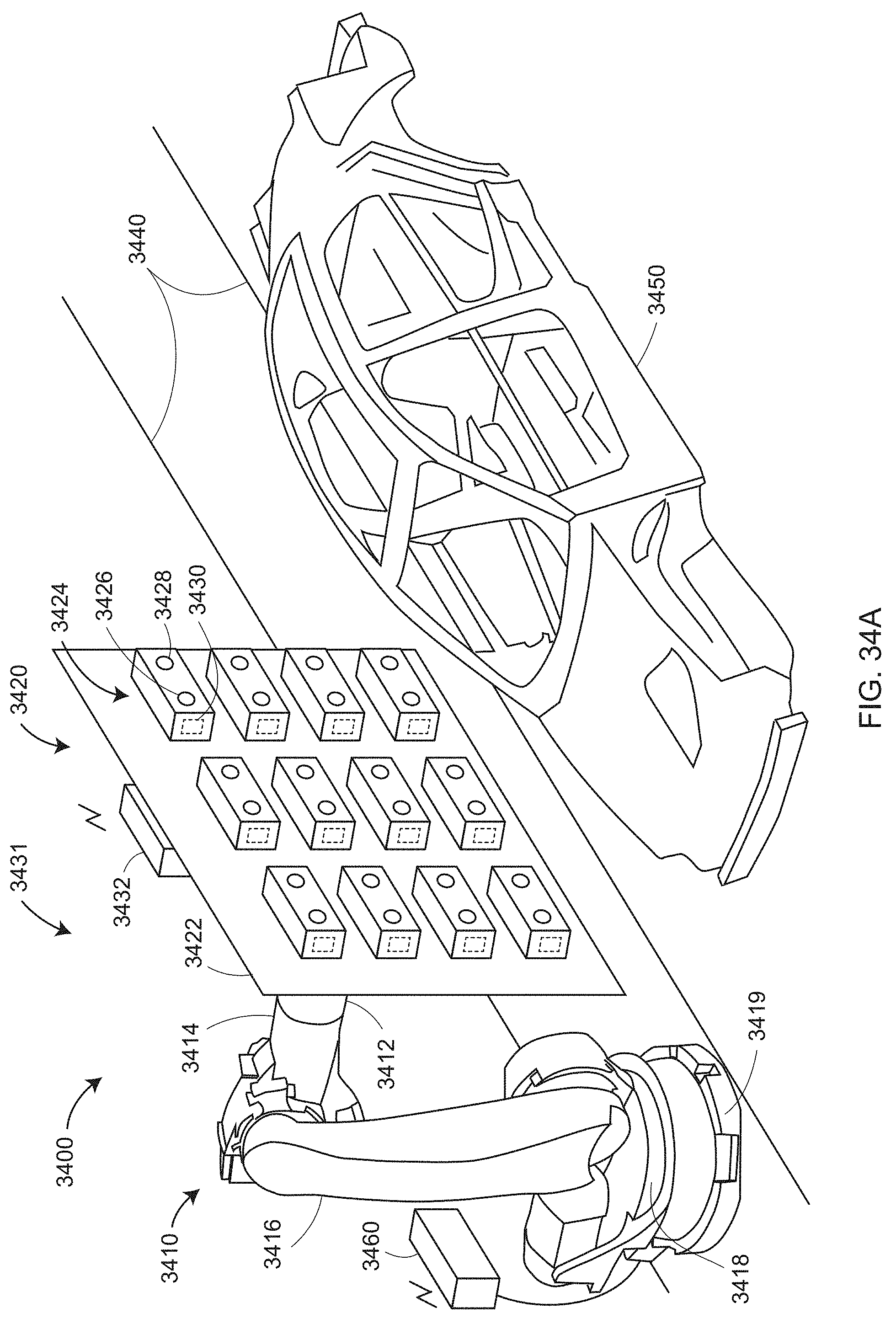

[0054] FIG. 34A is a perspective view of a bank of 3D imagers attached to a robot for measurement of an object according to an embodiment;

[0055] FIG. 34B is a perspective view of a first set of 3D imagers and a second set of 3D imagers measuring an object according to an embodiment;

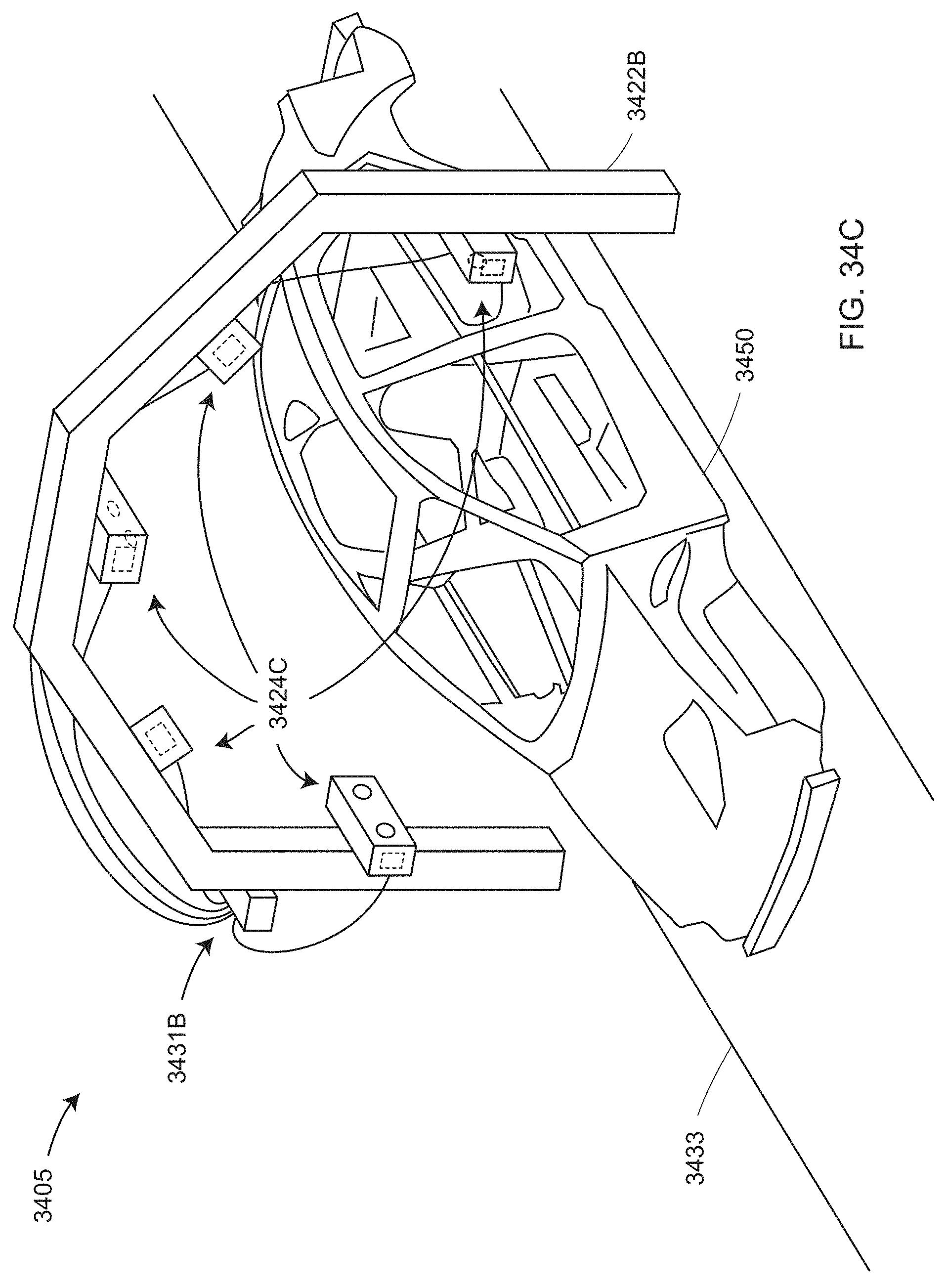

[0056] FIG. 34C is a perspective view of a bank of 3D imagers attached to a stationary mounting frame that measures an object on a conveyor according to an embodiment;

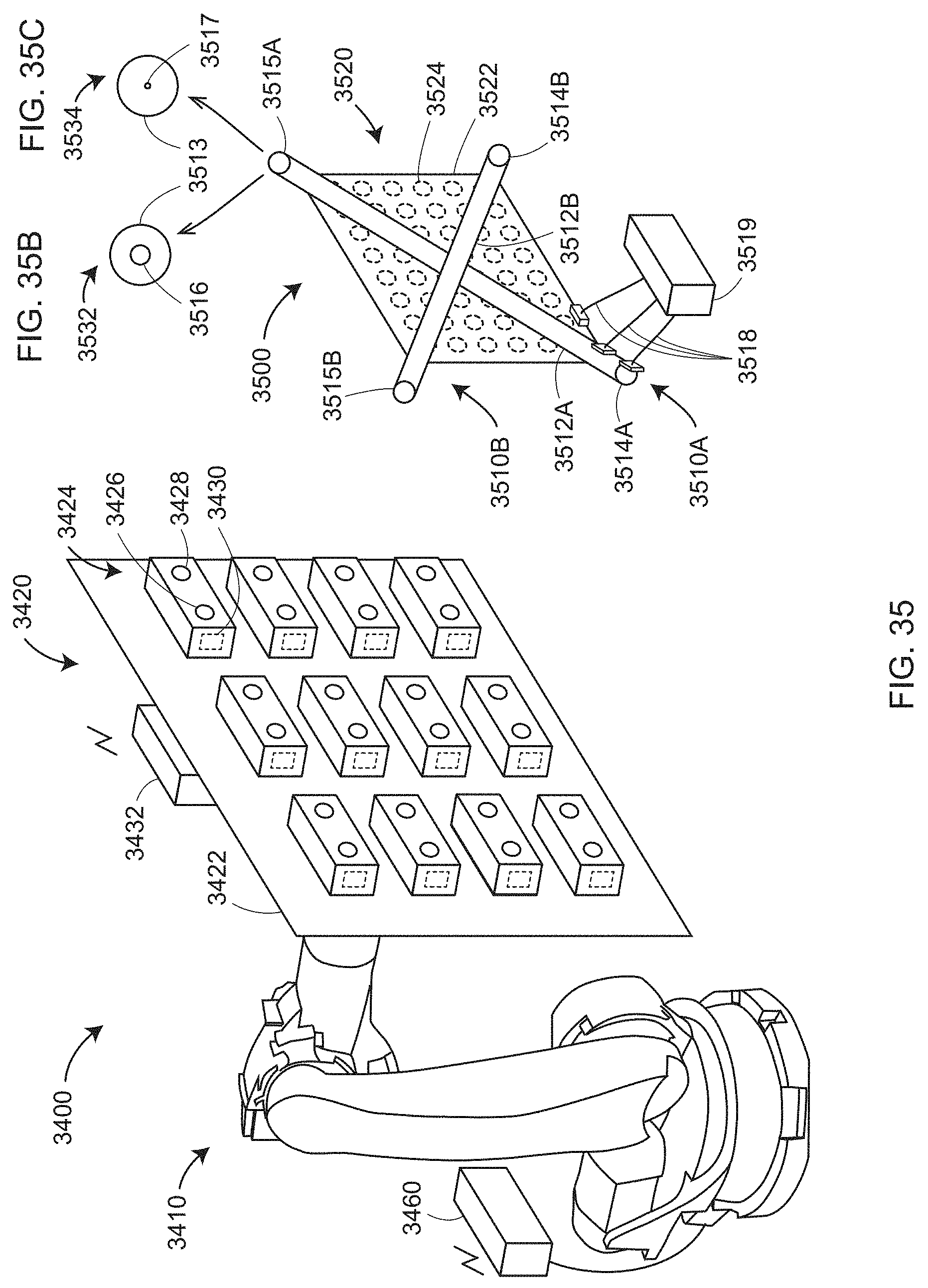

[0057] FIG. 35 is a perspective view of calibration/compensation of a 3D imager by measurement of a reference artifact according to an embodiment;

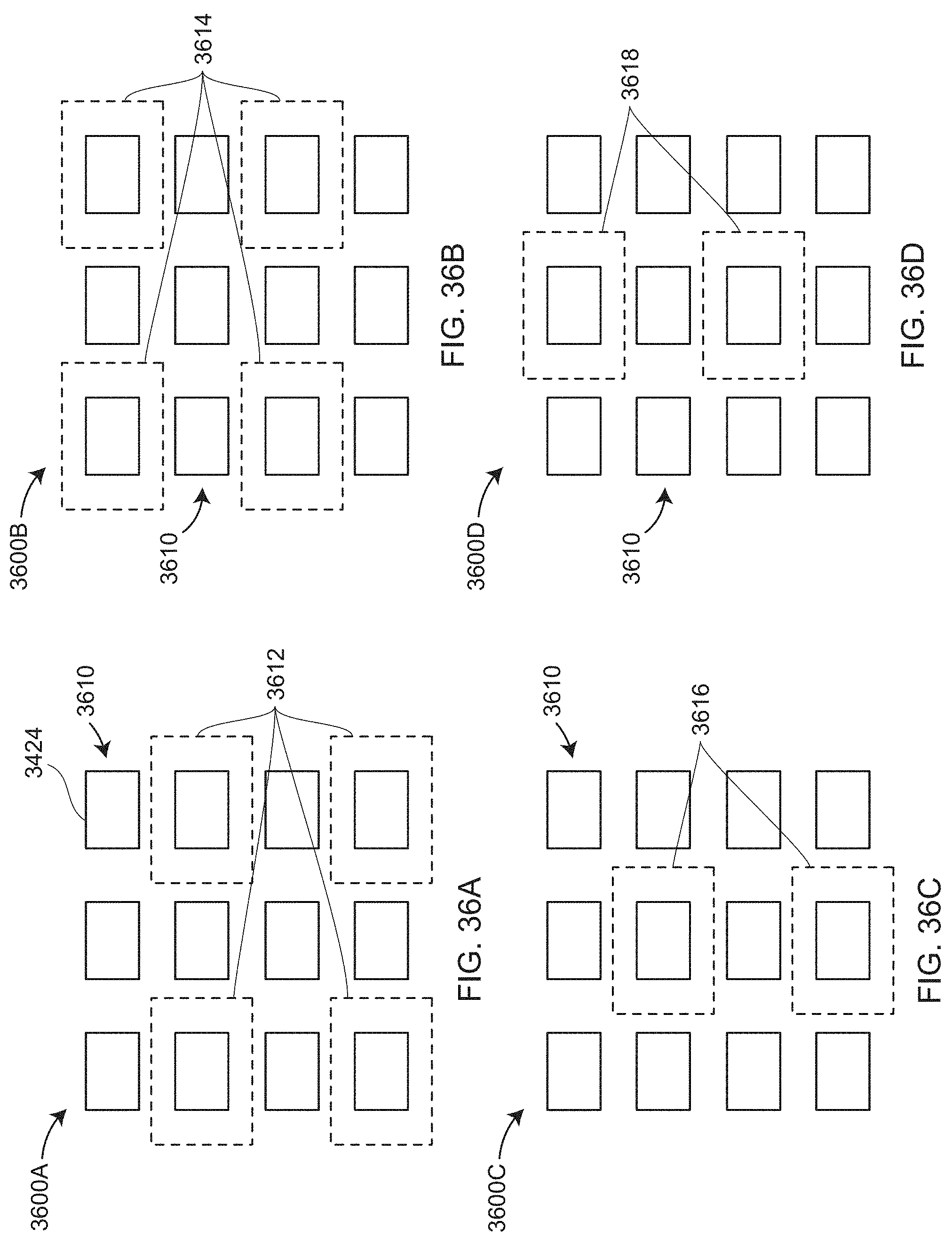

[0058] FIGS. 36A-36D illustrate a sequence of projections of light patterns by four sets of projectors so as to provide overlap of projected light according to an embodiment;

[0059] FIGS. 36E-36F illustrate a two-step sequence of patterned light projections for a one-dimensional array of 3D imagers according to an embodiment;

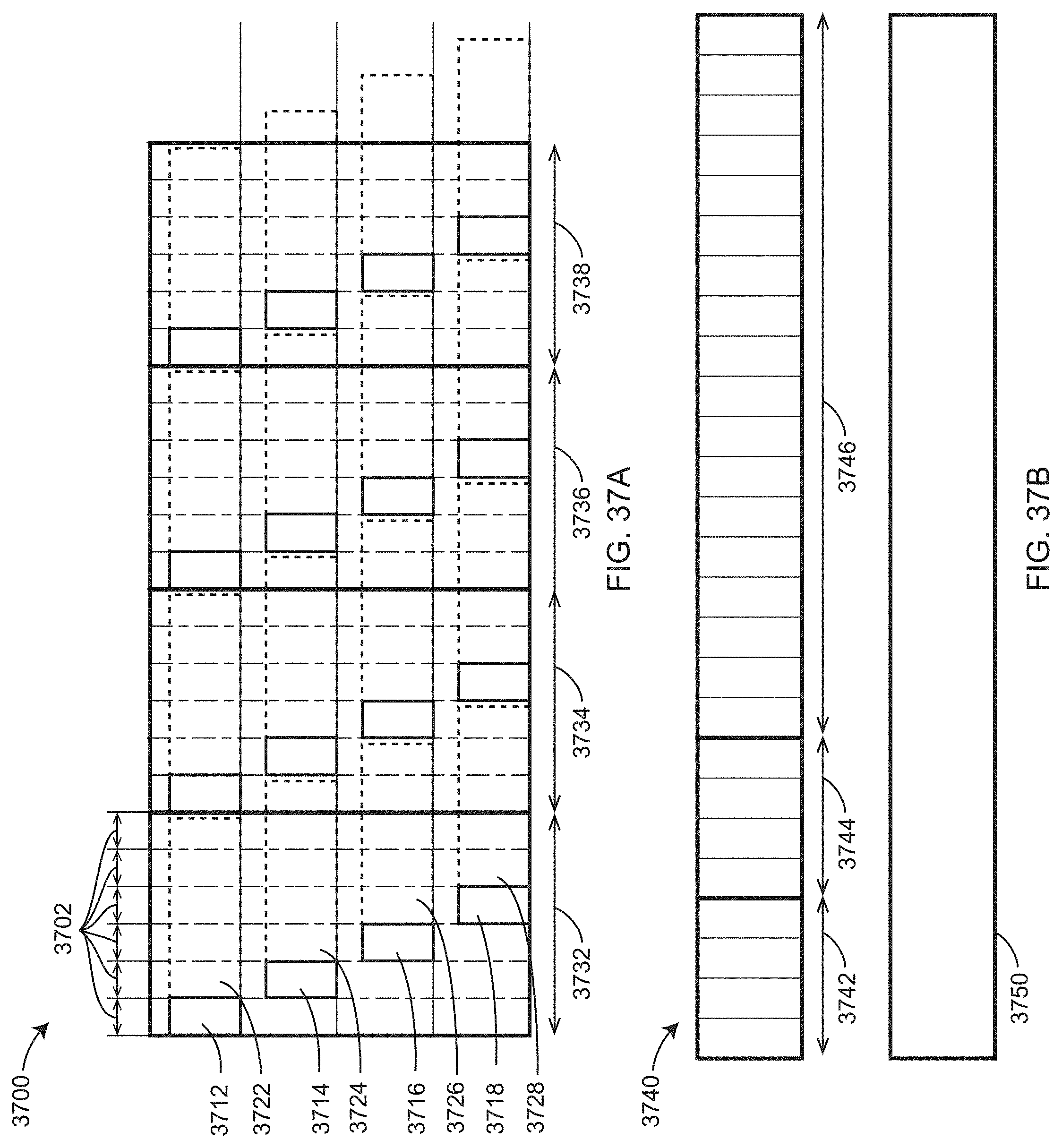

[0060] FIG. 37A and FIG. 37B illustrate timing of pattern projector/exposure and data processing according to an embodiment;

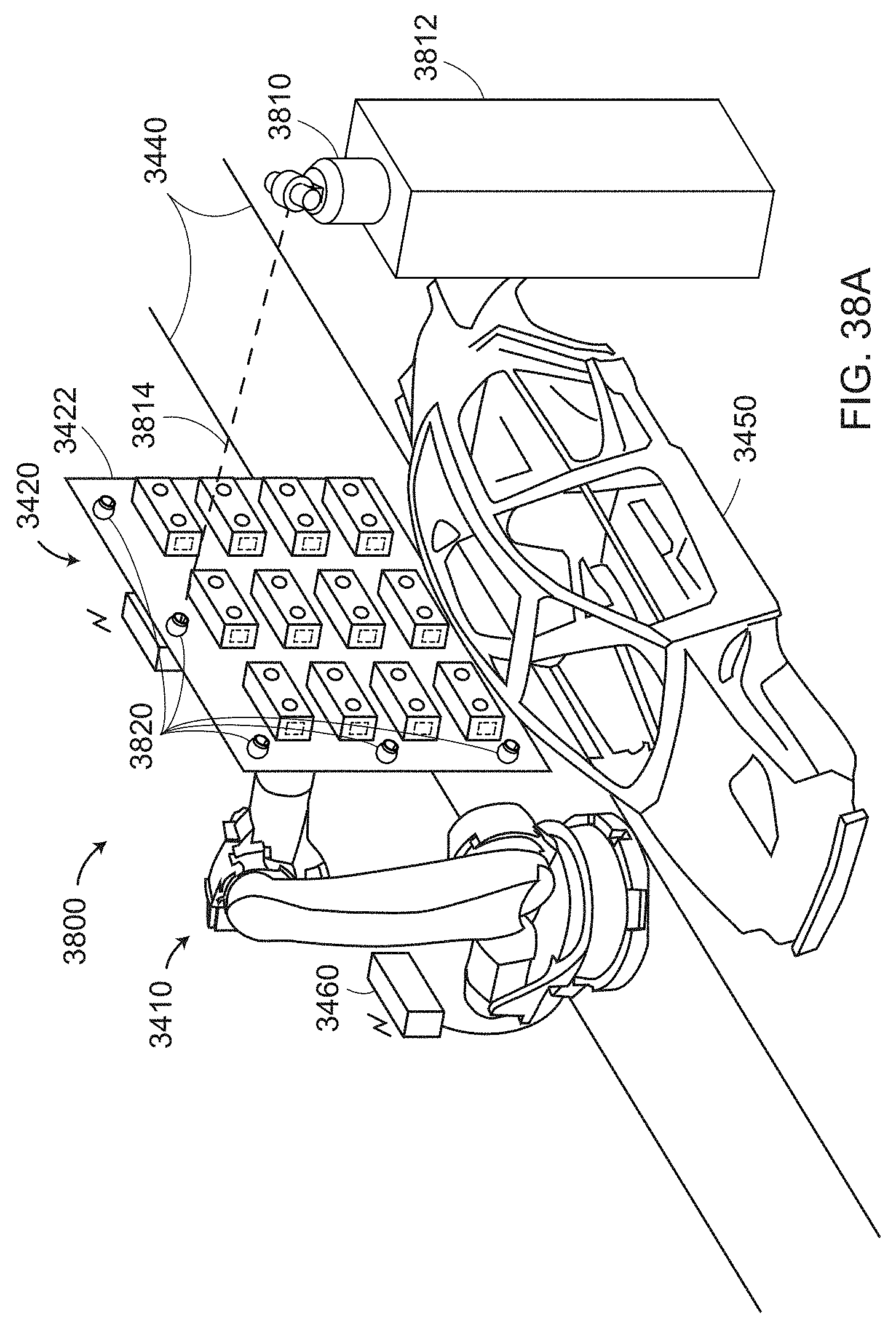

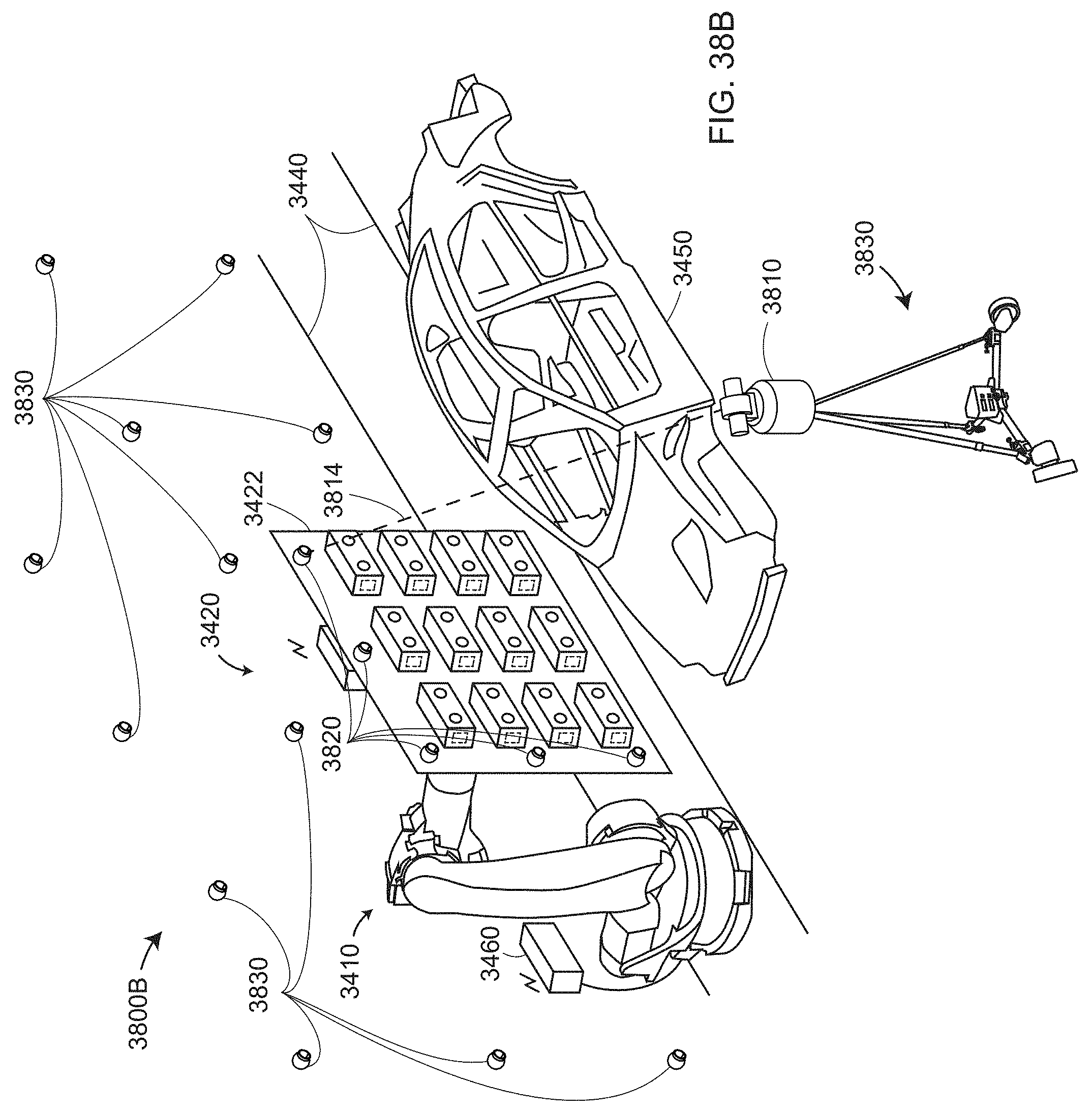

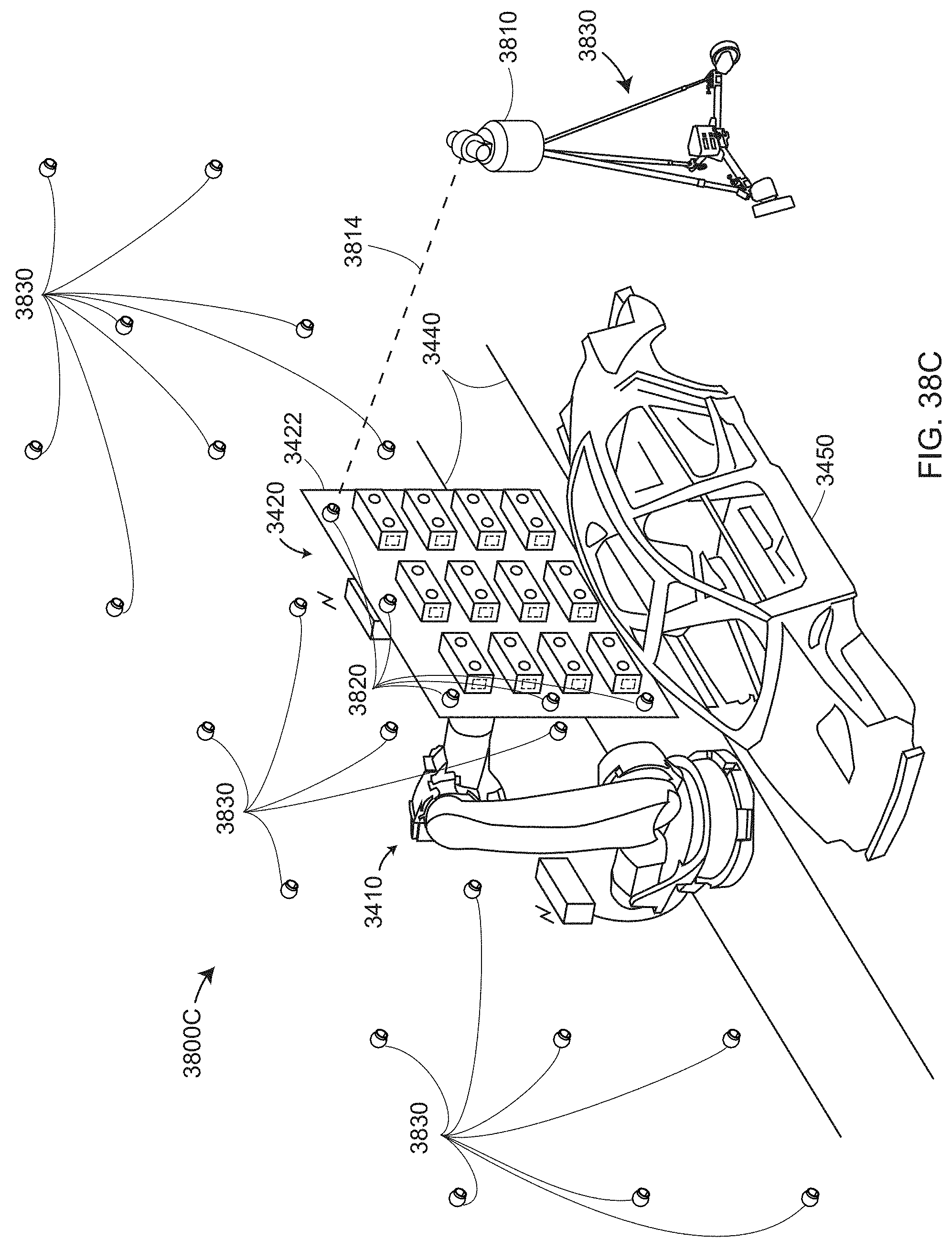

[0061] FIGS. 38A-C illustrate a 3D measuring device being used to register 3D coordinates obtained from a bank of 3D imagers that are moved by a mover according to an embodiment;

[0062] FIG. 39 illustrates a 3D measuring device being used to register coordinates obtained from a bank of 3D imagers that are moved by a mover according to an embodiment;

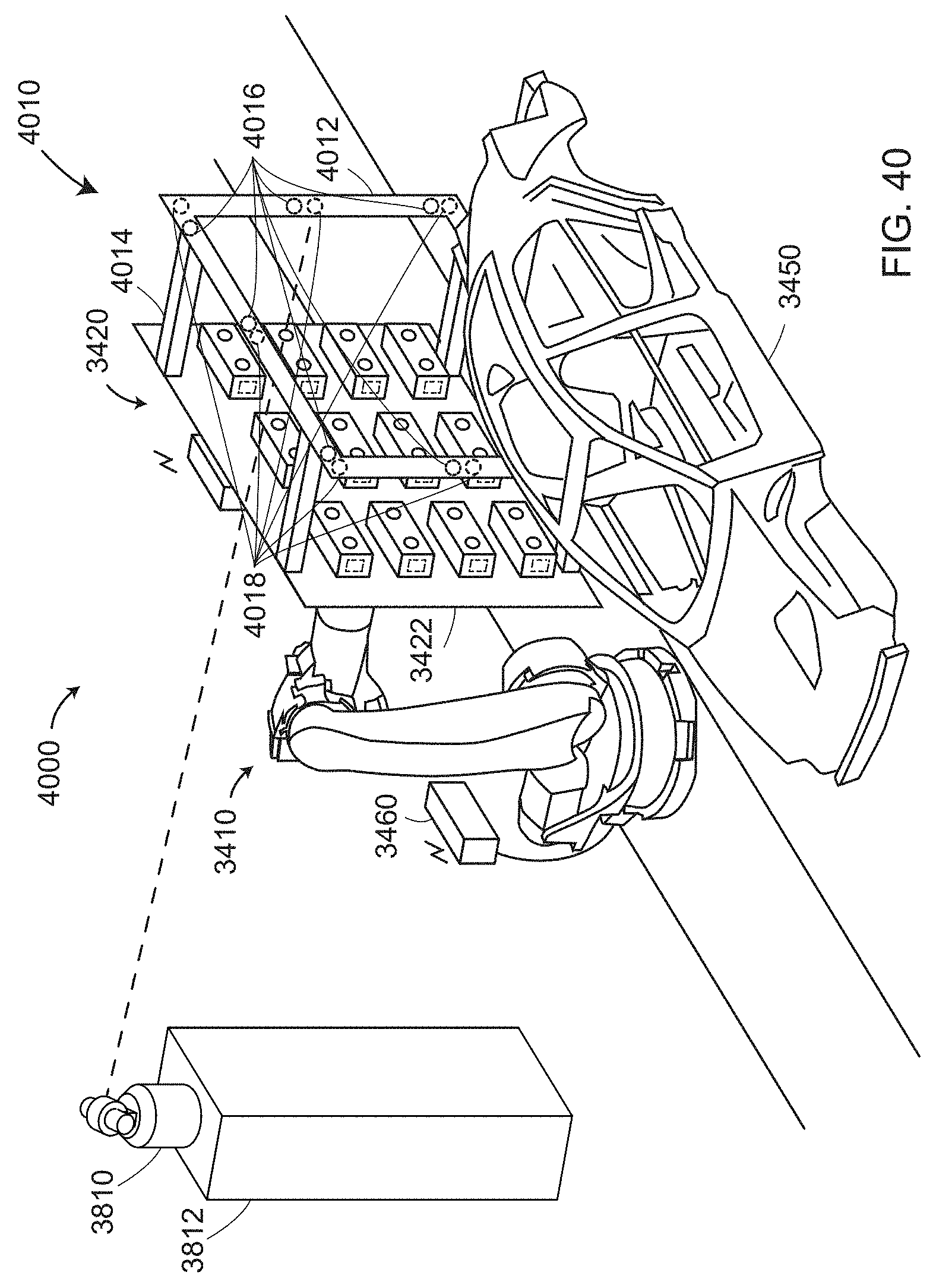

[0063] FIG. 40 illustrates a 3D measuring device further including an extension frame having targets according to an embodiment;

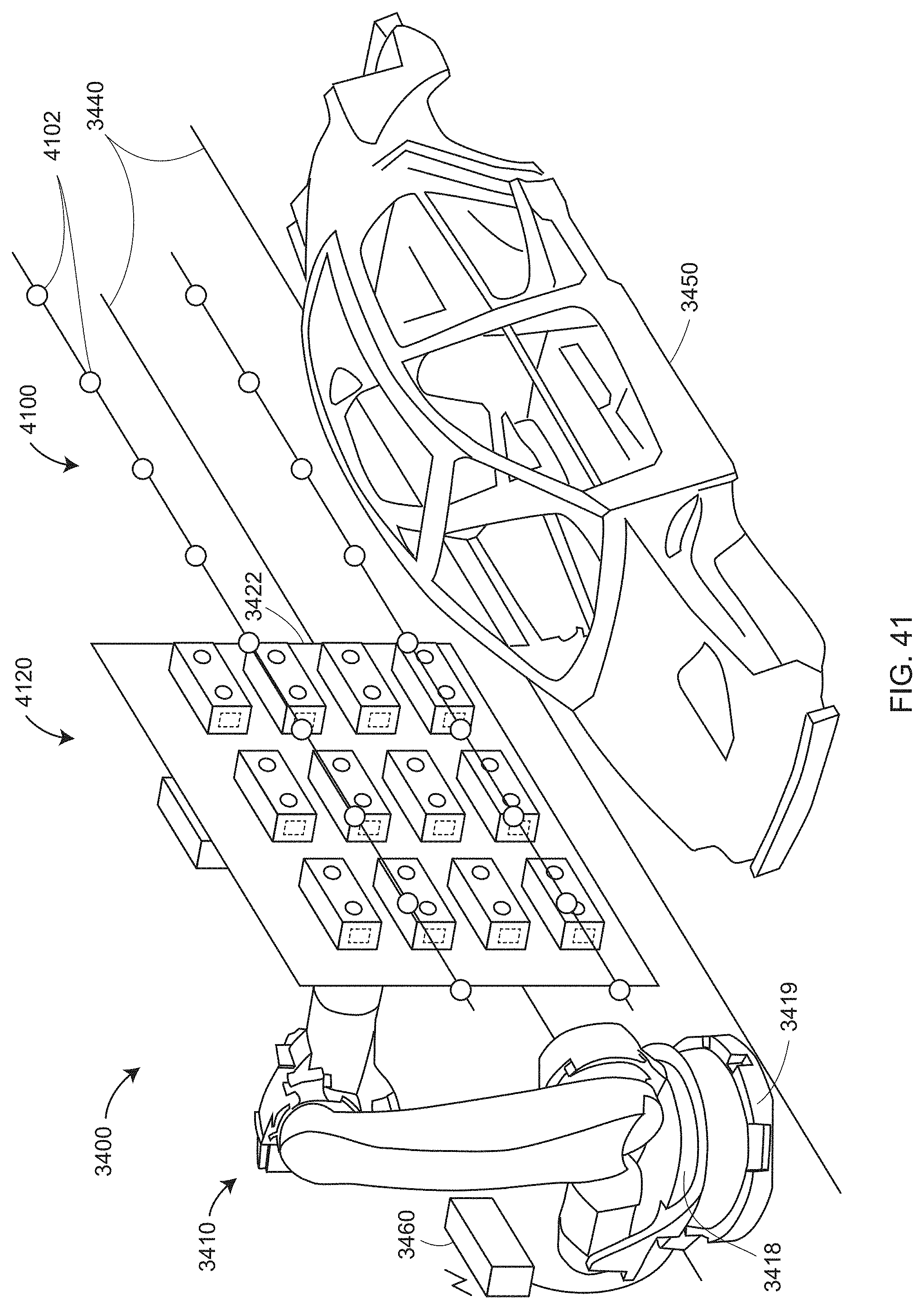

[0064] FIG. 41 illustrates inclusion of a collection of fixed reference targets in an environment;

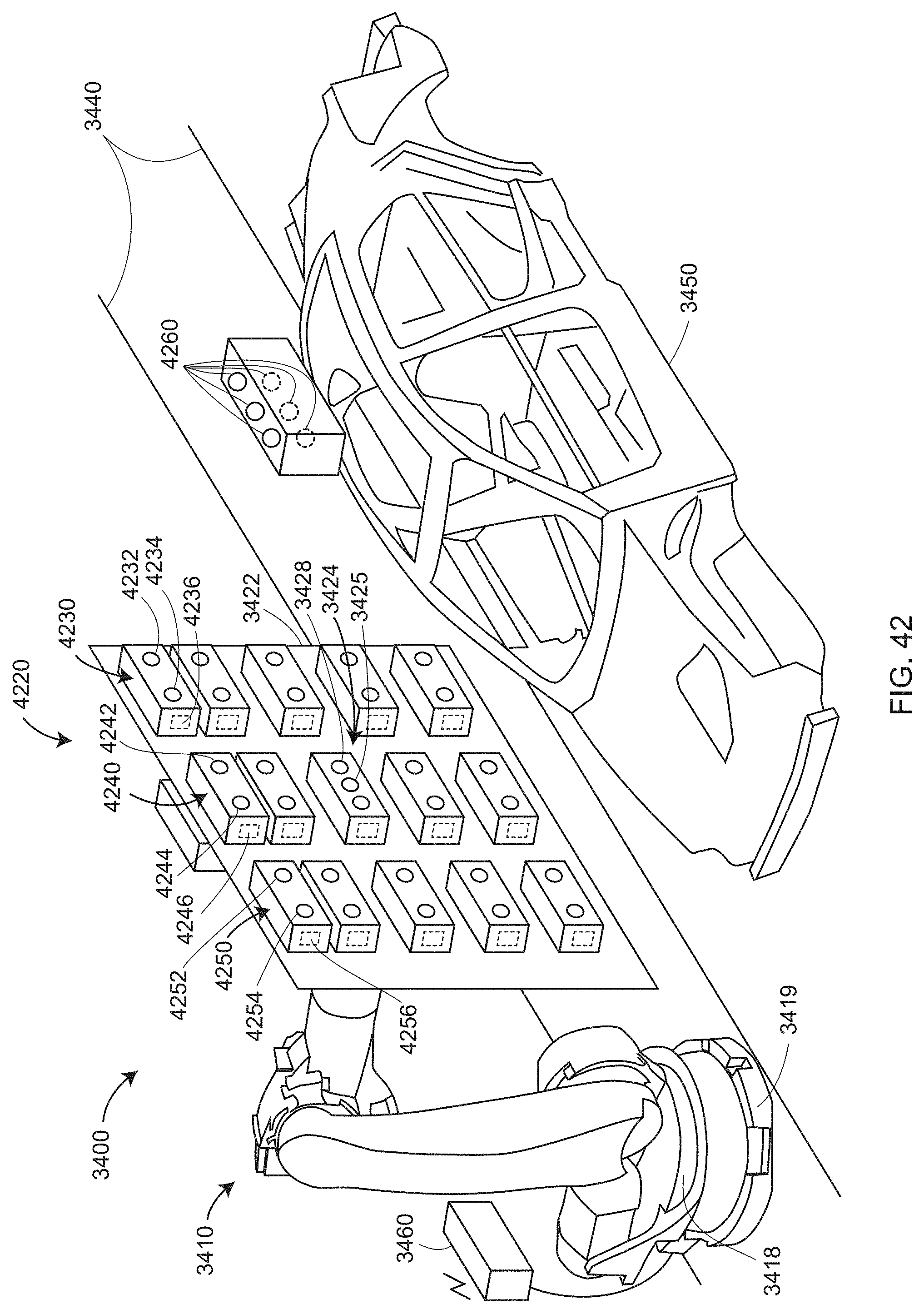

[0065] FIG. 42 illustrates inclusion of a wide-FOV registration camera, a color camera, and an information projector according to an embodiment;

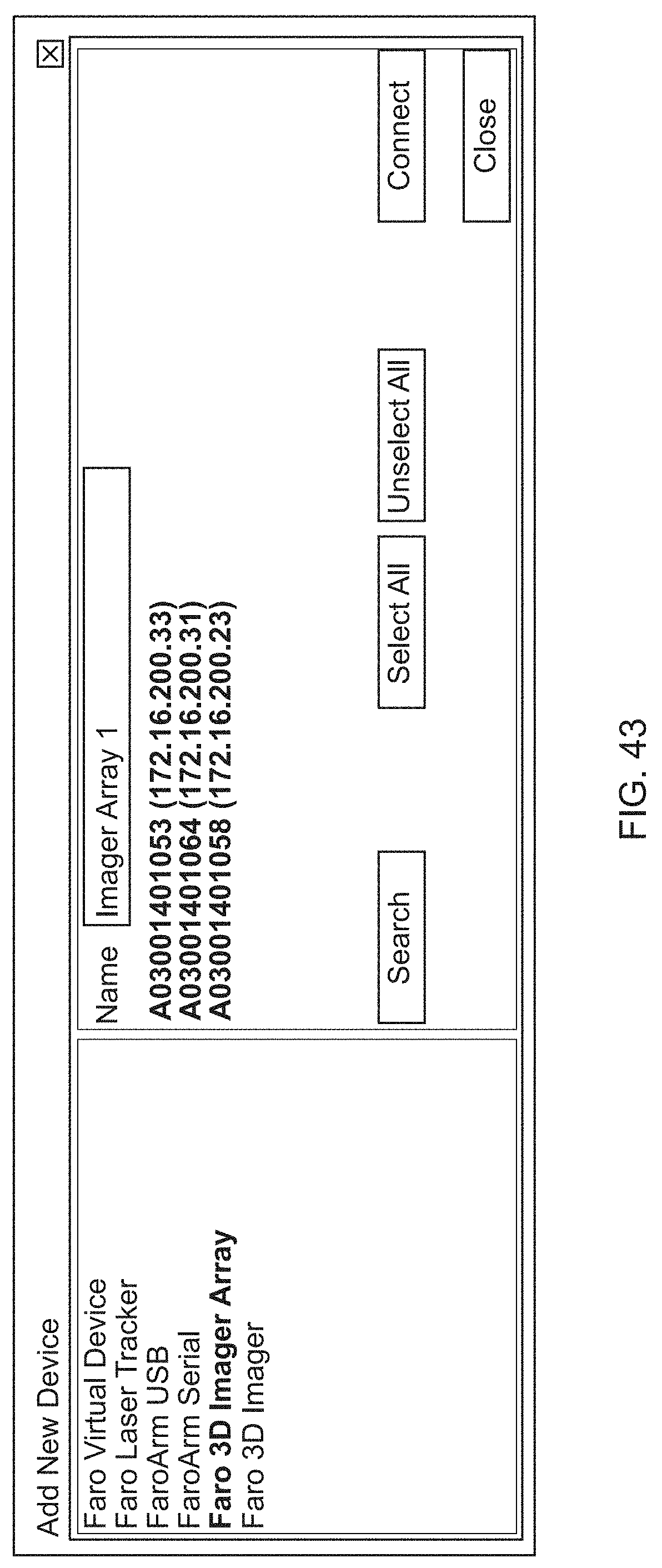

[0066] FIG. 43 shows a display screen from a user interface (UI) that enables configuration of a 3D assembly of 3D imagers according to an embodiment;

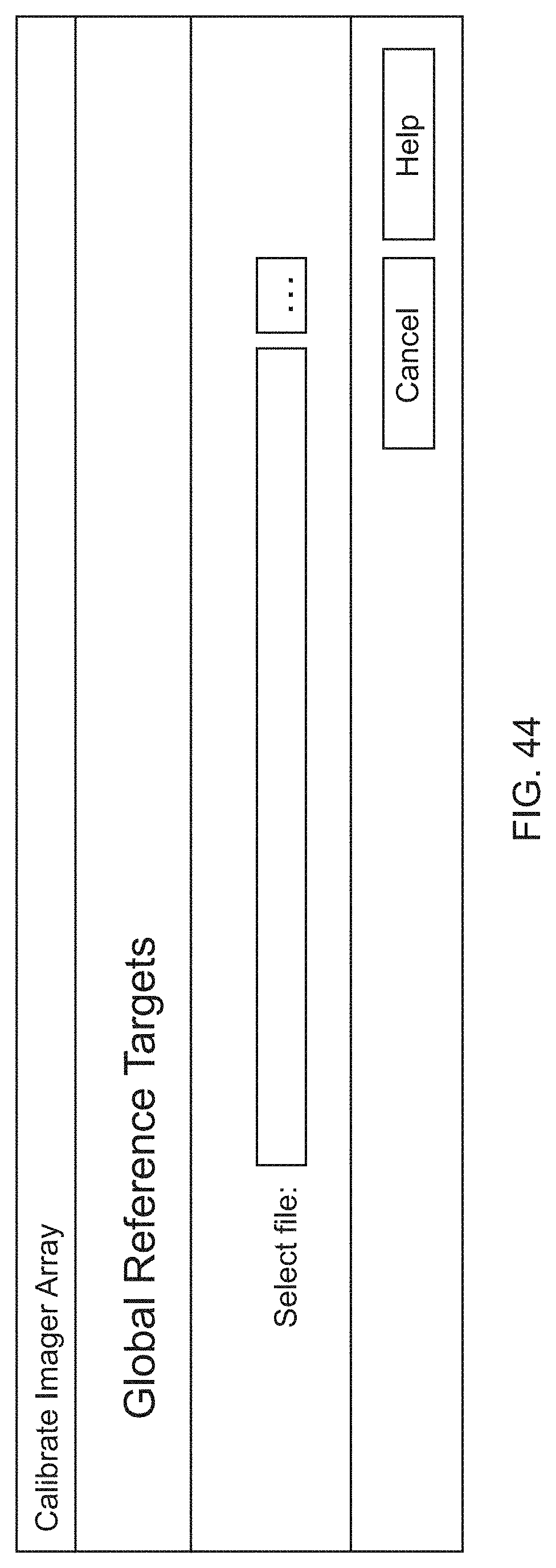

[0067] FIGS. 44-46 show UI display screens that enable a user to select fiducial targets, display 3D coordinates of the fiducial targets, and show results of an optimization calculation according to an embodiment;

[0068] FIG. 47 shows a UI display screen that provides further assistance in configuration a 3D assembly of 3D imagers;

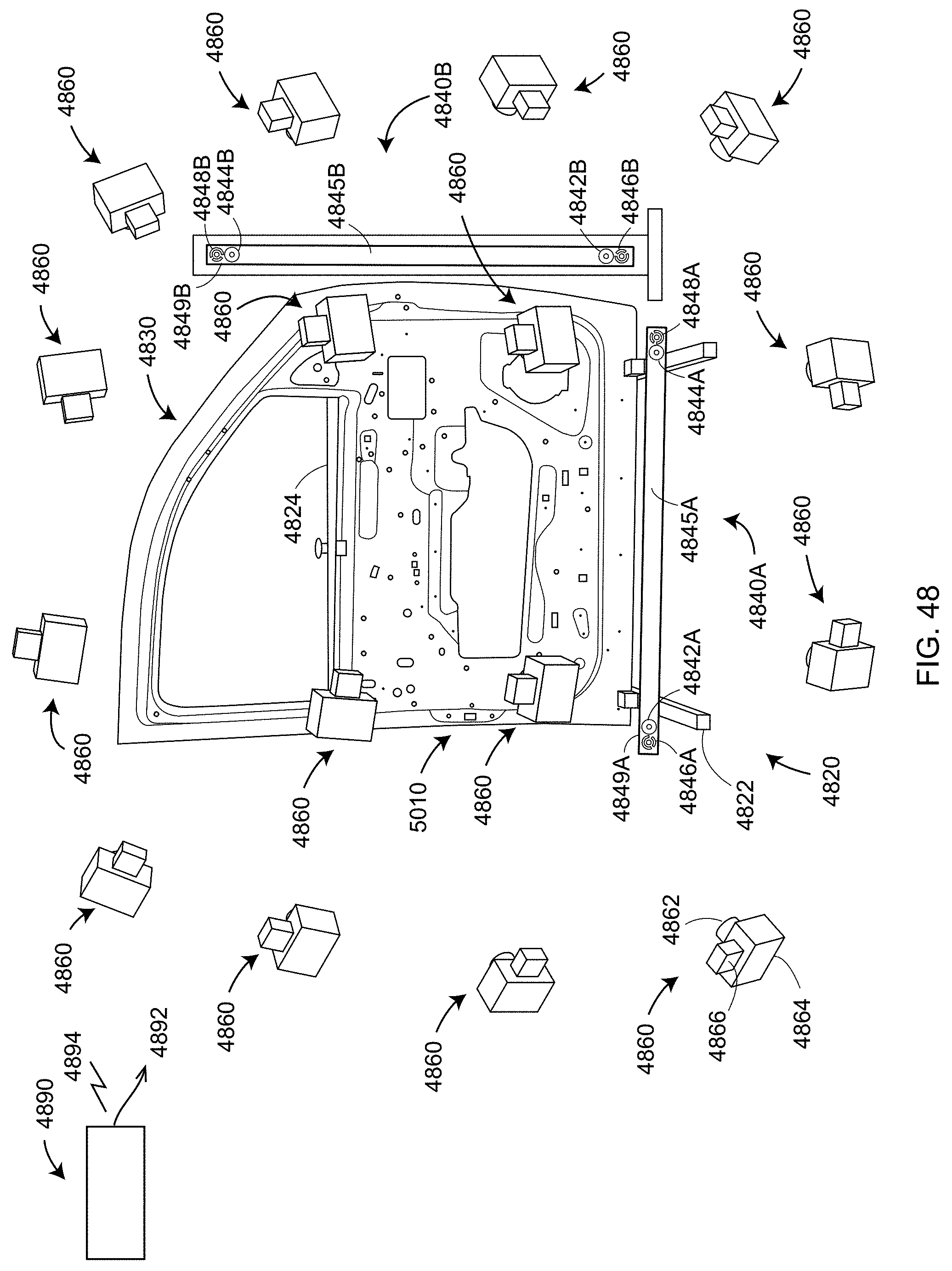

[0069] FIG. 48 is a perspective view of a master part having a collection of fiducial targets being imaged by a photogrammetry camera held in a plurality of positions according to an embodiment;

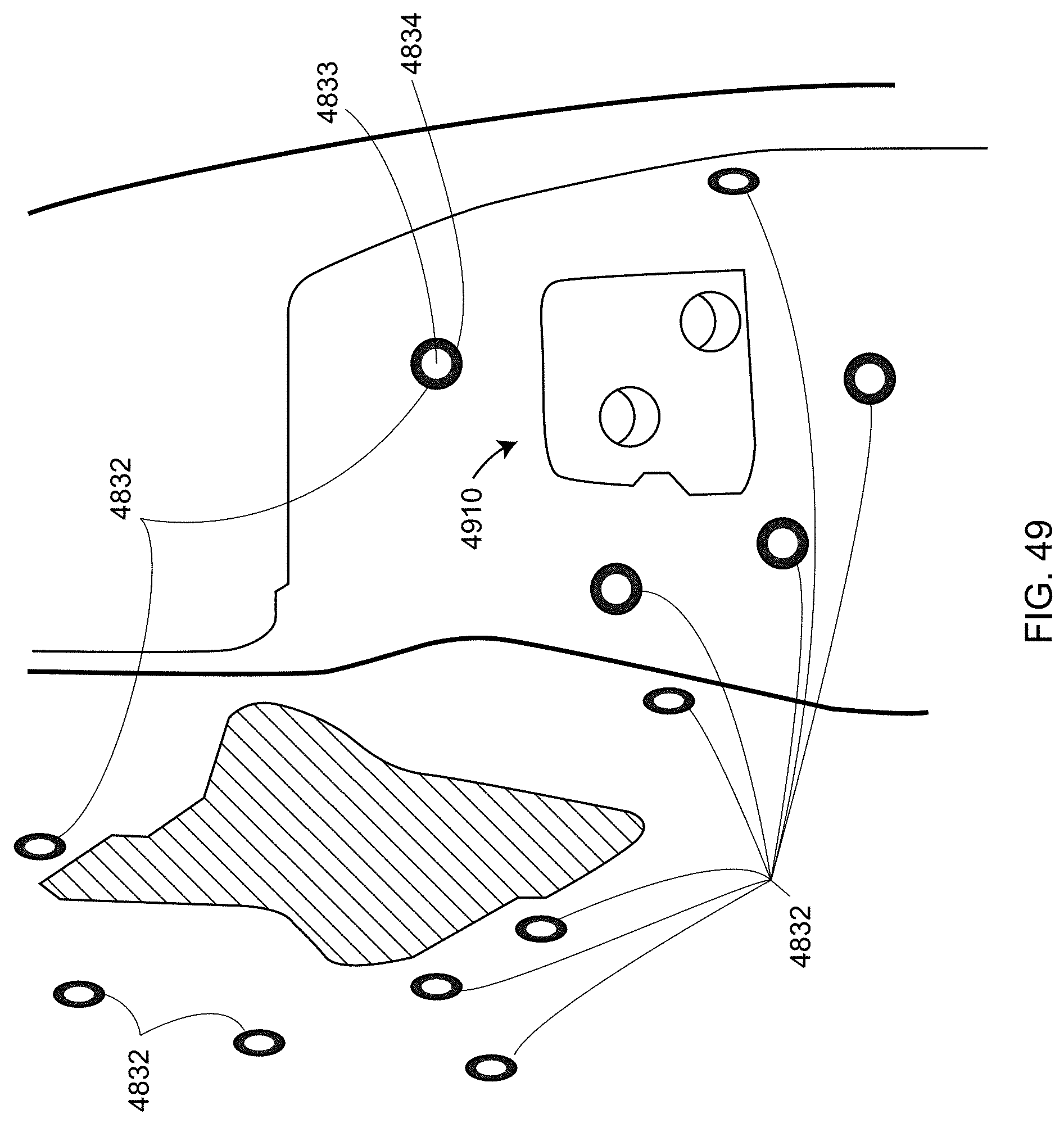

[0070] FIG. 49 is a perspective view of an right edge of the master part showing a hinge assembly and a collection of fiducial targets according to an embodiment;

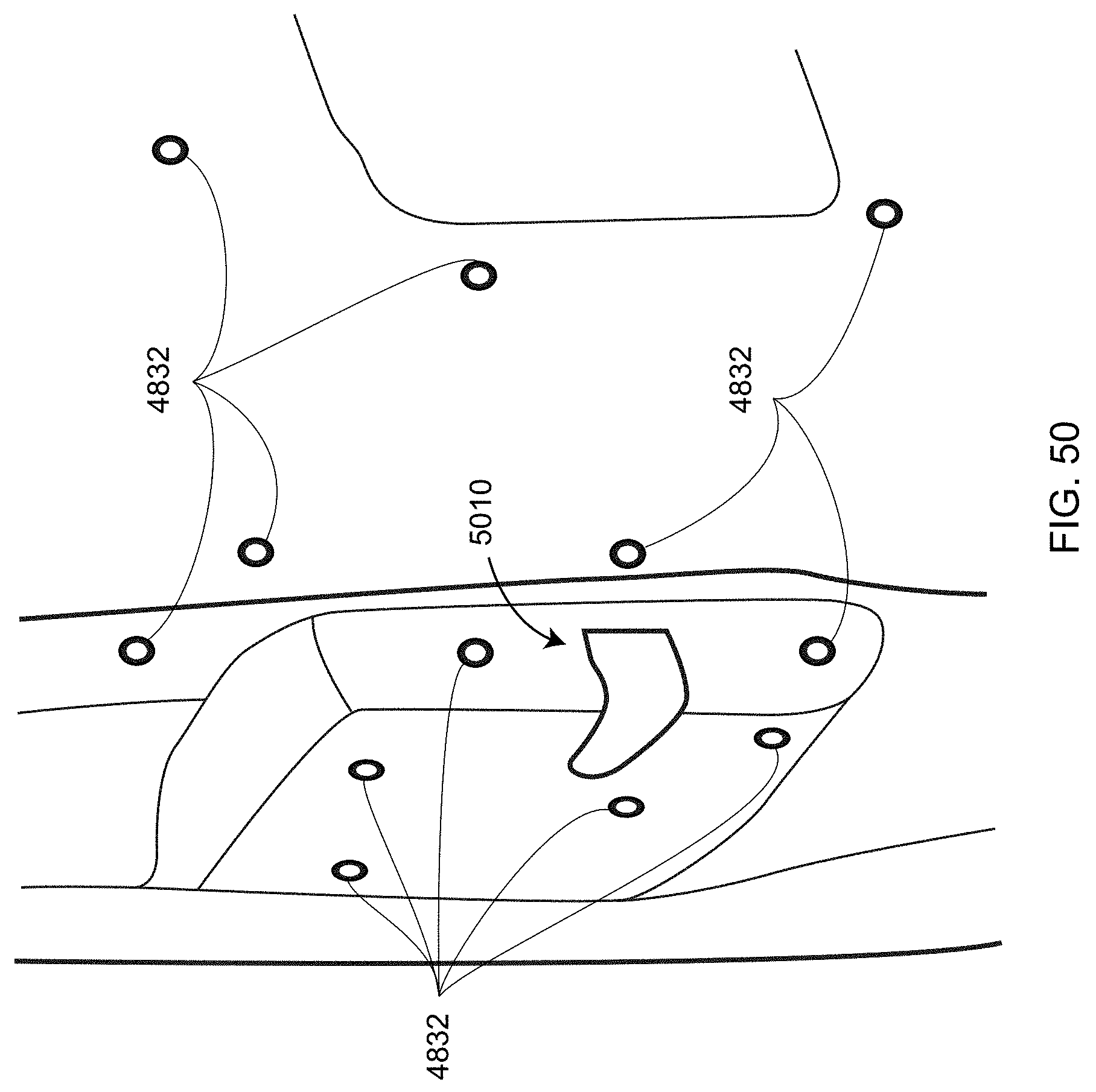

[0071] FIG. 50 is a perspective view of a left edge of the master part showing a door latch assembly and a collection of fiducial targets according to an embodiment;

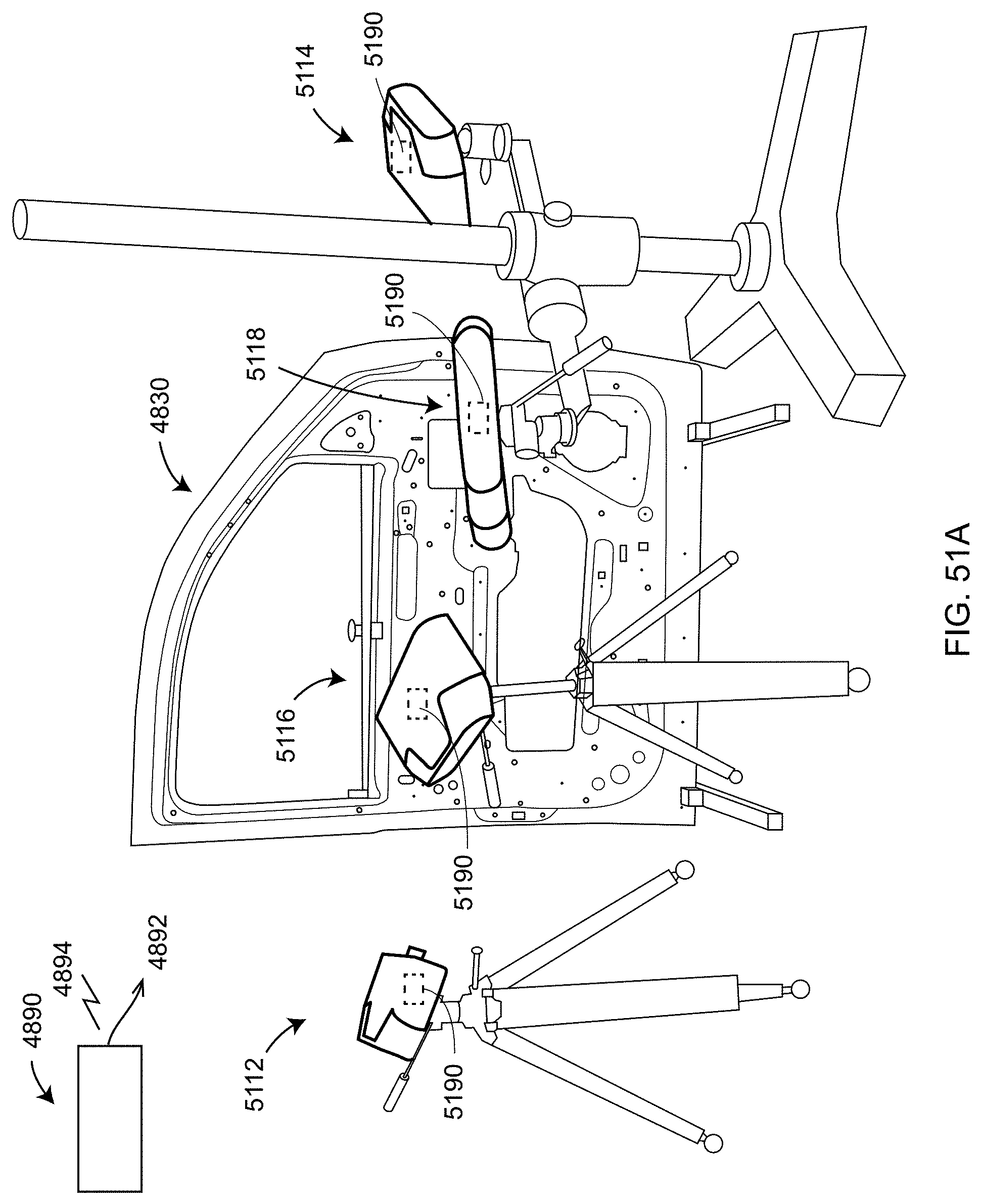

[0072] FIG. 51A shows a collection of 3D imagers imaging the master part to determine the relative pose of each within a common frame of reference according to an embodiment;

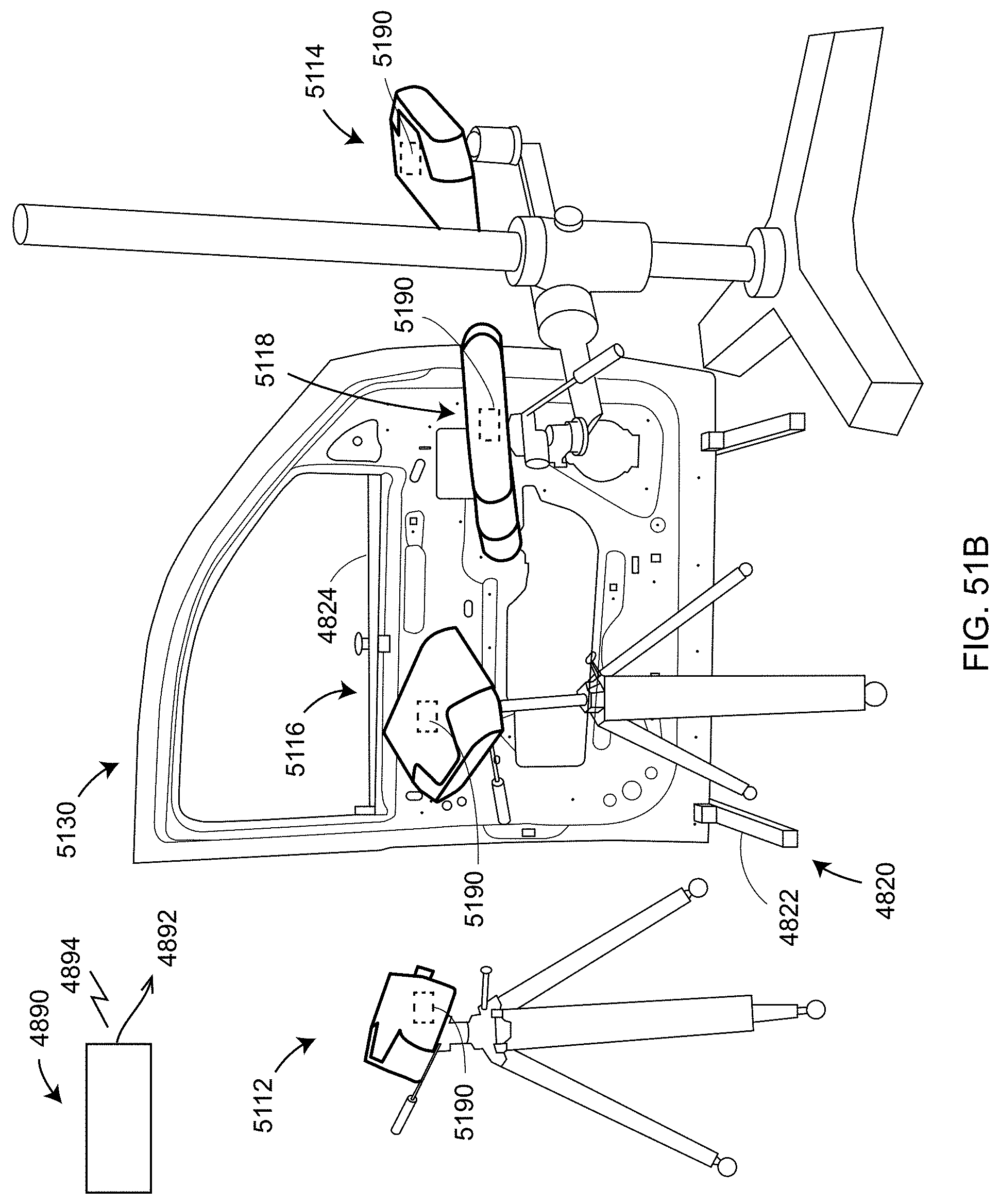

[0073] FIG. 51B shows the collection of 3D imagers determining 3D coordinates of surface points on a part-under-test according to an embodiment;

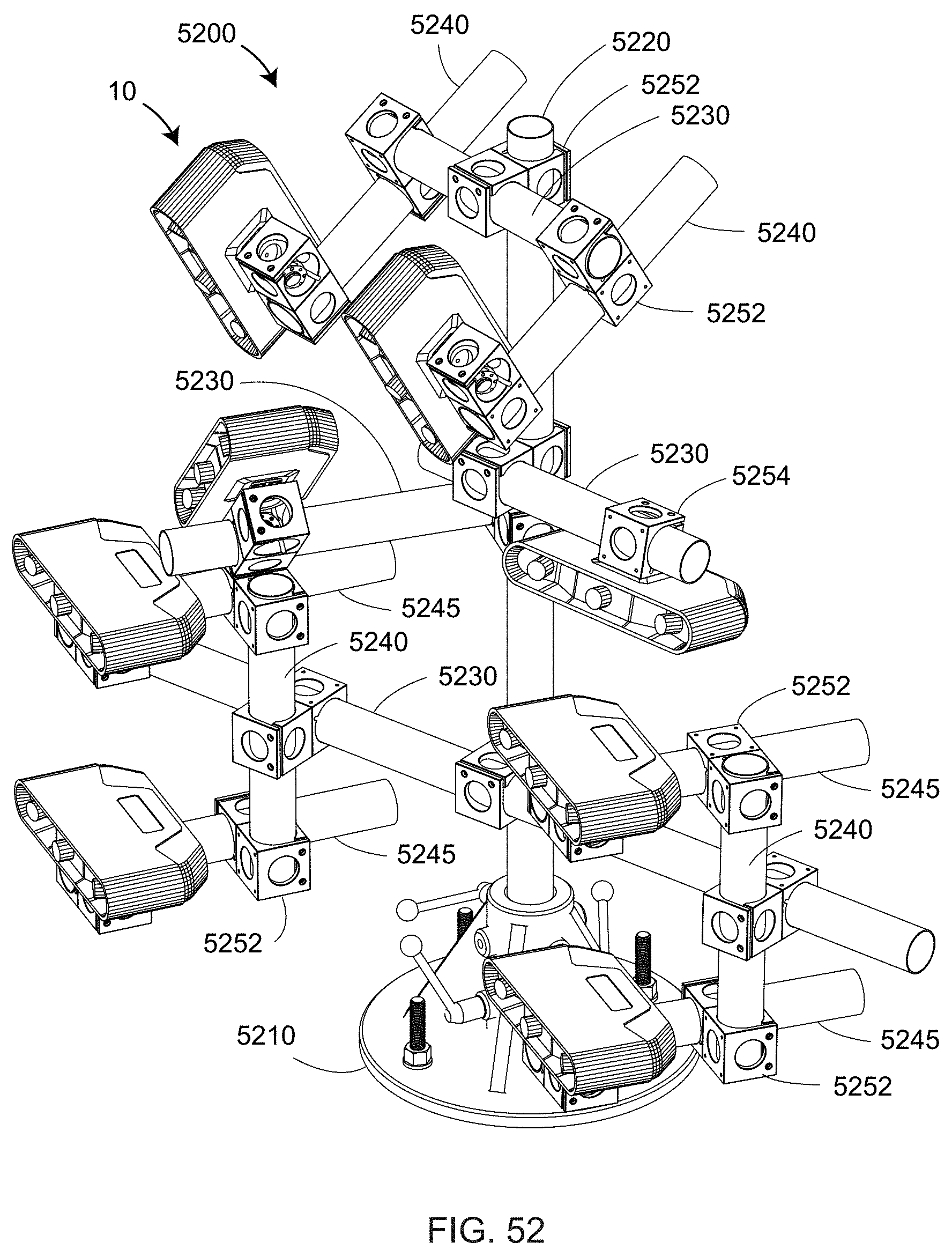

[0074] FIG. 52 shows a tree structure used to position 3D imagers according to an embodiment;

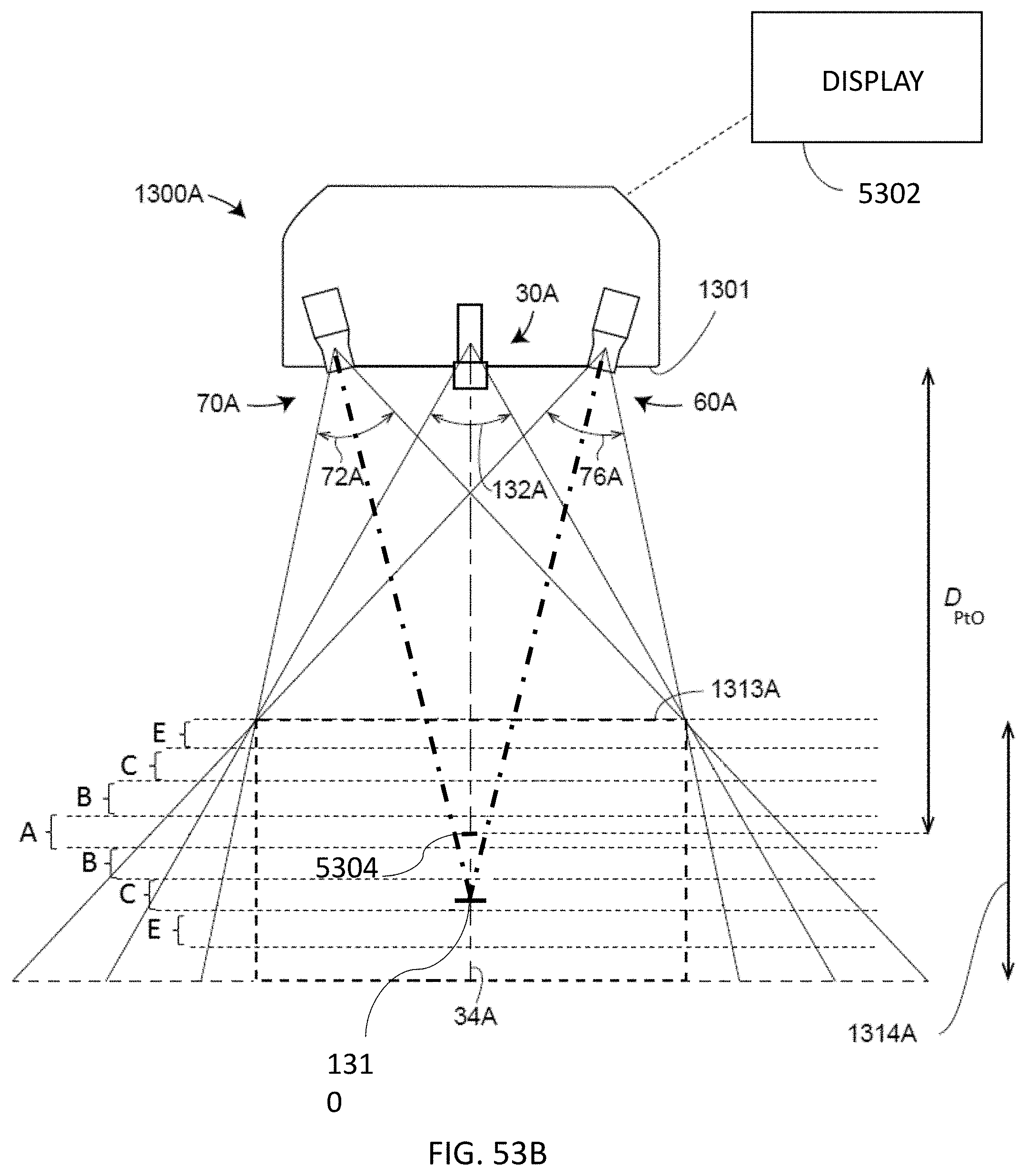

[0075] FIG. 53A and FIG. 53B are schematic representations of camera and projector lenses according to embodiments;

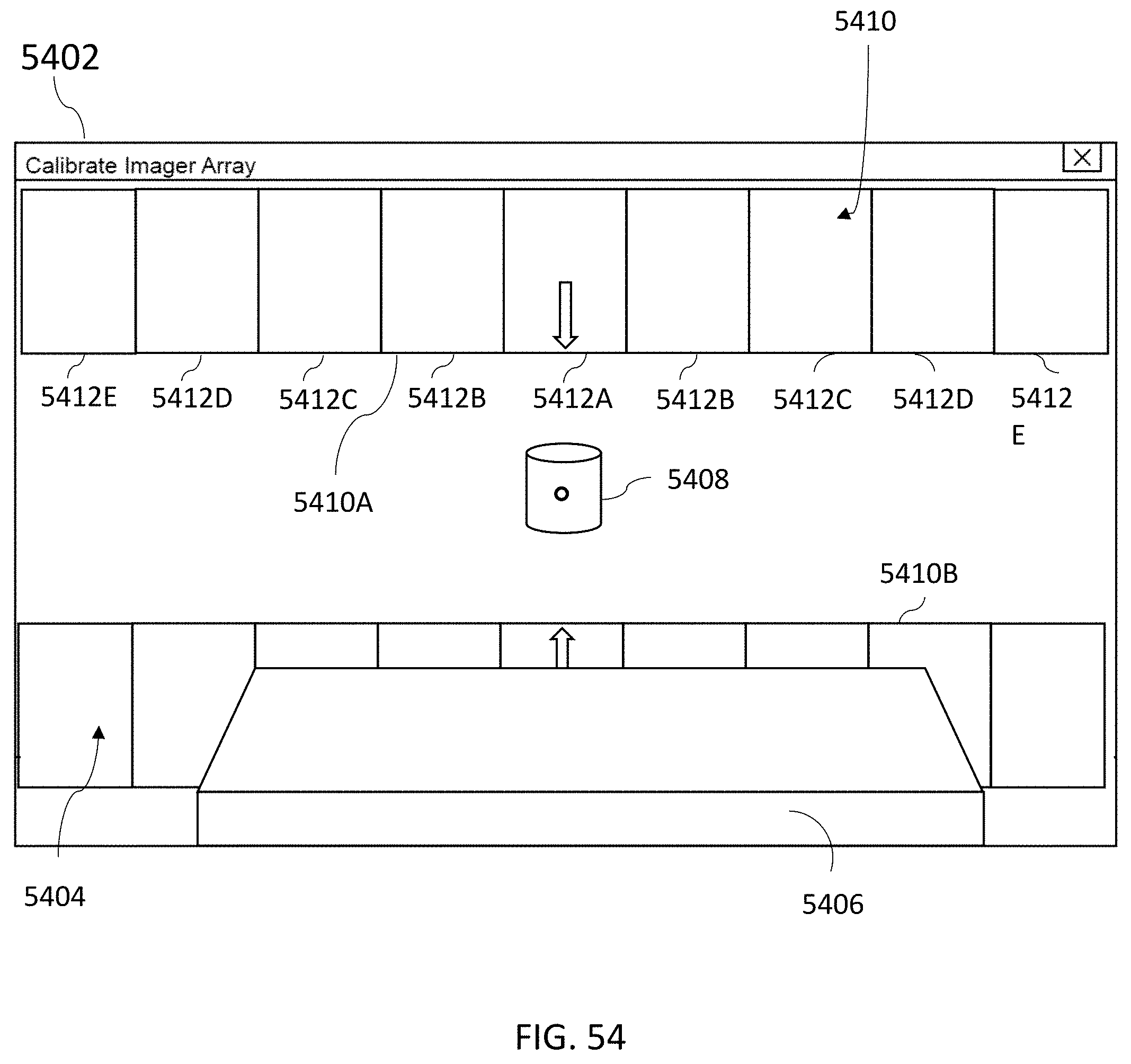

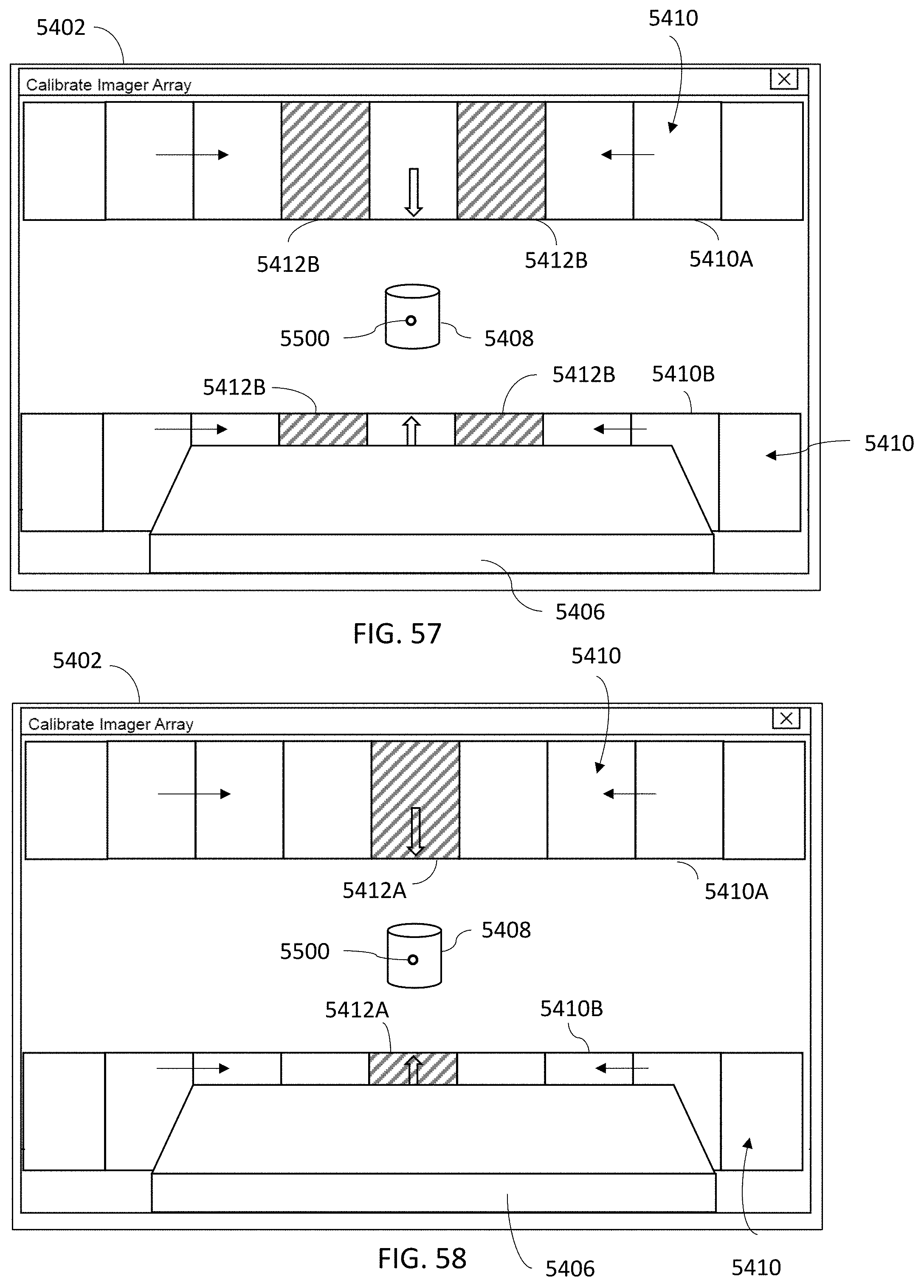

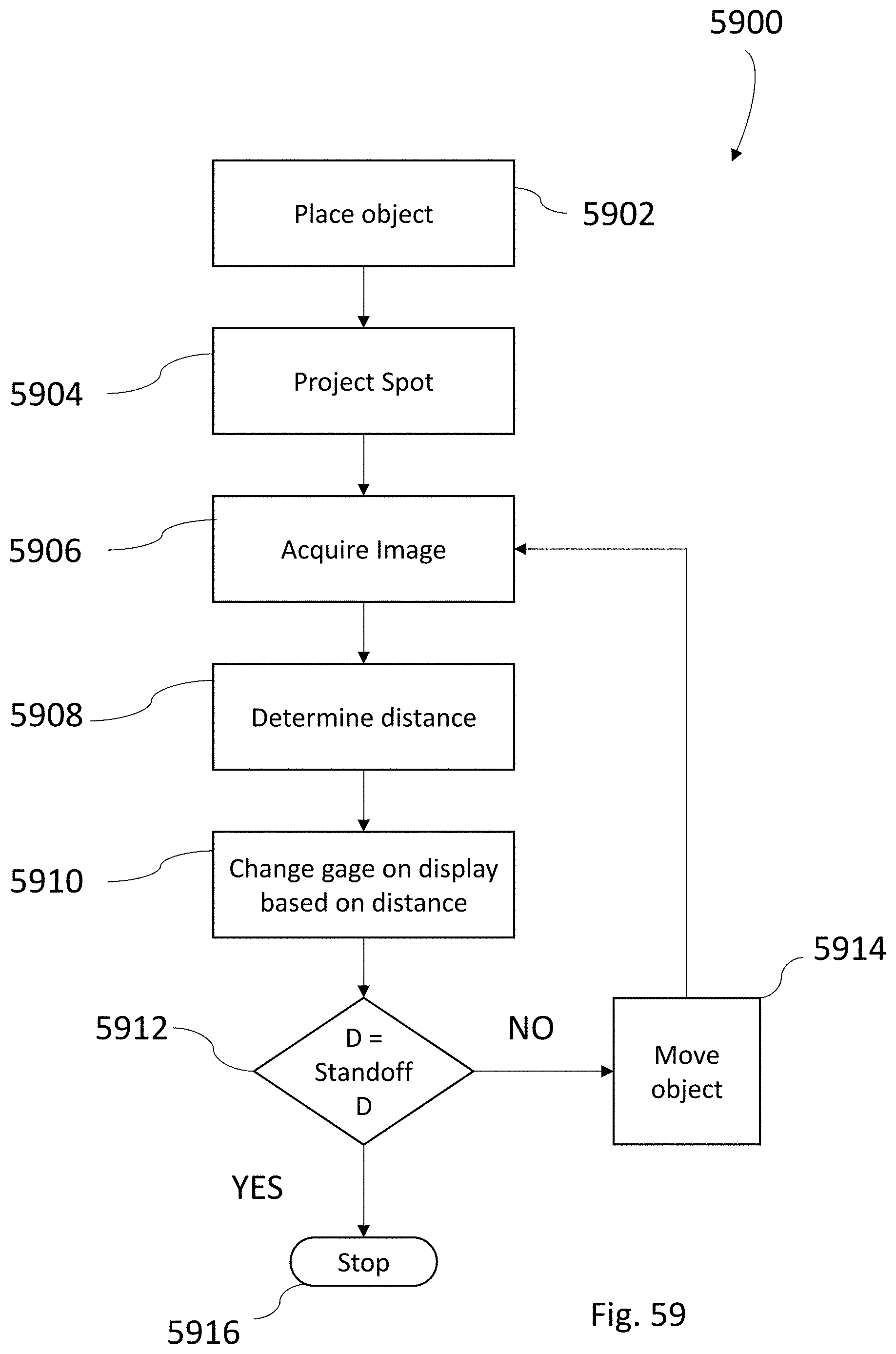

[0076] FIGS. 54-58 are illustrations of a graphical display with a standoff distance indicator that cooperates with a 3D imager; and

[0077] FIG. 59 is a flow diagram illustrating a method of positioning an object at a desired standoff distance.

[0078] The detailed description explains embodiments of the invention, together with advantages and features, by way of example with reference to the drawings.

DETAILED DESCRIPTION OF THE INVENTION

[0079] Embodiments of the present invention provide advantages in improving thermal stability and cooling and in enabling measurement of large objects with relatively high accuracy and high resolution at relatively high speeds.

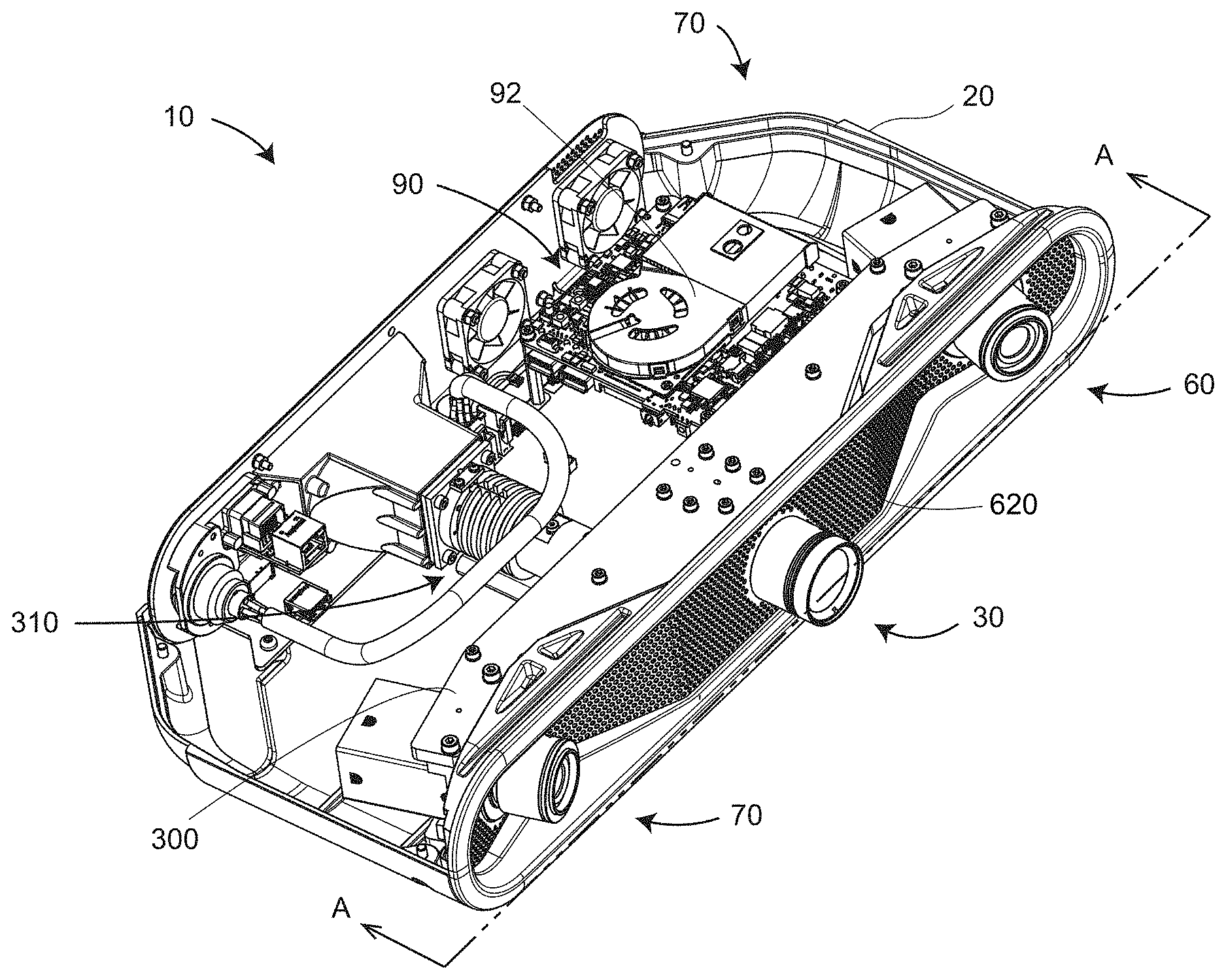

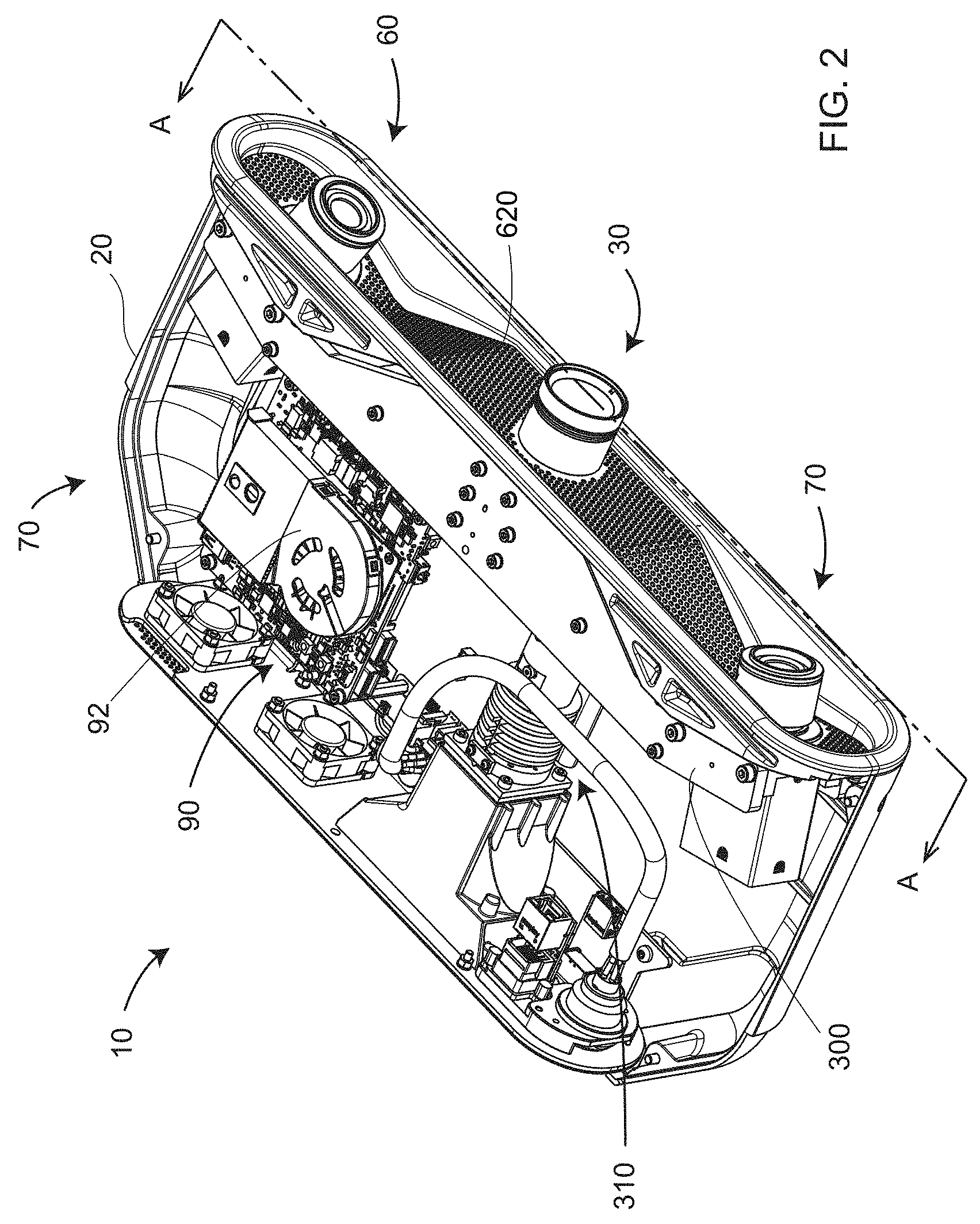

[0080] FIG. 1 is a perspective view of a 3D imager 10 according to an embodiment. It includes a frame 20, a projector 30, a first camera assembly 60, and a second camera assembly 70.

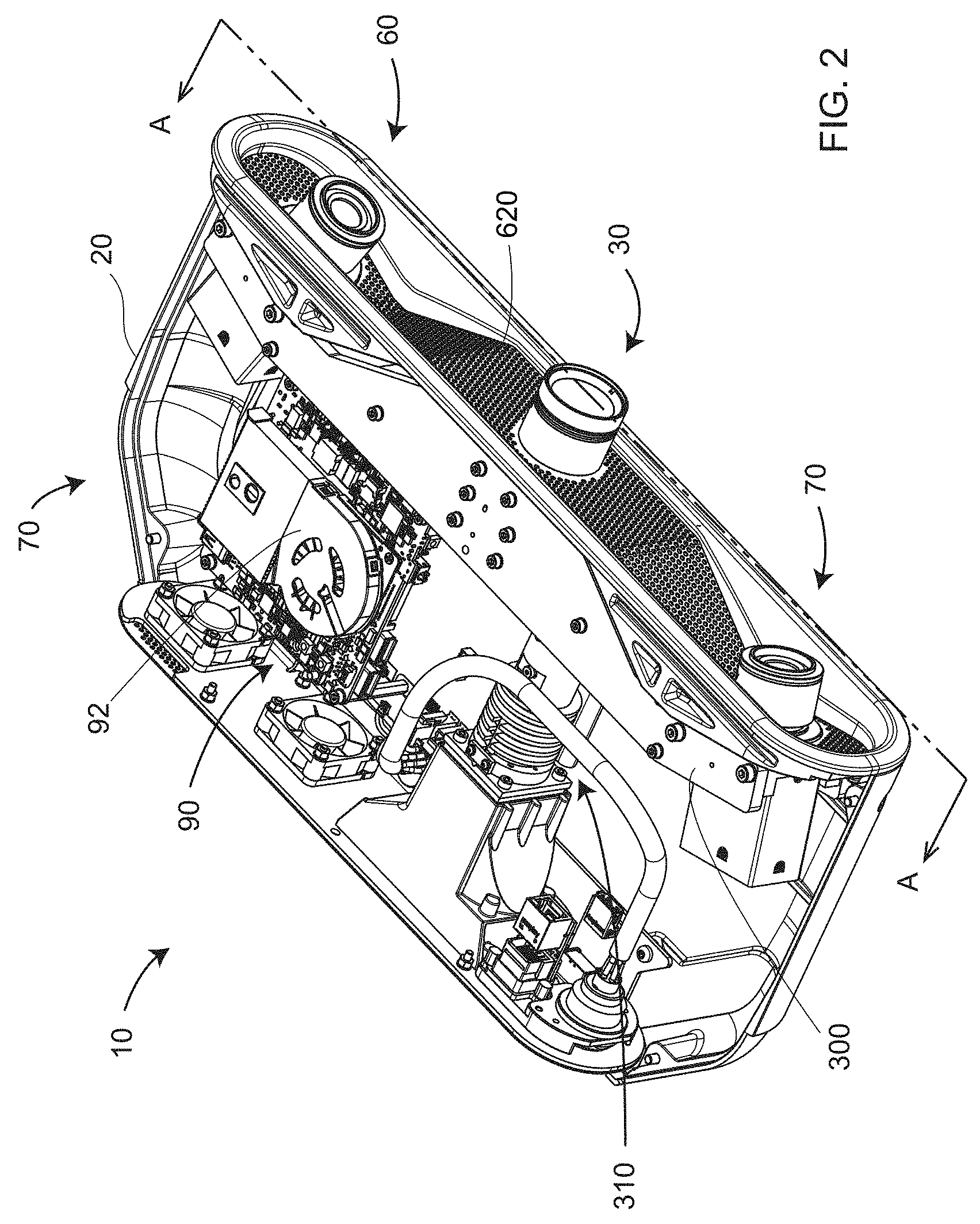

[0081] FIG. 2 and FIG. 3 show perspective views of internal elements 70 of the 3D imager 10. Internal elements are enclosed in a lower frame element 20. FIG. 3 shows elements of a projector-camera assembly 300 that includes projector-source assembly 310, projector 30, first camera-lens assembly 60, second camera-lens assembly 70, and support assembly 320. The support assembly 320 includes top structural support 322, bottom structural support 324, and web support 326. In addition, each camera includes mounting pins 328 and screws 329A, 329B.

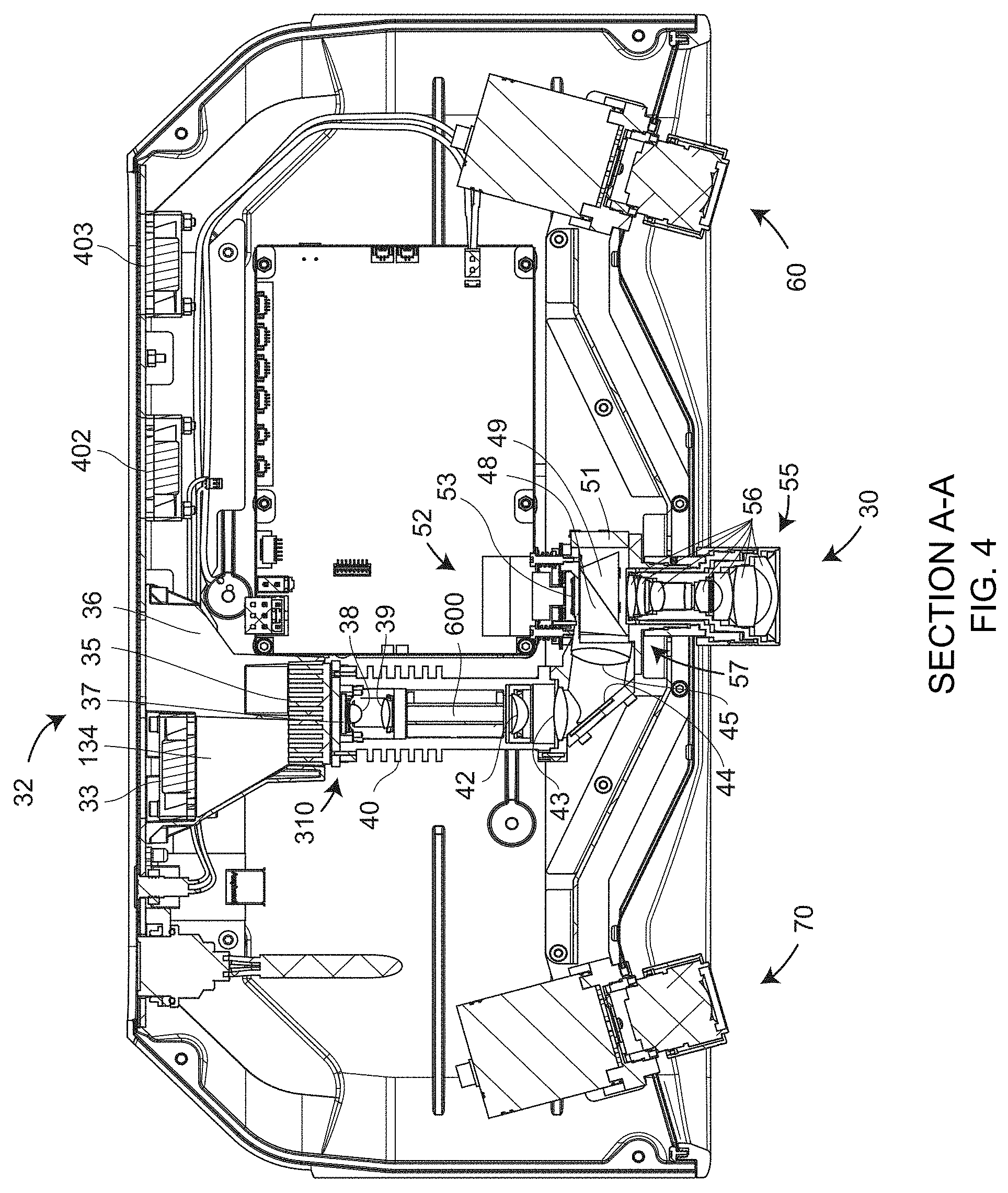

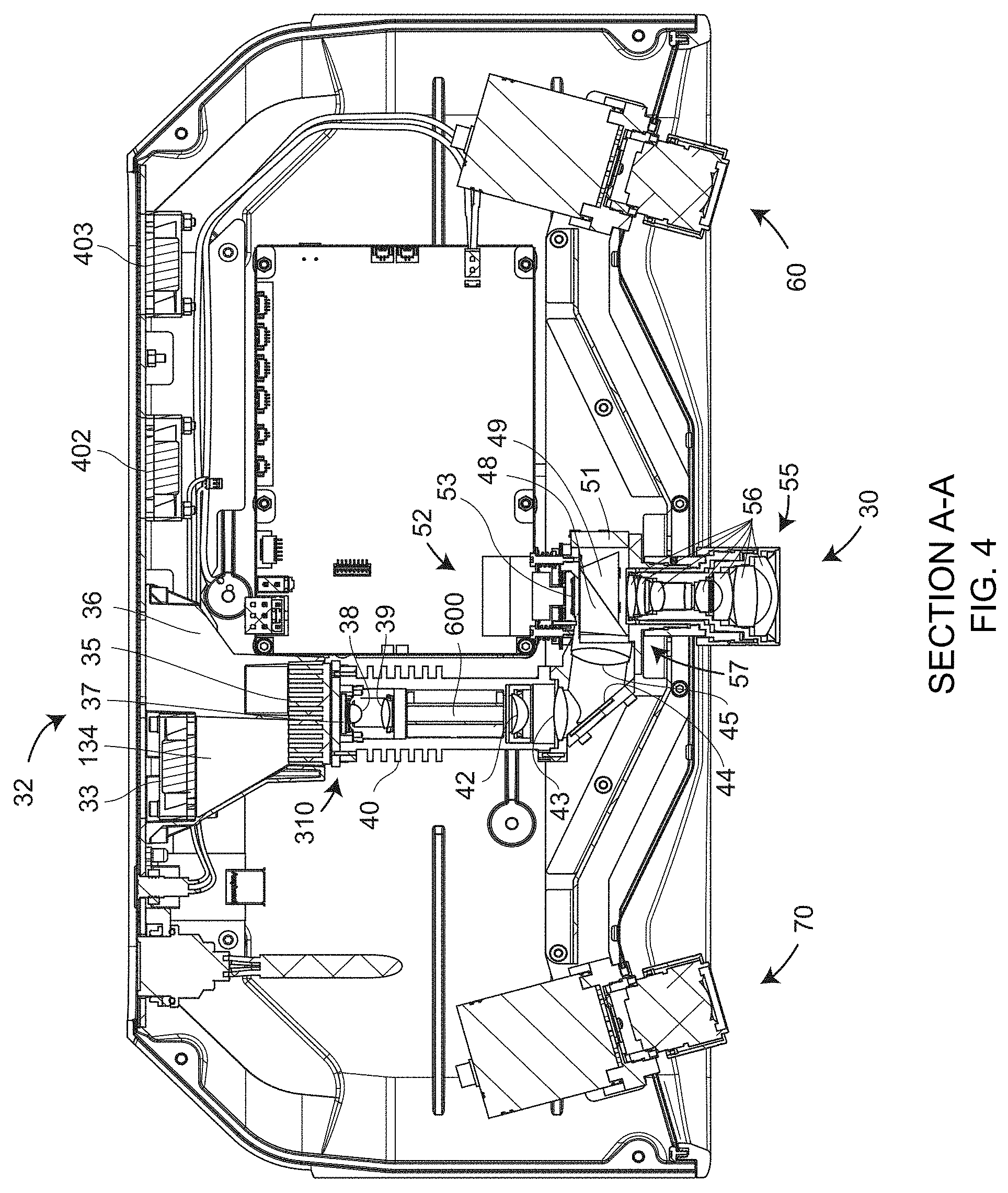

[0082] FIG. 4 is a top cross-sectional view of the 3D imager from FIG. 2. The projector lens assembly 30 includes a projector lens 55 and a projector lens mount 57. Projector lens 55 includes projector lens elements 56.

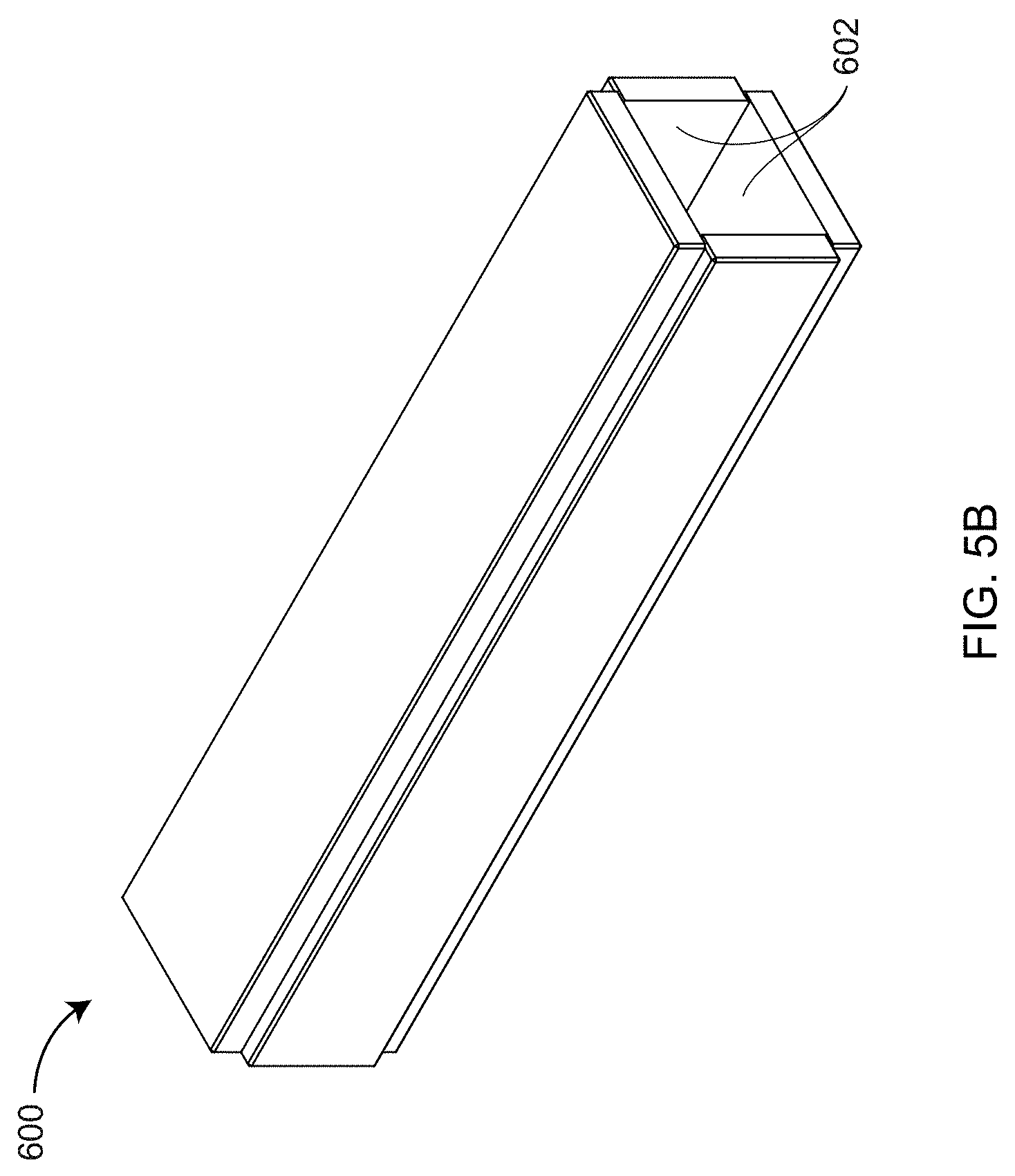

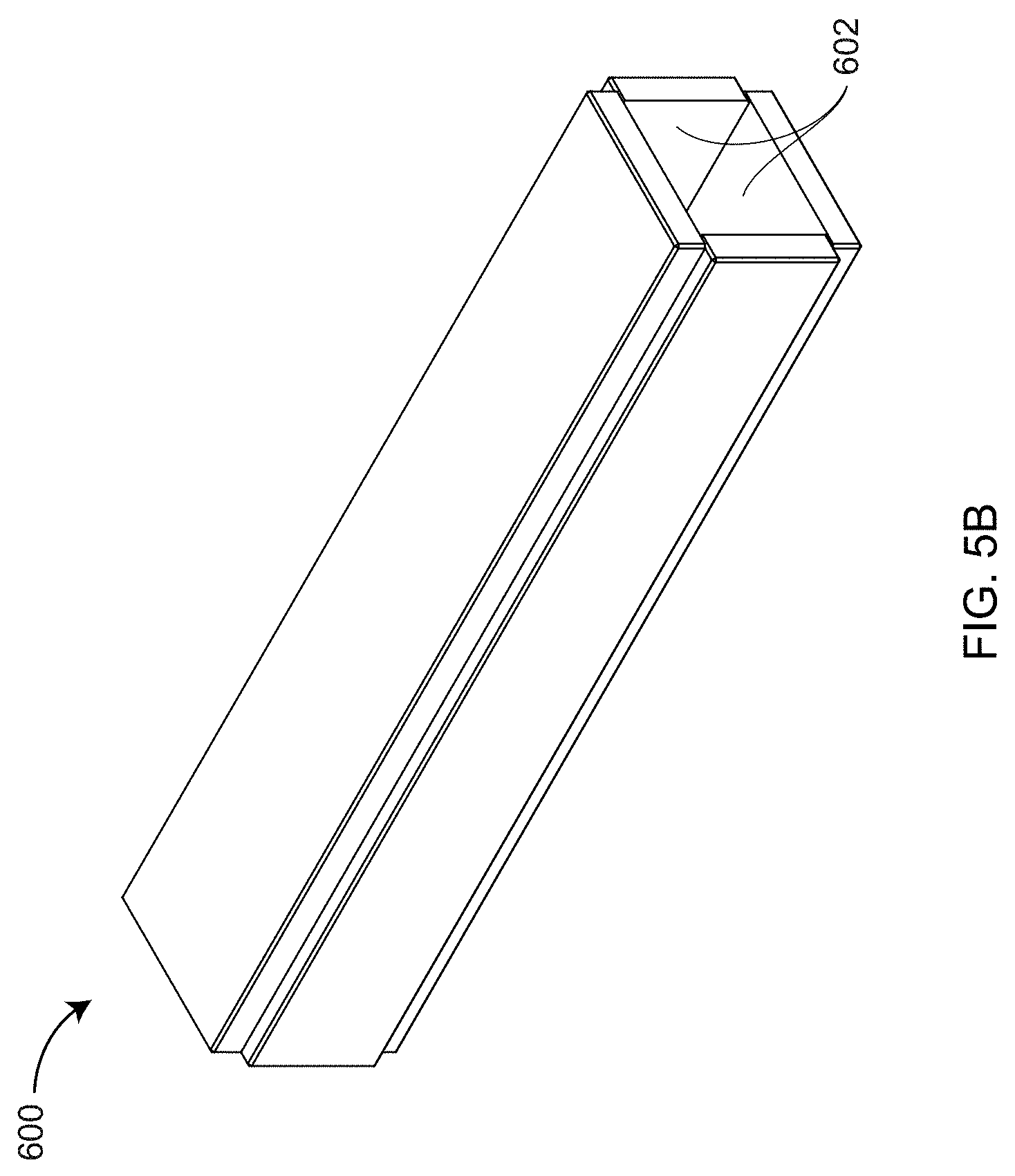

[0083] FIG. 5A, which is a cross-sectional view from FIG. 3, shows additional details of projector-source assembly 310 and pattern-projection assembly 52. In an embodiment, the projector-source assembly 310 includes light source 37, condensing lens elements 38, 39, light pipe 600, lenses 42, 43, 44, and mirror 44. In an embodiment, the light source 37 is an LED. The condensing lenses 38, 39 funnel light into the light pipe 600, which is shown in more detail in FIG. 5B. The light type reflects rays of light off reflective surfaces 602 in the light pipe 600. The purpose of the light pipe is to improve the homogeneity of the light from the condenser lenses 38, 39. Light passes through lenses 42 and 43 before reflecting off mirror 44 and passing through lens 45 into the pattern-projection assembly 52.

[0084] The pattern-projection assembly 52 includes a first prism 48, a second prism 49, and a digital micromirror device (DMD) 53. Together, the first prism 48 and second prism 49 comprise a total-internal-reflection (TIR) beam combiner. Light from lens 45 strikes an air interface between the first prism 48 and second prism 49. Because of the index of refraction of the glass in the first prism 48 and the angle of the first air interface relative to the light arriving from the lens 45, the light totally reflects toward the DMD 53. In the reverse direction, light reflected off the DMD 53 does not experience TIR and passes either out of the projector lens assembly 30 or onto a beam block 51. In an embodiment, the DMD 53 includes a large number of small micromechanical mirrors that rotate by a small angle of 10 to 12 degrees in either of two directions. In one direction, the light passes out of the projector 30. In the other direction, the light passes onto the beam block 51. Each mirror is toggled very quickly in such a way as to enable reflection of many shades of gray, from white to black. In an embodiment, the DMD chip produces 1024 shades of gray.

[0085] The light source assembly 37 is cooled by projector cooling system 32 shown in FIG. 4. The projector cooling system 32 includes fan 33, chambers 134, 36, and heat sinks 35, 40. In an embodiment, the heat sink 35 includes projections 31 having intervening air spaces, as shown in FIGS. 5A and 6C. In an embodiment, the fan 33 pushes air through chamber 134, through the air spaces separating the projections 31, into the chamber 36, and out the 3D imager 10 through a filtered exit in the frame 20. In this way, relatively cool outside air is forced past the heat sink projections 31, thereby removing heat generated by the light source 37 and stabilizing the temperature of the light source 37. In an embodiment illustrated in partial perspective view 604 in FIG. 6C, the light source 37 is an LED chip mounted to a heat sink element 608 that is in contact with the heat sink 31 and heat sink 40. The heat sink 31 may be in contact with a surrounding heat sink 606. In an embodiment, a temperature sensor 610 is attached to the heat sink 608 to enable monitoring of the LED temperature.

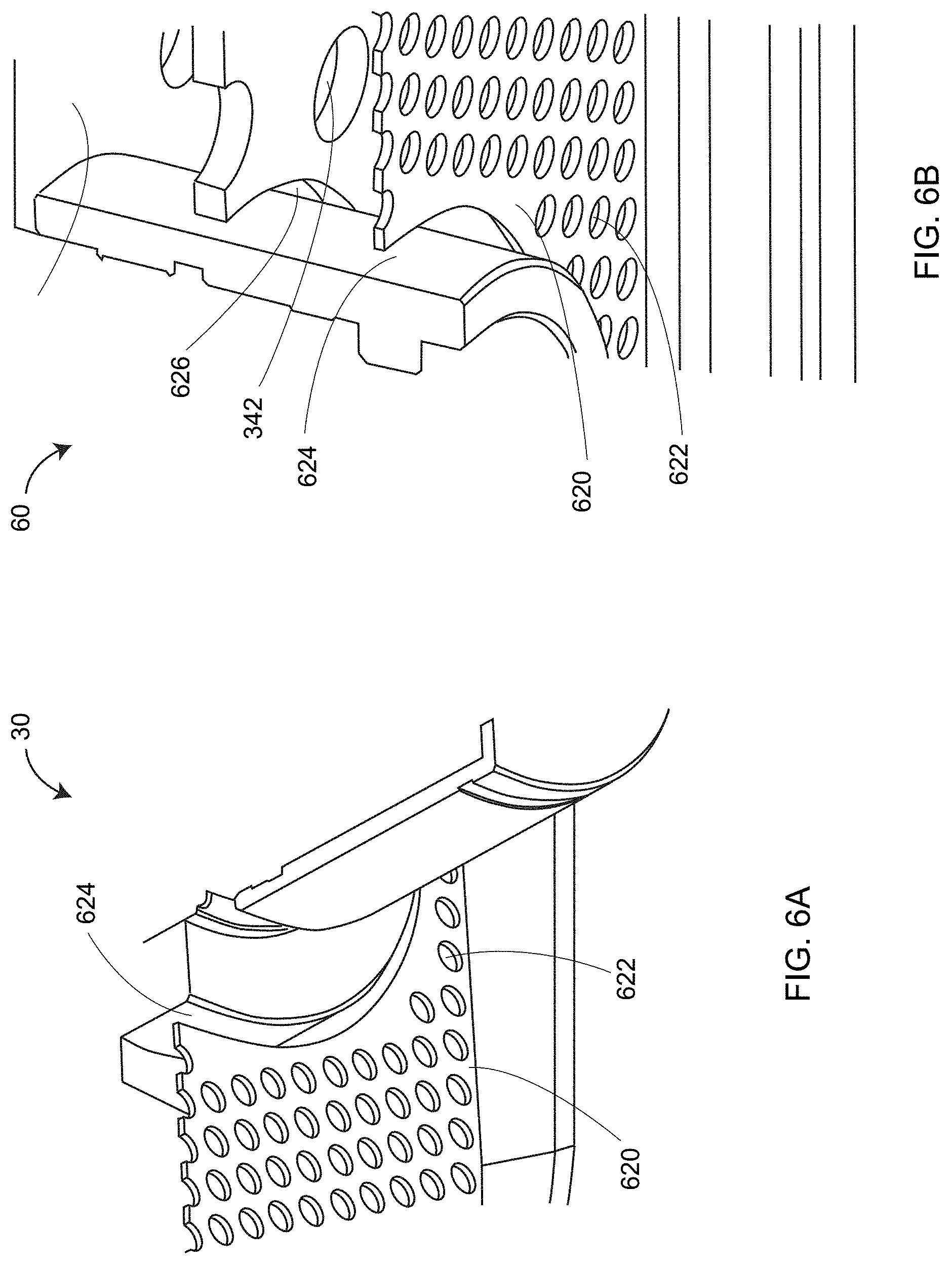

[0086] Elements within the frame 20 are cooled by fans 402 and 403 shown in FIG. 4. The fans 402 and 403 pull air out of the cavity, first through holes 622 and openings 624 in a grill vent 620 surrounding the projector 30, the first camera assembly 60, and the second camera assembly 70. The air is pulled through additional openings and holes in the projector-camera assembly 300 such as the opening 340 and the web holes 342 shown in FIG. 3 and the opening 626 shown in FIG. 6B. The air drawn out of the frame 20 by the fans 402 and 403 provides cooling for the projector 30 and the camera assemblies 60, 70, as well as the heat sink 40 and other elements internal to the frame 20. As shown in FIG. 2, in an embodiment further cooling is provided for a circuit board 90 by a fan 92 that pumps heat from the circuit board out of the frame 20 through a dedicated duct.

[0087] In an embodiment, the 3D imager includes internal electrical system 700 shown in FIG. 7. Internal electrical system 700 includes a Peripheral Component Interface (PCI) board 710, projector electronics 770, a processor board 750, and a collection of additional components discussed herein below. In an embodiment, the PCI board 710 includes a microcontroller integrated circuit 720, DMD controller chip 740, LED driver chip 734, an inertial measurement unit (IMU) chip 732, a Universal Serial Bus (USB) hub 736, and a power conversion component 714.

[0088] In an embodiment, the microcontroller integrated circuit 720 is a Programmable System-on-Chip (PSoC) by Cypress Semiconductor. The PSoC includes a central processing unit (CPU) core and mixed-signal arrays of configurable integrated analog and digital peripheral functions. In an embodiment, the microcontroller integrated circuit 720 is configured to serve as (1) a controller 724 for the fans 784A, 784B, and 784C, corresponding to fans 33, 402, and 403 in FIG. 4; (2) a controller for the LED driver chip 736; (3) an interface 726 for thermistor temperature sensors 782A, 782B, and 782C; (4) an inter-integrated circuit (I.sup.2C) interface 722; (5) an ARM microcontroller 727; and (6) a USB interface 728. The I.sup.2C interface 722 receives signals from the IMU chip 732 and I.sup.2C temperature sensors 786A, 786B, 786C, and 786D. It sends signals to an ARM microcontroller 727, which in turn sends signals to the fan controller 724. The DMD controller chip 740 sends high speed electrical pattern sequences to a DMD chip 772. It also sends output trigger signals to electronics 760A and 760B of the first camera assembly 60 and the second camera assembly 70, respectively. In an embodiment, the IMU includes a three-axis accelerometer and a three-axis gyroscope. In other embodiments, the IMU further includes an attitude sensor such as a magnetometer and an altitude sensor such as a barometer.

[0089] The projector electronics 770 includes fan electronics 777, projector photodiode 776, projector thermistor electronics 775, light source electronics 774, and DMD chip 772. In an embodiment, fan electronics 777 provides an electrical signal to influence the speed of the projector fan 33. The projector photodiode 776 measures an amount of optical power received by the DMD chip 772. The projector thermistor electronics 775 receives a signal from a thermistor temperature sensor such as the sensor 610 in FIG. 6C. The sensor 610 may provide a control signal in response. The light source electronics 774 may drive an LED chip 37. In an embodiment, the DMD is a DLP4500 device from Texas Instruments. This device includes 912.times.1140 micromirrors.

[0090] In an embodiment, the processor board 750 is a Next Unit of Computing (NUC) small form factor PC by Intel. In an embodiment, the processor board 750 is on the circuit board 90, which includes an integrated fan header 92, as shown in FIG. 1. In an embodiment, the processor board 750 communicates with camera assemblies 60 and 70 over electronics 760A, 760B via USB 3.0. The processor board 750 performs phase and triangulation calculations as discussed herein below and sends the results over USB 3.0 to the USB 2.0 hub 736, which shares signals with the DMD controller chip 740 and the USB interface 728. The processor board 750 may perform additional functions such as filtering of data or it may send partly processed data to additional computing elements, as explained herein below with reference to FIG. 8. In an embodiment, the processor board 750 further includes a USB 3.0 jack and an RJ45 jack.

[0091] In an embodiment, a DC adapter 704 attached to an AC mains plug 702 provides DC power through a connector pair 705, 706 and a socket 707 to the 3D imager 10. Power enters the frame 20 over the wires 708 and arrives at the power conversion component 714, which down-converts the DC voltages to desired levels and distributes the electrical power to components in the internal electrical system 700. One or more LEDs 715 may be provided to indicate status of the 3D imager 10.

[0092] FIG. 8 is a block diagram of a computing system that includes the internal electrical system 700, one or more computing elements 810, 820, and a network of computing elements 830, commonly referred to as the cloud. The cloud may represent any sort of network connection (e.g., the worldwide web or internet). Communication among the computing (processing and memory) components may be wired or wireless. Examples of wireless communication methods include IEEE 802.11 (Wi-Fi), IEEE 802.15.1 (Bluetooth), and cellular communication (e.g., 3G and 4G). Many other types of wireless communication are possible. A popular type of wired communication is IEEE 802.3 (Ethernet). In some cases, multiple external processors, especially processors on the cloud, may be used to process scanned data in parallel, thereby providing faster results, especially where relatively time-consuming registration and filtering may be required.

[0093] FIG. 9 shows a structured light triangulation scanner 900 that projects a pattern of light over an area on a surface 930. The scanner, which has a frame of reference 960, includes a projector 910 and a camera 920. The projector 910 includes an illuminated projector pattern generator 912, a projector lens 914, and a perspective center 918 through which a ray of light 911 emerges. The ray of light 911 emerges from a corrected point 916 having a corrected position on the pattern generator 912. In an embodiment, the point 916 has been corrected to account for aberrations of the projector, including aberrations of the lens 914, in order to cause the ray to pass through the perspective center, thereby simplifying triangulation calculations.

[0094] The ray of light 911 intersects the surface 930 in a point 932, which is reflected (scattered) off the surface and sent through the camera lens 924 to create a clear image of the pattern on the surface 930 on the surface of a photosensitive array 922. The light from the point 932 passes in a ray 921 through the camera perspective center 928 to form an image spot at the corrected point 926. The image spot is corrected in position to correct for aberrations in the camera lens. A correspondence is obtained between the point 926 on the photosensitive array 922 and the point 916 on the illuminated projector pattern generator 912. As explained herein below, the correspondence may be obtained by using a coded or an uncoded (sequentially projected) pattern. Once the correspondence is known, the angles a and b in FIG. 9 may be determined. The baseline 940, which is a line segment drawn between the perspective centers 918 and 928, has a length C. Knowing the angles a, b and the length C, all the angles and side lengths of the triangle 928-932-918 may be determined. Digital image information is transmitted to a processor 950, which determines 3D coordinates of the surface 930. The processor 950 may also instruct the illuminated pattern generator 912 to generate an appropriate pattern. The processor 950 may be located within the scanner assembly, or it may be an external computer, or a remote server.

[0095] As used herein, the term "pose" refers to a combination of a position and an orientation. In embodiment, the position and the orientation are desired for the camera and the projector in a frame of reference of the 3D imager 900. Since a position is characterized by three translational degrees of freedom (such as x, y, z) and an orientation is composed of three orientational degrees of freedom (such as roll, pitch, and yaw angles), the term pose defines a total of six degrees of freedom. In a triangulation calculation, a relative pose of the camera and the projector are desired within the frame of reference of the 3D imager. As used herein, the term "relative pose" is used because the perspective center of the camera or the projector can be located on an (arbitrary) origin of the 3D imager system; one direction (say the x axis) can be selected along the baseline; and one direction can be selected perpendicular to the baseline and perpendicular to an optical axis. In most cases, a relative pose described by six degrees of freedom is sufficient to perform the triangulation calculation. For example, the origin of a 3D imager can be placed at the perspective center of the camera. The baseline (between the camera perspective center and the projector perspective center) may be selected to coincide with the x axis of the 3D imager. The y axis may be selected perpendicular to the baseline and the optical axis of the camera. Two additional angles of rotation are used to fully define the orientation of the camera system. Three additional angles or rotation are used to fully define the orientation of the projector. In this embodiment, six degrees-of-freedom define the state of the 3D imager: one baseline, two camera angles, and three projector angles. In other embodiment, other coordinate representations are possible.

[0096] FIG. 10 shows a structured light triangulation scanner 1000 having a projector 1050, a first camera 1010, and a second camera 1030. The projector creates a pattern of light on a pattern generator plane 1052, which it projects from a corrected point 1053 on the pattern through a perspective center 1058 (point D) of the lens 1054 onto an object surface 1070 at a point 1072 (point F). The point 1072 is imaged by the first camera 1010 by receiving a ray of light from the point 1072 through a perspective center 1018 (point E) of a lens 1014 onto the surface of a photosensitive array 1012 of the camera as a corrected point 1020. The point 1020 is corrected in the read-out data by applying a correction factor to remove the effects of lens aberrations. The point 1072 is likewise imaged by the second camera 1030 by receiving a ray of light from the point 1072 through a perspective center 1038 (point C) of the lens 1034 onto the surface of a photosensitive array 1032 of the second camera as a corrected point 1035.

[0097] The inclusion of two cameras 1010 and 1030 in the system 1000 provides advantages over the device of FIG. 9 that includes a single camera. One advantage is that each of the two cameras has a different view of the point 1072 (point F). Because of this difference in viewpoints, it is possible in some cases to see features that would otherwise be obscured--for example, seeing into a hole or behind a blockage. In addition, it is possible in the system 1000 of FIG. 10 to perform three triangulation calculations rather than a single triangulation calculation, thereby improving measurement accuracy. A first triangulation calculation can be made between corresponding points in the two cameras using the triangle CEF with the baseline B.sub.3. A second triangulation calculation can be made based on corresponding points of the first camera and the projector using the triangle DEF with the baseline B.sub.2. A third triangulation calculation can be made based on corresponding points of the second camera and the projector using the triangle CDF with the baseline B.sub.1. The optical axis of the first camera 1020 is 1016, and the optical axis of the second camera 1030 is 1036.

[0098] FIG. 11 shows 3D imager 1100 having two cameras 1110, 1130 and a projector 1150 arranged in a triangle A.sub.1-A.sub.2-A.sub.3. In an embodiment, the 3D imager 1100 of FIG. 11 further includes a camera 1190 that may be used to provide color (texture) information for incorporation into the 3D image. In addition, the camera 1190 may be used to register multiple 3D images through the use of videogrammetry.

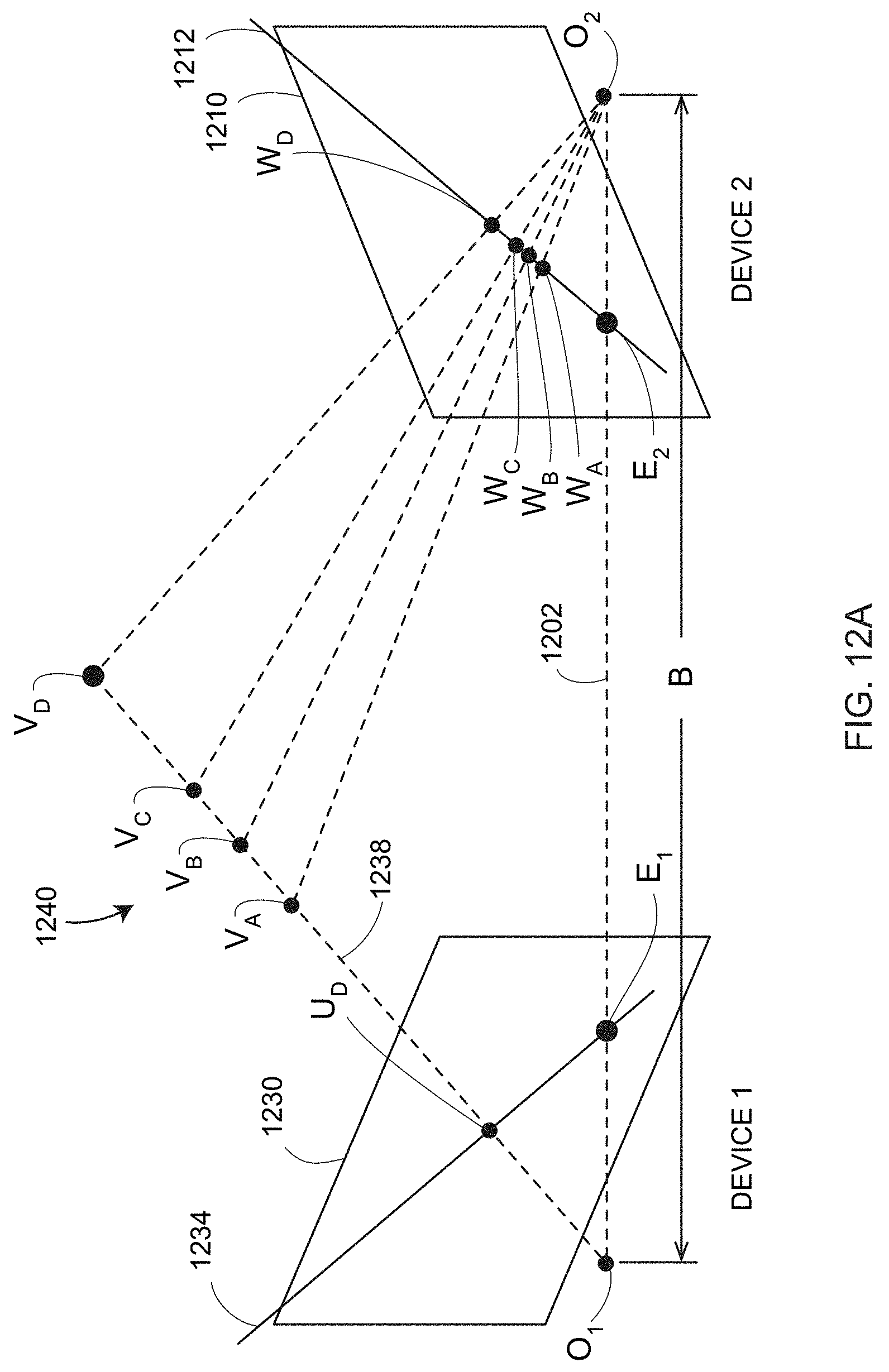

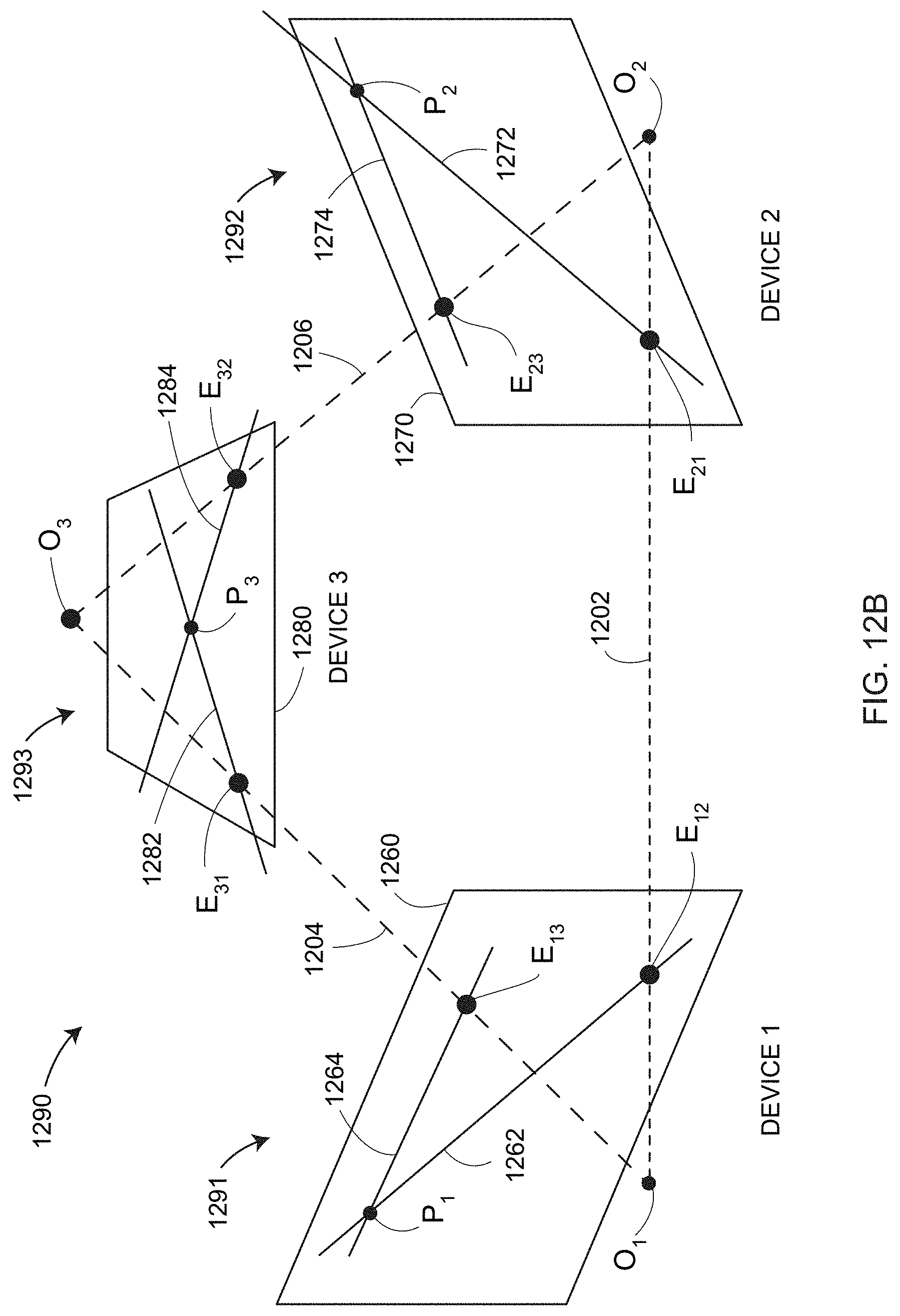

[0099] This triangular arrangement provides additional information beyond that available for two cameras and a projector arranged in a straight line as illustrated in FIGS. 1 and 10. The additional information may be understood in reference to FIG. 12A, which explain the concept of epipolar constraints, and FIG. 12B that explains how epipolar constraints are advantageously applied to the triangular arrangement of the 3D imager 1100. In FIG. 12A, a 3D triangulation instrument 1240 includes a device 1 and a device 2 on the left and right sides of FIG. 12A, respectively. Device 1 and device 2 may be two cameras or device 1 and device 2 may be one camera and one projector. Each of the two devices, whether a camera or a projector, has a perspective center, O.sub.1 and O.sub.2, and a representative plane, 1230 or 1210. The perspective centers are separated by a baseline distance B, which is the length of the line 1202. The concept of perspective center is discussed in more detail in reference to FIGS. 13C, 13D, and 13E. Basically, the perspective centers O.sub.1, O.sub.2 are points through which rays of light may be considered to travel, either to or from a point on an object. These rays of light either emerge from an illuminated projector pattern, such as the pattern on illuminated projector pattern generator 912 of FIG. 9, or impinge on a photosensitive array, such as the photosensitive array 922 of FIG. 9. As can be seen in FIG. 9, the lens 914 lies between the illuminated object point 932 and plane of the illuminated object projector pattern generator 912. Likewise, the lens 924 lies between the illuminated object point 932 and the plane of the photosensitive array 922, respectively. However, the pattern of the front surface planes of devices 912 and 922 would be the same if they were moved to appropriate positions opposite the lenses 914 and 924, respectively. This placement of the reference planes 1230, 1210 is applied in FIG. 12A, which shows the reference planes 1230, 1210 between the object point and the perspective centers O.sub.1, O.sub.2.

[0100] In FIG. 12A, for the reference plane 1230 angled toward the perspective center O.sub.2 and the reference plane 1210 angled toward the perspective center O.sub.1, a line 1202 drawn between the perspective centers O.sub.1 and O.sub.2 crosses the planes 1230 and 1210 at the epipole points E.sub.1, E.sub.2, respectively. Consider a point U.sub.D on the plane 1230. If device 1 is a camera, it is known that an object point that produces the point U.sub.D on the image lies on the line 1238. The object point might be, for example, one of the points V.sub.A, V.sub.B, V.sub.C, or V.sub.D. These four object points correspond to the points W.sub.A, W.sub.B, W.sub.C, W.sub.D, respectively, on the reference plane 1210 of device 2. This is true whether device 2 is a camera or a projector. It is also true that the four points lie on a straight line 1212 in the plane 1210. This line, which is the line of intersection of the reference plane 1210 with the plane of O.sub.1-O.sub.2-U.sub.D, is referred to as the epipolar line 1212. It follows that any epipolar line on the reference plane 1210 passes through the epipole E.sub.2. Just as there is an epipolar line on the reference plane of device 2 for any point on the reference plane of device 1, there is also an epipolar line 1234 on the reference plane of device 1 for any point on the reference plane of device 2.

[0101] FIG. 12B illustrates the epipolar relationships for a 3D imager 1290 corresponding to 3D imager 1100 of FIG. 11 in which two cameras and one projector are arranged in a triangular pattern. In general, the device 1, device 2, and device 3 may be any combination of cameras and projectors as long as at least one of the devices is a camera. Each of the three devices 1291, 1292, 1293 has a perspective center O.sub.1, O.sub.2, O.sub.3, respectively, and a reference plane 1260, 1270, and 1280, respectively. Each pair of devices has a pair of epipoles. Device 1 and device 2 have epipoles E.sub.12, E.sub.21 on the planes 1260, 1270, respectively. Device 1 and device 3 have epipoles E.sub.13, E.sub.31, respectively on the planes 1260, 1280, respectively. Device 2 and device 3 have epipoles E.sub.23, E.sub.32 on the planes 1270, 1280, respectively. In other words, each reference plane includes two epipoles. The reference plane for device 1 includes epipoles E.sub.12 and E.sub.13. The reference plane for device 2 includes epipoles E.sub.21 and E.sub.23. The reference plane for device 3 includes epipoles E.sub.31 and E.sub.32.

[0102] Consider the situation of FIG. 12B in which device 3 is a projector, device 1 is a first camera, and device 2 is a second camera. Suppose that a projection point P.sub.3, a first image point P.sub.1, and a second image point P.sub.2 are obtained in a measurement. These results can be checked for consistency in the following way.

[0103] To check the consistency of the image point P.sub.1, intersect the plane P.sub.3-E.sub.31-E.sub.13 with the reference plane 1260 to obtain the epipolar line 1264. Intersect the plane P.sub.2-E.sub.21-E.sub.12 to obtain the epipolar line 1262. If the image point P.sub.1 has been determined consistently, the observed image point P.sub.1 will lie on the intersection of the determined epipolar lines 1262 and 1264.

[0104] To check the consistency of the image point P.sub.2, intersect the plane P.sub.3-E.sub.32-E.sub.23 with the reference plane 1270 to obtain the epipolar line 1274. Intersect the plane P.sub.1-E.sub.12-E.sub.21 to obtain the epipolar line 1272. If the image point P.sub.2 has been determined consistently, the observed image point P.sub.2 will lie on the intersection of the determined epipolar lines 1272 and 1274.

[0105] To check the consistency of the projection point P.sub.3, intersect the plane P.sub.2-E.sub.23-E.sub.32 with the reference plane 1280 to obtain the epipolar line 1284. Intersect the plane P.sub.1-E.sub.13-E.sub.31 to obtain the epipolar line 1282. If the projection point P.sub.3 has been determined consistently, the projection point P.sub.3 will lie on the intersection of the determined epipolar lines 1282 and 1284.

[0106] The redundancy of information provided by using a 3D imager 1100 having a triangular arrangement of projector and cameras may be used to reduce measurement time, to identify errors, and to automatically update compensation/calibration parameters.

[0107] An example is now given of a way to reduce measurement time. As explained herein below in reference to FIGS. 14A-D and FIG. 15, one method of determining 3D coordinates is by performing sequential measurements. An example of such a sequential measurement method described herein below is to project a sinusoidal measurement pattern three or more times, with the phase of the pattern shifted each time. In an embodiment, such projections may be performed first with a coarse sinusoidal pattern, followed by a medium-resolution sinusoidal pattern, followed by a fine sinusoidal pattern. In this instance, the coarse sinusoidal pattern is used to obtain an approximate position of an object point in space. The medium-resolution and fine patterns used to obtain increasingly accurate estimates of the 3D coordinates of the object point in space. In an embodiment, redundant information provided by the triangular arrangement of the 3D imager 1100 eliminates the step of performing a coarse phase measurement. Instead, the information provided on the three reference planes 1260, 1270, and 1280 enables a coarse determination of object point position. One way to make this coarse determination is by iteratively solving for the position of object points based on an optimization procedure. For example, in one such procedure, a sum of squared residual errors is minimized to select the best-guess positions for the object points in space.

[0108] The triangular arrangement of 3D imager 1100 may also be used to help identify errors. For example, a projector 1293 in a 3D imager 1290 may project a coded pattern onto an object in a single shot with a first element of the pattern having a projection point P.sub.3. The first camera 1291 may associate a first image point P.sub.1 on the reference plane 1260 with the first element. The second camera 1292 may associate the first image point P.sub.2 on the reference plane 1270 with the first element. The six epipolar lines may be generated from the three points P.sub.1, P.sub.2, and P.sub.3 using the method described herein above. The intersection of the epipolar lines lie on the corresponding points P.sub.1, P.sub.2, and P.sub.3 for the solution to be consistent. If the solution is not consistent, additional measurements of other actions may be advisable.

[0109] The triangular arrangement of the 3D imager 1100 may also be used to automatically update compensation/calibration parameters. Compensation parameters are numerical values stored in memory, for example, in the internal electrical system 700 or in another external computing unit. Such parameters may include the relative positions and orientations of the cameras and projector in the 3D imager.

[0110] The compensation parameters may relate to lens characteristics such as lens focal length and lens aberrations. They may also relate to changes in environmental conditions such as temperature. Sometimes the term calibration is used in place of the term compensation. Often compensation procedures are performed by the manufacturer to obtain compensation parameters for a 3D imager. In addition, compensation procedures are often performed by a user. User compensation procedures may be performed when there are changes in environmental conditions such as temperature. User compensation procedures may also be performed when projector or camera lenses are changed or after then instrument is subjected to a mechanical shock. Typically user compensations may include imaging a collection of marks on a calibration plate. A further discussion of compensation procedures is given herein below in reference to FIG. 35.

[0111] Inconsistencies in results based on epipolar calculations for a 3D imager 1290 may indicate a problem in compensation parameters. In some cases, a pattern of inconsistencies may suggest an automatic correction that can be applied to the compensation parameters. In other cases, the inconsistencies may indicate that user compensation procedures should be performed.

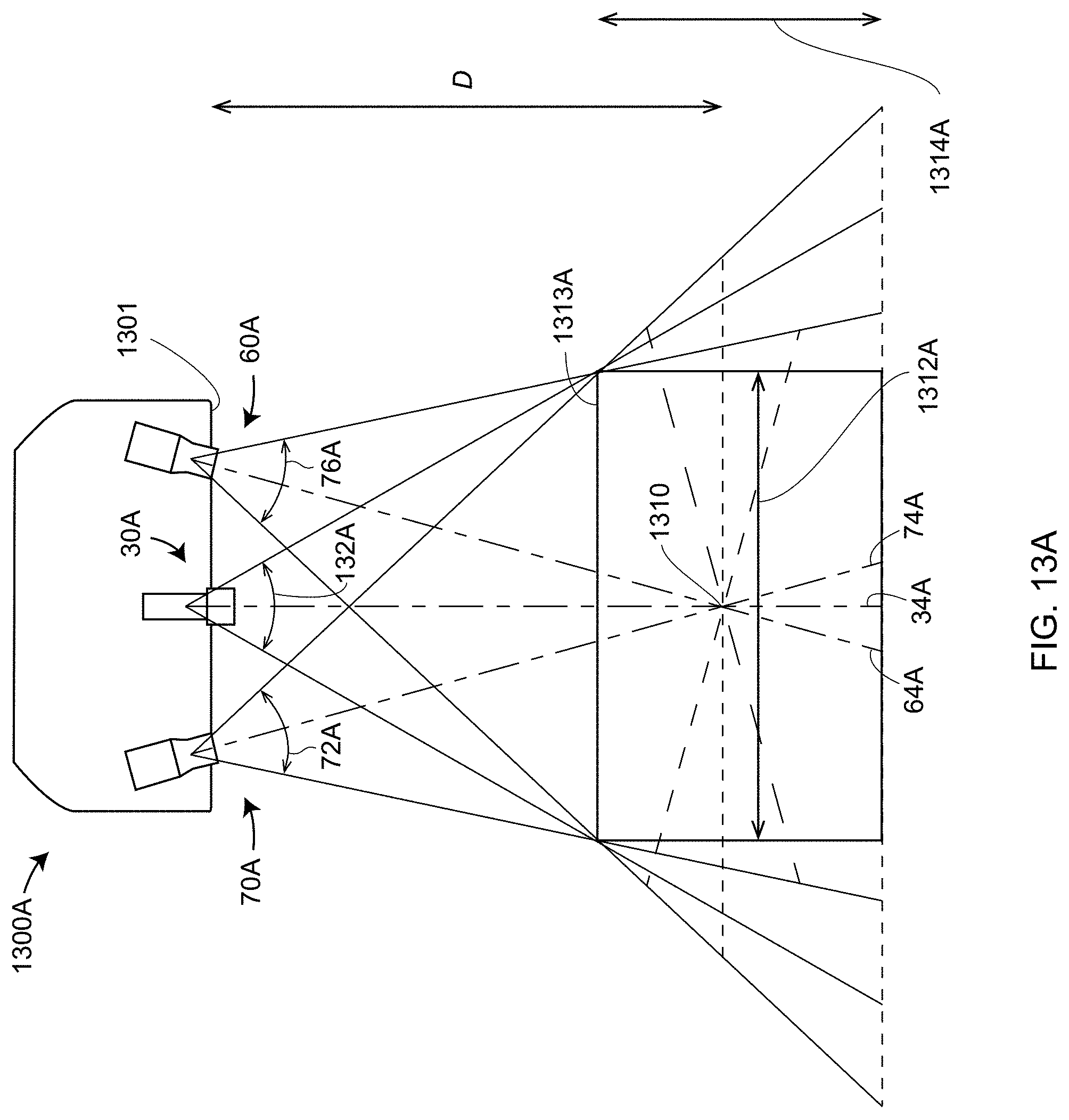

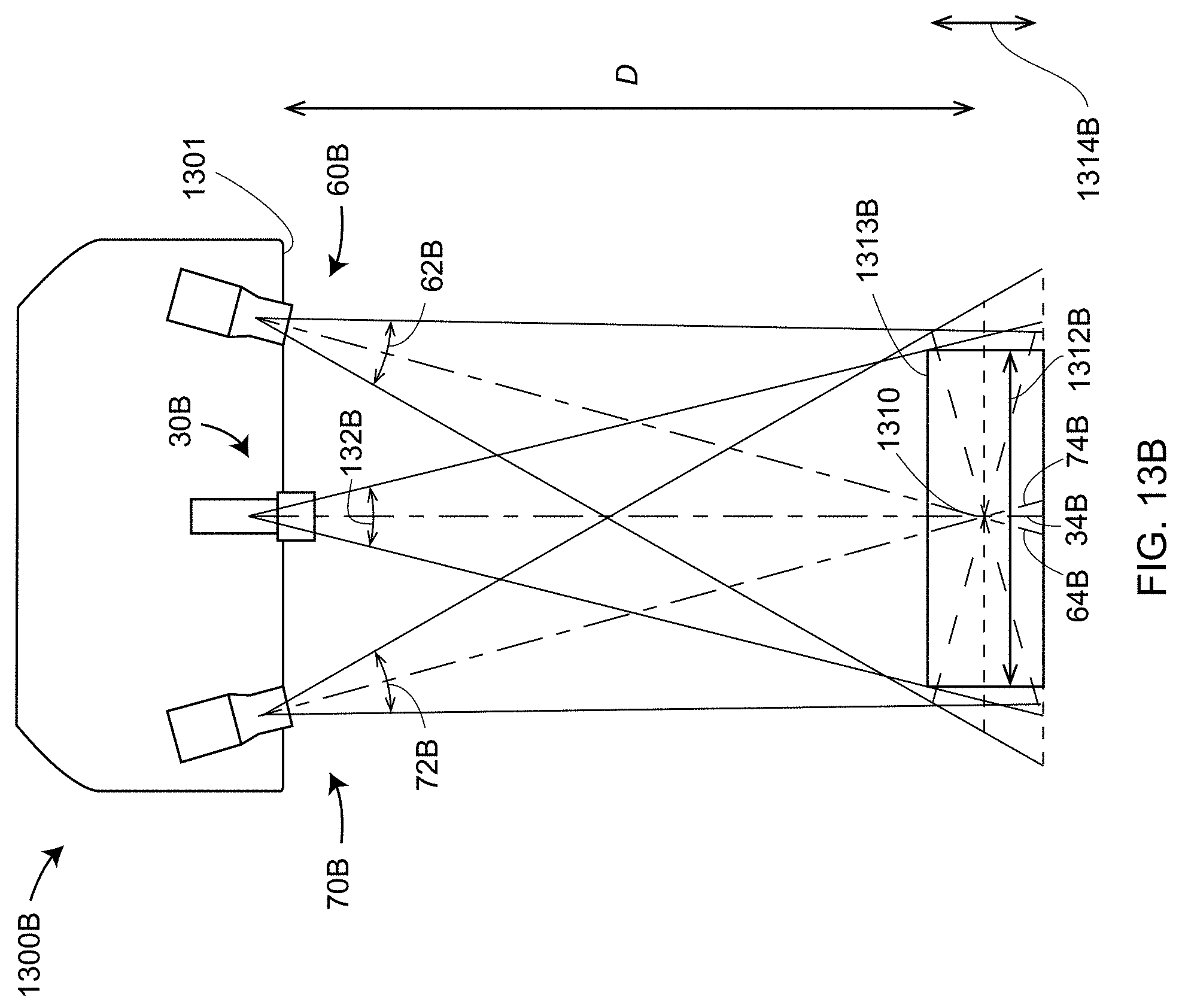

[0112] FIGS. 13A and 13B show two versions 1300A and 1300B, respectively, of the 3D imager 10. The 3D imager 1300A includes relatively wide FOV projector and camera lenses, while the 3D imager 1300B includes relatively narrow FOV projector and camera lenses. The FOVs of the wide-FOV cameras 70A, 60A and projector 30A of FIG. 13A are 72A, 62A, and 132A, respectively. The FOVs of the narrow-FOV cameras 70B, 60B and projector 30B of FIG. 13B are 72B, 62B, 132B, respectively. The standoff distance D of the 3D imager 1300A is the distance from the front 1301 of the scanner body to the point of intersection 1310 of the optical axes 74A and 64A of the camera lens assemblies 70A and 70B, respectively, with the optical axis 34A of the projector 30A. In an embodiment, the standoff distance D of the 3D imager 1300B is the same as the standoff distance D of the 3D imager 1300A. This occurs when the optical taxis 74B of the lens assembly 70B is the same as the optical axis 74A of the lens assembly 70A, which is to say that the assemblies 70A and 70B are pointed in the same direction. Similarly, the optical axes 34B and 34A have the same direction, and the optical axes 64A and 64B have the same direction. Because of this, the optical axes of the 3D imagers 1300A and 1300B intersect at the same point 1310. To achieve this result, lens assemblies 30A, 60A, and 70A are designed and constructed to be interchangeable without requiring fitting to each particular frame 10. This enables a user to purchase a lens off the shelf that is compatible with the configuration of imager 1300A, imager 1300B, or other compatible imagers. In addition, in an embodiment, such replacement lenses may be purchased without requiring adjustment of the lens to accommodate variations in the 3D imager. The method of achieving this compatibility is described in more detail herein below in reference to FIGS. 18, 19A-C, 20A-B, and 21A-C.

[0113] Because the nominal standoff distance D is the same for 3D imagers 1300A and 1300B, the narrow-FOV camera lenses 60B and 70B have longer focal lengths than the wide-FOV camera lenses 60A and 70A if the photosensitive array is the same size in each case. In addition, as shown in FIGS. 13A and 13B, the width 1312B of the measurement region 1313B is smaller than the width 1312A of the measurement region 1312A. In addition, if the diameters of lens apertures are the same in each case, the depth 1314B (the depth of field (DOF)) of the measurement region 1313B is smaller than the depth 1314A (DOF) of the measurement region 1313A. In an embodiment, 3D imagers 10 are available with different fields of view and different image sensor resolution and size.

[0114] FIG. 13C shows a cross-sectional schematic representation 1300C of a camera assembly 70 and a projector 30 according to an embodiment. The camera lens assembly 70 includes a perspective center 1376, which is the center of the lens entrance pupil. The entrance pupil is defined as the optical image of the physical aperture stop as seen through the front of the lens system. The ray that passes through the center of the entrance pupil is referred to as the chief ray, and the angle of the chief ray indicates the angle of an object point as received by the camera. A chief ray may be drawn from each illuminated point on the object through the entrance pupil. For example, the ray 1381 is a chief ray that defines the angle of an object point (on the ray) with respect to the camera lens 1371. This angle is defined with respect to an optical axis 74 of the lens 3171.

[0115] The exit pupil is defined as the optical image of the physical aperture stop as seen through the back of the lens system. The point 1377 is the center of the exit pupil. The chief ray travels from the point 1377 to a point on the photosensitive array 1373. In general, the angle of the chief ray as it leaves the exit pupil is different than the angle of the chief ray as it enters the perspective center (the entrance pupil). To simplify analysis, the ray path following the entrance pupil is adjusted to enable the beam to travel in a straight line through the perspective center 1376 to the photosensitive array 1373 as shown in FIGS. 13D and 13E. Three mathematical adjustments are made to accomplish this. First, the position of each imaged point on the photosensitive array is corrected to account for lens aberrations and other systematic error conditions. This may be done by performing compensation measurements of the lenses in the cameras 70, 60 and the projector 30. Such compensation measurement may include, for example, measuring a calibration dot plate in a prescribed arrangement and sequence to obtain aberration coefficients or an aberration map for the lenses. Second, the angle of the ray 1382 is changed to equal the angle of the ray 1381 that passes through the perspective center 1376. The distance from the exit pupil 1377 to the photosensitive array 1373 is adjusted accordingly to place the image points at the aberration-corrected points on the photosensitive array 1373. Third, the point 1377 is collapsed onto the perspective center to remove the space 1384, enabling all rays of light 1381 emerging from the object to pass a straight line through the point 1376 onto the photosensitive array 1373, as shown in FIG. 13E. By this means, the exact path of each beam of light passing through the optical system of the camera 70C may be simplified for rapid mathematical analysis by the electrical circuit and processor 1374 in a mount assembly 1372. In the discussion herein below, the term perspective center is taken to be the center of the entrance pupil with the lens model revised to enable rays to be drawn straight through the perspective center to a camera photosensitive array or straight through the perspective center to direct rays from a projector pattern generator device.

[0116] Referring again to FIG. 13C, the projector assembly 3C has a perspective center 1336, a center of an exit pupil 1337, an optical axis 34, and a projector pattern array 1333. As in the camera assembly 70, mathematical corrections are made to enable a ray from light 1341 to travel straight through the perspective center 1336 from the projector pattern plane 1333 to an object. In an embodiment, the projector pattern array 1333 is the DMD 53 shown in FIG. 5A.

[0117] An explanation is now given for a known method of determining 3D coordinate on an object surface using a sinusoidal phase-shift method, as described with reference to FIGS. 14A-D and FIG. 15. FIG. 14A illustrates projection of a sinusoidal pattern by the projector 30A. In an embodiment, the sinusoidal pattern in FIG. 14A varies in optical power from completely dark to completely bright. A minimum position on the sine wave in FIG. 14A corresponds to a dark projection and a maximum position on the sine wave corresponds to a bright projection. The projector 30A projects light along rays that travel in constant lines emerging from the perspective center of the projector lens. Hence in FIG. 14A, a line along the optical axis 34A in FIG. 14A represents a point neither at a maximum or minimum of the sinusoidal pattern and hence represents an intermediate brightness level. The relative brightness will be the same for all points lying on a ray projected through the perspective center of the projector lens. So, for example, all points along the ray 1415 are at maximum brightness level of the sinusoidal pattern. A complete sinusoidal pattern occurs along the lines 1410, 1412, and 1414, even though the lines 1410, 1412, and 1414 have different lengths.

[0118] In FIG. 14B, a given pixel of a camera 70A may see any of a collection of points that lie along a line drawn from the pixel through the perspective center of the camera lens assembly. The actual point observed by the pixel will depend on the object point intersected by the line. For example, for a pixel aligned to the optical axis 74A of the lens assembly 70A, the pixel may see a point 1420, 1422, or 1424, depending on whether the object lies along the lines of the patterns 1410, 1412, or 1414, respectively. Notice that in this case the position on the sinusoidal pattern is different in each of these three cases. In this example, the point 1420 is brighter than the point 1422, which is brighter than the point 1424.

[0119] FIG. 14C illustrates projection of a sinusoidal pattern by the projector 30A, but with more cycles of the sinusoidal pattern projected into space. FIG. 14C illustrates the case in which ten sinusoidal cycles are projected rather than one cycle. The cycles 1430, 1433, and 1434 are projected at the same distances from the scanner 1400 as the lines 1410, 1412, and 1414, respectively, in FIG. 14A. In addition, FIG. 14C shows an additional sinusoidal pattern 1433.

[0120] In FIG. 14D, a pixel aligned to the optical axis 74A of the lens assembly 70A sees the optical brightness levels corresponding to the positions 1440, 1442, 1444, and 1446 for the four sinusoidal patterns illustrated in FIG. 14D. Notice that the brightness level at a point 1440 is the same as at the point 1444. As an object moves farther away from the scanner 1400, from the point 1440 to the point 1444, it first gets slightly brighter at the peak of the sine wave, and then drops to a lower brightness level at position 1442, before returning to the original relative brightness level at 1444.

[0121] In a phase-shift method of determining distance to an object, a sinusoidal pattern is shifted side-to-side in a sequence of at least three phase shifts. For example, consider the situation illustrated in FIG. 15. In this figure, a point 1502 on an object surface 1500 is illuminated by the projector 30A. This point is observed by the camera 70A and the camera 60A. Suppose that the sinusoidal brightness pattern is shifted side-to-side in four steps to obtained shifted patterns 1512, 1514, 1516, and 1518. At the point 1502, each of the cameras 70A and 60A measure the relative brightness level at each of the four shifted patterns. If for example the phases of the sinusoids for the four measured phases are .theta.={160.degree., 250.degree., 340.degree., 70.degree.} for the positions 1522, 1524, 1526, and 1528, respectively, the relative brightness levels measured by the cameras 70A and 60A at these positions are (1+sin(.theta.))/2, or 0.671, 0.030, 0.329, and 0.969, respectively. A relatively low brightness level is seen at position 1424, and a relatively high brightness level is seen at the position 1528.

[0122] By measuring the amount of light received by the pixels in the cameras 70A and 60A, the initial phase shift of the light pattern 1512 can be determined. As suggested by FIG. 14D, such a phase shift enables determination of a distance from the scanner 1400, at least as long as the observed phases are known to be within a 360 degree phase range, for example, between the positions 1440 and 1444 in FIG. 14D. A quantitative method is known in the art for determining a phase shift by measuring relative brightness values at a point for at least three different phase shifts (side-to-side shifts in the projected sinusoidal pattern). For a collection of N phase shifts of sinusoidal signals resulting in measured relative brightness levels x.sub.i, a general expression for the phase .PHI. is given by .PHI.=tan.sup.-1(-b.sub.i/a.sub.i).sup.0.5, where a.sub.i=.SIGMA.x.sub.j cos(2.pi.j/N) and b.sub.i=.SIGMA.x.sub.j sin(2.pi.j/N), the summation being taken over integers from j=0 to N-1. For some embodiments, simpler formulas may be used. For example, for the embodiment of four measured phases each shifted successively by 90 degrees, the initial phase value is given by tan.sup.-1((x.sub.4-x.sub.2)/(x.sub.1-x.sub.3)).

[0123] The phase shift method of FIG. 15 may be used to determine the phase to within one sine wave period, or 360 degrees. For a case such as in FIG. 14D wherein more than one 360 interval is covered, the procedure may further include projection of a combination of relatively coarse and relatively fine phase periods. For example, in an embodiment, the relatively coarse pattern of FIG. 14A is first projected with at least three phase shifts to determine an approximate distance to the object point corresponding to a particular pixel on the camera 70A. Next the relatively fine pattern of FIG. 14C is projected onto the object with at least three phase shifts, and the phase is determined using the formulas given above. The results of the coarse phase-shift measurements and fine phase-shift measurements are combined to determine a composite phase shift to a point corresponding to a camera pixel. If the geometry of the scanner 1500 is known, this composite phase shift is sufficient to determine the three-dimensional coordinates of the point corresponding to a camera pixel using the methods of triangulation, as discussed herein above with respect to FIG. 9. The term "unwrapped phase" is sometimes used to indicate a total or composite phase shift.

[0124] An alternative method of determining 3D coordinates using triangulation methods is by projecting coded patterns. If a coded pattern projected by the projector is recognized by the camera(s), then a correspondence between the projected and imaged points can be made. Because the baseline and two angles are known for this case, the 3D coordinates for the object point can be determined.

[0125] An advantage of projecting coded patterns is that 3D coordinates may be obtained from a single projected pattern, thereby enabling rapid measurement, which is desired for example in handheld scanners. One disadvantage of projecting coded patterns is that background light can contaminate measurements, reducing accuracy. The problem of background light is avoided in the sinusoidal phase-shift method since background light, if constant, cancels out in the calculation of phase.

[0126] One way to preserve accuracy using the phase-shift method while minimizing measurement time is to use a scanner having a triangular geometry, as in FIG. 11. The three combinations of projector-camera orientation provide redundant information that may be used to eliminate some of the ambiguous intervals. For example, the multiple simultaneous solutions possible for the geometry of FIG. 11 may eliminate the possibility that the object lies in the interval between the positions 1444 and 1446 in FIG. 14D. This knowledge may eliminate a step of performing a preliminary coarse measurement of phase, as illustrated for example in FIG. 14B. An alternative method that may eliminate some coarse phase-shift measurements is to project a coded pattern to get an approximate position of each point on the object surface.