Method And Apparatus For Executing Cleaning Operation

HAN; Seungbeom ; et al.

U.S. patent application number 16/391834 was filed with the patent office on 2019-11-14 for method and apparatus for executing cleaning operation. The applicant listed for this patent is Samsung Electronics Co., Ltd.. Invention is credited to Seungbeom HAN, Kyunghun JANG, Hyunsuk KIM, Jungkap KUK.

| Application Number | 20190343355 16/391834 |

| Document ID | / |

| Family ID | 68465371 |

| Filed Date | 2019-11-14 |

View All Diagrams

| United States Patent Application | 20190343355 |

| Kind Code | A1 |

| HAN; Seungbeom ; et al. | November 14, 2019 |

METHOD AND APPARATUS FOR EXECUTING CLEANING OPERATION

Abstract

A robotic cleaning apparatus for performing a cleaning operation and a method of cleaning a cleaning space therefor are provided. The method includes acquiring contamination data indicating a contamination level of the cleaning space, acquiring contamination map data based on the contamination data, determining at least one cleaning target area in the cleaning space, based on a current time and the contamination map data, and cleaning the determined at least one cleaning target area. The method and apparatus may relate to artificial intelligence (AI) systems for mimicking functions of human brains, e.g., cognition and decision, by using a machine learning algorithm such as deep learning, and applications thereof.

| Inventors: | HAN; Seungbeom; (Suwon-si, KR) ; KUK; Jungkap; (Suwon-si, KR) ; KIM; Hyunsuk; (Suwon-si, KR) ; JANG; Kyunghun; (Suwon-si, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 68465371 | ||||||||||

| Appl. No.: | 16/391834 | ||||||||||

| Filed: | April 23, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62670149 | May 11, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A47L 2201/06 20130101; A47L 2201/04 20130101; A47L 9/2826 20130101; A47L 9/2847 20130101; A47L 9/2842 20130101; G05D 2201/0203 20130101; A47L 9/2815 20130101; G05D 1/0044 20130101; A47L 9/2894 20130101; A47L 9/2852 20130101 |

| International Class: | A47L 9/28 20060101 A47L009/28 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Nov 20, 2018 | KR | 10-2018-0143896 |

Claims

1. A robotic cleaning apparatus for cleaning a cleaning space, the robotic cleaning apparatus comprising: a communication interface; a memory storing one or more instructions; and at least one processor configured to: execute the one or more instructions to control the robotic cleaning apparatus, execute the one or more instructions to acquire contamination data indicating a contamination level of the cleaning space, acquire contamination map data based on the contamination data, determine at least one cleaning target area in the cleaning space, based on a current time and the contamination map data, and clean the determined at least one cleaning target area, wherein the contamination map data comprises: information indicating locations of contaminated areas in the cleaning space, contamination levels of the contaminated areas, and locations of objects in the cleaning space, and is stored for a predetermined time period.

2. The robotic cleaning apparatus of claim 1, wherein the at least one cleaning target area is determined by applying the contamination data and the contamination map data to at least one learning model learned to determine a location and a priority of the at least one cleaning target area in the cleaning space.

3. The robotic cleaning apparatus of claim 2, wherein the at least one cleaning target area is determined by applying another contamination map data similar to the contamination map data of the cleaning space by a certain value or more, to the at least one learning model, and wherein the other contamination map data is data about another cleaning space similar to the cleaning space by a certain value or more.

4. The robotic cleaning apparatus of claim 1, wherein the contamination data is acquired, based on an image captured by a camera of the robotic cleaning apparatus and sensing data sensed by a sensor of the robotic cleaning apparatus.

5. The robotic cleaning apparatus of claim 1, wherein the contamination data is acquired, based on a captured image received from a camera installed in the cleaning space and sensing data received from a sensor installed in the cleaning space.

6. The robotic cleaning apparatus of claim 1, wherein the at least one processor is further configured to execute the one or more instructions to: determine a priority of the determined at least one cleaning target area; and determine a cleaning strength of the robotic cleaning apparatus in the determined at least one cleaning target area.

7. The robotic cleaning apparatus of claim 6, wherein the at least one processor is further configured to execute the one or more instructions to: determine one of a plurality of operation modes of the robotic cleaning apparatus; and determine the at least one cleaning target area, based on the determined operation mode.

8. The robotic cleaning apparatus of claim 7, wherein the cleaning strength of the robotic cleaning apparatus in the at least one cleaning target area is determined based on the determined operation mode.

9. The robotic cleaning apparatus of claim 7, wherein the plurality of operation modes comprise at least two of a quick mode, a low-noise mode, and a normal mode.

10. The robotic cleaning apparatus of claim 1, wherein the at least one processor is further configured to execute the one or more instructions to: acquire battery level information of the robotic cleaning apparatus; and determine the at least one cleaning target area, based on the battery level information.

11. A method, performed by a robotic cleaning apparatus, of cleaning a cleaning space, the method comprising: acquiring contamination data indicating a contamination level of the cleaning space; acquiring contamination map data based on the contamination data; determining at least one cleaning target area in the cleaning space, based on a current time and the contamination map data; and cleaning the determined at least one cleaning target area, wherein the contamination map data comprises information indicating locations of contaminated areas in the cleaning space, contamination levels of the contaminated areas, and locations of objects in the cleaning space, and wherein the contamination map data is stored for a predetermined time period.

12. The method of claim 11, wherein the at least one cleaning target area is determined by applying the contamination data and the contamination map data to a learning model learned to determine a location and a priority of the at least one cleaning target area in the cleaning space.

13. The method of claim 12, wherein the at least one cleaning target area is determined by applying another contamination map data similar to the contamination map data of the cleaning space by a certain value or more, to the learning model, and wherein the other contamination map data is data about another cleaning space similar to the cleaning space by a certain value or more in terms of a structure of the cleaning space and the locations of the objects in the cleaning space.

14. The method of claim 11, wherein the contamination data is acquired, based on an image captured by a camera of the robotic cleaning apparatus and sensing data sensed by a sensor of the robotic cleaning apparatus.

15. The method of claim 11, wherein the acquiring of the contamination data comprises acquiring the contamination data, based on a captured image received from a camera installed in the cleaning space and sensing data received from a sensor installed in the cleaning space.

16. The method of claim 11, wherein the determining of the at least one cleaning target area comprises: determining a priority of the determined at least one cleaning target area; and determining a cleaning strength of the robotic cleaning apparatus in the determined at least one cleaning target area.

17. The method of claim 16, further comprising: determining one of a plurality of operation modes of the robotic cleaning apparatus, wherein the determining of the at least one cleaning target area comprises determining the at least one cleaning target area, based on the determined operation mode.

18. The method of claim 17, wherein the cleaning strength of the robotic cleaning apparatus in the at least one cleaning target area is determined based on the determined operation mode.

19. The method of claim 11, further comprising: acquiring battery level information of the robotic cleaning apparatus, wherein the determining of the at least one cleaning target area comprises determining the at least one cleaning target area, based on the battery level information.

20. A computer program product comprising a non-transitory computer-readable recording medium having recorded thereon a plurality of instructions that instruct at least one processor to perform the method of claim 11 on a computer.

Description

CROSS-REFERENCE TO RELATED APPLICATION(S)

[0001] This application is based on and claims priority under 35 U.S.C. .sctn. 119(e) of a U.S. Provisional application Ser. No. 62/670,149, filed on May 11, 2018, in the U.S. Patent and Trademark Office, and under 35 U.S.C. .sctn. 119(a) of a Korean patent application number 10-2018-0143896, filed on Nov. 20, 2018, in the Korean Intellectual Property Office, each of the disclosures is incorporated by reference herein in its entirety.

BACKGROUND

1. Field

[0002] The disclosure relates to a method and apparatus for executing a cleaning operation, based on a contamination level of a cleaning space.

2. Description of Related Art

[0003] Artificial intelligence (AI) systems are computer systems for implementing human-level intelligence. Unlike general rule-based smart systems, the AI systems autonomously learn and make decisions, and get smarter. The more the AI systems are used, the more recognition rates of the AI systems increase and the more accurately the AI systems understand user preferences. As such, the general rule-based smart systems are increasingly replaced by deep-learning-based AI systems. AI technology includes machine learning (or deep learning) and element technologies using machine learning.

[0004] Machine learning is an algorithm technology for autonomously classifying and learning features of input data, and element technologies are technologies for mimicking functions of human brains, e.g., cognition and decision, by using a machine learning algorithm such as deep learning, and include technological fields such as linguistic understanding, visual understanding, inference/prediction, knowledge expression, and operation control.

[0005] Various fields to which the AI technology is applicable are as follows. Linguistic understanding is a technology for recognizing and applying/processing verbal or written languages of people, and includes natural language processing, machine translation, dialogue systems, questions and answers, speech recognition/synthesis, etc. Visual understanding is a technology for recognizing and processing objects as in human views, and includes object recognition, object tracking, image search, human recognition, scene understanding, space understanding, image enhancement, etc. Inference/prediction is a technology for determining and logically inferring and predicting information, and includes knowledge/probability-based inference, optimized prediction, preference-based planning, recommendation, etc. Knowledge expression is a technology for automating human experience information into knowledge data, and includes knowledge construction (e.g., data generation/classification), knowledge management (e.g., data utilization), etc. Operation control is a technology for controlling autonomous driving of vehicles and motion of robots, and includes motion control (e.g., steering, collision, or driving control), manipulation control (e.g., behavior control), etc.

[0006] Robotic cleaning apparatuses need to efficiently clean a cleaning space in various operation modes and environments, and thus a technology for appropriately determining a cleaning target area under various conditions is required.

[0007] The above information is presented as background information only to assist with an understanding of the disclosure. No determination has been made, and no assertion is made, as to whether any of the above might be applicable as prior art with regard to the disclosure.

SUMMARY

[0008] Aspects of the disclosure are to address at least the above-mentioned problems and/or disadvantages and to provide at least the advantages described below. Accordingly, an aspect of the disclosure is to provide a robotic cleaning system and method capable of generating contamination map data based on a contamination level of a cleaning space, per a time period, and using the contamination map data.

[0009] Another aspect of the disclosure is to provide a robotic cleaning system and method capable of determining a cleaning target area and a priority of the cleaning target area by using a learning model.

[0010] Another aspect of the disclosure is to provide a robotic cleaning system and method capable of efficiently controlling a robotic cleaning apparatus in a plurality of operation modes and environments by using contamination map data per a time period.

[0011] Additional aspects will be set forth in part in the description which follows and, in part, will be apparent from the description, or may be learned by practice of the presented embodiments.

[0012] In accordance with an aspect of the disclosure, a robotic cleaning apparatus for cleaning a cleaning space is provided. The robotic cleaning apparatus includes a communication interface, a memory storing one or more instructions, and at least one processor configured to execute the one or more instructions to control the robotic cleaning apparatus, execute the one or more instructions to acquire contamination data indicating a contamination level of the cleaning space, acquire contamination map data based on the contamination data, determine at least one cleaning target area in the cleaning space, based on a current time and the contamination map data, and clean the determined at least one cleaning target area, wherein the contamination map data includes information indicating locations of contaminated areas in the cleaning space, contamination levels of the contaminated areas, and locations of objects in the cleaning space, and is stored for a predetermined time period.

[0013] In accordance with another aspect of the disclosure, a method, performed by a robotic cleaning apparatus, of cleaning a cleaning space is provided. The method includes acquiring contamination data indicating a contamination level of the cleaning space, acquiring contamination map data based on the contamination data, determining at least one cleaning target area in the cleaning space, based on a current time and the contamination map data, and cleaning the determined at least one cleaning target area, wherein the contamination map data includes information indicating locations of contaminated areas in the cleaning space, contamination levels of the contaminated areas, and locations of objects in the cleaning space, and wherein the contamination map data is stored for a predetermined time period.

[0014] In accordance with another aspect of the disclosure, a non-transitory computer program product is provided. The non-transitory computer program product includes a computer-readable recording medium having recorded thereon a plurality of instructions that instruct at least one processor to perform the method above.

[0015] Other aspects, advantages, and salient features of the disclosure will become apparent to those skilled in the art from the following detailed description, which, taken in conjunction with the annexed drawings, discloses various embodiments of the disclosure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0016] The above and other aspects, features, and advantages of certain embodiments of the disclosure will be more apparent from the following description taken in conjunction with the accompanying drawings, in which:

[0017] FIG. 1 is a schematic diagram of a cleaning system according to an embodiment of the disclosure;

[0018] FIG. 2 is a flowchart of a method, performed by a robotic cleaning apparatus, of determining a cleaning target area, based on contamination data, according to an embodiment of the disclosure;

[0019] FIG. 3 is a flowchart of a method, performed by the robotic cleaning apparatus, of acquiring contamination data, according to an embodiment of the disclosure;

[0020] FIG. 4 is a flowchart of a method, performed by the robotic cleaning apparatus, of determining a priority of and a cleaning strength for a cleaning target area considering an operation mode of the robotic cleaning apparatus, according to an embodiment of the disclosure;

[0021] FIG. 5 is a flowchart of a method, performed by the robotic cleaning apparatus, of determining an operation mode of the robotic cleaning apparatus, according to an embodiment of the disclosure;

[0022] FIG. 6 is a schematic diagram showing contamination map data according to an embodiment of the disclosure;

[0023] FIG. 7 is a flowchart of a method, performed by the robotic cleaning apparatus, of determining and changing a cleaning target area in association with a server, according to an embodiment of the disclosure;

[0024] FIG. 8 is a flowchart of a method, performed by the robotic cleaning apparatus, of determining and changing a cleaning target area in association with a server, according to another embodiment of the disclosure;

[0025] FIG. 9 is a schematic diagram showing an example in which a cleaning target area is determined using a learning model, according to an embodiment of the disclosure;

[0026] FIG. 10 is a schematic diagram showing an example in which a cleaning target area is determined using a learning model, according to another embodiment of the disclosure;

[0027] FIG. 11 is a schematic diagram showing an example in which a cleaning target area is determined using a learning model, according to another embodiment of the disclosure;

[0028] FIG. 12 is a schematic diagram showing an example in which a cleaning target area is determined using a learning model, according to another embodiment of the disclosure;

[0029] FIG. 13 is a table for describing operation modes of the robotic cleaning apparatus, according to an embodiment of the disclosure;

[0030] FIG. 14 is a schematic diagram of a cleaning system according to another embodiment of the disclosure;

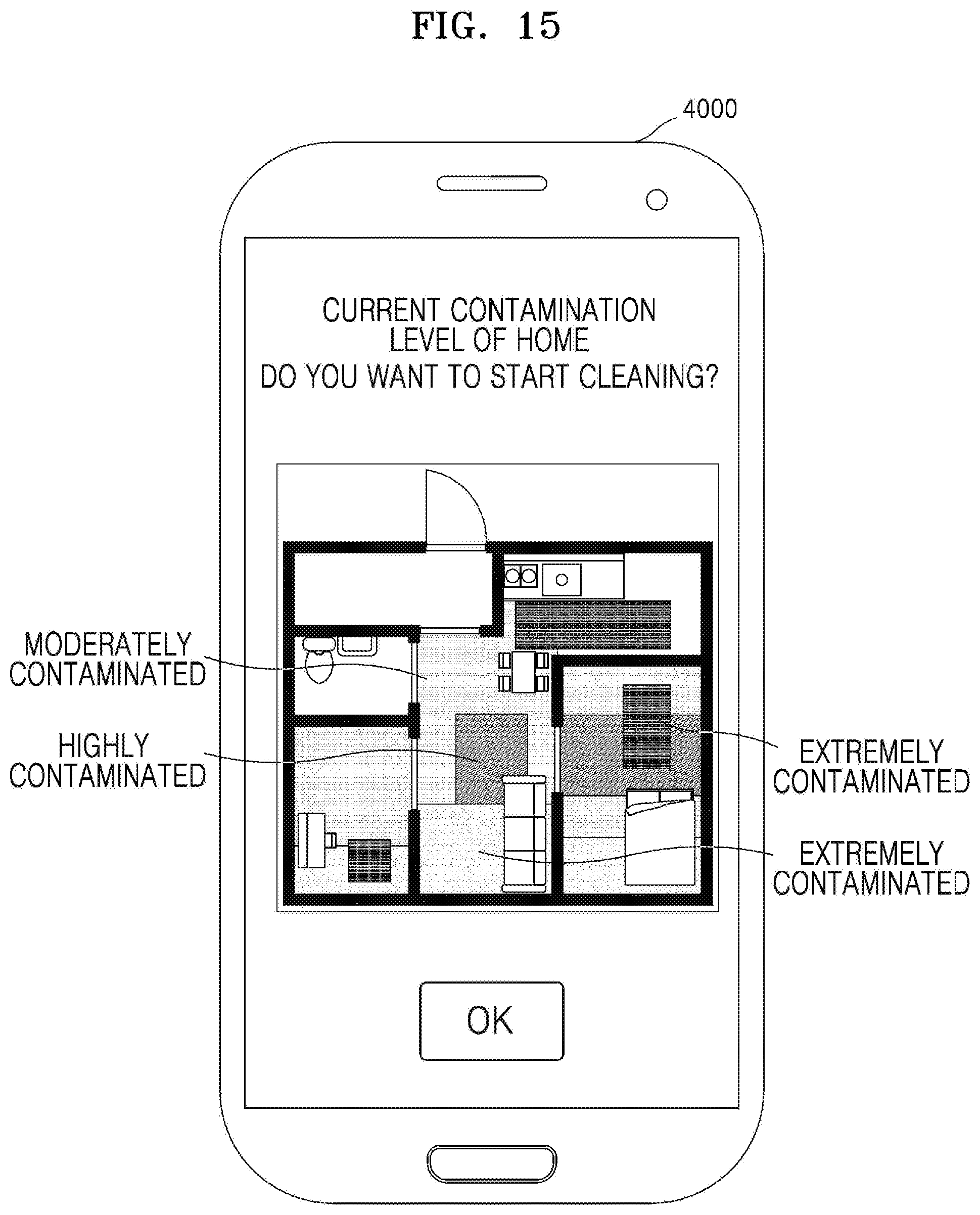

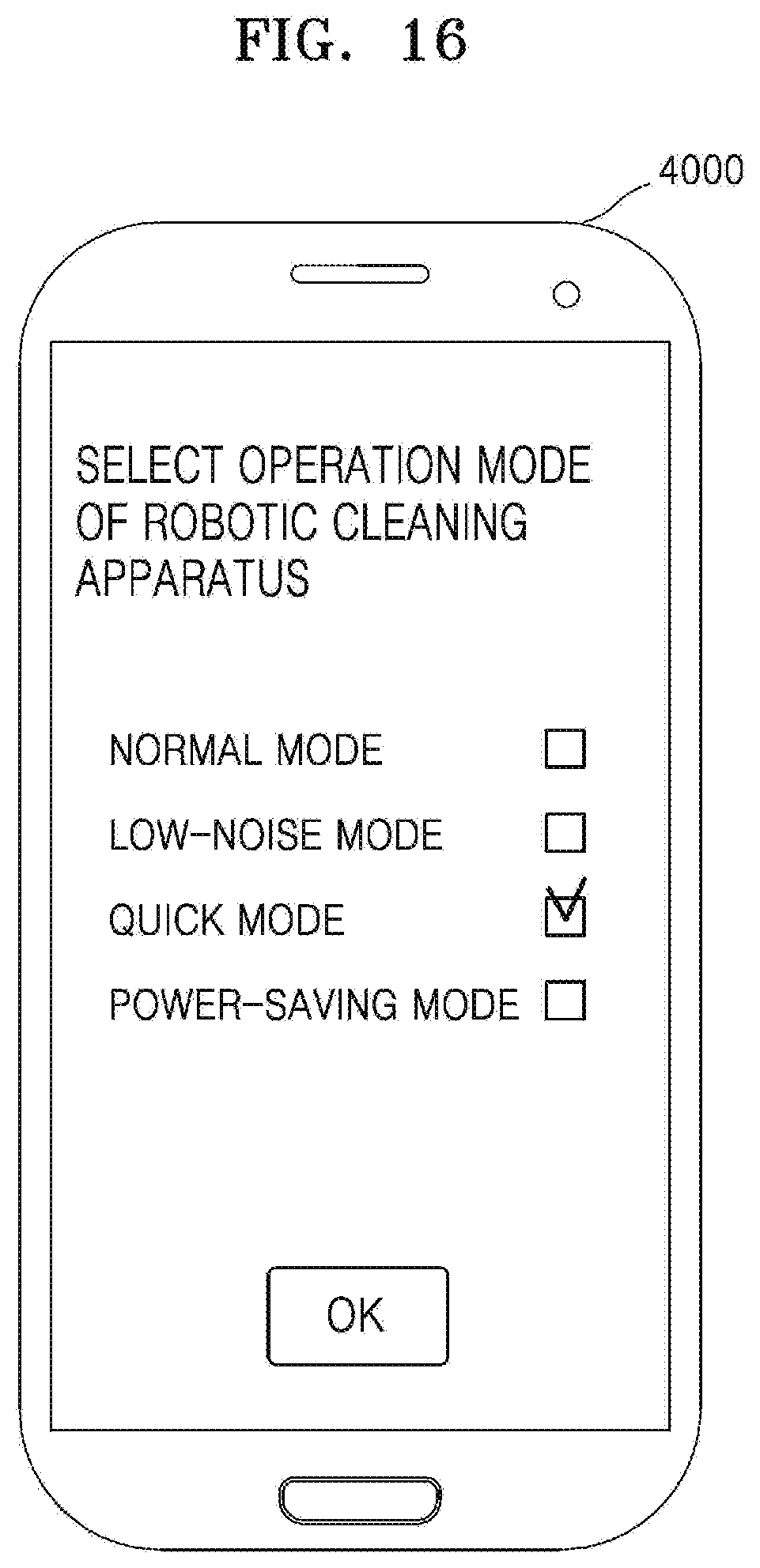

[0031] FIGS. 15, 16, and 17 are schematic diagrams showing an example in which a mobile device controls the robotic cleaning apparatus to operate in a certain operation mode, according to an embodiment of the disclosure;

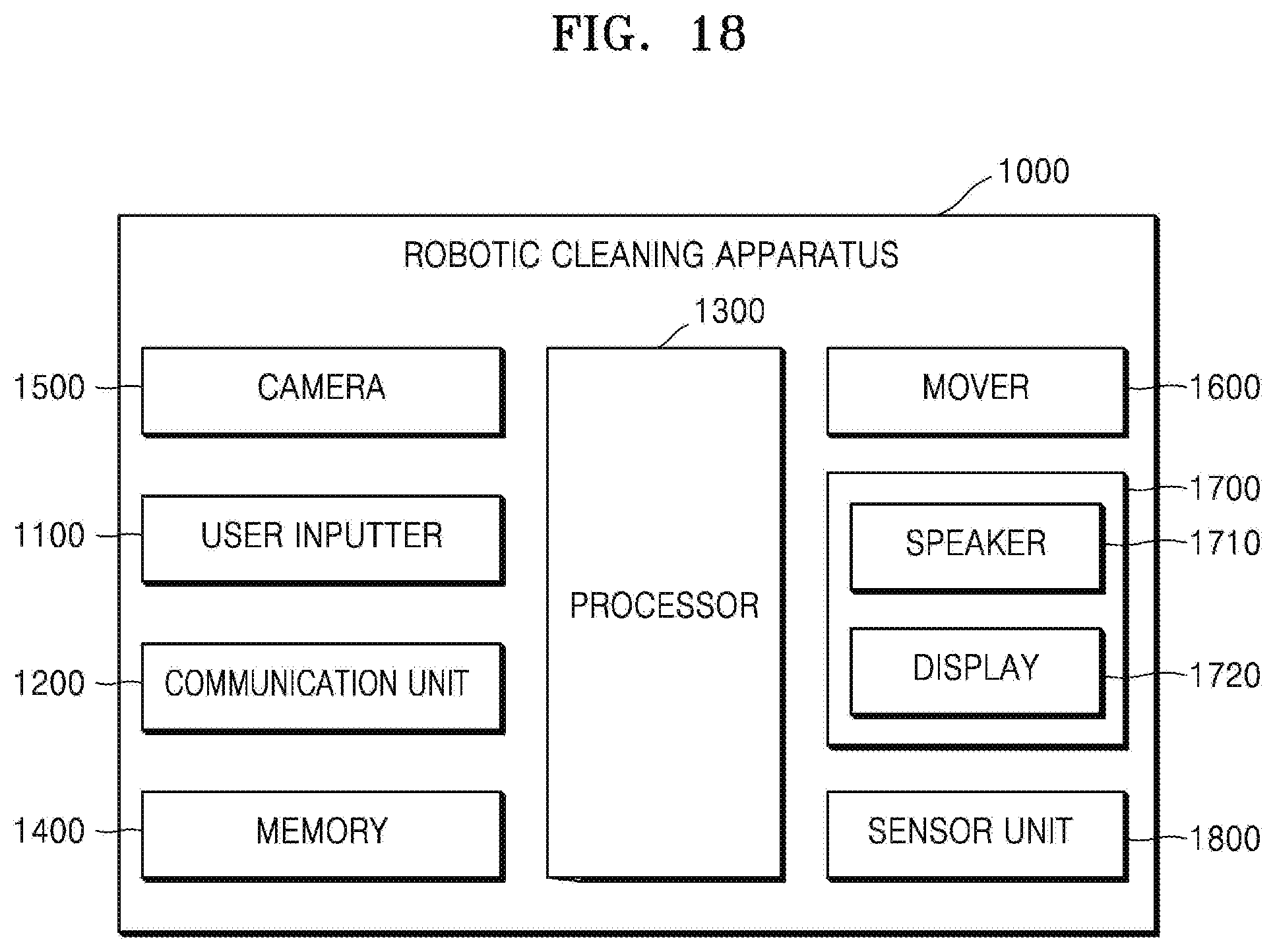

[0032] FIG. 18 is a block diagram of the robotic cleaning apparatus according to an embodiment of the disclosure;

[0033] FIG. 19 is a block diagram of the server according to an embodiment of the disclosure;

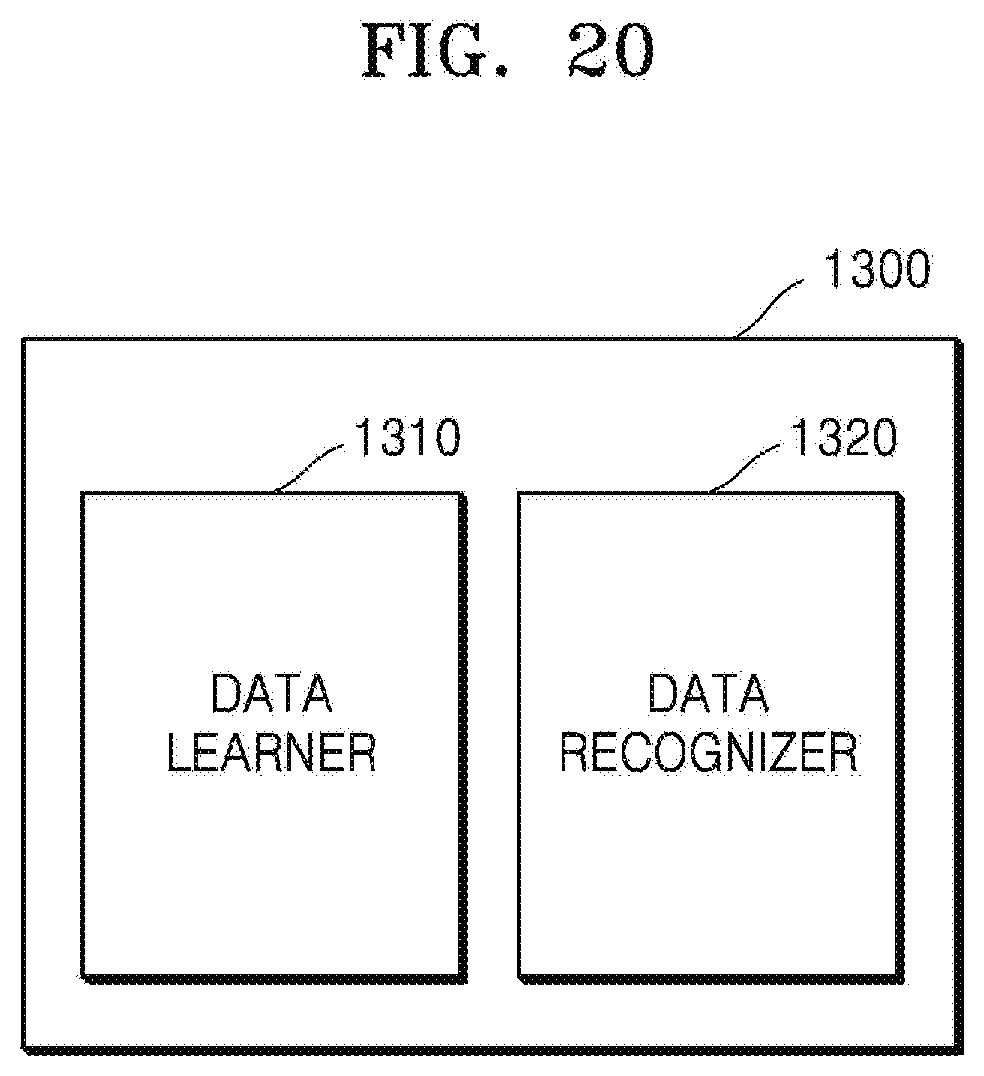

[0034] FIG. 20 is a block diagram of a processor according to an embodiment of the disclosure;

[0035] FIG. 21 is a block diagram of a data learner according to an embodiment of the disclosure;

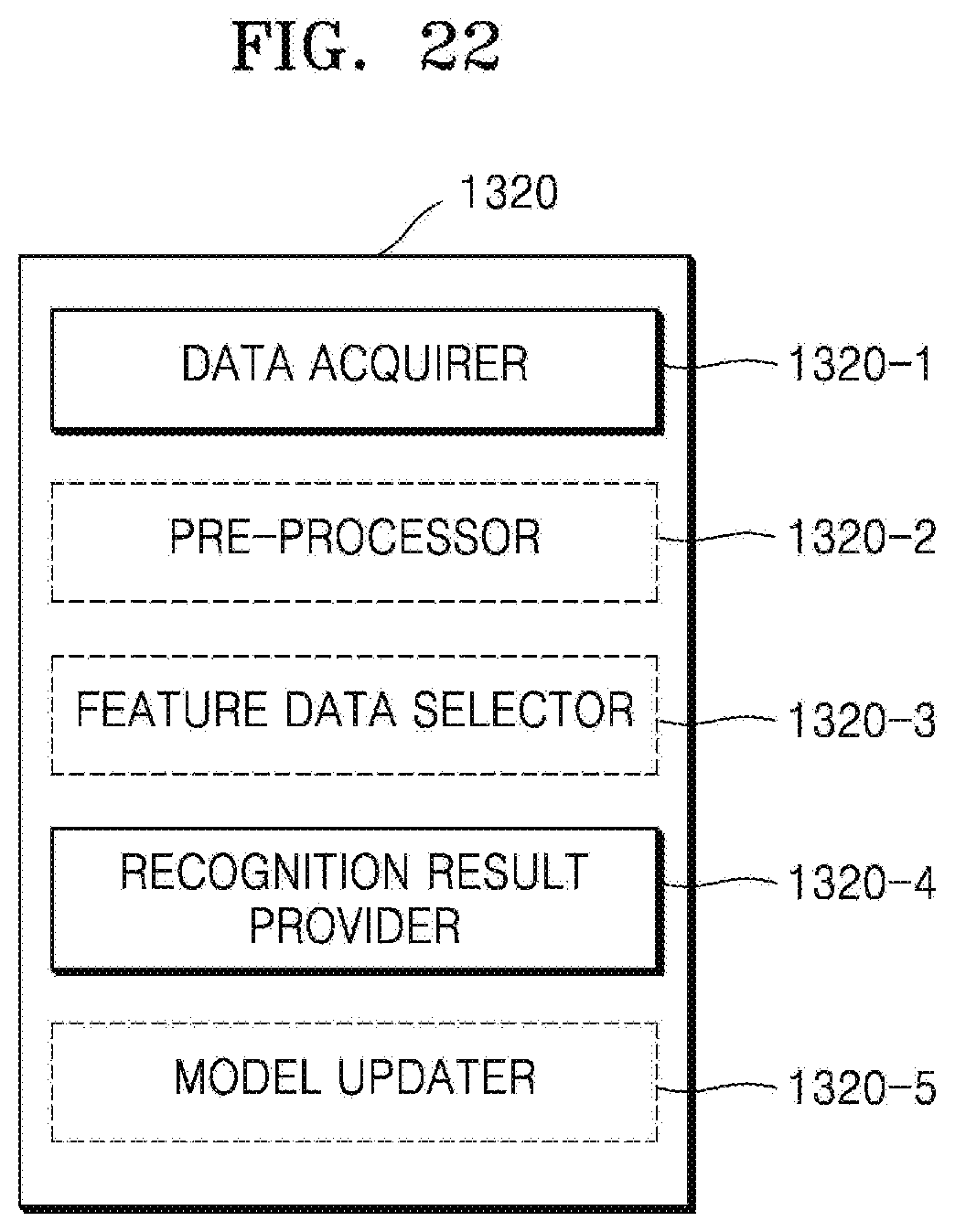

[0036] FIG. 22 is a block diagram of a data recognizer according to an embodiment of the disclosure;

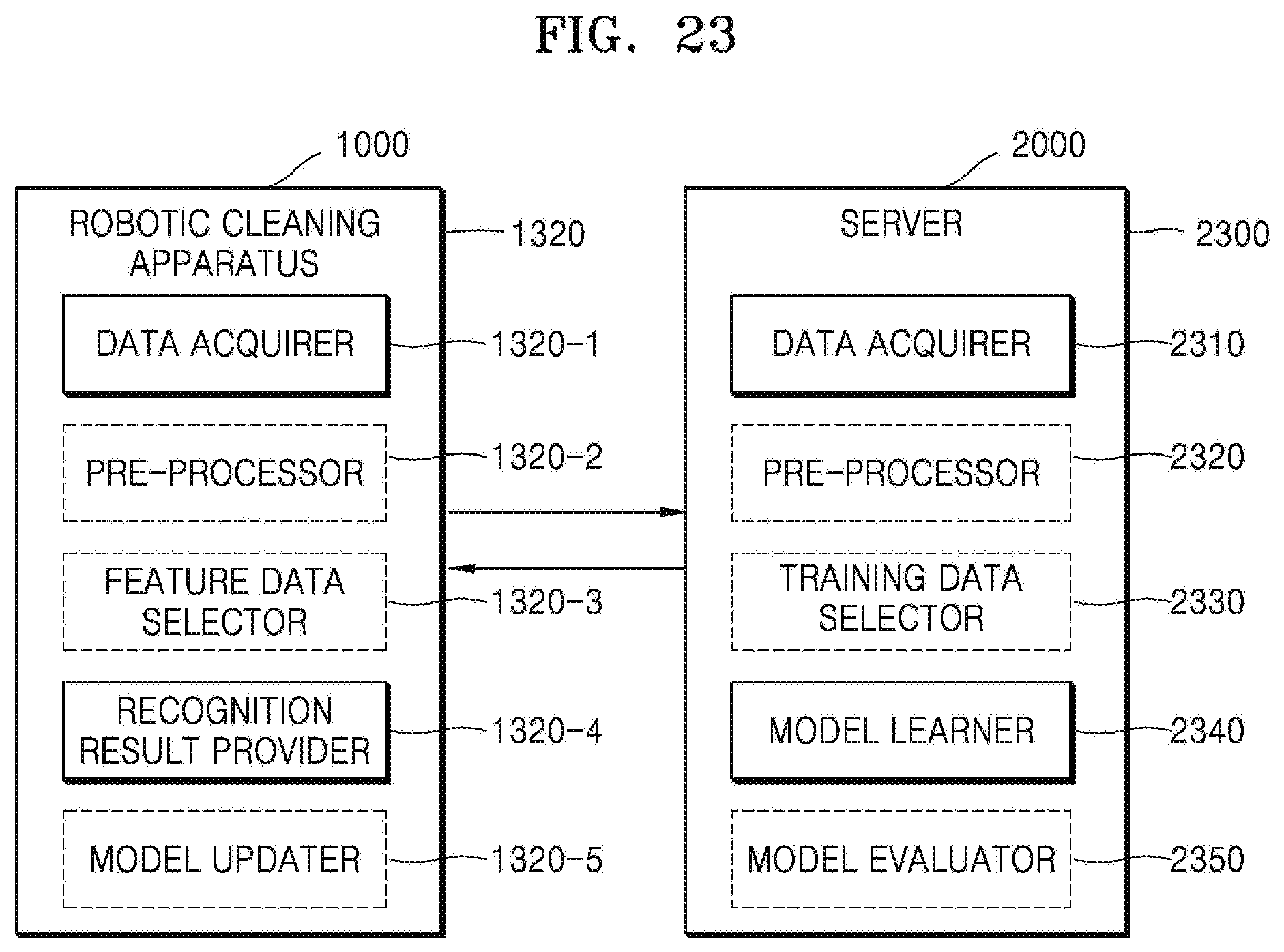

[0037] FIG. 23 is a block diagram showing an example in which the robotic cleaning apparatus and the server learn and recognize data in association with each other, according to an embodiment of the disclosure; and

[0038] FIG. 24 is a flowchart of a method, performed by the robotic cleaning apparatus, of moving an object in a cleaning space to a certain location, according to an embodiment of the disclosure.

[0039] Throughout the drawings, like reference numerals will be understood to refer to like parts, components, and structures.

DETAILED DESCRIPTION

[0040] The following description with reference to the accompanying drawings is provided to assist in a comprehensive understanding of various embodiments of the disclosure as defined by the claims and their equivalents. It includes various specific details to assist in that understanding but these are to be regarded as merely exemplary. Accordingly, those of ordinary skill in the art will recognize that various changes and modifications of the various embodiments described herein can be made without departing from the scope and spirit of the disclosure. In addition, descriptions of well-known functions and constructions may be omitted for clarity and conciseness.

[0041] The terms and words used in the following description and claims are not limited to the bibliographical meanings, but, are merely used by the inventor to enable a clear and consistent understanding of the disclosure. Accordingly, it should be apparent to those skilled in the art that the following description of various embodiments of the disclosure is provided for illustration purpose only and not for the purpose of limiting the disclosure as defined by the appended claims and their equivalents.

[0042] It is to be understood that the singular forms "a," "an," and "the" include plural referents unless the context clearly dictates otherwise. Thus, for example, reference to "a component surface" includes reference to one or more of such surfaces.

[0043] Hereinafter, the disclosure will be described in detail by explaining embodiments of the disclosure with reference to the attached drawings. The disclosure may, however, be embodied in many different forms and should not be construed as being limited to the various embodiments of the disclosure set forth herein; rather, these embodiments of the disclosure are provided so that this disclosure will be thorough and complete, and will fully convey the concept of the disclosure to one of ordinary skill in the art. In the drawings, parts not related to the disclosure are not illustrated for clarity of explanation, and like reference numerals denote like elements.

[0044] It will be understood that when an element is referred to as being "connected to" another element, it may be "directly connected to" the other element or be "electrically connected to" the other element through an intervening element. It will be further understood that the terms "includes" and/or "including", when used herein, specify the presence of stated elements, but do not preclude the presence or addition of one or more other elements, unless the context clearly indicates otherwise. Throughout the disclosure, the expression "at least one of a, b or c" indicates only a, only b, only c, both a and b, both a and c, both b and c, all of a, b, and c, or variations thereof.

[0045] The disclosure will now be described in detail with reference to the attached drawings.

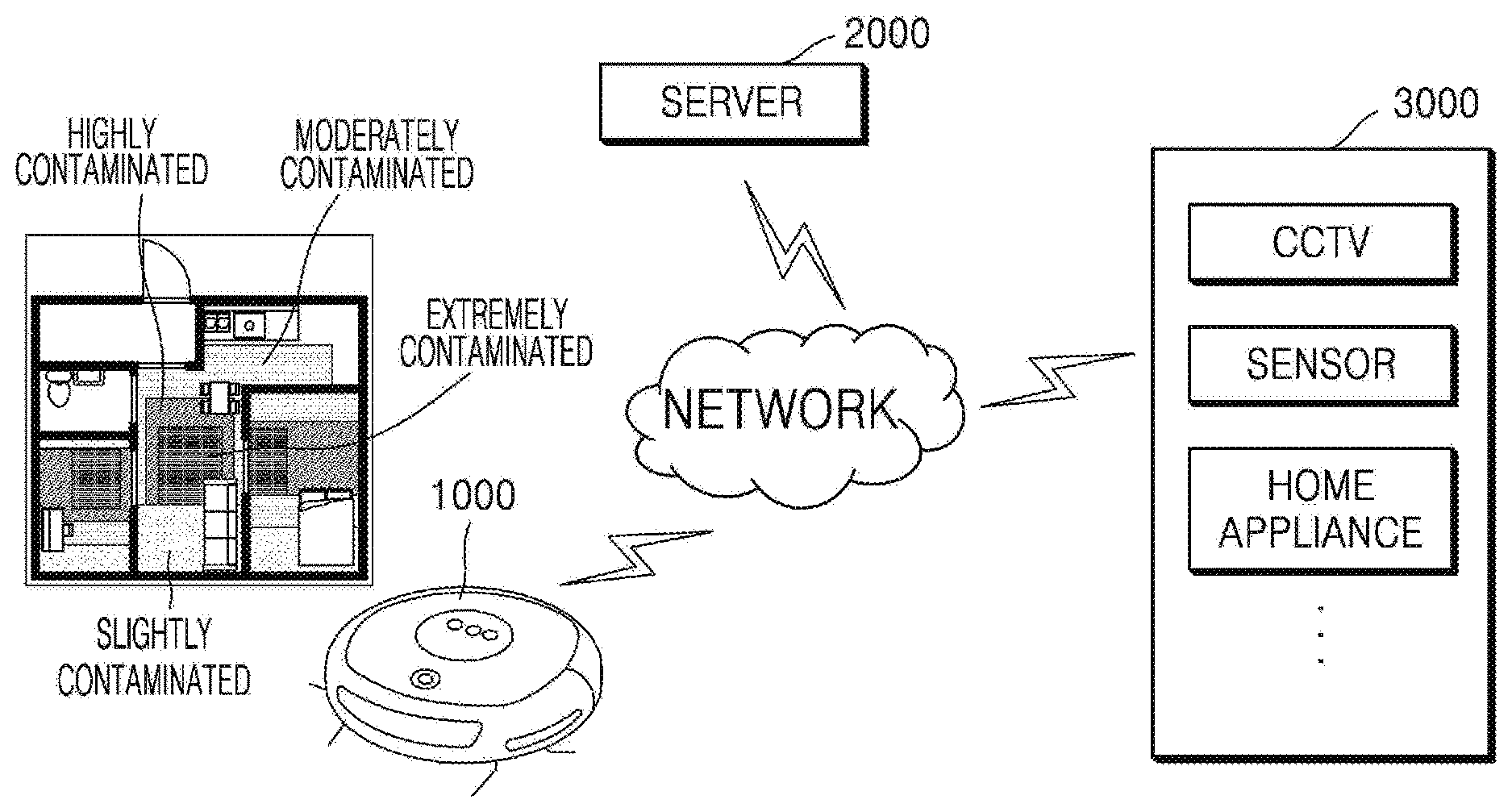

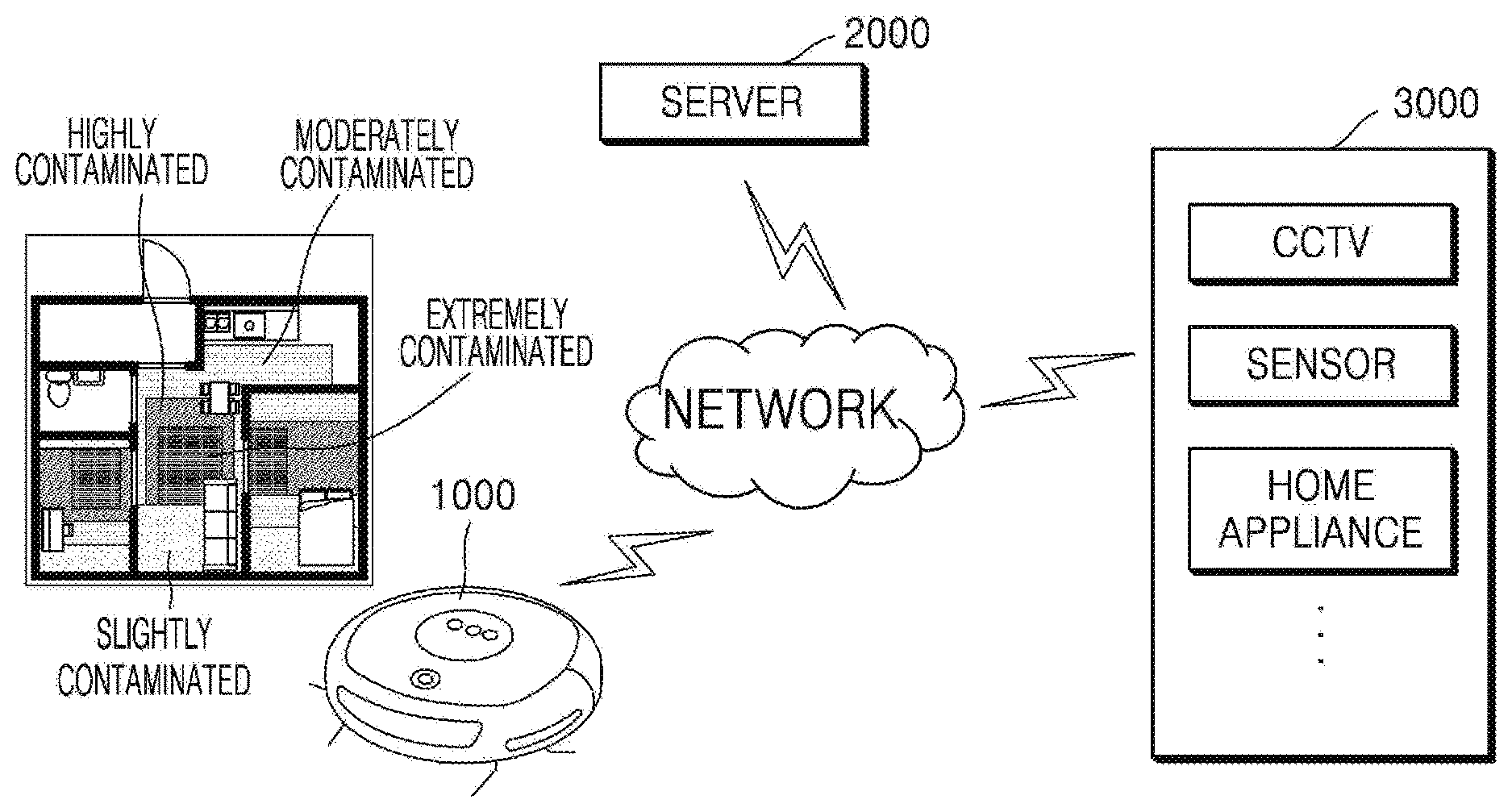

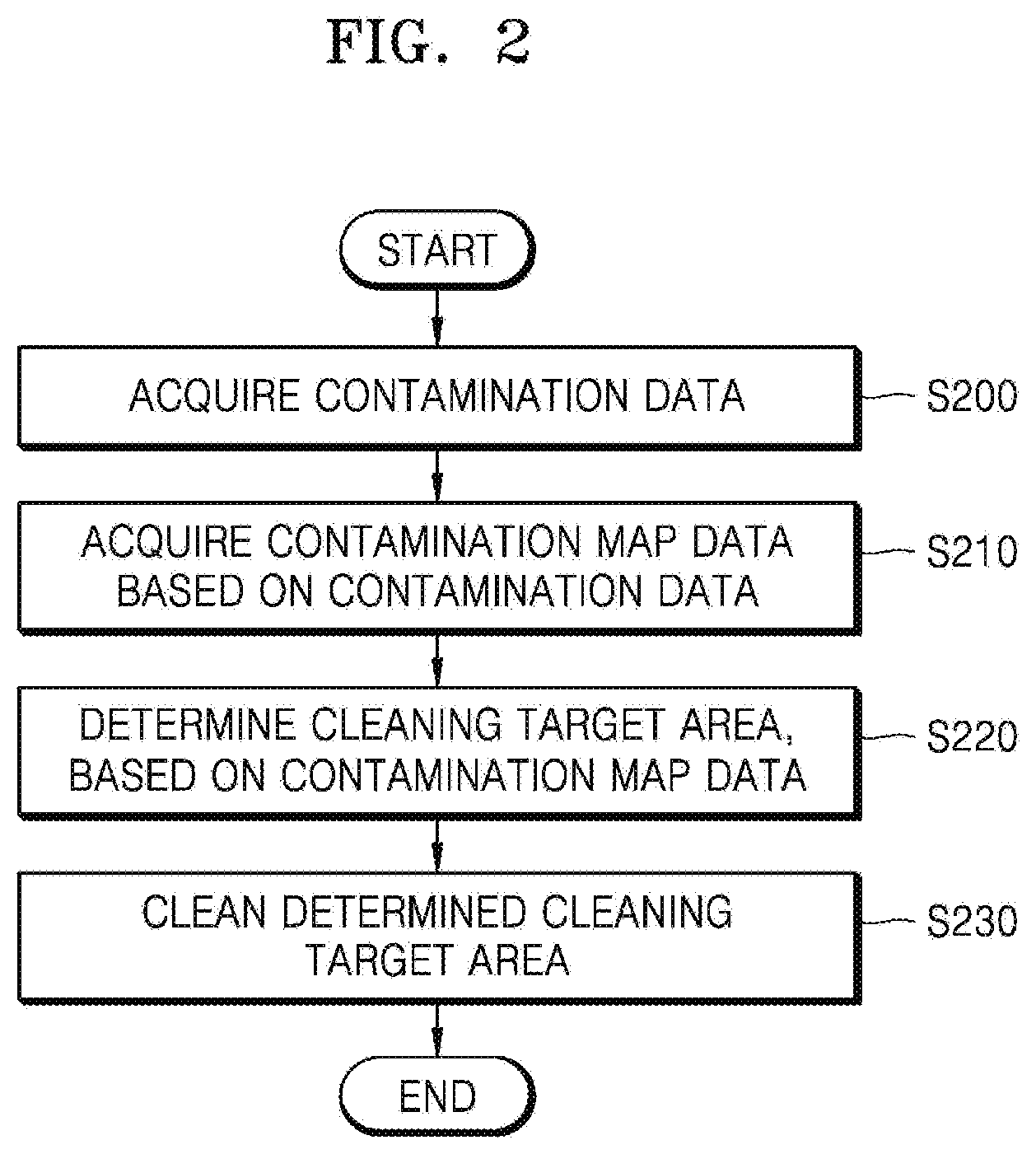

[0046] FIG. 1 is a schematic diagram of a cleaning system according to an embodiment of the disclosure.

[0047] Referring to FIG. 1, the cleaning system according to an embodiment of the disclosure may include a robotic cleaning apparatus 1000, a server 2000, and at least one external device 3000.

[0048] The robotic cleaning apparatus 1000 may clean a cleaning space while moving in the cleaning space. The cleaning space may be a space to be cleaned, e.g., a home or an office. The robotic cleaning apparatus 1000 is a robotic apparatus capable of autonomously moving by using wheels or the like, and may perform a cleaning function while moving in the cleaning space.

[0049] The robotic cleaning apparatus 1000 may collect contamination data about contamination in the cleaning space, generate contamination map data and determine a cleaning target area, based on the generated contamination map data.

[0050] The robotic cleaning apparatus 1000 may predict contaminated regions in the cleaning space by using the contamination map data and determine a priority of and a cleaning strength for the cleaning target area considering the predicted contaminated regions. The contamination map data may include map information of the cleaning space and information indicating contaminated areas, and the robotic cleaning apparatus 1000 may generate the contamination map data per a certain time period (e.g., a predetermined time period).

[0051] The robotic cleaning apparatus 1000 may use at least one learning model to generate the contamination map data and determine the cleaning target area. The learning model may be operated by at least one of the robotic cleaning apparatus 1000 or the server 2000.

[0052] The server 2000 may generate the contamination map data and determine the cleaning target area in association with the robotic cleaning apparatus 1000. The server 2000 may comprehensively manage a plurality of robotic cleaning apparatuses and a plurality of external devices 3000. The server 2000 may use information collected from another robotic cleaning apparatus (not shown), contamination map data of another cleaning space, etc. to determine the contamination map data and the cleaning target area of the robotic cleaning apparatus 1000.

[0053] The external device 3000 may generate contamination data about contamination in the cleaning space and data required to determine a status of the cleaning space and provide the generated data to the robotic cleaning apparatus 1000 or the server 2000. The external device 3000 may be installed in the cleaning space. The external device 3000 may include, for example, a closed-circuit television (CCTV), a sensor, or a home appliance, but is not limited thereto.

[0054] A network is a comprehensive data communication network capable of enabling appropriate communication between network entities illustrated in FIG. 1, e.g., a local area network (LAN), a wide area network (WAN), a value added network (VAN), a mobile radio communication network, a satellite communication network, or a combination thereof, and may include the wired Internet, the wireless Internet, and a mobile communication network. Wireless communication may include, for example, wireless local area network (WLAN) (or Wi-Fi) communication, Bluetooth communication, Bluetooth low energy (BLE) communication, Zigbee communication, Wi-Fi direct (WFD) communication, ultra-wideband (UWB) communication, Infrared Data Association (IrDA) communication, and near field communication (NFC), but is not limited thereto.

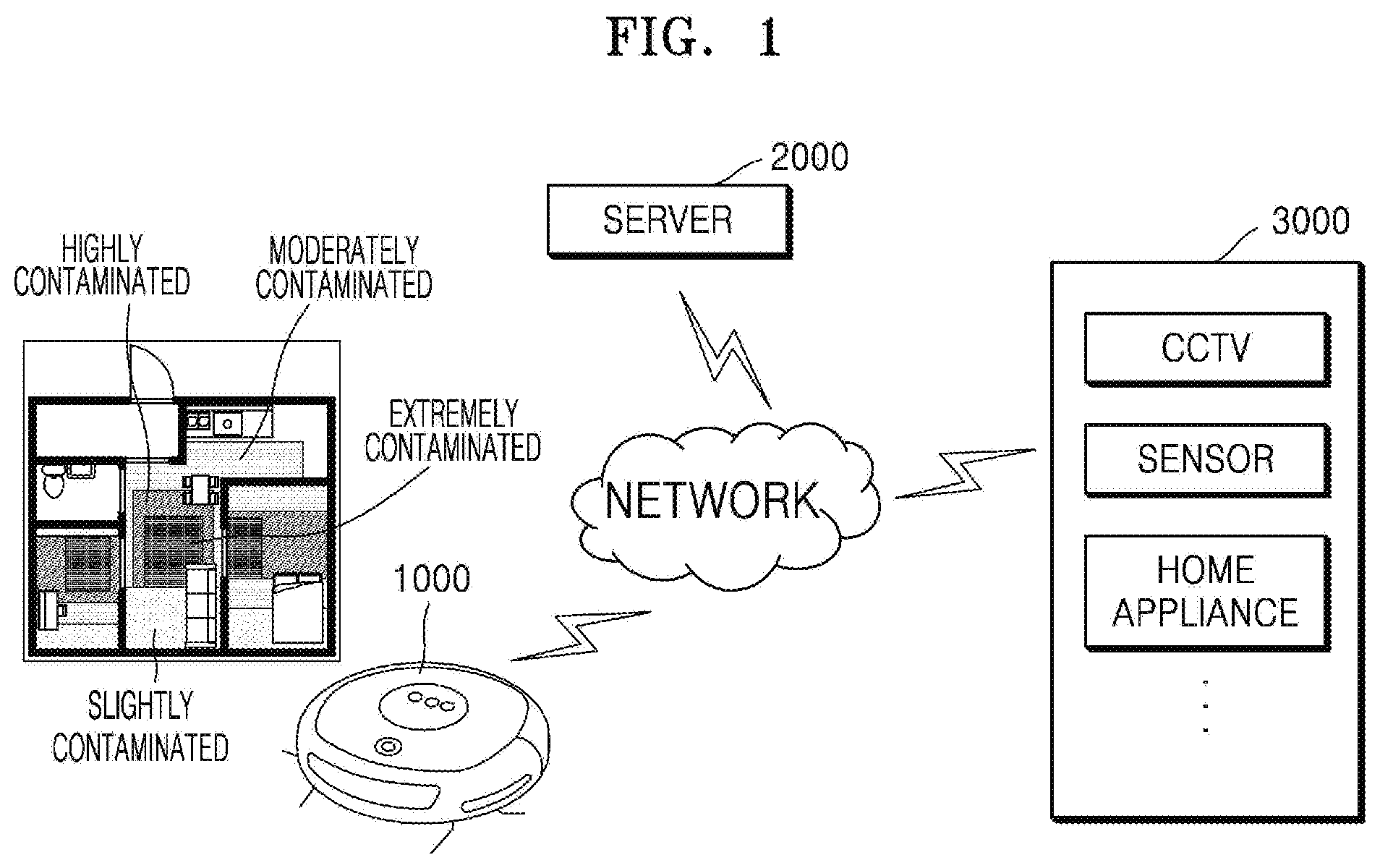

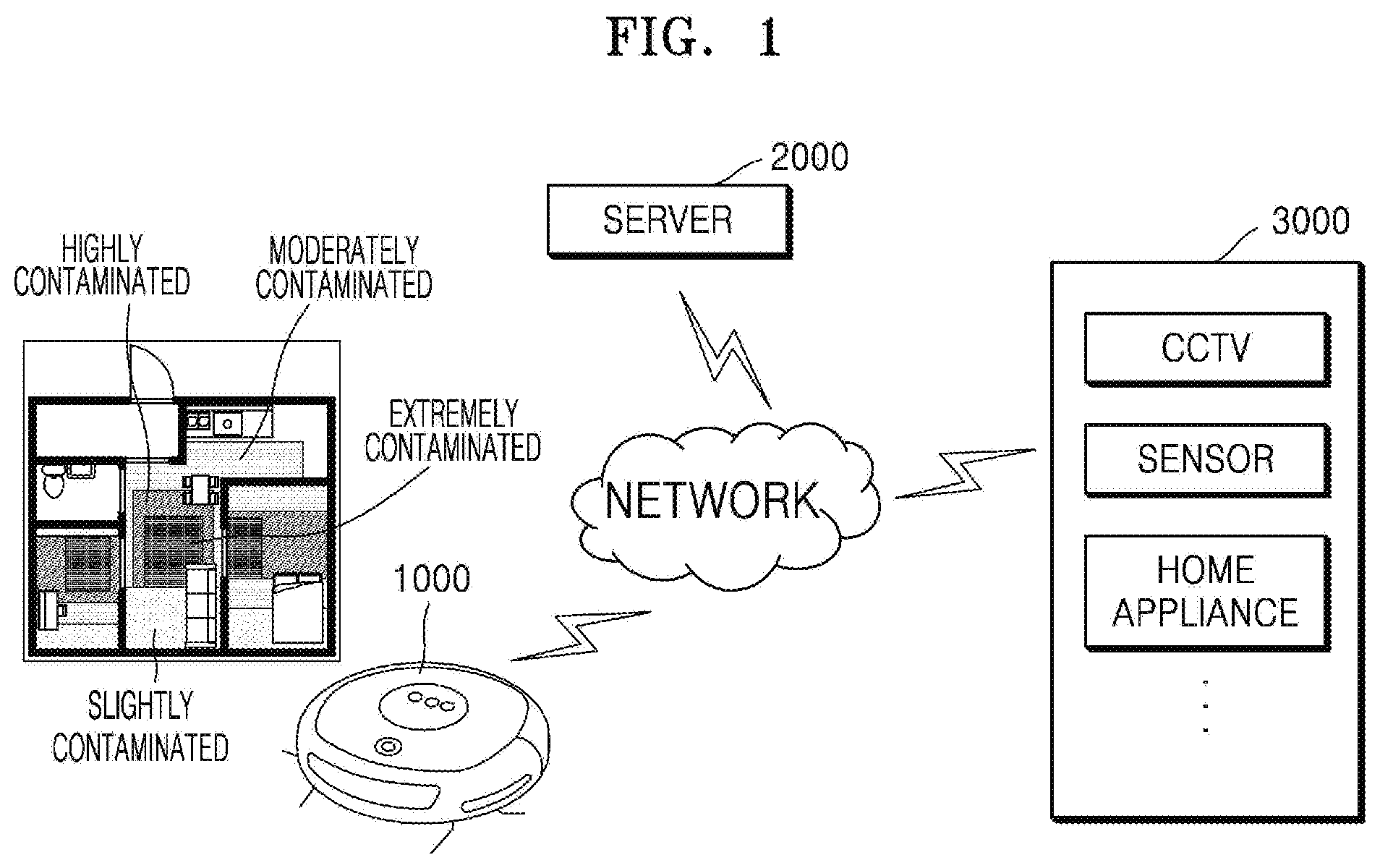

[0055] FIG. 2 is a flowchart of a method, performed by the robotic cleaning apparatus 1000, of determining a cleaning target area, based on contamination data, according to an embodiment of the disclosure.

[0056] In operation S200, the robotic cleaning apparatus 1000 may acquire contamination data. The robotic cleaning apparatus 1000 may generate contamination data of a cleaning space by using a camera and a sensor of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may generate image data indicating a contamination level of the cleaning space, by photographing the cleaning space with the camera while cleaning the cleaning space. The robotic cleaning apparatus 1000 may generate sensing data indicating a dust quantity in the cleaning space, by sensing the cleaning space through a dust sensor while cleaning the cleaning space.

[0057] The robotic cleaning apparatus 1000 may receive contamination data from the external device 3000. For example, a CCTV installed in the cleaning space may photograph the cleaning space and, when a motion of contaminating the cleaning space is detected, the CCTV may provide image data including the detected motion, to the robotic cleaning apparatus 1000. For example, a dust sensor installed in the cleaning space may provide sensing data about a dust quantity of the cleaning space to the robotic cleaning apparatus 1000.

[0058] The external device 3000 may provide the contamination data generated by the external device 3000, to the server 2000, and the robotic cleaning apparatus 1000 may receive the contamination data generated by the external device 3000, from the server 2000.

[0059] The contamination data may be used to determine a contaminated location and a contamination level of the cleaning space. For example, the contamination data may include information about a location where and a time when the contamination data is generated.

[0060] In operation S210, the robotic cleaning apparatus 1000 may acquire contamination map data based on the contamination data. The robotic cleaning apparatus 1000 may generate and update the contamination map data by using the acquired contamination data. The contamination map data may include a map of the cleaning space and information indicating locations and contamination levels of contaminated areas in the cleaning space. The contamination map data may be generated per a time period and indicate the locations and the contamination levels of the contaminated areas in the cleaning space, in each time period.

[0061] The robotic cleaning apparatus 1000 may generate map data of the cleaning space by recognizing a location of the robotic cleaning apparatus 1000 while moving. The robotic cleaning apparatus 1000 may generate the contamination map data indicating the contaminated areas in the cleaning space, by using the contamination data and the map data.

[0062] The map data of the cleaning space may include, for example, data about at least one of a navigation map used to move while cleaning, a simultaneous localization and mapping (SLAM) map used for location recognition, or an obstacle recognition map including information about recognized obstacles.

[0063] The contamination map data may indicate, for example, locations of rooms and furniture in the cleaning space, and include information about the locations and the contamination levels of the contaminated areas in the cleaning space.

[0064] The robotic cleaning apparatus 1000 may generate the contamination map data by, for example, applying the map data and the contamination data of the cleaning space to a learning model for generating the contamination map data.

[0065] Alternatively, the robotic cleaning apparatus 1000 may request the contamination map data from the server 2000. In this case, the robotic cleaning apparatus 1000 may provide the map data and the contamination data of the cleaning space to the server 2000, and the server 2000 may generate the contamination map data by applying the map data and the contamination data of the cleaning space to the learning model for generating the contamination map data.

[0066] In operation S220, the robotic cleaning apparatus 1000 may determine a cleaning target area, based on the contamination map data. The robotic cleaning apparatus 1000 may predict the locations and the contamination levels of the contaminated areas in the cleaning space, based on the contamination map data and determine at least one cleaning target area, based on an operation mode and an apparatus state of the robotic cleaning apparatus 1000.

[0067] The robotic cleaning apparatus 1000 may determine a priority of and a cleaning strength for the cleaning target area in the cleaning space considering the operation mode and the apparatus state of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may determine the cleaning strength of the robotic cleaning apparatus 1000 by, for example, determining a speed and suction power of the robotic cleaning apparatus 1000. For example, at a high cleaning strength, the robotic cleaning apparatus 1000 may strongly suck up dust while moving slowly. For example, at a low cleaning strength, the robotic cleaning apparatus 1000 may weakly suck up dust while moving fast. For example, the robotic cleaning apparatus 1000 may strongly suck up dust while moving fast, or weakly suck up dust while moving slowly.

[0068] The operation mode of the robotic cleaning apparatus 1000 may include, for example, a normal mode, a quick mode, a power-saving mode, and a low-noise mode, but is not limited thereto. For example, when the operation mode of the robotic cleaning apparatus 1000 is the quick mode, the robotic cleaning apparatus 1000 may determine the cleaning target area and the priority of the cleaning target area considering an operating time of the robotic cleaning apparatus 1000 to preferentially clean a seriously contaminated area within the operating time. For example, when the operation mode of the robotic cleaning apparatus 1000 is the low-noise mode, the robotic cleaning apparatus 1000 may determine the cleaning target area and the cleaning strength to clean a seriously contaminated area with low noise. For example, the robotic cleaning apparatus 1000 may determine the cleaning target area and the priority of the cleaning target area considering a battery level of the robotic cleaning apparatus 1000.

[0069] The robotic cleaning apparatus 1000 may determine the cleaning target area by applying information about the robotic cleaning apparatus 1000 and the cleaning space to a learning model for determining the cleaning target area. For example, the robotic cleaning apparatus 1000 may determine the cleaning target area, the priority of and the cleaning strength for the cleaning target area, etc. by inputting information about the operation mode of the robotic cleaning apparatus 1000, the apparatus state of the robotic cleaning apparatus 1000, and a motion of a user in the cleaning space, to the learning model for determining the cleaning target area.

[0070] In operation S230, the robotic cleaning apparatus 1000 may clean the determined cleaning target area. The robotic cleaning apparatus 1000 may clean the cleaning space according to the priority of and the cleaning strength for the cleaning target area. The robotic cleaning apparatus 1000 may collect contamination data about contamination of the cleaning space in real time while cleaning, and change the cleaning target area by reflecting the contamination data collected in real time.

[0071] FIG. 3 is a flowchart of a method, performed by the robotic cleaning apparatus 1000, of acquiring contamination data, according to an embodiment of the disclosure.

[0072] In operation S300, the robotic cleaning apparatus 1000 may detect contamination by using a sensor of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may include, for example, an infrared sensor, an ultrasonic sensor, a radio frequency (RF) sensor, a geomagnetic sensor, a position sensitive device (PSD) sensor, and a dust sensor. The robotic cleaning apparatus 1000 may detect a location and motion of the robotic cleaning apparatus 1000 and determine locations and contamination levels of contaminated areas by using the sensors of the robotic cleaning apparatus 1000 while cleaning. The robotic cleaning apparatus 1000 may generate contamination data including information about the contamination levels measured in the contaminated areas and the locations of the contaminated areas. The robotic cleaning apparatus 1000 may include, in the contamination data, information about a time when the contamination data is generated.

[0073] In operation S310, the robotic cleaning apparatus 1000 may photograph the contaminated areas by using a camera of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may generate image data of contaminants by photographing the contaminated areas determined as having the contaminants, while cleaning. The robotic cleaning apparatus 1000 may include, in the contamination data, information about a time when the contamination data is generated.

[0074] In operation S320, the robotic cleaning apparatus 1000 may receive contamination data from the external device 3000. At least one external device 3000 may be installed in a cleaning space to generate the contamination data. When contamination occurs in an area near a location where the external device 3000 is installed, the external device 3000 may generate and provide the contamination data to the robotic cleaning apparatus 1000. In this case, the robotic cleaning apparatus 1000 may determine the location of the external device 3000 in the cleaning space by communicating with the external device 3000. The robotic cleaning apparatus 1000 may determine the location of the external device 3000 in the cleaning space by determining the location of the robotic cleaning apparatus 1000 in the cleaning space and a relative location between the robotic cleaning apparatus 1000 and the external device 3000. Alternatively, the external device 3000 may determine the location thereof in the cleaning space and provide information indicating the location of the external device 3000, to the robotic cleaning apparatus 1000.

[0075] The external device 3000 may include, for example, a CCTV, an Internet protocol (IP) camera, an ultrasonic sensor, an infrared sensor, and a dust sensor. For example, when the external device 3000 is a CCTV installed in the cleaning space, the CCTV may continuously photograph the cleaning space and, when a motion of contaminating the cleaning space is detected, the CCTV may provide video data or captured image data including the detected motion, to the robotic cleaning apparatus 1000. For example, when the external device 3000 is a dust sensor installed in the cleaning space, the dust sensor may provide sensing data about a dust quantity of the cleaning space to the robotic cleaning apparatus 1000 in real time or periodically. For example, when the external device 3000 is a home appliance, the home appliance may provide information about an operating state thereof to the robotic cleaning apparatus 1000 in real time or periodically. The external device 3000 may include, in the contamination data, information about a time when the contamination data is generated.

[0076] In operation S330, the robotic cleaning apparatus 1000 may acquire contamination map data. The robotic cleaning apparatus 1000 may generate the contamination map data by analyzing the contamination data generated by the robotic cleaning apparatus 1000 and the contamination data generated by the external device 3000. The contamination data may include information about the locations and the contamination levels of the contaminated areas in the cleaning space.

[0077] The robotic cleaning apparatus 1000 may generate the contamination map data indicating a contaminated location and a contamination level in the cleaning space, by using map data of the cleaning space and the contamination data acquired in operations S300 to S320. The robotic cleaning apparatus 1000 may generate the contamination map data per a time period.

[0078] The robotic cleaning apparatus 1000 may generate the contamination map data by data about motion of the robotic cleaning apparatus 1000 and the contamination data to a learning model for generating the contamination map data. In this case, the learning model for generating the contamination map data may be previously learned by the robotic cleaning apparatus 1000 or the server 2000. Alternatively, for example, the robotic cleaning apparatus 1000 may provide the data about motion of the robotic cleaning apparatus 1000 and the contamination data to the server 2000, and the server 2000 may apply the data about motion of the robotic cleaning apparatus 1000 and the contamination data to the learning model for generating the contamination map data.

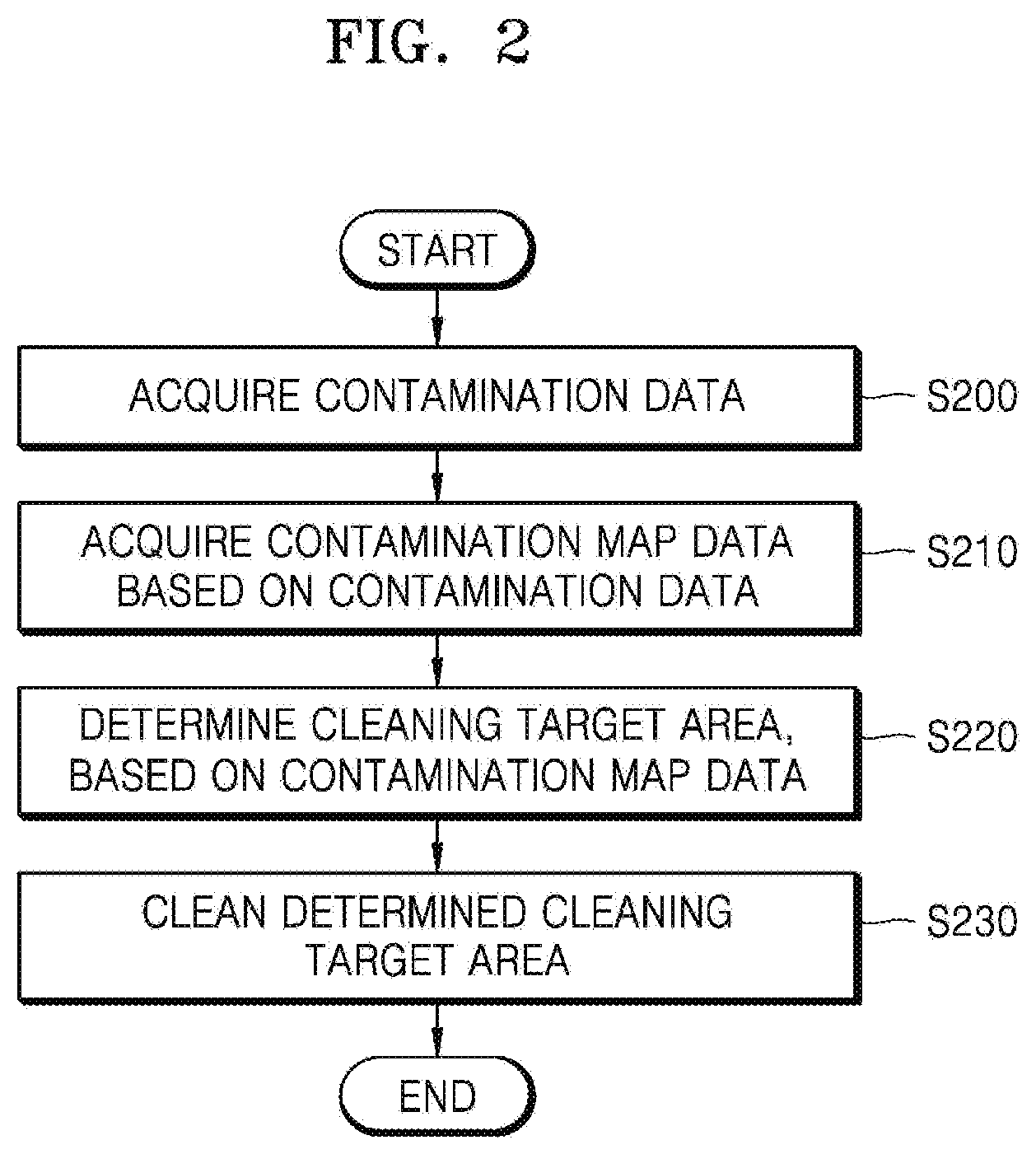

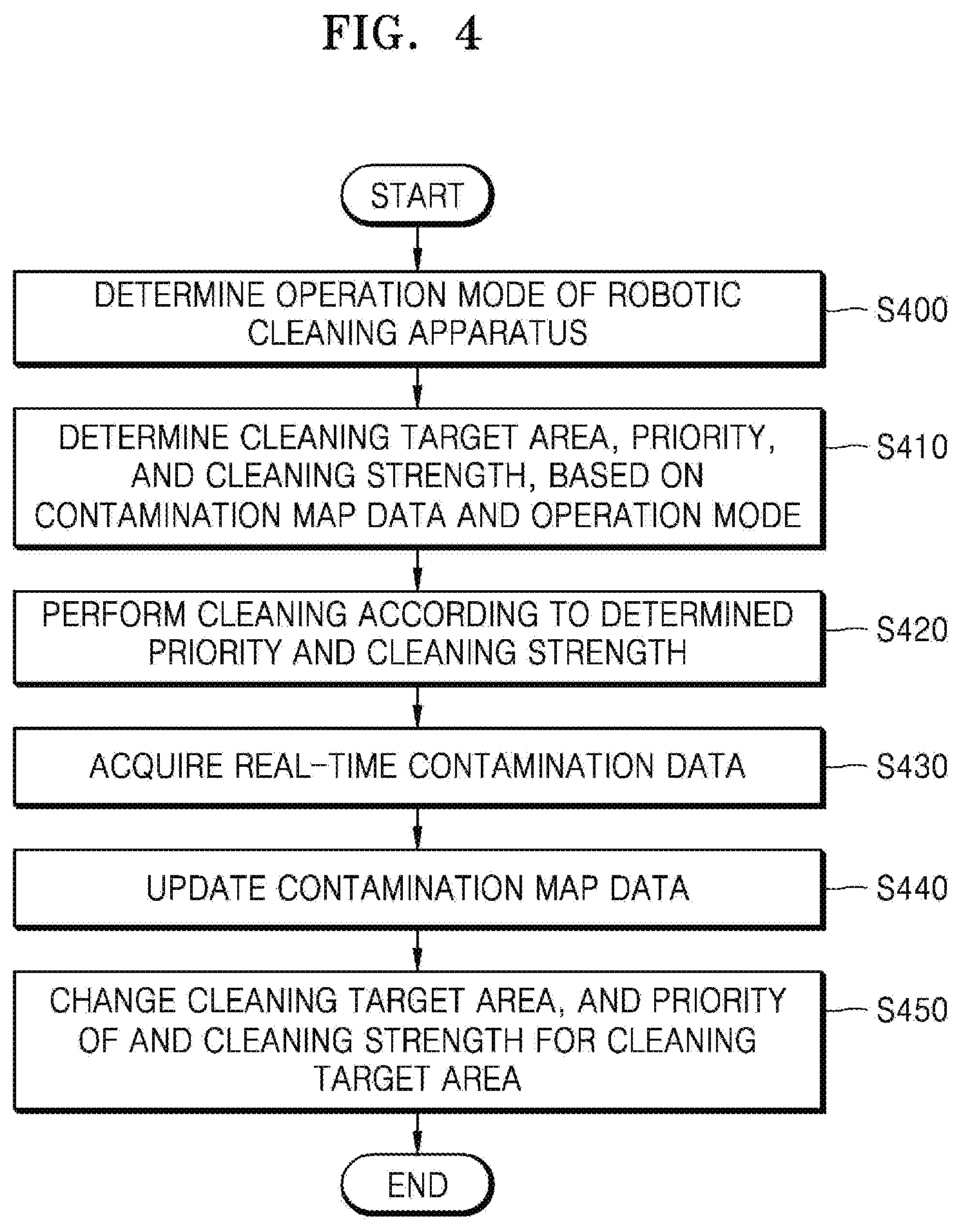

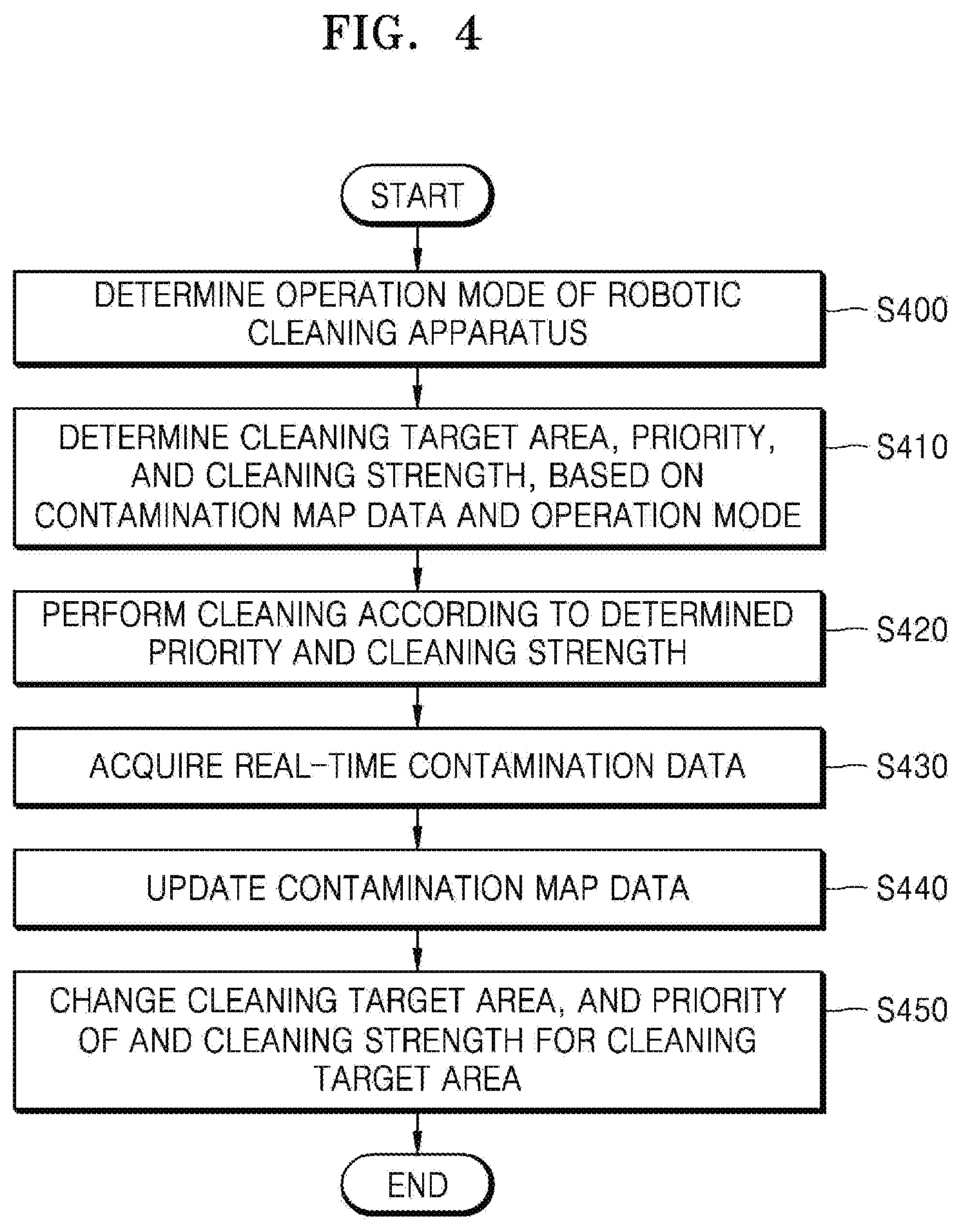

[0079] FIG. 4 is a flowchart of a method, performed by the robotic cleaning apparatus 1000, of determining a priority of and a cleaning strength for a cleaning target area considering an operation mode of the robotic cleaning apparatus 1000, according to an embodiment of the disclosure.

[0080] In operation S400, the robotic cleaning apparatus 1000 may determine an operation mode of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may determine the operation mode of the robotic cleaning apparatus 1000 considering an environment of a cleaning space, a current time, and an apparatus state of the robotic cleaning apparatus 1000. For example, the robotic cleaning apparatus 1000 may determine the operation mode of the robotic cleaning apparatus 1000 considering information about whether a user is present in the cleaning space, information about a motion of the user, a current time, a battery level of the robotic cleaning apparatus 1000, etc. In this case, the robotic cleaning apparatus 1000 may determine the operation mode of the robotic cleaning apparatus 1000 by collecting the information about whether a user is present in the cleaning space, the information about a motion of the user, the current time, and the battery level of the robotic cleaning apparatus 1000 and analyzing the collected information.

[0081] The operation mode of the robotic cleaning apparatus 1000 may include, for example, a normal mode, a quick mode, a power-saving mode, and a low-noise mode, but is not limited thereto. The operation mode of the robotic cleaning apparatus 1000 will be described in detail below.

[0082] The robotic cleaning apparatus 1000 may determine the operation mode of the robotic cleaning apparatus 1000, based on a user input.

[0083] In operation S410, the robotic cleaning apparatus 1000 may determine a priority of and a cleaning strength for a cleaning target area, based on contamination map data and the operation mode. For example, when the operation mode is the quick mode, the robotic cleaning apparatus 1000 may determine the cleaning target area, and the priority of and the cleaning strength for the cleaning target area to clean only a cleaning target area having a contamination level equal to or greater than a certain value. For example, when the operation mode is the low-noise mode, the robotic cleaning apparatus 1000 may determine the cleaning target area, and the priority of and the cleaning strength for the cleaning target area to slowly clean a cleaning target area having a contamination level equal to or greater than a certain value, at a low cleaning strength.

[0084] Although the robotic cleaning apparatus 1000 determines the cleaning target area, the priority of the cleaning target area, and the cleaning strength for the cleaning target area according to the operation mode in the above description, the disclosure is not limited thereto. The robotic cleaning apparatus 1000 may not determine the operation mode of the robotic cleaning apparatus 1000 and, in this case, the robotic cleaning apparatus 1000 may determine the cleaning target area, and the priority of and the cleaning strength for the cleaning target area considering the environment of the cleaning space, the current time, and the apparatus state of the robotic cleaning apparatus 1000. The cleaning strength may be differently set per a cleaning target area.

[0085] The robotic cleaning apparatus 1000 may determine the cleaning target area and the priority of and the cleaning strength for the cleaning target area by inputting information about the environment of the cleaning space, the current time, and the apparatus state of the robotic cleaning apparatus 1000 to a learning model for determining the cleaning target area. In this case, the learning model for determining the cleaning target area may be previously learned by the robotic cleaning apparatus 1000 or the server 2000. Alternatively, the robotic cleaning apparatus 1000 may provide the information about the environment of the cleaning space, the current time, and the apparatus state of the robotic cleaning apparatus 1000 to the server 2000, and the server 2000 may input the information about the environment of the cleaning space, the current time, and the apparatus state of the robotic cleaning apparatus 1000 to the learning model for determining the cleaning target area.

[0086] In operation S420, the robotic cleaning apparatus 1000 may perform cleaning according to the determined priority and cleaning strength. The robotic cleaning apparatus 1000 may clean the cleaning target area according to the priority of the cleaning target area at a different cleaning strength per a cleaning target area.

[0087] In operation S430, the robotic cleaning apparatus 1000 may acquire real-time contamination data while cleaning. The robotic cleaning apparatus 1000 may generate real-time contamination data by using a camera and a sensor of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may receive real-time contamination data generated by the external device 3000, from the external device 3000.

[0088] In operation S440, the robotic cleaning apparatus 1000 may update the contamination map data. The robotic cleaning apparatus 1000 may update the contamination map data by reflecting the real-time contamination data acquired in operation S430. In this case, the robotic cleaning apparatus 1000 may reflect a time when the real-time contamination data is generated, to update the contamination map data.

[0089] In operation S450, the robotic cleaning apparatus 1000 may change the cleaning target area, and the priority of and the cleaning strength for the cleaning target area. The robotic cleaning apparatus 1000 may change the cleaning target area, and the priority of and the cleaning strength for the cleaning target area, which are determined in operation S410, considering the real-time contamination data acquired in operation S430.

[0090] Although the robotic cleaning apparatus 1000 uses the learning model for generating the contamination map data and the learning model for determining the cleaning target area in FIGS. 3 and 4, respectively, the disclosure is not limited thereto. The robotic cleaning apparatus 1000 may acquire data about the contamination map data and the cleaning target area by using a learning model for generating the contamination map data and determining the cleaning target area.

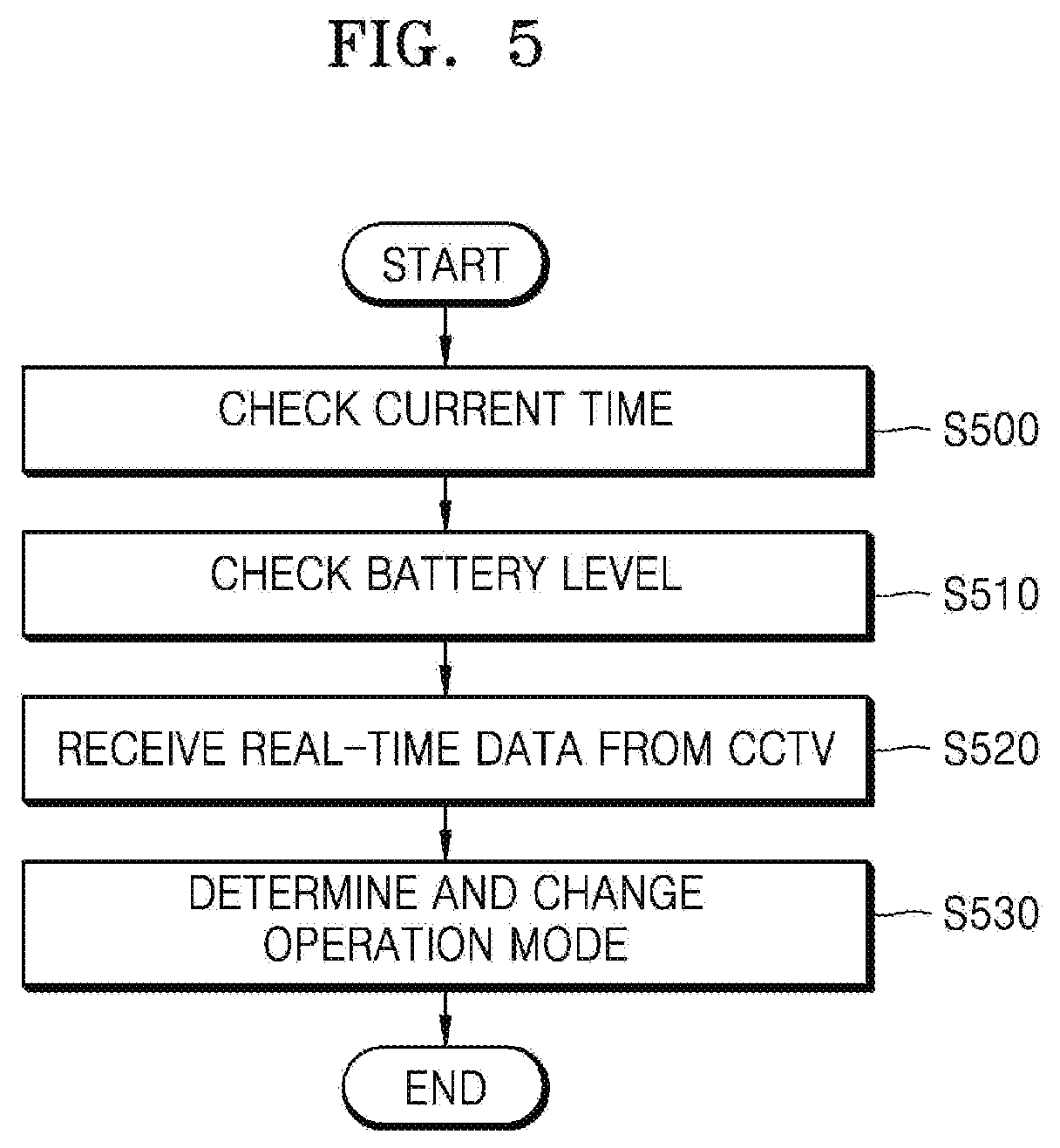

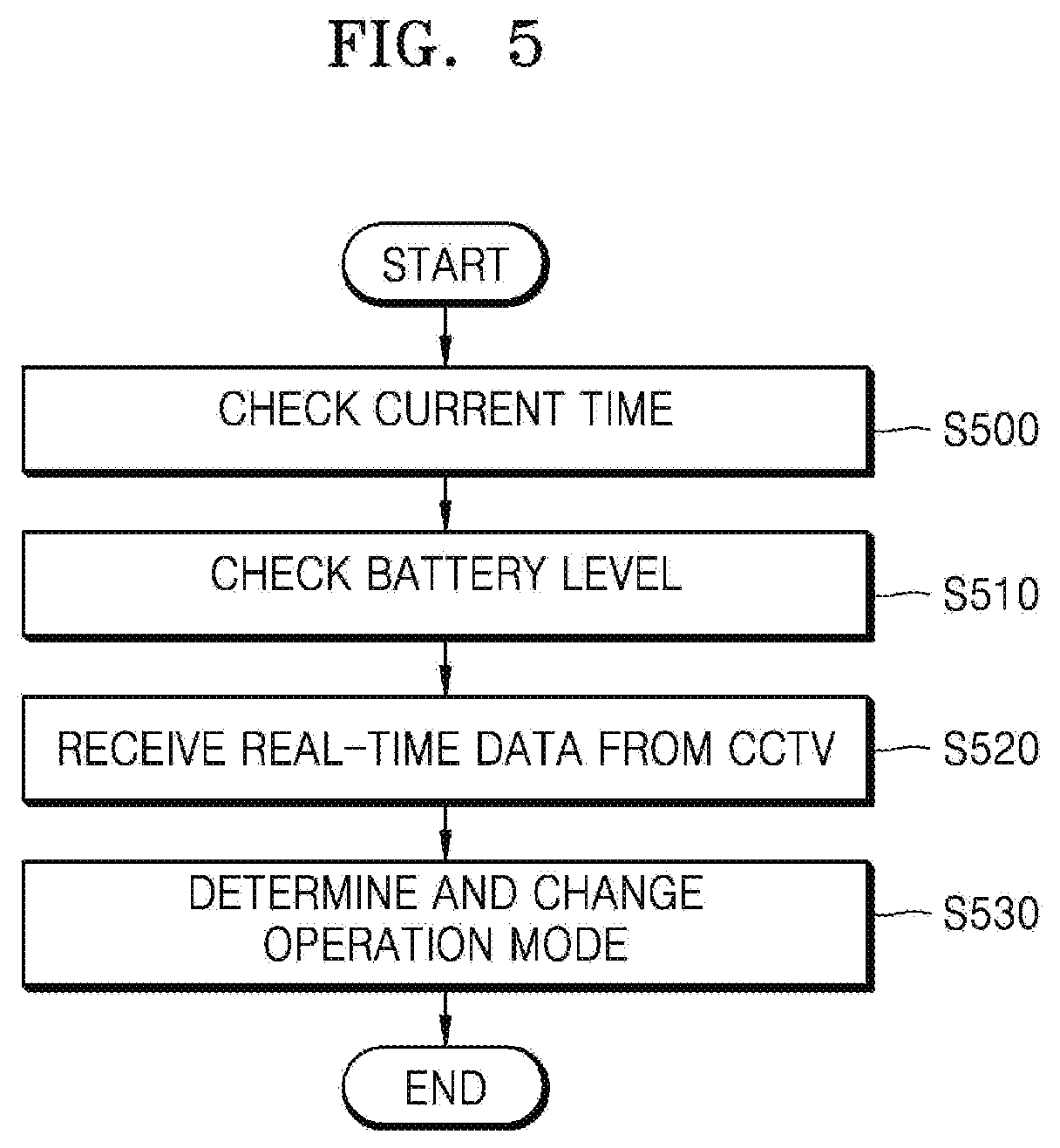

[0091] FIG. 5 is a flowchart of a method, performed by the robotic cleaning apparatus 1000, of determining an operation mode of the robotic cleaning apparatus 1000, according to an embodiment of the disclosure.

[0092] In operation S500, the robotic cleaning apparatus 1000 may check a current time. In operation S510, the robotic cleaning apparatus 1000 may check a battery level of the robotic cleaning apparatus 1000.

[0093] In operation S520, the robotic cleaning apparatus 1000 may receive real-time data from a CCTV in a cleaning space. When motion of a user in the cleaning space is detected, the CCTV may provide image data obtained by photographing the motion of the user, to the robotic cleaning apparatus 1000. In addition, when the motion of the user in the cleaning space is detected, the CCTV may provide information indicating that the motion of the user is detected, to the robotic cleaning apparatus 1000.

[0094] In operation S530, the robotic cleaning apparatus 1000 may determine and change an operation mode of the robotic cleaning apparatus 1000. The robotic cleaning apparatus 1000 may determine and change the operation mode of the robotic cleaning apparatus 1000 considering the current time, the battery level, and the real-time CCTV data.

[0095] For example, the robotic cleaning apparatus 1000 may determine and change the operation mode considering the current time in such a manner that the robotic cleaning apparatus 1000 operates in a low-noise mode at night. For example, the robotic cleaning apparatus 1000 may determine and change the operation mode considering the battery level in such a manner that the robotic cleaning apparatus 1000 operates in a power-saving mode when the battery level is equal to or less than a certain value. For example, when the user is present in the cleaning space, the robotic cleaning apparatus 1000 may determine and change the operation mode in such a manner that the robotic cleaning apparatus 1000 operates in a quick mode. For example, when the user is not present in the cleaning space, the robotic cleaning apparatus 1000 may determine and change the operation mode in such a manner that the robotic cleaning apparatus 1000 operates in a normal mode.

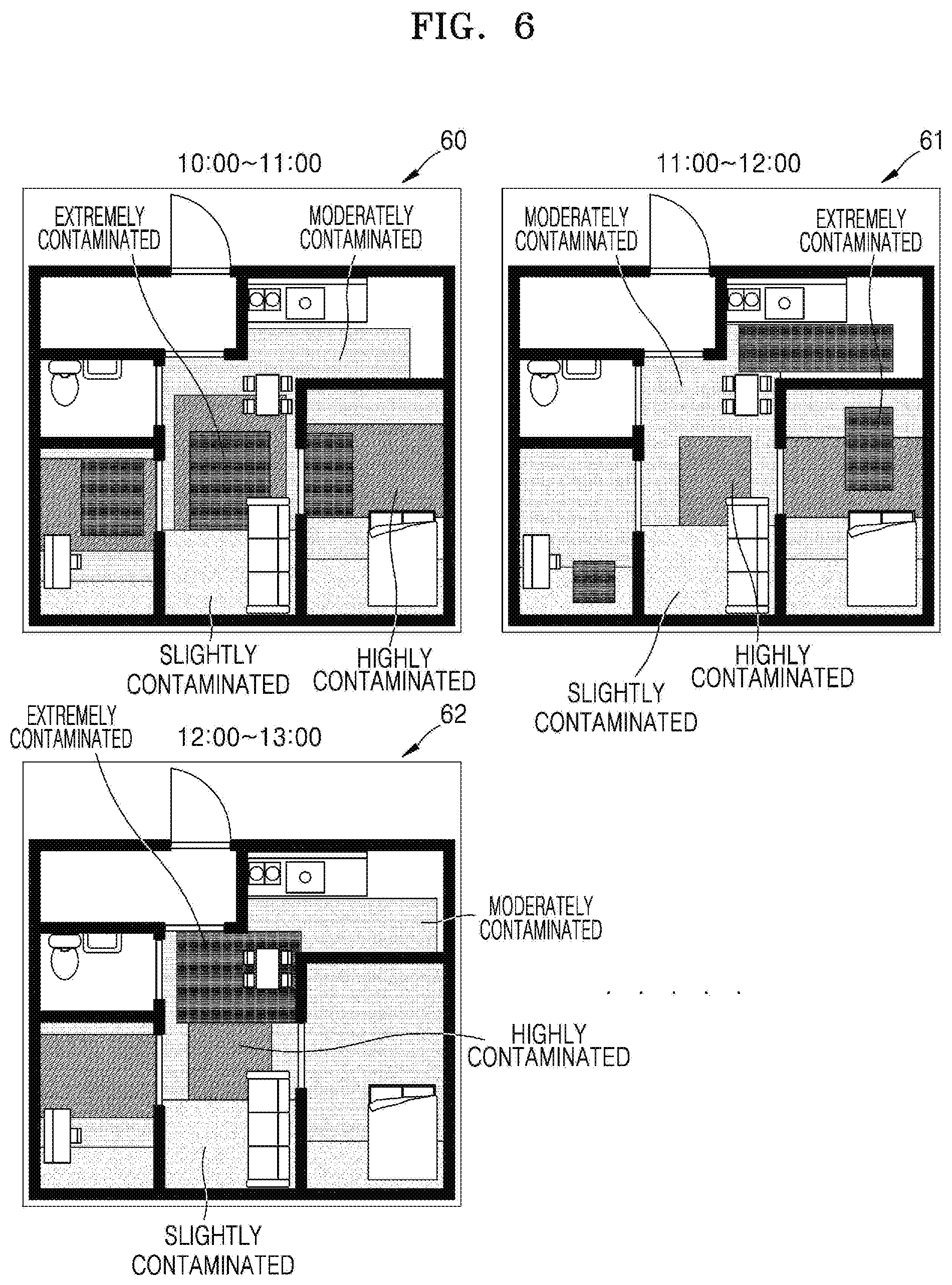

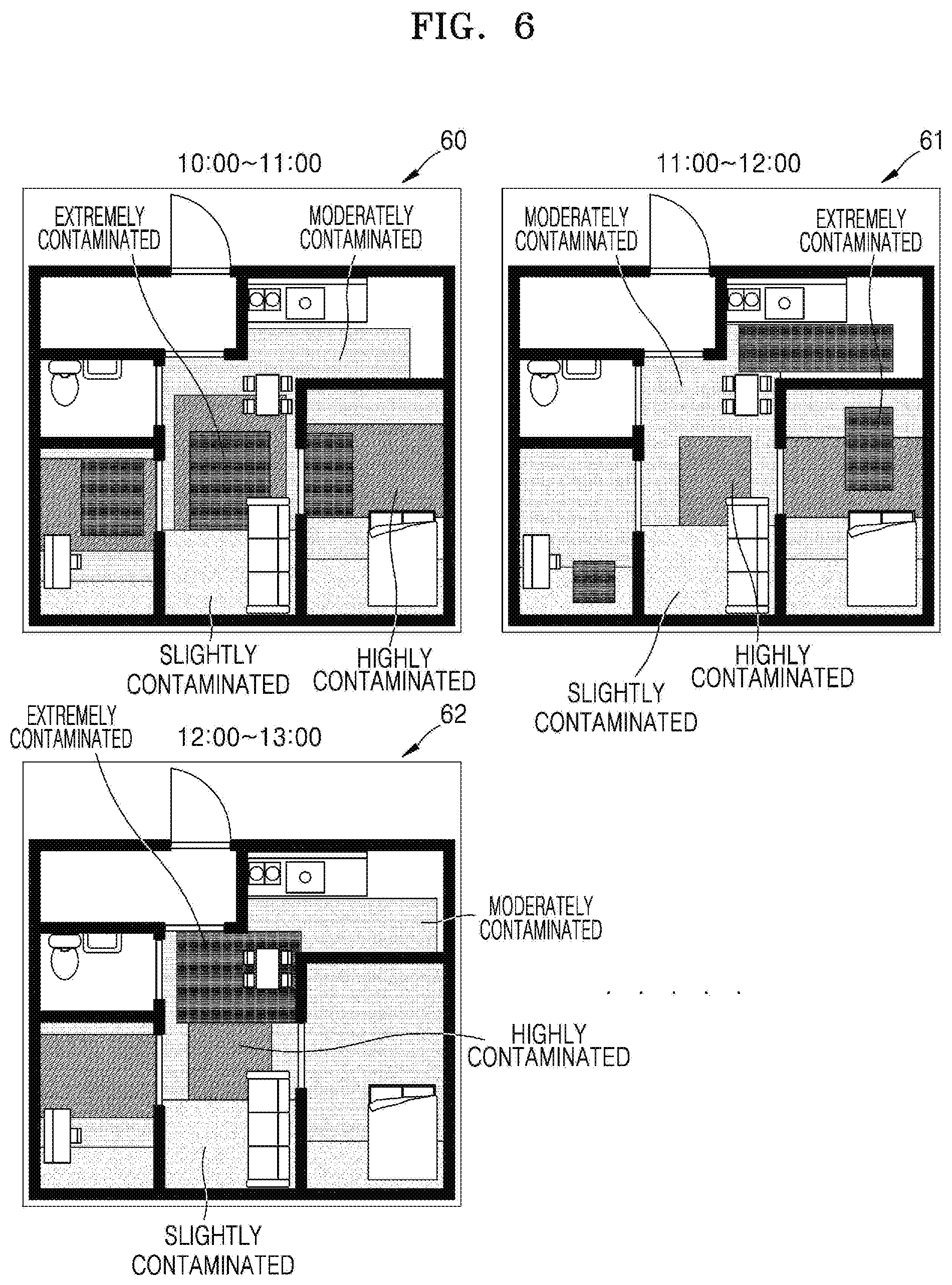

[0096] FIG. 6 is a schematic diagram showing contamination map data according to an embodiment of the disclosure.

[0097] Referring to FIG. 6, the contamination map data may include information about a plan view of a cleaning space. For example, the cleaning space may be divided into a plurality of rooms, and the contamination map data may include information about locations of the rooms in the cleaning space, information about locations of furniture, information about locations of contaminated areas, and information about contamination levels of the contaminated areas. As indicated by reference numerals 60, 61, and 62 in FIG. 6, the contamination map data may be differently generated per a time period.

[0098] FIGS. 7 and 8 are flowcharts of methods, performed by the robotic cleaning apparatus 1000, of determining and changing a cleaning target area in association with the server 2000, according to various embodiments of the disclosure.

[0099] Referring to FIG. 7, the server 2000 may determine and change a cleaning target area by acquiring contamination data from the robotic cleaning apparatus 1000.

[0100] In operation S700, the robotic cleaning apparatus 1000 may generate contamination data. In operation S705, the robotic cleaning apparatus 1000 may receive contamination data from the external device 3000.

[0101] In operation S710, the robotic cleaning apparatus 1000 may provide the generated contamination data and the received contamination data to the server 2000. The robotic cleaning apparatus 1000 may provide the generated contamination data and the received contamination data to the server 2000 in real time or periodically.

[0102] In operation S715, the server 2000 may generate and update contamination map data. The server 2000 may receive map data generated by the robotic cleaning apparatus 1000, from the robotic cleaning apparatus 1000. The server 2000 may generate the contamination map data by analyzing the received map data and contamination data. For example, the server 2000 may generate the contamination map data by applying the map data and the contamination data to a learning model for generating the contamination map data, but is not limited thereto.

[0103] The server 2000 may generate the contamination map data by using other map data and other contamination map data of another cleaning space, which are received from another robotic cleaning apparatus (not shown). In this case, the other map data of the other cleaning space, which is used by the server 2000, may be similar to the map data of the cleaning space of the robotic cleaning apparatus 1000 by a certain value or more. For example, when the other cleaning space and the cleaning space have similar space structures and similar furniture arrangements, the contamination map data of the other cleaning space may be used by the server 2000. To determine whether the other cleaning space is similar to the cleaning space, for example, the server 2000 may determine whether users in the other cleaning space are similar to users in the cleaning space of the robotic cleaning apparatus 1000. In this case, information such as occupations, ages, and genders of the users may be used to determine similarity between the users.

[0104] In operation S720, the robotic cleaning apparatus 1000 may provide information about an operation mode of the robotic cleaning apparatus 1000 to the server 2000. The robotic cleaning apparatus 1000 may determine the operation mode, based on a user input. Alternatively, the robotic cleaning apparatus 1000 may determine the operation mode considering an environment of the cleaning space and a state of the robotic cleaning apparatus 1000.

[0105] In operation S725, the robotic cleaning apparatus 1000 may provide information about an apparatus state of the robotic cleaning apparatus 1000 to the server 2000. The robotic cleaning apparatus 1000 may periodically transmit the information about the apparatus state of the robotic cleaning apparatus 1000 to the server 2000, but is not limited thereto.

[0106] In operation S730, the server 2000 may determine a cleaning target area. The server 2000 may determine a location of the cleaning target area, a priority of the cleaning target area, and a cleaning strength for the cleaning target area. The server 2000 may determine the location of the cleaning target area, the priority of the cleaning target area, and the cleaning strength for the cleaning target area considering the contamination map data, the operation mode of the robotic cleaning apparatus 1000, and the apparatus state of the robotic cleaning apparatus 1000. The cleaning strength may be determined by, for example, a speed and suction power of the robotic cleaning apparatus 1000.

[0107] The server 2000 may determine the location of the cleaning target area, the priority of the cleaning target area, and the cleaning strength for the cleaning target area by applying the contamination map data and information about the operation mode of the robotic cleaning apparatus 1000 and the apparatus state of the robotic cleaning apparatus 1000 to a learning model for determining the cleaning target area.

[0108] In operation S735, the server 2000 may provide information about the cleaning target area to the robotic cleaning apparatus 1000. In operation S740, the robotic cleaning apparatus 1000 may perform a cleaning operation. The robotic cleaning apparatus 1000 may perform cleaning, based on information about the location of the cleaning target area, the priority of the cleaning target area, and the cleaning strength for the cleaning target area, which is provided from the server 2000.

[0109] In operation S745, the robotic cleaning apparatus 1000 may generate real-time contamination data. In operation S750, the robotic cleaning apparatus 1000 may receive real-time contamination data from the external device 3000. In operation S755, the robotic cleaning apparatus 1000 may provide the generated real-time contamination data and the received real-time contamination data to the server 2000.

[0110] In operation S760, the server 2000 may change the cleaning target area, based on the real-time contamination data. The server 2000 may change the cleaning target area by applying the real-time contamination data to the learning model for determining the cleaning target area.

[0111] In operation S765, the server 2000 may provide information about the changed cleaning target area to the robotic cleaning apparatus 1000.

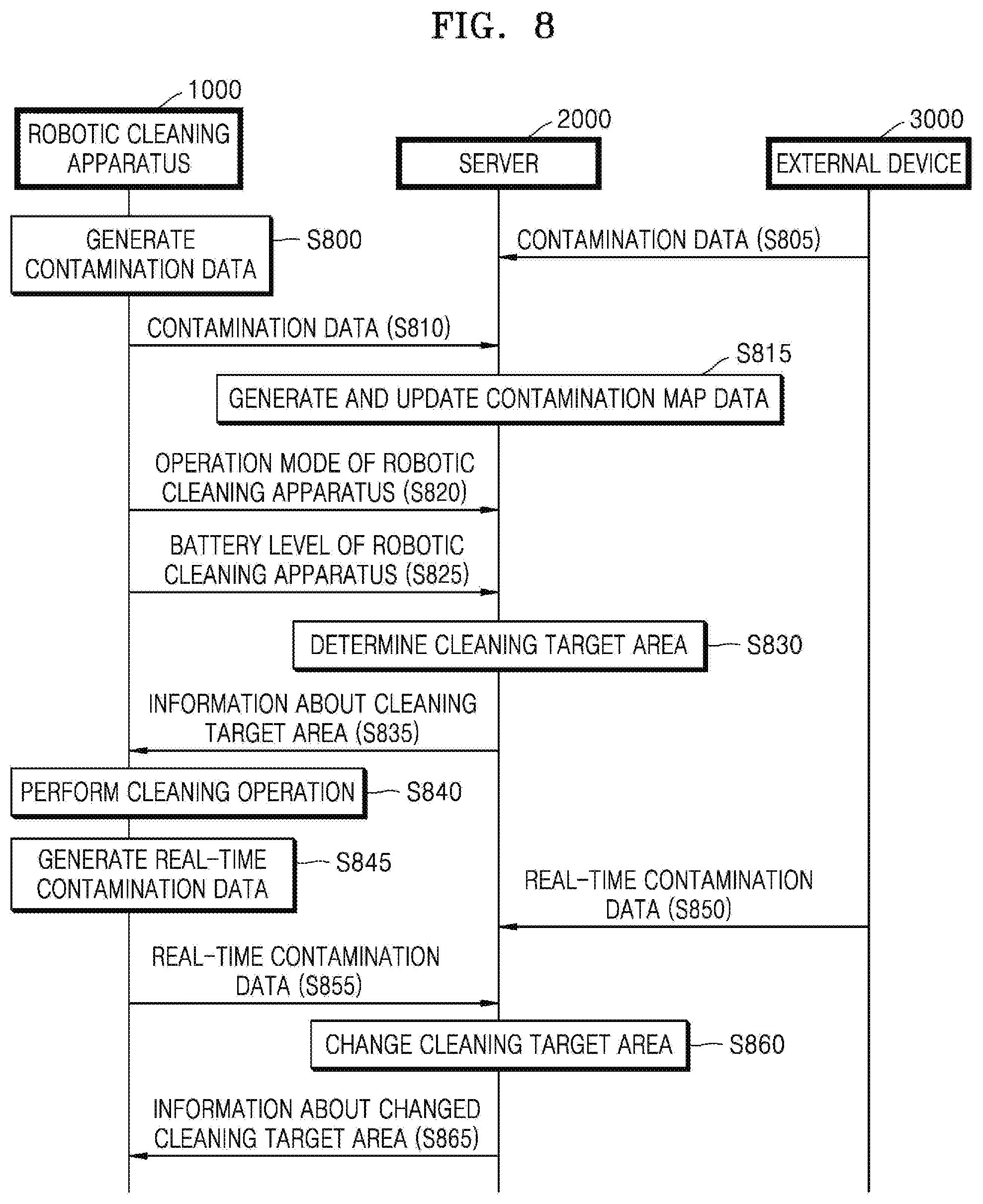

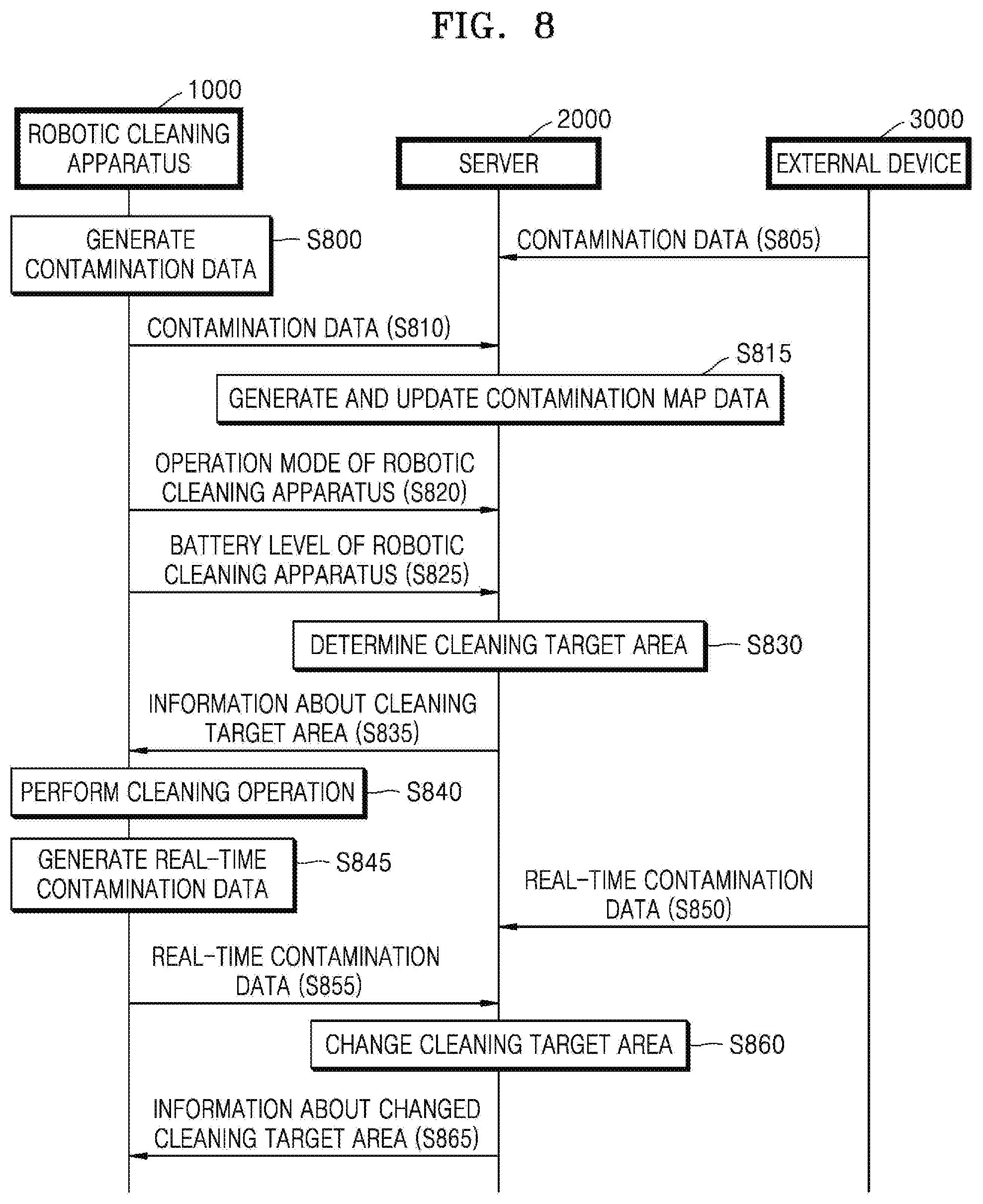

[0112] Referring to FIG. 8, contamination data generated by the external device 3000 (at operation S800) may be provided from the external device 3000 to the server 2000 (at operation S800).

[0113] In operation S805, the external device 3000 may provide contamination data generated by the external device 3000, to the server 2000. In operation S850, the external device 3000 may provide real-time contamination data generated by the external device 3000, to the server 2000. In this case, the server 2000 may manage the robotic cleaning apparatus 1000 and the external device 3000 together by using an integrated ID of a user. Operations S815 to S845 and S855 to S865 are akin to corresponding operations S715 to S745 and S755 to S765 shown in FIG. 7, and a detailed description thereof will be omitted. In brief, in operation S810, the robotic cleaning apparatus 1000 may provide contamination data to the server 2000, in operation S815, the server 2000 may generated and update the contamination data, in operation S820, the robotic cleaning apparatus 1000 may provide an operation mode of the robotic cleaning apparatus to the server 2000, in operation S825, the robotic cleaning apparatus 1000 may provide information related to the battery level of the robotic cleaning apparatus to the server 2000, in operation S830, the server may determine the cleaning target area based on the received information, in operation S835, the server 2000 may provide information about the cleaning target area to the robotic cleaning apparatus, in operation S840, the robotic cleaning apparatus 1000 may perform the cleaning operation of the cleaning target area, in operation S845, the robotic cleaning apparatus may generate real-time contamination data, in operation S855, the robotic cleaning apparatus 1000 provides real-time contamination data to the server 2000, in operation S860, based on the received real-time data, the server 2000 may change the cleaning target area, and in operation S865, the server 2000 may provide information about the changed cleaning target area to the robotic cleaning apparatus.

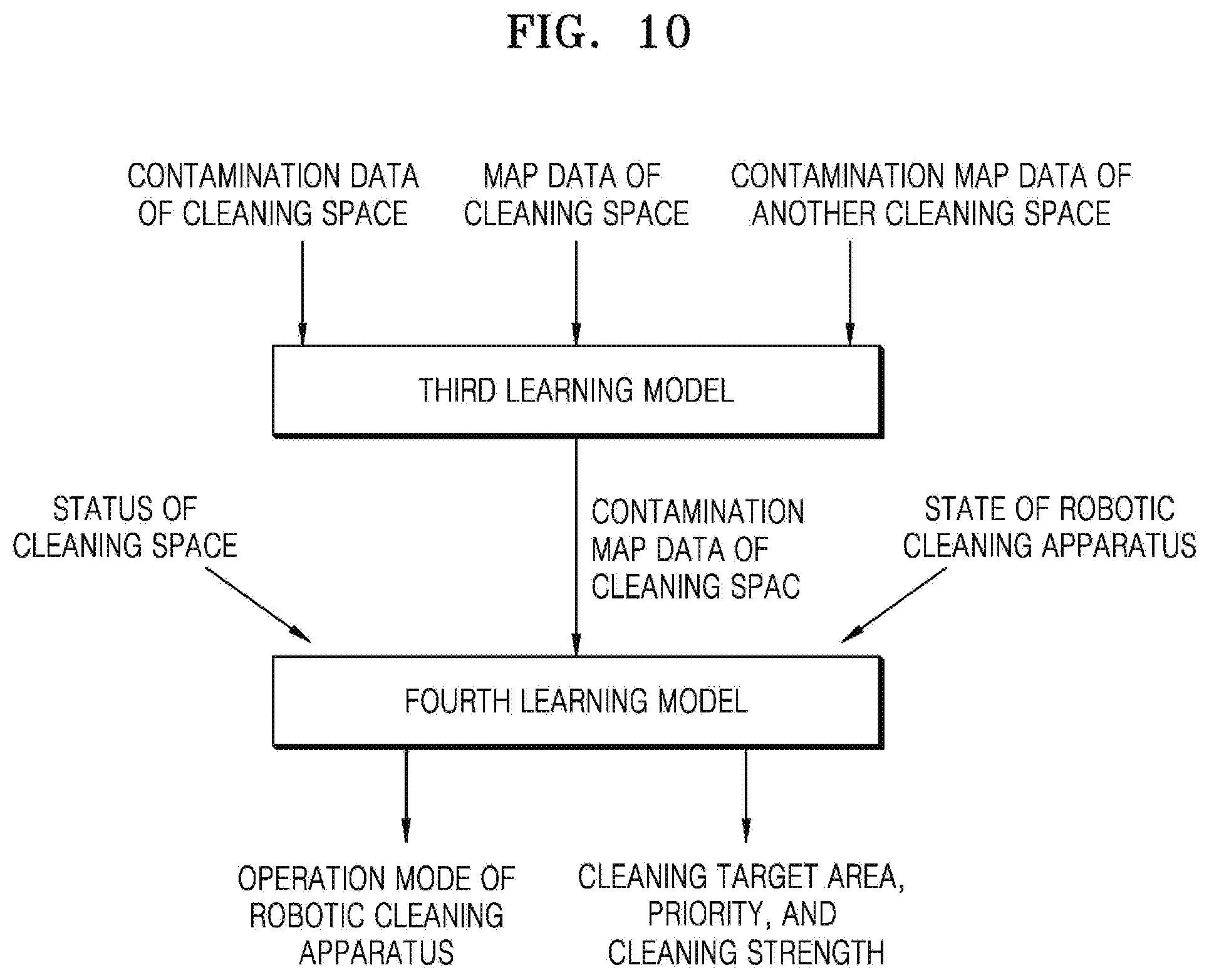

[0114] FIGS. 9, 10, 11, and 12 are schematic diagrams showing examples in which a cleaning target area is determined using learning models, according to various embodiments of the disclosure.

[0115] Referring to FIG. 9, for example, when contamination data of a cleaning space and map data of the cleaning space are applied to a first learning model, contamination map data of the cleaning space may be determined. In this case, the first learning model may be a learning model for generating the contamination map data of the cleaning space.

[0116] For example, when the contamination map data of the cleaning space, data about a status of the cleaning space, and data about an apparatus state of the robotic cleaning apparatus 1000 are applied to a second learning model, a cleaning target area, and a priority of and a cleaning strength for the cleaning target area may be determined. In this case, the second learning model may be a learning model for determining the cleaning target area.

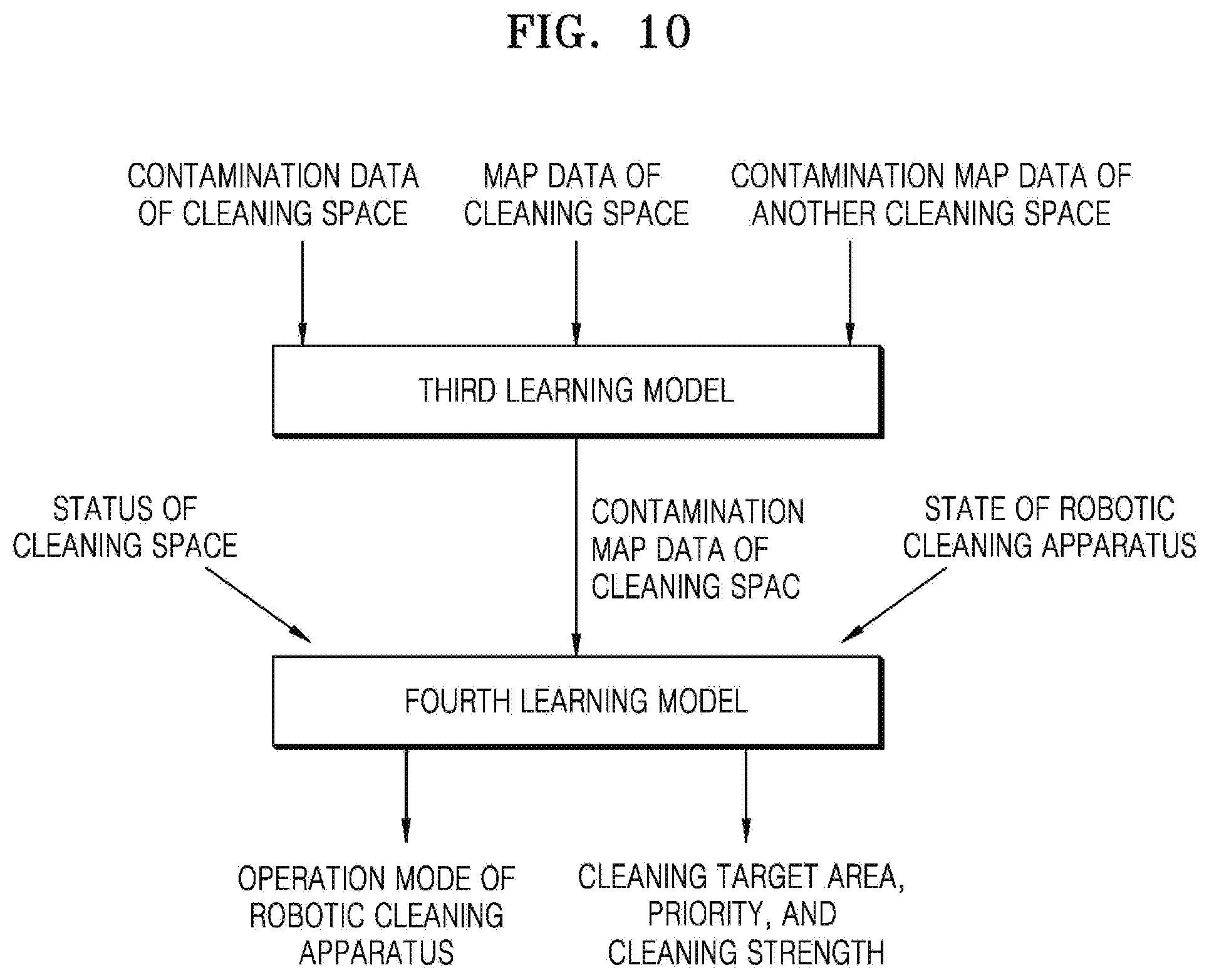

[0117] Referring to FIG. 10, for example, when contamination data of a cleaning space, map data of the cleaning space, and contamination map data of another cleaning space are applied to a third learning model, contamination map data of the cleaning space may be determined. In this case, the third learning model may be a learning model for generating the contamination map data of the cleaning space.

[0118] For example, when the contamination map data of the cleaning space, data about a status of the cleaning space, and data about an apparatus state of the robotic cleaning apparatus 1000 are applied to a fourth learning model, an operation mode of the robotic cleaning apparatus 1000, a cleaning target area, and a priority of and a cleaning strength for the cleaning target area may be determined. In this case, the fourth learning model may be a learning model for determining the operation mode of the robotic cleaning apparatus 1000 and the cleaning target area.

[0119] Referring to FIG. 11, for example, when contamination data of a cleaning space, map data of the cleaning space, contamination map data of another cleaning space, data about a status of the cleaning space, and data about an apparatus state of the robotic cleaning apparatus 1000 are applied to a fifth learning model, contamination map data of the cleaning space, an operation mode of the robotic cleaning apparatus 1000, a cleaning target area, and a priority of and a cleaning strength for the cleaning target area may be determined. In this case, the fifth learning model may be a learning model for determining the contamination map data of the cleaning space, the operation mode of the robotic cleaning apparatus 1000, and the cleaning target area.

[0120] Referring to FIG. 12, for example, when contamination data of a cleaning space, map data of the cleaning space, contamination map data of another cleaning space, data about a status of the cleaning space, data about an apparatus state of the robotic cleaning apparatus 1000, and schedule information of a user are applied to a sixth learning model, a cleaning target area, and a priority of and a cleaning strength for the cleaning target area may be determined. In this case, the sixth learning model may be a learning model for determining the cleaning target area.

[0121] Although the contamination map data of the cleaning space, the operation mode of the robotic cleaning apparatus 1000, and the cleaning target area are determined using various types of learning models in FIGS. 9 to 12, the disclosure is not limited thereto. Learning models different in types and numbers from those illustrated in FIGS. 9 to 12 may be used to determine the contamination map data of the cleaning space, the operation mode of the robotic cleaning apparatus 1000, and the cleaning target area. Different and various types of information may be input to the learning models.

[0122] FIG. 13 is a table for describing operation modes of the robotic cleaning apparatus 1000, according to an embodiment of the disclosure.

[0123] Referring to FIG. 13, the operation modes of the robotic cleaning apparatus 1000 may include, for example, a normal mode, a quick mode, a low-noise mode, and a power-saving mode. The normal mode may be an operation mode for normal cleaning.

[0124] The quick mode may be an operation mode for quick cleaning. In the quick mode, the robotic cleaning apparatus 1000 may clean only a contaminated area having a contamination level equal to or greater than a certain value, with high suction power while moving at a high speed. When an operating time is set by a user, the robotic cleaning apparatus 1000 may determine a cleaning target area to quickly complete cleaning within the set operating time.

[0125] The low-noise mode may be an operation mode for minimizing noise. When a user is present in a cleaning space or at night, the robotic cleaning apparatus 1000 may perform cleaning in the low-noise mode. In this case, the robotic cleaning apparatus 1000 may perform cleaning with low suction power while moving at a low speed.

[0126] The power-saving mode may be an operation mode for reducing battery consumption. When the robotic cleaning apparatus 1000 has a low battery level, the robotic cleaning apparatus 1000 may operate with a low power consumption and determine a cleaning target area considering the battery level.

[0127] The above-described operation modes of the robotic cleaning apparatus 1000 are merely examples, and the operation modes of the robotic cleaning apparatus 1000 are not limited thereto. The robotic cleaning apparatus 1000 may operate in operation modes different from the above-described operation modes and perform cleaning by using a combination of two or more operation modes. The operation mode of the robotic cleaning apparatus 1000 may not be set in a simple manner. For example, the robotic cleaning apparatus 1000 may operate by continuously setting and changing weights for quick cleaning, low-noise cleaning, and save cleaning.

[0128] FIG. 14 is a schematic diagram of a cleaning system according to another embodiment of the disclosure.

[0129] Referring to FIG. 14, the cleaning system according to another embodiment of the disclosure may include the robotic cleaning apparatus 1000, the server 2000, at least one external device 3000, and a mobile device 4000.

[0130] The mobile device 4000 may communicate with the robotic cleaning apparatus 1000, the server 2000, and the at least one external device 3000 through a network. The mobile device 4000 may perform some of the functions of the robotic cleaning apparatus 1000, which are described above in relation to FIGS. 1 to 13. For example, the mobile device 4000 may display a graphical user interface (GUI) for controlling the robotic cleaning apparatus 1000, and a map showing a contamination level of a cleaning space, on a screen of the mobile device 4000. For example, the mobile device 4000 may receive information about a state of the robotic cleaning apparatus 1000 and a status of the cleaning space from the robotic cleaning apparatus 1000, and use a learning model to determine how to clean the cleaning space. The mobile device 4000 may use at least one learning model to generate contamination map data and determine a cleaning target area. In this case, the mobile device 4000 may perform operations of the robotic cleaning apparatus 1000, as described below with reference to FIGS. 20 to 23.

[0131] The mobile device 4000 may include, for example, a display (not shown), a communication interface (not shown) used to communicate with an external device, a memory (not shown) storing one or more instructions, at least one sensor (not shown), and a processor (not shown) executing the instructions stored in the memory. The processor of the mobile device 4000 may control operations of the other elements of the mobile device 4000 by executing the instructions stored in the memory.

[0132] FIGS. 15 to 17 are schematic diagrams showing an example in which the mobile device 4000 controls the robotic cleaning apparatus 1000 to operate in a certain operation mode, according to an embodiment of the disclosure.

[0133] Referring to FIG. 15, the mobile device 4000 may receive contamination map data from the robotic cleaning apparatus 1000 and display the received contamination map data on a screen of the mobile device 4000. For example, the mobile device 4000 may display the contamination map data indicating a current contamination level of a home, and a GUI for determining whether to start cleaning by using the robotic cleaning apparatus 1000, on the screen.

[0134] Referring to FIG. 16, the mobile device 4000 may display a GUI for selecting an operation mode of the robotic cleaning apparatus 1000, on the screen. The mobile device 4000 may display a list of operation modes of the robotic cleaning apparatus 1000. For example, the mobile device 4000 may display an operation mode list including a normal mode, a low-noise mode, a quick mode, and a power-saving mode, and receive a user input for selecting the quick mode. The mobile device 4000 may request the robotic cleaning apparatus 1000 to perform cleaning in the quick mode.

[0135] Referring to FIG. 17, the mobile device 4000 may display a GUI showing a result of cleaning performed using the robotic cleaning apparatus 1000. For example, the robotic cleaning apparatus 1000 may clean a cleaning space in the quick mode according to the request of the mobile device 4000. For example, the robotic cleaning apparatus 1000 may clean an extremely contaminated area and a highly contaminated area of the cleaning space in the quick mode and provide contamination map data indicating a result of cleaning, to the mobile device 4000. As such, the mobile device 4000 may display the contamination map data indicating the result of cleaning. The mobile device 4000 may display a GUI for determining whether to schedule a cleaning using the robotic cleaning apparatus 1000.

[0136] FIG. 18 is a block diagram of the robotic cleaning apparatus 1000 according to an embodiment of the disclosure.

[0137] Referring to FIG. 18, the robotic cleaning apparatus 1000 according to an embodiment of the disclosure may include a user inputter 1100, a communication unit 1200, a memory 1400, a camera 1500, a mover 1600, an outputter 1700, a sensor unit 1800, and a processor 1300, and the outputter 1700 may include a speaker 1710 and a display 1720.

[0138] The user inputter 1100 may receive user inputs for controlling operations of the robotic cleaning apparatus 1000. For example, the user inputter 1100 may include, for example, a key pad, a dome switch, a touchpad (e.g., a capacitive overlay, resistive overlay, infrared beam, surface acoustic wave, integral strain gauge, or piezoelectric touchpad), a jog wheel, and a jog switch, but is not limited thereto.

[0139] The communication unit 1200 may include one or more communication modules for communicating with the server 2000 and the external device 3000. For example, the communication unit 1200 may include a short-range wireless communication unit and a mobile communication unit. The short-range wireless communication unit may include, for example, a Bluetooth communication unit, a BLE communication unit, an NFC unit, a WLAN (or Wi-Fi) communication unit, a Zigbee communication unit, an IrDA communication unit, a WFD communication unit, a UWB communication unit, or an Ant+ communication unit, but is not limited thereto. The mobile communication unit transmits and receives radio signals to and from at least one of a base station, an external terminal, or a server in a mobile communication network. Herein, the radio signals may include voice call signals, video call signals, or various types of data due to transception of text/multimedia messages.

[0140] The memory 1400 may store programs for controlling operations of the robotic cleaning apparatus 1000. The memory 1400 may include at least one instruction for controlling operations of the robotic cleaning apparatus 1000. The memory 1400 may store, for example, map data, contamination data, contamination map data, and data about a cleaning target area. The memory 1400 may store, for example, a learning model for generating the contamination map data, and a learning model for determining the cleaning target area. The programs stored in the memory 1400 may be classified into a plurality of modules according to functions thereof.

[0141] The memory 1400 may include at least one type of storage medium among flash memory, a hard disk, a multimedia card micro, card-type memory (e.g., secure digital (SD) or extreme digital (XD) memory), random access memory (RANI), static random access memory (SRAM), read-only memory (ROM), electrically erasable programmable ROM (EEPROM), programmable ROM (PROM), magnetic memory, a magnetic disc, and an optical disc.

[0142] The camera 1500 may photograph an ambient environment of the robotic cleaning apparatus 1000. The camera 1500 may photograph the ambient environment of the robotic cleaning apparatus 1000 or a floor while the robotic cleaning apparatus 1000 is performing cleaning.

[0143] The mover 1600 may include at least one driving wheel for moving the robotic cleaning apparatus 1000. The mover 1600 may include a driving motor connected to the driving wheel to rotate the driving wheel. The driving wheel may include a left wheel and a right wheel respectively provided at a left side and a right side of a main body of the robotic cleaning apparatus 1000. The left and right wheels may be driven by a single driving motor, or a left wheel driving motor for driving the left wheel and a right wheel driving motor for driving the right wheel may be separately used when needed. In this case, the robotic cleaning apparatus 1000 may be steered to a left side or a right side by rotating the left and right wheels at different speeds.

[0144] The outputter 1700 may output an audio signal or a video signal. The outputter 1700 may include the speaker 1710 and the display 1720. The speaker 1710 may output audio data received from the communication unit 1200 or stored in the memory 1400. The speaker 1710 may output a sound signal (e.g., call signal reception sound, message reception sound, or notification sound) related to a function performed by the robotic cleaning apparatus 1000.

[0145] The display 1720 displays information processed by the robotic cleaning apparatus 1000. For example, the display 1720 may display a user interface for controlling the robotic cleaning apparatus 1000 or a user interface indicating a state of the robotic cleaning apparatus 1000.

[0146] When the display 1720 and a touchpad are layered to configure a touchscreen, the display 1720 may be used not only as an output device but also as an input device.

[0147] The sensor unit 1800 may include at least one sensor for sensing data about an operation and a state of the robotic cleaning apparatus 1000, and data about contamination of a cleaning space. The sensor unit 1800 may include, for example, at least one of an infrared sensor, an ultrasonic sensor, an RF sensor, a geomagnetic sensor, or a PSD sensor.

[0148] The sensor unit 1800 may detect a contaminated area near the robotic cleaning apparatus 1000 and detect a contamination level. The sensor unit 1800 may detect an obstacle near the robotic cleaning apparatus 1000, or detect whether a cliff is present near the robotic cleaning apparatus 1000.

[0149] The sensor unit 1800 may further include an operation detection sensor for detecting operations of the robotic cleaning apparatus 1000. For example, the sensor unit 1800 may include a gyro sensor, a wheel sensor, and an acceleration sensor.

[0150] The gyro sensor may detect a rotation direction and a rotation angle when the robotic cleaning apparatus 1000 moves. The wheel sensor may be connected to the left and right wheels to detect the number of revolutions of each wheel. For example, the wheel sensor may be a rotary encoder but is not limited thereto.

[0151] The processor 1300 may generally control overall operations of the robotic cleaning apparatus 1000. For example, the processor 1300 may control the user inputter 1100, the communication unit 1200, the memory 1400, the camera 1500, the mover 1600, the outputter 1700, and the sensor unit 1800 by executing the programs stored in the memory 1400. The processor 1300 may control the operations of the robotic cleaning apparatus 1000, which are described above in relation to FIGS. 1 to 13, by controlling the user inputter 1100, the communication unit 1200, the memory 1400, the camera 1500, the mover 1600, the outputter 1700, and the sensor unit 1800.

[0152] The processor 1300 may acquire contamination data. The processor 1300 may generate contamination data of the cleaning space by using a camera and a sensor of the robotic cleaning apparatus 1000. The processor 1300 may generate image data indicating a contamination level of the cleaning space, by photographing the cleaning space with the camera while cleaning the cleaning space. The processor 1300 may generate sensing data indicating a dust quantity in the cleaning space, by sensing the cleaning space through a dust sensor while cleaning the cleaning space.

[0153] The processor 1300 may receive contamination data from the external device 3000. The external device 3000 may provide the contamination data generated by the external device 3000, to the server 2000, and the processor 1300 may receive the contamination data generated by the external device 3000, from the server 2000.

[0154] The processor 1300 may acquire contamination map data based on the contamination data. The processor 1300 may generate and update the contamination map data by using the acquired contamination data. The contamination map data may include a map of the cleaning space and information indicating locations and contamination levels of contaminated areas in the cleaning space. The contamination map data may be generated per a time period and indicate the locations and the contamination levels of the contaminated areas in the cleaning space, in each time period.

[0155] The processor 1300 may generate map data of the cleaning space by recognizing a location of the robotic cleaning apparatus 1000 while moving. The processor 1300 may generate the contamination map data indicating the contaminated areas in the cleaning space, by using the contamination data and the map data.

[0156] The processor 1300 may generate the contamination map data by, for example, applying the map data and the contamination data of the cleaning space to the learning model for generating the contamination map data.

[0157] Alternatively, the processor 1300 may request the contamination map data from the server 2000. In this case, the processor 1300 may provide the map data and the contamination data of the cleaning space to the server 2000, and the server 2000 may generate the contamination map data by applying the map data and the contamination data of the cleaning space to the learning model for generating the contamination map data.

[0158] The processor 1300 may determine a cleaning target area, based on the contamination map data. The processor 1300 may predict the locations and the contamination levels of the contaminated areas in the cleaning space, based on the contamination map data, and determine at least one cleaning target area, based on an operation mode and an apparatus state of the robotic cleaning apparatus 1000.

[0159] The processor 1300 may determine a priority of and a cleaning strength for the cleaning target area in the cleaning space considering the operation mode and the apparatus state of the robotic cleaning apparatus 1000. The processor 1300 may determine the cleaning strength of the robotic cleaning apparatus 1000 by, for example, determining a speed and suction power of the robotic cleaning apparatus 1000.

[0160] The processor 1300 may determine the cleaning target area by applying information about the robotic cleaning apparatus 1000 and the cleaning space to the learning model for determining the cleaning target area. For example, the processor 1300 may determine the cleaning target area, and the priority of and the cleaning strength for the cleaning target area by inputting information about the operation mode of the robotic cleaning apparatus 1000, the apparatus state of the robotic cleaning apparatus 1000, and a motion of a user in the cleaning space, to the learning model for determining the cleaning target area.

[0161] The processor 1300 may clean the determined cleaning target area. The processor 1300 may clean the cleaning space according to the priority of and the cleaning strength for the cleaning target area. The processor 1300 may collect contamination data about contamination of the cleaning space in real time while cleaning, and change the cleaning target area by reflecting the contamination data collected in real time.

[0162] FIG. 19 is a block diagram of the server 2000 according to an embodiment of the disclosure.

[0163] Referring to FIG. 19, the server 2000 according to an embodiment of the disclosure may include a communication unit 2100, a storage 2200, and a processor 2300.