Webrtc Api Redirection With Interception Techniques

VYSOTSKY; VLADIMIR ; et al.

U.S. patent application number 16/229445 was filed with the patent office on 2019-11-07 for webrtc api redirection with interception techniques. The applicant listed for this patent is CITRIX SYSTEMS, INC.. Invention is credited to TODD GIEBLER, GEORGY MOMCHILOV, JAMES PAGE, VLADIMIR VYSOTSKY.

| Application Number | 20190342378 16/229445 |

| Document ID | / |

| Family ID | 66542551 |

| Filed Date | 2019-11-07 |

View All Diagrams

| United States Patent Application | 20190342378 |

| Kind Code | A1 |

| VYSOTSKY; VLADIMIR ; et al. | November 7, 2019 |

WEBRTC API REDIRECTION WITH INTERCEPTION TECHNIQUES

Abstract

A computing system includes a virtual desktop server and a client computing device. The virtual desktop server includes an application framework comprising a real-time media application to provide real-time communications (RTC), a native RTC engine to execute a portion of the real-time media application when received by the native RTC engine, and an API code redirection module. The API code redirection module redirects intercepted APIs of the real-time media application intended for the native RTC engine based on redirection code injected into the real-time media application so that the portion of the real-time media application is redirected. The client computing device includes a client RTC API engine communicating with the API code redirection module through a virtual channel to execute the redirected portion of the real-time media application.

| Inventors: | VYSOTSKY; VLADIMIR; (FREMONT, CA) ; GIEBLER; TODD; (POMPANO BEACH, FL) ; MOMCHILOV; GEORGY; (PARKLAND, FL) ; PAGE; JAMES; (POMPANO BEACH, FL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 66542551 | ||||||||||

| Appl. No.: | 16/229445 | ||||||||||

| Filed: | December 21, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62667013 | May 4, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 7/90 20170101; H04L 67/1091 20130101; H04L 61/2514 20130101; H04L 65/4069 20130101; H04L 67/2804 20130101; H04L 61/2575 20130101; H04L 61/2589 20130101; H04L 67/2814 20130101; G06K 19/06028 20130101; H04L 61/256 20130101; G06F 9/452 20180201; G06F 9/45529 20130101; G06F 9/45533 20130101; H04L 67/42 20130101; G06F 9/547 20130101; H04L 67/2861 20130101; H04L 61/2564 20130101; H04L 65/608 20130101; H04L 65/4015 20130101; H04L 67/08 20130101; G06F 2009/45562 20130101; H04L 65/1069 20130101; G06F 9/45545 20130101; H04L 65/1063 20130101; H04L 65/403 20130101; H04L 67/02 20130101; H04L 43/12 20130101; G06F 2009/45595 20130101; H04N 5/272 20130101; G06F 9/45558 20130101; G06F 2203/04804 20130101; H04L 65/80 20130101; H04L 67/2819 20130101; H04L 67/34 20130101; H04L 65/1059 20130101; H04L 63/20 20130101; H04L 65/601 20130101; G06F 3/1454 20130101; G06F 9/455 20130101; H04L 65/60 20130101; H04N 7/147 20130101; H04L 67/104 20130101; H04L 65/605 20130101; G06F 2209/509 20130101; G06F 3/0481 20130101 |

| International Class: | H04L 29/08 20060101 H04L029/08; H04L 29/06 20060101 H04L029/06; G06F 9/451 20060101 G06F009/451; G06F 9/455 20060101 G06F009/455 |

Claims

1. A computing system comprising: a virtual desktop server comprising: a desktop and a desktop application framework associated therewith and a real-time media application to provide real-time communications (RTC), and a native RTC engine to execute a first portion of the real-time media application so as to integrate with other desktop applications and other operating system functionality, and an API code redirection module to redirect intercepted APIs of the real-time media application intended for the native RTC engine based on redirection code injected into the real-time media application so that a second portion of the real-time media application is redirected; and a client computing device comprising a client RTC API engine communicating with the API code redirection module through a virtual channel to execute the redirected second portion of the real-time media application, with the redirected second portion of the real-time media application corresponding to real-time media processing and networking being off-loaded to said client computing device.

2. The computing system according to claim 1 wherein the redirected APIs correspond to real-time media processing.

3. The computing system according to claim 1 wherein the redirected APIs correspond to peer-to-peer networking with another client computing device.

4. The computing system according to claim 1 wherein said API code redirection module further comprises a hooking module configured to perform the following: intercept the APIs of the real-time media application based on hooking; and inject the redirection code into the real-time media application based on the intercepted APIs.

5. The computing system according to claim 1 wherein said virtual desktop server comprises a browser including at least one of hooks and plug-ins configured to perform the following: intercept the APIs of the real-time media application based on at least one of the hooks and plug-ins; and inject the redirection code into the real-time media application based on the intercepted APIs.

6. The computing system according to claim 1 further comprising a proxy server configured to perform the following: intercept HTML content from a web server to be retrieved by the real-time media application; and re-write the intercepted HTML content so that execution of the re-written HTML content causes the APIs of the real-time media application to be intercepted, and the redirection code to be then injected into the real-time media application based on the intercepted APIs.

7. The computing system according to claim 1 further comprising a proxy server configured to perform the following: intercept HTML content from a web server to be retrieved by the real-time media application; and inject code into pages of the intercepted HTML content, with execution of the pages with the injected code causing the following: intercept the APIs of the real-time media application, and inject the redirection code into the real-time media application based on the intercepted APIs.

8. The computing system according to claim 1 wherein said virtual desktop server comprises a browser including at least one of a Browser Helper Object (BHO) and a Browser Extension configured to perform the following: intercept the APIs of the real-time media application based on at least one of the BHO and Browser Extension; and inject the redirection code into the real-time media application based on the intercepted APIs.

9. The computing system according to claim 1 further comprising a micro-virtual private network (VPN) plug-in configured to perform the following: intercept HTML content from a web server to be retrieved by the real-time media application; and re-write the intercepted HTML content so that execution of the re-written HTML content causes the APIs of the real-time media application to be intercepted, and the redirection code to be then injected into the real-time media application based on the intercepted APIs.

10. The computing system according to claim 1 further comprising a micro-virtual private network (VPN) plug-in configured to perform the following: intercept HTML content from a web server to be retrieved by the real-time media application; and inject code into pages of the intercepted HTML content, with execution of the pages with the injected code causing the following: intercept the APIs of the real-time media application, and inject the redirection code into the real-time media application based on the intercepted APIs.

11. The computing system according to claim 1 wherein the virtual desktop server further comprises a modified electron application, with the modification based on decomposing binaries of the electron application to access the APIs of the real-time media application, adding hooks to inject the redirection code into the real-time media application based on the intercepted APIs, repackaging the electron application binaries, and resigning the electron application, with the modified electron application configured to perform the following: intercept the APIs of the real-time media application based on hooking; and inject the redirection code into the real-time media application based on the intercepted APIs.

12. The computing system according to claim 1 wherein the real-time media application is active in a plurality of browser tabs, and wherein said client computing device suspends video playback in an inactive browser tab.

13. The computing system according to claim 1 wherein the real-time media application is active in a plurality of browser tabs, and wherein said client computing device stops rendering in a non-visible area.

14. The computing system according to claim 1 wherein said virtual desktop server includes at least one security policy, and execution by said client RTC API engine of at least part of the redirected portion of the real-time media application is based on the at least one security policy.

15. A method for operating a computing system comprising a virtual desktop server and a client computing device comprising a client RTC API engine, with the virtual desktop server comprising a desktop and a desktop application framework associated therewith and an API code redirection module, and with the application framework comprising a real-time media application and a native RTC engine, the method comprising: providing real-time communications (RTC) based on operation of the real-time media application, with a first portion of the real-time media application to be executed by the native RTC engine so as to integrate with other desktop applications and other operating system functionality; redirecting by the API code redirection module intercepted APIs of the real-time media application intended for the native RTC engine based on redirection code injected into the real-time media application so that a second portion of the real-time media application is redirected; and operating the client RTC API engine communicating with the API code redirection module through a virtual channel to execute the redirected second portion of the real-time media application, with the redirected second portion of the real-time media application corresponding to real-time media processing and networking being off-loaded to said client computing device.

16. The method according to claim 15 wherein the redirected APIs correspond to real-time media processing.

17. The method according to claim 15 wherein the redirected APIs correspond to peer-to-peer networking with another client computing device.

18. The method according to claim 15 wherein the API code redirection module further comprises a hooking module to perform the following: intercept the APIs of the real-time media application based on hooking; and inject the redirection code into the real-time media application based on the intercepted APIs.

19. The method according to claim 15 wherein the virtual desktop server comprises a browser including at least one of hooks and plug-ins configured to perform the following: intercept the APIs of the real-time media application based on at least one of the hooks and plug-ins; and inject the redirection code into the real-time media application based on the intercepted APIs.

20. A non-transitory computer readable medium for operating a virtual desktop server within a computing system comprising a client computing device comprising a client RTC API engine, with the virtual desktop server comprising a desktop and a desktop application framework associated therewith and an API code redirection module, with the application framework comprising a real-time media application and a native RTC engine, and with the non-transitory computer readable medium having a plurality of computer executable instructions for causing the virtual desktop server to perform steps comprising: providing real-time communications (RTC) based on operation of the real-time media application, with a first portion of the real-time media application to be executed by the native RTC engine so as to integrate with other desktop applications and other operating system functionality; and redirecting by the API code redirection module intercepted APIs of the real-time media application intended for the native RTC engine based on redirection code injected into the real-time media application so that a second portion of the real-time media application is redirected; and operating the client RTC API engine communicating with the API code redirection module through a virtual channel to execute the redirected second portion of the real-time media application, with the redirected second portion of the real-time media application corresponding to real-time media processing and networking being off-loaded to said client computing device.

Description

RELATED APPLICATIONS

[0001] This application claims the benefit of provisional application Ser. No. 62/667,013 filed May 4, 2018, which is hereby incorporated herein in its entirety by reference.

TECHNICAL FIELD

[0002] The present disclosure generally relates to an application virtualization platform allowing virtualized browser and desktop applications to deliver optimized real time communications using a standard Web Real-Time Communication (WebRTC) API.

BACKGROUND

[0003] Traditionally, personal computers include combinations of operating systems, applications, and user settings, which are each managed individually by owners or administrators on an ongoing basis. However, many organizations are now using desktop virtualization to provide a more flexible option to address the varying needs of their users. In desktop virtualization, a user's computing environment (e.g., operating system, applications, and/or user settings) may be separated from the user's physical computing device (e.g., smartphone, laptop, desktop computer). Using client-server technology, a "virtualized desktop" may be stored in and administered by a remote server, rather than in the local storage of the client computing device.

[0004] There are several different types of desktop virtualization systems. As an example, Virtual Desktop Infrastructure (VDI) refers to the process of running a user desktop inside a virtual machine that resides on a server. VDI and other server-based desktop virtualization systems may provide personalized desktops for each user, while allowing for centralized management and security. Servers in such systems may include storage for virtual desktop images and system configuration information, as well as software components to provide the virtual desktops and allow users to interconnect to them. For example, a VDI server may include one or more hypervisors (virtual machine managers) to create and maintain multiple virtual machines, software to manage the hypervisor(s), a connection broker, and software to provision and manage the virtual desktops.

[0005] Desktop virtualization systems may be implemented using a single virtualization server or a combination of servers interconnected as a server grid. For example, a cloud computing environment, or cloud system, may include a pool of computing resources (e.g., desktop virtualization servers), storage disks, networking hardware, and other physical resources that may be used to provision virtual desktops, along with additional computing devices to provide management and customer portals for the cloud system.

[0006] Cloud systems may dynamically create and manage virtual machines for customers over a network, providing remote customers with computational resources, data storage services, networking capabilities, and computer platform and application support. For example, a customer in a cloud system may request a new virtual machine having a specified processor speed and memory, and a specified amount of disk storage. Within the cloud system, a resource manager may select a set of available physical resources from the cloud resource pool (e.g., servers, storage disks) and may provision and create a new virtual machine in accordance with the customer's specified computing parameters. Cloud computing services may service multiple customers with private and/or public components, and may be configured to provide various specific services, including web servers, security systems, development environments, user interfaces, and the like.

SUMMARY

[0007] A computing system includes a virtual desktop server and a client computing device. The virtual desktop server includes an application framework comprising a real-time media application to provide real-time communications (RTC), a native RTC engine to execute a portion of the real-time media application when received by the native RTC engine, and an API code redirection module. The API code redirection module is to redirect intercepted APIs of the real-time media application intended for the native RTC engine based on redirection code injected into the real-time media application so that the portion of the real-time media application is redirected. The client computing device comprises a client RTC API engine communicating with the API code redirection module through a virtual channel to execute the redirected portion of the real-time media application.

[0008] Execution of real-time functionality by the client RTC API engine on the client computing device addresses high latency and low media quality issues typically introduced by virtualization of audio and video devices, which involves several rounds of media compression/decompression and extra network hops. Specific functions and interactions of the injected code, the API code redirection module, and the client RTC API engine are designed to optimize WebRTC.

[0009] It is still advantageous to run part of the real-time media application code on the virtual desktop server using a native RTC engine where it can integrate with other desktop applications and other desktop OS functionality. Redirection of WebRTC APIs provides a way for the real-time media application code to continue executing on the virtual desktop server while offloading real-time media processing and networking to the client computing device. The real-time media application code can be largely unaware of the fact that WebRTC APIs are being redirected, resulting in out of the box real-time media optimization for many if not all virtualized applications.

[0010] There are a number of API interception techniques for WebRTC redirection. These techniques include: hooking; use of a custom browser; JavaScript injection via proxy; JavaScript injection via a browser helper object (BHO) or browser extension; JavaScript injection via micro-VPN plugin; and use of an electron app decomposition.

[0011] Another aspect is directed to a method for operating a computing system as described above. The method comprises providing real-time communications (RTC) based on operation of the real-time media application, with a portion of the real-time media application to be executed by the native RTC engine when received by the native RTC engine. The API code redirection module redirects intercepted APIs of the real-time media application intended for the native RTC engine based on redirection code injected into the real-time media application so that the portion of the real-time media application is redirected. The client RTC API engine is operated to communicate with the API code redirection module through a virtual channel to execute the redirected portion of the real-time media application.

[0012] Yet another aspect is directed to a non-transitory computer readable medium for operating a virtual desktop server within a computing system as described above. The non-transitory computer readable medium has a plurality of computer executable instructions for causing the virtual desktop server to perform steps as also described above.

BRIEF DESCRIPTION OF THE DRAWINGS

[0013] FIG. 1 is a block diagram of a network environment of computing devices in which various aspects of the disclosure may be implemented.

[0014] FIG. 2 is a block diagram of a computing device useful for practicing an embodiment of the client machines or the remote machines illustrated in FIG. 1.

[0015] FIG. 3 is a block diagram of an architecture illustrating an application virtualization platform that provides WebRTC API on the virtual desktop server and executes the WebRTC API functionality on the client computing device in which various aspects of the disclosure may be implemented.

[0016] FIGS. 4-7 are various windows illustrating an example window monitoring/overlay detection scenario with the architecture illustrated in FIG. 3.

[0017] FIGS. 8-9 are updates to the window illustrated in FIG. 7 based on movement of the server-side window.

[0018] FIGS. 10-11 are updates to the window illustrated in FIG. 9 based on a rendered server-side application clipping a video stream.

[0019] FIG. 12 is a simplified block diagram of the architecture illustrated in FIG. 3 illustrating screen sharing with WebRTC API redirection.

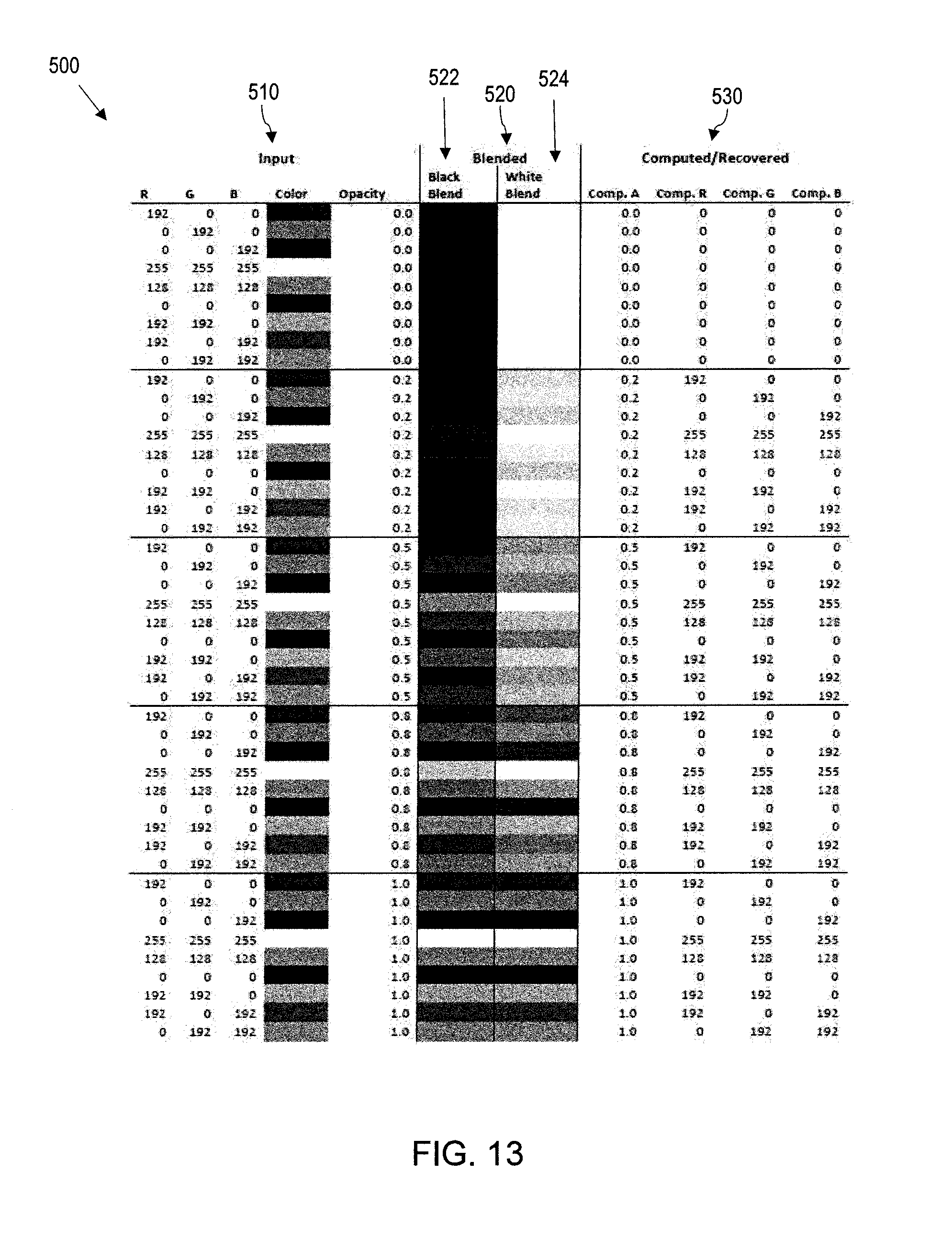

[0020] FIG. 13 is a table diagram illustrating a method of detecting semi-transparent overlays that can be used with the architecture illustrated in FIG. 3.

[0021] FIGS. 14-17 are sample images illustrating a test run of a method of detecting semi-transparent overlays that can be used with the architecture illustrated in FIG. 3.

[0022] FIG. 18 is a block diagram of the architecture illustrated in FIG. 3 modified to support any accelerated graphics media that includes a semi-transparent overlay.

DETAILED DESCRIPTION

[0023] The present description is made with reference to the accompanying drawings, in which exemplary embodiments are shown. However, many different embodiments may be used, and thus the description should not be construed as limited to the particular embodiments set forth herein. Rather, these embodiments are provided so that this disclosure will be thorough and complete. Like numbers refer to like elements throughout, and prime notations are used to indicate similar elements in alternative embodiments.

[0024] As will be appreciated by one of skill in the art upon reading the following disclosure, various aspects described herein may be embodied as a device, a method or a computer program product (e.g., a non-transitory computer-readable medium having computer executable instruction for performing the noted operations or steps). Accordingly, those aspects may take the form of an entirely hardware embodiment, an entirely software embodiment or an embodiment combining software and hardware aspects.

[0025] Furthermore, such aspects may take the form of a computer program product stored by one or more computer-readable storage media having computer-readable program code, or instructions, embodied in or on the storage media. Any suitable computer readable storage media may be utilized, including hard disks, CD-ROMs, optical storage devices, magnetic storage devices, and/or any combination thereof.

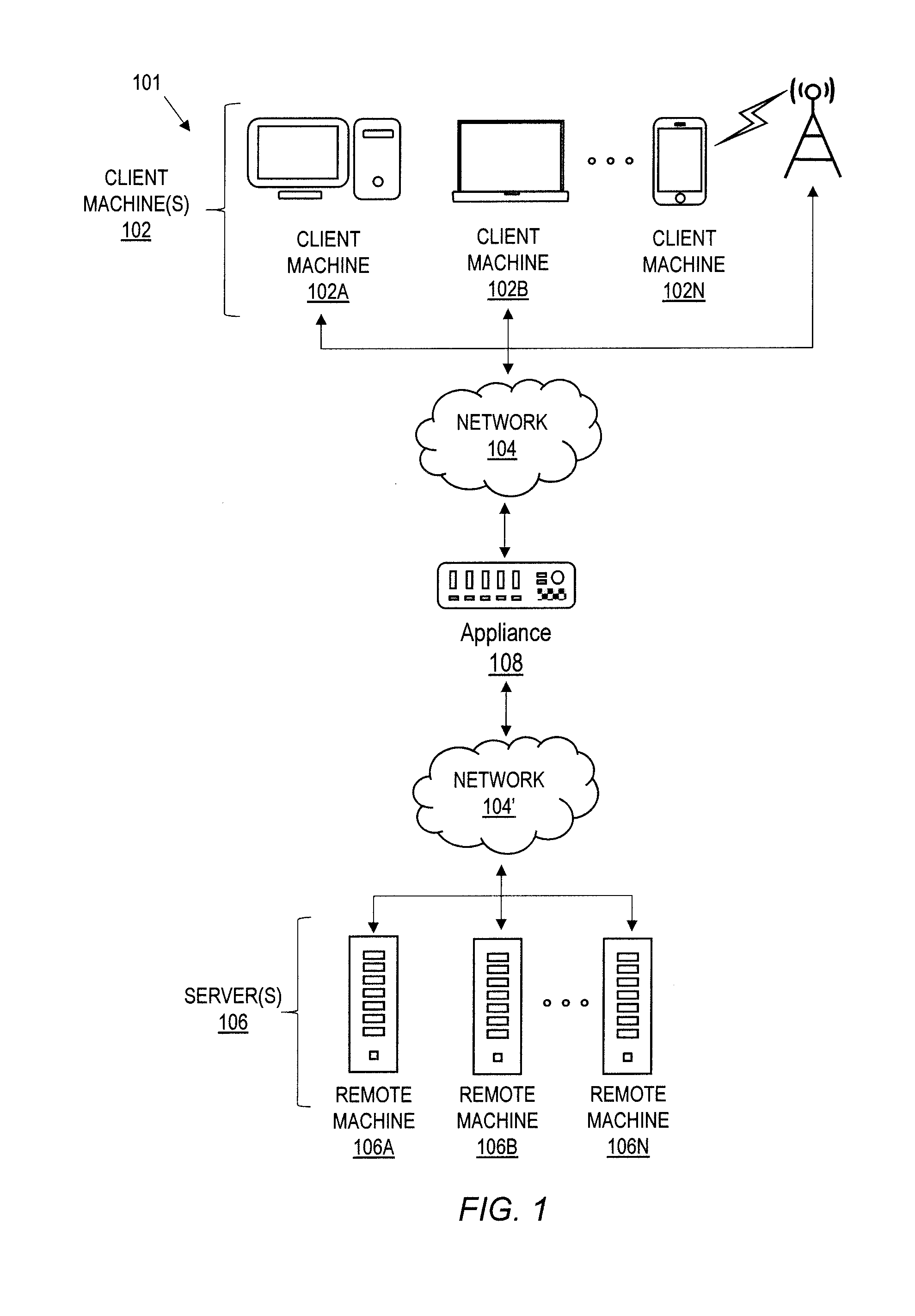

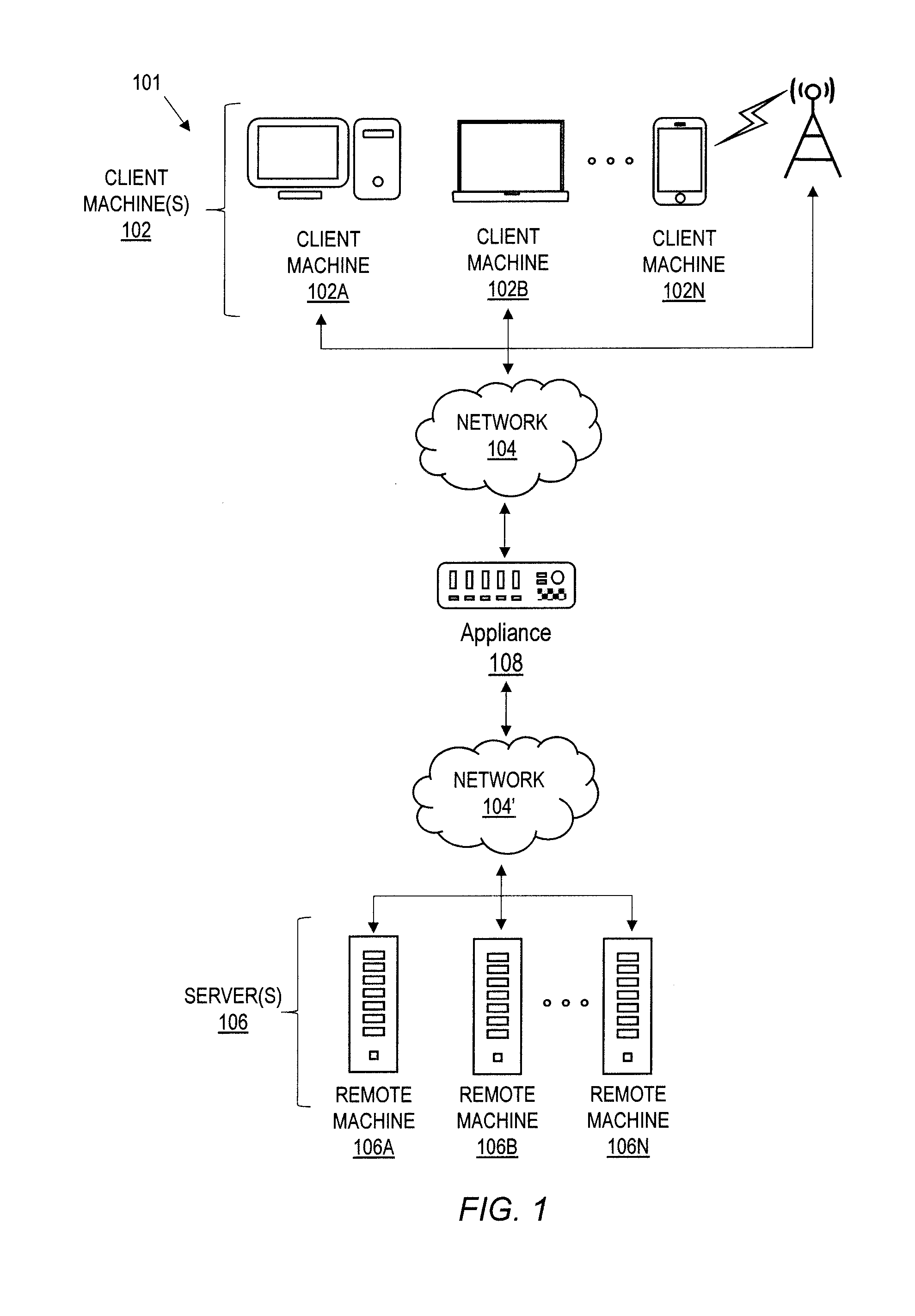

[0026] Referring initially to FIG. 1, a non-limiting network environment 101 in which various aspects of the disclosure may be implemented includes one or more client machines 102A-102N, one or more remote machines 106A-106N, one or more networks 104, 104', and one or more appliances 108 installed within the computing environment 101. The client machines 102A-102N communicate with the remote machines 106A-106N via the networks 104, 104'.

[0027] In some embodiments, the client machines 102A-102N communicate with the remote machines 106A-106N via an intermediary appliance 108. The illustrated appliance 108 is positioned between the networks 104, 104' and may be referred to as a network interface or gateway. In some embodiments, the appliance 108 may operate as an application delivery controller (ADC) to provide clients with access to business applications and other data deployed in a datacenter, the cloud, or delivered as Software as a Service (SaaS) across a range of client devices, and/or provide other functionality such as load balancing, etc. In some embodiments, multiple appliances 108 may be used, and the appliance(s) 108 may be deployed as part of the network 104 and/or 104'.

[0028] The client machines 102A-102N may be generally referred to as client machines 102, local machines 102, clients 102, client nodes 102, client computers 102, client devices 102, computing devices 102, endpoints 102, or endpoint nodes 102. The remote machines 106A-106N may be generally referred to as servers 106 or a server farm 106. In some embodiments, a client device 102 may have the capacity to function as both a client node seeking access to resources provided by a server 106 and as a server 106 providing access to hosted resources for other client devices 102A-102N. The networks 104, 104' may be generally referred to as a network 104. The networks 104 may be configured in any combination of wired and wireless networks.

[0029] A server 106 may be any server type such as, for example: a file server; an application server; a web server; a proxy server; an appliance; a network appliance; a gateway; an application gateway; a gateway server; a virtualization server; a deployment server; a Secure Sockets Layer Virtual Private Network (SSL VPN) server; a firewall; a web server; a server executing an active directory; or a server executing an application acceleration program that provides firewall functionality, application functionality, or load balancing functionality.

[0030] A server 106 may execute, operate or otherwise provide an application that may be any one of the following: software; a program; executable instructions; a virtual machine; a hypervisor; a web browser; a web-based client; a client-server application; a thin-client computing client; an ActiveX control; a Java applet; software related to voice over internet protocol (VoIP) communications like a soft IP telephone; an application for streaming video and/or audio; an application for facilitating real-time-data communications; a HTTP client; a FTP client; an Oscar client; a Telnet client; or any other set of executable instructions.

[0031] In some embodiments, a server 106 may execute a remote presentation services program or other program that uses a thin-client or a remote-display protocol to capture display output generated by an application executing on a server 106 and transmit the application display output to a client device 102.

[0032] In yet other embodiments, a server 106 may execute a virtual machine providing, to a user of a client device 102, access to a computing environment. The client device 102 may be a virtual machine. The virtual machine may be managed by, for example, a hypervisor, a virtual machine manager (VMM), or any other hardware virtualization technique within the server 106.

[0033] In some embodiments, the network 104 may be: a local-area network (LAN); a metropolitan area network (MAN); a wide area network (WAN); a primary public network 104; and a primary private network 104. Additional embodiments may include a network 104 of mobile telephone networks that use various protocols to communicate among mobile devices. For short range communications within a wireless local area network (WLAN), the protocols may include 802.11, Bluetooth, and Near Field Communication (NFC).

[0034] FIG. 2 depicts a block diagram of a computing device 100 useful for practicing an embodiment of client devices 102 or servers 106. The computing device 100 includes one or more processors 103, volatile memory 122 (e.g., random access memory (RAM)), non-volatile memory 128, user interface (UI) 123, one or more communications interfaces 118, and a communications bus 150.

[0035] The non-volatile memory 128 may include: one or more hard disk drives (HDDs) or other magnetic or optical storage media; one or more solid state drives (SSDs), such as a flash drive or other solid state storage media; one or more hybrid magnetic and solid state drives; and/or one or more virtual storage volumes, such as a cloud storage, or a combination of such physical storage volumes and virtual storage volumes or arrays thereof.

[0036] The user interface 123 may include a graphical user interface (GUI) 124 (e.g., a touchscreen, a display, etc.) and one or more input/output (I/O) devices 126 (e.g., a mouse, a keyboard, a microphone, one or more speakers, one or more cameras, one or more biometric scanners, one or more environmental sensors, and one or more accelerometers, etc.).

[0037] The non-volatile memory 128 stores an operating system 115, one or more applications 116, and data 117 such that, for example, computer instructions of the operating system 115 and/or the applications 116 are executed by processor(s) 103 out of the volatile memory 122. In some embodiments, the volatile memory 122 may include one or more types of RAM and/or a cache memory that may offer a faster response time than a main memory. Data may be entered using an input device of the GUI 124 or received from the I/O device(s) 126. Various elements of the computer 100 may communicate via the communications bus 150.

[0038] The illustrated computing device 100 is shown merely as an example client device or server, and may be implemented by any computing or processing environment with any type of machine or set of machines that may have suitable hardware and/or software capable of operating as described herein.

[0039] The processor(s) 103 may be implemented by one or more programmable processors to execute one or more executable instructions, such as a computer program, to perform the functions of the system. As used herein, the term "processor" describes circuitry that performs a function, an operation, or a sequence of operations. The function, operation, or sequence of operations may be hard coded into the circuitry or soft coded by way of instructions held in a memory device and executed by the circuitry. A processor may perform the function, operation, or sequence of operations using digital values and/or using analog signals.

[0040] In some embodiments, the processor can be embodied in one or more application specific integrated circuits (ASICs), microprocessors, digital signal processors (DSPs), graphics processing units (GPUs), microcontrollers, field programmable gate arrays (FPGAs), programmable logic arrays (PLAs), multi-core processors, or general-purpose computers with associated memory.

[0041] The processor may be analog, digital or mixed-signal. In some embodiments, the processor may be one or more physical processors, or one or more virtual (e.g., remotely located or cloud) processors. A processor including multiple processor cores and/or multiple processors may provide functionality for parallel, simultaneous execution of instructions or for parallel, simultaneous execution of one instruction on more than one piece of data.

[0042] The communications interfaces 118 may include one or more interfaces to enable the computing device 100 to access a computer network such as a Local Area Network (LAN), a Wide Area Network (WAN), a Personal Area Network (PAN), or the Internet through a variety of wired and/or wireless connections, including cellular connections.

[0043] In described embodiments, the computing device 100 may execute an application on behalf of a user of a client device. For example, the computing device 100 may execute one or more virtual machines managed by a hypervisor. Each virtual machine may provide an execution session within which applications execute on behalf of a user or a client device, such as a hosted desktop session. The computing device 100 may also execute a terminal services session to provide a hosted desktop environment. The computing device 100 may provide access to a remote computing environment including one or more applications, one or more desktop applications, and one or more desktop sessions in which one or more applications may execute.

[0044] Additional descriptions of a computing device 100 configured as a client device 102 or as a server 106, or as an appliance intermediary to a client device 102 and a server 106, and operations thereof, may be found in U.S. Pat. Nos. 9,176,744 and 9,538,345, which are incorporated herein by reference in their entirety. The '744 and '345 patents are both assigned to the current assignee of the present disclosure.

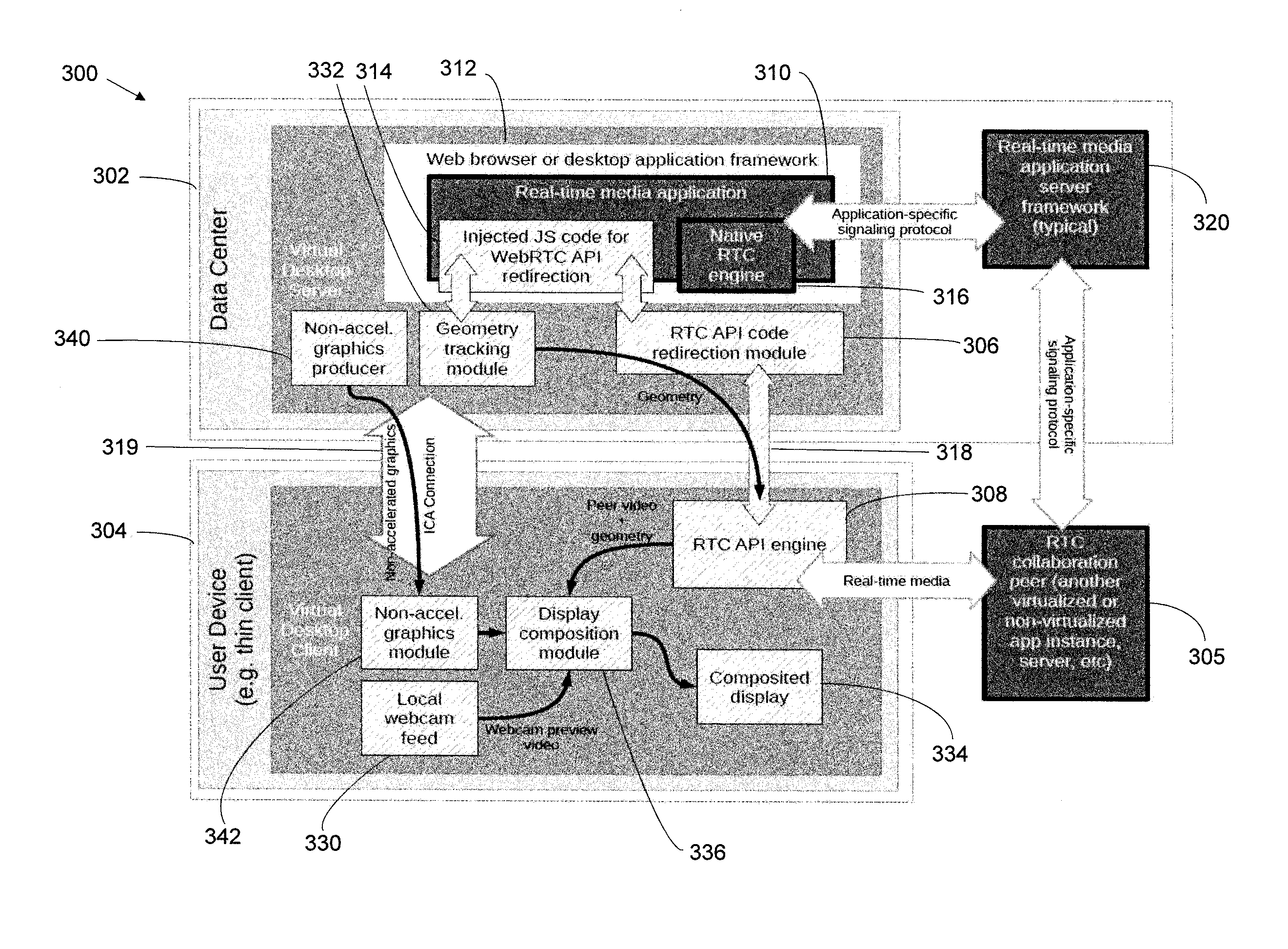

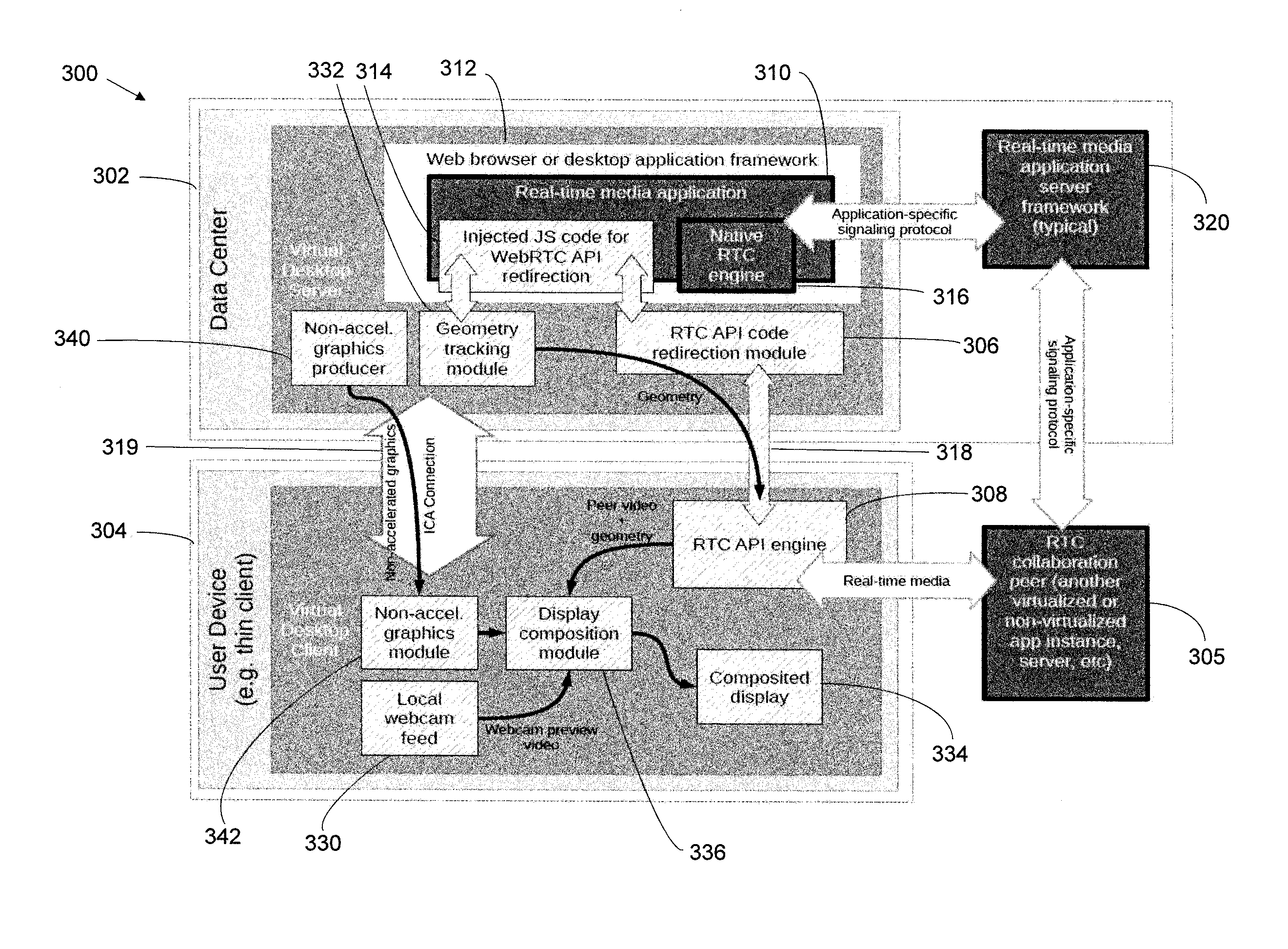

[0045] Turning now to FIG. 3, the illustrated architecture 300 will be discussed in terms of WebRTC redirection with interception techniques. The architecture 300 allows virtualized browser and desktop applications to deliver optimized real time communications (RTC) using a standard WebRTC API. Real time communications includes voice, video and data collaboration. An application framework 312 provides the WebRTC API on the application server 302, and provides execution of the WebRTC API functionality on the remote client 304. Various techniques are used to make this functionality feasible and transparent to the real-time media application 310, and achieve high quality user experience and other desirable features.

[0046] The application framework 312 includes a web browser or a desktop application to provide the real-time media application 310 that provides the real time communications. The real-time media application 310 is supported by a real-time media application server 320. The architecture 300 may be referred to as a computing system 300, the application server 302 may be referred to as a virtual desktop server 302, and the remote client 304 may be referred to as a client computing device 304 or as a virtual desktop client 304. The client computing device 304 is in peer-to-peer communications with at least one other client computing device 305.

[0047] WebRTC is a collection of APIs and an open source project enabling real-time communications (VoIP, video over IP, and other types of real-time collaboration) in browser and desktop applications. Since its introduction to the industry by Google in 2011, and adoption by several standards bodies (W3C and IETF), WebRTC has been included in most popular web browsers, as well as made available in desktop application platforms such as Electron. Application and service vendors as well as enterprise IT departments are increasingly making use of WebRTC for adding voice and video functionality to existing and new HTML5 applications.

[0048] Electron is an open source framework for building desktop applications (running outside of a browser) using technologies originally defined for the Web, including HTML5 and WebRTC. Electron is also seeing increased adoption in the industry by leading application vendors.

[0049] Under desktop virtualization, execution of WebRTC functionality on the virtual desktop server 302 has a number of well-known disadvantages. One disadvantage is the high latency and low media quality introduced by virtualization of audio and video devices, which involves several rounds of media compression/decompression and extra network hops. Another disadvantage is network and server scalability concerns caused by mandatory tunneling of peer-to-peer media through the data center or the cloud infrastructure, and running high CPU cost audio and video codecs on the virtual desktop server 302.

[0050] Execution of real-time functionality, including both the media processing pipelines and the networking code, by a client RTC API engine 308 on the client computing device 304 addresses these problems. It is still advantageous to run the rest of the real-time media application code on the virtual desktop server 302, using a native RTC engine 316 where it can integrate with other desktop applications and other desktop OS functionality. Redirection of WebRTC APIs provides a way for the real-time media application code to continue executing on the virtual desktop server 302, while offloading only real-time media processing and networking to the client computing device 304. Further, the real-time media application code can be largely unaware of the fact that WebRTC APIs are being redirected. This results in out of the box real-time media optimization for many if not all virtualized applications.

[0051] Redirection, or remoting, of parts of application functionality from the virtual desktop server 302 to the client computing device 304 is not new by itself, and has been implemented in a number of technologies (including such Citrix technologies as Remoting Audio and Video Extensions (RAVE), Flash Redirection, Browser Content Redirection, and Real-time Optimization Pack). However, what is new is using WebRTC redirection techniques to deliver real-time media optimization for HTML 5/JavaScript applications.

[0052] WebRTC redirection techniques include a number of unique features such as: new methods of API interception for browser and desktop applications; new techniques for providing fallback functionality; new techniques for video area identification and geometry tracking; new techniques for window monitoring/overlay detection; new techniques for selecting and capturing screen content for content sharing sessions; new types and applications of policies to control application access to endpoint media sources; and new techniques for managing network connectivity for real time media in virtualization application environments. These techniques are discussed in more detail below.

[0053] As a general overview, WebRTC comprises a set of API specifications maintained by the Web Real-Time Communications Working Group (part of W3C), as well as implementations of these APIs in web browsers and HTML5/JavaScript desktop application platforms such as Electron. The most relevant specifications for this architecture include WebRTC 1.0: Real-time Communication Between Browsers (further referenced as [webrtc]), Media Capture and Streams ([mediacapture-streams]), and Screen Capture ([screen-capture]). The following description references definitions and API elements from relevant W3C specifications without repeating them here.

[0054] The general idea of the illustrated architecture 300 in FIG. 3 is to redirect underlying functionality of WebRTC APIs from the virtual desktop server 302 to the client computing device 304 by intercepting calls to the API made by the real-time media application 310. The intercepted APIs are executed either remotely on the client computing device 304 via the client RTC API engine 308, or locally on the virtual desktop server 302 as appropriate via the native RTC engine 316. API callbacks are invoked or asynchronous API events are generated to the real-time media application 310.

[0055] Acronyms and definitions in this field include XenApp (XA), XenDesktop (XD), and Virtual Desktop Infrastructure (VDI). VDI is virtualization technology that hosts a desktop operating system on a centralized server in a data center, and provides remote access to virtual hosted applications and desktops. Citrix Receiver and Citrix Workspace App are virtual desktop clients providing remote access to virtual hosted applications and desktops.

[0056] The following major elements comprise the system architecture 300 for WebRTC redirection. A virtual desktop server 302 provides server-side virtual channel functionality and hosts virtual applications. For example, these may be implemented in Citrix XenApp/XenDesktop. A virtual desktop client 304 provides client-side virtual channel functionality. For example, this may be implemented in Citrix Receiver and Citrix Workspace App. A virtualized application (or virtual application) is a third party application implemented in HTML 5 and JavaScript and uses WebRTC to deliver real-time voice, video and data functionality. The virtualized application, such as the real-time media application 310, is hosted on the virtual desktop server 302.

[0057] A server-side API code redirection module 306 includes code libraries and applications running on the virtual desktop server 302, and interacts with the real-time media application 310 to achieve WebRTC API redirection. The API code redirection module 306 may be referred to as a WebRTC API connector 306. The API code redirection module 306 may be considered as a single system component, or may be split into two subcomponents: injected and non-injected code, for some of the techniques described below.

[0058] Injected server-side WebRTC connector code 314 (injected API code) includes portions of connector code that may be either implemented in JavaScript, or implemented in a different computer language but providing a JavaScript-compatible API. The injected API code 314 is injected into the real-time media application 310, and modifies the HTML5/JavaScript execution environment within the application process. If implemented in JavaScript, the injected code 314 would communicate with non-injected connector code libraries directly using WebSockets or another suitable inter-process communication mechanism, and indirectly through rendered screen contents as described below in the window tracking section.

[0059] Other server-side connector code includes code libraries implemented in any suitable programming language and running either within or outside of the application process. The API code redirection module 306 communicates with the injected code 314 through WebSockets or another suitable mechanism, and indirectly through screen capture, and with the client RTC API engine 308 through a virtual channel 318.

[0060] The client RTC API engine 308 includes code libraries running on the client computing device 304. The client RTC API engine 308 communicates with the API code redirection module 306 through a virtual channel 318 hosted by the virtual desktop server 302.

[0061] The illustrated architecture 300, and the general idea of optimizing application virtualization by offloading parts of the real-time media application RTC functionality from the virtual desktop server 302 to the client computing device 304, are fairly generic and widely used in systems implementing application or desktop virtualization. The distinction hereinlies in specific functions and interactions of the injected code 314, the API code redirection module 306, and the client RTC API engine 308 that are designed to optimize real time media APIs. In particular, WebRTC is optimized.

[0062] As noted above, the computing system 300 includes the virtual desktop server 302 and the client computing device 304 in peer-to-peer communications with another endpoint device 305. The virtual desktop server 302 includes the application framework 312 that includes the real-time media application 310 to provide real-time communications (RTC), and the native RTC engine 316 to execute a portion of the real-time media application 310 when received by the native RTC engine 316. The API code redirection module 306 redirects intercepted APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 injected into the real-time media application 310 so that the portion of the real-time media application 310 is redirected. The client RTC API engine 308 in the client computing device 304 communicates with the API code redirection module 306 through a virtual channel 318 to execute the redirected portion of the real-time media application 310.

[0063] The application framework 312 may be a web browser or a desktop application framework. The redirected APIs correspond to real-time media processing and/or peer-to-peer networking with another client computing device 305.

[0064] Various API interception techniques will now be discussed. WebRTC APIs are defined as Java Script APIs available to HTML 5/Java Script applications within a web browser or within an application development platform such as Electron. Electron combines an HTML 5/Java Script application engine with additional desktop-specific platform functionality. Interception of WebRTC APIs by a desktop virtualization platform requires additional novel techniques.

[0065] The following API interception techniques will be discussed below: hooking; use of a custom browser; JavaScript injection via proxy; JavaScript injection via a browser helper object (MO) or browser extension, JavaScript injection via micro-VPN plugin; and use of an electron app decomposition.

[0066] For hooking, the API code redirection module 306 may include a hooking module configured to intercept the APIs of the real-time media application 310 based on hooking, and inject the redirection code 314 into the real-time media application 310 based on the intercepted APIs.

[0067] JavaScript rendered DLL APIs are hooked and custom JS is inserted. For IS, the JavaScript engine DLL is jscript.dll and is a (in process) COM object server. Hence, the idea would be to employ standard COM shimming techniques to operate as a "man in the middle" (MITM) in order to insert custom JavaScript. In the IE JavaScript engine, all JavaScript objects implement the IDispatchEx interface. Alternatively, one could hook OS socket APIs, e.g. WinSock APIs, parse HTTP traffic and HTML content and then insert JS.

[0068] For a custom browser, a custom Chromium-based browser engine may be used and a custom Secure Browser published, e.g., as part of the Citrix Secure Browser Cloud Service. Hooks or plugins are used within the Chromium engine to inject custom JS. Alternatively, a custom browser engine may implement some or all of the WebRTC redirection functionality in native code, limiting or removing the need to inject custom JavaScript code. The virtual desktop server 302 includes a browser that includes hooks or plug-ins configured to intercept the APIs of the real-time media application 310, and inject the redirection code 314 into the real-time media application 310 based on the intercepted APIs.

[0069] For JavaScript injection via proxy, a proxy is used to intercept HTML content. The proxy would add additional JS via content rewriting. The proxy could be a separate appliance on the network, or reside on the virtual desktop server 302. The proxy could be configured explicitly (using browser settings) or operate as a transparent proxy.

[0070] In one approach, the computing system 300 further includes a proxy server configured to intercept HTML content from a web server to be retrieved by the real-time media application 310, and re-write the intercepted HTML content so that execution of the re-written HTML content causes the APIs of the real-time media application 310 to be intercepted. The redirection code 314 is then injected into the real-time media application 310 based on the intercepted APIs.

[0071] In another approach, the proxy server may be configured to intercept HTML content from a web server to be retrieved by the real-time media application 310, and inject code into pages of the intercepted HTML content. Execution of the pages with the injected code causes the APIs of the real-time media application 310 to be intercepted. The redirection code 314 is then injected into the real-time media application 310 based on the intercepted APIs.

[0072] For JavaScript injection via Browser Helper Object (BHO) or browser extension, JavaScript code to implement WebRTC API redirection could be injected into a browser-based application using the BHO or the Browser Extension for web browsers that implement BHO or browser extension mechanisms. The virtual desktop server 302 thus includes a browser including a BHO or a Browser Extension to intercept the APIs of the real-time media application 310, and inject the redirection 314 code into the real-time media application 310 based on the intercepted APIs.

[0073] For JavaScript injection via micro-VPN plugin, a universal windows platform (UWP) App is used to implement a virtual private network (VPN) app plugin. The UWP VPN plugin app will handle the declaration of the VPN client plug-in capability in the AppX manifest and provide reference to the VPN plug-in app handler. Running the plugin within a sandbox (app container) allows for greater security and reduced complexity of the implementation.

[0074] The VPN app plugin is able to control VPN connections in conjunction with the OS VPN platform. In addition, the VPN app plugin could be configured as a micro-VPN, i.e., it can be applied on a per-application basis. For example, the configuration could be achieved via mobile device management (MDM) or mobile application management (MDM) policies.

[0075] Alternatively, a custom profile may be created using PowerShell scripts. The configuration could specify apps whose traffic is to be managed by the micro-VPN plugin. In particular, the micro-VPN plugin could be configured for browser applications and Electron based apps. The micro-VPN plugin could be enabled to intercept network traffic for all or specific apps, decrypt TLS using local certificate store, parse HTTP traffic and HTML content and then insert custom JavaScript.

[0076] In one approach, the computing system further includes a micro-virtual private network (VPN) plug-in configured to intercept HTML content from a web server to be retrieved by the real-time media application 310, and re-write the intercepted HTML content so that execution of the re-written HTML content causes the APIs of the real-time media application 310 to be intercepted. Then the redirection code 314 is to be injected into the real-time media application 310 based on the intercepted APIs.

[0077] In another approach, the micro-virtual private network (VPN) plug-in may be configured to intercept HTML content from a web server to be retrieved by the real-time media application 310, and inject code into pages of the intercepted HTML content. Execution of the pages with the injected code causes the APIs of the real-time media application 310 to be intercepted. The redirection code 314 is then injected into the real-time media application 310 based on the intercepted APIs.

[0078] For electron app decomposition, the electron app is first run through a tool that modifies the electron app. The modification is based on decomposing binaries of the electron application to access the APIs of the real-time media application 310, add hooks to inject the redirection code into the real-time media application 310 based on the intercepted APIs, repackage the electron application binaries, and resign the electron application. The modified electron application is then configured to intercept the APIs of the real-time media application 310 based on hooking, and inject the redirection code 314 into the real-time media application 310 based on the intercepted APIs. Stated another way, the electron app is detected during virtual app publishing.

[0079] Electron app binaries are then decomposed. App content is usually located within an .asar archive located within a subfolder corresponding to the electron application. It is possible to unpack this archive using asar.exe (available as a node.js package) to access the JavaScript code for the application. Then hooks are added where the hooks comprise DLLs or other modules are to be loaded at runtime and to inject custom JS. The import address table of the main executable is modified to load the hooks when a process runs. The application binaries are repackaged, and the electron application package is then resigned.

[0080] Another aspect of delivering optimized real time communications (RTC) using a standard WebRTC API is directed to a method for operating a computing system 300 comprising a virtual desktop server 302 and a client computing device 304 comprising a client RTC API engine 308. The virtual desktop server 302 includes an application framework 312 and an API code redirection module 306. The application framework 312 includes a real-time media application 310 and a native RTC engine 316.

[0081] The method includes providing real-time communications based on operation of the real-time media application 310, with a portion of the real-time media application 310 to be executed by the native RTC engine 316 when received by the native RTC engine 316, The method further includes redirecting by the API code redirection module 306 intercepted APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 injected into the real-time media application 310 so that the portion of the real-time media application 310 is redirected. The client RTC API engine 308 communicates with the API code redirection module 306 through a virtual channel 318 to execute the redirected portion of the real-time media application 310.

[0082] Yet another aspect is directed to a non-transitory computer readable medium for operating a virtual desktop server 302 within a computing system 300 as described above. The non-transitory computer readable medium has a plurality of computer executable instructions for causing the virtual desktop server 302 to provide real-time communications (RTC) based on operation of the real-time media application 310, with a portion of the real-time media application 310 to be executed by the native RTC engine 316 when received by the native RTC engine 316. The instructions further cause the virtual desktop server 302 to redirect by the API code redirection module 306 intercepted APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 injected into the real-time media application 310 so that the client RTC API engine 308 communicates with the API code redirection module 306 through a virtual channel 318 to execute the redirected portion of the real-time media application 310.

[0083] Still referring to FIG. 3, the illustrated computing system 300 will now be discussed in terms of WebRTC redirection with fallback. General fallback techniques will now be discussed where in some cases the necessary functionality will be missing so a graceful fallback functionality to use less optimized mechanisms is needed for handling real time media when full optimization is not available.

[0084] The virtual desktop server 302 and the at least one client computing device 304 are as discussed above. The application framework 312 includes the real-time media application 310 to provide real-time communications (RTC), and the native RTC engine 316 executes a portion of the real-time media application 310 when received by the native RTC engine 306. The virtual desktop server 302 further includes an API code redirection module 306 to redirect original APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 injected into the real-time media application 310 so that the portion of the real-time media application 310 is to be redirected.

[0085] In particular, the client computing device 304 includes the client RTC API engine 308 reporting to the API code redirection module 306 through a virtual channel 318 on capabilities of the client computing device 304 to execute the redirected portion of the real-time media application 310. The API code redirection module 306 switches to a fallback mode if the client computing device 304 has limited capabilities. In the fallback mode at least part of the original APIs are used so that the native RTC engine 316 executes at least part of the portion of the real-time media application 310.

[0086] Real time media functionality enabled by WebRTC redirection described here will result in optimized user experience and system scalability when all system components include the necessary functionality. In particular, when virtual desktop clients and servers include compatible software and hardware modules that have the necessary capabilities cooperate to deliver the functionality as designed.

[0087] If the client computing device 304 has full capabilities fallback is not needed. In this case, the API code redirection module 306 does not switch to the fallback mode so that the client RTC API engine 308 executes all of the redirected portion of the real-time media application 310.

[0088] However, in real world deployments, it is inevitable that in some cases the necessary functionality will be missing. A WebRTC remoting solution must provide graceful fallback functionality to use other, less optimized mechanisms for handling real time media when full optimization is not available. Ideally, this fallback functionality (as well as the optimization itself) should be transparent to the application, i.e., handled by the application framework 312 without requiring application changes, and provide a friendly user experience.

[0089] To provide fallback functionality, a virtual application solution needs to include some or all of session state tracking and capability detection and negotiation. In session state tracking, application process lifetime may overlap with one or more user connections to a virtual access session, and the process of enabling optimized functionality or providing a fallback may happen multiple times as session state changes.

[0090] In capability detection and negotiation, client-server protocols used for providing optimized functionality need to support detection or negotiation of the virtual desktop server 302 and client computing device 304 capabilities, which enables the corresponding code to switch between optimized and fallback modes of operation.

[0091] When fallback is needed, then the following techniques are specific to providing fallback functionality for WebRTC redirection. These techniques include 1) fallback to the built-in native RTC engine 316; 2) per-modality fallback; 3) partial fallback; and 4) mapping of session connect/disconnect events to device availability events.

[0092] In fallback to the built-in native RTC engine 316, the client computing device 304 has no capabilities to execute the redirected portion of the real-time media application 310. In the fallback mode all of the original APIs are used so that the native RTC engine 316 executes all of the portion of the real-time media application 310 instead of the client RTC API engine 308.

[0093] When remote execution of WebRTC is not possible (e.g., when no client RTC API engine 308 is present), injected code can dynamically dispatch application API calls to the built-in native RTC engine 316 that is included as part of the application framework 312. Injected code 314 can use itself as a shim to monitor these calls and resulting built-in WebRTC objects are used to enable an eventual switch to remote (optimized) functionality as well as ongoing monitoring of application WebRTC usage and quality.

[0094] In the per-modality fallback, depending on client computing device 304 capabilities and policies, the application framework 312 may implement per-modality fallback functionality when a remaining part of the portion of the real-time media application 310 that is not executed by the native RTC engine 316 is redirected to the client RTC API engine 308 for execution.

[0095] For example, when a client computing device 304 can only handle the audio portion of a real-time media session, the application framework 312 may implement optimized functionality for audio and fallback functionality for video. To do this, injected code 314 can remote API calls for audio streams to the client computing device 304 and redirect API calls for video streams to the local built-in RTC engine 316, merging the results together. The at least part of the portion of the real-time media application 310 executed by the native RTC engine 316 corresponds to video, and the remaining part of the portion of the real-time media application 310 executed by the client RTC API engine 308 corresponds to audio.

[0096] Alternatively, the at least part of the portion of the real-time media application 310 executed by the native RTC engine 316 corresponds to audio, and the remaining part of the portion of the real-time media application 310 executed by the client RTC API engine 308 corresponds to video.

[0097] In the partial fallback, depending on the virtual desktop server 302 capabilities and policies, injected and server-side code can implement partial fallback. Partial fallback does not involve redirection to the client computing device 304. Instead, the capabilities of the virtual desktop server 302 are reduced.

[0098] In an optimized mode the WebRTC redirection framework may support audio, video, and data communications. In the partial fallback mode the framework may only provide audio and video if the server-side CPU utilization on a multi-user server does not exceed a certain value. If the certain value is exceeded, then video support is automatically turned off under high CPU load to avoid quality problems. Similar fallback restrictions can be implemented by monitoring media quality for fallback sessions, and disabling some functionality if quality stays or falls below a defined threshold.

[0099] In other words, if the client computing device 304 has limited capabilities and the API code redirection module 306 determines that the virtual desktop sever 302 also has limited capabilities, then the API code redirection module 306 does not switch to the fallback mode for at least a part of the portion of the real-time media application 310, and the at least part of the portion of the real-time media application 310 is not executed by neither the client RTC API engine 308 nor the native RTC engine 316.

[0100] For example, the at least part of the portion of the real-time media application 310 that is not executed by neither the client RTC API engine 308 nor the native RTC engine 316 may correspond to video, while the remaining part of the portion of the real-time media application 310 is executed by either the client RTC API engine 308 or the native RTC engine 316 in fallback mode and may correspond to at least one of audio and data communications.

[0101] As another example, the API code redirection module 306 may determine that the virtual desktop sever 302 also has limited capabilities based on at least one of client computing device 304 policies, virtual desktop sever 302 policies, virtual desktop sever 302 CPU load, and media quality.

[0102] In this case, if the client computing device 304 has limited capabilities and the API code redirection module 306 determines that the virtual desktop sever 302 also has limited capabilities, then the API code redirection module 306 does not switch to the fallback mode for at least a part of the portion of the real-time media application 310. The at least part of the portion of the real-time media application 310 is not executed by neither the client RTC API engine 308 nor the native RTC engine 316.

[0103] The at least part of the portion of the real-time media application 310 that is not executed by neither the client RTC API engine 308 nor the native RTC engine 316 corresponds to video, and the remaining part of the portion of the real-time media application 310 is executed by either the client RTC API engine 308 or the native RTC engine 316 in fallback mode and corresponds to at least one of audio and data communications.

[0104] The API code redirection module 306 determines that the virtual desktop sever 302 also has limited capabilities based on at least one of client computing device policies, virtual desktop sever policies, virtual desktop sever CPU load, and media quality.

[0105] In the mapping of session connect/disconnect events to device availability events, WebRTC includes a subset of APIs (NavigatorUserMedia, getUserMedia) enabling applications to enumerate local media devices, request access to a local media device (such as a microphone or a camera), and use it for a real-time media session. Under WebRTC redirection, the corresponding APIs will respectively enumerate, obtain access to, and request usage of, devices physically attached to the client computer.

[0106] To handle fallback scenarios, upon session disconnection, injected code will update internal data structures and generate events indicating to the application that all devices have been physically disconnected. Upon session reconnection and after successful capability negotiation, this code will indicate to the real-time media application 310 that new devices are now available.

[0107] Upon fallback, injected code will defer to the built-in WebRTC engine 316 to enumerate local devices, which may in turn invoke other virtual channel functionality to enumerate client devices and perform non-optimized redirection. For example, this may be via generic audio redirection or graphics remoting virtual channels. With this approach, the application designed properly to handle local media device availability will not require any special logic to process session connect/disconnect and fallback events.

[0108] In one aspect, the virtual desktop server 302 enumerates devices physically connected to the client computing device 304 when the client computing device 304 is connected to the virtual desktop server 302. When the client computing device 304 is disconnected from the virtual desktop server 302, then the injected code 314 generates events indicating to the real-time media application 310 that all devices have been physically disconnected.

[0109] Upon reconnection of the client computing device 304 to the virtual desktop server 302, the injected code 314 indicates to the real-time media application 310 that new devices are now available. Upon fallback, the injected code 314 defers to the native RTC engine 316 to enumerate the devices physically connected to the client computing device 304.

[0110] Another aspect of providing fallback functionality to use less optimized mechanisms for handling real time media when full optimization is not available is directed to a method for operating a computing system 300 comprising a virtual desktop server 302 and a client computing device 304 that includes a client RTC API engine 308. The virtual desktop server 302 includes an application framework 312 and an API code redirection module 306. The application framework 312 includes a real-time media application 310 and a native RTC engine 316.

[0111] The method includes providing real-time communications (RTC) based on operation of the real-time media application 310, with a portion of the real-time media application to be executed by the native RTC engine when received by the native RTC engine, and redirecting by the API code redirection module 306 original APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 injected into the real-time media application 310 so that the portion of the real-time media application 310 is to be redirected.

[0112] The method further includes reporting by the client RTC API engine 308 to the API code redirection module 306 through a virtual channel 318 on capabilities of the client computing device 304 to execute the redirected portion of the real-time media application 310. The API code redirection module 306 is operated to switch to a fallback mode if the client computing device 304 has limited capabilities, where in the fallback mode at least part of the original APIs are used so that the native RTC engine 316 executes at least part of the portion of the real-time media application 310.

[0113] Yet another aspect is directed to a non-transitory computer readable medium for operating a virtual desktop server 302 within a computing system 300 as described above. The non-transitory computer readable medium has a plurality of computer executable instructions for causing the virtual desktop server 302 to provide real-time communications (RTC) based on operation of the real-time media application 310, with a portion of the real-time media application 310 to be executed by the native RTC engine 316 when received by the native RTC engine 316. The instructions further cause the virtual desktop server 302 to redirect by the API code redirection module 306 intercepted APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 so that the portion of the real-time media application 310 is to be redirected. The API code redirection module 306 receives from the client RTC API engine 308 through a virtual channel 318 capabilities of the client computing device to execute the redirected portion of the real-time media application 310. The API code redirection module 306 is operated to switch to a fallback mode if the client computing device 304 has limited capabilities, where in the fallback mode at least part of the original APIs are used so that the native RTC engine 316 executes at least part of the portion of the real-time media application 310.

[0114] Still referring to FIG. 3, the illustrated computing system 300 will be now discussed in terms of WebRTC redirection with window monitoring/overlay detection. As will be discussed in detail below, geometry tracking is used to seamlessly provide redirection of WebRTC functionality. Applications using WebRTC typically render received video streams by connecting a MediaStream object to an HTML5 video element. Visibility and geometry of this element is usually managed by the application directly or using CSS style information.

[0115] Applications often create and position other UI elements (e.g., labels or control buttons) so that they visually overlap with the video, and may use transparency to blend rendered video with application UI content. The UI elements may be referred to as non-accelerated graphics, and the video may be referred to as accelerated graphics. An application, such as the real-time media application 310, may have more than one active video element rendering real-time video at the same time (e.g., one or more remote video feeds from video conference participants and a self-view from a local camera 330).

[0116] To achieve seamless redirection of WebRTC functionality, the application virtualization framework on the virtual desktop server 302 needs to identify and keep track of visibility and geometry of several video elements, as well as any other elements that overlay live video. When this information is available, WebRTC redirection code on the client computing device 304 will use it to create, position and clip local video rendering windows, and possibly render opaque or transparent overlays on top of these windows to represent corresponding application UI elements.

[0117] Geometry tracking for HTML5 video elements may be via color or pattern detection. Positioning and rendering of video and other UI elements in WebRTC applications is handled by the HTML rendering engine embedded in the browser or application framework, and is typically not readily available through an API or other means of introspection outside of the HTML renderer.

[0118] One possible way to overcome this limitation and identify multiple HTML5 video areas and track their geometry includes the following steps. One step is to use injected code 314 to assign each video element a unique ID at the point when the real-time media application 310 makes an API call to connect a redirected Media Stream with a particular video element. Also, the injected code 314 causes each video area to be painted with a color or a pattern derived from the video element ID. For example, this may include encoding bits of the element ID in bits of video area color for uniform painting, or in colors of adjacent pixels for pattern painting.

[0119] Another possibility is to paint the video area using a 1-D or 2-D bar code encoding the video element ID. In the graphics capture and remoting code (part of the application virtualization code), video overlay areas are detected by color, pattern, or bar code using any of the available techniques for pixel color or area pattern matching or bar code scanning.

[0120] Detected areas may be non-rectangular or may be partially clipped. To achieve correct geometry tracking, it will be necessary to combine the information retrieved from injected code (video element ID and size in pixels) with results of colored or patterned area detection to determine the size and clipping geometry of each individual video element. To improve performance, results of video area detection can be cached and only partially recalculated for subsequent frames.

[0121] The illustrated computing system 300 includes at least one video source 330 to provide at least one video stream, the virtual desktop server 302 and the at least one client computing device 304 as discussed above. The virtual desktop server 302 includes the application framework 312 that includes the real-time media application 310 to provide real-time communications (RTC), and the native RTC engine 316 to execute a portion of the real-time media application 310 when received by the native RTC engine 316.

[0122] The API code redirection module 306 redirects intercepted APIs of the real-time media application 310 intended for the native RTC engine 316 based on redirection code 314 injected into the real-time media application 310 so that the portion of the real-time media application 310 is redirected. In particular, the injected redirection code 314 defines at least one placeholder to indicate positioning geometry of the at least one video stream within an RTC window. A geometry tracking module 332 detects the at least one placeholder within the injected redirection code 314, and provides the positioning geometry associated therewith.

[0123] The client computing device 304 includes a display 334 to display the RTC window. The client RTC API engine 308 communicates with the API code redirection module 306 through the virtual channel 318 to execute the redirected portion of the real-time media application 310. This virtual channel 318 provides accelerated graphics.

[0124] In particular, the client computing device 304 further includes a display composition module 336 to receive the at least one video stream and the positioning geometry of the at least one placeholder, and to overlay the at least one video stream over the at least one placeholder within the displayed RTC window based on the positioning geometry.

[0125] The positioning data from the geometry tracking module 332 may be provided to the display composition module 336 over two different paths. In a first path, the geometry tracking module 332 provides the positioning geometry to the API code redirection module 306 so as to be included in the redirected portion of the real-time media application 310 to the client RTC API engine 308. The client RTC API engine 308 then provides the positioning data to the display composition module 336. In a second path, the geometry tracking module 332 provides the positioning geometry directly to the display composition module 336 over a different virtual channel 319. This virtual channel 319 is for non-accelerated graphics.

[0126] The RTC window as provided by the display 334 is a composited display that includes non-accelerated graphics and accelerated graphics. Non-accelerated graphics include UI elements, labels and control buttons, for example. Accelerated graphics in the illustrated embodiment is defined by the video stream provided by the local webcam feed 330. The display composition module 336 may receive additional video streams, such as from another end point device, such as client computing device 305.

[0127] The injected redirection code 314 generates the non-accelerated graphics but does not generate the accelerated graphics. This means that the geometry tracking module 332 analyzes the non-accelerated graphics for the at least one placeholder. The virtual desktop server 302 also includes a non-accelerated graphics producer 340 that provides non-accelerated graphics over the virtual channel 319 to a non-accelerated graphics module 342 in the client computing device 304.

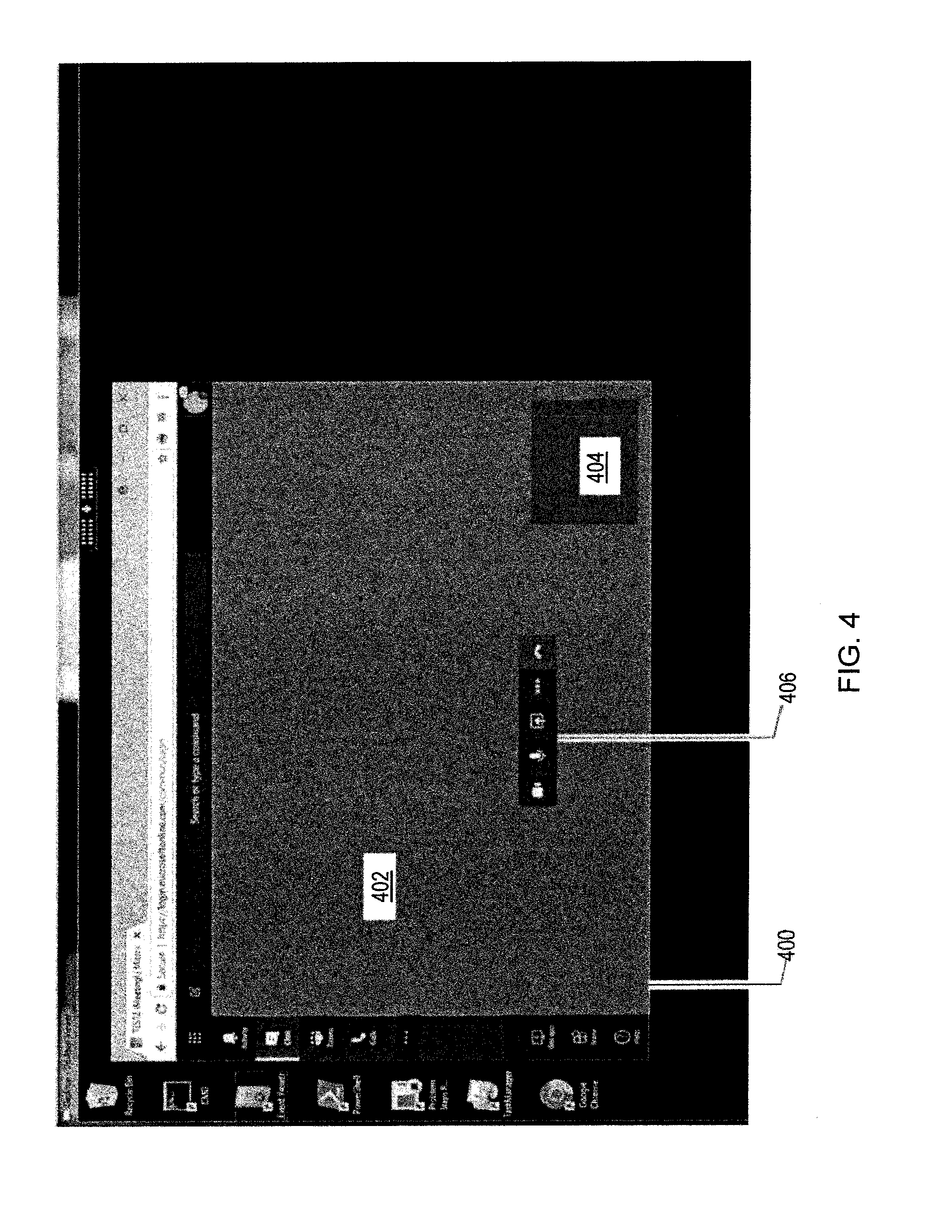

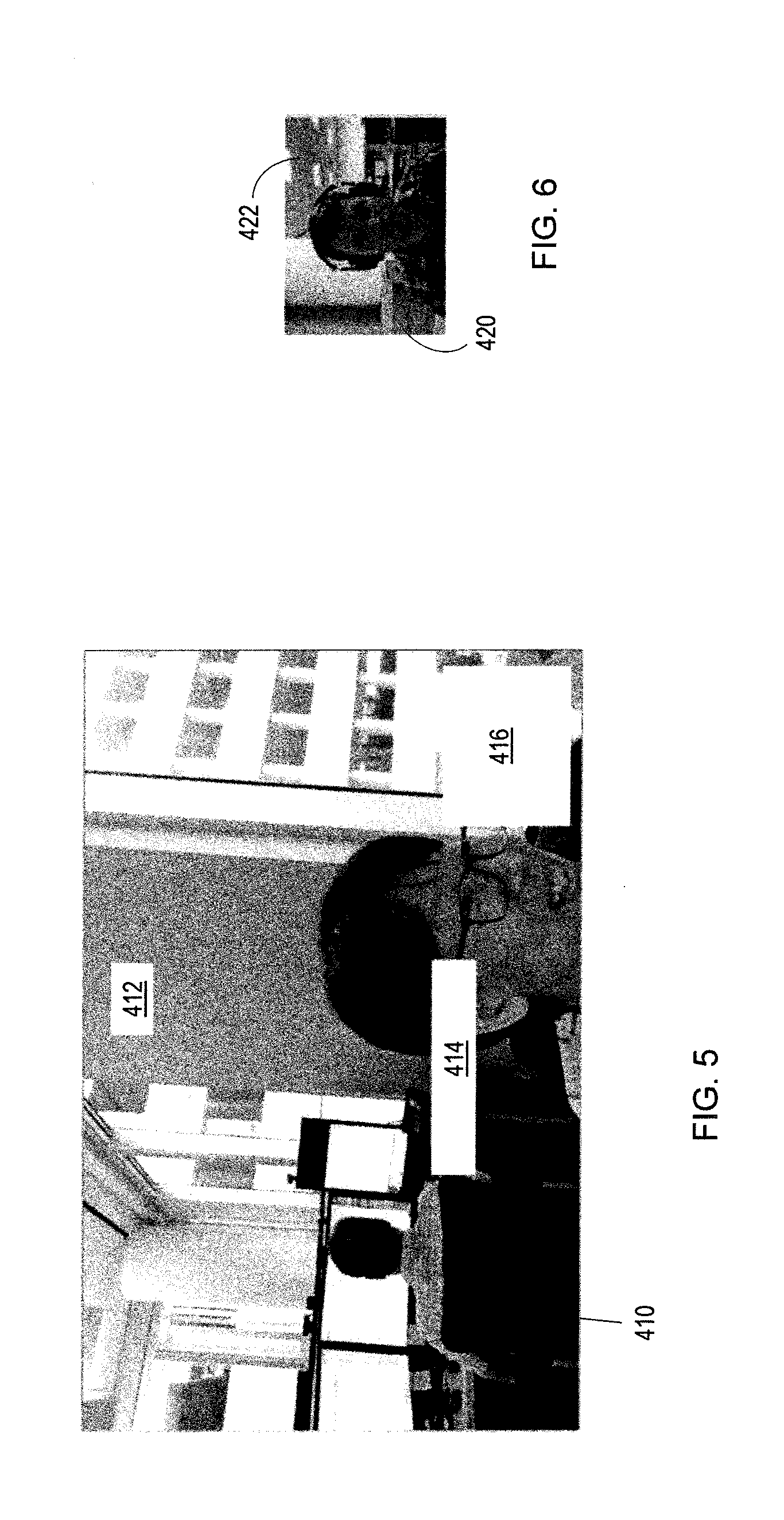

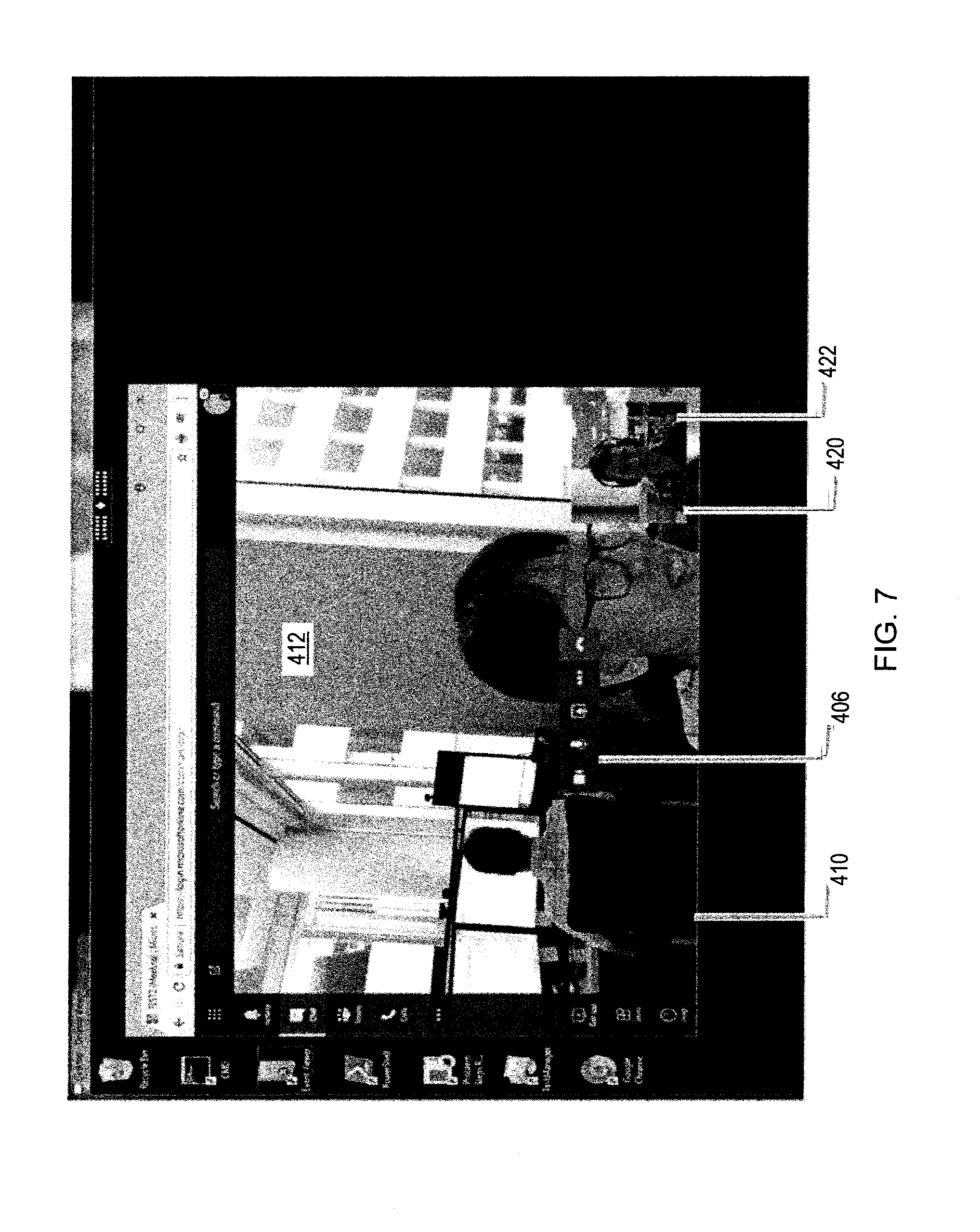

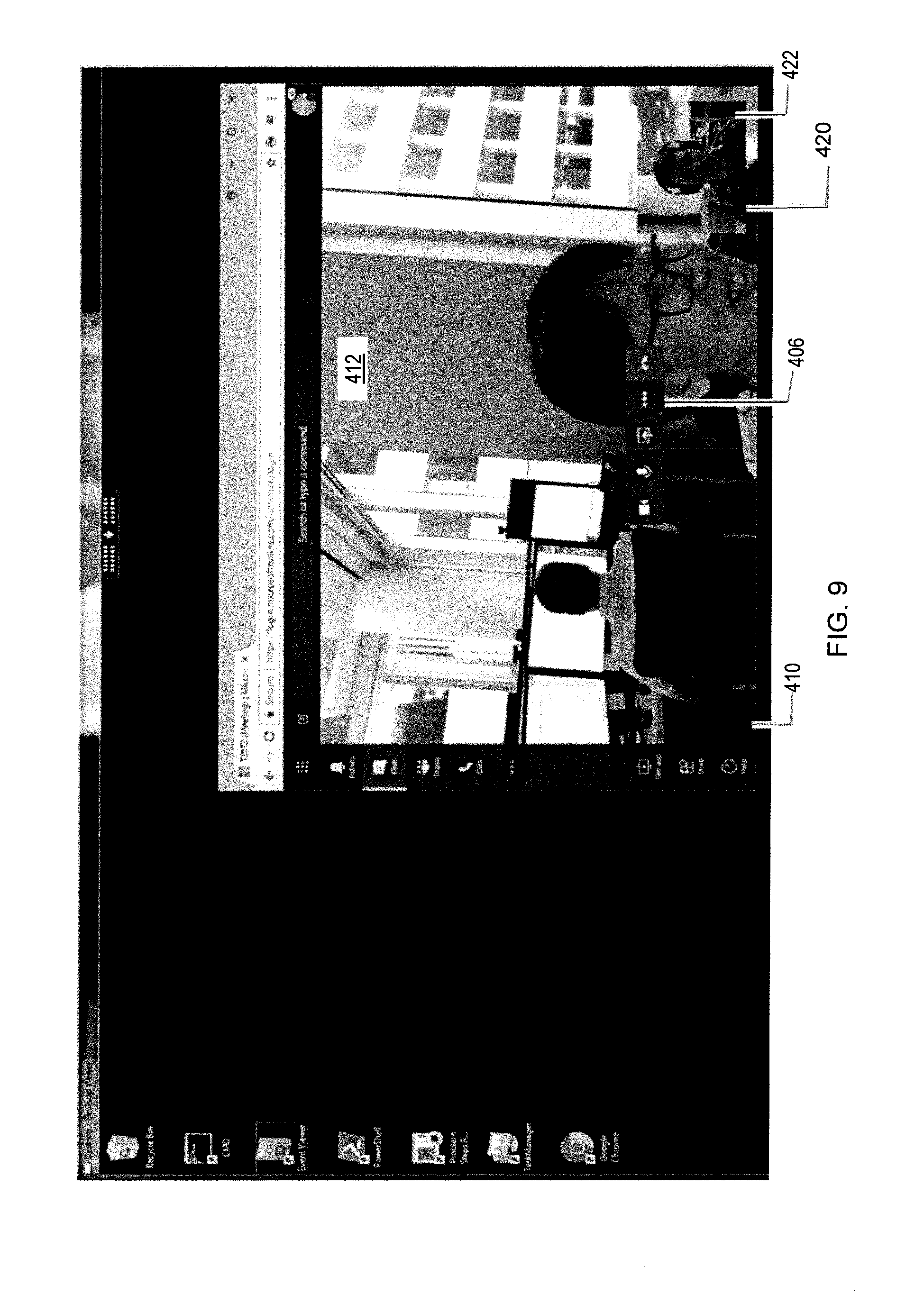

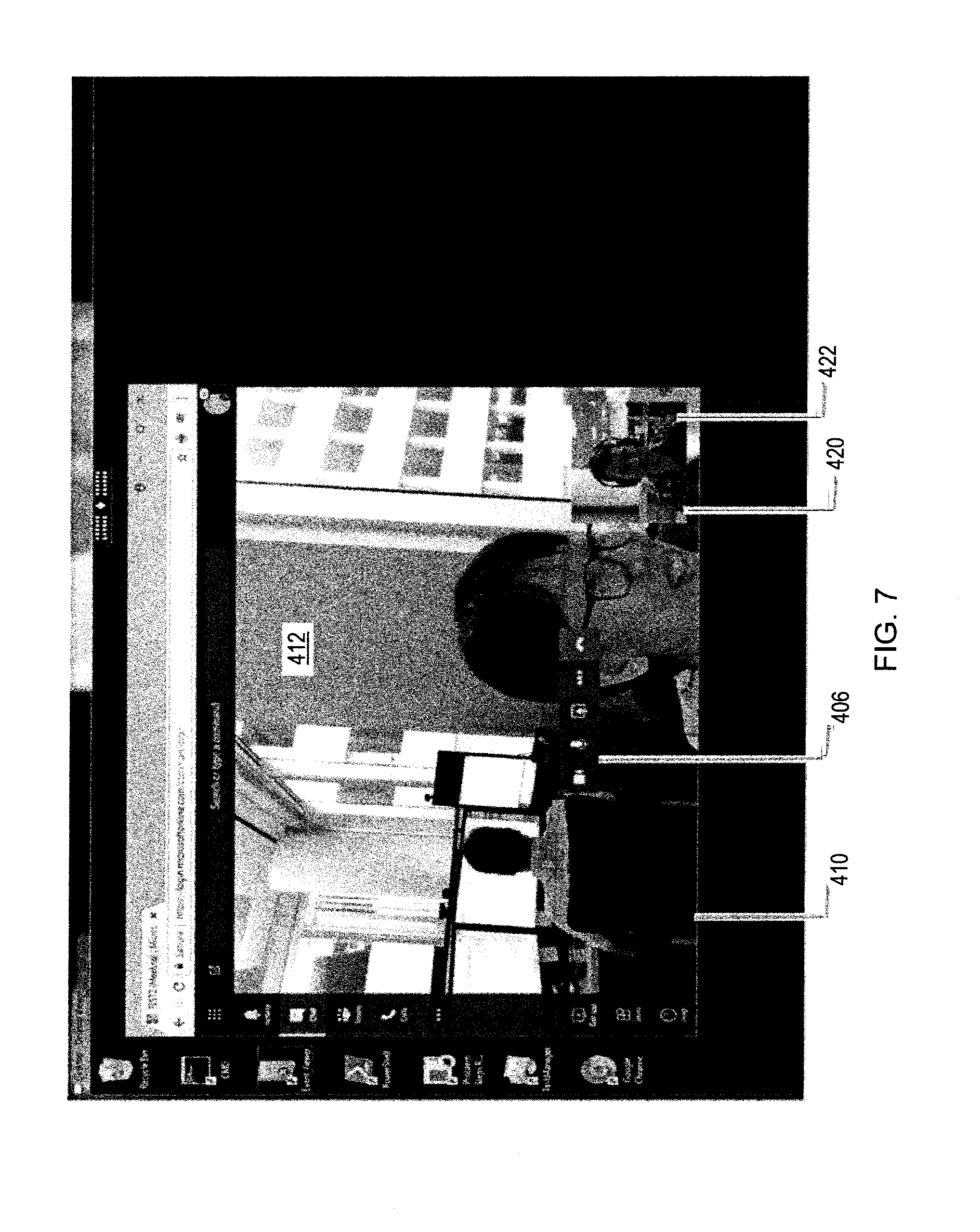

[0128] Referring now to FIGS. 4-7, an example window monitoring/overlay detection scenario will be discussed. In this scenario, there are two video stream sources. One video stream source is from the local webcam feed 330 on the first client computing device 304, and the other video stream source is from a second client computing device 305 in peer-to-peer communications with the first client computing device 304.

[0129] When the APIs are intercepted, the two video stream sources are enumerated by the virtual desktop server 302. When a call is started, the client computing device 304 reports to the real-time media application 310 that there is a first video stream from the local webcam feed 330. Similarly, the second client computing device 305 reports to the real-time media application 310 via the real-time media application server 320 that it has a camera providing the second video stream.

[0130] The real-time media application 310 gets notification that the two video streams have arrived, as reflected by events detected within the intercepted APIs. Since the real-time media application 310 does not receive the actual video streams, it creates a placeholder for each event. Each placeholder is drawn where the corresponding video stream is to be placed when displayed on the client computing device 304.

[0131] Referring now to FIGS. 4-7, various displays illustrating an example window monitoring/overlay detection scenario with the architecture illustrated in FIG. 3 will be discussed. As shown in window 400, the injected redirection code 314 in the virtual desktop server 302 renders a green rectangle 402 on where the peer video from the second client computing device 305 is to be placed and a red rectangle 404 on where the picture-in-picture preview video from the local webcam feed 330 is to be placed.

[0132] The injected redirection code 314 draws with different colors, with each color assigned to a respective video stream. An ID may also be associated with each color to identify the respective video streams. The controls 406 overlaid on the window 400 are rendered normally.

[0133] Since the window 400 is part of a WebRTC application framework, the real-time media application 310 needs to know where the video streams are to be rendered. The geometry tracking module 332 advantageously detects the different placeholders within the injected redirection code 314, and provides the positioning geometry associated with each placeholder to the display composition module 336. The positioning geometry provides x-y coordinates on where the video streams are to be placed, along with resolution.