Systems And Methods For Manipulating And/or Concatenating Videos

Joyce; Christopher ; et al.

U.S. patent application number 16/433598 was filed with the patent office on 2019-11-07 for systems and methods for manipulating and/or concatenating videos. This patent application is currently assigned to Movy Co.. The applicant listed for this patent is Movy Co.. Invention is credited to Christopher Joyce, Max Martinez.

| Application Number | 20190342241 16/433598 |

| Document ID | / |

| Family ID | 55064715 |

| Filed Date | 2019-11-07 |

View All Diagrams

| United States Patent Application | 20190342241 |

| Kind Code | A1 |

| Joyce; Christopher ; et al. | November 7, 2019 |

SYSTEMS AND METHODS FOR MANIPULATING AND/OR CONCATENATING VIDEOS

Abstract

Exemplary embodiments of the present disclosure are directed to manipulating and/or concatenating videos, and more particularly to (i) compression/decompression of videos; (ii) search and supplemental data generation based on video content, (iii) concatenating videos to form coherent, multi-user video threads; (iv) ensuring proper playback across different devices; and (v) creating synopses of videos.

| Inventors: | Joyce; Christopher; (Garney Valley, PA) ; Martinez; Max; (Barranquilla, CO) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Movy Co. Wilmington DE |

||||||||||

| Family ID: | 55064715 | ||||||||||

| Appl. No.: | 16/433598 | ||||||||||

| Filed: | June 6, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15324510 | Jan 6, 2017 | 10356022 | ||

| PCT/US2015/039021 | Jul 2, 2015 | |||

| 16433598 | ||||

| 62119160 | Feb 21, 2015 | |||

| 62066322 | Oct 20, 2014 | |||

| 62028299 | Jul 23, 2014 | |||

| 62026635 | Jul 19, 2014 | |||

| 62021163 | Jul 6, 2014 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 21/854 20130101; H04L 51/10 20130101; H04L 51/24 20130101; H04N 21/2743 20130101; H04L 51/16 20130101; H04N 21/632 20130101; H04N 21/8549 20130101; H04N 21/437 20130101; H04N 21/2353 20130101; H04L 67/42 20130101; H04L 51/14 20130101 |

| International Class: | H04L 12/58 20060101 H04L012/58; H04L 29/06 20060101 H04L029/06; H04N 21/2743 20060101 H04N021/2743; H04N 21/8549 20060101 H04N021/8549; H04N 21/63 20060101 H04N021/63; H04N 21/437 20060101 H04N021/437; H04N 21/235 20060101 H04N021/235; H04N 21/854 20060101 H04N021/854 |

Claims

1. A method of embedding supplemental data in a video file, the method comprising retrieving, by a server, a transcription of an audio component of a video file from a database; and generating, by the server, supplemental data to embed in the video file based on a transcription of the audio component of the video file and a comparison of words or phrases included in the transcription to a library of words.

2. The method of claim 1, further comprising: embedding the supplemental data in the video file programmatically upon determining that one of the words or phrases included in the transcription are also included in the library of words.

3. The method of claim 1, wherein embedding the supplemental data comprises embedding the supplemental data in the video file so that display of the supplemental data is aligned with an occurrence of the one of the words or phrases during playback.

4. The method of claim 1, wherein the supplemental data includes a selectable object that is selectable during playback of the video file and selection of the selectable object causes one or more actions to be performed.

5. A system for embedding supplemental data in a video file, the system comprising: a data storage device storing a video file and a transcription of an audio component of the video file; and a server having a processor operatively coupled to the data storage device, wherein the server is operative coupled to a communication network and is programmed to: generate supplemental data to embed in the video file based on the transcription of the audio component of the video file and a comparison of words or phrases included in the transcription to a library of words.

6. The system of claim 5, wherein the server is further programmed to: embed the supplemental data in the video file upon determining that one of the words or phrases included in the transcription are also included in the library of words.

7. The system of claim 5, wherein the server is programmed to embed the supplemental data in the video file so that display of the supplemental data is aligned with an occurrence of the one of the words or phrases during playback.

8. The system of claim 5, wherein the supplemental data includes a selectable object that is selectable during playback of the video file and selection of the selectable object causes one or more actions to be performed.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a Continuation of U.S. Non-Provisional application Ser. No. 15/324,510, filed Jan. 6, 2017, which claims the benefit of a national stage application filed under 35 USC 371 of PCT/US2015/039021, filed Jul. 2, 2015, which claims priority to: (i) U.S. Provisional Application No. 62/021,163, filed on Jul. 6, 2014; (ii) U.S. Provisional Application No. 62/026,635, filed on Jul. 19, 2014; (iii) U.S. Provisional Application No. 62/028,299, filed on Jul. 23, 2014; (iv) U.S. Provisional Application No. 62/066,322, filed on Oct. 20, 2014; and (v) U.S. Provisional Application No. 62/119,160, filed on Feb. 21, 2015, the disclosures of which are incorporated by reference herein in their entirety.

TECHNICAL FIELD

[0002] Exemplary embodiments of the present disclosure are directed to manipulating and/or concatenating videos, and more particularly to (i) compression/decompression of videos; (ii) search and supplemental data generation based on video content, (iii) concatenating videos to form coherent, multi-user video threads; (iv) ensuring proper playback across different devices; and (v) creating synopses of videos.

BACKGROUND

[0003] Video can be effective for capturing and communicating with others and has become increasingly important, not only for unilateral broadcasting of information to a population, but also as a mechanism for facilitating bidirectional communication between individuals. Recent advance in compression schemes and communication protocols has made communicating using video over cellular and data networks more efficient and accessible. As a result, applications or "apps" that generate video content are fast becoming the preferred mode of sharing, educating or advertising products or services. This content is increasingly being designed for and viewed on mobile devices such as smartphones, tablets, wearable devices, etc. When video content is shared, there is a need for efficient data transfer through wired and/or wireless cellular and data networks. However, there remains challenges to the use and distribution of video over cellular and data networks.

[0004] One such challenge is the constraints associated with bandwidth for transmitting video over networks. Typically, the (memory) size of a video can be dependent on a length of the video. For example, raw digital video data captured at high resolution creates a large data file that is often too large to be efficiently transmitted. Under the transmission constraints of some networks, if a sender captures a video in high resolution, and transmits it to a recipient, the recipient may have to wait for seconds or minutes before the video is received and render. This time lag is both inconvenient and unacceptable.

[0005] To minimize the strain on mobile networks, video content is most often transmitted in compressed form. Videos are compressed by hardware or software algorithms called codecs. These compression/decompression methods are based on removing the redundancy in video data (Wade, Graham (1994). Signal coding and processing (2 Ed.). Cambridge University Press. p. 34. ISBN 978-0-521-42336-6). Video data may be represented as a series of still image frames. When displayed to the viewer at frame rates greater than 24 frames per second, the viewer perceives that the image is in motion, i.e. a video. For example, the noted algorithms analyze each frame and compare them to adjacent frames to look for similarities and differences. Instead of transmitting each entire frame, the codec only sends the differences between a reference frame and subsequent frames. The video is then reconstructed frame by frame based on this difference data. Some of these methods are inherently lossy (i.e. they lose some of the original video quality) while others may preserve all relevant information from the original, uncompressed video.

[0006] Such frame-based compression can be done by either transmitting (i) the difference between the current frame and one or more of the adjacent (before or after) frames, referred to as "interframe"; or (ii) the difference between a pixel and adjacent pixels of each frame (i.e. image compression frame by frame) referred to as "intraframe". The interframe method is problematic for mobile transmission because if and when the data connection is momentarily lost, the reference frame is lost and has to be retransmitted with the difference data. The intraframe method solves this issue and is therefore more commonly used for digital video transmission.

[0007] Examples of the most prevalent methods include MPEG-4 Part 2 or H.263 or MPEG-4 Part 10 (AVC/H.264) or the more recent H.265. Finally, these codecs may be further optimized for mobile phone network transmittal such as the 3GP or 3G2 standard.

[0008] The size of these frame-based compression methods is still dependent on the initial size of the the raw digital video file and encodes and then decodes each frame one by one. Therefore they are all dependent on the duration of the raw video. For example a video that was recorded in 480p (i.e. 480.times.640 pixels) and with a duration of 1 minute creates a MPEG-4 video file of 28.2 MB. This 1 minute video file, when uploaded with a 3G wireless network connection (data transmission rate of 5.76 mbps or 0.72 MB/sec), takes approximately 39 seconds to upload. However, for the same 1 minute video at 1080p or HD resolution, the upload time balloons to 164 seconds or 2 minutes and 44 seconds. Although faster HSDPA and LTE data protocols are prevalent in North America, they only make up approximately 10-15% of all the world's 7 billion mobile phone users currently.

[0009] Another challenge regarding the use and distribution of video as a means of communicating, is the lack of a user-friendly, resource efficient platform that allows users to create video messing threads. For example, in recent years, a wide variety of text messaging applications or "apps" have been introduced for use on phones, smartphones and laptops. While many of these apps provide for the addition of images and videos into the text message thread, these conventional apps, are not designed or optimized for video messaging as they require multiple steps to create and send the video messages. These multiple steps are both cumbersome and on most devices not intuitive.

[0010] To illustrate this point conventional text messaging applications, such as native text messaging applications on phones or smartphones (e.g. WhatsApp Messenger from WhatsApp Inc., Facebook Messenger from Facebook, Kik Messenger from Kik Interactive Inc., etc.), typically require iterative interactions between the user and the user's phone (e.g., tapping a button, adding text, and swipe or other gestures) before a video can be incorporated into the text messaging application. For example on an Apple iPhone 5 (iOS version 6.1.4), creating a video message using the native "Messages" app requires a minimum of nine (9) distinct user steps or interactions. A similar number of user steps are required for a user to respond to a video message with another video message. This is not only time consuming and cumbersome requiring the user to first identify the correct steps and then execute them quickly and without error, but also an inefficient use of computing resources.

[0011] Some conventional video sharing apps offer some improvement in both the number of steps and time required to create a video using a mobile device. Examples of such video sharing apps are Keek from Keek Inc., Vine from Vine Labs, Inc., Viddy from Viddy Inc. Instagram video from Facebook. Creating a video message in these conventional video sharing applications, however, also requires multiple steps. For example on a Samsung Galaxy Note 2 (OS version 4.1.2), creating and sending a video message using Facebook's Instagram video app requires six (6) distinct steps. Additionally, most of these conventional video sharing apps, with the exception to Keek, cannot be used for video messaging (e.g., an exchange of sequential videos between individuals including video messages and video responses) as there is no capability to respond to the initial video message with a video message. Furthermore, most of these apps upload the videos to application servers in the app foreground, therefore suspending the use of the device until the video uploads, resulting in an inefficient use of computing resources.

[0012] Some conventional video messaging apps offer further improvement in both the number of steps and time as compared to text messaging and video sharing platforms. See U.S. Patent Application 20130093828. Examples of such conventional video messaging apps are Snapchat from Snapchat Inc., Eyejot from Eyejot, Inc., Ravid Video Messenger from Ravid, Inc., Kincast from Otter Media, Inc., Skype video messaging from Microsoft and Glide from Glide Talk, Ltd. These conventional video messaging apps, however, still maintain the format and structure of text based messaging. This message and response framework works well for text based messages, but is still slow, cumbersome and difficult to navigate with video messages and responses.

[0013] Furthermore, while some video platforms allow video to be delivered with additional features and functionalities, such as text transcripts and clickable hot spots that link to other content or information, the manner in which there additional features and functionalities are associated with or included in a video can also require additional steps or time, which introduces inefficiencies into providing supplemental information in or with videos that are distributed. For example, in the case of YOUTUBE, speech recognition is performed by a speech to text recognition engine or manually by the author after a video is uploaded to a remote server. This process of creating the text transcript can take several minutes to hours depending on the several factors. U.S. Patent Publication No. 2012/0148034 describes a method for transcribing speech.

[0014] Some conventional video platforms can be used to embed supplemental data, such as hot spots, into a video after a video has already been created such that the hotspots can be added to over overlaid on the video. As one example, when a hotspot in a video is scrolled over, the video can pause and the hotspot become active providing either information or links to additional information. As another example, U.S. Patent Publication No. 2012/0148034 provide for the ability of the author or a recipient a video to pause the playback of the video at a particular time and record a response in context to the content of the original message included in the video. When the original message is viewed for playback, the author or a recipient will be able to hear or see the message and see the embedded hot spot or thumbnail showing a response. When this thumbnail is clicked, the recipient is taken to the response recorded earlier. As with the earlier cited prior art, these hot spots are added only after the initial video is complete and viewed or reviewed upon playback (i.e., the prior art requires that the speech recognition and transcription take place only after the completion or upload of the video).

[0015] The slow and tedious video creation process of conventional apps cannot or does not easily facilitate the (i.) creation of video messages and responses (herein "video thread"); (ii.) creation of video thread by multiple users or respondents; (iii.) communication and collaboration between users where context and tonality is required; (iv.) creation of multi user or crowdsourced video content to be used to communicate information about an activity, product or service; and (v.) addition of supplemental content to videos.

[0016] Furthermore, the present disclosure relates to multimedia (e.g., picture and video) content delivery, preferably over a wireless network. Particularly, the present disclosure relates to dynamically optimizing the rendering of multimedia content on wireless mobile devices. Still more particularly, the present disclosure relates to the dynamic rendering of picture or video content regardless of device display orientation or dimensions or device operating system embellishments.

[0017] Another challenge is that devices on which videos are played back have different specifications and hardware configuration, which can result in the video being improperly displayed. For example, the use of mobile devices tethered to a wireless network is fast becoming the preferred mode of creating and viewing a wide variety of image content. Such content includes self-made or amateur pictures and videos, video messages, movies, etc. and is created on hand-held mobile devices. Furthermore, this content is delivered to a plurality of mobile devices and is rendered on the display of these devices. These mobile devices, such as mobile smartphones, are manufactured and distributed by a multitude of original equipment manufacturers (OEMs) and carriers. Each of these devices has potential hardware and software impediments that prevent the delivered content from being viewed "properly," e.g., rendering of the picture or video in the correct orientation (and not rotated 90.degree. to the right or left or upside down) based on the orientation that the playback device is held and in the same aspect ratio as when captured or recorded. These impediments include differing display hardware, resolutions, aspect ratios and sizes as well as customized software overlays that alter the native operating system's (OS) display. For example, often the created video is of a different resolution and/or aspect ratio than the device that it is being viewed on. This creates a mismatch and the video is not properly rendered on the recipient's screen.

[0018] Specifically, some faults that can negatively affect viewing or playback of an image include the image rotated to an orientation other than the orientation of the playback device and image not rendered in the aspect ratio that the image was initially captured or recorded. For examples, rendered videos on playback devices can be rotated 90.degree. to left or right or rotated 180.degree. (upside) down or vertically or horizontally compressed or stretched or in some cases, only a portion of the video may be rendered. In the most severe case, the video may not render at all and the application terminates or crashes.

[0019] These faults can be caused by the inability of the playback device to read the encoded metadata that accompanies the video file. This metadata can contain information about the image such as its dimensions (resolution in width and height dimension), orientation, bitrate, etc. This information is used by the playback device's OS and picture or video playback software (or app) to correctly render the image on its display. The inability to read the encoded metadata can stem from the use of older OS, the picture or video is converted to an incompatible format or is resized that strips this metadata outright, or other. Examples of this older operating system incompatibility may be found in the Android OS prior to its API Level 17 or Android 4.2 release. In devices operating with OS versions prior to this, the orientation metadata is not recognized and used. There are also situations when the PBD's OEM has modified the OS with overlays. Such modifications can prevent the PBD from properly reading some or all of the picture or video metadata causing the image to be rendered incorrectly.

[0020] In a small, discrete universe of devices, these impediments can be addressed and overcome using a monolithic operating system such as the iOS operating system from Apple, Inc. In the case of a family of devices, the number of unique devices and display dimensions or resolutions is low, e.g. approximately 20 devices and approximately 5 unique versions of the iOS operating system. The corrections to the application delivering the image content are made on a case by case basis for each device and OS version.

[0021] However, for devices and operating systems, such as the Android OS from Google Inc., that are open source and allow for a large amount of hardware and OS variation, the number of unique device-OS combinations number in the thousands. Additionally, due to the nature of this industry, new devices are introduced on a daily basis. Therefore corrections for image resolution mismatches, orientation errors and software issues quickly become impossible to address on a case-by-case basis.

[0022] The industry has addressed this issue by using detailed libraries in the code that provide the necessary information for each possible device and their respective display sizes, aspect ratios and software limitations. By using a detailed library, when a particular device calls for a playback, the image is delivered and the app compares the playback device specifications to the library and makes the suitable corrections. This methodology is inefficient, in part, because of the delay from using an additional application that contains an extensive device library. It is also prone to error because of the necessity of the library to be constantly updated. See U.S. Pat. Nos. 8,359,369; 8,649,659; and 8,719,373; and U.S. Patent Publications Nos. 20130103800; 20120240171; 20120087634; and 20110169976, each of which are incorporated by reference in their entirety.

[0023] The present disclosure relates to a system and method of rendering any image, e.g., playback of a video, regardless of resolution or initial orientation, on any playback device with display resolutions, orientations, OS's and modifications different from the capturing device, such that the image is rendered without anomalies or faults.

SUMMARY

[0024] Exemplary embodiments of the present disclosure are directed to manipulating and/or concatenating videos, and more particularly to (i) compression/decompression of videos; (ii) search and supplemental data generation based on video content, (iii) concatenating videos to form coherent, multi-user video threads; (iv) ensuring proper playback across different devices; and (v) creating synopses of videos.

[0025] Embodiments of the present disclosure relate to video messaging. For example, the present disclosure relates to a series of video messages and responses created on a mobile device or other camera enabled device. Still more particularly, the present disclosure is related to multi user generated video messages for the purpose of sharing, collaborating, communicating or promoting an activity, product or service.

[0026] Systems and methods of creating, organizing and sharing video messages are disclosed. Video messages and video responses (herein video thread) created for the purpose of collaborating, communicating or promoting an activity, product or service is provided. To create a thread of videos, a program or application is used which can be run on a mobile device such as a smart phone. Unlike current text based messaging applications, this application can be completely video based and can capture a video message with, for example, only one or two screen taps. The video threads hereby created may be simple messages and responses, instructions, advertisements or opinions on specific topic or product. It may be between two users or hundreds of users using the aforementioned application to create video responses appended to the original thread. As such, a simplified process of creating a video message (i.e. requiring less steps) which is user intuitive. This simplification includes minimizing the number of UI interaction steps required to create the video.

[0027] In accordance with embodiments of the present disclosure, systems and methods for forming a multi-user video message thread are disclosed. The systems can include data storage device storing video messages and one or more servers having one or more processors operatively coupled to the data storage device. The server is operative coupled to a communication network and is programmed to perform one or more processes. The processing and methods can include receiving, at the server(s) via the communication network, a video message captured by a first user device. The video message can be associated with a first user account and can be stored in a database by the server. The processing and methods can also include transmitting, by the server(s) via the communication network, a notification to a contact associated with the user account that the video message is viewable by the contact; and receiving, by the server(s) via the communication network, in response to the notification, a response video message captured by a second user device. The response video message can be associated with a second user account belonging to the contact and can be stored in the database by the server. The processing and methods can also include forming, by the server(s) a video thread that includes the video message and the response video message and streaming the video thread to a third user device to facilitate playback of the video message and the response video message in sequence by the third user device.

[0028] In accordance with embodiments of the present disclosure, a further response video message to the video thread can be received by the server(s) from the third user device, and can be added to the video thread by the server(s). The server(s) can stream the video thread to one of the first user device, the second user device, or a fourth user device to facilitate playback of the video message, the response video message, and the further response video message in sequence by the first user device, the second user device, or the fourth user device.

[0029] In accordance with embodiments of the present disclosure, an indication from the first user device indicating that the user associated with the first user account wishes to share the video message with the contact can be received by the server(s) via the communications network.

[0030] In accordance with embodiments of the present disclosure, the contact can be prevented from distributing the video message to others by the server(s).

[0031] In accordance with embodiments of the present disclose, an indication from the first user device indicating that the user associated with the first user account wishes to share the video message with all contacts associated with the first user account can be received by the server via the communications network.

[0032] In accordance with embodiments of the present disclosure, supplemental data to embed in the video message or the response video message can be generated based on a transcription of an audio component of the video message or the response video message and a comparison of words or phrases included in the transcription to a library of words. The server(s) embed the supplemental data in the video message or the response video message upon determining that one of the words or phrases included in the transcription are also included in the library of words. The supplemental data can be embedded in the video message or response video message so that display of the supplemental data is aligned with an occurrence of the one of the words or phrases during playback. The supplemental data can include a selectable object that is selectable during playback of the video message or during playback the response video message and selection of the selectable object causes one or more actions to be performed.

[0033] Exemplary embodiments of the present disclosure can relate to using speech recognition to provide a text transcript of the audio portion of the video. For example, exemplary embodiments of the present disclosure can relate to the simultaneous recording and audio transcription of a video message such that the text transcript is available immediately after recording. With this same-time transcription during video recording, additional features can be incorporated into the video messages that are visible during playback.

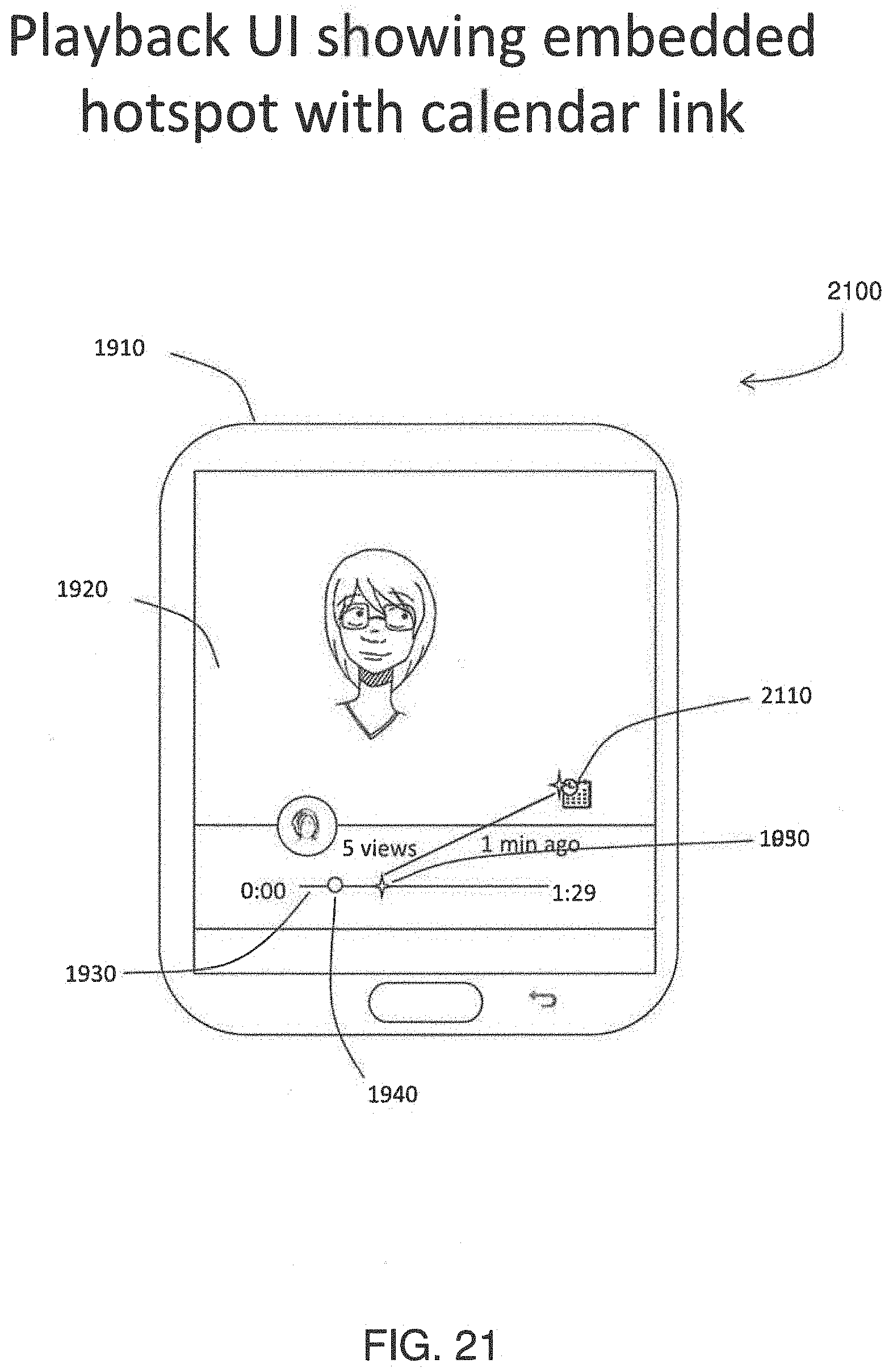

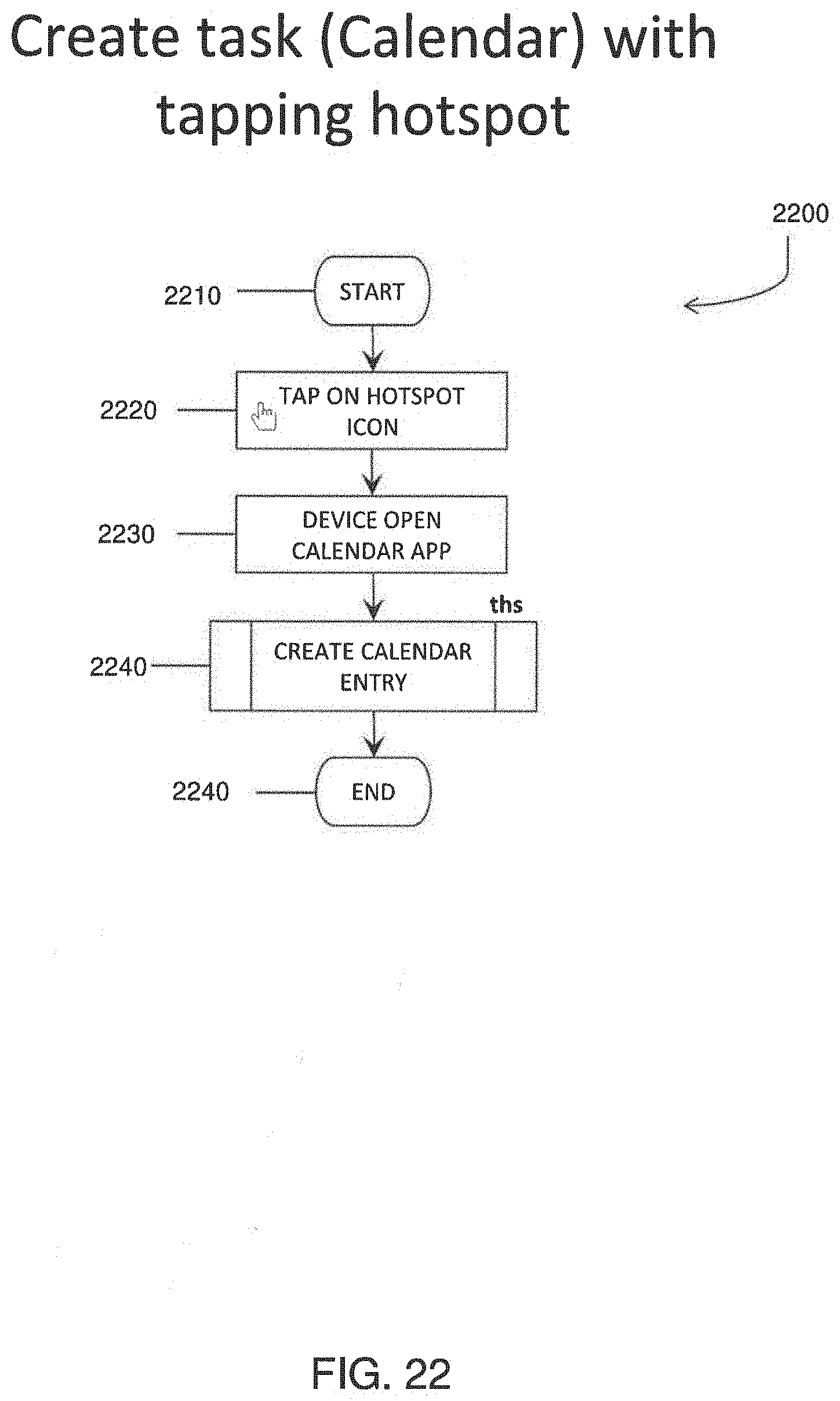

[0034] Systems and methods of adding synchronous speech recognition and embedded content to a video and video messages are disclosed. The systems and methods can utilize the user's device and built-in device speech recognition capabilities to create a text transcript of the video. This synchronous speech recognition allows for faster delivery of additional information and features with the video message to the recipient. These include the ability to search for videos based on content or topic. These also include the addition of embedded information and functionalities to the video message when it is delivered to the recipient. These embedded features are created automatically by the app recognizing certain key words or phrases contained in the video message (e.g., used by the author). For example if the author creates a video message wherein he uses the words meeting and a specific date, the app will display an embedded calendar icon to the recipient when viewed. If the recipient wishes to add this meeting to their calendar, he simply needs to click on the calendar icon during playback and a new meeting is created in his device's calendar application associated with the video message author's name and indicated date.

[0035] In accordance with embodiments of the present disclosure, systems and methods for embedding supplemental data in a video file are disclosed. The systems can include a data storage device and one or more servers having one or more processors. The data storage device can store a video file and a transcription of an audio component of the video file and the processor(s) of the server(s) can be operatively coupled to the data storage device. The server(s) can be programmed to perform one or more processes. The processes and methods can include generating supplemental data to embed in the video file based on the transcription of the audio component of the video file and a comparison of words or phrases included in the transcription to a library of words. The supplemental data can be embedded in the video file upon determining that one of the words or phrases included in the transcription are also included in the library of words. The supplemental data can be embedded in the video file so that display of the supplemental data is aligned with an occurrence of the one of the words or phrases during playback. The supplemental data can include a selectable object that is selectable during playback of the video file and selection of the selectable object causes one or more actions to be performed.

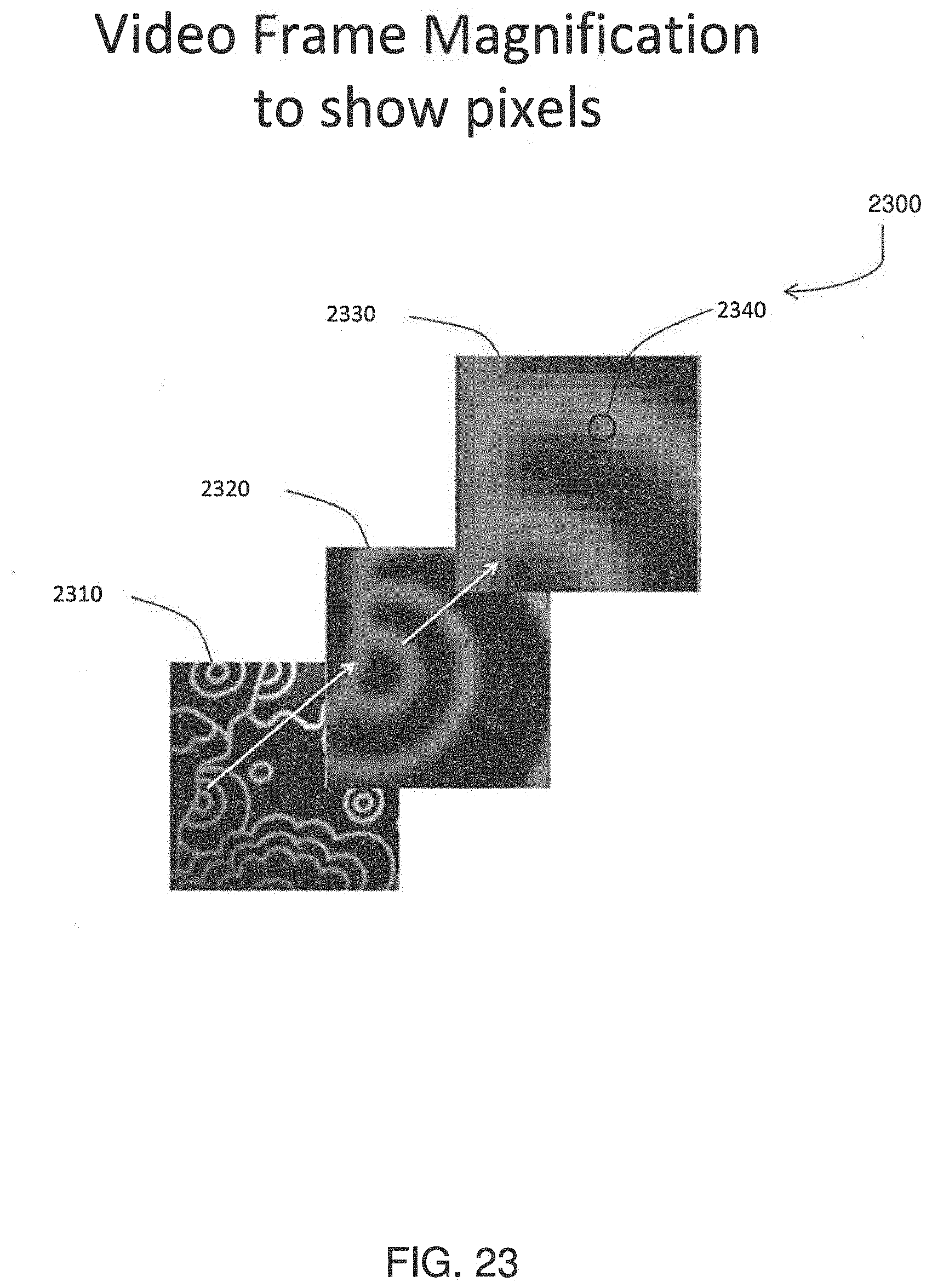

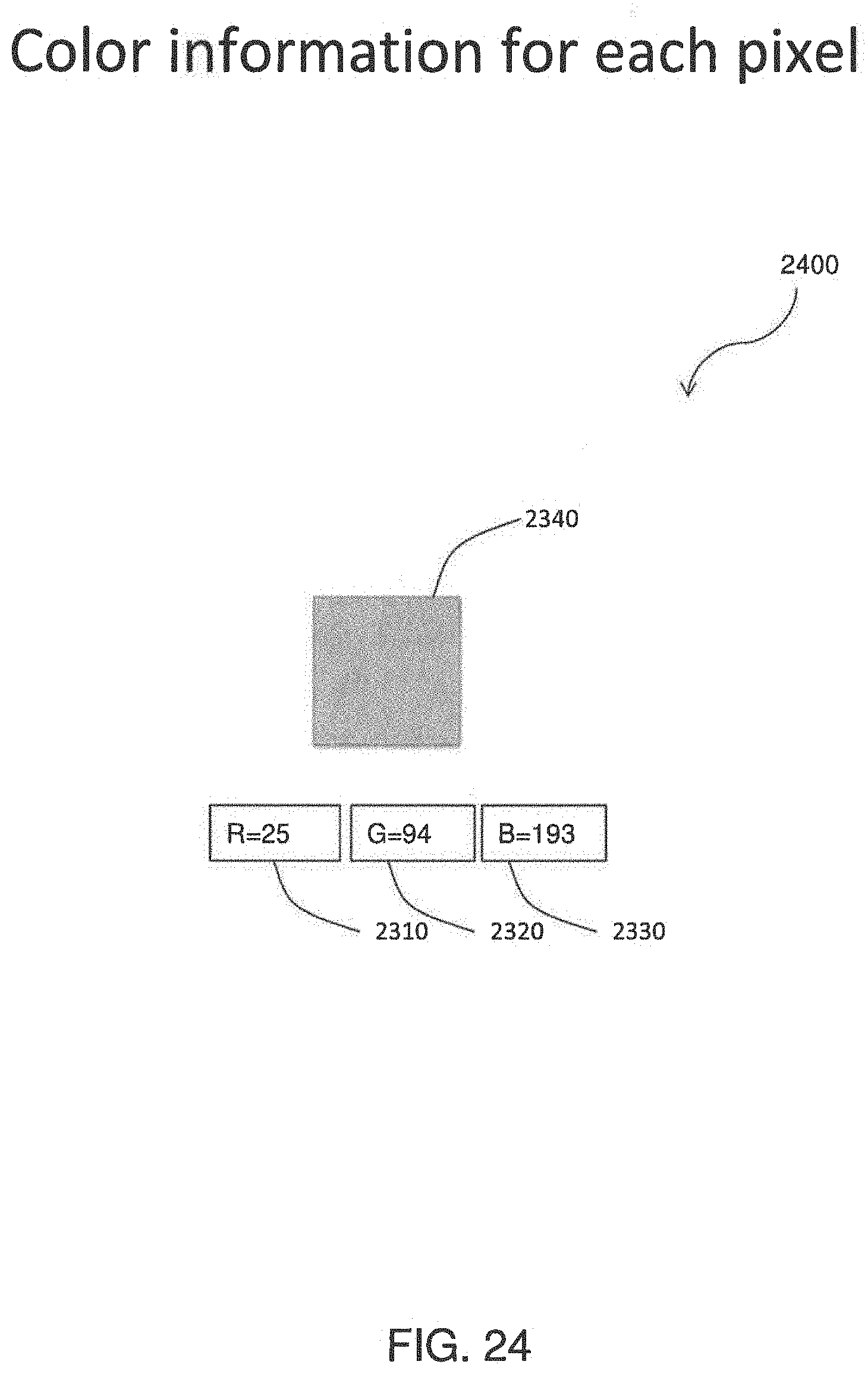

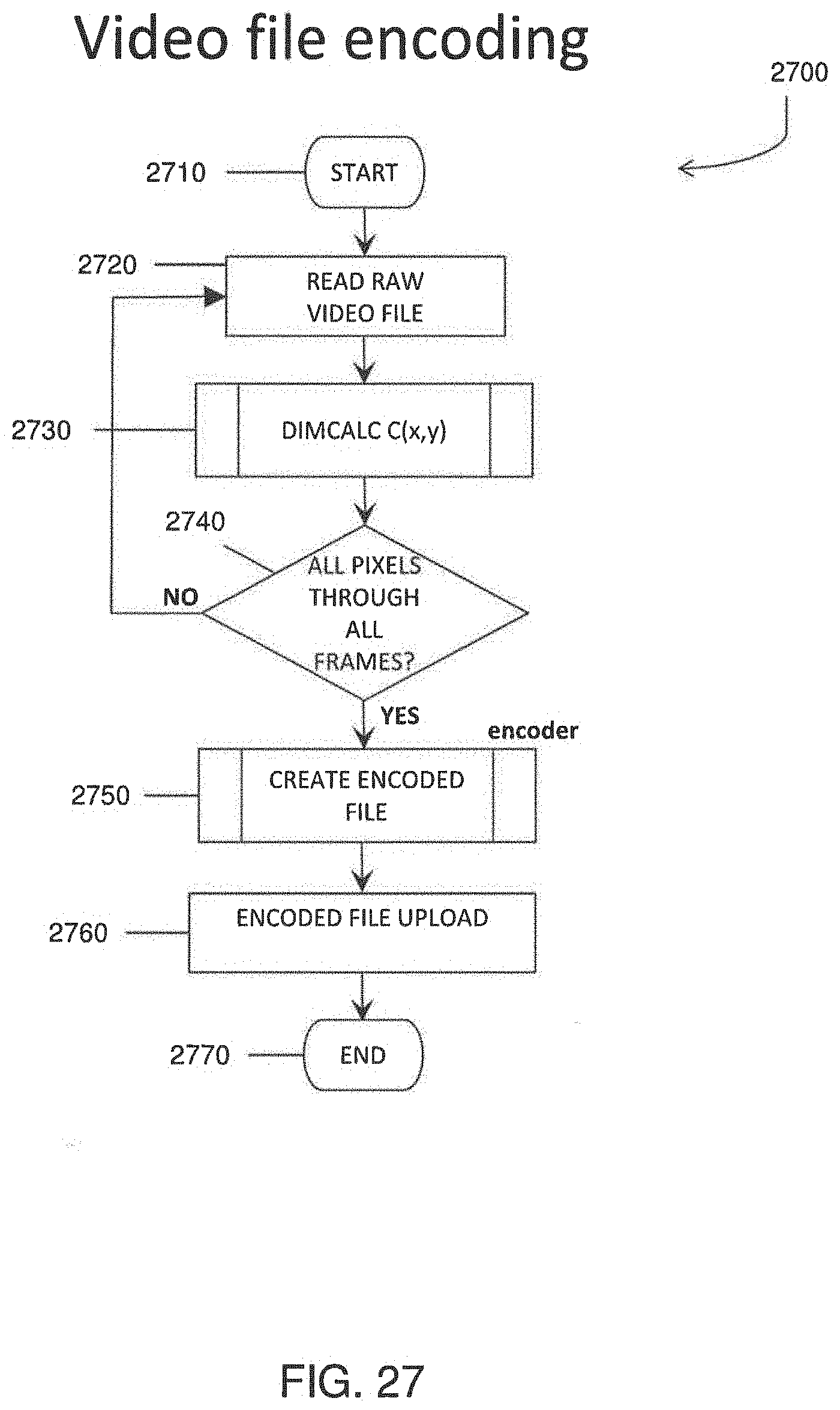

[0036] Exemplary embodiments of the present disclosure can relates to video content uploaded or downloaded from a mobile device or computer. For example, exemplary embodiments of the present disclosure can relate to systems and methods for compressing and decompressing videos and/or video messages to minimize their size during delivery through wireless phone networks and the internet. Given that the prior art requires that the each frame is compressed based on difference information, there is a need for a more efficient compression method that is independent of the number of frames or pixels and therefore independent of the length or duration of the video. Specifically there is (i) a need for more efficient transfer (i.e. smaller data files) through slower networks to facilitate video messaging with minimal delay and (ii) a need for a compression and decompression method that is not dependent on the length of a video. To efficiently compress the video data, exemplary embodiments of the present disclosure can characterize the color values for each pixel for every frame and generate a fingerprint based on the characterization. Therefore, instead of sending frame by frame color data, only the fingerprint is sent. In some embodiments, the fingerprint can consist of only two numbers for each color element (i.e. red, green and blue) for a total of six numbers per pixel.

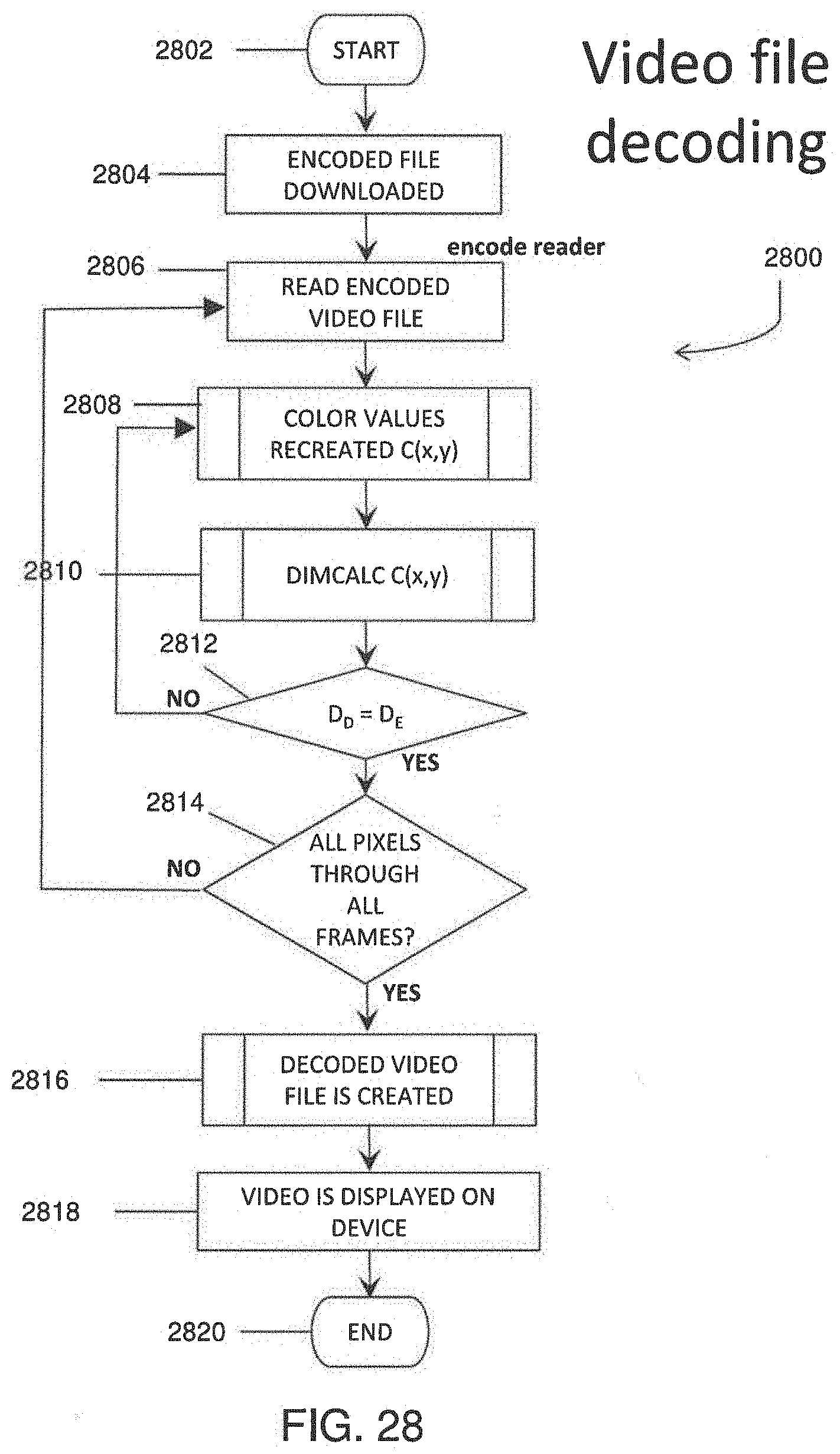

[0037] In accordance with embodiments of the present disclosure, systems and methods for compressing and/or decompressing a video file are disclosed. The systems can include a data storage device and a processing device operatively coupled to the data storage device. The data storage device can store a data file corresponding to a video file, where the data file represents a compressed version of the video file. The processor can be programmed to execute one or more processes. The processes and methods can include creating a numerical fingerprint for each pixel in a video file; and creating a data file containing the numerical fingerprint for each of the pixels of each of the video frames. The (memory) size of the data file is independent of a number of video frames included in the video file. The fingerprint can be created by creating a fingerprint of the color data for each pixel as a function of time. The fingerprint can be created by calculating a fractal dimension for each pixel, the fractal dimension representing the fingerprint. The data file can be created by including the fractal dimension for each pixel and a total duration of the video in the data file.

[0038] The processes and methods can also include obtaining a data file from a data storage device. The data file can represent a compressed version of a video file include a fractal dimension for each pixel and a total duration of the video included in the video file. The processes and methods can also include recreating the video file by creating a proxy plot for each color; and adjusts color values for the proxy plot until a simple linear regression converges to the fractal dimensions included in the data file.

[0039] In accordance with exemplary embodiments, systems and methods of rendering images, e.g., pictures, videos, etc., on electronic devices, e.g., mobile device displays, irrespective of their display sizes, OS level or modifications and rendering or playback orientation are disclosed. The systems and methods can include using a cloud storage server to extract elements of the encoded image metadata in order to transmit the information directly to the rendering or playback device when called for. By extracting and sending separately, the system and method enables rendering or playback in the correct orientation and in the same aspect ratio as when captured or recorded regardless of the device's display size, OS version or OS modification.

[0040] In some embodiment, the present disclosure can relate to a method for multimedia content delivery comprising providing a multimedia file on an electronic device, wherein the file has metadata related to its display orientation and dimensions, such as display size, aspect ratio and orientation angle, reading some or all of the metadata, extracting some or all of the metadata, adding the extracted metadata to the image file, and transferring the metadata to a playback device wherein the playback device is capable of rendering the image with the correct orientation and dimensions.

[0041] Any combination and/or permutation of embodiments is envisioned. Other objects and features will become apparent from the following detailed description considered in conjunction with the accompanying drawings. It is to be understood, however, that the drawings are designed as an illustration only and not as a definition of the limits of the present disclosure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0042] The present disclosure is better understood from the following detailed description when read in connection with the accompanying drawings. It should be understood that these drawings, while indicating preferred embodiments of the disclosure, are given by way of illustration only.

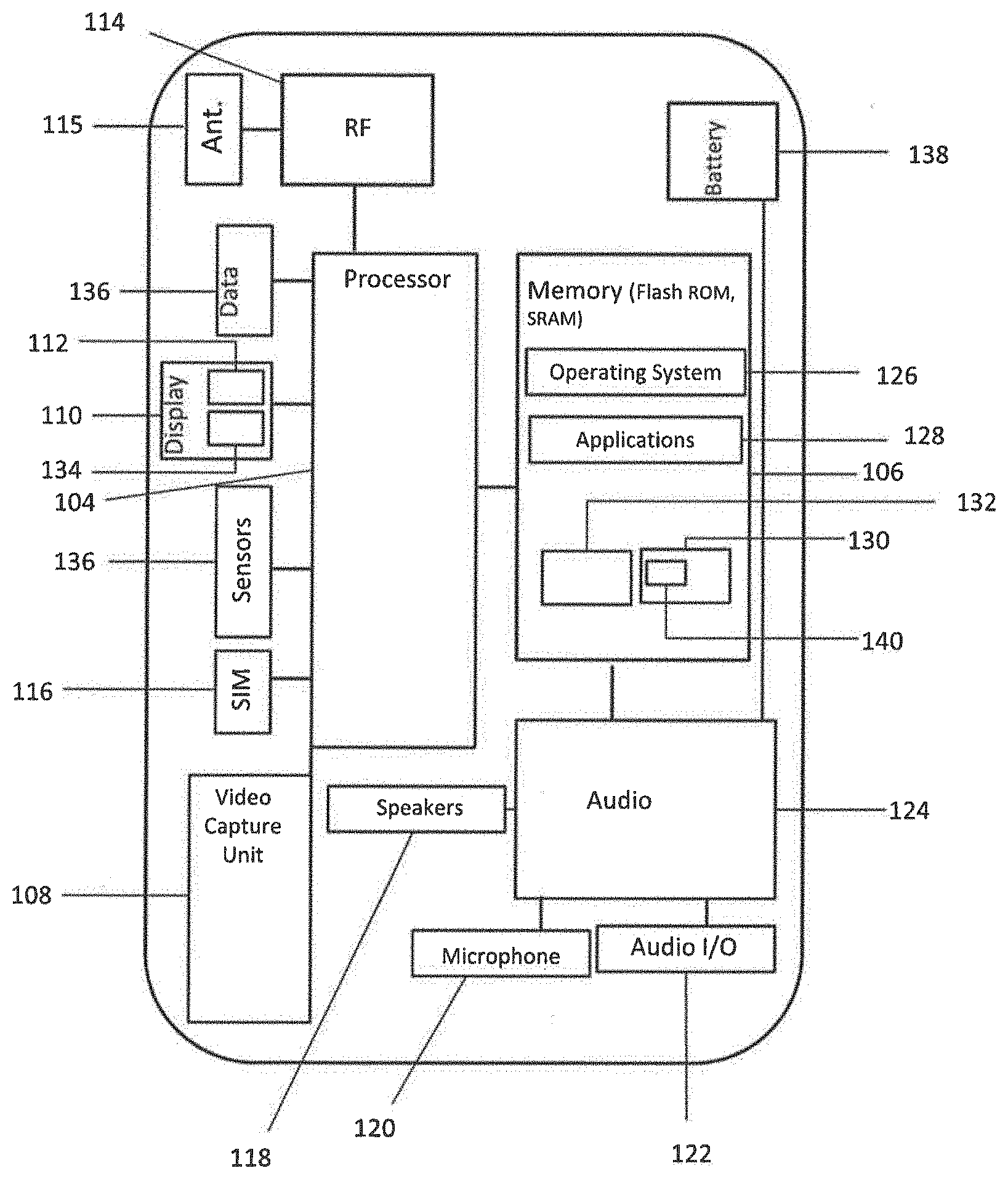

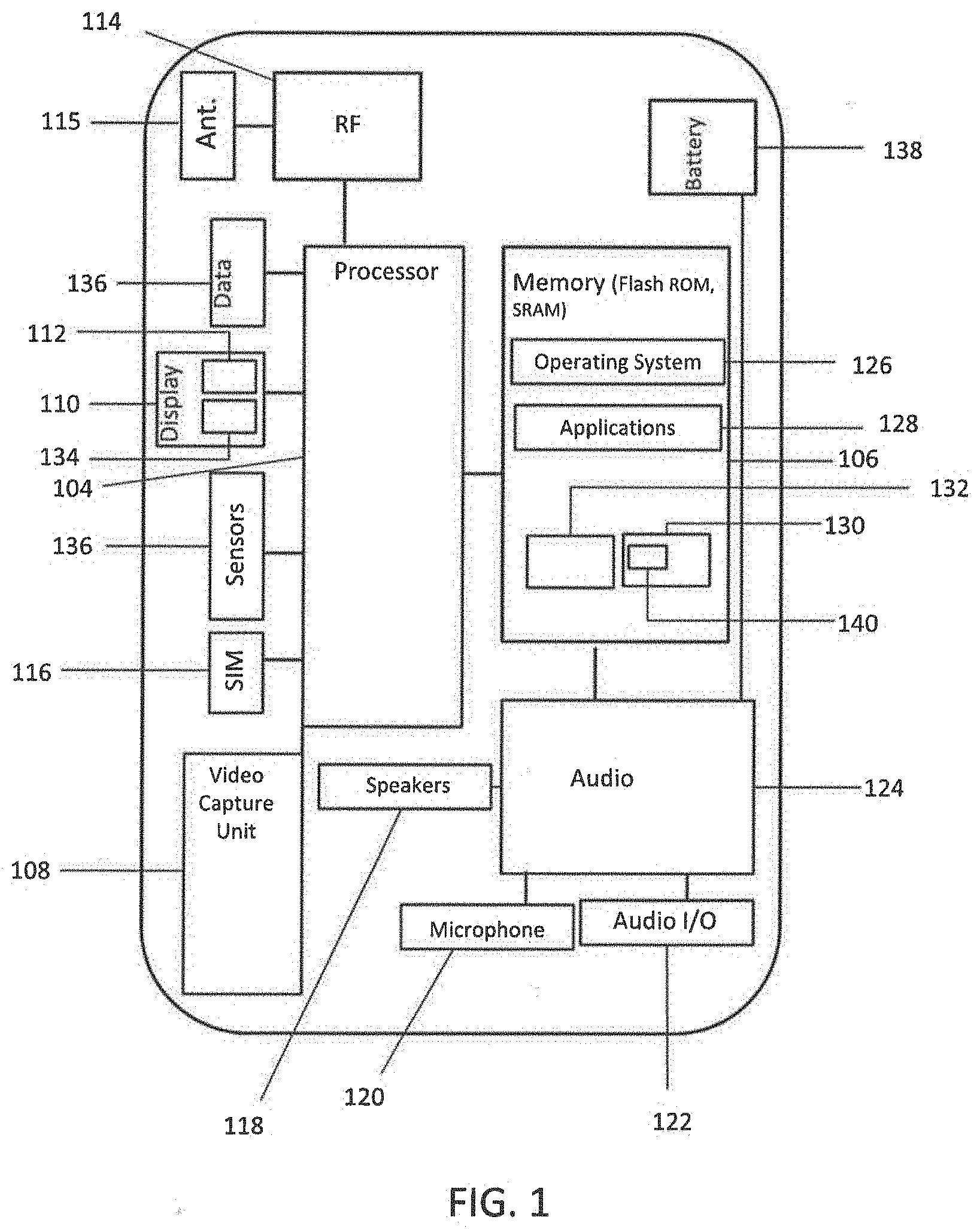

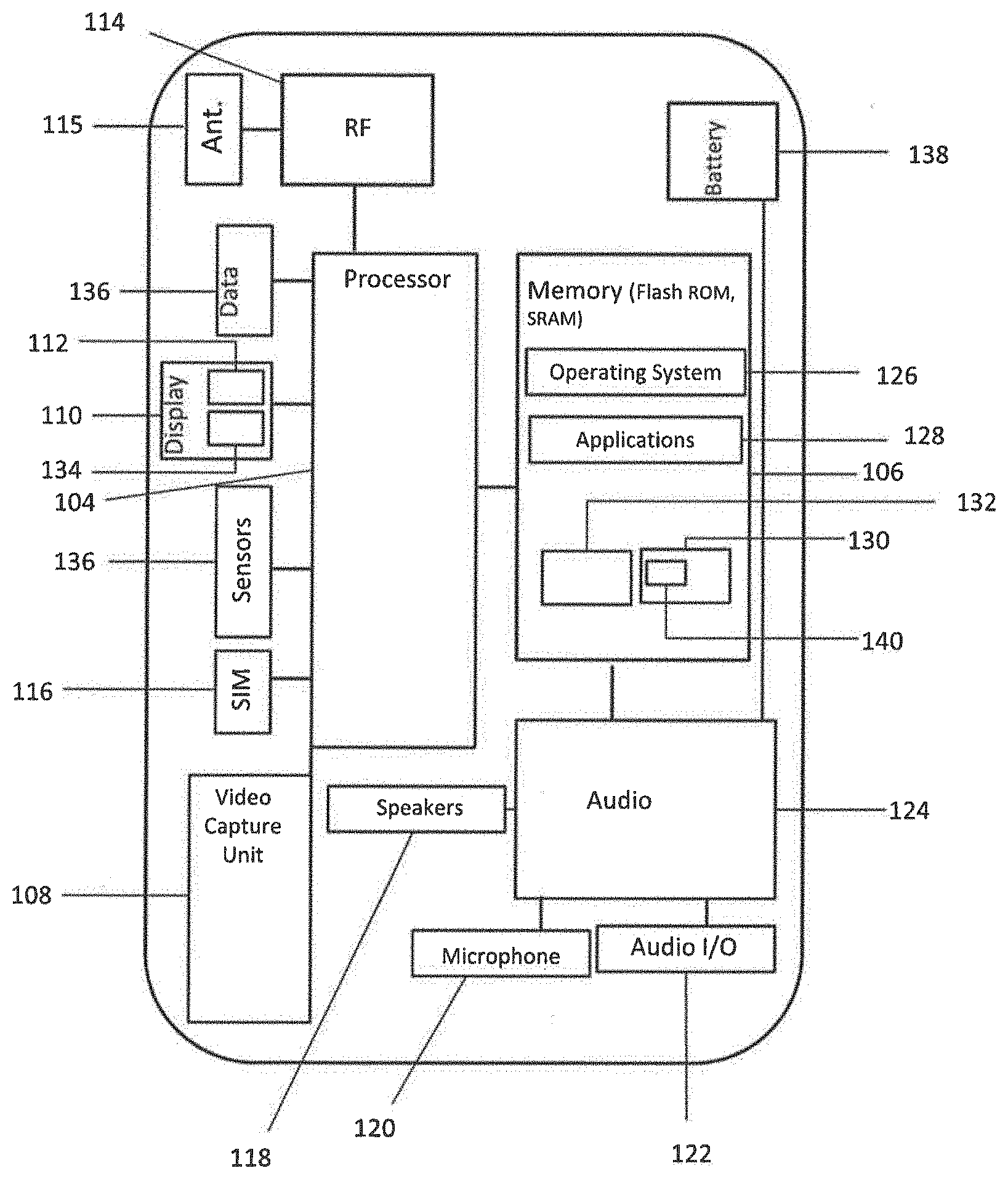

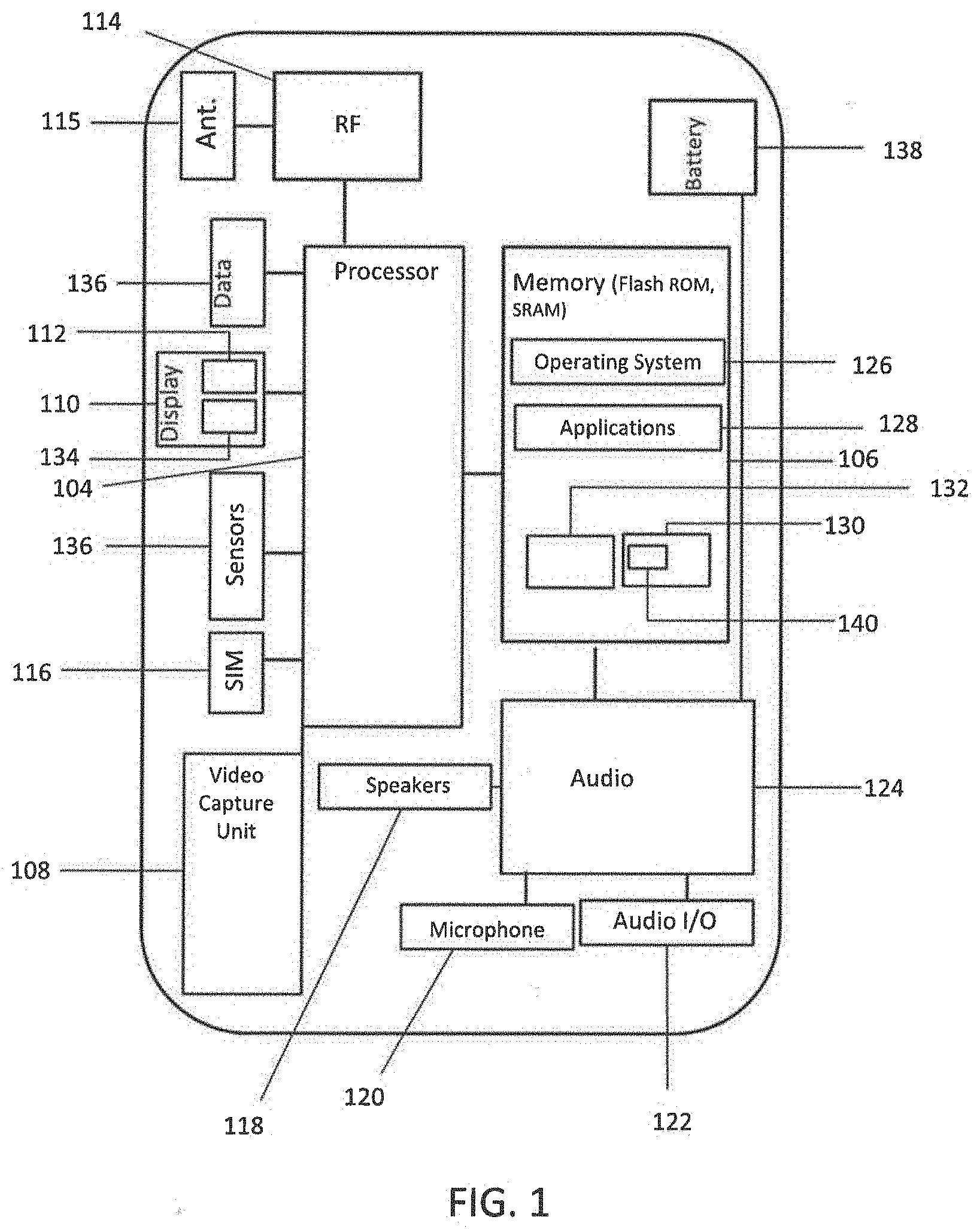

[0043] FIG. 1 is a block diagram of an exemplary user device for implementing exemplary embodiments of the present disclosure.

[0044] FIG. 2 is a block diagram of an exemplary server for implementation exemplary embodiments of the present disclosure.

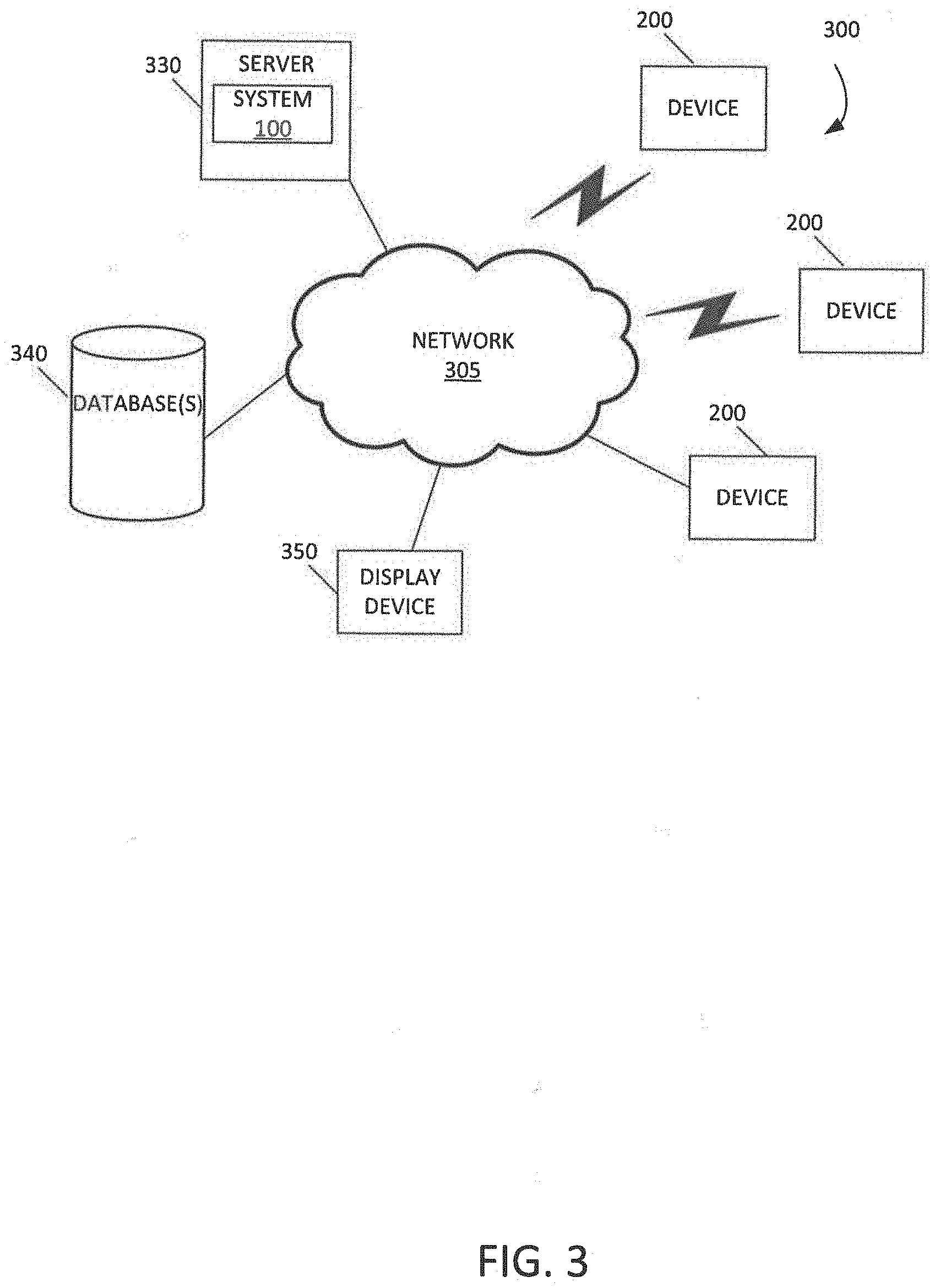

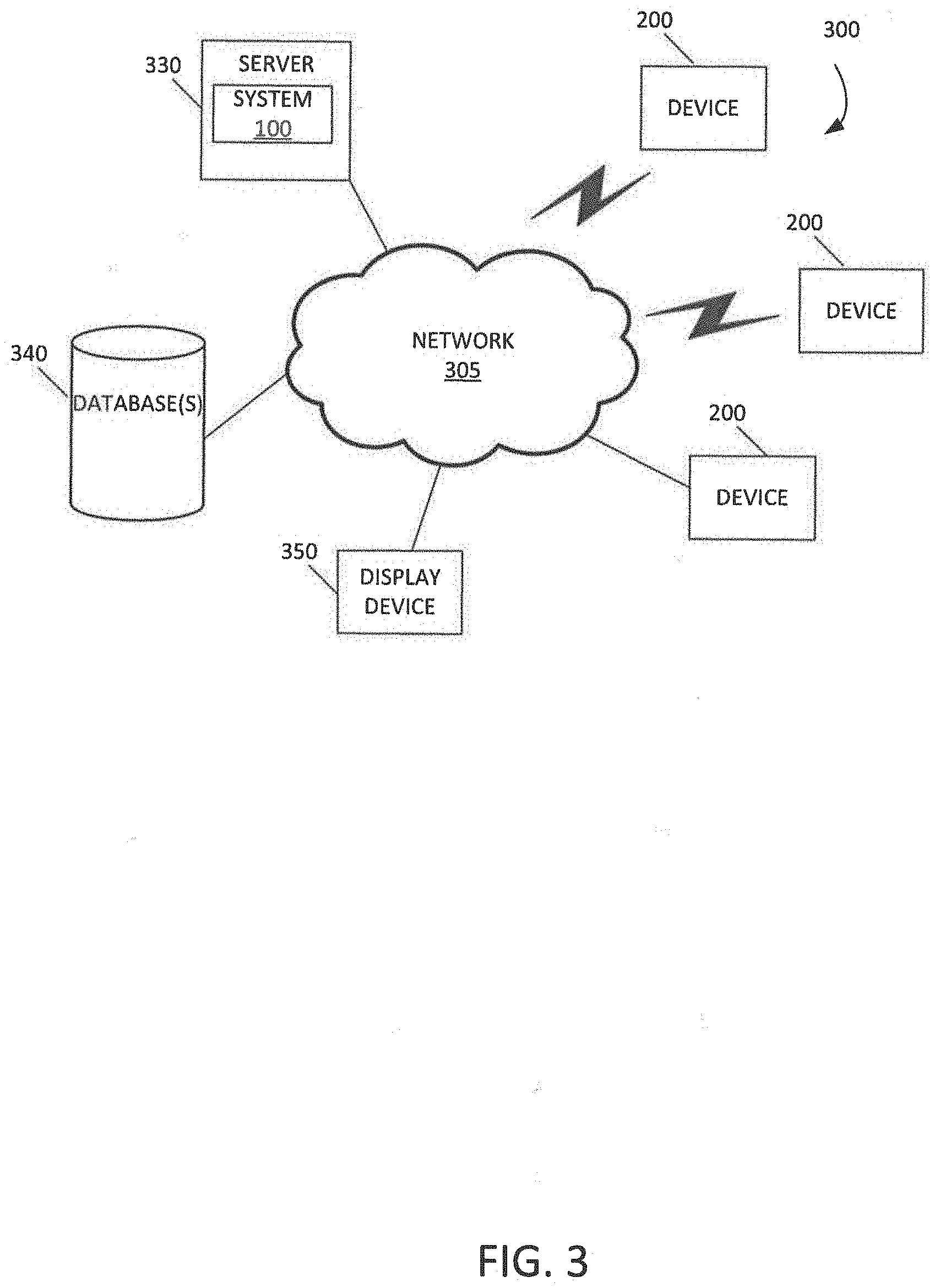

[0045] FIG. 3 is a block diagram of an exemplary network environment 300 for implementation exemplary embodiments of the present disclosure.

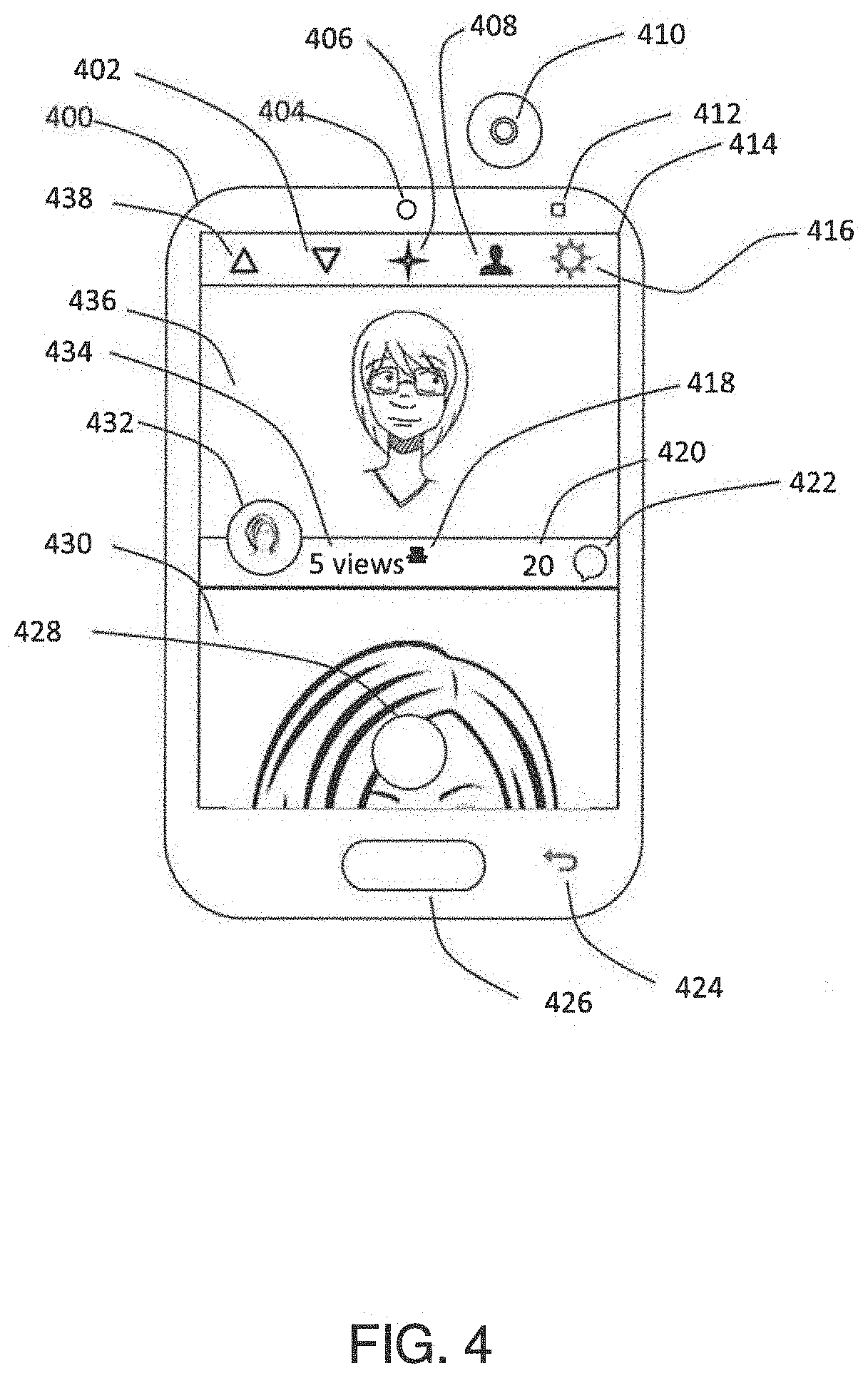

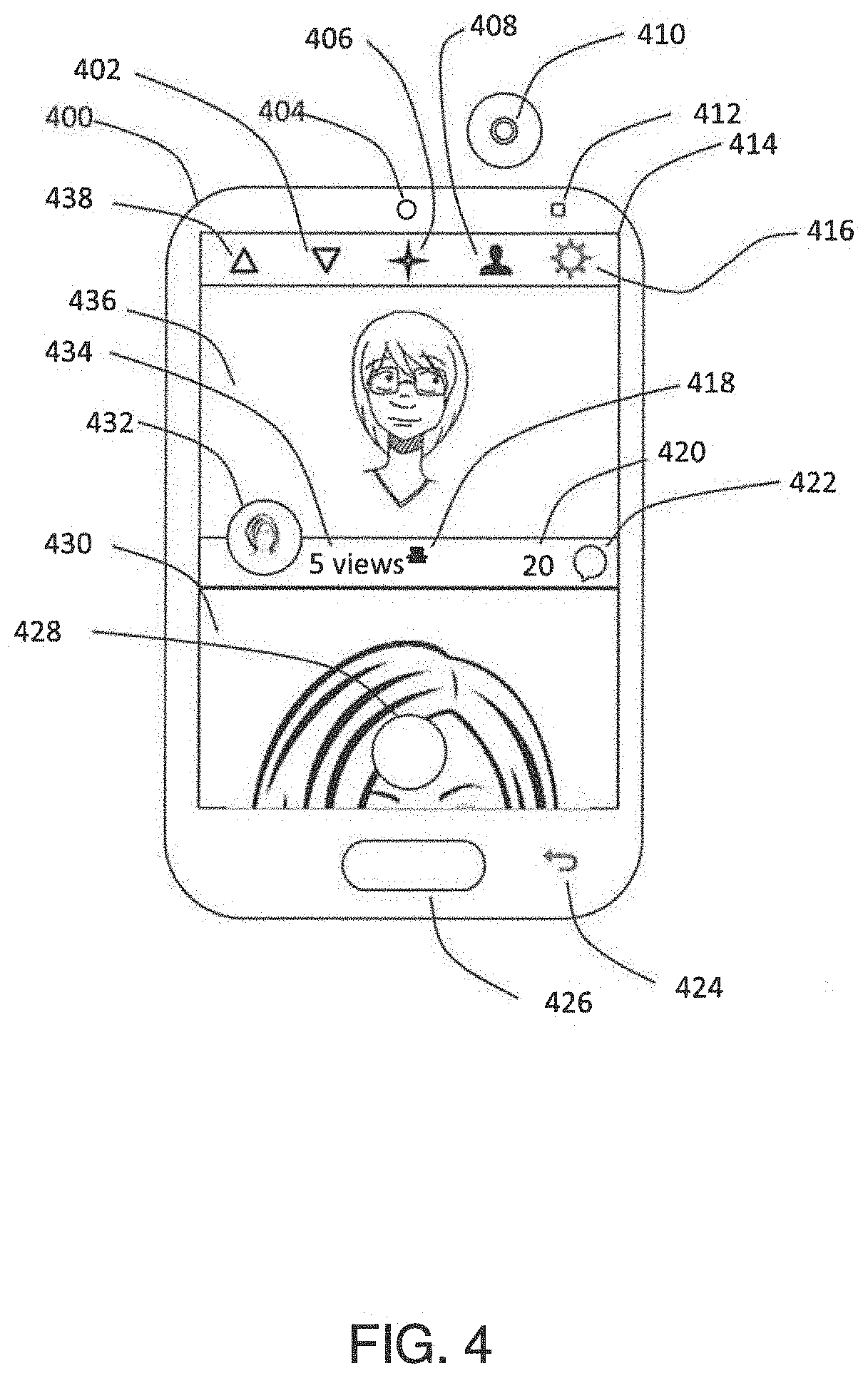

[0046] FIG. 4 shows a plan view of an exemplary user device having a display upon which a set of icons and informational elements are rendered to provide the user with navigation prompts and information about a video thread in accordance with exemplary embodiments of the present disclosure.

[0047] FIG. 5 shows symbol definitions used in conjunction with flowcharts provided herein.

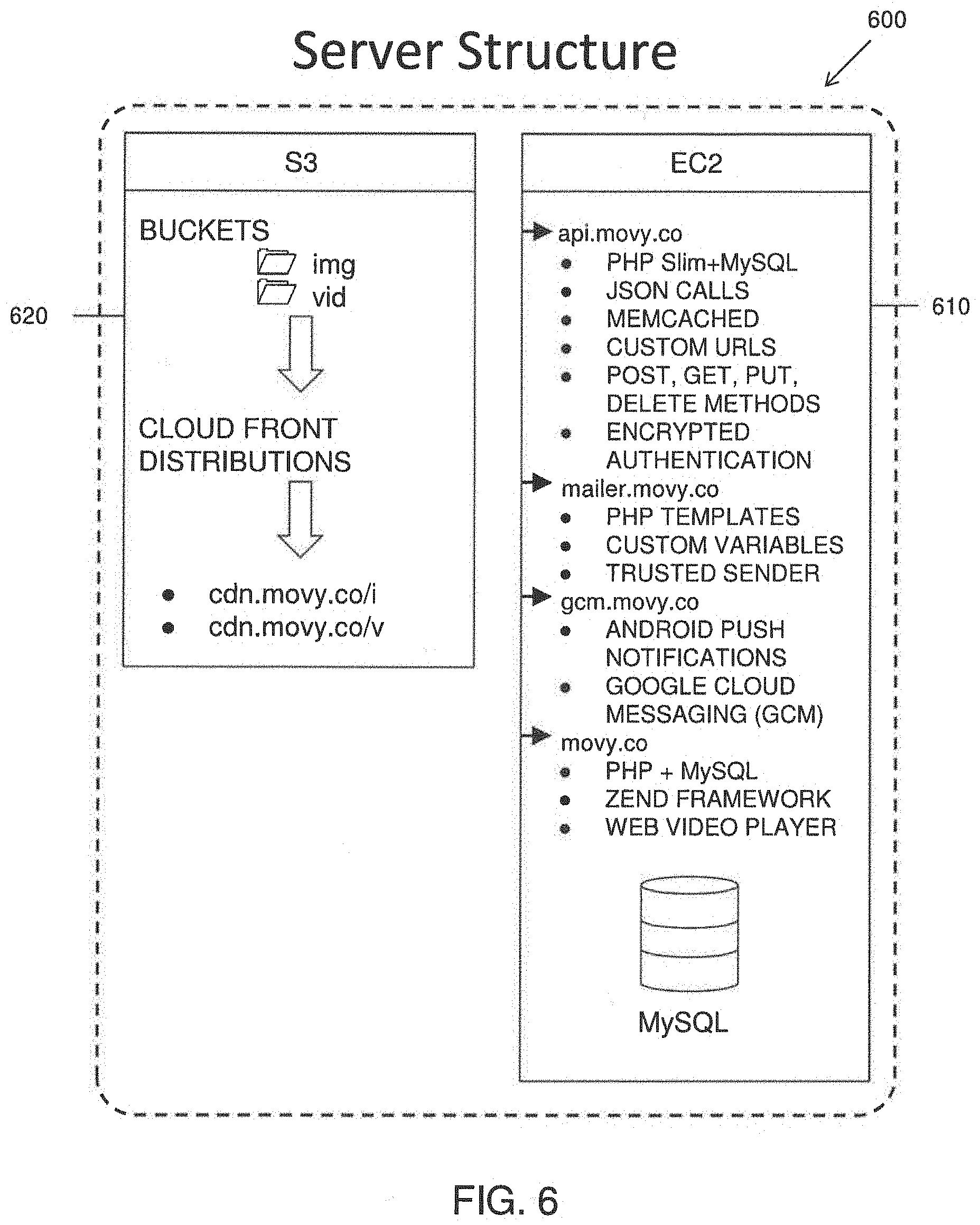

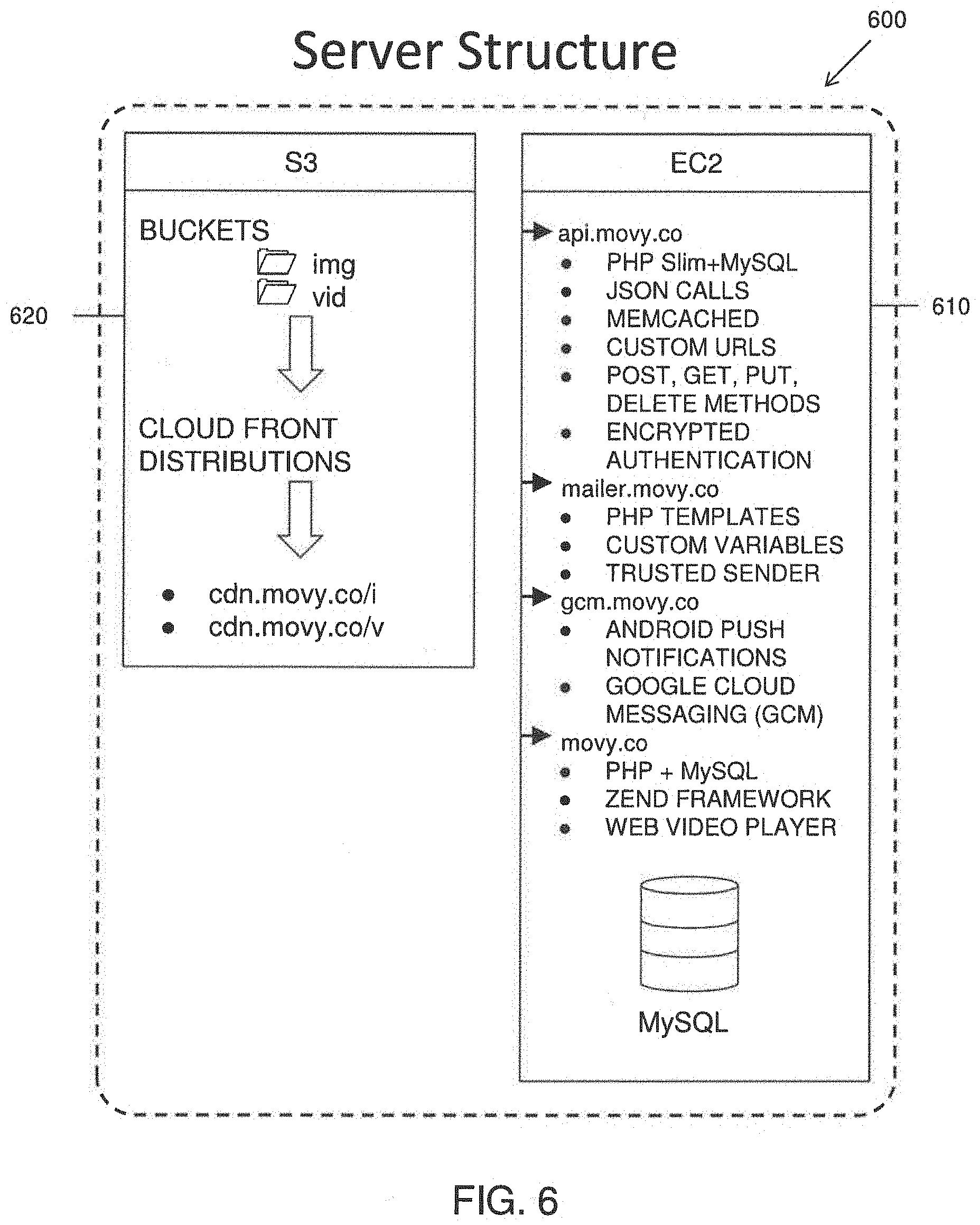

[0048] FIG. 6 shows a representation of cloud or server based elements for implementing exemplary embodiments of the video messaging system.

[0049] FIG. 7 shows a flowchart for recording a video message to a public stream using a video messaging application being executed on a user device in accordance with exemplary embodiments.

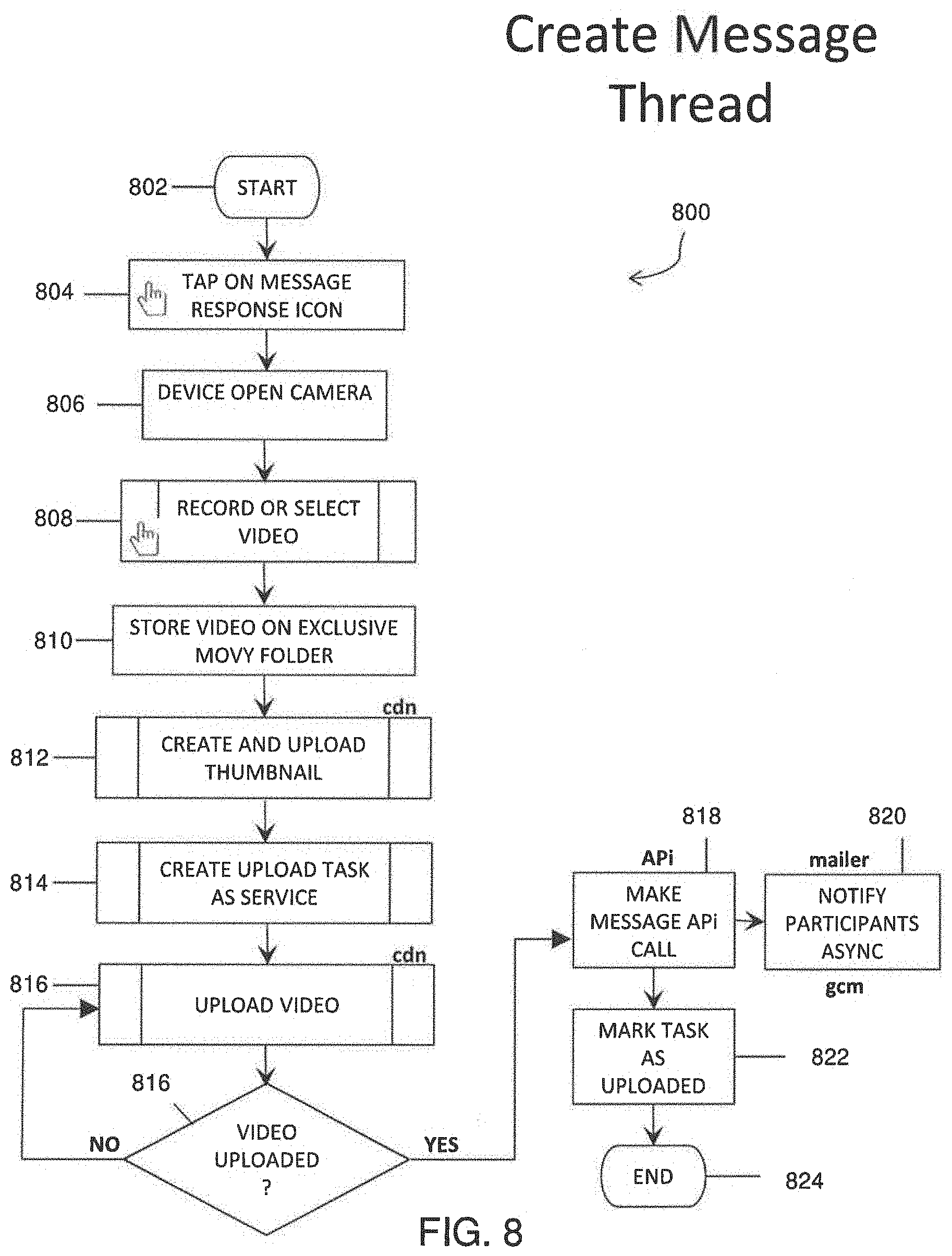

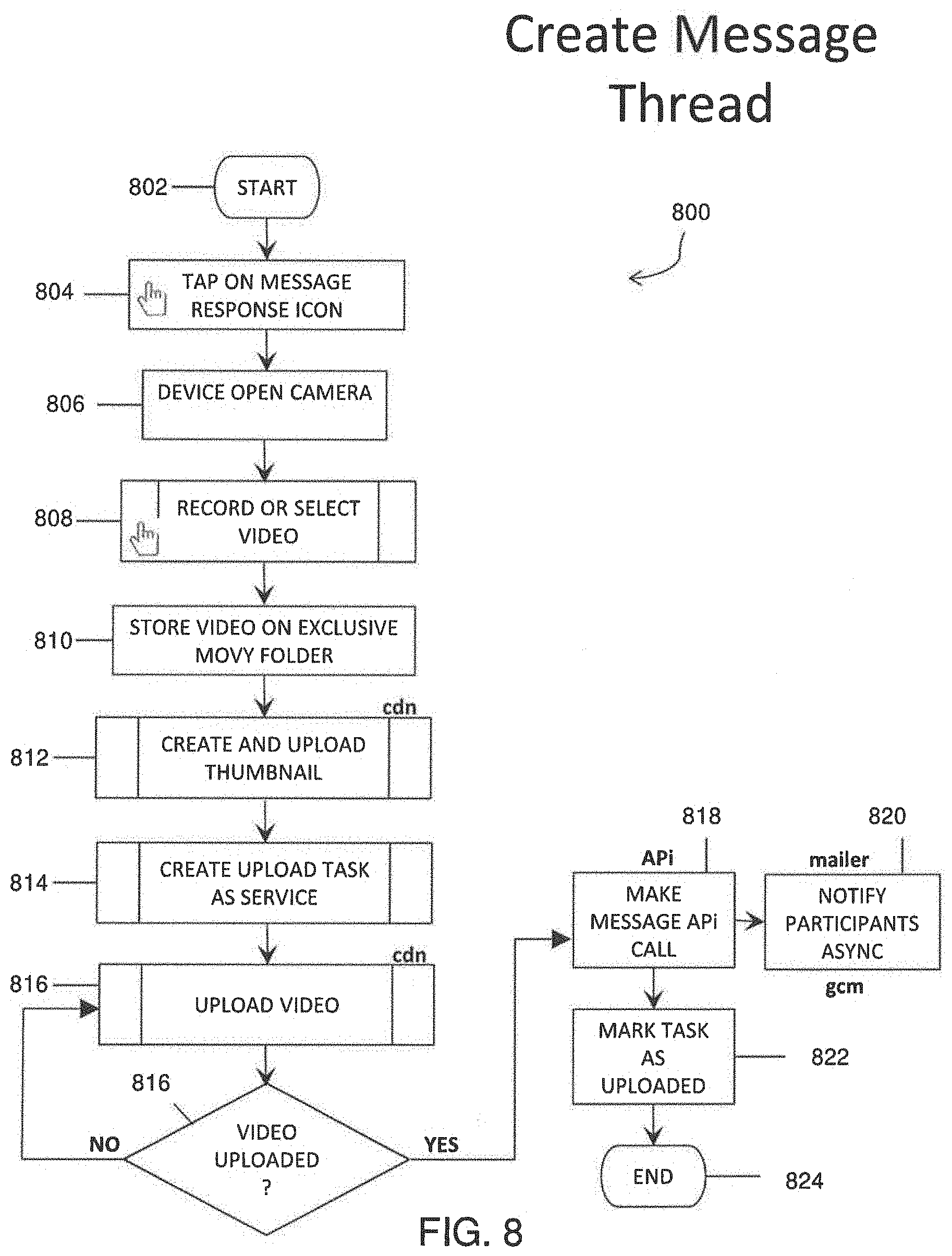

[0050] FIG. 8 shows a flowchart for creating a video message thread having responses to an initial video message using a video messaging application being executed on a user device in accordance with exemplary embodiments of the present disclosure.

[0051] FIG. 9 shows a flowchart for recording a direct video message to a specific recipient or recipients with a user device executing a video messaging application in accordance with exemplary embodiments of the present disclosure.

[0052] FIG. 10 is a flowchart illustrating steps and background actions for viewing or playing back a video message in accordance with exemplary embodiments of the present disclosure.

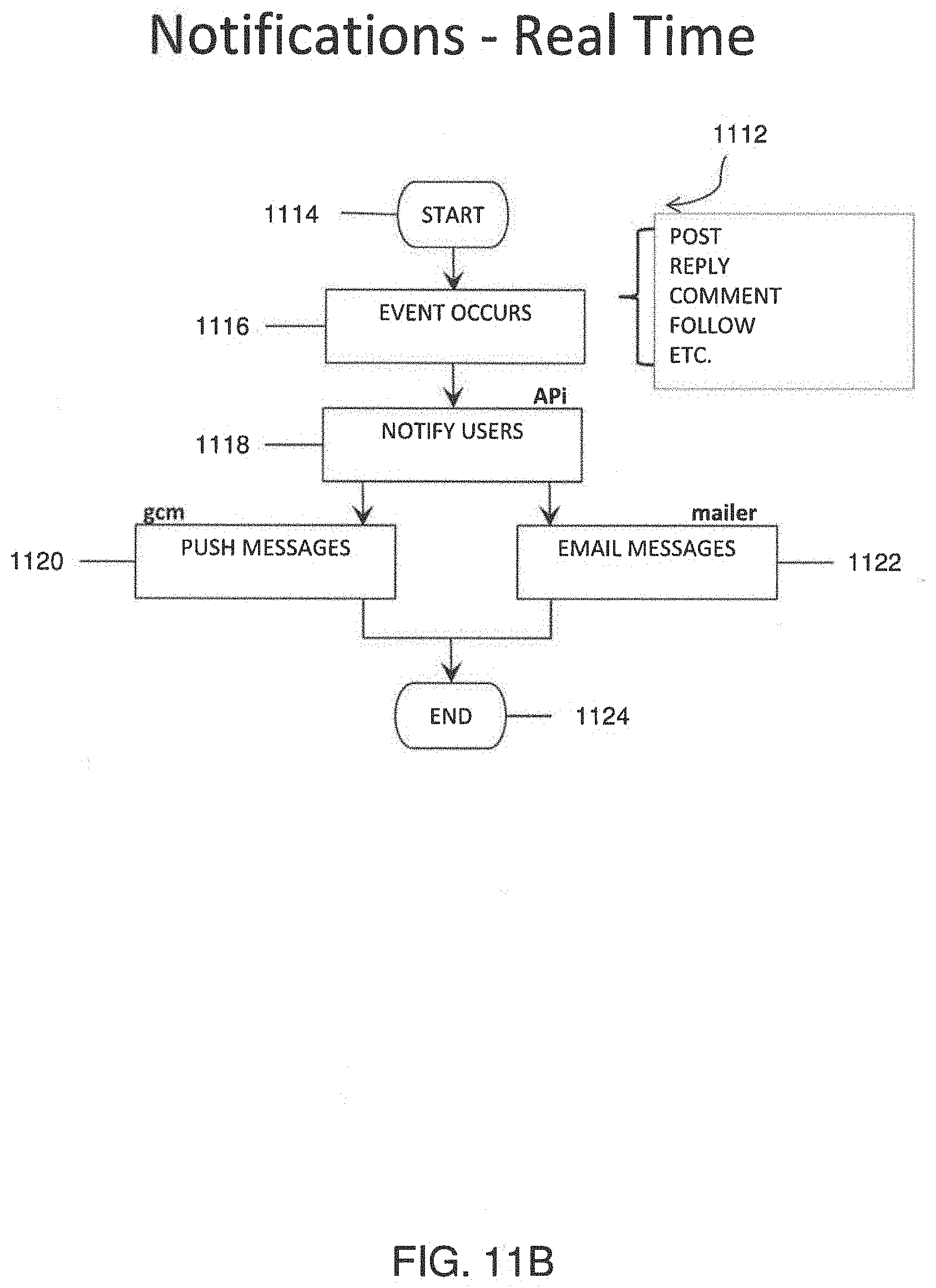

[0053] FIG. 11A shows a flowchart illustrating steps and background actions for on-demand notifications in accordance with exemplary embodiments of the present disclosure.

[0054] FIG. 11B shows a flowchart illustrates steps and background actions for real-time notifications.

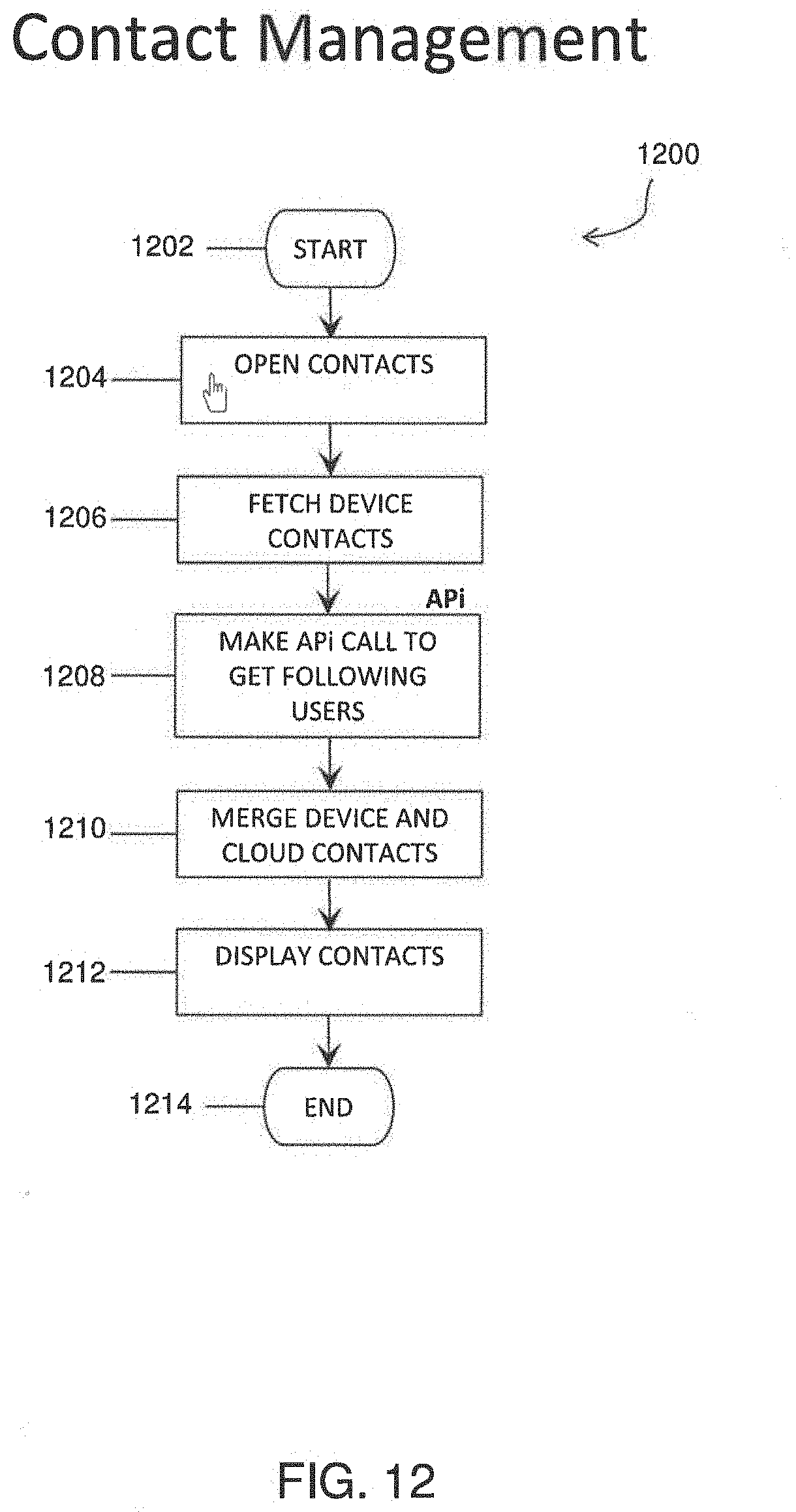

[0055] FIG. 12 shows a flowchart illustrating a contact management structure within a video messaging application being executed on a user device in accordance with exemplary embodiments of the present disclosure.

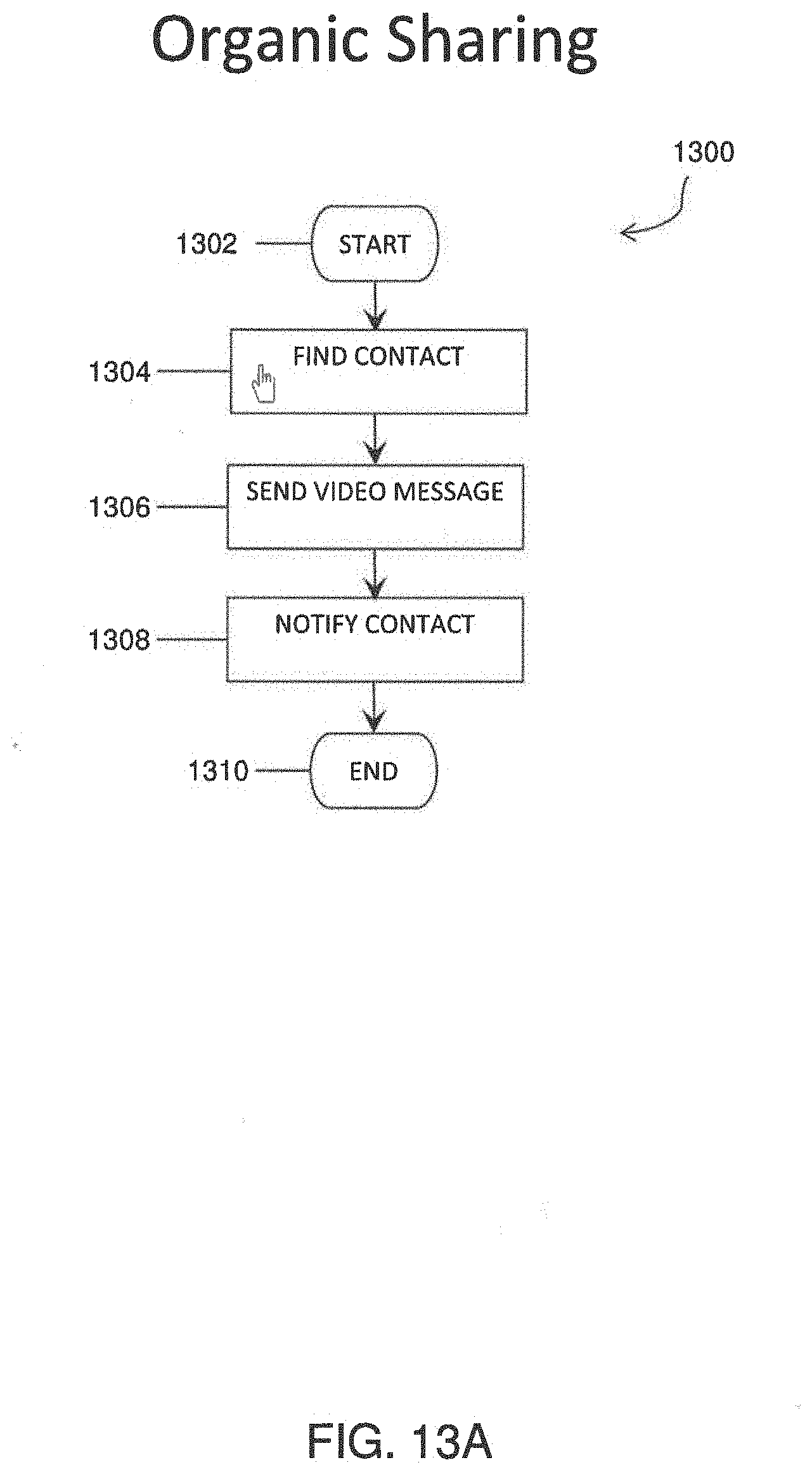

[0056] FIG. 13A shows a flowchart illustrating steps and background actions for sharing video threads with other users in accordance with exemplary embodiments of the present disclosure.

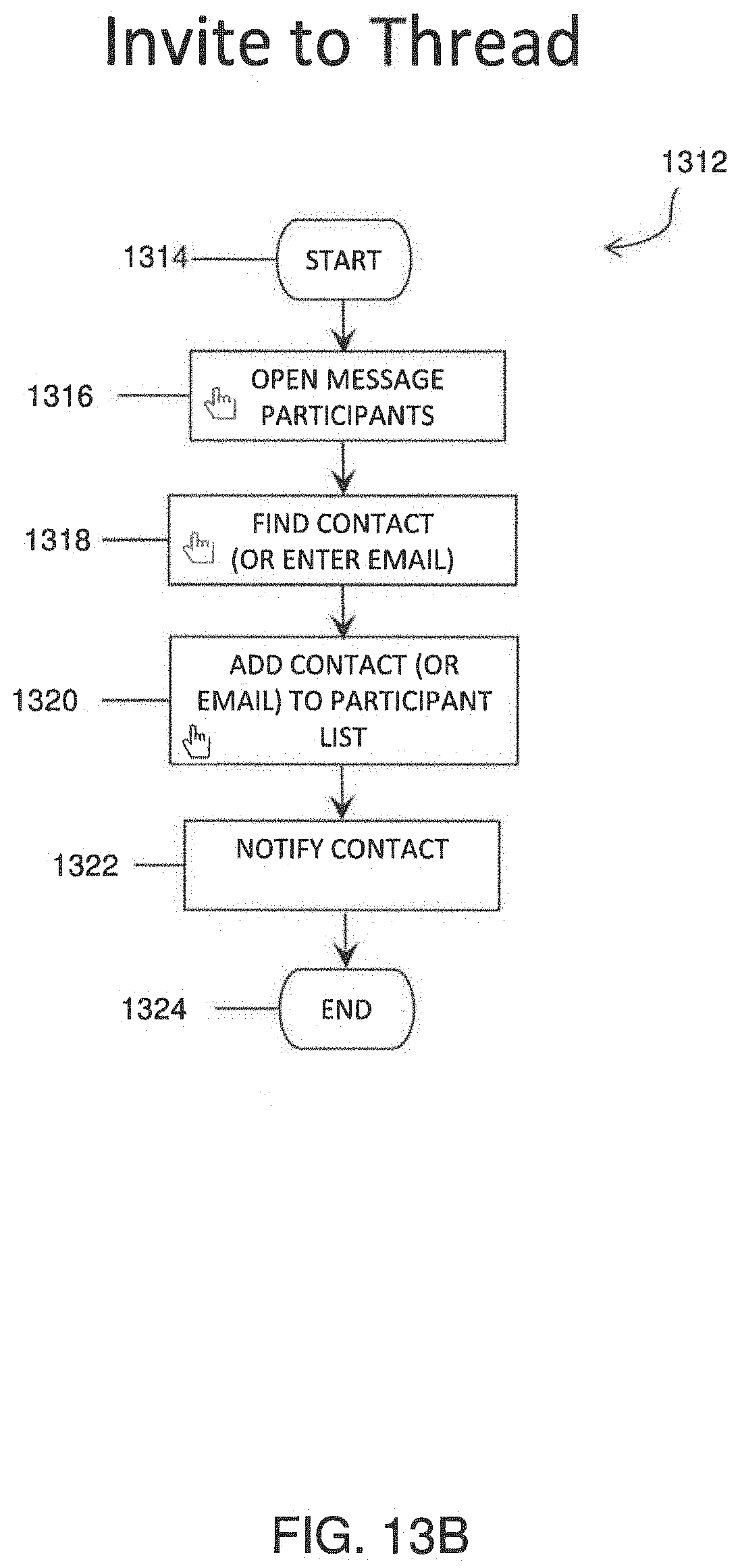

[0057] FIG. 13B shows a flowchart for inviting a user to an existing video thread in accordance with exemplary embodiments of the present disclosure.

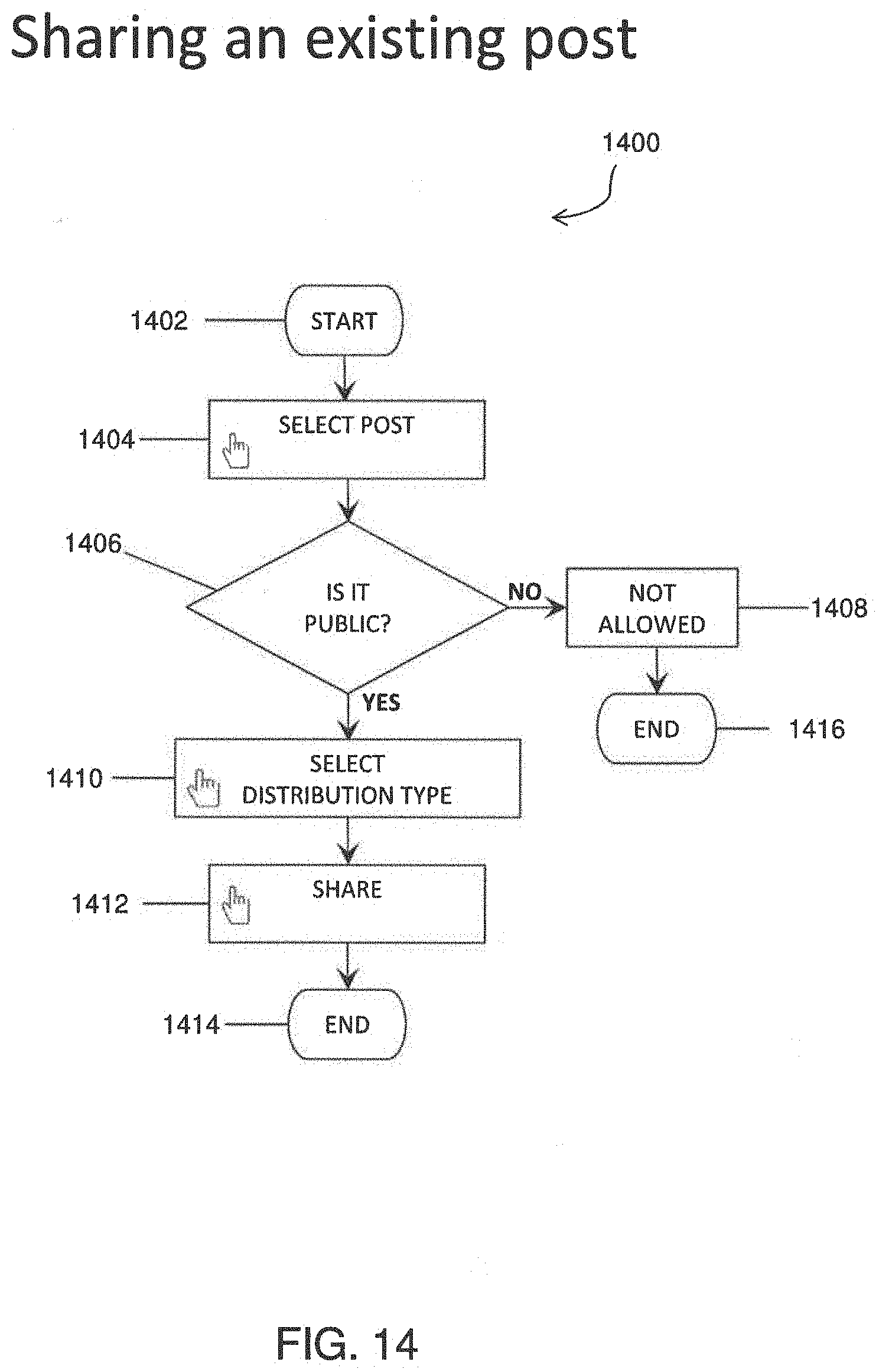

[0058] FIG. 14 shows a flowchart for sharing an existing video or thread in accordance with exemplary embodiments of the present disclosure.

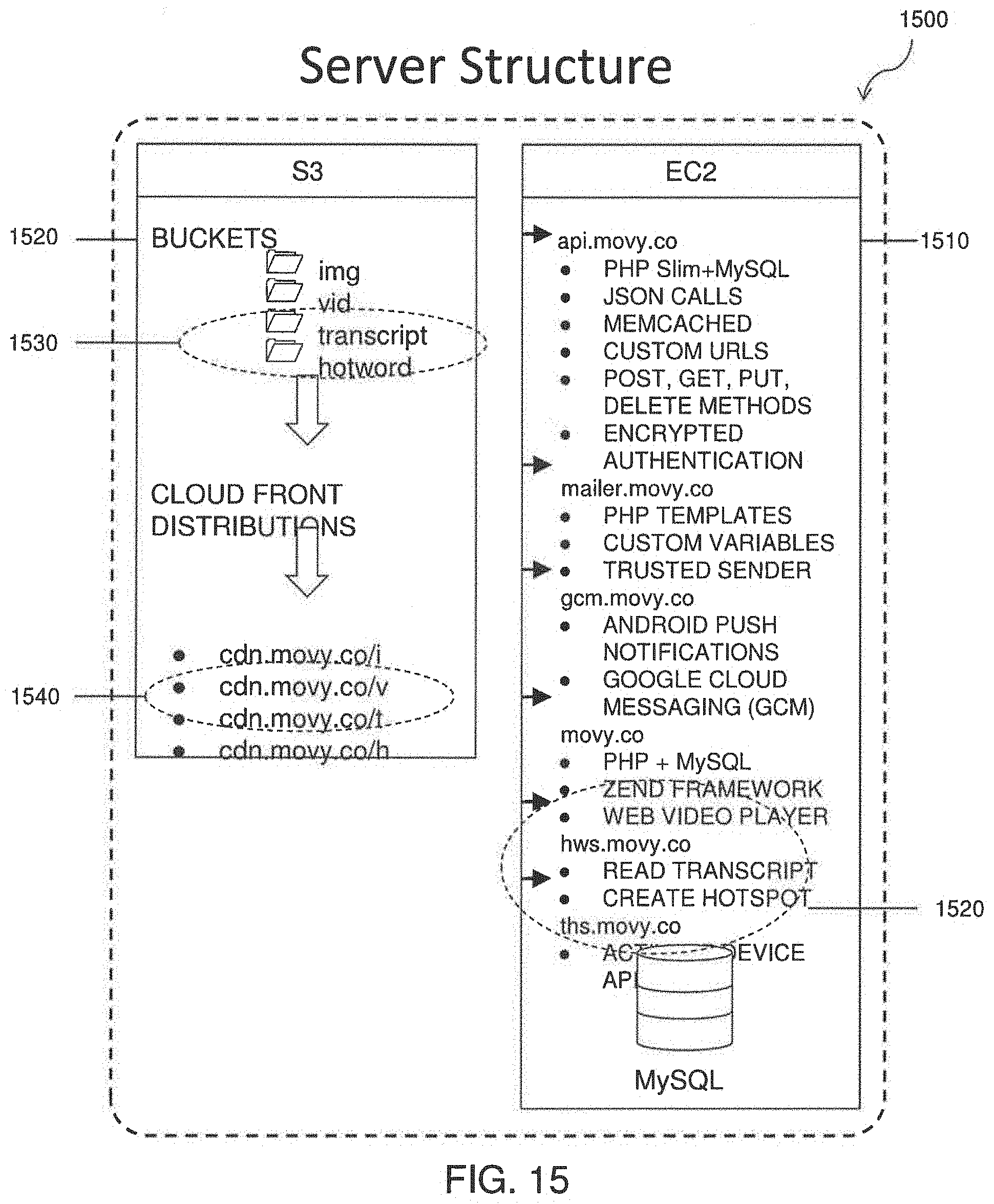

[0059] FIG. 15 shows an exemplary representation of cloud or server based elements used when providing search capabilities and/or supplemental data for videos in accordance with exemplary embodiments of the present disclosure.

[0060] FIG. 16 shows an exemplary representation of elements of a user device for implementing search capabilities and/or supplemental data for videos in accordance with exemplary embodiments of the present disclosure.

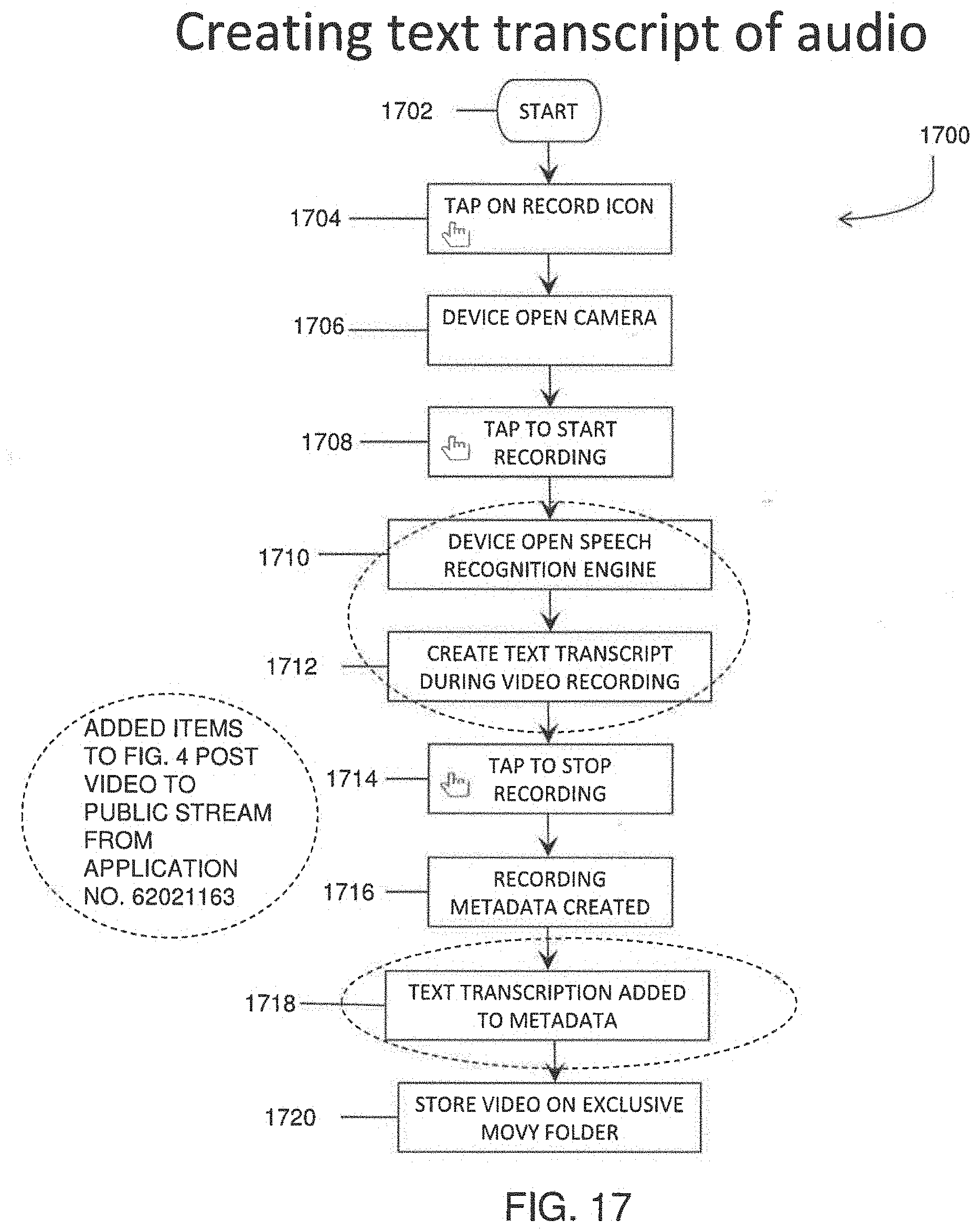

[0061] FIG. 17 is a flowchart illustrating a process for synchronous speech recognition and creation of a text transcript in accordance with exemplary embodiments of the present disclosure.

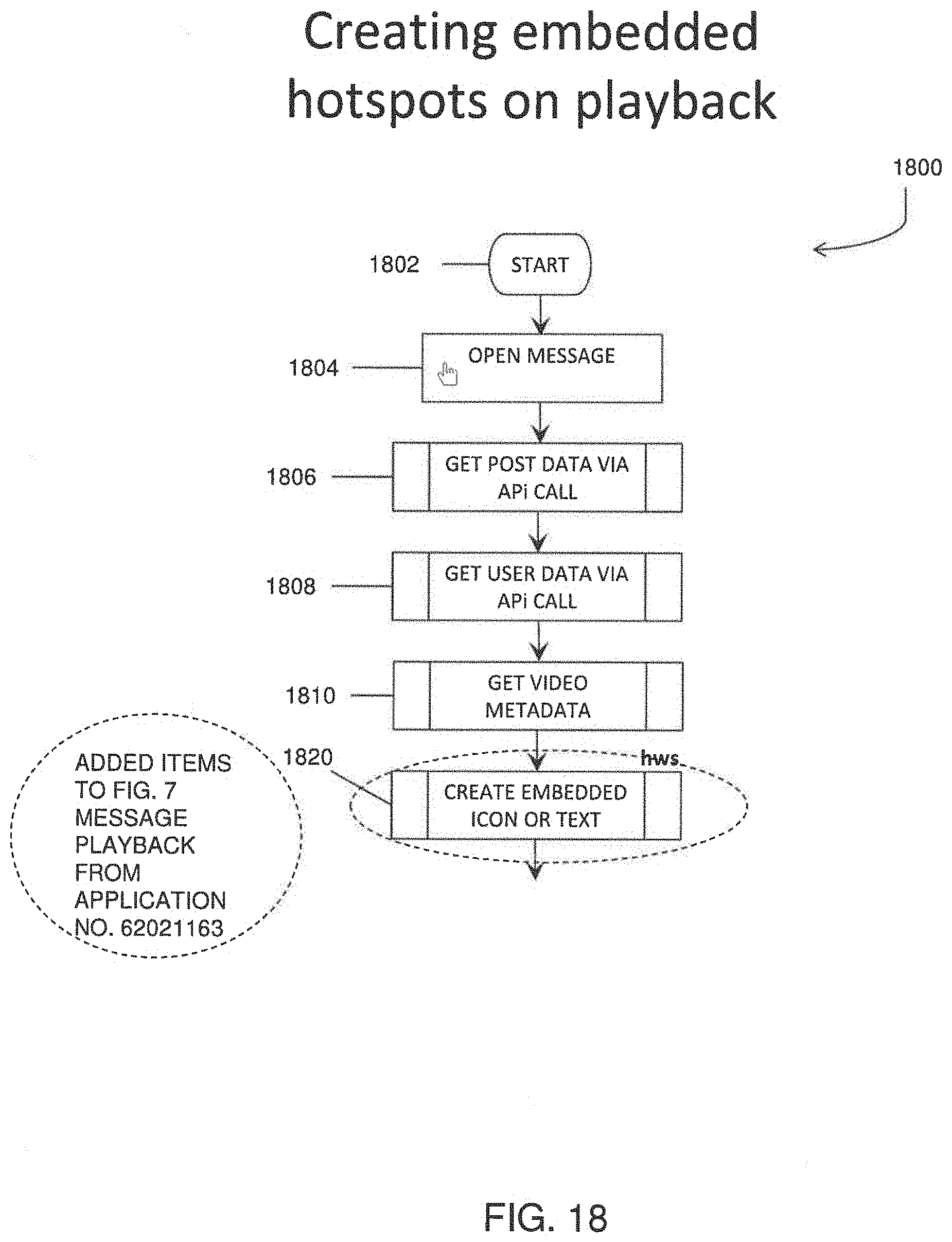

[0062] FIG. 18 shows a flowchart illustrating a process for adding embedded content to a video message in accordance with exemplary embodiments of the present disclosure.

[0063] FIG. 19 is a plan view of a user interface (UI) showing an embedded hot spot in a video containing information in accordance with exemplary embodiments of the present disclosure.

[0064] FIG. 20 is a plan view of a user interface (UI) showing an embedded hot spot containing a hyperlink in accordance with exemplary embodiments of the present disclosure.

[0065] FIG. 21 is a plan view of a user interface (UI) showing an embedded hot spot containing task addition in accordance with exemplary embodiments of the present disclosure.

[0066] FIG. 22 shows a flowchart illustrating a process for creating an actionable task as an embedded hot spot in a video in accordance with exemplary embodiments of the present disclosure.

[0067] FIG. 23 shows an exemplary structure of a single frame of video having an array pixels in each frame in accordance with exemplary embodiments of the present disclosure.

[0068] FIG. 24 shows color information of each pixel, red, blue, green in an exemplary single frame of video in accordance with exemplary embodiments of the present disclosure.

[0069] FIG. 25 is a graph showing an exemplary change in a color number for a pixel over time in accordance with exemplary embodiments of the present disclosure.

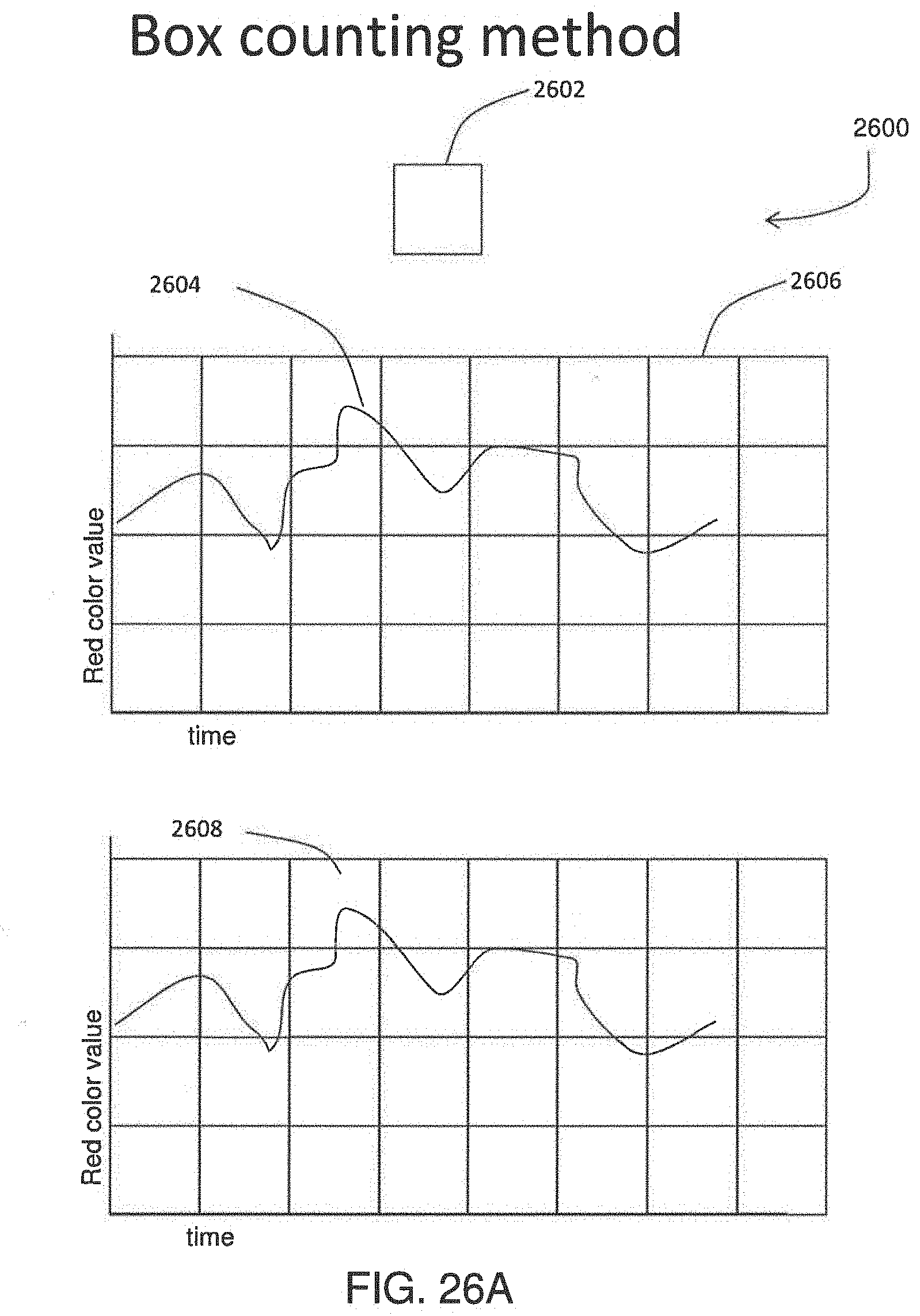

[0070] FIG. 26A illustrates a box counting method according to an exemplary embodiment.

[0071] FIG. 26B illustrates a box counting method according to an exemplary embodiment.

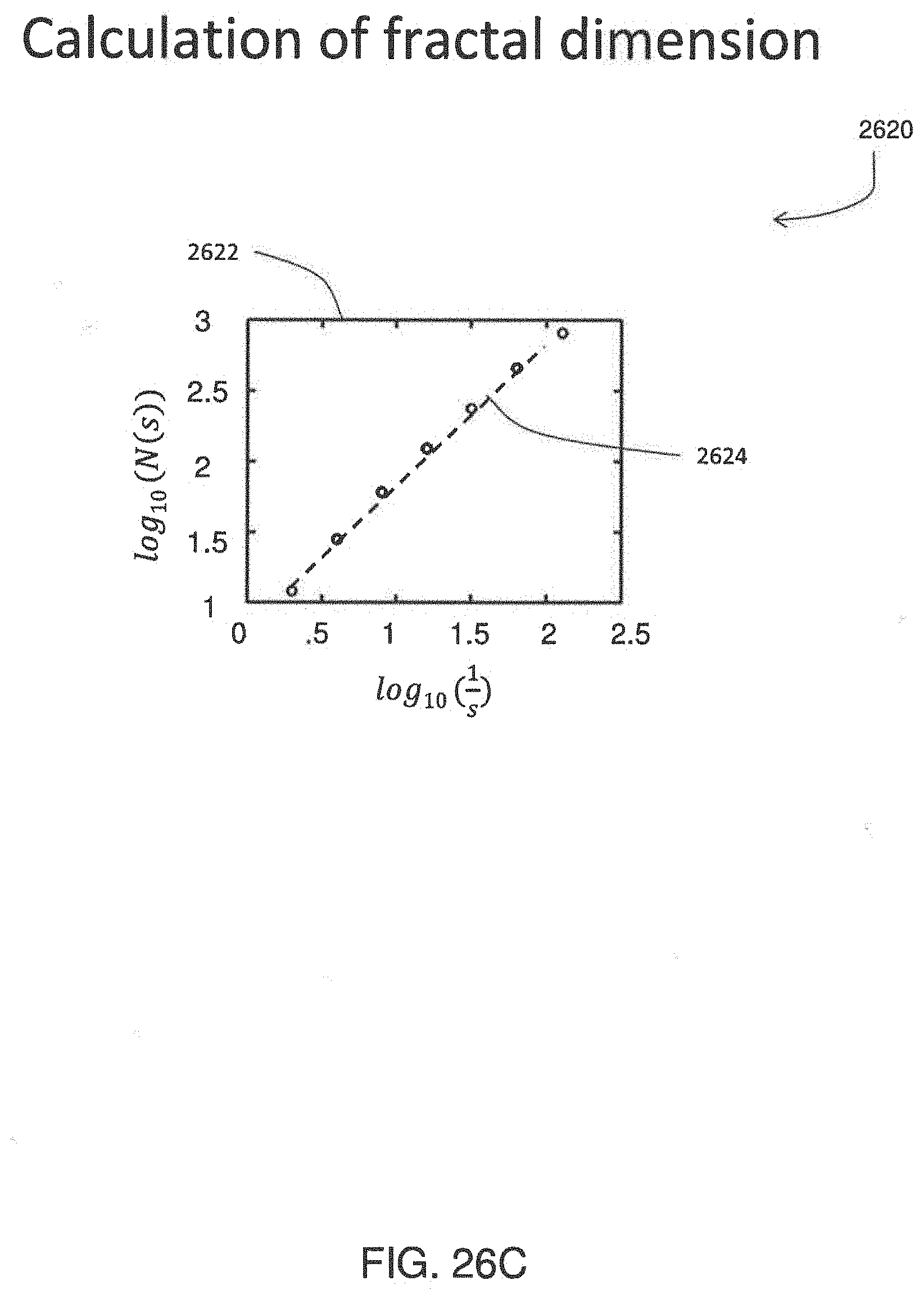

[0072] FIG. 26C is a graph showing a calculation of a fractal dimension, slope and y-intercept in accordance with exemplary embodiments of the present disclosure.

[0073] FIG. 27 is a flowchart illustrating a process of encoding a video file to compress the video file in accordance with exemplary embodiments of the present disclosure.

[0074] FIG. 28 is a flowchart illustrating a process of decoding an encoded video file to decompress the video file.

[0075] FIG. 29 shows a representation of a cloud or server elements.

[0076] FIG. 30 shows a representation of a mobile device elements.

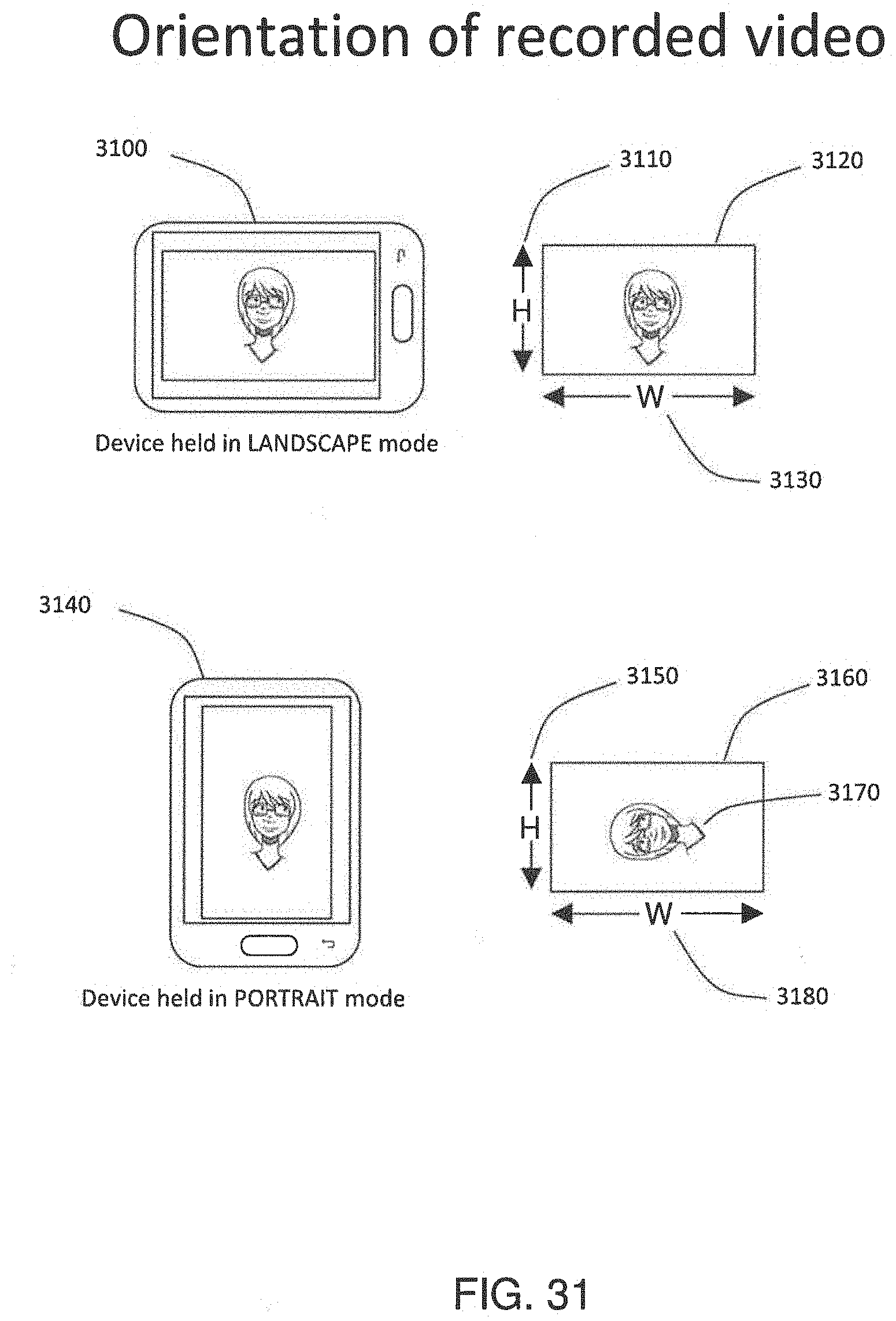

[0077] FIG. 31 shows the resultant orientation of a video recorded from a mobile device in landscape and portrait mode including the width (W) and height (H) dimensions.

[0078] FIG. 32 shows the angle required to rotate a resultant video given the orientation of the recording device for all four expected orientations.

[0079] FIG. 33 shows video metadata values encoded with a recorded video.

[0080] FIG. 34 shows an example of the angle required to rotate a resultant video given the orientation of the recording device for a video recorded in portrait mode.

[0081] FIG. 35 shows a flowchart representation of metadata added to a video created on a mobile device.

[0082] FIG. 36 shows a flowchart representation of orientation data extraction from an encoded video metadata and added to a MySQL database.

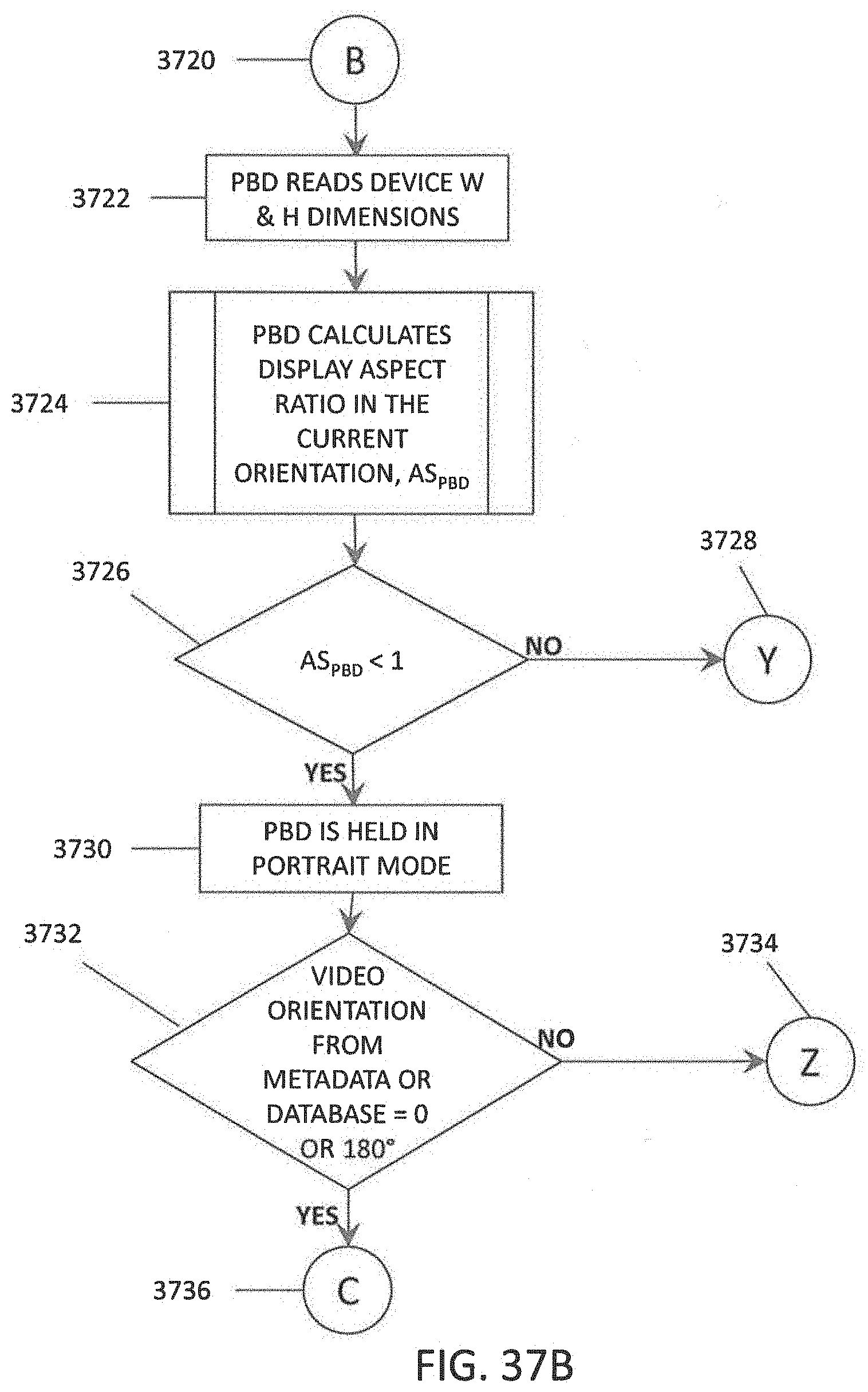

[0083] FIG. 37A-F show a flowchart representation of steps required to playback a video on a playback device in the correct orientation.

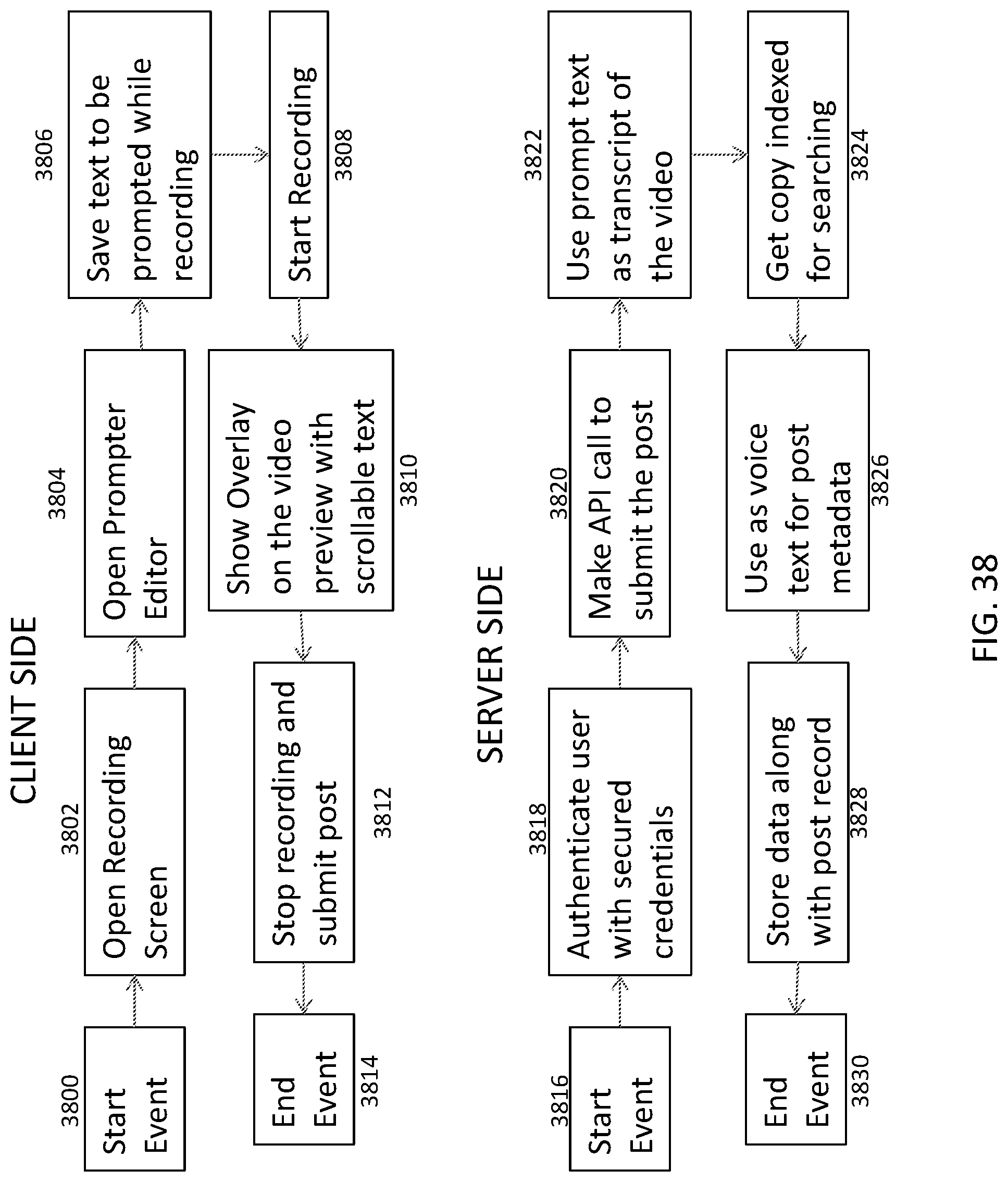

[0084] FIG. 38 shows a flow chart representation of an embodiment of the present disclosure having a prompter as experienced by the client (e.g., user) side, and by the server side.

[0085] FIG. 39 shows a flow chart representation of an embodiment of the present disclosure having a synopsis as experienced by the client (e.g., User) side, and by the server side.

[0086] FIG. 40 shows a flow chart representation of an embodiment of the present disclosure having Topics, Tags, Hubs, Groups or combinations thereof as experienced by the client (e.g., User) side, and by the server side.

[0087] FIG. 41 illustrates a list of exemplary Topics, Tags, Hubs, Groups.

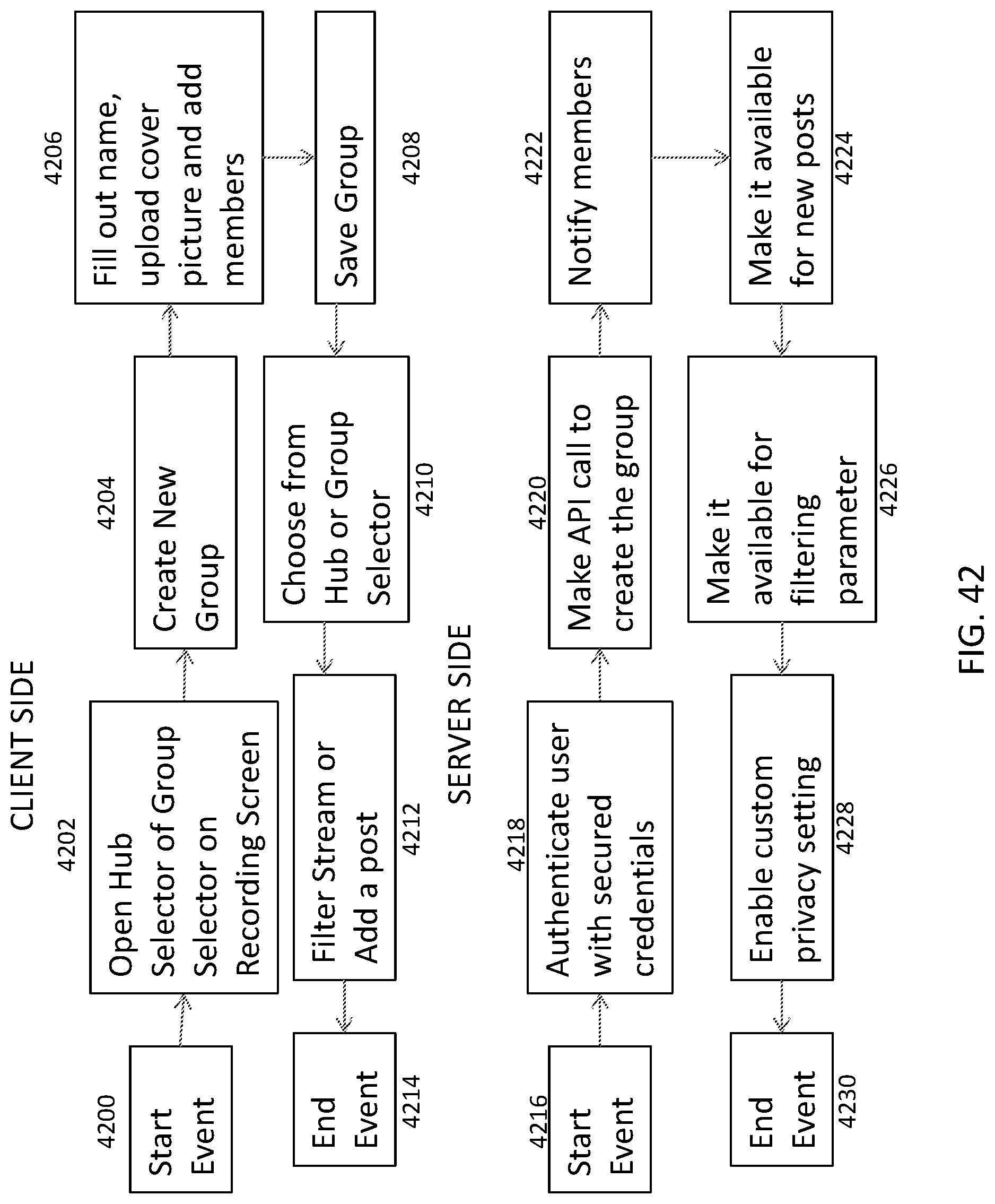

[0088] FIG. 42 shows a flow chart representation of an embodiment of the present disclosure having Hubs as experienced by the client (e.g., User) side, and by the server side.

DETAILED DESCRIPTION OF THE INVENTION

[0089] Exemplary embodiments of the present disclosure are related to the generation, manipulation, and/or concatenating of video. While exemplary embodiments may be described in relation of one or more non-limiting example applications (e.g., video messaging), exemplary embodiments of the present disclosure have broad applicability beyond such example applications. For example, the manipulation of video by data compression/decompression schemes and the introduction of supplemental data with or to a video are generally applicable to the transmission of video over networks and the conveyance of information that supplements a video, respectively.

[0090] The present disclosure provides for rapidly create a series of video messages (video thread) for the purpose of collaborating, communicating or promoting an activity, product or service. The videos can be organized with a thread starter created by a user and then followed by multiple video "responses" created by the user or other users. In this way the user can send a video message to another user or users detailing some activity, product or service. The message recipient can send a video response to the initial message and this message can then be appended chronologically to the initial message. Further responses and responses to responses may be additionally appended to the initial two videos to make up a video message thread.

[0091] This video thread can be created by an application or "app" that runs on a mobile or wearable device. Examples of such devices include but are not limited to cell phones, smart phones, wearable devices (smart watch, wrist bands, camera enabled eye spectacles, etc.) and wireless enabled cameras or other video capture devices. This "app" can be completely video based and in some embodiments does not require the text based structure of current "apps" designed for text messaging. It can be designed such that a video messages can be created and sent in as little as one or two screen taps.

[0092] Exemplary embodiments of the present disclosure can (i.) create and send video messages in the least number of user steps as possible to one or multiple recipients; (ii.) create and send video messages to any recipient, regardless of whether they have the app installed on their mobile device or not; (iii.) create video message responses from one or multiple recipients; (iv.) append the responses to the initial video message, thus creating a video thread; (v.) display these video message threads; (vi.) display information about the video messages such as the author, time and location of recording, number of responses, etc.; (vii.) notify the user about new video messages or information such as the delivery status notifications (return receipt), number of responses, number of views and rating; and (viii.) facilitate the sharing of an individual video message or video message thread to another user or third party as a continuous video with the video messages in the thread merged one after another.

[0093] The present disclosure also provides for rapidly deliver of both search capabilities and additional embedded in the digital video data in general or a video message. These additional functionalities enhance the nature of the conversation or collaboration as well as increase the productivity of the author and recipient. To enable these functionalities, exemplary embodiments of the present disclosure can transcribe the spoken content of a digital video and the transcription can be processed to identify words or phrases, which trigger one or more operations for automatically generating and/or associating supplemental data with the digital video.

[0094] The present disclosure provides for compressing and decompressing digital video data in generally or a video message. To efficiently compress the video data, exemplary embodiments of the present disclosure can characterize and generate fingerprints for color values for each pixel of every frame in the digital video data. As a result, instead of sending color data frame by frame, exemplary embodiments only send the fingerprint, which in some embodiments, can include only two numbers for each color element (i.e. red, green and blue) for a total of six numbers per pixel.

[0095] Exemplary embodiments can utilize a user's device and/or cloud server to compress video content or video messages for efficient transmission over cellular and/or data networks (e.g., the Internet) by forming a fingerprint of the entire video content or video messaging having a fixed size regardless of the length of the recording, and compressing and decompressing the fingerprint.

[0096] The disclosures of all cited references including publications, patents, and patent applications are expressly incorporated herein by reference in their entirety.

[0097] The present disclosure is further defined in the following Examples. It should be understood that these Examples, while indicating preferred embodiments of the invention, are given by way of illustration only.

[0098] As used herein the term(s) applications or "apps" refers to a program running on a mobile device in native form or on a web browser using a hypertext markup language such as HTML5.

[0099] As used herein the term(s) text messaging with video refers to applications that are specifically for text messaging but have the capability of sending and receiving videos as well.

[0100] As used herein the term(s) video sharing refers to applications that are designed for sharing short video segments or messages to the user community.

[0101] As used herein the term(s) video messaging refers to applications that are specifically designed for messaging in video, but retain the structure of the text message applications.

[0102] As used herein the term(s) video message or post refers to an individual video created or uploaded into the application.

[0103] As used herein the term(s) video thread refers to a series of video messages or segments containing a thread starter video followed by responses by any user within the user community.

[0104] As used herein the term(s) stream refers to a series of video threads with the first or initial video message (thread starter video) previewed on the application's user interface.

[0105] As used herein the term(s) user refers to a person with the application and the credentials to create send and receive video messages.

[0106] As used herein the term(s) original user refers to the thread starter video message

[0107] As used herein the term(s) user community refers to the community of persons with the application and the credentials to create send and receive video messages.

[0108] As used herein the term(s) third party refers to an individual who does not have the application and the credentials to create send and receive video messages.

[0109] As used herein the term(s) crowdsourced thread refers to a video thread created by multiple users.

[0110] As used herein the term(s) home screen refers to the main screen of the application showing the individual video threads and user interface elements for navigation and creating a video message.

[0111] As used herein the term(s) gestures refer to any user interaction (e.g. hand or finger) with the user interface such as a swipe, tap, tap & hold used to create, respond to or navigate between video messages.

[0112] As used herein the term(s) public post or message refers to a message sent to all users in the user community

[0113] As used herein the term(s) private post or direct message refers to a message sent to a specific user in the user community.

[0114] As used herein the term(s) stream playback refers to auto-play comments in the order they have been posted in order to follow the discussion flow.

[0115] As used herein the term(s) CDN refers to Content Delivery Network powered by Amazon S3 that hosts the video and thumbnail (previews) files to make them easily available on multiple servers and locations.

[0116] As used herein the term(s) GCM refers to Google Cloud Messaging service used for push notifications.

[0117] As used herein the term(s) ASYNC Notifications refers to parallel APi calls for faster responses.

[0118] As used herein the term(s) unclaimed profiles refers to recipients of video messages that are not part of the app user base.

[0119] As used herein the term(s) video message or post refers to an individual video created or uploaded into the application.

[0120] As used herein the term(s) video content refers to digital video content.

[0121] As used herein the term(s) pixel refers to the smallest unit of a video frame.

[0122] As used herein the term(s) compression refers to size reduction of a digital video data file.

[0123] As used herein the term(s) decompression refers to the reconstruction of the digital video file.

[0124] As used herein the term(s) codec refers to the software method or algorithm that compresses and decompresses the video file.

[0125] As used herein the term(s) fractal dimension D refers to the calculated dimension of a line created by the plot of the video color data. In this disclosure, the box counting method is used to calculate the fractal dimension.

[0126] As used herein the term(s) color number refers to the color information defining the color of each pixel. The color information consists of the constituent red, blue and green values each ranging between 0 and 255. They are represented in multiple forms including arithmetic, digital 8-bit or 16-bit data.

[0127] As used herein the term(s) upload refers to act of transmitting the compressed video file to application servers through the internet or mobile networks.

[0128] As used herein the term(s) download refers to act of transmitting the compressed video file from application servers to the recipient's device through the internet or mobile networks.

[0129] As used herein the term(s) bandwidth refers to the network speed in megabit per second or mbps. One million mbps equals 0.125 Megabytes per second (MB/sec) data transmission rate.

[0130] As used herein the term(s) network refers to mobile network bandwidths such as 2G, 3G, 4G, LTE, wifi, superwifi, bluetooth, near field communication (NFC).

[0131] As used herein the term(s) network refers to digital cellular technologies such as Global System for Mobile Communications (GSM), General Packet Radio Service (GPRS), CDMA2000, Evolution-Data Optimized (EV-DO), Enhanced Data Rates for GSM Evolution (EDGE), Universal Mobile Telecommunications System (UMTS), Digital Enhanced Cordless Telecommunications (DECT), Digital AMPS (IS-136/TDMA), and Integrated Digital Enhanced Network (iDEN).

[0132] As used herein the term(s) video resolution refers to the size in pixels of the video recording frame size. For example 480p refers to a frame that is 480 pixels tall and 640 pixels wide containing a total of 307,200 pixels. Other examples include 720p and 1080p.

[0133] As used herein the term(s) video frame speed of fps refers to the frame rate of the video capture or playback. In most cases this is between 24 and 30 frames per second.

I. Exemplary User Device

[0134] FIG. 1 depicts a block diagram of an exemplary user device 100 in accordance with exemplary embodiment of the present disclosure. The user device 100 can be a smartphone, tablet, subnotebook, laptop, personal computer, personal digital assistant (PDA), and/or any other suitable computing device that includes or can be operatively connected to an video capture device and can be programmed and/or configured to implement and/or interact with embodiments of a video messaging system. The user device 100 can include a processing device 104, such as a digital signal processor (DSP), microprocessor, and/or a microcontroller; memory/storage 106 in the form a non-transitory computer-readable medium; a video capture unit 108, a display unit 110, a microphone 120, a speaker 118, an radio frequency transceiver 114, and an digital input/output interface 122. Some embodiments of the user device 100 can be implemented as a portable computing device and can include components, such as sensors 136, a subscriber identity module (SIM) card 116, and a power source 138.

[0135] The memory 106 can include any suitable, non-transitory computer-readable storage medium, e.g., read-only memory (ROM), erasable programmable ROM (EPROM), electrically-erasable programmable ROM (EEPROM), flash memory, and the like. In exemplary embodiments, an operating system 126 and applications 128 can be embodied as computer-readable/executable program code stored on the non-transitory computer-readable memory 106 and implemented using any suitable, high or low level computing language and/or platform, such as, e.g., Java, C, C++, C#, assembly code, machine readable language, and the like. In some embodiments, the applications 128 can include a video capture and processing engine 132 and/or a video messaging application 130 configured to interact with the video capture unit 108, the microphone, and/or the speaker to record video (including audio) or to play back video (including audio). While memory is depicted as a single component those skilled in the art will recognize that the memory can be formed from multiple components and that separate non-volatile and volatile memory device can be used.

[0136] The processing device 104 can include any suitable single- or multiple-core microprocessor of any suitable architecture that is capable of implementing and/or facilitating an operation of the user device 100. For example, to perform a video capture operation, transmit the captured video (e.g., via the RF transceiver 114), transmit/receive a metadata associated with the video (e.g., via the RF transceiver 114), display data/information including GUIs 112 of the user interface 134, captured or received videos, and the like. The processing device 104 can be programmed and/or configured to execute the operating system 126 and applications 128 (e.g., video capture and processing engine 132 and video messaging application 130) to implement one or more processes to perform an operation. The processing device 104 can retrieve information/data from and store information/data to the storage device 106. For example, the processing device 104 can retrieve and/or store captured or received videos, metadata associated with captured or received videos, and/or any other suitable information/data that can be utilized by the user device 100 and/or the user.

[0137] The RF transceiver 114 can be configured to transmit and/or receive wireless transmissions via an antenna 115. For example, the RF transceiver 114 can be configured to transmit data/information, such as one or more videos captured by the video capture unit and/or metadata associated with the captured video, directly or indirectly, to one or more servers and/or one or more other user devices, and/or to receive videos and/or metadata associated with the videos, directly or indirectly, from one or more servers and/or one or more user devices. The RF transceiver 114 can be configured to transmit and/or receive information having at a specified frequency and/or according to a specified sequence and/or packet arrangement.

[0138] The display unit 110 can render user interfaces, such as graphical user interfaces 112 to a user and in some embodiments can provide a mechanism that allows the user to interact with the GUIs 112. For example, a user may interact with the user device 100 through display unit 110, which, in some embodiments, may be implemented as a liquid crystal touch-screen (or haptic) display, a light emitting diode touch-screen display, and/or any other suitable display device, which may display one or more user interfaces (e.g., GUIs 112) that may be provided in accordance with exemplary embodiments.

[0139] The power source 138 can be implemented as a battery or capacitive elements configured to store an electric charge and power the user device 100. In exemplary embodiments, the power source 138 can be a rechargeable power source, such as a battery or one or more capacitive elements configured to be recharged via a connection to an external power supply.

[0140] In exemplary embodiments, video messaging applications 130 can include a codec 140 for compressing and decompressing video files as described herein. While codec 140 is shown as separate and distinct, exemplary embodiments may be incorporated and integrated into one or more applications such as video messaging application 130 or video capture and processing engine 132.

[0141] In some embodiments, the user device can implement an one or more processes described herein via an execution of the video capture and processing application 132 and/or an execution of one of the applications 128. For example, the user device 100 can be used for video messaging, can transcribe the audio of a video into machine-encoded data or text, can integrate supplemental data into the video based on the content of the audio (as transcribed), and/or can compress/decompress videos as described herein.

II. Exemplary Server

[0142] FIG. 2 depicts a block diagram of an exemplary server 200 in accordance with exemplary embodiments of the present disclosure. The server 200 includes one or more non-transitory computer-readable media for storing one or more computer-executable instructions or software for implementing exemplary embodiments. The non-transitory computer-readable media may include, but are not limited to, one or more types of hardware memory, non-transitory tangible media (for example, one or more magnetic storage disks, one or more optical disks, one or more flash drives, one or more solid state disks), and the like. For example, memory 206 included in the server 200 may store computer-readable and computer-executable instructions or software for implementing exemplary embodiments of a video messaging platform 220. The video messaging platform 220, in conjunctions with video messaging applications 130 executed by user device can form a video messaging system.

[0143] The server 200 also includes configurable and/or programmable processor 202 and associated core(s) 204, and optionally, one or more additional configurable and/or programmable processor(s) 202' and associated core(s) 204' (for example, in the case of computer systems having multiple processors/cores), for executing computer-readable and computer-executable instructions or software stored in the memory 206 or storage 224, such as the video messaging platform 260 and/or other programs. Execution of the video messaging platform 220 by the processor 202 can allow users to generate accounts with user profile information, upload video messages to the server, and allow the server to transmit messages to user devices (e.g., of account holders). In some embodiments, the video messaging platform can provide speech recognition services to transcribe an audio component of a video, can generate and/or supplemental data to videos, can concatenate videos to form video message threads (e.g., by associating, linking, or integrating video messages associated with a thread together). Processor 202 and processor(s) 202' may each be a single core processor or multiple core (204 and 204') processor.

[0144] Virtualization may be employed in the server 200 so that infrastructure and resources in the server may be shared dynamically. A virtual machine 214 may be provided to handle a process running on multiple processors so that the process appears to be using only one computing resource rather than multiple computing resources. Multiple virtual machines may also be used with one processor.

[0145] Memory 206 may include a computer system memory or random access memory, such as DRAM, SRAM, EDO RAM, and the like. Memory 206 may include other types of memory as well, or combinations thereof.

[0146] The server 200 may also include one or more storage devices 216, such as a hard-drive, CD-ROM, or other computer readable media, for storing data and computer-readable instructions and/or software such as the video messaging platform 220. Exemplary storage device 216 may also store one or more databases for storing any suitable information required to implement exemplary embodiments. For example, exemplary storage device 216 can store one or more databases 218 for storing information, such user accounts and profiles, videos, video message threads, metadata associated with videos, and/or any other information to be used by embodiments of the video messaging platform 220. The databases may be updated manually or automatically at any suitable time to add, delete, and/or update one or more data items in the databases.

[0147] The server 200 can include a network interface 208 configured to interface via one or more network devices 214 with one or more networks, for example, Local Area Network (LAN), Wide Area Network (WAN) or the Internet through a variety of connections including, but not limited to, standard telephone lines, LAN or WAN links (for example, 802.11, T1, T3, 56 kb, X.25), broadband connections (for example, ISDN, Frame Relay, ATM), wireless connections, controller area network (CAN), or some combination of any or all of the above. The network interface 208 may include a built-in network adapter, network interface card, PCMCIA network card, card bus network adapter, wireless network adapter, USB network adapter, modem or any other device suitable for interfacing the server 200 to any type of network capable of communication and performing the operations described herein. Moreover, the server 200 may be any computer system, such as a workstation, desktop computer, server, laptop, handheld computer, tablet computer (e.g., the iPad.TM. tablet computer), mobile computing or communication device (e.g., the iPhone.TM. communication device), internal corporate devices, or other form of computing or telecommunications device that is capable of communication and that has sufficient processor power and memory capacity to perform the operations described herein.

[0148] The server 200 may run any operating system 210, such as any of the versions of the Microsoft.RTM. Windows.RTM. operating systems, the different releases of the Unix and Linux operating systems, any version of the MacOS.RTM. for Macintosh computers, any embedded operating system, any real-time operating system, any open source operating system, any proprietary operating system, or any other operating system capable of running on the server and performing the operations described herein. In exemplary embodiments, the operating system 216 may be run in native mode or emulated mode. In an exemplary embodiment, the operating system 216 may be run on one or more cloud machine instances.

III. Exemplary Network Environment

[0149] FIG. 3 depicts an exemplary network environment 300 for implementing exemplary embodiments of the present disclosure. The system 300 can include a network 305, a devices 200, a server 330, database(s) 340. Each of the devices 200, server 330, databases 340, is in communication with the network 305.

[0150] In an example embodiment, one or more portions of network 305 may be an ad hoc network, an intranet, an extranet, a virtual private network (VPN), a local area network (LAN), a wireless LAN (WLAN), a wide area network (WAN), a wireless wide area network (WWAN), a metropolitan area network (MAN), a portion of the Internet, a portion of the Public Switched Telephone Network (PSTN), a cellular telephone network, a wireless network, a WiFi network, a WiMax network, any other type of network, or a combination of two or more such networks.

[0151] The devices 200 may comprise, but is not limited to, work stations, computers, general purpose computers, Internet appliances, hand-held devices, wireless devices, portable devices, wearable computers, cellular or mobile phones, portable digital assistants (PDAs), smart phones, tablets, ultrabooks, netbooks, laptops, desktops, multi-processor systems, microprocessor-based or programmable consumer electronics, network PCs, mini-computers, smartphones, tablets, netbooks, and the like.

[0152] The devices 200 may also include various external or peripheral devices to aid in performing video messaging. Examples of peripheral devices include, but are not limited to, monitors, touch-screen monitors, clicking devices (e.g., mouse), input devices (e.g., keyboard), cameras, video cameras, and the like.

[0153] Each of the devices 200 may connect to network 305 via a wired or wireless connection. Each of the device 200 may include one or more applications or systems such as, but not limited to, embodiments of the video capture and processing engine, and embodiments the video messaging application 130, and the like. In an example embodiment, the device 200 may perform all the functionalities described herein.

[0154] In other embodiments, video concatenation system may be included on all devices 200, and the server 330 performs the functionalities described herein. In yet another embodiment, the devices 200 may perform some of the functionalities, and server 330 performs the other functionalities described herein. For example, devices 200 may generate the user interface 132 including a graphical representation 112 for viewing and editing video files. Furthermore, devices 200 may use the video capture device 108 to record and the devices 200 may also transmit videos to the server 330.

[0155] The database(s) 340 may store data including video files, video message files, video message threads, video metadata, user account information, supplemental data in connection with the video concatenation system.

[0156] Each of the devices 200 server 330, database(s) 340 is connected to the network 305 either via a wired connection or connected to the network 305 via a wireless connection. Server 330 comprises one or more computers or processors configured to communicate with the device 200 and database(s) 330 via network 305. Server 330 hosts one or more applications or websites accessed by devices 200 and/or facilitates access to the content of database(s) 340. Server 330 also may include system 100 described herein. Database(s) 340 comprise one or more storage devices for storing data and/or instructions (or code) for use by server 330, device 200. Database(s) 340 and server 330 may be located at one or more geographically distributed locations from each other or from the devices 200. Alternatively, database(s) 340 may be included within server 330.

IV. Exemplary Video Messaging Environment

[0157] FIGS. 4-14 illustrate an exemplary elements of a video messaging environment in which a user device in the form of a mobile device (e.g., a smartphone) executes an embodiment of the video messaging application 130 in accordance with exemplary embodiments of the present disclosure.

[0158] FIG. 4 shows mobile device 400 and user interface 405 of an embodiment of the video messaging application 130. The mobile device 400 includes a display 414, front 404 and rear facing cameras 410 (e.g., video capturing units), a microphone 412, a device "home" button 426, and a device "undo" button 424. Rendered on the display 414 are elements of the user interface (UI) generated by the video messaging application 130. Specifically, the UI renders on the display 414, a screen selection header (containing 438 to 416), a video preview of existing video messages 436 and 430, and a record icon 428. The header contains sub-elements including public posts 438, direct posts 402, notifications 406, contacts database 408 and settings 416. Additional information about each video message thread is also presented such as an original user 432 (the user that originates the video message), a number of video responses 420, respond or comment icon 422, a number of times a message has been viewed, a view counter 434, and names of participants in a message thread 418.

[0159] Exemplary embodiments of the video messaging application can take advantage of the processing power of the mobile device 400 and interfaces with cloud based servers (e.g., sever 330 shown in FIG. 3) to use the processing power of the servers. FIG. 6 shows one example embodiment of a basic structure of server based elements 600 that can be implemented or executed on a server to implement at least a portion of the video messaging platform. Is a non-limiting example, the servers can be implemented as the Amazon S3 (Simple Storage Service) server 620 and the Amazon EC2 (Elastic Compute) server 610. In other embodiments of the present disclosure, other elements having similar capabilities can be used.

[0160] The S3 or Simple Storage Service server 620 is can store thumbnail preview images of video messages in a specified file directory/structure (e.g., the "/img" file folder) and can store video messages in a specified file directory/structure (e.g., the "/vid" file folder). Once the preview images and videos are stored in the S3 server 620, an exemplary embodiment of the video messaging application 130 can utilize a distribution network, such as Amazon's Cloudfront distribution network (cdn), to deliver the preview images and video messages to other servers located in the same or different geographic areas (e.g., copies of the preview images and video messages can be stored on multiple servers in multiple location or data centers so that the previews and videos are available globally instantaneously). Therefore, once a video is posted (uploaded) to a server implementing embodiments of the video messaging platform by a user at one geographic location (e.g., in the United States), another user in another geographic location (e.g., s China) may quickly access and view the video message without his or her device having to communicate to the server 620 in the United States to which the video message was originally uploaded. Instead the user's device can request and get the thumbnail preview and video locally from a server located near the user (e.g., from the nearest server storing a copy of the video message), thus reducing the response time.

[0161] The EC2 or Elastic Compute server 610 can be responsible for directing data traffic between user devices and the S3 server 620 and executing video file operations such as post, delete, append to message thread, create video message addresses (URL's) and encryption. There are four sections or domains to the EC2 server 610. Each of these sections are responsible for executing and hosting the functions therein.

[0162] A "api.movy.co" section or domain handles communications between user devices and the S3 server 620 and contacts database (e.g., MySQL). The api.movy.co section or domain also conducts posting, appending video's into the message thread (e.g., concatenating videos) and deleting videos. Furthermore, the api.movy.co section or domain can create and encrypt an unique URL for each uploaded video and user.

[0163] The "mailer.movy.co" section or domain handles notifications used by the video messaging system to notify recipients (e.g., via email) that a new video message has been sent to them for retrieval and/or viewing via embodiments of the video messaging application.

[0164] The "gcm.movy.co" section or domain handles notifications received by recipients within embodiments of the video messaging application itself.

[0165] The "movy.co" section or domain is a parent domain to the above three domains (e.g., api.movy.co, mailer.movy.co, gcm.movy.co) and also hosts a web viewer used by recipients to use an embodiment of the video messaging system when they do not have the video messaging application on the mobile device to view a video message.

[0166] In exemplary embodiments, eight main operations can provide functions of exemplary embodiments of the video messaging application. These operations can include device executed actions, server executed actions, user actions, and/or a combination thereof.

[0167] The first of these operations provides for creating and uploading a video message to the public stream. FIG. 7 is a flowchart illustrating the process to post a video to public stream 700 and background steps that can be used to create this public video message. FIG. 5 shows symbol definitions used in flowcharts of the present disclosure. The operation 520 is defined as an action initiated and executed by device. The operation 540 is defined is an action initiated by user on device. The operation 560 is defined as a subroutine of actions initiated and executed by device. The operation 580 defined as a subroutine of actions initiated and executed jointly by device and server element. The process begins at step 702. In operation 704, the user device receives an input in the form of the user tapping on the record icon, which activates a video capture unit of the user device in video mode in operation 706. In operation 708, user device records a video message. In operation 710, the video message is stored locally on the user device. Alternatively, an existing video already stored on the user device can be uploaded to the server from the user device. In operation 712, the user device creates a preview thumbnail image. In some embodiments, the preview thumb can be created by one of the servers and/or can can be created based on an interaction between the user device and the servers (e.g., with the help of the Content Delivery Network, cdn). The video can be uploaded to the server (e.g. the Amazon S3 server 620). In operation 714, an upload task is created as a service. In operation 716, with the help of the cdn, the video message is uploaded to the S3 server 620. In operation 718, if the upload process is interrupted for any reason (user device being turn off, interruption on network or wifi reception, etc.), the video messaging application will control the user device to reconnect with the server to check if the upload process has been completed. If it has not, the user device continues to upload the video to completion in operation 716. In operation 720, the video is added to a video message stream with the help of an API server service and the server notifies, in operation 722, (e.g., with the help of gcm and mailer servers services) followers of the user that created the video message that a new post has been made. In operation 724, the task is marked as uploaded after the video is added into the video message stream.