Augmented Reality Guidance For Medical Devices

Chiu; Chia-Hung ; et al.

U.S. patent application number 16/403454 was filed with the patent office on 2019-11-07 for augmented reality guidance for medical devices. The applicant listed for this patent is MEDTRONIC MINIMED, INC.. Invention is credited to Chia-Hung Chiu, Rebecca K. Gottlieb, Carol A. Jerome, Kenny J. Long.

| Application Number | 20190341149 16/403454 |

| Document ID | / |

| Family ID | 68384517 |

| Filed Date | 2019-11-07 |

View All Diagrams

| United States Patent Application | 20190341149 |

| Kind Code | A1 |

| Chiu; Chia-Hung ; et al. | November 7, 2019 |

AUGMENTED REALITY GUIDANCE FOR MEDICAL DEVICES

Abstract

Medical devices and related augmented reality systems and methods are provided. A method of facilitating operation of a medical device involves identifying a current state of a user interface of the medical device based at least in part on a portion of the medical device captured by an imaging device, generating guidance information pertaining to the current state of the user interface, and presenting a guidance overlay including the guidance information pertaining to the current state of the user interface using augmented reality.

| Inventors: | Chiu; Chia-Hung; (Pasadena, CA) ; Gottlieb; Rebecca K.; (Culver City, CA) ; Jerome; Carol A.; (Mission Hills, CA) ; Long; Kenny J.; (Simi Valley, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 68384517 | ||||||||||

| Appl. No.: | 16/403454 | ||||||||||

| Filed: | May 3, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62791196 | Jan 11, 2019 | |||

| 62668022 | May 7, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G16H 20/30 20180101; G06T 19/006 20130101; G16H 20/17 20180101; A61M 2205/50 20130101; G16H 20/60 20180101; A61M 5/14 20130101; A61M 5/1723 20130101; A61M 2205/502 20130101; G16H 10/60 20180101; G16H 40/63 20180101; G16H 40/67 20180101; A61M 2005/14208 20130101; A61M 2230/201 20130101; A61B 2090/365 20160201; A61B 90/36 20160201; A61M 2205/3553 20130101; G16H 30/40 20180101; G06K 9/00671 20130101 |

| International Class: | G16H 40/67 20060101 G16H040/67; G16H 40/63 20060101 G16H040/63; A61B 90/00 20060101 A61B090/00; A61M 5/172 20060101 A61M005/172 |

Claims

1. A method of facilitating operation of a medical device, the method comprising: identifying, by a computing device, a current state of a user interface of the medical device based at least in part on a portion of the medical device captured by an imaging device associated with the computing device; generating, by the computing device, guidance information pertaining to the current state of the user interface; and presenting, by the computing device, a guidance overlay including the guidance information pertaining to the current state of the user interface.

2. The method of claim 1, further comprising presenting a graphical representation of the portion of the medical device captured by the imaging device on a display associated with the computing device, wherein the guidance overlay visually overlies the portion of the medical device.

3. The method of claim 2, the portion of the medical device including the user interface, wherein presenting the guidance overlay comprises displaying the guidance overlay on the display adjacent to the graphical representation of the user interface.

4. The method of claim 1, further comprising obtaining, at the computing device, settings information corresponding to a current configuration of the medical device, wherein the guidance information is influenced by the current configuration.

5. The method of claim 1, further comprising identifying, at the computing device, a user objective to configure a feature of the medical device, wherein: generating the guidance information comprises determining a user action to facilitate configuring the feature based on the current state of the user interface; and presenting the guidance overlay comprises providing a graphical indication of the user action with respect to the user interface overlying the portion of the medical device.

6. The method of claim 5, the current state of the user interface comprises a current graphical user interface (GUI) display presented by the medical device, wherein determining the user action comprises determining the user action for advancing from the current GUI display to a different GUI display for configuring the feature of the medical device.

7. The method of claim 6, wherein identifying the current state of the user interface comprises recognizing the current GUI display from among a plurality of potential GUI displays associated with the medical device based at least in part on the portion of the medical device captured by the imaging device.

8. The method of claim 1, further comprising identifying, at the computing device, a user objective to understand the current state of the user interface, wherein: generating the guidance information comprises: obtaining user guide information associated with the medical device; and generating explanatory information pertaining to the current state of the user interface based at least in part on the user guide information; and presenting the guidance overlay comprises providing the explanatory information overlying the portion of the medical device.

9. The method of claim 8, the current state of the user interface comprises a current graphical user interface (GUI) display presented by the medical device, wherein: identifying the current state of the user interface comprises recognizing the current GUI display from among a plurality of potential GUI displays associated with the medical device based at least in part on the portion of the medical device captured by the imaging device; and generating the explanatory information comprises generating the explanatory information pertaining to the current GUI display based at least in part on the user guide information associated with the current GUI display.

10. The method of claim 1, further comprising identifying, at the computing device, a user objective to understand the current state of the user interface, wherein: the current state of the user interface comprises a graphical user interface (GUI) display depicting historical data associated with a patient; generating the guidance information comprises: obtaining, at the computing device, the historical data associated with the patient; and determining one or more metrics characterizing the historical data; and the guidance overlay includes the one or more metrics.

11. The method of claim 10, wherein identifying the current state of the user interface comprises recognizing the user interface as displaying the GUI display depicting historical data associated with the patient from among a plurality of potential GUI displays based at least in part on the portion of the medical device captured by the imaging device.

12. The method of claim 1, further comprising identifying, at the computing device, a user objective to understand the current state of the user interface, wherein: the current state of the user interface comprises a graphical user interface (GUI) display depicting historical data associated with a patient; generating the guidance information comprises: obtaining, at the computing device, the historical data associated with the patient; and determining a recommended action based at least in part on the historical data; and the guidance overlay includes a graphical indication of the recommended action.

13. The method of claim 12, the graphical indication comprising a GUI element selectable to configure the medical device to implement the recommended action, wherein the method further comprises the computing device configuring the medical device to autonomously perform the recommended action in response to selection of the GUI element.

14. The method of claim 12, the graphical indication comprising a GUI element selectable to configure at least one of the computing device and the medical device to automatically generate a user notification pertaining to the recommended action.

15. A method of providing guidance pertaining to a display associated with an infusion device, the method comprising: obtaining, from an imaging device, one or more images capturing at least a portion of the display; identifying, based on the one or more images, a current graphical user interface (GUI) display presented on the display from among a plurality of GUI displays associated with the infusion device; identifying a user objective; generating, by a computing device, guidance information pertaining to the current GUI display based on the user objective; and providing a guidance overlay including the guidance information pertaining to the current GUI display, wherein the guidance overlay visually overlies at least a portion of the infusion device.

16. The method of claim 15, wherein: the user objective comprises understanding the current GUI display; and generating the guidance information comprises: obtaining user guide information associated with the current GUI display; and generating explanatory information pertaining to the current GUI display using the user guide information; and the guidance information includes the explanatory information.

17. The method of claim 15, wherein: the user objective comprises adjusting a setting of the infusion device; and generating the guidance information comprises: obtaining user guide information associated with the infusion device; and determining a user action for navigating from the current GUI display to a different GUI display associated with the setting of the infusion device; and the guidance information indicates the user action.

18. The method of claim 17, wherein: the current GUI display includes a menu; and the guidance overlay is positioned adjacent to the menu.

19. The method of claim 15, further comprising displaying, at the computing device, a graphical representation of the portion of the display of the infusion device captured by the imaging device, wherein providing the guidance overlay comprises displaying the guidance overlay adjacent to the graphical representation of the portion of the display.

20. An electronic device comprising an imaging device and a display having displayed thereon an augmented reality graphical user interface (GUI) display including a guidance overlay, wherein: the guidance overlay visually overlies at least a portion of a medical device; the guidance overlay includes guidance information pertaining to a current state of a user interface of the medical device determined based at least in part one or more images captured by the imaging device; and the one or more images include at least some of the user interface of the medical device.

Description

CROSS-REFERENCE TO RELATED APPLICATION(S)

[0001] This application claims the benefit of U.S. Provisional Patent Application Ser. No. 62/791,196, filed Jan. 11, 2019, and U.S. Provisional Patent Application Ser. No. 62/668,022, filed May 7, 2018, the entire contents of which are incorporated by reference herein. This application is also related to U.S. patent application Ser. No. ______ (Attorney Docket No. 009.5207US (C00019589.USU3) and U.S. patent application Ser. No. ______ (Attorney Docket No. 009.5208US (C00019589US01), both filed concurrently herewith.

TECHNICAL FIELD

[0002] Embodiments of the subject matter described herein relate generally to medical devices, and more particularly, embodiments of the subject matter relate to using augmented reality to improve patient experience or outcome.

BACKGROUND

[0003] The use of portable medical devices, such as infusion pump devices, continuous glucose monitors, and the like, has been increasing to improve the control or management of a patient's condition. Additionally, modem devices may incorporate or support any number of potential features as well as utilizing various user interface(s), which may be unique to a particular device. However, for some users, increased device complexity can be perceived as confusing, time consuming, or inconvenient. Accordingly, it is desirable to provide methods and systems that facilitate maximizing device performance to achieve better patient outcomes while also improving user experience by reducing patient burdens. Furthermore, other desirable features and characteristics will become apparent from the subsequent detailed description and the appended claims, taken in conjunction with the accompanying drawings and this background.

BRIEF SUMMARY

[0004] Medical devices and related systems and operating methods are provided. An embodiment of a method of facilitating operation of a medical device involves identifying, by a computing device, a current state of a user interface of the medical device based at least in part on a portion of the medical device captured by an imaging device associated with the computing device, generating, by the computing device, guidance information pertaining to the current state of the user interface, and presenting, by the computing device, a guidance overlay including the guidance information pertaining to the current state of the user interface.

[0005] In another embodiment, a method of providing guidance pertaining to a display associated with a medical device, such as an infusion device, involves obtaining, from an imaging device, one or more images capturing at least a portion of the display, identifying, based on the one or more images, a current graphical user interface (GUI) display presented on the display from among a plurality of GUI displays associated with the infusion device, identifying a user objective, generating, by a computing device, guidance information pertaining to the current GUI display based on the user objective, and providing a guidance overlay including the guidance information pertaining to the current GUI display, wherein the guidance overlay visually overlies at least a portion of the infusion device.

[0006] In yet another embodiment, an apparatus for an electronic device is provided. The electronic device includes an imaging device and a display having displayed thereon an augmented reality graphical user interface (GUI) display including a guidance overlay, wherein the guidance overlay visually overlies at least a portion of a medical device, the guidance overlay includes guidance information pertaining to a current state of a user interface of the medical device determined based at least in part one or more images captured by the imaging device, and the one or more images include at least some of the user interface of the medical device.

[0007] In another embodiment, a method of providing guidance to a patient using an electronic device having an imaging device associated therewith is provided. The method involves analyzing one or more images captured by the imaging device to identify image content indicative of a potential activity for the patient, determining, by a control system associated with the electronic device, one or more attributes for the potential activity, determining, by the control system, a predicted physiological response by the patient to the potential activity based at least in part on the one or more attributes, and providing, on a display associated with the electronic device, an augmented reality graphical user interface including a graphical indication influenced by the predicted physiological response.

[0008] In yet another embodiment, a method of providing guidance to a patient using an electronic device having an imaging device associated therewith involves obtaining an image of a meal using the imaging device, determining, by a control system associated with the electronic device, an estimated carbohydrate amount for the meal based at least in part on the image, determining, by the control system, a predicted physiological response by the patient to the meal based at least in part on the estimated carbohydrate amount, and providing, on a display associated with the electronic device, an augmented reality graphical user interface including a graphical indication influenced by the predicted physiological response.

[0009] In another embodiment, an apparatus for an electronic device having an imaging device and a display having displayed thereon an augmented reality graphical user interface (GUI) display is provided. The augmented reality GUI display includes a guidance overlay, wherein the guidance overlay visually overlies at least a portion of content captured by the imaging device, the content is indicative of a potential activity capable of influencing a physiological condition of a patient, the guidance overlay includes guidance information influenced by a predicted physiological response by the patient to the potential activity, and the predicted physiological response is determined based on recent data associated with the patient and one or more attributes for the potential activity.

[0010] In another embodiment, a method of operating an infusion device capable of delivering fluid to a patient is provided. The method involves analyzing one or more images captured by an imaging device to identify image content indicative of an activity capable of influencing the physiological condition of the patient and in response to identifying the activity based at least in part on the one or more images, automatically adjusting delivery of the fluid to the patient based at least in part on the activity.

[0011] In one embodiment, a method of operating an infusion device capable of delivering fluid to a patient involves obtaining an image of a meal captured by an imaging device, determining, by a control system associated with the infusion device, an expected nutritional characteristic for the meal based at least in part on the image, determining, by the control system, a delivery adjustment for delivering the fluid based on the expected nutritional characteristic, and providing, on a display, an augmented reality graphical user interface including an overlay comprising a graphical indication of the delivery adjustment.

[0012] In yet another embodiment, an apparatus for an electronic device is provided. The electronic device includes an imaging device and a display having displayed thereon an augmented reality graphical user interface (GUI) display including a guidance overlay, wherein the guidance overlay visually overlies at least a portion of meal content captured by the imaging device, the guidance overlay includes guidance information influenced by a predicted physiological response by a patient to the meal content, and the guidance overlay includes graphical indication of a delivery adjustment to an infusion device associated with the patient, wherein the delivery adjustment is determined based at least in part on the meal content captured by the imaging device and the delivery adjustment is influenced by the predicted physiological response by the patient to the meal content.

[0013] This summary is provided to introduce a selection of concepts in a simplified form that are further described below in the detailed description. This summary is not intended to identify key features or essential features of the claimed subject matter, nor is it intended to be used as an aid in determining the scope of the claimed subject matter.

BRIEF DESCRIPTION OF THE DRAWINGS

[0014] A more complete understanding of the subject matter may be derived by referring to the detailed description and claims when considered in conjunction with the following figures, wherein like reference numbers refer to similar elements throughout the figures, which may be illustrated for simplicity and clarity and are not necessarily drawn to scale.

[0015] FIG. 1 depicts an exemplary embodiment of an infusion system;

[0016] FIG. 2 depicts a plan view of an exemplary embodiment of a fluid infusion device suitable for use in the infusion system of FIG. 1 in one or more embodiments;

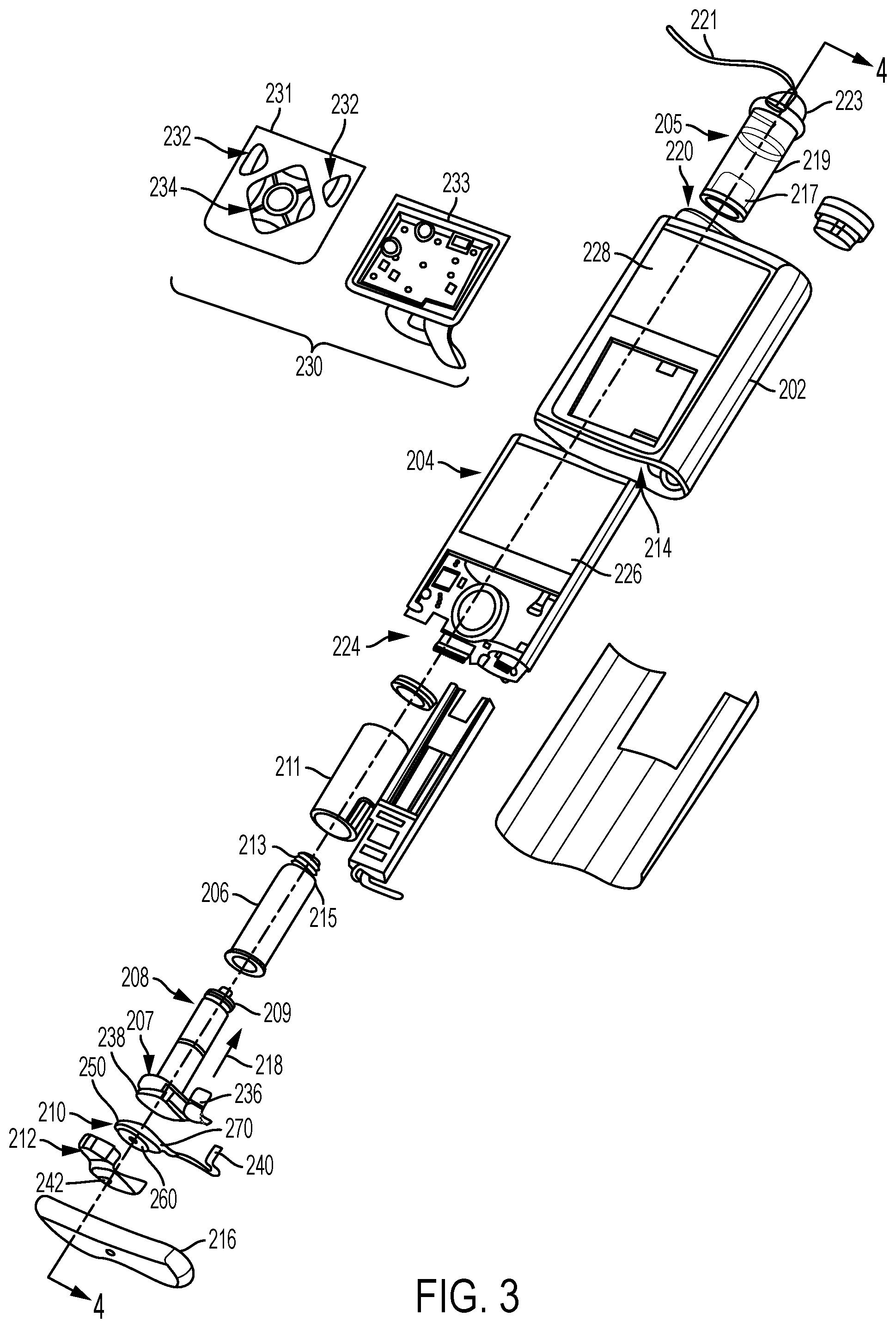

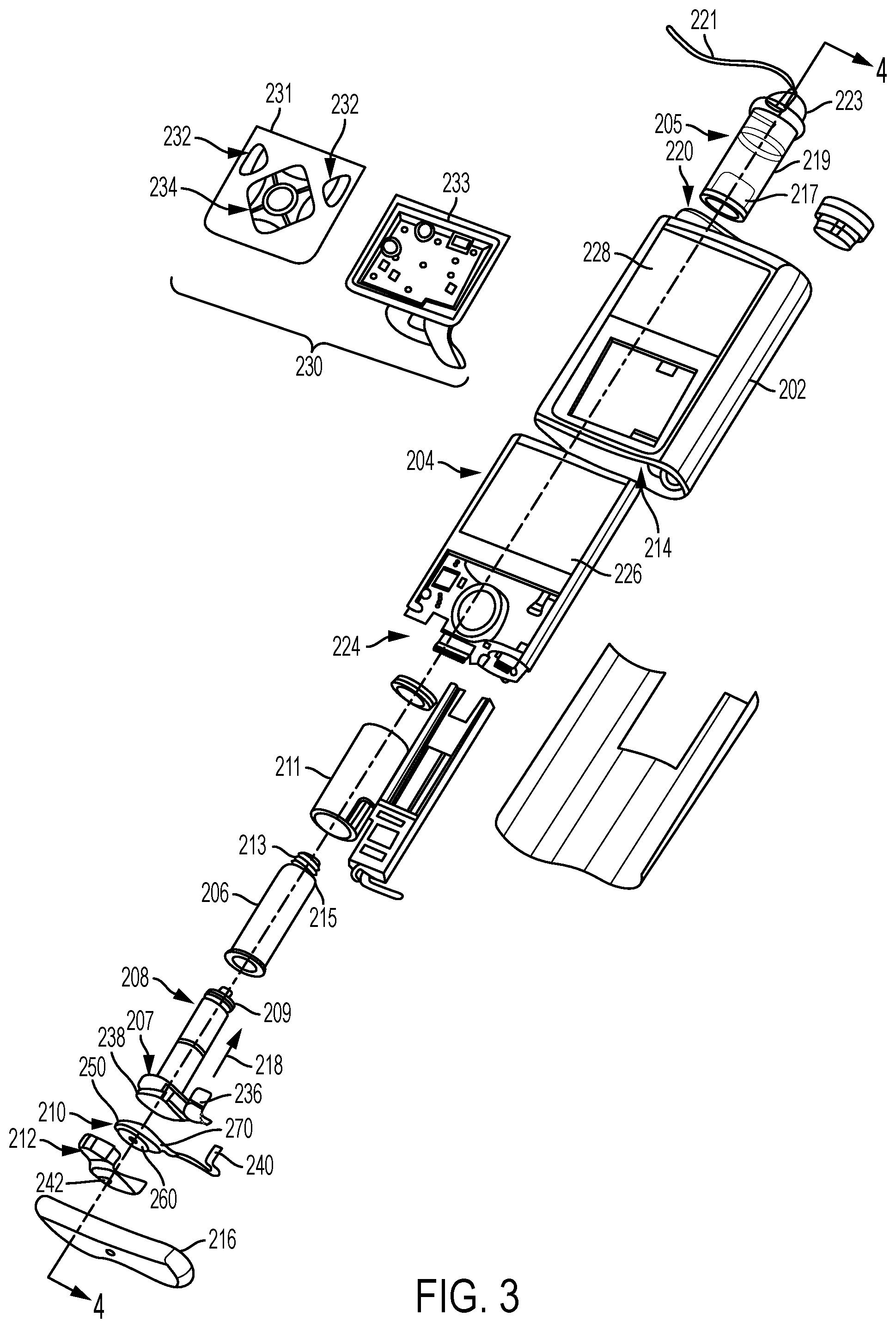

[0017] FIG. 3 is an exploded perspective view of the fluid infusion device of FIG. 2;

[0018] FIG. 4 is a block diagram of an exemplary infusion system suitable for use with a fluid infusion device in one or more embodiments;

[0019] FIG. 5 is a block diagram of an exemplary pump control system suitable for use in the infusion device in the infusion system of FIG. 4 in one or more embodiments;

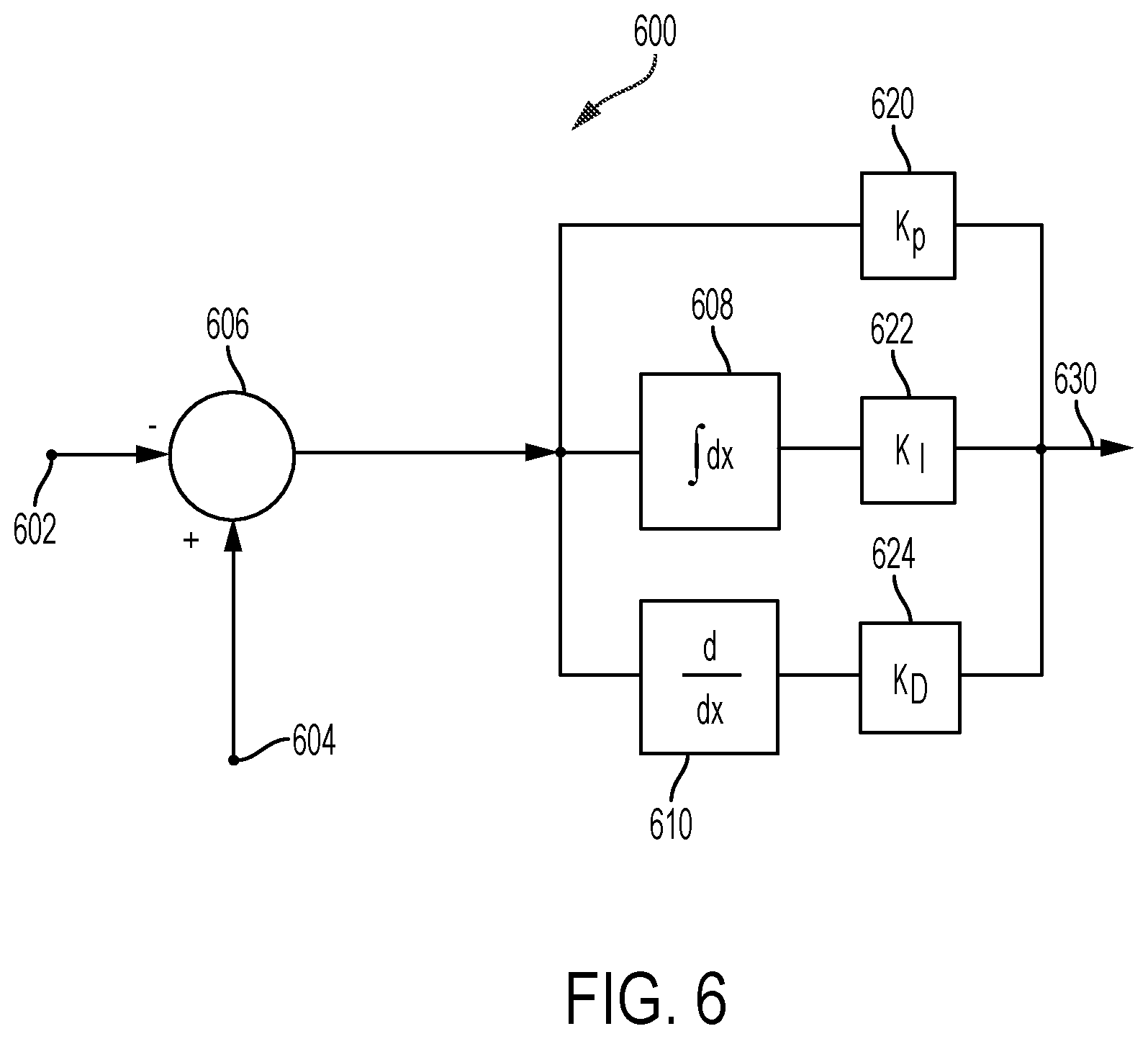

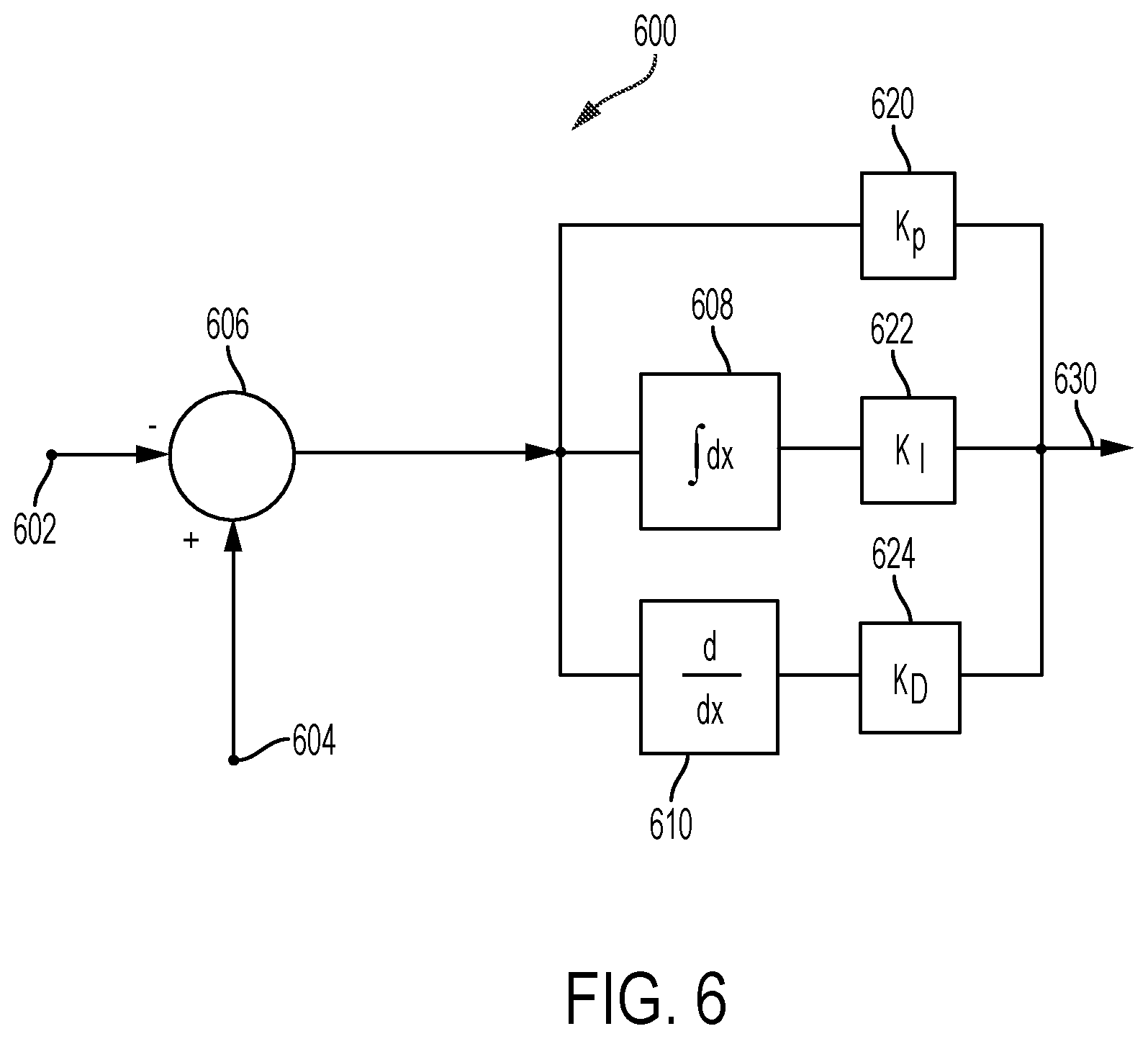

[0020] FIG. 6 is a block diagram of a closed-loop control system that may be implemented or otherwise supported by the pump control system in the fluid infusion device of FIGS. 4-5 in one or more exemplary embodiments;

[0021] FIG. 7 is a block diagram of an exemplary patient monitoring system;

[0022] FIG. 8 is a block diagram of an exemplary electronic device suitable for use in connection with a medical device in one or more exemplary embodiments;

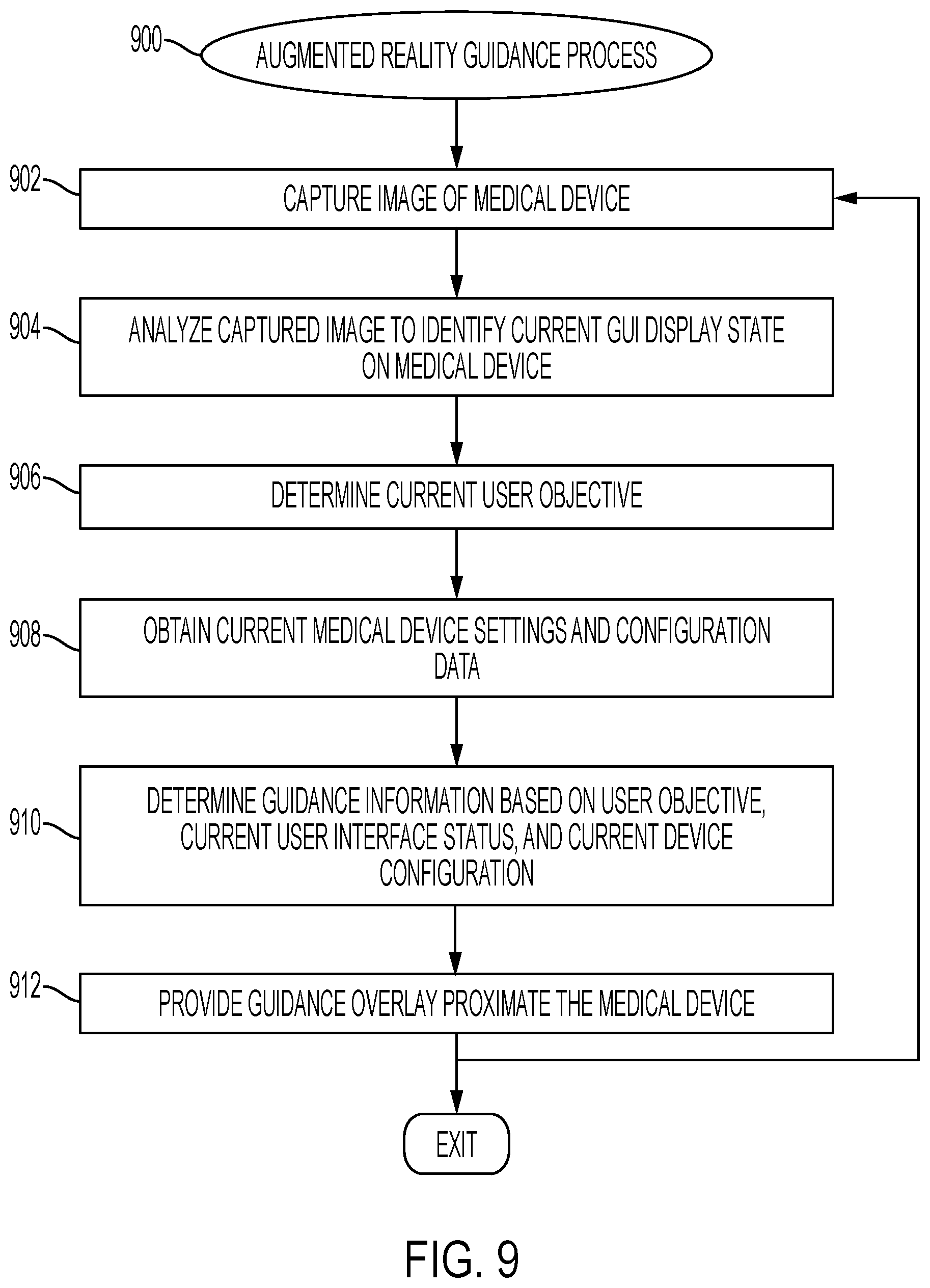

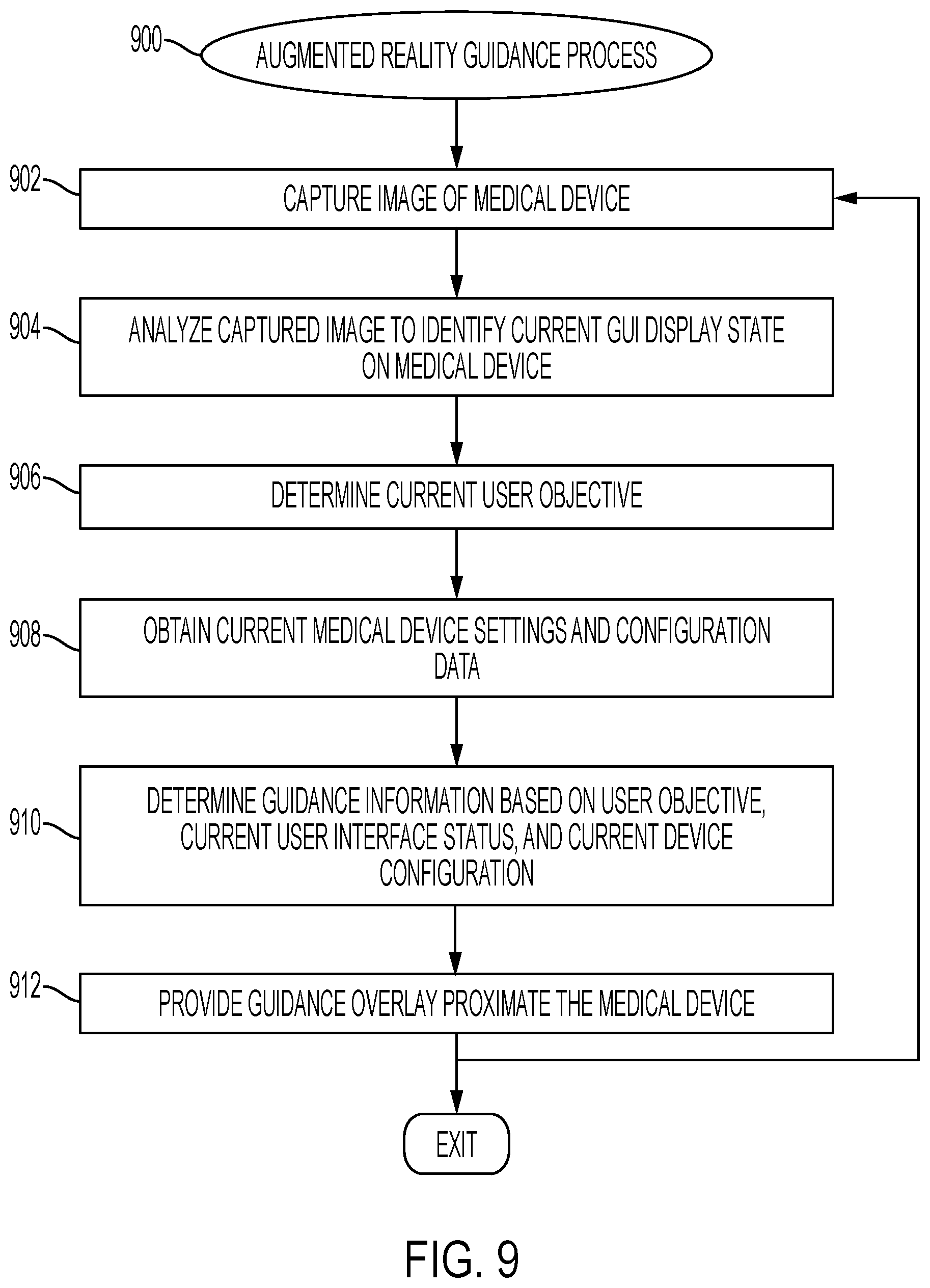

[0023] FIG. 9 is a flow diagram of an exemplary augmented reality guidance process suitable for use with a medical device in one or more exemplary embodiments;

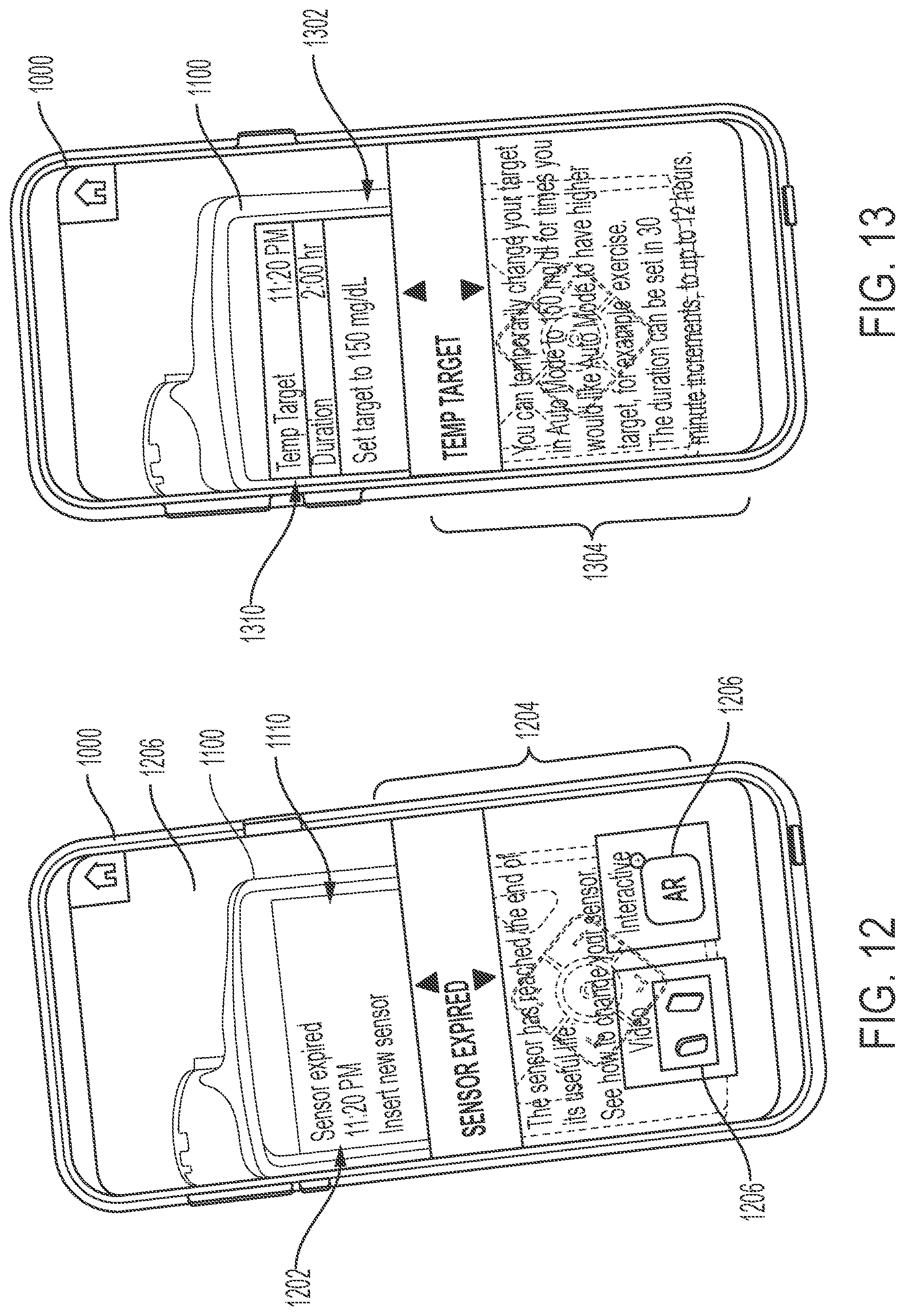

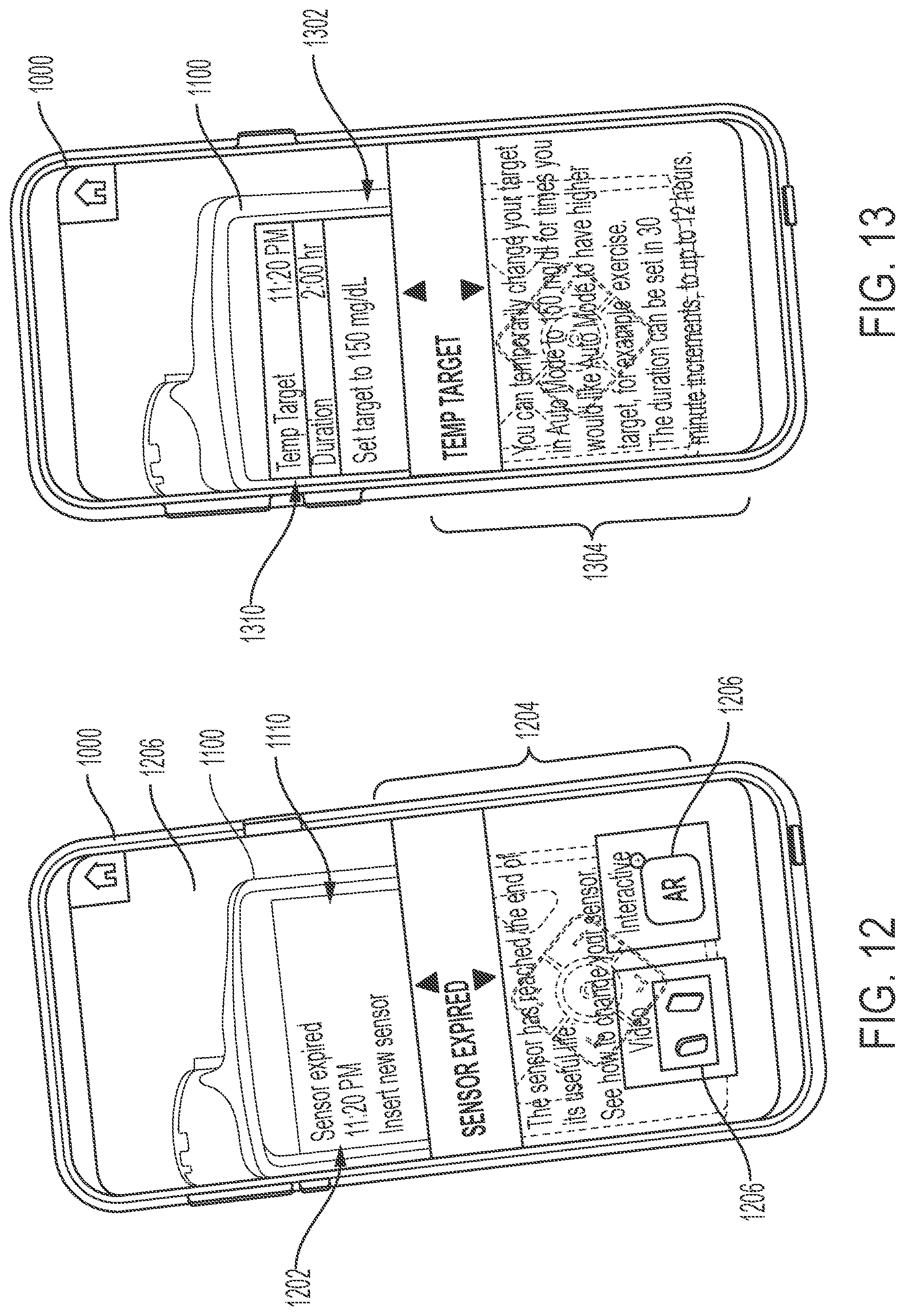

[0024] FIGS. 10-17 depict exemplary embodiments of augmented reality graphical user interface (GUI) displays suitable for presentation by an electronic device in connection with the augmented reality guidance process of FIG. 9;

[0025] FIG. 18 is a flow diagram of an exemplary proactive guidance process in one or more exemplary embodiments;

[0026] FIGS. 19-20 depict exemplary embodiments of augmented reality GUI displays suitable for presentation by an electronic device in connection with the proactive guidance process of FIG. 18;

[0027] FIG. 21 is a flow diagram of an exemplary proactive delivery adjustment process in one or more exemplary embodiments;

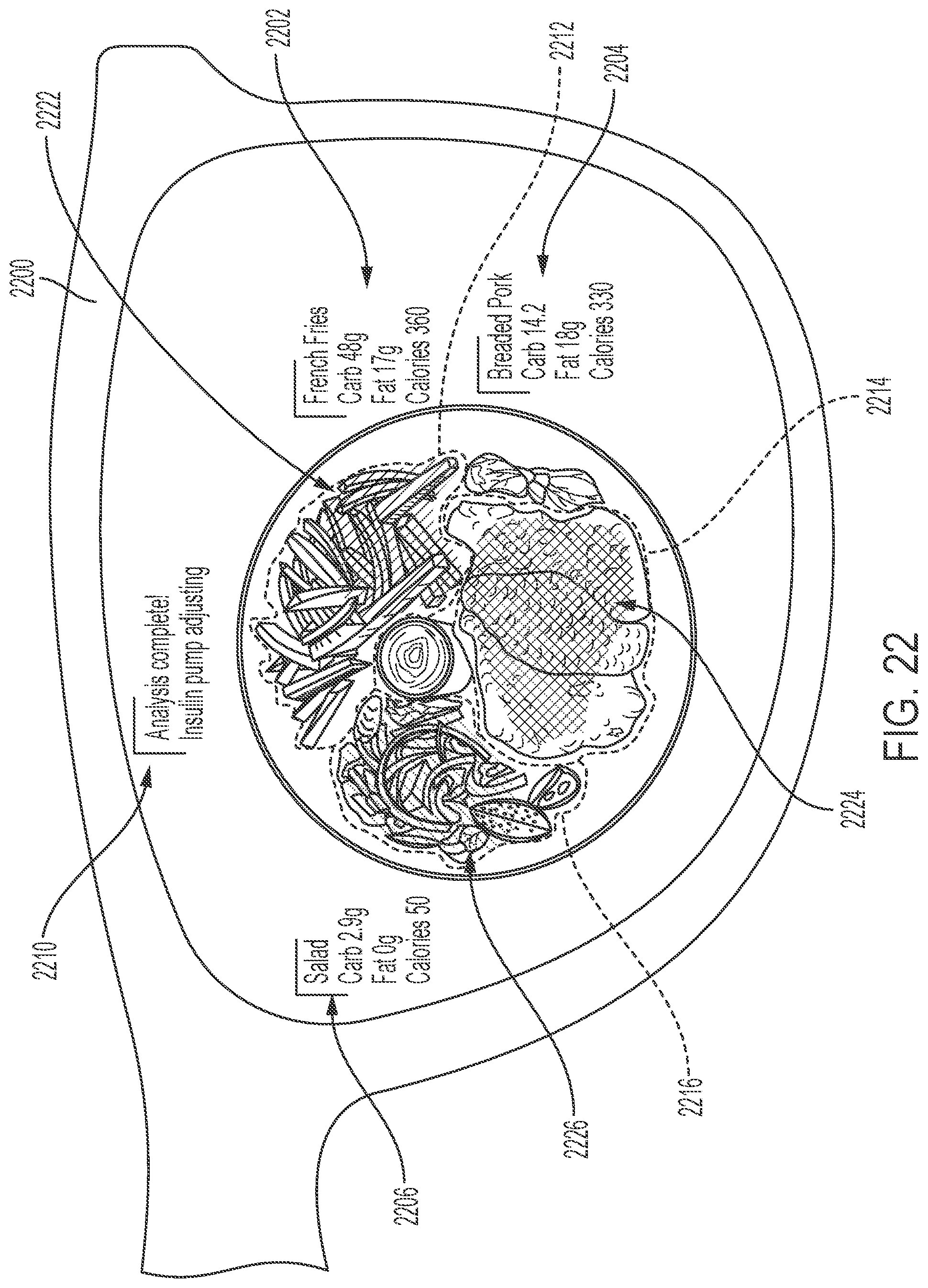

[0028] FIG. 22 is an exemplary augmented reality GUI displays suitable for presentation by an electronic device in connection with the proactive delivery adjustment process of FIG. 21;

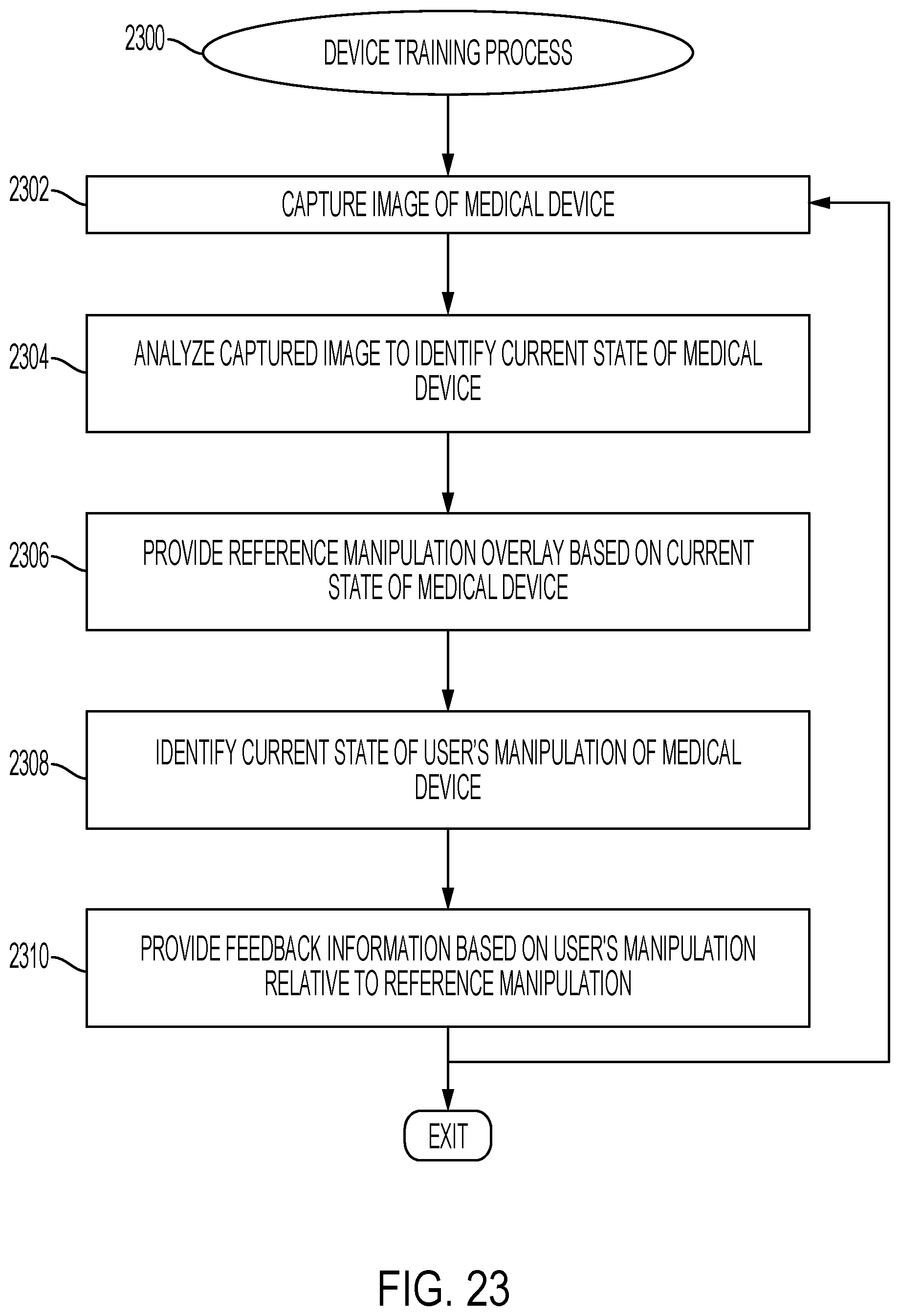

[0029] FIG. 23 is a flow diagram of an exemplary device training process in one or more exemplary embodiments;

[0030] FIG. 24 is an exemplary augmented reality GUI displays suitable for presentation by an electronic device in connection with the device training process of FIG. 23; and

[0031] FIG. 25 is a flow diagram of an exemplary gamification process in one or more exemplary embodiments.

DETAILED DESCRIPTION

[0032] The following detailed description is merely illustrative in nature and is not intended to limit the embodiments of the subject matter or the application and uses of such embodiments. As used herein, the word "exemplary" means "serving as an example, instance, or illustration." Any implementation described herein as exemplary is not necessarily to be construed as preferred or advantageous over other implementations. Furthermore, there is no intention to be bound by any expressed or implied theory presented in the preceding technical field, background, brief summary or the following detailed description.

[0033] Exemplary embodiments of the subject matter described herein are implemented in conjunction with medical devices, such as portable electronic medical devices. Although many different applications are possible, the following description focuses on embodiments that incorporate a fluid infusion device (or infusion pump) as part of an infusion system deployment. That said, the subject matter described herein is not limited to infusion devices (or any particular configuration or realization thereof) and may be implemented in an equivalent manner in the context of other medical devices, such as continuous glucose monitoring (CGM) devices, injection pens (e.g., smart injection pens), and the like. For the sake of brevity, conventional techniques related to infusion system operation, insulin pump and/or infusion set operation, and other functional aspects of the systems (and the individual operating components of the systems) may not be described in detail here. Examples of infusion pumps may be of the type described in, but not limited to, U.S. Pat. Nos. 4,562,751; 4,685,903; 5,080,653; 5,505,709; 5,097,122; 6,485,465; 6,554,798; 6,558,320; 6,558,351; 6,641,533; 6,659,980; 6,752,787; 6,817,990; 6,932,584; and 7,621,893; each of which are herein incorporated by reference. That said, the subject matter described herein can be utilized more generally in the context of overall diabetes management or other physiological conditions independent of or without the use of an infusion device or other medical device (e.g., when oral medication is utilized), and the subject matter described herein is not limited to any particular type of medication.

[0034] Generally, a fluid infusion device includes a motor or other actuation arrangement that is operable to linearly displace a plunger (or stopper) of a reservoir provided within the fluid infusion device to deliver a dosage of fluid, such as insulin, to the body of a user. Dosage commands that govern operation of the motor may be generated in an automated manner in accordance with the delivery control scheme associated with a particular operating mode, and the dosage commands may be generated in a manner that is influenced by a current (or most recent) measurement of a physiological condition in the body of the user. For example, in a closed-loop operating mode, dosage commands may be generated based on a difference between a current (or most recent) measurement of the interstitial fluid glucose level in the body of the user and a target (or reference) glucose value. In this regard, the rate of infusion may vary as the difference between a current measurement value and the target measurement value fluctuates. For purposes of explanation, the subject matter is described herein in the context of the infused fluid being insulin for regulating a glucose level of a user (or patient); however, it should be appreciated that many other fluids may be administered through infusion, and the subject matter described herein is not necessarily limited to use with insulin.

[0035] Exemplary embodiments of the subject matter described herein generally relate to utilizing augmented reality and other image processing to assist or improve operations of medical devices in a convenient manner that reduces patient burdens. For example, as described in greater detail below in the context of FIGS. 8-17, in one or more exemplary embodiments, augmented reality is utilized to interactively provide visually overlaid guidance information to help facilitate the configuration or operation of various features of a medical device. In this regard, a camera or other imaging device may be utilized to capture or otherwise obtain an image of the medical device, which, in turn, is analyzed to identify the current state of a user interface of the medical device, such as, for example, the graphical user interface (GUI) currently displayed on a display of the medical device, a current selection or position of a user input element on the display, and the like. Based on the current user interface status information, guidance information is determined and presented or otherwise provided on a display in a manner that overlies the medical device, and thereby enables review of the guidance information while concurrently viewing at least a portion of the medical device GUI. The patient or other user may then concurrently view the guidance information for accomplishing a particular objective in the foreground while interacting with the medical device in the background, thereby improving the user experience by allowing cross-referencing the user inputs with the guidance information in an intuitive manner without diverting the head or eyes of the user. Additionally, some embodiments could employ text-to-speech functionality to provide guidance information or other feedback in an auditory manner. For example, text-to-speech could be employed to read back text depicted on the captured medical device GUI to support visually impaired patients or other users who prefer auditory feedback.

[0036] Additionally, as described in greater detail below in the context of FIGS. 18-20, in one or more exemplary embodiments, overlaid guidance information or other graphical indicia are provided based on a patient's predicted physiological response to a portion of the content in a captured image. For example, food, beverages, or other consumable items (or indicia thereof) may be identified within a captured image. Estimated carbohydrate amounts or other attributes (e.g., fiber, fat, protein, and/or the like) associated with the captured consumable(s) may be input or otherwise provided to one or more prediction models to calculate or otherwise determine a predicted physiological response by the patient to consumption of at least some of the consumable items captured by the imaging device. Based on the predicted physiological response, graphical overlays may be provided proximate to the consumable items that indicate, to the patient, his or her predicted physiological response to consuming those items, or provide guidance or other recommendations to the patient regarding consuming those items (e.g., a recommended portion size, recommendations to abstain from consumption, or the like). In this manner, the guidance overlays may encourage or motivate the patient towards behavior that improves the patient's physiological condition (or the management thereof) or otherwise achieves a better outcome. In a similar manner, content in a captured image may be analyzed to identify items or other indicia associated with exercise (e.g., a treadmill, a gym, or the like), which, in turn may be utilized to estimate or otherwise determine a predicted physiological response to exercise and provide graphical overlays that provide suggestions, recommendations, or other guidance for exercise to improve the patient's physiological condition.

[0037] As described in the context of FIGS. 21-22, in one or more exemplary embodiments, captured images are also utilized to automatically and proactively adjust delivery control parameters or fluid delivery to account for the content of the captured images. For example, when a patient is about to begin consuming a meal, an image of the meal may be analyzed to identify the type of food being consumed, the nutritional characteristics or other content of the meal, the estimated portion size, and/or the like. The estimated portion size, nutritional characteristics or food type, and other attributes identified based on the captured image may be utilized to calculate or otherwise determine an estimated amount of carbohydrates expected to be consumed by the patient. Based on the estimated amount of carbohydrates, fat, protein, fiber, and/or other nutritional attributes of the current meal expected to be consumed by the patient, one or more control parameters of an infusion device may be automatically adjusted to proactively account for the probable metabolic or pharmacokinetic response to the meal. Additionally, or alternatively, one or more bolus amounts of insulin to be delivered may be calculated or otherwise determined based on the estimated carbohydrate amount. A confirmation graphical overlay may be provided that confirms or otherwise informs the patient of the proactive delivery adjustments that were automatically configured in response to a captured image. Additionally, overlaid information may provide graphical indicia that provide feedback to the patient regarding the results of the analysis of the captured image, such as, for example, indication of the estimated amounts of carbohydrates, fat, protein, fiber, and/or other nutritional attributes associated with the meal.

[0038] In one or more embodiments, the control parameters or other delivery adjustments may be performed dynamically in real-time in response to changes in the captured imagery over time. For example, successive images may be analyzed to identify or otherwise determine the amount of the meal that has been consumed over a given duration of time, which in turn, may be utilized to alter the delivery adjustments based on deviations between the patient's actual meal consumption relative to the initial prediction of the patient's expected consumption. Thus, when it appears that the patient did not or will not consume the entire meal after adjusting one or more control parameters to increase responsiveness of the fluid delivery to mitigate potential post-prandial hyperglycemia, the control parameter(s) may be adjusted to decrease the responsiveness of the fluid delivery and account for the reduced likelihood of hyperglycemia based on reduced consumption by the patient. In a similar manner, content in a captured image may be analyzed to identify items or other indicia associated with exercise, which, in turn may be utilized to automatically adjust control parameters to proactively account for a predicted physiological response to exercise and dynamically revert the control parameters when the patient has ceased exercising.

[0039] Infusion System Overview

[0040] FIG. 1 depicts one exemplary embodiment of an infusion system 100 that includes, without limitation, a fluid infusion device (or infusion pump) 102, a sensing arrangement 104, a command control device (CCD) 106, and a computer 108. The components of an infusion system 100 may be realized using different platforms, designs, and configurations, and the embodiment shown in FIG. 1 is not exhaustive or limiting. In practice, the infusion device 102 and the sensing arrangement 104 are secured at desired locations on the body of a user (or patient), as illustrated in FIG. 1. In this regard, the locations at which the infusion device 102 and the sensing arrangement 104 are secured to the body of the user in FIG. 1 are provided only as a representative, non-limiting, example. The elements of the infusion system 100 may be similar to those described in U.S. Pat. No. 8,674,288, the subject matter of which is hereby incorporated by reference in its entirety.

[0041] In the illustrated embodiment of FIG. 1, the infusion device 102 is designed as a portable medical device suitable for infusing a fluid, a liquid, a gel, or other medicament into the body of a user. In exemplary embodiments, the infused fluid is insulin, although many other fluids may be administered through infusion such as, but not limited to, HIV drugs, drugs to treat pulmonary hypertension, iron chelation drugs, pain medications, anti-cancer treatments, medications, vitamins, hormones, or the like. In some embodiments, the fluid may include a nutritional supplement, a dye, a tracing medium, a saline medium, a hydration medium, or the like.

[0042] The sensing arrangement 104 generally represents the components of the infusion system 100 configured to sense, detect, measure or otherwise quantify a condition of the user, and may include a sensor, a monitor, or the like, for providing data indicative of the condition that is sensed, detected, measured or otherwise monitored by the sensing arrangement. In this regard, the sensing arrangement 104 may include electronics and enzymes reactive to a biological condition, such as a blood glucose level, or the like, of the user, and provide data indicative of the blood glucose level to the infusion device 102, the CCD 106 and/or the computer 108. For example, the infusion device 102, the CCD 106 and/or the computer 108 may include a display for presenting information or data to the user based on the sensor data received from the sensing arrangement 104, such as, for example, a current glucose level of the user, a graph or chart of the user's glucose level versus time, device status indicators, alert messages, or the like. In other embodiments, the infusion device 102, the CCD 106 and/or the computer 108 may include electronics and software that are configured to analyze sensor data and operate the infusion device 102 to deliver fluid to the body of the user based on the sensor data and/or preprogrammed delivery routines. Thus, in exemplary embodiments, one or more of the infusion device 102, the sensing arrangement 104, the CCD 106, and/or the computer 108 includes a transmitter, a receiver, and/or other transceiver electronics that allow for communication with other components of the infusion system 100, so that the sensing arrangement 104 may transmit sensor data or monitor data to one or more of the infusion device 102, the CCD 106 and/or the computer 108.

[0043] Still referring to FIG. 1, in various embodiments, the sensing arrangement 104 may be secured to the body of the user or embedded in the body of the user at a location that is remote from the location at which the infusion device 102 is secured to the body of the user. In various other embodiments, the sensing arrangement 104 may be incorporated within the infusion device 102. In other embodiments, the sensing arrangement 104 may be separate and apart from the infusion device 102, and may be, for example, part of the CCD 106. In such embodiments, the sensing arrangement 104 may be configured to receive a biological sample, analyte, or the like, to measure a condition of the user.

[0044] In some embodiments, the CCD 106 and/or the computer 108 may include electronics and other components configured to perform processing, delivery routine storage, and to control the infusion device 102 in a manner that is influenced by sensor data measured by and/or received from the sensing arrangement 104. By including control functions in the CCD 106 and/or the computer 108, the infusion device 102 may be made with more simplified electronics. However, in other embodiments, the infusion device 102 may include all control functions, and may operate without the CCD 106 and/or the computer 108. In various embodiments, the CCD 106 may be a portable electronic device. In addition, in various embodiments, the infusion device 102 and/or the sensing arrangement 104 may be configured to transmit data to the CCD 106 and/or the computer 108 for display or processing of the data by the CCD 106 and/or the computer 108.

[0045] In some embodiments, the CCD 106 and/or the computer 108 may provide information to the user that facilitates the user's subsequent use of the infusion device 102. For example, the CCD 106 may provide information to the user to allow the user to determine the rate or dose of medication to be administered into the user's body. In other embodiments, the CCD 106 may provide information to the infusion device 102 to autonomously control the rate or dose of medication administered into the body of the user. In some embodiments, the sensing arrangement 104 may be integrated into the CCD 106. Such embodiments may allow the user to monitor a condition by providing, for example, a sample of his or her blood to the sensing arrangement 104 to assess his or her condition. In some embodiments, the sensing arrangement 104 and the CCD 106 may be used for determining glucose levels in the blood and/or body fluids of the user without the use of, or necessity of, a wire or cable connection between the infusion device 102 and the sensing arrangement 104 and/or the CCD 106.

[0046] In some embodiments, the sensing arrangement 104 and/or the infusion device 102 are cooperatively configured to utilize a closed-loop system for delivering fluid to the user. Examples of sensing devices and/or infusion pumps utilizing closed-loop systems may be found at, but are not limited to, the following U.S. Pat. Nos. 6,088,608, 6,119,028, 6,589,229, 6,740,072, 6,827,702, 7,323,142, and 7,402,153 or United States Patent Application Publication No. 2014/0066889, all of which are incorporated herein by reference in their entirety. In such embodiments, the sensing arrangement 104 is configured to sense or measure a condition of the user, such as, blood glucose level or the like. The infusion device 102 is configured to deliver fluid in response to the condition sensed by the sensing arrangement 104. In turn, the sensing arrangement 104 continues to sense or otherwise quantify a current condition of the user, thereby allowing the infusion device 102 to deliver fluid continuously in response to the condition currently (or most recently) sensed by the sensing arrangement 104 indefinitely. In some embodiments, the sensing arrangement 104 and/or the infusion device 102 may be configured to utilize the closed-loop system only for a portion of the day, for example only when the user is asleep or awake.

[0047] FIGS. 2-3 depict one exemplary embodiment of a fluid infusion device 200 (or alternatively, infusion pump) suitable for use in an infusion system, such as, for example, as infusion device 102 in the infusion system 100 of FIG. 1. The fluid infusion device 200 is a portable medical device designed to be carried or worn by a patient (or user), and the fluid infusion device 200 may leverage any number of conventional features, components, elements, and characteristics of existing fluid infusion devices, such as, for example, some of the features, components, elements, and/or characteristics described in U.S. Pat. Nos. 6,485,465 and 7,621,893. It should be appreciated that FIGS. 2-3 depict some aspects of the infusion device 200 in a simplified manner; in practice, the infusion device 200 could include additional elements, features, or components that are not shown or described in detail herein.

[0048] As best illustrated in FIGS. 2-3, the illustrated embodiment of the fluid infusion device 200 includes a housing 202 adapted to receive a fluid-containing reservoir 205. An opening 220 in the housing 202 accommodates a fitting 223 (or cap) for the reservoir 205, with the fitting 223 being configured to mate or otherwise interface with tubing 221 of an infusion set 225 that provides a fluid path to/from the body of the user. In this manner, fluid communication from the interior of the reservoir 205 to the user is established via the tubing 221. The illustrated fluid infusion device 200 includes a human-machine interface (HMI) 230 (or user interface) that includes elements 232, 234 that can be manipulated by the user to administer a bolus of fluid (e.g., insulin), to change therapy settings, to change user preferences, to select display features, and the like. The infusion device also includes a display element 226, such as a liquid crystal display (LCD) or another suitable display element, that can be used to present various types of information or data to the user, such as, without limitation: the current glucose level of the patient; the time; a graph or chart of the patient's glucose level versus time; device status indicators; etc.

[0049] The housing 202 is formed from a substantially rigid material having a hollow interior 214 adapted to allow an electronics assembly 204, a sliding member (or slide) 206, a drive system 208, a sensor assembly 210, and a drive system capping member 212 to be disposed therein in addition to the reservoir 205, with the contents of the housing 202 being enclosed by a housing capping member 216. The opening 220, the slide 206, and the drive system 208 are coaxially aligned in an axial direction (indicated by arrow 218), whereby the drive system 208 facilitates linear displacement of the slide 206 in the axial direction 218 to dispense fluid from the reservoir 205 (after the reservoir 205 has been inserted into opening 220), with the sensor assembly 210 being configured to measure axial forces (e.g., forces aligned with the axial direction 218) exerted on the sensor assembly 210 responsive to operating the drive system 208 to displace the slide 206. In various embodiments, the sensor assembly 210 may be utilized to detect one or more of the following: an occlusion in a fluid path that slows, prevents, or otherwise degrades fluid delivery from the reservoir 205 to a user's body; when the reservoir 205 is empty; when the slide 206 is properly seated with the reservoir 205; when a fluid dose has been delivered; when the infusion pump 200 is subjected to shock or vibration; when the infusion pump 200 requires maintenance.

[0050] Depending on the embodiment, the fluid-containing reservoir 205 may be realized as a syringe, a vial, a cartridge, a bag, or the like. In certain embodiments, the infused fluid is insulin, although many other fluids may be administered through infusion such as, but not limited to, HIV drugs, drugs to treat pulmonary hypertension, iron chelation drugs, pain medications, anti-cancer treatments, medications, vitamins, hormones, or the like. As best illustrated in FIG. 3, the reservoir 205 typically includes a reservoir barrel 219 that contains the fluid and is concentrically and/or coaxially aligned with the slide 206 (e.g., in the axial direction 218) when the reservoir 205 is inserted into the infusion pump 200. The end of the reservoir 205 proximate the opening 220 may include or otherwise mate with the fitting 223, which secures the reservoir 205 in the housing 202 and prevents displacement of the reservoir 205 in the axial direction 218 with respect to the housing 202 after the reservoir 205 is inserted into the housing 202. As described above, the fitting 223 extends from (or through) the opening 220 of the housing 202 and mates with tubing 221 to establish fluid communication from the interior of the reservoir 205 (e.g., reservoir barrel 219) to the user via the tubing 221 and infusion set 225. The opposing end of the reservoir 205 proximate the slide 206 includes a plunger 217 (or stopper) positioned to push fluid from inside the barrel 219 of the reservoir 205 along a fluid path through tubing 221 to a user. The slide 206 is configured to mechanically couple or otherwise engage with the plunger 217, thereby becoming seated with the plunger 217 and/or reservoir 205. Fluid is forced from the reservoir 205 via tubing 221 as the drive system 208 is operated to displace the slide 206 in the axial direction 218 toward the opening 220 in the housing 202.

[0051] In the illustrated embodiment of FIG. 3, the drive system 208 includes a motor assembly 207 and a drive screw 209. The motor assembly 207 includes a motor that is coupled to drive train components of the drive system 208 that are configured to convert rotational motor motion to a translational displacement of the slide 206 in the axial direction 218, and thereby engaging and displacing the plunger 217 of the reservoir 205 in the axial direction 218. In some embodiments, the motor assembly 207 may also be powered to translate the slide 206 in the opposing direction (e.g., the direction opposite direction 218) to retract and/or detach from the reservoir 205 to allow the reservoir 205 to be replaced. In exemplary embodiments, the motor assembly 207 includes a brushless DC (BLDC) motor having one or more permanent magnets mounted, affixed, or otherwise disposed on its rotor. However, the subject matter described herein is not necessarily limited to use with BLDC motors, and in alternative embodiments, the motor may be realized as a solenoid motor, an AC motor, a stepper motor, a piezoelectric caterpillar drive, a shape memory actuator drive, an electrochemical gas cell, a thermally driven gas cell, a bimetallic actuator, or the like. The drive train components may comprise one or more lead screws, cams, ratchets, jacks, pulleys, pawls, clamps, gears, nuts, slides, bearings, levers, beams, stoppers, plungers, sliders, brackets, guides, bearings, supports, bellows, caps, diaphragms, bags, heaters, or the like. In this regard, although the illustrated embodiment of the infusion pump utilizes a coaxially aligned drive train, the motor could be arranged in an offset or otherwise non-coaxial manner, relative to the longitudinal axis of the reservoir 205.

[0052] In some embodiments, the drive screw 209 mates with threads internal to the slide 206. When the motor assembly 207 is powered and operated, the drive screw 209 rotates, and the slide 206 is forced to translate in the axial direction 218. In an exemplary embodiment, the infusion pump 200 includes a sleeve 211 to prevent the slide 206 from rotating when the drive screw 209 of the drive system 208 rotates. Thus, rotation of the drive screw 209 causes the slide 206 to extend or retract relative to the drive motor assembly 207. When the fluid infusion device is assembled and operational, the slide 206 contacts the plunger 217 to engage the reservoir 205 and control delivery of fluid from the infusion pump 200. In an exemplary embodiment, the shoulder portion 215 of the slide 206 contacts or otherwise engages the plunger 217 to displace the plunger 217 in the axial direction 218. In alternative embodiments, the slide 206 may include a threaded tip 213 capable of being detachably engaged with internal threads on the plunger 217 of the reservoir 205, as described in detail in U.S. Pat. Nos. 6,248,093 and 6,485,465, which are incorporated by reference herein.

[0053] The electronics assembly 204 includes control electronics 224 coupled to the display element 226, with the housing 202 including a transparent window portion 228 that is aligned with the display element 226 to allow the display 226 to be viewed by the user when the electronics assembly 204 is disposed within the interior 214 of the housing 202. The control electronics 224 generally represent the hardware, firmware, processing logic and/or software (or combinations thereof) configured to control operation of the motor assembly 207 and/or drive system 208, as described in greater detail below in the context of FIG. 4. Whether such functionality is implemented as hardware, firmware, a state machine, or software depends upon the particular application and design constraints imposed on the embodiment. Those familiar with the concepts described here may implement such functionality in a suitable manner for each particular application, but such implementation decisions should not be interpreted as being restrictive or limiting. In an exemplary embodiment, the control electronics 224 includes one or more programmable controllers that may be programmed to control operation of the infusion pump 200.

[0054] The motor assembly 207 includes one or more electrical leads 236 adapted to be electrically coupled to the electronics assembly 204 to establish communication between the control electronics 224 and the motor assembly 207. In response to command signals from the control electronics 224 that operate a motor driver (e.g., a power converter) to regulate the amount of power supplied to the motor from a power supply, the motor actuates the drive train components of the drive system 208 to displace the slide 206 in the axial direction 218 to force fluid from the reservoir 205 along a fluid path (including tubing 221 and an infusion set), thereby administering doses of the fluid contained in the reservoir 205 into the user's body. Preferably, the power supply is realized one or more batteries contained within the housing 202. Alternatively, the power supply may be a solar panel, capacitor, AC or DC power supplied through a power cord, or the like. In some embodiments, the control electronics 224 may operate the motor of the motor assembly 207 and/or drive system 208 in a stepwise manner, typically on an intermittent basis; to administer discrete precise doses of the fluid to the user according to programmed delivery profiles.

[0055] Referring to FIGS. 2-3, as described above, the user interface 230 includes HMI elements, such as buttons 232 and a directional pad 234, that are formed on a graphic keypad overlay 231 that overlies a keypad assembly 233, which includes features corresponding to the buttons 232, directional pad 234 or other user interface items indicated by the graphic keypad overlay 231. When assembled, the keypad assembly 233 is coupled to the control electronics 224, thereby allowing the HMI elements 232, 234 to be manipulated by the user to interact with the control electronics 224 and control operation of the infusion pump 200, for example, to administer a bolus of insulin, to change therapy settings, to change user preferences, to select display features, to set or disable alarms and reminders, and the like. In this regard, the control electronics 224 maintains and/or provides information to the display 226 regarding program parameters, delivery profiles, pump operation, alarms, warnings, statuses, or the like, which may be adjusted using the HMI elements 232, 234. In various embodiments, the HMI elements 232, 234 may be realized as physical objects (e.g., buttons, knobs, joysticks, and the like) or virtual objects (e.g., using touch-sensing and/or proximity-sensing technologies). For example, in some embodiments, the display 226 may be realized as a touch screen or touch-sensitive display, and in such embodiments, the features and/or functionality of the HMI elements 232, 234 may be integrated into the display 226 and the HMI 230 may not be present. In some embodiments, the electronics assembly 204 may also include alert generating elements coupled to the control electronics 224 and suitably configured to generate one or more types of feedback, such as, without limitation: audible feedback; visual feedback; haptic (physical) feedback; or the like.

[0056] Referring to FIG. 3, in accordance with one or more embodiments, the sensor assembly 210 includes a back plate structure 250 and a loading element 260. The loading element 260 is disposed between the capping member 212 and a beam structure 270 that includes one or more beams having sensing elements disposed thereon that are influenced by compressive force applied to the sensor assembly 210 that deflects the one or more beams, as described in greater detail in U.S. Pat. No. 8,474,332, which is incorporated by reference herein. In exemplary embodiments, the back plate structure 250 is affixed, adhered, mounted, or otherwise mechanically coupled to the bottom surface 238 of the drive system 208 such that the back plate structure 250 resides between the bottom surface 238 of the drive system 208 and the housing cap 216. The drive system capping member 212 is contoured to accommodate and conform to the bottom of the sensor assembly 210 and the drive system 208. The drive system capping member 212 may be affixed to the interior of the housing 202 to prevent displacement of the sensor assembly 210 in the direction opposite the direction of force provided by the drive system 208 (e.g., the direction opposite direction 218). Thus, the sensor assembly 210 is positioned between the motor assembly 207 and secured by the capping member 212, which prevents displacement of the sensor assembly 210 in a downward direction opposite the direction of arrow 218, such that the sensor assembly 210 is subjected to a reactionary compressive force when the drive system 208 and/or motor assembly 207 is operated to displace the slide 206 in the axial direction 218 in opposition to the fluid pressure in the reservoir 205. Under normal operating conditions, the compressive force applied to the sensor assembly 210 is correlated with the fluid pressure in the reservoir 205. As shown, electrical leads 240 are adapted to electrically couple the sensing elements of the sensor assembly 210 to the electronics assembly 204 to establish communication to the control electronics 224, wherein the control electronics 224 are configured to measure, receive, or otherwise obtain electrical signals from the sensing elements of the sensor assembly 210 that are indicative of the force applied by the drive system 208 in the axial direction 218.

[0057] FIG. 4 depicts an exemplary embodiment of an infusion system 400 suitable for use with an infusion device 402, such as any one of the infusion devices 102, 200 described above. The infusion system 400 is capable of controlling or otherwise regulating a physiological condition in the body 401 of a patient to a desired (or target) value or otherwise maintain the condition within a range of acceptable values in an automated or autonomous manner. In one or more exemplary embodiments, the condition being regulated is sensed, detected, measured or otherwise quantified by a sensing arrangement 404 (e.g., sensing arrangement 404) communicatively coupled to the infusion device 402. However, it should be noted that in alternative embodiments, the condition being regulated by the infusion system 400 may be correlative to the measured values obtained by the sensing arrangement 404. That said, for clarity and purposes of explanation, the subject matter may be described herein in the context of the sensing arrangement 404 being realized as a glucose sensing arrangement that senses, detects, measures or otherwise quantifies the patient's glucose level, which is being regulated in the body 401 of the patient by the infusion system 400.

[0058] In exemplary embodiments, the sensing arrangement 404 includes one or more interstitial glucose sensing elements that generate or otherwise output electrical signals (alternatively referred to herein as measurement signals) having a signal characteristic that is correlative to, influenced by, or otherwise indicative of the relative interstitial fluid glucose level in the body 401 of the patient. The output electrical signals are filtered or otherwise processed to obtain a measurement value indicative of the patient's interstitial fluid glucose level. In exemplary embodiments, a blood glucose meter 430, such as a finger stick device, is utilized to directly sense, detect, measure or otherwise quantify the blood glucose in the body 401 of the patient. In this regard, the blood glucose meter 430 outputs or otherwise provides a measured blood glucose value that may be utilized as a reference measurement for calibrating the sensing arrangement 404 and converting a measurement value indicative of the patient's interstitial fluid glucose level into a corresponding calibrated blood glucose value. For purposes of explanation, the calibrated blood glucose value calculated based on the electrical signals output by the sensing element(s) of the sensing arrangement 404 may alternatively be referred to herein as the sensor glucose value, the sensed glucose value, or variants thereof.

[0059] In exemplary embodiments, the infusion system 400 also includes one or more additional sensing arrangements 406, 408 configured to sense, detect, measure or otherwise quantify a characteristic of the body 401 of the patient that is indicative of a condition in the body 401 of the patient. In this regard, in addition to the glucose sensing arrangement 404, one or more auxiliary sensing arrangements 406 may be worn, carried, or otherwise associated with the body 401 of the patient to measure characteristics or conditions of the patient (or the patient's activity) that may influence the patient's glucose levels or insulin sensitivity. For example, a heart rate sensing arrangement 406 could be worn on or otherwise associated with the patient's body 401 to sense, detect, measure or otherwise quantify the patient's heart rate, which, in turn, may be indicative of exercise (and the intensity thereof) that is likely to influence the patient's glucose levels or insulin response in the body 401. In yet another embodiment, another invasive, interstitial, or subcutaneous sensing arrangement 406 may be inserted into the body 401 of the patient to obtain measurements of another physiological condition that may be indicative of exercise (and the intensity thereof), such as, for example, a lactate sensor, a ketone sensor, or the like. Depending on the embodiment, the auxiliary sensing arrangement(s) 406 could be realized as a standalone component worn by the patient, or alternatively, the auxiliary sensing arrangement(s) 406 may be integrated with the infusion device 402 or the glucose sensing arrangement 404.

[0060] The illustrated infusion system 400 also includes an acceleration sensing arrangement 408 (or accelerometer) that may be worn on or otherwise associated with the patient's body 401 to sense, detect, measure or otherwise quantify an acceleration of the patient's body 401, which, in turn, may be indicative of exercise or some other condition in the body 401 that is likely to influence the patient's insulin response. While the acceleration sensing arrangement 408 is depicted as being integrated into the infusion device 402 in FIG. 4, in alternative embodiments, the acceleration sensing arrangement 408 may be integrated with another sensing arrangement 404, 406 on the body 401 of the patient, or the acceleration sensing arrangement 408 may be realized as a separate standalone component that is worn by the patient.

[0061] In the illustrated embodiment, the pump control system 420 generally represents the electronics and other components of the infusion device 402 that control operation of the fluid infusion device 402 according to a desired infusion delivery program in a manner that is influenced by the sensed glucose value indicating the current glucose level in the body 401 of the patient. For example, to support a closed-loop operating mode, the pump control system 420 maintains, receives, or otherwise obtains a target or commanded glucose value, and automatically generates or otherwise determines dosage commands for operating an actuation arrangement, such as a motor 432, to displace the plunger 417 and deliver insulin to the body 401 of the patient based on the difference between the sensed glucose value and the target glucose value. In other operating modes, the pump control system 420 may generate or otherwise determine dosage commands configured to maintain the sensed glucose value below an upper glucose limit, above a lower glucose limit, or otherwise within a desired range of glucose values. In practice, the infusion device 402 may store or otherwise maintain the target value, upper and/or lower glucose limit(s), insulin delivery limit(s), and/or other glucose threshold value(s) in a data storage element accessible to the pump control system 420. As described in greater detail, in one or more exemplary embodiments, the pump control system 420 automatically adjusts or adapts one or more parameters or other control information used to generate commands for operating the motor 432 in a manner that accounts for a likely change in the patient's glucose level or insulin response resulting from a meal, exercise, or other activity.

[0062] Still referring to FIG. 4, the target glucose value and other threshold glucose values utilized by the pump control system 420 may be received from an external component (e.g., CCD 106 and/or computing device 108) or be input by a patient via a user interface element 440 associated with the infusion device 402. In practice, the one or more user interface element(s) 440 associated with the infusion device 402 typically include at least one input user interface element, such as, for example, a button, a keypad, a keyboard, a knob, a joystick, a mouse, a touch panel, a touchscreen, a microphone or another audio input device, and/or the like. Additionally, the one or more user interface element(s) 440 include at least one output user interface element, such as, for example, a display element (e.g., a light-emitting diode or the like), a display device (e.g., a liquid crystal display or the like), a speaker or another audio output device, a haptic feedback device, or the like, for providing notifications or other information to the patient. It should be noted that although FIG. 4 depicts the user interface element(s) 440 as being separate from the infusion device 402, in practice, one or more of the user interface element(s) 440 may be integrated with the infusion device 402. Furthermore, in some embodiments, one or more user interface element(s) 440 are integrated with the sensing arrangement 404 in addition to and/or in alternative to the user interface element(s) 440 integrated with the infusion device 402. The user interface element(s) 440 may be manipulated by the patient to operate the infusion device 402 to deliver correction boluses, adjust target and/or threshold values, modify the delivery control scheme or operating mode, and the like, as desired.

[0063] Still referring to FIG. 4, in the illustrated embodiment, the infusion device 402 includes a motor control module 412 coupled to a motor 432 (e.g., motor assembly 207) that is operable to displace a plunger 417 (e.g., plunger 217) in a reservoir (e.g., reservoir 205) and provide a desired amount of fluid to the body 401 of a patient. In this regard, displacement of the plunger 417 results in the delivery of a fluid, such as insulin, that is capable of influencing the patient's physiological condition to the body 401 of the patient via a fluid delivery path (e.g., via tubing 221 of an infusion set 225). A motor driver module 414 is coupled between an energy source 418 and the motor 432. The motor control module 412 is coupled to the motor driver module 414, and the motor control module 412 generates or otherwise provides command signals that operate the motor driver module 414 to provide current (or power) from the energy source 418 to the motor 432 to displace the plunger 417 in response to receiving, from a pump control system 420, a dosage command indicative of the desired amount of fluid to be delivered.

[0064] In exemplary embodiments, the energy source 418 is realized as a battery housed within the infusion device 402 (e.g., within housing 202) that provides direct current (DC) power. In this regard, the motor driver module 414 generally represents the combination of circuitry, hardware and/or other electrical components configured to convert or otherwise transfer DC power provided by the energy source 418 into alternating electrical signals applied to respective phases of the stator windings of the motor 432 that result in current flowing through the stator windings that generates a stator magnetic field and causes the rotor of the motor 432 to rotate. The motor control module 412 is configured to receive or otherwise obtain a commanded dosage from the pump control system 420, convert the commanded dosage to a commanded translational displacement of the plunger 417, and command, signal, or otherwise operate the motor driver module 414 to cause the rotor of the motor 432 to rotate by an amount that produces the commanded translational displacement of the plunger 417. For example, the motor control module 412 may determine an amount of rotation of the rotor required to produce translational displacement of the plunger 417 that achieves the commanded dosage received from the pump control system 420. Based on the current rotational position (or orientation) of the rotor with respect to the stator that is indicated by the output of the rotor sensing arrangement 416, the motor control module 412 determines the appropriate sequence of alternating electrical signals to be applied to the respective phases of the stator windings that should rotate the rotor by the determined amount of rotation from its current position (or orientation). In embodiments where the motor 432 is realized as a BLDC motor, the alternating electrical signals commutate the respective phases of the stator windings at the appropriate orientation of the rotor magnetic poles with respect to the stator and in the appropriate order to provide a rotating stator magnetic field that rotates the rotor in the desired direction. Thereafter, the motor control module 412 operates the motor driver module 414 to apply the determined alternating electrical signals (e.g., the command signals) to the stator windings of the motor 432 to achieve the desired delivery of fluid to the patient.

[0065] When the motor control module 412 is operating the motor driver module 414, current flows from the energy source 418 through the stator windings of the motor 432 to produce a stator magnetic field that interacts with the rotor magnetic field. In some embodiments, after the motor control module 412 operates the motor driver module 414 and/or motor 432 to achieve the commanded dosage, the motor control module 412 ceases operating the motor driver module 414 and/or motor 432 until a subsequent dosage command is received. In this regard, the motor driver module 414 and the motor 432 enter an idle state during which the motor driver module 414 effectively disconnects or isolates the stator windings of the motor 432 from the energy source 418. In other words, current does not flow from the energy source 418 through the stator windings of the motor 432 when the motor 432 is idle, and thus, the motor 432 does not consume power from the energy source 418 in the idle state, thereby improving efficiency.

[0066] Depending on the embodiment, the motor control module 412 may be implemented or realized with a general purpose processor, a microprocessor, a controller, a microcontroller, a state machine, a content addressable memory, an application specific integrated circuit, a field programmable gate array, any suitable programmable logic device, discrete gate or transistor logic, discrete hardware components, or any combination thereof, designed to perform the functions described herein. In exemplary embodiments, the motor control module 412 includes or otherwise accesses a data storage element or memory, including any sort of random access memory (RAM), read only memory (ROM), flash memory, registers, hard disks, removable disks, magnetic or optical mass storage, or any other short or long term storage media or other non-transitory computer-readable medium, which is capable of storing programming instructions for execution by the motor control module 412. The computer-executable programming instructions, when read and executed by the motor control module 412, cause the motor control module 412 to perform or otherwise support the tasks, operations, functions, and processes described herein.

[0067] It should be appreciated that FIG. 4 is a simplified representation of the infusion device 402 for purposes of explanation and is not intended to limit the subject matter described herein in any way. In this regard, depending on the embodiment, some features and/or functionality of the sensing arrangement 404 may implemented by or otherwise integrated into the pump control system 420, or vice versa. Similarly, in practice, the features and/or functionality of the motor control module 412 may implemented by or otherwise integrated into the pump control system 420, or vice versa. Furthermore, the features and/or functionality of the pump control system 420 may be implemented by control electronics 224 located in the fluid infusion device 402, while in alternative embodiments, the pump control system 420 may be implemented by a remote computing device that is physically distinct and/or separate from the infusion device 402, such as, for example, the CCD 106 or the computing device 108.

[0068] FIG. 5 depicts an exemplary embodiment of a pump control system 500 suitable for use as the pump control system 420 in FIG. 4 in accordance with one or more embodiments. The illustrated pump control system 500 includes, without limitation, a pump control module 502, a communications interface 504, and a data storage element (or memory) 506. The pump control module 502 is coupled to the communications interface 504 and the memory 506, and the pump control module 502 is suitably configured to support the operations, tasks, and/or processes described herein. In various embodiments, the pump control module 502 is also coupled to one or more user interface elements (e.g., user interface 230, 440) for receiving user inputs (e.g., target glucose values or other glucose thresholds) and providing notifications, alerts, or other therapy information to the patient.

[0069] The communications interface 504 generally represents the hardware, circuitry, logic, firmware and/or other components of the pump control system 500 that are coupled to the pump control module 502 and configured to support communications between the pump control system 500 and the various sensing arrangements 404, 406, 408. In this regard, the communications interface 504 may include or otherwise be coupled to one or more transceiver modules capable of supporting wireless communications between the pump control system 420, 500 and the sensing arrangement 404, 406, 408. For example, the communications interface 504 may be utilized to receive sensor measurement values or other measurement data from each sensing arrangement 404, 406, 408 in an infusion system 400. In other embodiments, the communications interface 504 may be configured to support wired communications to/from the sensing arrangement(s) 404, 406, 408. In various embodiments, the communications interface 504 may also support communications with another electronic device (e.g., CCD 106 and/or computer 108) in an infusion system (e.g., to upload sensor measurement values to a server or other computing device, receive control information from a server or other computing device, and the like).

[0070] The pump control module 502 generally represents the hardware, circuitry, logic, firmware and/or other component of the pump control system 500 that is coupled to the communications interface 504 and configured to determine dosage commands for operating the motor 432 to deliver fluid to the body 401 based on measurement data received from the sensing arrangements 404, 406, 408 and perform various additional tasks, operations, functions and/or operations described herein. For example, in exemplary embodiments, pump control module 502 implements or otherwise executes a command generation application 510 that supports one or more autonomous operating modes and calculates or otherwise determines dosage commands for operating the motor 432 of the infusion device 402 in an autonomous operating mode based at least in part on a current measurement value for a condition in the body 401 of the patient. For example, in a closed-loop operating mode, the command generation application 510 may determine a dosage command for operating the motor 432 to deliver insulin to the body 401 of the patient based at least in part on the current glucose measurement value most recently received from the sensing arrangement 404 to regulate the patient's blood glucose level to a target reference glucose value. Additionally, the command generation application 510 may generate dosage commands for boluses that are manually-initiated or otherwise instructed by a patient via a user interface element.

[0071] In exemplary embodiments, the pump control module 502 also implements or otherwise executes a personalization application 508 that is cooperatively configured to interact with the command generation application 510 to support adjusting dosage commands or control information dictating the manner in which dosage commands are generated in a personalized, patient-specific manner. In this regard, in some embodiments, based on correlations between current or recent measurement data and the current operational context relative to historical data associated with the patient, the personalization application 508 may adjust or otherwise modify values for one or more parameters utilized by the command generation application 510 when determining dosage commands, for example, by modifying a parameter value at a register or location in memory 506 referenced by the command generation application 510. In yet other embodiments, the personalization application 508 may predict meals or other events or activities that are likely to be engaged in by the patient and output or otherwise provide an indication of the predicted patient behavior, which, in turn, may then be utilized to adjust the manner in which dosage commands are generated to regulate glucose in a manner that accounts for the patient's predicted behavior in a personalized manner.

[0072] Still referring to FIG. 5, depending on the embodiment, the pump control module 502 may be implemented or realized with a general purpose processor, a microprocessor, a controller, a microcontroller, a state machine, a content addressable memory, an application specific integrated circuit, a field programmable gate array, any suitable programmable logic device, discrete gate or transistor logic, discrete hardware components, or any combination thereof, designed to perform the functions described herein. In this regard, the steps of a method or algorithm described in connection with the embodiments disclosed herein may be embodied directly in hardware, in firmware, in a software module executed by the pump control module 502, or in any practical combination thereof. In exemplary embodiments, the pump control module 502 includes or otherwise accesses the data storage element or memory 506, which may be realized using any sort of non-transitory computer-readable medium capable of storing programming instructions for execution by the pump control module 502. The computer-executable programming instructions, when read and executed by the pump control module 502, cause the pump control module 502 to implement or otherwise generate the applications 508, 510 and perform tasks, operations, functions, and processes described herein.

[0073] It should be understood that FIG. 5 is a simplified representation of a pump control system 500 for purposes of explanation and is not intended to limit the subject matter described herein in any way. For example, in some embodiments, the features and/or functionality of the motor control module 412 may be implemented by or otherwise integrated into the pump control system 500 and/or the pump control module 502, for example, by the command generation application 510 converting the dosage command into a corresponding motor command, in which case, the separate motor control module 412 may be absent from an embodiment of the infusion device 402.

[0074] FIG. 6 depicts an exemplary closed-loop control system 600 that may be implemented by a pump control system 420, 500 to provide a closed-loop operating mode that autonomously regulates a condition in the body of a patient to a reference (or target) value. It should be appreciated that FIG. 6 is a simplified representation of the control system 600 for purposes of explanation and is not intended to limit the subject matter described herein in any way.