Providing A Fastlane For Disarming Malicious Content In Received Input Content

GRAFI; AVIV

U.S. patent application number 16/448270 was filed with the patent office on 2019-10-10 for providing a fastlane for disarming malicious content in received input content. The applicant listed for this patent is VOTIRO CYBERSEC LTD.. Invention is credited to AVIV GRAFI.

| Application Number | 20190311118 16/448270 |

| Document ID | / |

| Family ID | 62979983 |

| Filed Date | 2019-10-10 |

View All Diagrams

| United States Patent Application | 20190311118 |

| Kind Code | A1 |

| GRAFI; AVIV | October 10, 2019 |

PROVIDING A FASTLANE FOR DISARMING MALICIOUS CONTENT IN RECEIVED INPUT CONTENT

Abstract

The disclosed embodiments include a method for disarming malicious content in a computer system. The method includes accessing input content intended for a recipient of a network, automatically modifying at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content, enabling access to the modified input content by the intended recipient, analyzing the input content according to at least one malware detection algorithm configured to detect malicious content, and enabling access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm.

| Inventors: | GRAFI; AVIV; (Ramat Gan, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 62979983 | ||||||||||

| Appl. No.: | 16/448270 | ||||||||||

| Filed: | June 21, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15926976 | Mar 20, 2018 | 10331889 | ||

| 16448270 | ||||

| 15441904 | Feb 24, 2017 | 10015194 | ||

| 15926976 | ||||

| 15441860 | Feb 24, 2017 | 10013557 | ||

| 15441904 | ||||

| 15616577 | Jun 7, 2017 | 9858424 | ||

| 15441860 | ||||

| 15672037 | Aug 8, 2017 | 9922191 | ||

| 15616577 | ||||

| 15795021 | Oct 26, 2017 | 9923921 | ||

| 15672037 | ||||

| 15926484 | Mar 20, 2018 | 10331890 | ||

| 15795021 | ||||

| 62442452 | Jan 5, 2017 | |||

| 62442452 | Jan 5, 2017 | |||

| 62442452 | Jan 5, 2017 | |||

| 62450605 | Jan 26, 2017 | |||

| 62473902 | Mar 20, 2017 | |||

| 62442452 | Jan 5, 2017 | |||

| 62450605 | Jan 26, 2017 | |||

| 62473902 | Mar 20, 2017 | |||

| 62442452 | Jan 5, 2017 | |||

| 62450605 | Jan 26, 2017 | |||

| 62473902 | Mar 20, 2017 | |||

| 62473902 | Mar 20, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06Q 10/107 20130101; G06F 21/568 20130101; G06F 21/554 20130101; G06F 21/564 20130101; G06F 21/565 20130101 |

| International Class: | G06F 21/56 20060101 G06F021/56; G06F 21/55 20060101 G06F021/55 |

Claims

1. A method for disarming malicious content in a computer system having a processor, the method comprising: accessing, by the computer system, input content intended for a recipient of a network; automatically modifying, by the processor, at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content; enabling access to the modified input content by the intended recipient; analyzing, by the processor, the input content according to at least one malware detection algorithm configured to detect malicious content; and enabling access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm.

2. The method of claim 1, further comprising applying a signature-based malware detection algorithm to the input content, and automatically modifying at least a portion of digital values only if the signature-based malware detection algorithm does not detect malicious code in the input content.

3. The method of claim 2, wherein the signature-based malware detection algorithm includes a first set of signatures of known malicious content, and the at least one malware detection algorithm is configured to evaluate the input content based on a second set of signatures of known malicious content.

4. The method of claim 3, wherein the second set of signatures includes at least one signature not included in the first set of signatures.

5. The method of claim 1, further comprising wherein the input content includes a plurality of data units having digital values representing media content, and wherein the at least a portion of digital values and an adjustment of the digital values are determined so as not to interfere with an intended use of the input content.

6. The method of claim 5, wherein the at least a portion of digital values are determined without knowing a location of data units in the input content including malicious code.

7. The method of claim 5, wherein the portion of digital values are determined randomly or pseudo-randomly based on a data value alteration model configured to disarm malicious code included in the input content.

8. The method of claim 7, wherein the data value alteration model is configured to determine the portion of digital values based on determining that at least one of the digital values of the portion is statistically likely to include any malicious code.

9. The method of claim 2, wherein the at least one malware detection algorithm includes a behavior-based malware detection algorithm.

10. The method of claim 1, wherein the automatically modifying at least a portion of digital values of the input content renders inactive code included in the input content intended for malicious purpose without regard to any structure used to encapsulate the input content.

11. The method of claim 1, wherein the automatically modifying at least a portion of digital values of the input content includes adjusting a bit depth of the portion of digital values.

12. The method of claim 1, wherein the input content includes an input file of a file type indicative of at least one media content type.

13. The method of claim 1, wherein enabling access to the input content includes replacing the modified input content with the input content.

14. The method of claim 13, wherein replacing the modified input content includes replacing a pointer to the modified input content in a file server with a pointer to corresponding input content.

15. The method of claim 13, comprising storing the modified input content at an electronic mail server in association with an electronic mail of the intended recipient, wherein replacing the modified input content includes replacing the modified input content stored in association with the electronic mail with the input content, such that the input content is accessible to the intended recipient via the electronic mail server.

16. The method of claim 1, wherein enabling access to the input content includes providing a notification to the intended recipient indicating that the input content is accessible to the intended recipient, the notification including an electronic link to the input content.

17. The method of claim 1, wherein enabling access to the input content includes forwarding the input content in an electronic mail to the intended recipient.

18. The method of claim 1, wherein the automatically modifying is performed based on a configurable parameter associated with the intended recipient, the parameter indicating a rule that the intended recipient is to access the modified input content.

19. The method of claim 18, wherein the parameter is configurable by the intended recipient, and further wherein, the automatically modifying and enabling access to the modified input content is not performed when the parameter indicates a rule that the intended recipient is to access input content.

20. A non-transitory computer-readable medium comprising instructions that when executed by a processor are configured for carrying out the method of claim 1.

21. A method for disarming malicious content in a computer system having a processor, the method comprising: accessing, by the computer system, input content intended for a recipient of a network; enabling the intended recipient to select to access the input content or modified input content; upon receipt of a request to access modified input content: modifying, by the processor, at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content; and enabling access to the modified input content by the intended recipient; upon receipt of a request to access the input content: analyzing, by the processor, the input content according to at least one malware detection algorithm configured to detect malicious content; and enabling access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm.

22. The method of claim 21, wherein enabling the intended recipient to select to access the input content or modified input content includes enabling selection to access both the input content and the modified input content, wherein upon receipt of a request to access both the input content and modified input content the method further comprises first performing the modifying to render inactive code that is included in the input content intended for malicious purpose and enabling access to the modified input content, then performing the analyzing and enabling access to the input content.

23. The method of claim 22, wherein upon receipt of a request to access both the input content and modified input content, the enabling access to the input content includes replacing the modified input content with the input content.

24. The method of claim 21, wherein the method comprises, before enabling the intended recipient to select to access the input content or modified input content, applying a signature-based malware detection algorithm to the input content, and enabling the intended recipient to select to access the input content only if the signature-based malware detection algorithm does not detect malicious code in the input content.

25. The method of claim 24, wherein the at least one malware detection algorithm includes a behavior-based malware detection algorithm.

26. A non-transitory computer-readable medium comprising instructions that when executed by a processor are configured for carrying out the method of claim 21.

27. A system for disarming malicious content, the system comprising: a memory device storing a set of instructions; and a processor configured to execute the set of instructions to: access input content intended for a recipient of a network; modify at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content; enable access to the modified input content by the intended recipient; analyze, by the processor, the input content according to at least one malware detection algorithm configured to detect malicious content; and enable access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm.

28. The system of claim 27, wherein the processor is configured to execute the set of instructions to modify the at least a portion of digital values of the input content based on a received request from the intended recipient to access modified input content.

29. The system of claim 27, wherein the processor is configured to execute the set of instructions to modify the at least a portion of digital values of the input content based on a configurable parameter associated with the intended recipient, the parameter indicating a rule that the intended recipient is to access the modified input content.

Description

PRIORITY CLAIM

[0001] This application is a continuation-in-part of, and claims the benefit of priority to, U.S. patent application Ser. No. 15/441,904, filed on Feb. 24, 2017, and U.S. patent application Ser. No. 15/441,860, filed on Feb. 24, 2017, each of which claims priority under 35 U.S.C. .sctn. 119 to U.S. provisional patent application No. 62/442,452, filed on Jan. 5, 2017. This application is also a continuation-in-part of, and claims the benefit of priority to, U.S. patent application Ser. No. 15/616,577 filed on Jun. 7, 2017, now U.S. Pat. No. 9,858,424, U.S. patent application Ser. No. 15/672,037, filed on Aug. 8, 2017, now U.S. Pat. No. 9,922,191, and U.S. patent application Ser. No. 15/795,021, filed on Oct. 26, 2017, now U.S. Pat. No. 9,923,921, each of which claims priority under 35 U.S.C. .sctn. 119 to U.S. provisional patent application No. 62/442,452, filed on Jan. 5, 2017, U.S. provisional patent application No. 62/450,605, filed on Jan. 26, 2017, and U.S. provisional patent application No. 62/473,902, filed on Mar. 20, 2017. This application is also a continuation-in-part of, and claims the benefit of priority to U.S. patent application Ser. No. 15/926,484, filed Mar. 20, 2018, which claims priority under 35 U.S.C. .sctn. 119 to U.S. provisional patent application No. 62/473,902, filed on Mar. 20, 2017. Each of the aforementioned applications is incorporated herein by reference in its entirety.

BACKGROUND

[0002] Attackers are known to use several file or document based techniques for attacking a victim's computer. Known file-based attacks may exploit a structure of a file or document and/or vulnerabilities in a platform or document specification. Some file-based attacks include the use of active content embedded in a document, file, or communication to cause an application to execute malicious code or enable other malicious activity on a victim's computer upon rendering the file. Active content may include any content embedded in an electronic file or document configured to carry out an action or trigger an action. Common forms of active content include word processing and spreadsheet macros, formulas, or scripts, JavaScript code within Portable Document Format (PDF) documents, web pages including plugins, applets or other executable content, browser or application toolbars and extensions, etc. Some malicious active content can be automatically invoked to perform the intended malicious functions when a computer runs a program or application to render (e.g., open or read) the received content, such as a file or document. One such example includes the use of a macro embedded in a spreadsheet, where the macro is configured to be automatically executed to take control of the victimized computer upon the user opening the spreadsheet, without any additional action by the user. Active content used by hackers may also be invoked responsive to some other action taken by a user or computer process.

[0003] Another file-based attack includes the use of embedded shellcode in a file to take control of a victim's computer when the computer runs a program to open or read the file. A shellcode is a small piece of program code that may be embedded in a file that hackers can use to exploit vulnerable computers. Hackers typically embed shellcode in a file to take control of a computer when the computer runs a program to open or read the file. It is called "shellcode" because it typically starts a "command shell" to take control of the computer, though any piece of program code or software that performs any malicious task, like taking control of a computer, can be called "shellcode."

[0004] Most shellcode is written in a low-level programming language called "machine code" because of the low level at which the vulnerability being exploited gives an attacker access to a process executing on the computer. Shellcode in an infected or malicious file is typically encoded or embedded in byte level data--a basic data unit of information for the file. At this data unit level of a file, actual data or information for the file (e.g., a pixel value of an image) and executable machine code are indistinguishable. In other words, whether a data unit (i.e., a byte(s) or bit(s)) represents a pixel value for an image file or executable shellcode cannot typically be readily determined by examination of the byte level data.

[0005] Indeed, shellcode is typically crafted so that the infected or malicious file appears to be a legitimate file and in many cases functions as a legitimate file. Additionally, an infected or malicious file including embedded shellcode may not be executable at all by some software applications, and thus the infected file may appear as a legitimate file imposing no threat to a computer. That is, an infected or malicious image file, for example, may be processed by an application executed on a computer to display a valid image and/or to "execute" the byte level data as "machine code" to take control of a computer or to perform other functions dictated by the shellcode. Thus, whether a process executing on a computer interprets a byte or sequence of bytes of a file to represent information of the file, or instead to execute malicious machine code, depends on a vulnerability in a targeted application process executed on the computer.

[0006] Shellcode is therefore often created to target one specific combination of processor, operating system and service pack, called a platform. Additionally, shellcode is often created as the payload of an exploit directed to a particular vulnerability of targeted software on a computer, which in some cases may be specific to a particular version of the targeted software. Thus, for some exploits, due to the constraints put on the shellcode by the target process or target processor architecture, a very specific shellcode must be created. However, it is possible for one shellcode to work for multiple exploits, service packs, operating systems and even processors.

[0007] Attackers typically use shellcode as the payload of an exploit targeting a vulnerability in an endpoint or server application, triggering a bug that leads to "execution" of the byte level machine code. The actual malicious code may be contained within the byte level payload of the infected file, and to be executed, must be made available in the application process space, e.g., memory allocated to an application for performing a desired task. This may be achieved by loading the malicious code into the process space, which can be done by exploiting a vulnerability in an application known to the shellcode developer. A common technique includes performing a heap spray of the malicious byte level shellcode, which includes placing certain byte level data of the file (e.g., aspects of the embedded shellcode) at locations of allocated memory of an application process. This may exploit a vulnerability of the application process and lead the processor to execute the shellcode payload.

[0008] Other file-based attacks are known and are generally characterized by the ability to control a victim's computer or perform malicious activity on the victim's computer upon a user opening, executing, or rendering a malicious document or file on the user's computer. More commonly, the user receives the malicious document or file via electronic communication, such as downloading from a remote repository, via the internet or via an e-mail communication. Attackers are becoming increasingly more sophisticated to disguise the nature of the attack, making such attacks increasingly more difficult to prevent using conventional techniques.

[0009] Computer systems are known to implement various protective tools at end-user computer devices and/or gateways or access points to the computer system for screening or detecting malicious content before the malicious content is allowed to infect the computer system. Conventional tools commonly rely on the ability to identify or recognize a particular malicious threat or characteristics known to be associated with malicious content or activity. For example, conventional techniques include attempts to identify malicious files or malicious content by screening incoming files at a host computer or server based on a comparison of the possibly malicious code to a known malicious signature. These signature-based malware detection techniques, however, are incapable of identifying malicious files or malicious content for which a malicious signature has not yet been identified. Accordingly, it is generally not possible to identify new malicious exploits using signature-based detection methods, as the technique lags behind the crafty hacker. Furthermore, in most cases, malicious content is embedded in otherwise legitimate files having proper structure and characteristics, and the malicious content may also be disguised to hide the malicious nature of the content, so that the malicious content appears to be innocuous. Thus, even upon inspection of a document according to known malware scanning techniques, it may be difficult to identify malicious content.

[0010] Another conventional technique is based on the use of behavior-based techniques or heuristics to identify characteristics of known malicious exploits or other suspicious activity or behavior, such as that based on a heap spray attack. One such technique implements a "sandbox," (e.g., a type of secured, monitored, or virtual operating system environment) which can be used to virtually execute untested or untrusted programs, files, or code without risking harm to the host machine or operating system. That is, conventional sandbox techniques may execute or detonate a file while monitoring the damage or operations post-detonation such as writing to disk, network activity, spawn of new processes etc. and monitor for suspicious behaviors. This technique, however, also suffers from the inability to identify new exploits for which a (software) vulnerability has not yet been identified, e.g., so called zero-day exploits. Some sophisticated malware have also been developed to evade such "sandbox" techniques by halting or skipping if it detects that it is running in such a virtual execution or monitored environment. Furthermore, clever hackers consistently evolve their code to include delayed, or staged attacks that may not be detected from evaluation of a single file, for example, or may lay in wait for a future unknown process to complete an attack. Thus, in some situations it may be too computationally intensive or impracticable to identify some shellcode exploits using conventional sandbox techniques.

[0011] Furthermore, because some malicious attacks are often designed to exploit a specific vulnerability of a particular version of an application program, it is very difficult to identify a malicious file if that vulnerable version of the application program is not executed at a screening host computer or server. This creates additional problems for networks of computers that may be operating different versions of application or operating system software. Thus, while a shellcode attack, for example, may be prevented or undetected at a first computer because its application software does not include the target vulnerability, the malicious file may then be shared within the network where it may be executed at a machine that is operating the targeted vulnerable version of application software.

[0012] The present disclosure includes embodiments directed to solving problems rooted in the use of embedded or referenced malicious content generally, without regard to a specific vulnerability or how the malicious content is configured to be invoked. The present disclosure includes embodiments directed to solving problems and risks posed by malicious content generally, whether such malicious content may be considered active content or shellcode or any other form of malicious content.

SUMMARY

[0013] In the following description certain aspects and embodiments of the present disclosure will become evident. It should be understood that the disclosure, in its broadest sense, could be practiced without having one or more features of these aspects and embodiments. It should also be understood that these aspects and embodiments are examples only.

[0014] An embodiment of the present disclosure includes a method for disarming malicious content in a computer system having a processor. The method includes accessing input content intended for a recipient of a network, automatically modifying at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content, enabling access to the modified input content by the intended recipient, analyzing the input content according to at least one malware detection algorithm configured to detect malicious content, and enabling access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm.

[0015] The method may include applying a signature-based malware detection algorithm to the input content, and automatically modifying at least a portion of digital values only if the signature-based malware detection algorithm does not detect malicious code in the input content. In some embodiments, the signature-based malware detection algorithm includes a first set of signatures of known malicious content, and the at least one malware detection algorithm is configured to evaluate the input content based on a second set of signatures of known malicious content. The second set of signatures may include at least one signature not included in the first set of signatures. In some embodiments, the at least one malware detection algorithm includes a behavior-based malware detection algorithm.

[0016] In some embodiments, the input content includes a plurality of data units having digital values representing media content, and wherein the at least a portion of digital values and an adjustment of the digital values are determined so as not to interfere with an intended use of the input content. In some embodiments, the at least a portion of digital values are determined without knowing a location of data units in the input content including malicious code. In some embodiments, the portion of digital values are determined randomly or pseudo-randomly based on a data value alteration model configured to disarm malicious code included in the input content. In some embodiments, the data value alteration model is configured to determine the portion of digital values based on determining that at least one of the digital values of the portion is statistically likely to include any malicious code.

[0017] In some embodiments, the automatically modifying at least a portion of digital values of the input content includes adjusting a bit depth of the portion of digital values. Additionally, in some embodiments, the input content includes an input file of a file type indicative of at least one media content type. In some embodiments, the automatically modifying is performed based on a configurable parameter associated with the intended recipient, the parameter indicating a rule that the intended recipient is to access the modified input content, wherein the parameter may be configurable by the intended recipient, and further wherein, the automatically modifying and enabling access to the modified input content is not performed when the parameter indicates a rule that the intended recipient is to access input content.

[0018] In some embodiments, enabling access to the input content includes replacing the modified input content with the input content, wherein replacing the modified input content may include replacing a pointer to the modified input content in a file server with a pointer to corresponding input content. In some embodiments, the method further comprises storing the modified input content at an electronic mail server in association with an electronic mail of the intended recipient, wherein replacing the modified input content includes replacing the modified input content stored in association with the electronic mail with the input content, such that the input content is accessible to the intended recipient via the electronic mail server. In some embodiments, enabling access to the input content includes providing a notification to the intended recipient indicating that the input content is accessible to the intended recipient, the notification including an electronic link to the input content. In some embodiments, enabling access to the input content includes forwarding the input content in an electronic mail to the intended recipient.

[0019] Another embodiment of the present disclosure includes a method for disarming malicious content in a computer system having a processor. The method includes accessing, by the computer system, input content intended for a recipient of a network and enabling the intended recipient to select to access the input content or modified input content. Wherein upon receipt of a request to access modified input content, the method includes modifying, by the processor, at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content, and enabling access to the modified input content by the intended recipient. Wherein upon receipt of a request to access the input content, the method includes analyzing, by the processor, the input content according to at least one malware detection algorithm configured to detect malicious content, and enabling access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm.

[0020] In some embodiments, enabling the intended recipient to select to access the input content or modified input content includes enabling selection to access both the input content and the modified input content, wherein upon receipt of a request to access both the input content and modified input content the method further comprises first performing the modifying to render inactive code that is included in the input content intended for malicious purpose and enabling access to the modified input content, then performing the analyzing and enabling access to the input content. In some embodiments, upon receipt of a request to access both the input content and modified input content, the enabling access to the input content includes replacing the modified input content with the input content. In some embodiments, the method includes, before enabling the intended recipient to select to access the input content or modified input content, applying a signature-based malware detection algorithm to the input content, and enabling the intended recipient to select to access the input content only if the signature-based malware detection algorithm does not detect malicious code in the input content. In some embodiments, the at least one malware detection algorithm includes a behavior-based malware detection algorithm.

[0021] Another embodiment include a system for disarming malicious content, the system comprising a memory device storing a set of instructions, and a processor configured to execute the set of instructions to access input content intended for a recipient of a network, modify at least a portion of digital values of the input content to render inactive code that is included in the input content intended for malicious purpose, the modified input content being of the same type as the accessed input content, enable access to the modified input content by the intended recipient, analyze, by the processor, the input content according to at least one malware detection algorithm configured to detect malicious content, and enable access to the input content by the intended recipient when no malicious content is detected according to the at least one malware detection algorithm. The processor of the system may also be configured to execute the instructions to modify the at least a portion of digital values of the input content based on a received request from the intended recipient to access modified input content. In some embodiments, the processor may also be configured to execute the instructions to modify the at least a portion of digital values of the input content based on a configurable parameter associated with the intended recipient, the parameter indicating a rule that the intended recipient is to access the modified input content.

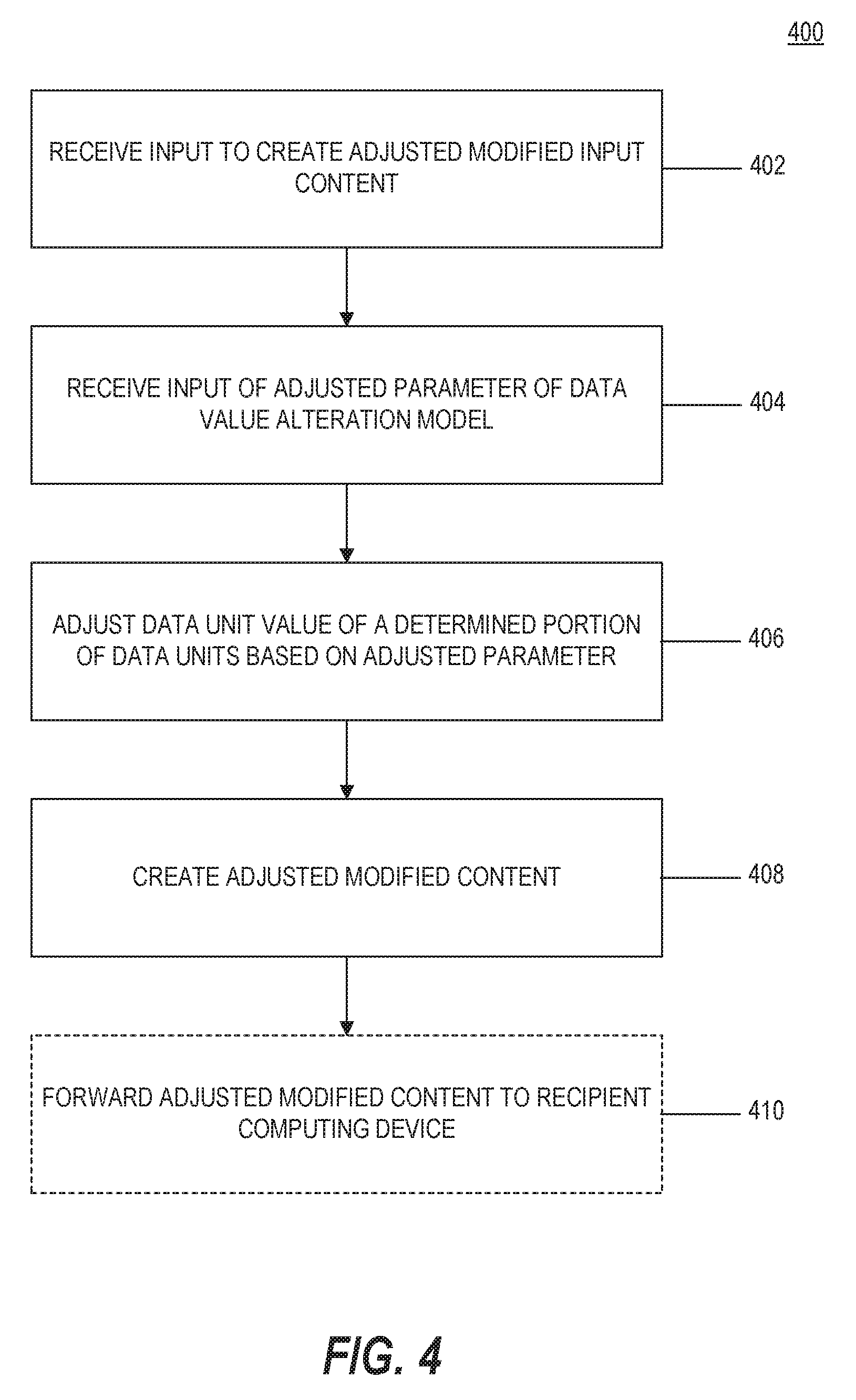

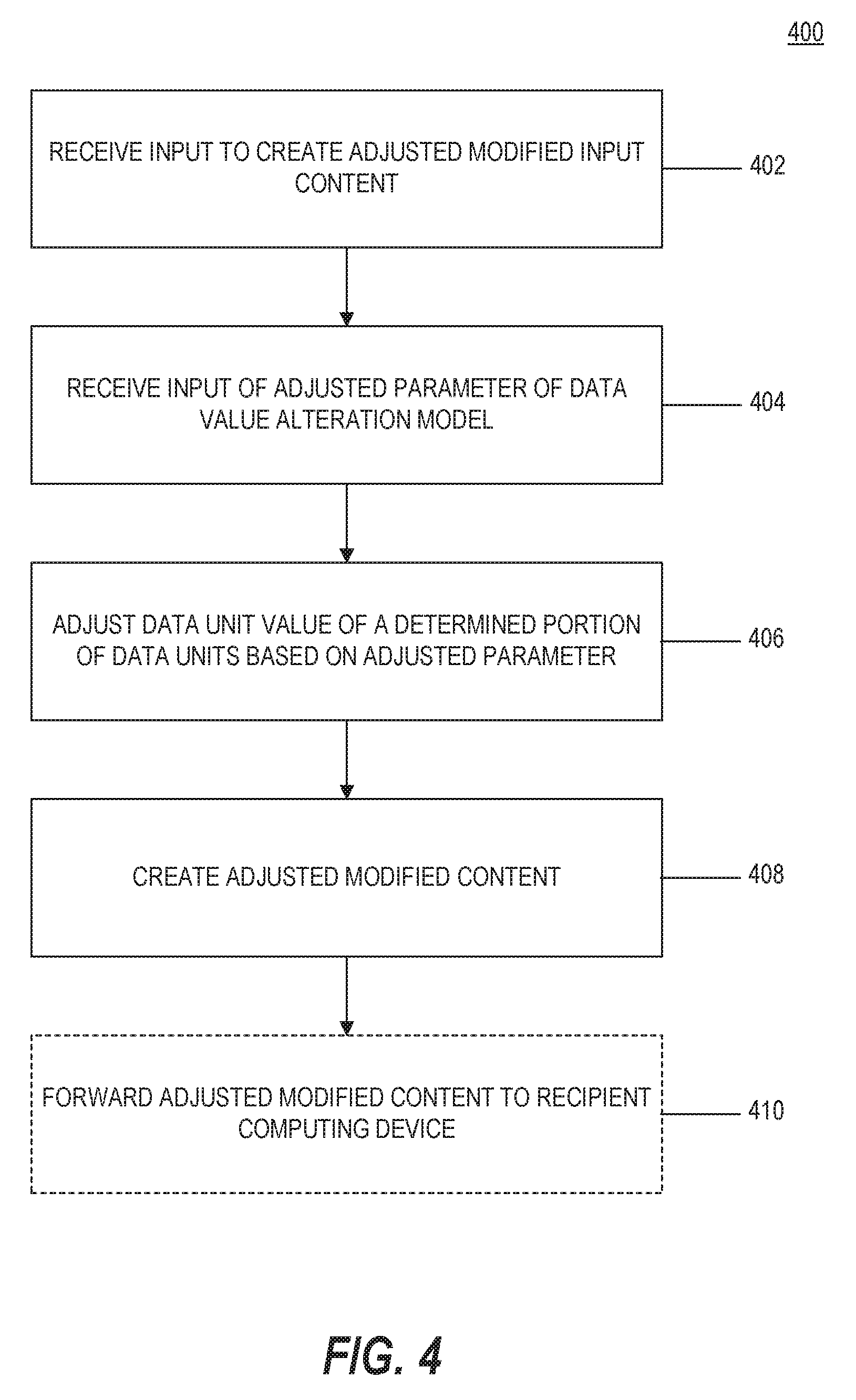

[0022] According to another embodiment, a method of disarming malicious code is included. The method includes receiving input content and modifying, according to a data value alteration model, at least a portion of digital values of the input content to render any malicious code in the input content inactive for its intended malicious purpose, which may result in modified input content. The method also includes receiving an instruction to create adjusted modified input content, and responsive to receiving the instruction, modifying, according to an adjusted data value alteration model, at least a portion of the digital values of the input content, which may result in adjusted modified input content that renders any malicious code in the input content inactive for its intended malicious purpose.

[0023] According to another embodiment, a method of disarming malicious code is included for receiving input content and modifying, according to a data value alteration model, at least a portion of digital values of the input content to render any malicious code in the input content inactive for its intended malicious purpose, which may result in modified input content. The method also includes enabling modification of a parameter of the data value alteration model for an adjusted modification of at least a portion of the digital values of the input content to create adjusted modified input content that renders any malicious code in the input content inactive for its intended malicious purpose while not interfering with an intended use of the input content.

[0024] According to another embodiment, a method of disarming malicious code in a computer system includes receiving input content that includes a plurality of data units having a bit value, automatically applying a bit depth alteration model to the input content for altering a depth of the bit value of at least a portion of the data units so as to render any malicious code included in the plurality of data units inactive for its intended malicious purpose, and creating new content reflecting the application of the bit depth alteration model to the input content. The bit depth alteration model may alter a depth of the bit value of a data unit without changing the bit value of the data unit.

[0025] According to another embodiment, a method for creating a reconstructed file in a computer system includes determining a file format associated with a received input file, parsing the input file into one or more objects based on the file format, determining a specification associated with the file format of the input file, determining a current version of the specification exists, wherein the current version of the specification is different from the specification associated with the file format of the input file, and reconfiguring a layout of the input file to create a reconstructed file, wherein the reconstructed file is configured according to the current version of the specification.

[0026] According to another embodiment, a method of disarming malicious code includes receiving an input file including input content, determining a file format of the input file, and rendering any malicious code included in the input content inactive for its intended malicious purpose according to a file-format specific content alteration model applied to the input content to create a modified input file.

[0027] According to another embodiment, a method of disarming malicious code in a received input file includes parsing the input file into one or more objects based on a format of the input file, wherein at least one object includes data indicative of a printer setting, and reconfiguring a layout of the input file including the one or more objects to create a reconstructed file, the reconstructed file preserving the data of the at least one object including data indicative of a printer setting.

[0028] According to another embodiment, a method of disarming malicious code includes parsing an input file into one or more objects based on a format specification associated with the input file, modifying at least a portion of digital values of at least one object of the one or more objects to create a corresponding modified object, and reconfiguring a layout of the input file, including the corresponding modified object(s), to create a reconstructed file.

[0029] According to another embodiment, a method of disarming malicious code includes receiving input content intended for a recipient in a network, determining one or more policies based on a characteristics of the input content, an identity of a sender of the input content, and an identity of the intended recipient, and processing the input content to create modified input content according to the determined one or more policies, wherein the modified input content is configured to disarm or remove any malicious content included in the input content.

[0030] According to another embodiment, a method for verifying any malicious code included in accessed input content is disarmed in modified input content includes determining that the input content includes malicious code, modifying at least a portion of digital values of the input content to create modified input content configured to disarm malicious code included in the accessed input content, analyzing the modified input content according to a behavior-based malware detection algorithm, and when no suspicious activity is detected, generating a report indicating at least one change in a digital value of the original input content that caused the malicious code to be disarmed.

[0031] In accordance with additional embodiments of the present disclosure, a computer-readable medium is disclosed that stores instructions that, when executed by a processor(s), causes the processor(s) to perform operations consistent with one or more disclosed methods.

[0032] In accordance with additional embodiments of the present disclosure, a system is disclosed including a memory device storing a set of instructions, and a processor configured to execute the set of instructions to perform operations consistent with one or more disclosed methods.

[0033] It is to be understood that both the foregoing general description and the following detailed description are by example and explanatory only, and are not restrictive of the disclosed embodiments, as claimed.

BRIEF DESCRIPTION OF THE DRAWINGS

[0034] The subject matter regarded as the invention is particularly pointed out and distinctly claimed in the concluding portion of the specification. The disclosed principles, however, both as to organization and method of operation, together with objects, features, and advantages thereof, may best be understood by reference to the following detailed description when read with the accompanying drawings in which:

[0035] FIG. 1 is a schematic block diagram of an example computing environment consistent with the disclosed embodiments;

[0036] FIG. 2 is a schematic block diagram of an example computing system adapted to perform aspects of the disclosed embodiments;

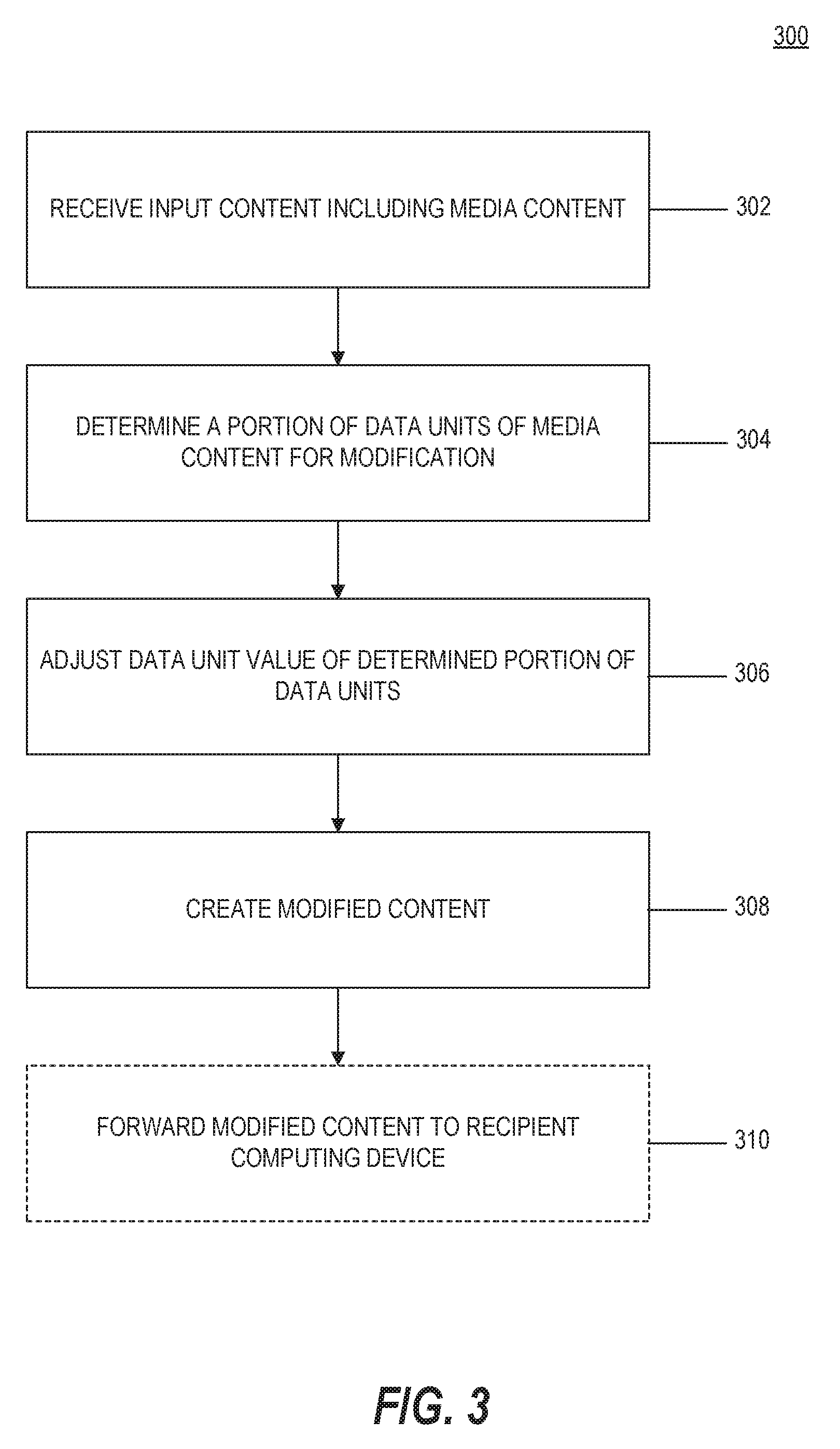

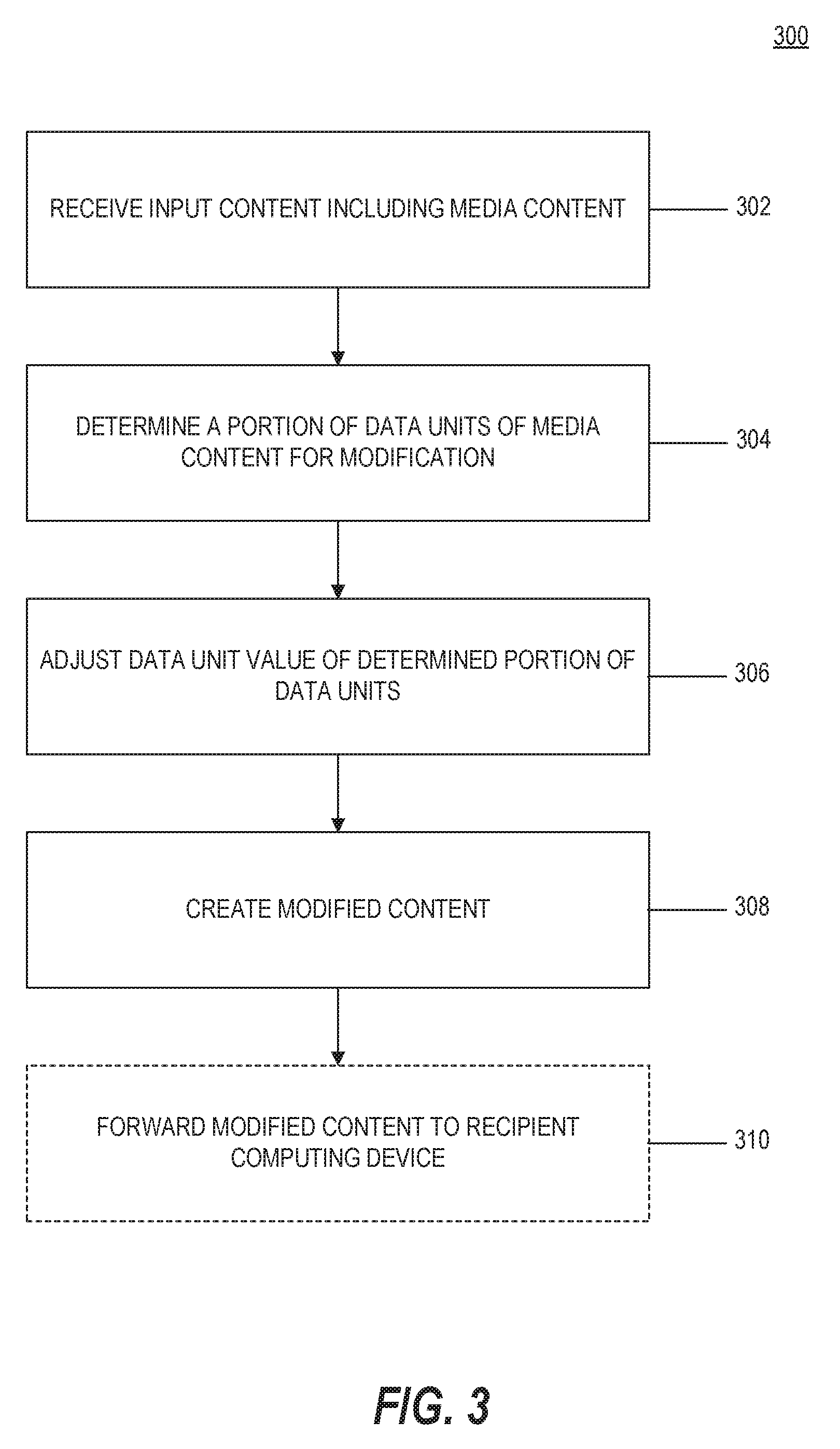

[0037] FIG. 3 is a flowchart of an example process for modifying input content to disarm malicious content according to a data value alteration model, consistent with the disclosed embodiments;

[0038] FIG. 4 is a flowchart of an example process for creating adjusted modified content, consistent with the disclosed embodiments;

[0039] FIG. 5 is a flowchart of an example process for modifying input content, according to a bit depth alteration model, consistent with the disclosed embodiments;

[0040] FIG. 6 is a flowchart of an example process for creating a reconstructed file according to a current version of a file format specification, consistent with the disclosed embodiments;

[0041] FIG. 7 is a flowchart of an example process for modifying input content to disarm malicious content according to a file-format specific content alteration model, consistent with the disclosed embodiments;

[0042] FIG. 8 is a flowchart of an example process for modifying content according to a XML format specific content alteration model, consistent with the disclosed embodiments;

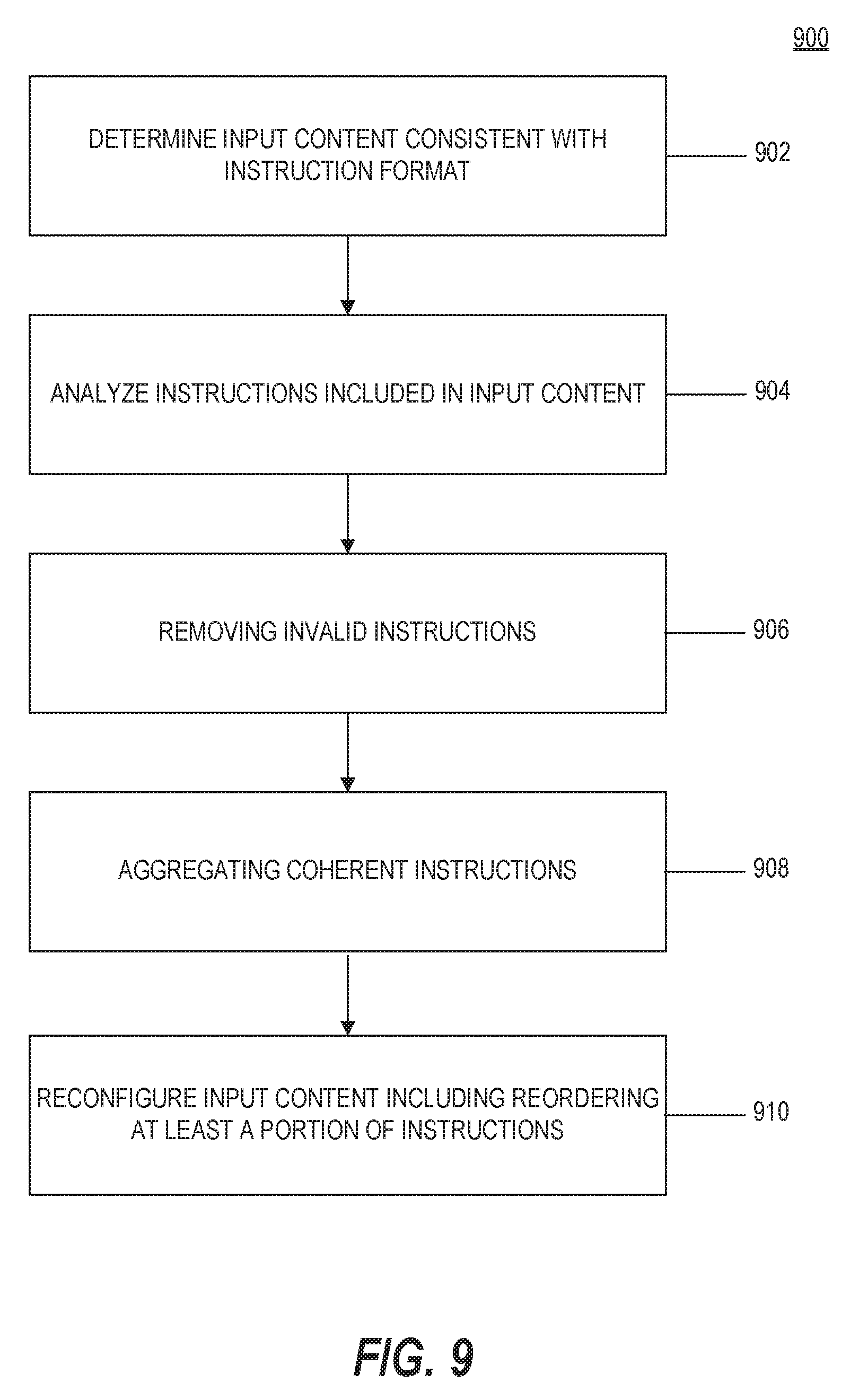

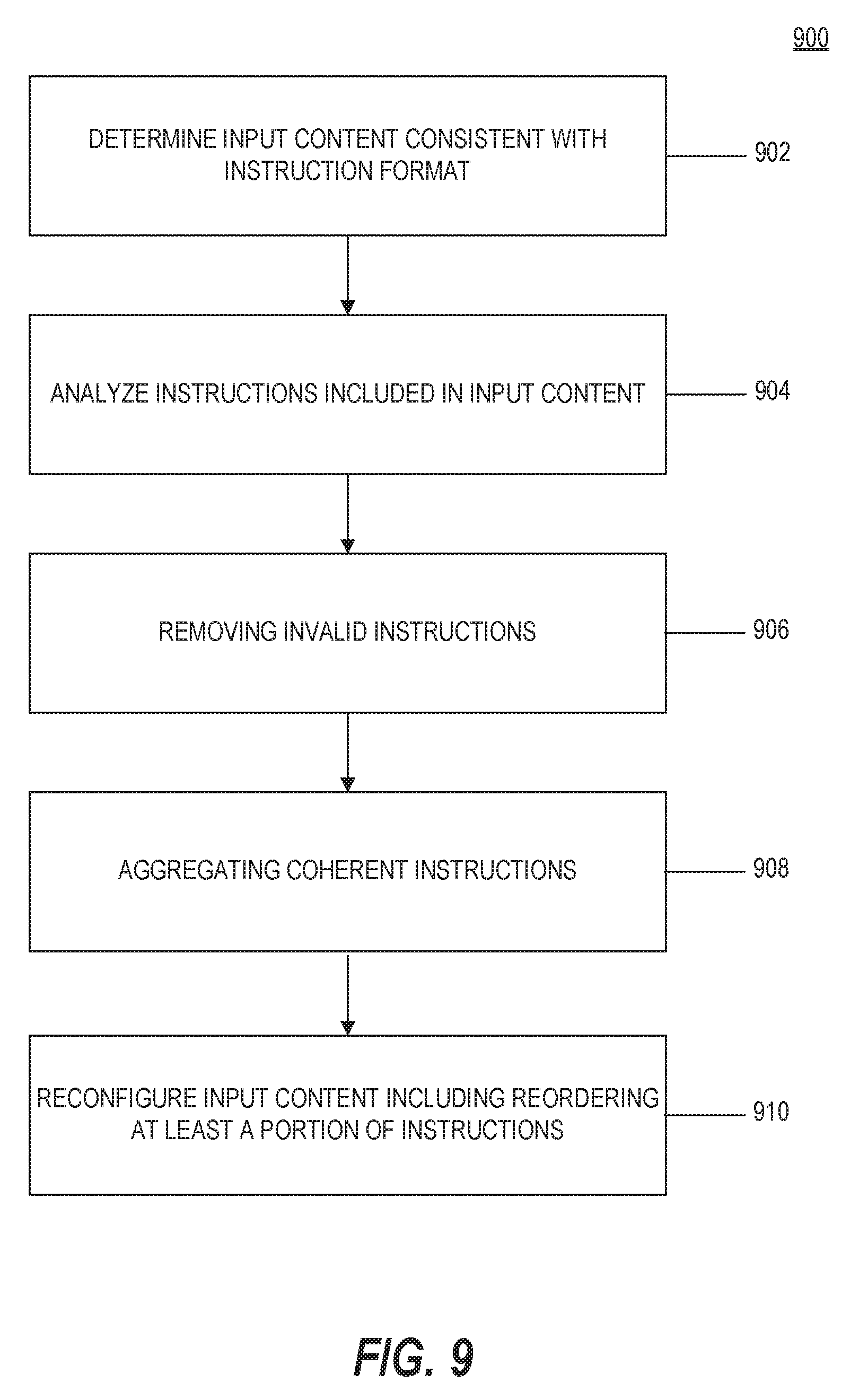

[0043] FIG. 9 is a flowchart of an example process for modifying input content, according an instruction format specific content alteration model, consistent with the disclosed embodiments;

[0044] FIG. 10 is a flowchart of an example process for creating modified content to disarm malicious content while preserving valid print settings, consistent with the disclosed embodiments;

[0045] FIG. 11 is a flowchart of an example process for modifying input content to disarm malicious content, consistent with the disclosed embodiments;

[0046] FIG. 12 is a flowchart of an example process for creating modified content according to hierarchical network policies, consistent with the disclosed embodiments;

[0047] FIG. 13 is a flowchart of an example process for modifying input content to disarm malicious content, consistent with the disclosed embodiments;

[0048] FIG. 14 is a flowchart of an example process for modifying input content to disarm malicious content, consistent with the disclosed embodiments; and

[0049] FIG. 15 is a flowchart of an example process for verifying effectiveness of a malicious content disarming technique.

[0050] It will be appreciated that for simplicity and clarity of illustration, elements shown in the figures have not necessarily been drawn to scale. For example, the dimensions of some of the elements may be exaggerated relative to other elements for clarity. Further, where considered appropriate, reference numerals may be repeated among the figures to indicate corresponding or analogous elements.

DETAILED DESCRIPTION

[0051] In the following detailed description, numerous specific details are set forth in order to provide a thorough understanding of the disclosed example embodiments. However, it will be understood by those skilled in the art that the principles of the example embodiments may be practiced without every specific detail. Well-known methods, procedures, and components have not been described in detail so as not to obscure the principles of the example embodiments. Unless explicitly stated, the example methods and processes described herein are not constrained to a particular order or sequence. Additionally, some of the described embodiments or elements thereof can occur or be performed simultaneously, at the same point in time, or concurrently.

[0052] As explained above, one technique hackers use to obtain control of a victim computer or computing environment is through the execution of malicious code at the victim computer or computing environment. One tool used by hackers, for which some of the example embodiments are directed, is the embedding of malicious shellcode in media content or a file of media content file type, such as an image, audio, video, or multimedia file type. The example embodiments, however, are also applicable to other non-media content and non-media content file types that encode data in a binary data format or other format that allows a binary data block to be embedded in them such that they may include encoded malicious shellcode. Some example embodiments are also applicable generally to disarming malicious code (in any form) including in input content of any format or a particular format.

[0053] Another technique hackers use to obtain control of a victim computer or computing environment is through the execution of malicious active content. Active content, as this term is used throughout this disclosure, refers to any content embedded in a document that can configured to carry out an action or trigger an action, and includes common forms such as word processing and spreadsheet macros, formulas, scripts etc. An action can include any executable operation performed within or initiated by the rendering application. Active content is distinct from other "passive content" that is rendered by the application to form the document itself.

[0054] Malicious code or malicious content, as these terms are interchangeably used throughout this disclosure, refers to any content or code or instructions intended for a malicious purpose or configured to perform or intended to perform any surreptitious or malicious task, often unwanted and unknown to a user, including tasks, for example, to take control of a computer, obtain data from a computer etc. In some embodiments, suspicious content may also refer to malicious content or potentially malicious content. Examples of malicious code or malicious content include malware. Malware-based attacks pose significant risks to computer systems. Malware includes, for example, any malicious content, code, scripts, active content, or software designed or intended to damage, disable, or take control over a computer or computer system. Examples of malware include computer viruses, worms, trojan horses, ransomware, spyware, adware, shellcode, etc. Malware may be received into a computer system in various ways, commonly through electronic communications such as email (and its attachments) and downloads from websites.

[0055] Some hackers aim to exploit specific computer application or operating system vulnerabilities to enable successful execution of malicious code. One of ordinary skill in the art would understand that hackers implement many different and evolving techniques to execute malicious code, and that the disclosed embodiments include general principles aimed to disarm or prevent the intended execution of malicious code in input content or an input file regardless of the particular process or techniques a hacker has implemented in the design of the malicious code. In the example embodiments, to disarm malicious content may generally refer to rendering inactive, any code included in the input content that is intended for a malicious purpose.

[0056] The disclosed embodiments may implement techniques for disarming, sanitizing, or otherwise preventing malicious content from entering or affecting a computer system via received electronic content. In the disclosed embodiments, any (or all) input content received by a computer system may be modified or transformed to thereby generate modified input content in which any malicious code included in the input content is excluded, disarmed, rendered inactive or otherwise prevented from causing its intended malicious effects. The modified input content may then be sent to an intended recipient instead of the original input content or until the original input content may be deemed safe for releasing to the intended recipient. In some embodiments, the original input content may be stored in a protective storage area and thus may be considered to be quarantined in the computer system, such that any malicious content in the received input content is unable to attack the computer system.

[0057] Accordingly, the disclosed embodiments provide advantages over techniques for identifying or disarming malicious code, including zero-day exploits, which rely on detection of a known malware signature or detection of suspicious behavior. That is, the disclosed embodiments can disarm any malicious code included in input content without relying on signature-based or behavior-based malware detection techniques or any knowledge of a computer vulnerability or other hacking technique.

[0058] Although example embodiments need not first detect suspicious content or malicious content to disarm any malicious code included in input content, in some embodiments, upon identifying suspicious or malicious content, the disclosed embodiments may render any malicious code that may be included in the input content inactive for its intended malicious purpose. In some embodiments, suspicious content may also refer potentially malicious content or content that is later determined to be malicious or have a malicious purpose. Additionally, in some embodiments it may be advantageous to quarantine or otherwise block or prevent an intended recipient from accessing any input content that has been determined to include suspicious or malicious code.

[0059] The disclosed embodiments also implement techniques for tracking received input content or other types of content received by the computer system, and associating the content (or copies or characteristics thereof) with any respective generated modified content that may be passed on to an intended recipient. The original content may be quarantined in the computer system or otherwise prevented from being received or accessed by an intended recipient, so that malicious content that may be included in the content is unable to infect the computer system. Because the disclosed embodiments may associate received or accessed input content with respective modified content, the disclosed techniques also enable a computer system to produce the original input content upon demand, if needed, such as with respect to a legal proceeding or for any other purpose for which the original input content is requested. The disclosed embodiments may also provide functionality for making the original content available based on one or more policies or upon determining that the original input content is unlikely to include malicious code.

[0060] The disclosed embodiments may be associated with or provided as part of a data sanitization or CDR process for sanitizing or modifying electronic content, including electronic mail or files or documents or web content received at a victim computer or a computer system, such as via e-mail or downloaded from the web, etc. The disclosed embodiments may be associated with or provided as part of a data sanitization or CDR process for sanitizing or modifying electronic content, including electronic mail or files or documents or web content received at a victim computer or a computer system, such as via e-mail or downloaded from the web, etc. The disclosed embodiments may implement any one or more of several CDR techniques applied to received content based on the type of content, for example, or other factors. Some example CDR techniques that may be implemented together with the disclosed embodiments include document reformatting or document layout reconstruction techniques, such as those disclosed in U.S. Pat. No. 9,047,293, for example, the content of which is expressly incorporated herein by reference, as well as the altering of digital content techniques of copending U.S. patent application Ser. Nos. 15/441,860 and 15/441,904, filed Feb. 24, 2017, the contents of which are also expressly incorporated herein by reference. Additional CDR techniques that may be implemented together with the disclosed embodiments include the particular techniques for protecting systems from active content such as those disclosed in U.S. Pat. No. 9,858,424, as well as the particular techniques for protecting systems from malicious content included in protected content, such as those disclosed in U.S. patent application Ser. No. 15/926,484, filed Mar. 20, 2018, as well as the particular techniques for protecting systems from malicious content included in digitally signed content, such as those disclosed in U.S. patent application Ser. No. 15/795,021, filed Oct. 26, 2017. The disclosed embodiments may also include aspects for determining the effectiveness of the disclosed CDR techniques, such as those disclosed in U.S. patent application Ser. No. 15/672,037, filed Aug. 8, 2017. Additional aspects of the embodiments disclosed in the aforementioned patents and applications may also be included in the example embodiments herein. The contents of each of the aforementioned patents and patent applications are expressly incorporated herein by reference in its entirety.

[0061] The disclosed embodiments may be implemented with respect to any malicious content (or suspicious content) included in or identified in a document, file, or other received or input content, without regard to whether the content or document itself is deemed suspicious in advance or before the sanitization is performed. Suspicious content may or may not include malicious content. Suspicious content refers, for example, to a situation where input content may potentially or more likely include malicious content, such as when the received content comes from or is associated with an untrusted source. Content may be deemed suspicious based on one or more characteristics of the received input content itself or the manner in which it is received as well as other factors that alone or together may cause suspicion. One example of a characteristic associated with the input content refers to an authorship property associated with the input content. For example, the property may identify an author of the input content and the system determines whether the author property matches the source from which the input content was received and if there is no match then the system marks the input content as suspicious.

[0062] The disclosed embodiments may implement one or more CDR processes to generate the modified input content (for disarming any malicious content) without regard to whether malicious content is detected in the input content and without regard to whether the original input content is even analyzed by one or more malware detection techniques (i.e. without applying a malware detection algorithm to the input content). That is, it is not necessary to first detect any malicious or suspicious content in the input content to disarm the malicious content. The content disarming or sanitization techniques of the disclosed embodiments thus may prevent malware infection without malware detection. In some embodiments, however, one or more malware detection techniques may be implemented together with the exemplary embodiments in association with receiving input content and generating modified input content, but knowledge or awareness of suspected malicious or suspicious content is not required to disarm any malicious content that may be included in the input content.

[0063] Although example embodiments need not first detect suspicious or malicious received content or any suspicious or malicious content embedded in the received content, in some embodiments, upon identifying suspicious or malicious content, the disclosed processes are performed to disable any such malicious content included in input content. Additionally, in some embodiments, if malicious content is identified, the example embodiments may include functionality for removing or destroying such input content or embedded content that is known to be malicious, in lieu of the disclosed disarming processes. In some embodiments, any received content determined to include malicious content may be quarantined or blocked, so as not to be accessed by an intended recipient altogether. The example embodiments may be configurable based on one or more policies instructing how received content and any malicious content embedded therein is to be processed for suspicious or malicious content based on a set of known factors, some of which may be enterprise specific. Thus, the example embodiments for disarming malicious content are not limited to any enterprise computing environment or implementation, and can be implemented as a standalone solution or in combination as a suite of solutions, and can be customized according to preferences of a computing environment. In some embodiments, one or more malware detection techniques may be implemented without generating modified input content.

[0064] Received content or input content according to the disclosed embodiments may include any form of electronic content, including a file, document, an e-mail, etc., or other objects that may be run, processed, opened or executed by an application or operating system of the victim computer or computing device. Malicious content can be embedded among seemingly legitimate received content or input content. A file including embedded or encoded malicious content may be an input file or document that is accessed by a computing system by any number of means, such as by importing locally via an external storage device, downloading or otherwise receiving from a remote webserver, file server, or content server, for example, or from receiving as an e-mail or via e-mail or any other means for accessing or receiving a file or file-like input content. An input file may be a file received or requested by a user of a computing system or other files accessed by processes or other applications executed on a computing system that may not necessarily be received or requested by a user of the computing system. An input file according to the disclosed embodiments may include any file or file-like content, such as an embedded object or script, that is processed, run, opened or executed by an application or operating system of a computing system. Input content may include electronic mail, for example, or streamed content or other content. Thus, while some embodiments of the present disclosure refer to an input file or document, the disclosed techniques are also applicable to objects within or embedded in an input file or to input content generally, without consideration as to whether it can be characterized as a file, document, or object.

[0065] Reference is now made to FIG. 1, which is a block diagram of an example computing environment 100, consistent with example embodiments of the present disclosure. As shown, system 100 may include a plurality of computing systems interconnected via one or more networks 150. A first network 110 may be configured as a private network. The first network 110 may include a plurality of host computers 120, one or more proxy servers 130, one or more e-mail servers 132, one or more file servers 134, a content disarm server 136, and a firewall 140. In some embodiments, first network 110 may optionally include a database 170, which may be part of or collocated with other elements of network 110 or otherwise connected to network 110, such as via content disarm server 136, as shown for example. Any of proxy server 130, e-mail server 132, or firewall 140 may be considered an edge or gateway network device that interfaces with a second network, such as network 150. In some embodiments, content disarm server 136 may be configured as an edge or gateway device. When either of these elements is configured to implement one or more security operations for network 110, it may be referred to as a security gateway device. Host computers 120 and other computing devices of first network 110 may be capable of communicating with one or more web servers 160, cloud servers and other host computers 122 via one or more additional networks 150.

[0066] Networks 110 and 150 may comprise any type of computer networking arrangement used to exchange data among a plurality of computing components and systems. Network 110 may include a single local area network or a plurality of distributed interconnected networks and may be associated with a firm or organization, or a cloud storage service. The interconnected computing systems of network 110 may be within a single building, for example, or distributed throughout the United States and globally. Network 110, thus, may include one or more private data networks, a virtual private network using a public network, one or more LANs or WANs, and/or any other suitable combination of one or more types of networks, secured or unsecured.

[0067] Network(s) 150 may comprise any type of computer networking arrangement for facilitating communication between devices of the first network 110 and other distributed computing components such as web servers 160, cloud servers 165, or other host computers 122. Web servers 160 and cloud servers 165 may include any configuration of one or more servers or server systems interconnected with network 150 for facilitating communications and transmission of content or other data to the plurality of computing systems interconnected via network 150. In some embodiments, cloud servers 165 may include any configuration of one or more servers or server systems providing content or other data specifically for the computing components of network 110. Network 150 may include the Internet, a private data network, a virtual private network using a public network, a Wi-Fi network, a LAN or WAN network, and/or other suitable connections that may enable information exchange among various components of system 100. Network 150 may also include a public switched telephone network ("PSTN") and/or a wireless cellular network.

[0068] Host computers 120 and 122 may include any type of computing system configured for communicating within network 110 and/or network 150. Host computers 120, 122 may include, for example, a desktop computer, laptop computer, tablet, smartphone and any other network connected device such as a server, server system, printer, as well as other networking components.

[0069] File server 134 may include one or more file servers, which may refer to any type of computing component or system for managing files and other data for network 110. In some embodiments, file server 134 may include a storage area network comprising one or more servers or databases, or other configurations known in the art.

[0070] Content disarm server 136 may include one or more dedicated servers or server systems or other computing components or systems for performing aspects of the example processes including disarming and modifying input content. Accordingly, content disarm server 136 may be configured to perform aspects of a CDR solution, as well as perform other known malware mitigation techniques. Content disarm server 136 may be provided as part of network 110, as shown, or may be accessible to other computing components of network 110 via network 150, for example. In some embodiments, some or all of the functionality attributed to content disarm server 136 may be performed in a host computer 120. Content disarm server 136 may be in communication with any of the computing components of first network 110, and may function as an intermediary system to receive input content, including input electronic files and web content, from proxy server 130, e-mail server 132, file server 134, host computer 120, or firewall 140 and return, forward, or store a modified input file or modified input content according to the example embodiments. In some embodiments, content disarm server 136 may be configured as a security gateway and/or an edge device to intercept electronic communications entering a network.

[0071] Content disarm server 136 may also be configured to perform one or more malware detection algorithms, such as a blacklist or signature-based malware detection algorithm, or other known behavior-based algorithms or techniques for detecting malicious activity in a monitored run environment, such as a "sandbox," for example. Accordingly, content disarm server 136 may include or may have access to one or more databases of malware signatures or behavioral characteristics, or one or more blacklists of known malicious URLs, or other similar lists of information (e.g., IP addresses, hostnames, domains, etc.) associated with malicious activity. Content disarm server 136 may also access one or more other service providers that perform one or more malware detection algorithms as a service. In some embodiments, one or more malware detection algorithms may be implemented together with the disclosed techniques to detect any malicious content included in input content. For example, one or more malware detection algorithms may be implemented to first screen input content for known malicious content, whereby the example embodiments are then implemented to disarm any malicious content that may have been included in the input content and that may not have been detected by the one or more malware detection algorithms. Likewise, content disarm server 136 may also be configured to perform one or more algorithms on received input content for identifying suspicious content.

[0072] In some embodiments, content disarm server 136 and or file server 134 may include a dedicated repository for storing original input content (and/or characteristics thereof) (protected or otherwise) received by content disarm server 136. The dedicated repository may be restricted from general access by users or computers of network 110. The dedicated repository may be a protected storage or storage area that may prevent any malicious content stored therein from attacking other computing devices of the computer system. In some embodiments, all or select original input content (protected or otherwise) may be stored in the dedicated repository for a predetermined period of time or according to a policy of a network administrator, for example. In some embodiments, characteristics associated with the original input content, such as a hash of an input content file, or a URL of requested web content, or other identifiers, etc., may be stored in addition to or instead of the original input content. In those embodiments where the original input content is protected, the protected original content may be stored in addition to or instead of any subsequently unprotected original input content.

[0073] Proxy server 130 may include one or more proxy servers, which may refer to any type of computing component or system for handling communication requests between one or more interconnected computing devices of network 110. In some embodiments, proxy server 130 may be configured as one or more edge servers positioned between a private network of first network 110, for example, and public network 150.

[0074] E-mail server 132 may include one or more e-mail servers, which may refer to any type of computing component or system for handling electronic mail communications between one or more interconnected computing devices of network 110 and other devices external to network 110. In some embodiments, e-mail server 132 may be configured as one or more edge servers positioned between a private network of first network 110, for example, and public network 150.

[0075] First network 110 may also include one or more firewalls 140, implemented according to any known firewall configuration for controlling communication traffic between first network 110 and network 150. In some embodiments, firewall 140 may include an edge firewall configured to filter communications entering and leaving first network 110. Firewall 140 may be positioned between network 150 and one or more of proxy server 130 and e-mail server 132. In the embodiment shown, proxy server 130, e-mail server 132 and firewall 140 are positioned within first network 110, however, other configurations of network 110 are contemplated by the present disclosure. For example, in another embodiment, one or more of the proxy server 130, e-mail server 132 and firewall 140 may be provided external to the first network 110. Any other suitable arrangement is also contemplated. Additionally, other networking components, not shown, may be implemented as part of first network 110 or external to network 110 for facilitating communications within the first network 110 and with other external networks, such as network 150.

[0076] In some embodiments, computing environment 100 may include a database 170. In some embodiments, database 170 may be part of network 110. In some embodiments, database 170 may be outside of network 110, but otherwise made accessible to network 110. Although not shown, database 170 may also be accessible via network 150. In the disclosed embodiments, database 170 may include any database configurations or technology and may be configured for storing any information described herein that may be accessed for performing the disclosed techniques. For example, in some embodiments, database 170 may be configured for storing one or more records associated with malware signatures or behavioral characteristics, or one or more blacklists of known malicious URLs, or other similar lists of information (e.g., IP addresses, hostnames, domains, etc.) associated with malicious activity. In some embodiments, database 170 may be configured for storing one or more specifications of a plurality of file formats. Database 170 may also be configured for storing one or more configuration files or other records used to enforce or implement one or more policies for received input content. Other uses of database 170 may be apparent from the disclosed example embodiments.

[0077] The processes of the example embodiments may be implemented at any one of the computing devices or systems shown in FIG. 1, including host computer 120, 122, proxy server 130, e-mail server 132, file server 134, content disarm server 136, firewall 140, or cloud server 165.

[0078] Reference is now made to FIG. 2, which is a schematic block diagram of an example computing system 200 adapted to perform aspects of the disclosed embodiments. According to the example embodiments, computing system 200 may be embodied in one or more computing components of computing environment 100. For example, computing system 200 may be provided as part of host computer 120,122, proxy server 130, e-mail server 132, file server 134, content disarm server 136, or cloud server 165, for example. In some embodiments, computing system 200 may not include each element or unit depicted in FIG. 2. Additionally, one of ordinary skill in the art would understand that the elements or units depicted in FIG. 2 are examples only and a computing system according to the example embodiments may include additional or alternative elements than those shown.

[0079] Computing system 200 may include a controller or processor 210, a user interface unit 202, communication unit 204, output unit 206, storage unit 212 and power supply 214. Controller/processor 210 may be, for example, a central processing unit processor (CPU), a chip or any suitable computing or computational device. Controller/processor 210 may be programmed or otherwise configured to carry out aspects of the disclosed embodiments.

[0080] Controller/processor 210 may include a memory unit 210A, which may be or may include, for example, a Random Access Memory (RAM), a read only memory (ROM), a Dynamic RAM (DRAM), a Synchronous DRAM (SD-RAM), a double data rate (DDR) memory chip, a Flash memory, a volatile memory, a non-volatile memory, a cache memory, a buffer, a short term memory unit, a long term memory unit, or other suitable computer-readable memory units or storage units. Memory unit 210A may be or may include a plurality of possibly different memory units.

[0081] Controller/processor 210 may further comprise executable code 210B which may be any executable code or instructions, e.g., an application, a program, a process, task or script. Executable code 210B may be executed by controller 210 possibly under control of operating system 210C. For example, executable code 210B may be an application that when operating performs one or more aspects of the example embodiments. Executable code 210B may also include one or more applications configured to render input content, so as to open, read, edit, and otherwise interact with the rendered content. Examples of a rendering application include one of various Microsoft.RTM. Office.RTM. suite of applications, a PDF reader application or any other conventional application for opening conventional electronic documents, as well as a web browser for accessing web content.

[0082] User interface unit 202 may be any interface enabling a user to control, tune and monitor the operation of computing system 200, including a keyboard, touch screen, pointing device, screen, and audio device such as loudspeaker or earphones.

[0083] Communication unit 204 may be any communication supporting unit for communicating across a network that enables transferring, i.e. transmitting and receiving, digital and/or analog data, including communicating over wired and/or wireless communication channels according to any known format. Communication unit 204 may include one or more interfaces known in the art for communicating via local (e.g., first network 110) or remote networks (e.g., network 150) and or for transmitting or receiving data via an external, connectable storage element or storage medium.

[0084] Output unit 206 may be any visual and/or aural output device adapted to present user-perceptible content to a user, such as media content. Output unit 206 may be configured to display web content or, for example, to display images embodied in image files, to play audio embodied in audio files and present and play video embodied in video files. Output unit 206 may comprise a screen, projector, personal projector and the like, for presenting image and/or video content to a user. Output unit 206 may comprise a loudspeaker, earphone and other audio playing devices adapted to present audio content to a user.

[0085] Storage unit 212 may be or may include, for example, a hard disk drive, a floppy disk drive, a Compact Disk (CD) drive, a CD-Recordable (CD-R) drive, solid state drive (SSD), solid state (SD) card, a Blu-ray disk (BD), a universal serial bus (USB) device or other suitable removable and/or fixed storage unit. Data or content, including user-perceptible content may be stored in storage unit 212 and may be loaded from storage 212 into memory unit 210A where it may be processed by controller/processor 210. For example, memory 210A may be a non-volatile memory having the storage capacity of storage unit 212.

[0086] Power supply 214 may include one or more conventional elements for providing power to computing system 200 including an internal battery or unit for receiving power from an external power supply, as is understood by one of ordinary skill in the art.

Disarming Malicious Content Using a Data Value Alteration Model

[0087] Reference is now made to FIG. 3, which is a flowchart of an example process for modifying input content, which in some embodiments may include an input file, consistent with the disclosed embodiments. According to the example embodiments, process 300 includes use of a data value alteration model that may be implemented to disarm malicious content or aspects of malicious content encoded in one or more data units of input content. In some embodiments, process 300 may be directed to disarming malicious content in the form of shellcode.

[0088] According to an example embodiment, a processor of a computing system may automatically apply a data value alteration model to the input content for altering select data values within the input content and output new content reflecting an application of the data value alteration model to the input content. The data value alteration model renders any malicious code included in the input content inactive for its intended malicious purpose without regard to any structure or format used to encapsulate the input content. That is the data value alteration model may be applied to input content without changing a structure, format or other specification for the input content. Additionally, the data value alteration model is determined such that a change to even a part of any malicious code included in the input content could render the malicious code inactive for its intended malicious purpose. In some embodiments, a malware detection algorithm may be applied to the new content reflecting an application of the data value alteration model to the input content to confirm the applied data value alteration model rendered any malicious code included in the input content inactive for its intended malicious purpose.

[0089] According to an example embodiment, malicious code, such as shellcode, in an input file or input content may be disarmed by applying intentional "noise" to the input file according to a data value alteration model, such as by changing the data unit values of at least some of the data units of the original input file to thereby create a modified input file. According to other embodiments for which a lossy compression is applicable for the specific format of the input file, the input file may be re-compressed to create a modified input file. The disclosed embodiments thereby change the bit or byte level representation of the content of the input file, such as an image, audio or video, but do so in a way intended to preserve a user's perceptibility of the content and not to prevent or interfere with an intended use of the content. As a result, at least some aspects of any malicious shellcode that may have been embedded in legitimate content data will have changed in the modified input file and will no longer be operational as intended, while a user's perception of the modified content, whether an image, an audio output or a video clip, will be largely unchanged. In some embodiments, the added "noise" may be added to randomly selected data units to eliminate any replay attack, to thwart crafty hackers, and so that any perceptible changes in the modified content to the user, whether visual and/or aural, may be minimal or negligible and at least will not prevent or interfere with an intended use of the content.

[0090] Upon opening, loading, playing, or otherwise accessing the modified input file, the changed/disarmed shellcode in the modified input file will contain a non-valid processor instruction(s) and/or illogical execution flow. Attempts at running or executing the disarmed shellcode will result in a processor exception and process termination, which will prevent a successful attack. While aspects of the example embodiments are described herein below as applied to an image file format, the example embodiments may be applied, with the apparent changes, to other media content file formats, such as image files (in any known format), audio files (in any known format) and video files (in any known format).