Filtering Pixels And Uses Thereof

Gutierrez; Roman

U.S. patent application number 16/440908 was filed with the patent office on 2019-09-26 for filtering pixels and uses thereof. This patent application is currently assigned to MEMS Start, LLC. The applicant listed for this patent is MEMS Start, LLC. Invention is credited to Roman Gutierrez.

| Application Number | 20190297289 16/440908 |

| Document ID | / |

| Family ID | 61246297 |

| Filed Date | 2019-09-26 |

View All Diagrams

| United States Patent Application | 20190297289 |

| Kind Code | A1 |

| Gutierrez; Roman | September 26, 2019 |

FILTERING PIXELS AND USES THEREOF

Abstract

Embodiments of the technology disclosed herein provide filtering pixels for performing filtering of signals within the pixel domain. Filtering pixels described herein include a hybrid analog-digital filter within the pixel circuitry, reducing the need for computationally intensive and inefficient post-processing of images to filter out aspects of captured signals, and account for ineffectiveness of optical filters.

| Inventors: | Gutierrez; Roman; (Arcadia, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | MEMS Start, LLC Arcadia CA |

||||||||||

| Family ID: | 61246297 | ||||||||||

| Appl. No.: | 16/440908 | ||||||||||

| Filed: | June 13, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15678032 | Aug 15, 2017 | 10368021 | ||

| 16440908 | ||||

| 62380212 | Aug 26, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/3535 20130101; H04N 5/3745 20130101; H04N 5/3696 20130101; H04N 5/378 20130101; H01L 27/146 20130101; H04N 5/3575 20130101 |

| International Class: | H04N 5/369 20060101 H04N005/369; H04N 5/3745 20060101 H04N005/3745; H01L 27/146 20060101 H01L027/146; H04N 5/353 20060101 H04N005/353; H04N 5/357 20060101 H04N005/357; H04N 5/378 20060101 H04N005/378 |

Claims

1. A system, comprising: an array of filtering pixels, each filtering pixel comprising: a photodiode; a filter circuit; a light source; and an imaging system, comprising: a lens; and an aperture stop; wherein the light source outputs a modulated light, and each filtering pixel of the array of filtering pixels is configured to detect a modulated light.

2. The system of claim 1, wherein the array of filtering pixels comprises one or more derivative pixels, the filter circuit comprising a first differentiator configured to output a signal proportional to a change in intensity of light falling on the photodiode.

3. The system of claim 2, the first differentiator comprising: a capacitor; a resistor; and a field-effect transistor.

4. The system of claim 2, each derivative pixel further comprising a reset FET configured to enable setting a voltage of the photodiode equivalent to a drain voltage of the system.

5. The system of claim 2, each derivative pixel further comprising a buffer electrically disposed between the photodiode and the derivative sensing circuit.

6. The system of claim 5, wherein the buffer comprises: a buffer FET; and a preliminary differentiator configured to output a signal proportional to an intensity of light falling on the photodiode.

7. The system of claim 5, each derivative pixel further comprising a second differentiator configured to output a signal proportional to a change in the change in intensity of light falling on the photodiode, the second differentiator electrically disposed after the first differentiator.

8. The system of claim 1, wherein the array of filtering pixels comprises one or more light source filtering pixels, the filter circuit comprising a sample-and-hold filter and a subtractor.

9. The system of claim 8, the sample-and-hold filter comprising a sample FET and a capacitor, wherein the sample FET is configured to sample an output from the photodiode in accordance with the detected modulated light, and the capacitor stores the sample of the output from the photodiode.

10. The system of claim 8, the filter circuit further comprising a current mirror.

11. The system of claim 10, wherein the current mirror outputs a current proportional to a magnitude of a difference between a voltage of the sample of the output from the photodiode and a voltage of the output of the photodiode.

12. The system of claim 8, wherein a high polarity of the filtering pixel is aligned with an ON state of the modulated light during a frame.

13. The system of claim 8, wherein a high polarity of the filtering pixel is aligned with an ON state of the modulated light for a first half of a frame, and aligned with an OFF state of the modulated light for a second half of the frame.

14. The system of claim 8, wherein the high polarity of the filtering pixel is modulated asynchronously with the modulated light of the light source.

15. The system of claim 8, wherein the filter circuit comprises two sample-and-hold filters, wherein a first sample and hold filter stores a first sample of the output of the photodiode in a first capacitor and a second sample and hold filter stores a second sample of the output of the photodiode in a second capacitor.

16. The system of claim 15, the filter circuit further comprises a current mirror.

17. The system of claim 16, wherein the current mirror outputs a current proportional to the magnitude of a difference between a voltage of the first sample and a voltage of the second sample.

18. The system of claim 8, wherein the filter circuit comprises a plurality of sample and hold filters.

19. The system of claim 2, further comprising: a coherent detection component, comprising a laser diode and a prism configured as a band pass filter corresponding to a wavelength of light emitted by the laser diode.

20. The system of claim 19, wherein the laser diode and the light source are the same.

21. The system of claim 1, further comprising an integrator circuit at an output of the filter circuit.

22. The system of claim 21, the integrator comprising a FET and a capacitor.

23. The system of claim 1, wherein the filtering circuit is modulated synchronously with a modulation scheme applied to the light source.

24. The system of claim 1, wherein the filtering circuit is modulated asynchronously with a modulation scheme applied to the light source.

25. A method of programming a filtering pixel, comprising: determining a type of filtering to be performed on a signal outputted from a photodiode of the filtering pixel; and setting one or more FETs to a particular state based on the determined type of filtering; wherein the type of filtering includes one of: no filtering; derivative filtering; or sample and hold filtering.

26. The method of claim 25, wherein the type of filtering further comprises: double derivative filtering; multiple derivative filtering; double sample and hold filtering; or multiple sample and hold filtering.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/678,032, filed Aug. 15, 2017, which claims the benefit of U.S. Provisional Application No. 62/380,212, filed Aug. 26, 2016, both of which are incorporated herein by reference in their entireties.

TECHNICAL FIELD

[0002] The disclosed technology relates generally to image sensing and sensors, and more particularly, some embodiments relate to image sensors including pixels with embedded filtering capabilities for extracting information from an image, such as, for example capturing and identifying motion within the field of view of the image sensor.

DESCRIPTION OF THE RELATED ART

[0003] Conventional camera solutions for measuring movement rely on frame subtraction to obtain the measurement. Conventional cameras capture all the elements of a scene, which are captured in frames. For example, changes in the position of objects within a field of view (FOV) of a camera can be identified by comparing frames, e.g., subtracting subsequent frames from a current frame. This same technique is utilized for various types of motion analysis, such as gesture recognition, obstacle avoidance, target tracking, and autofocus.

[0004] Image sensors comprise a plurality of pixels that convert captured light within an image into a current. Traditional pixels comprise a photodetector and, with respect to active pixel sensors, an amplifier. The total light intensity falling on each photodetector is captured, without any differentiation between the wavelength or source of the light. External filters, like Bayer filters, may be added to an image sensor to filter out undesired light prior to reaching the photodetector of each pixel.

BRIEF SUMMARY OF EMBODIMENTS

[0005] In some embodiments, an apparatus may comprise an array of filtering pixels. Each filtering pixel may comprise: a photodiode; a filter circuit; and a read out field-effect transistor (FET). The apparatus may further comprise a read bus for reading an output of each filtering pixel of the array of filtering pixels.

[0006] In some embodiments, the array of filtering pixels comprises one or more derivative pixels, the filter circuit comprising a first differentiator configured to output a signal proportional to a change in intensity of light falling on the photodiode. The first differentiator may comprise: a capacitor; a resistor; and a field-effect transistor.

[0007] In some embodiments, each derivative pixel further comprising a reset FET configured to enable setting a voltage of the photodiode equivalent to a drain voltage of the system.

[0008] In some embodiments, each derivative pixel further comprising a buffer electrically disposed between the photodiode and the derivative sensing circuit.

[0009] In some embodiments, the buffer comprises: a buffer FET; and a preliminary differentiator configured to output a signal proportional to an intensity of light falling on the photodiode.

[0010] In some embodiments, each derivative pixel further comprising a second differentiator configured to output a signal proportional to a change in the change in intensity of light falling on the photodiode, the second differentiator electrically disposed after the first differentiator.

[0011] In some embodiments, the read bus is configured for reading out an output of the second differentiator, and each derivative pixel further comprises a second read out FET and a second read out bus for reading out an output of the first differentiator.

[0012] In some embodiments, each derivative pixel further comprising a second differentiator configured to output a signal proportional to a change in the change in intensity of light falling on the photodiode, the second differentiator electrically disposed after the first differentiator.

[0013] In some embodiments, the read bus is configured for reading out an output of the second differentiator, and each derivative pixel further comprises a second read out FET and second read out bus for reading out an output of the first differentiator.

[0014] In some embodiments, each filtering pixel may further comprise an integrator, wherein the integrator comprises a capacitor. In some embodiments, each filtering pixel may further comprise a third read out FET and a third read bus configured to output a signal proportional to the intensity of light falling on the photodiode.

[0015] In accordance with one embodiment, method of derivative sensing may comprise sensing a change in intensity of light falling on a photodiode of a derivative pixel of an array of derivative pixels. The method may further comprise taking, by a differentiator, a derivative of a signal proportional to an intensity of light falling on the photodiode. Further still, the method may comprise integrating the derivative signal, and reading out the integrated signal on a read bus.

[0016] In some embodiments, the differentiator may comprise a capacitor, a resistor, and a field-effect transistor.

[0017] In some embodiments, the method may further comprise storing, by a buffer field effect transistor, a signal proportional to an integral of the intensity of light falling on the photodiode. In some embodiments, the method may further comprise taking a second derivative of the signal by a second differentiator disposed after the first differentiator.

[0018] In some embodiments, the second differentiator may comprise a second capacitor, a second resistor, and a second field-effect transistor. In some embodiments, the integrated signal comprises an output of the second differentiator integrated by an integrator, wherein the integrator comprises a capacitor.

[0019] In some embodiments, the method may further comprise integrating an output of the first differentiator, and reading out the integrated output of the first differentiator on a second read out bus.

[0020] In accordance with one embodiment, a system may comprise an array of filtering pixels. Each filtering pixel may comprise a photodiode, a filter circuit, a light source, and an imaging system. The imaging system may comprise a lens, and an aperture stop, wherein the light source outputs a modulated light, and each filtering pixel of the array of filtering pixels is configured to detect a modulated light.

[0021] In some embodiments, the array of filtering pixels comprises one or more derivative pixels, the filter circuit comprising a first differentiator configured to output a signal proportional to a change in intensity of light falling on the photodiode.

[0022] In some embodiments, the first differentiator may comprise a capacitor, a resistor, and a field-effect transistor. In some embodiments, each derivative pixel may further comprise a reset FET configured to enable setting a voltage of the photodiode equivalent to a drain voltage of the system. In some embodiments, each derivative pixel further comprises a buffer electrically disposed between the photodiode and the derivative sensing circuit.

[0023] In some embodiments, the buffer may comprise a buffer FET, and a preliminary differentiator configured to output a signal proportional to an intensity of light falling on the photodiode. In some embodiments, each derivative pixel further comprises a second differentiator configured to output a signal proportional to a change in the change in intensity of light falling on the photodiode, the second differentiator electrically disposed after the first differentiator.

[0024] In some embodiments, the array of filtering pixels comprises one or more light source filtering pixels, the filter circuit comprising a sample-and-hold filter and a subtractor. The sample-and-hold filter may comprise a sample FET and a capacitor, wherein the sample FET is configured to sample an output from the photodiode in accordance with the detected modulated light, and the capacitor stores the sample of the output from the photodiode. The filter circuit may further comprise a current mirror.

[0025] In some embodiments, the current mirror outputs a current proportional to a magnitude of a difference between a voltage of the sample of the output from the photodiode and a voltage of the output of the photodiode. In some embodiments, a high polarity of the filtering pixel is aligned with an ON state of the modulated light during a frame. In some embodiments, a high polarity of the filtering pixel is aligned with an ON state of the modulated light for a first half of a frame, and aligned with an OFF state of the modulated light for a second half of the frame. In some embodiments, the high polarity of the filtering pixel is modulated asynchronously with the modulated light of the light source.

[0026] In some embodiments, the filter circuit comprises two sample-and-hold filters, wherein a first sample and hold filter stores a first sample of the output of the photodiode in a first capacitor and a second sample and hold filter stores a second sample of the output of the photodiode in a second capacitor. In some embodiments, the filter circuit further comprises a current mirror.

[0027] In some embodiments, the current mirror outputs a current proportional to the magnitude of a difference between a voltage of the first sample and a voltage of the second sample. In some embodiments, the filter circuit comprises a plurality of sample and hold filters.

[0028] In some embodiments, the system may further comprise a coherent detection component, comprising a laser diode and a prism configured as a band pass filter corresponding to a wavelength of light emitted by the laser diode.

[0029] In some embodiments, the laser diode and the light source are the same.

[0030] In some embodiments, the system may further comprise an integrator circuit at an output of the filter circuit. In some embodiments, the integrator comprises a FET and a capacitor.

[0031] In some embodiments, the filtering circuit is modulated synchronously with a modulation scheme applied to the light source. In some embodiments, the filtering circuit is modulated asynchronously with a modulation scheme applied to the light source.

[0032] In accordance with one embodiment, a method of programming a filtering pixel comprises determining a type of filtering to be performed on a signal outputted from a photodiode of the filtering pixel, and setting one or more FETs to a particular state based on the determined type of filtering. The type of filtering may include one of: no filtering; derivative filtering; or sample and hold filtering. In some embodiments, the type of filtering further comprises: double derivative filtering; multiple derivative filtering; double sample and hold filtering; or multiple sample and hold filtering.

BRIEF DESCRIPTION OF THE DRAWINGS

[0033] The technology disclosed herein, in accordance with one or more various embodiments, is described in detail with reference to the following figures. The drawings are provided for purposes of illustration only and merely depict typical or example embodiments of the disclosed technology. These drawings are provided to facilitate the reader's understanding of the disclosed technology and shall not be considered limiting of the breadth, scope, or applicability thereof. It should be noted that for clarity and ease of illustration these drawings are not necessarily made to scale.

[0034] FIG. 1 is a circuit diagram of an example derivative pixel in accordance with embodiments of the technology disclosed herein.

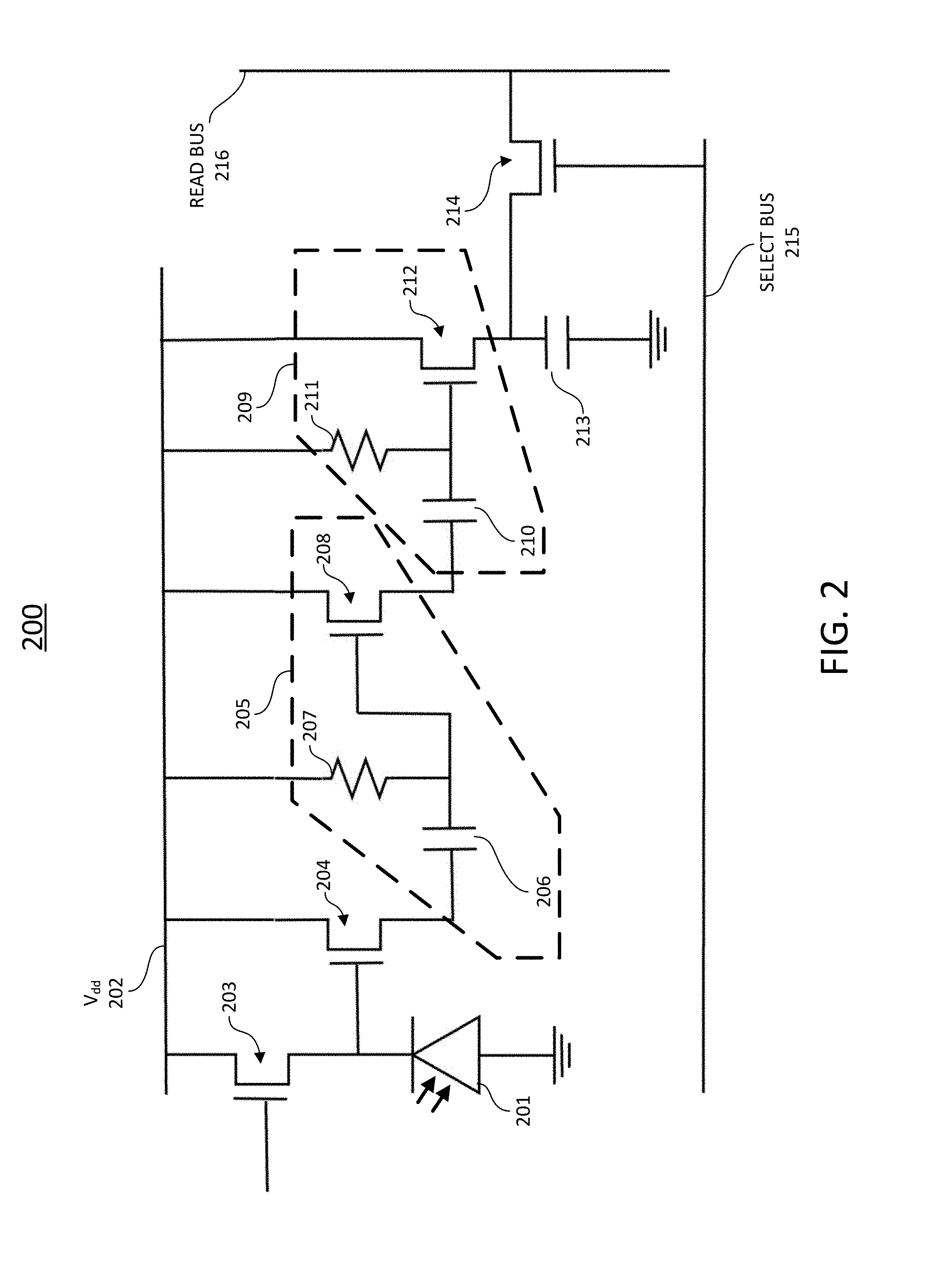

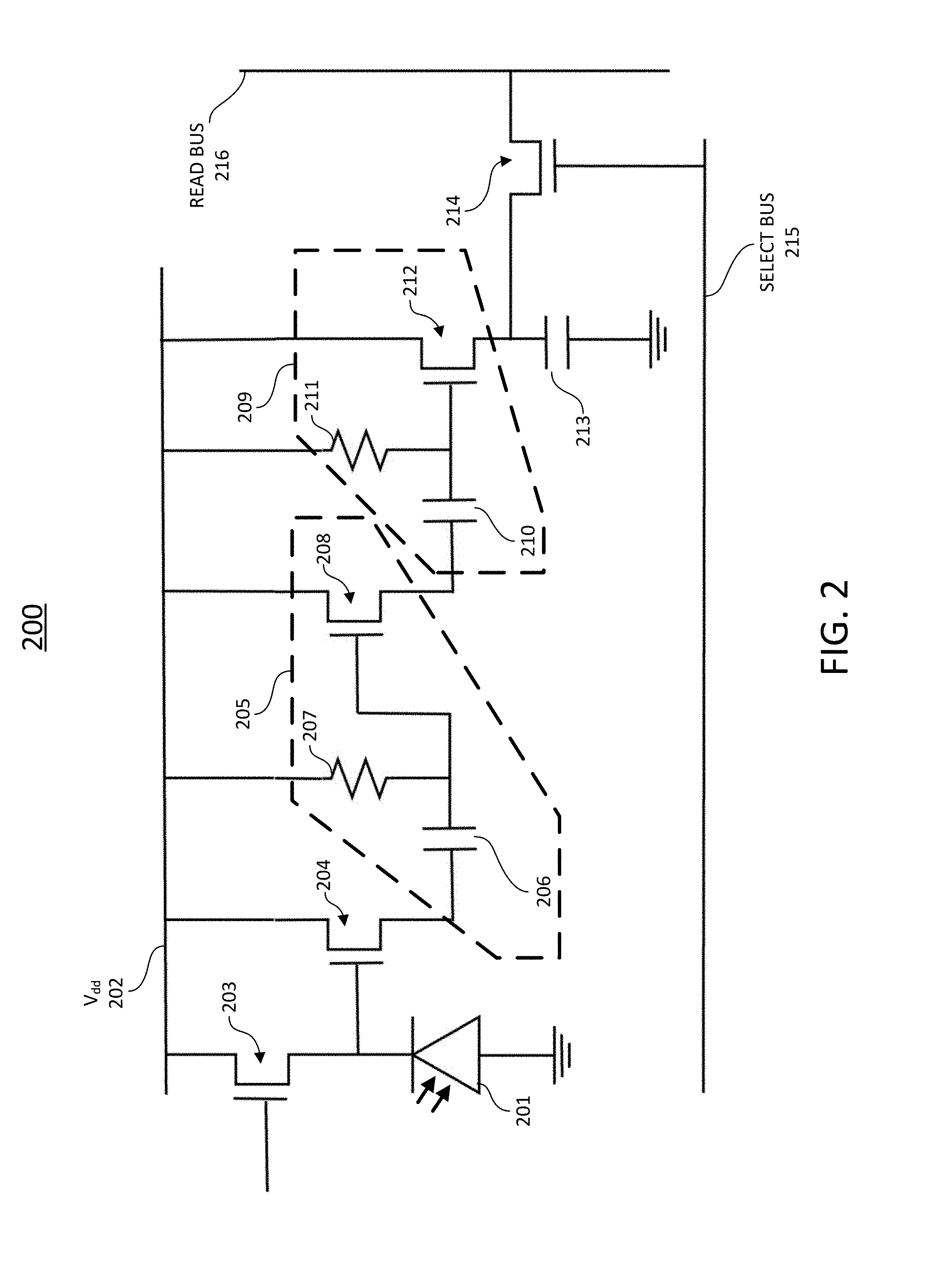

[0035] FIG. 2 illustrates another example derivative pixel for accumulating charge in accordance with embodiments of the technology disclosed herein.

[0036] FIG. 3 is a block diagram illustration of an example derivative pixel in accordance with embodiments of the technology disclosed herein.

[0037] FIG. 4 is a block diagram illustration of another example derivative pixel in accordance with embodiments of the technology disclosed herein.

[0038] FIGS. 5A, 5B, and 5C illustrate the outputs of different parts of an example derivative pixel in accordance with embodiments of the technology of the present disclosure.

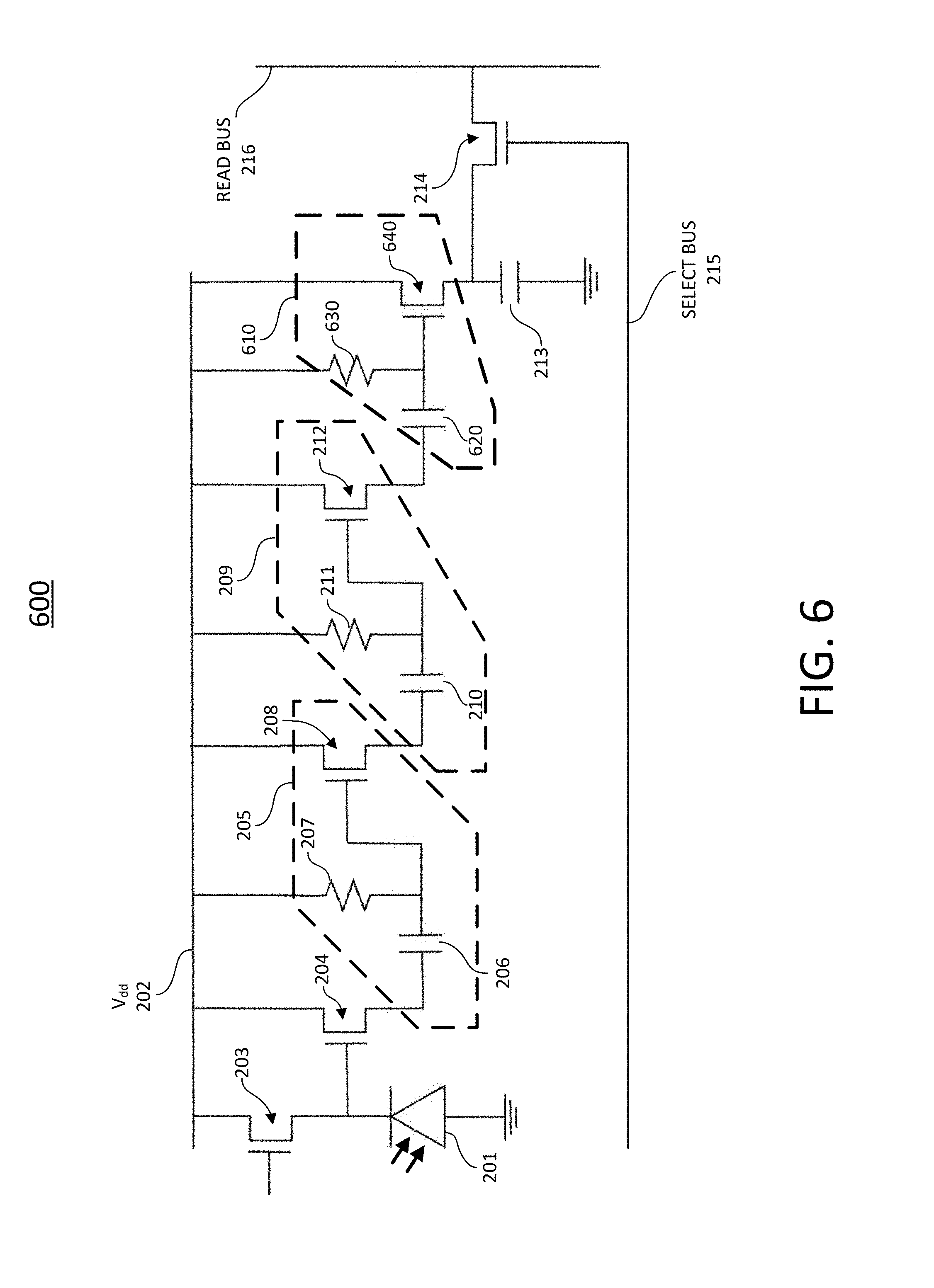

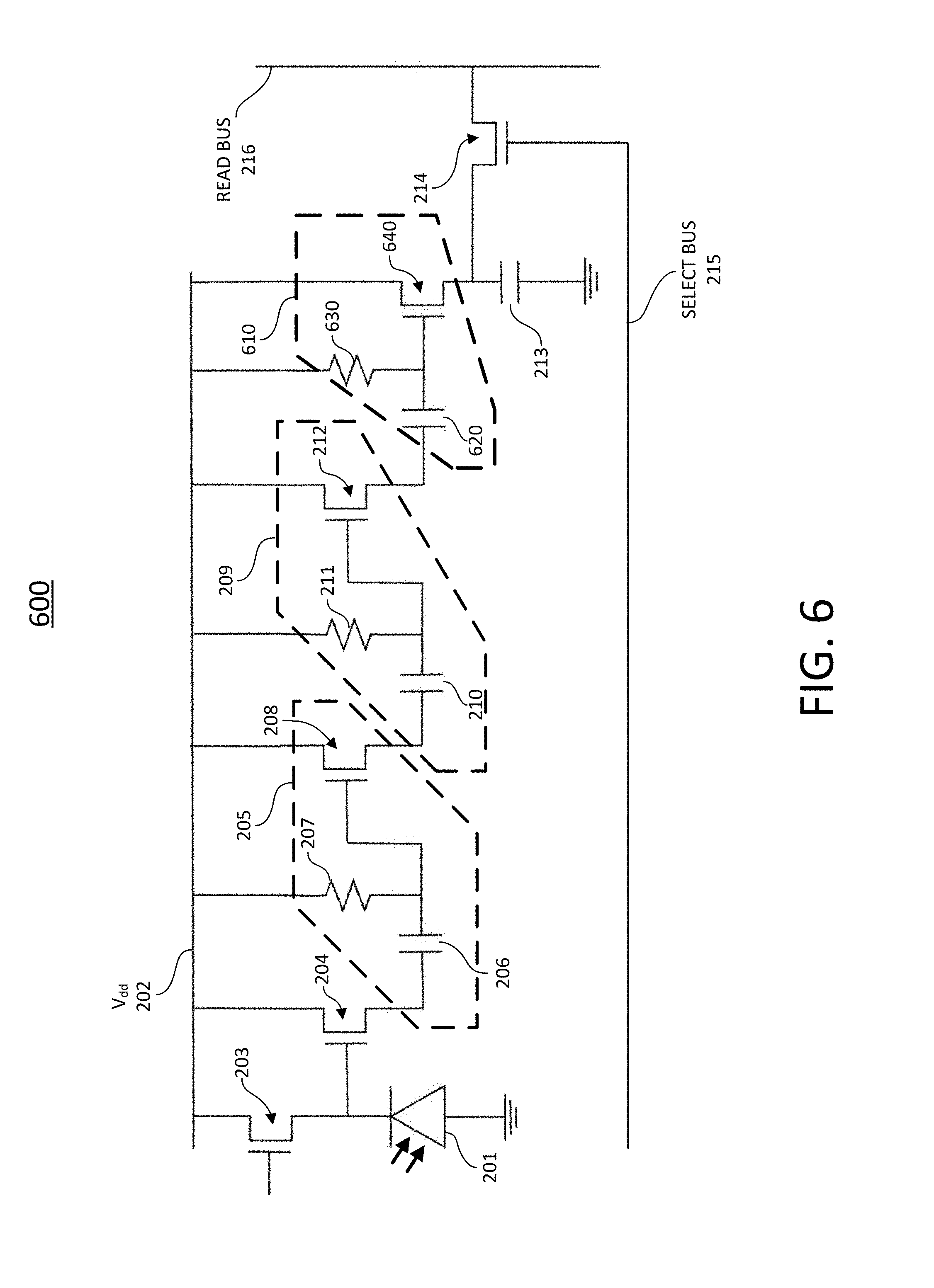

[0039] FIG. 6 illustrates an example double derivative pixel in accordance with embodiments of the technology disclosed herein.

[0040] FIG. 7 is a block diagram illustration of an example double derivative pixel in accordance with embodiments of the technology disclosed herein.

[0041] FIG. 8 is a block diagram illustration of another example double derivative pixel in accordance with embodiments of the technology disclosed herein.

[0042] FIG. 9 illustrates an example combined pixel imager in accordance with embodiments of the technology disclosed herein.

[0043] FIG. 10 is a block diagram illustration of an example combined pixel imager in accordance with embodiments of the technology disclosed herein.

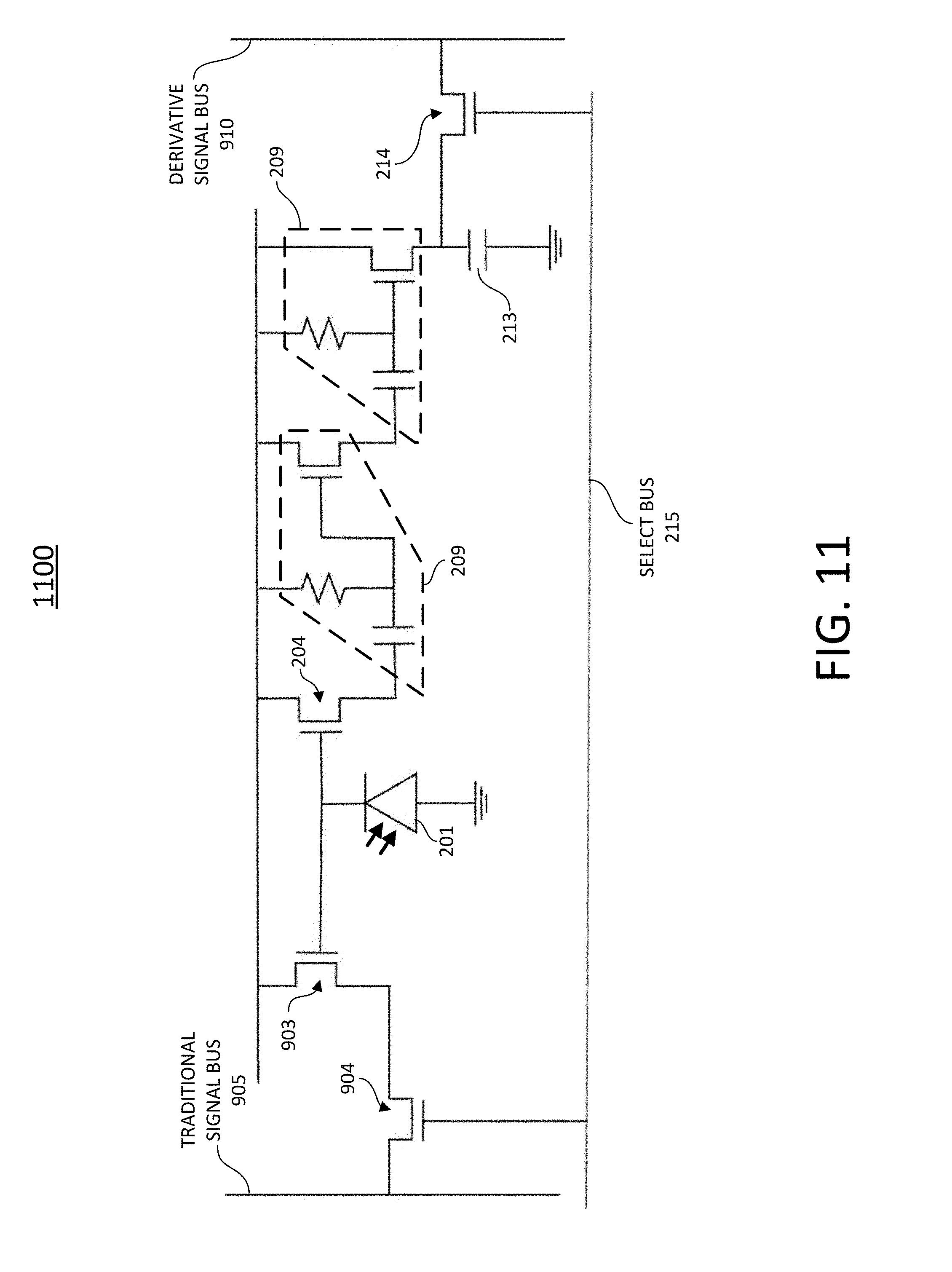

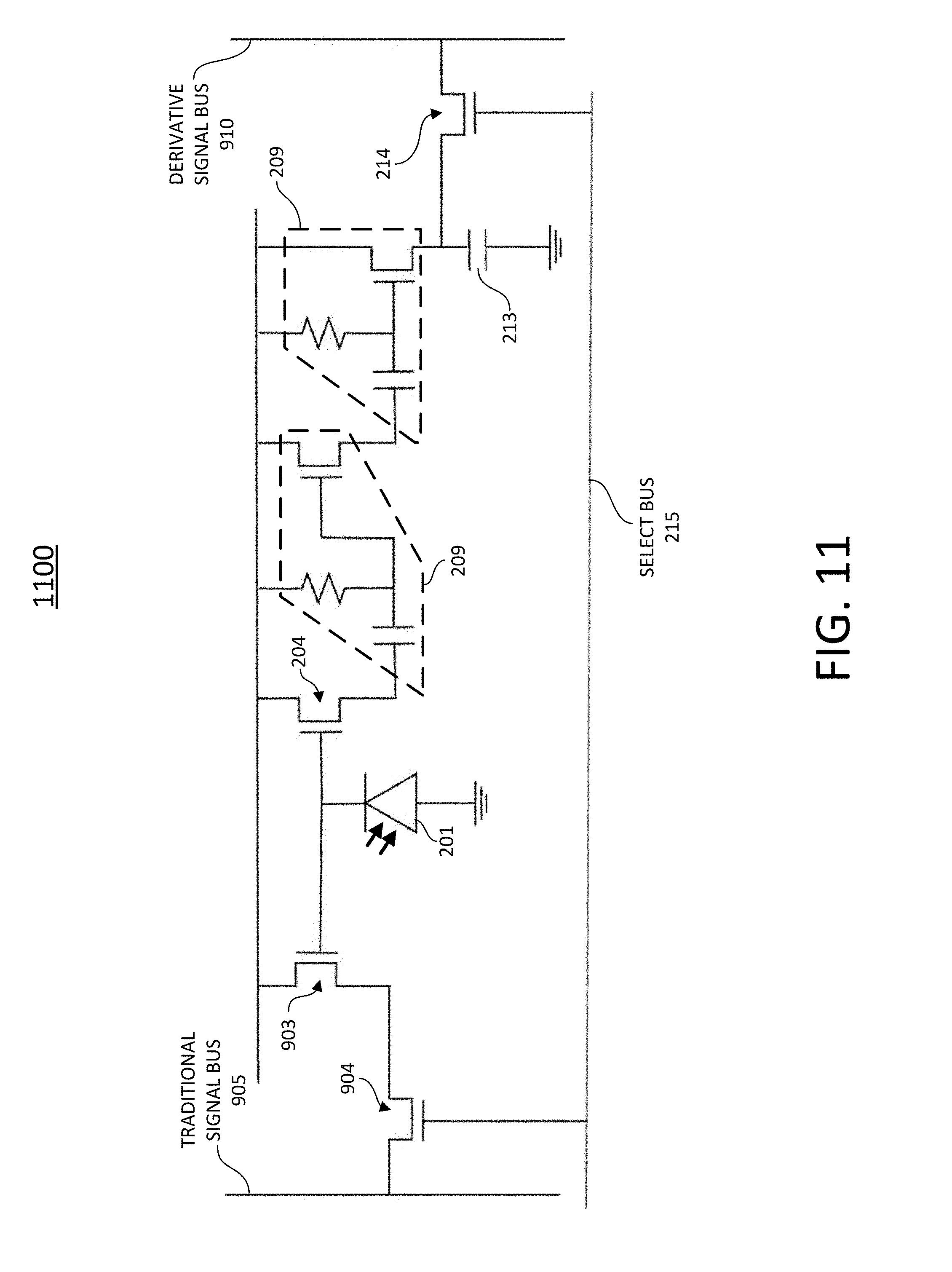

[0044] FIG. 11 illustrates another example combined pixel imager for accumulating charge in accordance with embodiments of the technology disclosed herein.

[0045] FIG. 12 illustrates another an example simplified combined pixel imager for accumulating charge in accordance with embodiments of the technology disclosed herein.

[0046] FIG. 13 is a block diagram illustration of an example simplified combined pixel imager in accordance with embodiments of the technology disclosed herein.

[0047] FIG. 14 illustrates an example direct motion measurement camera in accordance with the technology of the present disclosure.

[0048] FIG. 15 illustrates an example direct motion measurement camera with dithering in accordance with embodiments of the technology disclosed herein.

[0049] FIG. 16A illustrates a first frame from a conventional camera in a security application.

[0050] FIG. 16B illustrates the first frame from a camera in accordance with embodiments of the technology described herein.

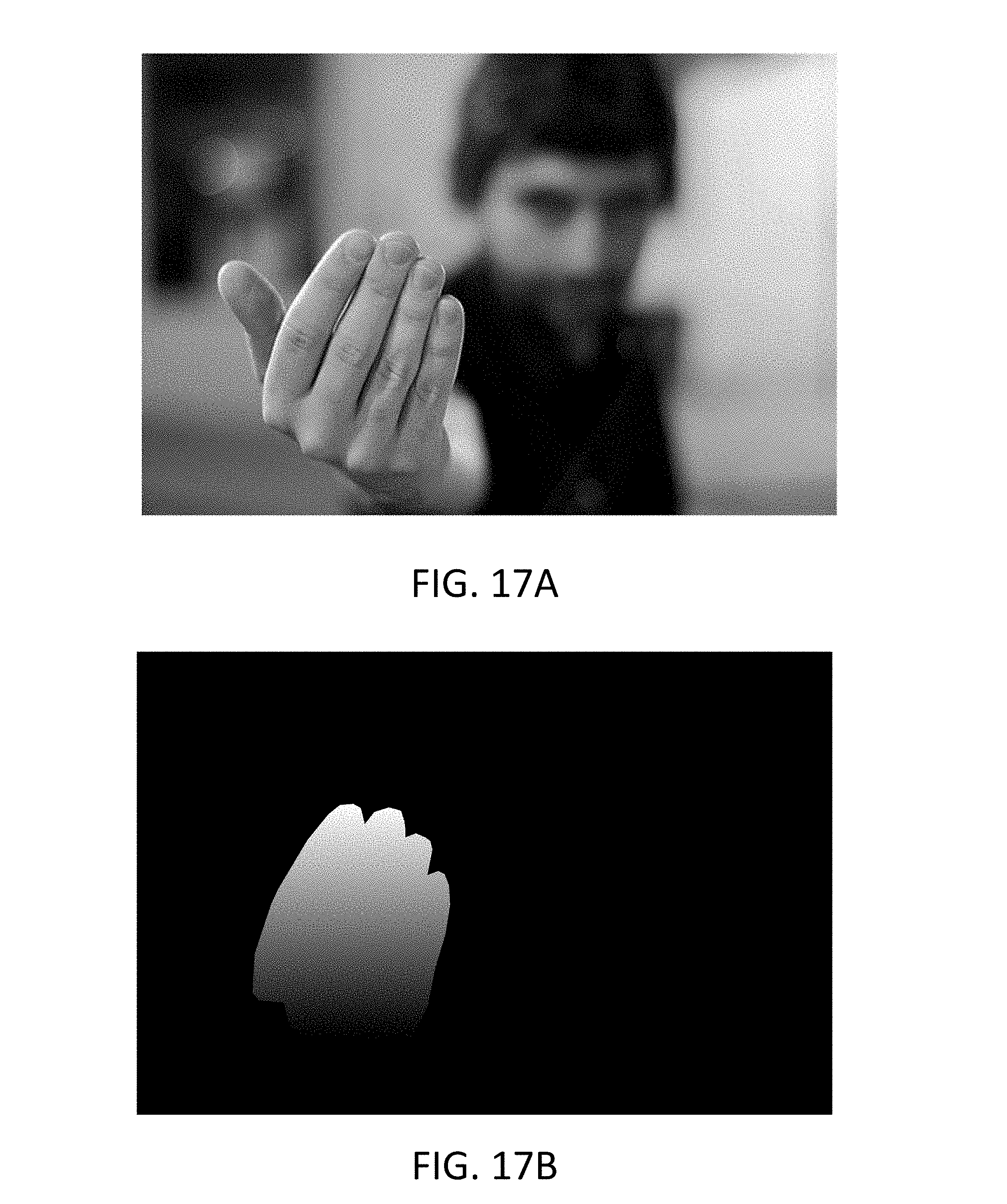

[0051] FIG. 17A illustrates a second frame from a conventional camera in a security application.

[0052] FIG. 17B illustrates the second frame as captured by a camera in accordance with embodiments of the technology disclosed herein

[0053] FIG. 18 illustrates an example method of detecting motion within the field of view of a pixel in accordance with embodiments of the technology disclosed herein.

[0054] FIG. 19 illustrates an example computing component that may be used in implementing various features of embodiments of the disclosed technology.

[0055] FIG. 20 illustrates an example traditional pixel used in current image sensors.

[0056] FIG. 21 is a block diagram illustrating the general approach for a filtering pixel in accordance with various embodiments of the technology disclosed herein.

[0057] FIG. 22 illustrates an example filtering pixel including a pseudo-digital filter in accordance with various embodiments of the technology disclosed herein.

[0058] FIGS. 23A and 23B illustrate example implementations of the filtering pixel described with respect to FIG. 22.

[0059] FIG. 24 illustrates an example imaging system implementing an image sensor with filtering pixels configured with such filtering capability.

[0060] FIG. 25A illustrates when the filtering pixel is configured to determine when an object is illuminated and remove unwanted light sources.

[0061] FIG. 25B illustrates when the filtering pixel is configured to determine when an object is moving.

[0062] FIG. 25C illustrates an example scheme for switching the filter polarity compared to modulated illumination.

[0063] FIG. 25D illustrates another example scheme for switching the filter polarity compared to modulated illumination.

[0064] FIG. 26 illustrates an example imaging system in accordance with various embodiments of the technology disclosed herein.

[0065] FIG. 27 illustrates an example pixel array in accordance with embodiments of the technology disclosed herein.

[0066] FIG. 28 illustrates an example filtering pixel with multiple sample and hold filters in accordance with various embodiments of the technology disclosed herein.

[0067] The figures are not intended to be exhaustive or to limit the invention to the precise form disclosed. It should be understood that the invention can be practiced with modification and alteration, and that the disclosed technology be limited only by the claims and the equivalents thereof.

DETAILED DESCRIPTION OF THE EMBODIMENTS

[0068] A traditional pixel utilized in image sensors simply outputs the total intensity of all light falling on the photodetector. FIG. 20 is an illustration of a basic pixel 2000 implemented in image sensor products, such as digital cameras. The pixel 2000 includes a photodiode 2010 for converting absorbed light into an electric current. The photodiode 2010 may be a p-n junction, while other embodiments may utilize a PIN junction (a diode with an undoped intrinsic semiconductor region disposed between the p-type semiconductor region and the n-type semiconductor region). As light falls on the photodiode 2010, the photodiode 2010 bleeds charge to ground, pulling the output voltage of the photodiode 2010 closer to ground. The output voltage of the photodiode 2010 is an integral of the light intensity on the photodiode 2010. Field-effect transistor (FET) 2030, controlled by the drain voltage V.sub.dd 2015, serves a buffer amplifier to isolate the photodetector 2010. The output from the FET 2030 is proportional to the integral of the intensity of light on the photodiode 2010. The select FET 2040 is controlled by the select bus 2025 allows for the output from the pixel to be outputted to the read bus 2035. A reset FET 2020 is placed between the photodiode 2010 and the drain voltage V.sub.dd 2015. The reset FET 2020, when activated, serves to make the output voltage of the photodiode 2010 equivalent to V.sub.dd 2015, thereby clearing any stored charge.

[0069] As arranged, each pixel outputs a signal proportional to the integral of the intensity of all the light falling on the photodiode 2010. That is, the total intensity of the light falling on the photodiode 2010 is outputted when the select FET 2040 is selected. That output can be modified through pre- or post-filtering applied to the image sensor. For example, in order to limit the type of light captured by the pixel (i.e., desired wavelengths), optical filters are generally added to the image sensor over the pixels. In CMOS applications, optical filters generally comprise layers of resist or other material placed above the photodiode of the pixel, designed to pass only one or more particular wavelengths of light. In some applications, a purpose-built lens or microlens array may also be used to filter the type or amount of light that reaches each pixel. Such optical filters, however, are not perfect, allowing some stray, undesired light to pass through. Moreover, optical filters may be overpowered by high-intensity light sources hindering the effectiveness of the filter. Accordingly, the output intensity of each pixel includes such undesired intensity.

[0070] Additionally, digital filtering may be applied to the output from each pixel to filter out certain aspects of the captured light. Traditional digital filtering is used to perform complex processing of the captured light, including sampling and application of complex algorithms to identify specific components of the captured light. Before any digital filtering may occur, however, the analog signal from the pixel must be converted into a digital signal, requiring the use of A/D converters. This need to convert the signal into the digital domain limits the speed at which processing may occur. As a result, information in the light that is faster than the frame rate of the sensor is lost. Moreover, the digitized signal includes all of the raw intensity captured by the pixel, meaning that if undesired information was captured it is embedded within the digital signal, limiting the effectiveness of filtering techniques meant to account for various problems in imaging (e.g., motion blur).

[0071] Accordingly, image sensors are, in essence, broken into three different domains: the optical domain, the pixel (analog) domain, and the digital processing domain. Variations of filtering have been applied in the optical domain and the digital processing domain, designed to filter out or enhance particular aspects of the captured image signal. However, the pixel domain has generally been limited to traditional pixels comprising merely a photodetector, or a photodetector and an amplifier in the case of active pixel sensors. That is, there is no additional filtering conducted in the pixel domain that could assist in overcoming deficiencies in the optical filtering or to ensure that only desired image signals are captured and outputted.

[0072] To this end, embodiments of the technology disclosed herein provides filtering-pixels for use in image sensors. The filtering-pixels of the embodiments disclosed herein provide additional filtering capabilities to image sensors, as opposed to the non-filtering characteristics of traditional pixels. The capabilities of filtering-pixels in accordance with embodiments of the technology disclosed herein provide an analog filter within the pixel domain, providing for more efficient filtering and processing of captured light. By adding filtering functionality to the pixels themselves, various embodiments of the technology allow for greater differentiation between light sources, enabling more efficient and finer filtering of undesired light sources, reducing saturation caused by high-intensity light and providing clearer image resolution. Further, greater separation between objects within a scene is possible by filtering out relevant aspects of the captured light (e.g., derivatives of the intensity) at the pixel level. That is, filtering pixels in accordance with embodiments of the disclosure herein remove undesired aspects of a captured image such that only the desired information signals are outputted at greater frame rates.

[0073] Moreover, various embodiments disclosed herein provide image sensors having a plurality of filtering pixels, enabling different information within an image to be captured simultaneously, and the plurality of filtering pixels may be programmable. Moreover, embodiments of the technology disclosed herein provide a hybrid analog-digital filter, enabling faster filtering of image signals by removing the need for an A/D converter. Moreover, various embodiments of the technology disclosed herein provide image sensors, cameras employing different embodiments of the image sensors, and uses thereof to provide cameras that are computationally less intensive and faster than traditional cameras through the use of filtering pixels.

[0074] FIG. 21 is a block diagram illustrating the general approach for a filtering pixel 2100 in accordance with various embodiments of the technology disclosed herein. As illustrated, the photodiode 2120 of the filtering pixel 2100 absorbs photons from light, outputting a current proportional to the intensity of the light (like a traditional pixel). A filter 2130 is embedded in the filtering pixel 2100. As will be explained in greater detail below, the filter 2130 may be configured to perform one of several different filtering functions. For example, the filter 2130 may filter to pass one or more derivatives of the output signal from the photodiode 2120. In other embodiments, the filter 2130 may perform a pseudo-digital sampling of the analog signal, adding and subtracting these samples to filter out particular aspects of the output signal from the photodiode 2120. The integrator 2140 outputs an integrated version of the signal output from the filter 2130. The output of the integrator 2140 would be digitized by an analog to digital converter.

[0075] Although described with respect to integrating within the current domain, a person of ordinary skill in the art would understand that the filtering approach described with respect to FIG. 21 may be done in the voltage domain. That is, instead of performing the filtering on a current signal, the output from the photodiode 2120 could be converted into a voltage and the filtering applied to the voltage. In other embodiments, portions of the filtering may be applied to a current signal while others are applied after the current signal is converted into a voltage signal. A person of ordinary skill in the art would understand how to modify the circuitry of the filtering pixel 2100 in enable filtering to occur in the current or voltage domains.

[0076] As alluded to above, filtering pixels in accordance with embodiments of the technology disclosed herein can be equipped with a variety of filtering capabilities. This enables a range of various signal aspects to be outputted by the filtering pixel, increasing the type of information about the scene that can be identified with higher resolution. For example, in various embodiments, the filtering pixels may filter to pass aspects of the signal indicative of motion within a captured scene. The conventional frame subtraction technique for measuring the displacement of objects, which can be interpreted as movement, within the FOV of a camera suffers from several disadvantages. One such disadvantage is a low update rate. For example, if two frames are subtracted to determine the motion of an object, displaying the resulting image requires the time needed to capture two frames and the computation time required to subtract the two frames. In addition, since each frame is an integral of the intensity for every pixel during the exposure time, changes that take place during each frame time are missed. Moreover, the frame subtraction technique is sensitive to blur effects on images, caused either by other objects within the camera's FOV or the motion of the camera itself. Changes in illumination also impact the ability to rely on frame subtraction, as it is more difficult to distinguish motion where the light reflecting off of or through objects varies independent of the motion of the objects. In summary, traditional cameras and image sensors are designed to capture non-moving images. As soon as there is motion or change in the scene, image quality is compromised and information is lost. Cameras and image sensors implementing embodiments of the technology disclosed herein provide advantages over traditional cameras and image sensors for a variety of different applications. Background separation is easier as only motion is captured through the use of derivative sensing. Conventional cameras capture all objects within a frame. This makes it more difficult to separate out moving objects from stationary objects in the background. For security applications, by limiting the amount of information captured it is easier to identify objects of interest within a scene.

[0077] Further, frame subtraction is computationally intensive. Conventional image sensors in cameras generally capture all objects within the FOV of the camera, whether or not the object is moving. This increases the amount of data for processing, makes background separation more difficult, and requires greater processing to identify and measure motion within the FOV. This renders motion detection through frame subtraction computationally intensive.

[0078] By utilizing a filtering pixel configured to filter one or more derivatives of the light intensity in accordance with embodiments of the technology disclosed herein, less computationally intensive and faster motion detection is provided compared with cameras employing traditional pixels. Traditional image sensors integrate the light that falls on each pixel and outputs how much light fell on that pixel during the frame. In contrast, image sensors in accordance with various embodiments described herein can detect changes in the light falling on pixels of the image sensor during the frame. In particular, the image sensors described herein detect the change in the intensity of light detected on the photodiodes of the pixels comprising image sensors, rather than detecting mere intensity on that photodiode (as in traditional image sensors). Where the intensity is constant (i.e., no movement), no voltage is accumulated. However, if the intensity of the light is varying (i.e., there is movement within the FOV), the output will be proportional to the number and size of changes during the frame. The more changes, or the larger the change, the larger the output voltage. In some embodiments, this type of image sensor employing derivative sensing may be implemented within a conventional camera to directly detect movement in addition to capturing the entire scene.

[0079] For example, FIG. 16A illustrates a frame captured using a conventional camera in a security application. As illustrated, all objects within the FOV of the camera are captured, including the ground, the car, and an individual that is walking. By capturing each of these objects, a large amount of information is obtained and stored when all that is of interest may be the movements of the individual in the scene. In contrast, FIG. 16B illustrates a frame captured by a camera implementing embodiments of the technology described herein. As can be seen in FIG. 16B, only the man (who is walking to the bottom left corner of the frame) is captured. By not capturing all the elements in the background, identification of a moving object is made easier.

[0080] In some embodiments, the camera in FIG. 16B may capture not only motion within the frame, but also capture and provide quantitative information on the motion (e.g., the velocity of the moving object; acceleration of the object; direction of motion; etc.). This information may be conveyed in a number of different ways. Some non-limiting examples may include: different colors indicating different velocities or ranges of velocities; textual layovers; graphical indications; or a combination thereof. Post-processing may be utilized to augment the derivative sensed images captured by the camera. Because the information is directly sensed through the derivative sensing capability of embodiments of the technology disclosed, post-processing of the frame is less computational intensive than is required to obtain the same information using traditional cameras. In some embodiments, the quantitative image for each derivative pixel is used as the input of a control system. For example, in some applications, faster moving objects may be more important. In some embodiments, the derivative pixel image may be further processed or filtered to eliminate noise or false images.

[0081] Implementations of embodiments of the technology disclosed herein may also provide benefits for other applications, such as gesture recognition, obstacle avoidance, and target tracking, to name a few. FIG. 17A shows a frame captured with a conventional camera, where an individual is making a "come here" gesture, as if calling a dog. Similar to the camera in FIG. 16A, all of the elements in the background of the scene including the object of interest (in this case, the gesturing individual) are captured by the camera of FIG. 17A, making background separation difficult. Moreover, the gesture being made is moving away from the camera, which makes identification of the gesture more difficult because the motion is perpendicular to the image sensor. This is especially true for slight motions away from the camera, which may not be visibly noticeable. FIG. 17B illustrates the same frame as captured by a camera implementing embodiments of the technology disclosed herein. As illustrated in FIG. 17B, only the gesture is captured by the camera. Moreover, the captured image illustrates a gradient representation, whereby the portion of the gesture with which greater is motion associated (the upper portions of the fingers) is represented by a more pronounced shade of white. This is because, as the fingertips move more than the knuckles, more charge is accumulated within the derivative sensing circuit (as will be discussed in greater detail below).

[0082] Such implementations also make it easier to identify and detect low energy motions. Conventional cameras and time of flight cameras that capture the entire scene require large motions that are easily distinguishable from the background in order to identify gestures or moving objects. By implementing embodiments of the technology disclosed herein, it is possible to capture low energy motion more easily, allowing for greater motion detection and identification. Even slight motions, like a tapping finger, can be picked up (which could otherwise be missed using traditional image sensors).

[0083] Various embodiments of the technology disclosed herein utilize derivative filtering to directly detect and capture motion within the scene. FIG. 1 is a circuit diagram of an example filtering pixel 100 configured to filter out the derivative of the intensity of light, in accordance with embodiments of the technology disclosed herein. In the illustrated example, the filtering pixel 100 is a complementary metal-oxide-semiconductor (CMOS), which captures images by scanning across rows of pixels, each with its own additional processing circuitry that enables high parallelism in image capture. The example filtering pixel 100 may be implemented in place of traditional pixels in an active-pixel sensor (APS) for use in a wide range of imaging applications, including cameras. As illustrated in FIG. 1, the filtering pixel 100 includes a photodiode 101. The photodiode 101 operates similarly to the photodiode 2010 discussed above with respect to FIG. 20.

[0084] Resistor 103 converts the current generated by the photodiode 101 into a voltage. In some embodiments, the resistor 103, and any other resistors illustrated throughout, may be implemented by using a switched capacitor. Capacitor 104 and resistor 105 act as a high-pass filter. Capacitor 104 serves to filter the alternating current (AC) components of the input voltage (when the intensity of the light on the photodiode 101 varies or changes), allowing an AC current to pass through. A derivative of the input voltage is taken by said capacitor 104 and resistor 105, which serves to connect to the supply and eliminate any DC voltage component. The derivative signal is integrated by the field-effect transistor (FET) 106 and capacitor 107 (i.e., an integrator), to generate the output voltage of the filtering pixel 100. The capacitor 104 and resistor 105 forming the high pass filter and may be referred to as a "differentiator," a type of circuit whose output is approximately directly proportional to the time derivative of the input. A second FET 108 reads out the output voltage when the Select Bus 109 voltage (i.e., gate voltage for second FET 108) is selected to "open" the second FET 108 (i.e., a "conductivity channel" is created or influenced by the voltage).

[0085] When the intensity of light falling on the photodiode 101 is constant, there is a constant amount of current flowing through the photodiode 101 and the resistor 103 from the voltage V.sub.dd 102 into ground. This creates a constant voltage at the input of the capacitor 104 (and no signal goes through capacitor 104). When the intensity of light falling on the photodiode 101 varies, an AC component of the signal is created that is coupled through the capacitor 104. This AC component of the signal is integrated by the FET 106 and capacitor 107, and the integrated signal is read out on the Read Bus 110 when the row (within an APS) containing the pixel is selected through a proper voltage being applied to the Select Bus 109.

[0086] In some cases, the amount of light falling on the photodiode may be very small. For such low intensity changes, it may be beneficial to include a charge accumulator and/or buffer before the differentiator. FIG. 2 illustrates another example filtering pixel 200 configured to filter to pass the derivative of the intensity of light in accordance with embodiments of the technology disclosed herein. Similar to the example filtering pixel 100 of FIG. 1, the example filtering pixel 200 may be implemented in place of traditional pixel sensors in an array of an active-pixel sensor (APS) for use in a wide range of imaging applications, including cameras. In various embodiments, the photodiode 201 may use a p-n junction, while other embodiments may utilize a PIN junction (a diode with an undoped intrinsic semiconductor region disposed between the p-type semiconductor region and the n-type semiconductor region). As light falls on the photodiode 201, the photodiode 201 bleeds charge to ground, pulling the output voltage of the photodiode 201 closer to ground. The output voltage of the photodiode 201 is an integral of the light intensity on the photodiode 201. A reset FET 203 is placed between the photodiode 201 and the drain voltage V.sub.dd 202. The reset FET 203, when activated, serves to make the output voltage of the photodiode 201 equivalent to V.sub.dd 202, thereby clearing any stored charge. In various embodiments, a pixel reset voltage (not pictured) may be utilized as the gate voltage of the reset FET 203. This can be utilized in between frames, so that minimal residual charge carries over from frame to frame. In some embodiments, a controlled leakage path to Vdd may be used to counteract the charge accumulation, as was done by resistor 103 in the embodiment described in FIG. 1. In some embodiments, this controlled leakage is provided by pulsing or otherwise controlling the leakage through FET 203.

[0087] In various embodiments, a buffer FET 204 may be included. When the amount of light falling on the photodiode 201 varies, the buffer FET 204 enables reading out of the output voltage of the photodiode 201 (which will accumulate with changes in the amount of light) without taking away the accumulated charge. As illustrated, the gate voltage of the buffer FET 204 is the voltage from the photodiode 201, and both the buffer FET 204 and the photodiode 201 have a common drain (in this case, V.sub.dd 202). The output voltage of the buffer FET 204 is proportional to the integral of the intensity of light on the photodiode 201. The buffer FET 204 also serves to isolate the photodiode 201 from the rest of the electronic circuits in the pixel.

[0088] In various embodiments, a first differentiator 205 takes a derivative of the output voltage of the buffer FET 204. The first differentiator 205 includes capacitor 206, resistor 207, and FET 208. The output voltage of the first differentiator 205 is proportional to the instantaneous intensity on the photodiode 201.

[0089] To identify motion within the FOV of the filtering pixel 200 (and to reverse the integration of the accumulator (e.g., FET 204)) the change in intensity of the photodiode is determined. A second differentiator 209 takes the derivative of the output voltage of the first differentiator 205. The output voltage of the second differentiator 209 is proportional to the change in intensity on the photodiode 201. The capacitor 213 integrates the output voltage of the second differentiator 209. When the Select Bus 215 is set to open the FET 214, the integrated output voltage is read out on the Read Bus 216.

[0090] FIG. 3 is a block diagram illustrating an example filtering pixel 300 in accordance with embodiments of the technology disclosed herein. The filtering pixel 300 may be one embodiment of the filtering pixel 200 discussed with respect to FIG. 2. As illustrated in FIG. 3, the filtering pixel 300 includes a photodiode 301. The photodiode 301 is connected to a storage circuit 302 for storing the charge from the photodiode 301 and converting to a voltage. As illustrated in FIG. 1, the storage circuit is optional in some embodiments and the signal from the photodiode may go directly to the derivative circuit. In some embodiments, the storage circuit 302 may include a buffer FET, similar to the buffer FET 204 discussed with respect to FIG. 2. Various embodiments may also include a reset FET for clearing any stored charge in between frames (utilizing the pixel reset voltage 304 to control the gate of the reset FET), similar to the reset FET 203 and discussed with respect to FIG. 2.

[0091] The output of the storage circuit 302 goes through the derivative circuit 303. In various embodiments, the derivative circuit 303 may be similar to the first and second differentiators 205, 209 discussed with respect to FIG. 2. The derivative circuit 303 outputs an output voltage proportional to the change in intensity on the photodiode 301.

[0092] The output of the derivative circuit 303 is integrated and read out through the read out circuit 305 when the select bus 306 voltage is set to activate the read out circuit 305. The read out circuit 305 may be similar to the capacitor 213 and FET 214, discussed with respect to FIG. 2. The output of the read out circuit 305 is the number and size of changes in intensity on the photodiode 301 during the frame, and is read out on the read bus 307.

[0093] In some embodiments, the derivative circuit may be placed before the storage circuit, thereby enabling storage of changes during the frame so they may be stored and read out using standard select bus and read bus architecture. FIG. 4 illustrates an example of such a filtering pixel 400 in accordance with embodiments of the technology disclosed herein. The components of the filtering pixel 400 are similar to those discussed with respect to FIG. 3, except that the derivative circuit 402 is placed in front of the storage circuit 403.

[0094] FIGS. 5A, 5B, and 5C illustrate the output of a filtering pixel described according to FIG. 1. FIG. 5A illustrates the input voltage V.sub.in, which is proportional to the intensity of the light falling on the photodiode, as a function of time. FIG. 5B illustrates the output voltage of the derivative circuit V.sub.out (derivative). FIG. 5C illustrates the corresponding integrated output voltage V.sub.out (integrated) through the derivative pixel as a function of time. It can be appreciated that as V.sub.in fluctuates, the output of the derivative circuit (FIG. 5B) changes in steps corresponding to the rate of change in the input voltage. In bipolar circuits, the output voltage of the derivative can be negative in some cases (when the input voltage drops). In some embodiments, a unipolar CMOS circuit may be used, which would peg the output voltage of the derivative at zero (eliminating the negative voltage portion of FIG. 5B). As illustrated in FIG. 5C, the integrated output voltage accumulates as the input voltage changes. In some embodiments, the circuit is designed so that the positive derivative is eliminated and the negative derivative is stored. For example, the FET 106 may be replaced by a circuit that inverts the signal. In some embodiments, one portion of the circuit outputs the stored positive derivative and another portion of the circuit outputs the stored negative derivative.

[0095] APSs and other imaging sensors implementing filtering pixels in accordance with the embodiments discussed with respect to FIGS. 1-4 enable direct measurement of motion within the FOV of the imaging sensor, without the need for frame subtraction. The motion is measured directly, instead of deriving the motion through post processing. This provides a visual representation of movement within the frame. By adding another derivative circuit to the filtering pixel, not only can the change in intensity of the light be measured, but also the change in the change of intensity of light may be measured. That is, the single derivative circuit (as that described with respect to FIGS. 1-4) senses velocity in the image, while the double derivative circuit senses acceleration within the image. By including an additional derivative circuit to create a double derivative circuit in the filtering pixel, the quality of the motion within the frame may be measured directly, as well as the motion itself, without the need for frame subtraction or other techniques. In some embodiments, even higher order derivatives are taken to measure jerk, snap, etc.

[0096] FIG. 6 illustrates an example filtering pixel 600 with a double derivative circuit in accordance with embodiments of the technology disclosed herein. The filtering pixel 600 includes the same components as the filtering pixel 200 discussed with respect to FIG. 2. For ease of discussion, similar components in FIG. 6 are referenced using the same numerals as used in FIG. 2, and should be understood to function in a similar manner as discussed above. Referring to FIG. 6, the filtering pixel 600 adds an additional differentiator 610 to the circuit, which takes the derivative of the derivative resulting from the differentiator 209. The output voltage of the additional differentiator 510 is integrated by capacitor 213 and read out on the read bus 216 by the FET 214. In some embodiments, the output of the differentiator 209 may also be read out as well by connecting a second capacitor and FET after the FET 212, and connecting the new FET to the select bus 215 and a second read bus.

[0097] Although described with respect to single and double derivatives, nothing in this disclosure should be interpreted to limit the number of derivative circuits that may be utilized. Additional higher order derivatives (e.g., triple derivative) may be created by adding more differentiators to the filtering pixel. Accordingly, a person of ordinary skill would appreciate that this specification contemplates derivative pixels for higher order derivatives.

[0098] FIG. 7 is a block diagram illustration of an example filtering pixel 700 with a double derivative circuit in accordance with embodiments of the technology disclosed herein. The filtering pixel 300 may be similar to the filtering pixel 600 discussed with respect to FIG. 6. For ease of discussion, similar components in FIG. 7 are referenced using the same numerals as used in FIG. 3, and should be understood to function in a similar manner as discussed above. In place of the derivative circuit 303, the filtering pixel 700 includes a double derivative circuit 703. The double derivative circuit 703 may be similar to the first, second, and third differentiators 205, 209, 610 discussed with respect to FIG. 6. The double derivative circuit 703 outputs an output voltage proportional to the change in the change of intensity on the photodiode 301. In some embodiments, the read out circuit 305 may include additional circuitry to read out the output voltage from each differentiator stage in the double derivative circuit 703. In such embodiments, the filtering pixel 700 may include an additional read bus.

[0099] In some embodiments, the derivative circuit may be placed before the storage circuit, thereby enabling storage of changes during the frame may be stored and read out using standard select bus and read bus architecture. FIG. 8 illustrates an example of such a filtering pixel 800 in accordance with embodiments of the technology disclosed herein. The components of the filtering pixel 800 are similar to those discussed with respect to FIGS. 4 and 7, except that the double derivative circuit 802 is placed in front of the storage circuit 403. In some embodiments, a single derivative circuit may be placed before the storage circuit and a second derivative circuit placed after the storage circuit. Other variations of the order of derivative and storage circuits exist. There may be multiple derivative circuits and or multiple storage circuits arranged in different orders.

[0100] Image sensors (or cameras) containing arrays of filtering pixels similar to the embodiments discussed with respect to FIGS. 1-8 allow for direct measurement of motion within a frame. In essence, such image sensors detect changes in the light falling on each pixel, and the read out of the pixels represent the motion occurring within the frame. This enables motion measurement without the need for intensive processing techniques like frame subtraction.

[0101] In various embodiments, the derivative sensing may be combined with traditional image capturing circuitry. FIG. 9 illustrates an example combined pixel imager 900 in accordance with embodiments of the technology disclosed herein. The example combined pixel imager 900 adds several components to the filtering pixel 100 discussed with respect to FIG. 1. For ease of discussion, similar components in FIG. 9 are referenced using the same numerals as used in FIG. 1, and should be understood to function in a similar manner as discussed above. Referring to FIG. 9, in addition to the filtering pixel components, resistor 901 and capacitor 902 serve as an low pass filter of the current from the photodiode 101 to capture the total amount of light falling on the photodiode during the frame. In various embodiments, FET 903 serves as a buffer to enable reading the integrated signal from the capacitor 902 and resistor 901. When the signal is read out on the traditional signal bus 905 by activating the FET 904 with the select bus 109 voltage, the output signal (when combined with the read outs from the other pixels in the sensor) results in a traditional image being captured. At the same time, the derivative output may be read out on the derivative signal bus 910 (similar to the read bus 110 of FIG. 1). In this way, both a traditional image, as well as the derivative signal, may be obtained within the same combine pixel imager 900 package.

[0102] FIG. 10 is a block diagram illustration of an example combined pixel imager 1000 in accordance with embodiments of the technology disclosed herein. The illustrated example combined pixel imager 1000 is a block diagram representation of the combined pixel imager 900 discussed with respect to FIG. 9. Referring to FIG. 10, the combined pixel imager 1000 includes common components to the filtering pixel 400 discussed with respect to FIG. 4. In this way, the derivative may be integrated (through 402, 403, 405) so that changes during a frame can be stored and read out using standard select bus and read bus (derivative signal bus 1010) architecture. At the same time, the integration circuit 1002 integrates the current of the photodiode 401 to generate a traditional read out signal (i.e., capturing the entire scene within the FOV of the combined pixel imager 1000). In various embodiments, the integration circuit 1002 contains the components comprising the integrator in FIG. 9 (i.e., resistor 901, capacitor 902). The storage circuit 1003 and read out circuit 1005 operate in a manner similar to the storage circuit 403 and read out circuit 405, with the read out circuit 1005 connected to the traditional signal bus 1020 instead of the derivative signal bus 1010.

[0103] In some cases, the amount of light falling on the photodiode may be very small. For such low intensity changes, it may be beneficial to include a charge accumulator and buffer before the differentiator, similar to the example filtering pixel 200 discussed with respect to FIG. 2. FIG. 11 illustrates another example combined pixel imager 1100 for accumulating charge in accordance with embodiments of the technology disclosed herein. For ease of discussion, similar components in FIG. 11 are referenced using the same numerals as used in FIGS. 2 and 9, and should be understood to function in a similar manner as discussed above. Inclusion of the buffer FET 204 isolates the photodiode 201, enabling for the additional capacitor 902 and resistor 902 to be removed from the circuit illustrated in FIG. 9. This further renders the FET 903 redundant. In various embodiments, the FET 903 may be removed (an example simplified combined pixel imager 1200 is illustrated in FIG. 12). Additional differentiators may be included in some embodiments to create a higher order derivative circuit (e.g., double derivative; triple derivative), in a manner similar to the example double derivative pixel 600 discussed with respect to FIG. 6.

[0104] When the redundant FET is removed, the combined pixel imager can be simplified. FIG. 13 is a block diagram illustration of the example simplified combined pixel imager 1300 in accordance with embodiments of the technology disclosed herein. As illustrated in FIG. 13, the simplified combined pixel imager 1300 may include similar components as discussed with respect to FIG. 3. Accordingly, for ease of discussion, similar components in FIG. 13 are referenced using the same numerals as used in FIG. 3, and should be understood to function in a similar manner as discussed above. Unlike the combined pixel imager 1000 of FIG. 10, the simplified combined pixel imager 1300 can provide dual read out of the traditional signal as well as the derivative signal with only the addition of a second read out circuit 1305 for the traditional imaging function.

[0105] Traditional CMOS APSs contain an array of pixels, and have been implemented in many different devices, such as cell phone cameras, web cameras, DSLRs, and other digital cameras and imaging systems. The pixels within this array capture only the total amount of light falling on the photodiode (photodetector) of the pixel, necessitating computationally intensive frame subtraction and other techniques in order to identify motion within the images. By replacing the array of traditional pixels with an array of filtering pixels as those discussed with respect to FIGS. 1-13, it is possible to capture the motion occurring within a frame, in lieu of or in addition to capturing the entire frame (like a traditional pixel).

[0106] The embodiments discussed with respect to FIGS. 1-13 enable measurement of motion within the frame that is in-plane, i.e. parallel to the surface of the image sensor. To provide measurement of out-of-plane motion (i.e., motion towards and away from the image sensor), an illumination of the scene can be used to convert out-of-plane motion to variations in intensity, which the filtering image sensor previously described can detect. For example, a structured light illumination where some sections are illuminated and others are dark can create intensity fluctuations on the object, and these fluctuations in intensity can be detected with the filtering pixel technology. In such embodiments, a known pattern of light (e.g., grids) is projected onto the scene and, based on the deformation caused by striking the surface as an object in the scene moves towards or away from the camera, the rate of motion may be calculated. In various embodiments, imperceptible structured light (e.g., infrared) may be utilized as to not impair the visual capture of the entire scene.

[0107] In some embodiments, a coherent laser radar component may be added to the implementation. FIG. 14 illustrates an example direct motion measurement camera 1400 in accordance with the technology of the present disclosure. The direct motion measurement camera 1400 includes an image sensor 1401. The image sensor 1401 includes an array of filtering pixels similar to the filtering pixels discussed above with respect to FIGS. 1-5 in some embodiments. In other embodiments, the image sensor 1401 includes an array of higher order filtering pixels similar to the higher order filtering pixels discussed with respect to FIGS. 6-8. The image sensor 1401 may include a combined pixel imager similar to the combined pixel imager discussed with respect to FIGS. 9-13, in various embodiments. The direct motion measurement camera 1400 further includes an imaging system, comprising a lens 1402 and an aperture stop 1403. The imaging system serves to ensure that light is directed correctly to fall on the image sensor 1401. In various embodiments, the direct motion measurement camera 1400 may include filtering pixels discussed with respect to FIGS. 21-23.

[0108] To provide the out-of-plane motion measurement, the direct motion measurement camera 1400 takes advantage of the Doppler shift. A laser diode 1404 is disposed on the surface of the aperture stop 1403. The laser diode 1404 emits a laser towards a prism 1405. The light emitted from the laser diode 1404 is divergent, and the divergence angle of the laser may be set by a lens (not shown) of the laser diode 1404. In various embodiments, the divergence angle may be set to match the diagonal FOV of the camera 1400. Polarization of the light emitted from the laser diode 1404 may be selected to avoid signal loss from polarization scattering on reflection from the moving object 1406. In some embodiments, the polarization may be circular; in other embodiments, linear polarization may be used. To eliminate speckle fading, the pixel size of the image sensor may be matched to the airy disk diameter of the imaging system in various embodiments.

[0109] The prism 1405 has a first power splitting surface (the surface closest to the laser diode 1404), which reflects the light 1410 emitted by the laser diode 1404 outwards away from the camera. In addition, a portion of the light emitted from the laser diode 1404 passes through the first surface of the prism and reflects 1420 off of a second, interior surface of the prism 1405 towards the lens 1402 of the imaging system, through an opening 1407 in aperture stop 1403. The prism 1405 is designed as a band pass filter corresponding to the wavelength of the light emitted from the laser diode 1404. Accordingly, the prism 1405 is opaque (like the aperture stop 1403) to visible light.

[0110] As can be seen in FIG. 14, the light 1410 that is transmitted outwards away from the camera 1400 reflects off of a moving object 1406 back towards the camera 1400 (as illustrated at 1430). The lens 1402 captures the returning light 1430 through the aperture stop 1403, and focuses the returning light 1430 onto the pixel on the image sensor 1401 corresponding to the location of the moving object 1406.

[0111] The returning light 1430 off of the moving object 1406 is shifted in frequency by the Doppler shift. If the moving object 1406 was stationary instead, no shift in the frequency would occur. For the moving object 1406, however, the shift in frequency is proportional to the velocity of the moving object 1406. Accordingly, the reflected light 1420 and the returning light 1430 each have a different frequency. When the two lights combine on the image sensor 1401, a beat frequency is generated that is equal to the difference in the frequencies between the reflected light 1420 and the returning light 1430. By measuring this beat frequency, the direct motion measurement camera 1400 may measure motion towards or away from the camera without the need for additional post-processing. The filtering pixel sensor previously described can detect the beat frequency since the derivative of a sine wave is a cosine wave. Thus, the first derivative sensing circuit will sense the same as the double derivative circuit. Also, the positive derivative and the negative derivative circuits will also detect the same signal. This is different than any other intensity variation that the pixel may detect, related to lateral motion of the object.

[0112] In some embodiments, not only can the velocity of motion in the out-of-plane direction be measured, but the direction of the out-of-plane motion may also be measured. By dithering the prism, the frequency of the outgoing light (1410 in FIG. 14) can be changed relative to the reflected light (1420 in FIG. 14). By doing this, the direction of motion of the moving object may be detected. Moreover, the beat frequency can be further distinguished from the object's in-plane motion. FIG. 15 illustrates an example direct motion measurement camera with dithering 1500 in accordance with embodiments of the technology disclosed herein. The direct motion measurement camera with dithering 1500 has a similar configuration to the direct motion measurement camera 1400 discussed with respect to FIG. 14. Referring to FIG. 15, the direct motion measurement camera with dithering 1500 includes a lens 1510, aperture stop 1520, laser diode 1530, prism 1540, an actuator 1550, and a housing 1560. Although not pictured, an image sensor is disposed behind the lens 1510 and within the housing 1560, and may be similar to the image sensor discussed with respect to FIG. 14. The prism 1540 is positioned over the opening in the aperture stop 1520 (the opening is shown by the cross-hatched rectangle in the prism 1540).

[0113] To provide the dither, the prism 1540 is connected to an actuator 1550. In various embodiments, the actuator 1540 may be a microelectromechanical system (MEMS) actuator, such as the actuator disclosed in U.S. patent application Ser. No. 15/133,142. The actuator 1540 may be configured such that the prism 1540 adds to Doppler shift during even frames, but subtracts from Doppler shift during odd frames. When Doppler shift is positive (i.e., objects are moving towards the camera), even numbered frames will have a larger Doppler shift than odd numbered frames. When Doppler shift is negative (i.e., objects are moving away from the camera), even numbered frames will have a smaller Doppler shift than odd numbered frames.

[0114] Although discussed with respect to embodiments employing coherent light, the dithering element may be utilized with other forms of illumination as well. For example, embodiments of the technology disclosed herein utilizing structured light may include the dithering effect to take advantage of Doppler shift. Nothing in this specification should be interpreted as limiting the use of the prism and dithering component described to a single illumination technique. A person of ordinary skill in the art would appreciate that the dithering technique is applicable wherever the Doppler shift could be utilized to identify out-of-plane motion of objects within the FOV of the derivative sensor.

[0115] As discussed above, cameras implementing the technology discussed herein provide advantages over traditional cameras. Frame subtraction is not needed in order to determine motion within the frame. The derivative circuits and image sensors discussed above with respect to FIGS. 1-15 allow for direct measurement of motion of object within a frame. Only the number and size of the motion is captured through the derivative circuit, making it easier to separate a moving object from the background and identifying the moving object. By combining the derivative sensing technology with a traditional pixel circuit, it is possible to both collect visual images of the entire scene (like a conventional camera) and also capture only the motion within the scene, at the same time. The ability to capture the motion of objects through the scene is useful for many different types of applications, including the security, gesture recognition, obstacle avoidance, and target tracking applications discussed, among others. The implementations enable only the motion in the scene to be captured, making it easier to differentiate between the background elements and the moving object.

[0116] As an example, a camera in accordance with embodiments of the technology disclosed herein may be built for use in cellular devices. In one embodiment, an aperture size of 2.5 mm diameter and an F number of 2.8 (traditional size of a camera in a cellular device) can be assumed. A FOV can be 74.5 degrees. To avoid speckle fading, the filtering pixel size may be set to be smaller than 6.59 .mu.m (dependent on the aperture size and F #). The camera resolution can be assumed to be 1.25 megapixels, or 0.94 mm lateral resolution at 1 m distance. Also, a laser diode power of 100 mW can be assumed. If there is an object moving at 1 m away from the camera, about 90 mW of the laser diode power may be used to illuminate the object at this distance, and an illumination equivalent to about 2 lux in the visible (1 lux is 1.46 mW/m{circumflex over ( )}2 at 555 nm) can be assumed. Assuming 30% diffuse object reflectivity, the power received per pixel is 7e-15W or 354 photons per frame running at 100 fps. A high pass filter can be placed on each filtering pixel that only detects between 1 kHz and 100 MHz frequencies. Thus, object motion can be between 0.49 mm/sec (1.8 m/hr) and 49 m/sec (176 km/hr) can be detected. In this arrangement, there are over 16 bits of dynamic range.

[0117] FIG. 18 illustrates an example method of detecting motion within the field of view of a pixel. At 1810, a change in intensity of light falling on a photodiode of a pixel is sensed. This sensing may be similar to the sensing discussed above with respect to the photodiode of FIGS. 1-15. As the intensity of light falling on the photodiode changes, the current flowing out of the photodiode to the drain voltage (V.sub.dd) varies, varying the voltage of the signal. This change in intensity generates an AC component to the output voltage (signal). In some embodiments, this may be sensed through a resistor, such as resistor 103 discussed with respect to FIG. 1. In such embodiments, the signal may be proportional to the intensity of the light falling on the photodiode. In various embodiments, the change in intensity may be sensed through a source follower transistor, such as the buffer FET 204 discussed above with respect to FIG. 2. In such embodiments, the output of the source follower is proportional to the integral of the intensity of the light falling on the photodiode.

[0118] At 1820, a differentiator takes a derivative of the voltage. The derivative of the output signal from 1810 is the change in intensity of the light falling on the photodiode. In various embodiments, the differentiator may comprise a capacitor, resistor, and a FET. In some embodiments, additional derivatives of the signal may be taken to measure different aspects of the signal. In embodiments where a source follower is utilized (such as, for example, the buffer FET 204 discussed above with respect to FIG. 2), two differentiators may be used to obtain the first derivative of the signal. As discussed above with respect to FIG. 2, in such embodiments the output from the source follower (buffer FET) is proportional to the integral of the intensity of light falling on the photodiode. Accordingly, the signal from 1810 must first be processed to obtain a signal proportional to the intensity of the light falling on the photodiode, and then the first derivative may be taken.

[0119] When applicable, additional derivatives may be taken at 1830. Additional derivatives are possible by including an additional differentiator in the circuit prior to integration (represented by the nth derivative in FIG. 18). As additional higher order derivatives are an option, 1830 is illustrated as a dashed box. If no higher order derivatives are taken, the method would go from 1820 to 1840.

[0120] At 1840, the derivative signal is integrated. In various embodiments, a capacitor may be used to integrate the derivative signal. At 1850, the integrated signal is read out on a read bus. In various embodiments, a row select FET may be utilized to read out the integrated signal.

[0121] In addition to filtering out derivatives of the intensity of light, filtering pixels in accordance with embodiments of the technology disclosed herein may include a sampling filter. In signal processing, sampling filters are digital filters that operate on discrete samples of an output signal from an analog circuit (e.g., a pixel). As it is in the digital domain, sampling requires that the analog output signal from the circuit is converted into a digital signal through an A/D converter. A microprocessor applies one or more mathematical operations making up the sampling filter to the discrete time sample of the digitized signal. However, such processing is time- and resource-intensive. Moreover, the effectiveness of the process is limited by the quality of the analog signal outputted from the circuit.

[0122] By including a sampling filter within the pixel itself, the usefulness of sampling filters used in the digital domain can be realized in the analog domain of the pixel circuit. In other words, various embodiments of the technology disclosed herein provide filtering pixels providing a hybrid analog-digital filtering capability within the pixel itself. By including a pseudo-digital filter circuit, such filtering pixels can perform digital-like sampling of the analog signal, without the need for an A/D converter.

[0123] For example, filtering pixels including a pseudo-digital filter may filter out undesired light sources. This capability can be used, for example, to address light saturation caused by high-intensity light sources (e.g., the sun). In some embodiments, this capability can be used to improve visibility in certain conditions, e.g., foggy conditions. That is, filtering pixels can be used to better identify moving objects through the fog. In some embodiments selective lighting filtering can be used to allow viewing only those objects illuminated by a particular code. As discussed above, traditional pixels used in image sensors integrate the total intensity of light falling on its photodiode during a frame. That is, each pixel can be thought of as a well, accumulating captured light corresponding to an increase in the total intensity of light captured. As with physical wells, however, the amount of light that may be captured by each pixel is limited by its size. When a pixel approaches its saturation limit, it loses the ability to accommodate any additional charge (caused by light falling on the photodiode). In traditional cameras, this results in the excess charge from a saturated pixel to spread to neighboring pixels, either causing those pixels to saturate or causing errors in the intensity outputted by those neighboring pixels. Moreover, a saturated pixel contains less information about the scene being captured due to the maximum signal level being reached. After that point, additional information cannot be captured as the pixel cannot accommodate anymore intensity.

[0124] FIG. 22 illustrates an example filtering pixel 2200 including a pseudo-digital filter in accordance with various embodiments of the technology disclosed herein. The example filtering pixel 2200 is similar to the filtering pixel 200 discussed with respect to FIG. 2, with a different filtering capability disclosed. Like referenced elements operate similar to those described with respect to FIG. 2.

[0125] As illustrated in FIG. 22, the filtering pixel 2200 includes a sample-and-hold filter 2210. The sample-and-hold filter 2210 enables pseudo-digital sampling in the analog domain. The output from the buffer FET 204 (or source follower) goes through the sample-and-hold circuit 2210, which stores a sample of a previous intensity of light falling on the photodiode 201. A sample FET 2201 is included, which can be controlled to identify when a sample of the output from the buffer FET 204 is to be taken and stored in a sample capacitor 2202. The length of the sample can be controlled by controlling the operational voltage applied to the sample FET 2201. In some embodiments, the sample may be a one-time, instantaneous sample of the output from the buffer FET 204, while in other embodiments the sample FET 2201 may be held open for a duration to accumulate a longer sample. The sampling rate depends on the particular implementation and the information of the signal desired to be identified and processed. The sample-and-hold circuit 2210 enables a wide range of sampling-based filtering to be applied in the analog domain. A sample source follower 2204 serves to isolate the sample capacitor 2202 from the rest of the circuit.