Systems And Methods For Multiple Instance Learning For Classification And Localization In Biomedical Imaging

Fuchs; Thomas ; et al.

U.S. patent application number 16/362470 was filed with the patent office on 2019-09-26 for systems and methods for multiple instance learning for classification and localization in biomedical imaging. The applicant listed for this patent is Memorial Sloan Kettering Cancer Center. Invention is credited to Gabriele Campanella, Thomas Fuchs.

| Application Number | 20190295252 16/362470 |

| Document ID | / |

| Family ID | 67985406 |

| Filed Date | 2019-09-26 |

View All Diagrams

| United States Patent Application | 20190295252 |

| Kind Code | A1 |

| Fuchs; Thomas ; et al. | September 26, 2019 |

SYSTEMS AND METHODS FOR MULTIPLE INSTANCE LEARNING FOR CLASSIFICATION AND LOCALIZATION IN BIOMEDICAL IMAGING

Abstract

The present disclosure is directed to systems and methods for classifying biomedical images. A feature classifier may generate a plurality of tiles from a biomedical image. Each tile may correspond to a portion of the biomedical image. The feature classifier may select a subset of tiles from the plurality of tiles by applying an inference model. The subset of tiles may have highest scores. Each score may indicate a likelihood that the corresponding tile includes a feature indicative of the presence of the condition. The feature classifier may determine a classification result for the biomedical image by applying an aggregation model. The classification result may indicate whether the biomedical includes the presence or lack of the condition.

| Inventors: | Fuchs; Thomas; (New York, NY) ; Campanella; Gabriele; (New York, NY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 67985406 | ||||||||||

| Appl. No.: | 16/362470 | ||||||||||

| Filed: | March 22, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62647002 | Mar 23, 2018 | |||

| 62670432 | May 11, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06N 20/00 20190101; G06K 2209/05 20130101; G06T 2207/30024 20130101; G06K 9/6277 20130101; G06K 9/6262 20130101; G16H 30/40 20180101; G06T 2207/20021 20130101; G06T 7/0012 20130101; G16H 50/30 20180101; G06K 9/6224 20130101; G06T 2207/20081 20130101; G06K 9/628 20130101; G16H 50/70 20180101; G06T 2207/30096 20130101; G06T 2207/10056 20130101; G06K 9/03 20130101; G06K 9/623 20130101; G16H 50/20 20180101; G06T 2207/20076 20130101; G06T 2207/20084 20130101 |

| International Class: | G06T 7/00 20060101 G06T007/00; G06K 9/62 20060101 G06K009/62; G06K 9/03 20060101 G06K009/03; G16H 30/40 20060101 G16H030/40; G16H 50/70 20060101 G16H050/70; G06N 20/00 20060101 G06N020/00 |

Claims

1. A method of training models for classifying biomedical images, comprising: generating, by an image classifier executing on one or more processors, a plurality of tiles from each biomedical image of a plurality of biomedical images, the plurality of biomedical images including a first biomedical image having a first label indicating a presence of a first condition and a second biomedical image having a second label indicating a lack of presence of the first condition or a presence of a second condition; establishing, by the image classifier, an inference system to determine, for each tile of the plurality of tiles in each biomedical image of the plurality of biomedical images, a score indicating a likelihood that the tile includes a feature indicative of the presence of the first condition; for the first biomedical image: selecting, by the image classifier, a first subset of tiles from the plurality of tiles having the highest scores; comparing, by the image classifier, the scores of the tiles in the first subset to a first threshold value corresponding to the presence of the first condition; and modifying, by the image classifier, the inference system responsive to determining that the scores of at least one tile of the first subset of tiles is below the first threshold value; and for the second biomedical image: selecting, by the image classifier, a second subset of tiles from the plurality of tiles having the highest scores; comparing, by the image classifier, the scores of the tiles in the second subset to a second threshold value corresponding to the lack of the presence of the first condition or the presence of the second condition; and modifying, by the image classifier, the inference system responsive to determining that the scores of at least one tile of the second subset of tiles is above the second threshold value.

2. The method of claim 1, further comprising: determining, by the image classifier, for the at least one tile of the first subset, a first error metric between the score of the at least one tile to a first value corresponding to the presence of the first condition; and wherein modifying the inference system further comprises modifying the inference system based on the first error metric of the at least one tile of the first subset; determining, by the image classifier, for the at least one tile of the second subset, a second error metric between the score of the at least one tile to a second value corresponding to the lack of the presence of the first condition; and wherein modifying the inference system further comprises modifying the inference system based on the second error metric of the at least one tile of the second subset.

3. The method of claim 1, further comprising: maintaining, by the image classifier, the inference system responsive to determining that scores of none of a plurality of tiles for a third biomedical image of the plurality of biomedical images is below the first threshold, the third biomedical image having the first label indicating the presence of the first condition; and maintaining, by the image classifier, the inference system responsive to determining that scores of none of a plurality of tiles for a fourth biomedical image of the plurality of biomedical images is below the second threshold, the fourth biomedical image having the first label indicating the lack of the presence of the first condition.

4. The method of claim 1, wherein selecting the first subset of tiles further comprises selecting a predefined first number of tiles from the plurality of tiles for the first biomedical image having the highest scores; and wherein selecting the second subset of tiles further comprises selecting a predefined second number of tiles from the plurality of tiles for the second biomedical image having the highest scores.

5. The method of claim 1, wherein establishing the inference system further comprises initializing the inference system comprising a convolutional neural network, the convolutional neural network having one or more parameters, each parameter of the one or more parameters set to a random value.

6. The method of claim 1, further comprising applying, by the image classifier, a third subset of tiles from a plurality of tiles for a third biomedical image of the plurality of biomedical images to an aggregation system to train the aggregation system based on a comparison on a label of the third biomedical image with a classification result from applying the aggregation system to third subset.

7. A method of training models for classifying features in biomedical images, comprising: identifying, by an image classifier executing on one or more processors, a subset of tiles from a plurality of tiles of a biomedical image of a plurality of biomedical images, the biomedical image having a label indicating a presence of a condition; establishing, by the image classifier, an aggregation system to determine classifications of biomedical images to indicate whether the corresponding biomedical image contains a feature indicative of the presence of the condition; determining, by the image classifier, a classification result for the biomedical image by applying the aggregation system to the subset of tiles identified from the biomedical image, the classification result indicating one of the biomedical image as containing at least one feature corresponding to the presence of the condition or the biomedical image as lacking any features corresponding to the lack the of the condition; comparing, by the image classifier, the classification result determined for the biomedical image with the label indicating the presence of the condition on the biomedical image; and modifying, by the image classifier, the aggregation system responsive to determining that the classification result from the aggregation system does not match the label for the biomedical image.

8. The method of claim 7, further comprising determining, by the image classifier, an error metric between the classification result and the label, responsive to determining that the classification result does not match the label for the biomedical image; and wherein modifying the aggregation system further comprises modifying at least one parameter of the aggregation system based on the error metric.

9. The method of claim 7, wherein establishing the aggregation system further comprises initializing the aggregation system comprising a recurrent neural network, the recurrent neural network having one or more parameters, each parameter of the one or more parameters set to a random value.

10. The method of claim 7, further comprising maintaining, by the image classifier, the aggregation system responsive to determining that a second classification result from the aggregation system for a second subset of tiles from a second biomedical image matches a second label for the second biomedical image.

11. The method of claim 7, wherein applying the aggregation system to the subset of tiles further comprises applying the subset of tiles in one of a sequential order or random order from the plurality of tiles for the biomedical image.

12. The method of claim 7, wherein identifying the subset of tiles further comprises identifying the subset of tiles from the plurality of tiles for the biomedical image selected by an inference system based on scores, each score for a corresponding tile of the subset indicating a likelihood that the corresponding tile includes a feature indicative of the presence of the condition.

13. A system for classifying biomedical images, comprising: a plurality of biomedical images maintainable on a database; an inference system maintainable on one or more processors, configured to select subsets of tiles from the plurality of biomedical images including features indicative of a presence of a first condition; an aggregation system maintainable on the one or more processors, configured to determine whether biomedical images are classified as one of including the presence of the first condition or a lack of the first condition or a presence of a second condition; a feature classifier executable on the one or more processors, configured to: generate a plurality of tiles from at least one biomedical image of the plurality of biomedical images, each tile corresponding to a portion of the biomedical image; select a subset of tiles from the plurality of tiles for the biomedical image by applying the inference system to the plurality of tiles, the subset of tiles having highest scores, each score indicating a likelihood that the corresponding tile includes a feature indicative of the presence of the first condition; and determine a classification result for the biomedical image by applying the aggregation system to the selected subset of tiles, the classification result indicating whether the biomedical includes the presence of the first condition or the lack of the condition or the presence of the second condition.

14. The system of claim 13, wherein the feature classifier is further configured to generate the plurality of tiles by using one of a plurality of defined magnification factors onto the biomedical image.

15. The system of claim 13, wherein the feature classifier is further configured to: determine, for each tile of the plurality of tiles of the biomedical image, by applying the inference system to the tile, a score indicating the likelihood that the tile includes features indicative of the presence of the first condition; and select a predefined number of tiles from the plurality of tiles having the highest scores to form the subset of tiles.

16. The system of claim 13, wherein the feature classifier is further configured to input the selected subset of tiles in sequential order or in random order in to the aggregation system to determine the classification result for the biomedical image.

17. The system of claim 13, further comprising a model trainer executable on the one or more processors, configured to: generate a plurality of tiles from each biomedical image of the plurality of biomedical images, the plurality of biomedical images including a first biomedical image having a first label indicating the presence of a condition and a second biomedical image having a second label indicating a lack of the presence of the first condition; select a first subset of tiles from the plurality of tiles of the first biomedical image having the highest scores among the plurality of tiles from the first biomedical image; select a second subset of tiles from the plurality of tiles of the second biomedical image having the highest scores among the plurality of tiles from the second biomedical image; and modify the inference system based on a first comparison between the scores of the first subset of tiles and a first value corresponding to the presence of the first condition and a second comparison between the scores of the second subset of tiles and a second value corresponding to the lack of the presence of the first condition.

18. The system of claim 17, further comprising a model trainer executable on the one or more processors, configured to: determine a first error metric based on the first comparison between the scores of the first subset of tiles and a first value corresponding to the presence of the first condition; determine a second error metric based on the second comparison between the scores of the second subset of tiles and a second value corresponding to the lack of the presence of the first condition; and modify at least one parameter of the inference system based on the first error metric and the second error metric.

19. The system of claim 13, further comprising a model trainer executable on the one or more processors, configured to: identify a subset of tiles from the plurality of tiles of a second biomedical image of the plurality of biomedical images, the second biomedical image having a label indicating the presence of the first condition; determine a second classification result for the second biomedical image by applying the aggregation system to the subset of tiles identified from the second biomedical image, the classification result indicating one of the second biomedical image as containing at least one feature corresponding to the presence of the first condition or the second biomedical image as lacking any features corresponding to the lack the of the first condition or the presence of the second condition; modify the aggregation system based on a comparison between the second classification result and the label for the second biomedical image.

20. The system of claim 13, further comprising a model trainer executable on the one or more processors, configured to: determine, subsequent to modifying the inference system, that one or more parameters of the inference system have converged relative the one or more parameters prior to the modification of the inference system; initiate training of the aggregation mode, responsive to the determination that the one or more parameters of the inference has converged.

Description

CROSS-REFERENCES TO RELATED APPLICATIONS

[0001] The present application claims the benefit of priority under 35 U.S.C. .sctn. 119(e) to U.S. Provisional Patent Application No. 62/647,002, titled "TERABYTE-SCALE DEEP MULTIPLE INSTANCE LEARNING FOR CLASSIFICATION AND LOCALIZATION IN PATHOLOGY," filed Mar. 23, 2018, and to U.S. Provisional Patent Application No. 62/670,432, titled "TERABYTE-SCALE DEEP MULTIPLE INSTANCE LEARNING FOR CLASSIFICATION AND LOCALIZATION IN PATHOLOGY," filed May 11, 2018, both of which are incorporated in their entireties.

BACKGROUND

[0002] Computer vision algorithms may be used to recognize and detect various features on digital images. Detection of features on a biomedical image may consume a significant amount of computing resources and time, due to the potentially enormous resolution and size of biomedical images.

SUMMARY

[0003] At least one aspect is directed to a method of training models for classifying biomedical images. An image classifier executing on one or more processors may generate a plurality of tiles from each biomedical image of a plurality of biomedical images. The plurality of biomedical images may include a first biomedical image and a second biomedical image. The first biomedical image may have a first label indicating a presence of a first condition and the second biomedical image may have a second label indicating a lack of presence of the first condition or a presence of a second condition. The image classifier may establish an inference system to determine, for each tile of the plurality of tiles in each biomedical image of the plurality of biomedical images, a score indicating a likelihood that the tile includes a feature indicative of the presence of the first condition. For the first biomedical image, the image classifier may select a first subset of tiles from the plurality of tiles having the highest scores. The image classifier may compare the scores of the tiles in the first subset to a first threshold value corresponding to the presence of the first condition. The image classifier may modify the inference system responsive to determining that the scores of at least one tile of the first subset of tiles is below the first threshold value. For the second biomedical image, the image classifier may select a second subset of tiles from the plurality of tiles having the highest scores. The image classifier may compare the scores of the tiles in the second subset to a second threshold value corresponding to the lack of the presence of the first condition or the presence of the second condition. The image classifier may modify the inference system responsive to determining that the scores of at least one tile of the second subset of tiles is above the second threshold value.

[0004] In some embodiments, the image classifier may determine, for the at least one tile of the first subset, a first error metric between the score of the at least one tile to a first value corresponding to the presence of the first condition. In some embodiments, modifying the inference system may include modifying the inference system based on the first error metric of the at least one tile of the first subset. In some embodiments, the image classifier may determine, for the at least one tile of the second subset, a second error metric between the score of the at least one tile to a second value corresponding to the lack of the presence of the first condition. In some embodiments, modifying the inference system may include modifying the inference system based on the second error metric of the at least one tile of the second subset.

[0005] In some embodiments, the image classifier may maintain the inference system responsive to determining that scores of none of a plurality of tiles for a third biomedical image of the plurality of biomedical images is below the first threshold. The third biomedical image may have the first label indicating the presence of the first condition. In some embodiments, the image classifier may maintain the inference system responsive to determining that scores of none of a plurality of tiles for a fourth biomedical image of the plurality of biomedical images is below the second threshold. The fourth biomedical image may have the first label indicating the lack of the presence of the first condition.

[0006] In some embodiments, selecting the first subset of tiles may include selecting a predefined first number of tiles from the plurality of tiles for the first biomedical image having the highest scores. In some embodiments, selecting the second subset of tiles may include selecting a predefined second number of tiles from the plurality of tiles for the second biomedical image having the highest scores.

[0007] In some embodiments, establishing the inference system may include initializing the inference system comprising a convolutional neural network. The convolutional neural network may have one or more parameters. Each parameter of the one or more parameters may be set to a random value. In some embodiments, the image classifier may apply a third subset of tiles from a plurality of tiles for a third biomedical image of the plurality of biomedical images to an aggregation system to train the aggregation system based on a comparison on a label of the third biomedical image with a classification result from applying the aggregation system to third subset.

[0008] At least one aspect is directed to a method of training models for classifying biomedical images. An image classifier executing on one or more processors may identify a subset of tiles from a plurality of tiles of a biomedical image of a plurality of biomedical images, the biomedical image having a label indicating a presence of a condition. The image classifier may establish an aggregation system to determine classifications of biomedical images to indicate whether the corresponding biomedical image contains a feature indicative of the presence of the condition. The image classifier may determine a classification result for the biomedical image by applying the aggregation system to the subset of tiles identified from the biomedical image. The classification result may indicate one of the biomedical image as containing at least one feature corresponding to the presence of the condition or the biomedical image as lacking any features corresponding to the lack the of the condition. The image classifier may compare the classification result determined for the biomedical image with the label indicating the presence of the condition on the biomedical image. The image classifier may modify the aggregation system responsive to determining that the classification result from the aggregation system does not match the label for the biomedical image.

[0009] In some embodiments, the image classifier may determine an error metric between the classification result and the label, responsive to determining that the classification result does not match the label for the biomedical image. In some embodiments, modifying the aggregation system may include modifying at least one parameter of the aggregation system based on the error metric.

[0010] In some embodiments, establishing the aggregation system may include initializing the aggregation system comprising a recurrent neural network. The recurrent neural network may have one or more parameters. Each parameter of the one or more parameters may be set to a random value. In some embodiments, the image classifier may maintain the aggregation system responsive to determining that a second classification result from the aggregation system for a second subset of tiles from a second biomedical image matches a second label for the second biomedical image.

[0011] In some embodiments, applying the aggregation system to the subset of tiles may include applying the subset of tiles in one of a sequential order or random order from the plurality of tiles for the biomedical image. In some embodiments, identifying the subset of tiles may include identifying the subset of tiles from the plurality of tiles for the biomedical image selected by an inference system based on scores. Each score for a corresponding tile of the subset may indicate a likelihood that the corresponding tile includes a feature indicative of the presence of the condition.

[0012] At least one aspect is directed to a system for classifying biomedical images. The system may include a plurality of biomedical images maintainable on a database. The system may include an inference system maintainable on one or more processors. The inference system may select subsets of tiles from the plurality of biomedical images including features indicative of a presence of a first condition. The system may include an aggregation system maintainable on the one or more processors. The aggregation system may determine whether biomedical images are classified as one of including the presence of the first condition or a lack of the first condition or a presence of a second condition. The system may include a feature classifier executable on the one or more processors. The feature classifier may generate a plurality of tiles from at least one biomedical image of the plurality of biomedical images. Each tile may correspond to a portion of the biomedical image. The feature classifier may select a subset of tiles from the plurality of tiles for the biomedical image by applying the inference system to the plurality of tiles. The subset of tiles may have highest scores. Each score may indicate a likelihood that the corresponding tile includes a feature indicative of the presence of the first condition. The feature classifier may determine a classification result for the biomedical image by applying the aggregation system to the selected subset of tiles. The classification result may indicate whether the biomedical includes the presence of the first condition or the lack of the first condition or the presence of the second condition.

[0013] In some embodiments, the feature classifier may generate the plurality of tiles by using one of a plurality of defined magnification factors onto the biomedical image. In some embodiments, the feature classifier may determine, for each tile of the plurality of tiles of the biomedical image, by applying the inference system to the tile, a score indicating the likelihood that the tile includes features indicative of the presence of the first condition. In some embodiments, the feature classifier may select a predefined number of tiles from the plurality of tiles having the highest scores to form the subset of tiles. In some embodiments, the feature classifier may input the selected subset of tiles in sequential order or in random order in to the aggregation system to determine the classification result for the biomedical image.

[0014] In some embodiments, the system may include a model trainer executable on the one or more processors. The model trainer may generate a plurality of tiles from each biomedical image of the plurality of biomedical images. The plurality of biomedical images may include a first biomedical image having a first label indicating the presence of the first condition and a second biomedical image having a second label indicating a lack of the presence of the first condition or the presence of the second condition. The model trainer may select a first subset of tiles from the plurality of tiles of the first biomedical image having the highest scores among the plurality of tiles from the first biomedical image. The model trainer may select a second subset of tiles from the plurality of tiles of the second biomedical image having the highest scores among the plurality of tiles from the second biomedical image. The model trainer may modify the inference system based on a first comparison between the scores of the first subset of tiles and a first value corresponding to the presence of the first condition and a second comparison between the scores of the second subset of tiles and a second value corresponding to the lack of the presence of the first condition or the presence of the second condition.

[0015] In some embodiments, the system may include a model trainer executable on the one or more processors. The model trainer may determine a first error metric based on the first comparison between the scores of the first subset of tiles and a first value corresponding to the presence of the first condition. The model trainer may determine a second error metric based on the second comparison between the scores of the second subset of tiles and a second value corresponding to the lack of the presence of the first condition or the presence of the second condition. The model trainer may modify at least one parameter of the inference system based on the first error metric and the second error metric.

[0016] In some embodiments, the system may include a model trainer executable on the one or more processors. The model trainer may identify a subset of tiles from the plurality of tiles of a second biomedical image of the plurality of biomedical images, the second biomedical image having a label indicating the presence of a first condition. The model trainer may determine a second classification result for the second biomedical image by applying the aggregation system to the subset of tiles identified from the second biomedical image. The classification result may indicate one of the second biomedical image as containing at least one feature corresponding to the presence of the first condition or the second biomedical image as lacking any features corresponding to the lack the presence of the first condition or the presence of the second condition. The model trainer may modify the aggregation system based on a comparison between the second classification result and the label for the second biomedical image.

[0017] In some embodiments, the system may include a model trainer executable on the one or more processors. The model trainer may determine, subsequent to modifying the inference system, that one or more parameters of the inference system have converged relative the one or more parameters prior to the modification of the inference system. The model trainer may initiate training of the aggregation mode, responsive to the determination that the one or more parameters of the inference has converged.

BRIEF DESCRIPTION OF THE DRAWINGS

[0018] The foregoing and other objects, aspects, features, and advantages of the disclosure will become more apparent and better understood by referring to the following description taken in conjunction with the accompanying drawings, in which:

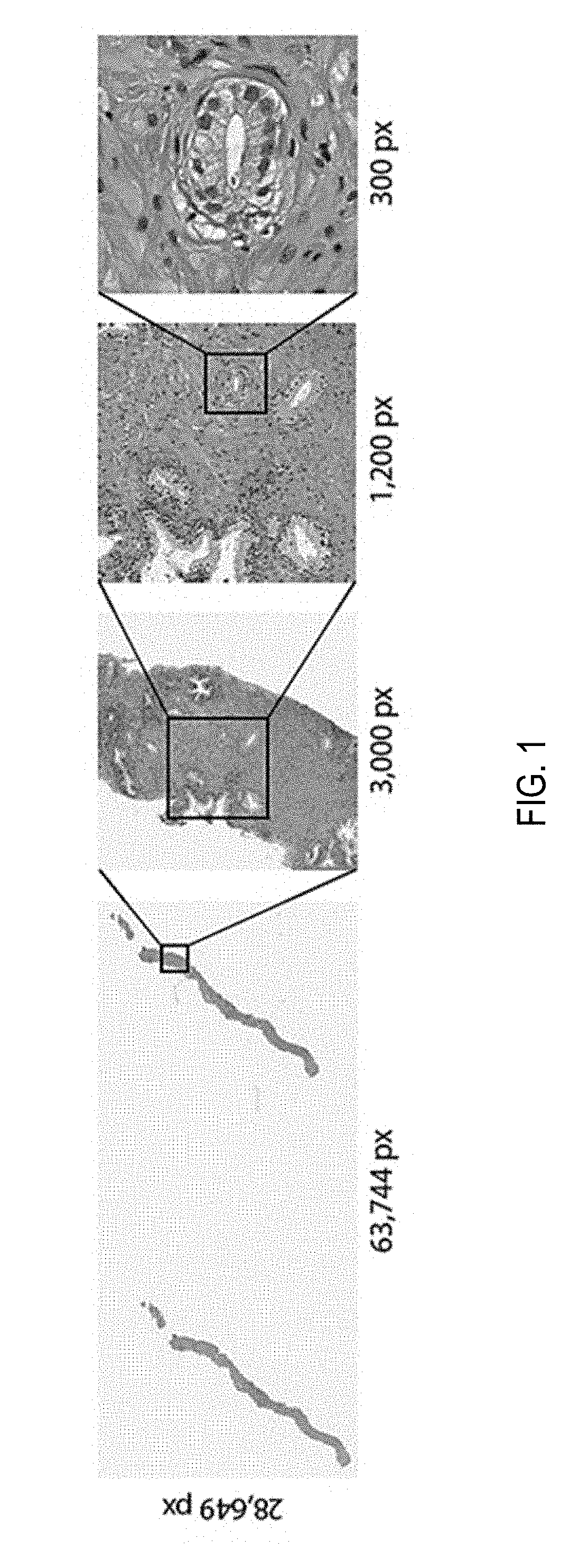

[0019] FIG. 1 depicts an example of a whole slide image (WSI) at various magnification factors;

[0020] FIG. 2 depicts a bar graph of splitting of a biopsy dataset;

[0021] FIG. 3 depicts a schema of performing multiple instance learning for classification of tumorous features on whole slide images;

[0022] FIG. 4 depicts line graphs indicating losses and validation errors;

[0023] FIG. 5 depicts an example of a whole slide image with slide tiles at various magnification factors;

[0024] FIGS. 6A-C each depict graphs of statistics on compositions of bags for training datasets;

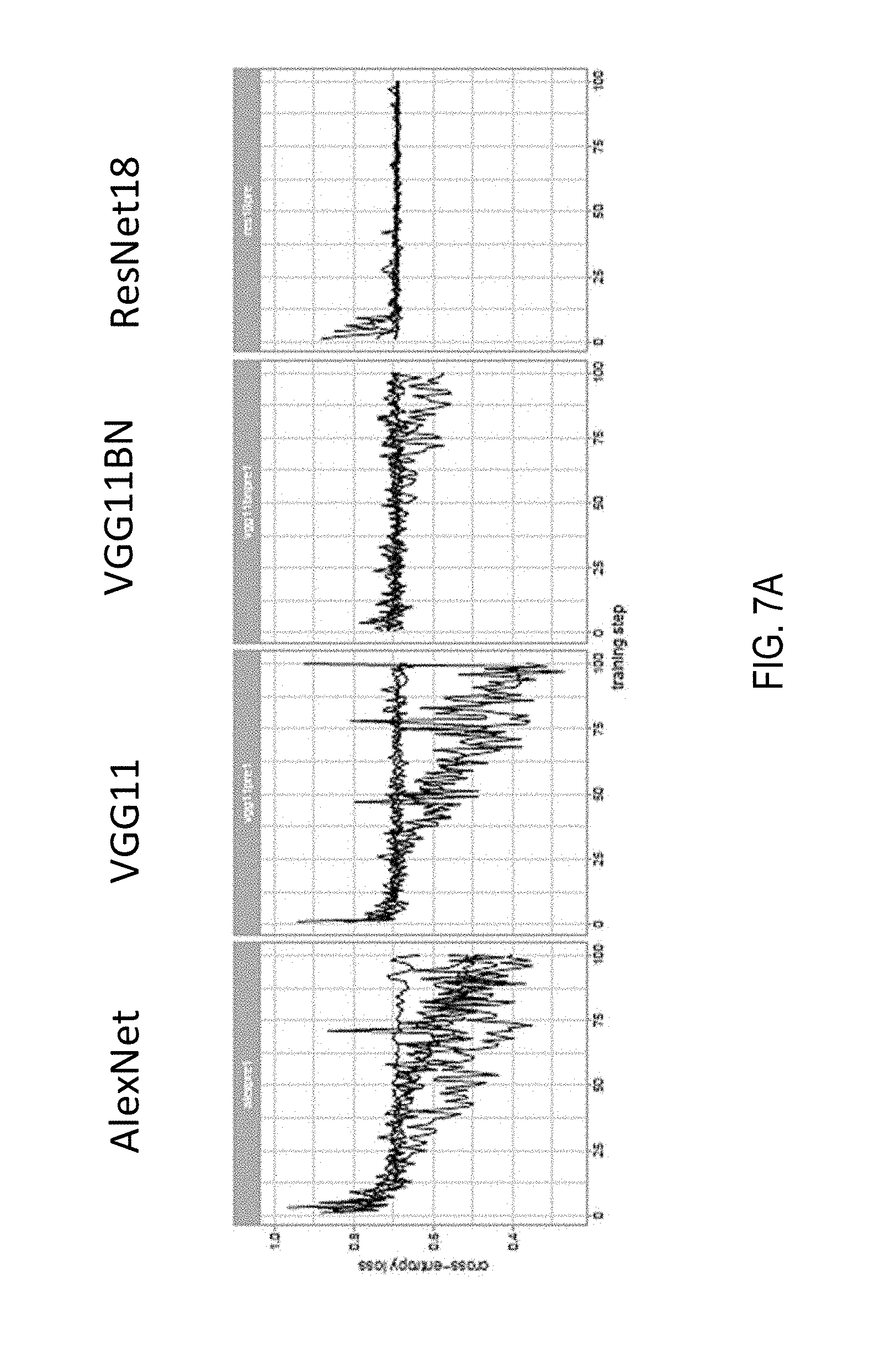

[0025] FIGS. 7A and 7B each depict graphs of performance of models in experiments;

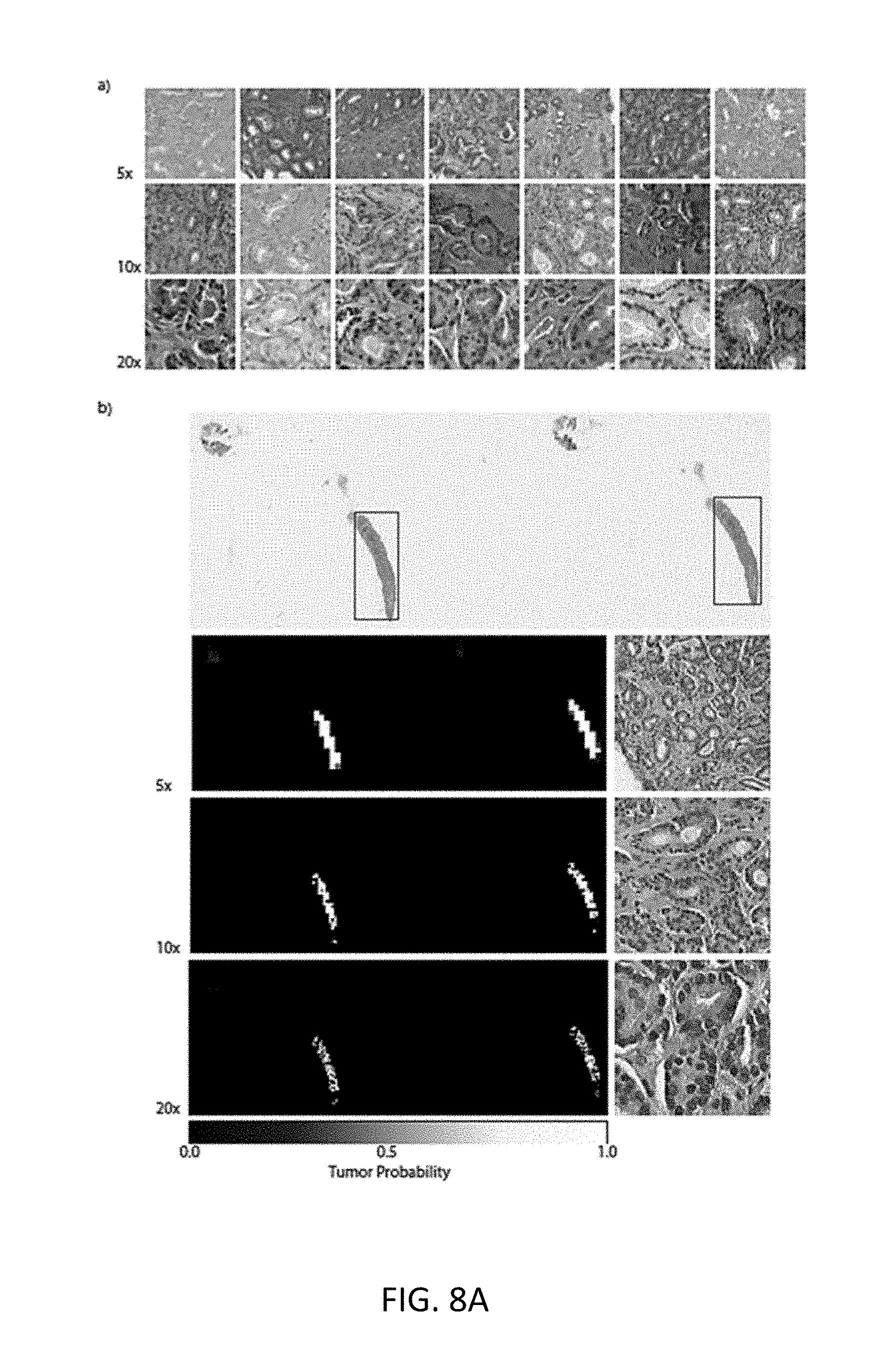

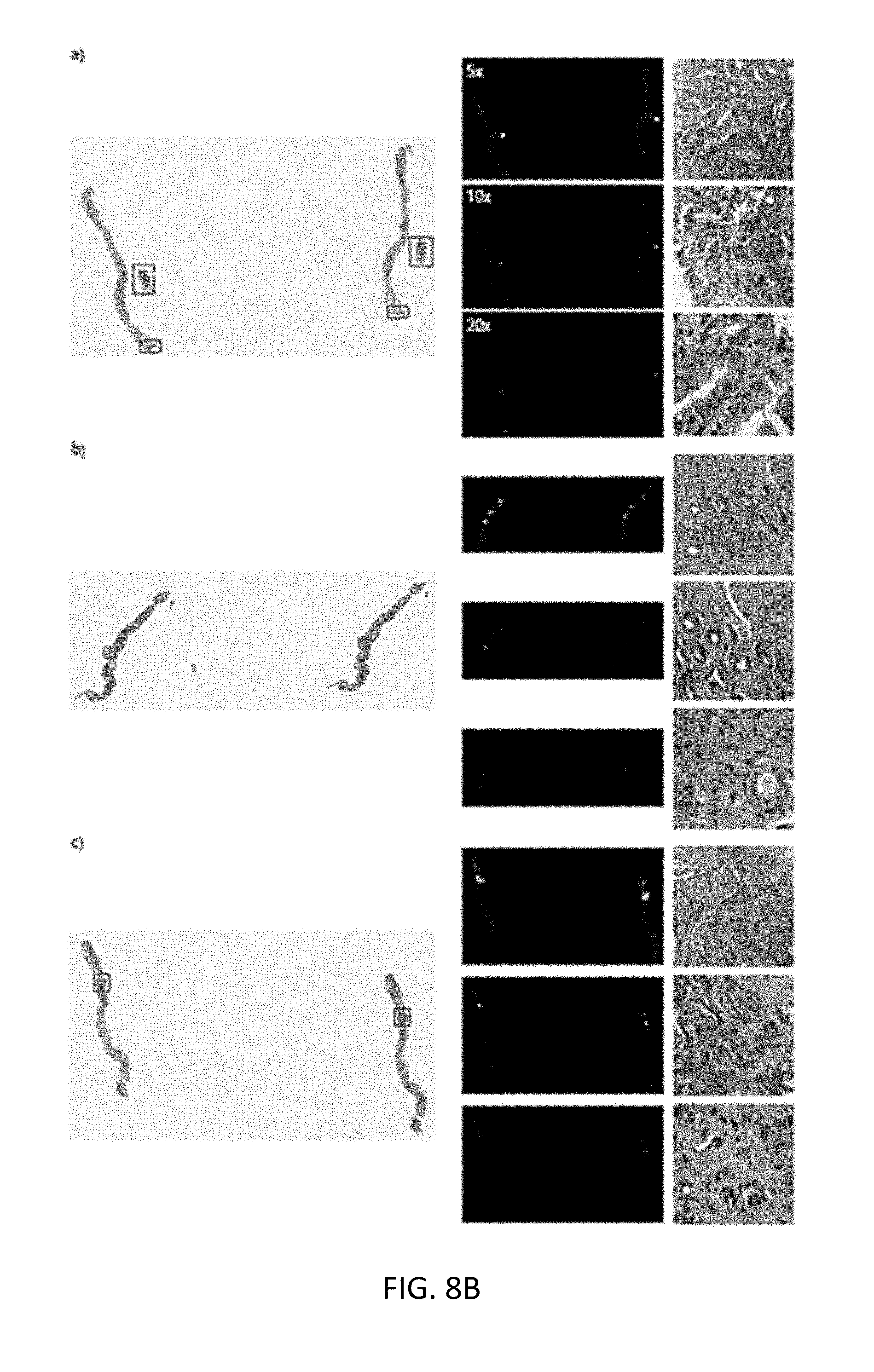

[0026] FIGS. 8A-C each depicts whole slide images with selections of features thereon using the multiple-instance learning trained model;

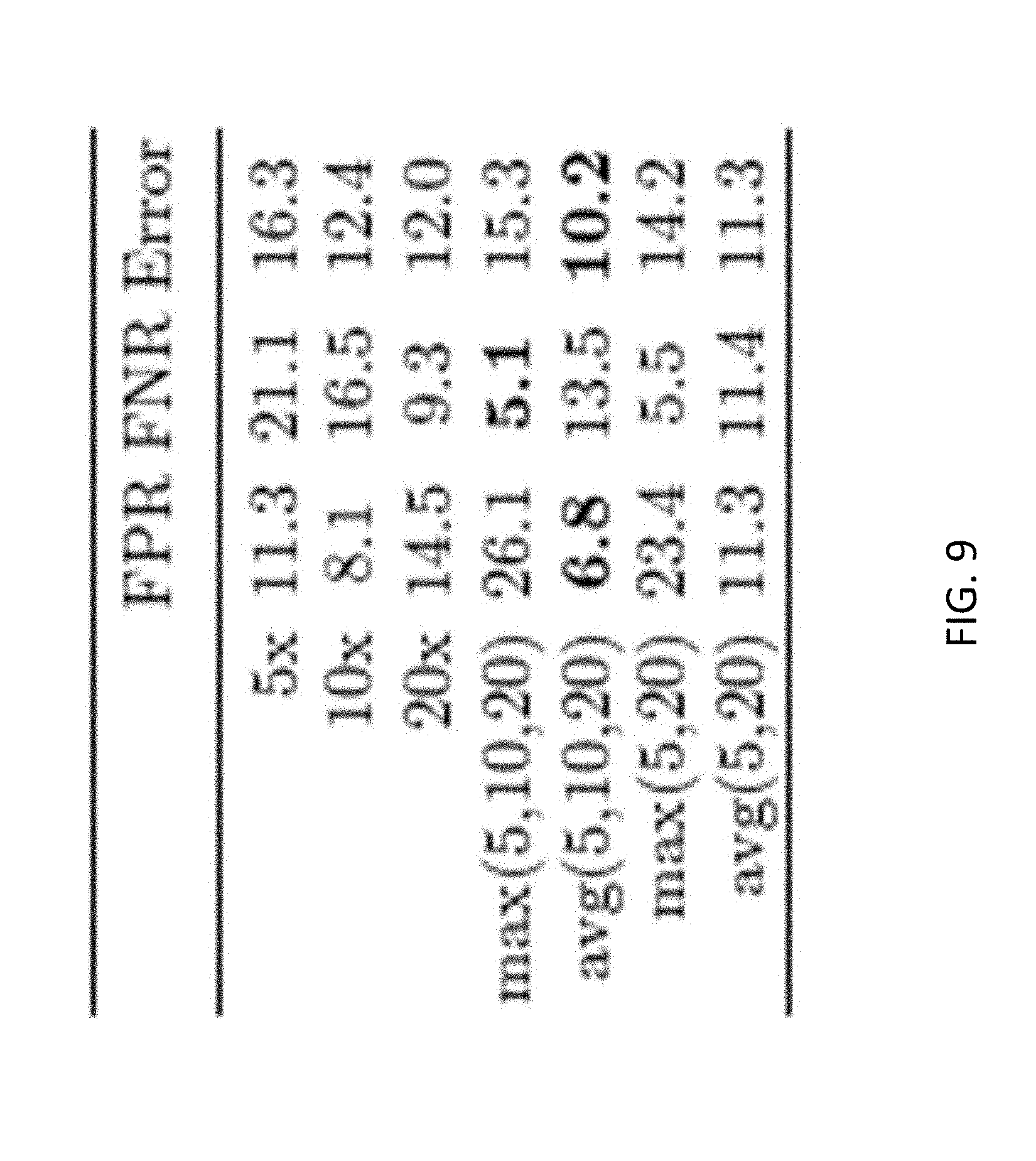

[0027] FIG. 9 depicts a table listing a performance comparison between models;

[0028] FIGS. 10A and 10B each depict line graphs of receiver operating characteristics (ROC) of the models;

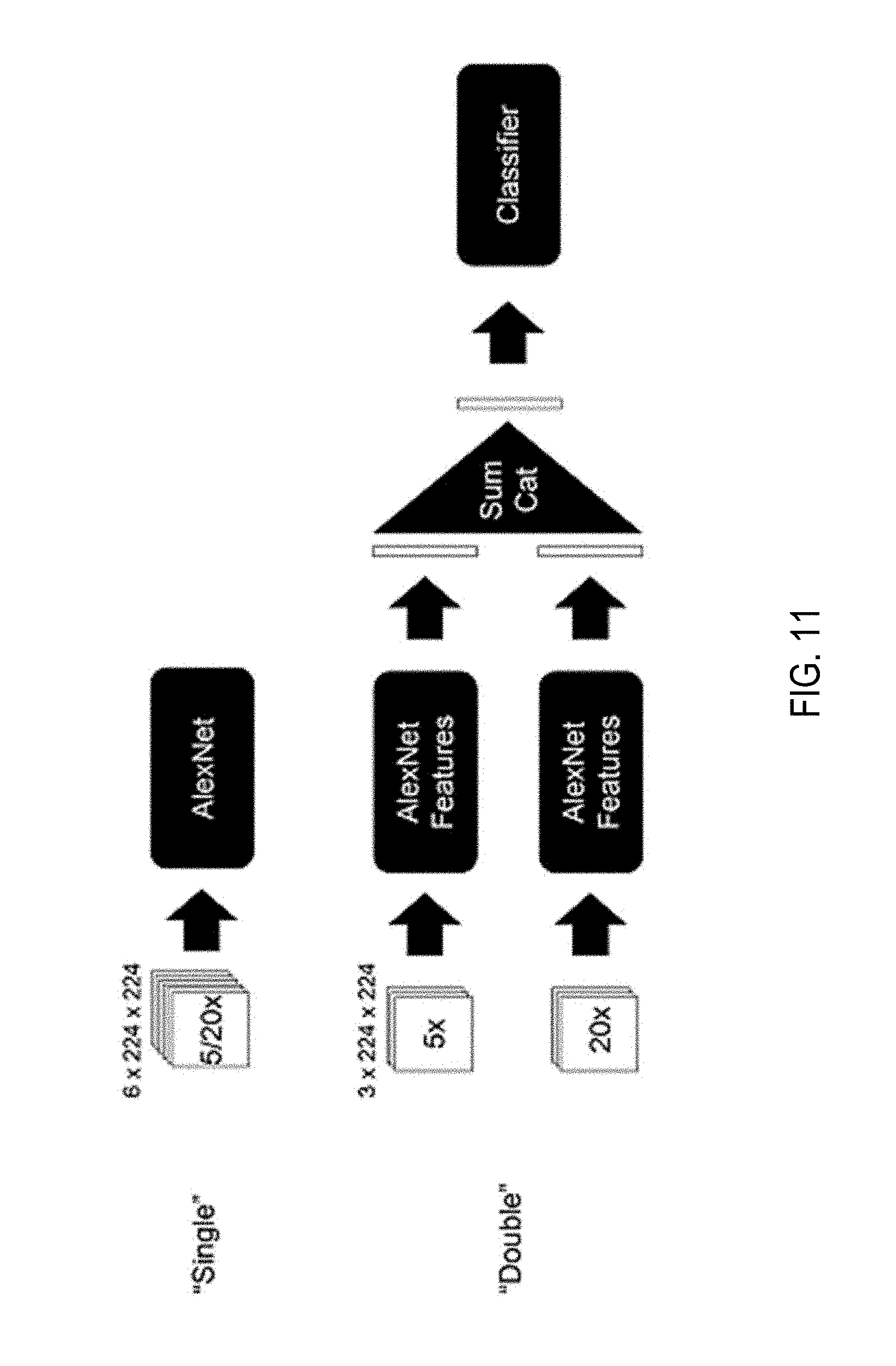

[0029] FIG. 11 depicts a schema of a model architecture multi-scale multiple instance learning experiments;

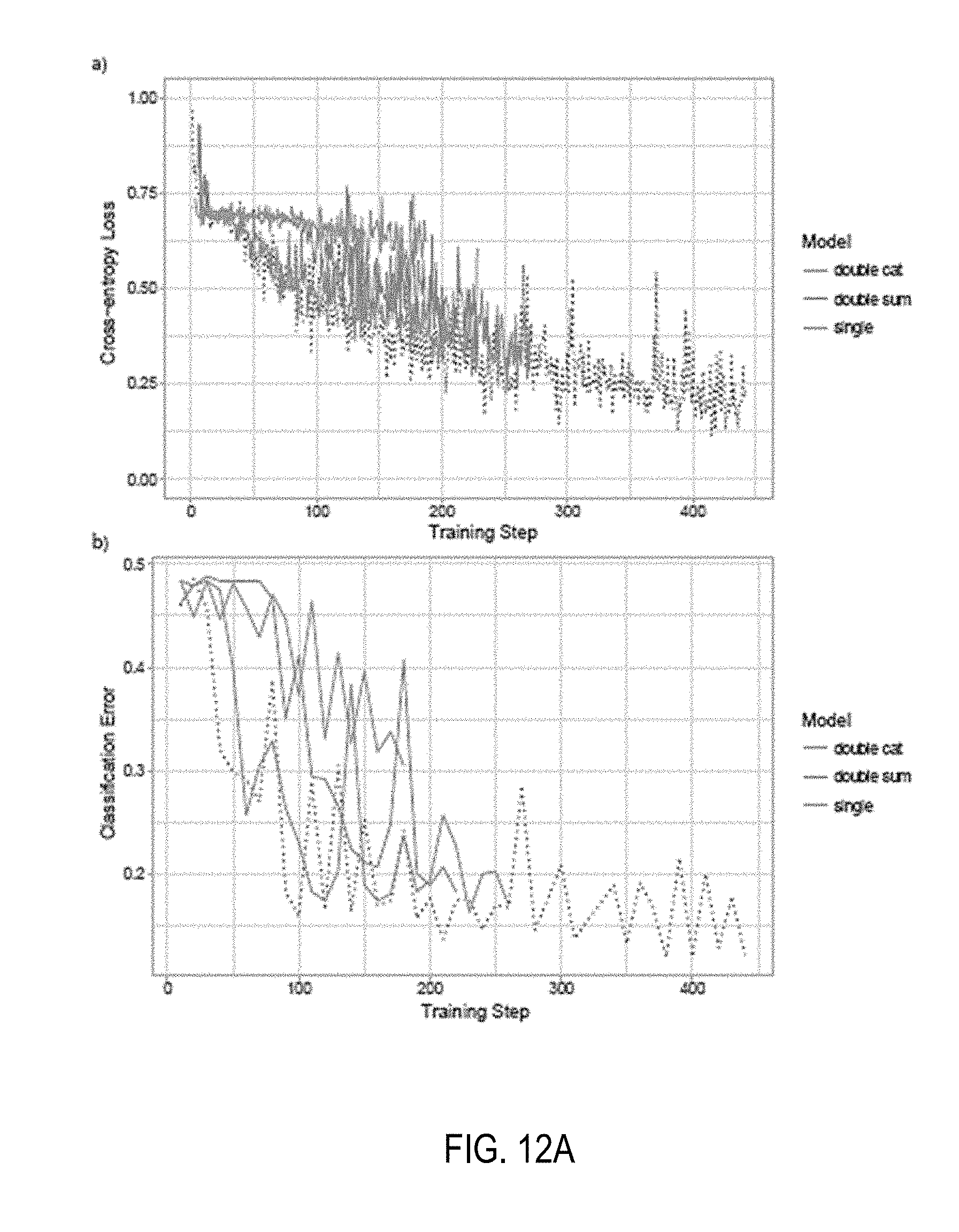

[0030] FIGS. 12A and 12B each depict line graphs showing training loss and classification error of various models;

[0031] FIG. 13 depicts confusion matrices for models on test sets;

[0032] FIG. 14 depicts line graphs of dataset size for classification performance

[0033] FIG. 15 depicts a visualization of feature space with principle component analysis (PCA) in scatter plot;

[0034] FIG. 16 depicts line graphs of receiver operating characteristics (ROC) of different models;

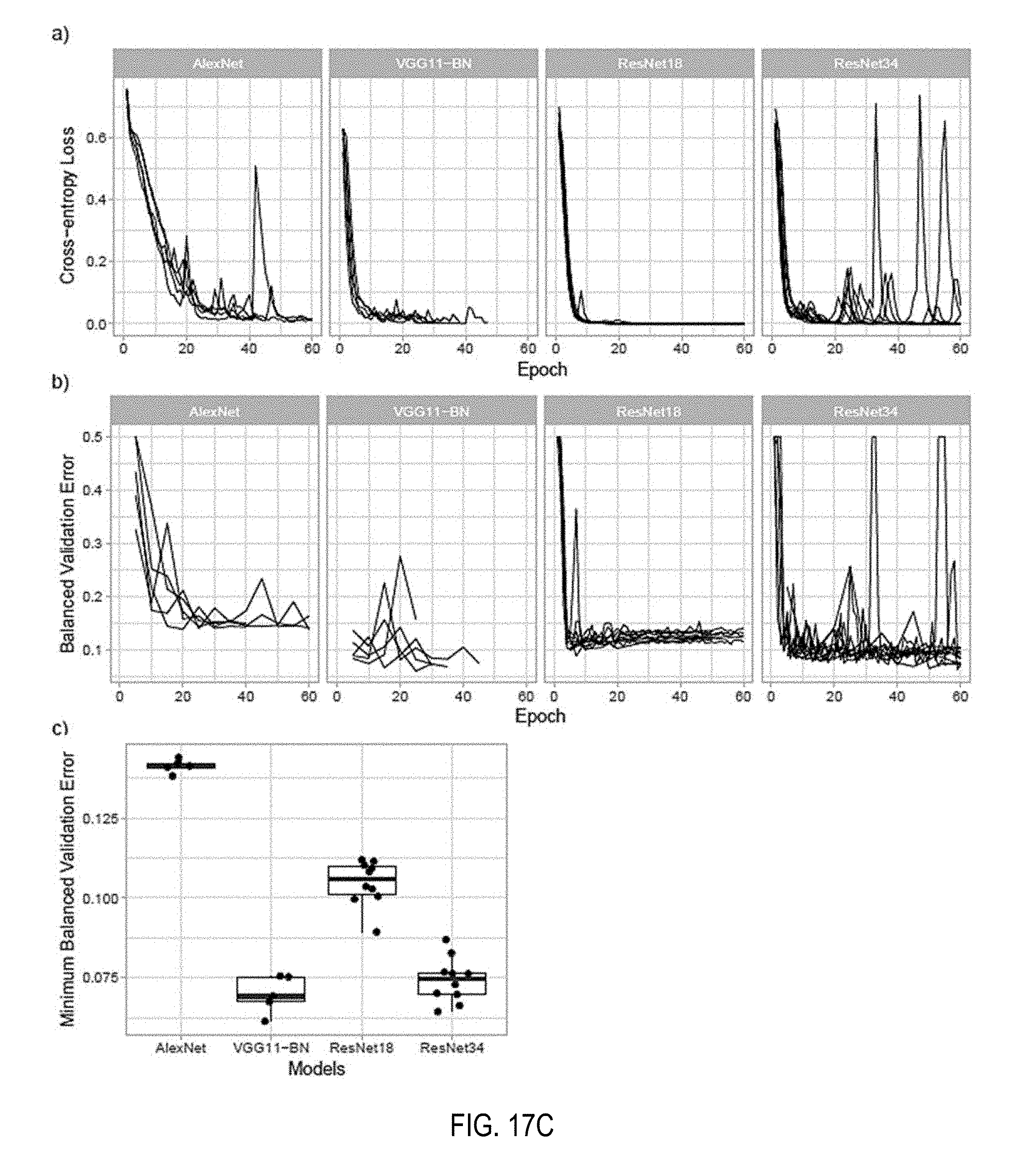

[0035] FIGS. 17A-E each depicts line graphs of comparisons of different models at various magnification factors on the whole slide images;

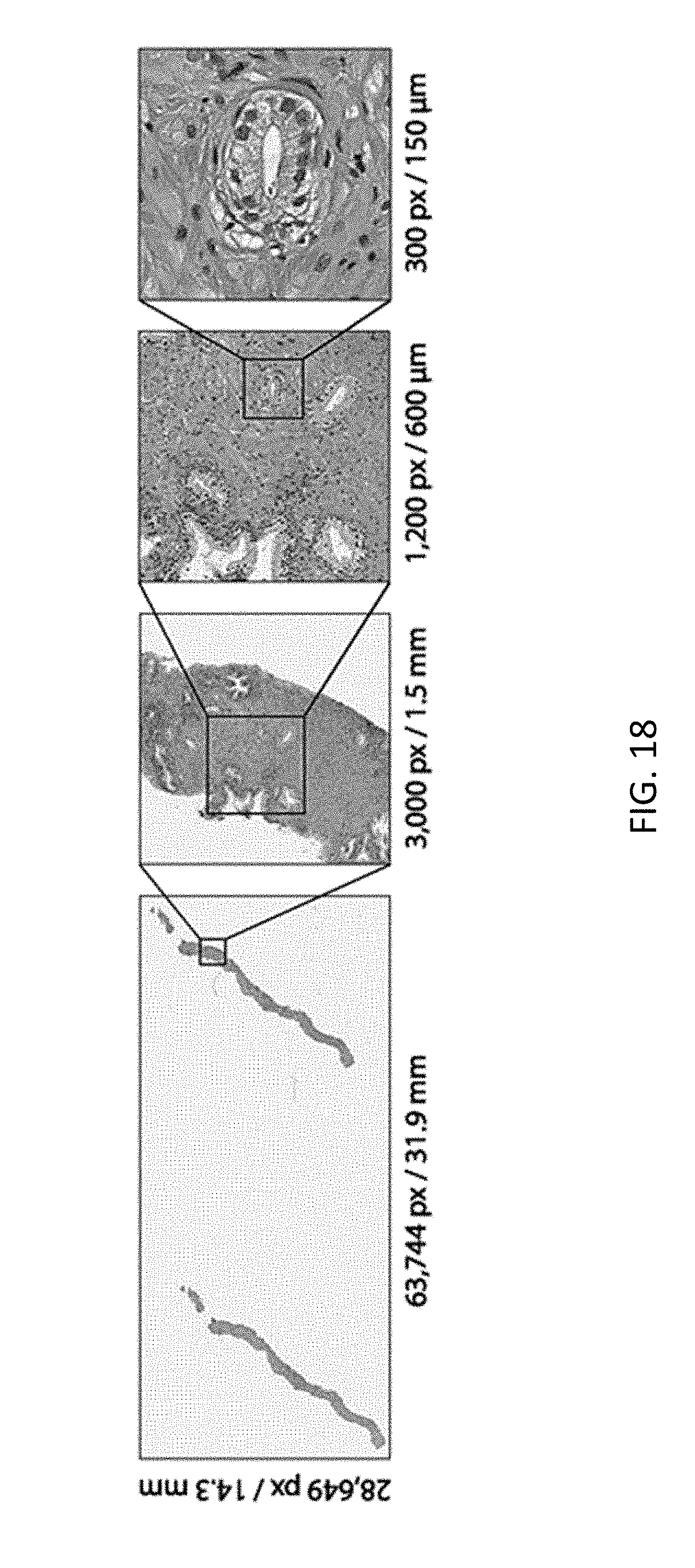

[0036] FIG. 18 depicts an example whole slide image for prostate cancer biopsy;

[0037] FIG. 19 depicts a block diagram of schema of an architecture for multiple instance learning;

[0038] FIG. 20 depicts line graphs of validation error versus a number of whole slide images in training data;

[0039] FIG. 21 depicts a representation visualization to classify tiles;

[0040] FIG. 22 depicts line graphs showing performance of various classification tasks;

[0041] FIG. 23 depicts examples of classification results using the model;

[0042] FIG. 24 depicts bar graphs juxtaposing the performance of different models;

[0043] FIG. 25 depicts graphs of decision-support in clinical practice using the model;

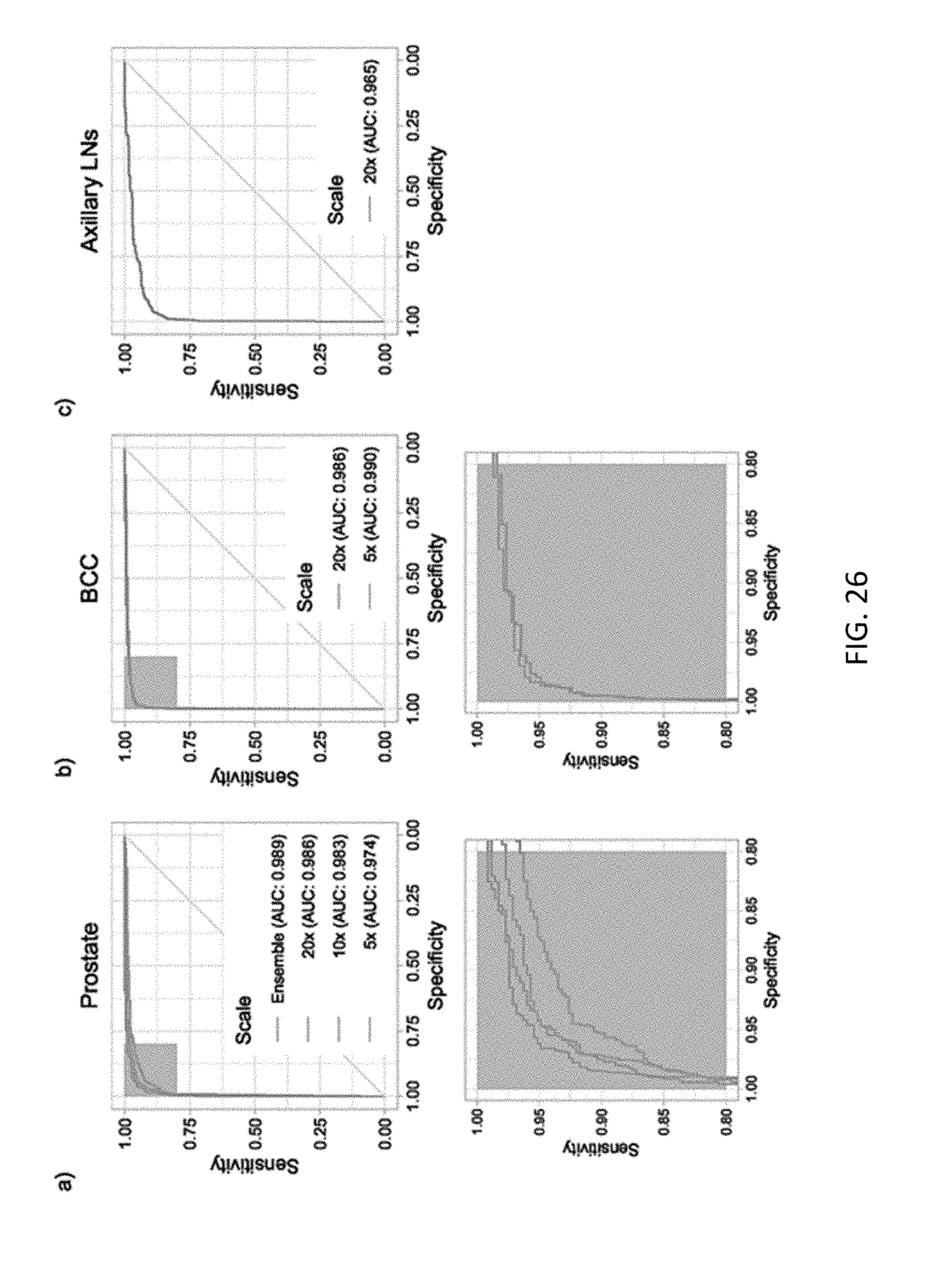

[0044] FIG. 26 depicts line graphs of classification performance for different cancer sets;

[0045] FIG. 27 depicts t-Distributed Stochastic Neighbor Embedding (t-SNE) visualization of node models;

[0046] FIG. 28 depicts line graphs of performance of model at multiple scales;

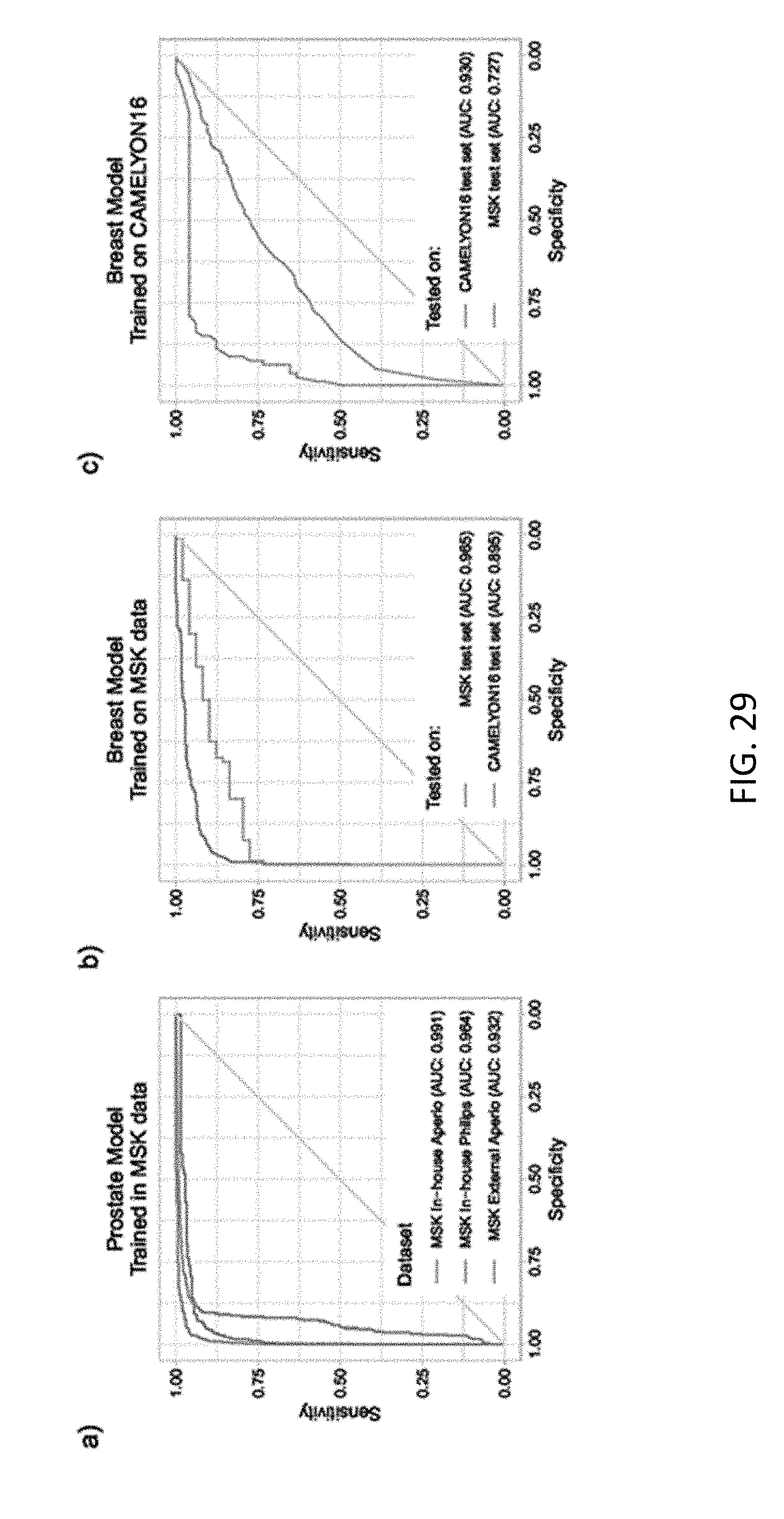

[0047] FIG. 29 depicts line graphs of receiver operating characteristic (ROC) curves of generalization experiments;

[0048] FIG. 30 depicts lien graphs of decision support with different models;

[0049] FIG. 31 depicts example slide tiled grid with no overlap;

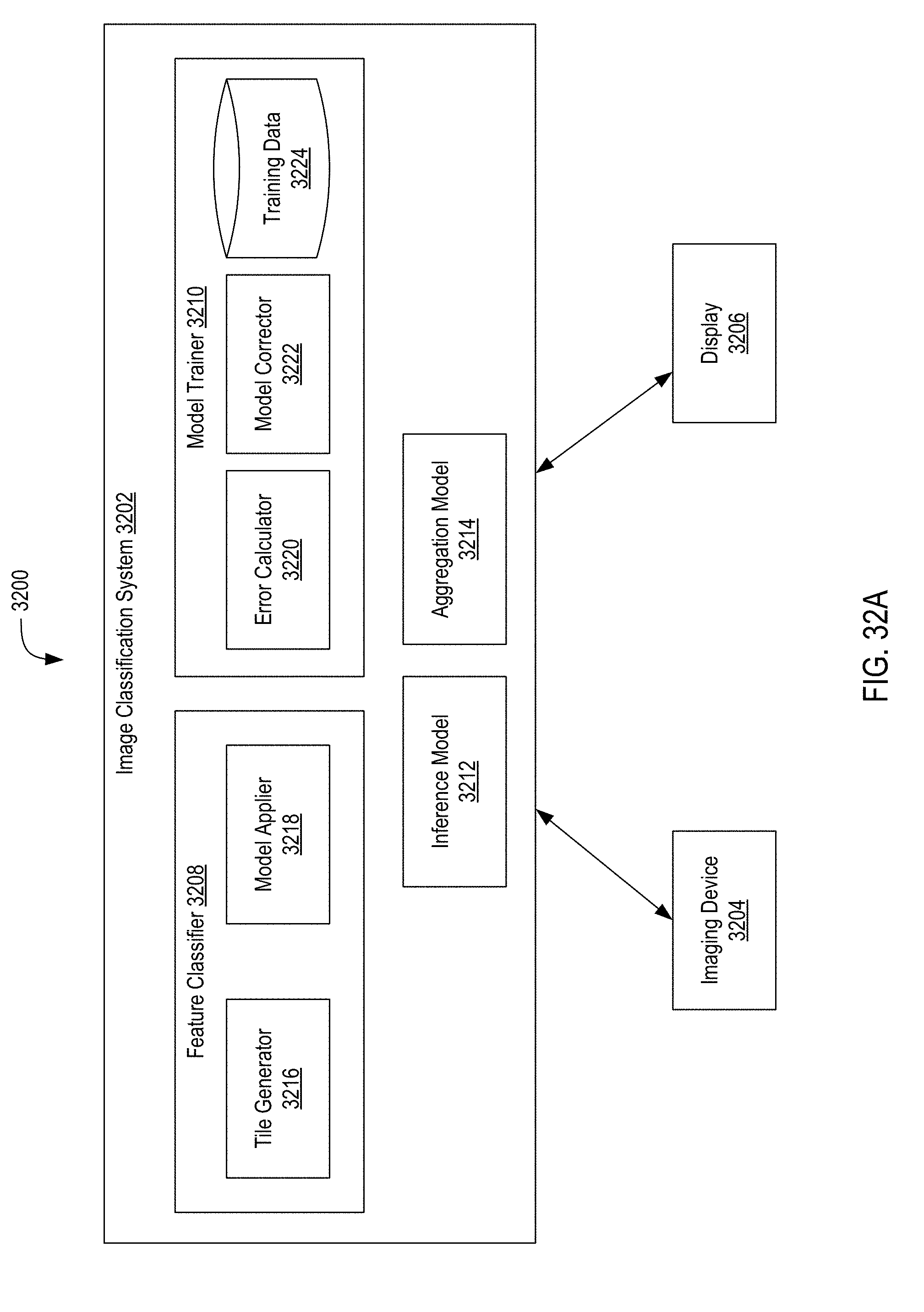

[0050] FIG. 32A depicts a block diagram of a system for classifying biomedical images and training models for classifying biomedical images using multiple-instance learning;

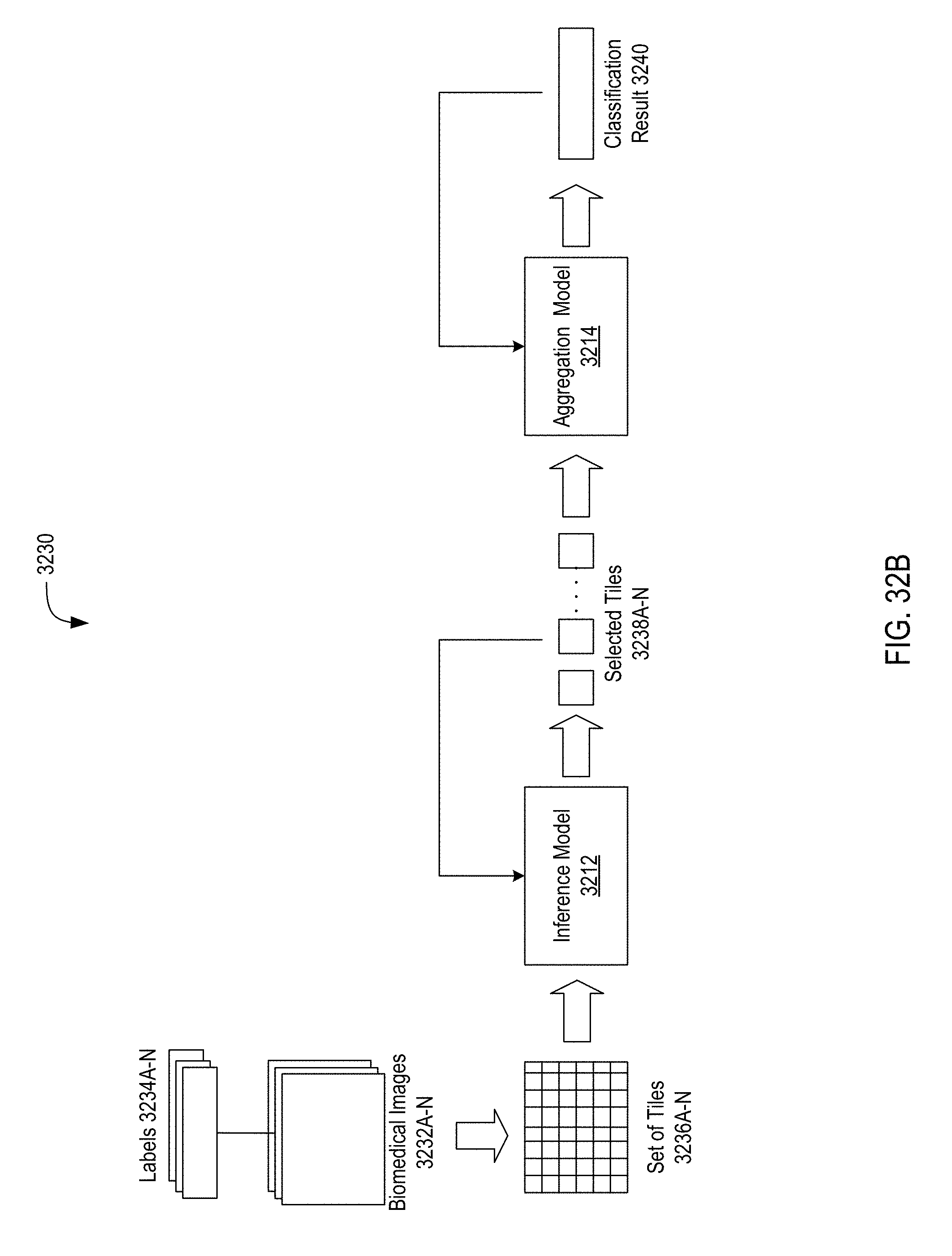

[0051] FIG. 32B depicts a process diagram of a system for classifying biomedical images and training models for classifying biomedical images using multiple-instance learning;

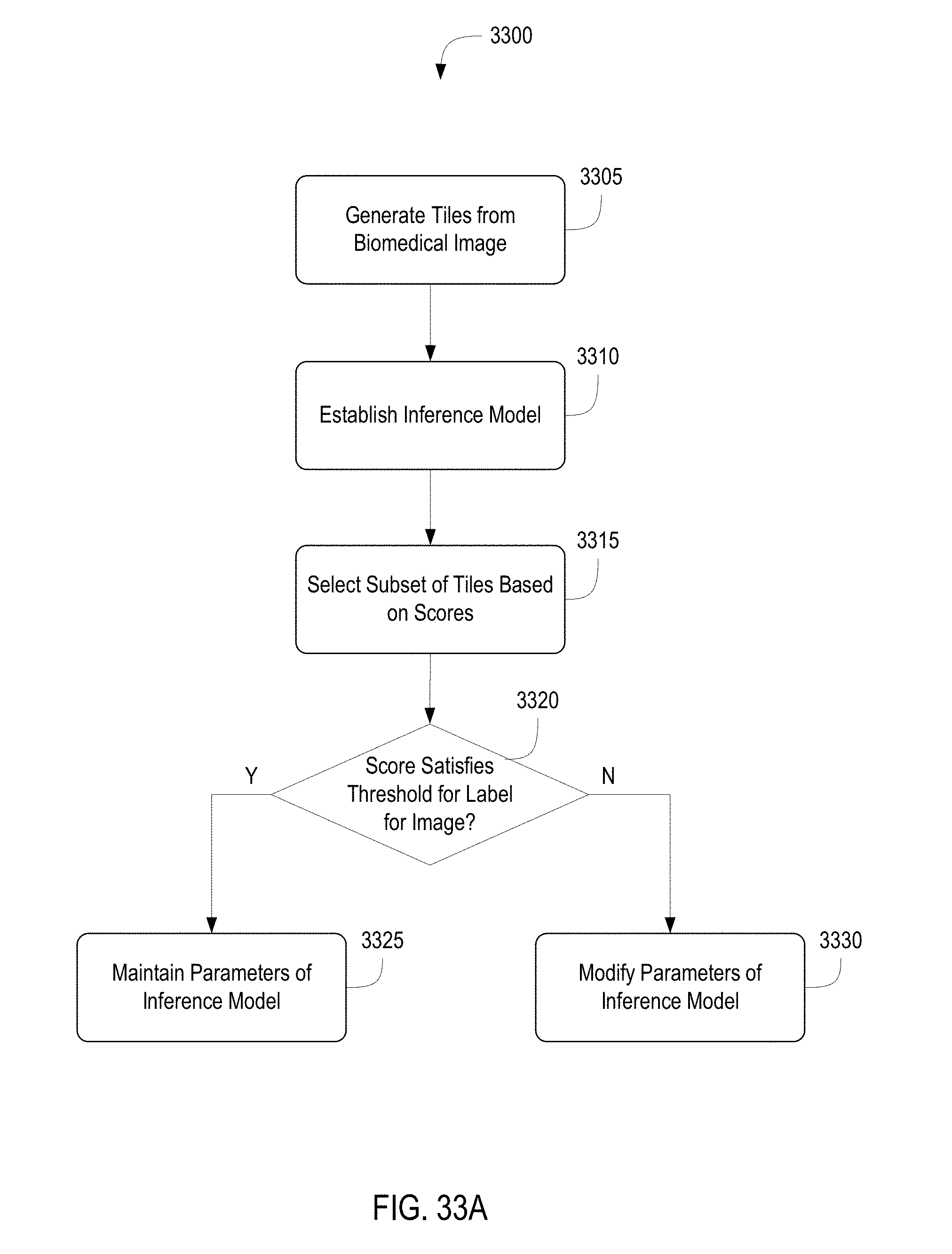

[0052] FIG. 33A depicts a flow diagram of a method of training models for classifying biomedical images using multiple-instance learning;

[0053] FIG. 33B depicts a flow diagram of a method of training models for classifying biomedical images using multiple-instance learning;

[0054] FIG. 33C depicts a flow diagram of a method of classifying biomedical images;

[0055] FIG. 34A is a block diagram depicting an embodiment of a network environment comprising client devices in communication with server devices;

[0056] FIG. 34B is a block diagram depicting a cloud computing environment comprising client devices in communication with a cloud service provider; and

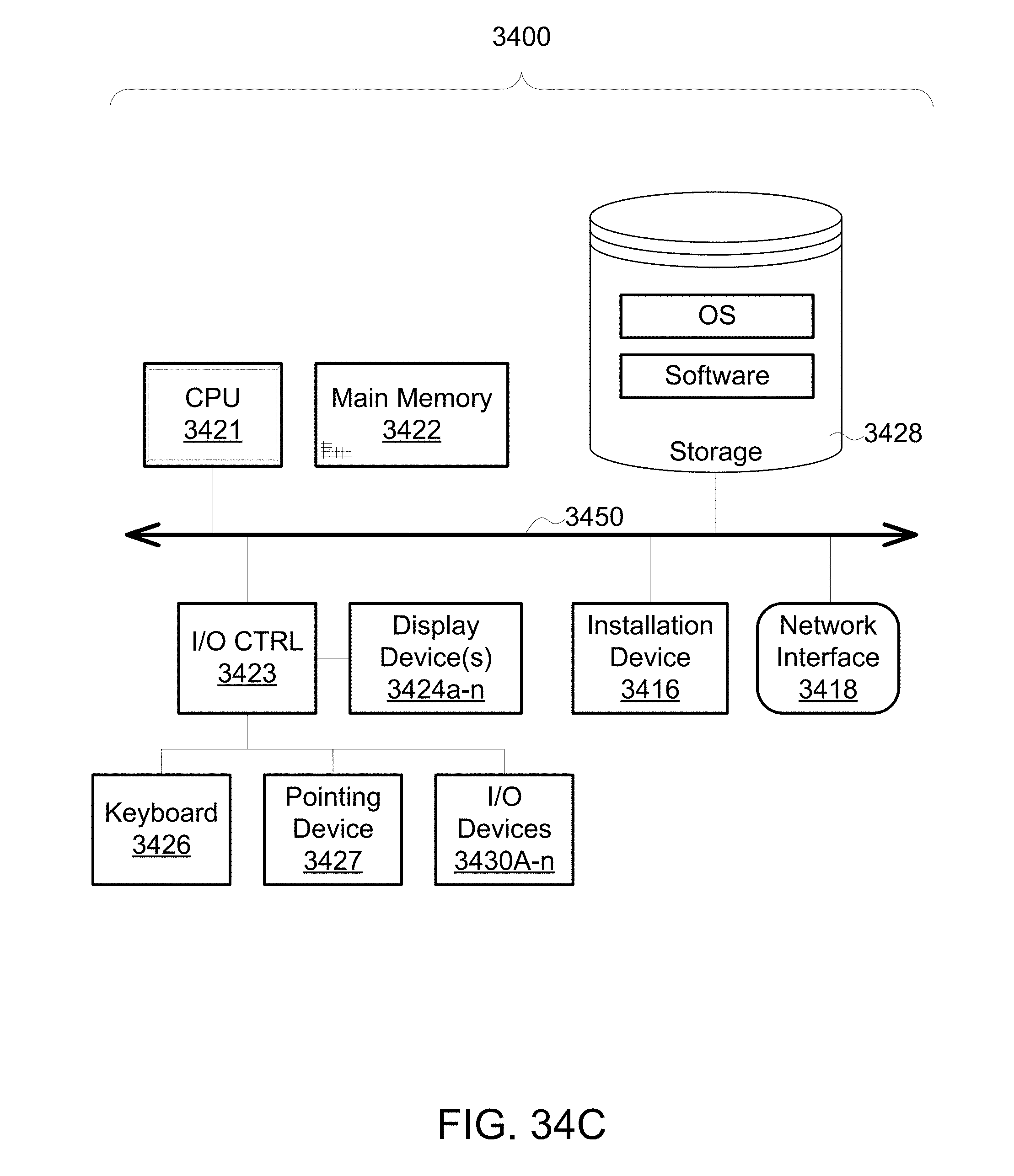

[0057] FIGS. 34C and 34D are block diagrams depicting embodiments of computing devices useful in connection with the methods and systems described herein

DETAILED DESCRIPTION

[0058] Following below are more detailed descriptions of various concepts related to, and embodiments of, inventive systems and methods for processing immobilization molds. It should be appreciated that various concepts introduced above and discussed in greater detail below may be implemented in any of numerous ways, as the disclosed concepts are not limited to any particular manner of implementation. Examples of specific implementations and applications are provided primarily for illustrative purposes.

[0059] Section A describes Terabyte-Scale Deep Multiple Instance Learning for Classification and Localization in Pathology.

[0060] Section B describes systems and methods of using two-dimensional slicing in training an encoder-decoder model for reconstructing biomedical images and applying the encoder-decoder model to reconstruct biomedical images.

[0061] Section C describes systems and methods of classifying biomedical images and training models for classifying biomedical images using multiple-instance learning.

[0062] Section D describes a network environment and computing environment which may be useful for practicing various computing related embodiments described herein.

[0063] It should be appreciated that various concepts introduced above and discussed in greater detail below may be implemented in any of numerous ways, as the disclosed concepts are not limited to any particular manner of implementation. Examples of specific implementations and applications are provided primarily for illustrative purposes.

A. Terabyte-Scale Deep Multiple Instance Learning for Classification and Localization in Pathology

1. Introduction

[0064] For some years there has been a strong push towards the digitization of pathology. The increasing size of available digital pathology data, coupled with the impressive advances that the fields of computer vision and machine learning have made in recent years, make for the perfect combination to deploy decision support systems in the clinic.

[0065] Despite few success stories, translating the achievements of computer vision to the medical domain is still far from solved. The lack of large datasets which are indispensable to learn high capacity classification models has set back the advance of computational pathology. The "CAMELYON16" challenge for metastasis detection contains one of the largest labeled datasets in the field with a total of 400 Whole Slide Images (WSIs). Such an amount of cases is extremely small compared to the millions of instances present in the ImageNet dataset. One widely adopted solution to face the scarcity of labeled examples in pathology is to take advantage of the size of each example. Pathology slides scanned at 20.times. magnification produce image files of several Giga-pixels. About 470 WSIs contain roughly the same number of pixels as the entire ImageNet dataset. By breaking the WSIs into small tiles it is possible to obtain thousands of instances per slide, enough to learn high-capacity models from a few hundred slides. Pixel-level annotations for supervised learning are prohibitively expensive and time consuming, especially in pathology. Some efforts along these lines have achieved state-of-the-art results on CAMELYON16. Despite the success on these carefully crafted datasets, the performance of these models hardly transfers to the real life scenario in the clinic because of the huge variance in real-world samples that is not captured by these small datasets.

2. Summary

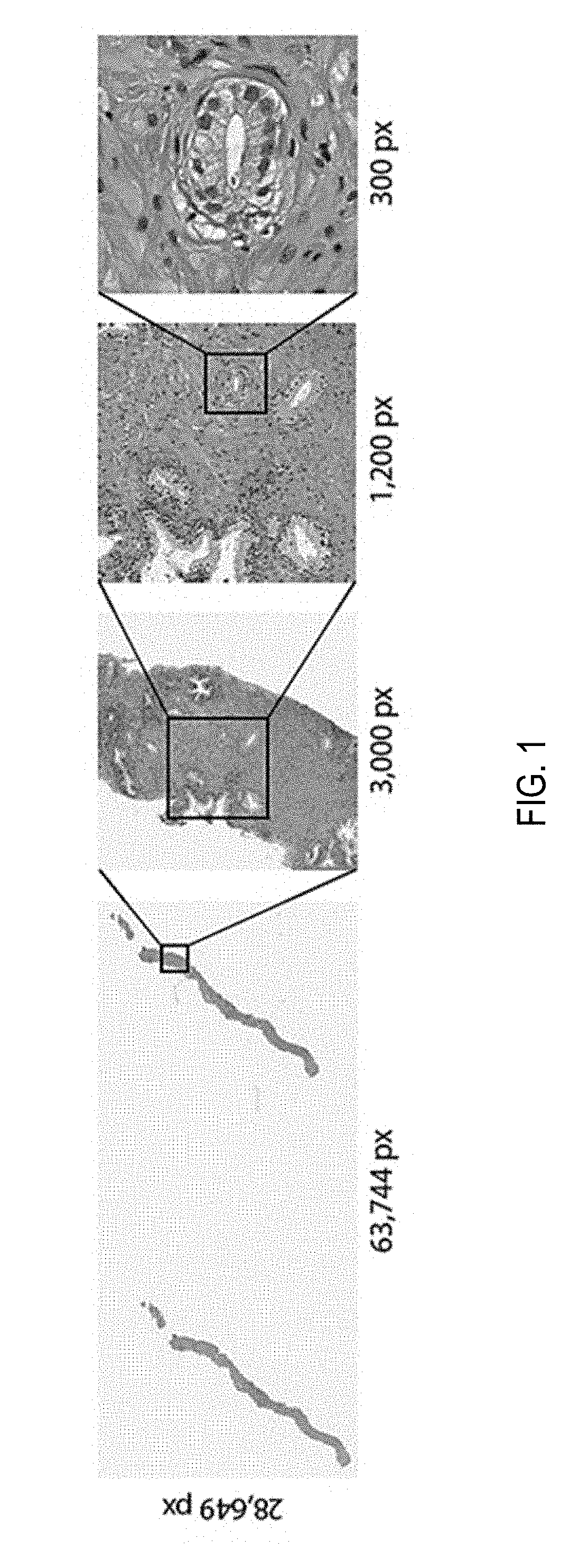

[0066] In summary, until now it was not possible to train high-capacity models at scale due to the lack of large WSI datasets. A dataset of unprecedented size in the field of computational pathology has been gathered. The data set includes over 12,000 slides from prostate needle biopsies, two orders of magnitude larger than most datasets in the field and with roughly the same number of pixels of 25 ImageNet datasets. Whole slide prostate cancer classification was chosen as a representative one in computational pathology due to its medical relevance and its computational difficulty. Prostate cancer is expected to be the leading source of new cancer cases for men and the second most frequent cause of death behind only the cancers of the lung and multiple studies have shown that prostate cancer diagnosis has a high inter- and intra-observer variability. It is important to note that the classification is frequently based on the presence of very small lesions that can comprise just a fraction of 1% of the tissue surface. Referring now to FIG. 1, depicted are whole slide images (WSI) at various magnification factors. Prostate cancer diagnosis is a difficult task. The diagnosis can be based on very small lesions. In the slide above, only about 6 small tumor glands are present. The right most image shows an example tumor gland. Its relation to the entire slide is put in evidence to reiterate the complexity of the task. The figure depicts the difficulty of the task, where only a few tumor glands concentrated in a small region of the slide determine the diagnosis.

[0067] Since the introduction of the Multiple Instance Learning (MIL) framework in 1997 there have been many efforts from both the theory and application of MIL in the computer vision literature. It has been determined that the MIL framework is very applicable to the case of WSI diagnosis and despite its success with classic computer vision algorithms, MIL has never been applied in computational pathology due, in part, to the lack of large WSI datasets. In the present disclosure, advantage is taken of a large prostate needle biopsy dataset. The present disclosure relates to a Deep Multiple Instance Learning (MIL) framework where only the whole slide class is needed to train a convolutional neural network capable of classifying digital slides on a large scale.

[0068] It is the first time pathology digital slide classification is formalized as a weakly supervised learning task under the MIL framework. Few other studies have applied MIL to the medical domain, but none in pathology. For instance, in comparison to pathology, CT slides and mammograms are much smaller and usually each image is used directly in a fully supervised approach. In previous studies applying MIL, MIL is used to enhance the classification accuracy and provide localization of the most characteristic regions in each image.

[0069] Diagnosis prediction of Whole Slide Images (WSI) can be seen as a weakly supervised task where the location of the disease within a positive slide is unknown. In this study the Multiple Instance Learning (MIL) paradigm is used to tackle the weakly supervised task of diagnosis prediction. In MIL, each WSI is a collection of small tiles. Each tile has a certain probability of being of class positive. Only if all tiles in a WSI are negative, the probability of being positive is lower than 0.5, the WSI is negative. According to MIL, learning can be achieved from the top-1 most positive tile in each WSI via a simple cross-entropy loss function and gradient descent optimization.

3. Dataset

[0070] A dataset including 12,160 needle biopsies slides scanned at 20.times. magnification, of which 2,424 are positive and 9,736 are negative is used. The diagnosis was retrieved from the original pathology reports in the Laboratory Information System (LIS) of a medical institution. Exploratory experiments were run on a subset of the full dataset including 1,759 slides split among a training set of 1,300 slides and a validation set of 459 slides. Both splits had a balanced number of positive and negative cases. The large-scale experiments were run on the entire dataset on a 70%-15%-15% random split for training, validation and testing respectively. During training, tiles are augmented on the fly with random horizontal flips and 90.degree. rotations.

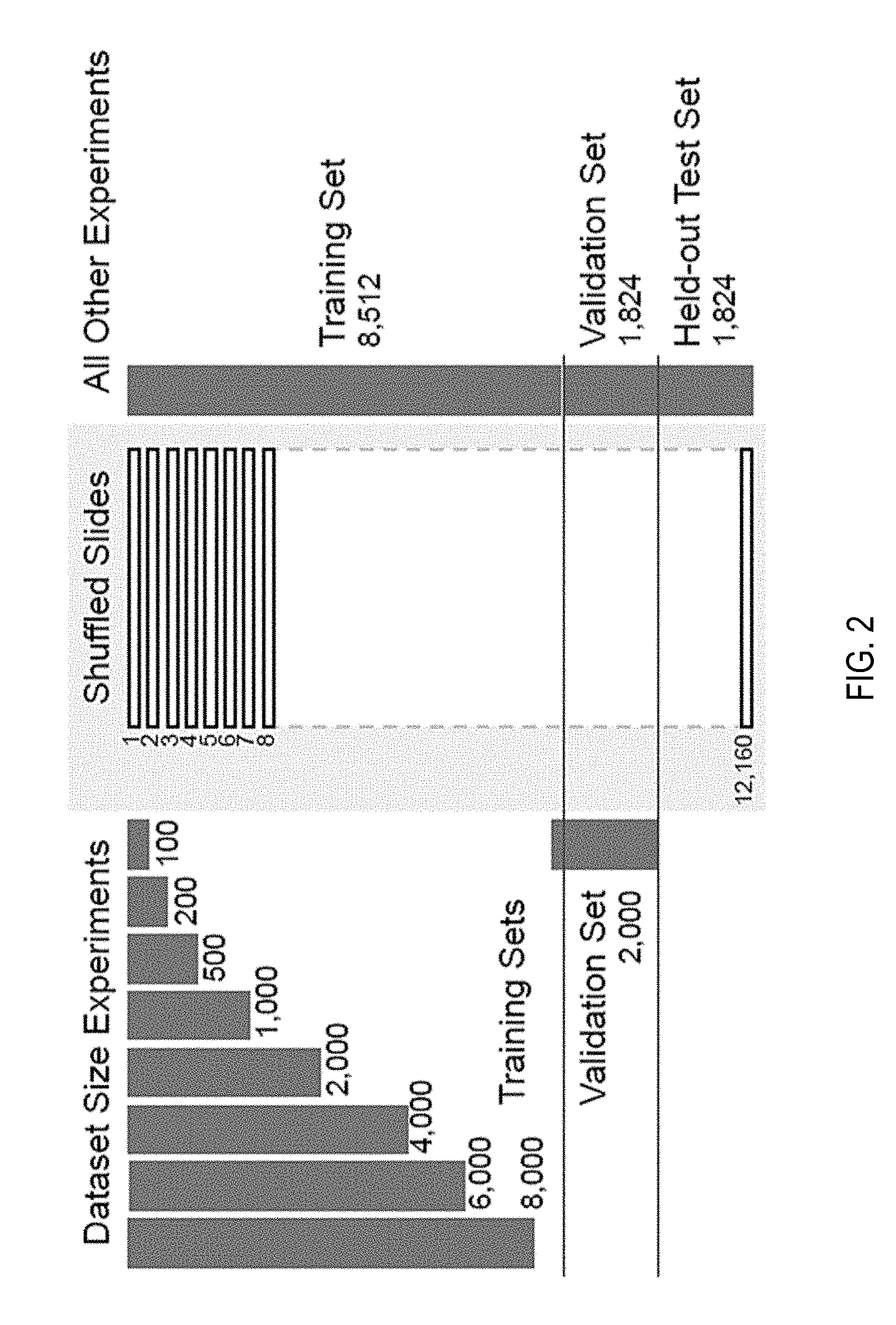

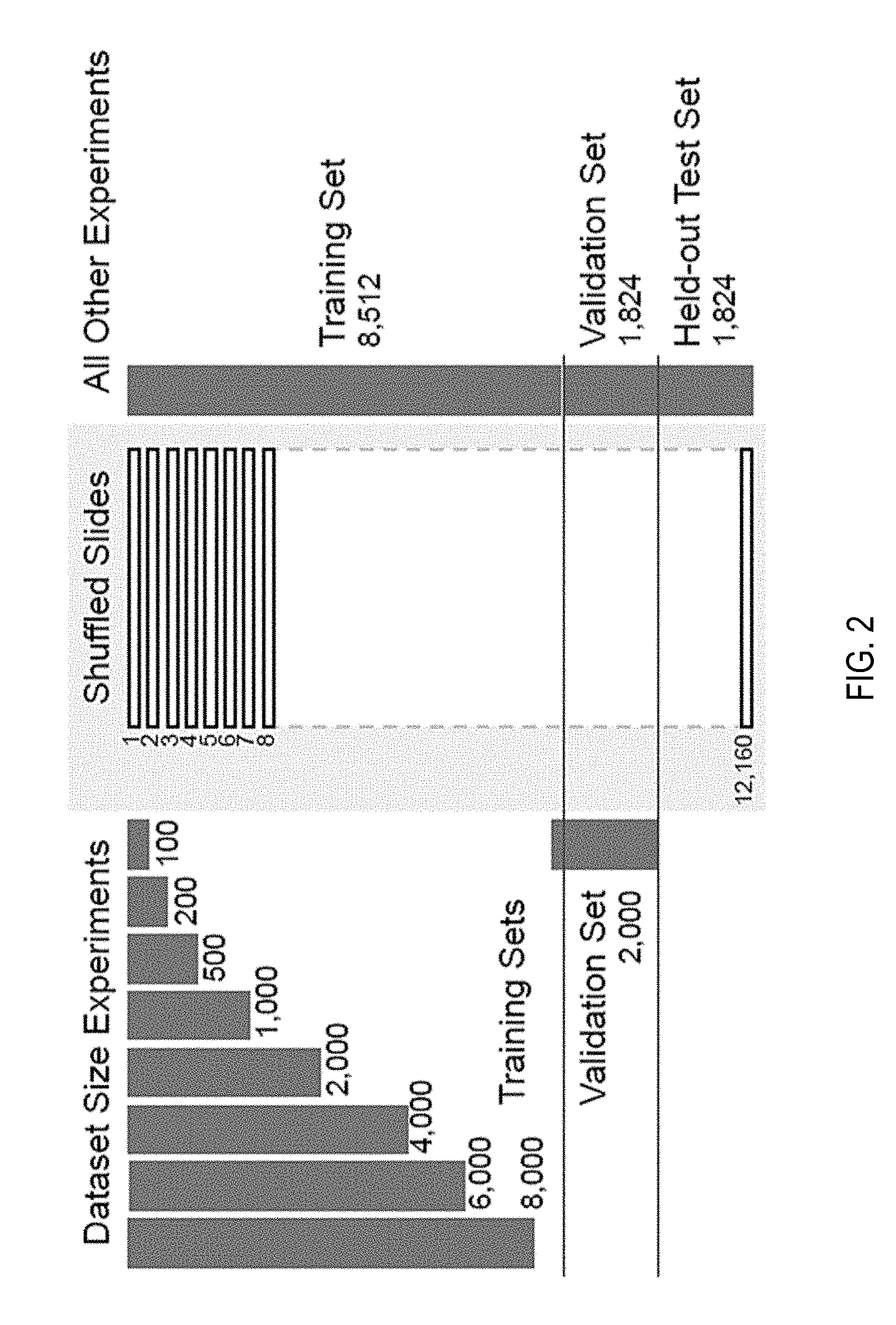

[0071] Referring now to FIG. 2, depicted are bar graphs of splitting of a biopsy dataset. The full dataset was divided into 70-15-15% splits for training, validation, and test for all experiments except the ones investigating dataset size importance. For those, out of the 85% training/validation split of the full dataset, training sets of increasing size were generated along with a common validation set. As visualized, the dataset was randomly split in training (70%), validation (15%) and testing (15%). No augmentation was performed during training. For the "dataset size importance" experiments, explained further in the Experiments section, a set of slides from the above mentioned training set were drawn to create training sets of different sizes.

4. Methods

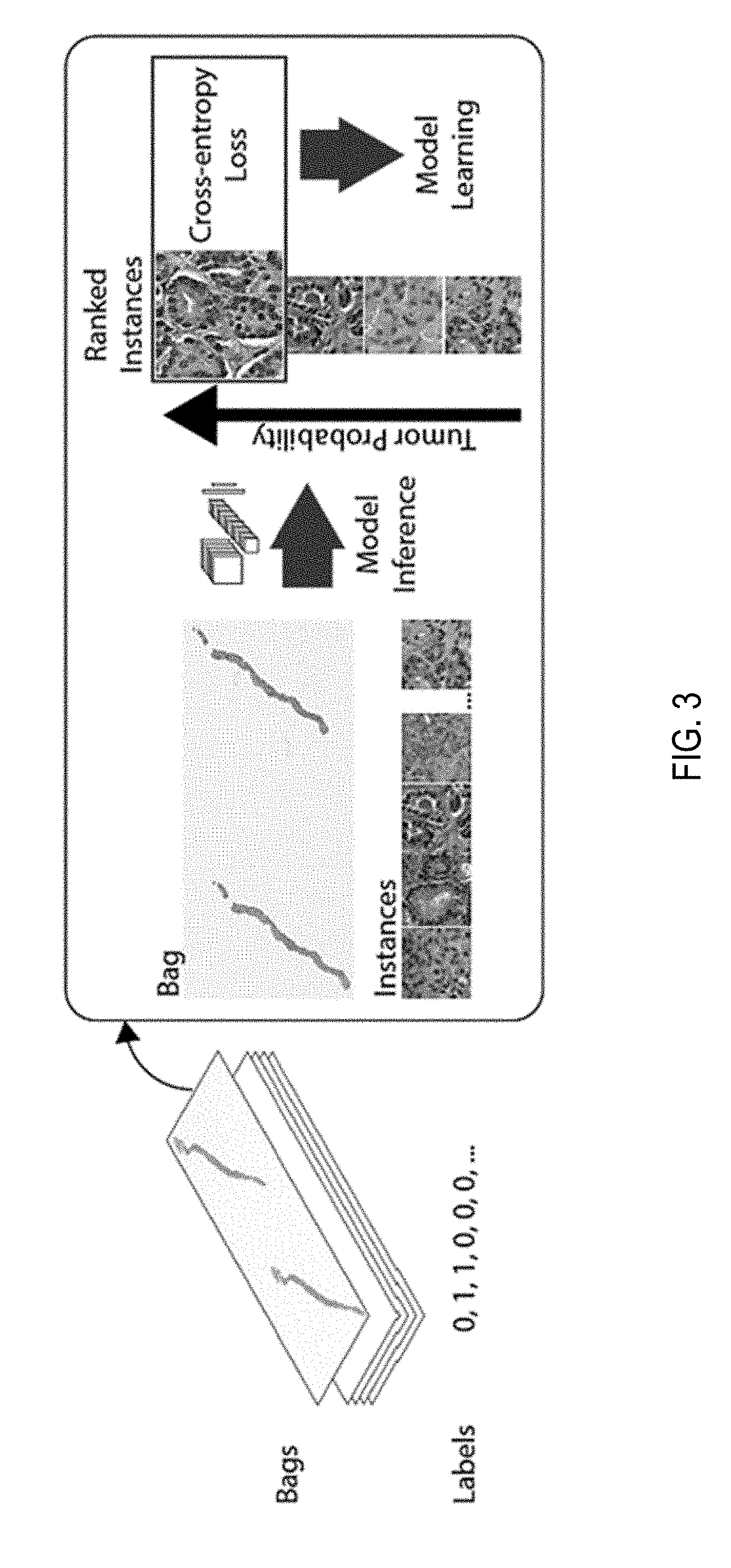

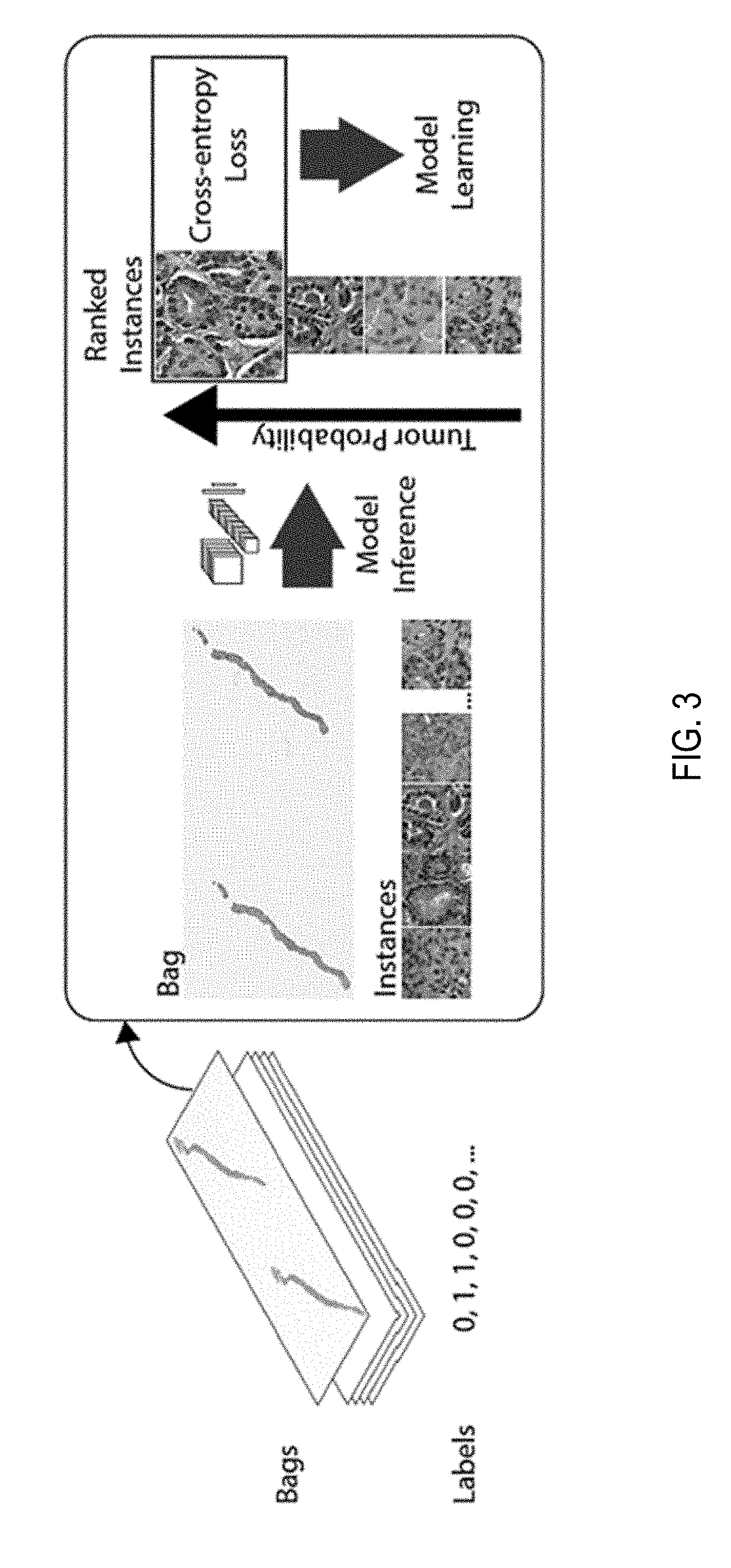

[0072] Classification of a whole digital slide based on a tile-level classifier can be formalized under the classic MIL paradigm when only the slide-level class is known and the classes of each tile in the slide are unknown. Each slide s.sub.i from the slide pool S={s.sub.i: i=1, 2, . . . n} can be considered as a bag consisting of a multitude of instances (tiles). For positive bags, it must exist at least one instance that is classified as positive by some classifier. For negative bags instead, all instances must be classified as negative. Given a bag, all instances are exhaustively classified and ranked according to their probability of being positive. If the bag is positive, the top-ranked instance should have a probability of being positive that approaches one, while if it is negative, the probability should approach zero. The complete pipeline of the method comprises the following steps: (i) tiling of each slide in the dataset; for each epoch, which consists of an entire pass through the training data, (ii) a complete inference pass through all the data; (iii) intra-slide ranking of instances; (iv) model learning based on the top-1 ranked instance for each slide.

[0073] Referring to FIG. 3, depicted is a schema of performing multiple instance learning for classification of tumorous features on whole slide images. The slide or bag consists of multiple instances. Given the current model, all the instances in the bag are used for inference. They are then ranked according to the probability of being of class positive (tumor probability). The top ranked instance is used for model learning via the standard cross-entropy loss. Unless otherwise noted a gradient step is taken every 100 randomly sampled slides and the models used in experiments is an AlexNet and VGG11 pretrained on ImageNet allowing all layers to be optimized.

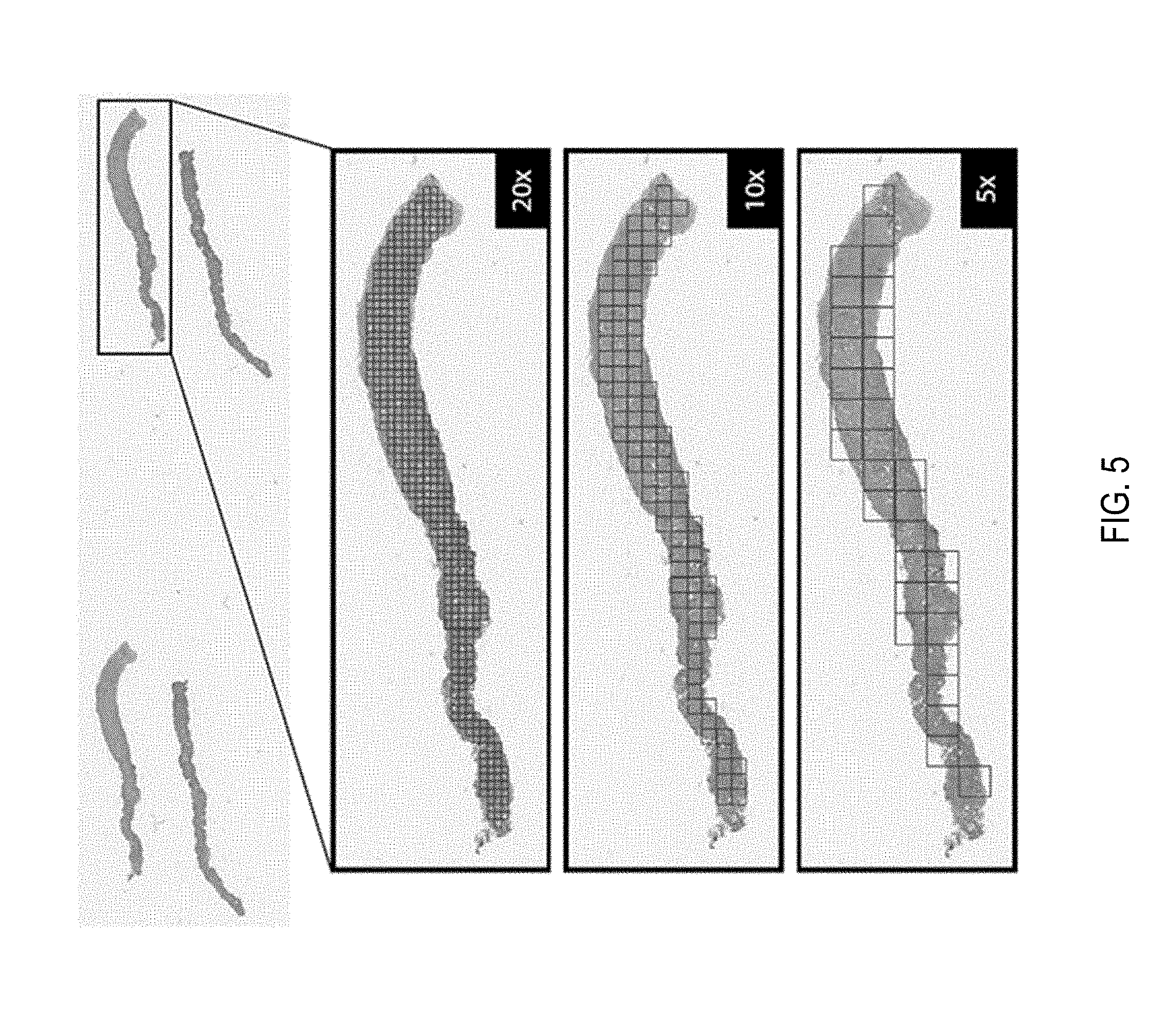

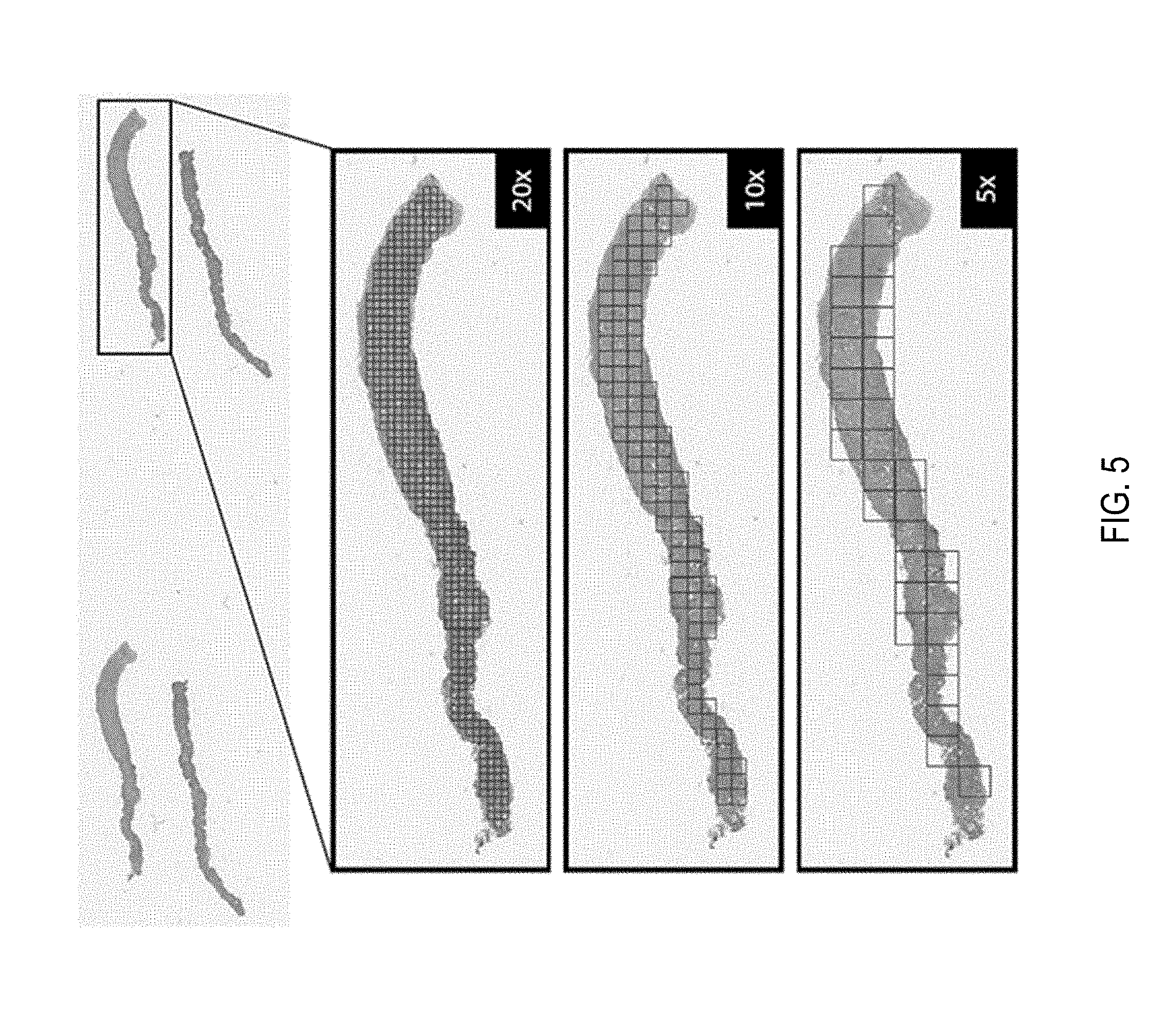

[0074] Slide Tiling: The instances are generated by tiling the slide on a grid. All the background tiles are efficiently discarded by an algorithm, reducing drastically the amount of computation per slide, since quite a big portion of it is not covered by tissue. Furthermore, tiling can be performed at different magnification levels and with various levels of overlap between adjacent tiles. In this work three magnification levels (5.times., 10.times. and 20.times.) were investigated, with no overlap for 10.times. and 20.times. magnification and with 50% overlap for 5.times. magnification. On average each slide contains about 100 non overlapping tissue tiles at 5.times. magnification and 1,000 at 20.times. magnification. More detailed information on the composition of the bags is given in FIGS. 6A-C. Given a tiling strategy and sampled slide s.sub.i, bags B={B.sub.s.sub.i: i=1, 2, . . . , n} where B.sub.s.sub.i={b.sub.i,1, b.sub.i,2, . . . , b.sub.i,m} is the bag for slide s.sub.i containing m total tiles. An example of tiling can be seen in FIG. 5.

[0075] Model Training: The model is a function f.sub..theta. with current parameters .theta. that maps input tiles b.sub.i,j to class probabilities for "negative" and "positive" classes. Given bags B a list of vectors O={o.sub.i,: i=1, 2, . . . , n} was obtained, one for each slide s.sub.i containing the probabilities of class "positive" for each tile b.sub.i,j: j=1, 2, . . . , m in B.sub.s.sub.i. The index k.sub.i of the tile was obtained within each slide which shows the highest probability of being "positive" k.sub.i=argmax(o.sub.i). The highest ranking tile in bag B.sub.s.sub.i is then b.sub.i,k. The output of the network {tilde over (y)}.sub.i=f.sub..theta.(b.sub.i,k) can be compared to y.sub.i, the target of slide s.sub.i, thorough the cross-entropy loss l as in Equation 1.

l=-w.sub.1[y.sub.i log({tilde over (y)}.sub.i)]-w.sub.0[(1-y.sub.i)log(1-{tilde over (y)}.sub.i)] (1)

[0076] Given the unbalanced frequency of classes, weights w0 and w1, for negative and positive classes respectively, can be used to give more importance to the underrepresented examples. The final loss is the weighted average of the losses over a mini-batch. Minimization of the loss is achieved via stochastic gradient descent using the Adam optimizer and learning rate 0.0001. Mini-batches of size 512 for AlexNet, 256 for ResNets and 128 for VGGs were used.

[0077] Model Testing: At test time all the instances of each slide are fed through the network. Given a threshold (usually 0.5), if at least one instance is positive then the entire slide is called positive; if all the instances are negative then the slide is negative. Accuracy, confusion matrix and ROC curve are calculated to analyze performance.

5. Exploratory Experiments

[0078] Experiments in were performed on a HPC cluster. In particular, seven NVIDIA DGX-1 workstations each containing 8 V100 Volta GPUs were used. OpenSlide was used toaccess on-the-fly the WSI files and PyTorch for data loading, building models, and training. Further data manipulation of results was performed in R.

[0079] Classic MIL: Various standard image classification models pre-trained on Imagenet under the MIL setup at 20.times. magnification and no overlap were tested. Each experiment was run 100 steps 5 times with different random initializations of the classification layers. Referring to FIG. 4, depicted are Training loss and validation error (a) and best model performance with the naive multi-scale approach (b) on the exploratory dataset. The colored ROC curves are different multi-scale modalities, which are compared to the single magnification models (dotted lines). c) Training and validation balanced error for the large-scale experiment with VGG11. d) Test set ROC curve of the best VGG11 model trained on large-scale. It was observed that not all the architectures are able to lower the loss under this optimization scheme. In particular, AlexNet was able to reduce the loss 4/5 of the time, while VGG11, which has an architecture very similar to AlexNet but contains 11 convolutional layers instead of 5, run successfully 2/5 of the time. Interestingly, adding batch normalization to VGG11 completely erases the performance seen in the standard VGG11. Finally, ResNet18 similarly to VGG11BN also gets stuck on a suboptimal minimum. Different optimizers and learning rates were also tested with similar results.

[0080] AlexNet gave the best and most reliable results and its performance was further tested under different magnifications. The MIL setup requires an exhaustive pass through every slide and thus it is quite time consuming. The experiments shown next were run for 160 hours and then stopped. FIG. 4(a) shows the training loss for the AlexNet model trained at different magnifications; to note how after 400 steps convergence has not been reached yet. FIG. 4(b) shows the overall misclassification error, the false negative rate and false positive rate for the validation set. As expected, the model originally assigns a positive label to every slide. As training proceeds, the false positive rate decreases while the false negative rate tends to increase. The best performing models on the validation set achieved 83.7, 87.6 and 88.0% accuracy for 5.times., 10.times. and 20.times. magnification respectively as seen in FIG. 4(a). 20.times. magnification seem to produce overall more false positives, while 5.times. s produces more false negatives. Finally, the models achieve 0.943, 0.935 and 0.895 AUC for 5.times., 10.times. and 20.times. magnification respectively in the ROC curves in FIG. 4(d). There seems to be quite a drop in performance at 5.times. magnification, but this may be due to the 10-fold decrease in number of patches present at 5.times. with respect to 20.times. magnification.

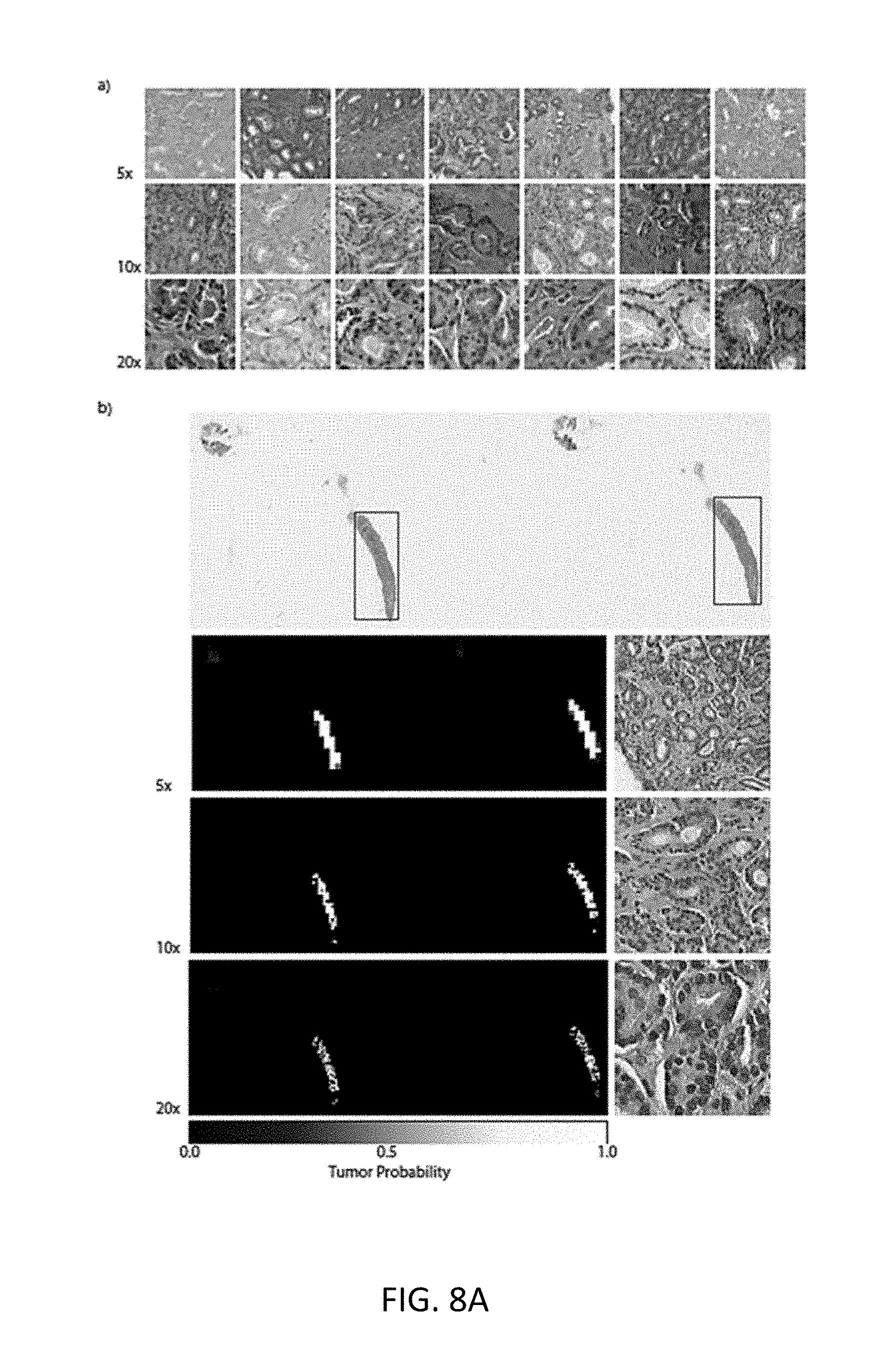

[0081] Error Analysis: Detailed analysis of the true positive cases (Referring to FIGS. 8A(a) and (b)) substantiates the hypothesis that irrespective of magnification, the attention is focused on malignant glands but based on different features which indicates that a multi-scale approach could be beneficial. Investigation of the 43 false positive slides (FIG. 8B) reveal known mimickers of prostate cancer like atrophy, adenosis and inflammation as well as seminal vesicles and colorectal tissue. The 29 false negative slides (FIG. 8C) were cases with very little tumor surface with predominant errors at 5.times.. Arguably, more training data containing more examples of mimickers would be useful to push the false positive rate down which reemphasizes the usefulness of real-world studies over curated toy datasets.

[0082] Naive multi-scale MIL. Previous results showed that many errors were not shared among the models learned at different magnifications. In addition, 5.times. and 20.times. magnifications showed complementary performance with respect to error modes. This suggests that a possible boost in performance may be possible by integrating information at different magnifications. The easiest approach is to combine the responses of the models trained at different magnifications. Here the probability of positive class of the models was combined from the previous section in four ways: (i) max(5, 10, 20), (ii) max(5,20), (iii) average(5, 10,20), (iv) average(5,20). Taking the maximum probability tends to increase the false positive rate, while drastically reducing the false negative rate. Whereas taking the average response leads to an overall lower error rate. The results shown in Table 1 and in the ROC curves in FIGS. 4(b) and 10A demonstrate the improved performance of the multi-scale approach.

[0083] Other MIL Extensions. Further experiments were performed to analyze the effect of tiling the slides with 50% overlap. The results showed only a minor improvement over the basic non overlapping approach. Given the encouraging results of the naive multi-scale approach, learning a multi-scale model was also tried with three different architectures. The experiments didn't show improved performance over previous results.

6. Large-Scale MIL

[0084] AlexNet and a VGG11 models pretrained on ImageNet on the full dataset were trained: 8,512 slides for training and 1,824 for validation. Each experiment was run 4 times to inspect the robustness to random initializations and optimization. Given the computational cost of fully inspecting every 20.times. tile in such a large dataset, the training was tested on the validation set only every 50 steps. The jobs were stopped after 160 hours completing almost 200 steps training steps. Traces of the training procedure are shown in FIGS. 4(c) and 13 (depicting confusion matrices for the best AlexNet and VGG11 models on the test set). Both AlexNet and VGG11 were able, at least in a subset of the runs, to reduce the loss during training. It is also clear that the models were still learning and that with more training the error could have decreased more. The best models, for each architecture, after 150 runs were selected to be tested on the test dataset consisting of 1,824 slides never used before, confusion matrices are shown in FIG. 19. VGG11 achieved the best performance on the test set with a balanced error rate of 13% and an AUC of 0.946 as seen in FIG. 4(d).

Weight Tuning

[0085] Needle biopsy diagnosis is an unbalanced classification task. The full dataset consists of 19.9% positive examples and 80.1% negative ones. To determine whether weighting the classification loss is beneficial, training was performed on the full dataset an AlexNet and a Resnet18 networks, both pretrained on ImageNet, with weights for the positive class w.sub.1 equal to 0.5, 0.7, 0.9, 0.95 and 0.99. The weights for both classes sum to 1, where w.sub.1=0.5 means that both classes are equally weighted. Each experiment was run five times and the best validation balanced error for each run was gathered. Training curves and validation balanced errors are reported in FIG. 24. Weights 0.9 and 0.95 were determined to give the best results. For the reminder of the experiments w.sub.1=0.9 was used.

Dataset Size Importance

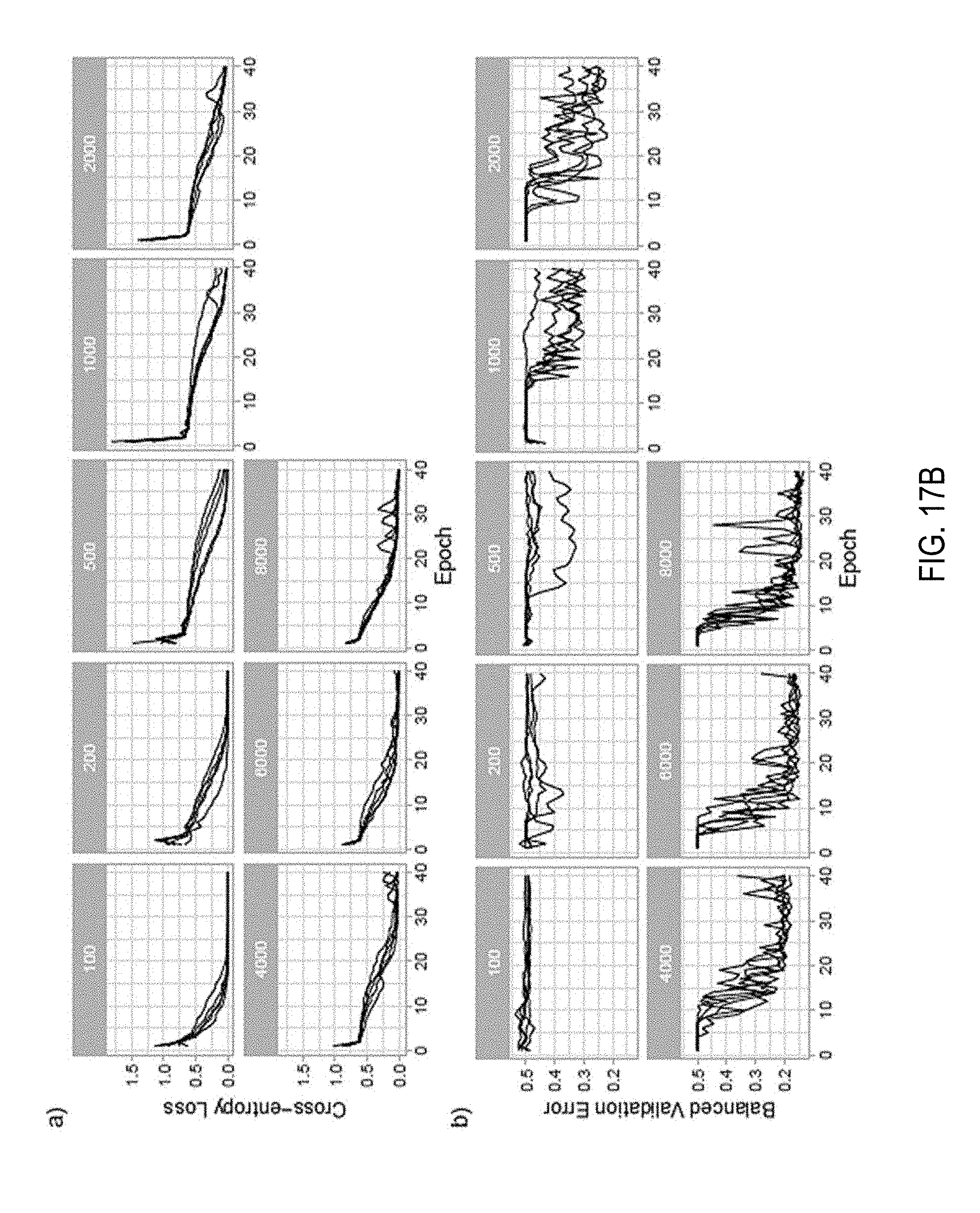

[0086] In the following set of experiments, how dataset size affects performance of a MIL based slide diagnosis task were determined. For these experiments the full dataset was split in a common validation set with 2,000 slides and training sets of different sizes: 100, 200, 500, 1,000, 2,000, 4,000, 6,000. Each bigger training dataset fully contained all previous datasets. For each condition, an AlexNet was trained five times and the best balanced errors on the common validation set are shown in FIG. 14 demonstrating how a MIL based classifier could not have been trained until now due to the lack of a large WSI dataset. Training curves and validation errors are also reported in FIG. 17B.

Model Comparison

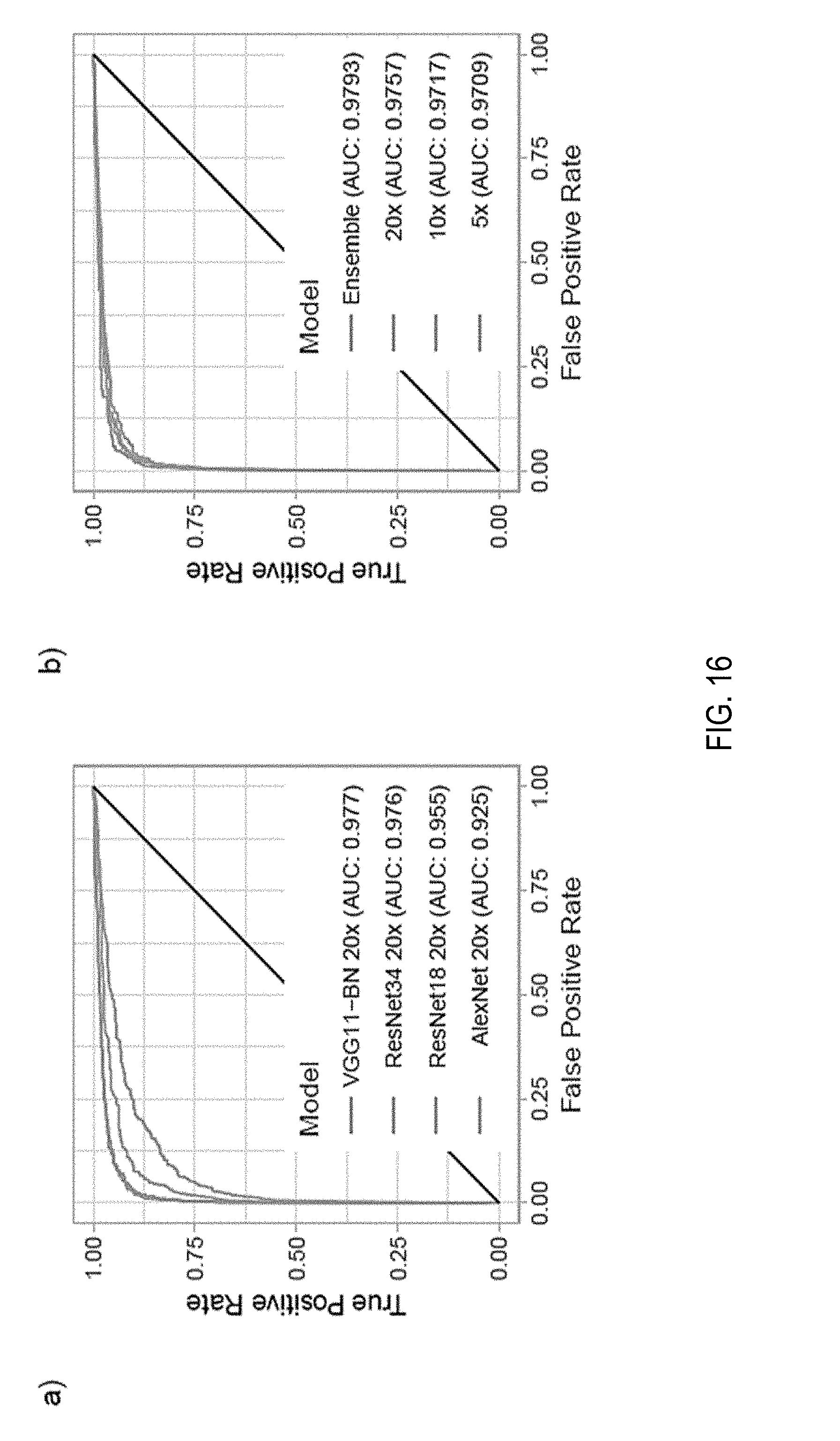

[0087] Various standard image classification models pretrained on ImageNet (AlexNet, VGG11-BN, ResNet18, Resnet34) under the MIL setup at 20.times. magnification were tested. Each experiment was run for up to 60 epochs for at least five times with different random initializations of the classification layers. In terms of balanced error on the validation set, AlexNet performed the worst, followed by the 18-layer ResNet and the 34-layer ResNet. Interestingly, the VGG11 network achieved results similar to those of the ResNet34 on this task. Training and validation results are reported in FIG. 17D.

[0088] Test Dataset Performance: For each architecture, the best model on the validation dataset was chosen for final testing. Performance was similar with the one on the validation data indicating good generalization. The best models were Resnet34 and VGG11-BN which achieved 0.976 and 0.977 AUC respectively. The ROC curves are shown in FIG. 16(a).

[0089] Error Analysis: A thorough analysis of the error modalities of the VGG11-BN model was performed by a pathologist. Of the 1,824 test slides, 55 were false positives (3.7% false positive rate) and 33 were false negatives (9.4% false negative rate). The analysis of the false positives found seven cases that were considered highly suspicious for prostate cancer. Six cases were considered "atypical", meaning that following-up with staining would have been necessary. Of the remaining false positives, 18 were a mix of known mimickers of prostate cancer: adenosis, atrophy, benign prostatic hyperplasia, and inflammation. The false negative cases were carefully inspected, but in six cases no sign of prostate cancer was found by the pathologist. The rest of the false negative cases were characterized by very low volume of cancer tissue.

[0090] Feature Embedding Visualization: Understanding what features the model uses to classify a tile is an important bottle-neck of current clinical applications of deep learning. One can gain insight by visualizing a projection of the feature space in two dimensions using dimensionality reduction techniques such as PCA. 50 tiles were sampled from each test slide, in addition to its top-ranked tile, and extracted the final feature embedding before the classification layer. Shown in FIG. 17A are the results of the ResNet34 model. From the 2D projection, a clear decision boundary between positively and negatively classified tiles can be seen. Interestingly, most of the points are clustered at the top left region where tiles are rarely top-ranked in a slide. By observing examples in this region of the PCA space, it can be determined that they are tiles containing stroma. Tiles containing glands extend along the second principal component axis, where there is a clear separation between benign and malignant glands. Other top-ranked tiles in negative slides contain edges and inked regions. The model trained only with the weak MIL assumption was still able to extract features that embed visually.

Augmentation Experiments

[0091] A small experiment with a ResNet34 model was run to determine whether augmentation of the data with rotations and flips during training could help lower the generalization error. The results are presented in FIG. 17D, showed no indication of a gain in accuracy when using augmentation.

Magnification Comparison

[0092] VGG11-BN and ResNet34 models were trained with tiles generated at 5.times. and 10.times. magnifications. Lowering the magnification led consistently to higher error rates across both models. Training curves and validation errors are shown in FIG. 17E. Ensemble models were also generated by averaging or taking the maximum response across different combinations of the three models trained at different magnifications. On the test set these naive multi-scale models outperformed the single-scale models, as can be seen in the ROC curves in FIG. 16(b). In particular, max-pooling the response of all the three models resulted in the best results with an AUC of 0.979, a balanced error of 5.8% and a false negative rate of 4.8%.

7. Conclusions

[0093] In this study the performance of convolutional neural networks under the MIL framework for WSI diagnosis was analyzed in depth. Focus was given on needle biopsies of the prostate as a complex representative task and the largest dataset in the field with 12,160 WSIs was obtained. Exploratory experiments on a subset of the data revealed that shallower networks without batch normalization, such as AlexNet and VGG11, were preferable over other architectures in this scenario. In addition, it was demonstrated that a multi-scale approach consisting of a pool of models, learned at different magnifications, can boost performance. Finally, the model was trained on the full dataset at 20.times. magnification and, while the model was only run for less than 200 steps, a balanced error rate of 13% was achieved on the best performing model and an AUC of 0.946.

[0094] The performance of the pipelines can be optimized to be able to run training in a fraction of the time. Investigation can be done on how to add supervision from a small pool of pixel-wise annotated slides to increase accuracy and achieve faster convergence. In addition, this MIL pipeline can be tested on other types of cancer to further validate the widespread applicability of the method described herein.

[0095] In addition, it was demonstrated that training on high-performing models for WSI diagnosis only using the slide-level diagnosis and no further expert annotation using the standard MIL assumption is possible. It was shown that final performance greatly depends on the dataset size. The best performing model achieved an AUC of 0.98 and a false negative rate of 4.8% on a held-out test set consisting of 1,824 slides. Given the current efforts in digitizing the pathology work-flow, approaches like these can be extremely effective in building decision support systems that can be effectively deployed in the clinic.

8. Supplemental

Slide Tiling

[0096] Referring to FIG. 5, shown is an example of a slide tiled on a grid with no overlap at different magnifications. The slide is the bag and the tiles constitute the instances of the bag. In this work instances at different magnifications are not part of the same bag. An example of a slide tiled on a grid with no overlap at different magnifications. The slide is the bag and the tiles constitute the instances of the bag. In this work instances at different magnifications are not part of the same bag.

Bag Composition

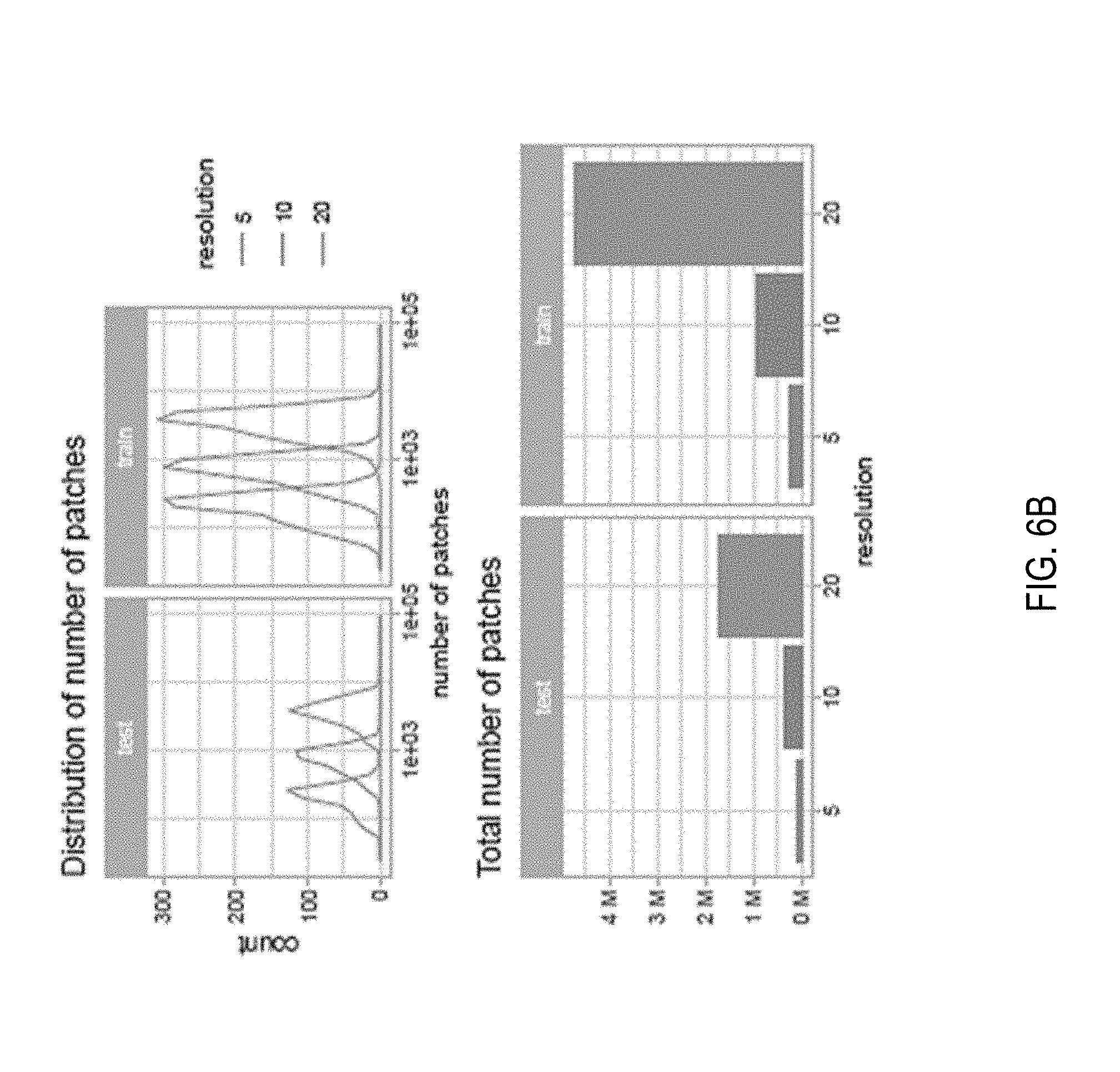

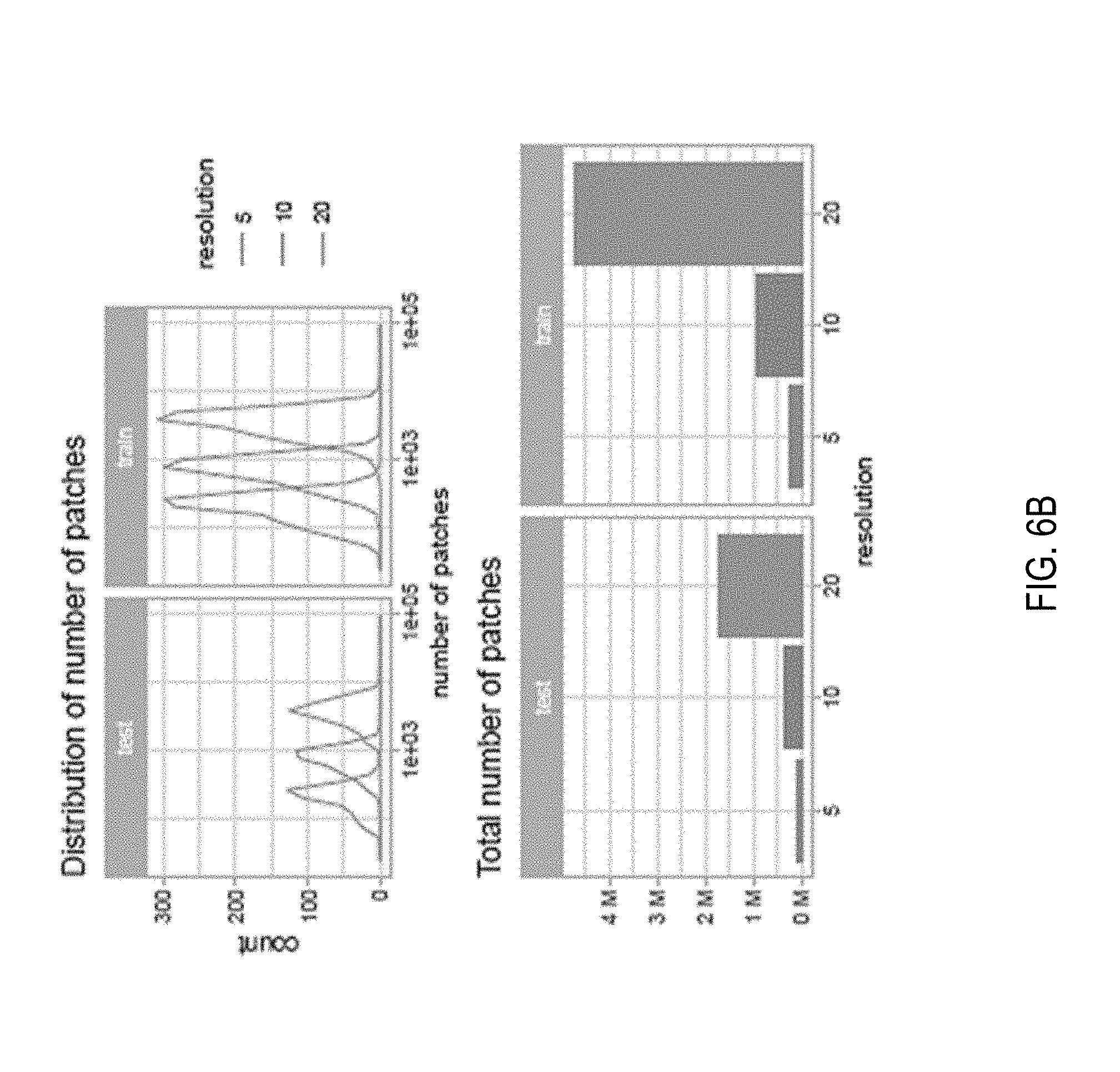

[0097] FIG. 6A illustrates some statistics on the composition of the bags for the exploratory dataset. FIG. 6B illustrates some statistics on the composition of the bags for the exploratory dataset tiled with 50% overlap. FIG. 6C illustrates some statistics on the composition of the bags for the full dataset consisting of 12,160 slides.

Architecture Comparisons

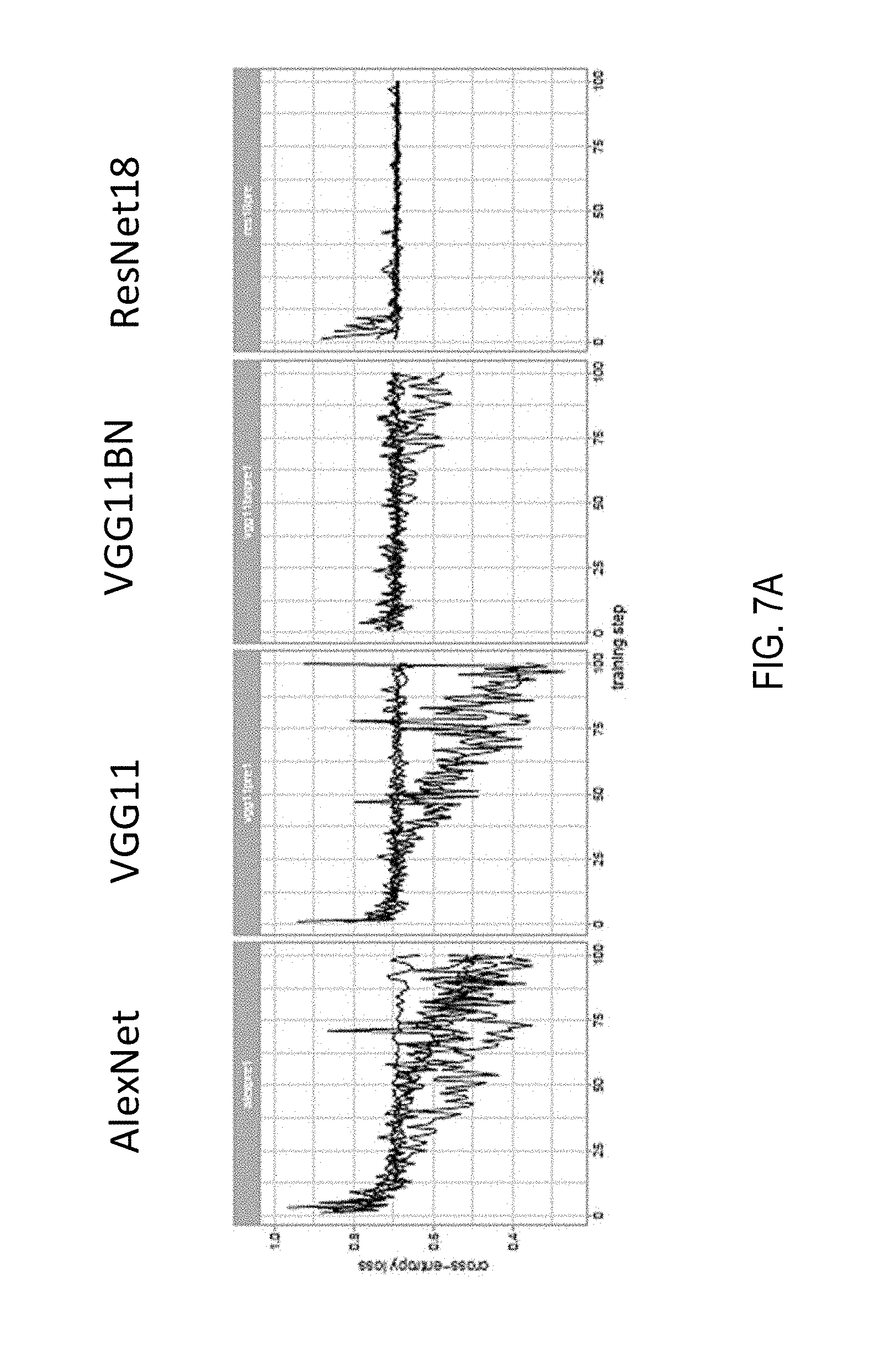

[0098] Referring now to FIG. 7A, shown are setups for exploratory experiments. Standard MIL setup at 20.times. magnification with no overlap; adam optimizer with starting learning rate of 0.0001 for 100 steps. The training loss is plotted for different architectures. To note how AlexNet and VGG11 are able to reduce the loss, while VGG11BN and ResNet18 are stuck in a suboptimal minimum.

Classic MIL AlexNet Training

[0099] Referring now to FIG. 7B, MIL training of an AlexNet at different magnifications. a) Training loss. b) Misclassification error, False Negative Rate and False Positive Rate on the validation set. c) Confusion matrices of the best models on the validation set for each magnification. d) ROC curves of the best models on the validation set for each magnification.

True Positives

[0100] Referring now to FIG. 8A, shown is a selection of true positives from the best models on the validation set. a) Tiles with highest tumor probability within their respective slides. It is clear the model reacts strongly to malignant glands at all magnifications. b) In depth analysis of a random true positive result. The red boxes on the original slide are the ground truth localization of the tumor. The heat-maps are produced at the three magnifications and their respective highest probability tiles are also shown. In some case, the heat-maps can be used for localization of the tumor.

False Positives

[0101] Referring now to FIG. 8B, shown are three examples of false positive slides on the validation set. These are all the cases that were mistakenly classified by the best models at each magnification tested. Inside the red rectangles are the tissue areas with a prostate cancer mimicker. a) The slide contains portions of seminal vesicle tissue. b) The slide presents areas of adenosis and general gland atrophy. c) The slide present areas of inflammation.

False Negatives

[0102] Referring now to FIG. 8C, shown are two examples of false negative slides on the validation set. The false negatives are in general cases where the tumor regions are particularly small.

Naive Multi-Scale Performance

[0103] Referring now to FIG. 9, shown is a table of a performance comparison of the classic MIL approach and the naive multi-scale version. A significant performance boost is observed by combining the prediction from multiple models. Referring now to FIG. 10A, shown are ROC curves for the naive multi-scale approach. The dotted lines are the ROC curves for each model alone. The performance of the three models together is improved as shown by the higher AUCs and overall error rates.

MIL with Overlap

[0104] Previous results suggested that especially for lower magnifications, tiling the slides with no overlap may be detrimental to the final performance. The experiments were repeated with 50% overlap of the tiles at every magnification. The bags at 5.times. magnification now contain several hundred instances, for a total of al-most half a million instances. The increased number of instances slows down the training considerably, especially at 20.times., where after 160 hours only little over 100 steps were completed. Only the model trained at 5.times. magnification was trained for a number of steps comparable with its non-overlap counterpart. Nonetheless, performance showed only a minor improvement with overlapping instances compared to non-overlapping instances. Training Loss, Errors on the validation dataset and other performance metrics are presented in FIG. 10B.

[0105] Referring to FIG. 10B, shown is performance of MIL trained with overlap. a) Training loss. b) Error measures on the validation set. c) ROC curves comparison with models trained without overlap. Only the 5.times. magnification model was trained long enough the be comparable with the "non-overlap" models. The overlap model trained at 5.times. magnification shows a slightly improved performance over its non-overlap counterpart.

Learned Multi-Scale Models

[0106] The results on the naive multi-scale approach are encouraging to try to learn feature at different scales within the same model. Three architectures were tested: (i) The "single" model uses as input a 6-channel image where the first three channels are for a 20.times. image and the second three channels are for a 5.times. image, both centered around the same pixel. (ii) The "double-sum" model has two parallel feature extractor, one for the 20.times. image and one for the 5.times. image. The features are then added element-wise and fed to a classifier. (iii) The "double-cat" model is very similar to the "double-sum" model but the features coming from the two streams are concatenated instead of added.

[0107] Referring now to FIG. 11, shown is a schematic of the three models. Model architectures for the learned multi-scale MIL experiments. The models receive as input a tile at 5.times. and 20.times. magnification. The tiles can be stacked into a "single" stream, or they can each go through parallel feature extractors. The features can then either be summed element-wise or concatenated before being fed to the final classifier.

[0108] The tiling for these experiments is done at 20.times. magnification without overlap, as before, but now two tiles are extracted at each time, one at 5.times. and one at 20.times.. The 5.times. tiles have 75% overlap. Referring now to FIG. 12A, shown are performance of the trained multi-scale experiments in comparison with the performance of the 20.times. magnification experiment from previous sections (dotted line). a) Training loss. b) Classification error on the validation set. The pipeline is slower than the non-multi-scale approach and fewer training steps could be completed. The performance of the "double" models is comparable to the 20.times. magnification model, while the "single" model seems to performs significantly worse. The results shown indicate that performance of the "double-sum" and "double-cat" models is comparable to that of the 20.times. magnification experiment, while the "single" model performs significantly worse. This experiment suggests that training models at different magnifications gives better results, but more experiments should be conducted to rule out the benefits of a trained multi-scale approach.

Large-Scale MIL Training

[0109] Referring now to FIG. 12B, shown are results from the large-scale training experiments on AlexNet (left column) and VGG11 (right column). Training loss and validation balanced error are plotted in the first and second rows respectively. The experiments were run 4 times each (gray traces) and the average curve is shown in red. While the AlexNet curve all show diminishing loss, in the VGG case, two of the four curves were stuck in a suboptimal minimum. The arrows point to the models chosen for the final testing on the test. Referring now to FIG. 13, shown are the confusion matrices for the best AlexNet and VGG11 models on the test set.

B. Towards Clinical-Level Decision-Support Systems in Computational Pathology

[0110] In computational pathology, the use of decision-support systems powered by state-of-the-art deep-learning solutions has been hampered by the lack of large labeled datasets. Previously, studies have relied on datasets consisting of a few hundred slides, which are not sufficient to train models that can perform in clinical practice. To overcome this bottleneck, a dataset including 44,732 whole slides from 15,187 patients was gathered across three different cancer types. Proposed is a novel deep-learning system under the multiple instance learning (MIL) assumption, where only the overall slide diagnosis is necessary for training, thus avoiding all the expensive pixel-wise annotations that are usually part of supervised learning. The proposed method works at scale and requires no dataset curation at any stage. This framework was evaluated on prostate cancer, basal cell carcinoma (BCC) and breast cancer metastases to axillary lymph nodes. It is demonstrated that classification performance with area under the curve (AUC) above 0.98 for all cancer types. In the prostate dataset, this level of accuracy translates to clinical applicability by allowing pathologists to potentially exclude 75% of slides while retaining 100% sensitivity. These results open the way for training accurate tumor classification models at unprecedented scale, laying the foundation for computational decision-support systems that can be deployed in clinical practice.

[0111] There has been a strong push towards the digitization of pathology with the birth of the new field of computational pathology. The availability of increasingly large digital pathology data, coupled with impressive advances in computer vision and machine learning in recent years, offer the perfect combination for the deployment of decision-support systems in the clinical setting. Translating these advancements in computer vision to the medical domain, and to pathology in particular, comes with challenges that remain unsolved, despite the notable success from dermatology and ophthalmology, where human level diagnosis is achieved on dermoscopy and optical coherence tomography (OCT) images, respectively. Unlike in other medical domains, the lack of large datasets which are indispensable for training high-capacity classification models, has set back the advance of computational pathology. The CAMELYON16 challenge for breast cancer metastasis detection contains one of the largest labeled datasets in the field, with a total of 400 whole-slide images (WSIs). But this amount of cases is extremely small compared to the millions of instances present in the popular ImageNet dataset. One widely adopted solution to the scarcity of labeled examples in pathology is to take advantage of the size of each example. Pathology slides scanned at 20.times. magnification produce image files of several gigapixels. About 470 WSIs scanned at 20.times. contain roughly the same number of pixels as the entire ImageNet dataset. By breaking the WSIs into small tiles, it is possible to obtain thousands of instances per slide, enough to train high-capacity models from a few hundred slides. Unfortunately, tile-level annotations are required for supervised learning, but these are prohibitively expensive and time consuming to produce, especially in pathology. There have been several efforts along these lines. Despite the success of computational algorithms on carefully crafted datasets, the performance of these models does not transfer to the real-life scenarios encountered in clinical practice because of the tremendous variance of clinical samples that is not captured in small datasets. Experiments presented in this article will substantiate this claim.

[0112] Another possibility, and the one that is thoroughly explored in this study, is to leverage the slide-level diagnosis, which is readily available from anatomic pathology laboratory information systems (LIS) or electronic health records (EHR), to train a classification model in a weakly supervised manner. Until now, training high-capacity models with clinical relevance at scale and only using slide-level supervision was not possible, due to the lack of large WSI datasets. To address this fundamental problem and to demonstrate how the proposed method can be seamlessly applied to virtually any type of cancer, three datasets of unprecedented size are gathered in the field of computational pathology: (i) a prostate core biopsy dataset consisting of 24,859 slides; (ii) a skin dataset of 9,962 slides; and (iii) a breast metastasis to lymph nodes dataset of 9,894 slides. Each one of these datasets is at least one order of magnitude larger than all other datasets in the field. In total, an equivalent number of pixels is analyzed from 88 ImageNet datasets (Table 1). It should be noted that the data were not curated. The slides in this work are representative of slides generated in a true pathology laboratory, which include common artifacts, such as air bubbles, microtomy knife slicing irregularities, fixation problems, cautery, folds, and cracks, as well as digitization artifacts, such as striping and blurred regions.

[0113] The datasets chosen represent different but complementary views of clinical practice, and offer insight into the types of challenges a flexible and robust decision support system should be able to solve. Prostate cancer, beyond its medical relevance as the leading source of new cancer cases and the second most frequent cause of death among men after lung cancers, can be diagnostically challenging, even for trained pathologists. Multiple studies have shown that prostate cancer diagnosis has a high inter- and intra-observer variability. Diagnosis is frequently based on the presence of very small lesions that comprise less than 1% of the entire tissue surface area (e.g., FIG. 18). Referring to FIG. 18, shown is a hematoxylin and eosin stained whole slide image for prostate cancer biopsy. The diagnosis can be based on very small foci of cancer that account for less than 1% of the tissue surface. In the slide above, only about 6 small tumor glands are present. The right-most image shows an example of a malignant gland. Its relation to the entire slide is put in perspective to reiterate the difficulty of the task.

[0114] For prostate cancer, making diagnosis more reproducible and aiding in the diagnosis of cases with low tumor volume are examples of how decision-support systems can improve patient care. BCC--the most common skin cancer, with approximately 4.3 million individuals diagnosed annually in the US--rarely causes metastases or death. In its most common form (e.g. nodular), pathologists can readily identify and diagnose the lesion; however, given its high frequency, the volume of cases that a pathologist must report is increasing. In this scenario, a decision support system should streamline the work of the pathologist and lead to faster diagnosis. For breast cancer metastases to lymph nodes, a clinical support system could allow for prioritization of slides with a higher probability of metastasis to be presented to the pathologist for confirmation. This assistive model would lower false negative rates and enable automation of subsequent downstream clinical tasks, such as quantification of metastatic tumor volume for clinical staging purposes. Detection of breast cancer metastasis in lymph nodes is also important because it allows directly comparison of the proposed methods to the state-of-the-art WSI classification that was established based on the CAMELYON16 challenge.

[0115] Since the introduction of the MIL framework, there have been many reports in the literature on both the theory and application of MIL in computer vision. Although it provides a good framework for weakly supervised WSI classification, and despite its success with classic computer vision algorithms, MIL has seen relatively little application in medical image analysis and computational pathology, in part due to the lack of large WSI datasets. This disclosure takes advantage of the large datasets and propose a deep MIL framework where only the whole-slide diagnosis is needed to train a decision-support system capable of classifying digital slides on a large scale with a performance in line with clinical practice.

1. Context