Electronic Apparatus And Method For Conditionally Providing Image Processing By An External Apparatus

KAMIDE; Sho ; et al.

U.S. patent application number 16/427506 was filed with the patent office on 2019-09-19 for electronic apparatus and method for conditionally providing image processing by an external apparatus. This patent application is currently assigned to NIKON CORPORATION. The applicant listed for this patent is NIKON CORPORATION. Invention is credited to Yae JOTAKI, Sho KAMIDE, Kunihiro KUWANO, Takeo MOTOHASHI, Yasuyuki MOTOKI, Teppei OKUYAMA, Masakazu SEKIGUCHI.

| Application Number | 20190289242 16/427506 |

| Document ID | / |

| Family ID | 51427759 |

| Filed Date | 2019-09-19 |

| United States Patent Application | 20190289242 |

| Kind Code | A1 |

| KAMIDE; Sho ; et al. | September 19, 2019 |

ELECTRONIC APPARATUS AND METHOD FOR CONDITIONALLY PROVIDING IMAGE PROCESSING BY AN EXTERNAL APPARATUS

Abstract

An electronic apparatus includes a processing unit that processes an image capture signal captured by an image capturing unit, a communication unit that is capable of transmitting the image capture signal captured by the image capturing unit to an external apparatus, and a determination unit that determines whether or not to transmit the image capture signal to the external apparatus, according to a capture setting for the image capturing unit.

| Inventors: | KAMIDE; Sho; (Yokohama-shi, JP) ; MOTOKI; Yasuyuki; (Yokohama-shi, JP) ; KUWANO; Kunihiro; (Kawasaki-shi, JP) ; OKUYAMA; Teppei; (Tokyo, JP) ; MOTOHASHI; Takeo; (Matsudo-shi, JP) ; JOTAKI; Yae; (Kawasaki-shi, JP) ; SEKIGUCHI; Masakazu; (Kawasaki-shi, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | NIKON CORPORATION Tokyo JP |

||||||||||

| Family ID: | 51427759 | ||||||||||

| Appl. No.: | 16/427506 | ||||||||||

| Filed: | May 31, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16204163 | Nov 29, 2018 | |||

| 16427506 | ||||

| 15645095 | Jul 10, 2017 | 10178338 | ||

| 16204163 | ||||

| 14768924 | Nov 6, 2015 | |||

| PCT/JP2013/071711 | Aug 9, 2013 | |||

| 15645095 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 1/00244 20130101; H04N 5/44 20130101; H04N 9/735 20130101; H04N 5/23206 20130101; G06T 7/13 20170101; G06K 9/4604 20130101; H04N 5/232935 20180801; H04N 5/23293 20130101; H04N 5/23245 20130101; H04N 5/23241 20130101 |

| International Class: | H04N 5/44 20060101 H04N005/44; G06T 7/13 20060101 G06T007/13; H04N 1/00 20060101 H04N001/00; H04N 5/232 20060101 H04N005/232; G06K 9/46 20060101 G06K009/46; H04N 9/73 20060101 H04N009/73 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Feb 28, 2013 | JP | 2013-039128 |

Claims

1. An electronic apparatus comprising: an image processing unit that performs image processing; and a communication unit that is capable of communicating with a plurality of external apparatuses each of which includes a photography unit outputting first image data, wherein the communication unit receives the first image data and information related to image processing performed on the first image data from one of the plurality of external apparatuses, and the image processing unit performs image processing on the first image data received by the communication unit based on the information related to image processing to generate second image data.

2. The electronic apparatus according to claim 1, wherein the communication unit transmits the second image data to the one of the plurality of external apparatuses.

3. The electronic apparatus according to claim 1, wherein the information related to image processing is at least one of a type name of the one of the plurality of external apparatuses, a specification of the one of the plurality of external apparatuses, and a parameter of image processing performed in the one of the plurality of external apparatuses.

4. The electronic apparatus according to claim 1, wherein the image processing unit performs image processing on the first image data to generate the second image data so as to be able to be displayed in the one of the plurality of external apparatuses.

5. The electronic apparatus according to claim 1, wherein the communication unit receives apparatus information of the one of the plurality of external apparatuses and the first image data together from the one of the plurality of external apparatuses.

6. The electronic apparatus according to claim 1, wherein the information includes color information and a parameter related to white balance adjustment.

7. The electronic apparatus according to claim 1, wherein the first image data is image data outputted through movie image photography.

8. The electronic apparatus according to claim 1, further comprising a recording unit that records the second image data which has been processed by the image processing unit.

9. An image processing method comprising: communicating with a plurality of external apparatuses each of which includes a photography unit outputting first image data; receiving the first image data from one of the plurality of external apparatuses; receiving information related to image processing performed on the first image data from one of the plurality of external apparatuses; and performing image processing based on the first image data and the information related to image processing to generate second image data.

10. The image processing method according to claim 9, further comprising transmitting the second image data to the one of the plurality of external apparatuses.

11. The image processing method according to claim 9, wherein the information related to image processing is at least one of a type name of the one of the plurality of external apparatuses, a specification of the one of the plurality of external apparatuses, and a parameter of image processing performed in the one of the plurality of external apparatuses.

Description

CROSS-REFERENCE TO RELATED APPLICATION(S)

[0001] This application is a continuation of U.S. patent application Ser. No. 16/204,163 filed Nov. 29, 2018 which is a divisional of U.S. patent application Ser. No. 15/645,095 filed Jul. 10, 2017 which is a continuation application of U.S. patent application Ser. No. 14/768,924, filed on Nov. 6, 2015, which in turn is a National Phase Application of PCT/JP2013/071711, filed on Aug. 9, 2013, and claims priority to Japanese Patent Application No. 2013-039128, filed on Feb. 28, 2013, the above applications being hereby incorporated by reference in their entirety.

DESCRIPTION

Technical Field

[0002] The present invention relates to an electronic apparatus.

Background Art

[0003] A prior art digital camera system is per se known that transmits an image capture signal from an imaging element (so-called RAW data) to a server, and image processing is performed upon this image capture signal by an image processing unit that is provided in the server (for example, refer to Patent Document #1).

CITATION LIST

Patent Literature

[0004] Patent Document #1: Japanese Laid-Open Patent Publication 2003-87618.

SUMMARY OF INVENTION

Technical Problem

[0005] With the prior art technique, there has been the problem that the convenience of use of the camera is poor, since the image processing is always performed by the server.

Solution to Technical Problem

[0006] According to the 1st aspect of the present invention, an electronic apparatus comprises: a processing unit that processes an image capture signal captured by an image capturing unit; a communication unit that is capable of transmitting the image capture signal captured by the image capturing unit to an external apparatus; and a determination unit that determines whether or not to transmit the image capture signal to the external apparatus, according to a capture setting for the image capturing unit.

[0007] According to the 2nd aspect of the present invention, it is preferred that in the electronic apparatus according to the 1st aspect, the communication unit transmits information related to details of the processing by the processing unit to the external apparatus.

[0008] According to the 3rd aspect of the present invention, it is preferred that in the electronic apparatus according to the 2nd aspect, the communication unit transmits information specifying at least one of a specification and parameters of the processing unit to the external apparatus.

[0009] According to the 4th aspect of the present invention, the electronic apparatus according to any one of the 1st through 3rd aspects may further comprise a recording unit that records a movie image and a still image upon a recording medium; and the determination unit may transmit the image capture signal to the external apparatus when the recording unit is to record the movie image upon the recording medium.

[0010] According to the 5th aspect of the present invention, the electronic apparatus according to any one of the 1st through 4th aspects may further comprise a setting unit that is capable of setting the image capturing unit to a movie image mode and to a still image mode; and the determination unit may transmit the image capture signal to the external apparatus when the movie image mode is set by the setting unit.

[0011] According to the 6th aspect of the present invention, the electronic apparatus according to the 5th aspect may further comprise a display unit that displays an image processed by the external apparatus as a live view.

[0012] According to the 7th aspect of the present invention, an electronic apparatus comprises: a processing unit that processes an image capture signal captured by an image capturing unit; a communication unit that is capable of transmitting the image capture signal captured by the image capturing unit to an external apparatus; and a determination unit that determines whether or not to transmit the image capture signal to the external apparatus, according to a state of generation of heat from at least one of the image capturing unit and the processing unit.

[0013] According to the 8th aspect of the present invention, it is preferred that in the electronic apparatus according to the 7th aspect, the determination unit determines whether or not to transmit the image capture signal to the external apparatus, according to a state of generation of heat from the communication unit.

[0014] According to the 9th aspect of the present invention, the electronic apparatus according to the 7th or 8th aspect may further comprise a temperature detection unit that detects the temperature of at least one of the image capturing unit, the processing unit, and the communication unit.

[0015] According to the 10th aspect of the present invention, the electronic apparatus according to any one of the 7th through 9th may further comprise a recording unit that records a movie image and a still image upon a recording medium; and the determination unit may transmit the image capture signal to the external apparatus when the recording unit is to record the movie image upon the recording medium.

[0016] According to the 11th aspect of the present invention, an electronic apparatus comprise: a processing unit that processes an image capture signal captured by an image capturing unit; a communication unit that is capable of transmitting the image capture signal captured by the image capturing unit to an external apparatus; and a determination unit that determines whether or not to transmit the image capture signal to the external apparatus, according to a state of generation of heat from the communication unit.

[0017] According to the 12th aspect of the present invention, the electronic apparatus according to the 11th aspect may further comprise a temperature detection unit that detects the temperature of the communication unit.

[0018] According to the 13th aspect of the present invention, it is preferred that in the electronic apparatus according to the 11th or 12th aspect, the communication unit transmits the image capture signal and information related to the processing unit to the external apparatus.

Advantageous Effects of Invention

[0019] According to the present invention, it is possible to provide an electronic apparatus whose convenience of use is good.

BRIEF DESCRIPTION OF DRAWINGS

[0020] FIG. 1 is a block diagram showing the structure of a photography system according to a first embodiment of the present invention;

[0021] FIG. 2 is a flow chart showing live view processing executed by a first control unit 21;

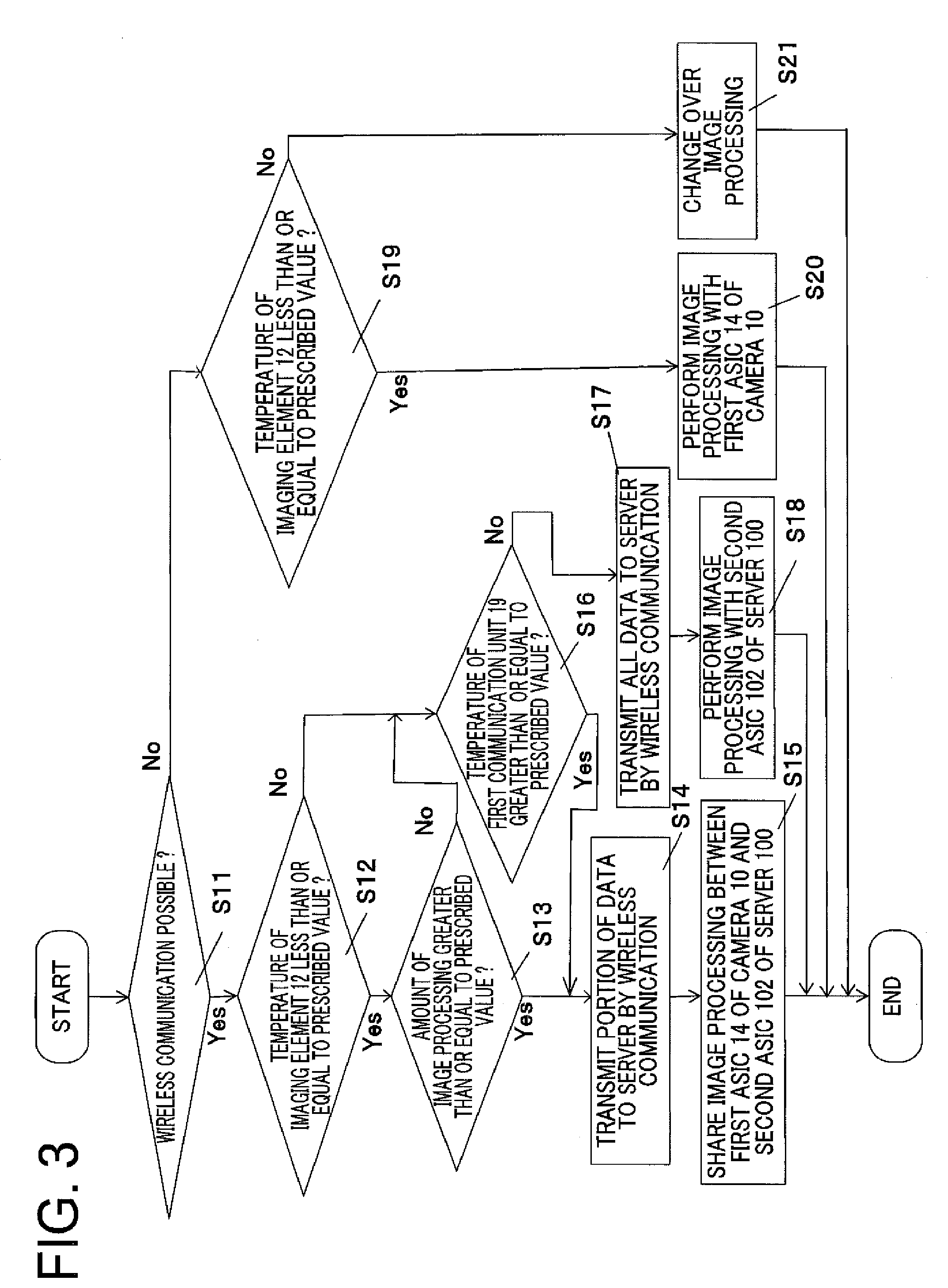

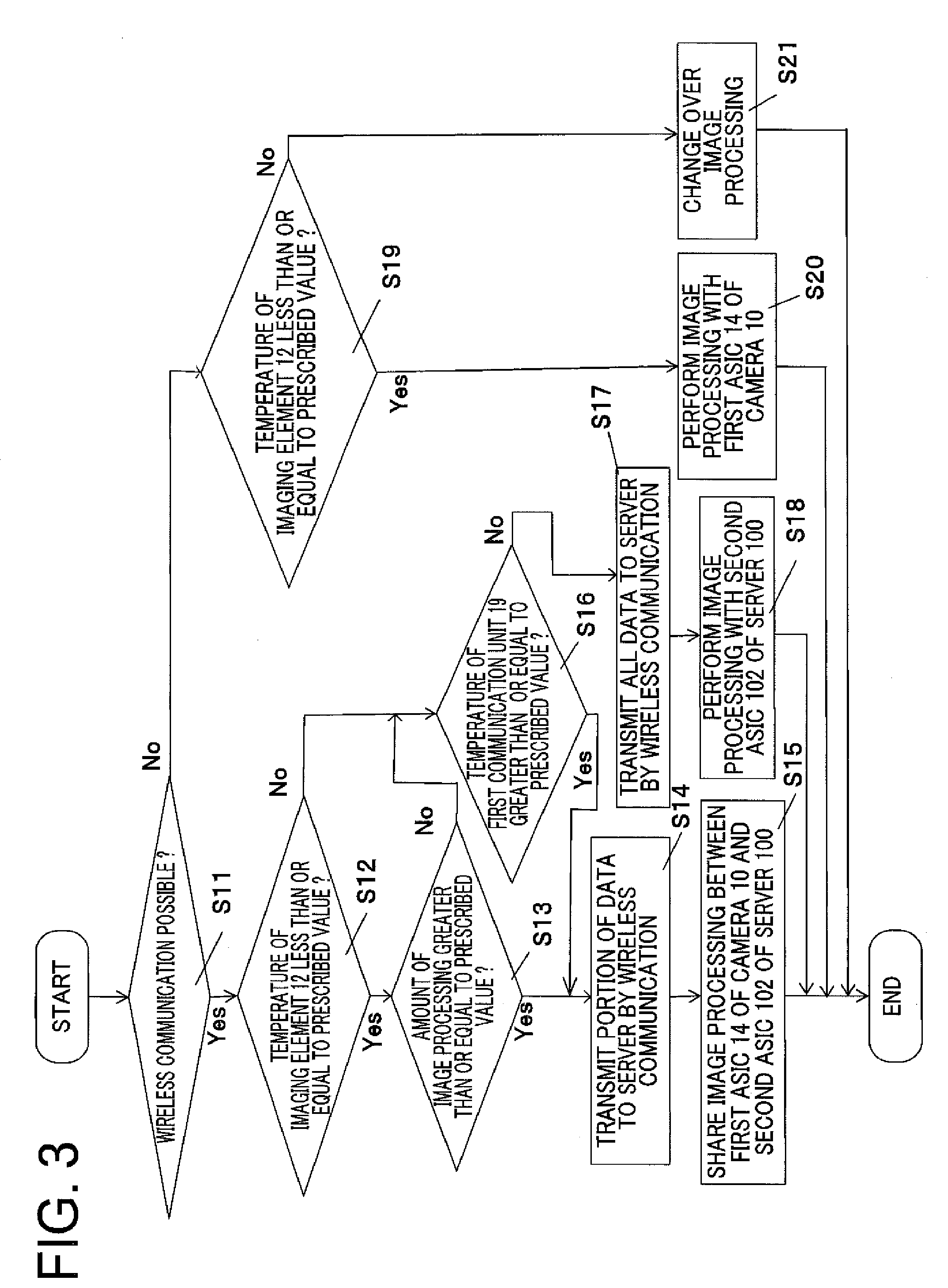

[0022] FIG. 3 is a flow chart showing live view processing executed by a first control unit 21 according to a second embodiment;

[0023] FIG. 4 is a time chart showing changeovers between a first ASIC 14 and a second ASIC 102 along with changes of temperature of an imaging element 12; and

[0024] FIG. 5 is a figure showing an example in which a plurality of cameras are connected to a single server 100.

DESCRIPTION OF EMBODIMENTS

Embodiment #1

[0025] FIG. 1 is a block diagram showing the structure of a photography system according to a first embodiment of the present invention. This photography system 1 comprises a camera 10 and a server 100. The camera 10 and the server 100 are connected together via a network 80, such as for example a LAN or a WAN, and are capable of performing mutual data communication in both directions.

[0026] The camera 10 is a so-called integrated lens type digital camera that obtains image data by capturing an image of a photographic subject that has been focused by an optical system 11 consisting of a plurality of lens groups with an imaging element 12 that may, for example, be a CMOS or a CCD or the like. The camera 10 comprises an A/D converter 13, a first ASIC 14, a display unit 15, a recording medium 16, an operation unit 17, a first memory 18, a first communication unit 19, a temperature sensor 20, and a first control unit 21.

[0027] The A/D converter 13 converts an analog image signal outputted from the imaging element 12 to a digital image signal. The first ASIC 14 is a circuit that performs image processing of various kinds (for example, color interpolation processing, tone conversion processing, image compression processing, or the like) upon the digital image signal outputted by the A/D converter 13. And the first ASIC 14 outputs the digital signal upon which it has performed the image processing described above to the display unit 15 and/or to the recording medium 16. The imaging element 12 and the first ASIC 14 are disposed close to one another within the casing of the camera 10, and the processing load upon the imaging element 12 and upon the first ASIC 14 increases during capture of a movie image or during image processing of a movie image, and accordingly the amount of heat generated raises the temperature of the imaging element 12 and the temperature of the first ASIC 14.

[0028] The display unit 15 is a display device that comprises, for example, a liquid crystal panel or the like, and displays images (still images and moving images) on the basis of digital image signals outputted by the first ASIC 14, and operating menu screens of various types and so on. And the recording medium 16 is a transportable type recording medium such as, for example, an SD card (registered trademark) or the like, and records image files on the basis of digital image signals outputted by the first ASIC 14. Furthermore, the operation unit 17 includes various operation members, such as a release switch for commanding preparatory operation for photography and photographic operation, a touch panel upon which settings of various types are established, a mode dial that selects the photographic mode, and so on. When the user operates these operation members, the operation unit 17 outputs operating signals corresponding to these operations to the first control unit 21. It should be understood that it would be acceptable to arrange for commands for photographing a still image and a movie image to be issued with the release switch, or alternatively a dedicated movie image capture switch may be provided. Moreover, the mode dial of this embodiment is capable of setting at least one of a plurality of still image modes and a movie image mode.

[0029] The first memory 18 is a non-volatile semiconductor memory such as, for example, a flash memory or the like, and the first control unit 21 stores therein in advance a control program and control parameters and so on so that the first control unit 21 controls the camera 10. The first communication unit 19 is a communication circuit that performs data communication to and from the server 100 via the network 80, for example by wireless communication. And the temperature sensor 20 is provided in the neighborhood of the imaging element 12, and detects the temperature of the imaging element 12 (in other words, the state of heat generation by the imaging element 12).

[0030] The first control unit 21 comprises a microprocessor, memory, and peripheral circuitry not shown in the figures, and provides overall control for the camera 10 by reading in and executing a predetermined control program from the first memory 18.

[0031] The server 100 comprises a second communication unit 101, a second ASIC 102, a second memory 104, and a second control unit 106. The second communication unit 101 is a communication circuit that performs data communication to and from the camera 10 via the network 80, for example by wireless communication. And the second ASIC 102 is a circuit that performs image processing similar to that performed by the first ASIC 14.

[0032] The second memory 104 is a non-volatile semiconductor memory such as, for example, a flash memory or the like, and stores therein in advance a control program and control parameters and so on so that the second control unit 106 controls the server 100. In addition to the control program and the control parameters and so on mentioned above, this second memory 104 also is capable of storing image data upon which image processing of various types has been performed by the second ASIC 102.

[0033] Next, live view display performed by this camera 10 will be explained. When the power supply of the camera 10 is in the ON state, the first control unit 21 performs so-called live view display upon the display unit 15. When performing this live view display, the first control unit 21 captures an image of the photographic subject with the imaging element 12, and outputs a digital image signal corresponding to this photographic subject image to the A/D converter 13. It should be understood that, as previously described, with the mode dial of this embodiment, it is possible to establish any one of a plurality of settings for still image photography, and to establish a setting for movie image photography. The first ASIC 14 changes the processing for live view display according to whether the mode dial is set for photography of a still image or for photography of a movie image. In concrete terms, by contrast to the case of live viewing in a still image mode in which the live view image is generated by thinning out the image captured by the imaging element 12 so that the amount of calculation by the first ASIC 14 is reduced, in the case of live viewing in the moving image mode the imaging element 12 and the first ASIC 14 generate a live view image having the same resolution as the recording size for the movie image. In other words, in this embodiment, the amount of heat generated by the imaging element 12 and the first ASIC 14 becomes greater during live view in the movie image mode, than during live view in a still image mode.

[0034] On the basis of the operational state of the camera 10 (i.e. whether the live viewing is in a still image mode or is in the movie image mode), the first control unit 21 determines whether to control the first ASIC 14 within the camera 10 to process this digital image signal, or to control the second ASIC 102 within the server 100 to perform this processing. And if the first control unit 21 has determined that the first ASIC 14 is to be controlled to process this digital image signal, then image data that has been produced by the first ASIC 14 performing various types of image processing upon the digital image signal (i.e. a live view image) is displayed upon the display unit 15.

[0035] On the other hand, if the first control unit 21 has determined that the second ASIC 102 is to be controlled to process the digital image signal, then the first control unit 21 transmits the digital image signal that has been outputted by the A/D converter 13 to the server 100 via the first communication unit 19. And, upon receipt of this digital image signal via the second communication unit 101, the second control unit 106 within the server 100 causes the second ASIC 102 to perform processing upon this received digital image signal. The second ASIC 102 generates image data (i.e. a live view image) by performing image processing of various types upon this digital image signal. And the second control unit 106 transmits this image data (i.e. the live view image) that has been generated by the second ASIC 102 to the camera 10 via the second communication unit 101. Upon receipt of this image data (i.e. the live view image) via the first control unit 21, the first control unit 21 within the camera 10 displays the live view image upon the display unit 15.

[0036] FIG. 2 is a flow chart showing the live view processing executed by the first control unit 21; in this embodiment, the processing shown in this flow chart is started in the case of live view in the movie image mode. In a first step S01, the first control unit 21 determines whether or not it is possible to perform wireless communication with the first communication unit 19. If the state is such that wireless communication with the first communication unit 19 is possible, then the flow of control proceeds to step S02.

[0037] In step S02, the first control unit 21 makes a decision as to whether or not the temperature of the imaging element 12, which has been detected with the temperature sensor 20, is less than or equal to a predetermined threshold value (for example 70.degree. C.). If the temperature of the imaging element 12 is less than or equal to the predetermined threshold value, then the flow of control proceeds to step S03. In this step S03, the first control unit 21 makes a decision as to whether or not the amount of image processing (i.e. the amount of calculation) required in order to generate image data (i.e. a live view image) will be greater than or equal to a predetermined threshold value. If the amount of image processing is greater than or equal to the predetermined threshold value, then the flow of control proceeds to step S04. It should be understood that it would also be acceptable to arrange for the predetermined threshold value to be set in five .degree. C. steps, as appropriate.

[0038] In step S04, by wireless communication via the first communication unit 19, the first control unit 21 transmits a portion of the digital image signal outputted from the A/D converter 13 to the server 100. And in the next step S05 the first ASIC 14 of the camera 10 and the second ASIC 102 of the server 100 share the image processing upon the digital image signal between one another.

[0039] As a method of sharing the image processing by the first ASIC 14 and the second ASIC 102, it may be suggested repeatedly to perform changeover processing to the second ASIC 102, after processing by the first ASIC 14 has been performed for some fixed time period. Alternatively, if the first control unit 21 displays the image data (i.e. the live view image) upon the display unit 15 at a rate of sixty frames per second, then, among the digital image signals outputted from the A/D converter 13 at the rate of sixty times per second, the first control unit 21 may output the odd numbered frames to the first ASIC 14, and may transmit the even numbered frames to the server 100 via the first communication unit 100. And in this case it is suggested that the first ASIC 14 should perform image processing upon the odd numbered frames, while the second ASIC 102 performs image processing upon the image numbered frames.

[0040] On the other hand, if in step S03 the amount of image processing is less than the predetermined threshold value and if in step S02 the temperature of the imaging element 12 is less than the predetermined threshold value, then the flow of control is transferred to step S06. In this step S06, the first control unit 21 transmits the digital image signal outputted from the A/D converter 13 to the server 100 by wireless communication via the first communication unit 19. And then in step S07 the second ASIC 102 of the server 100 performs image processing upon the digital image signal received via the second communication unit 101, and thereby generates image data (i.e. a live view image).

[0041] If in step S01, due to some reason such as, for example, the camera 10 being a long way away from the base station for wireless communication or the like, a state becomes established in which wireless communication between the camera 10 and the server 100 is not possible, then the flow of control is transferred to step S08. In this step S08, the first control unit 21 makes a decision as to whether or not the temperature of the imaging element 12 detected by the temperature sensor 20 is less than or equal to a predetermined threshold value. If the temperature of the imaging element 12 is less than or equal to the predetermined threshold value, then the flow of control proceeds to step S09. In this step S09, the first control unit 21 inputs the digital image signal outputted from the A/D converter 13 to the first ASIC 14, and generates image data (i.e. a live view image) by performing image processing upon this digital image signal with the first ASIC 14.

[0042] On the other hand, if the temperature of the imaging element 12 is greater than the predetermined threshold value, then the flow of control is transferred to step S10. In this step S10, the first control unit changes over from live view in the movie image mode to live view in a still image mode, thus alleviating the amount of processing by the imaging element 12 and the first ASIC 14.

[0043] As described above, in the live view image generation processing, the first control unit 21 determines whether or not to transmit the digital image signal to the server 100 by referring to three operational states of the camera 10, i.e. whether or not wireless communication with the first communication unit 19 is possible, the temperature of the imaging element 12 as detected by the temperature sensor 20, and the amount of image processing (i.e. the image processing load or the amount of calculation) to be performed by the first ASIC 14.

[0044] If wireless communication can be performed (i.e. if an affirmative decision is reached in step S01), then the first communication unit 21 causes the second ASIC 102 in the server 100 to perform processing of the digital image signal outputted from the A/D converter 13. Since, due to this, the burden of calculation upon the first ASIC 14 is reduced and the amount of heat generated by the first ASIC 14 decreases, accordingly rise of the temperature of the imaging element 12 is suppressed.

Embodiment #2

[0045] The photography system according to the second embodiment has a similar structure to that of the photography system according to the first embodiment, with the exception that a temperature sensor not shown in the figures is provided in the neighborhood of the first communication unit 19. This temperature sensor not shown in the figures detects the temperature of the first communication unit 19. In a similar manner to the case with the first ASIC 14, the amount of heat generated by the first communication unit 19 increases as the amount of communication (i.e. the amount of data communicated) increases, and this generated heat is supplied to the imaging element 12 which is provided within the same casing according to the amount of communication

[0046] In live view image generation processing, in addition to referring to three operational states of the camera 10, i.e. to whether or not wireless communication with the first communication unit 19 is possible, to the temperature of the imaging element 12 as detected by the temperature sensor 20, and to the amount of image processing (i.e. the image processing load or the amount of calculation) to be performed by the first ASIC 14, the first control unit 21 of this embodiment determines whether or not to transmit the digital image signal to the server 100 by further referring to the temperature of the first communication unit 19 as detected by a temperature sensor not shown in the figures.

[0047] FIG. 3 is a flow chart showing the live view processing executed by the first control unit 21 according to this second embodiment. In a first step S11, the first control unit 21 determines whether or not it is possible to perform wireless communication with the first communication unit 19. If the situation is such that wireless communication with the first communication unit 19 is possible, then the flow of control proceeds to step S12.

[0048] In step S12, the first control unit 21 makes a decision as to whether or not the temperature of the imaging element 12, which has been detected with the temperature sensor 20, is less than or equal to a predetermined threshold value. If the temperature of the imaging element 12 is less than or equal to the predetermined threshold value, then the flow of control proceeds to step S13. In this step S13, the first control unit 21 makes a decision as to whether or not the amount of image processing (i.e. the amount of calculation) required in order to generate image data (i.e. a live view image) will be greater than or equal to a predetermined threshold value. If the amount of image processing is greater than or equal to the predetermined threshold value, then the flow of control proceeds to step S14.

[0049] In step S14, by wireless communication via the first communication unit 19, the first control unit 21 transmits a portion of the digital image signal outputted from the A/D converter 13 to the server 100. And in the next step S05 the first ASIC 14 of the camera 10 and the second ASIC 102 of the server 100 share the image processing upon the digital image signal between one another.

[0050] On the other hand, if in step S13 the amount of image processing is less than the predetermined threshold value and if in step S12 the temperature of the imaging element 12 is less than the predetermined threshold value, then the flow of control is transferred to step S16. In this step S16, the first control unit 21 makes a decision as to whether or not the temperature of the first communication unit 19, which has been detected with the temperature sensor not shown in the figures, is less than or equal to a predetermined threshold value (for example, 60.degree. C.). If the temperature of the first communication unit 19 is less than or equal to the predetermined threshold value, then the flow of control proceeds to step S17. In this step S17, the first control unit 21 transmits the digital image signal outputted from the A/D converter 13 to the server 100 by wireless communication via the first communication unit 19. And then in step S18 the second ASIC 102 of the server 100 performs image processing upon the digital image signal that has been received via the second communication unit 101, and thereby generates image data (i.e. a live view image).

[0051] If in step S16 the temperature of the first communication unit 19 is greater than the predetermined threshold value, then the flow of control proceeds to step S14. In step S14 and step S15, as already explained, the first control unit 21 transmits a portion of the digital image signal outputted from the A/D converter 13 to the server 100 by wireless communication via the first communication unit 19, and image processing is performed by being shared between the first ASIC 14 of the camera 10 and the second ASIC 102 of the server 100. This is a preventative measure in case the temperature of the first communication unit 19 should become too high. It should be understood that the threshold value for the temperature of the first communication unit 19 may be set in five .degree. C. steps, as appropriate.

[0052] Moreover, instead of steps S14 and S15, if the temperature of the first communication unit 19 becomes greater than or equal to a prescribed value, it may also be arranged for the control unit 21 to stop transmitting the digital image to the server 100 with the first communication unit 19, and the first ASIC 14 to perform image processing.

[0053] It should be understood that, since the processing (i.e. steps S19 through S21) following the processing in step S11 which is performed when the system is in a state in which wireless communication between the camera 10 and the server 100 is not possible is the same as the processing of the steps S08 through S10 of FIG. 2 explained in connection with the first embodiment, and accordingly explanation thereof is omitted.

Embodiment #3

[0054] The photography system according to the third embodiment has a structure corresponding to that of the photography system according to the first embodiment, but with the temperature sensor 20 eliminated. During photography of a still image in a still image photographic mode, the first control unit 21 performs image processing with the first ASIC 14, while, during movie image photography in the movie image photographic mode, image processing is performed by the second ASIC 102 of the server 100. Since in this manner, in this third embodiment, the first control unit 21 determines according to the photographic mode whether image processing should be performed by the first ASIC 14 or by the second ASIC 102, accordingly it is possible to simplify the structure and the control of the photography system.

[0055] Variations of the following types also fall within the scope of the present invention, and moreover one or more of the following variant embodiments can also be combined with one or a plurality of the embodiments described above.

Variant Embodiment #1

[0056] By contrast with the fact that in the first embodiment, for example, the live view image generated by the first ASIC 14 is outputted to the display unit 15 almost in real time, the live view image generated by the second ASIC 102 needs to be sent via wireless communication by the first communication unit 19 and the second communication unit 101, so that some delay inevitably occurs. In order to suppress this delay, it will be acceptable to arrange for the first control unit 21 to buffer the live view image outputted by the first ASIC 14 and the live view image received by the first communication unit 19 in a memory not shown in the figures, so that the display of the live view image is always delayed by a constant time interval (for example 0.5 seconds). Due to this, the live view image that is displayed upon the display unit 15 continues to be shown smoothly, even if a delay occurs in the transmission of the live view image that is transmitted from the server 100.

[0057] Furthermore, during changing over between the ASICs that are employed for generating the live view image, it would also be acceptable to arrange to provide an interval during which the first control unit 21 operates both the first ASIC 14 and the second ASIC 102. By doing this, no temporary interruption of the live view image takes place when changing over is performed. This point will now be explained in more detail in the following.

[0058] FIG. 4 is a time chart showing several changeovers between the first ASIC 14 and the second ASIC 102 along with changes of temperature of the imaging element 12. It should be understood that in FIG. 4, in order to simplify the explanation, it is supposed that the first ASIC 14 and the second ASIC 102 do not share the image processing between them, as in steps S04 and S05 of FIG. 2.

[0059] Image processing by the first ASIC 14 is started at the time point t1, so that live view display upon the display unit 15 starts. Subsequent to the time point t1, the first ASIC 14 generates heat by repeatedly executing image processing, so that the temperature of the imaging element 12 rises. And thereafter, at the time point t2, the temperature of the imaging element 12 as detected by the temperature sensor 20 becomes greater than the threshold value. Although the first control unit 21 starts transmission of the digital image signal outputted from the A/D converter 13 to the server 100 at this time point, the first control unit 21 causes the first ASIC 14 to execute in parallel the image processing. And at the time point t3, at which point a time interval has elapsed that is sufficient for absorbing the delay accompanying wireless communication, the first control unit 21 stops image processing by the first ASIC 14.

[0060] Subsequent to the time point t3, since the first ASIC 14 is not performing any processing, accordingly the amount of heat that it generates is extremely low, and therefore increase of the temperature of the imaging element 12 is prevented. As a result, at the time point t4, the temperature of the imaging element 12 as detected by the temperature sensor 20 becomes less than or equal to the threshold value. Although, corresponding thereto, the first control unit 21 resumes image processing by the first ASIC 14, transmission of the digital image signal to the server 100 is still performed in parallel therewith, in a similar manner to the case at the time point t2. And then at the time point t5, when a fixed time period has elapsed, the first control unit 21 stops the transmission of the digital image signal to the first control unit 21. By overlapping the operations of the two ASICs in this manner when changing over between them, it is possible to keep the influence of delay in communication to the minimum limit.

Variant Embodiment #2

[0061] While, in FIG. 1, a photography system was shown in which the single camera 10 and the single server 100 were connected together by the network 80, it would also be possible for a plurality of cameras to be connected to a single server, and it would also be possible for a plurality of servers and a plurality of cameras to be connected together. FIG. 5 shows an example in which a plurality of cameras (a camera 10, a camera 30, and a camera 50) are connected to a single server 100.

[0062] In FIG. 5, each of the camera 10, the camera 30, and the camera 50 creates different photographic image data by performing photographic processing. In more concrete terms, the details of the image processing that each of a first ASIC 14 comprised in the camera 10, a third ASIC 34 comprised in the camera 30, and a fifth ASIC 54 comprised in the camera 50 can perform are different. Accordingly, even if the same analog image signals are outputted from their corresponding imaging elements 12, the image data generated by the first ASIC 14, the image data generated by the third ASIC 34, and the image data generated by the fifth ASIC 54 will differ from one another, for example in hue and/or texture and so on.

[0063] The server 100 shown in FIG. 5 comprises, in addition to a second ASIC 102 that is capable of performing image processing equivalent to that performed by the first ASIC 14, a fourth ASIC 112 that is capable of performing image processing equivalent to that performed by the third ASIC 34 and a sixth ASIC 122 that is capable of performing image processing equivalent to that performed by the fifth ASIC 54. In other words, for example, the image data that has been generated due to image processing by the fourth ASIC 112 is approximately the same as the image data that has been generated due to image processing by the third ASIC 34.

[0064] With the photography system having the structure described above, along with its digital image signal, the control unit of each of the cameras transmits information related to the details of the image processing executed by its ASIC to the server 10. This information may be, for example, the name of the camera type, and/or information specifying the specification of its ASIC (color, white balance, texture, and so on), and/or parameters or the like of the image processing executed by its ASIC. And, on the basis of this information related to the details of image processing that has been received, the second control unit 106 determines which ASIC is to be used for performing processing upon the digital image signal that have been received along with this information. For example, if a digital image signal has been received from the camera 30, the second control unit 106 may cause processing thereof to be performed by the fourth ASIC 112.

Variant Embodiment #3

[0065] While, in the first embodiment described above, the temperature sensor 20 was provided in the neighborhood of the imaging element 12, and the first control unit 21 determined whether or not it was possible to transmit the digital image signal on the basis of the temperature of the imaging element 12 as detected by this temperature sensor 20, it would also be acceptable to arrange to provide the temperature sensor 20 in the neighborhood of the first ASIC 14, and to determine whether or not it is possible to transmit the digital image signal, not on the basis of the temperature of the imaging element 12, but rather on the basis of the temperature of the first ASIC 14. The same variation would be possible in the case of the second embodiment. Moreover, it would also be possible not to determine whether or not it is possible to transmit the digital image signal on the basis of the temperature detected by the temperature sensor 20, but rather on the basis of the change over time of the temperature detected by the temperature sensor 20 (i.e. on the basis of the temperature gradient). Yet further, it would also be possible to provide temperature sensors in the neighborhoods of both the imaging element 12 and the first ASIC 14.

Variant Embodiment #4

[0066] It would also be possible for the device that is provided exterior to the camera 10 and that receives the digital image signal from the camera 10 and performs image processing thereof to be some type of external apparatus other than a server 100; for example, it could be a portable type electronic apparatus such as a personal computer or a so-called smart phone, or a tablet-type (slate-type) computer or the like.

Variant Embodiment #5

[0067] In the embodiment described above, an example was explained which was an integrated lens type digital camera. However, the present invention is not limited to this type of embodiment. For example, it would also be possible to apply the present invention to a so-called single lens reflex type digital camera whose lens is interchangeable, or to a digital camera of the interchangeable lens type that has no quick return mirror (i.e. a mirror-less camera), or to a portable type electronic apparatus such as a tablet type computer or the like. It should be understood that in the case of a single lens reflex type digital camera it would also be possible to provide a live view button or switch that performs live view display, and in this case it could be set to movie image live view or to still image live view. It would also be possible to apply the first embodiment to this case as well.

[0068] The present invention is not to be considered as being limited to the embodiments described above; provided that the particular characteristics of the present invention are preserved, other embodiments that are considered to fall within the range of the technical concept of the present invention are also included within the scope of the present invention.

[0069] The contents of the disclosure of the following application, upon which priority is claimed, are hereby incorporated herein by reference: Japanese Patent Application 2013-39,128 (filed on Feb. 28, 2013).

REFERENCE SIGNS LIST

[0070] 1: photography system; 10, 30, 50: cameras; 11: optical system; 12: imaging element; 13: A/D converter; 14: first ASIC; 15: display unit; 16: recording medium; 17: operation unit; 18: first memory; 19: first communication unit; 20: temperature sensor; 21: first control unit; 80: network; 100: server; 101: second communication unit; 102: second ASIC; 104: second memory; 106: second control unit.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.