Device And Method Of Controlling The Device

SIM; Dae-hyun ; et al.

U.S. patent application number 16/409028 was filed with the patent office on 2019-08-29 for device and method of controlling the device. The applicant listed for this patent is Samsung Electronics Co., Ltd.. Invention is credited to Radoslaw ANTONIEWICZ, Remigiusz BARBACHOWSKI, Marcin DUSZA, Rafal FOLTYNIEWICZ, Roman FRACZEK, Pawel KIES, Piotr KLOS, Jakub KSIEZNIAK, Wojciech KUSMIEREK, Dae-hyun SIM, Arleta STASZUK, Krzysztof SZARZYNSKI, Mateusz ZIEMEK.

| Application Number | 20190268418 16/409028 |

| Document ID | / |

| Family ID | 56368394 |

| Filed Date | 2019-08-29 |

View All Diagrams

| United States Patent Application | 20190268418 |

| Kind Code | A1 |

| SIM; Dae-hyun ; et al. | August 29, 2019 |

DEVICE AND METHOD OF CONTROLLING THE DEVICE

Abstract

A device is provided. The device includes a detection unit configured to detect driving conditions of a vehicle, and a control unit configured to limit notifications with respect to events that occur in the device during a section according to the detected driving conditions and provide the limited notifications during the section if the driving conditions are changed, wherein the section is determined in real time according to the driving conditions of the vehicle.

| Inventors: | SIM; Dae-hyun; (Seoul, KR) ; STASZUK; Arleta; (Warsaw, PL) ; KSIEZNIAK; Jakub; (Pulawy, PL) ; KIES; Pawel; (Warsaw, PL) ; FOLTYNIEWICZ; Rafal; (Warsaw, PL) ; BARBACHOWSKI; Remigiusz; (Warsaw, PL) ; KUSMIEREK; Wojciech; (Warsaw, PL) ; SZARZYNSKI; Krzysztof; (Poznan, PL) ; KLOS; Piotr; (Piock, PL) ; ZIEMEK; Mateusz; (Ostroleka, PL) ; ANTONIEWICZ; Radoslaw; (Warsaw, PL) ; DUSZA; Marcin; (Warsaw, PL) ; FRACZEK; Roman; (Warsaw, PL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 56368394 | ||||||||||

| Appl. No.: | 16/409028 | ||||||||||

| Filed: | May 10, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14991559 | Jan 8, 2016 | 10341442 | ||

| 16409028 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04W 4/46 20180201; H04L 67/125 20130101; H04M 1/72577 20130101; H04W 4/48 20180201; H04W 4/027 20130101; H04W 4/16 20130101; H04M 1/72569 20130101; H04L 67/12 20130101; B60Q 9/00 20130101; H04W 4/029 20180201; H04M 2250/12 20130101 |

| International Class: | H04L 29/08 20060101 H04L029/08; H04W 4/029 20060101 H04W004/029; H04M 1/725 20060101 H04M001/725; H04W 4/02 20060101 H04W004/02; H04W 4/16 20060101 H04W004/16 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Jan 12, 2015 | KR | 10-2015-0004446 |

Claims

1. A device comprising: a detector configured to detect current driving conditions of a vehicle or a user; a communicator configured to communicate with the vehicle, and receive a phone call or a message from a counterpart; and at least one processor configured to: limit notifications with respect to events that occur in the device based on the current driving conditions, when the phone call or the message is received, determine a condition among a plurality of conditions for changing the current driving conditions, the condition being determined based on the current driving conditions detected by the detector and destination information configured by the user, the destination information corresponding to information regarding a predetermined location of an end of travel, acquired from an application installed in the device, the vehicle, or a wearable device connected to the device, and provide a state change guide corresponding to the condition for changing the current driving conditions, the state change guide comprising at least one of information informing the user of the current driving conditions or an action for the user to perform to change the current driving conditions, limit a receiving notification with respect to the phone call or the message based on the current driving conditions, control a speaker closer to a threat element to output the notifications, the speaker being selected among at least two speakers installed in the vehicle according to a position of the threat element, and when the current driving conditions change, provide the limited notifications.

2. The device of claim 1, wherein the at least one processor is further configured to: determine a threat level according to the current driving conditions, and limit or provide the notifications adaptively based on at least one of the determined threat level, an occurrence frequency of the events, or the counterpart.

3. The device of claim 1, wherein the at least one processor is further configured to control the communicator to provide a response message, including a current situation based on the current driving conditions, to the counterpart.

4. The device of claim 3, wherein the at least one processor is further configured to: predict a future situation based on the current driving conditions, and control the communicator to provide the response message, further including the predicted future situation, to the counterpart.

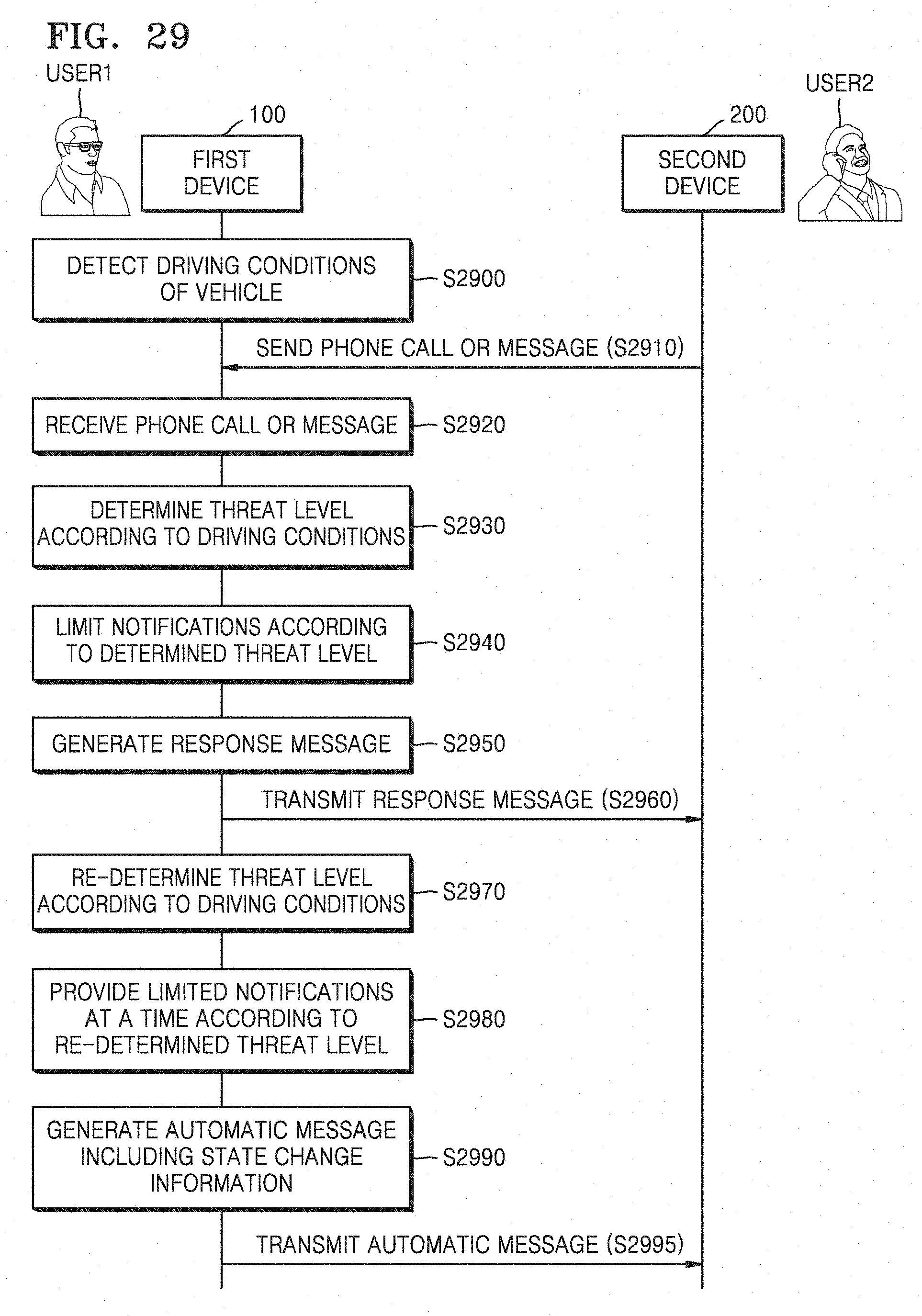

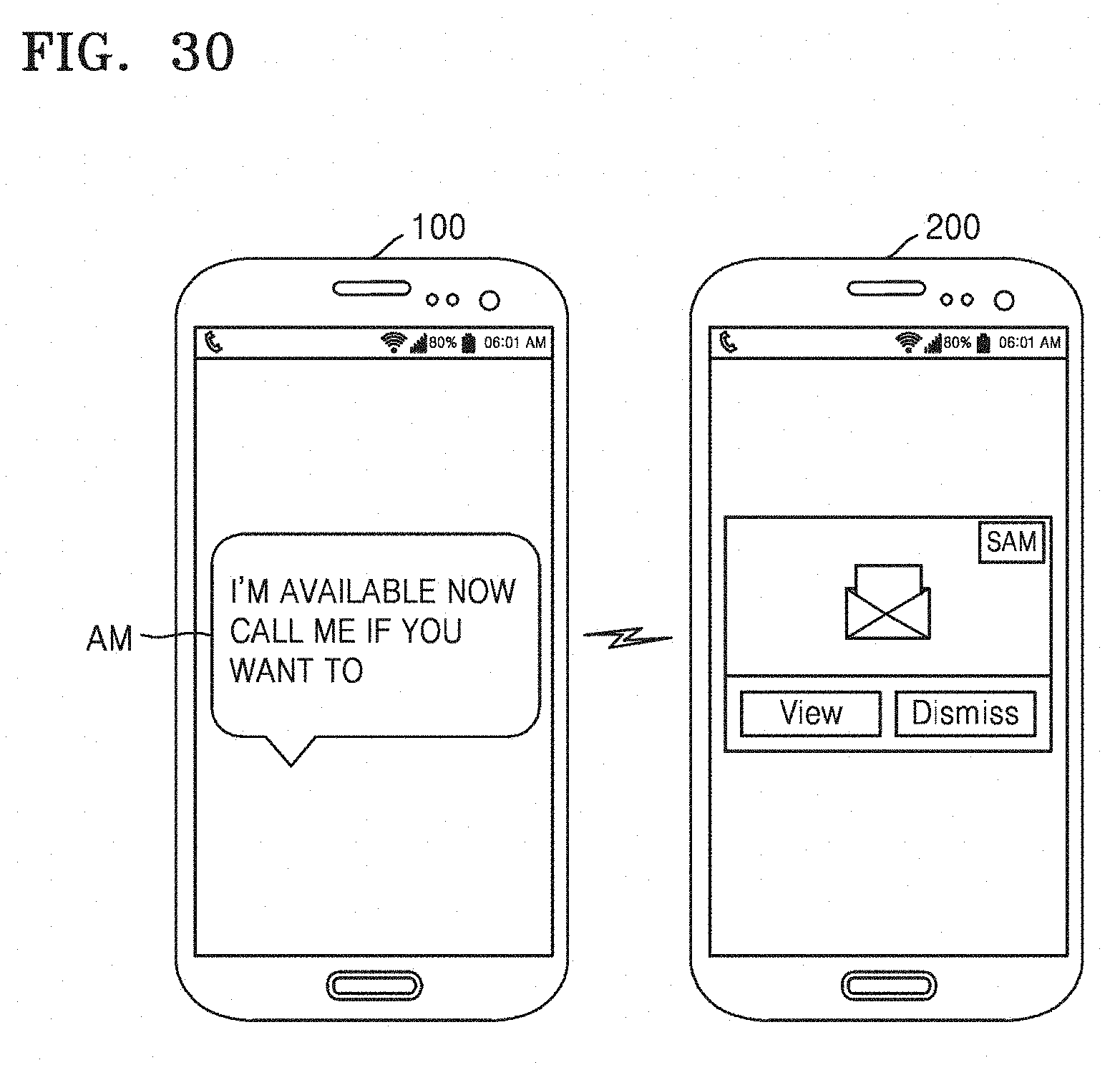

5. The device of claim 1, wherein the at least one processor is further configured to, when the current driving conditions change, control the communicator to provide an automatic message including state change information of the user to the counterpart.

6. The device of claim 1, wherein the communicator is further configured to receive information regarding the current driving conditions from at least one of a camera, a microphone, or a navigation that is installed in the vehicle.

7. The device of claim 1, wherein the current driving conditions of the vehicle include at least one of outer conditions of the vehicle, inner conditions of the vehicle, or conditions of the vehicle itself.

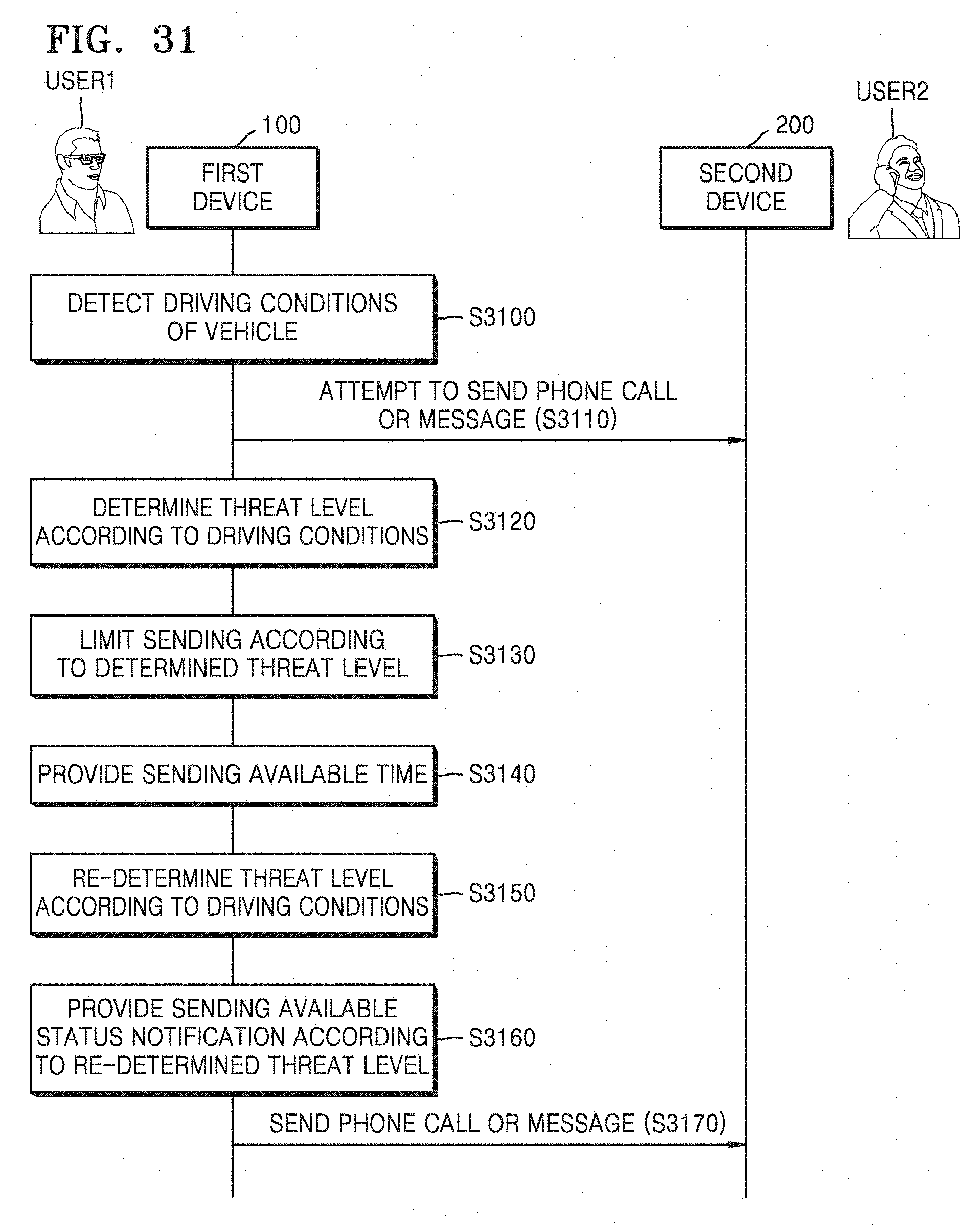

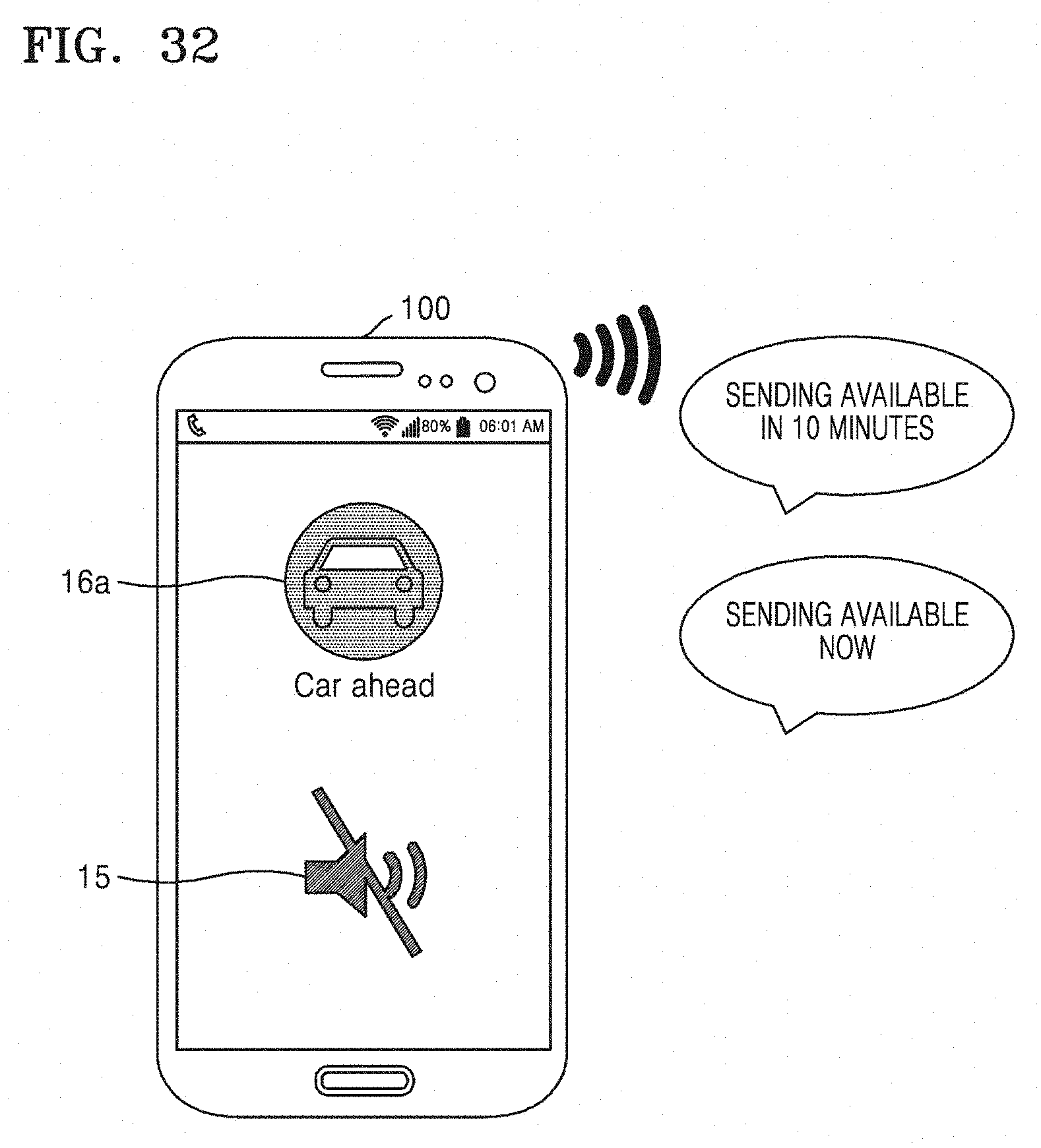

8. The device of claim 1, wherein, in a case where the user attempts to transmit a phone call or a message, the at least one processor is further configured to provide a current threat level or a transmission available time according to the current driving conditions.

9. The device of claim 8, wherein, in a case where the user attempts to transmit the phone call or the message, the at least one processor is further configured to provide the state change guide regarding the condition for changing the driving conditions.

10. The device of claim 8, wherein, when the current driving conditions change, the at least one processor is further configured to provide a notification informing a transmission available state to the user.

11. The device of claim 1, wherein, when an operation of the vehicle ends, the at least one processor is further configured to provide a reminder with respect to the events.

12. The device of claim 1, wherein the current driving conditions include at least one of inner conditions of the vehicle or conditions of the user.

13. A method of controlling a device, the device including a detector, the method comprising: detecting, by the detector, current driving conditions of a vehicle or a user; limiting notifications with respect to events that occur in the device based on the current driving conditions detected by the detector; receiving a phone call or a message from a counterpart; when the phone call or the message is received, determining a condition among a plurality of conditions for changing the current driving conditions, the condition being determined based on the current driving conditions detected by the detector and destination information configured by the user, the destination information corresponding to information regarding a predetermined location of an end of travel, acquired from an application installed in the device, the vehicle, or a wearable device connected to the device, and providing a state change guide corresponding to the condition for changing the current driving conditions, the state change guide comprising at least one of information informing the user of the current driving conditions or an action for the user to perform to change the current driving conditions; and when the current driving conditions change, providing the limited notifications, wherein the limiting of the notifications comprises limiting a receiving notification with respect to the phone call or the message, and wherein the method further comprises communicating with the vehicle to control a speaker closer to a threat element to output the notifications, the speaker being selected among at least two speakers installed in the vehicle according to a position of the threat element.

14. The method of claim 13, wherein the limiting of the notifications comprises: determining a threat level according to the current driving conditions; and limiting the notifications adaptively based on at least one of the determined threat level, an occurrence frequency of the events, or the counterpart.

15. The method of claim 13, further comprising providing a response message, including a current situation based on the current driving conditions, to the counterpart.

16. The method of claim 15, further comprising: predicting a future situation based on the current driving conditions, wherein the providing of the response message comprises providing the response message further including the predicted future situation to the counterpart.

17. The method of claim 13, further comprising, when the current driving conditions change, providing an automatic message including state change information of the user to the counterpart.

18. The method of claim 13, further comprising, in a case where the user attempts to transmit a phone call or a message, providing a current threat level or a transmission available time according to the current driving conditions.

19. The method of claim 18, further comprising, in a case where the user attempts to transmit the phone call or the message, providing the state change guide regarding the condition for changing the driving conditions.

20. The method of claim 18, further comprising, when the current driving conditions change, providing a notification informing a transmission available state to the user.

21. The method of claim 13, further comprising, when an operation of the vehicle ends, providing a reminder with respect to the events.

22. The method of claim 13, wherein the current driving conditions include at least one of inner conditions of the vehicle or conditions of the user.

23. At least one non-transitory computer readable recording medium for storing one or more programs comprising commands for executing a method of controlling a device, the device including a detector, the method comprising: detecting, by the detector, current driving conditions of a vehicle or a user; limiting notifications with respect to events that occur in the device based on the current driving conditions; receiving a phone call or a message from a counterpart; when the phone call or the message is received, determining a condition among a plurality of conditions for changing the current driving conditions, the condition being determined based on the current driving conditions and destination information configured by the user, the destination information corresponding to information regarding a predetermined location of an end of travel, acquired from an application installed in the device, the vehicle, or a wearable device connected to the device, and providing a state change guide corresponding to the condition for changing the current driving conditions, the state change guide comprising at least one of information informing the user of the current driving conditions or an action for the user to perform to change the current driving conditions; and when the current driving conditions change, providing the limited notifications, wherein the limiting of the notifications comprises limiting a receiving notification with respect to the phone call or the message, and wherein the method further comprises communicating with the vehicle to control a speaker closer to a threat element to output the notifications, the speaker being selected among at least two speakers installed in the vehicle according to a position of the threat element.

24. The at least one non-transitory computer readable recording medium of claim 23, wherein the current driving conditions include at least one of inner conditions of the vehicle or conditions of the user.

Description

CROSS-REFERENCE TO RELATED APPLICATION(S)

[0001] This application is a continuation application of prior application Ser. No. 14/991,559, filed on Jan. 8, 2016, which claims the benefit under 35 U.S.C. .sctn. 119(a) of a Korean patent application number 10-2015-0004446, filed on Jan. 12, 2015 in the Korean Intellectual Property Office, the disclosure of which is incorporated by reference herein in its entirety.

TECHNICAL FIELD

[0002] The present disclosure relates to devices and methods of controlling the devices.

BACKGROUND

[0003] Since there are many vehicle accidents due to a rapid increase in vehicles, a measure for safe driving is required. More particularly, since drivers increasingly use smart phones while driving owing to popularity and development of smart phones, use of smart phones while driving may interrupt safe driving of drivers and cause traffic accidents. However, in a case where communication through smart phones while driving is completely restricted, autonomy and convenience of users are extremely limited. Thus, solution for promoting the balance between safety and user convenience is required.

[0004] The above information is presented as background information only to assist with an understanding of the present disclosure. No determination has been made, and no assertion is made, as to whether any of the above might be applicable as prior art with regard to the present disclosure.

SUMMARY

[0005] Aspects of the present disclosure are to address at least the above-mentioned problems and/or disadvantages and to provide at least the advantages described below. Accordingly, an aspect of the present disclosure is to provide devices capable of supporting safe driving of drivers by selectively limiting notification of the device according to driving conditions and methods of controlling the devices.

[0006] In accordance with an aspect of the present disclosure, a device is provided. The device includes a detection unit configured to detect driving conditions of a vehicle, and a control unit configured to limit notifications with respect to events that occur in the device during a section according to the detected driving conditions and provide the limited notifications during the section if the driving conditions are changed, wherein the section is determined in real time according to the driving conditions of the vehicle.

[0007] The device may further include a communication unit configured to receive a phone call or a message from a counterpart, wherein the control unit limits a receiving notification with respect to the phone call or the message during the section.

[0008] The control unit may determine a threat level according to the driving conditions and limit or provide the notifications adaptively to the determined threat level, an occurrence frequency of the events, or the counterpart.

[0009] The control unit, if the phone call or the message is received during the section, may provide a state change guide regarding a condition for changing the driving conditions.

[0010] The control unit may control the communication unit to provide a response message, including a current situation based on the driving conditions, to the counterpart.

[0011] The control unit may predict a future situation based on the driving conditions and controls the communication unit to provide the response message, further including the predicted future situation, to the counterpart.

[0012] The control unit may control the communication unit to provide an automatic message including state change information of a user to the counterpart if the driving conditions are changed.

[0013] The communication unit may receive information regarding the driving conditions from a camera, a microphone, or a navigation that is installed in the vehicle.

[0014] The detection unit may detect the driving conditions including outer conditions of the vehicle, inner conditions of the vehicle, conditions of the vehicle itself, or conditions of the user.

[0015] In a case where a user attempts to transmit a phone call or a message during the section, the control unit may provide a current threat level or a transmission available time according to the driving conditions.

[0016] In a case where the user attempts to transmit the phone call or the message during the section, the control unit may provide a state change guide regarding a condition for changing the driving conditions.

[0017] The control unit may provide a notification informing a transmission available state to the user if the driving conditions are changed.

[0018] The control unit may provide a reminder with respect to the events if an operation of the vehicle ends.

[0019] The communication unit may be able to communicate with the vehicle, and the control unit controls a speaker closer to a threat element to output the notifications, the speaker being selected among at least two speakers installed in the vehicle according to a position of the threat element.

[0020] In accordance with another aspect of the present disclosure, a method of controlling a device is provided. The method includes detecting driving conditions of a vehicle, limiting notifications with respect to events that occur in the device during a section according to the detected driving conditions, and providing the limited notifications during the section if the driving conditions are changed, wherein the section is determined in real time according to the driving conditions of the vehicle.

[0021] The method may further include receiving a phone call or a message from a counterpart, wherein the limiting of the notifications includes limiting a receiving notification with respect to the phone call or the message during the section.

[0022] The limiting of the notifications may include determining a threat level according to the driving conditions, and limiting the notifications adaptively to the determined threat level, an occurrence frequency of the events, or the counterpart.

[0023] The method may further include if the phone call or the message is received during the section, providing a state change guide regarding a condition for changing the driving conditions.

[0024] The method may further include providing a response message including a current situation based on the driving conditions to the counterpart.

[0025] The method may further include predicting a future situation based on the driving conditions, wherein the providing of the response message includes providing the response message further including the predicted future situation to the counterpart.

[0026] The method may further include providing an automatic message including state change information of a user to the counterpart if the driving conditions are changed.

[0027] The method may further include, in a case where a user attempts to transmit a phone call or a message during the section, providing a current threat level or a transmission available time according to the driving conditions.

[0028] The method may further include, in a case where the user attempts to transmit the phone call or the message during the section, providing a state change guide regarding a condition for changing the driving conditions.

[0029] The method may further include providing a notification informing a transmission available state to the user if the driving conditions are changed.

[0030] The method may further include providing a reminder with respect to the events if an operation of the vehicle ends.

[0031] In accordance with another aspect of the present disclosure, a non-transitory computer readable recording medium storing one or more programs is provided. The non-transitory computer readable recording medium includes commands for executing a method of controlling a device, the method including detecting driving conditions of a vehicle, limiting notifications with respect to events that occur in the device during a section according to the detected driving conditions, and providing the limited notifications during the section if the driving conditions are changed, wherein the section is determined in real time according to the driving conditions of the vehicle.

[0032] Other aspects, advantages, and salient features of the disclosure will become apparent to those skilled in the art from the following detailed description, which, taken in conjunction with the annexed drawings, discloses various embodiments of the present disclosure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0033] The above and other aspects, features, and advantages of certain embodiments of the present disclosure will be more apparent from the following description taken in conjunction with the accompanying drawings, in which:

[0034] FIG. 1 illustrates a system providing a smart driving mode according to an embodiment of the present disclosure;

[0035] FIG. 2 is a flowchart of a device control method according to an embodiment of the present disclosure;

[0036] FIG. 3 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

[0037] FIG. 4 illustrates an operation of determining a probabilistic threat level performed by a first device according to an embodiment of the present disclosure;

[0038] FIG. 5 is a graph showing a threat level determined by a first device according to an embodiment of the present disclosure;

[0039] FIG. 6A is a graph showing probable threat elements detected by a first device at a first time and threat levels with respect to the probable threat elements according to an embodiment of the present disclosure;

[0040] FIG. 6B is a graph showing probable threat elements detected by a first device at a second time and threat levels with respect to the probable threat elements according to an embodiment of the present disclosure;

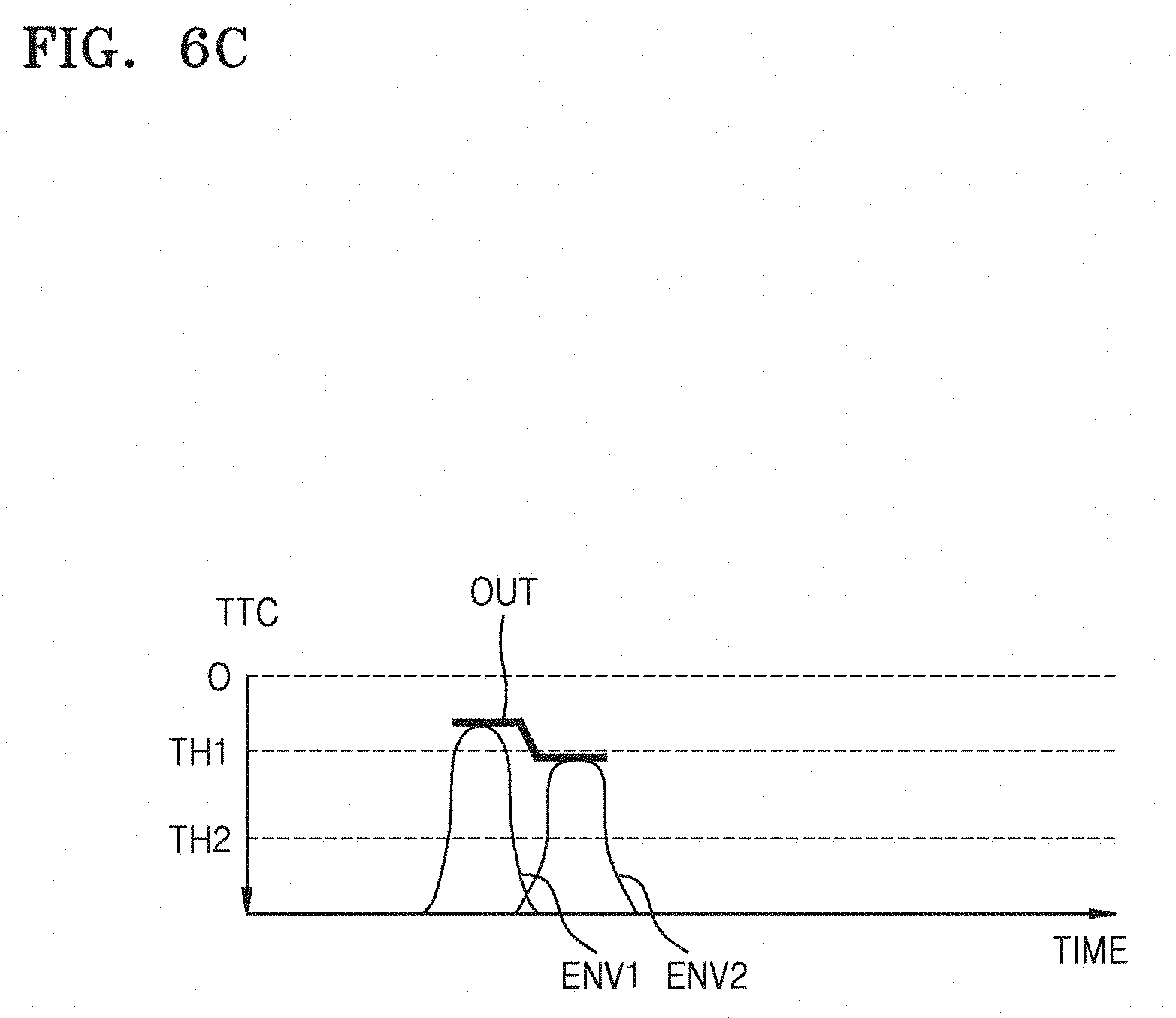

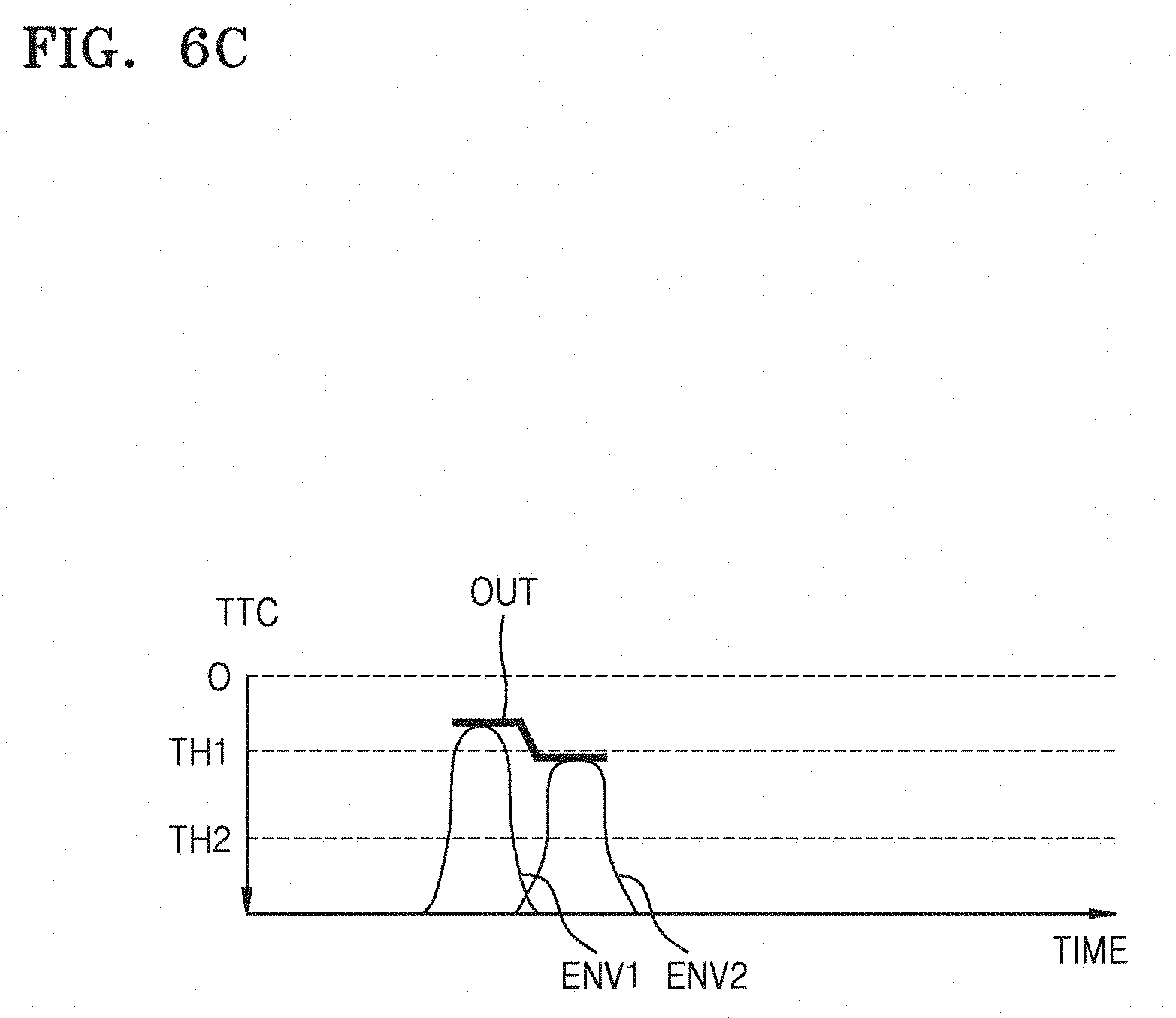

[0041] FIG. 6C illustrates a graph showing threat levels determined by a first device according to an embodiment of the present disclosure;

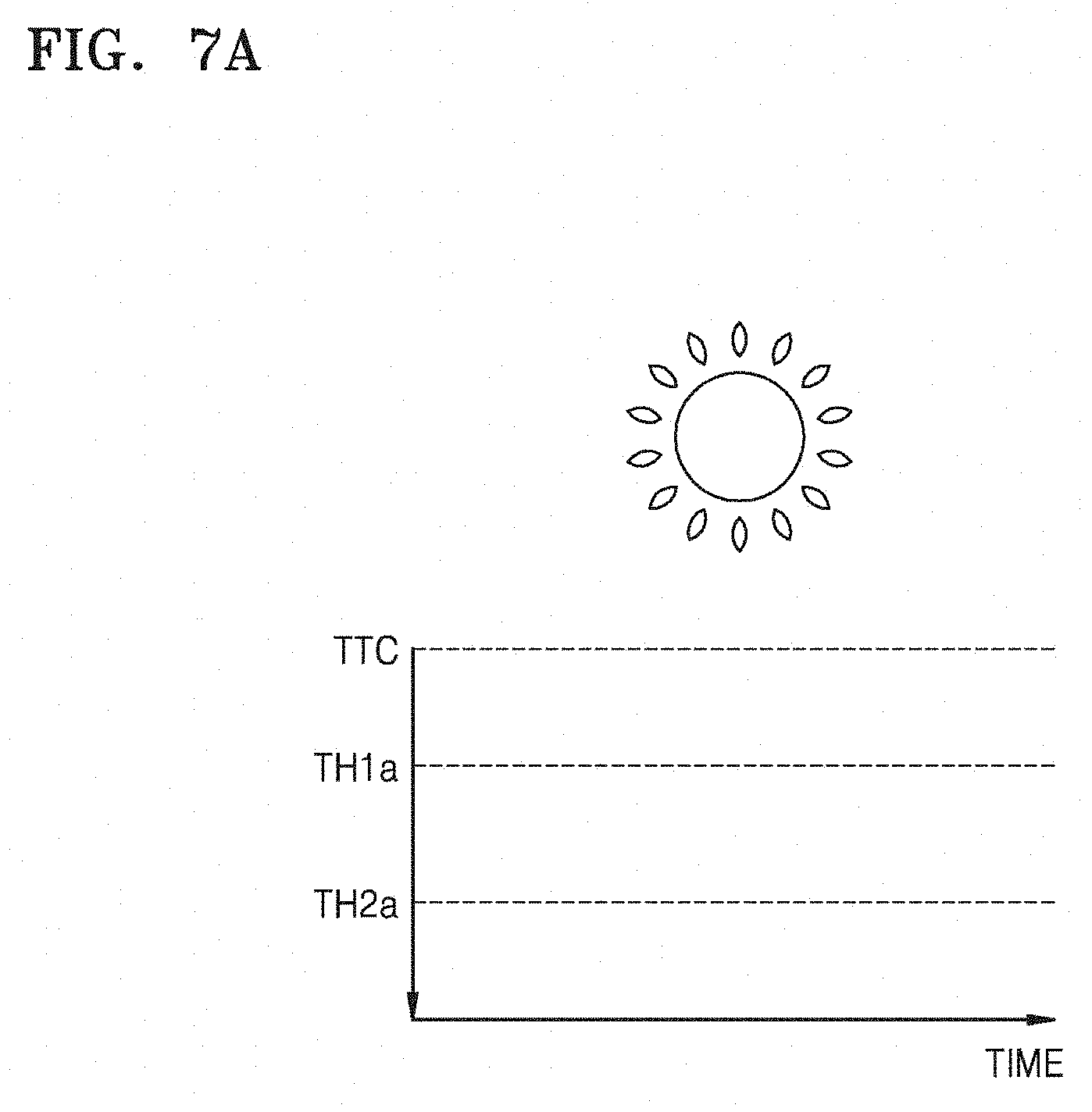

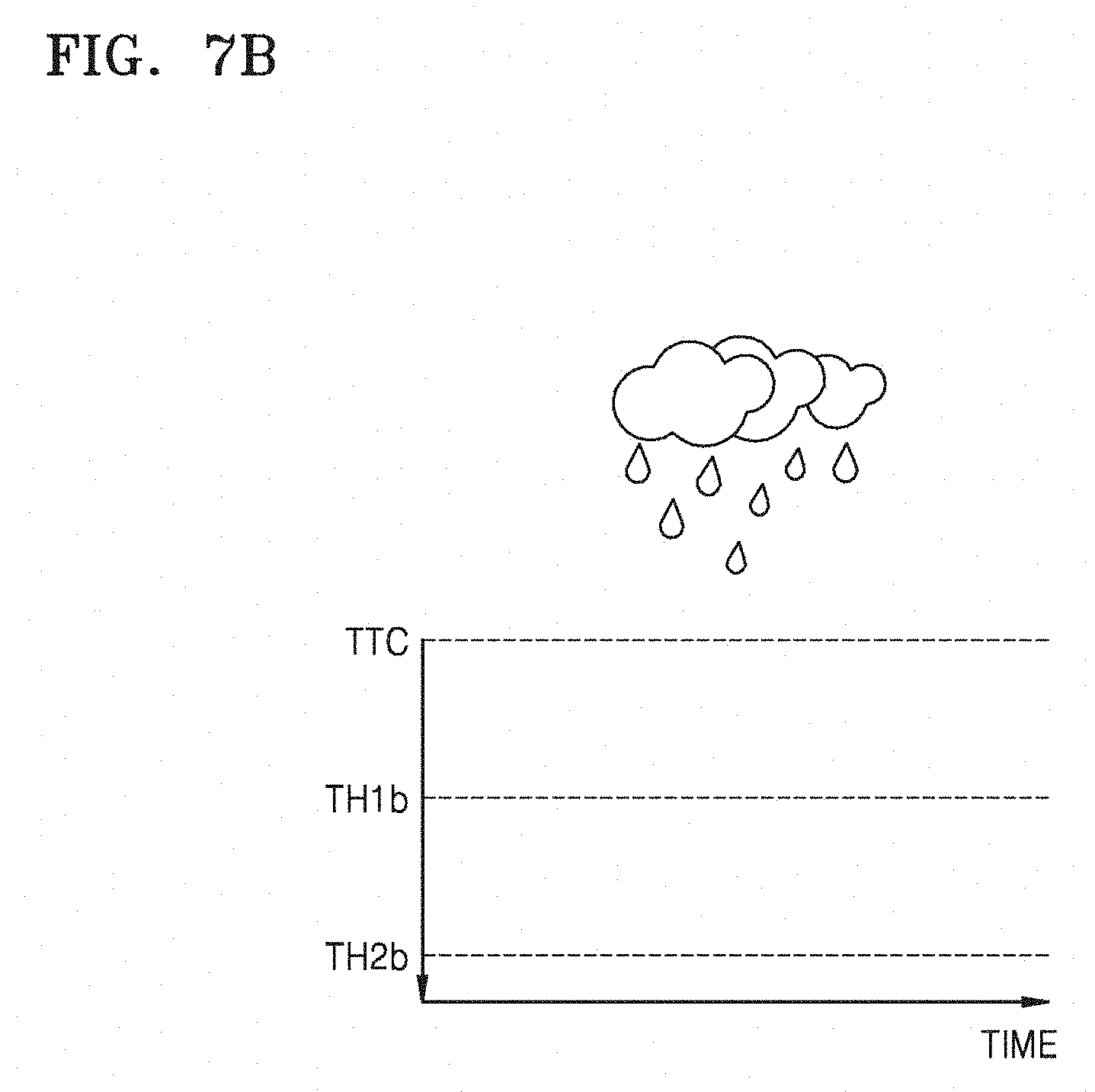

[0042] FIGS. 7A and 7B are graphs showing thresholds adaptive to an external environment determined by a first device according to an embodiment of the present disclosure;

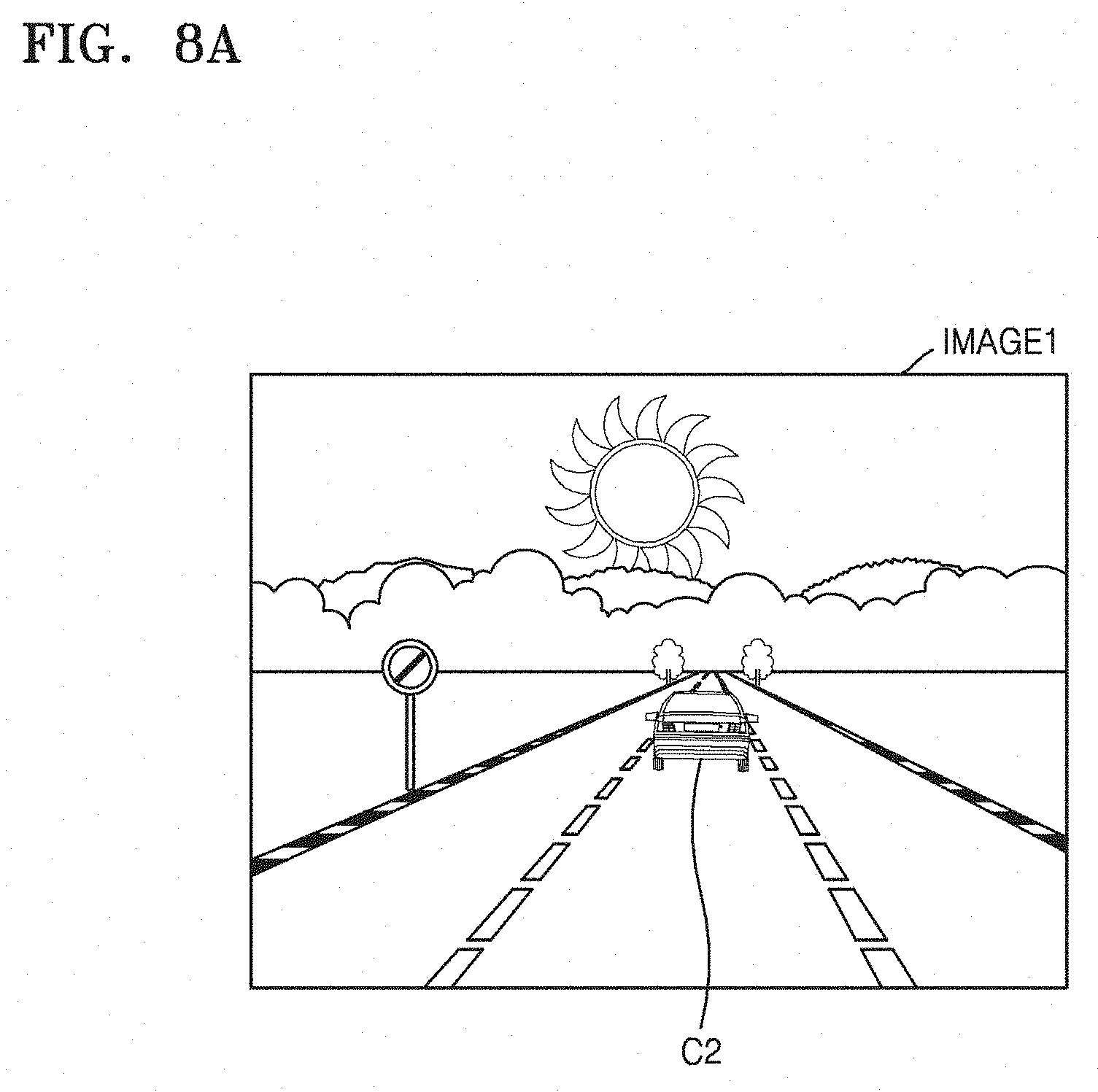

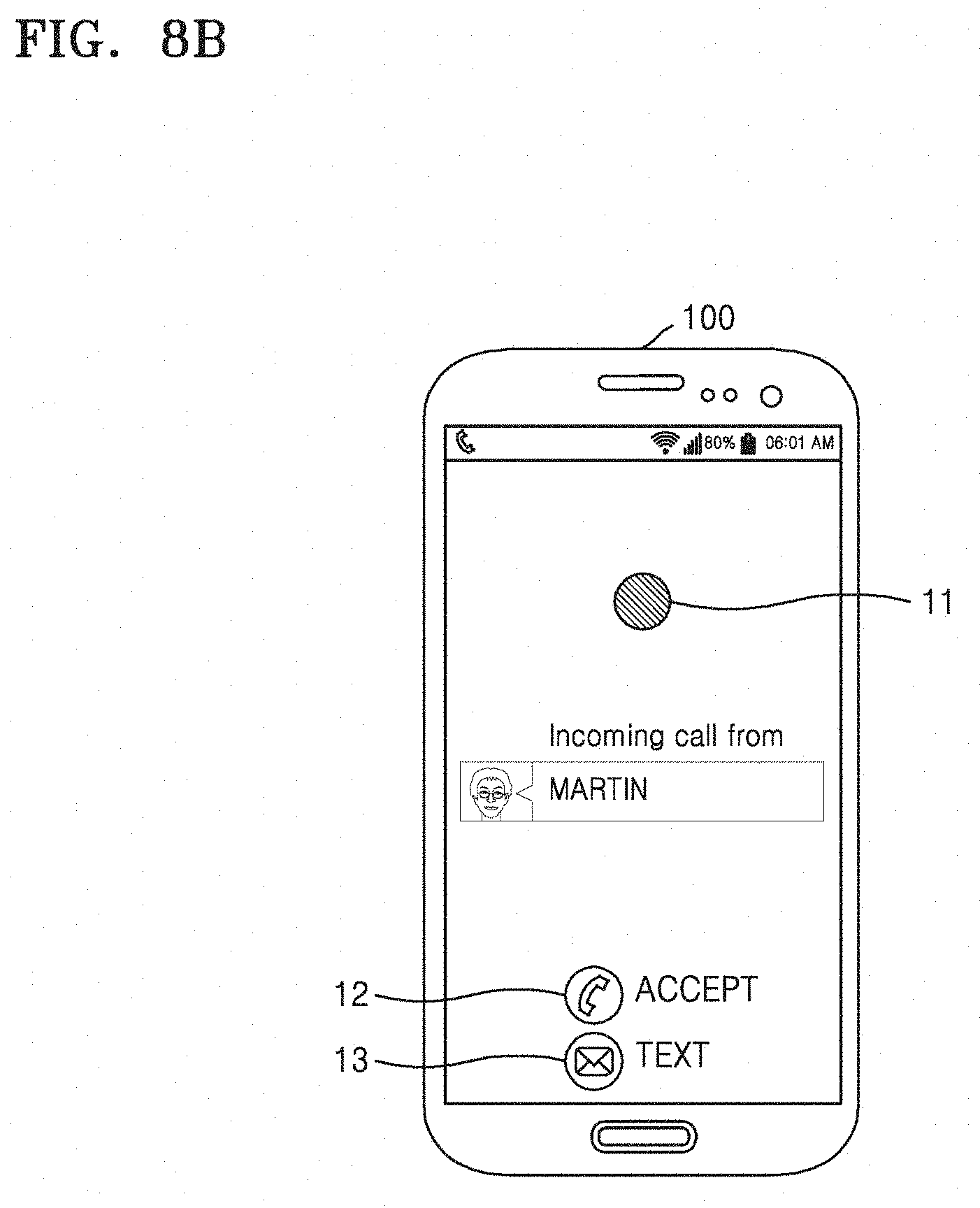

[0043] FIG. 8A illustrates first driving conditions detected by a first device according to an embodiment of the present disclosure, and FIG. 8B illustrates a threat level display of the first device according to the detected first driving conditions according to an embodiment of the present disclosure;

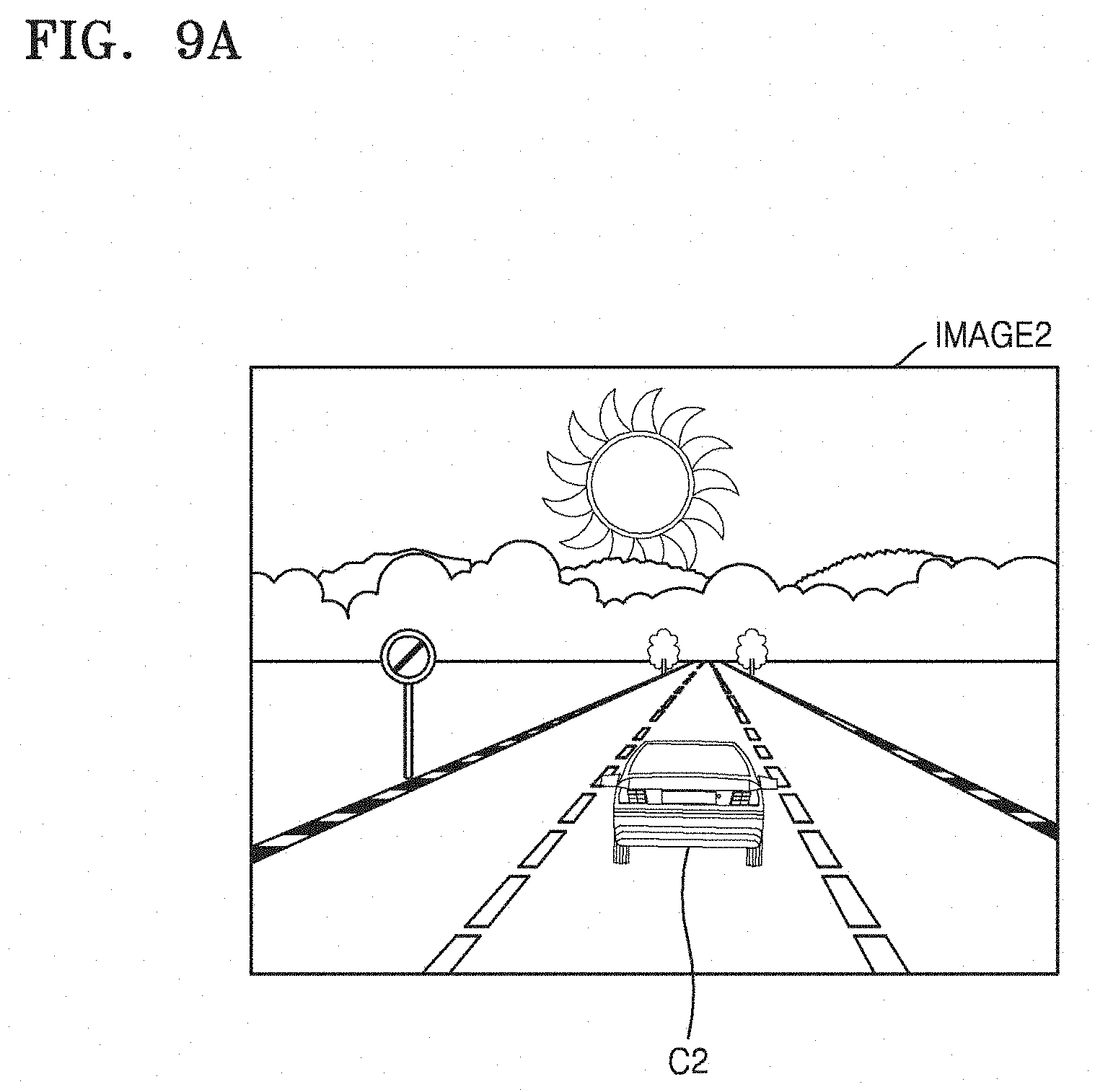

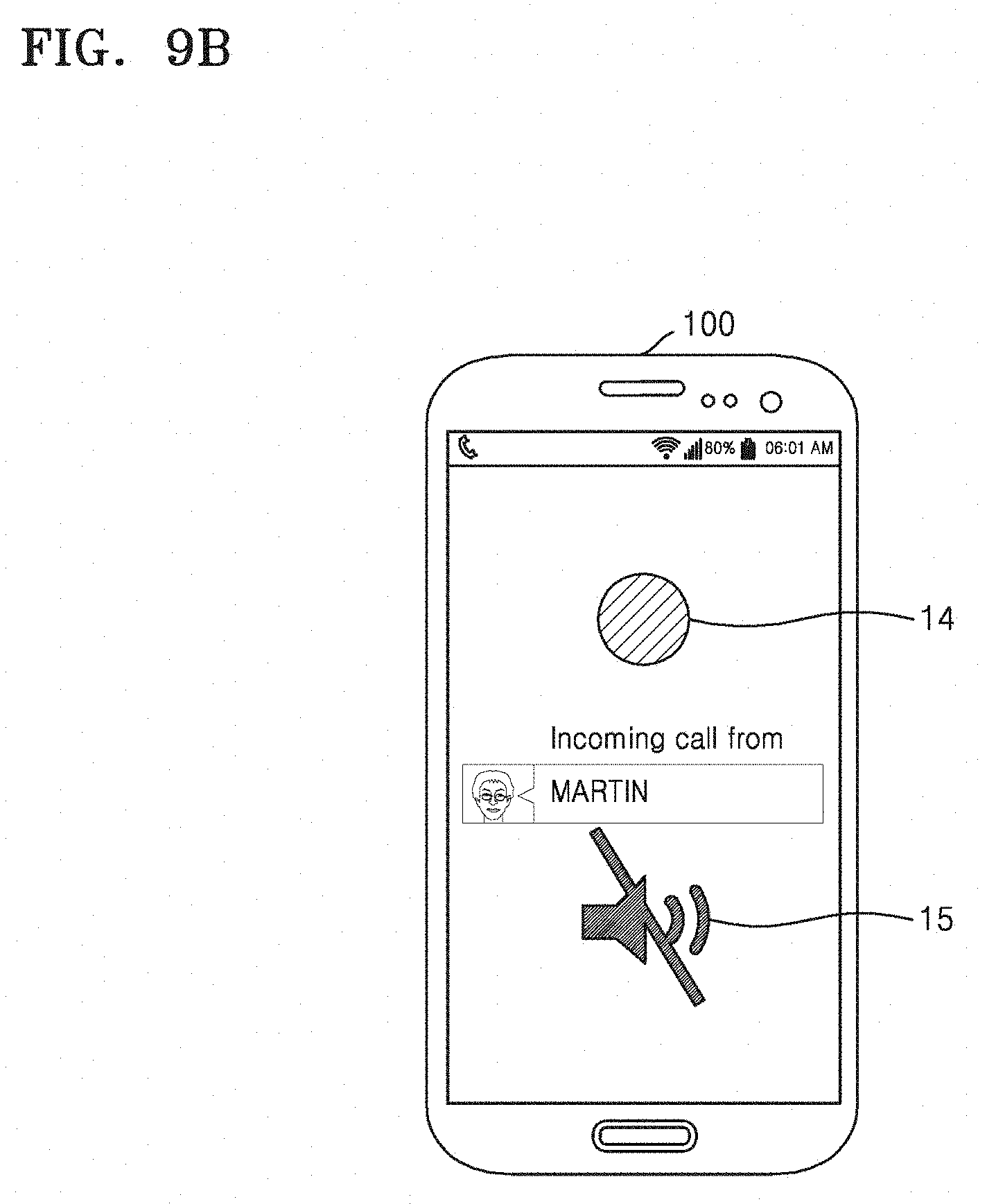

[0044] FIG. 9A illustrates second driving conditions detected by a first device according to an embodiment of the present disclosure, and FIG. 9B illustrates a threat level display of the first device according to the detected second driving conditions according to an embodiment of the present disclosure;

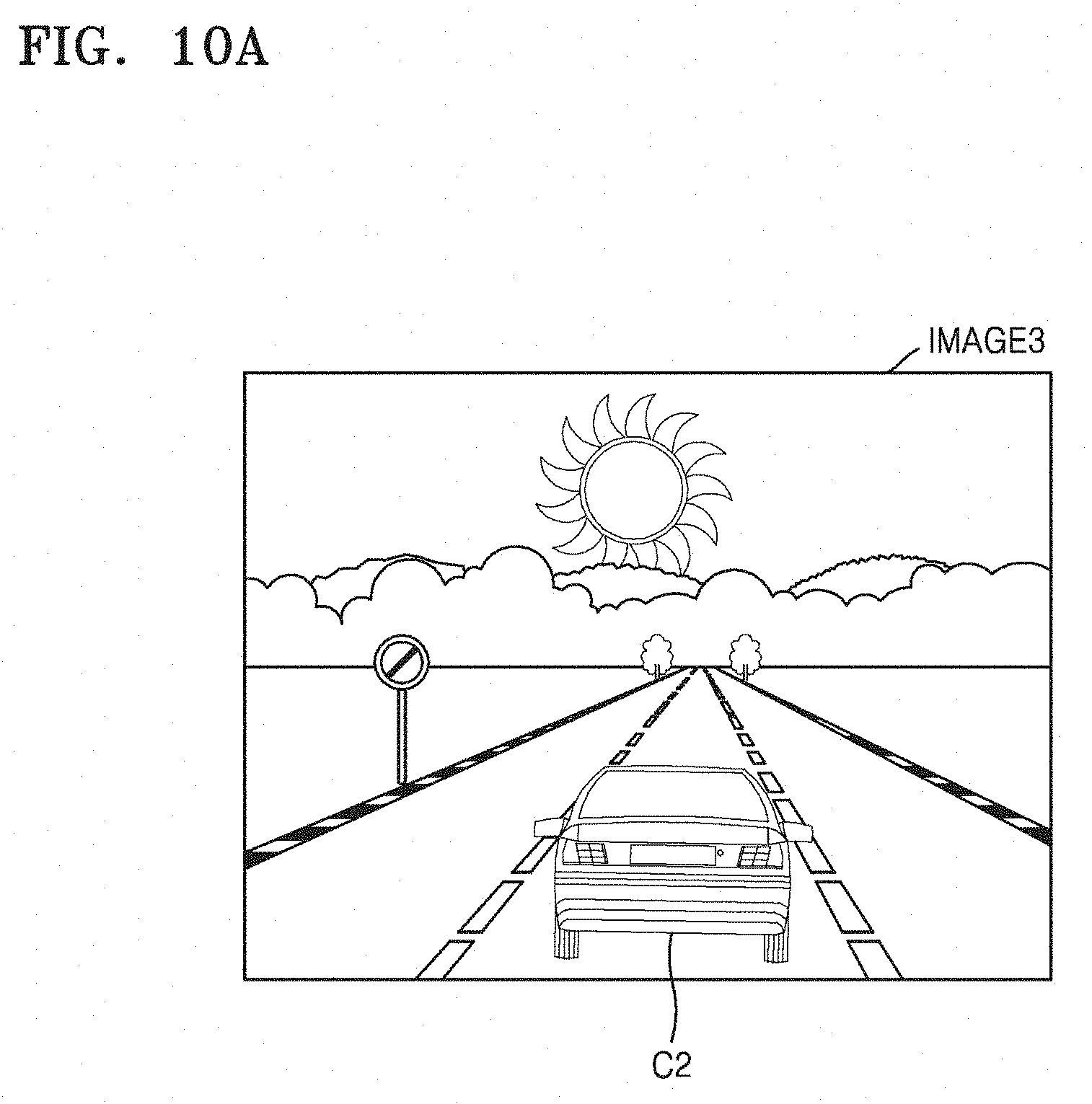

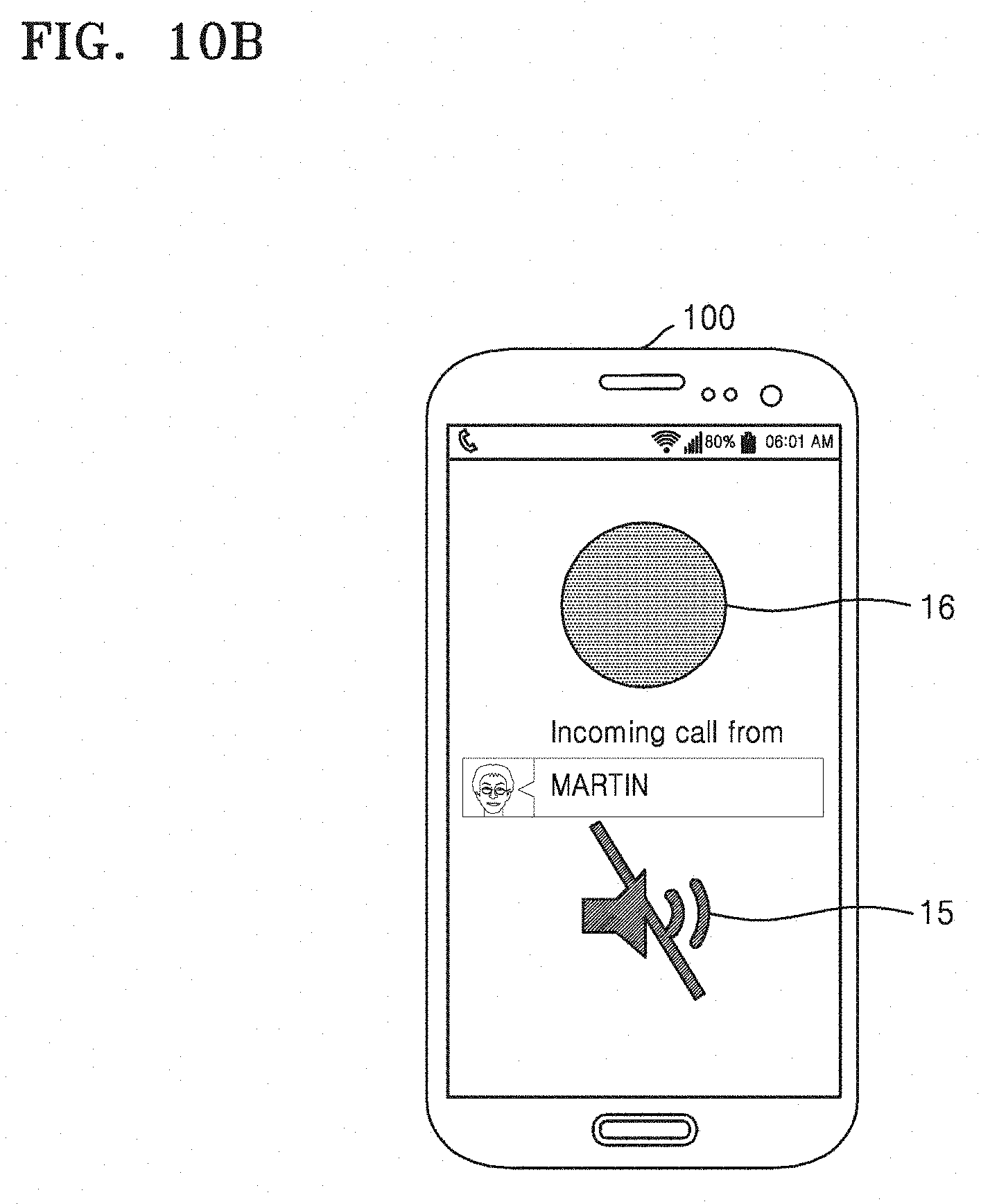

[0045] FIG. 10A illustrates third driving conditions detected by a first device according to an embodiment of the present disclosure, and FIG. 10B illustrates a threat level display of the first device according to the detected third driving conditions according to an embodiment of the present disclosure;

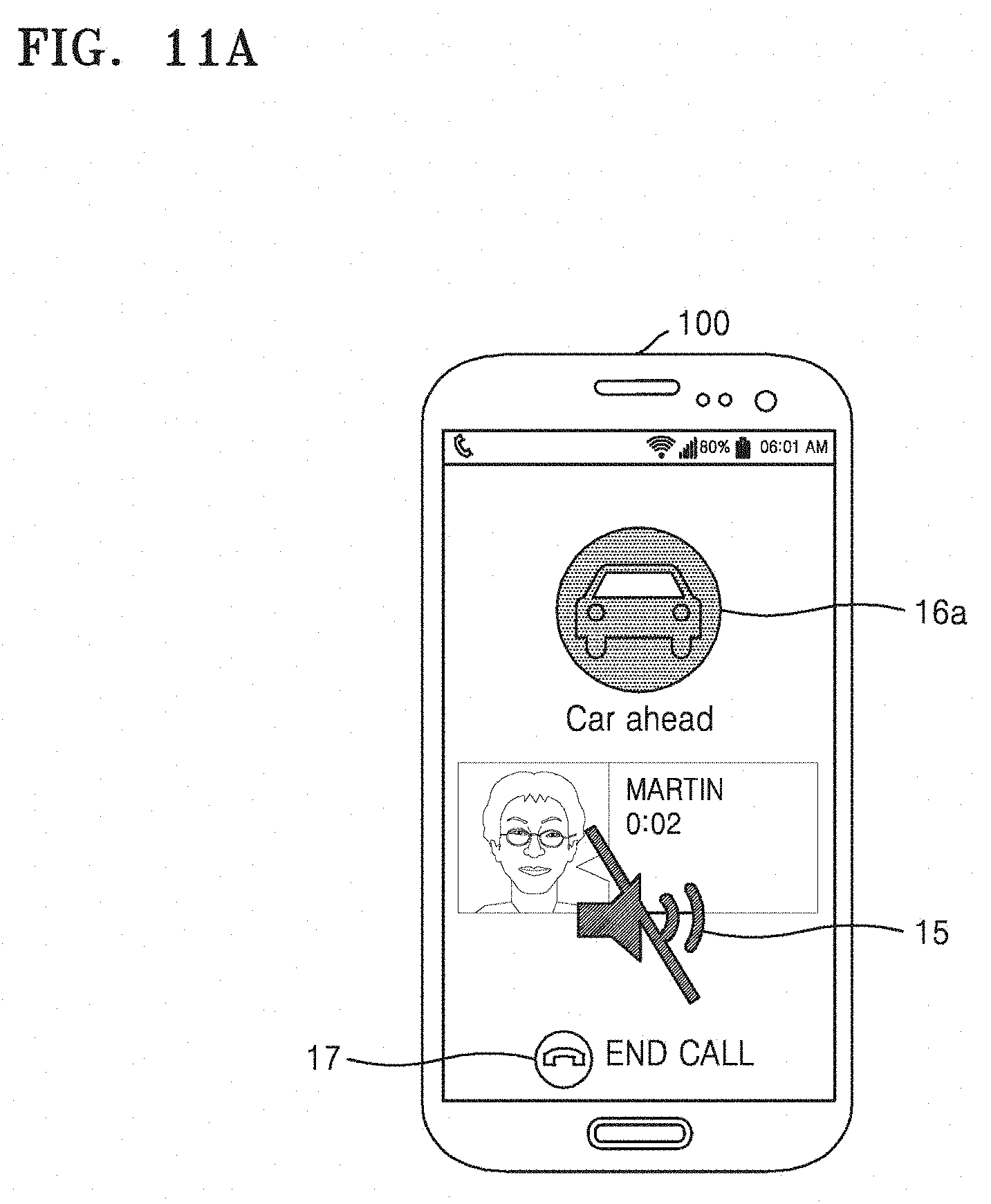

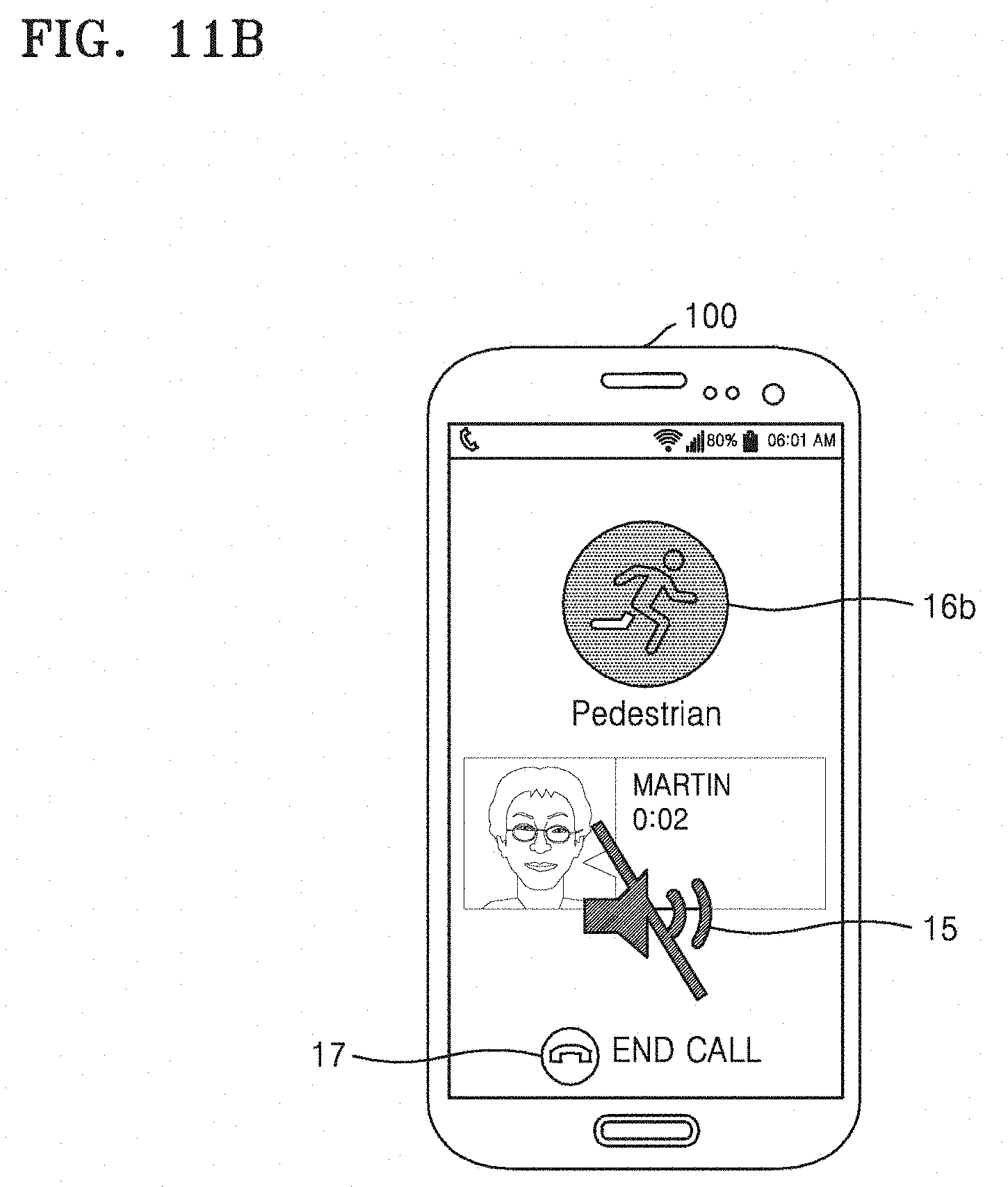

[0046] FIGS. 11A and 11B illustrate a high threat level display of a first device according to an embodiment of the present disclosure;

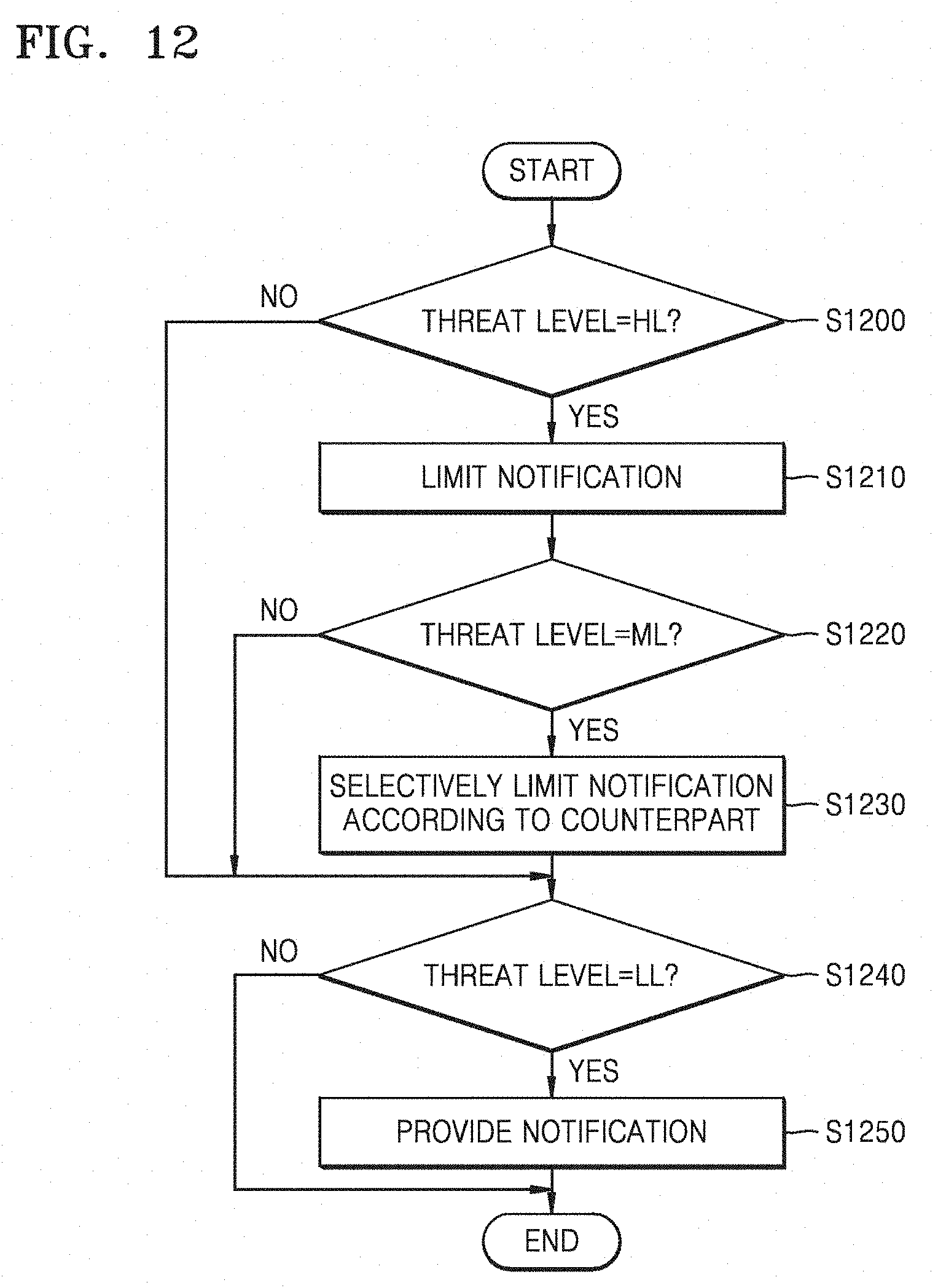

[0047] FIG. 12 is a flowchart of a notification limiting method adaptive to a plurality of threat levels performed by a first device according to an embodiment of the present disclosure;

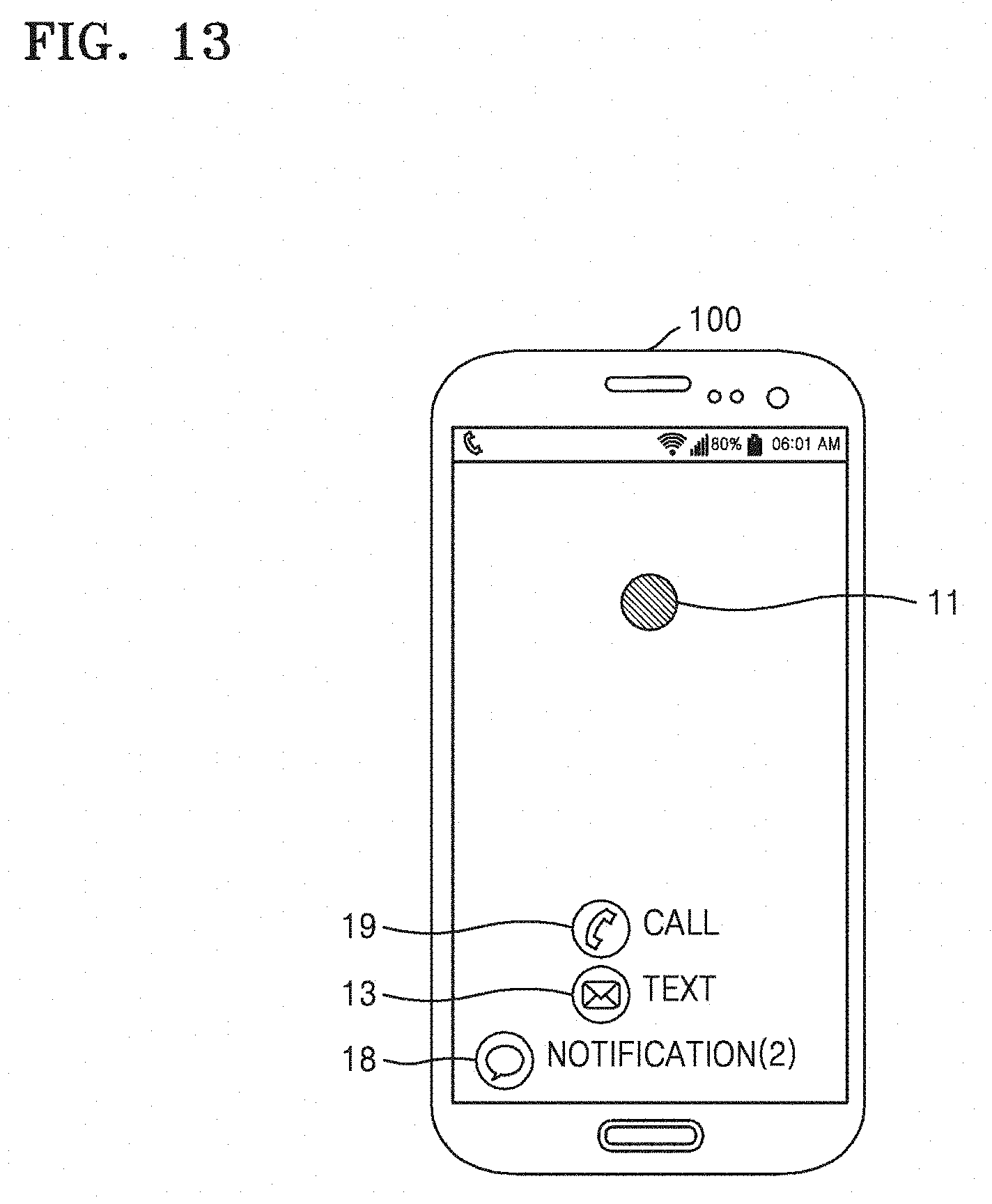

[0048] FIG. 13 illustrates a notification providing screen of a first device according to an embodiment of the present disclosure;

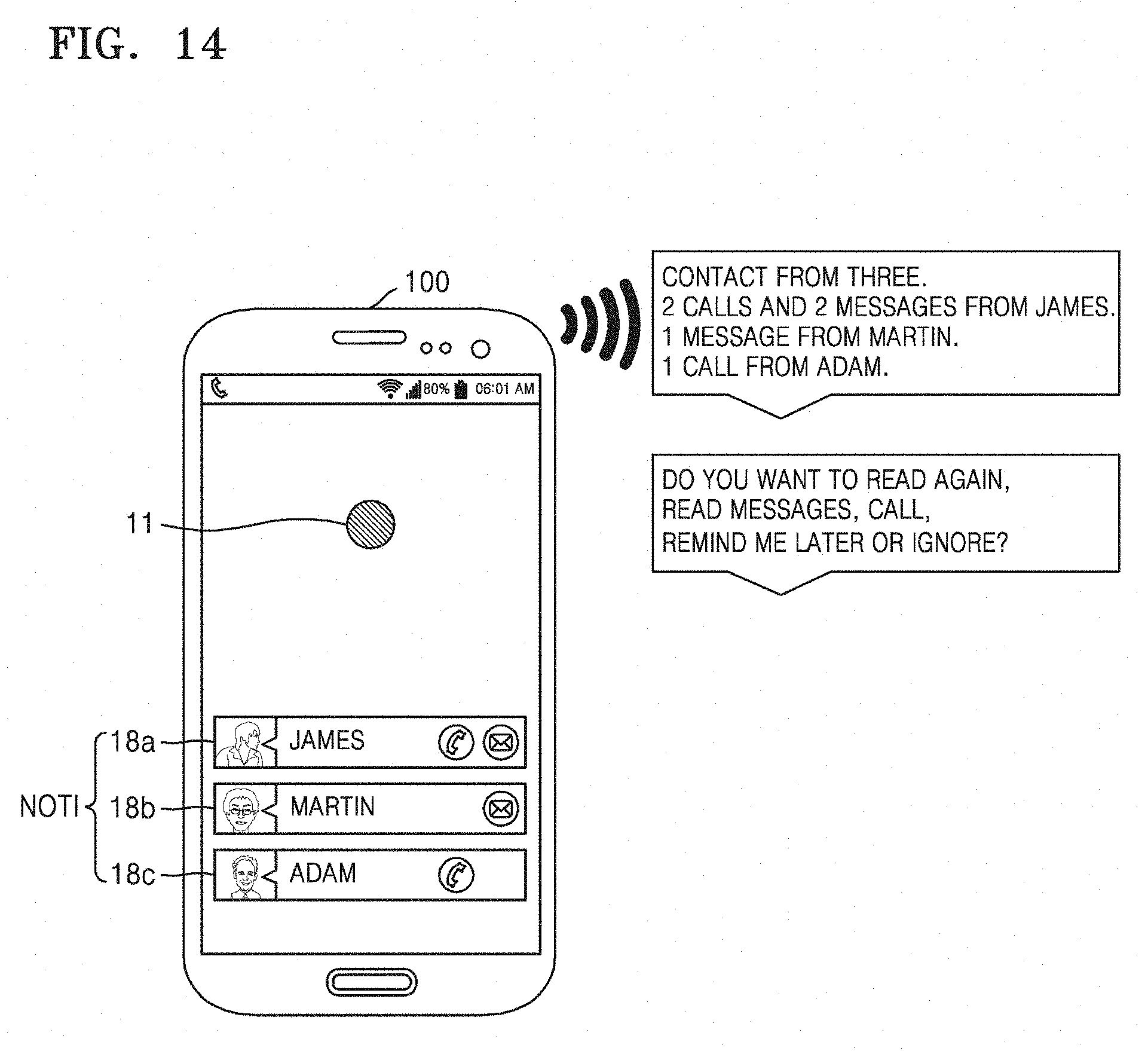

[0049] FIG. 14 illustrates a notification providing screen of a first device according to an embodiment of the present disclosure;

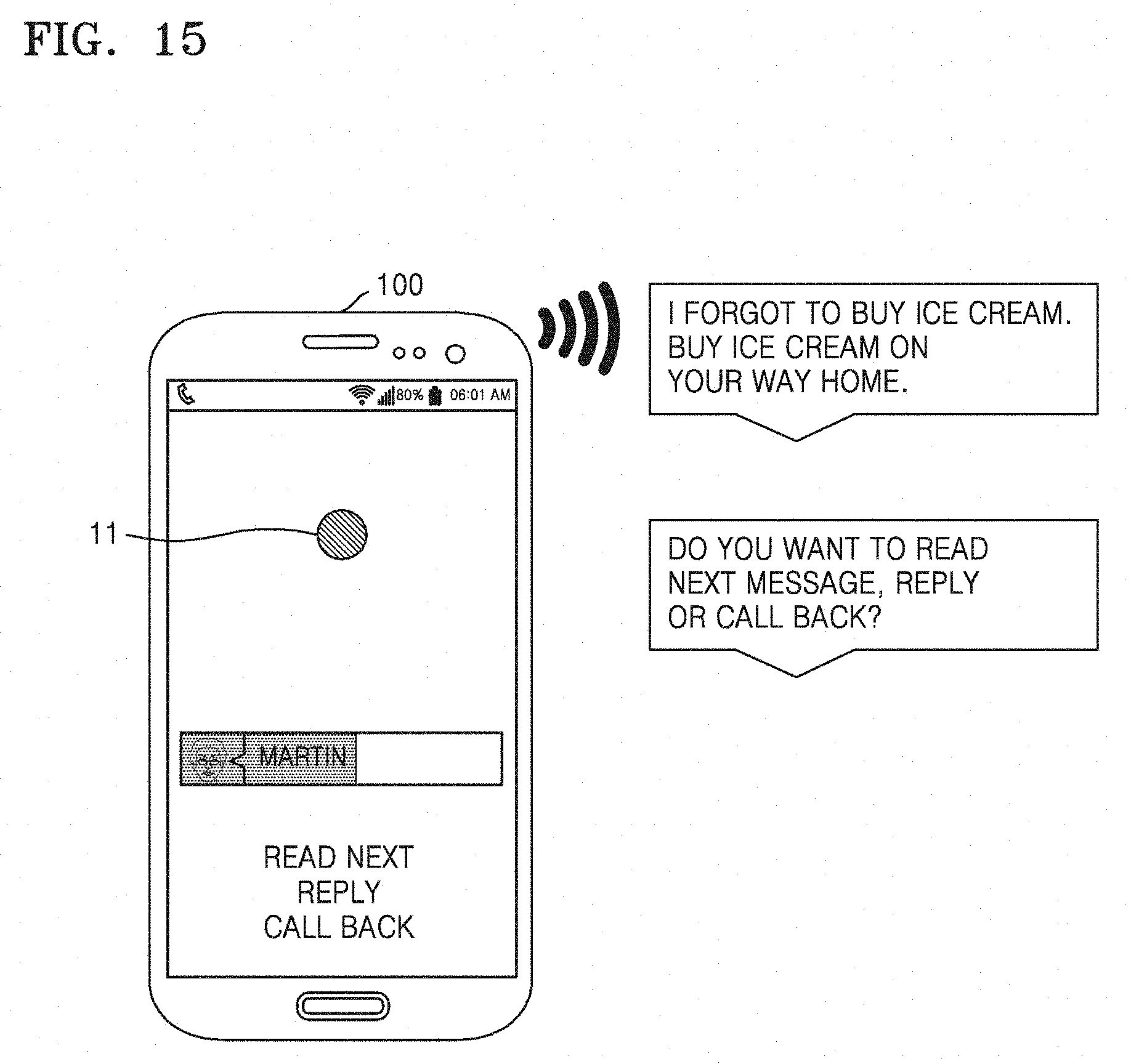

[0050] FIG. 15 illustrates providing a receiving message performed by a first device according to an embodiment of the present disclosure;

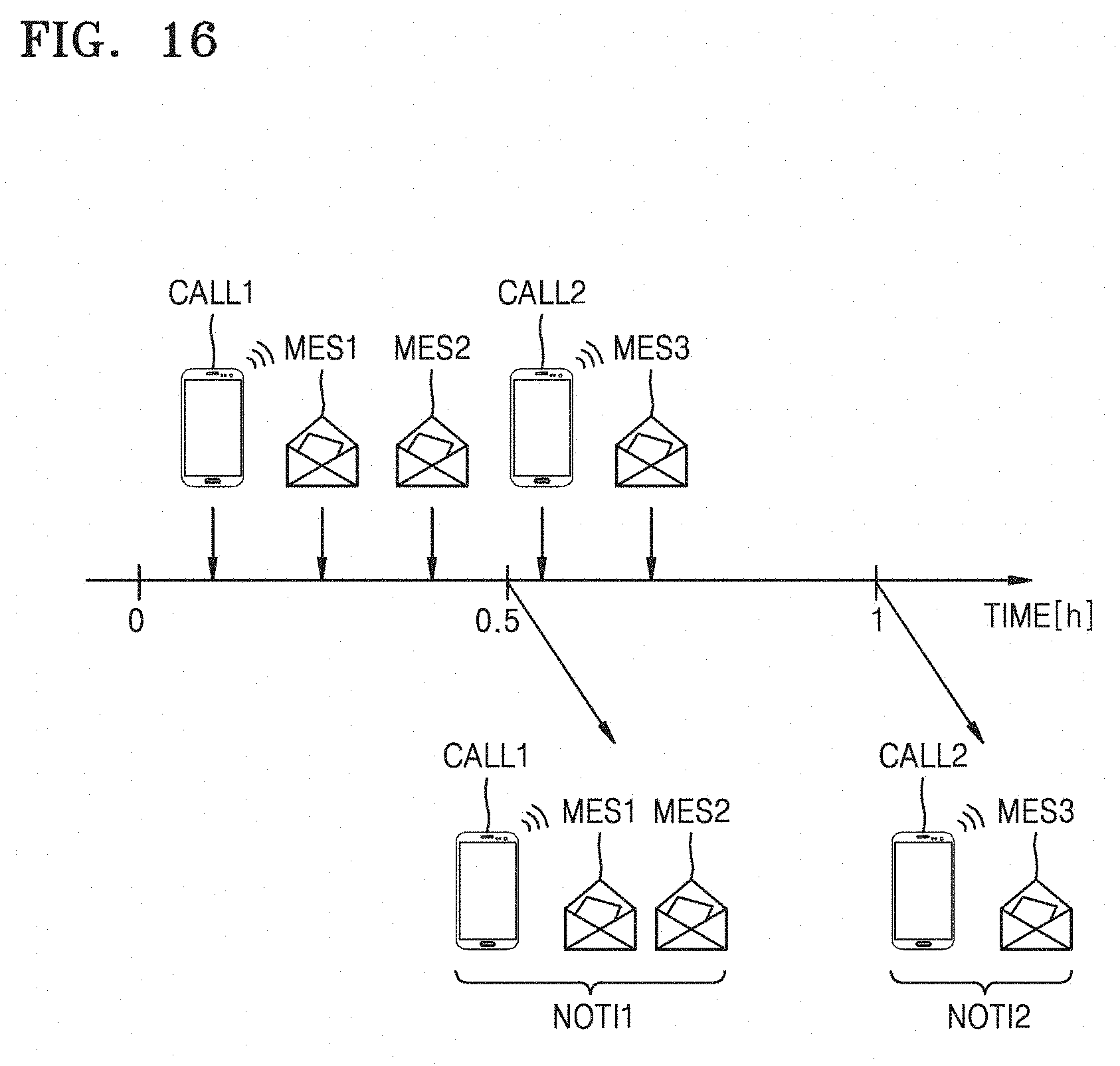

[0051] FIG. 16 illustrates a notification providing method performed by a first device according to an embodiment of the present disclosure;

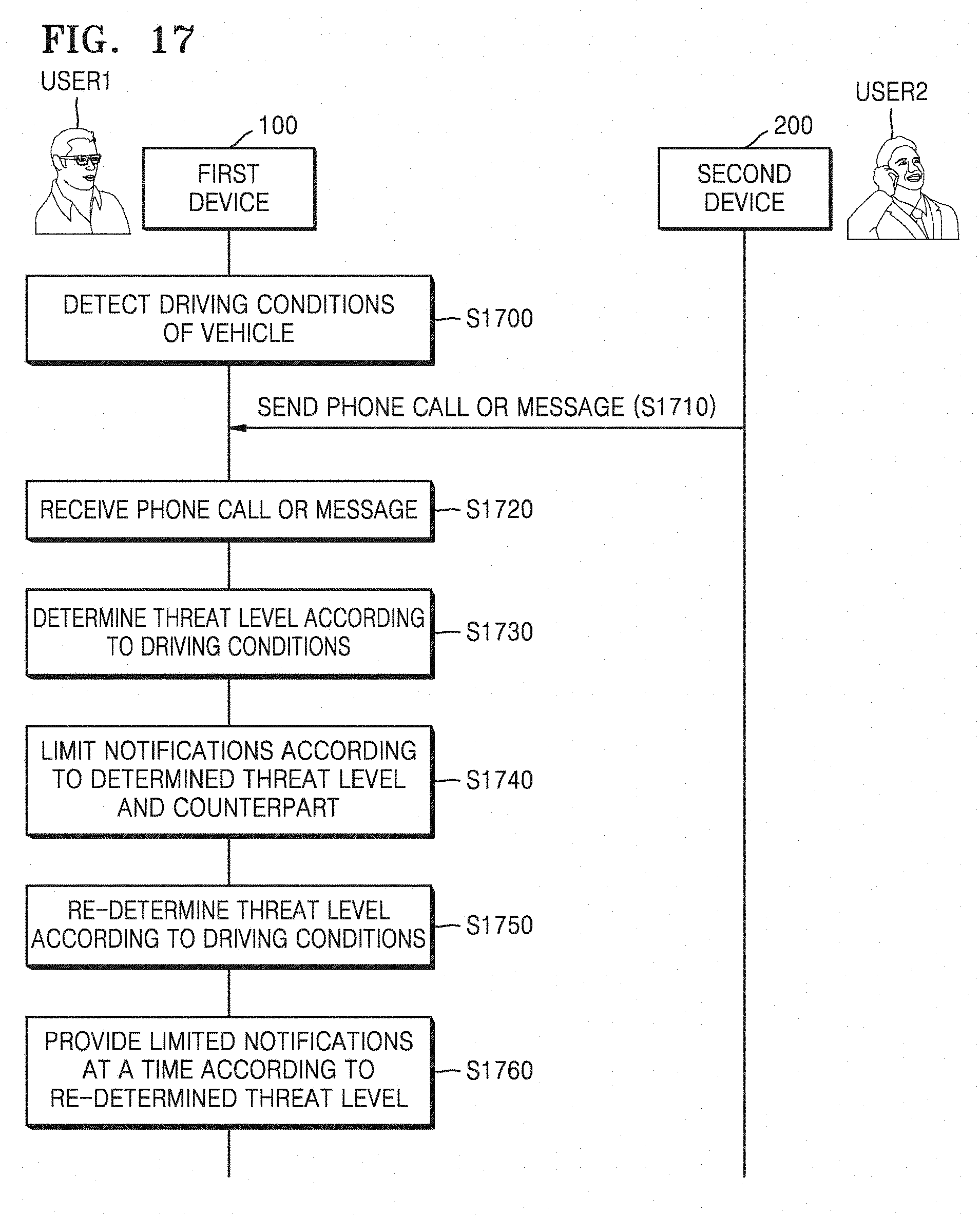

[0052] FIG. 17 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

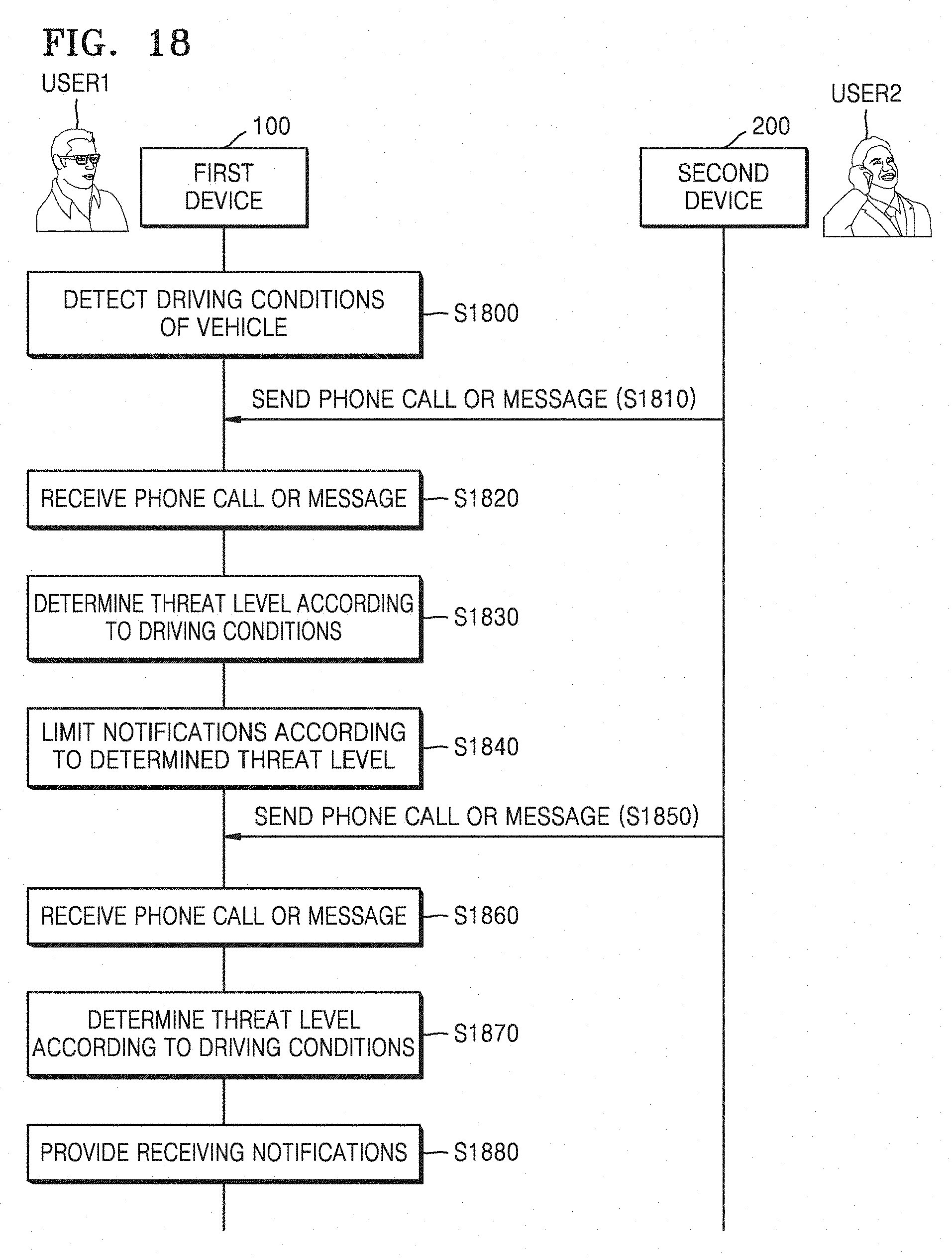

[0053] FIG. 18 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

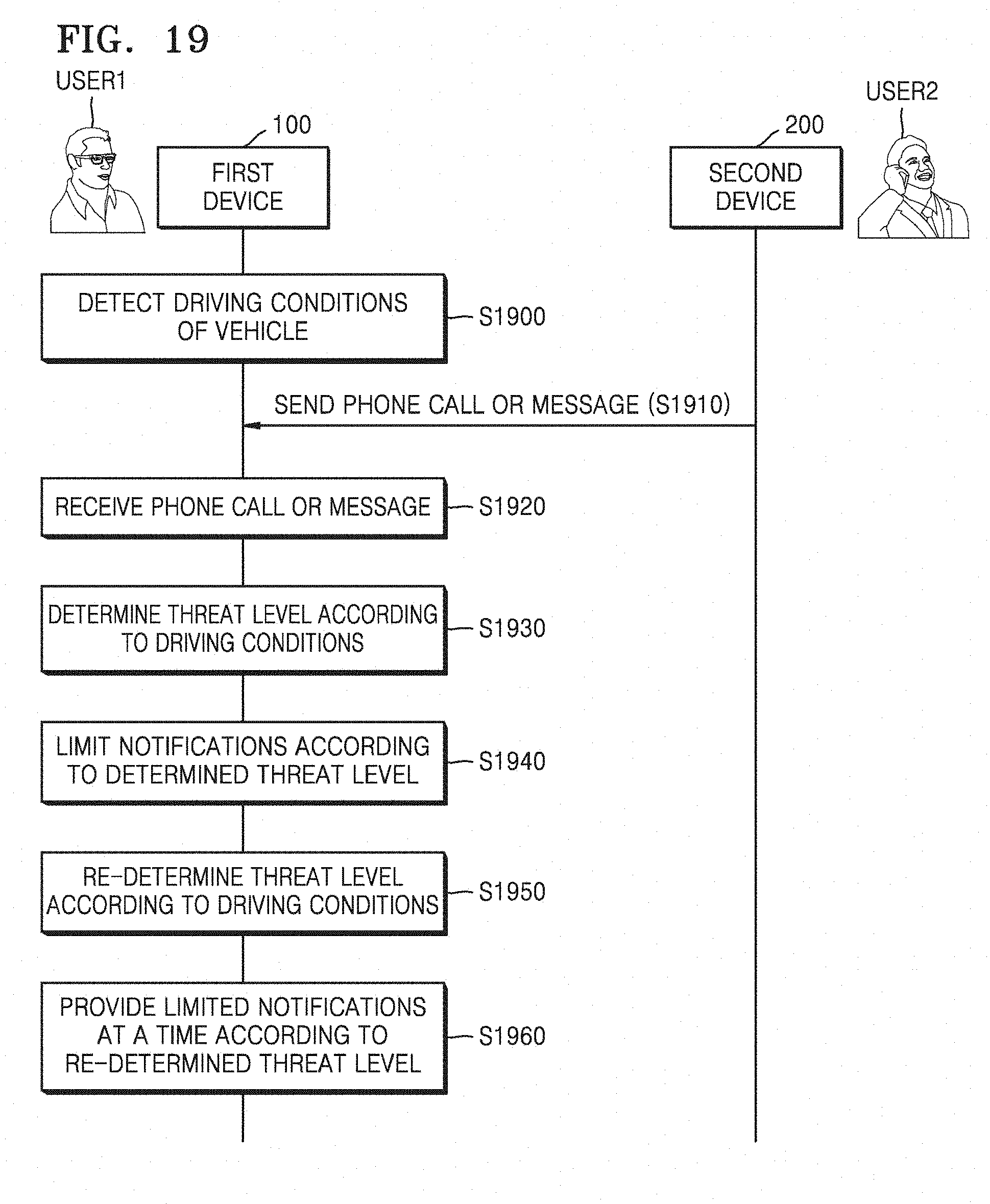

[0054] FIG. 19 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

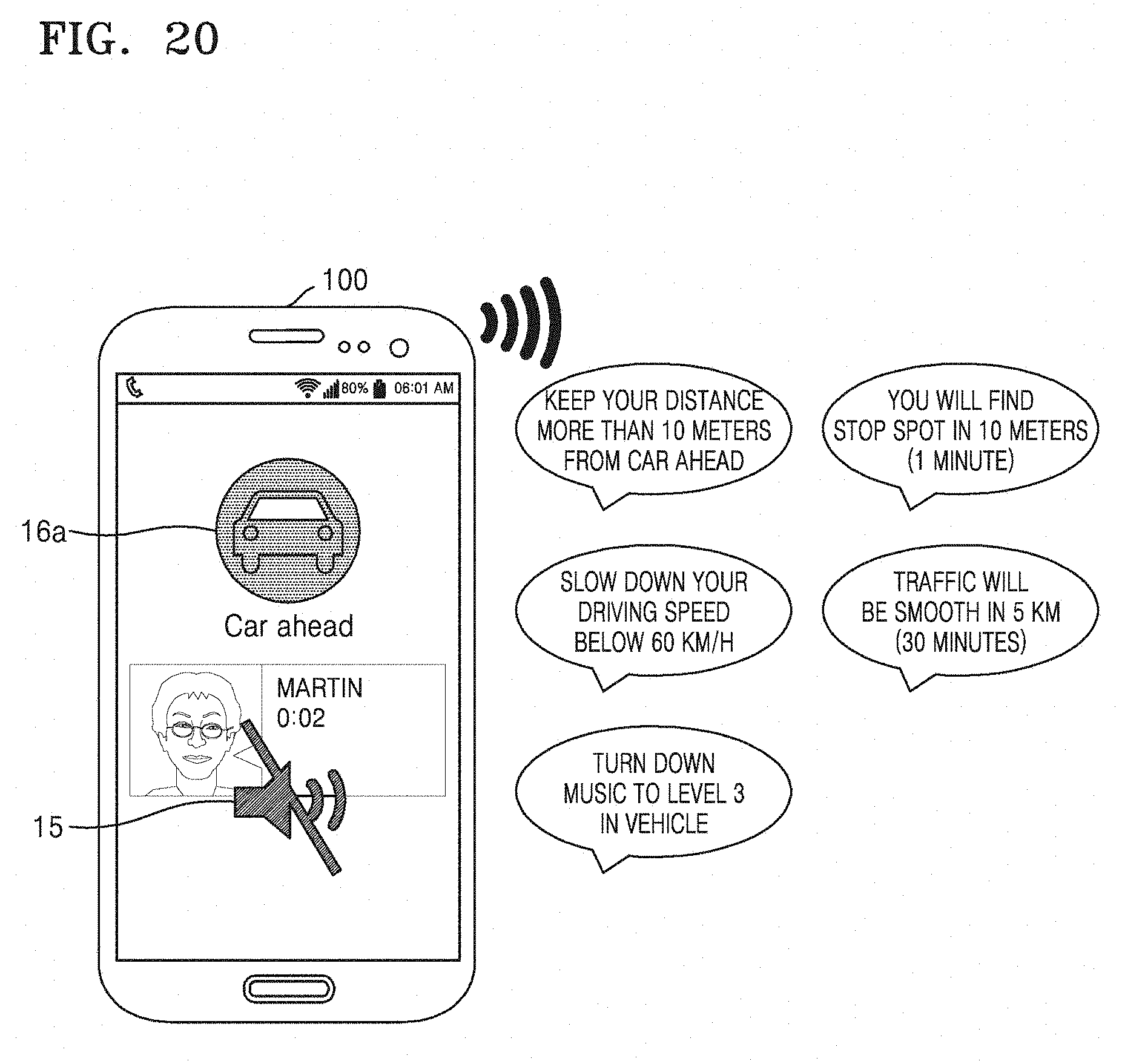

[0055] FIG. 20 illustrates a state change guide performed by a first device according to an embodiment of the present disclosure;

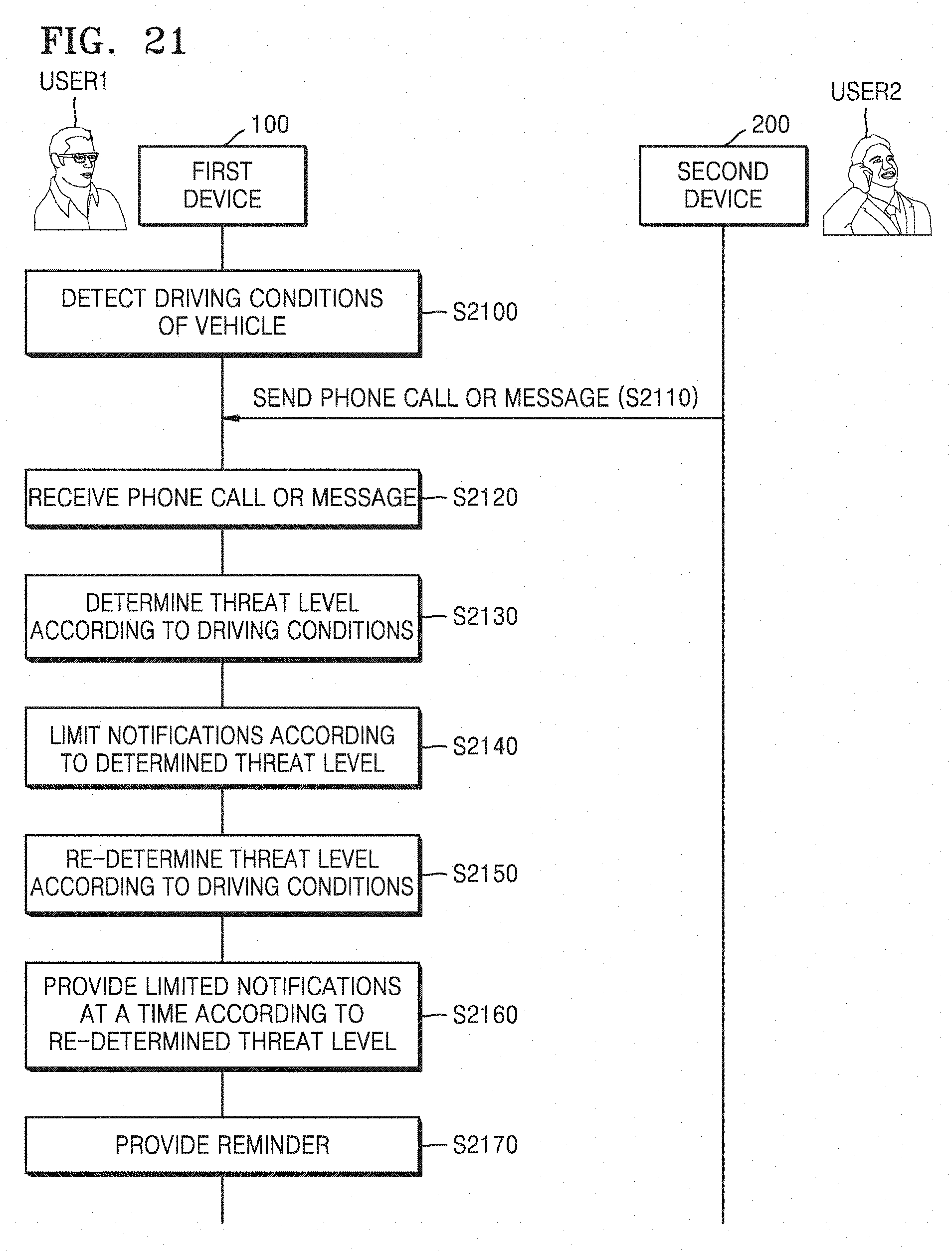

[0056] FIG. 21 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

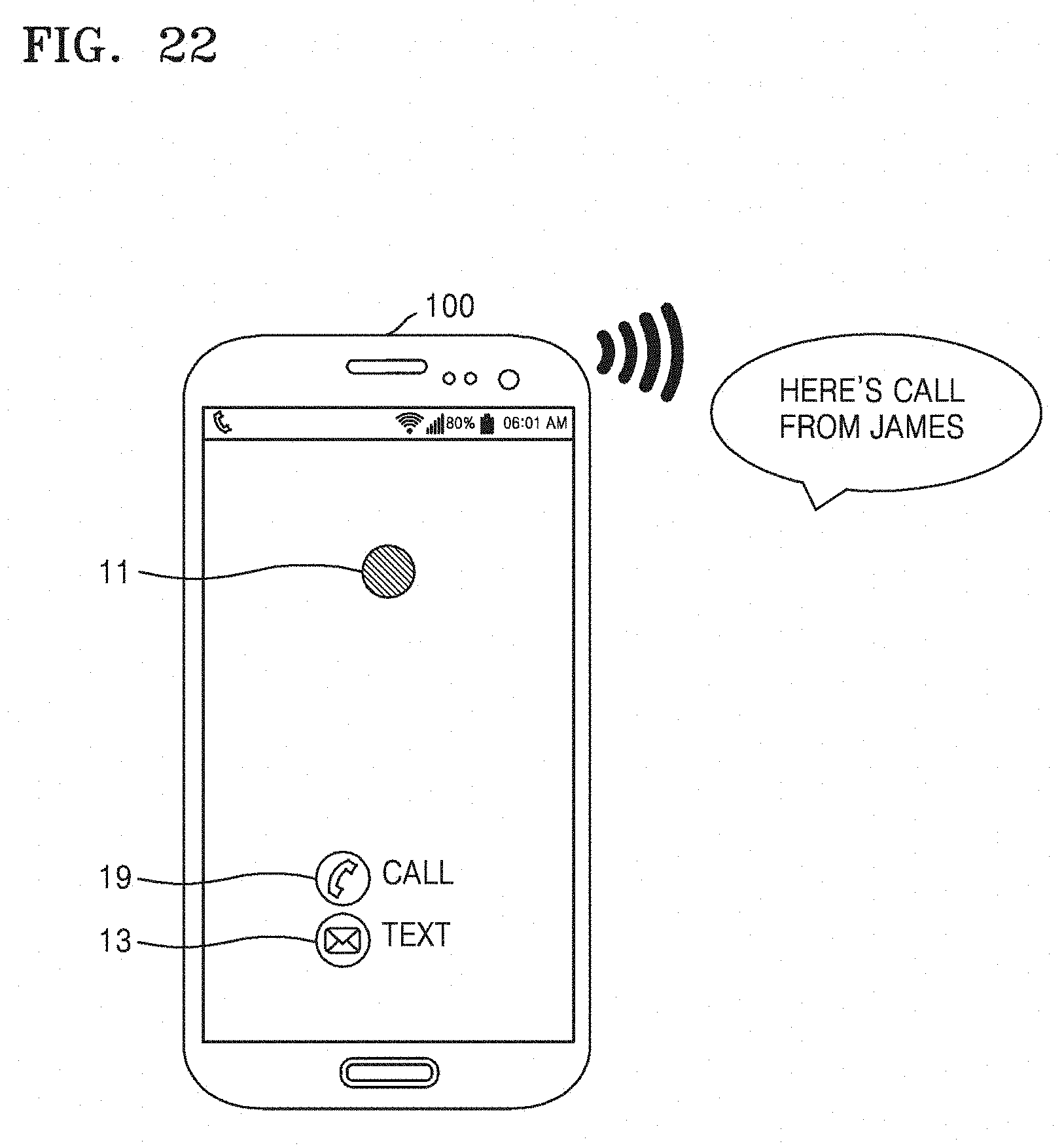

[0057] FIG. 22 illustrates providing a reminder performed by a first device according to an embodiment of the present disclosure;

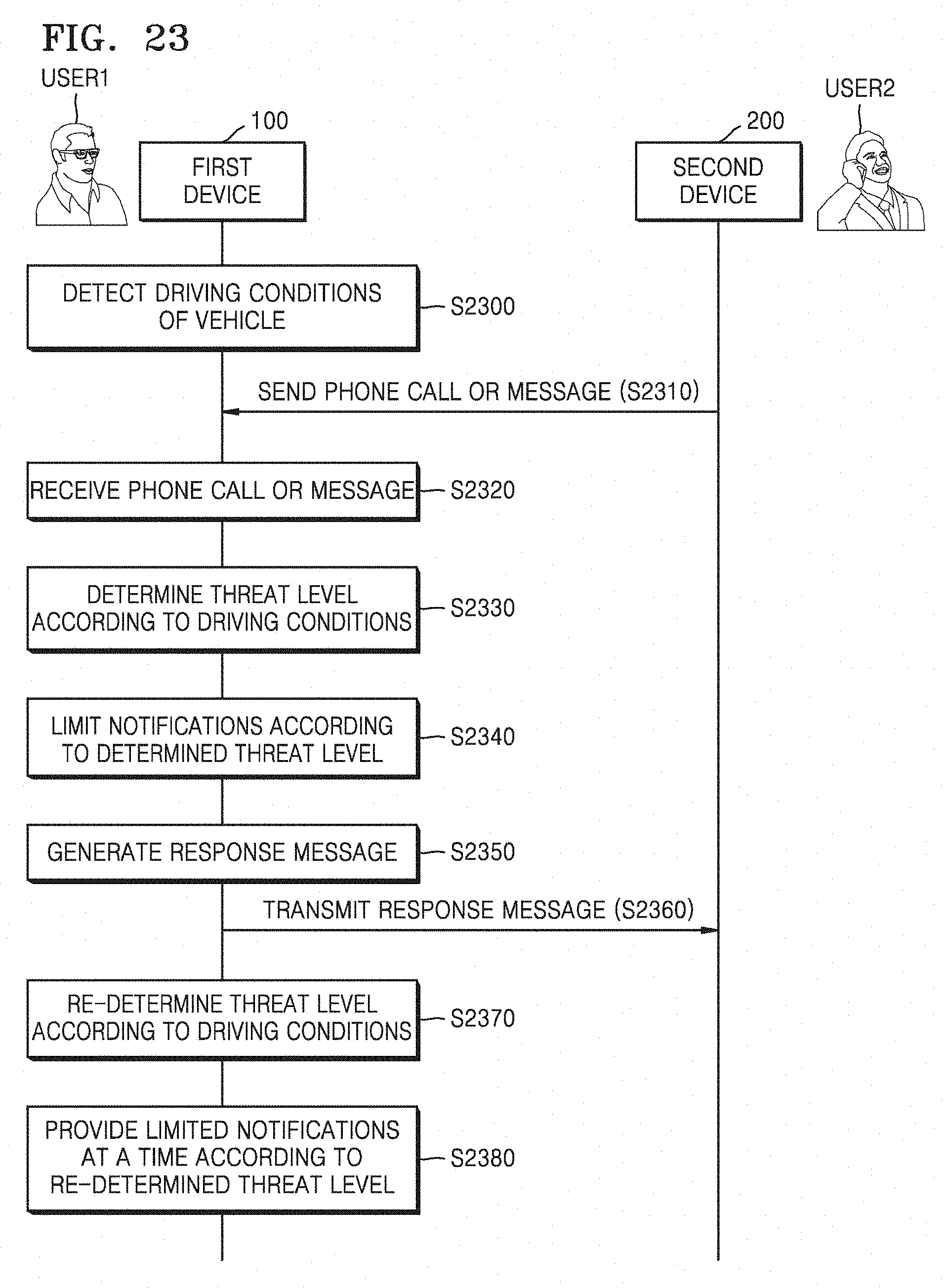

[0058] FIG. 23 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

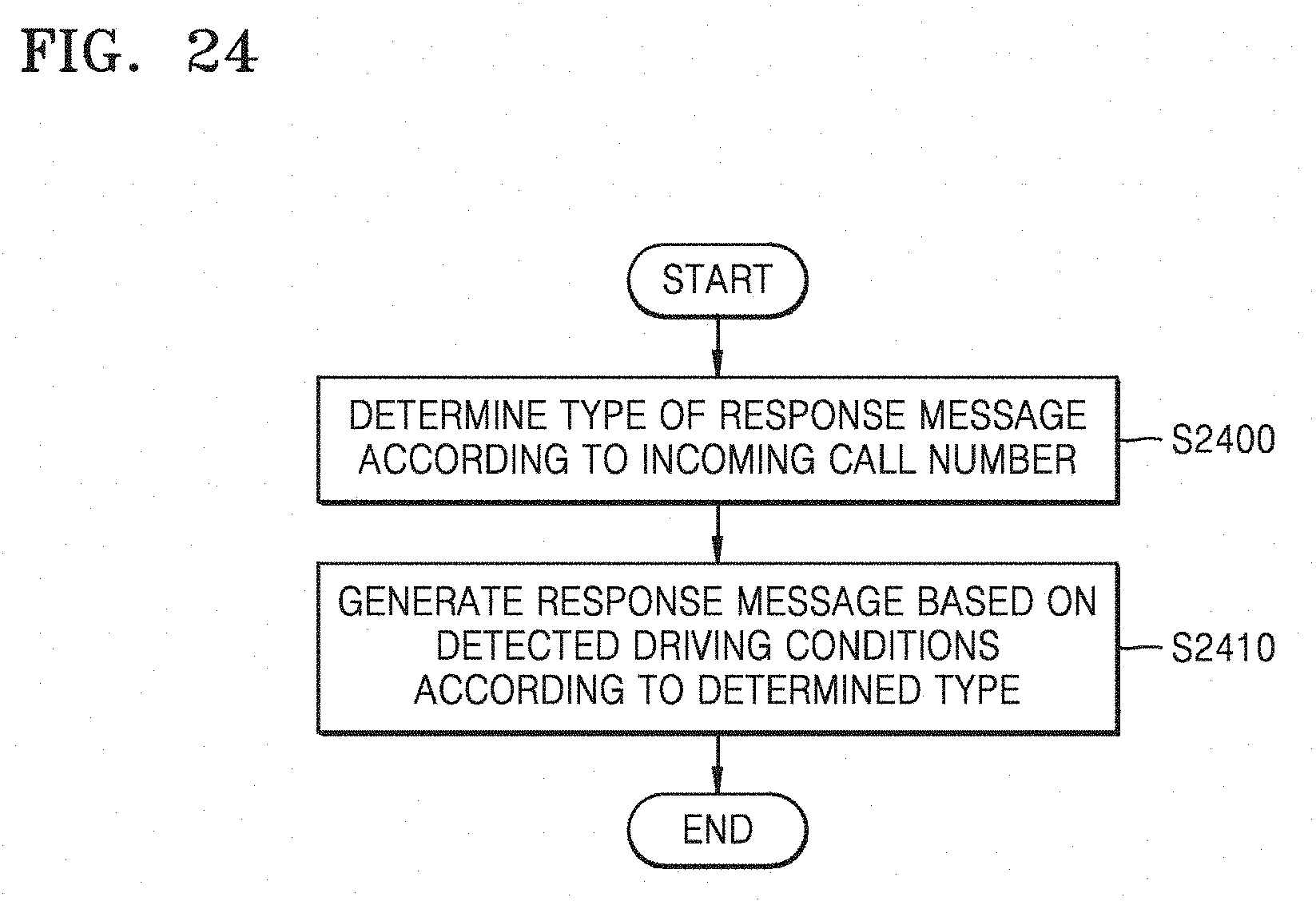

[0059] FIG. 24 is a flowchart of a response message generation method performed by a first device according to an embodiment of the present disclosure;

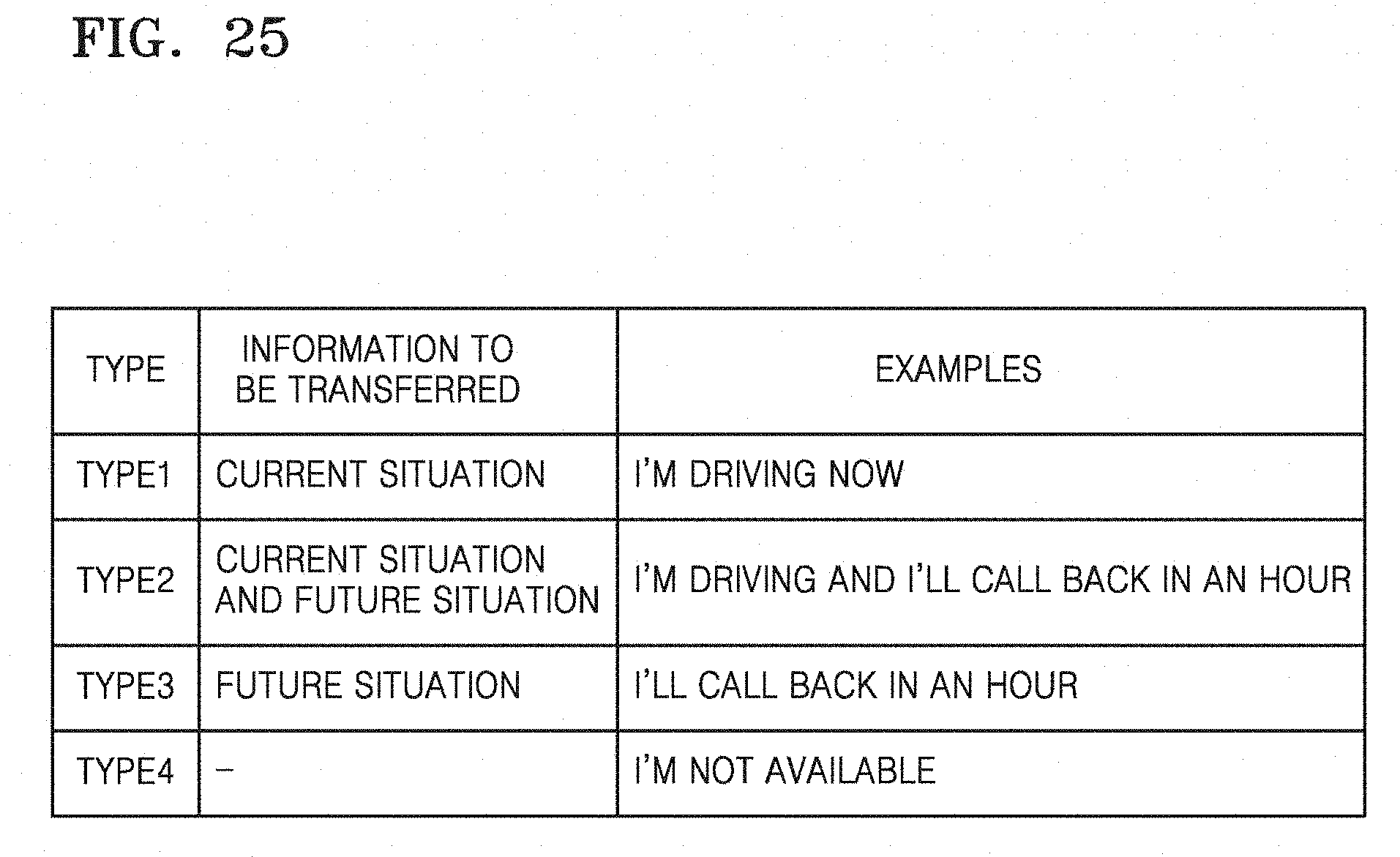

[0060] FIG. 25 is a table showing types of a response message generated by a first device according to an embodiment of the present disclosure;

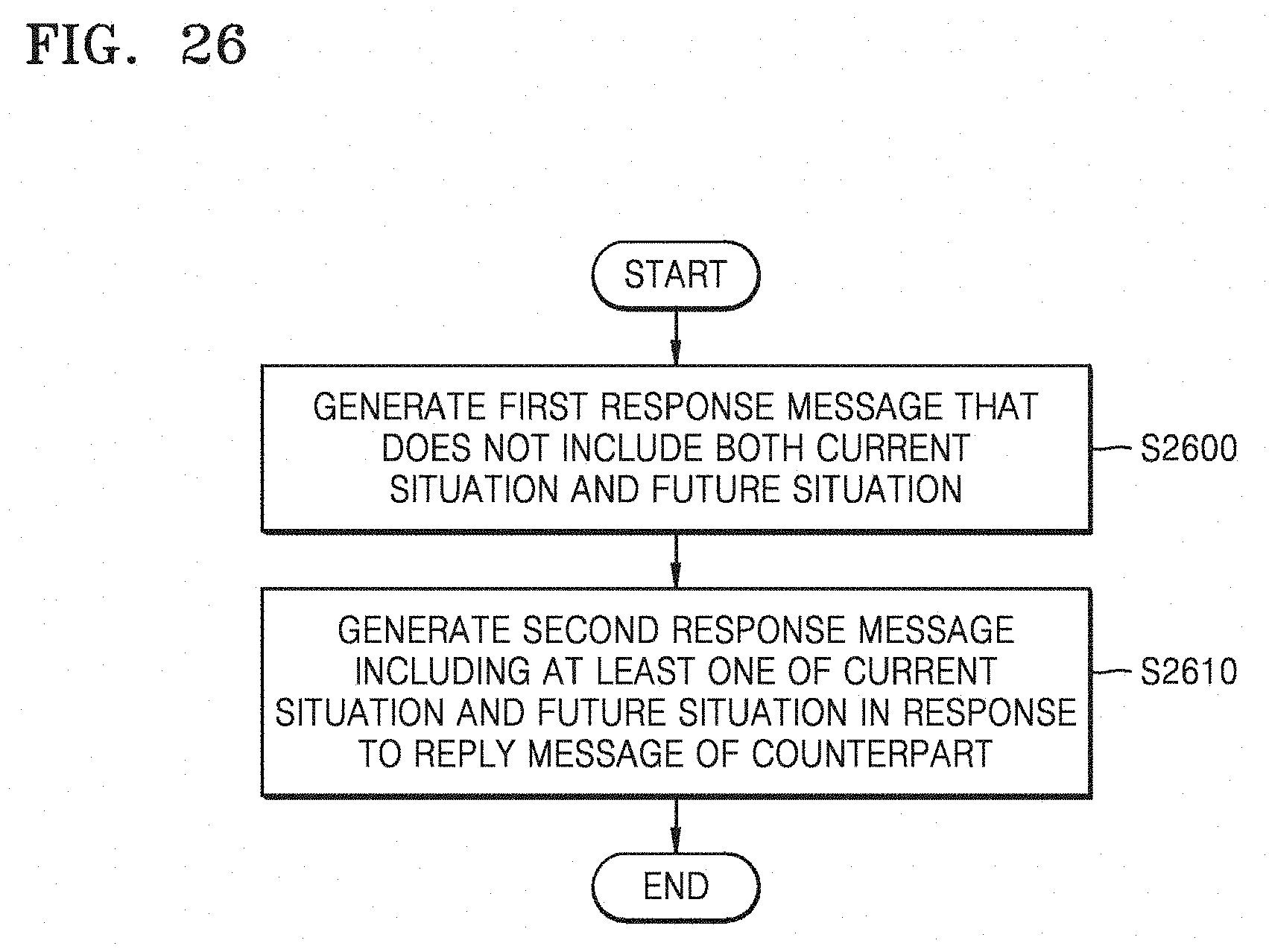

[0061] FIG. 26 is a flowchart of a response message generation method performed by a first device according to an embodiment of the present disclosure;

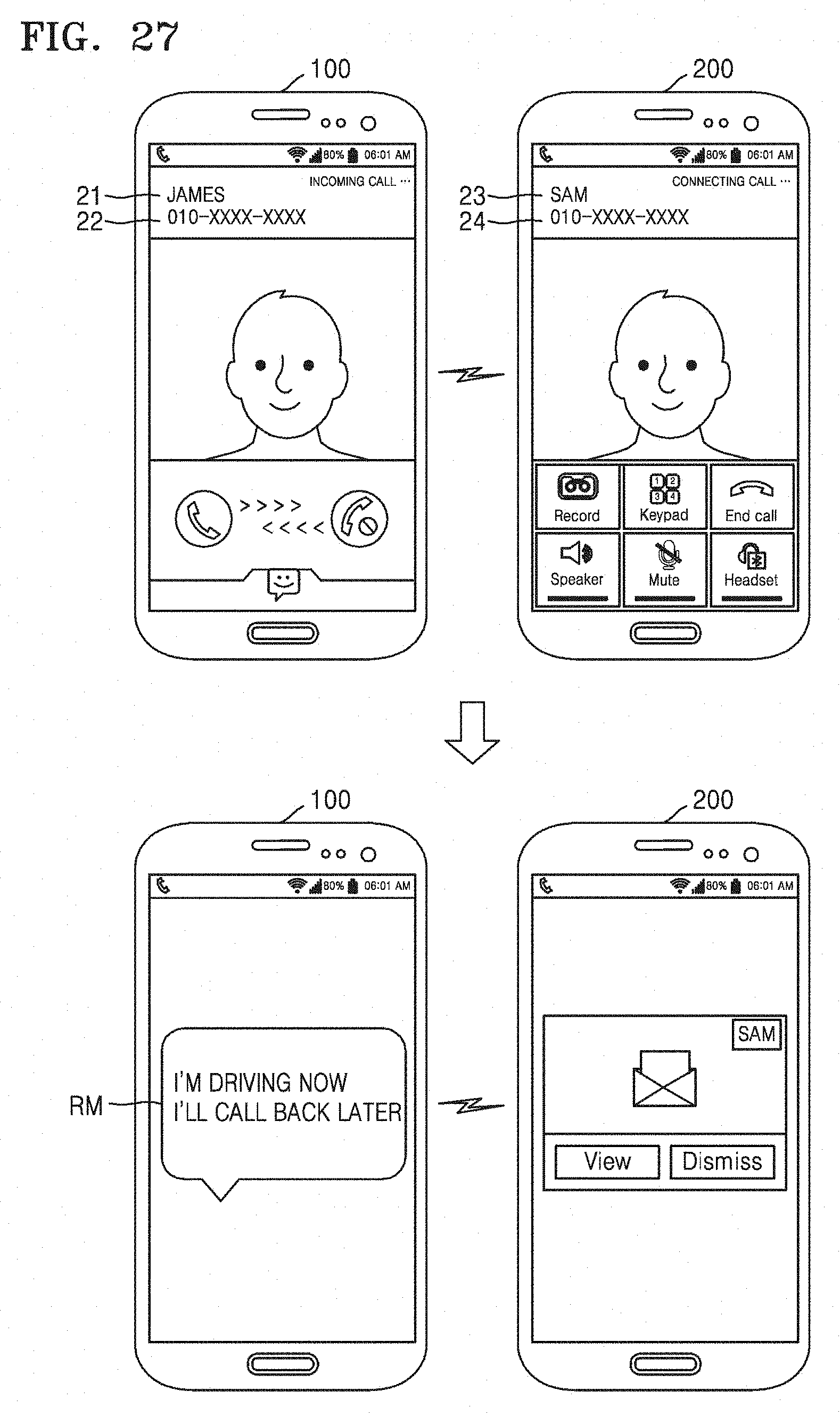

[0062] FIG. 27 illustrates an operation of providing a response message performed by a first device according to an embodiment of the present disclosure;

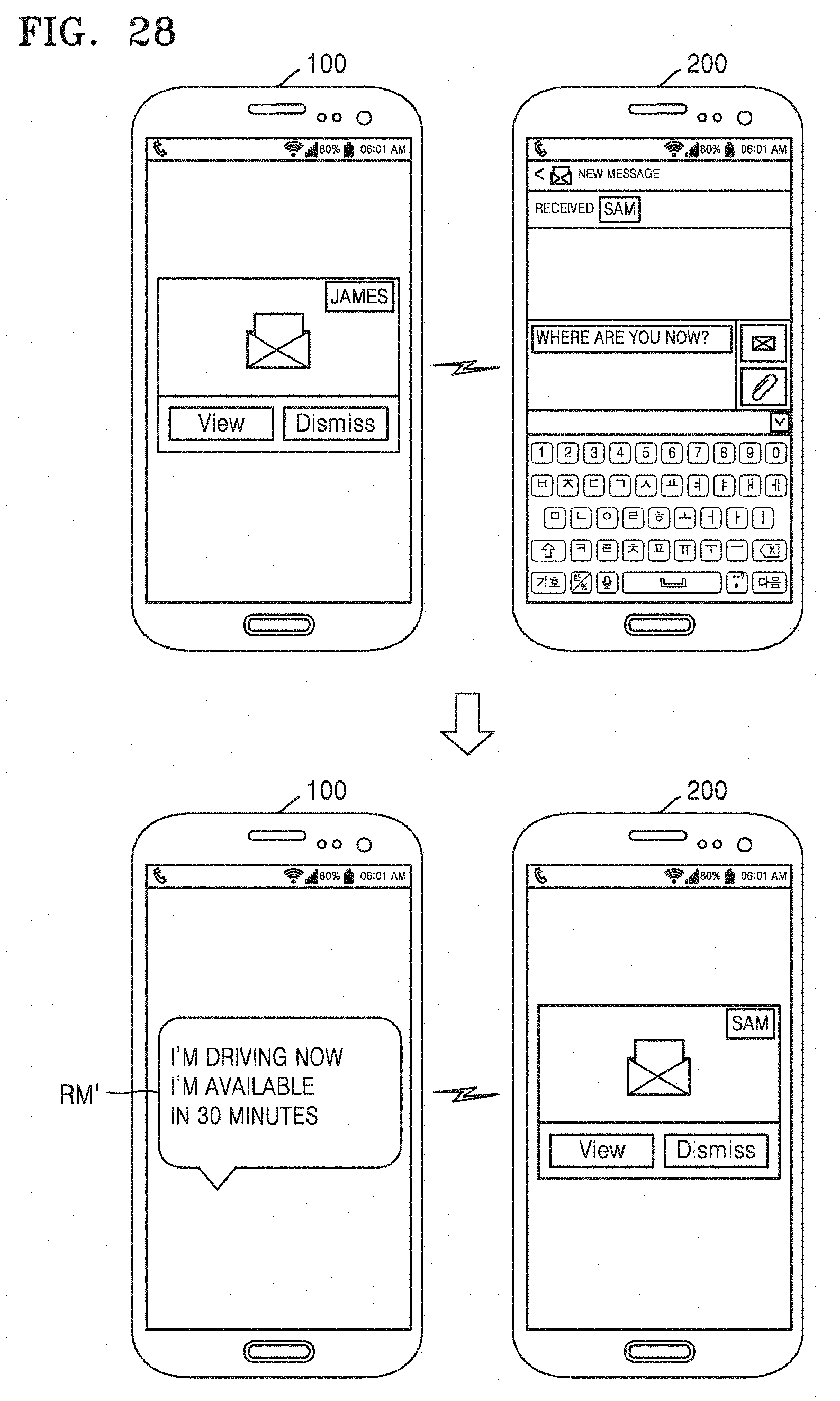

[0063] FIG. 28 illustrates an operation of providing a response message performed by a first device according to an embodiment of the present disclosure;

[0064] FIG. 29 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

[0065] FIG. 30 illustrates an operation of providing an automatic message performed by a first device according to an embodiment of the present disclosure;

[0066] FIG. 31 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

[0067] FIG. 32 illustrates an operation of providing a transmission available time performed by a first device according to an embodiment of the present disclosure;

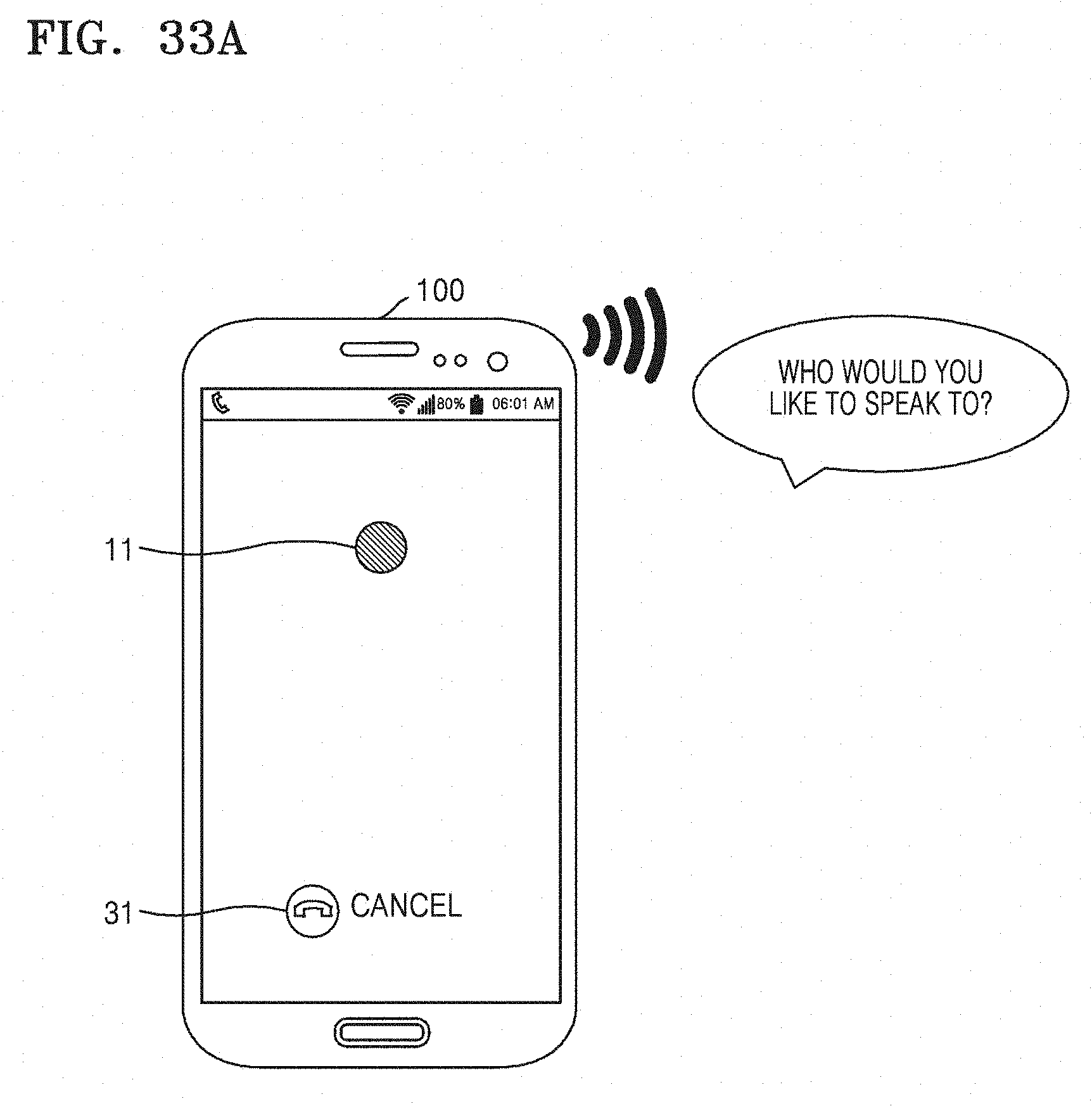

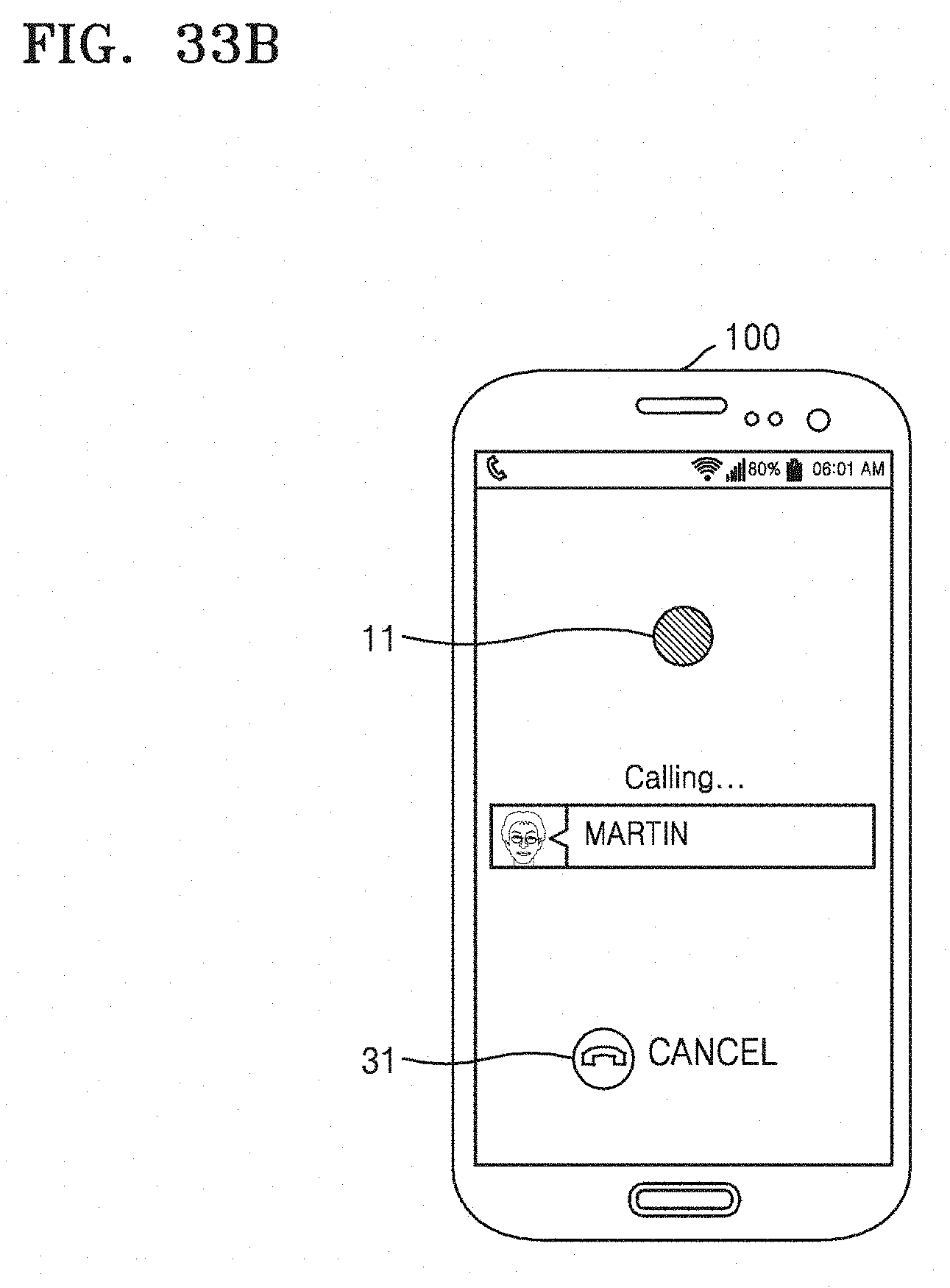

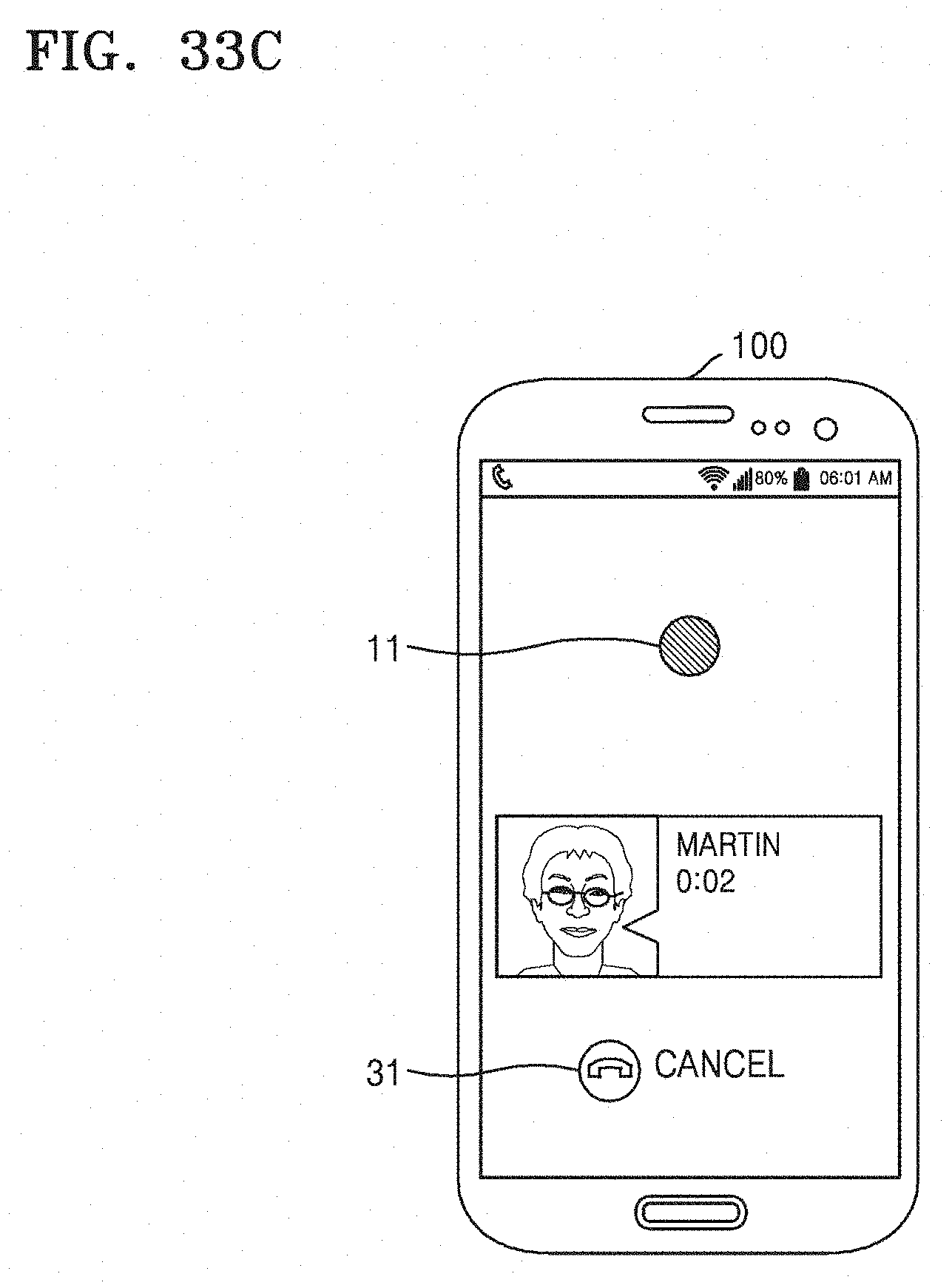

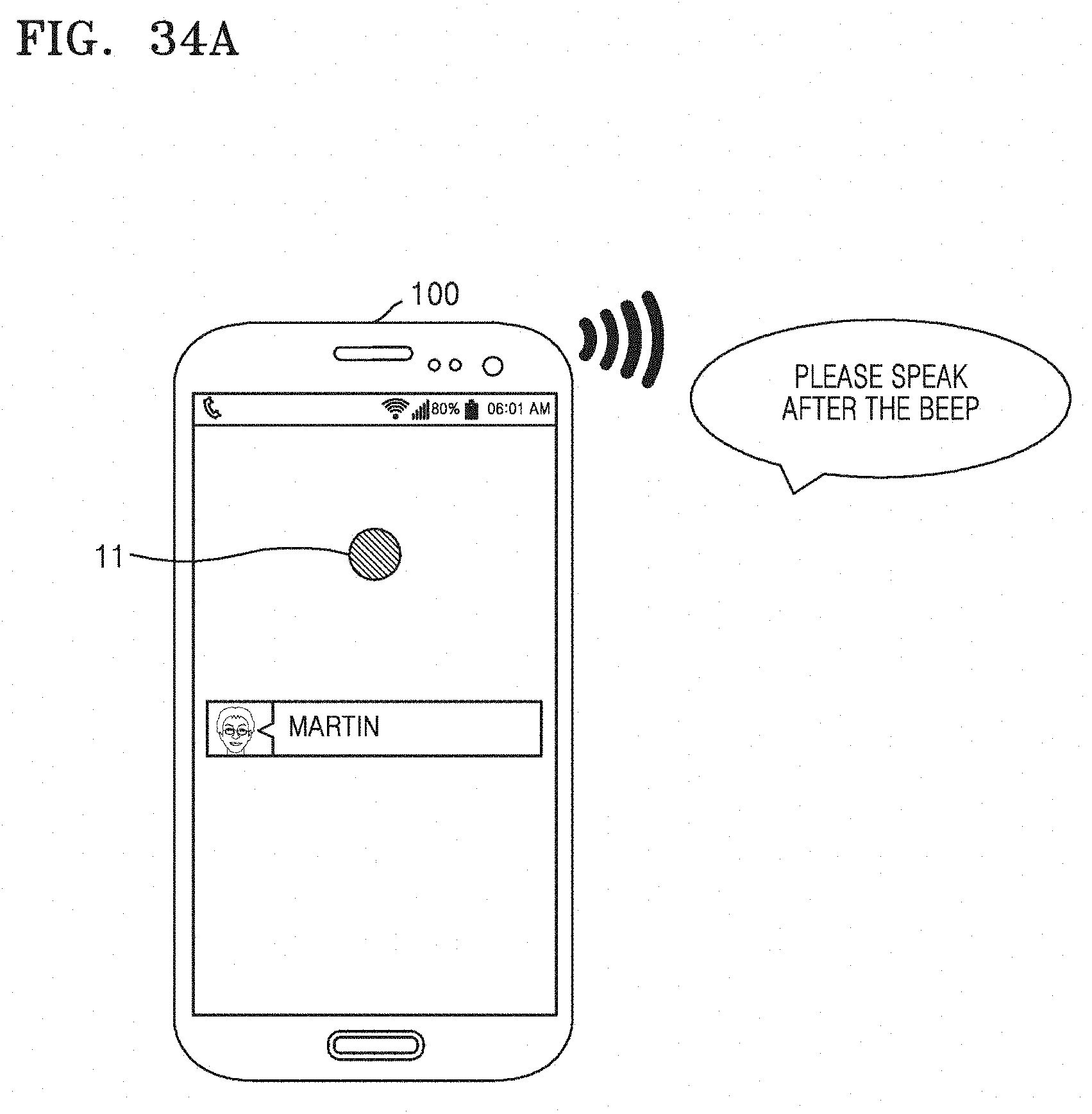

[0068] FIGS. 33A to 33C sequentially illustrate an operation of transmitting a phone call performed by a first device according to an embodiment of the present disclosure;

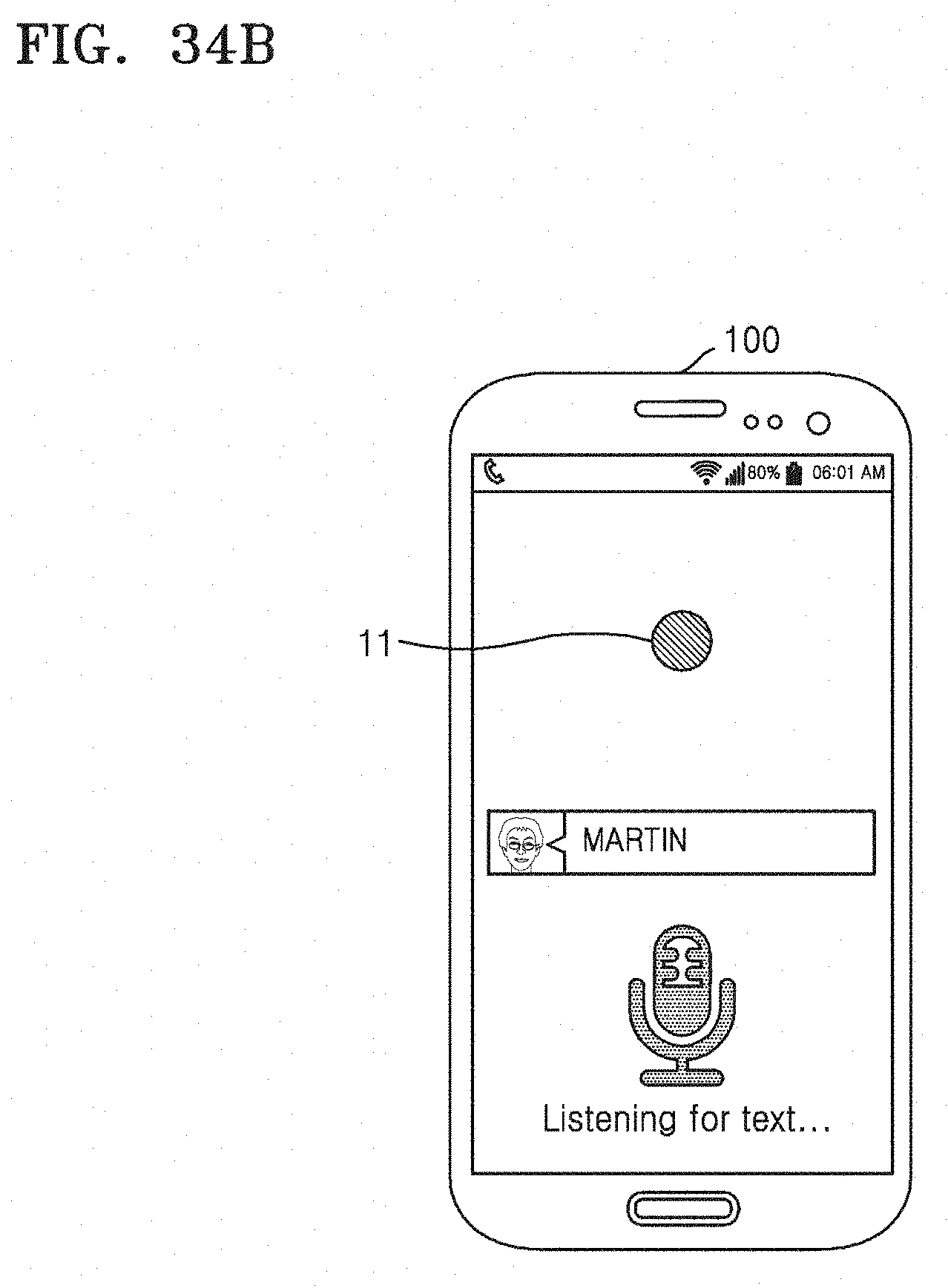

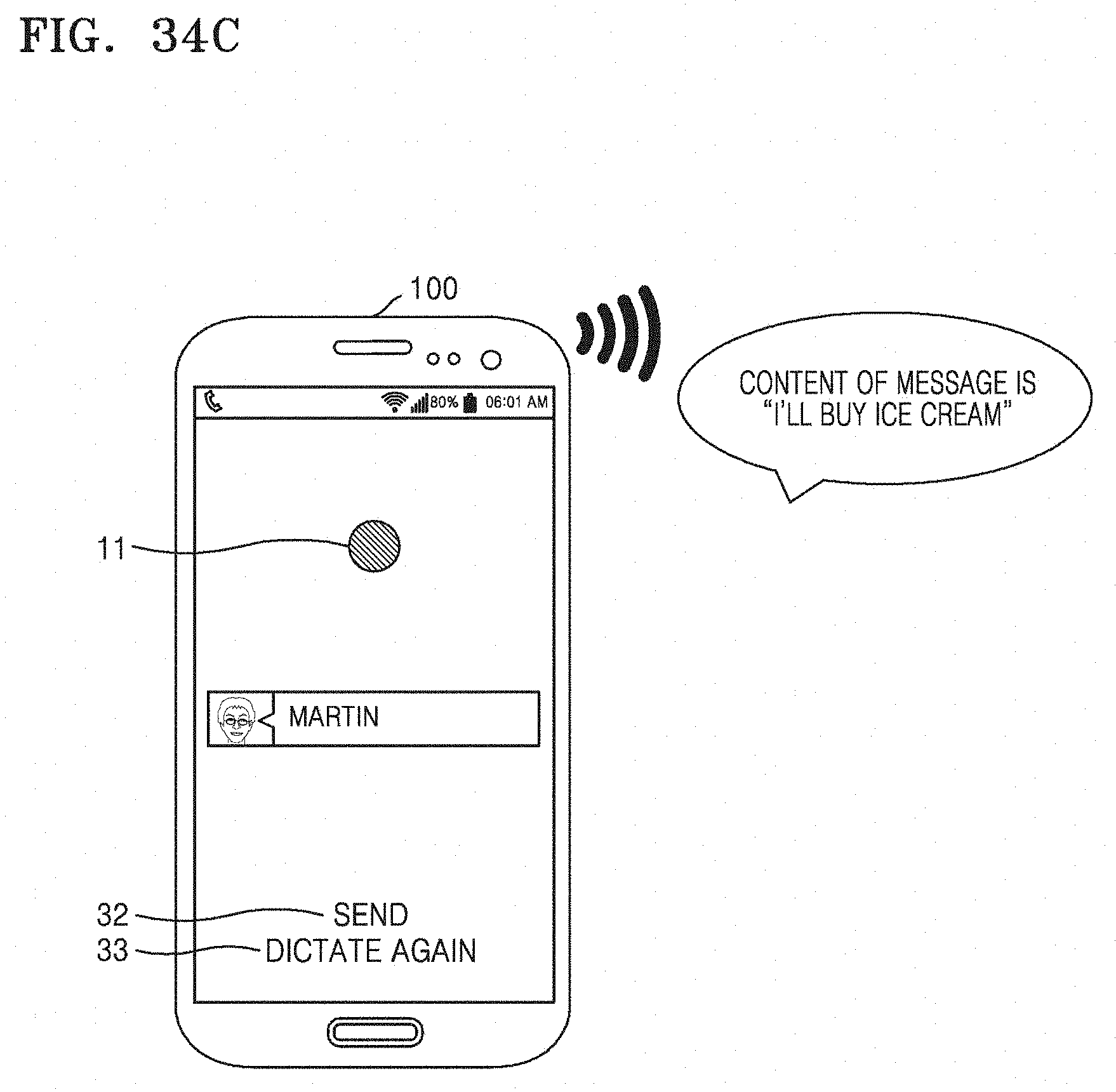

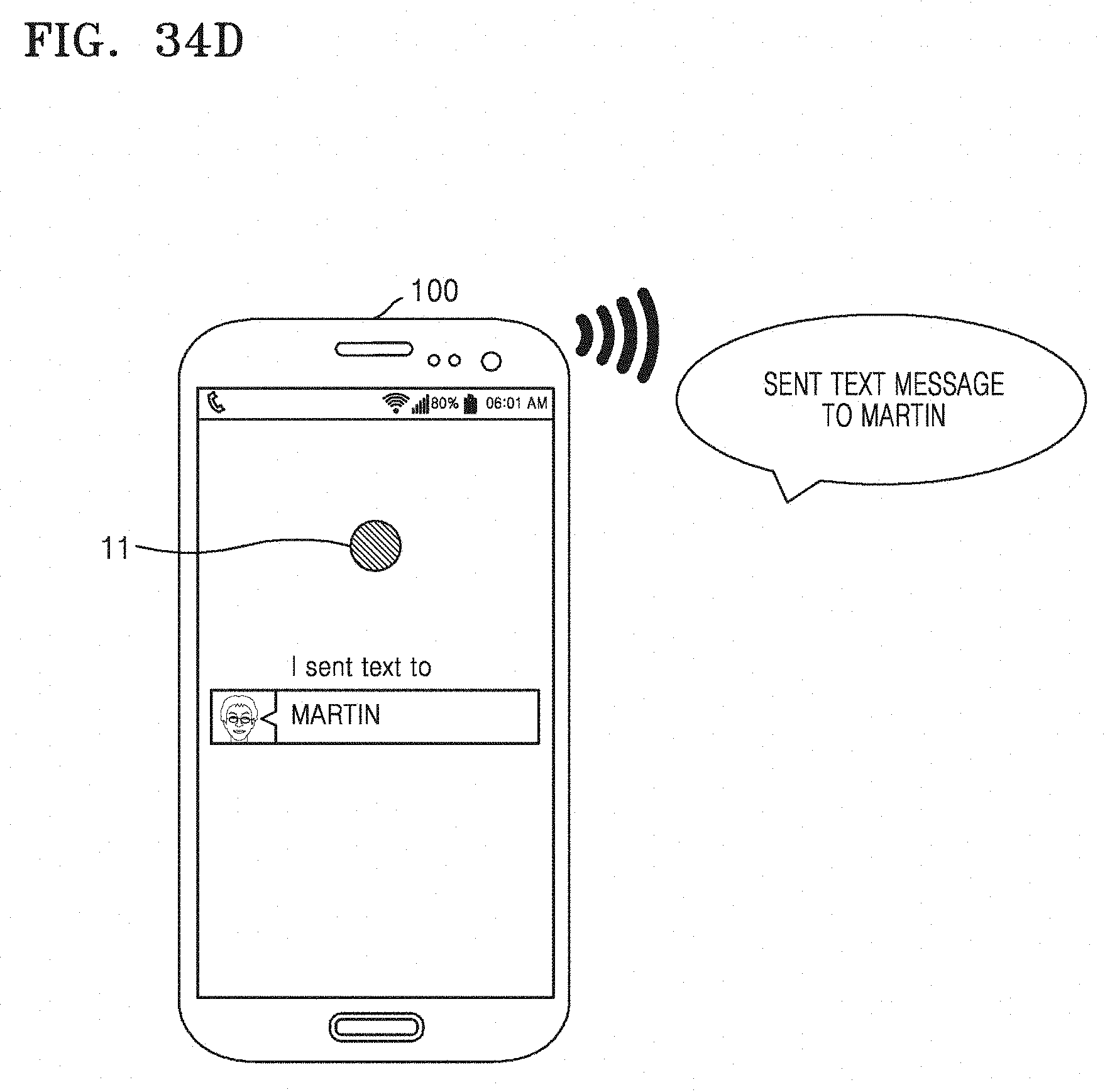

[0069] FIGS. 34A to 34D sequentially illustrate an operation of transmitting a message performed by a first device according to an embodiment of the present disclosure;

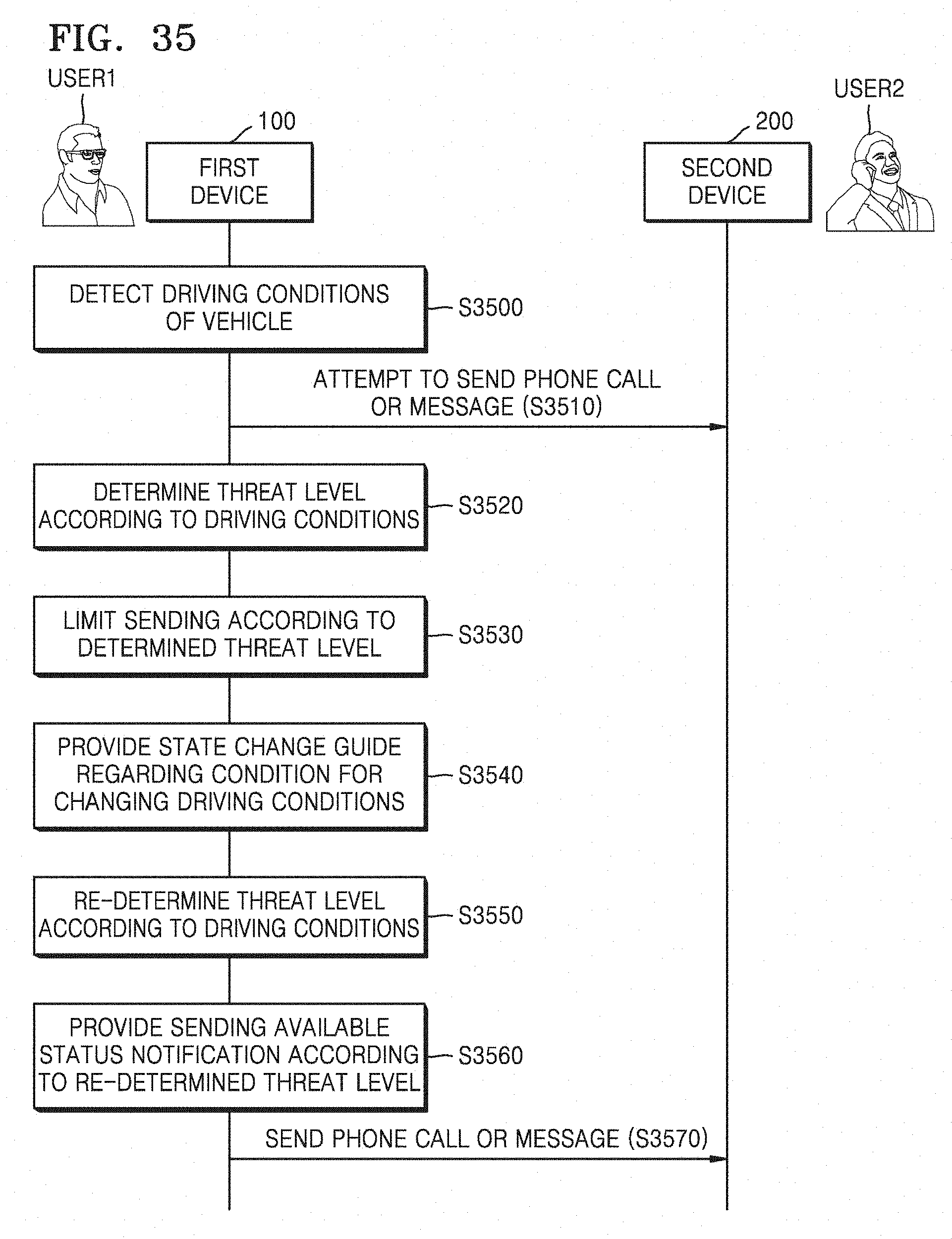

[0070] FIG. 35 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure;

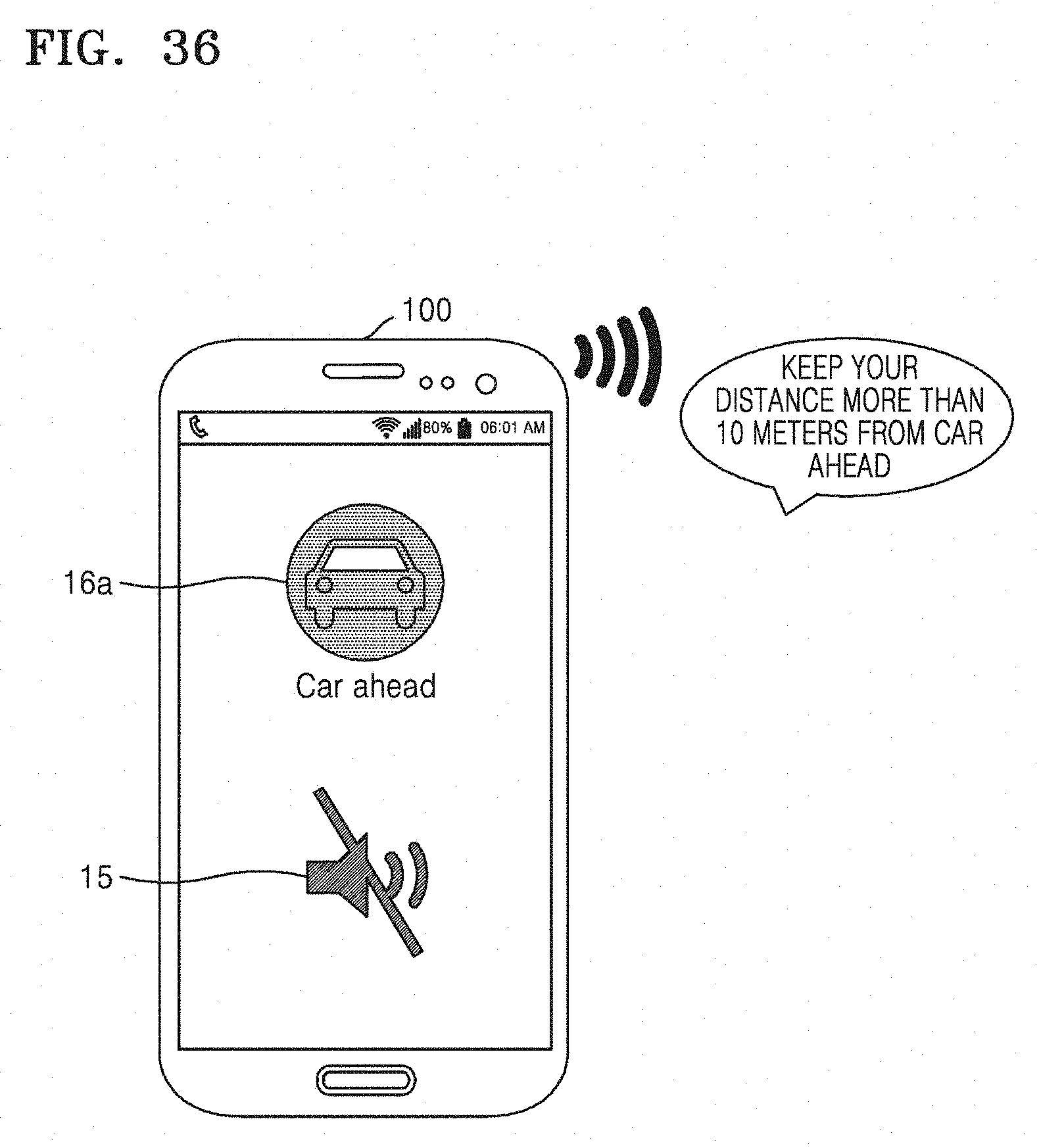

[0071] FIG. 36 illustrates an operation of providing a state change guide performed by a first device according to an embodiment of the present disclosure;

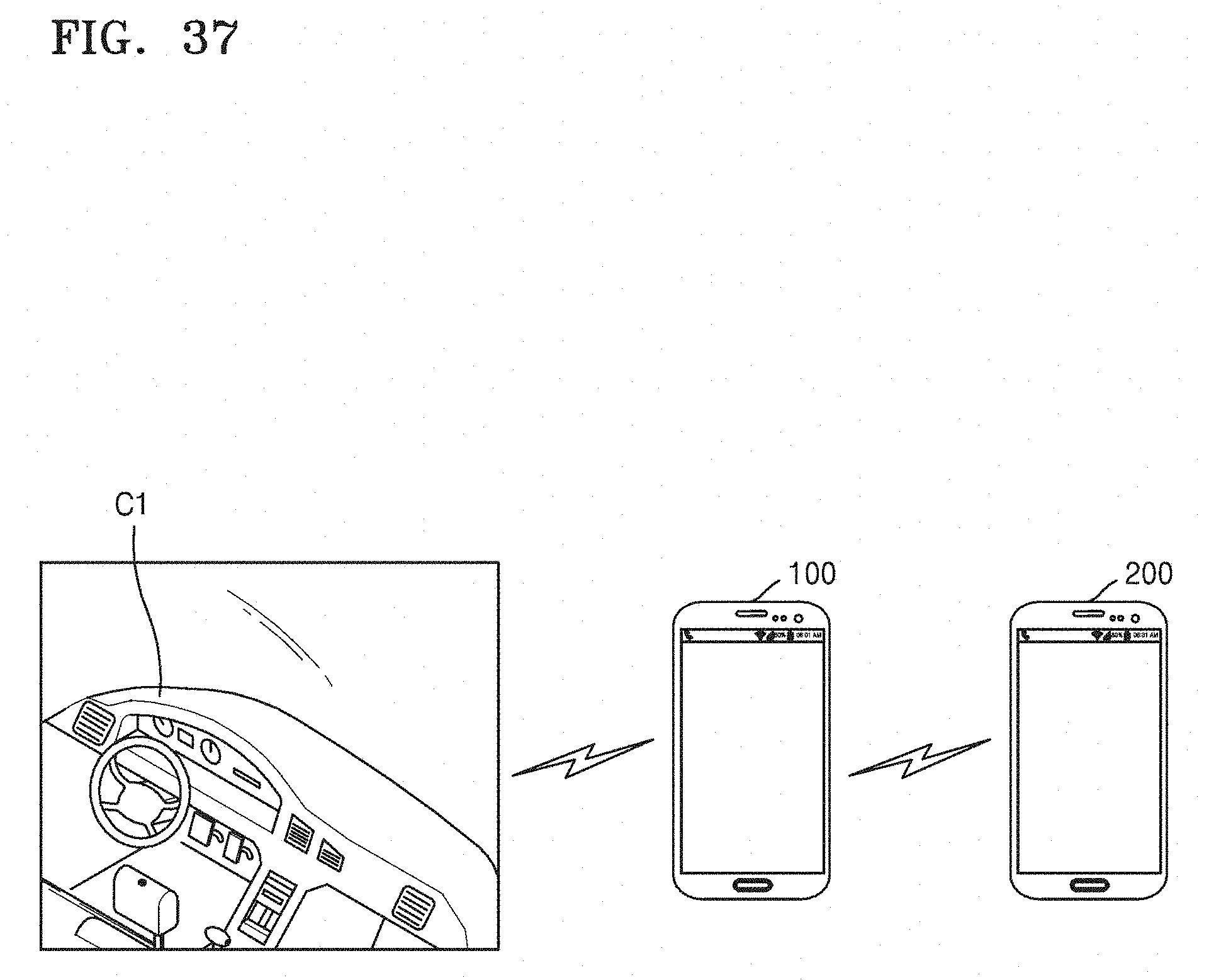

[0072] FIG. 37 illustrates a system providing a smart driving mode according to an embodiment of the present disclosure;

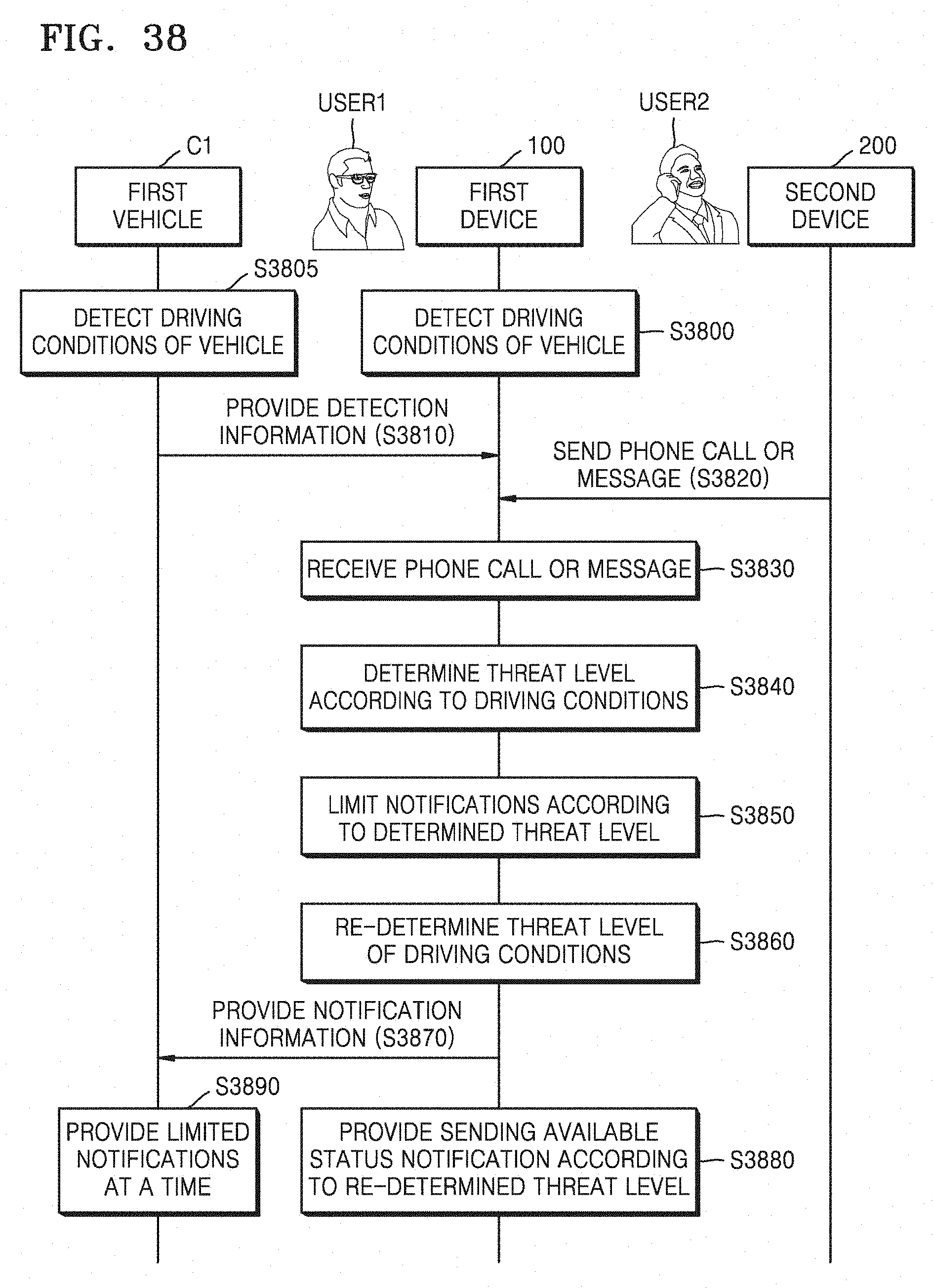

[0073] FIG. 38 is a flowchart illustrating operations of first and second devices and a first vehicle performed by using a device control method according to an embodiment of the present disclosure;

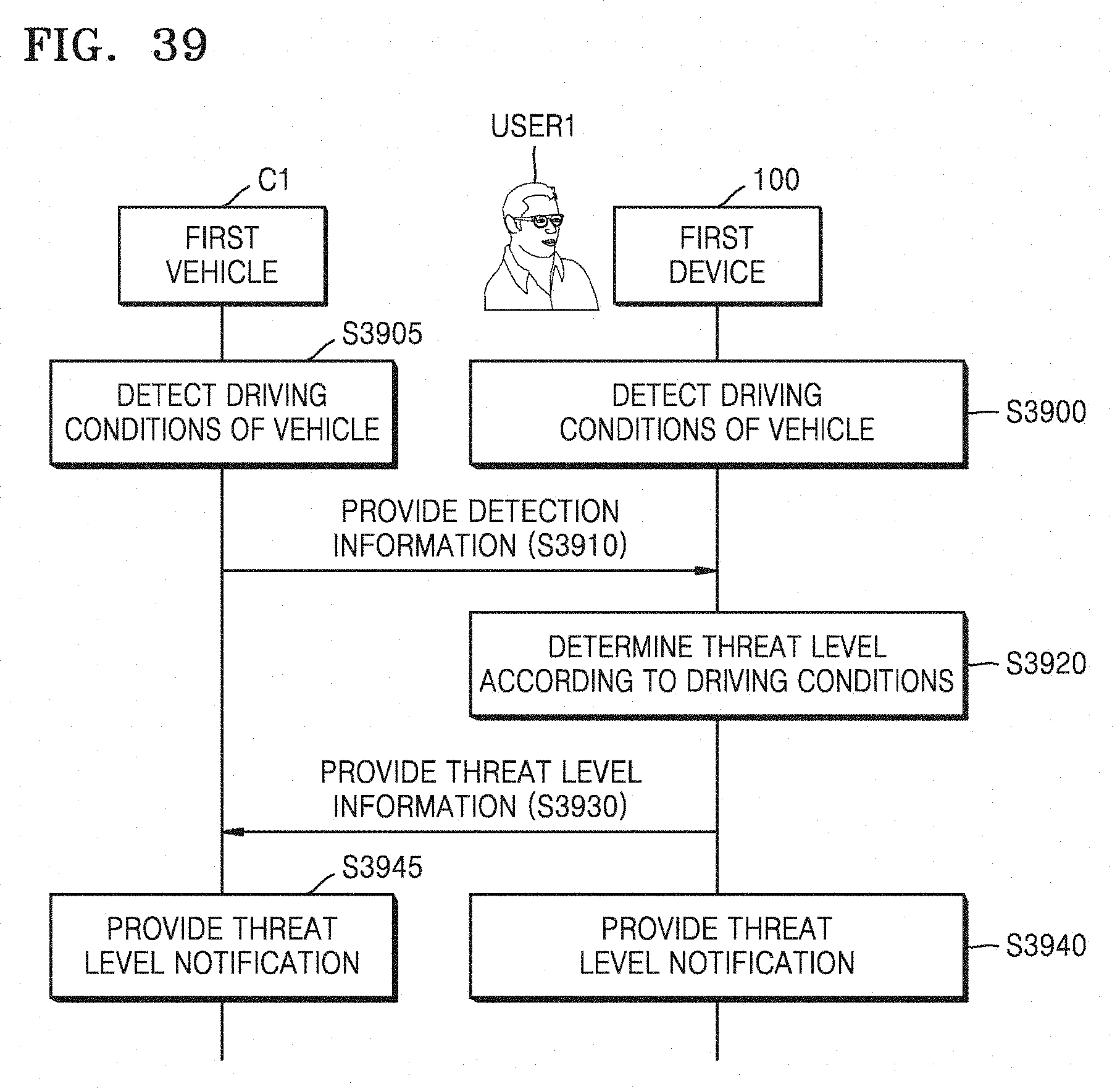

[0074] FIG. 39 is a flowchart illustrating operations of a first device and a first vehicle performed by using a device control method according to an embodiment of the present disclosure;

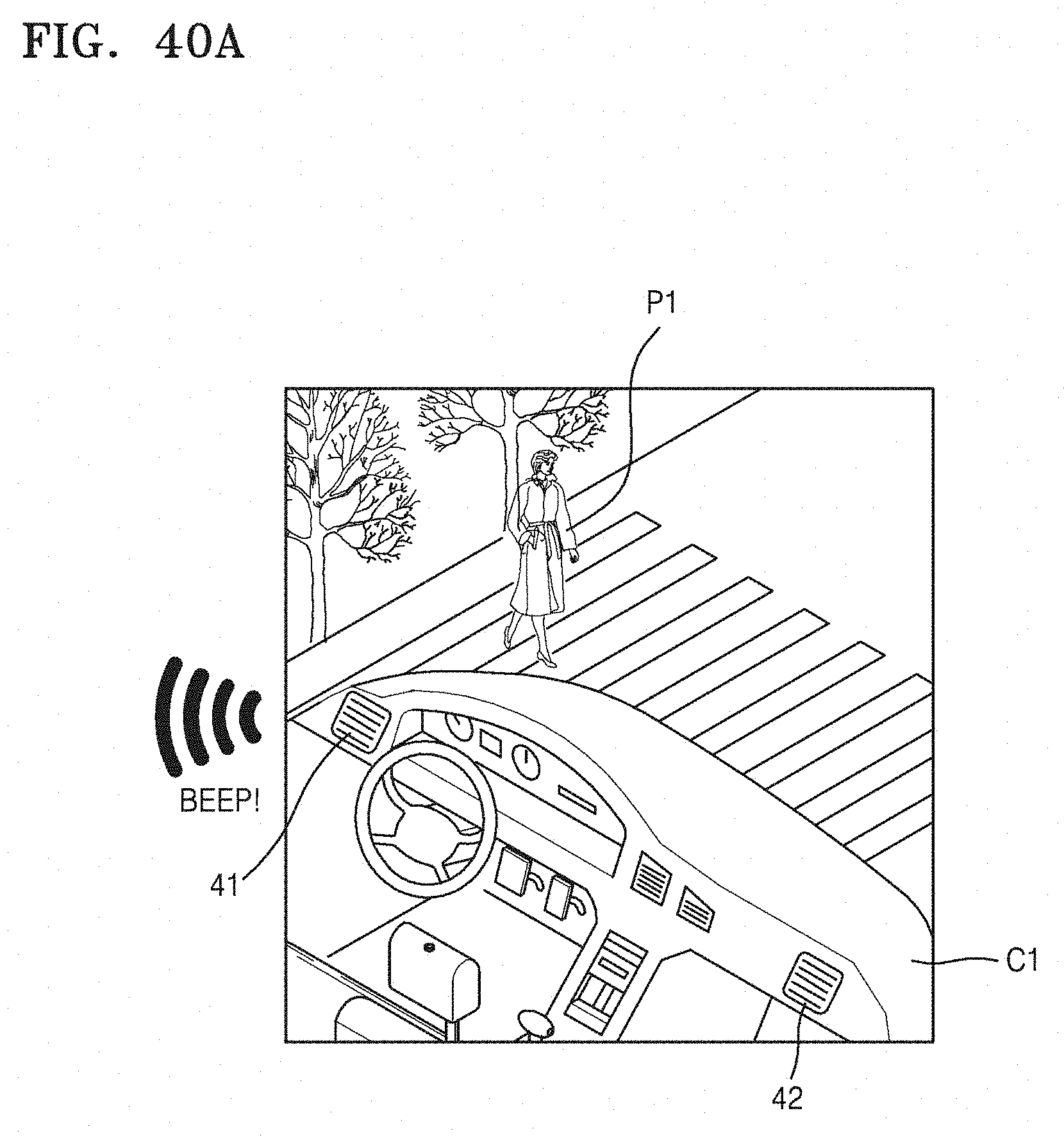

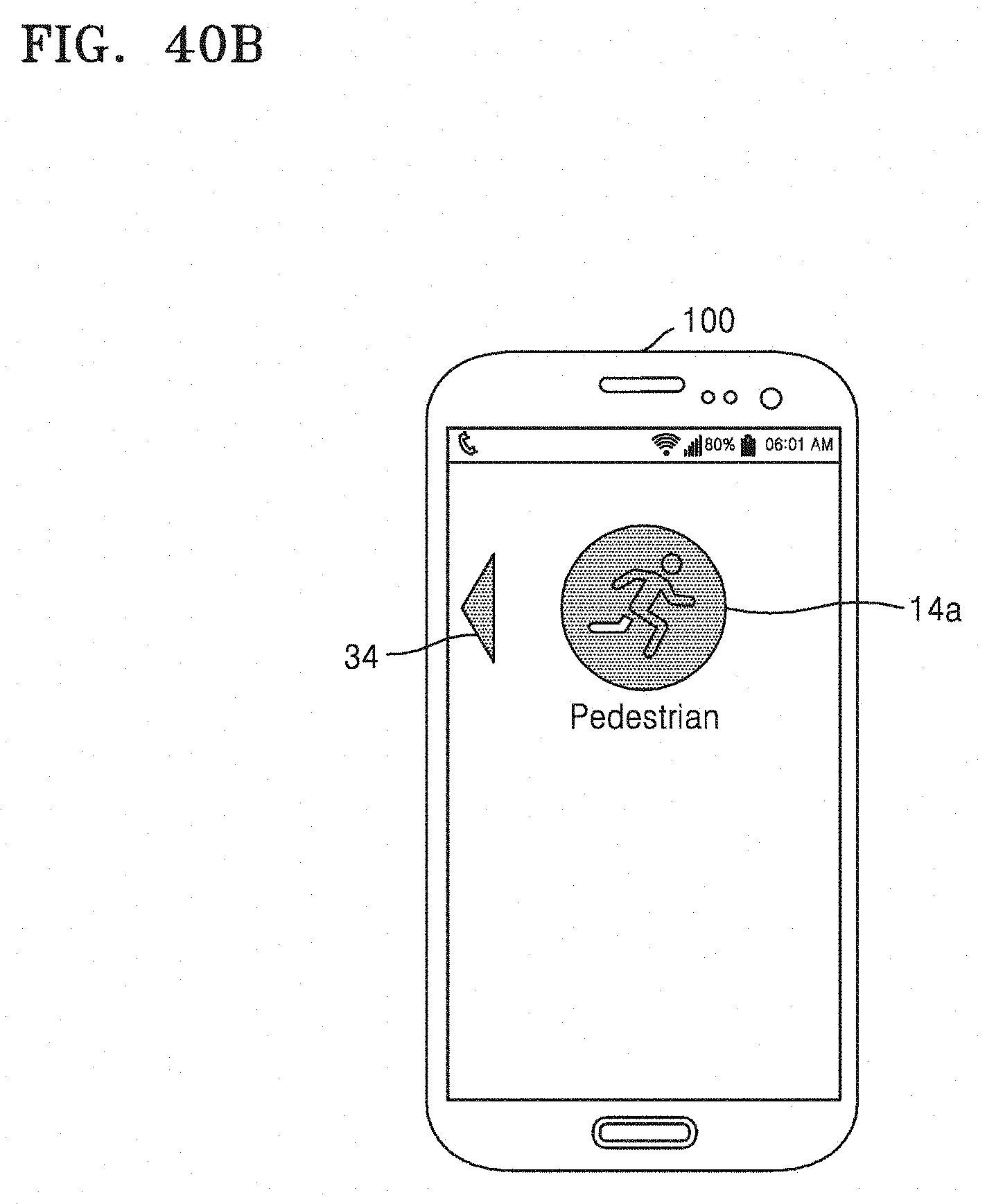

[0075] FIGS. 40A and 40B illustrate an operation of providing a threat level notification performed by a first vehicle and a first device according to an embodiment of the present disclosure;

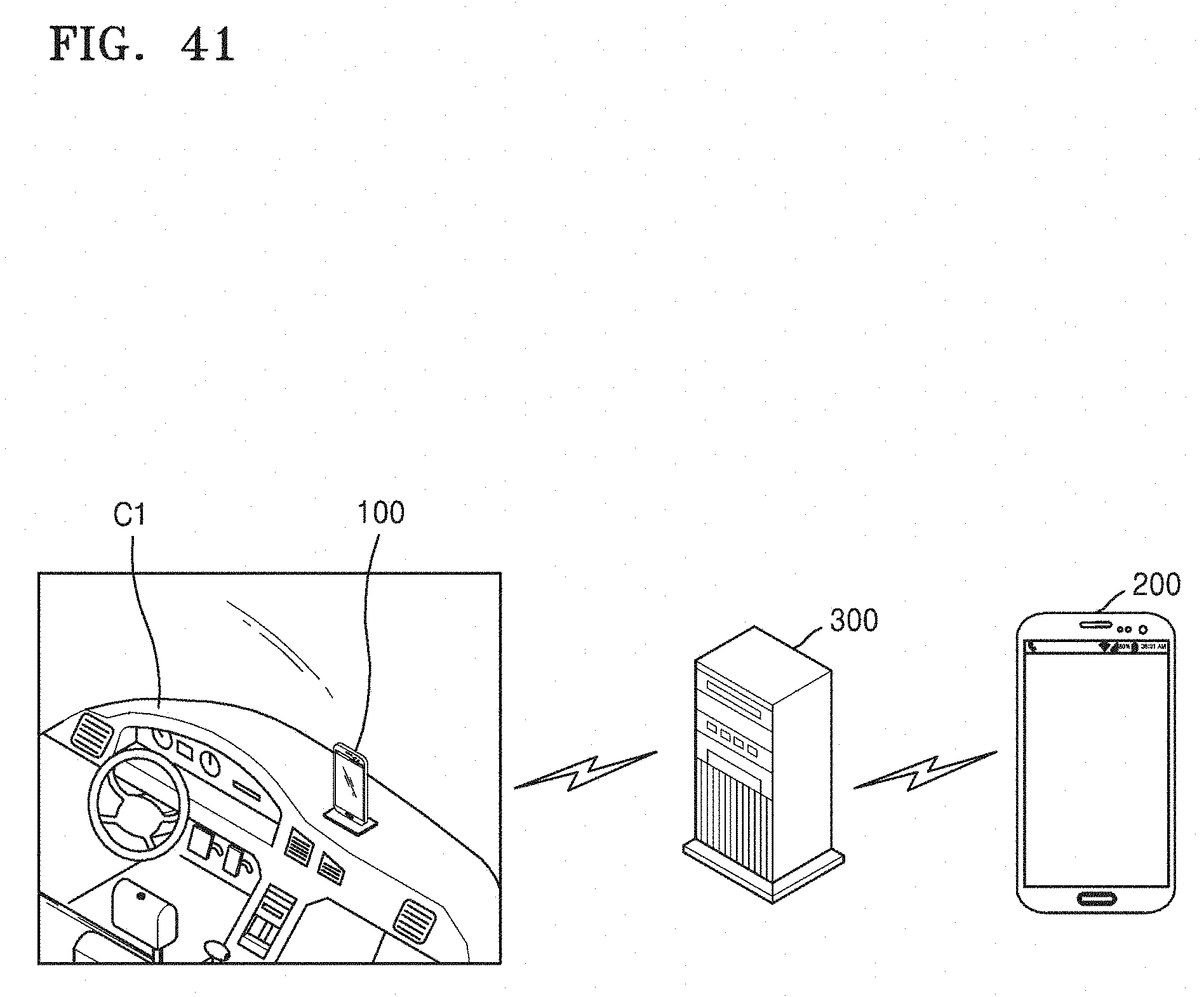

[0076] FIG. 41 illustrates a system providing a smart driving mode according to an embodiment of the present disclosure;

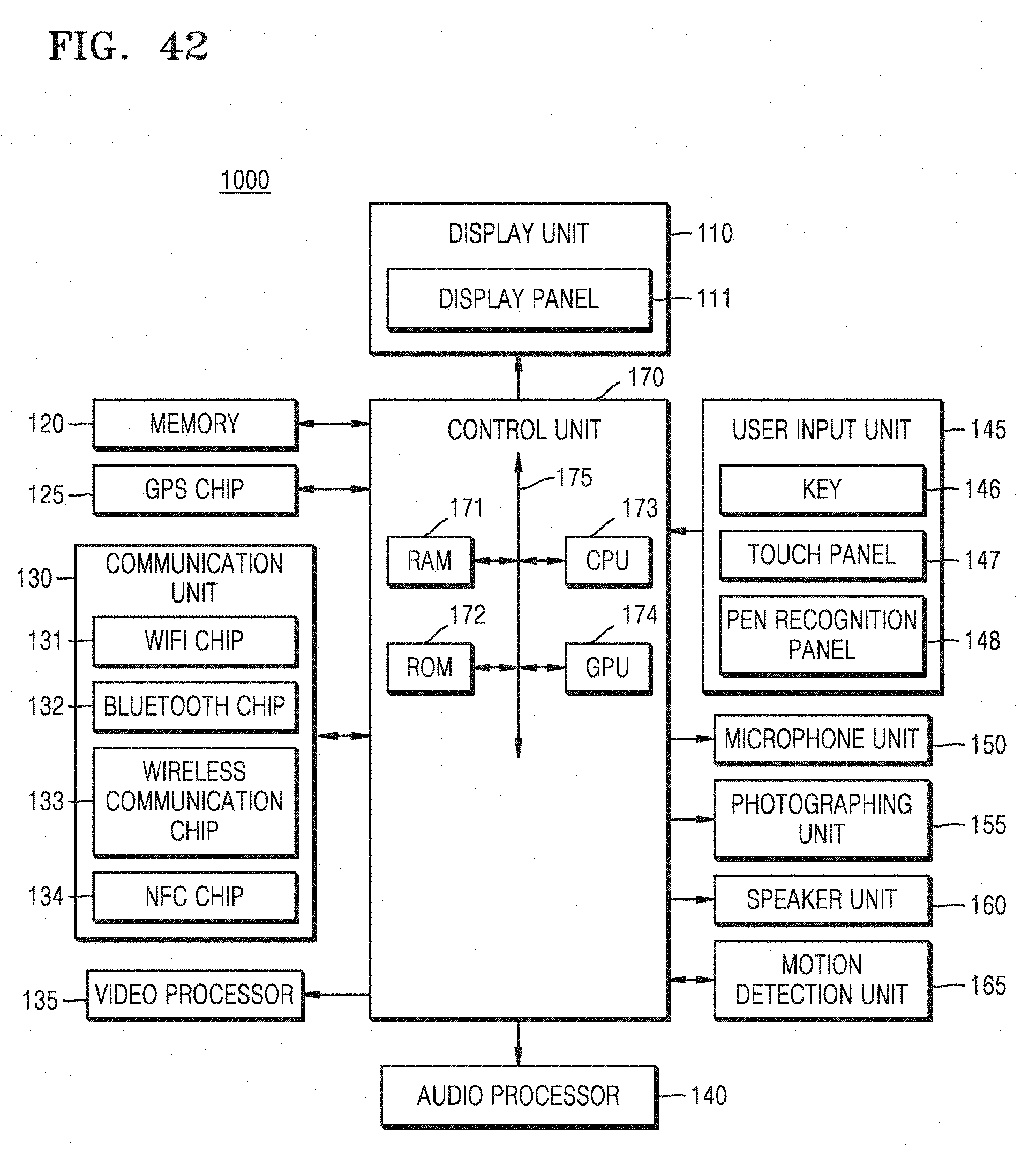

[0077] FIG. 42 is a block diagram illustrating a device according to an embodiment of the present disclosure;

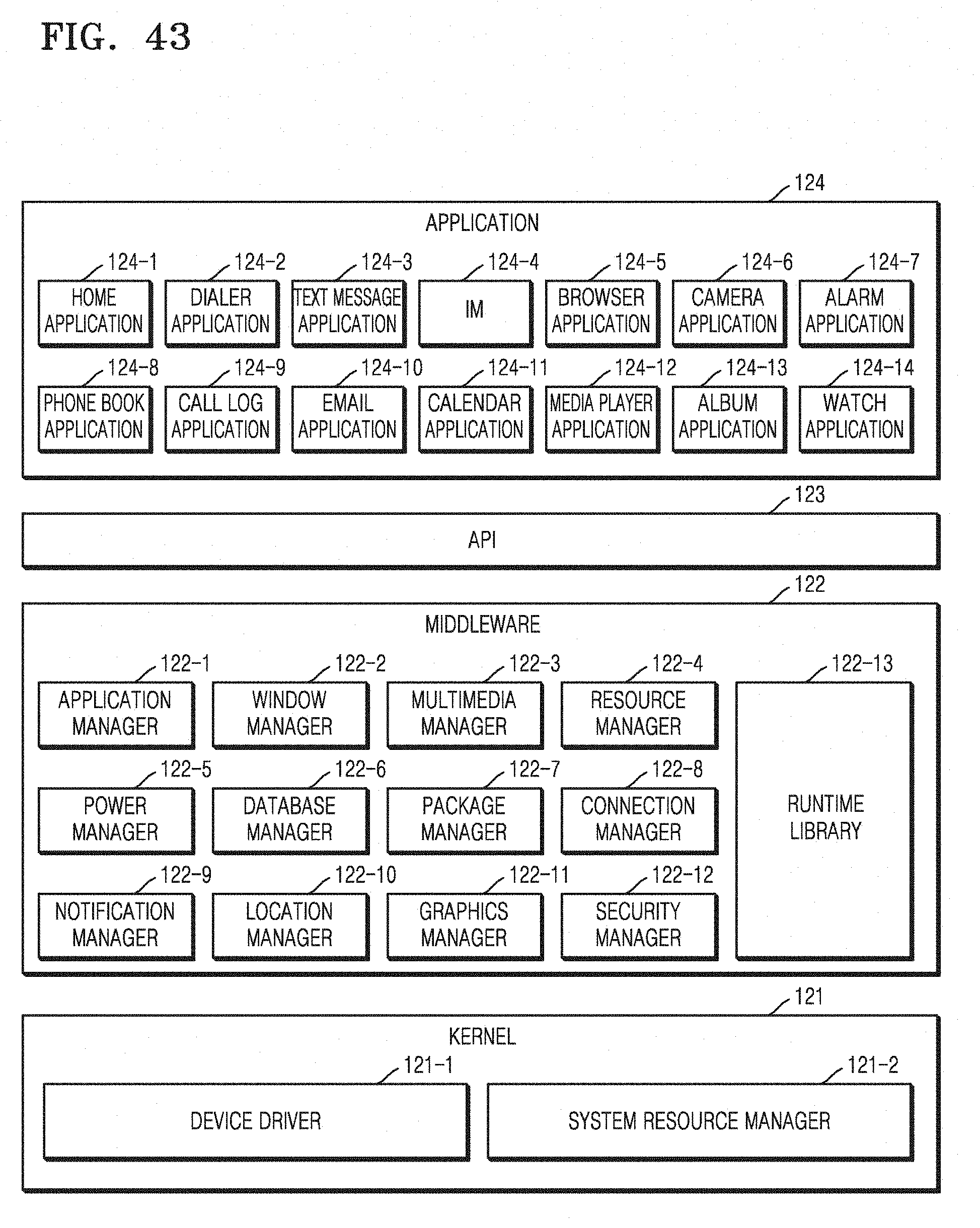

[0078] FIG. 43 is a block diagram illustrating software of a device according to an embodiment of the present disclosure;

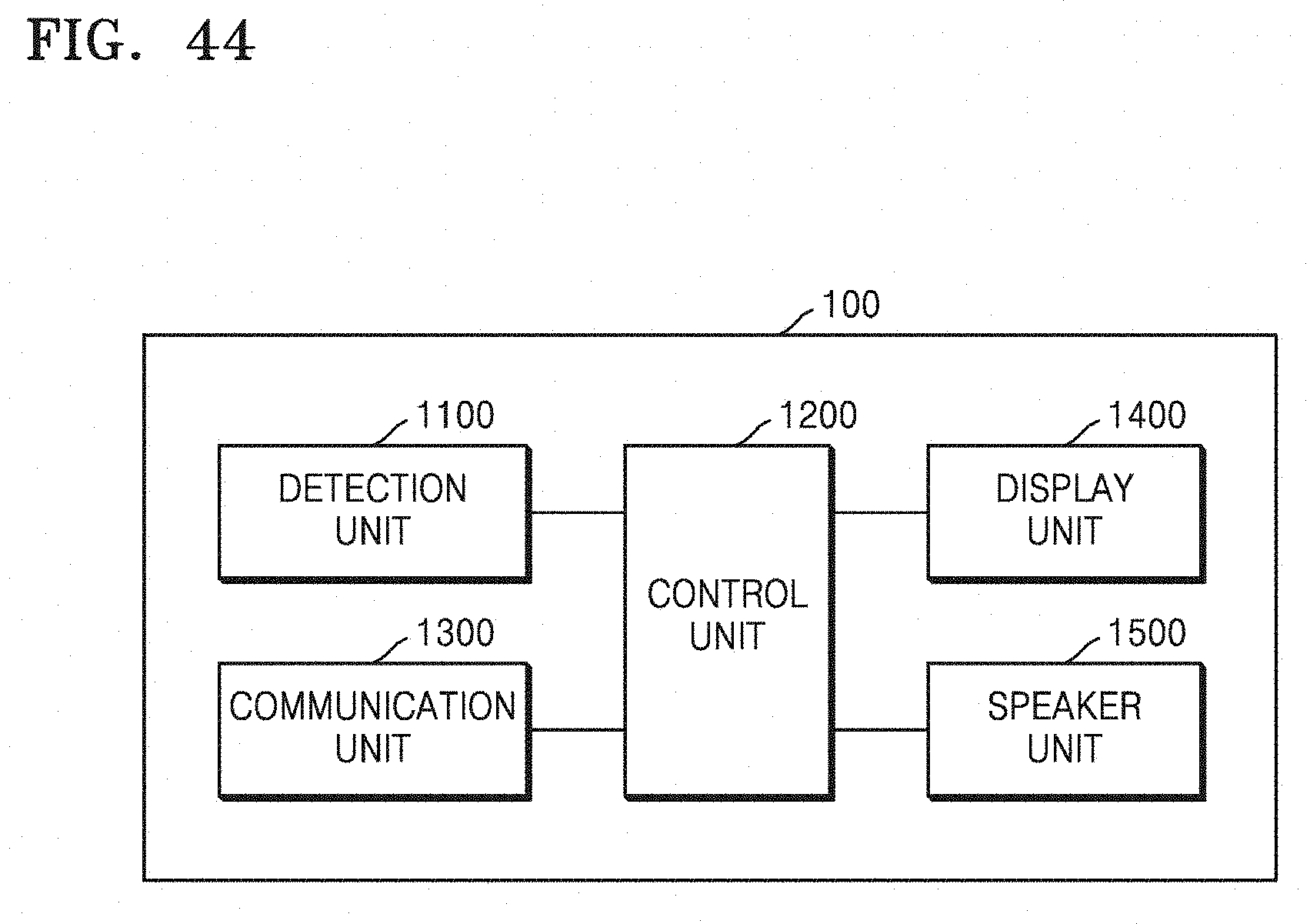

[0079] FIG. 44 is a block diagram illustrating a first device according to an embodiment of the present disclosure;

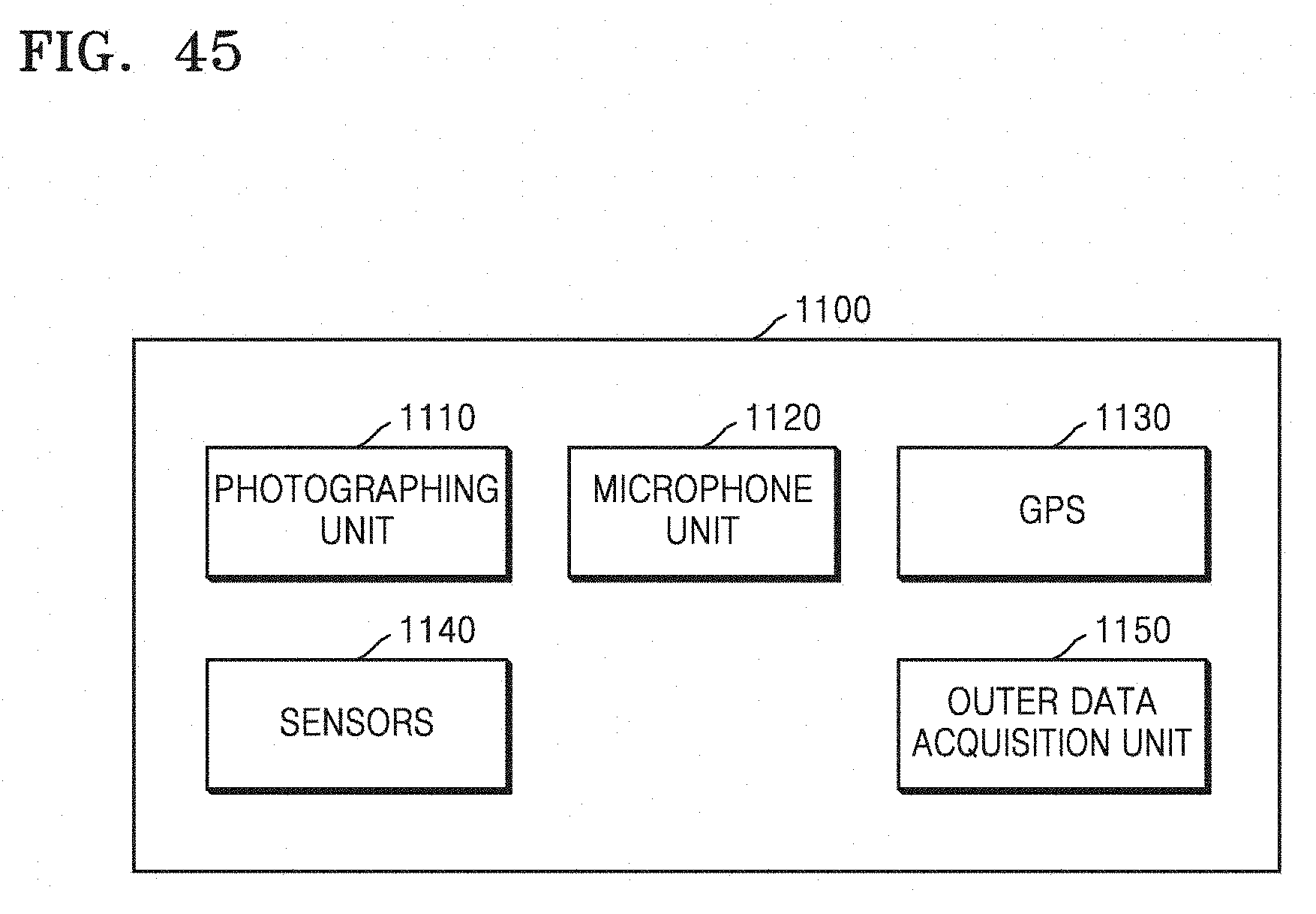

[0080] FIG. 45 is a block diagram illustrating a detection unit according to an embodiment of the present disclosure; and

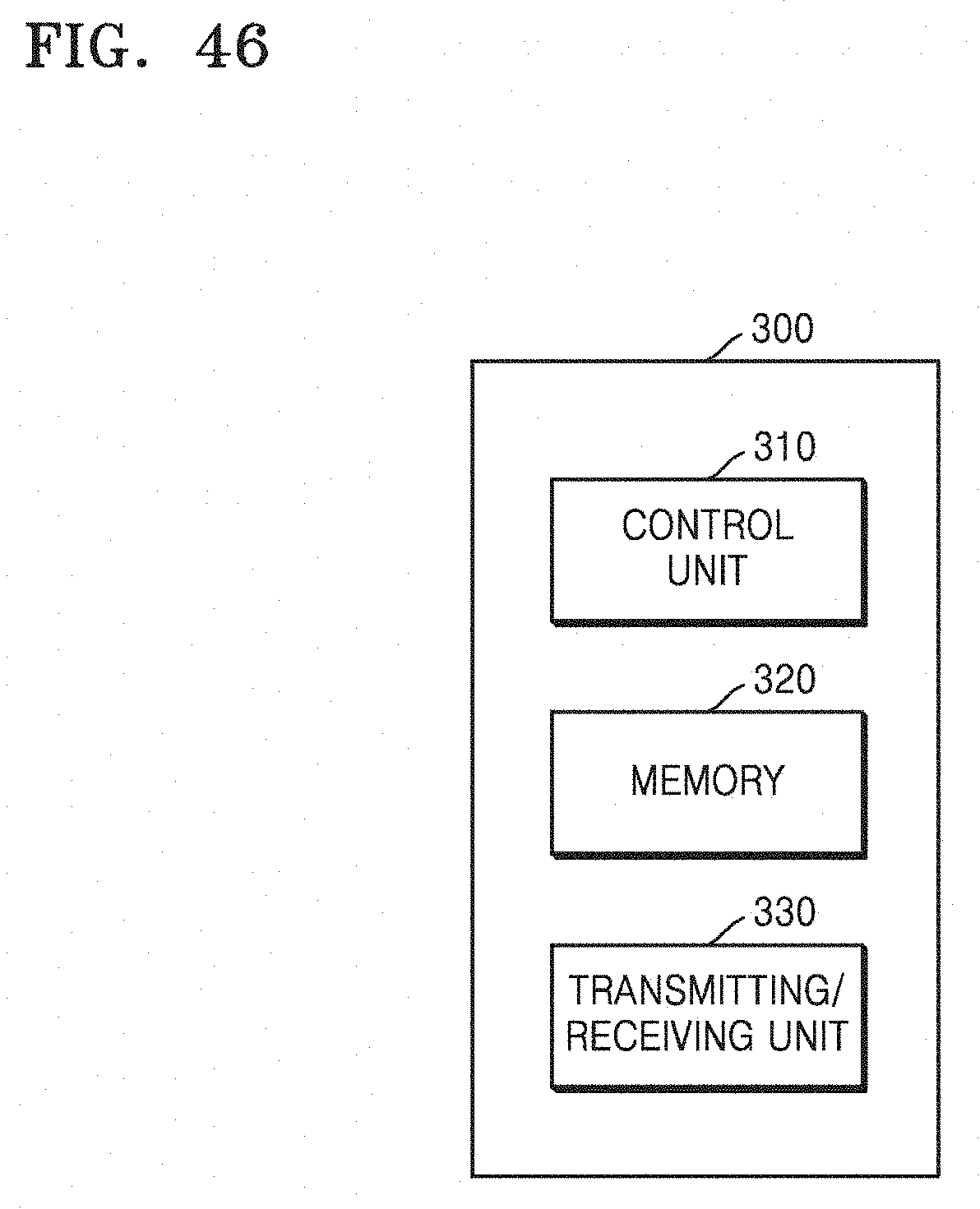

[0081] FIG. 46 is a block diagram illustrating a server according to an embodiment of the present disclosure.

[0082] Throughout the drawings, like reference numerals will be understood to refer to like parts, components, and structures.

DETAILED DESCRIPTION

[0083] The following description with reference to the accompanying drawings is provided to assist in a comprehensive understanding of various embodiments of the present disclosure as defined by the claims and their equivalents. It includes various specific details to assist in that understanding but these are to be regarded as merely exemplary. Accordingly, those of ordinary skill in the art will recognize that various changes and modifications of the various embodiments described herein can be made without departing from the scope and spirit of the present disclosure. In addition, descriptions of well-known functions and constructions may be omitted for clarity and conciseness.

[0084] The terms and words used in the following description and claims are not limited to the bibliographical meanings, but, are merely used by the inventor to enable a clear and consistent understanding of the present disclosure. Accordingly, it should be apparent to those skilled in the art that the following description of various embodiments of the present disclosure is provided for illustration purpose only and not for the purpose of limiting the present disclosure as defined by the appended claims and their equivalents.

[0085] It is to be understood that the singular forms "a," "an," and "the" include plural referents unless the context clearly dictates otherwise. Thus, for example, reference to "a component surface" includes reference to one or more of such surfaces.

[0086] By the term "substantially" it is meant that the recited characteristic, parameter, or value need not be achieved exactly, but that deviations or variations, including for example, tolerances, measurement error, measurement accuracy limitations and other factors known to those of skill in the art, may occur in amounts that do not preclude the effect the characteristic was intended to provide.

[0087] Unless explicitly described to the contrary, the word "comprise" and variations, such as "comprises" or "comprising" will be understood to imply the inclusion of stated elements but not the exclusion of any other elements. In addition, the terms "-er", "-or", and "module" described in the specification mean units for processing at least one function and operation and can be implemented by hardware components or software components and combinations thereof.

[0088] Reference will now be made to various embodiments of the present disclosure, examples of which are illustrated in the accompanying drawings, wherein like reference numerals refer to like elements throughout. In this regard, the present embodiments of the present disclosure may have different forms and should not be construed as being limited to the descriptions set forth herein. Accordingly, the various embodiments of the present disclosure are merely described below, by referring to the figures, to explain aspects of the present disclosure. In the accompanying drawings, a portion irrelevant to a description of the present disclosure will be omitted for clarity. As used herein, the term "and/or" includes any and all combinations of one or more of the associated listed items.

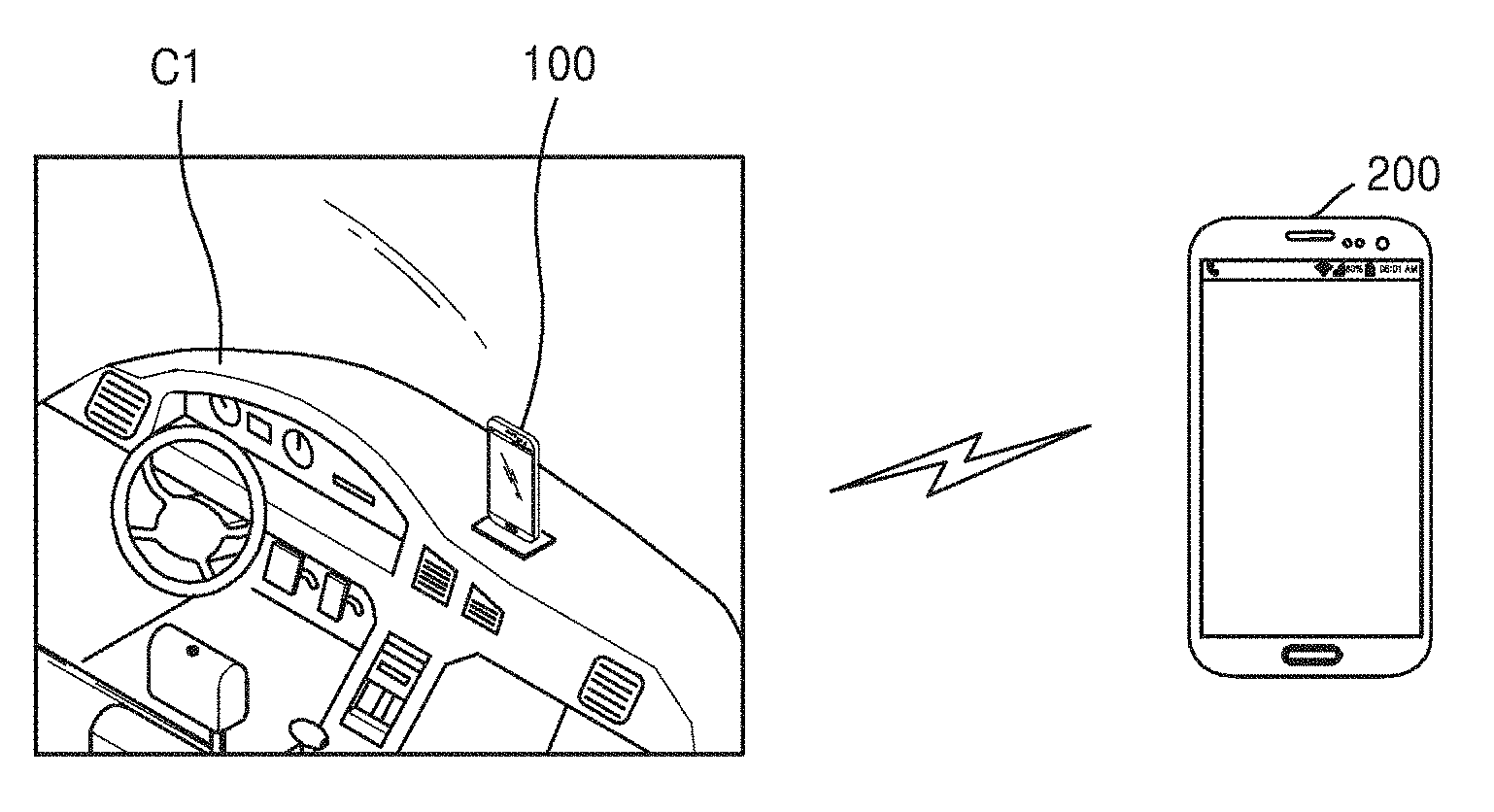

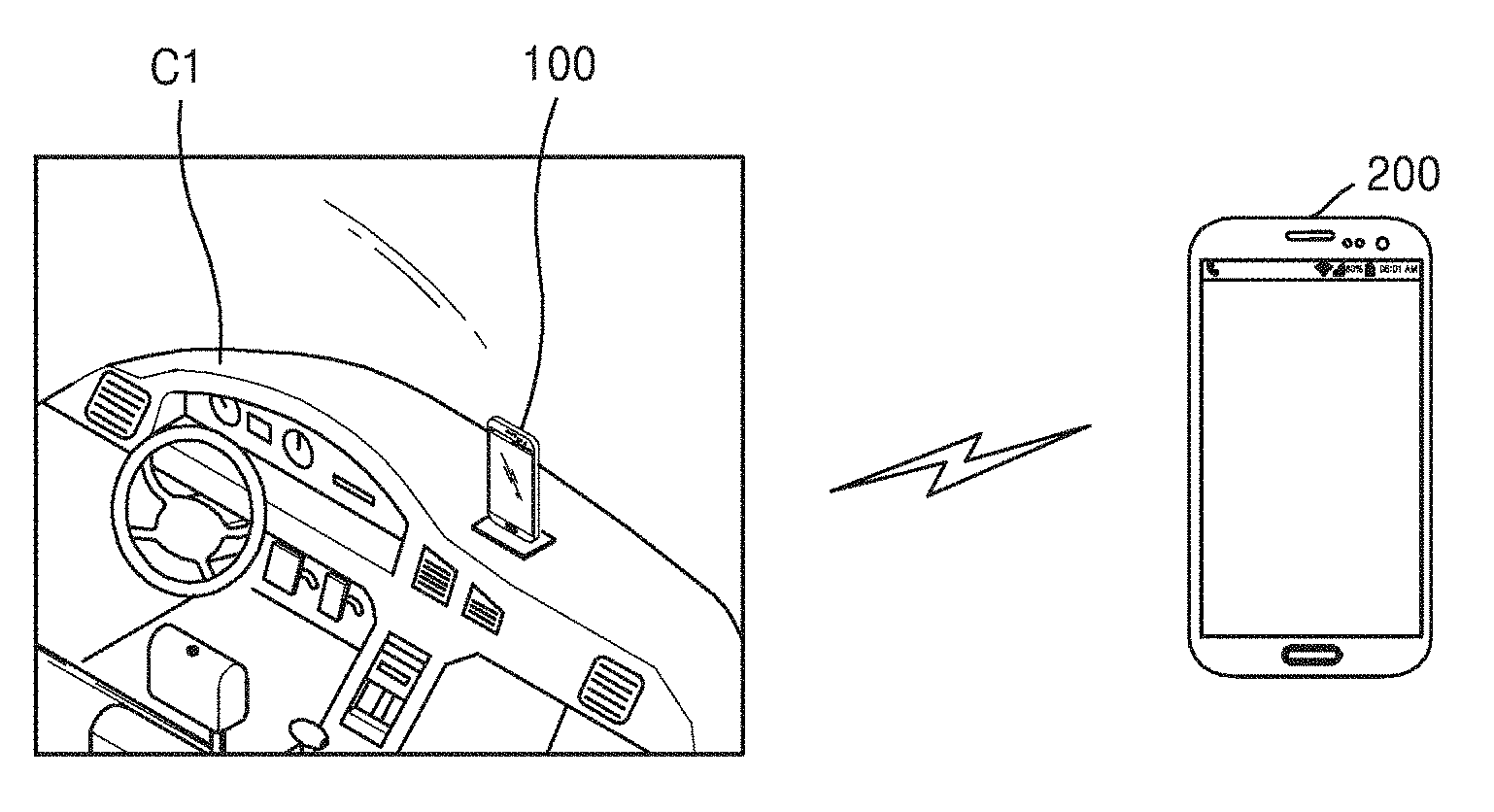

[0089] FIG. 1 illustrates a system providing a smart driving mode according to an embodiment of the present disclosure.

[0090] Referring to FIG. 1, the system providing the smart driving mode may include a first device 100 and a second device 200. The first device 100 may be positioned in a first vehicle C1. In an embodiment of the present disclosure, the first device 100 may be mounted in a cradle of the first vehicle C1. However, the various embodiments of the present disclosure are not limited thereto. The first device 100 may be positioned in an arbitrary space of the first vehicle C1.

[0091] The first and second devices 100 and 200 may be electronic devices supporting a voice call function, a video call function, or a message transmission/reception function. For example, the first and second devices 100 and 200 may be smart phones, tablet personal computers (PCs), PCs, smart televisions (TVs), mobile phones, personal digital assistants (PDAs), laptop PCs, media players, micro servers, global positioning system (GPS) devices, e-book terminals, digital broadcasting terminals, navigation devices, kiosks, moving picture experts group (phase 1 or phase 2) (MPEG-1 or MPEG-2) audio layer 3 (MP3) players, digital camera, and other mobile or non-mobile computing devices, but are not limited thereto. Furthermore, the first and second devices 100 and 200 may include various devices capable of receiving touch inputs, such as electronic blackboards and touch tables.

[0092] According to the present embodiment of the present disclosure, the first device 100 may be a device that executes software and performs particular functions via the software. Software may be executed by one from among an application, an operating system (OS), and a middleware or a combination thereof. More particularly, a function of providing a smart driving mode according to the present embodiment may be performed by one from among an application, an OS, and a middleware. Software may be executed not only by the first device 100, but also by other devices connected to the first device 100, e.g., a wearable device, a server, a navigation device, and the like.

[0093] The term "application" refers to a set of a series of computer programs designed to perform a particular task. The present specification may include various applications. For example, examples of applications may include a web browser, a camera application, a dictionary application, a translator application, a data transmission application, a music player application, a movie player application, a phone application, a message application, a social communicator application, a map application, a picture folder application, a broadcasting application, a game application, a fitness application, a payment application, a memo application, a calendar application, and a phone book application, but are not limited thereto.

[0094] "Middleware" may include a plurality of modules that are prepared in advance to provide functions that are commonly demanded by various applications. Middleware may provide functions via application program interfaces (APIs), such that applications may efficiently utilize resources in a device. For example, middleware may include at least one of a plurality of modules including an application manager, a window manager, a multimedia manager, a resource manager, a power manager, a database manager, a package manager, a connection manager, a notification manager, a location manager, a graphic manager, and a security manager. Detailed descriptions of middleware will be given below with reference to FIG. 43.

[0095] In the present embodiment of the present disclosure, a first user USER1 of the first device 100 may be a driver driving the first vehicle C1. In an embodiment of the present disclosure, the first device 100 may receive a call or a message from the second device 200. In an embodiment of the present disclosure, the first device 100 may transmit a call or a message to the second device 200. Accordingly, the first device 100 may be defined as a user device or a driver device, the second device 200 may be defined as a counterpart device, and the first vehicle C1 may be defined as a user vehicle.

[0096] In a case where a communication function is performed through the first device 100 while driving the first vehicle C1, safe driving of the first user USER1 may be interrupted according to driving conditions of the first vehicle C1. Thus, in the present embodiment of the present disclosure, the first device 100 may detect the driving conditions of the first vehicle C1, limit notifications with respect to events that occur in the first device 100 during a section according to the detected driving conditions, and, if the driving conditions are changed, provide the notifications limited during the section at a time.

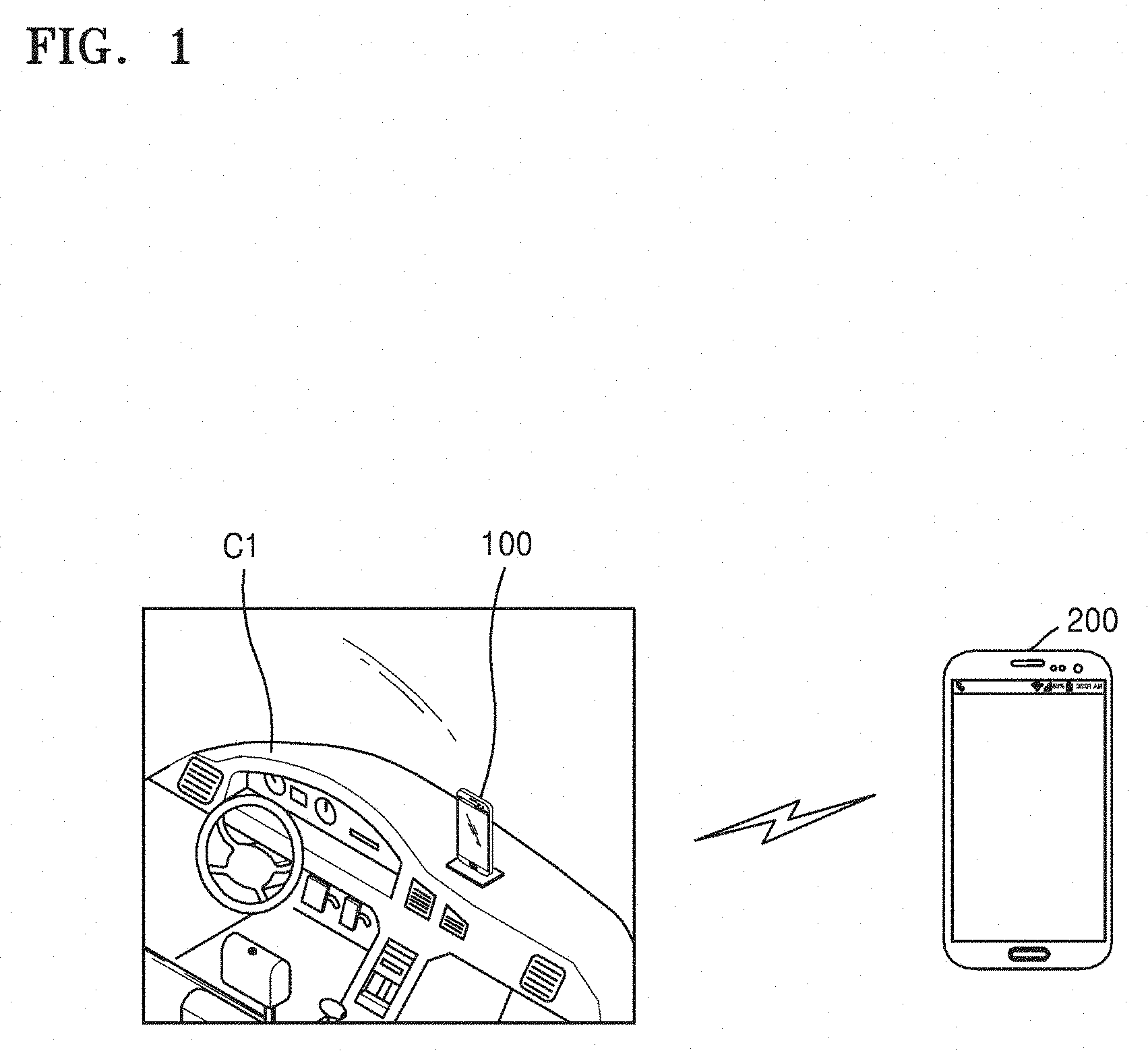

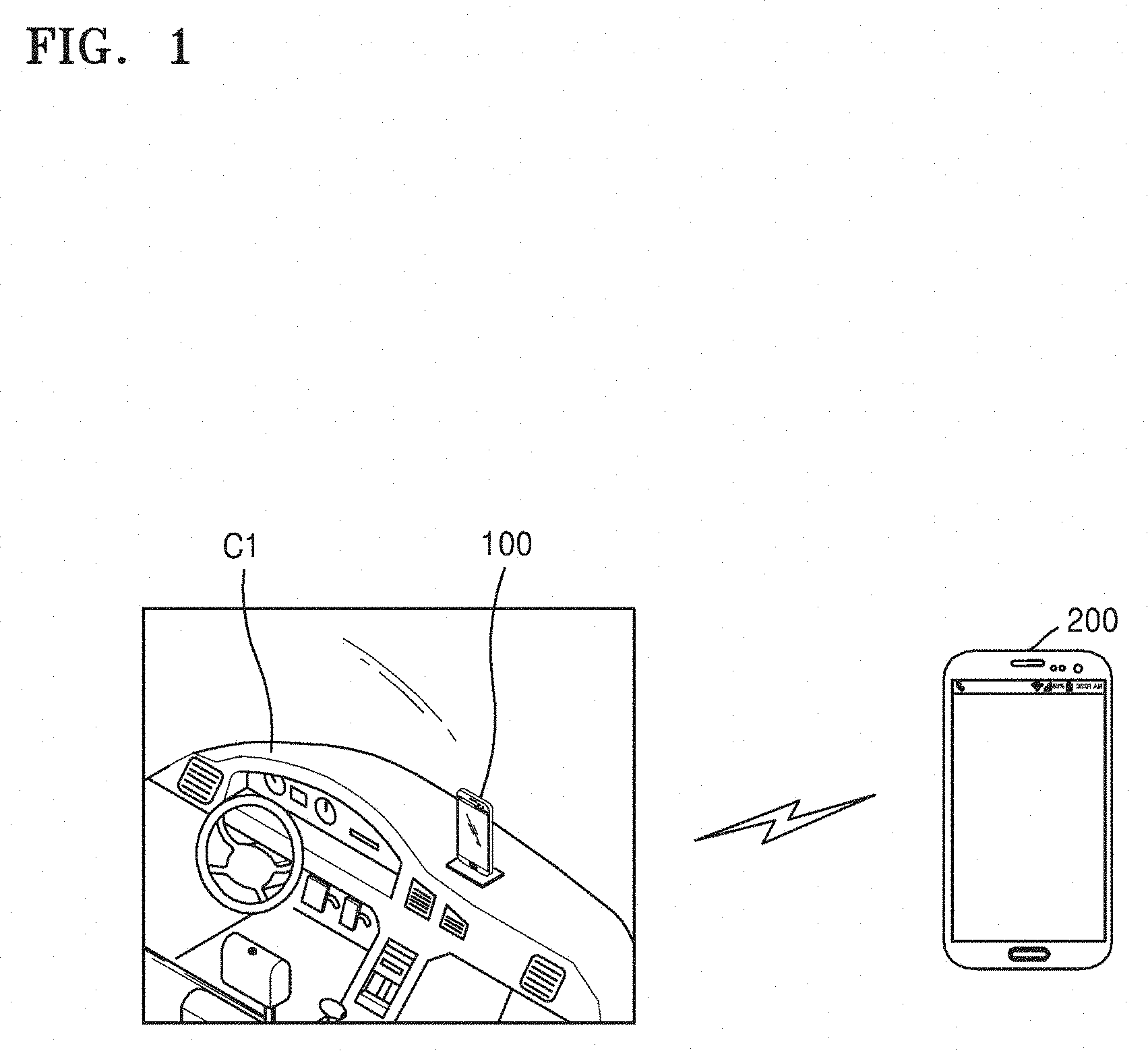

[0097] FIG. 2 is a flowchart of a device control method according to an embodiment of the present disclosure.

[0098] Referring to FIG. 2, the device control method according to the present embodiment of the present disclosure is a method for processing a phone call or a message received by a user device or a driver device and includes the following operations that are performed on the user device. For example, the device control method according to the present embodiment may include operations that are time-serially performed by the first device 100 of FIG. 1.

[0099] In operation S200, driving conditions of a vehicle may be detected. In this regard, the driving conditions of the vehicle may include at least one of outer conditions of the vehicle, inner conditions of the vehicle, conditions of the vehicle itself, and conditions of a driver. The outer conditions of the vehicle may include, for example, road conditions, such as other vehicles, pedestrians, lanes, traffic, road pavement status, and the like, or weather, and the like. The inner conditions of the vehicle may include noise inside the vehicle, and the like. The conditions of the vehicle itself may include a speed of the vehicle, and the like. The conditions of the driver may include an emotional state of the first user USER1, fatigue, attention, a driving experience, a driving concentration, and the like.

[0100] In operation S220, notifications of events that occur in a device are limited during a section according to the detected driving conditions. In this regard, the section may be determined in real time according to the driving conditions. In an embodiment of the present disclosure, an event may be a communication event, such as a phone call or a message received by the device during the section. In an embodiment of the present disclosure, the event may be a notification event provided through an application installed in the device during the section. Accordingly, as a result of determining the detected driving conditions, it may prevent the driver from being interrupted due to notifications of the device while concentration of the driver is required.

[0101] In operation S240, if the driving conditions are changed, the notifications limited during the section may be provided at a time. In this regard, the expression that the "driving conditions have been changed" indicates that the driver has entered a safe state in which the device may be used. In this regard, the expression that "notifications are provided at a time" indicates that communication events or notification events received by the device during the section are provided at a time. In the present embodiment of the present disclosure, the limited notifications may be provided as a notification packet at a time.

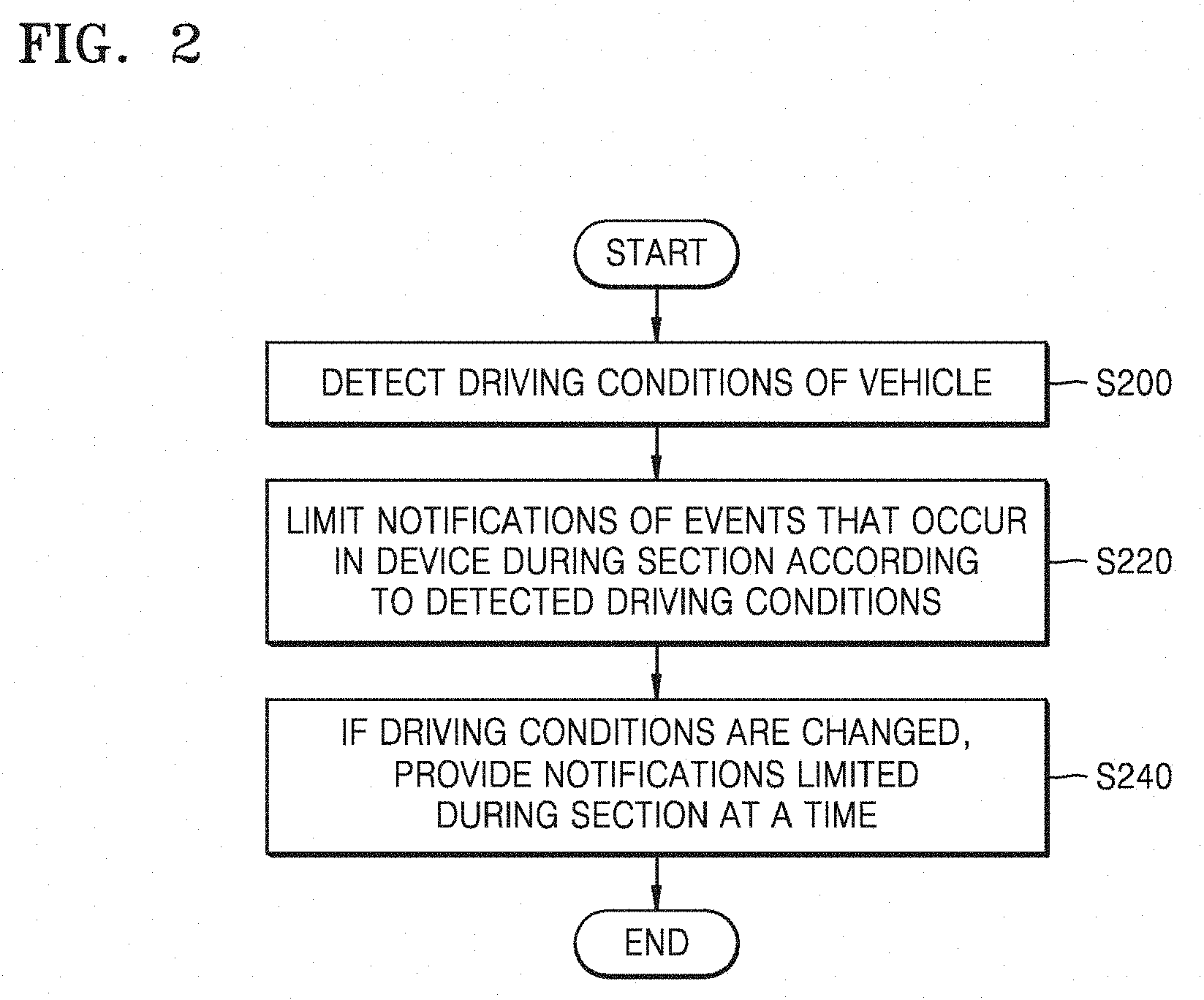

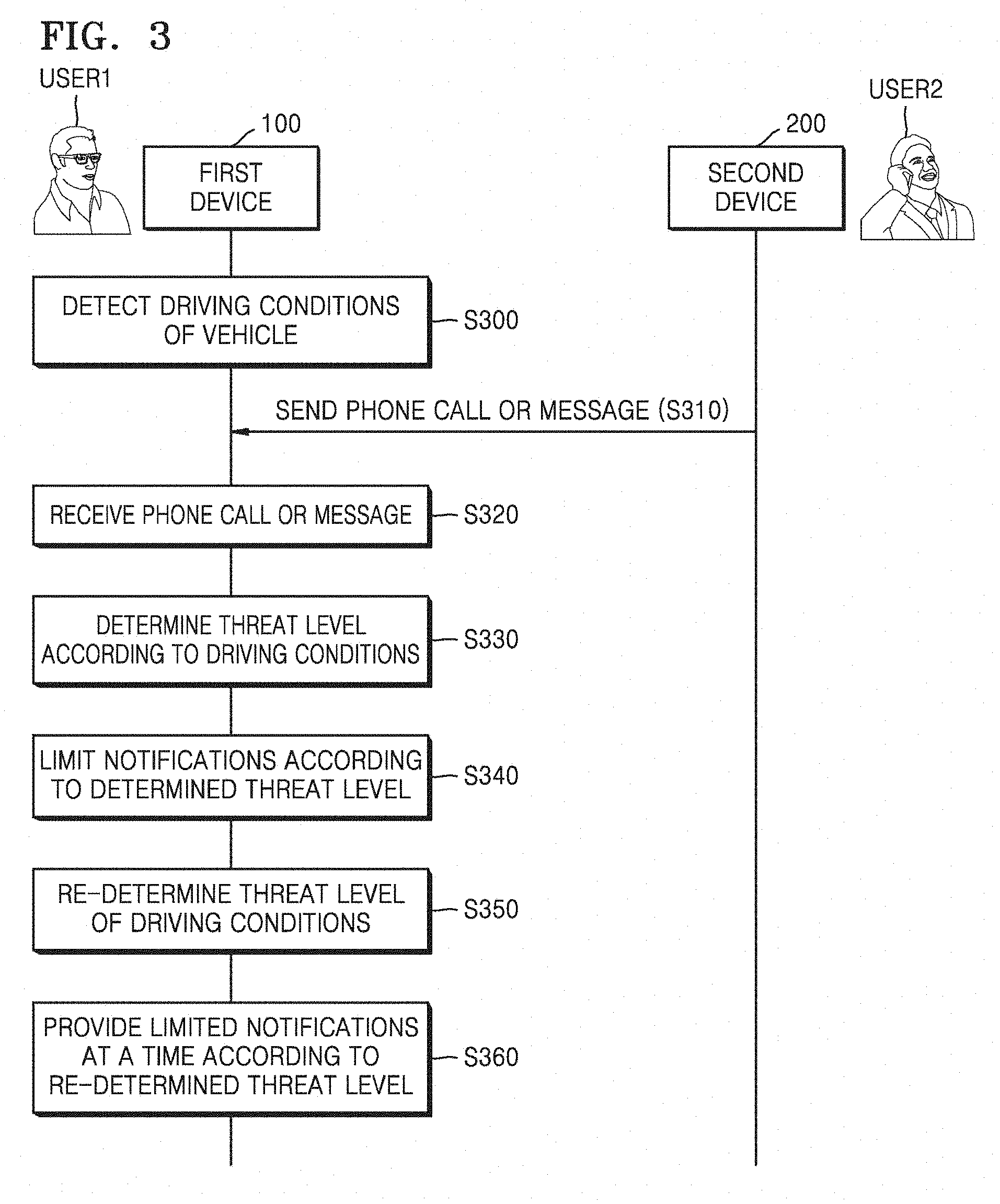

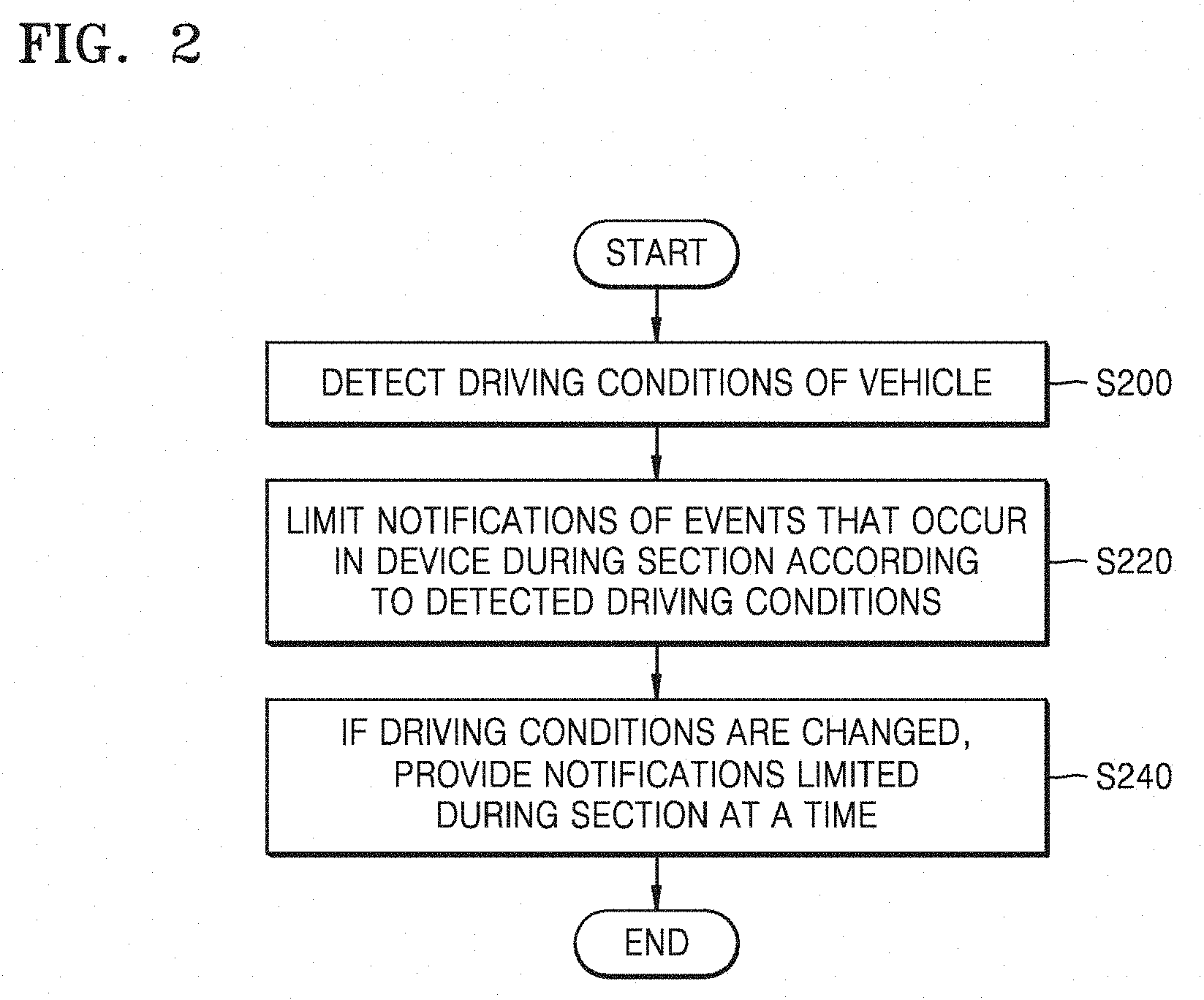

[0102] FIG. 3 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure.

[0103] Referring to FIG. 3, the device control method according to the present embodiment of the present disclosure may include operations that are time-serially performed by the first and second devices 100 and 200 of FIG. 1. Although omitted below, descriptions of the first and second devices 100 and 200 with reference to FIG. 1 apply to the device control method according to the present embodiment.

[0104] In operation S300, the first device 100 may detect driving conditions of the first vehicle C1. In this regard, the driving conditions of the vehicle may include at least one of outer conditions of the vehicle, inner conditions of the vehicle, conditions of the vehicle itself, and conditions of a driver.

[0105] In an embodiment of the present disclosure, the first device 100 may detect the driving conditions of the first vehicle C1 by using a camera module, a microphone module, a GPS module, various sensors, applications, and the like, of the first device 100. In an embodiment of the present disclosure, the first device 100 may be connected to the first vehicle C1 by wired or wirelessly and may detect the driving conditions of the first vehicle C1 by using a camera module, a microphone module, a GPS module, various sensors, applications, and the like, of the first vehicle C1. In an embodiment of the present disclosure, the first device 100 may be connected to a wearable device by wired or wirelessly and may detect the driving conditions of the first vehicle C1 by using a camera module, a microphone module, a GPS module, various sensors, applications, and the like, of the wearable device.

[0106] In an embodiment of the present disclosure, the first device 100 may detect the driving conditions of the first vehicle C1 by using the camera module. In more detail, the first device 100 may detect road conditions, such as vehicles, pedestrians, lanes, and the like, by using the camera module (for example, a camera module disposed at the rear side of the first device 100) acquiring an outer image of the first vehicle C1. The first device 100 may recognize an iris or head position of the first user USER1 and detect driver conditions, such as fatigue, attention, driving concentration, and the like, of the first user USER1 by using the camera module (for example, a camera module disposed at the front side of the first device 100) acquiring an inner image of the first vehicle C1.

[0107] In an embodiment of the present disclosure, the first device 100 may detect the driving conditions of the first vehicle C1 by using the microphone module. In more detail, the first device 100 may detect inner noise of the first vehicle C1 or voice of the first user USER1 by using the microphone module acquiring inner sound of the first vehicle C1.

[0108] In an embodiment of the present disclosure, the first device 100 may calculate a current position or speed of the first vehicle C1, and the like, by using the GPS module. For example, the current position may be determined as downtown, the country, highway, and the like. In an embodiment of the present disclosure, the first device 100 may calculate the current speed of the first vehicle C1, and the like, by using various sensors, such as an acceleration sensor, a magnetic sensor, a gyro sensor, and the like.

[0109] In an embodiment of the present disclosure, the first device 100 may detect the driving conditions of the first vehicle C1 with reference to information of an application that is being executed or has been executed. For example, the first device 100 may acquire information, such as the current position of the first vehicle C1, current traffic, accident black spots, and the like, by using a map application. In an embodiment of the present disclosure, the first device 100 may acquire information, such as current weather or future weather through a weather application. In an embodiment of the present disclosure, the first device 100 may acquire information relating to the driving conditions of the first vehicle C1 through a news application, an e-mail application, a social network service (SNS), and the like.

[0110] In operation S310, the second device 200 may transmit a phone call or a message to the first device 100. In operation S320, the first device 100 may receive the phone call or the message from the second device 200. In this regard, the phone call may be a phone call via a mobile communication network, a voice over internet protocol (VoIP) call, or a voice over long-term evolution (LTE) (VoLTE) call. In this regard, the message may be a short message service (SMS), a multimedia message service (MMS), or a message provided by a chatting service, such as KakaoTalk or Line, and the like.

[0111] In operation S330, the first device 100 may determine a threat level according to the driving conditions. In more detail, the first device 100 may analyze road conditions according to the detected driving conditions and determine the threat level according to a result of analysis.

[0112] An operation of the first device 100 that analyzes the road conditions will now be described. In an embodiment of the present disclosure, the first device 100 may analyze static road conditions and dynamic road conditions based on a result of detection. The first device 100 may analyze the static road conditions based on a threat element detected from a single frame of a camera and analyze the dynamic road conditions by tracking threat elements detected from continuous frames of the camera. The first device 100 may determine threats as static threats in a case where a distance from the threat element detected from the single frame to the first vehicle C1 is regarded as a threat and determine threats as dynamic threats in a case where the threat elements detected from continuous frames approach a colliding area of the first vehicle C1.

[0113] Thereafter, an operation of the first device 100 that determines the threat level will now be described. In an embodiment of the present disclosure, the first device 100 may predict a time to collision (TTC) and determine the threat level based on the predicted TTC. In more detail, the threat level may be finally determined based on detection of all threat elements that simultaneously occur, calculation of the TTC of all the detected threat elements, selection of the highest threat element, and the like. In this regard, threat elements may be defined as elements influencing the threat level, and may include, for example, a speed of the first vehicle C1, pedestrians in the colliding area, vehicles in the colliding area, a lane departure, road types (i.e., roads of the downtown or the countryside), road deterioration (i.e., road slipperiness or freezing due to rain or snow), and the like.

[0114] In an embodiment of the present disclosure, the first device 100 may determine a physical threat level (i.e., an objective threat level) relating to an object. The physical threat level may be explained as urgency URG of a response or attention of the driver. The urgency URG may be determined according to the TTC (i.e., URG=1/(1+TTC)). In this case, dynamics (i.e., a distance from pedestrian, a speed of a vehicle, and the like) inferred from current static and dynamic conditions may be considered.

[0115] In an embodiment of the present disclosure, the first device 100 may determine a probabilistic threat level of an accident that does not occur. In more detail, the probabilistic threat level involves a case where a detected target is not currently positioned on the colliding area of the first vehicle C1 but is predicted to immediately enter the colliding area. An operation of determining the threat level will be described in more detail with reference to FIGS. 4 to 7B below.

[0116] In operation S340, the first device 100 may limit notifications according to the determined threat level. In this regard, notifications may indicate receiving notifications of phone calls or messages. In more detail, all receiving notifications of phone calls or messages may be limited at the high threat level, and all receiving notifications of phone calls or messages may be permitted at the low threat level. Meanwhile, notifications may be selectively limited according to counterparts and receiving frequency at the middle threat level. This will be described later with reference to FIGS. 17 and 18.

[0117] In operation S350, the first device 100 may re-determine the threat level of the driving conditions. In operation S360, the first device 100 may provide limited notifications at a time according to the re-determined threat level. In the present embodiment of the present disclosure, the first device 100 may provide a notification packet including all limited notifications. In more detail, in a case where the re-determined threat level is the low threat level, the first device 100 may provide receiving notifications of phone calls or messages at a time. Meanwhile, in a case where the re-determined threat level is the middle threat level, the first device 100 may provide selectively receiving notifications of phone calls or messages according to counterparts and receiving frequency. This will be described with reference to FIGS. 13 to 16 later.

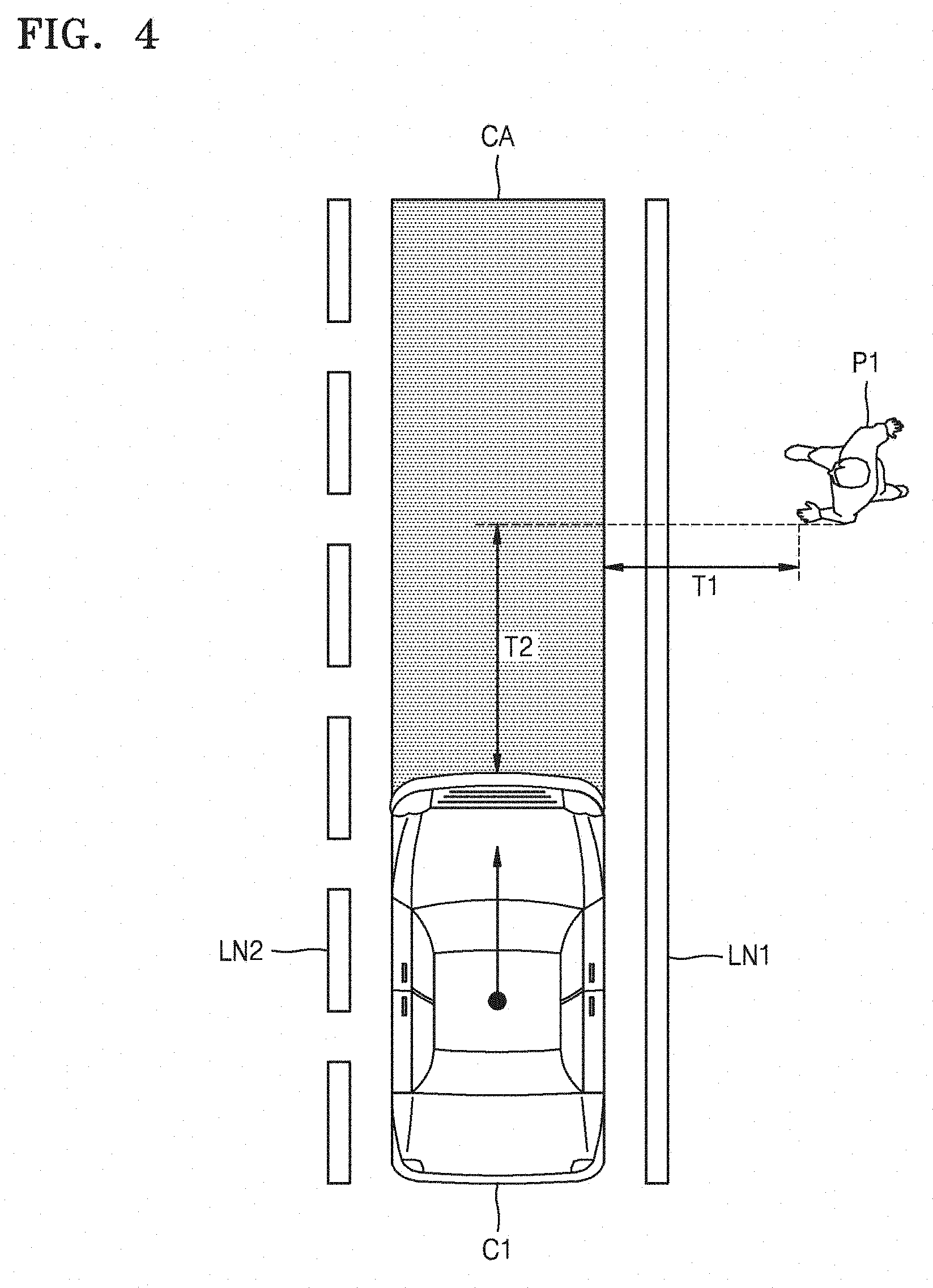

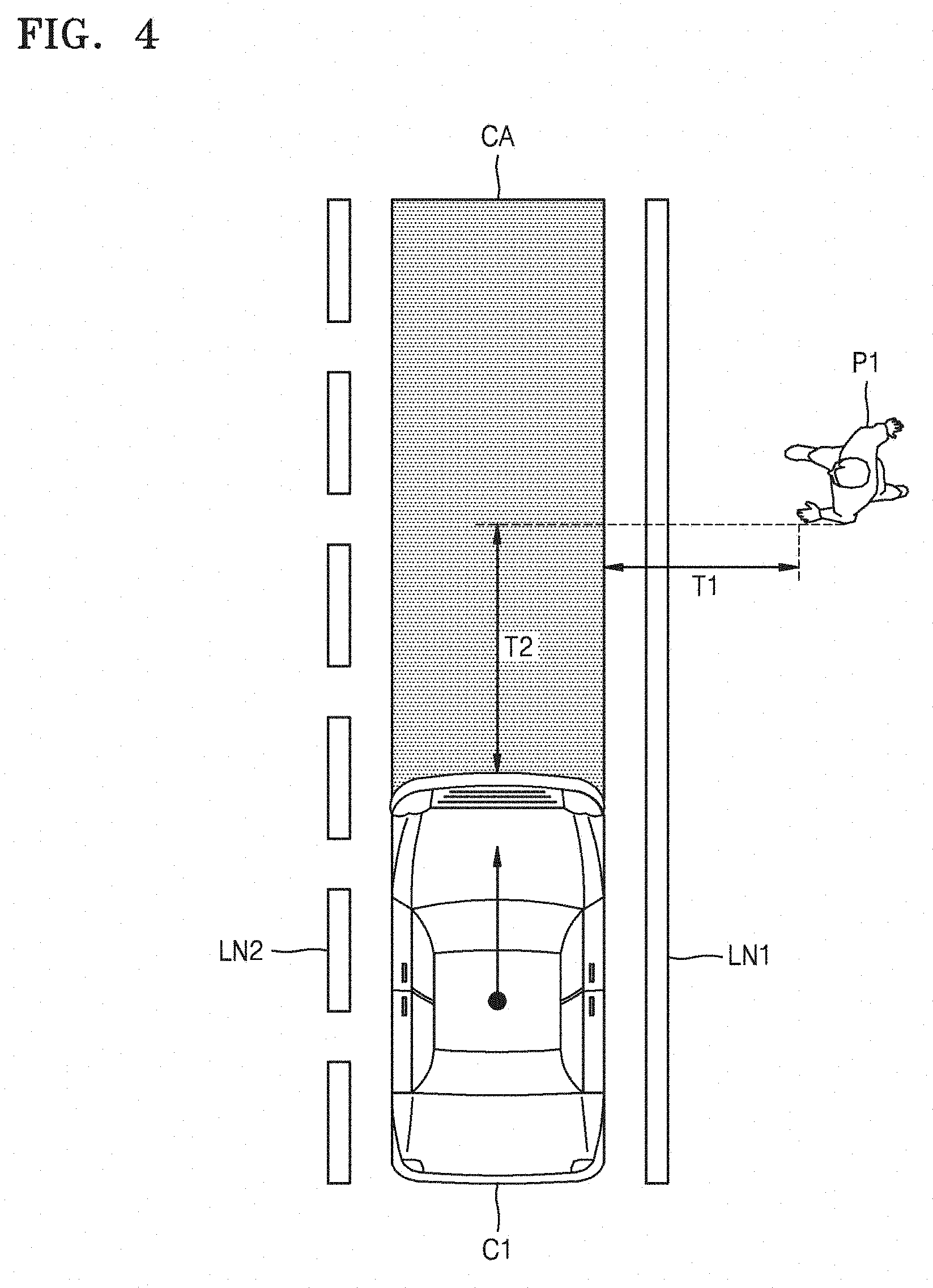

[0118] FIG. 4 illustrates illustrating an operation of determining a probabilistic threat level performed by a first device according to an embodiment of the present disclosure.

[0119] Referring to FIG. 4, the operation of determining the probabilistic threat level may be performed by, for example, the first device 100 of FIG. 1 and may correspond to, for example, operation S330 of FIG. 3. In the present example, a detected target may be a first pedestrian P1. The first pedestrian P1 is not positioned on a road between first and second lanes LN1 and LN2, i.e., in a colliding area CA, but is positioned next the first lane LN1. The first device 100 may estimate a predicted behavior of the first pedestrian P1 and thus may indicate the probabilistic threat level as the TTC.

[0120] A worst behavior may be used when the first pedestrian P1 stops moving or a motion vector of the first pedestrian P1 is unknown. In this regard, a speed of the first pedestrian P1 may be predicted as a standard value, for example, 1 m/s or 0.5 m/s. A most probable behavior may be used in case where the motion vector of the first pedestrian P1 is known. A first time T1 may be a time required by the first pedestrian P1 to reach the colliding area CA. A second time T2 may be defined as a required colliding time of the first vehicle C1. If the first pedestrian P1 is positioned on the colliding area CA, the first time T1 may be 0.

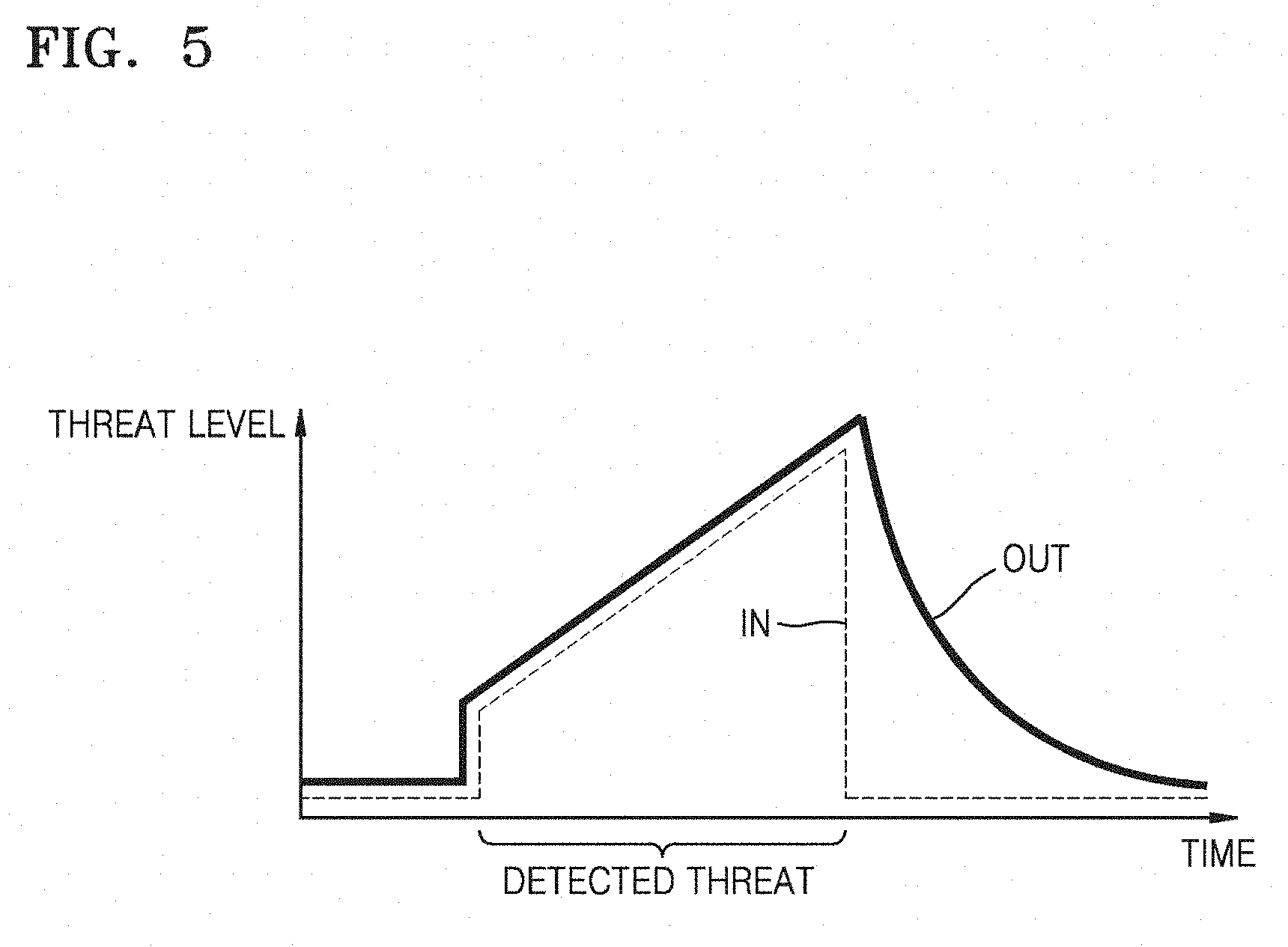

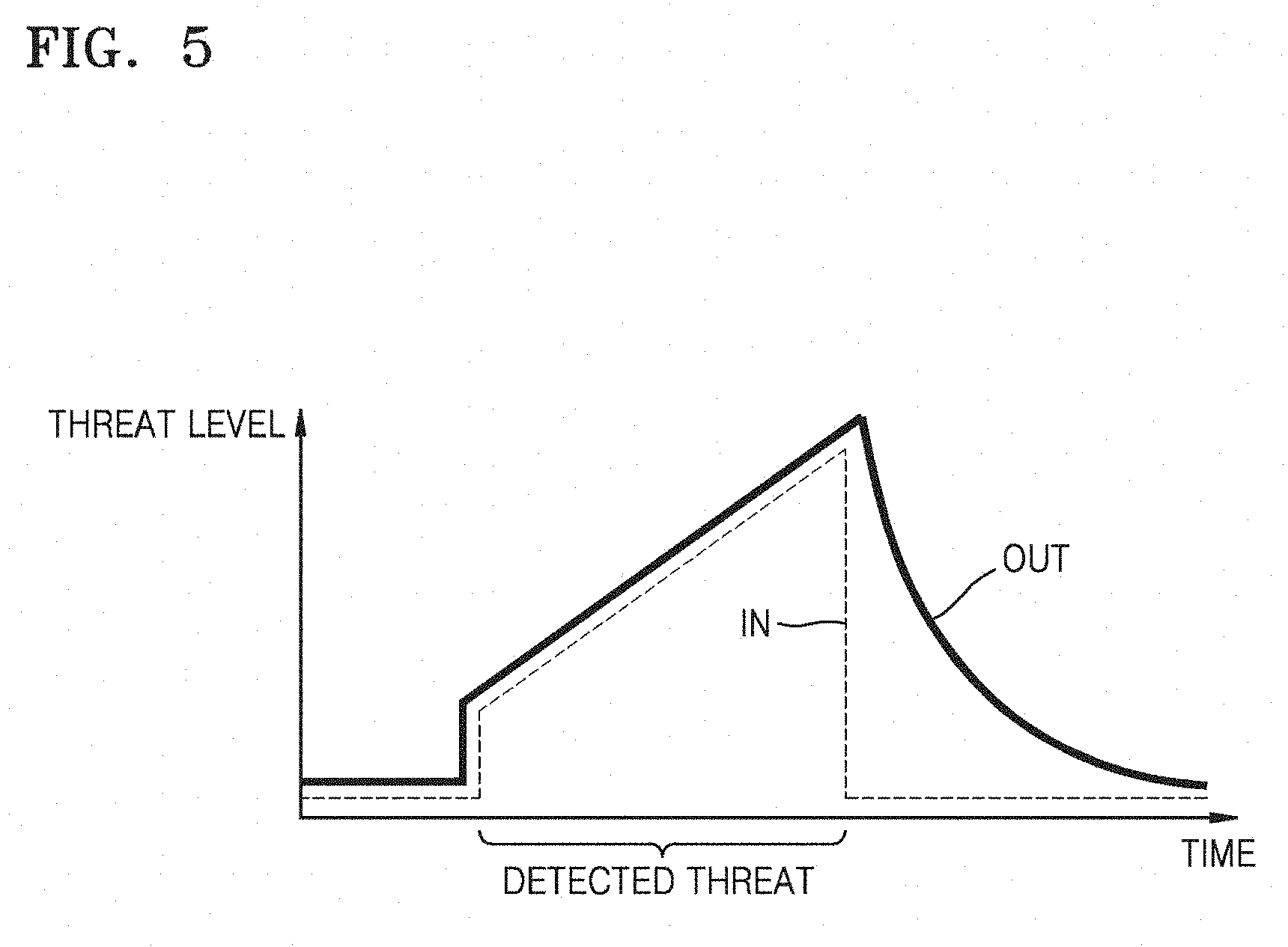

[0121] FIG. 5 is a graph showing a threat level determined by a first device according to an embodiment of the present disclosure.

[0122] Referring to FIG. 5, the threat level according to the present embodiment of the present disclosure may be determined by, for example, the first device 100, and may correspond to, for example, operation S330 of FIG. 3. In the graph of FIG. 5, an X axis indicates time, and a Y axis indicates the threat level. The first device 100 may generate an input threat level IN according to detected driving conditions, and generate an output threat level OUT based on the generated input threat level IN.

[0123] The input threat level IN may increase when there is a detect threat based on the driving conditions and rapidly decrease when the detected threat appears. The output threat level OUT may be generated by filtering the input threat level IN by using an identity function during a section in which the input threat level IN does not decrease, and filtering the input threat IN by using an inertial function during a section in which the input threat level IN decreases.

[0124] Accordingly, the first device 100 may determine the output threat level OUT based on a threat element that is not detected but is likely to occur. Thus, the first device 100 may determine in real time a notification limit section based on the output threat level OUT and limit notifications of phone calls or messages during the notification limit section.

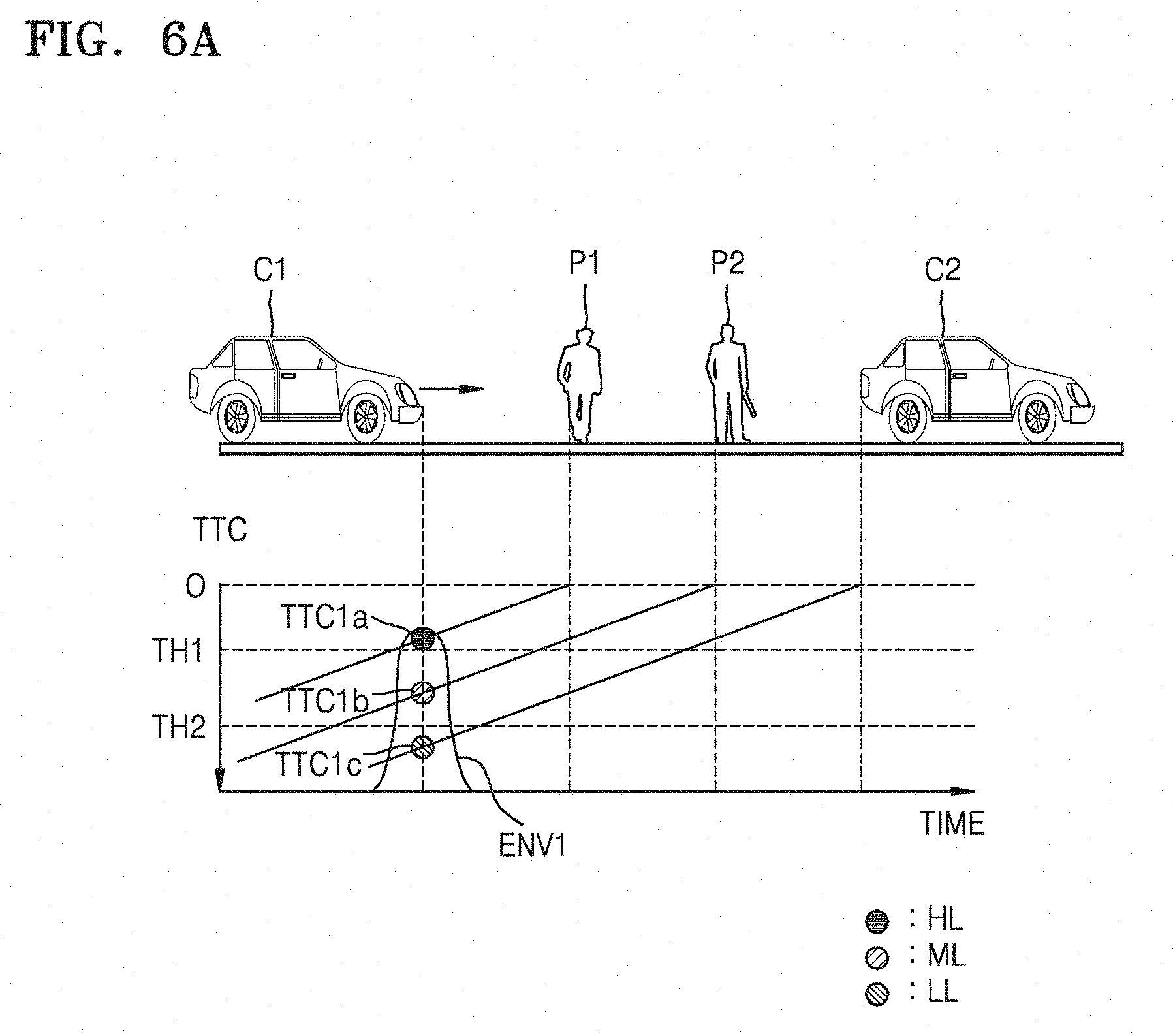

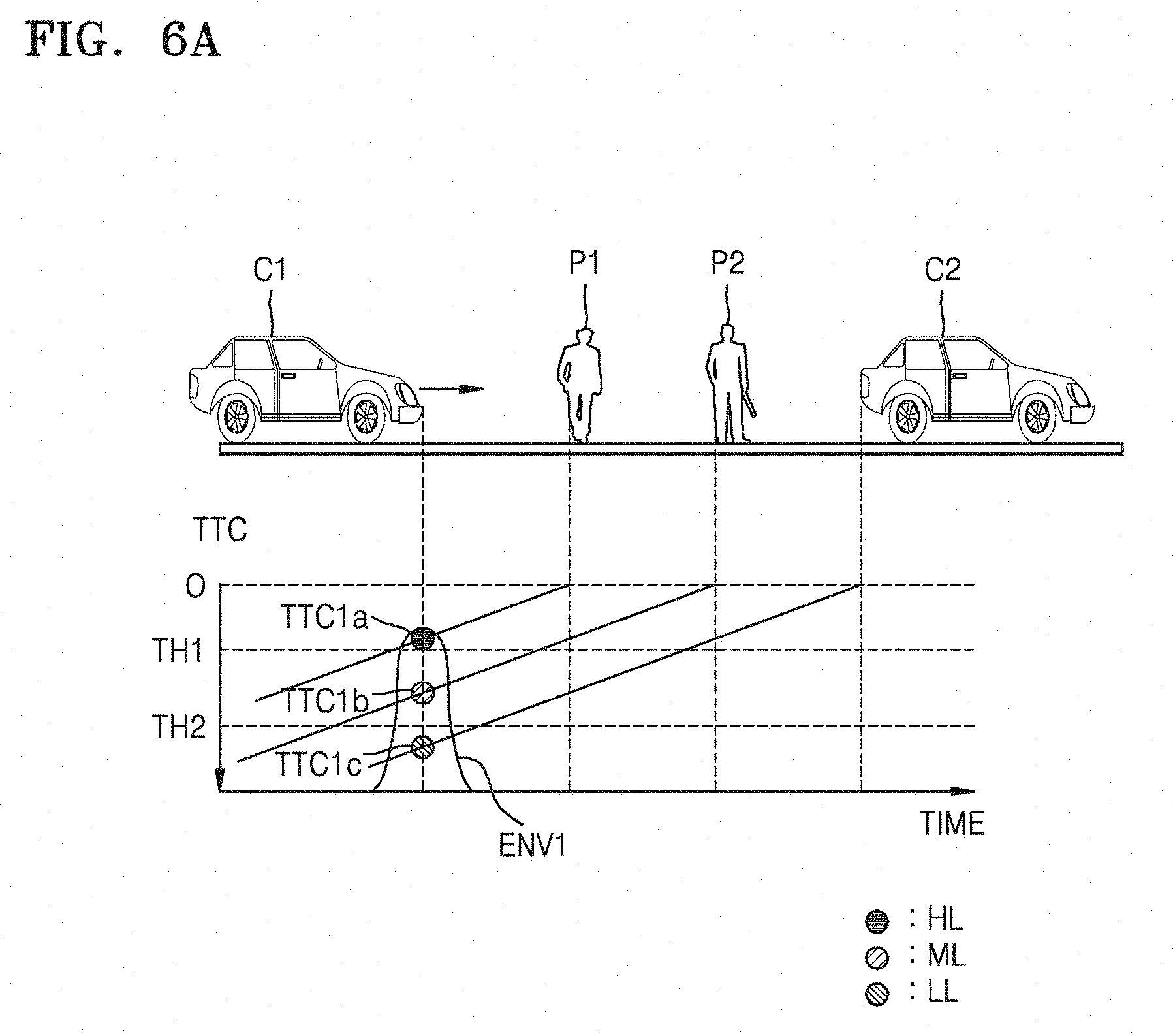

[0125] FIG. 6A is a graph showing probable threat elements detected by a first device at a first time and threat levels with respect to the probable threat elements according to an embodiment of the present disclosure.

[0126] Referring to FIG. 6A, the threat levels may be classified as at least three threat levels of a high threat level HL, a middle threat level ML, and a low threat level LL. In more detail, the first device 100 may determine the high threat level HL in a case where a predicted TTC is smaller than a first threshold TH1, determine the middle threat level ML in a case where the predicted TTC is between the first threshold TH1 and a second threshold TH2, and determine the low threat level LL in a case where the predicted TTC is greater than the second threshold TH2.

[0127] A second vehicle C2, the pedestrian P1, and a second pedestrian P2 may be positioned on a road on which the first vehicle C1 runs. An X axis indicates time. A Y axis indicates the TTC. Since a predicted first time to collision TTC1a with respect to the first pedestrian P1 is smaller than the first threshold TH1 at the first time t1, the first device 100 determine the high threat level HL with respect to the first pedestrian P1. Since a predicted second time to collision TTC1b with respect to the second pedestrian P2 is between the first threshold TH1 and the second threshold TH2 at the first time t1, the first device 100 determine the middle threat level ML with respect to the second pedestrian P2. Since a predicted third time to collision TTC1c with respect to the second vehicle C2 is greater than the second threshold TH2 at the first time t1, the first device 100 may determine the low threat level LL with respect to the second vehicle C2. An envelope ENV1 indicates all threat elements at the first time t1.

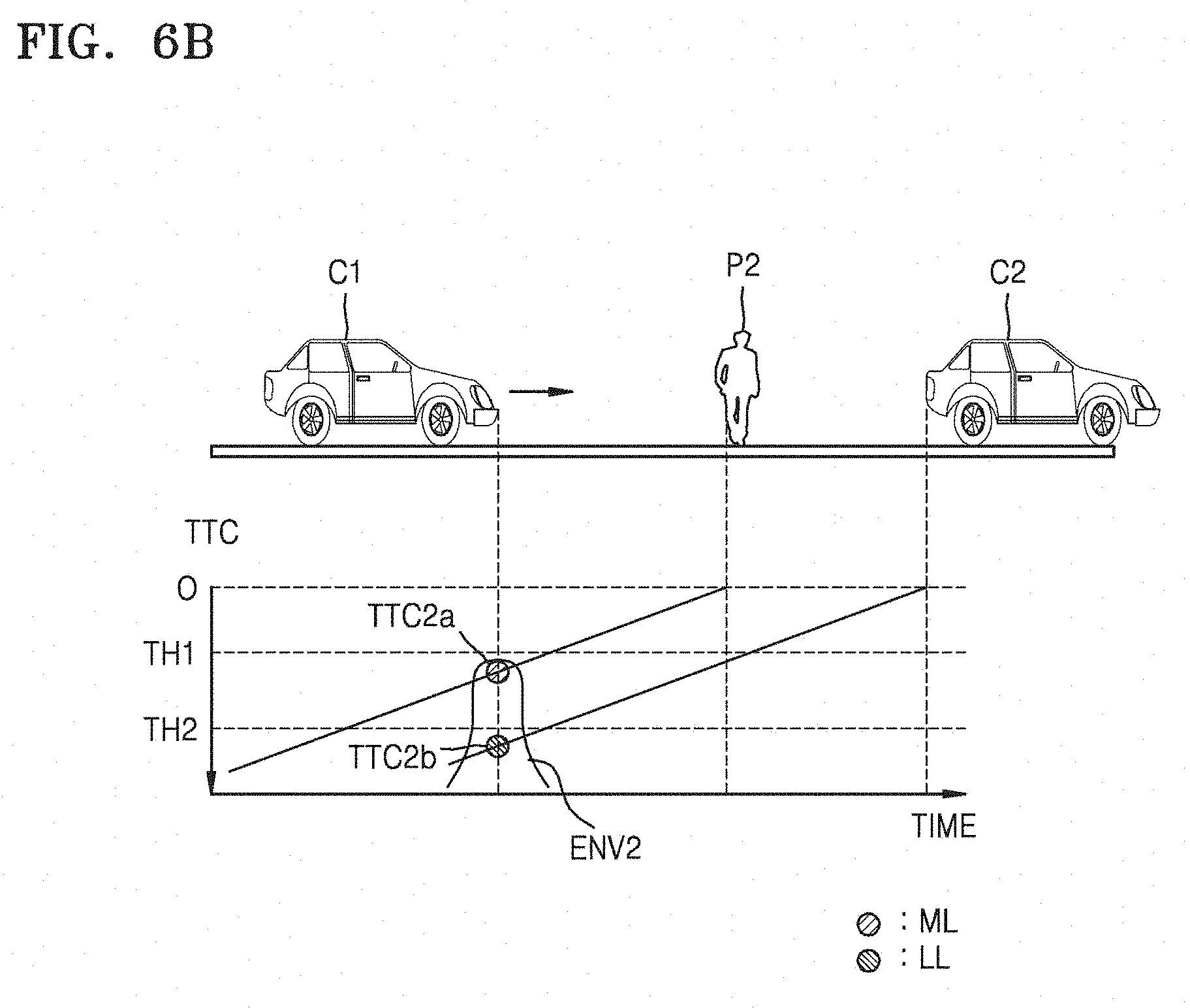

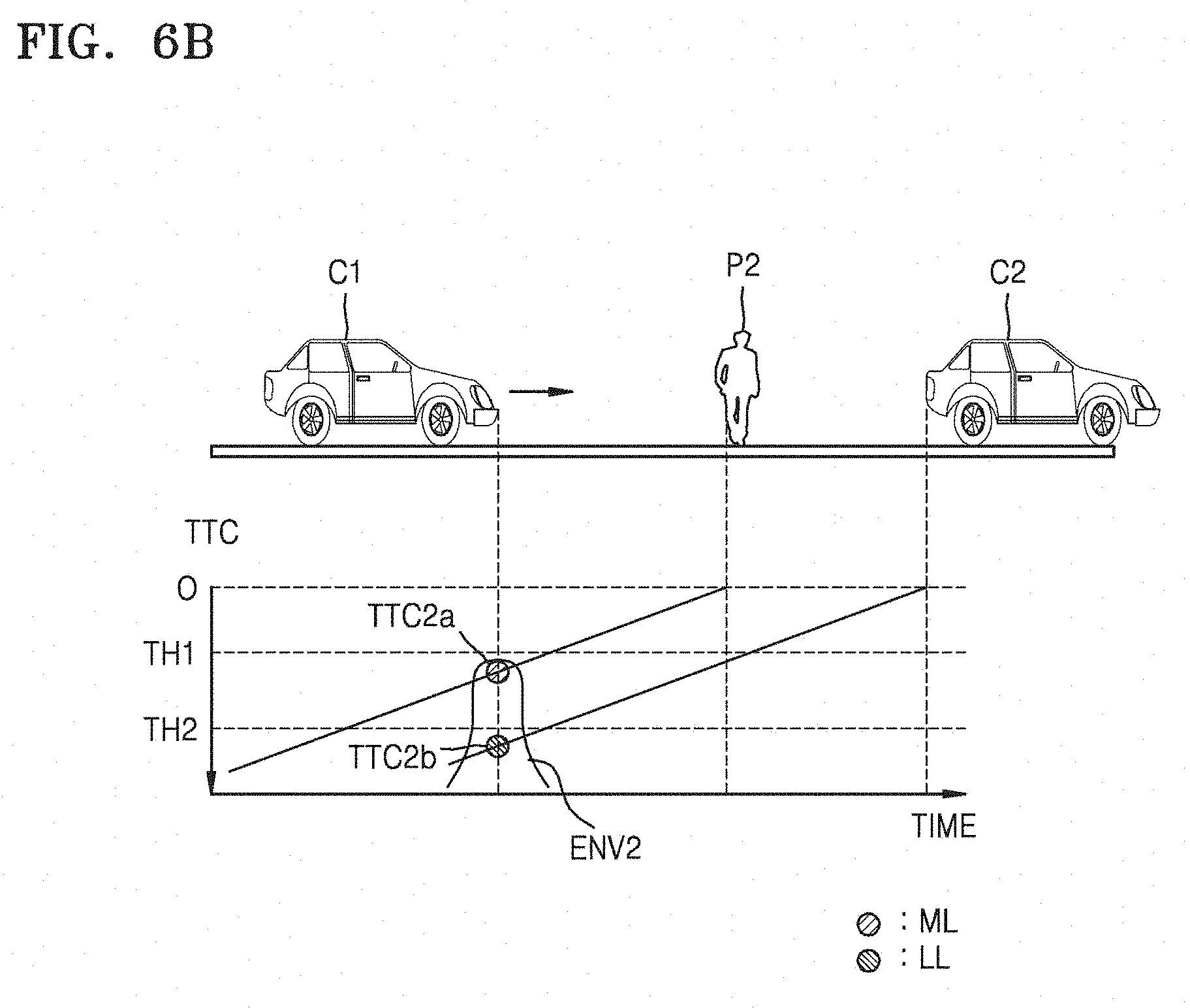

[0128] FIG. 6B is a graph showing probable threat elements detected by a first device at a second time and threat levels with respect to the probable threat elements according to an embodiment of the present disclosure.

[0129] Referring to FIG. 6B, the second vehicle C2 and the second pedestrian P2 may be positioned on a road on which the first vehicle C1 runs. An X axis indicates time. A Y axis indicates the TTC. Since a predicted first time to collision TTC2a with respect to the second pedestrian P2 is between the first threshold TH1 and the second threshold TH2 at the second time t2, the first device 100 determine the middle threat level ML with respect to the second pedestrian P2. Since a predicted third time to collision TTC2b with respect to the second vehicle C2 is greater than the second threshold TH2 at the second time t2, the first device 100 determine the low threat level LL with respect to the second vehicle C2. An envelope ENV2 indicates all threat elements at the second time t2.

[0130] FIG. 6C is a graph illustrating threat levels determined by a first device according to an embodiment of the present disclosure.

[0131] Referring to FIG. 6C, the first device 100 may determine the output threat level OUT according to threat levels having a highest threat level based on the envelope ENV1 at the first time t1 and the envelope ENV2 at the second time t2. For example, in a case where the first device 100 receives a phone call or a message from the second device 200 at the first time t1, since the output threat level OUT is the high threat level HL at the first time t1, the first device 100 may limit a receiving notification of the phone call or the message received from the second device 200. The first device 100 may determine the output threat level OUT in real time and provide the limited notification according to the determined output threat level OUT. In more detail, since the output threat level OUT is changed to the middle threat level ML at the second time t2, the first device 100 may provide the receiving notification of the phone call or the message received at the first time t1 from the second device 200 at the second time t2.

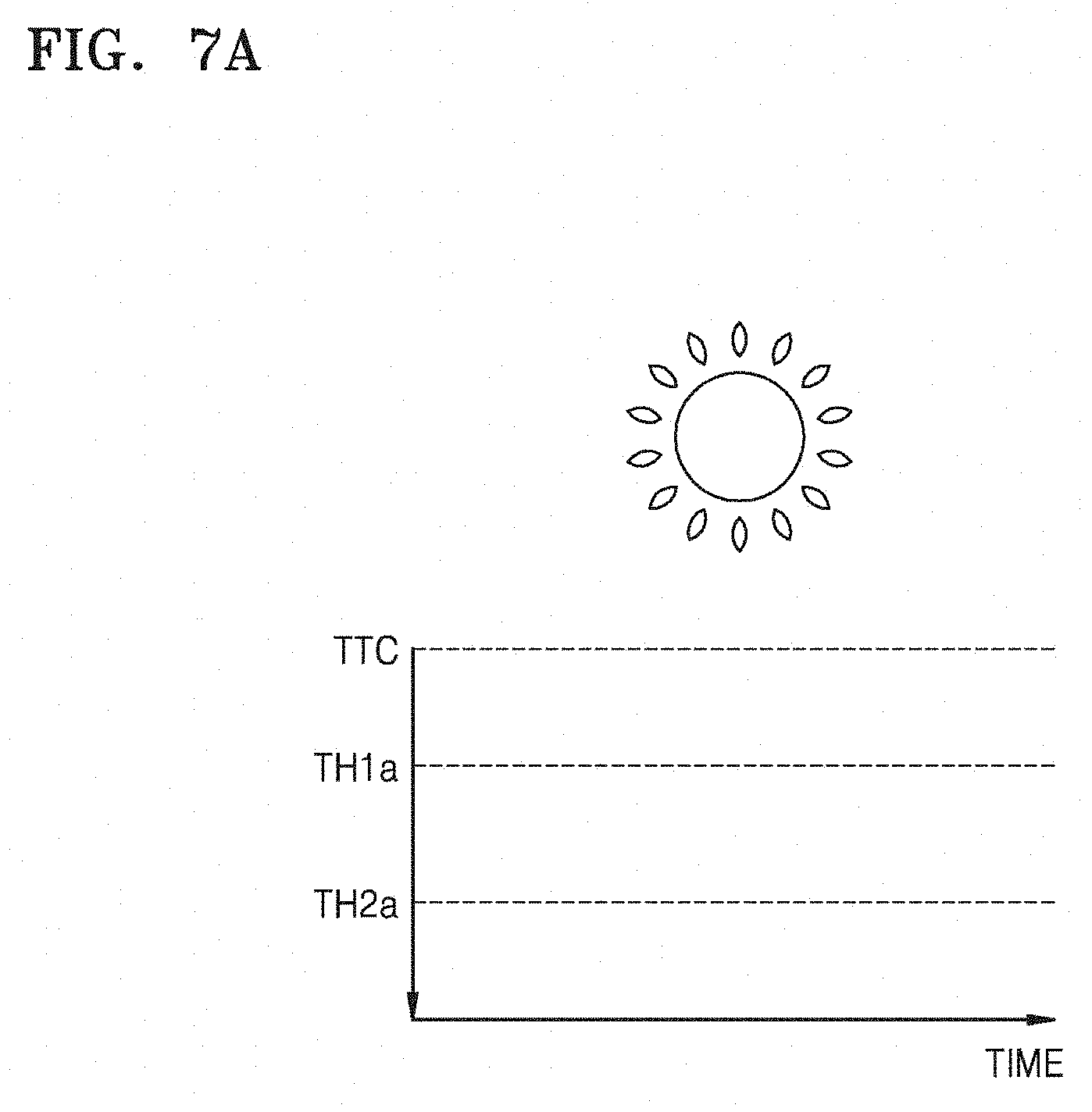

[0132] FIGS. 7A and 7B are graphs showing thresholds adaptive to an external environment determined by a first device according to an embodiment of the present disclosure.

[0133] Referring to FIGS. 7A and 7B, an X axis indicates time. A Y axis indicates the TTC. The first device 100 may acquire current weather information from, for example, a weather application, a news application, or an SNS application, and the like and configure thresholds for determining threat levels based on the acquired weather information.

[0134] In this regard, the thresholds for determining the threat levels may be configured as arbitrary TTC. In a case where a predicted TTC is below the first threshold TH1, the threat level may correspond to the high threat level HL. In a case where the predicted TTC is between the first threshold TH1 and the second threshold TH2, the threat level may correspond to the middle threat level ML. In a case where the predicted TTC exceeds the second threshold TH2, the threat level may correspond to the low threat level LL.

[0135] As shown in FIG. 7A, in a case where current weather is clear, the first device 100 may configure first and second threshold TH1a and TH2a to be relatively small. Meanwhile, as shown in FIG. 7B, in a case where current weather is cloudy or rainy, the first device 100 may configure first and second threshold TH1b and TH2b to be relatively great. In the case where current weather is cloudy or rainy, a reaction time of the first user USER1 may relatively increase, and a breaking distance may relatively increase. Thus, the first device 100 may configure the first and second threshold TH1b and TH2b in the case where current weather is cloudy or rainy to be sufficiently greater than the first and second threshold TH1a and TH2a in the case where current weather is clear.

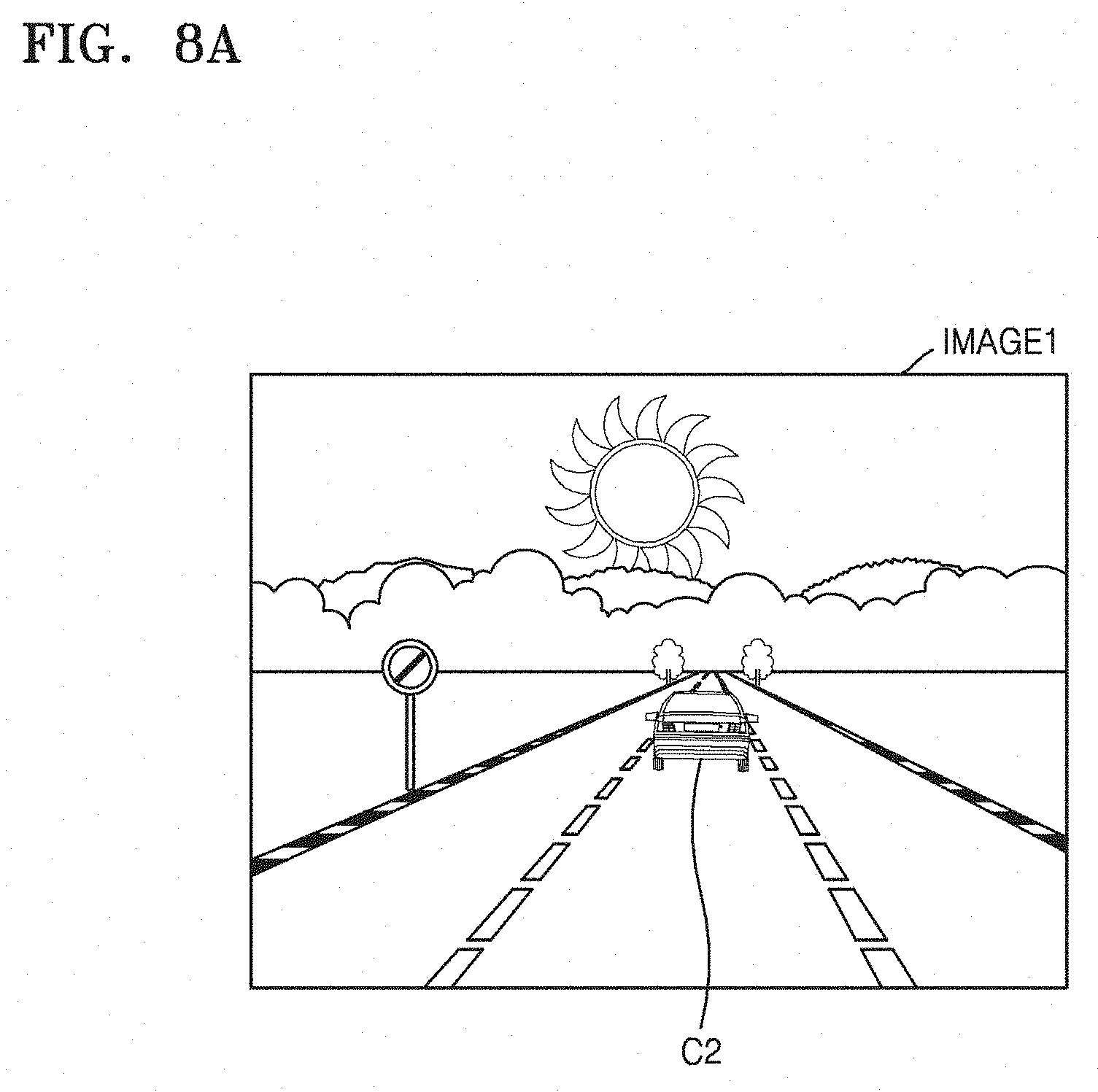

[0136] FIG. 8A illustrates first driving conditions detected by a first device according to an embodiment of the present disclosure, and FIG. 8B illustrates a threat level display of a first device according to the detected first driving conditions according to an embodiment of the present disclosure.

[0137] Referring to FIG. 8A, the first device 100 may acquire a first image IMAGE1 through a camera of the first device 100. The first image IMAGE1 indicates an image of a driving direction of the first vehicle C1 in which the first device 100 is mounted, i.e., an image at which the first user USER1 looks. The first device 100 may acquire distance information from the first image IMAGE1 to the second vehicle C2. The first device 100 may predict the TTC with respect to the second vehicle C2 based on the acquired distance information and compare the predicted TTC with the first and second thresholds TH1 and TH2, thereby generating the output threat level OUT. In the present example, the first device 100 may determine driving conditions detected from the first image IMAGE1 as the low threat level LL.

[0138] Referring to FIG. 8B, since the low threat level LL is determined, the first device 100 may provide a notification of a phone call received from the second device 200. In this regard, a user interface (UI) element indicating the low threat level LL, i.e., a low threat level display 11, may be provided as, for example, a circle having a small green size. The first user USER1 may select an accept 12 when wanting a call connection with the second device 200. Meanwhile, the first user USER1 may select a message transmission 13 when transmitting a message to the second device 200.

[0139] FIG. 9A illustrates second driving conditions detected by a first device according to an embodiment of the present disclosure, and FIG. 9B illustrates a threat level display of a first device according to the detected second driving conditions according to an embodiment of the present disclosure.

[0140] Referring to FIG. 9A, the first device 100 may acquire a second image IMAGE2 through a camera of the first device 100. The first device 100 may acquire distance information from the second image IMAGE2 to the second vehicle C2. The first device 100 may predict the TTC with respect to the second vehicle C2 based on the acquired distance information and compare the predicted TTC with the first and second thresholds TH1 and TH2, thereby generating the output threat level OUT. In the present example, the first device 100 may determine driving conditions detected from the second image IMAGE2 as the middle threat level ML.

[0141] Referring to FIG. 9B, since the middle threat level ML is determined, the first device 100 may limit a notification of a phone call received from the second device 200. In this regard, a UI element indicating the middle threat level ML, i.e., a middle threat level display 14, may be provided as, for example, a circle having a small yellow size, and, in more detail, may have a greater size than that of the low threat level display 11 of FI. 8B. The first device 100 may provide a mute display 15 indicating that the notification is limited at the middle threat level ML.

[0142] FIG. 10A illustrates third driving conditions detected by a first device according to an embodiment of the present disclosure, and FIG. 10B illustrates a threat level display of a first device according to the detected third driving conditions according to an embodiment of the present disclosure.

[0143] Referring to FIG. 10A, the first device 100 may acquire a third image IMAGE3 through a camera of the first device 100. The first device 100 may acquire distance information from the third image IMAGE3 to the second vehicle C2. The first device 100 may predict the TTC with respect to the second vehicle C2 based on the acquired distance information and compare the predicted TTC with the first and second thresholds TH1 and TH2, thereby generating the output threat level OUT. In the present example, the first device 100 may determine driving conditions detected from the third image IMAGE3 as the high threat level HL.

[0144] Referring to FIG. 10B, since the high threat level HL is determined, the first device 100 may limit a notification of a phone call received from the second device 200. In this regard, an UI element indicating the high threat level HL, i.e., a high threat level display 16, may be provided as, for example, a circle having a large red size, and, in more detail, may have a greater size than that of the middle threat level display 14 of FIG. 9B. The first device 100 may provide the mute display 15 indicating that the notification is limited at the high threat level HL. Examples of the UI element indicating the high threat level HL will be described in more detail with reference to FIGS. 11A and 11B below.

[0145] FIGS. 11A and 11B illustrate a high threat level display of a first device according to an embodiment of the present disclosure.

[0146] Referring to FIG. 11A, the first device 100 may provide a UI element including a detected threat element on a display at the high threat level HL. In the present embodiment of the present disclosure, since the detected threat element is a vehicle and a detected threat level is the high threat level HL, the first device 100 may provide the display 16a of the high threat level HL as, for example, a pictogram 16a including a vehicle shape in a large red circle.

[0147] For example, in a case where the first device 100 is on the phone with the second device 200, the first device 100 may limit a phone call at the high threat level HL. Accordingly, the first device 100 may enter the mute mode 15, and the first user USER1 may select an end call 17.

[0148] Referring to FIG. 11B, the first device 100 may provide a UI element including a detected threat element on a display at the high threat level HL. In the present embodiment of the present disclosure, since the detected threat element is a pedestrian and a detected threat level is the high threat level HL, the first device 100 may provide the display 16b of the high threat level HL as, for example, a pictogram 16b including a pedestrian shape in a large red circle.

[0149] FIG. 12 is a flowchart of a notification limiting method adaptive to a plurality of threat levels performed by a first device according to an embodiment of the present disclosure.

[0150] Referring to FIG. 12, the notification limiting method according to the present embodiment of the present disclosure may be performed by, for example, the first device 100 of FIG. 1 and may correspond to, for example, operation S350 of FIG. 3.

[0151] In operation S1200, the first device 100 may determine if a determined threat level is the high threat level HL. As a result of determination, the first device 100 may perform operation S1210 if the determined threat level is the high threat level HL and may perform operation S1240 if the determined threat level is not the high threat level HL. In operation S1210, the first device 100 may limit a notification with respect to a phone call or message.

[0152] In operation S1220, the first device 100 may determine if the determined threat level is the middle threat level ML. As a result of determination, the first device 100 may perform operation S1230 if the determined threat level is the middle threat level ML and may perform operation S1240 if the determined threat level is not the middle threat level ML. In operation S1230, the first device 100 may selectively limit a notification according to a second user USER2. For example, a user may configure to permit a receiving notification with respect to a specific sender in advance. In a case where the phone call or message is received from a sender who is allowed to permit the receiving notification, the first device 100 may permit the notification at the middle threat level ML.

[0153] In operation S1240, the first device 100 may determine if the determined threat level is the low threat level LL. As a result of determination, the first device 100 may perform operation S1250 if the determined threat level is the low threat level LL and may end a notification limiting operation if the determined threat level is not the low threat level LL. In operation S1250, the first device 100 may permit the notification with respect to the phone call or message.

[0154] FIG. 13 illustrates a notification providing screen of a first device according to an embodiment of the present disclosure.

[0155] Referring to FIG. 13, the notification providing screen according to the present embodiment may correspond to an embodiment of operation S360 of FIG. 3. In a case where a re-determined threat level is the low threat level LL, the first device 100 may provide notifications 18 at a time. In a case where the re-determined threat level is the low threat level LL, the first device 100 may display permission of a call transmission 19 and the message transmission 13.

[0156] FIG. 14 illustrates a notification providing screen of a first device according to an embodiment of the present disclosure.

[0157] Referring to FIG. 14, the present embodiment may be provided in a case where the first user USER1 selects the notifications 18 in FIG. 13. The first device 100 may provide a notification packet including notifications limited during a section at a time. The notification packet NOT1 may include a first notification 18a, a second notification 18b, and a third notification 18c. For example, the first notification 18a may be a notification with respect to calls and messages received from JAMES, the second notification 18b may be a notification with respect to a message received from MARTIN, and the third notification 18c may be a notification with respect to a call received from ADAM.

[0158] In an embodiment of the present disclosure, the first device 100 may provide the notification packet NOT1 in sound through a speaker of the first device 100. In an embodiment of the present disclosure, the first device 100 may provide the notification packet NOT1 in sound through a speaker of the first vehicle C1. For example, the first device 100 may output "Contact from three. 2 calls and 2 messages from JAMES. 1 message from MARTIN. 1 call from ADAM" in voice through the speaker. Thereafter, the first device 100 may output a selection request of a user "Do you want to Read again, Read messages, Call, Remind me later or Ignore?" in voice through the speaker.

[0159] FIG. 15 illustrates providing a receiving message performed by a first device according to an embodiment of the present disclosure.

[0160] Referring to FIG. 15, an operation of providing the receiving message according to the present embodiment of the present disclosure may apply, for example, in a case where a user selects "message reading" with respect to providing a notification of FIG. 14. The first device 100 may convert text included in a message (for example, a message received from MARTIN) into voice and output the converted voice (for example, "I forgot to buy ice cream. Buy ice cream on your way home") through a speaker of the first device 100. Thereafter, the first device 100 may output a selection request of the user "Do you want to Read next message, Reply or Call back?" in voice through the speaker.

[0161] FIG. 16 illustrates a notification providing method performed by a first device according to an embodiment of the present disclosure.

[0162] Referring to FIG. 16, the first device 100 may provide notification packets NOT11 and NOT12 at a preset period or a preset time. In an embodiment of the present disclosure, if driving conditions are changed, in more detail, if a threat level is changed to the low threat level LL, the first device 100 may provide the notification packets NOT11 and NOT12 at the preset period or the preset time. In an embodiment of the present disclosure, although the driving conditions are not changed, in more detail, if the threat level is changed to the high threat level HL or the middle threat level ML, the first device 100 may provide the notification packets NOT11 and NOT12 at the preset period or the preset time.

[0163] In the present example, the preset period may be 30 minutes. In an embodiment of the present disclosure, the first device 100 may limit notifications with respect to communication events received by the first device 100, i.e., a first call CALL1 and first and second messages MES1 and MES2, for initial 30 minutes, and provide the first notification packet NOT1 including all the first call CALL1 and the first and second messages MES1 and MES2 in 30 minutes. Thereafter, the first device 100 may limit notifications with respect to communication events received by the first device 100, i.e., a second call CALL2 and a third message MES3, for next 30 minutes, and provide the second notification packet NOT2 including both the second call CALL2 and the third message MES3 in 30 minutes.

[0164] According to the present embodiment of the present disclosure, the first user USER1 may previously configure a notification period or a notification time, and receive the notification packets NOT1 and NOT2 only at the notification period or the notification time while limiting other notifications, thereby greatly reducing a number of interruptions of driving of the first user USER1 caused by notifications of the first device 100.

[0165] FIG. 17 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure.

[0166] Referring to FIG. 17, the device control method according to the present embodiment may be a modification of the device control method of FIG. 3 and include operations that are time-serially performed by the first and second devices 100 and 200 of FIG. 1. Although omitted below, descriptions of the first and second devices 100 and 200 with reference to FIG. 1 apply to the device control method according to the present embodiment.

[0167] In operation S1700, the first device 100 may detect driving conditions of a vehicle. In operation S1710, the second device 200 may transmit a phone call or a message to the first device 100. In operation S1720, the first device 100 may receive the phone call or the message from the second device 200. In operation S1730, the first device 100 may determine a threat level according to the driving conditions.

[0168] In operation S1740, the first device 100 may limit notifications according to the determined threat level and a counterpart. In this regard, the determined threat level may be classified as at least the high threat level HL, the middle threat level ML, and the low threat level LL. In the present embodiment of the present disclosure, in a case where the determined threat level is the high threat level HL, the first device 100 may limit notifications irrespective of the counterpart, i.e., the second user USER2, in a case where the determined threat level is the middle threat level ML, the first device 100 may permit notifications irrespective of the second user USER2, and in a case where the determined threat level is the low threat level LL, the first device 100 may selectively limit notifications according to the second user USER2.

[0169] In an embodiment of the present disclosure, the first device 100 may determine a white list based on a phone book, a call history, a message transmitting/receiving list, or user settings. In the case where the determined threat level is the middle threat level ML, if the second user USER2 is included in the white list, the first device 100 may permit notifications, and, if the second user USER2 is not included in the white list, the first device 100 may limit notifications. For example, in a case where a phone call or message is received from the second user USER2 stored in a favorite list of the phone book, the first device 100 may determine that the second user USER2 is included in the white list and may permit notifications at the middle threat level ML.

[0170] In operation S1750, the first device 100 may re-determine the threat level according to the driving conditions. In operation S1760, the first device 100 may provide limited notifications at a time according to the re-determined threat level.

[0171] FIG. 18 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure.

[0172] Referring to FIG. 18, the device control method according to the present embodiment may be a modification of the device control method of FIG. 3 and include operations that are time-serially performed by the first and second devices 100 and 200 of FIG. 1. Although omitted below, descriptions of the first and second devices 100 and 200 with reference to FIG. 1 apply to the device control method according to the present embodiment.

[0173] In operation S1800, the first device 100 may detect driving conditions of a vehicle. In operation S1810, the second device 200 may transmit a phone call or a message to the first device 100. In operation S1820, the first device 100 may receive the phone call or the message from the second device 200. In operation S1830, the first device 100 may determine a threat level according to the driving conditions. In operation S1840, the first device 100 may limit notifications according to the determined threat level.

[0174] In operation S1850, the second device 200 may transmit a phone call or a message to the first device 100 again. In operation S1860, the first device 100 may receive the phone call or the message from the second device 200 again. In operation S1870, the first device 100 may determine the threat level according to the driving conditions. In operation S1880, the first device 100 may provide receiving notifications.

[0175] In the present embodiment of the present disclosure, the first device 100 may adaptively limit or provide notifications according to an occurrence frequency of events, i.e., a receiving frequency from the second device 200. In more detail, in a case where a receiving number from the same second user USER2 exceeds a preset value, the first device 100 may determine that the received phone call or message is an important phone call or message, and limitedly permit a notification with respect to the received phone call or message. In the present embodiment of the present disclosure, in a case where the receiving number from the second user USER2 is more than 2 times, the first device 100 may provide a receiving notification from the second device 200 at the middle threat level ML.

[0176] FIG. 19 is a flowchart illustrating operations of first and second devices performed by using a device control method according to an embodiment of the present disclosure.

[0177] Referring to FIG. 19, the device control method according to the present embodiment may be a modification of the device control method of FIG. 3 and include operations that are time-serially performed by the first and second devices 100 and 200 of FIG. 1. Although omitted below, descriptions of the first and second devices 100 and 200 with reference to FIG. 1 apply to the device control method according to the present embodiment.

[0178] In operation S1900, the first device 100 may detect driving conditions of a vehicle. In operation S1910, the second device 200 may transmit a phone call or a message to the first device 100. In operation S1920, the first device 100 may receive the phone call or the message from the second device 200. In operation S1930, the first device 100 may determine a threat level according to the driving conditions. In operation S1940, the first device 100 may limit notifications according to the determined threat level.